Repository: D-X-Y/NATS-Bench

Branch: main

Commit: 1d4a304ad190

Files: 34

Total size: 199.1 KB

Directory structure:

gitextract_fmzpgwc0/

├── .github/

│ ├── CODE-OF-CONDUCT.md

│ ├── ISSUE_TEMPLATE/

│ │ ├── bug-report.md

│ │ └── question.md

│ └── workflows/

│ └── ci.yml

├── .gitignore

├── LICENSE.md

├── README.md

├── fake_torch_dir/

│ ├── NATS-sss-v1_0-50262-simple/

│ │ ├── 000000.pickle.pbz2

│ │ ├── 000011.pickle.pbz2

│ │ ├── 000284.pickle.pbz2

│ │ └── meta.pickle.pbz2

│ └── NATS-tss-v1_0-3ffb9-simple/

│ ├── 000000.pickle.pbz2

│ ├── 000011.pickle.pbz2

│ ├── 000284.pickle.pbz2

│ └── meta.pickle.pbz2

├── nats_bench/

│ ├── __init__.py

│ ├── api_size.py

│ ├── api_topology.py

│ ├── api_utils.py

│ └── genotype_utils.py

├── notebooks/

│ ├── README.md

│ ├── create-query-sss.ipynb

│ ├── find-largest.ipynb

│ ├── issue-11.ipynb

│ ├── issue-12.ipynb

│ ├── issue-21.ipynb

│ ├── issue-27.ipynb

│ ├── issue-30.ipynb

│ ├── issue-33.ipynb

│ ├── issue-36.ipynb

│ ├── issue-7.ipynb

│ └── random-search.ipynb

├── setup.py

└── tests/

└── api_test.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .github/CODE-OF-CONDUCT.md

================================================

# Contributor Covenant Code of Conduct

## Our Pledge

In the interest of fostering an open and welcoming environment, we as

contributors and maintainers pledge to making participation in our project and

our community a harassment-free experience for everyone, regardless of age, body

size, disability, ethnicity, sex characteristics, gender identity and expression,

level of experience, education, socio-economic status, nationality, personal

appearance, race, religion, or sexual identity and orientation.

## Our Standards

Examples of behavior that contributes to creating a positive environment

include:

* Using welcoming and inclusive language

* Being respectful of differing viewpoints and experiences

* Gracefully accepting constructive criticism

* Focusing on what is best for the community

* Showing empathy towards other community members

Examples of unacceptable behavior by participants include:

* The use of sexualized language or imagery and unwelcome sexual attention or

advances

* Trolling, insulting/derogatory comments, and personal or political attacks

* Public or private harassment

* Publishing others' private information, such as a physical or electronic

address, without explicit permission

* Other conduct which could reasonably be considered inappropriate in a

professional setting

## Our Responsibilities

Project maintainers are responsible for clarifying the standards of acceptable

behavior and are expected to take appropriate and fair corrective action in

response to any instances of unacceptable behavior.

Project maintainers have the right and responsibility to remove, edit, or

reject comments, commits, code, wiki edits, issues, and other contributions

that are not aligned to this Code of Conduct, or to ban temporarily or

permanently any contributor for other behaviors that they deem inappropriate,

threatening, offensive, or harmful.

## Scope

This Code of Conduct applies both within project spaces and in public spaces

when an individual is representing the project or its community. Examples of

representing a project or community include using an official project e-mail

address, posting via an official social media account, or acting as an appointed

representative at an online or offline event. Representation of a project may be

further defined and clarified by project maintainers.

## Enforcement

Instances of abusive, harassing, or otherwise unacceptable behavior may be

reported by contacting the project team at dongxuanyi888@gmail.com. All

complaints will be reviewed and investigated and will result in a response that

is deemed necessary and appropriate to the circumstances. The project team is

obligated to maintain confidentiality with regard to the reporter of an incident.

Further details of specific enforcement policies may be posted separately.

Project maintainers who do not follow or enforce the Code of Conduct in good

faith may face temporary or permanent repercussions as determined by other

members of the project's leadership.

## Attribution

This Code of Conduct is adapted from the [Contributor Covenant][homepage], version 1.4,

available at https://www.contributor-covenant.org/version/1/4/code-of-conduct.html

[homepage]: https://www.contributor-covenant.org

For answers to common questions about this code of conduct, see

https://www.contributor-covenant.org/faq

================================================

FILE: .github/ISSUE_TEMPLATE/bug-report.md

================================================

---

name: Bug Report

about: Create a report to help us improve

title: ''

labels: ''

assignees: ''

---

**Describe the bug**

A clear and concise description of what the bug is.

**To Reproduce**

Please provide a small script to reproduce the behavior:

```

codes to reproduce the bug

```

Please let me know your OS, Python version, PyTorch version.

**Expected behavior**

A clear and concise description of what you expected to happen.

**Screenshots**

If applicable, add screenshots to help explain your problem.

================================================

FILE: .github/ISSUE_TEMPLATE/question.md

================================================

---

name: Questions about NATS-Bench

about: Ask questions about or discuss on NATS-Bench

title: ''

labels: ''

assignees: ''

---

**Describe the Question**

A clear and concise description of the question.

- Is it about the topology search space in NATS-Bench?

- Is it about the size search space in NATS-Bench?

- Which figure or table are you referring to in the paper?

================================================

FILE: .github/workflows/ci.yml

================================================

name: Run Python Tests

on:

push:

branches:

- main

pull_request:

branches:

- main

jobs:

build:

strategy:

matrix:

os: [ubuntu-18.04, ubuntu-20.04, macos-latest]

python-version: [3.6, 3.7, 3.8, 3.9]

runs-on: ${{ matrix.os }}

steps:

- uses: actions/checkout@v2

- name: Set up Python ${{ matrix.python-version }}

uses: actions/setup-python@v2

with:

python-version: ${{ matrix.python-version }}

- name: Lint with Black

run: |

cd ..

if [ "$RUNNER_OS" == "Windows" ]; then

python.exe -m pip install black

python.exe -m black NATS-Bench/nats_bench -l 88 --check --diff

python.exe -m black NATS-Bench/tests -l 88 --check --diff

else

python -m pip install black

python --version

python -m black --version

echo $PWD

ls

python -m black NATS-Bench/nats_bench -l 88 --check --diff --verbose

python -m black NATS-Bench/tests -l 88 --check --diff --verbose

fi

shell: bash

- name: Install nats_bench from source

run: |

pip install .

- name: Run tests with pytest

run: |

export FAKE_TORCH_HOME="fake_torch_dir"

python -m pip install pytest

python -m pytest . --durations=0

- name: Install nats_bench from pip with tests

run: |

pip uninstall -y nats_bench

python -m pip install nats_bench

export FAKE_TORCH_HOME="fake_torch_dir"

python -m pip install pytest

python -m pytest . --durations=0

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

env/

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

*.egg-info/

.installed.cfg

*.egg

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*,cover

.hypothesis/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# IPython Notebook

.ipynb_checkpoints

# pyenv

.python-version

# celery beat schedule file

celerybeat-schedule

# dotenv

.env

# virtualenv

venv/

ENV/

# Spyder project settings

.spyderproject

# Rope project settings

.ropeproject

# Pycharm project

.idea

snapshots

*.pytorch

*.tar.bz

data

.*.swp

*.sh

main_main.py

dist

build

*.egg-info

.DS_Store

================================================

FILE: LICENSE.md

================================================

MIT License

Copyright (c) since 2020 Xuanyi Dong (GitHub: https://github.com/D-X-Y)

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: README.md

================================================

# [NATS-Bench: Benchmarking NAS Algorithms for Architecture Topology and Size](https://arxiv.org/abs/2009.00437)

Xuanyi Dong, Lu Liu, Katarzyna Musial, Bogdan Gabrys

in IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), 2021

**Abstract**: Neural architecture search (NAS) has attracted a lot of attention and has been illustrated to bring tangible benefits in a large number of applications in the past few years. Network topology and network size have been regarded as two of the most important aspects for the performance of deep learning models and the community has spawned lots of searching algorithms for both of those aspects of the neural architectures. However, the performance gain from these searching algorithms is achieved under different search spaces and training setups. This makes the overall performance of the algorithms incomparable and the improvement from a sub-module of the searching model unclear.

In this paper, we propose NATS-Bench, a unified benchmark on searching for both topology and size, for (almost) any up-to-date NAS algorithm.

NATS-Bench includes the search space of 15,625 neural cell candidates for architecture topology and 32,768 for architecture size on three datasets.

We analyze the validity of our benchmark in terms of various criteria and performance comparison of all candidates in the search space.

We also show the versatility of NATS-Bench by benchmarking 13 recent state-of-the-art NAS algorithms on it. All logs and diagnostic information trained using the same setup for each candidate are provided.

This facilitates a much larger community of researchers to focus on developing better NAS algorithms in a more comparable and computationally effective environment.

**You can use `pip install nats_bench` to install the library of NATS-Bench

or install from source by `pip install .`.**

If you are seeking how to re-create NATS-Bench from scratch or reproduce benchmarked results, please see use [AutoDL-Projects](https://github.com/D-X-Y/AutoDL-Projects) and see these [instructions](https://github.com/D-X-Y/NATS-Bench#how-to-re-create-nats-bench-from-scratch).

If you have questions, please ask at [here](https://github.com/D-X-Y/NATS-Bench/issues) or [email me](mailto:dongxuanyi888@gmail.com) :)

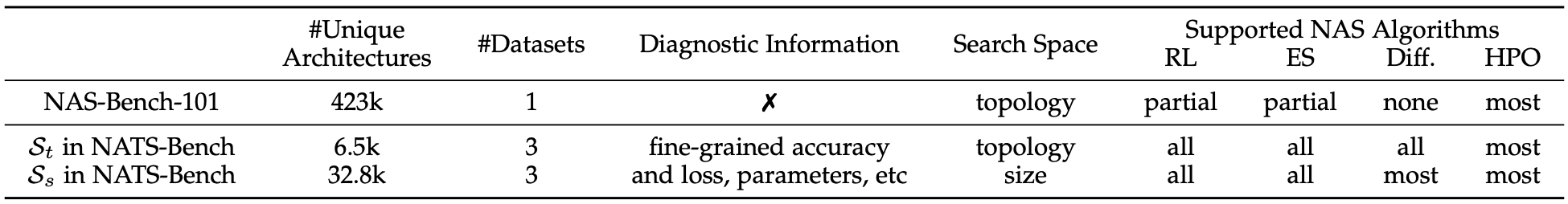

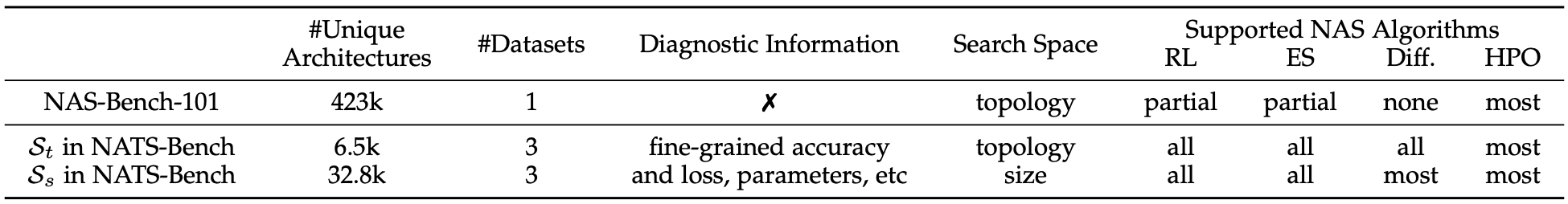

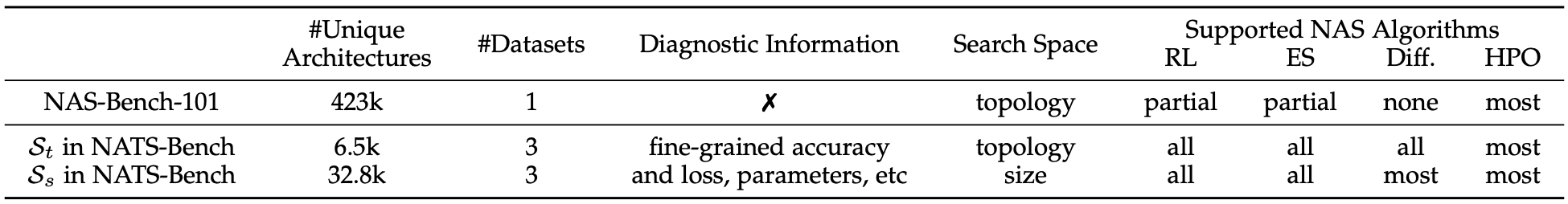

This figure is the main difference between `NATS-Bench`, `NAS-Bench-101`, and `NAS-Bench-201`. The `topology search space` (`$\mathcal{S}_t$`) in `NATS-Bench` is the same as `NAS-Bench-201`, while we upgrade with results of more runs for the architecture candidates, and the benchmarked NAS algorithms have better hyperparameters.

## Preparation and Download

**Step-1: download raw vision datasets.** (you can skip this one if you do not use weight-sharing NAS or re-create NATS-Bench).

In NATS-Bench, we (create and) use three image datasets -- CIFAR-10, CIFAR-100, and ImageNet16-120.

For more details, please see Sec-3.2 in [the NATS-Bench paper](https://arxiv.org/pdf/2009.00437.pdf). To download these three datasets, please find them at [Google Drive](https://drive.google.com/drive/folders/1T3UIyZXUhMmIuJLOBMIYKAsJknAtrrO4?usp=sharing).

To create the `ImageNet16-120` PyTorch dataset, please call [AutoDL-Projects/lib/datasets/ImageNet16](https://github.com/D-X-Y/AutoDL-Projects/blob/main/xautodl/datasets/get_dataset_with_transform.py#L222-L225), by using:

```

train_data = ImageNet16(root, True , train_transform, 120)

test_data = ImageNet16(root, False, test_transform , 120)

```

**Step-2: download benchmark files of NATS-Bench.**

The **latest** benchmark file of NATS-Bench can be downloaded from [Google Drive](https://drive.google.com/drive/folders/1zjB6wMANiKwB2A1yil2hQ8H_qyeSe2yt?usp=sharing).

After download `NATS-[tss/sss]-[version]-[md5sum]-simple.tar`, please uncompress it by using `tar xvf [file_name]`.

We highly recommend to put the downloaded benchmark file (`NATS-sss-v1_0-50262.pickle.pbz2` / `NATS-tss-v1_0-3ffb9.pickle.pbz2`) or uncompressed archive (`NATS-sss-v1_0-50262-simple` / `NATS-tss-v1_0-3ffb9-simple`) into `$TORCH_HOME`.

In this way, our api will automatically find the path for these benchmark files, which are convenient for the users. Otherwise, you need to indicate the file when creating the benchmark instance manually.

The history of benchmark files is as follows, `tss` indicates the topology search space and `sss` indicates the size search space.

The benchmark file is used when creating the NATS-Bench instance with `fast_mode=False`.

The archive is used when `fast_mode=True`, where `archive` is a directory containing 15,625 files for tss or contains 32,768 files for sss.

Each file contains all the information for a specific architecture candidate.

The `full archive` is similar to `archive`, while each file in `full archive` contains **the trained weights**.

Since the full archive is too large, we use `split -b 30G file_name file_name` to split it into multiple 30G chunks.

To merge the chunks into the original full archive, you can use `cat file_name* > file_name`.

| Date | benchmark file (tss) | (unpacked benchmark file) archive (tss) | full archive (tss) | benchmark file (sss) | (unpacked benchmark file) archive (sss) | full archive (sss) |

|:-----------|:---------------------:|:-------------:|:------------------:|:-------------------------------:|:--------------------------:|:------------------:|

| 2020.08.31 | [NATS-tss-v1_0-3ffb9.pickle.pbz2](https://drive.google.com/file/d/1vzyK0UVH2D3fTpa1_dSWnp1gvGpAxRul/view?usp=sharing) | [NATS-tss-v1_0-3ffb9-simple.tar](https://drive.google.com/file/d/17_saCsj_krKjlCBLOJEpNtzPXArMCqxU/view?usp=sharing) | [NATS-tss-v1_0-3ffb9-full](https://drive.google.com/drive/folders/17S2Xg_rVkUul4KuJdq0WaWoUuDbo8ZKB?usp=sharing) | [NATS-sss-v1_0-50262.pickle.pbz2](https://drive.google.com/file/d/1IabIvzWeDdDAWICBzFtTCMXxYWPIOIOX/view?usp=sharing) | [NATS-sss-v1_0-50262-simple.tar](https://drive.google.com/file/d/1scOMTUwcQhAMa_IMedp9lTzwmgqHLGgA/view?usp=sharing) | [NATS-sss-v1_0-50262-full](https://drive.google.com/drive/folders/1xutPQJ4bHoUV1EMArsPD0c1bUqvtMuYY?usp=sharing) |

| 2021.04.22 (Baidu-Pan) | [NATS-tss-v1_0-3ffb9.pickle.pbz2](https://pan.baidu.com/s/10z20F5s2RRPzGwRO40fLTw) (code: 8duj) | [NATS-tss-v1_0-3ffb9-simple.tar](https://pan.baidu.com/s/1vOnrHLxCB4y8cxUDrHUYAg) (code: tu1e) | [NATS-tss-v1_0-3ffb9-full](https://pan.baidu.com/s/1qbPNlI8Y1I29qMdxTo_ycA) (code:ssub) | [NATS-sss-v1_0-50262.pickle.pbz2](https://pan.baidu.com/s/1M1UaXr6y1D_RqEYg95YJcA) (code: za2h) | [NATS-sss-v1_0-50262-simple.tar](https://pan.baidu.com/s/1ek-b89Pw2qdm9MP6KKkErA) (code: e4t9) | [NATS-sss-v1_0-50262-full](https://pan.baidu.com/s/1bIruQd9pPeyArej_wttg_A) (code: htif) |

These benchmark files (without pretrained weights) can also be downloaded from [Dropbox](https://www.dropbox.com/sh/ceeo70u1buow681/AAC2M-SbKOxiIqpB0UCgXNxja?dl=0), [OneDrive](https://1drv.ms/u/s!Aqkc27lrowWDf6SvuIkSXx0UQaI?e=nfvM5r) or [Baidu-Pan (extract code: h6pm)](https://pan.baidu.com/s/144VC2BDm6iXbAVzMUpqO7A).

For the full checkpoints in `NATS-*ss-*-full`, we split the file into multiple parts (`NATS-*ss-*-full.tara*`) since they are too large to upload.

Each file is about `30GB`. For Baidu Pan, since they restrict the maximum size of each file, we further split `NATS-*ss-*-full.tara*` into `NATS-*ss-*-full.tara*-aa` and `NATS-*ss-*-full.tara*-ab`.

All splits are created by the command `split`.

**Note:** if you encounter the `quota exceed erros` when download from Google Drive, please try to (1) login your personal Google account, (2) right-click-copy the files to your personal Google Drive, and (3) download from your personal Google Drive.

## Usage

See more examples at [notebooks](notebooks).

#### 1, create the benchmark instance:

```

from nats_bench import create

# Create the API instance for the size search space in NATS

api = create(None, 'sss', fast_mode=True, verbose=True)

# Create the API instance for the topology search space in NATS

api = create(None, 'tss', fast_mode=True, verbose=True)

```

#### 2, query the performance:

```

# Show the architecture topology string of the 12-th architecture

# For the topology search space, the string is interpreted as

# arch = '|{}~0|+|{}~0|{}~1|+|{}~0|{}~1|{}~2|'.format(

# edge_node_0_to_node_1,

# edge_node_0_to_node_2,

# edge_node_1_to_node_2,

# edge_node_0_to_node_3,

# edge_node_1_to_node_3,

# edge_node_2_to_node_3,

# )

# For the size search space, the string is interpreted as

# arch = '{}:{}:{}:{}:{}'.format(out_channel_of_1st_conv_layer,

# out_channel_of_1st_cell_stage,

# out_channel_of_1st_residual_block,

# out_channel_of_2nd_cell_stage,

# out_channel_of_2nd_residual_block,

# )

architecture_str = api.arch(12)

print(architecture_str)

# Query the loss / accuracy / time for 1234-th candidate architecture on CIFAR-10

# info is a dict, where you can easily figure out the meaning by key

info = api.get_more_info(1234, 'cifar10')

# Query the flops, params, latency. info is a dict.

info = api.get_cost_info(12, 'cifar10')

# Simulate the training of the 1224-th candidate:

validation_accuracy, latency, time_cost, current_total_time_cost = api.simulate_train_eval(1224, dataset='cifar10', hp='12')

```

#### 3, create the instance of an architecture candidate in `NATS-Bench`:

```

# Create the instance of th 12-th candidate for CIFAR-10.

# To keep NATS-Bench repo concise, we did not include any model-related codes here because they rely on PyTorch.

# The package of [models] is defined at https://github.com/D-X-Y/AutoDL-Projects

# so that one need to first import this package.

import xautodl

from xautodl.models import get_cell_based_tiny_net

config = api.get_net_config(12, 'cifar10')

network = get_cell_based_tiny_net(config)

# Load the pre-trained weights: params is a dict, where the key is the seed and value is the weights.

params = api.get_net_param(12, 'cifar10', None)

network.load_state_dict(next(iter(params.values())))

```

#### 4, others:

```

# Clear the parameters of the 12-th candidate.

api.clear_params(12)

# Reload all information of the 12-th candidate.

api.reload(index=12)

```

Please see [`api_test.py`](https://github.com/D-X-Y/NATS-Bench/blob/main/tests/api_test.py) for more examples.

```

from nats_bench import api_test

api_test.test_nats_bench_tss('NATS-tss-v1_0-3ffb9-simple')

api_test.test_nats_bench_tss('NATS-sss-v1_0-50262-simple')

```

## How to Re-create NATS-Bench from Scratch

**You need to use the [AutoDL-Projects](https://github.com/D-X-Y/AutoDL-Projects) repo to re-create NATS-Bench from scratch.**

### The Size Search Space

The following command will train all architecture candidate in the size search space with 90 epochs and use the random seed of `777`.

If you want to use a different number of training epochs, please replace `90` with it, such as `01` or `12`.

```

bash ./scripts/NATS-Bench/train-shapes.sh 00000-32767 90 777

```

The checkpoint of all candidates are located at `output/NATS-Bench-size` by default.

After training these candidate architectures, please use the following command to re-organize all checkpoints into the official benchmark file.

```

python exps/NATS-Bench/sss-collect.py

```

### The Topology Search Space

The following command will train all architecture candidate in the topology search space with 200 epochs and use the random seed of `777`/`888`/`999`.

If you want to use a different number of training epochs, please replace `200` with it, such as `12`.

```

bash scripts/NATS-Bench/train-topology.sh 00000-15624 200 '777 888 999'

```

The checkpoint of all candidates are located at `output/NATS-Bench-topology` by default.

After training these candidate architectures, please use the following command to re-organize all checkpoints into the official benchmark file.

```

python exps/NATS-Bench/tss-collect.py

```

## To Reproduce 13 Baseline NAS Algorithms in NATS-Bench

**You need to use the [AutoDL-Projects](https://github.com/D-X-Y/AutoDL-Projects) repo to run 13 baseline NAS methods.** Here are a brief introduction on how to run each algorithm ([NATS-algos](https://github.com/D-X-Y/AutoDL-Projects/tree/main/exps/NATS-algos)).

### Reproduce NAS methods on the topology search space

Please use the following commands to run different NAS methods on the topology search space:

```

Four multi-trial based methods:

python ./exps/NATS-algos/reinforce.py --dataset cifar100 --search_space tss --learning_rate 0.01

python ./exps/NATS-algos/regularized_ea.py --dataset cifar100 --search_space tss --ea_cycles 200 --ea_population 10 --ea_sample_size 3

python ./exps/NATS-algos/random_wo_share.py --dataset cifar100 --search_space tss

python ./exps/NATS-algos/bohb.py --dataset cifar100 --search_space tss --num_samples 4 --random_fraction 0.0 --bandwidth_factor 3

DARTS (first order):

python ./exps/NATS-algos/search-cell.py --dataset cifar10 --data_path $TORCH_HOME/cifar.python --algo darts-v1

python ./exps/NATS-algos/search-cell.py --dataset cifar100 --data_path $TORCH_HOME/cifar.python --algo darts-v1

python ./exps/NATS-algos/search-cell.py --dataset ImageNet16-120 --data_path $TORCH_HOME/cifar.python/ImageNet16 --algo darts-v1

DARTS (second order):

python ./exps/NATS-algos/search-cell.py --dataset cifar10 --data_path $TORCH_HOME/cifar.python --algo darts-v2

python ./exps/NATS-algos/search-cell.py --dataset cifar100 --data_path $TORCH_HOME/cifar.python --algo darts-v2

python ./exps/NATS-algos/search-cell.py --dataset ImageNet16-120 --data_path $TORCH_HOME/cifar.python/ImageNet16 --algo darts-v2

GDAS:

python ./exps/NATS-algos/search-cell.py --dataset cifar10 --data_path $TORCH_HOME/cifar.python --algo gdas

python ./exps/NATS-algos/search-cell.py --dataset cifar100 --data_path $TORCH_HOME/cifar.python --algo gdas

python ./exps/NATS-algos/search-cell.py --dataset ImageNet16-120 --data_path $TORCH_HOME/cifar.python/ImageNet16

SETN:

python ./exps/NATS-algos/search-cell.py --dataset cifar10 --data_path $TORCH_HOME/cifar.python --algo setn

python ./exps/NATS-algos/search-cell.py --dataset cifar100 --data_path $TORCH_HOME/cifar.python --algo setn

python ./exps/NATS-algos/search-cell.py --dataset ImageNet16-120 --data_path $TORCH_HOME/cifar.python/ImageNet16 --algo setn

Random Search with Weight Sharing:

python ./exps/NATS-algos/search-cell.py --dataset cifar10 --data_path $TORCH_HOME/cifar.python --algo random

python ./exps/NATS-algos/search-cell.py --dataset cifar100 --data_path $TORCH_HOME/cifar.python --algo random

python ./exps/NATS-algos/search-cell.py --dataset ImageNet16-120 --data_path $TORCH_HOME/cifar.python/ImageNet16 --algo random

ENAS:

python ./exps/NATS-algos/search-cell.py --dataset cifar10 --data_path $TORCH_HOME/cifar.python --algo enas --arch_weight_decay 0 --arch_learning_rate 0.001 --arch_eps 0.001

python ./exps/NATS-algos/search-cell.py --dataset cifar100 --data_path $TORCH_HOME/cifar.python --algo enas --arch_weight_decay 0 --arch_learning_rate 0.001 --arch_eps 0.001

python ./exps/NATS-algos/search-cell.py --dataset ImageNet16-120 --data_path $TORCH_HOME/cifar.python/ImageNet16 --algo enas --arch_weight_decay 0 --arch_learning_rate 0.001 --arch_eps 0.001

```

### Reproduce NAS methods on the size search space

Please use the following commands to run different NAS methods on the size search space:

```

Four multi-trial based methods:

python ./exps/NATS-algos/reinforce.py --dataset cifar100 --search_space sss --learning_rate 0.01

python ./exps/NATS-algos/regularized_ea.py --dataset cifar100 --search_space sss --ea_cycles 200 --ea_population 10 --ea_sample_size 3

python ./exps/NATS-algos/random_wo_share.py --dataset cifar100 --search_space sss

python ./exps/NATS-algos/bohb.py --dataset cifar100 --search_space sss --num_samples 4 --random_fraction 0.0 --bandwidth_factor 3

Run Transformable Architecture Search (TAS), proposed in Network Pruning via Transformable Architecture Search, NeurIPS 2019

python ./exps/NATS-algos/search-size.py --dataset cifar10 --data_path $TORCH_HOME/cifar.python --algo tas --rand_seed 777

python ./exps/NATS-algos/search-size.py --dataset cifar100 --data_path $TORCH_HOME/cifar.python --algo tas --rand_seed 777

python ./exps/NATS-algos/search-size.py --dataset ImageNet16-120 --data_path $TORCH_HOME/cifar.python/ImageNet16 --algo tas --rand_seed 777

Run the channel search strategy in FBNet-V2 -- masking + Gumbel-Softmax :

python ./exps/NATS-algos/search-size.py --dataset cifar10 --data_path $TORCH_HOME/cifar.python --algo mask_gumbel --rand_seed 777

python ./exps/NATS-algos/search-size.py --dataset cifar100 --data_path $TORCH_HOME/cifar.python --algo mask_gumbel --rand_seed 777

python ./exps/NATS-algos/search-size.py --dataset ImageNet16-120 --data_path $TORCH_HOME/cifar.python/ImageNet16 --algo mask_gumbel --rand_seed 777

Run the channel search strategy in TuNAS -- masking + sampling :

python ./exps/NATS-algos/search-size.py --dataset cifar10 --data_path $TORCH_HOME/cifar.python --algo mask_rl --arch_weight_decay 0 --rand_seed 777 --use_api 0

python ./exps/NATS-algos/search-size.py --dataset cifar100 --data_path $TORCH_HOME/cifar.python --algo mask_rl --arch_weight_decay 0 --rand_seed 777

python ./exps/NATS-algos/search-size.py --dataset ImageNet16-120 --data_path $TORCH_HOME/cifar.python/ImageNet16 --algo mask_rl --arch_weight_decay 0 --rand_seed 777

```

### Final Discovered Architectures for Each Algorithm

The architecture index can be found by use `api.query_index_by_arch(architecture_string)`.

The final discovered architecture ID on CIFAR-10:

```

DARTS (first order):

|skip_connect~0|+|skip_connect~0|skip_connect~1|+|skip_connect~0|skip_connect~1|skip_connect~2|

|skip_connect~0|+|skip_connect~0|skip_connect~1|+|skip_connect~0|skip_connect~1|skip_connect~2|

|skip_connect~0|+|skip_connect~0|skip_connect~1|+|skip_connect~0|skip_connect~1|skip_connect~2|

DARTS (second order):

|skip_connect~0|+|skip_connect~0|skip_connect~1|+|skip_connect~0|skip_connect~1|skip_connect~2|

|skip_connect~0|+|skip_connect~0|skip_connect~1|+|skip_connect~0|skip_connect~1|skip_connect~2|

|skip_connect~0|+|skip_connect~0|skip_connect~1|+|skip_connect~0|skip_connect~1|skip_connect~2|

GDAS:

|nor_conv_3x3~0|+|nor_conv_3x3~0|none~1|+|nor_conv_1x1~0|nor_conv_3x3~1|nor_conv_3x3~2|

|nor_conv_3x3~0|+|nor_conv_3x3~0|none~1|+|nor_conv_3x3~0|nor_conv_3x3~1|nor_conv_3x3~2|

|avg_pool_3x3~0|+|nor_conv_3x3~0|skip_connect~1|+|nor_conv_3x3~0|nor_conv_1x1~1|nor_conv_1x1~2|

```

The final discovered architecture ID on CIFAR-100:

```

DARTS (V1):

|none~0|+|skip_connect~0|none~1|+|skip_connect~0|nor_conv_1x1~1|none~2|

|none~0|+|skip_connect~0|none~1|+|skip_connect~0|nor_conv_1x1~1|none~2|

|skip_connect~0|+|skip_connect~0|none~1|+|skip_connect~0|nor_conv_1x1~1|nor_conv_3x3~2|

DARTS (V2):

|none~0|+|skip_connect~0|none~1|+|skip_connect~0|nor_conv_1x1~1|skip_connect~2|

|skip_connect~0|+|nor_conv_3x3~0|none~1|+|skip_connect~0|none~1|none~2|

|skip_connect~0|+|nor_conv_1x1~0|none~1|+|nor_conv_3x3~0|skip_connect~1|none~2|

GDAS:

|nor_conv_3x3~0|+|nor_conv_1x1~0|none~1|+|avg_pool_3x3~0|nor_conv_3x3~1|nor_conv_3x3~2|

|avg_pool_3x3~0|+|nor_conv_1x1~0|none~1|+|nor_conv_3x3~0|avg_pool_3x3~1|nor_conv_1x1~2|

|avg_pool_3x3~0|+|nor_conv_3x3~0|none~1|+|nor_conv_3x3~0|nor_conv_1x1~1|nor_conv_1x1~2|

```

The final discovered architecture ID on ImageNet-16-120:

```

DARTS (V1):

|none~0|+|skip_connect~0|none~1|+|skip_connect~0|none~1|nor_conv_3x3~2|

|none~0|+|skip_connect~0|none~1|+|skip_connect~0|none~1|nor_conv_3x3~2|

|none~0|+|skip_connect~0|none~1|+|skip_connect~0|none~1|nor_conv_1x1~2|

DARTS (V2):

|none~0|+|skip_connect~0|none~1|+|skip_connect~0|none~1|skip_connect~2|

GDAS:

|none~0|+|none~0|none~1|+|nor_conv_3x3~0|none~1|none~2|

|none~0|+|none~0|none~1|+|nor_conv_3x3~0|none~1|none~2|

|none~0|+|none~0|none~1|+|nor_conv_3x3~0|none~1|none~2|

```

## Others

We use [`black`](https://github.com/psf/black) for Python code formatter.

Please use `black . -l 120`.

## Citation

If you find that NATS-Bench helps your research, please consider citing it:

```

@article{dong2021nats,

title = {{NATS-Bench}: Benchmarking NAS Algorithms for Architecture Topology and Size},

author = {Dong, Xuanyi and Liu, Lu and Musial, Katarzyna and Gabrys, Bogdan},

doi = {10.1109/TPAMI.2021.3054824},

journal = {IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI)},

year = {2021},

note = {\mbox{doi}:\url{10.1109/TPAMI.2021.3054824}}

}

@inproceedings{dong2020nasbench201,

title = {{NAS-Bench-201}: Extending the Scope of Reproducible Neural Architecture Search},

author = {Dong, Xuanyi and Yang, Yi},

booktitle = {International Conference on Learning Representations (ICLR)},

url = {https://openreview.net/forum?id=HJxyZkBKDr},

year = {2020}

}

```

================================================

FILE: nats_bench/__init__.py

================================================

##############################################################################

# Copyright (c) Xuanyi Dong [GitHub D-X-Y], 2020.08 ##########################

##############################################################################

# NATS-Bench: Benchmarking NAS Algorithms for Architecture Topology and Size #

##############################################################################

"""The official Application Programming Interface (API) for NATS-Bench."""

from typing import Text, Optional

from nats_bench.api_size import NATSsize

from nats_bench.api_topology import NATStopology

from nats_bench.api_utils import ArchResults

from nats_bench.api_utils import pickle_load

from nats_bench.api_utils import pickle_save

from nats_bench.api_utils import ResultsCount

NATS_BENCH_API_VERSIONs = [

"v1.0", # [2020.08.31] initialize

"v1.1", # [2020.12.20] add unit tests

"v1.2", # [2021.03.17] black re-formulate

"v1.3", # [2021.04.08] fix find_best issue for fast_mode=True

"v1.4", # [2021.04.30] add topology_str2structure

"v1.5", # [2021.12.09] make simulate_train_eval more robust

"v1.6", # [2022.01.19] fix the inconsistent flop/params which is caused by a legacy (weight migration) issue

"v1.7", # [2022.03.25] relax enforce_all kwargs and add a test

"v1.8", # [2022.10.06] fix bugs at issues/44

]

NATS_BENCH_SSS_NAMEs = ("sss", "size")

NATS_BENCH_TSS_NAMEs = ("tss", "topology")

def version():

return NATS_BENCH_API_VERSIONs[-1]

def create(file_path_or_dict, search_space, fast_mode=False, verbose=True):

"""Create the instead for NATS API.

Args:

file_path_or_dict: None or a file path or a directory path.

search_space: This is a string indicates the search space in NATS-Bench.

fast_mode: If True, we will not load all the data at initialization,

instead, the data for each candidate architecture will be loaded when

quering it; If False, we will load all the data during initialization.

verbose: This is a flag to indicate whether log additional information.

Raises:

ValueError: If not find the matched serach space description.

Returns:

The created NATS-Bench API.

"""

if search_space in NATS_BENCH_TSS_NAMEs:

return NATStopology(file_path_or_dict, fast_mode, verbose)

elif search_space in NATS_BENCH_SSS_NAMEs:

return NATSsize(file_path_or_dict, fast_mode, verbose)

else:

raise ValueError("invalid search space : {:}".format(search_space))

def search_space_info(main_tag: Text, aux_tag: Optional[Text]):

"""Obtain the search space information."""

nats_sss = dict(candidates=[8, 16, 24, 32, 40, 48, 56, 64], num_layers=5)

nats_tss = dict(

op_names=[

"none",

"skip_connect",

"nor_conv_1x1",

"nor_conv_3x3",

"avg_pool_3x3",

],

num_nodes=4,

)

if main_tag == "nats-bench":

if aux_tag in NATS_BENCH_SSS_NAMEs:

return nats_sss

elif aux_tag in NATS_BENCH_TSS_NAMEs:

return nats_tss

else:

raise ValueError("Unknown auxiliary tag: {:}".format(aux_tag))

elif main_tag == "nas-bench-201":

if aux_tag is not None:

raise ValueError("For NAS-Bench-201, the auxiliary tag should be None.")

return nats_tss

else:

raise ValueError("Unknown main tag: {:}".format(main_tag))

================================================

FILE: nats_bench/api_size.py

================================================

#####################################################

# Copyright (c) Xuanyi Dong [GitHub D-X-Y], 2020.08 #

##############################################################################

# NATS-Bench: Benchmarking NAS Algorithms for Architecture Topology and Size #

##############################################################################

# The history of benchmark files are as follows, #

# where the format is (the name is NATS-sss-[version]-[md5].pickle.pbz2) #

# [2020.08.31] NATS-sss-v1_0-50262.pickle.pbz2 #

##############################################################################

# pylint: disable=line-too-long

"""The API for size search space in NATS-Bench."""

import collections

import copy

import os

import random

from typing import Dict, Optional, Text, Union, Any

from nats_bench.api_utils import ArchResults

from nats_bench.api_utils import NASBenchMetaAPI

from nats_bench.api_utils import get_torch_home

from nats_bench.api_utils import nats_is_dir

from nats_bench.api_utils import nats_is_file

from nats_bench.api_utils import PICKLE_EXT

from nats_bench.api_utils import pickle_load

from nats_bench.api_utils import time_string

ALL_BASE_NAMES = ["NATS-sss-v1_0-50262"]

def print_information(information, extra_info=None, show=False):

"""print out the information of a given ArchResults."""

dataset_names = information.get_dataset_names()

strings = [

information.arch_str,

"datasets : {:}, extra-info : {:}".format(dataset_names, extra_info),

]

def metric2str(loss, acc):

return "loss = {:.3f} & top1 = {:.2f}%".format(loss, acc)

for dataset in dataset_names:

metric = information.get_compute_costs(dataset)

flop, param, latency = metric["flops"], metric["params"], metric["latency"]

str1 = "{:14s} FLOP={:6.2f} M, Params={:.3f} MB, latency={:} ms.".format(

dataset,

flop,

param,

"{:.2f}".format(latency * 1000)

if latency is not None and latency > 0

else None,

)

train_info = information.get_metrics(dataset, "train")

if dataset == "cifar10-valid":

valid_info = information.get_metrics(dataset, "x-valid")

test__info = information.get_metrics(dataset, "ori-test")

str2 = "{:14s} train : [{:}], valid : [{:}], test : [{:}]".format(

dataset,

metric2str(train_info["loss"], train_info["accuracy"]),

metric2str(valid_info["loss"], valid_info["accuracy"]),

metric2str(test__info["loss"], test__info["accuracy"]),

)

elif dataset == "cifar10":

test__info = information.get_metrics(dataset, "ori-test")

str2 = "{:14s} train : [{:}], test : [{:}]".format(

dataset,

metric2str(train_info["loss"], train_info["accuracy"]),

metric2str(test__info["loss"], test__info["accuracy"]),

)

else:

valid_info = information.get_metrics(dataset, "x-valid")

test__info = information.get_metrics(dataset, "x-test")

str2 = "{:14s} train : [{:}], valid : [{:}], test : [{:}]".format(

dataset,

metric2str(train_info["loss"], train_info["accuracy"]),

metric2str(valid_info["loss"], valid_info["accuracy"]),

metric2str(test__info["loss"], test__info["accuracy"]),

)

strings += [str1, str2]

if show:

print("\n".join(strings))

return strings

class NATSsize(NASBenchMetaAPI):

"""This is the class for the API of size search space in NATS-Bench."""

def __init__(

self,

file_path_or_dict: Optional[Union[Text, Dict[Text, Any]]] = None,

fast_mode: bool = False,

verbose: bool = True,

):

"""The initialization function that takes the dataset file path (or a dict loaded from that path) as input."""

self._all_base_names = ALL_BASE_NAMES

self.filename = None

self._search_space_name = "size"

self._fast_mode = fast_mode

self._archive_dir = None

self._full_train_epochs = 90

self.reset_time()

if file_path_or_dict is None:

if self._fast_mode:

self._archive_dir = os.path.join(

get_torch_home(), "{:}-simple".format(ALL_BASE_NAMES[-1])

)

else:

file_path_or_dict = os.path.join(

get_torch_home(), "{:}.{:}".format(ALL_BASE_NAMES[-1], PICKLE_EXT)

)

print(

"{:} Try to use the default NATS-Bench (size) path from "

"fast_mode={:} and path={:}.".format(

time_string(), self._fast_mode, file_path_or_dict

)

)

if isinstance(file_path_or_dict, str):

file_path_or_dict = str(file_path_or_dict)

if verbose:

print(

"{:} Try to create the NATS-Bench (size) api "

"from {:} with fast_mode={:}".format(

time_string(), file_path_or_dict, fast_mode

)

)

if not nats_is_file(file_path_or_dict) and not nats_is_dir(

file_path_or_dict

):

raise ValueError(

"{:} is neither a file or a dir.".format(file_path_or_dict)

)

self.filename = os.path.basename(file_path_or_dict)

if fast_mode:

if nats_is_file(file_path_or_dict):

raise ValueError(

"fast_mode={:} must feed the path for directory "

": {:}".format(fast_mode, file_path_or_dict)

)

else:

self._archive_dir = file_path_or_dict

else:

if nats_is_dir(file_path_or_dict):

raise ValueError(

"fast_mode={:} must feed the path for file "

": {:}".format(fast_mode, file_path_or_dict)

)

else:

file_path_or_dict = pickle_load(file_path_or_dict)

elif isinstance(file_path_or_dict, dict):

file_path_or_dict = copy.deepcopy(file_path_or_dict)

self.verbose = verbose

if isinstance(file_path_or_dict, dict):

keys = ("meta_archs", "arch2infos", "evaluated_indexes")

for key in keys:

if key not in file_path_or_dict:

raise ValueError("Can not find key[{:}] in the dict".format(key))

self.meta_archs = copy.deepcopy(file_path_or_dict["meta_archs"])

# NOTE(xuanyidong): This is a dict mapping each architecture to a dict,

# where the key is #epochs and the value is ArchResults

self.arch2infos_dict = collections.OrderedDict()

self._avaliable_hps = set()

for xkey in sorted(list(file_path_or_dict["arch2infos"].keys())):

all_infos = file_path_or_dict["arch2infos"][xkey]

hp2archres = collections.OrderedDict()

for hp_key, results in all_infos.items():

hp2archres[hp_key] = ArchResults.create_from_state_dict(results)

self._avaliable_hps.add(

hp_key

) # save the avaliable hyper-parameter

self.arch2infos_dict[xkey] = hp2archres

self.evaluated_indexes = set(file_path_or_dict["evaluated_indexes"])

elif self.archive_dir is not None:

benchmark_meta = pickle_load(

"{:}/meta.{:}".format(self.archive_dir, PICKLE_EXT)

)

self.meta_archs = copy.deepcopy(benchmark_meta["meta_archs"])

self.arch2infos_dict = collections.OrderedDict()

self._avaliable_hps = set()

self.evaluated_indexes = set()

else:

raise ValueError(

"file_path_or_dict [{:}] must be a dict or archive_dir "

"must be set".format(type(file_path_or_dict))

)

self.archstr2index = {}

for idx, arch in enumerate(self.meta_archs):

if arch in self.archstr2index:

raise ValueError(

"This [{:}]-th arch {:} already in the "

"dict ({:}).".format(idx, arch, self.archstr2index[arch])

)

self.archstr2index[arch] = idx

if self.verbose:

print(

"{:} Create NATS-Bench (size) done with {:}/{:} architectures "

"avaliable.".format(

time_string(), len(self.evaluated_indexes), len(self.meta_archs)

)

)

@property

def is_size(self):

return True

@property

def is_topology(self):

return False

@property

def full_epochs_in_paper(self):

return 90

def query_info_str_by_arch(self, arch, hp: Text = "12"):

"""Query the information of a specific architecture.

Args:

arch: it can be an architecture index or an architecture string.

hp: the hyperparamete indicator, could be 01, 12, or 90. The difference

between these three configurations are the number of training epochs.

Returns:

ArchResults instance

"""

if self.verbose:

print(

"{:} Call query_info_str_by_arch with arch={:}"

"and hp={:}".format(time_string(), arch, hp)

)

return self._query_info_str_by_arch(arch, hp, print_information)

def get_more_info(

self, index, dataset, iepoch=None, hp: Text = "12", is_random: bool = True

):

"""Return the metric for the `index`-th architecture.

Args:

index: the architecture index.

dataset:

'cifar10-valid' : using the proposed train set of CIFAR-10 as the training set

'cifar10' : using the proposed train+valid set of CIFAR-10 as the training set

'cifar100' : using the proposed train set of CIFAR-100 as the training set

'ImageNet16-120' : using the proposed train set of ImageNet-16-120 as the training set

iepoch: the index of training epochs from 0 to 11/199.

When iepoch=None, it will return the metric for the last training epoch

When iepoch=11, it will return the metric for the 11-th training epoch (starting from 0)

hp: indicates different hyper-parameters for training

When hp=01, it trains the network with 01 epochs and the LR decayed from 0.1 to 0 within 01 epochs

When hp=12, it trains the network with 01 epochs and the LR decayed from 0.1 to 0 within 12 epochs

When hp=90, it trains the network with 01 epochs and the LR decayed from 0.1 to 0 within 90 epochs

is_random:

When is_random=True, the performance of a random architecture will be returned

When is_random=False, the performanceo of all trials will be averaged.

Returns:

a dict, where key is the metric name and value is its value.

"""

if self.verbose:

print(

"{:} Call the get_more_info function with index={:}, dataset={:}, "

"iepoch={:}, hp={:}, and is_random={:}.".format(

time_string(), index, dataset, iepoch, hp, is_random

)

)

index = self.query_index_by_arch(

index

) # To avoid the input is a string or an instance of a arch object

self._prepare_info(index)

if index not in self.arch2infos_dict:

raise ValueError("Did not find {:} from arch2infos_dict.".format(index))

archresult = self.arch2infos_dict[index][str(hp)]

# if randomly select one trial, select the seed at first

if isinstance(is_random, bool) and is_random:

seeds = archresult.get_dataset_seeds(dataset)

is_random = random.choice(seeds)

# collect the training information

train_info = archresult.get_metrics(

dataset, "train", iepoch=iepoch, is_random=is_random

)

total = train_info["iepoch"] + 1

xinfo = {

"train-loss": train_info["loss"],

"train-accuracy": train_info["accuracy"],

"train-per-time": train_info["all_time"] / total,

"train-all-time": train_info["all_time"],

}

# collect the evaluation information

if dataset == "cifar10-valid":

valid_info = archresult.get_metrics(

dataset, "x-valid", iepoch=iepoch, is_random=is_random

)

try:

test_info = archresult.get_metrics(

dataset, "ori-test", iepoch=iepoch, is_random=is_random

)

except Exception as unused_e: # pylint: disable=broad-except

test_info = None

valtest_info = None

xinfo[

"comment"

] = "In this dict, train-loss/accuracy/time is the metric on the train set of CIFAR-10. The test-loss/accuracy/time is the performance of the CIFAR-10 test set after training on the train set by {:} epochs. The per-time and total-time indicate the per epoch and total time costs, respectively.".format(

hp

)

else:

if dataset == "cifar10":

xinfo[

"comment"

] = "In this dict, train-loss/accuracy/time is the metric on the train+valid sets of CIFAR-10. The test-loss/accuracy/time is the performance of the CIFAR-10 test set after training on the train+valid sets by {:} epochs. The per-time and total-time indicate the per epoch and total time costs, respectively.".format(

hp

)

try: # collect results on the proposed test set

if dataset == "cifar10":

test_info = archresult.get_metrics(

dataset, "ori-test", iepoch=iepoch, is_random=is_random

)

else:

test_info = archresult.get_metrics(

dataset, "x-test", iepoch=iepoch, is_random=is_random

)

except Exception as unused_e: # pylint: disable=broad-except

test_info = None

try: # collect results on the proposed validation set

valid_info = archresult.get_metrics(

dataset, "x-valid", iepoch=iepoch, is_random=is_random

)

except Exception as unused_e: # pylint: disable=broad-except

valid_info = None

try:

if dataset != "cifar10":

valtest_info = archresult.get_metrics(

dataset, "ori-test", iepoch=iepoch, is_random=is_random

)

else:

valtest_info = None

except Exception as unused_e: # pylint: disable=broad-except

valtest_info = None

if valid_info is not None:

xinfo["valid-loss"] = valid_info["loss"]

xinfo["valid-accuracy"] = valid_info["accuracy"]

xinfo["valid-per-time"] = valid_info["all_time"] / total

xinfo["valid-all-time"] = valid_info["all_time"]

if test_info is not None:

xinfo["test-loss"] = test_info["loss"]

xinfo["test-accuracy"] = test_info["accuracy"]

xinfo["test-per-time"] = test_info["all_time"] / total

xinfo["test-all-time"] = test_info["all_time"]

if valtest_info is not None:

xinfo["valtest-loss"] = valtest_info["loss"]

xinfo["valtest-accuracy"] = valtest_info["accuracy"]

xinfo["valtest-per-time"] = valtest_info["all_time"] / total

xinfo["valtest-all-time"] = valtest_info["all_time"]

return xinfo

def show(self, index: int = -1) -> None:

"""Print the information of a specific (or all) architecture(s)."""

self._show(index, print_information)

================================================

FILE: nats_bench/api_topology.py

================================================

#####################################################

# Copyright (c) Xuanyi Dong [GitHub D-X-Y], 2020.08 #

##############################################################################

# NATS-Bench: Benchmarking NAS Algorithms for Architecture Topology and Size #

##############################################################################

# The history of benchmark files are as follows, #

# where the format is (the name is NATS-tss-[version]-[md5].pickle.pbz2) #

# [2020.08.31] NATS-tss-v1_0-3ffb9.pickle.pbz2 #

##############################################################################

# pylint: disable=line-too-long

"""The API for topology search space in NATS-Bench."""

import collections

import copy

import os

import random

from typing import Any, Dict, List, Optional, Text, Union

from nats_bench.api_utils import ArchResults

from nats_bench.api_utils import NASBenchMetaAPI

from nats_bench.api_utils import get_torch_home

from nats_bench.api_utils import nats_is_dir

from nats_bench.api_utils import nats_is_file

from nats_bench.api_utils import PICKLE_EXT

from nats_bench.api_utils import pickle_load

from nats_bench.api_utils import time_string

from nats_bench.genotype_utils import topology_str2structure

ALL_BASE_NAMES = ["NATS-tss-v1_0-3ffb9"]

def print_information(information, extra_info=None, show=False):

"""print out the information of a given ArchResults."""

dataset_names = information.get_dataset_names()

strings = [

information.arch_str,

"datasets : {:}, extra-info : {:}".format(dataset_names, extra_info),

]

def metric2str(loss, acc):

return "loss = {:.3f} & top1 = {:.2f}%".format(loss, acc)

for dataset in dataset_names:

metric = information.get_compute_costs(dataset)

flop, param, latency = metric["flops"], metric["params"], metric["latency"]

str1 = "{:14s} FLOP={:6.2f} M, Params={:.3f} MB, latency={:} ms.".format(

dataset,

flop,

param,

"{:.2f}".format(latency * 1000)

if latency is not None and latency > 0

else None,

)

train_info = information.get_metrics(dataset, "train")

if dataset == "cifar10-valid":

valid_info = information.get_metrics(dataset, "x-valid")

str2 = "{:14s} train : [{:}], valid : [{:}]".format(

dataset,

metric2str(train_info["loss"], train_info["accuracy"]),

metric2str(valid_info["loss"], valid_info["accuracy"]),

)

elif dataset == "cifar10":

test__info = information.get_metrics(dataset, "ori-test")

str2 = "{:14s} train : [{:}], test : [{:}]".format(

dataset,

metric2str(train_info["loss"], train_info["accuracy"]),

metric2str(test__info["loss"], test__info["accuracy"]),

)

else:

valid_info = information.get_metrics(dataset, "x-valid")

test__info = information.get_metrics(dataset, "x-test")

str2 = "{:14s} train : [{:}], valid : [{:}], test : [{:}]".format(

dataset,

metric2str(train_info["loss"], train_info["accuracy"]),

metric2str(valid_info["loss"], valid_info["accuracy"]),

metric2str(test__info["loss"], test__info["accuracy"]),

)

strings += [str1, str2]

if show:

print("\n".join(strings))

return strings

class NATStopology(NASBenchMetaAPI):

"""This is the class for the API of topology search space in NATS-Bench."""

def __init__(

self,

file_path_or_dict: Optional[Union[Text, Dict[Text, Any]]] = None,

fast_mode: bool = False,

verbose: bool = True,

):

"""The initialization function that takes the dataset file path (or a dict loaded from that path) as input."""

self._all_base_names = ALL_BASE_NAMES

self.filename = None

self._search_space_name = "topology"

self._fast_mode = fast_mode

self._archive_dir = None

self._full_train_epochs = 200

self.reset_time()

if file_path_or_dict is None:

if self._fast_mode:

self._archive_dir = os.path.join(

get_torch_home(), "{:}-simple".format(ALL_BASE_NAMES[-1])

)

else:

file_path_or_dict = os.path.join(

get_torch_home(), "{:}.{:}".format(ALL_BASE_NAMES[-1], PICKLE_EXT)

)

print(

"{:} Try to use the default NATS-Bench (topology) path from "

"fast_mode={:} and path={:}.".format(

time_string(), self._fast_mode, file_path_or_dict

)

)

if isinstance(file_path_or_dict, str):

file_path_or_dict = str(file_path_or_dict)

if verbose:

print(

"{:} Try to create the NATS-Bench (topology) api "

"from {:} with fast_mode={:}".format(

time_string(), file_path_or_dict, fast_mode

)

)

if not nats_is_file(file_path_or_dict) and not nats_is_dir(

file_path_or_dict

):

raise ValueError(

"{:} is neither a file or a dir.".format(file_path_or_dict)

)

self.filename = os.path.basename(file_path_or_dict)

if fast_mode:

if nats_is_file(file_path_or_dict):

raise ValueError(

"fast_mode={:} must feed the path for directory "

": {:}".format(fast_mode, file_path_or_dict)

)

else:

self._archive_dir = file_path_or_dict

else:

if nats_is_dir(file_path_or_dict):

raise ValueError(

"fast_mode={:} must feed the path for file "

": {:}".format(fast_mode, file_path_or_dict)

)

else:

file_path_or_dict = pickle_load(file_path_or_dict)

elif isinstance(file_path_or_dict, dict):

file_path_or_dict = copy.deepcopy(file_path_or_dict)

self.verbose = verbose

if isinstance(file_path_or_dict, dict):

keys = ("meta_archs", "arch2infos", "evaluated_indexes")

for key in keys:

if key not in file_path_or_dict:

raise ValueError("Can not find key[{:}] in the dict".format(key))

self.meta_archs = copy.deepcopy(file_path_or_dict["meta_archs"])

# NOTE(xuanyidong): This is a dict mapping each architecture to a dict,

# where the key is #epochs and the value is ArchResults

self.arch2infos_dict = collections.OrderedDict()

self._avaliable_hps = set()

for xkey in sorted(list(file_path_or_dict["arch2infos"].keys())):

all_infos = file_path_or_dict["arch2infos"][xkey]

hp2archres = collections.OrderedDict()

for hp_key, results in all_infos.items():

hp2archres[hp_key] = ArchResults.create_from_state_dict(results)

self._avaliable_hps.add(

hp_key

) # save the avaliable hyper-parameter

self.arch2infos_dict[xkey] = hp2archres

self.evaluated_indexes = set(file_path_or_dict["evaluated_indexes"])

elif self.archive_dir is not None:

benchmark_meta = pickle_load(

"{:}/meta.{:}".format(self.archive_dir, PICKLE_EXT)

)

self.meta_archs = copy.deepcopy(benchmark_meta["meta_archs"])

self.arch2infos_dict = collections.OrderedDict()

self._avaliable_hps = set()

self.evaluated_indexes = set()

else:

raise ValueError(

"file_path_or_dict [{:}] must be a dict or archive_dir "

"must be set".format(type(file_path_or_dict))

)

self.archstr2index = {}

for idx, arch in enumerate(self.meta_archs):

if arch in self.archstr2index:

raise ValueError(

"This [{:}]-th arch {:} already in the "

"dict ({:}).".format(idx, arch, self.archstr2index[arch])

)

self.archstr2index[arch] = idx

if self.verbose:

print(

"{:} Create NATS-Bench (topology) done with {:}/{:} architectures "

"avaliable.".format(

time_string(), len(self.evaluated_indexes), len(self.meta_archs)

)

)

@property

def is_size(self):

return False

@property

def is_topology(self):

return True

@property

def full_epochs_in_paper(self):

return 200

def get_unique_str(self, arch):

"""Return a unique string for the isomorphism architectures.

Args:

arch: it can be an architecture index or an architecture string.

Returns:

the unique string.

"""

index = self.query_index_by_arch(

arch

) # To avoid the arch is a string or an instance of a arch object

arch_str = self.meta_archs[index]

structure = topology_str2structure(arch_str)

return structure.to_unique_str(consider_zero=True)

def query_info_str_by_arch(self, arch, hp: Text = "12"):

"""Query the information of a specific architecture.

Args:

arch: it can be an architecture index or an architecture string.

hp: the hyperparamete indicator, could be 12 or 200. The difference

between these three configurations are the number of training epochs.

Returns:

ArchResults instance

"""

if self.verbose:

print(

"{:} Call query_info_str_by_arch with arch={:}"

"and hp={:}".format(time_string(), arch, hp)

)

return self._query_info_str_by_arch(arch, hp, print_information)

def get_more_info(

self, index, dataset, iepoch=None, hp: Text = "12", is_random: bool = True

):

"""Return the metric for the `index`-th architecture."""

if self.verbose:

print(

"{:} Call the get_more_info function with index={:}, dataset={:}, "

"iepoch={:}, hp={:}, and is_random={:}.".format(

time_string(), index, dataset, iepoch, hp, is_random

)

)

index = self.query_index_by_arch(

index

) # To avoid the input is a string or an instance of a arch object

self._prepare_info(index)

if index not in self.arch2infos_dict:

raise ValueError("Did not find {:} from arch2infos_dict.".format(index))

archresult = self.arch2infos_dict[index][str(hp)]

# if randomly select one trial, select the seed at first

if isinstance(is_random, bool) and is_random:

seeds = archresult.get_dataset_seeds(dataset)

is_random = random.choice(seeds)

# collect the training information

train_info = archresult.get_metrics(

dataset, "train", iepoch=iepoch, is_random=is_random

)

total = train_info["iepoch"] + 1

xinfo = {

"train-loss": train_info["loss"],

"train-accuracy": train_info["accuracy"],

"train-per-time": train_info["all_time"] / total

if train_info["all_time"] is not None

else None,

"train-all-time": train_info["all_time"],

}

# collect the evaluation information

if dataset == "cifar10-valid":

valid_info = archresult.get_metrics(

dataset, "x-valid", iepoch=iepoch, is_random=is_random

)

try:

test_info = archresult.get_metrics(

dataset, "ori-test", iepoch=iepoch, is_random=is_random

)

except Exception as unused_e: # pylint: disable=broad-except

test_info = None

valtest_info = None

xinfo[

"comment"

] = "In this dict, train-loss/accuracy/time is the metric on the train set of CIFAR-10. The test-loss/accuracy/time is the performance of the CIFAR-10 test set after training on the train set by {:} epochs. The per-time and total-time indicate the per epoch and total time costs, respectively.".format(

hp

)

else:

if dataset == "cifar10":

xinfo[

"comment"

] = "In this dict, train-loss/accuracy/time is the metric on the train+valid sets of CIFAR-10. The test-loss/accuracy/time is the performance of the CIFAR-10 test set after training on the train+valid sets by {:} epochs. The per-time and total-time indicate the per epoch and total time costs, respectively.".format(

hp

)

try: # collect results on the proposed test set

if dataset == "cifar10":

test_info = archresult.get_metrics(

dataset, "ori-test", iepoch=iepoch, is_random=is_random

)

else:

test_info = archresult.get_metrics(

dataset, "x-test", iepoch=iepoch, is_random=is_random

)

except Exception as unused_e: # pylint: disable=broad-except

test_info = None

try: # collect results on the proposed validation set

valid_info = archresult.get_metrics(

dataset, "x-valid", iepoch=iepoch, is_random=is_random

)

except Exception as unused_e: # pylint: disable=broad-except

valid_info = None

try:

if dataset != "cifar10":

valtest_info = archresult.get_metrics(

dataset, "ori-test", iepoch=iepoch, is_random=is_random

)

else:

valtest_info = None

except Exception as unused_e: # pylint: disable=broad-except

valtest_info = None

if valid_info is not None:

xinfo["valid-loss"] = valid_info["loss"]

xinfo["valid-accuracy"] = valid_info["accuracy"]

xinfo["valid-per-time"] = (

valid_info["all_time"] / total

if valid_info["all_time"] is not None

else None

)

xinfo["valid-all-time"] = valid_info["all_time"]

if test_info is not None:

xinfo["test-loss"] = test_info["loss"]

xinfo["test-accuracy"] = test_info["accuracy"]

xinfo["test-per-time"] = (

test_info["all_time"] / total

if test_info["all_time"] is not None

else None

)

xinfo["test-all-time"] = test_info["all_time"]

if valtest_info is not None:

xinfo["valtest-loss"] = valtest_info["loss"]

xinfo["valtest-accuracy"] = valtest_info["accuracy"]

xinfo["valtest-per-time"] = (

valtest_info["all_time"] / total

if valtest_info["all_time"] is not None

else None

)

xinfo["valtest-all-time"] = valtest_info["all_time"]

return xinfo

def show(self, index: int = -1) -> None:

"""This function will print the information of a specific (or all) architecture(s)."""

self._show(index, print_information)

@staticmethod

def str2lists(arch_str: Text) -> List[Any]:

"""Shows how to read the string-based architecture encoding.

Args:

arch_str: the input is a string indicates the architecture topology, such as

|nor_conv_1x1~0|+|none~0|none~1|+|none~0|none~1|skip_connect~2|

Returns:

a list of tuple, contains multiple (op, input_node_index) pairs.

[USAGE]

It is the same as the `str2structure` func in AutoDL-Projects:

`github.com/D-X-Y/AutoDL-Projects/lib/models/cell_searchs/genotypes.py`

```

arch = api.str2lists( '|nor_conv_1x1~0|+|none~0|none~1|+|none~0|none~1|skip_connect~2|' )

print ('there are {:} nodes in this arch'.format(len(arch)+1)) # arch is a list

for i, node in enumerate(arch):

print('the {:}-th node is the sum of these {:} nodes with op: {:}'.format(i+1, len(node), node))

```

"""

node_strs = arch_str.split("+")

genotypes = []

for unused_i, node_str in enumerate(node_strs):

inputs = list(

filter(lambda x: x != "", node_str.split("|"))

) # pylint: disable=g-explicit-bool-comparison

for xinput in inputs:

assert len(xinput.split("~")) == 2, "invalid input length : {:}".format(

xinput

)

inputs = (xi.split("~") for xi in inputs)

input_infos = tuple((op, int(idx)) for (op, idx) in inputs)

genotypes.append(input_infos)

return genotypes

@staticmethod

def str2matrix(

arch_str: Text,

search_space: List[Text] = (

"none",

"skip_connect",

"nor_conv_1x1",

"nor_conv_3x3",

"avg_pool_3x3",

),

):

"""Convert the string-based architecture encoding to the encoding strategy in NAS-Bench-101.

Args:

arch_str: the input is a string indicates the architecture topology, such as

|nor_conv_1x1~0|+|none~0|none~1|+|none~0|none~1|skip_connect~2|

search_space: a list of operation string, the default list is the topology search space for NATS-BENCH.

the default value should be be consistent with this line https://github.com/D-X-Y/AutoDL-Projects/blob/main/lib/models/cell_operations.py#L24

Returns:

the numpy matrix (2-D np.ndarray) representing the DAG of this architecture topology

[USAGE]

matrix = api.str2matrix( '|nor_conv_1x1~0|+|none~0|none~1|+|none~0|none~1|skip_connect~2|' )

This matrix is 4-by-4 matrix representing a cell with 4 nodes (only the lower left triangle is useful).

[ [0, 0, 0, 0], # the first line represents the input (0-th) node

[2, 0, 0, 0], # the second line represents the 1-st node, is calculated by 2-th-op( 0-th-node )

[0, 0, 0, 0], # the third line represents the 2-nd node, is calculated by 0-th-op( 0-th-node ) + 0-th-op( 1-th-node )

[0, 0, 1, 0] ] # the fourth line represents the 3-rd node, is calculated by 0-th-op( 0-th-node ) + 0-th-op( 1-th-node ) + 1-th-op( 2-th-node )

In the topology search space in NATS-BENCH, 0-th-op is 'none', 1-th-op is 'skip_connect',

2-th-op is 'nor_conv_1x1', 3-th-op is 'nor_conv_3x3', 4-th-op is 'avg_pool_3x3'.

[NOTE]

If a node has two input-edges from the same node, this function does not work. One edge will be overlapped.

"""

import numpy as np

node_strs = arch_str.split("+")

num_nodes = len(node_strs) + 1

matrix = np.zeros((num_nodes, num_nodes))

for i, node_str in enumerate(node_strs):

inputs = list(

filter(lambda x: x != "", node_str.split("|"))

) # pylint: disable=g-explicit-bool-comparison

for xinput in inputs:

assert len(xinput.split("~")) == 2, "invalid input length : {:}".format(

xinput

)

for xi in inputs:

op, idx = xi.split("~")

if op not in search_space:

raise ValueError(

"this op ({:}) is not in {:}".format(op, search_space)

)

op_idx, node_idx = search_space.index(op), int(idx)

matrix[i + 1, node_idx] = op_idx

return matrix

================================================

FILE: nats_bench/api_utils.py

================================================

#####################################################

# Copyright (c) Xuanyi Dong [GitHub D-X-Y], 2020.07 #

##############################################################################

# NATS-Bench: Benchmarking NAS Algorithms for Architecture Topology and Size #

##############################################################################

"""In this file, we define NASBenchMetaAPI, ArchResults, and ResultsCount.

NASBenchMetaAPI is the abstract class for benchmark APIs.

We also define the class ArchResults, which contains all

information of a single architecture trained by one kind of hyper-parameters

on three datasets. We also define the class ResultsCount, which contains all

information of a single trial for a single architecture.

"""

import abc

import bz2

import collections

import copy

import os

import pickle

import random

import time

from typing import Any, Dict, Optional, Text, Union

import warnings

_FILE_SYSTEM = "default"

PICKLE_EXT = "pickle.pbz2"

def mean(xlist):

return sum(xlist) / len(xlist)

def time_string():

iso_time_format = "%Y-%m-%d %X"

string = "[{:}]".format(time.strftime(iso_time_format, time.gmtime(time.time())))

return string

def reset_file_system(lib: Text = "default"):

global _FILE_SYSTEM

_FILE_SYSTEM = lib

def get_file_system():

return _FILE_SYSTEM

def get_torch_home():

if "TORCH_HOME" in os.environ:

return os.environ["TORCH_HOME"]

elif "HOME" in os.environ:

return os.path.join(os.environ["HOME"], ".torch")

else:

raise ValueError(

"Did not find HOME in os.environ. "

"Please at least setup the path of HOME or TORCH_HOME "

"in the environment."

)

def nats_is_dir(file_path):

if _FILE_SYSTEM == "default":

return os.path.isdir(file_path)

elif _FILE_SYSTEM == "google":

import tensorflow as tf # pylint: disable=g-import-not-at-top

return tf.io.gfile.isdir(file_path)

else:

raise ValueError("Unknown file system lib: {:}".format(_FILE_SYSTEM))

def nats_is_file(file_path):

if _FILE_SYSTEM == "default":

return os.path.isfile(file_path)

elif _FILE_SYSTEM == "google":

import tensorflow as tf # pylint: disable=g-import-not-at-top

return tf.io.gfile.exists(file_path) and not tf.io.gfile.isdir(file_path)

else:

raise ValueError("Unknown file system lib: {:}".format(_FILE_SYSTEM))

def pickle_save(obj, file_path, ext=".pbz2", protocol=4):

"""Use pickle to save data (obj) into file_path.

Args:

obj: The object to be saved into a path.

file_path: The target saving path.

ext: The extension of file name.

protocol: The pickle protocol. According to this documentation

(https://docs.python.org/3/library/pickle.html#data-stream-format),

the protocol version 4 was added in Python 3.4. It adds support for very

large objects, pickling more kinds of objects, and some data format

optimizations. It is the default protocol starting with Python 3.8.

"""

# with open(file_path, 'wb') as cfile:

if _FILE_SYSTEM == "default":

with bz2.BZ2File(str(file_path) + ext, "wb") as cfile:

pickle.dump(

obj, cfile, protocol=protocol

) # pytype: disable=wrong-arg-types

else:

raise ValueError("Unknown file system lib: {:}".format(_FILE_SYSTEM))

def pickle_load(file_path, ext=".pbz2"):

"""Use pickle to load the file on different systems."""

# return pickle.load(open(file_path, "rb"))

if nats_is_file(str(file_path)):

xfile_path = str(file_path)

else:

xfile_path = str(file_path) + ext

if _FILE_SYSTEM == "default":

with bz2.BZ2File(xfile_path, "rb") as cfile:

return pickle.load(cfile) # pytype: disable=wrong-arg-types

elif _FILE_SYSTEM == "google":

import tensorflow as tf # pylint: disable=g-import-not-at-top

file_content = tf.io.gfile.GFile(file_path, mode="rb").read()

byte_content = bz2.decompress(file_content)

return pickle.loads(byte_content)

else:

raise ValueError("Unknown file system lib: {:}".format(_FILE_SYSTEM))

def remap_dataset_set_names(dataset, metric_on_set, verbose=False):

"""Re-map the metric_on_set to internal keys."""

if verbose:

print(

"Call internal function _remap_dataset_set_names with dataset={:} "

"and metric_on_set={:}".format(dataset, metric_on_set)

)

if dataset == "cifar10" and metric_on_set == "valid":

dataset, metric_on_set = "cifar10-valid", "x-valid"

elif dataset == "cifar10" and metric_on_set == "test":

dataset, metric_on_set = "cifar10", "ori-test"

elif dataset == "cifar10" and metric_on_set == "train":

dataset, metric_on_set = "cifar10", "train"

elif (

dataset == "cifar100" or dataset == "ImageNet16-120"

) and metric_on_set == "valid":

metric_on_set = "x-valid"

elif (

dataset == "cifar100" or dataset == "ImageNet16-120"

) and metric_on_set == "test":

metric_on_set = "x-test"

if verbose:

print(

" return dataset={:} and metric_on_set={:}".format(dataset, metric_on_set)

)

return dataset, metric_on_set

class NASBenchMetaAPI(metaclass=abc.ABCMeta):

"""The abstract class for NATS Bench API."""

@abc.abstractmethod

def __init__(

self,

file_path_or_dict: Optional[Union[Text, Dict[Text, Any]]] = None,

fast_mode: bool = False,

verbose: bool = True,

):

"""The initialization function that takes the dataset file path (or a dict loaded from that path) as input."""

# NOTE(xuanyidong): the following attributes must be initilaized in subclass

self.meta_archs = None

self.verbose = None

self.evaluated_indexes = None

self.arch2infos_dict = None

self.filename = None

self._fast_mode = None

self._archive_dir = None

self._avaliable_hps = None

self.archstr2index = None

def __getitem__(self, index: int):

return copy.deepcopy(self.meta_archs[index])

def arch(self, index: int):

"""Return the topology structure of the `index`-th architecture."""

if self.verbose:

print("Call the arch function with index={:}".format(index))

if index < 0 or index >= len(self.meta_archs):

raise ValueError(

"invalid index : {:} vs. {:}.".format(index, len(self.meta_archs))

)

return copy.deepcopy(self.meta_archs[index])

def __len__(self):

return len(self.meta_archs)

def __repr__(self):

return (

"{name}({num}/{total} architectures, fast_mode={fast_mode}, "

"file={filename})".format(

name=self.__class__.__name__,

num=len(self.evaluated_indexes),

total=len(self.meta_archs),

fast_mode=self.fast_mode,

filename=self.filename,

)

)

@property

def avaliable_hps(self):

return list(copy.deepcopy(self._avaliable_hps))

@property

def used_time(self):

return self._used_time

@property

def search_space_name(self):

return self._search_space_name

@property

def fast_mode(self):

return self._fast_mode

@property

def archive_dir(self):

return self._archive_dir

@property

def full_train_epochs(self):

return self._full_train_epochs

def reset_archive_dir(self, archive_dir):

self._archive_dir = archive_dir

def reset_fast_mode(self, fast_mode):

self._fast_mode = fast_mode

def reset_time(self):

self._used_time = 0

@abc.abstractmethod

def get_more_info(

self, index, dataset, iepoch=None, hp: Text = "12", is_random: bool = True

):

"""Return the metric for the `index`-th architecture."""

def simulate_train_eval(

self, arch, dataset, iepoch=None, hp="12", account_time=True

):

"""This function is used to simulate training and evaluating an arch."""

index = self.query_index_by_arch(arch)

all_names = ("cifar10", "cifar100", "ImageNet16-120")

if dataset not in all_names:

raise ValueError(

"Invalid dataset name : {:} vs {:}".format(dataset, all_names)

)

if dataset == "cifar10":

info = self.get_more_info(

index, "cifar10-valid", iepoch=iepoch, hp=hp, is_random=True

)

else:

info = self.get_more_info(

index, dataset, iepoch=iepoch, hp=hp, is_random=True

)

if "valid-accuracy" in info:

valid_acc, time_cost = (

info["valid-accuracy"],

info["train-all-time"] + info["valid-per-time"],

)

else:

valid_acc = info["valtest-accuracy"]

temp_info = self.get_more_info(

index, dataset, iepoch=None, hp=hp, is_random=True

)

time_cost = info["train-all-time"] + temp_info["valid-per-time"]

latency = self.get_latency(index, dataset, hp=hp)