Repository: Egrt/yolov7-obb

Branch: master

Commit: b602bf549be1

Files: 42

Total size: 336.9 KB

Directory structure:

gitextract__sd1byxa/

├── .gitignore

├── LICENSE

├── README.md

├── get_map.py

├── hrsc_annotation.py

├── kmeans_for_anchors.py

├── model_data/

│ ├── coco_classes.txt

│ ├── ssdd_classes.txt

│ ├── voc_classes.txt

│ └── yolo_anchors.txt

├── nets/

│ ├── __init__.py

│ ├── backbone.py

│ ├── yolo.py

│ └── yolo_training.py

├── predict.py

├── requirements.txt

├── summary.py

├── train.py

├── utils/

│ ├── __init__.py

│ ├── callbacks.py

│ ├── dataloader.py

│ ├── kld_loss.py

│ ├── nms_rotated/

│ │ ├── __init__.py

│ │ ├── nms_rotated_ext.cp38-win_amd64.pyd

│ │ ├── nms_rotated_wrapper.py

│ │ ├── setup.py

│ │ └── src/

│ │ ├── box_iou_rotated_utils.h

│ │ ├── nms_rotated_cpu.cpp

│ │ ├── nms_rotated_cuda.cu

│ │ ├── nms_rotated_ext.cpp

│ │ ├── poly_nms_cpu.cpp

│ │ └── poly_nms_cuda.cu

│ ├── utils.py

│ ├── utils_bbox.py

│ ├── utils_fit.py

│ ├── utils_map.py

│ └── utils_rbox.py

├── utils_coco/

│ ├── coco_annotation.py

│ └── get_map_coco.py

├── voc_annotation.py

├── yolo.py

└── 常见问题汇总.md

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

# ignore map, miou, datasets

map_out/

miou_out/

VOCdevkit/

datasets/

Medical_Datasets/

lfw/

logs/

.temp_map_out/

2007_train.txt

2007_val.txt

# Byte-compiled / optimized / DLL files

__pycache__/

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

pip-wheel-metadata/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

*.py,cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

.python-version

# pipenv

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

# However, in case of collaboration, if having platform-specific dependencies or dependencies

# having no cross-platform support, pipenv may install dependencies that don't work, or not

# install all needed dependencies.

#Pipfile.lock

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

__pypackages__/

# Celery stuff

celerybeat-schedule

celerybeat.pid

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

2007_train.txt

================================================

FILE: LICENSE

================================================

GNU GENERAL PUBLIC LICENSE

Version 3, 29 June 2007

Copyright (C) 2007 Free Software Foundation, Inc.

Everyone is permitted to copy and distribute verbatim copies

of this license document, but changing it is not allowed.

Preamble

The GNU General Public License is a free, copyleft license for

software and other kinds of works.

The licenses for most software and other practical works are designed

to take away your freedom to share and change the works. By contrast,

the GNU General Public License is intended to guarantee your freedom to

share and change all versions of a program--to make sure it remains free

software for all its users. We, the Free Software Foundation, use the

GNU General Public License for most of our software; it applies also to

any other work released this way by its authors. You can apply it to

your programs, too.

When we speak of free software, we are referring to freedom, not

price. Our General Public Licenses are designed to make sure that you

have the freedom to distribute copies of free software (and charge for

them if you wish), that you receive source code or can get it if you

want it, that you can change the software or use pieces of it in new

free programs, and that you know you can do these things.

To protect your rights, we need to prevent others from denying you

these rights or asking you to surrender the rights. Therefore, you have

certain responsibilities if you distribute copies of the software, or if

you modify it: responsibilities to respect the freedom of others.

For example, if you distribute copies of such a program, whether

gratis or for a fee, you must pass on to the recipients the same

freedoms that you received. You must make sure that they, too, receive

or can get the source code. And you must show them these terms so they

know their rights.

Developers that use the GNU GPL protect your rights with two steps:

(1) assert copyright on the software, and (2) offer you this License

giving you legal permission to copy, distribute and/or modify it.

For the developers' and authors' protection, the GPL clearly explains

that there is no warranty for this free software. For both users' and

authors' sake, the GPL requires that modified versions be marked as

changed, so that their problems will not be attributed erroneously to

authors of previous versions.

Some devices are designed to deny users access to install or run

modified versions of the software inside them, although the manufacturer

can do so. This is fundamentally incompatible with the aim of

protecting users' freedom to change the software. The systematic

pattern of such abuse occurs in the area of products for individuals to

use, which is precisely where it is most unacceptable. Therefore, we

have designed this version of the GPL to prohibit the practice for those

products. If such problems arise substantially in other domains, we

stand ready to extend this provision to those domains in future versions

of the GPL, as needed to protect the freedom of users.

Finally, every program is threatened constantly by software patents.

States should not allow patents to restrict development and use of

software on general-purpose computers, but in those that do, we wish to

avoid the special danger that patents applied to a free program could

make it effectively proprietary. To prevent this, the GPL assures that

patents cannot be used to render the program non-free.

The precise terms and conditions for copying, distribution and

modification follow.

TERMS AND CONDITIONS

0. Definitions.

"This License" refers to version 3 of the GNU General Public License.

"Copyright" also means copyright-like laws that apply to other kinds of

works, such as semiconductor masks.

"The Program" refers to any copyrightable work licensed under this

License. Each licensee is addressed as "you". "Licensees" and

"recipients" may be individuals or organizations.

To "modify" a work means to copy from or adapt all or part of the work

in a fashion requiring copyright permission, other than the making of an

exact copy. The resulting work is called a "modified version" of the

earlier work or a work "based on" the earlier work.

A "covered work" means either the unmodified Program or a work based

on the Program.

To "propagate" a work means to do anything with it that, without

permission, would make you directly or secondarily liable for

infringement under applicable copyright law, except executing it on a

computer or modifying a private copy. Propagation includes copying,

distribution (with or without modification), making available to the

public, and in some countries other activities as well.

To "convey" a work means any kind of propagation that enables other

parties to make or receive copies. Mere interaction with a user through

a computer network, with no transfer of a copy, is not conveying.

An interactive user interface displays "Appropriate Legal Notices"

to the extent that it includes a convenient and prominently visible

feature that (1) displays an appropriate copyright notice, and (2)

tells the user that there is no warranty for the work (except to the

extent that warranties are provided), that licensees may convey the

work under this License, and how to view a copy of this License. If

the interface presents a list of user commands or options, such as a

menu, a prominent item in the list meets this criterion.

1. Source Code.

The "source code" for a work means the preferred form of the work

for making modifications to it. "Object code" means any non-source

form of a work.

A "Standard Interface" means an interface that either is an official

standard defined by a recognized standards body, or, in the case of

interfaces specified for a particular programming language, one that

is widely used among developers working in that language.

The "System Libraries" of an executable work include anything, other

than the work as a whole, that (a) is included in the normal form of

packaging a Major Component, but which is not part of that Major

Component, and (b) serves only to enable use of the work with that

Major Component, or to implement a Standard Interface for which an

implementation is available to the public in source code form. A

"Major Component", in this context, means a major essential component

(kernel, window system, and so on) of the specific operating system

(if any) on which the executable work runs, or a compiler used to

produce the work, or an object code interpreter used to run it.

The "Corresponding Source" for a work in object code form means all

the source code needed to generate, install, and (for an executable

work) run the object code and to modify the work, including scripts to

control those activities. However, it does not include the work's

System Libraries, or general-purpose tools or generally available free

programs which are used unmodified in performing those activities but

which are not part of the work. For example, Corresponding Source

includes interface definition files associated with source files for

the work, and the source code for shared libraries and dynamically

linked subprograms that the work is specifically designed to require,

such as by intimate data communication or control flow between those

subprograms and other parts of the work.

The Corresponding Source need not include anything that users

can regenerate automatically from other parts of the Corresponding

Source.

The Corresponding Source for a work in source code form is that

same work.

2. Basic Permissions.

All rights granted under this License are granted for the term of

copyright on the Program, and are irrevocable provided the stated

conditions are met. This License explicitly affirms your unlimited

permission to run the unmodified Program. The output from running a

covered work is covered by this License only if the output, given its

content, constitutes a covered work. This License acknowledges your

rights of fair use or other equivalent, as provided by copyright law.

You may make, run and propagate covered works that you do not

convey, without conditions so long as your license otherwise remains

in force. You may convey covered works to others for the sole purpose

of having them make modifications exclusively for you, or provide you

with facilities for running those works, provided that you comply with

the terms of this License in conveying all material for which you do

not control copyright. Those thus making or running the covered works

for you must do so exclusively on your behalf, under your direction

and control, on terms that prohibit them from making any copies of

your copyrighted material outside their relationship with you.

Conveying under any other circumstances is permitted solely under

the conditions stated below. Sublicensing is not allowed; section 10

makes it unnecessary.

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

No covered work shall be deemed part of an effective technological

measure under any applicable law fulfilling obligations under article

11 of the WIPO copyright treaty adopted on 20 December 1996, or

similar laws prohibiting or restricting circumvention of such

measures.

When you convey a covered work, you waive any legal power to forbid

circumvention of technological measures to the extent such circumvention

is effected by exercising rights under this License with respect to

the covered work, and you disclaim any intention to limit operation or

modification of the work as a means of enforcing, against the work's

users, your or third parties' legal rights to forbid circumvention of

technological measures.

4. Conveying Verbatim Copies.

You may convey verbatim copies of the Program's source code as you

receive it, in any medium, provided that you conspicuously and

appropriately publish on each copy an appropriate copyright notice;

keep intact all notices stating that this License and any

non-permissive terms added in accord with section 7 apply to the code;

keep intact all notices of the absence of any warranty; and give all

recipients a copy of this License along with the Program.

You may charge any price or no price for each copy that you convey,

and you may offer support or warranty protection for a fee.

5. Conveying Modified Source Versions.

You may convey a work based on the Program, or the modifications to

produce it from the Program, in the form of source code under the

terms of section 4, provided that you also meet all of these conditions:

a) The work must carry prominent notices stating that you modified

it, and giving a relevant date.

b) The work must carry prominent notices stating that it is

released under this License and any conditions added under section

7. This requirement modifies the requirement in section 4 to

"keep intact all notices".

c) You must license the entire work, as a whole, under this

License to anyone who comes into possession of a copy. This

License will therefore apply, along with any applicable section 7

additional terms, to the whole of the work, and all its parts,

regardless of how they are packaged. This License gives no

permission to license the work in any other way, but it does not

invalidate such permission if you have separately received it.

d) If the work has interactive user interfaces, each must display

Appropriate Legal Notices; however, if the Program has interactive

interfaces that do not display Appropriate Legal Notices, your

work need not make them do so.

A compilation of a covered work with other separate and independent

works, which are not by their nature extensions of the covered work,

and which are not combined with it such as to form a larger program,

in or on a volume of a storage or distribution medium, is called an

"aggregate" if the compilation and its resulting copyright are not

used to limit the access or legal rights of the compilation's users

beyond what the individual works permit. Inclusion of a covered work

in an aggregate does not cause this License to apply to the other

parts of the aggregate.

6. Conveying Non-Source Forms.

You may convey a covered work in object code form under the terms

of sections 4 and 5, provided that you also convey the

machine-readable Corresponding Source under the terms of this License,

in one of these ways:

a) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by the

Corresponding Source fixed on a durable physical medium

customarily used for software interchange.

b) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by a

written offer, valid for at least three years and valid for as

long as you offer spare parts or customer support for that product

model, to give anyone who possesses the object code either (1) a

copy of the Corresponding Source for all the software in the

product that is covered by this License, on a durable physical

medium customarily used for software interchange, for a price no

more than your reasonable cost of physically performing this

conveying of source, or (2) access to copy the

Corresponding Source from a network server at no charge.

c) Convey individual copies of the object code with a copy of the

written offer to provide the Corresponding Source. This

alternative is allowed only occasionally and noncommercially, and

only if you received the object code with such an offer, in accord

with subsection 6b.

d) Convey the object code by offering access from a designated

place (gratis or for a charge), and offer equivalent access to the

Corresponding Source in the same way through the same place at no

further charge. You need not require recipients to copy the

Corresponding Source along with the object code. If the place to

copy the object code is a network server, the Corresponding Source

may be on a different server (operated by you or a third party)

that supports equivalent copying facilities, provided you maintain

clear directions next to the object code saying where to find the

Corresponding Source. Regardless of what server hosts the

Corresponding Source, you remain obligated to ensure that it is

available for as long as needed to satisfy these requirements.

e) Convey the object code using peer-to-peer transmission, provided

you inform other peers where the object code and Corresponding

Source of the work are being offered to the general public at no

charge under subsection 6d.

A separable portion of the object code, whose source code is excluded

from the Corresponding Source as a System Library, need not be

included in conveying the object code work.

A "User Product" is either (1) a "consumer product", which means any

tangible personal property which is normally used for personal, family,

or household purposes, or (2) anything designed or sold for incorporation

into a dwelling. In determining whether a product is a consumer product,

doubtful cases shall be resolved in favor of coverage. For a particular

product received by a particular user, "normally used" refers to a

typical or common use of that class of product, regardless of the status

of the particular user or of the way in which the particular user

actually uses, or expects or is expected to use, the product. A product

is a consumer product regardless of whether the product has substantial

commercial, industrial or non-consumer uses, unless such uses represent

the only significant mode of use of the product.

"Installation Information" for a User Product means any methods,

procedures, authorization keys, or other information required to install

and execute modified versions of a covered work in that User Product from

a modified version of its Corresponding Source. The information must

suffice to ensure that the continued functioning of the modified object

code is in no case prevented or interfered with solely because

modification has been made.

If you convey an object code work under this section in, or with, or

specifically for use in, a User Product, and the conveying occurs as

part of a transaction in which the right of possession and use of the

User Product is transferred to the recipient in perpetuity or for a

fixed term (regardless of how the transaction is characterized), the

Corresponding Source conveyed under this section must be accompanied

by the Installation Information. But this requirement does not apply

if neither you nor any third party retains the ability to install

modified object code on the User Product (for example, the work has

been installed in ROM).

The requirement to provide Installation Information does not include a

requirement to continue to provide support service, warranty, or updates

for a work that has been modified or installed by the recipient, or for

the User Product in which it has been modified or installed. Access to a

network may be denied when the modification itself materially and

adversely affects the operation of the network or violates the rules and

protocols for communication across the network.

Corresponding Source conveyed, and Installation Information provided,

in accord with this section must be in a format that is publicly

documented (and with an implementation available to the public in

source code form), and must require no special password or key for

unpacking, reading or copying.

7. Additional Terms.

"Additional permissions" are terms that supplement the terms of this

License by making exceptions from one or more of its conditions.

Additional permissions that are applicable to the entire Program shall

be treated as though they were included in this License, to the extent

that they are valid under applicable law. If additional permissions

apply only to part of the Program, that part may be used separately

under those permissions, but the entire Program remains governed by

this License without regard to the additional permissions.

When you convey a copy of a covered work, you may at your option

remove any additional permissions from that copy, or from any part of

it. (Additional permissions may be written to require their own

removal in certain cases when you modify the work.) You may place

additional permissions on material, added by you to a covered work,

for which you have or can give appropriate copyright permission.

Notwithstanding any other provision of this License, for material you

add to a covered work, you may (if authorized by the copyright holders of

that material) supplement the terms of this License with terms:

a) Disclaiming warranty or limiting liability differently from the

terms of sections 15 and 16 of this License; or

b) Requiring preservation of specified reasonable legal notices or

author attributions in that material or in the Appropriate Legal

Notices displayed by works containing it; or

c) Prohibiting misrepresentation of the origin of that material, or

requiring that modified versions of such material be marked in

reasonable ways as different from the original version; or

d) Limiting the use for publicity purposes of names of licensors or

authors of the material; or

e) Declining to grant rights under trademark law for use of some

trade names, trademarks, or service marks; or

f) Requiring indemnification of licensors and authors of that

material by anyone who conveys the material (or modified versions of

it) with contractual assumptions of liability to the recipient, for

any liability that these contractual assumptions directly impose on

those licensors and authors.

All other non-permissive additional terms are considered "further

restrictions" within the meaning of section 10. If the Program as you

received it, or any part of it, contains a notice stating that it is

governed by this License along with a term that is a further

restriction, you may remove that term. If a license document contains

a further restriction but permits relicensing or conveying under this

License, you may add to a covered work material governed by the terms

of that license document, provided that the further restriction does

not survive such relicensing or conveying.

If you add terms to a covered work in accord with this section, you

must place, in the relevant source files, a statement of the

additional terms that apply to those files, or a notice indicating

where to find the applicable terms.

Additional terms, permissive or non-permissive, may be stated in the

form of a separately written license, or stated as exceptions;

the above requirements apply either way.

8. Termination.

You may not propagate or modify a covered work except as expressly

provided under this License. Any attempt otherwise to propagate or

modify it is void, and will automatically terminate your rights under

this License (including any patent licenses granted under the third

paragraph of section 11).

However, if you cease all violation of this License, then your

license from a particular copyright holder is reinstated (a)

provisionally, unless and until the copyright holder explicitly and

finally terminates your license, and (b) permanently, if the copyright

holder fails to notify you of the violation by some reasonable means

prior to 60 days after the cessation.

Moreover, your license from a particular copyright holder is

reinstated permanently if the copyright holder notifies you of the

violation by some reasonable means, this is the first time you have

received notice of violation of this License (for any work) from that

copyright holder, and you cure the violation prior to 30 days after

your receipt of the notice.

Termination of your rights under this section does not terminate the

licenses of parties who have received copies or rights from you under

this License. If your rights have been terminated and not permanently

reinstated, you do not qualify to receive new licenses for the same

material under section 10.

9. Acceptance Not Required for Having Copies.

You are not required to accept this License in order to receive or

run a copy of the Program. Ancillary propagation of a covered work

occurring solely as a consequence of using peer-to-peer transmission

to receive a copy likewise does not require acceptance. However,

nothing other than this License grants you permission to propagate or

modify any covered work. These actions infringe copyright if you do

not accept this License. Therefore, by modifying or propagating a

covered work, you indicate your acceptance of this License to do so.

10. Automatic Licensing of Downstream Recipients.

Each time you convey a covered work, the recipient automatically

receives a license from the original licensors, to run, modify and

propagate that work, subject to this License. You are not responsible

for enforcing compliance by third parties with this License.

An "entity transaction" is a transaction transferring control of an

organization, or substantially all assets of one, or subdividing an

organization, or merging organizations. If propagation of a covered

work results from an entity transaction, each party to that

transaction who receives a copy of the work also receives whatever

licenses to the work the party's predecessor in interest had or could

give under the previous paragraph, plus a right to possession of the

Corresponding Source of the work from the predecessor in interest, if

the predecessor has it or can get it with reasonable efforts.

You may not impose any further restrictions on the exercise of the

rights granted or affirmed under this License. For example, you may

not impose a license fee, royalty, or other charge for exercise of

rights granted under this License, and you may not initiate litigation

(including a cross-claim or counterclaim in a lawsuit) alleging that

any patent claim is infringed by making, using, selling, offering for

sale, or importing the Program or any portion of it.

11. Patents.

A "contributor" is a copyright holder who authorizes use under this

License of the Program or a work on which the Program is based. The

work thus licensed is called the contributor's "contributor version".

A contributor's "essential patent claims" are all patent claims

owned or controlled by the contributor, whether already acquired or

hereafter acquired, that would be infringed by some manner, permitted

by this License, of making, using, or selling its contributor version,

but do not include claims that would be infringed only as a

consequence of further modification of the contributor version. For

purposes of this definition, "control" includes the right to grant

patent sublicenses in a manner consistent with the requirements of

this License.

Each contributor grants you a non-exclusive, worldwide, royalty-free

patent license under the contributor's essential patent claims, to

make, use, sell, offer for sale, import and otherwise run, modify and

propagate the contents of its contributor version.

In the following three paragraphs, a "patent license" is any express

agreement or commitment, however denominated, not to enforce a patent

(such as an express permission to practice a patent or covenant not to

sue for patent infringement). To "grant" such a patent license to a

party means to make such an agreement or commitment not to enforce a

patent against the party.

If you convey a covered work, knowingly relying on a patent license,

and the Corresponding Source of the work is not available for anyone

to copy, free of charge and under the terms of this License, through a

publicly available network server or other readily accessible means,

then you must either (1) cause the Corresponding Source to be so

available, or (2) arrange to deprive yourself of the benefit of the

patent license for this particular work, or (3) arrange, in a manner

consistent with the requirements of this License, to extend the patent

license to downstream recipients. "Knowingly relying" means you have

actual knowledge that, but for the patent license, your conveying the

covered work in a country, or your recipient's use of the covered work

in a country, would infringe one or more identifiable patents in that

country that you have reason to believe are valid.

If, pursuant to or in connection with a single transaction or

arrangement, you convey, or propagate by procuring conveyance of, a

covered work, and grant a patent license to some of the parties

receiving the covered work authorizing them to use, propagate, modify

or convey a specific copy of the covered work, then the patent license

you grant is automatically extended to all recipients of the covered

work and works based on it.

A patent license is "discriminatory" if it does not include within

the scope of its coverage, prohibits the exercise of, or is

conditioned on the non-exercise of one or more of the rights that are

specifically granted under this License. You may not convey a covered

work if you are a party to an arrangement with a third party that is

in the business of distributing software, under which you make payment

to the third party based on the extent of your activity of conveying

the work, and under which the third party grants, to any of the

parties who would receive the covered work from you, a discriminatory

patent license (a) in connection with copies of the covered work

conveyed by you (or copies made from those copies), or (b) primarily

for and in connection with specific products or compilations that

contain the covered work, unless you entered into that arrangement,

or that patent license was granted, prior to 28 March 2007.

Nothing in this License shall be construed as excluding or limiting

any implied license or other defenses to infringement that may

otherwise be available to you under applicable patent law.

12. No Surrender of Others' Freedom.

If conditions are imposed on you (whether by court order, agreement or

otherwise) that contradict the conditions of this License, they do not

excuse you from the conditions of this License. If you cannot convey a

covered work so as to satisfy simultaneously your obligations under this

License and any other pertinent obligations, then as a consequence you may

not convey it at all. For example, if you agree to terms that obligate you

to collect a royalty for further conveying from those to whom you convey

the Program, the only way you could satisfy both those terms and this

License would be to refrain entirely from conveying the Program.

13. Use with the GNU Affero General Public License.

Notwithstanding any other provision of this License, you have

permission to link or combine any covered work with a work licensed

under version 3 of the GNU Affero General Public License into a single

combined work, and to convey the resulting work. The terms of this

License will continue to apply to the part which is the covered work,

but the special requirements of the GNU Affero General Public License,

section 13, concerning interaction through a network will apply to the

combination as such.

14. Revised Versions of this License.

The Free Software Foundation may publish revised and/or new versions of

the GNU General Public License from time to time. Such new versions will

be similar in spirit to the present version, but may differ in detail to

address new problems or concerns.

Each version is given a distinguishing version number. If the

Program specifies that a certain numbered version of the GNU General

Public License "or any later version" applies to it, you have the

option of following the terms and conditions either of that numbered

version or of any later version published by the Free Software

Foundation. If the Program does not specify a version number of the

GNU General Public License, you may choose any version ever published

by the Free Software Foundation.

If the Program specifies that a proxy can decide which future

versions of the GNU General Public License can be used, that proxy's

public statement of acceptance of a version permanently authorizes you

to choose that version for the Program.

Later license versions may give you additional or different

permissions. However, no additional obligations are imposed on any

author or copyright holder as a result of your choosing to follow a

later version.

15. Disclaimer of Warranty.

THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY

APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT

HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY

OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO,

THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR

PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM

IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF

ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

16. Limitation of Liability.

IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS

THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY

GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE

USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF

DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD

PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),

EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF

SUCH DAMAGES.

17. Interpretation of Sections 15 and 16.

If the disclaimer of warranty and limitation of liability provided

above cannot be given local legal effect according to their terms,

reviewing courts shall apply local law that most closely approximates

an absolute waiver of all civil liability in connection with the

Program, unless a warranty or assumption of liability accompanies a

copy of the Program in return for a fee.

END OF TERMS AND CONDITIONS

How to Apply These Terms to Your New Programs

If you develop a new program, and you want it to be of the greatest

possible use to the public, the best way to achieve this is to make it

free software which everyone can redistribute and change under these terms.

To do so, attach the following notices to the program. It is safest

to attach them to the start of each source file to most effectively

state the exclusion of warranty; and each file should have at least

the "copyright" line and a pointer to where the full notice is found.

Copyright (C)

This program is free software: you can redistribute it and/or modify

it under the terms of the GNU General Public License as published by

the Free Software Foundation, either version 3 of the License, or

(at your option) any later version.

This program is distributed in the hope that it will be useful,

but WITHOUT ANY WARRANTY; without even the implied warranty of

MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

GNU General Public License for more details.

You should have received a copy of the GNU General Public License

along with this program. If not, see .

Also add information on how to contact you by electronic and paper mail.

If the program does terminal interaction, make it output a short

notice like this when it starts in an interactive mode:

Copyright (C)

This program comes with ABSOLUTELY NO WARRANTY; for details type `show w'.

This is free software, and you are welcome to redistribute it

under certain conditions; type `show c' for details.

The hypothetical commands `show w' and `show c' should show the appropriate

parts of the General Public License. Of course, your program's commands

might be different; for a GUI interface, you would use an "about box".

You should also get your employer (if you work as a programmer) or school,

if any, to sign a "copyright disclaimer" for the program, if necessary.

For more information on this, and how to apply and follow the GNU GPL, see

.

The GNU General Public License does not permit incorporating your program

into proprietary programs. If your program is a subroutine library, you

may consider it more useful to permit linking proprietary applications with

the library. If this is what you want to do, use the GNU Lesser General

Public License instead of this License. But first, please read

.

================================================

FILE: README.md

================================================

## YOLOV7-OBB:You Only Look Once OBB旋转目标检测模型在pytorch当中的实现

---

## 目录

1. [仓库更新 Top News](#仓库更新)

2. [相关仓库 Related code](#相关仓库)

3. [性能情况 Performance](#性能情况)

4. [所需环境 Environment](#所需环境)

5. [文件下载 Download](#文件下载)

6. [训练步骤 How2train](#训练步骤)

7. [预测步骤 How2predict](#预测步骤)

8. [评估步骤 How2eval](#评估步骤)

9. [参考资料 Reference](#Reference)

## Top News

**`2023-02`**:**仓库创建,支持step、cos学习率下降法、支持adam、sgd优化器选择、支持学习率根据batch_size自适应调整、新增图片裁剪、支持多GPU训练、支持各个种类目标数量计算、支持heatmap、支持EMA。**

## 相关仓库

| 目标检测模型 | 路径 |

| :----- | :----- |

YoloV7-OBB | https://github.com/Egrt/yolov7-obb

YoloV7-Tiny-OBB | https://github.com/Egrt/yolov7-tiny-obb

## 性能情况

| 训练数据集 | 权值文件名称 | 测试数据集 | 输入图片大小 | mAP 0.5 |

| :-----: | :------: | :------: | :------: | :------: |

| SSDD | [yolov7_obb_ssdd.pth](https://github.com/Egrt/yolov7-obb/releases/download/V1.0.0/yolov7_obb_ssdd.pth) | SSDD-Val | 640x640 | 95.22

### 预测结果展示

## 所需环境

torch==1.10.1

torchvision==0.11.2

为了使用amp混合精度,推荐使用torch1.7.1以上的版本。

## 文件下载

SSDD数据集下载地址如下,里面已经包括了训练集、测试集、验证集(与测试集一样),无需再次划分:

链接: https://pan.baidu.com/s/1Lpg28ZvMSgNXq00abHMZ5Q

提取码: 2021

## 训练步骤

### a、训练VOC07+12数据集

1. 数据集的准备

**本文使用VOC格式进行训练,训练前需要下载好VOC07+12的数据集,解压后放在根目录**

2. 数据集的处理

修改voc_annotation.py里面的annotation_mode=2,运行voc_annotation.py生成根目录下的2007_train.txt和2007_val.txt。

生成的数据集格式为image_path, x1, y1, x2, y2, x3, y3, x4, y4(polygon), class。

3. 开始网络训练

train.py的默认参数用于训练VOC数据集,直接运行train.py即可开始训练。

4. 训练结果预测

训练结果预测需要用到两个文件,分别是yolo.py和predict.py。我们首先需要去yolo.py里面修改model_path以及classes_path,这两个参数必须要修改。

**model_path指向训练好的权值文件,在logs文件夹里。

classes_path指向检测类别所对应的txt。**

完成修改后就可以运行predict.py进行检测了。运行后输入图片路径即可检测。

### b、训练自己的数据集

1. 数据集的准备

**本文使用VOC格式进行训练,训练前需要自己制作好数据集,**

训练前将标签文件放在VOCdevkit文件夹下的VOC2007文件夹下的Annotation中。

训练前将图片文件放在VOCdevkit文件夹下的VOC2007文件夹下的JPEGImages中。

2. 数据集的处理

在完成数据集的摆放之后,我们需要利用voc_annotation.py获得训练用的2007_train.txt和2007_val.txt。

修改voc_annotation.py里面的参数。第一次训练可以仅修改classes_path,classes_path用于指向检测类别所对应的txt。

训练自己的数据集时,可以自己建立一个cls_classes.txt,里面写自己所需要区分的类别。

model_data/cls_classes.txt文件内容为:

```python

cat

dog

...

```

修改voc_annotation.py中的classes_path,使其对应cls_classes.txt,并运行voc_annotation.py。

3. 开始网络训练

**训练的参数较多,均在train.py中,大家可以在下载库后仔细看注释,其中最重要的部分依然是train.py里的classes_path。**

**classes_path用于指向检测类别所对应的txt,这个txt和voc_annotation.py里面的txt一样!训练自己的数据集必须要修改!**

修改完classes_path后就可以运行train.py开始训练了,在训练多个epoch后,权值会生成在logs文件夹中。

4. 训练结果预测

训练结果预测需要用到两个文件,分别是yolo.py和predict.py。在yolo.py里面修改model_path以及classes_path。

**model_path指向训练好的权值文件,在logs文件夹里。

classes_path指向检测类别所对应的txt。**

完成修改后就可以运行predict.py进行检测了。运行后输入图片路径即可检测。

## 预测步骤

### a、使用预训练权重

1. 下载完库后解压,在百度网盘下载权值,放入model_data,运行predict.py,输入

```python

img/street.jpg

```

2. 在predict.py里面进行设置可以进行fps测试和video视频检测。

### b、使用自己训练的权重

1. 按照训练步骤训练。

2. 在yolo.py文件里面,在如下部分修改model_path和classes_path使其对应训练好的文件;**model_path对应logs文件夹下面的权值文件,classes_path是model_path对应分的类**。

```python

_defaults = {

#--------------------------------------------------------------------------#

# 使用自己训练好的模型进行预测一定要修改model_path和classes_path!

# model_path指向logs文件夹下的权值文件,classes_path指向model_data下的txt

#

# 训练好后logs文件夹下存在多个权值文件,选择验证集损失较低的即可。

# 验证集损失较低不代表mAP较高,仅代表该权值在验证集上泛化性能较好。

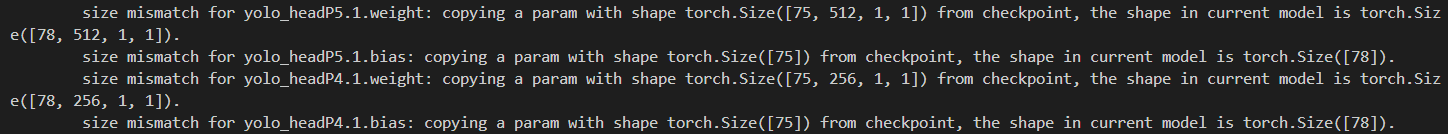

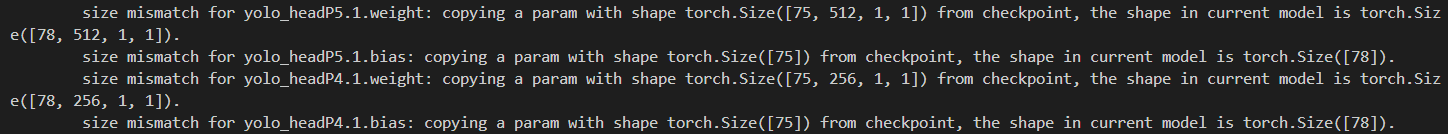

# 如果出现shape不匹配,同时要注意训练时的model_path和classes_path参数的修改

#--------------------------------------------------------------------------#

"model_path" : 'model_data/yolov7_weights.pth',

"classes_path" : 'model_data/coco_classes.txt',

#---------------------------------------------------------------------#

# anchors_path代表先验框对应的txt文件,一般不修改。

# anchors_mask用于帮助代码找到对应的先验框,一般不修改。

#---------------------------------------------------------------------#

"anchors_path" : 'model_data/yolo_anchors.txt',

"anchors_mask" : [[6, 7, 8], [3, 4, 5], [0, 1, 2]],

#---------------------------------------------------------------------#

# 输入图片的大小,必须为32的倍数。

#---------------------------------------------------------------------#

"input_shape" : [640, 640],

#------------------------------------------------------#

# 所使用到的yolov7的版本,本仓库一共提供两个:

# l : 对应yolov7

# x : 对应yolov7_x

#------------------------------------------------------#

"phi" : 'l',

#---------------------------------------------------------------------#

# 只有得分大于置信度的预测框会被保留下来

#---------------------------------------------------------------------#

"confidence" : 0.5,

#---------------------------------------------------------------------#

# 非极大抑制所用到的nms_iou大小

#---------------------------------------------------------------------#

"nms_iou" : 0.3,

#---------------------------------------------------------------------#

# 该变量用于控制是否使用letterbox_image对输入图像进行不失真的resize,

# 在多次测试后,发现关闭letterbox_image直接resize的效果更好

#---------------------------------------------------------------------#

"letterbox_image" : True,

#-------------------------------#

# 是否使用Cuda

# 没有GPU可以设置成False

#-------------------------------#

"cuda" : True,

}

```

3. 运行predict.py,输入

```python

img/street.jpg

```

4. 在predict.py里面进行设置可以进行fps测试和video视频检测。

## 评估步骤

### a、评估VOC07+12的测试集

1. 本文使用VOC格式进行评估。VOC07+12已经划分好了测试集,无需利用voc_annotation.py生成ImageSets文件夹下的txt。

2. 在yolo.py里面修改model_path以及classes_path。**model_path指向训练好的权值文件,在logs文件夹里。classes_path指向检测类别所对应的txt。**

3. 运行get_map.py即可获得评估结果,评估结果会保存在map_out文件夹中。

### b、评估自己的数据集

1. 本文使用VOC格式进行评估。

2. 如果在训练前已经运行过voc_annotation.py文件,代码会自动将数据集划分成训练集、验证集和测试集。如果想要修改测试集的比例,可以修改voc_annotation.py文件下的trainval_percent。trainval_percent用于指定(训练集+验证集)与测试集的比例,默认情况下 (训练集+验证集):测试集 = 9:1。train_percent用于指定(训练集+验证集)中训练集与验证集的比例,默认情况下 训练集:验证集 = 9:1。

3. 利用voc_annotation.py划分测试集后,前往get_map.py文件修改classes_path,classes_path用于指向检测类别所对应的txt,这个txt和训练时的txt一样。评估自己的数据集必须要修改。

4. 在yolo.py里面修改model_path以及classes_path。**model_path指向训练好的权值文件,在logs文件夹里。classes_path指向检测类别所对应的txt。**

5. 运行get_map.py即可获得评估结果,评估结果会保存在map_out文件夹中。

## Citation

如果该项目对你有所帮助,可以引用我们的论文:

```

@Article{app132011402,

AUTHOR = {Ye, Zixun and Zhang, Hongying and Gu, Jingliang and Li, Xue},

TITLE = {YOLOv7-3D: A Monocular 3D Traffic Object Detection Method from a Roadside Perspective},

JOURNAL = {Applied Sciences},

VOLUME = {13},

YEAR = {2023},

NUMBER = {20},

ARTICLE-NUMBER = {11402},

URL = {https://www.mdpi.com/2076-3417/13/20/11402},

ISSN = {2076-3417},

DOI = {10.3390/app132011402}

}

```

## Reference

https://github.com/WongKinYiu/yolov7

https://github.com/bubbliiiing/yolov7-pytorch

================================================

FILE: get_map.py

================================================

import os

import xml.etree.ElementTree as ET

import cv2

from PIL import Image

from tqdm import tqdm

import numpy as np

from utils.utils import get_classes

from utils.utils_map import get_coco_map, get_map

from yolo import YOLO

if __name__ == "__main__":

'''

Recall和Precision不像AP是一个面积的概念,因此在门限值(Confidence)不同时,网络的Recall和Precision值是不同的。

默认情况下,本代码计算的Recall和Precision代表的是当门限值(Confidence)为0.5时,所对应的Recall和Precision值。

受到mAP计算原理的限制,网络在计算mAP时需要获得近乎所有的预测框,这样才可以计算不同门限条件下的Recall和Precision值

因此,本代码获得的map_out/detection-results/里面的txt的框的数量一般会比直接predict多一些,目的是列出所有可能的预测框,

'''

#------------------------------------------------------------------------------------------------------------------#

# map_mode用于指定该文件运行时计算的内容

# map_mode为0代表整个map计算流程,包括获得预测结果、获得真实框、计算VOC_map。

# map_mode为1代表仅仅获得预测结果。

# map_mode为2代表仅仅获得真实框。

# map_mode为3代表仅仅计算VOC_map。

# map_mode为4代表利用COCO工具箱计算当前数据集的0.50:0.95map。需要获得预测结果、获得真实框后并安装pycocotools才行

#-------------------------------------------------------------------------------------------------------------------#

map_mode = 0

#--------------------------------------------------------------------------------------#

# 此处的classes_path用于指定需要测量VOC_map的类别

# 一般情况下与训练和预测所用的classes_path一致即可

#--------------------------------------------------------------------------------------#

classes_path = 'model_data/ssdd_classes.txt'

#--------------------------------------------------------------------------------------#

# MINOVERLAP用于指定想要获得的mAP0.x,mAP0.x的意义是什么请同学们百度一下。

# 比如计算mAP0.75,可以设定MINOVERLAP = 0.75。

#

# 当某一预测框与真实框重合度大于MINOVERLAP时,该预测框被认为是正样本,否则为负样本。

# 因此MINOVERLAP的值越大,预测框要预测的越准确才能被认为是正样本,此时算出来的mAP值越低,

#--------------------------------------------------------------------------------------#

MINOVERLAP = 0.5

#--------------------------------------------------------------------------------------#

# 受到mAP计算原理的限制,网络在计算mAP时需要获得近乎所有的预测框,这样才可以计算mAP

# 因此,confidence的值应当设置的尽量小进而获得全部可能的预测框。

#

# 该值一般不调整。因为计算mAP需要获得近乎所有的预测框,此处的confidence不能随便更改。

# 想要获得不同门限值下的Recall和Precision值,请修改下方的score_threhold。

#--------------------------------------------------------------------------------------#

confidence = 0.001

#--------------------------------------------------------------------------------------#

# 预测时使用到的非极大抑制值的大小,越大表示非极大抑制越不严格。

#

# 该值一般不调整。

#--------------------------------------------------------------------------------------#

nms_iou = 0.5

#---------------------------------------------------------------------------------------------------------------#

# Recall和Precision不像AP是一个面积的概念,因此在门限值不同时,网络的Recall和Precision值是不同的。

#

# 默认情况下,本代码计算的Recall和Precision代表的是当门限值为0.5(此处定义为score_threhold)时所对应的Recall和Precision值。

# 因为计算mAP需要获得近乎所有的预测框,上面定义的confidence不能随便更改。

# 这里专门定义一个score_threhold用于代表门限值,进而在计算mAP时找到门限值对应的Recall和Precision值。

#---------------------------------------------------------------------------------------------------------------#

score_threhold = 0.5

#-------------------------------------------------------#

# map_vis用于指定是否开启VOC_map计算的可视化

#-------------------------------------------------------#

map_vis = False

#-------------------------------------------------------#

# 指向VOC数据集所在的文件夹

# 默认指向根目录下的VOC数据集

#-------------------------------------------------------#

VOCdevkit_path = 'VOCdevkit'

#-------------------------------------------------------#

# 结果输出的文件夹,默认为map_out

#-------------------------------------------------------#

map_out_path = 'map_out'

image_ids = open(os.path.join(VOCdevkit_path, "VOC2007/ImageSets/Main/test.txt")).read().strip().split()

if not os.path.exists(map_out_path):

os.makedirs(map_out_path)

if not os.path.exists(os.path.join(map_out_path, 'ground-truth')):

os.makedirs(os.path.join(map_out_path, 'ground-truth'))

if not os.path.exists(os.path.join(map_out_path, 'detection-results')):

os.makedirs(os.path.join(map_out_path, 'detection-results'))

if not os.path.exists(os.path.join(map_out_path, 'images-optional')):

os.makedirs(os.path.join(map_out_path, 'images-optional'))

class_names, _ = get_classes(classes_path)

if map_mode == 0 or map_mode == 1:

print("Load model.")

yolo = YOLO(confidence = confidence, nms_iou = nms_iou)

print("Load model done.")

print("Get predict result.")

for image_id in tqdm(image_ids):

image_path = os.path.join(VOCdevkit_path, "VOC2007/JPEGImages/"+image_id+".jpg")

image = Image.open(image_path)

if map_vis:

image.save(os.path.join(map_out_path, "images-optional/" + image_id + ".jpg"))

yolo.get_map_txt(image_id, image, class_names, map_out_path)

print("Get predict result done.")

if map_mode == 0 or map_mode == 2:

print("Get ground truth result.")

for image_id in tqdm(image_ids):

with open(os.path.join(map_out_path, "ground-truth/"+image_id+".txt"), "w") as new_f:

root = ET.parse(os.path.join(VOCdevkit_path, "VOC2007/Annotations/"+image_id+".xml")).getroot()

for obj in root.findall('object'):

difficult_flag = False

if obj.find('difficult')!=None:

difficult = obj.find('difficult').text

if int(difficult)==1:

difficult_flag = True

obj_name = obj.find('name').text

if obj_name not in class_names:

continue

bndbox = obj.find('rotated_bndbox')

x1 = bndbox.find('x1').text

y1 = bndbox.find('y1').text

x2 = bndbox.find('x2').text

y2 = bndbox.find('y2').text

x3 = bndbox.find('x3').text

y3 = bndbox.find('y3').text

x4 = bndbox.find('x4').text

y4 = bndbox.find('y4').text

poly = np.array([[x1, y1, x2, y2, x3, y3, x4, y4]], dtype=np.int32)

poly = poly.reshape(4, 2)

(x, y), (w, h), angle = cv2.minAreaRect(poly) # θ ∈ [0, 90]

if difficult_flag:

new_f.write("%s %s %s %s %s %s difficult\n" % (obj_name, int(x), int(y), int(w), int(h),angle))

else:

new_f.write("%s %s %s %s %s %s\n" % (obj_name, int(x), int(y), int(w), int(h),angle))

print("Get ground truth result done.")

if map_mode == 0 or map_mode == 3:

print("Get map.")

get_map(MINOVERLAP, True, score_threhold = score_threhold, path = map_out_path)

print("Get map done.")

if map_mode == 4:

print("Get map.")

get_coco_map(class_names = class_names, path = map_out_path)

print("Get map done.")

================================================

FILE: hrsc_annotation.py

================================================

import os

import random

import xml.etree.ElementTree as ET

import numpy as np

from utils.utils_rbox import *

from utils.utils import get_classes

#--------------------------------------------------------------------------------------------------------------------------------#

# annotation_mode用于指定该文件运行时计算的内容

# annotation_mode为0代表整个标签处理过程,包括获得VOCdevkit/VOC2007/ImageSets里面的txt以及训练用的2007_train.txt、2007_val.txt

# annotation_mode为1代表获得VOCdevkit/VOC2007/ImageSets里面的txt

# annotation_mode为2代表获得训练用的2007_train.txt、2007_val.txt

#--------------------------------------------------------------------------------------------------------------------------------#

annotation_mode = 0

#-------------------------------------------------------------------#

# 必须要修改,用于生成2007_train.txt、2007_val.txt的目标信息

# 与训练和预测所用的classes_path一致即可

# 如果生成的2007_train.txt里面没有目标信息

# 那么就是因为classes没有设定正确

# 仅在annotation_mode为0和2的时候有效

#-------------------------------------------------------------------#

classes_path = 'model_data/hrsc_classes.txt'

#--------------------------------------------------------------------------------------------------------------------------------#

# trainval_percent用于指定(训练集+验证集)与测试集的比例,默认情况下 (训练集+验证集):测试集 = 9:1

# train_percent用于指定(训练集+验证集)中训练集与验证集的比例,默认情况下 训练集:验证集 = 9:1

# 仅在annotation_mode为0和1的时候有效

#--------------------------------------------------------------------------------------------------------------------------------#

trainval_percent = 0.9

train_percent = 0.9

#-------------------------------------------------------#

# 指向VOC数据集所在的文件夹

# 默认指向根目录下的VOC数据集

#-------------------------------------------------------#

VOCdevkit_path = 'VOCdevkit'

VOCdevkit_sets = [('2007_HRSC', 'train'), ('2007_HRSC', 'val')]

classes, _ = get_classes(classes_path)

#-------------------------------------------------------#

# 统计目标数量

#-------------------------------------------------------#

photo_nums = np.zeros(len(VOCdevkit_sets))

nums = np.zeros(len(classes))

def convert_annotation(year, image_id, list_file):

in_file = open(os.path.join(VOCdevkit_path, 'VOC%s/Annotations/%s.xml'%(year, image_id)), encoding='utf-8')

tree=ET.parse(in_file)

root = tree.getroot().find('HRSC_Objects')

for obj in root.iter('HRSC_Object'):

difficult = 0

if obj.find('difficult')!=None:

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult)==1:

continue

if obj.find('mbox_cx')==None:

continue

cls_id = classes.index(cls)

cx = float(obj.find('mbox_cx').text)

cy = float(obj.find('mbox_cy').text)

w = float(obj.find('mbox_w').text)

h = float(obj.find('mbox_h').text)

angle = float(obj.find('mbox_ang').text)

b = np.array([[cx, cy, w, h, angle]], dtype=np.float32)

b = rbox2poly(b)[0]

b = (b[0], b[1], b[2], b[3], b[4], b[5], b[6], b[7])

list_file.write(" " + ",".join([str(a) for a in b]) + ',' + str(cls_id))

nums[classes.index(cls)] = nums[classes.index(cls)] + 1

if __name__ == "__main__":

random.seed(0)

if " " in os.path.abspath(VOCdevkit_path):

raise ValueError("数据集存放的文件夹路径与图片名称中不可以存在空格,否则会影响正常的模型训练,请注意修改。")

if annotation_mode == 0 or annotation_mode == 1:

print("Generate txt in ImageSets.")

xmlfilepath = os.path.join(VOCdevkit_path, 'VOC2007_HRSC/Annotations')

saveBasePath = os.path.join(VOCdevkit_path, 'VOC2007_HRSC/ImageSets/Main')

temp_xml = os.listdir(xmlfilepath)

total_xml = []

for xml in temp_xml:

if xml.endswith(".xml"):

total_xml.append(xml)

num = len(total_xml)

list = range(num)

tv = int(num*trainval_percent)

tr = int(tv*train_percent)

trainval= random.sample(list,tv)

train = random.sample(trainval,tr)

print("train and val size",tv)

print("train size",tr)

ftrainval = open(os.path.join(saveBasePath,'trainval.txt'), 'w')

ftest = open(os.path.join(saveBasePath,'test.txt'), 'w')

ftrain = open(os.path.join(saveBasePath,'train.txt'), 'w')

fval = open(os.path.join(saveBasePath,'val.txt'), 'w')

for i in list:

name=total_xml[i][:-4]+'\n'

if i in trainval:

ftrainval.write(name)

if i in train:

ftrain.write(name)

else:

fval.write(name)

else:

ftest.write(name)

ftrainval.close()

ftrain.close()

fval.close()

ftest.close()

print("Generate txt in ImageSets done.")

if annotation_mode == 0 or annotation_mode == 2:

print("Generate 2007_train.txt and 2007_val.txt for train.")

type_index = 0

for year, image_set in VOCdevkit_sets:

image_ids = open(os.path.join(VOCdevkit_path, 'VOC%s/ImageSets/Main/%s.txt'%(year, image_set)), encoding='utf-8').read().strip().split()

list_file = open('%s_%s.txt'%(year, image_set), 'w', encoding='utf-8')

for image_id in image_ids:

list_file.write('%s/VOC%s/JPEGImages/%s.bmp'%(os.path.abspath(VOCdevkit_path), year, image_id))

convert_annotation(year, image_id, list_file)

list_file.write('\n')

photo_nums[type_index] = len(image_ids)

type_index += 1

list_file.close()

print("Generate 2007_train.txt and 2007_val.txt for train done.")

def printTable(List1, List2):

for i in range(len(List1[0])):

print("|", end=' ')

for j in range(len(List1)):

print(List1[j][i].rjust(int(List2[j])), end=' ')

print("|", end=' ')

print()

str_nums = [str(int(x)) for x in nums]

tableData = [

classes, str_nums

]

colWidths = [0]*len(tableData)

len1 = 0

for i in range(len(tableData)):

for j in range(len(tableData[i])):

if len(tableData[i][j]) > colWidths[i]:

colWidths[i] = len(tableData[i][j])

printTable(tableData, colWidths)

if photo_nums[0] <= 500:

print("训练集数量小于500,属于较小的数据量,请注意设置较大的训练世代(Epoch)以满足足够的梯度下降次数(Step)。")

if np.sum(nums) == 0:

print("在数据集中并未获得任何目标,请注意修改classes_path对应自己的数据集,并且保证标签名字正确,否则训练将会没有任何效果!")

print("在数据集中并未获得任何目标,请注意修改classes_path对应自己的数据集,并且保证标签名字正确,否则训练将会没有任何效果!")

print("在数据集中并未获得任何目标,请注意修改classes_path对应自己的数据集,并且保证标签名字正确,否则训练将会没有任何效果!")

print("(重要的事情说三遍)。")

================================================

FILE: kmeans_for_anchors.py

================================================

#-------------------------------------------------------------------------------------------------------#

# kmeans虽然会对数据集中的框进行聚类,但是很多数据集由于框的大小相近,聚类出来的9个框相差不大,

# 这样的框反而不利于模型的训练。因为不同的特征层适合不同大小的先验框,shape越小的特征层适合越大的先验框

# 原始网络的先验框已经按大中小比例分配好了,不进行聚类也会有非常好的效果。

#-------------------------------------------------------------------------------------------------------#

import glob

import xml.etree.ElementTree as ET

import matplotlib.pyplot as plt

import numpy as np

from tqdm import tqdm

def cas_ratio(box,cluster):

ratios_of_box_cluster = box / cluster

ratios_of_cluster_box = cluster / box

ratios = np.concatenate([ratios_of_box_cluster, ratios_of_cluster_box], axis = -1)

return np.max(ratios, -1)

def avg_ratio(box,cluster):

return np.mean([np.min(cas_ratio(box[i],cluster)) for i in range(box.shape[0])])

def kmeans(box,k):

#-------------------------------------------------------------#

# 取出一共有多少框

#-------------------------------------------------------------#

row = box.shape[0]

#-------------------------------------------------------------#

# 每个框各个点的位置

#-------------------------------------------------------------#

distance = np.empty((row,k))

#-------------------------------------------------------------#

# 最后的聚类位置

#-------------------------------------------------------------#

last_clu = np.zeros((row,))

np.random.seed()

#-------------------------------------------------------------#

# 随机选5个当聚类中心

#-------------------------------------------------------------#

cluster = box[np.random.choice(row,k,replace = False)]

iter = 0

while True:

#-------------------------------------------------------------#

# 计算当前框和先验框的宽高比例

#-------------------------------------------------------------#

for i in range(row):

distance[i] = cas_ratio(box[i],cluster)

#-------------------------------------------------------------#

# 取出最小点

#-------------------------------------------------------------#

near = np.argmin(distance,axis=1)

if (last_clu == near).all():

break

#-------------------------------------------------------------#

# 求每一个类的中位点

#-------------------------------------------------------------#

for j in range(k):

cluster[j] = np.median(

box[near == j],axis=0)

last_clu = near

if iter % 5 == 0:

print('iter: {:d}. avg_ratio:{:.2f}'.format(iter, avg_ratio(box,cluster)))

iter += 1

return cluster, near

def load_data(path):

data = []

#-------------------------------------------------------------#

# 对于每一个xml都寻找box

#-------------------------------------------------------------#

for xml_file in tqdm(glob.glob('{}/*xml'.format(path))):

tree = ET.parse(xml_file)

height = int(tree.findtext('./size/height'))

width = int(tree.findtext('./size/width'))

if height<=0 or width<=0:

continue

#-------------------------------------------------------------#

# 对于每一个目标都获得它的宽高

#-------------------------------------------------------------#

for obj in tree.iter('object'):

xmin = int(float(obj.findtext('bndbox/xmin'))) / width

ymin = int(float(obj.findtext('bndbox/ymin'))) / height

xmax = int(float(obj.findtext('bndbox/xmax'))) / width

ymax = int(float(obj.findtext('bndbox/ymax'))) / height

xmin = np.float64(xmin)

ymin = np.float64(ymin)

xmax = np.float64(xmax)

ymax = np.float64(ymax)

# 得到宽高

data.append([xmax-xmin,ymax-ymin])

return np.array(data)

if __name__ == '__main__':

np.random.seed(0)

#-------------------------------------------------------------#

# 运行该程序会计算'./VOCdevkit/VOC2007/Annotations'的xml

# 会生成yolo_anchors.txt

#-------------------------------------------------------------#

input_shape = [640, 640]

anchors_num = 9

#-------------------------------------------------------------#

# 载入数据集,可以使用VOC的xml

#-------------------------------------------------------------#

path = 'VOCdevkit/VOC2007/Annotations'

#-------------------------------------------------------------#

# 载入所有的xml

# 存储格式为转化为比例后的width,height

#-------------------------------------------------------------#

print('Load xmls.')

data = load_data(path)

print('Load xmls done.')

#-------------------------------------------------------------#

# 使用k聚类算法

#-------------------------------------------------------------#

print('K-means boxes.')

cluster, near = kmeans(data, anchors_num)

print('K-means boxes done.')

data = data * np.array([input_shape[1], input_shape[0]])

cluster = cluster * np.array([input_shape[1], input_shape[0]])

#-------------------------------------------------------------#

# 绘图

#-------------------------------------------------------------#

for j in range(anchors_num):

plt.scatter(data[near == j][:,0], data[near == j][:,1])

plt.scatter(cluster[j][0], cluster[j][1], marker='x', c='black')

plt.savefig("kmeans_for_anchors.jpg")

plt.show()

print('Save kmeans_for_anchors.jpg in root dir.')

cluster = cluster[np.argsort(cluster[:, 0] * cluster[:, 1])]

print('avg_ratio:{:.2f}'.format(avg_ratio(data, cluster)))

print(cluster)

f = open("yolo_anchors.txt", 'w')

row = np.shape(cluster)[0]

for i in range(row):

if i == 0:

x_y = "%d,%d" % (cluster[i][0], cluster[i][1])

else:

x_y = ", %d,%d" % (cluster[i][0], cluster[i][1])

f.write(x_y)

f.close()

================================================

FILE: model_data/coco_classes.txt

================================================

person

bicycle

car

motorbike

aeroplane

bus

train

truck

boat

traffic light

fire hydrant

stop sign

parking meter

bench

bird

cat

dog

horse

sheep

cow

elephant

bear

zebra

giraffe

backpack

umbrella

handbag

tie

suitcase

frisbee

skis

snowboard

sports ball

kite

baseball bat

baseball glove

skateboard

surfboard

tennis racket

bottle

wine glass

cup

fork

knife

spoon

bowl

banana

apple

sandwich

orange

broccoli

carrot

hot dog

pizza

donut

cake

chair

sofa

pottedplant

bed

diningtable

toilet

tvmonitor

laptop

mouse

remote

keyboard

cell phone

microwave

oven

toaster

sink

refrigerator

book

clock

vase

scissors

teddy bear

hair drier

toothbrush

================================================

FILE: model_data/ssdd_classes.txt

================================================

ship

================================================

FILE: model_data/voc_classes.txt

================================================

aeroplane

bicycle

bird

boat

bottle

bus

car

cat

chair

cow

diningtable

dog

horse

motorbike

person

pottedplant

sheep

sofa

train

tvmonitor

================================================

FILE: model_data/yolo_anchors.txt

================================================

12, 16, 19, 36, 40, 28, 36, 75, 76, 55, 72, 146, 142, 110, 192, 243, 459, 401

================================================

FILE: nets/__init__.py

================================================

#

================================================

FILE: nets/backbone.py

================================================

import torch

import torch.nn as nn

def autopad(k, p=None):

if p is None:

p = k // 2 if isinstance(k, int) else [x // 2 for x in k]

return p

class SiLU(nn.Module):

@staticmethod

def forward(x):

return x * torch.sigmoid(x)

class Conv(nn.Module):

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, act=SiLU()): # ch_in, ch_out, kernel, stride, padding, groups

super(Conv, self).__init__()

self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=False)

self.bn = nn.BatchNorm2d(c2, eps=0.001, momentum=0.03)

self.act = nn.LeakyReLU(0.1, inplace=True) if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def fuseforward(self, x):

return self.act(self.conv(x))

class Multi_Concat_Block(nn.Module):

def __init__(self, c1, c2, c3, n=4, e=1, ids=[0]):

super(Multi_Concat_Block, self).__init__()

c_ = int(c2 * e)

self.ids = ids

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = nn.ModuleList(

[Conv(c_ if i ==0 else c2, c2, 3, 1) for i in range(n)]

)

self.cv4 = Conv(c_ * 2 + c2 * (len(ids) - 2), c3, 1, 1)

def forward(self, x):

x_1 = self.cv1(x)

x_2 = self.cv2(x)

x_all = [x_1, x_2]

# [-1, -3, -5, -6] => [5, 3, 1, 0]

for i in range(len(self.cv3)):

x_2 = self.cv3[i](x_2)

x_all.append(x_2)

out = self.cv4(torch.cat([x_all[id] for id in self.ids], 1))

return out

class MP(nn.Module):

def __init__(self, k=2):

super(MP, self).__init__()

self.m = nn.MaxPool2d(kernel_size=k, stride=k)

def forward(self, x):

return self.m(x)

class Transition_Block(nn.Module):

def __init__(self, c1, c2):

super(Transition_Block, self).__init__()

self.cv1 = Conv(c1, c2, 1, 1)

self.cv2 = Conv(c1, c2, 1, 1)

self.cv3 = Conv(c2, c2, 3, 2)

self.mp = MP()

def forward(self, x):

# 160, 160, 256 => 80, 80, 256 => 80, 80, 128

x_1 = self.mp(x)

x_1 = self.cv1(x_1)

# 160, 160, 256 => 160, 160, 128 => 80, 80, 128

x_2 = self.cv2(x)

x_2 = self.cv3(x_2)

# 80, 80, 128 cat 80, 80, 128 => 80, 80, 256

return torch.cat([x_2, x_1], 1)

class Backbone(nn.Module):

def __init__(self, transition_channels, block_channels, n, phi, pretrained=False):

super().__init__()

#-----------------------------------------------#

# 输入图片是640, 640, 3

#-----------------------------------------------#

ids = {

'l' : [-1, -3, -5, -6],

'x' : [-1, -3, -5, -7, -8],

}[phi]

# 640, 640, 3 => 640, 640, 32 => 320, 320, 64

self.stem = nn.Sequential(

Conv(3, transition_channels, 3, 1),

Conv(transition_channels, transition_channels * 2, 3, 2),

Conv(transition_channels * 2, transition_channels * 2, 3, 1),

)

# 320, 320, 64 => 160, 160, 128 => 160, 160, 256

self.dark2 = nn.Sequential(

Conv(transition_channels * 2, transition_channels * 4, 3, 2),

Multi_Concat_Block(transition_channels * 4, block_channels * 2, transition_channels * 8, n=n, ids=ids),

)

# 160, 160, 256 => 80, 80, 256 => 80, 80, 512

self.dark3 = nn.Sequential(

Transition_Block(transition_channels * 8, transition_channels * 4),

Multi_Concat_Block(transition_channels * 8, block_channels * 4, transition_channels * 16, n=n, ids=ids),

)

# 80, 80, 512 => 40, 40, 512 => 40, 40, 1024

self.dark4 = nn.Sequential(

Transition_Block(transition_channels * 16, transition_channels * 8),

Multi_Concat_Block(transition_channels * 16, block_channels * 8, transition_channels * 32, n=n, ids=ids),

)

# 40, 40, 1024 => 20, 20, 1024 => 20, 20, 1024

self.dark5 = nn.Sequential(

Transition_Block(transition_channels * 32, transition_channels * 16),

Multi_Concat_Block(transition_channels * 32, block_channels * 8, transition_channels * 32, n=n, ids=ids),

)

if pretrained:

url = {

"l" : 'https://github.com/bubbliiiing/yolov7-pytorch/releases/download/v1.0/yolov7_backbone_weights.pth',

"x" : 'https://github.com/bubbliiiing/yolov7-pytorch/releases/download/v1.0/yolov7_x_backbone_weights.pth',

}[phi]

checkpoint = torch.hub.load_state_dict_from_url(url=url, map_location="cpu", model_dir="./model_data")

self.load_state_dict(checkpoint, strict=False)

print("Load weights from " + url.split('/')[-1])

def forward(self, x):

x = self.stem(x)

x = self.dark2(x)

#-----------------------------------------------#

# dark3的输出为80, 80, 512,是一个有效特征层

#-----------------------------------------------#

x = self.dark3(x)

feat1 = x

#-----------------------------------------------#

# dark4的输出为40, 40, 1024,是一个有效特征层

#-----------------------------------------------#

x = self.dark4(x)

feat2 = x

#-----------------------------------------------#

# dark5的输出为20, 20, 1024,是一个有效特征层

#-----------------------------------------------#

x = self.dark5(x)

feat3 = x

return feat1, feat2, feat3

================================================

FILE: nets/yolo.py

================================================

import numpy as np

import torch

import torch.nn as nn

from nets.backbone import Backbone, Multi_Concat_Block, Conv, SiLU, Transition_Block, autopad

class SPPCSPC(nn.Module):

# CSP https://github.com/WongKinYiu/CrossStagePartialNetworks

def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5, k=(5, 9, 13)):

super(SPPCSPC, self).__init__()

c_ = int(2 * c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(c_, c_, 3, 1)

self.cv4 = Conv(c_, c_, 1, 1)

self.m = nn.ModuleList([nn.MaxPool2d(kernel_size=x, stride=1, padding=x // 2) for x in k])

self.cv5 = Conv(4 * c_, c_, 1, 1)

self.cv6 = Conv(c_, c_, 3, 1)

# 输出通道数为c2

self.cv7 = Conv(2 * c_, c2, 1, 1)

def forward(self, x):

x1 = self.cv4(self.cv3(self.cv1(x)))

y1 = self.cv6(self.cv5(torch.cat([x1] + [m(x1) for m in self.m], 1)))

y2 = self.cv2(x)

return self.cv7(torch.cat((y1, y2), dim=1))

class RepConv(nn.Module):

# Represented convolution

# https://arxiv.org/abs/2101.03697

def __init__(self, c1, c2, k=3, s=1, p=None, g=1, act=SiLU(), deploy=False):

super(RepConv, self).__init__()

self.deploy = deploy

self.groups = g

self.in_channels = c1

self.out_channels = c2

assert k == 3

assert autopad(k, p) == 1

padding_11 = autopad(k, p) - k // 2

self.act = nn.LeakyReLU(0.1, inplace=True) if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

if deploy:

self.rbr_reparam = nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=True)

else:

self.rbr_identity = (nn.BatchNorm2d(num_features=c1, eps=0.001, momentum=0.03) if c2 == c1 and s == 1 else None)

self.rbr_dense = nn.Sequential(

nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=False),

nn.BatchNorm2d(num_features=c2, eps=0.001, momentum=0.03),

)

self.rbr_1x1 = nn.Sequential(

nn.Conv2d( c1, c2, 1, s, padding_11, groups=g, bias=False),

nn.BatchNorm2d(num_features=c2, eps=0.001, momentum=0.03),

)

def forward(self, inputs):

if hasattr(self, "rbr_reparam"):

return self.act(self.rbr_reparam(inputs))

if self.rbr_identity is None:

id_out = 0

else:

id_out = self.rbr_identity(inputs)

return self.act(self.rbr_dense(inputs) + self.rbr_1x1(inputs) + id_out)

def get_equivalent_kernel_bias(self):

kernel3x3, bias3x3 = self._fuse_bn_tensor(self.rbr_dense)

kernel1x1, bias1x1 = self._fuse_bn_tensor(self.rbr_1x1)

kernelid, biasid = self._fuse_bn_tensor(self.rbr_identity)

return (

kernel3x3 + self._pad_1x1_to_3x3_tensor(kernel1x1) + kernelid,

bias3x3 + bias1x1 + biasid,

)

def _pad_1x1_to_3x3_tensor(self, kernel1x1):

if kernel1x1 is None:

return 0

else:

return nn.functional.pad(kernel1x1, [1, 1, 1, 1])

def _fuse_bn_tensor(self, branch):

if branch is None:

return 0, 0

if isinstance(branch, nn.Sequential):

kernel = branch[0].weight