Repository: Evil0ctal/Douyin_TikTok_Download_API

Branch: main

Commit: 42784ffc83a7

Files: 75

Total size: 467.3 KB

Directory structure:

gitextract_6fzjbc2k/

├── .github/

│ ├── ISSUE_TEMPLATE/

│ │ ├── bug_report.md

│ │ ├── bug_report_CN.md

│ │ ├── feature_request.md

│ │ └── feature_request_CN.md

│ └── workflows/

│ ├── codeql-analysis.yml

│ ├── docker-image.yml

│ └── readme.yml

├── .gitignore

├── Dockerfile

├── LICENSE

├── Procfile

├── README.en.md

├── README.md

├── Screenshots/

│ ├── benchmarks/

│ │ └── info

│ └── v3_screenshots/

│ └── info

├── app/

│ ├── api/

│ │ ├── endpoints/

│ │ │ ├── bilibili_web.py

│ │ │ ├── douyin_web.py

│ │ │ ├── download.py

│ │ │ ├── hybrid_parsing.py

│ │ │ ├── ios_shortcut.py

│ │ │ ├── tiktok_app.py

│ │ │ └── tiktok_web.py

│ │ ├── models/

│ │ │ └── APIResponseModel.py

│ │ └── router.py

│ ├── main.py

│ └── web/

│ ├── app.py

│ └── views/

│ ├── About.py

│ ├── Document.py

│ ├── Downloader.py

│ ├── EasterEgg.py

│ ├── ParseVideo.py

│ ├── Shortcuts.py

│ └── ViewsUtils.py

├── bash/

│ ├── install.sh

│ └── update.sh

├── chrome-cookie-sniffer/

│ ├── README.md

│ ├── background.js

│ ├── manifest.json

│ ├── popup.html

│ └── popup.js

├── config.yaml

├── crawlers/

│ ├── base_crawler.py

│ ├── bilibili/

│ │ └── web/

│ │ ├── config.yaml

│ │ ├── endpoints.py

│ │ ├── models.py

│ │ ├── utils.py

│ │ ├── web_crawler.py

│ │ └── wrid.py

│ ├── douyin/

│ │ └── web/

│ │ ├── abogus.py

│ │ ├── config.yaml

│ │ ├── endpoints.py

│ │ ├── models.py

│ │ ├── utils.py

│ │ ├── web_crawler.py

│ │ └── xbogus.py

│ ├── hybrid/

│ │ └── hybrid_crawler.py

│ ├── tiktok/

│ │ ├── app/

│ │ │ ├── app_crawler.py

│ │ │ ├── config.yaml

│ │ │ ├── endpoints.py

│ │ │ └── models.py

│ │ └── web/

│ │ ├── config.yaml

│ │ ├── endpoints.py

│ │ ├── models.py

│ │ ├── utils.py

│ │ └── web_crawler.py

│ └── utils/

│ ├── api_exceptions.py

│ ├── deprecated.py

│ ├── logger.py

│ └── utils.py

├── daemon/

│ └── Douyin_TikTok_Download_API.service

├── docker-compose.yml

├── logo/

│ └── logo.txt

├── requirements.txt

├── start.py

└── start.sh

================================================

FILE CONTENTS

================================================

================================================

FILE: .github/ISSUE_TEMPLATE/bug_report.md

================================================

---

name: Bug report

about: Please describe your problem in as much detail as possible so that it can be

solved faster

title: "[BUG] Brief and clear description of the problem"

labels: BUG, enhancement

assignees: Evil0ctal

---

***Platform where the error occurred?***

Such as: Douyin/TikTok

***The endpoint where the error occurred?***

Such as: API-V1/API-V2/Web APP

***Submitted input value?***

Such as: video link

***Have you tried again?***

Such as: Yes, the error still exists after X time after the error occurred.

***Have you checked the readme or interface documentation for this project?***

Such as: Yes, and it is very sure that the problem is caused by the program.

================================================

FILE: .github/ISSUE_TEMPLATE/bug_report_CN.md

================================================

---

name: Bug反馈

about: 请尽量详细的描述你的问题以便更快的解决它

title: "[BUG] 简短明了的描述问题"

labels: BUG

assignees: Evil0ctal

---

***发生错误的平台?***

如:抖音/TikTok

***发生错误的端点?***

如:API-V1/API-V2/Web APP

***提交的输入值?***

如:短视频链接

***是否有再次尝试?***

如:是,发生错误后X时间后错误依旧存在。

***你有查看本项目的自述文件或接口文档吗?***

如:有,并且很确定该问题是程序导致的。

================================================

FILE: .github/ISSUE_TEMPLATE/feature_request.md

================================================

---

name: Feature request

about: Suggest an idea for this project

title: "[Feature request] Brief and clear description of the problem"

labels: enhancement

assignees: Evil0ctal

---

**Is your feature request related to a problem? Please describe.**

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

================================================

FILE: .github/ISSUE_TEMPLATE/feature_request_CN.md

================================================

---

name: 新功能需求

about: 为本项目提出一个新需求或想法

title: "[Feature request] 简短明了的描述问题"

labels: enhancement

assignees: Evil0ctal

---

**您的功能请求是否与问题相关? 如有,请描述。**

如:我在使用xxx时觉得如果可以改进xxx的话会更好。

**描述您想要的解决方案**

如:对您想要发生的事情的清晰简洁的描述。

**描述您考虑过的替代方案**

如:对您考虑过的任何替代解决方案或功能的清晰简洁的描述。

**附加上下文**

在此处添加有关功能请求的任何其他上下文或屏幕截图。

================================================

FILE: .github/workflows/codeql-analysis.yml

================================================

# For most projects, this workflow file will not need changing; you simply need

# to commit it to your repository.

#

# You may wish to alter this file to override the set of languages analyzed,

# or to provide custom queries or build logic.

#

# ******** NOTE ********

# We have attempted to detect the languages in your repository. Please check

# the `language` matrix defined below to confirm you have the correct set of

# supported CodeQL languages.

#

name: "CodeQL"

on:

push:

branches: [ main ]

pull_request:

# The branches below must be a subset of the branches above

branches: [ main ]

schedule:

- cron: '22 7 * * 3'

jobs:

analyze:

name: Analyze

runs-on: ubuntu-latest

permissions:

actions: read

contents: read

security-events: write

strategy:

fail-fast: false

matrix:

language: [ 'python' ]

# CodeQL supports [ 'cpp', 'csharp', 'go', 'java', 'javascript', 'python', 'ruby' ]

# Learn more about CodeQL language support at https://git.io/codeql-language-support

steps:

- name: Checkout repository

uses: actions/checkout@v3

# Initializes the CodeQL tools for scanning.

- name: Initialize CodeQL

uses: github/codeql-action/init@v2

with:

languages: ${{ matrix.language }}

# If you wish to specify custom queries, you can do so here or in a config file.

# By default, queries listed here will override any specified in a config file.

# Prefix the list here with "+" to use these queries and those in the config file.

# queries: ./path/to/local/query, your-org/your-repo/queries@main

# Autobuild attempts to build any compiled languages (C/C++, C#, or Java).

# If this step fails, then you should remove it and run the build manually (see below)

- name: Autobuild

uses: github/codeql-action/autobuild@v2

# ℹ️ Command-line programs to run using the OS shell.

# 📚 https://git.io/JvXDl

# ✏️ If the Autobuild fails above, remove it and uncomment the following three lines

# and modify them (or add more) to build your code if your project

# uses a compiled language

#- run: |

# make bootstrap

# make release

- name: Perform CodeQL Analysis

uses: github/codeql-action/analyze@v2

================================================

FILE: .github/workflows/docker-image.yml

================================================

# docker-image.yml

name: Publish Docker image # workflow名称,可以在Github项目主页的【Actions】中看到所有的workflow

on: # 配置触发workflow的事件

push:

branches: # main分支有push时触发此workflow

- 'main'

tags: # tag更新时触发此workflow

- '*'

workflow_dispatch:

inputs:

name:

description: 'Person to greet'

required: true

default: 'Mona the Octocat'

home:

description: 'location'

required: false

default: 'The Octoverse'

# 定义环境变量, 后面会使用

# 定义 APP_NAME 用于 docker build-args

# 定义 DOCKERHUB_REPO 标记 docker hub repo 名称

env:

APP_NAME: douyin_tiktok_download_api

DOCKERHUB_REPO: evil0ctal/douyin_tiktok_download_api

jobs:

main:

# 在 Ubuntu 上运行

runs-on: ubuntu-latest

steps:

# git checkout 代码

- name: Checkout

uses: actions/checkout@v2

# 设置 QEMU, 后面 docker buildx 依赖此.

- name: Set up QEMU

uses: docker/setup-qemu-action@v1

# 设置 Docker buildx, 方便构建 Multi platform 镜像

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v1

# 登录 docker hub

- name: Login to DockerHub

uses: docker/login-action@v1

with:

# GitHub Repo => Settings => Secrets 增加 docker hub 登录密钥信息

# DOCKERHUB_USERNAME 是 docker hub 账号名.

# DOCKERHUB_TOKEN: docker hub => Account Setting => Security 创建.

username: ${{ secrets.DOCKERHUB_USERNAME }}

password: ${{ secrets.DOCKERHUB_TOKEN }}

# 通过 git 命令获取当前 tag 信息, 存入环境变量 APP_VERSION

- name: Generate App Version

run: echo APP_VERSION=`git describe --tags --always` >> $GITHUB_ENV

# 构建 Docker 并推送到 Docker hub

- name: Build and push

id: docker_build

uses: docker/build-push-action@v2

with:

# 是否 docker push

push: true

# 生成多平台镜像, see https://github.com/docker-library/bashbrew/blob/v0.1.1/architecture/oci-platform.go

platforms: |

linux/amd64

linux/arm64

# docker build arg, 注入 APP_NAME/APP_VERSION

build-args: |

APP_NAME=${{ env.APP_NAME }}

APP_VERSION=${{ env.APP_VERSION }}

# 生成两个 docker tag: ${APP_VERSION} 和 latest

tags: |

${{ env.DOCKERHUB_REPO }}:latest

${{ env.DOCKERHUB_REPO }}:${{ env.APP_VERSION }}

================================================

FILE: .github/workflows/readme.yml

================================================

name: Translate README

on:

push:

branches:

- main

- Dev

jobs:

build:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2

- name: Setup Node.js

uses: actions/setup-node@v1

with:

node-version: 12.x

# ISO Langusge Codes: https://cloud.google.com/translate/docs/languages

- name: Adding README - English

uses: dephraiim/translate-readme@main

with:

LANG: en

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

pip-wheel-metadata/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

*.py,cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

.python-version

# pipenv

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

# However, in case of collaboration, if having platform-specific dependencies or dependencies

# having no cross-platform support, pipenv may install dependencies that don't work, or not

# install all needed dependencies.

#Pipfile.lock

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

__pypackages__/

# Celery stuff

celerybeat-schedule

celerybeat.pid

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

# pycharm

.idea

/app/api/endpoints/download/

/download/

================================================

FILE: Dockerfile

================================================

# 使用官方 Python 3.11 的轻量版镜像

FROM python:3.11-slim

LABEL maintainer="Evil0ctal"

# 设置非交互模式,避免 Docker 构建时的交互问题

ENV DEBIAN_FRONTEND=noninteractive

# 设置工作目录

WORKDIR /app

# 复制应用代码到容器

COPY . /app

# 使用 Aliyun 镜像源加速 pip

RUN pip install -i https://mirrors.aliyun.com/pypi/simple/ -U pip \

&& pip config set global.index-url https://mirrors.aliyun.com/pypi/simple/

# 安装依赖

RUN pip install --no-cache-dir -r requirements.txt

# 确保启动脚本可执行

RUN chmod +x start.sh

# 设置容器启动命令

CMD ["./start.sh"]

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright [yyyy] [name of copyright owner]

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: Procfile

================================================

web: python3 start.py

================================================

FILE: README.en.md

================================================

Douyin_TikTok_Download_API(抖音/TikTok API)

[English](./README.en.md)\|[Simplified Chinese](./README.md)

🚀"Douyin_TikTok_Download_API" is a high-performance asynchronous API that can be used out of the box[Tik Tok](https://www.douyin.com)\|[Tiktok](https://www.tiktok.com)\|[Biliable](https://www.bilibili.com)Data crawling tool supports API calling, online batch analysis and downloading.

[](LICENSE)[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/releases/latest)[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/stargazers)[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/network/members)[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues)[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues?q=is%3Aissue+is%3Aclosed)

## Sponsor

These sponsors have paid to be placed here,**Doinan_tics_download_api**The project will always be free and open source. If you would like to become a sponsor of this project, please check out my[GitHub Sponsor Page](https://github.com/sponsors/evil0ctal)。

[Tickubbub](https://tikhub.io/?utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad)Provides more than 700 endpoints that can be used to obtain and analyze data from 14+ social media platforms - including videos, users, comments, stores, products, trends, etc., complete all data access and analysis in one stop.

By checking in every day, you can get free quota. You can use my registration invitation link:[https://user.tikhub.io/users/signup?referral_code=1wRL8eQk](https://user.tikhub.io/users/signup?referral_code=1wRL8eQk&utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad)or invitation code:`1wRL8eQk`, you can get it by registering and recharging`$2`Quota.

[Tickubbub](https://tikhub.io/?utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad)The following services are provided:

- Rich data interface

- Get free quota by signing in every day

- High-quality API services

- Official website:[https://tikhub.io/](https://tikhub.io/?utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad)

- GitHub address:

- Githubub:

## 🖥Demo site: I am very vulnerable...please do not stress test (·•᷄ࡇ•᷅ )

> 😾The online download function of the demo site has been turned off, and due to cookie reasons, Douyin's parsing and API services cannot guarantee availability on the Demo site.

🍔Web APP:

🌭tikub APU Docuration:

💾iOS Shortcut (shortcut command):[Shortcut release](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/discussions/104?sort=top)

📦️Desktop downloader (recommended by warehouse):

- [Johnserf-Seed/Tiktokdownload](https://github.com/Johnserf-Seed/TikTokDownload)

- [HFrost0/bilix](https://github.com/HFrost0/bilix)

- [Tairraos/TikDown - \[needs update\]](https://github.com/Tairraos/TikDown/)

## ⚗️Technology stack

- [/app/web](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/blob/main/app/web)-[PyWebIO](https://www.pyweb.io/)

- [/app/api](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/blob/main/app/api)-[speedy](https://fastapi.tiangolo.com/)

- [/crawlers](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/blob/main/crawlers)-[HTTPX](https://www.python-httpx.org/)

> **_/crawlers_**

- Submit requests to APIs on different platforms and retrieve data. After processing, a dictionary (dict) is returned, and asynchronous support is supported.

> **_/app/api_**

- Get request parameters and use`Crawlers`The related classes process the data and return it in JSON form, download the video, and cooperate with iOS shortcut commands to achieve fast calling and support asynchronous.

> **_/app/web_**

- use`PyWebIO`A simple web program created to process the values entered on the web page and then use them`Crawlers`The related class processing interface outputs related data on the web page.

**_Most of the parameters of the above files can be found in the corresponding`config.yaml`Modify in_**

## 💡Project file structure

./Douyin_TikTok_Download_API

├─app

│ ├─api

│ │ ├─endpoints

│ │ └─models

│ ├─download

│ └─web

│ └─views

└─crawlers

├─bilibili

│ └─web

├─douyin

│ └─web

├─hybrid

├─tiktok

│ ├─app

│ └─web

└─utils

## ✨Supported functions:

- Batch parsing on the web page (supports Douyin/TikTok mixed parsing)

- Download videos or photo albums online.

- make[pip package](https://pypi.org/project/douyin-tiktok-scraper/)Conveniently and quickly import your projects

- [iOS shortcut commands to quickly call API](https://apps.apple.com/cn/app/%E5%BF%AB%E6%8D%B7%E6%8C%87%E4%BB%A4/id915249334)Achieve in-app download of watermark-free videos/photo albums

- Complete API documentation ([Demo/Demonstration](https://api.douyin.wtf/docs))

- Rich API interface:

- Douyin web version API

- [x] Video data analysis

- [x] Get user homepage work data

- [x] Obtain the data of works liked by the user's homepage

- [x] Obtain the data of collected works on the user's homepage

- [x] Get user homepage information

- [x] Get user collection work data

- [x] Get user live stream data

- [x] Get the live streaming data of a specified user

- [x] Get the ranking of users who give gifts in the live broadcast room

- [x] Get single video comment data

- [x] Get the comment reply data of the specified video

- [x] Generate msToken

- [x] Generate verify_fp

- [x] Generate s_v_web_id

- [x] Generate X-Bogus parameters using interface URL

- [x] Generate A_Bogus parameters using interface URL

- [x] Extract a single user id

- [x] Extract list user id

- [x] Extract a single work id

- [x] Extract list work id

- [x] Extract live broadcast room number from list

- [x] Extract live broadcast room number from list

- TikTok web version API

- [x] Video data analysis

- [x] Get user homepage work data

- [x] Obtain the data of works liked by the user's homepage

- [x] Get user homepage information

- [x] Get user home page fan data

- [x] Get user homepage follow data

- [x] Get user homepage collection work data

- [x] 获取用户主页搜藏数据

- [x] Get user homepage playlist data

- [x] Get single video comment data

- [x] Get the comment reply data of the specified video

- [x] Generate msToken

- [x] Generate ttwid

- [x] Generate X-Bogus parameters using interface URL

- [x] Extract a single user sec_user_id

- [x] Extract list user sec_user_id

- [x] Extract a single work id

- [x] Extract list work id

- [x] Get user unique_id

- [x] Get list unique_id

- Bilibili web version API

- [x] Get individual video details

- [x] Get video stream address

- [x] Obtain user-published video work data

- [x] Get all favorites information of the user

- [x] Get video data in specified favorites

- [x] Get information about a specified user

- [x] Get comprehensive popular video information

- [x] Get comments for specified video

- [x] Get the reply to the specified comment under the video

- [x] Get the specified user's updates

- [x] Get real-time video barrages

- [x] Get specified live broadcast room information

- [x] Get live room video stream

- [x] Get the anchors who are live broadcasting in the specified partition

- [x] Get a list of all live broadcast partitions

- [x] Obtain video sub-p information through bv number

* * *

## 📦Call the parsing library (obsolete and needs to be updated):

> 💡PIPI :

Online:

**_API demo:_**

- Crawl video data (TikTok or Douyin hybrid analysis)`https://api.douyin.wtf/api/hybrid/video_data?url=[视频链接/Video URL]&minimal=false`

- Download videos/photo albums (TikTok or Douyin hybrid analysis)`https://api.douyin.wtf/api/download?url=[视频链接/Video URL]&prefix=true&with_watermark=false`

**_For more demonstrations, please see the documentation..._**

## ⚠️Preparation work before deployment (please read carefully):

- You need to solve the problem of crawler cookie risk control by yourself, otherwise the interface may become unusable. After modifying the configuration file, you need to restart the service for it to take effect, and it is best to use cookies from accounts that you have already logged in to.

- Douyin web cookie (obtain and replace the cookie in the configuration file below):

-

- TikTok web-side cookies (obtain and replace the cookies in the configuration file below):

-

- I turned off the online download function of the demo site. The video someone downloaded was so huge that it crashed the server. You can right-click on the web page parsing results page to save the video...

- The cookies of the demo site are my own and are not guaranteed to be valid for a long time. They only serve as a demonstration. If you deploy it yourself, please obtain the cookies yourself.

- If you need to directly access the video link returned by TikTok Web API, an HTTP 403 error will occur. Please use the API in this project.`/api/download`The interface downloads TikTok videos. This interface has been manually closed in the demo site, and you need to deploy this project by yourself.

- here is one**Video tutorial**You can refer to:**_

> Use script to deploy this project with one click

- This project provides a one-click deployment script that can quickly deploy this project on the server.

- The script was tested on Ubuntu 20.04 LTS. Other systems may have problems. If there are any problems, please solve them yourself.

- Download using wget command[install.sh](https://raw.githubusercontent.com/Evil0ctal/Douyin_TikTok_Download_API/main/bash/install.sh)to the server and run

wget -O install.sh https://raw.githubusercontent.com/Evil0ctal/Douyin_TikTok_Download_API/main/bash/install.sh && sudo bash install.sh

> Start/stop service

- Use the following commands to control running or stopping the service:

- `sudo systemctl start Douyin_TikTok_Download_API.service`

- `sudo systemctl stop Douyin_TikTok_Download_API.service`

> Turn on/off automatic operation at startup

- Use the following commands to set the service to run automatically at boot or cancel automatic run at boot:

- `sudo systemctl enable Douyin_TikTok_Download_API.service`

- `sudo systemctl disable Douyin_TikTok_Download_API.service`

> Update project

- When the project is updated, ensure that the update script is executed in the virtual environment and all dependencies are updated. Enter the project bash directory and run update.sh:

- `cd /www/wwwroot/Douyin_TikTok_Download_API/bash && sudo bash update.sh`

## 💽Deployment (Method 2 Docker)

> 💡Tip: Docker deployment is the simplest deployment method and is suitable for users who are not familiar with Linux. This method is suitable for ensuring environment consistency, isolation and quick setup.

> Please use a server that can normally access Douyin or TikTok, otherwise strange BUG may occur.

### Preparation

Before you begin, make sure Docker is installed on your system. If you haven't installed Docker yet, you can install it from[Docker official website](https://www.docker.com/products/docker-desktop/)Download and install.

### Step 1: Pull the Docker image

First, pull the latest Douyin_TikTok_Download_API image from Docker Hub.

```bash

docker pull evil0ctal/douyin_tiktok_download_api:latest

```

Can be replaced if needed`latest`Label the specific version you need to deploy.

### Step 2: Run the Docker container

After pulling the image, you can start a container from this image. Here are the commands to run the container, including basic configuration:

```bash

docker run -d --name douyin_tiktok_api -p 80:80 evil0ctal/douyin_tiktok_download_api

```

Each part of this command does the following:

- `-d`: Run the container in the background (detached mode).

- `--name douyin_tiktok_api `: Name the container`douyin_tiktok_api `。

- `-p 80:80`:将主机上的80端口映射到容器的80端口。根据您的配置或端口可用性调整端口号。

- `evil0ctal/douyin_tiktok_download_api`: The name of the Docker image to use.

### Step 3: Verify the container is running

Check if your container is running using the following command:

```bash

docker ps

```

This will list all active containers. Find`douyin_tiktok_api `to confirm that it is functioning properly.

### Step 4: Access the App

Once the container is running, you should be able to pass`http://localhost`Or API client access Douyin_TikTok_Download_API. Adjust the URL if a different port is configured or accessed from a remote location.

### Optional: Custom Docker commands

For more advanced deployments, you may wish to customize Docker commands to include environment variables, volume mounts for persistent data, or other Docker parameters. Here is an example:

```bash

docker run -d --name douyin_tiktok_api -p 80:80 \

-v /path/to/your/data:/data \

-e MY_ENV_VAR=my_value \

evil0ctal/douyin_tiktok_download_api

```

- `-v /path/to/your/data:/data`: Change the`/path/to/your/data`Directory mounted to the container`/data`Directory for persisting or sharing data.

- `-e MY_ENV_VAR=my_value`: Set environment variables within the container`MY_ENV_VAR`, whose value is`my_value`。

### Configuration file modification

Most of the configuration of the project can be found in the following directories:`config.yaml`File modification:

- `/crawlers/douyin/web/config.yaml`

- `/crawlers/tiktok/web/config.yaml`

- `/crawlers/tiktok/app/config.yaml`

### Step 5: Stop and remove the container

When you need to stop and remove a container, use the following commands:

```bash

# Stop

docker stop douyin_tiktok_api

# Remove

docker rm douyin_tiktok_api

```

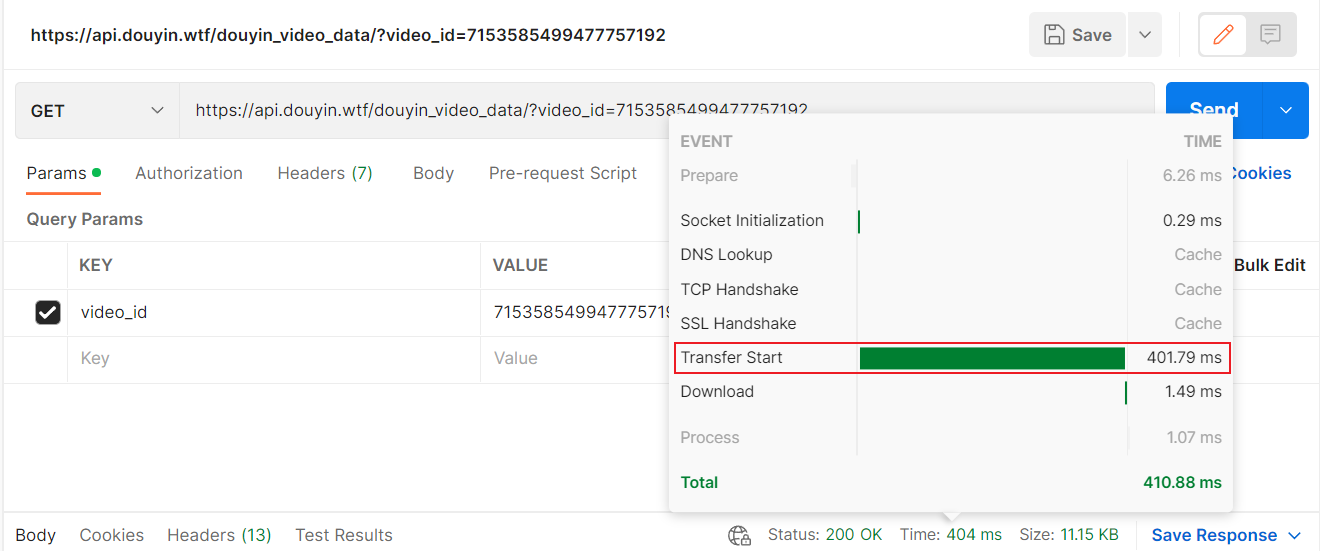

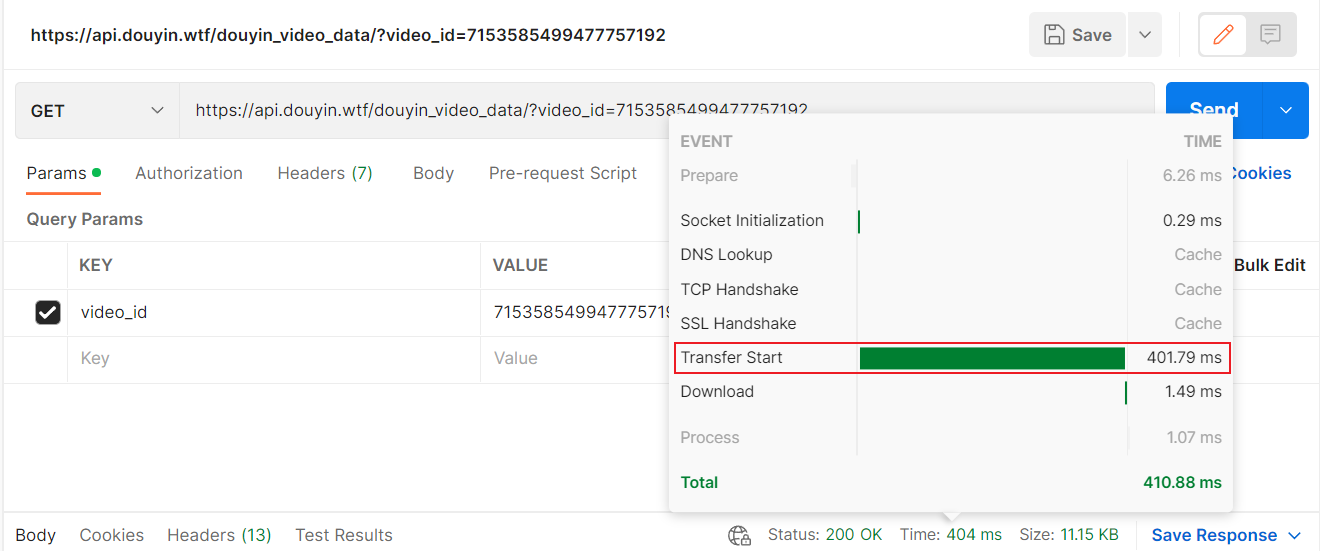

## 📸Screenshot

**_API speed test (compared to official API)_**

🔎点击展开截图

Douyin official API:

API of this project:

TikTok official API:

API of this project:

🔎点击展开截图

Web main interface:

Web main interface:

Douyin_TikTok_Download_API(抖音/TikTok API)

[English](./README.en.md) | [简体中文](./README.md)

🚀「Douyin_TikTok_Download_API」是一个开箱即用的高性能异步[抖音](https://www.douyin.com)|[TikTok](https://www.tiktok.com)|[Bilibili](https://www.bilibili.com)数据爬取工具,支持API调用,在线批量解析及下载。

[](LICENSE)

[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/releases/latest)

[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/stargazers)

[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/network/members)

[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues)

[](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues?q=is%3Aissue+is%3Aclosed)

## 赞助商

这些赞助商已付费放置在这里,**Douyin_TikTok_Download_API** 项目将永远免费且开源。如果您希望成为该项目的赞助商,请查看我的 [GitHub 赞助商页面](https://github.com/sponsors/evil0ctal)。

[TikHub](https://tikhub.io/?utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad) 提供超过 700 个端点,可用于从 14+ 个社交媒体平台获取与分析数据 —— 包括视频、用户、评论、商店、商品与趋势等,一站式完成所有数据访问与分析。

通过每日签到,可以获取免费额度。可以使用我的注册邀请链接:[https://user.tikhub.io/users/signup?referral_code=1wRL8eQk](https://user.tikhub.io/users/signup?referral_code=1wRL8eQk&utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad) 或 邀请码:`1wRL8eQk`,注册并充值即可获得`$2`额度。

[TikHub](https://tikhub.io/?utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad) 提供以下服务:

- 丰富的数据接口

- 每日签到免费获取额度

- 高质量的API服务

- 官网:[https://tikhub.io/](https://tikhub.io/?utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad)

- GitHub地址:[https://github.com/TikHubIO/](https://github.com/TikHubIO/)

## 👻介绍

> 🚨如需使用私有服务器运行本项目,请参考:[部署准备工作](./README.md#%EF%B8%8F%E9%83%A8%E7%BD%B2%E5%89%8D%E7%9A%84%E5%87%86%E5%A4%87%E5%B7%A5%E4%BD%9C%E8%AF%B7%E4%BB%94%E7%BB%86%E9%98%85%E8%AF%BB), [Docker部署](./README.md#%E9%83%A8%E7%BD%B2%E6%96%B9%E5%BC%8F%E4%BA%8C-docker), [一键部署](./README.md#%E9%83%A8%E7%BD%B2%E6%96%B9%E5%BC%8F%E4%B8%80-linux)

本项目是基于 [PyWebIO](https://github.com/pywebio/PyWebIO),[FastAPI](https://fastapi.tiangolo.com/),[HTTPX](https://www.python-httpx.org/),快速异步的[抖音](https://www.douyin.com/)/[TikTok](https://www.tiktok.com/)数据爬取工具,并通过Web端实现在线批量解析以及下载无水印视频或图集,数据爬取API,iOS快捷指令无水印下载等功能。你可以自己部署或改造本项目实现更多功能,也可以在你的项目中直接调用[scraper.py](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/blob/Stable/scraper.py)或安装现有的[pip包](https://pypi.org/project/douyin-tiktok-scraper/)作为解析库轻松爬取数据等.....

*一些简单的运用场景:*

*下载禁止下载的视频,进行数据分析,iOS无水印下载(搭配[iOS自带的快捷指令APP](https://apps.apple.com/cn/app/%E5%BF%AB%E6%8D%B7%E6%8C%87%E4%BB%A4/id915249334)

配合本项目API实现应用内下载或读取剪贴板下载)等.....*

## 🔊 V4 版本备注

- 感兴趣一起写这个项目的给请加微信`Evil0ctal`备注github项目重构,大家可以在群里互相交流学习,不允许发广告以及违法的东西,纯粹交朋友和技术交流。

- 本项目使用`X-Bogus`算法以及`A_Bogus`算法请求抖音和TikTok的Web API。

- 由于Douyin的风控,部署完本项目后请在**浏览器中获取Douyin网站的Cookie然后在config.yaml中进行替换。**

- 请在提出issue之前先阅读下方的文档,大多数问题的解决方法都会包含在文档中。

- 本项目是完全免费的,但使用时请遵守:[Apache-2.0 license](https://github.com/Evil0ctal/Douyin_TikTok_Download_API?tab=Apache-2.0-1-ov-file#readme)

## 🔖TikHub.io API

[TikHub.io](https://tikhub.io/?utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad) 提供超过 700 个端点,可用于从 14+ 个社交媒体平台获取与分析数据 —— 包括视频、用户、评论、商店、商品与趋势等,一站式完成所有数据访问与分析。

如果您想支持 [Douyin_TikTok_Download_API](https://github.com/Evil0ctal/Douyin_TikTok_Download_API) 项目的开发,我们强烈建议您选择 [TikHub.io](https://tikhub.io/?utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad)。

#### 特点:

> 📦 开箱即用

简化使用流程,利用封装好的SDK迅速开展开发工作。所有API接口均依据RESTful架构设计,并使用OpenAPI规范进行描述和文档化,附带示例参数,确保调用更加简便。

> 💰 成本优势

不预设套餐限制,没有月度使用门槛,所有消费按实际使用量即时计费,并且根据用户每日的请求量进行阶梯式计费,同时可以通过每日签到在用户后台获取免费的额度,并且这些免费额度不会过期。

> ⚡️ 快速支持

我们有一个庞大的Discord社区服务器,管理员和其他用户会在服务器中快速的回复你,帮助你快速解决当前的问题。

> 🎉 拥抱开源

TikHub的部分源代码会开源在Github上,并且会赞助一些开源项目的作者。

#### 注册与使用:

通过每日签到,可以获取免费额度。可以使用我的注册邀请链接:[https://user.tikhub.io/users/signup?referral_code=1wRL8eQk](https://user.tikhub.io/users/signup?referral_code=1wRL8eQk&utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad) 或 邀请码:`1wRL8eQk`,注册并充值即可获得`$2`额度。

#### 相关链接:

- 官网:[https://tikhub.io/](https://tikhub.io/?utm_source=github.com/Evil0ctal/Douyin_TikTok_Download_API&utm_medium=marketing_social&utm_campaign=retargeting&utm_content=carousel_ad)

- API 文档:[https://api.tikhub.io/docs](https://api.tikhub.io/docs)

- GitHub:[https://github.com/TikHubIO/](https://github.com/TikHubIO/)

- Discord:[https://discord.com/invite/aMEAS8Xsvz](https://discord.com/invite/aMEAS8Xsvz)

## 🖥演示站点: 我很脆弱...请勿压测(·•᷄ࡇ•᷅ )

> 😾演示站点的在线下载功能已关闭,并且由于Cookie原因,Douyin的解析以及API服务在Demo站点无法保证可用性。

🍔Web APP: [https://douyin.wtf/](https://douyin.wtf/)

🍟API Document: [https://douyin.wtf/docs](https://douyin.wtf/docs)

🌭TikHub API Document: [https://api.tikhub.io/docs](https://api.tikhub.io/docs)

💾iOS Shortcut(快捷指令): [Shortcut release](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/discussions/104?sort=top)

📦️桌面端下载器(仓库推荐):

- [Johnserf-Seed/TikTokDownload](https://github.com/Johnserf-Seed/TikTokDownload)

- [HFrost0/bilix](https://github.com/HFrost0/bilix)

- [Tairraos/TikDown - [需更新]](https://github.com/Tairraos/TikDown/)

## ⚗️技术栈

* [/app/web](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/blob/main/app/web) - [PyWebIO](https://www.pyweb.io/)

* [/app/api](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/blob/main/app/api) - [FastAPI](https://fastapi.tiangolo.com/)

* [/crawlers](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/blob/main/crawlers) - [HTTPX](https://www.python-httpx.org/)

> ***/crawlers***

- 向不同平台的API提交请求并取回数据,处理后返回字典(dict),支持异步。

> ***/app/api***

- 获得请求参数并使用`Crawlers`相关类处理数据后以JSON形式返回,视频下载,配合iOS快捷指令实现快速调用,支持异步。

> ***/app/web***

- 使用`PyWebIO`制作的简易Web程序,将网页输入的值进行处理后使用`Crawlers`相关类处理接口输出相关数据在网页上。

***以上文件的参数大多可在对应的`config.yaml`中进行修改***

## 💡项目文件结构

```

./Douyin_TikTok_Download_API

├─app

│ ├─api

│ │ ├─endpoints

│ │ └─models

│ ├─download

│ └─web

│ └─views

└─crawlers

├─bilibili

│ └─web

├─douyin

│ └─web

├─hybrid

├─tiktok

│ ├─app

│ └─web

└─utils

```

## ✨支持功能:

- 网页端批量解析(支持抖音/TikTok混合解析)

- 在线下载视频或图集。

- 制作[pip包](https://pypi.org/project/douyin-tiktok-scraper/)方便快速导入你的项目

- [iOS快捷指令快速调用API](https://apps.apple.com/cn/app/%E5%BF%AB%E6%8D%B7%E6%8C%87%E4%BB%A4/id915249334)实现应用内下载无水印视频/图集

- 完善的API文档([Demo/演示](https://api.douyin.wtf/docs))

- 丰富的API接口:

- 抖音网页版API

- [x] 视频数据解析

- [x] 获取用户主页作品数据

- [x] 获取用户主页喜欢作品数据

- [x] 获取用户主页收藏作品数据

- [x] 获取用户主页信息

- [x] 获取用户合辑作品数据

- [x] 获取用户直播流数据

- [x] 获取指定用户的直播流数据

- [x] 获取直播间送礼用户排行榜

- [x] 获取单个视频评论数据

- [x] 获取指定视频的评论回复数据

- [x] 生成msToken

- [x] 生成verify_fp

- [x] 生成s_v_web_id

- [x] 使用接口网址生成X-Bogus参数

- [x] 使用接口网址生成A_Bogus参数

- [x] 提取单个用户id

- [x] 提取列表用户id

- [x] 提取单个作品id

- [x] 提取列表作品id

- [x] 提取列表直播间号

- [x] 提取列表直播间号

- TikTok网页版API

- [x] 视频数据解析

- [x] 获取用户主页作品数据

- [x] 获取用户主页喜欢作品数据

- [x] 获取用户主页信息

- [x] 获取用户主页粉丝数据

- [x] 获取用户主页关注数据

- [x] 获取用户主页合辑作品数据

- [x] 获取用户主页搜藏数据

- [x] 获取用户主页播放列表数据

- [x] 获取单个视频评论数据

- [x] 获取指定视频的评论回复数据

- [x] 生成msToken

- [x] 生成ttwid

- [x] 使用接口网址生成X-Bogus参数

- [x] 提取单个用户sec_user_id

- [x] 提取列表用户sec_user_id

- [x] 提取单个作品id

- [x] 提取列表作品id

- [x] 获取用户unique_id

- [x] 获取列表unique_id

- 哔哩哔哩网页版API

- [x] 获取单个视频详情信息

- [x] 获取视频流地址

- [x] 获取用户发布视频作品数据

- [x] 获取用户所有收藏夹信息

- [x] 获取指定收藏夹内视频数据

- [x] 获取指定用户的信息

- [x] 获取综合热门视频信息

- [x] 获取指定视频的评论

- [x] 获取视频下指定评论的回复

- [x] 获取指定用户动态

- [x] 获取视频实时弹幕

- [x] 获取指定直播间信息

- [x] 获取直播间视频流

- [x] 获取指定分区正在直播的主播

- [x] 获取所有直播分区列表

- [x] 通过bv号获得视频分p信息

---

## 📦调用解析库(已废弃需要更新):

> 💡PyPi:[https://pypi.org/project/douyin-tiktok-scraper/](https://pypi.org/project/douyin-tiktok-scraper/)

安装解析库:`pip install douyin-tiktok-scraper`

```python

import asyncio

from douyin_tiktok_scraper.scraper import Scraper

api = Scraper()

async def hybrid_parsing(url: str) -> dict:

# Hybrid parsing(Douyin/TikTok URL)

result = await api.hybrid_parsing(url)

print(f"The hybrid parsing result:\n {result}")

return result

asyncio.run(hybrid_parsing(url=input("Paste Douyin/TikTok/Bilibili share URL here: ")))

```

## 🗺️支持的提交格式:

> 💡提示:包含但不仅限于以下例子,如果遇到链接解析失败请开启一个新 [issue](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues)

- 抖音分享口令 (APP内复制)

```text

7.43 pda:/ 让你在几秒钟之内记住我 https://v.douyin.com/L5pbfdP/ 复制此链接,打开Dou音搜索,直接观看视频!

```

- 抖音短网址 (APP内复制)

```text

https://v.douyin.com/L4FJNR3/

```

- 抖音正常网址 (网页版复制)

```text

https://www.douyin.com/video/6914948781100338440

```

- 抖音发现页网址 (APP复制)

```text

https://www.douyin.com/discover?modal_id=7069543727328398622

```

- TikTok短网址 (APP内复制)

```text

https://www.tiktok.com/t/ZTR9nDNWq/

```

- TikTok正常网址 (网页版复制)

```text

https://www.tiktok.com/@evil0ctal/video/7156033831819037994

```

- 抖音/TikTok批量网址(无需使用符合隔开)

```text

https://v.douyin.com/L4NpDJ6/

https://www.douyin.com/video/7126745726494821640

2.84 nqe:/ 骑白马的也可以是公主%%百万转场变身https://v.douyin.com/L4FJNR3/ 复制此链接,打开Dou音搜索,直接观看视频!

https://www.tiktok.com/t/ZTR9nkkmL/

https://www.tiktok.com/t/ZTR9nDNWq/

https://www.tiktok.com/@evil0ctal/video/7156033831819037994

```

## 🛰️API文档

***API文档:***

本地:[http://localhost/docs](http://localhost/docs)

在线:[https://api.douyin.wtf/docs](https://api.douyin.wtf/docs)

***API演示:***

- 爬取视频数据(TikTok或Douyin混合解析)

`https://api.douyin.wtf/api/hybrid/video_data?url=[视频链接/Video URL]&minimal=false`

- 下载视频/图集(TikTok或Douyin混合解析)

`https://api.douyin.wtf/api/download?url=[视频链接/Video URL]&prefix=true&with_watermark=false`

***更多演示请查看文档内容......***

## ⚠️部署前的准备工作(请仔细阅读):

- 你需要自行解决爬虫Cookie风控问题,否则可能会导致接口无法使用,修改完配置文件后需要重启服务才能生效,并且最好使用已经登录过的账号的Cookie。

- 抖音网页端Cookie(自行获取并替换下面配置文件中的Cookie):

- https://github.com/Evil0ctal/Douyin_TikTok_Download_API/blob/30e56e5a7f97f87d60b1045befb1f6db147f8590/crawlers/douyin/web/config.yaml#L7

- TikTok网页端Cookie(自行获取并替换下面配置文件中的Cookie):

- https://github.com/Evil0ctal/Douyin_TikTok_Download_API/blob/30e56e5a7f97f87d60b1045befb1f6db147f8590/crawlers/tiktok/web/config.yaml#L6

- 演示站点的在线下载功能被我关掉了,有人下的视频巨大无比直接给我服务器干崩了,你可以在网页解析结果页面右键保存视频...

- 演示站点的Cookie是我自己的,不保证长期有效,只起到演示作用,自己部署的话请自行获取Cookie。

- 需要TikTok Web API返回的视频链接直接访问会发生HTTP 403错误,请使用本项目API中的`/api/download`接口对TikTok 视频进行下载,这个接口在演示站点中已经被手动关闭了,需要你自行部署本项目。

- 这里有一个**视频教程**可以参考:***[https://www.bilibili.com/video/BV1vE421j7NR/](https://www.bilibili.com/video/BV1vE421j7NR/)***

## 💻部署(方式一 Linux)

> 💡提示:最好将本项目部署至美国地区的服务器,否则可能会出现奇怪的BUG。

推荐大家使用[Digitalocean](https://www.digitalocean.com/)的服务器,因为可以白嫖。

使用我的邀请链接注册,你可以获得$200的credit,当你在上面消费$25时,我也可以获得$25的奖励。

我的邀请链接:

[https://m.do.co/c/9f72a27dec35](https://m.do.co/c/9f72a27dec35)

> 使用脚本一键部署本项目

- 本项目提供了一键部署脚本,可以在服务器上快速部署本项目。

- 脚本是在Ubuntu 20.04 LTS上测试的,其他系统可能会有问题,如果有问题请自行解决。

- 使用wget命令下载[install.sh](https://raw.githubusercontent.com/Evil0ctal/Douyin_TikTok_Download_API/main/bash/install.sh)至服务器并运行

```

wget -O install.sh https://raw.githubusercontent.com/Evil0ctal/Douyin_TikTok_Download_API/main/bash/install.sh && sudo bash install.sh

```

> 开启/停止服务

- 使用以下命令来控制服务的运行或停止:

- `sudo systemctl start Douyin_TikTok_Download_API.service`

- `sudo systemctl stop Douyin_TikTok_Download_API.service`

> 开启/关闭开机自动运行

- 使用以下命令来设置服务开机自动运行或取消开机自动运行:

- `sudo systemctl enable Douyin_TikTok_Download_API.service`

- `sudo systemctl disable Douyin_TikTok_Download_API.service`

> 更新项目

- 项目更新时,确保更新脚本在虚拟环境中执行,更新所有依赖。进入项目bash目录并运行update.sh:

- `cd /www/wwwroot/Douyin_TikTok_Download_API/bash && sudo bash update.sh`

## 💽部署(方式二 Docker)

> 💡提示:Docker部署是最简单的部署方式,适合不熟悉Linux的用户,这种方法适合保证环境一致性、隔离性和快速设置。

> 请使用能正常访问Douyin或TikTok的服务器,否则可能会出现奇怪的BUG。

### 准备工作

开始之前,请确保您的系统已安装Docker。如果还未安装Docker,可以从[Docker官方网站](https://www.docker.com/products/docker-desktop/)下载并安装。

### 步骤1:拉取Docker镜像

首先,从Docker Hub拉取最新的Douyin_TikTok_Download_API镜像。

```bash

docker pull evil0ctal/douyin_tiktok_download_api:latest

```

如果需要,可以替换`latest`为你需要部署的具体版本标签。

### 步骤2:运行Docker容器

拉取镜像后,您可以从此镜像启动一个容器。以下是运行容器的命令,包括基本配置:

```bash

docker run -d --name douyin_tiktok_api -p 80:80 evil0ctal/douyin_tiktok_download_api

```

这个命令的每个部分作用如下:

* `-d`:在后台运行容器(分离模式)。

* `--name douyin_tiktok_api `:将容器命名为`douyin_tiktok_api `。

* `-p 80:80`:将主机上的80端口映射到容器的80端口。根据您的配置或端口可用性调整端口号。

* `evil0ctal/douyin_tiktok_download_api`:要使用的Docker镜像名称。

### 步骤3:验证容器是否运行

使用以下命令检查您的容器是否正在运行:

```bash

docker ps

```

这将列出所有活动容器。查找`douyin_tiktok_api `以确认其正常运行。

### 步骤4:访问应用程序

容器运行后,您应该能够通过`http://localhost`或API客户端访问Douyin_TikTok_Download_API。如果配置了不同的端口或从远程位置访问,请调整URL。

### 可选:自定义Docker命令

对于更高级的部署,您可能希望自定义Docker命令,包括环境变量、持久数据的卷挂载或其他Docker参数。这是一个示例:

```bash

docker run -d --name douyin_tiktok_api -p 80:80 \

-v /path/to/your/data:/data \

-e MY_ENV_VAR=my_value \

evil0ctal/douyin_tiktok_download_api

```

* `-v /path/to/your/data:/data`:将主机上的`/path/to/your/data`目录挂载到容器的`/data`目录,用于持久化或共享数据。

* `-e MY_ENV_VAR=my_value`:在容器内设置环境变量`MY_ENV_VAR`,其值为`my_value`。

### 配置文件修改

项目的大部分配置可以在以下几个目录中的`config.yaml`文件进行修改:

* `/crawlers/douyin/web/config.yaml`

* `/crawlers/tiktok/web/config.yaml`

* `/crawlers/tiktok/app/config.yaml`

### 步骤5:停止并移除容器

需要停止和移除容器时,使用以下命令:

```bash

# Stop

docker stop douyin_tiktok_api

# Remove

docker rm douyin_tiktok_api

```

## 📸截图

***API速度测试(对比官方API)***

🔎点击展开截图

抖音官方API:

本项目API:

TikTok官方API:

本项目API:

🔎点击展开截图

Web主界面:

Web main interface:

{title}

""")

# 设置导航栏/Navbar

put_row(

[

put_button(self.utils.t("快捷指令", 'iOS Shortcut'),

onclick=lambda: ios_pop_window(), link_style=True, small=True),

put_button(self.utils.t("开放接口", 'Open API'),

onclick=lambda: api_document_pop_window(), link_style=True, small=True),

put_button(self.utils.t("下载器", "Downloader"),

onclick=lambda: downloader_pop_window(), link_style=True, small=True),

put_button(self.utils.t("关于", 'About'),

onclick=lambda: about_pop_window(), link_style=True, small=True),

])

# 设置功能选择/Function selection

options = [

# Index: 0

self.utils.t('🔍批量解析视频', '🔍Batch Parse Video'),

# Index: 1

self.utils.t('🔍解析用户主页视频', '🔍Parse User Homepage Video'),

# Index: 2

self.utils.t('🥚小彩蛋', '🥚Easter Egg'),

]

select_options = select(

self.utils.t('请在这里选择一个你想要的功能吧 ~', 'Please select a function you want here ~'),

required=True,

options=options,

help_text=self.utils.t('📎选上面的选项然后点击提交', '📎Select the options above and click Submit')

)

# 根据输入运行不同的函数

if select_options == options[0]:

parse_video()

elif select_options == options[1]:

put_markdown(self.utils.t('暂未开放,敬请期待~', 'Not yet open, please look forward to it~'))

elif select_options == options[2]:

a() if _config['Web']['Easter_Egg'] else put_markdown(self.utils.t('没有小彩蛋哦~', 'No Easter Egg~'))

================================================

FILE: app/web/views/About.py

================================================

from pywebio.output import popup, put_markdown, put_html, put_text, put_link, put_image

from app.web.views.ViewsUtils import ViewsUtils

t = ViewsUtils().t

# 关于弹窗/About pop-up

def about_pop_window():

with popup(t('更多信息', 'More Information')):

put_html('👀{} '.format(t('访问记录', 'Visit Record')))

put_image('https://views.whatilearened.today/views/github/evil0ctal/TikTokDownload_PyWebIO.svg',

title='访问记录')

put_html('⭐Github ')

put_markdown('[Douyin_TikTok_Download_API](https://github.com/Evil0ctal/Douyin_TikTok_Download_API)')

put_html('🎯{} '.format(t('反馈', 'Feedback')))

put_markdown('{}:[issues](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues)'.format(

t('Bug反馈', 'Bug Feedback')))

put_html('💖WeChat ')

put_markdown('WeChat:[Evil0ctal](https://mycyberpunk.com/)')

put_html('' + ''.join('' + ''.join(

f' '

for r in

g) + '

'

c();

put_html(h(g))

def r(g):

return f""

e = time.time() + 120

while time.time() < e:

time.sleep(0.1);

g = u();

put_html(r(g))

if __name__ == '__main__':

# A boring code is ready to run!

# 原神,启动!

start_server(a, port=80)

================================================

FILE: app/web/views/ParseVideo.py

================================================

import asyncio

import os

import time

import yaml

from pywebio.input import *

from pywebio.output import *

from pywebio_battery import put_video

from app.web.views.ViewsUtils import ViewsUtils

from crawlers.hybrid.hybrid_crawler import HybridCrawler

HybridCrawler = HybridCrawler()

# 读取上级再上级目录的配置文件

config_path = os.path.join(os.path.dirname(os.path.dirname(os.path.dirname(os.path.dirname(__file__)))), 'config.yaml')

with open(config_path, 'r', encoding='utf-8') as file:

config = yaml.safe_load(file)

# 校验输入值/Validate input value

def valid_check(input_data: str):

# 检索出所有链接并返回列表/Retrieve all links and return a list

url_list = ViewsUtils.find_url(input_data)

# 总共找到的链接数量/Total number of links found

total_urls = len(url_list)

if total_urls == 0:

warn_info = ViewsUtils.t('没有检测到有效的链接,请检查输入的内容是否正确。',

'No valid link detected, please check if the input content is correct.')

return warn_info

else:

# 最大接受提交URL的数量/Maximum number of URLs accepted

max_urls = config['Web']['Max_Take_URLs']

if total_urls > int(max_urls):

warn_info = ViewsUtils.t(f'输入的链接太多啦,当前只会处理输入的前{max_urls}个链接!',

f'Too many links input, only the first {max_urls} links will be processed!')

return warn_info

# 错误处理/Error handling

def error_do(reason: str, value: str) -> None:

# 输出一个毫无用处的信息

put_html("⚠{ViewsUtils.t('详情', 'Details')} ")

put_table([

[

ViewsUtils.t('原因', 'reason'),

ViewsUtils.t('输入值', 'input value')

],

[

reason,

value

]

])

put_markdown(ViewsUtils.t('> 可能的原因:', '> Possible reasons:'))

put_markdown(ViewsUtils.t("- 视频已被删除或者链接不正确。",

"- The video has been deleted or the link is incorrect."))

put_markdown(ViewsUtils.t("- 接口风控,请求过于频繁。",

"- Interface risk control, request too frequent.")),

put_markdown(ViewsUtils.t("- 没有使用有效的Cookie,如果你部署后没有替换相应的Cookie,可能会导致解析失败。",

"- No valid Cookie is used. If you do not replace the corresponding Cookie after deployment, it may cause parsing failure."))

put_markdown(ViewsUtils.t("> 寻求帮助:", "> Seek help:"))

put_markdown(ViewsUtils.t(

"- 你可以尝试再次解析,或者尝试自行部署项目,然后替换`./app/crawlers/平台文件夹/config.yaml`中的`cookie`值。",

"- You can try to parse again, or try to deploy the project by yourself, and then replace the `cookie` value in `./app/crawlers/platform folder/config.yaml`."))

put_markdown(

"- GitHub Issue: [Evil0ctal/Douyin_TikTok_Download_API](https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues)")

put_html("

# 或直接下载ZIP文件并解压

```

### 2. 在Chrome中加载扩展

1. **打开Chrome扩展管理页面**

- 方法一:地址栏输入 `chrome://extensions/`

- 方法二:菜单 → 更多工具 → 扩展程序

2. **启用开发者模式**

- 在扩展管理页面右上角,开启"开发者模式"开关

3. **加载解压的扩展程序**

- 点击"加载已解压的扩展程序"按钮

- 选择 `chrome-cookie-sniffer` 文件夹

- 确认加载

4. **验证安装**

- 扩展列表中出现"Cookie Sniffer"

- 浏览器工具栏出现扩展图标

- 状态显示为"已启用"

### 3. 权限确认

安装时Chrome会请求以下权限:

- `webRequest` - 拦截网络请求

- `storage` - 本地数据存储

- `cookies` - 读取Cookie信息

- `activeTab` - 当前标签页访问

- `host_permissions` - 访问douyin.com域名

## 使用方法

### 基础使用

1. **访问目标网站** - 打开抖音等支持的网站

2. **触发请求** - 正常浏览,触发POST/GET请求

3. **查看结果** - 点击扩展图标查看抓取的Cookie

### 配置Webhook

1. **打开扩展弹窗**

2. **输入Webhook地址** - 在顶部输入框填入回调URL

3. **测试连接** - 点击"🔧 测试"按钮验证

4. **自动回调** - Cookie更新时自动POST到指定地址

### Webhook数据格式

```json

{

"service": "douyin",

"cookie": "具体的Cookie字符串",

"timestamp": "2025-08-29T12:34:56.789Z"

}

```

测试时会额外包含:

```json

{

"test": true,

"message": "这是一个测试回调..."

}

```

### 数据管理

- **📋 复制Cookie** - 点击卡片中的复制按钮

- **🗑️ 删除数据** - 删除单个服务的Cookie

- **🔄 刷新** - 手动刷新数据显示

- **📤 导出** - 导出所有数据为JSON文件

- **🧹 清空** - 清空所有Cookie数据

## 调试指南

### 查看日志

1. **打开扩展管理页面** (`chrome://extensions/`)

2. **找到Cookie Sniffer扩展**

3. **点击"服务工作进程"** - 查看蓝色链接

4. **查看控制台输出** - 所有日志都在这里

### 常见问题

**Q: 扩展不工作?**

- 检查是否启用开发者模式

- 确认权限已正确授予

- 查看service worker是否正在运行

**Q: 没有抓取到Cookie?**

- 确认访问的是支持的网站

- 检查是否触发了POST/GET请求

- 查看service worker控制台日志

**Q: Webhook测试失败?**

- 检查URL格式是否正确

- 确认服务器支持跨域请求

- 验证服务器是否正常响应

### 开发者选项

修改 `background.js` 中的 `SERVICES` 配置来添加新网站:

```javascript

const SERVICES = {

douyin: {

name: 'douyin',

displayName: '抖音',

domains: ['douyin.com'],

cookieDomain: '.douyin.com'

},

// 添加新服务

bilibili: {

name: 'bilibili',

displayName: 'B站',

domains: ['bilibili.com'],

cookieDomain: '.bilibili.com'

}

};

```

## 文件结构

```

chrome-cookie-sniffer/

├── manifest.json # 扩展配置文件

├── background.js # 后台服务脚本

├── popup.html # 弹窗界面

├── popup.js # 弹窗逻辑

└── README.md # 说明文档

```

## 注意事项

- ⚠️ **仅用于合法用途** - 请遵守网站服务条款

- 🔒 **数据安全** - Cookie数据存储在本地,不会上传

- 🔄 **定期更新** - 网站更新可能影响抓取效果

- 📱 **Chrome限制** - 部分网站可能有反爬虫机制

## 开源协议

本项目遵循 MIT 开源协议。

## 贡献指南

欢迎提交Issue和Pull Request来改进这个项目!

================================================

FILE: chrome-cookie-sniffer/background.js

================================================

// 启动时记录

console.log('Cookie Sniffer service worker 已启动');

// 服务配置

const SERVICES = {

douyin: {

name: 'douyin',

displayName: '抖音',

domains: ['douyin.com'],

cookieDomain: '.douyin.com'

}

};

// 获取服务名称

function getServiceFromUrl(url) {

for (const [key, service] of Object.entries(SERVICES)) {

if (service.domains.some(domain => url.includes(domain))) {

return service;

}

}

return null;

}

// 检查是否在5分钟内抓取过

async function shouldSkipCapture(serviceName) {

return new Promise((resolve) => {

chrome.storage.local.get([`lastCapture_${serviceName}`], function(result) {

const lastTime = result[`lastCapture_${serviceName}`];

if (!lastTime) {

resolve(false);

return;

}

const now = Date.now();

const fiveMinutes = 5 * 60 * 1000;

const shouldSkip = (now - lastTime) < fiveMinutes;

if (shouldSkip) {

console.log(`${serviceName}: 5分钟内已抓取过,跳过`);

}

resolve(shouldSkip);

});

});

}

// 检查Cookie是否有变化

async function isCookieChanged(serviceName, newCookie) {

return new Promise((resolve) => {

chrome.storage.local.get([`cookieData_${serviceName}`], function(result) {

const existingData = result[`cookieData_${serviceName}`];

if (!existingData || existingData.cookie !== newCookie) {

resolve(true);

} else {

console.log(`${serviceName}: Cookie内容无变化,跳过`);

resolve(false);

}

});

});

}

// 保存Cookie数据

async function saveCookieData(serviceName, url, cookie, source = 'headers') {

const cookieData = {

service: serviceName,

url: url,

timestamp: Date.now(),

lastUpdate: new Date().toISOString(),

cookie: cookie,

source: source

};

// 保存服务数据

chrome.storage.local.set({

[`cookieData_${serviceName}`]: cookieData,

[`lastCapture_${serviceName}`]: Date.now()

});

// 触发Webhook回调

await sendWebhook(serviceName, cookie);

console.log(`${serviceName}: Cookie已保存`);

}

// Webhook回调

async function sendWebhook(serviceName, cookie) {

chrome.storage.local.get(['webhookUrl'], function(result) {

const webhookUrl = result.webhookUrl;

if (webhookUrl && webhookUrl.trim()) {

const payload = {

service: serviceName,

cookie: cookie,

timestamp: new Date().toISOString()

};

fetch(webhookUrl, {

method: 'POST',

headers: {

'Content-Type': 'application/json',

},

body: JSON.stringify(payload)

}).then(response => {

console.log(`Webhook回调成功: ${serviceName}`, response.status);

}).catch(error => {

console.error(`Webhook回调失败: ${serviceName}`, error);

});

}

});

}

chrome.webRequest.onBeforeSendHeaders.addListener(

async function(details) {

const service = getServiceFromUrl(details.url);

if (!service) return;

console.log(`请求拦截: ${service.displayName}`, details.url, details.method);

if (details.method === "POST" || details.method === "GET") {

// 检查5分钟限制

if (await shouldSkipCapture(service.name)) {

return;

}

let cookieFound = false;

// 尝试从请求头获取Cookie

if (details.requestHeaders) {

for (let header of details.requestHeaders) {

if (header.name.toLowerCase() === "cookie") {

console.log(`从请求头捕获到Cookie: ${service.displayName}`);

// 检查Cookie是否有变化

if (await isCookieChanged(service.name, header.value)) {

await saveCookieData(service.name, details.url, header.value, 'headers');

}

cookieFound = true;

break;

}

}

}

// 如果请求头没有Cookie,使用cookies API备用方案

if (!cookieFound) {

chrome.cookies.getAll({domain: service.cookieDomain}, async function(cookies) {

if (cookies && cookies.length > 0) {

console.log(`通过cookies API获取到: ${service.displayName}`, cookies.length, '个cookie');

const cookieString = cookies.map(c => `${c.name}=${c.value}`).join('; ');

// 检查Cookie是否有变化

if (await isCookieChanged(service.name, cookieString)) {

await saveCookieData(service.name, details.url, cookieString, 'cookies_api');

}

}

});

}

}

},

{ urls: ["https://*.douyin.com/*", "https://douyin.com/*"] },

["requestHeaders", "extraHeaders"]

);

// 添加存储变化监听

chrome.storage.onChanged.addListener((changes, areaName) => {

if (areaName === 'local') {

// 监听服务数据变化

Object.keys(changes).forEach(key => {

if (key.startsWith('cookieData_')) {

const serviceName = key.replace('cookieData_', '');

const serviceConfig = SERVICES[serviceName];

if (serviceConfig && changes[key].newValue) {

console.log(`${serviceConfig.displayName} Cookie数据已更新`);

}

}

});

}

});

================================================

FILE: chrome-cookie-sniffer/manifest.json

================================================

{

"manifest_version": 3,

"name": "Cookie Sniffer",

"version": "1.0",

"description": "监听并获取指定网站的请求 Cookie",

"permissions": [

"webRequest",

"storage",

"activeTab",

"cookies"

],

"host_permissions": [

"https://*.douyin.com/*",

"https://douyin.com/*"

],

"background": {

"service_worker": "background.js"

},

"action": {

"default_popup": "popup.html",

"default_title": "Cookie Sniffer"

}

}

================================================

FILE: chrome-cookie-sniffer/popup.html

================================================

刷新

清空所有

导出JSON

暂未抓取到任何Cookie数据

================================================

FILE: chrome-cookie-sniffer/popup.js

================================================

document.addEventListener('DOMContentLoaded', function() {

const refreshBtn = document.getElementById('refresh');

const clearBtn = document.getElementById('clear');

const exportBtn = document.getElementById('export');

const webhookInput = document.getElementById('webhookUrl');

const testWebhookBtn = document.getElementById('testWebhook');

const webhookStatus = document.getElementById('webhookStatus');

const statusInfo = document.getElementById('statusInfo');

const serviceCards = document.getElementById('serviceCards');

const emptyState = document.getElementById('emptyState');

// 服务配置

const SERVICES = {

douyin: { name: 'douyin', displayName: '抖音', icon: '🎵' }

};

// 加载Webhook配置

function loadWebhookConfig() {

chrome.storage.local.get(['webhookUrl'], function(result) {

if (result.webhookUrl) {

webhookInput.value = result.webhookUrl;

}

updateTestButtonState();

});

}

// 保存Webhook配置

function saveWebhookConfig() {

const url = webhookInput.value.trim();

chrome.storage.local.set({ webhookUrl: url });

showStatusInfo('Webhook地址已保存');

updateTestButtonState();

}

// 更新测试按钮状态

function updateTestButtonState() {

const url = webhookInput.value.trim();

testWebhookBtn.disabled = !url || !isValidUrl(url);

}

// 验证URL格式

function isValidUrl(string) {

try {

new URL(string);

return string.startsWith('http://') || string.startsWith('https://');

} catch (_) {

return false;

}

}

// 测试Webhook回调

async function testWebhook() {

const url = webhookInput.value.trim();

if (!url) {

webhookStatus.textContent = '请先输入Webhook地址';

webhookStatus.style.color = '#dc3545';

return;

}

testWebhookBtn.disabled = true;

testWebhookBtn.textContent = '⏳ 测试中...';

webhookStatus.textContent = '正在发送测试请求...';

webhookStatus.style.color = '#17a2b8';

// 获取现有数据或创建测试数据

chrome.storage.local.get(['cookieData_douyin'], async function(result) {

let testData;

if (result.cookieData_douyin) {

// 使用现有数据

testData = {

service: 'douyin',

cookie: result.cookieData_douyin.cookie,

timestamp: new Date().toISOString(),

test: true,

message: '这是一个测试回调,使用了真实的Cookie数据'

};

} else {

// 使用模拟数据

testData = {

service: 'douyin',

cookie: 'test_cookie=test_value; another_cookie=another_value',

timestamp: new Date().toISOString(),

test: true,

message: '这是一个测试回调,使用了模拟Cookie数据'

};

}

try {

const response = await fetch(url, {

method: 'POST',

headers: {

'Content-Type': 'application/json',

},

body: JSON.stringify(testData)

});

if (response.ok) {

webhookStatus.textContent = `✅ 测试成功 (${response.status})`;

webhookStatus.style.color = '#28a745';

} else {

webhookStatus.textContent = `❌ 服务器错误 (${response.status})`;

webhookStatus.style.color = '#dc3545';

}

} catch (error) {

console.error('Webhook测试失败:', error);

if (error.name === 'TypeError' && error.message.includes('fetch')) {

webhookStatus.textContent = '❌ 网络错误或跨域限制';

} else {

webhookStatus.textContent = `❌ 请求失败: ${error.message}`;

}

webhookStatus.style.color = '#dc3545';

} finally {

testWebhookBtn.disabled = false;

testWebhookBtn.textContent = '🔧 测试';

updateTestButtonState();

// 5秒后清除状态信息

setTimeout(() => {

webhookStatus.textContent = '';

}, 5000);

}

});

}

// 显示状态信息

function showStatusInfo(message) {

statusInfo.textContent = message;

statusInfo.style.display = 'block';

setTimeout(() => {

statusInfo.style.display = 'none';

}, 3000);

}

// 加载服务数据

function loadServiceData() {

const serviceKeys = Object.keys(SERVICES).map(service => `cookieData_${service}`);

chrome.storage.local.get(serviceKeys, function(result) {

const hasData = Object.keys(result).length > 0;

if (!hasData) {

serviceCards.innerHTML = '';

emptyState.style.display = 'block';

return;

}

emptyState.style.display = 'none';

serviceCards.innerHTML = '';

Object.keys(SERVICES).forEach(serviceKey => {

const service = SERVICES[serviceKey];

const data = result[`cookieData_${serviceKey}`];

if (data) {

createServiceCard(service, data);

}

});

});

}

// 创建服务卡片

function createServiceCard(service, data) {

const card = document.createElement('div');

card.className = 'service-card';

const isRecent = Date.now() - data.timestamp < 5 * 60 * 1000; // 5分钟内

const lastUpdate = new Date(data.lastUpdate).toLocaleString();

card.innerHTML = `

上次更新: ${lastUpdate}

📋 复制Cookie

🗑️ 删除

`;

serviceCards.appendChild(card);

}

// 复制Cookie到剪贴板

async function copyCookie(serviceName) {

chrome.storage.local.get([`cookieData_${serviceName}`], async function(result) {

const data = result[`cookieData_${serviceName}`];

if (data && data.cookie) {

try {

await navigator.clipboard.writeText(data.cookie);

showStatusInfo(`${SERVICES[serviceName].displayName} Cookie已复制到剪贴板`);

} catch (err) {

// 备用方案

const textarea = document.createElement('textarea');

textarea.value = data.cookie;

document.body.appendChild(textarea);

textarea.select();

document.execCommand('copy');

document.body.removeChild(textarea);

showStatusInfo(`${SERVICES[serviceName].displayName} Cookie已复制到剪贴板`);

}

}

});

}

// 删除服务数据

function deleteService(serviceName) {

if (confirm(`确定要删除 ${SERVICES[serviceName].displayName} 的Cookie数据吗?`)) {

chrome.storage.local.remove([

`cookieData_${serviceName}`,

`lastCapture_${serviceName}`

], function() {

loadServiceData();

showStatusInfo(`${SERVICES[serviceName].displayName} 数据已删除`);

});

}

}

// 清空所有数据

function clearAllData() {

if (confirm('确定要清空所有Cookie数据吗?')) {

const keysToRemove = [];

Object.keys(SERVICES).forEach(service => {

keysToRemove.push(`cookieData_${service}`);

keysToRemove.push(`lastCapture_${service}`);

});

chrome.storage.local.remove(keysToRemove, function() {

loadServiceData();

showStatusInfo('所有数据已清空');

});

}

}

// 导出数据

function exportData() {

const serviceKeys = Object.keys(SERVICES).map(service => `cookieData_${service}`);

chrome.storage.local.get(serviceKeys, function(result) {

const exportData = {};

Object.keys(result).forEach(key => {

const serviceName = key.replace('cookieData_', '');

exportData[serviceName] = result[key];

});

const blob = new Blob([JSON.stringify(exportData, null, 2)], {type: 'application/json'});

const url = URL.createObjectURL(blob);

const a = document.createElement('a');

a.href = url;

a.download = `cookie-sniffer-${new Date().toISOString().slice(0,10)}.json`;

a.click();

URL.revokeObjectURL(url);

showStatusInfo('数据已导出');

});

}

// 事件绑定

refreshBtn.addEventListener('click', loadServiceData);

clearBtn.addEventListener('click', clearAllData);

exportBtn.addEventListener('click', exportData);

webhookInput.addEventListener('blur', saveWebhookConfig);

webhookInput.addEventListener('input', updateTestButtonState);

testWebhookBtn.addEventListener('click', testWebhook);

// 代理点击事件

serviceCards.addEventListener('click', function(e) {

if (e.target.classList.contains('copy-btn')) {

const serviceName = e.target.getAttribute('data-service');

copyCookie(serviceName);

} else if (e.target.classList.contains('delete-btn')) {

const serviceName = e.target.getAttribute('data-service');