| $(html(a)) | $(html(b)) |

| $(html(a)) |

| $(html(b)) |

| on tensors | on finite instructions | ||

|---|---|---|---|

| meaning | defining backward rules manully for functions on tensors | defining backward rules on a limited set of basic scalar operations, and generate gradient code using source code transformation | |

| pros and cons |

|

|

|

| packages | Jax PyTorch |

Tapenade Adept NiLang.jl |

""", md"**Evaluating derivatives: principles and techniques of algorithmic differentiation**

By: Griewank, Andreas, and Andrea Walther

(2008)")

# ╔═╡ 4ff09f7c-aeac-48bd-9d58-8446137c3acd

md"""

## The AD ecosystem in Julia

Please check JuliaDiff: [https://juliadiff.org/](https://juliadiff.org/)

A short list:

* Forward mode AD: ForwardDiff.jl

* Reverse mode AD (tensor): ReverseDiff.jl/Zygote.jl

* Reverse mode AD (scalar): NiLang.jl

Warnings

* The main authors of `Tracker`, `ReverseDiff` and `Zygote` are not maintaining them anymore.

"""

#=

| | Rules | Favors Tensor? | Type |

| ---- | ---- | --- | --- |

| Zygote | C | ✓ | R |

| ReverseDiff | D | ✓ | R |

| Nabla | D→C | ✓ | R |

| Tracker | D | ✓ | R |

| Yota | C | ✓ | R |

| NiLang | - | × | R |

| Enzyme | - | × | R |

| ForwardDiff | - | × | F |

| Diffractor | ? | ? | ? |

* R: reverse mode

* F: forward mode

* C: ChainRules

* D: DiffRules

"""

=#

# ╔═╡ ea44037b-9359-4fbd-990f-529d88d54351

md"# Quick summary

1. The history of AD is longer than many people have thought. People are most familar with *reverse mode AD with primitives implemented on tensors* that brings the boom of machine learning. There are also AD frameworks that can differentiate a general program directly, which does not require users defining AD rules manually.

2. **Forward mode AD** propagate gradients forward, it has a computational overhead propotional to the number of input parameters.

2. **Backward mode AD** propagate gradients backward, it has a computational overhead propotional to the number of output parameters.

* primitives on **tensors** v.s. **scalars**

* it is very expensive to reverse the program

4. Julia has one of the most active AD community!

#### Forward v.s. Backward

when is forward mode AD more useful?

* It is often combined with backward mode AD for obtaining Hessians (forward over backward).

* Having <20 input parameters.

when is backward mode AD more useful?

* In most variational optimizations, especially when we are training a neural network with ~ 100M parameters.

"

# ╔═╡ e731a8e3-6462-4a60-83e9-6ab7ddfff50e

md"# How do AD libraries work?"

# ╔═╡ 685c2b28-b071-452c-a881-801128dcb6c3

md"`ForwardDiff` is operator overloading based, many of its overheads can be optimized by Julia's JIT compiler."

# ╔═╡ 177ddfc2-2cbe-4dba-9d05-2857633dd1ae

md"# [Tapenade](http://tapenade.inria.fr:8080/tapenade/index.jsp)

"

# ╔═╡ 6c2a3a93-385f-4758-9b6e-4cb594a8e856

md"## Example 1: Bessel Example"

# ╔═╡ fb8168c2-8489-418b-909b-cede57b5ae64

md"bessel.f90"

# ╔═╡ fdb39284-dbb1-49fa-9a1c-f360f9e6b765

md"""

```fortran

subroutine besselj(res, v, z, atol)

implicit none

integer, intent(in) :: v

real*8, intent(in) :: z, atol

real*8, intent(out) :: res

real*8 :: s

integer :: k, i, factv

k = 0

factv = 1

do i = 2,v

factv = factv * i

enddo

s = (z/2.0)**v / factv

res = s

do while(abs(s) > atol)

k = k + 1

s = -s / k / (k+v) * ((z/2) ** 2)

res = res + s

enddo

endsubroutine besselj

```

"""

# ╔═╡ 60214f22-c8bb-4a32-a882-4e6c727b29a9

md"""

besselj_d.f90 (forward mode)

```fortran

! Generated by TAPENADE (INRIA, Ecuador team)

! Tapenade 3.15 (master) - 15 Apr 2020 11:54

!

! Differentiation of besselj in forward (tangent) mode:

! variations of useful results: res

! with respect to varying inputs: z

! RW status of diff variables: res:out z:in

SUBROUTINE BESSELJ_D(res, resd, v, z, zd, atol)

IMPLICIT NONE

INTEGER, INTENT(IN) :: v

REAL*8, INTENT(IN) :: z, atol

REAL*8, INTENT(IN) :: zd

REAL*8, INTENT(OUT) :: res

REAL*8, INTENT(OUT) :: resd

REAL*8 :: s

REAL*8 :: sd

INTEGER :: k, i, factv

INTRINSIC ABS

REAL*8 :: abs0

REAL*8 :: pwx1

REAL*8 :: pwx1d

REAL*8 :: pwr1

REAL*8 :: pwr1d

INTEGER :: temp

k = 0

factv = 1

DO i=2,v

factv = factv*i

END DO

pwx1d = zd/2.0

pwx1 = z/2.0

IF (pwx1 .LE. 0.0 .AND. (v .EQ. 0.0 .OR. v .NE. INT(v))) THEN

pwr1d = 0.0_8

ELSE

pwr1d = v*pwx1**(v-1)*pwx1d

END IF

pwr1 = pwx1**v

sd = pwr1d/factv

s = pwr1/factv

resd = sd

res = s

DO WHILE (.true.)

IF (s .GE. 0.) THEN

abs0 = s

ELSE

abs0 = -s

END IF

IF (abs0 .GT. atol) THEN

k = k + 1

temp = k*(k+v)*(2*2)

sd = -((z**2*sd+s*2*z*zd)/temp)

s = -(s*(z*z)/temp)

resd = resd + sd

res = res + s

ELSE

EXIT

END IF

END DO

END SUBROUTINE BESSELJ_D

```

besselj_b.f90 (backward mode)

```fortran

! Generated by TAPENADE (INRIA, Ecuador team)

! Tapenade 3.15 (master) - 15 Apr 2020 11:54

!

! Differentiation of besselj in reverse (adjoint) mode:

! gradient of useful results: res z

! with respect to varying inputs: res z

! RW status of diff variables: res:in-zero z:incr

SUBROUTINE BESSELJ_B(res, resb, v, z, zb, atol)

IMPLICIT NONE

INTEGER, INTENT(IN) :: v

REAL*8, INTENT(IN) :: z, atol

REAL*8 :: zb

REAL*8 :: res

REAL*8 :: resb

REAL*8 :: s

REAL*8 :: sb

INTEGER :: k, i, factv

INTRINSIC ABS

REAL*8 :: abs0

REAL*8 :: tempb

INTEGER :: ad_count

INTEGER :: i0

INTEGER :: branch

k = 0

factv = 1

DO i=2,v

factv = factv*i

END DO

s = (z/2.0)**v/factv

ad_count = 1

DO WHILE (.true.)

IF (s .GE. 0.) THEN

abs0 = s

ELSE

abs0 = -s

END IF

IF (abs0 .GT. atol) THEN

CALL PUSHINTEGER4(k)

k = k + 1

CALL PUSHREAL8(s)

s = -(s/k/(k+v)*(z/2)**2)

ad_count = ad_count + 1

ELSE

GOTO 100

END IF

END DO

CALL PUSHCONTROL1B(0)

GOTO 110

100 CALL PUSHCONTROL1B(1)

110 DO i0=1,ad_count

IF (i0 .EQ. 1) THEN

CALL POPCONTROL1B(branch)

IF (branch .EQ. 0) THEN

sb = 0.0_8

ELSE

sb = 0.0_8

END IF

ELSE

sb = sb + resb

CALL POPREAL8(s)

tempb = -(sb/(k*(k+v)*2**2))

sb = z**2*tempb

zb = zb + 2*z*s*tempb

CALL POPINTEGER4(k)

END IF

END DO

sb = sb + resb

IF (.NOT.(z/2.0 .LE. 0.0 .AND. (v .EQ. 0.0 .OR. v .NE. INT(v)))) zb = &

& zb + v*(z/2.0)**(v-1)*sb/(2.0*factv)

resb = 0.0_8

END SUBROUTINE BESSELJ_B

```

"""

# ╔═╡ 7a6dbe09-cb7f-405f-b9b5-b350ca170e5f

md"## Example 2: Matrix multiplication"

# ╔═╡ 5dc4a849-76dd-4c4f-8828-755671839e5e

md"""

matmul_b.f90

```fortran

! Generated by TAPENADE (INRIA, Ecuador team)

! Tapenade 3.16 (develop) - 9 Apr 2021 17:40

!

! Differentiation of mymatmul in reverse (adjoint) mode:

! gradient of useful results: x y z

! with respect to varying inputs: x y z

! RW status of diff variables: x:incr y:incr z:in-out

SUBROUTINE MYMATMUL_B(z, zb, x, xb, y, yb, m, n, o)

IMPLICIT NONE

INTEGER, INTENT(IN) :: m, n, o

REAL*8, DIMENSION(:, :) :: z(m, n)

REAL*8 :: zb(m, n)

REAL*8, DIMENSION(:, :), INTENT(IN) :: x(m, o), y(o, n)

REAL*8 :: xb(m, o), yb(o, n)

REAL*8 :: temp

REAL*8 :: tempb

INTEGER :: i, j, k

DO j=n,1,-1

DO i=m,1,-1

tempb = zb(i, j)

zb(i, j) = 0.0_8

DO k=o,1,-1

xb(i, k) = xb(i, k) + y(k, j)*tempb

yb(k, j) = yb(k, j) + x(i, k)*tempb

END DO

END DO

END DO

END SUBROUTINE MYMATMUL_B

```

"""

# ╔═╡ b053f11b-9ed7-47ff-ab32-0c70b87e71ed

md"## Example 3: Pyramid"

# ╔═╡ 7b1aa6dd-647f-44cb-b580-b58e23e8b5a6

html"""

""", md"**Evaluating derivatives: principles and techniques of algorithmic differentiation**

By: Griewank, Andreas, and Andrea Walther

(2008)")

# ╔═╡ 4ff09f7c-aeac-48bd-9d58-8446137c3acd

md"""

## The AD ecosystem in Julia

Please check JuliaDiff: [https://juliadiff.org/](https://juliadiff.org/)

A short list:

* Forward mode AD: ForwardDiff.jl

* Reverse mode AD (tensor): ReverseDiff.jl/Zygote.jl

* Reverse mode AD (scalar): NiLang.jl

Warnings

* The main authors of `Tracker`, `ReverseDiff` and `Zygote` are not maintaining them anymore.

"""

#=

| | Rules | Favors Tensor? | Type |

| ---- | ---- | --- | --- |

| Zygote | C | ✓ | R |

| ReverseDiff | D | ✓ | R |

| Nabla | D→C | ✓ | R |

| Tracker | D | ✓ | R |

| Yota | C | ✓ | R |

| NiLang | - | × | R |

| Enzyme | - | × | R |

| ForwardDiff | - | × | F |

| Diffractor | ? | ? | ? |

* R: reverse mode

* F: forward mode

* C: ChainRules

* D: DiffRules

"""

=#

# ╔═╡ ea44037b-9359-4fbd-990f-529d88d54351

md"# Quick summary

1. The history of AD is longer than many people have thought. People are most familar with *reverse mode AD with primitives implemented on tensors* that brings the boom of machine learning. There are also AD frameworks that can differentiate a general program directly, which does not require users defining AD rules manually.

2. **Forward mode AD** propagate gradients forward, it has a computational overhead propotional to the number of input parameters.

2. **Backward mode AD** propagate gradients backward, it has a computational overhead propotional to the number of output parameters.

* primitives on **tensors** v.s. **scalars**

* it is very expensive to reverse the program

4. Julia has one of the most active AD community!

#### Forward v.s. Backward

when is forward mode AD more useful?

* It is often combined with backward mode AD for obtaining Hessians (forward over backward).

* Having <20 input parameters.

when is backward mode AD more useful?

* In most variational optimizations, especially when we are training a neural network with ~ 100M parameters.

"

# ╔═╡ e731a8e3-6462-4a60-83e9-6ab7ddfff50e

md"# How do AD libraries work?"

# ╔═╡ 685c2b28-b071-452c-a881-801128dcb6c3

md"`ForwardDiff` is operator overloading based, many of its overheads can be optimized by Julia's JIT compiler."

# ╔═╡ 177ddfc2-2cbe-4dba-9d05-2857633dd1ae

md"# [Tapenade](http://tapenade.inria.fr:8080/tapenade/index.jsp)

"

# ╔═╡ 6c2a3a93-385f-4758-9b6e-4cb594a8e856

md"## Example 1: Bessel Example"

# ╔═╡ fb8168c2-8489-418b-909b-cede57b5ae64

md"bessel.f90"

# ╔═╡ fdb39284-dbb1-49fa-9a1c-f360f9e6b765

md"""

```fortran

subroutine besselj(res, v, z, atol)

implicit none

integer, intent(in) :: v

real*8, intent(in) :: z, atol

real*8, intent(out) :: res

real*8 :: s

integer :: k, i, factv

k = 0

factv = 1

do i = 2,v

factv = factv * i

enddo

s = (z/2.0)**v / factv

res = s

do while(abs(s) > atol)

k = k + 1

s = -s / k / (k+v) * ((z/2) ** 2)

res = res + s

enddo

endsubroutine besselj

```

"""

# ╔═╡ 60214f22-c8bb-4a32-a882-4e6c727b29a9

md"""

besselj_d.f90 (forward mode)

```fortran

! Generated by TAPENADE (INRIA, Ecuador team)

! Tapenade 3.15 (master) - 15 Apr 2020 11:54

!

! Differentiation of besselj in forward (tangent) mode:

! variations of useful results: res

! with respect to varying inputs: z

! RW status of diff variables: res:out z:in

SUBROUTINE BESSELJ_D(res, resd, v, z, zd, atol)

IMPLICIT NONE

INTEGER, INTENT(IN) :: v

REAL*8, INTENT(IN) :: z, atol

REAL*8, INTENT(IN) :: zd

REAL*8, INTENT(OUT) :: res

REAL*8, INTENT(OUT) :: resd

REAL*8 :: s

REAL*8 :: sd

INTEGER :: k, i, factv

INTRINSIC ABS

REAL*8 :: abs0

REAL*8 :: pwx1

REAL*8 :: pwx1d

REAL*8 :: pwr1

REAL*8 :: pwr1d

INTEGER :: temp

k = 0

factv = 1

DO i=2,v

factv = factv*i

END DO

pwx1d = zd/2.0

pwx1 = z/2.0

IF (pwx1 .LE. 0.0 .AND. (v .EQ. 0.0 .OR. v .NE. INT(v))) THEN

pwr1d = 0.0_8

ELSE

pwr1d = v*pwx1**(v-1)*pwx1d

END IF

pwr1 = pwx1**v

sd = pwr1d/factv

s = pwr1/factv

resd = sd

res = s

DO WHILE (.true.)

IF (s .GE. 0.) THEN

abs0 = s

ELSE

abs0 = -s

END IF

IF (abs0 .GT. atol) THEN

k = k + 1

temp = k*(k+v)*(2*2)

sd = -((z**2*sd+s*2*z*zd)/temp)

s = -(s*(z*z)/temp)

resd = resd + sd

res = res + s

ELSE

EXIT

END IF

END DO

END SUBROUTINE BESSELJ_D

```

besselj_b.f90 (backward mode)

```fortran

! Generated by TAPENADE (INRIA, Ecuador team)

! Tapenade 3.15 (master) - 15 Apr 2020 11:54

!

! Differentiation of besselj in reverse (adjoint) mode:

! gradient of useful results: res z

! with respect to varying inputs: res z

! RW status of diff variables: res:in-zero z:incr

SUBROUTINE BESSELJ_B(res, resb, v, z, zb, atol)

IMPLICIT NONE

INTEGER, INTENT(IN) :: v

REAL*8, INTENT(IN) :: z, atol

REAL*8 :: zb

REAL*8 :: res

REAL*8 :: resb

REAL*8 :: s

REAL*8 :: sb

INTEGER :: k, i, factv

INTRINSIC ABS

REAL*8 :: abs0

REAL*8 :: tempb

INTEGER :: ad_count

INTEGER :: i0

INTEGER :: branch

k = 0

factv = 1

DO i=2,v

factv = factv*i

END DO

s = (z/2.0)**v/factv

ad_count = 1

DO WHILE (.true.)

IF (s .GE. 0.) THEN

abs0 = s

ELSE

abs0 = -s

END IF

IF (abs0 .GT. atol) THEN

CALL PUSHINTEGER4(k)

k = k + 1

CALL PUSHREAL8(s)

s = -(s/k/(k+v)*(z/2)**2)

ad_count = ad_count + 1

ELSE

GOTO 100

END IF

END DO

CALL PUSHCONTROL1B(0)

GOTO 110

100 CALL PUSHCONTROL1B(1)

110 DO i0=1,ad_count

IF (i0 .EQ. 1) THEN

CALL POPCONTROL1B(branch)

IF (branch .EQ. 0) THEN

sb = 0.0_8

ELSE

sb = 0.0_8

END IF

ELSE

sb = sb + resb

CALL POPREAL8(s)

tempb = -(sb/(k*(k+v)*2**2))

sb = z**2*tempb

zb = zb + 2*z*s*tempb

CALL POPINTEGER4(k)

END IF

END DO

sb = sb + resb

IF (.NOT.(z/2.0 .LE. 0.0 .AND. (v .EQ. 0.0 .OR. v .NE. INT(v)))) zb = &

& zb + v*(z/2.0)**(v-1)*sb/(2.0*factv)

resb = 0.0_8

END SUBROUTINE BESSELJ_B

```

"""

# ╔═╡ 7a6dbe09-cb7f-405f-b9b5-b350ca170e5f

md"## Example 2: Matrix multiplication"

# ╔═╡ 5dc4a849-76dd-4c4f-8828-755671839e5e

md"""

matmul_b.f90

```fortran

! Generated by TAPENADE (INRIA, Ecuador team)

! Tapenade 3.16 (develop) - 9 Apr 2021 17:40

!

! Differentiation of mymatmul in reverse (adjoint) mode:

! gradient of useful results: x y z

! with respect to varying inputs: x y z

! RW status of diff variables: x:incr y:incr z:in-out

SUBROUTINE MYMATMUL_B(z, zb, x, xb, y, yb, m, n, o)

IMPLICIT NONE

INTEGER, INTENT(IN) :: m, n, o

REAL*8, DIMENSION(:, :) :: z(m, n)

REAL*8 :: zb(m, n)

REAL*8, DIMENSION(:, :), INTENT(IN) :: x(m, o), y(o, n)

REAL*8 :: xb(m, o), yb(o, n)

REAL*8 :: temp

REAL*8 :: tempb

INTEGER :: i, j, k

DO j=n,1,-1

DO i=m,1,-1

tempb = zb(i, j)

zb(i, j) = 0.0_8

DO k=o,1,-1

xb(i, k) = xb(i, k) + y(k, j)*tempb

yb(k, j) = yb(k, j) + x(i, k)*tempb

END DO

END DO

END DO

END SUBROUTINE MYMATMUL_B

```

"""

# ╔═╡ b053f11b-9ed7-47ff-ab32-0c70b87e71ed

md"## Example 3: Pyramid"

# ╔═╡ 7b1aa6dd-647f-44cb-b580-b58e23e8b5a6

html"""

"""

# ╔═╡ b96bac75-b4ad-45f7-aeec-cb6a387eebf0

md"You will see a lot allocation"

# ╔═╡ 5fe022eb-6a17-466e-a6d0-d67e82af23cd

md"pyramid.f90"

# ╔═╡ 92047e95-7eba-4021-9668-9bb4b92261d7

md"""

```fortran

! Differentiation of pyramid in reverse (adjoint) mode:

! gradient of useful results: v x

! with respect to varying inputs: v x

! RW status of diff variables: v:in-out x:incr

SUBROUTINE PYRAMID_B(v, vb, x, xb, n)

IMPLICIT NONE

INTEGER, INTENT(IN) :: n

REAL*8 :: v(n, n)

REAL*8 :: vb(n, n)

REAL*8, INTENT(IN) :: x(n)

REAL*8 :: xb(n)

INTEGER :: i, j

INTRINSIC SIN

INTRINSIC COS

INTEGER :: ad_to

DO j=1,n

v(1, j) = x(j)

END DO

DO i=1,n-1

DO j=1,n-i

CALL PUSHREAL8(v(i+1, j))

v(i+1, j) = SIN(v(i, j))*COS(v(i, j+1))

END DO

CALL PUSHINTEGER4(j - 1)

END DO

DO i=n-1,1,-1

CALL POPINTEGER4(ad_to)

DO j=ad_to,1,-1

CALL POPREAL8(v(i+1, j))

vb(i, j) = vb(i, j) + COS(v(i, j))*COS(v(i, j+1))*vb(i+1, j)

vb(i, j+1) = vb(i, j+1) - SIN(v(i, j+1))*SIN(v(i, j))*vb(i+1, j)

vb(i+1, j) = 0.0_8

END DO

END DO

DO j=n,1,-1

xb(j) = xb(j) + vb(1, j)

vb(1, j) = 0.0_8

END DO

END SUBROUTINE PYRAMID_B

```

"""

# ╔═╡ e2ae1084-8759-4f27-8ad1-43a88e434a3d

md"## How does NiLang avoid too many allocation?"

# ╔═╡ edd3aea8-abdb-4e12-9ef9-12ac0fff835b

@i function pyramid!(y!, v!, x::AbstractVector{T}) where T

@safe @assert size(v!,2) == size(v!,1) == length(x)

@inbounds for j=1:length(x)

v![1,j] += x[j]

end

@invcheckoff @inbounds for i=1:size(v!,1)-1

for j=1:size(v!,2)-i

@routine begin

@zeros T c s

c += cos(v![i,j+1])

s += sin(v![i,j])

end

v![i+1,j] += c * s

~@routine

end

end

y! += v![end,1]

end

# ╔═╡ a2904efb-186c-449d-b1aa-caf530f88e91

@i function power(x3, x)

@routine begin

x2 ← zero(x)

x2 += x^2

end

x3 += x2 * x

~@routine

end

# ╔═╡ 14faaf82-ad3e-4192-8d48-84adfa30442d

ex = NiLangCore.precom_ex(NiLang, :(for j=1:size(v!,2)-i

@routine begin

@zeros T c s

c += cos(v![i,j+1])

s += sin(v![i,j])

end

v![i+1,j] += c * s

~@routine

end)) |> NiLangCore.rmlines

# ╔═╡ 5d141b88-ec07-4a02-8eb3-37405e5c9f5d

NiLangCore.dual_ex(NiLang, ex)

# ╔═╡ 0907e683-f216-4cf6-a210-ae5181fdc487

function pyramid0!(v!, x::AbstractVector{T}) where T

@assert size(v!,2) == size(v!,1) == length(x)

for j=1:length(x)

v![1,j] = x[j]

end

@inbounds for i=1:size(v!,1)-1

for j=1:size(v!,2)-i

v![i+1,j] = cos(v![i,j+1]) * sin(v![i,j])

end

end

end

# ╔═╡ 0bbfa106-f465-4a7b-80a7-7732ba435822

x = randn(20);

# ╔═╡ 805c7072-98fa-4086-a69d-2e126c55af36

let

@benchmark pyramid0!(v, x) seconds=1 setup=(x=randn(1000); v=zeros(1000, 1000))

end

# ╔═╡ 7e527024-c294-4c16-8626-9953588d9b6a

let

@benchmark pyramid!(0.0, v, x) seconds=1 setup=(x=10*randn(1000); v=zeros(1000, 1000))

end

# ╔═╡ 3e59c65a-ceed-42ed-be64-a6964db016e7

pyramid!(0.0, zeros(20, 20), x)

# ╔═╡ 29f85d05-99fd-4843-9be0-5663e681dad7

html"""

"""

# ╔═╡ b96bac75-b4ad-45f7-aeec-cb6a387eebf0

md"You will see a lot allocation"

# ╔═╡ 5fe022eb-6a17-466e-a6d0-d67e82af23cd

md"pyramid.f90"

# ╔═╡ 92047e95-7eba-4021-9668-9bb4b92261d7

md"""

```fortran

! Differentiation of pyramid in reverse (adjoint) mode:

! gradient of useful results: v x

! with respect to varying inputs: v x

! RW status of diff variables: v:in-out x:incr

SUBROUTINE PYRAMID_B(v, vb, x, xb, n)

IMPLICIT NONE

INTEGER, INTENT(IN) :: n

REAL*8 :: v(n, n)

REAL*8 :: vb(n, n)

REAL*8, INTENT(IN) :: x(n)

REAL*8 :: xb(n)

INTEGER :: i, j

INTRINSIC SIN

INTRINSIC COS

INTEGER :: ad_to

DO j=1,n

v(1, j) = x(j)

END DO

DO i=1,n-1

DO j=1,n-i

CALL PUSHREAL8(v(i+1, j))

v(i+1, j) = SIN(v(i, j))*COS(v(i, j+1))

END DO

CALL PUSHINTEGER4(j - 1)

END DO

DO i=n-1,1,-1

CALL POPINTEGER4(ad_to)

DO j=ad_to,1,-1

CALL POPREAL8(v(i+1, j))

vb(i, j) = vb(i, j) + COS(v(i, j))*COS(v(i, j+1))*vb(i+1, j)

vb(i, j+1) = vb(i, j+1) - SIN(v(i, j+1))*SIN(v(i, j))*vb(i+1, j)

vb(i+1, j) = 0.0_8

END DO

END DO

DO j=n,1,-1

xb(j) = xb(j) + vb(1, j)

vb(1, j) = 0.0_8

END DO

END SUBROUTINE PYRAMID_B

```

"""

# ╔═╡ e2ae1084-8759-4f27-8ad1-43a88e434a3d

md"## How does NiLang avoid too many allocation?"

# ╔═╡ edd3aea8-abdb-4e12-9ef9-12ac0fff835b

@i function pyramid!(y!, v!, x::AbstractVector{T}) where T

@safe @assert size(v!,2) == size(v!,1) == length(x)

@inbounds for j=1:length(x)

v![1,j] += x[j]

end

@invcheckoff @inbounds for i=1:size(v!,1)-1

for j=1:size(v!,2)-i

@routine begin

@zeros T c s

c += cos(v![i,j+1])

s += sin(v![i,j])

end

v![i+1,j] += c * s

~@routine

end

end

y! += v![end,1]

end

# ╔═╡ a2904efb-186c-449d-b1aa-caf530f88e91

@i function power(x3, x)

@routine begin

x2 ← zero(x)

x2 += x^2

end

x3 += x2 * x

~@routine

end

# ╔═╡ 14faaf82-ad3e-4192-8d48-84adfa30442d

ex = NiLangCore.precom_ex(NiLang, :(for j=1:size(v!,2)-i

@routine begin

@zeros T c s

c += cos(v![i,j+1])

s += sin(v![i,j])

end

v![i+1,j] += c * s

~@routine

end)) |> NiLangCore.rmlines

# ╔═╡ 5d141b88-ec07-4a02-8eb3-37405e5c9f5d

NiLangCore.dual_ex(NiLang, ex)

# ╔═╡ 0907e683-f216-4cf6-a210-ae5181fdc487

function pyramid0!(v!, x::AbstractVector{T}) where T

@assert size(v!,2) == size(v!,1) == length(x)

for j=1:length(x)

v![1,j] = x[j]

end

@inbounds for i=1:size(v!,1)-1

for j=1:size(v!,2)-i

v![i+1,j] = cos(v![i,j+1]) * sin(v![i,j])

end

end

end

# ╔═╡ 0bbfa106-f465-4a7b-80a7-7732ba435822

x = randn(20);

# ╔═╡ 805c7072-98fa-4086-a69d-2e126c55af36

let

@benchmark pyramid0!(v, x) seconds=1 setup=(x=randn(1000); v=zeros(1000, 1000))

end

# ╔═╡ 7e527024-c294-4c16-8626-9953588d9b6a

let

@benchmark pyramid!(0.0, v, x) seconds=1 setup=(x=10*randn(1000); v=zeros(1000, 1000))

end

# ╔═╡ 3e59c65a-ceed-42ed-be64-a6964db016e7

pyramid!(0.0, zeros(20, 20), x)

# ╔═╡ 29f85d05-99fd-4843-9be0-5663e681dad7

html""" """

# ╔═╡ e7830e55-bd9e-4a8a-9239-4191a5f0b1d1

let

@benchmark NiLang.AD.gradient(Val(1), pyramid!, (0.0, v, x)) seconds=1 setup=(x=randn(1000); v=zeros(1000, 1000))

end

# ╔═╡ de2cd247-ba68-4ba4-9784-27a743478635

md"## NiLang's implementation"

# ╔═╡ dc929c23-7434-4848-847a-9fa696e84776

md"""

```math

\begin{align}

&v_{−1} &= & x_1 &=&1.5000\\

&v_0 &= & x_2 &=&0.5000\\

&v_1 &= & v_{−1}/v_0 &=&1.5000/0.5000 &= 3.0000\\

&v_2 &= & \sin(v1)&=& \sin(3.0000) &= 0.1411\\

&v_3 &= & \exp(v0)&=& \exp(0.5000) &= 1.6487\\

&v_4 &= & v_1 − v_3 &=&3.0000 − 1.6487 &= 1.3513\\

&v_5 &= & v_2 + v_4 &=&0.1411 + 1.3513 &= 1.4924\\

&v_6 &= & v_5 ∗ v_4 &=&1.4924 ∗ 1.3513 &= 2.0167\\

&y &= & v_6 &=&2.0167

\end{align}

```

"""

# ╔═╡ 4f1df03f-c315-47b1-b181-749e1231594c

html"""

"""

# ╔═╡ e7830e55-bd9e-4a8a-9239-4191a5f0b1d1

let

@benchmark NiLang.AD.gradient(Val(1), pyramid!, (0.0, v, x)) seconds=1 setup=(x=randn(1000); v=zeros(1000, 1000))

end

# ╔═╡ de2cd247-ba68-4ba4-9784-27a743478635

md"## NiLang's implementation"

# ╔═╡ dc929c23-7434-4848-847a-9fa696e84776

md"""

```math

\begin{align}

&v_{−1} &= & x_1 &=&1.5000\\

&v_0 &= & x_2 &=&0.5000\\

&v_1 &= & v_{−1}/v_0 &=&1.5000/0.5000 &= 3.0000\\

&v_2 &= & \sin(v1)&=& \sin(3.0000) &= 0.1411\\

&v_3 &= & \exp(v0)&=& \exp(0.5000) &= 1.6487\\

&v_4 &= & v_1 − v_3 &=&3.0000 − 1.6487 &= 1.3513\\

&v_5 &= & v_2 + v_4 &=&0.1411 + 1.3513 &= 1.4924\\

&v_6 &= & v_5 ∗ v_4 &=&1.4924 ∗ 1.3513 &= 2.0167\\

&y &= & v_6 &=&2.0167

\end{align}

```

"""

# ╔═╡ 4f1df03f-c315-47b1-b181-749e1231594c

html"""

"""

# ╔═╡ 7eccba6a-3ad5-440b-9c5d-392dc8dc7aba

@i function example_linear(y::T, x1::T, x2::T) where T

@routine begin

@zeros T v1 v2 v3 v4 v5

v1 += x1 / x2

v2 += sin(v1)

v3 += exp(x2)

v4 += v1 - v3

v5 += v2 + v4

end

y += v5 * v4

~@routine

end

# ╔═╡ 4a858a3e-ce28-4642-b061-3975a3ed99ff

md"NOTES:

* a statement changes values inplace directly,

* no return statement, returns the input arguments directly

* `@routine

"""

# ╔═╡ 7eccba6a-3ad5-440b-9c5d-392dc8dc7aba

@i function example_linear(y::T, x1::T, x2::T) where T

@routine begin

@zeros T v1 v2 v3 v4 v5

v1 += x1 / x2

v2 += sin(v1)

v3 += exp(x2)

v4 += v1 - v3

v5 += v2 + v4

end

y += v5 * v4

~@routine

end

# ╔═╡ 4a858a3e-ce28-4642-b061-3975a3ed99ff

md"NOTES:

* a statement changes values inplace directly,

* no return statement, returns the input arguments directly

* `@routine  """

# ╔═╡ 2192a1de-1042-4b13-a313-b67de489124c

md"""

1. Devide the program into ``\delta`` segments, each segment having size $\eta(\delta, \tau) = \frac{(\delta+\tau)!}{\delta! \tau!}$, where ``\delta=1,...,d`` and ``\tau=t-1``.

2. Cache the first state of each segment,

3. Compute gradients in the last segment,

4. Deallocate last checkpoint,

5. Devide the second last segments into two parts.

6. Recursively apply treeverse (Step 2-5).

"""

# ╔═╡ 01c709c7-806c-4389-bbb2-4081e64426d9

md"total number of steps ``T = \eta(d, t)``, both ``t`` and ``d`` can be logarithmic"

# ╔═╡ b1e0cf83-4337-4044-a7d1-5fca8ae79268

md"## An example"

# ╔═╡ 71f4b476-027d-4c8f-b561-1ee418bc9e61

html"""

"""

# ╔═╡ 2192a1de-1042-4b13-a313-b67de489124c

md"""

1. Devide the program into ``\delta`` segments, each segment having size $\eta(\delta, \tau) = \frac{(\delta+\tau)!}{\delta! \tau!}$, where ``\delta=1,...,d`` and ``\tau=t-1``.

2. Cache the first state of each segment,

3. Compute gradients in the last segment,

4. Deallocate last checkpoint,

5. Devide the second last segments into two parts.

6. Recursively apply treeverse (Step 2-5).

"""

# ╔═╡ 01c709c7-806c-4389-bbb2-4081e64426d9

md"total number of steps ``T = \eta(d, t)``, both ``t`` and ``d`` can be logarithmic"

# ╔═╡ b1e0cf83-4337-4044-a7d1-5fca8ae79268

md"## An example"

# ╔═╡ 71f4b476-027d-4c8f-b561-1ee418bc9e61

html"""

", md"**Matrix computations**

Golub, Gene H., and Charles F. Van Loan (2013)")

# ╔═╡ 4d373cf6-9b39-44bc-8f13-220933fc8f5c

function qrfactPivotedUnblocked!(A::AbstractMatrix)

m, n = size(A)

piv = Vector(UnitRange{BlasInt}(1,n))

τ = Vector{eltype(A)}(undef, min(m,n))

for j = 1:min(m,n)

# Find column with maximum norm in trailing submatrix

jm = indmaxcolumn(view(A, j:m, j:n)) + j - 1

if jm != j

# Flip elements in pivoting vector

tmpp = piv[jm]

piv[jm] = piv[j]

piv[j] = tmpp

# Update matrix with

for i = 1:m

tmp = A[i,jm]

A[i,jm] = A[i,j]

A[i,j] = tmp

end

end

# Compute reflector of columns j

x = view(A, j:m, j)

τj = LinearAlgebra.reflector!(x)

τ[j] = τj

# Update trailing submatrix with reflector

LinearAlgebra.reflectorApply!(x, τj, view(A, j:m, j+1:n))

end

return LinearAlgebra.QRPivoted{eltype(A), typeof(A)}(A, τ, piv)

end

# ╔═╡ 293a68ca-e02f-47b3-85ed-aeeb8995f3ec

struct Reflector{T,RT,VT<:AbstractVector{T}}

ξ::T

normu::RT

sqnormu::RT

r::T

y::VT

end

# ╔═╡ fa5716f9-8bff-4295-812b-691ccdc12832

struct QRPivotedRes{T,RT,VT}

factors::Matrix{T}

τ::Vector{T}

jpvt::Vector{Int}

reflectors::Vector{Reflector{T,RT,VT}}

vAs::Vector{Vector{T}}

jms::Vector{Int}

end

# ╔═╡ 8324f365-fd12-4ca3-8ca6-657e5917f946

# Elementary reflection similar to LAPACK. The reflector is not Hermitian but

# ensures that tridiagonalization of Hermitian matrices become real. See lawn72

@i function reflector!(R::Reflector{T,RT}, x::AbstractVector{T}) where {T,RT}

n ← length(x)

@inbounds @invcheckoff if n != 0

@zeros T ξ1

@zeros RT normu sqnormu

ξ1 += x[1]

sqnormu += abs2(ξ1)

for i = 2:n

sqnormu += abs2(x[i])

end

if !iszero(sqnormu)

normu += sqrt(sqnormu)

if real(ξ1) < 0

NEG(normu)

end

ξ1 += normu

R.y[1] -= normu

for i = 2:n

R.y[i] += x[i] / ξ1

end

R.r += ξ1/normu

end

SWAP(R.ξ, ξ1)

SWAP(R.normu, normu)

SWAP(R.sqnormu, sqnormu)

end

end

# ╔═╡ 70fb10ea-9229-46ef-8ba3-b1d3874b7929

# apply reflector from left

@i function reflectorApply!(vA::AbstractVector{T}, x::AbstractVector, τ::Number, A::StridedMatrix{T}) where T

(m, n) ← size(A)

if length(x) != m || length(vA) != n

@safe throw(DimensionMismatch("reflector has length ($(length(x)), $(length(vA))), which must match the first dimension of matrix A, ($m, $n)"))

end

@inbounds @invcheckoff if m != 0

for j = 1:n

# dot

@zeros T vAj vAj_τ

vAj += A[1, j]

for i = 2:m

vAj += x[i]'*A[i, j]

end

vAj_τ += τ' * vAj

# ger

A[1, j] -= vAj_τ

for i = 2:m

A[i, j] -= x[i]*vAj_τ

end

vAj_τ -= τ' * vAj

SWAP(vA[j], vAj)

end

end

end

# ╔═╡ 51504ba4-4711-48b7-aab9-d4f26c009659

function alloc(::typeof(reflector!), x::AbstractVector{T}) where T

RT = real(T)

Reflector(zero(T), zero(RT), zero(RT), zero(T), zero(x))

end

# ╔═╡ f267e315-3c19-4345-8fba-641bb0ea515b

@i function qr_pivoted!(res::QRPivotedRes, A::StridedMatrix{T}) where T

m, n ← size(A)

@invcheckoff @inbounds for j = 1:min(m,n)

# Find column with maximum norm in trailing submatrix

jm ← LinearAlgebra.indmaxcolumn(NiLang.value.(view(A, j:m, j:n))) + j - 1

if jm != j

# Flip elements in pivoting vector

SWAP(res.jpvt[jm], res.jpvt[j])

# Update matrix with

for i = 1:m

SWAP(A[i, jm], A[i, j])

end

end

# Compute reflector of columns j

R ← alloc(reflector!, A |> subarray(j:m, j))

vA ← zeros(T, n-j)

reflector!(R, A |> subarray(j:m, j))

# Update trailing submatrix with reflector

reflectorApply!(vA, R.y, R.r, A |> subarray(j:m, j+1:n))

for i=1:length(R.y)

SWAP(R.y[i], A[j+i-1, j])

end

PUSH!(res.reflectors, R)

PUSH!(res.vAs, vA)

PUSH!(res.jms, jm)

R → _zero(Reflector{T,real(T),Vector{T}})

vA → zeros(T, 0)

jm → 0

end

@inbounds for i=1:length(res.reflectors)

res.τ[i] += res.reflectors[i].r

end

res.factors += A

end

# ╔═╡ a07b93b1-742b-41d4-bd0f-bc899de55338

function alloc_qr(A::AbstractMatrix{T}) where T

(m, n) = size(A)

τ = zeros(T, min(m,n))

jpvt = collect(1:n)

reflectors = Reflector{T,real(T),Vector{T}}[]

vAs = Vector{T}[]

jms = Int[]

QRPivotedRes(zero(A), τ, jpvt, reflectors, vAs, jms)

end

# ╔═╡ 5f207f59-b9f4-477f-b79f-0aee743bdb8e

A = randn(ComplexF64, 20, 20);

# ╔═╡ f88517d6-b87d-45ba-bf3f-67074fa51fca

@test qr_pivoted!(alloc_qr(A), copy(A))[1].factors ≈ LinearAlgebra.qrfactPivotedUnblocked!(copy(A)).factors

# ╔═╡ 45aef837-9b2c-49b2-b815-e4d60f103f58

let

@testset "qr pivoted gradient" begin

# rank deficient initial matrix

n = 50

U = LinearAlgebra.qr(randn(n, n)).Q

Σ = Diagonal((x=randn(n); x[n÷2+1:end] .= 0; x))

A = U*Σ*U'

res = alloc_qr(A)

@test rank(A) == n ÷ 2

qrres = qr_pivoted!(deepcopy(res), copy(A))[1]

@test count(x->(x>1e-12), sum(abs2, QRPivoted(qrres.factors, qrres.τ, qrres.jpvt).R, dims=2)) == n ÷ 2

@i function loss(y, qrres, A)

qr_pivoted!(qrres, A)

y += abs(qrres.factors[1])

end

nrloss(A) = loss(0.0, deepcopy(res), A)[1]

ngA = zero(A)

δ = 1e-5

for j=1:size(A, 2)

for i=1:size(A, 1)

A_ = copy(A)

A_[i,j] -= δ/2

l1 = nrloss(copy(A_))

A_[i,j] += δ

l2 = nrloss(A_)

ngA[i,j] = (l2-l1)/δ

end

end

gA = NiLang.AD.gradient(loss, (0.0, res, A); iloss=1)[3]

@test real.(gA) ≈ ngA

end

end

# ╔═╡ Cell order:

# ╟─a1ef579e-4b66-4042-944e-7e27c660095e

# ╟─100b4293-fd1e-4b9c-a831-5b79bc2a5ebe

# ╟─f11023e5-8f7b-4f40-86d3-3407b61863d9

# ╟─9d11e058-a7d0-11eb-1d78-6592ff7a1b43

# ╟─b73157bf-1a77-47b8-8a06-8d6ec2045023

# ╟─ec13e0a9-64ff-4f66-a5a6-5fef53428fa1

# ╟─f8b0d1ce-99f7-4729-b46e-126da540cbbe

# ╟─435ac19e-1c0c-4ee5-942d-f2a97c8c4d80

# ╟─48ecd619-d01d-43ff-8b52-7c2566c3fa2b

# ╟─4878ce45-40ff-4fae-98e7-1be41e930e4d

# ╠═ce44f8bd-692e-4eab-9ba4-055b25e40c81

# ╠═b2c1936c-2c27-4fbb-8183-e38c5e858483

# ╠═8be1b812-fcac-404f-98aa-0571cb990f34

# ╟─33e0c762-c75e-44aa-bfe2-bff92dd1ace8

# ╟─c59c35ee-1907-4736-9893-e22c052150ca

# ╠═0ae13734-b826-4dbf-93d1-11044ce88bd4

# ╠═99187515-c8be-49c2-8d70-9c2998d9993c

# ╟─78ca6b08-84c4-4e4d-8412-ae6c28bfafce

# ╠═f12b25d8-7c78-4686-b46d-00b34e565605

# ╟─d90c3cc9-084d-4cf7-9db7-42cea043030b

# ╟─93c98cb2-18af-47df-afb3-8c5a34b4723c

# ╟─2dc74e15-e2ea-4961-b43f-0ada1a73d80a

# ╟─7ee75a15-eaea-462a-92b6-293813d2d4d7

# ╟─02a25b73-7353-43b1-8738-e7ca472d0cc7

# ╟─2afb984f-624e-4381-903f-ccc1d8a66a17

# ╟─7e5d5e69-90f2-4106-8edf-223c150a8168

# ╟─92d7a938-9463-4eee-8839-0b8c5f762c79

# ╟─4b1a0b59-ddc6-4b2d-b5f5-d92084c31e46

# ╟─81f16b8b-2f0b-4ba3-8c26-6669eabf48aa

# ╟─fb6c3a48-550a-4d2e-a00b-a1e40d86b535

# ╟─ab6fa4ac-29ed-4722-88ed-fa1caf2072f3

# ╟─8e72d934-e307-4505-ac82-c06734415df6

# ╟─e6ff86a9-9f54-474b-8111-a59a25eda506

# ╟─9c1d9607-a634-4350-aacd-2d40984d647d

# ╟─63db2fa2-50b2-4940-b8ee-0dc6e3966a57

# ╟─693167e7-e80c-401d-af89-55b5fae30848

# ╟─4cd70901-2142-4868-9a33-c46ca0d064ec

# ╟─89018a35-76f4-4f23-b15a-a600db046d6f

# ╟─1d219222-0778-4c37-9182-ed5ccbb3ef32

# ╟─4ff09f7c-aeac-48bd-9d58-8446137c3acd

# ╟─ea44037b-9359-4fbd-990f-529d88d54351

# ╟─e731a8e3-6462-4a60-83e9-6ab7ddfff50e

# ╟─685c2b28-b071-452c-a881-801128dcb6c3

# ╟─177ddfc2-2cbe-4dba-9d05-2857633dd1ae

# ╟─6c2a3a93-385f-4758-9b6e-4cb594a8e856

# ╟─fb8168c2-8489-418b-909b-cede57b5ae64

# ╟─fdb39284-dbb1-49fa-9a1c-f360f9e6b765

# ╟─60214f22-c8bb-4a32-a882-4e6c727b29a9

# ╟─7a6dbe09-cb7f-405f-b9b5-b350ca170e5f

# ╟─5dc4a849-76dd-4c4f-8828-755671839e5e

# ╟─b053f11b-9ed7-47ff-ab32-0c70b87e71ed

# ╟─7b1aa6dd-647f-44cb-b580-b58e23e8b5a6

# ╟─b96bac75-b4ad-45f7-aeec-cb6a387eebf0

# ╟─5fe022eb-6a17-466e-a6d0-d67e82af23cd

# ╟─92047e95-7eba-4021-9668-9bb4b92261d7

# ╟─e2ae1084-8759-4f27-8ad1-43a88e434a3d

# ╠═edd3aea8-abdb-4e12-9ef9-12ac0fff835b

# ╠═a2904efb-186c-449d-b1aa-caf530f88e91

# ╠═14faaf82-ad3e-4192-8d48-84adfa30442d

# ╠═5d141b88-ec07-4a02-8eb3-37405e5c9f5d

# ╠═0907e683-f216-4cf6-a210-ae5181fdc487

# ╠═805c7072-98fa-4086-a69d-2e126c55af36

# ╠═7e527024-c294-4c16-8626-9953588d9b6a

# ╠═0bbfa106-f465-4a7b-80a7-7732ba435822

# ╠═3e59c65a-ceed-42ed-be64-a6964db016e7

# ╟─29f85d05-99fd-4843-9be0-5663e681dad7

# ╠═9a46597c-b1ee-4e3b-aed1-fd2874b6e77a

# ╠═e7830e55-bd9e-4a8a-9239-4191a5f0b1d1

# ╟─de2cd247-ba68-4ba4-9784-27a743478635

# ╟─dc929c23-7434-4848-847a-9fa696e84776

# ╟─4f1df03f-c315-47b1-b181-749e1231594c

# ╠═ccd38f52-104d-434a-aea3-dd94e571374f

# ╠═7eccba6a-3ad5-440b-9c5d-392dc8dc7aba

# ╠═f4230251-ba54-434a-b86b-f972c7389217

# ╟─4a858a3e-ce28-4642-b061-3975a3ed99ff

# ╠═674bb3bb-637b-44f2-bf6d-d1678da03fbd

# ╠═5a59d96f-b2f1-4564-82c7-7f0fe181afb8

# ╠═55d2f8ee-4f77-4d44-b704-30643dbbab84

# ╠═14951168-97c2-43ae-8d5e-5506408a2bb2

# ╠═4f564581-6032-449c-8b15-3c741f44237a

# ╠═a36516e8-76c1-4bff-8a12-3e1e621b857d

# ╠═402b861c-d363-4d23-b9e9-eb088f57b5c4

# ╠═63975a80-1b41-4f55-91a1-4a316ad7bf26

# ╠═6f688f88-432a-42b2-a2db-19d6bb282e0a

# ╠═fb46db14-f7e0-4f01-9096-02334c62942d

# ╟─b2c3db3d-c250-4daa-8453-3c9a2734aede

# ╠═69dc2685-b70f-4a81-af30-f02e0054bd52

# ╠═9a986264-5ba7-4697-a00d-711f8efe29f0

# ╠═560cf3e9-0c14-4497-85b9-f07045eea32a

# ╠═8ab79efc-e8d0-4c6f-81df-a89008142bb7

# ╠═0eec318c-2c09-4dd6-9187-9c0273d29915

# ╠═1f0ef29c-0ad5-4d97-aeed-5ff44e86577a

# ╠═603d8fc2-5e7b-4d55-92b6-208b25ea6569

# ╟─2b3c765e-b505-4f07-9bcb-3c8cc47364ad

# ╠═e0f266da-7e65-4398-bfd4-a6c0b54e626b

# ╟─e1d35886-79d0-40a5-bd33-1c4e5f4a0a9a

# ╠═b63a30b0-c75b-4998-a2b2-0b79574cab81

# ╟─139bf020-c4a8-45c8-96fa-aeebc7ddaedc

# ╠═8967c0f0-89f8-4893-b11b-253333d1a823

# ╟─f2540450-5a07-4fb8-93fb-a6d48dd36a56

# ╠═3acb2cfd-fa29-4a2b-8f23-f5aaf474edd0

# ╟─aa1547f2-5edd-4b7e-b93e-bdfc4e4fc6d5

# ╟─6e76a107-4f51-4e32-b133-7b6e04d7d107

# ╟─999f7a8f-d72e-4ccd-8cbf-b5bbb7db1842

# ╟─32772c2a-6b80-4779-963c-06974ff0d832

# ╟─41642bd5-1321-490a-95ad-4c1d6363456f

# ╟─2a553e32-05ef-4c2d-aba7-41185c6035d4

# ╟─ab8345ce-e038-4d6b-9e1f-57e4f33bb67b

# ╟─bb9c9a4c-601a-4708-9b2d-04d1583938f2

# ╟─b9917e94-c33d-423f-a478-3252bacc2494

# ╟─4978f404-11ff-41b8-a673-f2d051b1f526

# ╟─73bd2e3b-902f-461b-860f-246257608ecd

# ╟─4dd47dc8-6dfa-47a4-a088-689b4b870762

# ╟─ecd975d2-9374-4f40-80ac-2cceda11e7fb

# ╟─832cc81d-a49d-46e7-9d2b-d8bde9bb1273

# ╟─2192a1de-1042-4b13-a313-b67de489124c

# ╟─01c709c7-806c-4389-bbb2-4081e64426d9

# ╟─b1e0cf83-4337-4044-a7d1-5fca8ae79268

# ╟─71f4b476-027d-4c8f-b561-1ee418bc9e61

# ╟─042013cf-9cd2-409d-827f-a311a2f8ce62

# ╟─82593cd0-1403-4597-8370-919c80494479

# ╟─f58720b5-2bcb-4950-b453-bd59f648c66a

# ╟─4576d791-6af7-4ba5-9b80-fe99c0bb2e88

# ╟─6e9d17f1-b17d-4e8d-82a3-921558a20c0f

# ╟─f18d89f5-1129-43e0-8b4a-5c1fcd618eab

# ╟─2912c7ed-75e3-4dfd-9c40-92115cc08194

# ╟─5d1517c0-562b-40db-bec2-32b5494de1b8

# ╟─ae096ad2-3ae9-4440-a959-0d7d9a174f1d

# ╟─8148bc1f-ef99-40a4-a5ce-0a42643f703d

# ╠═200f1848-0980-4185-919a-93ab2e7f788f

# ╠═bd86c5c2-16be-4cfd-ba7a-a0e2544d82d1

# ╟─11557d6b-3a1e-416d-874f-b8d217976f76

# ╟─48a10ea2-5d32-4a55-b8c0-f6a5e82eace9

# ╟─fafc1b0f-6469-4b6c-a00d-5272a45fc69b

# ╟─ad6cff7b-5cbf-4ab1-94f7-d21cbc171000

# ╠═30c191c5-642b-4062-98f3-643d314a054d

# ╠═fa5716f9-8bff-4295-812b-691ccdc12832

# ╠═f267e315-3c19-4345-8fba-641bb0ea515b

# ╠═4d373cf6-9b39-44bc-8f13-220933fc8f5c

# ╠═293a68ca-e02f-47b3-85ed-aeeb8995f3ec

# ╠═8324f365-fd12-4ca3-8ca6-657e5917f946

# ╠═70fb10ea-9229-46ef-8ba3-b1d3874b7929

# ╠═51504ba4-4711-48b7-aab9-d4f26c009659

# ╠═a07b93b1-742b-41d4-bd0f-bc899de55338

# ╠═864dbde7-b689-4165-a08e-6bbbd72190de

# ╠═5f207f59-b9f4-477f-b79f-0aee743bdb8e

# ╠═f88517d6-b87d-45ba-bf3f-67074fa51fca

# ╠═45aef837-9b2c-49b2-b815-e4d60f103f58

================================================

FILE: notebooks/basic.jl

================================================

### A Pluto.jl notebook ###

# v0.14.5

using Markdown

using InteractiveUtils

# This Pluto notebook uses @bind for interactivity. When running this notebook outside of Pluto, the following 'mock version' of @bind gives bound variables a default value (instead of an error).

macro bind(def, element)

quote

local el = $(esc(element))

global $(esc(def)) = Core.applicable(Base.get, el) ? Base.get(el) : missing

el

end

end

# ╔═╡ 1ef174fa-16f0-11eb-328a-afc201effd2f

using Pkg, Printf

# ╔═╡ 55cfdab8-d792-11ea-271f-e7383e19997c

using PlutoUI;

# ╔═╡ 9e509f80-d485-11ea-0044-c5b7e750aacb

using NiLang

# ╔═╡ 37ed073a-d492-11ea-156f-1fb155128d0f

using Zygote, BenchmarkTools

# ╔═╡ 4d75f302-d492-11ea-31b9-bbbdb43f344e

using NiLang.AD

# ╔═╡ 627ea2fb-6530-4ea0-98ee-66be3db54411

html"""

"""

# ╔═╡ 94b2b962-e02a-11ea-09a5-81b3226891ed

md"""# 连猩猩都能懂的可逆编程

### (Reversible programming made simple)

[https://github.com/JuliaReverse/NiLangTutorial/](https://github.com/JuliaReverse/NiLangTutorial/)

$(html"

", md"**Matrix computations**

Golub, Gene H., and Charles F. Van Loan (2013)")

# ╔═╡ 4d373cf6-9b39-44bc-8f13-220933fc8f5c

function qrfactPivotedUnblocked!(A::AbstractMatrix)

m, n = size(A)

piv = Vector(UnitRange{BlasInt}(1,n))

τ = Vector{eltype(A)}(undef, min(m,n))

for j = 1:min(m,n)

# Find column with maximum norm in trailing submatrix

jm = indmaxcolumn(view(A, j:m, j:n)) + j - 1

if jm != j

# Flip elements in pivoting vector

tmpp = piv[jm]

piv[jm] = piv[j]

piv[j] = tmpp

# Update matrix with

for i = 1:m

tmp = A[i,jm]

A[i,jm] = A[i,j]

A[i,j] = tmp

end

end

# Compute reflector of columns j

x = view(A, j:m, j)

τj = LinearAlgebra.reflector!(x)

τ[j] = τj

# Update trailing submatrix with reflector

LinearAlgebra.reflectorApply!(x, τj, view(A, j:m, j+1:n))

end

return LinearAlgebra.QRPivoted{eltype(A), typeof(A)}(A, τ, piv)

end

# ╔═╡ 293a68ca-e02f-47b3-85ed-aeeb8995f3ec

struct Reflector{T,RT,VT<:AbstractVector{T}}

ξ::T

normu::RT

sqnormu::RT

r::T

y::VT

end

# ╔═╡ fa5716f9-8bff-4295-812b-691ccdc12832

struct QRPivotedRes{T,RT,VT}

factors::Matrix{T}

τ::Vector{T}

jpvt::Vector{Int}

reflectors::Vector{Reflector{T,RT,VT}}

vAs::Vector{Vector{T}}

jms::Vector{Int}

end

# ╔═╡ 8324f365-fd12-4ca3-8ca6-657e5917f946

# Elementary reflection similar to LAPACK. The reflector is not Hermitian but

# ensures that tridiagonalization of Hermitian matrices become real. See lawn72

@i function reflector!(R::Reflector{T,RT}, x::AbstractVector{T}) where {T,RT}

n ← length(x)

@inbounds @invcheckoff if n != 0

@zeros T ξ1

@zeros RT normu sqnormu

ξ1 += x[1]

sqnormu += abs2(ξ1)

for i = 2:n

sqnormu += abs2(x[i])

end

if !iszero(sqnormu)

normu += sqrt(sqnormu)

if real(ξ1) < 0

NEG(normu)

end

ξ1 += normu

R.y[1] -= normu

for i = 2:n

R.y[i] += x[i] / ξ1

end

R.r += ξ1/normu

end

SWAP(R.ξ, ξ1)

SWAP(R.normu, normu)

SWAP(R.sqnormu, sqnormu)

end

end

# ╔═╡ 70fb10ea-9229-46ef-8ba3-b1d3874b7929

# apply reflector from left

@i function reflectorApply!(vA::AbstractVector{T}, x::AbstractVector, τ::Number, A::StridedMatrix{T}) where T

(m, n) ← size(A)

if length(x) != m || length(vA) != n

@safe throw(DimensionMismatch("reflector has length ($(length(x)), $(length(vA))), which must match the first dimension of matrix A, ($m, $n)"))

end

@inbounds @invcheckoff if m != 0

for j = 1:n

# dot

@zeros T vAj vAj_τ

vAj += A[1, j]

for i = 2:m

vAj += x[i]'*A[i, j]

end

vAj_τ += τ' * vAj

# ger

A[1, j] -= vAj_τ

for i = 2:m

A[i, j] -= x[i]*vAj_τ

end

vAj_τ -= τ' * vAj

SWAP(vA[j], vAj)

end

end

end

# ╔═╡ 51504ba4-4711-48b7-aab9-d4f26c009659

function alloc(::typeof(reflector!), x::AbstractVector{T}) where T

RT = real(T)

Reflector(zero(T), zero(RT), zero(RT), zero(T), zero(x))

end

# ╔═╡ f267e315-3c19-4345-8fba-641bb0ea515b

@i function qr_pivoted!(res::QRPivotedRes, A::StridedMatrix{T}) where T

m, n ← size(A)

@invcheckoff @inbounds for j = 1:min(m,n)

# Find column with maximum norm in trailing submatrix

jm ← LinearAlgebra.indmaxcolumn(NiLang.value.(view(A, j:m, j:n))) + j - 1

if jm != j

# Flip elements in pivoting vector

SWAP(res.jpvt[jm], res.jpvt[j])

# Update matrix with

for i = 1:m

SWAP(A[i, jm], A[i, j])

end

end

# Compute reflector of columns j

R ← alloc(reflector!, A |> subarray(j:m, j))

vA ← zeros(T, n-j)

reflector!(R, A |> subarray(j:m, j))

# Update trailing submatrix with reflector

reflectorApply!(vA, R.y, R.r, A |> subarray(j:m, j+1:n))

for i=1:length(R.y)

SWAP(R.y[i], A[j+i-1, j])

end

PUSH!(res.reflectors, R)

PUSH!(res.vAs, vA)

PUSH!(res.jms, jm)

R → _zero(Reflector{T,real(T),Vector{T}})

vA → zeros(T, 0)

jm → 0

end

@inbounds for i=1:length(res.reflectors)

res.τ[i] += res.reflectors[i].r

end

res.factors += A

end

# ╔═╡ a07b93b1-742b-41d4-bd0f-bc899de55338

function alloc_qr(A::AbstractMatrix{T}) where T

(m, n) = size(A)

τ = zeros(T, min(m,n))

jpvt = collect(1:n)

reflectors = Reflector{T,real(T),Vector{T}}[]

vAs = Vector{T}[]

jms = Int[]

QRPivotedRes(zero(A), τ, jpvt, reflectors, vAs, jms)

end

# ╔═╡ 5f207f59-b9f4-477f-b79f-0aee743bdb8e

A = randn(ComplexF64, 20, 20);

# ╔═╡ f88517d6-b87d-45ba-bf3f-67074fa51fca

@test qr_pivoted!(alloc_qr(A), copy(A))[1].factors ≈ LinearAlgebra.qrfactPivotedUnblocked!(copy(A)).factors

# ╔═╡ 45aef837-9b2c-49b2-b815-e4d60f103f58

let

@testset "qr pivoted gradient" begin

# rank deficient initial matrix

n = 50

U = LinearAlgebra.qr(randn(n, n)).Q

Σ = Diagonal((x=randn(n); x[n÷2+1:end] .= 0; x))

A = U*Σ*U'

res = alloc_qr(A)

@test rank(A) == n ÷ 2

qrres = qr_pivoted!(deepcopy(res), copy(A))[1]

@test count(x->(x>1e-12), sum(abs2, QRPivoted(qrres.factors, qrres.τ, qrres.jpvt).R, dims=2)) == n ÷ 2

@i function loss(y, qrres, A)

qr_pivoted!(qrres, A)

y += abs(qrres.factors[1])

end

nrloss(A) = loss(0.0, deepcopy(res), A)[1]

ngA = zero(A)

δ = 1e-5

for j=1:size(A, 2)

for i=1:size(A, 1)

A_ = copy(A)

A_[i,j] -= δ/2

l1 = nrloss(copy(A_))

A_[i,j] += δ

l2 = nrloss(A_)

ngA[i,j] = (l2-l1)/δ

end

end

gA = NiLang.AD.gradient(loss, (0.0, res, A); iloss=1)[3]

@test real.(gA) ≈ ngA

end

end

# ╔═╡ Cell order:

# ╟─a1ef579e-4b66-4042-944e-7e27c660095e

# ╟─100b4293-fd1e-4b9c-a831-5b79bc2a5ebe

# ╟─f11023e5-8f7b-4f40-86d3-3407b61863d9

# ╟─9d11e058-a7d0-11eb-1d78-6592ff7a1b43

# ╟─b73157bf-1a77-47b8-8a06-8d6ec2045023

# ╟─ec13e0a9-64ff-4f66-a5a6-5fef53428fa1

# ╟─f8b0d1ce-99f7-4729-b46e-126da540cbbe

# ╟─435ac19e-1c0c-4ee5-942d-f2a97c8c4d80

# ╟─48ecd619-d01d-43ff-8b52-7c2566c3fa2b

# ╟─4878ce45-40ff-4fae-98e7-1be41e930e4d

# ╠═ce44f8bd-692e-4eab-9ba4-055b25e40c81

# ╠═b2c1936c-2c27-4fbb-8183-e38c5e858483

# ╠═8be1b812-fcac-404f-98aa-0571cb990f34

# ╟─33e0c762-c75e-44aa-bfe2-bff92dd1ace8

# ╟─c59c35ee-1907-4736-9893-e22c052150ca

# ╠═0ae13734-b826-4dbf-93d1-11044ce88bd4

# ╠═99187515-c8be-49c2-8d70-9c2998d9993c

# ╟─78ca6b08-84c4-4e4d-8412-ae6c28bfafce

# ╠═f12b25d8-7c78-4686-b46d-00b34e565605

# ╟─d90c3cc9-084d-4cf7-9db7-42cea043030b

# ╟─93c98cb2-18af-47df-afb3-8c5a34b4723c

# ╟─2dc74e15-e2ea-4961-b43f-0ada1a73d80a

# ╟─7ee75a15-eaea-462a-92b6-293813d2d4d7

# ╟─02a25b73-7353-43b1-8738-e7ca472d0cc7

# ╟─2afb984f-624e-4381-903f-ccc1d8a66a17

# ╟─7e5d5e69-90f2-4106-8edf-223c150a8168

# ╟─92d7a938-9463-4eee-8839-0b8c5f762c79

# ╟─4b1a0b59-ddc6-4b2d-b5f5-d92084c31e46

# ╟─81f16b8b-2f0b-4ba3-8c26-6669eabf48aa

# ╟─fb6c3a48-550a-4d2e-a00b-a1e40d86b535

# ╟─ab6fa4ac-29ed-4722-88ed-fa1caf2072f3

# ╟─8e72d934-e307-4505-ac82-c06734415df6

# ╟─e6ff86a9-9f54-474b-8111-a59a25eda506

# ╟─9c1d9607-a634-4350-aacd-2d40984d647d

# ╟─63db2fa2-50b2-4940-b8ee-0dc6e3966a57

# ╟─693167e7-e80c-401d-af89-55b5fae30848

# ╟─4cd70901-2142-4868-9a33-c46ca0d064ec

# ╟─89018a35-76f4-4f23-b15a-a600db046d6f

# ╟─1d219222-0778-4c37-9182-ed5ccbb3ef32

# ╟─4ff09f7c-aeac-48bd-9d58-8446137c3acd

# ╟─ea44037b-9359-4fbd-990f-529d88d54351

# ╟─e731a8e3-6462-4a60-83e9-6ab7ddfff50e

# ╟─685c2b28-b071-452c-a881-801128dcb6c3

# ╟─177ddfc2-2cbe-4dba-9d05-2857633dd1ae

# ╟─6c2a3a93-385f-4758-9b6e-4cb594a8e856

# ╟─fb8168c2-8489-418b-909b-cede57b5ae64

# ╟─fdb39284-dbb1-49fa-9a1c-f360f9e6b765

# ╟─60214f22-c8bb-4a32-a882-4e6c727b29a9

# ╟─7a6dbe09-cb7f-405f-b9b5-b350ca170e5f

# ╟─5dc4a849-76dd-4c4f-8828-755671839e5e

# ╟─b053f11b-9ed7-47ff-ab32-0c70b87e71ed

# ╟─7b1aa6dd-647f-44cb-b580-b58e23e8b5a6

# ╟─b96bac75-b4ad-45f7-aeec-cb6a387eebf0

# ╟─5fe022eb-6a17-466e-a6d0-d67e82af23cd

# ╟─92047e95-7eba-4021-9668-9bb4b92261d7

# ╟─e2ae1084-8759-4f27-8ad1-43a88e434a3d

# ╠═edd3aea8-abdb-4e12-9ef9-12ac0fff835b

# ╠═a2904efb-186c-449d-b1aa-caf530f88e91

# ╠═14faaf82-ad3e-4192-8d48-84adfa30442d

# ╠═5d141b88-ec07-4a02-8eb3-37405e5c9f5d

# ╠═0907e683-f216-4cf6-a210-ae5181fdc487

# ╠═805c7072-98fa-4086-a69d-2e126c55af36

# ╠═7e527024-c294-4c16-8626-9953588d9b6a

# ╠═0bbfa106-f465-4a7b-80a7-7732ba435822

# ╠═3e59c65a-ceed-42ed-be64-a6964db016e7

# ╟─29f85d05-99fd-4843-9be0-5663e681dad7

# ╠═9a46597c-b1ee-4e3b-aed1-fd2874b6e77a

# ╠═e7830e55-bd9e-4a8a-9239-4191a5f0b1d1

# ╟─de2cd247-ba68-4ba4-9784-27a743478635

# ╟─dc929c23-7434-4848-847a-9fa696e84776

# ╟─4f1df03f-c315-47b1-b181-749e1231594c

# ╠═ccd38f52-104d-434a-aea3-dd94e571374f

# ╠═7eccba6a-3ad5-440b-9c5d-392dc8dc7aba

# ╠═f4230251-ba54-434a-b86b-f972c7389217

# ╟─4a858a3e-ce28-4642-b061-3975a3ed99ff

# ╠═674bb3bb-637b-44f2-bf6d-d1678da03fbd

# ╠═5a59d96f-b2f1-4564-82c7-7f0fe181afb8

# ╠═55d2f8ee-4f77-4d44-b704-30643dbbab84

# ╠═14951168-97c2-43ae-8d5e-5506408a2bb2

# ╠═4f564581-6032-449c-8b15-3c741f44237a

# ╠═a36516e8-76c1-4bff-8a12-3e1e621b857d

# ╠═402b861c-d363-4d23-b9e9-eb088f57b5c4

# ╠═63975a80-1b41-4f55-91a1-4a316ad7bf26

# ╠═6f688f88-432a-42b2-a2db-19d6bb282e0a

# ╠═fb46db14-f7e0-4f01-9096-02334c62942d

# ╟─b2c3db3d-c250-4daa-8453-3c9a2734aede

# ╠═69dc2685-b70f-4a81-af30-f02e0054bd52

# ╠═9a986264-5ba7-4697-a00d-711f8efe29f0

# ╠═560cf3e9-0c14-4497-85b9-f07045eea32a

# ╠═8ab79efc-e8d0-4c6f-81df-a89008142bb7

# ╠═0eec318c-2c09-4dd6-9187-9c0273d29915

# ╠═1f0ef29c-0ad5-4d97-aeed-5ff44e86577a

# ╠═603d8fc2-5e7b-4d55-92b6-208b25ea6569

# ╟─2b3c765e-b505-4f07-9bcb-3c8cc47364ad

# ╠═e0f266da-7e65-4398-bfd4-a6c0b54e626b

# ╟─e1d35886-79d0-40a5-bd33-1c4e5f4a0a9a

# ╠═b63a30b0-c75b-4998-a2b2-0b79574cab81

# ╟─139bf020-c4a8-45c8-96fa-aeebc7ddaedc

# ╠═8967c0f0-89f8-4893-b11b-253333d1a823

# ╟─f2540450-5a07-4fb8-93fb-a6d48dd36a56

# ╠═3acb2cfd-fa29-4a2b-8f23-f5aaf474edd0

# ╟─aa1547f2-5edd-4b7e-b93e-bdfc4e4fc6d5

# ╟─6e76a107-4f51-4e32-b133-7b6e04d7d107

# ╟─999f7a8f-d72e-4ccd-8cbf-b5bbb7db1842

# ╟─32772c2a-6b80-4779-963c-06974ff0d832

# ╟─41642bd5-1321-490a-95ad-4c1d6363456f

# ╟─2a553e32-05ef-4c2d-aba7-41185c6035d4

# ╟─ab8345ce-e038-4d6b-9e1f-57e4f33bb67b

# ╟─bb9c9a4c-601a-4708-9b2d-04d1583938f2

# ╟─b9917e94-c33d-423f-a478-3252bacc2494

# ╟─4978f404-11ff-41b8-a673-f2d051b1f526

# ╟─73bd2e3b-902f-461b-860f-246257608ecd

# ╟─4dd47dc8-6dfa-47a4-a088-689b4b870762

# ╟─ecd975d2-9374-4f40-80ac-2cceda11e7fb

# ╟─832cc81d-a49d-46e7-9d2b-d8bde9bb1273

# ╟─2192a1de-1042-4b13-a313-b67de489124c

# ╟─01c709c7-806c-4389-bbb2-4081e64426d9

# ╟─b1e0cf83-4337-4044-a7d1-5fca8ae79268

# ╟─71f4b476-027d-4c8f-b561-1ee418bc9e61

# ╟─042013cf-9cd2-409d-827f-a311a2f8ce62

# ╟─82593cd0-1403-4597-8370-919c80494479

# ╟─f58720b5-2bcb-4950-b453-bd59f648c66a

# ╟─4576d791-6af7-4ba5-9b80-fe99c0bb2e88

# ╟─6e9d17f1-b17d-4e8d-82a3-921558a20c0f

# ╟─f18d89f5-1129-43e0-8b4a-5c1fcd618eab

# ╟─2912c7ed-75e3-4dfd-9c40-92115cc08194

# ╟─5d1517c0-562b-40db-bec2-32b5494de1b8

# ╟─ae096ad2-3ae9-4440-a959-0d7d9a174f1d

# ╟─8148bc1f-ef99-40a4-a5ce-0a42643f703d

# ╠═200f1848-0980-4185-919a-93ab2e7f788f

# ╠═bd86c5c2-16be-4cfd-ba7a-a0e2544d82d1

# ╟─11557d6b-3a1e-416d-874f-b8d217976f76

# ╟─48a10ea2-5d32-4a55-b8c0-f6a5e82eace9

# ╟─fafc1b0f-6469-4b6c-a00d-5272a45fc69b

# ╟─ad6cff7b-5cbf-4ab1-94f7-d21cbc171000

# ╠═30c191c5-642b-4062-98f3-643d314a054d

# ╠═fa5716f9-8bff-4295-812b-691ccdc12832

# ╠═f267e315-3c19-4345-8fba-641bb0ea515b

# ╠═4d373cf6-9b39-44bc-8f13-220933fc8f5c

# ╠═293a68ca-e02f-47b3-85ed-aeeb8995f3ec

# ╠═8324f365-fd12-4ca3-8ca6-657e5917f946

# ╠═70fb10ea-9229-46ef-8ba3-b1d3874b7929

# ╠═51504ba4-4711-48b7-aab9-d4f26c009659

# ╠═a07b93b1-742b-41d4-bd0f-bc899de55338

# ╠═864dbde7-b689-4165-a08e-6bbbd72190de

# ╠═5f207f59-b9f4-477f-b79f-0aee743bdb8e

# ╠═f88517d6-b87d-45ba-bf3f-67074fa51fca

# ╠═45aef837-9b2c-49b2-b815-e4d60f103f58

================================================

FILE: notebooks/basic.jl

================================================

### A Pluto.jl notebook ###

# v0.14.5

using Markdown

using InteractiveUtils

# This Pluto notebook uses @bind for interactivity. When running this notebook outside of Pluto, the following 'mock version' of @bind gives bound variables a default value (instead of an error).

macro bind(def, element)

quote

local el = $(esc(element))

global $(esc(def)) = Core.applicable(Base.get, el) ? Base.get(el) : missing

el

end

end

# ╔═╡ 1ef174fa-16f0-11eb-328a-afc201effd2f

using Pkg, Printf

# ╔═╡ 55cfdab8-d792-11ea-271f-e7383e19997c

using PlutoUI;

# ╔═╡ 9e509f80-d485-11ea-0044-c5b7e750aacb

using NiLang

# ╔═╡ 37ed073a-d492-11ea-156f-1fb155128d0f

using Zygote, BenchmarkTools

# ╔═╡ 4d75f302-d492-11ea-31b9-bbbdb43f344e

using NiLang.AD

# ╔═╡ 627ea2fb-6530-4ea0-98ee-66be3db54411

html"""

"""

# ╔═╡ 94b2b962-e02a-11ea-09a5-81b3226891ed

md"""# 连猩猩都能懂的可逆编程

### (Reversible programming made simple)

[https://github.com/JuliaReverse/NiLangTutorial/](https://github.com/JuliaReverse/NiLangTutorial/)

$(html"") **Jinguo Liu** (github: [GiggleLiu](https://github.com/GiggleLiu/)) *Postdoc, Institute of physics, Chinese academy of sciences* (when doing this project) *Consultant, QuEra Computing* (current) *Postdoc, Havard* (soon) """ # ╔═╡ a5ee60c8-e02a-11ea-3512-7f481e499f23 md""" # Table of Contents 1. Reversible programming basics 2. Differentiate everything with a reversible programming language 4. Real world applications and benchmarks """ # ╔═╡ a11c4b60-d77d-11ea-1afe-1f2ab9621f42 md""" ## In this talk, We use the reversible eDSL [NiLang](https://github.com/GiggleLiu/NiLang.jl) is a [Julia](https://julialang.org/) as our reversible programming tool. A package that can differentiate everything.  Authors: [GiggleLiu](https://github.com/GiggleLiu), [Taine Zhao](https://github.com/thautwarm) """ # ╔═╡ e54a1be6-d485-11ea-0262-034c56e0fda8 md""" ## Sec I. Reversible programming basic ### Reversible function definition A reversible function `f` is defined as ```julia (~f)(f(x, y, z...)...) == (x, y, z...) ``` """ # ╔═╡ d1628f08-ddfb-11ea-241a-c7e6c1a22212 md""" ## Example 1: reversible adder ```math \begin{align} f &: x, y → x+y, y\\ {\small \mathrel{\sim}}f &: x, y → x-y, y \end{align} ``` """ # ╔═╡ 278ac6b6-e02c-11ea-1354-cd7ecd1099be md"The reversible macro `@i` defines two functions, the function itself and its inverse." # ╔═╡ a28d38be-d486-11ea-2c40-a377b74a05c1 @i function reversible_plus(x, y) x += y end # ╔═╡ e93f0bf6-d487-11ea-1baa-21d51ddb4a20 reversible_plus(2.0, 3.0) # ╔═╡ fc932606-d487-11ea-303e-75ca8b7a02f6 (~reversible_plus)(5.0, 3.0) # ╔═╡ e3d2b23a-ddfb-11ea-0f5e-e72ed299bb45 md"## The difference to a regular programming language" # ╔═╡ a961e048-ddf2-11ea-0262-6d19eb82b36b md"**Comment 1**: The return statement is not allowed, a reversible function returns input arguments directly." # ╔═╡ 2d22f504-ddf1-11ea-28ec-5de6f4ee79bb md"**Comment 2**: Every operation is reversible. `+=` is considered as reversible for integers and floating point numbers in NiLang, although for floating point numbers, there are *rounding errors*." # ╔═╡ 7d08ac24-e143-11ea-2085-539fd9e35889 md"### A case where `+=` is not reversible" # ╔═╡ 9fcdd77c-e0df-11ea-09e6-49a2861137e5 let x, y = 1e-20, 1e20 x += y x -= y (x, y) end # ╔═╡ 0a1a8594-ddfc-11ea-119a-1997c86cd91b md""" ## Use this function """ # ╔═╡ 0b4edb1a-ddf0-11ea-220c-91f2df7452e7 @i function reversible_plus2(x, y) reversible_plus(x, y) # equivalent to `reversible_plus(x, y)` reversible_plus(x, y) end # ╔═╡ f875ecd6-ddef-11ea-22a1-619809d15b37 md"**Comment**: Inside a reversible function definition, a statement changes a variable *inplace*" # ╔═╡ e7557bee-e0cc-11ea-1788-411e759b4766 reversible_plus2(2.0, 3.0) # ╔═╡ cd7b2a2e-ddf5-11ea-04c4-f7583bbb5a53 md"A statement can be **uncalled** with `~`" # ╔═╡ bc98a824-ddf5-11ea-1a6a-1f795452d3d0 @i function do_nothing(x, y) reversible_plus(x, y) ~reversible_plus(x, y) # uncall the expression end # ╔═╡ 05f8b91c-e0cd-11ea-09e3-f3c5c0e07e63 do_nothing(2.0, 3.0) # ╔═╡ ac302844-e07b-11ea-35dd-e3e06054401b md"## Example 2: Compute $x^5$" # ╔═╡ b722e098-e07b-11ea-3483-01360fb6954e @i function naive_power5(y, x::T) where T y = one(T) # error 1: `=` is not reversible for i=1:5 y *= x # error 2: `*=` is not reversible end end # ╔═╡ bf8b722c-dfa4-11ea-196a-719802bc23c5 md""" ## Compute $x^5$ reversibly """ # ╔═╡ 330edc28-dfac-11ea-35a5-3144c4afbfcf md"note: `*=` is not reversible for usual number systems" # ╔═╡ 0a679e04-dfa7-11ea-0288-a1fa490c4387 @i function power5(x5, x4, x3, x2, x1, x) x1 += x x2 += x1 * x x3 += x2 * x x4 += x3 * x x5 += x4 * x end # ╔═╡ cc32cae8-dfab-11ea-0d0b-c70ea8de720a power5(0.0, 0.0, 0.0, 0.0, 0.0, 2.0) # ╔═╡ b4240c16-dfac-11ea-3a40-33c54436e3a3 md"# Don't make me so many input arguments!" # ╔═╡ ade52358-dfac-11ea-2dd3-d3a691e7a8a2 @i function power5_twoinputs(x5, x::T) where T x1 ← zero(T) x2 ← zero(T) x3 ← zero(T) x4 ← zero(T) x1 += x x2 += x1 * x x3 += x2 * x x4 += x3 * x x5 += x4 * x x4 -= x3 * x x3 -= x2 * x x2 -= x1 * x x1 -= x x4 → zero(T) x3 → zero(T) x2 → zero(T) x1 → zero(T) end # ╔═╡ d86e2e5e-dfab-11ea-0053-6d52f1164bc5 power5_twoinputs(0.0, 2.0) # ╔═╡ 7951b9ec-e030-11ea-32ee-b1de49378186 md""" **Comment**: `n ← zero(T)` is the variable allocation operation. It means ``` if n is defined error else n = zero(T) end ``` Its inverse is `n → zero(T)`. It means ``` @assert n == zero(T) deallocate(n) ``` """ # ╔═╡ 6bc97f5e-dfad-11ea-0c43-e30b6620e6e8 md"# Shorter: compute-copy-uncompute" # ╔═╡ 80d24e9e-dfad-11ea-1dae-49568d534f10 @i function power5_twoinputs_shorter(x5, x::T) where T @routine begin # compute @zeros T x1 x2 x3 x4 x1 += x x2 += x1 * x x3 += x2 * x x4 += x3 * x end x5 += x4 * x # copy ~@routine # uncompute end # ╔═╡ a8092b18-dfad-11ea-0989-474f37d05f73 power5_twoinputs_shorter(0.0, 2.0) # ╔═╡ 43f0c2fc-e030-11ea-25d9-b323e6496a35 md"""**Comment**: ``` @routine statement ~@routine ``` is equivalent to ``` statement ~(statement) ``` This is the famous `compute-copy-uncompute` design pattern in reversible computing. Check this [reference](https://epubs.siam.org/doi/10.1137/0219046). """ # ╔═╡ b4ad5830-dfad-11ea-0057-055dda8cc9be md"# How to compute x^1000?" # ╔═╡ cf576d38-dfad-11ea-2682-7bd540db44a5 @i function power1000(x1000, x::T) where T @routine begin xs ← zeros(T, 1000) xs[1] += 1 for i=2:1000 xs[i] += xs[i-1] * x end end x1000 += xs[1000] * x ~@routine end # ╔═╡ 35fff53c-dfae-11ea-3602-918a17d5a5fa power1000(0.0, 1.001) # ╔═╡ 9b9b5328-e030-11ea-1d00-f3341572734a html"""

For loop

for i=start:step:stop

# do something

end

for i=stop:-step:start

# undo something

end

If statement

if (precondition, postcondition)

# do A

else

# do B

end

if (postcondition, precondition)

# undo A

else

# undo B

end

Automatic differentiation?

"""

# ╔═╡ e1370f80-e0bc-11ea-2a90-d50cc762cbcb

md"When we start learning AD, we start by learning the backward rules of the matrix multiplication"

# ╔═╡ 3098411c-e0bc-11ea-2754-eb0afbd663de

function mymul!(out::AbstractMatrix, A::AbstractMatrix, B::AbstractMatrix)

@assert size(A, 2) == size(B, 1) && size(out) == (size(A, 1), size(B, 2))

for k=1:size(B, 2)

for j=1:size(B, 1)

for i=size(A, 1)

@inbounds out[i, k] += A[i, j] * B[j, k]

end

end

end

return out

end

# ╔═╡ 3d0150ee-e0bd-11ea-0a5a-339465b496dc

md"Then, we learning how to use chain rule to chain different utilities."

# ╔═╡ 8016ff94-e0bc-11ea-3b9e-4f0676587edf

md"##### But wait! Why don't we start from the backward rules of `+` and `*`, then use the chain rule to derive the backward rule for matrix multiplication?"

# ╔═╡ 99108ace-e0bc-11ea-2744-d1b18db50ae1

md"# They are different"

# ╔═╡ b2337f26-e0bb-11ea-3da0-9507c35101ae

md"""

### Domain-specific autodiff (DS-AD)

* **Tensor**Flow

* **PyTorch**

* **Jax**

* **Flux (Zygote backended)**

### General Purposed autodiff (GP-AD)

* **Tapenade**

* **NiLang**

"""

# ╔═╡ 48db515c-e084-11ea-2eec-018b8545fa34

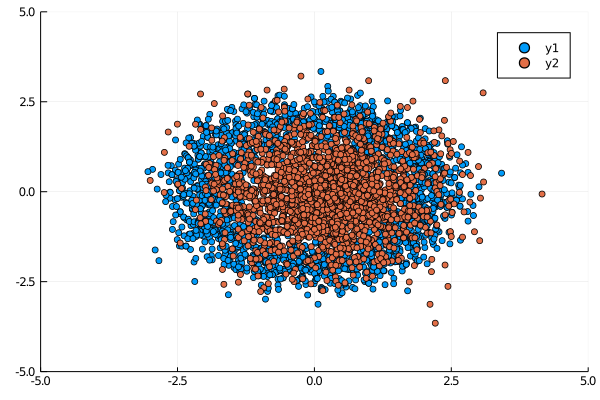

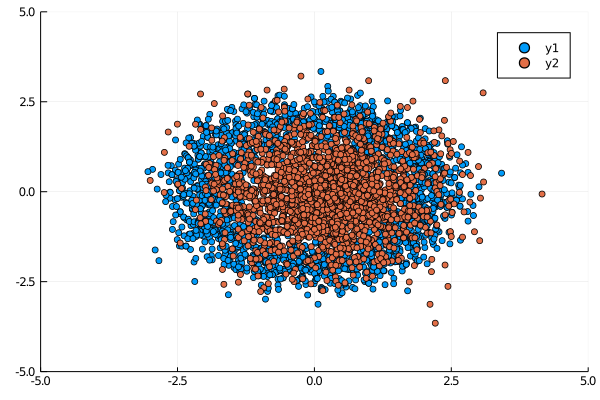

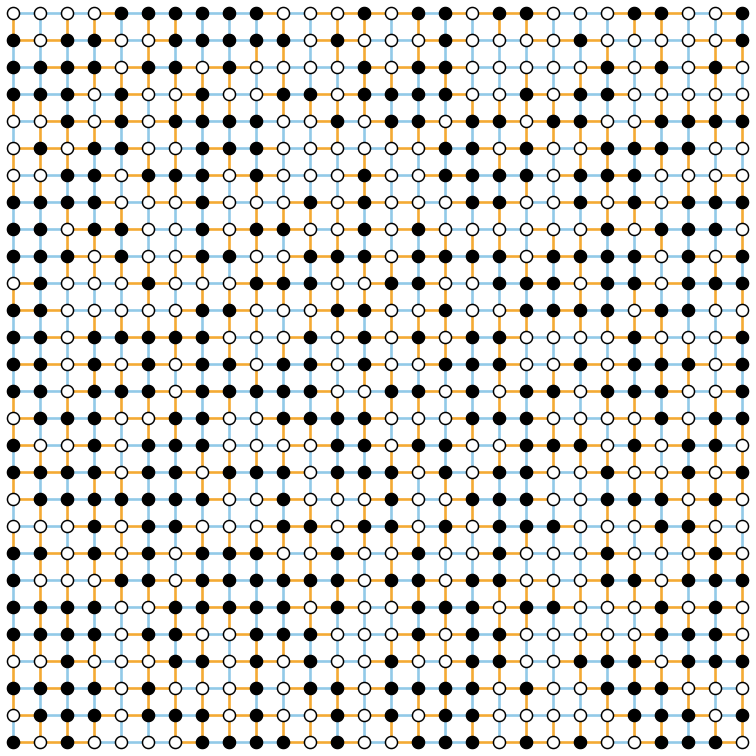

md"## Traditional AD uses checkpointing

Checkpoint every 100 steps. Blue and yellow objects are computing and re-computing. Here states 1 and state 101 are cached. Blue objects are computing, and yellow ones are re-computing. The state 100 is the desired state.

"

# ╔═╡ f531f556-e083-11ea-2f7e-77e110d6c53a

md""

# ╔═╡ 62643fbc-e084-11ea-1b1f-39b87ff32b9e

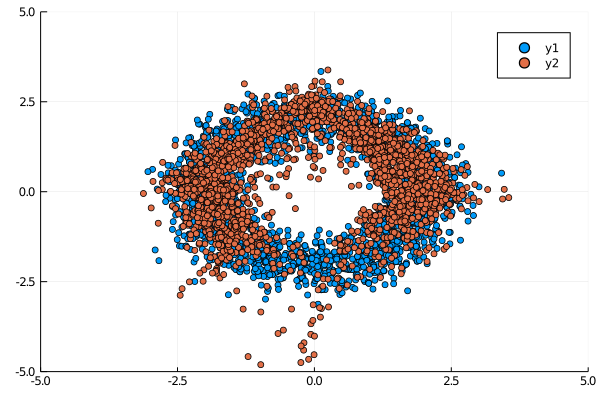

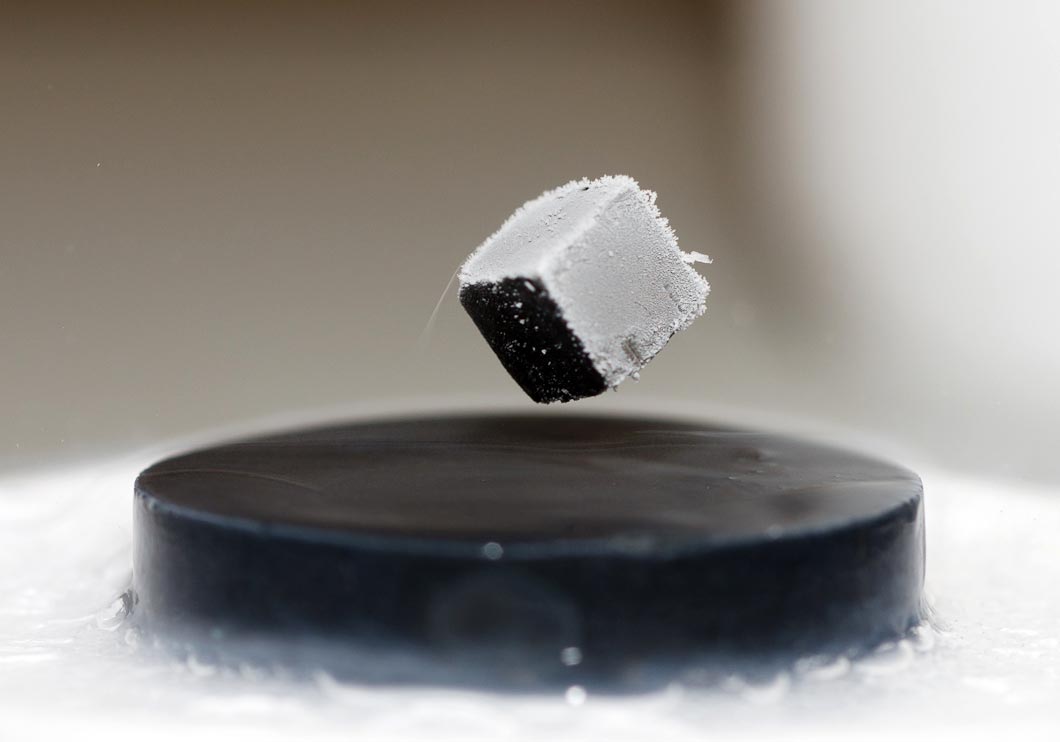

md"## Reverse Computing

Reversible computing approach to free up memories (a) when no operations are reversible. (b) when all operations are reversible. Blue and yellow diamonds are reversible operations executed in forward and backward directions, red cubics are garbage variables.

"

# ╔═╡ 0bf54b08-e084-11ea-3d11-7be65f3ec022

md""

# ╔═╡ 15f7c60a-e08e-11ea-31ea-a5cd055644db

md"## Difference Explained"

# ╔═╡ 55a3a260-d48e-11ea-06e2-1b7bd7bba6f5

md"""

"""

# ╔═╡ 38014ad0-e08e-11ea-1905-198038ab7e5f

md"# Obtaining the gradient of norm in Zygote"

# ╔═╡ 2e6fe4da-d79d-11ea-1e90-f5215190395c

md"**Obtaining the gradient of the norm function**"

# ╔═╡ 6560c28c-e08e-11ea-1094-d333b88071ce

function regular_norm(x::AbstractArray{T}) where T

res = zero(T)

for i=1:length(x)

@inbounds res += x[i]^2

end

return sqrt(res)

end

# ╔═╡ 744dd3c6-d492-11ea-0ed5-0fe02f99db1f

@benchmark Zygote.gradient($regular_norm, $(randn(1000))) seconds=1

# ╔═╡ f72246f8-e08e-11ea-3aa0-53f47a64f3e9

md"## The reversible counterpart"

# ╔═╡ f025e454-e08e-11ea-20d6-d139b9a6b301

@i function reversible_norm(res, y, x::AbstractArray{T}) where {T}

for i=1:length(x)

@inbounds y += x[i]^2

end

res += sqrt(y)

end

# ╔═╡ 8fedd65a-e08e-11ea-27f4-03bf9ed65875

let x = randn(1000)

@assert Zygote.gradient(regular_norm, x)[1] ≈ NiLang.AD.gradient(reversible_norm, (0.0, 0.0, x), iloss=1)[3]

end

# ╔═╡ 8ad60dc0-d492-11ea-2cb3-1750b39ddf86

@benchmark NiLang.AD.gradient($reversible_norm, (0.0, 0.0, $(randn(1000))), iloss=1)

# ╔═╡ 7bab4614-d77e-11ea-037c-8d1f432fc3b8

md"""

"""

#

# ╔═╡ fcca27ba-d4a4-11ea-213a-c3e2305869f1

#**1. The bundle adjustment jacobian benchmark**

#$(LocalResource("ba-origin.png"))

#

#**2. The Gaussian mixture model benchmark**

#$(LocalResource("gmm-origin.png"))

#

md"""

# Sec III. Applications in real world and benchmarks

"""

# ╔═╡ 519dc834-e092-11ea-2151-57ef23810b84

md"""

## 1. Bundle Adjustment (Jacobian)

*Srajer, Filip, Zuzana Kukelova, and Andrew Fitzgibbon. "A benchmark of selected algorithmic differentiation tools on some problems in computer vision and machine learning." Optimization Methods and Software 33.4-6 (2018): 889-906.*

### Benchmarks

**Devices**

* CPU: Intel(R) Xeon(R) Gold 6230 CPU @ 2.10GHz

* GPU: Nvidia Titan V.

**Github Repos**

* [https://github.com/microsoft/ADBench](https://github.com/microsoft/ADBench)

* [https://github.com/JuliaReverse/NiBundleAdjustment.jl](https://github.com/JuliaReverse/NiBundleAdjustment.jl)

"""

# ╔═╡ c89108f0-e092-11ea-0fe2-efad85008b28

html"""

Results from the original benchmark

%20[Jacobian]%20-%20Release%20Graph.png) """

# ╔═╡ cc0d5622-d788-11ea-19cd-3bf6864d9263

md"""##### Including NiLang.AD

"""

# ╔═╡ a1646ef0-e091-11ea-00f1-e7c246e191ff

md"## 3. Solve the memory wall problem in machine learning"

# ╔═╡ b18b3ae8-e091-11ea-24a1-e968b70b217c

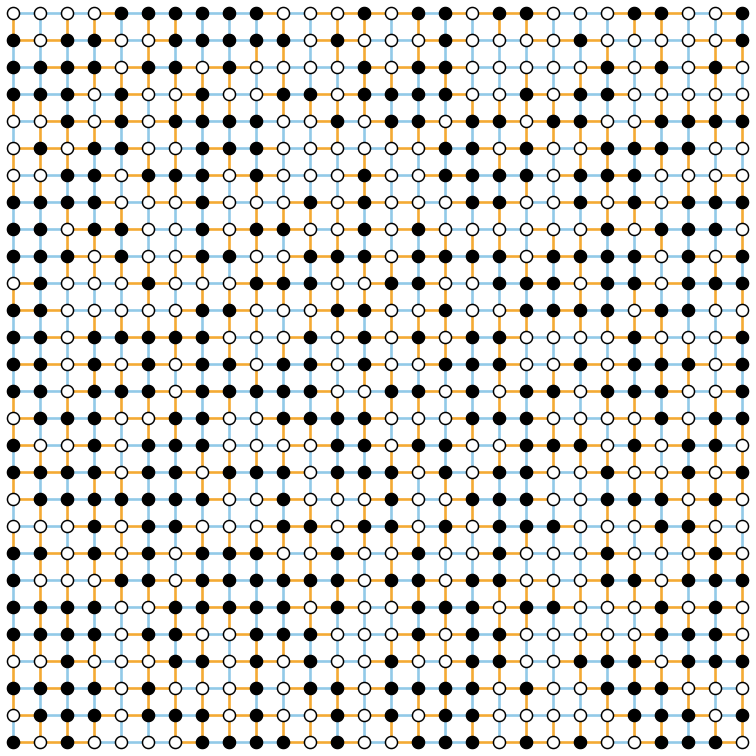

html"""

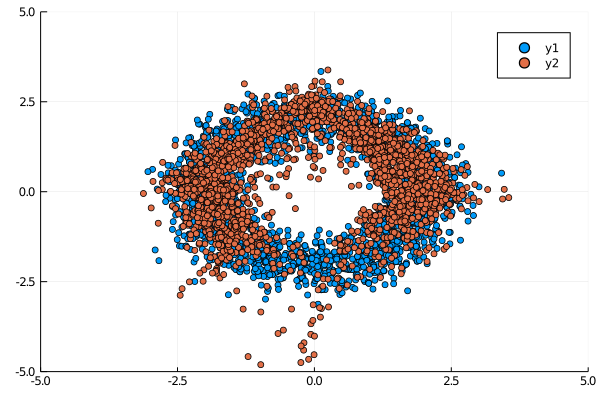

Learning a ring distribution with NICE network, before and after training

"""

# ╔═╡ cc0d5622-d788-11ea-19cd-3bf6864d9263

md"""##### Including NiLang.AD

"""

# ╔═╡ a1646ef0-e091-11ea-00f1-e7c246e191ff

md"## 3. Solve the memory wall problem in machine learning"

# ╔═╡ b18b3ae8-e091-11ea-24a1-e968b70b217c

html"""

Learning a ring distribution with NICE network, before and after training

References

"""

# ╔═╡ bf3774de-e091-11ea-3372-ef56452158e6

md"""

## 4. Solve the spinglass ground state configuration

Obtaining the optimal configuration of a spinglass problem on a $28 \times 28$ square lattice.

##### References

Jin-Guo Liu, Lei Wang, Pan Zhang, **arXiv 2008.06888**

"""

# ╔═╡ c8e4f7a6-e091-11ea-24a3-4399635a41a5

md"""

## 5. Optimizing problems in finance

Gradient based optimization of Sharpe rate.

600x acceleration comparing with using pure Zygote.

##### References

* Han Li's Github repo: [https://github.com/HanLi123/NiLang](https://github.com/HanLi123/NiLang) and his Zhihu blog [猴子掷骰子](https://zhuanlan.zhihu.com/c_1092471228488634368).

"""

# ╔═╡ bc872296-e09f-11ea-143b-9bfd5e52b14f

md"""## 6. Accelerate the performance critical part of variational mean field

[https://github.com/quantumlang/NiLangTest/pull/1](https://github.com/quantumlang/NiLangTest/pull/1)

600x acceleration comparing with using pure Zygote.

"""

# ╔═╡ e7b21fce-e091-11ea-180c-7b42e00598a9

md"""# Thank you!

Special thanks to my collaborator **Taine Zhao** and (ex-)advisor **Lei Wang**.

QuEra computing (a quantum computing company located in Boston) is hiring people.

"""

# ╔═╡ 7c79975c-d789-11ea-30b1-67ff05418cdb

md"""

"""

#

# ╔═╡ 5f1c3f6c-d48b-11ea-3eb0-357fd3ece4fc

md"""

## Sec IV. More about number systems

* Integers are reversible under (`+=`, `-=`).

* Floating point number system is **irreversible** under (`+=`, `-=`) and (`*=`, `/=`).

* [Fixedpoint number system](https://github.com/JuliaMath/FixedPointNumbers.jl) are reversible under (`+=`, `-=`)

* [Logarithmic number system](https://github.com/cjdoris/LogarithmicNumbers.jl) is reversible under (`*=`, `/=`)

"""

# ╔═╡ 11ddebfe-d488-11ea-223a-e9403f6ec8de

md"""

##### Example 1: Affine transformation with rounding error

```julia

y = A * x + b

```

"""

# ╔═╡ 030e592e-d488-11ea-060d-97a3bb6353b7

@i function reversible_affine!(y!::AbstractVector{T}, W::AbstractMatrix{T}, b::AbstractVector{T}, x::AbstractVector{T}) where T

@safe @assert size(W) == (length(y!), length(x)) && length(b) == length(y!)

for j=1:size(W, 2)

for i=1:size(W, 1)

@inbounds y![i] += W[i,j]*x[j]

end

end

for i=1:size(W, 1)

@inbounds y![i] += b[i]

end

end

# ╔═╡ c8d26856-d48a-11ea-3cd3-1124cd172f3a

begin

W = randn(10, 10)

b = randn(10)

x = randn(10)

end;

# ╔═╡ 37c4394e-d489-11ea-174c-b13bdddbe741

yout, Wout, bout, xout = reversible_affine!(zeros(10), W, b, x)

# ╔═╡ fef54688-d48a-11ea-340b-295b88d21382

# should be restored to 0, but not!

yin, Win, bin, xin = (~reversible_affine!)(yout, Wout, bout, xout)

# ╔═╡ 259a2852-d48c-11ea-0f01-b9634850e09d

md"""

### Reversible arithmetic functions

Computing basic functions like `power`, `exp` and `besselj` is not trivial for reversible programming.

There is no efficient constant memory algorithm using pure fixed point numbers only.

"""

# ╔═╡ f06fb004-d79f-11ea-0d60-8151019bf8c7

md"""

##### Example 2: Computing power function

To compute `x ^ n` reversiblly with fixed point numbers,

we need to either allocate a vector of size $O(n)$ or suffer from polynomial time overhead. It does not show the advantage to checkpointing.

"""

# ╔═╡ 26a8a42c-d7a1-11ea-24a3-45bc6e0674ea

@i function i_power_cache(y!::T, x::T, n::Int) where T

@routine @invcheckoff begin

cache ← zeros(T, n) # allocate a buffer of size n

cache[1] += x

for i=2:n

cache[i] += cache[i-1] * x

end

end

y! += cache[n]

~@routine # uncompute cache

end

# ╔═╡ 399552c4-d7a1-11ea-36bb-ad5ca42043cb

# To check the function

i_power_cache(Fixed43(0.0), Fixed43(0.99), 100)

# ╔═╡ 4bb19760-d7bf-11ea-12ed-4d9e4efb3482

md"""

##### Example 3: reversible thinker, the logarithmic number approach

With **logarithmic numbers**, we can still utilize reversibility. Fixed point numbers and logarithmic numbers can be converted via "a fast binary logarithm algorithm".

##### References

* [1] C. S. Turner, "A Fast Binary Logarithm Algorithm", IEEE Signal Processing Mag., pp. 124,140, Sep. 2010.

"""

# ╔═╡ 5a8ba8f4-d493-11ea-1839-8ba81f86799d

@i function i_power_lognumber(y::T, x::T, n::Int) where T

@routine @invcheckoff begin

lx ← one(ULogarithmic{T})

ly ← one(ULogarithmic{T})

## convert `x` to a logarithmic number

## Here, `*=` is reversible for log numbers

lx *= convert(x)

for i=1:n

ly *= lx

end

end

## convert back to fixed point numbers

y += convert(ly)

~@routine

end

# ╔═╡ a625a922-d493-11ea-1fe9-bdd4a694cde0

# To check the function

i_power_lognumber(Fixed43(0.0), Fixed43(0.99), 100)

# ╔═╡ 4fd20ed2-d7a2-11ea-206e-13799234913f

md"**Less allocation, better speed**"

# ╔═╡ 692dfb44-d7a1-11ea-00da-af6550bc0622

@benchmark i_power_cache(Fixed43(0.0), Fixed43(0.99), 100)

# ╔═╡ 7e4ee09c-d7a1-11ea-0e56-c1921012bc30

@benchmark i_power_lognumber(Fixed43(0.0), Fixed43(0.99), 100)

# ╔═╡ 4c209bbe-d7b1-11ea-0628-33eb8d664f5b

md"""##### Example 4: The first kind Bessel function computed with Taylor expansion

```math

J_\nu(z) = \sum\limits_{n=0}^{\infty} \frac{(z/2)^\nu}{\Gamma(k+1)\Gamma(k+\nu+1)} (-z^2/4)^{n}

```

"""

# ╔═╡ fd44a3d4-d7a4-11ea-24ea-09456ff2c53d

@i function ibesselj(y!::T, ν, z::T; atol=1e-8) where T

if z == 0

if ν == 0

out! += 1

end

else

@routine @invcheckoff begin

k ← 0

@ones ULogarithmic{T} lz halfz halfz_power_2 s

@zeros T out_anc

lz *= convert(z)

halfz *= lz / 2

halfz_power_2 *= halfz ^ 2

# s *= (z/2)^ν/ factorial(ν)

s *= halfz ^ ν

for i=1:ν

s /= i

end

out_anc += convert(s)

while (s.log > -25, k!=0) # upto precision e^-25

k += 1

# s *= 1 / k / (k+ν) * (z/2)^2

@routine begin

@zeros Int kkv kv

kv += k+ ν

kkv += kv*k

end

s *= halfz_power_2 / kkv

if k%2 == 0

out_anc += convert(s)

else

out_anc -= convert(s)

end

~@routine

end

end

y! += out_anc

~@routine

end

end

# ╔═╡ 84272664-d7b7-11ea-2e37-dffd2023d8d6

md"z = $(@bind z Slider(0:0.01:10; default=1.0))"

# ╔═╡ 900e2ea4-d7b8-11ea-3511-6f12d95e638a

begin

y = ibesselj(Fixed43(0.0), 2, Fixed43(z))[1]

gz = NiLang.AD.gradient(Val(1), ibesselj, (Fixed43(0.0), 2, Fixed43(z)))[3]

end;

# ╔═╡ d76be888-d7b4-11ea-2989-2174682ead76

let

md"""

| ``z`` | ``y`` | ``\partial y/\partial z`` |

| ---- | ----- | -------- |

| $(@sprintf "%.5f" z) | $(@sprintf "%.5f" y) | $(@sprintf "%.5f" gz) |

"""

end

# ╔═╡ 85c9edcc-d789-11ea-14c8-71697cd6a047

md"""

"""

#

# ╔═╡ Cell order:

# ╟─1ef174fa-16f0-11eb-328a-afc201effd2f

# ╟─627ea2fb-6530-4ea0-98ee-66be3db54411

# ╟─94b2b962-e02a-11ea-09a5-81b3226891ed

# ╟─a5ee60c8-e02a-11ea-3512-7f481e499f23

# ╟─a11c4b60-d77d-11ea-1afe-1f2ab9621f42

# ╟─e54a1be6-d485-11ea-0262-034c56e0fda8

# ╟─55cfdab8-d792-11ea-271f-e7383e19997c

# ╟─d1628f08-ddfb-11ea-241a-c7e6c1a22212

# ╠═9e509f80-d485-11ea-0044-c5b7e750aacb

# ╟─278ac6b6-e02c-11ea-1354-cd7ecd1099be

# ╠═a28d38be-d486-11ea-2c40-a377b74a05c1

# ╠═e93f0bf6-d487-11ea-1baa-21d51ddb4a20

# ╠═fc932606-d487-11ea-303e-75ca8b7a02f6

# ╟─e3d2b23a-ddfb-11ea-0f5e-e72ed299bb45

# ╟─a961e048-ddf2-11ea-0262-6d19eb82b36b

# ╟─2d22f504-ddf1-11ea-28ec-5de6f4ee79bb

# ╟─7d08ac24-e143-11ea-2085-539fd9e35889

# ╠═9fcdd77c-e0df-11ea-09e6-49a2861137e5

# ╟─0a1a8594-ddfc-11ea-119a-1997c86cd91b

# ╠═0b4edb1a-ddf0-11ea-220c-91f2df7452e7

# ╟─f875ecd6-ddef-11ea-22a1-619809d15b37

# ╠═e7557bee-e0cc-11ea-1788-411e759b4766

# ╟─cd7b2a2e-ddf5-11ea-04c4-f7583bbb5a53

# ╠═bc98a824-ddf5-11ea-1a6a-1f795452d3d0

# ╠═05f8b91c-e0cd-11ea-09e3-f3c5c0e07e63

# ╟─ac302844-e07b-11ea-35dd-e3e06054401b

# ╠═b722e098-e07b-11ea-3483-01360fb6954e

# ╟─bf8b722c-dfa4-11ea-196a-719802bc23c5

# ╟─330edc28-dfac-11ea-35a5-3144c4afbfcf

# ╠═0a679e04-dfa7-11ea-0288-a1fa490c4387

# ╠═cc32cae8-dfab-11ea-0d0b-c70ea8de720a

# ╟─b4240c16-dfac-11ea-3a40-33c54436e3a3

# ╠═ade52358-dfac-11ea-2dd3-d3a691e7a8a2

# ╠═d86e2e5e-dfab-11ea-0053-6d52f1164bc5

# ╟─7951b9ec-e030-11ea-32ee-b1de49378186

# ╟─6bc97f5e-dfad-11ea-0c43-e30b6620e6e8

# ╠═80d24e9e-dfad-11ea-1dae-49568d534f10

# ╠═a8092b18-dfad-11ea-0989-474f37d05f73

# ╟─43f0c2fc-e030-11ea-25d9-b323e6496a35

# ╟─b4ad5830-dfad-11ea-0057-055dda8cc9be

# ╠═cf576d38-dfad-11ea-2682-7bd540db44a5

# ╠═35fff53c-dfae-11ea-3602-918a17d5a5fa

# ╟─9b9b5328-e030-11ea-1d00-f3341572734a

# ╟─f3b87892-e080-11ea-353d-8d81c52cf9ac

# ╠═b27a3974-e030-11ea-0bcd-7f7035d55165

# ╠═e5d47096-e030-11ea-1e87-5b9b1dbecfe0

# ╟─9c62289a-dfae-11ea-0fe0-b1cb80a87704

# ╟─88838bce-dfaf-11ea-1a72-7d15629cfcb0

# ╠═a593f970-dfae-11ea-2d79-876030850dee

# ╠═f448548e-dfaf-11ea-05c0-d5d177683445

# ╟─65cd13ca-e031-11ea-3fc6-977792eb5f8c

# ╟─53c02100-e08f-11ea-1f5d-8b2311b095d2

# ╟─75751b24-e0b8-11ea-2b37-9d138121345c

# ╠═76b84de4-e031-11ea-0bcf-39b86a6b4552

# ╠═b1984d24-e031-11ea-3b13-3bd0119a2bcb

# ╟─7f163d82-e0b8-11ea-2fe7-332bb4dee586

# ╠═ddc6329e-e031-11ea-0e6e-e7332fa26e22

# ╠═f3d5e1b0-e031-11ea-1a90-7bed88e28bad

# ╟─ab67419a-dfae-11ea-27ba-09321303ad62

# ╟─d5c2efbc-d779-11ea-11ad-1f5873b95628

# ╟─30af9642-e084-11ea-1f92-b52abfddcf06

# ╟─db1fab1c-e084-11ea-0bf0-b1fbe9e74b3f

# ╟─e1370f80-e0bc-11ea-2a90-d50cc762cbcb

# ╠═3098411c-e0bc-11ea-2754-eb0afbd663de

# ╟─3d0150ee-e0bd-11ea-0a5a-339465b496dc

# ╟─8016ff94-e0bc-11ea-3b9e-4f0676587edf

# ╟─99108ace-e0bc-11ea-2744-d1b18db50ae1

# ╟─b2337f26-e0bb-11ea-3da0-9507c35101ae

# ╟─48db515c-e084-11ea-2eec-018b8545fa34

# ╟─f531f556-e083-11ea-2f7e-77e110d6c53a

# ╟─62643fbc-e084-11ea-1b1f-39b87ff32b9e

# ╟─0bf54b08-e084-11ea-3d11-7be65f3ec022

# ╟─15f7c60a-e08e-11ea-31ea-a5cd055644db

# ╟─55a3a260-d48e-11ea-06e2-1b7bd7bba6f5

# ╟─38014ad0-e08e-11ea-1905-198038ab7e5f

# ╟─2e6fe4da-d79d-11ea-1e90-f5215190395c

# ╠═6560c28c-e08e-11ea-1094-d333b88071ce