Showing preview only (900K chars total). Download the full file or copy to clipboard to get everything.

Repository: JoinQuant/jqfactor_analyzer

Branch: master

Commit: 69e677dc0dd9

Files: 33

Total size: 23.5 MB

Directory structure:

gitextract_zj34mbsw/

├── .gitignore

├── LICENSE

├── MANiFEST.in

├── README.md

├── docs/

│ └── API文档.md

├── jqfactor_analyzer/

│ ├── __init__.py

│ ├── analyze.py

│ ├── attribution.py

│ ├── compat.py

│ ├── config.json

│ ├── data.py

│ ├── exceptions.py

│ ├── factor_cache.py

│ ├── performance.py

│ ├── plot_utils.py

│ ├── plotting.py

│ ├── prepare.py

│ ├── preprocess.py

│ ├── sample.py

│ ├── sample_data/

│ │ ├── VOL5.csv

│ │ ├── index_weight_info.csv

│ │ └── weight_info.csv

│ ├── utils.py

│ ├── version.py

│ └── when.py

├── requirements.txt

├── setup.cfg

├── setup.py

└── tests/

├── __init__.py

├── test_attribution.py

├── test_data.py

├── test_performance.py

└── test_prepare.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# Jupyter Notebook

.ipynb_checkpoints

# pyenv

.python-version

# celery beat schedule file

celerybeat-schedule

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

================================================

FILE: LICENSE

================================================

MIT License

Copyright (c) 2019 JoinQuant

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: MANiFEST.in

================================================

include LICENSE

include *.txt

include jqfactor_analyzer/sample_data/*.csv

include jqfactor_analyzer/config.json

================================================

FILE: README.md

================================================

# jqfactor_analyzer

jqfactor_analyzer 是提供给用户配合 jqdatasdk 进行归因分析,因子数据缓存及单因子分析的开源工具。

### 安装

```pip install jqfactor_analyzer```

### 升级

```pip install -U jqfactor_analyzer```

### 具体使用方法

**详细用法请查看[API文档](https://github.com/JoinQuant/jqfactor_analyzer/blob/master/docs/API%E6%96%87%E6%A1%A3.md)**

## 归因分析使用示例

### 风格模型的基本概念

归因分析旨在通过对历史投资组合的收益进行分解,明确指出各个收益来源对组合的业绩贡献,能够更好地理解组合的表现是否符合预期,以及是否存在某一风格/行业暴露过高的风险。

多因子风险模型的基础理论认为,股票的收益是由一些共同的因子 (风格,行业和国家因子) 来驱动的,不能被这些因子解释的部分被称为股票的 “特异收益”, 而每只股票的特异收益之间是互不相关的。

(1) 风格因子,即影响股票收益的风格因素,如市值、成长、杠杆等。

(2) 行业因子,不同行业在不同时期可能优于或者差于其他行业,同一行业内的股票往往涨跌具有较强的关联性。

(3) 国家因子,表示股票市场整体涨落对投资组合的收益影响,对于任意投资组合,若他们投资的都是同一市场则其承担的国家因子和收益是相同的。

(4) 特异收益,即无法被多因子风险模型解释的部分,也就是影响个股收益的特殊因素,如公司经营能力、决策等。

根据上述多因子风险模型,股票的收益可以表达为 :

$$

R_i = \underbrace{1 \cdot f_c} _{\text{国家因子收益}} + \underbrace{\sum _{j=1}^{S} f _j^{style} \cdot X _{ij}^{style}} _{\text{风格因子收益}} + \underbrace{\sum _{j=1}^{I} f _j^{industry} \cdot X _{ij}^{industry}} _{\text{行业因子收益}} + \underbrace{u _i} _{\text{个股特异收益}}

$$

此公式可简化为:

$$

R_i = \underbrace{\sum_{j=1}^{K} f_j \cdot X_{ij}}_{\text{第 j 个因子 (含国家,风格和行业,总数为 K) 获得的收益}} + \underbrace{u_i} _{\text{个股特异收益}}

$$

其中:

- $R_i$ 是第 $i$ 只股票的收益

- $f_c$ 是国家因子的回报率

- $S$ 和 $I$ 分别是风格和行业因子的数量

- $f_j^{style}$ 是第 $j$ 个风格因子的回报率, $f_j^{industry}$ 是第 $j$ 个行业因子的回报率

- $X_{ij}^{style}$ 是第 $i$ 只股票在第 $j$ 个风格因子上的暴露, $X_{ij}^{industry}$ 是第 $i$ 只股票在第 $j$ 个行业因子上的暴露,因子暴露又称因子载荷/因子值 (通过<span style="color:red;">`jqdatasdk.get_factor_values`</span>可获取风格因子暴露及行业暴露哑变量)

- $u_i$ 是残差项,表示无法通过模型解释的部分 (即特异收益率)

根据上述公式,对市场上的股票 (一般采用中证全指作为股票池) 使用对数市值加权在横截面上进行加权最小二乘回归,可得到 :

- $f_j$ : 风格/行业因子和国家因子的回报率 , 通过 <span style="color:red;">`jqdatasdk.get_factor_style_returns`</span> 获取

- $u_i$ : 回归残差 (无法被模型解释的部分,即特异收益率), 通过<span style="color:red;"> `jqdatasdk.get_factor_specific_returns` </span>获取

### 使用上述已提供的数据进行归因分析 :

现已知你的投资组合 P 由权重 $w_n$ 构成,则投资组合第 j 个因子的暴露可表示为 :

$$

X^P_j = \sum_{i=1}^{n} w_i X_{ij}

$$

- $X^P_j$ 可通过 <span style="color:red;">`jqfactor_analyzer.AttributionAnalysis().exposure_portfolio` </span>获取

投资组合在第 j 个因子上获取到的收益率可以表示为 :

$$

R^P_j = X^P_j \cdot f_j

$$

- $R^P_j$ 可通过 <span style="color:red;">`jqfactor_analyzer.AttributionAnalysis().attr_daily_return` </span>获取

所以投资组合的收益率也可以被表示为 :

$$

R_P = \sum_{j=1}^{k} R^p_j \cdot f_j + \sum_{i-1}^{n} w_i u_i

$$

即理论上 $\sum_n w_n u_n$ 就是投资组合的特异收益 (alpha) $R_s$ (您也可以直接获取个股特异收益率与权重相乘直接进行计算),但现实中受到仓位,调仓时间,费用等其他因素的影响,此公式并非完全成立的,AttributionAnalysis 中是使用做差的方式来计算特异收益率,即:

$$

R_s = R_P - \sum_{j=1}^{k} R^p_j \cdot f_j

$$

### 以指数作为基准的归因分析

- jqdatasdk 已经根据指数权重计算好了指数的风格暴露 $X^B$,可通过<span style="color:red;">`jqdatasdk.get_index_style_exposure`</span> 获取

投资组合 P 相对于指数的第 j 个因子的暴露可表示为 :

$$

X^{P2B}_j = X^P_j - X^B_j

$$

- $X^{P2B}_j$ 可通过<span style="color:red;"> `jqfactor_analyzer.AttributionAnalysis().get_exposure2bench(index_symbol)` </span>获取

投资组合在第 j 个因子上相对于指数获取到的收益率可以表示为 :

$$

R^{P2B}_j = R^P_j - R^B_j = X^P_j \cdot f_j - X^B_j \cdot f_j = f_j \cdot X^{P2B}_j

$$

在 AttributionAnalysis 中,风格及行业因子部分,将指数的仓位和持仓的仓位进行了对齐;同时考虑了现金产生的收益 (国家因子在仓位对齐后不会产生暴露收益,现金收益为 0,现金相对于指数的收益即为:(-1) × 剩余仓位 × 指数收益)

所以投资组合相对于指数的收益可以被表示为:

$$

R_{P2B} = \sum_{j=1}^{k} R^{P2B}_j + R^{P2B}_s + 现金相对于指数的收益

$$

- $R_{P2B}$ 等可通过 <span style="color:red;">`jqfactor_analyzer.AttributionAnalysis().get_attr_daily_returns2bench(index_symbol)` </span>获取

### 累积收益的处理

上述 `attr_daily_return` 和 `get_attr_daily_returns2bench(index_symbol)` 获取到的均为单日收益率,在计算累积收益时需要考虑复利影响。

$$

N_t = \prod_{t=1}^{n} (R^p_t+1)

$$

$$

Rcum^p_{jt} = N_{t-1} \cdot R^P_{jt}

$$

其中 :

- $N_t$ 为投资组合在第 t 天盘后的净值

- $R^p_t$ 为投资组合在第 t 天的日度收益率

- $Rcum^p_{jt}$ 为投资组合 p 的第 j 个因子在 t 日的累积收益

- $R^P_{jt}$ 为投资组合 p 的第 j 个因子在 t 日的日收益率

- $N_t, Rcum^p_{jt}$ 均可通过<span style="color:red;"> `jqfactor_analyzer.AttributionAnalysis().attr_returns`</span> 获取

- 相对于基准的累积收益算法类似, 可通过 <span style="color:red;">`jqfactor_analyzer.AttributionAnalysis().get_attr_returns2bench` </span>获取

### 导入模块并登陆 jqdatasdk

```python

import jqdatasdk

import jqfactor_analyzer as ja

# 获取 jqdatasdk 授权,输入用户名、密码,申请地址:https://www.joinquant.com/default/index/sdk

# 聚宽官网,使用方法参见:https://www.joinquant.com/help/api/doc?name=JQDatadoc

jqdatasdk.auth("账号", "密码")

```

### 处理权重信息

此处使用的是 jqfactor_analyzer 提供的示例文件

数据格式要求 :

- 权重数据, 一个 dataframe, index 为日期, columns 为标的代码 (可使用 jqdatasdk.normalize_code 转为支持的格式), values 为权重, 每日的权重和应该小于 1

- 组合的日度收益数据, 一个 series, index 为日期, values 为日收益率

```python

import os

import pandas as pd

weight_path = os.path.join(os.path.dirname(ja.__file__), 'sample_data', 'weight_info.csv')

weight_infos = pd.read_csv(weight_path, index_col=0)

daily_return = weight_infos.pop("return")

```

```python

weight_infos.head(5)

```

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>000006.XSHE</th>

<th>000008.XSHE</th>

<th>000009.XSHE</th>

<th>000012.XSHE</th>

<th>000021.XSHE</th>

<th>000025.XSHE</th>

<th>000027.XSHE</th>

<th>000028.XSHE</th>

<th>000031.XSHE</th>

<th>000032.XSHE</th>

<th>...</th>

<th>603883.XSHG</th>

<th>603885.XSHG</th>

<th>603888.XSHG</th>

<th>603893.XSHG</th>

<th>603927.XSHG</th>

<th>603939.XSHG</th>

<th>603979.XSHG</th>

<th>603983.XSHG</th>

<th>605117.XSHG</th>

<th>605358.XSHG</th>

</tr>

</thead>

<tbody>

<tr>

<th>2020-01-02</th>

<td>0.000873</td>

<td>0.001244</td>

<td>0.002934</td>

<td>0.001219</td>

<td>0.001614</td>

<td>0.000433</td>

<td>0.001274</td>

<td>0.001181</td>

<td>0.001471</td>

<td>NaN</td>

<td>...</td>

<td>0.001294</td>

<td>0.001536</td>

<td>0.000781</td>

<td>NaN</td>

<td>NaN</td>

<td>0.001896</td>

<td>NaN</td>

<td>0.000482</td>

<td>NaN</td>

<td>NaN</td>

</tr>

<tr>

<th>2020-01-03</th>

<td>0.000897</td>

<td>0.001247</td>

<td>0.002679</td>

<td>0.001203</td>

<td>0.001708</td>

<td>0.000432</td>

<td>0.001293</td>

<td>0.001195</td>

<td>0.001463</td>

<td>NaN</td>

<td>...</td>

<td>0.001298</td>

<td>0.001505</td>

<td>0.000824</td>

<td>NaN</td>

<td>NaN</td>

<td>0.001912</td>

<td>NaN</td>

<td>0.000466</td>

<td>NaN</td>

<td>NaN</td>

</tr>

<tr>

<th>2020-01-06</th>

<td>0.000879</td>

<td>0.001216</td>

<td>0.002926</td>

<td>0.001225</td>

<td>0.001613</td>

<td>0.000434</td>

<td>0.001278</td>

<td>0.001228</td>

<td>0.001429</td>

<td>NaN</td>

<td>...</td>

<td>0.001238</td>

<td>0.001534</td>

<td>0.000767</td>

<td>NaN</td>

<td>NaN</td>

<td>0.001962</td>

<td>NaN</td>

<td>0.000488</td>

<td>NaN</td>

<td>NaN</td>

</tr>

<tr>

<th>2020-01-07</th>

<td>0.000883</td>

<td>0.001241</td>

<td>0.002591</td>

<td>0.001220</td>

<td>0.001536</td>

<td>0.000439</td>

<td>0.001294</td>

<td>0.001195</td>

<td>0.001488</td>

<td>NaN</td>

<td>...</td>

<td>0.001267</td>

<td>0.001575</td>

<td>0.000764</td>

<td>NaN</td>

<td>NaN</td>

<td>0.001959</td>

<td>NaN</td>

<td>0.000468</td>

<td>NaN</td>

<td>NaN</td>

</tr>

<tr>

<th>2020-01-08</th>

<td>0.000877</td>

<td>0.001231</td>

<td>0.002758</td>

<td>0.001205</td>

<td>0.001528</td>

<td>0.000429</td>

<td>0.001270</td>

<td>0.001208</td>

<td>0.001448</td>

<td>NaN</td>

<td>...</td>

<td>0.001277</td>

<td>0.001554</td>

<td>0.000749</td>

<td>NaN</td>

<td>NaN</td>

<td>0.001987</td>

<td>NaN</td>

<td>0.000474</td>

<td>NaN</td>

<td>NaN</td>

</tr>

</tbody>

</table>

<p>5 rows × 818 columns</p>

</div>

```python

weight_infos.sum(axis=1).head(5)

```

2020-01-02 0.752196

2020-01-03 0.750206

2020-01-06 0.752375

2020-01-07 0.752054

2020-01-08 0.748039

dtype: float64

### 进行归因分析

**具体用法请查看[API文档](https://github.com/JoinQuant/jqfactor_analyzer/blob/master/docs/API%E6%96%87%E6%A1%A3.md), 此处仅作示例**

```python

An = ja.AttributionAnalysis(weight_infos, daily_return, style_type='style', industry='sw_l1', use_cn=True, show_data_progress=True)

```

check/save factor cache : 100%|██████████| 54/54 [00:02<00:00, 25.75it/s]

calc_style_exposure : 100%|██████████| 1087/1087 [00:27<00:00, 39.52it/s]

calc_industry_exposure : 100%|██████████| 1087/1087 [00:19<00:00, 56.53it/s]

```python

An.exposure_portfolio.head(5) #查看暴露

```

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>size</th>

<th>beta</th>

<th>momentum</th>

<th>residual_volatility</th>

<th>non_linear_size</th>

<th>book_to_price_ratio</th>

<th>liquidity</th>

<th>earnings_yield</th>

<th>growth</th>

<th>leverage</th>

<th>...</th>

<th>801050</th>

<th>801040</th>

<th>801780</th>

<th>801970</th>

<th>801120</th>

<th>801790</th>

<th>801760</th>

<th>801890</th>

<th>801960</th>

<th>country</th>

</tr>

</thead>

<tbody>

<tr>

<th>2020-01-02</th>

<td>-0.487816</td>

<td>0.468947</td>

<td>-0.048262</td>

<td>0.104597</td>

<td>0.976877</td>

<td>-0.112042</td>

<td>0.278131</td>

<td>-0.311944</td>

<td>-0.000541</td>

<td>-0.356787</td>

<td>...</td>

<td>0.030234</td>

<td>0.023728</td>

<td>0.010499</td>

<td>NaN</td>

<td>0.017049</td>

<td>0.032292</td>

<td>0.042405</td>

<td>0.027871</td>

<td>NaN</td>

<td>0.752196</td>

</tr>

<tr>

<th>2020-01-03</th>

<td>-0.485128</td>

<td>0.461138</td>

<td>-0.044422</td>

<td>0.104270</td>

<td>0.970710</td>

<td>-0.110196</td>

<td>0.271739</td>

<td>-0.314469</td>

<td>-0.002360</td>

<td>-0.354623</td>

<td>...</td>

<td>0.030574</td>

<td>0.023712</td>

<td>0.010610</td>

<td>NaN</td>

<td>0.017071</td>

<td>0.033261</td>

<td>0.041491</td>

<td>0.027631</td>

<td>NaN</td>

<td>0.750206</td>

</tr>

<tr>

<th>2020-01-06</th>

<td>-0.477658</td>

<td>0.464642</td>

<td>-0.034905</td>

<td>0.116226</td>

<td>0.958563</td>

<td>-0.118501</td>

<td>0.277993</td>

<td>-0.320429</td>

<td>-0.001766</td>

<td>-0.352186</td>

<td>...</td>

<td>0.030807</td>

<td>0.023681</td>

<td>0.010619</td>

<td>NaN</td>

<td>0.016953</td>

<td>0.033203</td>

<td>0.042406</td>

<td>0.027906</td>

<td>NaN</td>

<td>0.752375</td>

</tr>

<tr>

<th>2020-01-07</th>

<td>-0.474913</td>

<td>0.456438</td>

<td>-0.030596</td>

<td>0.118867</td>

<td>0.953152</td>

<td>-0.117436</td>

<td>0.274219</td>

<td>-0.315071</td>

<td>-0.000874</td>

<td>-0.350100</td>

<td>...</td>

<td>0.030140</td>

<td>0.024215</td>

<td>0.010716</td>

<td>NaN</td>

<td>0.017240</td>

<td>0.033022</td>

<td>0.042867</td>

<td>0.027853</td>

<td>NaN</td>

<td>0.752054</td>

</tr>

<tr>

<th>2020-01-08</th>

<td>-0.474413</td>

<td>0.452745</td>

<td>-0.026417</td>

<td>0.123923</td>

<td>0.951369</td>

<td>-0.115294</td>

<td>0.271193</td>

<td>-0.305295</td>

<td>-0.000920</td>

<td>-0.345431</td>

<td>...</td>

<td>0.030176</td>

<td>0.023694</td>

<td>0.010671</td>

<td>NaN</td>

<td>0.017303</td>

<td>0.032777</td>

<td>0.040977</td>

<td>0.027820</td>

<td>NaN</td>

<td>0.748039</td>

</tr>

</tbody>

</table>

<p>5 rows × 43 columns</p>

</div>

```python

An.attr_daily_returns.head(5) #查看日度收益拆解

```

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>size</th>

<th>beta</th>

<th>momentum</th>

<th>residual_volatility</th>

<th>non_linear_size</th>

<th>book_to_price_ratio</th>

<th>liquidity</th>

<th>earnings_yield</th>

<th>growth</th>

<th>leverage</th>

<th>...</th>

<th>801970</th>

<th>801120</th>

<th>801790</th>

<th>801760</th>

<th>801890</th>

<th>801960</th>

<th>country</th>

<th>common_return</th>

<th>specific_return</th>

<th>total_return</th>

</tr>

</thead>

<tbody>

<tr>

<th>2020-01-02</th>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>...</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>0.000000</td>

<td>NaN</td>

<td>NaN</td>

</tr>

<tr>

<th>2020-01-03</th>

<td>0.000241</td>

<td>-0.000144</td>

<td>0.000130</td>

<td>0.000090</td>

<td>0.000955</td>

<td>-0.000039</td>

<td>-0.000045</td>

<td>0.000174</td>

<td>7.907650e-08</td>

<td>0.000148</td>

<td>...</td>

<td>NaN</td>

<td>-0.000168</td>

<td>-0.000019</td>

<td>0.000500</td>

<td>-0.000050</td>

<td>NaN</td>

<td>0.000860</td>

<td>0.003030</td>

<td>-0.001083</td>

<td>0.001948</td>

</tr>

<tr>

<th>2020-01-06</th>

<td>-0.000014</td>

<td>0.000151</td>

<td>0.000119</td>

<td>0.000199</td>

<td>0.002035</td>

<td>-0.000017</td>

<td>0.000025</td>

<td>0.000573</td>

<td>-1.457480e-07</td>

<td>0.000160</td>

<td>...</td>

<td>NaN</td>

<td>-0.000178</td>

<td>-0.000145</td>

<td>0.000286</td>

<td>0.000015</td>

<td>NaN</td>

<td>0.000949</td>

<td>0.004990</td>

<td>0.002358</td>

<td>0.007348</td>

</tr>

<tr>

<th>2020-01-07</th>

<td>0.000176</td>

<td>0.001208</td>

<td>0.000002</td>

<td>0.000236</td>

<td>0.001533</td>

<td>0.000012</td>

<td>-0.000213</td>

<td>-0.000627</td>

<td>8.726552e-07</td>

<td>0.000250</td>

<td>...</td>

<td>NaN</td>

<td>0.000077</td>

<td>-0.000003</td>

<td>0.000834</td>

<td>-0.000008</td>

<td>NaN</td>

<td>0.006875</td>

<td>0.009541</td>

<td>-0.000621</td>

<td>0.008920</td>

</tr>

<tr>

<th>2020-01-08</th>

<td>-0.000190</td>

<td>-0.001919</td>

<td>-0.000007</td>

<td>0.000019</td>

<td>0.000199</td>

<td>0.000027</td>

<td>-0.000134</td>

<td>0.000400</td>

<td>-8.393073e-09</td>

<td>-0.000140</td>

<td>...</td>

<td>NaN</td>

<td>0.000038</td>

<td>-0.000384</td>

<td>-0.000414</td>

<td>0.000104</td>

<td>NaN</td>

<td>-0.009655</td>

<td>-0.010019</td>

<td>-0.000516</td>

<td>-0.010535</td>

</tr>

</tbody>

</table>

<p>5 rows × 46 columns</p>

</div>

```python

An.attr_returns.head(5) #查看累积收益

```

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>size</th>

<th>beta</th>

<th>momentum</th>

<th>residual_volatility</th>

<th>non_linear_size</th>

<th>book_to_price_ratio</th>

<th>liquidity</th>

<th>earnings_yield</th>

<th>growth</th>

<th>leverage</th>

<th>...</th>

<th>801970</th>

<th>801120</th>

<th>801790</th>

<th>801760</th>

<th>801890</th>

<th>801960</th>

<th>country</th>

<th>common_return</th>

<th>specific_return</th>

<th>total_return</th>

</tr>

</thead>

<tbody>

<tr>

<th>2020-01-02</th>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>...</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

</tr>

<tr>

<th>2020-01-03</th>

<td>0.000241</td>

<td>-0.000144</td>

<td>0.000130</td>

<td>0.000090</td>

<td>0.000955</td>

<td>-0.000039</td>

<td>-0.000045</td>

<td>0.000174</td>

<td>7.907650e-08</td>

<td>0.000148</td>

<td>...</td>

<td>NaN</td>

<td>-0.000168</td>

<td>-0.000019</td>

<td>0.000500</td>

<td>-0.000050</td>

<td>NaN</td>

<td>0.000860</td>

<td>0.003030</td>

<td>-0.001083</td>

<td>0.001948</td>

</tr>

<tr>

<th>2020-01-06</th>

<td>0.000227</td>

<td>0.000007</td>

<td>0.000249</td>

<td>0.000290</td>

<td>0.002994</td>

<td>-0.000056</td>

<td>-0.000020</td>

<td>0.000748</td>

<td>-6.695534e-08</td>

<td>0.000308</td>

<td>...</td>

<td>NaN</td>

<td>-0.000346</td>

<td>-0.000164</td>

<td>0.000787</td>

<td>-0.000035</td>

<td>NaN</td>

<td>0.001812</td>

<td>0.008030</td>

<td>0.001280</td>

<td>0.009310</td>

</tr>

<tr>

<th>2020-01-07</th>

<td>0.000405</td>

<td>0.001226</td>

<td>0.000252</td>

<td>0.000528</td>

<td>0.004541</td>

<td>-0.000044</td>

<td>-0.000234</td>

<td>0.000115</td>

<td>8.138242e-07</td>

<td>0.000560</td>

<td>...</td>

<td>NaN</td>

<td>-0.000268</td>

<td>-0.000168</td>

<td>0.001629</td>

<td>-0.000043</td>

<td>NaN</td>

<td>0.008750</td>

<td>0.017660</td>

<td>0.000653</td>

<td>0.018313</td>

</tr>

<tr>

<th>2020-01-08</th>

<td>0.000212</td>

<td>-0.000728</td>

<td>0.000245</td>

<td>0.000547</td>

<td>0.004744</td>

<td>-0.000016</td>

<td>-0.000371</td>

<td>0.000522</td>

<td>8.052775e-07</td>

<td>0.000418</td>

<td>...</td>

<td>NaN</td>

<td>-0.000229</td>

<td>-0.000559</td>

<td>0.001207</td>

<td>0.000064</td>

<td>NaN</td>

<td>-0.001081</td>

<td>0.007457</td>

<td>0.000128</td>

<td>0.007585</td>

</tr>

</tbody>

</table>

<p>5 rows × 46 columns</p>

</div>

```python

An.get_attr_returns2bench('000905.XSHG').head(5) #查看相对指数的累积收益

```

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>size</th>

<th>beta</th>

<th>momentum</th>

<th>residual_volatility</th>

<th>non_linear_size</th>

<th>book_to_price_ratio</th>

<th>liquidity</th>

<th>earnings_yield</th>

<th>growth</th>

<th>leverage</th>

<th>...</th>

<th>801970</th>

<th>801120</th>

<th>801790</th>

<th>801760</th>

<th>801890</th>

<th>801960</th>

<th>common_return</th>

<th>cash</th>

<th>specific_return</th>

<th>total_return</th>

</tr>

</thead>

<tbody>

<tr>

<th>2020-01-02</th>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>...</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>NaN</td>

<td>0.000000</td>

<td>0.000000</td>

<td>0.000000</td>

<td>0.000000</td>

</tr>

<tr>

<th>2020-01-03</th>

<td>2.247752e-08</td>

<td>5.274612e-07</td>

<td>-1.010579e-06</td>

<td>-1.780933e-07</td>

<td>-5.018849e-09</td>

<td>-1.576053e-07</td>

<td>1.815168e-07</td>

<td>3.067299e-07</td>

<td>-8.676436e-08</td>

<td>3.843589e-07</td>

<td>...</td>

<td>NaN</td>

<td>-8.367921e-07</td>

<td>2.211999e-07</td>

<td>2.512287e-07</td>

<td>-2.031997e-07</td>

<td>NaN</td>

<td>-0.000006</td>

<td>-0.000670</td>

<td>-0.000079</td>

<td>-0.000755</td>

</tr>

<tr>

<th>2020-01-06</th>

<td>3.139000e-09</td>

<td>-2.167887e-06</td>

<td>1.005890e-06</td>

<td>-9.837778e-06</td>

<td>1.803920e-06</td>

<td>3.592758e-07</td>

<td>-3.082887e-07</td>

<td>-4.489268e-06</td>

<td>-1.570012e-07</td>

<td>-7.565016e-07</td>

<td>...</td>

<td>NaN</td>

<td>-4.620739e-06</td>

<td>-2.607788e-06</td>

<td>-8.734669e-06</td>

<td>-1.166518e-07</td>

<td>NaN</td>

<td>-0.000063</td>

<td>-0.003198</td>

<td>-0.000234</td>

<td>-0.003494</td>

</tr>

<tr>

<th>2020-01-07</th>

<td>-5.129552e-08</td>

<td>-2.485408e-05</td>

<td>9.140735e-07</td>

<td>-2.227106e-05</td>

<td>1.453669e-06</td>

<td>-5.066033e-08</td>

<td>4.500972e-06</td>

<td>4.348111e-06</td>

<td>1.794315e-07</td>

<td>-3.707358e-06</td>

<td>...</td>

<td>NaN</td>

<td>-1.876927e-06</td>

<td>-2.703177e-06</td>

<td>-3.476170e-05</td>

<td>-2.429496e-07</td>

<td>NaN</td>

<td>-0.000095</td>

<td>-0.006224</td>

<td>-0.000283</td>

<td>-0.006603</td>

</tr>

<tr>

<th>2020-01-08</th>

<td>-1.236180e-07</td>

<td>4.020758e-05</td>

<td>1.082783e-06</td>

<td>-2.386474e-05</td>

<td>1.502709e-06</td>

<td>-1.806807e-06</td>

<td>1.001751e-05</td>

<td>-7.241071e-06</td>

<td>1.893800e-07</td>

<td>-1.425501e-06</td>

<td>...</td>

<td>NaN</td>

<td>2.019730e-07</td>

<td>-1.379156e-05</td>

<td>-1.232299e-05</td>

<td>1.799073e-06</td>

<td>NaN</td>

<td>-0.000087</td>

<td>-0.002647</td>

<td>-0.000427</td>

<td>-0.003160</td>

</tr>

</tbody>

</table>

<p>5 rows × 46 columns</p>

</div>

```python

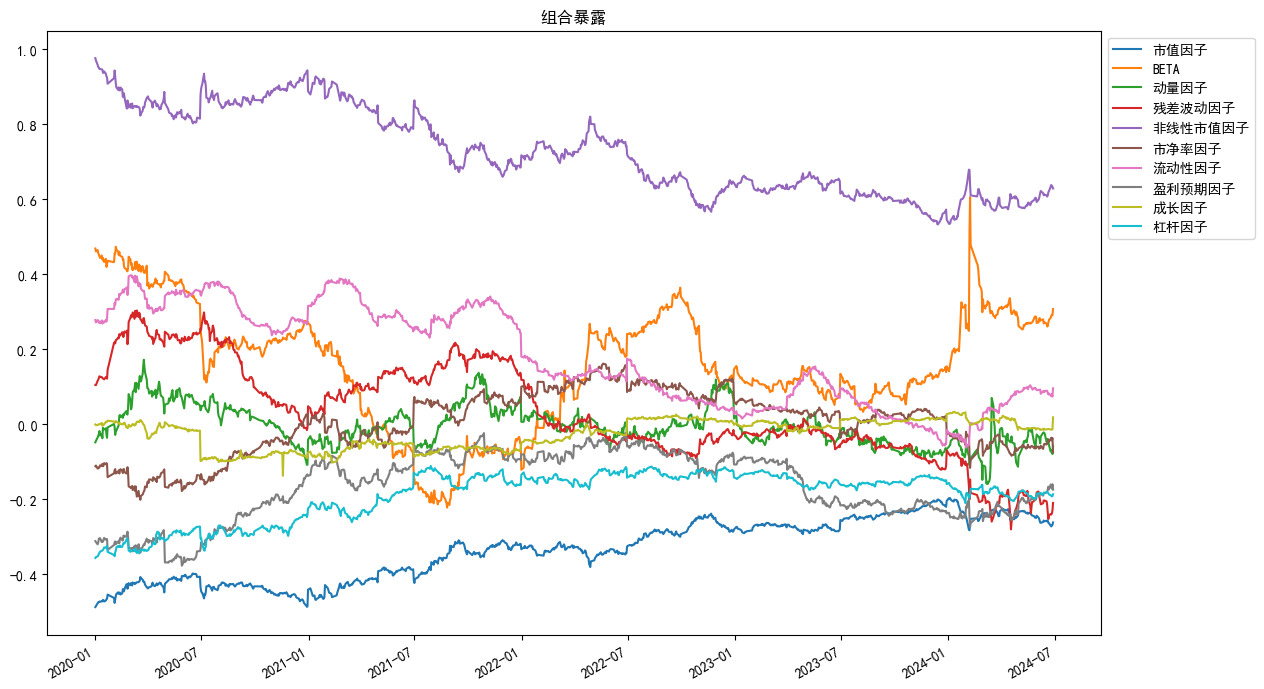

An.plot_exposure(factors='style',index_symbol=None,figsize=(15,7))

```

```python

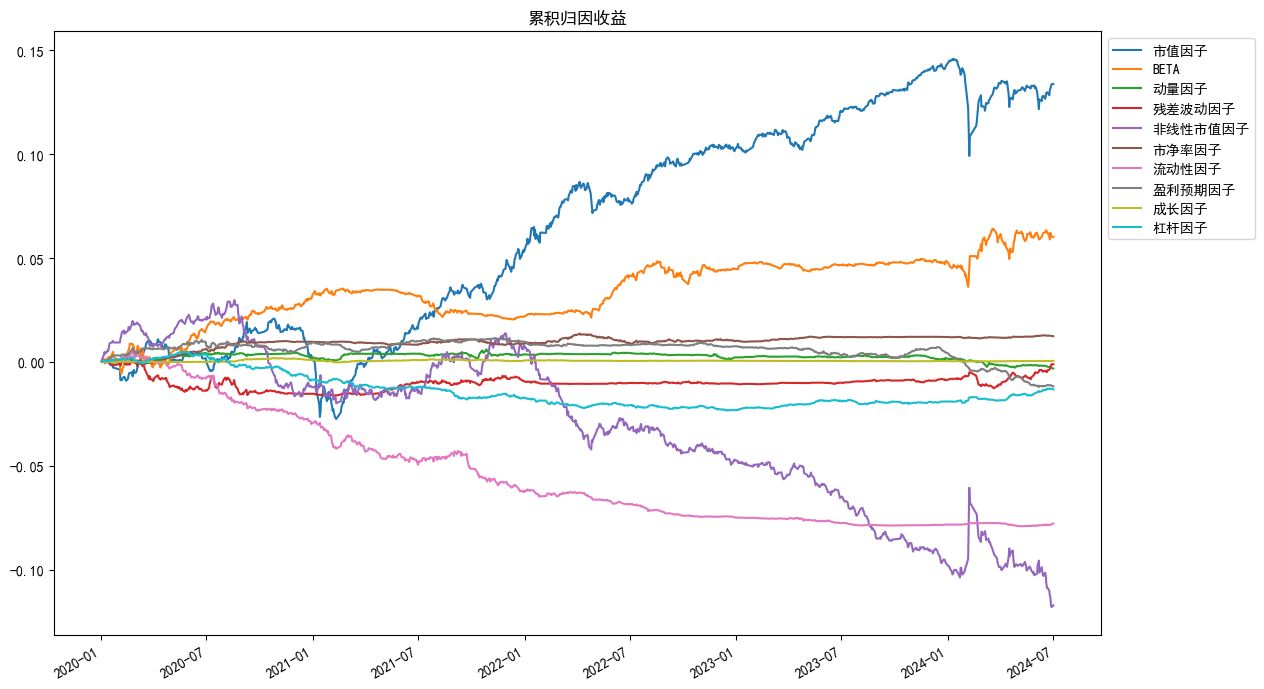

An.plot_returns(factors='style',index_symbol=None,figsize=(15,7))

```

```python

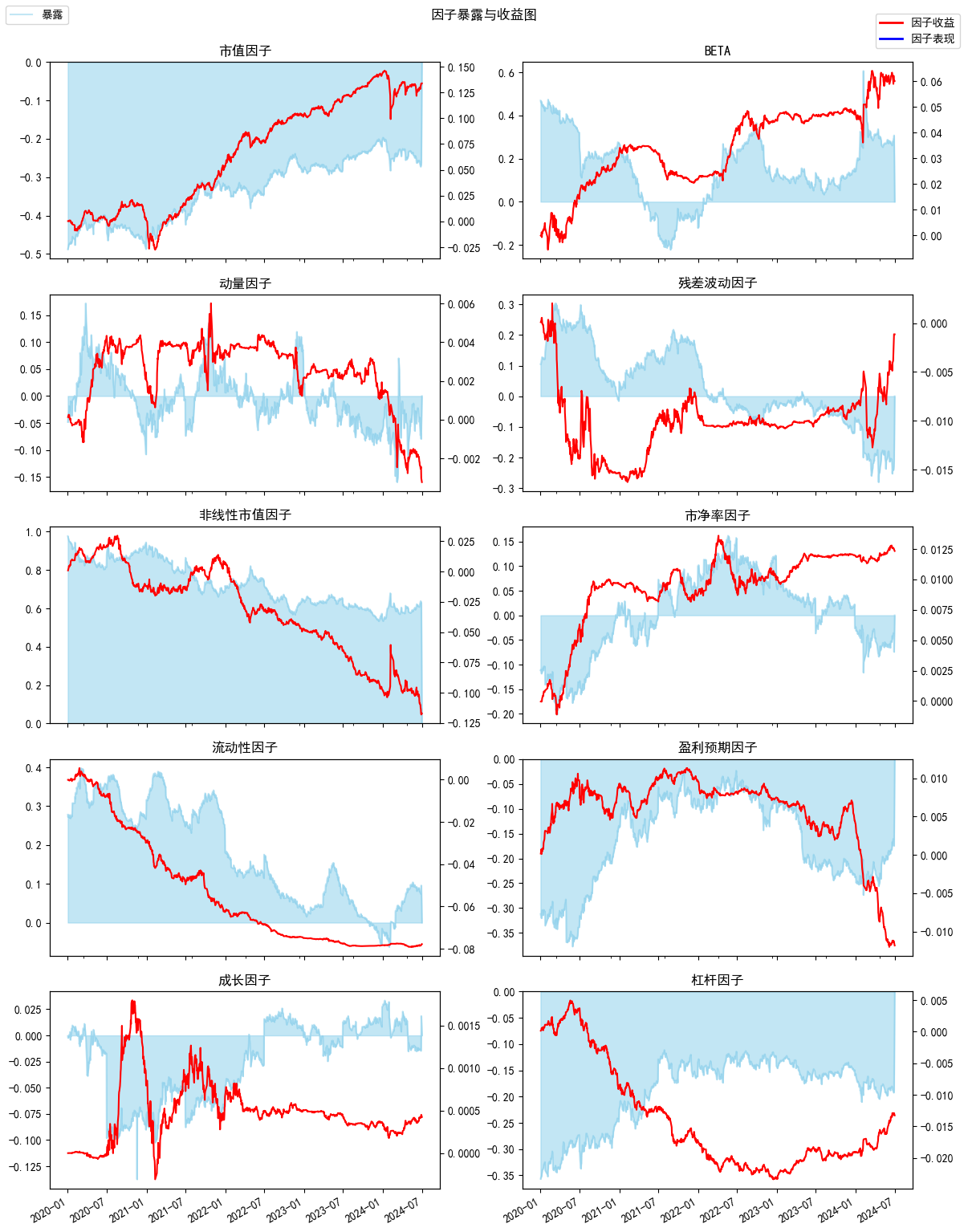

An.plot_exposure_and_returns(factors='style',index_symbol=None,show_factor_perf=False,figsize=(12,6))

```

## 因子数据本地缓存使用示例

**具体用法请查看[API文档](https://github.com/JoinQuant/jqfactor_analyzer/blob/master/docs/API%E6%96%87%E6%A1%A3.md), 此处仅作示例**

### 设置缓存目录

```python

from jqfactor_analyzer.factor_cache import set_cache_dir,get_cache_dir

# my_path = 'E:\\jqfactor_cache'

# set_cache_dir(my_path) #设置缓存目录为my_path

print(get_cache_dir()) #输出缓存目录

```

C:\Users\wq\jqfactor_datacache\bundle

### 缓存/检查缓存和读取已缓存数据

```python

from jqfactor_analyzer.factor_cache import save_factor_values_by_group,get_factor_values_by_cache,get_factor_folder,get_cache_dir

# import jqdatasdk as jq

# jq.auth("账号",'密码') #登陆jqdatasdk来从服务端缓存数据

all_factors = jqdatasdk.get_all_factors()

factor_names = all_factors[all_factors.category=='growth'].factor.tolist() #将聚宽因子库中的成长类因子作为一组因子

group_name = 'growth_factors' #因子组名定义为'growth_factors'

start_date = '2021-01-01'

end_date = '2021-06-01'

# 检查/缓存因子数据

factor_path = save_factor_values_by_group(start_date,end_date,factor_names=factor_names,group_name=group_name,overwrite=False,show_progress=True)

# factor_path = os.path.join(get_cache_dir(), get_factor_folder(factor_names,group_name=group_name) #等同于save_factor_values_by_group返回的路径

```

check/save factor cache : 100%|██████████| 6/6 [00:01<00:00, 5.87it/s]

```python

# 循环获取缓存的因子数据,并拼接

trade_days = jqdatasdk.get_trade_days(start_date,end_date)

factor_values = {}

for date in trade_days:

factor_values[date] = get_factor_values_by_cache(date,codes=None,factor_names=factor_names,group_name=group_name, factor_path=factor_path)#这里实际只需要指定group_name,factor_names参数的其中一个,缓存时指定了group_name时,factor_names不生效

factor_values = pd.concat(factor_values)

factor_values.head(5)

```

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th></th>

<th>financing_cash_growth_rate</th>

<th>net_asset_growth_rate</th>

<th>net_operate_cashflow_growth_rate</th>

<th>net_profit_growth_rate</th>

<th>np_parent_company_owners_growth_rate</th>

<th>operating_revenue_growth_rate</th>

<th>PEG</th>

<th>total_asset_growth_rate</th>

<th>total_profit_growth_rate</th>

</tr>

<tr>

<th></th>

<th>code</th>

<th></th>

<th></th>

<th></th>

<th></th>

<th></th>

<th></th>

<th></th>

<th></th>

<th></th>

</tr>

</thead>

<tbody>

<tr>

<th rowspan="5" valign="top">2021-01-04</th>

<th>000001.XSHE</th>

<td>4.218607</td>

<td>0.245417</td>

<td>-3.438636</td>

<td>-0.036129</td>

<td>-0.036129</td>

<td>0.139493</td>

<td>NaN</td>

<td>0.172409</td>

<td>-0.053686</td>

</tr>

<tr>

<th>000002.XSHE</th>

<td>-1.059306</td>

<td>0.236022</td>

<td>0.266020</td>

<td>0.009771</td>

<td>0.064828</td>

<td>0.115457</td>

<td>1.229423</td>

<td>0.107217</td>

<td>-0.013790</td>

</tr>

<tr>

<th>000004.XSHE</th>

<td>NaN</td>

<td>11.430834</td>

<td>-0.019530</td>

<td>-3.350306</td>

<td>-3.551808</td>

<td>-0.328126</td>

<td>NaN</td>

<td>10.912087</td>

<td>-3.888289</td>

</tr>

<tr>

<th>000005.XSHE</th>

<td>-1.014341</td>

<td>0.052103</td>

<td>-2.331018</td>

<td>-0.480705</td>

<td>-0.461062</td>

<td>-0.700859</td>

<td>NaN</td>

<td>-0.040798</td>

<td>-0.567470</td>

</tr>

<tr>

<th>000006.XSHE</th>

<td>-0.978757</td>

<td>0.112236</td>

<td>-1.509728</td>

<td>0.083089</td>

<td>0.044869</td>

<td>0.170041</td>

<td>1.931730</td>

<td>-0.005611</td>

<td>0.113066</td>

</tr>

</tbody>

</table>

</div>

## 单因子分析使用示例

**具体用法请查看[API文档](https://github.com/JoinQuant/jqfactor_analyzer/blob/master/docs/API%E6%96%87%E6%A1%A3.md), 此处仅作示例**

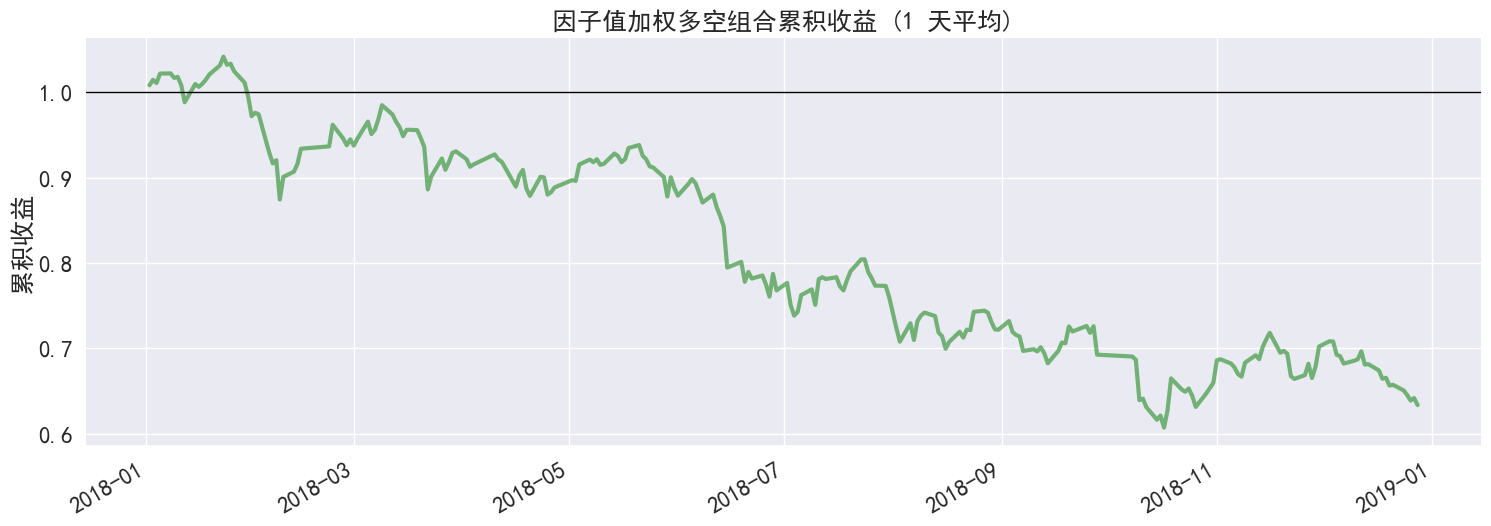

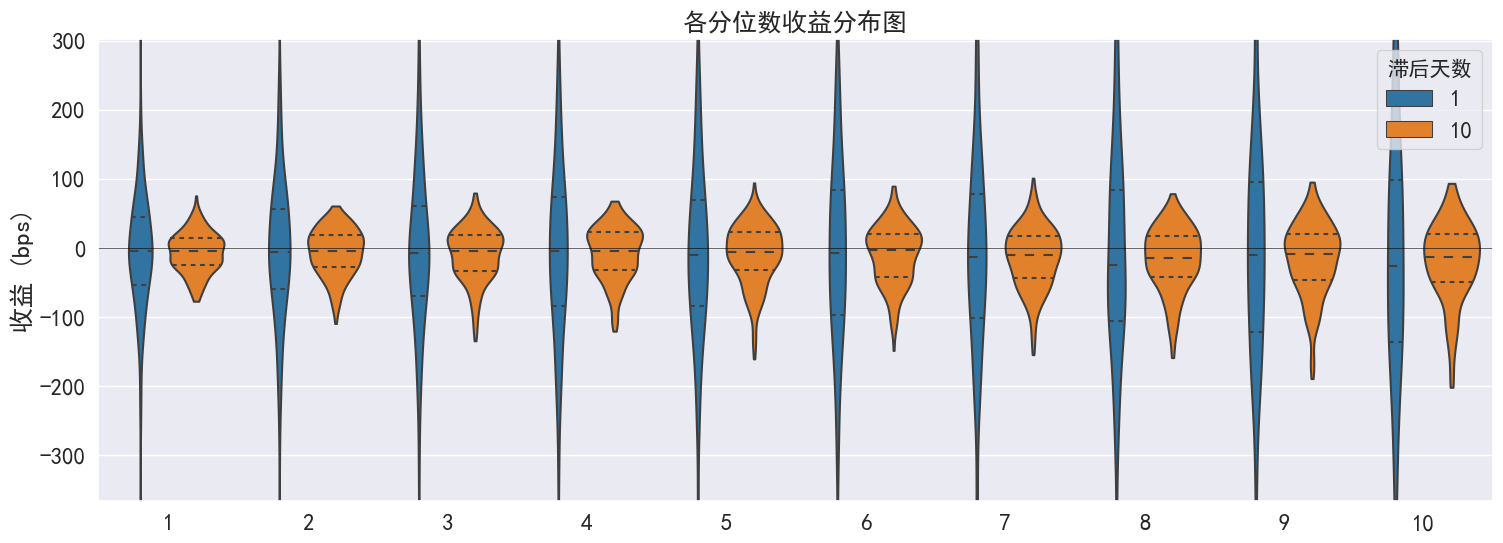

### 示例:5日平均换手率因子分析

```python

# 载入函数库

import pandas as pd

import jqfactor_analyzer as ja

# 获取5日平均换手率因子2018-01-01到2018-12-31之间的数据(示例用从库中直接调取)

# 聚宽因子库数据获取方法在下方

from jqfactor_analyzer.sample import VOL5

factor_data = VOL5

# 对因子进行分析

far = ja.analyze_factor(

factor_data, # factor_data 为因子值的 pandas.DataFrame

quantiles=10,

periods=(1, 10),

industry='jq_l1',

weight_method='avg',

max_loss=0.1

)

# 获取整理后的因子的IC值

far.ic

```

check/save price cache : 100%|██████████| 13/13 [00:00<00:00, 25.60it/s]

load price info : 100%|██████████| 253/253 [00:06<00:00, 38.09it/s]

load industry info : 100%|██████████| 243/243 [00:00<00:00, 331.46it/s]

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>period_1</th>

<th>period_10</th>

</tr>

<tr>

<th>date</th>

<th></th>

<th></th>

</tr>

</thead>

<tbody>

<tr>

<th>2018-01-02</th>

<td>0.141204</td>

<td>-0.058936</td>

</tr>

<tr>

<th>2018-01-03</th>

<td>0.082738</td>

<td>-0.176327</td>

</tr>

<tr>

<th>2018-01-04</th>

<td>-0.183788</td>

<td>-0.196901</td>

</tr>

<tr>

<th>2018-01-05</th>

<td>0.057023</td>

<td>-0.180102</td>

</tr>

<tr>

<th>2018-01-08</th>

<td>-0.025403</td>

<td>-0.187145</td>

</tr>

<tr>

<th>...</th>

<td>...</td>

<td>...</td>

</tr>

<tr>

<th>2018-12-24</th>

<td>0.098161</td>

<td>-0.198127</td>

</tr>

<tr>

<th>2018-12-25</th>

<td>-0.269072</td>

<td>-0.166092</td>

</tr>

<tr>

<th>2018-12-26</th>

<td>-0.430034</td>

<td>-0.117108</td>

</tr>

<tr>

<th>2018-12-27</th>

<td>-0.107514</td>

<td>-0.040684</td>

</tr>

<tr>

<th>2018-12-28</th>

<td>-0.013224</td>

<td>0.039446</td>

</tr>

</tbody>

</table>

<p>243 rows × 2 columns</p>

</div>

```python

# 生成统计图表

far.create_full_tear_sheet(

demeaned=False, group_adjust=False, by_group=False,

turnover_periods=None, avgretplot=(5, 15), std_bar=False

)

```

分位数统计

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>min</th>

<th>max</th>

<th>mean</th>

<th>std</th>

<th>count</th>

<th>count %</th>

</tr>

<tr>

<th>factor_quantile</th>

<th></th>

<th></th>

<th></th>

<th></th>

<th></th>

<th></th>

</tr>

</thead>

<tbody>

<tr>

<th>1</th>

<td>0.00000</td>

<td>0.30046</td>

<td>0.072019</td>

<td>0.056611</td>

<td>7293</td>

<td>10.054595</td>

</tr>

<tr>

<th>2</th>

<td>0.08846</td>

<td>0.49034</td>

<td>0.198844</td>

<td>0.066169</td>

<td>7266</td>

<td>10.017371</td>

</tr>

<tr>

<th>3</th>

<td>0.14954</td>

<td>0.65984</td>

<td>0.309961</td>

<td>0.089310</td>

<td>7219</td>

<td>9.952574</td>

</tr>

<tr>

<th>4</th>

<td>0.22594</td>

<td>0.80136</td>

<td>0.423978</td>

<td>0.111141</td>

<td>7248</td>

<td>9.992555</td>

</tr>

<tr>

<th>5</th>

<td>0.30904</td>

<td>0.99400</td>

<td>0.553684</td>

<td>0.133578</td>

<td>7280</td>

<td>10.036672</td>

</tr>

<tr>

<th>6</th>

<td>0.38860</td>

<td>1.23760</td>

<td>0.696531</td>

<td>0.166341</td>

<td>7211</td>

<td>9.941545</td>

</tr>

<tr>

<th>7</th>

<td>0.48394</td>

<td>1.56502</td>

<td>0.874488</td>

<td>0.204828</td>

<td>7240</td>

<td>9.981526</td>

</tr>

<tr>

<th>8</th>

<td>0.61900</td>

<td>2.09560</td>

<td>1.132261</td>

<td>0.265739</td>

<td>7226</td>

<td>9.962225</td>

</tr>

<tr>

<th>9</th>

<td>0.84984</td>

<td>3.30790</td>

<td>1.639863</td>

<td>0.436992</td>

<td>7261</td>

<td>10.010478</td>

</tr>

<tr>

<th>10</th>

<td>1.23172</td>

<td>40.47726</td>

<td>4.276270</td>

<td>3.640945</td>

<td>7290</td>

<td>10.050459</td>

</tr>

</tbody>

</table>

</div>

-------------------------

收益分析

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>period_1</th>

<th>period_10</th>

</tr>

</thead>

<tbody>

<tr>

<th>Ann. alpha</th>

<td>-0.087</td>

<td>-0.060</td>

</tr>

<tr>

<th>beta</th>

<td>1.218</td>

<td>1.238</td>

</tr>

<tr>

<th>Mean Period Wise Return Top Quantile (bps)</th>

<td>-20.913</td>

<td>-18.530</td>

</tr>

<tr>

<th>Mean Period Wise Return Bottom Quantile (bps)</th>

<td>-6.156</td>

<td>-6.452</td>

</tr>

<tr>

<th>Mean Period Wise Spread (bps)</th>

<td>-14.757</td>

<td>-13.177</td>

</tr>

</tbody>

</table>

</div>

<Figure size 640x480 with 0 Axes>

<Figure size 640x480 with 0 Axes>

......(图片过多,此处内容演示已省略,请参考api说明使用)

-------------------------

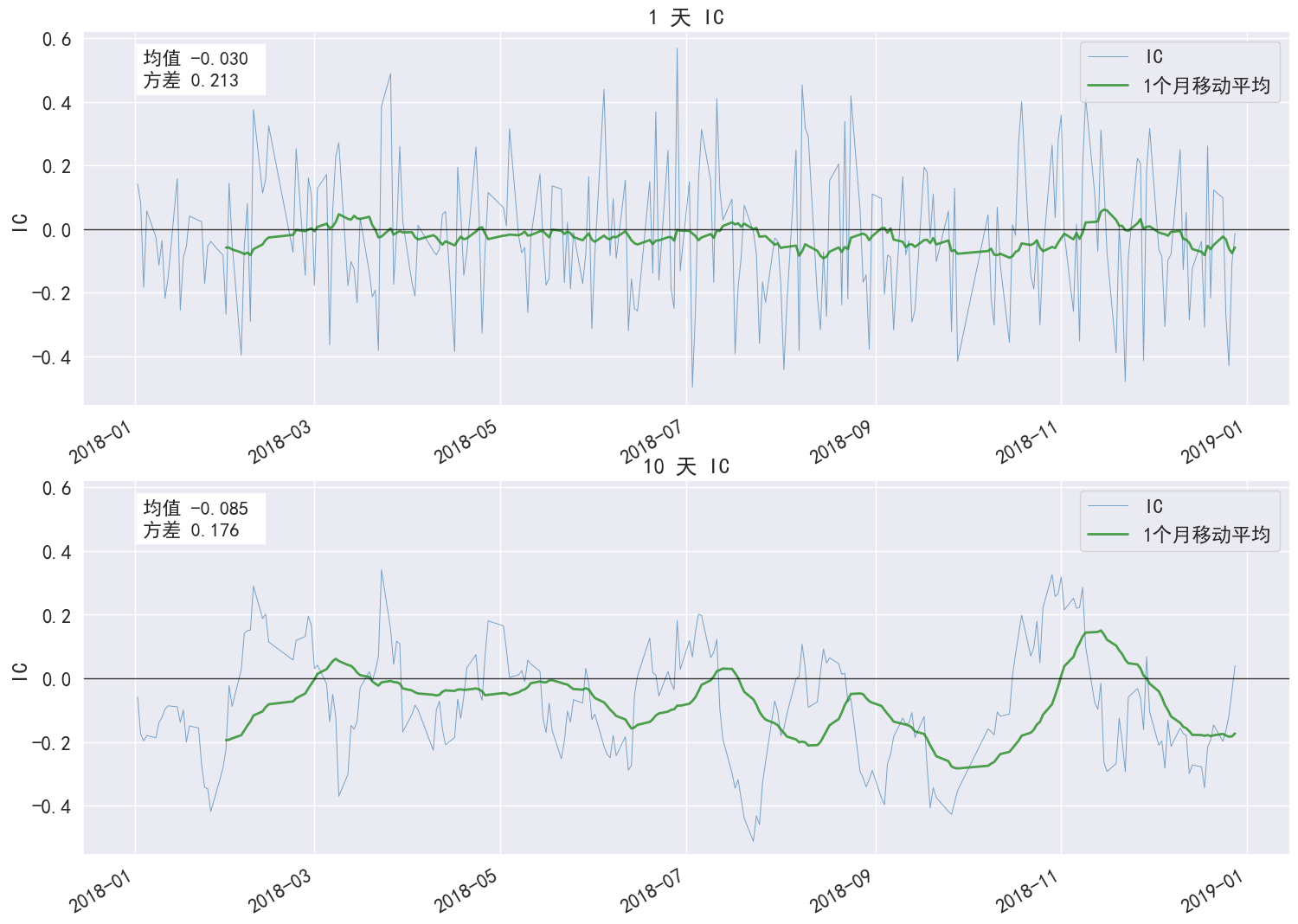

IC 分析

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>period_1</th>

<th>period_10</th>

</tr>

</thead>

<tbody>

<tr>

<th>IC Mean</th>

<td>-0.030</td>

<td>-0.085</td>

</tr>

<tr>

<th>IC Std.</th>

<td>0.213</td>

<td>0.176</td>

</tr>

<tr>

<th>IR</th>

<td>-0.140</td>

<td>-0.487</td>

</tr>

<tr>

<th>t-stat(IC)</th>

<td>-2.180</td>

<td>-7.587</td>

</tr>

<tr>

<th>p-value(IC)</th>

<td>0.030</td>

<td>0.000</td>

</tr>

<tr>

<th>IC Skew</th>

<td>0.240</td>

<td>0.091</td>

</tr>

<tr>

<th>IC Kurtosis</th>

<td>-0.420</td>

<td>-0.485</td>

</tr>

</tbody>

</table>

</div>

<Figure size 640x480 with 0 Axes>

<Figure size 640x480 with 0 Axes>

-------------------------

换手率分析

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>period_1</th>

<th>period_10</th>

</tr>

</thead>

<tbody>

<tr>

<th>Quantile 1 Mean Turnover</th>

<td>0.055</td>

<td>0.222</td>

</tr>

<tr>

<th>Quantile 2 Mean Turnover</th>

<td>0.136</td>

<td>0.447</td>

</tr>

<tr>

<th>Quantile 3 Mean Turnover</th>

<td>0.206</td>

<td>0.599</td>

</tr>

<tr>

<th>Quantile 4 Mean Turnover</th>

<td>0.268</td>

<td>0.680</td>

</tr>

<tr>

<th>Quantile 5 Mean Turnover</th>

<td>0.307</td>

<td>0.730</td>

</tr>

<tr>

<th>Quantile 6 Mean Turnover</th>

<td>0.337</td>

<td>0.742</td>

</tr>

<tr>

<th>Quantile 7 Mean Turnover</th>

<td>0.326</td>

<td>0.735</td>

</tr>

<tr>

<th>Quantile 8 Mean Turnover</th>

<td>0.279</td>

<td>0.708</td>

</tr>

<tr>

<th>Quantile 9 Mean Turnover</th>

<td>0.196</td>

<td>0.593</td>

</tr>

<tr>

<th>Quantile 10 Mean Turnover</th>

<td>0.073</td>

<td>0.283</td>

</tr>

</tbody>

</table>

</div>

<div>

<style scoped>

.dataframe tbody tr th:only-of-type {

vertical-align: middle;

}

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

</style>

<table border="1" class="dataframe">

<thead>

<tr style="text-align: right;">

<th></th>

<th>period_1</th>

<th>period_10</th>

</tr>

</thead>

<tbody>

<tr>

<th>Mean Factor Rank Autocorrelation</th>

<td>0.991</td>

<td>0.884</td>

</tr>

</tbody>

</table>

</div>

......(图片过多,此处内容演示已省略,请参考api说明使用)

### 获取聚宽因子库数据的方法

[聚宽因子库](https://www.joinquant.com/help/api/help#name:factor_values)包含数百个质量、情绪、风险等其他类目的因子

连接jqdatasdk获取数据包,数据接口需调用聚宽 [jqdatasdk](https://www.joinquant.com/help/api/doc?name=JQDatadoc) 接口获取金融数据 ([试用注册地址](https://www.joinquant.com/default/index/sdk))

```python

# 获取因子数据:以5日平均换手率为例,该数据可以直接用于因子分析

# 具体使用方法可以参照jqdatasdk的API文档

import jqdatasdk

jqdatasdk.auth('username', 'password')

# 获取聚宽因子库中的VOL5数据

factor_data=jqdatasdk.get_factor_values(

securities=jqdatasdk.get_index_stocks('000300.XSHG'),

factors=['VOL5'],

start_date='2018-01-01',

end_date='2018-12-31')['VOL5']

```

### 将自有因子值转换成 DataFrame 格式的数据

- index 为日期,格式为 pandas 日期通用的 DatetimeIndex

- columns 为股票代码,格式要求符合聚宽的代码定义规则(如:平安银行的股票代码为 000001.XSHE)

- 如果是深交所上市的股票,在股票代码后面需要加入 .XSHE

- 如果是上交所上市的股票,在股票代码后面需要加入 .XSHG

- 将 pandas.DataFrame 转换成满足格式要求数据格式

首先要保证 index 为 DatetimeIndex 格式,一般是通过 pandas 提供的 pandas.to_datetime 函数进行转换,在转换前应确保 index 中的值都为合理的日期格式, 如 '2018-01-01' / '20180101',之后再调用 pandas.to_datetime 进行转换;另外应确保 index 的日期是按照从小到大的顺序排列的,可以通过 sort_index 进行排序;最后请检查 columns 中的股票代码是否都满足聚宽的代码定义。

```python

import pandas as pd

sample_data = pd.DataFrame(

[[0.84, 0.43, 2.33, 0.86, 0.96],

[1.06, 0.51, 2.60, 0.90, 1.09],

[1.12, 0.54, 2.68, 0.94, 1.12],

[1.07, 0.64, 2.65, 1.33, 1.15],

[1.21, 0.73, 2.97, 1.65, 1.19]],

index=['2018-01-02', '2018-01-03', '2018-01-04', '2018-01-05', '2018-01-08'],

columns=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']

)

print(sample_data)

factor_data = sample_data.copy()

# 将 index 转换为 DatetimeIndex

factor_data.index = pd.to_datetime(factor_data.index)

# 将 DataFrame 按照日期顺序排列

factor_data = factor_data.sort_index()

# 检查 columns 是否满足聚宽股票代码格式

if not sample_data.columns.astype(str).str.match('\d{6}\.XSH[EG]').all():

print("有不满足聚宽股票代码格式的股票")

print(sample_data.columns[~sample_data.columns.astype(str).str.match('\d{6}\.XSH[EG]')])

print(factor_data)

```

- 将键为日期,值为各股票因子值的 Series 的 dict 转换成 pandas.DataFrame,可以直接利用 pandas.DataFrame 生成

```python

sample_data = \

{'2018-01-02': pd.Seris([0.84, 0.43, 2.33, 0.86, 0.96],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']),

'2018-01-03': pd.Seris([1.06, 0.51, 2.60, 0.90, 1.09],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']),

'2018-01-04': pd.Seris([1.12, 0.54, 2.68, 0.94, 1.12],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']),

'2018-01-05': pd.Seris([1.07, 0.64, 2.65, 1.33, 1.15],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']),

'2018-01-08': pd.Seris([1.21, 0.73, 2.97, 1.65, 1.19],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE'])}

import pandas as pd

# 直接调用 pd.DataFrame 将 dict 转换为 DataFrame

factor_data = pd.DataFrame(data).T

print(factor_data)

# 之后请按照 DataFrae 的方法转换成满足格式要求数据格式

```

================================================

FILE: docs/API文档.md

================================================

# **API文档**

## 一、因子缓存factor_cache模块

为了在本地进行分析时,为了提高数据获取的速度并避免反复从服务端获取数据,所以增加了本地数据缓存的方法。

注意缓存格式为pyarrow.feather格式,pyarrow库不同版本之间可能存在兼容问题,建议不要随意修改pyarrow库的版本,如果修改后产生大量缓存文件无法读取(提示已损坏)的情况,建议删除整个缓存目录后重新缓存。

### 1. 设置缓存目录

对于单因子分析和归因分析中使用到的市值/价格和风格因子等数据,默认会缓存到用户的主目录( `os.path.expanduser( '~/jqfactor_datacache/bundle')` )。 一般地,在 Unix 系统上可能是 `/home/username/jqfactor_datacache/bundle`,而在 Windows 系统上可能是 `C:\Users\username\jqfactor_datacache\bundle`。

您可以通过以下代码修改配置信息来设置为其他路径,设置过一次后后续都将沿用设置的这个路径,不用重复设置。

```python

from jqfactor_analyzer.factor_cache import set_cache_dir,get_cache_dir

set_cache_dir(my_path) #设置缓存目录为my_path

print(get_cache_dir()) #输出缓存目录

```

### 2. 缓存/检查缓存和读取已缓存数据

除过对单因子分析及归因分析依赖的数据进行缓存外,factor_cache还可以缓存自定义的因子组(仅限聚宽因子库中支持的因子)

```python

def save_factor_values_by_group(start_date,end_date,factor_names='prices',

group_name=None,overwrite=False,cache_dir=None,show_progress=True):

"""将因子库数据按因子组储存到本地,根据factor_names因子列表(顺序无关)自动生成因子组的名称

start_date : 开始时间

end_date : 结束时间

factor_names : 因子组所含因子的名称,除过因子库中支持的因子外,还支持指定为'prices'缓存价格数据

group_name : 因子组名称,不指定时使用get_factor_folder自动生成因子组名(即缓存文件夹名),如果指定则按照指定的名称生成文件夹名(使用get_factor_values_by_cache时,需要自行指定factor_path)

overwrite : 文件已存在时是否覆盖更新,默认为False即增量更新,文件已存在时跳过

cache_dir : 缓存的路径,如果没有指定则使用配置信息中的路径,一般不用指定

show_progress : 是否展示缓存进度,默认为True

返回 : 因子组储存的路径 , 文件以天为单位储存为feather文件,每天一个feather文件,每月一个文件夹,columns为因子名称, index为当天在市的所有标的代码

"""

def get_factor_values_by_cache(date,codes=None,factor_names=None,group_name=None,

factor_path=None):

"""从缓存的文件读取因子数据,文件不存在时返回空的dataframe

date : 日期

codes : 标的代码,默认为None获取当天在市的所有标的

factor_names : 因子列表(顺序无关),当指定factor_path/group_name时失效

group_name : 因子组名,如果缓存时指定了group_name,则获取时必须也指定group_name或factor_path

factor_path : 可选参数,因子组的路径,一般不用指定

返回:

如果缓存文件存在,则返回当天的因子数据,index是标的代码,columns是因子名

如果缓存文件不存在,则返回空的dataframe, 建议在使用get_factor_values_by_cache前,先运行save_factor_values_by_group检查时间区间内的缓存文件是否完整

"""

def get_factor_folder(factor_names,group_name=None):

"""获取因子组的文件夹名(文件夹位于get_cache_dir()获取的缓存目录下)

factor_names : 因子储存时,如果未指定group_name,则根据因子列表(顺序无关)获取md5值生成因子组名(即储存的文件夹名),使用此方法可以获取生成的文件夹名称

group_name : 如果储存时指定了因子组名,则直接返回此因子组名

"""

```

**示例**

```python

from jqfactor_analyzer.factor_cache import save_factor_values_by_group,get_factor_values_by_cache,get_factor_folder,get_cache_dir

# import jqdatasdk as jq

# jq.auth("账号",'密码') #登陆jqdatasdk来从服务端缓存数据

all_factors = jq.get_all_factors()

factor_names = all_factors[all_factors.category=='growth'].factor.tolist() #将聚宽因子库中的成长类因子作为一组因子

group_name = 'growth_factors' #因子组名定义为'growth_factors'

start_date = '2021-01-01'

end_date = '2021-06-01'

# 检查/缓存因子数据

factor_path = save_factor_values_by_group(start_date,end_date,factor_names=factor_names,group_name=group_name,overwrite=False,show_progress=True)

# factor_path = os.path.join(get_cache_dir(), get_factor_folder(factor_names,group_name=group_name) #等同于save_factor_values_by_group返回的路径

# 循环获取缓存的因子数据,并拼接

trade_days = jq.get_trade_days(start_date,end_date)

factor_values = {}

for date in trade_days:

factor_values[date] = get_factor_values_by_cache(date,codes=None,factor_names=factor_names,group_name=group_name, factor_path=factor_path)#这里实际只需要指定group_name,factor_names参数的其中一个,缓存时指定了group_name时,factor_names不生效

factor_values = pd.concat(factor_values)

```

## 二、归因分析模块

```python

from jqfactor_analyzer import AttributionAnalysis

AttributionAnalysis(weights,daily_return,style_type='style_pro',industry ='sw_l1',use_cn=True,show_data_progress=True)

```

**参数 :**

- `weights`:持仓权重信息,index是日期,columns是标的代码, value对应的是组合当天的仓位占比(单日仓位占比总和不为1时,剩余部分认为是当天的现金)

- `daily_return`:Series,index是日期,values为当天组合的收益率

- `style_type`:归因分析所使用的风格因子类型,可选'style'和'style_pro'中的一个

- `industry`:归因分析所使用的行业分类,可选'sw_l1'和'jq_l1'中的一个

- `use_cn`:绘图时是否使用中文

- `show_data_progress`:是否展示数据获取进度(使用本地缓存,第一次运行时速度较慢,后续对于本地不存在的数据将增量缓存)

**示例**

```python

import pandas as pd

# position_weights.csv 是一个储存了组合权重信息的csv文件,index是日期,columns是股票代码

# position_daily_return.csv 是一个储存了组合日收益率的csv文件,index是日期,daily_return列是日收益

weights = pd.read_csv("position_weights.csv",index_col=0)

returns = pd.read_csv("position_daily_return.csv",index_col=0)['daily_return']

An = AttributionAnalysis(weights , returns ,style_type='style_pro',industry ='sw_l1', show_data_progress=True )

```

### 1. 属性

- `style_exposure` : 组合的风格暴露

- `industry_exposure` : 组合的行业暴露

- `exposure_portfolio` : 组合的风格+行业及country暴露

- `attr_daily_returns` : 组合的\风格+行业及country日度归因收益率

- `attr_returns` : 组合的日度风格+行业及country累积归因收益率

### 2. 方法

#### (1) 获取组合相对于指数的暴露

```python

get_exposure2bench(index_symbol)

```

**参数 :**

- `index_symbol` : 基准指数, 可选`['000300.XSHG','000905.XSHG','000906.XSHG','000852.XSHG','932000.CSI','000985.XSHG']`中的一个

**返回 :**

- 一个dataframe,index为日期,columns为风格因子+行业因子+county , 其中country为股票总持仓占比

#### (2) 获取组合相对于指数的日度归因收益率

```python

get_attr_daily_returns2bench(index_symbol)

```

假设组合相对于指数的收益由以下部分构成 : 风格+行业暴露收益(common_return ) , 现金闲置收益(cash) ,策略本身的超额收益(specific_return)

**参数 :**

- `index_symbol` : 基准指数, 可选`['000300.XSHG','000905.XSHG','000906.XSHG','000852.XSHG','932000.CSI','000985.XSHG']`中的一个

**返回 :**

- 一个dataframe,index为日期,columns为`风格因子+行业因子+cash+common_return,specific_return,total_return`

其中:

cash是假设现金收益(0)相对指数带来的收益率

common_return 为风格+行业总收益率

specific_return 为特意收益率

total_return 为组合相对于指数的总收益

#### (3) 获取相对于指数的累积归因收益率

```python

get_attr_returns2bench(index_symbol)

```

假设组合相对于指数的收益由以下部分构成 : 风格+行业暴露收益(common_return ) , 现金闲置收益(cash) ,策略本身的超额收益(specific_return)

**参数 :**

`index_symbol` : 基准指数, 可选`['000300.XSHG','000905.XSHG','000906.XSHG','000852.XSHG','932000.CSI','000985.XSHG']`中的一个

**返回 :**

- 一个dataframe,index为日期,columns为`风格因子+行业因子+cash+common_return,specific_return,total_return`

其中:

cash是假设现金收益(0)相对指数带来的收益率

common_return 为风格+行业总收益率

specific_return 为特异收益率

total_return 为组合相对于指数的总收益(减法超额)

### 3. 绘图方法

#### (1) 绘制风格暴露时序图

```python

plot_exposure(factors='style',index_symbol=None,figsize=(15,7))

```

绘制风格暴露时序

**参数**

- factors : 绘制的暴露类型 , 可选 'style'(所有风格因子) , 'industry'(所有行业因子),也可以传递一个list,list为exposure_portfolio中columns的一个或者多个

- index_symbol : 基准指数代码,指定时绘制相对于指数的暴露 , 默认None为组合本身的暴露

- figsize : 画布大小

#### (2) 绘制归因分析收益时序图

```python

plot_returns(factors='style',index_symbol=None,figsize=(15,7))

```

绘制归因分析收益时序

**参数**

- factors : 绘制的暴露类型 , 可选 'style'(所有风格因子) , 'industry'(所有行业因子),也可以传递一个list,list为exposure_portfolio中columns的一个或者多个

同时也支持指定['common_return'(风格总收益),'specific_return'(特异收益),'total_return'(总收益)', 'country'(国家因子收益,当指定index_symbol时会用现金相对于指数的收益替代)]

- index_symbol : 基准指数代码,指定时绘制相对于指数的暴露 , 默认None为组合本身的暴露

- figsize : 画布大小

#### (3) 绘制暴露与收益对照图

```python

plot_exposure_and_returns(factors='style',index_symbol=None,show_factor_perf=False,figsize=(12,6))

```

将因子暴露与收益同时绘制在多个子图上

**参数**

- factors : 绘制的暴露类型 , 可选 'style'(所有风格因子) , 'industry'(所有行业因子,也可以传递一个list,list为exposure_portfolio中columns的一个或者多个

当指定index_symbol时,country会用现金相对于指数的收益替代)

- index_symbol : 基准指数代码,指定时绘制相对于指数的暴露及收益 , 默认None为组合本身的暴露和收益

- show_factor_perf : 是否同时绘制因子表现

- figsize : 画布大小,这里第一个参数是画布的宽度, 第二个参数为单个子图的高度

#### (4) 关闭中文图例显示

```python

plot_disable_chinese_label()

```

画图时默认会从系统中查找中文字体显示以中文图例

如果找不到中文字体则默认使用英文图例

当找到中文字体但中文显示乱码时, 可调用此 API 关闭中文图例显示而使用英文

## 三、单因子分析模块

```python

from jqfactor_analyzer import analyze_factor

analyze_factor(factor, industry='jq_l1', quantiles=5, periods=(1, 5, 10), weight_method='avg', max_loss=0.25, allow_cache=True, show_data_progress=True )

```

单因子分析函数

**参数**

* `factor`: 因子值,

pandas.DataFrame格式的数据

- index为日期,格式为pandas日期通用的DatetimeIndex,转换方法见[将自有因子值转换成 DataFrame 格式的数据](#将自有因子值转换成-dataframe-格式的数据)

- columns为股票代码,格式要求符合聚宽的代码定义规则(如:平安银行的股票代码为000001.XSHE)

- 如果是深交所上市的股票,在股票代码后面需要加入.XSHE

- 如果是上交所上市的股票,在股票代码后面需要加入.XSHG

或 pd.Series格式的数据

- index为日期和股票代码组成的MultiIndex

* `industry`: 行业分类, 默认为 `'jq_l1'`

* `'sw_l1'`: 申万一级行业

* `'sw_l2'`: 申万二级行业

* `'sw_l3'`: 申万三级行业

* `'jq_l1'`: 聚宽一级行业

* `'jq_l2'`: 聚宽二级行业

* `'zjw'`: 证监会行业

* `quantiles`: 分位数数量, 默认为 `5`

`int`

在因子分组中按照因子值大小平均分组的组数.

* `periods`: 调仓周期, 默认为 [1, 5, 10]

`int` or `list[int]`

* `weight_method`: 基于分位数收益时的加权方法, 默认为 `'avg'`

* `'avg'`: 等权重

* `'mktcap'`:按总市值加权

* `'ln_mktcap'`: 按总市值的对数加权

* `'cmktcap'`: 按流通市值加权

* `'ln_cmktcap'`: 按流通市值的对数加权

* `max_loss`: 因重复值或nan值太多而无效的因子值的最大占比, 默认为 0.25

`float`

允许的丢弃因子数据的最大百分比 (0.00 到 1.00),

计算比较输入因子索引中的项目数和输出 DataFrame 索引中的项目数.

因子数据本身存在缺陷 (例如 NaN),

没有提供足够的价格数据来计算所有因子值的远期收益,

或者因为分组失败, 因此可以部分地丢弃因子数据

* `allow_cache` : 是否允许对价格,市值等信息进行本地缓存(按天缓存,初次运行可能比较慢,但后续重新获取对应区间的数据将非常快,且分析时仅消耗较小的jqdatasdk流量)

* show_data_progress: 是否展示数据获取的进度信息

**示例**

```python

#载入函数库

import pandas as pd

import jqfactor_analyzer as ja

# 获取 jqdatasdk 授权

# 输入用户名、密码,申请地址:http://t.cn/EINDOxE

# 聚宽官网及金融终端,使用方法参见:http://t.cn/EINcS4j

import jqdatasdk

jqdatasdk.auth('username', 'password')

# 对因子进行分析

far = ja.analyze_factor(

factor_data, # factor_data 为因子值的 pandas.DataFrame

quantiles=10,

periods=(1, 10),

industry='jq_l1',

weight_method='avg',

max_loss=0.1

)

# 生成统计图表

far.create_full_tear_sheet(

demeaned=False, group_adjust=False, by_group=False,

turnover_periods=None, avgretplot=(5, 15), std_bar=False

)

```

### 1. 绘制结果

#### 展示全部分析

```

far.create_full_tear_sheet(demeaned=False, group_adjust=False, by_group=False,

turnover_periods=None, avgretplot=(5, 15), std_bar=False)

```

**参数:**

- demeaned:

- True: 使用超额收益计算 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False: 不使用超额收益

- group_adjust:

- True: 使用行业中性化后的收益计算 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False: 不使用行业中性化后的收益

- by_group:

- True: 按行业展示

- False: 不按行业展示

- turnover_periods: 调仓周期

- avgretplot: tuple 因子预测的天数-(计算过去的天数, 计算未来的天数)

- std_bar:

- True: 显示标准差

- False: 不显示标准差

#### 因子值特征分析

```

far.create_summary_tear_sheet(demeaned=False, group_adjust=False)

```

**参数:**

- demeaned:

- True: 对每日因子收益去均值求得因子收益表

- False: 因子收益表

- group_adjust:

- True: 按行业对因子收益去均值后求得因子收益表

- False: 因子收益表

#### 因子收益分析

```

far.create_returns_tear_sheet(demeaned=False, group_adjust=False, by_group=False)

```

**参数:**

- demeaned:

- True: 使用超额收益计算 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False: 不使用超额收益

- group_adjust:

- True: 使用行业中性化后的收益计算 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False: 不使用行业中性化后的收益

- by_group:

- True: 画各行业的各分位数平均收益图

- False: 不画各行业的各分位数平均收益图

#### 因子 IC 分析

```

far.create_information_tear_sheet(group_adjust=False, by_group=False)

```

**参数:**

- group_adjust:

- True: 使用行业中性收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重的加权的均值)

- False: 不使用行业中性收益

- by_group:

- True: 画按行业分组信息比率(IC)图

- False: 画月度信息比率(IC)图

#### 因子换手率分析

```

far.create_turnover_tear_sheet(turnover_periods=None)

```

**参数:**

- turnover_periods: 调仓周期

#### 因子预测能力分析

```

far.create_event_returns_tear_sheet(avgretplot=(5, 15),demeaned=False, group_adjust=False,std_bar=False)

```

**参数:**

- avgretplot: tuple 因子预测的天数-(计算过去的天数, 计算未来的天数)

- demeaned:

- True: 使用超额收益计算累积收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False: 不使用超额收益

- group_adjust:

- True: 使用行业中性化后的收益计算累积收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False: 不使用行业中性化后的收益

- std_bar:

- True: 显示标准差

- False: 不显示标准差

#### 打印因子收益表

```

far.plot_returns_table(demeaned=False, group_adjust=False)

```

**参数:**

- demeaned:

- True:使用超额收益计算 (基准收益被认为是每日所有股票收益按照weight列中权重的加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重的加权的均值)

- False:不使用行业中性收益

#### 打印换手率表

```

far.plot_turnover_table()

```

#### 打印信息比率(IC)相关表

```

far.plot_information_table(group_adjust=False, method='rank')

```

**参数:**

- group_adjust:

- True:使用行业中性收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重的加权的均值)

- False:不使用行业中性收益

- method:

- 'rank':用秩相关系数计算IC值

- 'normal': 用相关系数计算IC值

#### 打印个分位数统计表

```

far.plot_quantile_statistics_table()

```

#### 画信息比率(IC)时间序列图

```

far.plot_ic_ts(group_adjust=False, method='rank')

```

**参数:**

- group_adjust:

- True:使用行业中性收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重的加权的均值)

- False:不使用行业中性收益

- method:

- 'rank':用秩相关系数计算IC值

- 'normal': 用相关系数计算IC值

#### 画信息比率分布直方图

```

far.plot_ic_hist(group_adjust=False, method='rank')

```

**参数:**

- group_adjust:

- True:使用行业中性收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重的加权的均值)

- False:不使用行业中性收益

- method:

- 'rank':用秩相关系数计算IC值

- 'normal': 用相关系数计算IC值

#### 画信息比率 qq 图

```

far.plot_ic_qq(group_adjust=False, method='rank', theoretical_dist='norm')

```

**参数:**

- group_adjust:

- True:使用行业中性收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重的加权的均值)

- False:不使用行业中性收益

- method:

- 'rank':用秩相关系数计算IC值

- 'normal': 用相关系数计算IC值

- theoretical_dist:

- 'norm':正态分布

- 't':t分布

#### 画各分位数平均收益图

```

far.plot_quantile_returns_bar(by_group=False, demeaned=False, group_adjust=False)

```

**参数:**

- by_group:

- True:各行业的各分位数平均收益图

- False:各分位数平均收益图

- demeaned:

- True:使用超额收益计算累积收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性化后的收益计算累积收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False:不使用行业中性化后的收益

#### 画最高分位减最低分位收益图

```

far.plot_mean_quantile_returns_spread_time_series(demeaned=False, group_adjust=False, bandwidth=1)

```

**参数:**

- demeaned:

- True:使用超额收益计算累积收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性化后的收益计算累积收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False:不使用行业中性化后的收益

- bandwidth:n,加减n倍当日标准差

#### 画按行业分组信息比率(IC)图

```

far.plot_ic_by_group(group_adjust=False, method='rank')

```

**参数:**

- group_adjust:

- True:使用行业中性收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重的加权的均值)

- False:不使用行业中性收益

- method:

- 'rank':用秩相关系数计算IC值

- 'normal': 用相关系数计算IC值

#### 画因子自相关图

```

far.plot_factor_auto_correlation(rank=True)

```

**参数:**

- rank:

- True:用秩相关系数

- False:用相关系数

#### 画最高最低分位换手率图

```

far.plot_top_bottom_quantile_turnover(periods=(1, 3, 9))

```

**参数:**

- periods:调仓周期

#### 画月度信息比率(IC)图

```

far.plot_monthly_ic_heatmap(group_adjust=False)

```

**参数:**

- group_adjust:

- True:使用行业中性收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重的加权的均值)

- False:不使用行业中性收益

#### 画按因子值加权多空组合每日累积收益图

```

far.plot_cumulative_returns(period=1, demeaned=False, group_adjust=False)

```

**参数:**

- periods:调仓周期

- demeaned:

- True:对因子值加权组合每日收益的权重去均值 (每日权重 = 每日权重 - 每日权重的均值),使组合转换为cash-neutral多空组合

- False:不对权重去均值

- group_adjust:

- True:对权重分行业去均值 (每日权重 = 每日权重 - 每日各行业权重的均值),使组合转换为 industry-neutral 多空组合

- False:不对权重分行业去均值

#### 画做多最大分位数做空最小分位数组合每日累积收益图

```

far.plot_top_down_cumulative_returns(period=1, demeaned=False, group_adjust=False)

```

**参数:**

- periods:指定调仓周期

- demeaned:

- True:使用超额收益计算累积收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性化后的收益计算累积收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False:不使用行业中性化后的收益

#### 画各分位数每日累积收益图

```

far.plot_cumulative_returns_by_quantile(period=(1, 3, 9), demeaned=False, group_adjust=False)

```

**参数:**

- periods:调仓周期

- demeaned:

- True:使用超额收益计算累积收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性化后的收益计算累积收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False:不使用行业中性化后的收益

#### 因子预测能力平均累计收益图

```

far.plot_quantile_average_cumulative_return(periods_before=5, periods_after=10, by_quantile=False, std_bar=False, demeaned=False, group_adjust=False)

```

**参数:**

- periods_before: 计算过去的天数

- periods_after: 计算未来的天数

- by_quantile:

- True:各分位数分别显示因子预测能力平均累计收益图

- False:不用各分位数分别显示因子预测能力平均累计收益图

- std_bar:

- True:显示标准差

- False:不显示标准差

- demeaned:

- True: 使用超额收益计算累积收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False: 不使用超额收益

- group_adjust:

- True: 使用行业中性化后的收益计算累积收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False: 不使用行业中性化后的收益

#### 画有效因子数量统计图

```

far.plot_events_distribution(num_days=1)

```

**参数:**

- num_days:统计间隔天数

#### 关闭中文图例显示

```

far.plot_disable_chinese_label()

```

### 2. 属性列表

用于访问因子分析的结果,大部分为惰性属性,在访问才会计算结果并返回

#### 查看因子值

```

far.factor_data

```

- 类型:pandas.Series

- index:为日期和股票代码的MultiIndex

#### 去除 nan/inf,整理后的因子值、forward_return 和分位数

```

far.clean_factor_data

```

- 类型:pandas.DataFrame index:为日期和股票代码的MultiIndex

- columns:根据period选择后的forward_return(如果调仓周期为1天,那么forward_return为[第二天的收盘价-今天的收盘价]/今天的收盘价)、因子值、行业分组、分位数数组、权重

#### 按分位数分组加权平均因子收益

```

far.mean_return_by_quantile

```

- 类型:pandas.DataFrame

- index:分位数分组

- columns:调仓周期

#### 按分位数分组加权因子收益标准差

```

far.mean_return_std_by_quantile

```

- 类型:pandas.DataFrame

- index:分位数分组

- columns:调仓周期

#### 按分位数及日期分组加权平均因子收益

```

far.mean_return_by_date

```

- 类型:pandas.DataFrame

- index:为日期和分位数的MultiIndex

- columns:调仓周期

#### 按分位数及日期分组加权因子收益标准差

```

far.mean_return_std_by_date

```

- 类型:pandas.DataFrame

- index:为日期和分位数的MultiIndex

- columns:调仓周期

#### 按分位数及行业分组加权平均因子收益

```

far.mean_return_by_group

```

- 类型:pandas.DataFrame

- index:为行业和分位数的MultiIndex

- columns:调仓周期

#### 按分位数及行业分组加权因子收益标准差

```

far.mean_return_std_by_group

```

- 类型:pandas.DataFrame

- index:为行业和分位数的MultiIndex

- columns:调仓周期

#### 最高分位数因子收益减最低分位数因子收益每日均值

```

far.mean_return_spread_by_quantile

```

- 类型:pandas.DataFrame

- index:日期

- columns:调仓周期

#### 最高分位数因子收益减最低分位数因子收益每日标准差

```

far.mean_return_spread_std_by_quantile

```

- 类型:pandas.DataFrame

- index:日期

- columns:调仓周期

#### 信息比率

```

far.ic

```

- 类型:pandas.DataFrame

- index:日期

- columns:调仓周期

#### 分行业信息比率

```

far.ic_by_group

```

- 类型:pandas.DataFrame

- index:行业

- columns:调仓周期

#### 月度信息比率

```

far.ic_monthly

```

- 类型:pandas.DataFrame

- index:月度

- columns:调仓周期表

#### 换手率

```

far.quantile_turnover

```

- 键:调仓周期

- 值: pandas.DataFrame 换手率

- index:日期

- columns:分位数分组

#### 计算按分位数分组加权因子收益和标准差

```

mean, std = far.calc_mean_return_by_quantile(by_date=True, by_group=False, demeaned=False, group_adjust=False)

```

**参数:**

- by_date:

- True:按天计算收益

- False:不按天计算收益

- by_group:

- True: 按行业计算收益

- False:不按行业计算收益

- demeaned:

- True:使用超额收益计算各分位数收益,超额收益=收益-基准收益 (基准收益被认为是每日所有股票收益按照weight列中权重的加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性收益计算各分位数收益,行业中性收益=收益-行业收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重的加权的均值)

- False:不使用行业中性收益

#### 计算按因子值加权多空组合每日收益

```

far.calc_factor_returns(demeaned=True, group_adjust=False)

```

权重 = 每日因子值 / 每日因子值的绝对值的和

正的权重代表买入, 负的权重代表卖出

**参数:**

- demeaned:

- True: 对权重去均值 (每日权重 = 每日权重 - 每日权重的均值), 使组合转换为 cash-neutral 多空组合

- False:不对权重去均值

- group_adjust:

- True:对权重分行业去均值 (每日权重 = 每日权重 - 每日各行业权重的均值),使组合转换为 industry-neutral 多空组合

- False:不对权重分行业去均值

#### 计算两个分位数相减的因子收益和标准差

```

mean, std = far.compute_mean_returns_spread(upper_quant=None, lower_quant=None, by_date=False, by_group=False, demeaned=False, group_adjust=False)

```

**参数:**

- upper_quant:用upper_quant选择的分位数减去lower_quant选择的分位数,只能在已有的范围内选择

- lower_quant:用upper_quant选择的分位数减去lower_quant选择的分位数,只能在已有的范围内选择

- by_date:

- True:按天计算两个分位数相减的因子收益和标准差

- False:不按天计算两个分位数相减的因子收益和标准差

- by_group:

- True: 分行业计算两个分位数相减的因子收益和标准差

- False:不分行业计算两个分位数相减的因子收益和标准差

- demeaned:

- True:使用超额收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False:不使用行业中性收益

#### 计算因子的 alpha 和 beta

```

far.calc_factor_alpha_beta(demeaned=True, group_adjust=False)

```

因子值加权组合每日收益 = beta * 市场组合每日收益 + alpha

因子值加权组合每日收益计算方法见 calc_factor_returns 函数

市场组合每日收益是每日所有股票收益按照weight列中权重加权的均值

结果中的 alpha 是年化 alpha

**参数:**

- demeaned:

- True: 对因子值加权组合每日收益的权重去均值 (每日权重 = 每日权重 - 每日权重的均值),使组合转换为cash-neutral多空组合

- False:不对权重去均值

- group_adjust:

- True:对权重分行业去均值 (每日权重 = 每日权重 - 每日各行业权重的均值),使组合转换为 industry-neutral 多空组合

- False:不对权重分行业去均值

#### 计算每日因子信息比率(IC值)

```

far.calc_factor_information_coefficient(group_adjust=False, by_group=False, method='rank')

```

**参数:**

- group_adjust:

- True:使用行业中性收益计算 IC (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False:不使用行业中性收益

- by_group:

- True:分行业计算 IC

- False:不分行业计算 IC

- method:

- 'rank':用秩相关系数计算IC值

- 'normal':用普通相关系数计算IC值

#### 计算因子信息比率均值(IC值均值)

```

far.calc_mean_information_coefficient(group_adjust=False, by_group=False, by_time=None, method='rank')

```

**参数:**

- group_adjust:

- True:使用行业中性收益计算 IC (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False:不使用行业中性收益

- by_group:

- True:分行业计算 IC

- False:不分行业计算 IC

- by_time:

- 'Y':按年求均值

- 'M':按月求均值

- None:对所有日期求均值

- method:

- 'rank':用秩相关系数计算IC值

- 'normal':用普通相关系数计算IC值

#### 按照当天的分位数算分位数未来和过去的收益均值和标准差

```

far.calc_average_cumulative_return_by_quantile(periods_before=5, periods_after=15, demeaned=False, group_adjust=False)

```

**参数:**

- periods_before:计算过去的天数

- periods_after:计算未来的天数

- demeaned:

- True:使用超额收益计算累积收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性化后的收益计算累积收益

- False:不使用行业中性化后的收益

#### 计算指定调仓周期的各分位数每日累积收益

```

far.calc_cumulative_return_by_quantile(period=None, demeaned=False, group_adjust=False)

```

**参数:**

- period:指定调仓周期

- demeaned:

- True:使用超额收益计算累积收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性化后的收益计算累积收益

- False:不使用行业中性化后的收益

#### 计算指定调仓周期的按因子值加权多空组合每日累积收益

```

far.calc_cumulative_returns(period=5, demeaned=False, group_adjust=False)

```

当 period > 1 时,组合的累积收益计算方法为:

组合每日收益 = (从第0天开始每period天一调仓的组合每日收益 + 从第1天开始每period天一调仓的组合每日收益 + ... + 从第period-1天开始每period天一调仓的组合每日收益) / period

组合累积收益 = 组合每日收益的累积

**参数:**

- period:指定调仓周期

- demeaned:

- True:对权重去均值 (每日权重 = 每日权重 - 每日权重的均值),使组合转换为 cash-neutral 多空组合

- False:不对权重去均值

- group_adjust:

- True:对权重分行业去均值 (每日权重 = 每日权重 - 每日各行业权重的均值),使组合转换为 industry-neutral 多空组合

- False:不对权重分行业去均值

#### 计算指定调仓周期和前面定义好的加权方式计算多空组合每日累计收益

```

far.calc_top_down_cumulative_returns(period=5, demeaned=False, group_adjust=False)

```

**参数:**

- period:指定调仓周期

- demeaned:

- True:使用超额收益计算累积收益 (基准收益被认为是每日所有股票收益按照weight列中权重加权的均值)

- False:不使用超额收益

- group_adjust:

- True:使用行业中性化后的收益计算累积收益 (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False:不使用行业中性化后的收益

#### 根据调仓周期确定滞后期的每天计算因子自相关性

```

far.calc_autocorrelation(rank=True)

```

**参数:**

- rank:

- True:秩相关系数

- False:普通相关系数

#### 滞后n天因子值自相关性

```

far.calc_autocorrelation_n_days_lag(n=9,rank=True)

```

**参数:**

- n:滞后n天到1天的因子值自相关性

- rank:

- True:秩相关系数

- False:普通相关系数

#### 各分位数滞后1天到n天的换手率均值

```

far.calc_quantile_turnover_mean_n_days_lag(n=10)

```

**参数:**

- n:滞后 1 天到 n 天的换手率

#### 滞后 0 - n 天因子收益信息比率(IC)的移动平均

```

far.calc_ic_mean_n_days_lag(n=10,group_adjust=False,by_group=False,method=None)

```

**参数:**

- n:滞后0-n天因子收益的信息比率(IC)的移动平均

- group_adjust:

- True:使用行业中性收益计算 IC (行业收益被认为是每日各个行业股票收益按照weight列中权重加权的均值)

- False:不使用行业中性收益

- by_group:

- True:分行业计算 IC

- False:不分行业计算 IC

- method:

- 'rank':用秩相关系数计算IC值

- 'normal':用普通相关系数计算IC值

### 3. 获取聚宽因子库数据的方法

1. [聚宽因子库](https://www.joinquant.com/help/api/help?name=factor_values)包含数百个质量、情绪、风险等其他类目的因子

2. 连接jqdatasdk获取数据包,数据接口需调用聚宽 [`jqdatasdk`](https://github.com/JoinQuant/jqdatasdk/blob/master/README.md) 接口获取金融数据([试用注册地址](http://t.cn/EINDOxE))

```python

# 获取因子数据:以5日平均换手率为例,该数据可以直接用于因子分析

# 具体使用方法可以参照jqdatasdk的API文档

import jqdatasdk

jqdatasdk.auth('username', 'password')

# 获取聚宽因子库中的VOL5数据

factor_data=jqdatasdk.get_factor_values(

securities=jqdatasdk.get_index_stocks('000300.XSHG'),

factors=['VOL5'],

start_date='2018-01-01',

end_date='2018-12-31')['VOL5']

```

### 4. 将自有因子值转换成 DataFrame 格式的数据

- index 为日期,格式为 pandas 日期通用的 DatetimeIndex

- columns 为股票代码,格式要求符合聚宽的代码定义规则(如:平安银行的股票代码为 000001.XSHE)

- 如果是深交所上市的股票,在股票代码后面需要加入.XSHE

- 如果是上交所上市的股票,在股票代码后面需要加入.XSHG

- 将 pandas.DataFrame 转换成满足格式要求数据格式

首先要保证 index 为 `DatetimeIndex` 格式

一般是通过 pandas 提供的 [`pandas.to_datetime`](https://pandas.pydata.org/pandas-docs/stable/reference/api/pandas.to_datetime.html) 函数进行转换, 在转换前应确保 index 中的值都为合理的日期格式, 如 `'2018-01-01'` / `'20180101'`, 之后再调用 `pandas.to_datetime` 进行转换

另外应确保 index 的日期是按照从小到大的顺序排列的, 可以通过 [`sort_index`](https://pandas.pydata.org/pandas-docs/version/0.23.3/generated/pandas.DataFrame.sort_index.html) 进行排序

最后请检查 columns 中的股票代码是否都满足聚宽的代码定义

```python

import pandas as pd

sample_data = pd.DataFrame(

[[0.84, 0.43, 2.33, 0.86, 0.96],

[1.06, 0.51, 2.60, 0.90, 1.09],

[1.12, 0.54, 2.68, 0.94, 1.12],

[1.07, 0.64, 2.65, 1.33, 1.15],

[1.21, 0.73, 2.97, 1.65, 1.19]],

index=['2018-01-02', '2018-01-03', '2018-01-04', '2018-01-05', '2018-01-08'],

columns=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']

)

print(sample_data)

factor_data = sample_data.copy()

# 将 index 转换为 DatetimeIndex

factor_data.index = pd.to_datetime(factor_data.index)

# 将 DataFrame 按照日期顺序排列

factor_data = factor_data.sort_index()

# 检查 columns 是否满足聚宽股票代码格式

if not sample_data.columns.astype(str).str.match('\d{6}\.XSH[EG]').all():

print("有不满足聚宽股票代码格式的股票")

print(sample_data.columns[~sample_data.columns.astype(str).str.match('\d{6}\.XSH[EG]')])

print(factor_data)

```

- 将键为日期, 值为各股票因子值的 `Series` 的 `dict` 转换成 `pandas.DataFrame`

可以直接利用 `pandas.DataFrame` 生成

```python

sample_data = \

{'2018-01-02': pd.Seris([0.84, 0.43, 2.33, 0.86, 0.96],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']),

'2018-01-03': pd.Seris([1.06, 0.51, 2.60, 0.90, 1.09],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']),

'2018-01-04': pd.Seris([1.12, 0.54, 2.68, 0.94, 1.12],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']),

'2018-01-05': pd.Seris([1.07, 0.64, 2.65, 1.33, 1.15],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE']),

'2018-01-08': pd.Seris([1.21, 0.73, 2.97, 1.65, 1.19],

index=['000001.XSHE', '000002.XSHE', '000063.XSHE', '000069.XSHE', '000100.XSHE'])}

import pandas as pd

# 直接调用 pd.DataFrame 将 dict 转换为 DataFrame

factor_data = pd.DataFrame(data).T

print(factor_data)

# 之后请按照 DataFrame 的方法转换成满足格式要求数据格式

```

## 四、数据处理函数

#### 1. 中性化

```python

from jqfactor_analyzer import neutralize

neutralize(data, how=None, date=None, axis=1, fillna=None, add_constant=False)

```

**参数 :**

- data: pd.Series/pd.DataFrame, 待中性化的序列, 序列的 index/columns 为股票的 code

- how: str list. 中性化使用的因子名称列表.

默认为 ['jq_l1', 'market_cap'], 支持的中性化方法有:

(1) 行业: sw_l1, sw_l2, sw_l3, jq_l1, jq_l2

(2) mktcap(总市值), ln_mktcap(对数总市值), cmktcap(流通市值), ln_cmktcap(对数流通市值)

(3)自定义的中性化数据: 支持同时传入额外的 Series 或者 DataFrame 用来进行中性化, index 必须是标的代码

数列表。

- date: 日期, 将用 date 这天的相关变量数据对 series 进行中性化 (注意依赖数据的实际可用时间, 如市值数据当天盘中是无法获取到的)

- axis: 默认为 1. 仅在 data 为 pd.DataFrame 时生效. 表示沿哪个方向做中性化, 0 为对每列做中性化, 1 为对每行做中性化

- fillna: 缺失值填充方式, 默认为None, 表示不填充. 支持的值:

'jq_l1': 聚宽一级行业

'jq_l2': 聚宽二级行业

'sw_l1': 申万一级行业

'sw_l2': 申万二级行业

'sw_l3': 申万三级行业 表示使用某行业分类的均值进行填充.

- add_constant: 中性化时是否添加常数项, 默认为 False

**返回 :**

- 中性化后的因子数据

#### 2. 去极值

```python

from jqfactor_analyzer import winsorize

winsorize(data, scale=None, range=None, qrange=None, inclusive=True, inf2nan=True, axis=1)