` in the commit message, at the start.\n 3. Rebase `dev` with your branch and push your changes.\n 4. Once you are confident in your code changes, create a pull request in your fork to the `dev` branch in the LambdaTest/test-at-scale base repository.\n 5. Link the issue of the base repository in your Pull request description. [Guide](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue)\n 6. Fill out the [Pull Request Template](./.github/pull_request_template.md) completely within the body of the PR. If you feel some areas are not relevant add `N/A` but don’t delete those sections.\n\n\n#### **Commit messages**\n\n- The first line should be a summary of the changes, not exceeding 50\n characters, followed by an optional body that has more details about the\n changes. Refer to [this link](https://github.com/erlang/otp/wiki/writing-good-commit-messages)\n for more information on writing good commit messages.\n\n- Don't add a period/dot (.) at the end of the summary line.\n"

},

{

"path": "LICENSE",

"content": "Apache License\n Version 2.0, January 2004\n http://www.apache.org/licenses/\n\n TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION\n\n 1. Definitions.\n\n \"License\" shall mean the terms and conditions for use, reproduction,\n and distribution as defined by Sections 1 through 9 of this document.\n\n \"Licensor\" shall mean the copyright owner or entity authorized by\n the copyright owner that is granting the License.\n\n \"Legal Entity\" shall mean the union of the acting entity and all\n other entities that control, are controlled by, or are under common\n control with that entity. For the purposes of this definition,\n \"control\" means (i) the power, direct or indirect, to cause the\n direction or management of such entity, whether by contract or\n otherwise, or (ii) ownership of fifty percent (50%) or more of the\n outstanding shares, or (iii) beneficial ownership of such entity.\n\n \"You\" (or \"Your\") shall mean an individual or Legal Entity\n exercising permissions granted by this License.\n\n \"Source\" form shall mean the preferred form for making modifications,\n including but not limited to software source code, documentation\n source, and configuration files.\n\n \"Object\" form shall mean any form resulting from mechanical\n transformation or translation of a Source form, including but\n not limited to compiled object code, generated documentation,\n and conversions to other media types.\n\n \"Work\" shall mean the work of authorship, whether in Source or\n Object form, made available under the License, as indicated by a\n copyright notice that is included in or attached to the work\n (an example is provided in the Appendix below).\n\n \"Derivative Works\" shall mean any work, whether in Source or Object\n form, that is based on (or derived from) the Work and for which the\n editorial revisions, annotations, elaborations, or other modifications\n represent, as a whole, an original work of authorship. For the purposes\n of this License, Derivative Works shall not include works that remain\n separable from, or merely link (or bind by name) to the interfaces of,\n the Work and Derivative Works thereof.\n\n \"Contribution\" shall mean any work of authorship, including\n the original version of the Work and any modifications or additions\n to that Work or Derivative Works thereof, that is intentionally\n submitted to Licensor for inclusion in the Work by the copyright owner\n or by an individual or Legal Entity authorized to submit on behalf of\n the copyright owner. For the purposes of this definition, \"submitted\"\n means any form of electronic, verbal, or written communication sent\n to the Licensor or its representatives, including but not limited to\n communication on electronic mailing lists, source code control systems,\n and issue tracking systems that are managed by, or on behalf of, the\n Licensor for the purpose of discussing and improving the Work, but\n excluding communication that is conspicuously marked or otherwise\n designated in writing by the copyright owner as \"Not a Contribution.\"\n\n \"Contributor\" shall mean Licensor and any individual or Legal Entity\n on behalf of whom a Contribution has been received by Licensor and\n subsequently incorporated within the Work.\n\n 2. Grant of Copyright License. Subject to the terms and conditions of\n this License, each Contributor hereby grants to You a perpetual,\n worldwide, non-exclusive, no-charge, royalty-free, irrevocable\n copyright license to reproduce, prepare Derivative Works of,\n publicly display, publicly perform, sublicense, and distribute the\n Work and such Derivative Works in Source or Object form.\n\n 3. Grant of Patent License. Subject to the terms and conditions of\n this License, each Contributor hereby grants to You a perpetual,\n worldwide, non-exclusive, no-charge, royalty-free, irrevocable\n (except as stated in this section) patent license to make, have made,\n use, offer to sell, sell, import, and otherwise transfer the Work,\n where such license applies only to those patent claims licensable\n by such Contributor that are necessarily infringed by their\n Contribution(s) alone or by combination of their Contribution(s)\n with the Work to which such Contribution(s) was submitted. If You\n institute patent litigation against any entity (including a\n cross-claim or counterclaim in a lawsuit) alleging that the Work\n or a Contribution incorporated within the Work constitutes direct\n or contributory patent infringement, then any patent licenses\n granted to You under this License for that Work shall terminate\n as of the date such litigation is filed.\n\n 4. Redistribution. You may reproduce and distribute copies of the\n Work or Derivative Works thereof in any medium, with or without\n modifications, and in Source or Object form, provided that You\n meet the following conditions:\n\n (a) You must give any other recipients of the Work or\n Derivative Works a copy of this License; and\n\n (b) You must cause any modified files to carry prominent notices\n stating that You changed the files; and\n\n (c) You must retain, in the Source form of any Derivative Works\n that You distribute, all copyright, patent, trademark, and\n attribution notices from the Source form of the Work,\n excluding those notices that do not pertain to any part of\n the Derivative Works; and\n\n (d) If the Work includes a \"NOTICE\" text file as part of its\n distribution, then any Derivative Works that You distribute must\n include a readable copy of the attribution notices contained\n within such NOTICE file, excluding those notices that do not\n pertain to any part of the Derivative Works, in at least one\n of the following places: within a NOTICE text file distributed\n as part of the Derivative Works; within the Source form or\n documentation, if provided along with the Derivative Works; or,\n within a display generated by the Derivative Works, if and\n wherever such third-party notices normally appear. The contents\n of the NOTICE file are for informational purposes only and\n do not modify the License. You may add Your own attribution\n notices within Derivative Works that You distribute, alongside\n or as an addendum to the NOTICE text from the Work, provided\n that such additional attribution notices cannot be construed\n as modifying the License.\n\n You may add Your own copyright statement to Your modifications and\n may provide additional or different license terms and conditions\n for use, reproduction, or distribution of Your modifications, or\n for any such Derivative Works as a whole, provided Your use,\n reproduction, and distribution of the Work otherwise complies with\n the conditions stated in this License.\n\n 5. Submission of Contributions. Unless You explicitly state otherwise,\n any Contribution intentionally submitted for inclusion in the Work\n by You to the Licensor shall be under the terms and conditions of\n this License, without any additional terms or conditions.\n Notwithstanding the above, nothing herein shall supersede or modify\n the terms of any separate license agreement you may have executed\n with Licensor regarding such Contributions.\n\n 6. Trademarks. This License does not grant permission to use the trade\n names, trademarks, service marks, or product names of the Licensor,\n except as required for reasonable and customary use in describing the\n origin of the Work and reproducing the content of the NOTICE file.\n\n 7. Disclaimer of Warranty. Unless required by applicable law or\n agreed to in writing, Licensor provides the Work (and each\n Contributor provides its Contributions) on an \"AS IS\" BASIS,\n WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or\n implied, including, without limitation, any warranties or conditions\n of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A\n PARTICULAR PURPOSE. You are solely responsible for determining the\n appropriateness of using or redistributing the Work and assume any\n risks associated with Your exercise of permissions under this License.\n\n 8. Limitation of Liability. In no event and under no legal theory,\n whether in tort (including negligence), contract, or otherwise,\n unless required by applicable law (such as deliberate and grossly\n negligent acts) or agreed to in writing, shall any Contributor be\n liable to You for damages, including any direct, indirect, special,\n incidental, or consequential damages of any character arising as a\n result of this License or out of the use or inability to use the\n Work (including but not limited to damages for loss of goodwill,\n work stoppage, computer failure or malfunction, or any and all\n other commercial damages or losses), even if such Contributor\n has been advised of the possibility of such damages.\n\n 9. Accepting Warranty or Additional Liability. While redistributing\n the Work or Derivative Works thereof, You may choose to offer,\n and charge a fee for, acceptance of support, warranty, indemnity,\n or other liability obligations and/or rights consistent with this\n License. However, in accepting such obligations, You may act only\n on Your own behalf and on Your sole responsibility, not on behalf\n of any other Contributor, and only if You agree to indemnify,\n defend, and hold each Contributor harmless for any liability\n incurred by, or claims asserted against, such Contributor by reason\n of your accepting any such warranty or additional liability.\n\n END OF TERMS AND CONDITIONS\n\n APPENDIX: How to apply the Apache License to your work.\n\n To apply the Apache License to your work, attach the following\n boilerplate notice, with the fields enclosed by brackets \"[]\"\n replaced with your own identifying information. (Don't include\n the brackets!) The text should be enclosed in the appropriate\n comment syntax for the file format. We also recommend that a\n file or class name and description of purpose be included on the\n same \"printed page\" as the copyright notice for easier\n identification within third-party archives.\n\n Copyright 2021 LambdaTest Inc.\n\n Licensed under the Apache License, Version 2.0 (the \"License\");\n you may not use this file except in compliance with the License.\n You may obtain a copy of the License at\n\n http://www.apache.org/licenses/LICENSE-2.0\n\n Unless required by applicable law or agreed to in writing, software\n distributed under the License is distributed on an \"AS IS\" BASIS,\n WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.\n See the License for the specific language governing permissions and\n limitations under the License.\n \n"

},

{

"path": "Makefile",

"content": "NUCLEUS_DOCKER_FILE ?= ./build/nucleus/Dockerfile\nNUCLEUS_IMAGE_NAME ?= lambdatest/nucleus:latest\n\nSYNAPSE_DOCKER_FILE ?= ./build/synapse/Dockerfile\nSYNAPSE_IMAGE_NAME ?= lambdatest/synapse:latest\n\nREV_LIST ?= $(shell git rev-list --tags --max-count=1)\nVERSION ?= $(shell git describe --tags ${REV_LIST})\n\nusage:\t\t\t\t\t\t## Show this help\n\t@fgrep -h \"##\" $(MAKEFILE_LIST) | fgrep -v fgrep | sed -e 's/:.*##\\s*/##/g' | awk -F'##' '{ printf \"%-25s -> %s\\n\", $$1, $$2 }'\n\nlint:\t\t\t\t\t\t## Runs linting\n\tgolangci-lint run\n\nbuild-nucleus-image:\t\t## Builds nucleus docker image\n\tdocker build --build-arg VERSION=${VERSION}-dev -t ${NUCLEUS_IMAGE_NAME} --file $(NUCLEUS_DOCKER_FILE) .\n\nbuild-nucleus-bin:\t\t\t## Builds nucleus binary\n\tbash build/nucleus/build.sh\n\nbuild-synapse-image:\t\t## Builds synapse docker image\n\tdocker build --build-arg VERSION=${VERSION}-dev -t ${SYNAPSE_IMAGE_NAME} --file $(SYNAPSE_DOCKER_FILE) .\n\nbuild-synapse-bin:\t\t\t## Builds synapse binary\n\tbash build/synapse/build.sh\n\ninstall-mockery-mac:\n\tbrew install mockery\n\ninstall-mockery-linux:\n\tapt update && apt install -y mockery\n\ngen-mock-files:\n\tmockery --dir=./pkg --all\n"

},

{

"path": "README.md",

"content": "\n  \n

\n

\nTest At Scale

\n\n\n\n\n Test Smarter, Release Faster with test-at-scale.\n

\n\n\n  \n

\n  \n

\n  \n

\n  \n

\n  \n

\n  \n\n

\n\n

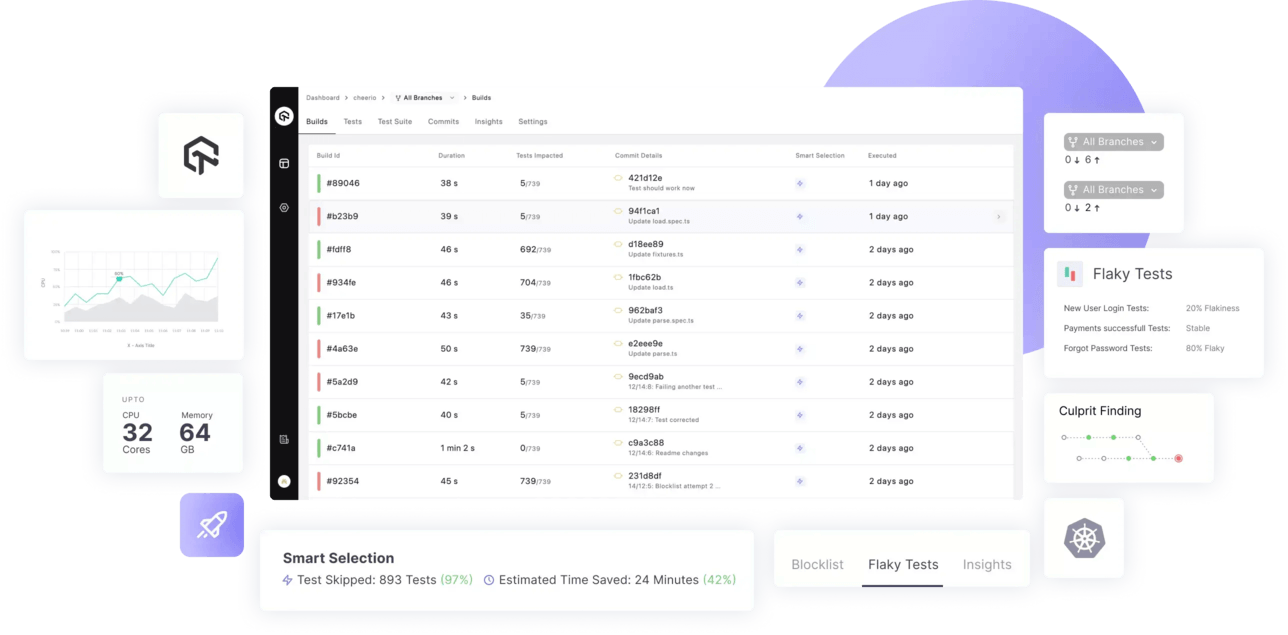

\n\n## Test at scale - TAS\nTAS helps you accelerate your testing, shorten job times and get faster feedback on code changes, manage flaky tests and keep master green at all times.\n

\n\nTo learn more about TAS features and capabilities, see our [product page](https://www.lambdatest.com/test-at-scale). \n\n## Features\n- Smart test selection to run only the subset of tests which get impacted by a commit ⚡\n- Smart auto grouping of test to evenly distribute test execution across multiple containers based on previous execution times\n- Deep insights about test runs and execution metrics\n- Support status checks for pull requests\n- Advanced analytics to surface test performance and quality data\n- YAML driven declarative workflow management\n- Natively integrates with Github and Gitlab\n- Flexible workflow to run pre-merge and post-merge tests\n- Allows blocking and unblocking tests directly from the UI or YAML directive. No more WIP commits!\n- Support for customizing testing environment using raw commands in pre and poststeps\n- Supports Javascript monorepos\n- Smart depdency caching to speedup subsequent test runs\n- Easily customizable to support all major language and frameworks\n- Available as [hosted solution](https://lambdatest.com/test-at-scale) as well as self-hosted opensource runner\n- [Upcoming] Smart flaky test management 🪄\n\n## Table of contents \n- 🚀 [Getting Started](#getting-started)\n- 💡 [Tutorials](#tutorials)\n- 💖 [Contribute](#contribute)\n- 📖 [Docs](https://www.lambdatest.com/support/docs/tas-overview)\n\n## Getting Started\n\n### Step 1 - Setting up a New Account\n\nIn order to create an account, visit [TAS Login Page](https://tas.lambdatest.com/login/). (Or [TAS Home Page](https://tas.lambdatest.com/))\n- Login using a suitable git provider and select your organization you want to continue with.\n- Tell us your specialization, team size. \n\n \n \n- Select **TAS Self Hosted** and click on Proceed.\n- You will find your **LambdaTest Secret Key** on this page which will be required in the next steps.\n\n \n\n

\n\n### Step 2 - Creating a configuration file for self hosted setup\n\nBefore installation we need to create a file that will be used for configuring test-at-scale. \n\n- Open any `Terminal` of your choice.\n- Move to your desired directory or you can create a new directory and move to it using the following command.\n- Download our sample configuration file using the given command.\n\n```bash\nmkdir ~/test-at-scale\ncd ~/test-at-scale\ncurl https://raw.githubusercontent.com/LambdaTest/test-at-scale/main/.sample.synapse.json -o .synapse.json\n```\n\n- Open the downloaded `.synapse.json` configuration file in any editor of your choice such as `vi`, `nano`, `code`, etc.\n> **NOTE**: `.synapse.json` file is hidden by default. You can list it using `ls -la` command.\n- You will need to add the following in this file:\n - 1- **LambdaTest Secret Key**, that you got at the end of **Step 1**.\n - 2- **Git Token**, that would be required to clone the repositories after Step 3. Generating [GitHub](https://www.lambdatest.com/support/docs/tas-how-to-guides-gh-token), [GitLab](https://www.lambdatest.com/support/docs/tas-how-to-guides-gl-token) personal access token.\n- This file will also be used to store certain other parameters such as **Repository Secrets** (Optional), **Container Registry** (Optional) etc that might be required in configuring test-at-scale on your local/self-hosted environment. You can learn more about the configuration options [here](https://www.lambdatest.com/support/docs/tas-self-hosted-configuration#parameters).\n\n

\n\n### Step 3 - Installation\n\n#### Installation on Docker\n\n##### Prerequisites\n- [Docker](https://docs.docker.com/get-docker/) and [Docker-Compose](https://docs.docker.com/compose/install/) (Recommended)\n\n##### Docker Compose\n- Run the docker application.\n \n ```bash\n docker info --format \"CPU: {{.NCPU}}, RAM: {{.MemTotal}}\"\n ```\n- Execute the above command to ensure that resources usable by Docker are atleast `CPU: 2, RAM: 4294967296`.\n > **NOTE:** In order to run test-at-scale you require a minimum configuration of 2 CPU cores and 4 GiBs of RAM.\n\n- The `.synapse.json` configuration file made in [Step 2](#step-2---creating-a-configuration-file-for-self-hosted-setup) will be required before executing the next command.\n- Download and run the docker compose file using the following command.\n \n ```bash\n cd ~/test-at-scale\n curl -L https://raw.githubusercontent.com/LambdaTest/test-at-scale/main/docker-compose.yml -o docker-compose.yml\n docker-compose up -d\n ```\n\n> **NOTE:** This docker-compose file will pull the latest version of test-at-scale and install on your self hosted environment.\n\n\nInstallation without Docker Compose

\n\nTo get up and running quickly, you can use the following instructions to setup Test at Scale on Self hosted environment without docker-compose.\n\n- The `.synapse.json` configuration file made in [Step 2](#step-2---creating-a-configuration-file-for-self-hosted-setup) will be required before executing the next command.\n- Execute the following command to run Test at Scale docker container\n\n```bash\ncd ~/test-at-scale\ndocker network create --internal test-at-scale\ndocker run --name synapse --restart always \\\n -v /var/run/docker.sock:/var/run/docker.sock \\\n -v /tmp/synapse:/tmp/synapse \\\n -v ${PWD}/.synapse.json:/home/synapse/.synapse.json \\\n -v /etc/machine-id:/etc/machine-id \\\n --network=test-at-scale \\\n lambdatest/synapse:latest\n```\n> **WARNING:** We strongly recommend to use docker-compose while Test at Scale on Self hosted environment.\n\n \n\n\nInstallation on Local Machine & Supported Cloud Platforms

\n\n- Local Machine - Setup using [docker](#docker).\n- Setup on [Azure](https://www.lambdatest.com/support/docs/tas-self-hosted-installation#azure)\n- Setup on [AWS](https://www.lambdatest.com/support/docs/tas-self-hosted-installation#aws)\n- Setup on [GCP](https://www.lambdatest.com/support/docs/tas-self-hosted-installation#gcp)\n \n\n- Once the installation is complete, go back to the TAS portal.\n- Click the 'Test Connection' button to ensure `test-at-scale` self hosted environment is connected and ready.\n- Hit `Proceed` to move forward to [Step 4](#step-4---importing-your-repo)\n\n

\n\n### Step 4 - Importing your repo\n> **NOTE:** Currently we support Mocha, Jest and Jasmine for testing Javascript codebases.\n- Click the Import button for the `JS` repository you want to integrate with TAS.\n- Once Imported successfully, click on `Go to Project` to proceed further.\n- You will be asked to setup a `post-merge` here. We recommend to proceed ahead with default settings. (You can change these later.) \n\n \n\n

\n\n### Step 5 - Configuring TAS yml\nA `.tas.yml` file is a basic yaml configuration file that contains steps required for installing necessary dependencies and executing the tests present in your repository.\n- In order to configure your imported repository, follow the steps given on the `.tas.yml` configuration page.\n- You can also know more about `.tas.yml` configuration parameters [here](https://www.lambdatest.com/support/docs/tas-configuring-tas-yml).\n\n \n \n- Placing the `.tas.yml` configuration file.\n - Create a new file as **.tas.yml** at the root level of your repository .\n - **Copy** the configuration from the TAS yml configuration page and **paste** them in the **.tas.yml** file you just created.\n - **Commit and Push** the changes to your repo.\n \n \n\n## **Language & Framework Support**\nCurrently we support Mocha, Jest and Jasmine for testing Javascript codebases.\n\n## **Tutorials**\n- [Setting up you first repo on TAS - Cloud](https://www.lambdatest.com/support/docs/tas-getting-started-integrating-your-first-repo/) \n- [Setting up you first repo on TAS - Self Hosted](https://www.lambdatest.com/support/docs/tas-self-hosted-installation) \n- Sample repos : [Mocha](https://github.com/LambdaTest/mocha-demos), [Jest](https://github.com/LambdaTest/jest-demos), [Jasmine](https://github.com/LambdaTest/jasmine-node-js-example).\n- [How to configure a .tas.yml file](https://www.lambdatest.com/support/docs/tas-configuring-tas-yml)\n\n## **Contribute**\nWe love our contributors! If you'd like to contribute anything from a bug fix to a feature update, start here:\n\n- 📕 Read our Code of Conduct [Code of Conduct](https://github.com/LambdaTest/test-at-scale/blob/main/CODE_OF_CONDUCT.md).\n- 📖 Know more about [test-at-scale](https://github.com/LambdaTest/test-at-scale/blob/main/CONTRIBUTING.md#repo-overview) and contributing from our [Contribution Guide](https://github.com/LambdaTest/test-at-scale/blob/main/CONTRIBUTING.md).\n- 👾 Explore some good first issues [good first issues](https://github.com/LambdaTest/test-at-scale/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22).\n\n### **Join our community**\nEngage with Developers, SDETs, and Testers around the world. \n- Get the latest product updates. \n- Discuss testing philosophies and more. \nJoin the Test-at-scale Community on [Discord](https://discord.gg/Wyf8srhf6K). Click [here](https://discord.com/channels/940635450509504523/941297958954102846) if you are already an existing member.\n\n### **Support & Troubleshooting** \nThe documentation and community will help you troubleshoot most issues. If you have encountered a bug, you can contact us using one of the following channels:\n- Help yourself with our [Documentation](https://www.lambdatest.com/support/docs/tas-overview)📚, and [FAQs](https://www.lambdatest.com/support/docs/tas-faq-and-troubleshooting/).\n- In case of Issue & bugs go to [GitHub issues](https://github.com/LambdaTest/test-at-scale/issues)🐛.\n- For support & feedback join our [Discord](https://discord.gg/Wyf8srhf6K) or reach out to us on our [email](mailto:hello.tas@lambdatest.com)💬.\n\nWe are committed to fostering an open and welcoming environment in the community. Please see the Code of Conduct.\n\n## **License**\n\nTestAtScale is available under the [Apache License 2.0](https://github.com/LambdaTest/test-at-scale/blob/main/LICENSE). Use it wisely.\n"

},

{

"path": "build/nucleus/Dockerfile",

"content": "FROM golang:latest as builder\n\n# create a working directory\nCOPY . /nucleus\nWORKDIR /nucleus\n\n\n# Build binary\nRUN GOARCH=amd64 GOOS=linux go build -ldflags=\"-w -s\" -o nucleus cmd/nucleus/*.go\n# Uncomment only when build is highly stable. Compress binary.\n# RUN strip --strip-unneeded ts\n# RUN upx ts\n\n# use a minimal alpine image\nFROM nikolaik/python-nodejs:python3.10-nodejs16-slim\n\nARG VERSION\nENV VERSION=$VERSION\n\n# Installing chromium so that all linux libs get automatically installed for running puppeteer tests\nRUN apt update && apt install -y git zstd chromium curl unzip zip xmlstarlet build-essential\nRUN curl -LJO https://go.dev/dl/go1.18.3.linux-amd64.tar.gz\nRUN tar -C /usr/local -xzf go1.18.3.linux-amd64.tar.gz\n\nCOPY bundle /usr/local/bin/bundle\nRUN chmod +x /usr/local/bin/bundle\nENV SMART_BINARY=/usr/local/bin/bundle\n\n# Install Custom Runners\nRUN mkdir /custom-runners\nRUN mkdir /tmp/custom-runners\n\nWORKDIR /tmp/custom-runners\nRUN npm init -y\nRUN npm install -g pnpm\nRUN npm i --global-style --legacy-peer-deps \\\n @lambdatest/test-at-scale-jasmine-runner@~0.3.0 \\\n @lambdatest/test-at-scale-mocha-runner@~0.3.0 \\\n @lambdatest/test-at-scale-jest-runner@~0.3.0\nRUN npm i -g nyc@^15.1.0\n\nRUN tar -zcf /custom-runners/custom-runners.tgz node_modules\nRUN rm -rf /tmp/custom-runners\nRUN mkdir /home/nucleus\nRUN mkdir /home/nucleus/.nvm\nENV NVM_DIR=/home/nucleus/.nvm\n\nENV GOROOT /usr/local/go\nENV GOPATH /home/nucleus\nENV PATH /usr/local/go/bin:/home/nucleus/bin:$PATH\n\nCOPY ./build/nucleus/golang/server /home/nucleus\n\nRUN chmod 744 /home/nucleus/server\n\n# install nvm for nucleus user\nRUN curl -o- https://raw.githubusercontent.com/nvm-sh/nvm/v0.39.1/install.sh | /bin/bash\n\nWORKDIR /home/nucleus\n# copy the binary from builder\nCOPY --from=builder /nucleus/nucleus /usr/local/bin/\n# run the binary\nCOPY ./build/nucleus/entrypoint.sh /\n\nRUN apt update -y && apt upgrade -y\n\nRUN curl -s https://get.sdkman.io | bash\nRUN /bin/bash -c \"source $HOME/.sdkman/bin/sdkman-init.sh;sdk install java 18.0.1-oracle\"\n\nENV JAVA_HOME=\"/root/.sdkman/candidates/java/current\"\nENV PATH=$JAVA_HOME:$PATH\nENV PATH=$JAVA_HOME/bin:$PATH\n\nARG MAVEN_VERSION=3.6.3\n\n# Define a constant with the working directory\nARG USER_HOME_DIR=\"/root\"\n# Define the URL where maven can be downloaded from\nARG BASE_URL=https://apache.osuosl.org/maven/maven-3/${MAVEN_VERSION}/binaries\n\n# Create the directories, download maven, validate the download, install it, remove downloaded file and set links\nRUN mkdir -p /usr/share/maven /usr/share/maven/ref \\\n && echo \"Downlaoding maven\" \\\n && curl -fsSL -o /tmp/apache-maven.tar.gz ${BASE_URL}/apache-maven-${MAVEN_VERSION}-bin.tar.gz \\\n \\\n && echo \"Unziping maven\" \\\n && tar -xzf /tmp/apache-maven.tar.gz -C /usr/share/maven --strip-components=1 \\\n \\\n && echo \"Cleaning and setting links\" \\\n && rm -f /tmp/apache-maven.tar.gz \\\n && ln -s /usr/share/maven/bin/mvn /usr/bin/mvn\n\n# Define environmental variables required by Maven, like Maven_Home directory and where the maven repo is located\nENV MAVEN_HOME /usr/share/maven\nRUN mkdir -p /home/nucleus/.m2\n\n#update settings.xml file for new maven local repo location\nRUN xmlstarlet ed -O --inplace -N a='http://maven.apache.org/SETTINGS/1.0.0' -s /a:settings --type elem --name \"localRepository\" -v /home/nucleus/.m2/repository /usr/share/maven/conf/settings.xml\n\nCOPY ./build/nucleus/java/test-at-scale-java.jar /\nRUN curl -o /home/nucleus/junit-platform-console-standalone-1.8.2.jar https://repo1.maven.org/maven2/org/junit/platform/junit-platform-console-standalone/1.8.2/junit-platform-console-standalone-1.8.2.jar\nCOPY ./build/nucleus/entrypoint.sh /\nENTRYPOINT [\"/bin/sh\", \"/entrypoint.sh\"]"

},

{

"path": "build/nucleus/build.sh",

"content": "#!/usr/bin\n# exit when any command fails\nset -e\n\n# keep track of the last executed command\ntrap 'last_command=$current_command; current_command=$BASH_COMMAND' DEBUG\n# echo an error message before exiting\ntrap 'echo \"\\\"${last_command}\\\" command filed with exit code $?.\"' EXIT\n\necho 'Building binary'\ngo build -o nucleus ./cmd/nucleus/*.go\necho 'Binary successfully build by the name of `nucleus`'\n"

},

{

"path": "build/nucleus/entrypoint.sh",

"content": "#!/bin/sh\n\nexec /usr/local/bin/nucleus \"$@\"\n"

},

{

"path": "build/nucleus/java/test-at-scale-java.jar",

"content": ""

},

{

"path": "build/synapse/Dockerfile",

"content": "FROM golang:latest as builder\n\n# create a working directory\nCOPY . /synapse\nWORKDIR /synapse\n\n# Build binary\nRUN go build -o synapse cmd/synapse/*.go\n\n# use a minimal alpine image\nFROM docker:latest\n\nARG VERSION\nENV VERSION=$VERSION\n\n# add ca-certificates in case you need them\nRUN apk update && apk add ca-certificates && rm -rf /var/cache/apk/*\n\n# Create a group and user\nRUN addgroup -S synapse && adduser -S synapse -G synapse\n\n# set working directory\nWORKDIR /home/synapse\n\n# copy the binary from builder\nCOPY --chown=synapse:synapse --from=builder /synapse/synapse .\n\nCOPY ./build/synapse/entrypoint.sh /\n# run the binary\nENTRYPOINT [\"/bin/sh\", \"/entrypoint.sh\"]"

},

{

"path": "build/synapse/build.sh",

"content": "#!/usr/bin\n# exit when any command fails\nset -e\n\n# keep track of the last executed command\ntrap 'last_command=$current_command; current_command=$BASH_COMMAND' DEBUG\n# echo an error message before exiting\ntrap 'echo \"\\\"${last_command}\\\" command filed with exit code $?.\"' EXIT\n\necho 'Building binary'\ngo build -o synapse ./cmd/synapse/*.go\necho 'Binary successfully build by the name of `synapse`'\n"

},

{

"path": "build/synapse/entrypoint.sh",

"content": "#!/bin/sh\nexec -- /home/synapse/synapse \"$@\"\n"

},

{

"path": "bundle",

"content": "\n"

},

{

"path": "cmd/nucleus/bin.go",

"content": "package main\n\n// this is cmd/root_cmd.go\n\nimport (\n\t\"context\"\n\t\"fmt\"\n\t\"log\"\n\t\"os\"\n\t\"os/signal\"\n\t\"path/filepath\"\n\t\"strings\"\n\t\"sync\"\n\t\"time\"\n\n\t\"github.com/LambdaTest/test-at-scale/config\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/api\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/azure\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/blocktestservice\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/cachemanager\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/command\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/core\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/diffmanager\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/driver\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/gitmanager\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/global\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/listsubmoduleservice\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/lumber\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/payloadmanager\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/requestutils\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/secret\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/server\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/service/coverage\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/service/teststats\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/tasconfigmanager\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/task\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/testdiscoveryservice\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/testexecutionservice\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/zstd\"\n\t\"github.com/cenkalti/backoff/v4\"\n\t\"github.com/spf13/cobra\"\n)\n\n// RootCommand will setup and return the root command\nfunc RootCommand() *cobra.Command {\n\trootCmd := cobra.Command{\n\t\tUse: \"nucleus\",\n\t\tLong: `nucleus is a coordinator binary used as entrypoint in tas containers`,\n\t\tVersion: global.NucleusBinaryVersion,\n\t\tRun: run,\n\t}\n\n\t// define flags used for this command\n\tAttachCLIFlags(&rootCmd)\n\n\treturn &rootCmd\n}\n\nfunc run(cmd *cobra.Command, args []string) {\n\t// create a context that we can cancel\n\tctx, cancel := context.WithCancel(context.Background())\n\tdefer cancel()\n\n\t// timeout in seconds\n\tconst gracefulTimeout = 5000 * time.Millisecond\n\n\t// a WaitGroup for the goroutines to tell us they've stopped\n\twg := sync.WaitGroup{}\n\n\tcfg, err := config.LoadNucleusConfig(cmd)\n\tif err != nil {\n\t\tfmt.Printf(\"[Error] Failed to load config: \" + err.Error())\n\t\tos.Exit(1)\n\t}\n\n\t// patch logconfig file location with root level log file location\n\tif cfg.LogFile != \"\" {\n\t\tcfg.LogConfig.FileLocation = filepath.Join(cfg.LogFile, \"nucleus.log\")\n\t}\n\n\t// You can also use logrus implementation\n\t// by using lumber.InstanceLogrusLogger\n\tlogger, err := lumber.NewLogger(cfg.LogConfig, cfg.Verbose, lumber.InstanceZapLogger)\n\tif err != nil {\n\t\tlog.Fatalf(\"Could not instantiate logger %s\", err.Error())\n\t}\n\tlogger.Debugf(\"Running on local: %t\", cfg.LocalRunner)\n\n\tif cfg.LocalRunner {\n\t\tlogger.Infof(\"Local runner detected , changing IP from: %s to: %s\", global.NeuronHost, cfg.SynapseHost)\n\t\tglobal.SetNeuronHost(strings.TrimSpace(cfg.SynapseHost))\n\n\t\tlogger.Infof(\"change neuron host to %s\", global.NeuronHost)\n\t} else {\n\t\tglobal.SetNeuronHost(global.NeuronRemoteHost)\n\t}\n\tpl, err := core.NewPipeline(cfg, logger)\n\tif err != nil {\n\t\tlogger.Errorf(\"Unable to create the pipeline: %+v\\n\", err)\n\t\tlogger.Errorf(\"Aborting ...\")\n\t\tos.Exit(1)\n\t}\n\n\tts, err := teststats.New(cfg, logger)\n\tif err != nil {\n\t\tlogger.Fatalf(\"failed to initialize test stats service: %v\", err)\n\t}\n\tdefaultRequests := requestutils.New(logger, global.DefaultAPITimeout, backoff.NewExponentialBackOff())\n\n\tazureClient, err := azure.NewAzureBlobEnv(cfg, defaultRequests, logger)\n\tif err != nil {\n\t\tlogger.Fatalf(\"failed to initialize azure blob: %v\", err)\n\t}\n\tif err != nil && !cfg.LocalRunner {\n\t\tlogger.Fatalf(\"failed to initialize azure blob: %v\", err)\n\t}\n\n\t// attach plugins to pipeline\n\tpm := payloadmanager.NewPayloadManger(azureClient, logger, cfg, defaultRequests)\n\tsecretParser := secret.New(logger)\n\ttcm := tasconfigmanager.NewTASConfigManager(logger)\n\texecManager := command.NewExecutionManager(secretParser, azureClient, logger)\n\tgm := gitmanager.NewGitManager(logger, execManager)\n\tdm := diffmanager.NewDiffManager(cfg, logger)\n\n\ttdResChan := make(chan core.DiscoveryResult)\n\ttds := testdiscoveryservice.NewTestDiscoveryService(ctx, tdResChan, execManager, defaultRequests, logger)\n\ttes := testexecutionservice.NewTestExecutionService(cfg, defaultRequests, execManager, azureClient, ts, logger)\n\ttbs := blocktestservice.NewTestBlockTestService(cfg, defaultRequests, logger)\n\trouter := api.NewRouter(logger, ts, tdResChan)\n\n\tt, err := task.New(defaultRequests, logger)\n\tif err != nil {\n\t\tlogger.Fatalf(\"failed to initialize task: %v\", err)\n\t}\n\n\tzstd, err := zstd.New(execManager, logger)\n\tif err != nil {\n\t\tlogger.Fatalf(\"failed to initialize zstd compressor: %v\", err)\n\t}\n\tcache, err := cachemanager.New(zstd, azureClient, logger)\n\tif err != nil {\n\t\tlogger.Fatalf(\"failed to initialize cache manager: %v\", err)\n\t}\n\n\tcoverageService, err := coverage.New(execManager, azureClient, zstd, cfg, logger)\n\tif err != nil {\n\t\tlogger.Fatalf(\"failed to initialize coverage service: %v\", err)\n\t}\n\tlistsubmodule := listsubmoduleservice.New(defaultRequests, logger)\n\n\tbuilder := driver.Builder{\n\t\tLogger: logger,\n\t\tTestExecutionService: tes,\n\t\tTestDiscoveryService: tds,\n\t\tAzureClient: azureClient,\n\t\tBlockTestService: tbs,\n\t\tExecutionManager: execManager,\n\t\tTASConfigManager: tcm,\n\t\tCacheStore: cache,\n\t\tDiffManager: dm,\n\t\tListSubModuleService: listsubmodule,\n\t}\n\n\tpl.PayloadManager = pm\n\tpl.TASConfigManager = tcm\n\tpl.GitManager = gm\n\tpl.DiffManager = dm\n\tpl.TestDiscoveryService = tds\n\tpl.BlockTestService = tbs\n\tpl.TestExecutionService = tes\n\tpl.ExecutionManager = execManager\n\tpl.CoverageService = coverageService\n\tpl.TestStats = ts\n\tpl.Task = t\n\tpl.CacheStore = cache\n\tpl.SecretParser = secretParser\n\tpl.Builder = &builder\n\n\tlogger.Infof(\"LambdaTest Nucleus version: %s\", global.NucleusBinaryVersion)\n\n\twg.Add(1)\n\tgo func() {\n\t\tdefer cancel()\n\t\tdefer wg.Done()\n\t\t// starting pipeline\n\t\tpl.Start(ctx)\n\t}()\n\twg.Add(1)\n\tgo func() {\n\t\tdefer cancel()\n\t\tdefer wg.Done()\n\t\tserver.ListenAndServe(ctx, router, cfg, logger)\n\t}()\n\t// listen for C-c\n\tc := make(chan os.Signal, 1)\n\tsignal.Notify(c, os.Interrupt)\n\n\t// create channel to mark status of waitgroup\n\t// this is required to brutally kill application in case of\n\t// timeout\n\tdone := make(chan struct{})\n\n\t// asynchronously wait for all the go routines\n\tgo func() {\n\t\t// and wait for all go routines\n\t\twg.Wait()\n\t\tlogger.Debugf(\"main: all goroutines have finished.\")\n\t\tclose(done)\n\t}()\n\n\t// wait for signal channel\n\tselect {\n\tcase <-c:\n\t\t{\n\t\t\tlogger.Debugf(\"main: received C-c - attempting graceful shutdown ....\")\n\t\t\t// tell the goroutines to stop\n\t\t\tlogger.Debugf(\"main: telling goroutines to stop\")\n\t\t\tcancel()\n\t\t\tselect {\n\t\t\tcase <-done:\n\t\t\t\tlogger.Debugf(\"Go routines exited within timeout\")\n\t\t\tcase <-time.After(gracefulTimeout):\n\t\t\t\tlogger.Errorf(\"Graceful timeout exceeded. Brutally killing the application\")\n\t\t\t}\n\n\t\t}\n\tcase <-done:\n\t\tos.Exit(0)\n\t}\n\n}\n"

},

{

"path": "cmd/nucleus/flags.go",

"content": "package main\n\nimport (\n\t\"github.com/spf13/cobra\"\n)\n\n//AttachCLIFlags attaches command line flags to command\nfunc AttachCLIFlags(rootCmd *cobra.Command) error {\n\n\trootCmd.PersistentFlags().StringP(\"config\", \"c\", \"\", \"the config file to use\")\n\trootCmd.PersistentFlags().StringP(\"port\", \"p\", \"\", \"Port for api server to run\")\n\trootCmd.PersistentFlags().StringP(\"payloadAddress\", \"l\", \"\", \"Payload address\")\n\trootCmd.PersistentFlags().String(\"subModule\", \"\", \"submodule of a repo\")\n\trootCmd.PersistentFlags().BoolP(\"verbose\", \"\", false, \"Run in verbose mode\")\n\trootCmd.PersistentFlags().BoolP(\"coverage\", \"\", false, \"Run coverage only mode\")\n\trootCmd.PersistentFlags().BoolP(\"discover\", \"\", false, \"Run nucleus in test discovery mode\")\n\trootCmd.PersistentFlags().BoolP(\"execute\", \"\", false, \"Run nucleus in test execution mode\")\n\trootCmd.PersistentFlags().BoolP(\"flaky\", \"\", false, \"Run nucleus in flaky mode\")\n\trootCmd.PersistentFlags().BoolP(\"collectStats\", \"\", false, \"Collect test execution metrics\")\n\trootCmd.PersistentFlags().IntP(\"consecutiveRuns\", \"\", 1, \"The consecutive test execution runs\")\n\n\trootCmd.PersistentFlags().StringP(\"env\", \"e\", \"prod\", \"Environment.\")\n\trootCmd.PersistentFlags().String(\"taskID\", \"\", \"The unique ID for a task\")\n\trootCmd.PersistentFlags().String(\"locators\", \"\", \"The test locators for a task\")\n\trootCmd.PersistentFlags().String(\"locatorAddress\", \"\", \"The test locators address for a task\")\n\trootCmd.PersistentFlags().String(\"buildID\", \"\", \"The unique ID for a build\")\n\trootCmd.PersistentFlags().String(\"targetCommit\", \"\", \"The target commit for nucleus\")\n\trootCmd.PersistentFlags().String(\"baseCommit\", \"\", \"The base commit for nucleus\")\n\trootCmd.PersistentFlags().StringP(\"synapsehost\", \"\", \"\", \"Local Ip of proxy server.\")\n\trootCmd.PersistentFlags().BoolP(\"local\", \"\", false, \"local mode\")\n\n\treturn nil\n}\n"

},

{

"path": "cmd/nucleus/main.go",

"content": "package main\n\nimport (\n\t\"log\"\n)\n\n// Main function just executes root command `ts`\n// this project structure is inspired from `cobra` package\nfunc main() {\n\tif err := RootCommand().Execute(); err != nil {\n\t\tlog.Fatal(err)\n\t}\n}\n"

},

{

"path": "cmd/synapse/bin.go",

"content": "package main\n\n// this is cmd/root_cmd.go\n\nimport (\n\t\"context\"\n\t\"fmt\"\n\t\"log\"\n\t\"os\"\n\t\"os/signal\"\n\t\"path/filepath\"\n\t\"strconv\"\n\t\"sync\"\n\t\"time\"\n\n\t\"github.com/LambdaTest/test-at-scale/config\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/cron\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/global\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/lumber\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/proxyserver\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/runner/docker\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/secrets\"\n\tsynapsepkg \"github.com/LambdaTest/test-at-scale/pkg/synapse\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/tasconfigdownloader\"\n\t\"github.com/LambdaTest/test-at-scale/pkg/utils\"\n\t\"github.com/joho/godotenv\"\n\t\"github.com/spf13/cobra\"\n)\n\n// RootCommand will setup and return the root command\nfunc RootCommand() *cobra.Command {\n\trootCmd := cobra.Command{\n\t\tUse: \"synapse\",\n\t\tLong: `Synapse is an opensource runner for TAS`,\n\t\tVersion: global.SynapseBinaryVersion,\n\t\tRun: run,\n\t}\n\n\t// define flags used for this command\n\tif err := AttachCLIFlags(&rootCmd); err != nil {\n\t\tfmt.Println(\"Error in attaching cli flags\")\n\t}\n\n\treturn &rootCmd\n}\n\nfunc run(cmd *cobra.Command, args []string) {\n\t// create a context that we can cancel\n\tctx, cancel := context.WithCancel(context.Background())\n\tdefer cancel()\n\t// set necessary os env\n\tsetEnv()\n\t// a WaitGroup for the goroutines to tell us they've stopped\n\twg := sync.WaitGroup{}\n\n\t// Load environment variables from .env if available\n\terr := godotenv.Load()\n\tif err != nil {\n\t\tfmt.Printf(\"Warning: No .env file found\\n\")\n\t}\n\n\tcfg, err := config.LoadSynapseConfig(cmd)\n\tif err != nil {\n\t\tfmt.Printf(\"Failed to load config: %s\", err.Error())\n\t}\n\n\terr = config.LoadRepoSecrets(cmd, cfg)\n\tif err != nil {\n\t\tfmt.Printf(\"Error loading repository secrets: %v\", err)\n\t}\n\n\t// patch logconfig file location with root level log file location\n\tif cfg.LogFile != \"\" {\n\t\tcfg.LogConfig.FileLocation = filepath.Join(cfg.LogFile, \"synapse.log\")\n\t}\n\n\t// You can also use logrus implementation\n\t// by using lumber.InstanceLogrusLogger\n\tlogger, err := lumber.NewLogger(cfg.LogConfig, cfg.Verbose, lumber.InstanceZapLogger)\n\tif err != nil {\n\t\tlog.Fatalf(\"Could not instantiate logger %s\", err.Error())\n\t}\n\tif err := config.ValidateCfg(cfg, logger); err != nil {\n\t\tlogger.Fatalf(\"Error loading synapse config: %v\", err)\n\t}\n\tsecretsManager := secrets.New(cfg, logger)\n\n\trunner, err := docker.New(secretsManager, logger, cfg)\n\tif err != nil {\n\t\tlogger.Fatalf(\"could not instantiate k8s runner %v\", err)\n\t}\n\ttasConfigDownloader := tasconfigdownloader.New(logger)\n\tsynapse := synapsepkg.New(runner, logger, secretsManager, tasConfigDownloader)\n\n\tproxyHandler, err := proxyserver.NewProxyHandler(logger)\n\tif err != nil {\n\t\tlogger.Fatalf(\"Could not instantiate proxyhandler %v\", err)\n\t}\n\n\t// setting up cron handler\n\twg.Add(1)\n\tgo cron.Setup(ctx, &wg, logger, runner)\n\n\t// All attempts to connect to lambdatest server failed\n\tconnectionFailed := make(chan struct{})\n\n\twg.Add(1)\n\tgo synapse.InitiateConnection(ctx, &wg, connectionFailed)\n\n\twg.Add(1)\n\tgo func() {\n\t\tdefer cancel()\n\t\tdefer wg.Done()\n\t\tif err := proxyserver.ListenAndServe(ctx, proxyHandler, cfg, logger); err != nil {\n\t\t\tlogger.Fatalf(\"Error starting proxy server: %v\", err)\n\t\t}\n\t}()\n\n\t// listen for C-cInterrupt\n\tc := make(chan os.Signal, 1)\n\tsignal.Notify(c, os.Interrupt)\n\n\t// create channel to mark status of waitgroup\n\t// this is required to brutally kill application in case of\n\t// timeout\n\tdone := make(chan struct{})\n\n\t// asynchronously wait for all the go routines\n\tgo func() {\n\t\t// and wait for all go routines\n\t\twg.Wait()\n\t\tlogger.Debugf(\"main: all goroutines have finished.\")\n\t\tclose(done)\n\t}()\n\n\t// wait for signal channel\n\tselect {\n\tcase <-c:\n\t\t{\n\t\t\tlogger.Debugf(\"main: received OS Interrupt signal, attempting graceful shutdown ....\")\n\t\t\t// tell the goroutines to stop\n\t\t\tlogger.Debugf(\"main: telling goroutines to stop\")\n\t\t\tcancel()\n\t\t\tselect {\n\t\t\tcase <-done:\n\t\t\t\tlogger.Debugf(\"Go routines exited within timeout\")\n\t\t\tcase <-time.After(global.GracefulTimeout):\n\t\t\t\tlogger.Errorf(\"Graceful timeout exceeded. Brutally killing the application\")\n\t\t\t}\n\t\t}\n\n\tcase <-connectionFailed:\n\t\t{\n\t\t\tlogger.Debugf(\"main: all attempts to connect to lamdatest server failed ....\")\n\t\t\t// tell the goroutines to stop\n\t\t\tlogger.Debugf(\"main: telling goroutines to stop\")\n\t\t\tcancel()\n\t\t\tselect {\n\t\t\tcase <-done:\n\t\t\t\tlogger.Debugf(\"Go routines exited within timeout\")\n\t\t\tcase <-time.After(global.GracefulTimeout):\n\t\t\t\tlogger.Errorf(\"Graceful timeout exceeded. Brutally killing the application\")\n\t\t\t}\n\t\t\tos.Exit(0)\n\n\t\t}\n\tcase <-done:\n\t\tos.Exit(0)\n\t}\n\n}\n\nfunc setEnv() {\n\tos.Setenv(global.AutoRemoveEnv, strconv.FormatBool(global.AutoRemove))\n\tos.Setenv(global.LocalEnv, strconv.FormatBool(global.Local))\n\tos.Setenv(global.SynapseHostEnv, utils.GetOutboundIP())\n\tos.Setenv(global.NetworkEnvName, global.NetworkName)\n}\n"

},

{

"path": "cmd/synapse/flags.go",

"content": "package main\n\nimport (\n\t\"github.com/spf13/cobra\"\n)\n\n//AttachCLIFlags attaches command line flags to command\nfunc AttachCLIFlags(rootCmd *cobra.Command) error {\n\trootCmd.PersistentFlags().StringP(\"config\", \"c\", \"\", \"the config file to use\")\n\trootCmd.PersistentFlags().BoolP(\"verbose\", \"\", false, \"should every proxy request be logged to stdout\")\n\treturn nil\n}\n"

},

{

"path": "cmd/synapse/main.go",

"content": "package main\n\nimport (\n\t\"log\"\n\n\t\"github.com/LambdaTest/test-at-scale/pkg/global\"\n)\n\n// Main function just executes root command `ts`\n// this project structure is inspired from `cobra` package\nfunc main() {\n\tlog.Printf(\"Starting synapse %s\", global.SynapseBinaryVersion)\n\tif err := RootCommand().Execute(); err != nil {\n\t\tlog.Fatal(err)\n\t}\n}\n"

},

{

"path": "config/default.go",

"content": "package config\n\nimport (\n\t\"github.com/LambdaTest/test-at-scale/pkg/global\"\n\t\"github.com/spf13/viper\"\n)\n\nfunc setNucleusDefaultConfig() {\n\tviper.SetDefault(\"LogConfig.EnableConsole\", true)\n\tviper.SetDefault(\"LogConfig.ConsoleJSONFormat\", false)\n\tviper.SetDefault(\"LogConfig.ConsoleLevel\", \"debug\")\n\tviper.SetDefault(\"LogConfig.EnableFile\", true)\n\tviper.SetDefault(\"LogConfig.FileJSONFormat\", true)\n\tviper.SetDefault(\"LogConfig.FileLevel\", \"debug\")\n\tviper.SetDefault(\"LogConfig.FileLocation\", global.HomeDir+\"/nucleus.log\")\n\tviper.SetDefault(\"Env\", \"prod\")\n\tviper.SetDefault(\"Port\", \"9876\")\n\tviper.SetDefault(\"Verbose\", false)\n}\n\nfunc setSynapseDefaultConfig() {\n\tviper.SetDefault(\"LogConfig.EnableConsole\", true)\n\tviper.SetDefault(\"LogConfig.ConsoleJSONFormat\", false)\n\tviper.SetDefault(\"LogConfig.ConsoleLevel\", \"info\")\n\tviper.SetDefault(\"LogConfig.EnableFile\", true)\n\tviper.SetDefault(\"LogConfig.FileJSONFormat\", true)\n\tviper.SetDefault(\"LogConfig.FileLevel\", \"debug\")\n\tviper.SetDefault(\"LogConfig.FileLocation\", \"./mould.log\")\n\tviper.SetDefault(\"Env\", \"prod\")\n\tviper.SetDefault(\"Verbose\", false)\n}\n"

},

{

"path": "config/loader.go",

"content": "package config\n\nimport (\n\t\"encoding/json\"\n\t\"errors\"\n\t\"fmt\"\n\t\"io/ioutil\"\n\t\"strings\"\n\n\t\"github.com/LambdaTest/test-at-scale/pkg/lumber\"\n\t\"github.com/spf13/cobra\"\n\t\"github.com/spf13/viper\"\n)\n\n// GlobalNucleusConfig stores the config instance for global use\nvar GlobalNucleusConfig *NucleusConfig\n\n// GlobalSynapseConfig store the config instance for synapse global use\nvar GlobalSynapseConfig *SynapseConfig\n\ntype tempSecretReader struct {\n\tRepoSecrets map[string]map[string]string `json:\"RepoSecrets\" yaml:\"RepoSecrets\"`\n}\n\n// LoadNucleusConfig loads config from command instance to predefined config variables\nfunc LoadNucleusConfig(cmd *cobra.Command) (*NucleusConfig, error) {\n\terr := viper.BindPFlags(cmd.Flags())\n\tif err != nil {\n\t\treturn nil, err\n\t}\n\n\t// default viper configs\n\tviper.SetEnvKeyReplacer(strings.NewReplacer(\".\", \"_\"))\n\tviper.AutomaticEnv()\n\n\t// set default configs\n\tsetNucleusDefaultConfig()\n\n\tif configFile, _ := cmd.Flags().GetString(\"config\"); configFile != \"\" {\n\t\tviper.SetConfigFile(configFile)\n\t} else {\n\t\tviper.SetConfigName(\".nucleus\")\n\t\tviper.AddConfigPath(\"./\")\n\t\tviper.AddConfigPath(\"$HOME/.nucleus\")\n\t}\n\n\tif err := viper.ReadInConfig(); err != nil {\n\t\tfmt.Println(\"Warning: No configuration file found. Proceeding with defaults\")\n\t}\n\n\treturn populateNucleusConfig(new(NucleusConfig))\n}\n\n// LoadSynapseConfig loads config from command instance to predefined config variables\nfunc LoadSynapseConfig(cmd *cobra.Command) (*SynapseConfig, error) {\n\terr := viper.BindPFlags(cmd.Flags())\n\tif err != nil {\n\t\treturn nil, err\n\t}\n\n\t// default viper configs\n\tviper.SetEnvPrefix(\"SYN\")\n\tviper.SetEnvKeyReplacer(strings.NewReplacer(\".\", \"_\"))\n\tviper.AutomaticEnv()\n\n\t// set default configs\n\tsetSynapseDefaultConfig()\n\n\tif configFile, _ := cmd.Flags().GetString(\"config\"); configFile != \"\" {\n\t\tviper.SetConfigFile(configFile)\n\t} else {\n\t\tviper.SetConfigName(\".synapse\")\n\t\tviper.AddConfigPath(\"./\")\n\t\tviper.AddConfigPath(\"$HOME/.synapse\")\n\t}\n\n\tif err := viper.ReadInConfig(); err != nil {\n\t\tfmt.Println(\"Warning: No configuration file found. Proceeding with defaults\")\n\t}\n\treturn populateSynapseConfig(new(SynapseConfig))\n}\n\n// LoadRepoSecrets loads repo secrets from configuration file\nfunc LoadRepoSecrets(cmd *cobra.Command, synapseConfig *SynapseConfig) error {\n\tif configFile, _ := cmd.Flags().GetString(\"config\"); configFile != \"\" {\n\t\tviper.SetConfigFile(configFile)\n\t} else {\n\t\tviper.SetConfigName(\".synapse\")\n\t\tviper.AddConfigPath(\"./\")\n\t\tviper.AddConfigPath(\"$HOME/.synapse\")\n\t}\n\n\tif err := viper.ReadInConfig(); err != nil {\n\t\tfmt.Println(\"Warning: No configuration file found. Proceeding with defaults\")\n\t}\n\n\tsecretFile, err := ioutil.ReadFile(viper.GetViper().ConfigFileUsed())\n\tif err != nil {\n\t\tfmt.Printf(\"error in reading config file: %v\\n\", err)\n\t}\n\n\tvar tempSecret tempSecretReader\n\tif err := json.Unmarshal(secretFile, &tempSecret); err != nil {\n\t\tfmt.Printf(\"error in umarshaling secrets: %v\\n\", err)\n\t}\n\n\tsynapseConfig.RepoSecrets = tempSecret.RepoSecrets\n\treturn nil\n}\n\n// ValidateCfg checks the validity of the config\nfunc ValidateCfg(cfg *SynapseConfig, logger lumber.Logger) error {\n\tif cfg.Lambdatest.SecretKey == \"\" {\n\t\treturn errors.New(\"error finding lambdatest secretkey in configuration file\")\n\t}\n\tif cfg.ContainerRegistry.Mode == \"\" {\n\t\treturn errors.New(\"error finding ContainerRegistry Mode in configuration file\")\n\t}\n\tif cfg.RepoSecrets == nil {\n\t\tlogger.Debugf(\"no RepoSecrets found in configuration file.\")\n\t\treturn nil\n\t}\n\treturn nil\n}\n"

},

{

"path": "config/nucleusmodel.go",

"content": "package config\n\nimport \"github.com/LambdaTest/test-at-scale/pkg/lumber\"\n\n// Model definition for configuration\n\n// NucleusConfig is the application's configuration\ntype NucleusConfig struct {\n\tConfig string\n\tPort string\n\tPayloadAddress string `json:\"payloadAddress\"`\n\tCollectStats bool `json:\"collectStats\"`\n\tConsecutiveRuns int `json:\"consecutiveRuns\"`\n\tLogFile string\n\tLogConfig lumber.LoggingConfig\n\tCoverageMode bool `json:\"coverage\"`\n\tDiscoverMode bool `json:\"discover\"`\n\tExecuteMode bool `json:\"execute\"`\n\tFlakyMode bool `json:\"flaky\"`\n\tTaskID string `json:\"taskID\" env:\"TASK_ID\"`\n\tBuildID string `json:\"buildID\" env:\"BUILD_ID\"`\n\tLocators string `json:\"locators\"`\n\tLocatorAddress string `json:\"locatorAddress\"`\n\tEnv string\n\tVerbose bool\n\tAzure Azure `env:\"AZURE\"`\n\tLocalRunner bool `env:\"local\"`\n\tSynapseHost string `env:\"synapsehost\"`\n\tSubModule string `json:\"subModule\"`\n}\n\n// Azure providers the storage configuration.\ntype Azure struct {\n\tContainerName string `env:\"CONTAINER_NAME\"`\n\tStorageAccountName string `env:\"STORAGE_ACCOUNT\"`\n\tStorageAccessKey string `env:\"STORAGE_ACCESS_KEY\"`\n}\n"

},

{

"path": "config/parse.go",

"content": "package config\n\nimport (\n\t\"errors\"\n\t\"fmt\"\n\t\"reflect\"\n\n\t\"github.com/spf13/viper\"\n)\n\nconst tagPrefix = \"viper\"\n\n// populateNucleusConfig is used to parse config read through viper\nfunc populateNucleusConfig(config *NucleusConfig) (*NucleusConfig, error) {\n\terr := recursivelySet(reflect.ValueOf(config), \"\")\n\tif err != nil {\n\t\treturn nil, err\n\t}\n\n\treturn config, nil\n}\n\n// populateSynapseConfig is used to parse config read through viper\nfunc populateSynapseConfig(config *SynapseConfig) (*SynapseConfig, error) {\n\terr := recursivelySet(reflect.ValueOf(config), \"\")\n\tif err != nil {\n\t\treturn nil, err\n\t}\n\n\treturn config, nil\n}\n\n// recursivelySet is used to recursively set conf read from\n// files to golang structs. Since nested values are accessed using periods\n// we need to recursively parse the values\nfunc recursivelySet(val reflect.Value, prefix string) error {\n\tif val.Kind() != reflect.Ptr {\n\t\treturn errors.New(\"WTF\")\n\t}\n\n\t// dereference\n\tval = reflect.Indirect(val)\n\tif val.Kind() != reflect.Struct {\n\t\treturn errors.New(\"FML\")\n\t}\n\n\t// grab the type for this instance\n\tvType := reflect.TypeOf(val.Interface())\n\n\t// go through child fields\n\tfor i := 0; i < val.NumField(); i++ {\n\t\tthisField := val.Field(i)\n\t\tthisType := vType.Field(i)\n\t\ttags := getTags(thisType)\n\t\t// try to fetch value for each key using multiple tags\n\t\tfor _, tag := range tags {\n\t\t\tkey := prefix + tag\n\t\t\tswitch thisField.Kind() {\n\t\t\tcase reflect.Struct:\n\t\t\t\tif err := recursivelySet(thisField.Addr(), key+\".\"); err != nil {\n\t\t\t\t\treturn err\n\t\t\t\t}\n\t\t\tcase reflect.Int:\n\t\t\t\tfallthrough\n\t\t\tcase reflect.Int32:\n\t\t\t\tfallthrough\n\t\t\tcase reflect.Int64:\n\t\t\t\t// you can only set with an int64 -> int\n\t\t\t\tconfigVal := int64(viper.GetInt(key))\n\t\t\t\t// skip the update if tag is not set in viper\n\t\t\t\tif viper.GetInt(key) == 0 && thisField.Int() != 0 {\n\t\t\t\t\tcontinue\n\t\t\t\t}\n\t\t\t\tthisField.SetInt(configVal)\n\t\t\tcase reflect.String:\n\t\t\t\t// skip the update if tag is not set in viper\n\t\t\t\tif viper.GetString(key) == \"\" && thisField.String() != \"\" {\n\t\t\t\t\tcontinue\n\t\t\t\t}\n\t\t\t\tthisField.SetString(viper.GetString(key))\n\t\t\tcase reflect.Bool:\n\t\t\t\t// skip the update if tag is not set in viper\n\t\t\t\tif !viper.GetBool(key) && thisField.Bool() {\n\t\t\t\t\tcontinue\n\t\t\t\t}\n\t\t\t\tthisField.SetBool(viper.GetBool(key))\n\t\t\tcase reflect.Map:\n\t\t\t\tcontinue\n\t\t\tdefault:\n\t\t\t\treturn fmt.Errorf(\"unexpected type detected ~ aborting: %s\", thisField.Kind())\n\t\t\t}\n\t\t}\n\t}\n\n\treturn nil\n}\n\nfunc getTags(field reflect.StructField) []string {\n\t// check if maybe we have a special magic tag\n\ttag := field.Tag\n\tvalues := []string{}\n\tif tag != \"\" {\n\t\tfor _, prefix := range []string{tagPrefix, \"yaml\", \"json\", \"env\", \"mapstructure\"} {\n\t\t\tif v := tag.Get(prefix); v != \"\" {\n\t\t\t\tvalues = append(values, v)\n\t\t\t}\n\t\t}\n\t\treturn values\n\t}\n\n\treturn []string{field.Name}\n}\n"

},

{

"path": "config/parse_test.go",

"content": "package config\n\nimport (\n\t\"reflect\"\n\t\"testing\"\n\n\t\"github.com/spf13/viper\"\n\t\"github.com/stretchr/testify/assert\"\n)\n\nfunc TestSimpleValues(t *testing.T) {\n\tc := struct {\n\t\tSimple string `json:\"simple\"`\n\t}{}\n\n\tviper.SetDefault(\"simple\", \"i am a simple string\")\n\n\tassert.Nil(t, recursivelySet(reflect.ValueOf(&c), \"\"))\n\tassert.Equal(t, \"i am a simple string\", c.Simple)\n}\n\nfunc TestNestedValues(t *testing.T) {\n\tc := struct {\n\t\tSimple string `json:\"simple\"`\n\t\tNested struct {\n\t\t\tBoolVal bool `json:\"bool\"`\n\t\t\tStringVal string `json:\"string\"`\n\t\t\tNumberVal int `json:\"number\"`\n\t\t} `json:\"nested\"`\n\t}{}\n\n\tviper.SetDefault(\"simple\", \"simple\")\n\tviper.SetDefault(\"nested.bool\", true)\n\tviper.SetDefault(\"nested.string\", \"i am a simple string\")\n\tviper.SetDefault(\"nested.number\", 4)\n\n\tassert.Nil(t, recursivelySet(reflect.ValueOf(&c), \"\"))\n\tassert.Equal(t, \"simple\", c.Simple)\n\tassert.Equal(t, 4, c.Nested.NumberVal)\n\tassert.Equal(t, \"i am a simple string\", c.Nested.StringVal)\n\tassert.Equal(t, true, c.Nested.BoolVal)\n}\n"

},

{

"path": "config/synapsemodel.go",

"content": "package config\n\nimport \"github.com/LambdaTest/test-at-scale/pkg/lumber\"\n\n// Model definition for configuration\n\n// SynapseConfig the application's configuration\ntype SynapseConfig struct {\n\tName string\n\tConfig string\n\tLogFile string\n\tLogConfig lumber.LoggingConfig\n\tEnv string\n\tVerbose bool\n\tLambdatest LambdatestConfig\n\tGit GitConfig\n\tContainerRegistry ContainerRegistryConfig\n\tRepoSecrets map[string]map[string]string\n}\n\n// LambdatestConfig contains credentials for lambdatest\ntype LambdatestConfig struct {\n\tSecretKey string\n}\n\n// GitConfig contains git token\ntype GitConfig struct {\n\tToken string\n\tTokenType string\n}\n\n// PullPolicyType defines when to pull docker image\ntype PullPolicyType string\n\n// ModeType define type of container repo\ntype ModeType string\n\n// ContainerRegistryConfig contains repo configuration if private repo is used\ntype ContainerRegistryConfig struct {\n\tPullPolicy PullPolicyType\n\tMode ModeType\n\tUsername string\n\tPassword string\n}\n\n// defines constant for docker config\nconst (\n\tPullAlways PullPolicyType = \"always\"\n\tPullNever PullPolicyType = \"never\"\n\tPrivateMode ModeType = \"private\"\n\tPublicMode ModeType = \"public\"\n)\n"

},

{

"path": "docker-compose.yml",

"content": "version: \"3.9\" \nservices:\n synapse:\n image: lambdatest/synapse:latest\n stop_signal: SIGINT\n restart: on-failure\n networks:\n - test-at-scale\n hostname: synapse\n container_name: synapse\n volumes:\n # synapse will needs socket access to create containers on host\n - \"/var/run/docker.sock:/var/run/docker.sock\"\n - \"/tmp/synapse:/tmp/synapse\"\n - \".synapse.json:/home/synapse/.synapse.json\"\n - \"/etc/machine-id:/etc/machine-id\"\n - \"./logs/synapse:/var/log/synapse\"\n\nnetworks:\n test-at-scale:\n external: false\n name: test-at-scale\n"

},

{

"path": "go.mod",

"content": "module github.com/LambdaTest/test-at-scale\n\ngo 1.17\n\nrequire (\n\tgithub.com/Azure/azure-sdk-for-go/sdk/azcore v0.21.1\n\tgithub.com/Azure/azure-sdk-for-go/sdk/storage/azblob v0.3.0\n\tgithub.com/bmatcuk/doublestar/v4 v4.0.2\n\tgithub.com/cenkalti/backoff/v4 v4.1.3\n\tgithub.com/denisbrodbeck/machineid v1.0.1\n\tgithub.com/docker/docker v20.10.12+incompatible\n\tgithub.com/docker/go-units v0.4.0\n\tgithub.com/gin-gonic/gin v1.7.7\n\tgithub.com/go-playground/locales v0.14.0\n\tgithub.com/go-playground/universal-translator v0.18.0\n\tgithub.com/go-playground/validator/v10 v10.10.0\n\tgithub.com/google/uuid v1.2.0\n\tgithub.com/gorilla/websocket v1.4.2\n\tgithub.com/joho/godotenv v1.4.0\n\tgithub.com/mholt/archiver/v3 v3.5.1\n\tgithub.com/robfig/cron/v3 v3.0.1\n\tgithub.com/shirou/gopsutil/v3 v3.21.1\n\tgithub.com/sirupsen/logrus v1.8.1\n\tgithub.com/spf13/cobra v1.3.0\n\tgithub.com/spf13/viper v1.10.1\n\tgithub.com/stretchr/testify v1.7.0\n\tgo.uber.org/zap v1.20.0\n\tgolang.org/x/sync v0.0.0-20210220032951-036812b2e83c\n\tgopkg.in/natefinch/lumberjack.v2 v2.0.0\n\tgopkg.in/yaml.v3 v3.0.0\n)\n\nrequire (\n\tgithub.com/Azure/azure-sdk-for-go/sdk/internal v0.8.3 // indirect\n\tgithub.com/Microsoft/go-winio v0.4.17 // indirect\n\tgithub.com/StackExchange/wmi v0.0.0-20190523213315-cbe66965904d // indirect\n\tgithub.com/andybalholm/brotli v1.0.1 // indirect\n\tgithub.com/containerd/containerd v1.5.10 // indirect\n\tgithub.com/davecgh/go-spew v1.1.1 // indirect\n\tgithub.com/docker/distribution v2.8.0+incompatible // indirect\n\tgithub.com/docker/go-connections v0.4.0 // indirect\n\tgithub.com/dsnet/compress v0.0.2-0.20210315054119-f66993602bf5 // indirect\n\tgithub.com/fsnotify/fsnotify v1.5.1 // indirect\n\tgithub.com/gin-contrib/sse v0.1.0 // indirect\n\tgithub.com/go-ole/go-ole v1.2.6 // indirect\n\tgithub.com/gogo/protobuf v1.3.2 // indirect\n\tgithub.com/golang/protobuf v1.5.2 // indirect\n\tgithub.com/golang/snappy v0.0.3 // indirect\n\tgithub.com/gorilla/mux v1.8.0 // indirect\n\tgithub.com/hashicorp/hcl v1.0.0 // indirect\n\tgithub.com/inconshreveable/mousetrap v1.0.0 // indirect\n\tgithub.com/json-iterator/go v1.1.12 // indirect\n\tgithub.com/klauspost/compress v1.11.13 // indirect\n\tgithub.com/klauspost/pgzip v1.2.5 // indirect\n\tgithub.com/leodido/go-urn v1.2.1 // indirect\n\tgithub.com/magiconair/properties v1.8.5 // indirect\n\tgithub.com/mattn/go-isatty v0.0.14 // indirect\n\tgithub.com/mitchellh/mapstructure v1.4.3 // indirect\n\tgithub.com/moby/term v0.0.0-20210619224110-3f7ff695adc6 // indirect\n\tgithub.com/modern-go/concurrent v0.0.0-20180306012644-bacd9c7ef1dd // indirect\n\tgithub.com/modern-go/reflect2 v1.0.2 // indirect\n\tgithub.com/morikuni/aec v1.0.0 // indirect\n\tgithub.com/nwaples/rardecode v1.1.0 // indirect\n\tgithub.com/opencontainers/go-digest v1.0.0 // indirect\n\tgithub.com/opencontainers/image-spec v1.0.2 // indirect\n\tgithub.com/pelletier/go-toml v1.9.4 // indirect\n\tgithub.com/pierrec/lz4/v4 v4.1.2 // indirect\n\tgithub.com/pkg/errors v0.9.1 // indirect\n\tgithub.com/pmezard/go-difflib v1.0.0 // indirect\n\tgithub.com/spf13/afero v1.6.0 // indirect\n\tgithub.com/spf13/cast v1.4.1 // indirect\n\tgithub.com/spf13/jwalterweatherman v1.1.0 // indirect\n\tgithub.com/spf13/pflag v1.0.5 // indirect\n\tgithub.com/stretchr/objx v0.2.0 // indirect\n\tgithub.com/subosito/gotenv v1.2.0 // indirect\n\tgithub.com/ugorji/go/codec v1.1.7 // indirect\n\tgithub.com/ulikunitz/xz v0.5.9 // indirect\n\tgithub.com/xi2/xz v0.0.0-20171230120015-48954b6210f8 // indirect\n\tgo.uber.org/atomic v1.7.0 // indirect\n\tgo.uber.org/multierr v1.6.0 // indirect\n\tgolang.org/x/crypto v0.0.0-20210817164053-32db794688a5 // indirect\n\tgolang.org/x/net v0.0.0-20210813160813-60bc85c4be6d // indirect\n\tgolang.org/x/sys v0.0.0-20220111092808-5a964db01320 // indirect\n\tgolang.org/x/text v0.3.7 // indirect\n\tgoogle.golang.org/genproto v0.0.0-20211208223120-3a66f561d7aa // indirect\n\tgoogle.golang.org/grpc v1.43.0 // indirect\n\tgoogle.golang.org/protobuf v1.27.1 // indirect\n\tgopkg.in/ini.v1 v1.66.2 // indirect\n\tgopkg.in/yaml.v2 v2.4.0 // indirect\n\tgotest.tools/v3 v3.1.0 // indirect\n)\n"

},

{

"path": "go.sum",