Showing preview only (659K chars total). Download the full file or copy to clipboard to get everything.

Repository: MKLab-ITI/CUDA

Branch: master

Commit: e567d31391d5

Files: 59

Total size: 33.1 MB

Directory structure:

gitextract_hg6xup5t/

├── README.md

├── binaries/

│ ├── linux/

│ │ ├── COPYRIGHT

│ │ ├── FAQ.html

│ │ ├── README

│ │ ├── README-GPU

│ │ ├── svm-predict

│ │ ├── svm-scale

│ │ ├── svm-train

│ │ ├── svm-train-gpu

│ │ ├── tools/

│ │ │ ├── README

│ │ │ ├── checkdata.py

│ │ │ ├── easy.py

│ │ │ ├── grid.py

│ │ │ └── subset.py

│ │ └── train_set

│ └── windows/

│ ├── x64/

│ │ ├── COPYRIGHT

│ │ ├── FAQ.html

│ │ ├── README

│ │ ├── README-GPU

│ │ ├── tools/

│ │ │ ├── README

│ │ │ ├── checkdata.py

│ │ │ ├── easy.py

│ │ │ ├── grid.py

│ │ │ └── subset.py

│ │ └── train_set

│ └── x86/

│ ├── COPYRIGHT

│ ├── FAQ.html

│ ├── README

│ ├── README-GPU

│ ├── tools/

│ │ ├── README

│ │ ├── checkdata.py

│ │ ├── easy.py

│ │ ├── grid.py

│ │ └── subset.py

│ └── train_set

└── src/

├── linux/

│ ├── COPYRIGHT

│ ├── Makefile

│ ├── README

│ ├── README-GPU

│ ├── cross_validation_with_matrix_precomputation.c

│ ├── findcudalib.mk

│ ├── kernel_matrix_calculation.c

│ ├── readme.txt

│ ├── svm-train.c

│ ├── svm.cpp

│ └── svm.h

└── windows/

├── README-GPU

├── libsvm_train_dense_gpu/

│ ├── cross_validation_with_matrix_precomputation.c

│ ├── kernel_matrix_calculation.c

│ ├── libsvm_train_dense_gpu.vcxproj

│ ├── libsvm_train_dense_gpu.vcxproj.filters

│ ├── libsvm_train_dense_gpu.vcxproj.user

│ ├── svm-train.c

│ ├── svm.cpp

│ └── svm.h

├── libsvm_train_dense_gpu.ncb

├── libsvm_train_dense_gpu.sdf

├── libsvm_train_dense_gpu.sln

└── libsvm_train_dense_gpu.suo

================================================

FILE CONTENTS

================================================

================================================

FILE: README.md

================================================

CUDA: GPU-accelerated LIBSVM

====

**LIBSVM Accelerated with GPU using the CUDA Framework**

GPU-accelerated LIBSVM is a modification of the [original LIBSVM](http://www.csie.ntu.edu.tw/~cjlin/libsvm/) that exploits the CUDA framework to significantly reduce processing time while producing identical results. The functionality and interface of LIBSVM remains the same. The modifications were done in the kernel computation, that is now performed using the GPU.

Watch a [short video](http://www.youtube.com/watch?v=Fl99tQQd55U) on the capabilities of the GPU-accelerated LIBSVM package

###CHANGELOG

V1.2

Updated to LIBSVM version 3.17

Updated to CUDA SDK v5.5

Using CUBLAS_V2 which is compatible with the CUDA SDK v4.0 and up.

### FEATURES

Mode Supported

C-SVC classification with RBF kernel

Functionality / User interface

Same as LIBSVM

### PREREQUISITES

LIBSVM prerequisites

NVIDIA Graphics card with CUDA support

Latest NVIDIA drivers for GPU

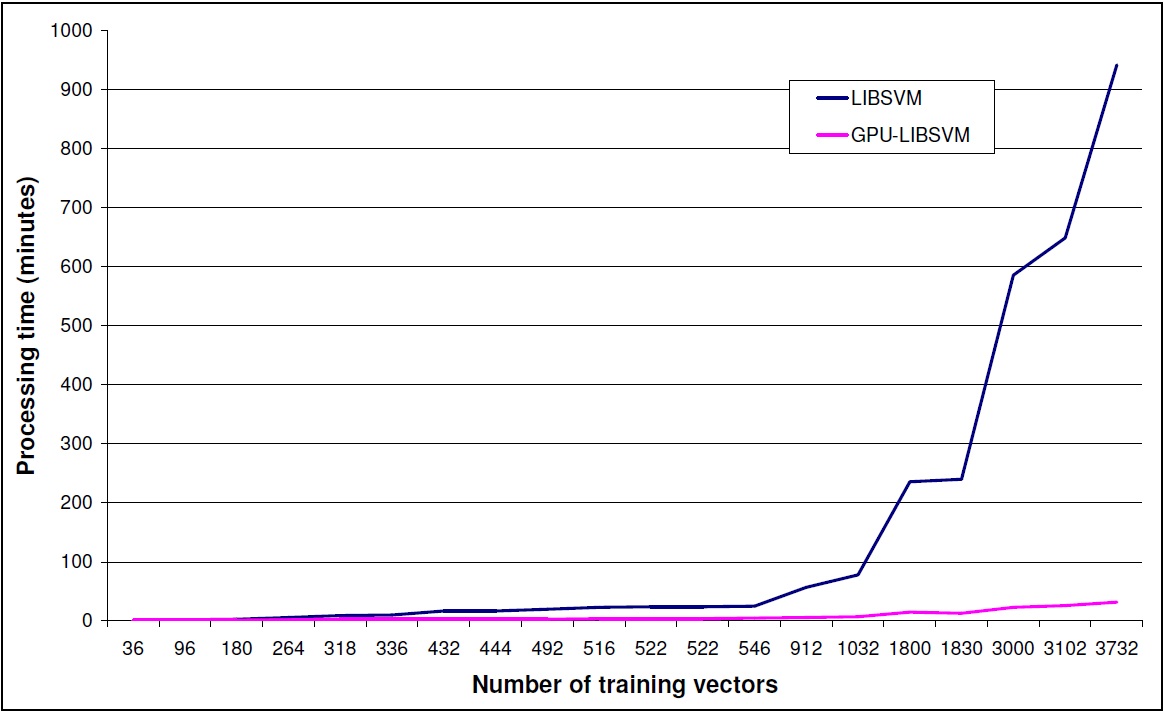

### PERFORMANCE COMPARISON

To showcase the performance gain using the GPU-accelerated LIBSVM we present an example run.

PC Setup

Quad core Intel Q6600 processor

3.5GB of DDR2 RAM

Windows-XP 32-bit OS

Input Data

TRECVID 2007 Dataset for the detection of high level features in video shots

Training vectors with a dimension of 6000

20 different feature models with a variable number of input training vectors ranging from 36 up to 3772

Classification parameters

c-svc

RBF kernel

Parameter optimization using the easy.py script provided by LIBSVM.

4 local workers

Discussion

GPU-accelerated LIBSVM gives a performance gain depending on the size of input data set.

This gain is increasing dramatically with the size of the dataset.

Please take into consideration input data size limitations that can occur from the memory

capacity of the graphics card that is used.

### PUBLICATION

A first document describing some of the work related to the GPU-Accelerated LIBSVM is the following; please cite it if you find this implementation useful in your work:

A. Athanasopoulos, A. Dimou, V. Mezaris, I. Kompatsiaris, "GPU Acceleration for Support Vector Machines", Proc. 12th International Workshop on Image Analysis for Multimedia Interactive Services (WIAMIS 2011), Delft, The Netherlands, April 2011.

### LICENSE

THIS SOFTWARE IS PROVIDED BY THE AUTHOR "AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE AUTHOR BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

### FREQUENTLY ASKED QUESTIONS (FAQ)

* Is there a GPU-accelerated LIBSVM version for Matlab?

We are interested in porting our imlementation in Matlab but due to our workload, it is not in our immediate plans. Everybody is welcome to make the port and we can host the ported software.

* Visual Studio will not let me build the provided project.

The project has been built in both 32/64 bit mode. If you are working on 32 bits, you might need the x64 compiler for Visual Studio 2010 to build the project.

* Building the project, I get linker error messages.

Please go to the project properties and check the library settings. CUDA libraries have different names for x32 / x64. Make sure that the correct path and filenames are given.

* I have built the project but the executables will not run (The application has failed to start because its side-by-side configuration is in

correct.)

Please update the VS2010 redistributables to the PC you are running your executable and install all the latest patches for visual studio.

* My GPU-accelerated LIBSVM is running smoothly but i do not see any speed-up.

GPU-accelerated LIBSVM is giving speed-ups mainly for big datasets. In the GPU-accelerated implementation some extra time is needed to load the data to the gpu memory. If the dataset is not big enough to give a significant performance gain, the gain is lost due to the gpu-memory -> cpu-memory, cpu-memory -> gpu-memory transfer time. Please refer to the graph above to have a better understanding of the performance gain for different dataset sizes.

Problems also seem to arise when the input dataset contains values with extreme differences (e.g. 107) if no scaling is performed. Such an example is the "breast-cancer" dataset provided in the official LIBSVM page.

### ACKNOWLEDGEMENTS

This work was supported by the EU FP7 projects GLOCAL (FP7-248984) and WeKnowIt (FP7-215453)

================================================

FILE: binaries/linux/COPYRIGHT

================================================

Copyright (c) 2000-2013 Chih-Chung Chang and Chih-Jen Lin

All rights reserved.

Redistribution and use in source and binary forms, with or without

modification, are permitted provided that the following conditions

are met:

1. Redistributions of source code must retain the above copyright

notice, this list of conditions and the following disclaimer.

2. Redistributions in binary form must reproduce the above copyright

notice, this list of conditions and the following disclaimer in the

documentation and/or other materials provided with the distribution.

3. Neither name of copyright holders nor the names of its contributors

may be used to endorse or promote products derived from this software

without specific prior written permission.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

``AS IS'' AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT

LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR

A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE REGENTS OR

CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL,

EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO,

PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR

PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF

LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING

NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS

SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

================================================

FILE: binaries/linux/FAQ.html

================================================

<html>

<head>

<title>LIBSVM FAQ</title>

</head>

<body bgcolor="#ffffcc">

<a name="_TOP"><b><h1><a

href=http://www.csie.ntu.edu.tw/~cjlin/libsvm>LIBSVM</a> FAQ </h1></b></a>

<b>last modified : </b>

Wed, 19 Dec 2012 13:26:34 GMT

<class="categories">

<li><a

href="#_TOP">All Questions</a>(78)</li>

<ul><b>

<li><a

href="#/Q1:_Some_sample_uses_of_libsvm">Q1:_Some_sample_uses_of_libsvm</a>(2)</li>

<li><a

href="#/Q2:_Installation_and_running_the_program">Q2:_Installation_and_running_the_program</a>(13)</li>

<li><a

href="#/Q3:_Data_preparation">Q3:_Data_preparation</a>(7)</li>

<li><a

href="#/Q4:_Training_and_prediction">Q4:_Training_and_prediction</a>(34)</li>

<li><a

href="#/Q5:_Probability_outputs">Q5:_Probability_outputs</a>(3)</li>

<li><a

href="#/Q6:_Graphic_interface">Q6:_Graphic_interface</a>(3)</li>

<li><a

href="#/Q7:_Java_version_of_libsvm">Q7:_Java_version_of_libsvm</a>(4)</li>

<li><a

href="#/Q8:_Python_interface">Q8:_Python_interface</a>(1)</li>

<li><a

href="#/Q9:_MATLAB_interface">Q9:_MATLAB_interface</a>(11)</li>

</b></ul>

</li>

<ul><ul class="headlines">

<li class="headlines_item"><a href="#faq101">Some courses which have used libsvm as a tool</a></li>

<li class="headlines_item"><a href="#faq102">Some applications/tools which have used libsvm </a></li>

<li class="headlines_item"><a href="#f201">Where can I find documents/videos of libsvm ?</a></li>

<li class="headlines_item"><a href="#f202">Where are change log and earlier versions?</a></li>

<li class="headlines_item"><a href="#f203">How to cite LIBSVM?</a></li>

<li class="headlines_item"><a href="#f204">I would like to use libsvm in my software. Is there any license problem?</a></li>

<li class="headlines_item"><a href="#f205">Is there a repository of additional tools based on libsvm?</a></li>

<li class="headlines_item"><a href="#f206">On unix machines, I got "error in loading shared libraries" or "cannot open shared object file." What happened ? </a></li>

<li class="headlines_item"><a href="#f207">I have modified the source and would like to build the graphic interface "svm-toy" on MS windows. How should I do it ?</a></li>

<li class="headlines_item"><a href="#f208">I am an MS windows user but why only one (svm-toy) of those precompiled .exe actually runs ? </a></li>

<li class="headlines_item"><a href="#f209">What is the difference between "." and "*" outputed during training? </a></li>

<li class="headlines_item"><a href="#f210">Why occasionally the program (including MATLAB or other interfaces) crashes and gives a segmentation fault?</a></li>

<li class="headlines_item"><a href="#f211">How to build a dynamic library (.dll file) on MS windows?</a></li>

<li class="headlines_item"><a href="#f212">On some systems (e.g., Ubuntu), compiling LIBSVM gives many warning messages. Is this a problem and how to disable the warning message?</a></li>

<li class="headlines_item"><a href="#f213">In LIBSVM, why you don't use certain C/C++ library functions to make the code shorter?</a></li>

<li class="headlines_item"><a href="#f301">Why sometimes not all attributes of a data appear in the training/model files ?</a></li>

<li class="headlines_item"><a href="#f302">What if my data are non-numerical ?</a></li>

<li class="headlines_item"><a href="#f303">Why do you consider sparse format ? Will the training of dense data be much slower ?</a></li>

<li class="headlines_item"><a href="#f304">Why sometimes the last line of my data is not read by svm-train?</a></li>

<li class="headlines_item"><a href="#f305">Is there a program to check if my data are in the correct format?</a></li>

<li class="headlines_item"><a href="#f306">May I put comments in data files?</a></li>

<li class="headlines_item"><a href="#f307">How to convert other data formats to LIBSVM format?</a></li>

<li class="headlines_item"><a href="#f401">The output of training C-SVM is like the following. What do they mean?</a></li>

<li class="headlines_item"><a href="#f402">Can you explain more about the model file?</a></li>

<li class="headlines_item"><a href="#f403">Should I use float or double to store numbers in the cache ?</a></li>

<li class="headlines_item"><a href="#f404">How do I choose the kernel?</a></li>

<li class="headlines_item"><a href="#f405">Does libsvm have special treatments for linear SVM?</a></li>

<li class="headlines_item"><a href="#f406">The number of free support vectors is large. What should I do?</a></li>

<li class="headlines_item"><a href="#f407">Should I scale training and testing data in a similar way?</a></li>

<li class="headlines_item"><a href="#f408">Does it make a big difference if I scale each attribute to [0,1] instead of [-1,1]?</a></li>

<li class="headlines_item"><a href="#f409">The prediction rate is low. How could I improve it?</a></li>

<li class="headlines_item"><a href="#f410">My data are unbalanced. Could libsvm handle such problems?</a></li>

<li class="headlines_item"><a href="#f411">What is the difference between nu-SVC and C-SVC?</a></li>

<li class="headlines_item"><a href="#f412">The program keeps running (without showing any output). What should I do?</a></li>

<li class="headlines_item"><a href="#f413">The program keeps running (with output, i.e. many dots). What should I do?</a></li>

<li class="headlines_item"><a href="#f414">The training time is too long. What should I do?</a></li>

<li class="headlines_item"><a href="#f4141">Does shrinking always help?</a></li>

<li class="headlines_item"><a href="#f415">How do I get the decision value(s)?</a></li>

<li class="headlines_item"><a href="#f4151">How do I get the distance between a point and the hyperplane?</a></li>

<li class="headlines_item"><a href="#f416">On 32-bit machines, if I use a large cache (i.e. large -m) on a linux machine, why sometimes I get "segmentation fault ?"</a></li>

<li class="headlines_item"><a href="#f417">How do I disable screen output of svm-train?</a></li>

<li class="headlines_item"><a href="#f418">I would like to use my own kernel. Any example? In svm.cpp, there are two subroutines for kernel evaluations: k_function() and kernel_function(). Which one should I modify ?</a></li>

<li class="headlines_item"><a href="#f419">What method does libsvm use for multi-class SVM ? Why don't you use the "1-against-the rest" method?</a></li>

<li class="headlines_item"><a href="#f4191">How does LIBSVM perform parameter selection for multi-class problems? </a></li>

<li class="headlines_item"><a href="#f420">After doing cross validation, why there is no model file outputted ?</a></li>

<li class="headlines_item"><a href="#f4201">Why my cross-validation results are different from those in the Practical Guide?</a></li>

<li class="headlines_item"><a href="#f421">On some systems CV accuracy is the same in several runs. How could I use different data partitions? In other words, how do I set random seed in LIBSVM?</a></li>

<li class="headlines_item"><a href="#f422">I would like to solve L2-loss SVM (i.e., error term is quadratic). How should I modify the code ?</a></li>

<li class="headlines_item"><a href="#f424">How do I choose parameters for one-class svm as training data are in only one class?</a></li>

<li class="headlines_item"><a href="#f427">Why the code gives NaN (not a number) results?</a></li>

<li class="headlines_item"><a href="#f428">Why on windows sometimes grid.py fails?</a></li>

<li class="headlines_item"><a href="#f429">Why grid.py/easy.py sometimes generates the following warning message?</a></li>

<li class="headlines_item"><a href="#f430">Why the sign of predicted labels and decision values are sometimes reversed?</a></li>

<li class="headlines_item"><a href="#f431">I don't know class labels of test data. What should I put in the first column of the test file?</a></li>

<li class="headlines_item"><a href="#f432">How can I use OpenMP to parallelize LIBSVM on a multicore/shared-memory computer?</a></li>

<li class="headlines_item"><a href="#f433">How could I know which training instances are support vectors?</a></li>

<li class="headlines_item"><a href="#f425">Why training a probability model (i.e., -b 1) takes a longer time?</a></li>

<li class="headlines_item"><a href="#f426">Why using the -b option does not give me better accuracy?</a></li>

<li class="headlines_item"><a href="#f427">Why using svm-predict -b 0 and -b 1 gives different accuracy values?</a></li>

<li class="headlines_item"><a href="#f501">How can I save images drawn by svm-toy?</a></li>

<li class="headlines_item"><a href="#f502">I press the "load" button to load data points but why svm-toy does not draw them ?</a></li>

<li class="headlines_item"><a href="#f503">I would like svm-toy to handle more than three classes of data, what should I do ?</a></li>

<li class="headlines_item"><a href="#f601">What is the difference between Java version and C++ version of libsvm?</a></li>

<li class="headlines_item"><a href="#f602">Is the Java version significantly slower than the C++ version?</a></li>

<li class="headlines_item"><a href="#f603">While training I get the following error message: java.lang.OutOfMemoryError. What is wrong?</a></li>

<li class="headlines_item"><a href="#f604">Why you have the main source file svm.m4 and then transform it to svm.java?</a></li>

<li class="headlines_item"><a href="#f704">Except the python-C++ interface provided, could I use Jython to call libsvm ?</a></li>

<li class="headlines_item"><a href="#f801">I compile the MATLAB interface without problem, but why errors occur while running it?</a></li>

<li class="headlines_item"><a href="#f8011">On 64bit Windows I compile the MATLAB interface without problem, but why errors occur while running it?</a></li>

<li class="headlines_item"><a href="#f802">Does the MATLAB interface provide a function to do scaling?</a></li>

<li class="headlines_item"><a href="#f803">How could I use MATLAB interface for parameter selection?</a></li>

<li class="headlines_item"><a href="#f8031">I use MATLAB parallel programming toolbox on a multi-core environment for parameter selection. Why the program is even slower?</a></li>

<li class="headlines_item"><a href="#f8032">How do I use LIBSVM with OpenMP under MATLAB?</a></li>

<li class="headlines_item"><a href="#f804">How could I generate the primal variable w of linear SVM?</a></li>

<li class="headlines_item"><a href="#f805">Is there an OCTAVE interface for libsvm?</a></li>

<li class="headlines_item"><a href="#f806">How to handle the name conflict between svmtrain in the libsvm matlab interface and that in MATLAB bioinformatics toolbox?</a></li>

<li class="headlines_item"><a href="#f807">On Windows I got an error message "Invalid MEX-file: Specific module not found" when running the pre-built MATLAB interface in the windows sub-directory. What should I do?</a></li>

<li class="headlines_item"><a href="#f808">LIBSVM supports 1-vs-1 multi-class classification. If instead I would like to use 1-vs-rest, how to implement it using MATLAB interface?</a></li>

</ul></ul>

<hr size="5" noshade />

<p/>

<a name="/Q1:_Some_sample_uses_of_libsvm"></a>

<a name="faq101"><b>Q: Some courses which have used libsvm as a tool</b></a>

<br/>

<ul>

<li><a href=http://lmb.informatik.uni-freiburg.de/lectures/svm_seminar/>Institute for Computer Science,

Faculty of Applied Science, University of Freiburg, Germany

</a>

<li> <a href=http://www.cs.vu.nl/~elena/ml.html>

Division of Mathematics and Computer Science.

Faculteit der Exacte Wetenschappen

Vrije Universiteit, The Netherlands. </a>

<li>

<a href=http://www.cae.wisc.edu/~ece539/matlab/>

Electrical and Computer Engineering Department,

University of Wisconsin-Madison

</a>

<li>

<a href=http://www.hpl.hp.com/personal/Carl_Staelin/cs236601/project.html>

Technion (Israel Institute of Technology), Israel.

<li>

<a href=http://www.cise.ufl.edu/~fu/learn.html>

Computer and Information Sciences Dept., University of Florida</a>

<li>

<a href=http://www.uonbi.ac.ke/acad_depts/ics/course_material/machine_learning/ML_and_DM_Resources.html>

The Institute of Computer Science,

University of Nairobi, Kenya.</a>

<li>

<a href=http://cerium.raunvis.hi.is/~tpr/courseware/svm/hugbunadur.html>

Applied Mathematics and Computer Science, University of Iceland.

<li>

<a href=http://chicago05.mlss.cc/tiki/tiki-read_article.php?articleId=2>

SVM tutorial in machine learning

summer school, University of Chicago, 2005.

</a>

</ul>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q1:_Some_sample_uses_of_libsvm"></a>

<a name="faq102"><b>Q: Some applications/tools which have used libsvm </b></a>

<br/>

(and maybe liblinear).

<ul>

<li>

<a href=http://people.csail.mit.edu/jjl/libpmk/>LIBPMK: A Pyramid Match Toolkit</a>

</li>

<li><a href=http://maltparser.org/>Maltparser</a>:

a system for data-driven dependency parsing

</li>

<li>

<a href=http://www.pymvpa.org/>PyMVPA: python tool for classifying neuroimages</a>

</li>

<li>

<a href=http://solpro.proteomics.ics.uci.edu/>

SOLpro: protein solubility predictor

</a>

</li>

<li>

<a href=http://bdval.campagnelab.org>

BDVal</a>: biomarker discovery in high-throughput datasets.

</li>

<li><a href=http://johel.m.free.fr/demo_045.htm>

Realtime object recognition</a>

</li>

<li><a href=http://scikit-learn.sourceforge.net/>

scikits.learn: machine learning in Python</a>

</li>

</ul>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f201"><b>Q: Where can I find documents/videos of libsvm ?</b></a>

<br/>

<p>

<ul>

<li>

Official implementation document:

<br>

C.-C. Chang and

C.-J. Lin.

LIBSVM

: a library for support vector machines.

ACM Transactions on Intelligent

Systems and Technology, 2:27:1--27:27, 2011.

<a href="http://www.csie.ntu.edu.tw/~cjlin/papers/libsvm.pdf">pdf</a>, <a href=http://www.csie.ntu.edu.tw/~cjlin/papers/libsvm.ps.gz>ps.gz</a>,

<a href=http://portal.acm.org/citation.cfm?id=1961199&CFID=29950432&CFTOKEN=30974232>ACM digital lib</a>.

<li> Instructions for using LIBSVM are in the README files in the main directory and some sub-directories.

<br>

README in the main directory: details all options, data format, and library calls.

<br>

tools/README: parameter selection and other tools

<li>

A guide for beginners:

<br>

C.-W. Hsu, C.-C. Chang, and

C.-J. Lin.

<A HREF="http://www.csie.ntu.edu.tw/~cjlin/papers/guide/guide.pdf">

A practical guide to support vector classification

</A>

<li> An <a href=http://www.youtube.com/watch?v=gePWtNAQcK8>introductory video</a>

for windows users.

</ul>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f202"><b>Q: Where are change log and earlier versions?</b></a>

<br/>

<p>See <a href="http://www.csie.ntu.edu.tw/~cjlin/libsvm/log">the change log</a>.

<p> You can download earlier versions

<a href="http://www.csie.ntu.edu.tw/~cjlin/libsvm/oldfiles">here</a>.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f203"><b>Q: How to cite LIBSVM?</b></a>

<br/>

<p>

Please cite the following paper:

<p>

Chih-Chung Chang and Chih-Jen Lin, LIBSVM

: a library for support vector machines.

ACM Transactions on Intelligent Systems and Technology, 2:27:1--27:27, 2011.

Software available at http://www.csie.ntu.edu.tw/~cjlin/libsvm

<p>

The bibtex format is

<pre>

@article{CC01a,

author = {Chang, Chih-Chung and Lin, Chih-Jen},

title = {{LIBSVM}: A library for support vector machines},

journal = {ACM Transactions on Intelligent Systems and Technology},

volume = {2},

issue = {3},

year = {2011},

pages = {27:1--27:27},

note = {Software available at \url{http://www.csie.ntu.edu.tw/~cjlin/libsvm}}

}

</pre>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f204"><b>Q: I would like to use libsvm in my software. Is there any license problem?</b></a>

<br/>

<p>

The libsvm license ("the modified BSD license")

is compatible with many

free software licenses such as GPL. Hence, it is very easy to

use libsvm in your software.

Please check the COPYRIGHT file in detail. Basically

you need to

<ol>

<li>

Clearly indicate that LIBSVM is used.

</li>

<li>

Retain the LIBSVM COPYRIGHT file in your software.

</li>

</ol>

It can also be used in commercial products.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f205"><b>Q: Is there a repository of additional tools based on libsvm?</b></a>

<br/>

<p>

Yes, see <a href="http://www.csie.ntu.edu.tw/~cjlin/libsvmtools">libsvm

tools</a>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f206"><b>Q: On unix machines, I got "error in loading shared libraries" or "cannot open shared object file." What happened ? </b></a>

<br/>

<p>

This usually happens if you compile the code

on one machine and run it on another which has incompatible

libraries.

Try to recompile the program on that machine or use static linking.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f207"><b>Q: I have modified the source and would like to build the graphic interface "svm-toy" on MS windows. How should I do it ?</b></a>

<br/>

<p>

Build it as a project by choosing "Win32 Project."

On the other hand, for "svm-train" and "svm-predict"

you want to choose "Win32 Console Project."

After libsvm 2.5, you can also use the file Makefile.win.

See details in README.

<p>

If you are not using Makefile.win and see the following

link error

<pre>

LIBCMTD.lib(wwincrt0.obj) : error LNK2001: unresolved external symbol

_wWinMain@16

</pre>

you may have selected a wrong project type.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f208"><b>Q: I am an MS windows user but why only one (svm-toy) of those precompiled .exe actually runs ? </b></a>

<br/>

<p>

You need to open a command window

and type svmtrain.exe to see all options.

Some examples are in README file.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f209"><b>Q: What is the difference between "." and "*" outputed during training? </b></a>

<br/>

<p>

"." means every 1,000 iterations (or every #data

iterations is your #data is less than 1,000).

"*" means that after iterations of using

a smaller shrunk problem,

we reset to use the whole set. See the

<a href=../papers/libsvm.pdf>implementation document</a> for details.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f210"><b>Q: Why occasionally the program (including MATLAB or other interfaces) crashes and gives a segmentation fault?</b></a>

<br/>

<p>

Very likely the program consumes too much memory than what the

operating system can provide. Try a smaller data and see if the

program still crashes.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f211"><b>Q: How to build a dynamic library (.dll file) on MS windows?</b></a>

<br/>

<p>

The easiest way is to use Makefile.win.

See details in README.

Alternatively, you can use Visual C++. Here is

the example using Visual Studio .Net 2008:

<ol>

<li>Create a Win32 empty DLL project and set (in Project->$Project_Name

Properties...->Configuration) to "Release."

About how to create a new dynamic link library, please refer to

<a href=http://msdn2.microsoft.com/en-us/library/ms235636(VS.80).aspx>http://msdn2.microsoft.com/en-us/library/ms235636(VS.80).aspx</a>

<li> Add svm.cpp, svm.h to your project.

<li> Add __WIN32__ and _CRT_SECURE_NO_DEPRECATE to Preprocessor definitions (in

Project->$Project_Name Properties...->C/C++->Preprocessor)

<li> Set Create/Use Precompiled Header to Not Using Precompiled Headers

(in Project->$Project_Name Properties...->C/C++->Precompiled Headers)

<li> Set the path for the Modulation Definition File svm.def (in

Project->$Project_Name Properties...->Linker->input

<li> Build the DLL.

<li> Rename the dll file to libsvm.dll and move it to the correct path.

</ol>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f212"><b>Q: On some systems (e.g., Ubuntu), compiling LIBSVM gives many warning messages. Is this a problem and how to disable the warning message?</b></a>

<br/>

<p>

The warning message is like

<pre>

svm.cpp:2730: warning: ignoring return value of int fscanf(FILE*, const char*, ...), declared with attribute warn_unused_result

</pre>

This is not a problem; see <a href=https://wiki.ubuntu.com/CompilerFlags#-D_FORTIFY_SOURCE=2>this page</a> for more

details of ubuntu systems.

In the future we may modify the code

so that these messages do not appear.

At this moment, to disable the warning message you can replace

<pre>

CFLAGS = -Wall -Wconversion -O3 -fPIC

</pre>

with

<pre>

CFLAGS = -Wall -Wconversion -O3 -fPIC -U_FORTIFY_SOURCE

</pre>

in Makefile.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q2:_Installation_and_running_the_program"></a>

<a name="f213"><b>Q: In LIBSVM, why you don't use certain C/C++ library functions to make the code shorter?</b></a>

<br/>

<p>

For portability, we use only features defined in ISO C89. Note that features in ISO C99 may not be available everywhere.

Even the newest gcc lacks some features in C99 (see <a href=http://gcc.gnu.org/c99status.html>http://gcc.gnu.org/c99status.html</a> for details).

If the situation changes in the future,

we might consider using these newer features.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q3:_Data_preparation"></a>

<a name="f301"><b>Q: Why sometimes not all attributes of a data appear in the training/model files ?</b></a>

<br/>

<p>

libsvm uses the so called "sparse" format where zero

values do not need to be stored. Hence a data with attributes

<pre>

1 0 2 0

</pre>

is represented as

<pre>

1:1 3:2

</pre>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q3:_Data_preparation"></a>

<a name="f302"><b>Q: What if my data are non-numerical ?</b></a>

<br/>

<p>

Currently libsvm supports only numerical data.

You may have to change non-numerical data to

numerical. For example, you can use several

binary attributes to represent a categorical

attribute.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q3:_Data_preparation"></a>

<a name="f303"><b>Q: Why do you consider sparse format ? Will the training of dense data be much slower ?</b></a>

<br/>

<p>

This is a controversial issue. The kernel

evaluation (i.e. inner product) of sparse vectors is slower

so the total training time can be at least twice or three times

of that using the dense format.

However, we cannot support only dense format as then we CANNOT

handle extremely sparse cases. Simplicity of the code is another

concern. Right now we decide to support

the sparse format only.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q3:_Data_preparation"></a>

<a name="f304"><b>Q: Why sometimes the last line of my data is not read by svm-train?</b></a>

<br/>

<p>

We assume that you have '\n' in the end of

each line. So please press enter in the end

of your last line.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q3:_Data_preparation"></a>

<a name="f305"><b>Q: Is there a program to check if my data are in the correct format?</b></a>

<br/>

<p>

The svm-train program in libsvm conducts only a simple check of the input data. To do a

detailed check, after libsvm 2.85, you can use the python script tools/checkdata.py. See tools/README for details.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q3:_Data_preparation"></a>

<a name="f306"><b>Q: May I put comments in data files?</b></a>

<br/>

<p>

We don't officially support this. But, cureently LIBSVM

is able to process data in the following

format:

<pre>

1 1:2 2:1 # your comments

</pre>

Note that the character ":" should not appear in your

comments.

<!--

No, for simplicity we don't support that.

However, you can easily preprocess your data before

using libsvm. For example,

if you have the following data

<pre>

test.txt

1 1:2 2:1 # proten A

</pre>

then on unix machines you can do

<pre>

cut -d '#' -f 1 < test.txt > test.features

cut -d '#' -f 2 < test.txt > test.comments

svm-predict test.feature train.model test.predicts

paste -d '#' test.predicts test.comments | sed 's/#/ #/' > test.results

</pre>

-->

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q3:_Data_preparation"></a>

<a name="f307"><b>Q: How to convert other data formats to LIBSVM format?</b></a>

<br/>

<p>

It depends on your data format. A simple way is to use

libsvmwrite in the libsvm matlab/octave interface.

Take a CSV (comma-separated values) file

in UCI machine learning repository as an example.

We download <a href=http://archive.ics.uci.edu/ml/machine-learning-databases/spect/SPECTF.train>SPECTF.train</a>.

Labels are in the first column. The following steps produce

a file in the libsvm format.

<pre>

matlab> SPECTF = csvread('SPECTF.train'); % read a csv file

matlab> labels = SPECTF(:, 1); % labels from the 1st column

matlab> features = SPECTF(:, 2:end);

matlab> features_sparse = sparse(features); % features must be in a sparse matrix

matlab> libsvmwrite('SPECTFlibsvm.train', labels, features_sparse);

</pre>

The tranformed data are stored in SPECTFlibsvm.train.

<p>

Alternatively, you can use <a href="./faqfiles/convert.c">convert.c</a>

to convert CSV format to libsvm format.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f401"><b>Q: The output of training C-SVM is like the following. What do they mean?</b></a>

<br/>

<br>optimization finished, #iter = 219

<br>nu = 0.431030

<br>obj = -100.877286, rho = 0.424632

<br>nSV = 132, nBSV = 107

<br>Total nSV = 132

<p>

obj is the optimal objective value of the dual SVM problem.

rho is the bias term in the decision function

sgn(w^Tx - rho).

nSV and nBSV are number of support vectors and bounded support

vectors (i.e., alpha_i = C). nu-svm is a somewhat equivalent

form of C-SVM where C is replaced by nu. nu simply shows the

corresponding parameter. More details are in

<a href="http://www.csie.ntu.edu.tw/~cjlin/papers/libsvm.pdf">

libsvm document</a>.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f402"><b>Q: Can you explain more about the model file?</b></a>

<br/>

<p>

In the model file, after parameters and other informations such as labels , each line represents a support vector.

Support vectors are listed in the order of "labels" shown earlier.

(i.e., those from the first class in the "labels" list are

grouped first, and so on.)

If k is the total number of classes,

in front of a support vector in class j, there are

k-1 coefficients

y*alpha where alpha are dual solution of the

following two class problems:

<br>

1 vs j, 2 vs j, ..., j-1 vs j, j vs j+1, j vs j+2, ..., j vs k

<br>

and y=1 in first j-1 coefficients, y=-1 in the remaining

k-j coefficients.

For example, if there are 4 classes, the file looks like:

<pre>

+-+-+-+--------------------+

|1|1|1| |

|v|v|v| SVs from class 1 |

|2|3|4| |

+-+-+-+--------------------+

|1|2|2| |

|v|v|v| SVs from class 2 |

|2|3|4| |

+-+-+-+--------------------+

|1|2|3| |

|v|v|v| SVs from class 3 |

|3|3|4| |

+-+-+-+--------------------+

|1|2|3| |

|v|v|v| SVs from class 4 |

|4|4|4| |

+-+-+-+--------------------+

</pre>

See also

<a href="#f804"> an illustration using

MATLAB/OCTAVE.</a>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f403"><b>Q: Should I use float or double to store numbers in the cache ?</b></a>

<br/>

<p>

We have float as the default as you can store more numbers

in the cache.

In general this is good enough but for few difficult

cases (e.g. C very very large) where solutions are huge

numbers, it might be possible that the numerical precision is not

enough using only float.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f404"><b>Q: How do I choose the kernel?</b></a>

<br/>

<p>

In general we suggest you to try the RBF kernel first.

A recent result by Keerthi and Lin

(<a href=http://www.csie.ntu.edu.tw/~cjlin/papers/limit.pdf>

download paper here</a>)

shows that if RBF is used with model selection,

then there is no need to consider the linear kernel.

The kernel matrix using sigmoid may not be positive definite

and in general it's accuracy is not better than RBF.

(see the paper by Lin and Lin

(<a href=http://www.csie.ntu.edu.tw/~cjlin/papers/tanh.pdf>

download paper here</a>).

Polynomial kernels are ok but if a high degree is used,

numerical difficulties tend to happen

(thinking about dth power of (<1) goes to 0

and (>1) goes to infinity).

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f405"><b>Q: Does libsvm have special treatments for linear SVM?</b></a>

<br/>

<p>

No, libsvm solves linear/nonlinear SVMs by the

same way.

Some tricks may save training/testing time if the

linear kernel is used,

so libsvm is <b>NOT</b> particularly efficient for linear SVM,

especially when

C is large and

the number of data is much larger

than the number of attributes.

You can either

<ul>

<li>

Use small C only. We have shown in the following paper

that after C is larger than a certain threshold,

the decision function is the same.

<p>

<a href="http://guppy.mpe.nus.edu.sg/~mpessk/">S. S. Keerthi</a>

and

<B>C.-J. Lin</B>.

<A HREF="papers/limit.pdf">

Asymptotic behaviors of support vector machines with

Gaussian kernel

</A>

.

<I><A HREF="http://mitpress.mit.edu/journal-home.tcl?issn=08997667">Neural Computation</A></I>, 15(2003), 1667-1689.

<li>

Check <a href=http://www.csie.ntu.edu.tw/~cjlin/liblinear>liblinear</a>,

which is designed for large-scale linear classification.

</ul>

<p> Please also see our <a href=../papers/guide/guide.pdf>SVM guide</a>

on the discussion of using RBF and linear

kernels.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f406"><b>Q: The number of free support vectors is large. What should I do?</b></a>

<br/>

<p>

This usually happens when the data are overfitted.

If attributes of your data are in large ranges,

try to scale them. Then the region

of appropriate parameters may be larger.

Note that there is a scale program

in libsvm.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f407"><b>Q: Should I scale training and testing data in a similar way?</b></a>

<br/>

<p>

Yes, you can do the following:

<pre>

> svm-scale -s scaling_parameters train_data > scaled_train_data

> svm-scale -r scaling_parameters test_data > scaled_test_data

</pre>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f408"><b>Q: Does it make a big difference if I scale each attribute to [0,1] instead of [-1,1]?</b></a>

<br/>

<p>

For the linear scaling method, if the RBF kernel is

used and parameter selection is conducted, there

is no difference. Assume Mi and mi are

respectively the maximal and minimal values of the

ith attribute. Scaling to [0,1] means

<pre>

x'=(x-mi)/(Mi-mi)

</pre>

For [-1,1],

<pre>

x''=2(x-mi)/(Mi-mi)-1.

</pre>

In the RBF kernel,

<pre>

x'-y'=(x-y)/(Mi-mi), x''-y''=2(x-y)/(Mi-mi).

</pre>

Hence, using (C,g) on the [0,1]-scaled data is the

same as (C,g/2) on the [-1,1]-scaled data.

<p> Though the performance is the same, the computational

time may be different. For data with many zero entries,

[0,1]-scaling keeps the sparsity of input data and hence

may save the time.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f409"><b>Q: The prediction rate is low. How could I improve it?</b></a>

<br/>

<p>

Try to use the model selection tool grid.py in the python

directory find

out good parameters. To see the importance of model selection,

please

see my talk:

<A HREF="http://www.csie.ntu.edu.tw/~cjlin/talks/freiburg.pdf">

A practical guide to support vector

classification

</A>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f410"><b>Q: My data are unbalanced. Could libsvm handle such problems?</b></a>

<br/>

<p>

Yes, there is a -wi options. For example, if you use

<pre>

> svm-train -s 0 -c 10 -w1 1 -w-1 5 data_file

</pre>

<p>

the penalty for class "-1" is larger.

Note that this -w option is for C-SVC only.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f411"><b>Q: What is the difference between nu-SVC and C-SVC?</b></a>

<br/>

<p>

Basically they are the same thing but with different

parameters. The range of C is from zero to infinity

but nu is always between [0,1]. A nice property

of nu is that it is related to the ratio of

support vectors and the ratio of the training

error.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f412"><b>Q: The program keeps running (without showing any output). What should I do?</b></a>

<br/>

<p>

You may want to check your data. Each training/testing

data must be in one line. It cannot be separated.

In addition, you have to remove empty lines.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f413"><b>Q: The program keeps running (with output, i.e. many dots). What should I do?</b></a>

<br/>

<p>

In theory libsvm guarantees to converge.

Therefore, this means you are

handling ill-conditioned situations

(e.g. too large/small parameters) so numerical

difficulties occur.

<p>

You may get better numerical stability by replacing

<pre>

typedef float Qfloat;

</pre>

in svm.cpp with

<pre>

typedef double Qfloat;

</pre>

That is, elements in the kernel cache are stored

in double instead of single. However, this means fewer elements

can be put in the kernel cache.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f414"><b>Q: The training time is too long. What should I do?</b></a>

<br/>

<p>

For large problems, please specify enough cache size (i.e.,

-m).

Slow convergence may happen for some difficult cases (e.g. -c is large).

You can try to use a looser stopping tolerance with -e.

If that still doesn't work, you may train only a subset of the data.

You can use the program subset.py in the directory "tools"

to obtain a random subset.

<p>

If you have extremely large data and face this difficulty, please

contact us. We will be happy to discuss possible solutions.

<p> When using large -e, you may want to check if -h 0 (no shrinking) or -h 1 (shrinking) is faster.

See a related question below.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f4141"><b>Q: Does shrinking always help?</b></a>

<br/>

<p>

If the number of iterations is high, then shrinking

often helps.

However, if the number of iterations is small

(e.g., you specify a large -e), then

probably using -h 0 (no shrinking) is better.

See the

<a href=../papers/libsvm.pdf>implementation document</a> for details.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f415"><b>Q: How do I get the decision value(s)?</b></a>

<br/>

<p>

We print out decision values for regression. For classification,

we solve several binary SVMs for multi-class cases. You

can obtain values by easily calling the subroutine

svm_predict_values. Their corresponding labels

can be obtained from svm_get_labels.

Details are in

README of libsvm package.

<p>

If you are using MATLAB/OCTAVE interface, svmpredict can directly

give you decision values. Please see matlab/README for details.

<p>

We do not recommend the following. But if you would

like to get values for

TWO-class classification with labels +1 and -1

(note: +1 and -1 but not things like 5 and 10)

in the easiest way, simply add

<pre>

printf("%f\n", dec_values[0]*model->label[0]);

</pre>

after the line

<pre>

svm_predict_values(model, x, dec_values);

</pre>

of the file svm.cpp.

Positive (negative)

decision values correspond to data predicted as +1 (-1).

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f4151"><b>Q: How do I get the distance between a point and the hyperplane?</b></a>

<br/>

<p>

The distance is |decision_value| / |w|.

We have |w|^2 = w^Tw = alpha^T Q alpha = 2*(dual_obj + sum alpha_i).

Thus in svm.cpp please find the place

where we calculate the dual objective value

(i.e., the subroutine Solve())

and add a statement to print w^Tw.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f416"><b>Q: On 32-bit machines, if I use a large cache (i.e. large -m) on a linux machine, why sometimes I get "segmentation fault ?"</b></a>

<br/>

<p>

On 32-bit machines, the maximum addressable

memory is 4GB. The Linux kernel uses 3:1

split which means user space is 3G and

kernel space is 1G. Although there are

3G user space, the maximum dynamic allocation

memory is 2G. So, if you specify -m near 2G,

the memory will be exhausted. And svm-train

will fail when it asks more memory.

For more details, please read

<a href=http://groups.google.com/groups?hl=en&lr=&ie=UTF-8&selm=3BA164F6.BAFA4FB%40daimi.au.dk>

this article</a>.

<p>

The easiest solution is to switch to a

64-bit machine.

Otherwise, there are two ways to solve this. If your

machine supports Intel's PAE (Physical Address

Extension), you can turn on the option HIGHMEM64G

in Linux kernel which uses 4G:4G split for

kernel and user space. If you don't, you can

try a software `tub' which can eliminate the 2G

boundary for dynamic allocated memory. The `tub'

is available at

<a href=http://www.bitwagon.com/tub.html>http://www.bitwagon.com/tub.html</a>.

<!--

This may happen only when the cache is large, but each cached row is

not large enough. <b>Note:</b> This problem is specific to

gnu C library which is used in linux.

The solution is as follows:

<p>

In our program we have malloc() which uses two methods

to allocate memory from kernel. One is

sbrk() and another is mmap(). sbrk is faster, but mmap

has a larger address

space. So malloc uses mmap only if the wanted memory size is larger

than some threshold (default 128k).

In the case where each row is not large enough (#elements < 128k/sizeof(float)) but we need a large cache ,

the address space for sbrk can be exhausted. The solution is to

lower the threshold to force malloc to use mmap

and increase the maximum number of chunks to allocate

with mmap.

<p>

Therefore, in the main program (i.e. svm-train.c) you want

to have

<pre>

#include <malloc.h>

</pre>

and then in main():

<pre>

mallopt(M_MMAP_THRESHOLD, 32768);

mallopt(M_MMAP_MAX,1000000);

</pre>

You can also set the environment variables instead

of writing them in the program:

<pre>

$ M_MMAP_MAX=1000000 M_MMAP_THRESHOLD=32768 ./svm-train .....

</pre>

More information can be found by

<pre>

$ info libc "Malloc Tunable Parameters"

</pre>

-->

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f417"><b>Q: How do I disable screen output of svm-train?</b></a>

<br/>

<p>

For commend-line users, use the -q option:

<pre>

> ./svm-train -q heart_scale

</pre>

<p>

For library users, set the global variable

<pre>

extern void (*svm_print_string) (const char *);

</pre>

to specify the output format. You can disable the output by the following steps:

<ol>

<li>

Declare a function to output nothing:

<pre>

void print_null(const char *s) {}

</pre>

</li>

<li>

Assign the output function of libsvm by

<pre>

svm_print_string = &print_null;

</pre>

</li>

</ol>

Finally, a way used in earlier libsvm

is by updating svm.cpp from

<pre>

#if 1

void info(const char *fmt,...)

</pre>

to

<pre>

#if 0

void info(const char *fmt,...)

</pre>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f418"><b>Q: I would like to use my own kernel. Any example? In svm.cpp, there are two subroutines for kernel evaluations: k_function() and kernel_function(). Which one should I modify ?</b></a>

<br/>

<p>

An example is "LIBSVM for string data" in LIBSVM Tools.

<p>

The reason why we have two functions is as follows.

For the RBF kernel exp(-g |xi - xj|^2), if we calculate

xi - xj first and then the norm square, there are 3n operations.

Thus we consider exp(-g (|xi|^2 - 2dot(xi,xj) +|xj|^2))

and by calculating all |xi|^2 in the beginning,

the number of operations is reduced to 2n.

This is for the training. For prediction we cannot

do this so a regular subroutine using that 3n operations is

needed.

The easiest way to have your own kernel is

to put the same code in these two

subroutines by replacing any kernel.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f419"><b>Q: What method does libsvm use for multi-class SVM ? Why don't you use the "1-against-the rest" method?</b></a>

<br/>

<p>

It is one-against-one. We chose it after doing the following

comparison:

C.-W. Hsu and C.-J. Lin.

<A HREF="http://www.csie.ntu.edu.tw/~cjlin/papers/multisvm.pdf">

A comparison of methods

for multi-class support vector machines

</A>,

<I>IEEE Transactions on Neural Networks</A></I>, 13(2002), 415-425.

<p>

"1-against-the rest" is a good method whose performance

is comparable to "1-against-1." We do the latter

simply because its training time is shorter.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f4191"><b>Q: How does LIBSVM perform parameter selection for multi-class problems? </b></a>

<br/>

<p>

LIBSVM implements "one-against-one" multi-class method, so there are

k(k-1)/2 binary models, where k is the number of classes.

<p>

We can consider two ways to conduct parameter selection.

<ol>

<li>

For any two classes of data, a parameter selection procedure is conducted. Finally,

each decision function has its own optimal parameters.

</li>

<li>

The same parameters are used for all k(k-1)/2 binary classification problems.

We select parameters that achieve the highest overall performance.

</li>

</ol>

Each has its own advantages. A

single parameter set may not be uniformly good for all k(k-1)/2 decision functions.

However, as the overall accuracy is the final consideration, one parameter set

for one decision function may lead to over-fitting. In the paper

<p>

Chen, Lin, and Schölkopf,

<A HREF="../papers/nusvmtutorial.pdf">

A tutorial on nu-support vector machines.

</A>

Applied Stochastic Models in Business and Industry, 21(2005), 111-136,

<p>

they have experimentally

shown that the two methods give similar performance.

Therefore, currently the parameter selection in LIBSVM

takes the second approach by considering the same parameters for

all k(k-1)/2 models.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f420"><b>Q: After doing cross validation, why there is no model file outputted ?</b></a>

<br/>

<p>

Cross validation is used for selecting good parameters.

After finding them, you want to re-train the whole

data without the -v option.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f4201"><b>Q: Why my cross-validation results are different from those in the Practical Guide?</b></a>

<br/>

<p>

Due to random partitions of

the data, on different systems CV accuracy values

may be different.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f421"><b>Q: On some systems CV accuracy is the same in several runs. How could I use different data partitions? In other words, how do I set random seed in LIBSVM?</b></a>

<br/>

<p>

If you use GNU C library,

the default seed 1 is considered. Thus you always

get the same result of running svm-train -v.

To have different seeds, you can add the following code

in svm-train.c:

<pre>

#include <time.h>

</pre>

and in the beginning of main(),

<pre>

srand(time(0));

</pre>

Alternatively, if you are not using GNU C library

and would like to use a fixed seed, you can have

<pre>

srand(1);

</pre>

<p>

For Java, the random number generator

is initialized using the time information.

So results of two CV runs are different.

To fix the seed, after version 3.1 (released

in mid 2011), you can add

<pre>

svm.rand.setSeed(0);

</pre>

in the main() function of svm_train.java.

<p>

If you use CV to select parameters, it is recommended to use identical folds

under different parameters. In this case, you can consider fixing the seed.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f422"><b>Q: I would like to solve L2-loss SVM (i.e., error term is quadratic). How should I modify the code ?</b></a>

<br/>

<p>

It is extremely easy. Taking c-svc for example, to solve

<p>

min_w w^Tw/2 + C \sum max(0, 1- (y_i w^Tx_i+b))^2,

<p>

only two

places of svm.cpp have to be changed.

First, modify the following line of

solve_c_svc from

<pre>

s.Solve(l, SVC_Q(*prob,*param,y), minus_ones, y,

alpha, Cp, Cn, param->eps, si, param->shrinking);

</pre>

to

<pre>

s.Solve(l, SVC_Q(*prob,*param,y), minus_ones, y,

alpha, INF, INF, param->eps, si, param->shrinking);

</pre>

Second, in the class of SVC_Q, declare C as

a private variable:

<pre>

double C;

</pre>

In the constructor replace

<pre>

for(int i=0;i<prob.l;i++)

QD[i]= (Qfloat)(this->*kernel_function)(i,i);

</pre>

with

<pre>

this->C = param.C;

for(int i=0;i<prob.l;i++)

QD[i]= (Qfloat)(this->*kernel_function)(i,i)+0.5/C;

</pre>

Then in the subroutine get_Q, after the for loop, add

<pre>

if(i >= start && i < len)

data[i] += 0.5/C;

</pre>

<p>

For one-class svm, the modification is exactly the same. For SVR, you don't need an if statement like the above. Instead, you only need a simple assignment:

<pre>

data[real_i] += 0.5/C;

</pre>

<p>

For large linear L2-loss SVM, please use

<a href=../liblinear>LIBLINEAR</a>.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f424"><b>Q: How do I choose parameters for one-class svm as training data are in only one class?</b></a>

<br/>

<p>

You have pre-specified true positive rate in mind and then search for

parameters which achieve similar cross-validation accuracy.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f427"><b>Q: Why the code gives NaN (not a number) results?</b></a>

<br/>

<p>

This rarely happens, but few users reported the problem.

It seems that their

computers for training libsvm have the VPN client

running. The VPN software has some bugs and causes this

problem. Please try to close or disconnect the VPN client.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f428"><b>Q: Why on windows sometimes grid.py fails?</b></a>

<br/>

<p>

This problem shouldn't happen after version

2.85. If you are using earlier versions,

please download the latest one.

<!--

<p>

If you are using earlier

versions, the error message is probably

<pre>

Traceback (most recent call last):

File "grid.py", line 349, in ?

main()

File "grid.py", line 344, in main

redraw(db)

File "grid.py", line 132, in redraw

gnuplot.write("set term windows\n")

IOError: [Errno 22] Invalid argument

</pre>

<p>Please try to close gnuplot windows and rerun.

If the problem still occurs, comment the following

two lines in grid.py by inserting "#" in the beginning:

<pre>

redraw(db)

redraw(db,1)

</pre>

Then you get accuracy only but not cross validation contours.

-->

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f429"><b>Q: Why grid.py/easy.py sometimes generates the following warning message?</b></a>

<br/>

<pre>

Warning: empty z range [62.5:62.5], adjusting to [61.875:63.125]

Notice: cannot contour non grid data!

</pre>

<p>Nothing is wrong and please disregard the

message. It is from gnuplot when drawing

the contour.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f430"><b>Q: Why the sign of predicted labels and decision values are sometimes reversed?</b></a>

<br/>

<p>Nothing is wrong. Very likely you have two labels +1/-1 and the first instance in your data

has -1.

Think about the case of labels +5/+10. Since

SVM needs to use +1/-1, internally

we map +5/+10 to +1/-1 according to which

label appears first.

Hence a positive decision value implies

that we should predict the "internal" +1,

which may not be the +1 in the input file.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f431"><b>Q: I don't know class labels of test data. What should I put in the first column of the test file?</b></a>

<br/>

<p>Any value is ok. In this situation, what you will use is the output file of svm-predict, which gives predicted class labels.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f432"><b>Q: How can I use OpenMP to parallelize LIBSVM on a multicore/shared-memory computer?</b></a>

<br/>

<p>It is very easy if you are using GCC 4.2

or after.

<p> In Makefile, add -fopenmp to CFLAGS.

<p> In class SVC_Q of svm.cpp, modify the for loop

of get_Q to:

<pre>

#pragma omp parallel for private(j)

for(j=start;j<len;j++)

</pre>

<p> In the subroutine svm_predict_values of svm.cpp, add one line to the for loop:

<pre>

#pragma omp parallel for private(i)

for(i=0;i<l;i++)

kvalue[i] = Kernel::k_function(x,model->SV[i],model->param);

</pre>

For regression, you need to modify

class SVR_Q instead. The loop in svm_predict_values

is also different because you need

a reduction clause for the variable sum:

<pre>

#pragma omp parallel for private(i) reduction(+:sum)

for(i=0;i<model->l;i++)

sum += sv_coef[i] * Kernel::k_function(x,model->SV[i],model->param);

</pre>

<p> Then rebuild the package. Kernel evaluations in training/testing will be parallelized. An example of running this modification on

an 8-core machine using the data set

<a href=../libsvmtools/datasets/binary/ijcnn1.bz2>ijcnn1</a>:

<p> 8 cores:

<pre>

%setenv OMP_NUM_THREADS 8

%time svm-train -c 16 -g 4 -m 400 ijcnn1

27.1sec

</pre>

1 core:

<pre>

%setenv OMP_NUM_THREADS 1

%time svm-train -c 16 -g 4 -m 400 ijcnn1

79.8sec

</pre>

For this data, kernel evaluations take 80% of training time. In the above example, we assume you use csh. For bash, use

<pre>

export OMP_NUM_THREADS=8

</pre>

instead.

<p> For Python interface, you need to add the -lgomp link option:

<pre>

$(CXX) -lgomp -shared -dynamiclib svm.o -o libsvm.so.$(SHVER)

</pre>

<p> For MS Windows, you need to add /openmp in CFLAGS of Makefile.win

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q4:_Training_and_prediction"></a>

<a name="f433"><b>Q: How could I know which training instances are support vectors?</b></a>

<br/>

<p>

It's very simple. Since version 3.13, you can use the function

<pre>

void svm_get_sv_indices(const struct svm_model *model, int *sv_indices)

</pre>

to get indices of support vectors. For example, in svm-train.c, after

<pre>

model = svm_train(&prob, &param);

</pre>

you can add

<pre>

int nr_sv = svm_get_nr_sv(model);

int *sv_indices = Malloc(int, nr_sv);

svm_get_sv_indices(model, sv_indices);

for (int i=0; i<nr_sv; i++)

printf("instance %d is a support vector\n", sv_indices[i]);

</pre>

<p> If you use matlab interface, you can directly check

<pre>

model.sv_indices

</pre>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q5:_Probability_outputs"></a>

<a name="f425"><b>Q: Why training a probability model (i.e., -b 1) takes a longer time?</b></a>

<br/>

<p>

To construct this probability model, we internally conduct a

cross validation, which is more time consuming than

a regular training.

Hence, in general you do parameter selection first without

-b 1. You only use -b 1 when good parameters have been

selected. In other words, you avoid using -b 1 and -v

together.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q5:_Probability_outputs"></a>

<a name="f426"><b>Q: Why using the -b option does not give me better accuracy?</b></a>

<br/>

<p>

There is absolutely no reason the probability outputs guarantee

you better accuracy. The main purpose of this option is

to provide you the probability estimates, but not to boost

prediction accuracy. From our experience,

after proper parameter selections, in general with

and without -b have similar accuracy. Occasionally there

are some differences.

It is not recommended to compare the two under

just a fixed parameter

set as more differences will be observed.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q5:_Probability_outputs"></a>

<a name="f427"><b>Q: Why using svm-predict -b 0 and -b 1 gives different accuracy values?</b></a>

<br/>

<p>

Let's just consider two-class classification here. After probability information is obtained in training,

we do not have

<p>

prob > = 0.5 if and only if decision value >= 0.

<p>

So predictions may be different with -b 0 and 1.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q6:_Graphic_interface"></a>

<a name="f501"><b>Q: How can I save images drawn by svm-toy?</b></a>

<br/>

<p>

For Microsoft windows, first press the "print screen" key on the keyboard.

Open "Microsoft Paint"

(included in Windows)

and press "ctrl-v." Then you can clip

the part of picture which you want.

For X windows, you can

use the program "xv" or "import" to grab the picture of the svm-toy window.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q6:_Graphic_interface"></a>

<a name="f502"><b>Q: I press the "load" button to load data points but why svm-toy does not draw them ?</b></a>

<br/>

<p>

The program svm-toy assumes both attributes (i.e. x-axis and y-axis

values) are in (0,1). Hence you want to scale your

data to between a small positive number and

a number less than but very close to 1.

Moreover, class labels must be 1, 2, or 3

(not 1.0, 2.0 or anything else).

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q6:_Graphic_interface"></a>

<a name="f503"><b>Q: I would like svm-toy to handle more than three classes of data, what should I do ?</b></a>

<br/>

<p>

Taking windows/svm-toy.cpp as an example, you need to

modify it and the difference

from the original file is as the following: (for five classes of

data)

<pre>

30,32c30

< RGB(200,0,200),

< RGB(0,160,0),

< RGB(160,0,0)

---

> RGB(200,0,200)

39c37

< HBRUSH brush1, brush2, brush3, brush4, brush5;

---

> HBRUSH brush1, brush2, brush3;

113,114d110

< brush4 = CreateSolidBrush(colors[7]);

< brush5 = CreateSolidBrush(colors[8]);

155,157c151

< else if(v==3) return brush3;

< else if(v==4) return brush4;

< else return brush5;

---

> else return brush3;

325d318

< int colornum = 5;

327c320

< svm_node *x_space = new svm_node[colornum * prob.l];

---

> svm_node *x_space = new svm_node[3 * prob.l];

333,338c326,331

< x_space[colornum * i].index = 1;

< x_space[colornum * i].value = q->x;

< x_space[colornum * i + 1].index = 2;

< x_space[colornum * i + 1].value = q->y;

< x_space[colornum * i + 2].index = -1;

< prob.x[i] = &x_space[colornum * i];

---

> x_space[3 * i].index = 1;

> x_space[3 * i].value = q->x;

> x_space[3 * i + 1].index = 2;

> x_space[3 * i + 1].value = q->y;

> x_space[3 * i + 2].index = -1;

> prob.x[i] = &x_space[3 * i];

397c390

< if(current_value > 5) current_value = 1;

---

> if(current_value > 3) current_value = 1;

</pre>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q7:_Java_version_of_libsvm"></a>

<a name="f601"><b>Q: What is the difference between Java version and C++ version of libsvm?</b></a>

<br/>

<p>

They are the same thing. We just rewrote the C++ code

in Java.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q7:_Java_version_of_libsvm"></a>

<a name="f602"><b>Q: Is the Java version significantly slower than the C++ version?</b></a>

<br/>

<p>

This depends on the VM you used. We have seen good

VM which leads the Java version to be quite competitive with

the C++ code. (though still slower)

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q7:_Java_version_of_libsvm"></a>

<a name="f603"><b>Q: While training I get the following error message: java.lang.OutOfMemoryError. What is wrong?</b></a>

<br/>

<p>

You should try to increase the maximum Java heap size.

For example,

<pre>

java -Xmx2048m -classpath libsvm.jar svm_train ...

</pre>

sets the maximum heap size to 2048M.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q7:_Java_version_of_libsvm"></a>

<a name="f604"><b>Q: Why you have the main source file svm.m4 and then transform it to svm.java?</b></a>

<br/>

<p>

Unlike C, Java does not have a preprocessor built-in.

However, we need some macros (see first 3 lines of svm.m4).

</ul>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q8:_Python_interface"></a>

<a name="f704"><b>Q: Except the python-C++ interface provided, could I use Jython to call libsvm ?</b></a>

<br/>

<p> Yes, here are some examples:

<pre>

$ export CLASSPATH=$CLASSPATH:~/libsvm-2.91/java/libsvm.jar

$ ./jython

Jython 2.1a3 on java1.3.0 (JIT: jitc)

Type "copyright", "credits" or "license" for more information.

>>> from libsvm import *

>>> dir()

['__doc__', '__name__', 'svm', 'svm_model', 'svm_node', 'svm_parameter',

'svm_problem']

>>> x1 = [svm_node(index=1,value=1)]

>>> x2 = [svm_node(index=1,value=-1)]

>>> param = svm_parameter(svm_type=0,kernel_type=2,gamma=1,cache_size=40,eps=0.001,C=1,nr_weight=0,shrinking=1)

>>> prob = svm_problem(l=2,y=[1,-1],x=[x1,x2])

>>> model = svm.svm_train(prob,param)

*

optimization finished, #iter = 1

nu = 1.0

obj = -1.018315639346838, rho = 0.0

nSV = 2, nBSV = 2

Total nSV = 2

>>> svm.svm_predict(model,x1)

1.0

>>> svm.svm_predict(model,x2)

-1.0

>>> svm.svm_save_model("test.model",model)

</pre>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q9:_MATLAB_interface"></a>

<a name="f801"><b>Q: I compile the MATLAB interface without problem, but why errors occur while running it?</b></a>

<br/>

<p>

Your compiler version may not be supported/compatible for MATLAB.

Please check <a href=http://www.mathworks.com/support/compilers/current_release>this MATLAB page</a> first and then specify the version

number. For example, if g++ X.Y is supported, replace

<pre>

CXX = g++

</pre>

in the Makefile with

<pre>

CXX = g++-X.Y

</pre>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q9:_MATLAB_interface"></a>

<a name="f8011"><b>Q: On 64bit Windows I compile the MATLAB interface without problem, but why errors occur while running it?</b></a>

<br/>

<p>

Please make sure that you use

the -largeArrayDims option in make.m. For example,

<pre>

mex -largeArrayDims -O -c svm.cpp

</pre>

Moreover, if you use Microsoft Visual Studio,

probabally it is not properly installed.

See the explanation

<a href=http://www.mathworks.com/support/compilers/current_release/win64.html#n7>here</a>.

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q9:_MATLAB_interface"></a>

<a name="f802"><b>Q: Does the MATLAB interface provide a function to do scaling?</b></a>

<br/>

<p>

It is extremely easy to do scaling under MATLAB.

The following one-line code scale each feature to the range

of [0,1]:

<pre>

(data - repmat(min(data,[],1),size(data,1),1))*spdiags(1./(max(data,[],1)-min(data,[],1))',0,size(data,2),size(data,2))

</pre>

<p align="right">

<a href="#_TOP">[Go Top]</a>

<hr/>

<a name="/Q9:_MATLAB_interface"></a>

<a name="f803"><b>Q: How could I use MATLAB interface for parameter selection?</b></a>

<br/>

<p>

One can do this by a simple loop.

See the following example:

<pre>

bestcv = 0;

for log2c = -1:3,

for log2g = -4:1,

cmd = ['-v 5 -c ', num2str(2^log2c), ' -g ', num2str(2^log2g)];

cv = svmtrain(heart_scale_label, heart_scale_inst, cmd);

if (cv >= bestcv),