Showing preview only (492K chars total). Download the full file or copy to clipboard to get everything.

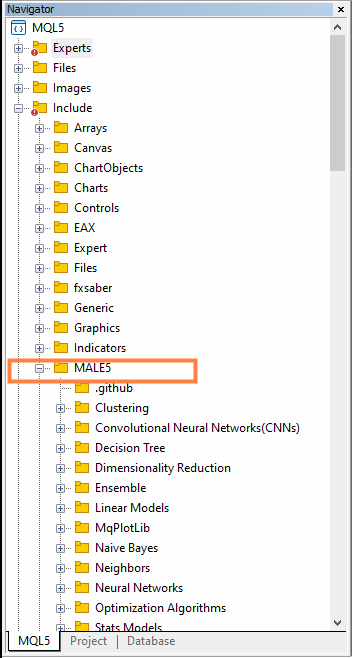

Repository: MegaJoctan/MALE5

Branch: MQL5-ML

Commit: d6541568aaba

Files: 47

Total size: 473.0 KB

Directory structure:

gitextract_ly_vy9wi/

├── .github/

│ └── FUNDING.yml

├── .gitignore

├── Examples/

│ ├── Classifier Model Example.mq5

│ └── Regressor Model Example.mq5

├── LICENSE

├── MqPlotLib/

│ └── plots.mqh

├── Neural Networks/

│ ├── Pattern Nets.mqh

│ ├── README.md

│ ├── Regressor Nets.mqh

│ ├── initializers.mqh

│ ├── kohonen maps.mqh

│ └── optimizers.mqh

├── Numpy/

│ └── Numpy.mqh

├── Pandas/

│ ├── Incremental LE.mqh

│ └── pandas.mqh

├── README.md

├── Sklearn/

│ ├── Cluster/

│ │ ├── DBSCAN.mqh

│ │ ├── Hierachical Clustering.mqh

│ │ ├── KMeans.mqh

│ │ └── base.mqh

│ ├── Decomposition/

│ │ ├── LDA.mqh

│ │ ├── NMF.mqh

│ │ ├── PCA.mqh

│ │ ├── README.md

│ │ ├── TruncatedSVD.mqh

│ │ └── base.mqh

│ ├── Ensemble/

│ │ ├── AdaBoost.mqh

│ │ ├── README.md

│ │ └── Random Forest.mqh

│ ├── Linear Models/

│ │ ├── Linear Regression.mqh

│ │ ├── Logistic Regression.mqh

│ │ ├── README.md

│ │ └── Ridge.mqh

│ ├── Naive Bayes/

│ │ ├── Naive Bayes.mqh

│ │ ├── README.md

│ │ └── naive bayes visuals.py

│ ├── Neighbors/

│ │ └── KNN_nearest_neighbors.mqh

│ ├── Tree/

│ │ ├── README.md

│ │ └── tree.mqh

│ ├── metrics.mqh

│ └── preprocessing.mqh

├── Stats Models/

│ ├── ADF.mqh

│ ├── ARIMA.mqh

│ └── OLS.mqh

├── Tensors.mqh

├── Utils.mqh

└── requirements.txt

================================================

FILE CONTENTS

================================================

================================================

FILE: .github/FUNDING.yml

================================================

# These are supported funding model platforms

ko_fi: omegajoctan

custom: ['https://www.mql5.com/en/users/omegajoctan/seller']

custom: ['https://www.buymeacoffee.com/omegajoctan']

================================================

FILE: .gitignore

================================================

*.ex5

*.psd

*.zip

*.rar

*.xlsx

Todo's.txt

logisticwiki.txt

/venv

/Neural Nets Pro

================================================

FILE: Examples/Classifier Model Example.mq5

================================================

//+------------------------------------------------------------------+

//| Classifier Model Sample.mq5 |

//| Copyright 2023, Omega Joctan |

//| https://www.mql5.com/en/users/omegajoctan |

//+------------------------------------------------------------------+

#property copyright "Copyright 2023, Omega Joctan"

#property link "https://www.mql5.com/en/users/omegajoctan"

#property version "1.00"

#include <MALE5\Decision Tree\tree.mqh>

#include <MALE5\preprocessing.mqh>

#include <MALE5\MatrixExtend.mqh> //helper functions for for data manipulations

#include <MALE5\metrics.mqh> //fo measuring the performance

StandardizationScaler scaler; //standardization scaler from preprocessing.mqh

CDecisionTreeClassifier *decision_tree; //a decision tree classifier model

MqlRates rates[];

//+------------------------------------------------------------------+

//| Expert initialization function |

//+------------------------------------------------------------------+

int OnInit()

{

//--- Model selection

decision_tree = new CDecisionTreeClassifier(2, 5); //a decision tree classifier from DecisionTree class

//---

vector open, high, low, close;

int data_size = 1000;

//--- Getting the open, high, low and close values for the past 1000 bars, starting from the recent closed bar of 1

open.CopyRates(Symbol(), PERIOD_D1, COPY_RATES_OPEN, 1, data_size);

high.CopyRates(Symbol(), PERIOD_D1, COPY_RATES_HIGH, 1, data_size);

low.CopyRates(Symbol(), PERIOD_D1, COPY_RATES_LOW, 1, data_size);

close.CopyRates(Symbol(), PERIOD_D1, COPY_RATES_CLOSE, 1, data_size);

matrix X(data_size, 3); //creating the x matrix

//--- Assigning the open, high, and low price values to the x matrix

X.Col(open, 0);

X.Col(high, 1);

X.Col(low, 2);

//--- Since we are using the x variables to predict y, we choose the close price to be the target variable

vector y(data_size);

for (int i=0; i<data_size; i++)

{

if (close[i]>open[i]) //a bullish candle appeared

y[i] = 1; //buy signal

else

{

y[i] = 0; //sell signal

}

}

//--- We split the data into training and testing samples for training and evaluation

matrix X_train, X_test;

vector y_train, y_test;

double train_size = 0.7; //70% of the data should be used for training the rest for testing

int random_state = 42; //we put a random state to shuffle the data so that a machine learning model understands the patterns and not the order of the dataset, this makes the model durable

MatrixExtend::TrainTestSplitMatrices(X, y, X_train, y_train, X_test, y_test, train_size, random_state); // we split the x and y data into training and testing samples

//--- Normalizing the independent variables

X_train = scaler.fit_transform(X_train); // we fit the scaler on the training data and transform the data alltogether

X_test = scaler.transform(X_test); // we transform the new data this way

//--- Training the model

decision_tree.fit(X_train, y_train); //The training function

//--- Measuring predictive accuracy

vector train_predictions = decision_tree.predict_bin(X_train);

Print("Training results classification report");

Metrics::classification_report(y_train, train_predictions);

//--- Evaluating the model on out-of-sample predictions

vector test_predictions = decision_tree.predict_bin(X_test);

Print("Testing results classification report");

Metrics::classification_report(y_test, test_predictions);

//---

ArraySetAsSeries(rates, true);

return(INIT_SUCCEEDED);

}

//+------------------------------------------------------------------+

//| Expert deinitialization function |

//+------------------------------------------------------------------+

void OnDeinit(const int reason)

{

//---

delete (decision_tree); //We have to delete the AI model object from the memory

}

//+------------------------------------------------------------------+

//| Expert tick function |

//+------------------------------------------------------------------+

void OnTick()

{

//--- Making predictions live from the market

CopyRates(Symbol(), PERIOD_D1, 1, 3, rates); //Get the very recent information from the market

vector x = {rates[0].open, rates[0].high, rates[0].low}; //Assigning data from the recent candle in a similar way to the training data

x = scaler.transform(x);

int signal = (int)decision_tree.predict_bin(x);

Comment("Signal = ",signal==1?"BUY":"SELL"); //Ternary operator for checking if the signal is either buy or sell

}

//+------------------------------------------------------------------+

================================================

FILE: Examples/Regressor Model Example.mq5

================================================

//+------------------------------------------------------------------+

//| Regressor Model sample.mq5 |

//| Copyright 2023, Omega Joctan |

//| https://www.mql5.com/en/users/omegajoctan |

//+------------------------------------------------------------------+

#property copyright "Copyright 2023, Omega Joctan"

#property link "https://www.mql5.com/en/users/omegajoctan"

#property version "1.00"

#include <MALE5\Decision Tree\tree.mqh>

#include <MALE5\preprocessing.mqh>

#include <MALE5\MatrixExtend.mqh> //helper functions for for data manipulations

#include <MALE5\metrics.mqh> //fo measuring the performance

StandardizationScaler scaler; //standardization scaler from preprocessing.mqh

CDecisionTreeRegressor *decision_tree; //a decision tree classifier model

MqlRates rates[];

//+------------------------------------------------------------------+

//| Expert initialization function |

//+------------------------------------------------------------------+

int OnInit()

{

//--- Model selection

decision_tree = new CDecisionTreeRegressor(2, 5); //a decision tree classifier from DecisionTree class

vector open, high, low, close;

int data_size = 1000; //bars

//--- Getting the open, high, low and close values for the past 1000 bars, starting from the recent closed bar of 1

open.CopyRates(Symbol(), PERIOD_D1, COPY_RATES_OPEN, 1, data_size);

high.CopyRates(Symbol(), PERIOD_D1, COPY_RATES_HIGH, 1, data_size);

low.CopyRates(Symbol(), PERIOD_D1, COPY_RATES_LOW, 1, data_size);

close.CopyRates(Symbol(), PERIOD_D1, COPY_RATES_CLOSE, 1, data_size);

matrix X(data_size, 3); //creating the x matrix

//--- Assigning the open, high, and low price values to the x matrix

X.Col(open, 0);

X.Col(high, 1);

X.Col(low, 2);

vector y = close; // The target variable is the close price, using open, high and low values were want to predict the next closing price

//--- We split the data into training and testing samples for training and evaluation

matrix X_train, X_test;

vector y_train, y_test;

double train_size = 0.7; //70% of the data to be used for training the rest 30% for testing

int random_state = 42; //we put a random state to shuffle the data so that a machine learning model understands the patterns and not the order of the dataset, this makes the model durable

MatrixExtend::TrainTestSplitMatrices(X, y, X_train, y_train, X_test, y_test, train_size, random_state); // we split the x and y data into training and testing samples

//--- Normalizing the independent variables

X_train = scaler.fit_transform(X_train); // we fit the scaler on the training data and transform the data alltogether

X_test = scaler.transform(X_test); // we transform the new data this way

//--- Training the model

decision_tree.fit(X_train, y_train); //The training function

//--- Measuring predictive accuracy

vector train_predictions = decision_tree.predict(X_train);

printf("Decision decision_tree training r2_score = %.3f ",Metrics::RegressionMetric(y_train, train_predictions, METRIC_R_SQUARED));

//--- Evaluating the model on out-of-sample predictions

vector test_predictions = decision_tree.predict(X_test);

printf("Decision decision_tree out-of-sample r2_score = %.3f ",Metrics::r_squared(y_test, test_predictions));

return(INIT_SUCCEEDED);

}

//+------------------------------------------------------------------+

//| Expert deinitialization function |

//+------------------------------------------------------------------+

void OnDeinit(const int reason)

{

//---

delete (decision_tree); //We have to delete the AI model object from the memory

}

//+------------------------------------------------------------------+

//| Expert tick function |

//+------------------------------------------------------------------+

void OnTick()

{

//--- Making predictions live from the market

CopyRates(Symbol(), PERIOD_D1, 1, 3, rates); //Get the very recent information from the market

vector x = {rates[0].open, rates[0].high, rates[0].low}; //Assigning data from the recent candle in a similar way to the training data

x = scaler.transform(x);

double predicted_close_price = decision_tree.predict(x);

Comment("Next closing price predicted is = ",predicted_close_price);

}

//+------------------------------------------------------------------+

================================================

FILE: LICENSE

================================================

MIT License

Copyright (c) 2023 Omega Joctan

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: MqPlotLib/plots.mqh

================================================

//+------------------------------------------------------------------+

//| plots.mqh |

//| Copyright 2022, Fxalgebra.com |

//| https://www.mql5.com/en/users/omegajoctan |

//+------------------------------------------------------------------+

#property copyright "Copyright 2022, Fxalgebra.com"

#property link "https://www.mql5.com/en/users/omegajoctan"

//+------------------------------------------------------------------+

//| defines |

//+------------------------------------------------------------------+

#include <Graphics\Graphic.mqh>

#include <MALE5\MatrixExtend.mqh>

class CPlots

{

protected:

CGraphic *graph;

long m_chart_id;

int m_subwin;

int m_x1, m_x2;

int m_y1, m_y2;

string m_font_family;

bool m_chart_show;

string m_plot_names[];

ENUM_CURVE_TYPE m_curve_type;

bool GraphCreate(string plot_name);

vector m_x, m_y;

string x_label, y_label;

public:

CPlots(long chart_id=0, int sub_win=0 ,int x1=30, int y1=40, int x2=550, int y2=310, string font_family="Consolas", bool chart_show=true);

~CPlots(void);

bool Plot(string plot_name, vector& x, vector& y, string x_axis_label, string y_axis_label, string label, ENUM_CURVE_TYPE curve_type=CURVE_POINTS_AND_LINES,color clr = clrDodgerBlue, bool points_fill = true);

bool AddPlot(vector &v,string label="plt",color clr=clrOrange);

};

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

CPlots::CPlots(long chart_id=0, int sub_win=0 ,int x1=30, int y1=40, int x2=550, int y2=310, string font_family="Consolas", bool chart_show=true):

m_chart_id(chart_id),

m_subwin(sub_win),

m_x1(x1),

m_y1(y1),

m_x2(x2),

m_y2(y2),

m_font_family(font_family),

m_chart_show(chart_show)

{

graph = new CGraphic();

ChartRedraw(m_chart_id);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

CPlots::~CPlots(void)

{

for (int i=0; i<ArraySize(m_plot_names); i++)

ObjectDelete(m_chart_id,m_plot_names[i]);

delete(graph);

ChartRedraw();

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

bool CPlots::GraphCreate(string plot_name)

{

ChartRedraw(m_chart_id);

ArrayResize(m_plot_names,ArraySize(m_plot_names)+1);

m_plot_names[ArraySize(m_plot_names)-1] = plot_name;

ChartSetInteger(m_chart_id, CHART_SHOW, m_chart_show);

if(!graph.Create(m_chart_id, plot_name, m_subwin, m_x1, m_y1, m_x2, m_y2))

{

printf("Failed to Create graphical object on the Main chart Err = %d", GetLastError());

return(false);

}

return (true);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

bool CPlots::Plot(

string plot_name,

vector& x,

vector& y,

string x_axis_label,

string y_axis_label,

string label,

ENUM_CURVE_TYPE curve_type=CURVE_POINTS_AND_LINES,

color clr = clrDodgerBlue,

bool points_fill = true

)

{

if (!this.GraphCreate(plot_name))

return (false);

//---

this.m_x = x;

this.m_y = y;

this.x_label = x_axis_label;;

this.y_label = y_axis_label;

double x_arr[], y_arr[];

MatrixExtend::VectorToArray(x, x_arr);

MatrixExtend::VectorToArray(y, y_arr);

m_curve_type = curve_type;

graph.CurveAdd(x_arr, y_arr, ColorToARGB(clr), m_curve_type, label);

graph.XAxis().Name(x_axis_label);

graph.XAxis().NameSize(13);

graph.YAxis().Name(y_axis_label);

graph.YAxis().NameSize(13);

graph.FontSet(m_font_family, 13);

graph.CurvePlotAll();

graph.Update();

return(true);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

bool CPlots::AddPlot(vector &v, string label="plt",color clr=clrOrange)

{

double x_arr[], y_arr[];

MatrixExtend::VectorToArray(this.m_x, x_arr);

MatrixExtend::VectorToArray(v, y_arr);

if (!graph.CurveAdd(x_arr, y_arr, ColorToARGB(clr), m_curve_type, label))

{

printf("%s failed to add a plot to the existing plot Err =%d",__FUNCTION__,GetLastError());

return false;

}

graph.XAxis().Name(this.x_label);

graph.XAxis().NameSize(13);

graph.YAxis().Name(this.y_label);

graph.YAxis().NameSize(13);

graph.FontSet(m_font_family, 13);

graph.CurvePlotAll();

graph.Update();

return(true);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

================================================

FILE: Neural Networks/Pattern Nets.mqh

================================================

//+------------------------------------------------------------------+

//| Pattern Nets.mqh |

//| Copyright 2022, Fxalgebra.com |

//| https://www.mql5.com/en/users/omegajoctan |

//+------------------------------------------------------------------+

#property copyright "Copyright 2022, Fxalgebra.com"

#property link "https://www.mql5.com/en/users/omegajoctan"

//+------------------------------------------------------------------+

//| Neural network type for pattern recognition/ can be used to |

//| to predict discrete data target variables. They are widely known |

//| as classification Neural Networks |

//+------------------------------------------------------------------+

#include <MALE5\MatrixExtend.mqh>

#include <MALE5\preprocessing.mqh>

#ifndef RANDOM_STATE

#define RANDOM_STATE 42

#endif

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

enum activation

{

AF_HARD_SIGMOID_ = AF_HARD_SIGMOID,

AF_SIGMOID_ = AF_SIGMOID,

AF_SWISH_ = AF_SWISH,

AF_SOFTSIGN_ = AF_SOFTSIGN,

AF_TANH_ = AF_TANH

};

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

class CPatternNets

{

private:

vector W_CONFIG;

vector W; //Weights vector

vector B; //Bias vector

activation A_FX;

protected:

ulong inputs;

ulong outputs;

ulong rows;

vector HL_CONFIG;

bool SoftMaxLayer;

vector classes;

void SoftMaxLayerFX(matrix<double> &mat);

public:

CPatternNets(matrix &xmatrix, vector &yvector,vector &HL_NODES, activation ActivationFx, bool SoftMaxLyr=false);

~CPatternNets(void);

int PatternNetFF(vector &in_vector);

vector PatternNetFF(matrix &xmatrix);

};

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

CPatternNets::CPatternNets(matrix &xmatrix, vector &yvector,vector &HL_NODES, activation ActivationFx, bool SoftMaxLyr=false)

{

A_FX = ActivationFx;

inputs = xmatrix.Cols();

rows = xmatrix.Rows();

SoftMaxLayer = SoftMaxLyr;

//--- Normalize data

if (rows != yvector.Size())

{

Print(__FUNCTION__," FATAL | Number of rows in the x matrix is not the same the y vector size ");

return;

}

classes = MatrixExtend::Unique(yvector);

outputs = classes.Size();

HL_CONFIG.Copy(HL_NODES);

HL_CONFIG.Resize(HL_CONFIG.Size()+1); //Add the output layer

HL_CONFIG[HL_CONFIG.Size()-1] = (int)outputs; //Append one node to the output layer

//---

W_CONFIG.Resize(HL_CONFIG.Size());

B.Resize((ulong)HL_CONFIG.Sum());

//--- GENERATE WEIGHTS

ulong layer_input = inputs;

for (ulong i=0; i<HL_CONFIG.Size(); i++)

{

W_CONFIG[i] = layer_input*HL_CONFIG[i];

layer_input = (ulong)HL_CONFIG[i];

}

W.Resize((ulong)W_CONFIG.Sum());

W = MatrixExtend::Random(0.0, 1.0, (int)W.Size(),RANDOM_STATE); //Gen weights

B = MatrixExtend::Random(0.0,1.0,(int)B.Size(),RANDOM_STATE); //Gen bias

//---

#ifdef DEBUG_MODE

Comment(

"< - - - P A T T E R N N E T S - - - >\n",

"HIDDEN LAYERS + OUTPUT ",HL_CONFIG,"\n",

"INPUTS ",inputs," | OUTPUTS ",outputs," W CONFIG ",W_CONFIG,"\n",

"activation ",EnumToString(A_FX)," SoftMaxLayer = ",bool(SoftMaxLayer)

);

Print("WEIGHTS ",W,"\nBIAS ",B);

#endif

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

CPatternNets::~CPatternNets(void)

{

ZeroMemory(W);

ZeroMemory(B);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

int CPatternNets::PatternNetFF(vector &in_vector)

{

matrix L_INPUT = {}, L_OUTPUT={}, L_WEIGHTS = {};

vector v_weights ={};

ulong w_start = 0;

L_INPUT = MatrixExtend::VectorToMatrix(in_vector);

vector L_BIAS_VECTOR = {};

matrix L_BIAS_MATRIX = {};

ulong b_start = 0;

for (ulong i=0; i<W_CONFIG.Size(); i++)

{

MatrixExtend::Copy(W,v_weights,w_start,ulong(W_CONFIG[i]));

L_WEIGHTS = MatrixExtend::VectorToMatrix(v_weights,L_INPUT.Rows());

MatrixExtend::Copy(B,L_BIAS_VECTOR,b_start,(ulong)HL_CONFIG[i]);

L_BIAS_MATRIX = MatrixExtend::VectorToMatrix(L_BIAS_VECTOR);

#ifdef DEBUG_MODE

Print("--> ",i);

Print("L_WEIGHTS\n",L_WEIGHTS,"\nL_INPUT\n",L_INPUT,"\nL_BIAS\n",L_BIAS_MATRIX);

#endif

L_OUTPUT = L_WEIGHTS.MatMul(L_INPUT);

L_OUTPUT = L_OUTPUT+L_BIAS_MATRIX; //Add bias

//---

if (i==W_CONFIG.Size()-1) //Last layer

{

if (SoftMaxLayer)

{

Print("Before softmax\n",L_OUTPUT);

SoftMaxLayerFX(L_OUTPUT);

Print("After\n",L_OUTPUT);

}

else

L_OUTPUT.Activation(L_OUTPUT, ENUM_ACTIVATION_FUNCTION(A_FX));

}

else

L_OUTPUT.Activation(L_OUTPUT, ENUM_ACTIVATION_FUNCTION(A_FX));

//---

L_INPUT.Copy(L_OUTPUT); //Assign outputs to the inputs

w_start += (ulong)W_CONFIG[i]; //New weights copy

b_start += (ulong)HL_CONFIG[i];

}

#ifdef DEBUG_MODE

Print("--> outputs\n ",L_OUTPUT);

#endif

vector v_out = MatrixExtend::MatrixToVector(L_OUTPUT);

return((int)classes[v_out.ArgMax()]);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

void CPatternNets::SoftMaxLayerFX(matrix<double> &mat)

{

vector<double> ret = MatrixExtend::MatrixToVector(mat);

ret.Activation(ret, AF_SOFTMAX);

mat = MatrixExtend::VectorToMatrix(ret, mat.Cols());

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

vector CPatternNets::PatternNetFF(matrix &xmatrix)

{

vector v(xmatrix.Rows());

for (ulong i=0; i<xmatrix.Rows(); i++)

v[i] = PatternNetFF(xmatrix.Row(i));

return (v);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

================================================

FILE: Neural Networks/README.md

================================================

## Kohonen Maps (Self-Organizing Maps)

This documentation explains the `CKohonenMaps` class in MQL5, which implements **Kohonen Maps**, also known as **Self-Organizing Maps (SOM)**, for clustering and visualization tasks.

**I. Kohonen Maps Theory:**

Kohonen Maps are a type of **artificial neural network** used for unsupervised learning, specifically for **clustering** and **visualization** of high-dimensional data. They work by:

1. **Initializing a grid of neurons:** Each neuron is associated with a weight vector representing its position in the high-dimensional space.

2. **Iteratively presenting data points:**

* For each data point:

* Find the **winning neuron** (closest neuron in terms of distance) based on the weight vectors.

* Update the weights of the winning neuron and its **neighborhood** towards the data point, with decreasing influence as the distance from the winning neuron increases.

3. **Convergence:** After a certain number of iterations (epochs), the weight vectors of the neurons become organized in a way that reflects the underlying structure of the data.

**II. CKohonenMaps Class:**

The `CKohonenMaps` class provides functionalities for implementing Kohonen Maps in MQL5:

**Public Functions:**

* **CKohonenMaps(uint clusters=2, double alpha=0.01, uint epochs=100, int random_state=42)** Constructor, allows setting hyperparameters:

* `clusters`: Number of clusters (default: 2).

* `alpha`: Learning rate (default: 0.01).

* `epochs`: Number of training epochs (default: 100).

* `random_state`: Random seed for reproducibility (default: 42).

* `~CKohonenMaps(void)` Destructor.

* `void fit(const matrix &x)` Trains the model on the provided data (`x`).

* `int predict(const vector &x)` Predicts the cluster label for a single data point (`x`).

* `vector predict(const matrix &x)` Predicts cluster labels for multiple data points (`x`).

**Internal Functions:**

* `Euclidean_distance(const vector &v1, const vector &v2)`: Calculates the Euclidean distance between two vectors.

* `CalcTimeElapsed(double seconds)`: Converts seconds to a human-readable format (not relevant for core functionality).

**III. Additional Notes:**

* The class internally uses the `CPlots` class (not documented here) for potential visualization purposes.

* The `c_matrix` and `w_matrix` member variables store the cluster assignments and weight matrix, respectively.

* Choosing the appropriate number of clusters is crucial for the quality of the results.

By understanding the theoretical foundation and functionalities of the `CKohonenMaps` class, MQL5 users can leverage Kohonen Maps for:

* **Clustering:** Group similar data points together based on their features.

* **Data visualization:** Project the high-dimensional data onto a lower-dimensional space (e.g., a 2D grid of neurons) for easier visualization, potentially using the `CPlots` class.

## Pattern Recognition Neural Network

This documentation explains the `CPatternNets` class in MQL5, which implements a **feed-forward neural network** for pattern recognition tasks.

**I. Neural Network Theory:**

A feed-forward neural network consists of interconnected layers of **neurons**. Each neuron receives input from the previous layer, applies an **activation function** to transform the signal, and outputs the result to the next layer.

**II. CPatternNets Class:**

The `CPatternNets` class provides functionalities for training and using a feed-forward neural network for pattern recognition in MQL5:

**Public Functions:**

* `CPatternNets(matrix &xmatrix, vector &yvector,vector &HL_NODES, activation ActivationFx, bool SoftMaxLyr=false)` Constructor:

* `xmatrix`: Input data matrix (rows: samples, columns: features).

* `yvector`: Target labels vector (corresponding labels for each sample in `xmatrix`).

* `HL_NODES`: Vector specifying the number of neurons in each hidden layer.

* `ActivationFx`: Activation function (enum specifying the type of activation function).

* `SoftMaxLyr`: Flag indicating whether to use a SoftMax layer in the output (default: False).

* `~CPatternNets(void)` Destructor.

* `int PatternNetFF(vector &in_vector)` Performs a forward pass on the network with a single input vector and returns the predicted class label.

* `vector PatternNetFF(matrix &xmatrix)` Performs a forward pass on the network for all rows in the input matrix and returns a vector of predicted class labels.

**Internal Functions:**

* `SoftMaxLayerFX(matrix<double> &mat)`: Applies the SoftMax function to a matrix (used for the output layer if `SoftMaxLyr` is True).

**III. Class Functionality:**

1. **Initialization:**

* The constructor validates data dimensions and parses user-defined hyperparameters.

* The network architecture (number of layers and neurons) is determined based on the provided configuration.

* Weights (connections between neurons) and biases (individual offsets for each neuron) are randomly initialized.

2. **Forward Pass:**

* The provided input vector is fed into the first layer.

* Each layer performs the following steps:

* Calculates the weighted sum of the previous layer's outputs.

* Adds the bias term to the weighted sum.

* Applies the chosen activation function to the result.

* This process continues through all layers until the final output layer is reached.

3. **SoftMax Layer (Optional)**

* If the `SoftMaxLyr` flag is True, the output layer uses the SoftMax function to ensure the output values sum to 1 and represent class probabilities.

4. **Prediction:**

* For single-sample prediction (`PatternNetFF(vector &in_vector)`), the class label with the **highest output value** is returned.

* For batch prediction (`PatternNetFF(matrix &xmatrix)`), a vector containing the predicted class label for each sample in the input matrix is returned.

**IV. Additional Notes:**

* The class provides several debug statements (disabled by default) to print intermediate calculations for debugging purposes.

* The code uses helper functions from the `MatrixExtend` class (not documented here) for matrix and vector operations.

* Choosing the appropriate network architecture, activation function, and learning approach (not implemented in this class) is crucial for optimal performance on specific tasks.

By understanding the theoretical foundation and functionalities of the `CPatternNets` class, MQL5 users can leverage neural networks for various pattern recognition tasks, including:

* **Classification:** Classifying data points into predefined categories based on their features.

* **Anomaly detection:** Identifying data points that deviate significantly from the expected patterns.

* **Feature learning:** Extracting hidden patterns or representations from the data.

## Regression Neural Network

This documentation explains the `CRegressorNets` class in MQL5, which implements a **Multi-Layer Perceptron (MLP)** for regression tasks.

**I. Regression vs. Classification:**

* **Regression:** Predicts continuous output values.

* **Classification:** Assigns data points to predefined categories.

**II. MLP Neural Network:**

An MLP is a type of **feed-forward neural network** used for supervised learning tasks like regression. It consists of:

* **Input layer:** Receives the input data.

* **Hidden layers:** Process and transform the information.

* **Output layer:** Produces the final prediction (continuous value in regression).

**III. CRegressorNets Class:**

The `CRegressorNets` class provides functionalities for training and using an MLP for regression in MQL5:

**Public Functions:**

* `CRegressorNets(vector &HL_NODES, activation ActivationFX=AF_RELU_)` Constructor:

* `HL_NODES`: Vector specifying the number of neurons in each hidden layer.

* `ActivationFX`: Activation function (enum specifying the type of activation function).

* `~CRegressorNets(void)` Destructor.

* `void fit(matrix &x, vector &y)` Trains the model on the provided data (`x` - features, `y` - target values).

* `double predict(vector &x)` Predicts the output value for a single input vector.

* `vector predict(matrix &x)` Predicts output values for all rows in the input matrix.

**Internal Functions (not directly accessible)**

* `CalcTimeElapsed(double seconds)`: Calculates and returns a string representing the elapsed time in a human-readable format (not relevant for core functionality).

* `RegressorNetsBackProp(matrix& x, vector &y, uint epochs, double alpha, loss LossFx=LOSS_MSE_, optimizer OPTIMIZER=OPTIMIZER_ADAM)`: Performs backpropagation for training (details not provided but likely involve calculating gradients and updating weights and biases).

* Optimizer functions (e.g., `AdamOptimizerW`, `AdamOptimizerB`) Implement specific optimization algorithms like Adam for updating weights and biases during training.

**Other Class Members:**

* `mlp_struct mlp`: Stores information about the network architecture (inputs, hidden layers, and outputs).

* `CTensors*` pointers: Represent tensors holding weights, biases, and other internal calculations (specific implementation likely relies on a custom tensor library).

* `matrix` variables: Used for calculations during training and may hold temporary data (e.g., `W_MATRIX`, `B_MATRIX`).

* `vector` variables: Store network configuration details (e.g., `W_CONFIG`, `HL_CONFIG`).

* `bool isBackProp`: Flag indicating if backpropagation is being performed (private).

* `matrix` variables: Used for storing intermediate results during backpropagation (e.g., `ACTIVATIONS`, `Partial_Derivatives`).

**IV. Additional Notes:**

* The class provides various activation function options and supports different loss functions (e.g., Mean Squared Error, Mean Absolute Error) for selecting the appropriate evaluation metric during training.

* The class implements the Adam optimizer, one of several optimization algorithms used for efficient training of neural networks.

* Detailed implementation of the backpropagation algorithm is not provided but is likely the core functionality for training the network.

**Reference**

* [Data Science and Machine Learning (Part 12): Can Self-Training Neural Networks Help You Outsmart the Stock Market?](https://www.mql5.com/en/articles/12209)

* [Data Science and Machine Learning — Neural Network (Part 01): Feed Forward Neural Network demystified](https://www.mql5.com/en/articles/11275)

* [Data Science and Machine Learning — Neural Network (Part 02): Feed forward NN Architectures Design](https://www.mql5.com/en/articles/11334)

================================================

FILE: Neural Networks/Regressor Nets.mqh

================================================

//+------------------------------------------------------------------+

//| neural_nn_lib.mqh |

//| Copyright 2022, Omega Joctan. |

//| https://www.mql5.com/en/users/omegajoctan |

//+------------------------------------------------------------------+

#property copyright "Copyright 2022, Omega Joctan."

#property link "https://www.mql5.com/en/users/omegajoctan"

//+------------------------------------------------------------------+

//| Regressor Neural Networks | Neural Networks for solving |

//| regression problems in contrast to classification problems, |

//| here we deal with continuous variables |

//+------------------------------------------------------------------+

#include <MALE5\preprocessing.mqh>;

#include <MALE5\MatrixExtend.mqh>;

#include <MALE5\Metrics.mqh>

#include <MALE5\Tensors.mqh>

#include <MALE5\cross_validation.mqh>

#include "optimizers.mqh"

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

enum activation

{

AF_ELU_ = AF_ELU,

AF_EXP_ = AF_EXP,

AF_GELU_ = AF_GELU,

AF_LINEAR_ = AF_LINEAR,

AF_LRELU_ = AF_LRELU,

AF_RELU_ = AF_RELU,

AF_SELU_ = AF_SELU,

AF_TRELU_ = AF_TRELU,

AF_SOFTPLUS_ = AF_SOFTPLUS

};

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

enum loss

{

LOSS_MSE_ = LOSS_MSE, // Mean Squared Error

LOSS_MAE_ = LOSS_MAE, // Mean Absolute Error

LOSS_MSLE_ = LOSS_MSLE, // Mean Squared Logarithmic Error

LOSS_POISSON_ = LOSS_POISSON // Poisson Loss

};

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

struct backprop //This structure returns the loss information obtained from the backpropagation function

{

vector training_loss,

validation_loss;

void Init(ulong epochs)

{

training_loss.Resize(epochs);

validation_loss.Resize(epochs);

}

};

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

struct mlp_struct //multi layer perceptron information structure

{

ulong inputs;

ulong hidden_layers;

ulong outputs;

};

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

class CRegressorNets

{

mlp_struct mlp;

CTensors *Weights_tensor; //Weight Tensor

CTensors *Bias_tensor;

CTensors *Input_tensor;

CTensors *Output_tensor;

protected:

activation A_FX;

loss m_loss_function;

bool trained;

string ConvertTime(double seconds);

//-- for backpropn

vector W_CONFIG;

vector HL_CONFIG;

bool isBackProp;

matrix<double> ACTIVATIONS;

matrix<double> Partial_Derivatives;

int m_random_state;

private:

virtual backprop backpropagation(const matrix& x, const vector &y, OptimizerSGD *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual backprop backpropagation(const matrix& x, const vector &y, OptimizerAdaDelta *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual backprop backpropagation(const matrix& x, const vector &y, OptimizerAdaGrad *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual backprop backpropagation(const matrix& x, const vector &y, OptimizerAdam *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual backprop backpropagation(const matrix& x, const vector &y, OptimizerNadam *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual backprop backpropagation(const matrix& x, const vector &y, OptimizerRMSprop *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

public:

CRegressorNets(vector &HL_NODES, activation AF_=AF_RELU_, loss m_loss_function=LOSS_MSE_, int random_state=42);

~CRegressorNets(void);

virtual void fit(const matrix &x, const vector &y, OptimizerSGD *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual void fit(const matrix &x, const vector &y, OptimizerAdaDelta *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual void fit(const matrix &x, const vector &y, OptimizerAdaGrad *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual void fit(const matrix &x, const vector &y, OptimizerAdam *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual void fit(const matrix &x, const vector &y, OptimizerNadam *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual void fit(const matrix &x, const vector &y, OptimizerRMSprop *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false);

virtual double predict(const vector &x);

virtual vector predict(const matrix &x);

};

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

CRegressorNets::CRegressorNets(vector &HL_NODES, activation AF_=AF_RELU_, loss LOSS_=LOSS_MSE_, int random_state=42)

:A_FX(AF_),

m_loss_function(LOSS_),

isBackProp(false),

m_random_state(random_state)

{

HL_CONFIG.Copy(HL_NODES);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

CRegressorNets::~CRegressorNets(void)

{

if (CheckPointer(this.Weights_tensor) != POINTER_INVALID) delete(this.Weights_tensor);

if (CheckPointer(this.Bias_tensor) != POINTER_INVALID) delete(this.Bias_tensor);

if (CheckPointer(this.Input_tensor) != POINTER_INVALID) delete(this.Input_tensor);

if (CheckPointer(this.Output_tensor) != POINTER_INVALID) delete(this.Output_tensor);

isBackProp = false;

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

double CRegressorNets::predict(const vector &x)

{

if (!trained)

{

printf("%s Train the model first before using it to make predictions | call the fit function first",__FUNCTION__);

return 0;

}

matrix L_INPUT = MatrixExtend::VectorToMatrix(x);

matrix L_OUTPUT ={};

for(ulong i=0; i<mlp.hidden_layers; i++)

{

if (isBackProp) //if we are on backpropagation store the inputs to be used for finding derivatives

this.Input_tensor.Add(L_INPUT, i);

L_OUTPUT = this.Weights_tensor.Get(i).MatMul(L_INPUT) + this.Bias_tensor.Get(i); //Weights x INputs + Bias

L_OUTPUT.Activation(L_OUTPUT, ENUM_ACTIVATION_FUNCTION(A_FX)); //Activation

L_INPUT = L_OUTPUT; //Next layer inputs = previous layer outputs

if (isBackProp) this.Output_tensor.Add(L_OUTPUT, i); //Add bias //if we are on backpropagation store the outputs to be used for finding derivatives

}

return(L_OUTPUT[0][0]);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

vector CRegressorNets::predict(const matrix &x)

{

ulong size = x.Rows();

vector v(size);

if (x.Cols() != mlp.inputs)

{

Print("Cen't pass this matrix to a MLP it doesn't have the same number of columns as the inputs given primarily");

return (v);

}

for (ulong i=0; i<size; i++)

v[i] = predict(x.Row(i));

return (v);

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

string CRegressorNets::ConvertTime(double seconds)

{

string time_str = "";

uint minutes = 0, hours = 0;

if (seconds >= 60)

{

minutes = (uint)(seconds / 60.0) ;

seconds = fmod(seconds, 1.0) * 60;

time_str = StringFormat("%d Minutes and %.3f Seconds", minutes, seconds);

}

if (minutes >= 60)

{

hours = (uint)(minutes / 60.0);

minutes = minutes % 60;

time_str = StringFormat("%d Hours and %d Minutes", hours, minutes);

}

if (time_str == "")

{

time_str = StringFormat("%.3f Seconds", seconds);

}

return time_str;

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

backprop CRegressorNets::backpropagation(const matrix& x, const vector &y, OptimizerSGD *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false)

{

isBackProp = true;

//---

backprop backprop_struct;

backprop_struct.Init(epochs);

ulong rows = x.Rows();

mlp.inputs = x.Cols();

mlp.outputs = 1;

//---

vector v2 = {(double)mlp.outputs}; //Adding the output layer to the mix of hidden layers

HL_CONFIG = MatrixExtend::concatenate(HL_CONFIG, v2);

mlp.hidden_layers = HL_CONFIG.Size();

W_CONFIG.Resize(HL_CONFIG.Size());

//---

if (y.Size() != rows)

{

Print(__FUNCTION__," FATAL | Number of rows in the x matrix is not the same the y vector size ");

return backprop_struct;

}

matrix W, B;

//--- GENERATE WEIGHTS

this.Weights_tensor = new CTensors((uint)mlp.hidden_layers);

this.Bias_tensor = new CTensors((uint)mlp.hidden_layers);

this.Input_tensor = new CTensors((uint)mlp.hidden_layers);

this.Output_tensor = new CTensors((uint)mlp.hidden_layers);

ulong layer_input = mlp.inputs;

for (ulong i=0; i<mlp.hidden_layers; i++)

{

W_CONFIG[i] = layer_input*HL_CONFIG[i];

W = MatrixExtend::Random(0.0, 1.0,(ulong)HL_CONFIG[i],layer_input, m_random_state);

W = W * sqrt(2/((double)layer_input + HL_CONFIG[i])); //glorot

this.Weights_tensor.Add(W, i);

B = MatrixExtend::Random(0.0, 0.5,(ulong)HL_CONFIG[i],1,m_random_state);

B = B * sqrt(2/((double)layer_input + HL_CONFIG[i])); //glorot

this.Bias_tensor.Add(B, i);

layer_input = (ulong)HL_CONFIG[i];

}

//---

if (MQLInfoInteger(MQL_DEBUG))

Comment("<------------------- R E G R E S S O R N E T S ------------------------->\n",

"HL_CONFIG ",HL_CONFIG," TOTAL HL(S) ",mlp.hidden_layers,"\n",

"W_CONFIG ",W_CONFIG," ACTIVATION ",EnumToString(A_FX),"\n",

"NN INPUTS ",mlp.inputs," OUTPUT ",mlp.outputs

);

//--- Optimizer

OptimizerSGD optimizer_weights = optimizer;

OptimizerSGD optimizer_bias = optimizer;

if (batch_size>0)

{

OptimizerMinBGD optimizer_weights;

OptimizerMinBGD optimizer_bias;

}

//--- Cross validation

CCrossValidation cross_validation;

CTensors *cv_tensor;

matrix validation_data = MatrixExtend::concatenate(x, y);

matrix validation_x;

vector validation_y;

cv_tensor = cross_validation.KFoldCV(validation_data, 10); //k-fold cross validation | 10 folds selected

//---

matrix DELTA = {};

double actual=0, pred=0;

matrix temp_inputs ={};

matrix dB = {}; //Bias Derivatives

matrix dW = {}; //Weight Derivatives

for (ulong epoch=0; epoch<epochs && !IsStopped(); epoch++)

{

double epoch_start = GetTickCount();

uint num_batches = (uint)MathFloor(x.Rows()/(batch_size+DBL_EPSILON));

vector batch_loss(num_batches),

batch_accuracy(num_batches);

vector actual_v(1), pred_v(1), LossGradient = {};

if (batch_size==0) //Stochastic Gradient Descent

{

for (ulong iter=0; iter<rows; iter++) //iterate through all data points

{

pred = predict(x.Row(iter));

actual = y[iter];

pred_v[0] = pred;

actual_v[0] = actual;

//---

DELTA.Resize(mlp.outputs,1);

for (int layer=(int)mlp.hidden_layers-1; layer>=0 && !IsStopped(); layer--) //Loop through the network backward from last to first layer

{

Partial_Derivatives = this.Output_tensor.Get(int(layer));

temp_inputs = this.Input_tensor.Get(int(layer));

Partial_Derivatives.Derivative(Partial_Derivatives, ENUM_ACTIVATION_FUNCTION(A_FX));

if (mlp.hidden_layers-1 == layer) //Last layer

{

LossGradient = pred_v.LossGradient(actual_v, ENUM_LOSS_FUNCTION(m_loss_function));

DELTA.Col(LossGradient, 0);

}

else

{

W = this.Weights_tensor.Get(layer+1);

DELTA = (W.Transpose().MatMul(DELTA)) * Partial_Derivatives;

}

//-- Observation | DeLTA matrix is same size as the bias matrix

W = this.Weights_tensor.Get(layer);

B = this.Bias_tensor.Get(layer);

//--- Derivatives wrt weights and bias

dB = DELTA;

dW = DELTA.MatMul(temp_inputs.Transpose());

//--- Weights updates

optimizer_weights.update(W, dW);

optimizer_bias.update(B, dB);

this.Weights_tensor.Add(W, layer);

this.Bias_tensor.Add(B, layer);

}

}

}

else //Batch Gradient Descent

{

for (uint batch=0, batch_start=0, batch_end=batch_size; batch<num_batches; batch++, batch_start+=batch_size, batch_end=(batch_start+batch_size-1))

{

matrix batch_x = MatrixExtend::Get(x, batch_start, batch_end-1);

vector batch_y = MatrixExtend::Get(y, batch_start, batch_end-1);

rows = batch_x.Rows();

for (ulong iter=0; iter<rows ; iter++) //iterate through all data points

{

pred_v[0] = predict(batch_x.Row(iter));

actual_v[0] = y[iter];

//---

DELTA.Resize(mlp.outputs,1);

for (int layer=(int)mlp.hidden_layers-1; layer>=0 && !IsStopped(); layer--) //Loop through the network backward from last to first layer

{

Partial_Derivatives = this.Output_tensor.Get(int(layer));

temp_inputs = this.Input_tensor.Get(int(layer));

Partial_Derivatives.Derivative(Partial_Derivatives, ENUM_ACTIVATION_FUNCTION(A_FX));

if (mlp.hidden_layers-1 == layer) //Last layer

{

LossGradient = pred_v.LossGradient(actual_v, ENUM_LOSS_FUNCTION(m_loss_function));

DELTA.Col(LossGradient, 0);

}

else

{

W = this.Weights_tensor.Get(layer+1);

DELTA = (W.Transpose().MatMul(DELTA)) * Partial_Derivatives;

}

//-- Observation | DeLTA matrix is same size as the bias matrix

W = this.Weights_tensor.Get(layer);

B = this.Bias_tensor.Get(layer);

//--- Derivatives wrt weights and bias

dB = DELTA;

dW = DELTA.MatMul(temp_inputs.Transpose());

//--- Weights updates

optimizer_weights.update(W, dW);

optimizer_bias.update(B, dB);

this.Weights_tensor.Add(W, layer);

this.Bias_tensor.Add(B, layer);

}

}

pred_v = predict(batch_x);

batch_loss[batch] = pred_v.Loss(batch_y, ENUM_LOSS_FUNCTION(m_loss_function));

batch_loss[batch] = MathIsValidNumber(batch_loss[batch]) ? (batch_loss[batch]>1e6 ? 1e6 : batch_loss[batch]) : 1e6; //Check for nan and return some large value if it is nan

batch_accuracy[batch] = Metrics::r_squared(batch_y, pred_v);

if (show_batch_progress)

printf("----> batch[%d/%d] batch-loss %.5f accuracy %.3f",batch+1,num_batches,batch_loss[batch], batch_accuracy[batch]);

}

}

//--- End of an epoch

vector validation_loss(cv_tensor.SIZE);

vector validation_acc(cv_tensor.SIZE);

for (ulong i=0; i<cv_tensor.SIZE; i++)

{

validation_data = cv_tensor.Get(i);

MatrixExtend::XandYSplitMatrices(validation_data, validation_x, validation_y);

vector val_preds = this.predict(validation_x);;

validation_loss[i] = val_preds.Loss(validation_y, ENUM_LOSS_FUNCTION(m_loss_function));

validation_acc[i] = Metrics::r_squared(validation_y, val_preds);

}

pred_v = this.predict(x);

if (batch_size==0)

{

backprop_struct.training_loss[epoch] = pred_v.Loss(y, ENUM_LOSS_FUNCTION(m_loss_function));

backprop_struct.training_loss[epoch] = MathIsValidNumber(backprop_struct.training_loss[epoch]) ? (backprop_struct.training_loss[epoch]>1e6 ? 1e6 : backprop_struct.training_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

backprop_struct.validation_loss[epoch] = validation_loss.Mean();

backprop_struct.validation_loss[epoch] = MathIsValidNumber(backprop_struct.validation_loss[epoch]) ? (backprop_struct.validation_loss[epoch]>1e6 ? 1e6 : backprop_struct.validation_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

}

else

{

backprop_struct.training_loss[epoch] = batch_loss.Mean();

backprop_struct.training_loss[epoch] = MathIsValidNumber(backprop_struct.training_loss[epoch]) ? (backprop_struct.training_loss[epoch]>1e6 ? 1e6 : backprop_struct.training_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

backprop_struct.validation_loss[epoch] = validation_loss.Mean();

backprop_struct.validation_loss[epoch] = MathIsValidNumber(backprop_struct.validation_loss[epoch]) ? (backprop_struct.validation_loss[epoch]>1e6 ? 1e6 : backprop_struct.validation_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

}

double epoch_stop = GetTickCount();

printf("--> Epoch [%d/%d] training -> loss %.8f accuracy %.3f validation -> loss %.5f accuracy %.3f | Elapsed %s ",epoch+1,epochs,backprop_struct.training_loss[epoch],Metrics::r_squared(y, pred_v),backprop_struct.validation_loss[epoch],validation_acc.Mean(),this.ConvertTime((epoch_stop-epoch_start)/1000.0));

}

isBackProp = false;

if (CheckPointer(this.Input_tensor) != POINTER_INVALID) delete(this.Input_tensor);

if (CheckPointer(this.Output_tensor) != POINTER_INVALID) delete(this.Output_tensor);

if (CheckPointer(optimizer)!=POINTER_INVALID)

delete optimizer;

return backprop_struct;

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

backprop CRegressorNets::backpropagation(const matrix& x, const vector &y, OptimizerAdaDelta *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false)

{

isBackProp = true;

//---

backprop backprop_struct;

backprop_struct.Init(epochs);

ulong rows = x.Rows();

mlp.inputs = x.Cols();

mlp.outputs = 1;

//---

vector v2 = {(double)mlp.outputs}; //Adding the output layer to the mix of hidden layers

HL_CONFIG = MatrixExtend::concatenate(HL_CONFIG, v2);

mlp.hidden_layers = HL_CONFIG.Size();

W_CONFIG.Resize(HL_CONFIG.Size());

//---

if (y.Size() != rows)

{

Print(__FUNCTION__," FATAL | Number of rows in the x matrix is not the same the y vector size ");

return backprop_struct;

}

matrix W, B;

//--- GENERATE WEIGHTS

this.Weights_tensor = new CTensors((uint)mlp.hidden_layers);

this.Bias_tensor = new CTensors((uint)mlp.hidden_layers);

this.Input_tensor = new CTensors((uint)mlp.hidden_layers);

this.Output_tensor = new CTensors((uint)mlp.hidden_layers);

ulong layer_input = mlp.inputs;

for (ulong i=0; i<mlp.hidden_layers; i++)

{

W_CONFIG[i] = layer_input*HL_CONFIG[i];

W = MatrixExtend::Random(0.0, 1.0,(ulong)HL_CONFIG[i],layer_input, m_random_state);

W = W * sqrt(2/((double)layer_input + HL_CONFIG[i])); //glorot

this.Weights_tensor.Add(W, i);

B = MatrixExtend::Random(0.0, 0.5,(ulong)HL_CONFIG[i],1,m_random_state);

B = B * sqrt(2/((double)layer_input + HL_CONFIG[i])); //glorot

this.Bias_tensor.Add(B, i);

layer_input = (ulong)HL_CONFIG[i];

}

//---

if (MQLInfoInteger(MQL_DEBUG))

Comment("<------------------- R E G R E S S O R N E T S ------------------------->\n",

"HL_CONFIG ",HL_CONFIG," TOTAL HL(S) ",mlp.hidden_layers,"\n",

"W_CONFIG ",W_CONFIG," ACTIVATION ",EnumToString(A_FX),"\n",

"NN INPUTS ",mlp.inputs," OUTPUT ",mlp.outputs

);

//--- Optimizer

OptimizerAdaDelta optimizer_weights = optimizer;

OptimizerAdaDelta optimizer_bias = optimizer;

if (batch_size>0)

{

OptimizerMinBGD optimizer_weights;

OptimizerMinBGD optimizer_bias;

}

//--- Cross validation

CCrossValidation cross_validation;

CTensors *cv_tensor;

matrix validation_data = MatrixExtend::concatenate(x, y);

matrix validation_x;

vector validation_y;

cv_tensor = cross_validation.KFoldCV(validation_data, 10); //k-fold cross validation | 10 folds selected

//---

matrix DELTA = {};

double actual=0, pred=0;

matrix temp_inputs ={};

matrix dB = {}; //Bias Derivatives

matrix dW = {}; //Weight Derivatives

for (ulong epoch=0; epoch<epochs && !IsStopped(); epoch++)

{

double epoch_start = GetTickCount();

uint num_batches = (uint)MathFloor(x.Rows()/(batch_size+DBL_EPSILON));

vector batch_loss(num_batches),

batch_accuracy(num_batches);

vector actual_v(1), pred_v(1), LossGradient = {};

if (batch_size==0) //Stochastic Gradient Descent

{

for (ulong iter=0; iter<rows; iter++) //iterate through all data points

{

pred = predict(x.Row(iter));

actual = y[iter];

pred_v[0] = pred;

actual_v[0] = actual;

//---

DELTA.Resize(mlp.outputs,1);

for (int layer=(int)mlp.hidden_layers-1; layer>=0 && !IsStopped(); layer--) //Loop through the network backward from last to first layer

{

Partial_Derivatives = this.Output_tensor.Get(int(layer));

temp_inputs = this.Input_tensor.Get(int(layer));

Partial_Derivatives.Derivative(Partial_Derivatives, ENUM_ACTIVATION_FUNCTION(A_FX));

if (mlp.hidden_layers-1 == layer) //Last layer

{

LossGradient = pred_v.LossGradient(actual_v, ENUM_LOSS_FUNCTION(m_loss_function));

DELTA.Col(LossGradient, 0);

}

else

{

W = this.Weights_tensor.Get(layer+1);

DELTA = (W.Transpose().MatMul(DELTA)) * Partial_Derivatives;

}

//-- Observation | DeLTA matrix is same size as the bias matrix

W = this.Weights_tensor.Get(layer);

B = this.Bias_tensor.Get(layer);

//--- Derivatives wrt weights and bias

dB = DELTA;

dW = DELTA.MatMul(temp_inputs.Transpose());

//--- Weights updates

optimizer_weights.update(W, dW);

optimizer_bias.update(B, dB);

this.Weights_tensor.Add(W, layer);

this.Bias_tensor.Add(B, layer);

}

}

}

else //Batch Gradient Descent

{

for (uint batch=0, batch_start=0, batch_end=batch_size; batch<num_batches; batch++, batch_start+=batch_size, batch_end=(batch_start+batch_size-1))

{

matrix batch_x = MatrixExtend::Get(x, batch_start, batch_end-1);

vector batch_y = MatrixExtend::Get(y, batch_start, batch_end-1);

rows = batch_x.Rows();

for (ulong iter=0; iter<rows ; iter++) //iterate through all data points

{

pred_v[0] = predict(batch_x.Row(iter));

actual_v[0] = y[iter];

//---

DELTA.Resize(mlp.outputs,1);

for (int layer=(int)mlp.hidden_layers-1; layer>=0 && !IsStopped(); layer--) //Loop through the network backward from last to first layer

{

Partial_Derivatives = this.Output_tensor.Get(int(layer));

temp_inputs = this.Input_tensor.Get(int(layer));

Partial_Derivatives.Derivative(Partial_Derivatives, ENUM_ACTIVATION_FUNCTION(A_FX));

if (mlp.hidden_layers-1 == layer) //Last layer

{

LossGradient = pred_v.LossGradient(actual_v, ENUM_LOSS_FUNCTION(m_loss_function));

DELTA.Col(LossGradient, 0);

}

else

{

W = this.Weights_tensor.Get(layer+1);

DELTA = (W.Transpose().MatMul(DELTA)) * Partial_Derivatives;

}

//-- Observation | DeLTA matrix is same size as the bias matrix

W = this.Weights_tensor.Get(layer);

B = this.Bias_tensor.Get(layer);

//--- Derivatives wrt weights and bias

dB = DELTA;

dW = DELTA.MatMul(temp_inputs.Transpose());

//--- Weights updates

optimizer_weights.update(W, dW);

optimizer_bias.update(B, dB);

this.Weights_tensor.Add(W, layer);

this.Bias_tensor.Add(B, layer);

}

}

pred_v = predict(batch_x);

batch_loss[batch] = pred_v.Loss(batch_y, ENUM_LOSS_FUNCTION(m_loss_function));

batch_loss[batch] = MathIsValidNumber(batch_loss[batch]) ? (batch_loss[batch]>1e6 ? 1e6 : batch_loss[batch]) : 1e6; //Check for nan and return some large value if it is nan

batch_accuracy[batch] = Metrics::r_squared(batch_y, pred_v);

if (show_batch_progress)

printf("----> batch[%d/%d] batch-loss %.5f accuracy %.3f",batch+1,num_batches,batch_loss[batch], batch_accuracy[batch]);

}

}

//--- End of an epoch

vector validation_loss(cv_tensor.SIZE);

vector validation_acc(cv_tensor.SIZE);

for (ulong i=0; i<cv_tensor.SIZE; i++)

{

validation_data = cv_tensor.Get(i);

MatrixExtend::XandYSplitMatrices(validation_data, validation_x, validation_y);

vector val_preds = this.predict(validation_x);;

validation_loss[i] = val_preds.Loss(validation_y, ENUM_LOSS_FUNCTION(m_loss_function));

validation_acc[i] = Metrics::r_squared(validation_y, val_preds);

}

pred_v = this.predict(x);

if (batch_size==0)

{

backprop_struct.training_loss[epoch] = pred_v.Loss(y, ENUM_LOSS_FUNCTION(m_loss_function));

backprop_struct.training_loss[epoch] = MathIsValidNumber(backprop_struct.training_loss[epoch]) ? (backprop_struct.training_loss[epoch]>1e6 ? 1e6 : backprop_struct.training_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

backprop_struct.validation_loss[epoch] = validation_loss.Mean();

backprop_struct.validation_loss[epoch] = MathIsValidNumber(backprop_struct.validation_loss[epoch]) ? (backprop_struct.validation_loss[epoch]>1e6 ? 1e6 : backprop_struct.validation_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

}

else

{

backprop_struct.training_loss[epoch] = batch_loss.Mean();

backprop_struct.training_loss[epoch] = MathIsValidNumber(backprop_struct.training_loss[epoch]) ? (backprop_struct.training_loss[epoch]>1e6 ? 1e6 : backprop_struct.training_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

backprop_struct.validation_loss[epoch] = validation_loss.Mean();

backprop_struct.validation_loss[epoch] = MathIsValidNumber(backprop_struct.validation_loss[epoch]) ? (backprop_struct.validation_loss[epoch]>1e6 ? 1e6 : backprop_struct.validation_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

}

double epoch_stop = GetTickCount();

printf("--> Epoch [%d/%d] training -> loss %.8f accuracy %.3f validation -> loss %.5f accuracy %.3f | Elapsed %s ",epoch+1,epochs,backprop_struct.training_loss[epoch],Metrics::r_squared(y, pred_v),backprop_struct.validation_loss[epoch],validation_acc.Mean(),this.ConvertTime((epoch_stop-epoch_start)/1000.0));

}

isBackProp = false;

if (CheckPointer(this.Input_tensor) != POINTER_INVALID) delete(this.Input_tensor);

if (CheckPointer(this.Output_tensor) != POINTER_INVALID) delete(this.Output_tensor);

if (CheckPointer(optimizer)!=POINTER_INVALID)

delete optimizer;

return backprop_struct;

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

backprop CRegressorNets::backpropagation(const matrix& x, const vector &y, OptimizerAdaGrad *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false)

{

isBackProp = true;

//---

backprop backprop_struct;

backprop_struct.Init(epochs);

ulong rows = x.Rows();

mlp.inputs = x.Cols();

mlp.outputs = 1;

//---

vector v2 = {(double)mlp.outputs}; //Adding the output layer to the mix of hidden layers

HL_CONFIG = MatrixExtend::concatenate(HL_CONFIG, v2);

mlp.hidden_layers = HL_CONFIG.Size();

W_CONFIG.Resize(HL_CONFIG.Size());

//---

if (y.Size() != rows)

{

Print(__FUNCTION__," FATAL | Number of rows in the x matrix is not the same the y vector size ");

return backprop_struct;

}

matrix W, B;

//--- GENERATE WEIGHTS

this.Weights_tensor = new CTensors((uint)mlp.hidden_layers);

this.Bias_tensor = new CTensors((uint)mlp.hidden_layers);

this.Input_tensor = new CTensors((uint)mlp.hidden_layers);

this.Output_tensor = new CTensors((uint)mlp.hidden_layers);

ulong layer_input = mlp.inputs;

for (ulong i=0; i<mlp.hidden_layers; i++)

{

W_CONFIG[i] = layer_input*HL_CONFIG[i];

W = MatrixExtend::Random(0.0, 1.0,(ulong)HL_CONFIG[i],layer_input, m_random_state);

W = W * sqrt(2/((double)layer_input + HL_CONFIG[i])); //glorot

this.Weights_tensor.Add(W, i);

B = MatrixExtend::Random(0.0, 0.5,(ulong)HL_CONFIG[i],1,m_random_state);

B = B * sqrt(2/((double)layer_input + HL_CONFIG[i])); //glorot

this.Bias_tensor.Add(B, i);

layer_input = (ulong)HL_CONFIG[i];

}

//---

if (MQLInfoInteger(MQL_DEBUG))

Comment("<------------------- R E G R E S S O R N E T S ------------------------->\n",

"HL_CONFIG ",HL_CONFIG," TOTAL HL(S) ",mlp.hidden_layers,"\n",

"W_CONFIG ",W_CONFIG," ACTIVATION ",EnumToString(A_FX),"\n",

"NN INPUTS ",mlp.inputs," OUTPUT ",mlp.outputs

);

//--- Optimizer

OptimizerAdaGrad optimizer_weights = optimizer;

OptimizerAdaGrad optimizer_bias = optimizer;

if (batch_size>0)

{

OptimizerMinBGD optimizer_weights;

OptimizerMinBGD optimizer_bias;

}

//--- Cross validation

CCrossValidation cross_validation;

CTensors *cv_tensor;

matrix validation_data = MatrixExtend::concatenate(x, y);

matrix validation_x;

vector validation_y;

cv_tensor = cross_validation.KFoldCV(validation_data, 10); //k-fold cross validation | 10 folds selected

//---

matrix DELTA = {};

double actual=0, pred=0;

matrix temp_inputs ={};

matrix dB = {}; //Bias Derivatives

matrix dW = {}; //Weight Derivatives

for (ulong epoch=0; epoch<epochs && !IsStopped(); epoch++)

{

double epoch_start = GetTickCount();

uint num_batches = (uint)MathFloor(x.Rows()/(batch_size+DBL_EPSILON));

vector batch_loss(num_batches),

batch_accuracy(num_batches);

vector actual_v(1), pred_v(1), LossGradient = {};

if (batch_size==0) //Stochastic Gradient Descent

{

for (ulong iter=0; iter<rows; iter++) //iterate through all data points

{

pred = predict(x.Row(iter));

actual = y[iter];

pred_v[0] = pred;

actual_v[0] = actual;

//---

DELTA.Resize(mlp.outputs,1);

for (int layer=(int)mlp.hidden_layers-1; layer>=0 && !IsStopped(); layer--) //Loop through the network backward from last to first layer

{

Partial_Derivatives = this.Output_tensor.Get(int(layer));

temp_inputs = this.Input_tensor.Get(int(layer));

Partial_Derivatives.Derivative(Partial_Derivatives, ENUM_ACTIVATION_FUNCTION(A_FX));

if (mlp.hidden_layers-1 == layer) //Last layer

{

LossGradient = pred_v.LossGradient(actual_v, ENUM_LOSS_FUNCTION(m_loss_function));

DELTA.Col(LossGradient, 0);

}

else

{

W = this.Weights_tensor.Get(layer+1);

DELTA = (W.Transpose().MatMul(DELTA)) * Partial_Derivatives;

}

//-- Observation | DeLTA matrix is same size as the bias matrix

W = this.Weights_tensor.Get(layer);

B = this.Bias_tensor.Get(layer);

//--- Derivatives wrt weights and bias

dB = DELTA;

dW = DELTA.MatMul(temp_inputs.Transpose());

//--- Weights updates

optimizer_weights.update(W, dW);

optimizer_bias.update(B, dB);

this.Weights_tensor.Add(W, layer);

this.Bias_tensor.Add(B, layer);

}

}

}

else //Batch Gradient Descent

{

for (uint batch=0, batch_start=0, batch_end=batch_size; batch<num_batches; batch++, batch_start+=batch_size, batch_end=(batch_start+batch_size-1))

{

matrix batch_x = MatrixExtend::Get(x, batch_start, batch_end-1);

vector batch_y = MatrixExtend::Get(y, batch_start, batch_end-1);

rows = batch_x.Rows();

for (ulong iter=0; iter<rows ; iter++) //iterate through all data points

{

pred_v[0] = predict(batch_x.Row(iter));

actual_v[0] = y[iter];

//---

DELTA.Resize(mlp.outputs,1);

for (int layer=(int)mlp.hidden_layers-1; layer>=0 && !IsStopped(); layer--) //Loop through the network backward from last to first layer

{

Partial_Derivatives = this.Output_tensor.Get(int(layer));

temp_inputs = this.Input_tensor.Get(int(layer));

Partial_Derivatives.Derivative(Partial_Derivatives, ENUM_ACTIVATION_FUNCTION(A_FX));

if (mlp.hidden_layers-1 == layer) //Last layer

{

LossGradient = pred_v.LossGradient(actual_v, ENUM_LOSS_FUNCTION(m_loss_function));

DELTA.Col(LossGradient, 0);

}

else

{

W = this.Weights_tensor.Get(layer+1);

DELTA = (W.Transpose().MatMul(DELTA)) * Partial_Derivatives;

}

//-- Observation | DeLTA matrix is same size as the bias matrix

W = this.Weights_tensor.Get(layer);

B = this.Bias_tensor.Get(layer);

//--- Derivatives wrt weights and bias

dB = DELTA;

dW = DELTA.MatMul(temp_inputs.Transpose());

//--- Weights updates

optimizer_weights.update(W, dW);

optimizer_bias.update(B, dB);

this.Weights_tensor.Add(W, layer);

this.Bias_tensor.Add(B, layer);

}

}

pred_v = predict(batch_x);

batch_loss[batch] = pred_v.Loss(batch_y, ENUM_LOSS_FUNCTION(m_loss_function));

batch_loss[batch] = MathIsValidNumber(batch_loss[batch]) ? (batch_loss[batch]>1e6 ? 1e6 : batch_loss[batch]) : 1e6; //Check for nan and return some large value if it is nan

batch_accuracy[batch] = Metrics::r_squared(batch_y, pred_v);

if (show_batch_progress)

printf("----> batch[%d/%d] batch-loss %.5f accuracy %.3f",batch+1,num_batches,batch_loss[batch], batch_accuracy[batch]);

}

}

//--- End of an epoch

vector validation_loss(cv_tensor.SIZE);

vector validation_acc(cv_tensor.SIZE);

for (ulong i=0; i<cv_tensor.SIZE; i++)

{

validation_data = cv_tensor.Get(i);

MatrixExtend::XandYSplitMatrices(validation_data, validation_x, validation_y);

vector val_preds = this.predict(validation_x);;

validation_loss[i] = val_preds.Loss(validation_y, ENUM_LOSS_FUNCTION(m_loss_function));

validation_acc[i] = Metrics::r_squared(validation_y, val_preds);

}

pred_v = this.predict(x);

if (batch_size==0)

{

backprop_struct.training_loss[epoch] = pred_v.Loss(y, ENUM_LOSS_FUNCTION(m_loss_function));

backprop_struct.training_loss[epoch] = MathIsValidNumber(backprop_struct.training_loss[epoch]) ? (backprop_struct.training_loss[epoch]>1e6 ? 1e6 : backprop_struct.training_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

backprop_struct.validation_loss[epoch] = validation_loss.Mean();

backprop_struct.validation_loss[epoch] = MathIsValidNumber(backprop_struct.validation_loss[epoch]) ? (backprop_struct.validation_loss[epoch]>1e6 ? 1e6 : backprop_struct.validation_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

}

else

{

backprop_struct.training_loss[epoch] = batch_loss.Mean();

backprop_struct.training_loss[epoch] = MathIsValidNumber(backprop_struct.training_loss[epoch]) ? (backprop_struct.training_loss[epoch]>1e6 ? 1e6 : backprop_struct.training_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

backprop_struct.validation_loss[epoch] = validation_loss.Mean();

backprop_struct.validation_loss[epoch] = MathIsValidNumber(backprop_struct.validation_loss[epoch]) ? (backprop_struct.validation_loss[epoch]>1e6 ? 1e6 : backprop_struct.validation_loss[epoch]) : 1e6; //Check for nan and return some large value if it is nan

}

double epoch_stop = GetTickCount();

printf("--> Epoch [%d/%d] training -> loss %.8f accuracy %.3f validation -> loss %.5f accuracy %.3f | Elapsed %s ",epoch+1,epochs,backprop_struct.training_loss[epoch],Metrics::r_squared(y, pred_v),backprop_struct.validation_loss[epoch],validation_acc.Mean(),this.ConvertTime((epoch_stop-epoch_start)/1000.0));

}

isBackProp = false;

if (CheckPointer(this.Input_tensor) != POINTER_INVALID) delete(this.Input_tensor);

if (CheckPointer(this.Output_tensor) != POINTER_INVALID) delete(this.Output_tensor);

if (CheckPointer(optimizer)!=POINTER_INVALID)

delete optimizer;

return backprop_struct;

}

//+------------------------------------------------------------------+

//| |

//+------------------------------------------------------------------+

backprop CRegressorNets::backpropagation(const matrix& x, const vector &y, OptimizerAdam *optimizer, const uint epochs, uint batch_size=0, bool show_batch_progress=false)

{

isBackProp = true;

//---

backprop backprop_struct;

backprop_struct.Init(epochs);

ulong rows = x.Rows();

mlp.inputs = x.Cols();

mlp.outputs = 1;

//---

vector v2 = {(double)mlp.outputs}; //Adding the output layer to the mix of hidden layers

HL_CONFIG = MatrixExtend::concatenate(HL_CONFIG, v2);

mlp.hidden_layers = HL_CONFIG.Size();

W_CONFIG.Resize(HL_CONFIG.Size());

//---

if (y.Size() != rows)

{

Print(__FUNCTION__," FATAL | Number of rows in the x matrix is not the same the y vector size ");

return backprop_struct;

}

matrix W, B;

//--- GENERATE WEIGHTS

this.Weights_tensor = new CTensors((uint)mlp.hidden_layers);

this.Bias_tensor = new CTensors((uint)mlp.hidden_layers);

this.Input_tensor = new CTensors((uint)mlp.hidden_layers);

this.Output_tensor = new CTensors((uint)mlp.hidden_layers);

ulong layer_input = mlp.inputs;

for (ulong i=0; i<mlp.hidden_layers; i++)

{

W_CONFIG[i] = layer_input*HL_CONFIG[i];

W = MatrixExtend::Random(0.0, 1.0,(ulong)HL_CONFIG[i],layer_input, m_random_state);

W = W * sqrt(2/((double)layer_input + HL_CONFIG[i])); //glorot

this.Weights_tensor.Add(W, i);

B = MatrixExtend::Random(0.0, 0.5,(ulong)HL_CONFIG[i],1,m_random_state);

B = B * sqrt(2/((double)layer_input + HL_CONFIG[i])); //glorot

this.Bias_tensor.Add(B, i);

layer_input = (ulong)HL_CONFIG[i];

}

//---

if (MQLInfoInteger(MQL_DEBUG))

Comment("<------------------- R E G R E S S O R N E T S ------------------------->\n",

"HL_CONFIG ",HL_CONFIG," TOTAL HL(S) ",mlp.hidden_layers,"\n",

"W_CONFIG ",W_CONFIG," ACTIVATION ",EnumToString(A_FX),"\n",

"NN INPUTS ",mlp.inputs," OUTPUT ",mlp.outputs

);

//--- Optimizer

OptimizerAdam optimizer_weights = optimizer;

OptimizerAdam optimizer_bias = optimizer;

if (batch_size>0)

{

OptimizerMinBGD optimizer_weights;

OptimizerMinBGD optimizer_bias;

}

//--- Cross validation

CCrossValidation cross_validation;

CTensors *cv_tensor;

matrix validation_data = MatrixExtend::concatenate(x, y);

matrix validation_x;

vector validation_y;

cv_tensor = cross_validation.KFoldCV(validation_data, 10); //k-fold cross validation | 10 folds selected

//---

matrix DELTA = {};

double actual=0, pred=0;

matrix temp_inputs ={};

matrix dB = {}; //Bias Derivatives

matrix dW = {}; //Weight Derivatives

for (ulong epoch=0; epoch<epochs && !IsStopped(); epoch++)

{

double epoch_start = GetTickCount();

uint num_batches = (uint)MathFloor(x.Rows()/(batch_size+DBL_EPSILON));

vector batch_loss(num_batches),

batch_accuracy(num_batches);

vector actual_v(1), pred_v(1), LossGradient = {};

if (batch_size==0) //Stochastic Gradient Descent

{

for (ulong iter=0; iter<rows; iter++) //iterate through all data points

{

pred = predict(x.Row(iter));

actual = y[iter];

pred_v[0] = pred;

actual_v[0] = actual;

//---

DELTA.Resize(mlp.outputs,1);

for (int layer=(int)mlp.hidden_layers-1; layer>=0 && !IsStopped(); layer--) //Loop through the network backward from last to first layer

{