[

{

"path": ".gitignore",

"content": "### Basic ignore file\n\n# Binaries for programs and plugins\nvsphere-influxdb\n\n# Test binary, build with `go test -c`\n*.test\n\n# Output of the go coverage tool, specifically when used with LiteIDE\n*.out\n\n# Configuration file\nvsphere-influxdb.json\n\n# Vim swap files\n*.swp\n"

},

{

"path": ".travis.yml",

"content": "language: go\nsudo: required\ngo:\n - 1.9\nenv:\n - PATH=/home/travis/gopath/bin:$PATH\nbefore_install:\n - sudo apt-get -qq update\n - sudo apt-get install -y ruby ruby-dev build-essential rpm \n - go get -u github.com/golang/dep/cmd/dep\n - go get -u github.com/alecthomas/gometalinter\ninstall:\n - dep ensure\nbefore_script:\n - gometalinter --install\n # - gometalinter --vendor ./...\nscript:\n - git status\nafter_success:\n# - gem install --no-ri --no-rdoc fpm\n - test -n \"$TRAVIS_TAG\" && curl -sL https://git.io/goreleaser | bash\n\n"

},

{

"path": "Dockerfile",

"content": "FROM golang:1.12-alpine3.10 as builder\n\nWORKDIR /go/src/vsphere-influxdb-go\nCOPY . .\nRUN apk --update add --virtual build-deps git \nRUN go get -d -v ./...\nRUN CGO_ENABLED=0 GOOS=linux go build -a -installsuffix cgo\n\nFROM alpine:3.10\nRUN apk update \\\n && apk upgrade \\\n && apk add ca-certificates \\\n && addgroup -S spock && adduser -S spock -G spock\nCOPY --from=0 /go/src/vsphere-influxdb-go/vsphere-influxdb-go /vsphere-influxdb-go\n\nUSER spock\n\nCMD [\"/vsphere-influxdb-go\"]\n"

},

{

"path": "Gopkg.toml",

"content": "\n# Gopkg.toml example\n#\n# Refer to https://github.com/golang/dep/blob/master/docs/Gopkg.toml.md\n# for detailed Gopkg.toml documentation.\n#\n# required = [\"github.com/user/thing/cmd/thing\"]\n# ignored = [\"github.com/user/project/pkgX\", \"bitbucket.org/user/project/pkgA/pkgY\"]\n#\n# [[constraint]]\n# name = \"github.com/user/project\"\n# version = \"1.0.0\"\n#\n# [[constraint]]\n# name = \"github.com/user/project2\"\n# branch = \"dev\"\n# source = \"github.com/myfork/project2\"\n#\n# [[override]]\n# name = \"github.com/x/y\"\n# version = \"2.4.0\"\n\n\n[[constraint]]\n name = \"github.com/davecgh/go-spew\"\n version = \"1.1.0\"\n\n[[constraint]]\n name = \"github.com/influxdata/influxdb\"\n version = \"1.3.6\"\n\n[[constraint]]\n name = \"github.com/vmware/govmomi\"\n version = \"0.15.0\"\n\n[[constraint]]\n branch = \"master\"\n name = \"golang.org/x/net\"\n"

},

{

"path": "LICENSE.txt",

"content": " GNU GENERAL PUBLIC LICENSE\n Version 3, 29 June 2007\n\n Copyright (C) 2007 Free Software Foundation, Inc. \n Everyone is permitted to copy and distribute verbatim copies\n of this license document, but changing it is not allowed.\n\n Preamble\n\n The GNU General Public License is a free, copyleft license for\nsoftware and other kinds of works.\n\n The licenses for most software and other practical works are designed\nto take away your freedom to share and change the works. By contrast,\nthe GNU General Public License is intended to guarantee your freedom to\nshare and change all versions of a program--to make sure it remains free\nsoftware for all its users. We, the Free Software Foundation, use the\nGNU General Public License for most of our software; it applies also to\nany other work released this way by its authors. You can apply it to\nyour programs, too.\n\n When we speak of free software, we are referring to freedom, not\nprice. Our General Public Licenses are designed to make sure that you\nhave the freedom to distribute copies of free software (and charge for\nthem if you wish), that you receive source code or can get it if you\nwant it, that you can change the software or use pieces of it in new\nfree programs, and that you know you can do these things.\n\n To protect your rights, we need to prevent others from denying you\nthese rights or asking you to surrender the rights. Therefore, you have\ncertain responsibilities if you distribute copies of the software, or if\nyou modify it: responsibilities to respect the freedom of others.\n\n For example, if you distribute copies of such a program, whether\ngratis or for a fee, you must pass on to the recipients the same\nfreedoms that you received. You must make sure that they, too, receive\nor can get the source code. And you must show them these terms so they\nknow their rights.\n\n Developers that use the GNU GPL protect your rights with two steps:\n(1) assert copyright on the software, and (2) offer you this License\ngiving you legal permission to copy, distribute and/or modify it.\n\n For the developers' and authors' protection, the GPL clearly explains\nthat there is no warranty for this free software. For both users' and\nauthors' sake, the GPL requires that modified versions be marked as\nchanged, so that their problems will not be attributed erroneously to\nauthors of previous versions.\n\n Some devices are designed to deny users access to install or run\nmodified versions of the software inside them, although the manufacturer\ncan do so. This is fundamentally incompatible with the aim of\nprotecting users' freedom to change the software. The systematic\npattern of such abuse occurs in the area of products for individuals to\nuse, which is precisely where it is most unacceptable. Therefore, we\nhave designed this version of the GPL to prohibit the practice for those\nproducts. If such problems arise substantially in other domains, we\nstand ready to extend this provision to those domains in future versions\nof the GPL, as needed to protect the freedom of users.\n\n Finally, every program is threatened constantly by software patents.\nStates should not allow patents to restrict development and use of\nsoftware on general-purpose computers, but in those that do, we wish to\navoid the special danger that patents applied to a free program could\nmake it effectively proprietary. To prevent this, the GPL assures that\npatents cannot be used to render the program non-free.\n\n The precise terms and conditions for copying, distribution and\nmodification follow.\n\n TERMS AND CONDITIONS\n\n 0. Definitions.\n\n \"This License\" refers to version 3 of the GNU General Public License.\n\n \"Copyright\" also means copyright-like laws that apply to other kinds of\nworks, such as semiconductor masks.\n\n \"The Program\" refers to any copyrightable work licensed under this\nLicense. Each licensee is addressed as \"you\". \"Licensees\" and\n\"recipients\" may be individuals or organizations.\n\n To \"modify\" a work means to copy from or adapt all or part of the work\nin a fashion requiring copyright permission, other than the making of an\nexact copy. The resulting work is called a \"modified version\" of the\nearlier work or a work \"based on\" the earlier work.\n\n A \"covered work\" means either the unmodified Program or a work based\non the Program.\n\n To \"propagate\" a work means to do anything with it that, without\npermission, would make you directly or secondarily liable for\ninfringement under applicable copyright law, except executing it on a\ncomputer or modifying a private copy. Propagation includes copying,\ndistribution (with or without modification), making available to the\npublic, and in some countries other activities as well.\n\n To \"convey\" a work means any kind of propagation that enables other\nparties to make or receive copies. Mere interaction with a user through\na computer network, with no transfer of a copy, is not conveying.\n\n An interactive user interface displays \"Appropriate Legal Notices\"\nto the extent that it includes a convenient and prominently visible\nfeature that (1) displays an appropriate copyright notice, and (2)\ntells the user that there is no warranty for the work (except to the\nextent that warranties are provided), that licensees may convey the\nwork under this License, and how to view a copy of this License. If\nthe interface presents a list of user commands or options, such as a\nmenu, a prominent item in the list meets this criterion.\n\n 1. Source Code.\n\n The \"source code\" for a work means the preferred form of the work\nfor making modifications to it. \"Object code\" means any non-source\nform of a work.\n\n A \"Standard Interface\" means an interface that either is an official\nstandard defined by a recognized standards body, or, in the case of\ninterfaces specified for a particular programming language, one that\nis widely used among developers working in that language.\n\n The \"System Libraries\" of an executable work include anything, other\nthan the work as a whole, that (a) is included in the normal form of\npackaging a Major Component, but which is not part of that Major\nComponent, and (b) serves only to enable use of the work with that\nMajor Component, or to implement a Standard Interface for which an\nimplementation is available to the public in source code form. A\n\"Major Component\", in this context, means a major essential component\n(kernel, window system, and so on) of the specific operating system\n(if any) on which the executable work runs, or a compiler used to\nproduce the work, or an object code interpreter used to run it.\n\n The \"Corresponding Source\" for a work in object code form means all\nthe source code needed to generate, install, and (for an executable\nwork) run the object code and to modify the work, including scripts to\ncontrol those activities. However, it does not include the work's\nSystem Libraries, or general-purpose tools or generally available free\nprograms which are used unmodified in performing those activities but\nwhich are not part of the work. For example, Corresponding Source\nincludes interface definition files associated with source files for\nthe work, and the source code for shared libraries and dynamically\nlinked subprograms that the work is specifically designed to require,\nsuch as by intimate data communication or control flow between those\nsubprograms and other parts of the work.\n\n The Corresponding Source need not include anything that users\ncan regenerate automatically from other parts of the Corresponding\nSource.\n\n The Corresponding Source for a work in source code form is that\nsame work.\n\n 2. Basic Permissions.\n\n All rights granted under this License are granted for the term of\ncopyright on the Program, and are irrevocable provided the stated\nconditions are met. This License explicitly affirms your unlimited\npermission to run the unmodified Program. The output from running a\ncovered work is covered by this License only if the output, given its\ncontent, constitutes a covered work. This License acknowledges your\nrights of fair use or other equivalent, as provided by copyright law.\n\n You may make, run and propagate covered works that you do not\nconvey, without conditions so long as your license otherwise remains\nin force. You may convey covered works to others for the sole purpose\nof having them make modifications exclusively for you, or provide you\nwith facilities for running those works, provided that you comply with\nthe terms of this License in conveying all material for which you do\nnot control copyright. Those thus making or running the covered works\nfor you must do so exclusively on your behalf, under your direction\nand control, on terms that prohibit them from making any copies of\nyour copyrighted material outside their relationship with you.\n\n Conveying under any other circumstances is permitted solely under\nthe conditions stated below. Sublicensing is not allowed; section 10\nmakes it unnecessary.\n\n 3. Protecting Users' Legal Rights From Anti-Circumvention Law.\n\n No covered work shall be deemed part of an effective technological\nmeasure under any applicable law fulfilling obligations under article\n11 of the WIPO copyright treaty adopted on 20 December 1996, or\nsimilar laws prohibiting or restricting circumvention of such\nmeasures.\n\n When you convey a covered work, you waive any legal power to forbid\ncircumvention of technological measures to the extent such circumvention\nis effected by exercising rights under this License with respect to\nthe covered work, and you disclaim any intention to limit operation or\nmodification of the work as a means of enforcing, against the work's\nusers, your or third parties' legal rights to forbid circumvention of\ntechnological measures.\n\n 4. Conveying Verbatim Copies.\n\n You may convey verbatim copies of the Program's source code as you\nreceive it, in any medium, provided that you conspicuously and\nappropriately publish on each copy an appropriate copyright notice;\nkeep intact all notices stating that this License and any\nnon-permissive terms added in accord with section 7 apply to the code;\nkeep intact all notices of the absence of any warranty; and give all\nrecipients a copy of this License along with the Program.\n\n You may charge any price or no price for each copy that you convey,\nand you may offer support or warranty protection for a fee.\n\n 5. Conveying Modified Source Versions.\n\n You may convey a work based on the Program, or the modifications to\nproduce it from the Program, in the form of source code under the\nterms of section 4, provided that you also meet all of these conditions:\n\n a) The work must carry prominent notices stating that you modified\n it, and giving a relevant date.\n\n b) The work must carry prominent notices stating that it is\n released under this License and any conditions added under section\n 7. This requirement modifies the requirement in section 4 to\n \"keep intact all notices\".\n\n c) You must license the entire work, as a whole, under this\n License to anyone who comes into possession of a copy. This\n License will therefore apply, along with any applicable section 7\n additional terms, to the whole of the work, and all its parts,\n regardless of how they are packaged. This License gives no\n permission to license the work in any other way, but it does not\n invalidate such permission if you have separately received it.\n\n d) If the work has interactive user interfaces, each must display\n Appropriate Legal Notices; however, if the Program has interactive\n interfaces that do not display Appropriate Legal Notices, your\n work need not make them do so.\n\n A compilation of a covered work with other separate and independent\nworks, which are not by their nature extensions of the covered work,\nand which are not combined with it such as to form a larger program,\nin or on a volume of a storage or distribution medium, is called an\n\"aggregate\" if the compilation and its resulting copyright are not\nused to limit the access or legal rights of the compilation's users\nbeyond what the individual works permit. Inclusion of a covered work\nin an aggregate does not cause this License to apply to the other\nparts of the aggregate.\n\n 6. Conveying Non-Source Forms.\n\n You may convey a covered work in object code form under the terms\nof sections 4 and 5, provided that you also convey the\nmachine-readable Corresponding Source under the terms of this License,\nin one of these ways:\n\n a) Convey the object code in, or embodied in, a physical product\n (including a physical distribution medium), accompanied by the\n Corresponding Source fixed on a durable physical medium\n customarily used for software interchange.\n\n b) Convey the object code in, or embodied in, a physical product\n (including a physical distribution medium), accompanied by a\n written offer, valid for at least three years and valid for as\n long as you offer spare parts or customer support for that product\n model, to give anyone who possesses the object code either (1) a\n copy of the Corresponding Source for all the software in the\n product that is covered by this License, on a durable physical\n medium customarily used for software interchange, for a price no\n more than your reasonable cost of physically performing this\n conveying of source, or (2) access to copy the\n Corresponding Source from a network server at no charge.\n\n c) Convey individual copies of the object code with a copy of the\n written offer to provide the Corresponding Source. This\n alternative is allowed only occasionally and noncommercially, and\n only if you received the object code with such an offer, in accord\n with subsection 6b.\n\n d) Convey the object code by offering access from a designated\n place (gratis or for a charge), and offer equivalent access to the\n Corresponding Source in the same way through the same place at no\n further charge. You need not require recipients to copy the\n Corresponding Source along with the object code. If the place to\n copy the object code is a network server, the Corresponding Source\n may be on a different server (operated by you or a third party)\n that supports equivalent copying facilities, provided you maintain\n clear directions next to the object code saying where to find the\n Corresponding Source. Regardless of what server hosts the\n Corresponding Source, you remain obligated to ensure that it is\n available for as long as needed to satisfy these requirements.\n\n e) Convey the object code using peer-to-peer transmission, provided\n you inform other peers where the object code and Corresponding\n Source of the work are being offered to the general public at no\n charge under subsection 6d.\n\n A separable portion of the object code, whose source code is excluded\nfrom the Corresponding Source as a System Library, need not be\nincluded in conveying the object code work.\n\n A \"User Product\" is either (1) a \"consumer product\", which means any\ntangible personal property which is normally used for personal, family,\nor household purposes, or (2) anything designed or sold for incorporation\ninto a dwelling. In determining whether a product is a consumer product,\ndoubtful cases shall be resolved in favor of coverage. For a particular\nproduct received by a particular user, \"normally used\" refers to a\ntypical or common use of that class of product, regardless of the status\nof the particular user or of the way in which the particular user\nactually uses, or expects or is expected to use, the product. A product\nis a consumer product regardless of whether the product has substantial\ncommercial, industrial or non-consumer uses, unless such uses represent\nthe only significant mode of use of the product.\n\n \"Installation Information\" for a User Product means any methods,\nprocedures, authorization keys, or other information required to install\nand execute modified versions of a covered work in that User Product from\na modified version of its Corresponding Source. The information must\nsuffice to ensure that the continued functioning of the modified object\ncode is in no case prevented or interfered with solely because\nmodification has been made.\n\n If you convey an object code work under this section in, or with, or\nspecifically for use in, a User Product, and the conveying occurs as\npart of a transaction in which the right of possession and use of the\nUser Product is transferred to the recipient in perpetuity or for a\nfixed term (regardless of how the transaction is characterized), the\nCorresponding Source conveyed under this section must be accompanied\nby the Installation Information. But this requirement does not apply\nif neither you nor any third party retains the ability to install\nmodified object code on the User Product (for example, the work has\nbeen installed in ROM).\n\n The requirement to provide Installation Information does not include a\nrequirement to continue to provide support service, warranty, or updates\nfor a work that has been modified or installed by the recipient, or for\nthe User Product in which it has been modified or installed. Access to a\nnetwork may be denied when the modification itself materially and\nadversely affects the operation of the network or violates the rules and\nprotocols for communication across the network.\n\n Corresponding Source conveyed, and Installation Information provided,\nin accord with this section must be in a format that is publicly\ndocumented (and with an implementation available to the public in\nsource code form), and must require no special password or key for\nunpacking, reading or copying.\n\n 7. Additional Terms.\n\n \"Additional permissions\" are terms that supplement the terms of this\nLicense by making exceptions from one or more of its conditions.\nAdditional permissions that are applicable to the entire Program shall\nbe treated as though they were included in this License, to the extent\nthat they are valid under applicable law. If additional permissions\napply only to part of the Program, that part may be used separately\nunder those permissions, but the entire Program remains governed by\nthis License without regard to the additional permissions.\n\n When you convey a copy of a covered work, you may at your option\nremove any additional permissions from that copy, or from any part of\nit. (Additional permissions may be written to require their own\nremoval in certain cases when you modify the work.) You may place\nadditional permissions on material, added by you to a covered work,\nfor which you have or can give appropriate copyright permission.\n\n Notwithstanding any other provision of this License, for material you\nadd to a covered work, you may (if authorized by the copyright holders of\nthat material) supplement the terms of this License with terms:\n\n a) Disclaiming warranty or limiting liability differently from the\n terms of sections 15 and 16 of this License; or\n\n b) Requiring preservation of specified reasonable legal notices or\n author attributions in that material or in the Appropriate Legal\n Notices displayed by works containing it; or\n\n c) Prohibiting misrepresentation of the origin of that material, or\n requiring that modified versions of such material be marked in\n reasonable ways as different from the original version; or\n\n d) Limiting the use for publicity purposes of names of licensors or\n authors of the material; or\n\n e) Declining to grant rights under trademark law for use of some\n trade names, trademarks, or service marks; or\n\n f) Requiring indemnification of licensors and authors of that\n material by anyone who conveys the material (or modified versions of\n it) with contractual assumptions of liability to the recipient, for\n any liability that these contractual assumptions directly impose on\n those licensors and authors.\n\n All other non-permissive additional terms are considered \"further\nrestrictions\" within the meaning of section 10. If the Program as you\nreceived it, or any part of it, contains a notice stating that it is\ngoverned by this License along with a term that is a further\nrestriction, you may remove that term. If a license document contains\na further restriction but permits relicensing or conveying under this\nLicense, you may add to a covered work material governed by the terms\nof that license document, provided that the further restriction does\nnot survive such relicensing or conveying.\n\n If you add terms to a covered work in accord with this section, you\nmust place, in the relevant source files, a statement of the\nadditional terms that apply to those files, or a notice indicating\nwhere to find the applicable terms.\n\n Additional terms, permissive or non-permissive, may be stated in the\nform of a separately written license, or stated as exceptions;\nthe above requirements apply either way.\n\n 8. Termination.\n\n You may not propagate or modify a covered work except as expressly\nprovided under this License. Any attempt otherwise to propagate or\nmodify it is void, and will automatically terminate your rights under\nthis License (including any patent licenses granted under the third\nparagraph of section 11).\n\n However, if you cease all violation of this License, then your\nlicense from a particular copyright holder is reinstated (a)\nprovisionally, unless and until the copyright holder explicitly and\nfinally terminates your license, and (b) permanently, if the copyright\nholder fails to notify you of the violation by some reasonable means\nprior to 60 days after the cessation.\n\n Moreover, your license from a particular copyright holder is\nreinstated permanently if the copyright holder notifies you of the\nviolation by some reasonable means, this is the first time you have\nreceived notice of violation of this License (for any work) from that\ncopyright holder, and you cure the violation prior to 30 days after\nyour receipt of the notice.\n\n Termination of your rights under this section does not terminate the\nlicenses of parties who have received copies or rights from you under\nthis License. If your rights have been terminated and not permanently\nreinstated, you do not qualify to receive new licenses for the same\nmaterial under section 10.\n\n 9. Acceptance Not Required for Having Copies.\n\n You are not required to accept this License in order to receive or\nrun a copy of the Program. Ancillary propagation of a covered work\noccurring solely as a consequence of using peer-to-peer transmission\nto receive a copy likewise does not require acceptance. However,\nnothing other than this License grants you permission to propagate or\nmodify any covered work. These actions infringe copyright if you do\nnot accept this License. Therefore, by modifying or propagating a\ncovered work, you indicate your acceptance of this License to do so.\n\n 10. Automatic Licensing of Downstream Recipients.\n\n Each time you convey a covered work, the recipient automatically\nreceives a license from the original licensors, to run, modify and\npropagate that work, subject to this License. You are not responsible\nfor enforcing compliance by third parties with this License.\n\n An \"entity transaction\" is a transaction transferring control of an\norganization, or substantially all assets of one, or subdividing an\norganization, or merging organizations. If propagation of a covered\nwork results from an entity transaction, each party to that\ntransaction who receives a copy of the work also receives whatever\nlicenses to the work the party's predecessor in interest had or could\ngive under the previous paragraph, plus a right to possession of the\nCorresponding Source of the work from the predecessor in interest, if\nthe predecessor has it or can get it with reasonable efforts.\n\n You may not impose any further restrictions on the exercise of the\nrights granted or affirmed under this License. For example, you may\nnot impose a license fee, royalty, or other charge for exercise of\nrights granted under this License, and you may not initiate litigation\n(including a cross-claim or counterclaim in a lawsuit) alleging that\nany patent claim is infringed by making, using, selling, offering for\nsale, or importing the Program or any portion of it.\n\n 11. Patents.\n\n A \"contributor\" is a copyright holder who authorizes use under this\nLicense of the Program or a work on which the Program is based. The\nwork thus licensed is called the contributor's \"contributor version\".\n\n A contributor's \"essential patent claims\" are all patent claims\nowned or controlled by the contributor, whether already acquired or\nhereafter acquired, that would be infringed by some manner, permitted\nby this License, of making, using, or selling its contributor version,\nbut do not include claims that would be infringed only as a\nconsequence of further modification of the contributor version. For\npurposes of this definition, \"control\" includes the right to grant\npatent sublicenses in a manner consistent with the requirements of\nthis License.\n\n Each contributor grants you a non-exclusive, worldwide, royalty-free\npatent license under the contributor's essential patent claims, to\nmake, use, sell, offer for sale, import and otherwise run, modify and\npropagate the contents of its contributor version.\n\n In the following three paragraphs, a \"patent license\" is any express\nagreement or commitment, however denominated, not to enforce a patent\n(such as an express permission to practice a patent or covenant not to\nsue for patent infringement). To \"grant\" such a patent license to a\nparty means to make such an agreement or commitment not to enforce a\npatent against the party.\n\n If you convey a covered work, knowingly relying on a patent license,\nand the Corresponding Source of the work is not available for anyone\nto copy, free of charge and under the terms of this License, through a\npublicly available network server or other readily accessible means,\nthen you must either (1) cause the Corresponding Source to be so\navailable, or (2) arrange to deprive yourself of the benefit of the\npatent license for this particular work, or (3) arrange, in a manner\nconsistent with the requirements of this License, to extend the patent\nlicense to downstream recipients. \"Knowingly relying\" means you have\nactual knowledge that, but for the patent license, your conveying the\ncovered work in a country, or your recipient's use of the covered work\nin a country, would infringe one or more identifiable patents in that\ncountry that you have reason to believe are valid.\n\n If, pursuant to or in connection with a single transaction or\narrangement, you convey, or propagate by procuring conveyance of, a\ncovered work, and grant a patent license to some of the parties\nreceiving the covered work authorizing them to use, propagate, modify\nor convey a specific copy of the covered work, then the patent license\nyou grant is automatically extended to all recipients of the covered\nwork and works based on it.\n\n A patent license is \"discriminatory\" if it does not include within\nthe scope of its coverage, prohibits the exercise of, or is\nconditioned on the non-exercise of one or more of the rights that are\nspecifically granted under this License. You may not convey a covered\nwork if you are a party to an arrangement with a third party that is\nin the business of distributing software, under which you make payment\nto the third party based on the extent of your activity of conveying\nthe work, and under which the third party grants, to any of the\nparties who would receive the covered work from you, a discriminatory\npatent license (a) in connection with copies of the covered work\nconveyed by you (or copies made from those copies), or (b) primarily\nfor and in connection with specific products or compilations that\ncontain the covered work, unless you entered into that arrangement,\nor that patent license was granted, prior to 28 March 2007.\n\n Nothing in this License shall be construed as excluding or limiting\nany implied license or other defenses to infringement that may\notherwise be available to you under applicable patent law.\n\n 12. No Surrender of Others' Freedom.\n\n If conditions are imposed on you (whether by court order, agreement or\notherwise) that contradict the conditions of this License, they do not\nexcuse you from the conditions of this License. If you cannot convey a\ncovered work so as to satisfy simultaneously your obligations under this\nLicense and any other pertinent obligations, then as a consequence you may\nnot convey it at all. For example, if you agree to terms that obligate you\nto collect a royalty for further conveying from those to whom you convey\nthe Program, the only way you could satisfy both those terms and this\nLicense would be to refrain entirely from conveying the Program.\n\n 13. Use with the GNU Affero General Public License.\n\n Notwithstanding any other provision of this License, you have\npermission to link or combine any covered work with a work licensed\nunder version 3 of the GNU Affero General Public License into a single\ncombined work, and to convey the resulting work. The terms of this\nLicense will continue to apply to the part which is the covered work,\nbut the special requirements of the GNU Affero General Public License,\nsection 13, concerning interaction through a network will apply to the\ncombination as such.\n\n 14. Revised Versions of this License.\n\n The Free Software Foundation may publish revised and/or new versions of\nthe GNU General Public License from time to time. Such new versions will\nbe similar in spirit to the present version, but may differ in detail to\naddress new problems or concerns.\n\n Each version is given a distinguishing version number. If the\nProgram specifies that a certain numbered version of the GNU General\nPublic License \"or any later version\" applies to it, you have the\noption of following the terms and conditions either of that numbered\nversion or of any later version published by the Free Software\nFoundation. If the Program does not specify a version number of the\nGNU General Public License, you may choose any version ever published\nby the Free Software Foundation.\n\n If the Program specifies that a proxy can decide which future\nversions of the GNU General Public License can be used, that proxy's\npublic statement of acceptance of a version permanently authorizes you\nto choose that version for the Program.\n\n Later license versions may give you additional or different\npermissions. However, no additional obligations are imposed on any\nauthor or copyright holder as a result of your choosing to follow a\nlater version.\n\n 15. Disclaimer of Warranty.\n\n THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY\nAPPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT\nHOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM \"AS IS\" WITHOUT WARRANTY\nOF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO,\nTHE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR\nPURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM\nIS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF\nALL NECESSARY SERVICING, REPAIR OR CORRECTION.\n\n 16. Limitation of Liability.\n\n IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING\nWILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS\nTHE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY\nGENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE\nUSE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF\nDATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD\nPARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),\nEVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF\nSUCH DAMAGES.\n\n 17. Interpretation of Sections 15 and 16.\n\n If the disclaimer of warranty and limitation of liability provided\nabove cannot be given local legal effect according to their terms,\nreviewing courts shall apply local law that most closely approximates\nan absolute waiver of all civil liability in connection with the\nProgram, unless a warranty or assumption of liability accompanies a\ncopy of the Program in return for a fee.\n\n END OF TERMS AND CONDITIONS\n\n How to Apply These Terms to Your New Programs\n\n If you develop a new program, and you want it to be of the greatest\npossible use to the public, the best way to achieve this is to make it\nfree software which everyone can redistribute and change under these terms.\n\n To do so, attach the following notices to the program. It is safest\nto attach them to the start of each source file to most effectively\nstate the exclusion of warranty; and each file should have at least\nthe \"copyright\" line and a pointer to where the full notice is found.\n\n \n Copyright (C) \n\n This program is free software: you can redistribute it and/or modify\n it under the terms of the GNU General Public License as published by\n the Free Software Foundation, either version 3 of the License, or\n (at your option) any later version.\n\n This program is distributed in the hope that it will be useful,\n but WITHOUT ANY WARRANTY; without even the implied warranty of\n MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the\n GNU General Public License for more details.\n\n You should have received a copy of the GNU General Public License\n along with this program. If not, see .\n\nAlso add information on how to contact you by electronic and paper mail.\n\n If the program does terminal interaction, make it output a short\nnotice like this when it starts in an interactive mode:\n\n Copyright (C) \n This program comes with ABSOLUTELY NO WARRANTY; for details type `show w'.\n This is free software, and you are welcome to redistribute it\n under certain conditions; type `show c' for details.\n\nThe hypothetical commands `show w' and `show c' should show the appropriate\nparts of the General Public License. Of course, your program's commands\nmight be different; for a GUI interface, you would use an \"about box\".\n\n You should also get your employer (if you work as a programmer) or school,\nif any, to sign a \"copyright disclaimer\" for the program, if necessary.\nFor more information on this, and how to apply and follow the GNU GPL, see\n.\n\n The GNU General Public License does not permit incorporating your program\ninto proprietary programs. If your program is a subroutine library, you\nmay consider it more useful to permit linking proprietary applications with\nthe library. If this is what you want to do, use the GNU Lesser General\nPublic License instead of this License. But first, please read\n.\n"

},

{

"path": "README.md",

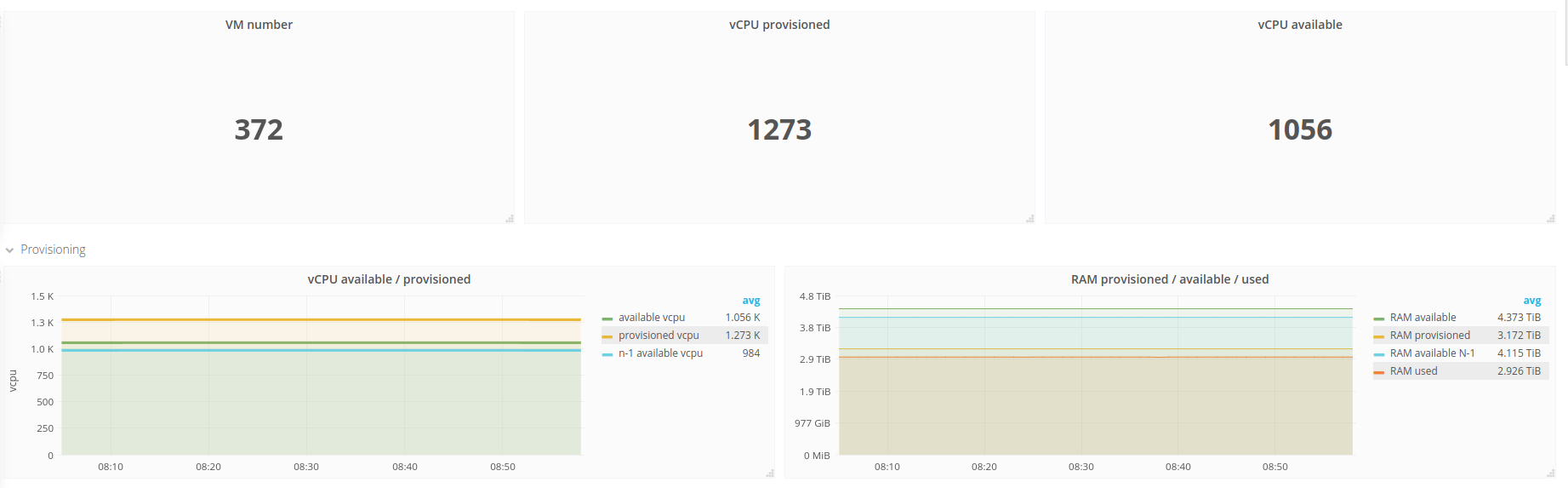

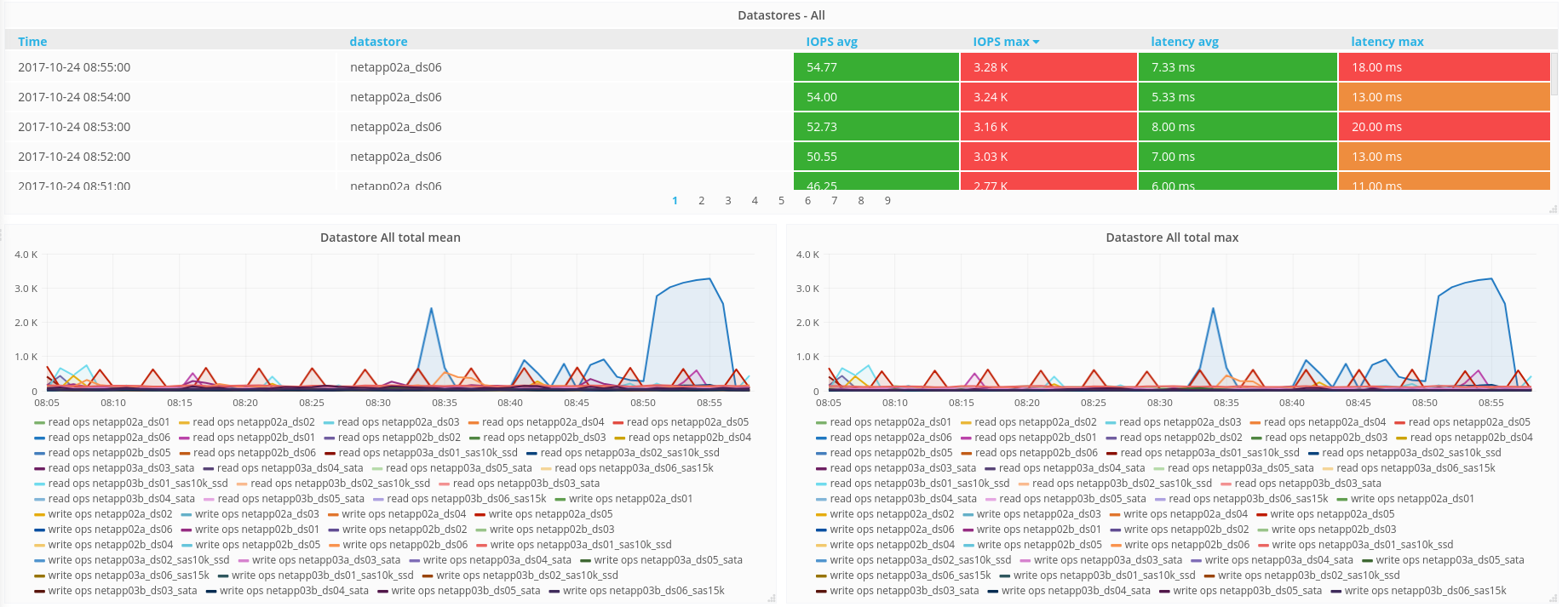

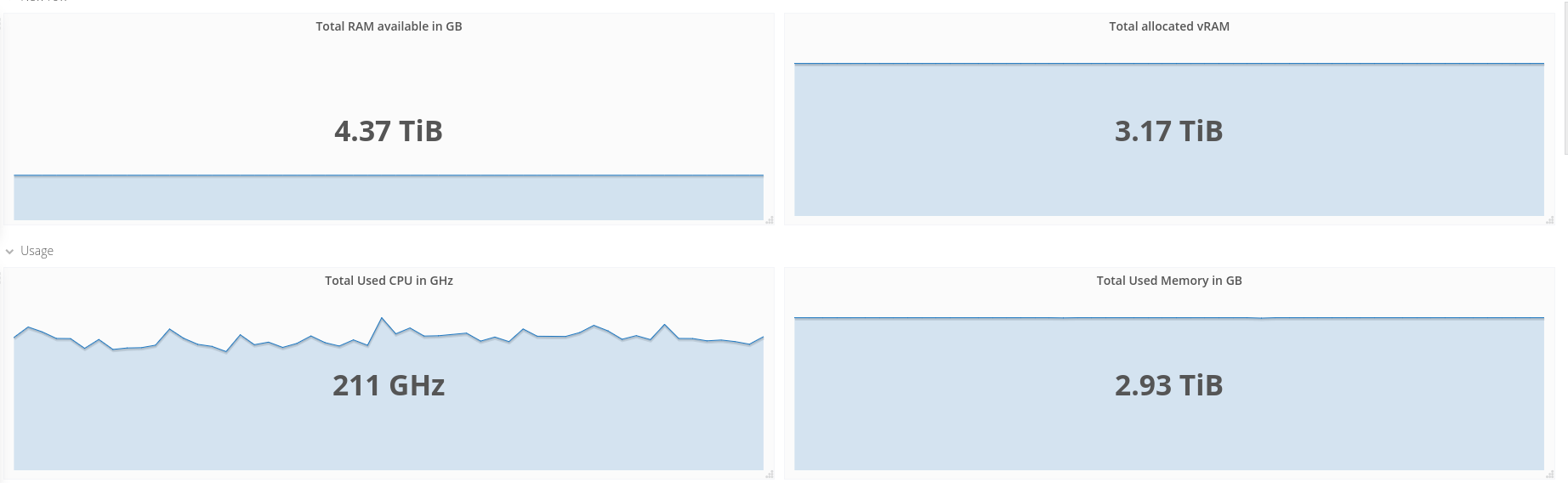

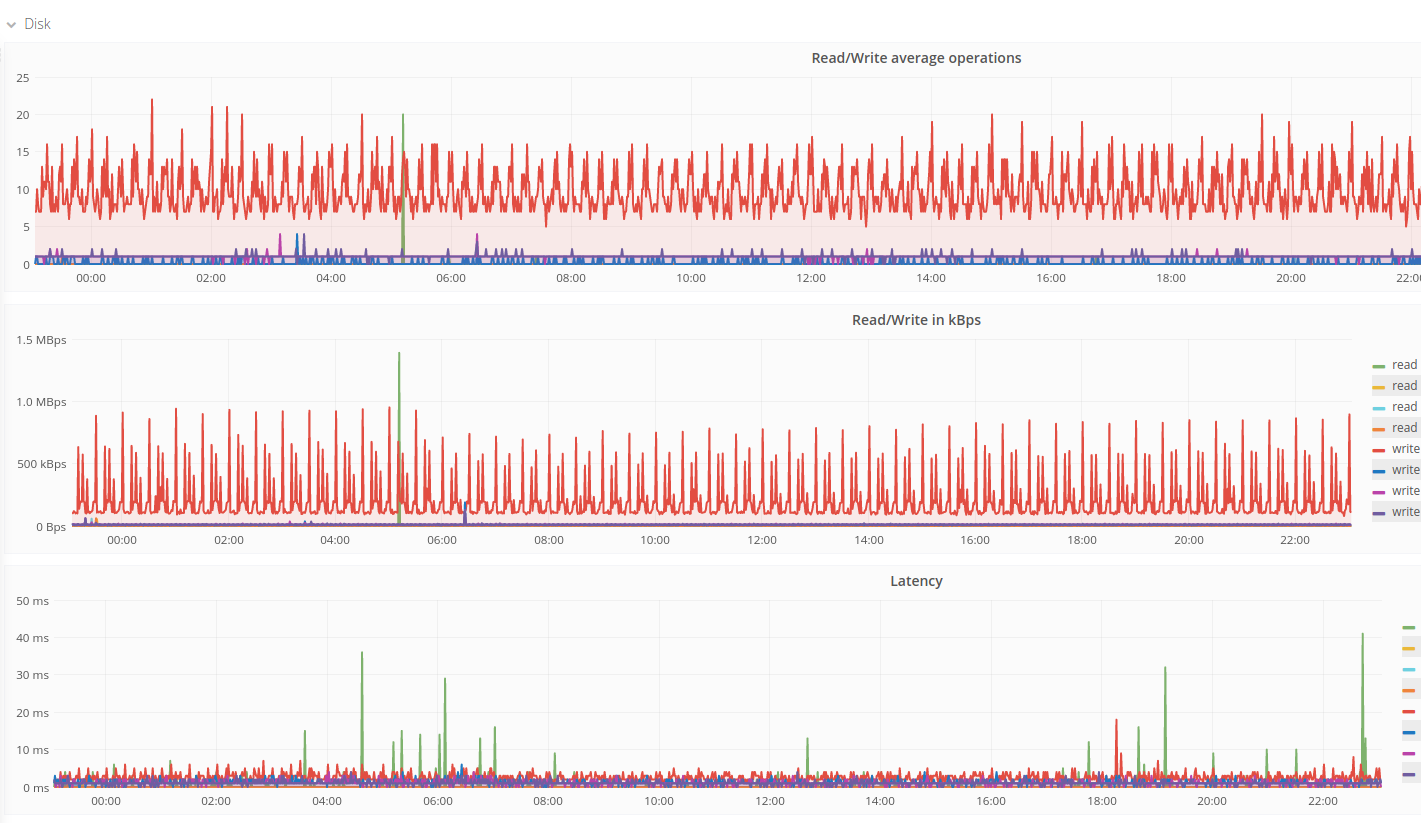

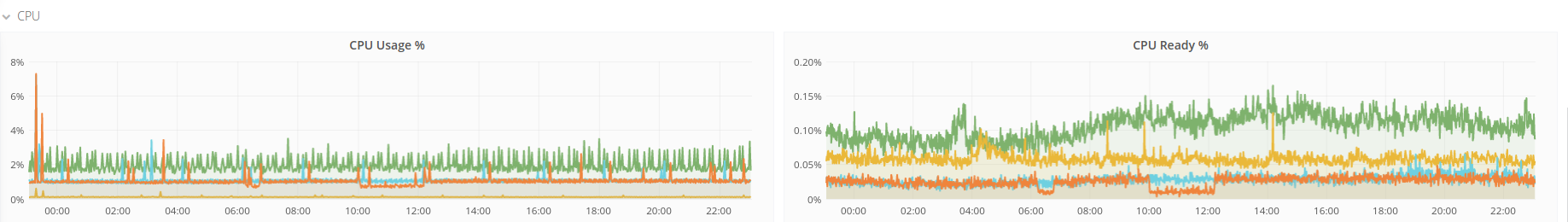

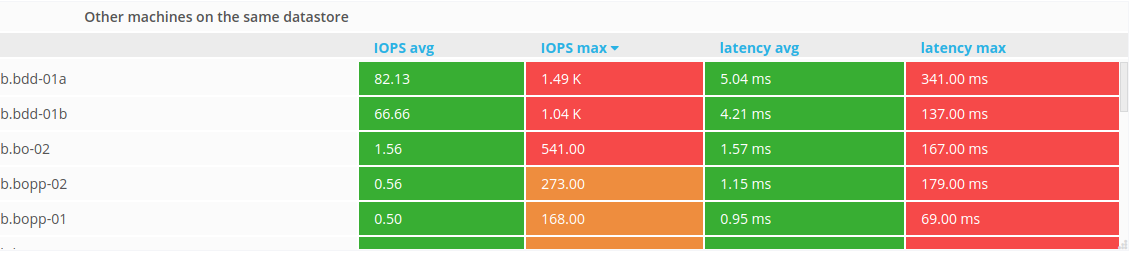

"content": "[](https://github.com/Oxalide/vsphere-influxdb-go/releases/latest) [](https://travis-ci.org/Oxalide/vsphere-influxdb-go) [](https://goreportcard.com/report/github.com/Oxalide/vsphere-influxdb-go)\n\n# Collect VMware vCenter and ESXi performance metrics and send them to InfluxDB\n\n# Screenshots of Grafana dashboards\n\n\n\n\n\n\n\n# Description and Features\nThis is a tool written in Go that helps you do your own custom tailored monitoring, capacity planning and performance debugging of VMware based infrastructures. It collects all possible metrics from vCenters and ESXi hypervisors about hosts, clusters, resource pools, datastores and virtual machines and sends them to an [InfluxDB database](https://github.com/influxdata/influxdb) (a popular open source time series database project written in Go), which you can then visualise in Grafana (links to sample dashboards [below](#example-dashboards)) or Chronograf, and use Grafana, Kapacitor or custom scripts to do alerting based on your needs, KPIs, capacity plannings/expectations.\n\n# Install \nGrab the [latest release](https://github.com/Oxalide/vsphere-influxdb-go/releases/latest) for your OS (deb, rpm packages, exes, archives for Linux, Darwin, Windows, FreeBSD on amd64, arm6, arm7, arm64 are available) and install it.\n\nFor Debian/Ubuntu on adm64:\n```\ncurl -L -O $(curl -s https://api.github.com/repos/Oxalide/vsphere-influxdb-go/releases | grep browser_download_url | grep '64[.]deb' | head -n 1 | cut -d '\"' -f 4)\ndpkg -i vsphere-influxdb-go*.deb\n```\n\nCentOS/Red Hat on amd64:\n```\ncurl -L -O $(curl -s https://api.github.com/repos/Oxalide/vsphere-influxdb-go/releases | grep browser_download_url | grep '64[.]rpm' | head -n 1 | cut -d '\"' -f 4)\nrpm -i vsphere-influxdb-go*.rpm\n```\n\nThis will install vsphere-influxdb-go in /usr/local/bin/vsphere-influxdb-go and an example configuration file in /etc/vsphere-influxdb-go.json that needs to be edited.\n\n\n# Configure\n\nThe JSON configuration file in /etc/vsphere-influxdb-go.json contains all your vCenters/ESXi to connect to, the InfluxDB connection details(url, username/password, database to use), and the metrics to collect(full list [here](http://www.virten.net/2015/05/vsphere-6-0-performance-counter-description/) ).\n\n**Note: Not all metrics are available directly, you might need to change your metric collection level.**\nA table with the level needed for each metric is availble [here](http://www.virten.net/2015/05/which-performance-counters-are-available-in-each-statistic-level/), and you can find a python script to change the collect level in the [tools folder of the project](./tools/).\n\nAdditionally you can provide a vCenter/ESXi server and InfluxDB connection details via environment variables, wich is extremly helpful when running inside a container:\n\nFor InfluxDB:\n* INFLUX\\_HOSTNAME\n* INFLUX\\_USERNAME\n* INFLUX\\_PASSWORD\n* INFLUX\\_DATABASE\n\nFor vSphere:\n* VSPHERE\\_HOSTNAME\n* VSPHERE\\_USERNAME\n* VSPHERE\\_PASSWORD \n\nKeep in mind, that currently only one vCenter/ESXi can be added via environment variable.\n\nIf you set a domain, it will be automaticaly removed from the names of the found objects.\n\nMetrics collected are defined by associating ObjectType groups with Metric groups.\n\nThere have been reports of the script not working correctly when the time is incorrect on the vsphere or vcenter. Make sure that the time is valid or activate the NTP service on the machine.\n\n# Run as a service\n\nCreate a crontab to run it every X minutes(one minute is fine - in our case, ~30 vCenters, ~100 ESXi and ~1400 VMs take approximately 25s to collect all metrics - rather impressive, i might add).\n```\n* * * * * /usr/local/bin/vsphere-influxdb-go\n```\n\n# Example dashboards\n* https://grafana.com/dashboards/1299 (thanks to @exbane )\n* https://grafana.com/dashboards/3556 (VMware cloud overview, mostly provisioning/global cloud usage stats)\n* https://grafana.com/dashboards/3571 (VMware performance, mostly VM oriented performance stats)\n\nContributions welcome!\n\n\n# Compile from source\n\n```\n\ngo get github.com/oxalide/vsphere-influxdb-go\n\n```\nThis will install the project in your $GOBIN($GOPATH/bin). If you have appended $GOBIN to your $PATH, you will be able to call it directly. Otherwise, you'll have to call it with its full path.\nExample:\n```\nvsphere-influxdb-go\n```\nor :\n```\n$GOBIN/vsphere-influxdb-go\n```\n\n# TODO before v1.0\n* Add service discovery(or probably something like [Viper](https://github.com/spf13/viper) for easier and more flexible configuration with multiple backends)\n* Daemonize\n* Provide a ready to use Dockerfile\n\n# Contributing\nYou are welcome to contribute!\n\n# License \n\nThe original project, upon which this one is based, is written by cblomart, sends the data to Graphite, and is available [here](https://github.com/cblomart/vsphere-graphite). \n\nThis one is licensed under GPLv3. You can find a copy of the license in [LICENSE.txt](./LICENSE.txt)\n\n\n"

},

{

"path": "goreleaser.yml",

"content": "project_name: vsphere-influxdb-go\nbuilds:\n - binary: vsphere-influxdb-go\n goos:\n - windows\n - darwin\n - linux\n - freebsd\n goarch:\n - amd64\n - arm\n - arm64\n goarm:\n - 6\n - 7\n\narchive:\n format: tar.gz\n files:\n - LICENSE.txt\n - README.md\nnfpm:\n # Your app's vendor.\n # Default is empty.\n vendor: Oxalide\n # Your app's homepage.\n homepage: https://github.com/Oxalide/vsphere-influxdb-go\n\n # Your app's maintainer (probably you).\n maintainer: Adrian Todorov \n\n # Your app's description.\n description: Collect VMware vSphere, vCenter and ESXi performance metrics and send them to InfluxDB\n\n # Your app's license.\n license: GPL 3.0\n\n # Formats to be generated.\n formats:\n - deb\n - rpm\n # Files or directories to add to your package (beyond the binary).\n # Keys are source paths to get the files from.\n # Values are the destination locations of the files in the package.\n files:\n \"vsphere-influxdb.json.sample\": \"/etc/vsphere-influxdb-go.json\"\n \n"

},

{

"path": "tools/README.md",

"content": "# Change vCenter metric collection level\n\n```\ngit clone https://github.com/Oxalide/vsphere-influxdb-go.git\npip install -r tools/requirements.txt\n./tools/change_metric_collection_level.py\n```\n"

},

{

"path": "tools/change_metric_collection_level.py",

"content": "#!/usr/bin/python\n#============================================\n# Script: change_metric_collection_level.py \n# Description: Change the metric collection level of an interval in a vCenter\n# Copyright 2017 Adrian Todorov, Oxalide ato@oxalide.com\n# This program is free software: you can redistribute it and/or modify\n# it under the terms of the GNU General Public License as published by\n# the Free Software Foundation, either version 3 of the License, or\n# (at your option) any later version.\n# This program is distributed in the hope that it will be useful,\n# but WITHOUT ANY WARRANTY; without even the implied warranty of\n# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the\n# GNU General Public License for more details.\n# You should have received a copy of the GNU General Public License\n# along with this program. If not, see .\n#\n#============================================\n\nfrom pyVim.connect import SmartConnect, Disconnect\nfrom pyVmomi import vim\nimport atexit\nimport sys\nimport requests\nimport argparse\nimport getpass\nimport linecache\n\nrequests.packages.urllib3.disable_warnings()\n\ndef PrintException():\n\t exc_type, exc_obj, tb = sys.exc_info()\n\t f = tb.tb_frame\n\t lineno = tb.tb_lineno\n\t filename = f.f_code.co_filename\n\t linecache.checkcache(filename)\n\t line = linecache.getline(filename, lineno, f.f_globals)\n\t print 'EXCEPTION IN ({}, LINE {} \"{}\"): {}'.format(filename, lineno, line.strip(), exc_obj)\n\n\ndef get_args():\n parser = argparse.ArgumentParser(description='Arguments for talking to vCenter and modifying a PerfManager collection interval')\n\n parser.add_argument('-s', '--host', required=True,action='store',help='vSpehre service to connect to')\n parser.add_argument('-o', '--port', type=int, default=443, action='store', help='Port to connect on')\n parser.add_argument('-u', '--user', required=True, action='store', help='User name to use')\n parser.add_argument('-p', '--password', required=False, action='store', help='Password to use')\n parser.add_argument('--interval-name', required=False, action='store', dest='intervalName', help='The name of the interval to modify')\n parser.add_argument('--interval-key', required=False, action='store', dest='intervalKey', help='The key of the interval to modify')\n parser.add_argument('--interval-level', type=int, required=True, default=4, action='store', dest='intervalLevel', help='The collection level wanted for the interval')\n\n args = parser.parse_args()\n\n if not args.password:\n args.password = getpass.getpass(prompt='Enter password:\\n')\n\tif not args.intervalName and not args.intervalKey:\n\t\tprint \"An interval name or key is needed\"\n\t\texit(2)\n\t\n\treturn args\n\ndef change_level(host, user, pwd, port, level, key, name):\n\ttry:\n\t\tprint user\n\t\tprint pwd\n\t\tprint host\n\t\tserviceInstance = SmartConnect(host=host,user=user,pwd=pwd,port=port)\n\t\tatexit.register(Disconnect, serviceInstance)\n\t\tcontent = serviceInstance.RetrieveContent()\n\t\tpm = content.perfManager\n\n\t\tfor hi in pm.historicalInterval:\n\t\t\tif (key and int(hi.key) == int(key)) or (name and str(hi.name) == str(name)):\n\t\t\t\tprint \"Changing interval '\" + str(hi.name) + \"'\"\n\t\t\t\tnewobj = hi\n\t\t\t\tnewobj.level = level\n\t\t\t\tpm.UpdatePerfInterval(newobj)\n\n\t\tprint \"Intervals are now configured as follows: \"\n\t\tprint \"Name | Level\"\n\t\tpm2 = content.perfManager\n\t\tfor hi2 in pm2.historicalInterval:\n\t\t\tprint hi2.name + \" | \" + str(hi2.level)\n\n\texcept Exception, e:\n\t\tprint \"Error: %s \" % (e)\n\t\tPrintException()\n\t\texit(2)\n\n\nif __name__ == \"__main__\":\n\targs = get_args()\n\tchange_level(args.host, args.user, args.password, args.port, args.intervalLevel, args.intervalKey, args.intervalName)\n\n\n"

},

{

"path": "tools/requirements.txt",

"content": "pyVmomi\nrequests\nargparse\n"

},

{

"path": "vendor/github.com/davecgh/go-spew/.gitignore",

"content": "# Compiled Object files, Static and Dynamic libs (Shared Objects)\n*.o\n*.a\n*.so\n\n# Folders\n_obj\n_test\n\n# Architecture specific extensions/prefixes\n*.[568vq]\n[568vq].out\n\n*.cgo1.go\n*.cgo2.c\n_cgo_defun.c\n_cgo_gotypes.go\n_cgo_export.*\n\n_testmain.go\n\n*.exe\n"

},

{

"path": "vendor/github.com/davecgh/go-spew/.travis.yml",

"content": "language: go\ngo:\n - 1.5.4\n - 1.6.3\n - 1.7\ninstall:\n - go get -v golang.org/x/tools/cmd/cover\nscript:\n - go test -v -tags=safe ./spew\n - go test -v -tags=testcgo ./spew -covermode=count -coverprofile=profile.cov\nafter_success:\n - go get -v github.com/mattn/goveralls\n - export PATH=$PATH:$HOME/gopath/bin\n - goveralls -coverprofile=profile.cov -service=travis-ci\n"

},

{

"path": "vendor/github.com/davecgh/go-spew/LICENSE",

"content": "ISC License\n\nCopyright (c) 2012-2016 Dave Collins \n\nPermission to use, copy, modify, and distribute this software for any\npurpose with or without fee is hereby granted, provided that the above\ncopyright notice and this permission notice appear in all copies.\n\nTHE SOFTWARE IS PROVIDED \"AS IS\" AND THE AUTHOR DISCLAIMS ALL WARRANTIES\nWITH REGARD TO THIS SOFTWARE INCLUDING ALL IMPLIED WARRANTIES OF\nMERCHANTABILITY AND FITNESS. IN NO EVENT SHALL THE AUTHOR BE LIABLE FOR\nANY SPECIAL, DIRECT, INDIRECT, OR CONSEQUENTIAL DAMAGES OR ANY DAMAGES\nWHATSOEVER RESULTING FROM LOSS OF USE, DATA OR PROFITS, WHETHER IN AN\nACTION OF CONTRACT, NEGLIGENCE OR OTHER TORTIOUS ACTION, ARISING OUT OF\nOR IN CONNECTION WITH THE USE OR PERFORMANCE OF THIS SOFTWARE.\n"

},

{

"path": "vendor/github.com/davecgh/go-spew/README.md",

"content": "go-spew\n=======\n\n[]\n(https://travis-ci.org/davecgh/go-spew) [![ISC License]\n(http://img.shields.io/badge/license-ISC-blue.svg)](http://copyfree.org) [![Coverage Status]\n(https://img.shields.io/coveralls/davecgh/go-spew.svg)]\n(https://coveralls.io/r/davecgh/go-spew?branch=master)\n\n\nGo-spew implements a deep pretty printer for Go data structures to aid in\ndebugging. A comprehensive suite of tests with 100% test coverage is provided\nto ensure proper functionality. See `test_coverage.txt` for the gocov coverage\nreport. Go-spew is licensed under the liberal ISC license, so it may be used in\nopen source or commercial projects.\n\nIf you're interested in reading about how this package came to life and some\nof the challenges involved in providing a deep pretty printer, there is a blog\npost about it\n[here](https://web.archive.org/web/20160304013555/https://blog.cyphertite.com/go-spew-a-journey-into-dumping-go-data-structures/).\n\n## Documentation\n\n[]\n(http://godoc.org/github.com/davecgh/go-spew/spew)\n\nFull `go doc` style documentation for the project can be viewed online without\ninstalling this package by using the excellent GoDoc site here:\nhttp://godoc.org/github.com/davecgh/go-spew/spew\n\nYou can also view the documentation locally once the package is installed with\nthe `godoc` tool by running `godoc -http=\":6060\"` and pointing your browser to\nhttp://localhost:6060/pkg/github.com/davecgh/go-spew/spew\n\n## Installation\n\n```bash\n$ go get -u github.com/davecgh/go-spew/spew\n```\n\n## Quick Start\n\nAdd this import line to the file you're working in:\n\n```Go\nimport \"github.com/davecgh/go-spew/spew\"\n```\n\nTo dump a variable with full newlines, indentation, type, and pointer\ninformation use Dump, Fdump, or Sdump:\n\n```Go\nspew.Dump(myVar1, myVar2, ...)\nspew.Fdump(someWriter, myVar1, myVar2, ...)\nstr := spew.Sdump(myVar1, myVar2, ...)\n```\n\nAlternatively, if you would prefer to use format strings with a compacted inline\nprinting style, use the convenience wrappers Printf, Fprintf, etc with %v (most\ncompact), %+v (adds pointer addresses), %#v (adds types), or %#+v (adds types\nand pointer addresses): \n\n```Go\nspew.Printf(\"myVar1: %v -- myVar2: %+v\", myVar1, myVar2)\nspew.Printf(\"myVar3: %#v -- myVar4: %#+v\", myVar3, myVar4)\nspew.Fprintf(someWriter, \"myVar1: %v -- myVar2: %+v\", myVar1, myVar2)\nspew.Fprintf(someWriter, \"myVar3: %#v -- myVar4: %#+v\", myVar3, myVar4)\n```\n\n## Debugging a Web Application Example\n\nHere is an example of how you can use `spew.Sdump()` to help debug a web application. Please be sure to wrap your output using the `html.EscapeString()` function for safety reasons. You should also only use this debugging technique in a development environment, never in production.\n\n```Go\npackage main\n\nimport (\n \"fmt\"\n \"html\"\n \"net/http\"\n\n \"github.com/davecgh/go-spew/spew\"\n)\n\nfunc handler(w http.ResponseWriter, r *http.Request) {\n w.Header().Set(\"Content-Type\", \"text/html\")\n fmt.Fprintf(w, \"Hi there, %s!\", r.URL.Path[1:])\n fmt.Fprintf(w, \"\")\n}\n\nfunc main() {\n http.HandleFunc(\"/\", handler)\n http.ListenAndServe(\":8080\", nil)\n}\n```\n\n## Sample Dump Output\n\n```\n(main.Foo) {\n unexportedField: (*main.Bar)(0xf84002e210)({\n flag: (main.Flag) flagTwo,\n data: (uintptr) \n }),\n ExportedField: (map[interface {}]interface {}) {\n (string) \"one\": (bool) true\n }\n}\n([]uint8) {\n 00000000 11 12 13 14 15 16 17 18 19 1a 1b 1c 1d 1e 1f 20 |............... |\n 00000010 21 22 23 24 25 26 27 28 29 2a 2b 2c 2d 2e 2f 30 |!\"#$%&'()*+,-./0|\n 00000020 31 32 |12|\n}\n```\n\n## Sample Formatter Output\n\nDouble pointer to a uint8:\n```\n\t %v: <**>5\n\t %+v: <**>(0xf8400420d0->0xf8400420c8)5\n\t %#v: (**uint8)5\n\t%#+v: (**uint8)(0xf8400420d0->0xf8400420c8)5\n```\n\nPointer to circular struct with a uint8 field and a pointer to itself:\n```\n\t %v: <*>{1 <*>}\n\t %+v: <*>(0xf84003e260){ui8:1 c:<*>(0xf84003e260)}\n\t %#v: (*main.circular){ui8:(uint8)1 c:(*main.circular)}\n\t%#+v: (*main.circular)(0xf84003e260){ui8:(uint8)1 c:(*main.circular)(0xf84003e260)}\n```\n\n## Configuration Options\n\nConfiguration of spew is handled by fields in the ConfigState type. For\nconvenience, all of the top-level functions use a global state available via the\nspew.Config global.\n\nIt is also possible to create a ConfigState instance that provides methods\nequivalent to the top-level functions. This allows concurrent configuration\noptions. See the ConfigState documentation for more details.\n\n```\n* Indent\n\tString to use for each indentation level for Dump functions.\n\tIt is a single space by default. A popular alternative is \"\\t\".\n\n* MaxDepth\n\tMaximum number of levels to descend into nested data structures.\n\tThere is no limit by default.\n\n* DisableMethods\n\tDisables invocation of error and Stringer interface methods.\n\tMethod invocation is enabled by default.\n\n* DisablePointerMethods\n\tDisables invocation of error and Stringer interface methods on types\n\twhich only accept pointer receivers from non-pointer variables. This option\n\trelies on access to the unsafe package, so it will not have any effect when\n\trunning in environments without access to the unsafe package such as Google\n\tApp Engine or with the \"safe\" build tag specified.\n\tPointer method invocation is enabled by default.\n\n* DisablePointerAddresses\n\tDisablePointerAddresses specifies whether to disable the printing of\n\tpointer addresses. This is useful when diffing data structures in tests.\n\n* DisableCapacities\n\tDisableCapacities specifies whether to disable the printing of capacities\n\tfor arrays, slices, maps and channels. This is useful when diffing data\n\tstructures in tests.\n\n* ContinueOnMethod\n\tEnables recursion into types after invoking error and Stringer interface\n\tmethods. Recursion after method invocation is disabled by default.\n\n* SortKeys\n\tSpecifies map keys should be sorted before being printed. Use\n\tthis to have a more deterministic, diffable output. Note that\n\tonly native types (bool, int, uint, floats, uintptr and string)\n\tand types which implement error or Stringer interfaces are supported,\n\twith other types sorted according to the reflect.Value.String() output\n\twhich guarantees display stability. Natural map order is used by\n\tdefault.\n\n* SpewKeys\n\tSpewKeys specifies that, as a last resort attempt, map keys should be\n\tspewed to strings and sorted by those strings. This is only considered\n\tif SortKeys is true.\n\n```\n\n## Unsafe Package Dependency\n\nThis package relies on the unsafe package to perform some of the more advanced\nfeatures, however it also supports a \"limited\" mode which allows it to work in\nenvironments where the unsafe package is not available. By default, it will\noperate in this mode on Google App Engine and when compiled with GopherJS. The\n\"safe\" build tag may also be specified to force the package to build without\nusing the unsafe package.\n\n## License\n\nGo-spew is licensed under the [copyfree](http://copyfree.org) ISC License.\n"

},

{

"path": "vendor/github.com/davecgh/go-spew/cov_report.sh",

"content": "#!/bin/sh\n\n# This script uses gocov to generate a test coverage report.\n# The gocov tool my be obtained with the following command:\n# go get github.com/axw/gocov/gocov\n#\n# It will be installed to $GOPATH/bin, so ensure that location is in your $PATH.\n\n# Check for gocov.\nif ! type gocov >/dev/null 2>&1; then\n\techo >&2 \"This script requires the gocov tool.\"\n\techo >&2 \"You may obtain it with the following command:\"\n\techo >&2 \"go get github.com/axw/gocov/gocov\"\n\texit 1\nfi\n\n# Only run the cgo tests if gcc is installed.\nif type gcc >/dev/null 2>&1; then\n\t(cd spew && gocov test -tags testcgo | gocov report)\nelse\n\t(cd spew && gocov test | gocov report)\nfi\n"

},

{

"path": "vendor/github.com/davecgh/go-spew/spew/bypass.go",

"content": "// Copyright (c) 2015-2016 Dave Collins \n//\n// Permission to use, copy, modify, and distribute this software for any\n// purpose with or without fee is hereby granted, provided that the above\n// copyright notice and this permission notice appear in all copies.\n//\n// THE SOFTWARE IS PROVIDED \"AS IS\" AND THE AUTHOR DISCLAIMS ALL WARRANTIES\n// WITH REGARD TO THIS SOFTWARE INCLUDING ALL IMPLIED WARRANTIES OF\n// MERCHANTABILITY AND FITNESS. IN NO EVENT SHALL THE AUTHOR BE LIABLE FOR\n// ANY SPECIAL, DIRECT, INDIRECT, OR CONSEQUENTIAL DAMAGES OR ANY DAMAGES\n// WHATSOEVER RESULTING FROM LOSS OF USE, DATA OR PROFITS, WHETHER IN AN\n// ACTION OF CONTRACT, NEGLIGENCE OR OTHER TORTIOUS ACTION, ARISING OUT OF\n// OR IN CONNECTION WITH THE USE OR PERFORMANCE OF THIS SOFTWARE.\n\n// NOTE: Due to the following build constraints, this file will only be compiled\n// when the code is not running on Google App Engine, compiled by GopherJS, and\n// \"-tags safe\" is not added to the go build command line. The \"disableunsafe\"\n// tag is deprecated and thus should not be used.\n// +build !js,!appengine,!safe,!disableunsafe\n\npackage spew\n\nimport (\n\t\"reflect\"\n\t\"unsafe\"\n)\n\nconst (\n\t// UnsafeDisabled is a build-time constant which specifies whether or\n\t// not access to the unsafe package is available.\n\tUnsafeDisabled = false\n\n\t// ptrSize is the size of a pointer on the current arch.\n\tptrSize = unsafe.Sizeof((*byte)(nil))\n)\n\nvar (\n\t// offsetPtr, offsetScalar, and offsetFlag are the offsets for the\n\t// internal reflect.Value fields. These values are valid before golang\n\t// commit ecccf07e7f9d which changed the format. The are also valid\n\t// after commit 82f48826c6c7 which changed the format again to mirror\n\t// the original format. Code in the init function updates these offsets\n\t// as necessary.\n\toffsetPtr = uintptr(ptrSize)\n\toffsetScalar = uintptr(0)\n\toffsetFlag = uintptr(ptrSize * 2)\n\n\t// flagKindWidth and flagKindShift indicate various bits that the\n\t// reflect package uses internally to track kind information.\n\t//\n\t// flagRO indicates whether or not the value field of a reflect.Value is\n\t// read-only.\n\t//\n\t// flagIndir indicates whether the value field of a reflect.Value is\n\t// the actual data or a pointer to the data.\n\t//\n\t// These values are valid before golang commit 90a7c3c86944 which\n\t// changed their positions. Code in the init function updates these\n\t// flags as necessary.\n\tflagKindWidth = uintptr(5)\n\tflagKindShift = uintptr(flagKindWidth - 1)\n\tflagRO = uintptr(1 << 0)\n\tflagIndir = uintptr(1 << 1)\n)\n\nfunc init() {\n\t// Older versions of reflect.Value stored small integers directly in the\n\t// ptr field (which is named val in the older versions). Versions\n\t// between commits ecccf07e7f9d and 82f48826c6c7 added a new field named\n\t// scalar for this purpose which unfortunately came before the flag\n\t// field, so the offset of the flag field is different for those\n\t// versions.\n\t//\n\t// This code constructs a new reflect.Value from a known small integer\n\t// and checks if the size of the reflect.Value struct indicates it has\n\t// the scalar field. When it does, the offsets are updated accordingly.\n\tvv := reflect.ValueOf(0xf00)\n\tif unsafe.Sizeof(vv) == (ptrSize * 4) {\n\t\toffsetScalar = ptrSize * 2\n\t\toffsetFlag = ptrSize * 3\n\t}\n\n\t// Commit 90a7c3c86944 changed the flag positions such that the low\n\t// order bits are the kind. This code extracts the kind from the flags\n\t// field and ensures it's the correct type. When it's not, the flag\n\t// order has been changed to the newer format, so the flags are updated\n\t// accordingly.\n\tupf := unsafe.Pointer(uintptr(unsafe.Pointer(&vv)) + offsetFlag)\n\tupfv := *(*uintptr)(upf)\n\tflagKindMask := uintptr((1<>flagKindShift != uintptr(reflect.Int) {\n\t\tflagKindShift = 0\n\t\tflagRO = 1 << 5\n\t\tflagIndir = 1 << 6\n\n\t\t// Commit adf9b30e5594 modified the flags to separate the\n\t\t// flagRO flag into two bits which specifies whether or not the\n\t\t// field is embedded. This causes flagIndir to move over a bit\n\t\t// and means that flagRO is the combination of either of the\n\t\t// original flagRO bit and the new bit.\n\t\t//\n\t\t// This code detects the change by extracting what used to be\n\t\t// the indirect bit to ensure it's set. When it's not, the flag\n\t\t// order has been changed to the newer format, so the flags are\n\t\t// updated accordingly.\n\t\tif upfv&flagIndir == 0 {\n\t\t\tflagRO = 3 << 5\n\t\t\tflagIndir = 1 << 7\n\t\t}\n\t}\n}\n\n// unsafeReflectValue converts the passed reflect.Value into a one that bypasses\n// the typical safety restrictions preventing access to unaddressable and\n// unexported data. It works by digging the raw pointer to the underlying\n// value out of the protected value and generating a new unprotected (unsafe)\n// reflect.Value to it.\n//\n// This allows us to check for implementations of the Stringer and error\n// interfaces to be used for pretty printing ordinarily unaddressable and\n// inaccessible values such as unexported struct fields.\nfunc unsafeReflectValue(v reflect.Value) (rv reflect.Value) {\n\tindirects := 1\n\tvt := v.Type()\n\tupv := unsafe.Pointer(uintptr(unsafe.Pointer(&v)) + offsetPtr)\n\trvf := *(*uintptr)(unsafe.Pointer(uintptr(unsafe.Pointer(&v)) + offsetFlag))\n\tif rvf&flagIndir != 0 {\n\t\tvt = reflect.PtrTo(v.Type())\n\t\tindirects++\n\t} else if offsetScalar != 0 {\n\t\t// The value is in the scalar field when it's not one of the\n\t\t// reference types.\n\t\tswitch vt.Kind() {\n\t\tcase reflect.Uintptr:\n\t\tcase reflect.Chan:\n\t\tcase reflect.Func:\n\t\tcase reflect.Map:\n\t\tcase reflect.Ptr:\n\t\tcase reflect.UnsafePointer:\n\t\tdefault:\n\t\t\tupv = unsafe.Pointer(uintptr(unsafe.Pointer(&v)) +\n\t\t\t\toffsetScalar)\n\t\t}\n\t}\n\n\tpv := reflect.NewAt(vt, upv)\n\trv = pv\n\tfor i := 0; i < indirects; i++ {\n\t\trv = rv.Elem()\n\t}\n\treturn rv\n}\n"

},

{

"path": "vendor/github.com/davecgh/go-spew/spew/bypasssafe.go",

"content": "// Copyright (c) 2015-2016 Dave Collins \n//\n// Permission to use, copy, modify, and distribute this software for any\n// purpose with or without fee is hereby granted, provided that the above\n// copyright notice and this permission notice appear in all copies.\n//\n// THE SOFTWARE IS PROVIDED \"AS IS\" AND THE AUTHOR DISCLAIMS ALL WARRANTIES\n// WITH REGARD TO THIS SOFTWARE INCLUDING ALL IMPLIED WARRANTIES OF\n// MERCHANTABILITY AND FITNESS. IN NO EVENT SHALL THE AUTHOR BE LIABLE FOR\n// ANY SPECIAL, DIRECT, INDIRECT, OR CONSEQUENTIAL DAMAGES OR ANY DAMAGES\n// WHATSOEVER RESULTING FROM LOSS OF USE, DATA OR PROFITS, WHETHER IN AN\n// ACTION OF CONTRACT, NEGLIGENCE OR OTHER TORTIOUS ACTION, ARISING OUT OF\n// OR IN CONNECTION WITH THE USE OR PERFORMANCE OF THIS SOFTWARE.\n\n// NOTE: Due to the following build constraints, this file will only be compiled\n// when the code is running on Google App Engine, compiled by GopherJS, or\n// \"-tags safe\" is added to the go build command line. The \"disableunsafe\"\n// tag is deprecated and thus should not be used.\n// +build js appengine safe disableunsafe\n\npackage spew\n\nimport \"reflect\"\n\nconst (\n\t// UnsafeDisabled is a build-time constant which specifies whether or\n\t// not access to the unsafe package is available.\n\tUnsafeDisabled = true\n)\n\n// unsafeReflectValue typically converts the passed reflect.Value into a one\n// that bypasses the typical safety restrictions preventing access to\n// unaddressable and unexported data. However, doing this relies on access to\n// the unsafe package. This is a stub version which simply returns the passed\n// reflect.Value when the unsafe package is not available.\nfunc unsafeReflectValue(v reflect.Value) reflect.Value {\n\treturn v\n}\n"

},

{

"path": "vendor/github.com/davecgh/go-spew/spew/common.go",

"content": "/*\n * Copyright (c) 2013-2016 Dave Collins \n *\n * Permission to use, copy, modify, and distribute this software for any\n * purpose with or without fee is hereby granted, provided that the above\n * copyright notice and this permission notice appear in all copies.\n *\n * THE SOFTWARE IS PROVIDED \"AS IS\" AND THE AUTHOR DISCLAIMS ALL WARRANTIES\n * WITH REGARD TO THIS SOFTWARE INCLUDING ALL IMPLIED WARRANTIES OF\n * MERCHANTABILITY AND FITNESS. IN NO EVENT SHALL THE AUTHOR BE LIABLE FOR\n * ANY SPECIAL, DIRECT, INDIRECT, OR CONSEQUENTIAL DAMAGES OR ANY DAMAGES\n * WHATSOEVER RESULTING FROM LOSS OF USE, DATA OR PROFITS, WHETHER IN AN\n * ACTION OF CONTRACT, NEGLIGENCE OR OTHER TORTIOUS ACTION, ARISING OUT OF\n * OR IN CONNECTION WITH THE USE OR PERFORMANCE OF THIS SOFTWARE.\n */\n\npackage spew\n\nimport (\n\t\"bytes\"\n\t\"fmt\"\n\t\"io\"\n\t\"reflect\"\n\t\"sort\"\n\t\"strconv\"\n)\n\n// Some constants in the form of bytes to avoid string overhead. This mirrors\n// the technique used in the fmt package.\nvar (\n\tpanicBytes = []byte(\"(PANIC=\")\n\tplusBytes = []byte(\"+\")\n\tiBytes = []byte(\"i\")\n\ttrueBytes = []byte(\"true\")\n\tfalseBytes = []byte(\"false\")\n\tinterfaceBytes = []byte(\"(interface {})\")\n\tcommaNewlineBytes = []byte(\",\\n\")\n\tnewlineBytes = []byte(\"\\n\")\n\topenBraceBytes = []byte(\"{\")\n\topenBraceNewlineBytes = []byte(\"{\\n\")\n\tcloseBraceBytes = []byte(\"}\")\n\tasteriskBytes = []byte(\"*\")\n\tcolonBytes = []byte(\":\")\n\tcolonSpaceBytes = []byte(\": \")\n\topenParenBytes = []byte(\"(\")\n\tcloseParenBytes = []byte(\")\")\n\tspaceBytes = []byte(\" \")\n\tpointerChainBytes = []byte(\"->\")\n\tnilAngleBytes = []byte(\"\")\n\tmaxNewlineBytes = []byte(\"\\n\")\n\tmaxShortBytes = []byte(\"\")\n\tcircularBytes = []byte(\"\")\n\tcircularShortBytes = []byte(\"\")\n\tinvalidAngleBytes = []byte(\"\")\n\topenBracketBytes = []byte(\"[\")\n\tcloseBracketBytes = []byte(\"]\")\n\tpercentBytes = []byte(\"%\")\n\tprecisionBytes = []byte(\".\")\n\topenAngleBytes = []byte(\"<\")\n\tcloseAngleBytes = []byte(\">\")\n\topenMapBytes = []byte(\"map[\")\n\tcloseMapBytes = []byte(\"]\")\n\tlenEqualsBytes = []byte(\"len=\")\n\tcapEqualsBytes = []byte(\"cap=\")\n)\n\n// hexDigits is used to map a decimal value to a hex digit.\nvar hexDigits = \"0123456789abcdef\"\n\n// catchPanic handles any panics that might occur during the handleMethods\n// calls.\nfunc catchPanic(w io.Writer, v reflect.Value) {\n\tif err := recover(); err != nil {\n\t\tw.Write(panicBytes)\n\t\tfmt.Fprintf(w, \"%v\", err)\n\t\tw.Write(closeParenBytes)\n\t}\n}\n\n// handleMethods attempts to call the Error and String methods on the underlying\n// type the passed reflect.Value represents and outputes the result to Writer w.\n//\n// It handles panics in any called methods by catching and displaying the error\n// as the formatted value.\nfunc handleMethods(cs *ConfigState, w io.Writer, v reflect.Value) (handled bool) {\n\t// We need an interface to check if the type implements the error or\n\t// Stringer interface. However, the reflect package won't give us an\n\t// interface on certain things like unexported struct fields in order\n\t// to enforce visibility rules. We use unsafe, when it's available,\n\t// to bypass these restrictions since this package does not mutate the\n\t// values.\n\tif !v.CanInterface() {\n\t\tif UnsafeDisabled {\n\t\t\treturn false\n\t\t}\n\n\t\tv = unsafeReflectValue(v)\n\t}\n\n\t// Choose whether or not to do error and Stringer interface lookups against\n\t// the base type or a pointer to the base type depending on settings.\n\t// Technically calling one of these methods with a pointer receiver can\n\t// mutate the value, however, types which choose to satisify an error or\n\t// Stringer interface with a pointer receiver should not be mutating their\n\t// state inside these interface methods.\n\tif !cs.DisablePointerMethods && !UnsafeDisabled && !v.CanAddr() {\n\t\tv = unsafeReflectValue(v)\n\t}\n\tif v.CanAddr() {\n\t\tv = v.Addr()\n\t}\n\n\t// Is it an error or Stringer?\n\tswitch iface := v.Interface().(type) {\n\tcase error:\n\t\tdefer catchPanic(w, v)\n\t\tif cs.ContinueOnMethod {\n\t\t\tw.Write(openParenBytes)\n\t\t\tw.Write([]byte(iface.Error()))\n\t\t\tw.Write(closeParenBytes)\n\t\t\tw.Write(spaceBytes)\n\t\t\treturn false\n\t\t}\n\n\t\tw.Write([]byte(iface.Error()))\n\t\treturn true\n\n\tcase fmt.Stringer:\n\t\tdefer catchPanic(w, v)\n\t\tif cs.ContinueOnMethod {\n\t\t\tw.Write(openParenBytes)\n\t\t\tw.Write([]byte(iface.String()))\n\t\t\tw.Write(closeParenBytes)\n\t\t\tw.Write(spaceBytes)\n\t\t\treturn false\n\t\t}\n\t\tw.Write([]byte(iface.String()))\n\t\treturn true\n\t}\n\treturn false\n}\n\n// printBool outputs a boolean value as true or false to Writer w.\nfunc printBool(w io.Writer, val bool) {\n\tif val {\n\t\tw.Write(trueBytes)\n\t} else {\n\t\tw.Write(falseBytes)\n\t}\n}\n\n// printInt outputs a signed integer value to Writer w.\nfunc printInt(w io.Writer, val int64, base int) {\n\tw.Write([]byte(strconv.FormatInt(val, base)))\n}\n\n// printUint outputs an unsigned integer value to Writer w.\nfunc printUint(w io.Writer, val uint64, base int) {\n\tw.Write([]byte(strconv.FormatUint(val, base)))\n}\n\n// printFloat outputs a floating point value using the specified precision,\n// which is expected to be 32 or 64bit, to Writer w.\nfunc printFloat(w io.Writer, val float64, precision int) {\n\tw.Write([]byte(strconv.FormatFloat(val, 'g', -1, precision)))\n}\n\n// printComplex outputs a complex value using the specified float precision\n// for the real and imaginary parts to Writer w.\nfunc printComplex(w io.Writer, c complex128, floatPrecision int) {\n\tr := real(c)\n\tw.Write(openParenBytes)\n\tw.Write([]byte(strconv.FormatFloat(r, 'g', -1, floatPrecision)))\n\ti := imag(c)\n\tif i >= 0 {\n\t\tw.Write(plusBytes)\n\t}\n\tw.Write([]byte(strconv.FormatFloat(i, 'g', -1, floatPrecision)))\n\tw.Write(iBytes)\n\tw.Write(closeParenBytes)\n}\n\n// printHexPtr outputs a uintptr formatted as hexidecimal with a leading '0x'\n// prefix to Writer w.\nfunc printHexPtr(w io.Writer, p uintptr) {\n\t// Null pointer.\n\tnum := uint64(p)\n\tif num == 0 {\n\t\tw.Write(nilAngleBytes)\n\t\treturn\n\t}\n\n\t// Max uint64 is 16 bytes in hex + 2 bytes for '0x' prefix\n\tbuf := make([]byte, 18)\n\n\t// It's simpler to construct the hex string right to left.\n\tbase := uint64(16)\n\ti := len(buf) - 1\n\tfor num >= base {\n\t\tbuf[i] = hexDigits[num%base]\n\t\tnum /= base\n\t\ti--\n\t}\n\tbuf[i] = hexDigits[num]\n\n\t// Add '0x' prefix.\n\ti--\n\tbuf[i] = 'x'\n\ti--\n\tbuf[i] = '0'\n\n\t// Strip unused leading bytes.\n\tbuf = buf[i:]\n\tw.Write(buf)\n}\n\n// valuesSorter implements sort.Interface to allow a slice of reflect.Value\n// elements to be sorted.\ntype valuesSorter struct {\n\tvalues []reflect.Value\n\tstrings []string // either nil or same len and values\n\tcs *ConfigState\n}\n\n// newValuesSorter initializes a valuesSorter instance, which holds a set of\n// surrogate keys on which the data should be sorted. It uses flags in\n// ConfigState to decide if and how to populate those surrogate keys.\nfunc newValuesSorter(values []reflect.Value, cs *ConfigState) sort.Interface {\n\tvs := &valuesSorter{values: values, cs: cs}\n\tif canSortSimply(vs.values[0].Kind()) {\n\t\treturn vs\n\t}\n\tif !cs.DisableMethods {\n\t\tvs.strings = make([]string, len(values))\n\t\tfor i := range vs.values {\n\t\t\tb := bytes.Buffer{}\n\t\t\tif !handleMethods(cs, &b, vs.values[i]) {\n\t\t\t\tvs.strings = nil\n\t\t\t\tbreak\n\t\t\t}\n\t\t\tvs.strings[i] = b.String()\n\t\t}\n\t}\n\tif vs.strings == nil && cs.SpewKeys {\n\t\tvs.strings = make([]string, len(values))\n\t\tfor i := range vs.values {\n\t\t\tvs.strings[i] = Sprintf(\"%#v\", vs.values[i].Interface())\n\t\t}\n\t}\n\treturn vs\n}\n\n// canSortSimply tests whether a reflect.Kind is a primitive that can be sorted\n// directly, or whether it should be considered for sorting by surrogate keys\n// (if the ConfigState allows it).\nfunc canSortSimply(kind reflect.Kind) bool {\n\t// This switch parallels valueSortLess, except for the default case.\n\tswitch kind {\n\tcase reflect.Bool:\n\t\treturn true\n\tcase reflect.Int8, reflect.Int16, reflect.Int32, reflect.Int64, reflect.Int:\n\t\treturn true\n\tcase reflect.Uint8, reflect.Uint16, reflect.Uint32, reflect.Uint64, reflect.Uint:\n\t\treturn true\n\tcase reflect.Float32, reflect.Float64:\n\t\treturn true\n\tcase reflect.String:\n\t\treturn true\n\tcase reflect.Uintptr:\n\t\treturn true\n\tcase reflect.Array:\n\t\treturn true\n\t}\n\treturn false\n}\n\n// Len returns the number of values in the slice. It is part of the\n// sort.Interface implementation.\nfunc (s *valuesSorter) Len() int {\n\treturn len(s.values)\n}\n\n// Swap swaps the values at the passed indices. It is part of the\n// sort.Interface implementation.\nfunc (s *valuesSorter) Swap(i, j int) {\n\ts.values[i], s.values[j] = s.values[j], s.values[i]\n\tif s.strings != nil {\n\t\ts.strings[i], s.strings[j] = s.strings[j], s.strings[i]\n\t}\n}\n\n// valueSortLess returns whether the first value should sort before the second\n// value. It is used by valueSorter.Less as part of the sort.Interface\n// implementation.\nfunc valueSortLess(a, b reflect.Value) bool {\n\tswitch a.Kind() {\n\tcase reflect.Bool:\n\t\treturn !a.Bool() && b.Bool()\n\tcase reflect.Int8, reflect.Int16, reflect.Int32, reflect.Int64, reflect.Int:\n\t\treturn a.Int() < b.Int()\n\tcase reflect.Uint8, reflect.Uint16, reflect.Uint32, reflect.Uint64, reflect.Uint:\n\t\treturn a.Uint() < b.Uint()\n\tcase reflect.Float32, reflect.Float64:\n\t\treturn a.Float() < b.Float()\n\tcase reflect.String:\n\t\treturn a.String() < b.String()\n\tcase reflect.Uintptr:\n\t\treturn a.Uint() < b.Uint()\n\tcase reflect.Array:\n\t\t// Compare the contents of both arrays.\n\t\tl := a.Len()\n\t\tfor i := 0; i < l; i++ {\n\t\t\tav := a.Index(i)\n\t\t\tbv := b.Index(i)\n\t\t\tif av.Interface() == bv.Interface() {\n\t\t\t\tcontinue\n\t\t\t}\n\t\t\treturn valueSortLess(av, bv)\n\t\t}\n\t}\n\treturn a.String() < b.String()\n}\n\n// Less returns whether the value at index i should sort before the\n// value at index j. It is part of the sort.Interface implementation.\nfunc (s *valuesSorter) Less(i, j int) bool {\n\tif s.strings == nil {\n\t\treturn valueSortLess(s.values[i], s.values[j])\n\t}\n\treturn s.strings[i] < s.strings[j]\n}\n\n// sortValues is a sort function that handles both native types and any type that\n// can be converted to error or Stringer. Other inputs are sorted according to\n// their Value.String() value to ensure display stability.\nfunc sortValues(values []reflect.Value, cs *ConfigState) {\n\tif len(values) == 0 {\n\t\treturn\n\t}\n\tsort.Sort(newValuesSorter(values, cs))\n}\n"

},

{

"path": "vendor/github.com/davecgh/go-spew/spew/common_test.go",