Repository: Pandora-Intelligence/concise-concepts

Branch: main

Commit: f31d1c3aa5a9

Files: 20

Total size: 49.2 KB

Directory structure:

gitextract_05q4s6rh/

├── .github/

│ └── workflows/

│ ├── python-package.yml

│ └── python-publish.yml

├── .gitignore

├── .pre-commit-config.yaml

├── CITATION.cff

├── LICENSE

├── README.md

├── concise_concepts/

│ ├── __init__.py

│ ├── conceptualizer/

│ │ ├── Conceptualizer.py

│ │ └── __init__.py

│ └── examples/

│ ├── __init__.py

│ ├── data.py

│ ├── example_gensim_custom_model.py

│ ├── example_gensim_custom_path.py

│ ├── example_gensim_default.py

│ └── example_spacy.py

├── pyproject.toml

├── setup.cfg

└── tests/

├── __init__.py

└── test_model_import.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .github/workflows/python-package.yml

================================================

# This workflow will install Python dependencies, run tests and lint with a variety of Python versions

# For more information see: https://help.github.com/actions/language-and-framework-guides/using-python-with-github-actions

name: Python package

on:

push:

branches: [main]

pull_request:

branches: [main]

jobs:

build:

runs-on: ubuntu-latest

strategy:

fail-fast: false

matrix:

python-version: ["3.8", "3.9", "3.10"]

steps:

- uses: actions/checkout@v3

- name: Set up Python ${{ matrix.python-version }}

uses: actions/setup-python@v3

with:

python-version: ${{ matrix.python-version }}

- name: Install dependencies

run: |

python -m pip install --upgrade pip

python -m pip install flake8 pytest pytest-cov

python -m pip install poetry

poetry export -f requirements.txt -o requirements.txt --without-hashes

if [ -f requirements.txt ]; then pip install -r requirements.txt; fi

python -m spacy download en_core_web_md

- name: Lint with flake8

run: |

# stop the build if there are Python syntax errors or undefined names

flake8 . --count --max-complexity=18 --enable=W0614 --select=C,E,F,W,B,B950 --ignore=E203,E266,E501,W503 --exclude=.git,__pycache__,build,dist --max-line-length=119 --show-source --statistics

- name: Test with pytest

run: |

pytest --doctest-modules --junitxml=junit/test-results.xml --cov=com --cov-report=xml --cov-report=html

================================================

FILE: .github/workflows/python-publish.yml

================================================

# This workflow will upload a Python Package using Twine when a release is created

# For more information see: https://help.github.com/en/actions/language-and-framework-guides/using-python-with-github-actions#publishing-to-package-registries

# This workflow uses actions that are not certified by GitHub.

# They are provided by a third-party and are governed by

# separate terms of service, privacy policy, and support

# documentation.

name: Upload Python Package

on:

release:

types: [created]

permissions:

contents: read

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Set up Python

uses: actions/setup-python@v3

with:

python-version: '3.x'

- name: Install dependencies

run: |

python -m pip install --upgrade pip

pip install build

- name: Build package

run: python -m build

- name: Publish package

uses: pypa/gh-action-pypi-publish@27b31702a0e7fc50959f5ad993c78deac1bdfc29

with:

user: __token__

password: ${{ secrets.PYPI_API_TOKEN }}

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

pip-wheel-metadata/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

*.py,cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

.python-version

# pipenv

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

# However, in case of collaboration, if having platform-specific dependencies or dependencies

# having no cross-platform support, pipenv may install dependencies that don't work, or not

# install all needed dependencies.

#Pipfile.lock

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

__pypackages__/

# Celery stuff

celerybeat-schedule

celerybeat.pid

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

/test_spacy.py

.model

/concise_concepts/word2vec.model.vectors.npy

/test.html

# Downloaded models

*.model

*.model.*

*.json

test.py

s2v_old

================================================

FILE: .pre-commit-config.yaml

================================================

repos:

- repo: https://github.com/pre-commit/pre-commit-hooks

rev: v4.0.1

hooks:

- id: check-added-large-files

- id: end-of-file-fixer

- id: check-ast

- id: check-case-conflict

- id: check-docstring-first

- id: check-merge-conflict

- id: check-symlinks

- id: check-toml

- id: check-xml

- id: check-yaml

- id: destroyed-symlinks

- id: detect-private-key

- id: fix-encoding-pragma

- repo: https://github.com/psf/black

rev: 22.3.0

hooks:

- id: black

- id: black-jupyter

# Execute isort on all changed files (make sure the version is the same as in pyproject)

- repo: https://github.com/pycqa/isort

rev: 5.10.1

hooks:

- id: isort

# Execute flake8 on all changed files (make sure the version is the same as in pyproject)

- repo: https://github.com/pycqa/flake8

rev: 4.0.1

hooks:

- id: flake8

additional_dependencies:

["flake8-docstrings", "flake8-bugbear", "pep8-naming"]

================================================

FILE: CITATION.cff

================================================

cff-version: 1.0.0

message: "If you use this software, please cite it as below."

authors:

- family-names: David

given-names: Berenstein

title: "Concise Concepts - an easy and intuitive approach to few-shot NER using most similar expansion over spaCy embeddings."

version: 0.7.3

date-released: 2022-12-31

================================================

FILE: LICENSE

================================================

MIT License

Copyright (c) 2022 Pandora Intelligence

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: README.md

================================================

# Concise Concepts

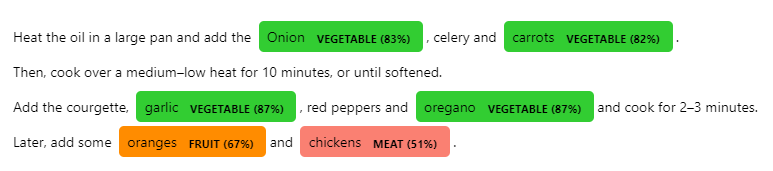

When wanting to apply NER to concise concepts, it is really easy to come up with examples, but pretty difficult to train an entire pipeline. Concise Concepts uses few-shot NER based on word embedding similarity to get you going

with easy! Now with entity scoring!

[](https://github.com/Pandora-Intelligence/concise-concepts/actions/workflows/python-package.yml)

[](https://github.com/pandora-intelligence/concise-concepts/releases)

[](https://pypi.org/project/concise-concepts/)

[](https://pypi.org/project/concise-concepts/)

[](https://github.com/ambv/black)

## Usage

This library defines matching patterns based on the most similar words found in each group, which are used to fill a [spaCy EntityRuler](https://spacy.io/api/entityruler). To better understand the rule definition, I recommend playing around with the [spaCy Rule-based Matcher Explorer](https://demos.explosion.ai/matcher).

### Tutorials

- [TechVizTheDataScienceGuy](https://www.youtube.com/c/TechVizTheDataScienceGuy) created a [nice tutorial](https://prakhar-mishra.medium.com/few-shot-named-entity-recognition-in-natural-language-processing-92d31f0d1143) on how to use it.

- [I](https://www.linkedin.com/in/david-berenstein-1bab11105/) created a [tutorial](https://www.rubrix.ml/blog/concise-concepts-rubrix/) in collaboration with Rubrix.

The section [Matching Pattern Rules](#matching-pattern-rules) expands on the construction, analysis and customization of these matching patterns.

# Install

```

pip install concise-concepts

```

# Quickstart

Take a look at the [configuration section](#configuration) for more info.

## Spacy Pipeline Component

Note that, [custom embedding models](#custom-embedding-models) are passed via `model_path`.

```python

import spacy

from spacy import displacy

data = {

"fruit": ["apple", "pear", "orange"],

"vegetable": ["broccoli", "spinach", "tomato"],

"meat": ['beef', 'pork', 'turkey', 'duck']

}

text = """

Heat the oil in a large pan and add the Onion, celery and carrots.

Then, cook over a medium–low heat for 10 minutes, or until softened.

Add the courgette, garlic, red peppers and oregano and cook for 2–3 minutes.

Later, add some oranges and chickens. """

nlp = spacy.load("en_core_web_md", disable=["ner"])

nlp.add_pipe(

"concise_concepts",

config={

"data": data,

"ent_score": True, # Entity Scoring section

"verbose": True,

"exclude_pos": ["VERB", "AUX"],

"exclude_dep": ["DOBJ", "PCOMP"],

"include_compound_words": False,

"json_path": "./fruitful_patterns.json",

"topn": (100,500,300)

},

)

doc = nlp(text)

options = {

"colors": {"fruit": "darkorange", "vegetable": "limegreen", "meat": "salmon"},

"ents": ["fruit", "vegetable", "meat"],

}

ents = doc.ents

for ent in ents:

new_label = f"{ent.label_} ({ent._.ent_score:.0%})"

options["colors"][new_label] = options["colors"].get(ent.label_.lower(), None)

options["ents"].append(new_label)

ent.label_ = new_label

doc.ents = ents

displacy.render(doc, style="ent", options=options)

```

## Standalone

This might be useful when iterating over few_shot training data when not wanting to reload larger models continuously.

Note that, [custom embedding models](#custom-embedding-models) are passed via `model`.

```python

import gensim

import spacy

from concise_concepts import Conceptualizer

model = gensim.downloader.load("fasttext-wiki-news-subwords-300")

nlp = spacy.load("en_core_web_sm")

data = {

"disease": ["cancer", "diabetes", "heart disease", "influenza", "pneumonia"],

"symptom": ["headache", "fever", "cough", "nausea", "vomiting", "diarrhea"],

}

conceptualizer = Conceptualizer(nlp, data, model)

conceptualizer.nlp("I have a headache and a fever.").ents

data = {

"disease": ["cancer", "diabetes"],

"symptom": ["headache", "fever"],

}

conceptualizer = Conceptualizer(nlp, data, model)

conceptualizer.nlp("I have a headache and a fever.").ents

```

# Configuration

## Matching Pattern Rules

A general introduction about the usage of matching patterns in the [usage section](#usage).

### Customizing Matching Pattern Rules

Even though the baseline parameters provide a decent result, the construction of these matching rules can be customized via the config passed to the spaCy pipeline.

- `exclude_pos`: A list of POS tags to be excluded from the rule-based match.

- `exclude_dep`: A list of dependencies to be excluded from the rule-based match.

- `include_compound_words`: If True, it will include compound words in the entity. For example, if the entity is "New York", it will also include "New York City" as an entity.

- `case_sensitive`: Whether to match the case of the words in the text.

### Analyze Matching Pattern Rules

To motivate actually looking at the data and support interpretability, the matching patterns that have been generated are stored as `./main_patterns.json`. This behavior can be changed by using the `json_path` variable via the config passed to the spaCy pipeline.

## Fuzzy matching using `spaczz`

- `fuzzy`: A boolean value that determines whether to use fuzzy matching

```python

data = {

"fruit": ["apple", "pear", "orange"],

"vegetable": ["broccoli", "spinach", "tomato"],

"meat": ["beef", "pork", "fish", "lamb"]

}

nlp.add_pipe("concise_concepts", config={"data": data, "fuzzy": True})

```

## Most Similar Word Expansion

- `topn`: Use a specific number of words to expand over.

```python

data = {

"fruit": ["apple", "pear", "orange"],

"vegetable": ["broccoli", "spinach", "tomato"],

"meat": ["beef", "pork", "fish", "lamb"]

}

topn = [50, 50, 150]

assert len(topn) == len

nlp.add_pipe("concise_concepts", config={"data": data, "topn": topn})

```

## Entity Scoring

- `ent_score`: Use embedding based word similarity to score entities against their groups

```python

import spacy

data = {

"ORG": ["Google", "Apple", "Amazon"],

"GPE": ["Netherlands", "France", "China"],

}

text = """Sony was founded in Japan."""

nlp = spacy.load("en_core_web_lg")

nlp.add_pipe("concise_concepts", config={"data": data, "ent_score": True, "case_sensitive": True})

doc = nlp(text)

print([(ent.text, ent.label_, ent._.ent_score) for ent in doc.ents])

# output

#

# [('Sony', 'ORG', 0.5207586), ('Japan', 'GPE', 0.7371268)]

```

## Custom Embedding Models

- `model_path`: Use custom `sense2vec.Sense2Vec`, `gensim.Word2vec` `gensim.FastText`, or `gensim.KeyedVectors`, or a pretrained model from [gensim](https://radimrehurek.com/gensim/downloader.html) library or a custom model path. For using a `sense2vec.Sense2Vec` take a look [here](https://github.com/explosion/sense2vec#pretrained-vectors).

- `model`: within [standalone usage](#standalone), it is possible to pass these models directly.

```python

data = {

"fruit": ["apple", "pear", "orange"],

"vegetable": ["broccoli", "spinach", "tomato"],

"meat": ["beef", "pork", "fish", "lamb"]

}

# model from https://radimrehurek.com/gensim/downloader.html or path to local file

model_path = "glove-wiki-gigaword-300"

nlp.add_pipe("concise_concepts", config={"data": data, "model_path": model_path})

````

================================================

FILE: concise_concepts/__init__.py

================================================

# -*- coding: utf-8 -*-

from typing import List, Union

from gensim.models import FastText, Word2Vec

from gensim.models.keyedvectors import KeyedVectors

from spacy.language import Language

from .conceptualizer import Conceptualizer

@Language.factory(

"concise_concepts",

default_config={

"data": None,

"topn": None,

"model_path": None,

"word_delimiter": "_",

"ent_score": False,

"exclude_pos": [

"VERB",

"AUX",

"ADP",

"DET",

"CCONJ",

"PUNCT",

"ADV",

"ADJ",

"PART",

"PRON",

],

"exclude_dep": [],

"include_compound_words": False,

"fuzzy": False,

"case_sensitive": False,

"json_path": "./matching_patterns.json",

"verbose": True,

},

)

def make_concise_concepts(

nlp: Language,

name: str,

data: Union[dict, list],

topn: Union[list, None],

model_path: Union[str, FastText, Word2Vec, KeyedVectors, None],

word_delimiter: str,

ent_score: bool,

exclude_pos: List[str],

exclude_dep: List[str],

include_compound_words: bool,

fuzzy: bool,

case_sensitive: bool,

json_path: str,

verbose: bool,

):

return Conceptualizer(

nlp=nlp,

data=data,

topn=topn,

model=model_path,

word_delimiter=word_delimiter,

ent_score=ent_score,

exclude_pos=exclude_pos,

exclude_dep=exclude_dep,

include_compound_words=include_compound_words,

fuzzy=fuzzy,

case_sensitive=case_sensitive,

json_path=json_path,

verbose=verbose,

name=name,

)

================================================

FILE: concise_concepts/conceptualizer/Conceptualizer.py

================================================

# -*- coding: utf-8 -*-

import json

import logging

import re

import types

from copy import deepcopy

from pathlib import Path

from typing import List, Union

import gensim.downloader

import spaczz # noqa: F401

from gensim import matutils # utility fnc for pickling, common scipy operations etc

from gensim.models import FastText, Word2Vec

from gensim.models.keyedvectors import KeyedVectors

from numpy import argmax, dot

from sense2vec import Sense2Vec

from spacy import Language, util

from spacy.tokens import Doc, Span

logger = logging.getLogger(__name__)

POS_LIST = [

"ADJ",

"ADP",

"ADV",

"AUX",

"CONJ",

"CCONJ",

"DET",

"INTJ",

"NOUN",

"NUM",

"PART",

"PRON",

"PROPN",

"PUNCT",

"SCONJ",

"SYM",

"VERB",

"X",

"SPACE",

]

class Conceptualizer:

def __init__(

self,

nlp: Language,

data: dict = {},

model: Union[str, FastText, KeyedVectors, Word2Vec] = None,

topn: list = None,

word_delimiter: str = "_",

ent_score: bool = False,

exclude_pos: list = None,

exclude_dep: list = None,

include_compound_words: bool = False,

case_sensitive: bool = False,

fuzzy: bool = False,

json_path: str = "./matching_patterns.json",

verbose: bool = True,

name: str = "concise_concepts",

):

"""

The function takes in a dictionary of words and their synonyms, and then creates a new dictionary of words and

their synonyms, but with the words in the new dictionary all in uppercase

:param nlp: The spaCy model to use.

:type nlp: Language

:param name: The name of the entity.

:type name: str

:param data: A dictionary of the words you want to match. The keys are the classes you want to match,

and the values are the words you want to expand over.

:type data: dict

:param topn: The number of words to be returned for each class.

:type topn: list

:param model_path: The path to the model you want to use. If you don't have a model, you can use the spaCy one.

:param word_delimiter: The delimiter used to separate words in model the dictionary, defaults to _ (optional)

:param ent_score: If True, the extension "ent_score" will be added to the Span object. This will be the score of

the entity, defaults to False (optional)

:param exclude_pos: A list of POS tags to exclude from the rule based match

:param exclude_dep: list of dependencies to exclude from the rule based match

:param include_compound_words: If True, it will include compound words in the entity. For example,

if the entity is "New York", it will also include "New York City" as an entity, defaults to False (optional)

:param case_sensitive: Whether to match the case of the words in the text, defaults to False (optional)

"""

assert data, ValueError("You must provide a dictionary of words to match")

self.verbose = verbose

self.log_cache = {"key": list(), "word": list(), "key_word": list()}

if Span.has_extension("ent_score"):

Span.remove_extension("ent_score")

if ent_score:

Span.set_extension("ent_score", default=None)

self.ent_score = ent_score

self.data = data

self.name = name

self.nlp = nlp

self.fuzzy = fuzzy

self.topn = topn

self.model = model

self.match_rule = {}

self.set_exclude_pos(exclude_pos)

self.set_exclude_dep(exclude_dep)

self.json_path = json_path

self.include_compound_words = include_compound_words

self.case_sensitive = case_sensitive

self.word_delimiter = word_delimiter

if "lemmatizer" not in self.nlp.component_names:

logger.warning(

"No lemmatizer found in spacy pipeline. Consider adding it for matching"

" on LEMMA instead of exact text."

)

self.match_key = "TEXT"

else:

self.match_key = "LEMMA"

for ruler in ["entity_ruler", "spaczz_ruler"]:

if ruler in self.nlp.component_names:

logger.warning(

f"{ruler} already exists in the pipeline. Removing old rulers"

)

self.nlp.remove_pipe(ruler)

self.run()

def set_exclude_dep(self, exclude_dep: list):

if exclude_dep is None:

exclude_dep = []

if exclude_dep:

self.match_rule["DEP"] = {"NOT_IN": exclude_dep}

def set_exclude_pos(self, exclude_pos: list):

if exclude_pos is None:

exclude_pos = [

"VERB",

"AUX",

"ADP",

"DET",

"CCONJ",

"PUNCT",

"ADV",

"ADJ",

"PART",

"PRON",

]

if exclude_pos:

self.match_rule["POS"] = {"NOT_IN": exclude_pos}

self.exclude_pos = exclude_pos

else:

self.exclude_pos = []

def run(self) -> None:

self.check_validity_path()

self.set_gensim_model()

self.verify_data(self.verbose)

self.determine_topn()

self.expand_concepts()

# settle words around overlapping concepts

for _ in range(5):

self.expand_concepts()

self.infer_original_data()

self.resolve_overlapping_concepts()

self.infer_original_data()

self.create_conceptual_patterns()

self.set_concept_dict()

if not self.ent_score:

del self.kv

self.data_upper = {k.upper(): v for k, v in self.data.items()}

def check_validity_path(self) -> None:

"""

If the path is a file, create the parent directory if it doesn't exist. If the path is a directory, create the

directory and set the path to the default file name

"""

if self.json_path:

if Path(self.json_path).suffix:

Path(self.json_path).parents[0].mkdir(parents=True, exist_ok=True)

else:

Path(self.json_path).mkdir(parents=True, exist_ok=True)

old_path = str(self.json_path)

self.json_path = Path(self.json_path) / "matching_patterns.json"

logger.warning(

f"Path ´{old_path} is a directory, not a file. Setting"

f" ´json_path´to {self.json_path}"

)

def determine_topn(self) -> None:

"""

If the user doesn't specify a topn value for each class,

then the topn value for each class is set to 100

"""

if self.topn is None:

self.topn_dict = {key: 100 for key in self.data}

else:

num_classes = len(self.data)

assert (

len(self.topn) == num_classes

), f"Provide a topn integer for each of the {num_classes} classes."

self.topn_dict = dict(zip(self.data, self.topn))

def set_gensim_model(self) -> None:

"""

If the model_path is not None, then we try to load the model from the path.

If it's not a valid path, then we raise an exception.

If the model_path is None, then we load the model from the internal embeddings of the spacy model

"""

if isinstance(self.model, str):

if self.model:

available_models = gensim.downloader.info()["models"]

if self.model in available_models:

self.kv = gensim.downloader.load(self.model)

else:

try:

self.kv = Sense2Vec().from_disk(self.model)

except Exception as e0:

try:

self.kv = FastText.load(self.model).wv

except Exception as e1:

try:

self.kv = Word2Vec.load(self.model).wv

except Exception as e2:

try:

self.kv = KeyedVectors.load(self.model)

except Exception as e3:

try:

self.kv = KeyedVectors.load_word2vec_format(

self.model, binary=True

)

except Exception as e4:

raise Exception(

"Not a valid model.Sense2Vec, FastText,"

f" Word2Vec, KeyedVectors.\n {e0}\n {e1}\n"

f" {e2}\n {e3}\n {e4}"

)

elif isinstance(self.model, (FastText, Word2Vec)):

self.kv = self.model.wv

elif isinstance(self.model, KeyedVectors):

self.kv = self.model

elif isinstance(self.model, Sense2Vec):

self.kv = self.model

else:

wordList = []

vectorList = []

assert len(

self.nlp.vocab.vectors

), "Choose a spaCy model with internal embeddings, e.g. md or lg."

for key, vector in self.nlp.vocab.vectors.items():

wordList.append(self.nlp.vocab.strings[key])

vectorList.append(vector)

self.kv = KeyedVectors(self.nlp.vocab.vectors_length)

self.kv.add_vectors(wordList, vectorList)

def verify_data(self, verbose: bool = True) -> None:

"""

It takes a dictionary of lists of words, and returns a dictionary of lists of words,

where each word in the list is present in the word2vec model

"""

verified_data: dict[str, list[str]] = dict()

for key, value in self.data.items():

verified_values = []

present_key = self._check_presence_vocab(key)

if present_key:

key = present_key

if not present_key and verbose and key not in self.log_cache["key"]:

logger.warning(f"key ´{key}´ not present in vector model")

self.log_cache["key"].append(key)

for word in value:

present_word = self._check_presence_vocab(word)

if present_word:

verified_values.append(present_word)

elif verbose and word not in self.log_cache["word"]:

logger.warning(

f"word ´{word}´ from key ´{key}´ not present in vector model"

)

self.log_cache["word"].append(word)

verified_data[key] = verified_values

if not len(verified_values):

msg = (

f"None of the entries for key {key} are present in the vector"

" model. "

)

if present_key:

logger.warning(

msg + f"Using {present_key} as word to expand over instead."

)

verified_data[key] = present_key

else:

raise Exception(msg)

self.data = deepcopy(verified_data)

self.original_data = deepcopy(verified_data)

def expand_concepts(self) -> None:

"""

For each key in the data dictionary, find the topn most similar words to the key and the values in the data

dictionary, and add those words to the values in the data dictionary

"""

for key in self.data:

present_key = self._check_presence_vocab(key)

if present_key:

key_list = [present_key]

else:

key_list = []

if isinstance(self.kv, Sense2Vec):

similar = self.kv.most_similar(

self.data[key] + key_list,

n=self.topn_dict[key],

)

else:

similar = self.kv.most_similar(

self.data[key] + key_list,

topn=self.topn_dict[key],

)

self.data[key] = list({word for word, _ratio in similar})

def resolve_overlapping_concepts(self) -> None:

"""

It removes words from the data that are in other concepts, and then removes words that are not closest to the

centroid of the concept

"""

for key in self.data:

self.data[key] = [

word

for word in self.data[key]

if key == self.most_similar_to_given(word, list(self.data.keys()))

]

def most_similar_to_given(self, key1, keys_list):

"""Get the `key` from `keys_list` most similar to `key1`."""

return keys_list[argmax([self.similarity(key1, key) for key in keys_list])]

def similarity(self, w1, w2):

"""Compute cosine similarity between two keys.

Parameters

----------

w1 : str

Input key.

w2 : str

Input key.

Returns

-------

float

Cosine similarity between `w1` and `w2`.

"""

return dot(matutils.unitvec(self.kv[w1]), matutils.unitvec(self.kv[w2]))

def infer_original_data(self) -> None:

"""

It takes the original data and adds the new data to it, then removes the new data from the original data.

"""

for key in self.data:

self.data[key] = list(set(self.data[key] + self.original_data[key]))

for key_x in self.data:

for key_y in self.data:

if key_x != key_y:

self.data[key_x] = [

word

for word in self.data[key_x]

if word not in self.original_data[key_y]

]

def lemmatize_concepts(self) -> None:

"""

For each key in the data dictionary,

the function takes the list of concepts associated with that key, and lemmatizes

each concept.

"""

for key in self.data:

self.data[key] = list(

set([doc[0].lemma_ for doc in self.nlp.pipe(self.data[key])])

)

def create_conceptual_patterns(self) -> None:

"""

For each key in the data dictionary,

create a pattern for each word in the list of words associated with that key.

The pattern is a dictionary with three keys:

1. "lemma"

2. "POS"

3. "DEP"

The value for each key is another dictionary with one key and one value.

The key is either "regex" or "NOT_IN" or "IN".

The value is either a regular expression or a list of strings.

The regular expression is the word associated with the key in the data dictionary.

The list of strings is either ["VERB"] or ["nsubjpass"] or ["amod", "compound"].

The regular expression is case insensitive.

The pattern is

"""

lemma_patterns = []

fuzzy_patterns = []

def add_patterns(input_dict: dict) -> None:

"""

It creates a list of dictionaries that can be used for a spaCy entity ruler

:param input_dict: a dictionary

:type input_dict: dict

"""

if isinstance(self.kv, Sense2Vec):

input_dict = {

key.split("|")[0]: [word.split("|")[0] for word in value]

for key, value in input_dict.items()

}

for key in input_dict:

words = input_dict[key]

for word in words:

if word != key:

word_parts = self._split_word(word)

op_pattern = {

"TEXT": {

"REGEX": "|".join([" ", "-", "_", "/"]),

"OP": "*",

}

}

partial_pattern_parts = []

lemma_pattern_parts = []

for partial_pattern in word_parts:

word_part = partial_pattern

if self.fuzzy:

partial_pattern = {

"FUZZY": word_part,

}

partial_pattern = {"TEXT": partial_pattern}

lemma_pattern_parts.append({self.match_key: word_part})

lemma_pattern_parts.append(op_pattern)

partial_pattern_parts.append(partial_pattern)

partial_pattern_parts.append(op_pattern)

pattern = {

"label": key.upper(),

"pattern": partial_pattern_parts[:-1],

"id": f"{word}_individual",

}

# add fuzzy matching formatting if fuzzy matching is enabled

fuzzy_patterns.append(pattern)

# add lemmma matching

if lemma_pattern_parts:

lemma_pattern = {

"label": key.upper(),

"pattern": lemma_pattern_parts[:-1],

"id": f"{word}_lemma_individual",

}

lemma_patterns.append(lemma_pattern)

if self.include_compound_words:

compound_rule = [

{

"DEP": {"IN": ["amod", "compound"]},

"OP": "*",

}

]

partial_pattern_parts.append(

{

"label": key.upper(),

"pattern": compound_rule

+ partial_pattern_parts[:-1]

+ compound_rule,

"id": f"{word}_compound",

}

)

if lemma_pattern_parts:

lemma_patterns.append(

{

"label": key.upper(),

"pattern": compound_rule

+ lemma_pattern_parts[:-1]

+ compound_rule,

"id": f"{word}_lemma_compound",

}

)

add_patterns(self.data)

if self.json_path:

with open(self.json_path, "w") as f:

json.dump(lemma_patterns + fuzzy_patterns, f)

config = {"overwrite_ents": True}

if self.case_sensitive:

config["phrase_matcher_attr"] = "LOWER"

self.ruler = self.nlp.add_pipe("entity_ruler", config=config)

self.ruler.add_patterns(lemma_patterns)

# Add spaczz entity ruler if fuzzy

if self.fuzzy:

for pattern in fuzzy_patterns:

pattern["type"] = "token"

self.fuzzy_ruler = self.nlp.add_pipe("spaczz_ruler", config=config)

self.fuzzy_ruler.add_patterns(fuzzy_patterns)

def __call__(self, doc: Doc) -> Doc:

"""

It takes a doc object and assigns a score to each entity in the doc object

:param doc: Doc

:type doc: Doc

"""

if isinstance(doc, str):

doc = self.nlp(doc)

elif isinstance(doc, Doc):

if self.ent_score:

doc = self.assign_score_to_entities(doc)

return doc

def pipe(self, stream, batch_size=128) -> Doc:

"""

It takes a stream of documents, and for each document,

it assigns a score to each entity in the document

:param stream: a generator of documents

:param batch_size: The number of documents to be processed at a time, defaults to 128 (optional)

"""

if isinstance(stream, str):

stream = [stream]

if not isinstance(stream, types.GeneratorType):

stream = self.nlp.pipe(stream, batch_size=batch_size)

for docs in util.minibatch(stream, size=batch_size):

for doc in docs:

if self.ent_score:

doc = self.assign_score_to_entities(doc)

yield doc

def assign_score_to_entities(self, doc: Doc) -> Doc:

"""

The function takes a spaCy document as input and assigns a score to each entity in the document. The score is

calculated using the word embeddings of the entity and the concept.

The score is assigned to the entity using the

`._.ent_score` attribute

:param doc: Doc

:type doc: Doc

:return: The doc object with the entities and their scores.

"""

ents = doc.ents

for ent in ents:

if ent.label_ in self.data_upper:

ent_text = ent.text

# get word part representations

if self._check_presence_vocab(ent_text):

entity = [self._check_presence_vocab(ent_text)]

else:

entity = []

for part in self._split_word(ent_text):

present_part = self._check_presence_vocab(part)

if present_part:

entity.append(present_part)

# get concepts to match

concept = self.concept_data.get(ent.label_, None)

# compare set similarities

if entity and concept:

ent._.ent_score = self.kv.n_similarity(entity, concept)

else:

ent._.ent_score = 0

if self.verbose:

if f"{ent_text}_{concept}" not in self.log_cache["key_word"]:

logger.warning(

f"Entity ´{ent.text}´ and/or label ´{concept}´ not"

" found in vector model. Nothing to compare to, so"

" setting ent._.ent_score to 0."

)

self.log_cache["key_word"].append(f"{ent_text}_{concept}")

else:

ent._.ent_score = 0

if self.verbose:

if ent.text not in self.log_cache["word"]:

logger.warning(

f"Entity ´{ent.text}´ not found in vector model. Nothing to"

" compare to, so setting ent._.ent_score to 0."

)

self.log_cache["word"].append(ent.text)

doc.ents = ents

return doc

def set_concept_dict(self):

self.concept_data = {k.upper(): v for k, v in self.data.items()}

for ent_label in self.concept_data:

concept = []

for word in self.concept_data[ent_label]:

present_word = self._check_presence_vocab(word)

if present_word:

concept.append(present_word)

self.concept_data[ent_label] = concept

def _split_word(self, word: str) -> List[str]:

"""

It splits a word into a list of subwords, using the word delimiter

:param word: str

:type word: str

:return: A list of strings or any.

"""

return re.split(f"[{re.escape(self.word_delimiter)}]+", word)

def _check_presence_vocab(self, word: str) -> str:

"""

If the word is not lowercase and the case_sensitive flag is set to False, then check if the lowercase version of

the word is in the vocabulary. If it is, return the lowercase version of the word. Otherwise, return the word

itself

:param word: The word to check for presence in the vocabulary

:type word: str

:return: The word itself if it is present in the vocabulary, otherwise the word with the highest probability of

being the word that was intended.

"""

word = word.replace(" ", "_")

if not word.islower() and not self.case_sensitive:

present_word = self.__check_presence_vocab(word.lower())

if present_word:

return present_word

return self.__check_presence_vocab(word)

def __check_presence_vocab(self, word: str) -> str:

"""

If the word is in the vocabulary, return the word. If not, replace spaces and dashes with the word delimiter and

check if the new word is in the vocabulary. If so, return the new word

:param word: str - the word to check

:type word: str

:return: The word or the check_word

"""

if isinstance(self.kv, Sense2Vec):

return self.kv.get_best_sense(word, (set(POS_LIST) - set(self.exclude_pos)))

else:

if word in self.kv:

return word

================================================

FILE: concise_concepts/conceptualizer/__init__.py

================================================

# -*- coding: utf-8 -*-

from .Conceptualizer import Conceptualizer

__all__ = ["Conceptualizer"]

================================================

FILE: concise_concepts/examples/__init__.py

================================================

================================================

FILE: concise_concepts/examples/data.py

================================================

# -*- coding: utf-8 -*-

text = """

Heat the oil in a large pan and add the Onion, celery and carrots.

Then, cook over a medium–low heat for 10 minutes, or until softened.

Add the courgette, garlic, red peppers and oregano and cook for 2–3 minutes.

Later, add some oranges, chickens. """

text_fuzzy = """

Heat the oil in a large pan and add the Onion, celery and carots.

Then, cook over a medium–low heat for 10 minutes, or until softened.

Add the courgette, garlic, red peppers and oregano and cook for 2–3 minutes.

Later, add some oranges, chickens. """

data = {

"fruit": ["apple", "pear", "orange"],

"vegetable": ["broccoli", "spinach", "tomato"],

"meat": ["chicken", "beef", "pork", "fish", "lamb"],

}

================================================

FILE: concise_concepts/examples/example_gensim_custom_model.py

================================================

# -*- coding: utf-8 -*-

import spacy

from gensim.models import Word2Vec

from gensim.test.utils import common_texts

import concise_concepts # noqa: F401

data = {"human": ["trees"], "interface": ["computer"]}

text = (

"believe me, it's the slowest mobile I saw. Don't go on screen and Battery, it is"

" an extremely slow mobile phone and takes ages to open and navigate. Forget about"

" heavy use, it can't handle normal regular use. I made a huge mistake but pls"

" don't buy this mobile. It's only a few months and I am thinking to change it. Its"

" dam SLOW SLOW SLOW."

)

model = Word2Vec(

sentences=common_texts, vector_size=100, window=5, min_count=1, workers=4

)

model.save("word2vec.model")

model_path = "word2vec.model"

nlp = spacy.blank("en")

nlp.add_pipe("concise_concepts", config={"data": data, "model_path": model_path})

================================================

FILE: concise_concepts/examples/example_gensim_custom_path.py

================================================

# -*- coding: utf-8 -*-

import gensim.downloader as api

import spacy

import concise_concepts # noqa: F401

from .data import data, text

model_path = "word2vec.model"

model = api.load("glove-twitter-25")

model.save(model_path)

nlp = spacy.blank("en")

nlp.add_pipe("concise_concepts", config={"data": data, "model_path": model_path})

doc = nlp(text)

print([(ent.text, ent.label_) for ent in doc.ents])

================================================

FILE: concise_concepts/examples/example_gensim_default.py

================================================

# -*- coding: utf-8 -*-

import spacy

import concise_concepts # noqa: F401

from .data import data, text

model_path = "glove-twitter-25"

nlp = spacy.blank("en")

nlp.add_pipe("concise_concepts", config={"data": data, "model_path": model_path})

doc = nlp(text)

print([(ent.text, ent.label_) for ent in doc.ents])

================================================

FILE: concise_concepts/examples/example_spacy.py

================================================

# -*- coding: utf-8 -*-

import spacy

import concise_concepts # noqa: F401

from .data import data, text

nlp = spacy.load("en_core_web_md")

nlp.add_pipe("concise_concepts", config={"data": data})

doc = nlp(text)

print([(ent.text, ent.label_) for ent in doc.ents])

================================================

FILE: pyproject.toml

================================================

[tool.poetry]

name = "concise-concepts"

version = "0.8.1"

description = "This repository contains an easy and intuitive approach to few-shot NER using most similar expansion over spaCy embeddings. Now with entity confidence scores!"

authors = ["David Berenstein <david.m.berenstein@gmail.com>"]

license = "MIT"

readme = "README.md"

homepage = "https://github.com/pandora-intelligence/concise-concepts"

repository = "https://github.com/pandora-intelligence/concise-concepts"

documentation = "https://github.com/pandora-intelligence/concise-concepts"

keywords = ["spacy", "NER", "few-shot classification", "nlu"]

classifiers = [

"Intended Audience :: Developers",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3.8",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Topic :: Scientific/Engineering",

"Topic :: Software Development"

]

packages = [{include = "concise_concepts"}]

[tool.poetry.dependencies]

python = ">=3.8,<3.12"

spacy = "^3"

scipy = "^1.7"

gensim = "^4"

spaczz = "^0.5.4"

sense2vec = "^2.0.1"

[tool.poetry.plugins]

[tool.poetry.plugins."spacy_factories"]

"spacy" = "concise_concepts.__init__:make_concise_concepts"

[tool.poetry.group.dev.dependencies]

black = "^22"

flake8 = "^5"

pytest = "^7.1"

pre-commit = "^2.20"

pep8-naming = "^0.13"

flake8-bugbear = "^22.9"

flake8-docstrings = "^1.6"

ipython = "^8.7.0"

ipykernel = "^6.17.1"

[build-system]

requires = ["poetry-core>=1.0.0"]

build-backend = "poetry.core.masonry.api"

[tool.pytest.ini_options]

testpaths = "tests"

[tool.black]

preview = true

[tool.isort]

profile = "black"

src_paths = ["concise_concepts"]

================================================

FILE: setup.cfg

================================================

[flake8]

max-line-length = 119

max-complexity = 18

docstring-convention=google

exclude = .git,__pycache__,build,dist

select = C,E,F,W,B,B950

ignore =

E203,E266,E501,W503

enable =

W0614

per-file-ignores =

test_*.py: D

================================================

FILE: tests/__init__.py

================================================

================================================

FILE: tests/test_model_import.py

================================================

# -*- coding: utf-8 -*-

def test_spacy_embeddings():

from concise_concepts.examples import example_spacy # noqa: F401

def test_gensim_default():

from concise_concepts.examples import example_gensim_default # noqa: F401

def test_gensim_custom_path():

from concise_concepts.examples import example_gensim_custom_path # noqa: F401

def test_gensim_custom_model():

from concise_concepts.examples import example_gensim_custom_model # noqa: F401

def test_standalone_spacy():

import spacy

from concise_concepts import Conceptualizer

nlp = spacy.load("en_core_web_md")

data = {

"disease": ["cancer", "diabetes", "heart disease", "influenza", "pneumonia"],

"symptom": ["headache", "fever", "cough", "nausea", "vomiting", "diarrhea"],

}

conceptualizer = Conceptualizer(nlp, data)

assert (

list(conceptualizer.pipe(["I have a headache and a fever."]))[0].to_json()

== list(conceptualizer.nlp.pipe(["I have a headache and a fever."]))[

0

].to_json()

)

assert (

conceptualizer("I have a headache and a fever.").to_json()

== conceptualizer.nlp("I have a headache and a fever.").to_json()

)

data = {

"disease": ["cancer", "diabetes"],

"symptom": ["headache", "fever"],

}

conceptualizer = Conceptualizer(nlp, data)

def test_standalone_gensim():

import gensim

import spacy

from concise_concepts import Conceptualizer

model_path = "glove-twitter-25"

model = gensim.downloader.load(model_path)

nlp = spacy.load("en_core_web_md")

data = {

"disease": ["cancer", "diabetes", "heart disease", "influenza", "pneumonia"],

"symptom": ["headache", "fever", "cough", "nausea", "vomiting", "diarrhea"],

}

conceptualizer = Conceptualizer(nlp, data, model=model)

print(list(conceptualizer.pipe(["I have a headache and a fever."]))[0].ents)

print(list(conceptualizer.nlp.pipe(["I have a headache and a fever."]))[0].ents)

print(conceptualizer("I have a headache and a fever.").ents)

print(conceptualizer.nlp("I have a headache and a fever.").ents)

def test_spaczz():

# -*- coding: utf-8 -*-

import spacy

import concise_concepts # noqa: F401

from concise_concepts.examples.data import data, text, text_fuzzy

nlp = spacy.load("en_core_web_md")

nlp.add_pipe("concise_concepts", config={"data": data, "fuzzy": True})

assert len(nlp(text).ents) == len(nlp(text_fuzzy).ents)

def test_sense2vec():

# -*- coding: utf-8 -*-

import requests

import spacy

import concise_concepts # noqa: F401

from concise_concepts.examples.data import data, text

model_path = "s2v_old"

# download .tar.gz file an URL

# and extract it to a folder

url = "https://github.com/explosion/sense2vec/releases/download/v1.0.0/s2v_reddit_2015_md.tar.gz"

r = requests.get(url, allow_redirects=True)

open("s2v_reddit_2015_md.tar.gz", "wb").write(r.content)

# extract tar.gz file

filename = "s2v_reddit_2015_md.tar.gz"

import tarfile

tar = tarfile.open(filename, "r:gz")

tar.extractall()

tar.close()

nlp = spacy.load("en_core_web_md")

nlp.add_pipe("concise_concepts", config={"data": data, "model_path": model_path})

assert len(nlp(text).ents)

gitextract_05q4s6rh/

├── .github/

│ └── workflows/

│ ├── python-package.yml

│ └── python-publish.yml

├── .gitignore

├── .pre-commit-config.yaml

├── CITATION.cff

├── LICENSE

├── README.md

├── concise_concepts/

│ ├── __init__.py

│ ├── conceptualizer/

│ │ ├── Conceptualizer.py

│ │ └── __init__.py

│ └── examples/

│ ├── __init__.py

│ ├── data.py

│ ├── example_gensim_custom_model.py

│ ├── example_gensim_custom_path.py

│ ├── example_gensim_default.py

│ └── example_spacy.py

├── pyproject.toml

├── setup.cfg

└── tests/

├── __init__.py

└── test_model_import.py

SYMBOL INDEX (32 symbols across 3 files)

FILE: concise_concepts/__init__.py

function make_concise_concepts (line 39) | def make_concise_concepts(

FILE: concise_concepts/conceptualizer/Conceptualizer.py

class Conceptualizer (line 45) | class Conceptualizer:

method __init__ (line 46) | def __init__(

method set_exclude_dep (line 124) | def set_exclude_dep(self, exclude_dep: list):

method set_exclude_pos (line 130) | def set_exclude_pos(self, exclude_pos: list):

method run (line 150) | def run(self) -> None:

method check_validity_path (line 170) | def check_validity_path(self) -> None:

method determine_topn (line 187) | def determine_topn(self) -> None:

method set_gensim_model (line 201) | def set_gensim_model(self) -> None:

method verify_data (line 257) | def verify_data(self, verbose: bool = True) -> None:

method expand_concepts (line 296) | def expand_concepts(self) -> None:

method resolve_overlapping_concepts (line 319) | def resolve_overlapping_concepts(self) -> None:

method most_similar_to_given (line 331) | def most_similar_to_given(self, key1, keys_list):

method similarity (line 335) | def similarity(self, w1, w2):

method infer_original_data (line 353) | def infer_original_data(self) -> None:

method lemmatize_concepts (line 369) | def lemmatize_concepts(self) -> None:

method create_conceptual_patterns (line 380) | def create_conceptual_patterns(self) -> None:

method __call__ (line 512) | def __call__(self, doc: Doc) -> Doc:

method pipe (line 527) | def pipe(self, stream, batch_size=128) -> Doc:

method assign_score_to_entities (line 547) | def assign_score_to_entities(self, doc: Doc) -> Doc:

method set_concept_dict (line 601) | def set_concept_dict(self):

method _split_word (line 611) | def _split_word(self, word: str) -> List[str]:

method _check_presence_vocab (line 621) | def _check_presence_vocab(self, word: str) -> str:

method __check_presence_vocab (line 639) | def __check_presence_vocab(self, word: str) -> str:

FILE: tests/test_model_import.py

function test_spacy_embeddings (line 2) | def test_spacy_embeddings():

function test_gensim_default (line 6) | def test_gensim_default():

function test_gensim_custom_path (line 10) | def test_gensim_custom_path():

function test_gensim_custom_model (line 14) | def test_gensim_custom_model():

function test_standalone_spacy (line 18) | def test_standalone_spacy():

function test_standalone_gensim (line 47) | def test_standalone_gensim():

function test_spaczz (line 67) | def test_spaczz():

function test_sense2vec (line 81) | def test_sense2vec():

Condensed preview — 20 files, each showing path, character count, and a content snippet. Download the .json file or copy for the full structured content (54K chars).

[

{

"path": ".github/workflows/python-package.yml",

"chars": 1560,

"preview": "# This workflow will install Python dependencies, run tests and lint with a variety of Python versions\n# For more inform"

},

{

"path": ".github/workflows/python-publish.yml",

"chars": 1089,

"preview": "# This workflow will upload a Python Package using Twine when a release is created\n# For more information see: https://h"

},

{

"path": ".gitignore",

"chars": 1938,

"preview": "# Byte-compiled / optimized / DLL files\n__pycache__/\n*.py[cod]\n*$py.class\n\n# C extensions\n*.so\n\n# Distribution / packagi"

},

{

"path": ".pre-commit-config.yaml",

"chars": 1031,

"preview": "repos:\n - repo: https://github.com/pre-commit/pre-commit-hooks\n rev: v4.0.1\n hooks:\n - id: check-added-large"

},

{

"path": "CITATION.cff",

"chars": 310,

"preview": "cff-version: 1.0.0\nmessage: \"If you use this software, please cite it as below.\"\nauthors:\n - family-names: David\n gi"

},

{

"path": "LICENSE",

"chars": 1077,

"preview": "MIT License\n\nCopyright (c) 2022 Pandora Intelligence\n\nPermission is hereby granted, free of charge, to any person obtain"

},

{

"path": "README.md",

"chars": 7952,

"preview": "# Concise Concepts\nWhen wanting to apply NER to concise concepts, it is really easy to come up with examples, but pretty"

},

{

"path": "concise_concepts/__init__.py",

"chars": 1715,

"preview": "# -*- coding: utf-8 -*-\nfrom typing import List, Union\n\nfrom gensim.models import FastText, Word2Vec\nfrom gensim.models."

},

{

"path": "concise_concepts/conceptualizer/Conceptualizer.py",

"chars": 25630,

"preview": "# -*- coding: utf-8 -*-\nimport json\nimport logging\nimport re\nimport types\nfrom copy import deepcopy\nfrom pathlib import "

},

{

"path": "concise_concepts/conceptualizer/__init__.py",

"chars": 97,

"preview": "# -*- coding: utf-8 -*-\nfrom .Conceptualizer import Conceptualizer\n\n__all__ = [\"Conceptualizer\"]\n"

},

{

"path": "concise_concepts/examples/__init__.py",

"chars": 0,

"preview": ""

},

{

"path": "concise_concepts/examples/data.py",

"chars": 751,

"preview": "# -*- coding: utf-8 -*-\ntext = \"\"\"\n Heat the oil in a large pan and add the Onion, celery and carrots.\n Then, cook"

},

{

"path": "concise_concepts/examples/example_gensim_custom_model.py",

"chars": 862,

"preview": "# -*- coding: utf-8 -*-\nimport spacy\nfrom gensim.models import Word2Vec\nfrom gensim.test.utils import common_texts\n\nimpo"

},

{

"path": "concise_concepts/examples/example_gensim_custom_path.py",

"chars": 405,

"preview": "# -*- coding: utf-8 -*-\nimport gensim.downloader as api\nimport spacy\n\nimport concise_concepts # noqa: F401\n\nfrom .data "

},

{

"path": "concise_concepts/examples/example_gensim_default.py",

"chars": 316,

"preview": "# -*- coding: utf-8 -*-\nimport spacy\n\nimport concise_concepts # noqa: F401\n\nfrom .data import data, text\n\nmodel_path = "

},

{

"path": "concise_concepts/examples/example_spacy.py",

"chars": 268,

"preview": "# -*- coding: utf-8 -*-\nimport spacy\n\nimport concise_concepts # noqa: F401\n\nfrom .data import data, text\n\nnlp = spacy.l"

},

{

"path": "pyproject.toml",

"chars": 1810,

"preview": "[tool.poetry]\nname = \"concise-concepts\"\nversion = \"0.8.1\"\ndescription = \"This repository contains an easy and intuitive "

},

{

"path": "setup.cfg",

"chars": 229,

"preview": "[flake8]\nmax-line-length = 119\nmax-complexity = 18\ndocstring-convention=google\nexclude = .git,__pycache__,build,dist\nsel"

},

{

"path": "tests/__init__.py",

"chars": 0,

"preview": ""

},

{

"path": "tests/test_model_import.py",

"chars": 3333,

"preview": "# -*- coding: utf-8 -*-\ndef test_spacy_embeddings():\n from concise_concepts.examples import example_spacy # noqa: F4"

}

]

About this extraction

This page contains the full source code of the Pandora-Intelligence/concise-concepts GitHub repository, extracted and formatted as plain text for AI agents and large language models (LLMs). The extraction includes 20 files (49.2 KB), approximately 12.5k tokens, and a symbol index with 32 extracted functions, classes, methods, constants, and types. Use this with OpenClaw, Claude, ChatGPT, Cursor, Windsurf, or any other AI tool that accepts text input. You can copy the full output to your clipboard or download it as a .txt file.

Extracted by GitExtract — free GitHub repo to text converter for AI. Built by Nikandr Surkov.