Repository: RUCAIBox/CRSLab

Branch: main

Commit: 649793891999

Files: 253

Total size: 590.2 KB

Directory structure:

gitextract_o03n7yor/

├── .gitattributes

├── .gitignore

├── .readthedocs.yml

├── LICENSE

├── README.md

├── README_CN.md

├── config/

│ ├── conversation/

│ │ ├── gpt2/

│ │ │ ├── durecdial.yaml

│ │ │ ├── gorecdial.yaml

│ │ │ ├── inspired.yaml

│ │ │ ├── opendialkg.yaml

│ │ │ ├── redial.yaml

│ │ │ └── tgredial.yaml

│ │ └── transformer/

│ │ ├── durecdial.yaml

│ │ ├── gorecdial.yaml

│ │ ├── inspired.yaml

│ │ ├── opendialkg.yaml

│ │ ├── redial.yaml

│ │ └── tgredial.yaml

│ ├── crs/

│ │ ├── inspired/

│ │ │ ├── durecdial.yaml

│ │ │ ├── gorecdial.yaml

│ │ │ ├── inspired.yaml

│ │ │ ├── opendialkg.yaml

│ │ │ ├── redial.yaml

│ │ │ └── tgredial.yaml

│ │ ├── kbrd/

│ │ │ ├── durecdial.yaml

│ │ │ ├── gorecdial.yaml

│ │ │ ├── inspired.yaml

│ │ │ ├── opendialkg.yaml

│ │ │ ├── redial.yaml

│ │ │ └── tgredial.yaml

│ │ ├── kgsf/

│ │ │ ├── durecdial.yaml

│ │ │ ├── gorecdial.yaml

│ │ │ ├── inspired.yaml

│ │ │ ├── opendialkg.yaml

│ │ │ ├── redial.yaml

│ │ │ └── tgredial.yaml

│ │ ├── ntrd/

│ │ │ └── tgredial.yaml

│ │ ├── redial/

│ │ │ ├── durecdial.yaml

│ │ │ ├── gorecdial.yaml

│ │ │ ├── inspired.yaml

│ │ │ ├── opendialkg.yaml

│ │ │ ├── redial.yaml

│ │ │ └── tgredial.yaml

│ │ └── tgredial/

│ │ ├── durecdial.yaml

│ │ ├── gorecdial.yaml

│ │ ├── inspired.yaml

│ │ ├── opendialkg.yaml

│ │ ├── redial.yaml

│ │ └── tgredial.yaml

│ ├── policy/

│ │ ├── conv_bert/

│ │ │ └── tgredial.yaml

│ │ ├── mgcg/

│ │ │ └── tgredial.yaml

│ │ ├── pmi/

│ │ │ └── tgredial.yaml

│ │ ├── profile_bert/

│ │ │ └── tgredial.yaml

│ │ └── topic_bert/

│ │ └── tgredial.yaml

│ └── recommendation/

│ ├── bert/

│ │ ├── durecdial.yaml

│ │ ├── gorecdial.yaml

│ │ ├── inspired.yaml

│ │ ├── opendialkg.yaml

│ │ ├── redial.yaml

│ │ └── tgredial.yaml

│ ├── gru4rec/

│ │ ├── durecdial.yaml

│ │ ├── gorecdial.yaml

│ │ ├── inspired.yaml

│ │ ├── opendialkg.yaml

│ │ ├── redial.yaml

│ │ └── tgredial.yaml

│ ├── popularity/

│ │ ├── durecdial.yaml

│ │ ├── gorecdial.yaml

│ │ ├── inspired.yaml

│ │ ├── opendialkg.yaml

│ │ ├── redial.yaml

│ │ └── tgredial.yaml

│ ├── sasrec/

│ │ ├── durecdial.yaml

│ │ ├── gorecdial.yaml

│ │ ├── inspired.yaml

│ │ ├── opendialkg.yaml

│ │ ├── redial.yaml

│ │ └── tgredial.yaml

│ └── textcnn/

│ ├── durecdial.yaml

│ ├── gorecdial.yaml

│ ├── inspired.yaml

│ ├── opendialkg.yaml

│ ├── redial.yaml

│ └── tgredial.yaml

├── crslab/

│ ├── __init__.py

│ ├── config/

│ │ ├── __init__.py

│ │ └── config.py

│ ├── data/

│ │ ├── __init__.py

│ │ ├── dataloader/

│ │ │ ├── __init__.py

│ │ │ ├── base.py

│ │ │ ├── inspired.py

│ │ │ ├── kbrd.py

│ │ │ ├── kgsf.py

│ │ │ ├── ntrd.py

│ │ │ ├── redial.py

│ │ │ ├── tgredial.py

│ │ │ └── utils.py

│ │ └── dataset/

│ │ ├── __init__.py

│ │ ├── base.py

│ │ ├── durecdial/

│ │ │ ├── __init__.py

│ │ │ ├── durecdial.py

│ │ │ └── resources.py

│ │ ├── gorecdial/

│ │ │ ├── __init__.py

│ │ │ ├── gorecdial.py

│ │ │ └── resources.py

│ │ ├── inspired/

│ │ │ ├── __init__.py

│ │ │ ├── inspired.py

│ │ │ └── resources.py

│ │ ├── opendialkg/

│ │ │ ├── __init__.py

│ │ │ ├── opendialkg.py

│ │ │ └── resources.py

│ │ ├── redial/

│ │ │ ├── __init__.py

│ │ │ ├── redial.py

│ │ │ └── resources.py

│ │ └── tgredial/

│ │ ├── __init__.py

│ │ ├── resources.py

│ │ └── tgredial.py

│ ├── download.py

│ ├── evaluator/

│ │ ├── __init__.py

│ │ ├── base.py

│ │ ├── conv.py

│ │ ├── embeddings.py

│ │ ├── end2end.py

│ │ ├── metrics/

│ │ │ ├── __init__.py

│ │ │ ├── base.py

│ │ │ ├── gen.py

│ │ │ └── rec.py

│ │ ├── rec.py

│ │ ├── standard.py

│ │ └── utils.py

│ ├── model/

│ │ ├── __init__.py

│ │ ├── base.py

│ │ ├── conversation/

│ │ │ ├── __init__.py

│ │ │ ├── gpt2/

│ │ │ │ ├── __init__.py

│ │ │ │ └── gpt2.py

│ │ │ └── transformer/

│ │ │ ├── __init__.py

│ │ │ └── transformer.py

│ │ ├── crs/

│ │ │ ├── __init__.py

│ │ │ ├── inspired/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── inspired_conv.py

│ │ │ │ ├── inspired_rec.py

│ │ │ │ └── modules.py

│ │ │ ├── kbrd/

│ │ │ │ ├── __init__.py

│ │ │ │ └── kbrd.py

│ │ │ ├── kgsf/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── kgsf.py

│ │ │ │ ├── modules.py

│ │ │ │ └── resources.py

│ │ │ ├── ntrd/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── modules.py

│ │ │ │ ├── ntrd.py

│ │ │ │ └── resources.py

│ │ │ ├── redial/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── modules.py

│ │ │ │ ├── redial_conv.py

│ │ │ │ └── redial_rec.py

│ │ │ └── tgredial/

│ │ │ ├── __init__.py

│ │ │ ├── tg_conv.py

│ │ │ ├── tg_policy.py

│ │ │ └── tg_rec.py

│ │ ├── policy/

│ │ │ ├── __init__.py

│ │ │ ├── conv_bert/

│ │ │ │ ├── __init__.py

│ │ │ │ └── conv_bert.py

│ │ │ ├── mgcg/

│ │ │ │ ├── __init__.py

│ │ │ │ └── mgcg.py

│ │ │ ├── pmi/

│ │ │ │ ├── __init__.py

│ │ │ │ └── pmi.py

│ │ │ ├── profile_bert/

│ │ │ │ ├── __init__.py

│ │ │ │ └── profile_bert.py

│ │ │ └── topic_bert/

│ │ │ ├── __init__.py

│ │ │ └── topic_bert.py

│ │ ├── pretrained_models.py

│ │ ├── recommendation/

│ │ │ ├── __init__.py

│ │ │ ├── bert/

│ │ │ │ ├── __init__.py

│ │ │ │ └── bert.py

│ │ │ ├── gru4rec/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── gru4rec.py

│ │ │ │ └── modules.py

│ │ │ ├── popularity/

│ │ │ │ ├── __init__.py

│ │ │ │ └── popularity.py

│ │ │ ├── sasrec/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── modules.py

│ │ │ │ └── sasrec.py

│ │ │ └── textcnn/

│ │ │ ├── __init__.py

│ │ │ └── textcnn.py

│ │ └── utils/

│ │ ├── __init__.py

│ │ ├── functions.py

│ │ └── modules/

│ │ ├── __init__.py

│ │ ├── attention.py

│ │ └── transformer.py

│ ├── quick_start/

│ │ ├── __init__.py

│ │ └── quick_start.py

│ └── system/

│ ├── __init__.py

│ ├── base.py

│ ├── inspired.py

│ ├── kbrd.py

│ ├── kgsf.py

│ ├── ntrd.py

│ ├── redial.py

│ ├── tgredial.py

│ └── utils/

│ ├── __init__.py

│ ├── functions.py

│ └── lr_scheduler.py

├── docs/

│ ├── Makefile

│ ├── make.bat

│ ├── requirements.txt

│ ├── requirements_geometric.txt

│ ├── requirements_sphinx.txt

│ ├── requirements_torch.txt

│ └── source/

│ ├── api/

│ │ ├── crslab.config.rst

│ │ ├── crslab.data.dataloader.rst

│ │ ├── crslab.data.dataset.durecdial.rst

│ │ ├── crslab.data.dataset.gorecdial.rst

│ │ ├── crslab.data.dataset.inspired.rst

│ │ ├── crslab.data.dataset.opendialkg.rst

│ │ ├── crslab.data.dataset.redial.rst

│ │ ├── crslab.data.dataset.rst

│ │ ├── crslab.data.dataset.tgredial.rst

│ │ ├── crslab.data.rst

│ │ ├── crslab.evaluator.metrics.rst

│ │ ├── crslab.evaluator.rst

│ │ ├── crslab.model.conversation.gpt2.rst

│ │ ├── crslab.model.conversation.rst

│ │ ├── crslab.model.conversation.transformer.rst

│ │ ├── crslab.model.crs.kbrd.rst

│ │ ├── crslab.model.crs.kgsf.rst

│ │ ├── crslab.model.crs.redial.rst

│ │ ├── crslab.model.crs.rst

│ │ ├── crslab.model.crs.tgredial.rst

│ │ ├── crslab.model.policy.conv_bert.rst

│ │ ├── crslab.model.policy.mgcg.rst

│ │ ├── crslab.model.policy.pmi.rst

│ │ ├── crslab.model.policy.profile_bert.rst

│ │ ├── crslab.model.policy.rst

│ │ ├── crslab.model.policy.topic_bert.rst

│ │ ├── crslab.model.recommendation.bert.rst

│ │ ├── crslab.model.recommendation.gru4rec.rst

│ │ ├── crslab.model.recommendation.popularity.rst

│ │ ├── crslab.model.recommendation.rst

│ │ ├── crslab.model.recommendation.sasrec.rst

│ │ ├── crslab.model.recommendation.textcnn.rst

│ │ ├── crslab.model.rst

│ │ ├── crslab.model.utils.modules.rst

│ │ ├── crslab.model.utils.rst

│ │ ├── crslab.quick_start.rst

│ │ ├── crslab.rst

│ │ ├── crslab.system.rst

│ │ └── modules.rst

│ ├── conf.py

│ └── index.md

├── requirements.txt

├── run_crslab.py

└── setup.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitattributes

================================================

* text=auto eol=lf

*.{cmd,[cC][mM][dD]} text eol=crlf

*.{bat,[bB][aA][tT]} text eol=crlf

================================================

FILE: .gitignore

================================================

# Created by .ignore support plugin (hsz.mobi)

### Project

data

log

save

!crslab/data

runs

### VisualStudioCode template

.vscode/*

!.vscode/settings.json

!.vscode/tasks.json

!.vscode/launch.json

!.vscode/extensions.json

*.code-workspace

# Local History for Visual Studio Code

.history/

### Python template

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

*.py,cover

.hypothesis/

.pytest_cache/

cover/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

.pybuilder/

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

# For a library or package, you might want to ignore these files since the code is

# intended to run in multiple environments; otherwise, check them in:

# .python-version

# pipenv

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

# However, in case of collaboration, if having platform-specific dependencies or dependencies

# having no cross-platform support, pipenv may install dependencies that don't work, or not

# install all needed dependencies.

#Pipfile.lock

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

__pypackages__/

# Celery stuff

celerybeat-schedule

celerybeat.pid

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

# pytype static type analyzer

.pytype/

# Cython debug symbols

cython_debug/

### JetBrains template

# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio, WebStorm and Rider

# Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

# User-specific stuff

.idea/**/workspace.xml

.idea/**/tasks.xml

.idea/**/usage.statistics.xml

.idea/**/dictionaries

.idea/**/shelf

# Generated files

.idea/**/contentModel.xml

# Sensitive or high-churn files

.idea/**/dataSources/

.idea/**/dataSources.ids

.idea/**/dataSources.local.xml

.idea/**/sqlDataSources.xml

.idea/**/dynamic.xml

.idea/**/uiDesigner.xml

.idea/**/dbnavigator.xml

# Gradle

.idea/**/gradle.xml

.idea/**/libraries

# Gradle and Maven with auto-import

# When using Gradle or Maven with auto-import, you should exclude module files,

# since they will be recreated, and may cause churn. Uncomment if using

# auto-import.

# .idea/artifacts

# .idea/compiler.xml

# .idea/jarRepositories.xml

# .idea/modules.xml

# .idea/*.iml

# .idea/modules

# *.iml

# *.ipr

# CMake

cmake-build-*/

# Mongo Explorer plugin

.idea/**/mongoSettings.xml

# File-based project format

*.iws

# IntelliJ

.idea

*.iml

out

gen

# mpeltonen/sbt-idea plugin

.idea_modules/

# JIRA plugin

atlassian-ide-plugin.xml

# Cursive Clojure plugin

.idea/replstate.xml

# Crashlytics plugin (for Android Studio and IntelliJ)

com_crashlytics_export_strings.xml

crashlytics.properties

crashlytics-build.properties

fabric.properties

# Editor-based Rest Client

.idea/httpRequests

# Android studio 3.1+ serialized cache file

.idea/caches/build_file_checksums.ser

### JupyterNotebooks template

# gitignore template for Jupyter Notebooks

# website: http://jupyter.org/

*/.ipynb_checkpoints/*

# Remove previous ipynb_checkpoints

# git rm -r .ipynb_checkpoints/

### macOS template

# General

.DS_Store

.AppleDouble

.LSOverride

# Icon must end with two \r

Icon

# Thumbnails

._*

# Files that might appear in the root of a volume

.DocumentRevisions-V100

.fseventsd

.Spotlight-V100

.TemporaryItems

.Trashes

.VolumeIcon.icns

.com.apple.timemachine.donotpresent

# Directories potentially created on remote AFP share

.AppleDB

.AppleDesktop

Network Trash Folder

Temporary Items

.apdisk

================================================

FILE: .readthedocs.yml

================================================

# Required

version: 2

# Build documentation in the docs/ directory with Sphinx

sphinx:

configuration: docs/source/conf.py

# Build documentation with MkDocs

#mkdocs:

# configuration: mkdocs.yml

# Optionally build your docs in additional formats such as PDF

formats: all

# Optionally set the version of Python and requirements required to build your docs

python:

version: 3.6

install:

- requirements: docs/requirements_torch.txt

- requirements: docs/requirements_geometric.txt

- requirements: docs/requirements.txt

- requirements: docs/requirements_sphinx.txt

================================================

FILE: LICENSE

================================================

MIT License

Copyright (c) 2021 RUCAIBox

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: README.md

================================================

# CRSLab

[](https://pypi.org/project/crslab)

[](https://github.com/rucaibox/crslab/releases)

[](./LICENSE)

[](https://arxiv.org/abs/2101.00939)

[](https://crslab.readthedocs.io/en/latest/?badge=latest)

[Paper](https://arxiv.org/pdf/2101.00939.pdf) | [Docs](https://crslab.readthedocs.io/en/latest/?badge=latest)

| [中文版](./README_CN.md)

**CRSLab** is an open-source toolkit for building Conversational Recommender System (CRS). It is developed based on

Python and PyTorch. CRSLab has the following highlights:

- **Comprehensive benchmark models and datasets**: We have integrated commonly-used 6 datasets and 18 models, including graph neural network and pre-training models such as R-GCN, BERT and GPT-2. We have preprocessed these datasets to support these models, and release for downloading.

- **Extensive and standard evaluation protocols**: We support a series of widely-adopted evaluation protocols for testing and comparing different CRS.

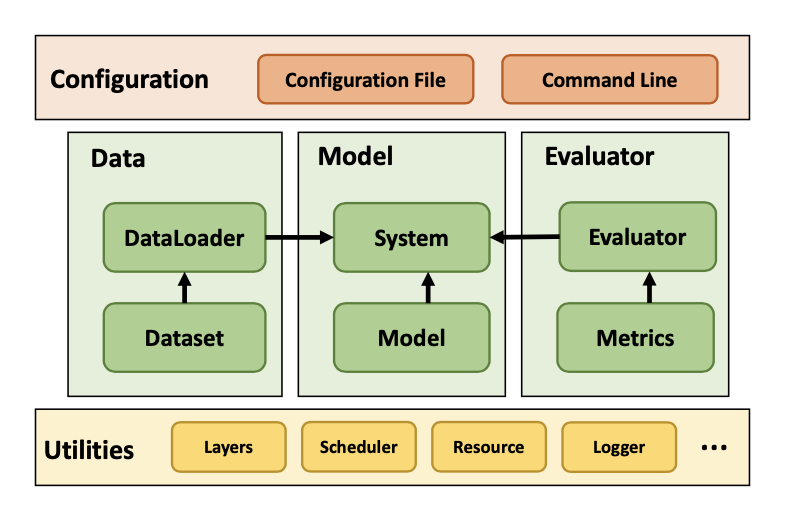

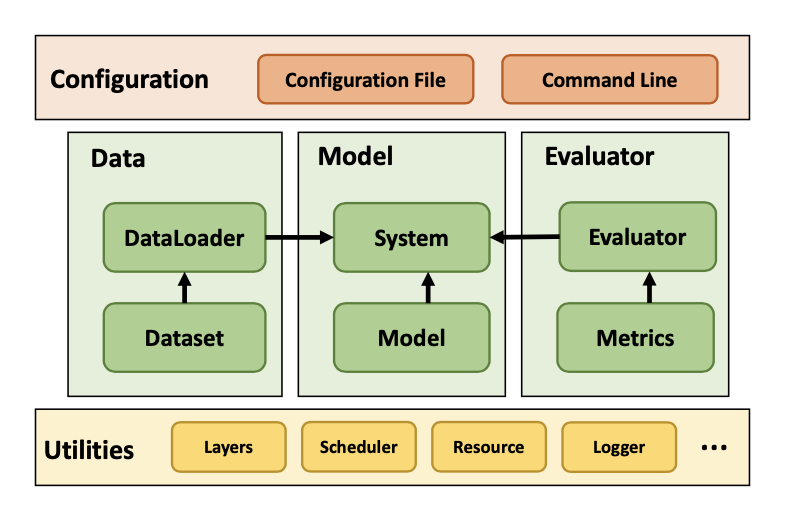

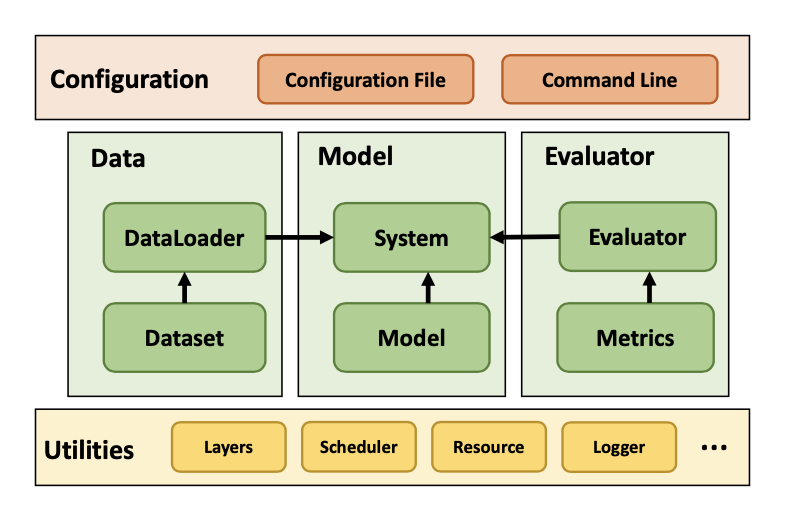

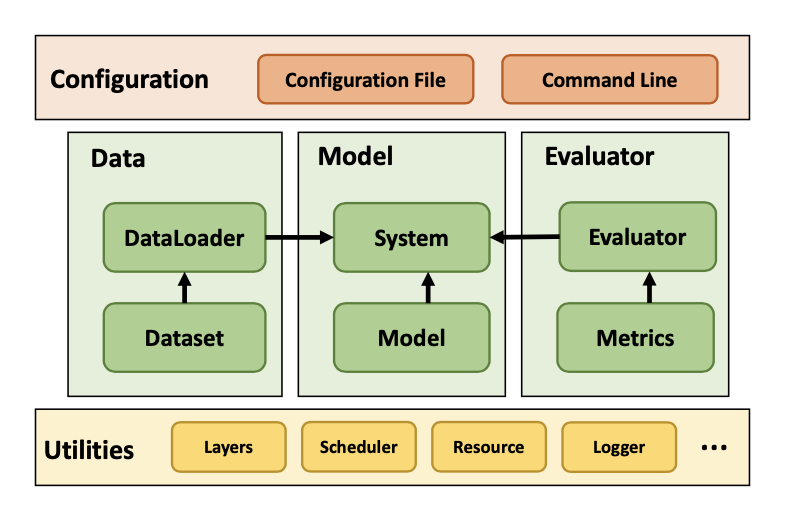

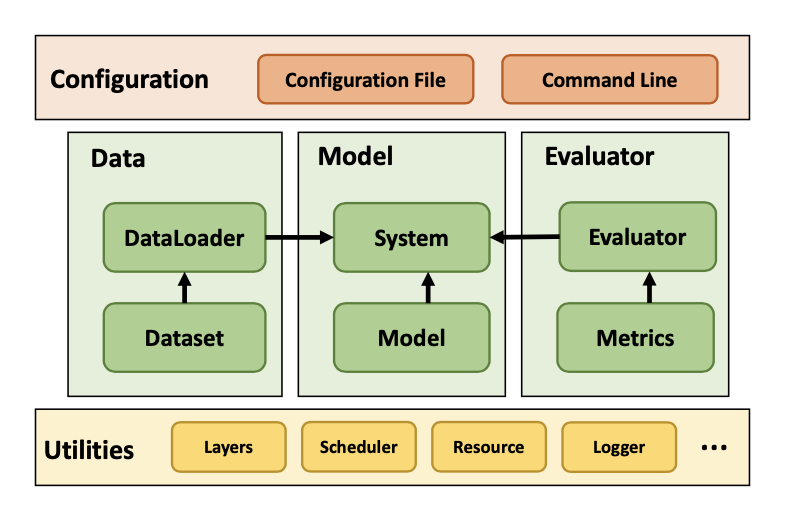

- **General and extensible structure**: We design a general and extensible structure to unify various conversational recommendation datasets and models, in which we integrate various built-in interfaces and functions for quickly development.

- **Easy to get started**: We provide simple yet flexible configuration for new researchers to quickly start in our library.

- **Human-machine interaction interfaces**: We provide flexible human-machine interaction interfaces for researchers to conduct qualitative analysis.

Figure 1: The overall framework of CRSLab

- [Installation](#Installation)

- [Quick-Start](#Quick-Start)

- [Models](#Models)

- [Datasets](#Datasets)

- [Performance](#Performance)

- [Releases](#Releases)

- [Contributions](#Contributions)

- [Citing](#Citing)

- [Team](#Team)

- [License](#License)

## Installation

CRSLab works with the following operating systems:

- Linux

- Windows 10

- macOS X

CRSLab requires Python version 3.7 or later.

CRSLab requires torch version 1.8. If you want to use CRSLab with GPU, please ensure that CUDA or CUDAToolkit version is 10.2 or later. Please use the combinations shown in this [Link](https://pytorch-geometric.com/whl/) to ensure the normal operation of PyTorch Geometric.

### Install PyTorch

Use PyTorch [Locally Installation](https://pytorch.org/get-started/locally/) or [Previous Versions Installation](https://pytorch.org/get-started/previous-versions/) commands to install PyTorch. For example, on Linux and Windows 10:

```bash

# CUDA 10.2

conda install pytorch==1.8.0 torchvision==0.9.0 torchaudio==0.8.0 cudatoolkit=10.2 -c pytorch

# CUDA 11.1

conda install pytorch==1.8.0 torchvision==0.9.0 torchaudio==0.8.0 cudatoolkit=11.1 -c pytorch -c conda-forge

# CPU Only

conda install pytorch==1.8.0 torchvision==0.9.0 torchaudio==0.8.0 cpuonly -c pytorch

```

If you want to use CRSLab with GPU, make sure the following command prints `True` after installation:

```bash

$ python -c "import torch; print(torch.cuda.is_available())"

>>> True

```

### Install PyTorch Geometric

Ensure that at least PyTorch 1.8.0 is installed:

```bash

$ python -c "import torch; print(torch.__version__)"

>>> 1.8.0

```

Find the CUDA version PyTorch was installed with:

```bash

$ python -c "import torch; print(torch.version.cuda)"

>>> 11.1

```

For Linux:

Install the relevant packages:

```

conda install pyg -c pyg

```

For others:

Check PyG [installation documents](https://pytorch-geometric.readthedocs.io/en/latest/install/installation.html) to install the relevant packages.

### Install CRSLab

You can install from pip:

```bash

pip install crslab

```

OR install from source:

```bash

git clone https://github.com/RUCAIBox/CRSLab && cd CRSLab

pip install -e .

```

## Quick-Start

With the source code, you can use the provided script for initial usage of our library with cpu by default:

```bash

python run_crslab.py --config config/crs/kgsf/redial.yaml

```

The system will complete the data preprocessing, and training, validation, testing of each model in turn. Finally it will get the evaluation results of specified models.

If you want to save pre-processed datasets and training results of models, you can use the following command:

```bash

python run_crslab.py --config config/crs/kgsf/redial.yaml --save_data --save_system

```

In summary, there are following arguments in `run_crslab.py`:

- `--config` or `-c`: relative path for configuration file(yaml).

- `--gpu` or `-g`: specify GPU id(s) to use, we now support multiple GPUs. Defaults to CPU(-1).

- `--save_data` or `-sd`: save pre-processed dataset.

- `--restore_data` or `-rd`: restore pre-processed dataset from file.

- `--save_system` or `-ss`: save trained system.

- `--restore_system` or `-rs`: restore trained system from file.

- `--debug` or `-d`: use validation dataset to debug your system.

- `--interact` or `-i`: interact with your system instead of training.

- `--tensorboard` or `-tb`: enable tensorboard to monitor train performance.

## Models

In CRSLab, we unify the task description of conversational recommendation into three sub-tasks, namely recommendation (recommend user-preferred items), conversation (generate proper responses) and policy (select proper interactive action). The recommendation and conversation sub-tasks are the core of a CRS and have been studied in most of works. The policy sub-task is needed by recent works, by which the CRS can interact with users through purposeful strategy.

As the first release version, we have implemented 18 models in the four categories of CRS model, Recommendation model, Conversation model and Policy model.

| Category | Model | Graph Neural Network? | Pre-training Model? |

| :------------------: | :----------------------------------------------------------: | :-----------------------------: | :-----------------------------: |

| CRS Model | [ReDial](https://arxiv.org/abs/1812.07617)

[KBRD](https://arxiv.org/abs/1908.05391)

[KGSF](https://arxiv.org/abs/2007.04032)

[TG-ReDial](https://arxiv.org/abs/2010.04125)

[INSPIRED](https://www.aclweb.org/anthology/2020.emnlp-main.654.pdf) | ×

√

√

×

× | ×

×

×

√

√ |

| Recommendation model | Popularity

[GRU4Rec](https://arxiv.org/abs/1511.06939)

[SASRec](https://arxiv.org/abs/1808.09781)

[TextCNN](https://arxiv.org/abs/1408.5882)

[R-GCN](https://arxiv.org/abs/1703.06103)

[BERT](https://arxiv.org/abs/1810.04805) | ×

×

×

×

√

× | ×

×

×

×

×

√ |

| Conversation model | [HERD](https://arxiv.org/abs/1507.04808)

[Transformer](https://arxiv.org/abs/1706.03762)

[GPT-2](http://www.persagen.com/files/misc/radford2019language.pdf) | ×

×

× | ×

×

√ |

| Policy model | PMI

[MGCG](https://arxiv.org/abs/2005.03954)

[Conv-BERT](https://arxiv.org/abs/2010.04125)

[Topic-BERT](https://arxiv.org/abs/2010.04125)

[Profile-BERT](https://arxiv.org/abs/2010.04125) | ×

×

×

×

× | ×

×

√

√

√ |

Among them, the four CRS models integrate the recommendation model and the conversation model to improve each other, while others only specify an individual task.

For Recommendation model and Conversation model, we have respectively implemented the following commonly-used automatic evaluation metrics:

| Category | Metrics |

| :--------------------: | :----------------------------------------------------------: |

| Recommendation Metrics | Hit@{1, 10, 50}, MRR@{1, 10, 50}, NDCG@{1, 10, 50} |

| Conversation Metrics | PPL, BLEU-{1, 2, 3, 4}, Embedding Average/Extreme/Greedy, Distinct-{1, 2, 3, 4} |

| Policy Metrics | Accuracy, Hit@{1,3,5} |

## Datasets

We have collected and preprocessed 6 commonly-used human-annotated datasets, and each dataset was matched with proper KGs as shown below:

| Dataset | Dialogs | Utterances | Domains | Task Definition | Entity KG | Word KG |

| :----------------------------------------------------------: | :-----: | :--------: | :----------: | :-------------: | :--------: | :--------: |

| [ReDial](https://redialdata.github.io/website/) | 10,006 | 182,150 | Movie | -- | DBpedia | ConceptNet |

| [TG-ReDial](https://github.com/RUCAIBox/TG-ReDial) | 10,000 | 129,392 | Movie | Topic Guide | CN-DBpedia | HowNet |

| [GoRecDial](https://arxiv.org/abs/1909.03922) | 9,125 | 170,904 | Movie | Action Choice | DBpedia | ConceptNet |

| [DuRecDial](https://arxiv.org/abs/2005.03954) | 10,200 | 156,000 | Movie, Music | Goal Plan | CN-DBpedia | HowNet |

| [INSPIRED](https://github.com/sweetpeach/Inspired) | 1,001 | 35,811 | Movie | Social Strategy | DBpedia | ConceptNet |

| [OpenDialKG](https://github.com/facebookresearch/opendialkg) | 13,802 | 91,209 | Movie, Book | Path Generate | DBpedia | ConceptNet |

## Performance

We have trained and test the integrated models on the TG-Redial dataset, which is split into training, validation and test sets using a ratio of 8:1:1. For each conversation, we start from the first utterance, and generate reply utterances or recommendations in turn by our model. We perform the evaluation on the three sub-tasks.

### Recommendation Task

| Model | Hit@1 | Hit@10 | Hit@50 | MRR@1 | MRR@10 | MRR@50 | NDCG@1 | NDCG@10 | NDCG@50 |

| :-------: | :---------: | :--------: | :--------: | :---------: | :--------: | :--------: | :---------: | :--------: | :--------: |

| SASRec | 0.000446 | 0.00134 | 0.0160 | 0.000446 | 0.000576 | 0.00114 | 0.000445 | 0.00075 | 0.00380 |

| TextCNN | 0.00267 | 0.0103 | 0.0236 | 0.00267 | 0.00434 | 0.00493 | 0.00267 | 0.00570 | 0.00860 |

| BERT | 0.00722 | 0.00490 | 0.0281 | 0.00722 | 0.0106 | 0.0124 | 0.00490 | 0.0147 | 0.0239 |

| KBRD | 0.00401 | 0.0254 | 0.0588 | 0.00401 | 0.00891 | 0.0103 | 0.00401 | 0.0127 | 0.0198 |

| KGSF | 0.00535 | **0.0285** | **0.0771** | 0.00535 | 0.0114 | **0.0135** | 0.00535 | **0.0154** | **0.0259** |

| TG-ReDial | **0.00793** | 0.0251 | 0.0524 | **0.00793** | **0.0122** | 0.0134 | **0.00793** | 0.0152 | 0.0211 |

### Conversation Task

| Model | BLEU@1 | BLEU@2 | BLEU@3 | BLEU@4 | Dist@1 | Dist@2 | Dist@3 | Dist@4 | Average | Extreme | Greedy | PPL |

| :---------: | :-------: | :-------: | :--------: | :--------: | :------: | :------: | :------: | :------: | :-------: | :-------: | :-------: | :------: |

| HERD | 0.120 | 0.0141 | 0.00136 | 0.000350 | 0.181 | 0.369 | 0.847 | 1.30 | 0.697 | 0.382 | 0.639 | 472 |

| Transformer | 0.266 | 0.0440 | 0.0145 | 0.00651 | 0.324 | 0.837 | 2.02 | 3.06 | 0.879 | 0.438 | 0.680 | 30.9 |

| GPT2 | 0.0858 | 0.0119 | 0.00377 | 0.0110 | **2.35** | **4.62** | **8.84** | **12.5** | 0.763 | 0.297 | 0.583 | 9.26 |

| KBRD | 0.267 | 0.0458 | 0.0134 | 0.00579 | 0.469 | 1.50 | 3.40 | 4.90 | 0.863 | 0.398 | 0.710 | 52.5 |

| KGSF | **0.383** | **0.115** | **0.0444** | **0.0200** | 0.340 | 0.910 | 3.50 | 6.20 | **0.888** | **0.477** | **0.767** | 50.1 |

| TG-ReDial | 0.125 | 0.0204 | 0.00354 | 0.000803 | 0.881 | 1.75 | 7.00 | 12.0 | 0.810 | 0.332 | 0.598 | **7.41** |

### Policy Task

| Model | Hit@1 | Hit@10 | Hit@50 | MRR@1 | MRR@10 | MRR@50 | NDCG@1 | NDCG@10 | NDCG@50 |

| :--------: | :-------: | :-------: | :-------: | :-------: | :-------: | :-------: | :-------: | :-------: | :-------: |

| MGCG | 0.591 | 0.818 | 0.883 | 0.591 | 0.680 | 0.683 | 0.591 | 0.712 | 0.729 |

| Conv-BERT | 0.597 | 0.814 | 0.881 | 0.597 | 0.684 | 0.687 | 0.597 | 0.716 | 0.731 |

| Topic-BERT | 0.598 | 0.828 | 0.885 | 0.598 | 0.690 | 0.693 | 0.598 | 0.724 | 0.737 |

| TG-ReDial | **0.600** | **0.830** | **0.893** | **0.600** | **0.693** | **0.696** | **0.600** | **0.727** | **0.741** |

The above results were obtained from our CRSLab in preliminary experiments. However, these algorithms were implemented and tuned based on our understanding and experiences, which may not achieve their optimal performance. If you could yield a better result for some specific algorithm, please kindly let us know. We will update this table after the results are verified.

## Releases

| Releases | Date | Features |

| :------: | :-----------: | :----------: |

| v0.1.1 | 1 / 4 / 2021 | Basic CRSLab |

| v0.1.2 | 3 / 28 / 2021 | CRSLab |

## Contributions

Please let us know if you encounter a bug or have any suggestions by [filing an issue](https://github.com/RUCAIBox/CRSLab/issues).

We welcome all contributions from bug fixes to new features and extensions.

We expect all contributions discussed in the issue tracker and going through PRs.

We thank the nice contributions through PRs from [@shubaoyu](https://github.com/shubaoyu), [@ToheartZhang](https://github.com/ToheartZhang).

## Citing

If you find CRSLab useful for your research or development, please cite our [Paper](https://arxiv.org/pdf/2101.00939.pdf):

```

@article{crslab,

title={CRSLab: An Open-Source Toolkit for Building Conversational Recommender System},

author={Kun Zhou, Xiaolei Wang, Yuanhang Zhou, Chenzhan Shang, Yuan Cheng, Wayne Xin Zhao, Yaliang Li, Ji-Rong Wen},

year={2021},

journal={arXiv preprint arXiv:2101.00939}

}

```

## Team

**CRSLab** was developed and maintained by [AI Box](http://aibox.ruc.edu.cn/) group in RUC.

## License

**CRSLab** uses [MIT License](./LICENSE).

================================================

FILE: README_CN.md

================================================

# CRSLab

[](https://pypi.org/project/crslab)

[](https://github.com/rucaibox/crslab/releases)

[](./LICENSE)

[](https://arxiv.org/abs/2101.00939)

[](https://crslab.readthedocs.io/en/latest/?badge=latest)

[论文](https://arxiv.org/pdf/2101.00939.pdf) | [文档](https://crslab.readthedocs.io/en/latest/?badge=latest)

| [English Version](./README.md)

**CRSLab** 是一个用于构建对话推荐系统(CRS)的开源工具包,其基于 PyTorch 实现、主要面向研究者使用,并具有如下特色:

- **全面的基准模型和数据集**:我们集成了常用的 6 个数据集和 18 个模型,包括基于图神经网络和预训练模型,比如 GCN,BERT 和 GPT-2;我们还对数据集进行相关处理以支持这些模型,并提供预处理后的版本供大家下载。

- **大规模的标准评测**:我们支持一系列被广泛认可的评估方式来测试和比较不同的 CRS。

- **通用和可扩展的结构**:我们设计了通用和可扩展的结构来统一各种对话推荐数据集和模型,并集成了多种内置接口和函数以便于快速开发。

- **便捷的使用方法**:我们为新手提供了简单而灵活的配置,方便其快速启动集成在 CRSLab 中的模型。

- **人性化的人机交互接口**:我们提供了人性化的人机交互界面,以供研究者对比和测试不同的模型系统。

图片: CRSLab 的总体架构

- [安装](#安装)

- [快速上手](#快速上手)

- [模型](#模型)

- [数据集](#数据集)

- [评测结果](#评测结果)

- [发行版本](#发行版本)

- [贡献](#贡献)

- [引用](#引用)

- [项目团队](#项目团队)

- [免责声明](#免责声明)

## 安装

CRSLab 可以在以下几种系统上运行:

- Linux

- Windows 10

- macOS X

CRSLab 需要在 Python 3.7 或更高的环境下运行。

CRSLab 要求 torch 版本为1.8,如果你想在 GPU 上运行 CRSLab,请确保你的 CUDA 版本或者 CUDAToolkit 版本在 10.2 及以上。为保证 PyTorch Geometric 库的正常运行,请使用[链接](https://pytorch-geometric.com/whl/)所示的安装方式。

### 安装 PyTorch

使用 PyTorch [本地安装](https://pytorch.org/get-started/locally/)命令或者[先前版本安装](https://pytorch.org/get-started/previous-versions/)命令安装 PyTorch,比如在 Linux 和 Windows 下:

```bash

# CUDA 10.2

conda install pytorch==1.8.0 torchvision==0.9.0 torchaudio==0.8.0 cudatoolkit=10.2 -c pytorch

# CUDA 11.1

conda install pytorch==1.8.0 torchvision==0.9.0 torchaudio==0.8.0 cudatoolkit=11.1 -c pytorch -c conda-forge

# CPU Only

conda install pytorch==1.8.0 torchvision==0.9.0 torchaudio==0.8.0 cpuonly -c pytorch

```

安装完成后,如果你想在 GPU 上运行 CRSLab,请确保如下命令输出`True`:

```bash

$ python -c "import torch; print(torch.cuda.is_available())"

>>> True

```

### 安装 PyTorch Geometric

确保安装的 PyTorch 版本至少为 1.8.0:

```bash

$ python -c "import torch; print(torch.__version__)"

>>> 1.8.0

```

找到安装好的 PyTorch 对应的 CUDA 版本:

```bash

$ python -c "import torch; print(torch.version.cuda)"

>>> 11.1

```

在Linux下:

安装相关的包:

```bash

conda install pyg -c pyg

```

在其他系统下:

查看PyG[官方下载文档](https://pytorch-geometric.readthedocs.io/en/latest/install/installation.html)安装相关的包。

### 安装 CRSLab

你可以通过 pip 来安装:

```bash

pip install crslab

```

也可以通过源文件进行进行安装:

```bash

git clone https://github.com/RUCAIBox/CRSLab && cd CRSLab

pip install -e .

```

## 快速上手

从 GitHub 下载 CRSLab 后,可以使用提供的脚本快速运行和测试,默认使用CPU:

```bash

python run_crslab.py --config config/crs/kgsf/redial.yaml

```

系统将依次完成数据的预处理,以及各模块的训练、验证和测试,并得到指定的模型评测结果。

如果你希望保存数据预处理结果与模型训练结果,可以使用如下命令:

```bash

python run_crslab.py --config config/crs/kgsf/redial.yaml --save_data --save_system

```

总的来说,`run_crslab.py`有如下参数可供调用:

- `--config` 或 `-c`:配置文件的相对路径,以指定运行的模型与数据集。

- `--gpu` or `-g`:指定 GPU id,支持多 GPU,默认使用 CPU(-1)。

- `--save_data` 或 `-sd`:保存预处理的数据。

- `--restore_data` 或 `-rd`:从文件读取预处理的数据。

- `--save_system` 或 `-ss`:保存训练好的 CRS 系统。

- `--restore_system` 或 `-rs`:从文件载入提前训练好的系统。

- `--debug` 或 `-d`:用验证集代替训练集以方便调试。

- `--interact` 或 `-i`:与你的系统进行对话交互,而非进行训练。

- `--tensorboard` or `-tb`:使用 tensorboardX 组件来监测训练表现。

## 模型

在第一个发行版中,我们实现了 4 类共 18 个模型。这里我们将对话推荐任务主要拆分成三个任务:推荐任务(生成推荐的商品),对话任务(生成对话的回复)和策略任务(规划对话推荐的策略)。其中所有的对话推荐系统都具有对话和推荐任务,他们是对话推荐系统的核心功能。而策略任务是一个辅助任务,其致力于更好的控制对话推荐系统,在不同的模型中的实现也可能不同(如 TG-ReDial 采用一个主题预测模型,DuRecDial 中采用一个对话规划模型等):

| 类别 | 模型 | Graph Neural Network? | Pre-training Model? |

| :------: | :----------------------------------------------------------: | :-----------------------------: | :-----------------------------: |

| CRS 模型 | [ReDial](https://arxiv.org/abs/1812.07617)

[KBRD](https://arxiv.org/abs/1908.05391)

[KGSF](https://arxiv.org/abs/2007.04032)

[TG-ReDial](https://arxiv.org/abs/2010.04125)

[INSPIRED](https://www.aclweb.org/anthology/2020.emnlp-main.654.pdf) | ×

√

√

×

× | ×

×

×

√

√ |

| 推荐模型 | Popularity

[GRU4Rec](https://arxiv.org/abs/1511.06939)

[SASRec](https://arxiv.org/abs/1808.09781)

[TextCNN](https://arxiv.org/abs/1408.5882)

[R-GCN](https://arxiv.org/abs/1703.06103)

[BERT](https://arxiv.org/abs/1810.04805) | ×

×

×

×

√

× | ×

×

×

×

×

√ |

| 对话模型 | [HERD](https://arxiv.org/abs/1507.04808)

[Transformer](https://arxiv.org/abs/1706.03762)

[GPT-2](http://www.persagen.com/files/misc/radford2019language.pdf) | ×

×

× | ×

×

√ |

| 策略模型 | PMI

[MGCG](https://arxiv.org/abs/2005.03954)

[Conv-BERT](https://arxiv.org/abs/2010.04125)

[Topic-BERT](https://arxiv.org/abs/2010.04125)

[Profile-BERT](https://arxiv.org/abs/2010.04125) | ×

×

×

×

× | ×

×

√

√

√ |

其中,CRS 模型是指直接融合推荐模型和对话模型,以相互增强彼此的效果,故其内部往往已经包含了推荐、对话和策略模型。其他如推荐模型、对话模型、策略模型往往只关注以上任务中的某一个。

我们对于这几类模型,我们还分别实现了如下的自动评测指标模块:

| 类别 | 指标 |

| :------: | :----------------------------------------------------------: |

| 推荐指标 | Hit@{1, 10, 50}, MRR@{1, 10, 50}, NDCG@{1, 10, 50} |

| 对话指标 | PPL, BLEU-{1, 2, 3, 4}, Embedding Average/Extreme/Greedy, Distinct-{1, 2, 3, 4} |

| 策略指标 | Accuracy, Hit@{1,3,5} |

## 数据集

我们收集了 6 个常用的人工标注数据集,并对它们进行了预处理(包括引入外部知识图谱),以融入统一的 CRS 任务中。如下为相关数据集的统计数据:

| Dataset | Dialogs | Utterances | Domains | Task Definition | Entity KG | Word KG |

| :----------------------------------------------------------: | :-----: | :--------: | :----------: | :-------------: | :--------: | :--------: |

| [ReDial](https://redialdata.github.io/website/) | 10,006 | 182,150 | Movie | -- | DBpedia | ConceptNet |

| [TG-ReDial](https://github.com/RUCAIBox/TG-ReDial) | 10,000 | 129,392 | Movie | Topic Guide | CN-DBpedia | HowNet |

| [GoRecDial](https://arxiv.org/abs/1909.03922) | 9,125 | 170,904 | Movie | Action Choice | DBpedia | ConceptNet |

| [DuRecDial](https://arxiv.org/abs/2005.03954) | 10,200 | 156,000 | Movie, Music | Goal Plan | CN-DBpedia | HowNet |

| [INSPIRED](https://github.com/sweetpeach/Inspired) | 1,001 | 35,811 | Movie | Social Strategy | DBpedia | ConceptNet |

| [OpenDialKG](https://github.com/facebookresearch/opendialkg) | 13,802 | 91,209 | Movie, Book | Path Generate | DBpedia | ConceptNet |

## 评测结果

我们在 TG-ReDial 数据集上对模型进行了训练和测试,这里我们将数据集按照 8:1:1 切分。其中对于每条数据,我们从对话的第一轮开始,一轮一轮的进行推荐、策略生成、回复生成任务。下表记录了相关的评测结果。

### 推荐任务

| 模型 | Hit@1 | Hit@10 | Hit@50 | MRR@1 | MRR@10 | MRR@50 | NDCG@1 | NDCG@10 | NDCG@50 |

| :-------: | :---------: | :--------: | :--------: | :---------: | :--------: | :--------: | :---------: | :--------: | :--------: |

| SASRec | 0.000446 | 0.00134 | 0.0160 | 0.000446 | 0.000576 | 0.00114 | 0.000445 | 0.00075 | 0.00380 |

| TextCNN | 0.00267 | 0.0103 | 0.0236 | 0.00267 | 0.00434 | 0.00493 | 0.00267 | 0.00570 | 0.00860 |

| BERT | 0.00722 | 0.00490 | 0.0281 | 0.00722 | 0.0106 | 0.0124 | 0.00490 | 0.0147 | 0.0239 |

| KBRD | 0.00401 | 0.0254 | 0.0588 | 0.00401 | 0.00891 | 0.0103 | 0.00401 | 0.0127 | 0.0198 |

| KGSF | 0.00535 | **0.0285** | **0.0771** | 0.00535 | 0.0114 | **0.0135** | 0.00535 | **0.0154** | **0.0259** |

| TG-ReDial | **0.00793** | 0.0251 | 0.0524 | **0.00793** | **0.0122** | 0.0134 | **0.00793** | 0.0152 | 0.0211 |

### 对话任务

| 模型 | BLEU@1 | BLEU@2 | BLEU@3 | BLEU@4 | Dist@1 | Dist@2 | Dist@3 | Dist@4 | Average | Extreme | Greedy | PPL |

| :---------: | :-------: | :-------: | :--------: | :--------: | :------: | :------: | :------: | :------: | :-------: | :-------: | :-------: | :------: |

| HERD | 0.120 | 0.0141 | 0.00136 | 0.000350 | 0.181 | 0.369 | 0.847 | 1.30 | 0.697 | 0.382 | 0.639 | 472 |

| Transformer | 0.266 | 0.0440 | 0.0145 | 0.00651 | 0.324 | 0.837 | 2.02 | 3.06 | 0.879 | 0.438 | 0.680 | 30.9 |

| GPT2 | 0.0858 | 0.0119 | 0.00377 | 0.0110 | **2.35** | **4.62** | **8.84** | **12.5** | 0.763 | 0.297 | 0.583 | 9.26 |

| KBRD | 0.267 | 0.0458 | 0.0134 | 0.00579 | 0.469 | 1.50 | 3.40 | 4.90 | 0.863 | 0.398 | 0.710 | 52.5 |

| KGSF | **0.383** | **0.115** | **0.0444** | **0.0200** | 0.340 | 0.910 | 3.50 | 6.20 | **0.888** | **0.477** | **0.767** | 50.1 |

| TG-ReDial | 0.125 | 0.0204 | 0.00354 | 0.000803 | 0.881 | 1.75 | 7.00 | 12.0 | 0.810 | 0.332 | 0.598 | **7.41** |

### 策略任务

| 模型 | Hit@1 | Hit@10 | Hit@50 | MRR@1 | MRR@10 | MRR@50 | NDCG@1 | NDCG@10 | NDCG@50 |

| :--------: | :-------: | :-------: | :-------: | :-------: | :-------: | :-------: | :-------: | :-------: | :-------: |

| MGCG | 0.591 | 0.818 | 0.883 | 0.591 | 0.680 | 0.683 | 0.591 | 0.712 | 0.729 |

| Conv-BERT | 0.597 | 0.814 | 0.881 | 0.597 | 0.684 | 0.687 | 0.597 | 0.716 | 0.731 |

| Topic-BERT | 0.598 | 0.828 | 0.885 | 0.598 | 0.690 | 0.693 | 0.598 | 0.724 | 0.737 |

| TG-ReDial | **0.600** | **0.830** | **0.893** | **0.600** | **0.693** | **0.696** | **0.600** | **0.727** | **0.741** |

上述结果是我们使用 CRSLab 进行实验得到的。然而,这些算法是根据我们的经验和理解来实现和调参的,可能还没有达到它们的最佳性能。如果您能在某个具体算法上得到更好的结果,请告知我们。验证结果后,我们会更新该表。

## 发行版本

| 版本号 | 发行日期 | 特性 |

| :----: | :-----------: | :----------: |

| v0.1.1 | 1 / 4 / 2021 | Basic CRSLab |

| v0.1.2 | 3 / 28 / 2021 | CRSLab |

## 贡献

如果您遇到错误或有任何建议,请通过 [Issue](https://github.com/RUCAIBox/CRSLab/issues) 进行反馈

我们欢迎关于修复错误、添加新特性的任何贡献。

如果想贡献代码,请先在 Issue 中提出问题,然后再提 PR。

我们感谢 [@shubaoyu](https://github.com/shubaoyu), [@ToheartZhang](https://github.com/ToheartZhang) 通过 PR 为项目贡献的新特性。

## 引用

如果你觉得 CRSLab 对你的科研工作有帮助,请引用我们的[论文](https://arxiv.org/pdf/2101.00939.pdf):

```

@article{crslab,

title={CRSLab: An Open-Source Toolkit for Building Conversational Recommender System},

author={Kun Zhou, Xiaolei Wang, Yuanhang Zhou, Chenzhan Shang, Yuan Cheng, Wayne Xin Zhao, Yaliang Li, Ji-Rong Wen},

year={2021},

journal={arXiv preprint arXiv:2101.00939}

}

```

## 项目团队

**CRSLab** 由中国人民大学 [AI Box](http://aibox.ruc.edu.cn/) 小组开发和维护。

## 免责声明

**CRSLab** 基于 [MIT License](./LICENSE) 进行开发,本项目的所有数据和代码只能被用于学术目的。

================================================

FILE: config/conversation/gpt2/durecdial.yaml

================================================

# dataset

dataset: DuRecDial

tokenize:

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

conv_model: GPT2

# optim

conv:

epoch: 1

batch_size: 8

gradient_clip: 1.0

update_freq: 1

optimizer:

name: AdamW

lr: !!float 1.5e-4

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 2000

================================================

FILE: config/conversation/gpt2/gorecdial.yaml

================================================

# dataset

dataset: GoRecDial

tokenize:

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

conv_model: GPT2

# optim

conv:

epoch: 1

batch_size: 4

gradient_clip: 1.0

update_freq: 1

optimizer:

name: AdamW

lr: !!float 1.5e-4

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 2000

================================================

FILE: config/conversation/gpt2/inspired.yaml

================================================

# dataset

dataset: Inspired

tokenize:

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

conv_model: GPT2

# optim

conv:

epoch: 1

batch_size: 8

gradient_clip: 1.0

update_freq: 1

optimizer:

name: AdamW

lr: !!float 1.5e-4

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 2000

================================================

FILE: config/conversation/gpt2/opendialkg.yaml

================================================

# dataset

dataset: OpenDialKG

tokenize:

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

conv_model: GPT2

# optim

conv:

epoch: 1

batch_size: 8

gradient_clip: 1.0

update_freq: 1

optimizer:

name: AdamW

lr: !!float 1.5e-4

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 2000

================================================

FILE: config/conversation/gpt2/redial.yaml

================================================

# dataset

dataset: ReDial

tokenize:

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

conv_model: GPT2

# optim

conv:

epoch: 1

batch_size: 8

gradient_clip: 1.0

update_freq: 1

optimizer:

name: AdamW

lr: !!float 1.5e-4

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 2000

================================================

FILE: config/conversation/gpt2/tgredial.yaml

================================================

# dataset

dataset: TGReDial

tokenize:

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

conv_model: GPT2

# optim

conv:

epoch: 50

batch_size: 8

gradient_clip: 1.0

update_freq: 1

early_stop: true

stop_mode: min

impatience: 3

optimizer:

name: AdamW

lr: !!float 1.5e-4

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 2000

================================================

FILE: config/conversation/transformer/durecdial.yaml

================================================

# dataset

dataset: DuRecDial

tokenize:

conv: jieba

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

conv_model: Transformer

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

conv:

epoch: 1

batch_size: 64

early_stop: True

stop_mode: min

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

================================================

FILE: config/conversation/transformer/gorecdial.yaml

================================================

# dataset

dataset: GoRecDial

tokenize:

conv: nltk

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

conv_model: Transformer

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

conv:

epoch: 1

batch_size: 256

optimizer:

name: Adam

lr: !!float 3e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

early_stop: true

stop_mode: min

impatience: 3

================================================

FILE: config/conversation/transformer/inspired.yaml

================================================

# dataset

dataset: Inspired

tokenize:

conv: nltk

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

conv_model: Transformer

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

conv:

epoch: 1

batch_size: 256

optimizer:

name: Adam

lr: !!float 3e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

early_stop: true

stop_mode: min

impatience: 3

================================================

FILE: config/conversation/transformer/opendialkg.yaml

================================================

# dataset

dataset: OpenDialKG

tokenize:

conv: nltk

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

conv_model: Transformer

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

conv:

epoch: 1

batch_size: 256

optimizer:

name: Adam

lr: !!float 3e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

early_stop: true

stop_mode: min

impatience: 3

================================================

FILE: config/conversation/transformer/redial.yaml

================================================

# dataset

dataset: ReDial

tokenize:

conv: nltk

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

conv_model: Transformer

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

conv:

epoch: 1

batch_size: 64

early_stop: True

stop_mode: min

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

================================================

FILE: config/conversation/transformer/tgredial.yaml

================================================

# dataset

dataset: TGReDial

tokenize:

conv: pkuseg

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

conv_model: Transformer

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

conv:

epoch: 50

batch_size: 64

early_stop: True

stop_mode: min

patience: 3

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

factor: 0.5

================================================

FILE: config/crs/inspired/durecdial.yaml

================================================

# dataset

dataset: DuRecDial

tokenize:

rec: bert

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

# rec

rec_model: InspiredRec

# conv

conv_model: InspiredConv

# embedding: word2vec

embedding_dim: 300

use_dropout: False

dropout: 0.3

decoder_hidden_size: 256

decoder_num_layers: 1

# optim

rec:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 1e-3

weight_decay: !!float 0.0000

early_stop: true

stop_mode: max

impatience: 3

lr_bert: !!float 1e-5

conv:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 3e-5

eps: !!float 1e-06

weight_decay: !!float 0.01

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 100

early_stop: true

impatience: 3

stop_mode: min

================================================

FILE: config/crs/inspired/gorecdial.yaml

================================================

# dataset

dataset: GoRecDial

tokenize:

rec: bert

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

# rec

rec_model: InspiredRec

# conv

conv_model: InspiredConv

# embedding: word2vec

embedding_dim: 300

use_dropout: False

dropout: 0.3

decoder_hidden_size: 256

decoder_num_layers: 1

# optim

rec:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 1e-3

weight_decay: !!float 0.0000

early_stop: true

stop_mode: max

impatience: 3

lr_bert: !!float 1e-5

conv:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 3e-5

eps: !!float 1e-06

weight_decay: !!float 0.01

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 100

early_stop: true

impatience: 3

stop_mode: min

================================================

FILE: config/crs/inspired/inspired.yaml

================================================

# dataset

dataset: Inspired

tokenize:

rec: bert

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

# rec

rec_model: InspiredRec

# conv

conv_model: InspiredConv

# optim

rec:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 1e-3

weight_decay: !!float 0.0000

early_stop: true

stop_mode: max

impatience: 3

lr_bert: !!float 1e-5

conv:

epoch: 50

batch_size: 1

optimizer:

name: AdamW

lr: !!float 3e-5

eps: !!float 1e-06

weight_decay: !!float 0.01

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 100

early_stop: true

impatience: 3

stop_mode: min

label_smoothing: -1

================================================

FILE: config/crs/inspired/opendialkg.yaml

================================================

# dataset

dataset: OpenDialKG

tokenize:

rec: bert

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

# rec

rec_model: InspiredRec

# conv

conv_model: InspiredConv

# embedding: word2vec

embedding_dim: 300

use_dropout: False

dropout: 0.3

decoder_hidden_size: 256

decoder_num_layers: 1

# optim

rec:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 1e-3

weight_decay: !!float 0.0000

early_stop: true

stop_mode: max

impatience: 3

lr_bert: !!float 1e-5

conv:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 3e-5

eps: !!float 1e-06

weight_decay: !!float 0.01

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 100

early_stop: true

impatience: 3

stop_mode: min

================================================

FILE: config/crs/inspired/redial.yaml

================================================

# dataset

dataset: ReDial

tokenize:

rec: bert

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

# rec

rec_model: InspiredRec

# conv

conv_model: InspiredConv

# embedding: word2vec

embedding_dim: 300

use_dropout: False

dropout: 0.3

decoder_hidden_size: 256

decoder_num_layers: 1

# optim

rec:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 1e-3

weight_decay: !!float 0.0000

early_stop: true

stop_mode: max

impatience: 3

lr_bert: !!float 1e-5

conv:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 3e-5

eps: !!float 1e-06

weight_decay: !!float 0.01

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 100

early_stop: true

impatience: 3

stop_mode: min

================================================

FILE: config/crs/inspired/tgredial.yaml

================================================

# dataset

dataset: TGReDial

tokenize:

rec: bert

conv: gpt2

# dataloader

context_truncate: 256

response_truncate: 30

item_truncate: 100

scale: 1

# model

# rec

rec_model: InspiredRec

# conv

conv_model: InspiredConv

# embedding: word2vec

embedding_dim: 300

use_dropout: False

dropout: 0.3

decoder_hidden_size: 256

decoder_num_layers: 1

# optim

rec:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 1e-3

weight_decay: !!float 0.0000

early_stop: true

stop_mode: max

impatience: 3

lr_bert: !!float 1e-5

conv:

epoch: 1

batch_size: 8

optimizer:

name: AdamW

lr: !!float 3e-5

eps: !!float 1e-06

weight_decay: !!float 0.01

lr_scheduler:

name: TransformersLinearLR

warmup_steps: 100

early_stop: true

impatience: 3

stop_mode: min

================================================

FILE: config/crs/kbrd/durecdial.yaml

================================================

# dataset

dataset: DuRecDial

tokenize: jieba

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

model: KBRD

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

user_proj_dim: 512

# optim

rec:

epoch: 1

batch_size: 4096

optimizer:

name: Adam

lr: !!float 3e-3

conv:

epoch: 1

batch_size: 64

early_stop: True

stop_mode: min

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

================================================

FILE: config/crs/kbrd/gorecdial.yaml

================================================

# dataset

dataset: GoRecDial

tokenize: nltk

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

model: KBRD

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

user_proj_dim: 512

# optim

rec:

epoch: 1

batch_size: 4096

optimizer:

name: Adam

lr: !!float 3e-3

conv:

epoch: 1

batch_size: 256

optimizer:

name: Adam

lr: !!float 3e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

early_stop: true

stop_mode: min

impatience: 3

================================================

FILE: config/crs/kbrd/inspired.yaml

================================================

# dataset

dataset: Inspired

tokenize: nltk

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

model: KBRD

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

user_proj_dim: 512

# optim

rec:

epoch: 1

batch_size: 1024

optimizer:

name: Adam

lr: !!float 3e-3

conv:

epoch: 1

batch_size: 64

early_stop: True

stop_mode: min

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

================================================

FILE: config/crs/kbrd/opendialkg.yaml

================================================

# dataset

dataset: OpenDialKG

tokenize: nltk

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

model: KBRD

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

user_proj_dim: 512

# optim

rec:

epoch: 1

batch_size: 1024

optimizer:

name: Adam

lr: !!float 3e-3

conv:

epoch: 1

batch_size: 64

early_stop: True

stop_mode: min

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

================================================

FILE: config/crs/kbrd/redial.yaml

================================================

# dataset

dataset: ReDial

tokenize: nltk

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

model: KBRD

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

user_proj_dim: 512

# optim

rec:

epoch: 10

batch_size: 4096

optimizer:

name: Adam

lr: !!float 3e-3

conv:

epoch: 10

batch_size: 32

early_stop: True

stop_mode: min

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

================================================

FILE: config/crs/kbrd/tgredial.yaml

================================================

# dataset

dataset: TGReDial

tokenize: pkuseg

# dataloader

context_truncate: 1024

response_truncate: 1024

scale: 1

# model

model: KBRD

token_emb_dim: 300

n_relation: 56

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

user_proj_dim: 512

# optim

rec:

epoch: 100

batch_size: 64

early_stop: True

stop_mode: max

patience: 3

optimizer:

name: Adam

lr: !!float 3e-3

conv:

epoch: 100

batch_size: 16

early_stop: True

stop_mode: min

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

================================================

FILE: config/crs/kgsf/durecdial.yaml

================================================

# dataset

dataset: DuRecDial

tokenize: jieba

embedding: word2vec.npy

# dataloader

context_truncate: 256

response_truncate: 30

scale: 1

# model

model: KGSF

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

pretrain:

epoch: 1

batch_size: 4096

optimizer:

name: Adam

lr: !!float 3e-3

rec:

epoch: 1

batch_size: 1024

optimizer:

name: Adam

lr: !!float 3e-3

early_stop: true

stop_mode: max

impatience: 3

conv:

epoch: 1

batch_size: 256

optimizer:

name: Adam

lr: !!float 3e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

================================================

FILE: config/crs/kgsf/gorecdial.yaml

================================================

# dataset

dataset: GoRecDial

tokenize: nltk

embedding: word2vec.npy

# dataloader

context_truncate: 256

response_truncate: 30

scale: 1

# model

model: KGSF

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

pretrain:

epoch: 1

batch_size: 64

optimizer:

name: Adam

lr: !!float 3e-3

rec:

epoch: 1

batch_size: 64

optimizer:

name: Adam

lr: !!float 3e-3

early_stop: true

stop_mode: max

impatience: 3

conv:

epoch: 1

batch_size: 64

optimizer:

name: Adam

lr: !!float 3e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

================================================

FILE: config/crs/kgsf/inspired.yaml

================================================

# dataset

dataset: Inspired

tokenize: nltk

embedding: word2vec.npy

# dataloader

context_truncate: 256

response_truncate: 30

scale: 1

# model

model: KGSF

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

pretrain:

epoch: 1

batch_size: 4096

optimizer:

name: Adam

lr: !!float 3e-3

rec:

epoch: 1

batch_size: 1024

optimizer:

name: Adam

lr: !!float 3e-3

early_stop: true

stop_mode: max

impatience: 3

conv:

epoch: 1

batch_size: 256

optimizer:

name: Adam

lr: !!float 3e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

================================================

FILE: config/crs/kgsf/opendialkg.yaml

================================================

# dataset

dataset: OpenDialKG

tokenize: nltk

embedding: word2vec.npy

# dataloader

context_truncate: 256

response_truncate: 30

scale: 1

# model

model: KGSF

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

pretrain:

epoch: 1

batch_size: 4096

optimizer:

name: Adam

lr: !!float 3e-3

rec:

epoch: 1

batch_size: 1024

optimizer:

name: Adam

lr: !!float 3e-3

early_stop: true

stop_mode: max

impatience: 3

conv:

epoch: 1

batch_size: 256

optimizer:

name: Adam

lr: !!float 3e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

================================================

FILE: config/crs/kgsf/redial.yaml

================================================

# dataset

dataset: ReDial

tokenize: nltk

embedding: word2vec.npy

# dataloader

context_truncate: 256

response_truncate: 30

scale: 1

# model

model: KGSF

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

pretrain:

epoch: 3

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

rec:

epoch: 9

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

conv:

epoch: 90

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

================================================

FILE: config/crs/kgsf/tgredial.yaml

================================================

# dataset

dataset: TGReDial

tokenize: pkuseg

embedding: word2vec.npy

# dataloader

context_truncate: 256

response_truncate: 30

scale: 1

# model

model: KGSF

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

# optim

pretrain:

epoch: 50

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

rec:

epoch: 20

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

stop_mode: max

impatience: 3

conv:

epoch: 10

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

================================================

FILE: config/crs/ntrd/tgredial.yaml

================================================

# dataset

dataset: TGReDial

tokenize: pkuseg

embedding: word2vec.npy

# dataloader

context_truncate: 256

response_truncate: 30

scale: 1

# model

model: NTRD

token_emb_dim: 300

kg_emb_dim: 128

num_bases: 8

n_heads: 2

n_layers: 2

ffn_size: 300

dropout: 0.1

attention_dropout: 0.0

relu_dropout: 0.1

learn_positional_embeddings: false

embeddings_scale: true

reduction: false

n_positions: 1024

gen_loss_weight: 5

n_movies: 62287

replace_token: '[ITEM]'

# optim

pretrain:

epoch: 50

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

rec:

epoch: 20

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

stop_mode: max

impatience: 3

conv:

epoch: 10

batch_size: 64

optimizer:

name: Adam

lr: !!float 1e-3

lr_scheduler:

name: ReduceLROnPlateau

patience: 3

factor: 0.5

gradient_clip: 0.1

================================================

FILE: config/crs/redial/durecdial.yaml

================================================

# dataset

dataset: DuRecDial

tokenize:

rec: jieba

conv: jieba

# dataloader

utterance_truncate: 80

conversation_truncate: 40

scale: 1

# model

# rec

rec_model: ReDialRec

autorec_layer_sizes: [ 1000 ]

autorec_f: sigmoid

autorec_g: sigmoid

# conv

conv_model: ReDialConv

# embedding: word2vec

embedding_dim: 300

utterance_encoder_hidden_size: 256

dialog_encoder_hidden_size: 256

dialog_encoder_num_layers: 1

use_dropout: False

dropout: 0.3

decoder_hidden_size: 256

decoder_num_layers: 1

# optim

rec:

epoch: 1

batch_size: 1024

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

impatience: 3

stop_mode: min

conv:

epoch: 1

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

impatience: 3

stop_mode: min

================================================

FILE: config/crs/redial/gorecdial.yaml

================================================

# dataset

dataset: GoRecDial

tokenize:

rec: nltk

conv: nltk

# dataloader

utterance_truncate: 80

conversation_truncate: 40

scale: 1

# model

# rec

rec_model: ReDialRec

autorec_layer_sizes: [ 1000 ]

autorec_f: sigmoid

autorec_g: sigmoid

# conv

conv_model: ReDialConv

#embedding: word2vec

embedding_dim: 300

utterance_encoder_hidden_size: 256

dialog_encoder_hidden_size: 256

dialog_encoder_num_layers: 1

use_dropout: False

dropout: 0.3

decoder_hidden_size: 256

decoder_num_layers: 1

# optim

rec:

epoch: 1

batch_size: 1024

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

impatience: 3

stop_mode: min

conv:

epoch: 1

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

impatience: 3

stop_mode: min

================================================

FILE: config/crs/redial/inspired.yaml

================================================

# dataset

dataset: Inspired

tokenize:

rec: nltk

conv: nltk

# dataloader

utterance_truncate: 80

conversation_truncate: 40

scale: 1

# model

# rec

rec_model: ReDialRec

autorec_layer_sizes: [ 1000 ]

autorec_f: sigmoid

autorec_g: sigmoid

# conv

conv_model: ReDialConv

# embedding: word2vec

embedding_dim: 300

utterance_encoder_hidden_size: 256

dialog_encoder_hidden_size: 256

dialog_encoder_num_layers: 1

use_dropout: False

dropout: 0.3

decoder_hidden_size: 256

decoder_num_layers: 1

# optim

rec:

epoch: 1

batch_size: 1024

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

impatience: 3

stop_mode: min

conv:

epoch: 1

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

impatience: 3

stop_mode: min

================================================

FILE: config/crs/redial/opendialkg.yaml

================================================

# dataset

dataset: OpenDialKG

tokenize:

rec: nltk

conv: nltk

# dataloader

utterance_truncate: 80

conversation_truncate: 40

scale: 1

# model

# rec

rec_model: ReDialRec

autorec_layer_sizes: [ 1000 ]

autorec_f: sigmoid

autorec_g: sigmoid

# conv

conv_model: ReDialConv

# embedding: word2vec

embedding_dim: 300

utterance_encoder_hidden_size: 256

dialog_encoder_hidden_size: 256

dialog_encoder_num_layers: 1

use_dropout: False

dropout: 0.3

decoder_hidden_size: 256

decoder_num_layers: 1

# optim

rec:

epoch: 1

batch_size: 1024

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

impatience: 3

stop_mode: min

conv:

epoch: 1

batch_size: 128

optimizer:

name: Adam

lr: !!float 1e-3

early_stop: true

impatience: 3

stop_mode: min