Repository: Sibozhu/MotionBlur-detection-by-CNN

Branch: master

Commit: 93ee3775a087

Files: 19

Total size: 55.4 MB

Directory structure:

gitextract_1_ro849x/

├── Prediction/

│ └── prediction_start.py

├── Processing/

│ ├── blur_utils.py

│ └── processing_utils.py

├── README.md

├── Report/

│ ├── nicefrac.sty

│ ├── nips_2017.aux

│ ├── nips_2017.log

│ ├── nips_2017.out

│ ├── nips_2017.sty

│ └── nips_2017.tex

├── cnn.py

├── image_slice.py

├── mergingpatches.py

├── motionblur.h5

├── motionblur.py

├── processing_utils.py

├── slicing.py

├── test_img_generator.py

└── trainingdatacreate.py

================================================

FILE CONTENTS

================================================

================================================

FILE: Prediction/prediction_start.py

================================================

import keras

================================================

FILE: Processing/blur_utils.py

================================================

================================================

FILE: Processing/processing_utils.py

================================================

'''

utility function package for image processing

by Sibo Zhu, Kieran Xiao Wang

2017.08.24

'''

import numpy as np

import cv2

import random

from PIL import Image

import PIL

from natsort import natsorted

import os, os.path

def save_image(image_np_array, image_save_path):

'''

!!! Sibo, Please complete this function !!!

:param image_np_array: 3-D numpy array

:param image_save_path: string

:return:

'''

im = Image.fromarray(image_np_array)

im.save(image_save_path)

def patch_merge_to_one_from_folder(patch_dir):

'''

merge patches into a whole image

!!! Sibo, please complete this function !!!

!!! now you are using naming for indicating patch location and blur/no blur, which is fine

!!! the patch_dir is supposed to contain all patches of an image(no matter blured or not)

!!! you may want to use os.walk

!!! if so avoid to have any other .jpg file except image patches(e.g. do not save the whole image in .jpg in that folder)

:param patch_dir: [string] directory to the folder that contains all patches(of an image)

:return: [3-d array] whole image in np array

'''

patch_dir = patch_dir

pi_imgs = []

valid_images = [".jpg"]

for f in os.listdir(patch_dir):

ext = os.path.splitext(f)[1]

if ext.lower() not in valid_images:

continue

pi_imgs.append(Image.open(os.path.join(patch_dir, f)))

total = len(pi_imgs)

print(str(len(pi_imgs)) + " patches in total")

#######################################################

"""Loading all the images from directory"""

dir = []

valid_images = [".jpg"]

for f in os.listdir(patch_dir):

ext = os.path.splitext(f)[1]

if ext.lower() not in valid_images:

continue

dir.append(os.path.join(patch_dir, f))

#####################################################

"""concat images with numpy"""

def concat_img_horizon(list_imgs):

imgs = [PIL.Image.open(i) for i in list_imgs]

# pick the image which is the smallest, and resize the others to match it (can be arbitrary image shape here)

min_shape = sorted([(np.sum(i.size), i.size) for i in imgs])[0][1]

#

imgs_comb = np.hstack((np.asarray(i.resize(min_shape)) for i in imgs))

imgs_comb = PIL.Image.fromarray(imgs_comb)

return imgs_comb

def concat_img_vertical(list_imgs):

imgs = [PIL.Image.open(i) for i in list_imgs]

# pick the image which is the smallest, and resize the others to match it (can be arbitrary image shape here)

min_shape = sorted([(np.sum(i.size), i.size) for i in imgs])[0][1]

imgs_comb = np.hstack((np.asarray(i.resize(min_shape)) for i in imgs))

# for a vertical stacking it is simple: use vstack

imgs_comb = np.vstack((np.asarray(i.resize(min_shape)) for i in imgs))

imgs_comb = PIL.Image.fromarray(imgs_comb)

return imgs_comb

def concat_temp_horizon(list_imgs):

imgs_comb = np.hstack((np.asarray(i) for i in list_imgs))

imgs_comb = PIL.Image.fromarray(imgs_comb)

return imgs_comb

#########################################################

"""counting number of whole pictures in the folder"""

max_index = 0

for i in range(len(dir)):

flag = int(dir[i].split("/")[-1].split(",")[0].split("_")[0])

if flag > max_index:

max_index = flag

#############################################

"""placing patches to their certain picture"""

pic_index = {}

for elem in range(max_index + 1):

pic_index[elem] = []

for j in range(len(dir)):

for k in range(len(pic_index)):

if int(dir[j].split("/")[-1].split(",")[0].split("_")[0]) == k:

pic_index[k].append(dir[j])

print('the first picture contains ' + str(len(pic_index[0])) + ' patches')

##########################################

"""getting the total columns of picture"""

def get_total_column(list):

max_col = 0

for l in range(len(list)):

col_flag = int(pic_index[0][l].split("/")[-1].split(",")[0].split("_")[1])

if col_flag > max_col:

max_col = col_flag

return max_col

print ("this picture's total column is " + str(get_total_column(pic_index[0])))

#########################################

"""getting the total rows of picture"""

def get_total_row(list):

max_row = 0

for o in range(len(list)):

row_flag = int(list[o].split("/")[-1].split(",")[0].split("_")[2])

if row_flag > max_row:

max_row = row_flag

return max_row

print ("this picture's total row is " + str(get_total_row(pic_index[0])))

########################################

"""sorting this picture's patches with order of name"""

def sort_picture_patches(list):

return natsorted(list)

#####################################

"""doing global merging"""

for a in range(len(pic_index)):

max_col = get_total_column(pic_index[a])

max_row = get_total_row(pic_index[a])

pic_index[a] = sort_picture_patches(pic_index[a])

col_index = {}

for elem in range(max_col + 1):

col_index[elem] = []

for j in range(len(pic_index[a])):

for k in range(max_col):

if int(pic_index[a][j].split("/")[-1].split(",")[0].split("_")[1]) == k:

col_index[k].append(pic_index[a][j])

saver = []

for i in range(max_col - 1):

flag = concat_img_vertical(col_index[i])

saver.append(flag)

res = concat_temp_horizon(saver)

img = PIL.Image.open(res).convert("L")

arr = np.array(img)

return arr

def mass_patch_merge_to_one_from_folder(patch_dir,save_dir):

'''

After implementing the merging patches back to a whole image,

we can also do that same thing to a folder that contains several patches that

come from different images and merge and save them back to those original images (with partially

blurry) based on the naming habit of slicing images.

:param patch_dir: [string] directory to the folder that contains all patches(of an image)

:param save_dir: [string] directory to the folder that used to save all those merged images

:return: This time there's no return

'''

patch_dir = patch_dir

result_dir = save_dir

pi_imgs = []

valid_images = [".jpg"]

for f in os.listdir(patch_dir):

ext = os.path.splitext(f)[1]

if ext.lower() not in valid_images:

continue

pi_imgs.append(Image.open(os.path.join(patch_dir, f)))

total = len(pi_imgs)

print(str(len(pi_imgs)) + " patches in total")

#######################################################

"""Loading all the images from directory"""

dir = []

valid_images = [".jpg"]

for f in os.listdir(patch_dir):

ext = os.path.splitext(f)[1]

if ext.lower() not in valid_images:

continue

dir.append(os.path.join(patch_dir, f))

#####################################################

"""concat images with numpy"""

def concat_img_horizon(list_imgs):

imgs = [PIL.Image.open(i) for i in list_imgs]

# pick the image which is the smallest, and resize the others to match it (can be arbitrary image shape here)

min_shape = sorted([(np.sum(i.size), i.size) for i in imgs])[0][1]

#

imgs_comb = np.hstack((np.asarray(i.resize(min_shape)) for i in imgs))

imgs_comb = PIL.Image.fromarray(imgs_comb)

return imgs_comb

def concat_img_vertical(list_imgs):

imgs = [PIL.Image.open(i) for i in list_imgs]

# pick the image which is the smallest, and resize the others to match it (can be arbitrary image shape here)

min_shape = sorted([(np.sum(i.size), i.size) for i in imgs])[0][1]

imgs_comb = np.hstack((np.asarray(i.resize(min_shape)) for i in imgs))

# for a vertical stacking it is simple: use vstack

imgs_comb = np.vstack((np.asarray(i.resize(min_shape)) for i in imgs))

imgs_comb = PIL.Image.fromarray(imgs_comb)

return imgs_comb

def concat_temp_horizon(list_imgs):

imgs_comb = np.hstack((np.asarray(i) for i in list_imgs))

imgs_comb = PIL.Image.fromarray(imgs_comb)

return imgs_comb

#########################################################

"""counting number of whole pictures in the folder"""

max_index = 0

for i in range(len(dir)):

flag = int(dir[i].split("/")[-1].split(",")[0].split("_")[0])

if flag > max_index:

max_index = flag

#############################################

"""placing patches to their certain picture"""

pic_index = {}

for elem in range(max_index + 1):

pic_index[elem] = []

for j in range(len(dir)):

for k in range(len(pic_index)):

if int(dir[j].split("/")[-1].split(",")[0].split("_")[0]) == k:

pic_index[k].append(dir[j])

print('the first picture contains ' + str(len(pic_index[0])) + ' patches')

##########################################

"""getting the total columns of picture"""

def get_total_column(list):

max_col = 0

for l in range(len(list)):

col_flag = int(pic_index[0][l].split("/")[-1].split(",")[0].split("_")[1])

if col_flag > max_col:

max_col = col_flag

return max_col

print ("this picture's total column is " + str(get_total_column(pic_index[0])))

#########################################

"""getting the total rows of picture"""

def get_total_row(list):

max_row = 0

for o in range(len(list)):

row_flag = int(list[o].split("/")[-1].split(",")[0].split("_")[2])

if row_flag > max_row:

max_row = row_flag

return max_row

print ("this picture's total row is " + str(get_total_row(pic_index[0])))

########################################

"""sorting this picture's patches with order of name"""

def sort_picture_patches(list):

return natsorted(list)

#####################################

"""doing global merging"""

for a in range(len(pic_index)):

max_col = get_total_column(pic_index[a])

max_row = get_total_row(pic_index[a])

pic_index[a] = sort_picture_patches(pic_index[a])

col_index = {}

for elem in range(max_col + 1):

col_index[elem] = []

for j in range(len(pic_index[a])):

for k in range(max_col):

if int(pic_index[a][j].split("/")[-1].split(",")[0].split("_")[1]) == k:

col_index[k].append(pic_index[a][j])

saver = []

for i in range(max_col - 1):

flag = concat_img_vertical(col_index[i])

saver.append(flag)

res = concat_temp_horizon(saver)

res.save(result_dir + str(a) + '.jpg')

def image_to_patch(image_path, patch_size, patch_dir):

'''

cut an image into patch with certain size

!!! Sibo, please complete this function !!!

:param image_path: [string] path to the image(e.g. ./whole_image.jpg)

:param patch_size: [tuple] i.g. (30(length),30(witch))

:param patch_dir: [string] dir where to save the patch dir.(patch dir is defined to be the folder that contains all

image patches of an image)

:return:

'''

img = Image.open(image_path)

(imageWidth, imageHeight) = img.size

gridx = patch_size

gridy = patch_size

rangex = img.width / gridx

rangey = img.height / gridy

print rangex * rangey

for x in xrange(rangex):

for y in xrange(rangey):

bbox = (x * gridx, y * gridy, x * gridx + gridx, y * gridy + gridy)

slice_bit = img.crop(bbox)

slice_bit.save(patch_dir + str(x) + '_' + str(y) + '.jpg', optimize=True,

bits=6)

print(patch_dir + str(x) + '_' + str(y) + '.jpg')

print(imageWidth)

def directory_to_patch(patch_size,original_path,no_blur_path,blur_path,all_img_path):

'''

We take a directory that contains several whole pictures and cut then into custom size of patches,

then apply 50% chance blur and non-blur to those patches, save them into blurry folder, non-blurry folder,

and a folder that contains all blurry and non-blurry patches with order.

:param patch_size: [integer] The custom patch size we want, e.g:for 30x30 patch, enter '30'

:param original_path: [string] The original path that contains all the original pictures without any modification

:param no_blur_path: [string] The destination path that contains all the non-blurry patches with order

:param blur_path: [string] The destination path that contains all the blurry patches with order

:param all_img_path: [string] The destination path that contains all the patches with order

:return: There's no return in this function, all the modified patches are saved into the destination path

'''

#motion blur preset

size = 15

gridx = patch_size

gridy = patch_size

kernel_motion_blur = np.zeros((size, size))

kernel_motion_blur[int((size - 1) / 2), :] = np.ones(size)

kernel_motion_blur = kernel_motion_blur / size

# go through every image in source folder

print('begin loading images')

pi_imgs = []

cv_imgs = []

valid_images = [".jpg"]

for f in os.listdir(original_path):

ext = os.path.splitext(f)[1]

if ext.lower() not in valid_images:

continue

pi_imgs.append(Image.open(os.path.join(original_path, f)))

cv_imgs.append(cv2.imread(os.path.join(original_path, f)))

print('finished loading images')

#

# looping to create blurry and non-blurry images in 50% chance

for i in range(len(pi_imgs)):

img = pi_imgs[i]

(imageWidth, imageHeight) = img.size

rangex = imageWidth / gridx

rangey = imageHeight / gridy

for x in xrange(rangex):

for y in xrange(rangey):

bbox = (x * gridx, y * gridy, x * gridx + gridx, y * gridy + gridy)

slice_bit = img.crop(bbox)

if random.randrange(2) == 0:

slice_bit.save(no_blur_path + str(i) + '_' + str(x) + '_' + str(y) + ',noblur.jpg', optimize=True,

bits=6)

slice_bit.save(all_img_path + str(i) + '_' + str(x) + '_' + str(y) + ',noblur.jpg', optimize=True,

bits=6)

print(str(i))

else:

slice_bit.save(blur_path + str(i) + '_' + str(x) + '_' + str(y) + ',blur.jpg', optimize=True, bits=6)

img1 = cv2.imread(blur_path + str(i) + '_' + str(x) + '_' + str(y) + ',blur.jpg')

output = cv2.filter2D(img1, -1, kernel_motion_blur)

cv2.imwrite(blur_path + str(i) + '_' + str(x) + '_' + str(y) + ',blur.jpg', output)

cv2.imwrite(all_img_path + str(i) + '_' + str(x) + '_' + str(y) + ',blur.jpg', output)

print(str(i))

================================================

FILE: README.md

================================================

# MotionBlur-detection-by-CNN

```

To run the cnn model, just enter "cnn.py" and run the code. It might take

couple hours to run it if you are using personal computers. Usinga server

or GPU to run this code would significantly lower the running time.

```

## Abstract

```

Our project aims to detect motion blur from a single, blurry image. We propose

a deep learning approach to predict the probabilistic distribution of motion blur at

the patch level using a Convolutional Neural Network (CNN).

```

## 1 Our approach

```

We approached the problem by slicing 100 images into 30x30 patches, and applied our own motion

blur algorithm to them (with a random rate of 50%). We then labeled the blurry and non-blurry

patches with 0s and 1s (0 for still, 1 for blurry), and loaded the modified images in as our training

data.

```

```

1.1 Generating the training data

```

We generated the training data using images from thePascal Visual Object Classes Challenge 2010

(VOC2010)data set. Our work was done in Python using thePIL,numpy,opency, andoslibraries.

```

Once we had the original images fromPascal, we had to modify them to fit our needs. We needed to

have 100 images, each partially blurred and with a corresponding matrix indicating which part of the

image is blurred.

We achieved this by:

```

1. Making a blurred copy of the original image.

2. Cutting both images (original and blurry) into 30 × 30 patches.

3. Creating a 2D List in Python of size 30 × 30 , to represent each image patch We initialize

each element to 0 (to represent non-blurry).

4. Picking half the patches from the list and marking them as 1 (to represent blurry).

5. Putting the final image together to get a partially-blurred, qualifying image (and its corre-

sponding matrix).

6. Saving the image as "n.jpg" (where n is the serial number of the image), and adding the

matrix to a list (to form a 3D ’list of lists’) containing the matrices of all the image.

```

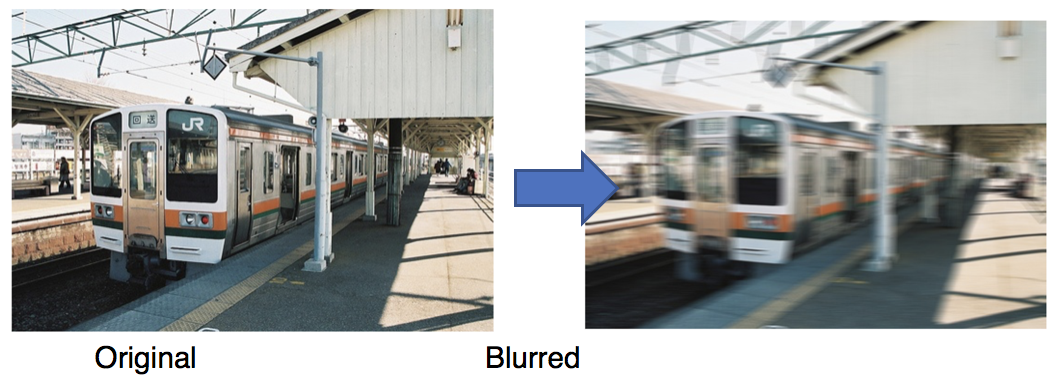

Image of the original-to-blur process.

```

```

Image of the image splitting process.

```

```

The final image with its corresponding matrix.

```

We repeat the above for all 100 images, until we end up with a folder containing partially-blurred

images"0.jpg"through"100.jpg", and a 3D list (named"labels") that contains 100 matrices. This

lets us access the matrix for image"31.jpg", for example, by querying for"labels[31]".

## 2 Learning the Convolutional Neural Network (CNN)

Once we had the prepared images,we loaded them into our training set.We ran into a prob-

lem loading the images into anumpyarray, where our images were of the form (30,30,3), while

theKeras.Conv2Dlayer required input to be of the form (3,30,30). We solved this by using the

numpy.swapexes()function to alter the images’ shape in order to fit the convolutional layer.

We then apply the CNN learning model. First, we apply a Convolution2D layer with 7 × 7 filters,

followed by aReLUfunction. TheConvlayer’s parameters consiste of a set of learnable filters. Each

filter is small spatially, but extends through the depth of the input volume.

During the forward pass, we slide each filter across the width and height of the input volume and

compute the dot products between the entries of the filter and the input at any position.ReLUis the

rectifier function- an activation function that can be used by neurons, just like any other activation

function. A node using the rectifier activation function is called aReLU node.ReLUsets all negative

values in the matrix x to 0, and all other values are kept constant. ReLU us computed after the

convolution, and thus a nonlinear activation function (liketanhorsigmoid).

After that,we add aMaxPooling2Dlayerwith a pool size of 2 × 2. MaxPooling is a sample-

based discretization process. The objective is to down-sample an input representation, reducing

its dimensionality and allowing for assumptions to be made about features contained in the binned

sub-regions.

We thenadd aDropoutlayerwith dropout rate of 0.2, which makes our learning process faster.

Dropout randomly ignoring nodes is useful in CNN models because it prevents interdependencies

from emerging between nodes. This allows the network to learn more and form a more robust

relationship. We then do the’Conv2D, ReLU, MaxPooling2D, Dropout’circle again. Finally, we add

a fully-connected layer withReLU, and thensoftmaxthe result.Softmaxis a classifier at the end of

the neural network — a logistic regression to regularize outputs to a value between 0 and 1.

We set our model’s learning rate to be 0. 01. This might generally be too big, but we made this

decision for the sake of brevity - it was the fastest way to show a result. We chose a batch size of 126

(because we had large training data). We also choseAdamas our optimizer as it’s the most efficient

optimizer for our model.

After training with 100 epochs,we had testing accuracy of 92%, which is a very optimal rate for

our model. Our training model is saved in an HDF5 file,"motionblur.h5".

## 3 Conclusion

In this report, we have proposed a novel CNN-based motion blur detection apporach. We learn an

effective CNN for estimating motion blur from local patches. In the future, we are interested in

designing a CNN for eastimating motion kernels. We are also interested in design a CNN non-uniform

motion deblurring method.

## Acknowledgement

This report has been prepared for the Boston University Machine Learning course (CS 542), taken

over the Summer 2, 2017 semester by the listed authors. It is intended to be used in compliance of

the requirements of the course.

## References

[1] Jian Sun, Wenfei Cao, Zongben Xu, Jean Ponce. Learning a convolutional neural network for non-uniform

motion blur removal. CVPR 2015 - IEEE Conference on Computer Vision and Pattern Recognition 2015, Jun

2015, Boston, United States. IEEE, 2015,.

[2] “Visual Object Classes Challenge 2010 (VOC2010).” The PASCAL Visual Object Classes Challenge 2010

(VOC2010), PASCAL, 2010, host.robots.ox.ac.uk/pascal/VOC/voc2010/.

================================================

FILE: Report/nicefrac.sty

================================================

%%

%% This is file `nicefrac.sty',

%% generated with the docstrip utility.

%%

%% The original source files were:

%%

%% units.dtx (with options: `nicefrac')

%%

%% LaTeX package for typesetting nice fractions

%%

%% Copyright (C) 1998 Axel Reichert

%% See the files README and COPYING.

%%

%% \CharacterTable

%% {Upper-case \A\B\C\D\E\F\G\H\I\J\K\L\M\N\O\P\Q\R\S\T\U\V\W\X\Y\Z

%% Lower-case \a\b\c\d\e\f\g\h\i\j\k\l\m\n\o\p\q\r\s\t\u\v\w\x\y\z

%% Digits \0\1\2\3\4\5\6\7\8\9

%% Exclamation \! Double quote \" Hash (number) \#

%% Dollar \$ Percent \% Ampersand \&

%% Acute accent \' Left paren \( Right paren \)

%% Asterisk \* Plus \+ Comma \,

%% Minus \- Point \. Solidus \/

%% Colon \: Semicolon \; Less than \<

%% Equals \= Greater than \> Question mark \?

%% Commercial at \@ Left bracket \[ Backslash \\

%% Right bracket \] Circumflex \^ Underscore \_

%% Grave accent \` Left brace \{ Vertical bar \|

%% Right brace \} Tilde \~}

\NeedsTeXFormat{LaTeX2e}[1995/12/01]

\ProvidesPackage{nicefrac}[1998/08/04 v0.9b Nice fractions]

\newlength{\L@UnitsRaiseDisplaystyle}

\newlength{\L@UnitsRaiseTextstyle}

\newlength{\L@UnitsRaiseScriptstyle}

\RequirePackage{ifthen}

\DeclareRobustCommand*{\@UnitsNiceFrac}[3][]{%

\ifthenelse{\boolean{mmode}}{%

\settoheight{\L@UnitsRaiseDisplaystyle}{%

\ensuremath{\displaystyle#1{M}}%

}%

\settoheight{\L@UnitsRaiseTextstyle}{%

\ensuremath{\textstyle#1{M}}%

}%

\settoheight{\L@UnitsRaiseScriptstyle}{%

\ensuremath{\scriptstyle#1{M}}%

}%

\settoheight{\@tempdima}{%

\ensuremath{\scriptscriptstyle#1{M}}%

}%

\addtolength{\L@UnitsRaiseDisplaystyle}{%

-\L@UnitsRaiseScriptstyle%

}%

\addtolength{\L@UnitsRaiseTextstyle}{%

-\L@UnitsRaiseScriptstyle%

}%

\addtolength{\L@UnitsRaiseScriptstyle}{-\@tempdima}%

\mathchoice

{%

\raisebox{\L@UnitsRaiseDisplaystyle}{%

\ensuremath{\scriptstyle#1{#2}}%

}%

}%

{%

\raisebox{\L@UnitsRaiseTextstyle}{%

\ensuremath{\scriptstyle#1{#2}}%

}%

}%

{%

\raisebox{\L@UnitsRaiseScriptstyle}{%

\ensuremath{\scriptscriptstyle#1{#2}}%

}%

}%

{%

\raisebox{\L@UnitsRaiseScriptstyle}{%

\ensuremath{\scriptscriptstyle#1{#2}}%

}%

}%

\mkern-2mu/\mkern-1mu%

\bgroup

\mathchoice

{\scriptstyle}%

{\scriptstyle}%

{\scriptscriptstyle}%

{\scriptscriptstyle}%

#1{#3}%

\egroup

}%

{%

\settoheight{\L@UnitsRaiseTextstyle}{#1{M}}%

\settoheight{\@tempdima}{%

\ensuremath{%

\mbox{\fontsize\sf@size\z@\selectfont#1{M}}%

}%

}%

\addtolength{\L@UnitsRaiseTextstyle}{-\@tempdima}%

\raisebox{\L@UnitsRaiseTextstyle}{%

\ensuremath{%

\mbox{\fontsize\sf@size\z@\selectfont#1{#2}}%

}%

}%

\ensuremath{\mkern-2mu}/\ensuremath{\mkern-1mu}%

\ensuremath{%

\mbox{\fontsize\sf@size\z@\selectfont#1{#3}}%

}%

}%

}

\DeclareRobustCommand*{\@UnitsUglyFrac}[3][]{%

\ifthenelse{\boolean{mmode}}{%

\frac{#1{#2}}{#1{#3}}%

}%

{%

#1{#2}/#1{#3}%

\PackageWarning{nicefrac}{%

You used \protect\nicefrac\space or

\protect\unitfrac\space in text mode\MessageBreak

and specified the ``ugly'' option.\MessageBreak

The fraction may be ambiguous or wrong.\MessageBreak

Please make sure the denominator is

correct.\MessageBreak

If it is, you can safely ignore\MessageBreak

this warning

}%

}%

}

\DeclareOption{nice}{%

\DeclareRobustCommand*{\nicefrac}{\@UnitsNiceFrac}%

}

\DeclareOption{ugly}{%

\DeclareRobustCommand*{\nicefrac}{\@UnitsUglyFrac}%

}

\ExecuteOptions{nice}

\ProcessOptions*

\endinput

%%

%% End of file `nicefrac.sty'.

================================================

FILE: Report/nips_2017.aux

================================================

\relax

\providecommand\hyper@newdestlabel[2]{}

\providecommand\HyperFirstAtBeginDocument{\AtBeginDocument}

\HyperFirstAtBeginDocument{\ifx\hyper@anchor\@undefined

\global\let\oldcontentsline\contentsline

\gdef\contentsline#1#2#3#4{\oldcontentsline{#1}{#2}{#3}}

\global\let\oldnewlabel\newlabel

\gdef\newlabel#1#2{\newlabelxx{#1}#2}

\gdef\newlabelxx#1#2#3#4#5#6{\oldnewlabel{#1}{{#2}{#3}}}

\AtEndDocument{\ifx\hyper@anchor\@undefined

\let\contentsline\oldcontentsline

\let\newlabel\oldnewlabel

\fi}

\fi}

\global\let\hyper@last\relax

\gdef\HyperFirstAtBeginDocument#1{#1}

\providecommand\HyField@AuxAddToFields[1]{}

\providecommand\HyField@AuxAddToCoFields[2]{}

\@writefile{toc}{\contentsline {section}{\numberline {1}Our approach}{1}{section.1}}

\@writefile{toc}{\contentsline {subsection}{\numberline {1.1}Generating the training data}{1}{subsection.1.1}}

\@writefile{toc}{\contentsline {section}{\numberline {2}Learning the Convolutional Neural Network (CNN)}{3}{section.2}}

\@writefile{toc}{\contentsline {paragraph}{We then apply the CNN learning model.}{3}{section*.1}}

\@writefile{toc}{\contentsline {paragraph}{During the forward pass,}{3}{section*.2}}

\@writefile{toc}{\contentsline {paragraph}{We set our model's learning rate to be $0.01$.}{3}{section*.3}}

\@writefile{toc}{\contentsline {section}{\numberline {3}Conclusion}{3}{section.3}}

================================================

FILE: Report/nips_2017.log

================================================

This is pdfTeX, Version 3.14159265-2.6-1.40.17 (TeX Live 2016/Debian) (preloaded format=pdflatex 2017.7.8) 12 AUG 2017 15:30

entering extended mode

restricted \write18 enabled.

%&-line parsing enabled.

**nips_2017.tex

(./nips_2017.tex

LaTeX2e <2017/01/01> patch level 3

Babel <3.9r> and hyphenation patterns for 3 language(s) loaded.

(/usr/share/texlive/texmf-dist/tex/latex/base/article.cls

Document Class: article 2014/09/29 v1.4h Standard LaTeX document class

(/usr/share/texlive/texmf-dist/tex/latex/base/size10.clo

File: size10.clo 2014/09/29 v1.4h Standard LaTeX file (size option)

)

\c@part=\count79

\c@section=\count80

\c@subsection=\count81

\c@subsubsection=\count82

\c@paragraph=\count83

\c@subparagraph=\count84

\c@figure=\count85

\c@table=\count86

\abovecaptionskip=\skip41

\belowcaptionskip=\skip42

\bibindent=\dimen102

) (./nips_2017.sty

Package: nips_2017 2017/03/20 NIPS 2017 submission/camera-ready style file

(/usr/share/texlive/texmf-dist/tex/latex/natbib/natbib.sty

Package: natbib 2010/09/13 8.31b (PWD, AO)

\bibhang=\skip43

\bibsep=\skip44

LaTeX Info: Redefining \cite on input line 694.

\c@NAT@ctr=\count87

)

(/usr/share/texlive/texmf-dist/tex/latex/geometry/geometry.sty

Package: geometry 2010/09/12 v5.6 Page Geometry

(/usr/share/texlive/texmf-dist/tex/latex/graphics/keyval.sty

Package: keyval 2014/10/28 v1.15 key=value parser (DPC)

\KV@toks@=\toks14

)

(/usr/share/texlive/texmf-dist/tex/generic/oberdiek/ifpdf.sty

Package: ifpdf 2016/05/14 v3.1 Provides the ifpdf switch

)

(/usr/share/texlive/texmf-dist/tex/generic/oberdiek/ifvtex.sty

Package: ifvtex 2016/05/16 v1.6 Detect VTeX and its facilities (HO)

Package ifvtex Info: VTeX not detected.

)

(/usr/share/texlive/texmf-dist/tex/generic/ifxetex/ifxetex.sty

Package: ifxetex 2010/09/12 v0.6 Provides ifxetex conditional

)

\Gm@cnth=\count88

\Gm@cntv=\count89

\c@Gm@tempcnt=\count90

\Gm@bindingoffset=\dimen103

\Gm@wd@mp=\dimen104

\Gm@odd@mp=\dimen105

\Gm@even@mp=\dimen106

\Gm@layoutwidth=\dimen107

\Gm@layoutheight=\dimen108

\Gm@layouthoffset=\dimen109

\Gm@layoutvoffset=\dimen110

\Gm@dimlist=\toks15

)

\@nipsabovecaptionskip=\skip45

\@nipsbelowcaptionskip=\skip46

)

(/usr/share/texlive/texmf-dist/tex/latex/base/inputenc.sty

Package: inputenc 2015/03/17 v1.2c Input encoding file

\inpenc@prehook=\toks16

\inpenc@posthook=\toks17

(/usr/share/texlive/texmf-dist/tex/latex/base/utf8.def

File: utf8.def 2017/01/28 v1.1t UTF-8 support for inputenc

Now handling font encoding OML ...

... no UTF-8 mapping file for font encoding OML

Now handling font encoding T1 ...

... processing UTF-8 mapping file for font encoding T1

(/usr/share/texlive/texmf-dist/tex/latex/base/t1enc.dfu

File: t1enc.dfu 2017/01/28 v1.1t UTF-8 support for inputenc

defining Unicode char U+00A0 (decimal 160)

defining Unicode char U+00A1 (decimal 161)

defining Unicode char U+00A3 (decimal 163)

defining Unicode char U+00AB (decimal 171)

defining Unicode char U+00AD (decimal 173)

defining Unicode char U+00BB (decimal 187)

defining Unicode char U+00BF (decimal 191)

defining Unicode char U+00C0 (decimal 192)

defining Unicode char U+00C1 (decimal 193)

defining Unicode char U+00C2 (decimal 194)

defining Unicode char U+00C3 (decimal 195)

defining Unicode char U+00C4 (decimal 196)

defining Unicode char U+00C5 (decimal 197)

defining Unicode char U+00C6 (decimal 198)

defining Unicode char U+00C7 (decimal 199)

defining Unicode char U+00C8 (decimal 200)

defining Unicode char U+00C9 (decimal 201)

defining Unicode char U+00CA (decimal 202)

defining Unicode char U+00CB (decimal 203)

defining Unicode char U+00CC (decimal 204)

defining Unicode char U+00CD (decimal 205)

defining Unicode char U+00CE (decimal 206)

defining Unicode char U+00CF (decimal 207)

defining Unicode char U+00D0 (decimal 208)

defining Unicode char U+00D1 (decimal 209)

defining Unicode char U+00D2 (decimal 210)

defining Unicode char U+00D3 (decimal 211)

defining Unicode char U+00D4 (decimal 212)

defining Unicode char U+00D5 (decimal 213)

defining Unicode char U+00D6 (decimal 214)

defining Unicode char U+00D8 (decimal 216)

defining Unicode char U+00D9 (decimal 217)

defining Unicode char U+00DA (decimal 218)

defining Unicode char U+00DB (decimal 219)

defining Unicode char U+00DC (decimal 220)

defining Unicode char U+00DD (decimal 221)

defining Unicode char U+00DE (decimal 222)

defining Unicode char U+00DF (decimal 223)

defining Unicode char U+00E0 (decimal 224)

defining Unicode char U+00E1 (decimal 225)

defining Unicode char U+00E2 (decimal 226)

defining Unicode char U+00E3 (decimal 227)

defining Unicode char U+00E4 (decimal 228)

defining Unicode char U+00E5 (decimal 229)

defining Unicode char U+00E6 (decimal 230)

defining Unicode char U+00E7 (decimal 231)

defining Unicode char U+00E8 (decimal 232)

defining Unicode char U+00E9 (decimal 233)

defining Unicode char U+00EA (decimal 234)

defining Unicode char U+00EB (decimal 235)

defining Unicode char U+00EC (decimal 236)

defining Unicode char U+00ED (decimal 237)

defining Unicode char U+00EE (decimal 238)

defining Unicode char U+00EF (decimal 239)

defining Unicode char U+00F0 (decimal 240)

defining Unicode char U+00F1 (decimal 241)

defining Unicode char U+00F2 (decimal 242)

defining Unicode char U+00F3 (decimal 243)

defining Unicode char U+00F4 (decimal 244)

defining Unicode char U+00F5 (decimal 245)

defining Unicode char U+00F6 (decimal 246)

defining Unicode char U+00F8 (decimal 248)

defining Unicode char U+00F9 (decimal 249)

defining Unicode char U+00FA (decimal 250)

defining Unicode char U+00FB (decimal 251)

defining Unicode char U+00FC (decimal 252)

defining Unicode char U+00FD (decimal 253)

defining Unicode char U+00FE (decimal 254)

defining Unicode char U+00FF (decimal 255)

defining Unicode char U+0100 (decimal 256)

defining Unicode char U+0101 (decimal 257)

defining Unicode char U+0102 (decimal 258)

defining Unicode char U+0103 (decimal 259)

defining Unicode char U+0104 (decimal 260)

defining Unicode char U+0105 (decimal 261)

defining Unicode char U+0106 (decimal 262)

defining Unicode char U+0107 (decimal 263)

defining Unicode char U+0108 (decimal 264)

defining Unicode char U+0109 (decimal 265)

defining Unicode char U+010A (decimal 266)

defining Unicode char U+010B (decimal 267)

defining Unicode char U+010C (decimal 268)

defining Unicode char U+010D (decimal 269)

defining Unicode char U+010E (decimal 270)

defining Unicode char U+010F (decimal 271)

defining Unicode char U+0110 (decimal 272)

defining Unicode char U+0111 (decimal 273)

defining Unicode char U+0112 (decimal 274)

defining Unicode char U+0113 (decimal 275)

defining Unicode char U+0114 (decimal 276)

defining Unicode char U+0115 (decimal 277)

defining Unicode char U+0116 (decimal 278)

defining Unicode char U+0117 (decimal 279)

defining Unicode char U+0118 (decimal 280)

defining Unicode char U+0119 (decimal 281)

defining Unicode char U+011A (decimal 282)

defining Unicode char U+011B (decimal 283)

defining Unicode char U+011C (decimal 284)

defining Unicode char U+011D (decimal 285)

defining Unicode char U+011E (decimal 286)

defining Unicode char U+011F (decimal 287)

defining Unicode char U+0120 (decimal 288)

defining Unicode char U+0121 (decimal 289)

defining Unicode char U+0122 (decimal 290)

defining Unicode char U+0123 (decimal 291)

defining Unicode char U+0124 (decimal 292)

defining Unicode char U+0125 (decimal 293)

defining Unicode char U+0128 (decimal 296)

defining Unicode char U+0129 (decimal 297)

defining Unicode char U+012A (decimal 298)

defining Unicode char U+012B (decimal 299)

defining Unicode char U+012C (decimal 300)

defining Unicode char U+012D (decimal 301)

defining Unicode char U+012E (decimal 302)

defining Unicode char U+012F (decimal 303)

defining Unicode char U+0130 (decimal 304)

defining Unicode char U+0131 (decimal 305)

defining Unicode char U+0132 (decimal 306)

defining Unicode char U+0133 (decimal 307)

defining Unicode char U+0134 (decimal 308)

defining Unicode char U+0135 (decimal 309)

defining Unicode char U+0136 (decimal 310)

defining Unicode char U+0137 (decimal 311)

defining Unicode char U+0139 (decimal 313)

defining Unicode char U+013A (decimal 314)

defining Unicode char U+013B (decimal 315)

defining Unicode char U+013C (decimal 316)

defining Unicode char U+013D (decimal 317)

defining Unicode char U+013E (decimal 318)

defining Unicode char U+0141 (decimal 321)

defining Unicode char U+0142 (decimal 322)

defining Unicode char U+0143 (decimal 323)

defining Unicode char U+0144 (decimal 324)

defining Unicode char U+0145 (decimal 325)

defining Unicode char U+0146 (decimal 326)

defining Unicode char U+0147 (decimal 327)

defining Unicode char U+0148 (decimal 328)

defining Unicode char U+014A (decimal 330)

defining Unicode char U+014B (decimal 331)

defining Unicode char U+014C (decimal 332)

defining Unicode char U+014D (decimal 333)

defining Unicode char U+014E (decimal 334)

defining Unicode char U+014F (decimal 335)

defining Unicode char U+0150 (decimal 336)

defining Unicode char U+0151 (decimal 337)

defining Unicode char U+0152 (decimal 338)

defining Unicode char U+0153 (decimal 339)

defining Unicode char U+0154 (decimal 340)

defining Unicode char U+0155 (decimal 341)

defining Unicode char U+0156 (decimal 342)

defining Unicode char U+0157 (decimal 343)

defining Unicode char U+0158 (decimal 344)

defining Unicode char U+0159 (decimal 345)

defining Unicode char U+015A (decimal 346)

defining Unicode char U+015B (decimal 347)

defining Unicode char U+015C (decimal 348)

defining Unicode char U+015D (decimal 349)

defining Unicode char U+015E (decimal 350)

defining Unicode char U+015F (decimal 351)

defining Unicode char U+0160 (decimal 352)

defining Unicode char U+0161 (decimal 353)

defining Unicode char U+0162 (decimal 354)

defining Unicode char U+0163 (decimal 355)

defining Unicode char U+0164 (decimal 356)

defining Unicode char U+0165 (decimal 357)

defining Unicode char U+0168 (decimal 360)

defining Unicode char U+0169 (decimal 361)

defining Unicode char U+016A (decimal 362)

defining Unicode char U+016B (decimal 363)

defining Unicode char U+016C (decimal 364)

defining Unicode char U+016D (decimal 365)

defining Unicode char U+016E (decimal 366)

defining Unicode char U+016F (decimal 367)

defining Unicode char U+0170 (decimal 368)

defining Unicode char U+0171 (decimal 369)

defining Unicode char U+0172 (decimal 370)

defining Unicode char U+0173 (decimal 371)

defining Unicode char U+0174 (decimal 372)

defining Unicode char U+0175 (decimal 373)

defining Unicode char U+0176 (decimal 374)

defining Unicode char U+0177 (decimal 375)

defining Unicode char U+0178 (decimal 376)

defining Unicode char U+0179 (decimal 377)

defining Unicode char U+017A (decimal 378)

defining Unicode char U+017B (decimal 379)

defining Unicode char U+017C (decimal 380)

defining Unicode char U+017D (decimal 381)

defining Unicode char U+017E (decimal 382)

defining Unicode char U+01CD (decimal 461)

defining Unicode char U+01CE (decimal 462)

defining Unicode char U+01CF (decimal 463)

defining Unicode char U+01D0 (decimal 464)

defining Unicode char U+01D1 (decimal 465)

defining Unicode char U+01D2 (decimal 466)

defining Unicode char U+01D3 (decimal 467)

defining Unicode char U+01D4 (decimal 468)

defining Unicode char U+01E2 (decimal 482)

defining Unicode char U+01E3 (decimal 483)

defining Unicode char U+01E6 (decimal 486)

defining Unicode char U+01E7 (decimal 487)

defining Unicode char U+01E8 (decimal 488)

defining Unicode char U+01E9 (decimal 489)

defining Unicode char U+01EA (decimal 490)

defining Unicode char U+01EB (decimal 491)

defining Unicode char U+01F0 (decimal 496)

defining Unicode char U+01F4 (decimal 500)

defining Unicode char U+01F5 (decimal 501)

defining Unicode char U+0218 (decimal 536)

defining Unicode char U+0219 (decimal 537)

defining Unicode char U+021A (decimal 538)

defining Unicode char U+021B (decimal 539)

defining Unicode char U+0232 (decimal 562)

defining Unicode char U+0233 (decimal 563)

defining Unicode char U+1E02 (decimal 7682)

defining Unicode char U+1E03 (decimal 7683)

defining Unicode char U+200C (decimal 8204)

defining Unicode char U+2010 (decimal 8208)

defining Unicode char U+2011 (decimal 8209)

defining Unicode char U+2012 (decimal 8210)

defining Unicode char U+2013 (decimal 8211)

defining Unicode char U+2014 (decimal 8212)

defining Unicode char U+2015 (decimal 8213)

defining Unicode char U+2018 (decimal 8216)

defining Unicode char U+2019 (decimal 8217)

defining Unicode char U+201A (decimal 8218)

defining Unicode char U+201C (decimal 8220)

defining Unicode char U+201D (decimal 8221)

defining Unicode char U+201E (decimal 8222)

defining Unicode char U+2030 (decimal 8240)

defining Unicode char U+2031 (decimal 8241)

defining Unicode char U+2039 (decimal 8249)

defining Unicode char U+203A (decimal 8250)

defining Unicode char U+2423 (decimal 9251)

defining Unicode char U+1E20 (decimal 7712)

defining Unicode char U+1E21 (decimal 7713)

)

Now handling font encoding OT1 ...

... processing UTF-8 mapping file for font encoding OT1

(/usr/share/texlive/texmf-dist/tex/latex/base/ot1enc.dfu

File: ot1enc.dfu 2017/01/28 v1.1t UTF-8 support for inputenc

defining Unicode char U+00A0 (decimal 160)

defining Unicode char U+00A1 (decimal 161)

defining Unicode char U+00A3 (decimal 163)

defining Unicode char U+00AD (decimal 173)

defining Unicode char U+00B8 (decimal 184)

defining Unicode char U+00BF (decimal 191)

defining Unicode char U+00C5 (decimal 197)

defining Unicode char U+00C6 (decimal 198)

defining Unicode char U+00D8 (decimal 216)

defining Unicode char U+00DF (decimal 223)

defining Unicode char U+00E6 (decimal 230)

defining Unicode char U+00EC (decimal 236)

defining Unicode char U+00ED (decimal 237)

defining Unicode char U+00EE (decimal 238)

defining Unicode char U+00EF (decimal 239)

defining Unicode char U+00F8 (decimal 248)

defining Unicode char U+0131 (decimal 305)

defining Unicode char U+0141 (decimal 321)

defining Unicode char U+0142 (decimal 322)

defining Unicode char U+0152 (decimal 338)

defining Unicode char U+0153 (decimal 339)

defining Unicode char U+0174 (decimal 372)

defining Unicode char U+0175 (decimal 373)

defining Unicode char U+0176 (decimal 374)

defining Unicode char U+0177 (decimal 375)

defining Unicode char U+0218 (decimal 536)

defining Unicode char U+0219 (decimal 537)

defining Unicode char U+021A (decimal 538)

defining Unicode char U+021B (decimal 539)

defining Unicode char U+2013 (decimal 8211)

defining Unicode char U+2014 (decimal 8212)

defining Unicode char U+2018 (decimal 8216)

defining Unicode char U+2019 (decimal 8217)

defining Unicode char U+201C (decimal 8220)

defining Unicode char U+201D (decimal 8221)

)

Now handling font encoding OMS ...

... processing UTF-8 mapping file for font encoding OMS

(/usr/share/texlive/texmf-dist/tex/latex/base/omsenc.dfu

File: omsenc.dfu 2017/01/28 v1.1t UTF-8 support for inputenc

defining Unicode char U+00A7 (decimal 167)

defining Unicode char U+00B6 (decimal 182)

defining Unicode char U+00B7 (decimal 183)

defining Unicode char U+2020 (decimal 8224)

defining Unicode char U+2021 (decimal 8225)

defining Unicode char U+2022 (decimal 8226)

)

Now handling font encoding OMX ...

... no UTF-8 mapping file for font encoding OMX

Now handling font encoding U ...

... no UTF-8 mapping file for font encoding U

defining Unicode char U+00A9 (decimal 169)

defining Unicode char U+00AA (decimal 170)

defining Unicode char U+00AE (decimal 174)

defining Unicode char U+00BA (decimal 186)

defining Unicode char U+02C6 (decimal 710)

defining Unicode char U+02DC (decimal 732)

defining Unicode char U+200C (decimal 8204)

defining Unicode char U+2026 (decimal 8230)

defining Unicode char U+2122 (decimal 8482)

defining Unicode char U+2423 (decimal 9251)

))

(/usr/share/texlive/texmf-dist/tex/latex/base/fontenc.sty

Package: fontenc 2017/02/22 v2.0g Standard LaTeX package

(/usr/share/texlive/texmf-dist/tex/latex/base/t1enc.def

File: t1enc.def 2017/02/22 v2.0g Standard LaTeX file

LaTeX Font Info: Redeclaring font encoding T1 on input line 48.

))

(/usr/share/texlive/texmf-dist/tex/latex/hyperref/hyperref.sty

Package: hyperref 2016/06/24 v6.83q Hypertext links for LaTeX

(/usr/share/texlive/texmf-dist/tex/generic/oberdiek/hobsub-hyperref.sty

Package: hobsub-hyperref 2016/05/16 v1.14 Bundle oberdiek, subset hyperref (HO)

(/usr/share/texlive/texmf-dist/tex/generic/oberdiek/hobsub-generic.sty

Package: hobsub-generic 2016/05/16 v1.14 Bundle oberdiek, subset generic (HO)

Package: hobsub 2016/05/16 v1.14 Construct package bundles (HO)

Package: infwarerr 2016/05/16 v1.4 Providing info/warning/error messages (HO)

Package: ltxcmds 2016/05/16 v1.23 LaTeX kernel commands for general use (HO)

Package: ifluatex 2016/05/16 v1.4 Provides the ifluatex switch (HO)

Package ifluatex Info: LuaTeX not detected.

Package hobsub Info: Skipping package `ifvtex' (already loaded).

Package: intcalc 2016/05/16 v1.2 Expandable calculations with integers (HO)

Package hobsub Info: Skipping package `ifpdf' (already loaded).

Package: etexcmds 2016/05/16 v1.6 Avoid name clashes with e-TeX commands (HO)

Package etexcmds Info: Could not find \expanded.

(etexcmds) That can mean that you are not using pdfTeX 1.50 or

(etexcmds) that some package has redefined \expanded.

(etexcmds) In the latter case, load this package earlier.

Package: kvsetkeys 2016/05/16 v1.17 Key value parser (HO)

Package: kvdefinekeys 2016/05/16 v1.4 Define keys (HO)

Package: pdftexcmds 2016/05/21 v0.22 Utility functions of pdfTeX for LuaTeX (HO

)

Package pdftexcmds Info: LuaTeX not detected.

Package pdftexcmds Info: \pdf@primitive is available.

Package pdftexcmds Info: \pdf@ifprimitive is available.

Package pdftexcmds Info: \pdfdraftmode found.

Package: pdfescape 2016/05/16 v1.14 Implements pdfTeX's escape features (HO)

Package: bigintcalc 2016/05/16 v1.4 Expandable calculations on big integers (HO

)

Package: bitset 2016/05/16 v1.2 Handle bit-vector datatype (HO)

Package: uniquecounter 2016/05/16 v1.3 Provide unlimited unique counter (HO)

)

Package hobsub Info: Skipping package `hobsub' (already loaded).

Package: letltxmacro 2016/05/16 v1.5 Let assignment for LaTeX macros (HO)

Package: hopatch 2016/05/16 v1.3 Wrapper for package hooks (HO)

Package: xcolor-patch 2016/05/16 xcolor patch

Package: atveryend 2016/05/16 v1.9 Hooks at the very end of document (HO)

Package atveryend Info: \enddocument detected (standard20110627).

Package: atbegshi 2016/06/09 v1.18 At begin shipout hook (HO)

Package: refcount 2016/05/16 v3.5 Data extraction from label references (HO)

Package: hycolor 2016/05/16 v1.8 Color options for hyperref/bookmark (HO)

)

(/usr/share/texlive/texmf-dist/tex/latex/oberdiek/auxhook.sty

Package: auxhook 2016/05/16 v1.4 Hooks for auxiliary files (HO)

)

(/usr/share/texlive/texmf-dist/tex/latex/oberdiek/kvoptions.sty

Package: kvoptions 2016/05/16 v3.12 Key value format for package options (HO)

)

\@linkdim=\dimen111

\Hy@linkcounter=\count91

\Hy@pagecounter=\count92

(/usr/share/texlive/texmf-dist/tex/latex/hyperref/pd1enc.def

File: pd1enc.def 2016/06/24 v6.83q Hyperref: PDFDocEncoding definition (HO)

Now handling font encoding PD1 ...

... no UTF-8 mapping file for font encoding PD1

)

\Hy@SavedSpaceFactor=\count93

(/usr/share/texlive/texmf-dist/tex/latex/latexconfig/hyperref.cfg

File: hyperref.cfg 2002/06/06 v1.2 hyperref configuration of TeXLive

)

Package hyperref Info: Hyper figures OFF on input line 4486.

Package hyperref Info: Link nesting OFF on input line 4491.

Package hyperref Info: Hyper index ON on input line 4494.

Package hyperref Info: Plain pages OFF on input line 4501.

Package hyperref Info: Backreferencing OFF on input line 4506.

Package hyperref Info: Implicit mode ON; LaTeX internals redefined.

Package hyperref Info: Bookmarks ON on input line 4735.

\c@Hy@tempcnt=\count94

(/usr/share/texlive/texmf-dist/tex/latex/url/url.sty

\Urlmuskip=\muskip10

Package: url 2013/09/16 ver 3.4 Verb mode for urls, etc.

)

LaTeX Info: Redefining \url on input line 5088.

\XeTeXLinkMargin=\dimen112

\Fld@menulength=\count95

\Field@Width=\dimen113

\Fld@charsize=\dimen114

Package hyperref Info: Hyper figures OFF on input line 6342.

Package hyperref Info: Link nesting OFF on input line 6347.

Package hyperref Info: Hyper index ON on input line 6350.

Package hyperref Info: backreferencing OFF on input line 6357.

Package hyperref Info: Link coloring OFF on input line 6362.

Package hyperref Info: Link coloring with OCG OFF on input line 6367.

Package hyperref Info: PDF/A mode OFF on input line 6372.

LaTeX Info: Redefining \ref on input line 6412.

LaTeX Info: Redefining \pageref on input line 6416.

\Hy@abspage=\count96

\c@Item=\count97

\c@Hfootnote=\count98

)

Package hyperref Message: Driver (autodetected): hpdftex.

(/usr/share/texlive/texmf-dist/tex/latex/hyperref/hpdftex.def

File: hpdftex.def 2016/06/24 v6.83q Hyperref driver for pdfTeX

\Fld@listcount=\count99

\c@bookmark@seq@number=\count100

(/usr/share/texlive/texmf-dist/tex/latex/oberdiek/rerunfilecheck.sty

Package: rerunfilecheck 2016/05/16 v1.8 Rerun checks for auxiliary files (HO)

Package uniquecounter Info: New unique counter `rerunfilecheck' on input line 2

82.

)

\Hy@SectionHShift=\skip47

)

(/usr/share/texlive/texmf-dist/tex/latex/booktabs/booktabs.sty

Package: booktabs 2016/04/27 v1.618033 publication quality tables

\heavyrulewidth=\dimen115

\lightrulewidth=\dimen116

\cmidrulewidth=\dimen117

\belowrulesep=\dimen118

\belowbottomsep=\dimen119

\aboverulesep=\dimen120

\abovetopsep=\dimen121

\cmidrulesep=\dimen122

\cmidrulekern=\dimen123

\defaultaddspace=\dimen124

\@cmidla=\count101

\@cmidlb=\count102

\@aboverulesep=\dimen125

\@belowrulesep=\dimen126

\@thisruleclass=\count103

\@lastruleclass=\count104

\@thisrulewidth=\dimen127

)

(/usr/share/texlive/texmf-dist/tex/latex/amsfonts/amsfonts.sty

Package: amsfonts 2013/01/14 v3.01 Basic AMSFonts support

\@emptytoks=\toks18

\symAMSa=\mathgroup4

\symAMSb=\mathgroup5

LaTeX Font Info: Overwriting math alphabet `\mathfrak' in version `bold'

(Font) U/euf/m/n --> U/euf/b/n on input line 106.

) (./nicefrac.sty

Package: nicefrac 1998/08/04 v0.9b Nice fractions

\L@UnitsRaiseDisplaystyle=\skip48

\L@UnitsRaiseTextstyle=\skip49

\L@UnitsRaiseScriptstyle=\skip50

(/usr/share/texlive/texmf-dist/tex/latex/base/ifthen.sty

Package: ifthen 2014/09/29 v1.1c Standard LaTeX ifthen package (DPC)

))

(/usr/share/texlive/texmf-dist/tex/latex/microtype/microtype.sty

Package: microtype 2016/05/14 v2.6a Micro-typographical refinements (RS)

\MT@toks=\toks19

\MT@count=\count105

LaTeX Info: Redefining \textls on input line 774.

\MT@outer@kern=\dimen128

LaTeX Info: Redefining \textmicrotypecontext on input line 1310.

\MT@listname@count=\count106

(/usr/share/texlive/texmf-dist/tex/latex/microtype/microtype-pdftex.def

File: microtype-pdftex.def 2016/05/14 v2.6a Definitions specific to pdftex (RS)

LaTeX Info: Redefining \lsstyle on input line 916.

LaTeX Info: Redefining \lslig on input line 916.

\MT@outer@space=\skip51

)

Package microtype Info: Loading configuration file microtype.cfg.

(/usr/share/texlive/texmf-dist/tex/latex/microtype/microtype.cfg

File: microtype.cfg 2016/05/14 v2.6a microtype main configuration file (RS)

))

(/usr/share/texlive/texmf-dist/tex/latex/graphics/graphicx.sty

Package: graphicx 2014/10/28 v1.0g Enhanced LaTeX Graphics (DPC,SPQR)

(/usr/share/texlive/texmf-dist/tex/latex/graphics/graphics.sty

Package: graphics 2016/10/09 v1.0u Standard LaTeX Graphics (DPC,SPQR)

(/usr/share/texlive/texmf-dist/tex/latex/graphics/trig.sty

Package: trig 2016/01/03 v1.10 sin cos tan (DPC)

)

(/usr/share/texlive/texmf-dist/tex/latex/graphics-cfg/graphics.cfg

File: graphics.cfg 2016/06/04 v1.11 sample graphics configuration

)

Package graphics Info: Driver file: pdftex.def on input line 99.

(/usr/share/texlive/texmf-dist/tex/latex/graphics-def/pdftex.def

File: pdftex.def 2017/01/12 v0.06k Graphics/color for pdfTeX

\Gread@gobject=\count107

))

\Gin@req@height=\dimen129

\Gin@req@width=\dimen130

)

(./nips_2017.aux)

\openout1 = `nips_2017.aux'.

LaTeX Font Info: Checking defaults for OML/cmm/m/it on input line 30.

LaTeX Font Info: ... okay on input line 30.

LaTeX Font Info: Checking defaults for T1/cmr/m/n on input line 30.

LaTeX Font Info: ... okay on input line 30.

LaTeX Font Info: Checking defaults for OT1/cmr/m/n on input line 30.

LaTeX Font Info: ... okay on input line 30.

LaTeX Font Info: Checking defaults for OMS/cmsy/m/n on input line 30.

LaTeX Font Info: ... okay on input line 30.

LaTeX Font Info: Checking defaults for OMX/cmex/m/n on input line 30.

LaTeX Font Info: ... okay on input line 30.

LaTeX Font Info: Checking defaults for U/cmr/m/n on input line 30.

LaTeX Font Info: ... okay on input line 30.

LaTeX Font Info: Checking defaults for PD1/pdf/m/n on input line 30.

LaTeX Font Info: ... okay on input line 30.

LaTeX Font Info: Try loading font information for T1+ptm on input line 30.

(/usr/share/texlive/texmf-dist/tex/latex/psnfss/t1ptm.fd

File: t1ptm.fd 2001/06/04 font definitions for T1/ptm.

)

*geometry* driver: auto-detecting

*geometry* detected driver: pdftex

*geometry* verbose mode - [ preamble ] result:

* driver: pdftex

* paper: letterpaper

* layout: <same size as paper>

* layoutoffset:(h,v)=(0.0pt,0.0pt)

* modes:

* h-part:(L,W,R)=(92.14519pt, 430.00462pt, 92.14519pt)

* v-part:(T,H,B)=(95.39737pt, 556.47656pt, 143.09605pt)

* \paperwidth=614.295pt

* \paperheight=794.96999pt

* \textwidth=430.00462pt

* \textheight=556.47656pt

* \oddsidemargin=19.8752pt

* \evensidemargin=19.8752pt

* \topmargin=-13.87262pt

* \headheight=12.0pt

* \headsep=25.0pt

* \topskip=10.0pt

* \footskip=30.0pt

* \marginparwidth=65.0pt

* \marginparsep=11.0pt

* \columnsep=10.0pt

* \skip\footins=9.0pt plus 4.0pt minus 2.0pt

* \hoffset=0.0pt

* \voffset=0.0pt

* \mag=1000

* \@twocolumnfalse

* \@twosidefalse

* \@mparswitchfalse

* \@reversemarginfalse

* (1in=72.27pt=25.4mm, 1cm=28.453pt)

*geometry* verbose mode - [ newgeometry ] result:

* driver: pdftex

* paper: letterpaper

* layout: <same size as paper>

* layoutoffset:(h,v)=(0.0pt,0.0pt)

* modes:

* h-part:(L,W,R)=(108.405pt, 397.48499pt, 108.40501pt)

* v-part:(T,H,B)=(72.26999pt, 650.43pt, 72.27pt)

* \paperwidth=614.295pt

* \paperheight=794.96999pt

* \textwidth=397.48499pt

* \textheight=650.43pt

* \oddsidemargin=36.13501pt

* \evensidemargin=36.13501pt

* \topmargin=-37.0pt

* \headheight=12.0pt

* \headsep=25.0pt

* \topskip=10.0pt

* \footskip=30.0pt

* \marginparwidth=65.0pt

* \marginparsep=11.0pt

* \columnsep=10.0pt

* \skip\footins=9.0pt plus 4.0pt minus 2.0pt

* \hoffset=0.0pt

* \voffset=0.0pt

* \mag=1000

* \@twocolumnfalse

* \@twosidefalse

* \@mparswitchfalse

* \@reversemarginfalse

* (1in=72.27pt=25.4mm, 1cm=28.453pt)

\AtBeginShipoutBox=\box26

Package hyperref Info: Link coloring OFF on input line 30.

(/usr/share/texlive/texmf-dist/tex/latex/hyperref/nameref.sty

Package: nameref 2016/05/21 v2.44 Cross-referencing by name of section

(/usr/share/texlive/texmf-dist/tex/generic/oberdiek/gettitlestring.sty

Package: gettitlestring 2016/05/16 v1.5 Cleanup title references (HO)

)

\c@section@level=\count108

)

LaTeX Info: Redefining \ref on input line 30.

LaTeX Info: Redefining \pageref on input line 30.

LaTeX Info: Redefining \nameref on input line 30.

(./nips_2017.out) (./nips_2017.out)

\@outlinefile=\write3

\openout3 = `nips_2017.out'.

LaTeX Info: Redefining \microtypecontext on input line 30.

Package microtype Info: Generating PDF output.

Package microtype Info: Character protrusion enabled (level 2).

Package microtype Info: Using default protrusion set `alltext'.

Package microtype Info: Automatic font expansion enabled (level 2),

(microtype) stretch: 20, shrink: 20, step: 1, non-selected.

Package microtype Info: Using default expansion set `basictext'.

Package microtype Info: No adjustment of tracking.

Package microtype Info: No adjustment of interword spacing.

Package microtype Info: No adjustment of character kerning.

(/usr/share/texlive/texmf-dist/tex/latex/microtype/mt-ptm.cfg

File: mt-ptm.cfg 2006/04/20 v1.7 microtype config. file: Times (RS)

)

(/usr/share/texlive/texmf-dist/tex/context/base/mkii/supp-pdf.mkii

[Loading MPS to PDF converter (version 2006.09.02).]

\scratchcounter=\count109

\scratchdimen=\dimen131

\scratchbox=\box27

\nofMPsegments=\count110

\nofMParguments=\count111

\everyMPshowfont=\toks20

\MPscratchCnt=\count112

\MPscratchDim=\dimen132

\MPnumerator=\count113

\makeMPintoPDFobject=\count114

\everyMPtoPDFconversion=\toks21

) (/usr/share/texlive/texmf-dist/tex/latex/oberdiek/epstopdf-base.sty

Package: epstopdf-base 2016/05/15 v2.6 Base part for package epstopdf

(/usr/share/texlive/texmf-dist/tex/latex/oberdiek/grfext.sty

Package: grfext 2016/05/16 v1.2 Manage graphics extensions (HO)

)

Package epstopdf-base Info: Redefining graphics rule for `.eps' on input line 4

38.

Package grfext Info: Graphics extension search list:

(grfext) [.png,.pdf,.jpg,.mps,.jpeg,.jbig2,.jb2,.PNG,.PDF,.JPG,.JPE

G,.JBIG2,.JB2,.eps]

(grfext) \AppendGraphicsExtensions on input line 456.

(/usr/share/texlive/texmf-dist/tex/latex/latexconfig/epstopdf-sys.cfg

File: epstopdf-sys.cfg 2010/07/13 v1.3 Configuration of (r)epstopdf for TeX Liv

e

))

LaTeX Font Info: Font shape `T1/ptm/bx/n' in size <17.28> not available

(Font) Font shape `T1/ptm/b/n' tried instead on input line 33.

(/usr/share/texlive/texmf-dist/tex/latex/microtype/mt-cmr.cfg

File: mt-cmr.cfg 2013/05/19 v2.2 microtype config. file: Computer Modern Roman

(RS)

)

LaTeX Font Info: Try loading font information for U+msa on input line 33.

(/usr/share/texlive/texmf-dist/tex/latex/amsfonts/umsa.fd

File: umsa.fd 2013/01/14 v3.01 AMS symbols A

)

(/usr/share/texlive/texmf-dist/tex/latex/microtype/mt-msa.cfg

File: mt-msa.cfg 2006/02/04 v1.1 microtype config. file: AMS symbols (a) (RS)

)

LaTeX Font Info: Try loading font information for U+msb on input line 33.

(/usr/share/texlive/texmf-dist/tex/latex/amsfonts/umsb.fd

File: umsb.fd 2013/01/14 v3.01 AMS symbols B

)

(/usr/share/texlive/texmf-dist/tex/latex/microtype/mt-msb.cfg

File: mt-msb.cfg 2005/06/01 v1.0 microtype config. file: AMS symbols (b) (RS)

)

LaTeX Font Info: Font shape `T1/ptm/bx/n' in size <10> not available

(Font) Font shape `T1/ptm/b/n' tried instead on input line 33.

LaTeX Font Info: Font shape `T1/ptm/bx/n' in size <12> not available

(Font) Font shape `T1/ptm/b/n' tried instead on input line 34.

<images/blur.png, id=21, 530.98375pt x 193.72375pt>

File: images/blur.png Graphic file (type png)

<use images/blur.png>

Package pdftex.def Info: images/blur.png used on input line 71.

(pdftex.def) Requested size: 397.48499pt x 145.0205pt.

<images/patches.png, id=23, 465.74pt x 290.08376pt>

File: images/patches.png Graphic file (type png)

<use images/patches.png>

Package pdftex.def Info: images/patches.png used on input line 73.

(pdftex.def) Requested size: 397.48499pt x 247.58154pt.

<images/matrix.png, id=24, 562.1pt x 274.02374pt>

File: images/matrix.png Graphic file (type png)

<use images/matrix.png>

Package pdftex.def Info: images/matrix.png used on input line 75.

(pdftex.def) Requested size: 397.48499pt x 193.77214pt.

Underfull \hbox (badness 10000) in paragraph at lines 71--77

[]

Underfull \vbox (badness 10000) has occurred while \output is active []

[1

{/var/lib/texmf/fonts/map/pdftex/updmap/pdftex.map}]

Underfull \vbox (badness 10000) has occurred while \output is active []

[2 <./images/blur.png> <./images/patches.png> <./images/matrix.png>]

LaTeX Font Info: Font shape `T1/ptm/bx/it' in size <10> not available

(Font) Font shape `T1/ptm/b/it' tried instead on input line 105.

<images/accuracy.jpeg, id=49, 642.4pt x 115.43124pt>

File: images/accuracy.jpeg Graphic file (type jpg)

<use images/accuracy.jpeg>

Package pdftex.def Info: images/accuracy.jpeg used on input line 128.

(pdftex.def) Requested size: 397.48499pt x 71.42395pt.

Underfull \hbox (badness 10000) in paragraph at lines 128--129

[]

Underfull \vbox (badness 1137) has occurred while \output is active []

[3 <./images/accuracy.jpeg>]

Package atveryend Info: Empty hook `BeforeClearDocument' on input line 152.

[4]

Package atveryend Info: Empty hook `AfterLastShipout' on input line 152.

(./nips_2017.aux)

Package atveryend Info: Executing hook `AtVeryEndDocument' on input line 152.

Package atveryend Info: Executing hook `AtEndAfterFileList' on input line 152.

Package rerunfilecheck Info: File `nips_2017.out' has not changed.

(rerunfilecheck) Checksum: 1DC773C805F4BC12F6E4365B1AA15BC9;253.

Package atveryend Info: Empty hook `AtVeryVeryEnd' on input line 152.

)

Here is how much of TeX's memory you used:

8271 strings out of 494945

123632 string characters out of 6181033

239876 words of memory out of 5000000

11331 multiletter control sequences out of 15000+600000

34120 words of font info for 106 fonts, out of 8000000 for 9000

14 hyphenation exceptions out of 8191

31i,8n,38p,213b,327s stack positions out of 5000i,500n,10000p,200000b,80000s

{/usr/share/texlive/texmf-dist/fonts/enc/dvips/base/8r.enc}</usr/share/texliv

e/texmf-dist/fonts/type1/public/amsfonts/cm/cmmi10.pfb></usr/share/texlive/texm

f-dist/fonts/type1/public/amsfonts/cm/cmr10.pfb></usr/share/texlive/texmf-dist/

fonts/type1/public/amsfonts/cm/cmsy10.pfb></usr/share/texlive/texmf-dist/fonts/

type1/urw/times/utmb8a.pfb></usr/share/texlive/texmf-dist/fonts/type1/urw/times

/utmbi8a.pfb></usr/share/texlive/texmf-dist/fonts/type1/urw/times/utmr8a.pfb></

usr/share/texlive/texmf-dist/fonts/type1/urw/times/utmri8a.pfb>

Output written on nips_2017.pdf (4 pages, 2502387 bytes).

PDF statistics:

96 PDF objects out of 1000 (max. 8388607)

75 compressed objects within 1 object stream

20 named destinations out of 1000 (max. 500000)

18485 words of extra memory for PDF output out of 20736 (max. 10000000)

================================================

FILE: Report/nips_2017.out

================================================

\BOOKMARK [1][-]{section.1}{Our approach}{}% 1

\BOOKMARK [2][-]{subsection.1.1}{Generating the training data}{section.1}% 2

\BOOKMARK [1][-]{section.2}{Learning the Convolutional Neural Network \(CNN\)}{}% 3

\BOOKMARK [1][-]{section.3}{Conclusion}{}% 4

================================================

FILE: Report/nips_2017.sty

================================================

% partial rewrite of the LaTeX2e package for submissions to the

% Conference on Neural Information Processing Systems (NIPS):

%

% - uses more LaTeX conventions

% - line numbers at submission time replaced with aligned numbers from

% lineno package

% - \nipsfinalcopy replaced with [final] package option

% - automatically loads times package for authors

% - loads natbib automatically; this can be suppressed with the

% [nonatbib] package option

% - adds foot line to first page identifying the conference

%

% Roman Garnett (garnett@wustl.edu) and the many authors of

% nips15submit_e.sty, including MK and drstrip@sandia

%

% last revision: March 2017

\NeedsTeXFormat{LaTeX2e}

\ProvidesPackage{nips_2017}[2017/03/20 NIPS 2017 submission/camera-ready style file]

% declare final option, which creates camera-ready copy

\newif\if@nipsfinal\@nipsfinalfalse

\DeclareOption{final}{

\@nipsfinaltrue

}

% declare nonatbib option, which does not load natbib in case of

% package clash (users can pass options to natbib via

% \PassOptionsToPackage)

\newif\if@natbib\@natbibtrue

\DeclareOption{nonatbib}{

\@natbibfalse

}

\ProcessOptions\relax

% fonts

\renewcommand{\rmdefault}{ptm}

\renewcommand{\sfdefault}{phv}

% change this every year for notice string at bottom

\newcommand{\@nipsordinal}{31st}

\newcommand{\@nipsyear}{2017}

\newcommand{\@nipslocation}{Long Beach, CA, USA}

% handle tweaks for camera-ready copy vs. submission copy

\if@nipsfinal

\newcommand{\@noticestring}{%

\@nipsordinal\/ Conference on Neural Information Processing Systems

(NIPS \@nipsyear), \@nipslocation.%

}

\else

\newcommand{\@noticestring}{%

Submitted to \@nipsordinal\/ Conference on Neural Information

Processing Systems (NIPS \@nipsyear). Do not distribute.%

}

% line numbers for submission

\RequirePackage{lineno}

\linenumbers

% fix incompatibilities between lineno and amsmath, if required, by

% transparently wrapping linenomath environments around amsmath

% environments

\AtBeginDocument{%

\@ifpackageloaded{amsmath}{%

\newcommand*\patchAmsMathEnvironmentForLineno[1]{%

\expandafter\let\csname old#1\expandafter\endcsname\csname #1\endcsname

\expandafter\let\csname oldend#1\expandafter\endcsname\csname end#1\endcsname

\renewenvironment{#1}%

{\linenomath\csname old#1\endcsname}%

{\csname oldend#1\endcsname\endlinenomath}%

}%

\newcommand*\patchBothAmsMathEnvironmentsForLineno[1]{%

\patchAmsMathEnvironmentForLineno{#1}%

\patchAmsMathEnvironmentForLineno{#1*}%

}%

\patchBothAmsMathEnvironmentsForLineno{equation}%

\patchBothAmsMathEnvironmentsForLineno{align}%

\patchBothAmsMathEnvironmentsForLineno{flalign}%

\patchBothAmsMathEnvironmentsForLineno{alignat}%

\patchBothAmsMathEnvironmentsForLineno{gather}%

\patchBothAmsMathEnvironmentsForLineno{multline}%

}{}

}

\fi

% load natbib unless told otherwise

\if@natbib

\RequirePackage{natbib}

\fi

% set page geometry

\usepackage[verbose=true,letterpaper]{geometry}

\AtBeginDocument{

\newgeometry{

textheight=9in,

textwidth=5.5in,

top=1in,

headheight=12pt,

headsep=25pt,

footskip=30pt

}

\@ifpackageloaded{fullpage}

{\PackageWarning{nips_2016}{fullpage package not allowed! Overwriting formatting.}}

{}

}

\widowpenalty=10000

\clubpenalty=10000

\flushbottom

\sloppy

% font sizes with reduced leading

\renewcommand{\normalsize}{%

\@setfontsize\normalsize\@xpt\@xipt

\abovedisplayskip 7\p@ \@plus 2\p@ \@minus 5\p@

\abovedisplayshortskip \z@ \@plus 3\p@

\belowdisplayskip \abovedisplayskip

\belowdisplayshortskip 4\p@ \@plus 3\p@ \@minus 3\p@

}

\normalsize

\renewcommand{\small}{%

\@setfontsize\small\@ixpt\@xpt

\abovedisplayskip 6\p@ \@plus 1.5\p@ \@minus 4\p@

\abovedisplayshortskip \z@ \@plus 2\p@

\belowdisplayskip \abovedisplayskip

\belowdisplayshortskip 3\p@ \@plus 2\p@ \@minus 2\p@

}

\renewcommand{\footnotesize}{\@setfontsize\footnotesize\@ixpt\@xpt}

\renewcommand{\scriptsize}{\@setfontsize\scriptsize\@viipt\@viiipt}

\renewcommand{\tiny}{\@setfontsize\tiny\@vipt\@viipt}

\renewcommand{\large}{\@setfontsize\large\@xiipt{14}}

\renewcommand{\Large}{\@setfontsize\Large\@xivpt{16}}

\renewcommand{\LARGE}{\@setfontsize\LARGE\@xviipt{20}}

\renewcommand{\huge}{\@setfontsize\huge\@xxpt{23}}

\renewcommand{\Huge}{\@setfontsize\Huge\@xxvpt{28}}

% sections with less space

\providecommand{\section}{}

\renewcommand{\section}{%

\@startsection{section}{1}{\z@}%

{-2.0ex \@plus -0.5ex \@minus -0.2ex}%

{ 1.5ex \@plus 0.3ex \@minus 0.2ex}%

{\large\bf\raggedright}%

}

\providecommand{\subsection}{}

\renewcommand{\subsection}{%

\@startsection{subsection}{2}{\z@}%

{-1.8ex \@plus -0.5ex \@minus -0.2ex}%

{ 0.8ex \@plus 0.2ex}%

{\normalsize\bf\raggedright}%

}

\providecommand{\subsubsection}{}

\renewcommand{\subsubsection}{%

\@startsection{subsubsection}{3}{\z@}%

{-1.5ex \@plus -0.5ex \@minus -0.2ex}%

{ 0.5ex \@plus 0.2ex}%

{\normalsize\bf\raggedright}%

}

\providecommand{\paragraph}{}

\renewcommand{\paragraph}{%

\@startsection{paragraph}{4}{\z@}%

{1.5ex \@plus 0.5ex \@minus 0.2ex}%

{-1em}%

{\normalsize\bf}%

}

\providecommand{\subparagraph}{}

\renewcommand{\subparagraph}{%

\@startsection{subparagraph}{5}{\z@}%

{1.5ex \@plus 0.5ex \@minus 0.2ex}%

{-1em}%

{\normalsize\bf}%

}

\providecommand{\subsubsubsection}{}

\renewcommand{\subsubsubsection}{%

\vskip5pt{\noindent\normalsize\rm\raggedright}%

}

% float placement

\renewcommand{\topfraction }{0.85}

\renewcommand{\bottomfraction }{0.4}

\renewcommand{\textfraction }{0.1}

\renewcommand{\floatpagefraction}{0.7}

\newlength{\@nipsabovecaptionskip}\setlength{\@nipsabovecaptionskip}{7\p@}

\newlength{\@nipsbelowcaptionskip}\setlength{\@nipsbelowcaptionskip}{\z@}

\setlength{\abovecaptionskip}{\@nipsabovecaptionskip}

\setlength{\belowcaptionskip}{\@nipsbelowcaptionskip}

% swap above/belowcaptionskip lengths for tables

\renewenvironment{table}

{\setlength{\abovecaptionskip}{\@nipsbelowcaptionskip}%

\setlength{\belowcaptionskip}{\@nipsabovecaptionskip}%

\@float{table}}

{\end@float}

% footnote formatting

\setlength{\footnotesep }{6.65\p@}

\setlength{\skip\footins}{9\p@ \@plus 4\p@ \@minus 2\p@}

\renewcommand{\footnoterule}{\kern-3\p@ \hrule width 12pc \kern 2.6\p@}

\setcounter{footnote}{0}

% paragraph formatting

\setlength{\parindent}{\z@}

\setlength{\parskip }{5.5\p@}

% list formatting

\setlength{\topsep }{4\p@ \@plus 1\p@ \@minus 2\p@}

\setlength{\partopsep }{1\p@ \@plus 0.5\p@ \@minus 0.5\p@}

\setlength{\itemsep }{2\p@ \@plus 1\p@ \@minus 0.5\p@}

\setlength{\parsep }{2\p@ \@plus 1\p@ \@minus 0.5\p@}

\setlength{\leftmargin }{3pc}

\setlength{\leftmargini }{\leftmargin}

\setlength{\leftmarginii }{2em}

\setlength{\leftmarginiii}{1.5em}

\setlength{\leftmarginiv }{1.0em}

\setlength{\leftmarginv }{0.5em}

\def\@listi {\leftmargin\leftmargini}

\def\@listii {\leftmargin\leftmarginii

\labelwidth\leftmarginii

\advance\labelwidth-\labelsep

\topsep 2\p@ \@plus 1\p@ \@minus 0.5\p@

\parsep 1\p@ \@plus 0.5\p@ \@minus 0.5\p@

\itemsep \parsep}

\def\@listiii{\leftmargin\leftmarginiii

\labelwidth\leftmarginiii

\advance\labelwidth-\labelsep

\topsep 1\p@ \@plus 0.5\p@ \@minus 0.5\p@

\parsep \z@

\partopsep 0.5\p@ \@plus 0\p@ \@minus 0.5\p@

\itemsep \topsep}

\def\@listiv {\leftmargin\leftmarginiv

\labelwidth\leftmarginiv

\advance\labelwidth-\labelsep}

\def\@listv {\leftmargin\leftmarginv

\labelwidth\leftmarginv

\advance\labelwidth-\labelsep}

\def\@listvi {\leftmargin\leftmarginvi

\labelwidth\leftmarginvi

\advance\labelwidth-\labelsep}

% create title

\providecommand{\maketitle}{}

\renewcommand{\maketitle}{%

\par

\begingroup

\renewcommand{\thefootnote}{\fnsymbol{footnote}}

% for perfect author name centering

\renewcommand{\@makefnmark}{\hbox to \z@{$^{\@thefnmark}$\hss}}

% The footnote-mark was overlapping the footnote-text,

% added the following to fix this problem (MK)

\long\def\@makefntext##1{%

\parindent 1em\noindent

\hbox to 1.8em{\hss $\m@th ^{\@thefnmark}$}##1

}

\thispagestyle{empty}

\@maketitle

\@thanks

\@notice

\endgroup

\let\maketitle\relax

\let\thanks\relax

}

% rules for title box at top of first page

\newcommand{\@toptitlebar}{

\hrule height 4\p@

\vskip 0.25in

\vskip -\parskip%

}

\newcommand{\@bottomtitlebar}{

\vskip 0.29in

\vskip -\parskip

\hrule height 1\p@

\vskip 0.09in%

}

% create title (includes both anonymized and non-anonymized versions)

\providecommand{\@maketitle}{}

\renewcommand{\@maketitle}{%

\vbox{%

\hsize\textwidth

\linewidth\hsize

\vskip 0.1in

\@toptitlebar

\centering

{\LARGE\bf \@title\par}

\@bottomtitlebar

\if@nipsfinal

\def\And{%

\end{tabular}\hfil\linebreak[0]\hfil%

\begin{tabular}[t]{c}\bf\rule{\z@}{24\p@}\ignorespaces%

}

\def\AND{%

\end{tabular}\hfil\linebreak[4]\hfil%

\begin{tabular}[t]{c}\bf\rule{\z@}{24\p@}\ignorespaces%

}

\begin{tabular}[t]{c}\bf\rule{\z@}{24\p@}\@author\end{tabular}%

\else

\begin{tabular}[t]{c}\bf\rule{\z@}{24\p@}

Anonymous Author(s) \\

Affiliation \\

Address \\

\texttt{email} \\

\end{tabular}%

\fi

\vskip 0.3in \@minus 0.1in

}

}

% add conference notice to bottom of first page

\newcommand{\ftype@noticebox}{8}

\newcommand{\@notice}{%

% give a bit of extra room back to authors on first page

\enlargethispage{2\baselineskip}%

\@float{noticebox}[b]%

\footnotesize\@noticestring%

\end@float%

}

% abstract styling

\renewenvironment{abstract}%

{%

\vskip 0.075in%

\centerline%

{\large\bf Abstract}%

\vspace{0.5ex}%

\begin{quote}%

}

{

\par%

\end{quote}%

\vskip 1ex%

}

\endinput

================================================

FILE: Report/nips_2017.tex

================================================

\documentclass{article}

\usepackage[final]{nips_2017}

% to compile a camera-ready version, add the [final] option, e.g.:

% \usepackage[final]{nips_2017}

\usepackage[utf8]{inputenc} % allow utf-8 input

\usepackage[T1]{fontenc} % use 8-bit T1 fonts

\usepackage{hyperref} % hyperlinks