Showing preview only (9,287K chars total). Download the full file or copy to clipboard to get everything.

Repository: VainF/Diff-Pruning

Branch: main

Commit: 71e922e54275

Files: 439

Total size: 8.8 MB

Directory structure:

gitextract_bsd98edx/

├── .gitignore

├── LICENSE

├── README.md

├── ddpm_exp/

│ ├── .gitignore

│ ├── LICENSE

│ ├── README.md

│ ├── calc_fid.py

│ ├── compute_flops.py

│ ├── compute_pruned_ssim_curve.py

│ ├── compute_ssim.py

│ ├── compute_ssim_vis.py

│ ├── configs/

│ │ ├── bedroom.yml

│ │ ├── celeba.yml

│ │ ├── church.yml

│ │ ├── cifar10.yml

│ │ └── cifar10_pruning.yml

│ ├── datasets/

│ │ ├── __init__.py

│ │ ├── celeba.py

│ │ ├── ffhq.py

│ │ ├── lsun.py

│ │ ├── utils.py

│ │ └── vision.py

│ ├── draw_ssim_pruned_curve.py

│ ├── extract_cifar10.py

│ ├── fid_score.py

│ ├── finetune.py

│ ├── finetune_simple.py

│ ├── functions/

│ │ ├── __init__.py

│ │ ├── ckpt_util.py

│ │ ├── denoising.py

│ │ └── losses.py

│ ├── inception.py

│ ├── main.py

│ ├── models/

│ │ ├── diffusion.py

│ │ └── ema.py

│ ├── prune.py

│ ├── prune_kd.py

│ ├── prune_ssim.py

│ ├── prune_test.py

│ ├── runners/

│ │ ├── __init__.py

│ │ ├── diffusion.py

│ │ └── diffusion_simple.py

│ ├── scripts/

│ │ ├── finetune_bedroom_ddpm.sh

│ │ ├── finetune_celeba_ddpm.sh

│ │ ├── finetune_celeba_ddpm_kd.sh

│ │ ├── finetune_church_ddpm.sh

│ │ ├── finetune_cifar_ddpm.sh

│ │ ├── finetune_cifar_ddpm_kd.sh

│ │ ├── finetune_cifar_ddpm_random.sh

│ │ ├── finetune_cifar_ddpm_taylor.sh

│ │ ├── old/

│ │ │ ├── run_bedroom_sample_pratrained.sh

│ │ │ ├── run_celeba_pruning_scratch.sh

│ │ │ ├── run_celeba_pruning_taylor.sh

│ │ │ ├── run_celeba_sample_pratrained.sh

│ │ │ ├── run_church_pruning_taylor.sh

│ │ │ ├── run_cifar_pruning_first_order_taylor.sh

│ │ │ ├── run_cifar_pruning_magnitude.sh

│ │ │ ├── run_cifar_pruning_random.sh

│ │ │ ├── run_cifar_pruning_random_kd.sh

│ │ │ ├── run_cifar_pruning_scratch.sh

│ │ │ ├── run_cifar_pruning_second_order_taylor.sh

│ │ │ ├── run_cifar_pruning_taylor.sh

│ │ │ ├── run_cifar_pruning_taylor_kd.sh

│ │ │ └── run_cifar_train.sh

│ │ ├── prune_bedroom_ddpm.sh

│ │ ├── prune_bedroom_ddpm_test.sh

│ │ ├── prune_celeba_ddpm.sh

│ │ ├── prune_celeba_ddpm_ssim.sh

│ │ ├── prune_church_ddpm.sh

│ │ ├── prune_church_ddpm_test.sh

│ │ ├── prune_cifar_ddpm.sh

│ │ ├── prune_cifar_ddpm_ssim.sh

│ │ ├── prune_cifar_ddpm_test.sh

│ │ ├── run_celeba.sh

│ │ ├── sample_bedroom_ddpm_pretrained.sh

│ │ ├── sample_bedroom_ddpm_pruning.sh

│ │ ├── sample_celeba_ddpm_pruning.sh

│ │ ├── sample_celeba_pretrained.sh

│ │ ├── sample_church_ddpm_pruning.sh

│ │ ├── sample_church_ddpm_pruning_old.sh

│ │ ├── sample_church_ddpm_test.sh

│ │ ├── sample_church_pretrained.sh

│ │ ├── sample_cifar_ddpm_pruning.sh

│ │ ├── sample_cifar_pretrained.sh

│ │ ├── simple_celeba_our.sh

│ │ └── simple_cifar_our.sh

│ ├── tools/

│ │ ├── extract_cifar10.py

│ │ └── transform_weights.py

│ ├── torch_pruning/

│ │ ├── __init__.py

│ │ ├── _helpers.py

│ │ ├── dependency.py

│ │ ├── importance.py

│ │ ├── ops.py

│ │ ├── pruner/

│ │ │ ├── __init__.py

│ │ │ ├── algorithms/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── batchnorm_scale_pruner.py

│ │ │ │ ├── group_norm_pruner.py

│ │ │ │ ├── magnitude_based_pruner.py

│ │ │ │ ├── metapruner.py

│ │ │ │ ├── scaling_factor_pruner.py

│ │ │ │ ├── scheduler.py

│ │ │ │ └── taylor_pruner.py

│ │ │ └── function.py

│ │ └── utils/

│ │ ├── __init__.py

│ │ ├── op_counter.py

│ │ └── utils.py

│ └── utils.py

├── ddpm_prune.py

├── ddpm_sample.py

├── ddpm_train.py

├── diffusers/

│ ├── __init__.py

│ ├── commands/

│ │ ├── __init__.py

│ │ ├── diffusers_cli.py

│ │ └── env.py

│ ├── configuration_utils.py

│ ├── dependency_versions_check.py

│ ├── dependency_versions_table.py

│ ├── experimental/

│ │ ├── README.md

│ │ ├── __init__.py

│ │ └── rl/

│ │ ├── __init__.py

│ │ └── value_guided_sampling.py

│ ├── image_processor.py

│ ├── loaders.py

│ ├── models/

│ │ ├── README.md

│ │ ├── __init__.py

│ │ ├── attention.py

│ │ ├── attention_flax.py

│ │ ├── attention_processor.py

│ │ ├── autoencoder_kl.py

│ │ ├── controlnet.py

│ │ ├── controlnet_flax.py

│ │ ├── cross_attention.py

│ │ ├── dual_transformer_2d.py

│ │ ├── embeddings.py

│ │ ├── embeddings_flax.py

│ │ ├── modeling_flax_pytorch_utils.py

│ │ ├── modeling_flax_utils.py

│ │ ├── modeling_pytorch_flax_utils.py

│ │ ├── modeling_utils.py

│ │ ├── prior_transformer.py

│ │ ├── resnet.py

│ │ ├── resnet_flax.py

│ │ ├── t5_film_transformer.py

│ │ ├── transformer_2d.py

│ │ ├── transformer_temporal.py

│ │ ├── unet_1d.py

│ │ ├── unet_1d_blocks.py

│ │ ├── unet_2d.py

│ │ ├── unet_2d_blocks.py

│ │ ├── unet_2d_blocks_flax.py

│ │ ├── unet_2d_condition.py

│ │ ├── unet_2d_condition_flax.py

│ │ ├── unet_3d_blocks.py

│ │ ├── unet_3d_condition.py

│ │ ├── vae.py

│ │ ├── vae_flax.py

│ │ └── vq_model.py

│ ├── optimization.py

│ ├── pipeline_utils.py

│ ├── pipelines/

│ │ ├── README.md

│ │ ├── __init__.py

│ │ ├── alt_diffusion/

│ │ │ ├── __init__.py

│ │ │ ├── modeling_roberta_series.py

│ │ │ ├── pipeline_alt_diffusion.py

│ │ │ └── pipeline_alt_diffusion_img2img.py

│ │ ├── audio_diffusion/

│ │ │ ├── __init__.py

│ │ │ ├── mel.py

│ │ │ └── pipeline_audio_diffusion.py

│ │ ├── audioldm/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_audioldm.py

│ │ ├── controlnet/

│ │ │ ├── __init__.py

│ │ │ ├── multicontrolnet.py

│ │ │ ├── pipeline_controlnet.py

│ │ │ ├── pipeline_controlnet_img2img.py

│ │ │ ├── pipeline_controlnet_inpaint.py

│ │ │ └── pipeline_flax_controlnet.py

│ │ ├── dance_diffusion/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_dance_diffusion.py

│ │ ├── ddim/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_ddim.py

│ │ ├── ddpm/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_ddpm.py

│ │ ├── deepfloyd_if/

│ │ │ ├── __init__.py

│ │ │ ├── pipeline_if.py

│ │ │ ├── pipeline_if_img2img.py

│ │ │ ├── pipeline_if_img2img_superresolution.py

│ │ │ ├── pipeline_if_inpainting.py

│ │ │ ├── pipeline_if_inpainting_superresolution.py

│ │ │ ├── pipeline_if_superresolution.py

│ │ │ ├── safety_checker.py

│ │ │ ├── timesteps.py

│ │ │ └── watermark.py

│ │ ├── dit/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_dit.py

│ │ ├── latent_diffusion/

│ │ │ ├── __init__.py

│ │ │ ├── pipeline_latent_diffusion.py

│ │ │ └── pipeline_latent_diffusion_superresolution.py

│ │ ├── latent_diffusion_uncond/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_latent_diffusion_uncond.py

│ │ ├── onnx_utils.py

│ │ ├── paint_by_example/

│ │ │ ├── __init__.py

│ │ │ ├── image_encoder.py

│ │ │ └── pipeline_paint_by_example.py

│ │ ├── pipeline_flax_utils.py

│ │ ├── pipeline_utils.py

│ │ ├── pndm/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_pndm.py

│ │ ├── repaint/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_repaint.py

│ │ ├── score_sde_ve/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_score_sde_ve.py

│ │ ├── semantic_stable_diffusion/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_semantic_stable_diffusion.py

│ │ ├── spectrogram_diffusion/

│ │ │ ├── __init__.py

│ │ │ ├── continous_encoder.py

│ │ │ ├── midi_utils.py

│ │ │ ├── notes_encoder.py

│ │ │ └── pipeline_spectrogram_diffusion.py

│ │ ├── stable_diffusion/

│ │ │ ├── README.md

│ │ │ ├── __init__.py

│ │ │ ├── convert_from_ckpt.py

│ │ │ ├── pipeline_cycle_diffusion.py

│ │ │ ├── pipeline_flax_stable_diffusion.py

│ │ │ ├── pipeline_flax_stable_diffusion_controlnet.py

│ │ │ ├── pipeline_flax_stable_diffusion_img2img.py

│ │ │ ├── pipeline_flax_stable_diffusion_inpaint.py

│ │ │ ├── pipeline_onnx_stable_diffusion.py

│ │ │ ├── pipeline_onnx_stable_diffusion_img2img.py

│ │ │ ├── pipeline_onnx_stable_diffusion_inpaint.py

│ │ │ ├── pipeline_onnx_stable_diffusion_inpaint_legacy.py

│ │ │ ├── pipeline_onnx_stable_diffusion_upscale.py

│ │ │ ├── pipeline_stable_diffusion.py

│ │ │ ├── pipeline_stable_diffusion_attend_and_excite.py

│ │ │ ├── pipeline_stable_diffusion_controlnet.py

│ │ │ ├── pipeline_stable_diffusion_depth2img.py

│ │ │ ├── pipeline_stable_diffusion_diffedit.py

│ │ │ ├── pipeline_stable_diffusion_image_variation.py

│ │ │ ├── pipeline_stable_diffusion_img2img.py

│ │ │ ├── pipeline_stable_diffusion_inpaint.py

│ │ │ ├── pipeline_stable_diffusion_inpaint_legacy.py

│ │ │ ├── pipeline_stable_diffusion_instruct_pix2pix.py

│ │ │ ├── pipeline_stable_diffusion_k_diffusion.py

│ │ │ ├── pipeline_stable_diffusion_latent_upscale.py

│ │ │ ├── pipeline_stable_diffusion_model_editing.py

│ │ │ ├── pipeline_stable_diffusion_panorama.py

│ │ │ ├── pipeline_stable_diffusion_pix2pix_zero.py

│ │ │ ├── pipeline_stable_diffusion_sag.py

│ │ │ ├── pipeline_stable_diffusion_upscale.py

│ │ │ ├── pipeline_stable_unclip.py

│ │ │ ├── pipeline_stable_unclip_img2img.py

│ │ │ ├── safety_checker.py

│ │ │ ├── safety_checker_flax.py

│ │ │ └── stable_unclip_image_normalizer.py

│ │ ├── stable_diffusion_safe/

│ │ │ ├── __init__.py

│ │ │ ├── pipeline_stable_diffusion_safe.py

│ │ │ └── safety_checker.py

│ │ ├── stochastic_karras_ve/

│ │ │ ├── __init__.py

│ │ │ └── pipeline_stochastic_karras_ve.py

│ │ ├── text_to_video_synthesis/

│ │ │ ├── __init__.py

│ │ │ ├── pipeline_text_to_video_synth.py

│ │ │ └── pipeline_text_to_video_zero.py

│ │ ├── unclip/

│ │ │ ├── __init__.py

│ │ │ ├── pipeline_unclip.py

│ │ │ ├── pipeline_unclip_image_variation.py

│ │ │ └── text_proj.py

│ │ ├── versatile_diffusion/

│ │ │ ├── __init__.py

│ │ │ ├── modeling_text_unet.py

│ │ │ ├── pipeline_versatile_diffusion.py

│ │ │ ├── pipeline_versatile_diffusion_dual_guided.py

│ │ │ ├── pipeline_versatile_diffusion_image_variation.py

│ │ │ └── pipeline_versatile_diffusion_text_to_image.py

│ │ └── vq_diffusion/

│ │ ├── __init__.py

│ │ └── pipeline_vq_diffusion.py

│ ├── schedulers/

│ │ ├── README.md

│ │ ├── __init__.py

│ │ ├── scheduling_ddim.py

│ │ ├── scheduling_ddim_flax.py

│ │ ├── scheduling_ddim_inverse.py

│ │ ├── scheduling_ddpm.py

│ │ ├── scheduling_ddpm_flax.py

│ │ ├── scheduling_deis_multistep.py

│ │ ├── scheduling_dpmsolver_multistep.py

│ │ ├── scheduling_dpmsolver_multistep_flax.py

│ │ ├── scheduling_dpmsolver_multistep_inverse.py

│ │ ├── scheduling_dpmsolver_sde.py

│ │ ├── scheduling_dpmsolver_singlestep.py

│ │ ├── scheduling_euler_ancestral_discrete.py

│ │ ├── scheduling_euler_discrete.py

│ │ ├── scheduling_heun_discrete.py

│ │ ├── scheduling_ipndm.py

│ │ ├── scheduling_k_dpm_2_ancestral_discrete.py

│ │ ├── scheduling_k_dpm_2_discrete.py

│ │ ├── scheduling_karras_ve.py

│ │ ├── scheduling_karras_ve_flax.py

│ │ ├── scheduling_lms_discrete.py

│ │ ├── scheduling_lms_discrete_flax.py

│ │ ├── scheduling_pndm.py

│ │ ├── scheduling_pndm_flax.py

│ │ ├── scheduling_repaint.py

│ │ ├── scheduling_sde_ve.py

│ │ ├── scheduling_sde_ve_flax.py

│ │ ├── scheduling_sde_vp.py

│ │ ├── scheduling_unclip.py

│ │ ├── scheduling_unipc_multistep.py

│ │ ├── scheduling_utils.py

│ │ ├── scheduling_utils_flax.py

│ │ └── scheduling_vq_diffusion.py

│ ├── training_utils.py

│ └── utils/

│ ├── __init__.py

│ ├── accelerate_utils.py

│ ├── constants.py

│ ├── deprecation_utils.py

│ ├── doc_utils.py

│ ├── dummy_flax_and_transformers_objects.py

│ ├── dummy_flax_objects.py

│ ├── dummy_note_seq_objects.py

│ ├── dummy_onnx_objects.py

│ ├── dummy_pt_objects.py

│ ├── dummy_torch_and_librosa_objects.py

│ ├── dummy_torch_and_scipy_objects.py

│ ├── dummy_torch_and_torchsde_objects.py

│ ├── dummy_torch_and_transformers_and_k_diffusion_objects.py

│ ├── dummy_torch_and_transformers_and_onnx_objects.py

│ ├── dummy_torch_and_transformers_objects.py

│ ├── dummy_transformers_and_torch_and_note_seq_objects.py

│ ├── dynamic_modules_utils.py

│ ├── hub_utils.py

│ ├── import_utils.py

│ ├── logging.py

│ ├── model_card_template.md

│ ├── outputs.py

│ ├── pil_utils.py

│ ├── testing_utils.py

│ └── torch_utils.py

├── fid_score.py

├── inception.py

├── ldm_exp/

│ ├── LICENSE

│ ├── README.md

│ ├── configs/

│ │ ├── autoencoder/

│ │ │ ├── autoencoder_kl_16x16x16.yaml

│ │ │ ├── autoencoder_kl_32x32x4.yaml

│ │ │ ├── autoencoder_kl_64x64x3.yaml

│ │ │ └── autoencoder_kl_8x8x64.yaml

│ │ ├── latent-diffusion/

│ │ │ ├── celebahq-ldm-vq-4.yaml

│ │ │ ├── cin-ldm-vq-f8.yaml

│ │ │ ├── cin256-v2.yaml

│ │ │ ├── ffhq-ldm-vq-4.yaml

│ │ │ ├── lsun_bedrooms-ldm-vq-4.yaml

│ │ │ ├── lsun_churches-ldm-kl-8.yaml

│ │ │ └── txt2img-1p4B-eval.yaml

│ │ └── retrieval-augmented-diffusion/

│ │ └── 768x768.yaml

│ ├── environment.yaml

│ ├── fid_score.py

│ ├── inception.py

│ ├── ldm/

│ │ ├── lr_scheduler.py

│ │ ├── models/

│ │ │ ├── autoencoder.py

│ │ │ └── diffusion/

│ │ │ ├── __init__.py

│ │ │ ├── classifier.py

│ │ │ ├── ddim.py

│ │ │ ├── ddpm.py

│ │ │ └── plms.py

│ │ ├── modules/

│ │ │ ├── __init__.py

│ │ │ ├── attention.py

│ │ │ ├── diffusionmodules/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── model.py

│ │ │ │ ├── openaimodel.py

│ │ │ │ └── util.py

│ │ │ ├── distributions/

│ │ │ │ ├── __init__.py

│ │ │ │ └── distributions.py

│ │ │ ├── ema.py

│ │ │ ├── encoders/

│ │ │ │ ├── __init__.py

│ │ │ │ └── modules.py

│ │ │ ├── image_degradation/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── bsrgan.py

│ │ │ │ ├── bsrgan_light.py

│ │ │ │ └── utils_image.py

│ │ │ ├── losses/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── contperceptual.py

│ │ │ │ └── vqperceptual.py

│ │ │ └── x_transformer.py

│ │ └── util.py

│ ├── main.py

│ ├── models/

│ │ ├── first_stage_models/

│ │ │ ├── kl-f16/

│ │ │ │ └── config.yaml

│ │ │ ├── kl-f32/

│ │ │ │ └── config.yaml

│ │ │ ├── kl-f4/

│ │ │ │ └── config.yaml

│ │ │ ├── kl-f8/

│ │ │ │ └── config.yaml

│ │ │ ├── vq-f16/

│ │ │ │ └── config.yaml

│ │ │ ├── vq-f4/

│ │ │ │ └── config.yaml

│ │ │ ├── vq-f4-noattn/

│ │ │ │ └── config.yaml

│ │ │ ├── vq-f8/

│ │ │ │ └── config.yaml

│ │ │ └── vq-f8-n256/

│ │ │ └── config.yaml

│ │ └── ldm/

│ │ ├── bsr_sr/

│ │ │ └── config.yaml

│ │ ├── celeba256/

│ │ │ └── config.yaml

│ │ ├── cin256/

│ │ │ └── config.yaml

│ │ ├── ffhq256/

│ │ │ └── config.yaml

│ │ ├── inpainting_big/

│ │ │ └── config.yaml

│ │ ├── layout2img-openimages256/

│ │ │ └── config.yaml

│ │ ├── lsun_beds256/

│ │ │ └── config.yaml

│ │ ├── lsun_churches256/

│ │ │ └── config.yaml

│ │ ├── semantic_synthesis256/

│ │ │ └── config.yaml

│ │ ├── semantic_synthesis512/

│ │ │ └── config.yaml

│ │ └── text2img256/

│ │ └── config.yaml

│ ├── notebook_helpers.py

│ ├── profile_ldm.py

│ ├── profile_ldm_pretrained.py

│ ├── profile_model.py

│ ├── prune_ldm.py

│ ├── prune_ldm_no_grad.py

│ ├── run.sh

│ ├── sample_for_FID.py

│ ├── sample_imagenet.py

│ ├── sample_pruned.py

│ ├── scripts/

│ │ ├── download_first_stages.sh

│ │ ├── download_models.sh

│ │ ├── inpaint.py

│ │ ├── knn2img.py

│ │ ├── latent_imagenet_diffusion.ipynb

│ │ ├── sample_diffusion.py

│ │ ├── train_searcher.py

│ │ └── txt2img.py

│ ├── setup.py

│ ├── test_criterion.py

│ └── test_diffusion.py

├── ldm_prune.py

├── requirements.txt

├── scripts/

│ ├── finetune_ddpm_cifar10.sh

│ ├── prune_ddpm_cifar10.sh

│ ├── prune_ddpm_ema_bedroom_random.sh

│ ├── prune_ddpm_ema_church_random.sh

│ ├── prune_ldm.sh

│ ├── sample_ddpm_cifar10_pretrained.sh

│ ├── sample_ddpm_cifar10_pretrained_distributed.sh

│ └── sample_ddpm_cifar10_pruned.sh

├── tools/

│ ├── convert_cifar10_ddpm_ema.sh

│ ├── convert_ddpm_original_checkpoint_to_diffusers_cifar10.py

│ ├── convert_ldm_original_checkpoint_to_diffusers.py

│ ├── ddpm_cifar10_config.json

│ ├── extract_cifar10.py

│ └── ldm_unet_config.json

└── utils.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

# Initially taken from Github's Python gitignore file

run2

run

pretrained

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

checkpoints

*.ckpt

# C extensions

*.so

cache

# tests and logs

tests/fixtures/cached_*_text.txt

logs/

lightning_logs/

lang_code_data/

docker

doc

_typos.toml

data

docs

ldm_generated_image.png

*.log

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

.python-version

# celery beat schedule file

celerybeat-schedule

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

# vscode

.vs

.vscode

# Pycharm

.idea

# TF code

tensorflow_code

# Models

proc_data

# examples

runs

/runs_old

/wandb

/examples/runs

/examples/**/*.args

/examples/rag/sweep

# data

/data

serialization_dir

# emacs

*.*~

debug.env

# vim

.*.swp

#ctags

tags

# pre-commit

.pre-commit*

# .lock

*.lock

# DS_Store (MacOS)

.DS_Store

# RL pipelines may produce mp4 outputs

*.mp4

# dependencies

/transformers

# ruff

.ruff_cache

wandb

__pycache__

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright [2023] [Gongfan Fang]

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: README.md

================================================

# Diff-Pruning: Structural Pruning for Diffusion Models

<div align="center">

<img src="assets/framework.png" width="80%"></img>

</div>

## Update

Check our latest work [DeepCache](https://horseee.github.io/Diffusion_DeepCache/), a **training-free and almost loessless** method for diffusion model acceleration. It can be viewed as a special pruning technique that dynamically drops deep layers and only runs shallow ones during inference.

## Introduction

> **Structural Pruning for Diffusion Models** [[arxiv]](https://arxiv.org/abs/2305.10924)

> *[Gongfan Fang](https://fangggf.github.io/), [Xinyin Ma](https://horseee.github.io/), [Xinchao Wang](https://sites.google.com/site/sitexinchaowang/)*

> *National University of Singapore*

This work presents *Diff-Pruning*, an efficient structrual pruning method for diffusion models. Our empirical assessment highlights two primary features:

1) ``Efficiency``: It enables approximately a 50% reduction in FLOPs at a mere 10% to 20% of the original training expenditure;

2) ``Consistency``: The pruned diffusion models inherently preserve generative behavior congruent with the pre-trained ones.

<div align="center">

<img src="assets/LSUN.png" width="80%"></img>

</div>

### Supported Methods

- [x] Magnitude Pruning

- [x] Random Pruning

- [x] Taylor Pruning

- [x] Diff-Pruning (A taylor-based method proposed in our paper)

### TODO List

- [ ] Support more diffusion models from Diffusers

- [ ] Upload checkpoints of pruned models

- [ ] Training scripts for CelebA-HQ, LSUN Church & LSUN Bedroom

- [ ] Align the performance with the [DDIM Repo](https://github.com/ermongroup/ddim).

## Our Exp Code (Unorganized)

### Pruning with DDIM codebase

This example shows how to prune a DDPM model pre-trained on CIFAR-10 using the [DDIM codebase](https://github.com/ermongroup/ddim). Since that [Huggingface Diffusers](https://github.com/huggingface/diffusers) do not support [``skip_type='quad'``](https://github.com/ermongroup/ddim/issues/3) in DDIM, you may get slightly worse FID scores with Diffusers for both pre-trained models (FID=4.5) and pruned models (FID=5.6). We are working on this to implement the quad strategy for Diffusers. For reproducibility, we provide our original **but unorganized** exp code for the paper in [ddpm_exp](ddpm_exp).

```bash

cd ddpm_exp

# Prune & Finetune

bash scripts/simple_cifar_our.sh 0.05 # the pre-trained model and data will be automatically prepared

# Sampling

bash scripts/sample_cifar_ddpm_pruning.sh run/finetune_simple_v2/cifar10_ours_T=0.05.pth/logs/post_training/ckpt_100000.pth run/sample

```

For FID, please refer to [this section](https://github.com/VainF/Diff-Pruning#4-fid-score).

Output:

```

Found 49984 files.

100%|██████████████████████████████████████████████████████████████████████████████████████████████████████| 391/391 [00:49<00:00, 7.97it/s]

FID: 5.242662673752534

```

### Pruning with LDM codebase

Please check [ldm_exp/run.sh](ldm_exp/run.sh) for an example of pruning a pre-trained LDM model on ImageNet. This codebase is still unorganized. We will clean it up in the future.

## Pruning with Huggingface Diffusers

The following pipeline prunes a pre-trained DDPM on CIFAR-10 with [Huggingface Diffusers](https://github.com/huggingface/diffusers).

### 0. Requirements, Data and Pretrained Model

* Requirements

```bash

pip install -r requirements.txt

```

* Data

Download and extract CIFAR-10 images to *data/cifar10_images* for training and evaluation.

```bash

python tools/extract_cifar10.py --output data

```

* Pretrained Models

The following script will download an official DDPM model and convert it to the format of Huggingface Diffusers. You can find the converted model at *pretrained/ddpm_ema_cifar10*. It is an EMA version of [google/ddpm-cifar10-32](https://huggingface.co/google/ddpm-cifar10-32)

```bash

bash tools/convert_cifar10_ddpm_ema.sh

```

(Optional) You can also download a pre-converted model using wget

```bash

wget https://github.com/VainF/Diff-Pruning/releases/download/v0.0.1/ddpm_ema_cifar10.zip

```

### 1. Pruning

Create a pruned model at *run/pruned/ddpm_cifar10_pruned*

```bash

bash scripts/prune_ddpm_cifar10.sh 0.3 # pruning ratio = 30\%

```

### 2. Finetuning (Post-Training)

Finetune the model and save it at *run/finetuned/ddpm_cifar10_pruned_post_training*

```bash

bash scripts/finetune_ddpm_cifar10.sh

```

### 3. Sampling

**Pruned:** Sample and save images to *run/sample/ddpm_cifar10_pruned*

```bash

bash scripts/sample_ddpm_cifar10_pruned.sh

```

**Pretrained:** Sample and save images to *run/sample/ddpm_cifar10_pretrained*

```bash

bash scripts/sample_ddpm_cifar10_pretrained.sh

```

### 4. FID Score

This script was modified from https://github.com/mseitzer/pytorch-fid.

```bash

# pre-compute the stats of CIFAR-10 dataset

python fid_score.py --save-stats data/cifar10_images run/fid_stats_cifar10.npz --device cuda:0 --batch-size 256

```

```bash

# Compute the FID score of sampled images

python fid_score.py run/sample/ddpm_cifar10_pruned run/fid_stats_cifar10.npz --device cuda:0 --batch-size 256

```

### 5. (Optional) Distributed Training and Sampling with Accelerate

This project supports distributed training and sampling.

```bash

python -m torch.distributed.launch --nproc_per_node=8 --master_port 22222 --use_env <ddpm_sample.py|ddpm_train.py> ...

```

A multi-processing example can be found at [scripts/sample_ddpm_cifar10_pretrained_distributed.sh](scripts/sample_ddpm_cifar10_pretrained_distributed.sh).

## Prune Pre-trained DPMs from [HuggingFace Diffusers](https://huggingface.co/models?library=diffusers)

### :rocket: [Denoising Diffusion Probabilistic Models (DDPMs)](https://arxiv.org/abs/2006.11239)

Example: [google/ddpm-ema-bedroom-256](https://huggingface.co/google/ddpm-ema-bedroom-256)

```bash

python ddpm_prune.py \

--dataset "<path/to/imagefoler>" \

--model_path google/ddpm-ema-bedroom-256 \

--save_path run/pruned/ddpm_ema_bedroom_256_pruned \

--pruning_ratio 0.05 \

--pruner "<random|magnitude|reinit|taylor|diff-pruning>" \

--batch_size 4 \

--thr 0.05 \

--device cuda:0 \

```

The ``dataset`` and ``thr`` arguments only work for taylor & diff-pruning.

### :rocket: [Latent Diffusion Models (LDMs)](https://arxiv.org/abs/2112.10752)

Example: [CompVis/ldm-celebahq-256](https://huggingface.co/CompVis/ldm-celebahq-256)

```bash

python ldm_prune.py \

--model_path CompVis/ldm-celebahq-256 \

--save_path run/pruned/ldm_celeba_pruned \

--pruning_ratio 0.05 \

--pruner "<random|magnitude|reinit>" \

--device cuda:0 \

--batch_size 4 \

```

## Results

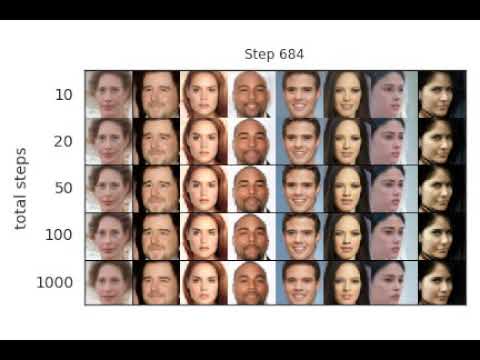

* **DDPM on Cifar-10, CelebA and LSUN**

<div align="center">

<img src="assets/exp.png" width="75%"></img>

<img src="https://github.com/VainF/Diff-Pruning/assets/18592211/39b3a7ad-2abb-4934-9ee0-07724029660b" width="75%"></img>

</div>

* **Conditional LDM on ImageNet-1K 256**

We also have some results on Conditional LDM for ImageNet-1K 256x256, where we finetune a pruned LDM for only 4 epochs. Will release the training script soon.

<div align="center">

<img src="https://github.com/VainF/Diff-Pruning/assets/18592211/31dbf489-2ca2-4625-ba54-5a5ff4e4a626" width="75%"></img>

<img src="https://github.com/VainF/Diff-Pruning/assets/18592211/20d546c5-9012-4ba9-80b2-96ed29da7d07" width="85%"></img>

</div>

## Acknowledgement

This project is heavily based on [Diffusers](https://github.com/huggingface/diffusers), [Torch-Pruning](https://github.com/VainF/Torch-Pruning), [pytorch-fid](https://github.com/mseitzer/pytorch-fid). Our experiments were conducted on [ddim](https://github.com/ermongroup/ddim) and [LDM](https://github.com/CompVis/latent-diffusion).

## Citation

If you find this work helpful, please cite:

```

@inproceedings{fang2023structural,

title={Structural pruning for diffusion models},

author={Gongfan Fang and Xinyin Ma and Xinchao Wang},

booktitle={Advances in Neural Information Processing Systems},

year={2023},

}

```

```

@inproceedings{fang2023depgraph,

title={Depgraph: Towards any structural pruning},

author={Fang, Gongfan and Ma, Xinyin and Song, Mingli and Mi, Michael Bi and Wang, Xinchao},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={16091--16101},

year={2023}

}

```

================================================

FILE: ddpm_exp/.gitignore

================================================

.vscode

__pycache__

*.log

run

data

*.png

================================================

FILE: ddpm_exp/LICENSE

================================================

MIT License

Copyright (c) 2020 Jiaming Song

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: ddpm_exp/README.md

================================================

# Denoising Diffusion Implicit Models (DDIM)

[Jiaming Song](http://tsong.me), [Chenlin Meng](http://cs.stanford.edu/~chenlin) and [Stefano Ermon](http://cs.stanford.edu/~ermon), Stanford

Implements sampling from an implicit model that is trained with the same procedure as [Denoising Diffusion Probabilistic Model](https://hojonathanho.github.io/diffusion/), but costs much less time and compute if you want to sample from it (click image below for a video demo):

<a href="http://www.youtube.com/watch?v=WCKzxoSduJQ" target="_blank"></a>

## **Integration with 🤗 Diffusers library**

DDIM is now also available in 🧨 Diffusers and accesible via the [DDIMPipeline](https://huggingface.co/docs/diffusers/api/pipelines/ddim).

Diffusers allows you to test DDIM in PyTorch in just a couple lines of code.

You can install diffusers as follows:

```

pip install diffusers torch accelerate

```

And then try out the model with just a couple lines of code:

```python

from diffusers import DDIMPipeline

model_id = "google/ddpm-cifar10-32"

# load model and scheduler

ddim = DDIMPipeline.from_pretrained(model_id)

# run pipeline in inference (sample random noise and denoise)

image = ddim(num_inference_steps=50).images[0]

# save image

image.save("ddim_generated_image.png")

```

More DDPM/DDIM models compatible with hte DDIM pipeline can be found directly [on the Hub](https://huggingface.co/models?library=diffusers&sort=downloads&search=ddpm)

To better understand the DDIM scheduler, you can check out [this introductionary google colab](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb)

The DDIM scheduler can also be used with more powerful diffusion models such as [Stable Diffusion](https://huggingface.co/docs/diffusers/v0.7.0/en/api/pipelines/stable_diffusion#stable-diffusion-pipelines)

You simply need to [accept the license on the Hub](https://huggingface.co/runwayml/stable-diffusion-v1-5), login with `huggingface-cli login` and install transformers:

```

pip install transformers

```

Then you can run:

```python

from diffusers import StableDiffusionPipeline, DDIMScheduler

ddim = DDIMScheduler.from_config("runwayml/stable-diffusion-v1-5", subfolder="scheduler")

pipeline = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", scheduler=ddim)

image = pipeline("An astronaut riding a horse.").images[0]

image.save("astronaut_riding_a_horse.png")

```

## Running the Experiments

The code has been tested on PyTorch 1.6.

### Train a model

Training is exactly the same as DDPM with the following:

```

python main.py --config {DATASET}.yml --exp {PROJECT_PATH} --doc {MODEL_NAME} --ni

```

### Sampling from the model

#### Sampling from the generalized model for FID evaluation

```

python main.py --config {DATASET}.yml --exp {PROJECT_PATH} --doc {MODEL_NAME} --sample --fid --timesteps {STEPS} --eta {ETA} --ni

```

where

- `ETA` controls the scale of the variance (0 is DDIM, and 1 is one type of DDPM).

- `STEPS` controls how many timesteps used in the process.

- `MODEL_NAME` finds the pre-trained checkpoint according to its inferred path.

If you want to use the DDPM pretrained model:

```

python main.py --config {DATASET}.yml --exp {PROJECT_PATH} --use_pretrained --sample --fid --timesteps {STEPS} --eta {ETA} --ni

```

the `--use_pretrained` option will automatically load the model according to the dataset.

We provide a CelebA 64x64 model [here](https://drive.google.com/file/d/1R_H-fJYXSH79wfSKs9D-fuKQVan5L-GR/view?usp=sharing), and use the DDPM version for CIFAR10 and LSUN.

If you want to use the version with the larger variance in DDPM: use the `--sample_type ddpm_noisy` option.

#### Sampling from the model for image inpainting

Use `--interpolation` option instead of `--fid`.

#### Sampling from the sequence of images that lead to the sample

Use `--sequence` option instead.

The above two cases contain some hard-coded lines specific to producing the image, so modify them according to your needs.

## References and Acknowledgements

```

@article{song2020denoising,

title={Denoising Diffusion Implicit Models},

author={Song, Jiaming and Meng, Chenlin and Ermon, Stefano},

journal={arXiv:2010.02502},

year={2020},

month={October},

abbr={Preprint},

url={https://arxiv.org/abs/2010.02502}

}

```

This implementation is based on / inspired by:

- [https://github.com/hojonathanho/diffusion](https://github.com/hojonathanho/diffusion) (the DDPM TensorFlow repo),

- [https://github.com/pesser/pytorch_diffusion](https://github.com/pesser/pytorch_diffusion) (PyTorch helper that loads the DDPM model), and

- [https://github.com/ermongroup/ncsnv2](https://github.com/ermongroup/ncsnv2) (code structure).

================================================

FILE: ddpm_exp/calc_fid.py

================================================

from cleanfid import fid

import argparse

parser = argparse.ArgumentParser(description=globals()["__doc__"])

parser.add_argument('--path1', type=str, required=True, help='Path to the images')

parser.add_argument('--path2', type=str, required=True, help='Path to the images')

args = parser.parse_args()

if args.path2=="cifar10":

score = fid.compute_fid(args.dir, dataset_name="cifar10", dataset_res=32, dataset_split="train")

else:

score = fid.compute_fid(args.path1, args.path2)

print("FID: ", score)

================================================

FILE: ddpm_exp/compute_flops.py

================================================

import torch

import random, os

import argparse

from PIL import Image

import torchvision

import numpy as np

import pytorch_msssim

from utils import UnlabeledImageFolder

from tqdm import tqdm

import torch_pruning as tp

parser = argparse.ArgumentParser()

parser.add_argument('--restore_from', type=str, required=True)

args = parser.parse_args()

model = torch.load(args.restore_from, map_location='cpu')[0]

example_inputs = {'x': torch.randn(1, 3, 32, 32), 't': torch.ones(1)}

macs, params = tp.utils.count_ops_and_params(model, example_inputs)

print("model: {}, macs: {} G, params: {} M".format(args.restore_from, macs/1e9, params/1e6))

================================================

FILE: ddpm_exp/compute_pruned_ssim_curve.py

================================================

import pytorch_msssim

import os

import torch

from PIL import Image

import torchvision

base_folder_name = 'run/prune_ssim_2/0'

folder_name = [os.path.join('run/prune_ssim_2', '{}'.format(k)) for k in range(50, 1000+1, 50)]

n_samples = 32

# test ssim for each folder

folder_ssim = []

for f in folder_name:

ssim_list = []

for img_id in range(n_samples):

img1 = Image.open(os.path.join(base_folder_name, f'{img_id}.png'))

img2 = Image.open(os.path.join(f, f'{img_id}.png'))

img1_tensor = torchvision.transforms.ToTensor()(img1)

img2_tensor = torchvision.transforms.ToTensor()(img2)

img1_tensor = img1_tensor.unsqueeze(0)

img2_tensor = img2_tensor.unsqueeze(0)

ssim = pytorch_msssim.ssim(img1_tensor, img2_tensor, data_range=1.0, size_average=True)

ssim_list.append(ssim)

ssim = sum(ssim_list) / len(ssim_list)

folder_ssim.append(ssim.item())

print(folder_ssim)

================================================

FILE: ddpm_exp/compute_ssim.py

================================================

import torch

import random, os

import argparse

from PIL import Image

import torchvision

import numpy as np

import pytorch_msssim

from utils import UnlabeledImageFolder

from tqdm import tqdm

parser = argparse.ArgumentParser()

parser.add_argument('--path', type=str, required=True, nargs='+')

args = parser.parse_args()

# generate radom index

nrow = 16

img_index = random.sample(list(range(50000)), nrow*nrow)

path1 = args.path[0]

path2 = args.path[1]

print(path1, path2)

img_dst1 = UnlabeledImageFolder(path1, transform=torchvision.transforms.ToTensor(), exts=["png"])

img_dst2 = UnlabeledImageFolder(path2, transform=torchvision.transforms.ToTensor(), exts=["png"])

print(len(img_dst1), len(img_dst2))

loader1 = torch.utils.data.DataLoader(

img_dst1,

batch_size=100,

shuffle=False,

num_workers=4,

drop_last=False,

)

loader2 = torch.utils.data.DataLoader(

img_dst2,

batch_size=100,

shuffle=False,

num_workers=4,

drop_last=False,

)

with torch.no_grad():

ssim_list = []

mse_list = []

for i, (img1, img2) in tqdm(enumerate(zip(loader1, loader2))):

ssim = pytorch_msssim.ssim(img1.cuda(), img2.cuda(), data_range=1.0, size_average=False)

ssim_list.append(ssim.cpu())

mse = torch.nn.functional.mse_loss(img1.cuda(), img2.cuda(), reduction='none').mean(dim=(1,2,3))

mse_list.append(mse.cpu())

ssim = torch.cat(ssim_list, dim=0)

mse = torch.cat(mse_list, dim=0)

ssim_avg = ssim.mean()

mse_avg = mse.mean()

print("path1: {}, path2: {}, ssim: {}, mse: {}".format(path1, path2, ssim_avg, mse_avg))

================================================

FILE: ddpm_exp/compute_ssim_vis.py

================================================

import torch

import random, os

import argparse

from PIL import Image

import torchvision

import numpy as np

import pytorch_msssim

from utils import UnlabeledImageFolder

from tqdm import tqdm

img_ids = [159, 149, 144, 127, 86, 41]

image_folder1 = 'run/sample_v2/bedroom_250k/image_samples/images/0'

image_folder2 = 'run/sample_v2/bedroom_official/image_samples/images/0'

base_img_id = 0

ssim_list = []

for iid in img_ids:

img1 = Image.open(os.path.join(image_folder1, f'{iid}.png'))

img2 = Image.open(os.path.join(image_folder2, f'{iid}.png'))

img1_tensor = torchvision.transforms.ToTensor()(img1).unsqueeze(0)

img2_tensor = torchvision.transforms.ToTensor()(img2).unsqueeze(0)

ssim = pytorch_msssim.ssim(img1_tensor, img2_tensor, data_range=1.0, size_average=True)

ssim_list.append(ssim.item())

print(ssim_list)

================================================

FILE: ddpm_exp/configs/bedroom.yml

================================================

data:

dataset: "LSUN"

category: "bedroom"

image_size: 256

channels: 3

logit_transform: false

uniform_dequantization: false

gaussian_dequantization: false

random_flip: true

rescaled: true

num_workers: 32

model:

type: "simple"

in_channels: 3

out_ch: 3

ch: 128

ch_mult: [1, 1, 2, 2, 4, 4]

num_res_blocks: 2

attn_resolutions: [16, ]

dropout: 0.0

var_type: fixedsmall

ema_rate: 0.999

ema: True

resamp_with_conv: True

diffusion:

beta_schedule: linear

beta_start: 0.0001

beta_end: 0.02

num_diffusion_timesteps: 1000

training:

batch_size: 8

n_epochs: 10000

n_iters: 5000000

snapshot_freq: 5000

validation_freq: 2000

sampling:

batch_size: 16

last_only: True

optim:

weight_decay: 0.000

optimizer: "Adam"

lr: 0.000002

beta1: 0.9

amsgrad: false

eps: 0.00000001

================================================

FILE: ddpm_exp/configs/celeba.yml

================================================

data:

dataset: "CELEBA"

image_size: 64

channels: 3

logit_transform: false

uniform_dequantization: false

gaussian_dequantization: false

random_flip: true

rescaled: true

num_workers: 4

model:

type: "simple"

in_channels: 3

out_ch: 3

ch: 128

ch_mult: [1, 2, 2, 2, 4]

num_res_blocks: 2

attn_resolutions: [16, ]

dropout: 0.1

var_type: fixedlarge

ema_rate: 0.9999

ema: True

resamp_with_conv: True

diffusion:

beta_schedule: linear

beta_start: 0.0001

beta_end: 0.02

num_diffusion_timesteps: 1000

training:

batch_size: 96 # 128

n_epochs: 10000

n_iters: 5000000

snapshot_freq: 5000

validation_freq: 20000

sampling:

batch_size: 32

last_only: True

optim:

weight_decay: 0.000

optimizer: "Adam"

lr: 0.0002

beta1: 0.9

amsgrad: false

eps: 0.00000001

grad_clip: 1.0

================================================

FILE: ddpm_exp/configs/church.yml

================================================

data:

dataset: "LSUN"

category: "church_outdoor"

image_size: 256

channels: 3

logit_transform: false

uniform_dequantization: false

gaussian_dequantization: false

random_flip: true

rescaled: true

num_workers: 32

model:

type: "simple"

in_channels: 3

out_ch: 3

ch: 128

ch_mult: [1, 1, 2, 2, 4, 4]

num_res_blocks: 2

attn_resolutions: [16, ]

dropout: 0.0

var_type: fixedsmall

ema_rate: 0.999

ema: True

resamp_with_conv: True

diffusion:

beta_schedule: linear

beta_start: 0.0001

beta_end: 0.02

num_diffusion_timesteps: 1000

training:

batch_size: 8 # 64

n_epochs: 10000

n_iters: 5000000

snapshot_freq: 5000

validation_freq: 2000

sampling:

batch_size: 16

last_only: True

optim:

weight_decay: 0.000

optimizer: "Adam"

lr: 0.00002

beta1: 0.9

amsgrad: false

eps: 0.00000001

================================================

FILE: ddpm_exp/configs/cifar10.yml

================================================

data:

dataset: "CIFAR10"

image_size: 32

channels: 3

logit_transform: false

uniform_dequantization: false

gaussian_dequantization: false

random_flip: true

rescaled: true

num_workers: 4

model:

type: "simple"

in_channels: 3

out_ch: 3

ch: 128

ch_mult: [1, 2, 2, 2]

num_res_blocks: 2

attn_resolutions: [16, ]

dropout: 0.1

var_type: fixedlarge

ema_rate: 0.9999

ema: True

resamp_with_conv: True

diffusion:

beta_schedule: linear

beta_start: 0.0001

beta_end: 0.02

num_diffusion_timesteps: 1000

training:

batch_size: 128

n_epochs: 10000

n_iters: 5000000

snapshot_freq: 5000

validation_freq: 2000

sampling:

batch_size: 64

last_only: True

optim:

weight_decay: 0.000

optimizer: "Adam"

lr: 0.0002

beta1: 0.9

amsgrad: false

eps: 0.00000001

grad_clip: 1.0

================================================

FILE: ddpm_exp/configs/cifar10_pruning.yml

================================================

data:

dataset: "CIFAR10"

image_size: 32

channels: 3

logit_transform: false

uniform_dequantization: false

gaussian_dequantization: false

random_flip: true

rescaled: true

num_workers: 4

model:

type: "simple"

in_channels: 3

out_ch: 3

ch: 128

ch_mult: [1, 2, 2, 2]

num_res_blocks: 2

attn_resolutions: [16, ]

dropout: 0.1

var_type: fixedlarge

ema_rate: 0.9999

ema: True

resamp_with_conv: True

diffusion:

beta_schedule: linear

beta_start: 0.0001

beta_end: 0.02

num_diffusion_timesteps: 1000

training:

batch_size: 128

n_epochs: 10000

n_iters: 5000000

snapshot_freq: 5000

validation_freq: 2000

sampling:

batch_size: 64

last_only: True

optim:

weight_decay: 0.000

optimizer: "Adam"

lr: 0.00002

beta1: 0.9

amsgrad: false

eps: 0.00000001

grad_clip: 1.0

================================================

FILE: ddpm_exp/datasets/__init__.py

================================================

import os

import torch

import numbers

import torchvision.transforms as transforms

import torchvision.transforms.functional as F

from torchvision.datasets import CIFAR10

from datasets.celeba import CelebA

from datasets.ffhq import FFHQ

from datasets.lsun import LSUN

from torch.utils.data import Subset

import numpy as np

class Crop(object):

def __init__(self, x1, x2, y1, y2):

self.x1 = x1

self.x2 = x2

self.y1 = y1

self.y2 = y2

def __call__(self, img):

return F.crop(img, self.x1, self.y1, self.x2 - self.x1, self.y2 - self.y1)

def __repr__(self):

return self.__class__.__name__ + "(x1={}, x2={}, y1={}, y2={})".format(

self.x1, self.x2, self.y1, self.y2

)

def get_dataset(args, config):

if config.data.random_flip is False:

tran_transform = test_transform = transforms.Compose(

[transforms.Resize(config.data.image_size), transforms.ToTensor()]

)

else:

tran_transform = transforms.Compose(

[

transforms.Resize(config.data.image_size),

transforms.RandomHorizontalFlip(p=0.5),

transforms.ToTensor(),

]

)

test_transform = transforms.Compose(

[transforms.Resize(config.data.image_size), transforms.ToTensor()]

)

if config.data.dataset == "CIFAR10":

dataset = CIFAR10(

os.path.join('data', "cifar10"),

train=True,

download=True,

transform=tran_transform,

)

test_dataset = CIFAR10(

os.path.join('data', "cifar10"),

train=False,

download=True,

transform=test_transform,

)

elif config.data.dataset == "CELEBA":

cx = 89

cy = 121

x1 = cy - 64

x2 = cy + 64

y1 = cx - 64

y2 = cx + 64

if config.data.random_flip:

dataset = CelebA(

root=os.path.join("data", "celeba"),

split="train",

transform=transforms.Compose(

[

Crop(x1, x2, y1, y2),

transforms.Resize(config.data.image_size),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

]

),

download=False,

)

else:

dataset = CelebA(

root=os.path.join("data", "celeba"),

split="train",

transform=transforms.Compose(

[

Crop(x1, x2, y1, y2),

transforms.Resize(config.data.image_size),

transforms.ToTensor(),

]

),

download=False,

)

test_dataset = CelebA(

root=os.path.join("data", "celeba"),

split="test",

transform=transforms.Compose(

[

Crop(x1, x2, y1, y2),

transforms.Resize(config.data.image_size),

transforms.ToTensor(),

]

),

download=True,

)

elif config.data.dataset == "LSUN":

train_folder = "{}_train".format(config.data.category)

val_folder = "{}_val".format(config.data.category)

if config.data.random_flip:

dataset = LSUN(

root=os.path.join("data", "lsun"),

classes=[train_folder],

transform=transforms.Compose(

[

transforms.Resize(config.data.image_size),

transforms.CenterCrop(config.data.image_size),

transforms.RandomHorizontalFlip(p=0.5),

transforms.ToTensor(),

]

),

)

else:

dataset = LSUN(

root=os.path.join("data", "lsun"),

classes=[train_folder],

transform=transforms.Compose(

[

transforms.Resize(config.data.image_size),

transforms.CenterCrop(config.data.image_size),

transforms.ToTensor(),

]

),

)

test_dataset = LSUN(

root=os.path.join("data", "lsun"),

classes=[val_folder],

transform=transforms.Compose(

[

transforms.Resize(config.data.image_size),

transforms.CenterCrop(config.data.image_size),

transforms.ToTensor(),

]

),

)

elif config.data.dataset == "FFHQ":

if config.data.random_flip:

dataset = FFHQ(

path=os.path.join("data", "FFHQ"),

transform=transforms.Compose(

[transforms.RandomHorizontalFlip(p=0.5), transforms.ToTensor()]

),

resolution=config.data.image_size,

)

else:

dataset = FFHQ(

path=os.path.join("data", "FFHQ"),

transform=transforms.ToTensor(),

resolution=config.data.image_size,

)

num_items = len(dataset)

indices = list(range(num_items))

random_state = np.random.get_state()

np.random.seed(2019)

np.random.shuffle(indices)

np.random.set_state(random_state)

train_indices, test_indices = (

indices[: int(num_items * 0.9)],

indices[int(num_items * 0.9) :],

)

test_dataset = Subset(dataset, test_indices)

dataset = Subset(dataset, train_indices)

else:

dataset, test_dataset = None, None

return dataset, test_dataset

def logit_transform(image, lam=1e-6):

image = lam + (1 - 2 * lam) * image

return torch.log(image) - torch.log1p(-image)

def data_transform(config, X):

if config.data.uniform_dequantization:

X = X / 256.0 * 255.0 + torch.rand_like(X) / 256.0

if config.data.gaussian_dequantization:

X = X + torch.randn_like(X) * 0.01

if config.data.rescaled:

X = 2 * X - 1.0

elif config.data.logit_transform:

X = logit_transform(X)

if hasattr(config, "image_mean"):

return X - config.image_mean.to(X.device)[None, ...]

return X

def inverse_data_transform(config, X):

if hasattr(config, "image_mean"):

X = X + config.image_mean.to(X.device)[None, ...]

if config.data.logit_transform:

X = torch.sigmoid(X)

elif config.data.rescaled:

X = (X + 1.0) / 2.0

return torch.clamp(X, 0.0, 1.0)

================================================

FILE: ddpm_exp/datasets/celeba.py

================================================

import torch

import os

import PIL

from .vision import VisionDataset

from .utils import download_file_from_google_drive, check_integrity

class CelebA(VisionDataset):

"""`Large-scale CelebFaces Attributes (CelebA) Dataset <http://mmlab.ie.cuhk.edu.hk/projects/CelebA.html>`_ Dataset.

Args:

root (string): Root directory where images are downloaded to.

split (string): One of {'train', 'valid', 'test'}.

Accordingly dataset is selected.

target_type (string or list, optional): Type of target to use, ``attr``, ``identity``, ``bbox``,

or ``landmarks``. Can also be a list to output a tuple with all specified target types.

The targets represent:

``attr`` (np.array shape=(40,) dtype=int): binary (0, 1) labels for attributes

``identity`` (int): label for each person (data points with the same identity are the same person)

``bbox`` (np.array shape=(4,) dtype=int): bounding box (x, y, width, height)

``landmarks`` (np.array shape=(10,) dtype=int): landmark points (lefteye_x, lefteye_y, righteye_x,

righteye_y, nose_x, nose_y, leftmouth_x, leftmouth_y, rightmouth_x, rightmouth_y)

Defaults to ``attr``.

transform (callable, optional): A function/transform that takes in an PIL image

and returns a transformed version. E.g, ``transforms.ToTensor``

target_transform (callable, optional): A function/transform that takes in the

target and transforms it.

download (bool, optional): If true, downloads the dataset from the internet and

puts it in root directory. If dataset is already downloaded, it is not

downloaded again.

"""

base_folder = "Img"

# There currently does not appear to be a easy way to extract 7z in python (without introducing additional

# dependencies). The "in-the-wild" (not aligned+cropped) images are only in 7z, so they are not available

# right now.

file_list = [

# File ID MD5 Hash Filename

("0B7EVK8r0v71pZjFTYXZWM3FlRnM", "00d2c5bc6d35e252742224ab0c1e8fcb", "img_align_celeba.zip"),

# ("0B7EVK8r0v71pbWNEUjJKdDQ3dGc", "b6cd7e93bc7a96c2dc33f819aa3ac651", "img_align_celeba_png.7z"),

# ("0B7EVK8r0v71peklHb0pGdDl6R28", "b6cd7e93bc7a96c2dc33f819aa3ac651", "img_celeba.7z"),

("0B7EVK8r0v71pblRyaVFSWGxPY0U", "75e246fa4810816ffd6ee81facbd244c", "list_attr_celeba.txt"),

("1_ee_0u7vcNLOfNLegJRHmolfH5ICW-XS", "32bd1bd63d3c78cd57e08160ec5ed1e2", "identity_CelebA.txt"),

("0B7EVK8r0v71pbThiMVRxWXZ4dU0", "00566efa6fedff7a56946cd1c10f1c16", "list_bbox_celeba.txt"),

("0B7EVK8r0v71pd0FJY3Blby1HUTQ", "cc24ecafdb5b50baae59b03474781f8c", "list_landmarks_align_celeba.txt"),

# ("0B7EVK8r0v71pTzJIdlJWdHczRlU", "063ee6ddb681f96bc9ca28c6febb9d1a", "list_landmarks_celeba.txt"),

("0B7EVK8r0v71pY0NSMzRuSXJEVkk", "d32c9cbf5e040fd4025c592c306e6668", "list_eval_partition.txt"),

]

def __init__(self, root,

split="train",

target_type="attr",

transform=None, target_transform=None,

download=False):

import pandas

super(CelebA, self).__init__(root)

self.split = split

if isinstance(target_type, list):

self.target_type = target_type

else:

self.target_type = [target_type]

self.transform = transform

self.target_transform = target_transform

#if download:

# self.download()

#if not self._check_integrity():

# raise RuntimeError('Dataset not found or corrupted.' +

# ' You can use download=True to download it')

self.transform = transform

self.target_transform = target_transform

if split.lower() == "train":

split = 0

elif split.lower() == "valid":

split = 1

elif split.lower() == "test":

split = 2

else:

raise ValueError('Wrong split entered! Please use split="train" '

'or split="valid" or split="test"')

with open(os.path.join(self.root, 'Eval', "list_eval_partition.txt"), "r") as f:

splits = pandas.read_csv(f, delim_whitespace=True, header=None, index_col=0)

with open(os.path.join(self.root, 'Anno', "identity_CelebA.txt"), "r") as f:

self.identity = pandas.read_csv(f, delim_whitespace=True, header=None, index_col=0)

with open(os.path.join(self.root, 'Anno', "list_bbox_celeba.txt"), "r") as f:

self.bbox = pandas.read_csv(f, delim_whitespace=True, header=1, index_col=0)

with open(os.path.join(self.root, 'Anno', "list_landmarks_align_celeba.txt"), "r") as f:

self.landmarks_align = pandas.read_csv(f, delim_whitespace=True, header=1)

with open(os.path.join(self.root, 'Anno', "list_attr_celeba.txt"), "r") as f:

self.attr = pandas.read_csv(f, delim_whitespace=True, header=1)

mask = (splits[1] == split)

self.filename = splits[mask].index.values

self.identity = torch.as_tensor(self.identity[mask].values)

self.bbox = torch.as_tensor(self.bbox[mask].values)

self.landmarks_align = torch.as_tensor(self.landmarks_align[mask].values)

self.attr = torch.as_tensor(self.attr[mask].values)

self.attr = (self.attr + 1) // 2 # map from {-1, 1} to {0, 1}

def _check_integrity(self):

for (_, md5, filename) in self.file_list:

fpath = os.path.join(self.root, self.base_folder, filename)

_, ext = os.path.splitext(filename)

# Allow original archive to be deleted (zip and 7z)

# Only need the extracted images

if ext not in [".zip", ".7z"] and not check_integrity(fpath, md5):

return False

# Should check a hash of the images

return os.path.isdir(os.path.join(self.root, self.base_folder, "img_align_celeba"))

def download(self):

import zipfile

if self._check_integrity():

print('Files already downloaded and verified')

return

for (file_id, md5, filename) in self.file_list:

download_file_from_google_drive(file_id, os.path.join(self.root, self.base_folder), filename, md5)

with zipfile.ZipFile(os.path.join(self.root, self.base_folder, "img_align_celeba.zip"), "r") as f:

f.extractall(os.path.join(self.root, self.base_folder))

def __getitem__(self, index):

X = PIL.Image.open(os.path.join(self.root, self.base_folder, "img_align_celeba", self.filename[index]))

target = []

for t in self.target_type:

if t == "attr":

target.append(self.attr[index, :])

elif t == "identity":

target.append(self.identity[index, 0])

elif t == "bbox":

target.append(self.bbox[index, :])

elif t == "landmarks":

target.append(self.landmarks_align[index, :])

else:

raise ValueError("Target type \"{}\" is not recognized.".format(t))

target = tuple(target) if len(target) > 1 else target[0]

if self.transform is not None:

X = self.transform(X)

if self.target_transform is not None:

target = self.target_transform(target)

return X, target

def __len__(self):

return len(self.attr)

def extra_repr(self):

lines = ["Target type: {target_type}", "Split: {split}"]

return '\n'.join(lines).format(**self.__dict__)

================================================

FILE: ddpm_exp/datasets/ffhq.py

================================================

from io import BytesIO

import lmdb

from PIL import Image

from torch.utils.data import Dataset

class FFHQ(Dataset):

def __init__(self, path, transform, resolution=8):

self.env = lmdb.open(

path,

max_readers=32,

readonly=True,

lock=False,

readahead=False,

meminit=False,

)

if not self.env:

raise IOError('Cannot open lmdb dataset', path)

with self.env.begin(write=False) as txn:

self.length = int(txn.get('length'.encode('utf-8')).decode('utf-8'))

self.resolution = resolution

self.transform = transform

def __len__(self):

return self.length

def __getitem__(self, index):

with self.env.begin(write=False) as txn:

key = f'{self.resolution}-{str(index).zfill(5)}'.encode('utf-8')

img_bytes = txn.get(key)

buffer = BytesIO(img_bytes)

img = Image.open(buffer)

img = self.transform(img)

target = 0

return img, target

================================================

FILE: ddpm_exp/datasets/lsun.py

================================================

from .vision import VisionDataset

from PIL import Image

import os

import os.path

import io

from collections.abc import Iterable

import pickle

from torchvision.datasets.utils import verify_str_arg, iterable_to_str

class LSUNClass(VisionDataset):

def __init__(self, root, transform=None, target_transform=None):

import lmdb

super(LSUNClass, self).__init__(

root, transform=transform, target_transform=target_transform

)

self.env = lmdb.open(

root,

max_readers=1,

readonly=True,

lock=False,

readahead=False,

meminit=False,

)

with self.env.begin(write=False) as txn:

self.length = txn.stat()["entries"]

root_split = root.split("/")

cache_file = os.path.join("/".join(root_split[:-1]), f"_cache_{root_split[-1]}")

if os.path.isfile(cache_file):

self.keys = pickle.load(open(cache_file, "rb"))

else:

with self.env.begin(write=False) as txn:

self.keys = [key for key, _ in txn.cursor()]

pickle.dump(self.keys, open(cache_file, "wb"))

def __getitem__(self, index):

img, target = None, None

env = self.env

with env.begin(write=False) as txn:

imgbuf = txn.get(self.keys[index])

buf = io.BytesIO()

buf.write(imgbuf)

buf.seek(0)

img = Image.open(buf).convert("RGB")

if self.transform is not None:

img = self.transform(img)

if self.target_transform is not None:

target = self.target_transform(target)

return img, target

def __len__(self):

return self.length

class LSUN(VisionDataset):

"""

`LSUN <https://www.yf.io/p/lsun>`_ dataset.

Args:

root (string): Root directory for the database files.

classes (string or list): One of {'train', 'val', 'test'} or a list of

categories to load. e,g. ['bedroom_train', 'church_outdoor_train'].

transform (callable, optional): A function/transform that takes in an PIL image

and returns a transformed version. E.g, ``transforms.RandomCrop``

target_transform (callable, optional): A function/transform that takes in the

target and transforms it.

"""

def __init__(self, root, classes="train", transform=None, target_transform=None):

super(LSUN, self).__init__(

root, transform=transform, target_transform=target_transform

)

self.classes = self._verify_classes(classes)

# for each class, create an LSUNClassDataset

self.dbs = []

for c in self.classes:

self.dbs.append(

LSUNClass(root=root + "/" + c + "_lmdb", transform=transform)

)

self.indices = []

count = 0

for db in self.dbs:

count += len(db)

self.indices.append(count)

self.length = count

def _verify_classes(self, classes):

categories = [

"bedroom",

"bridge",

"church_outdoor",

"classroom",

"conference_room",

"dining_room",

"kitchen",

"living_room",

"restaurant",

"tower",

]

dset_opts = ["train", "val", "test"]

try:

verify_str_arg(classes, "classes", dset_opts)

if classes == "test":

classes = [classes]

else:

classes = [c + "_" + classes for c in categories]

except ValueError:

if not isinstance(classes, Iterable):

msg = (

"Expected type str or Iterable for argument classes, "

"but got type {}."

)

raise ValueError(msg.format(type(classes)))

classes = list(classes)

msg_fmtstr = (

"Expected type str for elements in argument classes, "

"but got type {}."

)

for c in classes:

verify_str_arg(c, custom_msg=msg_fmtstr.format(type(c)))

c_short = c.split("_")

category, dset_opt = "_".join(c_short[:-1]), c_short[-1]

msg_fmtstr = "Unknown value '{}' for {}. Valid values are {{{}}}."

msg = msg_fmtstr.format(

category, "LSUN class", iterable_to_str(categories)

)

verify_str_arg(category, valid_values=categories, custom_msg=msg)