Showing preview only (1,590K chars total). Download the full file or copy to clipboard to get everything.

Repository: Vonng/pg_exporter

Branch: main

Commit: e303f2ad915c

Files: 158

Total size: 1.5 MB

Directory structure:

gitextract_340wwud1/

├── .github/

│ └── workflows/

│ ├── release.yaml

│ └── test-release.yaml

├── .gitignore

├── .goreleaser.yml

├── Dockerfile

├── Dockerfile.goreleaser

├── LICENSE

├── Makefile

├── README.md

├── config/

│ ├── 0000-doc.yml

│ ├── 0110-pg.yml

│ ├── 0120-pg_meta.yml

│ ├── 0130-pg_setting.yml

│ ├── 0210-pg_repl.yml

│ ├── 0220-pg_sync_standby.yml

│ ├── 0230-pg_downstream.yml

│ ├── 0240-pg_slot.yml

│ ├── 0250-pg_recv.yml

│ ├── 0260-pg_sub.yml

│ ├── 0270-pg_origin.yml

│ ├── 0300-pg_io.yml

│ ├── 0310-pg_size.yml

│ ├── 0320-pg_archiver.yml

│ ├── 0330-pg_bgwriter.yml

│ ├── 0331-pg_checkpointer.yml

│ ├── 0340-pg_ssl.yml

│ ├── 0350-pg_checkpoint.yml

│ ├── 0355-pg_timeline.yml

│ ├── 0360-pg_recovery.yml

│ ├── 0370-pg_slru.yml

│ ├── 0380-pg_shmem.yml

│ ├── 0390-pg_wal.yml

│ ├── 0410-pg_activity.yml

│ ├── 0420-pg_wait.yml

│ ├── 0430-pg_backend.yml

│ ├── 0440-pg_xact.yml

│ ├── 0450-pg_lock.yml

│ ├── 0460-pg_query.yml

│ ├── 0510-pg_vacuuming.yml

│ ├── 0520-pg_indexing.yml

│ ├── 0530-pg_clustering.yml

│ ├── 0540-pg_backup.yml

│ ├── 0610-pg_db.yml

│ ├── 0620-pg_db_confl.yml

│ ├── 0640-pg_pubrel.yml

│ ├── 0650-pg_subrel.yml

│ ├── 0700-pg_table.yml

│ ├── 0710-pg_index.yml

│ ├── 0720-pg_func.yml

│ ├── 0730-pg_seq.yml

│ ├── 0740-pg_relkind.yml

│ ├── 0750-pg_defpart.yml

│ ├── 0810-pg_table_size.yml

│ ├── 0820-pg_table_bloat.yml

│ ├── 0830-pg_index_bloat.yml

│ ├── 0910-pgbouncer_list.yml

│ ├── 0920-pgbouncer_database.yml

│ ├── 0930-pgbouncer_stat.yml

│ ├── 0940-pgbouncer_pool.yml

│ ├── 1000-pg_wait_event.yml

│ ├── 1800-pg_tsdb_hypertable.yml

│ ├── 1900-pg_citus.yml

│ └── 2000-pg_heartbeat.yml

├── docker/

│ ├── .dockerignore

│ ├── README.md

│ ├── build.sh

│ └── release.sh

├── exporter/

│ ├── arg.go

│ ├── args_normalize.go

│ ├── args_normalize_test.go

│ ├── collector.go

│ ├── column.go

│ ├── concurrency_test.go

│ ├── config.go

│ ├── config_coverage_pg9_test.go

│ ├── config_coverage_test.go

│ ├── config_merged_test.go

│ ├── config_style_test.go

│ ├── config_test.go

│ ├── exporter.go

│ ├── exporter_handlers_opts_test.go

│ ├── global.go

│ ├── health_state_test.go

│ ├── main.go

│ ├── metrics_lifecycle_test.go

│ ├── pgurl.go

│ ├── pgurl_test.go

│ ├── predicate_cache_test.go

│ ├── probehealth_pgbouncer_test.go

│ ├── prom_validate.go

│ ├── query.go

│ ├── query_column_test.go

│ ├── reload_signals_unix.go

│ ├── reload_signals_windows.go

│ ├── reload_test.go

│ ├── server.go

│ ├── server_exporter_test.go

│ ├── testmain_test.go

│ ├── utils.go

│ ├── utils_test.go

│ ├── validate_labels.go

│ └── validate_labels_test.go

├── go.mod

├── go.sum

├── hugo.yaml

├── legacy/

│ ├── README.md

│ ├── config/

│ │ ├── 0000-doc.yml

│ │ ├── 0110-pg.yml

│ │ ├── 0120-pg_meta.yml

│ │ ├── 0130-pg_setting.yml

│ │ ├── 0210-pg_repl.yml

│ │ ├── 0220-pg_sync_standby.yml

│ │ ├── 0230-pg_downstream.yml

│ │ ├── 0240-pg_slot.yml

│ │ ├── 0250-pg_recv.yml

│ │ ├── 0270-pg_origin.yml

│ │ ├── 0310-pg_size.yml

│ │ ├── 0320-pg_archiver.yml

│ │ ├── 0330-pg_bgwriter.yml

│ │ ├── 0331-pg_checkpointer.yml

│ │ ├── 0340-pg_ssl.yml

│ │ ├── 0350-pg_checkpoint.yml

│ │ ├── 0355-pg_timeline.yml

│ │ ├── 0360-pg_recovery.yml

│ │ ├── 0410-pg_activity.yml

│ │ ├── 0420-pg_wait.yml

│ │ ├── 0440-pg_xact.yml

│ │ ├── 0450-pg_lock.yml

│ │ ├── 0460-pg_query.yml

│ │ ├── 0610-pg_db.yml

│ │ ├── 0620-pg_db_confl.yml

│ │ ├── 0700-pg_table.yml

│ │ ├── 0710-pg_index.yml

│ │ ├── 0720-pg_func.yml

│ │ ├── 0740-pg_relkind.yml

│ │ ├── 0810-pg_table_size.yml

│ │ ├── 0820-pg_table_bloat.yml

│ │ ├── 0830-pg_index_bloat.yml

│ │ ├── 0910-pgbouncer_list.yml

│ │ ├── 0920-pgbouncer_database.yml

│ │ ├── 0930-pgbouncer_stat.yml

│ │ ├── 0940-pgbouncer_pool.yml

│ │ ├── 1800-pg_tsdb_hypertable.yml

│ │ ├── 1900-pg_citus.yml

│ │ └── 2000-pg_heartbeat.yml

│ └── pg_exporter.yml

├── main.go

├── monitor/

│ ├── initdb.sh

│ ├── pgrds-instance.json

│ └── pgsql-exporter.json

├── package/

│ ├── nfpm-amd64-deb.yaml

│ ├── nfpm-amd64-rpm.yaml

│ ├── nfpm-arm64-deb.yaml

│ ├── nfpm-arm64-rpm.yaml

│ ├── pg_exporter.default

│ ├── pg_exporter.service

│ └── preinstall.sh

└── pg_exporter.yml

================================================

FILE CONTENTS

================================================

================================================

FILE: .github/workflows/release.yaml

================================================

name: Release

on:

push:

tags:

- 'v*.*.*'

permissions:

contents: write

jobs:

release:

runs-on: ubuntu-latest

steps:

- name: Checkout

uses: actions/checkout@v4

with:

fetch-depth: 0

- name: Set up Go

uses: actions/setup-go@v5

with:

go-version-file: 'go.mod'

cache: true

- name: Run unit tests

run: go test ./...

- name: Run go vet

run: go vet ./...

- name: Set up QEMU

uses: docker/setup-qemu-action@v3

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v3

- name: Login to Docker Hub

uses: docker/login-action@v3

with:

username: ${{ secrets.DOCKERHUB_USERNAME }}

password: ${{ secrets.DOCKERHUB_TOKEN }}

- name: Run GoReleaser

uses: goreleaser/goreleaser-action@v6

with:

distribution: goreleaser

version: latest

args: release --clean

env:

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

- name: Upload artifacts

uses: actions/upload-artifact@v4

if: always()

with:

name: dist

path: dist/

================================================

FILE: .github/workflows/test-release.yaml

================================================

name: Test Release

on:

workflow_dispatch: # 允许手动触发

pull_request:

paths:

- '.goreleaser.yml'

- '.github/workflows/release.yaml'

- '.github/workflows/test-release.yaml'

permissions:

contents: read

jobs:

test:

runs-on: ubuntu-latest

steps:

- name: Checkout

uses: actions/checkout@v4

with:

fetch-depth: 0

- name: Set up Go

uses: actions/setup-go@v5

with:

go-version-file: 'go.mod'

cache: true

- name: Set up QEMU

uses: docker/setup-qemu-action@v3

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v3

- name: Run unit tests

run: go test ./...

- name: Run go vet

run: go vet ./...

- name: Test GoReleaser config

uses: goreleaser/goreleaser-action@v6

with:

distribution: goreleaser

version: latest

args: check

- name: Build snapshot

uses: goreleaser/goreleaser-action@v6

with:

distribution: goreleaser

version: latest

args: release --snapshot --clean --skip=publish,docker

- name: List artifacts

run: |

echo "Generated artifacts:"

ls -lh dist/*.tar.gz || true

echo ""

echo "Checksums:"

cat dist/checksums.txt || true

================================================

FILE: .gitignore

================================================

# binary files

pg_exporter

# tmp files

test/

deploy/

upload.sh

temp/

dist/

.DS_Store

# IDE files

.vscode/

.idea/

.code/

.claude

.codex/

.codex_tmp/

pg_exporter.iml

CLAUDE.md

.hugo_build.lock

public/

resources/

tmp/

================================================

FILE: .goreleaser.yml

================================================

version: 2

env:

- CGO_ENABLED=0

before:

hooks:

- go mod download

- go mod tidy

builds:

- id: pg_exporter

main: ./main.go

binary: pg_exporter

goos:

- linux

- darwin

- windows

goarch:

- amd64

- arm64

- ppc64le

goarm:

- 6

- 7

goamd64:

- v1

ignore:

# Darwin only supports amd64 and arm64

- goos: darwin

goarch: ppc64le

# Windows only supports amd64 and 386

- goos: windows

goarch: arm64

- goos: windows

goarch: arm

- goos: windows

goarch: ppc64le

ldflags:

- -s -w

- -extldflags "-static"

- -X 'pg_exporter/exporter.Version={{.Version}}'

- -X 'pg_exporter/exporter.Branch={{.Branch}}'

- -X 'pg_exporter/exporter.Revision={{.ShortCommit}}'

- -X 'pg_exporter/exporter.BuildDate={{.Date}}'

flags:

- -a

archives:

- id: pg_exporter

name_template: >-

{{ .ProjectName }}-{{ .Version }}.{{ .Os }}-

{{- if eq .Arch "amd64" }}amd64

{{- else if eq .Arch "386" }}386

{{- else if eq .Arch "arm64" }}arm64

{{- else if eq .Arch "arm" }}armv{{ .Arm }}

{{- else if eq .Arch "ppc64le" }}ppc64le

{{- else }}{{ .Arch }}{{ end }}

files:

- pg_exporter.yml

- LICENSE

- package/pg_exporter.default

- package/pg_exporter.service

nfpms:

- id: pg_exporter_rpm

package_name: pg_exporter

file_name_template: >-

{{ .PackageName }}-{{ .Version }}-{{ .Release }}.

{{- if eq .Arch "amd64" }}x86_64

{{- else if eq .Arch "arm64" }}aarch64

{{- else }}{{ .Arch }}{{ end }}

vendor: PGSTY

homepage: https://pigsty.io/docs/pg_exporter

maintainer: Ruohang Feng <rh@vonng.com>

description: |

Prometheus exporter for PostgreSQL / Pgbouncer server metrics.

Supported version: Postgres9.4 - 17+ & Pgbouncer 1.8 - 1.24+

Part of Project Pigsty -- Battery Included PostgreSQL Distribution

with ultimate observability support: https://pigsty.io/docs

license: Apache-2.0

formats:

- rpm

bindir: /usr/bin

release: "1"

section: database

priority: optional

contents:

- src: pg_exporter.yml

dst: /etc/pg_exporter.yml

type: config|noreplace

file_info:

mode: 0700

owner: prometheus

group: prometheus

- src: package/pg_exporter.default

dst: /etc/default/pg_exporter

type: config|noreplace

file_info:

mode: 0700

owner: prometheus

group: prometheus

- src: package/pg_exporter.service

dst: /usr/lib/systemd/system/pg_exporter.service

type: config

- src: LICENSE

dst: /usr/share/doc/pg_exporter/LICENSE

file_info:

mode: 0644

scripts:

preinstall: package/preinstall.sh

rpm:

compression: gzip

prefixes:

- /usr/bin

- id: pg_exporter_deb

package_name: pg-exporter

file_name_template: >-

{{ .PackageName }}_{{ .Version }}-{{ .Release }}_

{{- if eq .Arch "amd64" }}amd64

{{- else if eq .Arch "arm64" }}arm64

{{- else }}{{ .Arch }}{{ end }}

vendor: PGSTY

homepage: https://pigsty.io/docs/pg_exporter

maintainer: Ruohang Feng <rh@vonng.com>

description: |

Prometheus exporter for PostgreSQL / Pgbouncer server metrics.

Supported version: Postgres9.4 - 17+ & Pgbouncer 1.8 - 1.24+

Part of Project Pigsty -- Battery Included PostgreSQL Distribution

with ultimate observability support: https://pigsty.io/docs

license: Apache-2.0

formats:

- deb

bindir: /usr/bin

release: "1"

section: database

priority: optional

contents:

- src: pg_exporter.yml

dst: /etc/pg_exporter.yml

type: config|noreplace

file_info:

mode: 0700

owner: prometheus

group: prometheus

- src: package/pg_exporter.default

dst: /etc/default/pg_exporter

type: config|noreplace

file_info:

mode: 0700

owner: prometheus

group: prometheus

- src: package/pg_exporter.service

dst: /lib/systemd/system/pg_exporter.service

type: config

- src: LICENSE

dst: /usr/share/doc/pg_exporter/LICENSE

file_info:

mode: 0644

scripts:

preinstall: package/preinstall.sh

checksum:

name_template: 'checksums.txt'

algorithm: sha256

snapshot:

version_template: "{{ .Tag }}-next"

changelog:

sort: asc

filters:

exclude:

- '^docs:'

- '^test:'

- '^chore:'

- 'Merge pull request'

- 'Merge branch'

release:

github:

owner: pgsty

name: pg_exporter

draft: false

prerelease: false

mode: replace # Replace existing release with same tag

replace_existing_artifacts: true # Replace existing artifacts

name_template: "{{.ProjectName}}-v{{.Version}}"

disable: false

discussion_category_name: "" # Skip discussion creation

announce:

skip: true # Skip all announcements

# Docker configuration for multi-arch images

dockers:

- id: pg_exporter_amd64

ids:

- pg_exporter

goos: linux

goarch: amd64

image_templates:

- "pgsty/pg_exporter:{{ .Version }}-amd64"

- "pgsty/pg_exporter:latest-amd64"

dockerfile: Dockerfile.goreleaser

use: buildx

build_flag_templates:

- "--platform=linux/amd64"

- "--label=org.opencontainers.image.version={{.Version}}"

- "--label=org.opencontainers.image.created={{.Date}}"

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

extra_files:

- pg_exporter.yml

- LICENSE

- id: pg_exporter_arm64

ids:

- pg_exporter

goos: linux

goarch: arm64

image_templates:

- "pgsty/pg_exporter:{{ .Version }}-arm64"

- "pgsty/pg_exporter:latest-arm64"

dockerfile: Dockerfile.goreleaser

use: buildx

build_flag_templates:

- "--platform=linux/arm64"

- "--label=org.opencontainers.image.version={{.Version}}"

- "--label=org.opencontainers.image.created={{.Date}}"

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

extra_files:

- pg_exporter.yml

- LICENSE

docker_manifests:

- name_template: "pgsty/pg_exporter:{{ .Version }}"

image_templates:

- "pgsty/pg_exporter:{{ .Version }}-amd64"

- "pgsty/pg_exporter:{{ .Version }}-arm64"

- name_template: "pgsty/pg_exporter:latest"

image_templates:

- "pgsty/pg_exporter:latest-amd64"

- "pgsty/pg_exporter:latest-arm64"

================================================

FILE: Dockerfile

================================================

# syntax=docker/dockerfile:1

FROM golang:1.26.2-alpine AS builder-env

ARG GOPROXY=https://proxy.golang.org,direct

ARG GOSUMDB=sum.golang.org

ENV GOPROXY=${GOPROXY}

ENV GOSUMDB=${GOSUMDB}

# Build a self-contained pg_exporter container with a clean environment and no

# dependencies.

#

# build with

#

# docker buildx build -f Dockerfile --tag pg_exporter .

#

WORKDIR /build

COPY go.mod go.sum ./

RUN \

--mount=type=cache,target=/go/pkg/mod \

--mount=type=cache,target=/root/.cache/go-build \

CGO_ENABLED=0 GOOS=linux go mod download

COPY . /build

RUN \

--mount=type=cache,target=/go/pkg/mod \

--mount=type=cache,target=/root/.cache/go-build \

CGO_ENABLED=0 GOOS=linux go build -a -o /pg_exporter .

FROM scratch

LABEL org.opencontainers.image.authors="Ruohang Feng <rh@vonng.com>, Craig Ringer <craig.ringer@enterprisedb.com>" \

org.opencontainers.image.url="https://github.com/pgsty/pg_exporter" \

org.opencontainers.image.source="https://github.com/pgsty/pg_exporter" \

org.opencontainers.image.licenses="Apache-2.0" \

org.opencontainers.image.title="pg_exporter" \

org.opencontainers.image.description="PostgreSQL/Pgbouncer metrics exporter for Prometheus"

WORKDIR /bin

COPY --from=builder-env /pg_exporter /bin/pg_exporter

COPY pg_exporter.yml /etc/pg_exporter.yml

EXPOSE 9630/tcp

ENTRYPOINT ["/bin/pg_exporter"]

================================================

FILE: Dockerfile.goreleaser

================================================

# Dockerfile for goreleaser

# This uses pre-built binaries from goreleaser instead of building from source

FROM scratch

LABEL org.opencontainers.image.authors="Ruohang Feng <rh@vonng.com>" \

org.opencontainers.image.url="https://github.com/pgsty/pg_exporter" \

org.opencontainers.image.source="https://github.com/pgsty/pg_exporter" \

org.opencontainers.image.licenses="Apache-2.0" \

org.opencontainers.image.title="pg_exporter" \

org.opencontainers.image.description="PostgreSQL/Pgbouncer metrics exporter for Prometheus"

WORKDIR /bin

COPY pg_exporter /bin/pg_exporter

COPY pg_exporter.yml /etc/pg_exporter.yml

COPY LICENSE /LICENSE

EXPOSE 9630/tcp

ENTRYPOINT ["/bin/pg_exporter"]

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright [2019-2025] [Ruohang Feng](rh@vonng.com)

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: Makefile

================================================

#==============================================================#

# File : Makefile

# Mtime : 2025-08-14

# License : Apache-2.0 @ https://github.com/pgsty/pg_exporter

# Copyright : 2018-2026 Ruohang Feng / Vonng (rh@vonng.com)

#==============================================================#

VERSION ?= v1.2.2

BUILD_DATE := $(shell date '+%Y%m%d%H%M%S')

GIT_BRANCH := $(shell git rev-parse --abbrev-ref HEAD 2>/dev/null || echo "unknown")

GIT_REVISION := $(shell git rev-parse --short HEAD 2>/dev/null || echo "HEAD")

LDFLAGS_META := -X 'pg_exporter/exporter.Version=$(VERSION)' \

-X 'pg_exporter/exporter.Branch=$(GIT_BRANCH)' \

-X 'pg_exporter/exporter.Revision=$(GIT_REVISION)' \

-X 'pg_exporter/exporter.BuildDate=$(BUILD_DATE)'

LDFLAGS_STATIC := -s -w -extldflags \"-static\" $(LDFLAGS_META)

# Release Dir

LINUX_AMD_DIR:=dist/$(VERSION)/pg_exporter-$(VERSION).linux-amd64

LINUX_ARM_DIR:=dist/$(VERSION)/pg_exporter-$(VERSION).linux-arm64

DARWIN_AMD_DIR:=dist/$(VERSION)/pg_exporter-$(VERSION).darwin-amd64

DARWIN_ARM_DIR:=dist/$(VERSION)/pg_exporter-$(VERSION).darwin-arm64

WINDOWS_DIR:=dist/$(VERSION)/pg_exporter-$(VERSION).windows-amd64

###############################################################

# Shortcuts #

###############################################################

build:

go build -ldflags "$(LDFLAGS_META)" -o pg_exporter

clean:

rm -rf pg_exporter

build-darwin-amd64:

CGO_ENABLED=0 GOOS=darwin GOARCH=amd64 go build -a -ldflags "$(LDFLAGS_STATIC)" -o pg_exporter

build-darwin-arm64:

CGO_ENABLED=0 GOOS=darwin GOARCH=arm64 go build -a -ldflags "$(LDFLAGS_STATIC)" -o pg_exporter

build-linux-amd64:

CGO_ENABLED=0 GOOS=linux GOARCH=amd64 go build -a -ldflags "$(LDFLAGS_STATIC)" -o pg_exporter

build-linux-arm64:

CGO_ENABLED=0 GOOS=linux GOARCH=arm64 go build -a -ldflags "$(LDFLAGS_STATIC)" -o pg_exporter

r: release

release: release-linux release-darwin

release-linux: linux-amd64 linux-arm64

linux-amd64: clean build-linux-amd64

rm -rf $(LINUX_AMD_DIR) && mkdir -p $(LINUX_AMD_DIR)

nfpm package --packager rpm --config package/nfpm-amd64-rpm.yaml --target dist/$(VERSION)

nfpm package --packager deb --config package/nfpm-amd64-deb.yaml --target dist/$(VERSION)

cp pg_exporter $(LINUX_AMD_DIR)/pg_exporter

cp pg_exporter.yml $(LINUX_AMD_DIR)/pg_exporter.yml

cp LICENSE $(LINUX_AMD_DIR)/LICENSE

tar -czf dist/$(VERSION)/pg_exporter-$(VERSION).linux-amd64.tar.gz -C dist/$(VERSION) pg_exporter-$(VERSION).linux-amd64

rm -rf $(LINUX_AMD_DIR)

linux-arm64: clean build-linux-arm64

rm -rf $(LINUX_ARM_DIR) && mkdir -p $(LINUX_ARM_DIR)

nfpm package --packager rpm --config package/nfpm-arm64-rpm.yaml --target dist/$(VERSION)

nfpm package --packager deb --config package/nfpm-arm64-deb.yaml --target dist/$(VERSION)

cp pg_exporter $(LINUX_ARM_DIR)/pg_exporter

cp pg_exporter.yml $(LINUX_ARM_DIR)/pg_exporter.yml

cp LICENSE $(LINUX_ARM_DIR)/LICENSE

tar -czf dist/$(VERSION)/pg_exporter-$(VERSION).linux-arm64.tar.gz -C dist/$(VERSION) pg_exporter-$(VERSION).linux-arm64

rm -rf $(LINUX_ARM_DIR)

release-darwin: darwin-amd64 darwin-arm64

darwin-amd64: clean build-darwin-amd64

rm -rf $(DARWIN_AMD_DIR) && mkdir -p $(DARWIN_AMD_DIR)

cp pg_exporter $(DARWIN_AMD_DIR)/pg_exporter

cp pg_exporter.yml $(DARWIN_AMD_DIR)/pg_exporter.yml

cp LICENSE $(DARWIN_AMD_DIR)/LICENSE

tar -czf dist/$(VERSION)/pg_exporter-$(VERSION).darwin-amd64.tar.gz -C dist/$(VERSION) pg_exporter-$(VERSION).darwin-amd64

rm -rf $(DARWIN_AMD_DIR)

darwin-arm64: clean build-darwin-arm64

rm -rf $(DARWIN_ARM_DIR) && mkdir -p $(DARWIN_ARM_DIR)

cp pg_exporter $(DARWIN_ARM_DIR)/pg_exporter

cp pg_exporter.yml $(DARWIN_ARM_DIR)/pg_exporter.yml

cp LICENSE $(DARWIN_ARM_DIR)/LICENSE

tar -czf dist/$(VERSION)/pg_exporter-$(VERSION).darwin-arm64.tar.gz -C dist/$(VERSION) pg_exporter-$(VERSION).darwin-arm64

rm -rf $(DARWIN_ARM_DIR)

###############################################################

# Configuration #

###############################################################

# generate merged config from separated configuration

conf:

rm -rf pg_exporter.yml

cat config/*.yml >> pg_exporter.yml

# generate legacy merged config for PostgreSQL 9.1 - 9.6

conf9:

rm -rf legacy/pg_exporter.yml

cat legacy/config/*.yml >> legacy/pg_exporter.yml

# Backward-compatible alias (deprecated)

conf-pg9: conf9

###############################################################

# Release #

###############################################################

release-dir:

mkdir -p dist/$(VERSION)

release-clean:

rm -rf dist/$(VERSION)

###############################################################

# GoReleaser #

###############################################################

# Install goreleaser if not present

goreleaser-install:

@which goreleaser > /dev/null || (echo "Installing goreleaser..." && go install github.com/goreleaser/goreleaser/v2@latest)

# Build snapshot release (without publishing)

goreleaser-snapshot: goreleaser-install

goreleaser release --snapshot --clean --skip=publish

# Build release locally (without git tag)

goreleaser-build: goreleaser-install

goreleaser build --snapshot --clean

# Build release locally without snapshot suffix (requires clean git)

goreleaser-local: goreleaser-install

goreleaser release --clean --skip=publish

# Release with goreleaser (requires git tag)

goreleaser-release: goreleaser-install

goreleaser release --clean

# Test release (creates prerelease, no notifications)

goreleaser-test-release: goreleaser-install

@echo "Creating test release (prerelease mode, no notifications)..."

goreleaser release --clean

# Production release (set prerelease to false in config first)

goreleaser-prod-release: goreleaser-install

@echo "Creating production release (will notify subscribers if announce.skip is false)..."

goreleaser release --clean

# Check goreleaser configuration

goreleaser-check: goreleaser-install

goreleaser check

# New main release task using goreleaser

release-new: goreleaser-release

# build docker image

docker: docker-build

docker-build:

./docker/build.sh

docker-release:

./docker/release.sh

###############################################################

# Develop #

###############################################################

install: build

sudo install -m 0755 pg_exporter /usr/bin/pg_exporter

uninstall:

sudo rm -rf /usr/bin/pg_exporter

runb:

./pg_exporter --log.level=info --config=pg_exporter.yml --auto-discovery

run:

go run main.go --log.level=info --config=pg_exporter.yml --auto-discovery

debug:

go run main.go --log.level=debug --config=pg_exporter.yml --auto-discovery

curl:

curl localhost:9630/metrics | grep -v '#' | grep pg_

upload:

./upload.sh

d: dev

dev:

hugo serve

.PHONY: build clean build-darwin build-linux\

release release-darwin release-linux release-windows docker docker-build docker-release \

install uninstall debug curl upload \

goreleaser-install goreleaser-snapshot goreleaser-build goreleaser-release goreleaser-test-release \

goreleaser-check release-new goreleaser-local

================================================

FILE: README.md

================================================

<p align="center">

<img src="static/logo.png" alt="PG Exporter Logo" height="128" align="middle">

</p>

# PG EXPORTER

[](https://pigsty.io/docs/pg_exporter)

[](https://hub.docker.com/r/pgsty/pg_exporter)

[](https://github.com/pgsty/pg_exporter/releases/tag/v1.2.2)

[](https://github.com/pgsty/pg_exporter/blob/main/LICENSE)

[](https://star-history.com/#pgsty/pg_exporter&Date)

[](https://goreportcard.com/report/github.com/pgsty/pg_exporter)

> **Advanced [PostgreSQL](https://www.postgresql.org) & [pgBouncer](https://www.pgbouncer.org/) metrics [exporter](https://prometheus.io/docs/instrumenting/exporters/) for [Prometheus](https://prometheus.io/)**

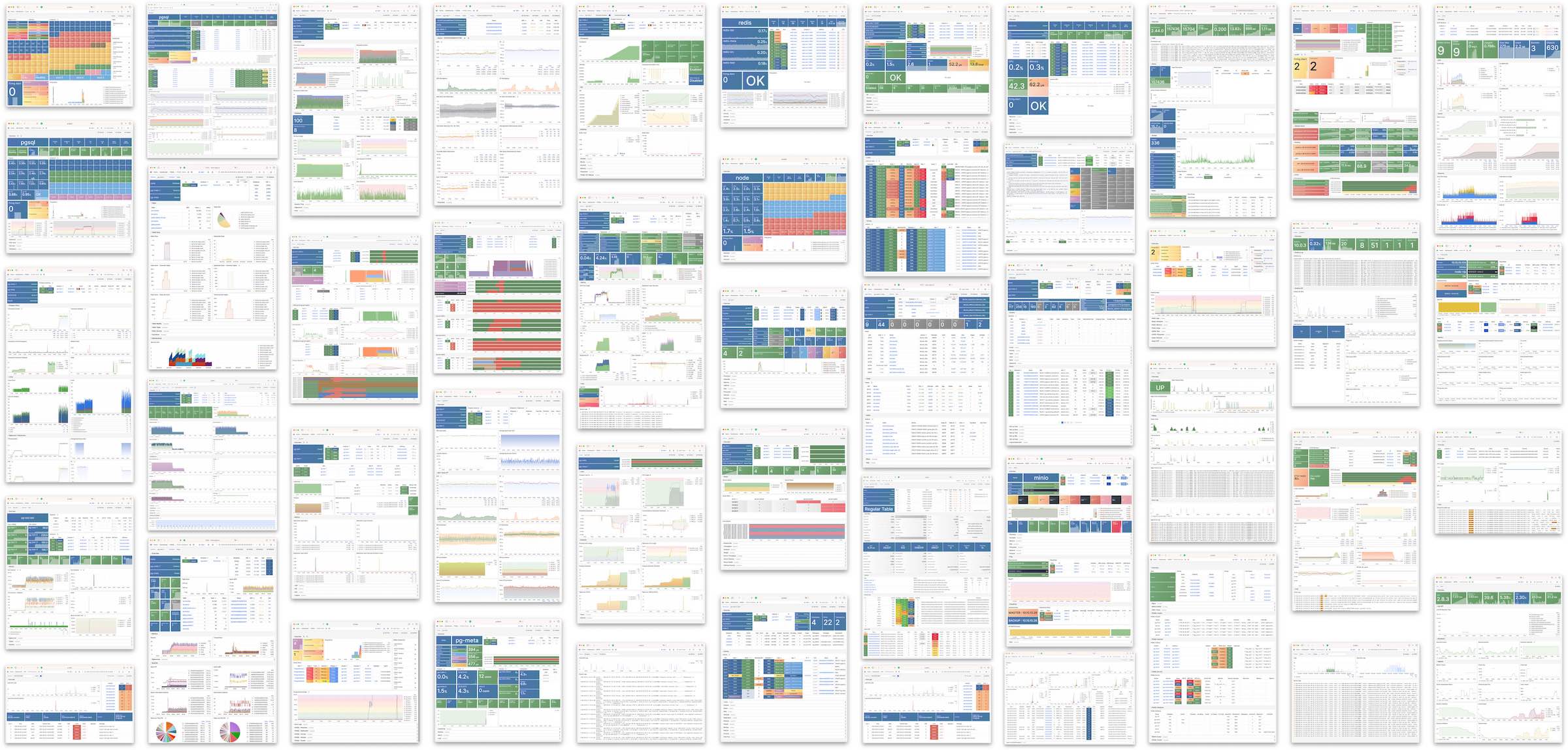

PG Exporter brings ultimate monitoring experience to your PostgreSQL with **declarative config**, **dynamic planning**, and **customizable collectors**.

It provides **600+** metrics and ~3K time series per instance, covers everything you'll need for PostgreSQL observability.

Check [**https://demo.pigsty.io**](https://demo.pigsty.io/ui/) for live demo, which is built upon this exporter by [**Pigsty**](https://pigsty.io).

<div align="center">

<a href="https://pigsty.io/docs/pg_exporter">Docs</a> •

<a href="#quick-start">Quick Start</a> •

<a href="#features">Features</a> •

<a href="#usage">Usage</a> •

<a href="#api">API</a> •

<a href="#deployment">Deployment</a> •

<a href="#collectors">Collectors</a> •

<a href="https://demo.pigsty.io/ui/">Demo</a>

</div><br>

[](https://demo.pigsty.io)

--------

## Features

- **Highly Customizable**: Define almost all metrics through declarative YAML configs

- **Full Coverage**: Monitor PostgreSQL (10-18+) and pgBouncer (1.8-1.25+) in a single exporter

- **Fine-grained Control**: Configure timeout, caching, skip conditions, and fatality per collector

- **Dynamic Planning**: Define multiple query branches based on different conditions

- **Self-monitoring**: Rich metrics about pg_exporter [itself](https://demo.pigsty.io/d/pgsql-exporter) for complete observability

- **Production-Ready**: Battle-tested in real-world environments across 12K+ cores for 6+ years

- **Auto-discovery**: Automatically discover and monitor multiple databases within an instance

- **Health Check APIs**: Comprehensive HTTP endpoints for service health and traffic routing

- **Extension Support**: `timescaledb`, `citus`, `pg_stat_statements`, `pg_wait_sampling`,...

- **Local-first URL behavior**: Built for on-host deployment, with implicit local target fallback and automatic `sslmode=disable` when omitted

> Also support PG 9.x with [legacy config bundle](legacy/).

--------

## Quick Start

RPM / DEB / Tarball available in the GitHub [release page](https://github.com/pgsty/pg_exporter/releases), and Pigsty's YUM / APT [Infra Repo](https://pigsty.io/docs/repo/infra).

To run this exporter, you need to pass the postgres/pgbouncer URL via env or arg:

```bash

PG_EXPORTER_URL='postgres://user:pass@host:port/postgres' pg_exporter

curl http://localhost:9630/metrics # access metrics

```

There are built-in metrics such as `pg_up`, `pg_version`, `pg_in_recovery`, `pg_exporter_build_info`, and exporter self-metrics under `pg_exporter_*` (disable with `--disable-intro`).

**All other metrics are defined in the [`pg_exporter.yml`](pg_exporter.yml) config file**.

There are two monitoring dashboards in the [`monitor/`](monitor/) directory.

You can use [**Pigsty**](https://pigsty.io) to monitor existing PostgreSQL cluster or RDS, it will setup pg_exporter for you.

--------

## Usage

```bash

usage: pg_exporter [<flags>]

Flags:

-h, --[no-]help Show context-sensitive help (also try --help-long and --help-man).

-u, --url=URL postgres target url

-c, --config=CONFIG path to config dir or file

--web.listen-address=:9630 ...

Addresses on which to expose metrics and web interface.

--web.config.file="" Path to configuration file that can enable TLS or authentication.

-l, --label="" constant lables:comma separated list of label=value pair ($PG_EXPORTER_LABEL)

-t, --tag="" tags,comma separated list of server tag ($PG_EXPORTER_TAG)

-C, --[no-]disable-cache force not using cache ($PG_EXPORTER_DISABLE_CACHE)

-m, --[no-]disable-intro disable internal/exporter self metrics ($PG_EXPORTER_DISABLE_INTRO)

-a, --[no-]auto-discovery automatically scrape all database for given server ($PG_EXPORTER_AUTO_DISCOVERY)

-x, --exclude-database="template0,template1,postgres"

excluded databases when enabling auto-discovery ($PG_EXPORTER_EXCLUDE_DATABASE)

-i, --include-database="" included databases when enabling auto-discovery ($PG_EXPORTER_INCLUDE_DATABASE)

-n, --namespace="" prefix of built-in metrics, (pg|pgbouncer) by default ($PG_EXPORTER_NAMESPACE)

-f, --[no-]fail-fast fail fast instead of waiting during start-up ($PG_EXPORTER_FAIL_FAST)

-T, --connect-timeout=100 connect timeout in ms, 100 by default ($PG_EXPORTER_CONNECT_TIMEOUT)

-P, --web.telemetry-path="/metrics"

URL path under which to expose metrics. ($PG_EXPORTER_TELEMETRY_PATH)

-D, --[no-]dry-run dry run and print raw configs

-E, --[no-]explain explain server planned queries

--log.level="info" log level: debug|info|warn|error]

--log.format="logfmt" log format: logfmt|json

--[no-]version Show application version.

```

Parameters could be given via command-line args or environment variables.

| CLI Arg | Environment Variable | Default Value |

|------------------------|--------------------------------|----------------------------------|

| `--url` | `PG_EXPORTER_URL` | `postgresql:///?sslmode=disable` |

| `--config` | `PG_EXPORTER_CONFIG` | `pg_exporter.yml` |

| `--label` | `PG_EXPORTER_LABEL` | |

| `--tag` | `PG_EXPORTER_TAG` | |

| `--auto-discovery` | `PG_EXPORTER_AUTO_DISCOVERY` | `true` |

| `--disable-cache` | `PG_EXPORTER_DISABLE_CACHE` | `false` |

| `--fail-fast` | `PG_EXPORTER_FAIL_FAST` | `false` |

| `--exclude-database` | `PG_EXPORTER_EXCLUDE_DATABASE` | |

| `--include-database` | `PG_EXPORTER_INCLUDE_DATABASE` | |

| `--namespace` | `PG_EXPORTER_NAMESPACE` | `pg\|pgbouncer` |

| `--connect-timeout` | `PG_EXPORTER_CONNECT_TIMEOUT` | `100` |

| `--dry-run` | | `false` |

| `--explain` | | `false` |

| `--log.level` | | `info` |

| `--log.format` | | `logfmt` |

| `--web.listen-address` | | `:9630` |

| `--web.config.file` | | `""` |

| `--web.telemetry-path` | `PG_EXPORTER_TELEMETRY_PATH` | `/metrics` |

### Connection URL Defaults

- If `--url` / `PG_EXPORTER_URL` is not provided, pg_exporter falls back to a local-first default URL: `postgresql:///?sslmode=disable`.

- If `sslmode` is not explicitly set in the URL, pg_exporter injects `sslmode=disable` by default.

- This is an intentional design choice for common on-host deployments (`pg_exporter` and PostgreSQL/PgBouncer on the same machine), where loopback TLS adds overhead with little practical gain.

- If you need TLS for remote targets, provide `sslmode` explicitly in the connection URL (for example: `sslmode=require`, `verify-ca`, `verify-full`).

------

## API

PG Exporter provides a rich set of HTTP endpoints:

Here are `pg_exporter` REST APIs

```bash

# Fetch metrics (customizable)

curl localhost:9630/metrics

# Reload configuration

curl -X POST localhost:9630/reload

# Explain configuration

curl localhost:9630/explain

# Print Statistics

curl localhost:9630/stat

# Aliveness health check (200 up, 503 down)

curl localhost:9630/up

curl localhost:9630/health

curl localhost:9630/liveness

curl localhost:9630/readiness

# traffic route health check

### 200 if not in recovery, 404 if in recovery, 503 if server is down

curl localhost:9630/primary

curl localhost:9630/leader

curl localhost:9630/master

curl localhost:9630/read-write

curl localhost:9630/rw

### 200 if in recovery, 404 if not in recovery, 503 if server is down

curl localhost:9630/replica

curl localhost:9630/standby

curl localhost:9630/read-only

curl localhost:9630/ro

### 200 if server is ready for read traffic (including primary), 503 if server is down

curl localhost:9630/read

```

--------

## Build

To build a static stand-alone binary for docker scratch

```bash

CGO_ENABLED=0 GOOS=linux go build -a -ldflags '-extldflags "-static"' -o pg_exporter

```

Or [download](https://github.com/pgsty/pg_exporter/releases) the latest prebuilt binaries from release pages.

We also have pre-packaged RPM / DEB packages in the [Pigsty Infra Repo](https://pigsty.io/docs/repo/infra/)

--------

## Docker

You can find pre-built amd64/arm64 docker images here: [pgsty/pg_exporter](https://hub.docker.com/r/pgsty/pg_exporter)

--------

## Deployment

Redhat rpm and Debian/Ubuntu deb packages are made with `nfpm` for `x86/arm64`:

* `/usr/bin/pg_exporter`: the pg_exporter binary.

* [`/etc/pg_exporter.yml`](pg_exporter.yml): the config file

* [`/usr/lib/systemd/system/pg_exporter.service`](package/pg_exporter.service): systemd service file

* [`/etc/default/pg_exporter`](package/pg_exporter.default): systemd service envs & options

Which is also available on Pigsty's [Infra Repo](https://pigsty.io/docs/repo/infra).

------

## Collectors

Configs lie in the core of `pg_exporter`. Actually, this project contains more lines of YAML than go.

* A monolith battery-included config file: [`pg_exporter.yml`](pg_exporter.yml)

* Separated metrics definition in [`config/collector`](config/)

* Example of how to write a config file: [`doc.yml`](config/0000-doc.yml)

* Legacy config bundle for PostgreSQL 9.1 - 9.6: [`legacy/`](legacy/) ([`legacy/README.md`](legacy/README.md))

Current `pg_exporter` is shipped with the following metrics collector definition files

- [0000-doc.yml](config/0000-doc.yml)

- [0110-pg.yml](config/0110-pg.yml)

- [0120-pg_meta.yml](config/0120-pg_meta.yml)

- [0130-pg_setting.yml](config/0130-pg_setting.yml)

- [0210-pg_repl.yml](config/0210-pg_repl.yml)

- [0220-pg_sync_standby.yml](config/0220-pg_sync_standby.yml)

- [0230-pg_downstream.yml](config/0230-pg_downstream.yml)

- [0240-pg_slot.yml](config/0240-pg_slot.yml)

- [0250-pg_recv.yml](config/0250-pg_recv.yml)

- [0260-pg_sub.yml](config/0260-pg_sub.yml)

- [0270-pg_origin.yml](config/0270-pg_origin.yml)

- [0300-pg_io.yml](config/0300-pg_io.yml)

- [0310-pg_size.yml](config/0310-pg_size.yml)

- [0320-pg_archiver.yml](config/0320-pg_archiver.yml)

- [0330-pg_bgwriter.yml](config/0330-pg_bgwriter.yml)

- [0331-pg_checkpointer.yml](config/0331-pg_checkpointer.yml)

- [0340-pg_ssl.yml](config/0340-pg_ssl.yml)

- [0350-pg_checkpoint.yml](config/0350-pg_checkpoint.yml)

- [0355-pg_timeline.yml](config/0355-pg_timeline.yml)

- [0360-pg_recovery.yml](config/0360-pg_recovery.yml)

- [0370-pg_slru.yml](config/0370-pg_slru.yml)

- [0380-pg_shmem.yml](config/0380-pg_shmem.yml)

- [0390-pg_wal.yml](config/0390-pg_wal.yml)

- [0410-pg_activity.yml](config/0410-pg_activity.yml)

- [0420-pg_wait.yml](config/0420-pg_wait.yml)

- [0430-pg_backend.yml](config/0430-pg_backend.yml)

- [0440-pg_xact.yml](config/0440-pg_xact.yml)

- [0450-pg_lock.yml](config/0450-pg_lock.yml)

- [0460-pg_query.yml](config/0460-pg_query.yml)

- [0510-pg_vacuuming.yml](config/0510-pg_vacuuming.yml)

- [0520-pg_indexing.yml](config/0520-pg_indexing.yml)

- [0530-pg_clustering.yml](config/0530-pg_clustering.yml)

- [0540-pg_backup.yml](config/0540-pg_backup.yml)

- [0610-pg_db.yml](config/0610-pg_db.yml)

- [0620-pg_db_confl.yml](config/0620-pg_db_confl.yml)

- [0640-pg_pubrel.yml](config/0640-pg_pubrel.yml)

- [0650-pg_subrel.yml](config/0650-pg_subrel.yml)

- [0700-pg_table.yml](config/0700-pg_table.yml)

- [0710-pg_index.yml](config/0710-pg_index.yml)

- [0720-pg_func.yml](config/0720-pg_func.yml)

- [0730-pg_seq.yml](config/0730-pg_seq.yml)

- [0740-pg_relkind.yml](config/0740-pg_relkind.yml)

- [0750-pg_defpart.yml](config/0750-pg_defpart.yml)

- [0810-pg_table_size.yml](config/0810-pg_table_size.yml)

- [0820-pg_table_bloat.yml](config/0820-pg_table_bloat.yml)

- [0830-pg_index_bloat.yml](config/0830-pg_index_bloat.yml)

- [0910-pgbouncer_list.yml](config/0910-pgbouncer_list.yml)

- [0920-pgbouncer_database.yml](config/0920-pgbouncer_database.yml)

- [0930-pgbouncer_stat.yml](config/0930-pgbouncer_stat.yml)

- [0940-pgbouncer_pool.yml](config/0940-pgbouncer_pool.yml)

- [1000-pg_wait_event.yml](config/1000-pg_wait_event.yml)

- [1800-pg_tsdb_hypertable.yml](config/1800-pg_tsdb_hypertable.yml)

- [1900-pg_citus.yml](config/1900-pg_citus.yml)

- [2000-pg_heartbeat.yml](config/2000-pg_heartbeat.yml)

> #### Note

>

> Supported version: PostgreSQL 10, 11, 12, 13, 14, 15, 16, 17, 18+

>

> But you can still get PostgreSQL 9.1 - 9.6 support by switching to the [`legacy/pg_exporter.yml`](legacy/pg_exporter.yml) config

`pg_exporter` will generate approximately 600 metrics for a completely new database cluster.

For a real-world database with 10 ~ 100 tables, it may generate several 1k ~ 10k metrics.

You may need to modify or disable some database-level metrics on a database with several thousand or more tables to complete the scrape in time.

Config files are using YAML format, there are lots of examples in the [conf](https://github.com/pgsty/pg_exporter/tree/main/config/collector) dir. and here is a [sample](config/0000-doc.yml) config.

```

#==============================================================#

# 1. Config File

#==============================================================#

# The configuration file for pg_exporter is a YAML file.

# Default configurations are retrieved via following precedence:

# 1. command line args: --config=<config path>

# 2. environment variables: PG_EXPORTER_CONFIG=<config path>

# 3. pg_exporter.yml (Current directory)

# 4. /etc/pg_exporter.yml (config file)

# 5. /etc/pg_exporter (config dir)

#==============================================================#

# 2. Config Format

#==============================================================#

# pg_exporter config could be a single YAML file, or a directory containing a series of separated YAML files.

# Each YAML config file consists of one or more metrics Collector definition, which are top-level objects.

# If a directory is provided, all YAML files in that directory (non-recursive; subdirectories are ignored) will be merged in alphabetic order.

# Collector definition examples are shown below.

#==============================================================#

# 3. Collector Example

#==============================================================#

# # Here is an example of a metrics collector definition

# pg_primary_only: # Collector branch name. Must be UNIQUE among the entire configuration

# name: pg # Collector namespace, used as METRIC PREFIX, set to branch name by default, can be override

# # the same namespace may contain multiple collector branches. It`s the user`s responsibility

# # to make sure that AT MOST ONE collector is picked for each namespace.

#

# desc: PostgreSQL basic information (on primary) # Collector description

# query: | # Metrics Query SQL

#

# SELECT extract(EPOCH FROM CURRENT_TIMESTAMP) AS timestamp,

# pg_current_wal_lsn() - '0/0' AS lsn,

# pg_current_wal_insert_lsn() - '0/0' AS insert_lsn,

# pg_current_wal_lsn() - '0/0' AS write_lsn,

# pg_current_wal_flush_lsn() - '0/0' AS flush_lsn,

# extract(EPOCH FROM now() - pg_postmaster_start_time()) AS uptime,

# extract(EPOCH FROM now() - pg_conf_load_time()) AS conf_reload_time,

# pg_is_in_backup() AS is_in_backup,

# extract(EPOCH FROM now() - pg_backup_start_time()) AS backup_time;

#

# # [OPTIONAL] metadata fields, control collector behavior

# ttl: 10 # Cache TTL: in seconds, how long will pg_exporter cache this collector`s query result.

# timeout: 0.1 # Query Timeout: in seconds, queries that exceed this limit will be canceled.

# min_version: 100000 # minimal supported version, boundary IS included. In server version number format,

# max_version: 130000 # maximal supported version, boundary NOT included, In server version number format

# fatal: false # Collector marked `fatal` fails, the entire scrape will abort immediately and marked as failed

# skip: false # Collector marked `skip` will not be installed during the planning procedure

#

# tags: [cluster, primary] # Collector tags, used for planning and scheduling

#

# # tags are list of strings, which could be:

# # * `cluster` marks this query as cluster level, so it will only execute once for the same PostgreSQL Server

# # * `primary` or `master` mark this query can only run on a primary instance (WILL NOT execute if pg_is_in_recovery())

# # * `standby` or `replica` mark this query can only run on a replica instance (WILL execute if pg_is_in_recovery())

# # some special tag prefix have special interpretation:

# # * `dbname:<dbname>` means this query will ONLY be executed on database with name `<dbname>`

# # * `username:<user>` means this query will only be executed when connect with user `<user>`

# # * `extension:<extname>` means this query will only be executed when extension `<extname>` is installed

# # * `schema:<nspname>` means this query will only by executed when schema `<nspname>` exist

# # * `not:<negtag>` means this query WILL NOT be executed when exporter is tagged with `<negtag>`

# # * `<tag>` means this query WILL be executed when exporter is tagged with `<tag>`

# # ( <tag> could not be cluster,primary,standby,master,replica,etc...)

#

# # One or more "predicate queries" may be defined for a metric query. These

# # are run before the main metric query (after any cache hit check). If all

# # of them, when run sequentially, return a single row with a single column

# # boolean true result, the main metric query is executed. If any of them

# # return false or return zero rows, the main query is skipped. If any

# # predicate query returns more than one row, a non-boolean result, or fails

# # with an error, the whole query is marked failed. Predicate queries can be

# # used to check for the presence of specific functions, tables, extensions,

# # settings, and vendor-specific pg features before running the main query.

#

# predicate_queries:

# - name: predicate query name

# predicate_query: |

# SELECT EXISTS (SELECT 1 FROM information_schema.routines WHERE routine_schema = 'pg_catalog' AND routine_name = 'pg_backup_start_time');

#

# metrics: # List of returned columns, each column must have a `name` and `usage`, `rename` and `description` are optional

# - timestamp: # Column name, should be exactly the same as returned column name

# usage: GAUGE # Metric type, `usage` could be

# * DISCARD: completely ignoring this field

# * LABEL: use columnName=columnValue as a label in metric

# * GAUGE: Mark column as a gauge metric, full name will be `<query.name>_<column.name>`

# * COUNTER: Same as above, except it is a counter rather than a gauge.

# rename: ts # [OPTIONAL] Alias, optional, the alias will be used instead of the column name

# description: xxxx # [OPTIONAL] Description of the column, will be used as a metric description

# default: 0 # [OPTIONAL] Default value, will be used when column is NULL

# scale: 1000 # [OPTIONAL] Scale the value by this factor

# - lsn:

# usage: COUNTER

# description: log sequence number, current write location (on primary)

# - insert_lsn:

# usage: COUNTER

# description: primary only, location of current wal inserting

# - write_lsn:

# usage: COUNTER

# description: primary only, location of current wal writing

# - flush_lsn:

# usage: COUNTER

# description: primary only, location of current wal syncing

# - uptime:

# usage: GAUGE

# description: seconds since postmaster start

# - conf_reload_time:

# usage: GAUGE

# description: seconds since last configuration reload

# - is_in_backup:

# usage: GAUGE

# description: 1 if backup is in progress

# - backup_time:

# usage: GAUGE

# description: seconds since the current backup start. null if don`t have one

#

# .... # you can also use rename & scale to customize the metric name and value:

# - checkpoint_write_time:

# rename: write_time

# usage: COUNTER

# scale: 1e-3

# description: Total amount of time that has been spent in the portion of checkpoint processing where files are written to disk, in seconds

#==============================================================#

# 4. Collector Presets

#==============================================================#

# pg_exporter is shipped with a series of preset collectors (already numbered and ordered by filename)

#

# 1xx Basic metrics: basic info, metadata, settings

# 2xx Replication metrics: replication, walreceiver, downstream, sync standby, slots, subscription

# 3xx Persist metrics: size, wal, background writer, checkpointer, ssl, checkpoint, recovery, slru cache, shmem usage

# 4xx Activity metrics: backend count group by state, wait event, locks, xacts, queries

# 5xx Progress metrics: clustering, vacuuming, indexing, basebackup, copy

# 6xx Database metrics: pg_database, publication, subscription

# 7xx Object metrics: pg_class, table, index, function, sequence, default partition

# 8xx Optional metrics: optional metrics collector (disable by default, slow queries)

# 9xx Pgbouncer metrics: metrics from pgbouncer admin database `pgbouncer`

#

# 100-599 Metrics for entire database cluster (scrape once)

# 600-899 Metrics for single database instance (scrape for each database ,except for pg_db itself)

#==============================================================#

# 5. Cache TTL

#==============================================================#

# Cache can be used for reducing query overhead, it can be enabled by setting a non-zero value for `ttl`

# It is highly recommended to use cache to avoid duplicate scrapes. Especially when you got multiple Prometheus

# scraping the same instance with slow monitoring queries. Setting `ttl` to zero or leaving blank will disable

# result caching, which is the default behavior

#

# TTL has to be smaller than your scrape interval. 15s scrape interval and 10s TTL is a good start for

# production environment. Some expensive monitoring queries (such as size/bloat check) will have longer `ttl`

# which can also be used as a mechanism to achieve `different scrape frequency`

#==============================================================#

# 6. Query Timeout

#==============================================================#

# Collectors can be configured with an optional Timeout. If the collector's query executes more than that

# timeout, it will be canceled immediately. Setting the `timeout` to 0 or leaving blank will reset it to

# default timeout 0.1 (100ms). Setting it to any negative number will disable the query timeout feature.

# All queries have a default timeout of 100ms, if exceeded, the query will be canceled immediately to avoid

# avalanche. You can explicitly overwrite that option. but beware: in some extreme cases, if all your

# timeouts sum up greater your scrape/cache interval (usually 15s), the queries may still be jammed.

# or, you can just disable potential slow queries.

#==============================================================#

# 7. Version Compatibility

#==============================================================#

# Each collector has two optional version compatibility parameters: `min_version` and `max_version`.

# These two parameters specify the version compatibility of the collector. If target postgres/pgbouncer's

# version is less than `min_version`, or higher than `max_version`, the collector will not be installed.

# These two parameters are using PostgreSQL server version number format, which is a 6-digit integer

# format as <major:2 digit><minor:2 digit>:<release: 2 digit>.

# For example, 090600 stands for 9.6, and 120100 stands for 12.1

# And beware that version compatibility range is left-inclusive right exclusive: [min, max), set to zero or

# leaving blank will affect as -inf or +inf

#==============================================================#

# 8. Fatality

#==============================================================#

# If a collector is marked with `fatal` falls, the entire scrape operation will be marked as fail and key metrics

# `pg_up` / `pgbouncer_up` will be reset to 0. It is always a good practice to set up AT LEAST ONE fatal

# collector for pg_exporter. `pg.pg_primary_only` and `pgbouncer_list` are the default fatal collector.

#

# If a collector without `fatal` flag fails, it will increase global fail counters. But the scrape operation

# will carry on. The entire scrape result will not be marked as faile, thus will not affect the `<xx>_up` metric.

#==============================================================#

# 9. Skip

#==============================================================#

# Collector with `skip` flag set to true will NOT be installed.

# This could be a handy option to disable collectors

#==============================================================#

# 10. Tags and Planning

#==============================================================#

# Tags are designed for collector planning & schedule. It can be handy to customize which queries run

# on which instances. And thus you can use one-single monolith config for multiple environments

#

# Tags are a list of strings, each string could be:

# Pre-defined special tags

# * `cluster` marks this collector as cluster level, so it will ONLY BE EXECUTED ONCE for the same PostgreSQL Server

# * `primary` or `master` mark this collector as primary-only, so it WILL NOT work iff pg_is_in_recovery()

# * `standby` or `replica` mark this collector as replica-only, so it WILL work iff pg_is_in_recovery()

# Special tag prefix which have different interpretation:

# * `dbname:<dbname>` means this collector will ONLY work on database with name `<dbname>`

# * `username:<user>` means this collector will ONLY work when connect with user `<user>`

# * `extension:<extname>` means this collector will ONLY work when extension `<extname>` is installed

# * `schema:<nspname>` means this collector will only work when schema `<nspname>` exists

# Customized positive tags (filter) and negative tags (taint)

# * `not:<negtag>` means this collector WILL NOT work when exporter is tagged with `<negtag>`

# * `<tag>` means this query WILL work if exporter is tagged with `<tag>` (special tags not included)

#

# pg_exporter will trigger the Planning procedure after connecting to the target. It will gather database facts

# and match them with tags and other metadata (such as supported version range). Collector will only

# be installed if and only if it is compatible with the target server.

```

--------------------

## About

Author: [Vonng](https://vonng.com/en) ([rh@vonng.com](mailto:rh@vonng.com))

Contributors: https://github.com/pgsty/pg_exporter/graphs/contributors

License: [Apache-2.0](LICENSE)

Copyright: 2018-2026 rh@vonng.com

<p align="center">

<img src="static/logo.png" alt="PG Exporter Logo" height="128" align="middle">

</p>

================================================

FILE: config/0000-doc.yml

================================================

#==============================================================#

# Desc : pg_exporter metrics collector definition

# Ver : PostgreSQL 10 ~ 18+ and pgbouncer 1.9~1.25+

# Ctime : 2019-12-09

# Mtime : 2026-03-21

# Homepage : https://pigsty.io

# Author : Ruohang Feng (rh@vonng.com)

# License : Apache-2.0 @ https://github.com/pgsty/pg_exporter

# Copyright : 2018-2026 Ruohang Feng / Vonng (rh@vonng.com)

#==============================================================#

#==============================================================#

# 1. Config File

#==============================================================#

# The configuration file for pg_exporter is a YAML file.

# Default configurations are retrieved via following precedence:

# 1. command line args: --config=<config path>

# 2. environment variables: PG_EXPORTER_CONFIG=<config path>

# 3. pg_exporter.yml (Current directory)

# 4. /etc/pg_exporter.yml (config file)

# 5. /etc/pg_exporter (config dir)

#==============================================================#

# 2. Config Format

#==============================================================#

# pg_exporter config could be a single YAML file, or a directory containing a series of separated YAML files.

# Each YAML config file consists of one or more metrics Collector definition, which are top-level objects.

# If a directory is provided, all YAML in that directory will be merged in alphabetic order.

# Collector definition examples are shown below.

#==============================================================#

# 3. Collector Example

#==============================================================#

# # Here is an example of a metrics collector definition

# pg_primary_only: # Collector branch name. Must be UNIQUE among the entire configuration

# name: pg # Collector namespace, used as METRIC PREFIX, set to branch name by default, can be override

# # the same namespace may contain multiple collector branches. It`s the user`s responsibility

# # to make sure that AT MOST ONE collector is picked for each namespace.

#

# desc: PostgreSQL basic information (on primary) # Collector description

# query: | # Metrics Query SQL

#

# SELECT extract(EPOCH FROM CURRENT_TIMESTAMP) AS timestamp,

# pg_current_wal_lsn() - '0/0' AS lsn,

# pg_current_wal_insert_lsn() - '0/0' AS insert_lsn,

# pg_current_wal_lsn() - '0/0' AS write_lsn,

# pg_current_wal_flush_lsn() - '0/0' AS flush_lsn,

# extract(EPOCH FROM now() - pg_postmaster_start_time()) AS uptime,

# extract(EPOCH FROM now() - pg_conf_load_time()) AS conf_reload_time,

# pg_is_in_backup() AS is_in_backup,

# extract(EPOCH FROM now() - pg_backup_start_time()) AS backup_time;

#

# # [OPTIONAL] metadata fields, control collector behavior

# ttl: 10 # Cache TTL: in seconds, how long will pg_exporter cache this collector`s query result.

# timeout: 0.1 # Query Timeout: in seconds, queries that exceed this limit will be canceled.

# min_version: 100000 # minimal supported version, boundary IS included. In server version number format,

# max_version: 130000 # maximal supported version, boundary NOT included, In server version number format

# fatal: false # Collector marked `fatal` fails, the entire scrape will abort immediately and marked as failed

# skip: false # Collector marked `skip` will not be installed during the planning procedure

#

# tags: [cluster, primary] # Collector tags, used for planning and scheduling

#

# # tags are list of strings, which could be:

# # * `cluster` marks this query as cluster level, so it will only execute once for the same PostgreSQL Server

# # * `primary` or `master` mark this query can only run on a primary instance (WILL NOT execute if pg_is_in_recovery())

# # * `standby` or `replica` mark this query can only run on a replica instance (WILL execute if pg_is_in_recovery())

# # some special tag prefix have special interpretation:

# # * `dbname:<dbname>` means this query will ONLY be executed on database with name `<dbname>`

# # * `username:<user>` means this query will only be executed when connect with user `<user>`

# # * `extension:<extname>` means this query will only be executed when extension `<extname>` is installed

# # * `schema:<nspname>` means this query will only by executed when schema `<nspname>` exist

# # * `not:<negtag>` means this query WILL NOT be executed when exporter is tagged with `<negtag>`

# # * `<tag>` means this query WILL be executed when exporter is tagged with `<tag>`

# # ( <tag> could not be cluster,primary,standby,master,replica,etc...)

#

# # One or more "predicate queries" may be defined for a metric query. These

# # are run before the main metric query (after any cache hit check). If all

# # of them, when run sequentially, return a single row with a single column

# # boolean true result, the main metric query is executed. If any of them

# # return false or return zero rows, the main query is skipped. If any

# # predicate query returns more than one row, a non-boolean result, or fails

# # with an error, the whole query is marked failed. Predicate queries can be

# # used to check for the presence of specific functions, tables, extensions,

# # settings, and vendor-specific pg features before running the main query.

#

# predicate_queries:

# - name: predicate query name

# predicate_query: |

# SELECT EXISTS (SELECT 1 FROM information_schema.routines WHERE routine_schema = 'pg_catalog' AND routine_name = 'pg_backup_start_time');

#

# metrics: # List of returned columns, each column must have a `name` and `usage`, `rename` and `description` are optional

# - timestamp: # Column name, should be exactly the same as returned column name

# usage: GAUGE # Metric type, `usage` could be

# * DISCARD: completely ignoring this field

# * LABEL: use columnName=columnValue as a label in metric

# * GAUGE: Mark column as a gauge metric, full name will be `<query.name>_<column.name>`

# * COUNTER: Same as above, except it is a counter rather than a gauge.

# rename: ts # [OPTIONAL] Alias, optional, the alias will be used instead of the column name

# description: xxxx # [OPTIONAL] Description of the column, will be used as a metric description

# default: 0 # [OPTIONAL] Default value, will be used when column is NULL

# scale: 1000 # [OPTIONAL] Scale the value by this factor

# - lsn:

# usage: COUNTER

# description: log sequence number, current write location (on primary)

# - insert_lsn:

# usage: COUNTER

# description: primary only, location of current wal inserting

# - write_lsn:

# usage: COUNTER

# description: primary only, location of current wal writing

# - flush_lsn:

# usage: COUNTER

# description: primary only, location of current wal syncing

# - uptime:

# usage: GAUGE

# description: seconds since postmaster start

# - conf_reload_time:

# usage: GAUGE

# description: seconds since last configuration reload

# - is_in_backup:

# usage: GAUGE

# description: 1 if backup is in progress

# - backup_time:

# usage: GAUGE

# description: seconds since the current backup start. null if don`t have one

#

# .... # you can also use rename & scale to customize the metric name and value:

# - checkpoint_write_time:

# rename: write_time

# usage: COUNTER

# scale: 1e-3

# description: Total amount of time that has been spent in the portion of checkpoint processing where files are written to disk, in seconds

#==============================================================#

# 4. Collector Presets

#==============================================================#

# pg_exporter is shipped with a series of preset collectors (already numbered and ordered by filename)

#

# 1xx Basic metrics: basic info, metadata, settings

# 2xx Replication metrics: replication, walreceiver, downstream, sync standby, slots, subscription

# 3xx Persist metrics: size, wal, background writer, checkpointer, ssl, checkpoint, recovery, slru cache, shmem usage

# 4xx Activity metrics: backend count group by state, wait event, locks, xacts, queries

# 5xx Progress metrics: clustering, vacuuming, indexing, basebackup, copy

# 6xx Database metrics: pg_database, publication, subscription

# 7xx Object metrics: pg_class, table, index, function, sequence, default partition

# 8xx Optional metrics: optional metrics collector (disable by default, slow queries)

# 9xx Pgbouncer metrics: metrics from pgbouncer admin database `pgbouncer`

#

# 100-599 Metrics for entire database cluster (scrape once)

# 600-899 Metrics for single database instance (scrape for each database ,except for pg_db itself)

#==============================================================#

# 5. Cache TTL

#==============================================================#

# Cache can be used for reducing query overhead, it can be enabled by setting a non-zero value for `ttl`

# It is highly recommended to use cache to avoid duplicate scrapes. Especially when you got multiple Prometheus

# scraping the same instance with slow monitoring queries. Setting `ttl` to zero or leaving blank will disable

# result caching, which is the default behavior

#

# TTL has to be smaller than your scrape interval. 15s scrape interval and 10s TTL is a good start for

# production environment. Some expensive monitoring queries (such as size/bloat check) will have longer `ttl`

# which can also be used as a mechanism to achieve `different scrape frequency`

#==============================================================#

# 6. Query Timeout

#==============================================================#

# Collectors can be configured with an optional Timeout. If the collector's query executes more than that

# timeout, it will be canceled immediately. Setting the `timeout` to 0 or leaving blank will reset it to

# default timeout 0.1 (100ms). Setting it to any negative number will disable the query timeout feature.

# All queries have a default timeout of 100ms, if exceeded, the query will be canceled immediately to avoid

# avalanche. You can explicitly overwrite that option. but beware: in some extreme cases, if all your

# timeouts sum up greater your scrape/cache interval (usually 15s), the queries may still be jammed.

# or, you can just disable potential slow queries.

#==============================================================#

# 7. Version Compatibility

#==============================================================#

# Each collector has two optional version compatibility parameters: `min_version` and `max_version`.

# These two parameters specify the version compatibility of the collector. If target postgres/pgbouncer's

# version is less than `min_version`, or higher than `max_version`, the collector will not be installed.

# These two parameters are using PostgreSQL server version number format, which is a 6-digit integer

# format as <major:2 digit><minor:2 digit>:<release: 2 digit>.

# For example, 090600 stands for 9.6, and 120100 stands for 12.1

# And beware that version compatibility range is left-inclusive right exclusive: [min, max), set to zero or

# leaving blank will affect as -inf or +inf

#==============================================================#

# 8. Fatality

#==============================================================#

# If a collector is marked with `fatal` falls, the entire scrape operation will be marked as fail and key metrics

# `pg_up` / `pgbouncer_up` will be reset to 0. It is always a good practice to set up AT LEAST ONE fatal

# collector for pg_exporter. `pg.pg_primary_only` and `pgbouncer_list` are the default fatal collector.

#