Showing preview only (202K chars total). Download the full file or copy to clipboard to get everything.

Repository: WellyZhang/RAVEN

Branch: master

Commit: 77927ba3fe26

Files: 30

Total size: 192.3 KB

Directory structure:

gitextract_dccjdwzt/

├── .gitignore

├── LICENSE

├── README.md

├── assets/

│ ├── README.md

│ └── embedding.npy

├── requirements.txt

└── src/

├── dataset/

│ ├── AoT.py

│ ├── Attribute.py

│ ├── Rule.py

│ ├── __init__.py

│ ├── api.py

│ ├── build_tree.py

│ ├── const.py

│ ├── constraints.py

│ ├── main.py

│ ├── rendering.py

│ ├── sampling.py

│ ├── serialize.py

│ └── solver.py

└── model/

├── __init__.py

├── basic_model.py

├── cnn_lstm.py

├── cnn_mlp.py

├── const/

│ ├── __init__.py

│ └── const.py

├── fc_tree_net.py

├── main.py

├── resnet18.py

└── utility/

├── __init__.py

└── dataset_utility.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# Jupyter Notebook

.ipynb_checkpoints

# pyenv

.python-version

# celery beat schedule file

celerybeat-schedule

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

# vscode

.vscode

# experiments

/experiments

================================================

FILE: LICENSE

================================================

GNU GENERAL PUBLIC LICENSE

Version 3, 29 June 2007

Copyright (C) 2007 Free Software Foundation, Inc. <https://fsf.org/>

Everyone is permitted to copy and distribute verbatim copies

of this license document, but changing it is not allowed.

Preamble

The GNU General Public License is a free, copyleft license for

software and other kinds of works.

The licenses for most software and other practical works are designed

to take away your freedom to share and change the works. By contrast,

the GNU General Public License is intended to guarantee your freedom to

share and change all versions of a program--to make sure it remains free

software for all its users. We, the Free Software Foundation, use the

GNU General Public License for most of our software; it applies also to

any other work released this way by its authors. You can apply it to

your programs, too.

When we speak of free software, we are referring to freedom, not

price. Our General Public Licenses are designed to make sure that you

have the freedom to distribute copies of free software (and charge for

them if you wish), that you receive source code or can get it if you

want it, that you can change the software or use pieces of it in new

free programs, and that you know you can do these things.

To protect your rights, we need to prevent others from denying you

these rights or asking you to surrender the rights. Therefore, you have

certain responsibilities if you distribute copies of the software, or if

you modify it: responsibilities to respect the freedom of others.

For example, if you distribute copies of such a program, whether

gratis or for a fee, you must pass on to the recipients the same

freedoms that you received. You must make sure that they, too, receive

or can get the source code. And you must show them these terms so they

know their rights.

Developers that use the GNU GPL protect your rights with two steps:

(1) assert copyright on the software, and (2) offer you this License

giving you legal permission to copy, distribute and/or modify it.

For the developers' and authors' protection, the GPL clearly explains

that there is no warranty for this free software. For both users' and

authors' sake, the GPL requires that modified versions be marked as

changed, so that their problems will not be attributed erroneously to

authors of previous versions.

Some devices are designed to deny users access to install or run

modified versions of the software inside them, although the manufacturer

can do so. This is fundamentally incompatible with the aim of

protecting users' freedom to change the software. The systematic

pattern of such abuse occurs in the area of products for individuals to

use, which is precisely where it is most unacceptable. Therefore, we

have designed this version of the GPL to prohibit the practice for those

products. If such problems arise substantially in other domains, we

stand ready to extend this provision to those domains in future versions

of the GPL, as needed to protect the freedom of users.

Finally, every program is threatened constantly by software patents.

States should not allow patents to restrict development and use of

software on general-purpose computers, but in those that do, we wish to

avoid the special danger that patents applied to a free program could

make it effectively proprietary. To prevent this, the GPL assures that

patents cannot be used to render the program non-free.

The precise terms and conditions for copying, distribution and

modification follow.

TERMS AND CONDITIONS

0. Definitions.

"This License" refers to version 3 of the GNU General Public License.

"Copyright" also means copyright-like laws that apply to other kinds of

works, such as semiconductor masks.

"The Program" refers to any copyrightable work licensed under this

License. Each licensee is addressed as "you". "Licensees" and

"recipients" may be individuals or organizations.

To "modify" a work means to copy from or adapt all or part of the work

in a fashion requiring copyright permission, other than the making of an

exact copy. The resulting work is called a "modified version" of the

earlier work or a work "based on" the earlier work.

A "covered work" means either the unmodified Program or a work based

on the Program.

To "propagate" a work means to do anything with it that, without

permission, would make you directly or secondarily liable for

infringement under applicable copyright law, except executing it on a

computer or modifying a private copy. Propagation includes copying,

distribution (with or without modification), making available to the

public, and in some countries other activities as well.

To "convey" a work means any kind of propagation that enables other

parties to make or receive copies. Mere interaction with a user through

a computer network, with no transfer of a copy, is not conveying.

An interactive user interface displays "Appropriate Legal Notices"

to the extent that it includes a convenient and prominently visible

feature that (1) displays an appropriate copyright notice, and (2)

tells the user that there is no warranty for the work (except to the

extent that warranties are provided), that licensees may convey the

work under this License, and how to view a copy of this License. If

the interface presents a list of user commands or options, such as a

menu, a prominent item in the list meets this criterion.

1. Source Code.

The "source code" for a work means the preferred form of the work

for making modifications to it. "Object code" means any non-source

form of a work.

A "Standard Interface" means an interface that either is an official

standard defined by a recognized standards body, or, in the case of

interfaces specified for a particular programming language, one that

is widely used among developers working in that language.

The "System Libraries" of an executable work include anything, other

than the work as a whole, that (a) is included in the normal form of

packaging a Major Component, but which is not part of that Major

Component, and (b) serves only to enable use of the work with that

Major Component, or to implement a Standard Interface for which an

implementation is available to the public in source code form. A

"Major Component", in this context, means a major essential component

(kernel, window system, and so on) of the specific operating system

(if any) on which the executable work runs, or a compiler used to

produce the work, or an object code interpreter used to run it.

The "Corresponding Source" for a work in object code form means all

the source code needed to generate, install, and (for an executable

work) run the object code and to modify the work, including scripts to

control those activities. However, it does not include the work's

System Libraries, or general-purpose tools or generally available free

programs which are used unmodified in performing those activities but

which are not part of the work. For example, Corresponding Source

includes interface definition files associated with source files for

the work, and the source code for shared libraries and dynamically

linked subprograms that the work is specifically designed to require,

such as by intimate data communication or control flow between those

subprograms and other parts of the work.

The Corresponding Source need not include anything that users

can regenerate automatically from other parts of the Corresponding

Source.

The Corresponding Source for a work in source code form is that

same work.

2. Basic Permissions.

All rights granted under this License are granted for the term of

copyright on the Program, and are irrevocable provided the stated

conditions are met. This License explicitly affirms your unlimited

permission to run the unmodified Program. The output from running a

covered work is covered by this License only if the output, given its

content, constitutes a covered work. This License acknowledges your

rights of fair use or other equivalent, as provided by copyright law.

You may make, run and propagate covered works that you do not

convey, without conditions so long as your license otherwise remains

in force. You may convey covered works to others for the sole purpose

of having them make modifications exclusively for you, or provide you

with facilities for running those works, provided that you comply with

the terms of this License in conveying all material for which you do

not control copyright. Those thus making or running the covered works

for you must do so exclusively on your behalf, under your direction

and control, on terms that prohibit them from making any copies of

your copyrighted material outside their relationship with you.

Conveying under any other circumstances is permitted solely under

the conditions stated below. Sublicensing is not allowed; section 10

makes it unnecessary.

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

No covered work shall be deemed part of an effective technological

measure under any applicable law fulfilling obligations under article

11 of the WIPO copyright treaty adopted on 20 December 1996, or

similar laws prohibiting or restricting circumvention of such

measures.

When you convey a covered work, you waive any legal power to forbid

circumvention of technological measures to the extent such circumvention

is effected by exercising rights under this License with respect to

the covered work, and you disclaim any intention to limit operation or

modification of the work as a means of enforcing, against the work's

users, your or third parties' legal rights to forbid circumvention of

technological measures.

4. Conveying Verbatim Copies.

You may convey verbatim copies of the Program's source code as you

receive it, in any medium, provided that you conspicuously and

appropriately publish on each copy an appropriate copyright notice;

keep intact all notices stating that this License and any

non-permissive terms added in accord with section 7 apply to the code;

keep intact all notices of the absence of any warranty; and give all

recipients a copy of this License along with the Program.

You may charge any price or no price for each copy that you convey,

and you may offer support or warranty protection for a fee.

5. Conveying Modified Source Versions.

You may convey a work based on the Program, or the modifications to

produce it from the Program, in the form of source code under the

terms of section 4, provided that you also meet all of these conditions:

a) The work must carry prominent notices stating that you modified

it, and giving a relevant date.

b) The work must carry prominent notices stating that it is

released under this License and any conditions added under section

7. This requirement modifies the requirement in section 4 to

"keep intact all notices".

c) You must license the entire work, as a whole, under this

License to anyone who comes into possession of a copy. This

License will therefore apply, along with any applicable section 7

additional terms, to the whole of the work, and all its parts,

regardless of how they are packaged. This License gives no

permission to license the work in any other way, but it does not

invalidate such permission if you have separately received it.

d) If the work has interactive user interfaces, each must display

Appropriate Legal Notices; however, if the Program has interactive

interfaces that do not display Appropriate Legal Notices, your

work need not make them do so.

A compilation of a covered work with other separate and independent

works, which are not by their nature extensions of the covered work,

and which are not combined with it such as to form a larger program,

in or on a volume of a storage or distribution medium, is called an

"aggregate" if the compilation and its resulting copyright are not

used to limit the access or legal rights of the compilation's users

beyond what the individual works permit. Inclusion of a covered work

in an aggregate does not cause this License to apply to the other

parts of the aggregate.

6. Conveying Non-Source Forms.

You may convey a covered work in object code form under the terms

of sections 4 and 5, provided that you also convey the

machine-readable Corresponding Source under the terms of this License,

in one of these ways:

a) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by the

Corresponding Source fixed on a durable physical medium

customarily used for software interchange.

b) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by a

written offer, valid for at least three years and valid for as

long as you offer spare parts or customer support for that product

model, to give anyone who possesses the object code either (1) a

copy of the Corresponding Source for all the software in the

product that is covered by this License, on a durable physical

medium customarily used for software interchange, for a price no

more than your reasonable cost of physically performing this

conveying of source, or (2) access to copy the

Corresponding Source from a network server at no charge.

c) Convey individual copies of the object code with a copy of the

written offer to provide the Corresponding Source. This

alternative is allowed only occasionally and noncommercially, and

only if you received the object code with such an offer, in accord

with subsection 6b.

d) Convey the object code by offering access from a designated

place (gratis or for a charge), and offer equivalent access to the

Corresponding Source in the same way through the same place at no

further charge. You need not require recipients to copy the

Corresponding Source along with the object code. If the place to

copy the object code is a network server, the Corresponding Source

may be on a different server (operated by you or a third party)

that supports equivalent copying facilities, provided you maintain

clear directions next to the object code saying where to find the

Corresponding Source. Regardless of what server hosts the

Corresponding Source, you remain obligated to ensure that it is

available for as long as needed to satisfy these requirements.

e) Convey the object code using peer-to-peer transmission, provided

you inform other peers where the object code and Corresponding

Source of the work are being offered to the general public at no

charge under subsection 6d.

A separable portion of the object code, whose source code is excluded

from the Corresponding Source as a System Library, need not be

included in conveying the object code work.

A "User Product" is either (1) a "consumer product", which means any

tangible personal property which is normally used for personal, family,

or household purposes, or (2) anything designed or sold for incorporation

into a dwelling. In determining whether a product is a consumer product,

doubtful cases shall be resolved in favor of coverage. For a particular

product received by a particular user, "normally used" refers to a

typical or common use of that class of product, regardless of the status

of the particular user or of the way in which the particular user

actually uses, or expects or is expected to use, the product. A product

is a consumer product regardless of whether the product has substantial

commercial, industrial or non-consumer uses, unless such uses represent

the only significant mode of use of the product.

"Installation Information" for a User Product means any methods,

procedures, authorization keys, or other information required to install

and execute modified versions of a covered work in that User Product from

a modified version of its Corresponding Source. The information must

suffice to ensure that the continued functioning of the modified object

code is in no case prevented or interfered with solely because

modification has been made.

If you convey an object code work under this section in, or with, or

specifically for use in, a User Product, and the conveying occurs as

part of a transaction in which the right of possession and use of the

User Product is transferred to the recipient in perpetuity or for a

fixed term (regardless of how the transaction is characterized), the

Corresponding Source conveyed under this section must be accompanied

by the Installation Information. But this requirement does not apply

if neither you nor any third party retains the ability to install

modified object code on the User Product (for example, the work has

been installed in ROM).

The requirement to provide Installation Information does not include a

requirement to continue to provide support service, warranty, or updates

for a work that has been modified or installed by the recipient, or for

the User Product in which it has been modified or installed. Access to a

network may be denied when the modification itself materially and

adversely affects the operation of the network or violates the rules and

protocols for communication across the network.

Corresponding Source conveyed, and Installation Information provided,

in accord with this section must be in a format that is publicly

documented (and with an implementation available to the public in

source code form), and must require no special password or key for

unpacking, reading or copying.

7. Additional Terms.

"Additional permissions" are terms that supplement the terms of this

License by making exceptions from one or more of its conditions.

Additional permissions that are applicable to the entire Program shall

be treated as though they were included in this License, to the extent

that they are valid under applicable law. If additional permissions

apply only to part of the Program, that part may be used separately

under those permissions, but the entire Program remains governed by

this License without regard to the additional permissions.

When you convey a copy of a covered work, you may at your option

remove any additional permissions from that copy, or from any part of

it. (Additional permissions may be written to require their own

removal in certain cases when you modify the work.) You may place

additional permissions on material, added by you to a covered work,

for which you have or can give appropriate copyright permission.

Notwithstanding any other provision of this License, for material you

add to a covered work, you may (if authorized by the copyright holders of

that material) supplement the terms of this License with terms:

a) Disclaiming warranty or limiting liability differently from the

terms of sections 15 and 16 of this License; or

b) Requiring preservation of specified reasonable legal notices or

author attributions in that material or in the Appropriate Legal

Notices displayed by works containing it; or

c) Prohibiting misrepresentation of the origin of that material, or

requiring that modified versions of such material be marked in

reasonable ways as different from the original version; or

d) Limiting the use for publicity purposes of names of licensors or

authors of the material; or

e) Declining to grant rights under trademark law for use of some

trade names, trademarks, or service marks; or

f) Requiring indemnification of licensors and authors of that

material by anyone who conveys the material (or modified versions of

it) with contractual assumptions of liability to the recipient, for

any liability that these contractual assumptions directly impose on

those licensors and authors.

All other non-permissive additional terms are considered "further

restrictions" within the meaning of section 10. If the Program as you

received it, or any part of it, contains a notice stating that it is

governed by this License along with a term that is a further

restriction, you may remove that term. If a license document contains

a further restriction but permits relicensing or conveying under this

License, you may add to a covered work material governed by the terms

of that license document, provided that the further restriction does

not survive such relicensing or conveying.

If you add terms to a covered work in accord with this section, you

must place, in the relevant source files, a statement of the

additional terms that apply to those files, or a notice indicating

where to find the applicable terms.

Additional terms, permissive or non-permissive, may be stated in the

form of a separately written license, or stated as exceptions;

the above requirements apply either way.

8. Termination.

You may not propagate or modify a covered work except as expressly

provided under this License. Any attempt otherwise to propagate or

modify it is void, and will automatically terminate your rights under

this License (including any patent licenses granted under the third

paragraph of section 11).

However, if you cease all violation of this License, then your

license from a particular copyright holder is reinstated (a)

provisionally, unless and until the copyright holder explicitly and

finally terminates your license, and (b) permanently, if the copyright

holder fails to notify you of the violation by some reasonable means

prior to 60 days after the cessation.

Moreover, your license from a particular copyright holder is

reinstated permanently if the copyright holder notifies you of the

violation by some reasonable means, this is the first time you have

received notice of violation of this License (for any work) from that

copyright holder, and you cure the violation prior to 30 days after

your receipt of the notice.

Termination of your rights under this section does not terminate the

licenses of parties who have received copies or rights from you under

this License. If your rights have been terminated and not permanently

reinstated, you do not qualify to receive new licenses for the same

material under section 10.

9. Acceptance Not Required for Having Copies.

You are not required to accept this License in order to receive or

run a copy of the Program. Ancillary propagation of a covered work

occurring solely as a consequence of using peer-to-peer transmission

to receive a copy likewise does not require acceptance. However,

nothing other than this License grants you permission to propagate or

modify any covered work. These actions infringe copyright if you do

not accept this License. Therefore, by modifying or propagating a

covered work, you indicate your acceptance of this License to do so.

10. Automatic Licensing of Downstream Recipients.

Each time you convey a covered work, the recipient automatically

receives a license from the original licensors, to run, modify and

propagate that work, subject to this License. You are not responsible

for enforcing compliance by third parties with this License.

An "entity transaction" is a transaction transferring control of an

organization, or substantially all assets of one, or subdividing an

organization, or merging organizations. If propagation of a covered

work results from an entity transaction, each party to that

transaction who receives a copy of the work also receives whatever

licenses to the work the party's predecessor in interest had or could

give under the previous paragraph, plus a right to possession of the

Corresponding Source of the work from the predecessor in interest, if

the predecessor has it or can get it with reasonable efforts.

You may not impose any further restrictions on the exercise of the

rights granted or affirmed under this License. For example, you may

not impose a license fee, royalty, or other charge for exercise of

rights granted under this License, and you may not initiate litigation

(including a cross-claim or counterclaim in a lawsuit) alleging that

any patent claim is infringed by making, using, selling, offering for

sale, or importing the Program or any portion of it.

11. Patents.

A "contributor" is a copyright holder who authorizes use under this

License of the Program or a work on which the Program is based. The

work thus licensed is called the contributor's "contributor version".

A contributor's "essential patent claims" are all patent claims

owned or controlled by the contributor, whether already acquired or

hereafter acquired, that would be infringed by some manner, permitted

by this License, of making, using, or selling its contributor version,

but do not include claims that would be infringed only as a

consequence of further modification of the contributor version. For

purposes of this definition, "control" includes the right to grant

patent sublicenses in a manner consistent with the requirements of

this License.

Each contributor grants you a non-exclusive, worldwide, royalty-free

patent license under the contributor's essential patent claims, to

make, use, sell, offer for sale, import and otherwise run, modify and

propagate the contents of its contributor version.

In the following three paragraphs, a "patent license" is any express

agreement or commitment, however denominated, not to enforce a patent

(such as an express permission to practice a patent or covenant not to

sue for patent infringement). To "grant" such a patent license to a

party means to make such an agreement or commitment not to enforce a

patent against the party.

If you convey a covered work, knowingly relying on a patent license,

and the Corresponding Source of the work is not available for anyone

to copy, free of charge and under the terms of this License, through a

publicly available network server or other readily accessible means,

then you must either (1) cause the Corresponding Source to be so

available, or (2) arrange to deprive yourself of the benefit of the

patent license for this particular work, or (3) arrange, in a manner

consistent with the requirements of this License, to extend the patent

license to downstream recipients. "Knowingly relying" means you have

actual knowledge that, but for the patent license, your conveying the

covered work in a country, or your recipient's use of the covered work

in a country, would infringe one or more identifiable patents in that

country that you have reason to believe are valid.

If, pursuant to or in connection with a single transaction or

arrangement, you convey, or propagate by procuring conveyance of, a

covered work, and grant a patent license to some of the parties

receiving the covered work authorizing them to use, propagate, modify

or convey a specific copy of the covered work, then the patent license

you grant is automatically extended to all recipients of the covered

work and works based on it.

A patent license is "discriminatory" if it does not include within

the scope of its coverage, prohibits the exercise of, or is

conditioned on the non-exercise of one or more of the rights that are

specifically granted under this License. You may not convey a covered

work if you are a party to an arrangement with a third party that is

in the business of distributing software, under which you make payment

to the third party based on the extent of your activity of conveying

the work, and under which the third party grants, to any of the

parties who would receive the covered work from you, a discriminatory

patent license (a) in connection with copies of the covered work

conveyed by you (or copies made from those copies), or (b) primarily

for and in connection with specific products or compilations that

contain the covered work, unless you entered into that arrangement,

or that patent license was granted, prior to 28 March 2007.

Nothing in this License shall be construed as excluding or limiting

any implied license or other defenses to infringement that may

otherwise be available to you under applicable patent law.

12. No Surrender of Others' Freedom.

If conditions are imposed on you (whether by court order, agreement or

otherwise) that contradict the conditions of this License, they do not

excuse you from the conditions of this License. If you cannot convey a

covered work so as to satisfy simultaneously your obligations under this

License and any other pertinent obligations, then as a consequence you may

not convey it at all. For example, if you agree to terms that obligate you

to collect a royalty for further conveying from those to whom you convey

the Program, the only way you could satisfy both those terms and this

License would be to refrain entirely from conveying the Program.

13. Use with the GNU Affero General Public License.

Notwithstanding any other provision of this License, you have

permission to link or combine any covered work with a work licensed

under version 3 of the GNU Affero General Public License into a single

combined work, and to convey the resulting work. The terms of this

License will continue to apply to the part which is the covered work,

but the special requirements of the GNU Affero General Public License,

section 13, concerning interaction through a network will apply to the

combination as such.

14. Revised Versions of this License.

The Free Software Foundation may publish revised and/or new versions of

the GNU General Public License from time to time. Such new versions will

be similar in spirit to the present version, but may differ in detail to

address new problems or concerns.

Each version is given a distinguishing version number. If the

Program specifies that a certain numbered version of the GNU General

Public License "or any later version" applies to it, you have the

option of following the terms and conditions either of that numbered

version or of any later version published by the Free Software

Foundation. If the Program does not specify a version number of the

GNU General Public License, you may choose any version ever published

by the Free Software Foundation.

If the Program specifies that a proxy can decide which future

versions of the GNU General Public License can be used, that proxy's

public statement of acceptance of a version permanently authorizes you

to choose that version for the Program.

Later license versions may give you additional or different

permissions. However, no additional obligations are imposed on any

author or copyright holder as a result of your choosing to follow a

later version.

15. Disclaimer of Warranty.

THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY

APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT

HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY

OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO,

THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR

PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM

IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF

ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

16. Limitation of Liability.

IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS

THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY

GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE

USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF

DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD

PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),

EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF

SUCH DAMAGES.

17. Interpretation of Sections 15 and 16.

If the disclaimer of warranty and limitation of liability provided

above cannot be given local legal effect according to their terms,

reviewing courts shall apply local law that most closely approximates

an absolute waiver of all civil liability in connection with the

Program, unless a warranty or assumption of liability accompanies a

copy of the Program in return for a fee.

END OF TERMS AND CONDITIONS

How to Apply These Terms to Your New Programs

If you develop a new program, and you want it to be of the greatest

possible use to the public, the best way to achieve this is to make it

free software which everyone can redistribute and change under these terms.

To do so, attach the following notices to the program. It is safest

to attach them to the start of each source file to most effectively

state the exclusion of warranty; and each file should have at least

the "copyright" line and a pointer to where the full notice is found.

<one line to give the program's name and a brief idea of what it does.>

Copyright (C) <year> <name of author>

This program is free software: you can redistribute it and/or modify

it under the terms of the GNU General Public License as published by

the Free Software Foundation, either version 3 of the License, or

(at your option) any later version.

This program is distributed in the hope that it will be useful,

but WITHOUT ANY WARRANTY; without even the implied warranty of

MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

GNU General Public License for more details.

You should have received a copy of the GNU General Public License

along with this program. If not, see <https://www.gnu.org/licenses/>.

Also add information on how to contact you by electronic and paper mail.

If the program does terminal interaction, make it output a short

notice like this when it starts in an interactive mode:

<program> Copyright (C) <year> <name of author>

This program comes with ABSOLUTELY NO WARRANTY; for details type `show w'.

This is free software, and you are welcome to redistribute it

under certain conditions; type `show c' for details.

The hypothetical commands `show w' and `show c' should show the appropriate

parts of the General Public License. Of course, your program's commands

might be different; for a GUI interface, you would use an "about box".

You should also get your employer (if you work as a programmer) or school,

if any, to sign a "copyright disclaimer" for the program, if necessary.

For more information on this, and how to apply and follow the GNU GPL, see

<https://www.gnu.org/licenses/>.

The GNU General Public License does not permit incorporating your program

into proprietary programs. If your program is a subroutine library, you

may consider it more useful to permit linking proprietary applications with

the library. If this is what you want to do, use the GNU Lesser General

Public License instead of this License. But first, please read

<https://www.gnu.org/licenses/why-not-lgpl.html>.

================================================

FILE: README.md

================================================

# RAVEN

This repo contains code for our CVPR 2019 paper.

[RAVEN: A Dataset for <u>R</u>elational and <u>A</u>nalogical <u>V</u>isual r<u>E</u>aso<u>N</u>ing](http://wellyzhang.github.io/attach/cvpr19zhang.pdf)

Chi Zhang*, Feng Gao*, Baoxiong Jia, Yixin Zhu, Song-Chun Zhu

*Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR)*, 2019

(* indicates equal contribution.)

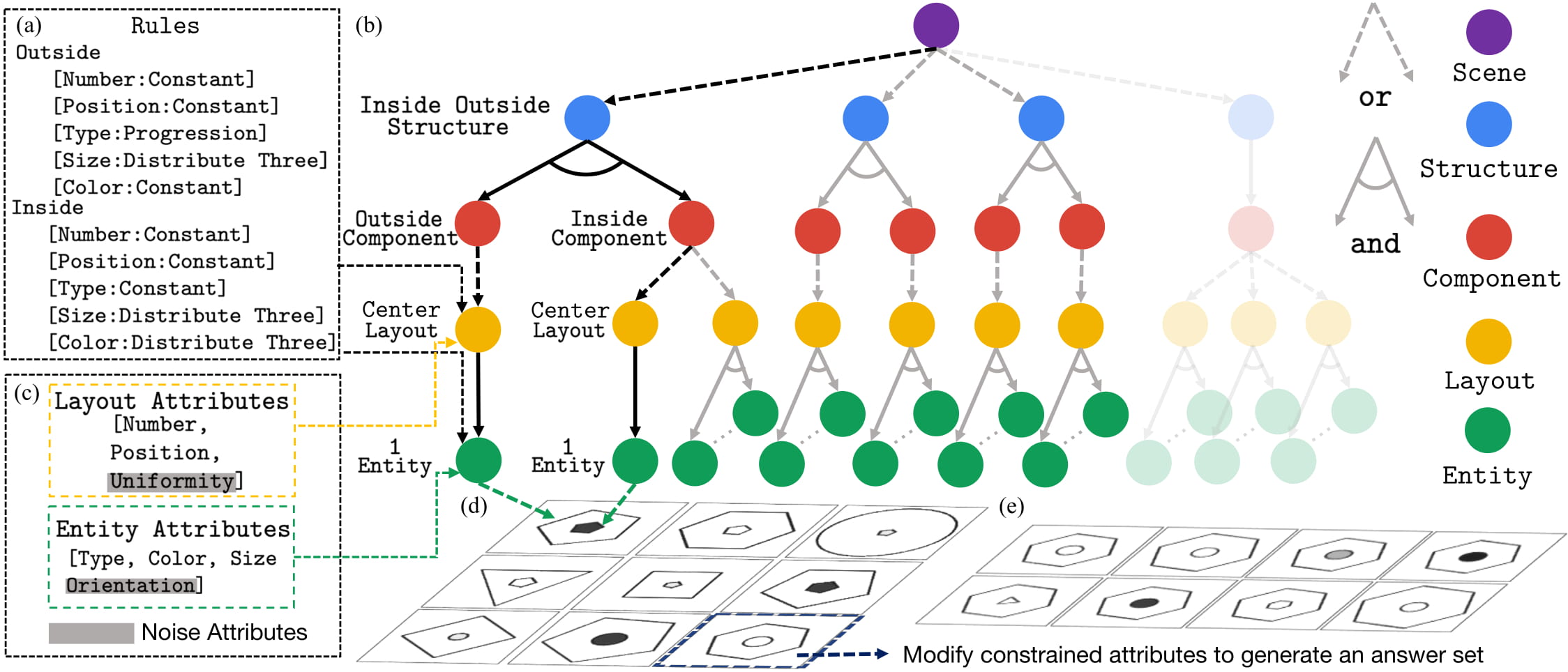

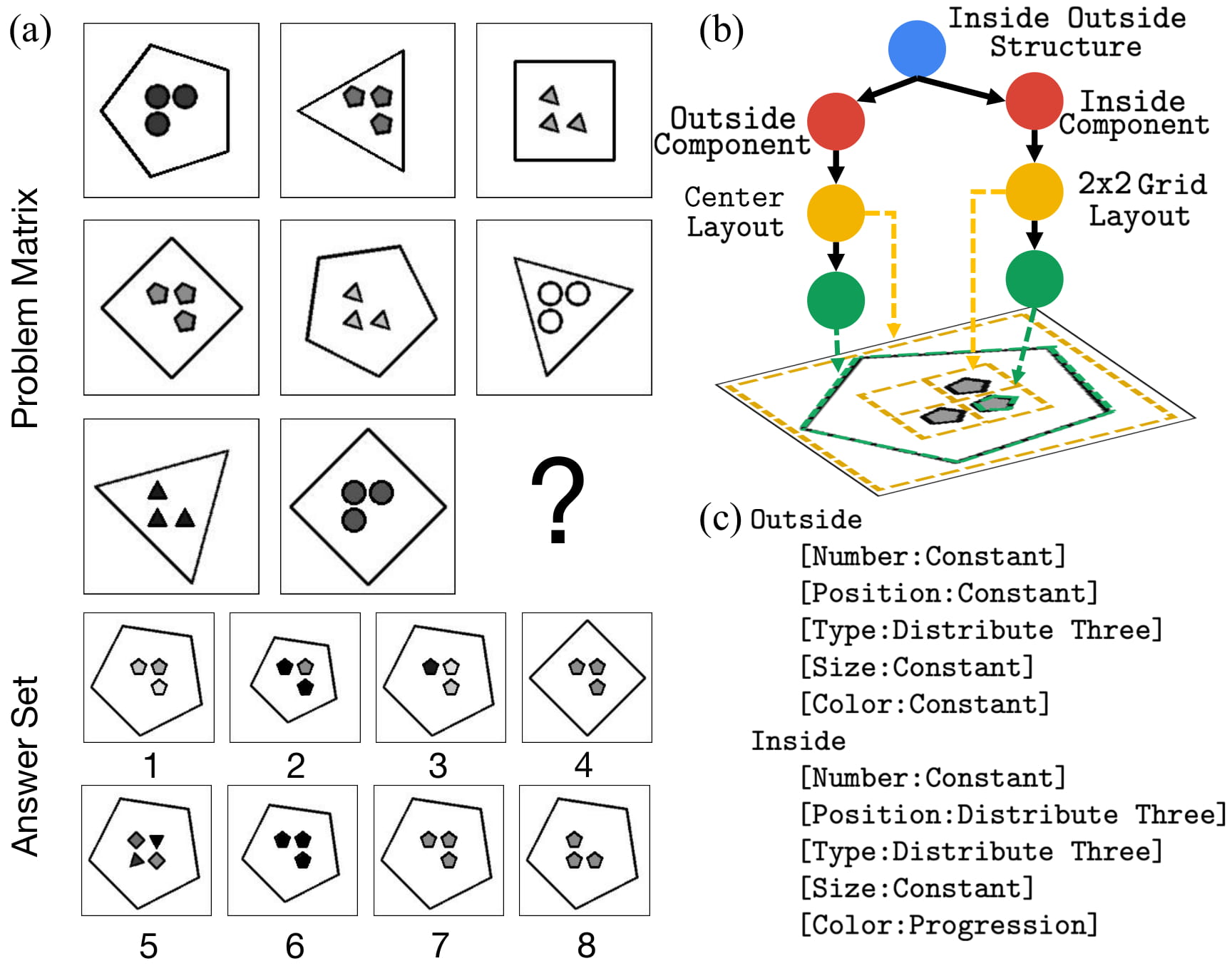

Dramatic progress has been witnessed in basic vision tasks involving low-level perception, such as object recognition, detection, and tracking. Unfortunately, there is still an enormous performance gap between artificial vision systems and human intelligence in terms of higher-level vision problems, especially ones involving reasoning. Earlier attempts in equipping machines with high-level reasoning have hovered around Visual Question Answering (VQA), one typical task associating vision and language understanding. In this work, we propose a new dataset, built in the context of Raven's Progressive Matrices (RPM) and aimed at lifting machine intelligence by associating vision with structural, relational, and analogical reasoning in a hierarchical representation. Unlike previous works in measuring abstract reasoning using RPM, we establish a semantic link between vision and reasoning by providing structure representation. This addition enables a new type of abstract reasoning by jointly operating on the structure representation. Machine reasoning ability using modern computer vision is evaluated in this newly proposed dataset. Additionally, we also provide human performance as a reference. Finally, we show consistent improvement across all models by incorporating a simple neural module that combines visual understanding and structure reasoning.

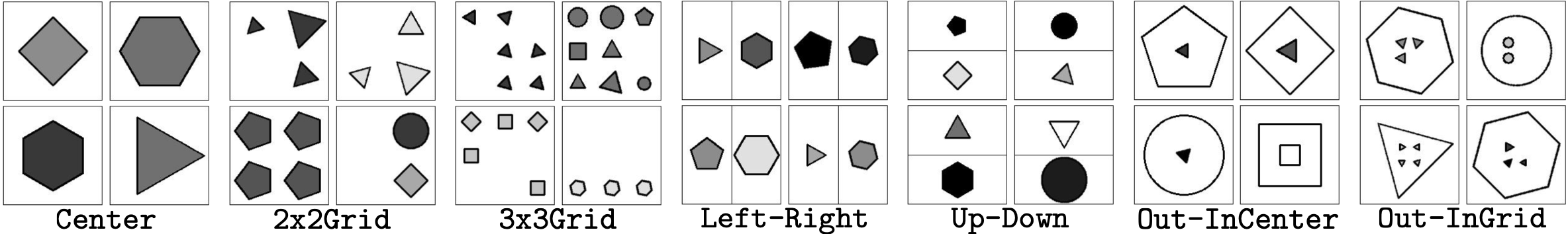

# Dataset

The dataset is generated using the attributed stochastic image grammar. An example is shown below.

The grammatical design makes the dataset flexible and extendable. In total, we come up with 7 different figural configurations.

The dataset formatting document is in ```assets/README.md```. To download the dataset, please check [our project page](http://wellyzhang.github.io/project/raven.html#dataset).

# Performance

We show performance of models in the following table. For details, please check our [paper](http://wellyzhang.github.io/attach/cvpr19zhang.pdf).

| Method | Acc | Center | 2x2Grid | 3x3Grid | L-R | U-D | O-IC | O-IG |

| :--- | :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: |

| LSTM | 13.07% | 13.19% | 14.13% | 13.69% | 12.84% | 12.35% | 12.15% | 12.99% |

| WReN | 14.69% | 13.09% | 28.62% | 28.27% | 7.49% | 6.34% | 8.38% | 10.56% |

| CNN | 36.97% | 33.58% | 30.30% | 33.53% | 39.43% | 41.26% | 43.20% | 37.54% |

| ResNet | 53.43% | 52.82% | 41.86% | 44.29% | 58.77% | 60.16% | 63.19% | 53.12% |

| LSTM+DRT | 13.96% | 14.29% | 15.08% | 14.09% | 13.79% | 13.24% | 13.99% | 13.29% |

| WReN+DRT | 15.02% | 15.38% | 23.26% | 29.51% | 6.99% | 8.43% | 8.93% | 12.35% |

| CNN+DRT | 39.42% | 37.30% | 30.06% | 34.57% | 45.49% | 45.54% | 45.93% | 37.54% |

| ResNet+DRT | **59.56%** | **58.08%** | **46.53%** | **50.40%** | **65.82%** | **67.11%** | **69.09%** | **60.11%** |

| Human | 84.41% | 95.45% | 81.82% | 79.55% | 86.36% | 81.81% | 86.36% | 81.81% |

| Solver | 100% | 100% | 100% | 100% | 100% | 100% | 100% | 100% |

# Dependencies

**Important**

* Python 2.7

* OpenCV

* PyTorch

* CUDA and cuDNN expected

See ```requirements.txt``` for a full list of packages required.

# Usage

## Dataset Generation

Code to generate the dataset resides in the ```src/dataset``` folder. To generate a dataset, run

```

python src/dataset/main.py --num-samples <number of samples per configuration> --save-dir <directory to save the dataset>

```

Check the ```main.py``` file for a full list of arguments you can adjust.

## Benchmarking

Code to benchmark the dataset resides in ```src/model```. To run the code, first put ```assets/embedding.npy``` in the dataset folder as specified in the ```src/model/utility/dataset_utility.py```. Then run

```

python src/model/main.py --model <model name> --path <path to the dataset>

```

You can check the ```main.py``` file for a full list of arguments. This repo only supports ```Resnet18_MLP```, ```CNN_MLP```, and ```CNN_LSTM```. For WReN, please check the implementation in [the WReN repo](https://github.com/Fen9/WReN).

Note that for batch processing, we implement the DRT as a maximum tree of all possible tree structures and prune the branches during training based on an indicator.

# Citation

If you find the paper and/or the code helpful, please cite us.

```

@inproceedings{zhang2019raven,

title={RAVEN: A Dataset for Relational and Analogical Visual rEasoNing},

author={Zhang, Chi and Gao, Feng and Jia, Baoxiong and Zhu, Yixin and Zhu, Song-Chun},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2019}

}

```

# Acknowledgement

We'd like to express our gratitude towards all the colleagues and anonymous reviewers for helping us improve the paper. The project is impossible to finish without the following open-source implementation.

* [WReN](https://github.com/Fen9/WReN)

================================================

FILE: assets/README.md

================================================

# Dataset Format

The dataset folder is organized as follows:

```

center_single/

RAVEN_0_train.npz

RAVEN_0_train.xml

...

RAVEN_6_val.npz

RAVEN_6_val.xml

...

RAVEN_8_test.npz

RAVEN_8_test.xml

...

distribute_four/

...

distribute_nine/

...

in_center_single_out_center_single/

...

in_distribute_four_out_center_single/

...

left_center_single_right_center_single/

...

up_center_single_down_center_single/

...

```

Note that each npz file comes with an xml file.

These 7 folders correspond to the 7 figure configurations in the paper. Specifically,

* Center = center_single

* 2x2Grid = distribute_four

* 3x3Grid = distribute_nine

* Left-Right = left_center_single_right_center_single

* Up-Down = up_center_single_down_center_single

* Out-InCenter = in_center_single_out_center_single

* Out-InGrid = in_distribute_four_out_center_single

## Naming

You might notice that the actual naming in this dataset is slightly different from what's reported in our paper. This is mostly due to the fact that things like **2x2** or **3x3** do not have corresponding word vectors. They are now **distribute_four** and **distribute_nine**. To make the paper concise, we also remove certain adjectives. **Center** was **Center_Single** and sometimes came with a component name.

As described in the paper, embeddings for each of them are obtained from pre-trained GloVe vectors and held fixed during training.

## NPZ file

Each npz file contains the following:

* image: a (16, 160, 160) array where all 16 figures in each problem are stacked on the first dimension. Note that first 8 figures compose the problem matrix and the last 8 figures are choices.

* target: the index of the correct answer in the answer set. Note that it starts from 0 and you should offset it by 8 if you want to retrieve it from the image array.

* structure: the tree structure annotation for the problem. It's serialized into a sequence using pre-order traversal.

* meta_matrix: similar to that in PGM. Detailed ordering could be found in ```src/dataset/const.py```.

* meta_target: bitwise-or of meta_matrix on all rows.

* meta_structure: it's similar to meta_matrix. Detailed ordering is in ```src/dataset/const.py```.

## XML file

Each xml file contains the following:

* Context panels and choice panels: each Panel could be further decomposed into Struct, Component, Layout, and Entity.

* Each layer comes with its name and id if necessary.

* Layout has its own attributes, whose values are indices into the value set (see also ```src/dataset/const.py```), except Position. Position is a list of slots entities could occupy, denoted by center and width/height.

* Entity's attributes follow the same annotation. The bbox is retrieved from the Position array in its parent Layout and the real_bbox is the actual bounding box, denoted by center and width/height. The mask is encoded using the run-length encoding. To decode it, use the ```rle_decode``` function in ```src/dataset/api.py```.

* Rules: rules are divided into groups, each of which applies to the corresponding component with the same id number.

* ```attr``` could be ```Number/Position``` when the rule is ```Constant``` as these two attributes are deeply coupled.

* When there is a rule on ```Number``` or ```Position```, we omit the rule on the other attribute, as it should be assumed **as is**, *i.e.*, following the rule on the other (could remain unchanged).

* Therefore, each rule group has 4 rules.

================================================

FILE: requirements.txt

================================================

numpy

scipy

matplotlib

pillow

scikit-image

opencv-contrib-python

tqdm

torch

torchvision

================================================

FILE: src/dataset/AoT.py

================================================

# -*- coding: utf-8 -*-

import copy

import numpy as np

from scipy.misc import comb

from Attribute import Angle, Color, Number, Position, Size, Type, Uniformity

from constraints import rule_constraint

class AoTNode(object):

"""Superclass of AoT.

"""

levels_next = {"Root": "Structure",

"Structure": "Component",

"Component": "Layout",

"Layout": "Entity"}

def __init__(self, name, level, node_type, is_pg=False):

self.name = name

self.level = level

self.node_type = node_type

self.children = []

self.is_pg = is_pg

def insert(self, node):

"""Used for public.

Arguments:

node(AoTNode): a node to insert

"""

assert isinstance(node, AoTNode)

assert self.node_type != "leaf"

assert node.level == self.levels_next[self.level]

self.children.append(node)

def _insert(self, node):

"""Used for private.

Arguments:

node(AoTNode): a node to insert

"""

assert isinstance(node, AoTNode)

assert self.node_type != "leaf"

assert node.level == self.levels_next[self.level]

self.children.append(node)

def _resample(self, change_number):

"""Resample the layout. If the number of entities change, resample also the

position distribution; otherwise only resample each attribute for each entity.

Arugments:

change_number(bool): whether to the number has been reset

"""

assert self.is_pg

if self.node_type == "and":

for child in self.children:

child._resample(change_number)

else:

self.children[0]._resample(change_number)

def __repr__(self):

return self.level + "." + self.name

def __str__(self):

return self.level + "." + self.name

class Root(AoTNode):

def __init__(self, name, is_pg=False):

super(Root, self).__init__(name, level="Root", node_type="or", is_pg=is_pg)

def sample(self):

"""The function returns a separate AoT that is correctly parsed.

Note that a new node is needed so that modification does not alter settings

in the original tree.

Returns:

new_node(Root): a newly instantiated node

"""

if self.is_pg:

raise ValueError("Could not sample on a PG")

new_node = Root(self.name, True)

selected = np.random.choice(self.children)

new_node.insert(selected._sample())

return new_node

def resample(self, change_number=False):

self._resample(change_number)

def prune(self, rule_groups):

"""Prune the AoT such that all branches satisfy the constraints.

Arguments:

rule_groups(list of list of Rule): each list of Rule applies to a component

Returns:

new_node(Root): a newly instantiated node with branches all satisfying the constraints;

None if no branches satisfy all the constraints

"""

new_node = Root(self.name)

for structure in self.children:

if len(structure.children) == len(rule_groups):

new_child = structure._prune(rule_groups)

if new_child is not None:

new_node.insert(new_child)

# during real execution, this should never happens

if len(new_node.children) == 0:

new_node = None

return new_node

def prepare(self):

"""This function prepares the AoT for rendering.

Returns:

structure.name(str): used for rendering structure

entities(list of Entity): used for rendering each entity

"""

assert self.is_pg

assert self.level == "Root"

structure = self.children[0]

components = []

for child in structure.children:

components.append(child)

entities = []

for component in components:

for child in component.children[0].children:

entities.append(child)

return structure.name, entities

def sample_new(self, component_idx, attr_name, min_level, max_level, root):

"""Sample a new configuration. This is used for generating answers.

Arguments:

component_idx(int): the component we will sample

attr_name(str): name of the attribute to sample

min_level(int): lower bound of value level for the attribute

max_level(int): upper bound of value level for the attribute

root(AoTNode): the answer AoT, used for storing previous value levels for each attribute

"""

assert self.is_pg

self.children[0]._sample_new(component_idx, attr_name, min_level, max_level, root.children[0])

class Structure(AoTNode):

def __init__(self, name, is_pg=False):

super(Structure, self).__init__(name, level="Structure", node_type="and", is_pg=is_pg)

def _sample(self):

if self.is_pg:

raise ValueError("Could not sample on a PG")

new_node = Structure(self.name, True)

for child in self.children:

new_node.insert(child._sample())

return new_node

def _prune(self, rule_groups):

new_node = Structure(self.name)

for i in range(len(self.children)):

child = self.children[i]

# if any of the components fails to satisfy the constraint

# the structure could not be chosen

new_child = child._prune(rule_groups[i])

if new_child is None:

return None

new_node.insert(new_child)

return new_node

def _sample_new(self, component_idx, attr_name, min_level, max_level, structure):

self.children[component_idx]._sample_new(attr_name, min_level, max_level, structure.children[component_idx])

class Component(AoTNode):

def __init__(self, name, is_pg=False):

super(Component, self).__init__(name, level="Component", node_type="or", is_pg=is_pg)

def _sample(self):

if self.is_pg:

raise ValueError("Could not sample on a PG")

new_node = Component(self.name, True)

selected = np.random.choice(self.children)

new_node.insert(selected._sample())

return new_node

def _prune(self, rule_group):

new_node = Component(self.name)

for child in self.children:

new_child = child._update_constraint(rule_group)

if new_child is not None:

new_node.insert(new_child)

if len(new_node.children) == 0:

new_node = None

return new_node

def _sample_new(self, attr_name, min_level, max_level, component):

self.children[0]._sample_new(attr_name, min_level, max_level, component.children[0])

class Layout(AoTNode):

"""Layout is the highest level of the hierarchy that has attributes (Number, Position and Uniformity).

To copy a Layout, please use deepcopy such that newly instantiated and separated attributes are created.

"""

def __init__(self, name, layout_constraint, entity_constraint,

orig_layout_constraint=None, orig_entity_constraint=None,

sample_new_num_count=None, is_pg=False):

super(Layout, self).__init__(name, level="Layout", node_type="and", is_pg=is_pg)

self.layout_constraint = layout_constraint

self.entity_constraint = entity_constraint

self.number = Number(min_level=layout_constraint["Number"][0], max_level=layout_constraint["Number"][1])

self.position = Position(pos_type=layout_constraint["Position"][0], pos_list=layout_constraint["Position"][1])

self.uniformity = Uniformity(min_level=layout_constraint["Uni"][0], max_level=layout_constraint["Uni"][1])

self.number.sample()

self.position.sample(self.number.get_value())

self.uniformity.sample()

# store initial layout_constraint and entity_constraint for answer generation

if orig_layout_constraint is None:

self.orig_layout_constraint = copy.deepcopy(self.layout_constraint)

else:

self.orig_layout_constraint = orig_layout_constraint

if orig_entity_constraint is None:

self.orig_entity_constraint = copy.deepcopy(self.entity_constraint)

else:

self.orig_entity_constraint = orig_entity_constraint

if sample_new_num_count is None:

self.sample_new_num_count = dict()

most_num = len(self.position.values)

for i in range(layout_constraint["Number"][0], layout_constraint["Number"][1] + 1):

self.sample_new_num_count[i] = [comb(most_num, i + 1), []]

else:

self.sample_new_num_count = sample_new_num_count

def add_new(self, *bboxes):

"""Add new entities into this level.

Arguments:

*bboxes(tuple of bbox): bboxes of new entities

"""

name = self.number.get_value()

uni = self.uniformity.get_value()

for i in range(len(bboxes)):

name += i

bbox = bboxes[i]

new_entity = copy.deepcopy(self.children[0])

new_entity.name = str(name)

new_entity.bbox = bbox

if not uni:

new_entity.resample()

self._insert(new_entity)

def resample(self, change_number=False):

self._resample(change_number)

def _sample(self):

"""Though Layout is an "and" node, we do not enumerate all possible configurations, but rather

we treat it as a sampling process such that different configurtions are sampled. After the

sampling, the lower level Entities are instantiated.

Returns:

new_node(Layout): a separated node with independent attributes

"""

pos = self.position.get_value()

new_node = copy.deepcopy(self)

new_node.is_pg = True

if self.uniformity.get_value():

node = Entity(name=str(0), bbox=pos[0], entity_constraint=self.entity_constraint)

new_node._insert(node)

for i in range(1, len(pos)):

bbox = pos[i]

node = copy.deepcopy(node)

node.name = str(i)

node.bbox = bbox

new_node._insert(node)

else:

for i in range(len(pos)):

bbox = pos[i]

node = Entity(name=str(i), bbox=bbox, entity_constraint=self.entity_constraint)

new_node._insert(node)

return new_node

def _resample(self, change_number):

"""Resample each attribute for every child.

This function is called across rows.

Arguments:

change_number(bool): whether to resample a number

"""

if change_number:

self.number.sample()

del self.children[:]

self.position.sample(self.number.get_value())

pos = self.position.get_value()

if self.uniformity.get_value():

node = Entity(name=str(0), bbox=pos[0], entity_constraint=self.entity_constraint)

self._insert(node)

for i in range(1, len(pos)):

bbox = pos[i]

node = copy.deepcopy(node)

node.name = str(i)

node.bbox = bbox

self._insert(node)

else:

for i in range(len(pos)):

bbox = pos[i]

node = Entity(name=str(i), bbox=bbox, entity_constraint=self.entity_constraint)

self._insert(node)

def _update_constraint(self, rule_group):

"""Update the constraint of the layout. If one constraint is not satisfied, return None

such that this structure is disgarded.

Arguments:

rule_group(list of Rule): all rules to apply to this layout

Returns:

Layout(Layout): a new Layout node with independent attributes

"""

num_min = self.layout_constraint["Number"][0]

num_max = self.layout_constraint["Number"][1]

uni_min = self.layout_constraint["Uni"][0]

uni_max = self.layout_constraint["Uni"][1]

type_min = self.entity_constraint["Type"][0]

type_max = self.entity_constraint["Type"][1]

size_min = self.entity_constraint["Size"][0]

size_max = self.entity_constraint["Size"][1]

color_min = self.entity_constraint["Color"][0]

color_max = self.entity_constraint["Color"][1]

new_constraints = rule_constraint(rule_group, num_min, num_max,

uni_min, uni_max,

type_min, type_max,

size_min, size_max,

color_min, color_max)

new_layout_constraint, new_entity_constraint = new_constraints

new_num_min = new_layout_constraint["Number"][0]

new_num_max = new_layout_constraint["Number"][1]

if new_num_min > new_num_max:

return None

new_uni_min = new_layout_constraint["Uni"][0]

new_uni_max = new_layout_constraint["Uni"][1]

if new_uni_min > new_uni_max:

return None

new_type_min = new_entity_constraint["Type"][0]

new_type_max = new_entity_constraint["Type"][1]

if new_type_min > new_type_max:

return None

new_size_min = new_entity_constraint["Size"][0]

new_size_max = new_entity_constraint["Size"][1]

if new_size_min > new_size_max:

return None

new_color_min = new_entity_constraint["Color"][0]

new_color_max = new_entity_constraint["Color"][1]

if new_color_min > new_color_max:

return None

new_layout_constraint = copy.deepcopy(self.layout_constraint)

new_layout_constraint["Number"][:] = [new_num_min, new_num_max]

new_layout_constraint["Uni"][:] = [new_uni_min, new_uni_max]

new_entity_constraint = copy.deepcopy(self.entity_constraint)

new_entity_constraint["Type"][:] = [new_type_min, new_type_max]

new_entity_constraint["Size"][:] = [new_size_min, new_size_max]

new_entity_constraint["Color"][:] = [new_color_min, new_color_max]

return Layout(self.name, new_layout_constraint, new_entity_constraint,

self.orig_layout_constraint, self.orig_entity_constraint,

self.sample_new_num_count)

def reset_constraint(self, attr):

attr_name = attr.lower()

instance = getattr(self, attr_name)

instance.min_level = self.layout_constraint[attr][0]

instance.max_level = self.layout_constraint[attr][1]

def _sample_new(self, attr_name, min_level, max_level, layout):

if attr_name == "Number":

while True:

value_level = self.number.sample_new(min_level, max_level)

if layout.sample_new_num_count[value_level][0] == 0:

continue

new_num = self.number.get_value(value_level)

new_value_idx = self.position.sample_new(new_num)

set_new_value_idx = set(new_value_idx)

if set_new_value_idx not in layout.sample_new_num_count[value_level][1]:

layout.sample_new_num_count[value_level][0] -= 1

layout.sample_new_num_count[value_level][1].append(set_new_value_idx)

break

self.number.set_value_level(value_level)

self.position.set_value_idx(new_value_idx)

pos = self.position.get_value()

del self.children[:]

for i in range(len(pos)):

bbox = pos[i]

node = Entity(name=str(i), bbox=bbox, entity_constraint=self.entity_constraint)

self._insert(node)

elif attr_name == "Position":

new_value_idx = self.position.sample_new(self.number.get_value())

layout.position.previous_values.append(new_value_idx)

self.position.set_value_idx(new_value_idx)

pos = self.position.get_value()

for i in range(len(pos)):

bbox = pos[i]

self.children[i].bbox = bbox

elif attr_name == "Type":

for index in range(len(self.children)):

new_value_level = self.children[index].type.sample_new(min_level, max_level)

self.children[index].type.set_value_level(new_value_level)

layout.children[index].type.previous_values.append(new_value_level)

elif attr_name == "Size":

for index in range(len(self.children)):

new_value_level = self.children[index].size.sample_new(min_level, max_level)

self.children[index].size.set_value_level(new_value_level)

layout.children[index].size.previous_values.append(new_value_level)

elif attr_name == "Color":

for index in range(len(self.children)):

new_value_level = self.children[index].color.sample_new(min_level, max_level)

self.children[index].color.set_value_level(new_value_level)

layout.children[index].color.previous_values.append(new_value_level)

else:

raise ValueError("Unsupported operation")

class Entity(AoTNode):

def __init__(self, name, bbox, entity_constraint):

super(Entity, self).__init__(name, level="Entity", node_type="leaf", is_pg=True)

# Attributes

# Sample each attribute such that the value lies in the admissible range

# Otherwise, random sample

self.entity_constraint = entity_constraint

self.bbox = bbox

self.type = Type(min_level=entity_constraint["Type"][0], max_level=entity_constraint["Type"][1])

self.type.sample()

self.size = Size(min_level=entity_constraint["Size"][0], max_level=entity_constraint["Size"][1])

self.size.sample()

self.color = Color(min_level=entity_constraint["Color"][0], max_level=entity_constraint["Color"][1])

self.color.sample()

self.angle = Angle(min_level=entity_constraint["Angle"][0], max_level=entity_constraint["Angle"][1])

self.angle.sample()

def reset_constraint(self, attr, min_level, max_level):

attr_name = attr.lower()

self.entity_constraint[attr][:] = [min_level, max_level]

instance = getattr(self, attr_name)

instance.min_level = min_level

instance.max_level = max_level

def resample(self):

self.type.sample()

self.size.sample()

self.color.sample()

self.angle.sample()

================================================

FILE: src/dataset/Attribute.py

================================================

# -*- coding: utf-8 -*-

import numpy as np

from const import (ANGLE_MAX, ANGLE_MIN, ANGLE_VALUES, COLOR_MAX, COLOR_MIN,

COLOR_VALUES, NUM_MAX, NUM_MIN, NUM_VALUES, SIZE_MAX,

SIZE_MIN, SIZE_VALUES, TYPE_MAX, TYPE_MIN, TYPE_VALUES,

UNI_MAX, UNI_MIN, UNI_VALUES)

class Attribute(object):

"""Super-class for all attributes. This should not be instantiated.

In the sub-class, each attribute should have a pre-defined value set

and a member to indicate the index in the value set. This design enables

setting a value by modifying the index only. Also, each instance should

come with value index boundaries, set as min_level and max_level. Boundaries

are good when we want to set constraints on the value set.

Before accessing the value, we should sample a value level by calling

the sample function.

"""

def __init__(self, name):

self.name = name

self.level = "Attribute"

# memory to store previous values

self.previous_values = []

def sample(self):

pass

def get_value(self):

pass

def set_value(self):

pass

def __repr__(self):

return self.level + "." + self.name

def __str__(self):

return self.level + "." + self.name

class Number(Attribute):

def __init__(self, min_level=NUM_MIN, max_level=NUM_MAX):

super(Number, self).__init__("Number")

self.value_level = 0

self.values = NUM_VALUES

self.min_level = min_level

self.max_level = max_level

def sample(self, min_level=NUM_MIN, max_level=NUM_MAX):

# min_level: min level index

# max_level: max level index

min_level = max(self.min_level, min_level)

max_level = min(self.max_level, max_level)

self.value_level = np.random.choice(range(min_level, max_level + 1))

def sample_new(self, min_level=None, max_level=None, previous_values=None):

"""Sample new values for generating the answer set.

Returns:

new_idx(int): a new value_level

"""

if min_level is None or max_level is None:

values = range(self.min_level, self.max_level + 1)

else:

values = range(min_level, max_level + 1)

if not previous_values:

available = set(values) - set(self.previous_values) - set([self.value_level])

else:

available = set(values) - set(previous_values) - set([self.value_level])

new_idx = np.random.choice(list(available))

return new_idx

def get_value_level(self):

return self.value_level

def set_value_level(self, value_level):

self.value_level = value_level

def get_value(self, value_level=None):

if value_level is None:

value_level = self.value_level

return self.values[value_level]

class Type(Attribute):

def __init__(self, min_level=TYPE_MIN, max_level=TYPE_MAX):

super(Type, self).__init__("Type")

self.value_level = 0

self.values = TYPE_VALUES

self.min_level = min_level

self.max_level = max_level

def sample(self, min_level=TYPE_MIN, max_level=TYPE_MAX):

min_level = max(self.min_level, min_level)

max_level = min(self.max_level, max_level)

self.value_level = np.random.choice(range(min_level, max_level + 1))

def sample_new(self, min_level=None, max_level=None, previous_values=None):

if min_level is None or max_level is None:

values = range(self.min_level, self.max_level + 1)

else:

values = range(min_level, max_level + 1)

if not previous_values:

available = set(values) - set(self.previous_values) - set([self.value_level])

else:

available = set(values) - set(previous_values) - set([self.value_level])

new_idx = np.random.choice(list(available))

return new_idx

def get_value_level(self):

return self.value_level

def set_value_level(self, value_level):

self.value_level = value_level

def get_value(self, value_level=None):

if value_level is None:

value_level = self.value_level

return self.values[value_level]

class Size(Attribute):

def __init__(self, min_level=SIZE_MIN, max_level=SIZE_MAX):

super(Size, self).__init__("Size")

self.value_level = 3

self.values = SIZE_VALUES

self.min_level = min_level

self.max_level = max_level

def sample(self, min_level=SIZE_MIN, max_level=SIZE_MAX):

min_level = max(self.min_level, min_level)

max_level = min(self.max_level, max_level)

self.value_level = np.random.choice(range(min_level, max_level + 1))

def sample_new(self, min_level=None, max_level=None, previous_values=None):

if min_level is None or max_level is None:

values = range(self.min_level, self.max_level + 1)

else:

values = range(min_level, max_level + 1)

if not previous_values:

available = set(values) - set(self.previous_values) - set([self.value_level])

else:

available = set(values) - set(previous_values) - set([self.value_level])

new_idx = np.random.choice(list(available))

return new_idx

def get_value_level(self):

return self.value_level

def set_value_level(self, value_level):

self.value_level = value_level

def get_value(self, value_level=None):

if value_level is None:

value_level = self.value_level

return self.values[value_level]

class Color(Attribute):

def __init__(self, min_level=COLOR_MIN, max_level=COLOR_MAX):

super(Color, self).__init__("Color")

self.value_level = 0

self.values = COLOR_VALUES

self.min_level = min_level

self.max_level = max_level

def sample(self, min_level=COLOR_MIN, max_level=COLOR_MAX):

min_level = max(self.min_level, min_level)

max_level = min(self.max_level, max_level)

self.value_level = np.random.choice(range(min_level, max_level + 1))

def sample_new(self, min_level=None, max_level=None, previous_values=None):

if min_level is None or max_level is None:

values = range(self.min_level, self.max_level + 1)

else:

values = range(min_level, max_level + 1)

if not previous_values:

available = set(values) - set(self.previous_values) - set([self.value_level])

else:

available = set(values) - set(previous_values) - set([self.value_level])

new_idx = np.random.choice(list(available))

return new_idx

def get_value_level(self):

return self.value_level

def set_value_level(self, value_level):

self.value_level = value_level

def get_value(self, value_level=None):

if value_level is None:

value_level = self.value_level

return self.values[value_level]

class Angle(Attribute):

def __init__(self, min_level=ANGLE_MIN, max_level=ANGLE_MAX):

super(Angle, self).__init__("Angle")

self.value_level = 3

self.values = ANGLE_VALUES

self.min_level = min_level

self.max_level = max_level

def sample(self, min_level=ANGLE_MIN, max_level=ANGLE_MAX):

min_level = max(self.min_level, min_level)

max_level = min(self.max_level, max_level)

self.value_level = np.random.choice(range(min_level, max_level + 1))

def sample_new(self, min_level=None, max_level=None, previous_values=None):

if min_level is None or max_level is None:

values = range(self.min_level, self.max_level + 1)

else:

values = range(min_level, max_level + 1)

if not previous_values:

available = set(values) - set(self.previous_values) - set([self.value_level])

else:

available = set(values) - set(previous_values) - set([self.value_level])

new_idx = np.random.choice(list(available))

return new_idx

def get_value_level(self):

return self.value_level

def set_value_level(self, value_level):

self.value_level = value_level

def get_value(self, value_level=None):

if value_level is None:

value_level = self.value_level

return self.values[value_level]

class Uniformity(Attribute):

def __init__(self, min_level=UNI_MIN, max_level=UNI_MAX):

super(Uniformity, self).__init__("Uniformity")

self.value_level = 0

self.values = UNI_VALUES

self.min_level = min_level

self.max_level = max_level

def sample(self):

self.value_level = np.random.choice(range(self.min_level, self.max_level + 1))

def sample_new(self):

# Should not resample uniformity

pass

def set_value_level(self, value_level):

self.value_level = value_level

def get_value_level(self):

return self.value_level

def get_value(self, value_level=None):

if value_level is None:

value_level = self.value_level

return self.values[value_level]

class Position(Attribute):

"""Position is a special case. There are the planar position and

the angular position. Planar position allows translation in the plane

while angular Position performs roration around an axis penperdicular to the plane.

"""

def __init__(self, pos_type, pos_list):

"""Instantiate the Position attribute by passing a position type

and a pre-defined position distribution on the plane. This attribute

is strongly coupled with Number and hence value index boundaries are

not needed.

Arguments:

pos_type(str): either "planar" or "angular

pos_list(list of list of numbers): actual distribution on the plane

"""

super(Position, self).__init__("Position")

# planar: [x_c, y_c, max_w, max_h]

# angular: [x_c, y_c, max_w, max_h, x_r, y_r, omega]

assert pos_type in ("planar", "angular")

self.pos_type = pos_type

self.values = pos_list

self.value_idx = None

def sample(self, num):

"""Sample multiple positions at the same time.

Arguments:

num(int): the number of positions to sample

"""

length = len(self.values)

assert num <= length

self.value_idx = np.random.choice(range(length), num, False)

def sample_new(self, num, previous_values=None):

# Here sample new relies on probability

length = len(self.values)

if not previous_values:

constraints = self.previous_values

else:

constraints = previous_values

while True:

finished = True

new_value_idx = np.random.choice(length, num, False)

if set(new_value_idx) == set(self.value_idx):

continue

for previous_value in constraints:

if set(new_value_idx) == set(previous_value):

finished = False

break

if finished:

break

return new_value_idx

def sample_add(self, num):

"""Sample additional number of positions.

Arguments:

num(int): the number of additional positions to sample

Returns:

ret(tuple of position): new positions to add to the layout

"""

ret = []

available = set(range(len(self.values))) - set(self.value_idx)

idxes_2_add = np.random.choice(list(available), num, False)

for index in idxes_2_add:

self.value_idx = np.insert(self.value_idx, 0, index)

ret.append(self.values[index])

return ret

def get_value_idx(self):

return self.value_idx

def set_value_idx(self, value_idx):

# Note that after sampling self.value_idx is a Numpy array

self.value_idx = value_idx