Repository: YadiraF/GAN_Theories

Branch: master

Commit: 6b781bb9821e

Files: 8

Total size: 38.7 KB

Directory structure:

gitextract_vowq7xlg/

├── README.md

├── began.py

├── dcgan.py

├── ebgan.py

├── utils/

│ ├── datas.py

│ └── nets.py

├── vae.py

└── wgan.py

================================================

FILE CONTENTS

================================================

================================================

FILE: README.md

================================================

All have been tested with python2.7+ and tensorflow1.0+ in linux.

* Samples: save generated data, each folder contains a figure to show the results.

* utils: contains 2 files

* data.py: prepreocessing data.

* nets.py: Generator and Discriminator are saved here.

For research purpose,

**Network architecture**: all GANs used the same network architecture(the Discriminator of EBGAN and BEGAN are the combination of traditional D and G)

**Learning rate**: all initialized by 1e-4 and decayed by a factor of 2 each 5000 epoches (Maybe it is unfair for some GANs, but the influences are small, so I ignored)

**Dataset**: celebA cropped with 128 and resized to 64, users should copy all celebA images to `./Datas/celebA` for training

- [x] DCGAN

- [x] EBGAN

- [x] WGAN

- [x] BEGAN

And for comparsion, I added VAE here.

- [x] VAE

The generated results are shown in the end of this page.

***************

# Theories

:sparkles:DCGAN

--------

**Main idea: Techniques(of architecture) to stabilize GAN**

[Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks](https://arxiv.org/pdf/1511.06434.pdf)[2015]

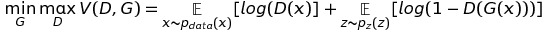

**Loss Function** (the same as Vanilla GAN)

**Architecture guidelines for stable Deep Convolutional GANs**

* Replace any pooling layers with strided convolutions (discriminator) and fractional-strided convolutions (generator).

* Use batchnorm in both the generator and the discriminator

* Remove fully connected hidden layers for deeper architectures. Just use average pooling at the end.

* Use ReLU activation in generator for all layers except for the output, which uses Tanh.

* Use LeakyReLU activation in the discriminator for all layers.

***************

:sparkles:EBGAN

--------

**Main idea: Views the discriminator as an energy function**

[Energy-based Generative Adversarial Network](https://arxiv.org/pdf/1609.03126.pdf)[2016]

(Here introduce EBGAN just for BEGAN, they use the same network structure)

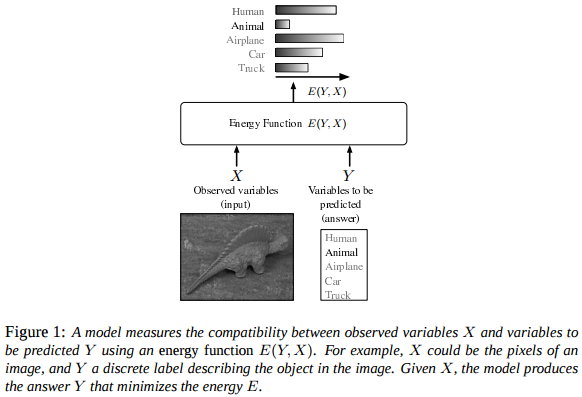

What is energy function?

The figure is from [LeCun, Yann, et al. "A tutorial on energy-based learning." ](http://yann.lecun.com/exdb/publis/pdf/lecun-06.pdf)

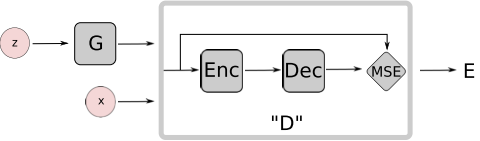

In EBGAN, we want the Discriminator to distinguish the real images and the generated(fake) images. How? A simple idea is to set X as the real image and Y as the reconstructed image, and then minimize the energy of X and Y. So we need a auto-encoder to get Y from X, and a measure to calcuate the energy (here are MSE, so simple).

Finally we get the structure of Discriminator as shown below.

So the task of D is to minimize the MSE of real image and the corresponding reconstructed image, and maximize the MSE of fake image from the G and the corresponding reconstructed fake image. And G is to do the adversarial task: minimize the MSE of fake images...

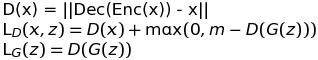

Then obviously the loss function can be written as:

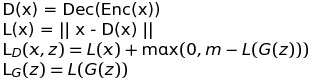

And for comparison with BEGAN, we can set the D only as the auto-encoder and L(*) for the MSE loss.

**Loss Function**

m is a positive margin here, when L(G(z)) is close to zero, the L_D is L(x) + m, which means to train D more heavily, and on the contrary, when L(G(z))>m, the L_D is L(x), which means the the D loosens the judgement of the fake images.

Finally, there is a quetion for EBGAN, why use auto-encoder in D instead of the traditonal one? What are the benifits?

I have not read the paper carefully, but one reason I think is that (said in the paper) auto-encoders have the ability to learn an energy manifold without supervision or negative examples. So, rather than simply judge the real or fake of images, the new D can catch the primary distribution of data then distinguish them. And the generated result shown in EBGAN also illustrated that(my understanding): the generated images of celebA from dcgan can hardly distinguish the face and the complex background, but the images from EBGAN focus more heavily on generating faces.

***************

:sparkles:Wasserstein GAN

--------

**Main idea: Stabilize the training by using Wasserstein-1 distance instead of Jenson-Shannon(JS) divergence**

GAN before using JS divergence has the problem of non-overlapping, leading to mode collapse and convergence difficulty.

Use EM distance or Wasserstein-1 distance, so GAN can solve the two problems above without particular architecture (like dcgan).

[Wasserstein GAN](https://arxiv.org/pdf/1701.07875.pdf)[2017]

**Mathmatics Analysis**

Why JS divergence has problems? pleas see [Towards Principled Methods for Training Generative Adversarial Networks](https://arxiv.org/pdf/1701.04862.pdf)

Anyway, this highlights the fact that **the KL, JS, and TV distances are not sensible

cost functions** when learning distributions supported by low dimensional manifolds.

so the author use Wasserstein distance

Apparently, the G is to maximize the distance, while the D is to minimize the distance.

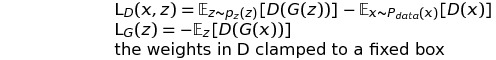

However, it is difficult to directly calculate the original formula, ||f||_L<=1 is hard to express. So the authors change it to the clip of varibales in D after some mathematical analysis, then the Wasserstein distance version of GAN loss function can be:

**Loss Function**

**Algorithm guidelines for stable GANs**

* No log in the loss. The output of D is no longer a probability, hence we do not apply sigmoid at the output of D

>

G_loss = -tf.reduce_mean(D_fake)

D_loss = tf.reduce_mean(D_fake) - tf.reduce_mean(D_real)

* Clip the weight of D (0.01)

>

self.clip_D = [var.assign(tf.clip_by_value(var, -0.01, 0.01)) for var in self.discriminator.vars]

* Train D more than G (5:1)

* Use RMSProp instead of ADAM

* Lower learning rate (0.00005)

****************

:sparkles: BEGAN

--------

**Main idea: Match auto-encoder loss distributions using a loss derived from the Wasserstein distance**

[BEGAN: Boundary Equilibrium Generative Adversarial Networks](https://arxiv.org/pdf/1703.10717.pdf)[2017]

**Mathmatics Analysis**

We have already introduced the structure of EBGAN, which is also used in BEGAN.

Then, instead of calculating the Wasserstein distance of the samples distribution in WGAN, BEGAN calculates the wasserstein distance of loss distribution.

(The mathematical analysis in BEGAN I think is more clear and intuitive than in WGAN)

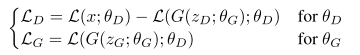

So, simply replace the E of L, we get the loss function:

Then, the most intereting part is comming:

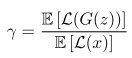

a new hyper-paramer to control the trade-off between image diversity and visual quality.

Lower values of γ lead to lower image diversity because the discriminator focuses more heavily on auto-encoding real images.

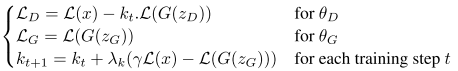

The final loss function is:

**Loss Function**

The intuition behind the function is easy to understand:

(Here I describe my understanding roughly...)

(1). In the beginning, the G and D are initialized randomly and k_0 = 0, so the L_real is larger than L_fake, leading to a short increase of k.

(2). After several iterations, the D easily learned how to reconstruct the real data, so gamma x L_real - L_fake is negative, k decreased to 0, now D is only to reconstruct the real data and G is to learn real data distrubition so as to minimize the reconstruction error in D.

(3). Along with the improvement of the ability of G to generate images like real data, L_fake becomes smaller and k becomes larger, so D focuses more on discriminating the real and fake data, then G trained more following.

(4). In the end, k becomes a constant, which means gamma x L_real - L_fake=0, so the optimization is done.

And the global loss is defined the addition of L_real (how well D learns the distribution of real data) and |gamma*L_real - L_fake| (how closed of the generated data from G and the real data)

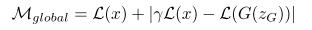

I set gamma=0.75, learning rate of k = 0.001, then the learning curve of loss and k is shown below.

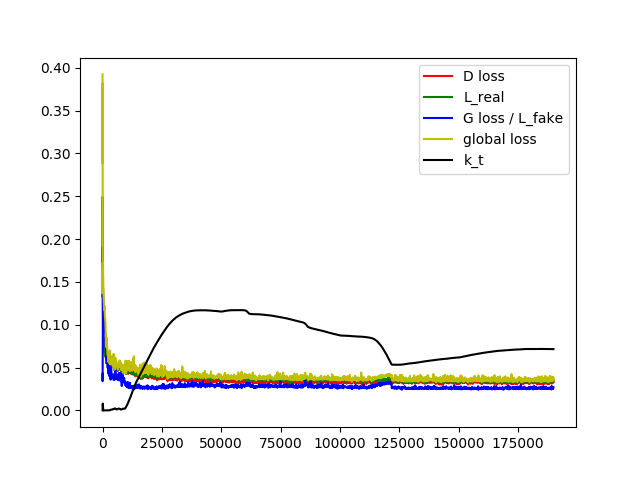

# Results

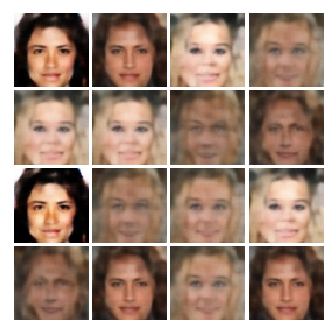

DCGAN

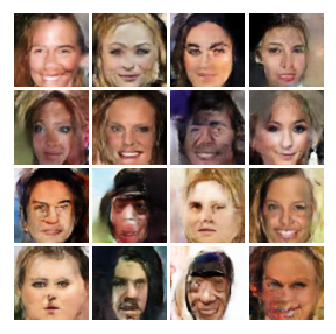

EBGAN (not trained enough)

WGAN (not trained enough)

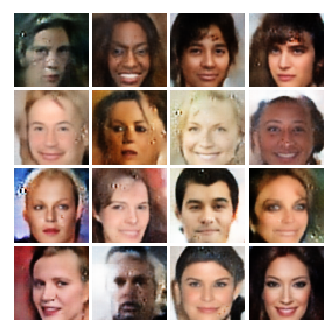

BEGAN: gamma=0.75 learning rate of k=0.001

BEGAN: gamma= 0.5 learning rate of k = 0.002

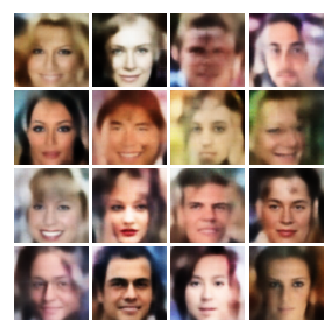

VAE

# References

http://wiseodd.github.io/techblog/2016/12/10/variational-autoencoder/ (a good blog to introduce VAE)

https://github.com/wiseodd/generative-models/tree/master/GAN

https://github.com/artcg/BEGAN

# Others

Tensorflow style: https://www.tensorflow.org/community/style_guide

A good website to convert latex equation to img(then insert into README):

http://www.sciweavers.org/free-online-latex-equation-editor

================================================

FILE: began.py

================================================

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import numpy as np

import matplotlib as mpl

mpl.use('Agg')

import matplotlib.pyplot as plt

import matplotlib.gridspec as gridspec

import os,sys

sys.path.append('utils')

from nets import *

from datas import *

def sample_z(m, n):

return np.random.uniform(-1., 1., size=[m, n])

class BEGAN():

def __init__(self, generator, discriminator, data):

self.generator = generator

self.discriminator = discriminator

self.data = data

# data

self.z_dim = self.data.z_dim

self.size = self.data.size

self.channel = self.data.channel

self.X = tf.placeholder(tf.float32, shape=[None, self.size, self.size, self.channel])

self.z = tf.placeholder(tf.float32, shape=[None, self.z_dim])

# began parameters

self.k_t = tf.placeholder(tf.float32, shape=[]) # weighting parameter which constantly updates during training

gamma = 0.75 # diversity ratio, used to control model equibilibrium.

lambda_k = 0.001 # learning rate for k. Berthelot et al. use 0.001

# nets

self.G_sample = self.generator(self.z)

self.D_real = self.discriminator(self.X)

self.D_fake = self.discriminator(self.G_sample, reuse = True)

# loss

L_real = tf.reduce_mean(tf.abs(self.X - self.D_real))

L_fake = tf.reduce_mean(tf.abs(self.G_sample - self.D_fake))

self.D_loss = L_real - self.k_t * L_fake

self.G_loss = L_fake

self.k_tn = self.k_t + lambda_k * (gamma*L_real - L_fake)

self.M_global = L_real + tf.abs(gamma*L_real - L_fake)

# solver

self.learning_rate = tf.placeholder(tf.float32, shape=[])

self.D_solver = tf.train.AdamOptimizer(learning_rate=self.learning_rate).minimize(self.D_loss, var_list=self.discriminator.vars)

self.G_solver = tf.train.AdamOptimizer(learning_rate=self.learning_rate).minimize(self.G_loss, var_list=self.generator.vars)

self.saver = tf.train.Saver()

gpu_options = tf.GPUOptions(allow_growth=True)

self.sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options))

self.model_name = 'Models/began.ckpt'

def train(self, sample_dir, training_epoches = 500000, batch_size = 16):

fig_count = 0

self.sess.run(tf.global_variables_initializer())

#self.saver.restore(self.sess, self.model_name)

k_tn = 0

learning_rate_initial = 1e-4

for epoch in range(training_epoches):

learning_rate = learning_rate_initial * pow(0.5, epoch // 50000)

# update D and G

X_b = self.data(batch_size)

_, _, k_tn = self.sess.run(

[self.D_solver, self.G_solver, self.k_tn],

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim), self.k_t: min(max(k_tn, 0.), 1.), self.learning_rate: learning_rate}

)

# save img, model. print loss

if epoch % 100 == 0 or epoch < 100:

D_loss_curr, G_loss_curr, M_global_curr = self.sess.run(

[self.D_loss, self.G_loss, self.M_global],

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim), self.k_t: min(max(k_tn, 0.), 1.)})

print('Iter: {}; D loss: {:.4}; G_loss: {:.4}; M_global: {:.4}; k_t: {:.6}; learning_rate:{:.8}'.format(epoch, D_loss_curr, G_loss_curr, M_global_curr, min(max(k_tn, 0.), 1.), learning_rate))

if epoch % 1000 == 0:

X_s, real, samples = self.sess.run([self.X, self.D_real, self.G_sample], feed_dict={self.X: X_b[:16,:,:,:], self.z: sample_z(16, self.z_dim)})

fig = self.data.data2fig(X_s)

plt.savefig('{}/{}.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

plt.close(fig)

fig = self.data.data2fig(real)

plt.savefig('{}/{}_d.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

plt.close(fig)

fig = self.data.data2fig(samples)

plt.savefig('{}/{}_r.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

plt.close(fig)

fig_count += 1

if epoch % 5000 == 0:

self.saver.save(self.sess, self.model_name)

if __name__ == '__main__':

# constraint GPU

os.environ['CUDA_VISIBLE_DEVICES'] = '1'

# save generated images

sample_dir = 'Samples/began'

if not os.path.exists(sample_dir):

os.makedirs(sample_dir)

# param

generator = G_conv()

discriminator = D_autoencoder()

data = cifar()

# run

began = BEGAN(generator, discriminator, data)

began.train(sample_dir)

================================================

FILE: dcgan.py

================================================

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import numpy as np

import matplotlib as mpl

mpl.use('Agg')

import matplotlib.pyplot as plt

import matplotlib.gridspec as gridspec

import os,sys

sys.path.append('utils')

from nets import *

from datas import *

def sample_z(m, n):

return np.random.uniform(-1., 1., size=[m, n])

class DCGAN():

def __init__(self, generator, discriminator, data):

self.generator = generator

self.discriminator = discriminator

self.data = data

# data

self.z_dim = self.data.z_dim

self.size = self.data.size

self.channel = self.data.channel

self.X = tf.placeholder(tf.float32, shape=[None, self.size, self.size, self.channel])

self.z = tf.placeholder(tf.float32, shape=[None, self.z_dim])

# nets

self.G_sample = self.generator(self.z)

self.D_real = self.discriminator(self.X)

self.D_fake = self.discriminator(self.G_sample, reuse = True)

# loss

self.D_loss = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(logits=self.D_real, labels=tf.ones_like(self.D_real))) + tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(logits=self.D_fake, labels=tf.zeros_like(self.D_fake)))

self.G_loss = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(logits=self.D_fake, labels=tf.ones_like(self.D_fake)))

# solver

self.learning_rate = tf.placeholder(tf.float32, shape=[])

self.D_solver = tf.train.AdamOptimizer(learning_rate=self.learning_rate).minimize(self.D_loss, var_list=self.discriminator.vars)

self.G_solver = tf.train.AdamOptimizer(learning_rate=self.learning_rate).minimize(self.G_loss, var_list=self.generator.vars)

self.saver = tf.train.Saver()

gpu_options = tf.GPUOptions(allow_growth=True)

self.sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options))

self.model_name = 'Models/dcgan.ckpt'

def train(self, sample_dir, training_epoches = 500000, batch_size = 32):

fig_count = 0

self.sess.run(tf.global_variables_initializer())

#self.saver.restore(self.sess, self.model_name)

learning_rate_initial = 1e-4

for epoch in range(training_epoches):

learning_rate = learning_rate_initial * pow(0.5, epoch // 50000)

# update D

X_b = self.data(batch_size)

self.sess.run(

self.D_solver,

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim), self.learning_rate: learning_rate}

)

# update G

for _ in range(1):

self.sess.run(

self.G_solver,

feed_dict={self.z: sample_z(batch_size, self.z_dim), self.learning_rate: learning_rate}

)

# save img, model. print loss

if epoch % 100 == 0 or epoch < 100:

D_loss_curr, G_loss_curr = self.sess.run(

[self.D_loss, self.G_loss],

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim)})

print('Iter: {}; D loss: {:.4}; G_loss: {:.4}'.format(epoch, D_loss_curr, G_loss_curr))

if epoch % 1000 == 0:

samples = self.sess.run(self.G_sample, feed_dict={self.z: sample_z(16, self.z_dim)})

fig = self.data.data2fig(samples)

plt.savefig('{}/{}.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

fig_count += 1

plt.close(fig)

if epoch % 5000 == 0:

self.saver.save(self.sess, self.model_name)

if __name__ == '__main__':

# constraint GPU

os.environ['CUDA_VISIBLE_DEVICES'] = '2'

# save generated images

sample_dir = 'Samples/dcgan'

if not os.path.exists(sample_dir):

os.makedirs(sample_dir)

# param

generator = G_conv()

discriminator = D_conv()

data = celebA()

# run

dcgan = DCGAN(generator, discriminator, data)

dcgan.train(sample_dir)

================================================

FILE: ebgan.py

================================================

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import numpy as np

import matplotlib as mpl

mpl.use('Agg')

import matplotlib.pyplot as plt

import matplotlib.gridspec as gridspec

import os,sys

sys.path.append('utils')

from nets import *

from datas import *

def sample_z(m, n):

return np.random.uniform(-1., 1., size=[m, n])

class EBGAN():

def __init__(self, generator, discriminator, data):

self.generator = generator

self.discriminator = discriminator

self.data = data

# data

self.z_dim = self.data.z_dim

self.size = self.data.size

self.channel = self.data.channel

self.X = tf.placeholder(tf.float32, shape=[None, self.size, self.size, self.channel])

self.z = tf.placeholder(tf.float32, shape=[None, self.z_dim])

# ebgan parameters

margin = 50. #

# nets

self.G_sample = self.generator(self.z)

self.D_real = self.discriminator(self.X)

self.D_fake = self.discriminator(self.G_sample, reuse = True)

# loss

#L_real = tf.reduce_mean((self.X - self.D_real)**2, [1,2,3])

#L_fake = tf.reduce_mean((self.G_sample - self.D_fake)**2, [1,2,3])

L_real = tf.nn.l2_loss(self.X - self.D_real)

L_fake = tf.nn.l2_loss(self.G_sample - self.D_fake)

self.D_loss = L_real + tf.maximum(0., margin - L_fake)

self.G_loss = L_fake

# solver

self.learning_rate = tf.placeholder(tf.float32, shape=[])

self.D_solver = tf.train.AdamOptimizer(learning_rate=self.learning_rate).minimize(self.D_loss, var_list=self.discriminator.vars)

self.G_solver = tf.train.AdamOptimizer(learning_rate=self.learning_rate).minimize(self.G_loss, var_list=self.generator.vars)

self.saver = tf.train.Saver()

gpu_options = tf.GPUOptions(allow_growth=True)

self.sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options))

self.model_name = 'Models/ebgan.ckpt'

def train(self, sample_dir, training_epoches = 500000, batch_size = 32):

fig_count = 0

self.sess.run(tf.global_variables_initializer())

#self.saver.restore(self.sess, self.model_name)

learning_rate_initial = 1e-4

for epoch in range(training_epoches):

learning_rate = learning_rate_initial * pow(0.5, epoch // 50000)

# update D and G

X_b = self.data(batch_size)

self.sess.run(

[self.D_solver, self.G_solver],

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim), self.learning_rate: learning_rate}

)

# save img, model. print loss

if epoch % 100 == 0 or epoch < 100:

D_loss_curr, G_loss_curr = self.sess.run(

[self.D_loss, self.G_loss],

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim)})

print('Iter: {}; D loss: {:.4}; G_loss: {:.4};'.format(epoch, D_loss_curr, G_loss_curr))

if epoch % 1000 == 0:

X_s, real, samples = self.sess.run([self.X, self.D_real, self.G_sample], feed_dict={self.X: X_b[:16,:,:,:], self.z: sample_z(16, self.z_dim)})

fig = self.data.data2fig(X_s)

plt.savefig('{}/{}.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

plt.close(fig)

fig = self.data.data2fig(real)

plt.savefig('{}/{}_d.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

plt.close(fig)

fig = self.data.data2fig(samples)

plt.savefig('{}/{}_r.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

plt.close(fig)

fig_count += 1

if epoch % 5000 == 0:

self.saver.save(self.sess, self.model_name)

if __name__ == '__main__':

# constraint GPU

os.environ['CUDA_VISIBLE_DEVICES'] = '1'

# save generated images

sample_dir = 'Samples/ebgan'

if not os.path.exists(sample_dir):

os.makedirs(sample_dir)

# param

generator = G_conv()

discriminator = D_autoencoder()

data = celebA()

# run

ebgan = EBGAN(generator, discriminator, data)

ebgan.train(sample_dir)

================================================

FILE: utils/datas.py

================================================

import os,sys

from PIL import Image

import scipy.misc

from glob import glob

import numpy as np

import matplotlib as mpl

mpl.use('Agg')

import matplotlib.pyplot as plt

import matplotlib.gridspec as gridspec

from tensorflow.examples.tutorials.mnist import input_data

prefix = './Datas/'

def get_img(img_path, is_crop=True, crop_h=256, resize_h=64):

img=scipy.misc.imread(img_path).astype(np.float)

resize_w = resize_h

if is_crop:

crop_w = crop_h

h, w = img.shape[:2]

j = int(round((h - crop_h)/2.))

i = int(round((w - crop_w)/2.))

cropped_image = scipy.misc.imresize(img[j:j+crop_h, i:i+crop_w],[resize_h, resize_w])

else:

cropped_image = scipy.misc.imresize(img,[resize_h, resize_w])

return np.array(cropped_image)/255.0

class celebA():

def __init__(self):

datapath = prefix + 'celebA'

self.z_dim = 100

self.size = 64

self.channel = 3

self.data = glob(os.path.join(datapath, '*.jpg'))

self.batch_count = 0

def __call__(self,batch_size):

batch_number = len(self.data)/batch_size

if self.batch_count < batch_number-2:

self.batch_count += 1

else:

self.batch_count = 0

path_list = self.data[self.batch_count*batch_size:(self.batch_count+1)*batch_size]

batch = [get_img(img_path, True, 128, self.size) for img_path in path_list]

batch_imgs = np.array(batch).astype(np.float32)

return batch_imgs

def data2fig(self, samples):

fig = plt.figure(figsize=(4, 4))

gs = gridspec.GridSpec(4, 4)

gs.update(wspace=0.05, hspace=0.05)

for i, sample in enumerate(samples):

ax = plt.subplot(gs[i])

plt.axis('off')

ax.set_xticklabels([])

ax.set_yticklabels([])

ax.set_aspect('equal')

plt.imshow(sample)

return fig

class cifar():

def __init__(self):

datapath = prefix + 'cifar10'

self.z_dim = 100

self.size = 64

self.channel = 3

self.data = glob(os.path.join(datapath, '*'))

self.batch_count = 0

def __call__(self,batch_size):

batch_number = len(self.data)/batch_size

if self.batch_count < batch_number-2:

self.batch_count += 1

else:

self.batch_count = 0

path_list = self.data[self.batch_count*batch_size:(self.batch_count+1)*batch_size]

batch = [get_img(img_path, False, 128, self.size) for img_path in path_list]

batch_imgs = np.array(batch).astype(np.float32)

return batch_imgs

def data2fig(self, samples):

fig = plt.figure(figsize=(4, 4))

gs = gridspec.GridSpec(4, 4)

gs.update(wspace=0.05, hspace=0.05)

for i, sample in enumerate(samples):

ax = plt.subplot(gs[i])

plt.axis('off')

ax.set_xticklabels([])

ax.set_yticklabels([])

ax.set_aspect('equal')

plt.imshow(sample)

return fig

class mnist():

def __init__(self):

datapath = prefix + 'mnist'

self.z_dim = 100

self.size = 64

self.channel = 1

self.data = input_data.read_data_sets(datapath, one_hot=True)

def __call__(self,batch_size):

batch_imgs = np.zeros([batch_size, self.size, self.size, self.channel])

batch_x,y = self.data.train.next_batch(batch_size)

batch_x = np.reshape(batch_x, (batch_size, 28, 28, self.channel))

for i in range(batch_size):

img = batch_x[i,:,:,0]

batch_imgs[i,:,:,0] = scipy.misc.imresize(img, [self.size, self.size])

batch_imgs /= 255.

return batch_imgs, y

def data2fig(self, samples):

fig = plt.figure(figsize=(4, 4))

gs = gridspec.GridSpec(4, 4)

gs.update(wspace=0.05, hspace=0.05)

for i, sample in enumerate(samples):

ax = plt.subplot(gs[i])

plt.axis('off')

ax.set_xticklabels([])

ax.set_yticklabels([])

ax.set_aspect('equal')

plt.imshow(sample.reshape(self.size,self.size), cmap='Greys_r')

return fig

if __name__ == '__main__':

data = mnist()

imgs,_ = data(20)

fig = mnist.data2fig(imgs[:16,:,:])

plt.savefig('Samples/test.png', bbox_inches='tight')

plt.close(fig)

================================================

FILE: utils/nets.py

================================================

import tensorflow as tf

import tensorflow.contrib as tc

import tensorflow.contrib.layers as tcl

def lrelu(x, leak=0.2, name="lrelu"):

with tf.variable_scope(name):

f1 = 0.5 * (1 + leak)

f2 = 0.5 * (1 - leak)

return f1 * x + f2 * abs(x)

class G_conv(object):

def __init__(self, channel=3, name='G_conv'):

self.name = name

self.size = 64/16

self.channel = channel

def __call__(self, z):

with tf.variable_scope(self.name) as scope:

g = tcl.fully_connected(z, self.size * self.size * 512, activation_fn=tf.nn.relu, normalizer_fn=tcl.batch_norm)

g = tf.reshape(g, (-1, self.size, self.size, 512)) # size

g = tcl.conv2d_transpose(g, 256, 3, stride=2, # size*2

activation_fn=tf.nn.relu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

g = tcl.conv2d_transpose(g, 128, 3, stride=2, # size*4

activation_fn=tf.nn.relu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

g = tcl.conv2d_transpose(g, 64, 3, stride=2, # size*8 32x32x64

activation_fn=tf.nn.relu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

g = tcl.conv2d_transpose(g, self.channel, 3, stride=2, # size*16

activation_fn=tf.nn.sigmoid, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

return g

@property

def vars(self):

return tf.get_collection(tf.GraphKeys.TRAINABLE_VARIABLES, scope=self.name)

class D_conv(object):

def __init__(self, name='D_conv'):

self.name = name

def __call__(self, x, reuse=False):

with tf.variable_scope(self.name) as scope:

if reuse:

scope.reuse_variables()

size = 64

d = tcl.conv2d(x, num_outputs=size, kernel_size=3, # bzx64x64x3 -> bzx32x32x64

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d(d, num_outputs=size * 2, kernel_size=3, # 16x16x128

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d(d, num_outputs=size * 4, kernel_size=3, # 8x8x256

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d(d, num_outputs=size * 8, kernel_size=3, # 4x4x512

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.fully_connected(tcl.flatten(d), 256, activation_fn=lrelu, weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.fully_connected(d, 1, activation_fn=None, weights_initializer=tf.random_normal_initializer(0, 0.02))

return d

@property

def vars(self):

return tf.get_collection(tf.GraphKeys.TRAINABLE_VARIABLES, scope=self.name)

# for ebgan and began

class D_autoencoder(object):

def __init__(self, n_hidden=256, name='D_autoencoder'):

self.name = name

self.n_hidden = n_hidden

def __call__(self, x, reuse=False):

with tf.variable_scope(self.name) as scope:

if reuse:

scope.reuse_variables()

# --- conv

size = 64

d = tcl.conv2d(x, num_outputs=size, kernel_size=3, # bzx64x64x3 -> bzx32x32x64

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d(d, num_outputs=size * 2, kernel_size=3, # 16x16x128

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d(d, num_outputs=size * 4, kernel_size=3, # 8x8x256

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d(d, num_outputs=size * 8, kernel_size=3, # 4x4x512

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

h = tcl.fully_connected(tcl.flatten(d), self.n_hidden, activation_fn=lrelu, weights_initializer=tf.random_normal_initializer(0, 0.02))

# -- deconv

d = tcl.fully_connected(h, 4 * 4 * 512, activation_fn=tf.nn.relu, normalizer_fn=tcl.batch_norm)

d = tf.reshape(d, (-1, 4, 4, 512)) # size

d = tcl.conv2d_transpose(d, 256, 3, stride=2, # size*2

activation_fn=tf.nn.relu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d_transpose(d, 128, 3, stride=2, # size*4

activation_fn=tf.nn.relu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d_transpose(d, 64, 3, stride=2, # size*8

activation_fn=tf.nn.relu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d_transpose(d, 3, 3, stride=2, # size*16

activation_fn=tf.nn.sigmoid, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

return d

@property

def vars(self):

return tf.get_collection(tf.GraphKeys.TRAINABLE_VARIABLES, scope=self.name)

# for vae

class D_vae(object):

def __init__(self, name='D_vae'):

self.name = name

def __call__(self, x, reuse=False):

with tf.variable_scope(self.name) as scope:

if reuse:

scope.reuse_variables()

size = 64

d = tcl.conv2d(x, num_outputs=size, kernel_size=3, # bzx64x64x3 -> bzx32x32x64

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d(d, num_outputs=size * 2, kernel_size=3, # 16x16x128

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d(d, num_outputs=size * 4, kernel_size=3, # 8x8x256

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.conv2d(d, num_outputs=size * 8, kernel_size=3, # 4x4x512

stride=2, activation_fn=lrelu, normalizer_fn=tcl.batch_norm, padding='SAME', weights_initializer=tf.random_normal_initializer(0, 0.02))

d = tcl.fully_connected(tcl.flatten(d), 256, activation_fn=lrelu, weights_initializer=tf.random_normal_initializer(0, 0.02))

mu = tcl.fully_connected(d, 100, activation_fn=None, weights_initializer=tf.random_normal_initializer(0, 0.02))

sigma = tcl.fully_connected(d, 100, activation_fn=None, weights_initializer=tf.random_normal_initializer(0, 0.02))

return mu, sigma

@property

def vars(self):

return tf.get_collection(tf.GraphKeys.TRAINABLE_VARIABLES, scope=self.name)

================================================

FILE: vae.py

================================================

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import numpy as np

import matplotlib as mpl

mpl.use('Agg')

import matplotlib.pyplot as plt

import matplotlib.gridspec as gridspec

import os,sys

sys.path.append('utils')

from nets import *

from datas import *

def sample_z(m, n):

return np.random.uniform(0, 1., size=[m, n])

class VAE():

def __init__(self, generator, discriminator, data):

self.generator = generator

self.discriminator = discriminator

self.data = data

# data

self.z_dim = self.data.z_dim

self.size = self.data.size

self.channel = self.data.channel

self.X = tf.placeholder(tf.float32, shape=[None, self.size, self.size, self.channel])

self.z = tf.placeholder(tf.float32, shape=[None, self.z_dim])

# nets

mu, sigma = self.discriminator(self.X)

latent_code = mu + tf.exp(sigma/2)*self.z

self.G_real = self.generator(latent_code)

self.G_sample = self.generator(self.z)

# loss

# E[log P(X|z)]

epsilon = 1e-8

self.recon = tf.reduce_sum(-self.X * tf.log(self.G_real + epsilon) -(1.0 - self.X) * tf.log(1.0 - self.G_real + epsilon))

# D_KL(Q(z|X) || P(z|X)); calculate in closed form as both dist. are Gaussian

self.kl = 0.5 * tf.reduce_sum(tf.exp(sigma) + tf.square(mu) - 1. - sigma)

self.loss = self.recon + self.kl

# solver

self.learning_rate = tf.placeholder(tf.float32, shape=[])

self.solver = tf.train.AdamOptimizer(learning_rate=self.learning_rate).minimize(self.loss, var_list=self.generator.vars + self.discriminator.vars)

self.saver = tf.train.Saver()

gpu_options = tf.GPUOptions(allow_growth=True)

self.sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options))

self.model_name = 'Models/vae_cifar.ckpt'

def train(self, sample_dir, training_epoches = 500000, batch_size = 32):

fig_count = 0

self.sess.run(tf.global_variables_initializer())

#self.saver.restore(self.sess, self.model_name)

learning_rate_initial = 1e-4

for epoch in range(training_epoches):

learning_rate = learning_rate_initial * pow(0.5, epoch // 50000)

X_b = self.data(batch_size)

self.sess.run(

self.solver,

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim), self.learning_rate: learning_rate}

)

# save img, model. print loss

if epoch % 100 == 0 or epoch < 100:

loss_curr = self.sess.run(

self.loss,

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim)})

print('Iter: {}; loss: {:.4}'.format(epoch, loss_curr))

if epoch % 1000 == 0:

real, samples = self.sess.run([self.G_real, self.G_sample], feed_dict={self.X: X_b[:16,:,:,:], self.z: sample_z(16, self.z_dim)})

fig = self.data.data2fig(real)

plt.savefig('{}/{}.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

plt.close(fig)

fig = self.data.data2fig(samples)

plt.savefig('{}/{}_s.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

plt.close(fig)

fig_count += 1

if epoch % 5000 == 0:

self.saver.save(self.sess, self.model_name)

if __name__ == '__main__':

# constraint GPU

os.environ['CUDA_VISIBLE_DEVICES'] = '2'

# save generated images

sample_dir = 'Samples/vae'

if not os.path.exists(sample_dir):

os.makedirs(sample_dir)

# param

generator = G_conv()

discriminator = D_vae()

data = celebA()

# run

vae = VAE(generator, discriminator, data)

vae.train(sample_dir)

================================================

FILE: wgan.py

================================================

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import numpy as np

import matplotlib as mpl

mpl.use('Agg')

import matplotlib.pyplot as plt

import matplotlib.gridspec as gridspec

import os,sys

sys.path.append('utils')

from nets import *

from datas import *

def sample_z(m, n):

return np.random.uniform(-1., 1., size=[m, n])

class WGAN():

def __init__(self, generator, discriminator, data):

self.generator = generator

self.discriminator = discriminator

self.data = data

# data

self.z_dim = self.data.z_dim

self.size = self.data.size

self.channel = self.data.channel

self.X = tf.placeholder(tf.float32, shape=[None, self.size, self.size, self.channel])

self.z = tf.placeholder(tf.float32, shape=[None, self.z_dim])

# nets

self.G_sample = self.generator(self.z)

self.D_real = self.discriminator(self.X)

self.D_fake = self.discriminator(self.G_sample, reuse = True)

# loss

self.D_loss = - tf.reduce_mean(self.D_real) + tf.reduce_mean(self.D_fake)

self.G_loss = - tf.reduce_mean(self.D_fake)

# clip

self.clip_D = [var.assign(tf.clip_by_value(var, -0.01, 0.01)) for var in self.discriminator.vars]

# solver

self.learning_rate = tf.placeholder(tf.float32, shape=[])

self.D_solver = tf.train.RMSPropOptimizer(learning_rate=self.learning_rate).minimize(self.D_loss, var_list=self.discriminator.vars)

self.G_solver = tf.train.RMSPropOptimizer(learning_rate=self.learning_rate).minimize(self.G_loss, var_list=self.generator.vars)

gpu_options = tf.GPUOptions(allow_growth=True)

self.sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options))

self.saver = tf.train.Saver()

self.model_name = 'Models/wgan.ckpt'

def train(self, sample_dir, training_epoches = 500000, batch_size = 32):

fig_count = 0

self.sess.run(tf.global_variables_initializer())

#self.saver.restore(self.sess, self.model_name)

learning_rate_initial = 1e-4

for epoch in range(training_epoches):

learning_rate = learning_rate_initial * pow(0.5, epoch // 50000)

# update D

n_d = 100 if epoch < 25 or (epoch+1) % 500 == 0 else 5

for _ in range(n_d):

X_b = self.data(batch_size)

self.sess.run(

[self.clip_D,self.D_solver],

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim), self.learning_rate: learning_rate}

)

# update G

for _ in range(1):

self.sess.run(

self.G_solver,

feed_dict={self.z: sample_z(batch_size, self.z_dim), self.learning_rate: learning_rate}

)

# save img, model. print loss

if epoch % 100 == 0 or epoch < 100:

D_loss_curr, G_loss_curr = self.sess.run(

[self.D_loss, self.G_loss],

feed_dict={self.X: X_b, self.z: sample_z(batch_size, self.z_dim)})

print('Iter: {}; D loss: {:.4}; G_loss: {:.4}'.format(epoch, D_loss_curr, G_loss_curr))

if epoch % 1000 == 0:

samples = self.sess.run(self.G_sample, feed_dict={self.z: sample_z(16, self.z_dim)})

fig = self.data.data2fig(samples)

plt.savefig('{}/{}.png'.format(sample_dir, str(fig_count).zfill(3)), bbox_inches='tight')

fig_count += 1

plt.close(fig)

if epoch % 5000 == 0:

self.saver.save(self.sess, self.model_name)

if __name__ == '__main__':

# constraint GPU

os.environ['CUDA_VISIBLE_DEVICES'] = '1'

# save generated images

sample_dir = 'Samples/wgan'

if not os.path.exists(sample_dir):

os.makedirs(sample_dir)

# param

generator = G_conv()

discriminator = D_conv()

data = celebA()

# run

wgan = WGAN(generator, discriminator, data)

wgan.train(sample_dir)

gitextract_vowq7xlg/ ├── README.md ├── began.py ├── dcgan.py ├── ebgan.py ├── utils/ │ ├── datas.py │ └── nets.py ├── vae.py └── wgan.py

SYMBOL INDEX (50 symbols across 7 files)

FILE: began.py

function sample_z (line 14) | def sample_z(m, n):

class BEGAN (line 17) | class BEGAN():

method __init__ (line 18) | def __init__(self, generator, discriminator, data):

method train (line 62) | def train(self, sample_dir, training_epoches = 500000, batch_size = 16):

FILE: dcgan.py

function sample_z (line 14) | def sample_z(m, n):

class DCGAN (line 17) | class DCGAN():

method __init__ (line 18) | def __init__(self, generator, discriminator, data):

method train (line 51) | def train(self, sample_dir, training_epoches = 500000, batch_size = 32):

FILE: ebgan.py

function sample_z (line 14) | def sample_z(m, n):

class EBGAN (line 17) | class EBGAN():

method __init__ (line 18) | def __init__(self, generator, discriminator, data):

method train (line 59) | def train(self, sample_dir, training_epoches = 500000, batch_size = 32):

FILE: utils/datas.py

function get_img (line 15) | def get_img(img_path, is_crop=True, crop_h=256, resize_h=64):

class celebA (line 29) | class celebA():

method __init__ (line 30) | def __init__(self):

method __call__ (line 39) | def __call__(self,batch_size):

method data2fig (line 53) | def data2fig(self, samples):

class cifar (line 67) | class cifar():

method __init__ (line 68) | def __init__(self):

method __call__ (line 77) | def __call__(self,batch_size):

method data2fig (line 91) | def data2fig(self, samples):

class mnist (line 106) | class mnist():

method __init__ (line 107) | def __init__(self):

method __call__ (line 114) | def __call__(self,batch_size):

method data2fig (line 125) | def data2fig(self, samples):

FILE: utils/nets.py

function lrelu (line 5) | def lrelu(x, leak=0.2, name="lrelu"):

class G_conv (line 11) | class G_conv(object):

method __init__ (line 12) | def __init__(self, channel=3, name='G_conv'):

method __call__ (line 17) | def __call__(self, z):

method vars (line 32) | def vars(self):

class D_conv (line 36) | class D_conv(object):

method __init__ (line 37) | def __init__(self, name='D_conv'):

method __call__ (line 40) | def __call__(self, x, reuse=False):

method vars (line 60) | def vars(self):

class D_autoencoder (line 64) | class D_autoencoder(object):

method __init__ (line 65) | def __init__(self, n_hidden=256, name='D_autoencoder'):

method __call__ (line 69) | def __call__(self, x, reuse=False):

method vars (line 101) | def vars(self):

class D_vae (line 105) | class D_vae(object):

method __init__ (line 106) | def __init__(self, name='D_vae'):

method __call__ (line 109) | def __call__(self, x, reuse=False):

method vars (line 130) | def vars(self):

FILE: vae.py

function sample_z (line 14) | def sample_z(m, n):

class VAE (line 17) | class VAE():

method __init__ (line 18) | def __init__(self, generator, discriminator, data):

method train (line 57) | def train(self, sample_dir, training_epoches = 500000, batch_size = 32):

FILE: wgan.py

function sample_z (line 14) | def sample_z(m, n):

class WGAN (line 17) | class WGAN():

method __init__ (line 18) | def __init__(self, generator, discriminator, data):

method train (line 54) | def train(self, sample_dir, training_epoches = 500000, batch_size = 32):

Condensed preview — 8 files, each showing path, character count, and a content snippet. Download the .json file or copy for the full structured content (43K chars).

[

{

"path": "README.md",

"chars": 10262,

"preview": "All have been tested with python2.7+ and tensorflow1.0+ in linux. \n\n* Samples: save generated data, each folder contain"

},

{

"path": "began.py",

"chars": 4275,

"preview": "import tensorflow as tf\nfrom tensorflow.examples.tutorials.mnist import input_data\nimport numpy as np\nimport matplotlib "

},

{

"path": "dcgan.py",

"chars": 3596,

"preview": "import tensorflow as tf\nfrom tensorflow.examples.tutorials.mnist import input_data\nimport numpy as np\nimport matplotlib "

},

{

"path": "ebgan.py",

"chars": 3810,

"preview": "import tensorflow as tf\nfrom tensorflow.examples.tutorials.mnist import input_data\nimport numpy as np\nimport matplotlib "

},

{

"path": "utils/datas.py",

"chars": 3769,

"preview": "import os,sys\nfrom PIL import Image\nimport scipy.misc\nfrom glob import glob\nimport numpy as np\nimport matplotlib as mpl\n"

},

{

"path": "utils/nets.py",

"chars": 6902,

"preview": "import tensorflow as tf\nimport tensorflow.contrib as tc\nimport tensorflow.contrib.layers as tcl\n\ndef lrelu(x, leak=0.2, "

},

{

"path": "vae.py",

"chars": 3438,

"preview": "import tensorflow as tf\nfrom tensorflow.examples.tutorials.mnist import input_data\nimport numpy as np\nimport matplotlib "

},

{

"path": "wgan.py",

"chars": 3566,

"preview": "import tensorflow as tf\nfrom tensorflow.examples.tutorials.mnist import input_data\nimport numpy as np\nimport matplotlib "

}

]

About this extraction

This page contains the full source code of the YadiraF/GAN_Theories GitHub repository, extracted and formatted as plain text for AI agents and large language models (LLMs). The extraction includes 8 files (38.7 KB), approximately 11.4k tokens, and a symbol index with 50 extracted functions, classes, methods, constants, and types. Use this with OpenClaw, Claude, ChatGPT, Cursor, Windsurf, or any other AI tool that accepts text input. You can copy the full output to your clipboard or download it as a .txt file.

Extracted by GitExtract — free GitHub repo to text converter for AI. Built by Nikandr Surkov.