Repository: ZJULearning/efanna

Branch: master

Commit: a65bb84e5cd5

Files: 28

Total size: 176.6 KB

Directory structure:

gitextract_2l4uult3/

├── LICENSE

├── Makefile

├── Makefile.debug

├── Makefile.silent

├── README.md

├── algorithm/

│ ├── base_index.hpp

│ ├── hashing_index.hpp

│ ├── init_indices.hpp

│ └── kdtreeub_index.hpp

├── efanna.hpp

├── general/

│ ├── distance.hpp

│ ├── matrix.hpp

│ └── params.hpp

├── matlab/

│ ├── .gitignore

│ ├── README.md

│ ├── efanna.m

│ ├── findex.cc

│ ├── fvecs_read.m

│ ├── handle_wrapper.hpp

│ └── samples/

│ ├── example_buildall.m

│ ├── example_buildgraph.m

│ ├── example_buildtree.m

│ └── example_search.m

└── samples/

├── efanna_index_buildall.cc

├── efanna_index_buildgraph.cc

├── efanna_index_buildtrees.cc

├── efanna_search.cc

└── evaluate.cc

================================================

FILE CONTENTS

================================================

================================================

FILE: LICENSE

================================================

BSD License

-----------

Copyright (c) 2016 Cong Fu, Deng Cai (http://wiki.zjulearning.org:8081/wiki/Main_Page) All rights reserved.

Redistribution and use in source and binary forms, with or without modification,

are permitted provided that the following conditions are met:

* Redistributions of source code must retain the above copyright notice, this

list of conditions and the following disclaimer.

* Redistributions in binary form must reproduce the above copyright notice, this

list of conditions and the following disclaimer in the documentation and/or

other materials provided with the distribution.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND

ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED

WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE

DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT HOLDER OR CONTRIBUTORS BE LIABLE FOR

ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES

(INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES;

LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON

ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT

(INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS

SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

================================================

FILE: Makefile

================================================

GXX=g++ -std=c++11

#OPTM=-O3 -msse2 -msse4 -fopenmp

OPTM=-O3 -march=native -fopenmp

CPFLAGS=$(OPTM) -Wall -DINFO

LDFLAGS=$(OPTM) -Wall -lboost_timer -lboost_chrono -lboost_system -DINFO

INCLUDES=-I./ -I./algorithm -I./general

SAMPLES=$(patsubst %.cc, %, $(wildcard samples/*.cc samples_hashing/*.cc))

SAMPLE_OBJS=$(foreach sample, $(SAMPLES), $(sample).o)

HEADERS=$(wildcard ./*.hpp ./*/*.hpp)

#EFNN is currently header only, so only samples will be compiled

#SHARED_LIB=libefnn.so

#OBJS=src/efnn.o

all: $(SHARED_LIB) $(SAMPLES)

#$(SHARED_LIB): $(OBJS)

# $(GXX) $(LDFLAGS) $(LIBS) $(OBJS) -shared -o $(SHARED_LIB)

$(SAMPLES): %: %.o

$(GXX) $^ -o $@ $(LDFLAGS) $(LIBS)

%.o: %.cpp $(HEADERS)

$(GXX) $(CPFLAGS) $(INCLUDES) -c $*.cpp -o $@

%.o: %.cc $(HEADERS)

$(GXX) $(CPFLAGS) $(INCLUDES) -c $*.cc -o $@

clean:

rm -rf $(OBJS)

rm -rf $(SHARED_LIB)

rm -rf $(SAMPLES)

rm -rf $(SAMPLE_OBJS)

================================================

FILE: Makefile.debug

================================================

GXX=g++ -std=c++11

OPTM=-O2 -msse4

CPFLAGS=$(OPTM) -Wall -Werror -g

LDFLAGS=$(OPTM) -Wall

INCLUDES=-I./ -I./algorithm -I./general

SAMPLES=$(patsubst %.cc, %, $(wildcard samples/*.cc samples_hashing/*.cc))

SAMPLE_OBJS=$(foreach sample, $(SAMPLES), $(sample).o)

HEADERS=$(wildcard ./*.hpp ./*/*.hpp)

#EFNN is currently header only, so only samples will be compiled

#SHARED_LIB=libefnn.so

#OBJS=src/efnn.o

all: $(SHARED_LIB) $(SAMPLES)

#$(SHARED_LIB): $(OBJS)

# $(GXX) $(LDFLAGS) $(LIBS) $(OBJS) -shared -o $(SHARED_LIB)

$(SAMPLES): %: %.o

$(GXX) $(LDFLAGS) $(LIBS) $^ -o $@

%.o: %.cpp $(HEADERS)

$(GXX) $(CPFLAGS) $(INCLUDES) -c $*.cpp -o $@

%.o: %.cc $(HEADERS)

$(GXX) $(CPFLAGS) $(INCLUDES) -c $*.cc -o $@

clean:

rm -rf $(OBJS)

rm -rf $(SHARED_LIB)

rm -rf $(SAMPLES)

rm -rf $(SAMPLE_OBJS)

================================================

FILE: Makefile.silent

================================================

GXX=g++ -std=c++11

#OPTM=-O3 -msse2 -msse4 -fopenmp

OPTM=-O3 -march=native -fopenmp

CPFLAGS=$(OPTM) -Wall

LDFLAGS=$(OPTM) -Wall -lboost_timer -lboost_system

INCLUDES=-I./ -I./algorithm -I./general

SAMPLES=$(patsubst %.cc, %, $(wildcard samples/*.cc samples_hashing/*.cc))

SAMPLE_OBJS=$(foreach sample, $(SAMPLES), $(sample).o)

HEADERS=$(wildcard ./*.hpp ./*/*.hpp)

#EFNN is currently header only, so only samples will be compiled

#SHARED_LIB=libefnn.so

#OBJS=src/efnn.o

all: $(SHARED_LIB) $(SAMPLES)

#$(SHARED_LIB): $(OBJS)

# $(GXX) $(LDFLAGS) $(LIBS) $(OBJS) -shared -o $(SHARED_LIB)

$(SAMPLES): %: %.o

$(GXX) $(LDFLAGS) $(LIBS) $^ -o $@

%.o: %.cpp $(HEADERS)

$(GXX) $(CPFLAGS) $(INCLUDES) -c $*.cpp -o $@

%.o: %.cc $(HEADERS)

$(GXX) $(CPFLAGS) $(INCLUDES) -c $*.cc -o $@

clean:

rm -rf $(OBJS)

rm -rf $(SHARED_LIB)

rm -rf $(SAMPLES)

rm -rf $(SAMPLE_OBJS)

================================================

FILE: README.md

================================================

EFANNA: an Extremely Fast Approximate Nearest Neighbor search Algorithm framework based on kNN graph

============

EFANNA is a ***flexible*** and ***efficient*** library for approximate nearest neighbor search (ANN search) on large scale data. It implements the algorithms of our paper [EFANNA : Extremely Fast Approximate Nearest Neighbor Search Algorithm Based on kNN Graph](http://arxiv.org/abs/1609.07228).

EFANNA provides fast solutions on both ***approximate nearest neighbor graph construction*** and ***ANN search*** problems.

EFANNA is also flexible to adopt all kinds of hierarchical structure for initialization, such as random projection tree, hierarchical clustering tree, [multi-table hashing](https://github.com/fc731097343/efanna/tree/master/samples_hashing) and so on.

What's new

-------

+ **Please see our more advanced search algorithm [NSG](https://github.com/ZJULearning/nsg)** Jan 13, 2018

+ **The [paper](http://arxiv.org/abs/1609.07228) updated significantly.** Dec 6, 2016

+ **Algorithm improved and AVX instructions supported.** Nov 30, 2016

+ **Parallelism with OpenMP.** Sep 26, 2016

Benchmark data set

-------

* [SIFT1M and GIST1M](http://corpus-texmex.irisa.fr/)

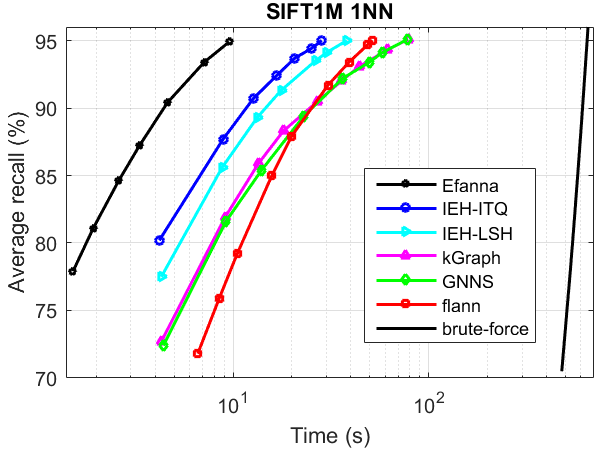

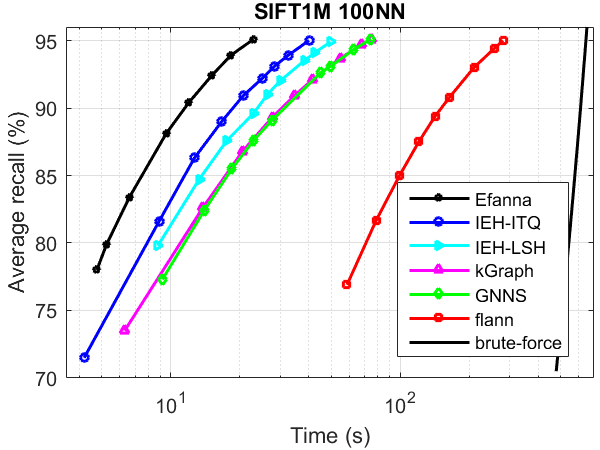

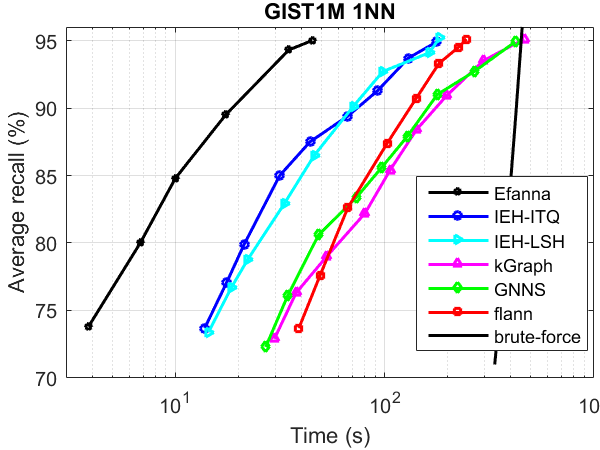

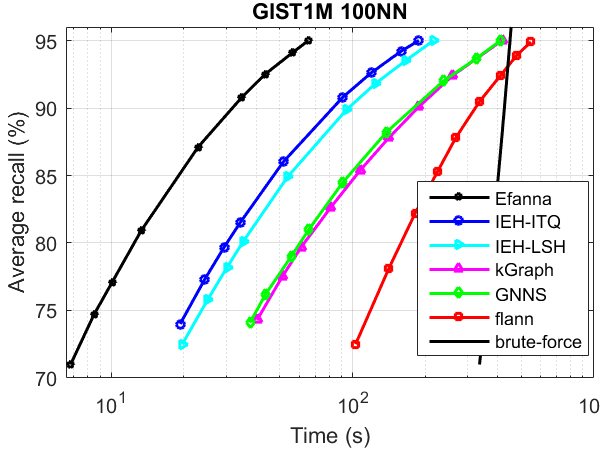

ANN search performance

------

The performance was tested without parallelism.

Compared Algorithms:

* [kGraph](http://www.kgraph.org)

* [flann](http://www.cs.ubc.ca/research/flann/)

* [IEH](http://ieeexplore.ieee.org/document/6734715/) : Fast and accurate hashing via iterative nearest neighbors expansion

* [GNNS](https://webdocs.cs.ualberta.ca/~abbasiya/gnns.pdf) : Fast Approximate Nearest-Neighbor Search with k-Nearest Neighbor Graph

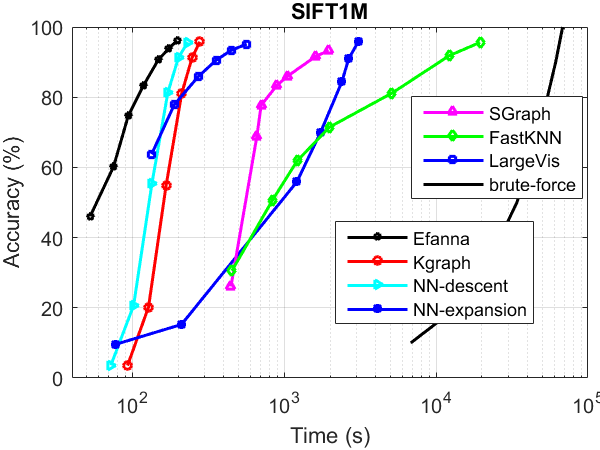

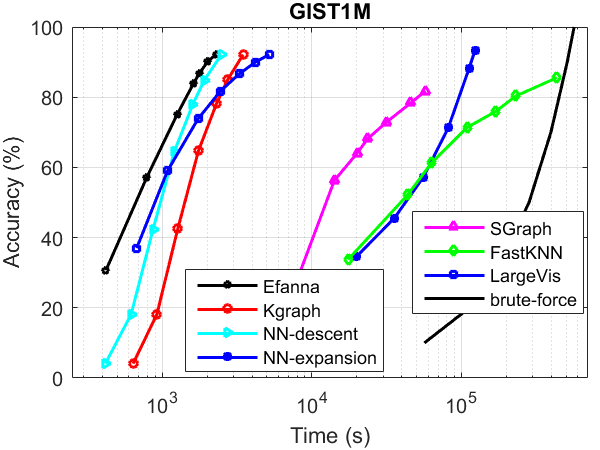

kNN Graph Construction Performance

------

The performance was tested without parallelism.

Compared Algorithms:

* [Kgraph](http://www.kgraph.org) (same with NN-descent)

* [NN-expansion](https://webdocs.cs.ualberta.ca/~abbasiya/gnns.pdf) (same with GNNS)

* [SGraph](http://ieeexplore.ieee.org/document/6247790/) : Scalable k-NN graph construction for visual descriptors

* [FastKNN](http://link.springer.com/chapter/10.1007/978-3-642-40991-2_42) : Fast kNN Graph Construction with Locality Sensitive Hashing

* [LargeVis](http://dl.acm.org/citation.cfm?id=2883041) : Visualizing Large-scale and High-dimensional Data

How To Complie

-------

Go to the root directory of EFANNA and make.

cd efanna/

make

How To Use

------

EFANNA uses a composite index to carry out ANN search, which includes an approximate kNN graph and a number of tree structures. They can be built by this library as a whole or seperately.

You may build the kNN graph seperately for other use, like other graph based machine learning algorithms.

Below are some demos.

* kNN graph building :

cd efanna/samples/

./efanna_index_buildgraph sift_base.fvecs sift.graph 8 8 8 30 25 10 10

Meaning of the parameters(from left to right):

sift_base.fvecs -- database points

sift.graph -- graph built by EFANNA

8 -- number of trees used to build the graph (larger is more accurate but slower)

8 -- conquer-to-depeth(smaller is more accurate but slower)

8 -- number of iterations to build the graph

30 -- L (larger is more accurate but slower, no smaller than K)

25 -- check (larger is more accurate but slower, no smaller than K)

10 -- K, for KNN graph

10 -- S (larger is more accurate but slower)

* tree building :

cd efanna/samples/

./efanna_index_buildtrees sift_base.fvecs sift.trees 32

Meaning of the parameters(from left to right):

sift_base.fvecs -- database points

sift.trees -- struncated KD-trees built by EFANNA

32 -- number of trees to build

* index building at one time:

cd efanna/samples/

./efanna_index_buildall sift_base.fvecs sift.graph sift.trees 32 8 8 200 200 100 10 8

Meaning of the parameters(from left to right)

sift_base.fvecs -- database points

sift.trees -- struncated KD-trees built by EFANNA

sift.graph -- approximate KNN graph built by EFANNA

32 -- number of trees in total for building index

8 -- conquer-to-depth

8 -- iteration number

200 -- L (larger is more accurate but slower, no smaller than K)

200 -- check (larger is more accurate but slower, no smaller than K)

100 -- K, for KNN graph

10 -- S (larger is more accurate but slower)

8 -- 8 out of 32 trees are used for building graph

* ANN search

cd efanna/samples/

./efanna_search sift_base.fvecs sift.trees sift.graph sift_query.fvecs sift.results 16 4 1200 200 10

Meaning of the parameters(from left to right):

sift_base.fvecs -- database points

sift.trees -- prebuilt struncated KD-trees used for search

sift.graph -- prebuilt kNN graph

sift_query -- sift query points

sift.results -- path to save ANN search results of given query

16 -- number of trees to use (no greater than the number of prebuilt trees)

4 -- number of epoches

1200 -- pool size factor (larger is more accurate but slower, usually 6~10 times larger than extend factor)

200 -- extend factor (larger is more accurate but slower)

10 -- required number of returned neighbors (i.e. k of k-NN)

* Evaluation

cd efanna/samples/

./evaluate sift.results sift_groundtruth.ivecs 10

Meaning of the parameters(from left to right):

sift.results -- search results file

sift_groundtruth.ivecs -- ground truth file

10 -- evaluate the 10NN accuracy (the only first 10 points returned by the algorithm are examined, how many points are among the true 10 nearest neighbors of the query)

See our paper or user manual for more details about the parameters and interfaces.

Output format

------

The file format of approximate kNN graph and ANN search results are the same.

Suppose the database has N points, and numbered from 0 to N-1. You want to build an approximate kNN graph. The graph can be regarded as a N * k Matrix. The saved kNN graph binary file saves the matrix by row. The first 4 bytes of each row saves the int value of k, next 4 bytes saves the value of M and next 4 bytes saves the float value of the norm of the point. Then it follows k*4 bytes, saving the indices of the k nearest neighbors of respective point. The N rows are saved continuously without seperating characters.

Similarly, suppose the query data has n points, numbered 0 to n-1. You want EFANNA to return k nearest neighbors for each query. The result file will save n rows like the graph file. It saves the returned indices row by row. Each row starts with 4 bytes recording value of k, and follows k*4 bytes recording neighbors' indices.

Input of EFANNA

------

Because there is no unified format for input data, users may need to write input function to read your own data. You may imitate the input function in our sample code (sample/efanna\_efanna\_index\_buildgraph.cc) to load the data into our matrix.

To use SIMD instruction optimization, you should pay attention to the data alignment problem of SSE / AVX instruction.

Compare with EFANNA without parallelism and SSE/AVX instructions

------

To disable the parallelism, there is no need to modify the code. Simply

export OMP_NUM_THREADS=1

before you run the code. Then the code will only use one thread. This is a very convenient way to control the number of threads used.

To disable SSE/AVX instructions, you need to modify samples/xxxx.cc, find the line

FIndex<float> index(dataset, new L2DistanceAVX<float>(), efanna::KDTreeUbIndexParams(true, trees ,mlevel ,epochs,checkK,L, kNN, trees, S));

Change **L2DistanceAVX** to **L2Distance** and build the project. Now the SSE/AVX instructions are disabled.

If you want to try SSE instead of AVX, try **L2DistanceSSE**

Parameters to get the Fig. 4/5 (10-NN approximate graph construction) in our paper

------

SIFT1M:

./efanna_index_buildgraph sift_base.fvecs sift.graph 8 8 0 20 10 10 10

./efanna_index_buildgraph sift_base.fvecs sift.graph 8 8 1 20 10 10 10

./efanna_index_buildgraph sift_base.fvecs sift.graph 8 8 2 20 10 10 10

./efanna_index_buildgraph sift_base.fvecs sift.graph 8 8 3 20 10 10 10

./efanna_index_buildgraph sift_base.fvecs sift.graph 8 8 5 20 10 10 10

./efanna_index_buildgraph sift_base.fvecs sift.graph 8 8 6 20 20 10 10

./efanna_index_buildgraph sift_base.fvecs sift.graph 8 8 6 20 30 10 10

GIST1M:

./efanna_index_buildgraph gist_base.fvecs gist.graph 8 8 2 30 30 10 10

./efanna_index_buildgraph gist_base.fvecs gist.graph 8 8 3 30 30 10 10

./efanna_index_buildgraph gist_base.fvecs gist.graph 8 8 4 30 30 10 10

./efanna_index_buildgraph gist_base.fvecs gist.graph 8 8 5 30 30 10 10

./efanna_index_buildgraph gist_base.fvecs gist.graph 8 8 6 30 30 10 10

./efanna_index_buildgraph gist_base.fvecs gist.graph 8 8 7 30 30 10 10

./efanna_index_buildgraph gist_base.fvecs gist.graph 8 8 10 30 40 10 10

Acknowledgment

------

Our code framework imitates [Flann](http://www.cs.ubc.ca/research/flann/) to make it scalable, and the implemnetation of NN-descent is taken from [Kgraph](http://www.kgraph.org). They proposed the NN-descent algorithm. Many thanks to them for inspiration.

What to do

-------

* Add more initial algorithm choice

================================================

FILE: algorithm/base_index.hpp

================================================

#ifndef EFANNA_BASE_INDEX_H_

#define EFANNA_BASE_INDEX_H_

#include "general/params.hpp"

#include "general/distance.hpp"

#include "general/matrix.hpp"

#include <boost/dynamic_bitset.hpp>

#include <fstream>

#include <iostream>

#include <omp.h>

#include <unordered_set>

#include <mutex>

#include "boost/smart_ptr/detail/spinlock.hpp"

#include <memory>

//#define BATCH_SIZE 200

namespace efanna{

typedef boost::detail::spinlock Lock;

typedef std::lock_guard<Lock> LockGuard;

struct Point {

unsigned id;

float dist;

bool flag;

Point () {}

Point (unsigned i, float d, bool f = true): id(i), dist(d), flag(f) {

}

bool operator < (const Point &n) const{

return this->dist < n.dist;

}

};

typedef std::vector<Point> Points;

static inline unsigned InsertIntoKnn (Point *addr, unsigned K, Point nn) {

// find the location to insert

unsigned j;

unsigned i = K;

while (i > 0) {

j = i - 1;

if (addr[j].dist <= nn.dist) break;

i = j;

}

// check for equal ID

unsigned l = i;

while (l > 0) {

j = l - 1;

if (addr[j].dist < nn.dist) break;

if (addr[j].id == nn.id) return K + 1;

l = j;

}

// i <= K-1

j = K;

while (j > i) {

addr[j] = addr[j-1];

--j;

}

addr[i] = nn;

return i;

}

struct Neighbor {

std::shared_ptr<Lock> lock;

float radius;

float radiusM;

Points pool;

unsigned L;

unsigned Range;

bool found;

std::vector<unsigned> nn_old;

std::vector<unsigned> nn_new;

std::vector<unsigned> rnn_old;

std::vector<unsigned> rnn_new;

Neighbor() : lock(std::make_shared<Lock>())

{

}

unsigned insert (unsigned id, float dist) {

if (dist > radius) return pool.size();

LockGuard guard(*lock);

unsigned l = InsertIntoKnn(&pool[0], L, Point(id, dist, true));

if (l <= L) {

if (L + 1 < pool.size()) {

++L;

}

else {

radius = pool[L-1].dist;

}

}

return l;

}

template <typename C>

void join (C callback) const {

for (unsigned const i: nn_new) {

for (unsigned const j: nn_new) {

if (i < j) {

callback(i, j);

}

}

for (unsigned j: nn_old) {

callback(i, j);

}

}

}

};

template <typename DataType>

class InitIndex{

public:

InitIndex(const Matrix<DataType>& features, const Distance<DataType>* d, const IndexParams& params):

features_(features),

distance_(d),

params_(params)

{

}

virtual ~InitIndex() {};

virtual void buildTrees(){}

virtual void buildIndex()

{

buildIndexImpl();

}

virtual void buildIndexImpl() = 0;

virtual void loadIndex(char* filename) = 0;

virtual void saveIndex(char* filename) = 0;

virtual void loadTrees(char* filename) = 0;

virtual void saveTrees(char* filename) = 0;

virtual void loadGraph(char* filename) = 0;

virtual void saveGraph(char* filename) = 0;

virtual void outputVisitBucketNum() = 0;

void saveResults(char* filename){

std::ofstream out(filename,std::ios::binary);

std::vector<std::vector<int>>::iterator i;

//std::cout<<nn_results.size()<<std::endl;

for(i = nn_results.begin(); i!= nn_results.end(); i++){

std::vector<int>::iterator j;

int dim = i->size();

//std::cout<<dim<<std::endl;

out.write((char*)&dim, sizeof(int));

for(j = i->begin(); j != i->end(); j++){

int id = *j;

out.write((char*)&id, sizeof(int));

}

}

out.close();

}

SearchParams SP;

void setSearchParams(int epochs, int init_num, int extend_to,int search_trees, int search_lv, int search_method){

SP.search_epoches = epochs;

SP.search_init_num = init_num;

if(extend_to>init_num) SP.extend_to = init_num;

else SP.extend_to = extend_to;

SP.search_depth = search_lv;

SP.tree_num = search_trees;

SP.search_method = search_method;

}

void nnExpansion_kgraph(size_t K, const DataType* qNow, std::vector<unsigned int>& pool, std::vector<Point>& results){

unsigned int base_n = features_.get_rows();

boost::dynamic_bitset<> tbflag(base_n, false);

boost::dynamic_bitset<> newflag(base_n, false);

std::vector<Point> knn(K + SP.extend_to +1);

int remainder = SP.search_init_num % SP.extend_to;

int nSeg = SP.search_init_num / SP.extend_to;

//clock_t s,f;

int Iter = nSeg;

if (remainder > 0) Iter++;

int Jter = SP.extend_to;

for(int i = 0; i<Iter; i++){

if((remainder > 0) && (i == Iter-1)) Jter=remainder;

unsigned int L = 0;

for(int j=0; j <Jter ; j++){

if(!tbflag.test(pool[i*SP.extend_to+j])){

knn[L++].id = pool[i*SP.extend_to+j];

}

}

for (unsigned int k = 0; k < L; ++k) {

knn[k].dist = distance_->compare(qNow, features_.get_row(knn[k].id), features_.get_cols());

newflag.set(knn[k].id);

}

std::sort(knn.begin(), knn.begin() + L);

//s = clock();

unsigned int k = 0;

while (k < L) {

unsigned int nk = L;

if (newflag.test(knn[k].id)){

newflag.reset(knn[k].id);

tbflag.set(knn[k].id);

typename CandidateHeap::reverse_iterator neighbor = knn_graph[knn[k].id].rbegin();

for(size_t nnk = 0;nnk < params_.K && neighbor != knn_graph[knn[k].id].rend(); neighbor++, nnk++){

if(tbflag.test(neighbor->row_id))continue;

tbflag.set(neighbor->row_id);

newflag.set(neighbor->row_id);

float dist = distance_->compare(qNow, features_.get_row(neighbor->row_id), features_.get_cols());

Point nn(neighbor->row_id, dist);

unsigned int r = InsertIntoKnn(&knn[0], L, nn);

if ( (r <= L) && (L + 1 < knn.size())) ++L;

if (r < nk) nk = r;

}

}

if (nk <= k) k = nk;

else ++k;

}

//f = clock();

//sum = sum + f-s;

if (L > K) L = K;

if (results.empty()) {

results.reserve(K + 1);

results.resize(L + 1);

std::copy(knn.begin(), knn.begin() + L, results.begin());

}

else {

for (unsigned int l = 0; l < L; ++l) {

unsigned r = InsertIntoKnn(&results[0], results.size() - 1, knn[l]);

if (r < results.size() /* inserted */ && results.size() < (K + 1)) {

results.resize(results.size() + 1);

}

}

}

}

results.pop_back();

}

void nnExpansion(size_t K, const DataType* qNow, std::vector<unsigned int>& pool, std::vector<int>& res){

unsigned int base_n = features_.get_rows();

boost::dynamic_bitset<> tbflag(base_n, false);

boost::dynamic_bitset<> newflag(base_n, false);

CandidateHeap Candidates;

int remainder = SP.search_init_num % SP.extend_to;

int nSeg = SP.search_init_num / SP.extend_to;

int segIter = nSeg;

if (remainder > 0) segIter++;

int Jter = SP.extend_to;

CandidateHeap Results;

for(int seg = 0; seg<segIter; seg++){

if((remainder > 0) && (seg == segIter-1)) Jter=remainder;

for(int j=0; j <Jter ; j++){

unsigned int nn = pool[seg*SP.extend_to+j];

//if(nn>=base_n) std::cout << "query:" << cur << " Init "<< nn << std::endl;

if(!tbflag.test(nn)){

newflag.set(nn);

Candidate<DataType> c(nn, distance_->compare(qNow, features_.get_row(nn), features_.get_cols()));

Candidates.insert(c);

}

}

std::vector<unsigned int> ids;

int iter=0;

while(iter++ < SP.search_epoches){

//the heap is max heap

ids.clear();

typename CandidateHeap::reverse_iterator it = Candidates.rbegin();

for(unsigned j = 0; j < SP.extend_to && it != Candidates.rend(); j++,it++){

// if(it->row_id>=base_n) std::cout<<"query:"<< cur<<" Judge node "<<it->row_id<<std::endl;

if(newflag.test(it->row_id)){

newflag.reset(it->row_id);

typename CandidateHeap::reverse_iterator neighbor = knn_graph[it->row_id].rbegin();

for(; neighbor != knn_graph[it->row_id].rend(); neighbor++){

// if(neighbor->row_id>=base_n) std::cout<<"query:"<< cur<<" Judge neighbor "<<neighbor->row_id<<std::endl;

if(tbflag.test(neighbor->row_id))continue;

tbflag.set(neighbor->row_id);

ids.push_back(neighbor->row_id);

}

}

}

for(size_t j = 0; j < ids.size(); j++){

Candidate<DataType> c(ids[j], distance_->compare(qNow, features_.get_row(ids[j]), features_.get_cols()) );

Candidates.insert(c);

newflag.set(ids[j]);

if(Candidates.size() > (unsigned int)SP.extend_to)Candidates.erase(Candidates.begin());

}

}

typename CandidateHeap::reverse_iterator it = Candidates.rbegin();

for(unsigned int j = 0; j < K && it != Candidates.rend(); j++,it++){

Results.insert(*it);

if(Results.size() > K)Results.erase(Results.begin());

}

}

typename CandidateHeap::reverse_iterator it = Results.rbegin();

for(unsigned int j = 0; j < K && it != Candidates.rend(); j++,it++){

res.push_back(it->row_id);

}

}

virtual void knnSearch(int K, const Matrix<DataType>& query){

getNeighbors(K,query);

}

virtual void getNeighbors(size_t K, const Matrix<DataType>& query) = 0;

virtual void initGraph() = 0;

//std::vector<unsigned> Range;

std::vector<std::vector<Point> > graph;

std::vector<Neighbor> nhoods;

void join(){

size_t dim = features_.get_cols();

size_t cc = 0;

#pragma omp parallel for default(shared) schedule(dynamic, 100) reduction(+:cc)

for(size_t i = 0; i < nhoods.size(); i++){

size_t uu = 0;

nhoods[i].found = false;

/*

for(size_t newi = 0; newi < nhoods[i].nn_new.size(); newi++){

for(size_t newj = newi+1; newj < nhoods[i].nn_new.size(); newj++){

unsigned a = nhoods[i].nn_new[newi];

unsigned b = nhoods[i].nn_new[newj];

DataType dist = distance_->compare(

features_.get_row(a), features_.get_row(b), dim);

unsigned r = nhoods[a].insert(b,dist);

if(r < params_.Check_K){uu += 2;}

nhoods[b].insert(a,dist);

}

for(size_t oldj = 0; oldj < nhoods[i].nn_old.size(); oldj++){

unsigned a = nhoods[i].nn_new[newi];

unsigned b = nhoods[i].nn_old[oldj];

DataType dist = distance_->compare(

features_.get_row(a), features_.get_row(b), dim);

unsigned r = nhoods[a].insert(b,dist);

if(r < params_.Check_K){uu += 2;}

nhoods[b].insert(a,dist);

}

}

*/

nhoods[i].join([&](unsigned i, unsigned j) {

DataType dist = distance_->compare(

features_.get_row(i), features_.get_row(j), dim);

++cc;

unsigned r;

r = nhoods[i].insert(j, dist);

if (r < params_.Check_K) ++uu;

nhoods[j].insert(i, dist);

if (r < params_.Check_K) ++uu;

});

nhoods[i].found = uu > 0;

}

}

void update (int paramL) {

for (size_t i = 0; i < nhoods.size(); i++) {

nhoods[i].nn_new.clear();

nhoods[i].nn_old.clear();

nhoods[i].rnn_new.clear();

nhoods[i].rnn_old.clear();

nhoods[i].radius = nhoods[i].pool.back().dist;

}

//find longest new

#pragma omp parallel for

for(size_t i = 0; i < nhoods.size(); i++){

if(nhoods[i].found){

unsigned maxl = nhoods[i].Range + params_.S < nhoods[i].L ? nhoods[i].Range + params_.S : nhoods[i].L;

unsigned c = 0;

unsigned l = 0;

while ((l < maxl) && (c < params_.S)) {

if (nhoods[i].pool[l].flag) ++c;

++l;

}

nhoods[i].Range = l;

}

nhoods[i].radiusM = nhoods[i].pool[nhoods[i].Range-1].dist;

}

#pragma omp parallel for

for (unsigned n = 0; n < nhoods.size(); ++n) {

Neighbor &nhood = nhoods[n];

std::vector<unsigned> &nn_new = nhood.nn_new;

std::vector<unsigned> &nn_old = nhood.nn_old;

for (unsigned l = 0; l < nhood.Range; ++l) {

Point &nn = nhood.pool[l];

Neighbor &nhood_o = nhoods[nn.id]; // nhood on the other side of the edge

if (nn.flag) {

nn_new.push_back(nn.id);

if (nn.dist > nhood_o.radiusM) {

LockGuard guard(*nhood_o.lock);

nhood_o.rnn_new.push_back(n);

}

nn.flag = false;

}

else {

nn_old.push_back(nn.id);

if (nn.dist > nhood_o.radiusM) {

LockGuard guard(*nhood_o.lock);

nhood_o.rnn_old.push_back(n);

}

}

}

}

for (unsigned i = 0; i < nhoods.size(); ++i) {

std::vector<unsigned> &nn_new = nhoods[i].nn_new;

std::vector<unsigned> &nn_old = nhoods[i].nn_old;

std::vector<unsigned> &rnn_new = nhoods[i].rnn_new;

std::vector<unsigned> &rnn_old = nhoods[i].rnn_old;

if (paramL && (rnn_new.size() > (unsigned int)paramL)) {

random_shuffle(rnn_new.begin(), rnn_new.end());

rnn_new.resize(paramL);

}

nn_new.insert(nn_new.end(), rnn_new.begin(), rnn_new.end());

if (paramL && (rnn_old.size() > (unsigned int)paramL)) {

random_shuffle(rnn_old.begin(), rnn_old.end());

rnn_old.resize(paramL);

}

nn_old.insert(nn_old.end(), rnn_old.begin(), rnn_old.end());

}

}

void refineGraph(){

std::cout << " refineGraph" << std::endl;

int iter = 0;

clock_t s,f;

s = clock();unsigned int l=100;

while(iter++ < params_.build_epoches){

join();//std::cout<<"after join"<<std::endl;

update(l);

f = clock();

std::cout << "iteration "<< iter << " time: "<< (f-s)*1.0/CLOCKS_PER_SEC<<" seconds"<< std::endl;

}

//calculate_norm();

std::cout << "saving graph" << std::endl;

/* knn_graph.clear();

for(size_t i = 0; i < nhoods.size(); i++){

CandidateHeap can;

for(size_t j = 0; j < params_.K; j++){

Candidate<DataType> c(nhoods[i].pool[j].id,nhoods[i].pool[j].dist);

can.insert(c);

}

while(can.size()<params_.K){

unsigned id = rand() % nhoods.size();

DataType dist = distance_->compare(features_.get_row(i), features_.get_row(id),features_.get_cols());

Candidate<DataType> c(id, dist);

can.insert(c);

}

knn_graph.push_back(can);

}

*/

g.resize(nhoods.size());

M.resize(nhoods.size());

gs.resize(nhoods.size());

for(unsigned i = 0; i < nhoods.size();i++){

M[i] = nhoods[i].Range;

g[i].resize(nhoods[i].pool.size());

std::copy(nhoods[i].pool.begin(), nhoods[i].pool.end(), g[i].begin());

gs[i].resize(params_.K);

for(unsigned j = 0; j < params_.K;j++)

gs[i][j] = g[i][j].id;

}

}

void calculate_norm(){

unsigned N = features_.get_rows();

unsigned D = features_.get_cols();

norms.resize(N);

#pragma omp parallel for

for (unsigned n = 0; n < N; ++n) {

norms[n] = distance_->norm(features_.get_row(n),D);

}

}

typedef std::set<Candidate<DataType>, std::greater<Candidate<DataType>> > CandidateHeap;

typedef std::vector<unsigned int> IndexVec;

size_t getGraphSize(){return gs.size();}

std::vector<unsigned> getGraphRow(unsigned row_id){

std::vector<unsigned> row;

if(gs.size() > row_id){

for(unsigned i = 0; i < gs[row_id].size(); i++)row.push_back(gs[row_id][i]);

}

return row;

}

protected:

const Matrix<DataType> features_;

const Distance<DataType>* distance_;

const IndexParams params_;

std::vector<std::vector<int> > knn_table_gt;

std::vector<std::vector<Point> > g;

std::vector<std::vector<unsigned> > gs;

std::vector<unsigned> M;

//std::vector<std::vector<int>> knn_graph;

std::vector<CandidateHeap> knn_graph;

std::vector<DataType> norms;

std::vector<std::vector<int> > nn_results;

DataType* Radius;

};

#define USING_BASECLASS_SYMBOLS \

using InitIndex<DataType>::distance_;\

using InitIndex<DataType>::params_;\

using InitIndex<DataType>::features_;\

using InitIndex<DataType>::buildIndex;\

using InitIndex<DataType>::knn_table_gt;\

using InitIndex<DataType>::nn_results;\

using InitIndex<DataType>::saveResults;\

using InitIndex<DataType>::knn_graph;\

using InitIndex<DataType>::refineGraph;\

using InitIndex<DataType>::nhoods;\

using InitIndex<DataType>::SP;\

using InitIndex<DataType>::nnExpansion;\

using InitIndex<DataType>::nnExpansion_kgraph;\

using InitIndex<DataType>::g;\

using InitIndex<DataType>::gs;\

using InitIndex<DataType>::M;\

using InitIndex<DataType>::norms;

}

#endif

================================================

FILE: algorithm/hashing_index.hpp

================================================

#ifndef EFANNA_HASHING_INDEX_H_

#define EFANNA_HASHING_INDEX_H_

#include "algorithm/base_index.hpp"

#include <boost/dynamic_bitset.hpp>

#include <time.h>

//for Debug

#include <iostream>

#include <fstream>

#include <string>

#include <unordered_map>

#include <map>

#include <sstream>

#include <set>

//#define MAX_RADIUS 6

namespace efanna{

struct HASHINGIndexParams : public IndexParams

{

HASHINGIndexParams(int codelen, int TableNum,int UpperBits, int HashRadius, char*& BaseCodeFile, char*& QueryCodeFile, int codelenShift = 0)

{

init_index_type = HASHING;

ValueType len;

len.int_val = codelen;

extra_params.insert(std::make_pair("codelen",len));

ValueType nTab;

nTab.int_val = TableNum;

extra_params.insert(std::make_pair("tablenum",nTab));

ValueType upb;

upb.int_val = UpperBits;

extra_params.insert(std::make_pair("upbits",upb));

ValueType radius;

radius.int_val = HashRadius;

extra_params.insert(std::make_pair("radius",radius));

ValueType bcf;

bcf.str_pt = BaseCodeFile;

extra_params.insert(std::make_pair("bcfile",bcf));

ValueType qcf;

qcf.str_pt = QueryCodeFile;

extra_params.insert(std::make_pair("qcfile",qcf));

ValueType lenShift;

lenShift.int_val = codelenShift;

extra_params.insert(std::make_pair("lenshift",lenShift));

}

};

template <typename DataType>

class HASHINGIndex : public InitIndex<DataType>

{

public:

typedef InitIndex<DataType> BaseClass;

typedef std::vector<unsigned int> Codes;

typedef std::unordered_map<unsigned int, std::vector<unsigned int> > HashBucket;

typedef std::vector<HashBucket> HashTable;

typedef std::vector<unsigned long> Codes64;

typedef std::unordered_map<unsigned long, std::vector<unsigned int> > HashBucket64;

typedef std::vector<HashBucket64> HashTable64;

HASHINGIndex(const Matrix<DataType>& dataset, const Distance<DataType>* d, const IndexParams& params = HASHINGIndexParams(0,NULL,NULL)) :

BaseClass(dataset,d,params)

{

std::cout<<"HASHING initial, max code length : 64" <<std::endl;

ExtraParamsMap::const_iterator it = params_.extra_params.find("codelen");

if(it != params_.extra_params.end()){

codelength = (it->second).int_val;

std::cout << "use "<<codelength<< " bit code"<< std::endl;

}

else{

std::cout << "error: no code length setting" << std::endl;

}

it = params_.extra_params.find("lenshift");

if(it != params_.extra_params.end()){

codelengthshift = (it->second).int_val;

int actuallen = codelength - codelengthshift;

if(actuallen > 0){

std::cout << "Actually use "<< actuallen<< " bit code"<< std::endl;

}else{

std::cout << "lenShift error: could not be larger than the code length! "<< std::endl;

}

}

else{

codelengthshift = 0;

}

it = params_.extra_params.find("tablenum");

if(it != params_.extra_params.end()){

tablenum = (it->second).int_val;

std::cout << "use "<<tablenum<< " hashtables"<< std::endl;

}

else{

std::cout << "error: no table number setting" << std::endl;

}

it = params_.extra_params.find("upbits");

if(it != params_.extra_params.end()){

upbits = (it->second).int_val;

std::cout << "use upper "<<upbits<< " bits as first level index of hashtable"<< std::endl;

std::cout << "use lower "<<codelength - codelengthshift - upbits<< " bits as second level index of hashtable"<< std::endl;

}

else{

std::cout << "error: no upper bits number setting" << std::endl;

}

if(upbits >= codelength-codelengthshift){

std::cout << "upbits should be smaller than the actual codelength!" << std::endl;

return;

}

int actuallen = codelength - codelengthshift;

it = params_.extra_params.find("radius");

if(it != params_.extra_params.end()){

radius = (it->second).int_val;

if(actuallen<=32){

if(radius > 13){

std::cout << "radius greater than 13 not supported yet!" << std::endl;

radius = 13;

}

}else if(actuallen<=36){

if(radius > 11){

std::cout << "radius greater than 11 not supported yet!" << std::endl;

radius = 11;

}

}else if(actuallen<=40){

if(radius > 10){

std::cout << "radius greater than 10 not supported yet!" << std::endl;

radius = 10;

}

}else if(actuallen<=48){

if(radius > 9){

std::cout << "radius greater than 9 not supported yet!" << std::endl;

radius = 9;

}

}else if(actuallen<=60){

if(radius > 8){

std::cout << "radius greater than 8 not supported yet!" << std::endl;

radius = 8;

}

}else{ //actuallen<=64

if(radius > 7){

std::cout << "radius greater than 7 not supported yet!" << std::endl;

radius = 7;

}

}

std::cout << "search hamming radius "<<radius<< std::endl;

}

else{

std::cout << "error: no radius number setting" << std::endl;

}

it = params_.extra_params.find("bcfile");

if(it != params_.extra_params.end()){

char* fpath = (it->second).str_pt;

std::string str(fpath);

std::cout << "Loading base code from " << str << std::endl;

if (codelength <= 32 ){

LoadCode32(fpath, BaseCode);

}else if(codelength <= 64 ){

LoadCode64(fpath, BaseCode64);

}else{

std::cout<<"code length not supported yet!"<<std::endl;

}

std::cout << "code length is "<<codelength<<std::endl;

}

else{

std::cout << "error: no base code file" << std::endl;

}

it = params_.extra_params.find("qcfile");

if(it != params_.extra_params.end()){

char* fpath = (it->second).str_pt;

std::string str(fpath);

std::cout << "Loading query code from " << str << std::endl;

if (codelength <= 32 ){

LoadCode32(fpath, QueryCode);

}else if(codelength <= 64 ){

LoadCode64(fpath, QueryCode64);

}else{

std::cout<<"code length not supported yet!"<<std::endl;

}

std::cout << "code length is "<<codelength<<std::endl;

}

else{

std::cout << "error: no query code file" << std::endl;

}

}

/**

* Builds the index

*/

void LoadCode32(char* filename, std::vector<Codes>& baseAll){

if (tablenum < 1){

std::cout<<"Total hash table num error! "<<std::endl;

}

int actuallen = codelength-codelengthshift;

unsigned int maxValue = 1;

for(int i=1;i<actuallen;i++){

maxValue = maxValue << 1;

maxValue ++;

}

std::stringstream ss;

for(int j = 0; j < tablenum; j++){

ss << filename << "_" << j+1 ;

std::string codeFile;

ss >> codeFile;

ss.clear();

std::ifstream in(codeFile.c_str(), std::ios::binary);

if(!in.is_open()){std::cout<<"open file " << filename <<" error"<< std::endl;return;}

int codeNum;

in.read((char*)&codeNum,4);

if (codeNum != 1){

std::cout<<"Codefile "<< j << " error!"<<std::endl;

}

in.read((char*)&codelength,4);

//std::cout<<"codelength: "<<codelength<<std::endl;

int num;

in.read((char*)&num,4);

//std::cout<<"ponit num: "<<num<<std::endl;

Codes base;

for(int i = 0; i < num; i++){

unsigned int codetmp;

in.read((char*)&codetmp,4);

codetmp = codetmp >> codelengthshift;

if (codetmp > maxValue){

std::cout<<"codetmp: "<< codetmp <<std::endl;

std::cout<<"codelengthshift: "<<codelengthshift<<std::endl;

std::cout<<"codefile "<< codeFile << " error! Exceed maximum value"<<std::endl;

in.close();

return;

}

base.push_back(codetmp);

}

baseAll.push_back(base);

in.close();

}

}

void LoadCode64(char* filename, std::vector<Codes64>& baseAll){

if (tablenum < 1){

std::cout<<"Total hash table num error! "<<std::endl;

}

int actuallen = codelength-codelengthshift;

unsigned long maxValue = 1;

for(int i=1;i<actuallen;i++){

maxValue = maxValue << 1;

maxValue ++;

}

std::stringstream ss;

for(int j = 0; j < tablenum; j++){

ss << filename << "_" << j+1 ;

std::string codeFile;

ss >> codeFile;

ss.clear();

std::ifstream in(codeFile.c_str(), std::ios::binary);

if(!in.is_open()){std::cout<<"open file " << filename <<" error"<< std::endl;return;}

int codeNum;

in.read((char*)&codeNum,4);

if (codeNum != 1){

std::cout<<"Codefile "<< j << " error!"<<std::endl;

}

in.read((char*)&codelength,4);

//std::cout<<"codelength: "<<codelength<<std::endl;

int num;

in.read((char*)&num,4);

//std::cout<<"ponit num: "<<num<<std::endl;

Codes64 base;

for(int i = 0; i < num; i++){

unsigned long codetmp;

in.read((char*)&codetmp,8);

codetmp = codetmp >> codelengthshift;

if (codetmp > maxValue){

std::cout<<"codetmp: "<< codetmp <<std::endl;

std::cout<<"codelengthshift: "<<codelengthshift<<std::endl;

std::cout<<"codefile "<< codeFile << " error! Exceed maximum value"<<std::endl;

in.close();

return;

}

base.push_back(codetmp);

}

baseAll.push_back(base);

in.close();

}

}

void BuildHashTable32(int upbits, int lowbits, std::vector<Codes>& baseAll ,std::vector<HashTable>& tbAll){

for(size_t h=0; h < baseAll.size(); h++){

Codes& base = baseAll[h];

HashTable tb;

for(int i = 0; i < (1 << upbits); i++){

HashBucket emptyBucket;

tb.push_back(emptyBucket);

}

for(size_t i = 0; i < base.size(); i ++){

unsigned int idx1 = base[i] >> lowbits;

unsigned int idx2 = base[i] - (idx1 << lowbits);

if(tb[idx1].find(idx2) != tb[idx1].end()){

tb[idx1][idx2].push_back(i);

}else{

std::vector<unsigned int> v;

v.push_back(i);

tb[idx1].insert(make_pair(idx2,v));

}

}

tbAll.push_back(tb);

}

}

void generateMask32(){

//i = 0 means the origin code

HammingBallMask.push_back(0);

HammingRadius.push_back(HammingBallMask.size());

if(radius>0){

//radius 1

for(int i = 0; i < codelength; i++){

unsigned int mask = 1 << i;

HammingBallMask.push_back(mask);

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>1){

//radius 2

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

unsigned int mask = (1<<i) | (1<<j);

HammingBallMask.push_back(mask);

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>2){

//radius 3

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k);

HammingBallMask.push_back(mask);

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>3){

//radius 4

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a);

HammingBallMask.push_back(mask);

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>4){

//radius 5

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a)| (1<<b);

HammingBallMask.push_back(mask);

}

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>5){

//radius 6

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a)| (1<<b)| (1<<c);

HammingBallMask.push_back(mask);

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>6){

//radius 7

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a)| (1<<b)| (1<<c)| (1<<d);

HammingBallMask.push_back(mask);

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>7){

//radius 8

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a)| (1<<b)| (1<<c)| (1<<d)| (1<<e);

HammingBallMask.push_back(mask);

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>8){

//radius 9

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

for(int f = e+1; f < codelength; f++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a)| (1<<b)| (1<<c)| (1<<d)| (1<<e)| (1<<f);

HammingBallMask.push_back(mask);

}

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>9){

//radius 10

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

for(int f = e+1; f < codelength; f++){

for(int g = f+1; g < codelength; g++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a)| (1<<b)| (1<<c)| (1<<d)| (1<<e)| (1<<f)| (1<<g);

HammingBallMask.push_back(mask);

}

}

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>10){

//radius 11

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

for(int f = e+1; f < codelength; f++){

for(int g = f+1; g < codelength; g++){

for(int h = g+1; h < codelength; h++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a)| (1<<b)| (1<<c)| (1<<d)| (1<<e)| (1<<f)| (1<<g)| (1<<h);

HammingBallMask.push_back(mask);

}

}

}

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>11){

//radius 12

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

for(int f = e+1; f < codelength; f++){

for(int g = f+1; g < codelength; g++){

for(int h = g+1; h < codelength; h++){

for(int l = h+1; h < codelength; l++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a)| (1<<b)| (1<<c)| (1<<d)| (1<<e)| (1<<f)| (1<<g)| (1<<h)| (1<<l);

HammingBallMask.push_back(mask);

}

}

}

}

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

if(radius>12){

//radius 13

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

for(int f = e+1; f < codelength; f++){

for(int g = f+1; g < codelength; g++){

for(int h = g+1; h < codelength; h++){

for(int l = h+1; h < codelength; l++){

for(int m = l+1; m < codelength; m++){

unsigned int mask = (1<<i) | (1<<j) | (1<<k)| (1<<a)| (1<<b)| (1<<c)| (1<<d)| (1<<e)| (1<<f)| (1<<g)| (1<<h)| (1<<l)| (1<<m);

HammingBallMask.push_back(mask);

}

}

}

}

}

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask.size());

}

}

void BuildHashTable64(int upbits, int lowbits, std::vector<Codes64>& baseAll ,std::vector<HashTable64>& tbAll){

for(size_t h=0; h < baseAll.size(); h++){

Codes64& base = baseAll[h];

HashTable64 tb;

for(int i = 0; i < (1 << upbits); i++){

HashBucket64 emptyBucket;

tb.push_back(emptyBucket);

}

for(size_t i = 0; i < base.size(); i ++){

unsigned int idx1 = base[i] >> lowbits;

unsigned long idx2 = base[i] - ((unsigned long)idx1 << lowbits);

if(tb[idx1].find(idx2) != tb[idx1].end()){

tb[idx1][idx2].push_back(i);

}else{

std::vector<unsigned int> v;

v.push_back(i);

tb[idx1].insert(make_pair(idx2,v));

}

}

tbAll.push_back(tb);

}

}

void generateMask64(){

//i = 0 means the origin code

HammingBallMask64.push_back(0);

HammingRadius.push_back(HammingBallMask64.size());

unsigned long One = 1;

if(radius>0){

//radius 1

for(int i = 0; i < codelength; i++){

unsigned long mask = One << i;

HammingBallMask64.push_back(mask);

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>1){

//radius 2

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

unsigned long mask = (One<<i) | (One<<j);

HammingBallMask64.push_back(mask);

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>2){

//radius 3

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

unsigned long mask = (One<<i) | (One<<j) | (One<<k);

HammingBallMask64.push_back(mask);

}

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>3){

//radius 4

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

unsigned long mask = (One<<i) | (One<<j) | (One<<k)| (One<<a);

HammingBallMask64.push_back(mask);

}

}

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>4){

//radius 5

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

unsigned long mask = (One<<i) | (One<<j) | (One<<k)| (One<<a)| (One<<b);

HammingBallMask64.push_back(mask);

}

}

}

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>5){

//radius 6

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

unsigned long mask = (One<<i) | (One<<j) | (One<<k)| (One<<a)| (One<<b)| (One<<c);

HammingBallMask64.push_back(mask);

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>6){

//radius 7

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

unsigned long mask = (One<<i) | (One<<j) | (One<<k)| (One<<a)| (One<<b)| (One<<c)| (One<<d);

HammingBallMask64.push_back(mask);

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>7){

//radius 8

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

unsigned long mask = (One<<i) | (One<<j) | (One<<k)| (One<<a)| (One<<b)| (One<<c)| (One<<d)| (One<<e);

HammingBallMask64.push_back(mask);

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>8){

//radius 9

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

for(int f = e+1; f < codelength; f++){

unsigned long mask = (One<<i) | (One<<j) | (One<<k)| (One<<a)| (One<<b)| (One<<c)| (One<<d)| (One<<e)| (One<<f);

HammingBallMask64.push_back(mask);

}

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>9){

//radius 10

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

for(int f = e+1; f < codelength; f++){

for(int g = f+1; g < codelength; g++){

unsigned long mask = (One<<i) | (One<<j) | (One<<k)| (One<<a)| (One<<b)| (One<<c)| (One<<d)| (One<<e)| (One<<f)| (One<<g);

HammingBallMask64.push_back(mask);

}

}

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

if(radius>10){

//radius 11

for(int i = 0; i < codelength; i++){

for(int j = i+1; j < codelength; j++){

for(int k = j+1; k < codelength; k++){

for(int a = k+1; a < codelength; a++){

for(int b = a+1; b < codelength; b++){

for(int c = b+1; c < codelength; c++){

for(int d = c+1; d < codelength; d++){

for(int e = d+1; e < codelength; e++){

for(int f = e+1; f < codelength; f++){

for(int g = f+1; g < codelength; g++){

for(int h = g+1; h < codelength; h++){

unsigned long mask = (One<<i) | (One<<j) | (One<<k)| (One<<a)| (One<<b)| (One<<c)| (One<<d)| (One<<e)| (One<<f)| (One<<g)| (One<<h);

HammingBallMask64.push_back(mask);

}

}

}

}

}

}

}

}

}

}

}

HammingRadius.push_back(HammingBallMask64.size());

}

}

void buildIndexImpl()

{

std::cout<<"HASHING building hashing table"<<std::endl;

if (codelength <= 32 ){

codelength = codelength - codelengthshift;

BuildHashTable32(upbits, codelength-upbits, BaseCode ,htb);

generateMask32();

}else if(codelength <= 64 ){

codelength = codelength - codelengthshift;

BuildHashTable64(upbits, codelength-upbits, BaseCode64 ,htb64);

generateMask64();

}else{

std::cout<<"code length not supported yet!"<<std::endl;

}

}

void getNeighbors(size_t K, const Matrix<DataType>& query){

if(gs.size() != features_.get_rows()){

if (codelength <= 32 ){

getNeighbors32(K,query);

}else if(codelength <= 64 ){

getNeighbors64(K,query);

}else{

std::cout<<"code length not supported yet!"<<std::endl;

}

}else{

switch(SP.search_method){

case 0:

if (codelength <= 32 ){

getNeighborsIEH32_kgraph(K, query);

}else if(codelength <= 64 ){

getNeighborsIEH64_kgraph(K, query);

}else{

std::cout<<"code length not supported yet!"<<std::endl;

}

break;

case 1:

if (codelength <= 32 ){

getNeighborsIEH32_nnexp(K, query);

}else if(codelength <= 64 ){

getNeighborsIEH64_nnexp(K, query);

}else{

std::cout<<"code length not supported yet!"<<std::endl;

}

break;

default:

std::cout<<"no such searching method"<<std::endl;

}

}

}

void getNeighbors32(size_t K, const Matrix<DataType>& query){

int lowbits = codelength - upbits;

unsigned int MaxCheck=HammingRadius[radius];

std::cout<<"maxcheck : "<<MaxCheck<<std::endl;

boost::dynamic_bitset<> tbflag(features_.get_rows(), false);

nn_results.clear();

VisitBucketNum.clear();

VisitBucketNum.resize(radius+2);

for(size_t cur = 0; cur < query.get_rows(); cur++){

std::vector<unsigned int> pool(SP.search_init_num);

unsigned int p = 0;

tbflag.reset();

unsigned int j = 0;

for(; j < MaxCheck; j++){

for(unsigned int h=0; h < QueryCode.size(); h++){

unsigned int searchcode = QueryCode[h][cur] ^ HammingBallMask[j];

unsigned int idx1 = searchcode >> lowbits;

unsigned int idx2 = searchcode - (idx1 << lowbits);

HashBucket::iterator bucket= htb[h][idx1].find(idx2);

if(bucket != htb[h][idx1].end()){

std::vector<unsigned int> vp = bucket->second;

for(size_t k = 0; k < vp.size() && p < (unsigned int)SP.search_init_num; k++){

if(tbflag.test(vp[k]))continue;

tbflag.set(vp[k]);

pool[p++]=(vp[k]);

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p < (unsigned int)SP.search_init_num){

VisitBucketNum[radius+1]++;

}else{

for(int r=0;r<=radius;r++){

if(j<=HammingRadius[r]){

VisitBucketNum[r]++;

break;

}

}

}

if (p<K){

int base_n = features_.get_rows();

while(p < K){

unsigned int nn = rand() % base_n;

if(tbflag.test(nn)) continue;

tbflag.set(nn);

pool[p++] = (nn);

}

}

std::vector<std::pair<float,size_t>> result;

for(unsigned int i=0; i<p;i++){

result.push_back(std::make_pair(distance_->compare(query.get_row(cur), features_.get_row(pool[i]), features_.get_cols()),pool[i]));

}

std::partial_sort(result.begin(), result.begin() + K, result.end());

std::vector<int> res;

for(unsigned int j = 0; j < K; j++) res.push_back(result[j].second);

nn_results.push_back(res);

}

//std::cout<<"bad query number: " << VisitBucketNum[radius+1] << std::endl;

}

void getNeighbors64(size_t K, const Matrix<DataType>& query){

int lowbits = codelength - upbits;

unsigned int MaxCheck=HammingRadius[radius];

std::cout<<"maxcheck : "<<MaxCheck<<std::endl;

boost::dynamic_bitset<> tbflag(features_.get_rows(), false);

nn_results.clear();

VisitBucketNum.clear();

VisitBucketNum.resize(radius+2);

for(size_t cur = 0; cur < query.get_rows(); cur++){

std::vector<unsigned int> pool(SP.search_init_num);

unsigned int p = 0;

tbflag.reset();

unsigned int j = 0;

for(; j < MaxCheck; j++){

for(unsigned int h=0; h < QueryCode64.size(); h++){

unsigned long searchcode = QueryCode64[h][cur] ^ HammingBallMask64[j];

unsigned int idx1 = searchcode >> lowbits;

unsigned long idx2 = searchcode - (( unsigned long)idx1 << lowbits);

HashBucket64::iterator bucket= htb64[h][idx1].find(idx2);

if(bucket != htb64[h][idx1].end()){

std::vector<unsigned int> vp = bucket->second;

for(size_t k = 0; k < vp.size() && p < (unsigned int)SP.search_init_num; k++){

if(tbflag.test(vp[k]))continue;

tbflag.set(vp[k]);

pool[p++]=(vp[k]);

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p < (unsigned int)SP.search_init_num){

VisitBucketNum[radius+1]++;

}else{

for(int r=0;r<=radius;r++){

if(j<=HammingRadius[r]){

VisitBucketNum[r]++;

break;

}

}

}

if (p<K){

int base_n = features_.get_rows();

while(p < K){

unsigned int nn = rand() % base_n;

if(tbflag.test(nn)) continue;

tbflag.set(nn);

pool[p++] = (nn);

}

}

std::vector<std::pair<float,size_t>> result;

for(unsigned int i=0; i<p;i++){

result.push_back(std::make_pair(distance_->compare(query.get_row(cur), features_.get_row(pool[i]), features_.get_cols()),pool[i]));

}

std::partial_sort(result.begin(), result.begin() + K, result.end());

std::vector<int> res;

for(unsigned int j = 0; j < K; j++) res.push_back(result[j].second);

nn_results.push_back(res);

}

//std::cout<<"bad query number: " <<VisitBucketNum[radius+1]<< std::endl;

}

void getNeighborsIEH32_nnexp(size_t K, const Matrix<DataType>& query){

int lowbits = codelength - upbits;

unsigned int MaxCheck=HammingRadius[radius];

std::cout<<"maxcheck : "<<MaxCheck<<std::endl;

int resultSize = SP.extend_to;

if (K > (unsigned)SP.extend_to)

resultSize = K;

boost::dynamic_bitset<> tbflag(features_.get_rows(), false);

nn_results.clear();

VisitBucketNum.clear();

VisitBucketNum.resize(radius+2);

for(size_t cur = 0; cur < query.get_rows(); cur++){

tbflag.reset();

std::vector<int> pool(SP.search_init_num);

unsigned int p = 0;

unsigned int j = 0;

for(; j < MaxCheck; j++){

for(size_t h=0; h < QueryCode.size(); h++){

unsigned int searchcode = QueryCode[h][cur] ^ HammingBallMask[j];

unsigned int idx1 = searchcode >> lowbits;

unsigned int idx2 = searchcode - (idx1 << lowbits);

HashBucket::iterator bucket= htb[h][idx1].find(idx2);

if(bucket != htb[h][idx1].end()){

std::vector<unsigned int> vp = bucket->second;

for(size_t k = 0; k < vp.size() && p < (unsigned int)SP.search_init_num; k++){

if(tbflag.test(vp[k]))continue;

tbflag.set(vp[k]);

pool[p++]=(vp[k]);

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p < (unsigned int)SP.search_init_num){

VisitBucketNum[radius+1]++;

}else{

for(int r=0;r<=radius;r++){

if(j<=HammingRadius[r]){

VisitBucketNum[r]++;

break;

}

}

}

int base_n = features_.get_rows();

while(p < (unsigned int)SP.search_init_num){

unsigned int nn = rand() % base_n;

if(tbflag.test(nn)) continue;

tbflag.set(nn);

pool[p++] = (nn);

}

//sorting the pool

std::vector<std::pair<float,size_t>> result;

for(unsigned int i=0; i<pool.size();i++){

result.push_back(std::make_pair(distance_->compare(query.get_row(cur), features_.get_row(pool[i]), features_.get_cols()),pool[i]));

}

std::partial_sort(result.begin(), result.begin() + resultSize, result.end());

result.resize(resultSize);

pool.clear();

for(int j = 0; j < resultSize; j++)

pool.push_back(result[j].second);

//nn_exp

boost::dynamic_bitset<> newflag(features_.get_rows(), true);

newflag.set();

int iter=0;

std::vector<int> ids;

while(iter++ < SP.search_epoches){

//the heap is max heap

ids.clear();

for(unsigned j = 0; j < SP.extend_to ; j++){

if(newflag.test( pool[j] )){

newflag.reset(pool[j]);

for(unsigned neighbor=0; neighbor < gs[pool[j]].size(); neighbor++){

unsigned id = gs[pool[j]][neighbor];

if(tbflag.test(id))continue;

else tbflag.set(id);

ids.push_back(id);

}

}

}

for(size_t j = 0; j < ids.size(); j++){

result.push_back(std::make_pair(distance_->compare(query.get_row(cur), features_.get_row(ids[j]), features_.get_cols()),ids[j]));

}

std::partial_sort(result.begin(), result.begin() + resultSize, result.end());

result.resize(resultSize);

pool.clear();

for(int j = 0; j < resultSize; j++)

pool.push_back(result[j].second);

}

if(K<(unsigned)SP.extend_to)

pool.resize(K);

nn_results.push_back(pool);

}

}

void getNeighborsIEH32_kgraph(size_t K, const Matrix<DataType>& query){

int lowbits = codelength - upbits;

unsigned int MaxCheck=HammingRadius[radius];

std::cout<<"maxcheck : "<<MaxCheck<<std::endl;

nn_results.clear();

boost::dynamic_bitset<> tbflag(features_.get_rows(), false);

bool bSorted = true;

unsigned pool_size = SP.search_epoches * SP.extend_to;

if (pool_size >= (unsigned)SP.search_init_num){

SP.search_init_num = pool_size;

bSorted = false;

}

VisitBucketNum.clear();

VisitBucketNum.resize(radius+2);

for(size_t cur = 0; cur < query.get_rows(); cur++){

tbflag.reset();

std::vector<unsigned int> pool(SP.search_init_num);

unsigned int p = 0;

unsigned int j = 0;

for(; j < MaxCheck; j++){

for(size_t h=0; h < QueryCode.size(); h++){

unsigned int searchcode = QueryCode[h][cur] ^ HammingBallMask[j];

unsigned int idx1 = searchcode >> lowbits;

unsigned int idx2 = searchcode - (idx1 << lowbits);

HashBucket::iterator bucket= htb[h][idx1].find(idx2);

if(bucket != htb[h][idx1].end()){

std::vector<unsigned int> vp = bucket->second;

for(size_t k = 0; k < vp.size() && p < (unsigned int)SP.search_init_num; k++){

if(tbflag.test(vp[k]))continue;

tbflag.set(vp[k]);

pool[p++]=(vp[k]);

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p < (unsigned int)SP.search_init_num){

VisitBucketNum[radius+1]++;

}else{

for(int r=0;r<=radius;r++){

if(j<=HammingRadius[r]){

VisitBucketNum[r]++;

break;

}

}

}

int base_n = features_.get_rows();

while(p < (unsigned int)SP.search_init_num){

unsigned int nn = rand() % base_n;

if(tbflag.test(nn)) continue;

tbflag.set(nn);

pool[p++] = (nn);

}

std::vector<std::pair<float,size_t>> result;

for(unsigned int i=0; i<pool.size();i++){

result.push_back(std::make_pair(distance_->compare(query.get_row(cur), features_.get_row(pool[i]), features_.get_cols()),pool[i]));

}

if(bSorted){

std::partial_sort(result.begin(), result.begin() + pool_size, result.end());

result.resize(pool_size);

}

tbflag.reset();

std::vector<Point> knn(K + SP.extend_to +1);

std::vector<Point> results;

for (unsigned iter = 0; iter < (unsigned)SP.search_epoches; iter++) {

unsigned L = 0;

for(unsigned j=0; j < SP.extend_to ; j++){

if(!tbflag.test(result[iter*SP.extend_to+j].second)){

tbflag.set(result[iter*SP.extend_to+j].second);

knn[L].id = result[iter*SP.extend_to+j].second;

knn[L].dist = result[iter*SP.extend_to+j].first;

knn[L].flag = true;

L++;

}

}

if(~bSorted){

std::sort(knn.begin(), knn.begin() + L);

}

unsigned int k = 0;

while (k < L) {

unsigned int nk = L;

if (knn[k].flag) {

knn[k].flag = false;

unsigned n = knn[k].id;

for(unsigned neighbor=0; neighbor < gs[n].size(); neighbor++){

unsigned id = gs[n][neighbor];

if(tbflag.test(id))continue;

tbflag.set(id);

float dist = distance_->compare(query.get_row(cur), features_.get_row(id), features_.get_cols());

Point nn(id, dist);

unsigned int r = InsertIntoKnn(&knn[0], L, nn);

//if ( (r <= L) && (L + 1 < knn.size())) ++L;

if ( L + 1 < knn.size()) ++L;

if (r < nk) nk = r;

}

}

if (nk <= k) k = nk;

else ++k;

}

if (L > K) L = K;

if (results.empty()) {

results.reserve(K + 1);

results.resize(L + 1);

std::copy(knn.begin(), knn.begin() + L, results.begin());

}

else {

for (unsigned int l = 0; l < L; ++l) {

unsigned r = InsertIntoKnn(&results[0], results.size() - 1, knn[l]);

if (r < results.size() /* inserted */ && results.size() < (K + 1)) {

results.resize(results.size() + 1);

}

}

}

}

std::vector<int> res;

for(size_t i = 0; i < K && i < results.size();i++)

res.push_back(results[i].id);

nn_results.push_back(res);

}

}

void getNeighborsIEH64_nnexp(size_t K, const Matrix<DataType>& query){

int lowbits = codelength - upbits;

unsigned int MaxCheck=HammingRadius[radius];

std::cout<<"maxcheck : "<<MaxCheck<<std::endl;

int resultSize = SP.extend_to;

if (K > (unsigned)SP.extend_to)

resultSize = K;

boost::dynamic_bitset<> tbflag(features_.get_rows(), false);

nn_results.clear();

VisitBucketNum.clear();

VisitBucketNum.resize(radius+2);

for(size_t cur = 0; cur < query.get_rows(); cur++){

std::vector<int> pool(SP.search_init_num);

unsigned int p = 0;

tbflag.reset();

unsigned int j = 0;

for(; j < MaxCheck; j++){

for(unsigned int h=0; h < QueryCode64.size(); h++){

unsigned long searchcode = QueryCode64[h][cur] ^ HammingBallMask64[j];

unsigned int idx1 = searchcode >> lowbits;

unsigned long idx2 = searchcode - (( unsigned long)idx1 << lowbits);

HashBucket64::iterator bucket= htb64[h][idx1].find(idx2);

if(bucket != htb64[h][idx1].end()){

std::vector<unsigned int> vp = bucket->second;

for(size_t k = 0; k < vp.size() && p < (unsigned int)SP.search_init_num; k++){

if(tbflag.test(vp[k]))continue;

tbflag.set(vp[k]);

pool[p++]=(vp[k]);

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p < (unsigned int)SP.search_init_num){

VisitBucketNum[radius+1]++;

}else{

for(int r=0;r<=radius;r++){

if(j<=HammingRadius[r]){

VisitBucketNum[r]++;

break;

}

}

}

int base_n = features_.get_rows();

while(p < (unsigned int)SP.search_init_num){

unsigned int nn = rand() % base_n;

if(tbflag.test(nn)) continue;

tbflag.set(nn);

pool[p++] = (nn);

}

//sorting the pool

std::vector<std::pair<float,size_t>> result;

for(unsigned int i=0; i<pool.size();i++){

result.push_back(std::make_pair(distance_->compare(query.get_row(cur), features_.get_row(pool[i]), features_.get_cols()),pool[i]));

}

std::partial_sort(result.begin(), result.begin() + resultSize, result.end());

result.resize(resultSize);

pool.clear();

for(int j = 0; j < resultSize; j++)

pool.push_back(result[j].second);

//nn_exp

boost::dynamic_bitset<> newflag(features_.get_rows(), true);

newflag.set();

int iter=0;

std::vector<int> ids;

while(iter++ < SP.search_epoches){

//the heap is max heap

ids.clear();

for(unsigned j = 0; j < SP.extend_to ; j++){

if(newflag.test( pool[j] )){

newflag.reset(pool[j]);

for(unsigned neighbor=0; neighbor < gs[pool[j]].size(); neighbor++){

unsigned id = gs[pool[j]][neighbor];

if(tbflag.test(id))continue;

else tbflag.set(id);

ids.push_back(id);

}

}

}

for(size_t j = 0; j < ids.size(); j++){

result.push_back(std::make_pair(distance_->compare(query.get_row(cur), features_.get_row(ids[j]), features_.get_cols()),ids[j]));

}

std::partial_sort(result.begin(), result.begin() + resultSize, result.end());

result.resize(resultSize);

pool.clear();

for(int j = 0; j < resultSize; j++)

pool.push_back(result[j].second);

}

if(K<(unsigned)SP.extend_to)

pool.resize(K);

nn_results.push_back(pool);

}

}

void getNeighborsIEH64_kgraph(size_t K, const Matrix<DataType>& query){

int lowbits = codelength - upbits;

unsigned int MaxCheck=HammingRadius[radius];

std::cout<<"maxcheck : "<<MaxCheck<<std::endl;

nn_results.clear();

boost::dynamic_bitset<> tbflag(features_.get_rows(), false);

bool bSorted = true;

unsigned pool_size = SP.search_epoches * SP.extend_to;

if (pool_size >= (unsigned)SP.search_init_num){

SP.search_init_num = pool_size;

bSorted = false;

}

VisitBucketNum.clear();

VisitBucketNum.resize(radius+2);

for(size_t cur = 0; cur < query.get_rows(); cur++){

std::vector<unsigned int> pool(SP.search_init_num);

unsigned int p = 0;

tbflag.reset();

unsigned int j = 0;

for(; j < MaxCheck; j++){

for(unsigned int h=0; h < QueryCode64.size(); h++){

unsigned long searchcode = QueryCode64[h][cur] ^ HammingBallMask64[j];

unsigned int idx1 = searchcode >> lowbits;

unsigned long idx2 = searchcode - (( unsigned long)idx1 << lowbits);

HashBucket64::iterator bucket= htb64[h][idx1].find(idx2);

if(bucket != htb64[h][idx1].end()){

std::vector<unsigned int> vp = bucket->second;

for(size_t k = 0; k < vp.size() && p < (unsigned int)SP.search_init_num; k++){

if(tbflag.test(vp[k]))continue;

tbflag.set(vp[k]);

pool[p++]=(vp[k]);

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p >= (unsigned int)SP.search_init_num) break;

}

if(p < (unsigned int)SP.search_init_num){

VisitBucketNum[radius+1]++;

}else{

for(int r=0;r<=radius;r++){

if(j<=HammingRadius[r]){

VisitBucketNum[r]++;

break;

}

}

}

int base_n = features_.get_rows();

while(p < (unsigned int)SP.search_init_num){

unsigned int nn = rand() % base_n;

if(tbflag.test(nn)) continue;

tbflag.set(nn);

pool[p++] = (nn);

}

std::vector<std::pair<float,size_t>> result;

for(unsigned int i=0; i<pool.size();i++){

result.push_back(std::make_pair(distance_->compare(query.get_row(cur), features_.get_row(pool[i]), features_.get_cols()),pool[i]));

}

if(bSorted){

std::partial_sort(result.begin(), result.begin() + pool_size, result.end());

result.resize(pool_size);

}

tbflag.reset();

std::vector<Point> knn(K + SP.extend_to +1);

std::vector<Point> results;

for (unsigned iter = 0; iter < (unsigned)SP.search_epoches; iter++) {

unsigned L = 0;

for(unsigned j=0; j < (unsigned)SP.extend_to ; j++){

if(!tbflag.test(result[iter*SP.extend_to+j].second)){

tbflag.set(result[iter*SP.extend_to+j].second);

knn[L].id = result[iter*SP.extend_to+j].second;

knn[L].dist = result[iter*SP.extend_to+j].first;

knn[L].flag = true;

L++;

}

}