Showing preview only (4,343K chars total). Download the full file or copy to clipboard to get everything.

Repository: airtai/fastkafka

Branch: main

Commit: 249d485f219a

Files: 352

Total size: 4.0 MB

Directory structure:

gitextract_q28pcl6l/

├── .github/

│ └── workflows/

│ ├── codeql.yml

│ ├── dependency-review.yml

│ ├── deploy.yaml

│ ├── index-docs-for-fastkafka-chat.yaml

│ └── test.yaml

├── .gitignore

├── .pre-commit-config.yaml

├── .semgrepignore

├── CHANGELOG.md

├── CNAME

├── CONTRIBUTING.md

├── LICENSE

├── MANIFEST.in

├── README.md

├── docker/

│ ├── .semgrepignore

│ └── dev.yml

├── docusaurus/

│ ├── babel.config.js

│ ├── docusaurus.config.js

│ ├── package.json

│ ├── scripts/

│ │ ├── build_docusaurus_docs.sh

│ │ ├── install_docusaurus_deps.sh

│ │ ├── serve_docusaurus_docs.sh

│ │ └── update_readme.sh

│ ├── sidebars.js

│ ├── src/

│ │ ├── components/

│ │ │ ├── BrowserWindow/

│ │ │ │ ├── index.js

│ │ │ │ └── styles.module.css

│ │ │ ├── HomepageCommunity/

│ │ │ │ ├── index.js

│ │ │ │ └── styles.module.css

│ │ │ ├── HomepageFAQ/

│ │ │ │ ├── index.js

│ │ │ │ └── styles.module.css

│ │ │ ├── HomepageFastkafkaChat/

│ │ │ │ ├── index.js

│ │ │ │ └── styles.module.css

│ │ │ ├── HomepageFeatures/

│ │ │ │ ├── index.js

│ │ │ │ └── styles.module.css

│ │ │ ├── HomepageWhatYouGet/

│ │ │ │ ├── index.js

│ │ │ │ └── styles.module.css

│ │ │ └── RobotFooterIcon/

│ │ │ ├── index.js

│ │ │ └── styles.module.css

│ │ ├── css/

│ │ │ └── custom.css

│ │ ├── pages/

│ │ │ ├── demo/

│ │ │ │ ├── index.js

│ │ │ │ └── styles.module.css

│ │ │ ├── index.js

│ │ │ └── index.module.css

│ │ └── utils/

│ │ ├── prismDark.mjs

│ │ └── prismLight.mjs

│ ├── static/

│ │ ├── .nojekyll

│ │ └── CNAME

│ ├── versioned_docs/

│ │ ├── version-0.5.0/

│ │ │ ├── CHANGELOG.md

│ │ │ ├── CNAME

│ │ │ ├── api/

│ │ │ │ └── fastkafka/

│ │ │ │ ├── FastKafka.md

│ │ │ │ ├── KafkaEvent.md

│ │ │ │ ├── encoder/

│ │ │ │ │ └── avsc_to_pydantic.md

│ │ │ │ └── testing/

│ │ │ │ ├── ApacheKafkaBroker.md

│ │ │ │ ├── LocalRedpandaBroker.md

│ │ │ │ └── Tester.md

│ │ │ ├── cli/

│ │ │ │ ├── fastkafka.md

│ │ │ │ └── run_fastkafka_server_process.md

│ │ │ ├── guides/

│ │ │ │ ├── Guide_00_FastKafka_Demo.md

│ │ │ │ ├── Guide_01_Intro.md

│ │ │ │ ├── Guide_02_First_Steps.md

│ │ │ │ ├── Guide_03_Authentication.md

│ │ │ │ ├── Guide_04_Github_Actions_Workflow.md

│ │ │ │ ├── Guide_05_Lifespan_Handler.md

│ │ │ │ ├── Guide_06_Benchmarking_FastKafka.md

│ │ │ │ ├── Guide_07_Encoding_and_Decoding_Messages_with_FastKafka.md

│ │ │ │ ├── Guide_11_Consumes_Basics.md

│ │ │ │ ├── Guide_21_Produces_Basics.md

│ │ │ │ ├── Guide_22_Partition_Keys.md

│ │ │ │ ├── Guide_30_Using_docker_to_deploy_fastkafka.md

│ │ │ │ └── Guide_31_Using_redpanda_to_test_fastkafka.md

│ │ │ ├── index.md

│ │ │ └── overrides/

│ │ │ ├── css/

│ │ │ │ └── extra.css

│ │ │ └── js/

│ │ │ ├── extra.js

│ │ │ ├── math.js

│ │ │ └── mathjax.js

│ │ ├── version-0.6.0/

│ │ │ ├── CHANGELOG.md

│ │ │ ├── CNAME

│ │ │ ├── CONTRIBUTING.md

│ │ │ ├── LICENSE.md

│ │ │ ├── api/

│ │ │ │ └── fastkafka/

│ │ │ │ ├── EventMetadata.md

│ │ │ │ ├── FastKafka.md

│ │ │ │ ├── KafkaEvent.md

│ │ │ │ ├── encoder/

│ │ │ │ │ ├── AvroBase.md

│ │ │ │ │ ├── avro_decoder.md

│ │ │ │ │ ├── avro_encoder.md

│ │ │ │ │ ├── avsc_to_pydantic.md

│ │ │ │ │ ├── json_decoder.md

│ │ │ │ │ └── json_encoder.md

│ │ │ │ ├── executors/

│ │ │ │ │ ├── DynamicTaskExecutor.md

│ │ │ │ │ └── SequentialExecutor.md

│ │ │ │ └── testing/

│ │ │ │ ├── ApacheKafkaBroker.md

│ │ │ │ ├── LocalRedpandaBroker.md

│ │ │ │ └── Tester.md

│ │ │ ├── cli/

│ │ │ │ ├── fastkafka.md

│ │ │ │ └── run_fastkafka_server_process.md

│ │ │ ├── guides/

│ │ │ │ ├── Guide_00_FastKafka_Demo.md

│ │ │ │ ├── Guide_01_Intro.md

│ │ │ │ ├── Guide_02_First_Steps.md

│ │ │ │ ├── Guide_03_Authentication.md

│ │ │ │ ├── Guide_04_Github_Actions_Workflow.md

│ │ │ │ ├── Guide_05_Lifespan_Handler.md

│ │ │ │ ├── Guide_06_Benchmarking_FastKafka.md

│ │ │ │ ├── Guide_07_Encoding_and_Decoding_Messages_with_FastKafka.md

│ │ │ │ ├── Guide_11_Consumes_Basics.md

│ │ │ │ ├── Guide_21_Produces_Basics.md

│ │ │ │ ├── Guide_22_Partition_Keys.md

│ │ │ │ ├── Guide_23_Batch_Producing.md

│ │ │ │ ├── Guide_30_Using_docker_to_deploy_fastkafka.md

│ │ │ │ └── Guide_31_Using_redpanda_to_test_fastkafka.md

│ │ │ ├── index.md

│ │ │ └── overrides/

│ │ │ ├── css/

│ │ │ │ └── extra.css

│ │ │ └── js/

│ │ │ ├── extra.js

│ │ │ ├── math.js

│ │ │ └── mathjax.js

│ │ ├── version-0.7.0/

│ │ │ ├── CHANGELOG.md

│ │ │ ├── CNAME

│ │ │ ├── CONTRIBUTING.md

│ │ │ ├── LICENSE.md

│ │ │ ├── api/

│ │ │ │ └── fastkafka/

│ │ │ │ ├── EventMetadata.md

│ │ │ │ ├── FastKafka.md

│ │ │ │ ├── KafkaEvent.md

│ │ │ │ ├── encoder/

│ │ │ │ │ ├── AvroBase.md

│ │ │ │ │ ├── avro_decoder.md

│ │ │ │ │ ├── avro_encoder.md

│ │ │ │ │ ├── avsc_to_pydantic.md

│ │ │ │ │ ├── json_decoder.md

│ │ │ │ │ └── json_encoder.md

│ │ │ │ ├── executors/

│ │ │ │ │ ├── DynamicTaskExecutor.md

│ │ │ │ │ └── SequentialExecutor.md

│ │ │ │ └── testing/

│ │ │ │ ├── ApacheKafkaBroker.md

│ │ │ │ ├── LocalRedpandaBroker.md

│ │ │ │ └── Tester.md

│ │ │ ├── cli/

│ │ │ │ ├── fastkafka.md

│ │ │ │ └── run_fastkafka_server_process.md

│ │ │ ├── guides/

│ │ │ │ ├── Guide_00_FastKafka_Demo.md

│ │ │ │ ├── Guide_01_Intro.md

│ │ │ │ ├── Guide_02_First_Steps.md

│ │ │ │ ├── Guide_03_Authentication.md

│ │ │ │ ├── Guide_04_Github_Actions_Workflow.md

│ │ │ │ ├── Guide_05_Lifespan_Handler.md

│ │ │ │ ├── Guide_06_Benchmarking_FastKafka.md

│ │ │ │ ├── Guide_07_Encoding_and_Decoding_Messages_with_FastKafka.md

│ │ │ │ ├── Guide_11_Consumes_Basics.md

│ │ │ │ ├── Guide_12_Batch_Consuming.md

│ │ │ │ ├── Guide_21_Produces_Basics.md

│ │ │ │ ├── Guide_22_Partition_Keys.md

│ │ │ │ ├── Guide_23_Batch_Producing.md

│ │ │ │ ├── Guide_24_Using_Multiple_Kafka_Clusters.md

│ │ │ │ ├── Guide_30_Using_docker_to_deploy_fastkafka.md

│ │ │ │ ├── Guide_31_Using_redpanda_to_test_fastkafka.md

│ │ │ │ └── Guide_32_Using_fastapi_to_run_fastkafka_application.md

│ │ │ ├── index.md

│ │ │ └── overrides/

│ │ │ ├── css/

│ │ │ │ └── extra.css

│ │ │ └── js/

│ │ │ ├── extra.js

│ │ │ ├── math.js

│ │ │ └── mathjax.js

│ │ ├── version-0.7.1/

│ │ │ ├── CHANGELOG.md

│ │ │ ├── CNAME

│ │ │ ├── CONTRIBUTING.md

│ │ │ ├── LICENSE.md

│ │ │ ├── api/

│ │ │ │ └── fastkafka/

│ │ │ │ ├── EventMetadata.md

│ │ │ │ ├── FastKafka.md

│ │ │ │ ├── KafkaEvent.md

│ │ │ │ ├── encoder/

│ │ │ │ │ ├── AvroBase.md

│ │ │ │ │ ├── avro_decoder.md

│ │ │ │ │ ├── avro_encoder.md

│ │ │ │ │ ├── avsc_to_pydantic.md

│ │ │ │ │ ├── json_decoder.md

│ │ │ │ │ └── json_encoder.md

│ │ │ │ ├── executors/

│ │ │ │ │ ├── DynamicTaskExecutor.md

│ │ │ │ │ └── SequentialExecutor.md

│ │ │ │ └── testing/

│ │ │ │ ├── ApacheKafkaBroker.md

│ │ │ │ ├── LocalRedpandaBroker.md

│ │ │ │ └── Tester.md

│ │ │ ├── cli/

│ │ │ │ ├── fastkafka.md

│ │ │ │ └── run_fastkafka_server_process.md

│ │ │ ├── guides/

│ │ │ │ ├── Guide_00_FastKafka_Demo.md

│ │ │ │ ├── Guide_01_Intro.md

│ │ │ │ ├── Guide_02_First_Steps.md

│ │ │ │ ├── Guide_03_Authentication.md

│ │ │ │ ├── Guide_04_Github_Actions_Workflow.md

│ │ │ │ ├── Guide_05_Lifespan_Handler.md

│ │ │ │ ├── Guide_06_Benchmarking_FastKafka.md

│ │ │ │ ├── Guide_07_Encoding_and_Decoding_Messages_with_FastKafka.md

│ │ │ │ ├── Guide_11_Consumes_Basics.md

│ │ │ │ ├── Guide_12_Batch_Consuming.md

│ │ │ │ ├── Guide_21_Produces_Basics.md

│ │ │ │ ├── Guide_22_Partition_Keys.md

│ │ │ │ ├── Guide_23_Batch_Producing.md

│ │ │ │ ├── Guide_24_Using_Multiple_Kafka_Clusters.md

│ │ │ │ ├── Guide_30_Using_docker_to_deploy_fastkafka.md

│ │ │ │ ├── Guide_31_Using_redpanda_to_test_fastkafka.md

│ │ │ │ └── Guide_32_Using_fastapi_to_run_fastkafka_application.md

│ │ │ ├── index.md

│ │ │ └── overrides/

│ │ │ ├── css/

│ │ │ │ └── extra.css

│ │ │ └── js/

│ │ │ ├── extra.js

│ │ │ ├── math.js

│ │ │ └── mathjax.js

│ │ └── version-0.8.0/

│ │ ├── CHANGELOG.md

│ │ ├── CNAME

│ │ ├── CONTRIBUTING.md

│ │ ├── LICENSE.md

│ │ ├── api/

│ │ │ └── fastkafka/

│ │ │ ├── EventMetadata.md

│ │ │ ├── FastKafka.md

│ │ │ ├── KafkaEvent.md

│ │ │ ├── encoder/

│ │ │ │ ├── AvroBase.md

│ │ │ │ ├── avro_decoder.md

│ │ │ │ ├── avro_encoder.md

│ │ │ │ ├── avsc_to_pydantic.md

│ │ │ │ ├── json_decoder.md

│ │ │ │ └── json_encoder.md

│ │ │ ├── executors/

│ │ │ │ ├── DynamicTaskExecutor.md

│ │ │ │ └── SequentialExecutor.md

│ │ │ └── testing/

│ │ │ ├── ApacheKafkaBroker.md

│ │ │ ├── LocalRedpandaBroker.md

│ │ │ └── Tester.md

│ │ ├── cli/

│ │ │ ├── fastkafka.md

│ │ │ └── run_fastkafka_server_process.md

│ │ ├── guides/

│ │ │ ├── Guide_00_FastKafka_Demo.md

│ │ │ ├── Guide_01_Intro.md

│ │ │ ├── Guide_02_First_Steps.md

│ │ │ ├── Guide_03_Authentication.md

│ │ │ ├── Guide_04_Github_Actions_Workflow.md

│ │ │ ├── Guide_05_Lifespan_Handler.md

│ │ │ ├── Guide_06_Benchmarking_FastKafka.md

│ │ │ ├── Guide_07_Encoding_and_Decoding_Messages_with_FastKafka.md

│ │ │ ├── Guide_11_Consumes_Basics.md

│ │ │ ├── Guide_12_Batch_Consuming.md

│ │ │ ├── Guide_21_Produces_Basics.md

│ │ │ ├── Guide_22_Partition_Keys.md

│ │ │ ├── Guide_23_Batch_Producing.md

│ │ │ ├── Guide_24_Using_Multiple_Kafka_Clusters.md

│ │ │ ├── Guide_30_Using_docker_to_deploy_fastkafka.md

│ │ │ ├── Guide_31_Using_redpanda_to_test_fastkafka.md

│ │ │ └── Guide_32_Using_fastapi_to_run_fastkafka_application.md

│ │ ├── index.md

│ │ └── overrides/

│ │ ├── css/

│ │ │ └── extra.css

│ │ └── js/

│ │ ├── extra.js

│ │ ├── math.js

│ │ └── mathjax.js

│ ├── versioned_sidebars/

│ │ ├── version-0.5.0-sidebars.json

│ │ ├── version-0.6.0-sidebars.json

│ │ ├── version-0.7.0-sidebars.json

│ │ ├── version-0.7.1-sidebars.json

│ │ └── version-0.8.0-sidebars.json

│ └── versions.json

├── fastkafka/

│ ├── __init__.py

│ ├── _aiokafka_imports.py

│ ├── _application/

│ │ ├── __init__.py

│ │ ├── app.py

│ │ └── tester.py

│ ├── _cli.py

│ ├── _cli_docs.py

│ ├── _cli_testing.py

│ ├── _components/

│ │ ├── __init__.py

│ │ ├── _subprocess.py

│ │ ├── aiokafka_consumer_loop.py

│ │ ├── asyncapi.py

│ │ ├── benchmarking.py

│ │ ├── docs_dependencies.py

│ │ ├── encoder/

│ │ │ ├── __init__.py

│ │ │ ├── avro.py

│ │ │ └── json.py

│ │ ├── helpers.py

│ │ ├── logger.py

│ │ ├── meta.py

│ │ ├── producer_decorator.py

│ │ ├── task_streaming.py

│ │ └── test_dependencies.py

│ ├── _docusaurus_helper.py

│ ├── _helpers.py

│ ├── _modidx.py

│ ├── _server.py

│ ├── _testing/

│ │ ├── __init__.py

│ │ ├── apache_kafka_broker.py

│ │ ├── in_memory_broker.py

│ │ ├── local_redpanda_broker.py

│ │ └── test_utils.py

│ ├── encoder.py

│ ├── executors.py

│ └── testing.py

├── mkdocs/

│ ├── docs_overrides/

│ │ ├── css/

│ │ │ └── extra.css

│ │ └── js/

│ │ ├── extra.js

│ │ ├── math.js

│ │ └── mathjax.js

│ ├── mkdocs.yml

│ ├── overrides/

│ │ └── main.html

│ ├── site_overrides/

│ │ ├── main.html

│ │ └── partials/

│ │ └── copyright.html

│ └── summary_template.txt

├── mypy.ini

├── nbs/

│ ├── .gitignore

│ ├── 000_AIOKafkaImports.ipynb

│ ├── 000_Testing_export.ipynb

│ ├── 001_InMemoryBroker.ipynb

│ ├── 002_ApacheKafkaBroker.ipynb

│ ├── 003_LocalRedpandaBroker.ipynb

│ ├── 004_Test_Utils.ipynb

│ ├── 005_Application_executors_export.ipynb

│ ├── 006_TaskStreaming.ipynb

│ ├── 010_Application_export.ipynb

│ ├── 011_ConsumerLoop.ipynb

│ ├── 013_ProducerDecorator.ipynb

│ ├── 014_AsyncAPI.ipynb

│ ├── 015_FastKafka.ipynb

│ ├── 016_Tester.ipynb

│ ├── 017_Benchmarking.ipynb

│ ├── 018_Avro_Encode_Decoder.ipynb

│ ├── 019_Json_Encode_Decoder.ipynb

│ ├── 020_Encoder_Export.ipynb

│ ├── 021_FastKafkaServer.ipynb

│ ├── 022_Subprocess.ipynb

│ ├── 023_CLI.ipynb

│ ├── 024_CLI_Docs.ipynb

│ ├── 025_CLI_Testing.ipynb

│ ├── 096_Docusaurus_Helper.ipynb

│ ├── 096_Meta.ipynb

│ ├── 097_Docs_Dependencies.ipynb

│ ├── 098_Test_Dependencies.ipynb

│ ├── 099_Test_Service.ipynb

│ ├── 998_Internal_Helpers.ipynb

│ ├── 999_Helpers.ipynb

│ ├── Logger.ipynb

│ ├── _quarto.yml

│ ├── guides/

│ │ ├── .gitignore

│ │ ├── Guide_00_FastKafka_Demo.ipynb

│ │ ├── Guide_01_Intro.ipynb

│ │ ├── Guide_02_First_Steps.ipynb

│ │ ├── Guide_03_Authentication.ipynb

│ │ ├── Guide_04_Github_Actions_Workflow.ipynb

│ │ ├── Guide_05_Lifespan_Handler.ipynb

│ │ ├── Guide_06_Benchmarking_FastKafka.ipynb

│ │ ├── Guide_07_Encoding_and_Decoding_Messages_with_FastKafka.ipynb

│ │ ├── Guide_11_Consumes_Basics.ipynb

│ │ ├── Guide_12_Batch_Consuming.ipynb

│ │ ├── Guide_21_Produces_Basics.ipynb

│ │ ├── Guide_22_Partition_Keys.ipynb

│ │ ├── Guide_23_Batch_Producing.ipynb

│ │ ├── Guide_24_Using_Multiple_Kafka_Clusters.ipynb

│ │ ├── Guide_30_Using_docker_to_deploy_fastkafka.ipynb

│ │ ├── Guide_31_Using_redpanda_to_test_fastkafka.ipynb

│ │ ├── Guide_32_Using_fastapi_to_run_fastkafka_application.ipynb

│ │ └── Guide_33_Using_Tester_class_to_test_fastkafka.ipynb

│ ├── index.ipynb

│ ├── nbdev.yml

│ ├── sidebar.yml

│ └── styles.css

├── run_jupyter.sh

├── set_variables.sh

├── settings.ini

├── setup.py

└── stop_jupyter.sh

================================================

FILE CONTENTS

================================================

================================================

FILE: .github/workflows/codeql.yml

================================================

# For most projects, this workflow file will not need changing; you simply need

# to commit it to your repository.

#

# You may wish to alter this file to override the set of languages analyzed,

# or to provide custom queries or build logic.

#

# ******** NOTE ********

# We have attempted to detect the languages in your repository. Please check

# the `language` matrix defined below to confirm you have the correct set of

# supported CodeQL languages.

#

name: "CodeQL"

on:

push:

branches: [ "main" ]

pull_request:

# The branches below must be a subset of the branches above

branches: [ "main" ]

schedule:

- cron: '34 11 * * 4'

jobs:

analyze:

name: Analyze

runs-on: ubuntu-latest

permissions:

actions: read

contents: read

security-events: write

strategy:

fail-fast: false

matrix:

language: [ 'javascript', 'python' ]

# CodeQL supports [ 'cpp', 'csharp', 'go', 'java', 'javascript', 'python', 'ruby' ]

# Use only 'java' to analyze code written in Java, Kotlin or both

# Use only 'javascript' to analyze code written in JavaScript, TypeScript or both

# Learn more about CodeQL language support at https://aka.ms/codeql-docs/language-support

steps:

- name: Checkout repository

uses: actions/checkout@v3

# Initializes the CodeQL tools for scanning.

- name: Initialize CodeQL

uses: github/codeql-action/init@v2 # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

with:

languages: ${{ matrix.language }}

# If you wish to specify custom queries, you can do so here or in a config file.

# By default, queries listed here will override any specified in a config file.

# Prefix the list here with "+" to use these queries and those in the config file.

# Details on CodeQL's query packs refer to : https://docs.github.com/en/code-security/code-scanning/automatically-scanning-your-code-for-vulnerabilities-and-errors/configuring-code-scanning#using-queries-in-ql-packs

# queries: security-extended,security-and-quality

# Autobuild attempts to build any compiled languages (C/C++, C#, Go, or Java).

# If this step fails, then you should remove it and run the build manually (see below)

- name: Autobuild

uses: github/codeql-action/autobuild@v2 # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

# ℹ️ Command-line programs to run using the OS shell.

# 📚 See https://docs.github.com/en/actions/using-workflows/workflow-syntax-for-github-actions#jobsjob_idstepsrun

# If the Autobuild fails above, remove it and uncomment the following three lines.

# modify them (or add more) to build your code if your project, please refer to the EXAMPLE below for guidance.

# - run: |

# echo "Run, Build Application using script"

# ./location_of_script_within_repo/buildscript.sh

- name: Perform CodeQL Analysis

uses: github/codeql-action/analyze@v2 # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

with:

category: "/language:${{matrix.language}}"

================================================

FILE: .github/workflows/dependency-review.yml

================================================

# Dependency Review Action

#

# This Action will scan dependency manifest files that change as part of a Pull Request, surfacing known-vulnerable versions of the packages declared or updated in the PR. Once installed, if the workflow run is marked as required, PRs introducing known-vulnerable packages will be blocked from merging.

#

# Source repository: https://github.com/actions/dependency-review-action

# Public documentation: https://docs.github.com/en/code-security/supply-chain-security/understanding-your-software-supply-chain/about-dependency-review#dependency-review-enforcement

name: 'Dependency Review'

on: [pull_request]

permissions:

contents: read

jobs:

dependency-review:

runs-on: ubuntu-latest

steps:

- name: 'Checkout Repository'

uses: actions/checkout@v3

- name: 'Dependency Review'

uses: actions/dependency-review-action@v2

================================================

FILE: .github/workflows/deploy.yaml

================================================

name: Deploy FastKafka documentation to the GitHub Pages

on:

push:

branches: [ "main", "master"]

workflow_dispatch:

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- uses: airtai/workflows/fastkafka-docusaurus-ghp@main # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

================================================

FILE: .github/workflows/index-docs-for-fastkafka-chat.yaml

================================================

name: Index docs for fastkafka chat application

on:

workflow_run:

workflows: ["pages-build-deployment"]

types: [completed]

env:

OPENAI_API_KEY: ${{ secrets.OPENAI_API_KEY }}

PERSONAL_ACCESS_TOKEN: ${{ secrets.PERSONAL_ACCESS_TOKEN }}

jobs:

on-success:

name: Index docs for fastkafka chat application

runs-on: ubuntu-latest

permissions:

contents: write

if: ${{ github.event.workflow_run.conclusion == 'success' }}

steps:

- name: Checkout airtai/fastkafkachat repo

uses: actions/checkout@v3

with:

token: ${{ secrets.PERSONAL_ACCESS_TOKEN }}

ref: ${{ github.head_ref }}

repository: airtai/fastkafkachat

- name: Setup Python

uses: actions/setup-python@v4

with:

python-version: "3.9"

cache: "pip"

cache-dependency-path: settings.ini

- name: Install Dependencies

shell: bash

run: |

set -ux

python -m pip install --upgrade pip

test -f setup.py && pip install -e ".[dev]"

- name: Index the fastkafka docs

shell: bash

run: |

index_website_data

- name: Push updated index to airtai/fastkafkachat repo

uses: stefanzweifel/git-auto-commit-action@v4 # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

with:

commit_message: "Update fastkafka docs index file"

file_pattern: "data/website_index.zip"

================================================

FILE: .github/workflows/test.yaml

================================================

name: CI

on: [workflow_dispatch, push]

jobs:

mypy_static_analysis:

runs-on: ubuntu-latest

steps:

- uses: airtai/workflows/airt-mypy-check@main # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

bandit_static_analysis:

runs-on: ubuntu-latest

steps:

- uses: airtai/workflows/airt-bandit-check@main # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

semgrep_static_analysis:

runs-on: ubuntu-latest

steps:

- uses: airtai/workflows/airt-semgrep-check@main # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

test:

timeout-minutes: 60

strategy:

fail-fast: false

matrix:

os: [ubuntu, windows]

version: ["3.8", "3.9", "3.10", "3.11"]

runs-on: ${{ matrix.os }}-latest

defaults:

run:

shell: bash

steps:

- name: Configure Pagefile

if: matrix.os == 'windows'

uses: al-cheb/configure-pagefile-action@v1.2 # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

with:

minimum-size: 8GB

maximum-size: 8GB

disk-root: "C:"

- name: Install quarto

uses: quarto-dev/quarto-actions/setup@v2 # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

- name: Prepare nbdev env

uses: fastai/workflows/nbdev-ci@master # nosemgrep: yaml.github-actions.security.third-party-action-not-pinned-to-commit-sha.third-party-action-not-pinned-to-commit-sha

with:

version: ${{ matrix.version }}

skip_test: true

- name: List pip deps

run: |

pip list

- name: Install testing deps

run: |

fastkafka docs install_deps

fastkafka testing install_deps

- name: Run nbdev tests

run: |

nbdev_test --timing --do_print --n_workers 1 --file_glob "*_CLI*" # Run CLI tests first one by one because of npm installation clashes with other tests

nbdev_test --timing --do_print --skip_file_glob "*_CLI*"

- name: Test building docs with nbdev-mkdocs

if: matrix.os != 'windows'

run: |

nbdev_mkdocs docs

if [ -f "mkdocs/site/index.html" ]; then

echo "docs built successfully."

else

echo "index page not found in rendered docs."

ls -la

ls -la mkdocs/site/

exit 1

fi

# https://github.com/marketplace/actions/alls-green#why

check: # This job does nothing and is only used for the branch protection

if: always()

needs:

- test

- mypy_static_analysis

- bandit_static_analysis

- semgrep_static_analysis

runs-on: ubuntu-latest

steps:

- name: Decide whether the needed jobs succeeded or failed

uses: re-actors/alls-green@release/v1 # nosemgrep

with:

jobs: ${{ toJSON(needs) }}

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

pip-wheel-metadata/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

*.py,cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

.python-version

# pipenv

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

# However, in case of collaboration, if having platform-specific dependencies or dependencies

# having no cross-platform support, pipenv may install dependencies that don't work, or not

# install all needed dependencies.

#Pipfile.lock

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

__pypackages__/

# Celery stuff

celerybeat-schedule

celerybeat.pid

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# docusaurus documentation

docusaurus/node_modules

docusaurus/docs

docusaurus/build

docusaurus/.docusaurus

docusaurus/.cache-loader

docusaurus/.DS_Store

docusaurus/.env.local

docusaurus/.env.development.local

docusaurus/.env.test.local

docusaurus/.env.production.local

docusaurus/npm-debug.log*

docusaurus/yarn-debug.log*

docusaurus/yarn-error.log*

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

# PyCharm

.idea

# nbdev related stuff

.gitattributes

.gitconfig

_proc

_docs

nbs/asyncapi

nbs/guides/asyncapi

nbs/.last_checked

nbs/_*.ipynb

token

*.bak

# nbdev_mkdocs

mkdocs/docs/

mkdocs/site/

# Ignore trashbins

.Trash*

.vscode

================================================

FILE: .pre-commit-config.yaml

================================================

# See https://pre-commit.com for more information

# See https://pre-commit.com/hooks.html for more hooks

repos:

- repo: https://github.com/pre-commit/pre-commit-hooks

rev: "v4.4.0"

hooks:

# - id: trailing-whitespace

# - id: end-of-file-fixer

# - id: check-yaml

- id: check-added-large-files

- repo: https://github.com/PyCQA/bandit

rev: '1.7.5'

hooks:

- id: bandit

#- repo: https://github.com/returntocorp/semgrep

# rev: "v1.14.0"

# hooks:

# - id: semgrep

# name: Semgrep

# args: ["--config", "auto", "--error"]

# exclude: ^docker/

================================================

FILE: .semgrepignore

================================================

docker/

================================================

FILE: CHANGELOG.md

================================================

# Release notes

<!-- do not remove -->

## 0.8.0

### New Features

- Add support for Pydantic v2 ([#408](https://github.com/airtai/fastkafka/issues/408)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- FastKafka now uses Pydantic v2 for serialization/deserialization of messages

- Enable nbdev_test on windows and run CI tests on windows ([#356](https://github.com/airtai/fastkafka/pull/356)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

### Bugs Squashed

- Fix ´fastkafka testing install deps´ failing ([#385](https://github.com/airtai/fastkafka/pull/385)), thanks to [@Sternakt](https://github.com/Sternakt)

- Create asyncapi docs directory only while building asyncapi docs ([#368](https://github.com/airtai/fastkafka/pull/368)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- Add retries to producer in case of raised KafkaTimeoutError exception ([#423](https://github.com/airtai/fastkafka/pull/423)), thanks to [@Sternakt](https://github.com/Sternakt)

## 0.7.1

### Bugs Squashed

- Limit pydantic version to <2.0 ([#427](https://github.com/airtai/fastkafka/issues/427))

- Fix Kafka broker version installation issues ([#427](https://github.com/airtai/fastkafka/issues/427))

- Fix ApacheKafkaBroker startup issues ([#427](https://github.com/airtai/fastkafka/issues/427))

## 0.7.0

### New Features

- Optional description argument to consumes and produces decorator implemented ([#338](https://github.com/airtai/fastkafka/pull/338)), thanks to [@Sternakt](https://github.com/Sternakt)

- Consumes and produces decorators now have optional `description` argument that is used instead of function docstring in async doc generation when specified

- FastKafka Windows OS support enabled ([#326](https://github.com/airtai/fastkafka/pull/326)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- FastKafka can now run on Windows

- FastKafka and FastAPI integration implemented ([#304](https://github.com/airtai/fastkafka/pull/304)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- FastKafka can now be run alongside FastAPI

- Batch consuming option to consumers implemented ([#298](https://github.com/airtai/fastkafka/pull/298)), thanks to [@Sternakt](https://github.com/Sternakt)

- Consumers can consume events in batches by specifying msg type of consuming function as `List[YourMsgType]`

- Removed support for synchronous produce functions ([#295](https://github.com/airtai/fastkafka/pull/295)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- Added default broker values and update docs ([#292](https://github.com/airtai/fastkafka/pull/292)), thanks to [@Sternakt](https://github.com/Sternakt)

### Bugs Squashed

- Fix index.ipynb to be runnable in colab ([#342](https://github.com/airtai/fastkafka/issues/342))

- Use cli option root_path docs generate and serve CLI commands ([#341](https://github.com/airtai/fastkafka/pull/341)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- Fix incorrect asyncapi docs path on fastkafka docs serve command ([#335](https://github.com/airtai/fastkafka/pull/335)), thanks to [@Sternakt](https://github.com/Sternakt)

- Serve docs now takes app `root_path` argument into consideration when specified in app

- Fix typo (supress_timestamps->suppress_timestamps) and remove fix for enabling timestamps ([#315](https://github.com/airtai/fastkafka/issues/315))

- Fix logs printing timestamps ([#308](https://github.com/airtai/fastkafka/issues/308))

- Fix topics with dots causing failure of tester instantiation ([#306](https://github.com/airtai/fastkafka/pull/306)), thanks to [@Sternakt](https://github.com/Sternakt)

- Specified topics can now have "." in their names

## 0.6.0

### New Features

- Timestamps added to CLI commands ([#283](https://github.com/airtai/fastkafka/pull/283)), thanks to [@davorrunje](https://github.com/davorrunje)

- Added option to process messages concurrently ([#278](https://github.com/airtai/fastkafka/pull/278)), thanks to [@Sternakt](https://github.com/Sternakt)

- A new `executor` option is added that supports either sequential processing for tasks with small latencies or concurrent processing for tasks with larger latencies.

- Add consumes and produces functions to app ([#274](https://github.com/airtai/fastkafka/pull/274)), thanks to [@Sternakt](https://github.com/Sternakt)

- Add batching for producers ([#273](https://github.com/airtai/fastkafka/pull/273)), thanks to [@Sternakt](https://github.com/Sternakt)

- requirement(batch): batch support is a real need! and i see it on the issue list.... so hope we do not need to wait too long

https://discord.com/channels/1085457301214855171/1090956337938182266/1098592795557630063

- Fix broken links in guides ([#272](https://github.com/airtai/fastkafka/pull/272)), thanks to [@harishmohanraj](https://github.com/harishmohanraj)

- Generate the docusaurus sidebar dynamically by parsing summary.md ([#270](https://github.com/airtai/fastkafka/pull/270)), thanks to [@harishmohanraj](https://github.com/harishmohanraj)

- Metadata passed to consumer ([#269](https://github.com/airtai/fastkafka/pull/269)), thanks to [@Sternakt](https://github.com/Sternakt)

- requirement(key): read the key value somehow..Maybe I missed something in the docs

requirement(header): read header values, Reason: I use CDC | Debezium and in the current system the header values are important to differentiate between the CRUD operations.

https://discord.com/channels/1085457301214855171/1090956337938182266/1098592795557630063

- Contribution with instructions how to build and test added ([#255](https://github.com/airtai/fastkafka/pull/255)), thanks to [@Sternakt](https://github.com/Sternakt)

- Export encoders, decoders from fastkafka.encoder ([#246](https://github.com/airtai/fastkafka/pull/246)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- Create a Github action file to automatically index the website and commit it to the FastKafkachat repository. ([#239](https://github.com/airtai/fastkafka/issues/239))

- UI Improvement: Post screenshots with links to the actual messages in testimonials section ([#228](https://github.com/airtai/fastkafka/issues/228))

### Bugs Squashed

- Batch testing fix ([#280](https://github.com/airtai/fastkafka/pull/280)), thanks to [@Sternakt](https://github.com/Sternakt)

- Tester breaks when using Batching or KafkaEvent producers ([#279](https://github.com/airtai/fastkafka/issues/279))

- Consumer loop callbacks are not executing in parallel ([#276](https://github.com/airtai/fastkafka/issues/276))

## 0.5.0

### New Features

- Significant speedup of Kafka producer ([#236](https://github.com/airtai/fastkafka/pull/236)), thanks to [@Sternakt](https://github.com/Sternakt)

- Added support for AVRO encoding/decoding ([#231](https://github.com/airtai/fastkafka/pull/231)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

### Bugs Squashed

- Fixed sidebar to include guides in docusaurus documentation ([#238](https://github.com/airtai/fastkafka/pull/238)), thanks to [@Sternakt](https://github.com/Sternakt)

- Fixed link to symbols in docusaurus docs ([#227](https://github.com/airtai/fastkafka/pull/227)), thanks to [@harishmohanraj](https://github.com/harishmohanraj)

- Removed bootstrap servers from constructor ([#220](https://github.com/airtai/fastkafka/pull/220)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

## 0.4.0

### New Features

- Integrate FastKafka chat ([#208](https://github.com/airtai/fastkafka/pull/208)), thanks to [@harishmohanraj](https://github.com/harishmohanraj)

- Add benchmarking ([#206](https://github.com/airtai/fastkafka/pull/206)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- Enable fast testing without running kafka locally ([#198](https://github.com/airtai/fastkafka/pull/198)), thanks to [@Sternakt](https://github.com/Sternakt)

- Generate docs using Docusaurus ([#194](https://github.com/airtai/fastkafka/pull/194)), thanks to [@harishmohanraj](https://github.com/harishmohanraj)

- Add test cases for LocalRedpandaBroker ([#189](https://github.com/airtai/fastkafka/pull/189)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- Reimplement patch and delegates from fastcore ([#188](https://github.com/airtai/fastkafka/pull/188)), thanks to [@Sternakt](https://github.com/Sternakt)

- Rename existing functions into start and stop and add lifespan handler ([#117](https://github.com/airtai/fastkafka/issues/117))

- https://www.linkedin.com/posts/tiangolo_fastapi-activity-7038907638331404288-Oar3/?utm_source=share&utm_medium=member_ios

## 0.3.1

- README.md file updated

## 0.3.0

### New Features

- Guide for FastKafka produces using partition key ([#172](https://github.com/airtai/fastkafka/pull/172)), thanks to [@Sternakt](https://github.com/Sternakt)

- Closes #161

- Add support for Redpanda for testing and deployment ([#181](https://github.com/airtai/fastkafka/pull/181)), thanks to [@kumaranvpl](https://github.com/kumaranvpl)

- Remove bootstrap_servers from __init__ and use the name of broker as an option when running/testing ([#134](https://github.com/airtai/fastkafka/issues/134))

- Add a GH action file to check for broken links in the docs ([#163](https://github.com/airtai/fastkafka/issues/163))

- Optimize requirements for testing and docs ([#151](https://github.com/airtai/fastkafka/issues/151))

- Break requirements into base and optional for testing and dev ([#124](https://github.com/airtai/fastkafka/issues/124))

- Minimize base requirements needed just for running the service.

- Add link to example git repo into guide for building docs using actions ([#81](https://github.com/airtai/fastkafka/issues/81))

- Add logging for run_in_background ([#46](https://github.com/airtai/fastkafka/issues/46))

- Implement partition Key mechanism for producers ([#16](https://github.com/airtai/fastkafka/issues/16))

### Bugs Squashed

- Implement checks for npm installation and version ([#176](https://github.com/airtai/fastkafka/pull/176)), thanks to [@Sternakt](https://github.com/Sternakt)

- Closes #158 by checking if the npx is installed and more verbose error handling

- Fix the helper.py link in CHANGELOG.md ([#165](https://github.com/airtai/fastkafka/issues/165))

- fastkafka docs install_deps fails ([#157](https://github.com/airtai/fastkafka/issues/157))

- Unexpected internal error: [Errno 2] No such file or directory: 'npx'

- Broken links in docs ([#141](https://github.com/airtai/fastkafka/issues/141))

- fastkafka run is not showing up in CLI docs ([#132](https://github.com/airtai/fastkafka/issues/132))

## 0.2.3

- Fixed broken links on PyPi index page

## 0.2.2

### New Features

- Extract JDK and Kafka installation out of LocalKafkaBroker ([#131](https://github.com/airtai/fastkafka/issues/131))

- PyYAML version relaxed ([#119](https://github.com/airtai/fastkafka/pull/119)), thanks to [@davorrunje](https://github.com/davorrunje)

- Replace docker based kafka with local ([#68](https://github.com/airtai/fastkafka/issues/68))

- [x] replace docker compose with a simple docker run (standard run_jupyter.sh should do)

- [x] replace all tests to use LocalKafkaBroker

- [x] update documentation

### Bugs Squashed

- Fix broken link for FastKafka docs in index notebook ([#145](https://github.com/airtai/fastkafka/issues/145))

- Fix encoding issues when loading setup.py on windows OS ([#135](https://github.com/airtai/fastkafka/issues/135))

## 0.2.0

### New Features

- Replace kafka container with LocalKafkaBroker ([#112](https://github.com/airtai/fastkafka/issues/112))

- - [x] Replace kafka container with LocalKafkaBroker in tests

- [x] Remove kafka container from tests environment

- [x] Fix failing tests

### Bugs Squashed

- Fix random failing in CI ([#109](https://github.com/airtai/fastkafka/issues/109))

## 0.1.3

- version update in __init__.py

## 0.1.2

### New Features

- Git workflow action for publishing Kafka docs ([#78](https://github.com/airtai/fastkafka/issues/78))

### Bugs Squashed

- Include missing requirement ([#110](https://github.com/airtai/fastkafka/issues/110))

- [x] Typer is imported in this [file](https://github.com/airtai/fastkafka/blob/main/fastkafka/_components/helpers.py) but it is not included in [settings.ini](https://github.com/airtai/fastkafka/blob/main/settings.ini)

- [x] Add aiohttp which is imported in this [file](https://github.com/airtai/fastkafka/blob/main/fastkafka/_helpers.py)

- [x] Add nbformat which is imported in _components/helpers.py

- [x] Add nbconvert which is imported in _components/helpers.py

## 0.1.1

### Bugs Squashed

- JDK install fails on Python 3.8 ([#106](https://github.com/airtai/fastkafka/issues/106))

## 0.1.0

Initial release

================================================

FILE: CNAME

================================================

fastkafka.airt.ai

================================================

FILE: CONTRIBUTING.md

================================================

# Contributing to FastKafka

First off, thanks for taking the time to contribute! ❤️

All types of contributions are encouraged and valued. See the [Table of Contents](#table-of-contents) for different ways to help and details about how this project handles them. Please make sure to read the relevant section before making your contribution. It will make it a lot easier for us maintainers and smooth out the experience for all involved. The community looks forward to your contributions. 🎉

> And if you like the project, but just don't have time to contribute, that's fine. There are other easy ways to support the project and show your appreciation, which we would also be very happy about:

> - Star the project

> - Tweet about it

> - Refer this project in your project's readme

> - Mention the project at local meetups and tell your friends/colleagues

## Table of Contents

- [I Have a Question](#i-have-a-question)

- [I Want To Contribute](#i-want-to-contribute)

- [Reporting Bugs](#reporting-bugs)

- [Suggesting Enhancements](#suggesting-enhancements)

- [Your First Code Contribution](#your-first-code-contribution)

- [Development](#development)

- [Prepare the dev environment](#prepare-the-dev-environment)

- [Way of working](#way-of-working)

- [Before a PR](#before-a-pr)

## I Have a Question

> If you want to ask a question, we assume that you have read the available [Documentation](https://fastkafka.airt.ai/docs).

Before you ask a question, it is best to search for existing [Issues](https://github.com/airtai/fastkafka/issues) that might help you. In case you have found a suitable issue and still need clarification, you can write your question in this issue.

If you then still feel the need to ask a question and need clarification, we recommend the following:

- Contact us on [Discord](https://discord.com/invite/CJWmYpyFbc)

- Open an [Issue](https://github.com/airtai/fastkafka/issues/new)

- Provide as much context as you can about what you're running into

We will then take care of the issue as soon as possible.

## I Want To Contribute

> ### Legal Notice

> When contributing to this project, you must agree that you have authored 100% of the content, that you have the necessary rights to the content and that the content you contribute may be provided under the project license.

### Reporting Bugs

#### Before Submitting a Bug Report

A good bug report shouldn't leave others needing to chase you up for more information. Therefore, we ask you to investigate carefully, collect information and describe the issue in detail in your report. Please complete the following steps in advance to help us fix any potential bug as fast as possible.

- Make sure that you are using the latest version.

- Determine if your bug is really a bug and not an error on your side e.g. using incompatible environment components/versions (Make sure that you have read the [documentation](https://fastkafka.airt.ai/docs). If you are looking for support, you might want to check [this section](#i-have-a-question)).

- To see if other users have experienced (and potentially already solved) the same issue you are having, check if there is not already a bug report existing for your bug or error in the [bug tracker](https://github.com/airtai/fastkafka/issues?q=label%3Abug).

- Also make sure to search the internet (including Stack Overflow) to see if users outside of the GitHub community have discussed the issue.

- Collect information about the bug:

- Stack trace (Traceback)

- OS, Platform and Version (Windows, Linux, macOS, x86, ARM)

- Python version

- Possibly your input and the output

- Can you reliably reproduce the issue? And can you also reproduce it with older versions?

#### How Do I Submit a Good Bug Report?

We use GitHub issues to track bugs and errors. If you run into an issue with the project:

- Open an [Issue](https://github.com/airtai/fastkafka/issues/new). (Since we can't be sure at this point whether it is a bug or not, we ask you not to talk about a bug yet and not to label the issue.)

- Explain the behavior you would expect and the actual behavior.

- Please provide as much context as possible and describe the *reproduction steps* that someone else can follow to recreate the issue on their own. This usually includes your code. For good bug reports you should isolate the problem and create a reduced test case.

- Provide the information you collected in the previous section.

Once it's filed:

- The project team will label the issue accordingly.

- A team member will try to reproduce the issue with your provided steps. If there are no reproduction steps or no obvious way to reproduce the issue, the team will ask you for those steps and mark the issue as `needs-repro`. Bugs with the `needs-repro` tag will not be addressed until they are reproduced.

- If the team is able to reproduce the issue, it will be marked `needs-fix`, as well as possibly other tags (such as `critical`), and the issue will be left to be implemented.

### Suggesting Enhancements

This section guides you through submitting an enhancement suggestion for FastKafka, **including completely new features and minor improvements to existing functionality**. Following these guidelines will help maintainers and the community to understand your suggestion and find related suggestions.

#### Before Submitting an Enhancement

- Make sure that you are using the latest version.

- Read the [documentation](https://fastkafka.airt.ai/docs) carefully and find out if the functionality is already covered, maybe by an individual configuration.

- Perform a [search](https://github.com/airtai/fastkafka/issues) to see if the enhancement has already been suggested. If it has, add a comment to the existing issue instead of opening a new one.

- Find out whether your idea fits with the scope and aims of the project. It's up to you to make a strong case to convince the project's developers of the merits of this feature. Keep in mind that we want features that will be useful to the majority of our users and not just a small subset. If you're just targeting a minority of users, consider writing an add-on/plugin library.

- If you are not sure or would like to discuiss the enhancement with us directly, you can always contact us on [Discord](https://discord.com/invite/CJWmYpyFbc)

#### How Do I Submit a Good Enhancement Suggestion?

Enhancement suggestions are tracked as [GitHub issues](https://github.com/airtai/fastkafka/issues).

- Use a **clear and descriptive title** for the issue to identify the suggestion.

- Provide a **step-by-step description of the suggested enhancement** in as many details as possible.

- **Describe the current behavior** and **explain which behavior you expected to see instead** and why. At this point you can also tell which alternatives do not work for you.

- **Explain why this enhancement would be useful** to most FastKafka users. You may also want to point out the other projects that solved it better and which could serve as inspiration.

### Your First Code Contribution

A great way to start contributing to FastKafka would be by solving an issue tagged with "good first issue". To find a list of issues that are tagged as "good first issue" and are suitable for newcomers, please visit the following link: [Good first issues](https://github.com/airtai/fastkafka/labels/good%20first%20issue)

These issues are beginner-friendly and provide a great opportunity to get started with contributing to FastKafka. Choose an issue that interests you, follow the contribution process mentioned in [Way of working](#way-of-working) and [Before a PR](#before-a-pr), and help us make FastKafka even better!

If you have any questions or need further assistance, feel free to reach out to us. Happy coding!

## Development

### Prepare the dev environment

To start contributing to FastKafka, you first have to prepare the development environment.

#### Clone the FastKafka repository

To clone the repository, run the following command in the CLI:

```shell

git clone https://github.com/airtai/fastkafka.git

```

#### Optional: create a virtual python environment

To prevent library version clashes with you other projects, it is reccomended that you create a virtual python environment for your FastKafka project by running:

```shell

python3 -m venv fastkafka-env

```

And to activate your virtual environment run:

```shell

source fastkafka-env/bin/activate

```

To learn more about virtual environments, please have a look at [official python documentation](https://docs.python.org/3/library/venv.html#:~:text=A%20virtual%20environment%20is%20created,the%20virtual%20environment%20are%20available.)

#### Install FastKafka

To install FastKafka, navigate to the root directory of the cloned FastKafka project and run:

```shell

pip install fastkafka -e [."dev"]

```

#### Install JRE and Kafka toolkit

To be able to run tests and use all the functionalities of FastKafka, you have to have JRE and Kafka toolkit installed on your machine. To do this, you have two options:

1. Use our `fastkafka testing install-deps` CLI command which will install JRE and Kafka toolkit for you in your .local folder

OR

2. Install JRE and Kafka manually.

To do this, please refer to [JDK and JRE installation guide](https://docs.oracle.com/javase/9/install/toc.htm) and [Apache Kafka quickstart](https://kafka.apache.org/quickstart)

#### Install npm

To be able to run tests you must have npm installed, because of documentation generation. To do this, you have two options:

1. Use our `fastkafka docs install_deps` CLI command which will install npm for you in your .local folder

OR

2. Install npm manually.

To do this, please refer to [NPM installation guide](https://docs.npmjs.com/downloading-and-installing-node-js-and-npm)

#### Install docusaurus

To generate the documentation, you need docusaurus. To install it run 'docusaurus/scripts/install_docusaurus_deps.sh' in the root of FastKafka project.

#### Check if everything works

After installing FastKafka and all the necessary dependencies, run `nbdev_test` in the root of FastKafka project. This will take a couple of minutes as it will run all the tests on FastKafka project. If everythng is setup correctly, you will get a "Success." message in your terminal, otherwise please refer to previous steps.

### Way of working

The development of FastKafka is done in Jupyter notebooks. Inside the `nbs` directory you will find all the source code of FastKafka, this is where you will implement your changes.

The testing, cleanup and exporting of the code is being handled by `nbdev`, please, before starting the work on FastKafka, get familiar with it by reading [nbdev documentation](https://nbdev.fast.ai/getting_started.html).

The general philosopy you should follow when writing code for FastKafka is:

- Function should be an atomic functionality, short and concise

- Good rule of thumb: your function should be 5-10 lines long usually

- If there are more than 2 params, enforce keywording using *

- E.g.: `def function(param1, *, param2, param3): ...`

- Define typing of arguments and return value

- If not, mypy tests will fail and a lot of easily avoidable bugs will go undetected

- After the function cell, write test cells using the assert keyword

- Whenever you implement something you should test that functionality immediately in the cells below

- Add Google style python docstrings when function is implemented and tested

### Before a PR

After you have implemented your changes you will want to open a pull request to merge those changes into our main branch. To make this as smooth for you and us, please do the following before opening the request (all the commands are to be run in the root of FastKafka project):

1. Format your notebooks: `nbqa black nbs`

2. Close, shutdown, and clean the metadata from your notebooks: `nbdev_clean`

3. Export your code: `nbdev_export`

4. Run the tests: `nbdev_test`

5. Test code typing: `mypy fastkafka`

6. Test code safety with bandit: `bandit -r fastkafka`

7. Test code safety with semgrep: `semgrep --config auto -r fastkafka`

When you have done this, and all the tests are passing, your code should be ready for a merge. Please commit and push your code and open a pull request and assign it to one of the core developers. We will then review your changes and if everythng is in order, we will approve your merge.

## Attribution

This guide is based on the **contributing-gen**. [Make your own](https://github.com/bttger/contributing-gen)!

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright [yyyy] [name of copyright owner]

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: MANIFEST.in

================================================

include settings.ini

include LICENSE

include CONTRIBUTING.md

include README.md

recursive-exclude * __pycache__

================================================

FILE: README.md

================================================

# FastKafka

<!-- WARNING: THIS FILE WAS AUTOGENERATED! DO NOT EDIT! -->

<b>Effortless Kafka integration for your web services</b>

## Deprecation notice

This project is superceeded by

[FastStream](https://github.com/airtai/faststream).

FastStream is a new package based on the ideas and experiences gained

from [FastKafka](https://github.com/airtai/fastkafka) and

[Propan](https://github.com/lancetnik/propan). By joining our forces, we

picked up the best from both packages and created the unified way to

write services capable of processing streamed data regradless of the

underliying protocol.

We’ll continue to maintain FastKafka package, but new development will

be in [FastStream](https://github.com/airtai/faststream). If you are

starting a new service,

[FastStream](https://github.com/airtai/faststream) is the recommended

way to do it.

------------------------------------------------------------------------

------------------------------------------------------------------------

[FastKafka](https://fastkafka.airt.ai/) is a powerful and easy-to-use

Python library for building asynchronous services that interact with

Kafka topics. Built on top of [Pydantic](https://docs.pydantic.dev/),

[AIOKafka](https://github.com/aio-libs/aiokafka) and

[AsyncAPI](https://www.asyncapi.com/), FastKafka simplifies the process

of writing producers and consumers for Kafka topics, handling all the

parsing, networking, task scheduling and data generation automatically.

With FastKafka, you can quickly prototype and develop high-performance

Kafka-based services with minimal code, making it an ideal choice for

developers looking to streamline their workflow and accelerate their

projects.

------------------------------------------------------------------------

#### ⭐⭐⭐ Stay in touch ⭐⭐⭐

Please show your support and stay in touch by:

- giving our [GitHub repository](https://github.com/airtai/fastkafka/) a

star, and

- joining our [Discord server](https://discord.gg/CJWmYpyFbc).

Your support helps us to stay in touch with you and encourages us to

continue developing and improving the library. Thank you for your

support!

------------------------------------------------------------------------

#### 🐝🐝🐝 We were busy lately 🐝🐝🐝

## Install

FastKafka works on Windows, macOS, Linux, and most Unix-style operating

systems. You can install base version of FastKafka with `pip` as usual:

``` sh

pip install fastkafka

```

To install FastKafka with testing features please use:

``` sh

pip install fastkafka[test]

```

To install FastKafka with asyncapi docs please use:

``` sh

pip install fastkafka[docs]

```

To install FastKafka with all the features please use:

``` sh

pip install fastkafka[test,docs]

```

## Tutorial

You can start an interactive tutorial in Google Colab by clicking the

button below:

<a href="https://colab.research.google.com/github/airtai/fastkafka/blob/main/nbs/index.ipynb" target=”_blank”>

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open in Colab" />

</a>

## Writing server code

To demonstrate FastKafka simplicity of using `@produces` and `@consumes`

decorators, we will focus on a simple app.

The app will consume JSON messages containing positive floats from one topic, log

them, and then produce incremented values to another topic.

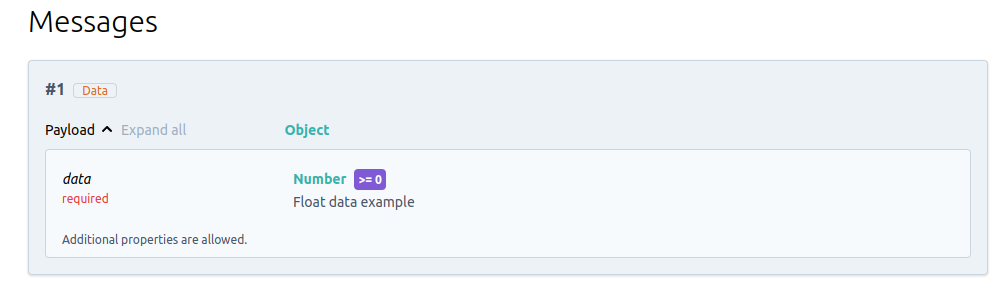

### Messages

FastKafka uses [Pydantic](https://docs.pydantic.dev/) to parse input

JSON-encoded data into Python objects, making it easy to work with

structured data in your Kafka-based applications. Pydantic’s

[`BaseModel`](https://docs.pydantic.dev/usage/models/) class allows you

to define messages using a declarative syntax, making it easy to specify

the fields and types of your messages.

This example defines one `Data` mesage class. This Class will model the

consumed and produced data in our app demo, it contains one

`NonNegativeFloat` field `data` that will be logged and “processed”

before being produced to another topic.

These message class will be used to parse and validate incoming data in

Kafka consumers and producers.

``` python

from pydantic import BaseModel, Field, NonNegativeFloat

class Data(BaseModel):

data: NonNegativeFloat = Field(

..., example=0.5, description="Float data example"

)

```

### Application

This example shows how to initialize a FastKafka application.

It starts by defining a dictionary called `kafka_brokers`, which

contains two entries: `"localhost"` and `"production"`, specifying local

development and production Kafka brokers. Each entry specifies the URL,

port, and other details of a Kafka broker. This dictionary is used for

both generating the documentation and later to run the actual server

against one of the given kafka broker.

Next, an object of the

[`FastKafka`](https://airtai.github.io/fastkafka/docs/api/fastkafka#fastkafka.FastKafka)

class is initialized with the minimum set of arguments:

- `kafka_brokers`: a dictionary used for generation of documentation

We will also import and create a logger so that we can log the incoming

data in our consuming function.

``` python

from logging import getLogger

from fastkafka import FastKafka

logger = getLogger("Demo Kafka app")

kafka_brokers = {

"localhost": {

"url": "localhost",

"description": "local development kafka broker",

"port": 9092,

},

"production": {

"url": "kafka.airt.ai",

"description": "production kafka broker",

"port": 9092,

"protocol": "kafka-secure",

"security": {"type": "plain"},

},

}

kafka_app = FastKafka(

title="Demo Kafka app",

kafka_brokers=kafka_brokers,

)

```

### Function decorators

FastKafka provides convenient function decorators `@kafka_app.consumes`

and `@kafka_app.produces` to allow you to delegate the actual process of

- consuming and producing data to Kafka, and

- decoding and encoding JSON messages

from user defined functions to the framework. The FastKafka framework

delegates these jobs to AIOKafka and Pydantic libraries.

These decorators make it easy to specify the processing logic for your

Kafka consumers and producers, allowing you to focus on the core

business logic of your application without worrying about the underlying

Kafka integration.

This following example shows how to use the `@kafka_app.consumes` and

`@kafka_app.produces` decorators in a FastKafka application:

- The `@kafka_app.consumes` decorator is applied to the `on_input_data`

function, which specifies that this function should be called whenever

a message is received on the “input_data” Kafka topic. The

`on_input_data` function takes a single argument which is expected to

be an instance of the `Data` message class. Specifying the type of the

single argument is instructing the Pydantic to use `Data.parse_raw()`

on the consumed message before passing it to the user defined function

`on_input_data`.

- The `@produces` decorator is applied to the `to_output_data` function,

which specifies that this function should produce a message to the

“output_data” Kafka topic whenever it is called. The `to_output_data`

function takes a single float argument `data`. It it increments the

data returns it wrapped in a `Data` object. The framework will call

the `Data.json().encode("utf-8")` function on the returned value and

produce it to the specified topic.

``` python

@kafka_app.consumes(topic="input_data", auto_offset_reset="latest")

async def on_input_data(msg: Data):

logger.info(f"Got data: {msg.data}")

await to_output_data(msg.data)

@kafka_app.produces(topic="output_data")

async def to_output_data(data: float) -> Data:

processed_data = Data(data=data+1.0)

return processed_data

```

## Testing the service

The service can be tested using the

[`Tester`](https://airtai.github.io/fastkafka/docs/api/fastkafka/testing/Tester#fastkafka.testing.Tester)

instances which internally starts InMemory implementation of Kafka

broker.

The Tester will redirect your consumes and produces decorated functions

to the InMemory Kafka broker so that you can quickly test your app

without the need for a running Kafka broker and all its dependencies.

``` python

from fastkafka.testing import Tester

msg = Data(

data=0.1,

)

# Start Tester app and create InMemory Kafka broker for testing

async with Tester(kafka_app) as tester:

# Send Data message to input_data topic

await tester.to_input_data(msg)

# Assert that the kafka_app responded with incremented data in output_data topic

await tester.awaited_mocks.on_output_data.assert_awaited_with(

Data(data=1.1), timeout=2

)

```

[INFO] fastkafka._testing.in_memory_broker: InMemoryBroker._start() called

[INFO] fastkafka._testing.in_memory_broker: InMemoryBroker._patch_consumers_and_producers(): Patching consumers and producers!

[INFO] fastkafka._testing.in_memory_broker: InMemoryBroker starting

[INFO] fastkafka._application.app: _create_producer() : created producer using the config: '{'bootstrap_servers': 'localhost:9092'}'

[INFO] fastkafka._testing.in_memory_broker: AIOKafkaProducer patched start() called()

[INFO] fastkafka._application.app: _create_producer() : created producer using the config: '{'bootstrap_servers': 'localhost:9092'}'

[INFO] fastkafka._testing.in_memory_broker: AIOKafkaProducer patched start() called()

[INFO] fastkafka._components.aiokafka_consumer_loop: aiokafka_consumer_loop() starting...

[INFO] fastkafka._components.aiokafka_consumer_loop: aiokafka_consumer_loop(): Consumer created using the following parameters: {'bootstrap_servers': 'localhost:9092', 'auto_offset_reset': 'latest', 'max_poll_records': 100}

[INFO] fastkafka._testing.in_memory_broker: AIOKafkaConsumer patched start() called()