Repository: ankandrew/fast-alpr

Branch: master

Commit: c5a94706f175

Files: 29

Total size: 51.6 KB

Directory structure:

gitextract_zyjy08no/

├── .editorconfig

├── .github/

│ ├── actionlint-matcher.json

│ └── workflows/

│ ├── ci.yaml

│ ├── close-inactive-issues.yaml

│ ├── codeql-analysis.yaml

│ ├── release.yaml

│ ├── secret-scanning.yaml

│ ├── test.yaml

│ └── workflow-lint.yaml

├── .gitignore

├── .yamllint.yaml

├── LICENSE

├── Makefile

├── README.md

├── docs/

│ ├── contributing.md

│ ├── custom_models.md

│ ├── index.md

│ ├── installation.md

│ ├── quick_start.md

│ └── reference.md

├── fast_alpr/

│ ├── __init__.py

│ ├── alpr.py

│ ├── base.py

│ ├── default_detector.py

│ └── default_ocr.py

├── mkdocs.yml

├── pyproject.toml

└── test/

├── __init__.py

└── test_alpr.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .editorconfig

================================================

# EditorConfig helps maintain consistent coding styles for multiple developers working on the same

# project across various editors and IDEs. The EditorConfig project consists of a file format for

# defining coding styles and a collection of text editor plugins that enable editors to read the

# file format and adhere to defined styles. EditorConfig files are easily readable and they work

# nicely with version control systems. https://editorconfig.org/

# top-most EditorConfig file

root = true

# Unix-style newlines with a newline ending every file

[*]

end_of_line = lf

insert_final_newline = true

charset = utf-8

trim_trailing_whitespace = true

indent_style = space

# 4 space indentation

[*.py]

indent_size = 4

================================================

FILE: .github/actionlint-matcher.json

================================================

{

"problemMatcher": [

{

"owner": "actionlint",

"pattern": [

{

"regexp": "^(?:\\x1b\\[\\d+m)?(.+?)(?:\\x1b\\[\\d+m)*:(?:\\x1b\\[\\d+m)*(\\d+)(?:\\x1b\\[\\d+m)*:(?:\\x1b\\[\\d+m)*(\\d+)(?:\\x1b\\[\\d+m)*: (?:\\x1b\\[\\d+m)*(.+?)(?:\\x1b\\[\\d+m)* \\[(.+?)\\]$",

"file": 1,

"line": 2,

"column": 3,

"message": 4,

"code": 5

}

]

}

]

}

================================================

FILE: .github/workflows/ci.yaml

================================================

name: CI

on:

push:

branches: [ master ]

pull_request:

branches: [ master ]

jobs:

test:

uses: ./.github/workflows/test.yaml

================================================

FILE: .github/workflows/close-inactive-issues.yaml

================================================

name: Close inactive issues

on:

schedule:

- cron: "30 1 * * *" # Runs daily at 1:30 AM UTC

jobs:

close-issues:

runs-on: ubuntu-latest

permissions:

issues: write

pull-requests: write

steps:

- uses: actions/stale@v9

with:

days-before-issue-stale: 90 # The number of days old an issue can be before marking it stale

days-before-issue-close: 14 # The number of days to wait to close an issue after it being marked stale

stale-issue-label: "stale"

stale-issue-message: "This issue is stale because it has been open for 90 days with no activity."

close-issue-message: "This issue was closed because it has been inactive for 14 days since being marked as stale."

days-before-pr-stale: -1 # Disables stale behavior for PRs

days-before-pr-close: -1 # Disables closing behavior for PRs

repo-token: ${{ secrets.GITHUB_TOKEN }}

================================================

FILE: .github/workflows/codeql-analysis.yaml

================================================

name: "CodeQL Analysis"

on:

pull_request:

branches: [ master ]

push:

branches: [ master ]

schedule:

- cron: '31 0 * * 1'

permissions:

contents: read

security-events: write

jobs:

analyze:

name: Analyze Code

runs-on: ubuntu-latest

permissions:

contents: read

security-events: write

strategy:

fail-fast: false

matrix:

language: [ 'python' ]

steps:

- name: Checkout repository

uses: actions/checkout@v4

- name: Initialize CodeQL

uses: github/codeql-action/init@v3

with:

languages: ${{ matrix.language }}

build-mode: none

- name: Run CodeQL Analysis

uses: github/codeql-action/analyze@v3

with:

category: "security"

================================================

FILE: .github/workflows/release.yaml

================================================

name: Release

on:

push:

tags: [ 'v*' ]

jobs:

test:

uses: ./.github/workflows/test.yaml

publish-to-pypi:

name: Build and Publish to PyPI

needs:

- test

if: "startsWith(github.ref, 'refs/tags/v')"

runs-on: ubuntu-latest

environment:

name: pypi

url: https://pypi.org/p/fast-alpr

permissions:

id-token: write

steps:

- uses: actions/checkout@v4

- name: Install uv (and Python 3.10)

uses: astral-sh/setup-uv@v6

with:

version: "latest"

python-version: "3.10"

enable-cache: true

- name: Build distributions (sdist + wheel)

run: uv build --no-sources

- name: Publish distribution 📦 to PyPI

uses: pypa/gh-action-pypi-publish@release/v1

github-release:

name: Create GitHub release

needs:

- publish-to-pypi

runs-on: ubuntu-latest

permissions:

contents: write

steps:

- uses: actions/checkout@v4

- name: Check package version matches tag

id: check-version

uses: samuelcolvin/check-python-version@v4.1

with:

version_file_path: 'pyproject.toml'

- name: Create GitHub Release

env:

GITHUB_TOKEN: ${{ github.token }}

tag: ${{ github.ref_name }}

run: |

gh release create "$tag" \

--repo="$GITHUB_REPOSITORY" \

--title="${GITHUB_REPOSITORY#*/} ${tag#v}" \

--generate-notes

update_docs:

name: Update documentation

needs:

- github-release

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- name: Install uv (and Python 3.10)

uses: astral-sh/setup-uv@v6

with:

version: "latest"

python-version: "3.10"

enable-cache: true

- name: Configure Git user

run: |

git config --local user.email "github-actions[bot]@users.noreply.github.com"

git config --local user.name "github-actions[bot]"

- name: Retrieve version

id: check-version

uses: samuelcolvin/check-python-version@v4.1

with:

version_file_path: 'pyproject.toml'

skip_env_check: true

- name: Install docs dependencies

run: uv sync --locked --no-default-groups --group docs

- name: Deploy the docs

run: |

uv run mike deploy \

--update-aliases \

--push \

--branch docs-site \

${{ steps.check-version.outputs.VERSION_MAJOR_MINOR }} latest

================================================

FILE: .github/workflows/secret-scanning.yaml

================================================

on:

push:

branches:

- master

pull_request:

branches:

- '**'

name: Secret Leaks

jobs:

trufflehog:

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v4

with:

fetch-depth: 0

- name: Secret Scanning

uses: trufflesecurity/trufflehog@main

with:

extra_args: --results=verified,unknown

================================================

FILE: .github/workflows/test.yaml

================================================

name: Test

on:

workflow_call:

jobs:

test:

name: Test

strategy:

fail-fast: false

matrix:

python-version: [ '3.10', '3.11', '3.12', '3.13' ]

os: [ 'ubuntu-latest' ]

runs-on: ${{ matrix.os }}

steps:

- uses: actions/checkout@v4

- name: Install uv

uses: astral-sh/setup-uv@v6

with:

version: "latest"

python-version: ${{ matrix.python-version }}

enable-cache: true

- name: Install the project

run: make install

- name: Check format

run: make check_format

- name: Run linters

run: make lint

- name: Run tests

run: make test

================================================

FILE: .github/workflows/workflow-lint.yaml

================================================

name: Lint GitHub Actions workflows

on:

pull_request:

paths:

- '.github/workflows/**/*.yaml'

- '.github/workflows/**/*.yml'

push:

branches: [ master ]

paths:

- '.github/workflows/**/*.yaml'

- '.github/workflows/**/*.yml'

jobs:

actionlint:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Enable matcher for actionlint

run: echo "::add-matcher::.github/actionlint-matcher.json"

- name: Download and run actionlint

run: |

bash <(curl https://raw.githubusercontent.com/rhysd/actionlint/main/scripts/download-actionlint.bash)

./actionlint -color

shell: bash

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

*.py,cover

.hypothesis/

.pytest_cache/

cover/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

.pybuilder/

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

# For a library or package, you might want to ignore these files since the code is

# intended to run in multiple environments; otherwise, check them in:

# .python-version

# pdm

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

#pdm.lock

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

# in version control.

# https://pdm.fming.dev/#use-with-ide

.pdm.toml

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

__pypackages__/

# Celery stuff

celerybeat-schedule

celerybeat.pid

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

# pytype static type analyzer

.pytype/

# Cython debug symbols

cython_debug/

# pyenv

.python-version

# CUDA DNN

cudnn64_7.dll

# Train folder

train_val_set/

# VS Code

.vscode/

# JetBrains IDEs

.idea/

*.iml

# macOS

.DS_Store

.AppleDouble

.LSOverride

# Windows

Thumbs.db

ehthumbs.db

Desktop.ini

# Linux

.directory

# Logs/runtime files

*.pid

*.tmp

*.bak

*.swp

*.swo

# Notebooks

**/.ipynb_checkpoints/

# Trained models

**/trained_models/

# Model artifacts

*.keras

*.h5

*.hdf5

*.weights.h5

# TensorFlow ckpts

checkpoint

*.ckpt

*.ckpt.*

*.index

*.data-*

# TF SavedModel

saved_model/

**/saved_model/

**/saved_model.pb

**/variables/

# Training outputs / logs

logs/

**/logs/

runs/

tb_logs/

tensorboard/

# ONNX related

*.onnx

*.ort

# Other Export formats

*.tflite

*.mlmodel

*.mlpackage

# Accelerator caches/artifacts

*.engine

*.plan

trt_engine_cache/

tensorrt/

================================================

FILE: .yamllint.yaml

================================================

# yamllint configuration file: https://yamllint.readthedocs.io/

extends: relaxed

rules:

line-length: disable

================================================

FILE: LICENSE

================================================

MIT License

Copyright (c) 2024 ankandrew

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: Makefile

================================================

# Directories

SRC_PATHS := fast_alpr/ test/

YAML_PATHS := .github/ mkdocs.yml

# Tasks

.PHONY: help

help:

@echo "Available targets:"

@echo " help : Show this help message"

@echo " install : Install project with all required dependencies"

@echo " format : Format code using Ruff format"

@echo " check_format : Check code formatting with Ruff format"

@echo " ruff : Run Ruff linter"

@echo " yamllint : Run yamllint linter"

@echo " pylint : Run Pylint linter"

@echo " mypy : Run MyPy static type checker"

@echo " lint : Run linters (Ruff, Pylint and Mypy)"

@echo " test : Run tests using pytest"

@echo " checks : Check format, lint, and test"

@echo " clean : Clean up caches and build artifacts"

.PHONY: install

install:

@echo "==> Installing project with all required dependencies..."

uv sync --locked --all-groups --extra onnx

.PHONY: format

format:

@echo "==> Sorting imports..."

@# Currently, the Ruff formatter does not sort imports, see https://docs.astral.sh/ruff/formatter/#sorting-imports

@uv run ruff check --select I --fix $(SRC_PATHS)

@echo "=====> Formatting code..."

@uv run ruff format $(SRC_PATHS)

.PHONY: check_format

check_format:

@echo "=====> Checking format..."

@uv run ruff format --check --diff $(SRC_PATHS)

@echo "=====> Checking imports are sorted..."

@uv run ruff check --select I --exit-non-zero-on-fix $(SRC_PATHS)

.PHONY: ruff

ruff:

@echo "=====> Running Ruff..."

@uv run ruff check $(SRC_PATHS)

.PHONY: yamllint

yamllint:

@echo "=====> Running yamllint..."

@uv run yamllint $(YAML_PATHS)

.PHONY: pylint

pylint:

@echo "=====> Running Pylint..."

@uv run pylint $(SRC_PATHS)

.PHONY: mypy

mypy:

@echo "=====> Running Mypy..."

@uv run mypy $(SRC_PATHS)

.PHONY: lint

lint: ruff yamllint pylint mypy

.PHONY: test

test:

@echo "=====> Running tests..."

@uv run pytest test/

.PHONY: clean

clean:

@echo "=====> Cleaning caches..."

@uv run ruff clean

@rm -rf .cache .pytest_cache .mypy_cache build dist *.egg-info

checks: format lint test

================================================

FILE: README.md

================================================

# FastALPR

[](https://github.com/ankandrew/fast-alpr/actions)

[](https://github.com/ankandrew/fast-alpr/actions)

[](https://github.com/astral-sh/ruff)

[](https://github.com/pylint-dev/pylint)

[](http://mypy-lang.org/)

[](https://onnx.ai/)

[](https://huggingface.co/spaces/ankandrew/fast-alpr)

[](https://ankandrew.github.io/fast-alpr/)

[](https://pypi.python.org/pypi/fast-alpr)

[](https://github.com/ankandrew/fast-alpr/releases)

[](./LICENSE)

[](https://youtu.be/-TPJot7-HTs?t=652)

**FastALPR** is a high-performance, customizable Automatic License Plate Recognition (ALPR) system. We offer fast and

efficient ONNX models by default, but you can easily swap in your own models if needed.

For Optical Character Recognition (**OCR**), we use [fast-plate-ocr](https://github.com/ankandrew/fast-plate-ocr) by

default, and for **license plate detection**, we

use [open-image-models](https://github.com/ankandrew/open-image-models). However, you can integrate any OCR or detection

model of your choice.

## 📋 Table of Contents

* [✨ Features](#-features)

* [📦 Installation](#-installation)

* [🚀 Quick Start](#-quick-start)

* [🛠️ Customization and Flexibility](#-customization-and-flexibility)

* [📖 Documentation](#-documentation)

* [🤝 Contributing](#-contributing)

* [🙏 Acknowledgements](#-acknowledgements)

* [📫 Contact](#-contact)

## ✨ Features

- **High Accuracy**: Uses advanced models for precise license plate detection and OCR.

- **Customizable**: Easily switch out detection and OCR models.

- **Easy to Use**: Quick setup with a simple API.

- **Out-of-the-Box Models**: Includes ready-to-use detection and OCR models

- **Fast Performance**: Optimized with ONNX Runtime for speed.

## 📦 Installation

```shell

pip install fast-alpr[onnx-gpu]

```

By default, **no ONNX runtime is installed**. To run inference, you **must** install at least one ONNX backend using an appropriate extra.

| Platform/Use Case | Install Command | Notes |

|--------------------|----------------------------------------|----------------------|

| CPU (default) | `pip install fast-alpr[onnx]` | Cross-platform |

| NVIDIA GPU (CUDA) | `pip install fast-alpr[onnx-gpu]` | Linux/Windows |

| Intel (OpenVINO) | `pip install fast-alpr[onnx-openvino]` | Best on Intel CPUs |

| Windows (DirectML) | `pip install fast-alpr[onnx-directml]` | For DirectML support |

| Qualcomm (QNN) | `pip install fast-alpr[onnx-qnn]` | Qualcomm chipsets |

## 🚀 Quick Start

> [!TIP]

> Try `fast-alpr` in [Hugging Spaces](https://huggingface.co/spaces/ankandrew/fast-alpr).

Here's how to get started with FastALPR:

```python

from fast_alpr import ALPR

# You can also initialize the ALPR with custom plate detection and OCR models.

alpr = ALPR(

detector_model="yolo-v9-t-384-license-plate-end2end",

ocr_model="cct-xs-v2-global-model",

)

# The "assets/test_image.png" can be found in repo root dir

alpr_results = alpr.predict("assets/test_image.png")

print(alpr_results)

```

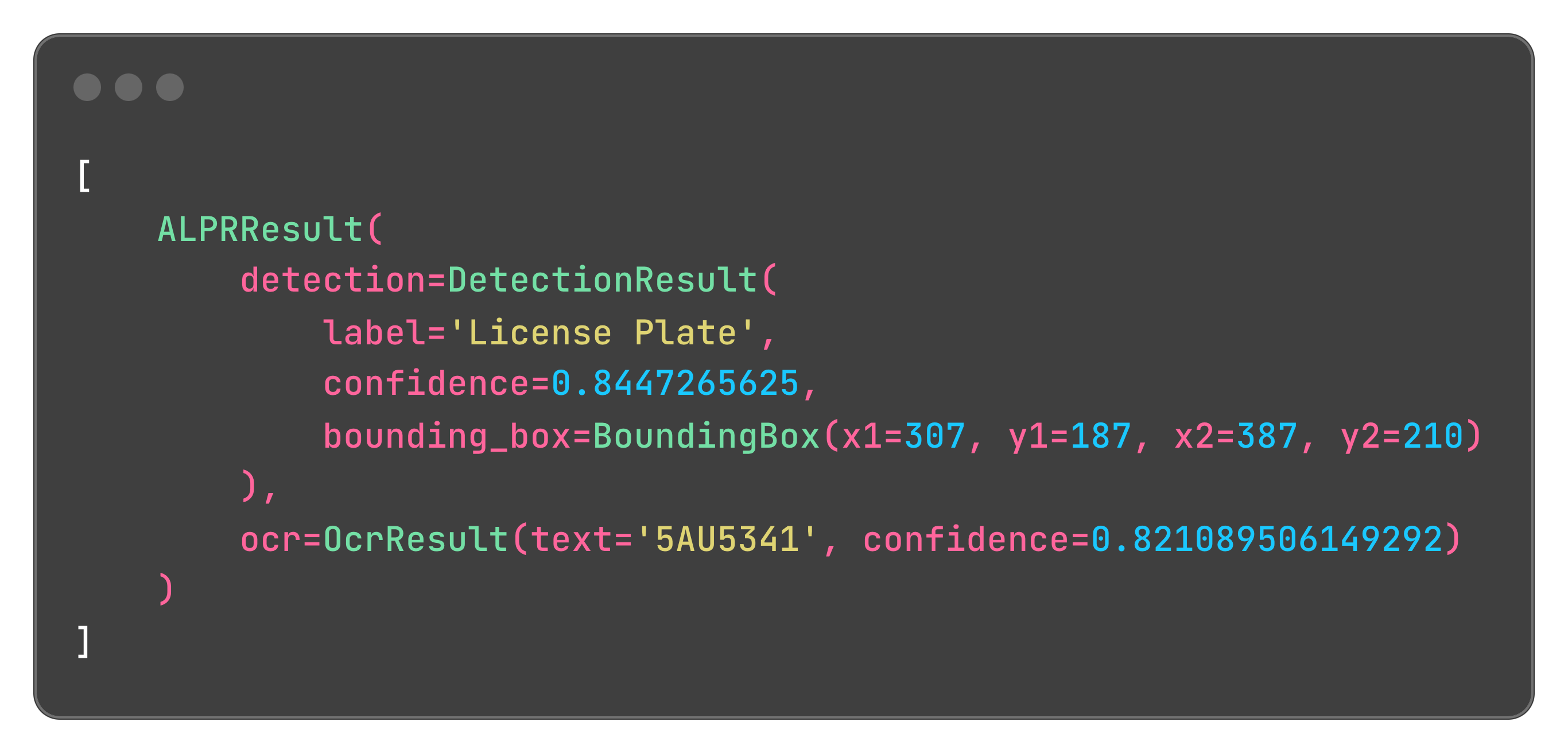

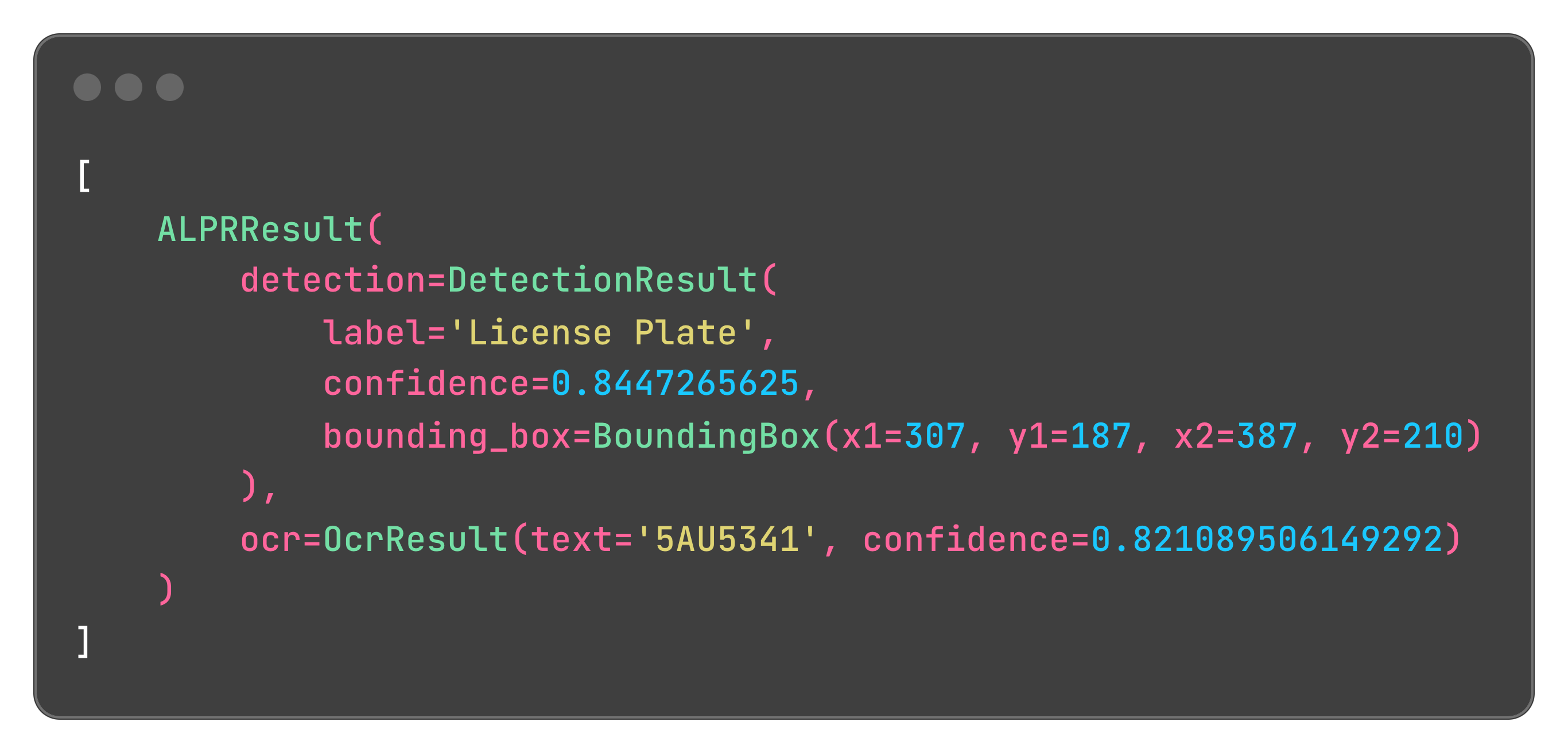

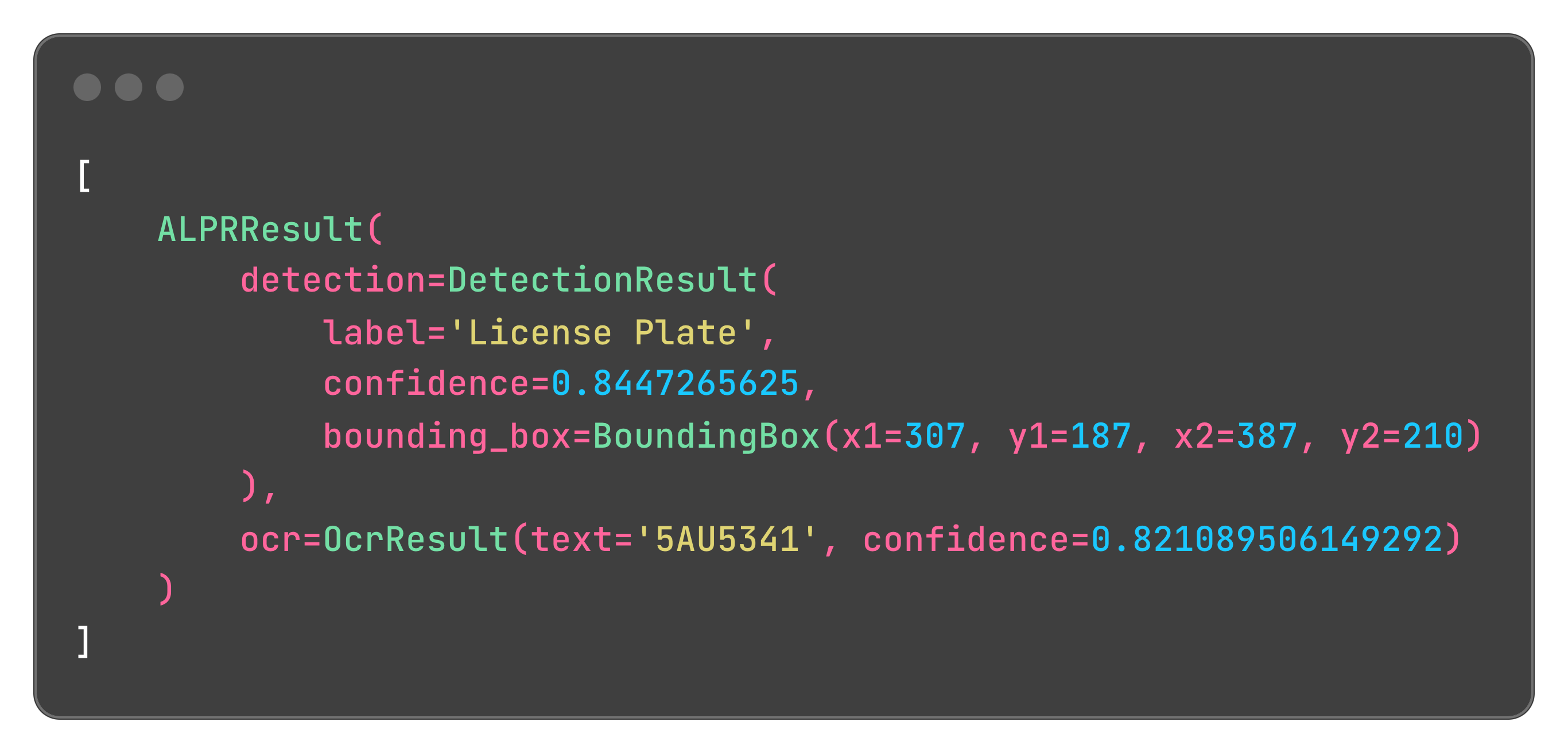

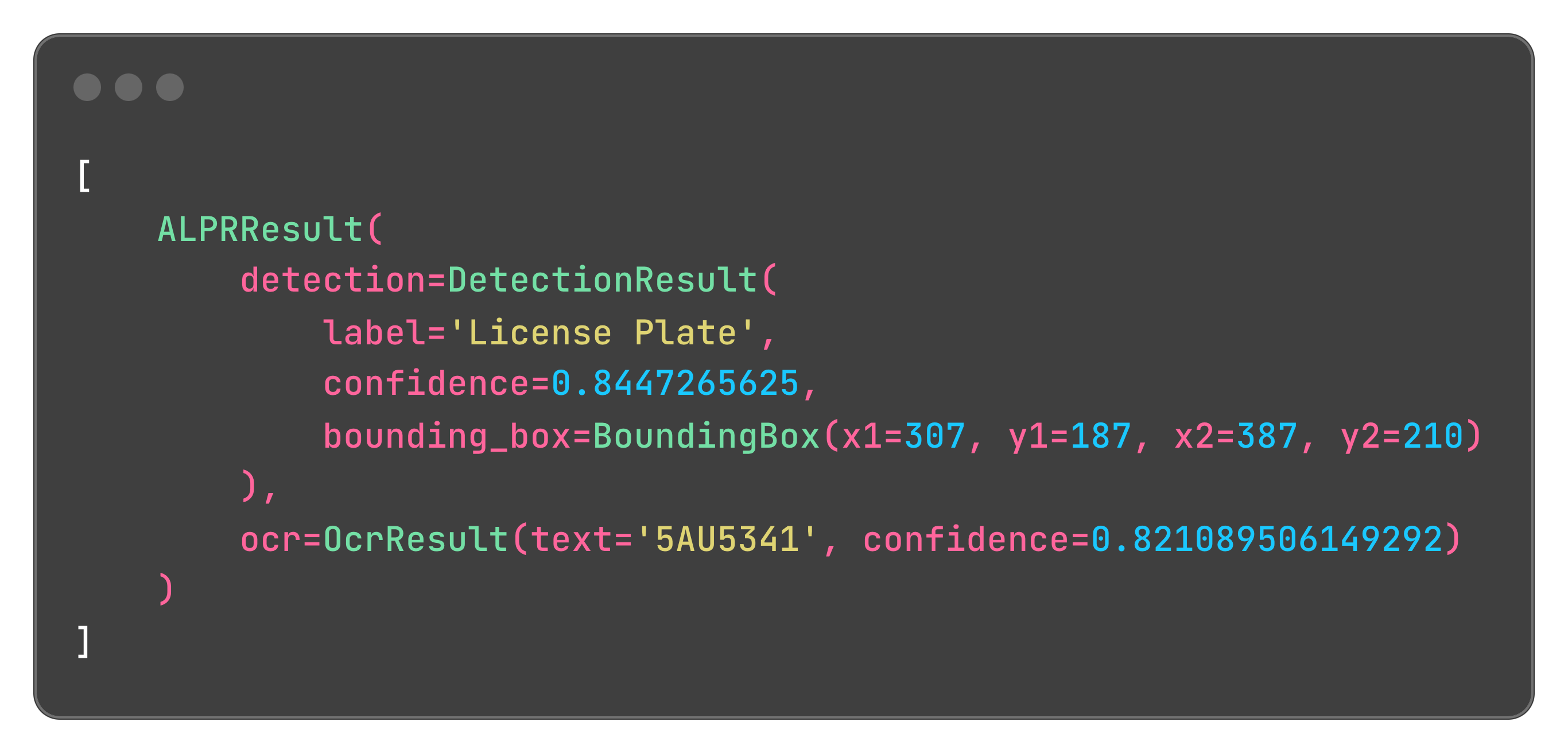

Output:

You can also draw the predictions directly on the image:

```python

import cv2

from fast_alpr import ALPR

# Initialize the ALPR

alpr = ALPR(

detector_model="yolo-v9-t-384-license-plate-end2end",

ocr_model="cct-xs-v2-global-model",

)

# Load the image

image_path = "assets/test_image.png"

frame = cv2.imread(image_path)

# Draw predictions on the image and get the ALPR results

drawn = alpr.draw_predictions(frame)

annotated_frame = drawn.image

results = drawn.results

```

Annotated frame:

You can also draw the predictions directly on the image:

```python

import cv2

from fast_alpr import ALPR

# Initialize the ALPR

alpr = ALPR(

detector_model="yolo-v9-t-384-license-plate-end2end",

ocr_model="cct-xs-v2-global-model",

)

# Load the image

image_path = "assets/test_image.png"

frame = cv2.imread(image_path)

# Draw predictions on the image and get the ALPR results

drawn = alpr.draw_predictions(frame)

annotated_frame = drawn.image

results = drawn.results

```

Annotated frame:

## 🛠️ Customization and Flexibility

FastALPR is designed to be flexible. You can customize the detector and OCR models according to your requirements.

You can very easily integrate with **Tesseract** OCR to leverage its capabilities:

```python

import re

from statistics import mean

import numpy as np

import pytesseract

from fast_alpr.alpr import ALPR, BaseOCR, OcrResult

class PytesseractOCR(BaseOCR):

def __init__(self) -> None:

"""

Init PytesseractOCR.

"""

def predict(self, cropped_plate: np.ndarray) -> OcrResult | None:

if cropped_plate is None:

return None

# You can change 'eng' to the appropriate language code as needed

data = pytesseract.image_to_data(

cropped_plate,

lang="eng",

config="--oem 3 --psm 6",

output_type=pytesseract.Output.DICT,

)

plate_text = " ".join(data["text"]).strip()

plate_text = re.sub(r"[^A-Za-z0-9]", "", plate_text)

avg_confidence = mean(conf for conf in data["conf"] if conf > 0) / 100.0

return OcrResult(text=plate_text, confidence=avg_confidence)

alpr = ALPR(detector_model="yolo-v9-t-384-license-plate-end2end", ocr=PytesseractOCR())

alpr_results = alpr.predict("assets/test_image.png")

print(alpr_results)

```

> [!TIP]

> See the [docs](https://ankandrew.github.io/fast-alpr/) for more examples!

## 📖 Documentation

Comprehensive documentation is available [here](https://ankandrew.github.io/fast-alpr/), including detailed API

references and additional examples.

## 🤝 Contributing

Contributions to the repo are greatly appreciated. Whether it's bug fixes, feature enhancements, or new models,

your contributions are warmly welcomed.

To start contributing or to begin development, you can follow these steps:

1. Clone repo

```shell

git clone https://github.com/ankandrew/fast-alpr.git

```

2. Install all dependencies (make sure you have [uv](https://docs.astral.sh/uv/getting-started/installation/) installed):

```shell

make install

```

3. To ensure your changes pass linting and tests before submitting a PR:

```shell

make checks

```

## 🙏 Acknowledgements

- [fast-plate-ocr](https://github.com/ankandrew/fast-plate-ocr) for default **OCR** models.

- [open-image-models](https://github.com/ankandrew/open-image-models) for default plate **detection** models.

## 📫 Contact

For questions or suggestions, feel free to open an issue.

================================================

FILE: docs/contributing.md

================================================

Contributions to the repo are greatly appreciated. Whether it's bug fixes, feature enhancements, or new models,

your contributions are warmly welcomed.

To start contributing or to begin development, you can follow these steps:

1. Clone repo

```shell

git clone https://github.com/ankandrew/fast-alpr.git

```

2. Install all dependencies (make sure you have [uv](https://docs.astral.sh/uv/getting-started/installation/) installed):

```shell

make install

```

3. To ensure your changes pass linting and tests before submitting a PR:

```shell

make checks

```

================================================

FILE: docs/custom_models.md

================================================

## 🛠️ Customization and Flexibility

FastALPR is designed to be flexible. You can customize the detector and OCR models according to your requirements.

### Using Tesseract OCR

You can very easily integrate with **Tesseract** OCR to leverage its capabilities:

```python title="tesseract_ocr.py"

import re

from statistics import mean

import numpy as np

import pytesseract

from fast_alpr.alpr import ALPR, BaseOCR, OcrResult

class PytesseractOCR(BaseOCR):

def __init__(self) -> None:

"""

Init PytesseractOCR.

"""

def predict(self, cropped_plate: np.ndarray) -> OcrResult | None:

if cropped_plate is None:

return None

# You can change 'eng' to the appropriate language code as needed

data = pytesseract.image_to_data(

cropped_plate,

lang="eng",

config="--oem 3 --psm 6",

output_type=pytesseract.Output.DICT,

)

plate_text = " ".join(data["text"]).strip()

plate_text = re.sub(r"[^A-Za-z0-9]", "", plate_text)

avg_confidence = mean(conf for conf in data["conf"] if conf > 0) / 100.0

return OcrResult(text=plate_text, confidence=avg_confidence)

alpr = ALPR(detector_model="yolo-v9-t-384-license-plate-end2end", ocr=PytesseractOCR())

alpr_results = alpr.predict("assets/test_image.png")

print(alpr_results)

```

???+ tip

You can implement this with any OCR you want! For example, [EasyOCR](https://github.com/JaidedAI/EasyOCR).

================================================

FILE: docs/index.md

================================================

# FastALPR

[](https://youtu.be/-TPJot7-HTs?t=652)

## Intro

**FastALPR** is a high-performance, customizable Automatic License Plate Recognition (ALPR) system. We offer fast and

efficient ONNX models by default, but you can easily swap in your own models if needed.

For Optical Character Recognition (**OCR**), we use [fast-plate-ocr](https://github.com/ankandrew/fast-plate-ocr) by

default, and for **license plate detection**, we

use [open-image-models](https://github.com/ankandrew/open-image-models). However, you can integrate any OCR or detection

model of your choice.

## Features

- **🔍 High Accuracy**: Uses advanced models for precise license plate detection and OCR.

- **🔧 Customizable**: Easily switch out detection and OCR models.

- **🚀 Easy to Use**: Quick setup with a simple API.

- **📦 Out-of-the-Box Models**: Includes ready-to-use detection and OCR models

- **⚡ Fast Performance**: Optimized with ONNX Runtime for speed.

## 🛠️ Customization and Flexibility

FastALPR is designed to be flexible. You can customize the detector and OCR models according to your requirements.

You can very easily integrate with **Tesseract** OCR to leverage its capabilities:

```python

import re

from statistics import mean

import numpy as np

import pytesseract

from fast_alpr.alpr import ALPR, BaseOCR, OcrResult

class PytesseractOCR(BaseOCR):

def __init__(self) -> None:

"""

Init PytesseractOCR.

"""

def predict(self, cropped_plate: np.ndarray) -> OcrResult | None:

if cropped_plate is None:

return None

# You can change 'eng' to the appropriate language code as needed

data = pytesseract.image_to_data(

cropped_plate,

lang="eng",

config="--oem 3 --psm 6",

output_type=pytesseract.Output.DICT,

)

plate_text = " ".join(data["text"]).strip()

plate_text = re.sub(r"[^A-Za-z0-9]", "", plate_text)

avg_confidence = mean(conf for conf in data["conf"] if conf > 0) / 100.0

return OcrResult(text=plate_text, confidence=avg_confidence)

alpr = ALPR(detector_model="yolo-v9-t-384-license-plate-end2end", ocr=PytesseractOCR())

alpr_results = alpr.predict("assets/test_image.png")

print(alpr_results)

```

> [!TIP]

> See the [docs](https://ankandrew.github.io/fast-alpr/) for more examples!

## 📖 Documentation

Comprehensive documentation is available [here](https://ankandrew.github.io/fast-alpr/), including detailed API

references and additional examples.

## 🤝 Contributing

Contributions to the repo are greatly appreciated. Whether it's bug fixes, feature enhancements, or new models,

your contributions are warmly welcomed.

To start contributing or to begin development, you can follow these steps:

1. Clone repo

```shell

git clone https://github.com/ankandrew/fast-alpr.git

```

2. Install all dependencies (make sure you have [uv](https://docs.astral.sh/uv/getting-started/installation/) installed):

```shell

make install

```

3. To ensure your changes pass linting and tests before submitting a PR:

```shell

make checks

```

## 🙏 Acknowledgements

- [fast-plate-ocr](https://github.com/ankandrew/fast-plate-ocr) for default **OCR** models.

- [open-image-models](https://github.com/ankandrew/open-image-models) for default plate **detection** models.

## 📫 Contact

For questions or suggestions, feel free to open an issue.

================================================

FILE: docs/contributing.md

================================================

Contributions to the repo are greatly appreciated. Whether it's bug fixes, feature enhancements, or new models,

your contributions are warmly welcomed.

To start contributing or to begin development, you can follow these steps:

1. Clone repo

```shell

git clone https://github.com/ankandrew/fast-alpr.git

```

2. Install all dependencies (make sure you have [uv](https://docs.astral.sh/uv/getting-started/installation/) installed):

```shell

make install

```

3. To ensure your changes pass linting and tests before submitting a PR:

```shell

make checks

```

================================================

FILE: docs/custom_models.md

================================================

## 🛠️ Customization and Flexibility

FastALPR is designed to be flexible. You can customize the detector and OCR models according to your requirements.

### Using Tesseract OCR

You can very easily integrate with **Tesseract** OCR to leverage its capabilities:

```python title="tesseract_ocr.py"

import re

from statistics import mean

import numpy as np

import pytesseract

from fast_alpr.alpr import ALPR, BaseOCR, OcrResult

class PytesseractOCR(BaseOCR):

def __init__(self) -> None:

"""

Init PytesseractOCR.

"""

def predict(self, cropped_plate: np.ndarray) -> OcrResult | None:

if cropped_plate is None:

return None

# You can change 'eng' to the appropriate language code as needed

data = pytesseract.image_to_data(

cropped_plate,

lang="eng",

config="--oem 3 --psm 6",

output_type=pytesseract.Output.DICT,

)

plate_text = " ".join(data["text"]).strip()

plate_text = re.sub(r"[^A-Za-z0-9]", "", plate_text)

avg_confidence = mean(conf for conf in data["conf"] if conf > 0) / 100.0

return OcrResult(text=plate_text, confidence=avg_confidence)

alpr = ALPR(detector_model="yolo-v9-t-384-license-plate-end2end", ocr=PytesseractOCR())

alpr_results = alpr.predict("assets/test_image.png")

print(alpr_results)

```

???+ tip

You can implement this with any OCR you want! For example, [EasyOCR](https://github.com/JaidedAI/EasyOCR).

================================================

FILE: docs/index.md

================================================

# FastALPR

[](https://youtu.be/-TPJot7-HTs?t=652)

## Intro

**FastALPR** is a high-performance, customizable Automatic License Plate Recognition (ALPR) system. We offer fast and

efficient ONNX models by default, but you can easily swap in your own models if needed.

For Optical Character Recognition (**OCR**), we use [fast-plate-ocr](https://github.com/ankandrew/fast-plate-ocr) by

default, and for **license plate detection**, we

use [open-image-models](https://github.com/ankandrew/open-image-models). However, you can integrate any OCR or detection

model of your choice.

## Features

- **🔍 High Accuracy**: Uses advanced models for precise license plate detection and OCR.

- **🔧 Customizable**: Easily switch out detection and OCR models.

- **🚀 Easy to Use**: Quick setup with a simple API.

- **📦 Out-of-the-Box Models**: Includes ready-to-use detection and OCR models

- **⚡ Fast Performance**: Optimized with ONNX Runtime for speed.

================================================

FILE: docs/installation.md

================================================

## Installation

For **inference**, install:

```shell

pip install fast-alpr[onnx-gpu]

```

???+ warning

By default, **no ONNX runtime is installed**.

To run inference, you **must install** one of the ONNX extras:

- `onnx` - for CPU inference (cross-platform)

- `onnx-gpu` - for NVIDIA GPUs (CUDA)

- `onnx-openvino` - for Intel CPUs / VPUs

- `onnx-directml` - for Windows devices via DirectML

- `onnx-qnn` - for Qualcomm chips on mobile

Dependencies for inference are kept **minimal by default**. Inference-related packages like **ONNX runtimes** are

**optional** and not installed unless **explicitly requested via extras**.

================================================

FILE: docs/quick_start.md

================================================

## 🚀 Quick Start

Here's how to get started with FastALPR:

### Predictions

```python

from fast_alpr import ALPR

# You can also initialize the ALPR with custom plate detection and OCR models.

alpr = ALPR(

detector_model="yolo-v9-t-384-license-plate-end2end",

ocr_model="cct-xs-v2-global-model",

)

# The "assets/test_image.png" can be found in repo root dir

# You can also pass a NumPy array containing cropped plate image

alpr_results = alpr.predict("assets/test_image.png")

print(alpr_results)

```

???+ note

See [reference](reference.md) for the available models.

Output:

### Draw Results

You can also **draw** the predictions directly on the image:

```python

import cv2

from fast_alpr import ALPR

# Initialize the ALPR

alpr = ALPR(

detector_model="yolo-v9-t-384-license-plate-end2end",

ocr_model="cct-xs-v2-global-model",

)

# Load the image

image_path = "assets/test_image.png"

frame = cv2.imread(image_path)

# Draw predictions on the image and get the ALPR results

drawn = alpr.draw_predictions(frame)

annotated_frame = drawn.image

results = drawn.results

```

Annotated frame:

### Draw Results

You can also **draw** the predictions directly on the image:

```python

import cv2

from fast_alpr import ALPR

# Initialize the ALPR

alpr = ALPR(

detector_model="yolo-v9-t-384-license-plate-end2end",

ocr_model="cct-xs-v2-global-model",

)

# Load the image

image_path = "assets/test_image.png"

frame = cv2.imread(image_path)

# Draw predictions on the image and get the ALPR results

drawn = alpr.draw_predictions(frame)

annotated_frame = drawn.image

results = drawn.results

```

Annotated frame:

================================================

FILE: docs/reference.md

================================================

# Reference

This page shows the public API of FastALPR.

## At a Glance

- Use `ALPR.predict()` to get structured ALPR results

- Use `ALPR.draw_predictions()` to get an annotated image and the same ALPR results

- `BoundingBox` and `DetectionResult` come from `open-image-models`

## Imports

```python

from fast_alpr import ALPR, ALPRResult, DrawPredictionsResult, OcrResult

```

## Common Inputs

- A NumPy image in BGR format

- A string path to an image file

## Common Returns

- `ALPR.predict(...)` returns `list[ALPRResult]`

- `ALPR.draw_predictions(...)` returns `DrawPredictionsResult`

`ALPRResult` contains:

- `detection`: box, label, and detection confidence

- `ocr`: recognized text and OCR confidence, or `None`

`DrawPredictionsResult` contains:

- `image`: the image with boxes and text drawn on it

- `results`: the same ALPR results used for drawing

## Available Models

See the available detection models in [open-image-models](https://ankandrew.github.io/open-image-models/0.4/reference/#open_image_models.detection.core.hub.PlateDetectorModel)

and OCR models in [fast-plate-ocr](https://ankandrew.github.io/fast-plate-ocr/1.0/inference/model_zoo/).

## Main Class

::: fast_alpr.alpr.ALPR

options:

show_root_heading: true

show_root_toc_entry: false

## Result Types

::: fast_alpr.alpr.ALPRResult

options:

show_root_heading: true

show_root_toc_entry: false

::: fast_alpr.alpr.DrawPredictionsResult

options:

show_root_heading: true

show_root_toc_entry: false

::: fast_alpr.base.OcrResult

options:

show_root_heading: true

show_root_toc_entry: false

## Interfaces

::: fast_alpr.base.BaseDetector

options:

show_root_heading: true

show_root_toc_entry: false

::: fast_alpr.base.BaseOCR

options:

show_root_heading: true

show_root_toc_entry: false

## External Types

See [`BoundingBox`][open_image_models.detection.core.base.BoundingBox]

and [`DetectionResult`][open_image_models.detection.core.base.DetectionResult].

================================================

FILE: fast_alpr/__init__.py

================================================

"""

FastALPR package.

"""

from fast_alpr.alpr import ALPR, ALPRResult, DrawPredictionsResult

from fast_alpr.base import BaseDetector, BaseOCR, DetectionResult, OcrResult

__all__ = [

"ALPR",

"ALPRResult",

"BaseDetector",

"BaseOCR",

"DetectionResult",

"DrawPredictionsResult",

"OcrResult",

]

================================================

FILE: fast_alpr/alpr.py

================================================

"""

ALPR module.

"""

import os

import statistics

from collections.abc import Sequence

from dataclasses import dataclass

from typing import Literal

import cv2

import numpy as np

import onnxruntime as ort

from fast_plate_ocr.inference.hub import OcrModel

from open_image_models.detection.core.hub import PlateDetectorModel

from fast_alpr.base import BaseDetector, BaseOCR, DetectionResult, OcrResult

from fast_alpr.default_detector import DefaultDetector

from fast_alpr.default_ocr import DefaultOCR

# pylint: disable=too-many-arguments, too-many-locals

# ruff: noqa: PLR0913

@dataclass(frozen=True)

class ALPRResult:

"""

Detection and OCR output for one license plate.

Attributes:

detection: Detector output for the plate.

ocr: OCR output for the plate, or None if OCR does not return a result.

"""

detection: DetectionResult

ocr: OcrResult | None

@dataclass(frozen=True, slots=True)

class DrawPredictionsResult:

"""

Return value from draw_predictions.

Attributes:

image: The input image with boxes and text drawn on it.

results: The ALPR results used to draw the annotations.

"""

image: np.ndarray

results: list[ALPRResult]

class ALPR:

"""

Automatic License Plate Recognition (ALPR) system class.

This class combines a detector and an OCR model to recognize license plates in images.

"""

def __init__(

self,

detector: BaseDetector | None = None,

ocr: BaseOCR | None = None,

detector_model: PlateDetectorModel = "yolo-v9-t-384-license-plate-end2end",

detector_conf_thresh: float = 0.4,

detector_providers: Sequence[str | tuple[str, dict]] | None = None,

detector_sess_options: ort.SessionOptions = None,

ocr_model: OcrModel | None = "cct-xs-v2-global-model",

ocr_device: Literal["cuda", "cpu", "auto"] = "auto",

ocr_providers: Sequence[str | tuple[str, dict]] | None = None,

ocr_sess_options: ort.SessionOptions | None = None,

ocr_model_path: str | os.PathLike | None = None,

ocr_config_path: str | os.PathLike | None = None,

ocr_force_download: bool = False,

) -> None:

"""

Initialize the ALPR system.

Parameters:

detector: An instance of BaseDetector. If None, the DefaultDetector is used.

ocr: An instance of BaseOCR. If None, the DefaultOCR is used.

detector_model: The name of the detector model or a PlateDetectorModel enum instance.

Defaults to "yolo-v9-t-384-license-plate-end2end".

detector_conf_thresh: Confidence threshold for the detector.

detector_providers: Execution providers for the detector.

detector_sess_options: Session options for the detector.

ocr_model: The name of the OCR model from the model hub. This can be none and

`ocr_model_path` and `ocr_config_path` parameters are expected to pass them to

`fast-plate-ocr` library.

ocr_device: The device to run the OCR model on ("cuda", "cpu", or "auto").

ocr_providers: Execution providers for the OCR. If None, the default providers are used.

ocr_sess_options: Session options for the OCR. If None, default session options are

used.

ocr_model_path: Custom model path for the OCR. If None, the model is downloaded from the

hub or cache.

ocr_config_path: Custom config path for the OCR. If None, the default configuration is

used.

ocr_force_download: Whether to force download the OCR model.

"""

# Initialize the detector

self.detector = detector or DefaultDetector(

model_name=detector_model,

conf_thresh=detector_conf_thresh,

providers=detector_providers,

sess_options=detector_sess_options,

)

# Initialize the OCR

self.ocr = ocr or DefaultOCR(

hub_ocr_model=ocr_model,

device=ocr_device,

providers=ocr_providers,

sess_options=ocr_sess_options,

model_path=ocr_model_path,

config_path=ocr_config_path,

force_download=ocr_force_download,

)

def predict(self, frame: np.ndarray | str) -> list[ALPRResult]:

"""

Run plate detection and OCR on an image.

Parameters:

frame: Unprocessed frame (Colors in order: BGR) or image path.

Returns:

A list of ALPRResult objects, one for each detected plate.

"""

if isinstance(frame, str):

img_path = frame

img = cv2.imread(img_path)

if img is None:

raise ValueError(f"Failed to load image from path: {img_path}")

else:

img = frame

plate_detections = self.detector.predict(img)

alpr_results: list[ALPRResult] = []

for detection in plate_detections:

bbox = detection.bounding_box

x1, y1 = max(bbox.x1, 0), max(bbox.y1, 0)

x2, y2 = min(bbox.x2, img.shape[1]), min(bbox.y2, img.shape[0])

cropped_plate = img[y1:y2, x1:x2]

ocr_result = self.ocr.predict(cropped_plate)

alpr_result = ALPRResult(detection=detection, ocr=ocr_result)

alpr_results.append(alpr_result)

return alpr_results

def draw_predictions(self, frame: np.ndarray | str) -> DrawPredictionsResult:

"""

Draw detections and OCR results on an image.

Parameters:

frame: The original frame or image path.

Returns:

A DrawPredictionsResult with the annotated image and the ALPR results.

"""

# If frame is a string, assume it's an image path and load it

if isinstance(frame, str):

img_path = frame

img = cv2.imread(img_path)

if img is None:

raise ValueError(f"Failed to load image from path: {img_path}")

else:

img = frame

# Get ALPR results using the ndarray

alpr_results = self.predict(img)

for result in alpr_results:

detection = result.detection

ocr_result = result.ocr

bbox = detection.bounding_box

x1, y1, x2, y2 = bbox.x1, bbox.y1, bbox.x2, bbox.y2

# Draw the bounding box

cv2.rectangle(img, (x1, y1), (x2, y2), (36, 255, 12), 2)

if ocr_result is None or not ocr_result.text or not ocr_result.confidence:

continue

confidence: float = (

statistics.mean(ocr_result.confidence)

if isinstance(ocr_result.confidence, list)

else ocr_result.confidence

)

font_scale = min(1.25, max(0.4, img.shape[1] / 1000))

text_thickness = 1 if font_scale < 0.75 else 2

outline_thickness = text_thickness + max(3, round(font_scale * 3))

display_lines = [f"{ocr_result.text} {confidence * 100:.0f}%"]

if ocr_result.region:

region_text = ocr_result.region

if ocr_result.region_confidence is not None:

region_text = f"{region_text} {ocr_result.region_confidence * 100:.0f}%"

display_lines.insert(0, region_text)

_, text_height = cv2.getTextSize(

display_lines[0], cv2.FONT_HERSHEY_SIMPLEX, font_scale, text_thickness

)[0]

line_gap = max(14, round(text_height * 0.6))

line_height = text_height + line_gap

text_y = y1 - 10 - ((len(display_lines) - 1) * line_height)

if text_y - text_height < 0:

text_y = y2 + text_height + 10

for idx, line in enumerate(display_lines):

text_width, current_text_height = cv2.getTextSize(

line, cv2.FONT_HERSHEY_SIMPLEX, font_scale, text_thickness

)[0]

text_x = min(max(x1, 5), max(5, img.shape[1] - text_width - 5))

current_y = min(

max(text_y + (idx * line_height), current_text_height + 5),

img.shape[0] - 5,

)

# Draw black background for better readability

cv2.putText(

img=img,

text=line,

org=(text_x, current_y),

fontFace=cv2.FONT_HERSHEY_SIMPLEX,

fontScale=font_scale,

color=(0, 0, 0),

thickness=outline_thickness,

lineType=cv2.LINE_AA,

)

# Draw white text

cv2.putText(

img=img,

text=line,

org=(text_x, current_y),

fontFace=cv2.FONT_HERSHEY_SIMPLEX,

fontScale=font_scale,

color=(255, 255, 255),

thickness=text_thickness,

lineType=cv2.LINE_AA,

)

return DrawPredictionsResult(image=img, results=alpr_results)

================================================

FILE: fast_alpr/base.py

================================================

"""

Base module.

"""

from abc import ABC, abstractmethod

from dataclasses import dataclass

import numpy as np

from open_image_models.detection.core.base import DetectionResult

@dataclass(frozen=True)

class OcrResult:

"""

OCR output for one cropped plate image.

Attributes:

text: Recognized plate text.

confidence: OCR confidence as one value or one value per character.

region: Optional region or country prediction.

region_confidence: Confidence for the region prediction.

"""

text: str

confidence: float | list[float]

region: str | None = None

region_confidence: float | None = None

class BaseDetector(ABC):

@abstractmethod

def predict(self, frame: np.ndarray) -> list[DetectionResult]:

"""Perform detection on the input frame and return a list of detections."""

class BaseOCR(ABC):

@abstractmethod

def predict(self, cropped_plate: np.ndarray) -> OcrResult | None:

"""Perform OCR on the cropped plate image and return the recognized text and character

probabilities."""

================================================

FILE: fast_alpr/default_detector.py

================================================

"""

Default Detector module.

"""

from collections.abc import Sequence

import numpy as np

import onnxruntime as ort

from open_image_models import LicensePlateDetector

from open_image_models.detection.core.hub import PlateDetectorModel

from fast_alpr.base import BaseDetector, DetectionResult

class DefaultDetector(BaseDetector):

"""

Default detector class for license plate detection using ONNX models.

This class utilizes the `LicensePlateDetector` from the `open_image_models` package

to perform detection on input frames.

"""

def __init__(

self,

model_name: PlateDetectorModel = "yolo-v9-t-384-license-plate-end2end",

conf_thresh: float = 0.4,

providers: Sequence[str | tuple[str, dict]] | None = None,

sess_options: ort.SessionOptions = None,

) -> None:

"""

Initialize the DefaultDetector with the specified parameters. Uses `open-image-models`'s

`LicensePlateDetector`.

Parameters:

model_name: The name of the detector model. See `PlateDetectorModel` for the available

models.

conf_thresh: Confidence threshold for the detector. Defaults to 0.25.

providers: The execution providers to use in ONNX Runtime. If None, the default

providers are used.

sess_options: Custom session options for ONNX Runtime. If None, default session options

are used.

"""

self.detector = LicensePlateDetector(

detection_model=model_name,

conf_thresh=conf_thresh,

providers=providers,

sess_options=sess_options,

)

def predict(self, frame: np.ndarray) -> list[DetectionResult]:

"""

Perform detection on the input frame and return a list of detections.

Parameters:

frame: The input image/frame in which to detect license plates.

Returns:

A list of detection results, each containing the label,

confidence, and bounding box of a detected license plate.

"""

return self.detector.predict(frame)

================================================

FILE: fast_alpr/default_ocr.py

================================================

"""

Default OCR module.

"""

import os

from collections.abc import Sequence

from typing import Literal

import cv2

import numpy as np

import onnxruntime as ort

from fast_plate_ocr import LicensePlateRecognizer

from fast_plate_ocr.inference.hub import OcrModel

from fast_alpr.base import BaseOCR, OcrResult

class DefaultOCR(BaseOCR):

"""

Default OCR class for license plate recognition using `fast-plate-ocr` models.

"""

def __init__(

self,

hub_ocr_model: OcrModel | None = None,

device: Literal["cuda", "cpu", "auto"] = "auto",

providers: Sequence[str | tuple[str, dict]] | None = None,

sess_options: ort.SessionOptions | None = None,

model_path: str | os.PathLike | None = None,

config_path: str | os.PathLike | None = None,

force_download: bool = False,

) -> None:

"""

Initialize the DefaultOCR with the specified parameters. Uses `fast-plate-ocr`'s

`LicensePlateRecognizer`

Parameters:

hub_ocr_model: The name of the OCR model from the model hub.

device: The device to run the model on. Options are "cuda", "cpu", or "auto". Defaults

to "auto".

providers: The execution providers to use in ONNX Runtime. If None, the default

providers are used.

sess_options: Custom session options for ONNX Runtime. If None, default session options

are used.

model_path: Path to a custom OCR model file. If None, the model is downloaded from the

hub or cache.

config_path: Path to a custom configuration file. If None, the default configuration is

used.

force_download: If True, forces the download of the model and overwrites any existing

files.

"""

self.ocr_model = LicensePlateRecognizer(

hub_ocr_model=hub_ocr_model,

device=device,

providers=providers,

sess_options=sess_options,

onnx_model_path=model_path,

plate_config_path=config_path,

force_download=force_download,

)

def predict(self, cropped_plate: np.ndarray) -> OcrResult | None:

"""

Perform OCR on a cropped license plate image.

Parameters:

cropped_plate: The cropped image of the license plate in BGR format.

Returns:

OcrResult: An object containing the recognized text and per-character confidence.

"""

if cropped_plate is None:

return None

if self.ocr_model.config.image_color_mode == "grayscale":

cropped_plate = cv2.cvtColor(cropped_plate, cv2.COLOR_BGR2GRAY)

elif self.ocr_model.config.image_color_mode == "rgb":

cropped_plate = cv2.cvtColor(cropped_plate, cv2.COLOR_BGR2RGB)

prediction = self.ocr_model.run_one(cropped_plate, return_confidence=True)

char_probs = prediction.char_probs

confidence: float | list[float] = (

0.0 if char_probs is None else [float(x) for x in char_probs.tolist()]

)

return OcrResult(

text=prediction.plate,

confidence=confidence,

region=prediction.region,

region_confidence=prediction.region_prob,

)

================================================

FILE: mkdocs.yml

================================================

site_name: FastALPR

site_author: ankandrew

site_description: Fast ALPR.

repo_url: https://github.com/ankandrew/fast-alpr

theme:

name: material

features:

- content.code.copy

- content.code.select

- content.footnote.tooltips

- header.autohide

- navigation.expand

- navigation.footer

- navigation.instant

- navigation.instant.progress

- navigation.path

- navigation.sections

- search.highlight

- search.suggest

- toc.follow

palette:

- scheme: default

toggle:

icon: material/lightbulb-outline

name: Switch to dark mode

- scheme: slate

toggle:

icon: material/lightbulb

name: Switch to light mode

nav:

- Introduction: index.md

- Installation: installation.md

- Quick Start: quick_start.md

- Custom Models: custom_models.md

- Contributing: contributing.md

- Reference: reference.md

plugins:

- search

- mike:

alias_type: symlink

canonical_version: latest

- mkdocstrings:

handlers:

python:

paths: [ fast_alpr ]

load_external_modules: true

inventories:

- https://ankandrew.github.io/open-image-models/0.5/objects.inv

- https://ankandrew.github.io/fast-plate-ocr/1.1/objects.inv

options:

members_order: source

separate_signature: true

filters: [ "!^_" ]

show_category_heading: true

docstring_options:

ignore_init_summary: true

show_signature: true

show_source: true

heading_level: 2

show_root_full_path: false

merge_init_into_class: true

show_signature_annotations: true

signature_crossrefs: true

extra:

version:

provider: mike

generator: false

markdown_extensions:

- admonition

- pymdownx.highlight:

anchor_linenums: true

line_spans: __span

pygments_lang_class: true

- pymdownx.inlinehilite

- pymdownx.snippets

- pymdownx.details

- pymdownx.superfences

- toc:

permalink: true

title: Page contents

================================================

FILE: pyproject.toml

================================================

[project]

name = "fast-alpr"

version = "0.4.0"

description = "Fast Automatic License Plate Recognition."

authors = [{ name = "ankandrew", email = "61120139+ankandrew@users.noreply.github.com" }]

requires-python = ">=3.10,<4.0"

readme = "README.md"

license = "MIT"

keywords = [

"image-processing",

"computer-vision",

"deep-learning",

"object-detection",

"plate-detection",

"license-plate-ocr",

"onnx",

]

classifiers = [

"Typing :: Typed",

"Intended Audience :: Developers",

"Intended Audience :: Education",

"Intended Audience :: Science/Research",

"Operating System :: OS Independent",

"Topic :: Software Development",

"Topic :: Scientific/Engineering",

"Topic :: Software Development :: Libraries",

"Topic :: Software Development :: Build Tools",

"Topic :: Scientific/Engineering :: Artificial Intelligence",

"Topic :: Software Development :: Libraries :: Python Modules",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3 :: Only",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13"

]

dependencies = [

"fast-plate-ocr>=1.1.0",

"open-image-models>=0.5.1",

"opencv-python-headless>=4.9.0.80",

]

[project.optional-dependencies]

onnx = ["onnxruntime>=1.19.2"]

onnx-gpu = ["onnxruntime-gpu>=1.19.2"]

onnx-openvino = ["onnxruntime-openvino>=1.19.2"]

onnx-directml = ["onnxruntime-directml>=1.19.2"]

onnx-qnn = ["onnxruntime-qnn>=1.19.2"]

[dependency-groups]

test = ["pytest"]

dev = [

"mypy",

"ruff",

"pylint",

"types-pyyaml",

"yamllint",

]

docs = [

"mkdocs-material",

"mkdocstrings[python]",

"mike",

]

[tool.uv]

default-groups = [

"test",

"dev",

"docs",

]

[build-system]

requires = ["hatchling"]

build-backend = "hatchling.build"

[tool.ruff]

line-length = 100

target-version = "py310"

[tool.ruff.lint]

select = [

# pycodestyle

"E",

"W",

# Pyflakes

"F",

# pep8-naming

"N",

# pyupgrade

"UP",

# flake8-bugbear

"B",

# flake8-simplify

"SIM",

# flake8-unused-arguments

"ARG",

# Pylint

"PL",

# Perflint

"PERF",

# Ruff-specific rules

"RUF",

# pandas-vet

"PD",

]

ignore = ["N812", "PLR2004", "PD011"]

fixable = ["ALL"]

unfixable = []

[tool.ruff.lint.pylint]

max-args = 8

[tool.ruff.format]

line-ending = "lf"

[tool.mypy]

disable_error_code = "import-untyped"

[tool.pylint.typecheck]

generated-members = ["cv2.*"]

signature-mutators = [

"click.decorators.option",

"click.decorators.argument",

"click.decorators.version_option",

"click.decorators.help_option",

"click.decorators.pass_context",

"click.decorators.confirmation_option"

]

[tool.pylint.format]

max-line-length = 100

[tool.pylint."messages control"]

disable = ["missing-class-docstring", "missing-function-docstring", "too-many-positional-arguments"]

[tool.pylint.design]

max-args = 8

min-public-methods = 1

[tool.pylint.basic]

no-docstring-rgx = "^__|^test_"

================================================

FILE: test/__init__.py

================================================

================================================

FILE: test/test_alpr.py

================================================

"""

Test ALPR end-to-end.

"""

from pathlib import Path

import cv2

import numpy as np

import pytest

from fast_plate_ocr.inference.hub import OcrModel

from open_image_models.detection.core.hub import PlateDetectorModel

from fast_alpr.alpr import ALPR

ASSETS_DIR = Path(__file__).resolve().parent.parent / "assets"

@pytest.fixture(scope="module", name="alpr")

def alpr_fixture() -> ALPR:

return ALPR(

detector_model="yolo-v9-t-384-license-plate-end2end",

ocr_model="cct-xs-v2-global-model",

)

@pytest.mark.parametrize(

"img_path, expected_plates", [(ASSETS_DIR / "test_image.png", {"5AU5341"})]

)

@pytest.mark.parametrize("detector_model", ["yolo-v9-t-384-license-plate-end2end"])

@pytest.mark.parametrize(

"ocr_model",

[

"cct-s-v2-global-model",

"cct-xs-v2-global-model",

"cct-s-v1-global-model",

"cct-xs-v1-global-model",

"global-plates-mobile-vit-v2-model",

"european-plates-mobile-vit-v2-model",

],

)

def test_default_alpr(

img_path: Path,

expected_plates: set[str],

detector_model: PlateDetectorModel,

ocr_model: OcrModel,

) -> None:

# pylint: disable=too-many-locals

im = cv2.imread(str(img_path))

assert im is not None, "Failed to load test image"

alpr = ALPR(

detector_model=detector_model,

ocr_model=ocr_model,

)

actual_result = alpr.predict(im)

actual_plates = {x.ocr.text for x in actual_result if x.ocr is not None}

assert actual_plates == expected_plates

for res in actual_result:

bbox = res.detection.bounding_box

height, width = im.shape[:2]

x1, y1 = max(bbox.x1, 0), max(bbox.y1, 0)

x2, y2 = min(bbox.x2, width), min(bbox.y2, height)

assert 0 <= x1 < width, f"x1 coordinate {x1} out of bounds (0, {width})"

assert 0 <= x2 <= width, f"x2 coordinate {x2} out of bounds (0, {width})"

assert 0 <= y1 < height, f"y1 coordinate {y1} out of bounds (0, {height})"

assert 0 <= y2 <= height, f"y2 coordinate {y2} out of bounds (0, {height})"

assert x1 < x2, f"x1 ({x1}) should be less than x2 ({x2})"

assert y1 < y2, f"y1 ({y1}) should be less than y2 ({y2})"

if res.ocr is not None:

conf = res.ocr.confidence

if isinstance(conf, list):

assert all(0.0 <= x <= 1.0 for x in conf)

elif isinstance(conf, float):

assert 0.0 <= conf <= 1.0

else:

raise TypeError(f"Unexpected type for confidence: {type(conf).__name__}")

@pytest.mark.parametrize("img_path", [ASSETS_DIR / "test_image.png"])

def test_draw_predictions(img_path: Path, alpr: ALPR) -> None:

im = cv2.imread(str(img_path))

assert im is not None, "Failed to load test image"

h, w, c = im.shape

# ndarray input

drawn_nd = alpr.draw_predictions(im.copy())

assert isinstance(drawn_nd.image, np.ndarray)

assert drawn_nd.image.shape == (h, w, c)

assert drawn_nd.results

diff_nd = cv2.absdiff(drawn_nd.image, im)

assert int(diff_nd.sum()) > 0

# string path input

drawn_path = alpr.draw_predictions(str(img_path))

assert isinstance(drawn_path.image, np.ndarray)

assert drawn_path.image.shape == (h, w, c)

assert drawn_path.results

diff_path = cv2.absdiff(drawn_path.image, im)

assert int(diff_path.sum()) > 0

================================================

FILE: docs/reference.md

================================================

# Reference

This page shows the public API of FastALPR.

## At a Glance

- Use `ALPR.predict()` to get structured ALPR results

- Use `ALPR.draw_predictions()` to get an annotated image and the same ALPR results

- `BoundingBox` and `DetectionResult` come from `open-image-models`

## Imports

```python

from fast_alpr import ALPR, ALPRResult, DrawPredictionsResult, OcrResult

```

## Common Inputs

- A NumPy image in BGR format

- A string path to an image file

## Common Returns

- `ALPR.predict(...)` returns `list[ALPRResult]`

- `ALPR.draw_predictions(...)` returns `DrawPredictionsResult`

`ALPRResult` contains:

- `detection`: box, label, and detection confidence

- `ocr`: recognized text and OCR confidence, or `None`

`DrawPredictionsResult` contains:

- `image`: the image with boxes and text drawn on it

- `results`: the same ALPR results used for drawing

## Available Models

See the available detection models in [open-image-models](https://ankandrew.github.io/open-image-models/0.4/reference/#open_image_models.detection.core.hub.PlateDetectorModel)

and OCR models in [fast-plate-ocr](https://ankandrew.github.io/fast-plate-ocr/1.0/inference/model_zoo/).

## Main Class

::: fast_alpr.alpr.ALPR

options:

show_root_heading: true

show_root_toc_entry: false

## Result Types

::: fast_alpr.alpr.ALPRResult

options:

show_root_heading: true

show_root_toc_entry: false

::: fast_alpr.alpr.DrawPredictionsResult

options:

show_root_heading: true

show_root_toc_entry: false

::: fast_alpr.base.OcrResult

options:

show_root_heading: true

show_root_toc_entry: false

## Interfaces

::: fast_alpr.base.BaseDetector

options:

show_root_heading: true

show_root_toc_entry: false

::: fast_alpr.base.BaseOCR

options:

show_root_heading: true

show_root_toc_entry: false

## External Types

See [`BoundingBox`][open_image_models.detection.core.base.BoundingBox]

and [`DetectionResult`][open_image_models.detection.core.base.DetectionResult].

================================================

FILE: fast_alpr/__init__.py

================================================

"""

FastALPR package.

"""

from fast_alpr.alpr import ALPR, ALPRResult, DrawPredictionsResult

from fast_alpr.base import BaseDetector, BaseOCR, DetectionResult, OcrResult

__all__ = [

"ALPR",

"ALPRResult",

"BaseDetector",

"BaseOCR",

"DetectionResult",

"DrawPredictionsResult",

"OcrResult",

]

================================================

FILE: fast_alpr/alpr.py

================================================

"""

ALPR module.

"""

import os

import statistics

from collections.abc import Sequence

from dataclasses import dataclass

from typing import Literal

import cv2

import numpy as np

import onnxruntime as ort

from fast_plate_ocr.inference.hub import OcrModel

from open_image_models.detection.core.hub import PlateDetectorModel

from fast_alpr.base import BaseDetector, BaseOCR, DetectionResult, OcrResult

from fast_alpr.default_detector import DefaultDetector

from fast_alpr.default_ocr import DefaultOCR

# pylint: disable=too-many-arguments, too-many-locals

# ruff: noqa: PLR0913

@dataclass(frozen=True)

class ALPRResult:

"""

Detection and OCR output for one license plate.

Attributes:

detection: Detector output for the plate.

ocr: OCR output for the plate, or None if OCR does not return a result.

"""

detection: DetectionResult

ocr: OcrResult | None

@dataclass(frozen=True, slots=True)

class DrawPredictionsResult:

"""

Return value from draw_predictions.

Attributes:

image: The input image with boxes and text drawn on it.

results: The ALPR results used to draw the annotations.

"""

image: np.ndarray

results: list[ALPRResult]

class ALPR:

"""

Automatic License Plate Recognition (ALPR) system class.

This class combines a detector and an OCR model to recognize license plates in images.

"""

def __init__(

self,

detector: BaseDetector | None = None,

ocr: BaseOCR | None = None,

detector_model: PlateDetectorModel = "yolo-v9-t-384-license-plate-end2end",

detector_conf_thresh: float = 0.4,

detector_providers: Sequence[str | tuple[str, dict]] | None = None,

detector_sess_options: ort.SessionOptions = None,

ocr_model: OcrModel | None = "cct-xs-v2-global-model",

ocr_device: Literal["cuda", "cpu", "auto"] = "auto",

ocr_providers: Sequence[str | tuple[str, dict]] | None = None,

ocr_sess_options: ort.SessionOptions | None = None,

ocr_model_path: str | os.PathLike | None = None,

ocr_config_path: str | os.PathLike | None = None,

ocr_force_download: bool = False,

) -> None:

"""

Initialize the ALPR system.

Parameters:

detector: An instance of BaseDetector. If None, the DefaultDetector is used.

ocr: An instance of BaseOCR. If None, the DefaultOCR is used.

detector_model: The name of the detector model or a PlateDetectorModel enum instance.

Defaults to "yolo-v9-t-384-license-plate-end2end".

detector_conf_thresh: Confidence threshold for the detector.

detector_providers: Execution providers for the detector.

detector_sess_options: Session options for the detector.

ocr_model: The name of the OCR model from the model hub. This can be none and

`ocr_model_path` and `ocr_config_path` parameters are expected to pass them to

`fast-plate-ocr` library.

ocr_device: The device to run the OCR model on ("cuda", "cpu", or "auto").

ocr_providers: Execution providers for the OCR. If None, the default providers are used.

ocr_sess_options: Session options for the OCR. If None, default session options are

used.

ocr_model_path: Custom model path for the OCR. If None, the model is downloaded from the

hub or cache.

ocr_config_path: Custom config path for the OCR. If None, the default configuration is

used.

ocr_force_download: Whether to force download the OCR model.

"""

# Initialize the detector

self.detector = detector or DefaultDetector(

model_name=detector_model,

conf_thresh=detector_conf_thresh,

providers=detector_providers,

sess_options=detector_sess_options,

)

# Initialize the OCR

self.ocr = ocr or DefaultOCR(

hub_ocr_model=ocr_model,

device=ocr_device,

providers=ocr_providers,

sess_options=ocr_sess_options,

model_path=ocr_model_path,

config_path=ocr_config_path,

force_download=ocr_force_download,

)

def predict(self, frame: np.ndarray | str) -> list[ALPRResult]:

"""

Run plate detection and OCR on an image.

Parameters:

frame: Unprocessed frame (Colors in order: BGR) or image path.

Returns:

A list of ALPRResult objects, one for each detected plate.

"""

if isinstance(frame, str):

img_path = frame

img = cv2.imread(img_path)

if img is None:

raise ValueError(f"Failed to load image from path: {img_path}")

else:

img = frame

plate_detections = self.detector.predict(img)

alpr_results: list[ALPRResult] = []

for detection in plate_detections:

bbox = detection.bounding_box

x1, y1 = max(bbox.x1, 0), max(bbox.y1, 0)

x2, y2 = min(bbox.x2, img.shape[1]), min(bbox.y2, img.shape[0])

cropped_plate = img[y1:y2, x1:x2]

ocr_result = self.ocr.predict(cropped_plate)

alpr_result = ALPRResult(detection=detection, ocr=ocr_result)

alpr_results.append(alpr_result)

return alpr_results

def draw_predictions(self, frame: np.ndarray | str) -> DrawPredictionsResult:

"""

Draw detections and OCR results on an image.

Parameters:

frame: The original frame or image path.

Returns:

A DrawPredictionsResult with the annotated image and the ALPR results.

"""

# If frame is a string, assume it's an image path and load it

if isinstance(frame, str):

img_path = frame

img = cv2.imread(img_path)

if img is None:

raise ValueError(f"Failed to load image from path: {img_path}")

else:

img = frame

# Get ALPR results using the ndarray

alpr_results = self.predict(img)

for result in alpr_results:

detection = result.detection

ocr_result = result.ocr

bbox = detection.bounding_box

x1, y1, x2, y2 = bbox.x1, bbox.y1, bbox.x2, bbox.y2

# Draw the bounding box

cv2.rectangle(img, (x1, y1), (x2, y2), (36, 255, 12), 2)

if ocr_result is None or not ocr_result.text or not ocr_result.confidence:

continue

confidence: float = (

statistics.mean(ocr_result.confidence)

if isinstance(ocr_result.confidence, list)

else ocr_result.confidence

)

font_scale = min(1.25, max(0.4, img.shape[1] / 1000))

text_thickness = 1 if font_scale < 0.75 else 2

outline_thickness = text_thickness + max(3, round(font_scale * 3))

display_lines = [f"{ocr_result.text} {confidence * 100:.0f}%"]

if ocr_result.region:

region_text = ocr_result.region

if ocr_result.region_confidence is not None:

region_text = f"{region_text} {ocr_result.region_confidence * 100:.0f}%"

display_lines.insert(0, region_text)

_, text_height = cv2.getTextSize(

display_lines[0], cv2.FONT_HERSHEY_SIMPLEX, font_scale, text_thickness

)[0]

line_gap = max(14, round(text_height * 0.6))

line_height = text_height + line_gap

text_y = y1 - 10 - ((len(display_lines) - 1) * line_height)

if text_y - text_height < 0:

text_y = y2 + text_height + 10

for idx, line in enumerate(display_lines):

text_width, current_text_height = cv2.getTextSize(

line, cv2.FONT_HERSHEY_SIMPLEX, font_scale, text_thickness

)[0]

text_x = min(max(x1, 5), max(5, img.shape[1] - text_width - 5))

current_y = min(

max(text_y + (idx * line_height), current_text_height + 5),

img.shape[0] - 5,

)

# Draw black background for better readability

cv2.putText(

img=img,

text=line,

org=(text_x, current_y),

fontFace=cv2.FONT_HERSHEY_SIMPLEX,

fontScale=font_scale,

color=(0, 0, 0),

thickness=outline_thickness,

lineType=cv2.LINE_AA,

)

# Draw white text

cv2.putText(

img=img,

text=line,

org=(text_x, current_y),

fontFace=cv2.FONT_HERSHEY_SIMPLEX,

fontScale=font_scale,

color=(255, 255, 255),

thickness=text_thickness,

lineType=cv2.LINE_AA,

)

return DrawPredictionsResult(image=img, results=alpr_results)

================================================

FILE: fast_alpr/base.py

================================================

"""

Base module.

"""

from abc import ABC, abstractmethod

from dataclasses import dataclass

import numpy as np

from open_image_models.detection.core.base import DetectionResult

@dataclass(frozen=True)

class OcrResult:

"""

OCR output for one cropped plate image.

Attributes:

text: Recognized plate text.

confidence: OCR confidence as one value or one value per character.

region: Optional region or country prediction.

region_confidence: Confidence for the region prediction.

"""

text: str

confidence: float | list[float]

region: str | None = None

region_confidence: float | None = None

class BaseDetector(ABC):

@abstractmethod

def predict(self, frame: np.ndarray) -> list[DetectionResult]:

"""Perform detection on the input frame and return a list of detections."""

class BaseOCR(ABC):

@abstractmethod

def predict(self, cropped_plate: np.ndarray) -> OcrResult | None:

"""Perform OCR on the cropped plate image and return the recognized text and character

probabilities."""

================================================

FILE: fast_alpr/default_detector.py

================================================

"""

Default Detector module.

"""

from collections.abc import Sequence

import numpy as np

import onnxruntime as ort