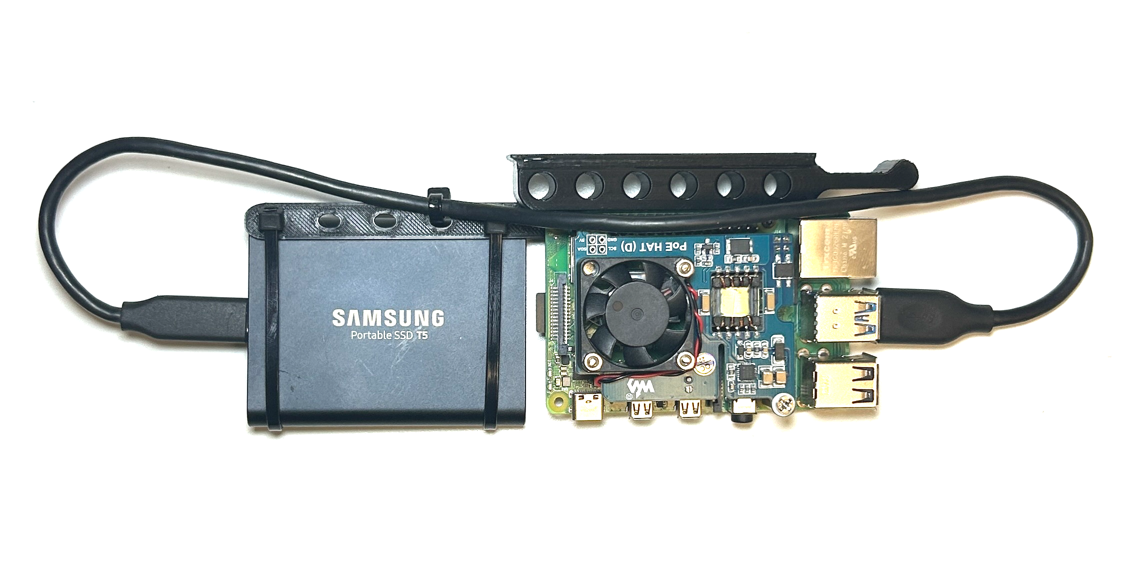

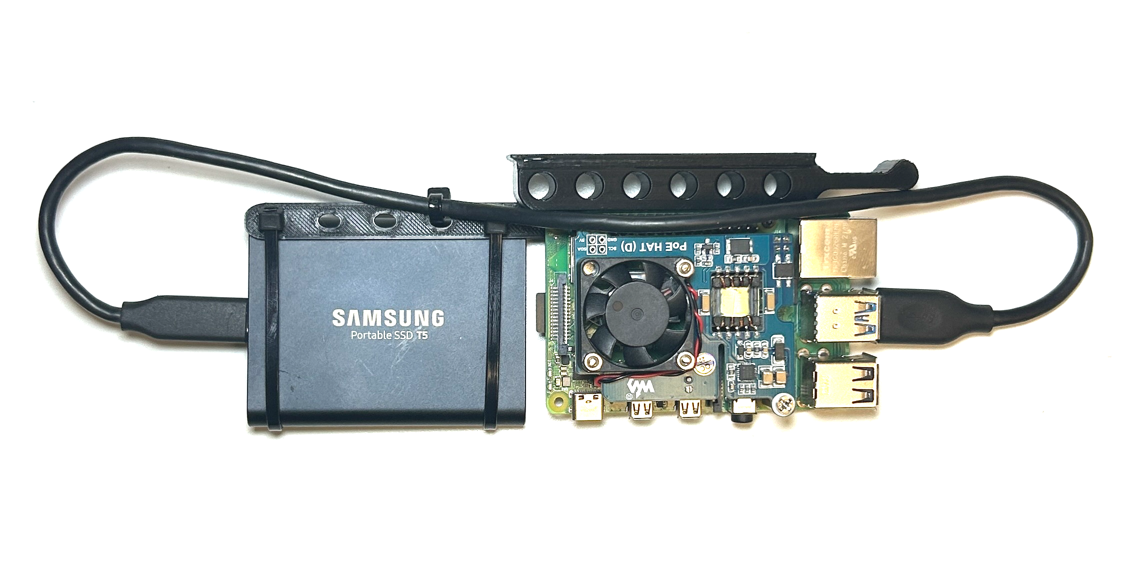

Since this project needs to be "Enterprise-grade" we need a distinct and replicable compute unit that we can buy and build in bulk. I call this a "Node" which is a Raspberry Pi with a 1TB SSD and POE hat. I have also 3D printed a rack-mount solution (Source: [Merocle From UpTimeLabs](https://www.thingiverse.com/thing:4756812)) for easy install into a rack.

### [Console](./sections/console.md)

Since this project needs to be "Enterprise-grade" we need a distinct and replicable compute unit that we can buy and build in bulk. I call this a "Node" which is a Raspberry Pi with a 1TB SSD and POE hat. I have also 3D printed a rack-mount solution (Source: [Merocle From UpTimeLabs](https://www.thingiverse.com/thing:4756812)) for easy install into a rack.

### [Console](./sections/console.md)

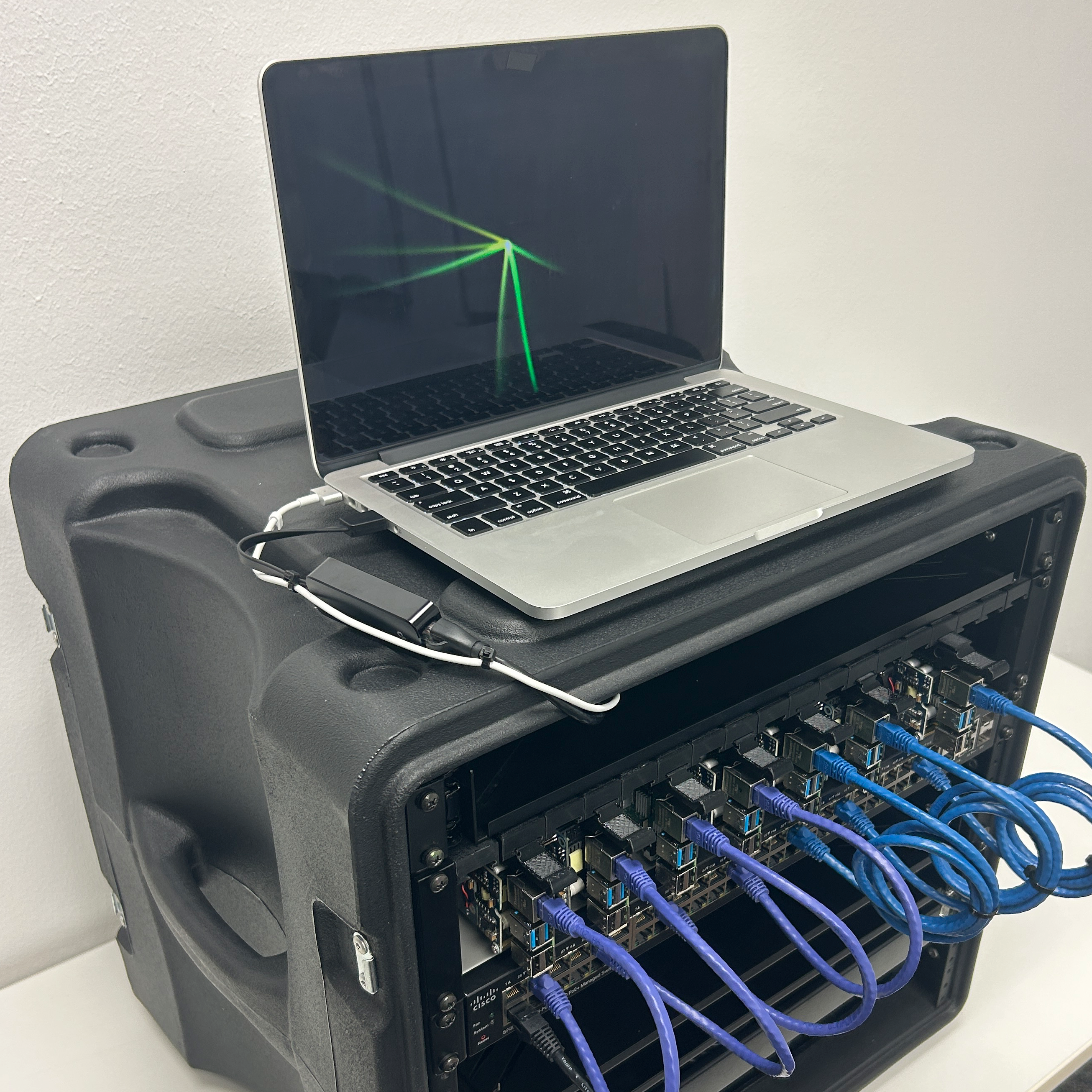

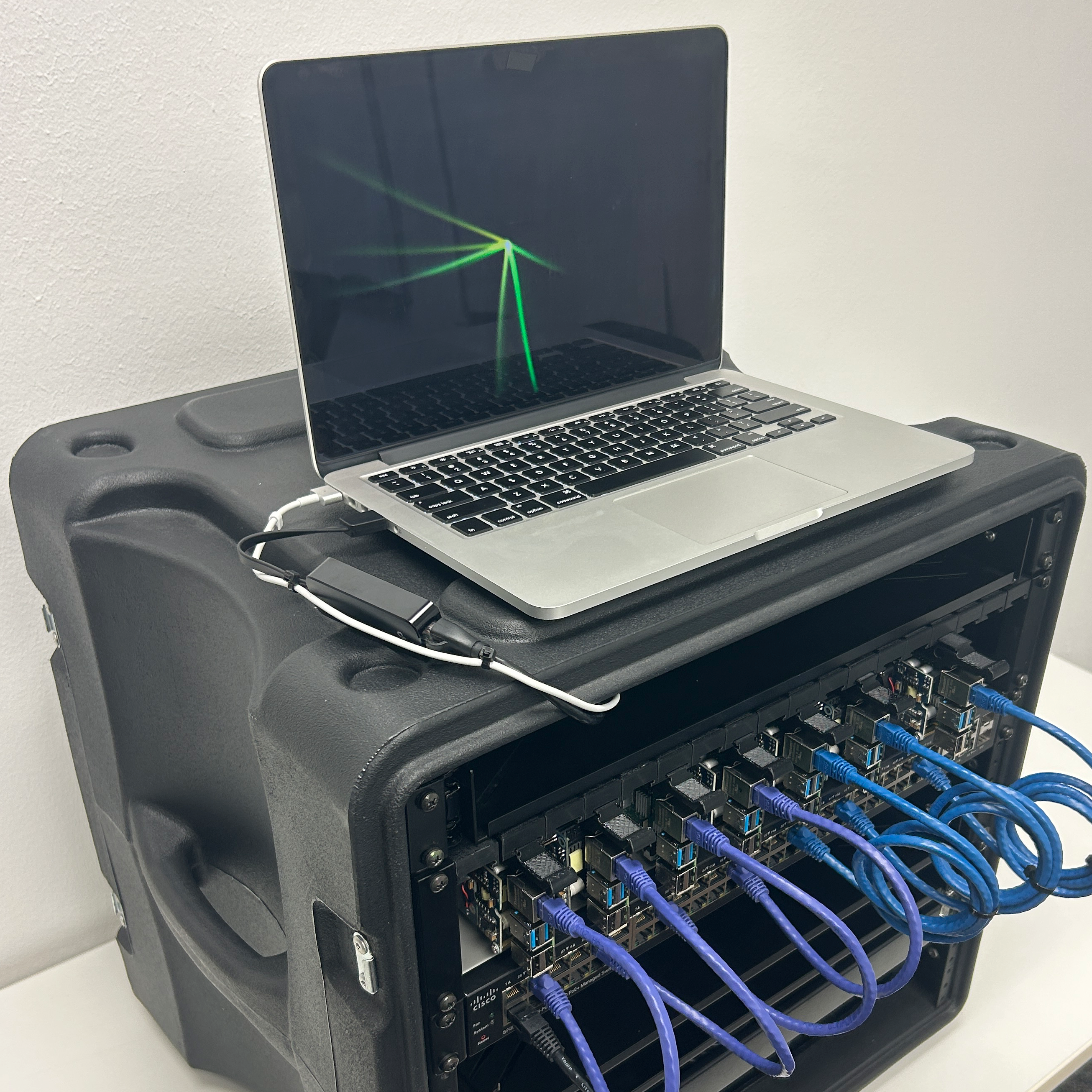

We will need a "console" so we can locally interact with the infrastructure. In the past I have tried using a Raspberry Pi with a monitor and keyboard attached but I have found that using an old MacBook Pro works best for this. In this section I explain how to set-up the console so you can use it to store secrets, manage the network, provision K3s clusters and deploy pods.

### [Frontend](./frontend/ReadMe.md)

We will need a "console" so we can locally interact with the infrastructure. In the past I have tried using a Raspberry Pi with a monitor and keyboard attached but I have found that using an old MacBook Pro works best for this. In this section I explain how to set-up the console so you can use it to store secrets, manage the network, provision K3s clusters and deploy pods.

### [Frontend](./frontend/ReadMe.md)

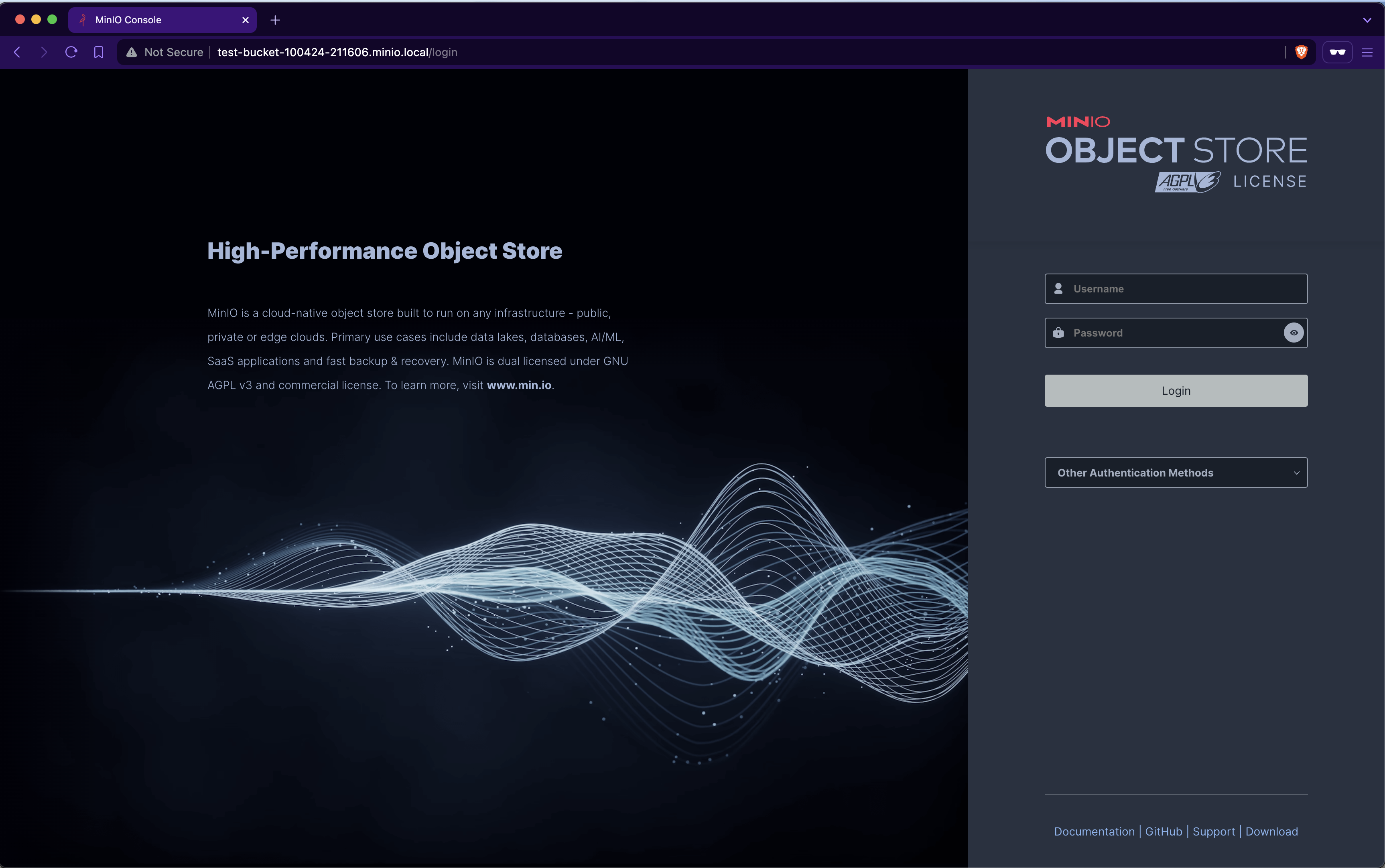

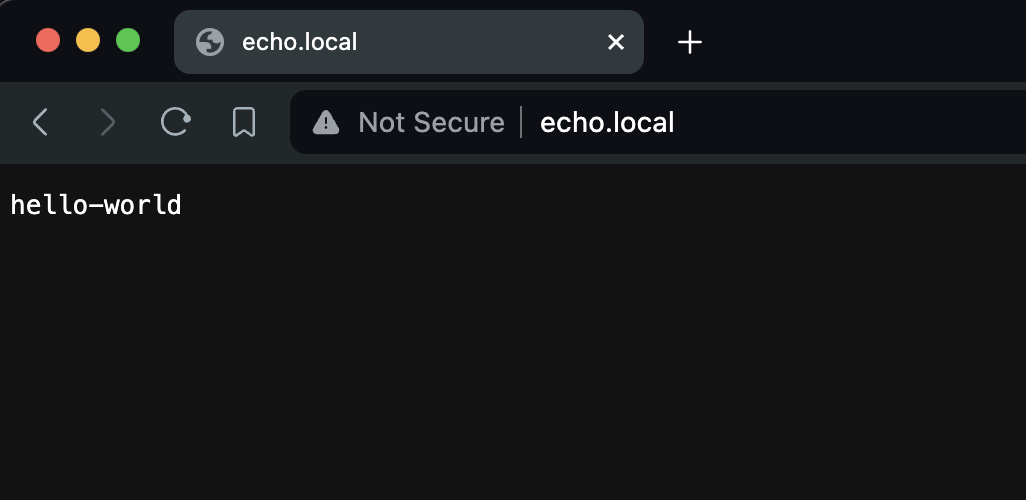

This represents the AWS management console found at [aws.amazon.com/console](https://aws.amazon.com/console/). This is a Vue.js SPA that makes HTTP requests to the [S3 REST API](./api/ReadMe.md) for users to create, manage and delete their S3 buckets.

### [API](./api/ReadMe.md)

```sh

curl -X POST \

-H 'Authorization: Bearer $JWT' \

-H 'Content-Type: application/json' \

-d '{ "name":"s3-test-bucket"}' \

https://s3-api.anthonybudd.io/buckets

```

This API simulates the back-end of the AWS Console. A user can sign-up, login, create a bucket then delete the bucket.

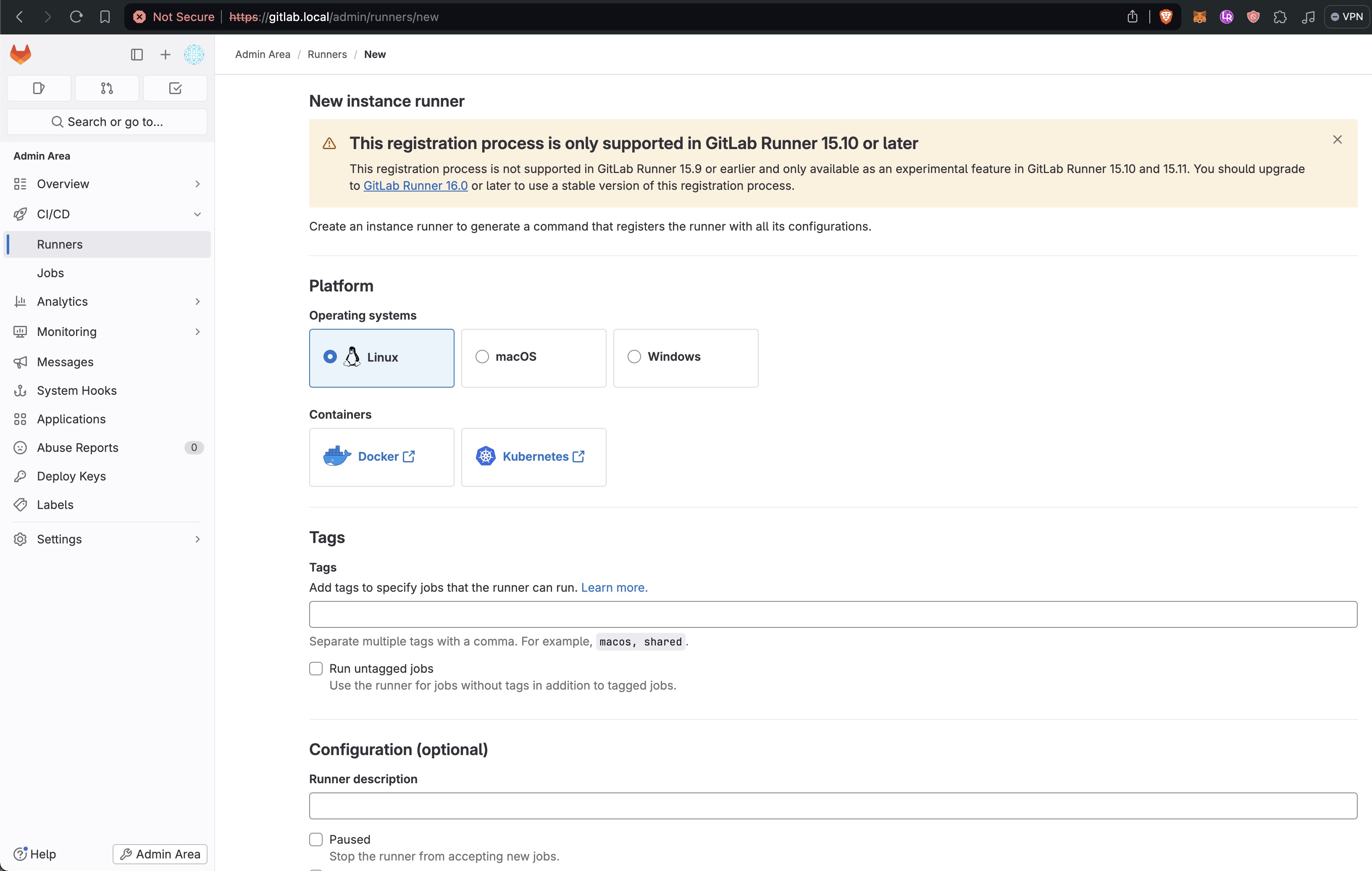

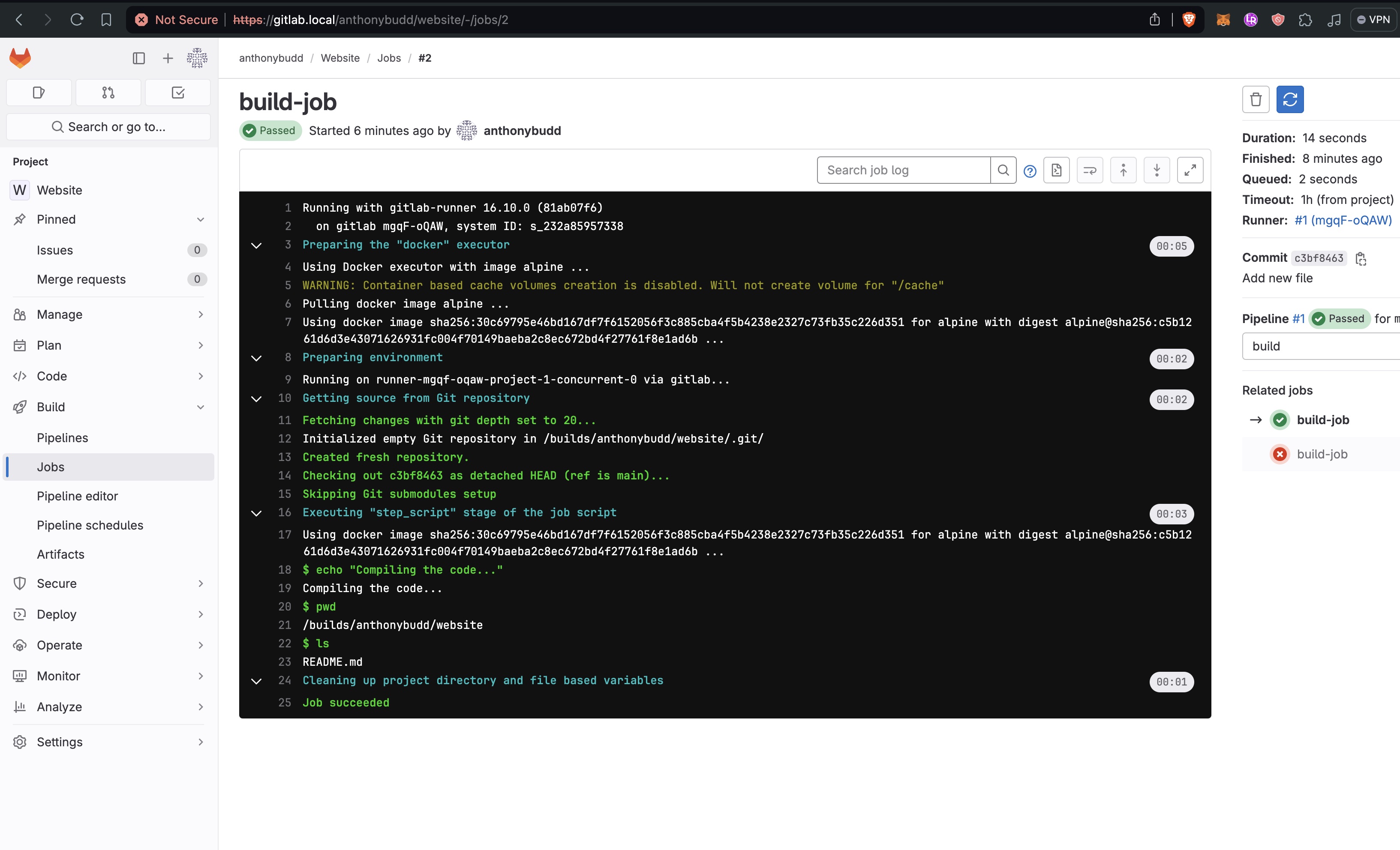

### [Source Control](./sections/gitlab.md)

This represents the AWS management console found at [aws.amazon.com/console](https://aws.amazon.com/console/). This is a Vue.js SPA that makes HTTP requests to the [S3 REST API](./api/ReadMe.md) for users to create, manage and delete their S3 buckets.

### [API](./api/ReadMe.md)

```sh

curl -X POST \

-H 'Authorization: Bearer $JWT' \

-H 'Content-Type: application/json' \

-d '{ "name":"s3-test-bucket"}' \

https://s3-api.anthonybudd.io/buckets

```

This API simulates the back-end of the AWS Console. A user can sign-up, login, create a bucket then delete the bucket.

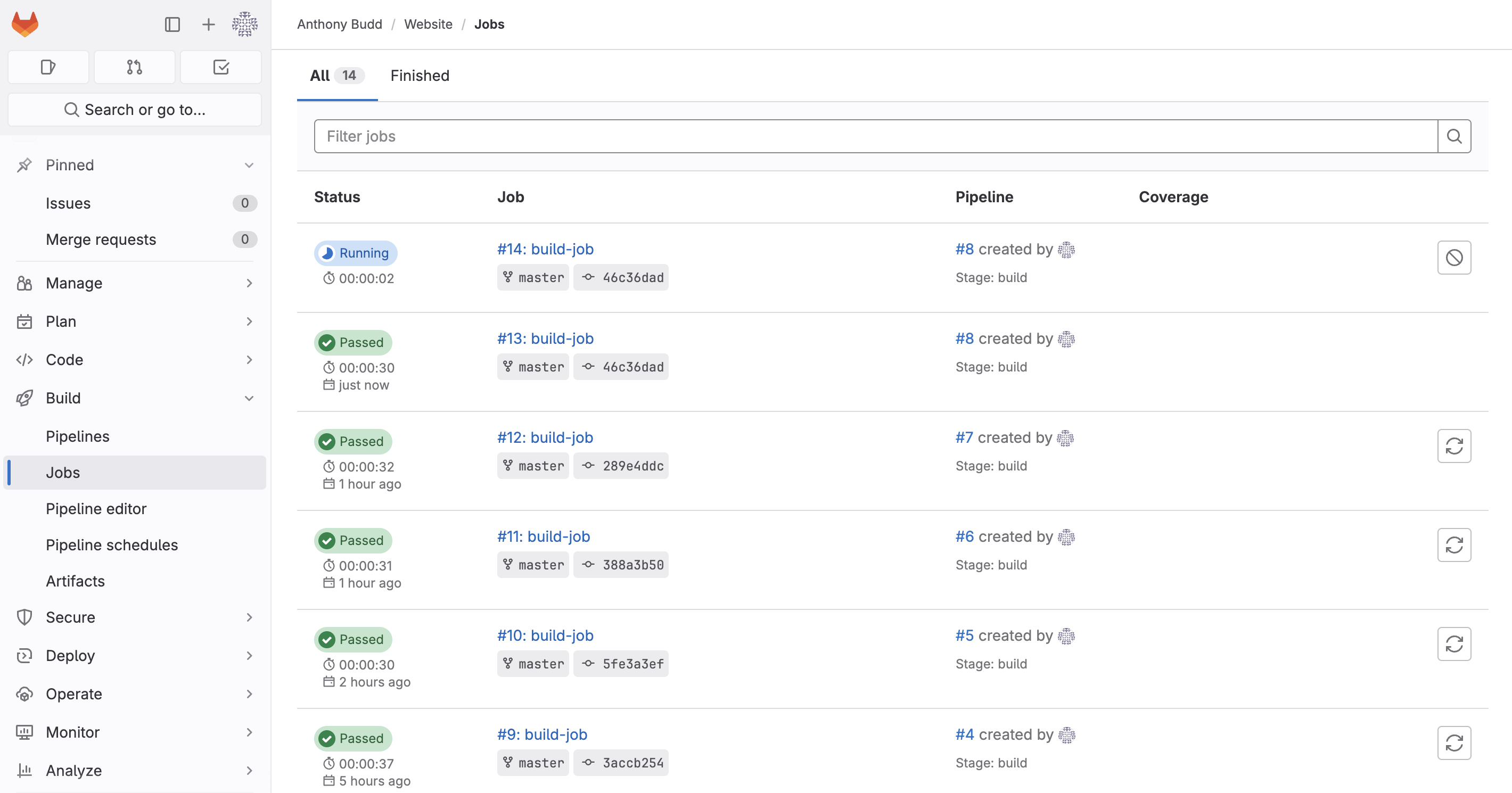

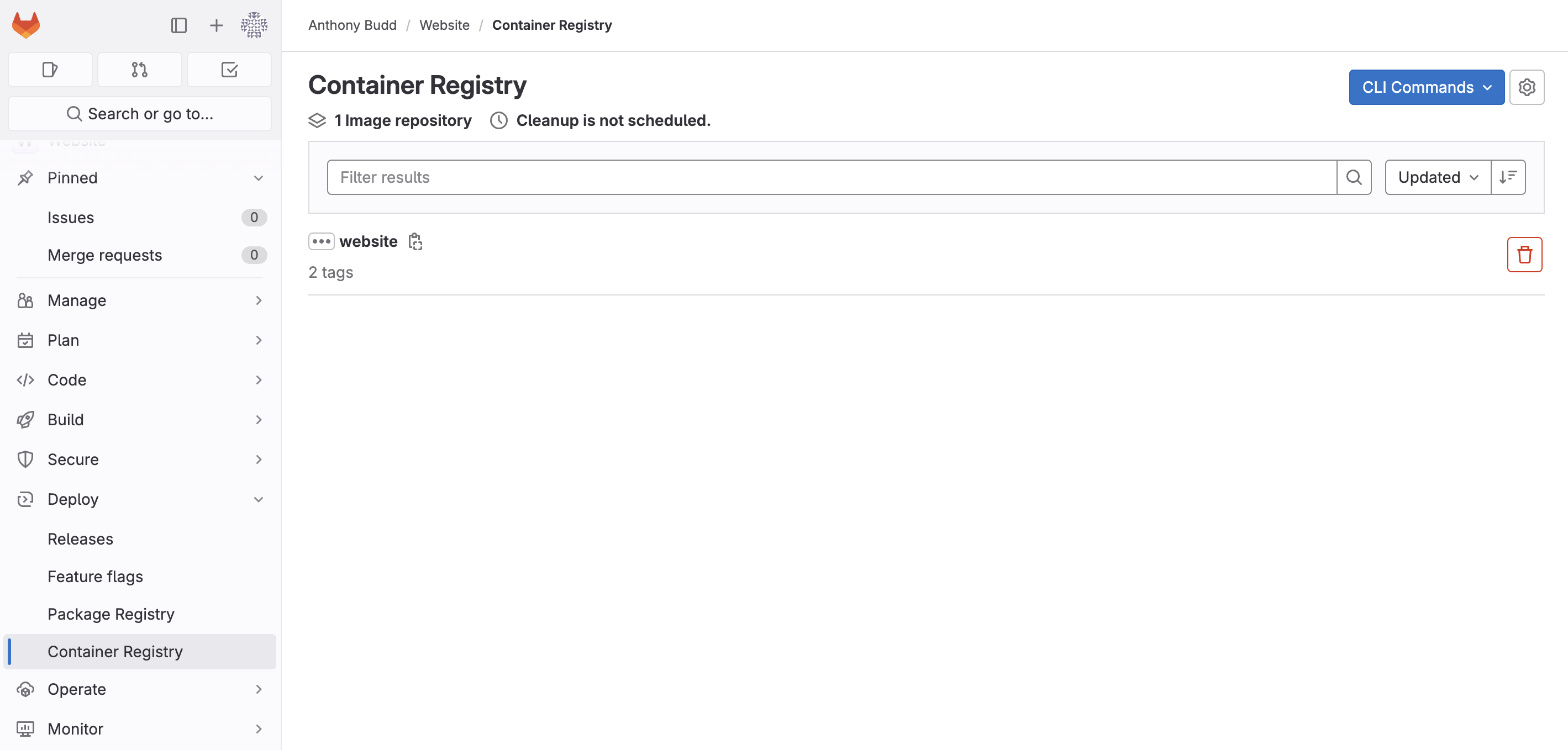

### [Source Control](./sections/gitlab.md)

We will need a network for the nodes to communicate. For the a router I have chosen OpenWRT. This allows me to use a Raspberry Pi with a USB 3.0 Ethernet adapter so it can work as a router between the internet and the datacenter.

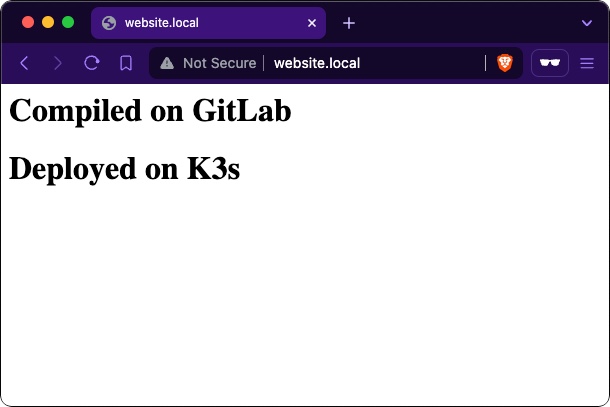

### [Automation](./sections/automated-bucket-deployment.md)

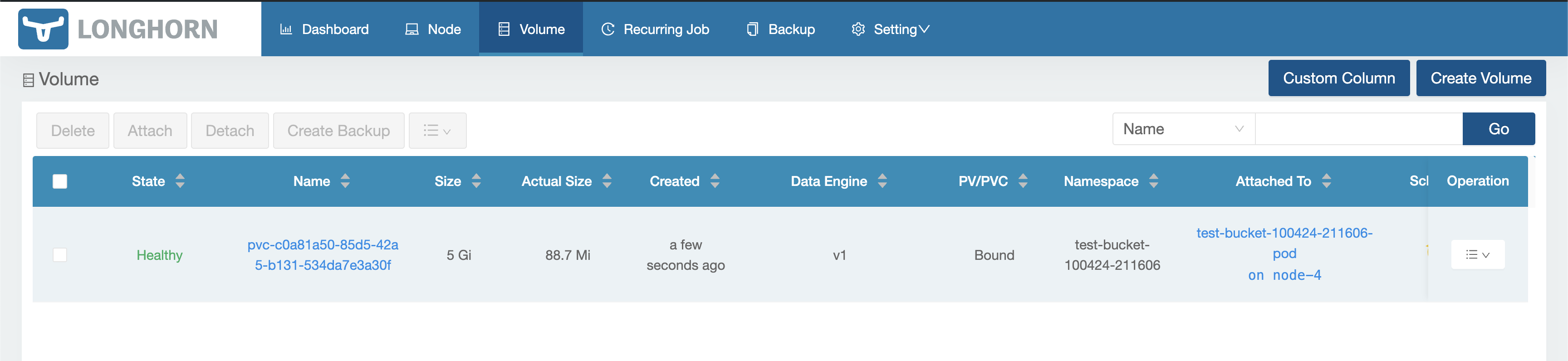

When you create an S3 bucket on AWS, everything is automated, there isn’t a human in a datacenter somewhere typing out CLI commands to get your bucket scheduled. I want my project to work the same way, when a user wants to create a bucket it must not require any human input to provision and deploy it.

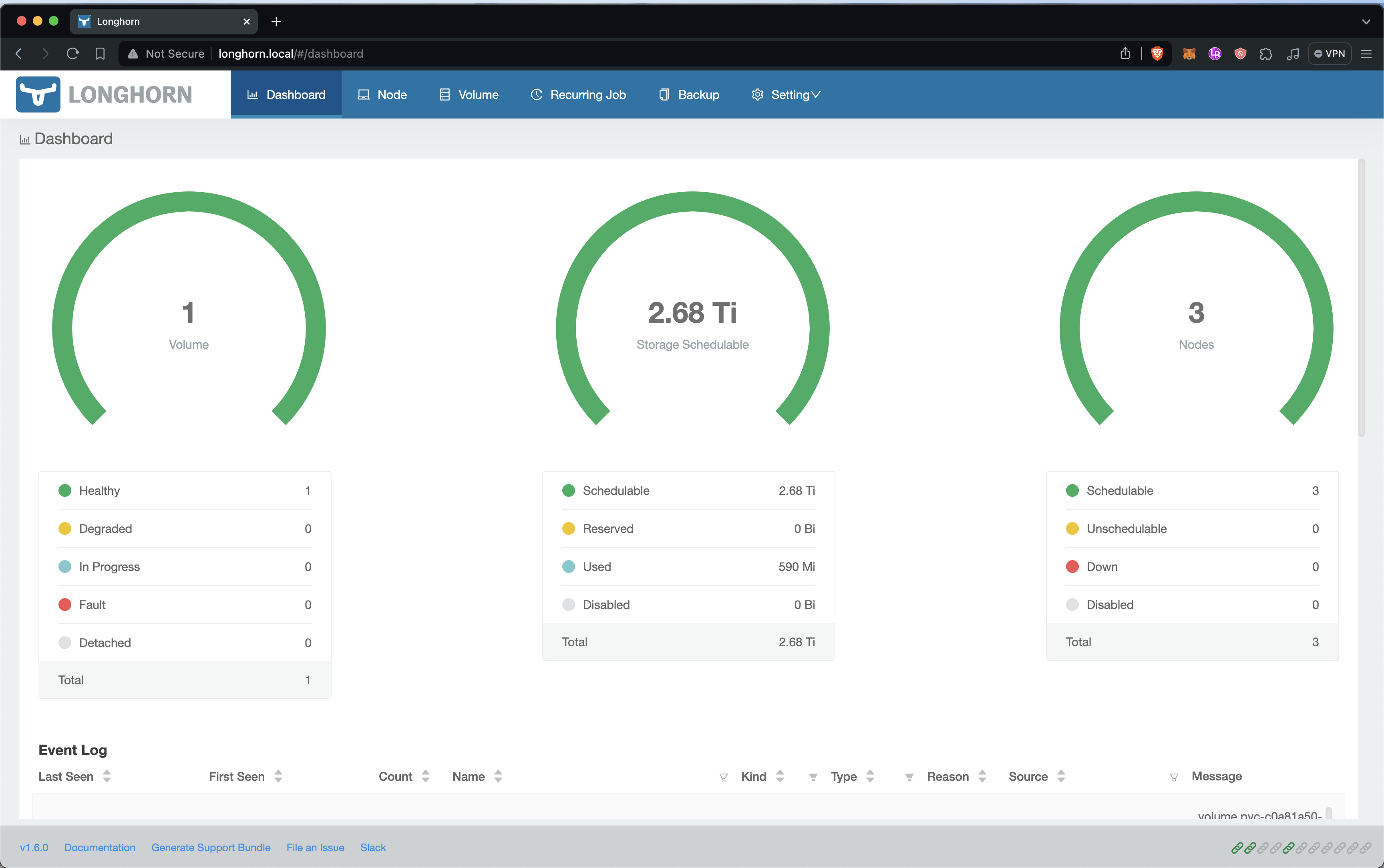

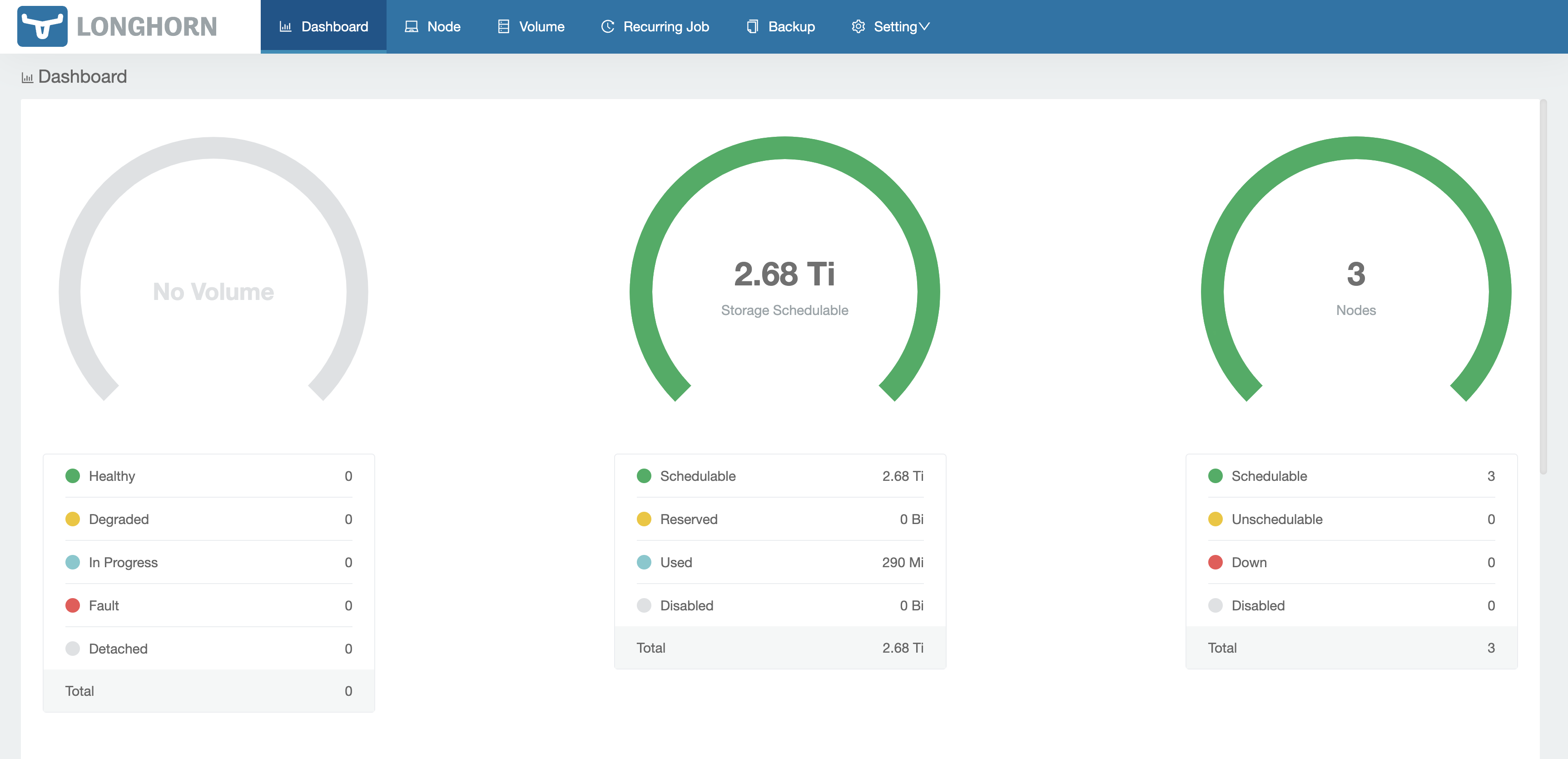

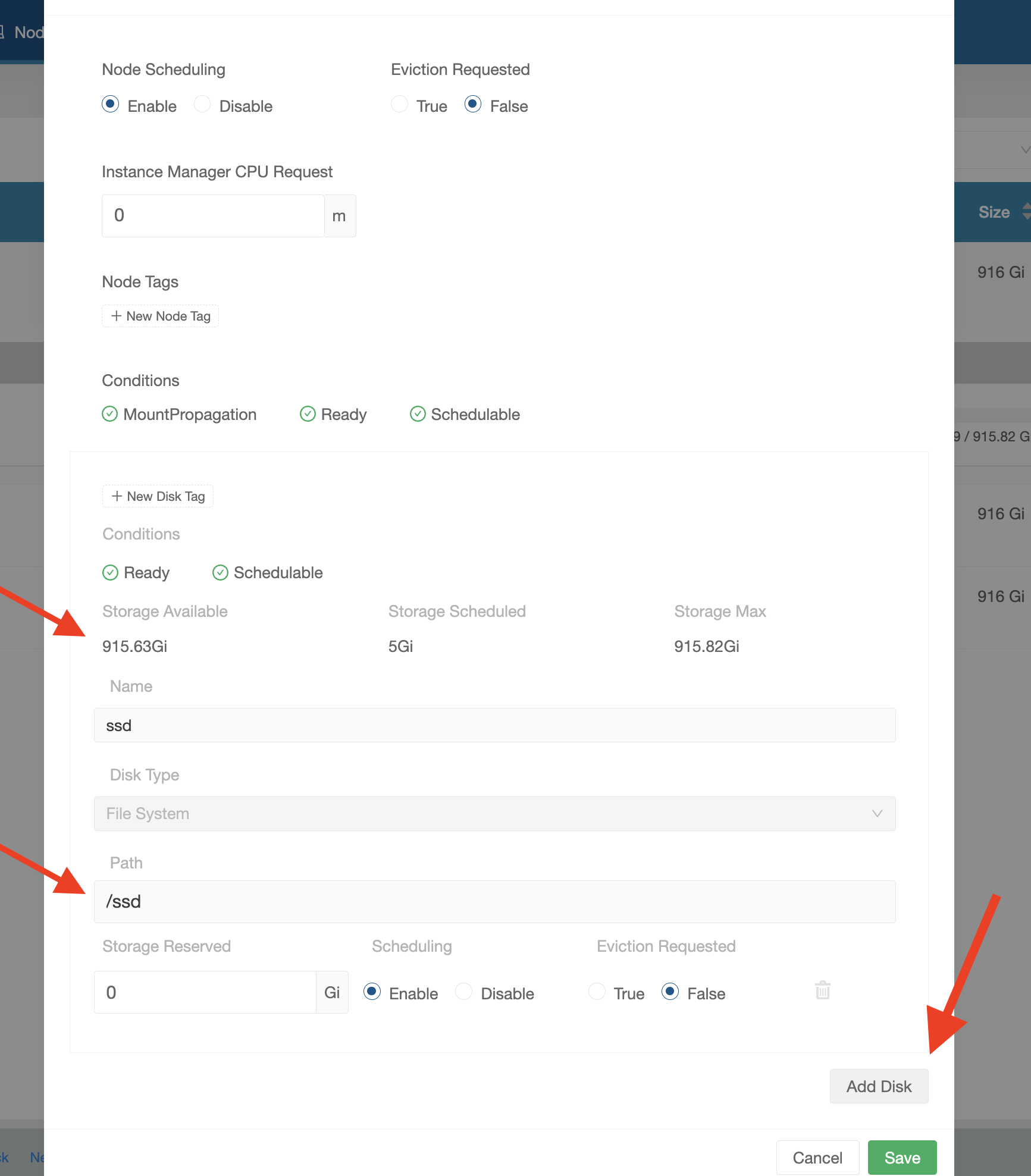

### [Resource Utilization](./sections/storage-cluster.md)

AWS doesn't give each user their own dedicated server with a hard drive attached, instead the hardware is virtualized, allowing multiple tenants to share a single physical CPU. Similarly it would not be practical to assign a whole node and SSD to each bucket, to maximize resource utilization my platform must be able to allow multiple tenants to share the pool of compute and SSD storage space available. In addition, AWS S3 buckets can store an unlimited amount of data, so my platform will also need to allow a user to have a dynamically increasing volume that will auto-scale based on the storage space required.

### Notes

You will need to SSH into multiple devices simultaneously I have added an annotation (example: `[Console] nano /boot/config.txt`) to all commands in this repo, to show where you should be executing each command. Generally you will see `[Console]` and `[Node X]`.

Because this is still very much a work-in-progress you will see my notes in italics "_AB:_" throughout, please ignore.

================================================

FILE: ansible/.gitignore

================================================

Notes.md

================================================

FILE: ansible/.yamllint

================================================

---

extends: default

rules:

line-length:

max: 120

level: warning

truthy:

allowed-values: ['true', 'false', 'yes', 'no']

================================================

FILE: ansible/README.md

================================================

# Ansible

Source: [https://github.com/k3s-io/k3s-ansible](https://github.com/k3s-io/k3s-ansible)

================================================

FILE: ansible/ansible.cfg

================================================

[defaults]

nocows = True

roles_path = ./roles

inventory = ./hosts.ini

remote_tmp = $HOME/.ansible/tmp

local_tmp = $HOME/.ansible/tmp

pipelining = True

become = True

host_key_checking = False

deprecation_warnings = False

callback_whitelist = profile_tasks

================================================

FILE: ansible/inventory/.gitignore

================================================

*-cluster/

!exmaple/

!.gitignore

!sample/

================================================

FILE: ansible/inventory/example/group_vars/all.yml

================================================

---

k3s_version: v1.26.9+k3s1

ansible_user: node

systemd_dir: /etc/systemd/system

master_ip: "{{ hostvars[groups['master'][0]]['ansible_host'] | default(groups['master'][0]) }}"

extra_server_args: ""

extra_agent_args: ""

================================================

FILE: ansible/inventory/example/hosts.ini

================================================

[master]

10.0.0.5

[node]

10.0.0.5

10.0.0.6

10.0.0.7

[k3s_cluster:children]

master

node

================================================

FILE: ansible/reset.yml

================================================

---

- hosts: k3s_cluster

gather_facts: yes

become: yes

roles:

- role: reset

================================================

FILE: ansible/roles/download/tasks/main.yml

================================================

---

- name: Download k3s binary x64

get_url:

url: https://github.com/k3s-io/k3s/releases/download/{{ k3s_version }}/k3s

checksum: sha256:https://github.com/k3s-io/k3s/releases/download/{{ k3s_version }}/sha256sum-amd64.txt

dest: /usr/local/bin/k3s

owner: root

group: root

mode: 0755

when: ansible_facts.architecture == "x86_64"

- name: Download k3s binary arm64

get_url:

url: https://github.com/k3s-io/k3s/releases/download/{{ k3s_version }}/k3s-arm64

checksum: sha256:https://github.com/k3s-io/k3s/releases/download/{{ k3s_version }}/sha256sum-arm64.txt

dest: /usr/local/bin/k3s

owner: root

group: root

mode: 0755

when:

- ( ansible_facts.architecture is search("arm") and

ansible_facts.userspace_bits == "64" ) or

ansible_facts.architecture is search("aarch64")

- name: Download k3s binary armhf

get_url:

url: https://github.com/k3s-io/k3s/releases/download/{{ k3s_version }}/k3s-armhf

checksum: sha256:https://github.com/k3s-io/k3s/releases/download/{{ k3s_version }}/sha256sum-arm.txt

dest: /usr/local/bin/k3s

owner: root

group: root

mode: 0755

when:

- ansible_facts.architecture is search("arm")

- ansible_facts.userspace_bits == "32"

================================================

FILE: ansible/roles/k3s/master/tasks/main.yml

================================================

---

- name: Copy K3s service file

register: k3s_service

template:

src: "k3s.service.j2"

dest: "{{ systemd_dir }}/k3s.service"

owner: root

group: root

mode: 0644

- name: Enable and check K3s service

systemd:

name: k3s

daemon_reload: yes

state: restarted

enabled: yes

- name: Wait for node-token

wait_for:

path: /var/lib/rancher/k3s/server/node-token

- name: Register node-token file access mode

stat:

path: /var/lib/rancher/k3s/server

register: p

- name: Change file access node-token

file:

path: /var/lib/rancher/k3s/server

mode: "g+rx,o+rx"

- name: Read node-token from master

slurp:

src: /var/lib/rancher/k3s/server/node-token

register: node_token

- name: Store Master node-token

set_fact:

token: "{{ node_token.content | b64decode | regex_replace('\n', '') }}"

- name: Restore node-token file access

file:

path: /var/lib/rancher/k3s/server

mode: "{{ p.stat.mode }}"

- name: Create directory .kube

file:

path: ~{{ ansible_user }}/.kube

state: directory

owner: "{{ ansible_user }}"

mode: "u=rwx,g=rx,o="

- name: Copy config file to user home directory

copy:

src: /etc/rancher/k3s/k3s.yaml

dest: ~{{ ansible_user }}/.kube/config

remote_src: yes

owner: "{{ ansible_user }}"

mode: "u=rw,g=,o="

- name: Replace https://localhost:6443 by https://master-ip:6443

command: >-

k3s kubectl config set-cluster default

--server=https://{{ master_ip }}:6443

--kubeconfig ~{{ ansible_user }}/.kube/config

changed_when: true

- name: Create kubectl symlink

file:

src: /usr/local/bin/k3s

dest: /usr/local/bin/kubectl

state: link

- name: Create crictl symlink

file:

src: /usr/local/bin/k3s

dest: /usr/local/bin/crictl

state: link

================================================

FILE: ansible/roles/k3s/master/templates/k3s.service.j2

================================================

[Unit]

Description=Lightweight Kubernetes

Documentation=https://k3s.io

After=network-online.target

[Service]

Type=notify

ExecStartPre=-/sbin/modprobe br_netfilter

ExecStartPre=-/sbin/modprobe overlay

ExecStart=/usr/local/bin/k3s server {{ extra_server_args | default("") }}

KillMode=process

Delegate=yes

# Having non-zero Limit*s causes performance problems due to accounting overhead

# in the kernel. We recommend using cgroups to do container-local accounting.

LimitNOFILE=1048576

LimitNPROC=infinity

LimitCORE=infinity

TasksMax=infinity

TimeoutStartSec=0

Restart=always

RestartSec=5s

[Install]

WantedBy=multi-user.target

================================================

FILE: ansible/roles/k3s/node/tasks/main.yml

================================================

---

- name: Copy K3s service file

template:

src: "k3s.service.j2"

dest: "{{ systemd_dir }}/k3s-node.service"

owner: root

group: root

mode: 0755

- name: Enable and check K3s service

systemd:

name: k3s-node

daemon_reload: yes

state: restarted

enabled: yes

================================================

FILE: ansible/roles/k3s/node/templates/k3s.service.j2

================================================

[Unit]

Description=Lightweight Kubernetes

Documentation=https://k3s.io

After=network-online.target

[Service]

Type=notify

ExecStartPre=-/sbin/modprobe br_netfilter

ExecStartPre=-/sbin/modprobe overlay

ExecStart=/usr/local/bin/k3s agent --server https://{{ master_ip }}:6443 --token {{ hostvars[groups['master'][0]]['token'] }} {{ extra_agent_args | default("") }}

KillMode=process

Delegate=yes

# Having non-zero Limit*s causes performance problems due to accounting overhead

# in the kernel. We recommend using cgroups to do container-local accounting.

LimitNOFILE=1048576

LimitNPROC=infinity

LimitCORE=infinity

TasksMax=infinity

TimeoutStartSec=0

Restart=always

RestartSec=5s

[Install]

WantedBy=multi-user.target

================================================

FILE: ansible/roles/prereq/tasks/main.yml

================================================

---

- name: Set SELinux to disabled state

selinux:

state: disabled

when: ansible_distribution in ['CentOS', 'Red Hat Enterprise Linux']

- name: Enable IPv4 forwarding

sysctl:

name: net.ipv4.ip_forward

value: "1"

state: present

reload: yes

- name: Enable IPv6 forwarding

sysctl:

name: net.ipv6.conf.all.forwarding

value: "1"

state: present

reload: yes

- name: Add br_netfilter to /etc/modules-load.d/

copy:

content: "br_netfilter"

dest: /etc/modules-load.d/br_netfilter.conf

mode: "u=rw,g=,o="

when: ansible_distribution in ['CentOS', 'Red Hat Enterprise Linux']

- name: Load br_netfilter

modprobe:

name: br_netfilter

state: present

when: ansible_distribution in ['CentOS', 'Red Hat Enterprise Linux']

- name: Set bridge-nf-call-iptables (just to be sure)

sysctl:

name: "{{ item }}"

value: "1"

state: present

reload: yes

when: ansible_distribution in ['CentOS', 'Red Hat Enterprise Linux']

loop:

- net.bridge.bridge-nf-call-iptables

- net.bridge.bridge-nf-call-ip6tables

- name: Add /usr/local/bin to sudo secure_path

lineinfile:

line: 'Defaults secure_path = /sbin:/bin:/usr/sbin:/usr/bin:/usr/local/bin'

regexp: "Defaults(\\s)*secure_path(\\s)*="

state: present

insertafter: EOF

path: /etc/sudoers

validate: 'visudo -cf %s'

when: ansible_distribution in ['CentOS', 'Red Hat Enterprise Linux']

================================================

FILE: ansible/roles/raspberrypi/handlers/main.yml

================================================

---

- name: reboot

reboot:

================================================

FILE: ansible/roles/raspberrypi/tasks/main.yml

================================================

---

- name: Test for raspberry pi /proc/cpuinfo

command: grep -E "Raspberry Pi|BCM2708|BCM2709|BCM2835|BCM2836" /proc/cpuinfo

register: grep_cpuinfo_raspberrypi

failed_when: false

changed_when: false

- name: Test for raspberry pi /proc/device-tree/model

command: grep -E "Raspberry Pi" /proc/device-tree/model

register: grep_device_tree_model_raspberrypi

failed_when: false

changed_when: false

- name: Set raspberry_pi fact to true

set_fact:

raspberry_pi: true

when:

grep_cpuinfo_raspberrypi.rc == 0 or grep_device_tree_model_raspberrypi.rc == 0

- name: Set detected_distribution to Raspbian

set_fact:

detected_distribution: Raspbian

when: >

raspberry_pi|default(false) and

( ansible_facts.lsb.id|default("") == "Raspbian" or

ansible_facts.lsb.description|default("") is match("[Rr]aspbian.*") )

- name: Set detected_distribution to Raspbian (ARM64 on Debian Buster)

set_fact:

detected_distribution: Raspbian

when:

- ansible_facts.architecture is search("aarch64")

- raspberry_pi|default(false)

- ansible_facts.lsb.description|default("") is match("Debian.*buster")

- name: Set detected_distribution_major_version

set_fact:

detected_distribution_major_version: "{{ ansible_facts.lsb.major_release }}"

when:

- detected_distribution | default("") == "Raspbian"

- name: execute OS related tasks on the Raspberry Pi

include_tasks: "{{ item }}"

with_first_found:

- "prereq/{{ detected_distribution }}-{{ detected_distribution_major_version }}.yml"

- "prereq/{{ detected_distribution }}.yml"

- "prereq/{{ ansible_distribution }}-{{ ansible_distribution_major_version }}.yml"

- "prereq/{{ ansible_distribution }}.yml"

- "prereq/default.yml"

when:

- raspberry_pi|default(false)

================================================

FILE: ansible/roles/raspberrypi/tasks/prereq/CentOS.yml

================================================

---

- name: Enable cgroup via boot commandline if not already enabled for Centos

lineinfile:

path: /boot/cmdline.txt

backrefs: yes

regexp: '^((?!.*\bcgroup_enable=cpuset cgroup_memory=1 cgroup_enable=memory\b).*)$'

line: '\1 cgroup_enable=cpuset cgroup_memory=1 cgroup_enable=memory'

notify: reboot

================================================

FILE: ansible/roles/raspberrypi/tasks/prereq/Raspbian.yml

================================================

---

- name: Activating cgroup support

lineinfile:

path: /boot/cmdline.txt

regexp: '^((?!.*\bcgroup_enable=cpuset cgroup_memory=1 cgroup_enable=memory\b).*)$'

line: '\1 cgroup_enable=cpuset cgroup_memory=1 cgroup_enable=memory'

backrefs: true

notify: reboot

- name: Flush iptables before changing to iptables-legacy

iptables:

flush: true

changed_when: false # iptables flush always returns changed

- name: Changing to iptables-legacy

alternatives:

path: /usr/sbin/iptables-legacy

name: iptables

register: ip4_legacy

- name: Changing to ip6tables-legacy

alternatives:

path: /usr/sbin/ip6tables-legacy

name: ip6tables

register: ip6_legacy

================================================

FILE: ansible/roles/raspberrypi/tasks/prereq/Ubuntu.yml

================================================

---

- name: Enable cgroup via boot commandline if not already enabled for Ubuntu on a Raspberry Pi

lineinfile:

path: /boot/firmware/cmdline.txt

backrefs: yes

regexp: '^((?!.*\bcgroup_enable=cpuset cgroup_memory=1 cgroup_enable=memory\b).*)$'

line: '\1 cgroup_enable=cpuset cgroup_memory=1 cgroup_enable=memory'

notify: reboot

================================================

FILE: ansible/roles/raspberrypi/tasks/prereq/default.yml

================================================

---

================================================

FILE: ansible/roles/reset/tasks/main.yml

================================================

---

- name: Disable services

systemd:

name: "{{ item }}"

state: stopped

enabled: no

failed_when: false

with_items:

- k3s

- k3s-node

- name: pkill -9 -f "k3s/data/[^/]+/bin/containerd-shim-runc"

register: pkill_containerd_shim_runc

command: pkill -9 -f "k3s/data/[^/]+/bin/containerd-shim-runc"

changed_when: "pkill_containerd_shim_runc.rc == 0"

failed_when: false

- name: Umount k3s filesystems

include_tasks: umount_with_children.yml

with_items:

- /run/k3s

- /var/lib/kubelet

- /run/netns

- /var/lib/rancher/k3s

loop_control:

loop_var: mounted_fs

- name: Remove service files, binaries and data

file:

name: "{{ item }}"

state: absent

with_items:

- /usr/local/bin/k3s

- "{{ systemd_dir }}/k3s.service"

- "{{ systemd_dir }}/k3s-node.service"

- /etc/rancher/k3s

- /var/lib/kubelet

- /var/lib/rancher/k3s

- name: daemon_reload

systemd:

daemon_reload: yes

================================================

FILE: ansible/roles/reset/tasks/umount_with_children.yml

================================================

---

- name: Get the list of mounted filesystems

shell: set -o pipefail && cat /proc/mounts | awk '{ print $2}' | grep -E "^{{ mounted_fs }}"

register: get_mounted_filesystems

args:

executable: /bin/bash

failed_when: false

changed_when: get_mounted_filesystems.stdout | length > 0

check_mode: false

- name: Umount filesystem

mount:

path: "{{ item }}"

state: unmounted

with_items:

"{{ get_mounted_filesystems.stdout_lines | reverse | list }}"

================================================

FILE: ansible/site.yml

================================================

---

- hosts: k3s_cluster

gather_facts: yes

become: yes

roles:

- role: prereq

- role: download

- role: raspberrypi

- hosts: master

become: yes

roles:

- role: k3s/master

- hosts: node

become: yes

roles:

- role: k3s/node

================================================

FILE: api/.dockerignore

================================================

node_modules

package-lock.json

================================================

FILE: api/.eslintignore

================================================

src/database/

tests

================================================

FILE: api/.gitignore

================================================

node_modules/

.vol/

.env

dev.js

private.pem

public.pem

.DS_Store

*/.DS_Store

k8s/secrets.yml

kubeconfig.yml

================================================

FILE: api/.gitlab-ci.yml

================================================

stages:

- build

build-job:

image: docker:dind

stage: build

services:

- docker:dind

variables:

IMAGE_TAG: $CI_REGISTRY_IMAGE:$CI_COMMIT_REF_SLUG

script:

- docker login $CI_REGISTRY -u $CI_REGISTRY_USER -p $CI_REGISTRY_PASSWORD

- docker build -t $IMAGE_TAG .

- docker push $IMAGE_TAG

================================================

FILE: api/.sequelizerc

================================================

const path = require('path');

module.exports = {

'config': path.resolve('src/providers', 'connections.js'),

'models-path': path.resolve('src/', 'models'),

'seeders-path': path.resolve('src/database', 'seeders'),

'migrations-path': path.resolve('src/database', 'migrations')

}

================================================

FILE: api/Dockerfile

================================================

FROM node:20

RUN apt-get update && apt-get install -y curl nano

RUN curl -LO "https://dl.k8s.io/release/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/linux/arm64/kubectl"

RUN install -o root -g root -m 0755 kubectl /usr/local/bin/kubectl

RUN npm install -g nodemon mocha sequelize sequelize-cli mysql2 eslint

WORKDIR /app

COPY . /app

RUN npm install

ENTRYPOINT [ "node", "/app/src/index.js" ]

================================================

FILE: api/LICENSE

================================================

The MIT License

Copyright Anthony C. Budd

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in

all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN

THE SOFTWARE.

================================================

FILE: api/ReadMe.md

================================================

# S3 API

This API simulates the back-end of the AWS Console. A user can sign-up, login, create a bucket then delete the bucket.

This API was built using my project [anthonybudd/express-api-boilerplate.](https://github.com/anthonybudd/express-api-boilerplate)

### Main Files

- Auth Controller: [./src/routes/Auth.js](./src/routes/auth.js)

- Bucket Controller: [./src/routes/Buckets.js](./src/routes/Buckets.js)

- Model: [./src/models/Bucket.js](./src/models/Bucket.js)

### Set-up

```

cp .env.example .env

npm install

# Private RSA key for JWT signing

openssl genrsa -out private.pem 2048

openssl rsa -in private.pem -outform PEM -pubout -out public.pem

# Start the app

docker compose up

npm run _db:refresh

npm run _test

```

### Routes

| Method | Route | Description | Payload | Response |

| ----------- | -------------------------------- | ------------------------------------- | ------------------------------------- | ----------------- |

| **Buckets** | | | | |

| GET | `/api/v1/buckets` | Get all buckets for the current user | -- | [Bucket, Bucket] |

| POST | `/api/v1/buckets` | Create new bucket | { name: "test-bucket" } | {Bucket} |

| GET | `/api/v1/buckets/:bucketID` | Get a single bucket | -- | {Bucket} |

| DELETE | `/api/v1/buckets/:bucketID` | Returns HTTP 202 {id} | -- | {bucketID} |

| **Auth** | | | | |

| POST | `/api/v1/auth/login` | Login | {email, password} | {accessToken} |

| POST | `/api/v1/auth/sign-up` | Sign-up | {email, password, firstName, tos} | {accessToken} |

| GET | `/api/v1/_authcheck` | Returns {auth: true} if has auth | -- | {auth: true} |

| **User** | | | | |

| GET | `/api/v1/user` | Get the current user | | {User} |

| POST | `/api/v1/user` | Update the current user | {firstName, lastName} | {User} |

================================================

FILE: api/docker-compose.yml

================================================

version: "3"

services:

s3-api:

build: .

entrypoint: "nodemon /app/src/index.js --watch /app --legacy-watch"

container_name: s3-api

volumes:

- ./:/app

- ./.vol/tmp:/tmp

links:

- s3-api-db

- s3-api-db-test

ports:

- "8888:80"

environment:

PORT: 80

s3-api-db:

image: mysql:oracle

container_name: s3-api-db

ports:

- "3306:3306"

volumes:

- ./.vol/s3-api:/var/lib/mysql

environment:

MYSQL_ROOT_PASSWORD: supersecret

MYSQL_DATABASE: $DB_DATABASE

MYSQL_USER: $DB_USERNAME

MYSQL_PASSWORD: $DB_PASSWORD

s3-api-db-test:

image: mysql:oracle

container_name: s3-api-db-test

ports:

- "3307:3306"

volumes:

- ./.vol/s3-api-test:/var/lib/mysql

environment:

MYSQL_ROOT_PASSWORD: supersecret

MYSQL_DATABASE: $DB_DATABASE

MYSQL_USER: $DB_USERNAME

MYSQL_PASSWORD: $DB_PASSWORD

================================================

FILE: api/k8s/Deploy.md

================================================

# Deploy

kubectl --kubeconfig=.kube/config create namespace s3-api

namespace/s3-api created

[Console]:~> kubectl --kubeconfig=.kube/config apply -f db.yml

service/s3-db created

deployment.apps/s3-db created

----

Find & Replace (case-sensaive, whole repo): "s3-api" => "your-api-name"

Save kubeconfig.yml to root of repo

### Namespace

Create a namespace

`kubectl --kubeconfig=./kubeconfig.yml create namespace s3-api`

### JWT

```

openssl genrsa -out private.pem 2048

openssl rsa -in private.pem -outform PEM -pubout -out public.pem

kubectl --kubeconfig=.kube/config --namespace=s3-api create secret generic s3-api-jwt-secret \

--from-file=./private.pem \

--from-file=./public.pem

rm ./private.pem ./public.pem

```

### Secrets

Make a new secrets config file

`cp secrets.example.yml secrets.yml`

__Add Secrets in Base64__

Hint: `echo -n 'my-secret-string' | base64`

Create the secrets

`kubectl --kubeconfig=./kubeconfig.yml apply -f ./k8s/secrets.yml`

### Build & Push Container Image

```

docker buildx build --platform linux/amd64 --push -t registry.digitalocean.com/s3-api/app:latest

```

### Create Deployment

```

kubectl --kubeconfig=./kubeconfig.yml apply -f ./k8s/api.deployment.yml

kubectl --kubeconfig=./kubeconfig.yml apply -f ./k8s/api.service.yml

```

### Deploy

```

docker buildx build --platform linux/amd64 --push -t registry.digitalocean.com/s3-api/app:latest . &&

kubectl --kubeconfig=./kubeconfig.yml rollout restart deployment s3-api && \

kubectl --kubeconfig=./kubeconfig.yml get pods -w

```

### Migrate

Migrate the DB

```

export POD="$(kubectl --kubeconfig=kubeconfig.yml --namespace=s3-api get pods --field-selector=status.phase==Running --no-headers -o custom-columns=":metadata.name")"

kubectl --kubeconfig=./kubeconfig.yml --namespace=s3-api exec -ti $POD -- /bin/bash -c 'sequelize db:migrate:undo:all && sequelize db:migrate && sequelize db:seed:all'

```

### SSL

ReadMore: https://www.digitalocean.com/community/tutorials/how-to-set-up-an-nginx-ingress-with-cert-manager-on-digitalocean-kubernetes

```

kubectl --kubeconfig=./kubeconfig.yml apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.1.1/deploy/static/provider/do/deploy.yaml

kubectl --kubeconfig=./kubeconfig.yml get pods -n ingress-nginx -l app.kubernetes.io/name=ingress-nginx --watch

kubectl --kubeconfig=./kubeconfig.yml apply -f ./k8s/api.ingress.yml

kubectl --kubeconfig=./kubeconfig.yml apply -f https://github.com/jetstack/cert-manager/releases/download/v1.7.1/cert-manager.yaml

kubectl --kubeconfig=./kubeconfig.yml get pods --namespace cert-manager

kubectl --kubeconfig=./kubeconfig.yml create -f k8s/prod-issuer.yml

```

### Useful K8S commands

##### Set $POD as the name of the pod in K8s

`export POD="$(kubectl --kubeconfig=kubeconfig.yml --namespace=s3-api get pods --field-selector=status.phase==Running --no-headers -o custom-columns=":metadata.name")"`

##### Execute bash script inside running container

`kubectl --kubeconfig=kubeconfig.yml exec -ti $POD -- /bin/bash -c "sequelize db:migrate"`

##### Get logs for $POD

`kubectl --kubeconfig=kubeconfig.yml logs $POD`

##### Create a cron job

`kubectl --kubeconfig=kubeconfig.yml create job --from=cronjob/s3-api-cron-job s3-api-cron-job`

##### Delete all faild cron jobs

`kubectl --kubeconfig=kubeconfig.yml delete jobs --field-selector status.successful=0`

================================================

FILE: api/k8s/api.deployment.yml

================================================

apiVersion: apps/v1

kind: Deployment

metadata:

name: s3-api

namespace: s3-api

spec:

replicas: 1

selector:

matchLabels:

app: s3-api

template:

metadata:

labels:

app: s3-api

spec:

volumes:

- name: s3-api-jwt-secret

secret:

secretName: s3-api-jwt-secret

- name: storage-cluster-config

secret:

secretName: storage-cluster-config

containers:

- name: s3-api

image: gitlab.local:5050/anthonybudd/api:master

imagePullPolicy: Always

lifecycle:

postStart:

exec:

command: ["/bin/bash", "-c", "sequelize db:migrate"]

ports:

- containerPort: 80

volumeMounts:

- name: s3-api-jwt-secret

mountPath: "/app/private.pem"

subPath: private.pem

- name: s3-api-jwt-secret

mountPath: "/app/public.pem"

subPath: public.pem

- name: storage-k8s-config

mountPath: "/app/config"

subPath: storage-config

env:

- name: S3_ROOT

value: "s3.anthonybudd.io"

- name: K8S_CONFIG_PATH

value: "/app/k8s-config"

- name: NODE_ENV

value: "production"

- name: FRONTEND_URL

value: "https://s3.anthonybudd.io"

- name: BACKEND_URL

value: "https://s3-api.anthonybudd.io/api/v1"

- name: PORT

value: "80"

- name: PRIVATE_KEY_PATH

value: "/app/private.pem"

- name: PUBLIC_KEY_PATH

value: "/app/public.pem"

- name: DB_HOST

value: "s3-db"

- name: DB_PORT

value: "3306"

- name: DB_USERNAME

value: "app"

- name: DB_DATABASE

value: "app"

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: s3-api-secrets

key: DB_PASSWORD

- name: HCAPTCHA_SECRET

valueFrom:

secretKeyRef:

name: s3-api-secrets

key: HCAPTCHA_SECRET

================================================

FILE: api/k8s/api.ingress.yml

================================================

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

namespace: s3-api

name: s3-ingress

annotations:

kubernetes.io/ingress.class: "traefik"

spec:

rules:

- host: api.local

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: s3-api

port:

number: 80

================================================

FILE: api/k8s/api.service.yml

================================================

apiVersion: v1

kind: Service

metadata:

name: s3-api

namespace: s3-api

spec:

ports:

- port: 80

targetPort: 80

selector:

app: s3-api

================================================

FILE: api/k8s/api.ssl.ingress.yml

================================================

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

namespace: s3-api

name: s3-ingress

annotations:

cert-manager.io/cluster-issuer: "letsencrypt-prod"

kubernetes.io/ingress.class: "traefik"

spec:

tls:

- hosts:

- s3-api.anthonybudd.io

secretName: s3-api-anthonybudd-io-cert

rules:

- host: s3-api.anthonybudd.io

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: s3-api

port:

number: 80

================================================

FILE: api/k8s/db.yml

================================================

apiVersion: v1

kind: Service

metadata:

name: s3-db

namespace: s3-api

spec:

selector:

app: s3-db

ports:

- protocol: TCP

port: 80

targetPort: 3306

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: s3-db

namespace: s3-api

spec:

selector:

matchLabels:

app: s3-db

strategy:

type: Recreate

template:

metadata:

labels:

app: s3-db

spec:

containers:

- image: mysql:8

name: s3-db

env:

- name: MYSQL_ROOT_PASSWORD

value: password

- name: MYSQL_DATABASE

value: app

- name: MYSQL_USER

value: app

- name: MYSQL_PASSWORD

value: password

ports:

- containerPort: 3306

name: s3-db

# volumeMounts:

# - name: mysql-persistent-storage

# mountPath: /var/lib/mysql

# volumes:

# - name: mysql-persistent-storage

# persistentVolumeClaim:

# claimName: mysql-pv-claim

================================================

FILE: api/k8s/prod.clusterissuer.yml

================================================

apiVersion: cert-manager.io/v1

kind: ClusterIssuer

metadata:

name: letsencrypt-prod

namespace: cert-manager

spec:

acme:

email: YOUR_EMAIL_ADDRESS

server: https://acme-v02.api.letsencrypt.org/directory

privateKeySecretRef:

name: letsencrypt-prod

solvers:

- http01:

ingress:

class: traefik

================================================

FILE: api/k8s/secrets.example.yml

================================================

apiVersion: v1

kind: Secret

metadata:

name: s3-api-secrets

namespace: default

type: Opaque

data:

DB_PASSWORD:

================================================

FILE: api/k8s/sync.job.yml

================================================

apiVersion: batch/v1

kind: CronJob

metadata:

name: sync

namespace: s3-api

spec:

schedule: "* * * * *"

jobTemplate:

spec:

template:

spec:

restartPolicy: OnFailure

volumes:

- name: storage-k8s-config

secret:

secretName: storage-k8s-config

containers:

- name: s3-api

image: gitlab.local:5050/anthonybudd/api:main

imagePullPolicy: Always

command:

- /bin/bash

- -c

- "node /app/src/scripts/sync.js"

volumeMounts:

- name: storage-k8s-config

mountPath: "/app/storage-config"

subPath: storage-config

env:

- name: S3_ROOT

value: "s3.anthonybudd.io"

- name: K8S_CONFIG_PATH

value: "/app/storage-config"

- name: NODE_ENV

value: "production"

- name: FRONTEND_URL

value: "https://s3.anthonybudd.io"

- name: BACKEND_URL

value: "https://s3-api.anthonybudd.io/api/v1"

- name: PORT

value: "80"

- name: DB_HOST

value: "s3-db"

- name: DB_PORT

value: "3306"

- name: DB_USERNAME

value: "app"

- name: DB_DATABASE

value: "app"

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: s3-api-secrets

key: DB_PASSWORD

- name: HCAPTCHA_SECRET

valueFrom:

secretKeyRef:

name: s3-api-secrets

key: HCAPTCHA_SECRET

================================================

FILE: api/package.json

================================================

{

"name": "s3-api-boilerplate",

"version": "1.0.0",

"main": "./src/index.js",

"author": "Anthony Budd",

"scripts": {

"start": "node ./src/",

"lint": "eslint src",

"_lint": "docker exec -ti s3-api npm run lint",

"jwt": "node ./src/scripts/jwt.js",

"_jwt": "docker exec -ti s3-api npm run jwt",

"env": "./src/scripts/env",

"db:migrate": "sequelize db:migrate",

"db:seed": "./src/scripts/seed",

"db:refresh": "./src/scripts/refresh",

"_db:refresh": "docker exec -ti s3-api npm run db:refresh",

"db:refresh-test": "node_modules/.bin/sequelize db:migrate:undo:all --env test && node_modules/.bin/sequelize db:migrate --env test && node_modules/.bin/sequelize db:seed:all --env test",

"test": "npm run db:refresh-test && mocha --exit --timeout 10000 tests",

"_test": "docker exec -ti s3-api npm run test"

},

"eslintConfig": {

"extends": "eslint:recommended",

"parserOptions": {

"ecmaVersion": 8,

"sourceType": "module"

},

"env": {

"node": true,

"es6": true

},

"rules": {

"no-console": 0,

"no-unused-vars": 1

}

},

"dependencies": {

"axios": "^0.24.0",

"bcrypt-nodejs": "0.0.3",

"cors": "^2.4.1",

"dotenv": "^10.0.0",

"express": "^4.8.5",

"express-fileupload": "^1.4.0",

"express-jwt": "^6.1.0",

"express-validator": "^6.13.0",

"faker": "^4.1.0",

"i": "^0.3.6",

"install": "^0.12.1",

"jsonwebtoken": "^5.7.0",

"jwt-decode": "^2.2.0",

"lodash": "^4.17.21",

"minimist": "^1.2.6",

"moment": "^2.30.1",

"morgan": "^1.9.1",

"mustache": "^3.2.1",

"mysql2": "^2.2.5",

"npm": "^7.20.6",

"passport": "^0.4.0",

"passport-jwt": "^4.0.0",

"passport-local": "^1.0.0",

"sequelize": "^6.11.0",

"sequelize-cli": "^6.3.0",

"sha256": "^0.2.0",

"uuid": "^3.4.0"

},

"devDependencies": {

"chai": "^3.2.0",

"chai-http": "^4.3.0",

"eslint": "^5.8.0",

"mocha": "^9.1.3",

"nyc": "^14.1.1",

"prettier": "^1.18.2"

}

}

================================================

FILE: api/postman.json

================================================

{

"info": {

"_postman_id": "994858ef-e55e-425c-9aac-1cf12496a933",

"name": "s3-api-Boilerplate",

"schema": "https://schema.getpostman.com/json/collection/v2.1.0/collection.json"

},

"item": [

{

"name": "Auth",

"item": [

{

"name": "/auth",

"event": [

{

"listen": "prerequest",

"script": {

"exec": [

""

],

"type": "text/javascript"

}

}

],

"protocolProfileBehavior": {

"disableBodyPruning": true

},

"request": {

"method": "GET",

"header": [

{

"key": "Authorization",

"value": "Bearer {{accessToken}}",

"type": "text"

}

],

"body": {

"mode": "urlencoded",

"urlencoded": []

},

"url": {

"raw": "{{hostname}}/_authcheck",

"host": [

"{{hostname}}"

],

"path": [

"_authcheck"

]

},

"description": "The body must have `username` and `password`. It returns `id_token` and `access_token` are signed with the secret located at the `config.json` file. The `id_token` will contain the `username` and the `extra` information sent, while the `access_token` will contain the `audience`, `jti`, `issuer` and `scope`."

},

"response": []

},

{

"name": "/auth/login",

"event": [

{

"listen": "test",

"script": {

"exec": [

"var jsonData = pm.response.json()",

"pm.collectionVariables.set(\"accessToken\", jsonData.data.accessToken);",

""

],

"type": "text/javascript"

}

},

{

"listen": "prerequest",

"script": {

"exec": [

""

],

"type": "text/javascript"

}

}

],

"request": {

"method": "POST",

"header": [

{

"key": "Content-Type",

"value": "application/x-www-form-urlencoded"

}

],

"body": {

"mode": "urlencoded",

"urlencoded": [

{

"key": "email",

"value": "user@example.com",

"type": "text"

},

{

"key": "password",

"value": "password",

"type": "text"

}

]

},

"url": {

"raw": "{{hostname}}/auth/login",

"host": [

"{{hostname}}"

],

"path": [

"auth",

"login"

]

},

"description": "The body must have `username` and `password`. It returns `id_token` and `access_token` are signed with the secret located at the `config.json` file. The `id_token` will contain the `username` and the `extra` information sent, while the `access_token` will contain the `audience`, `jti`, `issuer` and `scope`."

},

"response": []

},

{

"name": "/auth/sign-up",

"event": [

{

"listen": "test",

"script": {

"exec": [

"var jsonData = pm.response.json()",

"pm.collectionVariables.set(\"accessToken\", jsonData.data.accessToken);",

""

],

"type": "text/javascript"

}

}

],

"request": {

"method": "POST",

"header": [

{

"warning": "This is a duplicate header and will be overridden by the Content-Type header generated by Postman.",

"key": "Content-Type",

"value": "application/json"

}

],

"body": {

"mode": "urlencoded",

"urlencoded": [

{

"key": "email",

"value": "anthonybudd@example.com",

"type": "text"

},

{

"key": "password",

"value": "password",

"type": "text"

},

{

"key": "firstName",

"value": "Anthony",

"type": "text"

},

{

"key": "lastName",

"value": "Budd",

"type": "text"

},

{

"key": "groupName",

"value": "GitHub",

"type": "text"

},

{

"key": "tos",

"value": "2021-21-19",

"type": "text"

}

]

},

"url": {

"raw": "{{hostname}}/auth/sign-up",

"host": [

"{{hostname}}"

],

"path": [

"auth",

"sign-up"

]

},

"description": "The body must have `username` and `password`. It returns `id_token` and `access_token` are signed with the secret located at the `config.json` file. The `id_token` will contain the `username` and the `extra` information sent, while the `access_token` will contain the `audience`, `jti`, `issuer` and `scope`."

},

"response": []

}

]

}

],

"event": [

{

"listen": "prerequest",

"script": {

"type": "text/javascript",

"exec": [

""

]

}

},

{

"listen": "test",

"script": {

"type": "text/javascript",

"exec": [

""

]

}

}

],

"variable": [

{

"key": "hostname",

"value": "http://localhost:8888/api/v1"

},

{

"key": "accessToken",

"value": ""

}

]

}

================================================

FILE: api/requests.http

================================================

# Install VS Code extension rest-client

# URL: https://marketplace.visualstudio.com/items?itemName=humao.rest-client

@host=http://localhost:8888/api/v1

# @host=http://api.local/api/v1

@AccessToken=eyJ0eXAiOiJKV1QiLCJhbGciOiJSUzUxMiJ9.eyJpZCI6ImM0NjQ0NzMzLWRlZWEtNDdkOC1iMzVhLTg2ZjMwZmY5NjE4ZSIsImVtYWlsIjoidXNlckBleGFtcGxlLmNvbSIsImZpcnN0TmFtZSI6IlVzZXIiLCJsYXN0TmFtZSI6Ik9uZSIsImlhdCI6MTcxNTIxMzk0MiwiZXhwIjozNDMwNTE0MjgzfQ.khGH3zHxztsWmpwL9bWpwGr_VXcPFxGTCtgoCYJq9tz0H638kWKH_k_zLgjCQ1rD6N0fWh31pTE4l53RgUGz2iL8lAoYmq0ScwSgMmiWMKm6d1vxaN3UK0CivvZPku2Pn4MQ6p12xrfRxTUVCzxI_xP9hHEhG1VUbCA07JJnl-OJFQCwYVQWCmdK5daFe8wybddYLUCG0oAGpy7Kaf0_CBbJAeIccVCKI7fILgBxowVTwl7nqruzr3-k0biXuitkegNfHPyPwbs4AvIIYxdyLXZiT-Zz0JUazphQZncw4WBqB_PX4Eyoflf8xzQNRtgvdV3ANc6ZKeMG05jAp1IV3A

###########################################

# Auth

POST {{host}}/auth/login

content-type: application/json

{

"email": "user@example.com",

"password": "Password@1234"

}

### Check auth

GET {{host}}/_authcheck

Authorization: Bearer {{AccessToken}}

###########################################

# Buckets

GET {{host}}/buckets

Authorization: Bearer {{AccessToken}}

### Create Bucket

POST {{host}}/buckets

Authorization: Bearer {{AccessToken}}

content-type: application/json

{

"namespace": "x--xxctest",

"name": "x-0testx"

}

### Delete Bucket

DELETE {{host}}/buckets/fae8a1fb-bc90-4565-b567-1fe6846544de

Authorization: Bearer {{AccessToken}}

================================================

FILE: api/src/database/migrations/20180726090304-create-Users.js

================================================

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.createTable('Users', {

id: {

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

email: {

type: Sequelize.STRING,

allowNull: false,

unique: true

},

password: Sequelize.STRING,

firstName: Sequelize.STRING,

lastName: Sequelize.STRING,

bio: Sequelize.TEXT,

tos: Sequelize.STRING,

inviteKey: Sequelize.STRING,

passwordResetKey: Sequelize.STRING,

emailVerificationKey: Sequelize.STRING,

emailVerified: {

type: Sequelize.BOOLEAN,

defaultValue: false,

allowNull: false,

},

lastLoginAt: {

type: Sequelize.DATE,

allowNull: true,

},

createdAt: {

type: Sequelize.DATE,

allowNull: true,

},

updatedAt: {

type: Sequelize.DATE,

allowNull: true,

},

}),

down: (queryInterface, Sequelize) => queryInterface.dropTable('Users'),

};

================================================

FILE: api/src/database/migrations/20180726090404-create-Groups.js

================================================

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.createTable('Groups', {

id: {

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

name: Sequelize.STRING,

ownerID: Sequelize.UUID,

createdAt: {

type: Sequelize.DATE,

allowNull: true,

},

updatedAt: {

type: Sequelize.DATE,

allowNull: true,

},

deletedAt: {

type: Sequelize.DATE,

allowNull: true,

},

}),

down: (queryInterface, Sequelize) => queryInterface.dropTable('Groups')

};

================================================

FILE: api/src/database/migrations/20180726090405-create-GroupsUsers.js

================================================

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.createTable('GroupsUsers', {

id: { // Not used. required by msq system var sql_require_primary_key

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

groupID: {

type: Sequelize.UUID,

},

userID: {

type: Sequelize.UUID,

},

createdAt: {

type: Sequelize.DATE,

allowNull: true,

},

}).then(() => queryInterface.addConstraint('GroupsUsers', {

fields: ['groupID', 'userID'],

type: 'unique',

name: 'groupID_userID_index'

})),

down: (queryInterface, Sequelize) => queryInterface.dropTable('GroupsUsers'),

};

================================================

FILE: api/src/database/migrations/20240411041313-create-Buckets.js

================================================

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.createTable('Buckets', {

id: {

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

createdAt: {

type: Sequelize.DATE,

allowNull: true,

},

updatedAt: {

type: Sequelize.DATE,

allowNull: true,

},

deletedAt: {

type: Sequelize.DATE,

allowNull: true,

},

userID: {

type: Sequelize.UUID,

allowNull: true,

},

namespace: {

type: Sequelize.STRING,

allowNull: false,

},

name: {

type: Sequelize.STRING,

allowNull: false,

},

status: {

type: Sequelize.STRING,

allowNull: false,

},

bucketCreated: {

type: Sequelize.BOOLEAN,

allowNull: false,

defaultValue: false,

},

endpoint: {

type: Sequelize.STRING,

allowNull: false,

},

stdout: {

type: Sequelize.TEXT,

allowNull: true,

},

stderr: {

type: Sequelize.TEXT,

allowNull: true,

},

}),

down: (queryInterface, Sequelize) => queryInterface.dropTable('Buckets'),

};

================================================

FILE: api/src/database/migrations/20240430101608-create-Blacklist.js

================================================

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.createTable('Blacklist', {

id: {

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

value: {

type: Sequelize.STRING,

allowNull: false,

},

createdAt: {

type: Sequelize.DATE,

allowNull: true,

},

updatedAt: {

type: Sequelize.DATE,

allowNull: true,

},

}),

down: (queryInterface, Sequelize) => queryInterface.dropTable('Blacklist'),

};

================================================

FILE: api/src/database/seeders/20180726092449-Users.js

================================================

const bcrypt = require('bcrypt-nodejs');

const moment = require('moment');

const faker = require('faker');

const insert = [{

id: 'c4644733-deea-47d8-b35a-86f30ff9618e',

email: 'user@example.com',

password: bcrypt.hashSync('Password@1234', bcrypt.genSaltSync(10)),

firstName: 'User',

lastName: 'One',

tos: 'tos-version-2023-07-13',

createdAt: moment().format('YYYY-MM-DD HH:mm:ss'),

updatedAt: moment().format('YYYY-MM-DD HH:mm:ss'),

}, {

id: 'd700932c-4a11-427f-9183-d6c4b69368f9',

email: 'other.user@foobar.com',

password: bcrypt.hashSync('Password@1234', bcrypt.genSaltSync(10)),

firstName: faker.name.firstName(),

lastName: faker.name.lastName(),

tos: 'tos-version-2023-07-13',

inviteKey: '86f30ff9618e',

createdAt: moment().format('YYYY-MM-DD HH:mm:ss'),

updatedAt: moment().format('YYYY-MM-DD HH:mm:ss'),

}];

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.bulkInsert('Users', insert).catch(err => console.log(err)),

down: (queryInterface, Sequelize) => { }

};

================================================

FILE: api/src/database/seeders/20180726093449-Group.js

================================================

const moment = require('moment');

const insert = [{

id: 'fdab7a99-2c38-444b-bcb3-f7cef61c275b',

ownerID: 'c4644733-deea-47d8-b35a-86f30ff9618e',

name: 'Group A',

createdAt: moment().format('YYYY-MM-DD HH:mm:ss'),

updatedAt: moment().format('YYYY-MM-DD HH:mm:ss'),

}, {

id: 'be1fcb4e-caf9-41c2-ac27-c06fa24da36a',

ownerID: 'd700932c-4a11-427f-9183-d6c4b69368f9',

name: 'Group B',

createdAt: moment().format('YYYY-MM-DD HH:mm:ss'),

updatedAt: moment().format('YYYY-MM-DD HH:mm:ss'),

}];

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.bulkInsert('Groups', insert).catch(err => console.log(err)),

down: (queryInterface, Sequelize) => { }

};

================================================

FILE: api/src/database/seeders/20180726093449-GroupsUsers.js

================================================

const moment = require('moment');

const insert = [

{

id: '1872dcde-b79d-4f28-a36b-a22af519ac23',

userID: 'c4644733-deea-47d8-b35a-86f30ff9618e',

groupID: 'fdab7a99-2c38-444b-bcb3-f7cef61c275b',

createdAt: moment().format('YYYY-MM-DD HH:mm:ss'),

},

{

id: 'f4444505-cec7-4f91-948f-cdf3d4471c9e',

userID: 'c4644733-deea-47d8-b35a-86f30ff9618e',

groupID: 'be1fcb4e-caf9-41c2-ac27-c06fa24da36a',

createdAt: moment().add(1, 'min').format('YYYY-MM-DD HH:mm:ss'),

},

{

id: 'ed748a2d-453b-4bc8-b80d-bf1056e2b920',

userID: 'd700932c-4a11-427f-9183-d6c4b69368f9',

groupID: 'be1fcb4e-caf9-41c2-ac27-c06fa24da36a',

createdAt: moment().format('YYYY-MM-DD HH:mm:ss'),

}

];

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.bulkInsert('GroupsUsers', insert).catch(err => console.log(err)),

down: (queryInterface, Sequelize) => { }

};

================================================

FILE: api/src/database/seeders/20240411041313-Buckets.js

================================================

const moment = require('moment');

const insert = [{

id: 'fae8a1fb-bc90-4565-b567-1fe6846544de',

createdAt: moment().format('YYYY-MM-DD HH:mm:ss'),

updatedAt: moment().format('YYYY-MM-DD HH:mm:ss'),

userID: 'c4644733-deea-47d8-b35a-86f30ff9618e',

namespace: 'test-bucket',

name: 'test-bucket',

status: 'Provisioned',

bucketCreated: 1,

endpoint: `test-bucket.${process.env.S3_ROOT}`,

}];

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.bulkInsert('Buckets', insert).catch(err => console.log(err)),

down: (queryInterface, Sequelize) => { }

};

================================================

FILE: api/src/database/seeders/20240430101608-Blacklist.js

================================================

const { v4: uuidv4 } = require('uuid');

const blacklist = [

'about',

'aboutu',

'abuse',

'acme',

'ad',

'admanager',

'admin',

'admindashboard',

'administrator',

'ads',

'adsense',

'adult',

'adword',

'affiliate',

'affiliatepage',

'afp',

'alpha',

'anal',

'analytic',

'android',

'answer',

'anu',

'anus',

'ap',

'api',

'app',

'appengine',

'application',

'appnew',

'arse',

'asdf',

'a',

'as',

'ass',

'asset',

'asshole',

'atf',

'backup',

'ball',

'balls',

'ballsack',

'bank',

'base',

'bastard',

'beginner',

'beta',

'biatch',

'billing',

'binarie',

'binary',

'bitch',

'biz',

'blackberry',

'blog',

'blogsearch',

'bloody',

'blowjob',

'blowjobs',

'bollock',

'boner',

'boob',

'boobs',

'book',

'bugger',

'bum',

'butt',

'buttplug',

'buy',

'buzz',

'c',

'cache',

'calendar',

'cart',

'catalog',

'ceo',

'chart',

'chat',

'checkout',

'ci',

'cia',

'client',

'clitori',

'clitoris',

'cname',

'cnarne',

'cock',

'code',

'community',

'confirm',

'confirmation',

'contact',

'contact-u',

'contactu',

'content',

'controlpanel',

'coon',

'core',

'corp',

'countrie',

'country',

'cp',

'cpanel',

'crap',

'cs',

'cunt',

'cv',

'damn',

'dashboard',

'data',

'demo',

'deploy',

'deployment',

'desktop',

'dev',

'devel',

'developement',

'developer',

'development',

'dick',

'dike',

'dildo',

'dir',

'directory',

'discussion',

'dl',

'doc',

'document',

'donate',

'download',

'dyke',

'e',

'earth',

'email',

'enable',

'encrypted',

'engine',

'error',

'errorlog',

'fag',

'faggot',

'fbi',

'feature',

'feck',

'feed',

'feedburner',

'feedproxy',

'felching',

'fellate',

'fellatio',

'file',

'finance',

'flange',

'folder',

'forgotpassword',

'forum',

'friend',

'ftp',

'fuck',

'fudgepacker',

'fun',

'fusion',

'gadget',

'gear',

'geographic',

'gettingstarted',

'git',

'gitlab',

'gmail',

'go',

'goddamn',

'goto',

'gov',

'graph',

'group',

'hell',

'help',

'home',

'homo',

'html',

'htrnl',

'http',

'i',

'image',

'img',

'investor',

'invoice',

'io',

'ios',

'ipad',

'iphone',

'irnage',

'irng',

'item',

'j',

'jenkin',

'jerk',

'jira',

'jizz',

'job',

'join',

'js',

'knobend',

'lab',

'labia',

'legal',

'lesbo',

'list',

'lmao',

'lmfao',

'local',

'locale',

'location',

'log',

'login',

'logout',

'm',

'mail',

'manage',

'manager',

'map',

'marketing',

'me',

'media',

'message',

'misc',

'mm',

'mms',

'mobile',

'model',

'money',

'movie',

'muff',

'my',

'mystore',

'n',

'net',

'network',

'new',

'newsite',

'nigga',

'nigger',

'npm',

'ns',

'omg',

'online',

'order',

'org',

'other',

'p0rn',

'pack',

'packagist',

'page',

'partner',

'partnerpage',

'password',

'payment',

'peni',

'penis',

'people',

'person',

'pi',

'pis',

'piss',

'place',

'podcast',

'policy',

'poop',

'pop',

'pop3',

'popular',

'porn',

'pr0n',

'pricing',

'prick',

'print',

'privacy',

'private',

'prod',

'product',

'production',

'profile',

'promo',

'promotion',

'proxie',

'proxies',

'proxy',

'pube',

'public',

'purchase',

'pussy',

'queer',

'querie',

'queries',

'query',

'r',

'radio',

'random',

'reader',

'recover',

'redirect',

'register',

'registration',

'release',

'report',

'research',

'resolve',

'resolver',

'rnail',

'rnicrosoft',

'root',

'rs',

'rss',

'sale',

'sandbox',

'scholar',

'scrotum',

'search',

'secure',

'seminar',

'server',

'service',

'sex',

'sftp',

'sh1t',

'shit',

'shop',

'shopping',

'shortcut',

'signin',

'signup',

'site',

'sitemap',

'sitenew',

'sketchup',

'sky',

'slash',

'slashinvoice',

'slut',

'sm',

'smegma',

'sms',

'smtp',

'soap',

'software',

'sorry',

'spreadsheet',

'spunk',

'srntp',

'ssh',

'ssl',

'stage',

'staging',

'stat',

'static',

'statistic',

'statu',

'store',

'suggest',

'suggestquerie',

'suggestquery',

'support',

'survey',

'surveytool',

'svn',

'sync',

'sysadmin',

'talk',

'talkgadget',

'test',

'tester',

'testing',

'text',

'tit',

'tits',

'tool',

'toolbar',

'tosser',

'trac',

'translate',

'translation',

'translator',

'trend',

'turd',

'twat',

'txt',

'ul',

'upload',

'vagina',

'validation',

'vid',

'video',

'video-stat',

'voice',

'w',

'wank',

'wave',

'webdisk',

'webmail',

'webmaster',

'webrnail',

'whm',

'whoi',

'whore',

'wifi',

'wiki',

'wtf',

'ww',

'www',

'wwww',

'xhtml',

'xhtrnl',

'xml',

'xxx',

];

module.exports = {

up: (queryInterface, Sequelize) => queryInterface.bulkInsert('Blacklist', blacklist.map((value) => ({

id: uuidv4(),

value,

}))).catch(err => console.log(err)),

down: (queryInterface, Sequelize) => { }

};

================================================

FILE: api/src/index.js

================================================

require('dotenv').config();

require('./providers/passport');

const fileUpload = require('express-fileupload');

const express = require('express');

const morgan = require('morgan');

const cors = require('cors');

console.log('*************************************');

console.log('* Express API Boilerplate');

console.log('*');

console.log('* ENV');

console.log(`* NODE_ENV: ${process.env.NODE_ENV}`);

console.log(`* TEMP_FILE_DIR: ${process.env.TEMP_FILE_DIR}`);

if (!process.env.H_CAPTCHA_SECRET) console.log(`* H_CAPTCHA_SECRET: null ⚠️ Login/Sign-up requests will not require captcha validadation!`);

console.log('*');

console.log('*');

////////////////////////////////////////////////

// Express

const app = express();

app.disable('x-powered-by');

app.use(cors({

origin: '*',

credentials: true,

allowedHeaders: ['Content-Type', 'Authorization']

}));

app.use(express.json());

app.use(express.urlencoded({ extended: true }));

app.use(fileUpload({

limits: { fileSize: 50 * 1024 * 1024 },

tempFileDir: process.env.TEMP_FILE_DIR,

useTempFiles: true,

parseNested: true,

}));

app.get('/_readiness', (req, res) => res.send('healthy'));

app.get('/api/v1/_healthcheck', (req, res) => res.json({ status: 'ok' }));

if (typeof global.it !== 'function') app.use(morgan('[:date[iso]] HTTP/:http-version :status :method :url :response-time ms'));

////////////////////////////////////////////////

// HTTP

app.use('/api/v1/', require('./routes/auth'));

app.use('/api/v1/', require('./routes/user'));

app.use('/api/v1/', require('./routes/groups'));

app.use('/api/v1/', require('./routes/Buckets')); // AB: gen

////////////////////////////////////////////////

// Listen

let port = process.env.PORT || 80;

if (typeof global.it === 'function') port = 7777;

app.listen(port, () => console.log(`* Listening: http://127.0.0.1:${port}`));

module.exports = app;

================================================

FILE: api/src/models/Blacklist.js

================================================

const Sequelize = require('sequelize');

const db = require('./../providers/db');

const Blacklist = db.define('Blacklist', {

id: {

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

value: {

type: Sequelize.STRING,

allowNull: false,

},

createdAt: {

type: Sequelize.DATE,

allowNull: true,

},

updatedAt: {

type: Sequelize.DATE,

allowNull: true,

},

}, {

tableName: 'Blacklist',

defaultScope: {

attributes: {

exclude: [

]

}

},

});

module.exports = Blacklist;

================================================

FILE: api/src/models/Bucket.js

================================================

const { exec } = require('child_process');

const db = require('./../providers/db');

const Sequelize = require('sequelize');

const tmp = require('tmp');

const fs = require('fs');

const Bucket = db.define('Bucket', {

id: {

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

createdAt: {

type: Sequelize.DATE,

allowNull: true,

},

updatedAt: {

type: Sequelize.DATE,

allowNull: true,

},

deletedAt: {

type: Sequelize.DATE,

allowNull: true,

},

userID: {

type: Sequelize.UUID,

allowNull: true,

},

namespace: {

type: Sequelize.STRING,

allowNull: false,

},

name: {

type: Sequelize.STRING,

allowNull: false,

},

status: {

type: Sequelize.STRING,

allowNull: false,

},

bucketCreated: {

type: Sequelize.BOOLEAN,

allowNull: false,

defaultValue: false,

},

endpoint: {

type: Sequelize.STRING,

allowNull: false,

},

stdout: {

type: Sequelize.TEXT,

allowNull: true,

},

stderr: {

type: Sequelize.TEXT,

allowNull: true,

},

}, {

tableName: 'Buckets',

paranoid: true,

defaultScope: {

attributes: {

exclude: []

}

},

});

Bucket.prototype.createK3sAssets = async function () {

const generateAccessKeyID = () => {

const charSet = 'ABCDEFGHIJKLMNOPQRSTUVWXYZabcdefghijklmnopqrstuvwxyz23456789';

const length = 20;

let randomString = '';

for (let i = 0; i < length; i++) {

const randomIndex = Math.floor(Math.random() * charSet.length);

randomString += charSet.charAt(randomIndex);

}

return randomString;

};

const generateSecretAccessKey = () => {

const length = 40;

const charset = 'abcdefghijklmnopqrstuvwxyzABCDEFGHIJKLMNOPQRSTUVWXYZ0123456789+/';

let randomString = '';

for (let i = 0; i < length; i++) {

const randomIndex = Math.floor(Math.random() * charset.length);

randomString += charset.charAt(randomIndex);

}

return randomString;

};

const accessKeyID = generateAccessKeyID();

const secretAccessKey = generateSecretAccessKey();

tmp.file((err, path) => {

if (err) throw err;

fs.readFile('/app/src/providers/bucket.yml', 'utf8', (err, data) => {

if (err) throw err;

const result = data.replace(/NAMESPACE_HERE/g, this.namespace)

.replace(/BUCKETNAME_HERE/g, this.name)

.replace(/ROOTUSER/g, accessKeyID)

.replace(/ROOTPASSWORD/g, secretAccessKey);

fs.writeFile(path, result, 'utf8', (err) => {

if (err) throw err;

exec(`kubectl --kubeconfig=${process.env.K8S_CONFIG_PATH} apply -f ${path}`, (err, stdout, stderr) => {

if (err) console.error(err);

console.log(`stdout: ${stdout}`);

console.log(`stderr: ${stderr}`);

let status = 'Provisioning';

if (stderr) status = 'Error';

this.update({

status,

stdout,

stderr,

});

});

});

});

});

return {

accessKeyID,

secretAccessKey

};

};

Bucket.prototype.createBucket = async function () {

const command = `kubectl --kubeconfig=${process.env.K8S_CONFIG_PATH} -n ${this.namespace} exec minio-pod -- ./s3-create-bucket-script/create-bucket.sh`;

console.log(command);

exec(command, (err, stdout, stderr) => {

if (err) console.error(err);

console.log(`stdout: ${stdout}`);

console.log(`stderr: ${stderr}`);

if (stderr) {

this.update({

status: 'Error',

createStderr: `2: ${stderr}`,

});

} else {

this.update({ bucketCreated: true });

}

});

};

Bucket.prototype.sync = async function () {

if (this.status !== 'Error') {

exec(`kubectl --kubeconfig=${process.env.K8S_CONFIG_PATH} -n ${this.namespace} get pod minio-pod --no-headers -o custom-columns=":status.phase"`, (err, stdout, stderr) => {

if (err) console.error(err);

console.log(`stdout: ${stdout}`);

console.log(`stderr: ${stderr}`);

switch (stdout.trim()) {

case 'Running':

if (this.status !== 'Provisioned') this.update({ status: 'Provisioned' });

if (!this.bucketCreated) this.createBucket();

break;

}

});

}

};

Bucket.prototype.deleteK3sAssets = async function () {

exec(`kubectl --kubeconfig=${process.env.K8S_CONFIG_PATH} -n ${this.namespace} delete pod/minio-pod service/minio-svc ingress/minio-ing persistentvolumeclaim/minio-pvc namespace/${this.namespace}`, (err, stdout, stderr) => {

if (err) console.error(err);

if (stderr) console.log(`stderr: ${stderr}`);

console.log(`stdout: ${stdout}`);

this.update({

stdout,

stderr,

});

});

};

module.exports = Bucket;

================================================

FILE: api/src/models/Group.js

================================================

const Sequelize = require('sequelize');

const db = require('./../providers/db');

module.exports = db.define('Group', {

id: {

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

name: Sequelize.STRING,

ownerID: Sequelize.UUID,

deletedAt: {

type: Sequelize.DATE,

allowNull: true,

},

}, {

tableName: 'Groups',

paranoid: true,

});

================================================

FILE: api/src/models/GroupsUsers.js

================================================

const Sequelize = require('sequelize');

const db = require('./../providers/db');

module.exports = db.define('GroupsUsers', {

id: { // Not used. required by msq system var sql_require_primary_key

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

userID: Sequelize.UUID,

groupID: Sequelize.UUID,

}, {

tableName: 'GroupsUsers',

updatedAt: false,

});

================================================

FILE: api/src/models/User.js

================================================

const Sequelize = require('sequelize');

const db = require('./../providers/db');

module.exports = db.define('User', {

id: {

type: Sequelize.UUID,

defaultValue: Sequelize.UUIDV4,

primaryKey: true,

allowNull: false,

unique: true

},

email: {

type: Sequelize.STRING,

allowNull: false,

unique: true

},

password: Sequelize.STRING,

firstName: Sequelize.STRING,

lastName: Sequelize.STRING,

bio: Sequelize.TEXT,

tos: Sequelize.STRING,

inviteKey: Sequelize.STRING,

passwordResetKey: Sequelize.STRING,

emailVerificationKey: Sequelize.STRING,

emailVerified: {

type: Sequelize.BOOLEAN,

defaultValue: false,

allowNull: false,

},

lastLoginAt: {

type: Sequelize.DATE,

allowNull: true,

},

}, {

tableName: 'Users',

defaultScope: {

attributes: {

exclude: [

'password',

'passwordResetKey',

]

}

},

});

================================================

FILE: api/src/models/index.js

================================================

const User = require('./User');

const Group = require('./Group');

const GroupsUsers = require('./GroupsUsers');

const Bucket = require('./Bucket');

const Blacklist = require('./Blacklist');

User.belongsToMany(Group, {

through: GroupsUsers,

foreignKey: 'userID',

otherKey: 'groupID',

});

Group.belongsToMany(User, {

through: GroupsUsers,

foreignKey: 'groupID',

otherKey: 'userID',

});

module.exports = {

User,

Group,

GroupsUsers,

Bucket,

Blacklist,

};

================================================

FILE: api/src/providers/bucket.yml

================================================

apiVersion: v1

kind: Namespace

metadata:

name: NAMESPACE_HERE

labels:

name: NAMESPACE_HERE

---

apiVersion: v1

kind: Pod

metadata:

labels:

app: minio-pod

name: minio-pod

namespace: NAMESPACE_HERE

spec:

containers:

- name: minio-pod

image: quay.io/minio/minio:latest

env:

- name: MINIO_ROOT_USER

value: ROOTUSER

- name: MINIO_ROOT_PASSWORD

value: ROOTPASSWORD

- name: S3_NAMESPACE

value: NAMESPACE_HERE

- name: S3_BUCKET_NAME

value: BUCKETNAME_HERE

command:

- /bin/bash

- -c

args:

- minio server /data --console-address :9001

ports:

- name: http

containerPort: 80

- name: https

containerPort: 443

- name: console

containerPort: 9001

- name: api

containerPort: 9000

volumeMounts:

- name: longhornvolume

mountPath: /data

- name: s3-create-bucket-script

mountPath: /s3-create-bucket-script

volumes:

- name: s3-create-bucket-script

configMap:

name: s3-create-bucket-script

defaultMode: 0777

items:

- key: create-bucket.sh

path: create-bucket.sh

- name: longhornvolume

persistentVolumeClaim:

claimName: minio-pvc

---

apiVersion: v1

kind: Service

metadata:

name: minio-svc

namespace: NAMESPACE_HERE

spec: