Showing preview only (781K chars total). Download the full file or copy to clipboard to get everything.

Repository: azat/chdig

Branch: main

Commit: 7394b22c63a3

Files: 89

Total size: 747.5 KB

Directory structure:

gitextract_a1a8yrqt/

├── .cargo/

│ ├── audit.toml

│ └── config.toml

├── .exrc

├── .github/

│ └── workflows/

│ ├── build.yml

│ ├── pre_release.yml

│ ├── pull_request.yml

│ └── release.yml

├── .gitignore

├── .pre-commit-config.yaml

├── .yamllint

├── Cargo.toml

├── Documentation/

│ ├── Actions.md

│ ├── Bugs.md

│ ├── Developers.md

│ └── FAQ.md

├── LICENSE

├── Makefile

├── README.md

├── chdig-nfpm.yaml

├── rustfmt.toml

├── src/

│ ├── actions.rs

│ ├── bin.rs

│ ├── common/

│ │ ├── mod.rs

│ │ ├── relative_date_time.rs

│ │ ├── sparkline.rs

│ │ └── stopwatch.rs

│ ├── interpreter/

│ │ ├── background_runner.rs

│ │ ├── clickhouse.rs

│ │ ├── clickhouse_quirks.rs

│ │ ├── context.rs

│ │ ├── debug_metrics.rs

│ │ ├── flamegraph.rs

│ │ ├── mod.rs

│ │ ├── options.rs

│ │ ├── perfetto.rs

│ │ ├── query.rs

│ │ └── worker.rs

│ ├── lib.rs

│ ├── main.rs

│ ├── pastila.rs

│ ├── utils.rs

│ └── view/

│ ├── log_view.rs

│ ├── mod.rs

│ ├── navigation.rs

│ ├── provider.rs

│ ├── providers/

│ │ ├── asynchronous_inserts.rs

│ │ ├── background_schedule_pool.rs

│ │ ├── background_schedule_pool_log.rs

│ │ ├── backups.rs

│ │ ├── client.rs

│ │ ├── dictionaries.rs

│ │ ├── errors.rs

│ │ ├── logger_names.rs

│ │ ├── merges.rs

│ │ ├── mod.rs

│ │ ├── mutations.rs

│ │ ├── object_storage_queue.rs

│ │ ├── part_log.rs

│ │ ├── queries.rs

│ │ ├── replicas.rs

│ │ ├── replicated_fetches.rs

│ │ ├── replication_queue.rs

│ │ ├── server_logs.rs

│ │ ├── table_parts.rs

│ │ └── tables.rs

│ ├── queries_view.rs

│ ├── query_view.rs

│ ├── registry.rs

│ ├── search_history.rs

│ ├── settings_view.rs

│ ├── sql_query_view.rs

│ ├── summary_view.rs

│ ├── table_view.rs

│ ├── text_log_view.rs

│ └── utils.rs

├── tests/

│ └── configs/

│ ├── accept_invalid_certificate.yaml

│ ├── basic.xml

│ ├── basic.yaml

│ ├── chdig_basic.yaml

│ ├── chdig_empty.yaml

│ ├── chdig_partial.yaml

│ ├── connections.yaml

│ ├── empty.xml

│ ├── empty.yaml

│ ├── tls.xml

│ ├── tls.yaml

│ ├── unknown_directives.xml

│ └── unknown_directives.yaml

└── typos.toml

================================================

FILE CONTENTS

================================================

================================================

FILE: .cargo/audit.toml

================================================

# https://docs.rs/crate/cargo-audit/0.10.0/source/audit.toml.example

[advisories]

ignore = [

# time: Potential segfault in the time crate

# chdig should not be affected by this, waiting for upstream.

"RUSTSEC-2020-0071",

# ansi_term is Unmaintained

"RUSTSEC-2021-0139",

# term_size is Unmaintained

"RUSTSEC-2020-0163",

# stdweb is unmaintained

"RUSTSEC-2020-0056",

# Waiting for upstream

# owning_ref: Multiple soundness issues in `owning_ref`

"RUSTSEC-2022-0040",

# nix: Out-of-bounds write in nix::unistd::getgrouplist

"RUSTSEC-2021-0119",

# rustc-serialize: Stack overflow in rustc_serialize when parsing deeply nested JSON

"RUSTSEC-2022-0004",

# atty: Potential unaligned read

"RUSTSEC-2021-0145",

]

================================================

FILE: .cargo/config.toml

================================================

[build]

rustflags = ["--cfg", "tokio_unstable"]

================================================

FILE: .exrc

================================================

"

" Add this into your .vimrc, to allow vim handle this file.

"

" set exrc

" set secure " even after this this is kind of dangerous

"

set tabstop=4

set softtabstop=4

set shiftwidth=4

set expandtab

let detectindent_preferred_indent=4

let g:detectindent_preferred_expandtab=1

================================================

FILE: .github/workflows/build.yml

================================================

---

name: Build chdig

on:

workflow_call:

inputs: {}

env:

CARGO_TERM_COLOR: always

jobs:

lint:

name: Run linters

runs-on: ubuntu-22.04

steps:

- uses: actions/checkout@v3

with:

persist-credentials: false

- uses: Swatinem/rust-cache@v2

with:

cache-on-failure: true

- name: cargo check

run: cargo check

- name: cargo clippy

run: cargo clippy

build-linux:

name: Build Linux (x86_64)

runs-on: ubuntu-22.04

steps:

- uses: actions/checkout@v3

with:

# To fetch tags, but can this be improved using blobless checkout?

# [1]. But anyway right it is not important, and unlikely will be,

# since the repository is small.

#

# [1]: https://github.blog/2020-12-21-get-up-to-speed-with-partial-clone-and-shallow-clone/

fetch-depth: 0

persist-credentials: false

# Workaround for https://github.com/actions/checkout/issues/882

- name: Fix tags for release

# will break on a lightweight tag

run: git fetch origin +refs/tags/*:refs/tags/*

- uses: Swatinem/rust-cache@v2

with:

cache-on-failure: true

- name: Install dependencies

run: |

# nfpm

curl -sS -Lo /tmp/nfpm.deb "https://github.com/goreleaser/nfpm/releases/download/v2.43.4/nfpm_2.43.4_amd64.deb"

sudo dpkg -i /tmp/nfpm.deb

# for building cityhash for clickhouse-rs

sudo apt-get install -y musl-tools

# gcc cannot do cross compile, and there is no musl-g++ in musl-tools

sudo ln -srf /usr/bin/clang /usr/bin/musl-g++

# musl for static binaries

rustup target add x86_64-unknown-linux-musl

- name: Run tests

run: make test

- name: Build

run: |

set -x

make packages target=x86_64-unknown-linux-musl

ls -l

declare -A mapping

mapping[chdig*.x86_64.rpm]=chdig-latest.x86_64.rpm

mapping[chdig*-x86_64.pkg.tar.zst]=chdig-latest-x86_64.pkg.tar.zst

mapping[chdig*-x86_64.tar.gz]=chdig-latest-x86_64.tar.gz

mapping[chdig*_amd64.deb]=chdig-latest_amd64.deb

mapping[target/chdig]=chdig-amd64

for pattern in "${!mapping[@]}"; do

cp $pattern ${mapping[$pattern]}

done

- name: Check package

run: |

sudo dpkg -i chdig-latest_amd64.deb

chdig --help

- name: Archive Packages

uses: actions/upload-artifact@v4

with:

name: linux-packages-amd64

path: |

chdig-amd64

*.deb

*.rpm

*.tar.*

build-linux-no-features:

name: Build Linux (no features)

runs-on: ubuntu-22.04

steps:

- uses: actions/checkout@v3

with:

persist-credentials: false

- uses: Swatinem/rust-cache@v2

with:

cache-on-failure: true

- name: Run tests

run: make test

- name: Build

run: |

cargo build --no-default-features

- name: Check package

run: |

cargo run --no-default-features -- --help

build-macos-x86_64:

name: Build MacOS (x86_64)

runs-on: macos-15-intel

steps:

- uses: actions/checkout@v3

with:

# To fetch tags, but can this be improved using blobless checkout?

# [1]. But anyway right it is not important, and unlikely will be,

# since the repository is small.

#

# [1]: https://github.blog/2020-12-21-get-up-to-speed-with-partial-clone-and-shallow-clone/

fetch-depth: 0

persist-credentials: false

# Workaround for https://github.com/actions/checkout/issues/882

- name: Fix tags for release

# will break on a lightweight tag

run: git fetch origin +refs/tags/*:refs/tags/*

- uses: Swatinem/rust-cache@v2

with:

cache-on-failure: true

- name: Worker info

run: |

# SDKs versions

ls -al /Library/Developer/CommandLineTools/SDKs/

- name: Build

run: |

set -x

make deploy-binary

cp target/chdig chdig-macos-x86_64

- name: Check package

run: |

./chdig-macos-x86_64 --help

- name: Archive Packages

uses: actions/upload-artifact@v4

with:

name: macos-packages-x86_64

path: |

chdig-macos-x86_64

build-macos-arm64:

name: Build MacOS (arm64)

runs-on: macos-26

steps:

- uses: actions/checkout@v3

with:

# To fetch tags, but can this be improved using blobless checkout?

# [1]. But anyway right it is not important, and unlikely will be,

# since the repository is small.

#

# [1]: https://github.blog/2020-12-21-get-up-to-speed-with-partial-clone-and-shallow-clone/

fetch-depth: 0

persist-credentials: false

# Workaround for https://github.com/actions/checkout/issues/882

- name: Fix tags for release

# will break on a lightweight tag

run: git fetch origin +refs/tags/*:refs/tags/*

- uses: Swatinem/rust-cache@v2

with:

cache-on-failure: true

- name: Worker info

run: |

# SDKs versions

ls -al /Library/Developer/CommandLineTools/SDKs/

- name: Build

run: |

set -x

make deploy-binary

cp target/chdig chdig-macos-arm64

- name: Check package

run: |

./chdig-macos-arm64 --help

- name: Archive Packages

uses: actions/upload-artifact@v4

with:

name: macos-packages-arm64

path: |

chdig-macos-arm64

build-windows:

name: Build Windows

runs-on: windows-latest

steps:

- uses: actions/checkout@v3

with:

# To fetch tags, but can this be improved using blobless checkout?

# [1]. But anyway right it is not important, and unlikely will be,

# since the repository is small.

#

# [1]: https://github.blog/2020-12-21-get-up-to-speed-with-partial-clone-and-shallow-clone/

fetch-depth: 0

persist-credentials: false

# Workaround for https://github.com/actions/checkout/issues/882

- name: Fix tags for release

# will break on a lightweight tag

run: git fetch origin +refs/tags/*:refs/tags/*

- uses: Swatinem/rust-cache@v2

with:

cache-on-failure: true

- name: Build

run: |

make deploy-binary

cp target/chdig.exe chdig-windows-x86_64.exe

- name: Archive Packages

uses: actions/upload-artifact@v4

with:

name: windows-packages-x86_64

path: |

chdig-windows-x86_64.exe

build-linux-aarch64:

name: Build Linux (aarch64)

runs-on: ubuntu-22.04-arm

steps:

- uses: actions/checkout@v3

with:

# To fetch tags, but can this be improved using blobless checkout?

# [1]. But anyway right it is not important, and unlikely will be,

# since the repository is small.

#

# [1]: https://github.blog/2020-12-21-get-up-to-speed-with-partial-clone-and-shallow-clone/

fetch-depth: 0

persist-credentials: false

# Workaround for https://github.com/actions/checkout/issues/882

- name: Fix tags for release

# will break on a lightweight tag

run: git fetch origin +refs/tags/*:refs/tags/*

- uses: Swatinem/rust-cache@v2

with:

cache-on-failure: true

- name: Install dependencies

run: |

# nfpm

curl -sS -Lo /tmp/nfpm.deb "https://github.com/goreleaser/nfpm/releases/download/v2.43.4/nfpm_2.43.4_arm64.deb"

sudo dpkg -i /tmp/nfpm.deb

# for building cityhash for clickhouse-rs

sudo apt-get install -y musl-tools

# gcc cannot do cross compile, and there is no musl-g++ in musl-tools

sudo ln -srf /usr/bin/clang /usr/bin/musl-g++

# "Compiler family detection failed due to error: ToolNotFound: failed to find tool "aarch64-linux-musl-g++": No such file or directory"

sudo ln -srf /usr/bin/clang /usr/bin/aarch64-linux-musl-g++

# musl for static binaries

rustup target add aarch64-unknown-linux-musl

- name: Run tests

run: make test

- name: Build

run: |

set -x

make packages target=aarch64-unknown-linux-musl

ls -l

declare -A mapping

mapping[chdig*.aarch64.rpm]=chdig-latest.aarch64.rpm

mapping[chdig*-aarch64.pkg.tar.zst]=chdig-latest-aarch64.pkg.tar.zst

mapping[chdig*-aarch64.tar.gz]=chdig-latest-aarch64.tar.gz

mapping[chdig*_arm64.deb]=chdig-latest_arm64.deb

mapping[target/chdig]=chdig-aarch64

for pattern in "${!mapping[@]}"; do

cp $pattern ${mapping[$pattern]}

done

- name: Check package

run: |

sudo dpkg -i chdig-latest_arm64.deb

chdig --help

- name: Archive Packages

uses: actions/upload-artifact@v4

with:

name: linux-packages-aarch64

path: |

chdig-aarch64

*.deb

*.rpm

*.tar.*

================================================

FILE: .github/workflows/pre_release.yml

================================================

---

name: pre-release

on:

push:

branches:

- main

jobs:

build:

uses: ./.github/workflows/build.yml

publish-pre-release:

name: Publish Pre Release

runs-on: ubuntu-22.04

permissions:

contents: write

needs:

- build

steps:

- name: Download artifacts

uses: actions/download-artifact@v4

- uses: "marvinpinto/action-automatic-releases@latest"

with:

repo_token: "${{ secrets.GITHUB_TOKEN }}"

prerelease: true

automatic_release_tag: "latest"

title: "Development Build"

files: |

macos-packages-x86_64/*

macos-packages-arm64/*

windows-packages-x86_64/*

linux-packages-amd64/*

linux-packages-aarch64/*

================================================

FILE: .github/workflows/pull_request.yml

================================================

---

name: pull_request

on:

pull_request:

types:

- synchronize

- reopened

- opened

branches:

- main

paths-ignore:

- '**.md'

- 'Documentation/**'

jobs:

spellcheck:

name: Spell Check with Typos

runs-on: ubuntu-latest

steps:

- name: Checkout Actions Repository

uses: actions/checkout@v4

- name: Spell Check Repo

uses: crate-ci/typos@v1.31.1

with:

config: typos.toml

build:

needs: spellcheck

uses: ./.github/workflows/build.yml

================================================

FILE: .github/workflows/release.yml

================================================

---

name: release

on:

push:

tags:

- "v*"

jobs:

build:

uses: ./.github/workflows/build.yml

publish-release:

name: Publish Release

runs-on: ubuntu-22.04

permissions:

contents: write

needs:

- build

steps:

- name: Download artifacts

uses: actions/download-artifact@v4

- uses: "marvinpinto/action-automatic-releases@latest"

with:

repo_token: "${{ secrets.GITHUB_TOKEN }}"

prerelease: false

files: |

macos-packages-x86_64/*

macos-packages-arm64/*

windows-packages-x86_64/*

linux-packages-amd64/*

linux-packages-aarch64/*

- name: Generate PKGBUILD

run: |

set -x

VERSION="${GITHUB_REF##*/}"

VERSION="${VERSION#v}"

SHA256_x86_64=$(sha256sum linux-packages-amd64/chdig-$VERSION-1-x86_64.pkg.tar.zst | cut -d' ' -f1)

SHA256_aarch64=$(sha256sum linux-packages-aarch64/chdig-$VERSION-1-aarch64.pkg.tar.zst | cut -d' ' -f1)

cat > PKGBUILD <<EOL

# shellcheck disable=SC2034,SC2154

# - SC2034 - appears unused.

# - SC2154 - pkgdir is referenced but not assigned.

# Maintainer: Azat Khuzhin <a3at.mail@gmail.com>

pkgname=chdig-bin

pkgver=$VERSION

pkgrel=1

pkgdesc="Dig into ClickHouse with TUI interface (binaries for latest stable version)"

arch=('x86_64' 'aarch64')

conflicts=("chdig")

provides=("chdig")

url="https://github.com/azat/chdig"

license=('MIT')

source_x86_64=("https://github.com/azat/chdig/releases/download/v\$pkgver/chdig-\$pkgver-1-x86_64.pkg.tar.zst")

source_aarch64=("https://github.com/azat/chdig/releases/download/v\$pkgver/chdig-\$pkgver-1-aarch64.pkg.tar.zst")

sha256sums_x86_64=('$SHA256_x86_64')

sha256sums_aarch64=('$SHA256_aarch64')

package() {

tar -C "\$pkgdir" -xvf chdig-\$pkgver-1-\$(uname -m).pkg.tar.zst

rm -f "\$pkgdir/.PKGINFO"

rm -f "\$pkgdir/.MTREE"

}

# vim set: ts=4 sw=4 et

EOL

cat PKGBUILD

- name: Publish to the AUR

uses: KSXGitHub/github-actions-deploy-aur@v4.1.3

if: ${{ github.event.repository.fork == false }}

with:

pkgname: chdig-bin

pkgbuild: PKGBUILD

commit_username: Azat Khuzhin

commit_email: a3at.mail@gmail.com

ssh_private_key: ${{ secrets.AUR_SSH_PRIVATE_KEY }}

commit_message: Release ${{ github.ref_name }}

# force_push: 'true'

================================================

FILE: .gitignore

================================================

# cargo

target

/vendor

# distribution

dist

# packages

*.deb

*.tar.*

*.tar

*.rpm

# intellij

.idea/

================================================

FILE: .pre-commit-config.yaml

================================================

---

repos:

- repo: https://github.com/pre-commit/pre-commit-hooks

rev: v4.5.0

hooks:

- id: check-byte-order-marker

- id: check-yaml

- id: end-of-file-fixer

- id: mixed-line-ending

- id: trailing-whitespace

- repo: https://github.com/pre-commit/pre-commit

rev: v3.6.0

hooks:

- id: validate_manifest

- repo: https://github.com/doublify/pre-commit-rust

rev: v1.0

hooks:

- id: fmt

pass_filenames: false

- id: cargo-check

- id: clippy

- repo: https://github.com/adrienverge/yamllint.git

rev: v1.35.1

hooks:

- id: yamllint

================================================

FILE: .yamllint

================================================

# vi: ft=yaml

---

extends: default

rules:

indentation:

spaces: 2

level: error

indent-sequences: false

line-length:

max: 250

braces:

max-spaces-inside: 1

truthy:

allowed-values: ['true', 'false', 'yes', 'no']

check-keys: true

comments:

# this is useful to distinguish commented code from comments

require-starting-space: false

================================================

FILE: Cargo.toml

================================================

[package]

name = "chdig"

authors = ["Azat Khuzhin <a3at.mail@gmail.com>"]

homepage = "https://github.com/azat/chdig"

repository = "https://github.com/azat/chdig"

readme = "README.md"

description = "Dig into ClickHouse with TUI interface"

license = "MIT"

version = "26.4.3"

edition = "2024"

[lib]

name = "chdig"

crate-type = ["staticlib", "lib"]

path = "src/lib.rs"

[[bin]]

name = "chdig"

path = "src/main.rs"

[features]

default = ["tls"]

tls = ["clickhouse-rs/tls-rustls"]

tokio-console = ["dep:console-subscriber", "tokio/tracing"]

[patch.crates-io]

cursive = { git = "https://github.com/azat-rust/cursive", branch = "chdig-next" }

cursive_core = { git = "https://github.com/azat-rust/cursive", branch = "chdig-next" }

[dependencies]

# Basic

anyhow = { version = "*", default-features = false, features = ["std"] }

libc = { version = "*", default-features = false }

size = { version = "*", default-features = false, features = ["std"] }

tempfile = { version = "*", default-features = false }

url = { version = "*", default-features = false }

humantime = { version = "*", default-features = false }

backtrace = { version = "*", default-features = false, features = ["std"] }

futures = { version = "*", default-features = false, features = ["std"] }

strfmt = { version = "*", default-features = false }

fuzzy-matcher = { version = "*", default-features = false }

# chrono/chrono-tz should match clickhouse-rs

chrono = { version = "0.4", default-features = false, features = ["std", "clock"] }

chrono-tz = { version = "0.8", default-features = false }

flexi_logger = { version = "0.27", default-features = false }

log = { version = "0.4", default-features = false }

futures-util = { version = "*", default-features = false }

semver = { version = "*", default-features = false }

serde = { version = "*", features = ["derive"] }

serde_json = { version = "*", default-features = false, features = ["std"] }

serde_yaml = { version = "*", default-features = false }

quick-xml = { version = "*", features = ["serialize"] }

percent-encoding = { version = "*", default-features = false }

regex = { version = "*", default-features = false, features = ["std"] }

# CLI

clap = { version = "*", default-features = false, features = ["derive", "env", "help", "usage", "std", "color", "error-context", "suggestions"] }

clap_complete = { version = "*", default-features = false }

# UI

cursive = { version = "*", default-features = false, features = ["crossterm-backend"] }

cursive-syntect = { version = "*", default-features = true }

unicode-width = "0.1"

cursive-flexi-logger-view = { git = "https://github.com/azat-rust/cursive-flexi-logger-view", branch = "next", default-features = false }

syntect = { version = "*", default-features = false, features = ["default-syntaxes", "default-themes"] }

arboard = { version = "*", default-features = false }

clickhouse-rs = { git = "https://github.com/azat-rust/clickhouse-rs", branch = "next", default-features = false, features = ["tokio_io"] }

tokio = { version = "*", default-features = false, features = ["macros"] }

console-subscriber = { version = "*", default-features = false, optional = true }

# Flamegraphs

flamelens = { git = "https://github.com/azat-rust/flamelens", branch = "diff-mode", default-features = false }

ratatui = { version = "0.29.0", features = ["unstable-rendered-line-info"] }

# Should **only** with the flamelens, since cursive re-export it, while flamelens does not

crossterm = { version = "0.28.1", features = ["use-dev-tty"] }

# Perfetto

perfetto_protos = { version = "*", default-features = false }

protobuf = { version = "3", default-features = false }

tiny_http = { version = "*", default-features = false }

# Sharing

aes-gcm = { version = "0.10", default-features = false, features = ["aes", "alloc"] }

rand = { version = "0.8", default-features = false, features = ["std", "std_rng"] }

base64 = { version = "0.22", default-features = false, features = ["std"] }

[dev-dependencies]

pretty_assertions = { version= "*", default-features = false, features = ["alloc"] }

[profile.release]

# Too slow and does not worth it

lto = false

[lints.clippy]

needless_return = "allow"

type_complexity = "allow"

uninlined_format_args = "allow"

[lints.rust]

elided_lifetimes_in_paths = "deny"

================================================

FILE: Documentation/Actions.md

================================================

### Actions

`chdig` supports lots of actions, some has shortcut, others available only in

`Ctlr-P` (fuzzy search by all actions) (also there is `F8` for query actions

and `F2` for global actions, if you prefer old school).

### Shortcuts

Here is a list of available shortcuts

| Category | Shortcut | Description |

|-----------------|---------------|-----------------------------------------------|

| Global Shortcuts| **F1** | Show help |

| | **F2** | Views |

| | **F8** | Show actions |

| | **Ctrl-p** | Fuzzy actions |

| | **F** | CPU Server Flamegraph |

| | | Real Server Flamegraph |

| | | Memory Server Flamegraph |

| | | Memory Sample Server Flamegraph |

| | | Jemalloc Sample Server Flamegraph |

| | | Events Server Flamegraph |

| | | Live Server Flamegraph |

| | | CPU Server Flamegraph in speedscope |

| | | Real Server Flamegraph in speedscope |

| | | Memory Server Flamegraph in speedscope |

| | | Memory Sample Server Flamegraph in speedscope |

| | | Jemalloc Sample Server Flamegraph in speedscope|

| | | Events Server Flamegraph in speedscope |

| | | Live Server Flamegraph in speedscope |

| Actions | **<Space>** | Select |

| | **-** | Show all queries |

| | **+** | Show queries on shards |

| | **/** | Filter |

| | | Query details |

| | | Query profile events |

| | **P** | Query processors |

| | **v** | Query views |

| | **C** | Show CPU flamegraph |

| | **R** | Show Real flamegraph |

| | **M** | Show memory flamegraph |

| | | Show memory sample flamegraph |

| | | Show jemalloc sample flamegraph |

| | | Show events flamegraph |

| | **L** | Show live flamegraph |

| | | Show CPU flamegraph in speedscope |

| | | Show Real flamegraph in speedscope |

| | | Show memory flamegraph in speedscope |

| | | Show memory sample flamegraph in speedscope |

| | | Show jemalloc sample flamegraph in speedscope |

| | | Show events flamegraph in speedscope |

| | | Show live flamegraph in speedscope |

| | **Alt+E** | Edit query and execute |

| | **S** | Show query |

| | **y** | Copy query to clipboard |

| | **s** | `EXPLAIN SYNTAX` |

| | **e** | `EXPLAIN PLAN` |

| | **E** | `EXPLAIN PIPELINE` |

| | **G** | `EXPLAIN PIPELINE graph=1` (open in browser) |

| | **I** | `EXPLAIN INDEXES` |

| | **K** | `KILL` query |

| | **l** | Show query logs |

| | **(** | Increase number of queries to render to 20 |

| | **)** | Decrease number of queries to render to 20 |

| Logs | **-** | Turn ON/OFF options: |

| | | - `S` - toggle wrap mode |

| | **/** | Forward search |

| | **?** | Reverse search |

| | **s** | Save logs to file |

| | **n**/**N** | Move to next/previous match |

| Basic navigation| **j**/**k** | Down/Up |

| | **G**/**g** | Move to the end/Move to the beginning |

| | **PageDown**/**PageUp**| Move to the end/Move to the beginning|

| | **Home** | Reset selection/follow item in table |

| chdig controls | **Esc** | Back/Quit |

| | **q** | Back/Quit |

| | **Q** | Quit forcefully |

| | **Backspace** | Back |

| | **p** | Toggle pause |

| | **r** | Refresh |

| | **T** | Seek 10 mins backward |

| | **t** | Seek 10 mins forward |

| | **Alt+t** | Set time interval |

| | **~** | chdig debug console |

================================================

FILE: Documentation/Bugs.md

================================================

### `--history` is broken in some versions

The reason is that in some ClickHouse versions merge() function ignore aliases.

================================================

FILE: Documentation/Developers.md

================================================

## Developer Documentation

### Debugging async code with tokio-console

chdig supports [tokio-console](https://github.com/tokio-rs/console) for debugging async tasks and runtime behavior.

To enable tokio console support:

1. Build with the `tokio-console` feature:

```bash

cargo build --features tokio-console

```

2. Run chdig:

```bash

cargo run --features tokio-console

```

3. In a separate terminal, start tokio-console:

```bash

# Install if needed

cargo install tokio-console

# Connect to the running application

tokio-console

```

================================================

FILE: Documentation/FAQ.md

================================================

### What is format of the URL accepted by `chdig`?

The simplest form is just - **`localhost`**

For a secure connections with user and password _(note: passing the password on

the command line is not safe)_, use:

```sh

chdig -u 'user:password@clickhouse-host.com/?secure=true'

```

A full list of supported connection options is available [here](https://github.com/azat-rust/clickhouse-rs/?tab=readme-ov-file#dns).

_Note: This link currently points to my fork, as some changes have not yet been accepted upstream._

### Environment variables

A safer way to pass the password is via environment variables:

```sh

export CLICKHOUSE_USER='user'

export CLICKHOUSE_PASSWORD='password'

chdig -u 'clickhouse-host.com/?secure=true'

# or specify the port explicitly

chdig -u 'clickhouse-host.com:9440/?secure=true'

```

### What is --config (`CLICKHOUSE_CONFIG`)?

This is standard config for [ClickHouse client](https://clickhouse.com/docs/interfaces/cli#configuration_files), i.e.

```yaml

user: foo

password: bar

host: play

secure: true

```

_See also some examples and possible advanced use cases [here](/tests/configs)_

### What is --connection?

`--connection` allows you to use predefined connections, that is supported by

`clickhouse-client` ([1], [2]).

Here is an example in `XML` format:

```xml

<clickhouse>

<connections_credentials>

<connection>

<name>prod</name>

<hostname>prod</hostname>

<user>default</user>

<password>secret</password>

<!-- <secure>false</secure> -->

<!-- <skip_verify>false</skip_verify> -->

<!-- <ca_certificate></ca_certificate> -->

<!-- <client_certificate></client_certificate> -->

<!-- <client_private_key></client_private_key> -->

</connection>

</connections_credentials>

</clickhouse>

```

Or in `YAML`:

```yaml

---

connections_credentials:

prod:

name: prod

hostname: prod

user: default

password: secret

# secure: false

# skip_verify: false

# ca_certificate:

# client_certificate:

# client_private_key:

```

And later, instead of specifying `--url` (with password in plain-text, which is

highly not recommended), you can use `chdig --connection prod`.

[1]: https://github.com/ClickHouse/ClickHouse/pull/45715

[2]: https://github.com/ClickHouse/ClickHouse/pull/46480

### What is Perfetto export?

Pressing `X` in the queries view exports a timeline visualization to

[Perfetto UI](https://ui.perfetto.dev) — an open-source trace viewer that

provides a zoomable timeline, flamegraph visualization, and SQL-queryable trace

data. It runs entirely in the browser.

An embedded HTTP server starts on port 9001 (lazily, on first export) and serves

the binary protobuf trace. The browser opens automatically.

The export includes data from multiple ClickHouse system tables (when available):

| Source table | What it shows |

|---|---|

| In-memory queries | Query duration slices grouped by host/user |

| `system.opentelemetry_span_log` | Processor pipeline spans |

| `system.trace_log` (ProfileEvent) | Per-thread counter increments |

| `system.trace_log` (CPU/Real/Memory) | Stack trace samples (flamegraph in Perfetto) |

| `system.text_log` | Query log messages grouped by level |

| `system.query_metric_log` | Per-query metric snapshots |

| `system.part_log` | Part lifecycle events (NewPart, MergeParts, etc.) |

| `system.query_thread_log` | Per-thread execution with ProfileEvents |

Tables that don't exist are silently skipped — the export works with whatever

data is available.

When queries are selected with `Space`, only those queries are exported.

To get the richest traces, enable these ClickHouse settings for the queries you

want to analyze:

```sql

SET

opentelemetry_start_trace_probability = 1,

opentelemetry_trace_processors = 1,

opentelemetry_trace_cpu_scheduling = 1,

log_query_threads = 1,

trace_profile_events = 1,

query_metric_log_interval = 0

```

- `opentelemetry_start_trace_probability` / `opentelemetry_trace_processors` /

`opentelemetry_trace_cpu_scheduling` — enable OpenTelemetry spans for the

query execution pipeline (populates `system.opentelemetry_span_log`)

- `log_query_threads` — log per-thread execution info

(populates `system.query_thread_log`)

- `trace_profile_events` — record ProfileEvent counter increments with

timestamps into `system.trace_log`, giving precise per-event timelines

- `query_metric_log_interval` — controls periodic metric snapshots in

`system.query_metric_log` (sampled every N milliseconds). Set to `0` to

disable if you prefer the more accurate `trace_profile_events`. Set to e.g.

`1000` (1 second) if you want periodic snapshots — note that these are

sampled and less precise than `trace_profile_events`, but lighter on overhead

### What is flamegraph?

It is best to start with [Brendan Gregg's site](https://www.brendangregg.com/flamegraphs.html) for a solid introduction to flamegraphs.

Below is a description of the various types of flamegraphs available in `chdig`:

- `Real` - Traces are captured at regular intervals (defined by [`query_profiler_real_time_period_ns`](https://clickhouse.com/docs/operations/settings/settings#query_profiler_real_time_period_ns)/[`global_profiler_real_time_period_ns`](https://clickhouse.com/docs/operations/server-configuration-parameters/settings#global_profiler_real_time_period_ns)) for each thread, regardless of whether the thread is actively running on the CPU

- `CPU` - Traces are captured only when a thread is actively executing on the CPU, based on the interval specified in [`query_profiler_cpu_time_period_ns`](https://clickhouse.com/docs/operations/settings/settings#query_profiler_cpu_time_period_ns)/[`global_profiler_cpu_time_period_ns`](https://clickhouse.com/docs/operations/server-configuration-parameters/settings#global_profiler_cpu_time_period_ns)

- `Memory` - Traces are captured after each [`memory_profiler_step`](https://clickhouse.com/docs/operations/settings/settings#memory_profiler_step)/[`total_memory_profiler_step`](https://clickhouse.com/docs/operations/server-configuration-parameters/settings#total_memory_profiler_step) bytes are allocated by the query or server

- `Live` - Real-time visualization of what server is doing now from [`system.stack_trace`](https://clickhouse.com/docs/operations/system-tables/stack_trace)

See also:

- [Sampling Query Profiler](https://clickhouse.com/docs/operations/optimizing-performance/sampling-query-profiler)

_Note: for `Memory` `chdig` uses `memory_profiler_step` over `memory_profiler_sample_probability`, since the later is disabled by default_

### Why I see IO wait reported as zero?

- You should ensure that ClickHouse uses one of taskstat gathering methods:

- procfs

- netlink

- And also for linux 5.14 you should enable `kernel.task_delayacct` sysctl as well.

### How to copy text from `chdig`

By default `chdig` is started with mouse mode enabled in terminal, you cannot

copy with this mode enabled. But, terminals provide a way to disable it

temporary by pressing some key (usually it is some combination of `Alt`,

`Shift` or/and `Ctrl`), so you can find yours press them, and copy.

---

See also [bugs list](Bugs.md)

================================================

FILE: LICENSE

================================================

Copyright 2023 Azat Khuzhin

Permission is hereby granted, free of charge, to any person obtaining a copy of

this software and associated documentation files (the “Software”), to deal in

the Software without restriction, including without limitation the rights to

use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies

of the Software, and to permit persons to whom the Software is furnished to do

so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: Makefile

================================================

debug ?=

target ?= $(shell rustc -vV | sed -n 's|host: ||p')

# Parse the target (i.e. aarch64-unknown-linux-musl)

target_os := $(shell echo $(target) | cut -d'-' -f3)

target_libc := $(shell echo $(target) | cut -d'-' -f4)

target_arch := $(shell echo $(target) | cut -d'-' -f1)

host_arch := $(shell uname -m)

# Version normalization for deb/rpm:

# - trim "v" prefix

# - first "-" replace with "+"

# - second "-" replace with "~"

#

# Refs: https://www.debian.org/doc/debian-policy/ch-controlfields.html

CHDIG_VERSION=$(shell git describe | sed -e 's/^v//' -e 's/-/+/' -e 's/-/~/')

# Refs: https://wiki.archlinux.org/title/Arch_package_guidelines#Package_versioning

CHDIG_VERSION_ARCH=$(shell git describe | sed -e 's/^v//' -e 's/-/./g')

$(info DESTDIR = $(DESTDIR))

$(info CHDIG_VERSION = $(CHDIG_VERSION))

$(info CHDIG_VERSION_ARCH = $(CHDIG_VERSION_ARCH))

$(info debug = $(debug))

$(info target = $(target))

$(info host_arch = $(host_arch))

ifdef debug

cargo_build_opts :=

target_type := debug

else

cargo_build_opts := --release

target_type = release

endif

ifneq ($(target),)

cargo_build_opts += --target $(target)

endif

# Normalize architecture names

norm_target_arch := $(shell echo $(target_arch) | sed -e 's/^aarch64$$/arm64/' -e 's/^x86_64$$/amd64/')

norm_host_arch := $(shell echo $(host_arch) | sed -e 's/^aarch64$$/arm64/' -e 's/^x86_64$$/amd64/')

$(info Normalized target arch: $(norm_target_arch))

$(info Normalized host arch: $(norm_host_arch))

# Cross compilation requires some tricks:

# - use lld linker

# - explicitly specify path for libstdc++

# (Also some packages, that you can found in github actions manifests)

#

# TODO: allow to use clang/gcc from PATH

ifneq ($(norm_host_arch),$(norm_target_arch))

$(info Cross compilation for $(target_arch))

# Detect the latest lld

LLD := $(shell ls /usr/bin/ld.lld /usr/bin/ld.lld-* 2>/dev/null | sort -V | tail -n1)

$(info LLD = $(LLD))

# Detect the latest clang

CLANG := $(shell ls /usr/bin/clang /usr/bin/clang-* 2>/dev/null | grep -e '/clang$$' -e '/clang-[0-9]\+$$' | sort -V | tail -n1)

$(info CLANG = $(CLANG))

CLANG_CXX := $(shell ls /usr/bin/clang++ /usr/bin/clang++-* 2>/dev/null | grep -e '/clang++$$' -e '/clang++-[0-9]\+$$' | sort -V | tail -n1)

$(info CLANG_CXX = $(CLANG_CXX))

export CC := $(CLANG)

export CXX := $(CLANG_CXX)

export RUSTFLAGS := -C linker=$(LLD)

# /usr/aarch64-linux-gnu/lib64/ (archlinux aarch64-linux-gnu-gcc)

prefix := /usr/$(target_arch)-$(target_os)-gnu/lib

ifneq ($(wildcard $(prefix)),)

export RUSTFLAGS := $(RUSTFLAGS) -C link-args=-L$(prefix)

endif

prefix := /usr/$(target_arch)-$(target_os)-gnu/lib64

ifneq ($(wildcard $(prefix)),)

export RUSTFLAGS := $(RUSTFLAGS) -C link-args=-L$(prefix)

endif

# /usr/lib/gcc-cross/aarch64-linux-gnu/$gcc (ubuntu)

latest_gcc_cross_version := $(shell ls -d /usr/lib/gcc-cross/$(target_arch)-$(target_os)-gnu/* 2>/dev/null | sort -V | tail -n1 | xargs -I{} basename {})

prefix := /usr/lib/gcc-cross/$(target_arch)-$(target_os)-gnu/$(latest_gcc_cross_version)

ifneq ($(wildcard $(prefix)),)

export RUSTFLAGS := $(RUSTFLAGS) -C link-args=-L$(prefix)

endif

# NOTE: there is also https://musl.cc/aarch64-linux-musl-cross.tgz

$(info RUSTFLAGS = $(RUSTFLAGS))

endif

.PHONY: build build_completion deploy-binary chdig install run \

deb rpm archlinux tar packages

# This should be the first target (since ".DEFAULT_GOAL" is supported only since 3.80+)

default: build

.DEFAULT_GOAL: default

chdig:

cargo build $(cargo_build_opts)

run: chdig

cargo run $(cargo_build_opts)

build: chdig deploy-binary

test:

@if command -v cargo-nextest >/dev/null 2>&1; then \

cargo nextest run $(cargo_build_opts); \

else \

cargo test $(cargo_build_opts); \

fi

build_completion: chdig

cargo run $(cargo_build_opts) -- --completion bash > target/chdig.bash-completion

install: chdig build_completion

install -m755 -D -t $(DESTDIR)/bin target/$(target)/$(target_type)/chdig

install -m644 -D -t $(DESTDIR)/share/bash-completion/completions target/chdig.bash-completion

deploy-binary: chdig

cp target/$(target)/$(target_type)/chdig target/chdig

packages: build build_completion deb rpm archlinux tar

deb: build

CHDIG_VERSION=${CHDIG_VERSION} CHDIG_ARCH=${norm_target_arch} nfpm package --config chdig-nfpm.yaml --packager deb

rpm: build

CHDIG_VERSION=${CHDIG_VERSION} CHDIG_ARCH=${target_arch} nfpm package --config chdig-nfpm.yaml --packager rpm

archlinux: build

CHDIG_VERSION=${CHDIG_VERSION_ARCH} CHDIG_ARCH=${target_arch} nfpm package --config chdig-nfpm.yaml --packager archlinux

.ONESHELL:

tar: archlinux

CHDIG_VERSION=${CHDIG_VERSION_ARCH} CHDIG_ARCH=${target_arch} nfpm package --config chdig-nfpm.yaml --packager archlinux

tmp_dir=$(shell mktemp -d /tmp/chdig-${CHDIG_VERSION}.XXXXXX)

echo "Temporary directory for tar package: $$tmp_dir"

tar -C $$tmp_dir -vxf chdig-${CHDIG_VERSION_ARCH}-1-${target_arch}.pkg.tar.zst usr

# Strip /tmp/chdig-${CHDIG_VERSION}.XXXXXX and replace it with chdig-${CHDIG_VERSION}

# (and we need to remove leading slash)

tar --show-transformed-names --transform "s#^$${tmp_dir#/}#chdig-${CHDIG_VERSION}-${target_arch}#" -vczf chdig-${CHDIG_VERSION}-${target_arch}.tar.gz $$tmp_dir

echo rm -fr $$tmp_dir

help:

@echo "Usage: make [debug=1] [target=<TRIPLE>]"

================================================

FILE: README.md

================================================

### chdig

Dig into [ClickHouse](https://github.com/ClickHouse/ClickHouse/) with TUI interface.

### Installation

`chdig` is also available as part of `clickhouse` - `clickhouse chdig`, but

that version may be slightly outdated.

Pre-built packages (`.deb`, `.rpm`, `archlinux`, `.tar.gz`) and standalone

binaries for `Linux` and `macOS` are available for both `x86_64` and `aarch64`

architectures.

The latest [unstable release can be found on GitHub](https://github.com/azat/chdig/releases/tag/latest).

*See also the complete list of [releases](https://github.com/azat/chdig/releases).*

<details>

<summary>Package repositories (AUR, Scoop, Homebrew)</summary>

#### archlinux user repository (aur)

And also for archlinux there is an aur package:

- [**chdig-latest-bin**](https://aur.archlinux.org/packages/chdig-latest-bin) - binary artifact of the upstream

- [chdig-git](https://aur.archlinux.org/packages/chdig-git) - build from sources

- [chdig-bin](https://aur.archlinux.org/packages/chdig-bin) - binary of the latest stable version

*Note: `chdig-latest-bin` is recommended because it is latest available version and you don't need toolchain to compile*

#### scoop (windows)

```

scoop bucket add extras

scoop install extras/chdig

```

#### brew (macos)

```

brew install chdig

```

</details>

### Demo

[](https://asciinema.org/a/OvQIBpQCAtFU8AyF)

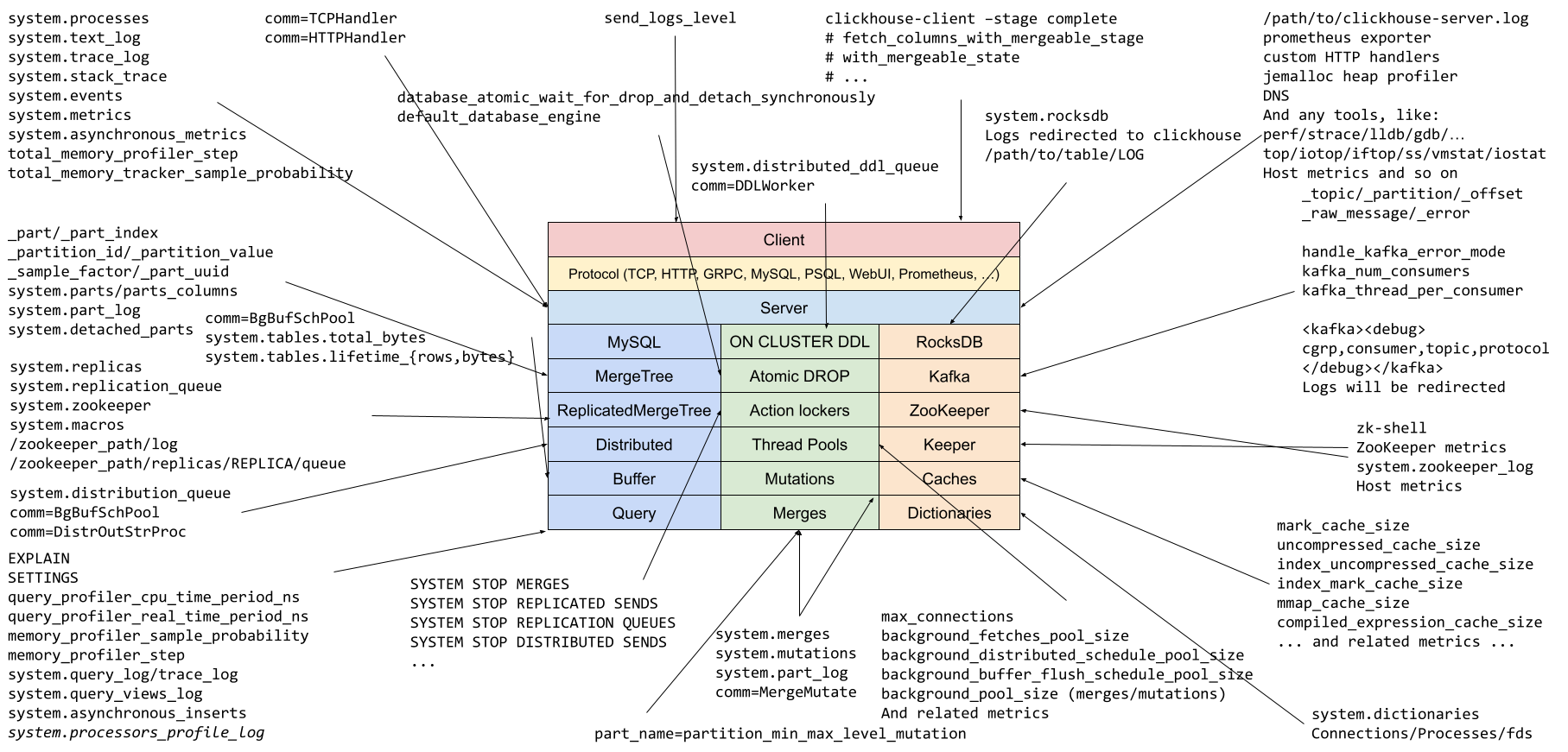

### Motivation

The idea is came from everyday digging into various ClickHouse issues.

ClickHouse has a approximately universe of introspection tools, and it is easy

to forget some of them. At first I came with some

[slides](https://azat.sh/presentations/2022-know-your-clickhouse/) and a

picture (to attract your attention) by analogy to what [Brendan

Gregg](https://www.brendangregg.com/linuxperf.html) did for Linux:

[](https://azat.sh/presentations/2022-know-your-clickhouse/Know-Your-ClickHouse.png)

*Note, the picture and the presentation had been made in the beginning of 2022,

so it may not include some new introspection tools*.

But this requires you to dig into lots of places, and even though during this

process you will learn a lot, it does not solves the problem of forgetfulness.

So I came up with this simple TUI interface that tries to make this process

simpler.

`chdig` can be used not only to debug some problems, but also just as a regular

introspection, like `top` for Linux.

### Features

- `top` like interface (or [`csysdig`](https://github.com/draios/sysdig) to be more precise)

- [Flamegraphs](Documentation/FAQ.md#what-is-flamegraph) (CPU/Real/Memory/Live) in TUI (thanks to [flamelens](https://github.com/ys-l/flamelens))

- [Perfetto support](Documentation/FAQ.md#what-is-perfetto-export)

- Share flamegraphs (using [pastila.nl](https://pastila.nl/) and [speedscope](https://www.speedscope.app/))

- Share logs via [pastila.nl](https://pastila.nl/)

- Share query pipelines (using [viz.js](https://github.com/mdaines/viz-js) and [pastila.nl](https://pastila.nl/))

- Cluster support (`--cluster`) - aggregate data from all hosts in the cluster

- Historical support (`--history`) - includes rotated `system.*_log_*` tables

- `clickhouse-client` compatibility (including `--connection`) for options and configuration files

And there is a huge bunch of [ideas](https://github.com/azat/chdig/issues).

**Note, this it is in a pre-alpha stage, so everything can be changed (keyboard

shortcuts, views, color schema and of course features)**

### Requirements

If something does not work, like you have too old version of `ClickHouse`, consider upgrading.

*Note: the oldest version that had been tested was 21.2 (at some point in time)*

### Build from sources

```

cargo build

```

> [!NOTE]

> If you see an error like `failed to authenticate when downloading repository: git@github.com:azat-rust/cursive`,

> it is likely because your local Git config is rewriting `https://github.com/` to `git@github.com:`:

>

> ```

> [url "git@github.com:"]

> insteadOf = https://github.com/

> ```

>

> Cargo's built-in Git library does not handle this case gracefully.

> You can either remove that config entry or tell Cargo to use the system Git client instead:

>

> ```toml

> # ~/.cargo/config.toml

> [net]

> git-fetch-with-cli = true

> ```

For development and debugging information, see [Documentation/Developers.md](Documentation/Developers.md).

## References

- [FAQ](Documentation/FAQ.md)

- [Bugs list](Documentation/Bugs.md)

- [Shortcuts](Documentation/Actions.md#shortcuts)

- [Developers](Documentation/Developers.md)

================================================

FILE: chdig-nfpm.yaml

================================================

---

name: "chdig"

arch: "${CHDIG_ARCH}"

platform: "linux"

version: "${CHDIG_VERSION}"

homepage: "https://github.com/azat/chdig"

license: "Apache"

priority: "optional"

maintainer: "Azat Khuzhin <a3at.mail@gmail.com>"

description: |

Dig into ClickHouse queries with TUI interface.

contents:

- src: target/chdig

dst: /usr/bin/chdig

file_info:

mode: 0755

- src: target/chdig.bash-completion

dst: /usr/share/bash-completion/completions/chdig

file_info:

mode: 0644

- src: README.md

dst: /usr/share/doc/chdig/README.md

file_info:

mode: 0644

================================================

FILE: rustfmt.toml

================================================

edition = "2018"

================================================

FILE: src/actions.rs

================================================

use cursive::{event::Event, theme::Effect, utils::markup::StyledString};

#[derive(Clone)]

pub struct ActionDescription {

pub text: &'static str,

pub event: Event,

}

impl ActionDescription {

pub fn event_string(&self) -> String {

match self.event {

Event::Char(c) => {

// - It is hard to understand that nothing is a space

// - And it overlaps with no shortcut actions

if c == ' ' {

return "<Space>".to_string();

} else {

return c.to_string();

}

}

Event::CtrlChar(c) => {

return format!("Ctrl+{}", c);

}

Event::AltChar(c) => {

return format!("Alt+{}", c);

}

Event::Key(k) => {

return format!("{:?}", k);

}

Event::Unknown(_) => {

return "".to_string();

}

_ => panic!("{:?} is not supported", self.event),

}

}

pub fn preview_styled(&self) -> StyledString {

let mut text = StyledString::default();

text.append_styled(format!("{:>10}", self.event_string()), Effect::Bold);

text.append_plain(format!(" - {}\n", self.text));

return text;

}

}

================================================

FILE: src/bin.rs

================================================

use anyhow::{Result, anyhow};

use backtrace::Backtrace;

use flexi_logger::{FileSpec, LogSpecification, Logger};

use std::ffi::OsString;

use std::panic::{self, PanicHookInfo};

use std::sync::Arc;

use cursive::view::Resizable;

use crate::{

interpreter::{ClickHouse, Context, ContextArc, options},

view::Navigation,

};

// NOTE: hyper also has trace_span() which will not be overwritten

//

// FIXME: should be initialize before options, but options prints completion that should be

// done before terminal switched to raw mode.

const DEFAULT_RUST_LOG: &str = "trace,cursive=info,clickhouse_rs=info,hyper=info,rustls=info";

fn panic_hook(info: &PanicHookInfo<'_>) {

let location = info.location().unwrap();

let msg = if let Some(s) = info.payload().downcast_ref::<&'static str>() {

*s

} else if let Some(s) = info.payload().downcast_ref::<String>() {

&s[..]

} else {

"Box<Any>"

};

// NOTE: we need to add \r since the terminal is in raw mode.

// (another option is to restore the terminal state with termios)

let stacktrace: String = format!("{:?}", Backtrace::new()).replace('\n', "\n\r");

print!(

"\n\rthread '<unnamed>' panicked at '{}', {}\n\r{}",

msg, location, stacktrace

);

}

pub async fn chdig_main_async<I, T>(itr: I) -> Result<()>

where

I: IntoIterator<Item = T>,

T: Into<OsString> + Clone,

{

let options = options::parse_from(itr)?;

let mut logger_handle = None;

// We start logging to file earlier for better introspection.

if let Some(log) = &options.service.log {

logger_handle = Some(

Logger::try_with_env_or_str(DEFAULT_RUST_LOG)?

.log_to_file(FileSpec::try_from(log)?)

.format(flexi_logger::with_thread)

.start()?,

);

}

// Initialize it before any backends (otherwise backend will prepare terminal for TUI app, and

// panic hook will clear the screen).

let clickhouse = Arc::new(ClickHouse::new(options.clickhouse.clone()).await?);

let server_warnings = match clickhouse.get_warnings().await {

Ok(w) => w,

Err(e) => {

log::warn!("Failed to fetch system.warnings: {}", e);

Vec::new()

}

};

panic::set_hook(Box::new(|info| {

panic_hook(info);

}));

let backend = cursive::backends::try_default().map_err(|e| anyhow!(e.to_string()))?;

let mut siv = cursive::CursiveRunner::new(cursive::Cursive::new(), backend);

if options.service.log.is_none() {

logger_handle = Some(

Logger::try_with_env_or_str(DEFAULT_RUST_LOG)?

.log_to_writer(cursive_flexi_logger_view::cursive_flexi_logger(&siv))

.format(flexi_logger::colored_with_thread)

.start()?,

);

}

// FIXME: should be initialized before cursive, otherwise on error it clears the terminal.

let context: ContextArc = Context::new(options, clickhouse, siv.cb_sink().clone()).await?;

siv.chdig(context.clone());

if !server_warnings.is_empty() {

let text = server_warnings.join("\n");

siv.add_layer(

cursive::views::Dialog::around(cursive::views::ScrollView::new(

cursive::views::TextView::new(text),

))

.title("Server warnings")

.button("OK", |s| {

s.pop_layer();

})

.max_width(80),

);

}

log::info!("chdig started");

siv.run();

if let Some(logger_handle) = logger_handle {

// Suppress error from the cursive_flexi_logger_view - "cursive callback sink is closed!"

// Note, cursive_flexi_logger_view does not implements shutdown() so it will not help.

logger_handle.set_new_spec(LogSpecification::parse("none")?);

}

return Ok(());

}

fn collect_args(argc: c_int, argv: *const *const c_char) -> Vec<OsString> {

use std::ffi::CStr;

unsafe {

std::slice::from_raw_parts(argv, argc as usize)

.iter()

.map(|&ptr| {

let c_str = CStr::from_ptr(ptr);

let string = c_str.to_string_lossy().into_owned();

OsString::from(string)

})

.collect()

}

}

use std::os::raw::{c_char, c_int};

#[unsafe(no_mangle)]

pub extern "C" fn chdig_main(argc: c_int, argv: *const *const c_char) -> c_int {

#[cfg(feature = "tokio-console")]

console_subscriber::init();

tokio::runtime::Builder::new_current_thread()

.enable_all()

.build()

.unwrap()

.block_on(chdig_main_async(collect_args(argc, argv)))

.unwrap_or_else(|e| {

eprintln!("{}", e);

std::process::exit(1);

});

return 0;

}

================================================

FILE: src/common/mod.rs

================================================

mod relative_date_time;

pub mod sparkline;

mod stopwatch;

pub use relative_date_time::RelativeDateTime;

pub use relative_date_time::parse_datetime_or_date;

pub use stopwatch::Stopwatch;

================================================

FILE: src/common/relative_date_time.rs

================================================

use chrono::{DateTime, Local, NaiveDate, NaiveDateTime, TimeDelta};

use std::{

fmt::Display,

ops::{AddAssign, SubAssign},

str::FromStr,

};

pub fn parse_datetime_or_date(value: &str) -> Result<DateTime<Local>, String> {

let mut errors = Vec::new();

// Parse without timezone

match value.parse::<NaiveDateTime>() {

Ok(datetime) => return Ok(datetime.and_local_timezone(Local).unwrap()),

Err(err) => errors.push(err),

}

// Parse *with* timezone

match value.parse::<DateTime<Local>>() {

Ok(datetime) => return Ok(datetime),

Err(err) => errors.push(err),

}

// Parse as date

match value.parse::<NaiveDate>() {

Ok(date) => {

return Ok(date

.and_hms_opt(0, 0, 0)

.unwrap()

.and_local_timezone(Local)

.unwrap());

}

Err(err) => errors.push(err),

}

return Err(format!(

"Valid RFC3339-formatted (YYYY-MM-DDTHH:MM:SS[.ssssss][±hh:mm|Z]) datetime or date while parsing '{}':\n{}",

value,

errors

.iter()

.map(|e| e.to_string())

.collect::<Vec<String>>()

.join("\n")

));

}

#[derive(Clone, Debug)]

pub struct RelativeDateTime {

date_time: Option<DateTime<Local>>,

// Always subtracted

offset: Option<TimeDelta>,

}

impl RelativeDateTime {

pub fn new(offset: Option<TimeDelta>) -> Self {

Self {

date_time: None,

offset,

}

}

pub fn get_date_time(&self) -> Option<DateTime<Local>> {

self.date_time

}

pub fn to_editable_string(&self) -> String {

match (&self.date_time, &self.offset) {

(None, Some(offset)) => {

humantime::format_duration(offset.to_std().unwrap_or_default()).to_string()

}

(Some(dt), _) => dt.format("%Y-%m-%dT%H:%M:%S").to_string(),

(None, None) => String::new(),

}

}

pub fn to_sql_datetime_64(&self) -> Option<String> {

match (self.date_time, self.offset) {

(Some(date_time), Some(offset)) => Some(format!(

"fromUnixTimestamp64Nano({}) - INTERVAL {} NANOSECOND",

date_time.timestamp_nanos_opt()?,

offset.num_nanoseconds()?

)),

(None, Some(offset)) => Some(format!(

"now() - INTERVAL {} NANOSECOND",

offset.num_nanoseconds()?

)),

(Some(date_time), None) => Some(format!(

"fromUnixTimestamp64Nano({})",

date_time.timestamp_nanos_opt()?

)),

(None, None) => Some("now()".to_string()),

}

}

}

impl From<DateTime<Local>> for RelativeDateTime {

fn from(value: DateTime<Local>) -> Self {

RelativeDateTime {

date_time: Some(value),

offset: None,

}

}

}

impl From<Option<DateTime<Local>>> for RelativeDateTime {

fn from(value: Option<DateTime<Local>>) -> Self {

RelativeDateTime {

date_time: value,

offset: None,

}

}

}

impl FromStr for RelativeDateTime {

type Err = anyhow::Error;

fn from_str(s: &str) -> std::result::Result<Self, Self::Err> {

// Empty string is a special case for relative "now"

// (i.e. it will be always calculated from current time)

if s.is_empty() {

Ok(RelativeDateTime {

date_time: None,

offset: None,

})

} else if let Ok(datetime) = parse_datetime_or_date(s) {

Ok(RelativeDateTime {

date_time: Some(datetime),

offset: None,

})

} else {

Ok(RelativeDateTime {

date_time: None,

offset: Some(TimeDelta::from_std(

s.parse::<humantime::Duration>()?.into(),

)?),

})

}

}

}

impl From<RelativeDateTime> for DateTime<Local> {

fn from(value: RelativeDateTime) -> Self {

let mut date_time = value.date_time.unwrap_or(Local::now());

if let Some(offset) = value.offset {

date_time -= offset;

}

return date_time;

}

}

impl Display for RelativeDateTime {

fn fmt(&self, f: &mut std::fmt::Formatter<'_>) -> std::fmt::Result {

f.write_fmt(format_args!(

"{:?} (offset={:?})",

self.date_time, self.offset

))

}

}

impl AddAssign<TimeDelta> for RelativeDateTime {

fn add_assign(&mut self, rhs: TimeDelta) {

self.offset = Some(rhs);

}

}

impl SubAssign<TimeDelta> for RelativeDateTime {

fn sub_assign(&mut self, rhs: TimeDelta) {

self.offset = Some(rhs);

}

}

================================================

FILE: src/common/sparkline.rs

================================================

use std::collections::VecDeque;

const BLOCKS: &[char] = &['▁', '▂', '▃', '▄', '▅', '▆', '▇', '█'];

pub struct SparklineBuffer {

data: VecDeque<f64>,

capacity: usize,

}

impl SparklineBuffer {

pub fn new(capacity: usize) -> Self {

Self {

data: VecDeque::with_capacity(capacity),

capacity,

}

}

pub fn push(&mut self, value: f64) {

if self.data.len() == self.capacity {

self.data.pop_front();

}

self.data.push_back(value);

}

pub fn render(&self, width: usize) -> String {

if self.data.is_empty() {

return String::new();

}

let samples: Vec<f64> = self

.data

.iter()

.rev()

.take(width)

.copied()

.collect::<Vec<_>>()

.into_iter()

.rev()

.collect();

let min = samples.iter().copied().fold(f64::INFINITY, f64::min);

let max = samples.iter().copied().fold(f64::NEG_INFINITY, f64::max);

let range = max - min;

samples

.iter()

.map(|&v| {

if range == 0.0 {

BLOCKS[BLOCKS.len() / 2]

} else {

let idx = ((v - min) / range * (BLOCKS.len() - 1) as f64).round() as usize;

BLOCKS[idx.min(BLOCKS.len() - 1)]

}

})

.collect()

}

}

================================================

FILE: src/common/stopwatch.rs

================================================

/// Stupid and simple implementation of stopwatch.

use std::time::{Duration, Instant};

pub struct Stopwatch {

start_time: Instant,

}

impl Stopwatch {

pub fn start_new() -> Stopwatch {

Stopwatch {

start_time: Instant::now(),

}

}

pub fn elapsed_ms(&self) -> u64 {

return self.elapsed().as_millis() as u64;

}

pub fn elapsed(&self) -> Duration {

return self.start_time.elapsed();

}

}

================================================

FILE: src/interpreter/background_runner.rs

================================================

use std::sync::{Arc, Condvar, Mutex, atomic};

use std::thread;

use std::time::Duration;

/// Runs periodic tasks in background thread.

///

/// It is OK to suppress unused warning for this code, since it join the thread in drop()

/// correctly, example:

///

/// ``rust

/// pub struct SomeView {

/// #[allow(unused)]

/// bg_runner: BackgroundRunner,

/// }

/// ``

///

pub struct BackgroundRunner {

interval: Duration,

thread: Option<thread::JoinHandle<()>>,

force: Arc<atomic::AtomicBool>,

exit: Arc<Mutex<bool>>,

cv: Arc<(Mutex<()>, Condvar)>,

}

impl Drop for BackgroundRunner {

fn drop(&mut self) {

log::debug!("Stopping updates");

*self.exit.lock().unwrap() = true;

self.cv.1.notify_all();

self.thread.take().unwrap().join().unwrap();

log::debug!("Updates stopped");

}

}

impl BackgroundRunner {

pub fn new(

interval: Duration,

cv: Arc<(Mutex<()>, Condvar)>,

force: Arc<atomic::AtomicBool>,

) -> Self {

return Self {

interval,

thread: None,

force,

exit: Arc::new(Mutex::new(false)),

cv,

};

}

pub fn start<C: Fn(bool) + std::marker::Send + 'static>(&mut self, callback: C) {

let interval = self.interval;

let cv = self.cv.clone();

let exit = self.exit.clone();

let force = self.force.clone();

self.thread = Some(std::thread::spawn(move || {

loop {

let was_force = force.swap(false, atomic::Ordering::SeqCst);

callback(was_force);

if *exit.lock().unwrap() {

break;

}

let _ = cv.1.wait_timeout(cv.0.lock().unwrap(), interval).unwrap();

if *exit.lock().unwrap() {

break;

}

}

}));

// Explicitly trigger at least one update with force

self.schedule();

}

pub fn schedule(&mut self) {

self.force.store(true, atomic::Ordering::SeqCst);

self.cv.1.notify_all();

}

}

================================================

FILE: src/interpreter/clickhouse.rs

================================================

use crate::{

common::RelativeDateTime,

interpreter::{

ClickHouseAvailableQuirks, ClickHouseQuirks,

options::{ClickHouseOptions, LogsOrder},

},

};

use anyhow::{Error, Result};

use chrono::{DateTime, Local};

use chrono_tz::Tz;

use clickhouse_rs::{

Block, Options, Pool,

types::{Complex, FromSql},

};

use futures_util::StreamExt;

use std::collections::HashMap;

use std::str::FromStr;

// TODO:

// - implement parsing using serde

// - replace clickhouse_rs::client_info::write() (with extend crate) to change the client name

// - escape parameters

pub type Columns = Block<Complex>;

pub struct ClickHouse {

pub quirks: ClickHouseQuirks,

// Server has use_shared_merge_tree_log_pipeline enabled (SharedMergeTree-backed system.*_log).

// When true, system.*_log reads do not need clusterAllReplicas(): one replica sees all rows.

shared_log_pipeline: bool,

options: ClickHouseOptions,

pool: Pool,

}

#[derive(Debug, PartialEq, Clone)]

#[allow(clippy::upper_case_acronyms)]

pub enum TraceType {

CPU,

Real,

Memory,

MemorySample,

JemallocSample,

ProfileEvent,

MemoryAllocatedWithoutCheck,

}

#[derive(Debug, Clone)]

pub struct TextLogArguments {

pub query_ids: Option<Vec<String>>,

pub logger_names: Option<Vec<String>>,

pub hostname: Option<String>,

pub message_filter: Option<String>,

pub max_level: Option<String>,

pub start: DateTime<Local>,

pub end: RelativeDateTime,

}

#[derive(Default)]

pub struct ClickHouseServerCPU {

pub count: u64,

pub user: u64,

pub system: u64,

}

/// NOTE: Likely misses threads for IO

#[derive(Default)]

pub struct ClickHouseServerThreadPools {

pub merges_mutations: u64,

pub fetches: u64,

pub common: u64,

pub moves: u64,

pub schedule: u64,

pub buffer_flush: u64,

pub distributed: u64,

pub message_broker: u64,

pub backups: u64,

pub io: u64,

pub remote_io: u64,

pub queries: u64,

}

#[derive(Default)]

pub struct ClickHouseServerThreads {

pub os_total: u64,

pub os_runnable: u64,

pub tcp: u64,

pub http: u64,

pub interserver: u64,

pub pools: ClickHouseServerThreadPools,

}

#[derive(Default)]

pub struct ClickHouseServerMemory {

pub os_total: u64,

pub resident: u64,

pub tracked: u64,

pub tables: u64,

pub caches: u64,

pub queries: u64,

pub merges_mutations: u64,

pub active_merges: u64,

pub async_inserts: u64,

pub dictionaries: u64,

pub primary_keys: u64,

pub fragmentation: u64,

pub index_granularity: u64,

pub io: u64,

}

/// May have duplicated accounting (due to bridges and stuff)

#[derive(Default)]

pub struct ClickHouseServerNetwork {

pub send_bytes: u64,

pub receive_bytes: u64,

}

#[derive(Default)]

pub struct ClickHouseServerUptime {

pub _os: u64,

pub server: u64,

}

/// May does not take into account some block devices (due to filter by sd*/nvme*/vd*)

#[derive(Default)]

pub struct ClickHouseServerBlockDevices {

pub read_bytes: u64,

pub write_bytes: u64,

}

#[derive(Default)]

pub struct ClickHouseServerStorages {

pub buffer_bytes: u64,

// Replace with bytes once [1] will be merged.

//

// [1]: https://github.com/ClickHouse/ClickHouse/pull/50238

pub distributed_insert_files: u64,

pub total_rows: u64,

pub total_bytes: u64,

}

#[derive(Default)]

pub struct ClickHouseServerRows {

pub selected: u64,

pub inserted: u64,

}

#[derive(Default)]

pub struct ClickHouseServerSummary {

pub queries: u64,

pub merges: u64,

pub mutations: u64,

pub replication_queue: u64,

pub replication_queue_tries: u64,

pub fetches: u64,

pub servers: u64,

pub rows: ClickHouseServerRows,

pub storages: ClickHouseServerStorages,

pub uptime: ClickHouseServerUptime,

pub memory: ClickHouseServerMemory,

pub cpu: ClickHouseServerCPU,

pub threads: ClickHouseServerThreads,

pub network: ClickHouseServerNetwork,

pub blkdev: ClickHouseServerBlockDevices,

pub update_interval: u64,

}

pub struct QueryMetricRow {

pub host_name: String,

pub timestamp_ns: u64,

pub memory_usage: i64,

pub peak_memory_usage: i64,

pub profile_events: HashMap<String, u64>,

}

pub struct MetricLogRow {

pub timestamp_ns: u64,

pub profile_events: HashMap<String, u64>,

pub current_metrics: HashMap<String, i64>,

}

fn collect_values<'b, T: FromSql<'b>>(block: &'b Columns, column: &str) -> Vec<T> {

return (0..block.row_count())

.map(|i| block.get(i, column).unwrap())

.collect();

}

const CHDIG_CLIENT_NAME: [&str; 2] = ["chdig", env!("CARGO_PKG_VERSION")];

fn get_client_name() -> String {

return CHDIG_CLIENT_NAME.join("-");

}

impl ClickHouse {

pub async fn new(options: ClickHouseOptions) -> Result<Self> {

let url = format!(

"{}&client_name={}",

options.url.clone().unwrap(),

get_client_name()

);

let connect_options: Options = Options::from_str(&url)?

.with_setting(

"storage_system_stack_trace_pipe_read_timeout_ms",

1000,

/* is_important= */ false,

)

// FIXME: ClickHouse's analyzer does not handle ProfileEvents.Names (and similar), it throws:

//

// Invalid column type for ColumnUnique::insertRangeFrom. Expected String, got LowCardinality(String)

//

.with_setting("allow_experimental_analyzer", false, true)

// TODO: add support of Map type for LowCardinality in the driver

.with_setting("low_cardinality_allow_in_native_format", false, true);

let pool = Pool::new(connect_options);

let mut handle = pool.get_handle().await.map_err(|e| {

Error::msg(format!(

"Cannot connect to ClickHouse at {} ({})",

options.url_safe, e

))

})?;

let version = if let Some(override_version) = &options.server_version {

override_version.clone()

} else {

let version = handle

.query("SELECT version()")

.fetch_all()

.await?

.get::<String, _>(0, 0)?;

// Get VERSION_DESCRIBE from system.build_options for full version info (only build_options

// include version prefix, i.e. -stable/-testing)

handle

.query("SELECT value FROM system.build_options WHERE name = 'VERSION_DESCRIBE'")

.fetch_all()

.await?

.get::<String, _>(0, 0)

.unwrap_or_else(|_| version.clone())

};

let quirks = ClickHouseQuirks::new(version);

// SMT-backed system.*_log (ClickHouse Cloud) exposes all replicas' rows through any single

// replica, so clusterAllReplicas() is pure overhead there. The setting is off by default

// and on self-hosted clusters, so we silently fall back to the cluster-wrapped path.

let shared_log_pipeline = handle

.query(

"SELECT value FROM system.server_settings \

WHERE name = 'use_shared_merge_tree_log_pipeline'",

)

.fetch_all()

.await

.ok()

.filter(|block| block.row_count() > 0)

.and_then(|block| block.get::<String, _>(0, 0).ok())

.map(|v| v == "1" || v.eq_ignore_ascii_case("true"))

.unwrap_or(false);

if shared_log_pipeline {

log::info!(

"SharedMergeTree log pipeline detected, skipping clusterAllReplicas() for system.*_log"

);

}

return Ok(ClickHouse {

quirks,

shared_log_pipeline,

options,

pool,

});

}

pub fn version(&self) -> String {

return self.quirks.get_version();

}

pub async fn get_slow_query_log(

&self,

filter: &String,

start: RelativeDateTime,

end: RelativeDateTime,

limit: u64,

selected_host: Option<&String>,

) -> Result<Columns> {

let dbtable = self.get_log_table_name("system", "query_log");

let host_filter = self.get_log_host_filter_clause(selected_host);

return self

.execute(

format!(

r#"

WITH

{start} AS start_,

{end} AS end_,

slow_queries_ids AS (

SELECT DISTINCT initial_query_id

FROM {db_table}

WHERE

event_date BETWEEN toDate(start_) AND toDate(end_) AND

event_time BETWEEN toDateTime(start_) AND toDateTime(end_) AND

is_initial_query AND

/* To make query faster */

query_duration_ms > 1e3

{filter}

{internal}

{host_filter}

ORDER BY query_duration_ms DESC

LIMIT {limit}

)

SELECT

ProfileEvents.Names,

ProfileEvents.Values,

Settings.Names,

Settings.Values,

{peak_threads_usage} AS peak_threads_usage,

// Compatibility with system.processlist

memory_usage::Int64 AS peak_memory_usage,

query_duration_ms/1e3 AS elapsed,

user,

is_initial_query,

(exception_code = 394)::UInt8 AS is_cancelled,

initial_query_id,

query_id,

hostname as host_name,

current_database,

query_start_time_microseconds,

event_time_microseconds AS query_end_time_microseconds,

toValidUTF8(query) AS original_query,

normalizeQuery(query) AS normalized_query

FROM {db_table}

PREWHERE

event_date BETWEEN toDate(start_) AND toDate(end_) AND

event_time BETWEEN toDateTime(start_) AND toDateTime(end_) AND

type != 'QueryStart' AND

initial_query_id GLOBAL IN slow_queries_ids

"#,

start = start.to_sql_datetime_64().ok_or(Error::msg("Invalid start"))?,

end = end.to_sql_datetime_64().ok_or(Error::msg("Invalid end"))?,

db_table = dbtable,

peak_threads_usage = if self.quirks.has(ClickHouseAvailableQuirks::QueryLogPeakThreadsUsage) {

"peak_threads_usage"

} else {

"length(thread_ids)"

},

internal = if self.options.internal_queries {

"".to_string()

} else {

format!("AND client_name != '{}'", get_client_name())

},

filter = if !filter.is_empty() {

format!("AND (client_hostname LIKE '{0}' OR log_comment LIKE '{0}' OR os_user LIKE '{0}' OR user LIKE '{0}' OR initial_user LIKE '{0}' OR client_name LIKE '{0}' OR query_id LIKE '{0}' OR query LIKE '{0}')", &filter)

} else {

"".to_string()

},

host_filter = host_filter,

)

.as_str(),

)

.await;

}

pub async fn get_last_query_log(

&self,

filter: &String,

start: RelativeDateTime,

end: RelativeDateTime,

limit: u64,

selected_host: Option<&String>,

) -> Result<Columns> {

// TODO:

// - propagate sort order from the table

// - distributed_group_by_no_merge=2 is broken for this query with WINDOW function

let dbtable = self.get_log_table_name("system", "query_log");

let host_filter = self.get_log_host_filter_clause(selected_host);

return self

.execute(

format!(

r#"

WITH

{start} AS start_,

{end} AS end_,

last_queries_ids AS (

SELECT DISTINCT initial_query_id

FROM {db_table}

WHERE

event_date BETWEEN toDate(start_) AND toDate(end_) AND

event_time BETWEEN toDateTime(start_) AND toDateTime(end_) AND

is_initial_query

{filter}

{internal}

{host_filter}

ORDER BY event_date DESC, event_time DESC

LIMIT {limit}

)

SELECT

ProfileEvents.Names,

ProfileEvents.Values,

Settings.Names,

Settings.Values,

{peak_threads_usage} AS peak_threads_usage,

// Compatibility with system.processlist

memory_usage::Int64 AS peak_memory_usage,

query_duration_ms/1e3 AS elapsed,

user,

is_initial_query,

(exception_code = 394)::UInt8 AS is_cancelled,

initial_query_id,

query_id,

hostname as host_name,

current_database,

query_start_time_microseconds,

event_time_microseconds AS query_end_time_microseconds,

toValidUTF8(query) AS original_query,

normalizeQuery(query) AS normalized_query