[

{

"path": ".gitignore",

"content": "# Byte-compiled / optimized / DLL files\n__pycache__/\nvis/\napex/\ncocoapi/\ndemo/\nvis_xtion/\nMASK_R_*/\nold/\ncheckpoints/\nVOCdevkit/\n*.py[cod]\n*$py.class\npano_pred/\npano_pred.json\n# C extensions\n*.so\n*.log\n\n# Distribution / packaging\n.Python\nbuild/\ndevelop-eggs/\ndist/\ndownloads/\neggs/\n.eggs/\nlib/\nlib64/\nparts/\nsdist/\nvar/\nwheels/\npip-wheel-metadata/\nshare/python-wheels/\n*.egg-info/\n.installed.cfg\n*.egg\nMANIFEST\n\n# PyInstaller\n# Usually these files are written by a python script from a template\n# before PyInstaller builds the exe, so as to inject date/other infos into it.\n*.manifest\n*.spec\n\n# Installer logs\npip-log.txt\npip-delete-this-directory.txt\n\n# Unit test / coverage reports\nhtmlcov/\n.tox/\n.nox/\n.coverage\n.coverage.*\n.cache\nnosetests.xml\ncoverage.xml\n*.cover\n.hypothesis/\n.pytest_cache/\n\n# Translations\n*.mo\n*.pot\n\n# Django stuff:\n*.log\nlocal_settings.py\ndb.sqlite3\n\n# Flask stuff:\ninstance/\n.webassets-cache\n\n# Scrapy stuff:\n.scrapy\n\n# Sphinx documentation\ndocs/_build/\n\n# PyBuilder\ntarget/\n\n# Jupyter Notebook\n.ipynb_checkpoints\n\n# IPython\nprofile_default/\nipython_config.py\n\n# pyenv\n.python-version\n\n# pipenv\n# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.\n# However, in case of collaboration, if having platform-specific dependencies or dependencies\n# having no cross-platform support, pipenv may install dependencies that don’t work, or not\n# install all needed dependencies.\n#Pipfile.lock\n\n# celery beat schedule file\ncelerybeat-schedule\n\n# SageMath parsed files\n*.sage.py\n\n# Environments\n.env\n.venv\nenv/\nvenv/\nENV/\nenv.bak/\nvenv.bak/\n\n# Spyder project settings\n.spyderproject\n.spyproject\n\n# Rope project settings\n.ropeproject\n\n# mkdocs documentation\n/site\n\n# mypy\n.mypy_cache/\n.dmypy.json\ndmypy.json\n\n# Pyre type checker\n.pyre/\n"

},

{

"path": "LICENSE",

"content": "MIT License\n\nCopyright (c) 2019 Structured3D Group\n\n\nPermission is hereby granted, free of charge, to any person obtaining a copy\nof this software and associated documentation files (the \"Software\"), to deal\nin the Software without restriction, including without limitation the rights\nto use, copy, modify, merge, publish, distribute, sublicense, and/or sell\ncopies of the Software, and to permit persons to whom the Software is\nfurnished to do so, subject to the following conditions:\n\nThe above copyright notice and this permission notice shall be included in all\ncopies or substantial portions of the Software.\n\nTHE SOFTWARE IS PROVIDED \"AS IS\", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR\nIMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,\nFITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE\nAUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER\nLIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,\nOUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE\nSOFTWARE.\n"

},

{

"path": "README.md",

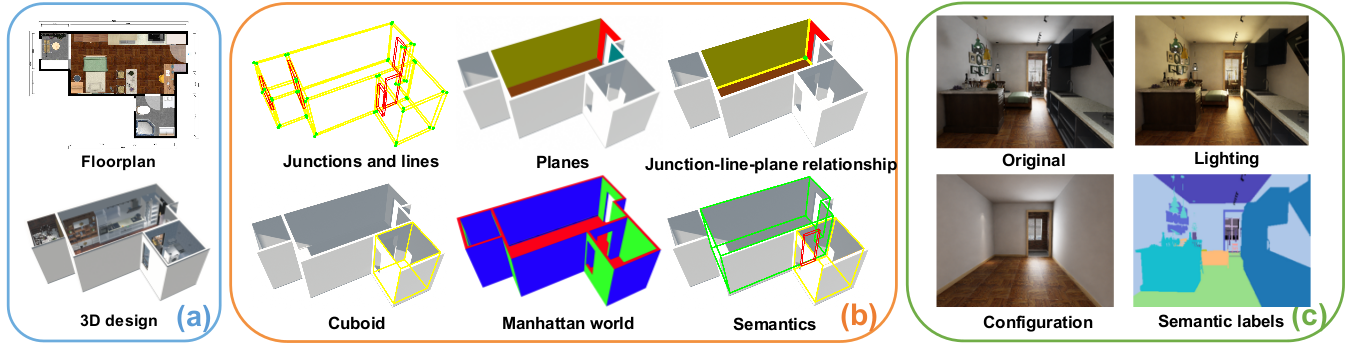

"content": "# Structured3D\n\n\n\nStructured3D is a large-scale photo-realistic dataset containing 3.5K house designs **(a)** created by professional designers with a variety of ground truth 3D structure annotations **(b)** and generate photo-realistic 2D images **(c)**.\n\n## Paper\n\n**Structured3D: A Large Photo-realistic Dataset for Structured 3D Modeling**\n\n[Jia Zheng](https://bertjiazheng.github.io/)\\*,\n[Junfei Zhang](https://www.linkedin.com/in/骏飞-张-1bb82691/?locale=en_US)\\*,\n[Jing Li](https://cn.linkedin.com/in/jing-li-253b26139),\n[Rui Tang](https://cn.linkedin.com/in/rui-tang-50973488),\n[Shenghua Gao](http://sist.shanghaitech.edu.cn/sist_en/2018/0820/c3846a31775/page.htm),\n[Zihan Zhou](https://faculty.ist.psu.edu/zzhou)\n\nEuropean Conference on Computer Vision (ECCV), 2020\n\n[[Preprint](https://arxiv.org/abs/1908.00222)] \n[[Paper](https://www.ecva.net/papers/eccv_2020/papers_ECCV/papers/123540494.pdf)] \n[[Supplementary Material](https://www.ecva.net/papers/eccv_2020/papers_ECCV/papers/123540494-supp.pdf)] \n[[Benchmark](https://competitions.codalab.org/competitions/24183)]\n\n(\\* Equal contribution)\n\n## Data\n\nThe dataset consists of rendering images and corresponding ground truth annotations (_e.g._, semantic, albedo, depth, surface normal, layout) under different lighting and furniture configurations. Please refer to [data organization](data_organization.md) for more details.\n\nTo download the dataset, please fill the [agreement form](https://forms.gle/LXg4bcjC2aEjrL9o8) that indicates you agree to the [Structured3D Terms of Use](https://drive.google.com/open?id=13ZwWpU_557ZQccwOUJ8H5lvXD7MeZFMa). After we receive your agreement form, we will provide download access to the dataset.\n\nFor fair comparison, we define standard training, validation, and testing splits as follows: _scene_00000_ to _scene_02999_ for training, _scene_03000_ to _scene_03249_ for validation, and _scene_03250_ to _scene_03499_ for testing.\n\n## Errata\n\n- 2020-04-06: We provide a list of invalid cases [here](metadata/errata.txt). You can ignore these cases when using our data.\n- 2020-03-26: Fix issue [#10](https://github.com/bertjiazheng/Structured3D/issues/10) about the basis of the bounding box annotations. Please re-download the annotations if you use them.\n\n## Tools\n\nWe provide the basic code for viewing the structure annotations of our dataset.\n\n### Installation\n\nClone repository:\n\n```bash\ngit clone git@github.com:bertjiazheng/Structured3D.git\n```\n\nPlease use Python 3, then follow [installation](https://pymesh.readthedocs.io/en/latest/installation.html) to install [PyMesh](https://github.com/PyMesh/PyMesh) (only for plane visualization) and the other dependencies:\n\n```bash\nconda install -y open3d -c open3d-admin\nconda install -y opencv -c conda-forge\nconda install -y descartes matplotlib numpy shapely\npip install panda3d\n```\n\n### Visualize 3D Annotation\n\nWe use [open3D](https://github.com/intel-isl/Open3D) for wireframe and plane visualization, please refer to interaction control [here](http://www.open3d.org/docs/tutorial/Basic/visualization.html#function-draw-geometries).\n\n```bash\npython visualize_3d.py --path /path/to/dataset --scene scene_id --type wireframe/plane/floorplan\n```\n\n| Wireframe | Plane | Floorplan |\n| ------------------------------------- | ----------------------------- | ------------------------------------- |\n|  |  |  |\n\n### Visualize 3D Textured Mesh\n\n```bash\npython visualize_mesh.py --path /path/to/dataset --scene scene_id --room room_id\n```\n\n\n \n

\n

\n\n### Visualize 2D Layout\n\n```bash\npython visualize_layout.py --path /path/to/dataset --scene scene_id --type perspective/panorama\n```\n\n#### Panorama Layout\n\n\n \n

\n \n

\n \n

\n

\n\nPlease refer to the [Supplementary Material](https://www.ecva.net/papers/eccv_2020/papers_ECCV/papers/123540494-supp.pdf) for more example ground truth room layouts.\n\n#### Perspective Layout\n\n\n \n

\n \n

\n \n

\n

\n\n### Visualize 3D Bounding Box\n\n```bash\npython visualize_bbox.py --path /path/to/dataset --scene scene_id\n```\n\n\n \n

\n \n

\n \n

\n

\n\n### Visualize Floorplan\n\n```bash\npython visualize_floorplan.py --path /path/to/dataset --scene scene_id\n```\n\n\n \n

\n

\n\n## Citation\n\nPlease cite `Structured3D` in your publications if it helps your research:\n\n```bibtex\n@inproceedings{Structured3D,\n title = {Structured3D: A Large Photo-realistic Dataset for Structured 3D Modeling},\n author = {Jia Zheng and Junfei Zhang and Jing Li and Rui Tang and Shenghua Gao and Zihan Zhou},\n booktitle = {Proceedings of The European Conference on Computer Vision (ECCV)},\n year = {2020}\n}\n```\n\n## License\n\nThe data is released under the [Structured3D Terms of Use](https://drive.google.com/open?id=13ZwWpU_557ZQccwOUJ8H5lvXD7MeZFMa), and the code is released under the [MIT license](LICENSE).\n\n## Contact\n\nPlease contact us at [Structured3D Group](mailto:structured3d@googlegroups.com) if you have any questions.\n\n## Acknowledgement\n\nWe would like to thank Kujiale.com for providing the database of house designs and the rendering engine. We especially thank Qing Ye and Qi Wu from Kujiale.com for the help on the data rendering.\n"

},

{

"path": "data_organization.md",

"content": "# Data Organization\n\nThere is a separate subdirectory for every scene (_i.e._, house design), which is named by a unique ID. Within each scene directory, there are separate directories for different types of data as follows:\n\n```\nscene_\n├── 2D_rendering\n│ └── \n│ ├── panorama\n│ │ ├── \n│ │ │ ├── rgb_light.png\n│ │ │ ├── semantic.png\n│ │ │ ├── instance.png\n│ │ │ ├── albedo.png\n│ │ │ ├── depth.png\n│ │ │ └── normal.png\n│ │ ├── layout.txt\n│ │ └── camera_xyz.txt\n│ └── perspective\n│ └── \n│ └── \n│ ├── rgb_rawlight.png\n│ ├── semantic.png\n│ ├── instance.png\n│ ├── albedo.png\n│ ├── depth.png\n│ ├── normal.png\n│ ├── layout.json\n│ └── camera_pose.txt\n├── bbox_3d.json\n└── annotation_3d.json\n```\n\n# Annotation Format\n\nWe provide the primitive and relationship based structure annotation for each scene, and oriented bounding box for each object instance.\n\n**Structure annotation (`annotation_3d.json`)**: see all the room types [here](metadata/room_types.txt).\n\n```\n{\n // PRIMITVIES\n \"junctions\":[\n {\n \"ID\": : int,\n \"coordinate\" : List[float] // 3D vector\n }\n ],\n \"lines\": [\n {\n \"ID\": : int,\n \"point\" : List[float], // 3D vector\n \"direction\" : List[float] // 3D vector\n }\n ],\n \"planes\": [\n {\n \"ID\": : int,\n \"type\" : str, // ceiling, floor, wall\n \"normal\" : List[float], // 3D vector, the normal points to the empty space\n \"offset\" : float\n }\n ],\n // RELATIONSHIPS\n \"semantics\": [\n {\n \"ID\" : int,\n \"type\" : str, // room type, door, window\n \"planeID\" : List[int] // indices of the planes\n }\n ],\n \"planeLineMatrix\" : Matrix[int], // matrix W_1 where the ij-th entry is 1 iff l_i is on p_j\n \"lineJunctionMatrix\" : Matrix[int], // matrix W_2 here the mn-th entry is 1 iff x_m is on l_nj\n // OTHERS\n \"cuboids\": [\n {\n \"ID\": : int,\n \"planeID\" : List[int] // indices of the planes\n }\n ]\n \"manhattan\": [\n {\n \"ID\": : int,\n \"planeID\" : List[int] // indices of the planes\n }\n ]\n}\n```\n\n**Bounding box (`bbox_3d.json`)**: the oriented bounding box annotation in world coordinate, same as the [SUN RGB-D Dataset](http://rgbd.cs.princeton.edu).\n\n```\n[\n {\n \"ID\" : int, // instance id\n \"basis\" : Matrix[float], // basis of the bounding box, one row is one basis\n \"coeffs\" : List[float], // radii in each dimension\n \"centroid\" : List[float], // 3D centroid of the bounding box\n }\n]\n```\n\nFor each image, we provide semantic, instance, albedo, depth, normal, layout annotation and camera position. Please note that we have different layout and camera annotation formats for panoramic and perspective images.\n\n**Semantic annotation (`semantic.png`)**: unsigned 8-bit integers within a PNG. We use [NYUv2](https://cs.nyu.edu/~silberman/datasets/nyu_depth_v2) 40-label set, see all the label ids [here](metadata/labelids.txt).\n\n**Instance annotation (`instance.png`)**: unsigned 16-bit integers within a PNG. We only provide instance annotation for full configuration. The maximum value (65535) denotes _background_.\n\n**Albedo data (`albedo.png`)**: unsigned 8-bit integers within a PNG.\n\n**Depth data (`depth.png`)**: unsigned 16-bit integers within a PNG. The units are millimeters, a value of 1000 is a meter. A zero value denotes _no reading_.\n\n**Normal data (`normal.png`)**: unsigned 8-bit integers within a PNG (x, y, z), where the integer values in the file are 128 \\* (1 + n), where n is a normal coordinate in range the [-1, 1].\n\n**Layout annotation for panorama (`layout.txt`)**: an ordered list of 2D positions of the junctions (same as [LayoutNet](https://github.com/zouchuhang/LayoutNet) and [HorizonNet](https://github.com/sunset1995/HorizonNet)). The order of the junctions is shown in the figure below. In our dataset, the cameras of the panoramas are aligned with the gravity direction, thus a pair of ceiling-wall and floor-wall junctions share the same x-axis coordinates.\n\n\n \n

\n

\n\n**Layout annotation for perspective (`layout.json`)**: We also include the junctions formed by line segments intersecting with each other or image boundary. We consider the visible and invisible parts caused by the room structure instead of furniture.\n\n```\n{\n \"junctions\":[\n {\n \"ID\" : int, // corresponding 3D junction id, none corresponds to fake 3D junction\n \"coordinate\" : List[int], // 2D location in the camera coordinate\n \"isvisible\" : bool // this junction is whether occluded by the other walls\n }\n ],\n \"planes\": [\n {\n \"ID\" : int, // corresponding 3D plane id\n \"visible_mask\" : List[List[int]], // visible segmentation mask, list of junctions ids\n \"amodal_mask\" : List[List[int]], // amodal segmentation mask, list of junctions ids\n \"normal\" : List[float], // normal in the camera coordinate\n \"offset\" : float, // offset in the camera coordinate\n \"type\" : str // ceiling, floor, wall\n }\n ]\n}\n```\n\n**Camera location for panorama (`camera_xyz.txt`)**: For each panoramic image, we only store the camera location in millimeters in global coordinates. The direction of the camera is always along the negative y-axis. The z-axis is upward.\n\n**Camera location for perspective (`camera_pose.txt`)**: For each perspective image, we store the camera location and pose in global coordinates.\n\n```\nvx vy vz tx ty tz ux uy uz xfov yfov 1\n```\n\nwhere `(vx, vy, vz)` is the eye viewpoint of the camera in millimeters, `(tx, ty, tz)` is the view direction, `(ux, uy, uz)` is the up direction, and `xfov` and `yfov` are the half-angles of the horizontal and vertical fields of view of the camera in radians (the angle from the central ray to the leftmost/bottommost ray in the field of view), same as the [Matterport3D Dataset](https://github.com/niessner/Matterport).\n"

},

{

"path": "metadata/errata.txt",

"content": "# invalid scene\nscene_01155\nscene_01714\nscene_01816\nscene_03398\nscene_01192\nscene_01852\n# a pair of junctions are not aligned along the x-axis\nscene_01778_room_858455\n# self-intersection layout\nscene_00010_room_846619\nscene_00043_room_1518\nscene_00043_room_3128\nscene_00043_room_474\nscene_00043_room_732\nscene_00043_room_856\nscene_00173_room_4722\nscene_00240_room_384\nscene_00325_room_970753\nscene_00335_room_686\nscene_00339_room_2193\nscene_00501_room_1840\nscene_00515_room_277475\nscene_00543_room_176\nscene_00587_room_9914\nscene_00703_room_762455\nscene_00703_room_771712\nscene_00728_room_5662\nscene_00828_room_607228\nscene_00865_room_1026\nscene_00865_room_1402\nscene_00875_room_739214\nscene_00917_room_188\nscene_00917_room_501284\nscene_00926_room_2290\nscene_00936_room_311\nscene_00937_room_1955\nscene_00986_room_141\nscene_01009_room_3234\nscene_01009_room_3571\nscene_01021_room_689126\nscene_01034_room_222021\nscene_01036_room_301\nscene_01043_room_2193\nscene_01104_room_875\nscene_01151_room_563\nscene_01165_room_204\nscene_01221_room_26619\nscene_01222_room_273364\nscene_01282_room_1917\nscene_01282_room_24057\nscene_01282_room_2631\nscene_01400_room_10576\nscene_01445_room_3495\nscene_01470_room_1413\nscene_01530_room_577\nscene_01670_room_291\nscene_01745_room_342\nscene_01759_room_3584\nscene_01759_room_3588\nscene_01772_room_897997\nscene_01774_room_143\nscene_01781_room_335\nscene_01781_room_878137\nscene_01786_room_5837\nscene_01916_room_2648\nscene_01993_room_849\nscene_01998_room_54762\nscene_02034_room_921879\nscene_02040_room_311\nscene_02046_room_1014\nscene_02046_room_834\nscene_02047_room_934954\nscene_02101_room_255228\nscene_02172_room_335\nscene_02235_room_799012\nscene_02274_room_4093\nscene_02326_room_836436\nscene_02334_room_869673\nscene_02357_room_118319\nscene_02484_room_43003\nscene_02499_room_1607\nscene_02499_room_977359\nscene_02509_room_687231\nscene_02542_room_671853\nscene_02564_room_702502\nscene_02580_room_724891\nscene_02650_room_877946\nscene_02659_room_577142\nscene_02690_room_586296\nscene_02706_room_823368\nscene_02788_room_815473\nscene_02889_room_848271\nscene_03035_room_631066\nscene_03120_room_830640\nscene_03327_room_315045\nscene_03376_room_800900\nscene_03399_room_337\nscene_03478_room_2193"

},

{

"path": "metadata/labelids.txt",

"content": "1\twall\n2\tfloor\n3\tcabinet\n4\tbed\n5\tchair\n6\tsofa\n7\ttable\n8\tdoor\n9\twindow\n10\tbookshelf\n11\tpicture\n12\tcounter\n13\tblinds\n14\tdesk\n15\tshelves\n16\tcurtain\n17\tdresser\n18\tpillow\n19\tmirror\n20\tfloor mat\n21\tclothes\n22\tceiling\n23\tbooks\n24\trefrigerator\n25\ttelevision\n26\tpaper\n27\ttowel\n28\tshower curtain\n29\tbox\n30\twhiteboard\n31\tperson\n32\tnightstand\n33\ttoilet\n34\tsink\n35\tlamp\n36\tbathtub\n37\tbag\n38\totherstructure\n39\totherfurniture\n40\totherprop"

},

{

"path": "metadata/room_types.txt",

"content": "living room\nkitchen\nbedroom\nbathroom\nbalcony\ncorridor\ndining room\nstudy\nstudio\nstore room\ngarden\nlaundry room\noffice\nbasement\ngarage\nundefined\n"

},

{

"path": "misc/__init__.py",

"content": ""

},

{

"path": "misc/colors.py",

"content": "semantics_cmap = {\n 'living room': '#e6194b',\n 'kitchen': '#3cb44b',\n 'bedroom': '#ffe119',\n 'bathroom': '#0082c8',\n 'balcony': '#f58230',\n 'corridor': '#911eb4',\n 'dining room': '#46f0f0',\n 'study': '#f032e6',\n 'studio': '#d2f53c',\n 'store room': '#fabebe',\n 'garden': '#008080',\n 'laundry room': '#e6beff',\n 'office': '#aa6e28',\n 'basement': '#fffac8',\n 'garage': '#800000',\n 'undefined': '#aaffc3',\n 'door': '#808000',\n 'window': '#ffd7b4',\n 'outwall': '#000000',\n}\n\n\ncolormap_255 = [\n [230, 25, 75],\n [ 60, 180, 75],\n [255, 225, 25],\n [ 0, 130, 200],\n [245, 130, 48],\n [145, 30, 180],\n [ 70, 240, 240],\n [240, 50, 230],\n [210, 245, 60],\n [250, 190, 190],\n [ 0, 128, 128],\n [230, 190, 255],\n [170, 110, 40],\n [255, 250, 200],\n [128, 0, 0],\n [170, 255, 195],\n [128, 128, 0],\n [255, 215, 180],\n [ 0, 0, 128],\n [128, 128, 128],\n [255, 255, 255],\n [ 0, 0, 0]\n]\n"

},

{

"path": "misc/figures.py",

"content": "\"\"\"\nCopy from https://github.com/Toblerity/Shapely/blob/master/docs/code/figures.py\n\"\"\"\n\nfrom math import sqrt\nfrom shapely import affinity\n\nGM = (sqrt(5)-1.0)/2.0\nW = 8.0\nH = W*GM\nSIZE = (W, H)\n\nBLUE = '#6699cc'\nGRAY = '#999999'\nDARKGRAY = '#333333'\nYELLOW = '#ffcc33'\nGREEN = '#339933'\nRED = '#ff3333'\nBLACK = '#000000'\n\nCOLOR_ISVALID = {\n True: BLUE,\n False: RED,\n}\n\n\ndef plot_line(ax, ob, color=GRAY, zorder=1, linewidth=3, alpha=1):\n x, y = ob.xy\n ax.plot(x, y, color=color, linewidth=linewidth, solid_capstyle='round', zorder=zorder, alpha=alpha)\n\n\ndef plot_coords(ax, ob, color=BLACK, zorder=1, alpha=1):\n x, y = ob.xy\n ax.plot(x, y, color=color, zorder=zorder, alpha=alpha)\n\n\ndef color_isvalid(ob, valid=BLUE, invalid=RED):\n if ob.is_valid:\n return valid\n else:\n return invalid\n\n\ndef color_issimple(ob, simple=BLUE, complex=YELLOW):\n if ob.is_simple:\n return simple\n else:\n return complex\n\n\ndef plot_line_isvalid(ax, ob, **kwargs):\n kwargs[\"color\"] = color_isvalid(ob)\n plot_line(ax, ob, **kwargs)\n\n\ndef plot_line_issimple(ax, ob, **kwargs):\n kwargs[\"color\"] = color_issimple(ob)\n plot_line(ax, ob, **kwargs)\n\n\ndef plot_bounds(ax, ob, zorder=1, alpha=1):\n x, y = zip(*list((p.x, p.y) for p in ob.boundary))\n ax.plot(x, y, 'o', color=BLACK, zorder=zorder, alpha=alpha)\n\n\ndef add_origin(ax, geom, origin):\n x, y = xy = affinity.interpret_origin(geom, origin, 2)\n ax.plot(x, y, 'o', color=GRAY, zorder=1)\n ax.annotate(str(xy), xy=xy, ha='center',\n textcoords='offset points', xytext=(0, 8))\n\n\ndef set_limits(ax, x0, xN, y0, yN):\n ax.set_xlim(x0, xN)\n ax.set_xticks(range(x0, xN+1))\n ax.set_ylim(y0, yN)\n ax.set_yticks(range(y0, yN+1))\n ax.set_aspect(\"equal\")\n"

},

{

"path": "misc/panorama.py",

"content": "\"\"\"\nCopy from https://github.com/sunset1995/pytorch-layoutnet/blob/master/pano.py\n\"\"\"\nimport numpy as np\nimport numpy.matlib as matlib\n\n\ndef xyz_2_coorxy(xs, ys, zs, H=512, W=1024):\n us = np.arctan2(xs, ys)\n vs = -np.arctan(zs / np.sqrt(xs**2 + ys**2))\n coorx = (us / (2 * np.pi) + 0.5) * W\n coory = (vs / np.pi + 0.5) * H\n return coorx, coory\n\n\ndef coords2uv(coords, width, height):\n \"\"\"\n Image coordinates (xy) to uv\n \"\"\"\n middleX = width / 2 + 0.5\n middleY = height / 2 + 0.5\n uv = np.hstack([\n (coords[:, [0]] - middleX) / width * 2 * np.pi,\n -(coords[:, [1]] - middleY) / height * np.pi])\n return uv\n\n\ndef uv2xyzN(uv, planeID=1):\n ID1 = (int(planeID) - 1 + 0) % 3\n ID2 = (int(planeID) - 1 + 1) % 3\n ID3 = (int(planeID) - 1 + 2) % 3\n xyz = np.zeros((uv.shape[0], 3))\n xyz[:, ID1] = np.cos(uv[:, 1]) * np.sin(uv[:, 0])\n xyz[:, ID2] = np.cos(uv[:, 1]) * np.cos(uv[:, 0])\n xyz[:, ID3] = np.sin(uv[:, 1])\n return xyz\n\n\ndef uv2xyzN_vec(uv, planeID):\n \"\"\"\n vectorization version of uv2xyzN\n @uv N x 2\n @planeID N\n \"\"\"\n assert (planeID.astype(int) != planeID).sum() == 0\n planeID = planeID.astype(int)\n ID1 = (planeID - 1 + 0) % 3\n ID2 = (planeID - 1 + 1) % 3\n ID3 = (planeID - 1 + 2) % 3\n ID = np.arange(len(uv))\n xyz = np.zeros((len(uv), 3))\n xyz[ID, ID1] = np.cos(uv[:, 1]) * np.sin(uv[:, 0])\n xyz[ID, ID2] = np.cos(uv[:, 1]) * np.cos(uv[:, 0])\n xyz[ID, ID3] = np.sin(uv[:, 1])\n return xyz\n\n\ndef xyz2uvN(xyz, planeID=1):\n ID1 = (int(planeID) - 1 + 0) % 3\n ID2 = (int(planeID) - 1 + 1) % 3\n ID3 = (int(planeID) - 1 + 2) % 3\n normXY = np.sqrt(xyz[:, [ID1]] ** 2 + xyz[:, [ID2]] ** 2)\n normXY[normXY < 0.000001] = 0.000001\n normXYZ = np.sqrt(xyz[:, [ID1]] ** 2 + xyz[:, [ID2]] ** 2 + xyz[:, [ID3]] ** 2)\n v = np.arcsin(xyz[:, [ID3]] / normXYZ)\n u = np.arcsin(xyz[:, [ID1]] / normXY)\n valid = (xyz[:, [ID2]] < 0) & (u >= 0)\n u[valid] = np.pi - u[valid]\n valid = (xyz[:, [ID2]] < 0) & (u <= 0)\n u[valid] = -np.pi - u[valid]\n uv = np.hstack([u, v])\n uv[np.isnan(uv[:, 0]), 0] = 0\n return uv\n\n\ndef computeUVN(n, in_, planeID):\n \"\"\"\n compute v given u and normal.\n \"\"\"\n if planeID == 2:\n n = np.array([n[1], n[2], n[0]])\n elif planeID == 3:\n n = np.array([n[2], n[0], n[1]])\n bc = n[0] * np.sin(in_) + n[1] * np.cos(in_)\n bs = n[2]\n out = np.arctan(-bc / (bs + 1e-9))\n return out\n\n\ndef computeUVN_vec(n, in_, planeID):\n \"\"\"\n vectorization version of computeUVN\n @n N x 3\n @in_ MN x 1\n @planeID N\n \"\"\"\n n = n.copy()\n if (planeID == 2).sum():\n n[planeID == 2] = np.roll(n[planeID == 2], 2, axis=1)\n if (planeID == 3).sum():\n n[planeID == 3] = np.roll(n[planeID == 3], 1, axis=1)\n n = np.repeat(n, in_.shape[0] // n.shape[0], axis=0)\n assert n.shape[0] == in_.shape[0]\n bc = n[:, [0]] * np.sin(in_) + n[:, [1]] * np.cos(in_)\n bs = n[:, [2]]\n out = np.arctan(-bc / (bs + 1e-9))\n return out\n\n\ndef lineFromTwoPoint(pt1, pt2):\n \"\"\"\n Generate line segment based on two points on panorama\n pt1, pt2: two points on panorama\n line:\n 1~3-th dim: normal of the line\n 4-th dim: the projection dimension ID\n 5~6-th dim: the u of line segment endpoints in projection plane\n \"\"\"\n numLine = pt1.shape[0]\n lines = np.zeros((numLine, 6))\n n = np.cross(pt1, pt2)\n n = n / (matlib.repmat(np.sqrt(np.sum(n ** 2, 1, keepdims=True)), 1, 3) + 1e-9)\n lines[:, 0:3] = n\n\n areaXY = np.abs(np.sum(n * matlib.repmat([0, 0, 1], numLine, 1), 1, keepdims=True))\n areaYZ = np.abs(np.sum(n * matlib.repmat([1, 0, 0], numLine, 1), 1, keepdims=True))\n areaZX = np.abs(np.sum(n * matlib.repmat([0, 1, 0], numLine, 1), 1, keepdims=True))\n planeIDs = np.argmax(np.hstack([areaXY, areaYZ, areaZX]), axis=1) + 1\n lines[:, 3] = planeIDs\n\n for i in range(numLine):\n uv = xyz2uvN(np.vstack([pt1[i, :], pt2[i, :]]), lines[i, 3])\n umax = uv[:, 0].max() + np.pi\n umin = uv[:, 0].min() + np.pi\n if umax - umin > np.pi:\n lines[i, 4:6] = np.array([umax, umin]) / 2 / np.pi\n else:\n lines[i, 4:6] = np.array([umin, umax]) / 2 / np.pi\n\n return lines\n\n\ndef lineIdxFromCors(cor_all, im_w, im_h):\n assert len(cor_all) % 2 == 0\n uv = coords2uv(cor_all, im_w, im_h)\n xyz = uv2xyzN(uv)\n lines = lineFromTwoPoint(xyz[0::2], xyz[1::2])\n num_sample = max(im_h, im_w)\n\n cs, rs = [], []\n for i in range(lines.shape[0]):\n n = lines[i, 0:3]\n sid = lines[i, 4] * 2 * np.pi\n eid = lines[i, 5] * 2 * np.pi\n if eid < sid:\n x = np.linspace(sid, eid + 2 * np.pi, num_sample)\n x = x % (2 * np.pi)\n else:\n x = np.linspace(sid, eid, num_sample)\n\n u = -np.pi + x.reshape(-1, 1)\n v = computeUVN(n, u, lines[i, 3])\n xyz = uv2xyzN(np.hstack([u, v]), lines[i, 3])\n uv = xyz2uvN(xyz, 1)\n\n r = np.minimum(np.floor((uv[:, 0] + np.pi) / (2 * np.pi) * im_w) + 1,\n im_w).astype(np.int32)\n c = np.minimum(np.floor((np.pi / 2 - uv[:, 1]) / np.pi * im_h) + 1,\n im_h).astype(np.int32)\n cs.extend(r - 1)\n rs.extend(c - 1)\n return rs, cs\n\n\ndef draw_boundary_from_cor_id(cor_id, img_src):\n im_h, im_w = img_src.shape[:2]\n cor_all = [cor_id]\n for i in range(len(cor_id)):\n cor_all.append(cor_id[i, :])\n cor_all.append(cor_id[(i+2) % len(cor_id), :])\n cor_all = np.vstack(cor_all)\n\n rs, cs = lineIdxFromCors(cor_all, im_w, im_h)\n rs = np.array(rs)\n cs = np.array(cs)\n\n panoEdgeC = img_src.astype(np.uint8)\n for dx, dy in [[-1, 0], [1, 0], [0, 0], [0, 1], [0, -1]]:\n panoEdgeC[np.clip(rs + dx, 0, im_h - 1), np.clip(cs + dy, 0, im_w - 1), 0] = 0\n panoEdgeC[np.clip(rs + dx, 0, im_h - 1), np.clip(cs + dy, 0, im_w - 1), 1] = 0\n panoEdgeC[np.clip(rs + dx, 0, im_h - 1), np.clip(cs + dy, 0, im_w - 1), 2] = 255\n\n return panoEdgeC\n\n\ndef coorx2u(x, w=1024):\n return ((x + 0.5) / w - 0.5) * 2 * np.pi\n\n\ndef coory2v(y, h=512):\n return ((y + 0.5) / h - 0.5) * np.pi\n\n\ndef u2coorx(u, w=1024):\n return (u / (2 * np.pi) + 0.5) * w - 0.5\n\n\ndef v2coory(v, h=512):\n return (v / np.pi + 0.5) * h - 0.5\n\n\ndef uv2xy(u, v, z=-50):\n c = z / np.tan(v)\n x = c * np.cos(u)\n y = c * np.sin(u)\n return x, y\n\n\ndef pano_connect_points(p1, p2, z=-50, w=1024, h=512):\n u1 = coorx2u(p1[0], w)\n v1 = coory2v(p1[1], h)\n u2 = coorx2u(p2[0], w)\n v2 = coory2v(p2[1], h)\n\n x1, y1 = uv2xy(u1, v1, z)\n x2, y2 = uv2xy(u2, v2, z)\n\n if abs(p1[0] - p2[0]) < w / 2:\n pstart = np.ceil(min(p1[0], p2[0]))\n pend = np.floor(max(p1[0], p2[0]))\n else:\n pstart = np.ceil(max(p1[0], p2[0]))\n pend = np.floor(min(p1[0], p2[0]) + w)\n coorxs = (np.arange(pstart, pend + 1) % w).astype(np.float64)\n vx = x2 - x1\n vy = y2 - y1\n us = coorx2u(coorxs, w)\n ps = (np.tan(us) * x1 - y1) / (vy - np.tan(us) * vx)\n cs = np.sqrt((x1 + ps * vx) ** 2 + (y1 + ps * vy) ** 2)\n vs = np.arctan2(z, cs)\n coorys = v2coory(vs)\n\n return np.stack([coorxs, coorys], axis=-1)\n"

},

{

"path": "misc/utils.py",

"content": "\"\"\"\nAdapted from https://github.com/thusiyuan/cooperative_scene_parsing/blob/master/utils/sunrgbd_utils.py\n\"\"\"\nimport numpy as np\n\n\ndef normalize(vector):\n return vector / np.linalg.norm(vector)\n\n\ndef parse_camera_info(camera_info, height, width):\n \"\"\" extract intrinsic and extrinsic matrix\n \"\"\"\n lookat = normalize(camera_info[3:6])\n up = normalize(camera_info[6:9])\n\n W = lookat\n U = np.cross(W, up)\n V = -np.cross(W, U)\n\n rot = np.vstack((U, V, W))\n trans = camera_info[:3]\n\n xfov = camera_info[9]\n yfov = camera_info[10]\n\n K = np.diag([1, 1, 1])\n\n K[0, 2] = width / 2\n K[1, 2] = height / 2\n\n K[0, 0] = K[0, 2] / np.tan(xfov)\n K[1, 1] = K[1, 2] / np.tan(yfov)\n\n return rot, trans, K\n\n\ndef flip_towards_viewer(normals, points):\n points = points / np.linalg.norm(points)\n proj = points.dot(normals[:2, :].T)\n flip = np.where(proj > 0)\n normals[flip, :] = -normals[flip, :]\n return normals\n\n\ndef get_corners_of_bb3d(basis, coeffs, centroid):\n corners = np.zeros((8, 3))\n # order the basis\n index = np.argsort(np.abs(basis[:, 0]))[::-1]\n # the case that two same value appear the same time\n if index[2] != 2:\n index[1:] = index[1:][::-1]\n basis = basis[index, :]\n coeffs = coeffs[index]\n # Now, we know the basis vectors are orders X, Y, Z. Next, flip the basis vectors towards the viewer\n basis = flip_towards_viewer(basis, centroid)\n coeffs = np.abs(coeffs)\n corners[0, :] = -basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]\n corners[1, :] = basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]\n corners[2, :] = basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]\n corners[3, :] = -basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]\n\n corners[4, :] = -basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]\n corners[5, :] = basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]\n corners[6, :] = basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]\n corners[7, :] = -basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]\n corners = corners + np.tile(centroid, (8, 1))\n return corners\n\n\ndef get_corners_of_bb3d_no_index(basis, coeffs, centroid):\n corners = np.zeros((8, 3))\n coeffs = np.abs(coeffs)\n corners[0, :] = -basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]\n corners[1, :] = basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]\n corners[2, :] = basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]\n corners[3, :] = -basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]\n\n corners[4, :] = -basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]\n corners[5, :] = basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]\n corners[6, :] = basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]\n corners[7, :] = -basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]\n\n corners = corners + np.tile(centroid, (8, 1))\n return corners\n\n\ndef project_3d_points_to_2d(points3d, R_ex, K):\n \"\"\"\n Project 3d points from camera-centered coordinate to 2D image plane\n Parameters\n ----------\n points3d: numpy array\n 3d location of point\n R_ex: numpy array\n extrinsic camera parameter\n K: numpy array\n intrinsic camera parameter\n Returns\n -------\n points2d: numpy array\n 2d location of the point\n \"\"\"\n points3d = R_ex.dot(points3d.T).T\n x3 = points3d[:, 0]\n y3 = -points3d[:, 1]\n z3 = np.abs(points3d[:, 2])\n xx = x3 * K[0, 0] / z3 + K[0, 2]\n yy = y3 * K[1, 1] / z3 + K[1, 2]\n points2d = np.vstack((xx, yy))\n return points2d\n\n\ndef project_struct_bdb_to_2d(basis, coeffs, center, R_ex, K):\n \"\"\"\n Project 3d bounding box to 2d bounding box\n Parameters\n ----------\n basis, coeffs, center, R_ex, K\n : K is the intrinsic camera parameter matrix\n : Rtilt is the extrinsic camera parameter matrix in right hand coordinates\n Returns\n -------\n bdb2d: dict\n Keys: {'x1', 'x2', 'y1', 'y2'}\n The (x1, y1) position is at the top left corner,\n the (x2, y2) position is at the bottom right corner\n \"\"\"\n corners3d = get_corners_of_bb3d(basis, coeffs, center)\n corners = project_3d_points_to_2d(corners3d, R_ex, K)\n bdb2d = dict()\n bdb2d['x1'] = int(max(np.min(corners[0, :]), 1)) # x1\n bdb2d['y1'] = int(max(np.min(corners[1, :]), 1)) # y1\n bdb2d['x2'] = int(min(np.max(corners[0, :]), 2*K[0, 2])) # x2\n bdb2d['y2'] = int(min(np.max(corners[1, :]), 2*K[1, 2])) # y2\n # if not check_bdb(bdb2d, 2*K[0, 2], 2*K[1, 2]):\n # bdb2d = None\n return bdb2d\n"

},

{

"path": "visualize_3d.py",

"content": "import os\nimport json\nimport argparse\n\nimport open3d\nimport pymesh\nimport numpy as np\nimport matplotlib.pyplot as plt\nfrom shapely.geometry import Polygon\nfrom descartes.patch import PolygonPatch\n\nfrom misc.figures import plot_coords\nfrom misc.colors import colormap_255, semantics_cmap\n\n\ndef visualize_wireframe(annos):\n \"\"\"visualize wireframe\n \"\"\"\n colormap = np.array(colormap_255) / 255\n\n junctions = np.array([item['coordinate'] for item in annos['junctions']])\n _, junction_pairs = np.where(np.array(annos['lineJunctionMatrix']))\n junction_pairs = junction_pairs.reshape(-1, 2)\n\n # extract hole lines\n lines_holes = []\n for semantic in annos['semantics']:\n if semantic['type'] in ['window', 'door']:\n for planeID in semantic['planeID']:\n lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())\n lines_holes = np.unique(lines_holes)\n\n # extract cuboid lines\n cuboid_lines = []\n for cuboid in annos['cuboids']:\n for planeID in cuboid['planeID']:\n cuboid_lineID = np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist()\n cuboid_lines.extend(cuboid_lineID)\n cuboid_lines = np.unique(cuboid_lines)\n cuboid_lines = np.setdiff1d(cuboid_lines, lines_holes)\n\n # visualize junctions\n connected_junctions = junctions[np.unique(junction_pairs)]\n connected_colors = np.repeat(colormap[0].reshape(1, 3), len(connected_junctions), axis=0)\n\n junction_set = open3d.geometry.PointCloud()\n junction_set.points = open3d.utility.Vector3dVector(connected_junctions)\n junction_set.colors = open3d.utility.Vector3dVector(connected_colors)\n\n # visualize line segments\n line_colors = np.repeat(colormap[5].reshape(1, 3), len(junction_pairs), axis=0)\n\n # color holes\n if len(lines_holes) != 0:\n line_colors[lines_holes] = colormap[6]\n\n # color cuboids\n if len(cuboid_lines) != 0:\n line_colors[cuboid_lines] = colormap[2]\n\n line_set = open3d.geometry.LineSet()\n line_set.points = open3d.utility.Vector3dVector(junctions)\n line_set.lines = open3d.utility.Vector2iVector(junction_pairs)\n line_set.colors = open3d.utility.Vector3dVector(line_colors)\n\n open3d.visualization.draw_geometries([junction_set, line_set])\n\n\ndef project(x, meta):\n \"\"\" project 3D to 2D for polygon clipping\n \"\"\"\n proj_axis = max(range(3), key=lambda i: abs(meta['normal'][i]))\n\n return tuple(c for i, c in enumerate(x) if i != proj_axis)\n\n\ndef project_inv(x, meta):\n \"\"\" recover 3D points from 2D\n \"\"\"\n # Returns the vector w in the walls' plane such that project(w) equals x.\n proj_axis = max(range(3), key=lambda i: abs(meta['normal'][i]))\n\n w = list(x)\n w[proj_axis:proj_axis] = [0.0]\n c = -meta['offset']\n for i in range(3):\n c -= w[i] * meta['normal'][i]\n c /= meta['normal'][proj_axis]\n w[proj_axis] = c\n return tuple(w)\n\n\ndef triangulate(points):\n \"\"\" triangulate the plane for operation and visualization\n \"\"\"\n\n num_points = len(points)\n indices = np.arange(num_points, dtype=np.int)\n segments = np.vstack((indices, np.roll(indices, -1))).T\n\n tri = pymesh.triangle()\n tri.points = np.array(points)\n\n tri.segments = segments\n tri.verbosity = 0\n tri.run()\n\n return tri.mesh\n\n\ndef clip_polygon(polygons, vertices_hole, junctions, meta):\n \"\"\" clip polygon the hole\n \"\"\"\n if len(polygons) == 1:\n junctions = [junctions[vertex] for vertex in polygons[0]]\n mesh_wall = triangulate(junctions)\n\n vertices = np.array(mesh_wall.vertices)\n faces = np.array(mesh_wall.faces)\n\n return vertices, faces\n\n else:\n wall = []\n holes = []\n for polygon in polygons:\n if np.any(np.intersect1d(polygon, vertices_hole)):\n holes.append(polygon)\n else:\n wall.append(polygon)\n\n # extract junctions on this plane\n indices = []\n junctions_wall = []\n for plane in wall:\n for vertex in plane:\n indices.append(vertex)\n junctions_wall.append(junctions[vertex])\n\n junctions_holes = []\n for plane in holes:\n junctions_hole = []\n for vertex in plane:\n indices.append(vertex)\n junctions_hole.append(junctions[vertex])\n junctions_holes.append(junctions_hole)\n\n junctions_wall = [project(x, meta) for x in junctions_wall]\n junctions_holes = [[project(x, meta) for x in junctions_hole] for junctions_hole in junctions_holes]\n\n mesh_wall = triangulate(junctions_wall)\n\n for hole in junctions_holes:\n mesh_hole = triangulate(hole)\n mesh_wall = pymesh.boolean(mesh_wall, mesh_hole, 'difference')\n\n vertices = [project_inv(vertex, meta) for vertex in mesh_wall.vertices]\n\n return vertices, np.array(mesh_wall.faces)\n\n\ndef draw_geometries_with_back_face(geometries):\n vis = open3d.visualization.Visualizer()\n vis.create_window()\n render_option = vis.get_render_option()\n render_option.mesh_show_back_face = True\n for geometry in geometries:\n vis.add_geometry(geometry)\n vis.run()\n vis.destroy_window()\n\n\ndef convert_lines_to_vertices(lines):\n \"\"\"convert line representation to polygon vertices\n \"\"\"\n polygons = []\n lines = np.array(lines)\n\n polygon = None\n while len(lines) != 0:\n if polygon is None:\n polygon = lines[0].tolist()\n lines = np.delete(lines, 0, 0)\n\n lineID, juncID = np.where(lines == polygon[-1])\n vertex = lines[lineID[0], 1 - juncID[0]]\n lines = np.delete(lines, lineID, 0)\n\n if vertex in polygon:\n polygons.append(polygon)\n polygon = None\n else:\n polygon.append(vertex)\n\n return polygons\n\n\ndef visualize_plane(annos, args, eps=0.9):\n \"\"\"visualize plane\n \"\"\"\n colormap = np.array(colormap_255) / 255\n junctions = [item['coordinate'] for item in annos['junctions']]\n\n if args.color == 'manhattan':\n manhattan = dict()\n for planes in annos['manhattan']:\n for planeID in planes['planeID']:\n manhattan[planeID] = planes['ID']\n\n # extract hole vertices\n lines_holes = []\n for semantic in annos['semantics']:\n if semantic['type'] in ['window', 'door']:\n for planeID in semantic['planeID']:\n lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())\n\n lines_holes = np.unique(lines_holes)\n _, vertices_holes = np.where(np.array(annos['lineJunctionMatrix'])[lines_holes])\n vertices_holes = np.unique(vertices_holes)\n\n # load polygons\n polygons = []\n for semantic in annos['semantics']:\n for planeID in semantic['planeID']:\n plane_anno = annos['planes'][planeID]\n lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist()\n junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]\n polygon = convert_lines_to_vertices(junction_pairs)\n vertices, faces = clip_polygon(polygon, vertices_holes, junctions, plane_anno)\n polygons.append([vertices, faces, planeID, plane_anno['normal'], plane_anno['type'], semantic['type']])\n\n plane_set = []\n for i, (vertices, faces, planeID, normal, plane_type, semantic_type) in enumerate(polygons):\n # ignore the room ceiling\n if plane_type == 'ceiling' and semantic_type not in ['door', 'window']:\n continue\n\n plane_vis = open3d.geometry.TriangleMesh()\n\n plane_vis.vertices = open3d.utility.Vector3dVector(vertices)\n plane_vis.triangles = open3d.utility.Vector3iVector(faces)\n\n if args.color == 'normal':\n if np.dot(normal, [1, 0, 0]) > eps:\n plane_vis.paint_uniform_color(colormap[0])\n elif np.dot(normal, [-1, 0, 0]) > eps:\n plane_vis.paint_uniform_color(colormap[1])\n elif np.dot(normal, [0, 1, 0]) > eps:\n plane_vis.paint_uniform_color(colormap[2])\n elif np.dot(normal, [0, -1, 0]) > eps:\n plane_vis.paint_uniform_color(colormap[3])\n elif np.dot(normal, [0, 0, 1]) > eps:\n plane_vis.paint_uniform_color(colormap[4])\n elif np.dot(normal, [0, 0, -1]) > eps:\n plane_vis.paint_uniform_color(colormap[5])\n else:\n plane_vis.paint_uniform_color(colormap[6])\n elif args.color == 'manhattan':\n # paint each plane with manhattan world\n if planeID not in manhattan.keys():\n plane_vis.paint_uniform_color(colormap[6])\n else:\n plane_vis.paint_uniform_color(colormap[manhattan[planeID]])\n\n plane_set.append(plane_vis)\n\n draw_geometries_with_back_face(plane_set)\n\n\ndef plot_floorplan(annos, polygons):\n \"\"\"plot floorplan\n \"\"\"\n fig = plt.figure()\n ax = fig.add_subplot(1, 1, 1)\n\n junctions = np.array([junc['coordinate'][:2] for junc in annos['junctions']])\n for (polygon, poly_type) in polygons:\n polygon = Polygon(junctions[np.array(polygon)])\n plot_coords(ax, polygon.exterior, alpha=0.5)\n if poly_type == 'outwall':\n patch = PolygonPatch(polygon, facecolor=semantics_cmap[poly_type], alpha=0)\n else:\n patch = PolygonPatch(polygon, facecolor=semantics_cmap[poly_type], alpha=0.5)\n ax.add_patch(patch)\n\n plt.axis('equal')\n plt.axis('off')\n plt.show()\n\n\ndef visualize_floorplan(annos):\n \"\"\"visualize floorplan\n \"\"\"\n # extract the floor in each semantic for floorplan visualization\n planes = []\n for semantic in annos['semantics']:\n for planeID in semantic['planeID']:\n if annos['planes'][planeID]['type'] == 'floor':\n planes.append({'planeID': planeID, 'type': semantic['type']})\n\n if semantic['type'] == 'outwall':\n outerwall_planes = semantic['planeID']\n\n # extract hole vertices\n lines_holes = []\n for semantic in annos['semantics']:\n if semantic['type'] in ['window', 'door']:\n for planeID in semantic['planeID']:\n lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())\n lines_holes = np.unique(lines_holes)\n\n # junctions on the floor\n junctions = np.array([junc['coordinate'] for junc in annos['junctions']])\n junction_floor = np.where(np.isclose(junctions[:, -1], 0))[0]\n\n # construct each polygon\n polygons = []\n for plane in planes:\n lineIDs = np.where(np.array(annos['planeLineMatrix'][plane['planeID']]))[0].tolist()\n junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]\n polygon = convert_lines_to_vertices(junction_pairs)\n polygons.append([polygon[0], plane['type']])\n\n outerwall_floor = []\n for planeID in outerwall_planes:\n lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist()\n lineIDs = np.setdiff1d(lineIDs, lines_holes)\n junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]\n for start, end in junction_pairs:\n if start in junction_floor and end in junction_floor:\n outerwall_floor.append([start, end])\n\n outerwall_polygon = convert_lines_to_vertices(outerwall_floor)\n polygons.append([outerwall_polygon[0], 'outwall'])\n\n plot_floorplan(annos, polygons)\n\n\ndef parse_args():\n parser = argparse.ArgumentParser(description=\"Structured3D 3D Visualization\")\n parser.add_argument(\"--path\", required=True,\n help=\"dataset path\", metavar=\"DIR\")\n parser.add_argument(\"--scene\", required=True,\n help=\"scene id\", type=int)\n parser.add_argument(\"--type\", choices=(\"floorplan\", \"wireframe\", \"plane\"),\n default=\"plane\", type=str)\n parser.add_argument(\"--color\", choices=[\"normal\", \"manhattan\"],\n default=\"normal\", type=str)\n return parser.parse_args()\n\n\ndef main():\n args = parse_args()\n\n # load annotations from json\n with open(os.path.join(args.path, f\"scene_{args.scene:05d}\", \"annotation_3d.json\")) as file:\n annos = json.load(file)\n\n if args.type == \"wireframe\":\n visualize_wireframe(annos)\n elif args.type == \"plane\":\n visualize_plane(annos, args)\n elif args.type == \"floorplan\":\n visualize_floorplan(annos)\n\n\nif __name__ == \"__main__\":\n main()\n"

},

{

"path": "visualize_bbox.py",

"content": "import os\nimport json\nimport argparse\n\nimport cv2\nimport numpy as np\nimport matplotlib.pyplot as plt\n\nfrom misc.utils import get_corners_of_bb3d_no_index, project_3d_points_to_2d, parse_camera_info\n\n\ndef visualize_bbox(args):\n with open(os.path.join(args.path, f\"scene_{args.scene:05d}\", \"bbox_3d.json\")) as file:\n annos = json.load(file)\n\n id2index = dict()\n for index, object in enumerate(annos):\n id2index[object.get('ID')] = index\n\n scene_path = os.path.join(args.path, f\"scene_{args.scene:05d}\", \"2D_rendering\")\n\n for room_id in np.sort(os.listdir(scene_path)):\n room_path = os.path.join(scene_path, room_id, \"perspective\", \"full\")\n\n if not os.path.exists(room_path):\n continue\n\n for position_id in np.sort(os.listdir(room_path)):\n position_path = os.path.join(room_path, position_id)\n\n image = cv2.imread(os.path.join(position_path, 'rgb_rawlight.png'))\n image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)\n height, width, _ = image.shape\n\n instance = cv2.imread(os.path.join(position_path, 'instance.png'), cv2.IMREAD_UNCHANGED)\n\n camera_info = np.loadtxt(os.path.join(position_path, 'camera_pose.txt'))\n\n rot, trans, K = parse_camera_info(camera_info, height, width)\n\n plt.figure()\n plt.imshow(image)\n\n for index in np.unique(instance)[:-1]:\n # for each instance in current image\n bbox = annos[id2index[index]]\n\n basis = np.array(bbox['basis'])\n coeffs = np.array(bbox['coeffs'])\n centroid = np.array(bbox['centroid'])\n\n corners = get_corners_of_bb3d_no_index(basis, coeffs, centroid)\n corners = corners - trans\n\n gt2dcorners = project_3d_points_to_2d(corners, rot, K)\n\n num_corner = gt2dcorners.shape[1] // 2\n plt.plot(np.hstack((gt2dcorners[0, :num_corner], gt2dcorners[0, 0])),\n np.hstack((gt2dcorners[1, :num_corner], gt2dcorners[1, 0])), 'r')\n plt.plot(np.hstack((gt2dcorners[0, num_corner:], gt2dcorners[0, num_corner])),\n np.hstack((gt2dcorners[1, num_corner:], gt2dcorners[1, num_corner])), 'b')\n for i in range(num_corner):\n plt.plot(gt2dcorners[0, [i, i + num_corner]], gt2dcorners[1, [i, i + num_corner]], 'y')\n\n plt.axis('off')\n plt.axis([0, width, height, 0])\n plt.show()\n\n\ndef parse_args():\n parser = argparse.ArgumentParser(\n description=\"Structured3D 3D Bounding Box Visualization\")\n parser.add_argument(\"--path\", required=True,\n help=\"dataset path\", metavar=\"DIR\")\n parser.add_argument(\"--scene\", required=True,\n help=\"scene id\", type=int)\n return parser.parse_args()\n\n\ndef main():\n args = parse_args()\n\n visualize_bbox(args)\n\n\nif __name__ == \"__main__\":\n main()\n"

},

{

"path": "visualize_floorplan.py",

"content": "import argparse\nimport json\nimport os\n\nimport matplotlib.pyplot as plt\nimport numpy as np\nfrom matplotlib import colors\nfrom shapely.geometry import Polygon\nfrom shapely.plotting import plot_polygon\n\nfrom misc.colors import semantics_cmap\nfrom misc.utils import get_corners_of_bb3d_no_index\n\n\ndef convert_lines_to_vertices(lines):\n \"\"\"convert line representation to polygon vertices\n \"\"\"\n polygons = []\n lines = np.array(lines)\n\n polygon = None\n while len(lines) != 0:\n if polygon is None:\n polygon = lines[0].tolist()\n lines = np.delete(lines, 0, 0)\n\n lineID, juncID = np.where(lines == polygon[-1])\n vertex = lines[lineID[0], 1 - juncID[0]]\n lines = np.delete(lines, lineID, 0)\n\n if vertex in polygon:\n polygons.append(polygon)\n polygon = None\n else:\n polygon.append(vertex)\n\n return polygons\n\n\ndef visualize_floorplan(args):\n \"\"\"visualize floorplan\n \"\"\"\n with open(os.path.join(args.path, f\"scene_{args.scene:05d}\", \"annotation_3d.json\")) as file:\n annos = json.load(file)\n\n with open(os.path.join(args.path, f\"scene_{args.scene:05d}\", \"bbox_3d.json\")) as file:\n boxes = json.load(file)\n\n # extract the floor in each semantic for floorplan visualization\n planes = []\n for semantic in annos['semantics']:\n for planeID in semantic['planeID']:\n if annos['planes'][planeID]['type'] == 'floor':\n planes.append({'planeID': planeID, 'type': semantic['type']})\n\n if semantic['type'] == 'outwall':\n outerwall_planes = semantic['planeID']\n\n # extract hole vertices\n lines_holes = []\n for semantic in annos['semantics']:\n if semantic['type'] in ['window', 'door']:\n for planeID in semantic['planeID']:\n lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())\n lines_holes = np.unique(lines_holes)\n\n # junctions on the floor\n junctions = np.array([junc['coordinate'] for junc in annos['junctions']])\n junction_floor = np.where(np.isclose(junctions[:, -1], 0))[0]\n\n # construct each polygon\n polygons = []\n for plane in planes:\n lineIDs = np.where(np.array(annos['planeLineMatrix'][plane['planeID']]))[0].tolist()\n junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]\n polygon = convert_lines_to_vertices(junction_pairs)\n polygons.append([polygon[0], plane['type']])\n\n outerwall_floor = []\n for planeID in outerwall_planes:\n lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist()\n lineIDs = np.setdiff1d(lineIDs, lines_holes)\n junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]\n for start, end in junction_pairs:\n if start in junction_floor and end in junction_floor:\n outerwall_floor.append([start, end])\n\n outerwall_polygon = convert_lines_to_vertices(outerwall_floor)\n polygons.append([outerwall_polygon[0], 'outwall'])\n\n fig = plt.figure()\n ax = fig.add_subplot(1, 1, 1)\n\n junctions = np.array([junc['coordinate'][:2] for junc in annos['junctions']])\n for (polygon, poly_type) in polygons:\n polygon = np.array(polygon + [polygon[0], ])\n polygon = Polygon(junctions[polygon])\n if poly_type == 'outwall':\n plot_polygon(polygon, ax=ax, add_points=False, facecolor=semantics_cmap[poly_type], alpha=0)\n else:\n plot_polygon(polygon, ax=ax, add_points=False, facecolor=semantics_cmap[poly_type], alpha=0.5)\n\n for bbox in boxes:\n basis = np.array(bbox['basis'])\n coeffs = np.array(bbox['coeffs'])\n centroid = np.array(bbox['centroid'])\n\n corners = get_corners_of_bb3d_no_index(basis, coeffs, centroid)\n corners = corners[[0, 1, 2, 3, 0], :2]\n\n polygon = Polygon(corners)\n plot_polygon(polygon, ax=ax, add_points=False, facecolor=colors.rgb2hex(np.random.rand(3)), alpha=0.5)\n\n plt.axis('equal')\n plt.axis('off')\n plt.show()\n\n\ndef parse_args():\n parser = argparse.ArgumentParser(\n description=\"Structured3D Floorplan Visualization\")\n parser.add_argument(\"--path\", required=True,\n help=\"dataset path\", metavar=\"DIR\")\n parser.add_argument(\"--scene\", required=True,\n help=\"scene id\", type=int)\n return parser.parse_args()\n\n\ndef main():\n args = parse_args()\n\n visualize_floorplan(args)\n\n\nif __name__ == \"__main__\":\n main()\n"

},

{

"path": "visualize_layout.py",

"content": "import os\nimport json\nimport argparse\n\nimport cv2\nimport numpy as np\nimport matplotlib.pyplot as plt\nfrom shapely.geometry import Polygon\nfrom descartes.patch import PolygonPatch\n\nfrom misc.panorama import draw_boundary_from_cor_id\nfrom misc.colors import colormap_255\n\n\ndef visualize_panorama(args):\n \"\"\"visualize panorama layout\n \"\"\"\n scene_path = os.path.join(args.path, f\"scene_{args.scene:05d}\", \"2D_rendering\")\n\n for room_id in np.sort(os.listdir(scene_path)):\n room_path = os.path.join(scene_path, room_id, \"panorama\")\n\n cor_id = np.loadtxt(os.path.join(room_path, \"layout.txt\"))\n img_src = cv2.imread(os.path.join(room_path, \"full\", \"rgb_rawlight.png\"))\n img_src = cv2.cvtColor(img_src, cv2.COLOR_BGR2RGB)\n img_viz = draw_boundary_from_cor_id(cor_id, img_src)\n\n plt.axis('off')\n plt.imshow(img_viz)\n plt.show()\n\n\ndef visualize_perspective(args):\n \"\"\"visualize perspective layout\n \"\"\"\n colors = np.array(colormap_255) / 255\n\n scene_path = os.path.join(args.path, f\"scene_{args.scene:05d}\", \"2D_rendering\")\n\n for room_id in np.sort(os.listdir(scene_path)):\n room_path = os.path.join(scene_path, room_id, \"perspective\", \"full\")\n\n if not os.path.exists(room_path):\n continue\n\n for position_id in np.sort(os.listdir(room_path)):\n position_path = os.path.join(room_path, position_id)\n\n image = cv2.imread(os.path.join(position_path, \"rgb_rawlight.png\"))\n image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)\n\n with open(os.path.join(position_path, \"layout.json\")) as f:\n annos = json.load(f)\n\n fig = plt.figure()\n for i, key in enumerate(['amodal_mask', 'visible_mask']):\n ax = fig.add_subplot(2, 1, i + 1)\n plt.axis('off')\n plt.imshow(image)\n\n for i, planes in enumerate(annos['planes']):\n if len(planes[key]):\n for plane in planes[key]:\n polygon = Polygon([annos['junctions'][id]['coordinate'] for id in plane])\n patch = PolygonPatch(polygon, facecolor=colors[i], alpha=0.5)\n ax.add_patch(patch)\n\n plt.title(key)\n plt.show()\n\n\ndef parse_args():\n parser = argparse.ArgumentParser(description=\"Structured3D 2D Layout Visualization\")\n parser.add_argument(\"--path\", required=True,\n help=\"dataset path\", metavar=\"DIR\")\n parser.add_argument(\"--scene\", required=True,\n help=\"scene id\", type=int)\n parser.add_argument(\"--type\", choices=[\"perspective\", \"panorama\"], required=True,\n help=\"type of camera\", type=str)\n return parser.parse_args()\n\n\ndef main():\n args = parse_args()\n\n if args.type == 'panorama':\n visualize_panorama(args)\n elif args.type == 'perspective':\n visualize_perspective(args)\n\n\nif __name__ == \"__main__\":\n main()\n"

},

{

"path": "visualize_mesh.py",

"content": "import os\nimport json\nimport argparse\n\nimport cv2\nimport open3d\nimport numpy as np\nfrom panda3d.core import Triangulator\n\nfrom misc.panorama import xyz_2_coorxy\nfrom visualize_3d import convert_lines_to_vertices\n\n\ndef E2P(image, corner_i, corner_j, wall_height, camera, resolution=512, is_wall=True):\n \"\"\"convert panorama to persepctive image\n \"\"\"\n corner_i = corner_i - camera\n corner_j = corner_j - camera\n\n if is_wall:\n xs = np.linspace(corner_i[0], corner_j[0], resolution)[None].repeat(resolution, 0)\n ys = np.linspace(corner_i[1], corner_j[1], resolution)[None].repeat(resolution, 0)\n zs = np.linspace(-camera[-1], wall_height - camera[-1], resolution)[:, None].repeat(resolution, 1)\n else:\n xs = np.linspace(corner_i[0], corner_j[0], resolution)[None].repeat(resolution, 0)\n ys = np.linspace(corner_i[1], corner_j[1], resolution)[:, None].repeat(resolution, 1)\n zs = np.zeros_like(xs) + wall_height - camera[-1]\n\n coorx, coory = xyz_2_coorxy(xs, ys, zs)\n\n persp = cv2.remap(image, coorx.astype(np.float32), coory.astype(np.float32), \n cv2.INTER_CUBIC, borderMode=cv2.BORDER_WRAP)\n\n return persp\n\n\ndef create_plane_mesh(vertices, vertices_floor, textures, texture_floor, texture_ceiling,\n delta_height, ignore_ceiling=False):\n # create mesh for 3D floorplan visualization\n triangles = []\n triangle_uvs = []\n\n # the number of vertical walls\n num_walls = len(vertices)\n\n # 1. vertical wall (always rectangle)\n num_vertices = 0\n for i in range(len(vertices)):\n # hardcode triangles for each vertical wall\n triangle = np.array([[0, 2, 1], [2, 0, 3]])\n triangles.append(triangle + num_vertices)\n num_vertices += 4\n\n triangle_uv = np.array(\n [\n [i / (num_walls + 2), 0], \n [i / (num_walls + 2), 1], \n [(i+1) / (num_walls + 2), 1], \n [(i+1) / (num_walls + 2), 0]\n ],\n dtype=np.float32\n )\n triangle_uvs.append(triangle_uv)\n\n # 2. floor and ceiling\n # Since the floor and ceiling may not be a rectangle, triangulate the polygon first.\n tri = Triangulator()\n for i in range(len(vertices_floor)):\n tri.add_vertex(vertices_floor[i, 0], vertices_floor[i, 1])\n\n for i in range(len(vertices_floor)):\n tri.add_polygon_vertex(i)\n\n tri.triangulate()\n\n # polygon triangulation\n triangle = []\n for i in range(tri.getNumTriangles()):\n triangle.append([tri.get_triangle_v0(i), tri.get_triangle_v1(i), tri.get_triangle_v2(i)])\n triangle = np.array(triangle)\n\n # add triangles for floor and ceiling\n triangles.append(triangle + num_vertices)\n num_vertices += len(np.unique(triangle))\n if not ignore_ceiling:\n triangles.append(triangle + num_vertices)\n\n # texture for floor and ceiling\n vertices_floor_min = np.min(vertices_floor[:, :2], axis=0)\n vertices_floor_max = np.max(vertices_floor[:, :2], axis=0)\n \n # normalize to [0, 1]\n triangle_uv = (vertices_floor[:, :2] - vertices_floor_min) / (vertices_floor_max - vertices_floor_min)\n triangle_uv[:, 0] = (triangle_uv[:, 0] + num_walls) / (num_walls + 2) \n\n triangle_uvs.append(triangle_uv)\n\n # normalize to [0, 1]\n triangle_uv = (vertices_floor[:, :2] - vertices_floor_min) / (vertices_floor_max - vertices_floor_min)\n triangle_uv[:, 0] = (triangle_uv[:, 0] + num_walls + 1) / (num_walls + 2)\n\n triangle_uvs.append(triangle_uv)\n\n # 3. Merge wall, floor, and ceiling\n vertices.append(vertices_floor)\n vertices.append(vertices_floor + delta_height)\n vertices = np.concatenate(vertices, axis=0)\n\n triangles = np.concatenate(triangles, axis=0)\n\n textures.append(texture_floor)\n textures.append(texture_ceiling)\n textures = np.concatenate(textures, axis=1)\n\n triangle_uvs = np.concatenate(triangle_uvs, axis=0)\n\n mesh = open3d.geometry.TriangleMesh(\n vertices=open3d.utility.Vector3dVector(vertices),\n triangles=open3d.utility.Vector3iVector(triangles)\n )\n mesh.compute_vertex_normals()\n\n mesh.texture = open3d.geometry.Image(textures)\n mesh.triangle_uvs = np.array(triangle_uvs[triangles.reshape(-1), :], dtype=np.float64)\n return mesh\n\n\ndef verify_normal(corner_i, corner_j, delta_height, plane_normal):\n edge_a = corner_j + delta_height - corner_i\n edge_b = delta_height\n\n normal = np.cross(edge_a, edge_b)\n normal /= np.linalg.norm(normal, ord=2)\n\n inner_product = normal.dot(plane_normal)\n \n if inner_product > 1e-8:\n return False\n else:\n return True\n \n\ndef visualize_mesh(args):\n \"\"\"visualize as water-tight mesh\n \"\"\"\n\n image = cv2.imread(os.path.join(args.path, f\"scene_{args.scene:05d}\", \"2D_rendering\", \n str(args.room), \"panorama/full/rgb_rawlight.png\"))\n image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)\n\n # load room annotations\n with open(os.path.join(args.path, f\"scene_{args.scene:05d}\" , \"annotation_3d.json\")) as f:\n annos = json.load(f)\n\n # load camera info\n camera_center = np.loadtxt(os.path.join(args.path, f\"scene_{args.scene:05d}\", \"2D_rendering\", \n str(args.room), \"panorama\", \"camera_xyz.txt\"))\n\n # parse corners\n junctions = np.array([item['coordinate'] for item in annos['junctions']])\n lines_holes = []\n for semantic in annos['semantics']:\n if semantic['type'] in ['window', 'door']:\n for planeID in semantic['planeID']:\n lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())\n\n lines_holes = np.unique(lines_holes)\n _, vertices_holes = np.where(np.array(annos['lineJunctionMatrix'])[lines_holes])\n vertices_holes = np.unique(vertices_holes)\n\n # parse annotations\n walls = dict()\n walls_normal = dict()\n for semantic in annos['semantics']:\n if semantic['ID'] != int(args.room):\n continue\n\n # find junctions of ceiling and floor \n for planeID in semantic['planeID']:\n plane_anno = annos['planes'][planeID]\n\n if plane_anno['type'] != 'wall':\n lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0]\n lineIDs = np.setdiff1d(lineIDs, lines_holes)\n junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]\n wall = convert_lines_to_vertices(junction_pairs)\n walls[plane_anno['type']] = wall[0]\n \n # save normal of the vertical walls\n for planeID in semantic['planeID']:\n plane_anno = annos['planes'][planeID]\n\n if plane_anno['type'] == 'wall':\n lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0]\n lineIDs = np.setdiff1d(lineIDs, lines_holes)\n junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]\n wall = convert_lines_to_vertices(junction_pairs)\n walls_normal[tuple(np.intersect1d(wall, walls['floor']))] = plane_anno['normal']\n\n # we assume that zs of floor equals 0, then the wall height is from the ceiling\n wall_height = np.mean(junctions[walls['ceiling']], axis=0)[-1]\n delta_height = np.array([0, 0, wall_height])\n\n # list of corner index\n wall_floor = walls['floor']\n\n corners = [] # 3D coordinate for each wall\n textures = [] # texture for each wall\n\n # wall\n for i, j in zip(wall_floor, np.roll(wall_floor, shift=-1)):\n corner_i, corner_j = junctions[i], junctions[j]\n\n flip = verify_normal(corner_i, corner_j, delta_height, walls_normal[tuple(sorted([i, j]))])\n \n if flip:\n corner_j, corner_i = corner_i, corner_j\n\n texture = E2P(image, corner_i, corner_j, wall_height, camera_center)\n\n corner = np.array([corner_i, corner_i + delta_height, corner_j + delta_height, corner_j])\n\n corners.append(corner)\n textures.append(texture)\n\n # floor and ceiling\n # the floor/ceiling texture is cropped by the maximum bounding box\n corner_floor = junctions[wall_floor]\n corner_min = np.min(corner_floor, axis=0)\n corner_max = np.max(corner_floor, axis=0)\n texture_floor = E2P(image, corner_min, corner_max, 0, camera_center, is_wall=False)\n texture_ceiling = E2P(image, corner_min, corner_max, wall_height, camera_center, is_wall=False)\n\n # create mesh\n mesh = create_plane_mesh(corners, corner_floor, textures, texture_floor, texture_ceiling,\n delta_height, ignore_ceiling=args.ignore_ceiling)\n\n # visualize mesh\n open3d.visualization.draw_geometries([mesh])\n\n\ndef parse_args():\n parser = argparse.ArgumentParser(description=\"Structured3D 3D Textured Mesh Visualization\")\n parser.add_argument(\"--path\", required=True,\n help=\"dataset path\", metavar=\"DIR\")\n parser.add_argument(\"--scene\", required=True,\n help=\"scene id\", type=int)\n parser.add_argument(\"--room\", required=True,\n help=\"room id\", type=int)\n parser.add_argument(\"--ignore_ceiling\", action='store_true',\n help=\"ignore ceiling for better visualization\")\n return parser.parse_args()\n\n\ndef main():\n args = parse_args()\n\n visualize_mesh(args)\n\n\nif __name__ == \"__main__\":\n main()\n"

}

] \n

\n \n

\n \n

\n \n

\n \n

\n \n

\n \n

\n \n

\n \n

\n \n

\n \n

\n \n

\n \n

\n