Repository: bertjiazheng/Structured3D

Branch: master

Commit: a1aa97f761f8

Files: 17

Total size: 64.6 KB

Directory structure:

gitextract_zakvmfv2/

├── .gitignore

├── LICENSE

├── README.md

├── data_organization.md

├── metadata/

│ ├── errata.txt

│ ├── labelids.txt

│ └── room_types.txt

├── misc/

│ ├── __init__.py

│ ├── colors.py

│ ├── figures.py

│ ├── panorama.py

│ └── utils.py

├── visualize_3d.py

├── visualize_bbox.py

├── visualize_floorplan.py

├── visualize_layout.py

└── visualize_mesh.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

vis/

apex/

cocoapi/

demo/

vis_xtion/

MASK_R_*/

old/

checkpoints/

VOCdevkit/

*.py[cod]

*$py.class

pano_pred/

pano_pred.json

# C extensions

*.so

*.log

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

pip-wheel-metadata/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

.python-version

# pipenv

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

# However, in case of collaboration, if having platform-specific dependencies or dependencies

# having no cross-platform support, pipenv may install dependencies that don’t work, or not

# install all needed dependencies.

#Pipfile.lock

# celery beat schedule file

celerybeat-schedule

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

================================================

FILE: LICENSE

================================================

MIT License

Copyright (c) 2019 Structured3D Group

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: README.md

================================================

# Structured3D

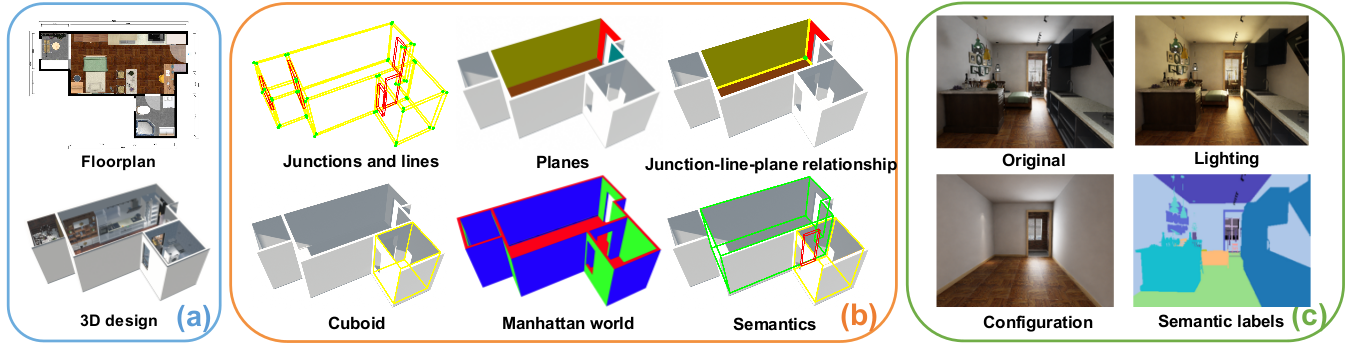

Structured3D is a large-scale photo-realistic dataset containing 3.5K house designs **(a)** created by professional designers with a variety of ground truth 3D structure annotations **(b)** and generate photo-realistic 2D images **(c)**.

## Paper

**Structured3D: A Large Photo-realistic Dataset for Structured 3D Modeling**

[Jia Zheng](https://bertjiazheng.github.io/)\*,

[Junfei Zhang](https://www.linkedin.com/in/骏飞-张-1bb82691/?locale=en_US)\*,

[Jing Li](https://cn.linkedin.com/in/jing-li-253b26139),

[Rui Tang](https://cn.linkedin.com/in/rui-tang-50973488),

[Shenghua Gao](http://sist.shanghaitech.edu.cn/sist_en/2018/0820/c3846a31775/page.htm),

[Zihan Zhou](https://faculty.ist.psu.edu/zzhou)

European Conference on Computer Vision (ECCV), 2020

[[Preprint](https://arxiv.org/abs/1908.00222)]

[[Paper](https://www.ecva.net/papers/eccv_2020/papers_ECCV/papers/123540494.pdf)]

[[Supplementary Material](https://www.ecva.net/papers/eccv_2020/papers_ECCV/papers/123540494-supp.pdf)]

[[Benchmark](https://competitions.codalab.org/competitions/24183)]

(\* Equal contribution)

## Data

The dataset consists of rendering images and corresponding ground truth annotations (_e.g._, semantic, albedo, depth, surface normal, layout) under different lighting and furniture configurations. Please refer to [data organization](data_organization.md) for more details.

To download the dataset, please fill the [agreement form](https://forms.gle/LXg4bcjC2aEjrL9o8) that indicates you agree to the [Structured3D Terms of Use](https://drive.google.com/open?id=13ZwWpU_557ZQccwOUJ8H5lvXD7MeZFMa). After we receive your agreement form, we will provide download access to the dataset.

For fair comparison, we define standard training, validation, and testing splits as follows: _scene_00000_ to _scene_02999_ for training, _scene_03000_ to _scene_03249_ for validation, and _scene_03250_ to _scene_03499_ for testing.

## Errata

- 2020-04-06: We provide a list of invalid cases [here](metadata/errata.txt). You can ignore these cases when using our data.

- 2020-03-26: Fix issue [#10](https://github.com/bertjiazheng/Structured3D/issues/10) about the basis of the bounding box annotations. Please re-download the annotations if you use them.

## Tools

We provide the basic code for viewing the structure annotations of our dataset.

### Installation

Clone repository:

```bash

git clone git@github.com:bertjiazheng/Structured3D.git

```

Please use Python 3, then follow [installation](https://pymesh.readthedocs.io/en/latest/installation.html) to install [PyMesh](https://github.com/PyMesh/PyMesh) (only for plane visualization) and the other dependencies:

```bash

conda install -y open3d -c open3d-admin

conda install -y opencv -c conda-forge

conda install -y descartes matplotlib numpy shapely

pip install panda3d

```

### Visualize 3D Annotation

We use [open3D](https://github.com/intel-isl/Open3D) for wireframe and plane visualization, please refer to interaction control [here](http://www.open3d.org/docs/tutorial/Basic/visualization.html#function-draw-geometries).

```bash

python visualize_3d.py --path /path/to/dataset --scene scene_id --type wireframe/plane/floorplan

```

| Wireframe | Plane | Floorplan |

| ------------------------------------- | ----------------------------- | ------------------------------------- |

|  |  |  |

### Visualize 3D Textured Mesh

```bash

python visualize_mesh.py --path /path/to/dataset --scene scene_id --room room_id

```

### Visualize 2D Layout

```bash

python visualize_layout.py --path /path/to/dataset --scene scene_id --type perspective/panorama

```

#### Panorama Layout

Please refer to the [Supplementary Material](https://www.ecva.net/papers/eccv_2020/papers_ECCV/papers/123540494-supp.pdf) for more example ground truth room layouts.

#### Perspective Layout

### Visualize 3D Bounding Box

```bash

python visualize_bbox.py --path /path/to/dataset --scene scene_id

```

### Visualize Floorplan

```bash

python visualize_floorplan.py --path /path/to/dataset --scene scene_id

```

## Citation

Please cite `Structured3D` in your publications if it helps your research:

```bibtex

@inproceedings{Structured3D,

title = {Structured3D: A Large Photo-realistic Dataset for Structured 3D Modeling},

author = {Jia Zheng and Junfei Zhang and Jing Li and Rui Tang and Shenghua Gao and Zihan Zhou},

booktitle = {Proceedings of The European Conference on Computer Vision (ECCV)},

year = {2020}

}

```

## License

The data is released under the [Structured3D Terms of Use](https://drive.google.com/open?id=13ZwWpU_557ZQccwOUJ8H5lvXD7MeZFMa), and the code is released under the [MIT license](LICENSE).

## Contact

Please contact us at [Structured3D Group](mailto:structured3d@googlegroups.com) if you have any questions.

## Acknowledgement

We would like to thank Kujiale.com for providing the database of house designs and the rendering engine. We especially thank Qing Ye and Qi Wu from Kujiale.com for the help on the data rendering.

================================================

FILE: data_organization.md

================================================

# Data Organization

There is a separate subdirectory for every scene (_i.e._, house design), which is named by a unique ID. Within each scene directory, there are separate directories for different types of data as follows:

```

scene_

├── 2D_rendering

│ └──

│ ├── panorama

│ │ ├──

│ │ │ ├── rgb_light.png

│ │ │ ├── semantic.png

│ │ │ ├── instance.png

│ │ │ ├── albedo.png

│ │ │ ├── depth.png

│ │ │ └── normal.png

│ │ ├── layout.txt

│ │ └── camera_xyz.txt

│ └── perspective

│ └──

│ └──

│ ├── rgb_rawlight.png

│ ├── semantic.png

│ ├── instance.png

│ ├── albedo.png

│ ├── depth.png

│ ├── normal.png

│ ├── layout.json

│ └── camera_pose.txt

├── bbox_3d.json

└── annotation_3d.json

```

# Annotation Format

We provide the primitive and relationship based structure annotation for each scene, and oriented bounding box for each object instance.

**Structure annotation (`annotation_3d.json`)**: see all the room types [here](metadata/room_types.txt).

```

{

// PRIMITVIES

"junctions":[

{

"ID": : int,

"coordinate" : List[float] // 3D vector

}

],

"lines": [

{

"ID": : int,

"point" : List[float], // 3D vector

"direction" : List[float] // 3D vector

}

],

"planes": [

{

"ID": : int,

"type" : str, // ceiling, floor, wall

"normal" : List[float], // 3D vector, the normal points to the empty space

"offset" : float

}

],

// RELATIONSHIPS

"semantics": [

{

"ID" : int,

"type" : str, // room type, door, window

"planeID" : List[int] // indices of the planes

}

],

"planeLineMatrix" : Matrix[int], // matrix W_1 where the ij-th entry is 1 iff l_i is on p_j

"lineJunctionMatrix" : Matrix[int], // matrix W_2 here the mn-th entry is 1 iff x_m is on l_nj

// OTHERS

"cuboids": [

{

"ID": : int,

"planeID" : List[int] // indices of the planes

}

]

"manhattan": [

{

"ID": : int,

"planeID" : List[int] // indices of the planes

}

]

}

```

**Bounding box (`bbox_3d.json`)**: the oriented bounding box annotation in world coordinate, same as the [SUN RGB-D Dataset](http://rgbd.cs.princeton.edu).

```

[

{

"ID" : int, // instance id

"basis" : Matrix[float], // basis of the bounding box, one row is one basis

"coeffs" : List[float], // radii in each dimension

"centroid" : List[float], // 3D centroid of the bounding box

}

]

```

For each image, we provide semantic, instance, albedo, depth, normal, layout annotation and camera position. Please note that we have different layout and camera annotation formats for panoramic and perspective images.

**Semantic annotation (`semantic.png`)**: unsigned 8-bit integers within a PNG. We use [NYUv2](https://cs.nyu.edu/~silberman/datasets/nyu_depth_v2) 40-label set, see all the label ids [here](metadata/labelids.txt).

**Instance annotation (`instance.png`)**: unsigned 16-bit integers within a PNG. We only provide instance annotation for full configuration. The maximum value (65535) denotes _background_.

**Albedo data (`albedo.png`)**: unsigned 8-bit integers within a PNG.

**Depth data (`depth.png`)**: unsigned 16-bit integers within a PNG. The units are millimeters, a value of 1000 is a meter. A zero value denotes _no reading_.

**Normal data (`normal.png`)**: unsigned 8-bit integers within a PNG (x, y, z), where the integer values in the file are 128 \* (1 + n), where n is a normal coordinate in range the [-1, 1].

**Layout annotation for panorama (`layout.txt`)**: an ordered list of 2D positions of the junctions (same as [LayoutNet](https://github.com/zouchuhang/LayoutNet) and [HorizonNet](https://github.com/sunset1995/HorizonNet)). The order of the junctions is shown in the figure below. In our dataset, the cameras of the panoramas are aligned with the gravity direction, thus a pair of ceiling-wall and floor-wall junctions share the same x-axis coordinates.

**Layout annotation for perspective (`layout.json`)**: We also include the junctions formed by line segments intersecting with each other or image boundary. We consider the visible and invisible parts caused by the room structure instead of furniture.

```

{

"junctions":[

{

"ID" : int, // corresponding 3D junction id, none corresponds to fake 3D junction

"coordinate" : List[int], // 2D location in the camera coordinate

"isvisible" : bool // this junction is whether occluded by the other walls

}

],

"planes": [

{

"ID" : int, // corresponding 3D plane id

"visible_mask" : List[List[int]], // visible segmentation mask, list of junctions ids

"amodal_mask" : List[List[int]], // amodal segmentation mask, list of junctions ids

"normal" : List[float], // normal in the camera coordinate

"offset" : float, // offset in the camera coordinate

"type" : str // ceiling, floor, wall

}

]

}

```

**Camera location for panorama (`camera_xyz.txt`)**: For each panoramic image, we only store the camera location in millimeters in global coordinates. The direction of the camera is always along the negative y-axis. The z-axis is upward.

**Camera location for perspective (`camera_pose.txt`)**: For each perspective image, we store the camera location and pose in global coordinates.

```

vx vy vz tx ty tz ux uy uz xfov yfov 1

```

where `(vx, vy, vz)` is the eye viewpoint of the camera in millimeters, `(tx, ty, tz)` is the view direction, `(ux, uy, uz)` is the up direction, and `xfov` and `yfov` are the half-angles of the horizontal and vertical fields of view of the camera in radians (the angle from the central ray to the leftmost/bottommost ray in the field of view), same as the [Matterport3D Dataset](https://github.com/niessner/Matterport).

================================================

FILE: metadata/errata.txt

================================================

# invalid scene

scene_01155

scene_01714

scene_01816

scene_03398

scene_01192

scene_01852

# a pair of junctions are not aligned along the x-axis

scene_01778_room_858455

# self-intersection layout

scene_00010_room_846619

scene_00043_room_1518

scene_00043_room_3128

scene_00043_room_474

scene_00043_room_732

scene_00043_room_856

scene_00173_room_4722

scene_00240_room_384

scene_00325_room_970753

scene_00335_room_686

scene_00339_room_2193

scene_00501_room_1840

scene_00515_room_277475

scene_00543_room_176

scene_00587_room_9914

scene_00703_room_762455

scene_00703_room_771712

scene_00728_room_5662

scene_00828_room_607228

scene_00865_room_1026

scene_00865_room_1402

scene_00875_room_739214

scene_00917_room_188

scene_00917_room_501284

scene_00926_room_2290

scene_00936_room_311

scene_00937_room_1955

scene_00986_room_141

scene_01009_room_3234

scene_01009_room_3571

scene_01021_room_689126

scene_01034_room_222021

scene_01036_room_301

scene_01043_room_2193

scene_01104_room_875

scene_01151_room_563

scene_01165_room_204

scene_01221_room_26619

scene_01222_room_273364

scene_01282_room_1917

scene_01282_room_24057

scene_01282_room_2631

scene_01400_room_10576

scene_01445_room_3495

scene_01470_room_1413

scene_01530_room_577

scene_01670_room_291

scene_01745_room_342

scene_01759_room_3584

scene_01759_room_3588

scene_01772_room_897997

scene_01774_room_143

scene_01781_room_335

scene_01781_room_878137

scene_01786_room_5837

scene_01916_room_2648

scene_01993_room_849

scene_01998_room_54762

scene_02034_room_921879

scene_02040_room_311

scene_02046_room_1014

scene_02046_room_834

scene_02047_room_934954

scene_02101_room_255228

scene_02172_room_335

scene_02235_room_799012

scene_02274_room_4093

scene_02326_room_836436

scene_02334_room_869673

scene_02357_room_118319

scene_02484_room_43003

scene_02499_room_1607

scene_02499_room_977359

scene_02509_room_687231

scene_02542_room_671853

scene_02564_room_702502

scene_02580_room_724891

scene_02650_room_877946

scene_02659_room_577142

scene_02690_room_586296

scene_02706_room_823368

scene_02788_room_815473

scene_02889_room_848271

scene_03035_room_631066

scene_03120_room_830640

scene_03327_room_315045

scene_03376_room_800900

scene_03399_room_337

scene_03478_room_2193

================================================

FILE: metadata/labelids.txt

================================================

1 wall

2 floor

3 cabinet

4 bed

5 chair

6 sofa

7 table

8 door

9 window

10 bookshelf

11 picture

12 counter

13 blinds

14 desk

15 shelves

16 curtain

17 dresser

18 pillow

19 mirror

20 floor mat

21 clothes

22 ceiling

23 books

24 refrigerator

25 television

26 paper

27 towel

28 shower curtain

29 box

30 whiteboard

31 person

32 nightstand

33 toilet

34 sink

35 lamp

36 bathtub

37 bag

38 otherstructure

39 otherfurniture

40 otherprop

================================================

FILE: metadata/room_types.txt

================================================

living room

kitchen

bedroom

bathroom

balcony

corridor

dining room

study

studio

store room

garden

laundry room

office

basement

garage

undefined

================================================

FILE: misc/__init__.py

================================================

================================================

FILE: misc/colors.py

================================================

semantics_cmap = {

'living room': '#e6194b',

'kitchen': '#3cb44b',

'bedroom': '#ffe119',

'bathroom': '#0082c8',

'balcony': '#f58230',

'corridor': '#911eb4',

'dining room': '#46f0f0',

'study': '#f032e6',

'studio': '#d2f53c',

'store room': '#fabebe',

'garden': '#008080',

'laundry room': '#e6beff',

'office': '#aa6e28',

'basement': '#fffac8',

'garage': '#800000',

'undefined': '#aaffc3',

'door': '#808000',

'window': '#ffd7b4',

'outwall': '#000000',

}

colormap_255 = [

[230, 25, 75],

[ 60, 180, 75],

[255, 225, 25],

[ 0, 130, 200],

[245, 130, 48],

[145, 30, 180],

[ 70, 240, 240],

[240, 50, 230],

[210, 245, 60],

[250, 190, 190],

[ 0, 128, 128],

[230, 190, 255],

[170, 110, 40],

[255, 250, 200],

[128, 0, 0],

[170, 255, 195],

[128, 128, 0],

[255, 215, 180],

[ 0, 0, 128],

[128, 128, 128],

[255, 255, 255],

[ 0, 0, 0]

]

================================================

FILE: misc/figures.py

================================================

"""

Copy from https://github.com/Toblerity/Shapely/blob/master/docs/code/figures.py

"""

from math import sqrt

from shapely import affinity

GM = (sqrt(5)-1.0)/2.0

W = 8.0

H = W*GM

SIZE = (W, H)

BLUE = '#6699cc'

GRAY = '#999999'

DARKGRAY = '#333333'

YELLOW = '#ffcc33'

GREEN = '#339933'

RED = '#ff3333'

BLACK = '#000000'

COLOR_ISVALID = {

True: BLUE,

False: RED,

}

def plot_line(ax, ob, color=GRAY, zorder=1, linewidth=3, alpha=1):

x, y = ob.xy

ax.plot(x, y, color=color, linewidth=linewidth, solid_capstyle='round', zorder=zorder, alpha=alpha)

def plot_coords(ax, ob, color=BLACK, zorder=1, alpha=1):

x, y = ob.xy

ax.plot(x, y, color=color, zorder=zorder, alpha=alpha)

def color_isvalid(ob, valid=BLUE, invalid=RED):

if ob.is_valid:

return valid

else:

return invalid

def color_issimple(ob, simple=BLUE, complex=YELLOW):

if ob.is_simple:

return simple

else:

return complex

def plot_line_isvalid(ax, ob, **kwargs):

kwargs["color"] = color_isvalid(ob)

plot_line(ax, ob, **kwargs)

def plot_line_issimple(ax, ob, **kwargs):

kwargs["color"] = color_issimple(ob)

plot_line(ax, ob, **kwargs)

def plot_bounds(ax, ob, zorder=1, alpha=1):

x, y = zip(*list((p.x, p.y) for p in ob.boundary))

ax.plot(x, y, 'o', color=BLACK, zorder=zorder, alpha=alpha)

def add_origin(ax, geom, origin):

x, y = xy = affinity.interpret_origin(geom, origin, 2)

ax.plot(x, y, 'o', color=GRAY, zorder=1)

ax.annotate(str(xy), xy=xy, ha='center',

textcoords='offset points', xytext=(0, 8))

def set_limits(ax, x0, xN, y0, yN):

ax.set_xlim(x0, xN)

ax.set_xticks(range(x0, xN+1))

ax.set_ylim(y0, yN)

ax.set_yticks(range(y0, yN+1))

ax.set_aspect("equal")

================================================

FILE: misc/panorama.py

================================================

"""

Copy from https://github.com/sunset1995/pytorch-layoutnet/blob/master/pano.py

"""

import numpy as np

import numpy.matlib as matlib

def xyz_2_coorxy(xs, ys, zs, H=512, W=1024):

us = np.arctan2(xs, ys)

vs = -np.arctan(zs / np.sqrt(xs**2 + ys**2))

coorx = (us / (2 * np.pi) + 0.5) * W

coory = (vs / np.pi + 0.5) * H

return coorx, coory

def coords2uv(coords, width, height):

"""

Image coordinates (xy) to uv

"""

middleX = width / 2 + 0.5

middleY = height / 2 + 0.5

uv = np.hstack([

(coords[:, [0]] - middleX) / width * 2 * np.pi,

-(coords[:, [1]] - middleY) / height * np.pi])

return uv

def uv2xyzN(uv, planeID=1):

ID1 = (int(planeID) - 1 + 0) % 3

ID2 = (int(planeID) - 1 + 1) % 3

ID3 = (int(planeID) - 1 + 2) % 3

xyz = np.zeros((uv.shape[0], 3))

xyz[:, ID1] = np.cos(uv[:, 1]) * np.sin(uv[:, 0])

xyz[:, ID2] = np.cos(uv[:, 1]) * np.cos(uv[:, 0])

xyz[:, ID3] = np.sin(uv[:, 1])

return xyz

def uv2xyzN_vec(uv, planeID):

"""

vectorization version of uv2xyzN

@uv N x 2

@planeID N

"""

assert (planeID.astype(int) != planeID).sum() == 0

planeID = planeID.astype(int)

ID1 = (planeID - 1 + 0) % 3

ID2 = (planeID - 1 + 1) % 3

ID3 = (planeID - 1 + 2) % 3

ID = np.arange(len(uv))

xyz = np.zeros((len(uv), 3))

xyz[ID, ID1] = np.cos(uv[:, 1]) * np.sin(uv[:, 0])

xyz[ID, ID2] = np.cos(uv[:, 1]) * np.cos(uv[:, 0])

xyz[ID, ID3] = np.sin(uv[:, 1])

return xyz

def xyz2uvN(xyz, planeID=1):

ID1 = (int(planeID) - 1 + 0) % 3

ID2 = (int(planeID) - 1 + 1) % 3

ID3 = (int(planeID) - 1 + 2) % 3

normXY = np.sqrt(xyz[:, [ID1]] ** 2 + xyz[:, [ID2]] ** 2)

normXY[normXY < 0.000001] = 0.000001

normXYZ = np.sqrt(xyz[:, [ID1]] ** 2 + xyz[:, [ID2]] ** 2 + xyz[:, [ID3]] ** 2)

v = np.arcsin(xyz[:, [ID3]] / normXYZ)

u = np.arcsin(xyz[:, [ID1]] / normXY)

valid = (xyz[:, [ID2]] < 0) & (u >= 0)

u[valid] = np.pi - u[valid]

valid = (xyz[:, [ID2]] < 0) & (u <= 0)

u[valid] = -np.pi - u[valid]

uv = np.hstack([u, v])

uv[np.isnan(uv[:, 0]), 0] = 0

return uv

def computeUVN(n, in_, planeID):

"""

compute v given u and normal.

"""

if planeID == 2:

n = np.array([n[1], n[2], n[0]])

elif planeID == 3:

n = np.array([n[2], n[0], n[1]])

bc = n[0] * np.sin(in_) + n[1] * np.cos(in_)

bs = n[2]

out = np.arctan(-bc / (bs + 1e-9))

return out

def computeUVN_vec(n, in_, planeID):

"""

vectorization version of computeUVN

@n N x 3

@in_ MN x 1

@planeID N

"""

n = n.copy()

if (planeID == 2).sum():

n[planeID == 2] = np.roll(n[planeID == 2], 2, axis=1)

if (planeID == 3).sum():

n[planeID == 3] = np.roll(n[planeID == 3], 1, axis=1)

n = np.repeat(n, in_.shape[0] // n.shape[0], axis=0)

assert n.shape[0] == in_.shape[0]

bc = n[:, [0]] * np.sin(in_) + n[:, [1]] * np.cos(in_)

bs = n[:, [2]]

out = np.arctan(-bc / (bs + 1e-9))

return out

def lineFromTwoPoint(pt1, pt2):

"""

Generate line segment based on two points on panorama

pt1, pt2: two points on panorama

line:

1~3-th dim: normal of the line

4-th dim: the projection dimension ID

5~6-th dim: the u of line segment endpoints in projection plane

"""

numLine = pt1.shape[0]

lines = np.zeros((numLine, 6))

n = np.cross(pt1, pt2)

n = n / (matlib.repmat(np.sqrt(np.sum(n ** 2, 1, keepdims=True)), 1, 3) + 1e-9)

lines[:, 0:3] = n

areaXY = np.abs(np.sum(n * matlib.repmat([0, 0, 1], numLine, 1), 1, keepdims=True))

areaYZ = np.abs(np.sum(n * matlib.repmat([1, 0, 0], numLine, 1), 1, keepdims=True))

areaZX = np.abs(np.sum(n * matlib.repmat([0, 1, 0], numLine, 1), 1, keepdims=True))

planeIDs = np.argmax(np.hstack([areaXY, areaYZ, areaZX]), axis=1) + 1

lines[:, 3] = planeIDs

for i in range(numLine):

uv = xyz2uvN(np.vstack([pt1[i, :], pt2[i, :]]), lines[i, 3])

umax = uv[:, 0].max() + np.pi

umin = uv[:, 0].min() + np.pi

if umax - umin > np.pi:

lines[i, 4:6] = np.array([umax, umin]) / 2 / np.pi

else:

lines[i, 4:6] = np.array([umin, umax]) / 2 / np.pi

return lines

def lineIdxFromCors(cor_all, im_w, im_h):

assert len(cor_all) % 2 == 0

uv = coords2uv(cor_all, im_w, im_h)

xyz = uv2xyzN(uv)

lines = lineFromTwoPoint(xyz[0::2], xyz[1::2])

num_sample = max(im_h, im_w)

cs, rs = [], []

for i in range(lines.shape[0]):

n = lines[i, 0:3]

sid = lines[i, 4] * 2 * np.pi

eid = lines[i, 5] * 2 * np.pi

if eid < sid:

x = np.linspace(sid, eid + 2 * np.pi, num_sample)

x = x % (2 * np.pi)

else:

x = np.linspace(sid, eid, num_sample)

u = -np.pi + x.reshape(-1, 1)

v = computeUVN(n, u, lines[i, 3])

xyz = uv2xyzN(np.hstack([u, v]), lines[i, 3])

uv = xyz2uvN(xyz, 1)

r = np.minimum(np.floor((uv[:, 0] + np.pi) / (2 * np.pi) * im_w) + 1,

im_w).astype(np.int32)

c = np.minimum(np.floor((np.pi / 2 - uv[:, 1]) / np.pi * im_h) + 1,

im_h).astype(np.int32)

cs.extend(r - 1)

rs.extend(c - 1)

return rs, cs

def draw_boundary_from_cor_id(cor_id, img_src):

im_h, im_w = img_src.shape[:2]

cor_all = [cor_id]

for i in range(len(cor_id)):

cor_all.append(cor_id[i, :])

cor_all.append(cor_id[(i+2) % len(cor_id), :])

cor_all = np.vstack(cor_all)

rs, cs = lineIdxFromCors(cor_all, im_w, im_h)

rs = np.array(rs)

cs = np.array(cs)

panoEdgeC = img_src.astype(np.uint8)

for dx, dy in [[-1, 0], [1, 0], [0, 0], [0, 1], [0, -1]]:

panoEdgeC[np.clip(rs + dx, 0, im_h - 1), np.clip(cs + dy, 0, im_w - 1), 0] = 0

panoEdgeC[np.clip(rs + dx, 0, im_h - 1), np.clip(cs + dy, 0, im_w - 1), 1] = 0

panoEdgeC[np.clip(rs + dx, 0, im_h - 1), np.clip(cs + dy, 0, im_w - 1), 2] = 255

return panoEdgeC

def coorx2u(x, w=1024):

return ((x + 0.5) / w - 0.5) * 2 * np.pi

def coory2v(y, h=512):

return ((y + 0.5) / h - 0.5) * np.pi

def u2coorx(u, w=1024):

return (u / (2 * np.pi) + 0.5) * w - 0.5

def v2coory(v, h=512):

return (v / np.pi + 0.5) * h - 0.5

def uv2xy(u, v, z=-50):

c = z / np.tan(v)

x = c * np.cos(u)

y = c * np.sin(u)

return x, y

def pano_connect_points(p1, p2, z=-50, w=1024, h=512):

u1 = coorx2u(p1[0], w)

v1 = coory2v(p1[1], h)

u2 = coorx2u(p2[0], w)

v2 = coory2v(p2[1], h)

x1, y1 = uv2xy(u1, v1, z)

x2, y2 = uv2xy(u2, v2, z)

if abs(p1[0] - p2[0]) < w / 2:

pstart = np.ceil(min(p1[0], p2[0]))

pend = np.floor(max(p1[0], p2[0]))

else:

pstart = np.ceil(max(p1[0], p2[0]))

pend = np.floor(min(p1[0], p2[0]) + w)

coorxs = (np.arange(pstart, pend + 1) % w).astype(np.float64)

vx = x2 - x1

vy = y2 - y1

us = coorx2u(coorxs, w)

ps = (np.tan(us) * x1 - y1) / (vy - np.tan(us) * vx)

cs = np.sqrt((x1 + ps * vx) ** 2 + (y1 + ps * vy) ** 2)

vs = np.arctan2(z, cs)

coorys = v2coory(vs)

return np.stack([coorxs, coorys], axis=-1)

================================================

FILE: misc/utils.py

================================================

"""

Adapted from https://github.com/thusiyuan/cooperative_scene_parsing/blob/master/utils/sunrgbd_utils.py

"""

import numpy as np

def normalize(vector):

return vector / np.linalg.norm(vector)

def parse_camera_info(camera_info, height, width):

""" extract intrinsic and extrinsic matrix

"""

lookat = normalize(camera_info[3:6])

up = normalize(camera_info[6:9])

W = lookat

U = np.cross(W, up)

V = -np.cross(W, U)

rot = np.vstack((U, V, W))

trans = camera_info[:3]

xfov = camera_info[9]

yfov = camera_info[10]

K = np.diag([1, 1, 1])

K[0, 2] = width / 2

K[1, 2] = height / 2

K[0, 0] = K[0, 2] / np.tan(xfov)

K[1, 1] = K[1, 2] / np.tan(yfov)

return rot, trans, K

def flip_towards_viewer(normals, points):

points = points / np.linalg.norm(points)

proj = points.dot(normals[:2, :].T)

flip = np.where(proj > 0)

normals[flip, :] = -normals[flip, :]

return normals

def get_corners_of_bb3d(basis, coeffs, centroid):

corners = np.zeros((8, 3))

# order the basis

index = np.argsort(np.abs(basis[:, 0]))[::-1]

# the case that two same value appear the same time

if index[2] != 2:

index[1:] = index[1:][::-1]

basis = basis[index, :]

coeffs = coeffs[index]

# Now, we know the basis vectors are orders X, Y, Z. Next, flip the basis vectors towards the viewer

basis = flip_towards_viewer(basis, centroid)

coeffs = np.abs(coeffs)

corners[0, :] = -basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]

corners[1, :] = basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]

corners[2, :] = basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]

corners[3, :] = -basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]

corners[4, :] = -basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]

corners[5, :] = basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]

corners[6, :] = basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]

corners[7, :] = -basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]

corners = corners + np.tile(centroid, (8, 1))

return corners

def get_corners_of_bb3d_no_index(basis, coeffs, centroid):

corners = np.zeros((8, 3))

coeffs = np.abs(coeffs)

corners[0, :] = -basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]

corners[1, :] = basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]

corners[2, :] = basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]

corners[3, :] = -basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + basis[2, :] * coeffs[2]

corners[4, :] = -basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]

corners[5, :] = basis[0, :] * coeffs[0] + basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]

corners[6, :] = basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]

corners[7, :] = -basis[0, :] * coeffs[0] + -basis[1, :] * coeffs[1] + -basis[2, :] * coeffs[2]

corners = corners + np.tile(centroid, (8, 1))

return corners

def project_3d_points_to_2d(points3d, R_ex, K):

"""

Project 3d points from camera-centered coordinate to 2D image plane

Parameters

----------

points3d: numpy array

3d location of point

R_ex: numpy array

extrinsic camera parameter

K: numpy array

intrinsic camera parameter

Returns

-------

points2d: numpy array

2d location of the point

"""

points3d = R_ex.dot(points3d.T).T

x3 = points3d[:, 0]

y3 = -points3d[:, 1]

z3 = np.abs(points3d[:, 2])

xx = x3 * K[0, 0] / z3 + K[0, 2]

yy = y3 * K[1, 1] / z3 + K[1, 2]

points2d = np.vstack((xx, yy))

return points2d

def project_struct_bdb_to_2d(basis, coeffs, center, R_ex, K):

"""

Project 3d bounding box to 2d bounding box

Parameters

----------

basis, coeffs, center, R_ex, K

: K is the intrinsic camera parameter matrix

: Rtilt is the extrinsic camera parameter matrix in right hand coordinates

Returns

-------

bdb2d: dict

Keys: {'x1', 'x2', 'y1', 'y2'}

The (x1, y1) position is at the top left corner,

the (x2, y2) position is at the bottom right corner

"""

corners3d = get_corners_of_bb3d(basis, coeffs, center)

corners = project_3d_points_to_2d(corners3d, R_ex, K)

bdb2d = dict()

bdb2d['x1'] = int(max(np.min(corners[0, :]), 1)) # x1

bdb2d['y1'] = int(max(np.min(corners[1, :]), 1)) # y1

bdb2d['x2'] = int(min(np.max(corners[0, :]), 2*K[0, 2])) # x2

bdb2d['y2'] = int(min(np.max(corners[1, :]), 2*K[1, 2])) # y2

# if not check_bdb(bdb2d, 2*K[0, 2], 2*K[1, 2]):

# bdb2d = None

return bdb2d

================================================

FILE: visualize_3d.py

================================================

import os

import json

import argparse

import open3d

import pymesh

import numpy as np

import matplotlib.pyplot as plt

from shapely.geometry import Polygon

from descartes.patch import PolygonPatch

from misc.figures import plot_coords

from misc.colors import colormap_255, semantics_cmap

def visualize_wireframe(annos):

"""visualize wireframe

"""

colormap = np.array(colormap_255) / 255

junctions = np.array([item['coordinate'] for item in annos['junctions']])

_, junction_pairs = np.where(np.array(annos['lineJunctionMatrix']))

junction_pairs = junction_pairs.reshape(-1, 2)

# extract hole lines

lines_holes = []

for semantic in annos['semantics']:

if semantic['type'] in ['window', 'door']:

for planeID in semantic['planeID']:

lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())

lines_holes = np.unique(lines_holes)

# extract cuboid lines

cuboid_lines = []

for cuboid in annos['cuboids']:

for planeID in cuboid['planeID']:

cuboid_lineID = np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist()

cuboid_lines.extend(cuboid_lineID)

cuboid_lines = np.unique(cuboid_lines)

cuboid_lines = np.setdiff1d(cuboid_lines, lines_holes)

# visualize junctions

connected_junctions = junctions[np.unique(junction_pairs)]

connected_colors = np.repeat(colormap[0].reshape(1, 3), len(connected_junctions), axis=0)

junction_set = open3d.geometry.PointCloud()

junction_set.points = open3d.utility.Vector3dVector(connected_junctions)

junction_set.colors = open3d.utility.Vector3dVector(connected_colors)

# visualize line segments

line_colors = np.repeat(colormap[5].reshape(1, 3), len(junction_pairs), axis=0)

# color holes

if len(lines_holes) != 0:

line_colors[lines_holes] = colormap[6]

# color cuboids

if len(cuboid_lines) != 0:

line_colors[cuboid_lines] = colormap[2]

line_set = open3d.geometry.LineSet()

line_set.points = open3d.utility.Vector3dVector(junctions)

line_set.lines = open3d.utility.Vector2iVector(junction_pairs)

line_set.colors = open3d.utility.Vector3dVector(line_colors)

open3d.visualization.draw_geometries([junction_set, line_set])

def project(x, meta):

""" project 3D to 2D for polygon clipping

"""

proj_axis = max(range(3), key=lambda i: abs(meta['normal'][i]))

return tuple(c for i, c in enumerate(x) if i != proj_axis)

def project_inv(x, meta):

""" recover 3D points from 2D

"""

# Returns the vector w in the walls' plane such that project(w) equals x.

proj_axis = max(range(3), key=lambda i: abs(meta['normal'][i]))

w = list(x)

w[proj_axis:proj_axis] = [0.0]

c = -meta['offset']

for i in range(3):

c -= w[i] * meta['normal'][i]

c /= meta['normal'][proj_axis]

w[proj_axis] = c

return tuple(w)

def triangulate(points):

""" triangulate the plane for operation and visualization

"""

num_points = len(points)

indices = np.arange(num_points, dtype=np.int)

segments = np.vstack((indices, np.roll(indices, -1))).T

tri = pymesh.triangle()

tri.points = np.array(points)

tri.segments = segments

tri.verbosity = 0

tri.run()

return tri.mesh

def clip_polygon(polygons, vertices_hole, junctions, meta):

""" clip polygon the hole

"""

if len(polygons) == 1:

junctions = [junctions[vertex] for vertex in polygons[0]]

mesh_wall = triangulate(junctions)

vertices = np.array(mesh_wall.vertices)

faces = np.array(mesh_wall.faces)

return vertices, faces

else:

wall = []

holes = []

for polygon in polygons:

if np.any(np.intersect1d(polygon, vertices_hole)):

holes.append(polygon)

else:

wall.append(polygon)

# extract junctions on this plane

indices = []

junctions_wall = []

for plane in wall:

for vertex in plane:

indices.append(vertex)

junctions_wall.append(junctions[vertex])

junctions_holes = []

for plane in holes:

junctions_hole = []

for vertex in plane:

indices.append(vertex)

junctions_hole.append(junctions[vertex])

junctions_holes.append(junctions_hole)

junctions_wall = [project(x, meta) for x in junctions_wall]

junctions_holes = [[project(x, meta) for x in junctions_hole] for junctions_hole in junctions_holes]

mesh_wall = triangulate(junctions_wall)

for hole in junctions_holes:

mesh_hole = triangulate(hole)

mesh_wall = pymesh.boolean(mesh_wall, mesh_hole, 'difference')

vertices = [project_inv(vertex, meta) for vertex in mesh_wall.vertices]

return vertices, np.array(mesh_wall.faces)

def draw_geometries_with_back_face(geometries):

vis = open3d.visualization.Visualizer()

vis.create_window()

render_option = vis.get_render_option()

render_option.mesh_show_back_face = True

for geometry in geometries:

vis.add_geometry(geometry)

vis.run()

vis.destroy_window()

def convert_lines_to_vertices(lines):

"""convert line representation to polygon vertices

"""

polygons = []

lines = np.array(lines)

polygon = None

while len(lines) != 0:

if polygon is None:

polygon = lines[0].tolist()

lines = np.delete(lines, 0, 0)

lineID, juncID = np.where(lines == polygon[-1])

vertex = lines[lineID[0], 1 - juncID[0]]

lines = np.delete(lines, lineID, 0)

if vertex in polygon:

polygons.append(polygon)

polygon = None

else:

polygon.append(vertex)

return polygons

def visualize_plane(annos, args, eps=0.9):

"""visualize plane

"""

colormap = np.array(colormap_255) / 255

junctions = [item['coordinate'] for item in annos['junctions']]

if args.color == 'manhattan':

manhattan = dict()

for planes in annos['manhattan']:

for planeID in planes['planeID']:

manhattan[planeID] = planes['ID']

# extract hole vertices

lines_holes = []

for semantic in annos['semantics']:

if semantic['type'] in ['window', 'door']:

for planeID in semantic['planeID']:

lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())

lines_holes = np.unique(lines_holes)

_, vertices_holes = np.where(np.array(annos['lineJunctionMatrix'])[lines_holes])

vertices_holes = np.unique(vertices_holes)

# load polygons

polygons = []

for semantic in annos['semantics']:

for planeID in semantic['planeID']:

plane_anno = annos['planes'][planeID]

lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist()

junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]

polygon = convert_lines_to_vertices(junction_pairs)

vertices, faces = clip_polygon(polygon, vertices_holes, junctions, plane_anno)

polygons.append([vertices, faces, planeID, plane_anno['normal'], plane_anno['type'], semantic['type']])

plane_set = []

for i, (vertices, faces, planeID, normal, plane_type, semantic_type) in enumerate(polygons):

# ignore the room ceiling

if plane_type == 'ceiling' and semantic_type not in ['door', 'window']:

continue

plane_vis = open3d.geometry.TriangleMesh()

plane_vis.vertices = open3d.utility.Vector3dVector(vertices)

plane_vis.triangles = open3d.utility.Vector3iVector(faces)

if args.color == 'normal':

if np.dot(normal, [1, 0, 0]) > eps:

plane_vis.paint_uniform_color(colormap[0])

elif np.dot(normal, [-1, 0, 0]) > eps:

plane_vis.paint_uniform_color(colormap[1])

elif np.dot(normal, [0, 1, 0]) > eps:

plane_vis.paint_uniform_color(colormap[2])

elif np.dot(normal, [0, -1, 0]) > eps:

plane_vis.paint_uniform_color(colormap[3])

elif np.dot(normal, [0, 0, 1]) > eps:

plane_vis.paint_uniform_color(colormap[4])

elif np.dot(normal, [0, 0, -1]) > eps:

plane_vis.paint_uniform_color(colormap[5])

else:

plane_vis.paint_uniform_color(colormap[6])

elif args.color == 'manhattan':

# paint each plane with manhattan world

if planeID not in manhattan.keys():

plane_vis.paint_uniform_color(colormap[6])

else:

plane_vis.paint_uniform_color(colormap[manhattan[planeID]])

plane_set.append(plane_vis)

draw_geometries_with_back_face(plane_set)

def plot_floorplan(annos, polygons):

"""plot floorplan

"""

fig = plt.figure()

ax = fig.add_subplot(1, 1, 1)

junctions = np.array([junc['coordinate'][:2] for junc in annos['junctions']])

for (polygon, poly_type) in polygons:

polygon = Polygon(junctions[np.array(polygon)])

plot_coords(ax, polygon.exterior, alpha=0.5)

if poly_type == 'outwall':

patch = PolygonPatch(polygon, facecolor=semantics_cmap[poly_type], alpha=0)

else:

patch = PolygonPatch(polygon, facecolor=semantics_cmap[poly_type], alpha=0.5)

ax.add_patch(patch)

plt.axis('equal')

plt.axis('off')

plt.show()

def visualize_floorplan(annos):

"""visualize floorplan

"""

# extract the floor in each semantic for floorplan visualization

planes = []

for semantic in annos['semantics']:

for planeID in semantic['planeID']:

if annos['planes'][planeID]['type'] == 'floor':

planes.append({'planeID': planeID, 'type': semantic['type']})

if semantic['type'] == 'outwall':

outerwall_planes = semantic['planeID']

# extract hole vertices

lines_holes = []

for semantic in annos['semantics']:

if semantic['type'] in ['window', 'door']:

for planeID in semantic['planeID']:

lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())

lines_holes = np.unique(lines_holes)

# junctions on the floor

junctions = np.array([junc['coordinate'] for junc in annos['junctions']])

junction_floor = np.where(np.isclose(junctions[:, -1], 0))[0]

# construct each polygon

polygons = []

for plane in planes:

lineIDs = np.where(np.array(annos['planeLineMatrix'][plane['planeID']]))[0].tolist()

junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]

polygon = convert_lines_to_vertices(junction_pairs)

polygons.append([polygon[0], plane['type']])

outerwall_floor = []

for planeID in outerwall_planes:

lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist()

lineIDs = np.setdiff1d(lineIDs, lines_holes)

junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]

for start, end in junction_pairs:

if start in junction_floor and end in junction_floor:

outerwall_floor.append([start, end])

outerwall_polygon = convert_lines_to_vertices(outerwall_floor)

polygons.append([outerwall_polygon[0], 'outwall'])

plot_floorplan(annos, polygons)

def parse_args():

parser = argparse.ArgumentParser(description="Structured3D 3D Visualization")

parser.add_argument("--path", required=True,

help="dataset path", metavar="DIR")

parser.add_argument("--scene", required=True,

help="scene id", type=int)

parser.add_argument("--type", choices=("floorplan", "wireframe", "plane"),

default="plane", type=str)

parser.add_argument("--color", choices=["normal", "manhattan"],

default="normal", type=str)

return parser.parse_args()

def main():

args = parse_args()

# load annotations from json

with open(os.path.join(args.path, f"scene_{args.scene:05d}", "annotation_3d.json")) as file:

annos = json.load(file)

if args.type == "wireframe":

visualize_wireframe(annos)

elif args.type == "plane":

visualize_plane(annos, args)

elif args.type == "floorplan":

visualize_floorplan(annos)

if __name__ == "__main__":

main()

================================================

FILE: visualize_bbox.py

================================================

import os

import json

import argparse

import cv2

import numpy as np

import matplotlib.pyplot as plt

from misc.utils import get_corners_of_bb3d_no_index, project_3d_points_to_2d, parse_camera_info

def visualize_bbox(args):

with open(os.path.join(args.path, f"scene_{args.scene:05d}", "bbox_3d.json")) as file:

annos = json.load(file)

id2index = dict()

for index, object in enumerate(annos):

id2index[object.get('ID')] = index

scene_path = os.path.join(args.path, f"scene_{args.scene:05d}", "2D_rendering")

for room_id in np.sort(os.listdir(scene_path)):

room_path = os.path.join(scene_path, room_id, "perspective", "full")

if not os.path.exists(room_path):

continue

for position_id in np.sort(os.listdir(room_path)):

position_path = os.path.join(room_path, position_id)

image = cv2.imread(os.path.join(position_path, 'rgb_rawlight.png'))

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

height, width, _ = image.shape

instance = cv2.imread(os.path.join(position_path, 'instance.png'), cv2.IMREAD_UNCHANGED)

camera_info = np.loadtxt(os.path.join(position_path, 'camera_pose.txt'))

rot, trans, K = parse_camera_info(camera_info, height, width)

plt.figure()

plt.imshow(image)

for index in np.unique(instance)[:-1]:

# for each instance in current image

bbox = annos[id2index[index]]

basis = np.array(bbox['basis'])

coeffs = np.array(bbox['coeffs'])

centroid = np.array(bbox['centroid'])

corners = get_corners_of_bb3d_no_index(basis, coeffs, centroid)

corners = corners - trans

gt2dcorners = project_3d_points_to_2d(corners, rot, K)

num_corner = gt2dcorners.shape[1] // 2

plt.plot(np.hstack((gt2dcorners[0, :num_corner], gt2dcorners[0, 0])),

np.hstack((gt2dcorners[1, :num_corner], gt2dcorners[1, 0])), 'r')

plt.plot(np.hstack((gt2dcorners[0, num_corner:], gt2dcorners[0, num_corner])),

np.hstack((gt2dcorners[1, num_corner:], gt2dcorners[1, num_corner])), 'b')

for i in range(num_corner):

plt.plot(gt2dcorners[0, [i, i + num_corner]], gt2dcorners[1, [i, i + num_corner]], 'y')

plt.axis('off')

plt.axis([0, width, height, 0])

plt.show()

def parse_args():

parser = argparse.ArgumentParser(

description="Structured3D 3D Bounding Box Visualization")

parser.add_argument("--path", required=True,

help="dataset path", metavar="DIR")

parser.add_argument("--scene", required=True,

help="scene id", type=int)

return parser.parse_args()

def main():

args = parse_args()

visualize_bbox(args)

if __name__ == "__main__":

main()

================================================

FILE: visualize_floorplan.py

================================================

import argparse

import json

import os

import matplotlib.pyplot as plt

import numpy as np

from matplotlib import colors

from shapely.geometry import Polygon

from shapely.plotting import plot_polygon

from misc.colors import semantics_cmap

from misc.utils import get_corners_of_bb3d_no_index

def convert_lines_to_vertices(lines):

"""convert line representation to polygon vertices

"""

polygons = []

lines = np.array(lines)

polygon = None

while len(lines) != 0:

if polygon is None:

polygon = lines[0].tolist()

lines = np.delete(lines, 0, 0)

lineID, juncID = np.where(lines == polygon[-1])

vertex = lines[lineID[0], 1 - juncID[0]]

lines = np.delete(lines, lineID, 0)

if vertex in polygon:

polygons.append(polygon)

polygon = None

else:

polygon.append(vertex)

return polygons

def visualize_floorplan(args):

"""visualize floorplan

"""

with open(os.path.join(args.path, f"scene_{args.scene:05d}", "annotation_3d.json")) as file:

annos = json.load(file)

with open(os.path.join(args.path, f"scene_{args.scene:05d}", "bbox_3d.json")) as file:

boxes = json.load(file)

# extract the floor in each semantic for floorplan visualization

planes = []

for semantic in annos['semantics']:

for planeID in semantic['planeID']:

if annos['planes'][planeID]['type'] == 'floor':

planes.append({'planeID': planeID, 'type': semantic['type']})

if semantic['type'] == 'outwall':

outerwall_planes = semantic['planeID']

# extract hole vertices

lines_holes = []

for semantic in annos['semantics']:

if semantic['type'] in ['window', 'door']:

for planeID in semantic['planeID']:

lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())

lines_holes = np.unique(lines_holes)

# junctions on the floor

junctions = np.array([junc['coordinate'] for junc in annos['junctions']])

junction_floor = np.where(np.isclose(junctions[:, -1], 0))[0]

# construct each polygon

polygons = []

for plane in planes:

lineIDs = np.where(np.array(annos['planeLineMatrix'][plane['planeID']]))[0].tolist()

junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]

polygon = convert_lines_to_vertices(junction_pairs)

polygons.append([polygon[0], plane['type']])

outerwall_floor = []

for planeID in outerwall_planes:

lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist()

lineIDs = np.setdiff1d(lineIDs, lines_holes)

junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]

for start, end in junction_pairs:

if start in junction_floor and end in junction_floor:

outerwall_floor.append([start, end])

outerwall_polygon = convert_lines_to_vertices(outerwall_floor)

polygons.append([outerwall_polygon[0], 'outwall'])

fig = plt.figure()

ax = fig.add_subplot(1, 1, 1)

junctions = np.array([junc['coordinate'][:2] for junc in annos['junctions']])

for (polygon, poly_type) in polygons:

polygon = np.array(polygon + [polygon[0], ])

polygon = Polygon(junctions[polygon])

if poly_type == 'outwall':

plot_polygon(polygon, ax=ax, add_points=False, facecolor=semantics_cmap[poly_type], alpha=0)

else:

plot_polygon(polygon, ax=ax, add_points=False, facecolor=semantics_cmap[poly_type], alpha=0.5)

for bbox in boxes:

basis = np.array(bbox['basis'])

coeffs = np.array(bbox['coeffs'])

centroid = np.array(bbox['centroid'])

corners = get_corners_of_bb3d_no_index(basis, coeffs, centroid)

corners = corners[[0, 1, 2, 3, 0], :2]

polygon = Polygon(corners)

plot_polygon(polygon, ax=ax, add_points=False, facecolor=colors.rgb2hex(np.random.rand(3)), alpha=0.5)

plt.axis('equal')

plt.axis('off')

plt.show()

def parse_args():

parser = argparse.ArgumentParser(

description="Structured3D Floorplan Visualization")

parser.add_argument("--path", required=True,

help="dataset path", metavar="DIR")

parser.add_argument("--scene", required=True,

help="scene id", type=int)

return parser.parse_args()

def main():

args = parse_args()

visualize_floorplan(args)

if __name__ == "__main__":

main()

================================================

FILE: visualize_layout.py

================================================

import os

import json

import argparse

import cv2

import numpy as np

import matplotlib.pyplot as plt

from shapely.geometry import Polygon

from descartes.patch import PolygonPatch

from misc.panorama import draw_boundary_from_cor_id

from misc.colors import colormap_255

def visualize_panorama(args):

"""visualize panorama layout

"""

scene_path = os.path.join(args.path, f"scene_{args.scene:05d}", "2D_rendering")

for room_id in np.sort(os.listdir(scene_path)):

room_path = os.path.join(scene_path, room_id, "panorama")

cor_id = np.loadtxt(os.path.join(room_path, "layout.txt"))

img_src = cv2.imread(os.path.join(room_path, "full", "rgb_rawlight.png"))

img_src = cv2.cvtColor(img_src, cv2.COLOR_BGR2RGB)

img_viz = draw_boundary_from_cor_id(cor_id, img_src)

plt.axis('off')

plt.imshow(img_viz)

plt.show()

def visualize_perspective(args):

"""visualize perspective layout

"""

colors = np.array(colormap_255) / 255

scene_path = os.path.join(args.path, f"scene_{args.scene:05d}", "2D_rendering")

for room_id in np.sort(os.listdir(scene_path)):

room_path = os.path.join(scene_path, room_id, "perspective", "full")

if not os.path.exists(room_path):

continue

for position_id in np.sort(os.listdir(room_path)):

position_path = os.path.join(room_path, position_id)

image = cv2.imread(os.path.join(position_path, "rgb_rawlight.png"))

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

with open(os.path.join(position_path, "layout.json")) as f:

annos = json.load(f)

fig = plt.figure()

for i, key in enumerate(['amodal_mask', 'visible_mask']):

ax = fig.add_subplot(2, 1, i + 1)

plt.axis('off')

plt.imshow(image)

for i, planes in enumerate(annos['planes']):

if len(planes[key]):

for plane in planes[key]:

polygon = Polygon([annos['junctions'][id]['coordinate'] for id in plane])

patch = PolygonPatch(polygon, facecolor=colors[i], alpha=0.5)

ax.add_patch(patch)

plt.title(key)

plt.show()

def parse_args():

parser = argparse.ArgumentParser(description="Structured3D 2D Layout Visualization")

parser.add_argument("--path", required=True,

help="dataset path", metavar="DIR")

parser.add_argument("--scene", required=True,

help="scene id", type=int)

parser.add_argument("--type", choices=["perspective", "panorama"], required=True,

help="type of camera", type=str)

return parser.parse_args()

def main():

args = parse_args()

if args.type == 'panorama':

visualize_panorama(args)

elif args.type == 'perspective':

visualize_perspective(args)

if __name__ == "__main__":

main()

================================================

FILE: visualize_mesh.py

================================================

import os

import json

import argparse

import cv2

import open3d

import numpy as np

from panda3d.core import Triangulator

from misc.panorama import xyz_2_coorxy

from visualize_3d import convert_lines_to_vertices

def E2P(image, corner_i, corner_j, wall_height, camera, resolution=512, is_wall=True):

"""convert panorama to persepctive image

"""

corner_i = corner_i - camera

corner_j = corner_j - camera

if is_wall:

xs = np.linspace(corner_i[0], corner_j[0], resolution)[None].repeat(resolution, 0)

ys = np.linspace(corner_i[1], corner_j[1], resolution)[None].repeat(resolution, 0)

zs = np.linspace(-camera[-1], wall_height - camera[-1], resolution)[:, None].repeat(resolution, 1)

else:

xs = np.linspace(corner_i[0], corner_j[0], resolution)[None].repeat(resolution, 0)

ys = np.linspace(corner_i[1], corner_j[1], resolution)[:, None].repeat(resolution, 1)

zs = np.zeros_like(xs) + wall_height - camera[-1]

coorx, coory = xyz_2_coorxy(xs, ys, zs)

persp = cv2.remap(image, coorx.astype(np.float32), coory.astype(np.float32),

cv2.INTER_CUBIC, borderMode=cv2.BORDER_WRAP)

return persp

def create_plane_mesh(vertices, vertices_floor, textures, texture_floor, texture_ceiling,

delta_height, ignore_ceiling=False):

# create mesh for 3D floorplan visualization

triangles = []

triangle_uvs = []

# the number of vertical walls

num_walls = len(vertices)

# 1. vertical wall (always rectangle)

num_vertices = 0

for i in range(len(vertices)):

# hardcode triangles for each vertical wall

triangle = np.array([[0, 2, 1], [2, 0, 3]])

triangles.append(triangle + num_vertices)

num_vertices += 4

triangle_uv = np.array(

[

[i / (num_walls + 2), 0],

[i / (num_walls + 2), 1],

[(i+1) / (num_walls + 2), 1],

[(i+1) / (num_walls + 2), 0]

],

dtype=np.float32

)

triangle_uvs.append(triangle_uv)

# 2. floor and ceiling

# Since the floor and ceiling may not be a rectangle, triangulate the polygon first.

tri = Triangulator()

for i in range(len(vertices_floor)):

tri.add_vertex(vertices_floor[i, 0], vertices_floor[i, 1])

for i in range(len(vertices_floor)):

tri.add_polygon_vertex(i)

tri.triangulate()

# polygon triangulation

triangle = []

for i in range(tri.getNumTriangles()):

triangle.append([tri.get_triangle_v0(i), tri.get_triangle_v1(i), tri.get_triangle_v2(i)])

triangle = np.array(triangle)

# add triangles for floor and ceiling

triangles.append(triangle + num_vertices)

num_vertices += len(np.unique(triangle))

if not ignore_ceiling:

triangles.append(triangle + num_vertices)

# texture for floor and ceiling

vertices_floor_min = np.min(vertices_floor[:, :2], axis=0)

vertices_floor_max = np.max(vertices_floor[:, :2], axis=0)

# normalize to [0, 1]

triangle_uv = (vertices_floor[:, :2] - vertices_floor_min) / (vertices_floor_max - vertices_floor_min)

triangle_uv[:, 0] = (triangle_uv[:, 0] + num_walls) / (num_walls + 2)

triangle_uvs.append(triangle_uv)

# normalize to [0, 1]

triangle_uv = (vertices_floor[:, :2] - vertices_floor_min) / (vertices_floor_max - vertices_floor_min)

triangle_uv[:, 0] = (triangle_uv[:, 0] + num_walls + 1) / (num_walls + 2)

triangle_uvs.append(triangle_uv)

# 3. Merge wall, floor, and ceiling

vertices.append(vertices_floor)

vertices.append(vertices_floor + delta_height)

vertices = np.concatenate(vertices, axis=0)

triangles = np.concatenate(triangles, axis=0)

textures.append(texture_floor)

textures.append(texture_ceiling)

textures = np.concatenate(textures, axis=1)

triangle_uvs = np.concatenate(triangle_uvs, axis=0)

mesh = open3d.geometry.TriangleMesh(

vertices=open3d.utility.Vector3dVector(vertices),

triangles=open3d.utility.Vector3iVector(triangles)

)

mesh.compute_vertex_normals()

mesh.texture = open3d.geometry.Image(textures)

mesh.triangle_uvs = np.array(triangle_uvs[triangles.reshape(-1), :], dtype=np.float64)

return mesh

def verify_normal(corner_i, corner_j, delta_height, plane_normal):

edge_a = corner_j + delta_height - corner_i

edge_b = delta_height

normal = np.cross(edge_a, edge_b)

normal /= np.linalg.norm(normal, ord=2)

inner_product = normal.dot(plane_normal)

if inner_product > 1e-8:

return False

else:

return True

def visualize_mesh(args):

"""visualize as water-tight mesh

"""

image = cv2.imread(os.path.join(args.path, f"scene_{args.scene:05d}", "2D_rendering",

str(args.room), "panorama/full/rgb_rawlight.png"))

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

# load room annotations

with open(os.path.join(args.path, f"scene_{args.scene:05d}" , "annotation_3d.json")) as f:

annos = json.load(f)

# load camera info

camera_center = np.loadtxt(os.path.join(args.path, f"scene_{args.scene:05d}", "2D_rendering",

str(args.room), "panorama", "camera_xyz.txt"))

# parse corners

junctions = np.array([item['coordinate'] for item in annos['junctions']])

lines_holes = []

for semantic in annos['semantics']:

if semantic['type'] in ['window', 'door']:

for planeID in semantic['planeID']:

lines_holes.extend(np.where(np.array(annos['planeLineMatrix'][planeID]))[0].tolist())

lines_holes = np.unique(lines_holes)

_, vertices_holes = np.where(np.array(annos['lineJunctionMatrix'])[lines_holes])

vertices_holes = np.unique(vertices_holes)

# parse annotations

walls = dict()

walls_normal = dict()

for semantic in annos['semantics']:

if semantic['ID'] != int(args.room):

continue

# find junctions of ceiling and floor

for planeID in semantic['planeID']:

plane_anno = annos['planes'][planeID]

if plane_anno['type'] != 'wall':

lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0]

lineIDs = np.setdiff1d(lineIDs, lines_holes)

junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]

wall = convert_lines_to_vertices(junction_pairs)

walls[plane_anno['type']] = wall[0]

# save normal of the vertical walls

for planeID in semantic['planeID']:

plane_anno = annos['planes'][planeID]

if plane_anno['type'] == 'wall':

lineIDs = np.where(np.array(annos['planeLineMatrix'][planeID]))[0]

lineIDs = np.setdiff1d(lineIDs, lines_holes)

junction_pairs = [np.where(np.array(annos['lineJunctionMatrix'][lineID]))[0].tolist() for lineID in lineIDs]

wall = convert_lines_to_vertices(junction_pairs)

walls_normal[tuple(np.intersect1d(wall, walls['floor']))] = plane_anno['normal']

# we assume that zs of floor equals 0, then the wall height is from the ceiling

wall_height = np.mean(junctions[walls['ceiling']], axis=0)[-1]

delta_height = np.array([0, 0, wall_height])

# list of corner index

wall_floor = walls['floor']

corners = [] # 3D coordinate for each wall

textures = [] # texture for each wall

# wall

for i, j in zip(wall_floor, np.roll(wall_floor, shift=-1)):

corner_i, corner_j = junctions[i], junctions[j]

flip = verify_normal(corner_i, corner_j, delta_height, walls_normal[tuple(sorted([i, j]))])

if flip:

corner_j, corner_i = corner_i, corner_j

texture = E2P(image, corner_i, corner_j, wall_height, camera_center)

corner = np.array([corner_i, corner_i + delta_height, corner_j + delta_height, corner_j])

corners.append(corner)

textures.append(texture)

# floor and ceiling

# the floor/ceiling texture is cropped by the maximum bounding box

corner_floor = junctions[wall_floor]

corner_min = np.min(corner_floor, axis=0)

corner_max = np.max(corner_floor, axis=0)

texture_floor = E2P(image, corner_min, corner_max, 0, camera_center, is_wall=False)

texture_ceiling = E2P(image, corner_min, corner_max, wall_height, camera_center, is_wall=False)

# create mesh

mesh = create_plane_mesh(corners, corner_floor, textures, texture_floor, texture_ceiling,

delta_height, ignore_ceiling=args.ignore_ceiling)

# visualize mesh

open3d.visualization.draw_geometries([mesh])

def parse_args():

parser = argparse.ArgumentParser(description="Structured3D 3D Textured Mesh Visualization")

parser.add_argument("--path", required=True,

help="dataset path", metavar="DIR")

parser.add_argument("--scene", required=True,

help="scene id", type=int)

parser.add_argument("--room", required=True,

help="room id", type=int)

parser.add_argument("--ignore_ceiling", action='store_true',

help="ignore ceiling for better visualization")

return parser.parse_args()

def main():

args = parse_args()

visualize_mesh(args)

if __name__ == "__main__":

main()