Showing preview only (1,214K chars total). Download the full file or copy to clipboard to get everything.

Repository: disler/single-file-agents

Branch: main

Commit: ae5826a4165c

Files: 193

Total size: 1.1 MB

Directory structure:

gitextract_m5kdaf2m/

├── .gitignore

├── CLAUDE.md

├── README.md

├── ai_docs/

│ ├── anthropic-new-text-editor.md

│ ├── anthropic-token-efficient-tool-use.md

│ ├── building-eff-agents.md

│ ├── existing_anthropic_computer_use_code.md

│ ├── fc_openai_agents.md

│ ├── openai-function-calling.md

│ ├── python_anthropic.md

│ ├── python_genai.md

│ └── python_openai.md

├── codebase-architectures/

│ ├── .gitignore

│ ├── README.md

│ ├── atomic-composable-architecture/

│ │ ├── README.md

│ │ ├── atom/

│ │ │ ├── auth.py

│ │ │ ├── notifications.py

│ │ │ └── validation.py

│ │ ├── main.py

│ │ ├── molecule/

│ │ │ ├── alerting.py

│ │ │ └── user_management.py

│ │ └── organism/

│ │ ├── alerts_api.py

│ │ └── user_api.py

│ ├── layered-architecture/

│ │ ├── README.md

│ │ ├── api/

│ │ │ ├── category_api.py

│ │ │ └── product_api.py

│ │ ├── data/

│ │ │ └── database.py

│ │ ├── main.py

│ │ ├── models/

│ │ │ ├── category.py

│ │ │ └── product.py

│ │ ├── services/

│ │ │ ├── category_service.py

│ │ │ └── product_service.py

│ │ └── utils/

│ │ └── logger.py

│ ├── pipeline-architecture/

│ │ ├── README.md

│ │ ├── data/

│ │ │ ├── .gitkeep

│ │ │ └── sales_data.json

│ │ ├── main.py

│ │ ├── output/

│ │ │ ├── .gitkeep

│ │ │ └── sales_analysis.json

│ │ ├── pipeline_manager/

│ │ │ ├── data_pipeline.py

│ │ │ └── pipeline_manager.py

│ │ ├── shared/

│ │ │ └── utilities.py

│ │ └── steps/

│ │ ├── input_stage.py

│ │ ├── output_stage.py

│ │ └── processing_stage.py

│ └── vertical-slice-architecture/

│ ├── README.md

│ ├── features/

│ │ ├── projects/

│ │ │ ├── README.md

│ │ │ ├── api.py

│ │ │ ├── model.py

│ │ │ └── service.py

│ │ ├── tasks/

│ │ │ ├── README.md

│ │ │ ├── api.py

│ │ │ ├── model.py

│ │ │ └── service.py

│ │ └── users/

│ │ ├── README.md

│ │ ├── api.py

│ │ ├── model.py

│ │ └── service.py

│ └── main.py

├── data/

│ ├── analytics.csv

│ └── analytics.json

├── example-agent-codebase-arch/

│ ├── README.md

│ ├── __init__.py

│ ├── atomic-composable-architecture/

│ │ ├── __init__.py

│ │ ├── atom/

│ │ │ ├── __init__.py

│ │ │ ├── file_tools/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── insert_tool.py

│ │ │ │ ├── read_tool.py

│ │ │ │ ├── replace_tool.py

│ │ │ │ ├── result_tool.py

│ │ │ │ ├── undo_tool.py

│ │ │ │ └── write_tool.py

│ │ │ ├── logging/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── console.py

│ │ │ │ └── display.py

│ │ │ └── path_utils/

│ │ │ ├── __init__.py

│ │ │ ├── directory.py

│ │ │ ├── extension.py

│ │ │ ├── normalize.py

│ │ │ └── validation.py

│ │ ├── membrane/

│ │ │ ├── __init__.py

│ │ │ ├── main_file_agent.py

│ │ │ └── mcp_file_agent.py

│ │ ├── molecule/

│ │ │ ├── __init__.py

│ │ │ ├── file_crud.py

│ │ │ ├── file_reader.py

│ │ │ └── file_writer.py

│ │ └── organism/

│ │ ├── __init__.py

│ │ └── file_agent.py

│ └── vertical-slice-architecture/

│ ├── __init__.py

│ ├── features/

│ │ ├── __init__.py

│ │ ├── blog_agent/

│ │ │ ├── __init__.py

│ │ │ ├── blog_agent.py

│ │ │ ├── blog_manager.py

│ │ │ ├── create_tool.py

│ │ │ ├── delete_tool.py

│ │ │ ├── model_tools.py

│ │ │ ├── read_tool.py

│ │ │ ├── search_tool.py

│ │ │ ├── tool_handler.py

│ │ │ └── update_tool.py

│ │ ├── blog_agent_v2/

│ │ │ ├── __init__.py

│ │ │ ├── blog_agent.py

│ │ │ ├── blog_manager.py

│ │ │ ├── create_tool.py

│ │ │ ├── delete_tool.py

│ │ │ ├── model_tools.py

│ │ │ ├── read_tool.py

│ │ │ ├── search_tool.py

│ │ │ ├── tool_handler.py

│ │ │ └── update_tool.py

│ │ ├── file_agent/

│ │ │ ├── __init__.py

│ │ │ ├── api_tools.py

│ │ │ ├── create_tool.py

│ │ │ ├── file_agent.py

│ │ │ ├── file_editor.py

│ │ │ ├── file_writer.py

│ │ │ ├── insert_tool.py

│ │ │ ├── model_tools.py

│ │ │ ├── read_tool.py

│ │ │ ├── replace_tool.py

│ │ │ ├── service_tools.py

│ │ │ ├── tool_handler.py

│ │ │ └── write_tool.py

│ │ ├── file_agent_v2/

│ │ │ ├── __init__.py

│ │ │ ├── api_tools.py

│ │ │ ├── create_tool.py

│ │ │ ├── file_agent.py

│ │ │ ├── file_editor.py

│ │ │ ├── file_writer.py

│ │ │ ├── insert_tool.py

│ │ │ ├── model_tools.py

│ │ │ ├── read_tool.py

│ │ │ ├── replace_tool.py

│ │ │ ├── service_tools.py

│ │ │ ├── tool_handler.py

│ │ │ └── write_tool.py

│ │ └── file_agent_v2_gemini/

│ │ ├── __init__.py

│ │ ├── api_tools.py

│ │ ├── create_tool.py

│ │ ├── file_agent.py

│ │ ├── file_editor.py

│ │ ├── file_writer.py

│ │ ├── insert_tool.py

│ │ ├── model_tools.py

│ │ ├── read_tool.py

│ │ ├── replace_tool.py

│ │ ├── service_tools.py

│ │ ├── tool_handler.py

│ │ └── write_tool.py

│ └── main.py

├── extra/

│ ├── ai_code_basic.sh

│ ├── ai_code_reflect.sh

│ ├── create_db.py

│ ├── gist_poc.py

│ └── gist_poc.sh

├── openai-agents-examples/

│ ├── 01_basic_agent.py

│ ├── 02_multi_agent.py

│ ├── 03_sync_agent.py

│ ├── 04_agent_with_tracing.py

│ ├── 05_agent_with_function_tools.py

│ ├── 06_agent_with_custom_tools.py

│ ├── 07_agent_with_handoffs.py

│ ├── 08_agent_with_agent_as_tool.py

│ ├── 09_agent_with_context_management.py

│ ├── 10_agent_with_guardrails.py

│ ├── 11_agent_orchestration.py

│ ├── 12_anthropic_agent.py

│ ├── 13_research_blog_system.py

│ ├── README.md

│ ├── fix_imports.py

│ ├── install_dependencies.sh

│ ├── summary.md

│ ├── test_all_examples.sh

│ └── test_imports.py

├── sfa_bash_editor_agent_anthropic_v2.py

├── sfa_bash_editor_agent_anthropic_v3.py

├── sfa_codebase_context_agent_v3.py

├── sfa_codebase_context_agent_w_ripgrep_v3.py

├── sfa_duckdb_anthropic_v2.py

├── sfa_duckdb_gemini_v1.py

├── sfa_duckdb_gemini_v2.py

├── sfa_duckdb_openai_v2.py

├── sfa_file_editor_sonny37_v1.py

├── sfa_jq_gemini_v1.py

├── sfa_meta_prompt_openai_v1.py

├── sfa_openai_agent_sdk_v1.py

├── sfa_openai_agent_sdk_v1_minimal.py

├── sfa_poc.py

├── sfa_polars_csv_agent_anthropic_v3.py

├── sfa_polars_csv_agent_openai_v2.py

├── sfa_scrapper_agent_openai_v2.py

└── sfa_sqlite_openai_v2.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

.aider*

session_dir/

data/*

!data/mock.json

!data/mock.db

!data/mock.sqlite

!data/analytics.json

!data/analytics.db

!data/analytics.sqlite

!data/analytics.csv

specs/

patterns.log

paic-patterns.log

.env

relevant_files.json

output_relevant_files.json

package-lock.json

agent_workspace/

__pycache__/

*.pyc

*.pyo

*.pyd

================================================

FILE: CLAUDE.md

================================================

# CLAUDE.md - Single File Agents Repository

## Commands

- **Run agents**: `uv run <agent_filename.py> [options]`

## Environment

- Set API keys before running agents:

```bash

export GEMINI_API_KEY='your-api-key-here'

export OPENAI_API_KEY='your-api-key-here'

export ANTHROPIC_API_KEY='your-api-key-here'

export FIRECRAWL_API_KEY='your-api-key-here'

```

## Code Style

- Single file agents with embedded dependencies (using `uv`)

- Dependencies specified at top of file in `/// script` comments

- Include example usage in docstrings

- Detailed error handling with user-friendly messages

- Consistent format for command-line arguments

## Structure

- Each agent focuses on a single capability (DuckDB, SQLite, JQ, etc.)

- Command-line arguments use argparse with consistent patterns

- File naming: `sfa_<capability>_<provider>_v<version>.py`

## Usage

> We use astral `uv` as our python package manager.

>

> This enables us to run SINGLE FILE AGENTS with embedded dependencies.

To run an agent, use the following command:

```bash

uv run sfa_<capability>_<provider>_v<version>.py <arguments>

```

================================================

FILE: README.md

================================================

# Single File Agents (SFA)

> Premise: #1: What if we could pack single purpose, powerful AI Agents into a single python file?

>

> Premise: #2: What's the best structural pattern for building Agents that can improve in capability as compute and intelligence increases?

## What is this?

A collection of powerful single-file agents built on top of [uv](https://github.com/astral/uv) - the modern Python package installer and resolver.

These agents aim to do one thing and one thing only. They demonstrate precise prompt engineering and GenAI patterns for practical tasks many of which I share on the [IndyDevDan YouTube channel](https://www.youtube.com/@indydevdan). Watch us walk through the Single File Agent in [this video](https://youtu.be/YAIJV48QlXc).

You can also check out [this video](https://youtu.be/vq-vTsbSSZ0) where we use [Devin](https://devin.ai/), [Cursor](https://www.cursor.com/), [Aider](https://aider.chat/), and [PAIC-Patterns](https://agenticengineer.com/principled-ai-coding) to build three new agents with powerful spec (plan) prompts.

This repo contains a few agents built across the big 3 GenAI providers (Gemini, OpenAI, Anthropic).

## Quick Start

Export your API keys:

```bash

export GEMINI_API_KEY='your-api-key-here'

export OPENAI_API_KEY='your-api-key-here'

export ANTHROPIC_API_KEY='your-api-key-here'

export FIRECRAWL_API_KEY='your-api-key-here' # Get your API key from https://www.firecrawl.dev/

```

JQ Agent:

```bash

uv run sfa_jq_gemini_v1.py --exe "Filter scores above 80 from data/analytics.json and save to high_scores.json"

```

DuckDB Agent (OpenAI):

```bash

# Tip tier

uv run sfa_duckdb_openai_v2.py -d ./data/analytics.db -p "Show me all users with score above 80"

```

DuckDB Agent (Anthropic):

```bash

# Tip tier

uv run sfa_duckdb_anthropic_v2.py -d ./data/analytics.db -p "Show me all users with score above 80"

```

DuckDB Agent (Gemini):

```bash

# Buggy but usually works

uv run sfa_duckdb_gemini_v2.py -d ./data/analytics.db -p "Show me all users with score above 80"

```

SQLite Agent (OpenAI):

```bash

uv run sfa_sqlite_openai_v2.py -d ./data/analytics.sqlite -p "Show me all users with score above 80"

```

Meta Prompt Generator:

```bash

uv run sfa_meta_prompt_openai_v1.py \

--purpose "generate mermaid diagrams" \

--instructions "generate a mermaid valid chart, use diagram type specified or default flow, use examples to understand the structure of the output" \

--sections "user-prompt" \

--variables "user-prompt"

```

### Bash Editor Agent (Anthropic)

> (sfa_bash_editor_agent_anthropic_v2.py)

An AI-powered assistant that can both edit files and execute bash commands using Claude's tool use capabilities.

Example usage:

```bash

# View a file

uv run sfa_bash_editor_agent_anthropic_v2.py --prompt "Show me the first 10 lines of README.md"

# Create a new file

uv run sfa_bash_editor_agent_anthropic_v2.py --prompt "Create a new file called hello.txt with 'Hello World!' in it"

# Replace text in a file

uv run sfa_bash_editor_agent_anthropic_v2.py --prompt "Create a new file called hello.txt with 'Hello World!' in it. Then update hello.txt to say 'Hello AI Coding World'"

# Execute a bash command

uv run sfa_bash_editor_agent_anthropic_v2.py --prompt "List all Python files in the current directory sorted by size"

```

### Polars CSV Agent (OpenAI)

> (sfa_polars_csv_agent_openai_v2.py)

An AI-powered assistant that generates and executes Polars data transformations for CSV files using OpenAI's function calling capabilities.

Example usage:

```bash

# Run Polars CSV agent with default compute loops (10)

uv run sfa_polars_csv_agent_openai_v2.py -i "data/analytics.csv" -p "What is the average age of the users?"

# Run with custom compute loops

uv run sfa_polars_csv_agent_openai_v2.py -i "data/analytics.csv" -p "What is the average age of the users?" -c 5

```

### Web Scraper Agent (OpenAI)

> (sfa_scrapper_agent_openai_v2.py)

An AI-powered web scraping and content filtering assistant that uses OpenAI's function calling capabilities and the Firecrawl API for efficient web scraping.

Example usage:

```bash

# Basic scraping with markdown list output

uv run sfa_scrapper_agent_openai_v2.py -u "https://example.com" -p "Scrap and format each sentence as a separate line in a markdown list" -o "example.md"

# Advanced scraping with specific content extraction

uv run sfa_scrapper_agent_openai_v2.py \

--url https://agenticengineer.com/principled-ai-coding \

--prompt "What are the names and descriptions of each lesson?" \

--output-file-path paic-lessons.md \

-c 10

```

## Features

- **Self-contained**: Each agent is a single file with embedded dependencies

- **Minimal, Precise Agents**: Carefully crafted prompts for small agents that can do one thing really well

- **Modern Python**: Built on uv for fast, reliable dependency management

- **Run From The Cloud**: With uv, you can run these scripts from your server or right from a gist (see my gists commands)

- **Patternful**: Building effective agents is about setting up the right prompts, tools, and process for your use case. Once you setup a great pattern, you can re-use it over and over. That's part of the magic of these SFA's.

## Test Data

The project includes a test duckdb database (`data/analytics.db`), a sqlite database (`data/analytics.sqlite`), and a JSON file (`data/analytics.json`) for testing purposes. The database contains sample user data with the following characteristics:

### User Table

- 30 sample users with varied attributes

- Fields: id (UUID), name, age, city, score, is_active, status, created_at

- Test data includes:

- Names: Alice, Bob, Charlie, Diana, Eric, Fiona, Jane, John

- Cities: Berlin, London, New York, Paris, Singapore, Sydney, Tokyo, Toronto

- Status values: active, inactive, pending, archived

- Age range: 20-65

- Score range: 3.1-96.18

- Date range: 2023-2025

Perfect for testing filtering, sorting, and aggregation operations with realistic data variations.

## Agents

> Note: We're using the term 'agent' loosely for some of these SFA's. We have prompts, prompt chains, and a couple are official Agents.

### JQ Command Agent

> (sfa_jq_gemini_v1.py)

An AI-powered assistant that generates precise jq commands for JSON processing

Example usage:

```bash

# Generate and execute a jq command

uv run sfa_jq_gemini_v1.py --exe "Filter scores above 80 from data/analytics.json and save to high_scores.json"

# Generate command only

uv run sfa_jq_gemini_v1.py "Filter scores above 80 from data/analytics.json and save to high_scores.json"

```

### DuckDB Agents

> (sfa_duckdb_openai_v2.py, sfa_duckdb_anthropic_v2.py, sfa_duckdb_gemini_v2.py, sfa_duckdb_gemini_v1.py)

We have three DuckDB agents that demonstrate different approaches and capabilities across major AI providers:

#### DuckDB OpenAI Agent (sfa_duckdb_openai_v2.py, sfa_duckdb_openai_v1.py)

An AI-powered assistant that generates and executes DuckDB SQL queries using OpenAI's function calling capabilities.

Example usage:

```bash

# Run DuckDB agent with default compute loops (10)

uv run sfa_duckdb_openai_v2.py -d ./data/analytics.db -p "Show me all users with score above 80"

# Run with custom compute loops

uv run sfa_duckdb_openai_v2.py -d ./data/analytics.db -p "Show me all users with score above 80" -c 5

```

#### DuckDB Anthropic Agent (sfa_duckdb_anthropic_v2.py)

An AI-powered assistant that generates and executes DuckDB SQL queries using Claude's tool use capabilities.

Example usage:

```bash

# Run DuckDB agent with default compute loops (10)

uv run sfa_duckdb_anthropic_v2.py -d ./data/analytics.db -p "Show me all users with score above 80"

# Run with custom compute loops

uv run sfa_duckdb_anthropic_v2.py -d ./data/analytics.db -p "Show me all users with score above 80" -c 5

```

#### DuckDB Gemini Agent (sfa_duckdb_gemini_v2.py)

An AI-powered assistant that generates and executes DuckDB SQL queries using Gemini's function calling capabilities.

Example usage:

```bash

# Run DuckDB agent with default compute loops (10)

uv run sfa_duckdb_gemini_v2.py -d ./data/analytics.db -p "Show me all users with score above 80"

# Run with custom compute loops

uv run sfa_duckdb_gemini_v2.py -d ./data/analytics.db -p "Show me all users with score above 80" -c 5

```

### Meta Prompt Generator (sfa_meta_prompt_openai_v1.py)

An AI-powered assistant that generates comprehensive, structured prompts for language models.

Example usage:

```bash

# Generate a meta prompt using command-line arguments.

# Optional arguments are marked with a ?.

uv run sfa_meta_prompt_openai_v1.py \

--purpose "generate mermaid diagrams" \

--instructions "generate a mermaid valid chart, use diagram type specified or default flow, use examples to understand the structure of the output" \

--sections "examples, user-prompt" \

--examples "create examples of 3 basic mermaid charts with <user-chart-request> and <chart-response> blocks" \

--variables "user-prompt"

# Without optional arguments, the script will enter interactive mode.

uv run sfa_meta_prompt_openai_v1.py \

--purpose "generate mermaid diagrams" \

--instructions "generate a mermaid valid chart, use diagram type specified or default flow, use examples to understand the structure of the output"

# Interactive Mode

# Just run the script without any flags to enter interactive mode.

# You'll be prompted step by step for:

# - Purpose (required): The main goal of your prompt

# - Instructions (required): Detailed instructions for the model

# - Sections (optional): Additional sections to include

# - Examples (optional): Example inputs and outputs

# - Variables (optional): Placeholders for dynamic content

uv run sfa_meta_prompt_openai_v1.py

```

### Git Agent

> Up for a challenge?

## Requirements

- Python 3.8+

- uv package manager

- GEMINI_API_KEY (for Gemini-based agents)

- OPENAI_API_KEY (for OpenAI-based agents)

- ANTHROPIC_API_KEY (for Anthropic-based agents)

- jq command-line JSON processor (for JQ agent)

- DuckDB CLI (for DuckDB agents)

### Installing Required Tools

#### jq Installation

macOS:

```bash

brew install jq

```

Windows:

- Download from [stedolan.github.io/jq/download](https://stedolan.github.io/jq/download/)

- Or install with Chocolatey: `choco install jq`

#### DuckDB Installation

macOS:

```bash

brew install duckdb

```

Windows:

- Download the CLI executable from [duckdb.org/docs/installation](https://duckdb.org/docs/installation)

- Add the executable location to your system PATH

## Installation

1. Install uv:

```bash

curl -LsSf https://astral.sh/uv/install.sh | sh

```

2. Clone this repository:

```bash

git clone <repository-url>

```

3. Set your Gemini API key (for JQ generator):

```bash

export GEMINI_API_KEY='your-api-key-here'

# Set your OpenAI API key (for DuckDB agents):

export OPENAI_API_KEY='your-api-key-here'

# Set your Anthropic API key (for DuckDB agents):

export ANTHROPIC_API_KEY='your-api-key-here'

```

## Shout Outs + Resources for you

- [uv](https://github.com/astral/uv) - The engineers creating uv are built different. Thank you for fixing the python ecosystem.

- [Simon Willison](https://simonwillison.net) - Simon introduced me to the fact that you can [use uv to run single file python scripts](https://simonwillison.net/2024/Aug/20/uv-unified-python-packaging/) with dependencies. Massive thanks for all your work. He runs one of the most valuable blogs for engineers in the world.

- [Building Effective Agents](https://www.anthropic.com/research/building-effective-agents) - A proper breakdown of how to build useful units of value built on top of GenAI.

- [Part Time Larry](https://youtu.be/zm0Vo6Di3V8?si=oBetAgc5ifhBmK03) - Larry has a great breakdown on the new Python GenAI library and delivers great hands on, actionable GenAI x Finance information.

- [Aider](https://aider.chat/) - AI Coding done right. Maximum control over your AI Coding Experience. Enough said.

---

- [New Gemini Python SDK](https://github.com/google-gemini/generative-ai-python)

- [Anthropic Agent Chatbot Example](https://github.com/anthropics/courses/blob/master/tool_use/06_chatbot_with_multiple_tools.ipynb)

- [Anthropic Customer Service Agent](https://github.com/anthropics/anthropic-cookbook/blob/main/tool_use/customer_service_agent.ipynb)

## AI Coding

## Context Priming

Read README.md, CLAUDE.md, ai_docs/*, and run git ls-files to understand this codebase.

## License

MIT License - feel free to use this code in your own projects.

If you find value from my work: give a shout out and tag my YT channel [IndyDevDan](https://www.youtube.com/@indydevdan).

================================================

FILE: ai_docs/anthropic-new-text-editor.md

================================================

Claude can use an Anthropic-defined text editor tool to view and modify text files, helping you debug, fix, and improve your code or other text documents. This allows Claude to directly interact with your files, providing hands-on assistance rather than just suggesting changes.

## Before using the text editor tool

### Use a compatible model

Anthropic's text editor tool is only available for Claude 3.5 Sonnet and Claude 3.7 Sonnet:

* **Claude 3.7 Sonnet**: `text_editor_20250124`

* **Claude 3.5 Sonnet**: `text_editor_20241022`

Both versions provide identical capabilities - the version you use should match the model you're working with.

### Assess your use case fit

Some examples of when to use the text editor tool are:

* **Code debugging**: Have Claude identify and fix bugs in your code, from syntax errors to logic issues.

* **Code refactoring**: Let Claude improve your code structure, readability, and performance through targeted edits.

* **Documentation generation**: Ask Claude to add docstrings, comments, or README files to your codebase.

* **Test creation**: Have Claude create unit tests for your code based on its understanding of the implementation.

---

## Use the text editor tool

Provide the text editor tool (named `str_replace_editor`) to Claude using the Messages API:

The text editor tool can be used in the following way:

### Text editor tool commands

The text editor tool supports several commands for viewing and modifying files:

#### view

The `view` command allows Claude to examine the contents of a file. It can read the entire file or a specific range of lines.

Parameters:

* `command`: Must be "view"

* `path`: The path to the file to view

* `view_range` (optional): An array of two integers specifying the start and end line numbers to view. Line numbers are 1-indexed, and -1 for the end line means read to the end of the file.

#### str\_replace

The `str_replace` command allows Claude to replace a specific string in a file with a new string. This is used for making precise edits.

Parameters:

* `command`: Must be "str\_replace"

* `path`: The path to the file to modify

* `old_str`: The text to replace (must match exactly, including whitespace and indentation)

* `new_str`: The new text to insert in place of the old text

#### create

The `create` command allows Claude to create a new file with specified content.

Parameters:

* `command`: Must be "create"

* `path`: The path where the new file should be created

* `file_text`: The content to write to the new file

#### insert

The `insert` command allows Claude to insert text at a specific location in a file.

Parameters:

* `command`: Must be "insert"

* `path`: The path to the file to modify

* `insert_line`: The line number after which to insert the text (0 for beginning of file)

* `new_str`: The text to insert

#### undo\_edit

The `undo_edit` command allows Claude to revert the last edit made to a file.

Parameters:

* `command`: Must be "undo\_edit"

* `path`: The path to the file whose last edit should be undone

### Example: Fixing a syntax error with the text editor tool

This example demonstrates how Claude uses the text editor tool to fix a syntax error in a Python file.

First, your application provides Claude with the text editor tool and a prompt to fix a syntax error:

Claude will use the text editor tool first to view the file:

Your application should then read the file and return its contents to Claude:

Claude will identify the syntax error and use the `str_replace` command to fix it:

Your application should then make the edit and return the result:

Finally, Claude will provide a complete explanation of the fix:

---

## Implement the text editor tool

The text editor tool is implemented as a schema-less tool, identified by `type: "text_editor_20250124"`. When using this tool, you don't need to provide an input schema as with other tools; the schema is built into Claude's model and can't be modified.

### Handle errors

When using the text editor tool, various errors may occur. Here is guidance on how to handle them:

### Follow implementation best practices

---

## Pricing and token usage

The text editor tool uses the same pricing structure as other tools used with Claude. It follows the standard input and output token pricing based on the Claude model you're using.

In addition to the base tokens, the following additional input tokens are needed for the text editor tool:

| Tool | Additional input tokens |

| --- | --- |

| `text_editor_20241022` (Claude 3.5 Sonnet) | 700 tokens |

| `text_editor_20250124` (Claude 3.7 Sonnet) | 700 tokens |

For more detailed information about tool pricing, see [Tool use pricing](about:/en/docs/build-with-claude/tool-use#pricing).

## Integrate the text editor tool with computer use

The text editor tool can be used alongside the [computer use tool](/en/docs/agents-and-tools/computer-use) and other Anthropic-defined tools. When combining these tools, you'll need to:

1. Include the appropriate beta header (if using with computer use)

2. Match the tool version with the model you're using

3. Account for the additional token usage for all tools included in your request

For more information about using the text editor tool in a computer use context, see the [Computer use](/en/docs/agents-and-tools/computer-use).

## Change log

| Date | Version | Changes |

| --- | --- | --- |

| March 13, 2025 | `text_editor_20250124` | Introduction of standalone Text Editor Tool documentation. This version is optimized for Claude 3.7 Sonnet but has identical capabilities to the previous version. |

| October 22, 2024 | `text_editor_20241022` | Initial release of the Text Editor Tool with Claude 3.5 Sonnet. Provides capabilities for viewing, creating, and editing files through the `view`, `create`, `str_replace`, `insert`, and `undo_edit` commands. |

## Next steps

Here are some ideas for how to use the text editor tool in more convenient and powerful ways:

* **Integrate with your development workflow**: Build the text editor tool into your development tools or IDE

* **Create a code review system**: Have Claude review your code and make improvements

* **Build a debugging assistant**: Create a system where Claude can help you diagnose and fix issues in your code

* **Implement file format conversion**: Let Claude help you convert files from one format to another

* **Automate documentation**: Set up workflows for Claude to automatically document your code

As you build applications with the text editor tool, we're excited to see how you leverage Claude's capabilities to enhance your development workflow and productivity.

================================================

FILE: ai_docs/anthropic-token-efficient-tool-use.md

================================================

# Token-Efficient Tool Use

The upgraded Claude 3.7 Sonnet model is capable of calling tools in a token-efficient manner. Requests save an average of 14% in output tokens, up to 70%, which also reduces latency. Exact token reduction and latency improvements depend on the overall response shape and size.

To use this beta feature, simply add the beta header `token-efficient-tools-2025-02-19` to a tool use request with `claude-3-7-sonnet-20250219`. If you are using the SDK, ensure that you are using the beta SDK with `anthropic.beta.messages`.

Here's an example of how to use token-efficient tools with the API:

```python

# Sample code to demonstrate token-efficient tools

import anthropic

from anthropic.beta import messages as beta_messages

client = anthropic.Anthropic()

# Use the beta messages endpoint with token-efficient tools

response = beta_messages.create(

model="claude-3-7-sonnet-20250219",

max_tokens=1000,

beta_features=["token-efficient-tools-2025-02-19"],

tools=[{

"name": "get_weather",

"description": "Get the current weather for a location",

"input_schema": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state"

}

},

"required": ["location"]

}

}],

messages=[{

"role": "user",

"content": "What's the weather in San Francisco?"

}]

)

```

The above request should, on average, use fewer input and output tokens than a normal request. To confirm this, try making the same request but remove `token-efficient-tools-2025-02-19` from the beta headers list.

# Text Editor Tool

Claude can use an Anthropic-defined text editor tool to view and modify text files, helping you debug, fix, and improve your code or other text documents. This allows Claude to directly interact with your files, providing hands-on assistance rather than just suggesting changes.

## Before using the text editor tool

### Use a compatible model

Anthropic's text editor tool is only available for Claude 3.5 Sonnet and Claude 3.7 Sonnet:

* **Claude 3.7 Sonnet**: `text_editor_20250124`

* **Claude 3.5 Sonnet**: `text_editor_20241022`

Both versions provide identical capabilities - the version you use should match the model you're working with.

### Assess your use case fit

Some examples of when to use the text editor tool are:

* **Code debugging**: Have Claude identify and fix bugs in your code, from syntax errors to logic issues.

* **Code refactoring**: Let Claude improve your code structure, readability, and performance through targeted edits.

* **Documentation generation**: Ask Claude to add docstrings, comments, or README files to your codebase.

* **Test creation**: Have Claude create unit tests for your code based on its understanding of the implementation.

## Text editor tool commands

The text editor tool supports several commands for viewing and modifying files:

### view

The `view` command allows Claude to examine the contents of a file. It can read the entire file or a specific range of lines.

Parameters:

* `command`: Must be "view"

* `path`: The path to the file to view

* `view_range` (optional): An array of two integers specifying the start and end line numbers to view. Line numbers are 1-indexed, and -1 for the end line means read to the end of the file.

### str_replace

The `str_replace` command allows Claude to replace a specific string in a file with a new string. This is used for making precise edits.

Parameters:

* `command`: Must be "str_replace"

* `path`: The path to the file to modify

* `old_str`: The text to replace (must match exactly, including whitespace and indentation)

* `new_str`: The new text to insert in place of the old text

### create

The `create` command allows Claude to create a new file with specified content.

Parameters:

* `command`: Must be "create"

* `path`: The path where the new file should be created

* `file_text`: The content to write to the new file

### insert

The `insert` command allows Claude to insert text at a specific location in a file.

Parameters:

* `command`: Must be "insert"

* `path`: The path to the file to modify

* `insert_line`: The line number after which to insert the text (0 for beginning of file)

* `new_str`: The text to insert

### undo_edit

The `undo_edit` command allows Claude to revert the last edit made to a file.

Parameters:

* `command`: Must be "undo_edit"

* `path`: The path to the file whose last edit should be undone

## Pricing and token usage

The text editor tool uses the same pricing structure as other tools used with Claude. It follows the standard input and output token pricing based on the Claude model you're using.

In addition to the base tokens, the following additional input tokens are needed for the text editor tool:

| Tool | Additional input tokens |

| --- | --- |

| `text_editor_20241022` (Claude 3.5 Sonnet) | 700 tokens |

| `text_editor_20250124` (Claude 3.7 Sonnet) | 700 tokens |

## Change log

| Date | Version | Changes |

| --- | --- | --- |

| March 13, 2025 | `text_editor_20250124` | Introduction of standalone Text Editor Tool documentation. This version is optimized for Claude 3.7 Sonnet but has identical capabilities to the previous version. |

| October 22, 2024 | `text_editor_20241022` | Initial release of the Text Editor Tool with Claude 3.5 Sonnet. Provides capabilities for viewing, creating, and editing files through the `view`, `create`, `str_replace`, `insert`, and `undo_edit` commands. |

================================================

FILE: ai_docs/building-eff-agents.md

================================================

Product

# Building effective agents

Dec 19, 2024

Over the past year, we've worked with dozens of teams building large language model (LLM) agents across industries. Consistently, the most successful implementations weren't using complex frameworks or specialized libraries. Instead, they were building with simple, composable patterns.

In this post, we share what we’ve learned from working with our customers and building agents ourselves, and give practical advice for developers on building effective agents.

## What are agents?

"Agent" can be defined in several ways. Some customers define agents as fully autonomous systems that operate independently over extended periods, using various tools to accomplish complex tasks. Others use the term to describe more prescriptive implementations that follow predefined workflows. At Anthropic, we categorize all these variations as **agentic systems**, but draw an important architectural distinction between **workflows** and **agents**:

- **Workflows** are systems where LLMs and tools are orchestrated through predefined code paths.

- **Agents**, on the other hand, are systems where LLMs dynamically direct their own processes and tool usage, maintaining control over how they accomplish tasks.

Below, we will explore both types of agentic systems in detail. In Appendix 1 (“Agents in Practice”), we describe two domains where customers have found particular value in using these kinds of systems.

## When (and when not) to use agents

When building applications with LLMs, we recommend finding the simplest solution possible, and only increasing complexity when needed. This might mean not building agentic systems at all. Agentic systems often trade latency and cost for better task performance, and you should consider when this tradeoff makes sense.

When more complexity is warranted, workflows offer predictability and consistency for well-defined tasks, whereas agents are the better option when flexibility and model-driven decision-making are needed at scale. For many applications, however, optimizing single LLM calls with retrieval and in-context examples is usually enough.

## When and how to use frameworks

There are many frameworks that make agentic systems easier to implement, including:

- [LangGraph](https://langchain-ai.github.io/langgraph/) from LangChain;

- Amazon Bedrock's [AI Agent framework](https://aws.amazon.com/bedrock/agents/);

- [Rivet](https://rivet.ironcladapp.com/), a drag and drop GUI LLM workflow builder; and

- [Vellum](https://www.vellum.ai/), another GUI tool for building and testing complex workflows.

These frameworks make it easy to get started by simplifying standard low-level tasks like calling LLMs, defining and parsing tools, and chaining calls together. However, they often create extra layers of abstraction that can obscure the underlying prompts and responses, making them harder to debug. They can also make it tempting to add complexity when a simpler setup would suffice.

We suggest that developers start by using LLM APIs directly: many patterns can be implemented in a few lines of code. If you do use a framework, ensure you understand the underlying code. Incorrect assumptions about what's under the hood are a common source of customer error.

See our [cookbook](https://github.com/anthropics/anthropic-cookbook/tree/main/patterns/agents) for some sample implementations.

## Building blocks, workflows, and agents

In this section, we’ll explore the common patterns for agentic systems we’ve seen in production. We'll start with our foundational building block—the augmented LLM—and progressively increase complexity, from simple compositional workflows to autonomous agents.

### Building block: The augmented LLM

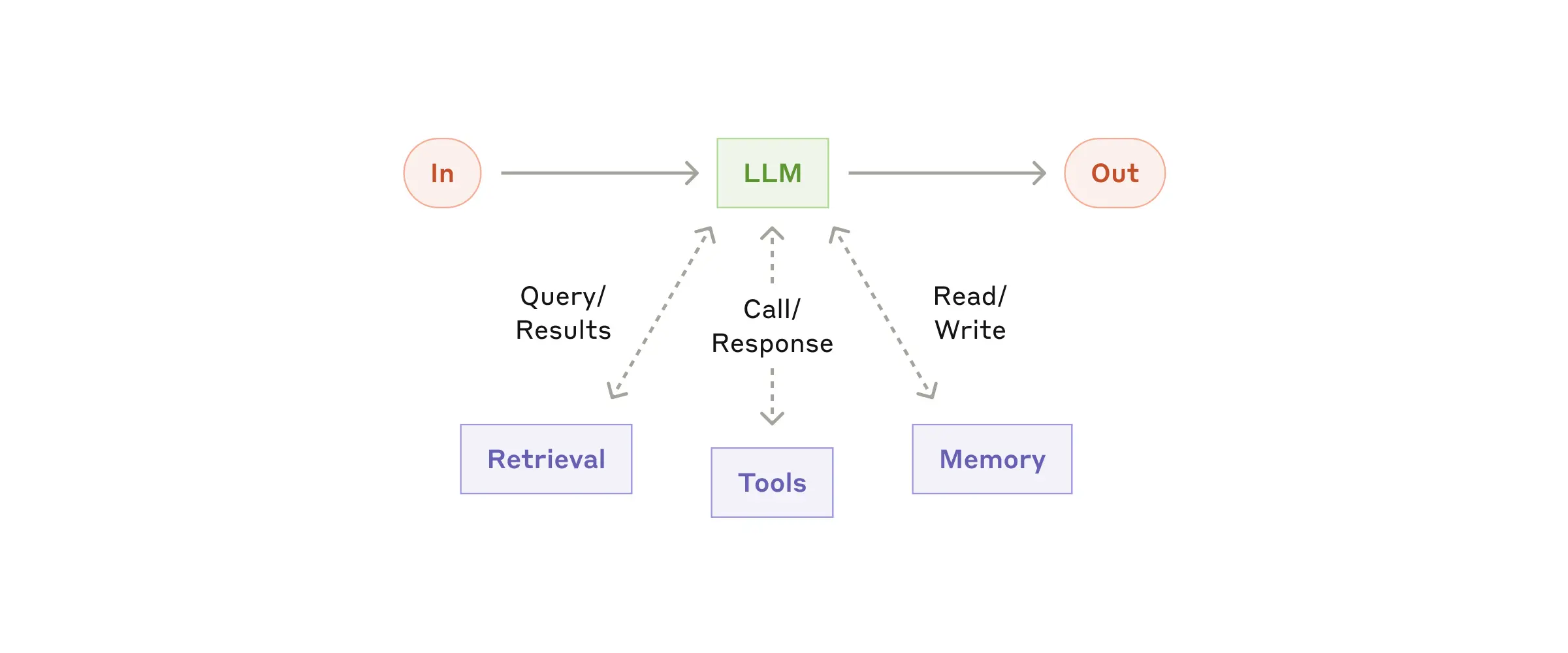

The basic building block of agentic systems is an LLM enhanced with augmentations such as retrieval, tools, and memory. Our current models can actively use these capabilities—generating their own search queries, selecting appropriate tools, and determining what information to retain.

The augmented LLM

We recommend focusing on two key aspects of the implementation: tailoring these capabilities to your specific use case and ensuring they provide an easy, well-documented interface for your LLM. While there are many ways to implement these augmentations, one approach is through our recently released [Model Context Protocol](https://www.anthropic.com/news/model-context-protocol), which allows developers to integrate with a growing ecosystem of third-party tools with a simple [client implementation](https://modelcontextprotocol.io/tutorials/building-a-client#building-mcp-clients).

For the remainder of this post, we'll assume each LLM call has access to these augmented capabilities.

### Workflow: Prompt chaining

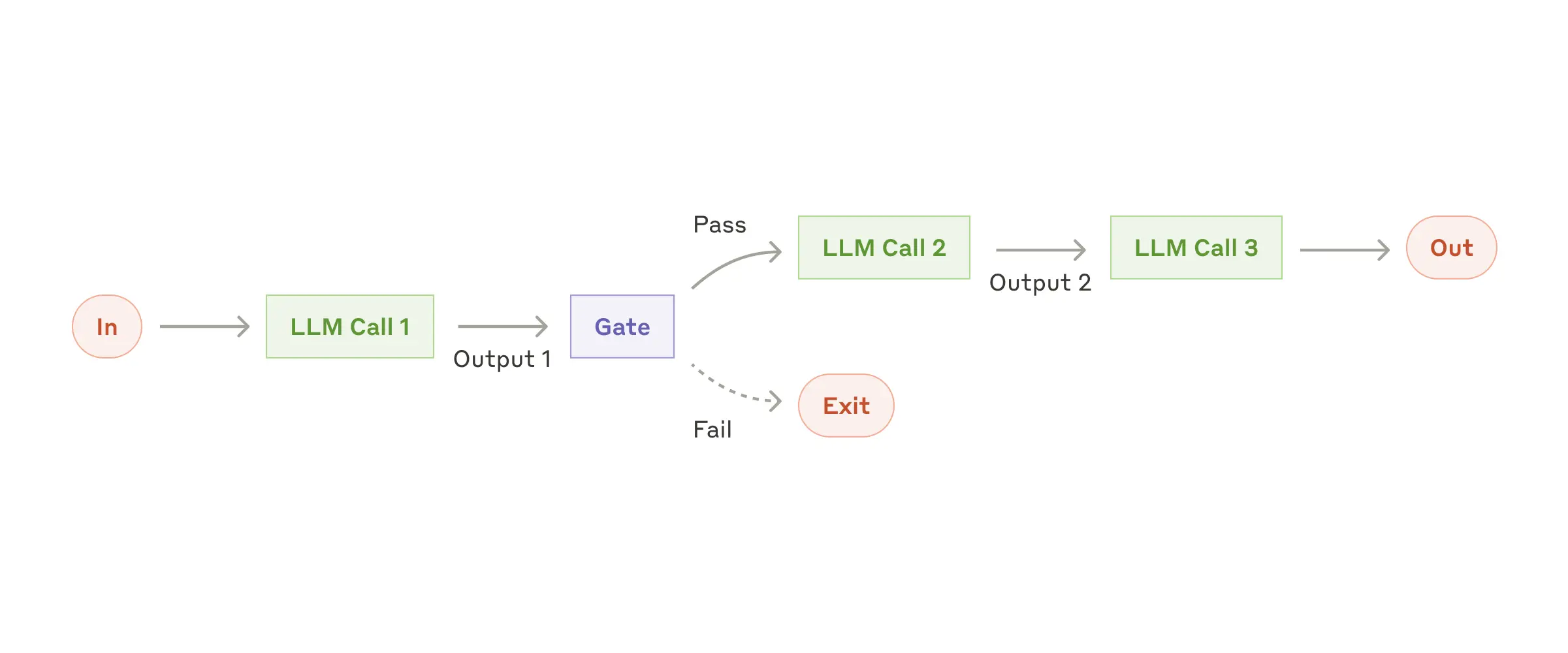

Prompt chaining decomposes a task into a sequence of steps, where each LLM call processes the output of the previous one. You can add programmatic checks (see "gate” in the diagram below) on any intermediate steps to ensure that the process is still on track.

The prompt chaining workflow

**When to use this workflow:** This workflow is ideal for situations where the task can be easily and cleanly decomposed into fixed subtasks. The main goal is to trade off latency for higher accuracy, by making each LLM call an easier task.

**Examples where prompt chaining is useful:**

- Generating Marketing copy, then translating it into a different language.

- Writing an outline of a document, checking that the outline meets certain criteria, then writing the document based on the outline.

### Workflow: Routing

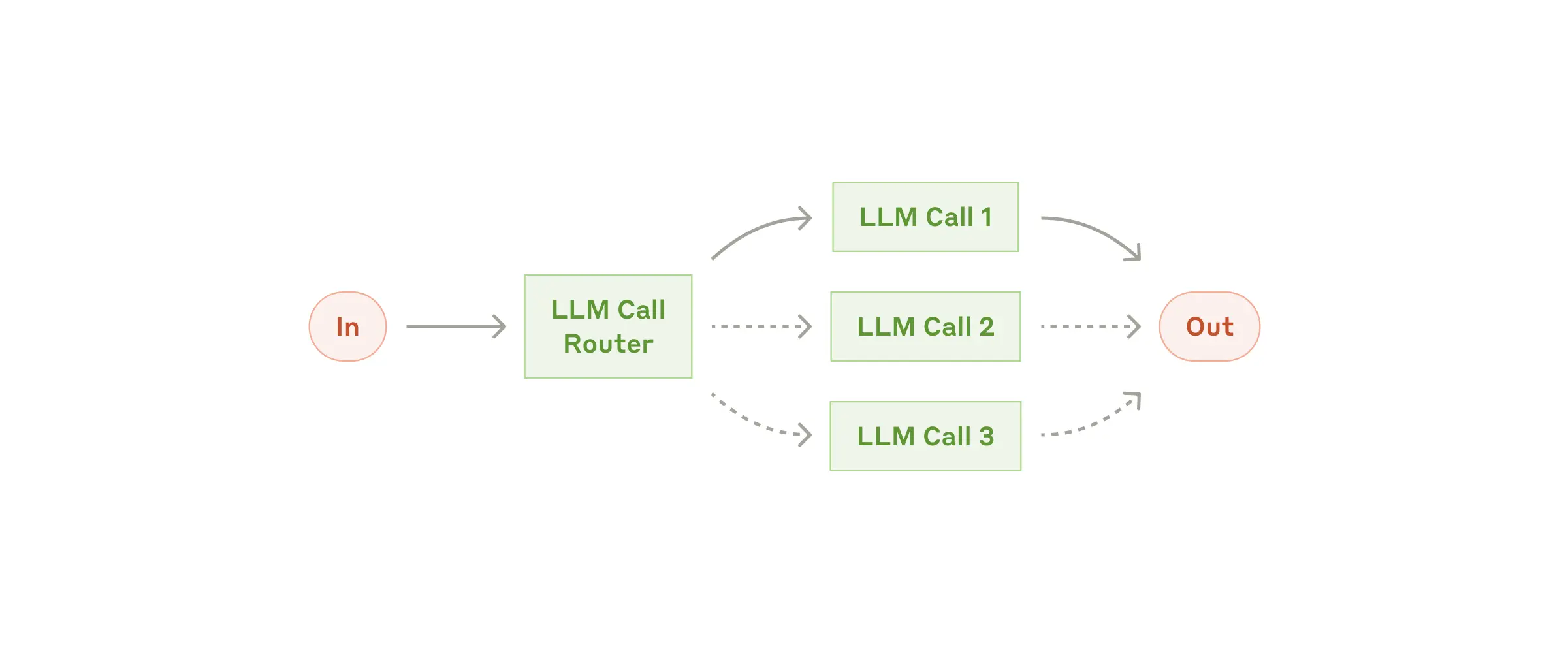

Routing classifies an input and directs it to a specialized followup task. This workflow allows for separation of concerns, and building more specialized prompts. Without this workflow, optimizing for one kind of input can hurt performance on other inputs.

The routing workflow

**When to use this workflow:** Routing works well for complex tasks where there are distinct categories that are better handled separately, and where classification can be handled accurately, either by an LLM or a more traditional classification model/algorithm.

**Examples where routing is useful:**

- Directing different types of customer service queries (general questions, refund requests, technical support) into different downstream processes, prompts, and tools.

- Routing easy/common questions to smaller models like Claude 3.5 Haiku and hard/unusual questions to more capable models like Claude 3.5 Sonnet to optimize cost and speed.

### Workflow: Parallelization

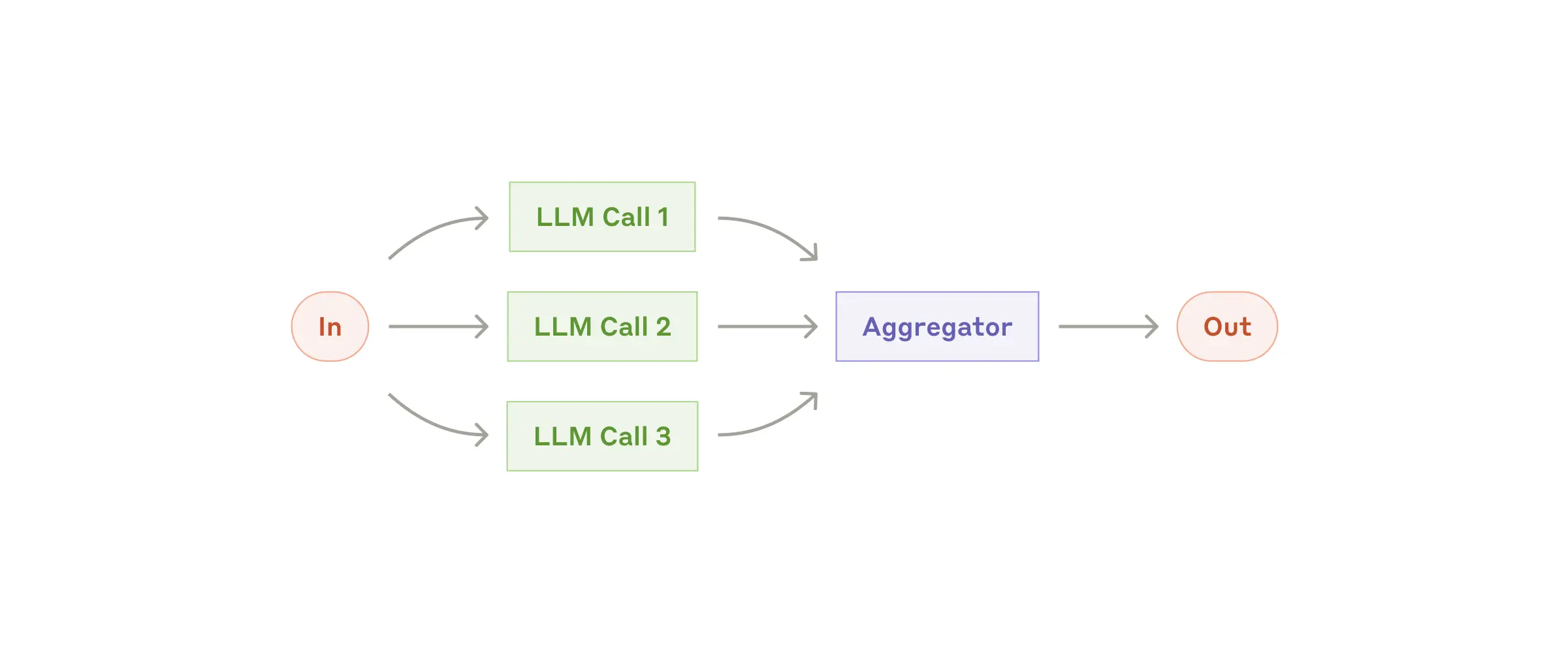

LLMs can sometimes work simultaneously on a task and have their outputs aggregated programmatically. This workflow, parallelization, manifests in two key variations:

- **Sectioning**: Breaking a task into independent subtasks run in parallel.

- **Voting:** Running the same task multiple times to get diverse outputs.

The parallelization workflow

**When to use this workflow:** Parallelization is effective when the divided subtasks can be parallelized for speed, or when multiple perspectives or attempts are needed for higher confidence results. For complex tasks with multiple considerations, LLMs generally perform better when each consideration is handled by a separate LLM call, allowing focused attention on each specific aspect.

**Examples where parallelization is useful:**

- **Sectioning**:

- Implementing guardrails where one model instance processes user queries while another screens them for inappropriate content or requests. This tends to perform better than having the same LLM call handle both guardrails and the core response.

- Automating evals for evaluating LLM performance, where each LLM call evaluates a different aspect of the model’s performance on a given prompt.

- **Voting**:

- Reviewing a piece of code for vulnerabilities, where several different prompts review and flag the code if they find a problem.

- Evaluating whether a given piece of content is inappropriate, with multiple prompts evaluating different aspects or requiring different vote thresholds to balance false positives and negatives.

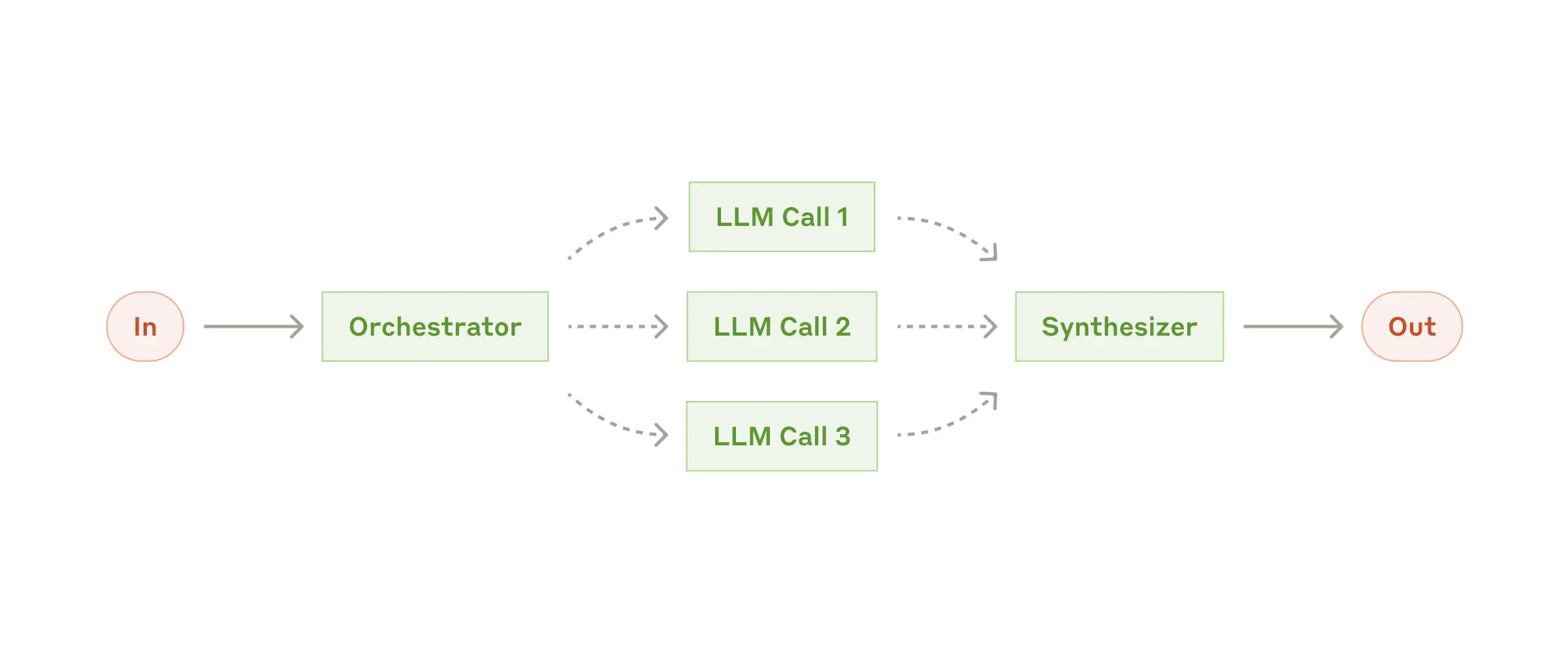

### Workflow: Orchestrator-workers

In the orchestrator-workers workflow, a central LLM dynamically breaks down tasks, delegates them to worker LLMs, and synthesizes their results.

The orchestrator-workers workflow

**When to use this workflow:** This workflow is well-suited for complex tasks where you can’t predict the subtasks needed (in coding, for example, the number of files that need to be changed and the nature of the change in each file likely depend on the task). Whereas it’s topographically similar, the key difference from parallelization is its flexibility—subtasks aren't pre-defined, but determined by the orchestrator based on the specific input.

**Example where orchestrator-workers is useful:**

- Coding products that make complex changes to multiple files each time.

- Search tasks that involve gathering and analyzing information from multiple sources for possible relevant information.

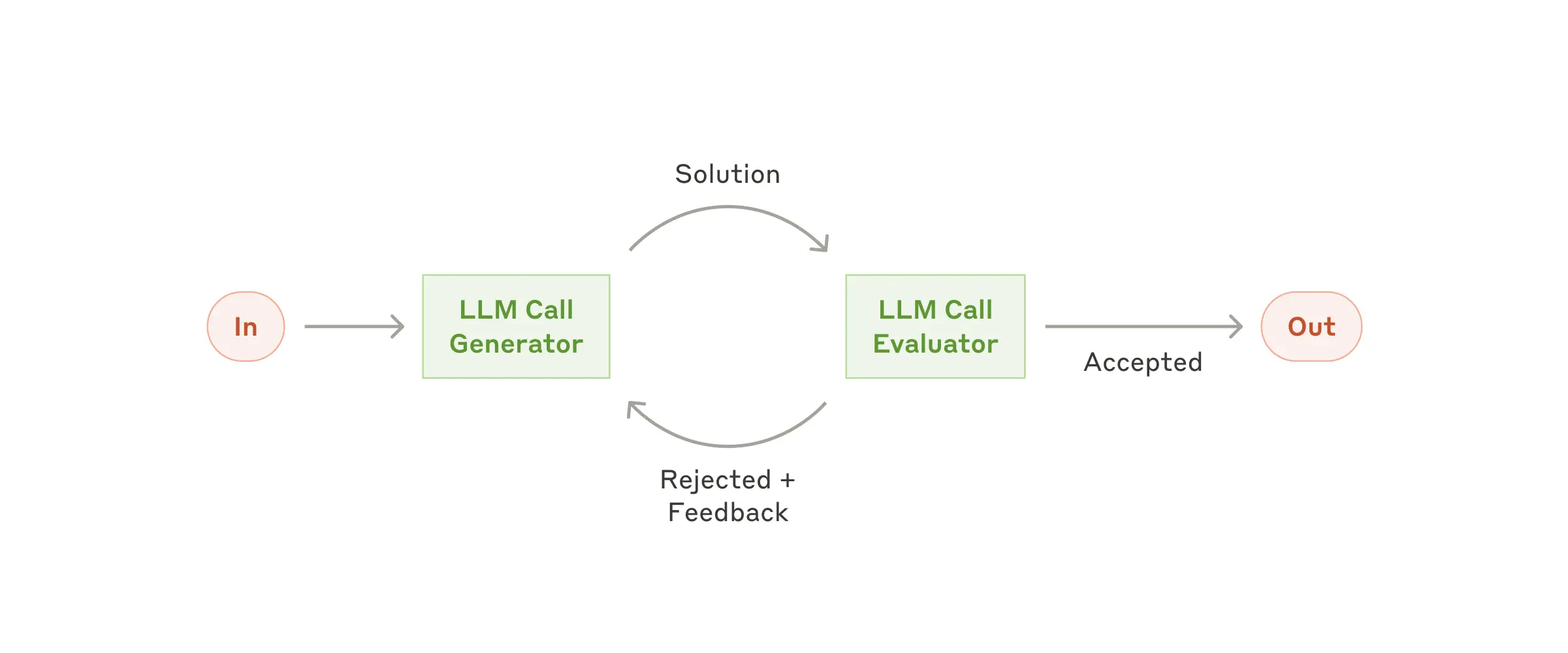

### Workflow: Evaluator-optimizer

In the evaluator-optimizer workflow, one LLM call generates a response while another provides evaluation and feedback in a loop.

The evaluator-optimizer workflow

**When to use this workflow:** This workflow is particularly effective when we have clear evaluation criteria, and when iterative refinement provides measurable value. The two signs of good fit are, first, that LLM responses can be demonstrably improved when a human articulates their feedback; and second, that the LLM can provide such feedback. This is analogous to the iterative writing process a human writer might go through when producing a polished document.

**Examples where evaluator-optimizer is useful:**

- Literary translation where there are nuances that the translator LLM might not capture initially, but where an evaluator LLM can provide useful critiques.

- Complex search tasks that require multiple rounds of searching and analysis to gather comprehensive information, where the evaluator decides whether further searches are warranted.

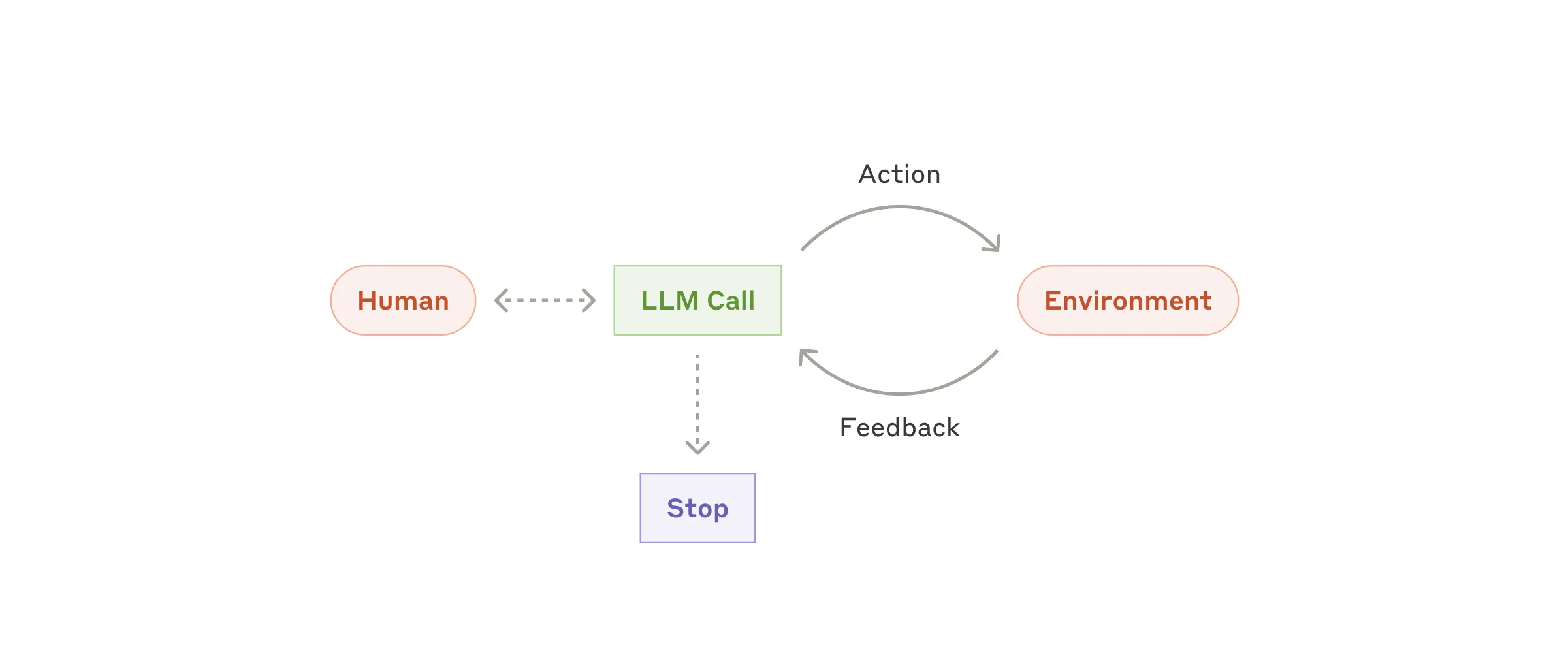

### Agents

Agents are emerging in production as LLMs mature in key capabilities—understanding complex inputs, engaging in reasoning and planning, using tools reliably, and recovering from errors. Agents begin their work with either a command from, or interactive discussion with, the human user. Once the task is clear, agents plan and operate independently, potentially returning to the human for further information or judgement. During execution, it's crucial for the agents to gain “ground truth” from the environment at each step (such as tool call results or code execution) to assess its progress. Agents can then pause for human feedback at checkpoints or when encountering blockers. The task often terminates upon completion, but it’s also common to include stopping conditions (such as a maximum number of iterations) to maintain control.

Agents can handle sophisticated tasks, but their implementation is often straightforward. They are typically just LLMs using tools based on environmental feedback in a loop. It is therefore crucial to design toolsets and their documentation clearly and thoughtfully. We expand on best practices for tool development in Appendix 2 ("Prompt Engineering your Tools").

Autonomous agent

**When to use agents:** Agents can be used for open-ended problems where it’s difficult or impossible to predict the required number of steps, and where you can’t hardcode a fixed path. The LLM will potentially operate for many turns, and you must have some level of trust in its decision-making. Agents' autonomy makes them ideal for scaling tasks in trusted environments.

The autonomous nature of agents means higher costs, and the potential for compounding errors. We recommend extensive testing in sandboxed environments, along with the appropriate guardrails.

**Examples where agents are useful:**

The following examples are from our own implementations:

- A coding Agent to resolve [SWE-bench tasks](https://www.anthropic.com/research/swe-bench-sonnet), which involve edits to many files based on a task description;

- Our [“computer use” reference implementation](https://github.com/anthropics/anthropic-quickstarts/tree/main/computer-use-demo), where Claude uses a computer to accomplish tasks.

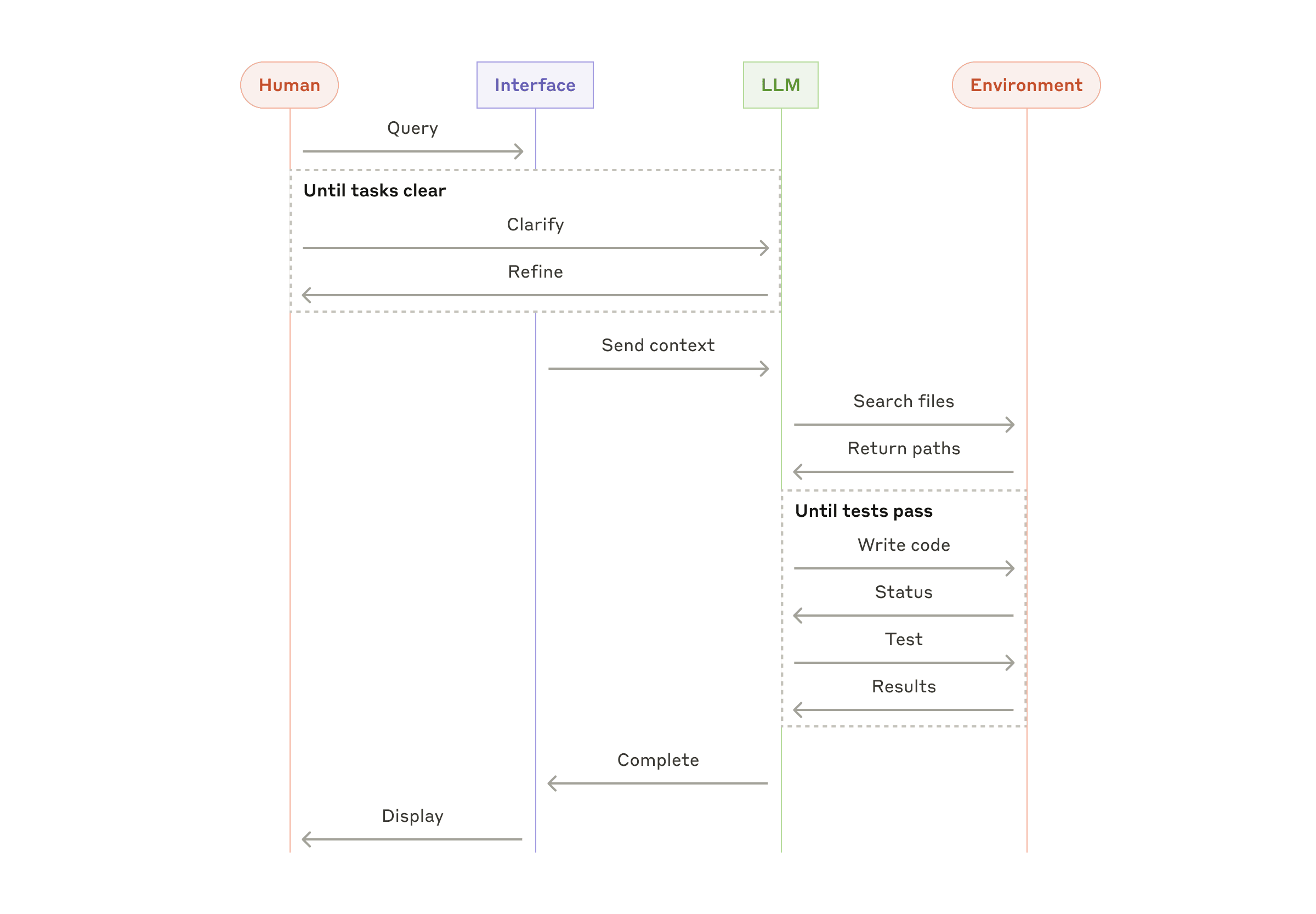

High-level flow of a coding agent

## Combining and customizing these patterns

These building blocks aren't prescriptive. They're common patterns that developers can shape and combine to fit different use cases. The key to success, as with any LLM features, is measuring performance and iterating on implementations. To repeat: you should consider adding complexity _only_ when it demonstrably improves outcomes.

## Summary

Success in the LLM space isn't about building the most sophisticated system. It's about building the _right_ system for your needs. Start with simple prompts, optimize them with comprehensive evaluation, and add multi-step agentic systems only when simpler solutions fall short.

When implementing agents, we try to follow three core principles:

1. Maintain **simplicity** in your agent's design.

2. Prioritize **transparency** by explicitly showing the agent’s planning steps.

3. Carefully craft your agent-computer interface (ACI) through thorough tool **documentation and testing**.

Frameworks can help you get started quickly, but don't hesitate to reduce abstraction layers and build with basic components as you move to production. By following these principles, you can create agents that are not only powerful but also reliable, maintainable, and trusted by their users.

### Acknowledgements

Written by Erik Schluntz and Barry Zhang. This work draws upon our experiences building agents at Anthropic and the valuable insights shared by our customers, for which we're deeply grateful.

## Appendix 1: Agents in practice

Our work with customers has revealed two particularly promising applications for AI agents that demonstrate the practical value of the patterns discussed above. Both applications illustrate how agents add the most value for tasks that require both conversation and action, have clear success criteria, enable feedback loops, and integrate meaningful human oversight.

### A. Customer support

Customer support combines familiar chatbot interfaces with enhanced capabilities through tool integration. This is a natural fit for more open-ended agents because:

- Support interactions naturally follow a conversation flow while requiring access to external information and actions;

- Tools can be integrated to pull customer data, order history, and knowledge base articles;

- Actions such as issuing refunds or updating tickets can be handled programmatically; and

- Success can be clearly measured through user-defined resolutions.

Several companies have demonstrated the viability of this approach through usage-based pricing models that charge only for successful resolutions, showing confidence in their agents' effectiveness.

### B. Coding agents

The software development space has shown remarkable potential for LLM features, with capabilities evolving from code completion to autonomous problem-solving. Agents are particularly effective because:

- Code solutions are verifiable through automated tests;

- Agents can iterate on solutions using test results as feedback;

- The problem space is well-defined and structured; and

- Output quality can be measured objectively.

In our own implementation, agents can now solve real GitHub issues in the [SWE-bench Verified](https://www.anthropic.com/research/swe-bench-sonnet) benchmark based on the pull request description alone. However, whereas automated testing helps verify functionality, human review remains crucial for ensuring solutions align with broader system requirements.

## Appendix 2: Prompt engineering your tools

No matter which agentic system you're building, tools will likely be an important part of your agent. [Tools](https://www.anthropic.com/news/tool-use-ga) enable Claude to interact with external services and APIs by specifying their exact structure and definition in our API. When Claude responds, it will include a [tool use block](https://docs.anthropic.com/en/docs/build-with-claude/tool-use#example-api-response-with-a-tool-use-content-block) in the API response if it plans to invoke a tool. Tool definitions and specifications should be given just as much prompt engineering attention as your overall prompts. In this brief appendix, we describe how to prompt engineer your tools.

There are often several ways to specify the same action. For instance, you can specify a file edit by writing a diff, or by rewriting the entire file. For structured output, you can return code inside markdown or inside JSON. In software engineering, differences like these are cosmetic and can be converted losslessly from one to the other. However, some formats are much more difficult for an LLM to write than others. Writing a diff requires knowing how many lines are changing in the chunk header before the new code is written. Writing code inside JSON (compared to markdown) requires extra escaping of newlines and quotes.

Our suggestions for deciding on tool formats are the following:

- Give the model enough tokens to "think" before it writes itself into a corner.

- Keep the format close to what the model has seen naturally occurring in text on the internet.

- Make sure there's no formatting "overhead" such as having to keep an accurate count of thousands of lines of code, or string-escaping any code it writes.

One rule of thumb is to think about how much effort goes into human-computer interfaces (HCI), and plan to invest just as much effort in creating good _agent_-computer interfaces (ACI). Here are some thoughts on how to do so:

- Put yourself in the model's shoes. Is it obvious how to use this tool, based on the description and parameters, or would you need to think carefully about it? If so, then it’s probably also true for the model. A good tool definition often includes example usage, edge cases, input format requirements, and clear boundaries from other tools.

- How can you change parameter names or descriptions to make things more obvious? Think of this as writing a great docstring for a junior developer on your team. This is especially important when using many similar tools.

- Test how the model uses your tools: Run many example inputs in our [workbench](https://console.anthropic.com/workbench) to see what mistakes the model makes, and iterate.

- [Poka-yoke](https://en.wikipedia.org/wiki/Poka-yoke) your tools. Change the arguments so that it is harder to make mistakes.

While building our agent for [SWE-bench](https://www.anthropic.com/research/swe-bench-sonnet), we actually spent more time optimizing our tools than the overall prompt. For example, we found that the model would make mistakes with tools using relative filepaths after the agent had moved out of the root directory. To fix this, we changed the tool to always require absolute filepaths—and we found that the model used this method flawlessly.

[Share on Twitter](https://twitter.com/intent/tweet?text=https://www.anthropic.com/research/building-effective-agents)[Share on LinkedIn](https://www.linkedin.com/shareArticle?mini=true&url=https://www.anthropic.com/research/building-effective-agents)

================================================

FILE: ai_docs/existing_anthropic_computer_use_code.md

================================================

```python

import os

import anthropic

import argparse

import yaml

import subprocess

from datetime import datetime

import uuid

from typing import Dict, Any, List, Optional, Union

import traceback

import sys

import logging

from logging.handlers import RotatingFileHandler

EDITOR_DIR = os.path.join(os.getcwd(), "editor_dir")

SESSIONS_DIR = os.path.join(os.getcwd(), "sessions")

os.makedirs(SESSIONS_DIR, exist_ok=True)

# Fetch system prompts from environment variables or use defaults

BASH_SYSTEM_PROMPT = os.environ.get(

"BASH_SYSTEM_PROMPT", "You are a helpful assistant that can execute bash commands."

)

EDITOR_SYSTEM_PROMPT = os.environ.get(

"EDITOR_SYSTEM_PROMPT",

"You are a helpful assistant that helps users edit text files.",

)

class SessionLogger:

def __init__(self, session_id: str, sessions_dir: str):

self.session_id = session_id

self.sessions_dir = sessions_dir

self.logger = self._setup_logging()

# Initialize token counters

self.total_input_tokens = 0

self.total_output_tokens = 0

def _setup_logging(self) -> logging.Logger:

"""Configure logging for the session"""

log_formatter = logging.Formatter(

"%(asctime)s - %(name)s - %(levelname)s - %(prefix)s - %(message)s"

)

log_file = os.path.join(self.sessions_dir, f"{self.session_id}.log")

file_handler = RotatingFileHandler(

log_file, maxBytes=1024 * 1024, backupCount=5

)

file_handler.setFormatter(log_formatter)

console_handler = logging.StreamHandler()

console_handler.setFormatter(log_formatter)

logger = logging.getLogger(self.session_id)

logger.addHandler(file_handler)

logger.addHandler(console_handler)

logger.setLevel(logging.DEBUG)

return logger

def update_token_usage(self, input_tokens: int, output_tokens: int):

"""Update the total token usage."""

self.total_input_tokens += input_tokens

self.total_output_tokens += output_tokens

def log_total_cost(self):

"""Calculate and log the total cost based on token usage."""

cost_per_million_input_tokens = 3.0 # $3.00 per million input tokens

cost_per_million_output_tokens = 15.0 # $15.00 per million output tokens

total_input_cost = (

self.total_input_tokens / 1_000_000

) * cost_per_million_input_tokens

total_output_cost = (

self.total_output_tokens / 1_000_000

) * cost_per_million_output_tokens

total_cost = total_input_cost + total_output_cost

prefix = "📊 session"

self.logger.info(

f"Total input tokens: {self.total_input_tokens}", extra={"prefix": prefix}

)

self.logger.info(

f"Total output tokens: {self.total_output_tokens}", extra={"prefix": prefix}

)

self.logger.info(

f"Total input cost: ${total_input_cost:.6f}", extra={"prefix": prefix}

)

self.logger.info(

f"Total output cost: ${total_output_cost:.6f}", extra={"prefix": prefix}

)

self.logger.info(f"Total cost: ${total_cost:.6f}", extra={"prefix": prefix})

class EditorSession:

def __init__(self, session_id: Optional[str] = None):

"""Initialize editor session with optional existing session ID"""

self.session_id = session_id or self._create_session_id()

self.sessions_dir = SESSIONS_DIR

self.editor_dir = EDITOR_DIR

self.client = anthropic.Anthropic(api_key=os.environ.get("ANTHROPIC_API_KEY"))

self.messages = []

# Create editor directory if needed

os.makedirs(self.editor_dir, exist_ok=True)

# Initialize logger placeholder

self.logger = None

# Set log prefix

self.log_prefix = "📝 file_editor"

def set_logger(self, session_logger: SessionLogger):

"""Set the logger for the session and store the SessionLogger instance."""

self.session_logger = session_logger

self.logger = logging.LoggerAdapter(

self.session_logger.logger, {"prefix": self.log_prefix}

)

def _create_session_id(self) -> str:

"""Create a new session ID"""

timestamp = datetime.now().strftime("%Y%m%d-%H%M%S")

return f"{timestamp}-{uuid.uuid4().hex[:6]}"

def _get_editor_path(self, path: str) -> str:

"""Convert API path to local editor directory path"""

# Strip any leading /repo/ from the path

clean_path = path.replace("/repo/", "", 1)

# Join with editor_dir

full_path = os.path.join(self.editor_dir, clean_path)

# Create the directory structure if it doesn't exist

os.makedirs(os.path.dirname(full_path), exist_ok=True)

return full_path

def _handle_view(self, path: str, _: Dict[str, Any]) -> Dict[str, Any]:

"""Handle view command"""

editor_path = self._get_editor_path(path)

if os.path.exists(editor_path):

with open(editor_path, "r") as f:

return {"content": f.read()}

return {"error": f"File {editor_path} does not exist"}

def _handle_create(self, path: str, tool_call: Dict[str, Any]) -> Dict[str, Any]:

"""Handle create command"""

os.makedirs(os.path.dirname(path), exist_ok=True)

with open(path, "w") as f:

f.write(tool_call["file_text"])

return {"content": f"File created at {path}"}

def _handle_str_replace(

self, path: str, tool_call: Dict[str, Any]

) -> Dict[str, Any]:

"""Handle str_replace command"""

with open(path, "r") as f:

content = f.read()

if tool_call["old_str"] not in content:

return {"error": "old_str not found in file"}

new_content = content.replace(

tool_call["old_str"], tool_call.get("new_str", "")

)

with open(path, "w") as f:

f.write(new_content)

return {"content": "File updated successfully"}

def _handle_insert(self, path: str, tool_call: Dict[str, Any]) -> Dict[str, Any]:

"""Handle insert command"""

with open(path, "r") as f:

lines = f.readlines()

insert_line = tool_call["insert_line"]

if insert_line > len(lines):

return {"error": "insert_line beyond file length"}

lines.insert(insert_line, tool_call["new_str"] + "\n")

with open(path, "w") as f:

f.writelines(lines)

return {"content": "Content inserted successfully"}

def log_to_session(self, data: Dict[str, Any], section: str) -> None:

"""Log data to session log file"""

self.logger.info(f"{section}: {data}")

def handle_text_editor_tool(self, tool_call: Dict[str, Any]) -> Dict[str, Any]:

"""Handle text editor tool calls"""

try:

command = tool_call["command"]

if not all(key in tool_call for key in ["command", "path"]):

return {"error": "Missing required fields"}

# Get path and ensure directory exists

path = self._get_editor_path(tool_call["path"])

handlers = {

"view": self._handle_view,

"create": self._handle_create,

"str_replace": self._handle_str_replace,

"insert": self._handle_insert,

}

handler = handlers.get(command)

if not handler:

return {"error": f"Unknown command {command}"}

return handler(path, tool_call)

except Exception as e:

self.logger.error(f"Error in handle_text_editor_tool: {str(e)}")

return {"error": str(e)}

def process_tool_calls(

self, tool_calls: List[anthropic.types.ContentBlock]

) -> List[Dict[str, Any]]:

"""Process tool calls and return results"""

results = []

for tool_call in tool_calls:

if tool_call.type == "tool_use" and tool_call.name == "str_replace_editor":

# Log the keys and first 20 characters of the values of the tool_call

for key, value in tool_call.input.items():

truncated_value = str(value)[:20] + (

"..." if len(str(value)) > 20 else ""

)

self.logger.info(

f"Tool call key: {key}, Value (truncated): {truncated_value}"

)

result = self.handle_text_editor_tool(tool_call.input)

# Convert result to match expected tool result format

is_error = False

if result.get("error"):

is_error = True

tool_result_content = [{"type": "text", "text": result["error"]}]

else:

tool_result_content = [

{"type": "text", "text": result.get("content", "")}

]

results.append(

{

"tool_call_id": tool_call.id,

"output": {

"type": "tool_result",

"content": tool_result_content,

"tool_use_id": tool_call.id,

"is_error": is_error,

},

}

)

return results

def process_edit(self, edit_prompt: str) -> None:

"""Main method to process editing prompts"""

try:

# Initial message with proper content structure

api_message = {

"role": "user",

"content": [{"type": "text", "text": edit_prompt}],

}

self.messages = [api_message]

self.logger.info(f"User input: {api_message}")

while True:

response = self.client.beta.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=4096,

messages=self.messages,

tools=[

{"type": "text_editor_20241022", "name": "str_replace_editor"}

],

system=EDITOR_SYSTEM_PROMPT,

betas=["computer-use-2024-10-22"],

)

# Extract token usage from the response

input_tokens = getattr(response.usage, "input_tokens", 0)

output_tokens = getattr(response.usage, "output_tokens", 0)

self.logger.info(

f"API usage: input_tokens={input_tokens}, output_tokens={output_tokens}"

)

# Update token counts in SessionLogger

self.session_logger.update_token_usage(input_tokens, output_tokens)

self.logger.info(f"API response: {response.model_dump()}")

# Convert response content to message params

response_content = []

for block in response.content:

if block.type == "text":

response_content.append({"type": "text", "text": block.text})

else:

response_content.append(block.model_dump())

# Add assistant response to messages

self.messages.append({"role": "assistant", "content": response_content})

if response.stop_reason != "tool_use":

print(response.content[0].text)

break

tool_results = self.process_tool_calls(response.content)

# Add tool results as user message

if tool_results:

self.messages.append(

{"role": "user", "content": [tool_results[0]["output"]]}

)

if tool_results[0]["output"]["is_error"]:

self.logger.error(

f"Error: {tool_results[0]['output']['content']}"

)

break

# After the execution loop, log the total cost

self.session_logger.log_total_cost()

except Exception as e:

self.logger.error(f"Error in process_edit: {str(e)}")

self.logger.error(traceback.format_exc())

raise

class BashSession:

def __init__(self, session_id: Optional[str] = None, no_agi: bool = False):

"""Initialize Bash session with optional existing session ID"""

self.session_id = session_id or self._create_session_id()

self.sessions_dir = SESSIONS_DIR

self.client = anthropic.Anthropic(api_key=os.environ.get("ANTHROPIC_API_KEY"))

self.messages = []

# Initialize a persistent environment dictionary for subprocesses

self.environment = os.environ.copy()

# Initialize logger placeholder

self.logger = None

# Set log prefix

self.log_prefix = "🐚 bash"

# Store the no_agi flag

self.no_agi = no_agi

def set_logger(self, session_logger: SessionLogger):

"""Set the logger for the session and store the SessionLogger instance."""

self.session_logger = session_logger

self.logger = logging.LoggerAdapter(

session_logger.logger, {"prefix": self.log_prefix}

)

def _create_session_id(self) -> str:

"""Create a new session ID"""

timestamp = datetime.now().strftime("%Y%m%d-%H:%M:%S-%f")

# return f"{timestamp}-{uuid.uuid4().hex[:6]}"

return f"{timestamp}"

def _handle_bash_command(self, tool_call: Dict[str, Any]) -> Dict[str, Any]:

"""Handle bash command execution"""

try:

command = tool_call.get("command")

restart = tool_call.get("restart", False)

if restart:

self.environment = os.environ.copy() # Reset the environment

self.logger.info("Bash session restarted.")

return {"content": "Bash session restarted."}

if not command:

self.logger.error("No command provided to execute.")

return {"error": "No command provided to execute."}

# Check if no_agi is enabled

if self.no_agi:

self.logger.info(f"Mock executing bash command: {command}")

return {"content": "in mock mode, command did not run"}

# Log the command being executed

self.logger.info(f"Executing bash command: {command}")

# Execute the command in a subprocess

result = subprocess.run(

command,

shell=True,

stdout=subprocess.PIPE,

stderr=subprocess.PIPE,

env=self.environment,

text=True,

executable="/bin/bash",

)

output = result.stdout.strip()

error_output = result.stderr.strip()

# Log the outputs

if output:

self.logger.info(

f"Command output:\n\n```output for '{command[:20]}...'\n{output}\n```"

)

if error_output:

self.logger.error(

f"Command error output:\n\n```error for '{command}'\n{error_output}\n```"

)

if result.returncode != 0:

error_message = error_output or "Command execution failed."

return {"error": error_message}

return {"content": output}

except Exception as e:

self.logger.error(f"Error in _handle_bash_command: {str(e)}")

self.logger.error(traceback.format_exc())

return {"error": str(e)}

def process_tool_calls(

self, tool_calls: List[anthropic.types.ContentBlock]

) -> List[Dict[str, Any]]:

"""Process tool calls and return results"""

results = []

for tool_call in tool_calls:

if tool_call.type == "tool_use" and tool_call.name == "bash":

self.logger.info(f"Bash tool call input: {tool_call.input}")

result = self._handle_bash_command(tool_call.input)

# Convert result to match expected tool result format

is_error = False

if result.get("error"):

is_error = True

tool_result_content = [{"type": "text", "text": result["error"]}]

else:

tool_result_content = [

{"type": "text", "text": result.get("content", "")}

]

results.append(

{

"tool_call_id": tool_call.id,

"output": {

"type": "tool_result",

"content": tool_result_content,

"tool_use_id": tool_call.id,

"is_error": is_error,

},

}

)

return results

def process_bash_command(self, bash_prompt: str) -> None:

"""Main method to process bash commands via the assistant"""

try:

# Initial message with proper content structure

api_message = {

"role": "user",

"content": [{"type": "text", "text": bash_prompt}],

}

self.messages = [api_message]

self.logger.info(f"User input: {api_message}")

while True:

response = self.client.beta.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=4096,

messages=self.messages,

tools=[{"type": "bash_20241022", "name": "bash"}],

system=BASH_SYSTEM_PROMPT,

betas=["computer-use-2024-10-22"],

)

# Extract token usage from the response

input_tokens = getattr(response.usage, "input_tokens", 0)

output_tokens = getattr(response.usage, "output_tokens", 0)

self.logger.info(

f"API usage: input_tokens={input_tokens}, output_tokens={output_tokens}"

)

# Update token counts in SessionLogger

self.session_logger.update_token_usage(input_tokens, output_tokens)

self.logger.info(f"API response: {response.model_dump()}")

# Convert response content to message params

response_content = []

for block in response.content:

if block.type == "text":

response_content.append({"type": "text", "text": block.text})

else:

response_content.append(block.model_dump())

# Add assistant response to messages

self.messages.append({"role": "assistant", "content": response_content})

if response.stop_reason != "tool_use":

# Print the assistant's final response

print(response.content[0].text)

break

tool_results = self.process_tool_calls(response.content)

# Add tool results as user message

if tool_results:

self.messages.append(

{"role": "user", "content": [tool_results[0]["output"]]}

)

if tool_results[0]["output"]["is_error"]:

self.logger.error(

f"Error: {tool_results[0]['output']['content']}"

)

break

# After the execution loop, log the total cost

self.session_logger.log_total_cost()

except Exception as e:

self.logger.error(f"Error in process_bash_command: {str(e)}")

self.logger.error(traceback.format_exc())

raise

def main():

"""Main entry point"""

parser = argparse.ArgumentParser()

parser.add_argument("prompt", help="The prompt for Claude", nargs="?")

parser.add_argument(

"--mode", choices=["editor", "bash"], default="editor", help="Mode to run"

)

parser.add_argument(

"--no-agi",

action="store_true",

help="When set, commands will not be executed, but will return 'command ran'.",

)

args = parser.parse_args()

# Create a shared session ID

session_id = datetime.now().strftime("%Y%m%d-%H%M%S") + "-" + uuid.uuid4().hex[:6]

# Create a single SessionLogger instance

session_logger = SessionLogger(session_id, SESSIONS_DIR)

if args.mode == "editor":

session = EditorSession(session_id=session_id)

# Pass the logger via setter method

session.set_logger(session_logger)

print(f"Session ID: {session.session_id}")

session.process_edit(args.prompt)

elif args.mode == "bash":

session = BashSession(session_id=session_id, no_agi=args.no_agi)

# Pass the logger via setter method

session.set_logger(session_logger)

print(f"Session ID: {session.session_id}")

session.process_bash_command(args.prompt)

if __name__ == "__main__":

main()

```

================================================

FILE: ai_docs/fc_openai_agents.md

================================================

# OpenAI Agents SDK Documentation

This file contains documentation for the OpenAI Agents SDK, scraped from the official documentation site.

## Overview

The [OpenAI Agents SDK](https://github.com/openai/openai-agents-python) enables you to build agentic AI apps in a lightweight, easy-to-use package with very few abstractions. It's a production-ready upgrade of the previous experimentation for agents, [Swarm](https://github.com/openai/swarm/tree/main). The Agents SDK has a very small set of primitives:

- **Agents**, which are LLMs equipped with instructions and tools

- **Handoffs**, which allow agents to delegate to other agents for specific tasks

- **Guardrails**, which enable the inputs to agents to be validated

In combination with Python, these primitives are powerful enough to express complex relationships between tools and agents, and allow you to build real-world applications without a steep learning curve. In addition, the SDK comes with built-in **tracing** that lets you visualize and debug your agentic flows, as well as evaluate them and even fine-tune models for your application.

### Why use the Agents SDK

The SDK has two driving design principles:

1. Enough features to be worth using, but few enough primitives to make it quick to learn.

2. Works great out of the box, but you can customize exactly what happens.

Here are the main features of the SDK:

- Agent loop: Built-in agent loop that handles calling tools, sending results to the LLM, and looping until the LLM is done.

- Python-first: Use built-in language features to orchestrate and chain agents, rather than needing to learn new abstractions.

- Handoffs: A powerful feature to coordinate and delegate between multiple agents.

- Guardrails: Run input validations and checks in parallel to your agents, breaking early if the checks fail.

- Function tools: Turn any Python function into a tool, with automatic schema generation and Pydantic-powered validation.

- Tracing: Built-in tracing that lets you visualize, debug and monitor your workflows, as well as use the OpenAI suite of evaluation, fine-tuning and distillation tools.

### Installation

```bash

pip install openai-agents

```

### Hello world example

```python

from agents import Agent, Runner

agent = Agent(name="Assistant", instructions="You are a helpful assistant")

result = Runner.run_sync(agent, "Write a haiku about recursion in programming.")

print(result.final_output)

# Code within the code,

# Functions calling themselves,

# Infinite loop's dance.

```

## Quickstart

### Create a project and virtual environment

```bash

mkdir my_project

cd my_project

python -m venv .venv

source .venv/bin/activate

pip install openai-agents

export OPENAI_API_KEY=sk-...

```

### Create your first agent

```python

from agents import Agent

agent = Agent(

name="Math Tutor",

instructions="You provide help with math problems. Explain your reasoning at each step and include examples",

)

```

### Add a few more agents

```python

from agents import Agent

history_tutor_agent = Agent(

name="History Tutor",

handoff_description="Specialist agent for historical questions",

instructions="You provide assistance with historical queries. Explain important events and context clearly.",

)

math_tutor_agent = Agent(

name="Math Tutor",

handoff_description="Specialist agent for math questions",

instructions="You provide help with math problems. Explain your reasoning at each step and include examples",

)

```