Repository: doubledaibo/drnet_cvpr2017

Branch: master

Commit: a83aa5768b91

Files: 22

Total size: 123.2 KB

Directory structure:

gitextract_pa_vjz81/

├── .gitignore

├── LICENSE

├── README.md

├── lib/

│ ├── customize_layers/

│ │ ├── __init__.py

│ │ └── concat_layer.py

│ ├── rel_data_layer/

│ │ ├── __init__.py

│ │ └── layer.py

│ └── utils/

│ ├── __init__.py

│ └── eval_utils.py

├── prototxts/

│ ├── drnet_8units_linear_shareweight.prototxt

│ ├── drnet_8units_relu_shareweight.prototxt

│ ├── drnet_8units_softmax.prototxt

│ ├── test_drnet_8units_linear_shareweight.prototxt

│ ├── test_drnet_8units_relu_shareweight.prototxt

│ └── test_drnet_8units_softmax.prototxt

├── snapshots/

│ └── README.md

└── tools/

├── _init_paths.py

├── eval_triplet_recall.py

├── eval_union_recall.py

├── prepare_data.py

├── test_predicate_recognition.py

└── test_triplet_detection.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

snapshots/*.caffemodel

svg

================================================

FILE: LICENSE

================================================

Copyright the Chinese University of Hong Kong. All rights reserved.

Contact persons:

Bo Dai (db014 [at] ie [dot] cuhk [dot] edu [dot] hk)

This software is being made available for research use only.

Any commercial use or redistribution of this software requires a license from

the Chinese University of Hong Kong.

You may use this work subject to the following conditions:

1. This work is provided "as is" by the copyright holder, with

absolutely no warranties of correctness, fitness, intellectual property

ownership, or anything else whatsoever. You use the work

entirely at your own risk. The copyright holder will not be liable for

any legal damages whatsoever connected with the use of this work.

2. The copyright holder retain all copyright to the work. All copies of

the work and all works derived from it must contain (1) this copyright

notice, and (2) additional notices describing the content, dates and

copyright holder of modifications or additions made to the work, if

any, including distribution and use conditions and intellectual property

claims. Derived works must be clearly distinguished from the original

work, both by name and by the prominent inclusion of explicit

descriptions of overlaps and differences.

3. The names and trademarks of the copyright holder may not be used in

advertising or publicity related to this work without specific prior

written permission.

4. In return for the free use of this work, you are requested, but not

legally required, to do the following:

* If you become aware of factors that may significantly affect other

users of the work, for example major bugs or

deficiencies or possible intellectual property issues, you are

requested to report them to the copyright holder, if possible

including redistributable fixes or workarounds.

* If you use the work in scientific research or as part of a larger

software system, you are requested to cite the use in any related

publications or technical documentation. The work is based upon:

"Detecting Visual Relationship with Deep Relational Networks"

Bo Dai, Yuqi Zhang, Dahua Lin, Computer Vision and Pattern Recognition,

(CVPR 2017), 2017 (oral).

this copyright notice must be retained with all copies of the software,

including any modified or derived versions.

================================================

FILE: README.md

================================================

# Code of [Detecting Visual Relationships with Deep Relational Networks](https://arxiv.org/abs/1704.03114)

The code is written in python, and all networks are implemented using [Caffe](https://github.com/BVLC/caffe).

## Datasets

* [VRD](http://cs.stanford.edu/people/ranjaykrishna/vrd/dataset.zip)

* sVG: subset of [Visual Genome](https://visualgenome.org/)

- [Link](https://drive.google.com/file/d/0B5RJWjAhdT04SXRfVHBKZ0dOTzQ/view?usp=sharing&resourcekey=0-bW_W0QVJOfaNs5NyGjDjbQ)

- Images can be downloaded from the website of Visual Genome

- Remarks: eventually I found no time to further clean it. This subset has a manually cleaned list for relationship predicates. The list for objects may need further cleaning, although Faster-RCNN can get a recall@20 around 50%.

- Using our method, you can get the corresponding results reported in the paper on this dataset.

## Networks

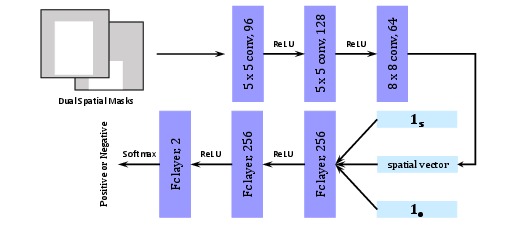

This repo contains three kinds of networks. And all of them get the raw response for predicate based on both appearance cues and spatial cues,

followed by a refinement according to responses of the subject, the object and the predicate.

The networks are designed for the task of predicate recognition,

where ground-truth labels of the subject and the object are provided as inputs.

Therefore, in these networks, responses of the subject and the object are replaced with indicator vectors,

and only response of the predicate will be refined.

In these networks, the subnet for appearance cues is VGG16, and the subnet for spatial cues consists of three conv layers.

And outputs of both subnets are combined via a customized concatenate layer,

followed by two fc layers to generate raw response for the predicate.

The customized concatenate layer is used for combining the output of a fc layer and channels of the output of a conv layer,

which can be replaced with caffe's Concat layer

if the last conv layer in spatial subnet (conv3_p) is equivalently replaced with a fc layer.

The details of these networks are

* drnet_8units_softmax: it has 8 inference units with softmax function as the activation function.

* drnet_8units_linear_shareweight: it has 8 inference units with no activation function, and the weights are shared across units.

* drnet_8units_relu_shareweight: it has 8 inference units with relu function as the activation function, and the weights are shared across units.

### Training

The training procedure is component-by-component.

Specifically, a network usually contains three components,

namely the subnet for appearance (A), the subnet for spatial cues (S), and the drnet for statistical dependencies (D).

In training, we train the network as follow:

* train A in isolation

* train S in isolation

* train A + S in isolation, with weights initialized from previous steps

* train A + S + D jointly, with weights initialized from previous steps

Each step we use the same loss, and we use dropout to avoid overfit.

### Recalls on Predicate Recognition

| Networks | Recall@50 | Recall@100 |

| --- | :---: | :---: |

| drnet_8units_softmax | 75.22 | 77.55 |

| drnet_8units_linear_shareweight | 78.57 | 79.94 |

| drnet_8units_relu_shareweight | 80.86 | 81.83 |

## Codes

* lib/: python layers, as well as auxiliary files for evaluation

* prototxts/: training and testing prototxts

* tools/: python codes for preparing data and evaluation

* snapshots/: pretrain models

## Finetune or Evaluate

1. Download the dataset [VRD](https://github.com/Prof-Lu-Cewu/Visual-Relationship-Detection)

2. Preprocess the dataset using tools/preprare_data.py

3. Download one pretrain model in snapshots/

4. Finetune or Evaluate using corresponding prototxts in prototxts/

## Pair Filter

### Structure

### Training

To train this network, we randomly sample pairs of bounding boxes (with labels) from

each training image, treating those with 0.5 IoU (or above) with any ground-truth pairs (with same labels)

as positive samples, and the rest as negative samples.

## Citation

If you use this code, please cite the following paper(s):

@article{dai2017detecting,

title={Detecting Visual Relationships with Deep Relational Networks},

author={Dai, Bo and Zhang, Yuqi and Lin, Dahua},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition},

year={2017}

}

## License

This code is used for research only. See LICENSE for details.

================================================

FILE: lib/customize_layers/__init__.py

================================================

================================================

FILE: lib/customize_layers/concat_layer.py

================================================

import caffe

import numpy as np

import json

class Layer(caffe.Layer):

def setup(self, bottom, top):

self._sum = 0

for i in xrange(len(bottom)):

self._sum += bottom[i].data.shape[1]

def reshape(self, bottom, top):

top[0].reshape(bottom[0].data.shape[0], self._sum)

def forward(self, bottom, top):

offset = 0

for i in xrange(len(bottom)):

if len(bottom[i].data.shape) == 2:

top[0].data[:, offset : offset + bottom[i].data.shape[1]] = bottom[i].data

else:

top[0].data[:, offset : offset + bottom[i].data.shape[1]] = bottom[i].data[:, :, 0, 0]

offset += bottom[i].data.shape[1]

def backward(self, top, propagate_down, bottom):

offset = 0

for i in xrange(len(bottom)):

if len(bottom[i].data.shape) == 2:

bottom[i].diff[...] = top[0].diff[:, offset : offset + bottom[i].data.shape[1]]

else:

bottom[i].diff[:, :, 0, 0] = top[0].diff[:, offset : offset + bottom[i].data.shape[1]]

offset += bottom[i].data.shape[1]

================================================

FILE: lib/rel_data_layer/__init__.py

================================================

================================================

FILE: lib/rel_data_layer/layer.py

================================================

import caffe

import numpy as np

import json

import math

import os.path as osp

import os

import cv2

import h5py

class RelDataLayer(caffe.Layer):

def _shuffle_inds(self):

self._perm = np.random.permutation(np.arange(self._num_instance))

self._cur = 0

def _get_next_batch_ids(self):

if self._cur + self._batch_size > self._num_instance:

self._shuffle_inds()

ids = self._perm[self._cur : self._cur + self._batch_size]

self._cur += self._batch_size

return ids

def _getAppr(self, im, bb):

subim = im[bb[1] : bb[3], bb[0] : bb[2], :]

subim = cv2.resize(subim, None, None, 224.0 / subim.shape[1], 224.0 / subim.shape[0], interpolation=cv2.INTER_LINEAR)

pixel_means = np.array([[[103.939, 116.779, 123.68]]])

subim -= pixel_means

subim = subim.transpose((2, 0, 1))

return subim

def _getDualMask(self, ih, iw, bb):

rh = 32.0 / ih

rw = 32.0 / iw

x1 = max(0, int(math.floor(bb[0] * rw)))

x2 = min(32, int(math.ceil(bb[2] * rw)))

y1 = max(0, int(math.floor(bb[1] * rh)))

y2 = min(32, int(math.ceil(bb[3] * rh)))

mask = np.zeros((32, 32))

mask[y1 : y2, x1 : x2] = 1

assert(mask.sum() == (y2 - y1) * (x2 - x1))

return mask

def _get_next_batch(self):

ids = self._get_next_batch_ids()

qas = []

qbs = []

ims = []

poses = []

labels = []

for id in ids:

sample = self._samples[id]

im = cv2.imread(sample["imPath"]).astype(np.float32, copy=False)

ih = im.shape[0]

iw = im.shape[1]

qa = np.zeros(self._nclass)

qa[sample["aLabel"] - 1] = 1

qas.append(qa)

qb = np.zeros(self._nclass)

qb[sample["bLabel"] - 1] = 1

qbs.append(qb)

ims.append(self._getAppr(im, sample["rBBox"]))

poses.append([self._getDualMask(ih, iw, sample["aBBox"]), \

self._getDualMask(ih, iw, sample["bBBox"])])

labels.append(sample["rLabel"])

return {"qa": np.array(qas), "qb": np.array(qbs), "im": np.array(ims), "posdata": np.array(poses), "labels": np.array(labels)}

def setup(self, bottom, top):

layer_params = json.loads(self.param_str)

self._samples = json.load(open(layer_params["dataset"]))

self._num_instance = len(self._samples)

self._batch_size = layer_params["batch_size"]

self._nclass = layer_params["nclass"]

self._name_to_top_map = {"qa": 0, "qb": 1, "im": 2, "posdata": 3, "labels": 4}

self._shuffle_inds()

top[0].reshape(self._batch_size, self._nclass)

top[1].reshape(self._batch_size, self._nclass)

top[2].reshape(self._batch_size, 3, 224, 224)

top[3].reshape(self._batch_size, 2, 32, 32)

top[4].reshape(self._batch_size)

def forward(self, bottom, top):

batch = self._get_next_batch()

for blob_name, blob in batch.iteritems():

idx = self._name_to_top_map[blob_name]

top[idx].reshape(*(blob.shape))

top[idx].data[...] = blob.astype(np.float32, copy=False)

def backward(self, top, propagate_down, bottom):

pass

def reshape(self, bottom, top):

pass

================================================

FILE: lib/utils/__init__.py

================================================

================================================

FILE: lib/utils/eval_utils.py

================================================

def computeArea(bb):

return max(0, bb[2] - bb[0] + 1) * max(0, bb[3] - bb[1] + 1)

def computeIoU(bb1, bb2):

ibb = [max(bb1[0], bb2[0]), \

max(bb1[1], bb2[1]), \

min(bb1[2], bb2[2]), \

min(bb1[3], bb2[3])]

iArea = computeArea(ibb)

uArea = computeArea(bb1) + computeArea(bb2) - iArea

return (iArea + 0.0) / uArea

================================================

FILE: prototxts/drnet_8units_linear_shareweight.prototxt

================================================

layer {

name: "data"

type: "Python"

top: "qa"

top: "qb"

top: "im"

top: "posdata"

top: "labels"

include {

phase: TRAIN

}

python_param {

module: 'rel_data_layer.layer'

layer: 'RelDataLayer'

param_str: '{"dataset": "reltrain.json", "batch_size": 25, "nclass": 100}'

}

}

layer {

name: "data"

type: "Python"

top: "qa"

top: "qb"

top: "im"

top: "posdata"

top: "labels"

include {

phase: TEST

}

python_param {

module: 'rel_data_layer.layer'

layer: 'RelDataLayer'

param_str: '{"dataset": "reltest.json", "batch_size": 25, "nclass": 100}'

}

}

# Appearance Subnet

layer {

name: "conv1_1"

type: "Convolution"

bottom: "im"

top: "conv1_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 64

pad: 1

kernel_size: 3

}

}

layer {

name: "relu1_1"

type: "ReLU"

bottom: "conv1_1"

top: "conv1_1"

}

layer {

name: "conv1_2"

type: "Convolution"

bottom: "conv1_1"

top: "conv1_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 64

pad: 1

kernel_size: 3

}

}

layer {

name: "relu1_2"

type: "ReLU"

bottom: "conv1_2"

top: "conv1_2"

}

layer {

name: "pool1"

type: "Pooling"

bottom: "conv1_2"

top: "pool1"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv2_1"

type: "Convolution"

bottom: "pool1"

top: "conv2_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 128

pad: 1

kernel_size: 3

}

}

layer {

name: "relu2_1"

type: "ReLU"

bottom: "conv2_1"

top: "conv2_1"

}

layer {

name: "conv2_2"

type: "Convolution"

bottom: "conv2_1"

top: "conv2_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 128

pad: 1

kernel_size: 3

}

}

layer {

name: "relu2_2"

type: "ReLU"

bottom: "conv2_2"

top: "conv2_2"

}

layer {

name: "pool2"

type: "Pooling"

bottom: "conv2_2"

top: "pool2"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv3_1"

type: "Convolution"

bottom: "pool2"

top: "conv3_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 256

pad: 1

kernel_size: 3

}

}

layer {

name: "relu3_1"

type: "ReLU"

bottom: "conv3_1"

top: "conv3_1"

}

layer {

name: "conv3_2"

type: "Convolution"

bottom: "conv3_1"

top: "conv3_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 2

decay_mult: 0

}

convolution_param {

num_output: 256

pad: 1

kernel_size: 3

}

}

layer {

name: "relu3_2"

type: "ReLU"

bottom: "conv3_2"

top: "conv3_2"

}

layer {

name: "conv3_3"

type: "Convolution"

bottom: "conv3_2"

top: "conv3_3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 256

pad: 1

kernel_size: 3

}

}

layer {

name: "relu3_3"

type: "ReLU"

bottom: "conv3_3"

top: "conv3_3"

}

layer {

name: "pool3"

type: "Pooling"

bottom: "conv3_3"

top: "pool3"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv4_1"

type: "Convolution"

bottom: "pool3"

top: "conv4_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu4_1"

type: "ReLU"

bottom: "conv4_1"

top: "conv4_1"

}

layer {

name: "conv4_2"

type: "Convolution"

bottom: "conv4_1"

top: "conv4_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu4_2"

type: "ReLU"

bottom: "conv4_2"

top: "conv4_2"

}

layer {

name: "conv4_3"

type: "Convolution"

bottom: "conv4_2"

top: "conv4_3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu4_3"

type: "ReLU"

bottom: "conv4_3"

top: "conv4_3"

}

layer {

name: "pool4"

type: "Pooling"

bottom: "conv4_3"

top: "pool4"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv5_1"

type: "Convolution"

bottom: "pool4"

top: "conv5_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu5_1"

type: "ReLU"

bottom: "conv5_1"

top: "conv5_1"

}

layer {

name: "conv5_2"

type: "Convolution"

bottom: "conv5_1"

top: "conv5_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu5_2"

type: "ReLU"

bottom: "conv5_2"

top: "conv5_2"

}

layer {

name: "conv5_3"

type: "Convolution"

bottom: "conv5_2"

top: "conv5_3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu5_3"

type: "ReLU"

bottom: "conv5_3"

top: "conv5_3"

}

layer {

name: "pool5"

type: "Pooling"

bottom: "conv5_3"

top: "pool5"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "fc6"

type: "InnerProduct"

bottom: "pool5"

top: "fc6"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 4096

}

}

layer {

name: "relu6"

type: "ReLU"

bottom: "fc6"

top: "fc6"

}

layer {

name: "fc7"

type: "InnerProduct"

bottom: "fc6"

top: "fc7"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 4096

}

}

layer {

name: "relu7"

type: "ReLU"

bottom: "fc7"

top: "fc7"

}

layer {

name: "fc8"

type: "InnerProduct"

bottom: "fc7"

top: "fc8"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 256

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "relu8"

type: "ReLU"

bottom: "fc8"

top: "fc8"

}

# Spatial Cfg Subnet

layer {

name: "conv1_p"

type: "Convolution"

bottom: "posdata"

top: "conv1_p"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 96

kernel_size: 5

pad: 2

stride: 2

}

}

layer {

name: "relu1_p"

type: "ReLU"

bottom: "conv1_p"

top: "conv1_p"

}

layer {

name: "conv2_p"

type: "Convolution"

bottom: "conv1_p"

top: "conv2_p"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 128

kernel_size: 5

pad: 2

stride: 2

}

}

layer {

name: "conv3_p"

type: "Convolution"

bottom: "conv2_p"

top: "conv3_p"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 64

kernel_size: 8

}

}

layer {

name: "relu3_p"

type: "ReLU"

bottom: "conv3_p"

top: "conv3_p"

}

# Combine features from subnets

layer {

name: "concat1_c"

type: "Python"

bottom: "fc8"

bottom: "conv3_p"

top: "concat1_c"

python_param {

module: "customize_layers.concat_layer"

layer: "Layer"

}

}

layer {

name: "fc2_c"

type: "InnerProduct"

bottom: "concat1_c"

top: "fc2_c"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 128

}

}

layer {

name: "relu2_c"

type: "ReLU"

bottom: "fc2_c"

top: "fc2_c"

}

layer {

name: "PhiR_0"

type: "InnerProduct"

bottom: "fc2_c"

top: "qr0"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

#DR-Net

layer {

name: "PhiA_1"

type: "InnerProduct"

bottom: "qa"

top: "qar1"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "PhiB_1"

type: "InnerProduct"

bottom: "qb"

top: "qbr1"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "PhiR_1"

type: "InnerProduct"

bottom: "qr0"

top: "q1r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "QSum_1"

type: "Eltwise"

bottom: "qar1"

bottom: "qbr1"

bottom: "q1r"

top: "qr1"

eltwise_param { operation: SUM }

}

layer {

name: "PhiA_2"

type: "InnerProduct"

bottom: "qa"

top: "qar2"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_2"

type: "InnerProduct"

bottom: "qb"

top: "qbr2"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_2"

type: "InnerProduct"

bottom: "qr1"

top: "q2r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_2"

type: "Eltwise"

bottom: "qar2"

bottom: "qbr2"

bottom: "q2r"

top: "qr2"

eltwise_param { operation: SUM }

}

layer {

name: "PhiA_3"

type: "InnerProduct"

bottom: "qa"

top: "qar3"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_3"

type: "InnerProduct"

bottom: "qb"

top: "qbr3"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_3"

type: "InnerProduct"

bottom: "qr2"

top: "q3r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_3"

type: "Eltwise"

bottom: "qar3"

bottom: "qbr3"

bottom: "q3r"

top: "qr3"

eltwise_param { operation: SUM }

}

layer {

name: "PhiA_4"

type: "InnerProduct"

bottom: "qa"

top: "qar4"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_4"

type: "InnerProduct"

bottom: "qb"

top: "qbr4"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_4"

type: "InnerProduct"

bottom: "qr3"

top: "q4r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_4"

type: "Eltwise"

bottom: "qar4"

bottom: "qbr4"

bottom: "q4r"

top: "qr4"

eltwise_param { operation: SUM }

}

layer {

name: "PhiA_5"

type: "InnerProduct"

bottom: "qa"

top: "qar5"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_5"

type: "InnerProduct"

bottom: "qb"

top: "qbr5"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_5"

type: "InnerProduct"

bottom: "qr4"

top: "q5r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_5"

type: "Eltwise"

bottom: "qar5"

bottom: "qbr5"

bottom: "q5r"

top: "qr5"

eltwise_param { operation: SUM }

}

layer {

name: "PhiA_6"

type: "InnerProduct"

bottom: "qa"

top: "qar6"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_6"

type: "InnerProduct"

bottom: "qb"

top: "qbr6"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_6"

type: "InnerProduct"

bottom: "qr5"

top: "q6r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_6"

type: "Eltwise"

bottom: "qar6"

bottom: "qbr6"

bottom: "q6r"

top: "qr6"

eltwise_param { operation: SUM }

}

layer {

name: "PhiA_7"

type: "InnerProduct"

bottom: "qa"

top: "qar7"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_7"

type: "InnerProduct"

bottom: "qb"

top: "qbr7"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_7"

type: "InnerProduct"

bottom: "qr6"

top: "q7r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_7"

type: "Eltwise"

bottom: "qar7"

bottom: "qbr7"

bottom: "q7r"

top: "qr7"

eltwise_param { operation: SUM }

}

layer {

name: "PhiA_8"

type: "InnerProduct"

bottom: "qa"

top: "qar8"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_8"

type: "InnerProduct"

bottom: "qb"

top: "qbr8"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_8"

type: "InnerProduct"

bottom: "qr7"

top: "q8r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_8"

type: "Eltwise"

bottom: "qar8"

bottom: "qbr8"

bottom: "q8r"

top: "qr8"

eltwise_param { operation: SUM }

}

layer {

name: "loss3"

type: "SoftmaxWithLoss"

top: "loss3"

bottom: "qr8"

bottom: "labels"

include {

phase: TRAIN

}

loss_weight: 1

}

layer {

name: "softmax"

type: "Softmax"

bottom: "qr8"

top: "pred"

include {

phase: TEST

}

}

layer {

name: "accuracy"

type: "Accuracy"

bottom: "pred"

bottom: "labels"

top: "top-1"

include {

phase: TEST

}

accuracy_param {

top_k: 1

}

}

layer {

name: "accuracy_top5"

type: "Accuracy"

bottom: "pred"

bottom: "labels"

top: "top-5"

include {

phase: TEST

}

accuracy_param {

top_k: 5

}

}

================================================

FILE: prototxts/drnet_8units_relu_shareweight.prototxt

================================================

layer {

name: "data"

type: "Python"

top: "qa"

top: "qb"

top: "im"

top: "posdata"

top: "labels"

include {

phase: TRAIN

}

python_param {

module: 'rel_data_layer.layer'

layer: 'RelDataLayer'

param_str: '{"dataset": "reltrain.json", "batch_size": 25, "nclass": 100}'

}

}

layer {

name: "data"

type: "Python"

top: "qa"

top: "qb"

top: "im"

top: "posdata"

top: "labels"

include {

phase: TEST

}

python_param {

module: 'rel_data_layer.layer'

layer: 'RelDataLayer'

param_str: '{"dataset": "reltest.json", "batch_size": 25, "nclass": 100}'

}

}

# Appearance Subnet

layer {

name: "conv1_1"

type: "Convolution"

bottom: "im"

top: "conv1_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 64

pad: 1

kernel_size: 3

}

}

layer {

name: "relu1_1"

type: "ReLU"

bottom: "conv1_1"

top: "conv1_1"

}

layer {

name: "conv1_2"

type: "Convolution"

bottom: "conv1_1"

top: "conv1_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 64

pad: 1

kernel_size: 3

}

}

layer {

name: "relu1_2"

type: "ReLU"

bottom: "conv1_2"

top: "conv1_2"

}

layer {

name: "pool1"

type: "Pooling"

bottom: "conv1_2"

top: "pool1"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv2_1"

type: "Convolution"

bottom: "pool1"

top: "conv2_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 128

pad: 1

kernel_size: 3

}

}

layer {

name: "relu2_1"

type: "ReLU"

bottom: "conv2_1"

top: "conv2_1"

}

layer {

name: "conv2_2"

type: "Convolution"

bottom: "conv2_1"

top: "conv2_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 128

pad: 1

kernel_size: 3

}

}

layer {

name: "relu2_2"

type: "ReLU"

bottom: "conv2_2"

top: "conv2_2"

}

layer {

name: "pool2"

type: "Pooling"

bottom: "conv2_2"

top: "pool2"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv3_1"

type: "Convolution"

bottom: "pool2"

top: "conv3_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 256

pad: 1

kernel_size: 3

}

}

layer {

name: "relu3_1"

type: "ReLU"

bottom: "conv3_1"

top: "conv3_1"

}

layer {

name: "conv3_2"

type: "Convolution"

bottom: "conv3_1"

top: "conv3_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 2

decay_mult: 0

}

convolution_param {

num_output: 256

pad: 1

kernel_size: 3

}

}

layer {

name: "relu3_2"

type: "ReLU"

bottom: "conv3_2"

top: "conv3_2"

}

layer {

name: "conv3_3"

type: "Convolution"

bottom: "conv3_2"

top: "conv3_3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 256

pad: 1

kernel_size: 3

}

}

layer {

name: "relu3_3"

type: "ReLU"

bottom: "conv3_3"

top: "conv3_3"

}

layer {

name: "pool3"

type: "Pooling"

bottom: "conv3_3"

top: "pool3"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv4_1"

type: "Convolution"

bottom: "pool3"

top: "conv4_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu4_1"

type: "ReLU"

bottom: "conv4_1"

top: "conv4_1"

}

layer {

name: "conv4_2"

type: "Convolution"

bottom: "conv4_1"

top: "conv4_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu4_2"

type: "ReLU"

bottom: "conv4_2"

top: "conv4_2"

}

layer {

name: "conv4_3"

type: "Convolution"

bottom: "conv4_2"

top: "conv4_3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu4_3"

type: "ReLU"

bottom: "conv4_3"

top: "conv4_3"

}

layer {

name: "pool4"

type: "Pooling"

bottom: "conv4_3"

top: "pool4"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv5_1"

type: "Convolution"

bottom: "pool4"

top: "conv5_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu5_1"

type: "ReLU"

bottom: "conv5_1"

top: "conv5_1"

}

layer {

name: "conv5_2"

type: "Convolution"

bottom: "conv5_1"

top: "conv5_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu5_2"

type: "ReLU"

bottom: "conv5_2"

top: "conv5_2"

}

layer {

name: "conv5_3"

type: "Convolution"

bottom: "conv5_2"

top: "conv5_3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu5_3"

type: "ReLU"

bottom: "conv5_3"

top: "conv5_3"

}

layer {

name: "pool5"

type: "Pooling"

bottom: "conv5_3"

top: "pool5"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "fc6"

type: "InnerProduct"

bottom: "pool5"

top: "fc6"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 4096

}

}

layer {

name: "relu6"

type: "ReLU"

bottom: "fc6"

top: "fc6"

}

layer {

name: "fc7"

type: "InnerProduct"

bottom: "fc6"

top: "fc7"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 4096

}

}

layer {

name: "relu7"

type: "ReLU"

bottom: "fc7"

top: "fc7"

}

layer {

name: "fc8"

type: "InnerProduct"

bottom: "fc7"

top: "fc8"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 256

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "relu8"

type: "ReLU"

bottom: "fc8"

top: "fc8"

}

# Spatial Cfg Subnet

layer {

name: "conv1_p"

type: "Convolution"

bottom: "posdata"

top: "conv1_p"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 96

kernel_size: 5

pad: 2

stride: 2

}

}

layer {

name: "relu1_p"

type: "ReLU"

bottom: "conv1_p"

top: "conv1_p"

}

layer {

name: "conv2_p"

type: "Convolution"

bottom: "conv1_p"

top: "conv2_p"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 128

kernel_size: 5

pad: 2

stride: 2

}

}

layer {

name: "conv3_p"

type: "Convolution"

bottom: "conv2_p"

top: "conv3_p"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 64

kernel_size: 8

}

}

layer {

name: "relu3_p"

type: "ReLU"

bottom: "conv3_p"

top: "conv3_p"

}

# Combine features from subnets

layer {

name: "concat1_c"

type: "Python"

bottom: "fc8"

bottom: "conv3_p"

top: "concat1_c"

python_param {

module: "customize_layers.concat_layer"

layer: "Layer"

}

}

layer {

name: "fc2_c"

type: "InnerProduct"

bottom: "concat1_c"

top: "fc2_c"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 128

}

}

layer {

name: "relu2_c"

type: "ReLU"

bottom: "fc2_c"

top: "fc2_c"

}

layer {

name: "PhiR_0"

type: "InnerProduct"

bottom: "fc2_c"

top: "q0r"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "relu_0"

type: "ReLU"

bottom: "q0r"

top: "qr0"

}

#DR-Net

layer {

name: "PhiA_1"

type: "InnerProduct"

bottom: "qa"

top: "qar1"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "PhiB_1"

type: "InnerProduct"

bottom: "qb"

top: "qbr1"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "PhiR_1"

type: "InnerProduct"

bottom: "qr0"

top: "q1r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "QSum_1"

type: "Eltwise"

bottom: "qar1"

bottom: "qbr1"

bottom: "q1r"

top: "qr1un"

eltwise_param { operation: SUM }

}

layer {

name: "relu_1"

type: "ReLU"

bottom: "qr1un"

top: "qr1"

}

layer {

name: "PhiA_2"

type: "InnerProduct"

bottom: "qa"

top: "qar2"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_2"

type: "InnerProduct"

bottom: "qb"

top: "qbr2"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_2"

type: "InnerProduct"

bottom: "qr1"

top: "q2r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_2"

type: "Eltwise"

bottom: "qar2"

bottom: "qbr2"

bottom: "q2r"

top: "qr2un"

eltwise_param { operation: SUM }

}

layer {

name: "relu_2"

type: "ReLU"

bottom: "qr2un"

top: "qr2"

}

layer {

name: "PhiA_3"

type: "InnerProduct"

bottom: "qa"

top: "qar3"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_3"

type: "InnerProduct"

bottom: "qb"

top: "qbr3"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_3"

type: "InnerProduct"

bottom: "qr2"

top: "q3r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_3"

type: "Eltwise"

bottom: "qar3"

bottom: "qbr3"

bottom: "q3r"

top: "qr3un"

eltwise_param { operation: SUM }

}

layer {

name: "relu_3"

type: "ReLU"

bottom: "qr3un"

top: "qr3"

}

layer {

name: "PhiA_4"

type: "InnerProduct"

bottom: "qa"

top: "qar4"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_4"

type: "InnerProduct"

bottom: "qb"

top: "qbr4"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_4"

type: "InnerProduct"

bottom: "qr3"

top: "q4r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_4"

type: "Eltwise"

bottom: "qar4"

bottom: "qbr4"

bottom: "q4r"

top: "qr4un"

eltwise_param { operation: SUM }

}

layer {

name: "relu_4"

type: "ReLU"

bottom: "qr4un"

top: "qr4"

}

layer {

name: "PhiA_5"

type: "InnerProduct"

bottom: "qa"

top: "qar5"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_5"

type: "InnerProduct"

bottom: "qb"

top: "qbr5"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_5"

type: "InnerProduct"

bottom: "qr4"

top: "q5r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_5"

type: "Eltwise"

bottom: "qar5"

bottom: "qbr5"

bottom: "q5r"

top: "qr5un"

eltwise_param { operation: SUM }

}

layer {

name: "relu_5"

type: "ReLU"

bottom: "qr5un"

top: "qr5"

}

layer {

name: "PhiA_6"

type: "InnerProduct"

bottom: "qa"

top: "qar6"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_6"

type: "InnerProduct"

bottom: "qb"

top: "qbr6"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_6"

type: "InnerProduct"

bottom: "qr5"

top: "q6r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_6"

type: "Eltwise"

bottom: "qar6"

bottom: "qbr6"

bottom: "q6r"

top: "qr6un"

eltwise_param { operation: SUM }

}

layer {

name: "relu_6"

type: "ReLU"

bottom: "qr6un"

top: "qr6"

}

layer {

name: "PhiA_7"

type: "InnerProduct"

bottom: "qa"

top: "qar7"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_7"

type: "InnerProduct"

bottom: "qb"

top: "qbr7"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_7"

type: "InnerProduct"

bottom: "qr6"

top: "q7r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_7"

type: "Eltwise"

bottom: "qar7"

bottom: "qbr7"

bottom: "q7r"

top: "qr7un"

eltwise_param { operation: SUM }

}

layer {

name: "relu_7"

type: "ReLU"

bottom: "qr7un"

top: "qr7"

}

layer {

name: "PhiA_8"

type: "InnerProduct"

bottom: "qa"

top: "qar8"

param {

name: "qar_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qar_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_8"

type: "InnerProduct"

bottom: "qb"

top: "qbr8"

param {

name: "qbr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qbr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_8"

type: "InnerProduct"

bottom: "qr7"

top: "q8r"

param {

name: "qr_w"

lr_mult: 1

decay_mult: 1

}

param {

name: "qr_b"

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_8"

type: "Eltwise"

bottom: "qar8"

bottom: "qbr8"

bottom: "q8r"

top: "qr8"

eltwise_param { operation: SUM }

}

layer {

name: "loss3"

type: "SoftmaxWithLoss"

top: "loss3"

bottom: "qr8"

bottom: "labels"

include {

phase: TRAIN

}

loss_weight: 1

}

layer {

name: "softmax"

type: "Softmax"

bottom: "qr8"

top: "pred"

include {

phase: TEST

}

}

layer {

name: "accuracy"

type: "Accuracy"

bottom: "pred"

bottom: "labels"

top: "top-1"

include {

phase: TEST

}

accuracy_param {

top_k: 1

}

}

layer {

name: "accuracy_top5"

type: "Accuracy"

bottom: "pred"

bottom: "labels"

top: "top-5"

include {

phase: TEST

}

accuracy_param {

top_k: 5

}

}

================================================

FILE: prototxts/drnet_8units_softmax.prototxt

================================================

layer {

name: "data"

type: "Python"

top: "qa"

top: "qb"

top: "im"

top: "posdata"

top: "labels"

include {

phase: TRAIN

}

python_param {

module: 'rel_data_layer.layer'

layer: 'RelDataLayer'

param_str: '{"dataset": "reltrain.json", "batch_size": 25, "nclass": 100}'

}

}

layer {

name: "data"

type: "Python"

top: "qa"

top: "qb"

top: "im"

top: "posdata"

top: "labels"

include {

phase: TEST

}

python_param {

module: 'rel_data_layer.layer'

layer: 'RelDataLayer'

param_str: '{"dataset": "reltest.json", "batch_size": 25, "nclass": 100}'

}

}

# Appearance Subnet

layer {

name: "conv1_1"

type: "Convolution"

bottom: "im"

top: "conv1_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 64

pad: 1

kernel_size: 3

}

}

layer {

name: "relu1_1"

type: "ReLU"

bottom: "conv1_1"

top: "conv1_1"

}

layer {

name: "conv1_2"

type: "Convolution"

bottom: "conv1_1"

top: "conv1_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 64

pad: 1

kernel_size: 3

}

}

layer {

name: "relu1_2"

type: "ReLU"

bottom: "conv1_2"

top: "conv1_2"

}

layer {

name: "pool1"

type: "Pooling"

bottom: "conv1_2"

top: "pool1"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv2_1"

type: "Convolution"

bottom: "pool1"

top: "conv2_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 128

pad: 1

kernel_size: 3

}

}

layer {

name: "relu2_1"

type: "ReLU"

bottom: "conv2_1"

top: "conv2_1"

}

layer {

name: "conv2_2"

type: "Convolution"

bottom: "conv2_1"

top: "conv2_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 128

pad: 1

kernel_size: 3

}

}

layer {

name: "relu2_2"

type: "ReLU"

bottom: "conv2_2"

top: "conv2_2"

}

layer {

name: "pool2"

type: "Pooling"

bottom: "conv2_2"

top: "pool2"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv3_1"

type: "Convolution"

bottom: "pool2"

top: "conv3_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 256

pad: 1

kernel_size: 3

}

}

layer {

name: "relu3_1"

type: "ReLU"

bottom: "conv3_1"

top: "conv3_1"

}

layer {

name: "conv3_2"

type: "Convolution"

bottom: "conv3_1"

top: "conv3_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 2

decay_mult: 0

}

convolution_param {

num_output: 256

pad: 1

kernel_size: 3

}

}

layer {

name: "relu3_2"

type: "ReLU"

bottom: "conv3_2"

top: "conv3_2"

}

layer {

name: "conv3_3"

type: "Convolution"

bottom: "conv3_2"

top: "conv3_3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 256

pad: 1

kernel_size: 3

}

}

layer {

name: "relu3_3"

type: "ReLU"

bottom: "conv3_3"

top: "conv3_3"

}

layer {

name: "pool3"

type: "Pooling"

bottom: "conv3_3"

top: "pool3"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv4_1"

type: "Convolution"

bottom: "pool3"

top: "conv4_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu4_1"

type: "ReLU"

bottom: "conv4_1"

top: "conv4_1"

}

layer {

name: "conv4_2"

type: "Convolution"

bottom: "conv4_1"

top: "conv4_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu4_2"

type: "ReLU"

bottom: "conv4_2"

top: "conv4_2"

}

layer {

name: "conv4_3"

type: "Convolution"

bottom: "conv4_2"

top: "conv4_3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu4_3"

type: "ReLU"

bottom: "conv4_3"

top: "conv4_3"

}

layer {

name: "pool4"

type: "Pooling"

bottom: "conv4_3"

top: "pool4"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "conv5_1"

type: "Convolution"

bottom: "pool4"

top: "conv5_1"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu5_1"

type: "ReLU"

bottom: "conv5_1"

top: "conv5_1"

}

layer {

name: "conv5_2"

type: "Convolution"

bottom: "conv5_1"

top: "conv5_2"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu5_2"

type: "ReLU"

bottom: "conv5_2"

top: "conv5_2"

}

layer {

name: "conv5_3"

type: "Convolution"

bottom: "conv5_2"

top: "conv5_3"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 512

pad: 1

kernel_size: 3

}

}

layer {

name: "relu5_3"

type: "ReLU"

bottom: "conv5_3"

top: "conv5_3"

}

layer {

name: "pool5"

type: "Pooling"

bottom: "conv5_3"

top: "pool5"

pooling_param {

pool: MAX

kernel_size: 2

stride: 2

}

}

layer {

name: "fc6"

type: "InnerProduct"

bottom: "pool5"

top: "fc6"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 4096

}

}

layer {

name: "relu6"

type: "ReLU"

bottom: "fc6"

top: "fc6"

}

layer {

name: "fc7"

type: "InnerProduct"

bottom: "fc6"

top: "fc7"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 4096

}

}

layer {

name: "relu7"

type: "ReLU"

bottom: "fc7"

top: "fc7"

}

layer {

name: "fc8"

type: "InnerProduct"

bottom: "fc7"

top: "fc8"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 256

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "relu8"

type: "ReLU"

bottom: "fc8"

top: "fc8"

}

# Spatial Cfg Subnet

layer {

name: "conv1_p"

type: "Convolution"

bottom: "posdata"

top: "conv1_p"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 96

kernel_size: 5

pad: 2

stride: 2

}

}

layer {

name: "relu1_p"

type: "ReLU"

bottom: "conv1_p"

top: "conv1_p"

}

layer {

name: "conv2_p"

type: "Convolution"

bottom: "conv1_p"

top: "conv2_p"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 128

kernel_size: 5

pad: 2

stride: 2

}

}

layer {

name: "conv3_p"

type: "Convolution"

bottom: "conv2_p"

top: "conv3_p"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

convolution_param {

num_output: 64

kernel_size: 8

}

}

layer {

name: "relu3_p"

type: "ReLU"

bottom: "conv3_p"

top: "conv3_p"

}

# Combine features from subnets

layer {

name: "concat1_c"

type: "Python"

bottom: "fc8"

bottom: "conv3_p"

top: "concat1_c"

python_param {

module: "customize_layers.concat_layer"

layer: "Layer"

}

}

layer {

name: "fc2_c"

type: "InnerProduct"

bottom: "concat1_c"

top: "fc2_c"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 128

}

}

layer {

name: "relu2_c"

type: "ReLU"

bottom: "fc2_c"

top: "fc2_c"

}

layer {

name: "PhiR_0"

type: "InnerProduct"

bottom: "fc2_c"

top: "q0r"

param {

lr_mult: 0

decay_mult: 0

}

param {

lr_mult: 0

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "Softmax_0"

type: "Softmax"

bottom: "q0r"

top: "qr0"

}

#DR-Net

layer {

name: "PhiA_1"

type: "InnerProduct"

bottom: "qa"

top: "qar1"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "PhiB_1"

type: "InnerProduct"

bottom: "qb"

top: "qbr1"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "PhiR_1"

type: "InnerProduct"

bottom: "qr0"

top: "q1r"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "QSum_1"

type: "Eltwise"

bottom: "qar1"

bottom: "qbr1"

bottom: "q1r"

top: "qr1un"

eltwise_param { operation: SUM }

}

layer {

name: "Softmax_1"

type: "Softmax"

bottom: "qr1un"

top: "qr1"

}

layer {

name: "PhiA_2"

type: "InnerProduct"

bottom: "qa"

top: "qar2"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_2"

type: "InnerProduct"

bottom: "qb"

top: "qbr2"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_2"

type: "InnerProduct"

bottom: "qr1"

top: "q2r"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_2"

type: "Eltwise"

bottom: "qar2"

bottom: "qbr2"

bottom: "q2r"

top: "qr2un"

eltwise_param { operation: SUM }

}

layer {

name: "Softmax_2"

type: "Softmax"

bottom: "qr2un"

top: "qr2"

}

layer {

name: "PhiA_3"

type: "InnerProduct"

bottom: "qa"

top: "qar3"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_3"

type: "InnerProduct"

bottom: "qb"

top: "qbr3"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_3"

type: "InnerProduct"

bottom: "qr2"

top: "q3r"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_3"

type: "Eltwise"

bottom: "qar3"

bottom: "qbr3"

bottom: "q3r"

top: "qr3un"

eltwise_param { operation: SUM }

}

layer {

name: "Softmax_3"

type: "Softmax"

bottom: "qr3un"

top: "qr3"

}

layer {

name: "PhiA_4"

type: "InnerProduct"

bottom: "qa"

top: "qar4"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_4"

type: "InnerProduct"

bottom: "qb"

top: "qbr4"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_4"

type: "InnerProduct"

bottom: "qr3"

top: "q4r"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_4"

type: "Eltwise"

bottom: "qar4"

bottom: "qbr4"

bottom: "q4r"

top: "qr4un"

eltwise_param { operation: SUM }

}

layer {

name: "Softmax_4"

type: "Softmax"

bottom: "qr4un"

top: "qr4"

}

layer {

name: "PhiA_5"

type: "InnerProduct"

bottom: "qa"

top: "qar5"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_5"

type: "InnerProduct"

bottom: "qb"

top: "qbr5"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_5"

type: "InnerProduct"

bottom: "qr4"

top: "q5r"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_5"

type: "Eltwise"

bottom: "qar5"

bottom: "qbr5"

bottom: "q5r"

top: "qr5un"

eltwise_param { operation: SUM }

}

layer {

name: "Softmax_5"

type: "Softmax"

bottom: "qr5un"

top: "qr5"

}

layer {

name: "PhiA_6"

type: "InnerProduct"

bottom: "qa"

top: "qar6"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_6"

type: "InnerProduct"

bottom: "qb"

top: "qbr6"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_6"

type: "InnerProduct"

bottom: "qr5"

top: "q6r"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_6"

type: "Eltwise"

bottom: "qar6"

bottom: "qbr6"

bottom: "q6r"

top: "qr6un"

eltwise_param { operation: SUM }

}

layer {

name: "Softmax_6"

type: "Softmax"

bottom: "qr6un"

top: "qr6"

}

layer {

name: "PhiA_7"

type: "InnerProduct"

bottom: "qa"

top: "qar7"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiB_7"

type: "InnerProduct"

bottom: "qb"

top: "qbr7"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "PhiR_7"

type: "InnerProduct"

bottom: "qr6"

top: "q7r"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

inner_product_param {

num_output: 70

}

}

layer {

name: "QSum_7"

type: "Eltwise"

bottom: "qar7"

bottom: "qbr7"

bottom: "q7r"

top: "qr7un"