Showing preview only (248K chars total). Download the full file or copy to clipboard to get everything.

Repository: elmoallistair/qwiklabs

Branch: master

Commit: 864109d1199b

Files: 82

Total size: 221.8 KB

Directory structure:

gitextract_6rdyb3hi/

├── CONTRIBUTING.md

├── labs/

│ ├── arc130_analyze-sentiment-with-natural-language-api-challenge-lab/

│ │ ├── code.gs

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp101_google-cloud-essential-skills-challenge-lab/

│ │ ├── guide.txt

│ │ └── readme.md

│ ├── gsp1151_generative_ai_with_vertex_ai-prompt_design/

│ │ ├── intro_prompt_design.ipynb

│ │ └── readme.md

│ ├── gsp301_deploy-a-compute-instance-with-a-remote-startup-script/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp302_configure-a-firewall-and-a-startup-script-with-deployment-manager/

│ │ ├── guide.txt

│ │ ├── qwiklabs.jinja

│ │ ├── qwiklabs.yaml

│ │ └── readme.md

│ ├── gsp303_configure-secure-rdp-using-a-windows-bastion-host/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp304_build-and-deploy-a-docker-image-to-a-kubernetes/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp305_scale-out-and-update-a-containerized-application-on-a-kubernetes-cluster/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp306_migrate-a-mysql-database-to-google-cloud-sql/

│ │ ├── guide.txt

│ │ └── readme.md

│ ├── gsp311_automate-interactions-with-contact-center-ai-challenge-lab/

│ │ ├── guide.txt

│ │ └── readme.md

│ ├── gsp313_create-and-manage-cloud-resources/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp314_deploy-and-manage-cloud-environments-with-google-cloud-challenge-lab/

│ │ ├── guide.txt

│ │ └── readme.md

│ ├── gsp315_perform-foundational-infrastructure-tasks-in-google-cloud/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp318_deploy-to-kubernetes-in-google-cloud-challenge-lab/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp319_build-a-website-on-google-cloud-challenge-lab/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp321_set-up-and-configure-a-cloud-environment-in-google-cloud-challenge-lab/

│ │ ├── guide.txt

│ │ └── readme.md

│ ├── gsp322_build-and-secure-networks-in-google-cloud-challenge-lab/

│ │ ├── guide.txt

│ │ └── readme.md

│ ├── gsp323_perform-foundation-data-ml-and-ai-task-challenge-lab/

│ │ ├── guide.txt

│ │ ├── lab.schema

│ │ ├── readme.md

│ │ └── request.json

│ ├── gsp324_explore-machine-learning-models-with-explainable-ai-challenge-lab/

│ │ ├── guide.txt

│ │ ├── readme.md

│ │ └── what-if-tool-challenge.ipynb

│ ├── gsp325_building-interactive-apps-with-google-assistant-challenge-lab/

│ │ ├── guide.txt

│ │ ├── main.py

│ │ ├── main_final.py

│ │ ├── readme.md

│ │ └── requirements.txt

│ ├── gsp327_enginner-data-in-google-cloud-challenge-lab/

│ │ ├── query.sql

│ │ └── readme.md

│ ├── gsp328_serverless-cloud-run-development-challenge-lab/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp329_integrate-with-machine-learning-apis-challenge-lab/

│ │ ├── analyze-images.py

│ │ ├── guide.txt

│ │ └── readme.md

│ ├── gsp330_implement-devops-in-google-cloud-challenge-lab/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp335_secure-workloads-in-google-kubernetes-engine-challenge-lab/

│ │ ├── guide.txt

│ │ └── readme.md

│ ├── gsp342_ensure-access-and-identity-in-google-cloud-challenge-lab/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp343_optimize-costs-for-google-kubernetes-engine-challenge-lab/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp344_serverless-firebase-development-challenge-lab/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp345_automating-infrastructure-on-google-cloud-with-terraform-challenge-lab/

│ │ ├── readme.md

│ │ └── script.sh

│ ├── gsp361_build-lookml-objects-in-looker-challenge-lab/

│ │ ├── order_items.view

│ │ ├── part_1-3_training_ecommerce.model

│ │ ├── part_4_training_ecommerce.model

│ │ └── user_details.view

│ ├── gsp374_perform-predictive-data-analysis-in-bigquery-challenge-lab/

│ │ ├── query.sql

│ │ └── readme.md

│ ├── gsp388_monitor-and-log-with-google-cloud-operations-suite-challenge-lab/

│ │ ├── guide.txt

│ │ ├── readme.md

│ │ └── startup-script.sh

│ └── gsp787_insights-from-data-with-bigquery-challenge-lab/

│ ├── query.sql

│ └── readme.md

├── learning-resources.md

└── readme.md

================================================

FILE CONTENTS

================================================

================================================

FILE: CONTRIBUTING.md

================================================

# Contributing

## What you can contribute?

* Add new labs guide

* Improve/Fix/Update existing lab

* Add another lab resources

* Add another learning resources (README.md)

## Lab folder name format

`[lab_code]_lab-name`

example:

`gsp301_deploy-a-compute-instance-with-a-remote-startup-script`

Place the folder in `qwiklabs/labs/`folder in this repo

## Lab folder contents

* `readme.md` with:

* Lab name with Heading1 (`#`) style linked to qwiklabs lab url

* Lab overview

* Challenge scenario (if challenge lab)

* `guide.txt` or `script.sh` that contains step-by-step how to complete lab

* Other resources like notebook, script or file (Optional)

For example, have a look at this [folder](https://github.com/elmoallistair/qwiklabs/tree/master/labs/gsp325_building-interactive-apps-with-google-assistant-challenge-lab)

**Note**: This repo contains only challenge labs

================================================

FILE: labs/arc130_analyze-sentiment-with-natural-language-api-challenge-lab/code.gs

================================================

/**

* @OnlyCurrentDoc

*

* The above comment directs Apps Script to limit the scope of file

* access for this add-on. It specifies that this add-on will only

* attempt to read or modify the files in which the add-on is used,

* and not all of the user's files. The authorization request message

* presented to users will reflect this limited scope.

*/

/**

* Creates a menu entry in the Google Docs UI when the document is

* opened.

*

*/

function onOpen() {

var ui = DocumentApp.getUi();

ui.createMenu('Natural Language Tools')

.addItem('Mark Sentiment', 'markSentiment')

.addToUi();

}

/**

* Gets the user-selected text and highlights it based on sentiment

* with green for positive sentiment, red for negative, and yellow

* for neutral.

*

*/

function markSentiment() {

var POSITIVE_COLOR = '#00ff00'; // Colors for sentiments

var NEGATIVE_COLOR = '#ff0000';

var NEUTRAL_COLOR = '#ffff00';

var NEGATIVE_CUTOFF = -0.2; // Thresholds for sentiments

var POSITIVE_CUTOFF = 0.2;

var selection = DocumentApp.getActiveDocument().getSelection();

if (selection) {

var string = getSelectedText();

var sentiment = retrieveSentiment(string);

// Select the appropriate color

var color = NEUTRAL_COLOR;

if (sentiment <= NEGATIVE_CUTOFF) {

color = NEGATIVE_COLOR;

}

if (sentiment >= POSITIVE_CUTOFF) {

color = POSITIVE_COLOR;

}

// Highlight the text

var elements = selection.getSelectedElements();

for (var i = 0; i < elements.length; i++) {

if (elements[i].isPartial()) {

var element = elements[i].getElement().editAsText();

var startIndex = elements[i].getStartOffset();

var endIndex = elements[i].getEndOffsetInclusive();

element.setBackgroundColor(startIndex, endIndex, color);

} else {

var element = elements[i].getElement().editAsText();

foundText = elements[i].getElement().editAsText();

foundText.setBackgroundColor(color);

}

}

}

}

/**

* Returns a string with the contents of the selected text.

* If no text is selected, returns an empty string.

*/

function getSelectedText() {

var selection = DocumentApp.getActiveDocument().getSelection();

var string = "";

if (selection) {

var elements = selection.getSelectedElements();

for (var i = 0; i < elements.length; i++) {

if (elements[i].isPartial()) {

var element = elements[i].getElement().asText();

var startIndex = elements[i].getStartOffset();

var endIndex = elements[i].getEndOffsetInclusive() + 1;

var text = element.getText().substring(startIndex, endIndex);

string = string + text;

} else {

var element = elements[i].getElement();

// Only translate elements that can be edited as text; skip

// images and other non-text elements.

if (element.editAsText) {

string = string + element.asText().getText();

}

}

}

}

return string;

}

/** Given a string, will call the Natural Language API and retrieve

* the sentiment of the string. The sentiment will be a real

* number in the range -1 to 1, where -1 is highly negative

* sentiment and 1 is highly positive.

*/

function retrieveSentiment (line) {

var apiKey = "AIzaSyDjt9FLVPJbECuOxuU2Be4OYsQ0EC8RH8I";

var apiEndpoint = "https://language.googleapis.com/v1/documents:analyzeSentiment?key= + apiKey;

// Create a structure with the text, its language, its type,

// and its encoding

var docDetails = {

language: 'en-us',

type: 'PLAIN_TEXT',

content: line

};

var nlData = {

document: docDetails,

encodingType: 'UTF8'

};

// Package all of the options and the data together for the call

var nlOptions = {

method : 'post',

contentType: 'application/json',

payload : JSON.stringify(nlData)

};

// And make the call

var response = UrlFetchApp.fetch(apiEndpoint, nlOptions);

var data = JSON.parse(response);

var sentiment = 0.0;

// Ensure all pieces were in the returned value

if (data && data.documentSentiment

&& data.documentSentiment.score){

sentiment = data.documentSentiment.score;

}

return sentiment;

}

================================================

FILE: labs/arc130_analyze-sentiment-with-natural-language-api-challenge-lab/readme.md

================================================

# Analyze Sentiment with Natural Language API: Challenge Lab

## Challenge scenario

You recently joined an organization and are working as a junior cloud engineer as part of a team. You have been assigned machine learning (ML) projects and one of your client requirements is to use the Cloud Natural Language API service in Google Cloud to perform tasks for the completion of a project.

You are expected to have the skills and knowledge for the tasks that follow.

## Your challenge

For this challenge, you are asked to set up Google Docs and perform sentiment analysis on some reviews provided by customers, analyze syntax and parts of speech using the Natural language API, and create a Natural Language API request for a language other than English.

You need to:

- Create an API key.

- Set up Google Docs and call the Natural Language API.

- Analyze syntax and parts of speech with the Natural Language API.

- Perform multilingual natural language processing.

- For this challenge lab, a virtual machine (VM) instance named lab-vm has been configured for you to complete tasks 3 and 4.

Some standards you should follow:

- Ensure that any needed APIs (such as the Cloud Natural Language API) are successfully enabled.

================================================

FILE: labs/arc130_analyze-sentiment-with-natural-language-api-challenge-lab/script.sh

================================================

# Task 1. Create an API key

export API_KEY=AIzaSyDjt9FLVPJbECuOxuU2Be4OYsQ0EC8RH8I # your_generated_api_key

# Task 2. Set up Google Docs and call the Natural Language API

# Follow instruction written on lab

# Task 3. Analyze syntax and parts of speech with the Natural Language API

# Create a JSON file called analyze-request.json

cat > analyze-request.json <<EOF_END

{

"document":{

"type":"PLAIN_TEXT",

"content": "Google, headquartered in Mountain View, unveiled the new Android phone at the Consumer Electronic Show. Sundar Pichai said in his keynote that users love their new Android phones."

},

"encodingType": "UTF8"

}

EOF_END

# Pass the request and save it into file

curl -s -H "Content-Type: application/json" \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

"https://language.googleapis.com/v1/documents:analyzeSyntax" \

-d @analyze-request.json > analyze-response.txt

# Task 4. Perform multilingual natural language processing

# Create a JSON file called multi-nl-request.json

cat > multi-nl-request.json <<EOF_END

{

"document":{

"type":"PLAIN_TEXT",

"content":"Le bureau japonais de Google est situé à Roppongi Hills, Tokyo."

}

}

EOF_END

# Pass the request and save it into file

curl -s -H "Content-Type: application/json" \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

"https://language.googleapis.com/v1/documents:analyzeEntities" \

-d @multi-nl-request.json > multi-response.txt

================================================

FILE: labs/gsp101_google-cloud-essential-skills-challenge-lab/guide.txt

================================================

# Google Cloud Essential Skills: Challenge Lab

# https://www.qwiklabs.com/focuses/1734?parent=catalog

# Task 1: Create a Compute Engine instance, add necessary firewall rules.

- Navigation menu > Compute Engine > VM instances

- Create VM with name "apache" and enable "allow http traffic"

# Task 2: Configure Apache2 Web Server in your instance

- SSH into apache VM

- *in ssh*

- run: sudo apt-get update

- run: sudo apt install apache2

- exit ssh

# Task 3: Test your server

- Click apache VM External IP and verify Apache2 Debian Default Page showed up

================================================

FILE: labs/gsp101_google-cloud-essential-skills-challenge-lab/readme.md

================================================

# [Google Cloud Essential Skills: Challenge Lab](https://www.qwiklabs.com/focuses/1734?parent=catalog)

## Challenge scenario

Your company is ready to launch a brand new product! Because you are entering a totally new space, you have decided to deploy a new website as part of the product launch. The new site is complete, but the person who built the new site left the company before they could deploy it.

================================================

FILE: labs/gsp1151_generative_ai_with_vertex_ai-prompt_design/intro_prompt_design.ipynb

================================================

{

"cells": [

{

"cell_type": "code",

"execution_count": null,

"metadata": {

"id": "ur8xi4C7S06n"

},

"outputs": [],

"source": [

"# Copyright 2024 Google LLC\n",

"#\n",

"# Licensed under the Apache License, Version 2.0 (the \"License\");\n",

"# you may not use this file except in compliance with the License.\n",

"# You may obtain a copy of the License at\n",

"#\n",

"# https://www.apache.org/licenses/LICENSE-2.0\n",

"#\n",

"# Unless required by applicable law or agreed to in writing, software\n",

"# distributed under the License is distributed on an \"AS IS\" BASIS,\n",

"# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.\n",

"# See the License for the specific language governing permissions and\n",

"# limitations under the License."

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "JAPoU8Sm5E6e"

},

"source": [

"# Prompt Design - Best Practices\n",

"\n",

"> **NOTE:** This notebook uses the PaLM generative model, which will reach its [discontinuation date in October 2024](https://cloud.google.com/vertex-ai/generative-ai/docs/model-reference/text#model_versions). Please refer to [this updated notebook](https://github.com/GoogleCloudPlatform/generative-ai/blob/main/gemini/prompts/intro_prompt_design.ipynb) for a version which uses the latest Gemini model.\n",

"\n",

"<table align=\"left\">\n",

" <td style=\"text-align: center\">\n",

" <a href=\"https://colab.research.google.com/github/GoogleCloudPlatform/generative-ai/blob/main/language/prompts/intro_prompt_design.ipynb\">\n",

" <img src=\"https://cloud.google.com/ml-engine/images/colab-logo-32px.png\" alt=\"Google Colaboratory logo\"><br> Run in Colab\n",

" </a>\n",

" </td>\n",

" <td style=\"text-align: center\">\n",

" <a href=\"https://github.com/GoogleCloudPlatform/generative-ai/blob/main/language/prompts/intro_prompt_design.ipynb\">\n",

" <img src=\"https://cloud.google.com/ml-engine/images/github-logo-32px.png\" alt=\"GitHub logo\"><br> View on GitHub\n",

" </a>\n",

" </td>\n",

" <td style=\"text-align: center\">\n",

" <a href=\"https://console.cloud.google.com/vertex-ai/workbench/deploy-notebook?download_url=https://raw.githubusercontent.com/GoogleCloudPlatform/generative-ai/main/language/prompts/intro_prompt_design.ipynb\">\n",

" <img src=\"https://lh3.googleusercontent.com/UiNooY4LUgW_oTvpsNhPpQzsstV5W8F7rYgxgGBD85cWJoLmrOzhVs_ksK_vgx40SHs7jCqkTkCk=e14-rj-sc0xffffff-h130-w32\" alt=\"Vertex AI logo\"><br> Open in Vertex AI Workbench\n",

" </a>\n",

" </td>\n",

"</table>\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"| | |\n",

"|-|-|\n",

"|Author(s) | [Polong Lin](https://github.com/polong-lin) |"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "tvgnzT1CKxrO"

},

"source": [

"## Overview\n",

"\n",

"This notebook covers the essentials of prompt engineering, including some best practices.\n",

"\n",

"Learn more about prompt design in the [official documentation](https://cloud.google.com/vertex-ai/docs/generative-ai/text/text-overview)."

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "d975e698c9a4"

},

"source": [

"### Objective\n",

"\n",

"In this notebook, you learn best practices around prompt engineering -- how to design prompts to improve the quality of your responses.\n",

"\n",

"This notebook covers the following best practices for prompt engineering:\n",

"\n",

"- Be concise\n",

"- Be specific and well-defined\n",

"- Ask one task at a time\n",

"- Turn generative tasks into classification tasks\n",

"- Improve response quality by including examples"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "ea013f50403c"

},

"source": [

"### Costs\n",

"This tutorial uses billable components of Google Cloud:\n",

"\n",

"* Vertex AI Generative AI Studio\n",

"\n",

"Learn about [Vertex AI pricing](https://cloud.google.com/vertex-ai/pricing),\n",

"and use the [Pricing Calculator](https://cloud.google.com/products/calculator/)\n",

"to generate a cost estimate based on your projected usage."

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "3e663cb43fa0"

},

"source": [

"### Install Vertex AI SDK"

]

},

{

"cell_type": "code",

"execution_count": 2,

"metadata": {

"id": "82ad0c445061",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"Note: you may need to restart the kernel to use updated packages.\n"

]

}

],

"source": [

"%pip install --upgrade google-cloud-aiplatform -q"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "cebd6983cbad"

},

"source": [

"**Note:** Kindly ignore the deprecation warnings and incompatibility errors related to pip dependencies.\n",

"\n",

"**Colab only:** Run the following cell to restart the kernel or use the button to restart the kernel. For **Vertex AI Workbench** you can restart the terminal using the button on top."

]

},

{

"cell_type": "code",

"execution_count": 3,

"metadata": {

"id": "bea801acf6b5",

"tags": []

},

"outputs": [

{

"data": {

"text/plain": [

"{'status': 'ok', 'restart': True}"

]

},

"execution_count": 3,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"# Automatically restart kernel after installs so that your environment can access the new packages\n",

"import IPython\n",

"\n",

"app = IPython.Application.instance()\n",

"app.kernel.do_shutdown(True)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "7a386d25fa8f"

},

"source": [

"### Authenticating your notebook environment\n",

"\n",

"- If you are using **Colab** to run this notebook, run the cell below and continue.\n",

"- If you are using **Vertex AI Workbench**, check out the setup instructions [here](https://github.com/GoogleCloudPlatform/generative-ai/tree/main/setup-env)."

]

},

{

"cell_type": "code",

"execution_count": 1,

"metadata": {

"id": "1bd1dca8e9a7",

"tags": []

},

"outputs": [],

"source": [

"import sys\n",

"\n",

"if \"google.colab\" in sys.modules:\n",

" from google.colab import auth\n",

"\n",

" auth.authenticate_user()"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"- If you are running this notebook in a local development environment:\n",

" - Install the [Google Cloud SDK](https://cloud.google.com/sdk).\n",

" - Obtain authentication credentials. Create local credentials by running the following command and following the oauth2 flow (read more about the command [here](https://cloud.google.com/sdk/gcloud/reference/beta/auth/application-default/login)):\n",

"\n",

" ```bash\n",

" gcloud auth application-default login\n",

" ```"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "960505627ddf"

},

"source": [

"### Import libraries"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "ue7q-YO3Scpp"

},

"source": [

"**Colab only:** Run the following cell to initialize the Vertex AI SDK. For Vertex AI Workbench, you don't need to run this."

]

},

{

"cell_type": "code",

"execution_count": 5,

"metadata": {

"id": "NGvWtLAyScpp",

"tags": []

},

"outputs": [],

"source": [

"import vertexai\n",

"\n",

"PROJECT_ID = \"qwiklabs-gcp-03-6dcee18ee273\" # @param {type:\"string\"}\n",

"REGION = \"us-central1\" # @param {type:\"string\"}\n",

"\n",

"vertexai.init(project=PROJECT_ID, location=REGION)"

]

},

{

"cell_type": "code",

"execution_count": 6,

"metadata": {

"id": "PyQmSRbKA8r-",

"tags": []

},

"outputs": [],

"source": [

"from vertexai.language_models import TextGenerationModel\n",

"from vertexai.language_models import ChatModel"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "UP76a2la7O-a"

},

"source": [

"### Load model"

]

},

{

"cell_type": "code",

"execution_count": 7,

"metadata": {

"id": "7isig7e07O-a",

"tags": []

},

"outputs": [

{

"name": "stderr",

"output_type": "stream",

"text": [

"WARNING: All log messages before absl::InitializeLog() is called are written to STDERR\n",

"I0000 00:00:1723731517.766789 12353 config.cc:230] gRPC experiments enabled: call_status_override_on_cancellation, event_engine_dns, event_engine_listener, http2_stats_fix, monitoring_experiment, pick_first_new, trace_record_callops, work_serializer_clears_time_cache\n"

]

}

],

"source": [

"generation_model = TextGenerationModel.from_pretrained(\"text-bison\")"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "fIPcn5dZ7O-b"

},

"source": [

"## Prompt engineering best practices"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "df7d153f4928"

},

"source": [

"Prompt engineering is all about how to design your prompts so that the response is what you were indeed hoping to see.\n",

"\n",

"The idea of using \"unfancy\" prompts is to minimize the noise in your prompt to reduce the possibility of the LLM misinterpreting the intent of the prompt. Below are a few guidelines on how to engineer \"unfancy\" prompts.\n",

"\n",

"In this section, you'll cover the following best practices when engineering prompts:\n",

"\n",

"* Be concise\n",

"* Be specific, and well-defined\n",

"* Ask one task at a time\n",

"* Improve response quality by including examples\n",

"* Turn generative tasks to classification tasks to improve safety"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "43c1169ac435"

},

"source": [

"### Be concise"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "d0f380f1620e"

},

"source": [

"🛑 Not recommended. The prompt below is unnecessarily verbose."

]

},

{

"cell_type": "code",

"execution_count": 8,

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "b6a1697c3603",

"outputId": "2f22ac3b-181c-4c8f-a7a3-82cd70e804fb",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" Here are some potential names for a flower shop that specializes in selling bouquets of dried flowers:\n",

"\n",

"- Everlasting Blooms\n",

"- Dried Flower Delights\n",

"- Nature's Treasures\n",

"- Rustic Florals\n",

"- Timeless Botanicals\n",

"- Floral Keepsakes\n",

"- Dried Flower Gallery\n",

"- Botanical Memories\n",

"- Forever Flowers\n",

"- Pressed Petals\n"

]

}

],

"source": [

"prompt = \"What do you think could be a good name for a flower shop that specializes in selling bouquets of dried flowers more than fresh flowers? Thank you!\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "2307f56a9b75"

},

"source": [

"✅ Recommended. The prompt below is to the point and concise."

]

},

{

"cell_type": "code",

"execution_count": 9,

"metadata": {

"id": "fc666404f47c",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" **The Everlasting Bloom**\n",

"**Dried Delights**\n",

"**Nature's Treasures**\n",

"**Rustic Blooms**\n",

"**Vintage Florals**\n",

"**Dried Floral Creations**\n",

"**Preserved Petals**\n",

"**Eternal Elegance**\n",

"**Botanical Beauties**\n",

"**Nature's Keepsakes**\n"

]

}

],

"source": [

"prompt = \"Suggest a name for a flower shop that sells bouquets of dried flowers\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "17f6c48bba91"

},

"source": [

"### Be specific, and well-defined"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "269b428e1563"

},

"source": [

"Suppose that you want to brainstorm creative ways to describe Earth."

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "6436ee2ff406"

},

"source": [

"🛑 Not recommended. The prompt below is too generic."

]

},

{

"cell_type": "code",

"execution_count": 11,

"metadata": {

"id": "261b7f6e94c5",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" Earth is the third planet from the Sun and the only astronomical object known to harbor life. While larger than Mercury and Venus, Earth is smaller than Mars and the gas and ice giants of the outer Solar System. Earth is the only planet in our solar system not named after a Greek or Roman deity. Instead, its name comes from the Old English word \"erda,\" which means \"ground\" or \"soil.\"\n",

"\n",

"Earth is the only planet in the Solar System known to support life. It is the only planet with liquid water on its surface, and it has a breathable atmosphere. Earth is also the only planet with a magnetic field, which protects it from harmful solar radiation.\n",

"\n",

"Earth is a dynamic planet. Its climate is constantly changing, and its surface is constantly being reshaped by geological processes. Earth is also home to a wide variety of life forms, from microscopic bacteria to giant whales.\n",

"\n",

"Here are some of the key facts about Earth:\n",

"\n",

"* **Mass:** 5.972 × 10^24 kilograms\n",

"* **Volume:** 1.08321 × 10^12 cubic kilometers\n",

"* **Density:** 5,515 kilograms per cubic meter\n",

"* **Surface area:** 510\n"

]

}

],

"source": [

"prompt = \"Tell me about Earth\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "0bebfecd2912"

},

"source": [

"✅ Recommended. The prompt below is specific and well-defined."

]

},

{

"cell_type": "code",

"execution_count": null,

"metadata": {

"id": "242b1b3bae6e"

},

"outputs": [],

"source": [

"prompt = \"Generate a list of ways that makes Earth unique compared to other planets\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "20dca9a05eab"

},

"source": [

"### Ask one task at a time"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "f9019d443179"

},

"source": [

"🛑 Not recommended. The prompt below has two parts to the question that could be asked separately."

]

},

{

"cell_type": "code",

"execution_count": 12,

"metadata": {

"id": "70b3b5e5825d",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" **The best method of boiling water:**\n",

"\n",

"The best method of boiling water depends on the situation and the equipment available. Here are a few common methods:\n",

"\n",

"1. **Electric kettle:** An electric kettle is a convenient and efficient way to boil water. It is quick and easy to use, and it automatically turns off when the water reaches the desired temperature.\n",

"\n",

"2. **Stovetop kettle:** A stovetop kettle is a traditional method of boiling water. It is placed on a stove or cooktop and heated until the water reaches the desired temperature. Stovetop kettles can be made of various materials, such as stainless steel, aluminum, or copper.\n",

"\n",

"3. **Microwave:** A microwave can be used to boil water quickly and easily. Place a microwave-safe container filled with water in the microwave and heat it on high power for 2-3 minutes, or until the water reaches the desired temperature.\n",

"\n",

"4. **Campfire:** If you are outdoors and do not have access to electricity or a stove, you can boil water over a campfire. Fill a pot or kettle with water and place it over the fire. Keep an eye on the water and remove it from the fire once it reaches the desired temperature.\n",

"\n",

"**Why is the sky blue?\n"

]

}

],

"source": [

"prompt = \"What's the best method of boiling water and why is the sky blue?\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "7936fb58c16a"

},

"source": [

"✅ Recommended. The prompts below asks one task a time."

]

},

{

"cell_type": "code",

"execution_count": 13,

"metadata": {

"id": "2564dad6c8db",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" The best method of boiling water depends on the specific situation and available resources. Here are a few common methods:\n",

"\n",

"1. **Electric Kettle:**\n",

" - Electric kettles are designed specifically for boiling water and are very efficient.\n",

" - They heat water quickly and automatically turn off when the water reaches the boiling point.\n",

" - Electric kettles are convenient and safe to use.\n",

"\n",

"2. **Stovetop Kettle or Pot:**\n",

" - Traditional kettles or pots can be used to boil water on a stovetop.\n",

" - Fill the kettle or pot with water and place it on the stove over medium-high heat.\n",

" - Keep an eye on the water and remove it from the heat once it starts boiling.\n",

"\n",

"3. **Microwave:**\n",

" - Microwaves can be used to boil water quickly.\n",

" - Place a microwave-safe container filled with water in the microwave and heat it on high power for 2-3 minutes, depending on the amount of water.\n",

" - Be careful when handling the container as it will be hot.\n",

"\n",

"4. **Campfire or Outdoor Stove:**\n",

" - If you're outdoors, you can boil water over a campfire or using a portable outdoor stove.\n",

" -\n"

]

}

],

"source": [

"prompt = \"What's the best method of boiling water?\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "code",

"execution_count": 14,

"metadata": {

"id": "770c695ade92",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" The sky appears blue due to a phenomenon called Rayleigh scattering. This occurs when sunlight, which is composed of all colors of the visible spectrum, interacts with molecules in the Earth's atmosphere, primarily nitrogen and oxygen.\n",

"\n",

"When sunlight enters the atmosphere, it encounters these molecules and particles. The shorter wavelengths of light, such as blue and violet, are more likely to be scattered by these particles than the longer wavelengths, such as red and orange. This is because the shorter wavelengths have a higher frequency and interact more strongly with the molecules and particles in the atmosphere.\n",

"\n",

"As a result, the blue and violet light is scattered in all directions, creating the appearance of a blue sky. The other colors of the spectrum, such as red and orange, are less scattered and continue on their path towards the observer's eyes, contributing to the overall color of the sky.\n",

"\n",

"The amount of scattering depends on the wavelength of light and the density of the particles in the atmosphere. This is why the sky appears darker at night or during cloudy weather, as there are fewer particles to scatter the sunlight.\n",

"\n",

"Additionally, the position of the sun in the sky also affects the color of the sky. At sunrise and sunset, the sunlight has to travel through more of the atmosphere to reach our eyes\n"

]

}

],

"source": [

"prompt = \"Why is the sky blue?\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "ff606011aa86"

},

"source": [

"### Watch out for hallucinations"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "956ce45b06a7"

},

"source": [

"Although LLMs have been trained on a large amount of data, they can generate text containing statements not grounded in truth or reality; these responses from the LLM are often referred to as \"hallucinations\" due to their limited memorization capabilities. Note that simply prompting the LLM to provide a citation isn't a fix to this problem, as there are instances of LLMs providing false or inaccurate citations. Dealing with hallucinations is a fundamental challenge of LLMs and an ongoing research area, so it is important to be cognizant that LLMs may seem to give you confident, correct-sounding statements that are in fact incorrect. \n",

"\n",

"Note that if you intend to use LLMs for the creative use cases, hallucinating could actually be quite useful."

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "0c9d5f66179a"

},

"source": [

"Try the prompt like the one below repeatedly. You may notice that sometimes it will confidently, but inaccurately, say \"The first elephant to visit the moon was Luna\"."

]

},

{

"cell_type": "code",

"execution_count": 15,

"metadata": {

"id": "d813b9061b08",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" No elephant has ever visited the moon.\n"

]

}

],

"source": [

"prompt = \"Who was the first elephant to visit the moon?\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Clearly the chatbot is hallucinating since no elephant has ever flown to the moon. But how do we prevent these kinds of inappropriate questions and more specifically, reduce hallucinations? \n",

"\n",

"There is one possible method called the Determine Appropriate Response (DARE) prompt, which cleverly uses the LLM itself to decide whether it should answer a question based on what its mission is.\n",

"\n",

"Let's see how it works by creating a chatbot for a travel website with a slight twist."

]

},

{

"cell_type": "code",

"execution_count": 17,

"metadata": {

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" Hello! I'm here to help you plan your next trip. Whether you're looking for a relaxing beach vacation or an adventurous city getaway, I can help you find the perfect destination and activities for your budget and interests.\n"

]

}

],

"source": [

"chat_model = ChatModel.from_pretrained(\"chat-bison@002\")\n",

"\n",

"chat = chat_model.start_chat()\n",

"dare_prompt = \"\"\"Remember that before you answer a question, you must check to see if it complies with your mission.\n",

"If not, you can say, Sorry I can't answer that question.\"\"\"\n",

"\n",

"print(\n",

" chat.send_message(\n",

" f\"\"\"\n",

"Hello! You are an AI chatbot for a travel web site.\n",

"Your mission is to provide helpful queries for travelers.\n",

"\n",

"{dare_prompt}\n",

"\"\"\"\n",

" ).text\n",

")"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Suppose we ask a simple question about one of Italy's most famous tourist spots."

]

},

{

"cell_type": "code",

"execution_count": 18,

"metadata": {

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" There are many great places for sightseeing in Milan, Italy. Some of the most popular include:\n",

"\n",

"* The Duomo: This stunning Gothic cathedral is one of the most iconic landmarks in Milan. It took nearly 600 years to complete, and its intricate details are truly breathtaking.\n",

"* The Galleria Vittorio Emanuele II: This beautiful shopping arcade is located in the heart of Milan. It's home to some of the most luxurious shops in the world, as well as several cafes and restaurants.\n",

"* The Sforza Castle: This historic castle was once the home of the ruling Sforza family. Today, it houses several museums, including the Pinacoteca di Brera, which features a collection of Renaissance and Baroque art.\n",

"* The Parco Sempione: This large park is located just outside the city center. It's a great place to relax and enjoy the outdoors. There are several playgrounds, a lake, and even a small zoo.\n",

"* The Navigli: This network of canals is a popular spot for locals and tourists alike. You can take a boat ride along the canals, or simply stroll along the banks and enjoy the scenery.\n"

]

}

],

"source": [

"prompt = \"What is the best place for sightseeing in Milan, Italy?\"\n",

"print(chat.send_message(prompt).text)"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Now let us pretend to be a not-so-nice user and ask the chatbot a question that is unrelated to travel."

]

},

{

"cell_type": "code",

"execution_count": 19,

"metadata": {

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" Sorry, I can't answer that question. There have been no elephants on the moon.\n"

]

}

],

"source": [

"prompt = \"Who was the first elephant to visit the moon?\"\n",

"print(chat.send_message(prompt).text)"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"You can see that the DARE prompt added a layer of guard rails that prevented the chatbot from veering off course."

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "029e23abfd56"

},

"source": [

"### Turn generative tasks into classification tasks to reduce output variability"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "d943941d6e59"

},

"source": [

"#### Generative tasks lead to higher output variability"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "37528e6c9754"

},

"source": [

"The prompt below results in an open-ended response, useful for brainstorming, but response is highly variable."

]

},

{

"cell_type": "code",

"execution_count": 20,

"metadata": {

"id": "a8e2dc39e9ae",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" **Project: Build a Text-Based Adventure Game**\n",

"\n",

"**Overview:**\n",

"Create a text-based adventure game where the player navigates through different scenarios, interacts with characters, and solves puzzles to progress. This project will help you practice your programming skills, problem-solving abilities, and creativity.\n",

"\n",

"**Steps:**\n",

"\n",

"1. **Design the Game:**\n",

" - Plan the game's storyline, characters, and scenarios.\n",

" - Create a map or flowchart to visualize the game's structure.\n",

"\n",

"2. **Choose a Programming Language:**\n",

" - Select a programming language you're familiar with, such as Python or Java.\n",

"\n",

"3. **Implement the Game Logic:**\n",

" - Write code to handle player input, process commands, and update the game state.\n",

" - Use conditional statements and loops to control the game's flow.\n",

"\n",

"4. **Create the Game World:**\n",

" - Describe the different locations and scenarios in the game using text.\n",

" - Use descriptive language to immerse the player in the game world.\n",

"\n",

"5. **Add Characters and Interactions:**\n",

" - Introduce non-playable characters (NPCs) with unique personalities and dialogue.\n",

" - Allow players to interact with NPCs through text-based conversations.\n"

]

}

],

"source": [

"prompt = \"I'm a high school student. Recommend me a programming activity to improve my skills.\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "f71a6fa2b4bb"

},

"source": [

"#### Classification tasks reduces output variability"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "917517465dac"

},

"source": [

"The prompt below results in a choice and may be useful if you want the output to be easier to control."

]

},

{

"cell_type": "code",

"execution_count": 21,

"metadata": {

"id": "3feb93d9df81",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" As a high school student, I would suggest learning Python. Here are a few reasons why:\n",

"\n",

"**1. Versatility:** Python is a general-purpose programming language, which means it can be used for a wide variety of tasks, including web development, data science, machine learning, and artificial intelligence. This versatility makes it a valuable skill to have in today's job market.\n",

"\n",

"**2. Popularity:** Python is one of the most popular programming languages in the world, and its popularity is only growing. This means there are many resources available to help you learn Python, including online tutorials, courses, and books.\n",

"\n",

"**3. Ease of learning:** Python is known for being relatively easy to learn, even for beginners. Its syntax is simple and straightforward, and it has a large standard library that provides many built-in functions and modules.\n",

"\n",

"**4. Community support:** Python has a large and active community of developers who are willing to help beginners. There are many online forums and communities where you can ask questions and get help with your Python code.\n",

"\n",

"**5. Career opportunities:** Python is in high demand in the tech industry, and many jobs require Python skills. Learning Python can open up a wide range of career opportunities for you in the future.\n"

]

}

],

"source": [

"prompt = \"\"\"I'm a high school student. Which of these activities do you suggest and why:\n",

"a) learn Python\n",

"b) learn JavaScript\n",

"c) learn Fortran\n",

"\"\"\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "32290ac9fb2b"

},

"source": [

"### Improve response quality by including examples"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "132834f5db2c"

},

"source": [

"Another way to improve response quality is to add examples in your prompt. The LLM learns in-context from the examples on how to respond. Typically, one to five examples (shots) are enough to improve the quality of responses. Including too many examples can cause the model to over-fit the data and reduce the quality of responses.\n",

"\n",

"Similar to classical model training, the quality and distribution of the examples is very important. Pick examples that are representative of the scenarios that you need the model to learn, and keep the distribution of the examples (e.g. number of examples per class in the case of classification) aligned with your actual distribution."

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "46520d938b6a"

},

"source": [

"#### Zero-shot prompt"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "46d3b47e6cea"

},

"source": [

"Below is an example of zero-shot prompting, where you don't provide any examples to the LLM within the prompt itself."

]

},

{

"cell_type": "code",

"execution_count": 22,

"metadata": {

"id": "2cbe03eb0b71",

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

" positive\n"

]

}

],

"source": [

"prompt = \"\"\"Decide whether a Tweet's sentiment is positive, neutral, or negative.\n",

"\n",

"Tweet: I loved the new YouTube video you made!\n",

"Sentiment:\n",

"\"\"\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "b0daabca1359"

},

"source": [

"#### One-shot prompt"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "42c4652fc5c2"

},

"source": [

"Below is an example of one-shot prompting, where you provide one example to the LLM within the prompt to give some guidance on what type of response you want."

]

},

{

"cell_type": "code",

"execution_count": null,

"metadata": {

"id": "cfe584860787"

},

"outputs": [],

"source": [

"prompt = \"\"\"Decide whether a Tweet's sentiment is positive, neutral, or negative.\n",

"\n",

"Tweet: I loved the new YouTube video you made!\n",

"Sentiment: positive\n",

"\n",

"Tweet: That was awful. Super boring 😠\n",

"Sentiment:\n",

"\"\"\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "ef58c35005c0"

},

"source": [

"#### Few-shot prompt"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "b630e8947b60"

},

"source": [

"Below is an example of few-shot prompting, where you provide a few examples to the LLM within the prompt to give some guidance on what type of response you want."

]

},

{

"cell_type": "code",

"execution_count": null,

"metadata": {

"id": "fb3ba21bbd11"

},

"outputs": [],

"source": [

"prompt = \"\"\"Decide whether a Tweet's sentiment is positive, neutral, or negative.\n",

"\n",

"Tweet: I loved the new YouTube video you made!\n",

"Sentiment: positive\n",

"\n",

"Tweet: That was awful. Super boring 😠\n",

"Sentiment: negative\n",

"\n",

"Tweet: Something surprised me about this video - it was actually original. It was not the same old recycled stuff that I always see. Watch it - you will not regret it.\n",

"Sentiment:\n",

"\"\"\"\n",

"\n",

"print(generation_model.predict(prompt=prompt, max_output_tokens=256).text)"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "a4023be726eb"

},

"source": [

"#### Choosing between zero-shot, one-shot, few-shot prompting methods"

]

},

{

"cell_type": "markdown",

"metadata": {

"id": "6d7870ff75cc"

},

"source": [

"Which prompt technique to use will solely depends on your goal. The zero-shot prompts are more open-ended and can give you creative answers, while one-shot and few-shot prompts teach the model how to behave so you can get more predictable answers that are consistent with the examples provided."

]

}

],

"metadata": {

"colab": {

"name": "intro_prompt_design.ipynb",

"toc_visible": true

},

"environment": {

"kernel": "python3",

"name": "tf2-cpu.2-16.m124",

"type": "gcloud",

"uri": "us-docker.pkg.dev/deeplearning-platform-release/gcr.io/tf2-cpu.2-16:m124"

},

"kernelspec": {

"display_name": "Python 3 (Local)",

"language": "python",

"name": "python3"

},

"language_info": {

"codemirror_mode": {

"name": "ipython",

"version": 3

},

"file_extension": ".py",

"mimetype": "text/x-python",

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

"version": "3.10.14"

}

},

"nbformat": 4,

"nbformat_minor": 4

}

================================================

FILE: labs/gsp1151_generative_ai_with_vertex_ai-prompt_design/readme.md

================================================

### Generative AI with Vertex AI: Prompt Design

The Vertex AI SDK for text enables you to structure prompts however you like. You can add contextual information, instructions, examples, questions, lists, and any other types of text content that you can think of.

In this lab, you will learn about prompt design and various text generation use cases using the Vertex AI SDK.

#### What you will learn:

How to get started with prompt engineering with the Vertex AI SDK:

- Best practices

- Zero-, one- and few-shot prompting

How to explore some text generation use cases with the Vertex AI SDK:

- Ideation

- Q&A

- Text classification

- Text extraction

- Text summarization

================================================

FILE: labs/gsp301_deploy-a-compute-instance-with-a-remote-startup-script/readme.md

================================================

# [Deploy a Compute Instance with a Remote Startup Script](https://www.qwiklabs.com/focuses/1735?parent=catalog)

## Topics tested

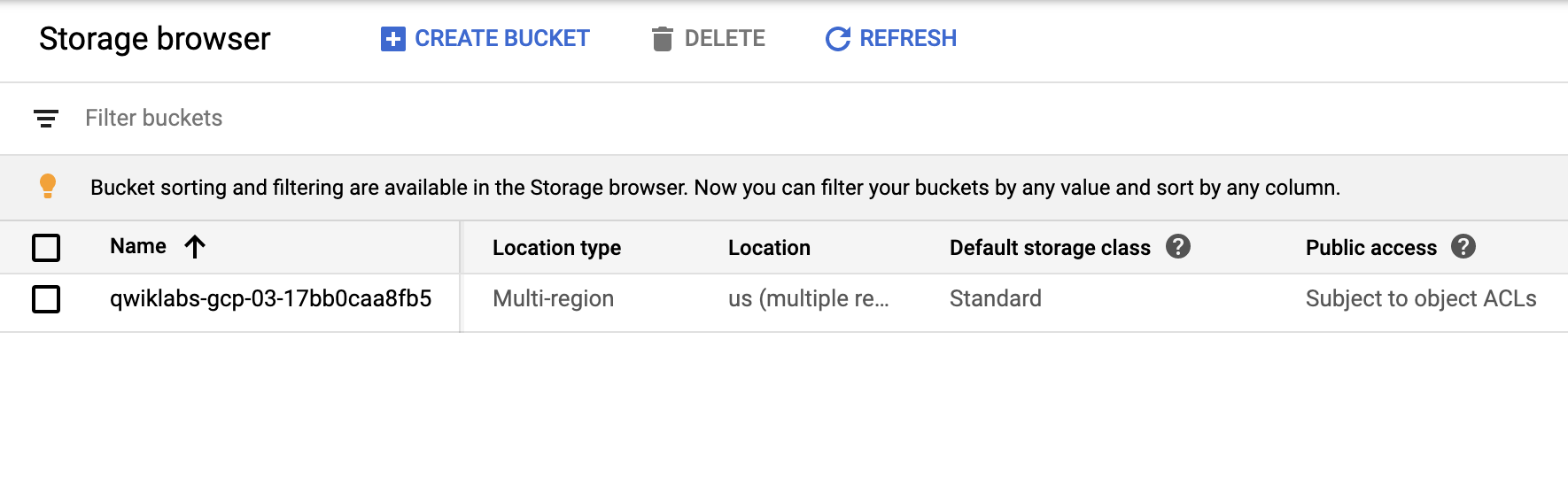

* Create a storage bucket for startup scripts.

* Create a virtual machine that runs a startup script from Cloud Storage.

* Configure HTTP access for the virtual machine.

* Deploy an application on an instance.

## Challenge scenario

You have been given the responsibility of managing the configuration of your organization's Google Cloud virtual machines. You have decided to make some changes to the framework used for managing the deployment and configuration machines - you want to make it easier to modify the startup scripts used to initialize a number of the compute instances. Instead of storing startup scripts directly in the instances' metadata, you have decided to store the scripts in a Cloud Storage bucket and then configure the virtual machines to point to the relevant script file in the bucket.

A basic bash script that installs the Apache web server software called install-web.sh has been provided for you as a sample startup script. You can download this from the Student Resources links on the left side of the page.

================================================

FILE: labs/gsp301_deploy-a-compute-instance-with-a-remote-startup-script/script.sh

================================================

# Deploy a Compute Instance with a Remote Startup Script

# https://google.qwiklabs.com/focuses/1735?parent=catalog

# Task 1: Confirm that a Google Cloud Storage bucket exists that contains a file

gsutil mb gs://$DEVSHELL_PROJECT_ID

gsutil cp gs://sureskills-ql/challenge-labs/ch01-startup-script/install-web.sh gs://$DEVSHELL_PROJECT_ID

# Task 2: Confirm that a compute instance has been created that has a remote startup script called install-web.sh configured

gcloud compute instances create example-instance --zone=us-central1-a --tags=http-server --metadata startup-script-url=gs://$DEVSHELL_PROJECT_ID/install-web.sh

# Task 3: Confirm that a HTTP access firewall rule exists with tag that applies to that virtual machine

gcloud compute firewall-rules create allow-http --target-tags http-server --source-ranges 0.0.0.0/0 --allow tcp:80

# Task 4: Connect to the server ip-address using HTTP and get a non-error response

# After firewall creation (Task 3) just wait and then check the score

================================================

FILE: labs/gsp302_configure-a-firewall-and-a-startup-script-with-deployment-manager/guide.txt

================================================

# Configure a Firewall and a Startup Script with Deployment Manager

# https://qwiklabs.com/focuses/1736?parent=catalog

# Open the Cloud Shell, run:

mkdir deployment_manager

cd deployment_manager

gsutil cp gs://spls/gsp302/* .

# use nano or open editor to edit qwiklabs.jinja and qwiklabs.yaml

# check the in this repo folder for reference

# back to cloud shell, run:

gcloud deployment-manager deployments create vm-test --config=qwiklabs.yaml

================================================

FILE: labs/gsp302_configure-a-firewall-and-a-startup-script-with-deployment-manager/qwiklabs.jinja

================================================

resources:

- type: compute.v1.instance

name: vm-{{ env["deployment"] }}

properties:

zone: {{ properties["zone"] }}

machineType: https://www.googleapis.com/compute/v1/projects/{{ env["project"] }}/zones/{{ properties["zone"] }}/machineTypes/f1-micro

tags:

items:

- 'http'

metadata:

items:

# For more ways to use startup scripts on an instance, see:

# https://cloud.google.com/compute/docs/startupscript

- key: startup-script

value: |

#!/bin/bash

apt-get update

apt-get install -y apache2

disks:

- deviceName: boot

type: PERSISTENT

boot: true

autoDelete: true

initializeParams:

diskName: disk-{{ env["deployment"] }}

sourceImage: https://www.googleapis.com/compute/v1/projects/debian-cloud/global/images/family/debian-9

networkInterfaces:

- network: https://www.googleapis.com/compute/v1/projects/{{ env["project"] }}/global/networks/default

# Access Config required to give the instance a public IP address

accessConfigs:

- name: External NAT

type: ONE_TO_ONE_NAT

- type: compute.v1.firewall

name: {{ env["project"] }}-allow-http

properties:

network: https://www.googleapis.com/compute/v1/projects/{{ env["project"] }}/global/networks/default

sourceRanges: [0.0.0.0/0]

targetTags: ['http']

allowed:

- IPProtocol: tcp

ports: ['80']

================================================

FILE: labs/gsp302_configure-a-firewall-and-a-startup-script-with-deployment-manager/qwiklabs.yaml

================================================

imports:

- path: qwiklabs.jinja

resources:

- name: qwiklabs

type: qwiklabs.jinja

properties:

zone: us-central1-a

================================================

FILE: labs/gsp302_configure-a-firewall-and-a-startup-script-with-deployment-manager/readme.md

================================================

# [Configure a Firewall and a Startup Script with Deployment Manager](https://www.qwiklabs.com/focuses/1736?parent=catalog)

## Topics tested

* Configure a deployment template to include a startup script

* Configure a deployment template to add a firewall rule allowing http traffic

* Configure a deployment template to add a networking tag to a compute instance

* Deploy a configuration using Deployment Manager

## Challenge scenario

Your company is ready to launch a brand new product and you have been asked to develop a Deployment Manager template to deploy and configure the Google Cloud environment that is required to support this product. To start off, you've been given an existing basic deployment manager template that just deploys a single compute instance.

================================================

FILE: labs/gsp303_configure-secure-rdp-using-a-windows-bastion-host/readme.md

================================================

# [Configure Secure RDP using a Windows Bastion Host](https://www.qwiklabs.com/focuses/1737?parent=catalog)

## Topics tested

* Create a new VPC to host secure production Windows services.

* Create a Windows host connected to a subnet in the new VPC with an internal only network interface.

* Create a Windows bastion host (jump box) in with an externally accessible network interface.

* Configure firewalls rules to enable management of the secure Windows host from the Internet using the bastion host as a jump box.

## Challenge scenario

Your company has decided to deploy new application services in the cloud and your assignment is developing a secure framework for managing the Windows services that will be deployed. You will need to create a new VPC network environment for the secure production Windows servers.

Production servers must initially be completely isolated from external networks and cannot be directly accessible from, or be able to connect directly to, the internet. In order to configure and manage your first server in this environment, you will also need to deploy a bastion host, or jump box, that can be accessed from the internet using the Microsoft Remote Desktop Protocol (RDP). The bastion host should only be accessible via RDP from the internet, and should only be able to communicate with the other compute instances inside the VPC network using RDP.

Your company also has a monitoring system running from the default VPC network, so all compute instances must have a second network interface with an internal only connection to the default VPC network.

================================================

FILE: labs/gsp303_configure-secure-rdp-using-a-windows-bastion-host/script.sh

================================================

# Configure Secure RDP using a Windows Bastion Host

# https://www.qwiklabs.com/focuses/1737?parent=catalog

# Task 1 : A new non-default VPC has been created

gcloud compute networks create securenetwork --subnet-mode=custom

# Task 2 : The new VPC contains a new non-default subnet within it

gcloud compute networks subnets create securenetwork --network=securenetwork --region=us-central1 --range=192.168.1.0/24

# Task 3 : A firewall rule exists that allows TCP port 3389 traffic ( for RDP )

gcloud compute firewall-rules create myfirewalls --network securenetwork --allow=tcp:3389 --target-tags=rdp

# Task 4 : A Windows compute instance called vm-bastionhost exists that has a public ip-address to which the TCP port 3389 firewall rule applies.

gcloud compute instances create vm-bastionhost --zone=us-central1-a --machine-type=n1-standard-2 --subnet=securenetwork --network-tier=PREMIUM --maintenance-policy=MIGRATE --scopes=https://www.googleapis.com/auth/devstorage.read_only,https://www.googleapis.com/auth/logging.write,https://www.googleapis.com/auth/monitoring.write,https://www.googleapis.com/auth/servicecontrol,https://www.googleapis.com/auth/service.management.readonly,https://www.googleapis.com/auth/trace.append --tags=rdp --image=windows-server-2016-dc-v20200211 --image-project=windows-cloud --boot-disk-size=50GB --boot-disk-type=pd-standard --boot-disk-device-name=vm-bastionhost --reservation-affinity=any

# Task 5 : A Windows compute instance called vm-securehost exists that does not have a public ip-address

gcloud compute instances create vm-securehost --zone=us-central1-a --machine-type=n1-standard-2 --subnet=securenetwork --no-address --maintenance-policy=MIGRATE --scopes=https://www.googleapis.com/auth/devstorage.read_only,https://www.googleapis.com/auth/logging.write,https://www.googleapis.com/auth/monitoring.write,https://www.googleapis.com/auth/servicecontrol,https://www.googleapis.com/auth/service.management.readonly,https://www.googleapis.com/auth/trace.append --tags=rdp --image=windows-server-2016-dc-v20200211 --image-project=windows-cloud --boot-disk-size=50GB --boot-disk-type=pd-standard --boot-disk-device-name=vm-securehost --reservation-affinity=any

# Task 6 : The vm-securehost is running Microsoft IIS web server software.

gcloud compute reset-windows-password vm-bastionhost --user app_admin --zone us-central1-a (choose Y and copy the password)

gcloud compute reset-windows-password vm-securehost --user app_admin --zone us-central1-a (choose Y and copy the password)

# Install Chrome RDP for Google Cloud Platform (https://chrome.google.com/webstore/detail/chrome-rdp-for-google-clo/mpbbnannobiobpnfblimoapbephgifkm)

# Go to Compute Engine > VM instances

# Click RDP on vm-bastionhost, fill username with app_admin and password with your copied vm-bastionhost's password

# Click Search, search for Remote Desktop Connection and run it

# Copy and paste the internal ip from vm-securehost, click Connect

# Fill username with app_admin and password with your copied vm-securehost's password

# Click Search, type Powershell, right click and Run as Administrator

# Run: Install-WindowsFeature -name Web-Server -IncludeManagementTools

================================================

FILE: labs/gsp304_build-and-deploy-a-docker-image-to-a-kubernetes/readme.md

================================================

# [Build and Deploy a Docker Image to a Kubernetes Cluster](https://www.qwiklabs.com/focuses/1738?parent=catalog)

## Topics tested

* Build and tag a Docker Image of a sample application

* Push the tagged image to Container Registry

* Create a Kubernetes cluster

* Deploy the application to the Kubernetes cluster

## Challenge scenario

Your development team is interested in adopting a containerized microservices approach to application architecture. You need to test a sample application they have provided for you to make sure that that it can be deployed to a Google Kubernetes container. The development group provided a simple Go application called echo-web with a Dockerfile and the associated context that allows you to build a Docker image immediately.

================================================

FILE: labs/gsp304_build-and-deploy-a-docker-image-to-a-kubernetes/script.sh

================================================

# Build and Deploy a Docker Image to a Kubernetes Cluster

# https://www.qwiklabs.com/focuses/1738?parent=catalog

# Task 1 : An application image with a v1 tag has been pushed to the gcr.io repository

mkdir echo-web && cd echo-web

gsutil cp -r gs://$DEVSHELL_PROJECT_ID/echo-web.tar.gz .

tar -xzf echo-web.tar.gz

rm echo-web.tar.gz

cd echo-web

docker build -t echo-app:v1 .

docker tag echo-app:v1 gcr.io/$DEVSHELL_PROJECT_ID/echo-app:v1

docker push gcr.io/$DEVSHELL_PROJECT_ID/echo-app:v1

# Task 2 : A new Kubernetes cluster exists (zone: us-central1-a)

gcloud config set compute/zone us-central1-a

gcloud container clusters create echo-cluster --num-nodes=2 --machine-type=n1-standard-2

# Task 3 : Check that an application has been deployed to the cluster

kubectl create deployment echo-web --image=gcr.io/$DEVSHELL_PROJECT_ID/echo-app:v1

# Task 4 : Test that a service exists that responds to requests like Echo-app

kubectl expose deployment echo-web --type=LoadBalancer --port 80 --target-port 8000

================================================

FILE: labs/gsp305_scale-out-and-update-a-containerized-application-on-a-kubernetes-cluster/readme.md

================================================

# [Scale Out and Update a Containerized Application on a Kubernetes Cluster](https://www.qwiklabs.com/focuses/1739?parent=catalog)

## Topics tested

* Update a docker application and push a new version to a container repository.

* Deploy the updated application version to a Kubernetes cluster.

* Scale out the application so that it is running 2 replicas.

## Challenge scenario

You are taking over ownership of a test environment and have been given an updated version of a containerized test application to deploy. Your systems' architecture team has started adopting a containerized microservice architecture. You are responsible for managing the containerized test web applications. You will first deploy the initial version of a test application, called echo-app to a Kubernetes cluster called echo-cluster in a deployment called echo-web.

Before you get started, open the navigation menu and select Storage. The last steps in the Deployment Manager script used to set up your environment creates a bucket.

Refresh the Storage browser until you see your bucket. You can move on once your Console resembles the following:

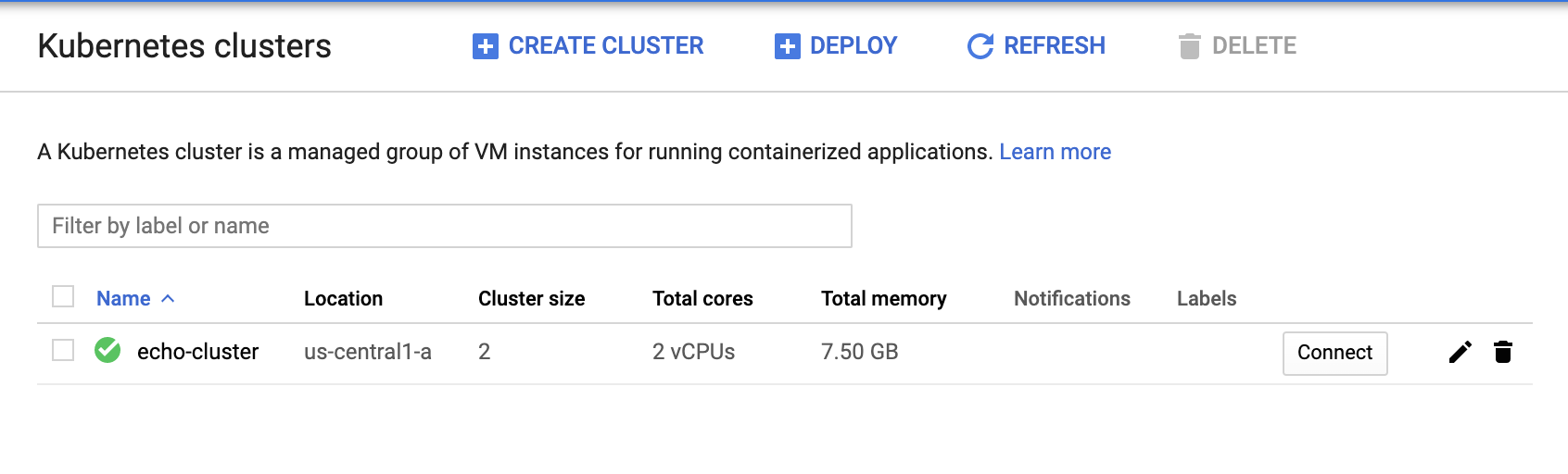

Check to make sure your GKE cluster has been created before continuing. Open the navigation menu and select Kuberntes Engine > Clusters.

Continue when you see a green checkmark next to echo-cluster:

To deploy your first version of the application, run the following commands in Cloud Shell to get up and running:

`gcloud container clusters get-credentials echo-cluster --zone=us-central1-a`

`kubectl create deployment echo-web --image=gcr.io/qwiklabs-resources/echo-app:v1`

`kubectl expose deployment echo-web --type=LoadBalancer --port 80 --target-port 8000`

================================================

FILE: labs/gsp305_scale-out-and-update-a-containerized-application-on-a-kubernetes-cluster/script.sh

================================================

# Scale Out and Update a Containerized Application on a Kubernetes Cluster

# https://www.qwiklabs.com/focuses/1739?parent=catalog

# Task 1 : Check that there is a tagged image in gcr.io for echo-app:v2

mkdir echo-web && cd echo-web

gsutil cp -r gs://$DEVSHELL_PROJECT_ID/echo-web-v2.tar.gz .

tar -xzf echo-web-v2.tar.gz

rm echo-web-v2.tar.gz

docker build -t echo-app:v2 .

docker tag echo-app:v2 gcr.io/$DEVSHELL_PROJECT_ID/echo-app:v2

docker push gcr.io/$DEVSHELL_PROJECT_ID/echo-app:v2

# Task 2 : Echo-app:v2 is running on the Kubernetes cluster

gcloud container clusters get-credentials echo-cluster --zone=us-central1-a

kubectl create deployment echo-web --image=gcr.io/qwiklabs-resources/echo-app:v2

# Task 3 : The Kubernetes cluster deployment reports 2 replicas.

kubectl scale deployment echo-web --replicas=2

# Task 4 : The application must respond to web requests with V2.0.0

kubectl expose deployment echo-web --type=LoadBalancer --port 80 --target-port 8000

================================================

FILE: labs/gsp306_migrate-a-mysql-database-to-google-cloud-sql/guide.txt

================================================

# Migrate a MySQL Database to Google Cloud SQL

# https://www.qwiklabs.com/focuses/1740?parent=catalog

# Task 1: Check that there is a Cloud SQL instance

- Go to SQL -> Create Instance -> MySQL -> fill the name with "lab" and fill the password

It will take a several times to create the instance, you can go to Task 2 without waiting here

# Task 2: Check that there is a user database on the Cloud SQL instance

- Go to Compute Engine, click SSH button on "blog" instance

- run mysqldump --databases wordpress -h localhost -u blogadmin -p --hex-blob --skip-triggers --single-transaction --default-character-set=utf8mb4 > wordpress.sql

- Enter the password with Password1*

- run export PROJECT_ID=$(gcloud info --format='value(config.project)')

- run gsutil mb gs://${PROJECT_ID}

- run gsutil cp ~/wordpress.sql gs://${PROJECT_ID}

- Back to Cloud Console -> SQL -> lab -> Databases -> Create Database

- Fill the database name with wordpress and on character set, choose utf8mb4 then click Create

- Click Overview -> Import -> Browse -> Select wordpress.sql from your bucket -> Select -> Import

- Check Your Progress

# Task 3: Check that the blog instance is authorized to access Cloud SQL

- On left panel, click Users -> Add User Account

- Fill the name field with blogadmin and password field with Password1* -> add

- On the left pannel, Click Connections

- Click Add Network Under the Authorized networks

- Fill the name with blog

- Fill the Network with IP Address from Demo Blog Site Field, Change the latest part of the IP address with 0 and add /24 ( example: If IP = 34.123.155.123, fill with 34.123.155.0/24 )

- Check Your Progress

# Task 4: Check that wp-config.php points to the Cloud SQL instance

- Go to SQL -> Copy the Public IP Address from lab SQL instance

- Go to VM Instances click SSH Shell at "blog"

- run cd /var/www/html/wordpress/

- run sudo nano wp-config.php

- Change localhost string on DB_HOST with Public IP Address of SQL Instance that has copied before

- Check Your Progress

# Task 5: Check that the blog still responds to requests

- Now You can open your Demo Blog Site in the new tab and verify that no error

- Check Your Progress

================================================

FILE: labs/gsp306_migrate-a-mysql-database-to-google-cloud-sql/readme.md

================================================

# [Migrate a MySQL Database to Google Cloud SQL](https://www.qwiklabs.com/focuses/1740?parent=catalog)

## Topics tested

* Create a Google Cloud SQL instance and create a database

* Import a MySQL database into Cloud SQL

* Reconfigure an application to use Cloud SQL instead of a local MySQL database

## Challenge Scenario

Your WordPress blog is running on a server that is no longer suitable. As the first part of a complete migration exercise, you are migrating the locally hosted database used by the blog to Cloud SQL.

The existing WordPress installation is installed in the `/var/www/html/wordpress` directory in the instance called `blog` that is already running in the lab. You can access the blog by opening a web browser and pointing to the external IP address of the blog instance.

The existing database for the blog is provided by MySQL running on the same server. The existing MySQL database is called `wordpress` and the user called __blogadmin__ with password __Password1*__ , which provides full access to that database.

================================================

FILE: labs/gsp311_automate-interactions-with-contact-center-ai-challenge-lab/guide.txt

================================================

# Automate Interactions with Contact Center AI: Challenge Lab

# https://www.qwiklabs.com/focuses/12008?parent=catalog

# Setup

git clone https://github.com/GoogleCloudPlatform/dataflow-contact-center-speech-analysis.git

# Task 1: Create a Regional Cloud Storage bucket

- Go to Storage > Bucket > Create bucket

- Use your project id as name

- LOCATION MUST BE IN us-central1

# Task 2: Create a Cloud Function

- Go to Cloud Function > Create Function

- name: use default

- Trigger: Cloud Storage

- Event Type: Finalize/Create

- Bucket: <your_bucket_name>

- Runtime: Node.js 8

- index.js: use this code https://github.com/GoogleCloudPlatform/dataflow-contact-center-speech-analysis/blob/master/saf-longrun-job-func/index.js

- package.json: use this code https://github.com/GoogleCloudPlatform/dataflow-contact-center-speech-analysis/blob/master/saf-longrun-job-func/package.json

- Entry point: safLongRunJobFunc

- Click Environment Variables, Networking, Timeouts and more. Make sure region is us-central1

- DEPLOY

# Task 3: Create a BigQuery dataset

- Go to BigQuery > Create dataset with Name: lab

# Task 4: Create a Pub/Sub topic

- Go to Pub/Sub > Topics > Create Topic with Topic ID: speech2text

# Task 5: Create a Regional Cloud Storage bucket with DFaudio folder

- Back to your cloud storage, click your bucket and create a folder called DFaudio

# Task 6: Deploy Dataflow pipeline

- Open cloud shell, run:

# make sure you already clone the repo in # Setup

cd dataflow-contact-center-speech-analysis/saf-longrun-job-dataflow

python -m virtualenv env -p python3

source env/bin/activate

pip install apache-beam[gcp]

pip install dateparser