Showing preview only (3,460K chars total). Download the full file or copy to clipboard to get everything.

Repository: eval-sys/mcpmark

Branch: main

Commit: adc5e6558f05

Files: 670

Total size: 3.1 MB

Directory structure:

gitextract_5znolca_/

├── .dockerignore

├── .editorconfig

├── .gitattributes

├── .github/

│ ├── ISSUE_TEMPLATE/

│ │ ├── 1_bug_report.yml

│ │ ├── 2_feature_request.yml

│ │ └── config.yml

│ ├── PULL_REQUEST_TEMPLATE.md

│ ├── scripts/

│ │ └── pr-comment.js

│ └── workflows/

│ └── publish-docker-image.yml

├── .gitignore

├── CHANGELOG.md

├── Dockerfile

├── LICENSE

├── README.md

├── build-docker.sh

├── cspell.config.yaml

├── docs/

│ ├── contributing/

│ │ └── make-contribution.md

│ ├── datasets/

│ │ └── task.md

│ ├── installation_and_docker_usage.md

│ ├── introduction.md

│ ├── mcp/

│ │ ├── filesystem.md

│ │ ├── github.md

│ │ ├── notion.md

│ │ ├── playwright.md

│ │ └── postgres.md

│ └── quickstart.md

├── pipeline.py

├── pyproject.toml

├── run-benchmark.sh

├── run-task.sh

├── src/

│ ├── agents/

│ │ ├── __init__.py

│ │ ├── base_agent.py

│ │ ├── mcp/

│ │ │ ├── __init__.py

│ │ │ ├── http_server.py

│ │ │ └── stdio_server.py

│ │ ├── mcpmark_agent.py

│ │ ├── react_agent.py

│ │ └── utils/

│ │ ├── __init__.py

│ │ └── token_usage.py

│ ├── aggregators/

│ │ ├── aggregate_results.py

│ │ ├── aggregate_specific_results.py

│ │ ├── aggregate_task_meta.py

│ │ └── pricing.py

│ ├── base/

│ │ ├── __init__.py

│ │ ├── login_helper.py

│ │ ├── state_manager.py

│ │ └── task_manager.py

│ ├── config/

│ │ ├── __init__.py

│ │ └── config_schema.py

│ ├── errors.py

│ ├── evaluator.py

│ ├── factory.py

│ ├── logger.py

│ ├── mcp_services/

│ │ ├── filesystem/

│ │ │ ├── __init__.py

│ │ │ ├── filesystem_login_helper.py

│ │ │ ├── filesystem_state_manager.py

│ │ │ └── filesystem_task_manager.py

│ │ ├── github/

│ │ │ ├── __init__.py

│ │ │ ├── github_login_helper.py

│ │ │ ├── github_state_manager.py

│ │ │ ├── github_task_manager.py

│ │ │ ├── repo_exporter.py

│ │ │ ├── repo_importer.py

│ │ │ └── token_pool.py

│ │ ├── insforge/

│ │ │ ├── __init__.py

│ │ │ ├── insforge_login_helper.py

│ │ │ ├── insforge_state_manager.py

│ │ │ └── insforge_task_manager.py

│ │ ├── notion/

│ │ │ ├── __init__.py

│ │ │ ├── notion_login_helper.py

│ │ │ ├── notion_state_manager.py

│ │ │ └── notion_task_manager.py

│ │ ├── playwright/

│ │ │ ├── __init__.py

│ │ │ ├── playwright_login_helper.py

│ │ │ ├── playwright_state_manager.py

│ │ │ └── playwright_task_manager.py

│ │ ├── playwright_webarena/

│ │ │ ├── playwright_login_helper.py

│ │ │ ├── playwright_state_manager.py

│ │ │ ├── playwright_task_manager.py

│ │ │ └── reddit_env_setup.md

│ │ ├── postgres/

│ │ │ ├── __init__.py

│ │ │ ├── postgres_login_helper.py

│ │ │ ├── postgres_state_manager.py

│ │ │ └── postgres_task_manager.py

│ │ └── supabase/

│ │ ├── __init__.py

│ │ ├── supabase_login_helper.py

│ │ ├── supabase_state_manager.py

│ │ └── supabase_task_manager.py

│ ├── model_config.py

│ ├── results_reporter.py

│ └── services.py

└── tasks/

├── __init__.py

├── filesystem/

│ ├── easy/

│ │ ├── .gitkeep

│ │ ├── file_context/

│ │ │ ├── file_splitting/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ ├── pattern_matching/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── uppercase/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── file_property/

│ │ │ ├── largest_rename/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── txt_merging/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── folder_structure/

│ │ │ └── structure_analysis/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── legal_document/

│ │ │ └── file_reorganize/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── papers/

│ │ │ └── papers_counting/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── student_database/

│ │ ├── duplicate_name/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── recommender_name/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── standard/

│ ├── desktop/

│ │ ├── music_report/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── project_management/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── timeline_extraction/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── desktop_template/

│ │ ├── budget_computation/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── contact_information/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── file_arrangement/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── file_context/

│ │ ├── duplicates_searching/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── file_merging/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── file_splitting/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── pattern_matching/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── uppercase/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── file_property/

│ │ ├── size_classification/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── time_classification/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── folder_structure/

│ │ ├── structure_analysis/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── structure_mirror/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── legal_document/

│ │ ├── dispute_review/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── individual_comments/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── solution_tracing/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── papers/

│ │ ├── author_folders/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── find_math_paper/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── organize_legacy_papers/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── student_database/

│ │ ├── duplicate_name/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── english_talent/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── gradebased_score/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── threestudio/

│ │ ├── code_locating/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── output_analysis/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── requirements_completion/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── votenet/

│ ├── dataset_comparison/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── debugging/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── requirements_writing/

│ ├── description.md

│ ├── meta.json

│ └── verify.py

├── github/

│ ├── easy/

│ │ ├── build-your-own-x/

│ │ │ ├── close_commented_issues/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── record_recent_commits/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── claude-code/

│ │ │ ├── add_terminal_shortcuts_doc/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ ├── thank_docker_pr_author/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── triage_missing_tool_result_issue/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── mcpmark-cicd/

│ │ │ ├── basic_ci_checks/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ ├── issue_lint_guard/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── nightly_health_check/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── missing-semester/

│ │ ├── count_translations/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── find_ga_tracking_id/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── standard/

│ ├── build_your_own_x/

│ │ ├── find_commit_date/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── find_rag_commit/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── claude-code/

│ │ ├── automated_changelog_generation/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── claude_collaboration_analysis/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── critical_issue_hotfix_workflow/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── feature_commit_tracking/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── label_color_standardization/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── easyr1/

│ │ ├── advanced_branch_strategy/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── config_parameter_audit/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── performance_regression_investigation/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── qwen3_issue_management/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── harmony/

│ │ ├── fix_conflict/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── issue_pr_commit_workflow/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── issue_tagging_pr_closure/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── multi_branch_commit_aggregation/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── release_management_workflow/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── mcpmark-cicd/

│ │ ├── deployment_status_workflow/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── issue_management_workflow/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── linting_ci_workflow/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── pr_automation_workflow/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── missing-semester/

│ ├── assign_contributor_labels/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── find_legacy_name/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── find_salient_file/

│ ├── description.md

│ ├── meta.json

│ └── verify.py

├── notion/

│ ├── easy/

│ │ ├── .gitkeep

│ │ ├── computer_science_student_dashboard/

│ │ │ ├── simple__code_snippets_go/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── simple__study_session_tracker/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── it_trouble_shooting_hub/

│ │ │ └── simple__asset_retirement_migration/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── japan_travel_planner/

│ │ │ └── simple__remove_osaka_itinerary/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── online_resume/

│ │ │ └── simple__skills_development_tracker/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── python_roadmap/

│ │ │ └── simple__expert_level_lessons/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── self_assessment/

│ │ │ └── simple__faq_column_layout/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── standard_operating_procedure/

│ │ │ └── simple__section_organization/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── team_projects/

│ │ │ └── simple__swap_tasks/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── toronto_guide/

│ │ └── simple__change_color/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── standard/

│ ├── company_in_a_box/

│ │ ├── employee_onboarding/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── goals_restructure/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── quarterly_review_dashboard/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── computer_science_student_dashboard/

│ │ ├── code_snippets_go/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── courses_internships_relation/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── study_session_tracker/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── it_trouble_shooting_hub/

│ │ ├── asset_retirement_migration/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── security_audit_ticket/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── verification_expired_update/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── japan_travel_planner/

│ │ ├── daily_itinerary_overview/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── packing_progress_summary/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── remove_osaka_itinerary/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── restaurant_expenses_sync/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── online_resume/

│ │ ├── layout_adjustment/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── projects_section_update/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── skills_development_tracker/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── work_history_addition/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── python_roadmap/

│ │ ├── expert_level_lessons/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── learning_metrics_dashboard/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── self_assessment/

│ │ ├── faq_column_layout/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── hyperfocus_analysis_report/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── numbered_list_emojis/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── standard_operating_procedure/

│ │ ├── deployment_process_sop/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── section_organization/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── team_projects/

│ │ ├── priority_tasks_table/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── swap_tasks/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── toronto_guide/

│ ├── change_color/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── weekend_adventure_planner/

│ ├── description.md

│ ├── meta.json

│ └── verify.py

├── playwright/

│ ├── easy/

│ │ └── .gitkeep

│ └── standard/

│ ├── eval_web/

│ │ ├── cloudflare_turnstile_challenge/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── extraction_table/

│ │ ├── data.csv

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── web_search/

│ ├── birth_of_arvinxu/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── r1_arxiv/

│ ├── content.txt

│ ├── description.md

│ ├── meta.json

│ └── verify.py

├── playwright_webarena/

│ ├── easy/

│ │ ├── .gitkeep

│ │ ├── reddit/

│ │ │ ├── ai_data_analyst/

│ │ │ │ ├── description.md

│ │ │ │ ├── label.txt

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ ├── llm_research_summary/

│ │ │ │ ├── description.md

│ │ │ │ ├── label.txt

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ ├── movie_reviewer_analysis/

│ │ │ │ ├── description.md

│ │ │ │ ├── label.txt

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ ├── nba_statistics_analysis/

│ │ │ │ ├── description.md

│ │ │ │ ├── label.txt

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── routine_tracker_forum/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── shopping_admin/

│ │ ├── fitness_promotion_strategy/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── ny_expansion_analysis/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── products_sales_analysis/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── sales_inventory_analysis/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── search_filtering_operations/

│ │ ├── description.md

│ │ ├── label.txt

│ │ ├── meta.json

│ │ └── verify.py

│ └── standard/

│ ├── reddit/

│ │ ├── ai_data_analyst/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── budget_europe_travel/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── buyitforlife_research/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── llm_research_summary/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── movie_reviewer_analysis/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── nba_statistics_analysis/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── routine_tracker_forum/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── shopping/

│ │ ├── advanced_product_analysis/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── gaming_accessories_analysis/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── health_routine_optimization/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── holiday_baking_competition/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── multi_category_budget_analysis/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── printer_keyboard_search/

│ │ │ ├── description.md

│ │ │ ├── label.txt

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── running_shoes_purchase/

│ │ ├── description.md

│ │ ├── label.txt

│ │ ├── meta.json

│ │ └── verify.py

│ └── shopping_admin/

│ ├── customer_segmentation_setup/

│ │ ├── description.md

│ │ ├── label.txt

│ │ ├── meta.json

│ │ └── verify.py

│ ├── fitness_promotion_strategy/

│ │ ├── description.md

│ │ ├── label.txt

│ │ ├── meta.json

│ │ └── verify.py

│ ├── marketing_customer_analysis/

│ │ ├── description.md

│ │ ├── label.txt

│ │ ├── meta.json

│ │ └── verify.py

│ ├── ny_expansion_analysis/

│ │ ├── description.md

│ │ ├── label.txt

│ │ ├── meta.json

│ │ └── verify.py

│ ├── products_sales_analysis/

│ │ ├── description.md

│ │ ├── label.txt

│ │ ├── meta.json

│ │ └── verify.py

│ ├── sales_inventory_analysis/

│ │ ├── description.md

│ │ ├── label.txt

│ │ ├── meta.json

│ │ └── verify.py

│ └── search_filtering_operations/

│ ├── description.md

│ ├── label.txt

│ ├── meta.json

│ └── verify.py

├── postgres/

│ ├── easy/

│ │ ├── .gitkeep

│ │ ├── chinook/

│ │ │ ├── customer_data_migration_basic/

│ │ │ │ ├── customer_data.pkl

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── update_employee_info/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── dvdrental/

│ │ │ └── create_payment_index/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── employees/

│ │ │ ├── department_summary_view/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ ├── employee_gender_statistics/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ ├── employee_projects_basic/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── hiring_year_summary/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── lego/

│ │ │ ├── basic_security_setup/

│ │ │ │ ├── description.md

│ │ │ │ ├── meta.json

│ │ │ │ └── verify.py

│ │ │ └── fix_data_inconsistencies/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── sports/

│ │ └── create_performance_indexes/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── standard/

│ ├── chinook/

│ │ ├── customer_data_migration/

│ │ │ ├── customer_data.pkl

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── employee_hierarchy_management/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── sales_and_music_charts/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── dvdrental/

│ │ ├── customer_analysis_fix/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── customer_analytics_optimization/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── film_inventory_management/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── employees/

│ │ ├── employee_demographics_report/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── employee_performance_analysis/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── employee_project_tracking/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── employee_retention_analysis/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── executive_dashboard_automation/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── management_structure_analysis/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── lego/

│ │ ├── consistency_enforcement/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── database_security_policies/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── transactional_inventory_transfer/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ ├── security/

│ │ ├── rls_business_access/

│ │ │ ├── description.md

│ │ │ ├── ground_truth.sql

│ │ │ ├── meta.json

│ │ │ ├── prepare_environment.py

│ │ │ └── verify.py

│ │ └── user_permission_audit/

│ │ ├── description.md

│ │ ├── ground_truth.sql

│ │ ├── meta.json

│ │ ├── prepare_environment.py

│ │ └── verify.py

│ ├── sports/

│ │ ├── baseball_player_analysis/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ ├── participant_report_optimization/

│ │ │ ├── description.md

│ │ │ ├── meta.json

│ │ │ └── verify.py

│ │ └── team_roster_management/

│ │ ├── description.md

│ │ ├── meta.json

│ │ └── verify.py

│ └── vectors/

│ ├── dba_vector_analysis/

│ │ ├── description.md

│ │ ├── ground_truth.sql

│ │ ├── meta.json

│ │ ├── prepare_environment.py

│ │ └── verify.py

│ └── vectors_setup.py

└── utils/

├── __init__.py

├── notion_utils.py

└── postgres_utils.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .dockerignore

================================================

# Git

.git

.gitignore

# Python

__pycache__

*.pyc

*.pyo

*.pyd

.Python

*.egg

*.egg-info/

dist/

build/

.eggs/

*.so

# Virtual environments

venv/

env/

ENV/

.venv/

# IDE

.vscode/

.idea/

*.swp

*.swo

*~

.DS_Store

# Environment files (contain secrets)

.env

.mcp_env

notion_state.json

# Test and development files

.pytest_cache/

.coverage

htmlcov/

.tox/

.mypy_cache/

.ruff_cache/

tests/

test_environments/

# Results and logs

results/

*.log

logs/

# PostgreSQL data

.postgres/

# Playwright

playwright-report/

test-results/

# Documentation images

asset/

# Temporary files

*.tmp

tmp/

temp/

# Docker

Dockerfile

docker-compose.yml

.dockerignore

# Node modules (if any locally installed)

node_modules/

# Pixi lock file

pixi.lock

.pixi/

# GitHub state files

github_state/

github_template_repo/

# Backup directories

.mcpbench_backups/

================================================

FILE: .editorconfig

================================================

root = true

; Always use Unix style new lines with new line ending on every file and trim whitespace

[*]

end_of_line = lf

insert_final_newline = true

trim_trailing_whitespace = true

; Python: PEP8 defines 4 spaces for indentation

[*.py]

indent_style = space

indent_size = 4

================================================

FILE: .gitattributes

================================================

# SCM syntax highlighting & preventing 3-way merges

pixi.lock merge=binary linguist-language=YAML linguist-generated=true

================================================

FILE: .github/ISSUE_TEMPLATE/1_bug_report.yml

================================================

name: '🐛 Bug Report'

description: 'Report an bug'

labels: ['unconfirm']

type: Bug

body:

- type: textarea

attributes:

label: '🐛 Bug Description'

description: A clear and concise description of the bug, if the above option is `Other`, please also explain in detail.

validations:

required: true

- type: textarea

attributes:

label: '📷 Recurrence Steps'

description: A clear and concise description of how to recurrence.

- type: textarea

attributes:

label: '🚦 Expected Behavior'

description: A clear and concise description of what you expected to happen.

- type: textarea

attributes:

label: '📝 Additional Information'

description: If your problem needs further explanation, or if the issue you're seeing cannot be reproduced in a gist, please add more information here.

================================================

FILE: .github/ISSUE_TEMPLATE/2_feature_request.yml

================================================

name: '🌠 Feature Request'

description: 'Suggest an idea'

title: '[Request] '

type: Feature

body:

- type: textarea

attributes:

label: '🥰 Feature Description'

description: Please add a clear and concise description of the problem you are seeking to solve with this feature request.

validations:

required: true

- type: textarea

attributes:

label: '🧐 Proposed Solution'

description: Describe the solution you'd like in a clear and concise manner.

validations:

required: true

- type: textarea

attributes:

label: '📝 Additional Information'

description: Add any other context about the problem here.

================================================

FILE: .github/ISSUE_TEMPLATE/config.yml

================================================

contact_links:

- name: Questions and ideas

url: https://github.com/eval-sys/mcpmark/discussions/new/choose

about: Please post questions, and ideas in discussions.

================================================

FILE: .github/PULL_REQUEST_TEMPLATE.md

================================================

#### Change Type

<!-- For change type, change [ ] to [x]. -->

- [ ] ✨ feat

- [ ] 🐛 fix

- [ ] ♻️ refactor

- [ ] 💄 style

- [ ] 👷 build

- [ ] ⚡️ perf

- [ ] 📝 docs

- [ ] 🔨 chore

#### Description of Change

<!-- Thank you for your Pull Request. Please provide a description above. -->

#### Additional Information

<!-- Add any other context about the Pull Request here. -->

================================================

FILE: .github/scripts/pr-comment.js

================================================

/**

* Generate or update PR comment with Docker build info

*/

module.exports = async ({ github, context, dockerMetaJson, image, version, dockerhubUrl, platforms }) => {

const COMMENT_IDENTIFIER = '<!-- DOCKER-BUILD-COMMENT -->';

const parseTags = () => {

try {

if (dockerMetaJson) {

const parsed = JSON.parse(dockerMetaJson);

if (Array.isArray(parsed.tags) && parsed.tags.length > 0) {

return parsed.tags;

}

}

} catch (e) {

// ignore parsing error, fallback below

}

if (image && version) {

return [`${image}:${version}`];

}

return [];

};

const generateCommentBody = () => {

const tags = parseTags();

const buildTime = new Date().toISOString();

// Use the first tag as the main version

const mainTag = tags.length > 0 ? tags[0] : `${image}:${version}`;

const tagVersion = mainTag.includes(':') ? mainTag.split(':')[1] : version;

return [

COMMENT_IDENTIFIER,

'',

'### 🐳 Docker Build Completed!',

`**Version**: \`${tagVersion || 'N/A'}\``,

`**Build Time**: \`${buildTime}\``,

'',

dockerhubUrl ? `🔗 View all tags on Docker Hub: ${dockerhubUrl}` : '',

'',

'### Pull Image',

'Download the Docker image to your local machine:',

'',

'```bash',

`docker pull ${mainTag}`,

'```',

'',

'### Run Eval',

'Execute evaluation tasks using the built image:',

'',

'```bash',

`DOCKER_IMAGE_VERSION=${tagVersion} ./run-task.sh --models gpt-4.1-mini --tasks file_context/uppercase`,

'```',

'',

'> [!IMPORTANT]',

'> This build is for testing and validation purposes.',

]

.filter(Boolean)

.join('\n');

};

const body = generateCommentBody();

// List comments on the PR

const { data: comments } = await github.rest.issues.listComments({

issue_number: context.issue.number,

owner: context.repo.owner,

repo: context.repo.repo,

});

const existing = comments.find((c) => c.body && c.body.includes(COMMENT_IDENTIFIER));

if (existing) {

await github.rest.issues.updateComment({

comment_id: existing.id,

owner: context.repo.owner,

repo: context.repo.repo,

body,

});

return { updated: true, id: existing.id };

}

const result = await github.rest.issues.createComment({

issue_number: context.issue.number,

owner: context.repo.owner,

repo: context.repo.repo,

body,

});

return { updated: false, id: result.data.id };

};

================================================

FILE: .github/workflows/publish-docker-image.yml

================================================

name: Publish Docker Image

on:

workflow_dispatch:

release:

types: [ published ]

pull_request:

types: [ synchronize, labeled, unlabeled ]

permissions:

contents: read

pull-requests: write

concurrency:

group: ${{ github.ref }}-${{ github.workflow }}

cancel-in-progress: true

env:

REGISTRY_IMAGE: evalsysorg/mcpmark

PR_TAG_PREFIX: pr-

jobs:

build:

if: |

(github.event_name == 'pull_request' &&

contains(github.event.pull_request.labels.*.name, 'Build Docker')) ||

github.event_name != 'pull_request'

strategy:

matrix:

include:

- platform: linux/amd64

os: ubuntu-latest

- platform: linux/arm64

os: ubuntu-24.04-arm

runs-on: ${{ matrix.os }}

name: Build ${{ matrix.platform }} Image

steps:

- name: Prepare

run: |

platform=${{ matrix.platform }}

echo "PLATFORM_PAIR=${platform//\//-}" >> $GITHUB_ENV

- name: Checkout base

uses: actions/checkout@v4

with:

fetch-depth: 0

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v3

- name: Generate PR metadata

if: github.event_name == 'pull_request'

id: pr_meta

run: |

branch_name="${{ github.head_ref }}"

sanitized_branch=$(echo "${branch_name}" | sed -E 's/[^a-zA-Z0-9_.-]+/-/g')

echo "pr_tag=${sanitized_branch}-$(git rev-parse --short HEAD)" >> $GITHUB_OUTPUT

- name: Docker meta

id: meta

uses: docker/metadata-action@v5

with:

images: ${{ env.REGISTRY_IMAGE }}

tags: |

type=raw,value=${{ env.PR_TAG_PREFIX }}${{ steps.pr_meta.outputs.pr_tag }},enable=${{ github.event_name == 'pull_request' }}

type=semver,pattern={{version}},enable=${{ github.event_name != 'pull_request' }}

type=raw,value=latest,enable=${{ github.event_name != 'pull_request' }}

- name: Docker login

uses: docker/login-action@v3

with:

username: ${{ secrets.DOCKER_REGISTRY_USER }}

password: ${{ secrets.DOCKER_REGISTRY_PASSWORD }}

- name: Get commit SHA

if: github.ref == 'refs/heads/main'

id: vars

run: echo "sha_short=$(git rev-parse --short HEAD)" >> $GITHUB_OUTPUT

- name: Build and export

id: build

uses: docker/build-push-action@v6

with:

platforms: ${{ matrix.platform }}

context: .

file: ./Dockerfile

labels: ${{ steps.meta.outputs.labels }}

build-args: |

SHA=${{ steps.vars.outputs.sha_short }}

outputs: type=image,name=${{ env.REGISTRY_IMAGE }},push-by-digest=true,name-canonical=true,push=true

- name: Export digest

run: |

rm -rf /tmp/digests

mkdir -p /tmp/digests

digest="${{ steps.build.outputs.digest }}"

touch "/tmp/digests/${digest#sha256:}"

- name: Upload artifact

uses: actions/upload-artifact@v4

with:

name: digest-${{ env.PLATFORM_PAIR }}

path: /tmp/digests/*

if-no-files-found: error

retention-days: 1

merge:

name: Merge

needs: build

runs-on: ubuntu-latest

steps:

- name: Checkout base

uses: actions/checkout@v4

with:

fetch-depth: 0

- name: Download digests

uses: actions/download-artifact@v5

with:

path: /tmp/digests

pattern: digest-*

merge-multiple: true

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v3

- name: Generate PR metadata

if: github.event_name == 'pull_request'

id: pr_meta

run: |

branch_name="${{ github.head_ref }}"

sanitized_branch=$(echo "${branch_name}" | sed -E 's/[^a-zA-Z0-9_.-]+/-/g')

echo "pr_tag=${sanitized_branch}-$(git rev-parse --short HEAD)" >> $GITHUB_OUTPUT

- name: Docker meta

id: meta

uses: docker/metadata-action@v5

with:

images: ${{ env.REGISTRY_IMAGE }}

tags: |

type=raw,value=${{ env.PR_TAG_PREFIX }}${{ steps.pr_meta.outputs.pr_tag }},enable=${{ github.event_name == 'pull_request' }}

type=semver,pattern={{version}},enable=${{ github.event_name != 'pull_request' }}

type=raw,value=latest,enable=${{ github.event_name != 'pull_request' }}

- name: Docker login

uses: docker/login-action@v3

with:

username: ${{ secrets.DOCKER_REGISTRY_USER }}

password: ${{ secrets.DOCKER_REGISTRY_PASSWORD }}

- name: Create manifest list and push

working-directory: /tmp/digests

run: |

docker buildx imagetools create $(jq -cr '.tags | map("-t " + .) | join(" ")' <<< "$DOCKER_METADATA_OUTPUT_JSON") \

$(printf '${{ env.REGISTRY_IMAGE }}@sha256:%s ' *)

- name: Inspect image

run: |

docker buildx imagetools inspect ${{ env.REGISTRY_IMAGE }}:${{ steps.meta.outputs.version }}

- name: Comment on PR with Docker build info

if: github.event_name == 'pull_request'

uses: actions/github-script@v7

with:

github-token: ${{ secrets.GITHUB_TOKEN }}

script: |

const prComment = require('${{ github.workspace }}/.github/scripts/pr-comment.js');

const result = await prComment({

github,

context,

dockerMetaJson: ${{ toJSON(steps.meta.outputs.json) }},

image: "${{ env.REGISTRY_IMAGE }}",

version: "${{ steps.meta.outputs.version }}",

dockerhubUrl: "https://hub.docker.com/r/${{ env.REGISTRY_IMAGE }}/tags",

platforms: "linux/amd64, linux/arm64",

});

core.info(`Status: ${result.updated ? 'Updated' : 'Created'}, ID: ${result.id}`);

================================================

FILE: .gitignore

================================================

logs

.claude

CLAUDE.md

.gemini

results

materials

scripts

!.github/scripts

.nfs*

.mcp_env

.idea

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[codz]

*$py.class

logs

logs/*

.DS_Store

notion-sdk-py/

github_state/*

# for playwright cookies

notion_state.json

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

*.py.cover

.hypothesis/

.pytest_cache/

cover/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

.pybuilder/

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

# For a library or package, you might want to ignore these files since the code is

# intended to run in multiple environments; otherwise, check them in:

# .python-version

# pipenv

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

# However, in case of collaboration, if having platform-specific dependencies or dependencies

# having no cross-platform support, pipenv may install dependencies that don't work, or not

# install all needed dependencies.

#Pipfile.lock

# UV

# Similar to Pipfile.lock, it is generally recommended to include uv.lock in version control.

# This is especially recommended for binary packages to ensure reproducibility, and is more

# commonly ignored for libraries.

#uv.lock

# poetry

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

# This is especially recommended for binary packages to ensure reproducibility, and is more

# commonly ignored for libraries.

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

#poetry.lock

#poetry.toml

# pdm

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

# pdm recommends including project-wide configuration in pdm.toml, but excluding .pdm-python.

# https://pdm-project.org/en/latest/usage/project/#working-with-version-control

#pdm.lock

#pdm.toml

.pdm-python

.pdm-build/

# pixi

# Similar to Pipfile.lock, it is generally recommended to include pixi.lock in version control.

#pixi.lock

# Pixi creates a virtual environment in the .pixi directory, just like venv module creates one

# in the .venv directory. It is recommended not to include this directory in version control.

.pixi

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

__pypackages__/

# Celery stuff

celerybeat-schedule

celerybeat.pid

# SageMath parsed files

*.sage.py

# Environments

.env

.envrc

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

# pytype static type analyzer

.pytype/

# Cython debug symbols

cython_debug/

# PyCharm

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

# and can be added to the global gitignore or merged into this file. For a more nuclear

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

#.idea/

# Abstra

# Abstra is an AI-powered process automation framework.

# Ignore directories containing user credentials, local state, and settings.

# Learn more at https://abstra.io/docs

.abstra/

# Visual Studio Code

# Visual Studio Code specific template is maintained in a separate VisualStudioCode.gitignore

# that can be found at https://github.com/github/gitignore/blob/main/Global/VisualStudioCode.gitignore

# and can be added to the global gitignore or merged into this file. However, if you prefer,

# you could uncomment the following to ignore the entire vscode folder

# .vscode/

# Ruff stuff:

.ruff_cache/

# PyPI configuration file

.pypirc

# Cursor

# Cursor is an AI-powered code editor. `.cursorignore` specifies files/directories to

# exclude from AI features like autocomplete and code analysis. Recommended for sensitive data

# refer to https://docs.cursor.com/context/ignore-files

.cursorignore

.cursorindexingignore

# Marimo

marimo/_static/

marimo/_lsp/

__marimo__/

# pixi environments

.pixi

*.egg-info

.postgres

# MCPMark backup directories

.mcpmark_backups/*

test_environments/

postgres_state

================================================

FILE: CHANGELOG.md

================================================

# Changelog

All notable changes to this project will be documented in this file.

The format is based on [Keep a Changelog](https://keepachangelog.com/en/1.0.0/),

and this project adheres to [Semantic Versioning](https://semver.org/spec/v2.0.0.html).

## v1.2.0 - 2025-09-20

This version includes multiple important feature enhancements, particularly improvements in cost calculation, error handling, and Notion integration. Added per-model cost calculation, comprehensive aggregator functionality, and more robust error recovery mechanisms.

### ✨ Features

- **Add 1m parameter & improve log** (#198) - Added claude-1m-context option and enhanced logging functionality

- **Refine Notion parent resolution and duplicate recovery** (#197) - Improved Notion parent page resolution and duplicate content recovery mechanism

- **Comprehensive aggregator, enable push to new branch** (#185) - Implemented comprehensive aggregator functionality with support for pushing to new branches

- **Support price cost calculating per model** (#186) - Added per-model price cost calculation functionality

- **Improve agent end log** (#183) - Enhanced agent end logging

- **Improve litellm error handling** (#181) - Enhanced LiteLLM error handling mechanism

### ♻️ Refactoring

- **Use notion child block list to locate page** (#196) - Refactored page location logic to use Notion child block list approach

### 🐛 Bug Fixes

- **Fix verification in Notion task company_in_a_box/goals_restructure** (#194) - Fixed verification logic for specific Notion tasks

- **Improve claude error handling** (#195) - Improved error handling for Claude API interactions

- **Fix tailing slash issue for find_legacy_name** - Resolved trailing slash issues in find_legacy_name path handling

- **Recover when duplication lands on parent** (#189) - Fixed recovery mechanism when duplicate content affects parent pages

- **Correctly handle playwright parser** (#184) - Properly handle Playwright parser

- **Handle timeout error, add timeout error for resuming** (#182) - Handle timeout errors and add timeout error handling for resume operations

### 📝 Documentation

- **Better readme, notion language guide** (#190) - Improved README documentation and added comprehensive Notion language guide

### 🔨 Maintenance

- **Update price info** (#188) - Updated pricing information

- **Update desktop_template/file_arrangement/verify.py** (#187) - Maintenance updates to verification scripts

================================================

FILE: Dockerfile

================================================

# MCPMark Docker image with optimized layer caching

# Stage 1: Builder for Python dependencies only

FROM python:3.12-slim AS builder

RUN apt-get update && apt-get install -y --no-install-recommends \

gcc \

g++ \

libpq-dev \

&& rm -rf /var/lib/apt/lists/*

WORKDIR /build

# Copy project files needed for pip install

COPY pyproject.toml ./

COPY src/ ./src/

COPY tasks/ ./tasks/

# Install dependencies

RUN pip install --no-cache-dir --user .

# Stage 2: Final image with all runtime dependencies

FROM python:3.12-slim

# Layer 1: Core system dependencies (very stable, rarely changes)

RUN apt-get update && apt-get install -y --no-install-recommends \

ca-certificates \

&& rm -rf /var/lib/apt/lists/*

# Layer 2: PostgreSQL runtime and client tools (stable, only changes with postgres version)

RUN apt-get update && apt-get install -y --no-install-recommends \

libpq5 \

postgresql-client \

&& rm -rf /var/lib/apt/lists/*

# Layer 3: Git (stable)

RUN apt-get update && apt-get install -y --no-install-recommends \

git \

&& rm -rf /var/lib/apt/lists/*

# Layer 4: Playwright system dependencies (changes with browser requirements)

RUN apt-get update && apt-get install -y --no-install-recommends \

libnss3 \

libnspr4 \

libatk1.0-0 \

libatk-bridge2.0-0 \

libcups2 \

libdrm2 \

libxkbcommon0 \

libatspi2.0-0 \

libx11-6 \

libxcomposite1 \

libxdamage1 \

libxfixes3 \

libxrandr2 \

libgbm1 \

libxcb1 \

libpango-1.0-0 \

libcairo2 \

libasound2 \

&& rm -rf /var/lib/apt/lists/*

# Layer 5: Download tools and Node.js (changes with Node version)

RUN apt-get update && \

apt-get install -y --no-install-recommends curl wget unzip && \

curl -fsSL https://deb.nodesource.com/setup_20.x | bash - && \

apt-get install -y --no-install-recommends nodejs && \

apt-get autoremove -y && \

rm -rf /var/lib/apt/lists/*

# Layer 6: pipx (rarely changes)

RUN pip install --no-cache-dir pipx && \

pipx ensurepath

# Layer 7: Copy Python packages from builder (changes with dependencies)

COPY --from=builder /root/.local /root/.local

# Layer 8: Playwright browsers (changes with browser versions)

RUN python3 -m playwright install chromium && \

npx -y playwright install chromium

# Layer 9: Install PostgreSQL MCP server (Python, used via `pipx run postgres-mcp`)

RUN pipx install postgres-mcp

# Set working directory

WORKDIR /app

# Layer 9: Create directory structure (rarely changes)

RUN mkdir -p /app/results

# Layer 10: Application code (changes frequently)

COPY . .

# Set environment

ENV PATH="/root/.local/bin:/root/.local/pipx/venvs/*/bin:${PATH}"

ENV PYTHONPATH="/app"

ENV PLAYWRIGHT_BROWSERS_PATH=/root/.cache/ms-playwright

ENV PIPX_HOME=/root/.local/pipx

ENV PIPX_BIN_DIR=/root/.local/bin

# Default command

CMD ["python3", "-m", "pipeline", "--help"]

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright [yyyy] [name of copyright owner]

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: README.md

================================================

<div align="center">

# MCPMark: Stress-Testing Comprehensive MCP Use

[](https://mcpmark.ai)

[](https://arxiv.org/abs/2509.24002)

[](https://discord.gg/HrKkJAxDnA)

[](https://mcpmark.ai/docs)

[](https://huggingface.co/datasets/Jakumetsu/mcpmark-trajectory-log)

</div>

An evaluation suite for agentic models in real MCP tool environments (Notion / GitHub / Filesystem / Postgres / Playwright).

MCPMark provides a reproducible, extensible benchmark for researchers and engineers: one-command tasks, isolated sandboxes, auto-resume for failures, unified metrics, and aggregated reports.

[](https://mcpmark.ai)

## News

- 📌 **21 Jan** — Pinned MCP server versions for reproducible benchmarks: GitHub MCP Server `v0.15.0` (switched to Docker for version control), Notion MCP Server `@1.9.1` (Notion released 2.0 but it has many bugs, not recommended). See [#246](https://github.com/eval-sys/mcpmark/pull/246).

- 🔥 **13 Dec** — Added auto-compaction support (`--compaction-token`) to summarize long conversations and avoid context overflow during evaluation ([#236](https://github.com/eval-sys/mcpmark/pull/236])).

- 🏅 **02 Dec** — Evaluated `gemini-3-pro-preview` (thinking: low): **Pass@1 50.6%** ± 2.3% — so close to `gpt-5-high` (51.6%)! Also `deepseek-v3.2-thinking` 36.8% and `deepseek-v3.2-chat` 29.7%

- 🔥 **02 Dec** — Obfuscate GitHub @mentions to prevent notification spam during evaluation ([#229](https://github.com/eval-sys/mcpmark/pull/229))

- 🏅 **01 Dec** — DeepSeek v3.2 uses MCPMark! Kudos on securing the best open-source model. [X Post](https://x.com/deepseek_ai/status/1995452650557763728) | [Technical Report](https://huggingface.co/deepseek-ai/DeepSeek-V3.2/resolve/main/assets/paper.pdf)

- 🔥 **17 Nov** — Added 50 easy tasks (10 per MCP server) for smaller open-source models ([#225](https://github.com/eval-sys/mcpmark/pull/225))

- 🤝 **31 Oct** — Community PR from insforge: better MCP servers achieve better results with fewer tokens! ([#214](https://github.com/eval-sys/mcpmark/pull/214))

- 🔥 **13 Oct** — Added ReAct agent support. PRs for new agent scaffolds welcome! ([#209](https://github.com/eval-sys/mcpmark/pull/209))

- 🏅 **10 Sep** — `qwen-3-coder-plus` is the best open-source model! Kudos to Qwen team. [X Post](https://x.com/Alibaba_Qwen/status/1965457023438651532)

---

## What you can do with MCPMark

- **Evaluate real tool usage** across multiple MCP services: `Notion`, `GitHub`, `Filesystem`, `Postgres`, `Playwright`.

- **Use ready-to-run tasks** covering practical workflows, each with strict automated verification.

- **Reliable and reproducible**: isolated environments that do not pollute your accounts/data; failed tasks auto-retry and resume.

- **Unified metrics and aggregation**: single/multi-run (pass@k, avg@k, etc.) with automated results aggregation.

- **Flexible deployment**: local or Docker; fully validated on macOS and Linux.

---

## Quickstart (5 minutes)

### 1) Clone the repository

```bash

git clone https://github.com/eval-sys/mcpmark.git

cd mcpmark

```

### 2) Set environment variables (create `.mcp_env` at repo root)

Only set what you need. Add service credentials when running tasks for that service.

```env

# Example: OpenAI

OPENAI_BASE_URL="https://api.openai.com/v1"

OPENAI_API_KEY="sk-..."

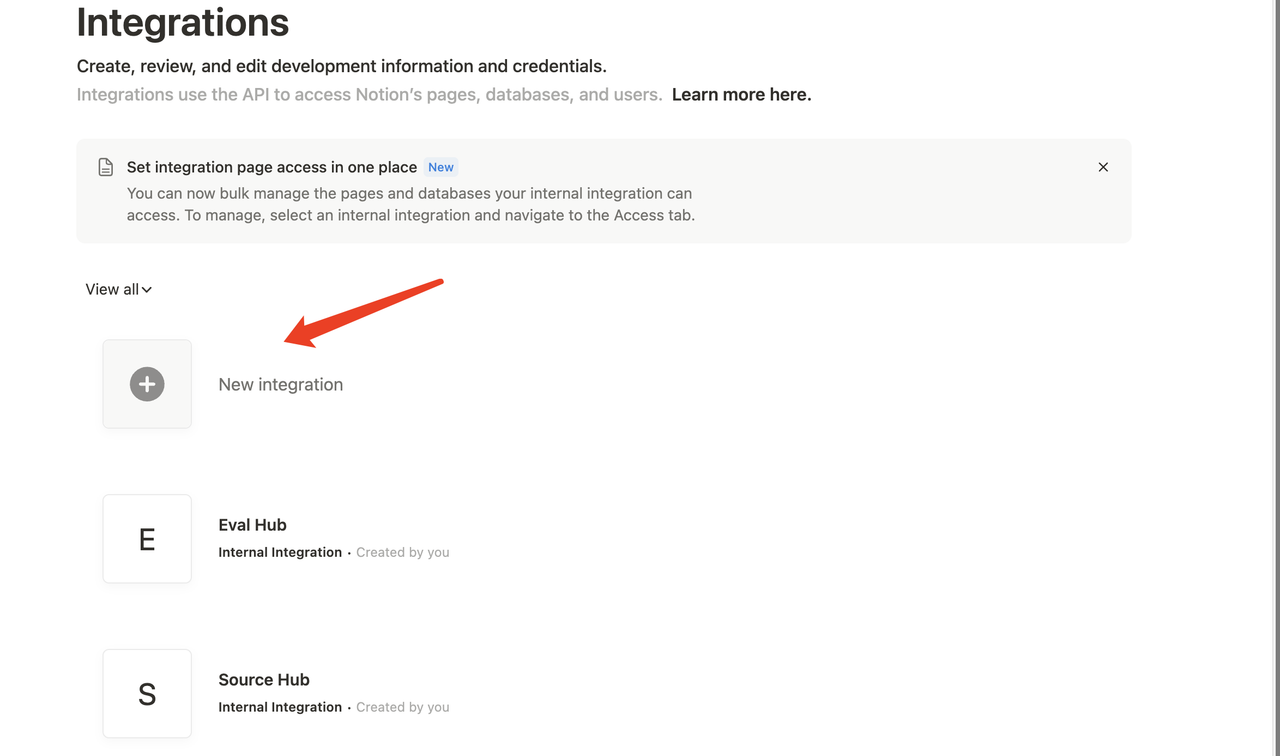

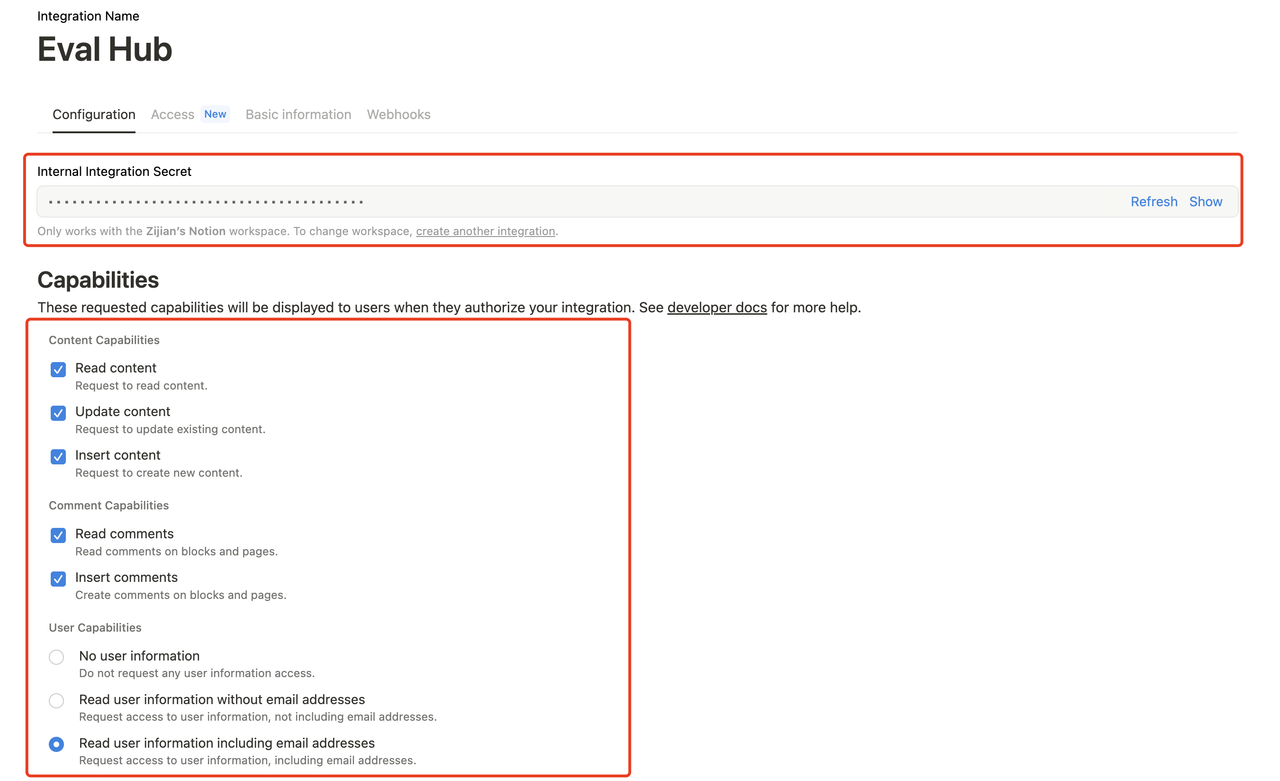

# Optional: Notion (only for Notion tasks)

SOURCE_NOTION_API_KEY="your-source-notion-api-key"

EVAL_NOTION_API_KEY="your-eval-notion-api-key"

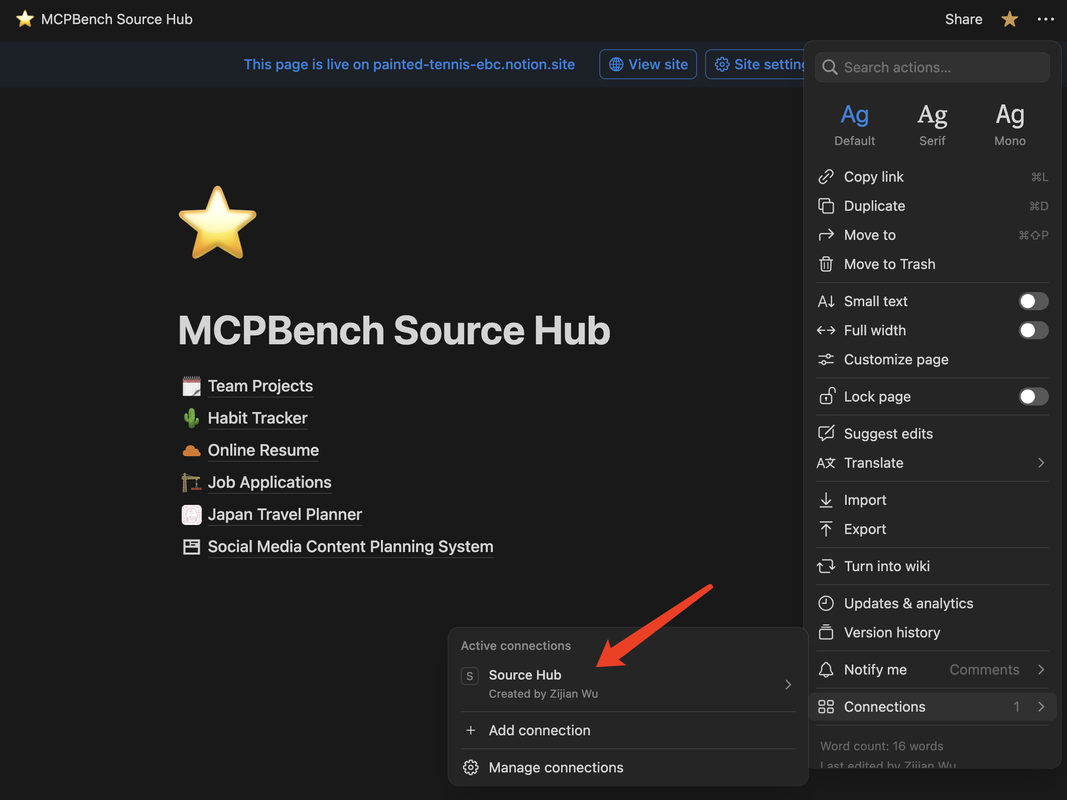

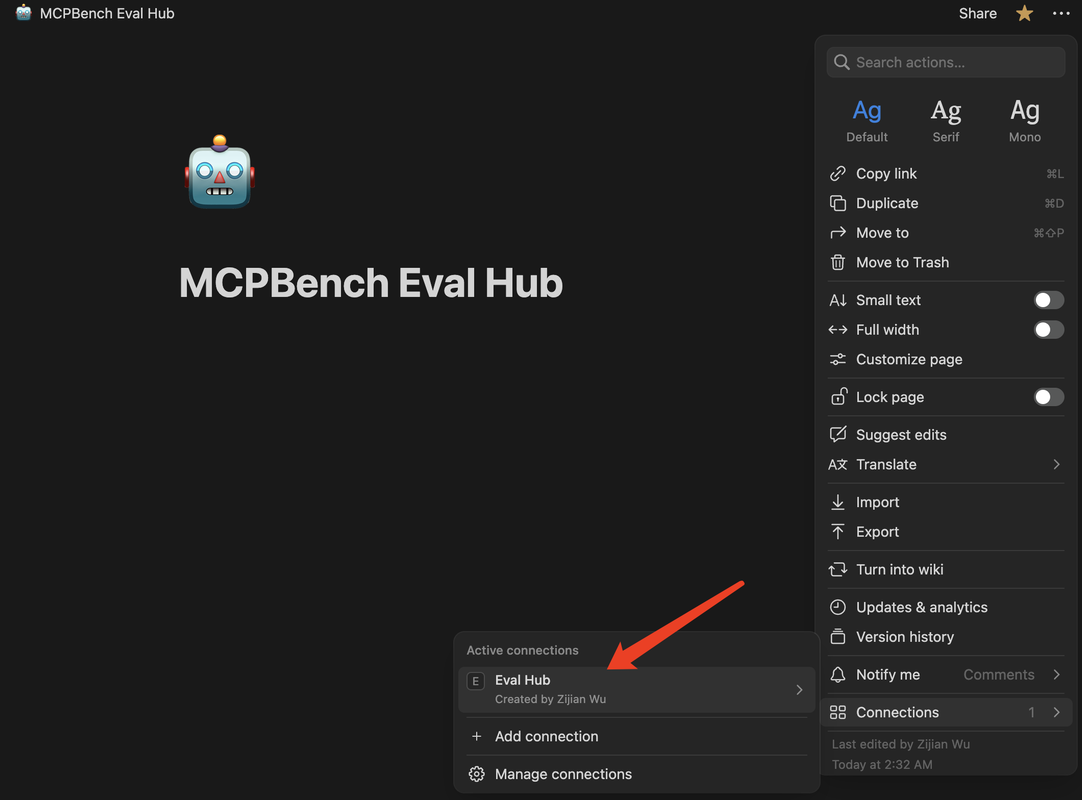

EVAL_PARENT_PAGE_TITLE="MCPMark Eval Hub"

PLAYWRIGHT_BROWSER="chromium" # chromium | firefox

PLAYWRIGHT_HEADLESS="True"

# Optional: GitHub (only for GitHub tasks)

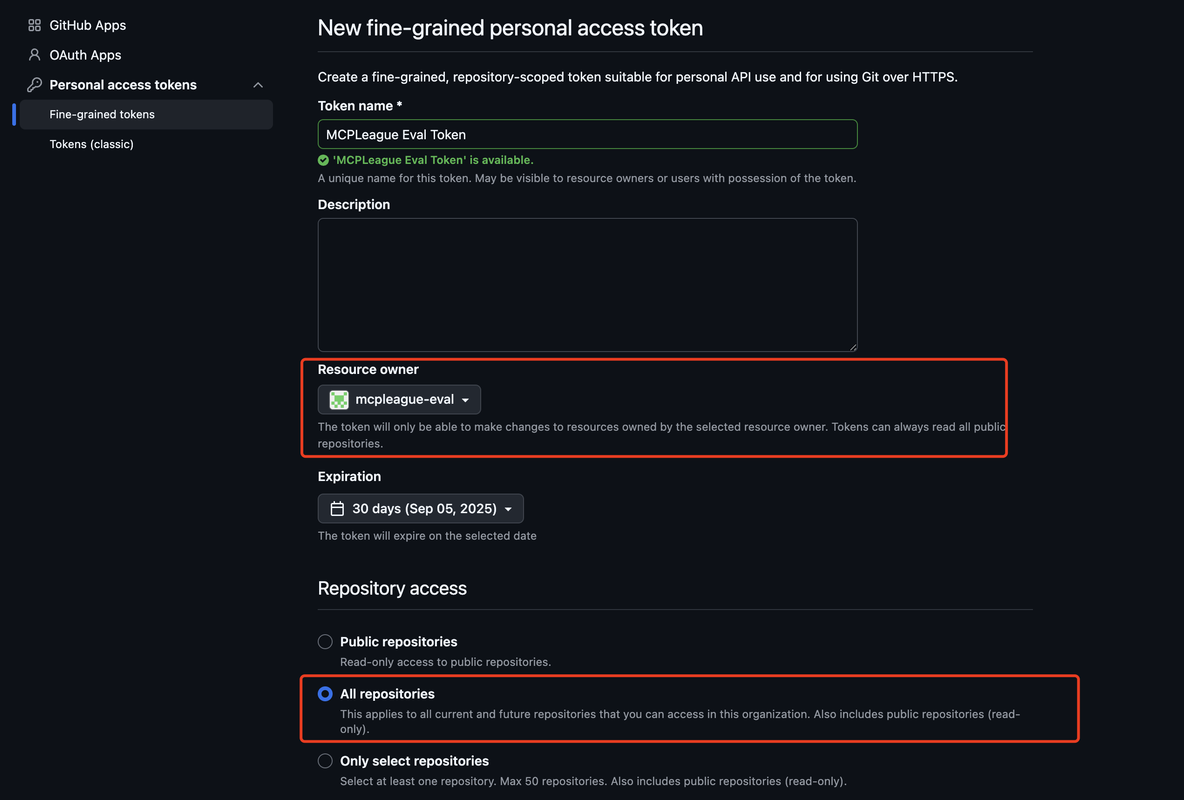

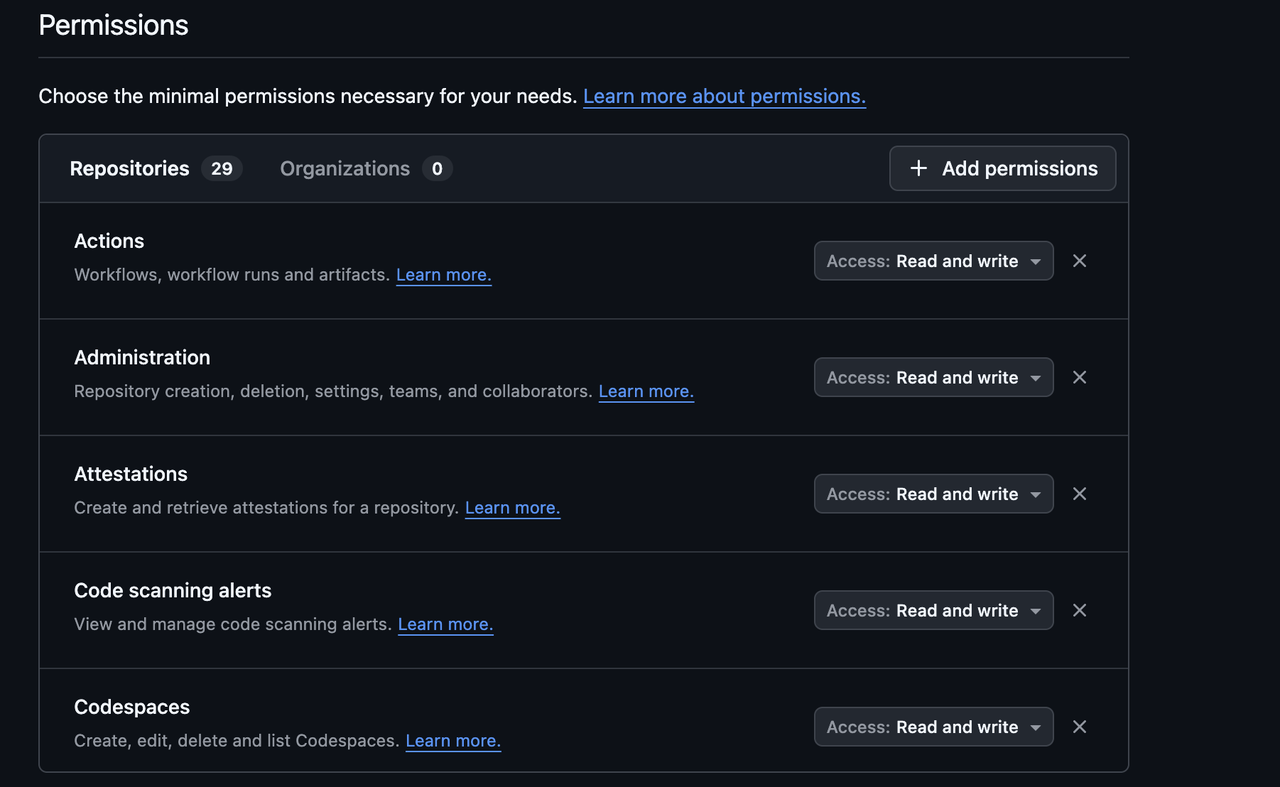

GITHUB_TOKENS="token1,token2" # token pooling for rate limits

GITHUB_EVAL_ORG="your-eval-org"

# Optional: Postgres (only for Postgres tasks)

POSTGRES_HOST="localhost"

POSTGRES_PORT="5432"

POSTGRES_USERNAME="postgres"

POSTGRES_PASSWORD="password"

```

See `docs/introduction.md` and the service guides below for more details.

### 3) Install and run a minimal example

Local (Recommended)

```bash

pip install -e .

# If you'll use browser-based tasks, install Playwright browsers first

playwright install

```

MCPMark defaults to the built-in orchestration agent (`MCPMarkAgent`). To experiment with the ReAct-style agent, pass `--agent react` to `pipeline.py` (other settings stay the same).

Docker

```bash

./build-docker.sh

```

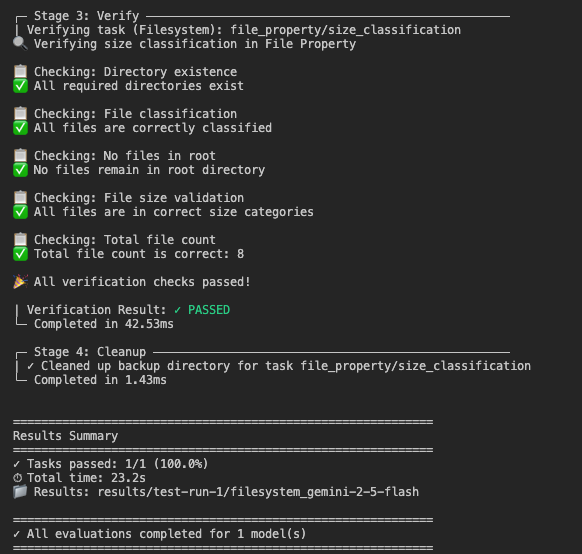

Run a filesystem task (no external accounts required):

```bash

python -m pipeline \

--mcp filesystem \

--k 1 \ # run once to quick start

--models gpt-5 \ # or any model you configured

--tasks file_property/size_classification

# Add --task-suite easy to run the lightweight dataset (where available)

```

Results are saved to `./results/{exp_name}/{model}__{mcp}/run-*/...` for the standard suite and `./results/{exp_name}/{model}__{mcp}-easy/run-*/...` when you run `--task-suite easy` (e.g., `./results/test-run/gpt-5__filesystem/run-1/...` or `./results/test-run/gpt-5__github-easy/run-1/...`).

---

## Run your evaluations

### Task suites (standard vs easy)

- Each MCP service now stores tasks under `tasks/<mcp>/<task_suite>/<category>/<task>/`.

- `standard` (default) covers the full benchmark (127 tasks today).

- `easy` hosts 10 lightweight tasks per MCP, ideal for smoke tests and CI (GitHub’s are already available under `tasks/github/easy`).

- Switch suites with `--task-suite easy` (defaults to `--task-suite standard`).

### Single run (k=1)

```bash

# Run ALL tasks for a service

python -m pipeline --exp-name exp --mcp notion --tasks all --models MODEL --k 1

# Run a task group

python -m pipeline --exp-name exp --mcp notion --tasks online_resume --models MODEL --k 1

# Run a specific task

python -m pipeline --exp-name exp --mcp notion --tasks online_resume/daily_itinerary_overview --models MODEL --k 1

# Evaluate multiple models

python -m pipeline --exp-name exp --mcp notion --tasks all --models MODEL1,MODEL2,MODEL3 --k 1

```

### Multiple runs (k>1) for pass@k

```bash

# Run k=4 to compute stability metrics (requires --exp-name to aggregate final results)

python -m pipeline --exp-name exp --mcp notion --tasks all --models MODEL

# Aggregate results (pass@1 / pass@k / pass^k / avg@k)

python -m src.aggregators.aggregate_results --exp-name exp

```

### Run with Docker

```bash

# Run all tasks for a service

./run-task.sh --mcp notion --models MODEL --exp-name exp --tasks all

# Cross-service benchmark

./run-benchmark.sh --models MODEL --exp-name exp --docker

```

Please visit `docs/introduction.md` for choices of *MODEL*.

Tip: MCPMark supports **auto-resume**. When re-running, only unfinished tasks will execute. Failures matching our retryable patterns (see [RETRYABLE_PATTERNS](src/errors.py)) are retried automatically. Models may emit different error strings—if you encounter a new resumable error, please open a PR or issue.

Tip: MCPMark supports **auto-compaction**; pass `--compaction-token N` to enable automatic context summarization when prompt tokens reach `N` (use `999999999` to disable).

---

## Service setup and authentication

| Service | Setup summary | Docs |

|-------------|-----------------------------------------------------------------------------------------------------------------|---------------------------------------|

| Notion | Environment isolation (Source Hub / Eval Hub), integration creation and grants, browser login verification. | [Guide](docs/mcp/notion.md) |

| GitHub | Multi-account token pooling recommended; import pre-exported repo state if needed. | [Guide](docs/mcp/github.md) |

| Postgres | Start via Docker and import sample databases. | [Setup](docs/mcp/postgres.md) |

| Playwright | Install browsers before first run; defaults to `chromium`. | [Setup](docs/mcp/playwright.md) |

| Filesystem | Zero-configuration, run directly. | [Config](docs/mcp/filesystem.md) |

You can also follow [Quickstart](docs/quickstart.md) for the shortest end-to-end path.

### Important Notice: GitHub Repository Privacy

> **Please ensure your evaluation repositories are set to PRIVATE.**

GitHub state templates are now automatically downloaded from our CDN during evaluation — no manual download is required. However, because these templates contain issues and pull requests from real open-source repositories, the recreation process includes `@username` mentions of the original authors.

**We have received feedback from original GitHub authors who were inadvertently notified** when evaluation repositories were created as public. To be a responsible member of the open-source community, we urge all users to:

1. **Always keep evaluation repositories private** during the evaluation process.

2. **In the latest version**, we have added random suffixes to all `@username` mentions (e.g., `@user` becomes `@user_x7k2`) and implemented a safety check that prevents importing templates to public repositories.

3. **If you are using an older version of MCPMark**, please either:

- Pull the latest code immediately, or

- Manually ensure all GitHub evaluation repositories are set to private.

Thank you for helping us maintain a respectful relationship with the open-source community.

---

## Results and metrics

- Results are organized under `./results/{exp_name}/{model}__{mcp}/run-*/` (JSON + CSV per task).

- Generate a summary with:

```bash

# Basic usage

python -m src.aggregators.aggregate_results --exp-name exp

# For k-run experiments with single-run models

python -m src.aggregators.aggregate_results --exp-name exp --k 4 --single-run-models claude-opus-4-1

```

- Only models with complete results across all tasks and runs are included in the final summary.

- Includes multi-run metrics (pass@k, pass^k) for stability comparisons when k > 1.

---

## Model and Tasks

- **Model support**: MCPMark calls models via LiteLLM — see the LiteLLM docs: [`LiteLLM Doc`](https://docs.litellm.ai/docs/). For Anthropic (Claude) extended thinking mode (enabled via `--reasoning-effort`), we use Anthropic’s native API.

- See `docs/introduction.md` for details and configuration of supported models in MCPMark.

- To add a new model, edit `src/model_config.py`. Before adding, check LiteLLM supported models/providers. See [`LiteLLM Doc`](https://docs.litellm.ai/docs/).

- Task design principles in `docs/datasets/task.md`. Each task ships with an automated `verify.py` for objective, reproducible evaluation, see `docs/task.md` for details.

---

## Contributing

Contributions are welcome:

1. Add a new task under `tasks/<mcp>/<task_suite>/<category_id>/<task_id>/` with `meta.json`, `description.md` and `verify.py`.

2. Ensure local checks pass and open a PR.

3. See `docs/contributing/make-contribution.md`.

---

## Citation

If you find our works useful for your research, please consider citing:

```bibtex

@misc{wu2025mcpmark,

title={MCPMark: A Benchmark for Stress-Testing Realistic and Comprehensive MCP Use},

author={Zijian Wu and Xiangyan Liu and Xinyuan Zhang and Lingjun Chen and Fanqing Meng and Lingxiao Du and Yiran Zhao and Fanshi Zhang and Yaoqi Ye and Jiawei Wang and Zirui Wang and Jinjie Ni and Yufan Yang and Arvin Xu and Michael Qizhe Shieh},

year={2025},

eprint={2509.24002},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2509.24002},

}

```

## License

This project is licensed under the Apache License 2.0 — see `LICENSE`.

================================================

FILE: build-docker.sh

================================================

#!/bin/bash

# Build Docker image for MCPMark

set -e

# Color codes for output

GREEN='\033[0;32m'

YELLOW='\033[1;33m'

NC='\033[0m' # No Color

echo -e "${YELLOW}Building MCPMark Docker image locally...${NC}"

# Build the Docker image with the same tag as Docker Hub for local testing

docker build -t evalsysorg/mcpmark:latest . "$@"

# Check if build was successful

if [ $? -eq 0 ]; then

echo -e "${GREEN}✓ Docker image built successfully${NC}"

echo " Tag: evalsysorg/mcpmark:latest"

# Show image info

echo ""

echo "Image details:"

docker images evalsysorg/mcpmark:latest --format "table {{.Repository}}\t{{.Tag}}\t{{.Size}}\t{{.CreatedAt}}"

echo ""

echo "You can now run tasks using:"