Showing preview only (296K chars total). Download the full file or copy to clipboard to get everything.

Repository: google/audion

Branch: main

Commit: 11805e3151d3

Files: 101

Total size: 271.5 KB

Directory structure:

gitextract_0gd149fy/

├── .babelrc

├── .editorconfig

├── .eslintrc.json

├── .github/

│ └── workflows/

│ └── nodejs-ci.yml

├── .gitignore

├── .husky/

│ ├── .gitignore

│ └── pre-commit

├── .jsdoc.json

├── .prettierrc

├── LICENSE

├── README.md

├── fixtures/

│ └── oscillatorGainParam.ts

├── package.json

├── simulations/

│ ├── updateGraphRender.html

│ ├── updateGraphRender.ts

│ └── webpack.config.js

├── src/

│ ├── .jest.config.json

│ ├── build/

│ │ ├── make-chrome-extension.js

│ │ └── manifest.json.mustache

│ ├── chrome/

│ │ ├── API.js

│ │ ├── Debugger.js

│ │ ├── DebuggerPageDomain.ts

│ │ ├── DebuggerWebAudioDomain.ts

│ │ ├── DevTools.js

│ │ ├── Runtime.js

│ │ ├── Types.js

│ │ └── index.js

│ ├── custom.d.ts

│ ├── devtools/

│ │ ├── DebuggerAttachEventController.ts

│ │ ├── DebuggerEvents.ts

│ │ ├── DevtoolsGraphPanel.test.js

│ │ ├── DevtoolsGraphPanel.ts

│ │ ├── Types.ts

│ │ ├── WebAudioEventObserver.test.js

│ │ ├── WebAudioEventObserver.ts

│ │ ├── WebAudioGraphIntegrator.test.js

│ │ ├── WebAudioGraphIntegrator.ts

│ │ ├── WebAudioRealtimeData.ts

│ │ ├── deserializeGraphContext.ts

│ │ ├── layoutGraphContext.ts

│ │ ├── main.ts

│ │ ├── partitionMap.ts

│ │ ├── serializeGraphContext.js

│ │ └── setOptionsToGraphContext.ts

│ ├── devtools.html

│ ├── extraSettingPage/

│ │ ├── options.html

│ │ └── options.js

│ ├── panel/

│ │ ├── GraphSelector.ts

│ │ ├── Observer.runtime.ts

│ │ ├── Types.ts

│ │ ├── components/

│ │ │ ├── WholeGraphButton.css

│ │ │ ├── WholeGraphButton.ts

│ │ │ ├── collectGarbage.css

│ │ │ ├── collectGarbage.ts

│ │ │ ├── detailPanel.css

│ │ │ ├── detailPanel.ts

│ │ │ ├── domUtils.ts

│ │ │ ├── realtimeSummary.ts

│ │ │ ├── selectGraph.css

│ │ │ └── selectGraph.ts

│ │ ├── graph/

│ │ │ ├── AudioEdgeArrowGraphics.ts

│ │ │ ├── AudioEdgeCurvedLineGraphics.ts

│ │ │ ├── AudioEdgeRender.ts

│ │ │ ├── AudioGraphRender.ts

│ │ │ ├── AudioGraphText.ts

│ │ │ ├── AudioGraphTextCacheGroup.ts

│ │ │ ├── AudioNodeBackground.ts

│ │ │ ├── AudioNodeBackgroundRenderCacheGroup.ts

│ │ │ ├── AudioNodePort.ts

│ │ │ ├── AudioNodeRender.ts

│ │ │ ├── AudioPortCacheGroup.ts

│ │ │ ├── Camera.js

│ │ │ ├── GraphicsCache.ts

│ │ │ ├── graphStyle.js

│ │ │ └── graphStyle.ts

│ │ ├── main.ts

│ │ ├── updateGraphRender.ts

│ │ ├── updateGraphSizes.ts

│ │ └── worker.ts

│ ├── panel.html

│ ├── utils/

│ │ ├── Observer.emitter.js

│ │ ├── Observer.test.js

│ │ ├── Observer.ts

│ │ ├── Types.ts

│ │ ├── dlog.js

│ │ ├── error.js

│ │ ├── error.test.js

│ │ ├── index.js

│ │ ├── mapThruWorker.ts

│ │ ├── math.js

│ │ ├── retry.js

│ │ ├── retry.test.js

│ │ ├── rxChrome.ts

│ │ └── rxInterop.ts

│ └── webpack.config.js

├── test/

│ ├── .jest-puppeteer.config.json

│ ├── .jest.config.json

│ ├── README.md

│ ├── browserLaunch.js

│ └── updateGraphRender.js

└── tsconfig.json

================================================

FILE CONTENTS

================================================

================================================

FILE: .babelrc

================================================

{

"plugins": [[

"@babel/plugin-transform-modules-commonjs"

], [

"@babel/plugin-proposal-optional-chaining"

]],

"presets": ["@babel/preset-typescript"]

}

================================================

FILE: .editorconfig

================================================

# EditorConfig is awesome: https://EditorConfig.org

# top-most EditorConfig file

root = true

[*]

indent_style = space

indent_size = 2

end_of_line = lf

charset = utf-8

trim_trailing_whitespace = true

insert_final_newline = false

================================================

FILE: .eslintrc.json

================================================

{

"env": {

"browser": true,

"es2021": true,

"node": true

},

"extends": ["eslint:recommended", "google"],

"parserOptions": {

"ecmaVersion": 12,

"sourceType": "module"

},

"rules": {

// Indent files with prettier

"indent": ["off"],

// Allow triple slash comments

"spaced-comment": ["error", "always", {"markers": ["/"]}],

"operator-linebreak": ["off"]

}

}

================================================

FILE: .github/workflows/nodejs-ci.yml

================================================

name: Node.js CI

on: [push, pull_request]

jobs:

build:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Use Node.js "18.x"

uses: actions/setup-node@v3

with:

node-version: '18.x'

- run: npm ci

- name: Run npm test with xvfb

uses: coactions/setup-xvfb@v1

with:

run: npm test

================================================

FILE: .gitignore

================================================

.DS_Store

# dependencies

node_modules

# build/test

.eslintcache

docs

coverage

/build

/simulations/build

!src/build

================================================

FILE: .husky/.gitignore

================================================

_

================================================

FILE: .husky/pre-commit

================================================

#!/bin/sh

. "$(dirname "$0")/_/husky.sh"

npx lint-staged

================================================

FILE: .jsdoc.json

================================================

{

"source": {

"include": ["./src/"],

"includePattern": ".+\\.js(doc)?$",

"excludePattern": "(^|\\/|\\\\)_|\\.test\\.js$"

},

"opts": {

"encoding": "utf8",

"recurse": true,

"private": false,

"lenient": true,

"destination": "./docs",

"template": "./node_modules/@pixi/jsdoc-template",

"readme": "README.md"

},

"plugins": ["plugins/markdown"]

}

================================================

FILE: .prettierrc

================================================

{

"tabWidth": 2,

"useTabs": false,

"trailingComma": "all",

"singleQuote": true,

"bracketSpacing": false

}

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright 2021 Google, Inc.

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: README.md

================================================

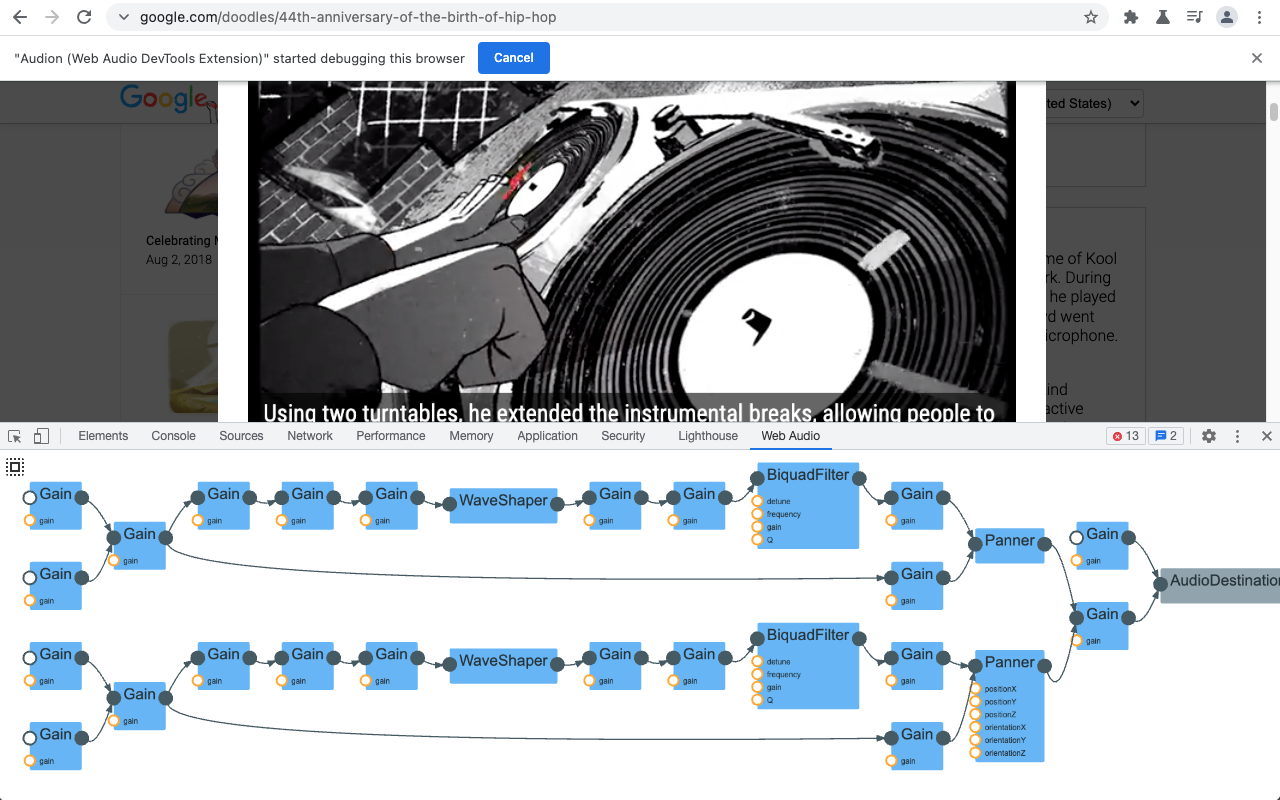

# Audion: Web Audio Graph Visualizer

[](https://github.com/GoogleChrome/audion/actions/workflows/nodejs-ci.yml)

Audion is a Chrome extension that adds a panel to DevTools. This panel

visualizes the audio graph (programmed with Web Audio API) in real-time.

Soon you will be able to install the extension from Chrome Web Store page.

## Usage

1. [Install the extension](https://chrome.google.com/webstore/detail/audion/cmhomipkklckpomafalojobppmmidlgl)

from Chrome Web Store.

1. Alternatively, you can clone this repository and build the extension

locally. Follow

[this instruction](https://developer.chrome.com/docs/extensions/mv3/faq/#faq-dev-01)

to load the local build.

1. [Open Chrome Developer Tools](https://developer.chrome.com/docs/devtools/open/).

You should be able to find “Web Audio” panel in the top. Select the panel.

1. Visit or reload a page that uses Web Audio API. If the page is loaded before

opening Developer Tools, you need to reload the page for the extension to

work correctly.

1. You can pan and zoom with the mouse and wheel. Click the “autofit” button to

fit the graph within the panel.

## Development

### Build and test the extension

1. Install NodeJS 14 or later.

1. Install dependencies with `npm ci` or `npm install`.

1. Run `npm test` to build and test the extension.

#### Install the development copy of the extension

1. Open `chrome://extensions` in Chrome.

1. Turn on `Developer mode` if it is not already active.

1. Load an unpacked extension with the `Load unpacked` button. In the file

modal that opens, select the `audion` directory inside of the `build`

directory under the copy of this repository.

#### Use and make changes to the extension

1. Open the added `Web Audio` panel in an inspector window with a page that

uses Web Audio API.

1. Make changes to the extension and rebuild with `npm test` or `npm run build`.

1. Open `chrome://extensions`, click `Update` to reload the rebuilt extension.

Close and reopen any tab and inspector to get the rebuilt extension's panel.

### Use extra debugging information

1. Open the extension option panel and check "Click here to show more debug

info".

2. Right click the visualizer panel and click "Inspect" to the extension's

DevTools panel, and see the console for the extra debugging information.

## Acknowledgments

Special thanks to [Chi Zeng](https://github.com/chihuahua) (Google),

[Gaoping Huang](https://github.com/gaopinghuang0),

[Michael "Z" Goddard](https://github.com/mzgoddard)

([Bocoup](https://bocoup.com/)) and

[Tenghui Zhang](https://github.com/TenghuiZhang) for their contribution on this

project.

## Contribution

If you have found an error in this library, please file an issue at:

https://github.com/GoogleChrome/audion/issues.

Patches are encouraged, and may be submitted by forking this project and

submitting a pull request through GitHub. See CONTRIBUTING for more detail.

## License

Copyright 2021 Google Inc. All Rights Reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use

this file except in compliance with the License. You may obtain a copy of the

License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed

under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR

CONDITIONS OF ANY KIND, either express or implied. See the License for the

specific language governing permissions and limitations under the License.

================================================

FILE: fixtures/oscillatorGainParam.ts

================================================

/**

* Event sequences that would be produced by an audio context with oscillator

* and gain nodes connecting outputs to params.

*

* @file

*/

import {WebAudioDebuggerEvent} from '../src/chrome/DebuggerWebAudioDomain';

import {Audion} from '../src/devtools/Types';

/**

* A sequence of events produced by WebAudioEventObservable from a context

* connect some oscillator and gain nodes, especially connecting an output to

* another gain node's gain param.

*

* @example

* // unit and integration tests can replace

* new WebAudioEventObservable()

* // with something like

* from(OSCILLATOR_GAIN_PARAM_EVENTS)

* // or something over time such as

* interval(50).pipe(map((_, i) =>

* OSCILLATOR_GAIN_PARAM_EVENTS[i]))

*

* @example

* // context that creates this sequence from

* // WebAudioEventObservable

* const audioContext = new AudioContext();

* const delayNode = new DelayNode(audioContext,

* {delayTime: delayTime});

* const inputNode = new GainNode(audioContext);

* const outputNode = new GainNode(audioContext);

* const depthNode = new GainNode(audioContext,

* {gain: width});

* const oscillatorNode = new OscillatorNode(audioContext,

* {type: "sine", frequency: speed});

* inputNode.connect(delayNode);

* delayNode.connect(outputNode);

* oscillatorNode.connect(depthNode);

* depthNode.connect(delayNode.delayTime);

*

* @see https://github.com/GoogleChrome/audion/issues/117

*/

export const OSCILLATOR_GAIN_PARAM_EVENTS: Audion.WebAudioEvent[] = [

{

method: WebAudioDebuggerEvent.contextCreated,

params: {

context: {

callbackBufferSize: 256,

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

contextState: 'suspended',

contextType: 'realtime',

maxOutputChannelCount: 2,

sampleRate: 48000,

},

},

},

{

method: WebAudioDebuggerEvent.audioNodeCreated,

params: {

node: {

channelCount: 2,

channelCountMode: 'explicit',

channelInterpretation: 'speakers',

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

nodeId: '57a4d84b-6165-495e-9ad7-2ad82497d423',

nodeType: 'AudioDestination',

numberOfInputs: 1,

numberOfOutputs: 0,

},

},

},

{

method: WebAudioDebuggerEvent.audioListenerCreated,

params: {

listener: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

listenerId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 0,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

paramId: '63a77a6c-1779-42df-bedc-c68c5171722f',

paramType: 'positionX',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 0,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

paramId: 'e15f2c0e-f466-4d2a-92a2-c3fe23e591f5',

paramType: 'positionY',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 0,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

paramId: 'bbabbcc8-91eb-4014-9351-43e1742644e9',

paramType: 'positionZ',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 0,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

paramId: '4e3f5c2d-6b59-4a69-ab4f-da62db30e7db',

paramType: 'forwardX',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 0,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

paramId: 'd2425aaa-dc91-4e60-ba57-22be7b26f941',

paramType: 'forwardY',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: -1,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

paramId: '1842fc18-6b51-402b-97f1-c56d4681866a',

paramType: 'forwardZ',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 0,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

paramId: '872a56b9-ed99-47ea-9957-bda9307fac5b',

paramType: 'upX',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 1,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

paramId: '4acf61c7-363f-44af-9857-c5e8c8ea5629',

paramType: 'upY',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 0,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'bb5255e5-5bd3-4290-b714-ecd3ff57be28',

paramId: '4b818074-5b96-42c3-b2e6-fcdd350e37bb',

paramType: 'upZ',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioNodeCreated,

params: {

node: {

channelCount: 2,

channelCountMode: 'max',

channelInterpretation: 'speakers',

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

nodeId: 'e5bd5ec5-abb8-426a-bad8-65f723970c76',

nodeType: 'Delay',

numberOfInputs: 1,

numberOfOutputs: 1,

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 0,

maxValue: 1,

minValue: 0,

nodeId: 'e5bd5ec5-abb8-426a-bad8-65f723970c76',

paramId: 'a88ea483-fc15-4c2b-ab0c-597af8e069b9',

paramType: 'delayTime',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioNodeCreated,

params: {

node: {

channelCount: 2,

channelCountMode: 'max',

channelInterpretation: 'speakers',

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

nodeId: '61b107eb-24ad-4f11-b811-72b2c5e7e79f',

nodeType: 'Gain',

numberOfInputs: 1,

numberOfOutputs: 1,

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 1,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: '61b107eb-24ad-4f11-b811-72b2c5e7e79f',

paramId: '03e13b59-a58f-4883-8479-d7a048ebe80a',

paramType: 'gain',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioNodeCreated,

params: {

node: {

channelCount: 2,

channelCountMode: 'max',

channelInterpretation: 'speakers',

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

nodeId: '78b78fae-b32e-4993-a2b4-7523c08e16c0',

nodeType: 'Gain',

numberOfInputs: 1,

numberOfOutputs: 1,

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 1,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: '78b78fae-b32e-4993-a2b4-7523c08e16c0',

paramId: 'b6ea1b98-2dda-43d0-8a52-49492fcafdde',

paramType: 'gain',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioNodeCreated,

params: {

node: {

channelCount: 2,

channelCountMode: 'max',

channelInterpretation: 'speakers',

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

nodeId: 'd8ac44f0-f099-40ff-9cf4-949148fca53f',

nodeType: 'Gain',

numberOfInputs: 1,

numberOfOutputs: 1,

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 1,

maxValue: 3.4028234663852886e38,

minValue: -3.4028234663852886e38,

nodeId: 'd8ac44f0-f099-40ff-9cf4-949148fca53f',

paramId: '38ec329f-650c-4c35-805c-32c559b47ea7',

paramType: 'gain',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioNodeCreated,

params: {

node: {

channelCount: 2,

channelCountMode: 'max',

channelInterpretation: 'speakers',

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

nodeId: '59200b98-60e1-43cf-88f6-d0a33d5643cf',

nodeType: 'Oscillator',

numberOfInputs: 0,

numberOfOutputs: 1,

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 0,

maxValue: 153600,

minValue: -153600,

nodeId: '59200b98-60e1-43cf-88f6-d0a33d5643cf',

paramId: '0b2b73d2-bc98-423b-a19c-1a0651e06d20',

paramType: 'detune',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.audioParamCreated,

params: {

param: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

defaultValue: 440,

maxValue: 24000,

minValue: -24000,

nodeId: '59200b98-60e1-43cf-88f6-d0a33d5643cf',

paramId: '42dddc62-c058-473e-9f48-a678a708c001',

paramType: 'frequency',

rate: 'a-rate',

},

},

},

{

method: WebAudioDebuggerEvent.nodesConnected,

params: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

destinationId: 'e5bd5ec5-abb8-426a-bad8-65f723970c76',

destinationInputIndex: 0,

sourceId: '61b107eb-24ad-4f11-b811-72b2c5e7e79f',

sourceOutputIndex: 0,

},

},

{

method: WebAudioDebuggerEvent.nodesConnected,

params: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

destinationId: '78b78fae-b32e-4993-a2b4-7523c08e16c0',

destinationInputIndex: 0,

sourceId: 'e5bd5ec5-abb8-426a-bad8-65f723970c76',

sourceOutputIndex: 0,

},

},

{

method: WebAudioDebuggerEvent.nodesConnected,

params: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

destinationId: 'd8ac44f0-f099-40ff-9cf4-949148fca53f',

destinationInputIndex: 0,

sourceId: '59200b98-60e1-43cf-88f6-d0a33d5643cf',

sourceOutputIndex: 0,

},

},

{

method: WebAudioDebuggerEvent.nodeParamConnected,

params: {

contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62',

destinationId: 'a88ea483-fc15-4c2b-ab0c-597af8e069b9',

sourceId: 'd8ac44f0-f099-40ff-9cf4-949148fca53f',

sourceOutputIndex: 0,

},

},

{

method: WebAudioDebuggerEvent.contextWillBeDestroyed,

params: {contextId: '9d36b0e0-4251-41a6-89cb-876b0fbe1b62'},

},

];

================================================

FILE: package.json

================================================

{

"name": "audion",

"private": true,

"version": "3.0.9",

"description": "A Chrome DevTools extension traces Web Audio API calls and visualizes in the DevTools.",

"repository": {

"type": "git",

"url": "git+https://github.com/GoogleChrome/audion.git"

},

"keywords": [],

"author": "",

"license": "Apache-2.0",

"bugs": {

"url": "https://github.com/GoogleChrome/audion/issues"

},

"homepage": "https://github.com/GoogleChrome/audion#readme",

"main": "index.js",

"engines": {

"node": "18"

},

"dependencies": {

"@pixi/unsafe-eval": "^7.2.4",

"dagre": "^0.8.5",

"pixi.js": "^7.2.4",

"rxjs": "^7.8.1",

"taffydb": "^2.7.3"

},

"devDependencies": {

"@babel/core": "^7.14.6",

"@babel/plugin-proposal-optional-chaining": "^7.16.7",

"@babel/plugin-transform-modules-commonjs": "^7.14.5",

"@babel/preset-typescript": "^7.16.7",

"@pixi/jsdoc-template": "^2.6.0",

"@types/dagre": "^0.7.46",

"@types/graphlib": "^2.1.8",

"babel-jest": "^29.5.0",

"copy-webpack-plugin": "^11.0.0",

"css-loader": "^6.6.0",

"devtools-protocol": "^0.0.924232",

"eslint": "^8.40.0",

"eslint-config-google": "^0.14.0",

"file-loader": "^6.2.0",

"husky": ">=6",

"jest": "^27.0.6",

"jest-puppeteer": "^5.0.4",

"jsdoc": "^4.0.2",

"lint-staged": ">=10",

"mustache": "^4.2.0",

"pinst": ">=2",

"prettier": "^2.3.2",

"puppeteer": "^9.1.1",

"raw-loader": "^4.0.2",

"rimraf": "^3.0.2",

"source-map-loader": "^3.0.0",

"style-loader": "^3.2.1",

"ts-loader": "^9.2.6",

"typescript": "^4.4.3",

"webpack": "^5.44.0",

"webpack-cli": "^4.7.2",

"yazl": "^2.5.1"

},

"scripts": {

"build:chrome-extension": "node src/build/make-chrome-extension.js",

"build:clean": "rimraf build",

"build:webpack": "webpack --mode production --config src/webpack.config.js",

"build": "npm run build:clean && npm run build:webpack && npm run build:chrome-extension",

"clean": "rimraf build docs src/coverage simulations/build",

"dev": "webpack --mode development --config src/webpack.config.js && npm run build:chrome-extension",

"postinstall": "husky install",

"postpublish": "pinst --enable",

"prepublishOnly": "pinst --disable",

"test:integration:build": "npm run test:integration:clean && npm run test:integration:webpack",

"test:integration:clean": "rimraf simulations/build",

"test:integration:webpack": "webpack --mode development --config simulations/webpack.config.js",

"test:integration:run": "JEST_PUPPETEER_CONFIG=test/.jest-puppeteer.config.json jest --config test/.jest.config.json",

"test:integration": "npm run build && npm run test:integration:build && npm run test:integration:run",

"test:jsdoc": "jsdoc -c .jsdoc.json",

"test:lint:eslint": "eslint src/**/*.js",

"test:lint:prettier": "prettier --check src/**/*.{js,ts}",

"test:lint": "npm run test:lint:eslint && npm run test:lint:prettier",

"test:unit": "jest --config src/.jest.config.json",

"test": "npm run test:lint && npm run test:jsdoc && npm run test:unit && npm run test:integration"

},

"lint-staged": {

"*.{js}": "eslint --cache --fix",

"*.{js,ts,json,css,md}": "prettier --write"

}

}

================================================

FILE: simulations/updateGraphRender.html

================================================

<div class="graph" style="height: 100%"></div>

<script src="./build/updateGraphRender.js"></script>

================================================

FILE: simulations/updateGraphRender.ts

================================================

import {

auditTime,

EMPTY,

filter,

finalize,

from,

interval,

map,

pipe,

switchMap,

take,

} from 'rxjs';

import {layoutGraphContext} from '../src/devtools/layoutGraphContext';

import {deserializeGraphContext} from '../src/devtools/deserializeGraphContext';

import {serializeGraphContext} from '../src/devtools/serializeGraphContext';

import {WebAudioRealtimeData} from '../src/devtools/WebAudioRealtimeData';

import {integrateWebAudioGraph} from '../src/devtools/WebAudioGraphIntegrator';

import {updateGraphRender} from '../src/panel/updateGraphRender';

import {AudioGraphRender} from '../src/panel/graph/AudioGraphRender';

import {OSCILLATOR_GAIN_PARAM_EVENTS} from '../fixtures/oscillatorGainParam';

import {updateGraphSizes} from '../src/panel/updateGraphSizes';

function main() {

const graphContainer = document.querySelector('.graph') as HTMLElement;

const graphRender = new AudioGraphRender({

elementContainer: graphContainer,

});

graphRender.init();

graphContainer.appendChild(graphRender.pixiView);

const simulation = () =>

pipe(

integrateWebAudioGraph({

pollContext() {

return EMPTY;

},

} as unknown as WebAudioRealtimeData),

auditTime(1),

map(serializeGraphContext),

filter((graphContext) => graphContext.graph !== null),

map(updateGraphSizes(graphRender)),

map(deserializeGraphContext),

map(layoutGraphContext),

map(serializeGraphContext),

map(updateGraphRender(graphRender)),

);

interval(50)

.pipe(

take(OSCILLATOR_GAIN_PARAM_EVENTS.length),

switchMap((_, i) =>

from(

OSCILLATOR_GAIN_PARAM_EVENTS.slice(-1).concat(

OSCILLATOR_GAIN_PARAM_EVENTS.slice(

0,

i % (OSCILLATOR_GAIN_PARAM_EVENTS.length - 1),

),

OSCILLATOR_GAIN_PARAM_EVENTS.slice(

(i + 1) % (OSCILLATOR_GAIN_PARAM_EVENTS.length - 1),

OSCILLATOR_GAIN_PARAM_EVENTS.length - 1,

),

),

),

),

simulation(),

finalize(() => graphContainer.classList.add('complete')),

)

.subscribe();

}

main();

================================================

FILE: simulations/webpack.config.js

================================================

const {resolve} = require('path');

const srcConfig = require('../src/webpack.config');

module.exports = (env, argv) => ({

...srcConfig(env, argv),

entry: {

updateGraphRender: resolve(__dirname, './updateGraphRender'),

},

output: {

path: resolve(__dirname, './build'),

},

});

================================================

FILE: src/.jest.config.json

================================================

{

"collectCoverage": true,

"injectGlobals": false,

"transform": {

"\\.[jt]sx?$": "babel-jest"

},

"coveragePathIgnorePatterns": ["<rootDir>/chrome/"]

}

================================================

FILE: src/build/make-chrome-extension.js

================================================

/**

* A nodejs script that copies files, writes a extension manifest, and zips it

* all up.

*

* @namespace makeChromeExtension

*/

const fs = require('fs').promises;

const {createWriteStream} = require('fs');

const path = require('path');

const mustache = require('mustache');

const {ZipFile} = require('yazl');

main();

/**

* Copy files, generate extension manifest, and zip the unpacked extension.

*

* Calls other methods in this script.

*

* @memberof makeChromeExtension

*/

async function main() {

await Promise.all([

copyFiles({

src: '..',

dest: '../../build/audion',

files: ['panel.html', 'devtools.html'],

}),

generateManifest({

view: {version: require('../../package.json').version},

dest: '../../build/audion',

}),

]);

await zipChromeExtension({

src: '../../build',

dir: 'audion',

});

}

/**

* Copy file paths from a src directory to a dest directory.

*

* @param {object} options

* @memberof makeChromeExtension

*/

async function copyFiles({src, dest, files, cwd = __dirname}) {

await Promise.all(

files.map(async (file) => {

await mkdir(path.resolve(cwd, dest, path.dirname(file)));

await fs.copyFile(

path.resolve(cwd, src, file),

path.resolve(cwd, dest, file),

);

}),

);

}

/**

* Generate a extension manifest from a template file.

*

* @param {object} options

* @memberof makeChromeExtension

*/

async function generateManifest({

view,

dest,

file = 'manifest.json',

cwd = __dirname,

}) {

await mkdir(path.resolve(cwd, dest, path.dirname(file)));

await fs.writeFile(

path.resolve(cwd, dest, file),

mustache.render(

await fs.readFile(

path.resolve(__dirname, 'manifest.json.mustache'),

'utf8',

),

view,

),

);

}

/**

* Zip the unpacked chrome extension.

*

* @param {object} options

* @memberof makeChromeExtension

*/

async function zipChromeExtension({

src,

cwd = __dirname,

dir,

file = `${dir}.zip`,

}) {

await unlink(path.resolve(cwd, src, file));

const files = await readdirRecursive(path.resolve(cwd, src, dir));

const output = createWriteStream(path.resolve(cwd, src, file));

const zip = new ZipFile();

const zipDone = new Promise((resolve, reject) =>

zip.outputStream.pipe(output).on('close', resolve).on('error', reject),

);

for (const file of files) {

zip.addFile(path.resolve(cwd, src, dir, file), file);

}

zip.end();

await zipDone;

}

/**

* Read entry names in a directory recursively.

* @param {string} dir directory to recursively read

* @return {Promise<Array<string>>} array of paths relative to `dir`

* @memberof makeChromeExtension

*/

async function readdirRecursive(dir) {

return (

await Promise.all(

(

await fs.readdir(dir)

).map(async (file) => {

try {

return (await readdirRecursive(path.resolve(dir, file))).map(

(subfile) => path.join(file, subfile),

);

} catch (err) {

if (err.code === 'ENOTDIR') {

return file;

}

throw err;

}

}),

)

).flat();

}

/**

* Create a directory if it does not already exist.

*

* @param {string} dirpath directory to create

* @memberof makeChromeExtension

*/

async function mkdir(dirpath) {

try {

await fs.mkdir(dirpath, {recursive: true});

} catch (err) {

if (err.code === 'EEXIST') {

return;

}

throw err;

}

}

/**

* Unlink a file from the filesystem if it exists.

*

* @param {string} filepath file to unlink

* @memberof makeChromeExtension

*/

async function unlink(filepath) {

try {

await fs.unlink(filepath);

} catch (err) {

if (err.code === 'ENOENT') {

return;

}

throw err;

}

}

================================================

FILE: src/build/manifest.json.mustache

================================================

{

"manifest_version": 3,

"name": "Audion",

"version": "{{version}}",

"description": "Web Audio DevTools Extension (graph visualizer)",

"devtools_page": "devtools.html",

"options_ui": {

"page": "options.html",

"open_in_tab": false

},

"permissions": [

"debugger"

]

}

================================================

FILE: src/chrome/API.js

================================================

/// <reference path="./Debugger.js" />

/// <reference path="./DevTools.js" />

/// <reference path="./Runtime.js" />

/**

* Top level chrome extension API type. Contains references of each accessible

* extension api.

*

* @typedef Chrome.API

* @property {Chrome.Debugger} debugger

* @property {Chrome.DevTools} devtools

* @property {Chrome.Runtime} runtime

*/

================================================

FILE: src/chrome/Debugger.js

================================================

/// <reference path="Types.js" />

/**

* [Chrome extension api][1] to the [Chrome Debugger Protocol][2]. Used by this

* extension to access the [Web Audio domain][3].

*

* [1]: https://developer.chrome.com/docs/extensions/reference/debugger/

* [2]: https://chromedevtools.github.io/devtools-protocol/

* [3]: ChromeDebuggerWebAudioDomain.html

*

* @typedef Chrome.Debugger

* @property {function(

* Chrome.DebuggerDebuggee, string, function(): void

* ): void} attach

* @property {function(Chrome.DebuggerDebuggee, function(): void): void} detach

* @property {Chrome.Event<function(object, string): void>} onDetach

* @property {Chrome.Event<Chrome.DebuggerOnEventListener>} onEvent

* @property {Chrome.DebuggerSendCommand} sendCommand

* @see https://developer.chrome.com/docs/extensions/reference/debugger/

* @see https://chromedevtools.github.io/devtools-protocol/

*/

/**

* @callback Chrome.DebuggerSendCommand

* @param {Chrome.DebuggerDebuggee} target

* @param {string} method

* @param {*} [commandParams]

* @param {*} [callback]

*/

/**

* A debuggee identifier.

*

* Either tabId or extensionId must be specified.

*

* @typedef Chrome.DebuggerDebuggee

* @property {string} [extensionId]

* @property {string} [tabId]

* @property {string} [targetId]

* @see https://developer.chrome.com/docs/extensions/reference/debugger/#type-Debuggee

*/

/**

* Arguments passed to Debugger onEvent listeners.

*

* @callback Chrome.DebuggerOnEventListener

* @param {Chrome.DebuggerDebuggee} source

* @param {string} method

* @param {*} [params]

* @return {void}

*/

================================================

FILE: src/chrome/DebuggerPageDomain.ts

================================================

/**

* @file

* Strings passed to `chrome.debugger.sendCommand` and received from

* `chrome.debugger.onEvent` callbacks.

*/

import {ProtocolMapping} from 'devtools-protocol/types/protocol-mapping';

/** @see https://chromedevtools.github.io/devtools-protocol/tot/Page/#methods */

export enum PageDebuggerMethod {

disable = 'Page.disable',

enable = 'Page.enable',

}

/** @see https://chromedevtools.github.io/devtools-protocol/tot/Page/#events */

export enum PageDebuggerEvent {

domContentEventFired = 'Page.domContentEventFired',

frameAttached = 'Page.frameAttached',

frameDetached = 'Page.frameDetached',

frameNavigated = 'Page.frameNavigated',

frameRequestedNavigation = 'Page.frameRequestedNavigation',

frameStartedLoading = 'Page.frameStartedLoading',

frameStoppedLoading = 'Page.frameStoppedLoading',

lifecycleEvent = 'Page.lifecycleEvent',

loadEventFired = 'Page.loadEventFired',

}

/** @see https://chromedevtools.github.io/devtools-protocol/tot/Page/#types */

export type PageDebuggerEventParams<Name extends PageDebuggerEvent> =

ProtocolMapping.Events[Name];

================================================

FILE: src/chrome/DebuggerWebAudioDomain.ts

================================================

/**

* @file

* Strings passed to `chrome.debugger.sendCommand` and received from

* `chrome.debugger.onEvent` callbacks.

*/

import {ProtocolMapping} from 'devtools-protocol/types/protocol-mapping';

/** @see https://chromedevtools.github.io/devtools-protocol/tot/WebAudio/#methods */

export enum WebAudioDebuggerMethod {

disable = 'WebAudio.disable',

enable = 'WebAudio.enable',

getRealtimeData = 'WebAudio.getRealtimeData',

}

/** @see https://chromedevtools.github.io/devtools-protocol/tot/WebAudio/#events */

export enum WebAudioDebuggerEvent {

audioListenerCreated = 'WebAudio.audioListenerCreated',

audioListenerWillBeDestroyed = 'WebAudio.audioListenerWillBeDestroyed',

audioNodeCreated = 'WebAudio.audioNodeCreated',

audioNodeWillBeDestroyed = 'WebAudio.audioNodeWillBeDestroyed',

audioParamCreated = 'WebAudio.audioParamCreated',

audioParamWillBeDestroyed = 'WebAudio.audioParamWillBeDestroyed',

contextChanged = 'WebAudio.contextChanged',

contextCreated = 'WebAudio.contextCreated',

contextWillBeDestroyed = 'WebAudio.contextWillBeDestroyed',

nodeParamConnected = 'WebAudio.nodeParamConnected',

nodeParamDisconnected = 'WebAudio.nodeParamDisconnected',

nodesConnected = 'WebAudio.nodesConnected',

nodesDisconnected = 'WebAudio.nodesDisconnected',

}

/** @see https://chromedevtools.github.io/devtools-protocol/tot/WebAudio/#types */

export type WebAudioDebuggerEventParams<Name extends WebAudioDebuggerEvent> =

ProtocolMapping.Events[Name];

================================================

FILE: src/chrome/DevTools.js

================================================

/// <reference path="Types.js" />

/**

* [Chrome extension api][1] to devtool inspector available to a extension's

* devtools page specified by the extension manifest's `"devtools_page"`.

*

* [1]: https://developer.chrome.com/docs/extensions/mv3/devtools/

*

* @typedef Chrome.DevTools

* @property {Chrome.DevToolsInspectedWindow} inspectedWindow

* @property {Chrome.DevtoolsNetwork} network

* @property {Chrome.DevToolsPanels} panels

*/

/**

* [Extension api][1] for the tab inspected by this `"devtools_page"` instance.

*

* [1]: https://developer.chrome.com/docs/extensions/reference/devtools_inspectedWindow/

*

* @typedef Chrome.DevToolsInspectedWindow

* @property {string} tabId

*/

/**

* @typedef Chrome.DevtoolsNetwork

* @property {Chrome.Event<function(string): void>} onNavigated

*/

/**

* [Extension api][1] to manage panels this extension adds.

*

* [1]: https://developer.chrome.com/docs/extensions/reference/devtools_panels/

*

* @typedef Chrome.DevToolsPanels

* @property {Chrome.DevToolsPanelsCreateFunction} create

* @property {'default' | 'dark'} themeName

*/

/**

* [`chrome.devtools.panels.create(...)`][1]

*

* [1]: https://developer.chrome.com/docs/extensions/reference/devtools_panels/#method-create

*

* @callback Chrome.DevToolsPanelsCreateFunction

* @param {string} title

* @param {string} icon

* @param {string} pageUrl

* @param {Chrome.DevToolsPanelsCreateCallback} onPanelCreated

* @return {void}

*/

/**

* @callback Chrome.DevToolsPanelsCreateCallback

* @param {Chrome.DevToolsPanel} panel

* @return {void}

*/

/**

* [Panel][1] created by [`chrome.devtools.panels.create`][2].

*

* [1]: https://developer.chrome.com/docs/extensions/reference/devtools_panels/#type-ExtensionPanel

* [2]: Chrome.html#.DevToolsPanelsCreateFunction

*

* @typedef Chrome.DevToolsPanel

* @property {Chrome.Event<function(): void>} onHidden

* @property {Chrome.Event<function(): void>} onShown

*/

================================================

FILE: src/chrome/Runtime.js

================================================

/// <reference path="Types.js" />

/**

* [Chrome extension api][1] about the extension the host platform and

* communication betwen different extension contexts.

*

* [1]: https://developer.chrome.com/docs/extensions/reference/runtime/

*

* @typedef Chrome.Runtime

* @property {function(): Chrome.RuntimePort} connect

* @property {function(string): string} getURL

* @property {Chrome.RuntimeError} lastError

* @property {Chrome.Event<Chrome.RuntimeOnConnectCallback>} onConnect

*/

/**

* @typedef Chrome.RuntimeError

* @property {string} [message]

* @see https://developer.chrome.com/docs/extensions/reference/runtime/#property-lastError

*/

/**

* Callback passed to [`chrome.runtime.onConnect`][1].

*

* [1]: https://developer.chrome.com/docs/extensions/reference/runtime/#event-onConnect

*

* @callback Chrome.RuntimeOnConnectCallback

* @param {Chrome.RuntimePort} port

* @return {void}

*/

/**

* [Port][1] to another chrome extension runtime context.

*

* [1]: https://developer.chrome.com/docs/extensions/reference/runtime/#type-Port

*

* @typedef Chrome.RuntimePort

* @property {function(): void} disconnect

* @property {Chrome.Event<function(Chrome.RuntimePort): void>} onDisconnect

* @property {Chrome.Event<function(*, Chrome.RuntimePort): void>} onMessage

* @property {function(*): void} postMessage

*/

================================================

FILE: src/chrome/Types.js

================================================

/**

* Types provided by the [chrome extension api][1].

*

* [1]: https://developer.chrome.com/docs/extensions/reference/

*

* @namespace Chrome

*/

/**

* Generic [event emitter][1] in chrome extension types.

*

* [1]: https://developer.chrome.com/docs/extensions/reference/events/#type-Event

*

* @typedef Chrome.Event

* @property {Chrome.EventCallback<T>} addListener

* @property {Chrome.EventCallback<T>} removeListener

* @template {function} T

*/

/**

* Function taking an event listener passed to a {@link Chrome.Event} instance.

*

* @callback Chrome.EventCallback

* @param {T} callback

* @template {function} T

*/

================================================

FILE: src/chrome/index.js

================================================

/// <reference path="API.js" />

/// <reference path="Types.js" />

/**

* Global chrome extension api instance.

*

* Normally available on the global context `chrome` identifier. Use this export

* to assist in testing use of the chrome extension api from inside this

* extension.

*

* @type {Chrome.API}

* @memberof Chrome

* @alias chrome

*/

export const chrome = getChrome();

/**

* Return a no-operation implementation of Chrome.API. Used in testing.

*

* @return {Chrome.API}

* @memberof Chrome

*/

function noopChrome() {

/**

* @return {Chrome.Event<*>}

*/

function noopEvent() {

return {addListener() {}, removeListener() {}};

}

return {

debugger: {

attach() {},

detach() {},

onDetach: noopEvent(),

onEvent: noopEvent(),

sendCommand() {},

},

devtools: {

inspectedWindow: {tabId: 'tab'},

network: {onNavigated: noopEvent()},

panels: {create() {}},

},

runtime: {

connect() {

return {

onDisconnect: noopEvent(),

onMessage: noopEvent(),

disconnect() {},

postMessage(message) {},

};

},

getURL(url) {

return url;

},

/**

* If a called chrome api method errored, lastError is set to that error

* while the provided callback is run. Otherwise lastError is not set.

*/

lastError: undefined,

onConnect: noopEvent(),

},

};

}

/**

* Return the global scope.

*

* @return {*}

* @memberof Chrome

*/

function getGlobal() {

if (typeof window === 'object') return window;

if (typeof self === 'object') return self;

if (typeof globalThis === 'object') return globalThis;

if (typeof global === 'object') return global;

if (typeof process === 'object') return process;

throw new Error('Cannot find global object');

}

/**

* Return a {@link Chrome.API} instance. Return a copy from

* {@link Chrome.noopChrome} if running under a unit test environment.

*

* @return {Chrome.API}

* @memberof Chrome

*/

function getChrome() {

const g = getGlobal();

if (

'chrome' in g &&

typeof g.chrome === 'object' &&

typeof g.chrome.devtools === 'object'

) {

return g.chrome;

}

return noopChrome();

}

================================================

FILE: src/custom.d.ts

================================================

declare module '*.svg' {

const content: any;

export default content;

}

declare module '*.css' {

const content: any;

export default content;

}

================================================

FILE: src/devtools/DebuggerAttachEventController.ts

================================================

import {

BehaviorSubject,

combineLatest,

concat,

defer,

EMPTY,

Observable,

of,

Subject,

Subscriber,

} from 'rxjs';

import {

catchError,

delay,

distinctUntilChanged,

exhaustMap,

filter,

finalize,

map,

share,

take,

} from 'rxjs/operators';

import {chrome} from '../chrome';

import {PageDebuggerMethod} from '../chrome/DebuggerPageDomain';

import {WebAudioDebuggerMethod} from '../chrome/DebuggerWebAudioDomain';

/**

* Permission value in regards to calling `chrome.debugger.attach`.

*

* When the extension calls `chrome.debugger.attach` a notification will display

* in devtools that the extension is debugging the tab. Attaching when the user

* does not expect it and then see this notification is not desired. The user

* needs to grant permission for the extension the privilege to attach, or

* reject prior permission.

*

* Permission could be implied when the extension's panel is opened.

*

* Permission should be rejected when the debugging notification is canceled or

* dismissed.

*

* Permission could be granted more explicitly by a panel component when the

* panel is visible but the extension does not have permission.

*

* WebAudioEventObserver will be instructed with rules like the above by other

* functions outside of this file.

*/

enum AttachPermission {

/**

* Initial value.

*

* When WebAudioEventObserver is created, it does not know if permission has

* been granted or not and should treat this as **not having** permission.

*/

UNKNOWN,

/**

* Permission has been granted by a user action. WebAudioEventObserver may

* attach to `chrome.debugger`.

*/

TEMPORARY,

/**

* Permission has been rejected. WebAudioEventObserver must not attach to

* `chrome.debugger`.

*/

REJECTED,

}

/**

* Value used to indicate if the `chrome.debugger` attachment and

* receiving `chrome.debugger.onEvent` events are "active".

*/

enum BinaryTransition {

DEACTIVATING = 'deactivating',

IS_INACTIVE = 'isInactive',

ACTIVATING = 'activating',

IS_ACTIVE = 'isActive',

}

export interface DebuggerAttachEventState {

permission: AttachPermission;

attachInterest: number;

attachState: BinaryTransition;

pageEventInterest: number;

pageEventState: BinaryTransition;

webAudioEventInterest: number;

webAudioEventState: BinaryTransition;

}

/** Chrome Devtools Protocol version to attach to. */

const debuggerVersion = '1.3';

/** Chrome tab to attach the debugger to. */

const {tabId} = chrome.devtools.inspectedWindow;

export enum ChromeDebuggerAPIEventName {

detached = 'ChromeDebuggerAPI.detached',

}

export interface ChromeDebuggerAPIDetachEventParams {

reason: 'canceled_by_user' | 'target_closed';

}

export interface ChromeDebuggerAPIDetachEvent {

method: ChromeDebuggerAPIEventName.detached;

params: ChromeDebuggerAPIDetachEventParams;

}

export type ChromeDebuggerAPIEvent = ChromeDebuggerAPIDetachEvent;

export type ChromeDebuggerAPIEventParams = ChromeDebuggerAPIEvent['params'];

/**

* Control attachment to chrome.debugger depending on if the user has given

* permission and how many parts of the extension need attachment.

*

* @memberof Audion

* @alias DebuggerAttachEventController

*/

export class DebuggerAttachEventController {

/** Does user permit extension to use `chrome.debugger`. */

permission$: PermissionSubject;

/** How many subscriptions want to attach to `chrome.debugger`. */

attachInterest$: CounterSubject;

attachState$: Observable<BinaryTransition>;

/**

* How many subscriptions want to receive page events through

* `chrome.debugger.onEvent`.

*/

pageEventInterest$: CounterSubject;

pageEventState$: Observable<BinaryTransition>;

/**

* How many subscriptions want to receive web audio events through

* `chrome.debugger.onEvent`.

*/

webAudioEventInterest$: CounterSubject;

webAudioEventState$: Observable<BinaryTransition>;

combinedState$: Observable<DebuggerAttachEventState>;

debuggerEvent$: Observable<ChromeDebuggerAPIEvent>;

constructor() {

// Create an interface of subjects to track changes in state with the

// `chrome.debugger` api.

const debuggerSubject = {

// Does the extension have permission from the user to use `chrome.debugger` api.

permission: new PermissionSubject(),

// How many entities want to attach to the debugger to call `sendCommand`

// or listen to `onEvent`.

attachInterest: new CounterSubject(0),

// attachState must be IS_ACTIVE for `chrome.debugger.sendCommand` to be used.

attachState: new BinaryTransitionSubject({

initialState: BinaryTransition.IS_INACTIVE,

activateAction: () => attach({tabId}, debuggerVersion),

deactivateAction: () => detach({tabId}),

}),

// How many entities want to listen to page events through `onEvent`.

pageEventInterest: new CounterSubject(0),

// must be IS_ACTIVE for `onEvent` to receive events.

pageEventState: new BinaryTransitionSubject({

initialState: BinaryTransition.IS_INACTIVE,

activateAction: () => sendCommand({tabId}, PageDebuggerMethod.enable),

deactivateAction: () =>

sendCommand({tabId}, PageDebuggerMethod.disable),

}),

// How many entities want to listen to web audio events through `onEvent`.

webAudioEventInterest: new CounterSubject(0),

// webAudioEventState must be IS_ACTIVE for `onEvent` to receive events.

webAudioEventState: new BinaryTransitionSubject({

initialState: BinaryTransition.IS_INACTIVE,

activateAction: () =>

sendCommand({tabId}, WebAudioDebuggerMethod.enable),

deactivateAction: () =>

sendCommand({tabId}, WebAudioDebuggerMethod.disable),

}),

};

this.permission$ = debuggerSubject.permission;

this.attachInterest$ = debuggerSubject.attachInterest;

this.attachState$ = debuggerSubject.attachState;

this.pageEventInterest$ = debuggerSubject.pageEventInterest;

this.pageEventState$ = debuggerSubject.pageEventState;

this.webAudioEventInterest$ = debuggerSubject.webAudioEventInterest;

this.webAudioEventState$ = debuggerSubject.webAudioEventState;

// Observable of changes to state derived from debuggerSubject.

const debuggerState$ = (this.combinedState$ =

// Push objects mapping of keys in debuggerSubject to values pushed from

// that debuggerSubject member.

combineLatest(debuggerSubject).pipe(

// Filter out combined state that is not different from the last value.

distinctUntilChanged(

(previous, current) =>

previous.permission === current.permission &&

previous.attachInterest === current.attachInterest &&

previous.attachState === current.attachState &&

previous.pageEventInterest === current.pageEventInterest &&

previous.pageEventState === current.pageEventState &&

previous.webAudioEventInterest === current.webAudioEventInterest &&

previous.webAudioEventState === current.webAudioEventState,

),

// Make one subscription debuggerSubject once for many subscribers.

share(),

));

// The following subscriptions govern debuggerSubject.

// Govern attachment to `chrome.debugger`.

debuggerState$.subscribe({

next: (state) => {

// When debugger state has permission to attach to `chrome.debugger` and

// something wants to use `chrome.debugger`, activate the attachment.

// Otherwise deactivate the attachment.

if (

state.permission === AttachPermission.TEMPORARY &&

state.attachInterest > 0

) {

debuggerSubject.attachState.activate();

} else {

debuggerSubject.attachState.deactivate();

}

},

});

this.debuggerEvent$ = onDebuggerDetach$.pipe(

map(([, reason]) => {

return {

method: ChromeDebuggerAPIEventName.detached,

params: {reason},

} as ChromeDebuggerAPIDetachEvent;

}),

);

// Govern permission rejection and externally induced detachment.

onDebuggerDetach$.subscribe({

next([, reason]) {

if (reason === 'canceled_by_user') {

// Reject permission to use `chrome.debugger` in this extension. We

// understand this event to be an explicit rejection from the

// extension's user.

debuggerSubject.permission.reject();

}

// Immediately go to the inactive state. Detachment was initiated

// outside the extension and does not need to be requested.

debuggerSubject.attachState.next(BinaryTransition.IS_INACTIVE);

},

});

// Govern receiving events through `chrome.debugger.onEvent`.

debuggerState$.subscribe(

activateEventWhileAttached(

debuggerSubject.pageEventState,

({pageEventInterest}) => pageEventInterest > 0,

),

);

debuggerState$.subscribe(

activateEventWhileAttached(

debuggerSubject.webAudioEventState,

({webAudioEventInterest}) => webAudioEventInterest > 0,

),

);

}

/**

* Attach to the debugger if not already, and call chrome.debugger.sendCommand.

* @param method Chrome devtools protocol method like 'HeapProfiler.collectGarbage'.

* @returns observable that completes once done without pushing any values

*/

sendCommand(method: string): Observable<never> {

this.attachInterest$.increment();

return this.attachState$.pipe(

filter((state) => state === BinaryTransition.IS_ACTIVE),

take(1),

exhaustMap(() => sendCommand({tabId}, method)),

finalize(() => this.attachInterest$.decrement()),

);

}

}

function activateEventWhileAttached(

eventState: BinaryTransitionSubject,

interestExists: (state: DebuggerAttachEventState) => boolean,

): Partial<Subscriber<DebuggerAttachEventState>> {

return {

next(state) {

if (

state.attachState === BinaryTransition.IS_ACTIVE &&

interestExists(state)

) {

// Start receiving events. The attachemnt is active and some entities

// are listening for events.

eventState.activate();

} else {

if (state.attachState === BinaryTransition.IS_ACTIVE) {

// Stop receiving events. The attachment is still active but no

// entities are listening for events.

eventState.deactivate();

} else {

// "Skip" deactivation of receiving events and immediately go to the

// inactive state. The process of detachment either requested by the

// extension or initiated otherwise has implicitly stopped reception

// of events.

eventState.next(BinaryTransition.IS_INACTIVE);

}

}

},

};

}

/**

* Create a function that returns an observable that completes when the api

* calls back.

* @param method `chrome` api method whose last argument is a callback

* @param thisArg `this` inside of the method

* @returns observable that completes when the method is done

*/

function bindChromeCallback<P extends any[]>(

method: (...args: [...params: P, callback: () => void]) => void,

thisArg = null,

) {

return (...args: P) =>

new Observable<never>((subscriber) => {

method.call(thisArg, ...args, () => {

if (chrome.runtime.lastError) {

subscriber.error(chrome.runtime.lastError);

} else {

subscriber.complete();

}

});

});

}

/**

* Return an observable that pushes events from a `chrome` api event.

* @param event `chrome` api event

* @returns observable of `chrome` api events

*/

function fromChromeEvent<A extends any[]>(

event: Chrome.Event<(...args: A) => any>,

) {

return new Observable<A>((subscriber) => {

const listener = (...args: A) => {

subscriber.next(args);

};

event.addListener(listener);

return () => {

event.removeListener(listener);

};

});

}

/**

* Call `chrome.debugger.attach`.

*

* @see

* https://developer.chrome.com/docs/extensions/reference/debugger/#method-attach

*/

const attach = bindChromeCallback(chrome.debugger.attach, chrome.debugger);

/**

* Call `chrome.debugger.detach`.

*

* @see

* https://developer.chrome.com/docs/extensions/reference/debugger/#method-detach

*/

const detach = bindChromeCallback(chrome.debugger.detach, chrome.debugger);

/**

* Call `chrome.debugger.sendCommand`.

*

* @see

* https://developer.chrome.com/docs/extensions/reference/debugger/#method-sendCommand

*/

const sendCommand = bindChromeCallback(

chrome.debugger.sendCommand as (

target: Chrome.DebuggerDebuggee,

method: string,

params?,

callback?,

) => void,

chrome.debugger,

);

/**

* Observable of `chrome.debugger.onDetach` events.

*/

const onDebuggerDetach$ = fromChromeEvent<

[target: Chrome.DebuggerDebuggee, reason: string]

>(chrome.debugger.onDetach);

/**

* Store if user allows the extension to use `chrome.debugger` api.

*/

export class PermissionSubject extends BehaviorSubject<AttachPermission> {

constructor() {

super(AttachPermission.UNKNOWN);

}

/**

* Permit use of `chrome.debugger`.

*/

grantTemporary() {

if (this.value === AttachPermission.UNKNOWN) {

this.next(AttachPermission.TEMPORARY);

}

}

/**

* Reject use of `chrome.debugger`.

*/

reject() {

if (this.value !== AttachPermission.REJECTED) {

this.next(AttachPermission.REJECTED);

}

}

}

/**

* Description of a transition in BinaryTransitionSubject.

*/

interface BinaryTransitionDescription {

/** The state the Subject must start in to perform this transition. */

beginningState: BinaryTransition;

/** The state the Subject is in while performing this transition. */

intermediateState: BinaryTransition;

/** The state the Subject is in after action is successfully. */

successState: BinaryTransition;

/** The state the Subject is in after action is unsuccessful. */

errorState: BinaryTransition;

/**

* Delegate that does some work to modify other application state to the

* desired state.

*/

action: () => Observable<void>;

}

/**

* Control a transition between inactive and active state. To perform a

* transition the subject enters a intermediate state and calls a delegate to do

* some action. After the action completes successfully the subject enters the

* desired state.

*/

class BinaryTransitionSubject extends BehaviorSubject<BinaryTransition> {

private readonly activateTransition: BinaryTransitionDescription;

private readonly deactivateTransition: BinaryTransitionDescription;

constructor({

initialState,

activateAction,

deactivateAction,

}: {

initialState: BinaryTransition;

activateAction: () => Observable<void>;

deactivateAction: () => Observable<void>;

}) {

super(initialState);

this.activateTransition = {

beginningState: BinaryTransition.IS_INACTIVE,

intermediateState: BinaryTransition.ACTIVATING,

successState: BinaryTransition.IS_ACTIVE,

errorState: BinaryTransition.IS_INACTIVE,

action: activateAction,

};

this.deactivateTransition = {

beginningState: BinaryTransition.IS_ACTIVE,

intermediateState: BinaryTransition.DEACTIVATING,

successState: BinaryTransition.IS_INACTIVE,

errorState: BinaryTransition.IS_INACTIVE,

action: deactivateAction,

};

}

/**

* Transition to a desired state.

*

* Change the subject value if it is set to beginningState to intermediateState and once action completes successfuly, set to successState.

* @param description

*/

transition(description: BinaryTransitionDescription) {

if (this.value === description.beginningState) {

concat(

of(description.intermediateState),

description.action(),

defer(() =>

this.value === description.intermediateState

? of(description.successState)

: EMPTY,

),

)

.pipe(

catchError((err) => {

console.error(err);

if (

err.message.startsWith('Another debugger is already attached')

) {

return this.value === description.intermediateState

? of(description.successState)

: EMPTY;

}

return of(

this.value === description.intermediateState

? description.errorState

: description.beginningState,

);

}),

)

.subscribe({next: this.next.bind(this)});

}

}

/**

* If subject is inactive, transition to active.

*/

activate() {

this.transition(this.activateTransition);

}

/**