Repository: ibestvina/datasloth

Branch: main

Commit: 6bb41fa7629a

Files: 10

Total size: 55.3 KB

Directory structure:

gitextract_hgdrt7b0/

├── .gitignore

├── LICENSE

├── MANIFEST.in

├── README.md

├── README.rst

├── datasloth/

│ └── __init__.py

├── examples/

│ ├── datasloth_detailed_example.ipynb

│ └── datasloth_quick_example.ipynb

├── setup.cfg

└── setup.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

# C extensions

*.so

# Distribution / packaging

.Python

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

pip-wheel-metadata/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

*.py,cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

.python-version

# pipenv

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

# However, in case of collaboration, if having platform-specific dependencies or dependencies

# having no cross-platform support, pipenv may install dependencies that don't work, or not

# install all needed dependencies.

#Pipfile.lock

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

__pypackages__/

# Celery stuff

celerybeat-schedule

celerybeat.pid

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

env/

venv/

ENV/

env.bak/

venv.bak/

# Spyder project settings

.spyderproject

.spyproject

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# mypy

.mypy_cache/

.dmypy.json

dmypy.json

# Pyre type checker

.pyre/

================================================

FILE: LICENSE

================================================

MIT License

Copyright (c) 2022 Ivan Bestvina

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: MANIFEST.in

================================================

include README.rst

================================================

FILE: README.md

================================================

# DataSloth

_Natural language Pandas queries and data generation powered by GPT-3_

## Installation

`pip install datasloth`

## Usage

In order for DataSloth to work, you must have a working [OpenAI API key](https://beta.openai.com/account/api-keys) set in your environment variable, or provide it to the DataSloth object. For more info, refer to this [guide](https://help.openai.com/en/articles/5112595-best-practices-for-api-key-safety).

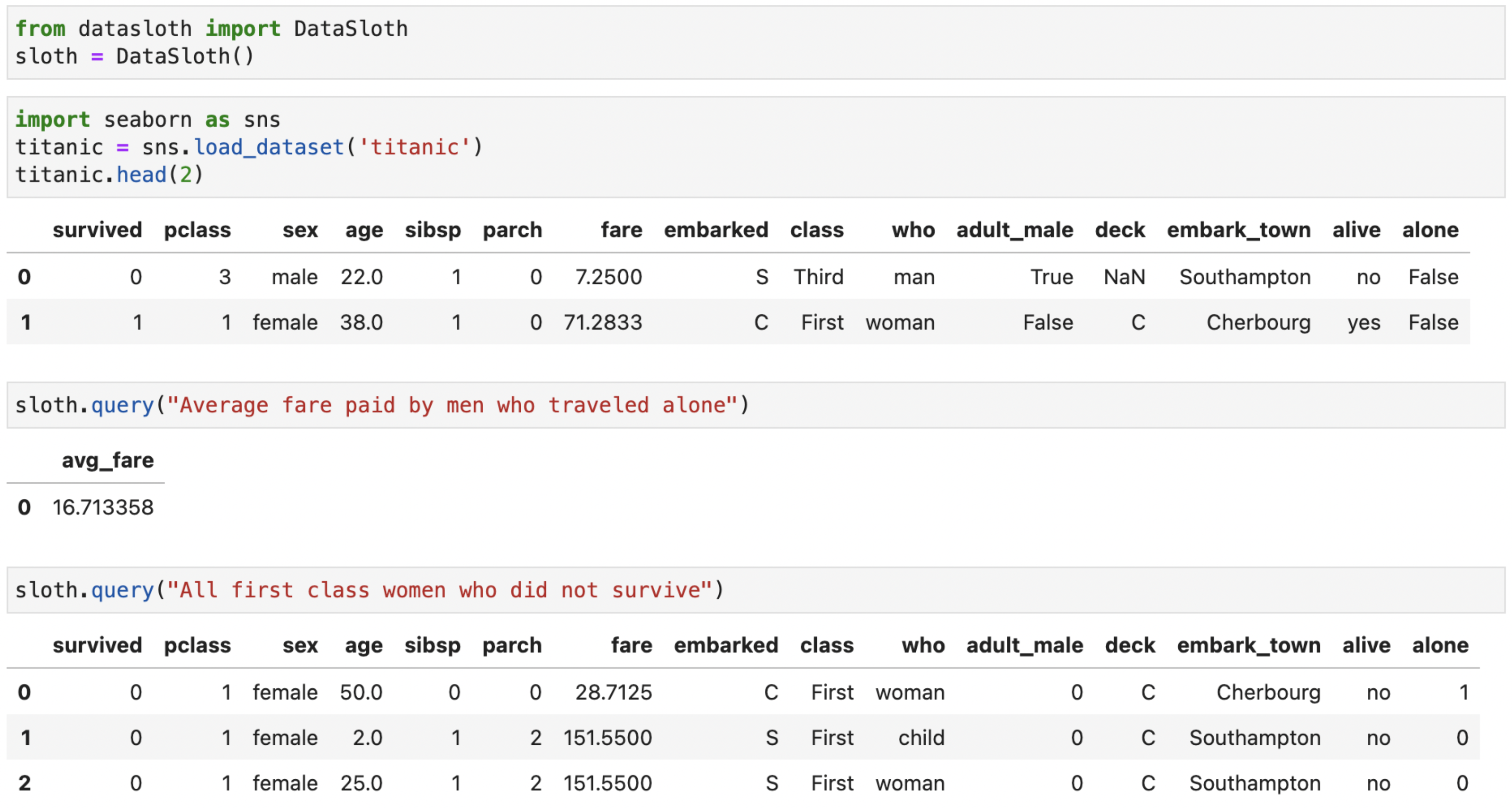

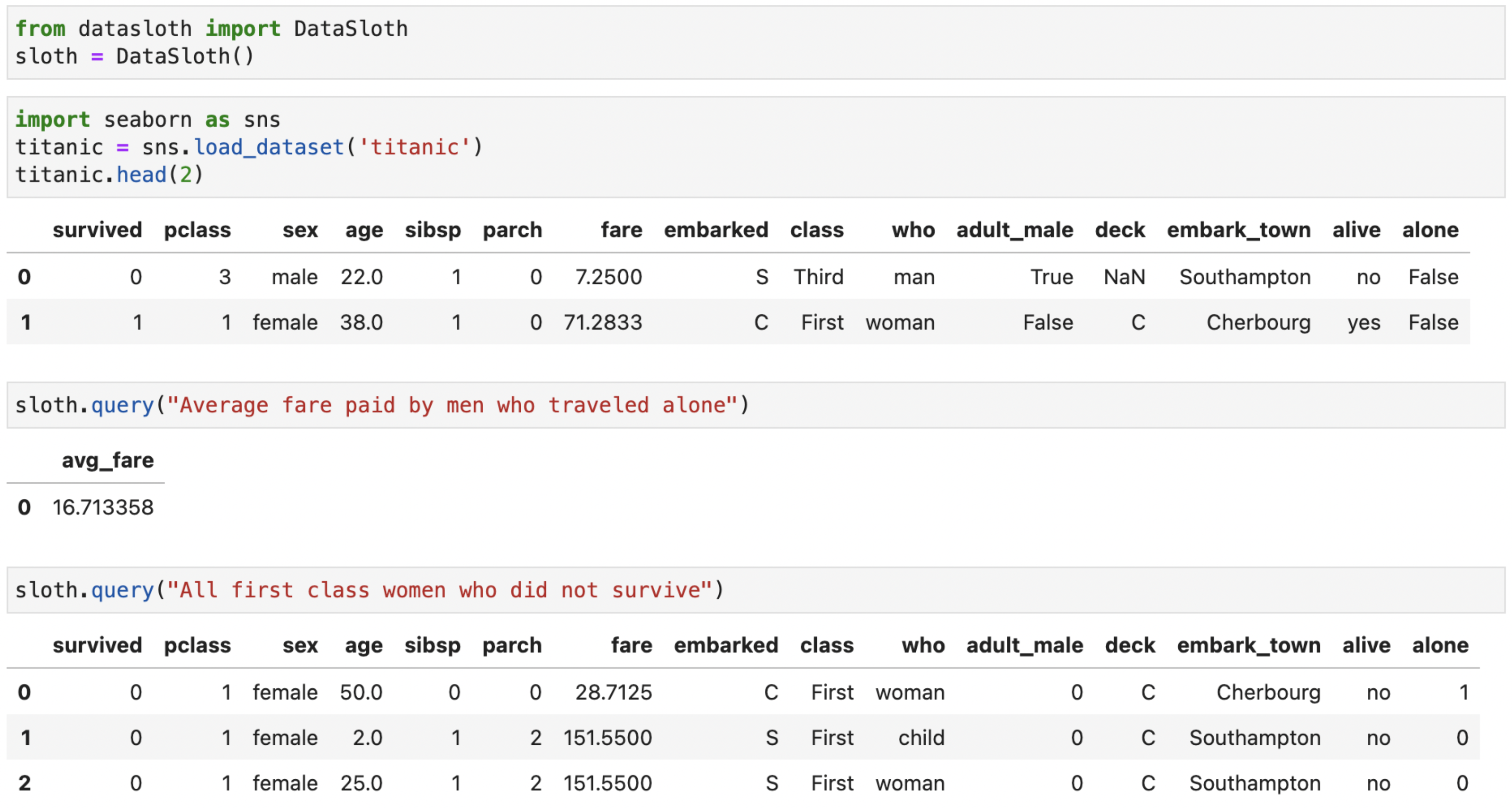

DataSloth automatically discovers all Pandas dataframes in your namespace (filtering out names starting with an underscode). Before you load any data, import DataSloth and create the `sloth`:

```python

from datasloth import DataSloth

sloth = DataSloth()

```

Next, load any data you want to use. Try naming your dataframes and columns in a meaningful way, as DataSloth uses these names to understand what the data is about.

Once your data is loaded, simply run

`sloth.query('...')`

to query the data.

### Improving results

To improve the results, you can set custom descriptions of your tables:

`df.sloth.description = 'Verbose description of the table'`

By default, table descriptions consist of information about each column in the table. You can include this default description in your custom one by adding a `{COLUMNS_SUMMARY}` placeholder. See the detailed example notebook in the examples folder for more information.

### Solving issues

A lot of times, if the returned data is not correct, or not fully formatted the way you want, it helps to rephrase the question or give specific pointers to how the final data should look like. To better understand where things might have gone wrong, use `show_query=True` in the `sloth.query()`, or run `sloth.show_last_query()` after the prompt has finished to print out the SQL query used (whithout rerunning the engine).

## Data generation

DataSloth is also able to generate random data with the `generate` function. For example, running:

```python

sloth.generate(

description="people from Mars, with very space-sounding names, and strange taste in ice cream",

columns=['First Name', 'Last Name', 'Date Of Birth', 'Country', 'City', 'Favourite Ice Cream'],

n_rows=15

)

```

Produces something like this:

| First Name | Last Name | Date Of Birth | Country | City | Favourite Ice Cream |

|-----------:|----------:|--------------:|--------:|-----------------:|--------------------:|

| Glorza | Mangal | 06/12/2079 | Mars | Pryus Mater | Celestial Delight |

| Yalza | Krang | 09/21/2084 | Mars | Valles Marineris | Moon Mist |

| Tralza | Vomar | 04/17/2074 | Mars | Syrtis Major | Mars Mud Pie |

| Dalza | Ralad | 01/02/2088 | Mars | Hellas Planitia | Alien Abduction |

| Halza | Wular | 11/04/2092 | Mars | Olympus Mons | Martian Sunrise |

Note that the results of the `generate` function are random, and different on each call.

================================================

FILE: README.rst

================================================

DataSloth

=========

*Natural language Pandas queries and data generation powered by GPT-3*

Installation

------------

``pip install datasloth``

Usage

-----

In order for DataSloth to work, you must have a working `OpenAI API

key `__ set in your

environment variable, or provide it to the DataSloth object. For more

info, refer to this

`guide `__.

DataSloth automatically discovers all Pandas dataframes in your

namespace (filtering out names starting with an underscode). Before you

load any data, import DataSloth and create the ``sloth``:

.. code:: python

from datasloth import DataSloth

sloth = DataSloth()

Next, load any data you want to use. Try naming your dataframes and

columns in a meaningful way, as DataSloth uses these names to understand

what the data is about.

Once your data is loaded, simply run

``sloth.query('...')``

to query the data.

================================================

FILE: datasloth/__init__.py

================================================

import os

import inspect

import re

import pandas as pd

from pandas.api.extensions import register_dataframe_accessor

from pandas.api.types import is_string_dtype, is_numeric_dtype, is_datetime64_any_dtype

from sqlalchemy import desc

from pandasql import sqldf, PandaSQLException

import openai

@pd.api.extensions.register_dataframe_accessor("sloth")

class SlothAccessor:

"""

Pandas Dataframe accessor to add '.sloth.description' field to dataframes,

and manage column summaries used by DataSloth.

"""

def __init__(self, pandas_obj: pd.DataFrame) -> None:

self._validate(pandas_obj)

self._obj = pandas_obj

self._description = '{COLUMNS_SUMMARY}'

@staticmethod

def _validate(obj):

pass

@property

def description(self) -> str:

return self._description.format(COLUMNS_SUMMARY=self.columns_summary())

@description.setter

def description(self, value: str) -> None:

"""

Set additional description manually to inform the language engine about this table.

Use '{COLUMNS_SUMMARY}' to include the default column summary in the description.

By default, description is set only to this summary. To reset it, set description to None.

"""

if value is None:

self._description = '{COLUMNS_SUMMARY}'

else:

self._description = value

def columns_summary(self) -> str:

"""

Returns columns summary of the dataframe, in the "table" format containing

column names, data types and additional info about columns.

"""

summary_lines = ['|column name|data type|info|']

for col_name in self._obj:

col = self._obj[col_name]

summary_lines.append(f'|{col_name}|{col.dtype}|{column_info(col)}|')

return '\n'.join(summary_lines)

class DataSloth():

prompt_format = """

Make sure to join in tables if information from multiple tables is needed for a task.

Task: percentage of True values of column X in table Y

```

SQL query for SQLite:

SELECT (SUM(CASE WHEN X = 'True' THEN 1.0 END) / COUNT(*)) * 100 AS percentage

FROM Y

```

Task: count of rows in table T where date is equal to 11th of August 1993

```

SQL query for SQLite:

SELECT COUNT(*) AS row_count

FROM T

WHERE date(date) = date('1993-08-11')

```

Task: {QUERY}

SQL query for SQLite:

```

"""

def __init__(self, openai_api_key=None) -> None:

if openai_api_key:

openai.api_key = openai_api_key

else:

openai.api_key = os.getenv("OPENAI_API_KEY")

if not openai.api_key:

raise Exception(

"OpenAI API key is not set. Either provide it to DataSloth(openai_api_key='...') "\

"run openai.api_key('...'), or set it as an env variable OPENAI_API_KEY."

)

self.last_prompt = None

self.last_gpt_response = None

@staticmethod

def dataframes_summary(env=None, ignore='^_') -> str:

"""

Summary of all DataFrames available in the namespace, ignoring those matching the 'ignore' regex.

"""

summary_lines = ['Tables available in the database, with their additional information, are:']

table_count = 0

for name, value in env.items():

if isinstance(value, pd.DataFrame) and (not ignore or not re.match(ignore, name)):

summary_lines += [

f"\n\nTable name: {name}",

value.sloth.description

]

table_count += 1

if not table_count:

return None

return '\n'.join(summary_lines)

def query(self, query, env=None, show_query=False):

"""

Query all Pandas DataFrames available in the namespace with a natural language query.

To limit the tables used in the query, set the 'env' variable to a dict of tables

(keys are table names, and values are table objects), or set it to globals() or locals().

To learn more, check pandasql docs.

"""

env = env or get_outer_frame_variables()

query = query[0].lower() + query[1:]

prompt = self.dataframes_summary(env)

if not prompt:

print('No dataframes found')

return

prompt += DataSloth.prompt_format.format(QUERY=query)

response = openai.Completion.create(

model="gpt-3.5-turbo-instruct", # as per OpenAI deprecations guide: https://platform.openai.com/docs/deprecations/instructgpt-models

prompt=prompt,

temperature=0,

max_tokens=1000,

top_p=1,

frequency_penalty=0,

presence_penalty=0,

stop=["\n```\n"]

)

sql_query = response['choices'][0]['text']

sql_query = sql_query.replace('```', '')

self.last_prompt = (prompt, sql_query)

if show_query:

print(sql_query)

try:

result = sqldf(sql_query, env)

except PandaSQLException:

result = None

print('Unsuccessful. Try rephrasing your query, or add additional table descriptions in df.sloth.description.')

print('You can inspect the generated prompt and GPT response in sloth.show_last_prompt().')

return result

def generate(self, description, columns, n_rows=10):

"""

Generates a random dataset based on the description and a list of columns.

"""

rows = []

while len(rows) < n_rows:

prompt = f'Fill the table below with {min(n_rows - len(rows) + 5, 30)} random rows about {description}\n\n'

prompt += f"|{'|'.join(columns)}|\n"

prompt += f"|{'|'.join(['-'*len(col) for col in columns])}|\n|"

response = openai.Completion.create(

model="gpt-3.5-turbo-instruct", # as per OpenAI deprecations guide: https://platform.openai.com/docs/deprecations/instructgpt-models

prompt=prompt,

temperature=0.8,

max_tokens=1000,

top_p=1,

frequency_penalty=0,

presence_penalty=0,

)

response = '|' + response['choices'][0]['text']

new_rows = [row[1:-1].split('|') for row in response.split('\n') if not re.match('^[- |]*$', row)]

new_rows = [row for row in new_rows if len(row) == len(columns)]

rows += new_rows

prompt = response + prompt

df = pd.DataFrame(rows, columns=columns).head(n_rows)

return df

def _last_prompt(self):

if self.last_prompt:

print(self.last_prompt[0])

print(f'[->]\n{self.last_prompt[1]}')

def show_last_query(self):

"""Print the SQL query generated in the last sloth.query() call."""

if self.last_prompt:

print(self.last_prompt[1])

# Code copied from pandasql

def get_outer_frame_variables():

""" Get a dict of local and global variables of the first outer frame from another file. """

cur_filename = inspect.getframeinfo(inspect.currentframe()).filename

outer_frame = next(f

for f in inspect.getouterframes(inspect.currentframe())

if f.filename != cur_filename)

variables = {}

variables.update(outer_frame.frame.f_globals)

variables.update(outer_frame.frame.f_locals)

return variables

def column_info(col):

"""Info about a specific column, different depending on its type"""

if is_string_dtype(col) or col.dtype == 'category':

unique = col.unique().tolist()

summary = 'unique values: ' + ', '.join(map(str, unique[:30]))

if len(unique) > 30:

summary += '...'

elif col.dtype == 'bool':

summary = f"values: 0, 1"

elif is_numeric_dtype(col):

summary = f"min={col.min()}, max={col.max()}"

elif is_datetime64_any_dtype(col):

summary = f"first={col.min()}, last={col.max()}"

else:

summary = ''

return summary

================================================

FILE: examples/datasloth_detailed_example.ipynb

================================================

{

"cells": [

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# DataSloth"

]

},

{

"cell_type": "code",

"execution_count": 1,

"metadata": {},

"outputs": [],

"source": [

"from datasloth import DataSloth\n",

"import pandas as pd\n",

"import seaborn as sns"

]

},

{

"cell_type": "code",

"execution_count": 2,

"metadata": {},

"outputs": [],

"source": [

"# Make sure your OpenAI API key is set in the OPENAI_API_KEY env variable, or provide it as an argument to DataSloth()\n",

"sloth = DataSloth()"

]

},

{

"cell_type": "code",

"execution_count": 2,

"metadata": {},

"outputs": [

{

"data": {

"text/html": [

"\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" survived | \n",

" pclass | \n",

" sex | \n",

" age | \n",

" sibsp | \n",

" parch | \n",

" fare | \n",

" embarked | \n",

" class | \n",

" who | \n",

" adult_male | \n",

" deck | \n",

" embark_town | \n",

" alive | \n",

" alone | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 0 | \n",

" 3 | \n",

" male | \n",

" 22.0 | \n",

" 1 | \n",

" 0 | \n",

" 7.2500 | \n",

" S | \n",

" Third | \n",

" man | \n",

" True | \n",

" NaN | \n",

" Southampton | \n",

" no | \n",

" False | \n",

"

\n",

" \n",

" | 1 | \n",

" 1 | \n",

" 1 | \n",

" female | \n",

" 38.0 | \n",

" 1 | \n",

" 0 | \n",

" 71.2833 | \n",

" C | \n",

" First | \n",

" woman | \n",

" False | \n",

" C | \n",

" Cherbourg | \n",

" yes | \n",

" False | \n",

"

\n",

" \n",

" | 2 | \n",

" 1 | \n",

" 3 | \n",

" female | \n",

" 26.0 | \n",

" 0 | \n",

" 0 | \n",

" 7.9250 | \n",

" S | \n",

" Third | \n",

" woman | \n",

" False | \n",

" NaN | \n",

" Southampton | \n",

" yes | \n",

" True | \n",

"

\n",

" \n",

" | 3 | \n",

" 1 | \n",

" 1 | \n",

" female | \n",

" 35.0 | \n",

" 1 | \n",

" 0 | \n",

" 53.1000 | \n",

" S | \n",

" First | \n",

" woman | \n",

" False | \n",

" C | \n",

" Southampton | \n",

" yes | \n",

" False | \n",

"

\n",

" \n",

" | 4 | \n",

" 0 | \n",

" 3 | \n",

" male | \n",

" 35.0 | \n",

" 0 | \n",

" 0 | \n",

" 8.0500 | \n",

" S | \n",

" Third | \n",

" man | \n",

" True | \n",

" NaN | \n",

" Southampton | \n",

" no | \n",

" True | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" survived_men | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 109 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" avg_fare | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 16.713358 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" percentage | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 12.233446 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" sex | \n",

" percentage | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" female | \n",

" 74.203822 | \n",

"

\n",

" \n",

" | 1 | \n",

" male | \n",

" 18.890815 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" pclass | \n",

" meal_type | \n",

" n_courses | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 1 | \n",

" breakfast | \n",

" 10 | \n",

"

\n",

" \n",

" | 1 | \n",

" 1 | \n",

" lunch | \n",

" 15 | \n",

"

\n",

" \n",

" | 2 | \n",

" 1 | \n",

" dinner | \n",

" 20 | \n",

"

\n",

" \n",

" | 3 | \n",

" 2 | \n",

" breakfast | \n",

" 5 | \n",

"

\n",

" \n",

" | 4 | \n",

" 2 | \n",

" lunch | \n",

" 6 | \n",

"

\n",

" \n",

" | 5 | \n",

" 2 | \n",

" dinner | \n",

" 7 | \n",

"

\n",

" \n",

" | 6 | \n",

" 3 | \n",

" breakfast | \n",

" 1 | \n",

"

\n",

" \n",

" | 7 | \n",

" 3 | \n",

" lunch | \n",

" 2 | \n",

"

\n",

" \n",

" | 8 | \n",

" 3 | \n",

" dinner | \n",

" 3 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" sex | \n",

" percentage | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" female | \n",

" 96.808511 | \n",

"

\n",

" \n",

" | 1 | \n",

" male | \n",

" 36.885246 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" code | \n",

" date | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" S | \n",

" 1912-04-10 | \n",

"

\n",

" \n",

" | 1 | \n",

" C | \n",

" 1912-04-10 | \n",

"

\n",

" \n",

" | 2 | \n",

" Q | \n",

" 1912-04-11 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" female_passengers | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 0 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" female_passengers | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 36 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" First Name | \n",

" Last Name | \n",

" Date Of Birth | \n",

" Country | \n",

" City | \n",

" Favourite Ice Cream | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" Glorza | \n",

" Mangal | \n",

" 06/12/2079 | \n",

" Mars | \n",

" Pryus Mater | \n",

" Celestial Delight | \n",

"

\n",

" \n",

" | 1 | \n",

" Yalza | \n",

" Krang | \n",

" 09/21/2084 | \n",

" Mars | \n",

" Valles Marineris | \n",

" Moon Mist | \n",

"

\n",

" \n",

" | 2 | \n",

" Tralza | \n",

" Vomar | \n",

" 04/17/2074 | \n",

" Mars | \n",

" Syrtis Major | \n",

" Mars Mud Pie | \n",

"

\n",

" \n",

" | 3 | \n",

" Dalza | \n",

" Ralad | \n",

" 01/02/2088 | \n",

" Mars | \n",

" Hellas Planitia | \n",

" Alien Abduction | \n",

"

\n",

" \n",

" | 4 | \n",

" Halza | \n",

" Wular | \n",

" 11/04/2092 | \n",

" Mars | \n",

" Olympus Mons | \n",

" Martian Sunrise | \n",

"

\n",

" \n",

" | 5 | \n",

" Kalza | \n",

" Lopal | \n",

" 03/09/2073 | \n",

" Mars | \n",

" Ares Vallis | \n",

" Red Planet | \n",

"

\n",

" \n",

" | 6 | \n",

" Malza | \n",

" Bomar | \n",

" 07/14/2081 | \n",

" Mars | \n",

" Terra Cimmeria | \n",

" Mars Bar | \n",

"

\n",

" \n",

" | 7 | \n",

" Nalza | \n",

" Kamar | \n",

" 12/25/2085 | \n",

" Mars | \n",

" Utopia Planitia | \n",

" Espresso crunch | \n",

"

\n",

" \n",

" | 8 | \n",

" Ralza | \n",

" Fomar | \n",

" 02/11/2070 | \n",

" Mars | \n",

" Arsia Mons | \n",

" Cotton candy | \n",

"

\n",

" \n",

" | 9 | \n",

" Salza | \n",

" Soldar | \n",

" 05/16/2078 | \n",

" Mars | \n",

" Tharsis Montes | \n",

" Butterscotch | \n",

"

\n",

" \n",

" | 10 | \n",

" Talza | \n",

" Womar | \n",

" 10/28/2080 | \n",

" Mars | \n",

" Mangala Valles | \n",

" Cookies and Cream | \n",

"

\n",

" \n",

" | 11 | \n",

" Ulza | \n",

" Dalad | \n",

" 06/01/2072 | \n",

" Mars | \n",

" Elysium Planitia | \n",

" Green Tea | \n",

"

\n",

" \n",

" | 12 | \n",

" Vulza | \n",

" Ropal | \n",

" 04/14/2087 | \n",

" Mars | \n",

" Cydonia Mensae | \n",

" Mint chocolate chip | \n",

"

\n",

" \n",

" | 13 | \n",

" Zalza | \n",

" Bular | \n",

" 07/11/2089 | \n",

" Mars | \n",

" Isidis Planitia | \n",

" Rocky Road | \n",

"

\n",

" \n",

" | 14 | \n",

" Blorza | \n",

" Fomar | \n",

" 09/08/2076 | \n",

" Mars | \n",

" Tempe Terra | \n",

" Vanilla | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" survived | \n",

" pclass | \n",

" sex | \n",

" age | \n",

" sibsp | \n",

" parch | \n",

" fare | \n",

" embarked | \n",

" class | \n",

" who | \n",

" adult_male | \n",

" deck | \n",

" embark_town | \n",

" alive | \n",

" alone | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 0 | \n",

" 3 | \n",

" male | \n",

" 22.0 | \n",

" 1 | \n",

" 0 | \n",

" 7.2500 | \n",

" S | \n",

" Third | \n",

" man | \n",

" True | \n",

" NaN | \n",

" Southampton | \n",

" no | \n",

" False | \n",

"

\n",

" \n",

" | 1 | \n",

" 1 | \n",

" 1 | \n",

" female | \n",

" 38.0 | \n",

" 1 | \n",

" 0 | \n",

" 71.2833 | \n",

" C | \n",

" First | \n",

" woman | \n",

" False | \n",

" C | \n",

" Cherbourg | \n",

" yes | \n",

" False | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" avg_fare | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 16.713358 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" survived | \n",

" pclass | \n",

" sex | \n",

" age | \n",

" sibsp | \n",

" parch | \n",

" fare | \n",

" embarked | \n",

" class | \n",

" who | \n",

" adult_male | \n",

" deck | \n",

" embark_town | \n",

" alive | \n",

" alone | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 0 | \n",

" 1 | \n",

" female | \n",

" 50.0 | \n",

" 0 | \n",

" 0 | \n",

" 28.7125 | \n",

" C | \n",

" First | \n",

" woman | \n",

" 0 | \n",

" C | \n",

" Cherbourg | \n",

" no | \n",

" 1 | \n",

"

\n",

" \n",

" | 1 | \n",

" 0 | \n",

" 1 | \n",

" female | \n",

" 2.0 | \n",

" 1 | \n",

" 2 | \n",

" 151.5500 | \n",

" S | \n",

" First | \n",

" child | \n",

" 0 | \n",

" C | \n",

" Southampton | \n",

" no | \n",

" 0 | \n",

"

\n",

" \n",

" | 2 | \n",

" 0 | \n",

" 1 | \n",

" female | \n",

" 25.0 | \n",

" 1 | \n",

" 2 | \n",

" 151.5500 | \n",

" S | \n",

" First | \n",

" woman | \n",

" 0 | \n",

" C | \n",

" Southampton | \n",

" no | \n",

" 0 | \n",

"

\n",

" \n",

"

\n",

"