Repository: isaacus-dev/semchunk

Branch: main

Commit: 5a4797893d3a

Files: 6

Total size: 35.4 KB

Directory structure:

gitextract_7fjk9v29/

├── .gitignore

├── .isort.cfg

├── CHANGELOG.md

├── LICENCE

├── README.md

└── pyproject.toml

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

# Exclude everything.

*

# Then, include just the folders and files we want.

!README.md

!LICENCE

!CHANGELOG.md

!pyproject.toml

!src/

!src/**/*

!tests/

!tests/**/*

!.github/

!.github/**/*

!.gitignore

!.isort.cfg

# Finally, exclude anything in the above inclusions that we don't want.

*.pyc

*.pyo

*.ipynb

*.parquet

__pycache__/

.pytest_cache/

================================================

FILE: .isort.cfg

================================================

[settings]

skip_gitignore = True

length_sort = True

line_length = 180

sections = FUTURE,STDLIB,FIRSTPARTY,THIRDPARTY,LOCALFOLDER

lines_between_types = 1

order_by_type = False

================================================

FILE: CHANGELOG.md

================================================

## Changelog 🔄

All notable changes to semchunk will be documented here. This project adheres to [Keep a Changelog](https://keepachangelog.com/en/1.1.0/) and [Semantic Versioning](https://semver.org/spec/v2.0.0.html).

## [4.0.0] - 2026-03-23

### Added

- Added a new AI chunking mode to semchunk that leverages Isaacus enrichment models to hierarchically segment texts.

- Made it possible to chunk [Isaacus Legal Graph Schema (ILGS) Documents](https://docs.isaacus.com/ilgs/introduction) instead of just strings.

- Added a new `tokenizer_kwargs` argument to `chunkerify()` allowing users to specify custom keyword arguments to their tokenizers and token counters. `tokenizer_kwargs` can be used to override the default behavior of treating any encountered special tokens as if they are normal text when using a `tiktoken` or `transformers` tokenzier.

- Where a `tiktoken` or `transformers` tokenizer is used, started treating special tokens as normal text instead of, in the case of `tiktoken`, raising an error and, in the case of `transformers`, treating them as special tokens.

- Added support for Python 3.14.

### Changed

- Demoted asterisks in the hierarchy of splitters from sentence terminators to clause separators to better reflect their typical syntactic function.

- Dramatically improved performance when handling extremely long sequences of punctuation characters.

- All arguments to `chunkerify()` except for the first two arguments, `tokenizer_or_token_counter` and `chunk_size`, are now keyword-only arguments.

- All arguments to `chunk()` except for the first three, `text`, `chunk_size`, and `token_counter`, are now keyword-only arguments.

- Significantly improved performance in cases where `merge_splits()` was the biggest bottleneck by switching from joining splits with splitters to indexing into the original text.

- Slightly sped up `merge_splits()` by switching to the standard library's `bisect_left()` function which is now faster than the previous implementation.

### Removed

- Dropped support for Python 3.9.

## [3.2.5] - 2025-10-28

### Changed

- Switched to more accurate monthly download counts from [pypistats.org](https://pypistats.org/) rather than the less accurate counts from [pepy.tech](https://pepy.tech/).

## [3.2.4] - 2025-10-26

### Fixed

- Fixed splitters being sorted lexographically rather than by length, which should improve the meaningfulness of chunks.

### Fixed

- Fixed broken Python download count shield ([crflynn/pypistats.org#82](https://github.com/crflynn/pypistats.org/issues/82#issue-3285911460)).

## [3.2.3] - 2025-08-13

### Fixed

- Fixed broken Python download count shield ([crflynn/pypistats.org#82](https://github.com/crflynn/pypistats.org/issues/82#issue-3285911460)).

## [3.2.2] - 2025-06-09

### Fixed

- Fixed `IndexError` being raised when chunking whitespace only texts with overlapping enabled ([#18](https://github.com/isaacus-dev/semchunk/issues/18)).

## [3.2.1] - 2025-03-27

### Fixed

- Fixed minor typos in the README and docstrings.

## [3.2.0] - 2025-03-20

### Changed

- Significantly improved the quality of chunks produced when chunking with low chunk sizes or documents with minimal varying levels of whitespace by adding a new rule to the semchunk algorithm that prioritizes splitting at the occurrence of single whitespace characters preceded by hierarchically meaningful non-whitespace characters over splitting at all single whitespace characters in general ([#17](https://github.com/isaacus-dev/semchunk/issues/17)).

## [3.1.3] - 2025-03-10

### Changed

- Added mention of Isaacus to the README.

## [3.1.2] - 2025-03-06

### Changed

- Changed test model from `isaacus/emubert` to `isaacus/kanon-tokenizer`.

## [3.1.1] - 2025-02-18

### Added

- Added a note to the quickstart section of the README advising users to deduct the number of special tokens automatically added by their tokenizer from their chunk size. This note had been removed in version 3.0.0 but has been readded as it is unlikely to be obvious to users.

## [3.1.0] - 2025-02-16

### Added

- Introduced a new `cache_maxsize` argument to `chunkerify()` and `chunk()` that specifies the maximum number of text-token count pairs that can be stored in a token counter's cache. The argument defaults to `None`, in which case the cache is unbounded.

## [3.0.4] - 2025-02-14

### Fixed

- Fixed bug where attempting to chunk only whitespace characters would raise `ValueError: not enough values to unpack (expected 2, got 0)` ([ScrapeGraphAI/Scrapegraph-ai#893](https://github.com/ScrapeGraphAI/Scrapegraph-ai/issues/893)).

## [3.0.3] - 2025-02-13

### Fixed

- Fixed `isaacus/emubert` mistakenly being set to `isaacus-dev/emubert` in the README and tests.

## [3.0.2] - 2025-02-13

### Fixed

- Significantly sped up chunking very long texts with little to no variation in levels of whitespace used (fixing [#8](https://github.com/isaacus-dev/semchunk/issues/8)) and, in the process, also slightly improved overall performance.

### Changed

- Transferred semchunk to [Isaacus](https://isaacus.com/).

- Began formatting with Ruff.

## [3.0.1] - 2024-01-10

### Fixed

- Fixed a bug where attempting to chunk an empty text would raise a `ValueError`.

## [3.0.0] - 2024-12-31

### Added

- Added an `offsets` argument to `chunk()` and `Chunker.__call__()` that specifies whether to return the start and end offsets of each chunk ([#9](https://github.com/isaacus-dev/semchunk/issues/9)). The argument defaults to `False`.

- Added an `overlap` argument to `chunk()` and `Chunker.__call__()` that specifies the proportion of the chunk size, or, if >=1, the number of tokens, by which chunks should overlap ([#1](https://github.com/isaacus-dev/semchunk/issues/1)). The argument defaults to `None`, in which case no overlapping occurs.

- Added an undocumented, private `_make_chunk_function()` method to the `Chunker` class that constructs chunking functions with call-level arguments passed.

- Added more unit tests for new features as well as for multiple token counters and for ensuring there are no chunks comprised entirely of whitespace characters.

### Changed

- Began removing chunks comprised entirely of whitespace characters from the output of `chunk()`.

- Updated semchunk's description from 'A fast and lightweight Python library for splitting text into semantically meaningful chunks.' and 'A fast, lightweight and easy-to-use Python library for splitting text into semantically meaningful chunks.'.

### Fixed

- Fixed a typo in the docstring for the `__call__()` method of the `Chunker` class returned by `chunkerify()` where most of the documentation for the arguments were listed under the section for the method's returns.

### Removed

- Removed undocumented, private `chunk()` method from the `Chunker` class returned by `chunkerify()`.

- Removed undocumented, private `_reattach_whitespace_splitters` argument of `chunk()` that was introduced to experiment with potentially adding support for overlap ratios.

## [2.2.2] - 2024-12-18

### Fixed

- Ensured `hatch` does not include irrelevant files in the distribution.

## [2.2.1] - 2024-12-17

### Changed

- Started benchmarking [`semantic-text-splitter`](https://pypi.org/project/semantic-text-splitter/) in parallel to ensure a fair comparison, courtesy of [@benbrandt](https://github.com/benbrandt) ([#17](https://github.com/isaacus-dev/semchunk/pull/12)).

## [2.2.0] - 2024-07-12

### Changed

- Switched from having `chunkerify()` output a function to having it return an instance of the new `Chunker()` class which should not alter functionality in any way but will allow for the preservation of type hints, fixing [#7](https://github.com/isaacus-dev/semchunk/pull/7).

## [2.1.0] - 2024-06-20

### Fixed

- Ceased memoizing `chunk()` (but not token counters) due to the fact that cached outputs of memoized functions are shallow rather than deep copies of original outputs, meaning that if one were to chunk a text and then chunk that same text again and then modify one of the chunks outputted by the first call, the chunks outputted by the second call would also be modified. This behaviour is not expected and therefore undesirable. The memoization of token counters is not impacted as they output immutable objects, namely, integers.

## [2.0.0] - 2024-06-19

### Added

- Added support for multiprocessing through the `processes` argument passable to chunkers constructed by `chunkerify()`.

### Removed

- No longer guaranteed that semchunk is pure Python.

## [1.0.1] - 2024-06-02

### Fixed

- Documented the `progress` argument in the docstring for `chunkerify()` and its type hint in the README.

## [1.0.0] - 2024-06-02

### Added

- Added a `progress` argument to the chunker returned by `chunkerify()` that, when set to `True` and multiple texts are passed, displays a progress bar.

## [0.3.2] - 2024-06-01

### Fixed

- Fixed a bug where a `DivisionByZeroError` would be raised where a token counter returned zero tokens when called from `merge_splits()`, courtesy of [@jcobol](https://github.com/jcobol) ([#5](https://github.com/isaacus-dev/semchunk/pull/5)) ([7fd64eb](https://github.com/isaacus-dev/semchunk/pull/5/commits/7fd64eb8cf51f45702c59f43795be9a00c7d0d17)), fixing [#4](https://github.com/isaacus-dev/semchunk/issues/4).

## [0.3.1] - 2024-05-18

### Fixed

- Fixed typo in error messages in `chunkerify()` where it was referred to as `make_chunker()`.

## [0.3.0] - 2024-05-18

### Added

- Introduced the `chunkerify()` function, which constructs a chunker from a tokenizer or token counter that can be reused and can also chunk multiple texts in a single call. The resulting chunker speeds up chunking by 40.4% thanks, in large part, to a token counter that avoid having to count the number of tokens in a text when the number of characters in the text exceed a certain threshold, courtesy of [@R0bk](https://github.com/R0bk) ([#3](https://github.com/isaacus-dev/semchunk/pull/3)) ([337a186](https://github.com/isaacus-dev/semchunk/pull/3/commits/337a18615f991076b076262288b0408cb162b48c)).

## [0.2.4] - 2024-05-13

### Changed

- Improved chunking performance with larger chunk sizes by switching from linear to binary search for the identification of optimal chunk boundaries, courtesy of [@R0bk](https://github.com/R0bk) ([#3](https://github.com/isaacus-dev/semchunk/pull/3)) ([337a186](https://github.com/isaacus-dev/semchunk/pull/3/commits/337a18615f991076b076262288b0408cb162b48c)).

## [0.2.3] - 2024-03-11

### Fixed

- Ensured that memoization does not overwrite `chunk()`'s function signature.

## [0.2.2] - 2024-02-05

### Fixed

- Ensured that the `memoize` argument is passed back to `chunk()` in recursive calls.

## [0.2.1] - 2023-11-09

### Added

- Memoized `chunk()`.

### Fixed

- Fixed typos in README.

## [0.2.0] - 2023-11-07

### Added

- Added the `memoize` argument to `chunk()`, which memoizes token counters by default to significantly improve performance.

### Changed

- Improved chunking performance.

## [0.1.2] - 2023-11-07

### Fixed

- Fixed links in the README.

## [0.1.1] - 2023-11-07

### Added

- Added new test samples.

- Added benchmarks.

### Changed

- Improved chunking performance.

- improved test coverage.

## [0.1.0] - 2023-11-05

### Added

- Added the `chunk()` function, which splits text into semantically meaningful chunks of a specified size as determined by a provided token counter.

[4.0.0]: https://github.com/isaacus-dev/semchunk/compare/v3.2.5...v4.0.0

[3.2.5]: https://github.com/isaacus-dev/semchunk/compare/v3.2.4...v3.2.5

[3.2.4]: https://github.com/isaacus-dev/semchunk/compare/v3.2.3...v3.2.4

[3.2.3]: https://github.com/isaacus-dev/semchunk/compare/v3.2.2...v3.2.3

[3.2.2]: https://github.com/isaacus-dev/semchunk/compare/v3.2.1...v3.2.2

[3.2.1]: https://github.com/isaacus-dev/semchunk/compare/v3.2.0...v3.2.1

[3.2.0]: https://github.com/isaacus-dev/semchunk/compare/v3.1.3...v3.2.0

[3.1.3]: https://github.com/isaacus-dev/semchunk/compare/v3.1.2...v3.1.3

[3.1.2]: https://github.com/isaacus-dev/semchunk/compare/v3.1.1...v3.1.2

[3.1.1]: https://github.com/isaacus-dev/semchunk/compare/v3.1.0...v3.1.1

[3.1.0]: https://github.com/isaacus-dev/semchunk/compare/v3.0.4...v3.1.0

[3.0.4]: https://github.com/isaacus-dev/semchunk/compare/v3.0.3...v3.0.4

[3.0.3]: https://github.com/isaacus-dev/semchunk/compare/v3.0.2...v3.0.3

[3.0.2]: https://github.com/isaacus-dev/semchunk/compare/v3.0.1...v3.0.2

[3.0.1]: https://github.com/isaacus-dev/semchunk/compare/v3.0.0...v3.0.1

[3.0.0]: https://github.com/isaacus-dev/semchunk/compare/v2.2.2...v3.0.0

[2.2.2]: https://github.com/isaacus-dev/semchunk/compare/v2.2.1...v2.2.2

[2.2.1]: https://github.com/isaacus-dev/semchunk/compare/v2.2.0...v2.2.1

[2.2.0]: https://github.com/isaacus-dev/semchunk/compare/v2.1.0...v2.2.0

[2.1.0]: https://github.com/isaacus-dev/semchunk/compare/v2.0.0...v2.1.0

[2.0.0]: https://github.com/isaacus-dev/semchunk/compare/v1.0.1...v2.0.0

[1.0.1]: https://github.com/isaacus-dev/semchunk/compare/v1.0.0...v1.0.1

[1.0.0]: https://github.com/isaacus-dev/semchunk/compare/v0.3.2...v1.0.0

[0.3.2]: https://github.com/isaacus-dev/semchunk/compare/v0.3.1...v0.3.2

[0.3.1]: https://github.com/isaacus-dev/semchunk/compare/v0.3.0...v0.3.1

[0.3.0]: https://github.com/isaacus-dev/semchunk/compare/v0.2.4...v0.3.0

[0.2.4]: https://github.com/isaacus-dev/semchunk/compare/v0.2.3...v0.2.4

[0.2.3]: https://github.com/isaacus-dev/semchunk/compare/v0.2.2...v0.2.3

[0.2.2]: https://github.com/isaacus-dev/semchunk/compare/v0.2.1...v0.2.2

[0.2.1]: https://github.com/isaacus-dev/semchunk/compare/v0.2.0...v0.2.1

[0.2.0]: https://github.com/isaacus-dev/semchunk/compare/v0.1.2...v0.2.0

[0.1.2]: https://github.com/isaacus-dev/semchunk/compare/v0.1.1...v0.1.2

[0.1.1]: https://github.com/isaacus-dev/semchunk/compare/v0.1.0...v0.1.1

[0.1.0]: https://github.com/isaacus-dev/semchunk/releases/tag/v0.1.0

================================================

FILE: LICENCE

================================================

Copyright (c) 2024-2026 Isaacus Pty Ltd and Umar Butler

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: README.md

================================================

**semchunk** is a Python library for splitting text into smaller chunks while preserving as much local semantic context as possible.

semchunk supports AI-powered chunking, chunk overlapping, and chunk offsets, and works with any tokenizer or token counter, including those from Tiktoken and Transformers.

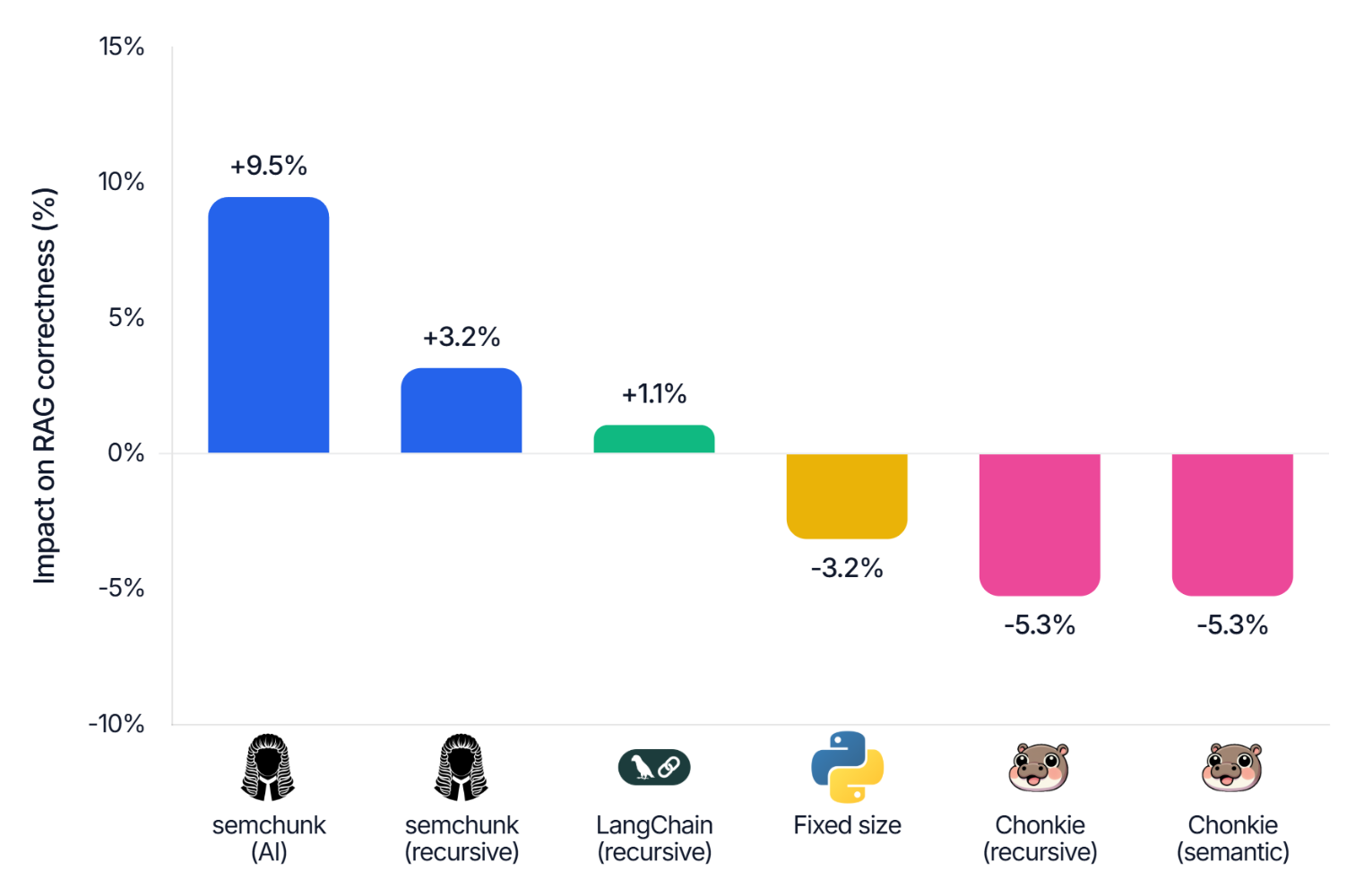

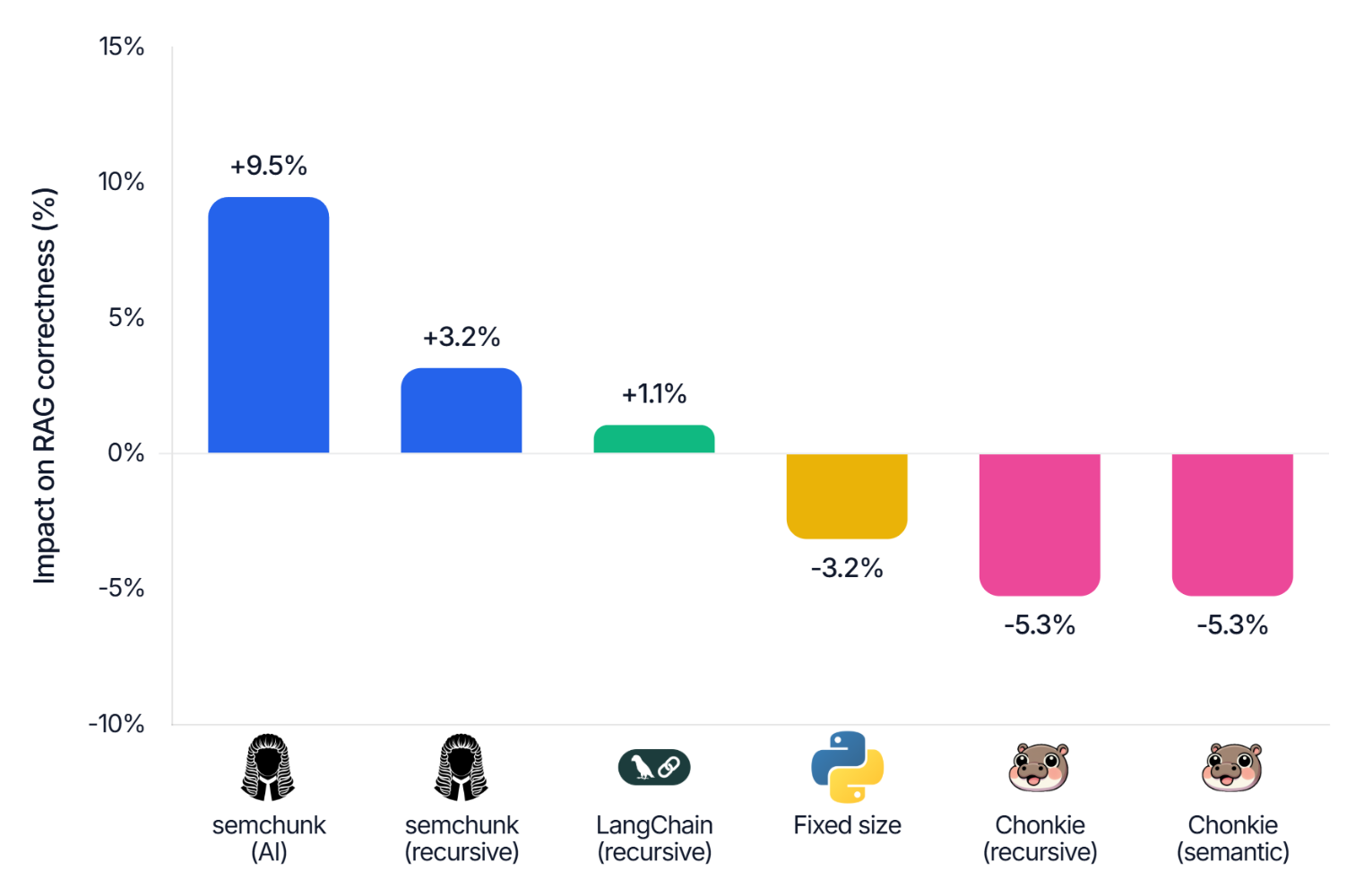

Powered by a novel hierarchical chunking algorithm, semchunk delivers 15% better RAG performance than its closest competitors (see [Benchmarks 📊](https://github.com/isaacus-dev/semchunk#benchmarks-)).

semchunk is production-ready. It is downloaded millions of times per month and is used in Docling, the Microsoft Intelligence Toolkit, and the Isaacus API.

## Setup 📦

semchunk can be installed with `pip` (or `uv`):

```bash

pip install semchunk

```

If you're using AI-powered chunking, you'll also want to install the [Isaacus SDK](https://github.com/isaacus-dev/isaacus-python) and obtain an [Isaacus API key](https://platform.isaacus.com/accounts/signup/):

```bash

pip install isaacus

```

semchunk is also available on `conda-forge`:

```bash

conda install conda-forge::semchunk

# or

conda install -c conda-forge semchunk

```

[@dominictarro](https://github.com/dominictarro) maintains a Rust port of semchunk named [`semchunk-rs`](https://crates.io/crates/semchunk-rs).

## Quickstart 👩💻

The code snippet below demonstrates how to chunk text with semchunk:

```python

import semchunk

import tiktoken # Transformers and Tiktoken are not dependencies,

from transformers import AutoTokenizer # they're just here for demonstration purposes.

chunk_size = 4 # A low chunk size is used here for demonstration purposes. Keep in mind, semchunk

# does not know how many special tokens, if any, your tokenizer adds to every input,

# so you may want to deduct the number of special tokens added from your chunk size.

text = 'The quick brown fox jumps over the lazy dog.'

# You can construct a chunker with `semchunk.chunkerify()` by passing the name of an OpenAI model,

# OpenAI Tiktoken encoding or Hugging Face model, or a custom tokenizer that has an `encode()` method

# (like a Tiktoken or Transformers tokenizer) or a custom token counting function that takes a text and

# returns the number of tokens in it.

chunker = semchunk.chunkerify('isaacus/kanon-2-tokenizer', chunk_size) or \

semchunk.chunkerify('gpt-4', chunk_size) or \

semchunk.chunkerify('cl100k_base', chunk_size) or \

semchunk.chunkerify(AutoTokenizer.from_pretrained('isaacus/kanon-2-tokenizer'), chunk_size) or \

semchunk.chunkerify(tiktoken.encoding_for_model('gpt-4'), chunk_size) or \

semchunk.chunkerify(lambda text: len(text.split()), chunk_size)

# If you give the resulting chunker a single text, it'll return a list of chunks. If you give it a

# list of texts, it'll return a list of lists of chunks.

assert chunker(text) == ['The quick brown fox', 'jumps over the', 'lazy dog.']

assert chunker([text], progress=True) == [['The quick brown fox', 'jumps over the', 'lazy dog.']]

# If you have a lot of texts and you want to speed things up, you can enable multiprocessing by

# setting `processes` to a number greater than 1.

assert chunker([text], processes=2) == [['The quick brown fox', 'jumps over the', 'lazy dog.']]

# You can also pass an `offsets` argument to return the offsets of chunks, as well as an `overlap`

# argument to overlap chunks by a ratio (if < 1) or an absolute number of tokens (if >= 1).

chunks, offsets = chunker(text, offsets=True, overlap=0.5)

```

To leverage AI-powered chunking, ensure the [Isaacus SDK](https://github.com/isaacus-dev/isaacus-python) is installed and your `ISAACUS_API_KEY` environment variable is set, and then simply pass the name of an [Isaacus enrichment model](https://docs.isaacus.com/models/introduction#enrichment) like `kanon-2-enricher` as the `chunking_model` argument of `chunkerify()` or `chunk()`, like so:

```python

import requests # For demonstration purposes, we'll use `requests` to download a long document.

import semchunk

from os import environ

# Set your `ISAACUS_API_KEY` environment variable to your Isaacus API key.

environ["ISAACUS_API_KEY"] = "INSERT_YOUR_API_KEY_HERE"

# Download a very long document to chunk.

text = requests.get("https://examples.isaacus.com/dred-scott-v-sandford.txt").text

# Construct a chunker that uses `kanon-2-enricher` for AI-powered chunking.

# NOTE Because we're using a Hugging Face Transformers tokenizer, the `transformers` library is required here,

# however, you can use any tokenizer or token counter you like.

chunker = semchunk.chunkerify("isaacus/kanon-2-tokenizer", 512, chunking_model="kanon-2-enricher")

# Chunk the document with AI-powered chunking.

chunks = chunker(text)

```

## Usage 🕹️

### `chunkerify()`

```python

def chunkerify(

tokenizer_or_token_counter: str | tiktoken.Encoding | transformers.PreTrainedTokenizer | \

tokenizers.Tokenizer | Callable[[str], int],

chunk_size: int | None = None,

*,

chunking_model: str | None = None,

isaacus_client: isaacus.Isaacus | None = None,

tokenizer_kwargs: dict | None = None,

max_token_chars: int | None = None,

memoize: bool = True,

cache_maxsize: int | None = None,

) -> Callable[[str | ILGSDocument | Sequence[str | ILGSDocument], int, bool, bool, int | float | None], list[str] | tuple[list[str], list[tuple[int, int]]] | list[list[str]] | tuple[list[list[str]], list[list[tuple[int, int]]]]]:

```

`chunkerify()` constructs a chunker that splits one or more texts into semantically meaningful chunks of a specified size as determined by the provided tokenizer or token counter.

`tokenizer_or_token_counter` is either: the name of a Tiktoken or Transformers tokenizer (with priority given to the former); a tokenizer with an `encode()` method (e.g., a Tiktoken or Transformers tokenizer); or a token counter that returns the number of tokens in an input.

`chunk_size` is the maximum number of tokens a chunk may contain. It defaults to `None`, in which case it will be set to the same value as the tokenizer's `model_max_length` attribute (minus the number of tokens returned by attempting to tokenize an empty string) if possible, otherwise a `ValueError` will be raised.

`chunking_model` is the name of the [Isaacus enrichment model](https://docs.isaacus.com/models/introduction#enrichment) to use for AI chunking. This argument defaults to `None`, in which case, unless you provide an [Isaacus Legal Graph Schema (ILGS) Document](https://docs.isaacus.com/ilgs/introduction) as input, AI chunking will be disabled.

`isaacus_client` is an instance of the `isaacus.Isaacus` API client to use for AI chunking instead of a client constructed with default parameters. This argument defaults to `None`, in which case a client will be constructed with default parameters if AI chunking is enabled.

`tokenizer_kwargs` is an optional dictionary of keyword arguments to be passed to the tokenizer or token counter whenever it is called. This can be used to disable the current default behavior of treating any encountered special tokens as if they are normal text when using a Tiktoken or Transformers tokenizer. This argument defaults to `None`, in which case no additional keyword arguments will be passed to the tokenizer or token counter.

`max_token_chars` is the maximum number of characters a token may contain. It is used to significantly speed up the token counting of long inputs. It defaults to `None` in which case it will either not be used or will, if possible, be set to the number of characters in the longest token in the tokenizer's vocabulary as determined by the `token_byte_values` or `get_vocab` methods.

`memoize` flags whether to memoize the token counter. It defaults to `True`.

`cache_maxsize` is the maximum number of text-token count pairs that can be stored in the token counter's cache. It defaults to `None`, which makes the cache unbounded. This argument is only used if `memoize` is `True`.

This function returns a chunker that takes either a single input or a sequence of inputs and returns, depending on whether multiple inputs have been provided, a list or list of lists of chunks up to `chunk_size`-tokens-long with the whitespace used to split the input removed, and, if the optional `offsets` argument to the chunker is `True`, a list or lists of tuples of the form `(start, end)` where `start` is the index of the first character of a chunk in an input and `end` is the index of the character succeeding the last character of the chunk such that `chunks[i] == text[offsets[i][0]:offsets[i][1]]`.

The resulting chunker can be passed a `processes` argument that specifies the number of processes to be used when chunking multiple inputs.

It is also possible to pass a `progress` argument which, if set to `True` and multiple inputs are passed, will display a progress bar.

As described above, the `offsets` argument, if set to `True`, will cause the chunker to return the start and end offsets of each chunk.

The chunker accepts an `overlap` argument that specifies the proportion of the chunk size, or, if >=1, the number of tokens, by which chunks should overlap. It defaults to `None`, in which case no overlapping occurs.

### `chunk()`

```python

def chunk(

text: str | ILGSDocument,

chunk_size: int,

token_counter: Callable[[str], int],

*,

memoize: bool = True,

offsets: bool = False,

overlap: float | int | None = None,

chunking_model: str | None = None,

isaacus_client: "isaacus.Isaacus | None" = None,

cache_maxsize: int | None = None,

) -> list[str] | tuple[list[str], list[tuple[int, int]]]:

```

`chunk()` splits a text into semantically meaningful chunks of a specified size as determined by the provided token counter.

`text` is the input to be chunked. If you pass an Isaacus Legal Graph Schema (ILGS) Document, AI chunking will occur automatically without re-enriching the document.

`chunk_size` is the maximum number of tokens a chunk may contain.

`token_counter` is a callable that takes a string and returns the number of tokens in it.

`memoize` flags whether to memoize the token counter. It defaults to `True`.

`offsets` flags whether to return the start and end offsets of each chunk. It defaults to `False`.

`overlap` specifies the proportion of the chunk size, or, if >=1, the number of tokens, by which chunks should overlap. It defaults to `None`, in which case no overlapping occurs.

`chunking_model` is the name of the [Isaacus enrichment model](https://docs.isaacus.com/models/introduction#enrichment) to use for AI chunking. This argument defaults to `None`, in which case, unless you provide an Isaacus Legal Graph Schema (ILGS) Document as input, AI chunking will be disabled.

`isaacus_client` is an instance of the `isaacus.Isaacus` API client to use for AI chunking instead of a client constructed with default parameters. This argument defaults to `None`, in which case a client will be constructed with default parameters if AI chunking is enabled.

`cache_maxsize` is the maximum number of text-token count pairs that can be stored in the token counter's cache. It defaults to `None`, which makes the cache unbounded. This argument is only used if `memoize` is `True`.

This function returns a list of chunks up to `chunk_size`-tokens-long, with any whitespace used to split the text removed, and, if `offsets` is `True`, a list of tuples of the form `(start, end)` where `start` is the index of the first character of the chunk in the original text and `end` is the index of the character after the last character of the chunk such that `chunks[i] == text[offsets[i][0]:offsets[i][1]]`.

## How It Works 🔍

semchunk works by recursively splitting texts until all resulting chunks are less than or equal to a specified chunk size.

In particular, it:

1. splits text using the most structurally meaningful splitter possible;

2. recursively splits the resulting chunks until a set of chunks less than or equal to the specified chunk size is produced;

3. merges any chunks that are under the chunk size back together until the chunk size is reached;

4. reattaches any non-whitespace splitters back to the ends of chunks except for the last chunk if doing so does not bring chunks over the chunk size, otherwise adds non-whitespace splitters as their own chunks; and

5. since version 3.0.0, excludes chunks consisting entirely of whitespace characters.

To ensure that chunks are as semantically meaningful as possible, semchunk uses the following splitters, in order of precedence:

1. the largest sequence of newlines (`\n`) and/or carriage returns (`\r`);

2. the largest sequence of tabs;

3. the largest sequence of whitespace characters (as defined by regex's `\s` character class) or, since version 3.2.0, if the largest sequence of whitespace characters is only a single character and there exist whitespace characters preceded by any of the structurally meaningful non-whitespace characters listed below (in the same order of precedence), then only those specific whitespace characters;

4. sentence terminators (`.`, `?`, and `!`);

5. clause separators (`;`, `,`, `(`, `)`, `[`, `]`, `“`, `”`, `‘`, `’`, `'`, `"`, `` ` ``, and `*`);

6. sentence interrupters (`:`, `—` and `…`);

7. word joiners (`/`, `\`, `–`, `&` and `-`); and

8. all other characters.

Where AI-powered chunking is enabled, semchunk:

1. splits text into chunks up to 1,000,000-characters-long using the above algorithm in order to avoid sending excessively long inputs to an enrichment model;

2. enriches the resulting chunks with the enrichment model, pooling all unique spans extracted from each enriched chunk together;

3. for each level of depth, creates new spans where necessary to ensure that all content at that level of depth is covered by a span;

4. constructs a tree of spans based on containment;

5. iterates through the span tree:

1. adding spans to chunks until the chunk size is reached;

2. discarding whitespace-only chunks;

3. removing leading and trailing whitespace from chunks;

4. entering into the children of spans where a span exceeds the chunk size; and

5. falling back to the above algorithm where a span has no children.

If overlapping chunks have been requested, semchunk also:

1. internally reduces the chunk size to `min(overlap, chunk_size - overlap)` (`overlap` being computed as `floor(chunk_size * overlap)` for relative overlaps and `min(overlap, chunk_size - 1)` for absolute overlaps); and

2. merges every `floor(original_chunk_size / reduced_chunk_size)` chunks starting from the first chunk and then jumping by `floor((original_chunk_size - overlap) / reduced_chunk_size)` chunks until the last chunk is reached.

## Benchmarks 📊

On [Legal RAG QA](https://huggingface.co/datasets/isaacus/legal-rag-qa), semchunk's AI chunking mode achieves the highest RAG correctness score of 37.7%, followed by semchunk's non-AI chunking mode at 35.5%. In comparison, LangChain's recursive chunker achieves correctness of 34.8% while fixed-size chunking achieves 33.3% correctness. Chonkie's semantic and recursive chunkers achieve the lowest correctness score of 32.6%. That is 15% lower than semchunk's AI chunking mode and 8% lower than semchunk's non-AI chunking mode.

A full write up of our evaluation methodology and findings may be found on our [blog](https://isaacus.com/blog/introducing-ai-chunking-to-semchunk).

## Citation 📝

If you use semchunk for research, please cite it as follows:

```bibtex

@software{butler2023semchunk,

author = {Butler, Umar},

title = {semchunk: a Python library for semantic chunking},

year = {2023},

url = {https://github.com/isaacus-dev/semchunk},

version = {4.0.0},

publisher = {Isaacus}

}

```

## License 📜

This library is licensed under the [MIT License](https://github.com/isaacus-dev/semchunk/blob/main/LICENCE).

================================================

FILE: pyproject.toml

================================================

[build-system]

requires = ["hatchling"]

build-backend = "hatchling.build"

[project]

name = "semchunk"

version = "4.0.0"

authors = [

{name="Isaacus", email="hello@isaacus.com"},

{name="Umar Butler", email="umar@isaacus.com"},

]

description = "A Python library for splitting text into smaller chunks while preserving as much local semantic context as possible."

readme = "README.md"

requires-python = ">=3.10"

license = {text="MIT"}

keywords = [

"chunking",

"splitting",

"text",

"split",

"splits",

"chunks",

"chunk",

"splitter",

"chunker",

"nlp",

"ai",

]

classifiers = [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"Intended Audience :: Information Technology",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14",

"Programming Language :: Python :: Implementation :: CPython",

"Topic :: Scientific/Engineering :: Artificial Intelligence",

"Topic :: Software Development :: Libraries :: Python Modules",

"Topic :: Text Processing :: General",

"Topic :: Utilities",

"Typing :: Typed"

]

dependencies = [

"tqdm",

"mpire[dill]",

]

[project.urls]

Homepage = "https://github.com/isaacus-dev/semchunk"

Documentation = "https://github.com/isaacus-dev/semchunk/blob/main/README.md"

Issues = "https://github.com/isaacus-dev/semchunk/issues"

Source = "https://github.com/isaacus-dev/semchunk"

[tool.hatch.build.targets.sdist]

only-include = ['src/semchunk/__init__.py', 'src/semchunk/py.typed', 'src/semchunk/semchunk.py', 'pyproject.toml', 'README.md', 'LICENCE', 'CHANGELOG.md', 'tests/bench.py', 'tests/test_semchunk.py', '.github/workflows/ci.yml', 'tests/helpers.py']

[tool.ruff]

exclude = [

"__pycache__",

"develop-eggs",

"eggs",

".eggs",

"wheels",

"htmlcov",

".tox",

".nox",

".coverage",

".cache",

".pytest_cache",

".ipynb_checkpoints",

".mypy_cache",

".pybuilder",

"__pypackages__",

".env",

".venv",

"venv",

"env",

"ENV",

"env.bak",

"venv.bak",

".archive",

".persist_cache",

"site-packages",

"node_modules",

"dist",

"build",

"dist-info",

"egg-info",

".hatchling",

".bzr",

".direnv",

".git",

".git-rewrite",

".hg",

".pants.d",

".pytype",

".ruff_cache",

".svn",

".vscode",

"_build",

"buck-out",

"migrations",

"target",

"bin",

"lib",

"lib64",

"include",

"share",

"var",

"tmp",

"temp",

"logs",

]

line-length = 120

indent-width = 4

target-version = "py312"

[tool.ruff.lint]

select = ["E4", "E7", "E9", "F", "I"]

fixable = [

"ALL",

]

unfixable = []

ignore = ["E741"]

[tool.ruff.lint.isort]

length-sort = true

section-order = [

"future",

"standard-library",

"third-party",

"first-party",

"local-folder",

]

lines-between-types = 1

order-by-type = false

combine-as-imports = true

[tool.ruff.lint.per-file-ignores]

"__init__.py" = ["F401"]

[tool.ruff.format]

quote-style = "double"

indent-style = "space"

skip-magic-trailing-comma = false

line-ending = "auto"

[dependency-groups]

dev = [

"build>=1.2.2.post1",

"datasets>=4.5.0",

"hatch>=1.14.1",

"ipykernel>=6.31.0",

"isaacus>=0.19.0",

"isort>=6.1.0",

"nltk>=3.9.1",

"pytest>=8.4.0",

"pytest-cov>=6.1.1",

"semantic-text-splitter>=0.27.0",

"tiktoken>=0.9.0",

"tqdm>=4.67.1",

"transformers>=4.52.4",

"twine>=6.1.0",

]

A full write up of our evaluation methodology and findings may be found on our [blog](https://isaacus.com/blog/introducing-ai-chunking-to-semchunk).

## Citation 📝

If you use semchunk for research, please cite it as follows:

```bibtex

@software{butler2023semchunk,

author = {Butler, Umar},

title = {semchunk: a Python library for semantic chunking},

year = {2023},

url = {https://github.com/isaacus-dev/semchunk},

version = {4.0.0},

publisher = {Isaacus}

}

```

## License 📜

This library is licensed under the [MIT License](https://github.com/isaacus-dev/semchunk/blob/main/LICENCE).

================================================

FILE: pyproject.toml

================================================

[build-system]

requires = ["hatchling"]

build-backend = "hatchling.build"

[project]

name = "semchunk"

version = "4.0.0"

authors = [

{name="Isaacus", email="hello@isaacus.com"},

{name="Umar Butler", email="umar@isaacus.com"},

]

description = "A Python library for splitting text into smaller chunks while preserving as much local semantic context as possible."

readme = "README.md"

requires-python = ">=3.10"

license = {text="MIT"}

keywords = [

"chunking",

"splitting",

"text",

"split",

"splits",

"chunks",

"chunk",

"splitter",

"chunker",

"nlp",

"ai",

]

classifiers = [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"Intended Audience :: Information Technology",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14",

"Programming Language :: Python :: Implementation :: CPython",

"Topic :: Scientific/Engineering :: Artificial Intelligence",

"Topic :: Software Development :: Libraries :: Python Modules",

"Topic :: Text Processing :: General",

"Topic :: Utilities",

"Typing :: Typed"

]

dependencies = [

"tqdm",

"mpire[dill]",

]

[project.urls]

Homepage = "https://github.com/isaacus-dev/semchunk"

Documentation = "https://github.com/isaacus-dev/semchunk/blob/main/README.md"

Issues = "https://github.com/isaacus-dev/semchunk/issues"

Source = "https://github.com/isaacus-dev/semchunk"

[tool.hatch.build.targets.sdist]

only-include = ['src/semchunk/__init__.py', 'src/semchunk/py.typed', 'src/semchunk/semchunk.py', 'pyproject.toml', 'README.md', 'LICENCE', 'CHANGELOG.md', 'tests/bench.py', 'tests/test_semchunk.py', '.github/workflows/ci.yml', 'tests/helpers.py']

[tool.ruff]

exclude = [

"__pycache__",

"develop-eggs",

"eggs",

".eggs",

"wheels",

"htmlcov",

".tox",

".nox",

".coverage",

".cache",

".pytest_cache",

".ipynb_checkpoints",

".mypy_cache",

".pybuilder",

"__pypackages__",

".env",

".venv",

"venv",

"env",

"ENV",

"env.bak",

"venv.bak",

".archive",

".persist_cache",

"site-packages",

"node_modules",

"dist",

"build",

"dist-info",

"egg-info",

".hatchling",

".bzr",

".direnv",

".git",

".git-rewrite",

".hg",

".pants.d",

".pytype",

".ruff_cache",

".svn",

".vscode",

"_build",

"buck-out",

"migrations",

"target",

"bin",

"lib",

"lib64",

"include",

"share",

"var",

"tmp",

"temp",

"logs",

]

line-length = 120

indent-width = 4

target-version = "py312"

[tool.ruff.lint]

select = ["E4", "E7", "E9", "F", "I"]

fixable = [

"ALL",

]

unfixable = []

ignore = ["E741"]

[tool.ruff.lint.isort]

length-sort = true

section-order = [

"future",

"standard-library",

"third-party",

"first-party",

"local-folder",

]

lines-between-types = 1

order-by-type = false

combine-as-imports = true

[tool.ruff.lint.per-file-ignores]

"__init__.py" = ["F401"]

[tool.ruff.format]

quote-style = "double"

indent-style = "space"

skip-magic-trailing-comma = false

line-ending = "auto"

[dependency-groups]

dev = [

"build>=1.2.2.post1",

"datasets>=4.5.0",

"hatch>=1.14.1",

"ipykernel>=6.31.0",

"isaacus>=0.19.0",

"isort>=6.1.0",

"nltk>=3.9.1",

"pytest>=8.4.0",

"pytest-cov>=6.1.1",

"semantic-text-splitter>=0.27.0",

"tiktoken>=0.9.0",

"tqdm>=4.67.1",

"transformers>=4.52.4",

"twine>=6.1.0",

]

A full write up of our evaluation methodology and findings may be found on our [blog](https://isaacus.com/blog/introducing-ai-chunking-to-semchunk).

## Citation 📝

If you use semchunk for research, please cite it as follows:

```bibtex

@software{butler2023semchunk,

author = {Butler, Umar},

title = {semchunk: a Python library for semantic chunking},

year = {2023},

url = {https://github.com/isaacus-dev/semchunk},

version = {4.0.0},

publisher = {Isaacus}

}

```

## License 📜

This library is licensed under the [MIT License](https://github.com/isaacus-dev/semchunk/blob/main/LICENCE).

================================================

FILE: pyproject.toml

================================================

[build-system]

requires = ["hatchling"]

build-backend = "hatchling.build"

[project]

name = "semchunk"

version = "4.0.0"

authors = [

{name="Isaacus", email="hello@isaacus.com"},

{name="Umar Butler", email="umar@isaacus.com"},

]

description = "A Python library for splitting text into smaller chunks while preserving as much local semantic context as possible."

readme = "README.md"

requires-python = ">=3.10"

license = {text="MIT"}

keywords = [

"chunking",

"splitting",

"text",

"split",

"splits",

"chunks",

"chunk",

"splitter",

"chunker",

"nlp",

"ai",

]

classifiers = [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"Intended Audience :: Information Technology",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14",

"Programming Language :: Python :: Implementation :: CPython",

"Topic :: Scientific/Engineering :: Artificial Intelligence",

"Topic :: Software Development :: Libraries :: Python Modules",

"Topic :: Text Processing :: General",

"Topic :: Utilities",

"Typing :: Typed"

]

dependencies = [

"tqdm",

"mpire[dill]",

]

[project.urls]

Homepage = "https://github.com/isaacus-dev/semchunk"

Documentation = "https://github.com/isaacus-dev/semchunk/blob/main/README.md"

Issues = "https://github.com/isaacus-dev/semchunk/issues"

Source = "https://github.com/isaacus-dev/semchunk"

[tool.hatch.build.targets.sdist]

only-include = ['src/semchunk/__init__.py', 'src/semchunk/py.typed', 'src/semchunk/semchunk.py', 'pyproject.toml', 'README.md', 'LICENCE', 'CHANGELOG.md', 'tests/bench.py', 'tests/test_semchunk.py', '.github/workflows/ci.yml', 'tests/helpers.py']

[tool.ruff]

exclude = [

"__pycache__",

"develop-eggs",

"eggs",

".eggs",

"wheels",

"htmlcov",

".tox",

".nox",

".coverage",

".cache",

".pytest_cache",

".ipynb_checkpoints",

".mypy_cache",

".pybuilder",

"__pypackages__",

".env",

".venv",

"venv",

"env",

"ENV",

"env.bak",

"venv.bak",

".archive",

".persist_cache",

"site-packages",

"node_modules",

"dist",

"build",

"dist-info",

"egg-info",

".hatchling",

".bzr",

".direnv",

".git",

".git-rewrite",

".hg",

".pants.d",

".pytype",

".ruff_cache",

".svn",

".vscode",

"_build",

"buck-out",

"migrations",

"target",

"bin",

"lib",

"lib64",

"include",

"share",

"var",

"tmp",

"temp",

"logs",

]

line-length = 120

indent-width = 4

target-version = "py312"

[tool.ruff.lint]

select = ["E4", "E7", "E9", "F", "I"]

fixable = [

"ALL",

]

unfixable = []

ignore = ["E741"]

[tool.ruff.lint.isort]

length-sort = true

section-order = [

"future",

"standard-library",

"third-party",

"first-party",

"local-folder",

]

lines-between-types = 1

order-by-type = false

combine-as-imports = true

[tool.ruff.lint.per-file-ignores]

"__init__.py" = ["F401"]

[tool.ruff.format]

quote-style = "double"

indent-style = "space"

skip-magic-trailing-comma = false

line-ending = "auto"

[dependency-groups]

dev = [

"build>=1.2.2.post1",

"datasets>=4.5.0",

"hatch>=1.14.1",

"ipykernel>=6.31.0",

"isaacus>=0.19.0",

"isort>=6.1.0",

"nltk>=3.9.1",

"pytest>=8.4.0",

"pytest-cov>=6.1.1",

"semantic-text-splitter>=0.27.0",

"tiktoken>=0.9.0",

"tqdm>=4.67.1",

"transformers>=4.52.4",

"twine>=6.1.0",

]

A full write up of our evaluation methodology and findings may be found on our [blog](https://isaacus.com/blog/introducing-ai-chunking-to-semchunk).

## Citation 📝

If you use semchunk for research, please cite it as follows:

```bibtex

@software{butler2023semchunk,

author = {Butler, Umar},

title = {semchunk: a Python library for semantic chunking},

year = {2023},

url = {https://github.com/isaacus-dev/semchunk},

version = {4.0.0},

publisher = {Isaacus}

}

```

## License 📜

This library is licensed under the [MIT License](https://github.com/isaacus-dev/semchunk/blob/main/LICENCE).

================================================

FILE: pyproject.toml

================================================

[build-system]

requires = ["hatchling"]

build-backend = "hatchling.build"

[project]

name = "semchunk"

version = "4.0.0"

authors = [

{name="Isaacus", email="hello@isaacus.com"},

{name="Umar Butler", email="umar@isaacus.com"},

]

description = "A Python library for splitting text into smaller chunks while preserving as much local semantic context as possible."

readme = "README.md"

requires-python = ">=3.10"

license = {text="MIT"}

keywords = [

"chunking",

"splitting",

"text",

"split",

"splits",

"chunks",

"chunk",

"splitter",

"chunker",

"nlp",

"ai",

]

classifiers = [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"Intended Audience :: Information Technology",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14",

"Programming Language :: Python :: Implementation :: CPython",

"Topic :: Scientific/Engineering :: Artificial Intelligence",

"Topic :: Software Development :: Libraries :: Python Modules",

"Topic :: Text Processing :: General",

"Topic :: Utilities",

"Typing :: Typed"

]

dependencies = [

"tqdm",

"mpire[dill]",

]

[project.urls]

Homepage = "https://github.com/isaacus-dev/semchunk"

Documentation = "https://github.com/isaacus-dev/semchunk/blob/main/README.md"

Issues = "https://github.com/isaacus-dev/semchunk/issues"

Source = "https://github.com/isaacus-dev/semchunk"

[tool.hatch.build.targets.sdist]

only-include = ['src/semchunk/__init__.py', 'src/semchunk/py.typed', 'src/semchunk/semchunk.py', 'pyproject.toml', 'README.md', 'LICENCE', 'CHANGELOG.md', 'tests/bench.py', 'tests/test_semchunk.py', '.github/workflows/ci.yml', 'tests/helpers.py']

[tool.ruff]

exclude = [

"__pycache__",

"develop-eggs",

"eggs",

".eggs",

"wheels",

"htmlcov",

".tox",

".nox",

".coverage",

".cache",

".pytest_cache",

".ipynb_checkpoints",

".mypy_cache",

".pybuilder",

"__pypackages__",

".env",

".venv",

"venv",

"env",

"ENV",

"env.bak",

"venv.bak",

".archive",

".persist_cache",

"site-packages",

"node_modules",

"dist",

"build",

"dist-info",

"egg-info",

".hatchling",

".bzr",

".direnv",

".git",

".git-rewrite",

".hg",

".pants.d",

".pytype",

".ruff_cache",

".svn",

".vscode",

"_build",

"buck-out",

"migrations",

"target",

"bin",

"lib",

"lib64",

"include",

"share",

"var",

"tmp",

"temp",

"logs",

]

line-length = 120

indent-width = 4

target-version = "py312"

[tool.ruff.lint]

select = ["E4", "E7", "E9", "F", "I"]

fixable = [

"ALL",

]

unfixable = []

ignore = ["E741"]

[tool.ruff.lint.isort]

length-sort = true

section-order = [

"future",

"standard-library",

"third-party",

"first-party",

"local-folder",

]

lines-between-types = 1

order-by-type = false

combine-as-imports = true

[tool.ruff.lint.per-file-ignores]

"__init__.py" = ["F401"]

[tool.ruff.format]

quote-style = "double"

indent-style = "space"

skip-magic-trailing-comma = false

line-ending = "auto"

[dependency-groups]

dev = [

"build>=1.2.2.post1",

"datasets>=4.5.0",

"hatch>=1.14.1",

"ipykernel>=6.31.0",

"isaacus>=0.19.0",

"isort>=6.1.0",

"nltk>=3.9.1",

"pytest>=8.4.0",

"pytest-cov>=6.1.1",

"semantic-text-splitter>=0.27.0",

"tiktoken>=0.9.0",

"tqdm>=4.67.1",

"transformers>=4.52.4",

"twine>=6.1.0",

]