Repository: jamesseanwright/wax

Branch: master

Commit: 44f406b7c0f9

Files: 55

Total size: 58.4 KB

Directory structure:

gitextract__3x_qwi4/

├── .babelrc

├── .editorconfig

├── .eslintrc.js

├── .gitignore

├── .npmignore

├── .nvmrc

├── .travis.yml

├── README.md

├── docs/

│ ├── 000-introduction.md

│ ├── 001-getting-started.md

│ ├── 002-audio-parameters.md

│ ├── 003-aggregations.md

│ ├── 004-updating-audio-graphs.md

│ ├── 005-interop-with-react.md

│ ├── 006-api-reference.md

│ └── 007-local-development.md

├── example/

│ ├── README.md

│ ├── devServer.js

│ └── src/

│ ├── aggregation.jsx

│ ├── combineElementCreators.js

│ ├── index.html

│ ├── simple.jsx

│ └── withReact.jsx

├── jest.config.js

├── package.json

├── rollup.config.js

└── src/

├── __tests__/

│ ├── connectNodes.test.js

│ ├── createAudioElement.test.js

│ └── helpers.js

├── components/

│ ├── Aggregation.jsx

│ ├── AudioBufferSource.js

│ ├── AudioGraph.js

│ ├── ChannelMerger.js

│ ├── Destination.js

│ ├── Gain.js

│ ├── NoOp.js

│ ├── Oscillator.js

│ ├── StereoPanner.js

│ ├── __tests__/

│ │ ├── AudioBufferSource.test.js

│ │ ├── Gain.test.js

│ │ ├── Oscillator.test.js

│ │ ├── StereoPanner.test.js

│ │ └── asSourceNode.test.jsx

│ ├── asSourceNode.jsx

│ └── index.js

├── connectNodes.js

├── createAudioElement.js

├── index.js

├── isWaxComponent.js

├── paramMutations/

│ ├── __tests__/

│ │ ├── assignAudioParam.test.js

│ │ └── createParamMutator.test.js

│ ├── assignAudioParam.js

│ ├── createParamMutator.js

│ └── index.js

└── renderAudioGraph.js

================================================

FILE CONTENTS

================================================

================================================

FILE: .babelrc

================================================

{

"plugins": [

"@babel/proposal-object-rest-spread",

["@babel/transform-react-jsx", {

"pragma": "createAudioElement"

}]

],

"env": {

"test": {

"plugins": [

"@babel/transform-modules-commonjs"

]

}

}

}

================================================

FILE: .editorconfig

================================================

root = true

# Unix-style newlines with a newline ending every file

[*]

end_of_line = lf

insert_final_newline = true

# Matches multiple files with brace expansion notation

# Set default charset

[*.{js,jsx,html,scss}]

charset = utf-8

indent_style = space

indent_size = 4

trim_trailing_whitespace = true

================================================

FILE: .eslintrc.js

================================================

module.exports = {

"globals": {

"onAudioContextResumed": "readable",

},

"env": {

"browser": true,

"es6": true,

"jest": true,

"node": true,

},

"extends": "eslint:recommended",

"parserOptions": {

"ecmaFeatures": {

"jsx": true

},

"ecmaVersion": 2018,

"sourceType": "module"

},

"plugins": [

"react"

],

"settings": {

"react": {

"pragma": "createAudioElement"

}

},

"rules": {

"indent": [

"error",

4

],

"linebreak-style": [

"error",

"unix"

],

"quotes": [

"error",

"single"

],

"semi": [

"error",

"always"

],

"react/jsx-uses-react": 1,

"react/jsx-uses-vars": 1,

}

};

================================================

FILE: .gitignore

================================================

dist

node_modules

coverage

.nyc_output

.DS_Store

================================================

FILE: .npmignore

================================================

src

example

.babelrc

.eslintignore

.eslintrc.js

.nvmrc

README.md

rollup.config.js

.travis.yml

================================================

FILE: .nvmrc

================================================

v8.11.0

================================================

FILE: .travis.yml

================================================

language: node_js

node_js:

- "8"

- "10"

script: npm run test:ci

================================================

FILE: README.md

================================================

# Wax

[](https://travis-ci.org/jamesseanwright/wax) [](https://coveralls.io/github/jamesseanwright/wax?branch=master) [](https://www.npmjs.com/package/wax-core)

An experimental, JSX-compatible renderer for the Web Audio API. I wrote Wax for my [Manchester Web Meetup](https://www.meetup.com/Manchester-Web-Meetup) talk, [_Manipulating the Web Audio API with JSX and Custom Renderers_](https://www.youtube.com/watch?v=IeuuBKBb4Wg).

While it has decent test coverage and is stable, I still deem this to be a work-in-progress. **Use in production at your own risk!**

```jsx

/** @jsx createAudioElement */

import {

createAudioElement,

renderAudioGraph,

AudioGraph,

Oscillator,

Gain,

StereoPanner,

Destination,

setValueAtTime,

exponentialRampToValueAtTime,

} from 'wax-core';

renderAudioGraph(

<AudioGraph>

<Oscillator

frequency={[

setValueAtTime(200, 0),

exponentialRampToValueAtTime(800, 3),

]}

type="square"

endTime={3}

/>

<Gain gain={0.2} />

<StereoPanner pan={-1} />

<Destination />

</AudioGraph>

);

```

## Example Apps

Consult the [example](https://github.com/jamesseanwright/wax/tree/master/example) directory for a few small example apps that use Wax. The included [`README`](https://github.com/jamesseanwright/wax/blob/master/example/README.md) summarises them and details how they can be built and ran.

## Documentation

* [Introduction](https://github.com/jamesseanwright/wax/blob/master/docs/000-introduction.md)

* [Getting Started](https://github.com/jamesseanwright/wax/blob/master/docs/001-getting-started.md)

* [Manipulating Audio Parameters](https://github.com/jamesseanwright/wax/blob/master/docs/002-audio-parameters.md)

* [Building Complex Graphs with `<Aggregation />`s](https://github.com/jamesseanwright/wax/blob/master/docs/003-aggregations.md)

* [Updating Rendered `<AudioGraph />`s](https://github.com/jamesseanwright/wax/blob/master/docs/004-updating-audio-graphs.md)

* [Interop with React](https://github.com/jamesseanwright/wax/blob/master/docs/005-interop-with-react.md)

* [API Reference](https://github.com/jamesseanwright/wax/blob/master/docs/006-api-reference.md)

* [Local Development](https://github.com/jamesseanwright/wax/blob/master/docs/007-local-development.md)

================================================

FILE: docs/000-introduction.md

================================================

# Introduction

[Web Audio](https://developer.mozilla.org/en-US/docs/Web/API/Web_Audio_API) is a exciting capability that allows developers to generate and manipulate sound in real-time (and to [render it for later use](https://developer.mozilla.org/en-US/docs/Web/API/OfflineAudioContext)), requiring nothing beyond JavaScript and a built-in browser API. Its audio graph model is conceptually logical, but writing imperative connection code can prove tedious, especially for larger graphs:

```js

oscillator.connect(gain);

gain.connect(stereoPanner);

bufferSource.connect(stereoPanner);

stereoPanner.connect(context.destination);

```

[There are ways of mitigating this "fatigue"](https://github.com/learnable-content/web-audio-api-mini-course/blob/lesson1.3/complete/index.js#L66), but what if we could declare our audio graph, and its components, as a tree of elements using [JSX](https://reactjs.org/docs/introducing-jsx.html)? Can we thus avoid directly specifying this connection code? Wax is an attempt at answering these questions.

Take the example found in the main README:

```jsx

renderAudioGraph(

<AudioGraph>

<Oscillator

frequency={[

setValueAtTime(200, 0),

exponentialRampToValueAtTime(800, 3),

]}

type="square"

endTime={3}

/>

<Gain gain={0.2} />

<StereoPanner pan={-1} />

<Destination />

</AudioGraph>

);

```

This is analogous to:

```js

const context = new AudioContext();

const oscillator = context.createOscillator();

const gain = context.createGain();

const stereoPanner = context.createStereoPanner();

const getTime = time => context.currentTime + time;

oscillator.type = 'square';

oscillator.frequency.value = 200;

oscillator.frequency.exponentialRampToValueAtTime(800, getTime(3));

gain.gain.value = 0.2;

stereoPanner.pan.value = -0.2

oscillator.connect(gain);

gain.connect(stereoPanner);

stereoPanner.connect(context.destination);

oscillator.start();

oscillator.stop(getTime(3));

```

As React abstracts manual, imperative DOM operations, Wax abstracts manual, imperative Web Audio operations.

But how does Wax connect these nodes? The children of the root `<AudioGraph />` element **will be connected to one another in the order in which they're declared**. In our case:

1. `<Oscillator />` will be rendered and connected to the rendered `<Gain />`

2. `<Gain />` will be connected to `<StereoPanner />`

3. `<StereoPanner />` will be connected to `<Destination />` (`Destination` is a convenience component to consistently handle connections to the audio context's `destination` node)

================================================

FILE: docs/001-getting-started.md

================================================

# Getting Started

The entirety of Wax is available in a single package from npm, named `wax-core`. Install it into your project with:

```shell

npm i --save wax-core

```

Create a single entry point, `simple.jsx`, and replicate the following imports and audio graph.

```js

import {

createAudioElement,

renderAudioGraph,

AudioGraph,

Oscillator,

Gain,

StereoPanner,

Destination,

setValueAtTime,

exponentialRampToValueAtTime,

} from 'wax-core';

renderAudioGraph(

<AudioGraph>

<Oscillator

frequency={[

setValueAtTime(200, 0),

exponentialRampToValueAtTime(800, 3),

]}

type="square"

endTime={3}

/>

<Gain gain={0.2} />

<StereoPanner pan={-0.2} />

<Destination />

</AudioGraph>

);

```

While `<AudioGraph />` does nothing special at present, it may manipulate its children in future versions of Wax. Please ensure you always specify an `AudioGraph` as the root element of the tree.

But how do we actually build this? How can we instruct a transpiler that these JSX constructs should specifically target Wax? Firstly, let's look at the first binding we import from `wax-core`:

```js

import {

createAudioElement,

...

} from 'wax-core';

```

Why are we importing this is we aren't calling it anywhere? Oh, but we are; when our JSX is transpiled, it'll resolve to invocations of `createAudioElement`. It is the Wax equivalent of `React.createElement`, and follows the exact same signature!

```js

renderAudioGraph(

createAudioElement(

AudioGraph,

null,

createAudioElement(

Oscillator,

{

frequency: [

setValueAtTime(200, 0),

exponentialRampToValueAtTime(800, 3)

],

type: 'square',

endTime: 3,

},

),

createAudioElement(Gain, { gain: 0.2 }),

createAudioElement(StereoPanner, { pan: -0.2 }),

createAudioElement(Destination, null),

)

);

```

To achieve this transformation, we can use [Babel](https://babeljs.io) and the [`transform-react-jsx`](https://babeljs.io/docs/en/babel-plugin-transform-react-jsx) plugin; the latter exposes a `pragma` option that we can configure to transform JSX to `createAudioElement` calls:

```json

{

"plugins": [

["@babel/transform-react-jsx", {

"pragma": "createAudioElement"

}]

]

}

```

Despite the name, this plugin performs general JSX transformations, defaulting to `React.createElement`. You do not need React to use Wax!

To create a bundle containing Wax and our app's code, we'll need a build tool **that supports ES Modules**. For the example apps, we use [Rollup](https://rollupjs.org/) and [`rollup-plugin-babel`](https://github.com/rollup/rollup-plugin-babel) to respect JSX transpilation ([config](https://github.com/jamesseanwright/wax/blob/master/rollup.config.js)).

Once we have our bundle, we can load it into a HTML document using a `<script>` element:

```html

<script src="/index.js"></script>

```

## `createAudioElement` and ESLint

If you are using ESLint to analyse your code, you may receive this error:

```

'createAudioElement' is defined but never used.

```

This is because `eslint-plugin-react` expects the pragma to be `React.createElement`. To suppress this error, one should explicitly configure the React plugin in the ESLint config's `settings` property:

```json

{

"settings": {

"react": {

"pragma": "createAudioElement"

}

}

}

```

Alternatively, one can specify an `@jsx` directive at the beginning of your module

```js

/** @jsx createAudioElement */

```

================================================

FILE: docs/002-audio-parameters.md

================================================

# Manipulating Audio Parameters

The [`AudioParam`](https://developer.mozilla.org/en-US/docs/Web/API/AudioParam) interface provides a means of changing audio properties, such as `OscillatorNode.prototype.frequency` and `GainNode.prototype.gain`, via direct values or scheduled events.

Looking at our app, we can observe that it a few parameter changes will occur:

```jsx

<Oscillator

frequency={[

setValueAtTime(200, 0),

exponentialRampToValueAtTime(800, 3),

]}

type="square"

endTime={3}

/>

<Gain gain={0.2} />

<StereoPanner pan={-0.2} />

```

* `<Oscillator />`'s frequency will immediately be set to 200 Hz, then ramped to 800 Hz over a duration of 3 seconds

* `<Gain />`'s gain will be a constant value of `0.2`

* `<StereoPanner />`'s pan will be a constant value of `-0.2`

With this in mind, Wax components support param changes with various props, whose values can be:

* a single, constant value

* a single parameter mutation e.g. `frequency={setValueAtTime(200, 0)}`

* an array of parameter mutations (as above), which will be applied in order of declaration

## What Are Parameter Mutations?

Parameter mutations are functions that conform to those exposed by the `AudioParam` interface; If an audio parameter supports it, then Wax will export a mutation for it! All of them are exported for consumption, but to list them for transparency:

* `setValueAtTime`

* `linearRampToValueAtTime`

* `exponentialRampToValueAtTime`

* `setTargetAtTime`

* `setValueCurveAtTime`

================================================

FILE: docs/003-aggregations.md

================================================

# Building Complex Graphs with `<Aggregation />`s

Thus far, we have built a simple, linear audio graph. What if we want to build more complex graphs in which multiple sources connect to common nodes? In Wax, we can achieve this with the `<Aggregation />` component.

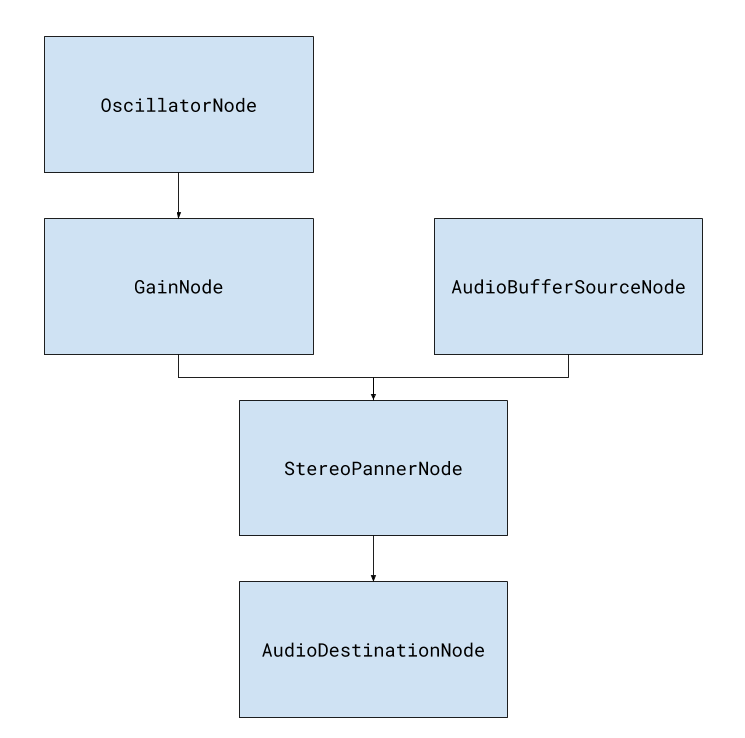

Say we wish to build the following graph:

The Web Audio API is built for this, demonstrating how the audio node model is a pragmatic fit:

```js

// Node instantiation code assumed

oscillator.connect(gain);

gain.connect(stereoPanner);

bufferSource.connect(stereoPanner);

stereoPanner.connect(context.destination);

```

Great! But how can we achieve this with Wax? With the `<Aggregation />` component, that's how:

```js

import {

Aggregation,

...

} from 'wax-core';

// [...]

const yodel = await fetchAsAudioBuffer('/yodel.mp3', audioContext);

const stereoPanner = <StereoPanner pan={0.4} />;

renderAudioGraph(

<AudioGraph>

<Aggregation>

<Oscillator

frequency={[

setValueAtTime(200, 0),

exponentialRampToValueAtTime(800, 3),

]}

type="square"

endTime={3}

/>

<Gain gain={0.1} />

{stereoPanner}

</Aggregation>

<Aggregation>

<AudioBufferSource

buffer={yodel}

/>

{stereoPanner}

</Aggregation>

{stereoPanner}

<Destination />

</AudioGraph>,

audioContext,

);

```

You can think of an aggregation as a nestable audio graph; it will connect its children sequentially. When the root `<AudioGraph />` is rendered, any inner `<Aggregation />` elements will be respected, avoiding double rendering and connection issues.

Typically, you'll declare a shared element in a single place, to which the other children of an aggregation can connect; said shared element can then be specified again in the main audio graph to ensure it is ultimately connected, directly or indirectly, to the destination node.

Let's clarify this within the above example. We declare a single `<StereoPanner />` element, which will create a single `StereoPannerNode` to which a `GainNode` and an `AudioBufferSourceNode` will respectively connect. Outside of the two `<Aggregation />` elements, we specify the same element instance again in the root audio graph, so that it will be connected to `audioContext.destination`. As we can reuse existing elements by declaration name within a set of curly braces in React, we can achieve the same in Wax; to summarise, these sharable elements can be used within inner aggregations and the root audio graph to generate more complex sounds.

================================================

FILE: docs/004-updating-audio-graphs.md

================================================

# Updating Rendered `<AudioGraph />`s

Thus far, we have been rendering static audio graphs with the `renderAudioGraph` function. Say we now want to update a graph that creates an oscillator, whose frequency is dictated by a slider (`<input type="range" />`):

```js

import {

renderAudioGraph,

...

} from 'wax-core';

const slider = document.body.querySelector('#slider');

slider.addEventListener('change', ({ target }) => {

renderAudioGraph(

<AudioGraph>

<Oscillator frequency={target.value} />

</AudioGraph>

);

});

```

The problem with this approach is that we'll be creating a new audio node for each element whenever the slider's value changes! While `AudioNode`s are cheap to create, the above code will result in many frequencies being played at once; try at your own peril, but I can assure you that it sounds horrible.

To update a tree that already exists, one can replace `renderAudioGraph` with `renderPersistentAudioGraph`:

```js

import {

renderPersistentAudioGraph,

...

} from 'wax-core';

const slider = document.body.querySelector('#slider');

let value = 40;

slider.value = value;

const audioGraph = (

<AudioGraph>

<Oscillator frequency={value} />

</AudioGraph>

);

const updateAudioGraph = renderPersistentAudioGraph(audioGraph);

slider.addEventListener('change', ({ target }) => {

value = target.value;

updateAudioGraph(audioGraph);

});

```

By invoking the `updateAudioGraph` function returned by calling `renderPersistentAudioGraph`, we can update our existing tree of audio elements to reflect the latest property values; this internally reinvokes component logic across the tree, but against the already-created nodes. It's analogous to React's [reconciliation](https://reactjs.org/docs/reconciliation.html) algorithm, albeit infinitely less sophisticated.

## A Note on "Reconciliation"

At present, Wax will not diff element trees between renders to determine if nodes have been added or removed; it assumes that their structures are identical, and that only respective properties have changed. This is certaintly a big limitation and will be addressed properly if this project evolves from its experimental stage; for the time being, conditionally specifying elements will not work:

```js

<AudioGraph>

{/* This won't work... yet. */}

{makeNoise && <Oscillator frequency={frequency} />}

</AudioGraph>

```

================================================

FILE: docs/005-interop-with-react.md

================================================

# Interop with React

In the prior chapter, we learned how to update an existing audio graph whenever a `HTMLInputElement` fires a `change` event. Can we handle the visual UI with React while supporting JSX-declared audio graphs with Wax?

The problem is that we need to be able to use `React.createElement` and `createAudioElement` at the same time. What if we could compose a single pragma that can select whether to use the former or the latter at runtime? The [`withReact` example app](https://github.com/jamesseanwright/wax/blob/master/example/src/withReact.jsx) has a solution:

```js

/** @jsx createElement */

import {

isWaxComponent,

...

} from 'wax-core';

import combineElementCreators from './combineElementCreators';

const createElement = combineElementCreators(

[isWaxComponent, createAudioElement],

[() => true, React.createElement],

);

```

`combineElementCreators` is a function that takes a mapping between predicates and pragmas, and returns a new pragma to be targeted by our transpiler. In our example, if an element belongs to Wax (determined using the exposed `isWaxComponent` binding), then the `createAudioElement` pragma will be invoked; otherwise, we'll default to `React.createElement`. `combineElementCreators` isn't provided by Wax but can be implemented with a few lines of code:

```js

const getCreator = (map, Component) =>

[...map.entries()]

.find(([predicate]) => predicate(Component))[1];

const combineElementCreators = (...creatorBindings) => {

const map = new Map(creatorBindings);

return (Component, props, ...children) => {

const creator = getCreator(map, Component);

return creator(Component, props, ...children);

};

};

```

We can then instruct Babel to target this pragma in the usual way:

```json

{

"presets": [

["@babel/react", {

"pragma": "createElement"

}]

]

}

```

The aforementioned `withReact` example demonstrates how `ReactDOM.render` and `renderPersistentAudioGraph` can be used across a single app.

================================================

FILE: docs/006-api-reference.md

================================================

# API Reference

Coming soon. I'm going to find a nice way of autogenerating this!

================================================

FILE: docs/007-local-development.md

================================================

# Local Development

To build Wax and to run the example apps locally, you'll first need to run these commands in your terminal

```shell

git clone https://github.com/jamesseanwright/wax.git # or fork and use SSH if submitting a PR

cd wax

npm i

```

Then you can run one of the following scripts:

* `npm run build` - builds the library and outputs it to the `dist` dir, ready for publishing

* `npm run build-example` - builds the example app specified in the `ENTRY` environment variable, defaulting to `simple`

* `npm run dev` - builds the library, then builds and runs the example app specified in the `ENTRY` environment variable, defaulting to `simple`

* `npm test` - lints the source code (including `example`) and runs the unit tests. Append ` -- --watch` to enter Jest's watch mode

For more information on the example apps, consult the [README in the `example` folder](https://github.com/jamesseanwright/wax/blob/master/example/README.md).

================================================

FILE: example/README.md

================================================

# Example Apps

The `src` directory contains three example Wax applications, each bootstrapped by the same HTML document (index.html):

* `simple.jsx` - declares an oscillator, with a couple of scheduled frequency changes, whose gain and pan are altered

* `aggregation.jsx` - demonstrates how one can use the `<Aggregation />` component to build more complex audio graphs

* `withReact.jsx` - renders a slider element using React, which updates an `<AudioGraph />` whenever its value is changed. This calls `renderPersistentAudioGraph()`

## Running the Examples

After following the setup guide in the [local development documentation](https://github.com/jamesseanwright/wax/blob/master/docs/007-local-development.md), run `npm run dev` from the root of the repository. You can specify the `ENTRY` environment varible to select which app to run; this is the name of the app **without** the `.jsx` extension e.g. `ENTRY=withReact npm run dev`. If omitted, `simple` will be built and started.

The example apps are [built using rollup](https://github.com/jamesseanwright/wax/blob/master/rollup.config.js).

## Mitigating Chrome's Autoplay Policy

As of December 2018, Chrome instantiates `AudioContext`s in the `'suspended'` state, [requiring an explicit user interaction before they can be resumed](https://developers.google.com/web/updates/2017/09/autoplay-policy-changes#webaudio). To mitigate this, there is some [JavaScript in the HTML bootstrapper (index.html) which will create, resume, and forward a context to the current app](https://github.com/jamesseanwright/wax/blob/master/example/src/index.html#L14) via a callback. This will potentially become a requirement for other browsers as they adopt similar policies in the future.

================================================

FILE: example/devServer.js

================================================

'use strict';

/* node-static unfortunately doesn't provide

* the correct Content-Type header for non-HTML

* files, breaking the decoding of yodel.mp3.

* Thus, I'm overriding this via this abstraction */

const PORT = 8080;

const DEFAULT_MIME_TYPE = 'application/html';

const http = require('http');

const path = require('path');

const url = require('url');

const nodeStatic = require('node-static');

const file = new nodeStatic.Server(

path.join(__dirname, 'dist'),

{ cache: 0 },

);

const contentTypes = new Map([

[/.*\.mp3$/ig, 'audio/mp3'],

]);

const getContentType = req => {

const { pathname } = url.parse(req.url);

for (let [expression, mimeType] of contentTypes) {

if (expression.test(pathname)) {

return mimeType;

}

}

return DEFAULT_MIME_TYPE;

};

const server = http.createServer((req, res) => {

req.on('end', () => {

res.setHeader('Content-Type', getContentType(req));

file.serve(req, res);

}).resume();

});

// eslint-disable-next-line no-console

server.listen(PORT, () => console.log('Dev server listening on', PORT));

================================================

FILE: example/src/aggregation.jsx

================================================

import {

createAudioElement,

renderAudioGraph,

AudioGraph,

Aggregation,

AudioBufferSource,

Oscillator,

Gain,

StereoPanner,

Destination,

setValueAtTime,

exponentialRampToValueAtTime,

} from 'wax-core';

const fetchAsAudioBuffer = async (url, audioContext) => {

const response = await fetch(url);

const arrayBuffer = await response.arrayBuffer();

return await audioContext.decodeAudioData(arrayBuffer);

};

onAudioContextResumed(async context => {

const yodel = await fetchAsAudioBuffer('/yodel.mp3', context);

const stereoPanner = <StereoPanner pan={0.4} />;

renderAudioGraph(

<AudioGraph>

<Aggregation>

<Oscillator

frequency={[

setValueAtTime(200, 0),

exponentialRampToValueAtTime(800, 3),

]}

type="square"

endTime={3}

/>

<Gain gain={0.1} />

{stereoPanner}

</Aggregation>

<Aggregation>

<AudioBufferSource

buffer={yodel}

/>

<Gain gain={1.4} />

{stereoPanner}

</Aggregation>

{stereoPanner}

<Destination />

</AudioGraph>,

context,

);

});

================================================

FILE: example/src/combineElementCreators.js

================================================

const getCreator = (map, Component) =>

[...map.entries()]

.find(([predicate]) => predicate(Component))[1];

const combineElementCreators = (...creatorBindings) => {

const map = new Map(creatorBindings);

return (Component, props, ...children) => {

const creator = getCreator(map, Component);

return creator(Component, props, ...children);

};

};

export default combineElementCreators;

================================================

FILE: example/src/index.html

================================================

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8" />

<title>Wax Example</title>

</head>

<body>

<main>

<h1>Wax Example</h1>

<p>Turn your speakers up!</p>

<button id="start">Resume audio context and start!</button>

<section id="react-target"></section>

<script>

'use strict';

/* Workaround for Chrome's Web Audio autoplay

* policy. See the README for more info. */

const button = document.body.querySelector('#start');

const audioContext = new AudioContext();

let resumptionCallback = () => undefined;

const onAudioContextResumed = callback => {

resumptionCallback = callback;

};

button.onclick = async () => {

await audioContext.resume();

resumptionCallback(audioContext);

};

</script>

<script src="/index.js"></script>

</main>

</body>

</html>

================================================

FILE: example/src/simple.jsx

================================================

import {

createAudioElement,

renderAudioGraph,

AudioGraph,

Oscillator,

Gain,

StereoPanner,

Destination,

setValueAtTime,

exponentialRampToValueAtTime,

} from 'wax-core';

onAudioContextResumed(context => {

renderAudioGraph(

<AudioGraph>

<Oscillator

frequency={[

setValueAtTime(200, 0),

exponentialRampToValueAtTime(800, 3),

]}

type="square"

endTime={3}

/>

<Gain gain={0.2} />

<StereoPanner pan={-0.2} />

<Destination />

</AudioGraph>,

context,

);

});

================================================

FILE: example/src/withReact.jsx

================================================

/** @jsx createElement */

import React from 'react';

import ReactDOM from 'react-dom';

import {

isWaxComponent,

createAudioElement,

renderPersistentAudioGraph,

AudioGraph,

Oscillator,

Gain,

Destination,

} from 'wax-core';

import combineElementCreators from './combineElementCreators';

const createElement = combineElementCreators(

[isWaxComponent, createAudioElement],

[() => true, React.createElement],

);

class Slider extends React.Component {

constructor(props) {

super(props);

this.onChange = this.onChange.bind(this);

}

componentDidMount() {

const { children, min, audioContext } = this.props;

this.updateAudioGraph = renderPersistentAudioGraph(

children(min),

audioContext,

);

}

onChange({ target }) {

this.updateAudioGraph(

this.props.children(target.value),

);

}

render() {

return (

<input

type="range"

min={this.props.min}

max={this.props.max}

onChange={this.onChange}

/>

);

}

}

onAudioContextResumed(context => {

ReactDOM.render(

<Slider

audioContext={context}

min={40}

max={800}

>

{value =>

<AudioGraph>

<Oscillator

frequency={value}

type="square"

/>

<Gain gain={0.2} />

<Destination />

</AudioGraph>

}

</Slider>,

document.querySelector('#react-target'),

);

});

================================================

FILE: jest.config.js

================================================

module.exports = {

testRegex: 'src\\/(.*\\/)*__tests__\\/.*\\.test\\.jsx?$',

transform: {

'.*\\.jsx?$': 'babel-jest',

},

};

================================================

FILE: package.json

================================================

{

"name": "wax-core",

"version": "0.1.1",

"description": "An experimental, JSX-compatible renderer for the Web Audio API",

"main": "dist/index.js",

"scripts": {

"build": "babel src --out-dir dist",

"build-example": "mkdir -p example/dist && rm -rf example/dist/* && rollup -c && bash -c 'cp -r example/src/*.{html,mp3} example/dist'",

"dev": "npm run build && npm run build-example && node example/devServer",

"test": "eslint --ext js --ext jsx src example/src && jest",

"test:ci": "npm test -- --coverage --coverageReporters=text-lcov | coveralls"

},

"repository": {

"type": "git",

"url": "git+https://github.com/jamesseanwright/wax.git"

},

"keywords": [

"web",

"audio",

"api",

"components",

"jsx",

"react"

],

"author": "James Wright <james@jamesswright.co.uk>",

"license": "ISC",

"bugs": {

"url": "https://github.com/jamesseanwright/wax/issues"

},

"homepage": "https://github.com/jamesseanwright/wax#readme",

"devDependencies": {

"@babel/cli": "7.0.0",

"@babel/core": "7.0.0",

"@babel/plugin-proposal-object-rest-spread": "7.0.0",

"@babel/plugin-transform-modules-commonjs": "7.1.0",

"@babel/plugin-transform-react-jsx": "7.0.0",

"@babel/preset-react": "7.0.0",

"babel-core": "7.0.0-bridge.0",

"babel-jest": "23.6.0",

"babel-plugin-transform-inline-environment-variables": "0.4.3",

"coveralls": "3.0.2",

"eslint": "5.5.0",

"eslint-plugin-react": "7.11.1",

"jest": "23.6.0",

"node-static": "0.7.10",

"nyc": "^13.0.1",

"react": "16.5.0",

"react-dom": "16.5.0",

"remove": "^0.1.5",

"rollup": "0.65.0",

"rollup-plugin-alias": "1.4.0",

"rollup-plugin-babel": "4.0.2",

"rollup-plugin-commonjs": "9.1.6",

"rollup-plugin-node-resolve": "3.3.0"

}

}

================================================

FILE: rollup.config.js

================================================

/* TODO: move this and dependencies to

* separate package in example directory?

*/

import { resolve as resolvePath } from 'path';

import alias from 'rollup-plugin-alias';

import commonjs from 'rollup-plugin-commonjs';

import resolve from 'rollup-plugin-node-resolve';

import babel from 'rollup-plugin-babel';

const entry = process.env.ENTRY || 'simple';

export default {

input: `example/src/${entry}.jsx`,

output: {

file: 'example/dist/index.js',

format: 'iife',

},

plugins: [

resolve(),

commonjs(), // for React and ReactDOM

alias({

'wax-core': resolvePath(__dirname, 'dist', 'index.js'),

}),

babel(

entry === 'withReact'

&& {

babelrc: false,

presets: [

['@babel/react', {

pragma: 'createElement',

pragmaFrag: 'React.Fragment',

}],

],

plugins: ['transform-inline-environment-variables'],

}

),

],

};

================================================

FILE: src/__tests__/connectNodes.test.js

================================================

import { NO_OP } from '../components/NoOp';

import connectNodes from '../connectNodes';

import { createArrayWith } from './helpers';

const createStubAudioNode = () => ({

connect: jest.fn(),

});

/* we concat item[item.length - 1] if item

* is an array to match the reducing nature

* of the connectNodes function. */

const flatten = array => array.reduce(

(arr, item) => (

arr.concat(Array.isArray(item) ? item[item.length - 1] : item)

), []);

describe('connectNodes', () => {

it('should sequentially connect an array of audio nodes and return the last', () => {

const nodes = createArrayWith(5, createStubAudioNode);

const result = connectNodes(nodes);

expect(result).toBe(nodes[nodes.length - 1]);

nodes.reduce((previousNode, currentNode) => {

expect(previousNode.connect).toHaveBeenCalledTimes(1);

expect(previousNode.connect).toHaveBeenCalledWith(currentNode);

return currentNode;

});

});

it('should not connect NO_OP nodes', () => {

const noOpIndex = 3;

const nodes = createArrayWith(

5,

(_, i) => i === noOpIndex ? NO_OP : createStubAudioNode(),

);

connectNodes(nodes);

nodes.reduce((previousNode, currentNode) => {

if (currentNode === NO_OP) {

expect(previousNode.connect).not.toHaveBeenCalled();

} else if (previousNode !== NO_OP) {

expect(previousNode.connect).toHaveBeenCalledTimes(1);

expect(previousNode.connect).toHaveBeenCalledWith(currentNode);

}

return currentNode;

});

});

it('should reduce multidimensional arrays of AudioNodes', () => {

const nodes = [

...createArrayWith(3, createStubAudioNode),

createArrayWith(4, createStubAudioNode),

...createArrayWith(2, createStubAudioNode),

];

connectNodes(nodes);

flatten(nodes).reduce((previousNode, currentNode) => {

expect(previousNode.connect).toHaveBeenCalledTimes(1);

expect(previousNode.connect).toHaveBeenCalledWith(currentNode);

return currentNode;

});

});

});

================================================

FILE: src/__tests__/createAudioElement.test.js

================================================

import { createArrayWith } from './helpers';

import createAudioElement from '../createAudioElement';

const createElementCreator = node => {

const creator = jest.fn().mockReturnValue(node);

creator.isElementCreator = true;

return creator;

};

const createAudioGraph = nodeTree =>

nodeTree.map(node =>

Array.isArray(node)

? createElementCreator(createAudioGraph(node))

: createElementCreator(node)

);

const assertNestedAudioGraph = (graph, nodeTree, audioContext) => {

graph.forEach((creator, i) => {

if (Array.isArray(creator)) {

assertNestedAudioGraph(creator, nodeTree[i], audioContext);

} else {

expect(creator).toHaveBeenCalledTimes(1);

expect(creator).toHaveBeenCalledWith(audioContext, nodeTree[i]);

}

});

};

describe('createAudioElement', () => {

it('should conform to the JSX pragma signature and return a creator function', () => {

const node = {};

const audioContext = {};

const Component = jest.fn().mockReturnValue(node);

const props = { foo: 'bar', bar: 'baz' };

const children = [{}, {}, {}];

const creator = createAudioElement(Component, props, ...children);

const result = creator(audioContext);

expect(result).toBe(node);

expect(Component).toHaveBeenCalledTimes(1);

expect(Component).toHaveBeenCalledWith({

children,

audioContext,

...props,

});

});

it('should render a creator when it is returned from a component', () => {

const innerNode = {};

const innerCreator = createElementCreator(innerNode);

const audioContext = {};

const Component = jest.fn().mockReturnValue(innerCreator);

const creator = createAudioElement(Component, {});

const result = creator(audioContext);

expect(result).toBe(innerNode);

expect(innerCreator).toHaveBeenCalledTimes(1);

expect(innerCreator).toHaveBeenCalledWith(audioContext, undefined);

});

it('should invoke child creators when setting the parent`s `children` prop', () => {

const children = createArrayWith(10, (_, id) => {

const node = { id };

const creator = createElementCreator(node);

return { node, creator };

});

const audioContext = {};

const Component = jest.fn().mockReturnValue({});

const creator = createAudioElement(

Component,

{},

...children.map(({ creator }) => creator),

);

creator(audioContext);

expect(Component).toHaveBeenCalledWith({

audioContext,

children: children.map(({ node }) => node),

});

});

it('should reconcile existing nodes for the graph from the provided array', () => {

const nodeTree = [

{ id: 0 },

{ id: 1 },

[

{ id: 2 },

{ id: 3 },

[

{ id: 4 },

{ id: 5 },

],

{ id: 6 },

],

{ id: 7 },

{ id: 8 },

];

const audioContext = { isAudioContext: true };

const graph = createAudioGraph(nodeTree);

const Component = jest.fn().mockReturnValue(nodeTree);

const audioGraphCreator = createAudioElement(

Component,

{},

...graph,

);

audioGraphCreator(audioContext, nodeTree);

assertNestedAudioGraph(graph, nodeTree, audioContext);

});

it('should cache creator results', () => {

const Component = jest.fn().mockReturnValue({});

const creator = createAudioElement(

Component,

{},

);

creator({});

creator({});

expect(Component).toHaveBeenCalledTimes(1);

});

});

================================================

FILE: src/__tests__/helpers.js

================================================

export const createStubAudioContext = (currentTime = 0) => ({

currentTime,

createGain() {

return {

gain: {

value: 0,

},

};

},

createStereoPanner() {

return {

pan: {

value: 0,

},

};

},

createBufferSource() {

return {

detune: {

value: 0,

},

playbackRate: {

value: 0,

},

};

},

createOscillator() {

return {

detune: {

value: 0,

},

frequency: {

value: 0,

},

};

}

});

export const createArrayWith = (length, creator) =>

Array(length).fill(null).map(creator);

================================================

FILE: src/components/Aggregation.jsx

================================================

import createAudioElement from '../createAudioElement';

import NoOp from './NoOp';

import AudioGraph from './AudioGraph';

const Aggregation = ({ children }) => (

<AudioGraph>

{children}

<NoOp />

</AudioGraph>

);

export default Aggregation;

================================================

FILE: src/components/AudioBufferSource.js

================================================

import asSourceNode from './asSourceNode';

import assignAudioParam from '../paramMutations/assignAudioParam';

export const createAudioBufferSource = assignParam =>

({

audioContext,

buffer,

detune,

loop = false,

loopStart = 0,

loopEnd = 0,

playbackRate,

enqueue,

node = audioContext.createBufferSource(),

}) => {

node.buffer = buffer;

node.loop = loop;

node.loopStart = loopStart;

node.loopEnd = loopEnd;

assignParam(node.detune, detune, audioContext.currentTime);

assignParam(node.playbackRate, playbackRate, audioContext.currentTime);

enqueue(node);

return node;

};

export default asSourceNode(

createAudioBufferSource(

assignAudioParam

)

);

================================================

FILE: src/components/AudioGraph.js

================================================

/* This does little right now,

* but might be used in the future

* to make optimisations and to

* invoke other operations. */

const AudioGraph = ({ children }) => children;

export default AudioGraph;

================================================

FILE: src/components/ChannelMerger.js

================================================

const ChannelMerger = ({

audioContext,

inputs,

children,

node = audioContext.createChannelMerger(inputs),

}) => {

const [setupConnections] = children;

const connectToChannel = (childNode, channel) => {

// assumption here that all nodes have 1 output. Extra param?

childNode.connect(node, 0, channel);

};

setupConnections(connectToChannel);

return node;

};

export default ChannelMerger;

================================================

FILE: src/components/Destination.js

================================================

/* A convenience wrapper to expose

* the audio context's destination

* as a Web Audio X Component. */

const Destination = ({ audioContext }) => audioContext.destination;

export default Destination;

================================================

FILE: src/components/Gain.js

================================================

import assignAudioParam from '../paramMutations/assignAudioParam';

export const createGain = assignParam =>

({

audioContext,

gain,

node = audioContext.createGain(),

}) => {

assignParam(node.gain, gain, audioContext.currentTime);

return node;

};

export default createGain(assignAudioParam);

================================================

FILE: src/components/NoOp.js

================================================

/* An internal, workaround component

* to inform the node connector that

* no connection is required at this

* point in the current subtree.

* Used by Aggregation. */

export const NO_OP = 'NO_OP';

const NoOp = () => NO_OP;

export default NoOp;

================================================

FILE: src/components/Oscillator.js

================================================

import asSourceNode from './asSourceNode';

import assignAudioParam from '../paramMutations/assignAudioParam';

export const createOscillator = assignParam =>

({

audioContext,

detune = 0,

frequency,

type,

onended,

enqueue,

node = audioContext.createOscillator(),

}) => {

assignParam(node.detune, detune, audioContext.currentTime);

assignParam(node.frequency, frequency, audioContext.currentTime);

node.type = type;

node.onended = onended;

enqueue(node);

return node;

};

export default asSourceNode(

createOscillator(assignAudioParam)

);

================================================

FILE: src/components/StereoPanner.js

================================================

import assignAudioParam from '../paramMutations/assignAudioParam';

export const createStereoPanner = assignParam =>

({

audioContext,

pan,

node = audioContext.createStereoPanner(),

}) => {

assignParam(node.pan, pan, audioContext.currentTime);

return node;

};

export default createStereoPanner(assignAudioParam);

================================================

FILE: src/components/__tests__/AudioBufferSource.test.js

================================================

import { createAudioBufferSource } from '../AudioBufferSource';

import { createStubAudioContext } from '../../__tests__/helpers';

describe('AudioBufferSource', () => {

let AudioBufferSource;

let assignAudioParam;

beforeEach(() => {

assignAudioParam = jest.fn().mockImplementationOnce((param, value) => param.value = value);

AudioBufferSource = createAudioBufferSource(assignAudioParam);

});

it('should create an AudioBufferSourceNode, assign its props, enqueue, and return said GainNode', () => {

const audioContext = createStubAudioContext();

const buffer = {};

const loop = true;

const loopStart = 1;

const loopEnd = 3;

const detune = 1;

const playbackRate = 1;

const enqueue = jest.fn();

const node = AudioBufferSource({

audioContext,

buffer,

loop,

loopStart,

loopEnd,

detune,

playbackRate,

enqueue,

});

expect(node.buffer).toBe(buffer);

expect(node.loop).toEqual(true);

expect(node.loopStart).toEqual(1);

expect(node.loopEnd).toEqual(3);

expect(assignAudioParam).toHaveBeenCalledTimes(2);

expect(assignAudioParam).toHaveBeenCalledWith(node.detune, detune, audioContext.currentTime);

expect(assignAudioParam).toHaveBeenCalledWith(node.playbackRate, playbackRate, audioContext.currentTime);

expect(enqueue).toHaveBeenCalledTimes(1);

expect(enqueue).toHaveBeenCalledWith(node);

});

it('should respect and mutate an existing node if provided', () => {

const audioContext = createStubAudioContext();

const node = audioContext.createBufferSource();

const buffer = {};

const result = AudioBufferSource({

audioContext,

buffer,

node,

enqueue: jest.fn(),

});

expect(node).toBe(result);

expect(node.buffer).toBe(buffer);

});

});

================================================

FILE: src/components/__tests__/Gain.test.js

================================================

import { createGain } from '../Gain';

import { createStubAudioContext } from '../../__tests__/helpers';

describe('Gain', () => {

let Gain;

let assignAudioParam;

beforeEach(() => {

assignAudioParam = jest.fn().mockImplementation((param, value) => param.value = value);

Gain = createGain(assignAudioParam);

});

it('should create a GainNode, assign its gain, and return said GainNode', () => {

const audioContext = createStubAudioContext();

const gain = 0.4;

const node = Gain({ audioContext, gain });

expect(assignAudioParam).toHaveBeenCalledTimes(1);

expect(assignAudioParam).toHaveBeenCalledWith(node.gain, gain, audioContext.currentTime);

expect(node.gain.value).toEqual(gain);

});

it('should mutate an existing GainNode when provided', () => {

const audioContext = createStubAudioContext();

const node = audioContext.createGain();

const gain = 0.7;

const result = Gain({ audioContext, gain, node });

expect(result).toBe(node);

expect(assignAudioParam).toHaveBeenCalledTimes(1);

expect(assignAudioParam).toHaveBeenCalledWith(node.gain, gain, audioContext.currentTime);

expect(node.gain.value).toEqual(gain);

});

});

================================================

FILE: src/components/__tests__/Oscillator.test.js

================================================

import { createOscillator } from '../Oscillator';

import { createStubAudioContext } from '../../__tests__/helpers';

describe('Oscillator', () => {

let assignAudioParam;

let Oscillator;

beforeEach(() => {

assignAudioParam = jest.fn().mockImplementation((param, value) => param.value = value);

Oscillator = createOscillator(assignAudioParam);

});

it('should create an OscillatorNode, assign its props, and return said OscillatorNode', () => {

const audioContext = createStubAudioContext();

const detune = 7;

const frequency = 300;

const type = 'square';

const onended = jest.fn();

const enqueue = jest.fn();

const node = Oscillator({

audioContext,

detune,

frequency,

type,

onended,

enqueue,

});

expect(assignAudioParam).toHaveBeenCalledTimes(2);

expect(assignAudioParam).toHaveBeenCalledWith(node.detune, detune, audioContext.currentTime);

expect(assignAudioParam).toHaveBeenCalledWith(node.frequency, frequency, audioContext.currentTime);

expect(enqueue).toHaveBeenCalledTimes(1);

expect(enqueue).toHaveBeenCalledWith(node);

expect(node.frequency.value).toEqual(frequency);

expect(node.detune.value).toEqual(detune);

expect(node.onended).toBe(onended);

});

it('should mutate an existing OscillatorNode when provided', () => {

const audioContext = createStubAudioContext();

const node = audioContext.createOscillator();

const frequency = 20;

const enqueue = jest.fn();

const result = Oscillator({

audioContext,

frequency,

enqueue,

node,

});

expect(result).toBe(node);

expect(result.frequency.value).toEqual(20);

});

});

================================================

FILE: src/components/__tests__/StereoPanner.test.js

================================================

import { createStereoPanner } from '../StereoPanner';

import { createStubAudioContext } from '../../__tests__/helpers';

describe('StereoPanner', () => {

let assignAudioParam;

let StereoPanner;

beforeEach(() => {

assignAudioParam = jest.fn().mockImplementationOnce((param, value) => param.value = value);

StereoPanner = createStereoPanner(assignAudioParam);

});

afterEach(() => {

assignAudioParam.mockReset();

});

it('should create a StereoPannerNode, assign its pan, and return said StereoPannerNode', () => {

const audioContext = createStubAudioContext();

const pan = 0.4;

const node = StereoPanner({ audioContext, pan });

expect(assignAudioParam).toHaveBeenCalledTimes(1);

expect(assignAudioParam).toHaveBeenCalledWith(node.pan, pan, audioContext.currentTime);

expect(node.pan.value).toEqual(pan);

});

it('should mutate an existing StereoPannerNode when provided', () => {

const audioContext = createStubAudioContext();

const node = audioContext.createStereoPanner();

const pan = 0.7;

const result = StereoPanner({ audioContext, pan, node });

expect(result).toBe(node);

expect(assignAudioParam).toHaveBeenCalledTimes(1);

expect(assignAudioParam).toHaveBeenCalledWith(node.pan, pan, audioContext.currentTime);

expect(node.pan.value).toEqual(pan);

});

});

================================================

FILE: src/components/__tests__/asSourceNode.test.jsx

================================================

import { createAsSourceNode } from '../asSourceNode';

import { createStubAudioContext } from '../../__tests__/helpers';

const createStubSourceNode = () => ({

start: jest.fn(),

stop: jest.fn(),

});

describe('asSourceNode HOC', () => {

// used by both asSourceNode and JSX below

const createAudioElement = jest.fn().mockImplementation(

(Component, props, ...children) =>

Component({

children,

...props,

})

);

let asSourceNode;

beforeEach(() => {

asSourceNode = createAsSourceNode(createAudioElement);

});

it('should create a new component that proxies incoming props and provides an enqueue prop', () => {

const MyComponent = props => ({ props }); // TODO: common, reusable pattern across tests?

const SourceComponent = asSourceNode(MyComponent);

const sourceElement = <SourceComponent foo="bar" />;

expect(sourceElement.props.foo).toEqual('bar');

expect(sourceElement.props.enqueue).toBeDefined();

});

it('should schedule playback of the source node based upon the startTime prop and context`s current time', () => {

const audioContext = createStubAudioContext(3);

const audioNode = createStubSourceNode();

const MyComponent = ({ enqueue, node }) => enqueue(node);

const SourceComponent = asSourceNode(MyComponent);

<SourceComponent

startTime={1}

audioContext={audioContext}

node={audioNode}

/>;

expect(audioNode.start).toHaveBeenCalledTimes(1);

expect(audioNode.start).toHaveBeenCalledWith(4);

expect(audioNode.stop).not.toHaveBeenCalled();

});

it('should schedule the stopping of the source node when endTime is provided', () => {

const audioContext = createStubAudioContext(3);

const audioNode = createStubSourceNode();

const MyComponent = ({ enqueue, node }) => enqueue(node);

const SourceComponent = asSourceNode(MyComponent);

<SourceComponent

startTime={1}

endTime={5}

audioContext={audioContext}

node={audioNode}

/>;

expect(audioNode.start).toHaveBeenCalledTimes(1);

expect(audioNode.start).toHaveBeenCalledWith(4);

expect(audioNode.stop).toHaveBeenCalledTimes(1);

expect(audioNode.stop).toHaveBeenCalledWith(8);

});

it('should not schedule node playback or stopping when it has already been scheduled', () => {

const audioContext = createStubAudioContext(3);

const audioNode = createStubSourceNode();

const MyComponent = ({ enqueue, node }) => enqueue(node);

const SourceComponent = asSourceNode(MyComponent);

audioNode.isScheduled = true;

<SourceComponent

startTime={1}

endTime={5}

audioContext={audioContext}

node={audioNode}

/>;

expect(audioNode.start).not.toHaveBeenCalled();

expect(audioNode.stop).not.toHaveBeenCalled();

});

});

================================================

FILE: src/components/asSourceNode.jsx

================================================

/* A higher-order component that

* abstracts and centralises logic

* for enqueuing the starting and

* stopping of source nodes */

import elementCreator from '../createAudioElement';

const createEnqueuer = ({ audioContext, startTime = 0, endTime }) =>

node => {

if (node.isScheduled) {

return;

}

node.start(audioContext.currentTime + startTime);

if (endTime) {

node.stop(audioContext.currentTime + endTime);

}

node.isScheduled = true;

};

/* thunk to create HOC with cAE

* as injectable dependency */

export const createAsSourceNode = createAudioElement =>

Component =>

props =>

<Component

enqueue={createEnqueuer(props)}

{...props}

/>;

export default createAsSourceNode(elementCreator);

================================================

FILE: src/components/index.js

================================================

export { default as Aggregation } from './Aggregation';

export { default as AudioGraph } from './AudioGraph';

export { default as AudioBufferSource } from './AudioBufferSource';

export { default as ChannelMerger } from './ChannelMerger';

export { default as Gain } from './Gain';

export { default as Oscillator } from './Oscillator';

export { default as StereoPanner } from './StereoPanner';

export { default as Destination } from './Destination';

================================================

FILE: src/connectNodes.js

================================================

import { NO_OP } from './components/NoOp';

const connectNodes = nodes =>

nodes.reduce((sourceNode, targetNode) => {

const source = Array.isArray(sourceNode)

? connectNodes(sourceNode)

: sourceNode;

const target = Array.isArray(targetNode)

? connectNodes(targetNode)

: targetNode;

if (source !== NO_OP && target !== NO_OP) {

source.connect(target);

}

return target;

});

export default connectNodes;

================================================

FILE: src/createAudioElement.js

================================================

/* I chose "cache" over "memoise" here,

* as we don't cache by the inner

* arguments. We just want to avoid

* recomputing the node for a certain

* creator func reference. */

const cache = func => {

let result;

return (...args) => {

if (!result) {

result = func(...args);

}

return result;

};

};

/* decoration is required to differentiate

* between element creators and other function

* children e.g. render props */

const asCachedCreator = creator => {

const cachedCreator = cache(creator);

cachedCreator.isElementCreator = true;

return cachedCreator;

};

const getNodeFromTree = tree =>

!Array.isArray(tree)

? tree

: undefined; // facilitates with default prop in destructuring

const createAudioElement = (Component, props, ...children) =>

asCachedCreator((audioContext, nodeTree = []) => {

const mapResult = (result, i) =>

result.isElementCreator

? result(audioContext, nodeTree[i])

: result;

/* we want to render children first so the nodes

* can be directly consumed by their parents */

const createChildren = children => children.map(mapResult);

const existingNode = getNodeFromTree(nodeTree);

return mapResult(

Component({

children: createChildren(children),

audioContext,

node: existingNode,

...props,

})

);

});

export default createAudioElement;

================================================

FILE: src/index.js

================================================

export { default as createAudioElement } from './createAudioElement';

export * from './renderAudioGraph';

export { default as isWaxComponent } from './isWaxComponent';

export * from './components';

export * from './paramMutations';

export { default as assignAudioParam } from './paramMutations/assignAudioParam';

================================================

FILE: src/isWaxComponent.js

================================================

import * as components from './components';

const componentsArray = Object.values(components);

const isWaxComponent = Component => componentsArray.includes(Component);

export default isWaxComponent;

================================================

FILE: src/paramMutations/__tests__/assignAudioParam.test.js

================================================

import assignAudioParam from '../assignAudioParam';

describe('assignAudioParam', () => {

it('should do nothing if a value is not provided', () => {

const param = {};

assignAudioParam(param, null, 1);

expect(param).toEqual({});

});

it('should invoke the value with the param and current time when it`s a function', () => {

const param = {};

const value = jest.fn();

const currentTime = 6;

assignAudioParam(param, value, currentTime);

expect(value).toHaveBeenCalledTimes(1);

expect(value).toHaveBeenCalledWith(param, currentTime);

});

it('should invoke each value with the param and currenttime when it`s an array of functions', () => {

const param = {};

const value = [jest.fn(), jest.fn(), jest.fn()];

const currentTime = 9;

assignAudioParam(param, value, currentTime);

value.forEach(mutator => {

expect(mutator).toHaveBeenCalledTimes(1);

expect(mutator).toHaveBeenCalledWith(param, currentTime);

});

});

it('should assign the value to the param`s value property if it is not a function', () => {

const param = {};

const value = 5;

assignAudioParam(param, value);

expect(param.value).toEqual(value);

});

});

================================================

FILE: src/paramMutations/__tests__/createParamMutator.test.js

================================================

import createParamMutator from '../createParamMutator';

describe('createParamMutator', () => {

it('should create a func for public use which returns an inner function to manipulate AudioParams', () => {

const audioParam = { setValueAtTime: jest.fn() };

const setValueAtTime = createParamMutator('setValueAtTime');

const mutateAudioParam = setValueAtTime(300, 7);

mutateAudioParam(audioParam, 3);

expect(audioParam.setValueAtTime).toHaveBeenCalledTimes(1);

expect(audioParam.setValueAtTime).toHaveBeenCalledWith(300, 10);

});

});

================================================

FILE: src/paramMutations/assignAudioParam.js

================================================

const isMutation = value => typeof value === 'function';

const isMutationSequence = value =>

Array.isArray(value) && value.every(isMutation);

const assignAudioParam = (param, value, currentTime) => {

if (!value) {

return;

}

if (isMutation(value)) {

value(param, currentTime);

} else if (isMutationSequence(value)) {

value.forEach(paramMutation => paramMutation(param, currentTime));

} else {

param.value = value;

}

};

export default assignAudioParam;

================================================

FILE: src/paramMutations/createParamMutator.js

================================================

/* Since AudioParam methods follow a

* consistent signature, this function

* allows one to trivially create functions

* that will be consumed by users and

* components to schedule value changes. */

const createParamMutator = name =>

(value, time) =>

(param, currentTime) => {

param[name](value, currentTime + time);

};

export default createParamMutator;

================================================

FILE: src/paramMutations/index.js

================================================

import createParamMutator from './createParamMutator';

export const setValueAtTime = createParamMutator('setValueAtTime');

export const linearRampToValueAtTime = createParamMutator('linearRampToValueAtTime');

export const exponentialRampToValueAtTime = createParamMutator('exponentialRampToValueAtTime');

export const setTargetAtTime = createParamMutator('setTargetAtTime');

export const setValueCurveAtTime = createParamMutator('setValueCurveAtTime');

================================================

FILE: src/renderAudioGraph.js

================================================

import connectNodes from './connectNodes';

export const renderAudioGraph = (

createGraphElement,

context = new AudioContext(),

) => {

const nodes = createGraphElement(context);

connectNodes(nodes);

return nodes;

};

export const renderPersistentAudioGraph = (

createGraphElement,

context = new AudioContext(),

) => {

let nodes = renderAudioGraph(createGraphElement, context);

return createNewGraphElement => {

nodes = createNewGraphElement(context, nodes);

};

};

gitextract__3x_qwi4/

├── .babelrc

├── .editorconfig

├── .eslintrc.js

├── .gitignore

├── .npmignore

├── .nvmrc

├── .travis.yml

├── README.md

├── docs/

│ ├── 000-introduction.md

│ ├── 001-getting-started.md

│ ├── 002-audio-parameters.md

│ ├── 003-aggregations.md

│ ├── 004-updating-audio-graphs.md

│ ├── 005-interop-with-react.md

│ ├── 006-api-reference.md

│ └── 007-local-development.md

├── example/

│ ├── README.md

│ ├── devServer.js

│ └── src/

│ ├── aggregation.jsx

│ ├── combineElementCreators.js

│ ├── index.html

│ ├── simple.jsx

│ └── withReact.jsx

├── jest.config.js

├── package.json

├── rollup.config.js

└── src/

├── __tests__/

│ ├── connectNodes.test.js

│ ├── createAudioElement.test.js

│ └── helpers.js

├── components/

│ ├── Aggregation.jsx

│ ├── AudioBufferSource.js

│ ├── AudioGraph.js

│ ├── ChannelMerger.js

│ ├── Destination.js

│ ├── Gain.js

│ ├── NoOp.js

│ ├── Oscillator.js

│ ├── StereoPanner.js

│ ├── __tests__/

│ │ ├── AudioBufferSource.test.js

│ │ ├── Gain.test.js

│ │ ├── Oscillator.test.js

│ │ ├── StereoPanner.test.js

│ │ └── asSourceNode.test.jsx

│ ├── asSourceNode.jsx

│ └── index.js

├── connectNodes.js

├── createAudioElement.js

├── index.js

├── isWaxComponent.js

├── paramMutations/

│ ├── __tests__/

│ │ ├── assignAudioParam.test.js

│ │ └── createParamMutator.test.js

│ ├── assignAudioParam.js

│ ├── createParamMutator.js

│ └── index.js

└── renderAudioGraph.js

SYMBOL INDEX (12 symbols across 4 files)

FILE: example/devServer.js

constant PORT (line 8) | const PORT = 8080;

constant DEFAULT_MIME_TYPE (line 9) | const DEFAULT_MIME_TYPE = 'application/html';

FILE: example/src/withReact.jsx

class Slider (line 23) | class Slider extends React.Component {

method constructor (line 24) | constructor(props) {

method componentDidMount (line 29) | componentDidMount() {

method onChange (line 38) | onChange({ target }) {

method render (line 44) | render() {

FILE: src/__tests__/helpers.js

method createGain (line 3) | createGain() {

method createStereoPanner (line 10) | createStereoPanner() {

method createBufferSource (line 17) | createBufferSource() {

method createOscillator (line 27) | createOscillator() {

FILE: src/components/NoOp.js

constant NO_OP (line 7) | const NO_OP = 'NO_OP';

Condensed preview — 55 files, each showing path, character count, and a content snippet. Download the .json file or copy for the full structured content (65K chars).

[

{

"path": ".babelrc",

"chars": 307,

"preview": "{\n \"plugins\": [\n \"@babel/proposal-object-rest-spread\",\n [\"@babel/transform-react-jsx\", {\n \"p"

},

{

"path": ".editorconfig",

"chars": 303,

"preview": "root = true\n\n# Unix-style newlines with a newline ending every file\n[*]\nend_of_line = lf\ninsert_final_newline = true\n\n# "

},

{

"path": ".eslintrc.js",

"chars": 906,

"preview": "module.exports = {\n \"globals\": {\n \"onAudioContextResumed\": \"readable\",\n },\n \"env\": {\n \"browser\": "

},

{

"path": ".gitignore",

"chars": 48,

"preview": "dist\nnode_modules\ncoverage\n.nyc_output\n.DS_Store"

},

{

"path": ".npmignore",

"chars": 94,

"preview": "src\nexample\n.babelrc\n.eslintignore\n.eslintrc.js\n.nvmrc\nREADME.md\nrollup.config.js\n.travis.yml\n"

},

{

"path": ".nvmrc",

"chars": 8,

"preview": "v8.11.0\n"

},

{

"path": ".travis.yml",

"chars": 69,

"preview": "language: node_js\nnode_js:\n - \"8\"\n - \"10\"\n\nscript: npm run test:ci\n"

},

{

"path": "README.md",

"chars": 2608,

"preview": "# Wax\n\n[](https://travis-ci.org/jame"

},

{

"path": "docs/000-introduction.md",

"chars": 2643,

"preview": "# Introduction\n\n[Web Audio](https://developer.mozilla.org/en-US/docs/Web/API/Web_Audio_API) is a exciting capability tha"

},

{

"path": "docs/001-getting-started.md",

"chars": 3763,

"preview": "# Getting Started\n\nThe entirety of Wax is available in a single package from npm, named `wax-core`. Install it into your"

},

{

"path": "docs/002-audio-parameters.md",

"chars": 1509,

"preview": "# Manipulating Audio Parameters\n\nThe [`AudioParam`](https://developer.mozilla.org/en-US/docs/Web/API/AudioParam) interfa"

},

{

"path": "docs/003-aggregations.md",

"chars": 2872,

"preview": "# Building Complex Graphs with `<Aggregation />`s\n\nThus far, we have built a simple, linear audio graph. What if we want"

},

{

"path": "docs/004-updating-audio-graphs.md",

"chars": 2419,

"preview": "# Updating Rendered `<AudioGraph />`s\n\nThus far, we have been rendering static audio graphs with the `renderAudioGraph` "

},

{

"path": "docs/005-interop-with-react.md",

"chars": 2049,

"preview": "# Interop with React\n\nIn the prior chapter, we learned how to update an existing audio graph whenever a `HTMLInputElemen"

},

{

"path": "docs/006-api-reference.md",

"chars": 83,

"preview": "# API Reference\n\nComing soon. I'm going to find a nice way of autogenerating this!\n"

},

{

"path": "docs/007-local-development.md",

"chars": 949,

"preview": "# Local Development\n\nTo build Wax and to run the example apps locally, you'll first need to run these commands in your t"

},

{

"path": "example/README.md",

"chars": 1739,

"preview": "# Example Apps\n\nThe `src` directory contains three example Wax applications, each bootstrapped by the same HTML document"

},

{

"path": "example/devServer.js",

"chars": 1120,

"preview": "'use strict';\n\n/* node-static unfortunately doesn't provide\n * the correct Content-Type header for non-HTML\n * files, br"

},

{

"path": "example/src/aggregation.jsx",

"chars": 1380,

"preview": "import {\n createAudioElement,\n renderAudioGraph,\n AudioGraph,\n Aggregation,\n AudioBufferSource,\n Oscil"

},

{

"path": "example/src/combineElementCreators.js",

"chars": 425,

"preview": "const getCreator = (map, Component) =>\n [...map.entries()]\n .find(([predicate]) => predicate(Component))[1];\n\n"

},

{

"path": "example/src/index.html",

"chars": 1102,

"preview": "<!DOCTYPE html>\n<html>\n <head>\n <meta charset=\"utf-8\" />\n <title>Wax Example</title>\n </head>\n <b"

},

{

"path": "example/src/simple.jsx",

"chars": 682,

"preview": "import {\n createAudioElement,\n renderAudioGraph,\n AudioGraph,\n Oscillator,\n Gain,\n StereoPanner,\n D"

},

{

"path": "example/src/withReact.jsx",

"chars": 1707,

"preview": "/** @jsx createElement */\n\nimport React from 'react';\nimport ReactDOM from 'react-dom';\n\nimport {\n isWaxComponent,\n "

},

{

"path": "jest.config.js",

"chars": 144,

"preview": "module.exports = {\n testRegex: 'src\\\\/(.*\\\\/)*__tests__\\\\/.*\\\\.test\\\\.jsx?$',\n transform: {\n '.*\\\\.jsx?$': "

},

{

"path": "package.json",

"chars": 1824,

"preview": "{\n \"name\": \"wax-core\",\n \"version\": \"0.1.1\",\n \"description\": \"An experimental, JSX-compatible renderer for the Web Aud"

},

{

"path": "rollup.config.js",

"chars": 1129,

"preview": "/* TODO: move this and dependencies to\n * separate package in example directory?\n */\n\nimport { resolve as resolvePath } "

},

{

"path": "src/__tests__/connectNodes.test.js",

"chars": 2244,

"preview": "import { NO_OP } from '../components/NoOp';\nimport connectNodes from '../connectNodes';\nimport { createArrayWith } from "

},

{

"path": "src/__tests__/createAudioElement.test.js",

"chars": 3946,

"preview": "import { createArrayWith } from './helpers';\nimport createAudioElement from '../createAudioElement';\n\nconst createElemen"

},

{

"path": "src/__tests__/helpers.js",

"chars": 798,

"preview": "export const createStubAudioContext = (currentTime = 0) => ({\n currentTime,\n createGain() {\n return {\n "

},

{

"path": "src/components/Aggregation.jsx",

"chars": 266,

"preview": "import createAudioElement from '../createAudioElement';\nimport NoOp from './NoOp';\nimport AudioGraph from './AudioGraph'"

},

{

"path": "src/components/AudioBufferSource.js",

"chars": 811,