Repository: jindongwang/Pytorch-CapsuleNet

Branch: master

Commit: c5031b719dcd

Files: 5

Total size: 15.3 KB

Directory structure:

gitextract_4t2pogpk/

├── LICENSE

├── README.md

├── capsnet.py

├── data_loader.py

└── test_capsnet.py

================================================

FILE CONTENTS

================================================

================================================

FILE: LICENSE

================================================

MIT License

Copyright (c) 2018 jindongwang

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: README.md

================================================

# Pytorch-CapsuleNet

A flexible and easy-to-follow Pytorch implementation of Hinton's Capsule Network.

There are already many repos containing the code for CapsNet. However, most of them are too tight to customize. And as we all know, Hinton's original paper is only tested on *MNIST* datasets. We clearly want to do more.

This repo is designed to hold other datasets and configurations. And the most important thing is, we want to make the code **flexible**. Then, we can *tailor* the network according to our needs.

Currently, the code supports both **MNIST and CIFAR-10** datasets.

## Requirements

- Python 3.x

- Pytorch 0.3.0 or above

- Numpy

- tqdm (to make display better, of course you can replace it with 'print')

## Run

Just run `Python test_capsnet.py` in your terminal. That's all. If you want to change the dataset (MNIST or CIFAR-10), you can easily set the `dataset` variable.

It is better to run the code on a server with GPUs. Capsule network demands good computing devices. For instance, on my device (Nvidia K80), it will take about 5 minutes for one epoch of the MNIST datasets (batch size = 100).

## More details

There are 3 `.py` files:

- `capsnet.py`: the main class for capsule network

- `data_loader.py`: the class to hold many classes

- `test_capsnet.py`: the training and testing code

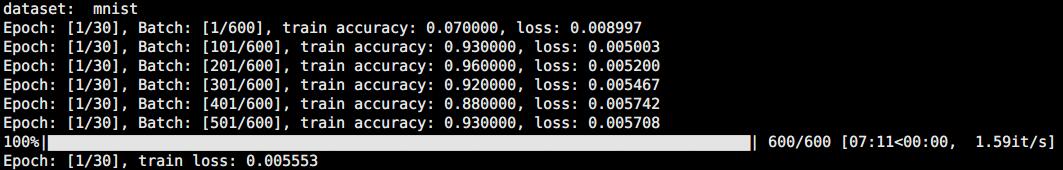

The results on your device may look like the following picture:

## Acknowledgements

- [Capsule-Network-Tutorial](https://github.com/higgsfield/Capsule-Network-Tutorial)

- The original paper of Capsule Net by Geoffrey Hinton: [Dynamic routing between capsules](http://papers.nips.cc/paper/6975-dynamic-routing-between-capsules)

================================================

FILE: capsnet.py

================================================

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.autograd import Variable

USE_CUDA = True if torch.cuda.is_available() else False

class ConvLayer(nn.Module):

def __init__(self, in_channels=1, out_channels=256, kernel_size=9):

super(ConvLayer, self).__init__()

self.conv = nn.Conv2d(in_channels=in_channels,

out_channels=out_channels,

kernel_size=kernel_size,

stride=1

)

def forward(self, x):

return F.relu(self.conv(x))

class PrimaryCaps(nn.Module):

def __init__(self, num_capsules=8, in_channels=256, out_channels=32, kernel_size=9, num_routes=32 * 6 * 6):

super(PrimaryCaps, self).__init__()

self.num_routes = num_routes

self.capsules = nn.ModuleList([

nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size, stride=2, padding=0)

for _ in range(num_capsules)])

def forward(self, x):

u = [capsule(x) for capsule in self.capsules]

u = torch.stack(u, dim=1)

u = u.view(x.size(0), self.num_routes, -1)

return self.squash(u)

def squash(self, input_tensor):

squared_norm = (input_tensor ** 2).sum(-1, keepdim=True)

output_tensor = squared_norm * input_tensor / ((1. + squared_norm) * torch.sqrt(squared_norm))

return output_tensor

class DigitCaps(nn.Module):

def __init__(self, num_capsules=10, num_routes=32 * 6 * 6, in_channels=8, out_channels=16):

super(DigitCaps, self).__init__()

self.in_channels = in_channels

self.num_routes = num_routes

self.num_capsules = num_capsules

self.W = nn.Parameter(torch.randn(1, num_routes, num_capsules, out_channels, in_channels))

def forward(self, x):

batch_size = x.size(0)

x = torch.stack([x] * self.num_capsules, dim=2).unsqueeze(4)

W = torch.cat([self.W] * batch_size, dim=0)

u_hat = torch.matmul(W, x)

b_ij = Variable(torch.zeros(1, self.num_routes, self.num_capsules, 1))

if USE_CUDA:

b_ij = b_ij.cuda()

num_iterations = 3

for iteration in range(num_iterations):

c_ij = F.softmax(b_ij, dim=1)

c_ij = torch.cat([c_ij] * batch_size, dim=0).unsqueeze(4)

s_j = (c_ij * u_hat).sum(dim=1, keepdim=True)

v_j = self.squash(s_j)

if iteration < num_iterations - 1:

a_ij = torch.matmul(u_hat.transpose(3, 4), torch.cat([v_j] * self.num_routes, dim=1))

b_ij = b_ij + a_ij.squeeze(4).mean(dim=0, keepdim=True)

return v_j.squeeze(1)

def squash(self, input_tensor):

squared_norm = (input_tensor ** 2).sum(-1, keepdim=True)

output_tensor = squared_norm * input_tensor / ((1. + squared_norm) * torch.sqrt(squared_norm))

return output_tensor

class Decoder(nn.Module):

def __init__(self, input_width=28, input_height=28, input_channel=1):

super(Decoder, self).__init__()

self.input_width = input_width

self.input_height = input_height

self.input_channel = input_channel

self.reconstraction_layers = nn.Sequential(

nn.Linear(16 * 10, 512),

nn.ReLU(inplace=True),

nn.Linear(512, 1024),

nn.ReLU(inplace=True),

nn.Linear(1024, self.input_height * self.input_width * self.input_channel),

nn.Sigmoid()

)

def forward(self, x, data):

classes = torch.sqrt((x ** 2).sum(2))

classes = F.softmax(classes, dim=0)

_, max_length_indices = classes.max(dim=1)

masked = Variable(torch.sparse.torch.eye(10))

if USE_CUDA:

masked = masked.cuda()

masked = masked.index_select(dim=0, index=Variable(max_length_indices.squeeze(1).data))

t = (x * masked[:, :, None, None]).view(x.size(0), -1)

reconstructions = self.reconstraction_layers(t)

reconstructions = reconstructions.view(-1, self.input_channel, self.input_width, self.input_height)

return reconstructions, masked

class CapsNet(nn.Module):

def __init__(self, config=None):

super(CapsNet, self).__init__()

if config:

self.conv_layer = ConvLayer(config.cnn_in_channels, config.cnn_out_channels, config.cnn_kernel_size)

self.primary_capsules = PrimaryCaps(config.pc_num_capsules, config.pc_in_channels, config.pc_out_channels,

config.pc_kernel_size, config.pc_num_routes)

self.digit_capsules = DigitCaps(config.dc_num_capsules, config.dc_num_routes, config.dc_in_channels,

config.dc_out_channels)

self.decoder = Decoder(config.input_width, config.input_height, config.cnn_in_channels)

else:

self.conv_layer = ConvLayer()

self.primary_capsules = PrimaryCaps()

self.digit_capsules = DigitCaps()

self.decoder = Decoder()

self.mse_loss = nn.MSELoss()

def forward(self, data):

output = self.digit_capsules(self.primary_capsules(self.conv_layer(data)))

reconstructions, masked = self.decoder(output, data)

return output, reconstructions, masked

def loss(self, data, x, target, reconstructions):

return self.margin_loss(x, target) + self.reconstruction_loss(data, reconstructions)

def margin_loss(self, x, labels, size_average=True):

batch_size = x.size(0)

v_c = torch.sqrt((x ** 2).sum(dim=2, keepdim=True))

left = F.relu(0.9 - v_c).view(batch_size, -1)

right = F.relu(v_c - 0.1).view(batch_size, -1)

loss = labels * left + 0.5 * (1.0 - labels) * right

loss = loss.sum(dim=1).mean()

return loss

def reconstruction_loss(self, data, reconstructions):

loss = self.mse_loss(reconstructions.view(reconstructions.size(0), -1), data.view(reconstructions.size(0), -1))

return loss * 0.0005

================================================

FILE: data_loader.py

================================================

import torch

from torchvision import datasets, transforms

class Dataset:

def __init__(self, dataset, _batch_size):

super(Dataset, self).__init__()

if dataset == 'mnist':

dataset_transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

train_dataset = datasets.MNIST('/data/mnist', train=True, download=True,

transform=dataset_transform)

test_dataset = datasets.MNIST('/data/mnist', train=False, download=True,

transform=dataset_transform)

self.train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=_batch_size, shuffle=True)

self.test_loader = torch.utils.data.DataLoader(test_dataset, batch_size=_batch_size, shuffle=False)

elif dataset == 'cifar10':

data_transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])

train_dataset = datasets.CIFAR10(

'/data/cifar', train=True, download=True, transform=data_transform)

test_dataset = datasets.CIFAR10(

'/data/cifar', train=False, download=True, transform=data_transform)

self.train_loader = torch.utils.data.DataLoader(

train_dataset, batch_size=_batch_size, shuffle=True)

self.test_loader = torch.utils.data.DataLoader(

test_dataset, batch_size=_batch_size, shuffle=False)

elif dataset == 'office-caltech':

pass

elif dataset == 'office31':

pass

================================================

FILE: test_capsnet.py

================================================

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.autograd import Variable

from torchvision import datasets, transforms

from capsnet import CapsNet

from data_loader import Dataset

from tqdm import tqdm

USE_CUDA = True if torch.cuda.is_available() else False

BATCH_SIZE = 100

N_EPOCHS = 30

LEARNING_RATE = 0.01

MOMENTUM = 0.9

'''

Config class to determine the parameters for capsule net

'''

class Config:

def __init__(self, dataset='mnist'):

if dataset == 'mnist':

# CNN (cnn)

self.cnn_in_channels = 1

self.cnn_out_channels = 256

self.cnn_kernel_size = 9

# Primary Capsule (pc)

self.pc_num_capsules = 8

self.pc_in_channels = 256

self.pc_out_channels = 32

self.pc_kernel_size = 9

self.pc_num_routes = 32 * 6 * 6

# Digit Capsule (dc)

self.dc_num_capsules = 10

self.dc_num_routes = 32 * 6 * 6

self.dc_in_channels = 8

self.dc_out_channels = 16

# Decoder

self.input_width = 28

self.input_height = 28

elif dataset == 'cifar10':

# CNN (cnn)

self.cnn_in_channels = 3

self.cnn_out_channels = 256

self.cnn_kernel_size = 9

# Primary Capsule (pc)

self.pc_num_capsules = 8

self.pc_in_channels = 256

self.pc_out_channels = 32

self.pc_kernel_size = 9

self.pc_num_routes = 32 * 8 * 8

# Digit Capsule (dc)

self.dc_num_capsules = 10

self.dc_num_routes = 32 * 8 * 8

self.dc_in_channels = 8

self.dc_out_channels = 16

# Decoder

self.input_width = 32

self.input_height = 32

elif dataset == 'your own dataset':

pass

def train(model, optimizer, train_loader, epoch):

capsule_net = model

capsule_net.train()

n_batch = len(list(enumerate(train_loader)))

total_loss = 0

for batch_id, (data, target) in enumerate(tqdm(train_loader)):

target = torch.sparse.torch.eye(10).index_select(dim=0, index=target)

data, target = Variable(data), Variable(target)

if USE_CUDA:

data, target = data.cuda(), target.cuda()

optimizer.zero_grad()

output, reconstructions, masked = capsule_net(data)

loss = capsule_net.loss(data, output, target, reconstructions)

loss.backward()

optimizer.step()

correct = sum(np.argmax(masked.data.cpu().numpy(), 1) == np.argmax(target.data.cpu().numpy(), 1))

train_loss = loss.item()

total_loss += train_loss

if batch_id % 100 == 0:

tqdm.write("Epoch: [{}/{}], Batch: [{}/{}], train accuracy: {:.6f}, loss: {:.6f}".format(

epoch,

N_EPOCHS,

batch_id + 1,

n_batch,

correct / float(BATCH_SIZE),

train_loss / float(BATCH_SIZE)

))

tqdm.write('Epoch: [{}/{}], train loss: {:.6f}'.format(epoch,N_EPOCHS,total_loss / len(train_loader.dataset)))

def test(capsule_net, test_loader, epoch):

capsule_net.eval()

test_loss = 0

correct = 0

for batch_id, (data, target) in enumerate(test_loader):

target = torch.sparse.torch.eye(10).index_select(dim=0, index=target)

data, target = Variable(data), Variable(target)

if USE_CUDA:

data, target = data.cuda(), target.cuda()

output, reconstructions, masked = capsule_net(data)

loss = capsule_net.loss(data, output, target, reconstructions)

test_loss += loss.item()

correct += sum(np.argmax(masked.data.cpu().numpy(), 1) ==

np.argmax(target.data.cpu().numpy(), 1))

tqdm.write(

"Epoch: [{}/{}], test accuracy: {:.6f}, loss: {:.6f}".format(epoch, N_EPOCHS, correct / len(test_loader.dataset),

test_loss / len(test_loader)))

if __name__ == '__main__':

torch.manual_seed(1)

dataset = 'cifar10'

# dataset = 'mnist'

config = Config(dataset)

mnist = Dataset(dataset, BATCH_SIZE)

capsule_net = CapsNet(config)

capsule_net = torch.nn.DataParallel(capsule_net)

if USE_CUDA:

capsule_net = capsule_net.cuda()

capsule_net = capsule_net.module

optimizer = torch.optim.Adam(capsule_net.parameters())

for e in range(1, N_EPOCHS + 1):

train(capsule_net, optimizer, mnist.train_loader, e)

test(capsule_net, mnist.test_loader, e)

gitextract_4t2pogpk/ ├── LICENSE ├── README.md ├── capsnet.py ├── data_loader.py └── test_capsnet.py

SYMBOL INDEX (26 symbols across 3 files)

FILE: capsnet.py

class ConvLayer (line 9) | class ConvLayer(nn.Module):

method __init__ (line 10) | def __init__(self, in_channels=1, out_channels=256, kernel_size=9):

method forward (line 19) | def forward(self, x):

class PrimaryCaps (line 23) | class PrimaryCaps(nn.Module):

method __init__ (line 24) | def __init__(self, num_capsules=8, in_channels=256, out_channels=32, k...

method forward (line 31) | def forward(self, x):

method squash (line 37) | def squash(self, input_tensor):

class DigitCaps (line 43) | class DigitCaps(nn.Module):

method __init__ (line 44) | def __init__(self, num_capsules=10, num_routes=32 * 6 * 6, in_channels...

method forward (line 53) | def forward(self, x):

method squash (line 78) | def squash(self, input_tensor):

class Decoder (line 84) | class Decoder(nn.Module):

method __init__ (line 85) | def __init__(self, input_width=28, input_height=28, input_channel=1):

method forward (line 99) | def forward(self, x, data):

class CapsNet (line 114) | class CapsNet(nn.Module):

method __init__ (line 115) | def __init__(self, config=None):

method forward (line 132) | def forward(self, data):

method loss (line 137) | def loss(self, data, x, target, reconstructions):

method margin_loss (line 140) | def margin_loss(self, x, labels, size_average=True):

method reconstruction_loss (line 153) | def reconstruction_loss(self, data, reconstructions):

FILE: data_loader.py

class Dataset (line 5) | class Dataset:

method __init__ (line 6) | def __init__(self, dataset, _batch_size):

FILE: test_capsnet.py

class Config (line 22) | class Config:

method __init__ (line 23) | def __init__(self, dataset='mnist'):

function train (line 74) | def train(model, optimizer, train_loader, epoch):

function test (line 107) | def test(capsule_net, test_loader, epoch):

Condensed preview — 5 files, each showing path, character count, and a content snippet. Download the .json file or copy for the full structured content (17K chars).

[

{

"path": "LICENSE",

"chars": 1068,

"preview": "MIT License\n\nCopyright (c) 2018 jindongwang\n\nPermission is hereby granted, free of charge, to any person obtaining a cop"

},

{

"path": "README.md",

"chars": 1741,

"preview": "# Pytorch-CapsuleNet\n\nA flexible and easy-to-follow Pytorch implementation of Hinton's Capsule Network.\n\nThere are alrea"

},

{

"path": "capsnet.py",

"chars": 6263,

"preview": "import torch\r\nimport torch.nn as nn\r\nimport torch.nn.functional as F\r\nfrom torch.autograd import Variable\r\n\r\nUSE_CUDA = "

},

{

"path": "data_loader.py",

"chars": 1782,

"preview": "import torch\r\nfrom torchvision import datasets, transforms\r\n\r\n\r\nclass Dataset:\r\n def __init__(self, dataset, _batch_s"

},

{

"path": "test_capsnet.py",

"chars": 4831,

"preview": "import numpy as np\r\nimport torch\r\nimport torch.nn as nn\r\nimport torch.nn.functional as F\r\nfrom torch.autograd import Var"

}

]

About this extraction

This page contains the full source code of the jindongwang/Pytorch-CapsuleNet GitHub repository, extracted and formatted as plain text for AI agents and large language models (LLMs). The extraction includes 5 files (15.3 KB), approximately 3.8k tokens, and a symbol index with 26 extracted functions, classes, methods, constants, and types. Use this with OpenClaw, Claude, ChatGPT, Cursor, Windsurf, or any other AI tool that accepts text input. You can copy the full output to your clipboard or download it as a .txt file.

Extracted by GitExtract — free GitHub repo to text converter for AI. Built by Nikandr Surkov.