Showing preview only (1,734K chars total). Download the full file or copy to clipboard to get everything.

Repository: jupyter-server/enterprise_gateway

Branch: main

Commit: 56a80a112385

Files: 295

Total size: 1.6 MB

Directory structure:

gitextract_mwzi65qv/

├── .git-blame-ignore-revs

├── .gitattributes

├── .github/

│ ├── ISSUE_TEMPLATE.md

│ ├── codeql/

│ │ └── codeql-config.yml

│ ├── dependabot.yml

│ └── workflows/

│ ├── build.yml

│ └── codeql-analysis.yml

├── .gitignore

├── .pre-commit-config.yaml

├── .readthedocs.yaml

├── LICENSE.md

├── Makefile

├── README.md

├── codecov.yml

├── conftest.py

├── docs/

│ ├── Makefile

│ ├── doc-requirements.txt

│ ├── environment.yml

│ ├── make.bat

│ └── source/

│ ├── _static/

│ │ └── custom.css

│ ├── conf.py

│ ├── contributors/

│ │ ├── contrib.md

│ │ ├── debug.md

│ │ ├── devinstall.md

│ │ ├── docker.md

│ │ ├── index.rst

│ │ ├── roadmap.md

│ │ ├── sequence-diagrams.md

│ │ └── system-architecture.md

│ ├── developers/

│ │ ├── custom-images.md

│ │ ├── dev-process-proxy.md

│ │ ├── index.rst

│ │ ├── kernel-launcher.md

│ │ ├── kernel-library.md

│ │ ├── kernel-manager.md

│ │ ├── kernel-specification.md

│ │ └── rest-api.rst

│ ├── index.rst

│ ├── operators/

│ │ ├── config-add-env.md

│ │ ├── config-availability.md

│ │ ├── config-cli.md

│ │ ├── config-culling.md

│ │ ├── config-dynamic.md

│ │ ├── config-env-debug.md

│ │ ├── config-file.md

│ │ ├── config-kernel-override.md

│ │ ├── config-security.md

│ │ ├── config-sys-env.md

│ │ ├── deploy-conductor.md

│ │ ├── deploy-distributed.md

│ │ ├── deploy-docker.md

│ │ ├── deploy-kubernetes.md

│ │ ├── deploy-single.md

│ │ ├── deploy-yarn-cluster.md

│ │ ├── index.rst

│ │ ├── installing-eg.md

│ │ ├── installing-kernels.md

│ │ └── launching-eg.md

│ ├── other/

│ │ ├── index.rst

│ │ ├── related-resources.md

│ │ └── troubleshooting.md

│ └── users/

│ ├── client-config.md

│ ├── connecting-to-eg.md

│ ├── index.rst

│ ├── installation.md

│ └── kernel-envs.md

├── enterprise_gateway/

│ ├── __init__.py

│ ├── __main__.py

│ ├── _version.py

│ ├── base/

│ │ ├── __init__.py

│ │ └── handlers.py

│ ├── client/

│ │ ├── __init__.py

│ │ └── gateway_client.py

│ ├── enterprisegatewayapp.py

│ ├── itests/

│ │ ├── __init__.py

│ │ ├── kernels/

│ │ │ └── authorization_test/

│ │ │ └── kernel.json

│ │ ├── test_authorization.py

│ │ ├── test_base.py

│ │ ├── test_python_kernel.py

│ │ ├── test_r_kernel.py

│ │ └── test_scala_kernel.py

│ ├── mixins.py

│ ├── services/

│ │ ├── __init__.py

│ │ ├── api/

│ │ │ ├── __init__.py

│ │ │ ├── handlers.py

│ │ │ ├── swagger.json

│ │ │ └── swagger.yaml

│ │ ├── kernels/

│ │ │ ├── __init__.py

│ │ │ ├── handlers.py

│ │ │ └── remotemanager.py

│ │ ├── kernelspecs/

│ │ │ ├── __init__.py

│ │ │ ├── handlers.py

│ │ │ └── kernelspec_cache.py

│ │ ├── processproxies/

│ │ │ ├── __init__.py

│ │ │ ├── conductor.py

│ │ │ ├── container.py

│ │ │ ├── crd.py

│ │ │ ├── distributed.py

│ │ │ ├── docker_swarm.py

│ │ │ ├── k8s.py

│ │ │ ├── processproxy.py

│ │ │ ├── spark_operator.py

│ │ │ └── yarn.py

│ │ └── sessions/

│ │ ├── __init__.py

│ │ ├── handlers.py

│ │ ├── kernelsessionmanager.py

│ │ └── sessionmanager.py

│ └── tests/

│ ├── __init__.py

│ ├── resources/

│ │ ├── failing_code2.ipynb

│ │ ├── failing_code3.ipynb

│ │ ├── kernel_api2.ipynb

│ │ ├── kernel_api3.ipynb

│ │ ├── kernels/

│ │ │ └── kernel_defaults_test/

│ │ │ └── kernel.json

│ │ ├── public/

│ │ │ └── index.html

│ │ ├── responses_2.ipynb

│ │ ├── responses_3.ipynb

│ │ ├── simple_api2.ipynb

│ │ ├── simple_api3.ipynb

│ │ ├── unknown_kernel.ipynb

│ │ ├── zen2.ipynb

│ │ └── zen3.ipynb

│ ├── test_enterprise_gateway.py

│ ├── test_gatewayapp.py

│ ├── test_handlers.py

│ ├── test_kernelspec_cache.py

│ ├── test_mixins.py

│ ├── test_process_proxy.py

│ └── test_yaml_injection.py

├── etc/

│ ├── Makefile

│ ├── docker/

│ │ ├── demo-base/

│ │ │ ├── Dockerfile

│ │ │ ├── README.md

│ │ │ ├── bootstrap-yarn-spark.sh

│ │ │ ├── core-site.xml.template

│ │ │ ├── fix-permissions

│ │ │ ├── hdfs-site.xml

│ │ │ ├── mapred-site.xml

│ │ │ ├── ssh_config

│ │ │ └── yarn-site.xml.template

│ │ ├── docker-compose.yml

│ │ ├── enterprise-gateway/

│ │ │ ├── Dockerfile

│ │ │ ├── README.md

│ │ │ └── start-enterprise-gateway.sh

│ │ ├── enterprise-gateway-demo/

│ │ │ ├── Dockerfile

│ │ │ ├── README.md

│ │ │ ├── bootstrap-enterprise-gateway.sh

│ │ │ └── start-enterprise-gateway.sh.template

│ │ ├── kernel-image-puller/

│ │ │ ├── Dockerfile

│ │ │ ├── README.md

│ │ │ ├── image_fetcher.py

│ │ │ ├── kernel_image_puller.py

│ │ │ └── requirements.txt

│ │ ├── kernel-py/

│ │ │ ├── Dockerfile

│ │ │ └── README.md

│ │ ├── kernel-r/

│ │ │ ├── Dockerfile

│ │ │ └── README.md

│ │ ├── kernel-scala/

│ │ │ ├── Dockerfile

│ │ │ └── README.md

│ │ ├── kernel-spark-py/

│ │ │ ├── Dockerfile

│ │ │ └── README.md

│ │ ├── kernel-spark-r/

│ │ │ ├── Dockerfile

│ │ │ └── README.md

│ │ ├── kernel-tf-gpu-py/

│ │ │ ├── Dockerfile

│ │ │ └── README.md

│ │ └── kernel-tf-py/

│ │ ├── Dockerfile

│ │ └── README.md

│ ├── kernel-launchers/

│ │ ├── R/

│ │ │ └── scripts/

│ │ │ ├── launch_IRkernel.R

│ │ │ └── server_listener.py

│ │ ├── bootstrap/

│ │ │ └── bootstrap-kernel.sh

│ │ ├── docker/

│ │ │ └── scripts/

│ │ │ └── launch_docker.py

│ │ ├── kubernetes/

│ │ │ └── scripts/

│ │ │ ├── kernel-pod.yaml.j2

│ │ │ └── launch_kubernetes.py

│ │ ├── operators/

│ │ │ └── scripts/

│ │ │ ├── launch_custom_resource.py

│ │ │ └── sparkoperator.k8s.io-v1beta2.yaml.j2

│ │ ├── python/

│ │ │ └── scripts/

│ │ │ └── launch_ipykernel.py

│ │ └── scala/

│ │ └── toree-launcher/

│ │ ├── build.sbt

│ │ ├── project/

│ │ │ ├── build.properties

│ │ │ ├── plugins.sbt

│ │ │ └── scalastyle-config.xml

│ │ └── src/

│ │ └── main/

│ │ └── scala/

│ │ └── launcher/

│ │ ├── KernelProfile.scala

│ │ ├── ToreeLauncher.scala

│ │ └── utils/

│ │ ├── SecurityUtils.scala

│ │ └── SocketUtils.scala

│ ├── kernel-resources/

│ │ └── ir/

│ │ └── kernel.js

│ ├── kernelspecs/

│ │ ├── R_docker/

│ │ │ └── kernel.json

│ │ ├── R_kubernetes/

│ │ │ └── kernel.json

│ │ ├── dask_python_yarn_remote/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── python_distributed/

│ │ │ └── kernel.json

│ │ ├── python_docker/

│ │ │ └── kernel.json

│ │ ├── python_kubernetes/

│ │ │ └── kernel.json

│ │ ├── python_tf_docker/

│ │ │ └── kernel.json

│ │ ├── python_tf_gpu_docker/

│ │ │ └── kernel.json

│ │ ├── python_tf_gpu_kubernetes/

│ │ │ └── kernel.json

│ │ ├── python_tf_kubernetes/

│ │ │ └── kernel.json

│ │ ├── scala_docker/

│ │ │ └── kernel.json

│ │ ├── scala_kubernetes/

│ │ │ └── kernel.json

│ │ ├── spark_R_conductor_cluster/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_R_kubernetes/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_R_yarn_client/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_R_yarn_cluster/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_python_conductor_cluster/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_python_kubernetes/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_python_operator/

│ │ │ └── kernel.json

│ │ ├── spark_python_yarn_client/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_python_yarn_cluster/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_scala_conductor_cluster/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_scala_kubernetes/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ ├── spark_scala_yarn_client/

│ │ │ ├── bin/

│ │ │ │ └── run.sh

│ │ │ └── kernel.json

│ │ └── spark_scala_yarn_cluster/

│ │ ├── bin/

│ │ │ └── run.sh

│ │ └── kernel.json

│ └── kubernetes/

│ └── helm/

│ └── enterprise-gateway/

│ ├── Chart.yaml

│ ├── templates/

│ │ ├── daemonset.yaml

│ │ ├── deployment.yaml

│ │ ├── eg-clusterrole.yaml

│ │ ├── eg-clusterrolebinding.yaml

│ │ ├── eg-serviceaccount.yaml

│ │ ├── imagepullSecret.yaml

│ │ ├── ingress.yaml

│ │ ├── kip-clusterrole.yaml

│ │ ├── kip-clusterrolebinding.yaml

│ │ ├── kip-serviceaccount.yaml

│ │ ├── psp.yaml

│ │ └── service.yaml

│ └── values.yaml

├── pyproject.toml

├── release.sh

├── requirements.yml

└── website/

├── .gitignore

├── README.md

├── _config.yml

├── _data/

│ └── navigation.yml

├── _includes/

│ ├── call-to-action.html

│ ├── contact.html

│ ├── features.html

│ ├── head.html

│ ├── header.html

│ ├── nav.html

│ ├── platforms.html

│ └── scripts.html

├── _layouts/

│ ├── home.html

│ └── page.html

├── _sass/

│ ├── _base.scss

│ └── _mixins.scss

├── css/

│ ├── bootstrap.css

│ └── main.scss

├── font-awesome/

│ ├── css/

│ │ └── font-awesome.css

│ ├── fonts/

│ │ └── FontAwesome.otf

│ ├── less/

│ │ ├── animated.less

│ │ ├── bordered-pulled.less

│ │ ├── core.less

│ │ ├── fixed-width.less

│ │ ├── font-awesome.less

│ │ ├── icons.less

│ │ ├── larger.less

│ │ ├── list.less

│ │ ├── mixins.less

│ │ ├── path.less

│ │ ├── rotated-flipped.less

│ │ ├── stacked.less

│ │ └── variables.less

│ └── scss/

│ ├── _animated.scss

│ ├── _bordered-pulled.scss

│ ├── _core.scss

│ ├── _fixed-width.scss

│ ├── _icons.scss

│ ├── _larger.scss

│ ├── _list.scss

│ ├── _mixins.scss

│ ├── _path.scss

│ ├── _rotated-flipped.scss

│ ├── _stacked.scss

│ ├── _variables.scss

│ └── font-awesome.scss

├── index.md

├── js/

│ ├── bootstrap.js

│ ├── cbpAnimatedHeader.js

│ ├── classie.js

│ ├── creative.js

│ ├── jquery.fittext.js

│ └── jquery.js

├── platform-kubernetes.md

├── platform-spark.md

├── privacy-policy.md

└── publish.sh

================================================

FILE CONTENTS

================================================

================================================

FILE: .git-blame-ignore-revs

================================================

# Initial pre-commit reformat

df811d0deacebfd6cc77e8bf501d9b87ff006fb5

================================================

FILE: .gitattributes

================================================

# Set the default behavior to have all files normalized to Unix-style

# line endings upon check-in.

* text=auto

# Declare files that will always have CRLF line endings on checkout.

*.bat text eol=crlf

# Denote all files that are truly binary and should not be modified.

*.dll binary

*.exp binary

*.lib binary

*.pdb binary

*.exe binary

================================================

FILE: .github/ISSUE_TEMPLATE.md

================================================

Help us improve the Jupyter Enterprise Gateway project by reporting issues

or asking questions.

## Description

## Screenshots / Logs

If applicable, add screenshots and/or logs to help explain your problem.

To generate better logs, please run the gateway with `--debug` command line parameter.

## Environment

- Enterprise Gateway Version \[e.g. 1.x, 2.x, ...\]

- Platform: \[e.g. YARN, Kubernetes ...\]

- Others \[e.g. Jupyter Server 5.7, JupyterHub 1.0, etc\]

================================================

FILE: .github/codeql/codeql-config.yml

================================================

name: "Enterprise Gateway CodeQL config"

queries:

- uses: security-and-quality

paths-ignore:

- enterprise_gateway/tests

================================================

FILE: .github/dependabot.yml

================================================

version: 2

updates:

# Set update schedule for GitHub Actions

- package-ecosystem: "github-actions"

directory: "/"

schedule:

# Check for updates to GitHub Actions once a week (Mondays by default)

interval: "weekly"

# Set update schedule for pip

- package-ecosystem: "pip"

directory: "/"

schedule:

# Check for updates to Python deps once a week (Mondays by default)

interval: "weekly"

================================================

FILE: .github/workflows/build.yml

================================================

name: Builds

on:

push:

pull_request:

jobs:

build:

runs-on: ${{ matrix.os }}

env:

ASYNC_TEST_TIMEOUT: 60

KERNEL_LAUNCH_TIMEOUT: 120

CONDA_HOME: /usr/share/miniconda

strategy:

fail-fast: false

matrix:

os: [ubuntu-latest]

python-version: ["3.10", "3.11"]

steps:

- name: Checkout

uses: actions/checkout@v4

with:

clean: true

- uses: jupyterlab/maintainer-tools/.github/actions/base-setup@v1

- name: Display dependency info

run: |

python --version

pip --version

conda --version

- name: Add SBT launcher

uses: sbt/setup-sbt@v1

- name: Install Python dependencies

run: |

pip install ".[test]"

- name: Build and install Jupyter Enterprise Gateway

uses: nick-invision/retry@v3.0.0

with:

timeout_minutes: 10

max_attempts: 2

command: |

make clean dist enterprise-gateway-demo test-install-wheel

- name: Log current Python dependencies version

run: |

pip freeze

- name: Run unit tests

uses: nick-invision/retry@v3.0.0

with:

timeout_minutes: 3

max_attempts: 1

command: |

make test

- name: Run integration tests

run: |

# Run integration tests with debug output

make itest-yarn-debug

- name: Collect logs

if: success() || failure()

run: |

python --version

pip --version

pip list

echo "==== Docker Container Logs ===="

docker logs itest-yarn

echo "==== Docker Container Status ===="

docker ps -a

echo "==== Enterprise Gateway Log ===="

docker exec -it itest-yarn cat /usr/local/share/jupyter/enterprise-gateway.log || true

- name: Run linters

run: |

make lint

- name: Bump versions

run: |

pipx run tbump --dry-run --no-tag --no-push 100.100.100rc0

link_check:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: jupyterlab/maintainer-tools/.github/actions/base-setup@v1

with:

python_version: "3.11"

- name: Install Python dependencies

run: |

pip install ".[test]"

- uses: jupyterlab/maintainer-tools/.github/actions/check-links@v1

with:

ignore_links: |-

http://my-gateway-server\.com:8888|https://docs\.openshift\.com/.*|https://docs\.redhat\.com/.*

build_docs:

runs-on: windows-latest

steps:

- name: Checkout

uses: actions/checkout@v4

- name: Base Setup

uses: jupyterlab/maintainer-tools/.github/actions/base-setup@v1

with:

python_version: "3.11"

- name: Build Docs

run: make docs

test_minimum_versions:

name: Test Minimum Versions

timeout-minutes: 20

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: jupyterlab/maintainer-tools/.github/actions/base-setup@v1

with:

python_version: "3.11"

- name: Install dependencies with minimum versions

run: |

pip install ".[test]"

- name: Run the unit tests

run: |

pytest -vv -W default || pytest -vv -W default --lf

make_sdist:

name: Make SDist

runs-on: ubuntu-latest

timeout-minutes: 10

steps:

- uses: actions/checkout@v4

- uses: jupyterlab/maintainer-tools/.github/actions/base-setup@v1

with:

python_version: "3.11"

- uses: jupyterlab/maintainer-tools/.github/actions/make-sdist@v1

test_sdist:

runs-on: ubuntu-latest

needs: [make_sdist]

name: Install from SDist and Test

timeout-minutes: 20

steps:

- uses: jupyterlab/maintainer-tools/.github/actions/base-setup@v1

with:

python_version: "3.11"

- uses: jupyterlab/maintainer-tools/.github/actions/test-sdist@v1

python_tests_check: # This job does nothing and is only used for the branch protection

if: always()

needs:

- build

- link_check

- test_minimum_versions

- build_docs

- test_sdist

runs-on: ubuntu-latest

steps:

- name: Decide whether the needed jobs succeeded or failed

uses: re-actors/alls-green@release/v1

with:

jobs: ${{ toJSON(needs) }}

================================================

FILE: .github/workflows/codeql-analysis.yml

================================================

# For most projects, this workflow file will not need changing; you simply need

# to commit it to your repository.

#

# You may wish to alter this file to override the set of languages analyzed,

# or to provide custom queries or build logic.

#

# ******** NOTE ********

# We have attempted to detect the languages in your repository. Please check

# the `language` matrix defined below to confirm you have the correct set of

# supported CodeQL languages.

#

name: "CodeQL Checks"

on:

push:

branches: [main]

pull_request:

# The branches below must be a subset of the branches above

branches: [main]

schedule:

- cron: "24 7 * * 1"

jobs:

analyze:

name: Analyze

runs-on: ubuntu-latest

permissions:

actions: read

contents: read

security-events: write

strategy:

fail-fast: false

matrix:

language: ["python"]

# CodeQL supports [ 'cpp', 'csharp', 'go', 'java', 'javascript', 'python', 'ruby' ]

# Learn more about CodeQL language support at https://aka.ms/codeql-docs/language-support

steps:

- name: Checkout repository

uses: actions/checkout@v4

with:

# We must fetch at least the immediate parents so that if this is

# a pull request then we can checkout the head.

fetch-depth: 2

# Initializes the CodeQL tools for scanning.

- name: Initialize CodeQL

uses: github/codeql-action/init@v3

with:

languages: ${{ matrix.language }}

config-file: ./.github/codeql/codeql-config.yml

# If you wish to specify custom queries, you can do so here or in a config file.

# By default, queries listed here will override any specified in a config file.

# Prefix the list here with "+" to use these queries and those in the config file.

# Details on CodeQL's query packs refer to : https://docs.github.com/en/code-security/code-scanning/automatically-scanning-your-code-for-vulnerabilities-and-errors/configuring-code-scanning#using-queries-in-ql-packs

# queries: security-extended,security-and-quality

# Autobuild attempts to build any compiled languages (C/C++, C#, or Java).

# If this step fails, then you should remove it and run the build manually (see below)

- name: Autobuild

uses: github/codeql-action/autobuild@v3

# ℹ️ Command-line programs to run using the OS shell.

# 📚 See https://docs.github.com/en/actions/using-workflows/workflow-syntax-for-github-actions#jobsjob_idstepsrun

# If the Autobuild fails above, remove it and uncomment the following three lines.

# modify them (or add more) to build your code if your project, please refer to the EXAMPLE below for guidance.

# - run: |

# echo "Run, Build Application using script"

# ./location_of_script_within_repo/buildscript.sh

- name: Perform CodeQL Analysis

uses: github/codeql-action/analyze@v3

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

# C extensions

*.so

# Distribution / packaging

.Python

env/

build/

develop-eggs/

dist/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

*.egg-info/

.installed.cfg

*.egg

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*,cover

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

# Sphinx documentation

docs/_build/

# PyBuilder

target/

.DS_Store

.ipynb_checkpoints/

# PyCharm

.idea/

*.iml

# Build-related

.image-*

# Jekyll

_site/

.sass-cache/

# Debug-related

.kube/

# vscode ide stuff

*.code-workspace

.history/

.vscode/

# jetbrains ide stuff

*.iml

.idea/

================================================

FILE: .pre-commit-config.yaml

================================================

ci:

autoupdate_schedule: monthly

repos:

- repo: https://github.com/pre-commit/pre-commit-hooks

rev: v4.5.0

hooks:

- id: check-case-conflict

- id: check-ast

- id: check-docstring-first

- id: check-executables-have-shebangs

- id: check-added-large-files

- id: check-case-conflict

- id: check-merge-conflict

- id: check-json

- id: check-toml

- id: check-yaml

exclude: etc/kubernetes/.*.yaml

- id: end-of-file-fixer

- id: trailing-whitespace

- repo: https://github.com/python-jsonschema/check-jsonschema

rev: 0.27.4

hooks:

- id: check-github-workflows

- repo: https://github.com/executablebooks/mdformat

rev: 0.7.17

hooks:

- id: mdformat

additional_dependencies:

[mdformat-gfm, mdformat-frontmatter, mdformat-footnote]

- repo: https://github.com/psf/black

rev: 24.2.0

hooks:

- id: black

- repo: https://github.com/charliermarsh/ruff-pre-commit

rev: v0.3.0

hooks:

- id: ruff

args: ["--fix"]

================================================

FILE: .readthedocs.yaml

================================================

version: 2

build:

os: "ubuntu-22.04"

tools:

python: "mambaforge-22.9"

sphinx:

configuration: docs/source/conf.py

conda:

environment: docs/environment.yml

================================================

FILE: LICENSE.md

================================================

# Licensing terms

This project is licensed under the terms of the Modified BSD License

(also known as New or Revised or 3-Clause BSD), as follows:

- Copyright (c) 2001-2015, IPython Development Team

- Copyright (c) 2015-, Jupyter Development Team

All rights reserved.

Redistribution and use in source and binary forms, with or without

modification, are permitted provided that the following conditions are met:

Redistributions of source code must retain the above copyright notice, this

list of conditions and the following disclaimer.

Redistributions in binary form must reproduce the above copyright notice, this

list of conditions and the following disclaimer in the documentation and/or

other materials provided with the distribution.

Neither the name of the Jupyter Development Team nor the names of its

contributors may be used to endorse or promote products derived from this

software without specific prior written permission.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND

ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED

WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE

DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT OWNER OR CONTRIBUTORS BE LIABLE

FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL

DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR

SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY,

OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

## About the Jupyter Development Team

The Jupyter Development Team is the set of all contributors to the Jupyter project.

This includes all of the Jupyter Subprojects, which are the different repositories

under the [jupyter](https://github.com/jupyter/) GitHub organization.

The core team that coordinates development on GitHub can be found here:

https://github.com/jupyter/.

## Our copyright policy

Jupyter uses a shared copyright model. Each contributor maintains copyright

over their contributions to Jupyter. But, it is important to note that these

contributions are typically only changes to the repositories. Thus, the Jupyter

source code, in its entirety is not the copyright of any single person or

institution. Instead, it is the collective copyright of the entire Jupyter

Development Team. If individual contributors want to maintain a record of what

changes/contributions they have specific copyright on, they should indicate

their copyright in the commit message of the change, when they commit the

change to one of the Jupyter repositories.

With this in mind, the following banner should be used in any source code file

to indicate the copyright and license terms:

```

# Copyright (c) Jupyter Development Team.

# Distributed under the terms of the Modified BSD License.

```

================================================

FILE: Makefile

================================================

# Copyright (c) Jupyter Development Team.

# Distributed under the terms of the Modified BSD License.

.PHONY: help clean clean-env dev dev-http docs install bdist sdist test release check_dists \

clean-images clean-enterprise-gateway clean-demo-base clean-kernel-images clean-enterprise-gateway \

clean-kernel-py clean-kernel-spark-py clean-kernel-r clean-kernel-spark-r clean-kernel-scala clean-kernel-tf-py \

clean-kernel-tf-gpu-py clean-kernel-image-puller push-images push-enterprise-gateway-demo push-demo-base \

push-kernel-images push-enterprise-gateway push-kernel-py push-kernel-spark-py push-kernel-r push-kernel-spark-r \

push-kernel-scala push-kernel-tf-py push-kernel-tf-gpu-py push-kernel-image-puller publish helm-chart

SA?=source activate

ENV:=enterprise-gateway-dev

SHELL:=/bin/bash

MULTIARCH_BUILD?=

TARGET_ARCH?=undefined

VERSION?=3.3.0.dev0

SPARK_VERSION?=3.2.1

ifeq (dev, $(findstring dev, $(VERSION)))

TAG:=dev

else

TAG:=$(VERSION)

endif

WHEEL_FILES:=$(shell find . -type f ! -path "./build/*" ! -path "./etc/*" ! -path "./docs/*" ! -path "./.git/*" ! -path "./.idea/*" ! -path "./dist/*" ! -path "./.image-*" ! -path "*/__pycache__/*" )

WHEEL_FILE:=dist/jupyter_enterprise_gateway-$(VERSION)-py3-none-any.whl

SDIST_FILE:=dist/jupyter_enterprise_gateway-$(VERSION).tar.gz

DIST_FILES=$(WHEEL_FILE) $(SDIST_FILE)

HELM_DESIRED_VERSION:=v3.18.3 # Pin the version of helm to use (v3.18.3 is latest as of 6/21/25)

HELM_CHART_VERSION:=$(shell grep version: etc/kubernetes/helm/enterprise-gateway/Chart.yaml | sed 's/version: //')

HELM_CHART_PACKAGE:=dist/enterprise-gateway-$(HELM_CHART_VERSION).tgz

HELM_CHART:=dist/jupyter_enterprise_gateway_helm-$(VERSION).tar.gz

HELM_CHART_DIR:=etc/kubernetes/helm/enterprise-gateway

HELM_CHART_FILES:=$(shell find $(HELM_CHART_DIR) -type f ! -name .DS_Store)

HELM_INSTALL_DIR?=/usr/local/bin

help:

# http://marmelab.com/blog/2016/02/29/auto-documented-makefile.html

@grep -E '^[a-zA-Z0-9_-]+:.*?## .*$$' $(MAKEFILE_LIST) | sort | awk 'BEGIN {FS = ":.*?## "}; {printf "\033[36m%-30s\033[0m %s\n", $$1, $$2}'

env: ## Make a dev environment

-conda env create --file requirements.yml --name $(ENV)

-conda env config vars set PYTHONPATH=$(PWD) --name $(ENV)

activate: ## Print instructions to activate the virtualenv (default: enterprise-gateway-dev)

@echo "Run \`$(SA) $(ENV)\` to activate the environment."

clean: ## Make a clean source tree

-rm -rf dist

-rm -rf build

-rm -rf *.egg-info

-find . -name target -type d -exec rm -fr {} +

-find . -name __pycache__ -type d -exec rm -fr {} +

-find enterprise_gateway -name '*.pyc' -exec rm -fr {} +

-find website -name '.sass-cache' -type d -exec rm -fr {} +

-find website -name '_site' -type d -exec rm -fr {} +

-find website -name 'build' -type d -exec rm -fr {} +

-make -C docs clean

-make -C etc clean

clean-env: ## Remove conda env

-conda env remove -n $(ENV) -y

lint: ## Check code style

@pip install -q -e ".[lint]"

@pip install -q pipx

ruff check .

black --check --diff --color .

mdformat --check *.md

pipx run 'validate-pyproject[all]' pyproject.toml

pipx run interrogate -v .

run-dev: test-install-wheel ## Make a server in jupyter_websocket mode

python enterprise_gateway

docs: ## Make HTML documentation

make -C docs requirements html SPHINXOPTS="-W"

kernelspecs: kernelspecs_all kernelspecs_yarn kernelspecs_conductor kernelspecs_kubernetes kernelspecs_docker kernel_image_files ## Create archives with sample kernelspecs

kernelspecs_all kernelspecs_yarn kernelspecs_conductor kernelspecs_kubernetes kernelspecs_docker kernel_image_files:

make VERSION=$(VERSION) TAG=$(TAG) SPARK_VERSION=$(SPARK_VERSION) -C etc $@

test-install: dist test-install-wheel test-install-tar ## Install and minimally run EG with the wheel and tar distributions

test-install-wheel:

pip uninstall -y jupyter_enterprise_gateway

pip install dist/jupyter_enterprise_gateway-*.whl && \

jupyter enterprisegateway --help

test-install-tar:

pip uninstall -y jupyter_enterprise_gateway

pip install dist/jupyter_enterprise_gateway-*.tar.gz && \

jupyter enterprisegateway --help

bdist: $(WHEEL_FILE)

$(WHEEL_FILE): $(WHEEL_FILES)

pip install build && python -m build --wheel . \

&& rm -rf *.egg-info && chmod 0755 dist/*.*

sdist: $(SDIST_FILE)

$(SDIST_FILE): $(WHEEL_FILES)

pip install build && python -m build --sdist . \

&& rm -rf *.egg-info && chmod 0755 dist/*.*

helm-chart: helm-install $(HELM_CHART) ## Make helm chart distribution

helm-install: $(HELM_INSTALL_DIR)/helm

$(HELM_INSTALL_DIR)/helm: # Download and install helm

curl https://raw.githubusercontent.com/helm/helm/master/scripts/get-helm-3 -o /tmp/get_helm.sh \

&& chmod +x /tmp/get_helm.sh \

&& DESIRED_VERSION=$(HELM_DESIRED_VERSION) /tmp/get_helm.sh \

&& rm -f /tmp/get_helm.sh

helm-lint: helm-clean

helm lint $(HELM_CHART_DIR)

helm-clean: # Remove any .DS_Store files that might wind up in the package

$(shell find etc/kubernetes/helm -type f -name '.DS_Store' -exec rm -f {} \;)

$(HELM_CHART): $(HELM_CHART_FILES)

make helm-lint

helm package $(HELM_CHART_DIR) -d dist

mv $(HELM_CHART_PACKAGE) $(HELM_CHART) # Rename output to match other assets

dist: lint bdist sdist kernelspecs helm-chart ## Make source, binary, kernelspecs and helm chart distributions to dist folder

TEST_DEBUG_OPTS:=

test-debug:

make TEST_DEBUG_OPTS="--nocapture --nologcapture --logging-level=10" test

test: TEST?=

test: ## Run unit tests

ifeq ($(TEST),)

pytest -vv $(TEST_DEBUG_OPTS)

else

# e.g., make test TEST="test_gatewayapp.py::TestGatewayAppConfig"

pytest -vv $(TEST_DEBUG_OPTS) enterprise_gateway/tests/$(TEST)

endif

release: dist check_dists ## Make a wheel + source release on PyPI

twine upload $(DIST_FILES)

check_dists:

pip install twine && twine check --strict $(DIST_FILES)

# Here for doc purposes

docker-images: ## Build docker images (includes kernel-based images)

kernel-images: ## Build kernel-based docker images

# Actual working targets...

docker-images: demo-base enterprise-gateway-demo kernel-images enterprise-gateway kernel-py kernel-spark-py kernel-r kernel-spark-r kernel-scala kernel-tf-py kernel-tf-gpu-py kernel-image-puller

enterprise-gateway-demo kernel-images enterprise-gateway kernel-py kernel-spark-py kernel-r kernel-spark-r kernel-scala kernel-tf-py kernel-tf-gpu-py kernel-image-puller:

make WHEEL_FILE=$(WHEEL_FILE) VERSION=$(VERSION) NO_CACHE=$(NO_CACHE) TAG=$(TAG) SPARK_VERSION=$(SPARK_VERSION) MULTIARCH_BUILD=$(MULTIARCH_BUILD) TARGET_ARCH=$(TARGET_ARCH) -C etc $@

demo-base:

make WHEEL_FILE=$(WHEEL_FILE) VERSION=$(VERSION) NO_CACHE=$(NO_CACHE) TAG=$(SPARK_VERSION) SPARK_VERSION=$(SPARK_VERSION) MULTIARCH_BUILD=$(MULTIARCH_BUILD) TARGET_ARCH=$(TARGET_ARCH) -C etc $@

# Here for doc purposes

clean-images: clean-demo-base ## Remove docker images (includes kernel-based images)

clean-kernel-images: ## Remove kernel-based images

clean-images clean-enterprise-gateway-demo clean-kernel-images clean-enterprise-gateway clean-kernel-py clean-kernel-spark-py clean-kernel-r clean-kernel-spark-r clean-kernel-scala clean-kernel-tf-py clean-kernel-tf-gpu-py clean-kernel-image-puller:

make WHEEL_FILE=$(WHEEL_FILE) VERSION=$(VERSION) TAG=$(TAG) -C etc $@

clean-demo-base:

make WHEEL_FILE=$(WHEEL_FILE) VERSION=$(VERSION) TAG=$(SPARK_VERSION) -C etc $@

push-images: push-demo-base

push-images push-enterprise-gateway-demo push-kernel-images push-enterprise-gateway push-kernel-py push-kernel-spark-py push-kernel-r push-kernel-spark-r push-kernel-scala push-kernel-tf-py push-kernel-tf-gpu-py push-kernel-image-puller:

make WHEEL_FILE=$(WHEEL_FILE) VERSION=$(VERSION) TAG=$(TAG) -C etc $@

push-demo-base:

make WHEEL_FILE=$(WHEEL_FILE) VERSION=$(VERSION) TAG=$(SPARK_VERSION) -C etc $@

publish: NO_CACHE=--no-cache

publish: clean clean-images dist docker-images push-images

# itest should have these targets up to date: bdist kernelspecs docker-enterprise-gateway

itest: itest-docker itest-yarn

# itest configurable settings

# indicates two things:

# this prefix is used by itest to determine hostname to test against, in addtion,

# if itests will be run locally with docker-prep target, this will set the hostname within that container as well

ITEST_HOSTNAME_PREFIX?=itest

# indicates the user to emulate. This equates to 'KERNEL_USERNAME'...

ITEST_USER?=bob

# indicates the other set of options to use. At this time, only the python notebooks succeed, so we're skipping R and Scala.

ITEST_OPTIONS?=

# here's an example of the options (besides host and user) with their expected values ...

# ITEST_OPTIONS=--impersonation < True | False >

ITEST_YARN_PORT?=8888

ITEST_YARN_HOST?=localhost:$(ITEST_YARN_PORT)

ITEST_YARN_TESTS?=enterprise_gateway/itests

ITEST_KERNEL_LAUNCH_TIMEOUT=120

LOG_LEVEL=INFO

itest-yarn-debug: ## Run integration tests (optionally) against docker demo (YARN) container with print statements

make LOG_LEVEL=DEBUG TEST_DEBUG_OPTS="--log-level=10" itest-yarn

PREP_ITEST_YARN?=1

itest-yarn: ## Run integration tests (optionally) against docker demo (YARN) container

ifeq (1, $(PREP_ITEST_YARN))

make itest-yarn-prep

endif

(GATEWAY_HOST=$(ITEST_YARN_HOST) LOG_LEVEL=$(LOG_LEVEL) KERNEL_USERNAME=$(ITEST_USER) KERNEL_LAUNCH_TIMEOUT=$(ITEST_KERNEL_LAUNCH_TIMEOUT) SPARK_VERSION=$(SPARK_VERSION) ITEST_HOSTNAME_PREFIX=$(ITEST_HOSTNAME_PREFIX) pytest -vv $(TEST_DEBUG_OPTS) $(ITEST_YARN_TESTS))

@echo "Run \`docker logs itest-yarn\` to see enterprise-gateway log."

PREP_TIMEOUT?=60

itest-yarn-prep:

@-docker rm -f itest-yarn >> /dev/null

@echo "Starting enterprise-gateway container (run \`docker logs itest-yarn\` to see container log)..."

@-docker run -itd -p $(ITEST_YARN_PORT):$(ITEST_YARN_PORT) -p 8088:8088 -p 8042:8042 -h itest-yarn --name itest-yarn -v `pwd`/enterprise_gateway/itests:/tmp/byok elyra/enterprise-gateway-demo:$(TAG) --gateway

@(r="1"; attempts=0; while [ "$$r" == "1" -a $$attempts -lt $(PREP_TIMEOUT) ]; do echo "Waiting for enterprise-gateway to start..."; sleep 2; ((attempts++)); docker logs itest-yarn |grep --regexp "Jupyter Enterprise Gateway .* is available at http"; r=$$?; done; if [ $$attempts -ge $(PREP_TIMEOUT) ]; then echo "Wait for startup timed out!"; exit 1; fi;)

# This should get cleaned up once docker support is more mature

ITEST_DOCKER_PORT?=8889

ITEST_DOCKER_HOST?=localhost:$(ITEST_DOCKER_PORT)

ITEST_DOCKER_TESTS?=enterprise_gateway/itests/test_r_kernel.py::TestRKernelLocal enterprise_gateway/itests/test_python_kernel.py::TestPythonKernelLocal enterprise_gateway/itests/test_scala_kernel.py::TestScalaKernelLocal

ITEST_DOCKER_KERNELS=PYTHON_KERNEL_LOCAL_NAME=python_docker SCALA_KERNEL_LOCAL_NAME=scala_docker R_KERNEL_LOCAL_NAME=R_docker

itest-docker-debug: ## Run integration tests (optionally) against docker container with print statements

make LOG_LEVEL=DEBUG TEST_DEBUG_OPTS="--nocapture --nologcapture --logging-level=10" itest-docker

PREP_ITEST_DOCKER?=1

itest-docker: ## Run integration tests (optionally) against docker swarm

ifeq (1, $(PREP_ITEST_DOCKER))

make itest-docker-prep

endif

(GATEWAY_HOST=$(ITEST_DOCKER_HOST) LOG_LEVEL=$(LOG_LEVEL) KERNEL_USERNAME=$(ITEST_USER) KERNEL_LAUNCH_TIMEOUT=$(ITEST_KERNEL_LAUNCH_TIMEOUT) $(ITEST_DOCKER_KERNELS) ITEST_HOSTNAME_PREFIX=$(ITEST_USER) pytest -vv $(TEST_DEBUG_OPTS) $(ITEST_DOCKER_TESTS))

@echo "Run \`docker service logs itest-docker\` to see enterprise-gateway log."

PREP_TIMEOUT?=180

itest-docker-prep:

@-docker service rm enterprise-gateway_enterprise-gateway enterprise-gateway_enterprise-gateway-proxy

@-docker swarm leave --force

# Check if swarm mode is active, if not attempt to create the swarm

@(docker info | grep -q 'Swarm: active'; if [ $$? -eq 1 ]; then docker swarm init; fi;)

@echo "Starting enterprise-gateway swarm service (run \`docker service logs enterprise-gateway_enterprise-gateway\` to see service log)..."

@KG_PORT=${ITEST_DOCKER_PORT} EG_DOCKER_NETWORK=enterprise-gateway docker stack deploy -c etc/docker/docker-compose.yml enterprise-gateway

@(r="1"; attempts=0; while [ "$$r" == "1" -a $$attempts -lt $(PREP_TIMEOUT) ]; do echo "Waiting for enterprise-gateway to start..."; sleep 2; ((attempts++)); docker service logs enterprise-gateway_enterprise-gateway 2>&1 |grep --regexp "Jupyter Enterprise Gateway .* is available at http"; r=$$?; done; if [ $$attempts -ge $(PREP_TIMEOUT) ]; then echo "Wait for startup timed out!"; exit 1; fi;)

================================================

FILE: README.md

================================================

**[Website](https://jupyter-enterprise-gateway.readthedocs.io/)** |

**[Technical Overview](#technical-overview)** |

**[Installation](#installation)** |

**[System Architecture](#system-architecture)** |

**[Contributing](#contributing)**

# Jupyter Enterprise Gateway

[](https://github.com/jupyter-server/enterprise_gateway/actions)

[](https://badge.fury.io/py/jupyter-enterprise-gateway)

[](https://pepy.tech/project/jupyter-enterprise-gateway)

[](https://jupyter-enterprise-gateway.readthedocs.io/en/latest/?badge=latest)

[](https://groups.google.com/forum/#!forum/jupyter)

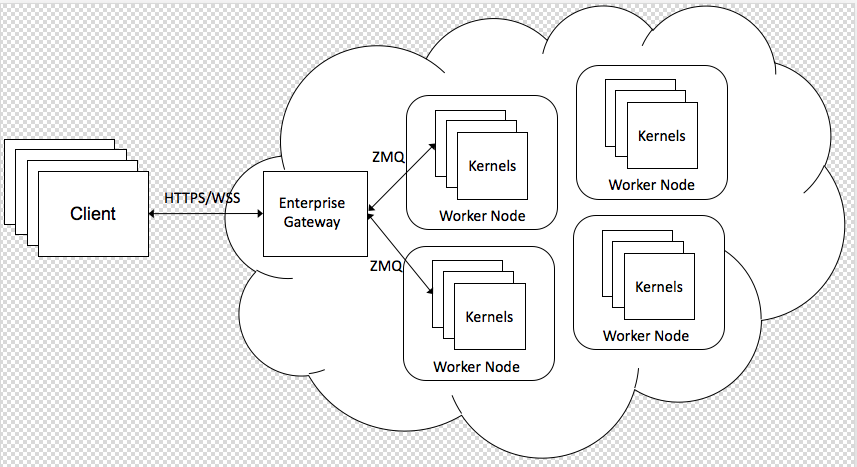

Jupyter Enterprise Gateway enables Jupyter Notebook to launch remote kernels in a distributed cluster,

including Apache Spark managed by YARN, IBM Spectrum Conductor, Kubernetes or Docker Swarm.

It provides out of the box support for the following kernels:

- Python using IPython kernel

- R using IRkernel

- Scala using Apache Toree kernel

Full Documentation for Jupyter Enterprise Gateway can be found [here](https://jupyter-enterprise-gateway.readthedocs.io/en/latest)

Jupyter Enterprise Gateway does not manage multiple Jupyter Notebook deployments, for that

you should use [JupyterHub](https://github.com/jupyterhub/jupyterhub).

## Technical Overview

Jupyter Enterprise Gateway is a web server that provides headless access to Jupyter kernels within

an enterprise. Inspired by Jupyter Kernel Gateway, Jupyter Enterprise Gateway provides feature parity with Kernel Gateway's [jupyter-websocket mode](https://jupyter-kernel-gateway.readthedocs.io/en/latest/websocket-mode.html) in addition to the following:

- Adds support for remote kernels hosted throughout the enterprise where kernels can be launched in

the following ways:

- Local to the Enterprise Gateway server (today's Kernel Gateway behavior)

- On specific nodes of the cluster utilizing a round-robin algorithm

- On nodes identified by an associated resource manager

- Provides support for Apache Spark managed by YARN, IBM Spectrum Conductor, Kubernetes or Docker Swarm out of the box. Others can be configured via Enterprise Gateway's extensible framework.

- Secure communication from the client, through the Enterprise Gateway server, to the kernels

- Multi-tenant capabilities

- Persistent kernel sessions

- Ability to associate profiles consisting of configuration settings to a kernel for a given user (see [Project Roadmap](https://jupyter-enterprise-gateway.readthedocs.io/en/latest/contributors/roadmap.html))

## Installation

Detailed installation instructions are located in the

[Users Guide](https://jupyter-enterprise-gateway.readthedocs.io/en/latest/users/index.html)

of the project docs. Here's a quick start using `pip`:

```bash

# install from pypi

pip install --upgrade jupyter_enterprise_gateway

# show all config options

jupyter enterprisegateway --help-all

# run it with default options

jupyter enterprisegateway

```

Please check the [configuration options within the Operators Guide](https://jupyter-enterprise-gateway.readthedocs.io/en/latest/operators/index.html#configuring-enterprise-gateway)

for information about the supported options.

## System Architecture

The [System Architecture page](https://jupyter-enterprise-gateway.readthedocs.io/en/latest/contributors/system-architecture.html)

includes information about Enterprise Gateway's remote kernel, process proxy, and launcher frameworks.

## Contributing

The [Contribution page](https://jupyter-enterprise-gateway.readthedocs.io/en/latest/contributors/contrib.html) includes

information about how to contribute to Enterprise Gateway along with our roadmap. While there, you'll want to

[set up a development environment](https://jupyter-enterprise-gateway.readthedocs.io/en/latest/contributors/devinstall.html) and check out typical developer tasks.

================================================

FILE: codecov.yml

================================================

codecov:

notify:

require_ci_to_pass: yes

coverage:

precision: 2

round: down

range: "70...100"

status:

project: no

patch: no

changes: no

parsers:

gcov:

branch_detection:

conditional: yes

loop: yes

method: no

macro: no

comment: off

================================================

FILE: conftest.py

================================================

def pytest_addoption(parser):

parser.addoption("--host", action="store", default="localhost:8888")

parser.addoption("--username", action="store", default="elyra")

parser.addoption("--impersonation", action="store", default="false")

def pytest_generate_tests(metafunc):

# This is called for every test. Only get/set command line arguments

# if the argument is specified in the list of test "fixturenames".

if "host" in metafunc.fixturenames:

metafunc.parametrize("host", [metafunc.config.option.host])

if "username" in metafunc.fixturenames:

metafunc.parametrize("username", [metafunc.config.option.username])

if "impersonation" in metafunc.fixturenames:

metafunc.parametrize("impersonation", [metafunc.config.option.impersonation])

================================================

FILE: docs/Makefile

================================================

# Makefile for Sphinx documentation

#

# You can set these variables from the command line.

SPHINXOPTS = -n

SPHINXBUILD = sphinx-build

PAPER =

BUILDDIR = build

# Internal variables.

PAPEROPT_a4 = -D latex_paper_size=a4

PAPEROPT_letter = -D latex_paper_size=letter

ALLSPHINXOPTS = -d $(BUILDDIR)/doctrees $(PAPEROPT_$(PAPER)) $(SPHINXOPTS) source

# the i18n builder cannot share the environment and doctrees with the others

I18NSPHINXOPTS = $(PAPEROPT_$(PAPER)) $(SPHINXOPTS) source

DOC_REQUIREMENTS = doc-requirements.txt

.PHONY: help clean html dirhtml singlehtml pickle json htmlhelp qthelp devhelp epub latex latexpdf text man changes linkcheck doctest coverage gettext requirements

help:

@echo "Please use \`make <target>' where <target> is one of"

@echo " requirements to install required packages"

@echo " html to make standalone HTML files"

@echo " dirhtml to make HTML files named index.html in directories"

@echo " singlehtml to make a single large HTML file"

@echo " pickle to make pickle files"

@echo " json to make JSON files"

@echo " htmlhelp to make HTML files and a HTML help project"

@echo " qthelp to make HTML files and a qthelp project"

@echo " applehelp to make an Apple Help Book"

@echo " devhelp to make HTML files and a Devhelp project"

@echo " epub to make an epub"

@echo " latex to make LaTeX files, you can set PAPER=a4 or PAPER=letter"

@echo " latexpdf to make LaTeX files and run them through pdflatex"

@echo " latexpdfja to make LaTeX files and run them through platex/dvipdfmx"

@echo " text to make text files"

@echo " man to make manual pages"

@echo " texinfo to make Texinfo files"

@echo " info to make Texinfo files and run them through makeinfo"

@echo " gettext to make PO message catalogs"

@echo " changes to make an overview of all changed/added/deprecated items"

@echo " xml to make Docutils-native XML files"

@echo " pseudoxml to make pseudoxml-XML files for display purposes"

@echo " linkcheck to check all external links for integrity"

@echo " doctest to run all doctests embedded in the documentation (if enabled)"

@echo " coverage to run coverage check of the documentation (if enabled)"

clean:

rm -rf $(BUILDDIR)/*

requirements:

pip install -q -r $(DOC_REQUIREMENTS)

html:

$(SPHINXBUILD) -b html $(ALLSPHINXOPTS) $(BUILDDIR)/html

@echo

@echo "Build finished. The HTML pages are in $(BUILDDIR)/html."

dirhtml:

$(SPHINXBUILD) -b dirhtml $(ALLSPHINXOPTS) $(BUILDDIR)/dirhtml

@echo

@echo "Build finished. The HTML pages are in $(BUILDDIR)/dirhtml."

singlehtml:

$(SPHINXBUILD) -b singlehtml $(ALLSPHINXOPTS) $(BUILDDIR)/singlehtml

@echo

@echo "Build finished. The HTML page is in $(BUILDDIR)/singlehtml."

pickle:

$(SPHINXBUILD) -b pickle $(ALLSPHINXOPTS) $(BUILDDIR)/pickle

@echo

@echo "Build finished; now you can process the pickle files."

json:

$(SPHINXBUILD) -b json $(ALLSPHINXOPTS) $(BUILDDIR)/json

@echo

@echo "Build finished; now you can process the JSON files."

htmlhelp:

$(SPHINXBUILD) -b htmlhelp $(ALLSPHINXOPTS) $(BUILDDIR)/htmlhelp

@echo

@echo "Build finished; now you can run HTML Help Workshop with the" \

".hhp project file in $(BUILDDIR)/htmlhelp."

qthelp:

$(SPHINXBUILD) -b qthelp $(ALLSPHINXOPTS) $(BUILDDIR)/qthelp

@echo

@echo "Build finished; now you can run "qcollectiongenerator" with the" \

".qhcp project file in $(BUILDDIR)/qthelp, like this:"

@echo "# qcollectiongenerator $(BUILDDIR)/qthelp/JupyterHub.qhcp"

@echo "To view the help file:"

@echo "# assistant -collectionFile $(BUILDDIR)/qthelp/JupyterHub.qhc"

applehelp:

$(SPHINXBUILD) -b applehelp $(ALLSPHINXOPTS) $(BUILDDIR)/applehelp

@echo

@echo "Build finished. The help book is in $(BUILDDIR)/applehelp."

@echo "N.B. You won't be able to view it unless you put it in" \

"~/Library/Documentation/Help or install it in your application" \

"bundle."

devhelp:

$(SPHINXBUILD) -b devhelp $(ALLSPHINXOPTS) $(BUILDDIR)/devhelp

@echo

@echo "Build finished."

@echo "To view the help file:"

@echo "# mkdir -p $$HOME/.local/share/devhelp/JupyterHub"

@echo "# ln -s $(BUILDDIR)/devhelp $$HOME/.local/share/devhelp/JupyterHub"

@echo "# devhelp"

epub:

$(SPHINXBUILD) -b epub $(ALLSPHINXOPTS) $(BUILDDIR)/epub

@echo

@echo "Build finished. The epub file is in $(BUILDDIR)/epub."

latex:

$(SPHINXBUILD) -b latex $(ALLSPHINXOPTS) $(BUILDDIR)/latex

@echo

@echo "Build finished; the LaTeX files are in $(BUILDDIR)/latex."

@echo "Run \`make' in that directory to run these through (pdf)latex" \

"(use \`make latexpdf' here to do that automatically)."

latexpdf:

$(SPHINXBUILD) -b latex $(ALLSPHINXOPTS) $(BUILDDIR)/latex

@echo "Running LaTeX files through pdflatex..."

$(MAKE) -C $(BUILDDIR)/latex all-pdf

@echo "pdflatex finished; the PDF files are in $(BUILDDIR)/latex."

latexpdfja:

$(SPHINXBUILD) -b latex $(ALLSPHINXOPTS) $(BUILDDIR)/latex

@echo "Running LaTeX files through platex and dvipdfmx..."

$(MAKE) -C $(BUILDDIR)/latex all-pdf-ja

@echo "pdflatex finished; the PDF files are in $(BUILDDIR)/latex."

text:

$(SPHINXBUILD) -b text $(ALLSPHINXOPTS) $(BUILDDIR)/text

@echo

@echo "Build finished. The text files are in $(BUILDDIR)/text."

man:

$(SPHINXBUILD) -b man $(ALLSPHINXOPTS) $(BUILDDIR)/man

@echo

@echo "Build finished. The manual pages are in $(BUILDDIR)/man."

texinfo:

$(SPHINXBUILD) -b texinfo $(ALLSPHINXOPTS) $(BUILDDIR)/texinfo

@echo

@echo "Build finished. The Texinfo files are in $(BUILDDIR)/texinfo."

@echo "Run \`make' in that directory to run these through makeinfo" \

"(use \`make info' here to do that automatically)."

info:

$(SPHINXBUILD) -b texinfo $(ALLSPHINXOPTS) $(BUILDDIR)/texinfo

@echo "Running Texinfo files through makeinfo..."

make -C $(BUILDDIR)/texinfo info

@echo "makeinfo finished; the Info files are in $(BUILDDIR)/texinfo."

gettext:

$(SPHINXBUILD) -b gettext $(I18NSPHINXOPTS) $(BUILDDIR)/locale

@echo

@echo "Build finished. The message catalogs are in $(BUILDDIR)/locale."

changes:

$(SPHINXBUILD) -b changes $(ALLSPHINXOPTS) $(BUILDDIR)/changes

@echo

@echo "The overview file is in $(BUILDDIR)/changes."

linkcheck:

$(SPHINXBUILD) -b linkcheck $(ALLSPHINXOPTS) $(BUILDDIR)/linkcheck

@echo

@echo "Link check complete; look for any errors in the above output " \

"or in $(BUILDDIR)/linkcheck/output.txt."

doctest:

$(SPHINXBUILD) -b doctest $(ALLSPHINXOPTS) $(BUILDDIR)/doctest

@echo "Testing of doctests in the sources finished, look at the " \

"results in $(BUILDDIR)/doctest/output.txt."

coverage:

$(SPHINXBUILD) -b coverage $(ALLSPHINXOPTS) $(BUILDDIR)/coverage

@echo "Testing of coverage in the sources finished, look at the " \

"results in $(BUILDDIR)/coverage/python.txt."

xml:

$(SPHINXBUILD) -b xml $(ALLSPHINXOPTS) $(BUILDDIR)/xml

@echo

@echo "Build finished. The XML files are in $(BUILDDIR)/xml."

pseudoxml:

$(SPHINXBUILD) -b pseudoxml $(ALLSPHINXOPTS) $(BUILDDIR)/pseudoxml

@echo

@echo "Build finished. The pseudo-XML files are in $(BUILDDIR)/pseudoxml."

================================================

FILE: docs/doc-requirements.txt

================================================

# https://github.com/miyakogi/m2r/issues/66

mistune<4

myst-parser

pydata_sphinx_theme

sphinx

sphinx-markdown-tables

sphinx_book_theme

sphinxcontrib-mermaid

sphinxcontrib-openapi

sphinxcontrib_github_alt

sphinxcontrib_spelling

sphinxemoji

tornado

================================================

FILE: docs/environment.yml

================================================

name: enterprise_gateway_docs

channels:

- conda-forge

- defaults

- free

dependencies:

- pip

- python=3.11

- pip:

- -r doc-requirements.txt

================================================

FILE: docs/make.bat

================================================

@ECHO OFF

REM Command file for Sphinx documentation

if "%SPHINXBUILD%" == "" (

set SPHINXBUILD=sphinx-build

)

set BUILDDIR=build

set ALLSPHINXOPTS=-d %BUILDDIR%/doctrees %SPHINXOPTS% source

set I18NSPHINXOPTS=%SPHINXOPTS% source

if NOT "%PAPER%" == "" (

set ALLSPHINXOPTS=-D latex_paper_size=%PAPER% %ALLSPHINXOPTS%

set I18NSPHINXOPTS=-D latex_paper_size=%PAPER% %I18NSPHINXOPTS%

)

if "%1" == "" goto help

if "%1" == "help" (

:help

echo.Please use `make ^<target^>` where ^<target^> is one of

echo. html to make standalone HTML files

echo. dirhtml to make HTML files named index.html in directories

echo. singlehtml to make a single large HTML file

echo. pickle to make pickle files

echo. json to make JSON files

echo. htmlhelp to make HTML files and a HTML help project

echo. qthelp to make HTML files and a qthelp project

echo. devhelp to make HTML files and a Devhelp project

echo. epub to make an epub

echo. latex to make LaTeX files, you can set PAPER=a4 or PAPER=letter

echo. text to make text files

echo. man to make manual pages

echo. texinfo to make Texinfo files

echo. gettext to make PO message catalogs

echo. changes to make an overview over all changed/added/deprecated items

echo. xml to make Docutils-native XML files

echo. pseudoxml to make pseudoxml-XML files for display purposes

echo. linkcheck to check all external links for integrity

echo. doctest to run all doctests embedded in the documentation if enabled

echo. coverage to run coverage check of the documentation if enabled

goto end

)

if "%1" == "clean" (

for /d %%i in (%BUILDDIR%\*) do rmdir /q /s %%i

del /q /s %BUILDDIR%\*

goto end

)

REM Check if sphinx-build is available and fallback to Python version if any

%SPHINXBUILD% 1>NUL 2>NUL

if errorlevel 9009 goto sphinx_python

goto sphinx_ok

:sphinx_python

set SPHINXBUILD=python -m sphinx.__init__

%SPHINXBUILD% 2> nul

if errorlevel 9009 (

echo.

echo.The 'sphinx-build' command was not found. Make sure you have Sphinx

echo.installed, then set the SPHINXBUILD environment variable to point

echo.to the full path of the 'sphinx-build' executable. Alternatively you

echo.may add the Sphinx directory to PATH.

echo.

echo.If you don't have Sphinx installed, grab it from

echo.https://sphinx-doc.org/

exit /b 1

)

:sphinx_ok

if "%1" == "html" (

%SPHINXBUILD% -b html %ALLSPHINXOPTS% %BUILDDIR%/html

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The HTML pages are in %BUILDDIR%/html.

goto end

)

if "%1" == "dirhtml" (

%SPHINXBUILD% -b dirhtml %ALLSPHINXOPTS% %BUILDDIR%/dirhtml

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The HTML pages are in %BUILDDIR%/dirhtml.

goto end

)

if "%1" == "singlehtml" (

%SPHINXBUILD% -b singlehtml %ALLSPHINXOPTS% %BUILDDIR%/singlehtml

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The HTML pages are in %BUILDDIR%/singlehtml.

goto end

)

if "%1" == "pickle" (

%SPHINXBUILD% -b pickle %ALLSPHINXOPTS% %BUILDDIR%/pickle

if errorlevel 1 exit /b 1

echo.

echo.Build finished; now you can process the pickle files.

goto end

)

if "%1" == "json" (

%SPHINXBUILD% -b json %ALLSPHINXOPTS% %BUILDDIR%/json

if errorlevel 1 exit /b 1

echo.

echo.Build finished; now you can process the JSON files.

goto end

)

if "%1" == "htmlhelp" (

%SPHINXBUILD% -b htmlhelp %ALLSPHINXOPTS% %BUILDDIR%/htmlhelp

if errorlevel 1 exit /b 1

echo.

echo.Build finished; now you can run HTML Help Workshop with the ^

.hhp project file in %BUILDDIR%/htmlhelp.

goto end

)

if "%1" == "qthelp" (

%SPHINXBUILD% -b qthelp %ALLSPHINXOPTS% %BUILDDIR%/qthelp

if errorlevel 1 exit /b 1

echo.

echo.Build finished; now you can run "qcollectiongenerator" with the ^

.qhcp project file in %BUILDDIR%/qthelp, like this:

echo.^> qcollectiongenerator %BUILDDIR%\qthelp\JupyterHub.qhcp

echo.To view the help file:

echo.^> assistant -collectionFile %BUILDDIR%\qthelp\JupyterHub.ghc

goto end

)

if "%1" == "devhelp" (

%SPHINXBUILD% -b devhelp %ALLSPHINXOPTS% %BUILDDIR%/devhelp

if errorlevel 1 exit /b 1

echo.

echo.Build finished.

goto end

)

if "%1" == "epub" (

%SPHINXBUILD% -b epub %ALLSPHINXOPTS% %BUILDDIR%/epub

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The epub file is in %BUILDDIR%/epub.

goto end

)

if "%1" == "latex" (

%SPHINXBUILD% -b latex %ALLSPHINXOPTS% %BUILDDIR%/latex

if errorlevel 1 exit /b 1

echo.

echo.Build finished; the LaTeX files are in %BUILDDIR%/latex.

goto end

)

if "%1" == "latexpdf" (

%SPHINXBUILD% -b latex %ALLSPHINXOPTS% %BUILDDIR%/latex

cd %BUILDDIR%/latex

make all-pdf

cd %~dp0

echo.

echo.Build finished; the PDF files are in %BUILDDIR%/latex.

goto end

)

if "%1" == "latexpdfja" (

%SPHINXBUILD% -b latex %ALLSPHINXOPTS% %BUILDDIR%/latex

cd %BUILDDIR%/latex

make all-pdf-ja

cd %~dp0

echo.

echo.Build finished; the PDF files are in %BUILDDIR%/latex.

goto end

)

if "%1" == "text" (

%SPHINXBUILD% -b text %ALLSPHINXOPTS% %BUILDDIR%/text

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The text files are in %BUILDDIR%/text.

goto end

)

if "%1" == "man" (

%SPHINXBUILD% -b man %ALLSPHINXOPTS% %BUILDDIR%/man

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The manual pages are in %BUILDDIR%/man.

goto end

)

if "%1" == "texinfo" (

%SPHINXBUILD% -b texinfo %ALLSPHINXOPTS% %BUILDDIR%/texinfo

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The Texinfo files are in %BUILDDIR%/texinfo.

goto end

)

if "%1" == "gettext" (

%SPHINXBUILD% -b gettext %I18NSPHINXOPTS% %BUILDDIR%/locale

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The message catalogs are in %BUILDDIR%/locale.

goto end

)

if "%1" == "changes" (

%SPHINXBUILD% -b changes %ALLSPHINXOPTS% %BUILDDIR%/changes

if errorlevel 1 exit /b 1

echo.

echo.The overview file is in %BUILDDIR%/changes.

goto end

)

if "%1" == "linkcheck" (

%SPHINXBUILD% -b linkcheck %ALLSPHINXOPTS% %BUILDDIR%/linkcheck

if errorlevel 1 exit /b 1

echo.

echo.Link check complete; look for any errors in the above output ^

or in %BUILDDIR%/linkcheck/output.txt.

goto end

)

if "%1" == "doctest" (

%SPHINXBUILD% -b doctest %ALLSPHINXOPTS% %BUILDDIR%/doctest

if errorlevel 1 exit /b 1

echo.

echo.Testing of doctests in the sources finished, look at the ^

results in %BUILDDIR%/doctest/output.txt.

goto end

)

if "%1" == "coverage" (

%SPHINXBUILD% -b coverage %ALLSPHINXOPTS% %BUILDDIR%/coverage

if errorlevel 1 exit /b 1

echo.

echo.Testing of coverage in the sources finished, look at the ^

results in %BUILDDIR%/coverage/python.txt.

goto end

)

if "%1" == "xml" (

%SPHINXBUILD% -b xml %ALLSPHINXOPTS% %BUILDDIR%/xml

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The XML files are in %BUILDDIR%/xml.

goto end

)

if "%1" == "pseudoxml" (

%SPHINXBUILD% -b pseudoxml %ALLSPHINXOPTS% %BUILDDIR%/pseudoxml

if errorlevel 1 exit /b 1

echo.

echo.Build finished. The pseudo-XML files are in %BUILDDIR%/pseudoxml.

goto end

)

:end

================================================

FILE: docs/source/_static/custom.css

================================================

body div.sphinxsidebarwrapper p.logo {

text-align: left;

}

.mermaid svg {

height: 100%;

}

================================================

FILE: docs/source/conf.py

================================================

#

# This file is execfile()d with the current directory set to its

# containing dir.

#

# Note that not all possible configuration values are present in this

# autogenerated file.

#

# All configuration values have a default; values that are commented out

# serve to show the default.

import os

# If extensions (or modules to document with autodoc) are in another directory,

# add these directories to sys.path here. If the directory is relative to the

# documentation root, use os.path.abspath to make it absolute, like shown here.

# sys.path.insert(0, os.path.abspath('.'))

# -- General configuration ------------------------------------------------

# If your documentation needs a minimal Sphinx version, state it here.

needs_sphinx = "3.0"

# Add any Sphinx extension module names here, as strings. They can be

# extensions coming with Sphinx (named 'sphinx.ext.*') or your custom

# ones.

extensions = [

"myst_parser",

"sphinx.ext.autodoc",

"sphinx.ext.doctest",

"sphinx.ext.intersphinx",

"sphinx.ext.autosummary",

"sphinx.ext.mathjax",

"sphinxcontrib_github_alt",

"sphinxcontrib.mermaid",

"sphinxcontrib.openapi",

"sphinxemoji.sphinxemoji",

]

try:

import enchant # noqa

extensions += ["sphinxcontrib.spelling"]

except ImportError:

pass

myst_enable_extensions = ["html_image"]

myst_heading_anchors = 4 # Needs to be 4 or higher

# Add any paths that contain templates here, relative to this directory.

templates_path = ["_templates"]

# The suffix(es) of source filenames.

source_suffix = {

".rst": "restructuredtext",

".txt": "markdown",

".md": "markdown",

}

# The encoding of source files.

# source_encoding = 'utf-8-sig'

# The master toctree document.

master_doc = "index"

# General information about the project.

project = "Jupyter Enterprise Gateway"

copyright = "2022, Project Jupyter" # noqa

author = "Jupyter Server Team"

# The version info for the project you're documenting, acts as replacement for

# |version| and |release|, also used in various other places throughout the

# built documents.

#

_version_py = os.path.join("..", "..", "enterprise_gateway", "_version.py")

version_ns = {}

with open(_version_py) as version_file:

exec(version_file.read(), version_ns) # noqa

# The short X.Y version.

version = version_ns["__version__"][:3]

# The full version, including alpha/beta/rc tags.

release = version_ns["__version__"]

# The language for content autogenerated by Sphinx. Refer to documentation

# for a list of supported languages.

#

# This is also used if you do content translation via gettext catalogs.

# Usually you set "language" from the command line for these cases.

language = 'en'

# There are two options for replacing |today|: either, you set today to some

# non-false value, then it is used:

# today = ''

# Else, today_fmt is used as the format for a strftime call.

# today_fmt = '%B %d, %Y'

# List of patterns, relative to source directory, that match files and

# directories to ignore when looking for source files.

exclude_patterns = ["_build", "Thumbs.db", ".DS_Store"]

# The reST default role (used for this markup: `text`) to use for all

# documents.

# default_role = None

# If true, '()' will be appended to :func: etc. cross-reference text.

# add_function_parentheses = True

# If true, the current module name will be prepended to all description

# unit titles (such as .. function::).

# add_module_names = True

# If true, sectionauthor and moduleauthor directives will be shown in the

# output. They are ignored by default.

# show_authors = False

# The name of the Pygments (syntax highlighting) style to use.

pygments_style = "default"

# A list of ignored prefixes for module index sorting.

# modindex_common_prefix = []

# If true, keep warnings as "system message" paragraphs in the built documents.

# eep_warnings = False

# If true, `todo` and `todoList` produce output, else they produce nothing.

todo_include_todos = False

# -- Options for HTML output ----------------------------------------------

# The theme to use for HTML and HTML Help pages. See the documentation for

# a list of builtin themes.

html_theme = "pydata_sphinx_theme"

# html_theme = "sphinx_book_theme"

html_logo = "_static/jupyter-logo.png"

# Theme options are theme-specific and customize the look and feel of a theme

# further. For a list of options available for each theme, see the

# documentation.

# html_theme_options = {}

# 'logo_only': html_logo

# 'description': "Enterprise Gateway",

# 'fixed_sidebar': False,

# 'show_relbars': True,

# 'github_user': 'jupyter',

# 'github_repo': 'enterprise_gateway',

# 'github_type': 'star',

# 'logo': 'jupyter-logo.png',

# 'logo_text_align': 'left',

# 'analytics_id': 'UA-130853690-1',

# Add any paths that contain custom themes here, relative to this directory.

# html_theme_path = []

# The name for this set of Sphinx documents. If None, it defaults to

# "<project> v<release> documentation".

# html_title = None

# A shorter title for the navigation bar. Default is the same as html_title.

# html_short_title = None

# The name of an image file (relative to this directory) to place at the top

# of the sidebar.

# html_logo = None

# The name of an image file (within the static path) to use as favicon of the

# docs. This file should be a Windows icon file (.ico) being 16x16 or 32x32

# pixels large.

# html_favicon = None

# Add any paths that contain custom static files (such as style sheets) here,

# relative to this directory. They are copied after the builtin static files,

# so a file named "default.css" will overwrite the builtin "default.css".

html_static_path = ["_static"]

# These paths are either relative to html_static_path

# or fully qualified paths (eg. https://...)

html_css_files = [

"custom.css",

]

# Add any extra paths that contain custom files (such as robots.txt or

# .htaccess) here, relative to this directory. These files are copied

# directly to the root of the documentation.

# html_extra_path = []

# If not '', a 'Last updated on:' timestamp is inserted at every page bottom,

# using the given strftime format.

# html_last_updated_fmt = '%b %d, %Y'

# If true, SmartyPants will be used to convert quotes and dashes to

# typographically correct entities.

# html_use_smartypants = True

# Custom sidebar templates, maps document names to template names.

# html_sidebars = {}

# Additional templates that should be rendered to pages, maps page names to

# template names.

# html_additional_pages = {}

# If false, no module index is generated.

# html_domain_indices = True

# If false, no index is generated.

# html_use_index = True

# If true, the index is split into individual pages for each letter.

# html_split_index = False

# If true, links to the reST sources are added to the pages.

# html_show_sourcelink = True

# If true, "Created using Sphinx" is shown in the HTML footer. Default is True.

# html_show_sphinx = True

# If true, "(C) Copyright ..." is shown in the HTML footer. Default is True.

# html_show_copyright = True

# If true, an OpenSearch description file will be output, and all pages will

# contain a <link> tag referring to it. The value of this option must be the

# base URL from which the finished HTML is served.

# html_use_opensearch = ''

# This is the file name suffix for HTML files (e.g. ".xhtml").

# html_file_suffix = None

# Language to be used for generating the HTML full-text search index.

# Sphinx supports the following languages:

# 'da', 'de', 'en', 'es', 'fi', 'fr', 'hu', 'it', 'ja'

# 'nl', 'no', 'pt', 'ro', 'ru', 'sv', 'tr'

# html_search_language = 'en'

# A dictionary with options for the search language support, empty by default.

# Now only 'ja' uses this config value

# html_search_options = {'type': 'default'}

# The name of a javascript file (relative to the configuration directory) that

# implements a search results scorer. If empty, the default will be used.

# html_search_scorer = 'scorer.js'

# Output file base name for HTML help builder.

htmlhelp_basename = "EnterpriseGatewaydoc"

# -- Options for LaTeX output ---------------------------------------------

latex_elements = {

# The paper size ('letterpaper' or 'a4paper').

# 'papersize': 'letterpaper',

# The font size ('10pt', '11pt' or '12pt').

# 'pointsize': '10pt',

# Additional stuff for the LaTeX preamble.

# 'preamble': '',

# Latex figure (float) alignment

# 'figure_align': 'htbp',

}

# Grouping the document tree into LaTeX files. List of tuples

# (source start file, target name, title,

# author, documentclass [howto, manual, or own class]).

latex_documents = [

(

master_doc,

"EnterpriseGateway.tex",

"Enterprise Gateway Documentation",

"https://jupyter.org",

"manual",

),

]

# The name of an image file (relative to this directory) to place at the top of

# the title page.

# latex_logo = None

# For "manual" documents, if this is true, then toplevel headings are parts,

# not chapters.

# latex_use_parts = False

# If true, show page references after internal links.

# latex_show_pagerefs = False

# If true, show URL addresses after external links.

# latex_show_urls = False

# Documents to append as an appendix to all manuals.

# latex_appendices = []

# If false, no module index is generated.

# latex_domain_indices = True

# -- Options for manual page output ---------------------------------------

# One entry per manual page. List of tuples

# (source start file, name, description, authors, manual section).

man_pages = [(master_doc, "enterprise_gateway", "Enterprise Gateway Documentation", [author], 1)]

# If true, show URL addresses after external links.

# man_show_urls = False

# -- Options for Texinfo output -------------------------------------------

# Grouping the document tree into Texinfo files. List of tuples

# (source start file, target name, title, author,

# dir menu entry, description, category)

texinfo_documents = [

(

master_doc,

"enterprise_gateway",

"Enterprise Gateway Documentation",

author,

"EnterpriseGateway",

"One line description of project.",

"Miscellaneous",

),

]

# Documents to append as an appendix to all manuals.

# texinfo_appendices = []

# If false, no module index is generated.

# texinfo_domain_indices = True

# How to display URL addresses: 'footnote', 'no', or 'inline'.

# texinfo_show_urls = 'footnote'

# If true, do not generate a @detailmenu in the "Top" node's menu.

# texinfo_no_detailmenu = False

# -- Options for Epub output ----------------------------------------------

# Bibliographic Dublin Core info.

epub_title = project

epub_author = author

epub_publisher = author

epub_copyright = copyright

# The basename for the epub file. It defaults to the project name.

# epub_basename = project

# The HTML theme for the epub output. Since the default themes are not optimized

# for small screen space, using the same theme for HTML and epub output is

# usually not wise. This defaults to 'epub', a theme designed to save visual

# space.

# epub_theme = 'epub'

# The language of the text. It defaults to the language option

# or 'en' if the language is not set.

# epub_language = ''

# The scheme of the identifier. Typical schemes are ISBN or URL.

# epub_scheme = ''

# The unique identifier of the text. This can be a ISBN number

# or the project homepage.

# epub_identifier = ''

# A unique identification for the text.

# epub_uid = ''

# A tuple containing the cover image and cover page html template filenames.

# epub_cover = ()

# A sequence of (type, uri, title) tuples for the guide element of content.opf.

# epub_guide = ()

# HTML files that should be inserted before the pages created by sphinx.

# The format is a list of tuples containing the path and title.

# epub_pre_files = []

# HTML files shat should be inserted after the pages created by sphinx.

# The format is a list of tuples containing the path and title.

# epub_post_files = []

# A list of files that should not be packed into the epub file.

epub_exclude_files = ["search.html"]

# The depth of the table of contents in toc.ncx.

# epub_tocdepth = 3

# Allow duplicate toc entries.

# epub_tocdup = True

# Choose between 'default' and 'includehidden'.

# epub_tocscope = 'default'

# Fix unsupported image types using the Pillow.

# epub_fix_images = False

# Scale large images.

# epub_max_image_width = 0

# How to display URL addresses: 'footnote', 'no', or 'inline'.

# epub_show_urls = 'inline'

# If false, no index is generated.

# epub_use_index = True