Repository: linuxserver/davos

Branch: master

Commit: fd3a46bffa8d

Files: 184

Total size: 424.6 KB

Directory structure:

gitextract_1q48vioe/

├── .gitignore

├── LICENSE

├── README.md

├── build.gradle

├── conf/

│ ├── local/

│ │ ├── application.properties

│ │ └── log4j2.xml

│ └── release/

│ ├── application.properties

│ └── log4j2.xml

├── docs/

│ ├── Makefile

│ ├── make.bat

│ └── source/

│ ├── conf.py

│ ├── developers/

│ │ └── index.rst

│ ├── faq/

│ │ └── index.rst

│ ├── guides/

│ │ ├── appsettings.rst

│ │ ├── gettingstarted/

│ │ │ ├── hosts.rst

│ │ │ ├── index.rst

│ │ │ └── schedules.rst

│ │ ├── index.rst

│ │ └── installation.rst

│ ├── index.rst

│ ├── reference/

│ │ ├── api.rst

│ │ └── index.rst

│ └── requirements.txt

├── gradle/

│ └── wrapper/

│ ├── gradle-wrapper.jar

│ └── gradle-wrapper.properties

├── gradlew

├── gradlew.bat

├── src/

│ ├── cucumber/

│ │ ├── java/

│ │ │ └── io/

│ │ │ └── linuxserver/

│ │ │ └── davos/

│ │ │ └── bdd/

│ │ │ ├── ClientStepDefs.java

│ │ │ ├── ScheduleStepDefs.java

│ │ │ ├── ServerStepDefs.java

│ │ │ └── helpers/

│ │ │ ├── FakeFTPServerFactory.java

│ │ │ ├── FakeSFTPServerFactory.java

│ │ │ └── Logging.java

│ │ └── resources/

│ │ ├── Client.feature

│ │ └── Schedule.feature

│ ├── main/

│ │ ├── java/

│ │ │ └── io/

│ │ │ └── linuxserver/

│ │ │ └── davos/

│ │ │ ├── DavosApplication.java

│ │ │ ├── Version.java

│ │ │ ├── converters/

│ │ │ │ ├── Converter.java

│ │ │ │ ├── HostConverter.java

│ │ │ │ └── ScheduleConverter.java

│ │ │ ├── delegation/

│ │ │ │ └── services/

│ │ │ │ ├── HostService.java

│ │ │ │ ├── HostServiceImpl.java

│ │ │ │ ├── ScheduleService.java

│ │ │ │ ├── ScheduleServiceImpl.java

│ │ │ │ ├── SettingsService.java

│ │ │ │ └── SettingsServiceImpl.java

│ │ │ ├── dto/

│ │ │ │ ├── ActionDTO.java

│ │ │ │ ├── FTPFileDTO.java

│ │ │ │ ├── FilterDTO.java

│ │ │ │ ├── HostDTO.java

│ │ │ │ ├── ScheduleDTO.java

│ │ │ │ └── ScheduleProcessResponse.java

│ │ │ ├── exception/

│ │ │ │ ├── HostInUseException.java

│ │ │ │ ├── ScheduleAlreadyRunningException.java

│ │ │ │ └── ScheduleNotRunningException.java

│ │ │ ├── logging/

│ │ │ │ └── LoggingManager.java

│ │ │ ├── persistence/

│ │ │ │ ├── dao/

│ │ │ │ │ ├── DefaultHostDAO.java

│ │ │ │ │ ├── DefaultScheduleDAO.java

│ │ │ │ │ ├── HostDAO.java

│ │ │ │ │ └── ScheduleDAO.java

│ │ │ │ ├── model/

│ │ │ │ │ ├── ActionModel.java

│ │ │ │ │ ├── FilterModel.java

│ │ │ │ │ ├── HostModel.java

│ │ │ │ │ ├── ScannedFileModel.java

│ │ │ │ │ └── ScheduleModel.java

│ │ │ │ └── repository/

│ │ │ │ ├── HostRepository.java

│ │ │ │ └── ScheduleRepository.java

│ │ │ ├── schedule/

│ │ │ │ ├── RunnableSchedule.java

│ │ │ │ ├── RunningSchedule.java

│ │ │ │ ├── ScheduleConfiguration.java

│ │ │ │ ├── ScheduleConfigurationFactory.java

│ │ │ │ ├── ScheduleExecutor.java

│ │ │ │ └── workflow/

│ │ │ │ ├── ConnectWorkflowStep.java

│ │ │ │ ├── DisconnectWorkflowStep.java

│ │ │ │ ├── DownloadFilesWorkflowStep.java

│ │ │ │ ├── FilterFilesWorkflowStep.java

│ │ │ │ ├── ScheduleWorkflow.java

│ │ │ │ ├── WorkflowStep.java

│ │ │ │ ├── actions/

│ │ │ │ │ ├── HttpAPICallAction.java

│ │ │ │ │ ├── MoveFileAction.java

│ │ │ │ │ ├── PostDownloadAction.java

│ │ │ │ │ ├── PostDownloadExecution.java

│ │ │ │ │ ├── PushbulletNotifyAction.java

│ │ │ │ │ └── SNSNotifyAction.java

│ │ │ │ ├── filter/

│ │ │ │ │ ├── FileFilter.java

│ │ │ │ │ ├── ReferentialFileFilter.java

│ │ │ │ │ └── TemporalFileFilter.java

│ │ │ │ └── transfer/

│ │ │ │ ├── FTPTransfer.java

│ │ │ │ ├── FilesAndFoldersTranferStrategy.java

│ │ │ │ ├── FilesOnlyTransferStrategy.java

│ │ │ │ ├── TransferStrategy.java

│ │ │ │ └── TransferStrategyFactory.java

│ │ │ ├── transfer/

│ │ │ │ └── ftp/

│ │ │ │ ├── FTPFile.java

│ │ │ │ ├── FileTransferType.java

│ │ │ │ ├── TransferProtocol.java

│ │ │ │ ├── client/

│ │ │ │ │ ├── Client.java

│ │ │ │ │ ├── ClientFactory.java

│ │ │ │ │ ├── FTPClient.java

│ │ │ │ │ ├── FTPSClient.java

│ │ │ │ │ ├── SFTPClient.java

│ │ │ │ │ └── UserCredentials.java

│ │ │ │ ├── connection/

│ │ │ │ │ ├── Connection.java

│ │ │ │ │ ├── ConnectionFactory.java

│ │ │ │ │ ├── FTPConnection.java

│ │ │ │ │ ├── SFTPConnection.java

│ │ │ │ │ └── progress/

│ │ │ │ │ ├── ListenerFactory.java

│ │ │ │ │ ├── ProgressListener.java

│ │ │ │ │ └── SFTPProgressListener.java

│ │ │ │ └── exception/

│ │ │ │ ├── ClientConnectionException.java

│ │ │ │ ├── ClientDisconnectException.java

│ │ │ │ ├── DeleteFileException.java

│ │ │ │ ├── DownloadFailedException.java

│ │ │ │ ├── FTPException.java

│ │ │ │ └── FileListingException.java

│ │ │ ├── util/

│ │ │ │ ├── FileStreamFactory.java

│ │ │ │ ├── FileUtils.java

│ │ │ │ └── PatternBuilder.java

│ │ │ └── web/

│ │ │ ├── API.java

│ │ │ ├── Filter.java

│ │ │ ├── Host.java

│ │ │ ├── Notifications.java

│ │ │ ├── Pushbullet.java

│ │ │ ├── SNS.java

│ │ │ ├── Schedule.java

│ │ │ ├── ScheduleCommand.java

│ │ │ ├── Settings.java

│ │ │ ├── Transfer.java

│ │ │ ├── VersionChecker.java

│ │ │ ├── controller/

│ │ │ │ ├── APIController.java

│ │ │ │ ├── FragmentController.java

│ │ │ │ ├── ViewController.java

│ │ │ │ └── response/

│ │ │ │ ├── APIResponse.java

│ │ │ │ └── APIResponseBuilder.java

│ │ │ └── selectors/

│ │ │ ├── IntervalSelector.java

│ │ │ ├── LogLevelSelector.java

│ │ │ ├── MethodSelector.java

│ │ │ ├── ProtocolSelector.java

│ │ │ └── TransferSelector.java

│ │ └── resources/

│ │ ├── static/

│ │ │ ├── browserconfig.xml

│ │ │ ├── css/

│ │ │ │ └── davos.css

│ │ │ ├── js/

│ │ │ │ └── davos.js

│ │ │ └── manifest.json

│ │ └── templates/

│ │ ├── fragments/

│ │ │ ├── api.html

│ │ │ ├── filter.html

│ │ │ ├── header.html

│ │ │ ├── pushbullet.html

│ │ │ ├── sns.html

│ │ │ └── transfers.html

│ │ └── v2/

│ │ ├── edit-host.html

│ │ ├── edit-schedule.html

│ │ ├── hosts.html

│ │ ├── schedules.html

│ │ └── settings.html

│ └── test/

│ └── java/

│ └── io/

│ └── linuxserver/

│ └── davos/

│ ├── VersionTest.java

│ ├── delegation/

│ │ └── services/

│ │ ├── ScheduleServiceImplTest.java

│ │ └── SettingsServiceImplTest.java

│ ├── persistence/

│ │ └── dao/

│ │ └── DefaultScheduleDAOTest.java

│ ├── schedule/

│ │ ├── ScheduleConfigurationFactoryTest.java

│ │ ├── ScheduleExecutorTest.java

│ │ └── workflow/

│ │ ├── ConnectWorkflowStepTest.java

│ │ ├── DisconnectWorkflowStepTest.java

│ │ ├── DownloadFilesWorkflowStepTest.java

│ │ ├── FilterFilesWorkflowStepTest.java

│ │ ├── actions/

│ │ │ ├── HttpAPICallActionTest.java

│ │ │ ├── MoveFileActionTest.java

│ │ │ └── PushbulletNotifyActionTest.java

│ │ ├── filter/

│ │ │ └── ReferentialFileFilterTest.java

│ │ └── transfer/

│ │ ├── FilesAndFoldersTranferStrategyTest.java

│ │ ├── FilesOnlyTransferStrategyTest.java

│ │ ├── TransferStrategyFactoryTest.java

│ │ └── TransferStrategyTest.java

│ ├── transfer/

│ │ └── ftp/

│ │ ├── client/

│ │ │ ├── ClientFactoryTest.java

│ │ │ ├── FTPClientTest.java

│ │ │ ├── FTPSClientTest.java

│ │ │ └── SFTPClientTest.java

│ │ └── connection/

│ │ ├── FTPConnectionTest.java

│ │ ├── SFTPConnectionTest.java

│ │ └── progress/

│ │ ├── ListenerFactoryTest.java

│ │ ├── ProgressListenerTest.java

│ │ └── SFTPProgressListenerTest.java

│ ├── util/

│ │ └── PatternBuilderTest.java

│ └── web/

│ └── controller/

│ ├── APIControllerTest.java

│ └── ViewControllerTest.java

└── version.txt

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

.gradle

*.sw?

.#*

*#

*~

/build

/code

.classpath

.project

.settings

.metadata

.factorypath

.recommenders

bin

build

lib/

target

.factorypath

.springBeans

interpolated*.xml

dependency-reduced-pom.xml

build.log

_site/

.*.md.html

manifest.yml

MANIFEST.MF

settings.xml

activemq-data

overridedb.*

*.iml

*.ipr

*.iws

.idea

.DS_Store

.factorypath

davos.log

src/main/resources/application.properties

src/main/resources/log4j2.xml

.vscode/

================================================

FILE: LICENSE

================================================

The MIT License (MIT)

Copyright (c) 2015 LinuxServer.io

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: README.md

================================================

# davos

[](http://ci.linuxserver.io/job/Software/job/Davos/job/davos_10_Unit_Tests/) [](http://davos.readthedocs.io/en/latest)

davos is an FTP download automation tool that allows you to scan various FTP servers for files you are interested in. This can be used to configure file feeds as part of a wider workflow.

# Why use davos?

A fair number of services still rely on "file-drops" to transport data from place to place. A common practice is to configure a cron job to periodically trigger FTP/SFTP programs to download those files. _davos_ is relatively similar, only it also adds a web UI to the whole process, making the management of these schedules easier.

# How it works

## Hosts

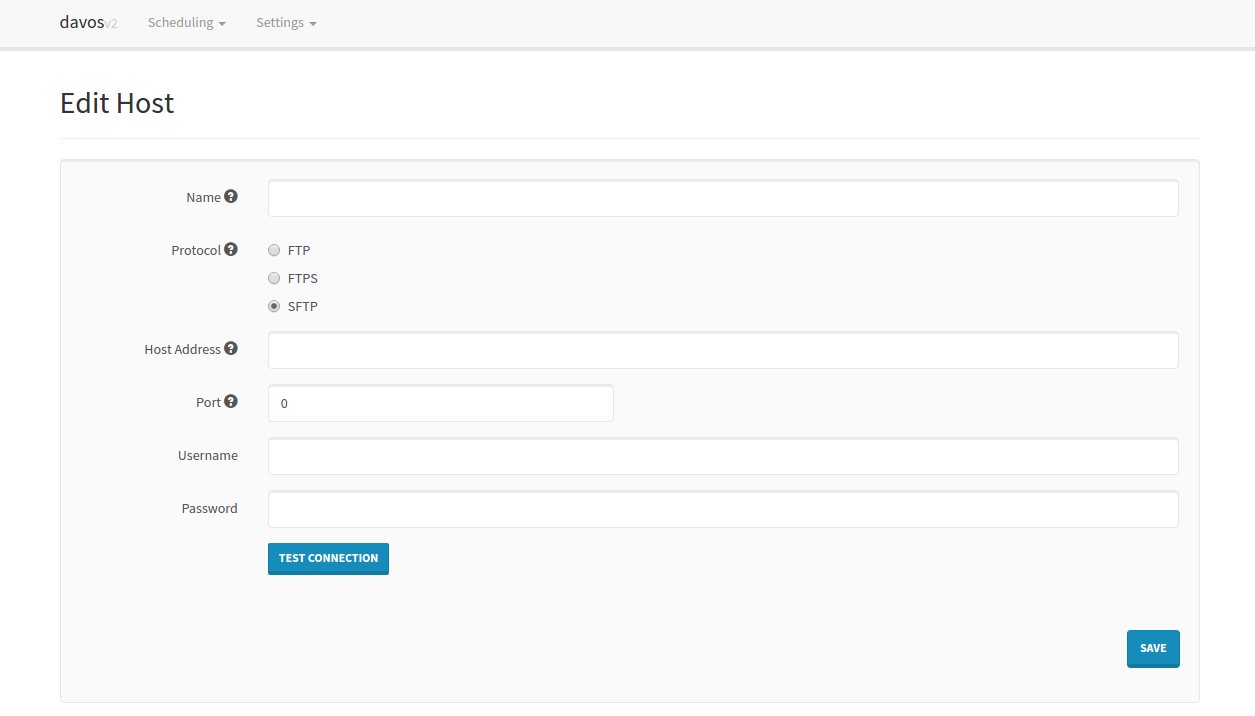

All periodic scans (Schedules) require a host to connect to. These can be added individually:

## Schedules

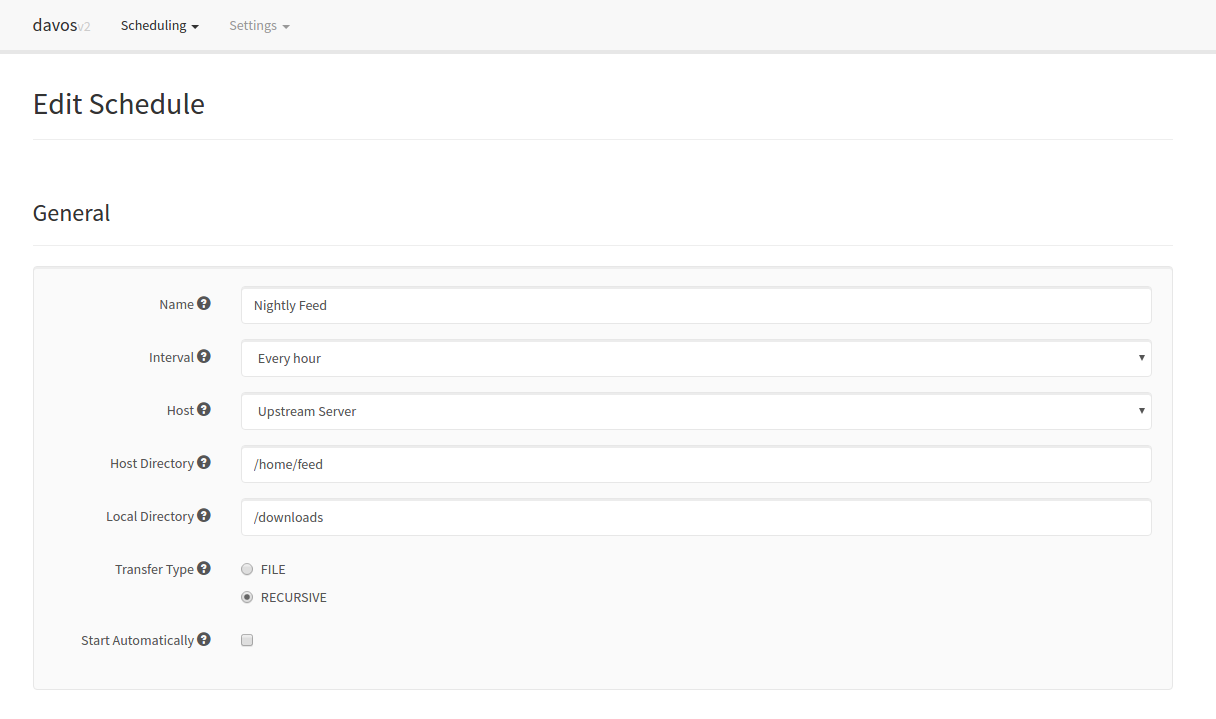

Each schedule contains all of the required information pertaining to the files it is interested in. This includes the host it needs to connect to, where to look for the files, where to download them, and how often:

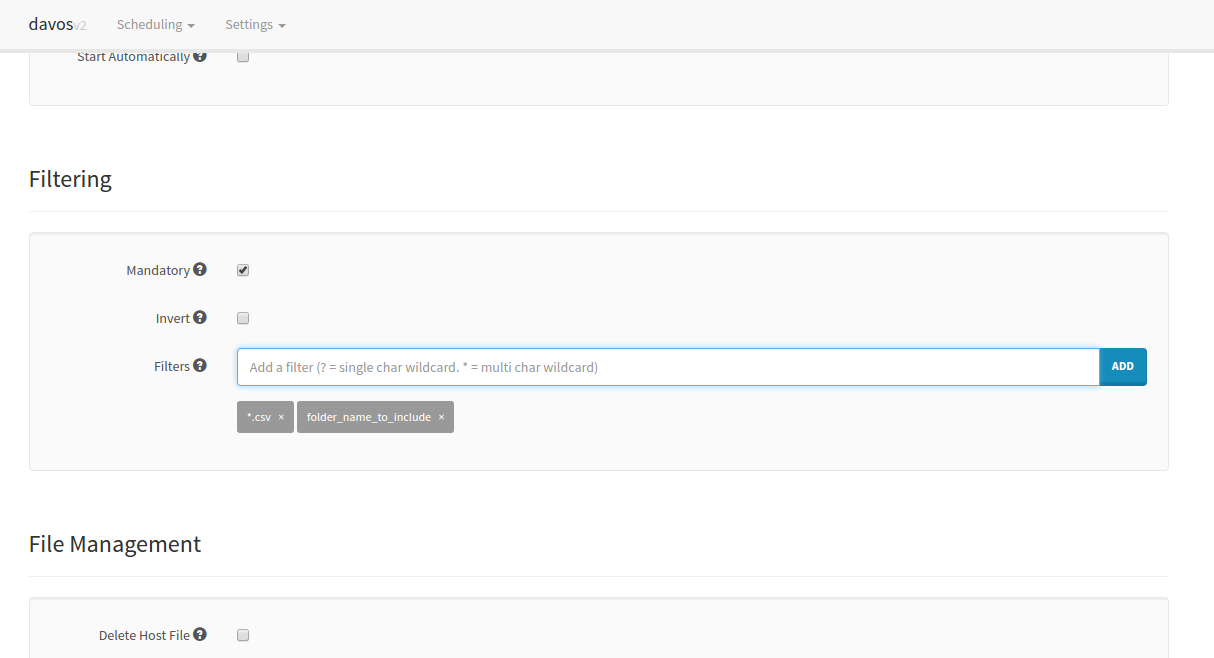

It is also possible to limit what the schedule downloads by applying filters to each scan. _davos_ will only download files that match its list of given filters. If no filters are applied to a schedule, all files will be downloaded. Each schedule also keeps an internal record of what it scanned in the previous run, so it won't download the same file twice.

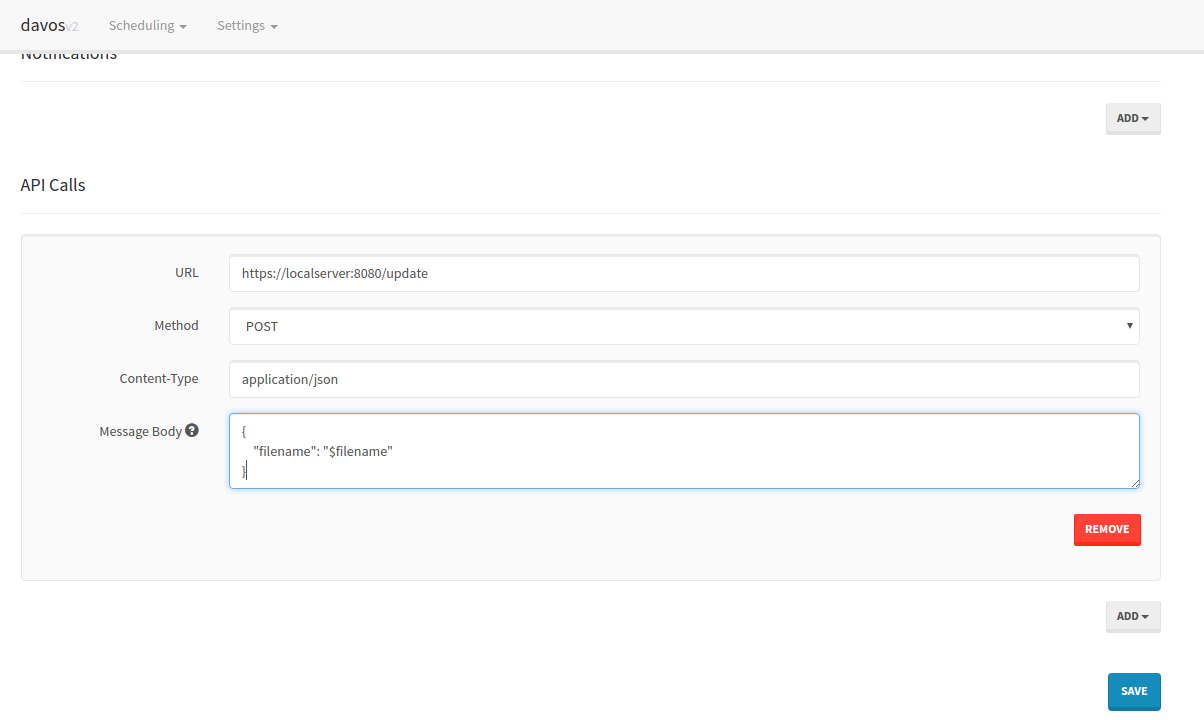

Once each file has been downloaded, _davos_ can also notify you via Pushbullet, as well as sending downstream requests to other services. This is particularly useful if another service makes use of the file _davos_ has just downloaded.

## Running

Finally, schedules can be started or stopped at any point, using the schedules listing UI:

# Changelog

- **2.2.2**

- Updated log4j dependency to 2.16.0, accounting for CVE-2021-44228

- **2.2.1**

- Fixed bug where lastRunTime got reset whenever a change was made to a schedule.

- General refactoring of code, plus added unit tests.

- Allow $filename resolution in URLs of API calls.

- **2.2.0**

- The filter pattern matcher now resolves '*' to zero or more characters, rather than one or more.

- The scanned items list can now be cleared.

- Added a Last Run field to the scanned items modal.

- Included [readthedocs](https://davos.readthedocs.io) documentation!

- Added SNS capability to notifications area

- Updated FTPS connections to run over Explicit TLS, rather than Implicit SSL

- This may or may not break existing schedules that use FTPS prior to 2.2.0.

- Improved some areas of DEBUG logging

- Schedules page now automatically updates when files are downloading

- Added identity file authentication for SFTP connections

- Included a version checker to help prompt users when a new version is available

- **Full disclosure**: This makes a GET request to GitHub to ascertain the latest release version.

- **2.1.2**

- Fixed NaN bug caused by empty files (Div/0)

- Fixed recursive delete issue for directories in FTP and SFTP connections.

- **2.1.1**

- Fixed primitive issue on Schedule model for new fields

- **2.1.0**

- Mandatory filtering allows schedules to only download files when at least one filter has been set.

- Form validation on Hosts and Schedule pages

- New theme

- Inverse filtering allows schedules to download files that DO NOT match provided filters.

- "Test Connection" button added to Hosts page

- Schedules can now delete the remote copy of each file once the download has completed. This is separate to the Post-download actions.

- New intervals: "Every minute" and "Every 5 minutes"

================================================

FILE: build.gradle

================================================

import java.util.regex.Matcher;

buildscript {

ext {

springBootVersion = '1.4.2.RELEASE'

}

repositories {

mavenCentral()

}

dependencies {

classpath("org.springframework.boot:spring-boot-gradle-plugin:${springBootVersion}")

classpath('io.spring.gradle:dependency-management-plugin:0.6.1.RELEASE')

}

}

plugins {

id "com.github.samueltbrown.cucumber" version "0.9"

}

apply plugin: 'java'

apply plugin: 'eclipse'

apply plugin: 'idea'

apply plugin: 'org.springframework.boot'

apply plugin: 'io.spring.dependency-management'

jar {

baseName = 'davos'

version = file('version.txt').text.trim()

}

sourceCompatibility = 1.8

targetCompatibility = 1.8

repositories {

mavenCentral()

}

configurations {

all*.exclude module : 'spring-boot-starter-logging'

bddTestCompile

testCompile.extendsFrom bddTestCompile

}

sourceSets {

bbdTest {

java { srcDir 'src/cucumber/java' }

resources { srcDir 'src/cucumber/resources' }

compileClasspath += test.output

}

}

dependencies {

compile 'org.springframework.boot:spring-boot-starter-web'

compile 'org.springframework.boot:spring-boot-starter-thymeleaf'

compile 'org.springframework.boot:spring-boot-starter-data-jpa'

compile 'org.springframework.boot:spring-boot-starter-jdbc'

compile 'org.apache.logging.log4j:log4j-api:2.16.0'

compile 'org.apache.logging.log4j:log4j-core:2.16.0'

compile 'org.apache.logging.log4j:log4j-slf4j-impl:2.16.0'

compile 'com.jcraft:jsch:0.1.50'

compile 'joda-time:joda-time:2.3'

compile 'commons-net:commons-net:3.3'

compile 'commons-io:commons-io:2.4'

compile 'org.apache.commons:commons-lang3:3.4'

compile 'com.amazonaws:aws-java-sdk-sns:1.11.167'

runtime 'com.h2database:h2'

testCompile 'org.springframework.boot:spring-boot-starter-test'

testCompile 'org.assertj:assertj-core:3.2.0'

testCompile 'org.mockito:mockito-all:1.9.5'

testCompile 'junit:junit:4.11'

bddTestCompile 'org.mockftpserver:MockFtpServer:2.6'

bddTestCompile 'org.apache.sshd:sshd-core:1.4.0'

bddTestCompile 'info.cukes:cucumber-java:1.2.4'

cucumberCompile 'info.cukes:cucumber-java:1.2.4'

}

eclipse {

classpath {

containers.remove('org.eclipse.jdt.launching.JRE_CONTAINER')

containers 'org.eclipse.jdt.launching.JRE_CONTAINER/org.eclipse.jdt.internal.debug.ui.launcher.StandardVMType/JavaSE-1.8'

}

}

task wrapper(type: Wrapper) {

gradleVersion = '2.14'

}

task updateBuildVersion << {

Matcher matcher = file('version.txt').text.trim() =~ /(.+)\.(.+)\.(.+)$/

if (matcher.find()) {

String major = matcher.group(1)

String minor = matcher.group(2)

String patch = matcher.group(3).trim()

int nextBuild = Integer.valueOf(patch) + 1

String updatedVersion = "${major}.${minor}.${nextBuild}"

file('version.txt').text = "${updatedVersion}\n"

}

}

task copyConfig(type: Copy) {

def location = project.hasProperty('env') ? "$env" : 'local'

from "conf/${location}"

into "src/main/resources/"

}

cucumber {

jvmOptions {

maxHeapSize = '512m'

environment 'ENV', 'staging'

}

}

build.dependsOn copyConfig

build.mustRunAfter copyConfig

================================================

FILE: conf/local/application.properties

================================================

davos.version=2.2.2

================================================

FILE: conf/local/log4j2.xml

================================================

================================================

FILE: conf/release/application.properties

================================================

spring.datasource.url=jdbc:h2:file:/config/db/davos2

spring.datasource.username=sa

spring.datasource.password=sa

spring.datasource.driverClassName=org.h2.Driver

spring.jpa.hibernate.ddl-auto=update

davos.version=2.2.2

================================================

FILE: conf/release/log4j2.xml

================================================

================================================

FILE: docs/Makefile

================================================

# Minimal makefile for Sphinx documentation

#

# You can set these variables from the command line.

SPHINXOPTS =

SPHINXBUILD = python -msphinx

SPHINXPROJ = davos

SOURCEDIR = source

BUILDDIR = build

# Put it first so that "make" without argument is like "make help".

help:

@$(SPHINXBUILD) -M help "$(SOURCEDIR)" "$(BUILDDIR)" $(SPHINXOPTS) $(O)

.PHONY: help Makefile

# Catch-all target: route all unknown targets to Sphinx using the new

# "make mode" option. $(O) is meant as a shortcut for $(SPHINXOPTS).

%: Makefile

@$(SPHINXBUILD) -M $@ "$(SOURCEDIR)" "$(BUILDDIR)" $(SPHINXOPTS) $(O)

================================================

FILE: docs/make.bat

================================================

@ECHO OFF

pushd %~dp0

REM Command file for Sphinx documentation

if "%SPHINXBUILD%" == "" (

set SPHINXBUILD=python -msphinx

)

set SOURCEDIR=source

set BUILDDIR=build

set SPHINXPROJ=davos

if "%1" == "" goto help

%SPHINXBUILD% >NUL 2>NUL

if errorlevel 9009 (

echo.

echo.The Sphinx module was not found. Make sure you have Sphinx installed,

echo.then set the SPHINXBUILD environment variable to point to the full

echo.path of the 'sphinx-build' executable. Alternatively you may add the

echo.Sphinx directory to PATH.

echo.

echo.If you don't have Sphinx installed, grab it from

echo.http://sphinx-doc.org/

exit /b 1

)

%SPHINXBUILD% -M %1 %SOURCEDIR% %BUILDDIR% %SPHINXOPTS%

goto end

:help

%SPHINXBUILD% -M help %SOURCEDIR% %BUILDDIR% %SPHINXOPTS%

:end

popd

================================================

FILE: docs/source/conf.py

================================================

# -*- coding: utf-8 -*-

#

# davos documentation build configuration file, created by

# sphinx-quickstart on Sat Jul 29 08:01:32 2017.

#

# This file is execfile()d with the current directory set to its

# containing dir.

#

# Note that not all possible configuration values are present in this

# autogenerated file.

#

# All configuration values have a default; values that are commented out

# serve to show the default.

# If extensions (or modules to document with autodoc) are in another directory,

# add these directories to sys.path here. If the directory is relative to the

# documentation root, use os.path.abspath to make it absolute, like shown here.

#

# import os

# import sys

# sys.path.insert(0, os.path.abspath('.'))

# -- General configuration ------------------------------------------------

# If your documentation needs a minimal Sphinx version, state it here.

#

# needs_sphinx = '1.0'

# Add any Sphinx extension module names here, as strings. They can be

# extensions coming with Sphinx (named 'sphinx.ext.*') or your custom

# ones.

extensions = []

# Add any paths that contain templates here, relative to this directory.

templates_path = ['_templates']

# The suffix(es) of source filenames.

# You can specify multiple suffix as a list of string:

#

# source_suffix = ['.rst', '.md']

source_suffix = '.rst'

# The master toctree document.

master_doc = 'index'

# General information about the project.

project = u'davos'

copyright = u'2017, Josh Stark'

author = u'Josh Stark'

# The version info for the project you're documenting, acts as replacement for

# |version| and |release|, also used in various other places throughout the

# built documents.

#

# The short X.Y version.

version = u'2.2'

# The full version, including alpha/beta/rc tags.

release = u'2.2.0'

# The language for content autogenerated by Sphinx. Refer to documentation

# for a list of supported languages.

#

# This is also used if you do content translation via gettext catalogs.

# Usually you set "language" from the command line for these cases.

language = None

# List of patterns, relative to source directory, that match files and

# directories to ignore when looking for source files.

# This patterns also effect to html_static_path and html_extra_path

exclude_patterns = []

# The name of the Pygments (syntax highlighting) style to use.

pygments_style = 'sphinx'

# If true, `todo` and `todoList` produce output, else they produce nothing.

todo_include_todos = False

# -- Options for HTML output ----------------------------------------------

# The theme to use for HTML and HTML Help pages. See the documentation for

# a list of builtin themes.

#

#html_theme = 'alabaster'

import sphinx_rtd_theme

html_theme = "sphinx_rtd_theme"

html_theme_path = [sphinx_rtd_theme.get_html_theme_path()]

# Theme options are theme-specific and customize the look and feel of a theme

# further. For a list of options available for each theme, see the

# documentation.

#

# html_theme_options = {}

# Add any paths that contain custom static files (such as style sheets) here,

# relative to this directory. They are copied after the builtin static files,

# so a file named "default.css" will overwrite the builtin "default.css".

html_static_path = ['_static']

# Custom sidebar templates, must be a dictionary that maps document names

# to template names.

#

# This is required for the alabaster theme

# refs: http://alabaster.readthedocs.io/en/latest/installation.html#sidebars

html_sidebars = {

'**': [

'about.html',

'navigation.html',

'relations.html', # needs 'show_related': True theme option to display

'searchbox.html',

'donate.html',

]

}

# -- Options for HTMLHelp output ------------------------------------------

# Output file base name for HTML help builder.

htmlhelp_basename = 'davosdoc'

# -- Options for LaTeX output ---------------------------------------------

latex_elements = {

# The paper size ('letterpaper' or 'a4paper').

#

# 'papersize': 'letterpaper',

# The font size ('10pt', '11pt' or '12pt').

#

# 'pointsize': '10pt',

# Additional stuff for the LaTeX preamble.

#

# 'preamble': '',

# Latex figure (float) alignment

#

# 'figure_align': 'htbp',

}

# Grouping the document tree into LaTeX files. List of tuples

# (source start file, target name, title,

# author, documentclass [howto, manual, or own class]).

latex_documents = [

(master_doc, 'davos.tex', u'davos Documentation',

u'Josh Stark', 'manual'),

]

# -- Options for manual page output ---------------------------------------

# One entry per manual page. List of tuples

# (source start file, name, description, authors, manual section).

man_pages = [

(master_doc, 'davos', u'davos Documentation',

[author], 1)

]

# -- Options for Texinfo output -------------------------------------------

# Grouping the document tree into Texinfo files. List of tuples

# (source start file, target name, title, author,

# dir menu entry, description, category)

texinfo_documents = [

(master_doc, 'davos', u'davos Documentation',

author, 'davos', 'One line description of project.',

'Miscellaneous'),

]

================================================

FILE: docs/source/developers/index.rst

================================================

##########

Developers

##########

If you wish to contribute to davos (and help me tidy up some of its rather messy code!), you

will need to be able to build and run it locally. davos is written almost completely in

Java using the Spring Framework, utilising the Thymeleaf rendering engine. The project is

unit and integration tested using jUnit and Cucumber JVM, respectively.

*****

Setup

*****

Download and install the `Java 8 JDK `_.

I'd also recommend using `Spring Tool Suite (STS) `_ as it is a prebuilt version of Eclipse IDE with all of the necessary

plugins installed for working with a Spring application.

********

Building

********

.. note:: You do not need to pre-install Gradle for this application as it comes with Gradle Wrapper, which does all the work for you.

To build the application, use Gradle:

.. code-block:: text

./gradlew clean build -Penv={release|local}

This will download all necessary dependencies, run tests, then package up the application.

The resulting .jar file will be in ``build/libs``. If you pass through a ``-Penv=release`` when

running this command, the packaged application will use the config under ``conf/release``, which

tells davos to use a file-based database. By default (i.e. if you do not pass this switch

through), it will use the ``conf/local`` configuration, which makes use of an in-memory database.

***************

Running the app

***************

It is recommended to build the app first before running, so you know your latest

changes are built:

.. code-block:: text

./gradlew clean build && java -jar build/libs/davos-2.2.0.jar

***********

Development

***********

Classpath

---------

When using Eclipse (or STS), a separate Gradle command is required in order to update

the project's classpath files so Eclipse is aware of the downloaded dependencies:

.. code-block:: text

./gradlew cleanEcipse eclipse

Code Structure

--------------

The code of davos is split in to four main sections:

``src/main/java``

The core functional code. This contains all logic for the workflow, API,

connectivity, and object persistence (database).

``src/main/resources``

The front-end code, including all JavaScript, CSS, images, and Thymeleaf

templates.

``src/test/java``

All unit tests for the core code

``src/cucumber/java``

Integration test code. This is separate to the main project code and

does not get packaged in to the released application.

Running Tests

-------------

To run all unit tests, use Gradle:

.. code-block:: text

./gradlew test

To run all integration tests:

.. code-block:: text

./gradlew cucumber

Managing the version

--------------------

The version of the application is referenced in three files:

* ``version.txt`` in the project root directory

* ``conf/local/application.properties`` as a property called ``davos.version``

* ``conf/release/application.properties`` as a property called ``davos.version``

All three of these need to be updated if you are changing the version number.

================================================

FILE: docs/source/faq/index.rst

================================================

###

FAQ

###

**********************************

Can davos be used to upload files?

**********************************

No, davos only downloads files. There are currently no plans on implementing the ability

to upload files as this will require a rework of the schedule workflow engine.

******************************

How many schedules can I have?

******************************

There is no theoretical limit to the number of schedules you can have. davos creates

an initial thread pool of 10 worker threads, but this gets extended if more than 10

schedules are created.

**************************

How many hosts can I have?

**************************

Unlimited.

********************************************

Are host credentials hashed in the database?

********************************************

No, all host usernames and passwords are stored in plain text in the H2 database. This

is because the application needs to query the hosts table every time a schedule runs,

and would have no way to compare a hash with a valid password.

***************************************************

How do I use an identity file for SFTP connections?

***************************************************

On the Host configuration page for your Host, make sure **Use Identity File** is checked. Then

enter the absolute path of the identity file. If you're running davos in a Docker container (recommended),

the value of this should be some thing like "/config/id_rsa", assuming you are using an SSH private key called

"id_rsa" and have placed it in your mapped host directory on your machine.

Any form of private identity is applicable, for example if your host server uses .pem files

for authentication, use "/config/my_identity.pem".

.. note:: Remember, davos can't see files outside of its ``/download`` and ``/config`` directories when running in a Docker container. So remember to place your identity file(s) in the mapped directory on the host (e.g. ``/home/user/davos``).

***************************************************************

I've just updated davos. The application is behaving strangely.

***************************************************************

Some version updates include changes to the JavaScript sources for the website side

of the application. Modern browsers like Chrome tend to cache these types of sources for

the sake of performance. It is likely your browser has not re-cached the latest version of

the JavaScript code.

To remedy this, hard-refresh the app: ``CTRL`` + ``F5``.

****************************************

How can I use SNS to notify me by email?

****************************************

To use SNS, you'll need an Amazon AWS Account. Once set up, you should go to **Services -> Simple Notification Service**,

then **Create topic**. For Topic name, enter something like "davos-notifications", and click **Create topic**. The first

thing you'll notice is that it has generated a **Topic ARN**. You'll need this for the notification configuration later.

Now create a subscription to your topic by clicking on **Create subscription**, chosing "Email" as the Protocol, and your

preferred email address as the Endpoint. Click **Create subscription**. You'll receive an email asking you to confirm

the subscription request.

Once your topic has been configured, you should create an IAM User that can publish messages to it. It is this user's

credentials that davos needs to perform the publish.

Go to **Services -> IAM**, then **Users**. Click **Add user**. For User name, enter something sensible, then select "Programmatic access"

as the Access Type. Click **Next: Permissions**. This user should only have permission to publish to this topic,

nothing more. So, under "Add user to group", click **Create group**, and then **Create policy**.

.. note:: A user can be in many groups. Groups can have many policies. A policy is a set of permissions for access to various things in AWS.

You should be directed to the policy creation tool. Select the Policy Generator and set the following:

Effect

Allow

AWS Service

Amazon SNS

Actions

Publish

Amazon Resource Name (ARN)

{YOUR_TOPIC_ARN}

Then click **Add Statement**. You should see it added underneath. Click **Next Step**. The generated policy will be shown

to you on screen (it's formatted as JSON, and contains a ``Statement`` array). Update the Policy Name to something

sensible (e.g. "DavosTopicPublishAccess") then click **Create Policy**. You'll be redirected back to the IAM

console, but you can close this.

Go back to the previous tab and under the Filter, type in the name of the policy you just created. Select it. Now, for the

Group name, give it a sensible name (e.g. DavosNotifications), and click **Create group**. The group should now be selected under

the IAM user console. Click **Next: Review**, make sure you're happy, and then click **Create user**.

You should see a table showing the user's Access key ID and Secret access key. You'll need these for the SNS configuration

in davos, so keep them safe somewhere (you can download a .csv with the credentials in).

.. warning:: The Secret access key will only be shown once in the console, so make sure you store it somewhere safe.

================================================

FILE: docs/source/guides/appsettings.rst

================================================

############

App Settings

############

Under **Settings -> App Settings**, you can configure the log level that davos

will output to its log file.

*******

Logging

*******

All logs are written to ``davos.log``, located in the ``/config/logs`` directory.

When mapping the ``/config`` directory in the container to a host directory, logs

will be made available in that host directory.

The log level can be changed at any time while the application is running. The available

levels are:

* DEBUG

* INFO

* WARN

* ERROR

The higher the level (``DEBUG`` is lowest, ``ERROR`` is highest), the fewer logs will be

written. By default, davos logs at ``INFO`` level. If you are experiencing issues

with davos and wish to understand the area of failure, change the level to ``DEBUG``.

Under this setting, the most logs will be written.

.. warning:: When setting the log level to ``DEBUG``, any secure credentials used in connections to the FTP host, or notification systems **will** be logged.

================================================

FILE: docs/source/guides/gettingstarted/hosts.rst

================================================

#####

Hosts

#####

A Host configuration provides one or more Schedules with information pertaining

to the FTP server to connect to when scanning for files. They are separate to the

Schedule configuration to allow multiple Schedules to use the same Host configuration

without the need for having to input the same data multiple times.

Under **Settings -> New Host**, you will be prompted to enter all of the relevant

information.

Name *[REQUIRED]*

The friendly name for this Host. This is what will be visible when creating a

schedule, so make it indicative of the Host you're making.

Protocol

Which type of connection to be made. This has no bearing on how you configure

the host, but will direct davos to build the specific client when connecting.

Host Address *[REQUIRED]*

The IP address (or hostname) of the server.

Port

FTP and FTPS are usually on ``21``, while SFTP is usually on ``22``. If your

server has been configured to run on a separate port, this is where you

reference it.

Username *[REQUIRED]*

Name of the user to connect as.

Password

Password of the user to connect as.

Use Identity File

Only available when ``SFTP`` is selected. Choose this if the SFTP server

requires an identity file to authenticate the user.

Identity File

Displayed when ``Use Identity File`` is checked, replacing the ``Password`` field. Enter

the location of the file.

.. note:: The location of the identity file will be relative to the container's filesystem, so should ideally be under ``/config`` as this is the directory exposed by the Docker volume mapping.

It is also possible to create, manage, and delete a Host via the HTTP API. See :doc:`../../reference/api` for more details.

================================================

FILE: docs/source/guides/gettingstarted/index.rst

================================================

###############

Getting Started

###############

This section aims to help you understand how davos is pieced together, and shows

you how it can be configured to meet your needs. It is recommended that you follow

the below guides.

.. toctree::

:maxdepth: 1

hosts

schedules

************

How it works

************

The Schedules in davos are powered by a basic workflow engine that runs a series

of steps to ensure each run processes files properly. The order of this

workflow is as follows:

1. Connect to the host.

2. List all files in the provided remote directory.

3. Filter all files in the remote directory so only the relevant ones remain.

4. Remove any files that have been previously scanned.

5. For each matched file, download it. Once downloaded, run any actions required by the schedule.

6. Store the list of scanned files against the Schedule.

7. Disconnect.

There is no theoretical limit to the number of schedules you can have running at

the same time, however it is advised you keep it below 10, as memory usage can

become quite high.

================================================

FILE: docs/source/guides/gettingstarted/schedules.rst

================================================

#########

Schedules

#########

A Schedule is the configuration that tells davos when to run, where to connect, what

to look for, and what to do once it has finished downloading. Schedules are the heart

of davos and are powered by its workflow engine.

To create a new Schedule, go to **Settings -> New Schedule**. Schedules are split into

multiple sections, each with their own part to play in the process.

*******

General

*******

This defines the metadata and connection information of the Schedule. The **General** section

allows you to name the Schedule, as well as define how often it should run, and where files

should be managed.

Name *[REQUIRED]*

The name of the Schedule. This should be relevant to the task this schedule

is performing. E.g. "Nightly Feed"

Interval

How often the schedule should run. The rate at which the schedule runs begins

when the schedule is started for the first time. So, if it is started at 14:05,

with an interval of "Every 30 minutes", it will run again at 14:35, then 15:05, and

so on.

.. note:: If you change the interval for an already running Schedule, you'll need to restart it before the change takes effect.

..

Host

The Host configuration to use for this Schedule. It will default to the first

Host in the list. You cannot create a Schedule if no Hosts have been created.

Host Directory *[REQUIRED]*

This is the directory on the host (relative to the connection entry point) that

the Schedule should use for file scanning. Absolute paths are also compliant.

Local Directory *[REQUIRED]*

The directory where this schedule should place file downloads.

.. note :: The local directory must be relative to the container's filesystem, so should be under ``/download``.

..

Transfer Type

This setting will inform the Schedule whether or not it should only download

matching files (``FILE``), or if it should also scan matching directories (``RECURSIVE``). This can be useful

if the server contains sub-directories that may match in a scan, but should not be

downloaded.

Start Automatically

If checked, the Schedule will automatically start when davos is started. Useful if

you have a restart policy enabled in Docker and your machine requires a restart.

*********

Filtering

*********

This is a process that allows you to narrow down file scanning so only relevant

files are processed. Filters can be exceptionally useful for host directories that

are used by multiple processes or contain large numbers of files.

Mandatory

If checked, the Schedule will only consider scanning files if at least one filter has been

defined. If checked and no filters are defined, nothing will be scanned, so nothing

will be downloaded.

Invert

The default behaviour is to match all files on the host with the defined filters. Checking

this option will invert that behaviour, so all files *not* matching the defined filters

will be downloaded.

Filters

A list of strings that will be used to scan the host directory. Each file on the host is compared to

this list - if it matches at least one filter, it will be downloaded. Filters can also be wildcarded

using ``?`` (single character) and ``*`` (multiple characters).

For example, for a file called "my_file_name.txt":

.. code-block:: text

my?file?name.txt = MATCH

my*name.txt = MATCH

my_file.name.txt = NO MATCH

*file_name* = MATCH

*file_name = NO MATCH

***************

File Management

***************

davos also provides a way to tidy processed files upon completion. You can choose to

either delete the file remotely once downloaded (effectively making it a *move* operation),

and you can also move the file locally.

Delete from Host

If checked, all matched and downloaded files will be deleted from the Host. This

logic will run after each individual download has completed.

.. warning :: If the FTP user does not have permission to delete files on the Host, this step will fail and the Schedule will cancel the current run. A future run of the Schedule will skip all files previously scanned.

..

Move Downloaded File

The location to move each successfully downloaded file. This will occur after each individual

download has completed. A common use-case for this feature is to separate in-progress files with

completed files (i.e. ``/download/doing`` and ``/download/done``).

.. note :: The "move to" directory must be relative to the container's filesystem, so should be under ``/download``. Advanced users may create additional volume mappings if need be.

.. note :: If davos is unable to move the file, it will remain in its originating directory, and will continue on to the Schedule's next step without failure.

******************

Downstream Actions

******************

One of the unique aspects of davos in respect to FTP management is its ability to create hooks in to other

applications that may be interested in the downloaded files. This may be useful when

the download action is part of a wider workflow that must be continued outside of the scope

of davos.

Actions defined against a Schedule will run for each individually downloaded file *after*

the File Management step previously mentioned has run.

There are two types of Downstream Action: *Notifications* and *API Calls*.

Notifications

=============

Notifications are useful if you'd like to know whenever davos has successfully downloaded

a file. Generally speaking, no further action is taken after a notification is sent,

but SNS may be configured to include a subscriber to a topic that performs a further action.

.. note:: There is no limit to the number of notifications you can have.

Pushbullet

----------

You will need an account with `Pushbullet `_ in order to use this feature.

In your Pushbullet account, create an Access Token.

Access Token

Your Pushbullet account's access token. This will be used to authenticate

notification push requests to the Pushbullet API.

Amazon SNS

-------------------------

You will need an `Amazon AWS `_ account to use this feature.

Topic Arn

The Amazon Resource Name for an SNS Topic created under your AWS account. This

will be the topic that notifications are sent to.

Region

The region that the topic was created under. While regions are not mandatory for

Topic Arns, this will be used to authenticate your account and create an SNS

client in the correct region.

Access Key

The access key for an IAM User under your AWS account.

Secret Access Key

The second half of authentication with AWS. This is the secret key for the same

IAM User.

.. warning:: Be careful with IAM User permissions! You should create a new IAM User with permissions only to publish messages to your notification topic, nothing more! See :doc:`../../faq/index` for more details on best practice regarding IAM Users.

API Calls

=========

API Calls are a great way to create hooks in to other applications via their own HTTP API.

URL

The URL of the API you wish to call

Method

Available options are *GET*, *POST*, *PUT* and *DELETE*

Content-Type

Informs the target API what type of body you're sending (if any), e.g. "application/json"

Message Body

The request payload being sent to the target API

.. note:: If you need to reference the downloaded file in an HTTP request, use **$filename**. This will resolve to the file or folder that was matched and subsequently downloaded.

================================================

FILE: docs/source/guides/index.rst

================================================

######

Guides

######

This section will run you through the aspects of the application itself, including installation,

first time use, and the concept of schedules (what they consist of), hosts, and how they tie together.

.. toctree::

:maxdepth: 1

installation

gettingstarted/index

appsettings

================================================

FILE: docs/source/guides/installation.rst

================================================

############

Installation

############

.. note :: davos has been written with `Docker `_ at the forefront regarding installation and deployment. This means that you should consider using the pre-built Docker image that `LinuxServer have provided `_ for this application.

***********

With Docker

***********

This is the recommended method of installation and deployment.

Install Docker

--------------

Firstly, you'll need to install `Docker `_, a container engine that is used to

fire up user-space virtual containers. I recommend using `Docker's official guide `_ on installing the latest version of Docker CE on your machine,

as the steps differ depending on your platform.

Build the container

===================

Create a new container from LinuxServer's image.

.. code-block:: text

docker create \

--name=davos \

-v :/config \

-v :/download

-e PGID= -e PUID= \

-p 8080:8080 \

linuxserver/davos

Params

------

* ````

The folder on your machine where davos will place its configuration and log files.

Typically this will be somewhere like ``/home/me/davos``, but it can be anywhere.

* ````

The folder on your machine that davos can download files to. This is the volume mount

point that davos is aware of for all file downloads.

* ````

The id of the user you'd like davos to run as. All files downloaded by davos will be owned by this user.

* ````

The id of the group you'd like to attribute to the user davos runs as. All files downloaded by davos will be owned by this group.

.. warning:: Docker will run all containers as ``root`` by default. Omitting ``PUID`` and ``PGID`` is not recommended.

Run the container

=================

Once the container has been created, you can run it.

.. code-block:: text

docker start davos

After about 30 seconds, the application will be running and will be accessible on ``http://localhost:8080``. If you are running

davos on a remote server, substitute ``localhost`` with the server's IP address.

**************

Without Docker

**************

This is not the recommended method of installation and deployment, but has the potential for being the most configurable and flexible.

Davos does not have any prebuilt binaries, so you'll need to get the source and build it yourself (another reason to use Docker instead).

Get the source

--------------

.. code-block:: text

wget https://github.com/linuxserver/davos/archive/LatestRelease.zip

unzip LatestRelease.zip -d davos

Configure the application

-------------------------

By default, davos is configured to place all of its configuration in ``/config``, which may

not be preferable if you're running the application on bare metal. Firstly, reconfigure davos

to use your own defined directory for its database.

In ``conf/release/application.properties``, change ``spring.datasource.url``, e.g.:

.. code-block:: text

spring.datasource.url=jdbc:h2:file:/home/me/davos

You'll also need to do the same in ``conf/release/log4j2.xml``, this time for the appender:

.. code-block:: xml

Build davos

-----------

.. note:: davos requires the `Java 8 SDK `_ to build.

Once you've updated the configuration locations, you can build the binary.

.. code-block:: text

./gradlew build -Penv=release

This will create "davos-|release|.jar" in ``build/libs``. You should move this somewhere more fitting for an executable (``/var/lib``, for example).

It may also be worth renaming the .jar to "davos.jar", although this is not necessary.

Run davos

---------

.. note:: davos requires the `Java 8 JRE `_ to build. This is not required if you already have the SDK installed.

To run the application, run the following command:

.. code-block:: text

java -jar davos.jar

================================================

FILE: docs/source/index.rst

================================================

##############################

davos: FTP Download Automation

##############################

This is the documentation for `davos `_, a web-based tool for

automating and managing file downloads over FTP, FTPS and SFTP. Davos was born from the idea that

even today, FTP still has relevance in many different markets, but there weren't many web-based

solutions that provided an easy way to manage the movement of files (outside of a command line cron job)

from one place to another.

For those new to davos, look through the :doc:`guides/installation` and :doc:`guides/gettingstarted/index` guides.

They will run you though how to get and set up davos for the first time.

davos also provides a basic HTTP API that can be used to hook in to the application to manage things like

schedules, hosts, filters, and even to stop or start individual schedules.

.. toctree::

:maxdepth: 2

:caption: Contents

guides/index

reference/index

faq/index

developers/index

================================================

FILE: docs/source/reference/api.rst

================================================

###

API

###

davos provides an HTTP API that exposes Schedules and Hosts so they can be managed

outside the scope of the web application. This API is also used by the web application's

AJAX calls.

.. warning:: This API is completely unauthenticated, so anyone on your network can use this

*********

/schedule

*********

POST

----

Creates a single Schedule.

.. code-block:: text

POST /api/v2/schedule HTTP 1.0

Host: localhost:8080

Content-Type: application/json

Accept: application/json

{

"name": String,

"interval": Integer,

"host": Integer,

"hostDirectory": String,

"localDirectory": String,

"transferType": String [ FILE | RECURSIVE ],

"automatic": Boolean,

"moveFileTo": String,

"filtersMandatory": Boolean,

"invertFilters": Boolean,

"deleteHostFile": Boolean,

"filters": [

{

"value": String

}

],

"notifications": {

"pushbullet": [

{

"apiKey": String

}

],

"sns": [

{

"topicArn": String,

"region": String,

"accessKey": String,

"secretAccessKey": String

}

]

},

"apis": [

{

"url": String,

"method": String [ POST | GET | PUT | DELETE ],

"contentType": String,

"body": String

}

]

}

For more information regarding what each field represents, see the :doc:`../guides/gettingstarted/schedules` documentation

in :doc:`../guides/gettingstarted/index`.

Response

========

See: :ref:`Schedule Response Syntax `.

**************

/schedule/{id}

**************

GET

---

Retrieves a single Schedule based on the supplied ``{id}``.

.. code-block:: text

GET /api/v2/schedule/{id} HTTP 1.0

Host: localhost:8080

Accept: application/json

Response

========

See: :ref:`Schedule Response Syntax `.

PUT

---

Updates a single Schedule based on the given ``{id}``. All fields must be supplied, even if only a subset is

being updated. Use a GET to first obtain the most up-to-date payload before performing

a PUT.

.. code-block:: text

PUT /api/v2/schedule/{id} HTTP 1.0

Host: localhost:8080

Content-Type: application/json

Accept: application/json

{

"name": String,

"interval": Integer,

"host": Integer,

"hostDirectory": String,

"localDirectory": String,

"transferType": String [ FILE | RECURSIVE ],

"automatic": Boolean,

"moveFileTo": String,

"filtersMandatory": Boolean,

"invertFilters": Boolean,

"deleteHostFile": Boolean,

"filters": [

{

"id": Integer,

"value": String

}

],

"notifications": {

"pushbullet": [

{

"id": Integer,

"apiKey": String

}

],

"sns": [

{

"id": Integer,

"topicArn": String,

"region": String,

"accessKey": String,

"secretAccessKey": String

}

]

},

"apis": [

{

"url": String,

"method": String [ POST | GET | PUT | DELETE ],

"contentType": String,

"body": String

}

]

}

.. note:: If you are updating a listed object, you must provide the object's ``id``. If you do not, the API will remove the old reference and create a new one. To add a new item to the list, provide the new item (without an ``id``) alongside the existing one.

Response

========

See: :ref:`Schedule Response Syntax `.

DELETE

------

Deletes a single Schedule with the given ``{id}``.

.. code-block:: text

DELETE /api/v2/schedule/{id} HTTP 1.0

Host: localhost:8080

Accept: application/json

Response

========

.. code-block:: javascript

{

"status": String [ OK | Failed ],

"body": String

}

***************************

/schedule/{id}/scannedFiles

***************************

DELETE

------

Clears all items in the given Schedule's ``lastScannedFiles``.

.. code-block:: text

DELETE /api/v2/schedule/{id}/scannedFiles HTTP 1.0

Host: localhost:8080

Accept: application/json

Response

========

.. code-block:: javascript

{

"status": String [ OK | Failed ],

"body": String

}

**********************

/schedule/{id}/execute

**********************

POST

----

Starts/Stops an existing Schedule.

.. code-block:: text

POST /api/v2/schedule/{id}/execute

Host: localhost:8080

Content-Type: application/json

Accept: application/json

{

"command": String [ START | STOP ]

}

Response

========

.. code-block:: javascript

{

"status": String [ OK | Failed ],

"body": String

}

*****

/host

*****

POST

----

Creates a new Host.

.. code-block:: text

POST /api/v2/host

Host: localhost:8080

Content-Type: application/json

Accept: application/json

{

"name": String,

"address": String,

"port": Integer,

"protocol": String [ FTP | FTPS | SFTP ],

"username": String,

"password": String,

"identityFile": String,

"identityFileEnabled": Boolean

}

.. note:: If ``identityFileEnabled`` is set to TRUE, you must also provide ``identityFile``, otherwise provide ``password``.

**********

/host/{id}

**********

GET

---

Retrieves a single Host based on the given ``{id}``.

.. code-block:: text

GET /api/v2/host/{id}

Host: localhost:8080

Accept: application/json

Response

========

See: :ref:`Host Response Syntax `.

PUT

---

Updates a Host with the given ``{id}``.

.. code-block:: text

POST /api/v2/host/{id}

Host: localhost:8080

Content-Type: application/json

Accept: application/json

{

"name": String,

"address": String,

"port": Integer,

"protocol": String [ FTP | FTPS | SFTP ],

"username": String,

"password": String,

"identityFile": String,

"identityFileEnabled": Boolean

}

.. note:: If ``identityFileEnabled`` is set to TRUE, you must also provide ``identityFile``, otherwise provide ``password``.

Response

========

See: :ref:`Host Response Syntax `.

DELETE

------

Deletes a single Host with the given ``{id}``.

.. code-block:: text

DELETE /api/v2/host/{id} HTTP 1.0

Host: localhost:8080

Accept: application/json

Response

========

.. code-block:: javascript

{

"status": String [ OK | Failure ],

"body": String

}

.. warning:: If the Host you are attempting to delete is being used by an active Schedule, the DELETE call will fail.

***************

/testConnection

***************

POST

----

Allows you to assert whether or not the provided payload contains valid Host information.

.. code-block:: text

POST /api/v2/testConnection

Host: localhost:8080

Content-Type: application/json

{

"id": Integer,

"name": String,

"address": String,

"port": Integer,

"protocol": String [ FTP | FTPS | SFTP ],

"username": String,

"password": String,

"identityFile": String,

"identityFileEnabled": Boolean

}

Response

========

.. code-block:: javascript

{

"status": String [ OK | Failed ],

"body": String

}

*************

/settings/log

*************

POST

----

Changes the logging level of the application's core code. Unlike other POST calls,

there is no payload body. The level is passed in as a request parameter.

level

The level to change the logging to. Available options are DEBUG, INFO, WARN, ERROR, FATAL

.. code-block:: text

POST /api/v2/settings/log?level={LEVEL}

Host: localhost:8080

Accept: application/json

Response

========

.. code-block:: javascript

{

"status": String [ OK | Failed ],

"body": String

}

*********

Responses

*********

.. _schedule-response:

Schedule Response Syntax

------------------------

.. code-block:: javascript

{

"status": String [ OK ],

"body": {

"id": Integer,

"name": String,

"interval": Integer,

"host": Integer,

"hostDirectory": String,

"localDirectory": String,

"transferType": String [ FILE | RECURSIVE ],

"automatic": Boolean,

"moveFileTo": String,

"running": Boolean,

"filtersMandatory": Boolean,

"invertFilters": Boolean,

"lastRunTime": String,

"deleteHostFile": Boolean,

"lastScannedFiles": [

String

],

"filters": [

{

"id": Integer,

"value": String

}

],

"notifications": {

"pushbullet": [

{

"id": Integer,

"apiKey": String

}

],

"sns": [

{

"id": Integer,

"topicArn": String,

"region": String,

"accessKey": String,

"secretAccessKey": String

}

]

},

"transfers": [

{

"fileName": String,

"fileSize": Integer,

"directory": Boolean,

"progress": {

"percentageComplete": Double,

"transferSpeed": Double

},

"status": String [ DOWNLOADING | SKIPPED | PENDING | FINISHED ]

}

],

"apis": [

{

"id": Integer,

"url": String,

"method": String [ POST | GET | PUT | DELETE ],

"contentType": String,

"body": String

}

]

}

}

.. note:: ``running``, ``lastScannedFiles``, ``lastRunTime`` and ``transfers`` are immutable metadata fields and can't be used in PUT or POST requests. If supplied, they will be ignored.

..

host

References the ``id`` of the linked host.

running

Descibes whether or not the Schedule is running.

lastRunTime

The time recorded when the Schedule last *finished* running.

lastScannedFiles

A list of Strings that represent the files/folders found in the last run of the

schedule.

transfers

A list of transfer objects that describe all files being actioned. This list

will only be populated when the Schedule is running and is actively downloading.

.. _host-response:

Host Response Syntax

--------------------

Success

=======

.. code-block:: javascript

{

"status": String [ OK ],

"body": {

"id": Integer,

"name": String,

"address": String,

"port": Integer,

"protocol": String [ FTP | FTPS | SFTP ],

"username": String,

"password": String,

"identityFile": String,

"identityFileEnabled": Boolean

}

}

Failure

=======

.. code-block:: javascript

{

"status": String [ Failed ],

"body": String

}

================================================

FILE: docs/source/reference/index.rst

================================================

#########

Reference

#########

.. toctree::

:maxdepth: 1

api

================================================

FILE: docs/source/requirements.txt

================================================

sphinx_rtd_theme

================================================

FILE: gradle/wrapper/gradle-wrapper.properties

================================================

#Fri Nov 11 19:22:20 GMT 2016

distributionBase=GRADLE_USER_HOME

distributionPath=wrapper/dists

zipStoreBase=GRADLE_USER_HOME

zipStorePath=wrapper/dists

distributionUrl=https\://services.gradle.org/distributions/gradle-2.14-bin.zip

================================================

FILE: gradlew

================================================

#!/usr/bin/env bash

##############################################################################

##

## Gradle start up script for UN*X

##

##############################################################################

# Attempt to set APP_HOME

# Resolve links: $0 may be a link

PRG="$0"

# Need this for relative symlinks.

while [ -h "$PRG" ] ; do

ls=`ls -ld "$PRG"`

link=`expr "$ls" : '.*-> \(.*\)$'`

if expr "$link" : '/.*' > /dev/null; then

PRG="$link"

else

PRG=`dirname "$PRG"`"/$link"

fi

done

SAVED="`pwd`"

cd "`dirname \"$PRG\"`/" >/dev/null

APP_HOME="`pwd -P`"

cd "$SAVED" >/dev/null

APP_NAME="Gradle"

APP_BASE_NAME=`basename "$0"`

# Add default JVM options here. You can also use JAVA_OPTS and GRADLE_OPTS to pass JVM options to this script.

DEFAULT_JVM_OPTS=""

# Use the maximum available, or set MAX_FD != -1 to use that value.

MAX_FD="maximum"

warn ( ) {

echo "$*"

}

die ( ) {

echo

echo "$*"

echo

exit 1

}

# OS specific support (must be 'true' or 'false').

cygwin=false

msys=false

darwin=false

nonstop=false

case "`uname`" in

CYGWIN* )

cygwin=true

;;

Darwin* )

darwin=true

;;

MINGW* )

msys=true

;;

NONSTOP* )

nonstop=true

;;

esac

CLASSPATH=$APP_HOME/gradle/wrapper/gradle-wrapper.jar

# Determine the Java command to use to start the JVM.

if [ -n "$JAVA_HOME" ] ; then

if [ -x "$JAVA_HOME/jre/sh/java" ] ; then

# IBM's JDK on AIX uses strange locations for the executables

JAVACMD="$JAVA_HOME/jre/sh/java"

else

JAVACMD="$JAVA_HOME/bin/java"

fi

if [ ! -x "$JAVACMD" ] ; then

die "ERROR: JAVA_HOME is set to an invalid directory: $JAVA_HOME

Please set the JAVA_HOME variable in your environment to match the

location of your Java installation."

fi

else

JAVACMD="java"

which java >/dev/null 2>&1 || die "ERROR: JAVA_HOME is not set and no 'java' command could be found in your PATH.

Please set the JAVA_HOME variable in your environment to match the

location of your Java installation."

fi

# Increase the maximum file descriptors if we can.

if [ "$cygwin" = "false" -a "$darwin" = "false" -a "$nonstop" = "false" ] ; then

MAX_FD_LIMIT=`ulimit -H -n`

if [ $? -eq 0 ] ; then

if [ "$MAX_FD" = "maximum" -o "$MAX_FD" = "max" ] ; then

MAX_FD="$MAX_FD_LIMIT"

fi

ulimit -n $MAX_FD

if [ $? -ne 0 ] ; then

warn "Could not set maximum file descriptor limit: $MAX_FD"

fi

else

warn "Could not query maximum file descriptor limit: $MAX_FD_LIMIT"

fi

fi

# For Darwin, add options to specify how the application appears in the dock

if $darwin; then

GRADLE_OPTS="$GRADLE_OPTS \"-Xdock:name=$APP_NAME\" \"-Xdock:icon=$APP_HOME/media/gradle.icns\""

fi

# For Cygwin, switch paths to Windows format before running java

if $cygwin ; then

APP_HOME=`cygpath --path --mixed "$APP_HOME"`

CLASSPATH=`cygpath --path --mixed "$CLASSPATH"`

JAVACMD=`cygpath --unix "$JAVACMD"`

# We build the pattern for arguments to be converted via cygpath

ROOTDIRSRAW=`find -L / -maxdepth 1 -mindepth 1 -type d 2>/dev/null`

SEP=""

for dir in $ROOTDIRSRAW ; do

ROOTDIRS="$ROOTDIRS$SEP$dir"

SEP="|"

done

OURCYGPATTERN="(^($ROOTDIRS))"

# Add a user-defined pattern to the cygpath arguments

if [ "$GRADLE_CYGPATTERN" != "" ] ; then

OURCYGPATTERN="$OURCYGPATTERN|($GRADLE_CYGPATTERN)"

fi

# Now convert the arguments - kludge to limit ourselves to /bin/sh

i=0

for arg in "$@" ; do

CHECK=`echo "$arg"|egrep -c "$OURCYGPATTERN" -`

CHECK2=`echo "$arg"|egrep -c "^-"` ### Determine if an option

if [ $CHECK -ne 0 ] && [ $CHECK2 -eq 0 ] ; then ### Added a condition

eval `echo args$i`=`cygpath --path --ignore --mixed "$arg"`

else

eval `echo args$i`="\"$arg\""

fi

i=$((i+1))

done

case $i in

(0) set -- ;;

(1) set -- "$args0" ;;

(2) set -- "$args0" "$args1" ;;

(3) set -- "$args0" "$args1" "$args2" ;;

(4) set -- "$args0" "$args1" "$args2" "$args3" ;;

(5) set -- "$args0" "$args1" "$args2" "$args3" "$args4" ;;

(6) set -- "$args0" "$args1" "$args2" "$args3" "$args4" "$args5" ;;

(7) set -- "$args0" "$args1" "$args2" "$args3" "$args4" "$args5" "$args6" ;;

(8) set -- "$args0" "$args1" "$args2" "$args3" "$args4" "$args5" "$args6" "$args7" ;;

(9) set -- "$args0" "$args1" "$args2" "$args3" "$args4" "$args5" "$args6" "$args7" "$args8" ;;

esac

fi

# Split up the JVM_OPTS And GRADLE_OPTS values into an array, following the shell quoting and substitution rules

function splitJvmOpts() {

JVM_OPTS=("$@")

}

eval splitJvmOpts $DEFAULT_JVM_OPTS $JAVA_OPTS $GRADLE_OPTS

JVM_OPTS[${#JVM_OPTS[*]}]="-Dorg.gradle.appname=$APP_BASE_NAME"

exec "$JAVACMD" "${JVM_OPTS[@]}" -classpath "$CLASSPATH" org.gradle.wrapper.GradleWrapperMain "$@"

================================================

FILE: gradlew.bat

================================================

@if "%DEBUG%" == "" @echo off

@rem ##########################################################################

@rem

@rem Gradle startup script for Windows

@rem

@rem ##########################################################################

@rem Set local scope for the variables with windows NT shell

if "%OS%"=="Windows_NT" setlocal

set DIRNAME=%~dp0

if "%DIRNAME%" == "" set DIRNAME=.

set APP_BASE_NAME=%~n0

set APP_HOME=%DIRNAME%

@rem Add default JVM options here. You can also use JAVA_OPTS and GRADLE_OPTS to pass JVM options to this script.

set DEFAULT_JVM_OPTS=

@rem Find java.exe

if defined JAVA_HOME goto findJavaFromJavaHome

set JAVA_EXE=java.exe

%JAVA_EXE% -version >NUL 2>&1

if "%ERRORLEVEL%" == "0" goto init

echo.

echo ERROR: JAVA_HOME is not set and no 'java' command could be found in your PATH.

echo.

echo Please set the JAVA_HOME variable in your environment to match the

echo location of your Java installation.

goto fail

:findJavaFromJavaHome

set JAVA_HOME=%JAVA_HOME:"=%

set JAVA_EXE=%JAVA_HOME%/bin/java.exe

if exist "%JAVA_EXE%" goto init

echo.

echo ERROR: JAVA_HOME is set to an invalid directory: %JAVA_HOME%

echo.

echo Please set the JAVA_HOME variable in your environment to match the

echo location of your Java installation.

goto fail

:init

@rem Get command-line arguments, handling Windows variants

if not "%OS%" == "Windows_NT" goto win9xME_args

if "%@eval[2+2]" == "4" goto 4NT_args

:win9xME_args

@rem Slurp the command line arguments.

set CMD_LINE_ARGS=

set _SKIP=2

:win9xME_args_slurp

if "x%~1" == "x" goto execute

set CMD_LINE_ARGS=%*

goto execute

:4NT_args

@rem Get arguments from the 4NT Shell from JP Software

set CMD_LINE_ARGS=%$

:execute

@rem Setup the command line

set CLASSPATH=%APP_HOME%\gradle\wrapper\gradle-wrapper.jar

@rem Execute Gradle

"%JAVA_EXE%" %DEFAULT_JVM_OPTS% %JAVA_OPTS% %GRADLE_OPTS% "-Dorg.gradle.appname=%APP_BASE_NAME%" -classpath "%CLASSPATH%" org.gradle.wrapper.GradleWrapperMain %CMD_LINE_ARGS%

:end

@rem End local scope for the variables with windows NT shell

if "%ERRORLEVEL%"=="0" goto mainEnd

:fail

rem Set variable GRADLE_EXIT_CONSOLE if you need the _script_ return code instead of

rem the _cmd.exe /c_ return code!

if not "" == "%GRADLE_EXIT_CONSOLE%" exit 1

exit /b 1

:mainEnd

if "%OS%"=="Windows_NT" endlocal

:omega

================================================

FILE: src/cucumber/java/io/linuxserver/davos/bdd/ClientStepDefs.java

================================================

package io.linuxserver.davos.bdd;

import static org.assertj.core.api.Assertions.assertThat;

import java.io.File;

import java.util.List;

import org.apache.commons.io.FileUtils;

import cucumber.api.java.After;

import cucumber.api.java.en.Then;

import cucumber.api.java.en.When;

import io.linuxserver.davos.bdd.helpers.FakeFTPServerFactory;

import io.linuxserver.davos.bdd.helpers.FakeSFTPServerFactory;

import io.linuxserver.davos.transfer.ftp.FTPFile;

import io.linuxserver.davos.transfer.ftp.client.Client;

import io.linuxserver.davos.transfer.ftp.client.FTPClient;

import io.linuxserver.davos.transfer.ftp.client.SFTPClient;

import io.linuxserver.davos.transfer.ftp.client.UserCredentials;

import io.linuxserver.davos.transfer.ftp.connection.Connection;

import io.linuxserver.davos.transfer.ftp.connection.progress.ProgressListener;

public class ClientStepDefs {

private static final String TMP = FileUtils.getTempDirectoryPath();

private Connection connection;

private Client client;

private ProgressListener progressListener;

@After("@Client")

public void after() {

client.disconnect();

}

@When("^davos connects to the server$")

public void davos_connects_to_the_server() throws Throwable {

client = new FTPClient();

client.setCredentials(new UserCredentials("user", "password"));

client.setHost("localhost");

client.setPort(FakeFTPServerFactory.getPort());

connection = client.connect();

}

@When("^davos connects to the SFTP server$")

public void davos_connects_to_the_SFTP_server() throws Throwable {

client = new SFTPClient();

client.setCredentials(new UserCredentials("user", "password"));

client.setHost("localhost");

client.setPort(FakeSFTPServerFactory.getPort());

connection = client.connect();

}

@When("^deletes an SFTP directory$")

public void deletes_an_SFTP_directory() throws Throwable {

connection.deleteRemoteFile(new FTPFile("toDelete", 0, "/", 0, true));

}

@Then("^the SFTP directory is deleted on the server$")

public void the_SFTP_directory_is_deleted_on_the_server() throws Throwable {

assertThat(new File(TMP + "/toDelete").exists()).isFalse();

}

@Then("^listing the files will show the correct files$")

public void listing_the_files_will_show_the_correct_files() throws Throwable {

List files = connection.listFiles("/tmp");

assertThat(files).hasSize(3);

assertThat(files.get(0).getName()).isEqualTo("file3.txt");

assertThat(files.get(1).getName()).isEqualTo("file2.txt");

assertThat(files.get(2).getName()).isEqualTo("file1.txt");

}

@When("^downloads a file$")