Repository: lmnt-com/haste

Branch: master

Commit: ceba32ecd373

Files: 102

Total size: 762.7 KB

Directory structure:

gitextract_l70fejx2/

├── .gitignore

├── CHANGELOG.md

├── LICENSE

├── Makefile

├── README.md

├── benchmarks/

│ ├── benchmark_gru.cc

│ ├── benchmark_lstm.cc

│ ├── cudnn_wrappers.h

│ └── report.py

├── build/

│ ├── MANIFEST.in

│ ├── common.py

│ ├── setup.pytorch.py

│ └── setup.tf.py

├── docs/

│ ├── pytorch/

│ │ ├── haste_pytorch/

│ │ │ ├── GRU.md

│ │ │ ├── IndRNN.md

│ │ │ ├── LSTM.md

│ │ │ ├── LayerNormGRU.md

│ │ │ └── LayerNormLSTM.md

│ │ └── haste_pytorch.md

│ └── tf/

│ ├── haste_tf/

│ │ ├── GRU.md

│ │ ├── GRUCell.md

│ │ ├── IndRNN.md

│ │ ├── LSTM.md

│ │ ├── LayerNorm.md

│ │ ├── LayerNormGRU.md

│ │ ├── LayerNormGRUCell.md

│ │ ├── LayerNormLSTM.md

│ │ ├── LayerNormLSTMCell.md

│ │ └── ZoneoutWrapper.md

│ └── haste_tf.md

├── examples/

│ ├── device_ptr.h

│ ├── gru.cc

│ └── lstm.cc

├── frameworks/

│ ├── pytorch/

│ │ ├── __init__.py

│ │ ├── base_rnn.py

│ │ ├── gru.cc

│ │ ├── gru.py

│ │ ├── indrnn.cc

│ │ ├── indrnn.py

│ │ ├── layer_norm_gru.cc

│ │ ├── layer_norm_gru.py

│ │ ├── layer_norm_indrnn.cc

│ │ ├── layer_norm_indrnn.py

│ │ ├── layer_norm_lstm.cc

│ │ ├── layer_norm_lstm.py

│ │ ├── lstm.cc

│ │ ├── lstm.py

│ │ ├── support.cc

│ │ └── support.h

│ └── tf/

│ ├── __init__.py

│ ├── arena.h

│ ├── base_rnn.py

│ ├── gru.cc

│ ├── gru.py

│ ├── gru_cell.py

│ ├── indrnn.cc

│ ├── indrnn.py

│ ├── layer_norm.cc

│ ├── layer_norm.py

│ ├── layer_norm_gru.cc

│ ├── layer_norm_gru.py

│ ├── layer_norm_gru_cell.py

│ ├── layer_norm_indrnn.cc

│ ├── layer_norm_indrnn.py

│ ├── layer_norm_lstm.cc

│ ├── layer_norm_lstm.py

│ ├── layer_norm_lstm_cell.py

│ ├── lstm.cc

│ ├── lstm.py

│ ├── support.cc

│ ├── support.h

│ ├── weight_config.py

│ └── zoneout_wrapper.py

├── lib/

│ ├── blas.h

│ ├── device_assert.h

│ ├── gru_backward_gpu.cu.cc

│ ├── gru_forward_gpu.cu.cc

│ ├── haste/

│ │ ├── gru.h

│ │ ├── indrnn.h

│ │ ├── layer_norm.h

│ │ ├── layer_norm_gru.h

│ │ ├── layer_norm_indrnn.h

│ │ ├── layer_norm_lstm.h

│ │ └── lstm.h

│ ├── haste.h

│ ├── indrnn_backward_gpu.cu.cc

│ ├── indrnn_forward_gpu.cu.cc

│ ├── inline_ops.h

│ ├── layer_norm_backward_gpu.cu.cc

│ ├── layer_norm_forward_gpu.cu.cc

│ ├── layer_norm_gru_backward_gpu.cu.cc

│ ├── layer_norm_gru_forward_gpu.cu.cc

│ ├── layer_norm_indrnn_backward_gpu.cu.cc

│ ├── layer_norm_indrnn_forward_gpu.cu.cc

│ ├── layer_norm_lstm_backward_gpu.cu.cc

│ ├── layer_norm_lstm_forward_gpu.cu.cc

│ ├── lstm_backward_gpu.cu.cc

│ └── lstm_forward_gpu.cu.cc

└── validation/

├── pytorch.py

├── pytorch_speed.py

├── tf.py

└── tf_pytorch.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

*.a

*.o

*.so

*.whl

benchmark_lstm

benchmark_gru

haste_lstm

haste_gru

================================================

FILE: CHANGELOG.md

================================================

# ChangeLog

## 0.4.0 (2020-04-13)

### Added

- New layer normalized GRU layer (`LayerNormGRU`).

- New IndRNN layer.

- CPU support for all PyTorch layers.

- Support for building PyTorch API on Windows.

- Added `state` argument to PyTorch layers to specify initial state.

- Added weight transforms to TensorFlow API (see docs for details).

- Added `get_weights` method to extract weights from RNN layers (TensorFlow).

- Added `to_native_weights` and `from_native_weights` to PyTorch API for `LSTM` and `GRU` layers.

- Validation tests to check for correctness.

### Changed

- Performance improvements to GRU layer.

- BREAKING CHANGE: PyTorch layers default to CPU instead of GPU.

- BREAKING CHANGE: `h` must not be transposed before passing it to `gru::BackwardPass::Iterate`.

### Fixed

- Multi-GPU training with TensorFlow caused by invalid sharing of `cublasHandle_t`.

## 0.3.0 (2020-03-09)

### Added

- PyTorch support.

- New layer normalized LSTM layer (`LayerNormLSTM`).

- New fused layer normalization layer.

### Fixed

- Occasional uninitialized memory use in TensorFlow LSTM implementation.

## 0.2.0 (2020-02-12)

### Added

- New time-fused API for LSTM (`lstm::ForwardPass::Run`, `lstm::BackwardPass::Run`).

- Benchmarking code to evaluate the performance of an implementation.

### Changed

- Performance improvements to existing iterative LSTM API.

- BREAKING CHANGE: `h` must not be transposed before passing it to `lstm::BackwardPass::Iterate`.

- BREAKING CHANGE: `dv` does not need to be allocated and `v` must be passed instead to `lstm::BackwardPass::Iterate`.

## 0.1.0 (2020-01-29)

### Added

- Initial release of Haste.

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright 2020 LMNT, Inc.

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: Makefile

================================================

AR ?= ar

CXX ?= g++

NVCC ?= nvcc -ccbin $(CXX)

PYTHON ?= python

ifeq ($(OS),Windows_NT)

LIBHASTE := haste.lib

CUDA_HOME ?= $(CUDA_PATH)

AR := lib

AR_FLAGS := /nologo /out:$(LIBHASTE)

NVCC_FLAGS := -x cu -Xcompiler "/MD"

else

LIBHASTE := libhaste.a

CUDA_HOME ?= /usr/local/cuda

AR ?= ar

AR_FLAGS := -crv $(LIBHASTE)

NVCC_FLAGS := -std=c++11 -x cu -Xcompiler -fPIC

endif

LOCAL_CFLAGS := -I/usr/include/eigen3 -I$(CUDA_HOME)/include -Ilib -O3

LOCAL_LDFLAGS := -L$(CUDA_HOME)/lib64 -L. -lcudart -lcublas

GPU_ARCH_FLAGS := -gencode arch=compute_37,code=compute_37 -gencode arch=compute_60,code=compute_60 -gencode arch=compute_70,code=compute_70

# Small enough project that we can just recompile all the time.

.PHONY: all haste haste_tf haste_pytorch libhaste_tf examples benchmarks clean

all: haste haste_tf haste_pytorch examples benchmarks

haste:

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/lstm_forward_gpu.cu.cc -o lib/lstm_forward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/lstm_backward_gpu.cu.cc -o lib/lstm_backward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/gru_forward_gpu.cu.cc -o lib/gru_forward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/gru_backward_gpu.cu.cc -o lib/gru_backward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/layer_norm_forward_gpu.cu.cc -o lib/layer_norm_forward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/layer_norm_backward_gpu.cu.cc -o lib/layer_norm_backward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/layer_norm_lstm_forward_gpu.cu.cc -o lib/layer_norm_lstm_forward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/layer_norm_lstm_backward_gpu.cu.cc -o lib/layer_norm_lstm_backward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/layer_norm_gru_forward_gpu.cu.cc -o lib/layer_norm_gru_forward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/layer_norm_gru_backward_gpu.cu.cc -o lib/layer_norm_gru_backward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/indrnn_backward_gpu.cu.cc -o lib/indrnn_backward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/indrnn_forward_gpu.cu.cc -o lib/indrnn_forward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/layer_norm_indrnn_forward_gpu.cu.cc -o lib/layer_norm_indrnn_forward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(NVCC) $(GPU_ARCH_FLAGS) -c lib/layer_norm_indrnn_backward_gpu.cu.cc -o lib/layer_norm_indrnn_backward_gpu.o $(NVCC_FLAGS) $(LOCAL_CFLAGS)

$(AR) $(AR_FLAGS) lib/*.o

libhaste_tf: haste

$(eval TF_CFLAGS := $(shell $(PYTHON) -c 'import tensorflow as tf; print(" ".join(tf.sysconfig.get_compile_flags()))'))

$(eval TF_LDFLAGS := $(shell $(PYTHON) -c 'import tensorflow as tf; print(" ".join(tf.sysconfig.get_link_flags()))'))

$(CXX) -std=c++11 -c frameworks/tf/lstm.cc -o frameworks/tf/lstm.o $(LOCAL_CFLAGS) $(TF_CFLAGS) -fPIC

$(CXX) -std=c++11 -c frameworks/tf/gru.cc -o frameworks/tf/gru.o $(LOCAL_CFLAGS) $(TF_CFLAGS) -fPIC

$(CXX) -std=c++11 -c frameworks/tf/layer_norm.cc -o frameworks/tf/layer_norm.o $(LOCAL_CFLAGS) $(TF_CFLAGS) -fPIC

$(CXX) -std=c++11 -c frameworks/tf/layer_norm_gru.cc -o frameworks/tf/layer_norm_gru.o $(LOCAL_CFLAGS) $(TF_CFLAGS) -fPIC

$(CXX) -std=c++11 -c frameworks/tf/layer_norm_indrnn.cc -o frameworks/tf/layer_norm_indrnn.o $(LOCAL_CFLAGS) $(TF_CFLAGS) -fPIC

$(CXX) -std=c++11 -c frameworks/tf/layer_norm_lstm.cc -o frameworks/tf/layer_norm_lstm.o $(LOCAL_CFLAGS) $(TF_CFLAGS) -fPIC

$(CXX) -std=c++11 -c frameworks/tf/indrnn.cc -o frameworks/tf/indrnn.o $(LOCAL_CFLAGS) $(TF_CFLAGS) -fPIC

$(CXX) -std=c++11 -c frameworks/tf/support.cc -o frameworks/tf/support.o $(LOCAL_CFLAGS) $(TF_CFLAGS) -fPIC

$(CXX) -shared frameworks/tf/*.o libhaste.a -o frameworks/tf/libhaste_tf.so $(LOCAL_LDFLAGS) $(TF_LDFLAGS) -fPIC

# Dependencies handled by setup.py

haste_tf:

@$(eval TMP := $(shell mktemp -d))

@cp -r . $(TMP)

@cat build/common.py build/setup.tf.py > $(TMP)/setup.py

@(cd $(TMP); $(PYTHON) setup.py -q bdist_wheel)

@cp $(TMP)/dist/*.whl .

@rm -rf $(TMP)

# Dependencies handled by setup.py

haste_pytorch:

@$(eval TMP := $(shell mktemp -d))

@cp -r . $(TMP)

@cat build/common.py build/setup.pytorch.py > $(TMP)/setup.py

@(cd $(TMP); $(PYTHON) setup.py -q bdist_wheel)

@cp $(TMP)/dist/*.whl .

@rm -rf $(TMP)

dist:

@$(eval TMP := $(shell mktemp -d))

@cp -r . $(TMP)

@cp build/MANIFEST.in $(TMP)

@cat build/common.py build/setup.tf.py > $(TMP)/setup.py

@(cd $(TMP); $(PYTHON) setup.py -q sdist)

@cp $(TMP)/dist/*.tar.gz .

@rm -rf $(TMP)

@$(eval TMP := $(shell mktemp -d))

@cp -r . $(TMP)

@cp build/MANIFEST.in $(TMP)

@cat build/common.py build/setup.pytorch.py > $(TMP)/setup.py

@(cd $(TMP); $(PYTHON) setup.py -q sdist)

@cp $(TMP)/dist/*.tar.gz .

@rm -rf $(TMP)

examples: haste

$(CXX) -std=c++11 examples/lstm.cc $(LIBHASTE) $(LOCAL_CFLAGS) $(LOCAL_LDFLAGS) -o haste_lstm -Wno-ignored-attributes

$(CXX) -std=c++11 examples/gru.cc $(LIBHASTE) $(LOCAL_CFLAGS) $(LOCAL_LDFLAGS) -o haste_gru -Wno-ignored-attributes

benchmarks: haste

$(CXX) -std=c++11 benchmarks/benchmark_lstm.cc $(LIBHASTE) $(LOCAL_CFLAGS) $(LOCAL_LDFLAGS) -o benchmark_lstm -Wno-ignored-attributes -lcudnn

$(CXX) -std=c++11 benchmarks/benchmark_gru.cc $(LIBHASTE) $(LOCAL_CFLAGS) $(LOCAL_LDFLAGS) -o benchmark_gru -Wno-ignored-attributes -lcudnn

clean:

rm -fr benchmark_lstm benchmark_gru haste_lstm haste_gru haste_*.whl haste_*.tar.gz

find . \( -iname '*.o' -o -iname '*.so' -o -iname '*.a' -o -iname '*.lib' \) -delete

================================================

FILE: README.md

================================================

--------------------------------------------------------------------------------

[](https://github.com/lmnt-com/haste/releases) [](https://colab.research.google.com/drive/1hzYhcyvbXYMAUwa3515BszSkhx1UUFSt) [](LICENSE)

**We're hiring!**

If you like what we're building here, [come join us at LMNT](https://explore.lmnt.com).

Haste is a CUDA implementation of fused RNN layers with built-in [DropConnect](http://proceedings.mlr.press/v28/wan13.html) and [Zoneout](https://arxiv.org/abs/1606.01305) regularization. These layers are exposed through C++ and Python APIs for easy integration into your own projects or machine learning frameworks.

Which RNN types are supported?

- [GRU](https://en.wikipedia.org/wiki/Gated_recurrent_unit)

- [IndRNN](http://arxiv.org/abs/1803.04831)

- [LSTM](https://en.wikipedia.org/wiki/Long_short-term_memory)

- [Layer Normalized GRU](https://arxiv.org/abs/1607.06450)

- [Layer Normalized LSTM](https://arxiv.org/abs/1607.06450)

What's included in this project?

- a standalone C++ API (`libhaste`)

- a TensorFlow Python API (`haste_tf`)

- a PyTorch API (`haste_pytorch`)

- examples for writing your own custom C++ inference / training code using `libhaste`

- benchmarking programs to evaluate the performance of RNN implementations

For questions or feedback about Haste, please open an issue on GitHub or send us an email at [haste@lmnt.com](mailto:haste@lmnt.com).

## Install

Here's what you'll need to get started:

- a [CUDA Compute Capability](https://developer.nvidia.com/cuda-gpus) 3.7+ GPU (required)

- [CUDA Toolkit](https://developer.nvidia.com/cuda-toolkit) 10.0+ (required)

- [TensorFlow GPU](https://www.tensorflow.org/install/gpu) 1.14+ or 2.0+ for TensorFlow integration (optional)

- [PyTorch](https://pytorch.org) 1.3+ for PyTorch integration (optional)

- [Eigen 3](http://eigen.tuxfamily.org/) to build the C++ examples (optional)

- [cuDNN Developer Library](https://developer.nvidia.com/rdp/cudnn-archive) to build benchmarking programs (optional)

Once you have the prerequisites, you can install with pip or by building the source code.

### Using pip

```

pip install haste_pytorch

pip install haste_tf

```

### Building from source

```

make # Build everything

make haste # ;) Build C++ API

make haste_tf # Build TensorFlow API

make haste_pytorch # Build PyTorch API

make examples

make benchmarks

```

If you built the TensorFlow or PyTorch API, install it with `pip`:

```

pip install haste_tf-*.whl

pip install haste_pytorch-*.whl

```

If the CUDA Toolkit that you're building against is not in `/usr/local/cuda`, you must specify the

`$CUDA_HOME` environment variable before running make:

```

CUDA_HOME=/usr/local/cuda-10.2 make

```

## Performance

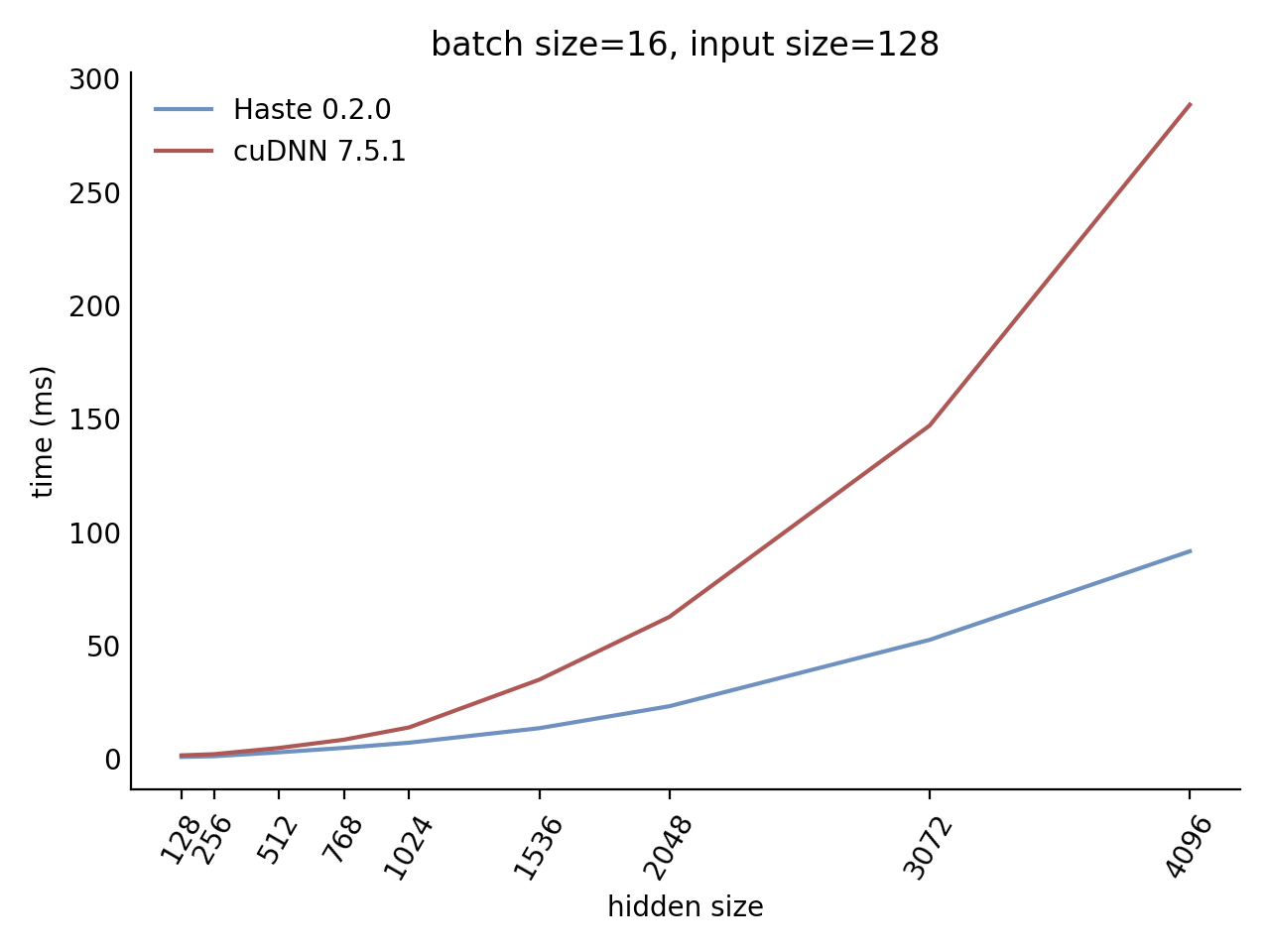

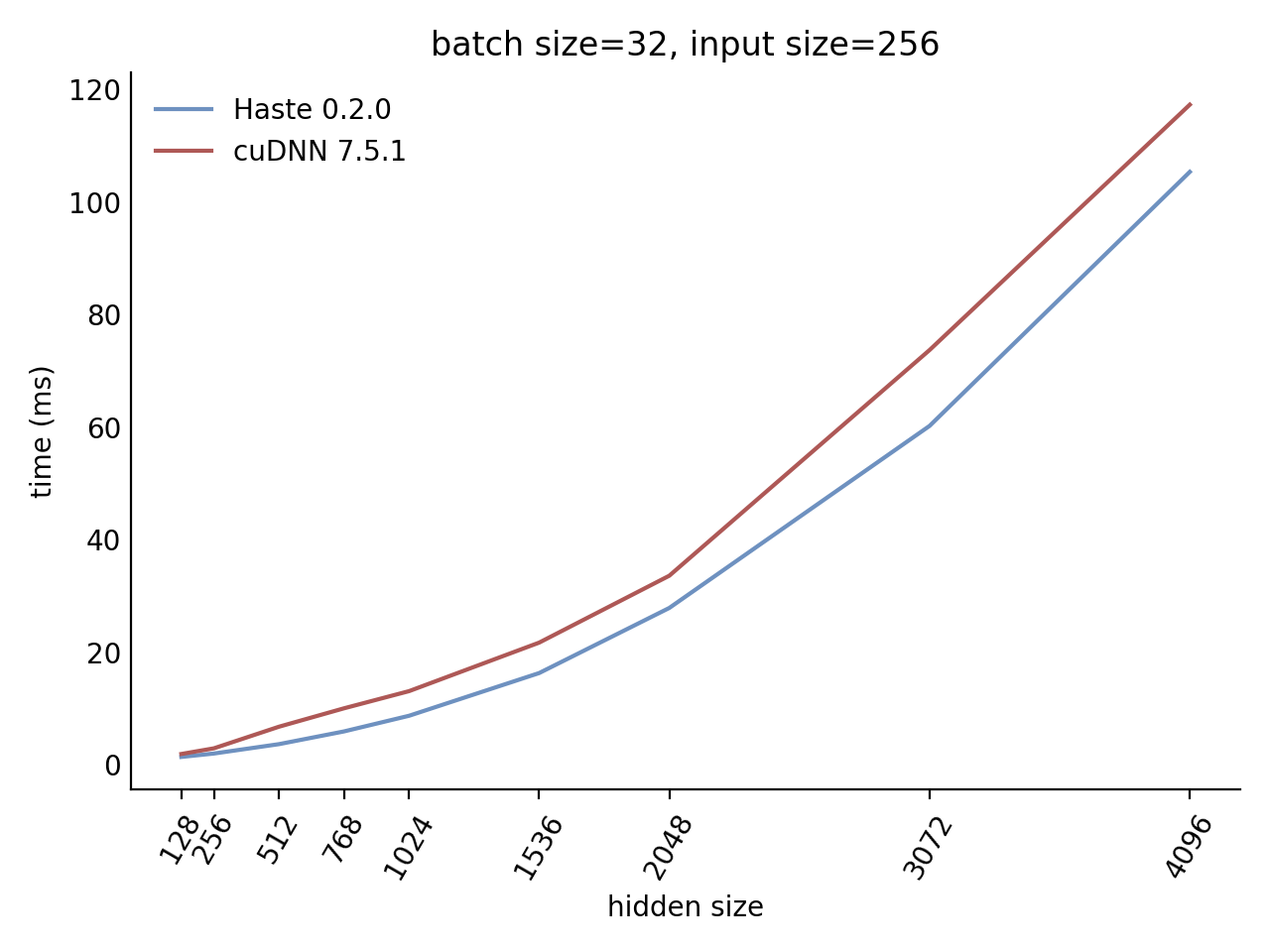

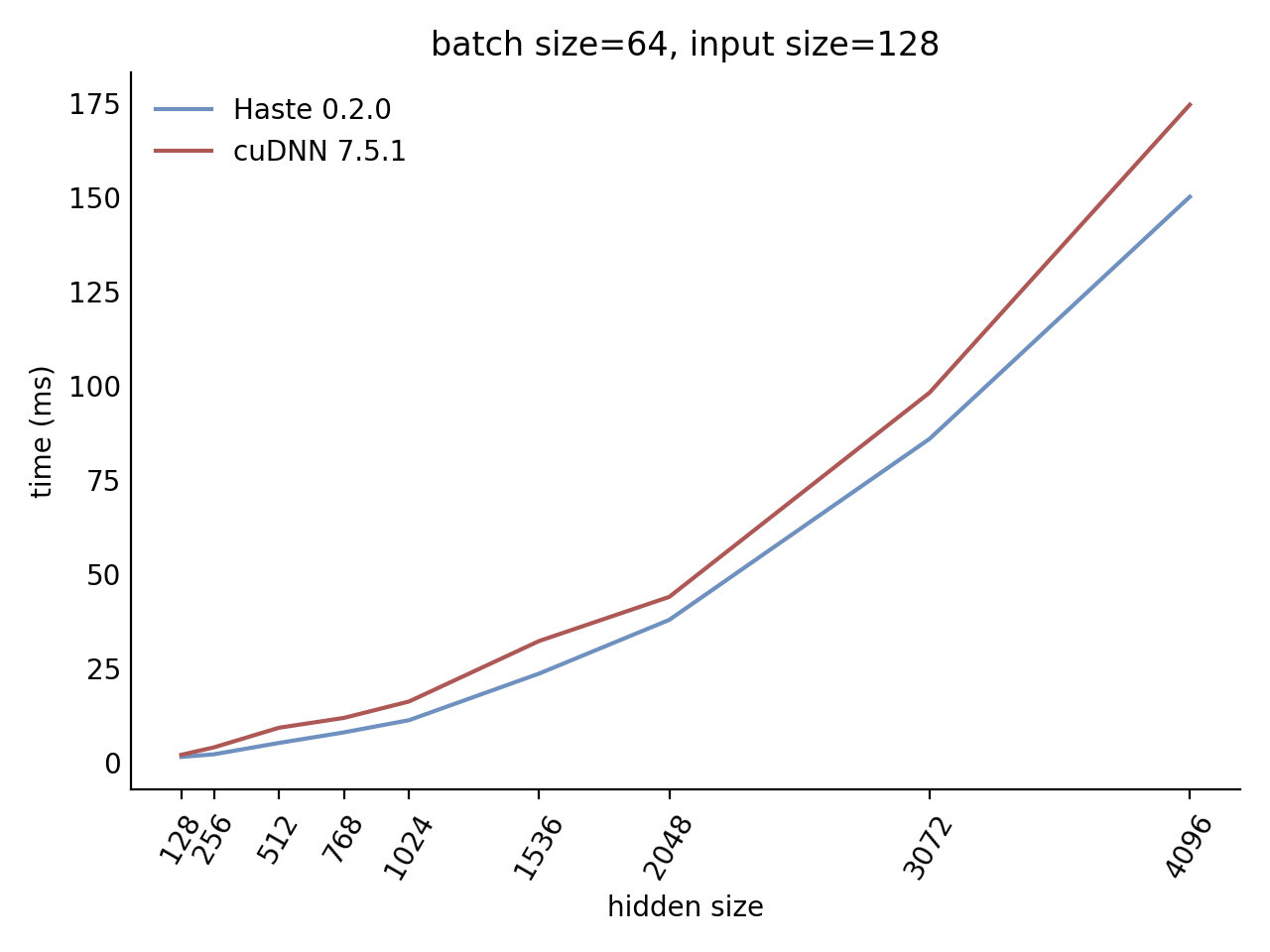

Our LSTM and GRU benchmarks indicate that Haste has the fastest publicly available implementation for nearly all problem sizes. The following charts show our LSTM results, but the GRU results are qualitatively similar.

Here is our complete LSTM benchmark result grid:

[`N=1 C=64`](https://lmnt.com/assets/haste/benchmark/report_n=1_c=64.png)

[`N=1 C=128`](https://lmnt.com/assets/haste/benchmark/report_n=1_c=128.png)

[`N=1 C=256`](https://lmnt.com/assets/haste/benchmark/report_n=1_c=256.png)

[`N=1 C=512`](https://lmnt.com/assets/haste/benchmark/report_n=1_c=512.png)

[`N=32 C=64`](https://lmnt.com/assets/haste/benchmark/report_n=32_c=64.png)

[`N=32 C=128`](https://lmnt.com/assets/haste/benchmark/report_n=32_c=128.png)

[`N=32 C=256`](https://lmnt.com/assets/haste/benchmark/report_n=32_c=256.png)

[`N=32 C=512`](https://lmnt.com/assets/haste/benchmark/report_n=32_c=512.png)

[`N=64 C=64`](https://lmnt.com/assets/haste/benchmark/report_n=64_c=64.png)

[`N=64 C=128`](https://lmnt.com/assets/haste/benchmark/report_n=64_c=128.png)

[`N=64 C=256`](https://lmnt.com/assets/haste/benchmark/report_n=64_c=256.png)

[`N=64 C=512`](https://lmnt.com/assets/haste/benchmark/report_n=64_c=512.png)

[`N=128 C=64`](https://lmnt.com/assets/haste/benchmark/report_n=128_c=64.png)

[`N=128 C=128`](https://lmnt.com/assets/haste/benchmark/report_n=128_c=128.png)

[`N=128 C=256`](https://lmnt.com/assets/haste/benchmark/report_n=128_c=256.png)

[`N=128 C=512`](https://lmnt.com/assets/haste/benchmark/report_n=128_c=512.png)

## Documentation

### TensorFlow API

```python

import haste_tf as haste

gru_layer = haste.GRU(num_units=256, direction='bidirectional', zoneout=0.1, dropout=0.05)

indrnn_layer = haste.IndRNN(num_units=256, direction='bidirectional', zoneout=0.1)

lstm_layer = haste.LSTM(num_units=256, direction='bidirectional', zoneout=0.1, dropout=0.05)

norm_gru_layer = haste.LayerNormGRU(num_units=256, direction='bidirectional', zoneout=0.1, dropout=0.05)

norm_lstm_layer = haste.LayerNormLSTM(num_units=256, direction='bidirectional', zoneout=0.1, dropout=0.05)

# `x` is a tensor with shape [N,T,C]

x = tf.random.normal([5, 25, 128])

y, state = gru_layer(x, training=True)

y, state = indrnn_layer(x, training=True)

y, state = lstm_layer(x, training=True)

y, state = norm_gru_layer(x, training=True)

y, state = norm_lstm_layer(x, training=True)

```

The TensorFlow Python API is documented in [`docs/tf/haste_tf.md`](docs/tf/haste_tf.md).

### PyTorch API

```python

import torch

import haste_pytorch as haste

gru_layer = haste.GRU(input_size=128, hidden_size=256, zoneout=0.1, dropout=0.05)

indrnn_layer = haste.IndRNN(input_size=128, hidden_size=256, zoneout=0.1)

lstm_layer = haste.LSTM(input_size=128, hidden_size=256, zoneout=0.1, dropout=0.05)

norm_gru_layer = haste.LayerNormGRU(input_size=128, hidden_size=256, zoneout=0.1, dropout=0.05)

norm_lstm_layer = haste.LayerNormLSTM(input_size=128, hidden_size=256, zoneout=0.1, dropout=0.05)

gru_layer.cuda()

indrnn_layer.cuda()

lstm_layer.cuda()

norm_gru_layer.cuda()

norm_lstm_layer.cuda()

# `x` is a CUDA tensor with shape [T,N,C]

x = torch.rand([25, 5, 128]).cuda()

y, state = gru_layer(x)

y, state = indrnn_layer(x)

y, state = lstm_layer(x)

y, state = norm_gru_layer(x)

y, state = norm_lstm_layer(x)

```

The PyTorch API is documented in [`docs/pytorch/haste_pytorch.md`](docs/pytorch/haste_pytorch.md).

### C++ API

The C++ API is documented in [`lib/haste/*.h`](lib/haste/) and there are code samples in [`examples/`](examples/).

## Code layout

- [`benchmarks/`](benchmarks): programs to evaluate performance of RNN implementations

- [`docs/tf/`](docs/tf): API reference documentation for `haste_tf`

- [`docs/pytorch/`](docs/pytorch): API reference documentation for `haste_pytorch`

- [`examples/`](examples): examples for writing your own C++ inference / training code using `libhaste`

- [`frameworks/tf/`](frameworks/tf): TensorFlow Python API and custom op code

- [`frameworks/pytorch/`](frameworks/pytorch): PyTorch API and custom op code

- [`lib/`](lib): CUDA kernels and C++ API

- [`validation/`](validation): scripts to validate output and gradients of RNN layers

## Implementation notes

- the GRU implementation is based on `1406.1078v1` (same as cuDNN) rather than `1406.1078v3`

- Zoneout on LSTM cells is applied to the hidden state only, and not the cell state

- the layer normalized LSTM implementation uses [these equations](https://github.com/lmnt-com/haste/issues/1)

## References

1. Hochreiter, S., & Schmidhuber, J. (1997). Long Short-Term Memory. _Neural Computation_, _9_(8), 1735–1780. https://doi.org/10.1162/neco.1997.9.8.1735

1. Cho, K., van Merrienboer, B., Gulcehre, C., Bahdanau, D., Bougares, F., Schwenk, H., & Bengio, Y. (2014). Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation. _arXiv:1406.1078 [cs, stat]_. http://arxiv.org/abs/1406.1078.

1. Wan, L., Zeiler, M., Zhang, S., Cun, Y. L., & Fergus, R. (2013). Regularization of Neural Networks using DropConnect. In _International Conference on Machine Learning_ (pp. 1058–1066). Presented at the International Conference on Machine Learning. http://proceedings.mlr.press/v28/wan13.html.

1. Krueger, D., Maharaj, T., Kramár, J., Pezeshki, M., Ballas, N., Ke, N. R., et al. (2017). Zoneout: Regularizing RNNs by Randomly Preserving Hidden Activations. _arXiv:1606.01305 [cs]_. http://arxiv.org/abs/1606.01305.

1. Ba, J., Kiros, J.R., & Hinton, G.E. (2016). Layer Normalization. _arXiv:1607.06450 [cs, stat]_. https://arxiv.org/abs/1607.06450.

1. Li, S., Li, W., Cook, C., Zhu, C., & Gao, Y. (2018). Independently Recurrent Neural Network (IndRNN): Building A Longer and Deeper RNN. _arXiv:1803.04831 [cs]_. http://arxiv.org/abs/1803.04831.

## Citing this work

To cite this work, please use the following BibTeX entry:

```

@misc{haste2020,

title = {Haste: a fast, simple, and open RNN library},

author = {Sharvil Nanavati},

year = 2020,

month = "Jan",

howpublished = {\url{https://github.com/lmnt-com/haste/}},

}

```

## License

[Apache 2.0](LICENSE)

================================================

FILE: benchmarks/benchmark_gru.cc

================================================

// Copyright 2020 LMNT, Inc. All Rights Reserved.

//

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to in writing, software

// distributed under the License is distributed on an "AS IS" BASIS,

// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

// See the License for the specific language governing permissions and

// limitations under the License.

// ==============================================================================

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include "../examples/device_ptr.h"

#include "cudnn_wrappers.h"

#include "haste.h"

using haste::v0::gru::BackwardPass;

using haste::v0::gru::ForwardPass;

using std::string;

using Tensor1 = Eigen::Tensor;

using Tensor2 = Eigen::Tensor;

using Tensor3 = Eigen::Tensor;

static constexpr int DEFAULT_SAMPLE_SIZE = 10;

static constexpr int DEFAULT_TIME_STEPS = 50;

static cudnnHandle_t g_cudnn_handle;

static cublasHandle_t g_blas_handle;

float TimeLoop(std::function fn, int iterations) {

cudaEvent_t start, stop;

cudaEventCreate(&start);

cudaEventCreate(&stop);

cudaEventRecord(start);

for (int i = 0; i < iterations; ++i)

fn();

float elapsed_ms;

cudaEventRecord(stop);

cudaEventSynchronize(stop);

cudaEventElapsedTime(&elapsed_ms, start, stop);

cudaEventDestroy(start);

cudaEventDestroy(stop);

return elapsed_ms / iterations;

}

float CudnnInference(

int sample_size,

const Tensor2& W,

const Tensor2& R,

const Tensor1& bx,

const Tensor1& br,

const Tensor3& x) {

const int time_steps = x.dimension(2);

const int batch_size = x.dimension(1);

const int input_size = x.dimension(0);

const int hidden_size = R.dimension(1);

device_ptr x_dev(x);

device_ptr h_dev(batch_size * hidden_size);

device_ptr c_dev(batch_size * hidden_size);

device_ptr y_dev(time_steps * batch_size * hidden_size);

device_ptr h_out_dev(batch_size * hidden_size);

device_ptr c_out_dev(batch_size * hidden_size);

h_dev.zero();

c_dev.zero();

// Descriptors all the way down. Nice.

RnnDescriptor rnn_descriptor(g_cudnn_handle, hidden_size, CUDNN_GRU);

TensorDescriptorArray x_descriptors(time_steps, { batch_size, input_size, 1 });

TensorDescriptorArray y_descriptors(time_steps, { batch_size, hidden_size, 1 });

auto h_descriptor = TensorDescriptor({ 1, batch_size, hidden_size });

auto c_descriptor = TensorDescriptor({ 1, batch_size, hidden_size });

auto h_out_descriptor = TensorDescriptor({ 1, batch_size, hidden_size });

auto c_out_descriptor = TensorDescriptor({ 1, batch_size, hidden_size });

size_t workspace_size;

cudnnGetRNNWorkspaceSize(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&x_descriptors,

&workspace_size);

auto workspace_dev = device_ptr::NewByteSized(workspace_size);

size_t w_count;

cudnnGetRNNParamsSize(

g_cudnn_handle,

*rnn_descriptor,

*&x_descriptors,

&w_count,

CUDNN_DATA_FLOAT);

auto w_dev = device_ptr::NewByteSized(w_count);

FilterDescriptor w_descriptor(w_dev.Size());

float ms = TimeLoop([&]() {

cudnnRNNForwardInference(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&x_descriptors,

x_dev.data,

*h_descriptor,

h_dev.data,

*c_descriptor,

c_dev.data,

*w_descriptor,

w_dev.data,

&y_descriptors,

y_dev.data,

*h_out_descriptor,

h_out_dev.data,

*c_out_descriptor,

c_out_dev.data,

workspace_dev.data,

workspace_size);

}, sample_size);

return ms;

}

float CudnnTrain(

int sample_size,

const Tensor2& W,

const Tensor2& R,

const Tensor1& bx,

const Tensor1& br,

const Tensor3& x,

const Tensor3& dh) {

const int time_steps = x.dimension(2);

const int batch_size = x.dimension(1);

const int input_size = x.dimension(0);

const int hidden_size = R.dimension(1);

device_ptr y_dev(time_steps * batch_size * hidden_size);

device_ptr dy_dev(time_steps * batch_size * hidden_size);

device_ptr dhy_dev(batch_size * hidden_size);

device_ptr dcy_dev(batch_size * hidden_size);

device_ptr hx_dev(batch_size * hidden_size);

device_ptr cx_dev(batch_size * hidden_size);

device_ptr dx_dev(time_steps * batch_size * input_size);

device_ptr dhx_dev(batch_size * hidden_size);

device_ptr dcx_dev(batch_size * hidden_size);

RnnDescriptor rnn_descriptor(g_cudnn_handle, hidden_size, CUDNN_GRU);

TensorDescriptorArray y_descriptors(time_steps, { batch_size, hidden_size, 1 });

TensorDescriptorArray dy_descriptors(time_steps, { batch_size, hidden_size, 1 });

TensorDescriptorArray dx_descriptors(time_steps, { batch_size, input_size, 1 });

TensorDescriptor dhy_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor dcy_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor hx_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor cx_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor dhx_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor dcx_descriptor({ 1, batch_size, hidden_size });

size_t workspace_size = 0;

cudnnGetRNNWorkspaceSize(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&dx_descriptors,

&workspace_size);

auto workspace_dev = device_ptr::NewByteSized(workspace_size);

size_t w_count = 0;

cudnnGetRNNParamsSize(

g_cudnn_handle,

*rnn_descriptor,

*&dx_descriptors,

&w_count,

CUDNN_DATA_FLOAT);

auto w_dev = device_ptr::NewByteSized(w_count);

FilterDescriptor w_descriptor(w_dev.Size());

size_t reserve_size = 0;

cudnnGetRNNTrainingReserveSize(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&dx_descriptors,

&reserve_size);

auto reserve_dev = device_ptr::NewByteSized(reserve_size);

float ms = TimeLoop([&]() {

cudnnRNNForwardTraining(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&dx_descriptors,

dx_dev.data,

*hx_descriptor,

hx_dev.data,

*cx_descriptor,

cx_dev.data,

*w_descriptor,

w_dev.data,

&y_descriptors,

y_dev.data,

*dhy_descriptor,

dhy_dev.data,

*dcy_descriptor,

dcy_dev.data,

workspace_dev.data,

workspace_size,

reserve_dev.data,

reserve_size);

cudnnRNNBackwardData(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&y_descriptors,

y_dev.data,

&dy_descriptors,

dy_dev.data,

*dhy_descriptor,

dhy_dev.data,

*dcy_descriptor,

dcy_dev.data,

*w_descriptor,

w_dev.data,

*hx_descriptor,

hx_dev.data,

*cx_descriptor,

cx_dev.data,

&dx_descriptors,

dx_dev.data,

*dhx_descriptor,

dhx_dev.data,

*dcx_descriptor,

dcx_dev.data,

workspace_dev.data,

workspace_size,

reserve_dev.data,

reserve_size);

cudnnRNNBackwardWeights(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&dx_descriptors,

dx_dev.data,

*hx_descriptor,

hx_dev.data,

&y_descriptors,

y_dev.data,

workspace_dev.data,

workspace_size,

*w_descriptor,

w_dev.data,

reserve_dev.data,

reserve_size);

}, sample_size);

return ms;

}

float HasteInference(

int sample_size,

const Tensor2& W,

const Tensor2& R,

const Tensor1& bx,

const Tensor1& br,

const Tensor3& x) {

const int time_steps = x.dimension(2);

const int batch_size = x.dimension(1);

const int input_size = x.dimension(0);

const int hidden_size = R.dimension(1);

// Copy weights over to GPU.

device_ptr W_dev(W);

device_ptr R_dev(R);

device_ptr bx_dev(bx);

device_ptr br_dev(br);

device_ptr x_dev(x);

device_ptr h_dev((time_steps + 1) * batch_size * hidden_size);

device_ptr tmp_Wx_dev(time_steps * batch_size * hidden_size * 3);

device_ptr tmp_Rh_dev(batch_size * hidden_size * 3);

h_dev.zero();

// Settle down the GPU and off we go!

cudaDeviceSynchronize();

float ms = TimeLoop([&]() {

ForwardPass forward(

false,

batch_size,

input_size,

hidden_size,

g_blas_handle);

forward.Run(

time_steps,

W_dev.data,

R_dev.data,

bx_dev.data,

br_dev.data,

x_dev.data,

h_dev.data,

nullptr,

tmp_Wx_dev.data,

tmp_Rh_dev.data,

0.0f,

nullptr);

}, sample_size);

return ms;

}

float HasteTrain(

int sample_size,

const Tensor2& W,

const Tensor2& R,

const Tensor1& bx,

const Tensor1& br,

const Tensor3& x,

const Tensor3& dh) {

const int time_steps = x.dimension(2);

const int batch_size = x.dimension(1);

const int input_size = x.dimension(0);

const int hidden_size = R.dimension(1);

device_ptr W_dev(W);

device_ptr R_dev(R);

device_ptr x_dev(x);

device_ptr h_dev((time_steps + 1) * batch_size * hidden_size);

device_ptr v_dev(time_steps * batch_size * hidden_size * 4);

device_ptr tmp_Wx_dev(time_steps * batch_size * hidden_size * 3);

device_ptr tmp_Rh_dev(batch_size * hidden_size * 3);

device_ptr W_t_dev(W);

device_ptr R_t_dev(R);

device_ptr bx_dev(bx);

device_ptr br_dev(br);

device_ptr x_t_dev(x);

// These gradients should actually come "from above" but we're just allocating

// a bunch of uninitialized memory and passing it in.

device_ptr dh_new_dev(dh);

device_ptr dx_dev(time_steps * batch_size * input_size);

device_ptr dW_dev(input_size * hidden_size * 3);

device_ptr dR_dev(hidden_size * hidden_size * 3);

device_ptr dbx_dev(hidden_size * 3);

device_ptr dbr_dev(hidden_size * 3);

device_ptr dh_dev(batch_size * hidden_size);

device_ptr dp_dev(time_steps * batch_size * hidden_size * 3);

device_ptr dq_dev(time_steps * batch_size * hidden_size * 3);

ForwardPass forward(

true,

batch_size,

input_size,

hidden_size,

g_blas_handle);

BackwardPass backward(

batch_size,

input_size,

hidden_size,

g_blas_handle);

static const float alpha = 1.0f;

static const float beta = 0.0f;

cudaDeviceSynchronize();

float ms = TimeLoop([&]() {

forward.Run(

time_steps,

W_dev.data,

R_dev.data,

bx_dev.data,

br_dev.data,

x_dev.data,

h_dev.data,

v_dev.data,

tmp_Wx_dev.data,

tmp_Rh_dev.data,

0.0f,

nullptr);

// Haste needs `x`, `W`, and `R` to be transposed between the forward

// pass and backward pass. Add these transposes in here to get a fair

// measurement of the overall time it takes to run an entire training

// loop.

cublasSgeam(

g_blas_handle,

CUBLAS_OP_T, CUBLAS_OP_N,

batch_size * time_steps, input_size,

&alpha,

x_dev.data, input_size,

&beta,

x_dev.data, batch_size * time_steps,

x_t_dev.data, batch_size * time_steps);

cublasSgeam(

g_blas_handle,

CUBLAS_OP_T, CUBLAS_OP_N,

input_size, hidden_size * 3,

&alpha,

W_dev.data, hidden_size * 3,

&beta,

W_dev.data, input_size,

W_t_dev.data, input_size);

cublasSgeam(

g_blas_handle,

CUBLAS_OP_T, CUBLAS_OP_N,

hidden_size, hidden_size * 3,

&alpha,

R_dev.data, hidden_size * 3,

&beta,

R_dev.data, hidden_size,

R_t_dev.data, hidden_size);

backward.Run(

time_steps,

W_t_dev.data,

R_t_dev.data,

bx_dev.data,

br_dev.data,

x_t_dev.data,

h_dev.data,

v_dev.data,

dh_new_dev.data,

dx_dev.data,

dW_dev.data,

dR_dev.data,

dbx_dev.data,

dbr_dev.data,

dh_dev.data,

dp_dev.data,

dq_dev.data,

nullptr);

}, sample_size);

return ms;

}

void usage(const char* name) {

printf("Usage: %s [OPTION]...\n", name);

printf(" -h, --help\n");

printf(" -i, --implementation IMPL (default: haste)\n");

printf(" -m, --mode MODE (default: training)\n");

printf(" -s, --sample_size NUM number of runs to average over (default: %d)\n",

DEFAULT_SAMPLE_SIZE);

printf(" -t, --time_steps NUM number of time steps in RNN (default: %d)\n",

DEFAULT_TIME_STEPS);

}

int main(int argc, char* const* argv) {

srand(time(0));

cudnnCreate(&g_cudnn_handle);

cublasCreate(&g_blas_handle);

static struct option long_options[] = {

{ "help", no_argument, 0, 'h' },

{ "implementation", required_argument, 0, 'i' },

{ "mode", required_argument, 0, 'm' },

{ "sample_size", required_argument, 0, 's' },

{ "time_steps", required_argument, 0, 't' },

{ 0, 0, 0, 0 }

};

int c;

int opt_index;

bool inference_flag = false;

bool haste_flag = true;

int sample_size = DEFAULT_SAMPLE_SIZE;

int time_steps = DEFAULT_TIME_STEPS;

while ((c = getopt_long(argc, argv, "hi:m:s:t:", long_options, &opt_index)) != -1)

switch (c) {

case 'h':

usage(argv[0]);

return 0;

case 'i':

if (optarg[0] == 'c' || optarg[0] == 'C')

haste_flag = false;

break;

case 'm':

if (optarg[0] == 'i' || optarg[0] == 'I')

inference_flag = true;

break;

case 's':

sscanf(optarg, "%d", &sample_size);

break;

case 't':

sscanf(optarg, "%d", &time_steps);

break;

}

printf("# Benchmark configuration:\n");

printf("# Mode: %s\n", inference_flag ? "inference" : "training");

printf("# Implementation: %s\n", haste_flag ? "Haste" : "cuDNN");

printf("# Sample size: %d\n", sample_size);

printf("# Time steps: %d\n", time_steps);

printf("#\n");

printf("# batch_size,hidden_size,input_size,time_ms\n");

for (const int N : { 1, 16, 32, 64, 128 }) {

for (const int H : { 128, 256, 512, 768, 1024, 1536, 2048, 3072, 4096 }) {

for (const int C : { 64, 128, 256, 512 }) {

Tensor2 W(H * 3, C);

Tensor2 R(H * 3, H);

Tensor1 bx(H * 3);

Tensor1 br(H * 3);

Tensor3 x(C, N, time_steps);

Tensor3 dh(H, N, time_steps + 1);

float ms;

if (inference_flag) {

if (haste_flag)

ms = HasteInference(sample_size, W, R, bx, br, x);

else

ms = CudnnInference(sample_size, W, R, bx, br, x);

} else {

if (haste_flag)

ms = HasteTrain(sample_size, W, R, bx, br, x, dh);

else

ms = CudnnTrain(sample_size, W, R, bx, br, x, dh);

}

printf("%d,%d,%d,%f\n", N, H, C, ms);

}

}

}

cublasDestroy(g_blas_handle);

cudnnDestroy(g_cudnn_handle);

return 0;

}

================================================

FILE: benchmarks/benchmark_lstm.cc

================================================

// Copyright 2020 LMNT, Inc. All Rights Reserved.

//

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to in writing, software

// distributed under the License is distributed on an "AS IS" BASIS,

// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

// See the License for the specific language governing permissions and

// limitations under the License.

// ==============================================================================

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include "../examples/device_ptr.h"

#include "cudnn_wrappers.h"

#include "haste.h"

using haste::v0::lstm::BackwardPass;

using haste::v0::lstm::ForwardPass;

using std::string;

using Tensor1 = Eigen::Tensor;

using Tensor2 = Eigen::Tensor;

using Tensor3 = Eigen::Tensor;

static constexpr int DEFAULT_SAMPLE_SIZE = 10;

static constexpr int DEFAULT_TIME_STEPS = 50;

static cudnnHandle_t g_cudnn_handle;

static cublasHandle_t g_blas_handle;

float TimeLoop(std::function fn, int iterations) {

cudaEvent_t start, stop;

cudaEventCreate(&start);

cudaEventCreate(&stop);

cudaEventRecord(start);

for (int i = 0; i < iterations; ++i)

fn();

float elapsed_ms;

cudaEventRecord(stop);

cudaEventSynchronize(stop);

cudaEventElapsedTime(&elapsed_ms, start, stop);

cudaEventDestroy(start);

cudaEventDestroy(stop);

return elapsed_ms / iterations;

}

float CudnnInference(

int sample_size,

const Tensor2& W,

const Tensor2& R,

const Tensor1& b,

const Tensor3& x) {

const int time_steps = x.dimension(2);

const int batch_size = x.dimension(1);

const int input_size = x.dimension(0);

const int hidden_size = R.dimension(1);

device_ptr x_dev(x);

device_ptr h_dev(batch_size * hidden_size);

device_ptr c_dev(batch_size * hidden_size);

device_ptr y_dev(time_steps * batch_size * hidden_size);

device_ptr h_out_dev(batch_size * hidden_size);

device_ptr c_out_dev(batch_size * hidden_size);

h_dev.zero();

c_dev.zero();

// Descriptors all the way down. Nice.

RnnDescriptor rnn_descriptor(g_cudnn_handle, hidden_size, CUDNN_LSTM);

TensorDescriptorArray x_descriptors(time_steps, { batch_size, input_size, 1 });

TensorDescriptorArray y_descriptors(time_steps, { batch_size, hidden_size, 1 });

auto h_descriptor = TensorDescriptor({ 1, batch_size, hidden_size });

auto c_descriptor = TensorDescriptor({ 1, batch_size, hidden_size });

auto h_out_descriptor = TensorDescriptor({ 1, batch_size, hidden_size });

auto c_out_descriptor = TensorDescriptor({ 1, batch_size, hidden_size });

size_t workspace_size;

cudnnGetRNNWorkspaceSize(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&x_descriptors,

&workspace_size);

auto workspace_dev = device_ptr::NewByteSized(workspace_size);

size_t w_count;

cudnnGetRNNParamsSize(

g_cudnn_handle,

*rnn_descriptor,

*&x_descriptors,

&w_count,

CUDNN_DATA_FLOAT);

auto w_dev = device_ptr::NewByteSized(w_count);

FilterDescriptor w_descriptor(w_dev.Size());

float ms = TimeLoop([&]() {

cudnnRNNForwardInference(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&x_descriptors,

x_dev.data,

*h_descriptor,

h_dev.data,

*c_descriptor,

c_dev.data,

*w_descriptor,

w_dev.data,

&y_descriptors,

y_dev.data,

*h_out_descriptor,

h_out_dev.data,

*c_out_descriptor,

c_out_dev.data,

workspace_dev.data,

workspace_size);

}, sample_size);

return ms;

}

float CudnnTrain(

int sample_size,

const Tensor2& W,

const Tensor2& R,

const Tensor1& b,

const Tensor3& x,

const Tensor3& dh,

const Tensor3& dc) {

const int time_steps = x.dimension(2);

const int batch_size = x.dimension(1);

const int input_size = x.dimension(0);

const int hidden_size = R.dimension(1);

device_ptr y_dev(time_steps * batch_size * hidden_size);

device_ptr dy_dev(time_steps * batch_size * hidden_size);

device_ptr dhy_dev(batch_size * hidden_size);

device_ptr dcy_dev(batch_size * hidden_size);

device_ptr hx_dev(batch_size * hidden_size);

device_ptr cx_dev(batch_size * hidden_size);

device_ptr dx_dev(time_steps * batch_size * input_size);

device_ptr dhx_dev(batch_size * hidden_size);

device_ptr dcx_dev(batch_size * hidden_size);

RnnDescriptor rnn_descriptor(g_cudnn_handle, hidden_size, CUDNN_LSTM);

TensorDescriptorArray y_descriptors(time_steps, { batch_size, hidden_size, 1 });

TensorDescriptorArray dy_descriptors(time_steps, { batch_size, hidden_size, 1 });

TensorDescriptorArray dx_descriptors(time_steps, { batch_size, input_size, 1 });

TensorDescriptor dhy_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor dcy_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor hx_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor cx_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor dhx_descriptor({ 1, batch_size, hidden_size });

TensorDescriptor dcx_descriptor({ 1, batch_size, hidden_size });

size_t workspace_size = 0;

cudnnGetRNNWorkspaceSize(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&dx_descriptors,

&workspace_size);

auto workspace_dev = device_ptr::NewByteSized(workspace_size);

size_t w_count = 0;

cudnnGetRNNParamsSize(

g_cudnn_handle,

*rnn_descriptor,

*&dx_descriptors,

&w_count,

CUDNN_DATA_FLOAT);

auto w_dev = device_ptr::NewByteSized(w_count);

FilterDescriptor w_descriptor(w_dev.Size());

size_t reserve_size = 0;

cudnnGetRNNTrainingReserveSize(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&dx_descriptors,

&reserve_size);

auto reserve_dev = device_ptr::NewByteSized(reserve_size);

float ms = TimeLoop([&]() {

cudnnRNNForwardTraining(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&dx_descriptors,

dx_dev.data,

*hx_descriptor,

hx_dev.data,

*cx_descriptor,

cx_dev.data,

*w_descriptor,

w_dev.data,

&y_descriptors,

y_dev.data,

*dhy_descriptor,

dhy_dev.data,

*dcy_descriptor,

dcy_dev.data,

workspace_dev.data,

workspace_size,

reserve_dev.data,

reserve_size);

cudnnRNNBackwardData(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&y_descriptors,

y_dev.data,

&dy_descriptors,

dy_dev.data,

*dhy_descriptor,

dhy_dev.data,

*dcy_descriptor,

dcy_dev.data,

*w_descriptor,

w_dev.data,

*hx_descriptor,

hx_dev.data,

*cx_descriptor,

cx_dev.data,

&dx_descriptors,

dx_dev.data,

*dhx_descriptor,

dhx_dev.data,

*dcx_descriptor,

dcx_dev.data,

workspace_dev.data,

workspace_size,

reserve_dev.data,

reserve_size);

cudnnRNNBackwardWeights(

g_cudnn_handle,

*rnn_descriptor,

time_steps,

&dx_descriptors,

dx_dev.data,

*hx_descriptor,

hx_dev.data,

&y_descriptors,

y_dev.data,

workspace_dev.data,

workspace_size,

*w_descriptor,

w_dev.data,

reserve_dev.data,

reserve_size);

}, sample_size);

return ms;

}

float HasteInference(

int sample_size,

const Tensor2& W,

const Tensor2& R,

const Tensor1& b,

const Tensor3& x) {

const int time_steps = x.dimension(2);

const int batch_size = x.dimension(1);

const int input_size = x.dimension(0);

const int hidden_size = R.dimension(1);

// Copy weights over to GPU.

device_ptr W_dev(W);

device_ptr R_dev(R);

device_ptr b_dev(b);

device_ptr x_dev(x);

device_ptr h_dev((time_steps + 1) * batch_size * hidden_size);

device_ptr c_dev((time_steps + 1) * batch_size * hidden_size);

device_ptr v_dev(time_steps * batch_size * hidden_size * 4);

device_ptr tmp_Rh_dev(batch_size * hidden_size * 4);

h_dev.zero();

c_dev.zero();

// Settle down the GPU and off we go!

cudaDeviceSynchronize();

float ms = TimeLoop([&]() {

ForwardPass forward(

false,

batch_size,

input_size,

hidden_size,

g_blas_handle);

forward.Run(

time_steps,

W_dev.data,

R_dev.data,

b_dev.data,

x_dev.data,

h_dev.data,

c_dev.data,

v_dev.data,

tmp_Rh_dev.data,

0.0f,

nullptr);

}, sample_size);

return ms;

}

float HasteTrain(

int sample_size,

const Tensor2& W,

const Tensor2& R,

const Tensor1& b,

const Tensor3& x,

const Tensor3& dh,

const Tensor3& dc) {

const int time_steps = x.dimension(2);

const int batch_size = x.dimension(1);

const int input_size = x.dimension(0);

const int hidden_size = R.dimension(1);

Eigen::array transpose_x({ 1, 2, 0 });

Tensor3 x_t = x.shuffle(transpose_x);

Eigen::array transpose({ 1, 0 });

Tensor2 W_t = W.shuffle(transpose);

Tensor2 R_t = R.shuffle(transpose);

device_ptr W_dev(W);

device_ptr R_dev(R);

device_ptr x_dev(x);

device_ptr h_dev((time_steps + 1) * batch_size * hidden_size);

device_ptr c_dev((time_steps + 1) * batch_size * hidden_size);

device_ptr v_dev(time_steps * batch_size * hidden_size * 4);

device_ptr tmp_Rh_dev(batch_size * hidden_size * 4);

device_ptr W_t_dev(W_t);

device_ptr R_t_dev(R_t);

device_ptr b_dev(b);

device_ptr x_t_dev(x_t);

// These gradients should actually come "from above" but we're just allocating

// a bunch of uninitialized memory and passing it in.

device_ptr dh_new_dev(dh);

device_ptr dc_new_dev(dc);

device_ptr dx_dev(time_steps * batch_size * input_size);

device_ptr dW_dev(input_size * hidden_size * 4);

device_ptr dR_dev(hidden_size * hidden_size * 4);

device_ptr db_dev(hidden_size * 4);

device_ptr dh_dev((time_steps + 1) * batch_size * hidden_size);

device_ptr dc_dev((time_steps + 1) * batch_size * hidden_size);

dW_dev.zero();

dR_dev.zero();

db_dev.zero();

dh_dev.zero();

dc_dev.zero();

ForwardPass forward(

true,

batch_size,

input_size,

hidden_size,

g_blas_handle);

BackwardPass backward(

batch_size,

input_size,

hidden_size,

g_blas_handle);

static const float alpha = 1.0f;

static const float beta = 0.0f;

cudaDeviceSynchronize();

float ms = TimeLoop([&]() {

forward.Run(

time_steps,

W_dev.data,

R_dev.data,

b_dev.data,

x_dev.data,

h_dev.data,

c_dev.data,

v_dev.data,

tmp_Rh_dev.data,

0.0f,

nullptr);

// Haste needs `x`, `W`, and `R` to be transposed between the forward

// pass and backward pass. Add these transposes in here to get a fair

// measurement of the overall time it takes to run an entire training

// loop.

cublasSgeam(

g_blas_handle,

CUBLAS_OP_T, CUBLAS_OP_N,

batch_size * time_steps, input_size,

&alpha,

x_dev.data, input_size,

&beta,

x_dev.data, batch_size * time_steps,

x_t_dev.data, batch_size * time_steps);

cublasSgeam(

g_blas_handle,

CUBLAS_OP_T, CUBLAS_OP_N,

input_size, hidden_size * 4,

&alpha,

W_dev.data, hidden_size * 4,

&beta,

W_dev.data, input_size,

W_t_dev.data, input_size);

cublasSgeam(

g_blas_handle,

CUBLAS_OP_T, CUBLAS_OP_N,

hidden_size, hidden_size * 4,

&alpha,

R_dev.data, hidden_size * 4,

&beta,

R_dev.data, hidden_size,

R_t_dev.data, hidden_size);

backward.Run(

time_steps,

W_t_dev.data,

R_t_dev.data,

b_dev.data,

x_t_dev.data,

h_dev.data,

c_dev.data,

dh_new_dev.data,

dc_new_dev.data,

dx_dev.data,

dW_dev.data,

dR_dev.data,

db_dev.data,

dh_dev.data,

dc_dev.data,

v_dev.data,

nullptr);

}, sample_size);

return ms;

}

void usage(const char* name) {

printf("Usage: %s [OPTION]...\n", name);

printf(" -h, --help\n");

printf(" -i, --implementation IMPL (default: haste)\n");

printf(" -m, --mode MODE (default: training)\n");

printf(" -s, --sample_size NUM number of runs to average over (default: %d)\n",

DEFAULT_SAMPLE_SIZE);

printf(" -t, --time_steps NUM number of time steps in RNN (default: %d)\n",

DEFAULT_TIME_STEPS);

}

int main(int argc, char* const* argv) {

srand(time(0));

cudnnCreate(&g_cudnn_handle);

cublasCreate(&g_blas_handle);

static struct option long_options[] = {

{ "help", no_argument, 0, 'h' },

{ "implementation", required_argument, 0, 'i' },

{ "mode", required_argument, 0, 'm' },

{ "sample_size", required_argument, 0, 's' },

{ "time_steps", required_argument, 0, 't' },

{ 0, 0, 0, 0 }

};

int c;

int opt_index;

bool inference_flag = false;

bool haste_flag = true;

int sample_size = DEFAULT_SAMPLE_SIZE;

int time_steps = DEFAULT_TIME_STEPS;

while ((c = getopt_long(argc, argv, "hi:m:s:t:", long_options, &opt_index)) != -1)

switch (c) {

case 'h':

usage(argv[0]);

return 0;

case 'i':

if (optarg[0] == 'c' || optarg[0] == 'C')

haste_flag = false;

break;

case 'm':

if (optarg[0] == 'i' || optarg[0] == 'I')

inference_flag = true;

break;

case 's':

sscanf(optarg, "%d", &sample_size);

break;

case 't':

sscanf(optarg, "%d", &time_steps);

break;

}

printf("# Benchmark configuration:\n");

printf("# Mode: %s\n", inference_flag ? "inference" : "training");

printf("# Implementation: %s\n", haste_flag ? "Haste" : "cuDNN");

printf("# Sample size: %d\n", sample_size);

printf("# Time steps: %d\n", time_steps);

printf("#\n");

printf("# batch_size,hidden_size,input_size,time_ms\n");

for (const int N : { 1, 16, 32, 64, 128 }) {

for (const int H : { 128, 256, 512, 768, 1024, 1536, 2048, 3072, 4096 }) {

for (const int C : { 64, 128, 256, 512 }) {

Tensor2 W(H * 4, C);

Tensor2 R(H * 4, H);

Tensor1 b(H * 4);

Tensor3 x(C, N, time_steps);

Tensor3 dh(H, N, time_steps + 1);

Tensor3 dc(H, N, time_steps + 1);

float ms;

if (inference_flag) {

if (haste_flag)

ms = HasteInference(sample_size, W, R, b, x);

else

ms = CudnnInference(sample_size, W, R, b, x);

} else {

if (haste_flag)

ms = HasteTrain(sample_size, W, R, b, x, dh, dc);

else

ms = CudnnTrain(sample_size, W, R, b, x, dh, dc);

}

printf("%d,%d,%d,%f\n", N, H, C, ms);

}

}

}

cublasDestroy(g_blas_handle);

cudnnDestroy(g_cudnn_handle);

return 0;

}

================================================

FILE: benchmarks/cudnn_wrappers.h

================================================

// Copyright 2020 LMNT, Inc. All Rights Reserved.

//

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to in writing, software

// distributed under the License is distributed on an "AS IS" BASIS,

// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

// See the License for the specific language governing permissions and

// limitations under the License.

// ==============================================================================

#pragma once

#include

#include

#include

template

struct CudnnDataType {};

template<>

struct CudnnDataType {

static constexpr auto value = CUDNN_DATA_FLOAT;

};

template<>

struct CudnnDataType {

static constexpr auto value = CUDNN_DATA_DOUBLE;

};

template

class TensorDescriptor {

public:

TensorDescriptor(const std::vector& dims) {

std::vector strides;

int stride = 1;

for (int i = dims.size() - 1; i >= 0; --i) {

strides.insert(strides.begin(), stride);

stride *= dims[i];

}

cudnnCreateTensorDescriptor(&descriptor_);

cudnnSetTensorNdDescriptor(descriptor_, CudnnDataType::value, dims.size(), &dims[0], &strides[0]);

}

~TensorDescriptor() {

cudnnDestroyTensorDescriptor(descriptor_);

}

cudnnTensorDescriptor_t& operator*() {

return descriptor_;

}

private:

cudnnTensorDescriptor_t descriptor_;

};

template

class TensorDescriptorArray {

public:

TensorDescriptorArray(int count, const std::vector& dims) {

std::vector strides;

int stride = 1;

for (int i = dims.size() - 1; i >= 0; --i) {

strides.insert(strides.begin(), stride);

stride *= dims[i];

}

for (int i = 0; i < count; ++i) {

cudnnTensorDescriptor_t descriptor;

cudnnCreateTensorDescriptor(&descriptor);

cudnnSetTensorNdDescriptor(descriptor, CudnnDataType::value, dims.size(), &dims[0], &strides[0]);

descriptors_.push_back(descriptor);

}

}

~TensorDescriptorArray() {

for (auto& desc : descriptors_)

cudnnDestroyTensorDescriptor(desc);

}

cudnnTensorDescriptor_t* operator&() {

return &descriptors_[0];

}

private:

std::vector descriptors_;

};

class DropoutDescriptor {

public:

DropoutDescriptor(const cudnnHandle_t& handle) {

cudnnCreateDropoutDescriptor(&descriptor_);

cudnnSetDropoutDescriptor(descriptor_, handle, 0.0f, nullptr, 0, 0LL);

}

~DropoutDescriptor() {

cudnnDestroyDropoutDescriptor(descriptor_);

}

cudnnDropoutDescriptor_t& operator*() {

return descriptor_;

}

private:

cudnnDropoutDescriptor_t descriptor_;

};

template

class RnnDescriptor {

public:

RnnDescriptor(const cudnnHandle_t& handle, int size, cudnnRNNMode_t algorithm) : dropout_(handle) {

cudnnCreateRNNDescriptor(&descriptor_);

cudnnSetRNNDescriptor(

handle,

descriptor_,

size,

1,

*dropout_,

CUDNN_LINEAR_INPUT,

CUDNN_UNIDIRECTIONAL,

algorithm,

CUDNN_RNN_ALGO_STANDARD,

CudnnDataType::value);

}

~RnnDescriptor() {

cudnnDestroyRNNDescriptor(descriptor_);

}

cudnnRNNDescriptor_t& operator*() {

return descriptor_;

}

private:

cudnnRNNDescriptor_t descriptor_;

DropoutDescriptor dropout_;

};

template

class FilterDescriptor {

public:

FilterDescriptor(const size_t size) {

int filter_dim[] = { (int)size, 1, 1 };

cudnnCreateFilterDescriptor(&descriptor_);

cudnnSetFilterNdDescriptor(descriptor_, CudnnDataType::value, CUDNN_TENSOR_NCHW, 3, filter_dim);

}

~FilterDescriptor() {

cudnnDestroyFilterDescriptor(descriptor_);

}

cudnnFilterDescriptor_t& operator*() {

return descriptor_;

}

private:

cudnnFilterDescriptor_t descriptor_;

};

================================================

FILE: benchmarks/report.py

================================================

# Copyright 2020 LMNT, Inc. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

import argparse

import matplotlib.pyplot as plt

import numpy as np

import os

def extract(x, predicate):

return np.array(list(filter(predicate, x)))

def main(args):

np.set_printoptions(suppress=True)

A = np.loadtxt(args.A, delimiter=',')

B = np.loadtxt(args.B, delimiter=',')

faster = 1.0 - A[:,-1] / B[:,-1]

print(f'A is faster than B by:')

print(f' mean: {np.mean(faster)*100:7.4}%')

print(f' std: {np.std(faster)*100:7.4}%')

print(f' median: {np.median(faster)*100:7.4}%')

print(f' min: {np.min(faster)*100:7.4}%')

print(f' max: {np.max(faster)*100:7.4}%')

for batch_size in np.unique(A[:,0]):

for input_size in np.unique(A[:,2]):

a = extract(A, lambda x: x[0] == batch_size and x[2] == input_size)

b = extract(B, lambda x: x[0] == batch_size and x[2] == input_size)

fig, ax = plt.subplots(dpi=200)

ax.set_xticks(a[:,1])

ax.set_xticklabels(a[:,1].astype(np.int32), rotation=60)

ax.tick_params(axis='y', which='both', length=0)

ax.spines['top'].set_visible(False)

ax.spines['right'].set_visible(False)

plt.title(f'batch size={int(batch_size)}, input size={int(input_size)}')

plt.plot(a[:,1], a[:,-1], color=args.color[0])

plt.plot(a[:,1], b[:,-1], color=args.color[1])

plt.xlabel('hidden size')

plt.ylabel('time (ms)')

plt.legend(args.name, frameon=False)

plt.tight_layout()

if args.save:

os.makedirs(args.save[0], exist_ok=True)

plt.savefig(f'{args.save[0]}/report_n={int(batch_size)}_c={int(input_size)}.png', dpi=200)

else:

plt.show()

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--name', nargs=2, default=['A', 'B'])

parser.add_argument('--color', nargs=2, default=['#1f77b4', '#2ca02c'])

parser.add_argument('--save', nargs=1, default=None)

parser.add_argument('A')

parser.add_argument('B')

main(parser.parse_args())

================================================

FILE: build/MANIFEST.in

================================================

include Makefile

include frameworks/tf/*.h

include frameworks/tf/*.cc

include frameworks/pytorch/*.h

include frameworks/pytorch/*.cc

include lib/*.cc

include lib/*.h

include lib/haste/*.h

================================================

FILE: build/common.py

================================================

# Copyright 2020 LMNT, Inc. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

VERSION = '0.5.0-rc0'

DESCRIPTION = 'Haste: a fast, simple, and open RNN library.'

AUTHOR = 'LMNT, Inc.'

AUTHOR_EMAIL = 'haste@lmnt.com'

URL = 'https://haste.lmnt.com'

LICENSE = 'Apache 2.0'

CLASSIFIERS = [

'Development Status :: 4 - Beta',

'Intended Audience :: Developers',

'Intended Audience :: Education',

'Intended Audience :: Science/Research',

'License :: OSI Approved :: Apache Software License',

'Programming Language :: Python :: 3.6',

'Programming Language :: Python :: 3.7',

'Programming Language :: Python :: 3.8',

'Topic :: Scientific/Engineering :: Mathematics',

'Topic :: Software Development :: Libraries :: Python Modules',

'Topic :: Software Development :: Libraries',

]

================================================

FILE: build/setup.pytorch.py

================================================

# Copyright 2020 LMNT, Inc. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

import os

import sys

from glob import glob

from platform import platform

from torch.utils import cpp_extension

from setuptools import setup

from setuptools.dist import Distribution

class BuildHaste(cpp_extension.BuildExtension):

def run(self):

os.system('make haste')

super().run()

base_path = os.path.dirname(os.path.realpath(__file__))

if 'Windows' in platform():

CUDA_HOME = os.environ.get('CUDA_HOME', os.environ.get('CUDA_PATH'))

extra_args = []

else:

CUDA_HOME = os.environ.get('CUDA_HOME', '/usr/local/cuda')

extra_args = ['-Wno-sign-compare']

with open(f'frameworks/pytorch/_version.py', 'wt') as f:

f.write(f'__version__ = "{VERSION}"')

extension = cpp_extension.CUDAExtension(

'haste_pytorch_lib',

sources = glob('frameworks/pytorch/*.cc'),

extra_compile_args = extra_args,

include_dirs = [os.path.join(base_path, 'lib'), os.path.join(CUDA_HOME, 'include')],

libraries = ['haste'],

library_dirs = ['.'])

setup(name = 'haste_pytorch',

version = VERSION,

description = DESCRIPTION,

long_description = open('README.md', 'r',encoding='utf-8').read(),

long_description_content_type = 'text/markdown',

author = AUTHOR,

author_email = AUTHOR_EMAIL,

url = URL,

license = LICENSE,

keywords = 'pytorch machine learning rnn lstm gru custom op',

packages = ['haste_pytorch'],

package_dir = { 'haste_pytorch': 'frameworks/pytorch' },

install_requires = [],

ext_modules = [extension],

cmdclass = { 'build_ext': BuildHaste },

classifiers = CLASSIFIERS)

================================================

FILE: build/setup.tf.py

================================================

# Copyright 2020 LMNT, Inc. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

import os

import sys

from setuptools import setup

from setuptools.dist import Distribution

from distutils.command.build import build as _build

class BinaryDistribution(Distribution):

"""This class is needed in order to create OS specific wheels."""

def has_ext_modules(self):

return True

class BuildHaste(_build):

def run(self):

os.system('make libhaste_tf')

super().run()

with open(f'frameworks/tf/_version.py', 'wt') as f:

f.write(f'__version__ = "{VERSION}"')

setup(name = 'haste_tf',

version = VERSION,

description = DESCRIPTION,

long_description = open('README.md', 'r').read(),

long_description_content_type = 'text/markdown',

author = AUTHOR,

author_email = AUTHOR_EMAIL,

url = URL,

license = LICENSE,

keywords = 'tensorflow machine learning rnn lstm gru custom op',

packages = ['haste_tf'],

package_dir = { 'haste_tf': 'frameworks/tf' },