Showing preview only (7,984K chars total). Download the full file or copy to clipboard to get everything.

Repository: openai/whisper

Branch: main

Commit: c0d2f624c09d

Files: 43

Total size: 7.6 MB

Directory structure:

gitextract_mtgfm16e/

├── .flake8

├── .gitattributes

├── .github/

│ ├── dependabot.yml

│ └── workflows/

│ ├── python-publish.yml

│ └── test.yml

├── .gitignore

├── .pre-commit-config.yaml

├── CHANGELOG.md

├── LICENSE

├── MANIFEST.in

├── README.md

├── data/

│ ├── README.md

│ └── meanwhile.json

├── model-card.md

├── notebooks/

│ ├── LibriSpeech.ipynb

│ └── Multilingual_ASR.ipynb

├── pyproject.toml

├── requirements.txt

├── tests/

│ ├── conftest.py

│ ├── jfk.flac

│ ├── test_audio.py

│ ├── test_normalizer.py

│ ├── test_timing.py

│ ├── test_tokenizer.py

│ └── test_transcribe.py

└── whisper/

├── __init__.py

├── __main__.py

├── assets/

│ ├── gpt2.tiktoken

│ ├── mel_filters.npz

│ └── multilingual.tiktoken

├── audio.py

├── decoding.py

├── model.py

├── normalizers/

│ ├── __init__.py

│ ├── basic.py

│ ├── english.json

│ └── english.py

├── timing.py

├── tokenizer.py

├── transcribe.py

├── triton_ops.py

├── utils.py

└── version.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .flake8

================================================

[flake8]

per-file-ignores =

*/__init__.py: F401

================================================

FILE: .gitattributes

================================================

# Override jupyter in Github language stats for more accurate estimate of repo code languages

# reference: https://github.com/github/linguist/blob/master/docs/overrides.md#generated-code

*.ipynb linguist-generated

================================================

FILE: .github/dependabot.yml

================================================

# Keep GitHub Actions up to date with GitHub's Dependabot...

# https://docs.github.com/en/code-security/dependabot/working-with-dependabot/keeping-your-actions-up-to-date-with-dependabot

# https://docs.github.com/en/code-security/dependabot/dependabot-version-updates/configuration-options-for-the-dependabot.yml-file#package-ecosystem

version: 2

updates:

- package-ecosystem: github-actions

directory: /

groups:

github-actions:

patterns:

- "*" # Group all Actions updates into a single larger pull request

schedule:

interval: weekly

================================================

FILE: .github/workflows/python-publish.yml

================================================

name: Release

on:

push:

branches:

- main

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions-ecosystem/action-regex-match@v2

id: regex-match

with:

text: ${{ github.event.head_commit.message }}

regex: '^Release ([^ ]+)'

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: '3.12'

- name: Install dependencies

run: |

python -m pip install --upgrade pip

pip install setuptools wheel twine build

- name: Release

if: ${{ steps.regex-match.outputs.match != '' }}

uses: softprops/action-gh-release@v2

with:

tag_name: v${{ steps.regex-match.outputs.group1 }}

- name: Build and publish

if: ${{ steps.regex-match.outputs.match != '' }}

env:

TWINE_USERNAME: __token__

TWINE_PASSWORD: ${{ secrets.PYPI_API_TOKEN }}

run: |

python -m build --sdist

twine upload dist/*

================================================

FILE: .github/workflows/test.yml

================================================

name: test

on:

push:

branches:

- main

pull_request:

branches:

- main

jobs:

pre-commit:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Fetch base branch

run: git fetch origin ${{ github.base_ref }}

- uses: actions/setup-python@v5

with:

python-version: "3.9"

architecture: x64

- name: Get pip cache dir

id: pip-cache

run: |

echo "dir=$(pip cache dir)" >> $GITHUB_OUTPUT

- name: pip/pre-commit cache

uses: actions/cache@v4

with:

path: |

${{ steps.pip-cache.outputs.dir }}

~/.cache/pre-commit

key: ${{ runner.os }}-pip-pre-commit-${{ hashFiles('**/.pre-commit-config.yaml') }}

restore-keys: |

${{ runner.os }}-pip-pre-commit

- name: pre-commit

run: |

pip install --upgrade pre-commit

pre-commit install --install-hooks

pre-commit run --all-files

whisper-test:

needs: pre-commit

runs-on: ubuntu-latest

strategy:

fail-fast: false

matrix:

include:

- python-version: '3.8'

pytorch-version: 1.10.1

numpy-requirement: "'numpy<2'"

- python-version: '3.8'

pytorch-version: 1.13.1

numpy-requirement: "'numpy<2'"

- python-version: '3.8'

pytorch-version: 2.0.1

numpy-requirement: "'numpy<2'"

- python-version: '3.9'

pytorch-version: 2.1.2

numpy-requirement: "'numpy<2'"

- python-version: '3.10'

pytorch-version: 2.2.2

numpy-requirement: "'numpy<2'"

- python-version: '3.11'

pytorch-version: 2.3.1

numpy-requirement: "'numpy'"

- python-version: '3.12'

pytorch-version: 2.4.1

numpy-requirement: "'numpy'"

- python-version: '3.12'

pytorch-version: 2.5.1

numpy-requirement: "'numpy'"

- python-version: '3.13'

pytorch-version: 2.5.1

numpy-requirement: "'numpy'"

steps:

- uses: conda-incubator/setup-miniconda@v3

- run: conda install -n test ffmpeg python=${{ matrix.python-version }}

- uses: actions/checkout@v4

- run: echo "$CONDA/envs/test/bin" >> $GITHUB_PATH

- run: pip3 install .["dev"] ${{ matrix.numpy-requirement }} torch==${{ matrix.pytorch-version }}+cpu --index-url https://download.pytorch.org/whl/cpu --extra-index-url https://pypi.org/simple

- run: pytest --durations=0 -vv -k 'not test_transcribe or test_transcribe[tiny] or test_transcribe[tiny.en]' -m 'not requires_cuda'

================================================

FILE: .gitignore

================================================

__pycache__/

*.py[cod]

*$py.class

*.egg-info

.pytest_cache

.ipynb_checkpoints

thumbs.db

.DS_Store

.idea

================================================

FILE: .pre-commit-config.yaml

================================================

repos:

- repo: https://github.com/pre-commit/pre-commit-hooks

rev: v5.0.0

hooks:

- id: check-json

- id: end-of-file-fixer

types: [file, python]

- id: trailing-whitespace

types: [file, python]

- id: mixed-line-ending

- id: check-added-large-files

args: [--maxkb=4096]

- repo: https://github.com/psf/black

rev: 25.1.0

hooks:

- id: black

- repo: https://github.com/pycqa/isort

rev: 6.0.0

hooks:

- id: isort

name: isort (python)

args: ["--profile", "black", "-l", "88", "--trailing-comma", "--multi-line", "3"]

- repo: https://github.com/pycqa/flake8.git

rev: 7.1.1

hooks:

- id: flake8

types: [python]

args: ["--max-line-length", "88", "--ignore", "E203,E501,W503,W504"]

================================================

FILE: CHANGELOG.md

================================================

# CHANGELOG

## [v20250625](https://github.com/openai/whisper/releases/tag/v20250625)

* Fix: Update torch.load to use weights_only=True to prevent security w… ([#2451](https://github.com/openai/whisper/pull/2451))

* Fix: Ensure DTW cost tensor is on the same device as input tensor ([#2561](https://github.com/openai/whisper/pull/2561))

* docs: updated README to specify translation model limitation ([#2547](https://github.com/openai/whisper/pull/2547))

* Fixed triton kernel update to support latest triton versions ([#2588](https://github.com/openai/whisper/pull/2588))

* Fix: GitHub display errors for Jupyter notebooks ([#2589](https://github.com/openai/whisper/pull/2589))

* Bump the github-actions group with 3 updates ([#2592](https://github.com/openai/whisper/pull/2592))

* Keep GitHub Actions up to date with GitHub's Dependabot ([#2486](https://github.com/openai/whisper/pull/2486))

* pre-commit: Upgrade black v25.1.0 and isort v6.0.0 ([#2514](https://github.com/openai/whisper/pull/2514))

* GitHub Actions: Add Python 3.13 to the testing ([#2487](https://github.com/openai/whisper/pull/2487))

* PEP 621: Migrate from setup.py to pyproject.toml ([#2435](https://github.com/openai/whisper/pull/2435))

* pre-commit autoupdate && pre-commit run --all-files ([#2484](https://github.com/openai/whisper/pull/2484))

* Upgrade GitHub Actions ([#2430](https://github.com/openai/whisper/pull/2430))

* Bugfix: Illogical "Avoid computing higher temperatures on no_speech" ([#1903](https://github.com/openai/whisper/pull/1903))

* Updating README and doc strings to reflect that n_mels can now be 128 ([#2049](https://github.com/openai/whisper/pull/2049))

* fix typo data/README.md ([#2433](https://github.com/openai/whisper/pull/2433))

* Update README.md ([#2379](https://github.com/openai/whisper/pull/2379))

* Add option to carry initial_prompt with the sliding window ([#2343](https://github.com/openai/whisper/pull/2343))

* more pytorch versions in tests ([#2408](https://github.com/openai/whisper/pull/2408))

## [v20240930](https://github.com/openai/whisper/releases/tag/v20240930)

* allowing numpy 2 in tests ([#2362](https://github.com/openai/whisper/pull/2362))

* large-v3-turbo model ([#2361](https://github.com/openai/whisper/pull/2361))

* test on python/pytorch versions up to 3.12 and 2.4.1 ([#2360](https://github.com/openai/whisper/pull/2360))

* using sdpa if available ([#2359](https://github.com/openai/whisper/pull/2359))

## [v20240927](https://github.com/openai/whisper/releases/tag/v20240927)

* pinning numpy<2 in tests ([#2332](https://github.com/openai/whisper/pull/2332))

* Relax triton requirements for compatibility with pytorch 2.4 and newer ([#2307](https://github.com/openai/whisper/pull/2307))

* Skip silence around hallucinations ([#1838](https://github.com/openai/whisper/pull/1838))

* Fix triton env marker ([#1887](https://github.com/openai/whisper/pull/1887))

## [v20231117](https://github.com/openai/whisper/releases/tag/v20231117)

* Relax triton requirements for compatibility with pytorch 2.1 and newer ([#1802](https://github.com/openai/whisper/pull/1802))

## [v20231106](https://github.com/openai/whisper/releases/tag/v20231106)

* large-v3 ([#1761](https://github.com/openai/whisper/pull/1761))

## [v20231105](https://github.com/openai/whisper/releases/tag/v20231105)

* remove tiktoken pin ([#1759](https://github.com/openai/whisper/pull/1759))

* docs: Disambiguation of the term "relative speed" in the README ([#1751](https://github.com/openai/whisper/pull/1751))

* allow_pickle=False while loading of mel matrix IN audio.py ([#1511](https://github.com/openai/whisper/pull/1511))

* handling transcribe exceptions. ([#1682](https://github.com/openai/whisper/pull/1682))

* Add new option to generate subtitles by a specific number of words ([#1729](https://github.com/openai/whisper/pull/1729))

* Fix exception when an audio file with no speech is provided ([#1396](https://github.com/openai/whisper/pull/1396))

## [v20230918](https://github.com/openai/whisper/releases/tag/v20230918)

* Add .pre-commit-config.yaml ([#1528](https://github.com/openai/whisper/pull/1528))

* fix doc of TextDecoder ([#1526](https://github.com/openai/whisper/pull/1526))

* Update model-card.md ([#1643](https://github.com/openai/whisper/pull/1643))

* word timing tweaks ([#1559](https://github.com/openai/whisper/pull/1559))

* Avoid rearranging all caches ([#1483](https://github.com/openai/whisper/pull/1483))

* Improve timestamp heuristics. ([#1461](https://github.com/openai/whisper/pull/1461))

* fix condition_on_previous_text ([#1224](https://github.com/openai/whisper/pull/1224))

* Fix numba depreceation notice ([#1233](https://github.com/openai/whisper/pull/1233))

* Updated README.md to provide more insight on BLEU and specific appendices ([#1236](https://github.com/openai/whisper/pull/1236))

* Avoid computing higher temperatures on no_speech segments ([#1279](https://github.com/openai/whisper/pull/1279))

* Dropped unused execute bit from mel_filters.npz. ([#1254](https://github.com/openai/whisper/pull/1254))

* Drop ffmpeg-python dependency and call ffmpeg directly. ([#1242](https://github.com/openai/whisper/pull/1242))

* Python 3.11 ([#1171](https://github.com/openai/whisper/pull/1171))

* Update decoding.py ([#1219](https://github.com/openai/whisper/pull/1219))

* Update decoding.py ([#1155](https://github.com/openai/whisper/pull/1155))

* Update README.md to reference tiktoken ([#1105](https://github.com/openai/whisper/pull/1105))

* Implement max line width and max line count, and make word highlighting optional ([#1184](https://github.com/openai/whisper/pull/1184))

* Squash long words at window and sentence boundaries. ([#1114](https://github.com/openai/whisper/pull/1114))

* python-publish.yml: bump actions version to fix node warning ([#1211](https://github.com/openai/whisper/pull/1211))

* Update tokenizer.py ([#1163](https://github.com/openai/whisper/pull/1163))

## [v20230314](https://github.com/openai/whisper/releases/tag/v20230314)

* abort find_alignment on empty input ([#1090](https://github.com/openai/whisper/pull/1090))

* Fix truncated words list when the replacement character is decoded ([#1089](https://github.com/openai/whisper/pull/1089))

* fix github language stats getting dominated by jupyter notebook ([#1076](https://github.com/openai/whisper/pull/1076))

* Fix alignment between the segments and the list of words ([#1087](https://github.com/openai/whisper/pull/1087))

* Use tiktoken ([#1044](https://github.com/openai/whisper/pull/1044))

## [v20230308](https://github.com/openai/whisper/releases/tag/v20230308)

* kwargs in decode() for convenience ([#1061](https://github.com/openai/whisper/pull/1061))

* fix all_tokens handling that caused more repetitions and discrepancy in JSON ([#1060](https://github.com/openai/whisper/pull/1060))

* fix typo in CHANGELOG.md

## [v20230307](https://github.com/openai/whisper/releases/tag/v20230307)

* Fix the repetition/hallucination issue identified in #1046 ([#1052](https://github.com/openai/whisper/pull/1052))

* Use triton==2.0.0 ([#1053](https://github.com/openai/whisper/pull/1053))

* Install triton in x86_64 linux only ([#1051](https://github.com/openai/whisper/pull/1051))

* update setup.py to specify python >= 3.8 requirement

## [v20230306](https://github.com/openai/whisper/releases/tag/v20230306)

* remove auxiliary audio extension ([#1021](https://github.com/openai/whisper/pull/1021))

* apply formatting with `black`, `isort`, and `flake8` ([#1038](https://github.com/openai/whisper/pull/1038))

* word-level timestamps in `transcribe()` ([#869](https://github.com/openai/whisper/pull/869))

* Decoding improvements ([#1033](https://github.com/openai/whisper/pull/1033))

* Update README.md ([#894](https://github.com/openai/whisper/pull/894))

* Fix infinite loop caused by incorrect timestamp tokens prediction ([#914](https://github.com/openai/whisper/pull/914))

* drop python 3.7 support ([#889](https://github.com/openai/whisper/pull/889))

## [v20230124](https://github.com/openai/whisper/releases/tag/v20230124)

* handle printing even if sys.stdout.buffer is not available ([#887](https://github.com/openai/whisper/pull/887))

* Add TSV formatted output in transcript, using integer start/end time in milliseconds ([#228](https://github.com/openai/whisper/pull/228))

* Added `--output_format` option ([#333](https://github.com/openai/whisper/pull/333))

* Handle `XDG_CACHE_HOME` properly for `download_root` ([#864](https://github.com/openai/whisper/pull/864))

* use stdout for printing transcription progress ([#867](https://github.com/openai/whisper/pull/867))

* Fix bug where mm is mistakenly replaced with hmm in e.g. 20mm ([#659](https://github.com/openai/whisper/pull/659))

* print '?' if a letter can't be encoded using the system default encoding ([#859](https://github.com/openai/whisper/pull/859))

## [v20230117](https://github.com/openai/whisper/releases/tag/v20230117)

The first versioned release available on [PyPI](https://pypi.org/project/openai-whisper/)

================================================

FILE: LICENSE

================================================

MIT License

Copyright (c) 2022 OpenAI

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: MANIFEST.in

================================================

include requirements.txt

include README.md

include LICENSE

include whisper/assets/*

include whisper/normalizers/english.json

================================================

FILE: README.md

================================================

# Whisper

[[Blog]](https://openai.com/blog/whisper)

[[Paper]](https://arxiv.org/abs/2212.04356)

[[Model card]](https://github.com/openai/whisper/blob/main/model-card.md)

[[Colab example]](https://colab.research.google.com/github/openai/whisper/blob/master/notebooks/LibriSpeech.ipynb)

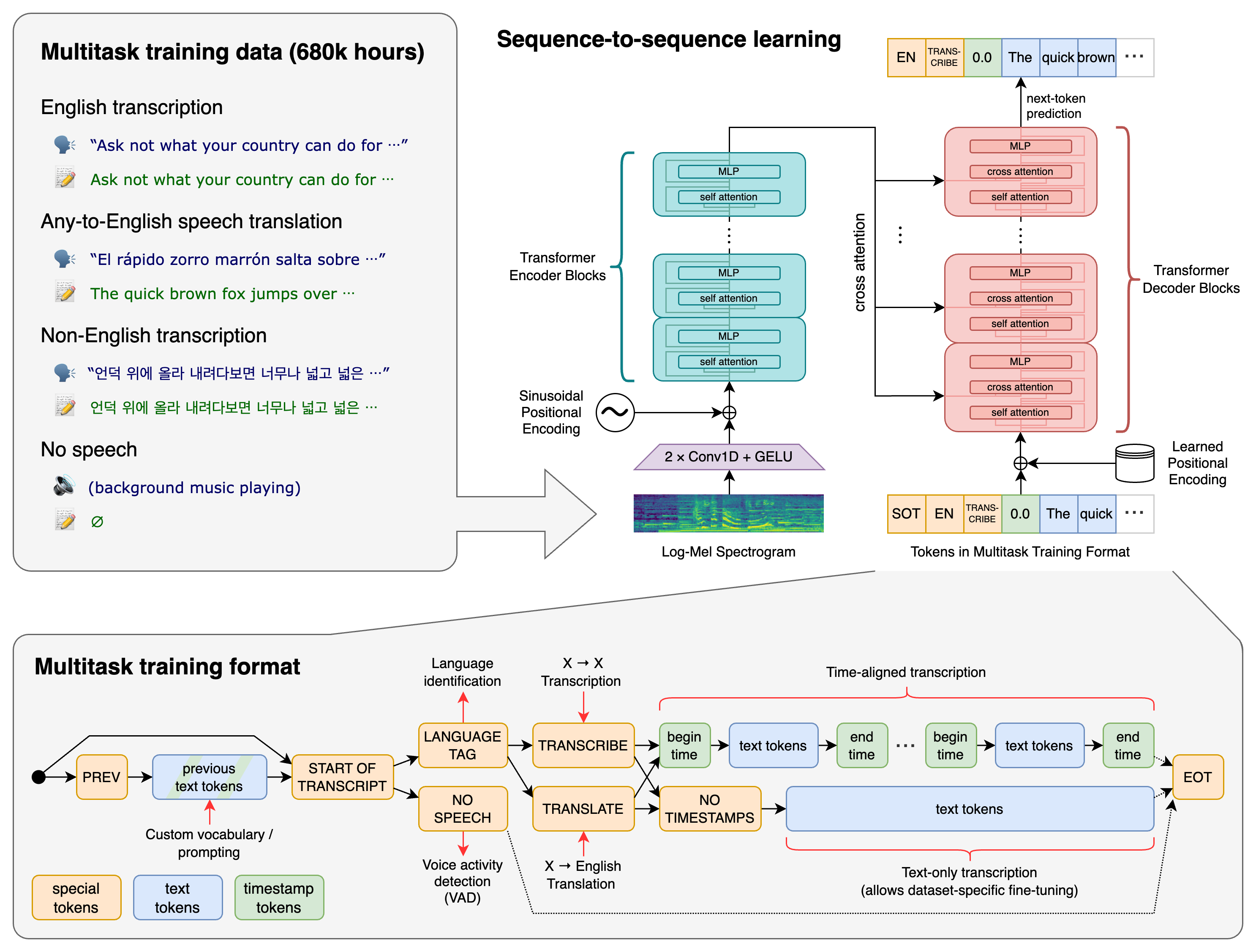

Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification.

## Approach

A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.

## Setup

We used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.8-3.11 and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [OpenAI's tiktoken](https://github.com/openai/tiktoken) for their fast tokenizer implementation. You can download and install (or update to) the latest release of Whisper with the following command:

pip install -U openai-whisper

Alternatively, the following command will pull and install the latest commit from this repository, along with its Python dependencies:

pip install git+https://github.com/openai/whisper.git

To update the package to the latest version of this repository, please run:

pip install --upgrade --no-deps --force-reinstall git+https://github.com/openai/whisper.git

It also requires the command-line tool [`ffmpeg`](https://ffmpeg.org/) to be installed on your system, which is available from most package managers:

```bash

# on Ubuntu or Debian

sudo apt update && sudo apt install ffmpeg

# on Arch Linux

sudo pacman -S ffmpeg

# on MacOS using Homebrew (https://brew.sh/)

brew install ffmpeg

# on Windows using Chocolatey (https://chocolatey.org/)

choco install ffmpeg

# on Windows using Scoop (https://scoop.sh/)

scoop install ffmpeg

```

You may need [`rust`](http://rust-lang.org) installed as well, in case [tiktoken](https://github.com/openai/tiktoken) does not provide a pre-built wheel for your platform. If you see installation errors during the `pip install` command above, please follow the [Getting started page](https://www.rust-lang.org/learn/get-started) to install Rust development environment. Additionally, you may need to configure the `PATH` environment variable, e.g. `export PATH="$HOME/.cargo/bin:$PATH"`. If the installation fails with `No module named 'setuptools_rust'`, you need to install `setuptools_rust`, e.g. by running:

```bash

pip install setuptools-rust

```

## Available models and languages

There are six model sizes, four with English-only versions, offering speed and accuracy tradeoffs.

Below are the names of the available models and their approximate memory requirements and inference speed relative to the large model.

The relative speeds below are measured by transcribing English speech on a A100, and the real-world speed may vary significantly depending on many factors including the language, the speaking speed, and the available hardware.

| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |

|:------:|:----------:|:------------------:|:------------------:|:-------------:|:--------------:|

| tiny | 39 M | `tiny.en` | `tiny` | ~1 GB | ~10x |

| base | 74 M | `base.en` | `base` | ~1 GB | ~7x |

| small | 244 M | `small.en` | `small` | ~2 GB | ~4x |

| medium | 769 M | `medium.en` | `medium` | ~5 GB | ~2x |

| large | 1550 M | N/A | `large` | ~10 GB | 1x |

| turbo | 809 M | N/A | `turbo` | ~6 GB | ~8x |

The `.en` models for English-only applications tend to perform better, especially for the `tiny.en` and `base.en` models. We observed that the difference becomes less significant for the `small.en` and `medium.en` models.

Additionally, the `turbo` model is an optimized version of `large-v3` that offers faster transcription speed with a minimal degradation in accuracy.

Whisper's performance varies widely depending on the language. The figure below shows a performance breakdown of `large-v3` and `large-v2` models by language, using WERs (word error rates) or CER (character error rates, shown in *Italic*) evaluated on the Common Voice 15 and Fleurs datasets. Additional WER/CER metrics corresponding to the other models and datasets can be found in Appendix D.1, D.2, and D.4 of [the paper](https://arxiv.org/abs/2212.04356), as well as the BLEU (Bilingual Evaluation Understudy) scores for translation in Appendix D.3.

## Command-line usage

The following command will transcribe speech in audio files, using the `turbo` model:

```bash

whisper audio.flac audio.mp3 audio.wav --model turbo

```

The default setting (which selects the `turbo` model) works well for transcribing English. However, **the `turbo` model is not trained for translation tasks**. If you need to **translate non-English speech into English**, use one of the **multilingual models** (`tiny`, `base`, `small`, `medium`, `large`) instead of `turbo`.

For example, to transcribe an audio file containing non-English speech, you can specify the language:

```bash

whisper japanese.wav --language Japanese

```

To **translate** speech into English, use:

```bash

whisper japanese.wav --model medium --language Japanese --task translate

```

> **Note:** The `turbo` model will return the original language even if `--task translate` is specified. Use `medium` or `large` for the best translation results.

Run the following to view all available options:

```bash

whisper --help

```

See [tokenizer.py](https://github.com/openai/whisper/blob/main/whisper/tokenizer.py) for the list of all available languages.

## Python usage

Transcription can also be performed within Python:

```python

import whisper

model = whisper.load_model("turbo")

result = model.transcribe("audio.mp3")

print(result["text"])

```

Internally, the `transcribe()` method reads the entire file and processes the audio with a sliding 30-second window, performing autoregressive sequence-to-sequence predictions on each window.

Below is an example usage of `whisper.detect_language()` and `whisper.decode()` which provide lower-level access to the model.

```python

import whisper

model = whisper.load_model("turbo")

# load audio and pad/trim it to fit 30 seconds

audio = whisper.load_audio("audio.mp3")

audio = whisper.pad_or_trim(audio)

# make log-Mel spectrogram and move to the same device as the model

mel = whisper.log_mel_spectrogram(audio, n_mels=model.dims.n_mels).to(model.device)

# detect the spoken language

_, probs = model.detect_language(mel)

print(f"Detected language: {max(probs, key=probs.get)}")

# decode the audio

options = whisper.DecodingOptions()

result = whisper.decode(model, mel, options)

# print the recognized text

print(result.text)

```

## More examples

Please use the [🙌 Show and tell](https://github.com/openai/whisper/discussions/categories/show-and-tell) category in Discussions for sharing more example usages of Whisper and third-party extensions such as web demos, integrations with other tools, ports for different platforms, etc.

## License

Whisper's code and model weights are released under the MIT License. See [LICENSE](https://github.com/openai/whisper/blob/main/LICENSE) for further details.

================================================

FILE: data/README.md

================================================

This directory supplements the paper with more details on how we prepared the data for evaluation, to help replicate our experiments.

## Short-form English-only datasets

### LibriSpeech

We used the test-clean and test-other splits from the [LibriSpeech ASR corpus](https://www.openslr.org/12).

### TED-LIUM 3

We used the test split of [TED-LIUM Release 3](https://www.openslr.org/51/), using the segmented manual transcripts included in the release.

### Common Voice 5.1

We downloaded the English subset of Common Voice Corpus 5.1 from [the official website](https://commonvoice.mozilla.org/en/datasets)

### Artie

We used the [Artie bias corpus](https://github.com/artie-inc/artie-bias-corpus). This is a subset of the Common Voice dataset.

### CallHome & Switchboard

We used the two corpora from [LDC2002S09](https://catalog.ldc.upenn.edu/LDC2002S09) and [LDC2002T43](https://catalog.ldc.upenn.edu/LDC2002T43) and followed the [eval2000_data_prep.sh](https://github.com/kaldi-asr/kaldi/blob/master/egs/fisher_swbd/s5/local/eval2000_data_prep.sh) script for preprocessing. The `wav.scp` files can be converted to WAV files with the following bash commands:

```bash

mkdir -p wav

while read name cmd; do

echo $name

echo ${cmd/\|/} wav/$name.wav | bash

done < wav.scp

```

### WSJ

We used [LDC93S6B](https://catalog.ldc.upenn.edu/LDC93S6B) and [LDC94S13B](https://catalog.ldc.upenn.edu/LDC94S13B) and followed the [s5 recipe](https://github.com/kaldi-asr/kaldi/tree/master/egs/wsj/s5) to preprocess the dataset.

### CORAAL

We used the 231 interviews from [CORAAL (v. 2021.07)](https://oraal.uoregon.edu/coraal) and used the segmentations from [the FairSpeech project](https://github.com/stanford-policylab/asr-disparities/blob/master/input/CORAAL_transcripts.csv).

### CHiME-6

We downloaded the [CHiME-5 dataset](https://spandh.dcs.shef.ac.uk//chime_challenge/CHiME5/download.html) and followed the stage 0 of the [s5_track1 recipe](https://github.com/kaldi-asr/kaldi/tree/master/egs/chime6/s5_track1) to create the CHiME-6 dataset which fixes synchronization. We then used the binaural recordings (`*_P??.wav`) and the corresponding transcripts.

### AMI-IHM, AMI-SDM1

We preprocessed the [AMI Corpus](https://groups.inf.ed.ac.uk/ami/corpus/overview.shtml) by following the stage 0 and 2 of the [s5b recipe](https://github.com/kaldi-asr/kaldi/tree/master/egs/ami/s5b).

## Long-form English-only datasets

### TED-LIUM 3

To create a long-form transcription dataset from the [TED-LIUM3](https://www.openslr.org/51/) dataset, we sliced the audio between the beginning of the first labeled segment and the end of the last labeled segment of each talk, and we used the concatenated text as the label. Below are the timestamps used for slicing each of the 11 TED talks in the test split.

| Filename | Begin time (s) | End time (s) |

|---------------------|----------------|--------------|

| DanBarber_2010 | 16.09 | 1116.24 |

| JaneMcGonigal_2010 | 15.476 | 1187.61 |

| BillGates_2010 | 15.861 | 1656.94 |

| TomWujec_2010U | 16.26 | 402.17 |

| GaryFlake_2010 | 16.06 | 367.14 |

| EricMead_2009P | 18.434 | 536.44 |

| MichaelSpecter_2010 | 16.11 | 979.312 |

| DanielKahneman_2010 | 15.8 | 1199.44 |

| AimeeMullins_2009P | 17.82 | 1296.59 |

| JamesCameron_2010 | 16.75 | 1010.65 |

| RobertGupta_2010U | 16.8 | 387.03 |

### Meanwhile

This dataset consists of 64 segments from The Late Show with Stephen Colbert. The YouTube video ID, start and end timestamps, and the labels can be found in [meanwhile.json](meanwhile.json). The labels are collected from the closed-caption data for each video and corrected with manual inspection.

### Rev16

We use a subset of 16 files from the 30 podcast episodes in [Rev.AI's Podcast Transcription Benchmark](https://www.rev.ai/blog/podcast-transcription-benchmark-part-1/), after finding that there are multiple cases where a significant portion of the audio and the labels did not match, mostly on the parts introducing the sponsors. We selected 16 episodes that do not have this error, whose "file number" are:

3 4 9 10 11 14 17 18 20 21 23 24 26 27 29 32

### Kincaid46

This dataset consists of 46 audio files and the corresponding transcripts compiled in the blog article [Which automatic transcription service is the most accurate - 2018](https://medium.com/descript/which-automatic-transcription-service-is-the-most-accurate-2018-2e859b23ed19) by Jason Kincaid. We used the 46 audio files and reference transcripts from the Airtable widget in the article.

For the human transcription benchmark in the paper, we use a subset of 25 examples from this data, whose "Ref ID" are:

2 4 5 8 9 10 12 13 14 16 19 21 23 25 26 28 29 30 33 35 36 37 42 43 45

### Earnings-21, Earnings-22

For these datasets, we used the files available in [the speech-datasets repository](https://github.com/revdotcom/speech-datasets), as of their `202206` version.

### CORAAL

We used the 231 interviews from [CORAAL (v. 2021.07)](https://oraal.uoregon.edu/coraal) and used the full-length interview files and transcripts.

## Multilingual datasets

### Multilingual LibriSpeech

We used the test splits from each language in [the Multilingual LibriSpeech (MLS) corpus](https://www.openslr.org/94/).

### Fleurs

We collected audio files and transcripts using the implementation available as [HuggingFace datasets](https://huggingface.co/datasets/google/fleurs/blob/main/fleurs.py). To use as a translation dataset, we matched the numerical utterance IDs to find the corresponding transcript in English.

### VoxPopuli

We used the `get_asr_data.py` script from [the official repository](https://github.com/facebookresearch/voxpopuli) to collect the ASR data in 14 languages.

### Common Voice 9

We downloaded the Common Voice Corpus 9 from [the official website](https://commonvoice.mozilla.org/en/datasets)

### CoVOST 2

We collected the `X into English` data collected using [the official repository](https://github.com/facebookresearch/covost).

================================================

FILE: data/meanwhile.json

================================================

{

"1YOmY-Vjy-o": {

"begin": "1:04.0",

"end": "2:11.0",

"text": "FOLKS, IF YOU WATCH THE SHOW,\nYOU KNOW I SPEND A LOT OF TIME\nRIGHT OVER THERE, PATIENTLY AND\nASTUTELY SCRUTINIZING THE\nBOXWOOD AND MAHOGANY CHESS SET\nOF THE DAY'S BIGGEST STORIES,\nDEVELOPING THE CENTRAL\nHEADLINE-PAWNS, DEFTLY\nMANEUVERING AN OH-SO-TOPICAL\nKNIGHT TO F-6, FEIGNING A\nCLASSIC SICILIAN-NAJDORF\nVARIATION ON THE NEWS, ALL THE\nWHILE, SEEING EIGHT MOVES DEEP\nAND PATIENTLY MARSHALING THE\nLATEST PRESS RELEASES INTO A\nFISCHER SOZIN LIPNITZKY ATTACK\nTHAT CULMINATES IN THE ELEGANT,\nLETHAL, SLOW-PLAYED EN PASSANT\nCHECKMATE THAT IS MY NIGHTLY\nMONOLOGUE.\nBUT SOMETIMES, SOMETIMES,\nFOLKS-- I,\nSOMETIMES,\nI STARTLE AWAKE UPSIDE DOWN ON\nTHE MONKEY BARS OF A CONDEMNED\nPLAYGROUND ON A SUPERFUND SITE,\nGET ALL HEPPED UP ON GOOFBALLS,\nRUMMAGE THROUGH A DISCARDED TAG\nBAG OF DEFECTIVE TOYS, YANK\nOUT A FISTFUL OF DISEMBODIED\nDOLL LIMBS, TOSS THEM ON A\nSTAINED KID'S PLACEMAT FROM A\nDEFUNCT DENNY'S, SET UP A TABLE\nINSIDE A RUSTY CARGO CONTAINER\nDOWN BY THE WHARF, AND CHALLENGE\nTOOTHLESS DRIFTERS TO THE\nGODLESS BUGHOUSE BLITZ\nOF TOURNAMENT OF NEWS THAT IS MY\nSEGMENT:\nMEANWHILE!\n"

},

"3P_XnxdlXu0": {

"begin": "2:08.3",

"end": "3:02.3",

"text": "FOLKS, I SPEND A LOT OF TIME\nRIGHT OVER THERE, NIGHT AFTER NIGHT ACTUALLY, CAREFULLY\nSELECTING FOR YOU THE DAY'S NEWSIEST,\nMOST AERODYNAMIC HEADLINES,\nSTRESS TESTING THE MOST TOPICAL\nANTILOCK BRAKES AND POWER\nSTEERING, PAINSTAKINGLY\nSTITCHING LEATHER SEATING SO\nSOFT, IT WOULD MAKE J.D. POWER\nAND HER ASSOCIATES BLUSH, TO\nCREATE THE LUXURY SEDAN THAT IS\nMY NIGHTLY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES,\nFOLKS, I LURCH TO CONSCIOUSNESS\nIN THE BACK OF AN ABANDONED\nSCHOOL BUS AND SLAP MYSELF AWAKE\nWITH A CRUSTY FLOOR MAT BEFORE\nUSING A MOUSE-BITTEN TIMING BELT\nTO STRAP SOME OLD PLYWOOD TO A\nCOUPLE OF DISCARDED OIL DRUMS.\nTHEN, BY THE LIGHT OF A HEATHEN\nMOON, RENDER A GAS TANK OUT OF\nAN EMPTY BIG GULP, FILL IT WITH\nWHITE CLAW AND DENATURED\nALCOHOL, THEN LIGHT A MATCH AND\nLET HER RIP, IN THE DEMENTED\nONE-MAN SOAP BOX DERBY OF NEWS\nTHAT IS MY SEGMENT: MEANWHILE!"

},

"3elIlQzJEQ0": {

"begin": "1:08.5",

"end": "1:58.5",

"text": "LADIES AND GENTLEMEN, YOU KNOW, I SPEND A\nLOT OF TIME RIGHT OVER THERE,\nRAISING THE FINEST HOLSTEIN NEWS\nCATTLE, FIRMLY, YET TENDERLY,\nMILKING THE LATEST HEADLINES\nFROM THEIR JOKE-SWOLLEN TEATS,\nCHURNING THE DAILY STORIES INTO\nTHE DECADENT, PROVENCAL-STYLE\nTRIPLE CREME BRIE THAT IS MY\nNIGHTLY MONOLOGUE.\nBUT SOMETIMES, SOMETIMES, FOLKS,\nI STAGGER HOME HUNGRY AFTER\nBEING RELEASED BY THE POLICE,\nAND ROOT AROUND IN THE NEIGHBOR'S\nTRASH CAN FOR AN OLD MILK\nCARTON, SCRAPE OUT THE BLOOMING\nDAIRY RESIDUE ONTO THE REMAINS\nOF A WET CHEESE RIND I WON\nFROM A RAT IN A PRE-DAWN STREET\nFIGHT, PUT IT IN A DISCARDED\nPAINT CAN, AND LEAVE IT TO\nFERMENT NEXT TO A TRASH FIRE,\nTHEN HUNKER DOWN AND HALLUCINATE\nWHILE EATING THE LISTERIA-LADEN\nDEMON CUSTARD OF NEWS THAT IS\nMY SEGMENT: MEANWHILE!"

},

"43P4q1KGKEU": {

"begin": "0:29.3",

"end": "1:58.3",

"text": "FOLKS, IF YOU WATCH THE SHOW, YOU KNOW I SPEND MOST\nOF MY TIME, RIGHT OVER THERE.\nCAREFULLY SORTING THROUGH THE\nDAY'S BIGGEST STORIES, AND\nSELECTING ONLY THE MOST\nSUPPLE AND UNBLEMISHED OSTRICH\nAND CROCODILE NEWS LEATHER,\nWHICH I THEN ENTRUST TO ARTISAN\nGRADUATES OF THE \"ECOLE\nGREGOIRE-FERRANDI,\" WHO\nCAREFULLY DYE THEM IN A PALETTE\nOF BRIGHT, ZESTY SHADES, AND\nADORN THEM WITH THE FINEST, MOST\nTOPICAL INLAY WORK USING HAND\nTOOLS AND DOUBLE MAGNIFYING\nGLASSES, THEN ASSEMBLE THEM\nACCORDING TO NOW CLASSIC AND\nELEGANT GEOMETRY USING OUR\nSIGNATURE SADDLE STITCHING, AND\nLINE IT WITH BEESWAX-COATED\nLINEN, AND FINALLY ATTACH A\nMALLET-HAMMERED STRAP, PEARLED\nHARDWARE, AND A CLOCHETTE TO\nCREATE FOR YOU THE ONE-OF-A-KIND\nHAUTE COUTURE HERMES BIRKIN BAG\nTHAT IS MY MONOLOGUE.\nBUT SOMETIMES, SOMETIMES, FOLKS,\nSOMETIMES, SOMETIMES I WAKE UP IN THE\nLAST CAR OF AN ABANDONED ROLLER\nCOASTER AT CONEY ISLAND, WHERE\nI'M HIDING FROM THE TRIADS, I\nHUFF SOME ENGINE LUBRICANTS OUT\nOF A SAFEWAY BAG AND STAGGER\nDOWN THE SHORE TO TEAR THE SAIL\nOFF A BEACHED SCHOONER, THEN I\nRIP THE CO-AXIAL CABLE OUT OF\nTHE R.V. OF AN ELDERLY COUPLE\nFROM UTAH, HANK AND MABEL,\nLOVELY FOLKS, AND USE IT TO\nSTITCH THE SAIL INTO A LOOSE,\nPOUCH-LIKE RUCKSACK, THEN I\nSTOW AWAY IN THE BACK OF A\nGARBAGE TRUCK TO THE JUNK YARD\nWHERE I PICK THROUGH THE DEBRIS\nFOR ONLY THE BROKEN TOYS THAT\nMAKE ME THE SADDEST UNTIL I HAVE\nLOADED, FOR YOU, THE HOBO\nFUGITIVE'S BUG-OUT BINDLE OF\nNEWS THAT IS MY SEGMENT:\nMEANWHILE!"

},

"4ktyaJkLMfo": {

"begin": "0:42.5",

"end": "1:26.5",

"text": "YOU KNOW, FOLKS, I SPEND A LOT\nOF TIME CRAFTING FOR YOU A\nBESPOKE PLAYLIST OF THE DAY'S\nBIGGEST STORIES, RIGHT OVER THERE, METICULOUSLY\nSELECTING THE MOST TOPICAL\nCHAKRA-AFFIRMING SCENTED\nCANDLES, AND USING FENG SHUI TO\nPERFECTLY ALIGN THE JOKE ENERGY\nIN THE EXCLUSIVE BOUTIQUE YOGA\nRETREAT THAT IS MY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES,\nI GO TO THE DUMPSTER BEHIND THE\nWAFFLE HOUSE AT 3:00 IN THE\nMORNING, TAKE OFF MY SHIRT,\nCOVER MYSELF IN USED FRY OIL,\nWRAP MY HANDS IN SOME OLD DUCT\nTAPE I STOLE FROM A BROKEN CAR\nWINDOW, THEN POUND A SIX-PACK OF\nBLUEBERRY HARD SELTZER AND A\nSACK OF PILLS I STOLE FROM A\nPARKED AMBULANCE, THEN\nARM-WRESTLE A RACCOON IN THE\nBACK ALLEY VISION QUEST OF NEWS\nTHAT IS MY SEGMENT:\n\"MEANWHILE!\""

},

"5Dsh9AgqRG0": {

"begin": "1:06.0",

"end": "2:34.0",

"text": "YOU KNOW, FOLKS, I SPEND MOST OF\nMY TIME RIGHT OVER THERE, MINING\nTHE DAY'S BIGGEST, MOST\nIMPORTANT STORIES, COLLECTING\nTHE FINEST, MOST TOPICAL IRON\nORE, HAND HAMMERING IT INTO JOKE\nPANELS.\nTHEN I CRAFT SHEETS OF BRONZE\nEMBLAZONED WITH PATTERNS THAT\nTELL AN EPIC TALE OF CONQUEST\nAND GLORY.\nTHEN, USING THE GERMANIC\nTRADITIONAL PRESSBLECH\nPROCESS, I PLACE THIN SHEETS OF\nFOIL AGAINST THE SCENES, AND BY\nHAMMERING OR OTHERWISE,\nAPPLYING PRESSURE FROM THE BACK,\nI PROJECT THESE SCENES INTO A\nPAIR OF CHEEK GUARDS AND A\nFACEPLATE.\nAND, FINALLY, USING FLUTED\nSTRIPS OF WHITE ALLOYED\nMOULDING, I DIVIDE THE DESIGNS\nINTO FRAMED PANELS AND HOLD IT\nALL TOGETHER USING BRONZE RIVETS\nTO CREATE THE BEAUTIFUL AND\nINTIMIDATING ANGLO-SAXON\nBATTLE HELM THAT IS MY\nNIGHTLY MONOLOGUE.\nBUT SOMETIMES, SOMETIMES, FOLKS,\nSOMETIMES, JUST SOMETIMES, I COME TO MY SENSES FULLY NAKED\nON THE DECK OF A PIRATE-BESIEGED\nMELEE CONTAINER SHIP THAT PICKED\nME UP FLOATING ON THE DETACHED\nDOOR OF A PORT-A-POTTY IN THE\nINDIAN OCEAN.\nTHEN, AFTER A SUNSTROKE-INDUCED\nREALIZATION THAT THE CREW OF\nTHIS SHIP PLANS TO SELL ME IN\nEXCHANGE FOR A BAG OF ORANGES TO\nFIGHT OFF SCURVY, I LEAD A\nMUTINY USING ONLY A P.V.C. PIPE\nAND A POOL CHAIN.\nTHEN, ACCEPTING MY NEW ROLE AS\nCAPTAIN, AND DECLARING MYSELF\nKING OF THE WINE-DARK SEAS, I\nGRAB A DIRTY MOP BUCKET COVERED\nIN BARNACLES AND ADORN IT WITH\nTHE TEETH OF THE VANQUISHED, TO\nCREATE THE SOPPING WET PIRATE\nCROWN OF NEWS THAT IS MY\nSEGMENT:\n\"MEANWHILE!\" "

},

"748OyesQy84": {

"begin": "0:40.0",

"end": "1:41.0",

"text": "FOLKS, IF YOU WATCH THE SHOW, YOU KNOW, I SPEND MOST OF\nMY TIME, RIGHT OVER THERE,\nCAREFULLY BLENDING FOR YOU THE\nDAY'S NEWSIEST, MOST TOPICAL\nFLOUR, EGGS, MILK, AND BUTTER,\nAND STRAINING IT INTO A FINE\nBATTER TO MAKE DELICATE, YET\nINFORMATIVE COMEDY PANCAKES.\nTHEN I GLAZE THEM IN THE JUICE\nAND ZEST OF THE MOST RELEVANT\nMIDNIGHT VALENCIA ORANGES, AND\nDOUSE IT ALL IN A FINE DELAMAIN\nDE VOYAGE COGNAC, BEFORE\nFLAMBEING AND BASTING THEM TABLE\nSIDE, TO SERVE FOR YOU THE JAMES\nBEARD AWARD-WORTHY CREPES\nSUZETTE THAT IS MY NIGHTLY,\nMONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES FOLKS, I WAKE UP IN THE\nBAGGAGE HOLD OF A GREYHOUND BUS\nAS ITS BEING HOISTED BY THE\nSCRAPYARD CLAW TOWARD THE BURN\nPIT, ESCAPE TO A NEARBY\nABANDONED PRICE CHOPPER, WHERE I\nSCROUNGE FOR OLD BREAD SCRAPS,\nBUSTED OPEN BAGS OF STAR FRUIT\nCANDIES, AND EXPIRED EGGS,\nCHUCK IT ALL IN A DIRTY HUBCAP\nAND SLAP IT OVER A TIRE FIRE\nBEFORE USING THE LEGS OF A\nSTAINED PAIR OF SWEATPANTS AS\nOVEN MITTS TO EXTRACT AND SERVE\nTHE DEMENTED TRANSIENT'S POUND\nCAKE OF NEWS THAT IS MY SEGMENT:\n\"MEANWHILE.\""

},

"8prs9Pq5Xhk": {

"begin": "1:18.5",

"end": "2:17.5",

"text": "FOLKS, IF YOU WATCH THE SHOW,\nAND I HOPE YOU DO, I SPEND A\nLOT OF TIME RIGHT OVER THERE,\nTIRELESSLY STUDYING THE LINEAGE\nOF THE DAY'S MOST IMPORTANT\nTHOROUGHBRED STORIES AND\nHOLSTEINER HEADLINES, WORKING\nWITH THE BEST TRAINERS MONEY CAN\nBUY TO REAR THEIR COMEDY\nOFFSPRING WITH A HAND THAT IS\nSTERN, YET GENTLE, INTO THE\nTRIPLE-CROWN-WINNING EQUINE\nSPECIMEN THAT IS MY NIGHTLY\nMONOLOGUE.\nBUT SOMETIMES, SOMETIMES, FOLKS\nI BREAK INTO AN UNINCORPORATED\nVETERINARY GENETICS LAB AND GRAB\nWHATEVER TEST TUBES I CAN FIND.\nAND THEN, UNDER A GROW LIGHT I GOT\nFROM A DISCARDED CHIA PET, I MIX\nTHE PILFERED D.N.A. OF A HORSE\nAND WHATEVER WAS IN A TUBE\nLABELED \"KEITH-COLON-EXTRA,\"\nSLURRYING THE CONCOCTION WITH\nCAFFEINE PILLS AND A MICROWAVED\nRED BULL, I SCREAM-SING A PRAYER\nTO JANUS, INITIATOR OF HUMAN\nLIFE AND GOD OF TRANSFORMATION\nAS A HALF-HORSE, HALF-MAN FREAK,\nSEIZES TO LIFE BEFORE ME IN THE\nHIDEOUS COLLECTION OF LOOSE\nANIMAL PARTS AND CORRUPTED MAN\nTISSUE THAT IS MY SEGMENT:\nMEANWHILE!"

},

"9gX4kdFajqE": {

"begin": "0:44.0",

"end": "1:08.0",

"text": "FOLKS, IF YOU WATCH THE SHOW,\nYOU KNOW SOMETIMES I'M OVER\nTHERE DOING THE MONOLOGUE.\nAND THEN THERE'S A COMMERCIAL\nBREAK, AND THEN I'M SITTING\nHERE.\nAND I DO A REALLY LONG\nDESCRIPTION OF A DIFFERENT\nSEGMENT ON THE SHOW, A SEGMENT\nWE CALL... \"MEANWHILE!\""

},

"9ssGpE9oem8": {

"begin": "0:00.0",

"end": "0:58.0",

"text": "WELCOME BACK, EVERYBODY.\nYOU KNOW, FOLKS, I SPEND MOST OF\nMY TIME RIGHT OVER THERE,\nCOMBING OVER THE DAY'S NEWS,\nSELECTING ONLY THE HIGHEST\nQUALITY AND MOST TOPICAL BONDED\nCALFSKIN-LEATHER STORIES,\nCAREFULLY TANNING THEM AND CUTTING\nTHEM WITH MILLIMETER PRECISION,\nTHEN WEAVING IT TOGETHER IN\nA DOUBLE-FACED INTRECCIATO\nPATTERN TO CREATE FOR YOU THE\nEXQUISITE BOTTEGA VENETA CLUTCH\nTHAT IS MY MONOLOGUE.\nBUT SOMETIMES, WHILE AT A RAVE\nIN A CONDEMNED CEMENT FACTORY, I\nGET INJECTED WITH A MYSTERY\nCOCKTAIL OF HALLUCINOGENS AND\nPAINT SOLVENTS, THEN, OBEYING\nTHE VOICES WHO WILL STEAL MY\nTEETH IF I DON'T, I STUMBLE INTO\nA SHIPYARD WHERE I RIP THE\nCANVAS TARP FROM A GRAVEL TRUCK,\nAND TIE IT OFF WITH THE ROPE FROM A\nROTTING FISHING NET, THEN WANDER\nA FOOD COURT, FILLING IT WITH\nWHAT I THINK ARE GOLD COINS BUT\nARE, IN FACT, OTHER PEOPLE'S CAR\nKEYS, TO DRAG AROUND THE\nROOTLESS TRANSIENT'S CLUSTER\nSACK OF NEWS THAT IS MY SEGMENT:\n\"MEANWHILE!\""

},

"ARw4K9BRCAE": {

"begin": "0:26.0",

"end": "1:16.0",

"text": "YOU KNOW, FOLKS, I SPEND\nMOST OF MY TIME STANDING RIGHT OVER\nTHERE,\nGOING OVER THE DAY'S NEWS\nAND SELECTING THE FINEST,\nMOST\nTOPICAL CARBON FIBER\nSTORIES, SHAPING THEM IN DRY\nDOCK INTO A\nSLEEK AND SEXY HULL, KITTING\nIT OUT WITH THE MOST TOPICAL\nFIBERGLASS AND TEAK FITTINGS\nBRASS RAILINGS, HOT TUBS,\nAND\nNEWS HELIPADS, TO CREATE THE\nCUSTOM DESIGNED, GLEAMING\nMEGA-YACHT THAT IS MY NIGHTLY\nMONOLOGUE. BUT SOMETIMES, JUST SOMETIMES FOLKS, I\nWASH ASHORE AT\nAN ABANDONED BEACH RESORT\nAFTER A NIGHT OF BATH SALTS\nAND\nSCOPOLAMINE, LASH SOME\nROTTING PICNIC TABLES\nTOGETHER, THEN\nDREDGE THE NEWS POND TO\nHAUL UP WHATEVER DISCARDED\nTRICYCLES AND BROKEN\nFRISBEES I CAN FIND, STEAL\nAN EYE PATCH\nFROM A HOBO, STAPLE A DEAD\nPIGEON TO MY SHOULDER, AND\nSAIL\nINTO INTERNATIONAL WATERS\nON THE PIRATE GARBAGE SCOW\nOF\nNEWS THAT IS MY SEGMENT:\nMEANWHILE!"

},

"B1DRmrOlKtY": {

"begin": "1:34.0",

"end": "2:17.0",

"text": "FOLKS, I SPEND A LOT OF TIME\nSTANDING RIGHT OVER THERE, OKAY,\nHANDPICKING ONLY THE RIPEST\nMOST TOPICAL DAILY HEADLINES,\nSEEKING OUT THE SWEETEST, MOST\nREFINED OF JEST-JAMS AND\nJOKE-JELLIES, CURATING A PLATE\nOF LOCAL COMEDY-FED MEATS AND\nSATIRICAL CHEESES TO LAY OUT THE\nARTISANAL CHARCUTERIE BOARD\nA-NEWS BOUCHE THAT IS MY NIGHTLY\nMONOLOGUE.\nBUT SOMETIMES, I WAKE UP IN THE\nGREASE TRAP OF AN\nUNLICENSED SLAUGHTERHOUSE,\nSPLASH MY FACE WITH SOME BEEF TALLOW,\nRENDER A RUDIMENTARY PRESERVE\nFROM BONE MARROW AND MELTED\nGUMMY WORMS, AND THROW IT\nTOGETHER WITH SOME SWEETBREADS\nAND TRIPE TO PRESENT THE\nPLOWMAN'S PLATTER OF UNCLAIMED\nOFFAL THAT IS MY SEGMENT:\nMEANWHILE!"

},

"BT950jqCCUY": {

"begin": "1:00.0",

"end": "2:09.0",

"text": "YOU KNOW, FOLKS,\nI SPEND SO MUCH OF MY TIME RIGHT\nOVER THERE, SIFTING THROUGH THE\nDAY'S BIGGEST STORIES, HAND\nSELECTING ONLY THE FINEST, MOST\nPERFECTLY AGED BURMESE NEWS\nTEAK.\nTHEN CAREFULLY CARVING AND\nSHAPING IT INTO A REFINED\nBUDDHA, DEPICTED IN THE \"CALLING\nTHE EARTH TO WITNESS\" GESTURE,\nWITH CHARACTERISTIC CIRCULAR\nPATTERNS ON THE ROBE, SHINS, AND\nKNEES, OF COURSE, WHICH I\nTHEN CAREFULLY GILD WITH THE\nMOST TOPICAL GOLD LEAF.\nTHEN I HARVEST THE SAP OF A\nUSITATA TREE TO APPLY THE DELICATE\nTAI O LACQUER, AND FINALLY HAND\nDECORATE IT WITH THE WHITE GLASS\nINLAYS TO CREATE FOR YOU THE\nGLORIOUS AMARA-PURA PERIOD\nSCULPTURE THAT IS MY MONOLOGUE.\nBUT SOMETIMES, SOMETIMES FOLKS,\nI WASH UP ON A GALVESTON\nBEACH, NAKED ON A RAFT OF GAS\nCANS AFTER ESCAPING FROM A FIGHT CLUB\nIN INTERNATIONAL WATERS.\nTHEN, STILL DERANGED ON A\nCOCKTAIL OF MESCALINE AND COUGH\nSYRUP, I STEAL A CINDER BLOCK\nFROM UNDER A STRIPPED '87 FORD\nTAURUS, AND CHIP AWAY AT IT\nWITH A BROKEN UMBRELLA HANDLE I\nSCAVENGED FROM A GOODWILL\nDUMPSTER UNTIL IT VAGUELY\nRESEMBLES THE HUNGERING WOLF\nTHAT SCRATCHES AT THE DOOR\nOF MY DREAMS AND PRESENT TO YOU\nTHE TORMENTED DREAD-EFFIGY OF\nNEWS THAT IS MY SEGMENT:\nMEANWHILE!"

},

"C0e8XM30tQI": {

"begin": "0:57.0",

"end": "2:40.0",

"text": "YOU KNOW, IF YOU WATCH THE SHOW, YOU'RE AWARE THAT I SPEND MOST OF\nMY TIME RIGHT OVER THERE,\nWANDERING THE NEWS FOREST FOR\nYOU, FELLING ONLY THE BIGGEST\nAND HEARTIEST WHITE STORY OAKS,\nCUTTING AND SHAPING THEM INTO\nTHE NEWSIEST, MOST TOPICAL\nCLEATS, CLAMPS AND PLANKS,\nKEEPING THEM AT A CONSTANT\nANGLE, GRADUALLY CREATING A\nSHELL-SHAPED, SHALLOW BOWL HULL\nUSING THE FIRE-BENDING TECHNIQUE\nINSTEAD OF STEAM-BENDING\nOBVIOUSLY.\nTHEN I LAY OUT ALL THE KEEL\nBLOCKS TO CAREFULLY SET UP THE\nSTEM, STERN AND, GARBOARD,\nATTACH THE BILGE FUTTOCKS TO THE\nTIMBER AND LOVINGLY CRAFT A FLAT\nTRANSOM STERN OUT OF\nNATURALLY-CURVED QUARTER\nCIRCLES.\nTHEN SECURE ALL THE PLANKS WITH\nTRUNNELS HANDMADE FROM THE\nFINEST LOCUST WOOD AND, FINALLY,\nADORN IT WITH A PROUD BOWSPRIT,\nFOREPEAK, AND CUSTOM GILDED\nFIGUREHEAD TO PRESENT TO YOU THE\nDUTCH GOLDEN AGE \"SPIEGEL-JACHT\"\nTHAT IS MY MONOLOGUE.\nBUT SOMETIMES, SOMETIMES,\nFOLKS-- YOU GOT TO HYDRATE\nAFTER THAT.\n\"SPIEGEL-JACHT\"\nBUT SOMETIMES, I\nAWAKEN FROM A MEAT-SWEAT-\nINDUCED FEVER STRAPPED TO A\nBASKET ON THE WONDER WHEEL AT\nCONEY ISLAND, STUMBLE ACROSS THE\nGARBAGE-FLECKED BEACH TO THE\nSOUND OF A TERRIFYING RAGGED\nBELLOW I REALIZE IS COMING FROM\nMY OWN LUNGS, WHICH THEN SUMMONS AN\nARMY OF SEAGULLS WHOM I INSTRUCT\nTO GATHER THE HALF-EMPTIED CANS\nOF BUSCH LIGHT LEFT BY A MOB OF\nBELGIAN TOURISTS, ALL OF WHICH\nI GATHER INTO A SACK I FASHIONED\nOUT OF PANTS I STOLE FROM A\nSLEEPING COP. THEN I SWIPE A\nGIANT INFLATABLE BABY YODA FROM\nA CARNY GAME, STRAP IT TO THE\nMAST I MADE BY RIPPING A B-68\nBUS STOP SIGN OUT OF THE GROUND\nON THE CORNER OF STILLWELL, AND\nLAUNCH THE VESSEL AS CAPTAIN OF\nTHE UNREGULATED PIRATE BOOZE\nCRUISE OF NEWS THAT IS MY\nSEGMENT:\n\"MEANWHILE\"!"

},

"CKsASCGr_4A": {

"begin": "1:48.3",

"end": "2:37.3",

"text": "FOLKS, YOU KNOW, I SPEND A LOT\nOF TIME RIGHT OVER THERE, CARVING THE\nFINEST, MOST-TOPICAL JAPANESE\nNEWS CYPRESS INTO AN EXPRESSIVE\nHANNYA DEMON MASK, DONNING MY\nBLACK AND GOLD SHOZOKU ROBES\nMADE OF THE SMOOTHEST STORY\nSILK, AND MASTERING THE ELABORATE\nCHOREOGRAPHY OF STILLNESS AND\nMOVEMENT, TO CREATE THE JAPANESE\nNOH THEATER PRODUCTION THAT IS\nMY NIGHTLY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES,\nFOLKS, I ENTER A FUGUE STATE IN\nTHE MIDDLE OF THE NIGHT, SIPHON\nA BUCKETFUL OF GASOLINE OUT OF MY\nNEIGHBOR'S MAZDA, RUN BAREFOOT\nFROM MY HOUSE TO A HOVEL UNDER\nTHE TURNPIKE WHERE I ASK A HOBO\nFOR A LIGHT AND SET A GARBAGE\nCAN ABLAZE, THEN PLACE MY\nFROSTBITTEN HANDS IN FRONT OF\nTHE DUMPSTER TO PROJECT THE\nSHADOW PUPPET WINO OPERA OF NEWS\nTHAT IS MY SEGMENT, \"MEANWHILE.\""

},

"DSc26qAJp_g": {

"begin": "0:28.0",

"end": "1:46.0",

"text": "FOLKS, I SPEND MOST OF MY TIME\nRIGHT OVER THERE, ASSEMBLING THE\nDAY'S BIGGEST, MOST-IMPORTANT\nSTORIES, THEN HAND-SHAPING THEM\nINTO SLEEK, ELEGANT BODYWORK,\nWHICH I LINE WITH ONLY THE\nFINEST, MOST TOPICAL POLISHED\nMACASSAR EBONY AND OPEN-PORE\nPALDAO VENEER, ADDING LIGHT\nMOCCASIN AND DARK SPICE LEATHER\nSEATS, AND MALABAR TEAK WOOD TO\nSET OFF A SCULPTED MINIMALIST\nSWITCHGEAR, ACCOMPANIED BY A\nSTERLING SILVER HUMIDOR AND\nCHAMPAGNE CELLARETTE, THEN\nHAND-SET 1,600 FIBER OPTIC\nLIGHTS ALIGNED WITH PINPOINT\nPERFORATIONS IN THE ROOF-LINING,\nTO CREATE FOR YOU THE BESPOKE\nCOACH BUILT ROLLS-ROYCE SWEPTAIL\nTHAT IS MY MONOLOGUE.\nBUT SOMETIMES, SOMETIMES, FOLKS,\nSOMETIMES, I JUST, I JUST SHRIEK AWAKE IN THE\nSCRAPYARD OF A DERELICT MACHINE\nSHOP, SCAVENGE A\n3.6-HORSEPOWER BRIGGS AND STRATTON\nLAWN MOWER ENGINE, BULLY A\nDOG INTO GIVING ME ITS FRISBEE\nTO USE AS A STEERING WHEEL, THEN\nI BRIEFLY CONSIDER-- BUT DECIDE\nAGAINST-- SWIPING THE BRAKE PADS\nOFF AN UNATTENDED HEARSE,\nBECAUSE WHERE I'M GOING, WE DON'T\nNEED BRAKES.\nI HOOK IT ALL UP TO A RUSTY\nDOLLAR TREE SHOPPING CART,\nSHOTGUN A WHITE CLAW, NO LAW ON THE CLAW, SPRAY\nPAINT MY TEETH, AND BLAST OFF IN\nTHE \"FURY ROAD\" THUG-BUGGY OF\nNEWS THAT IS MY SEGMENT:\n\"MEANWHILE\"!"

},

"DhuCyncmFgM": {

"begin": "0:43.5",

"end": "1:30.5",

"text": "YOU KNOW, FOLKS, I\nSPEND A LOT OF TIME STANDING RIGHT\nOVER THERE, COMBING THROUGH\nTHE DAY'S\nSTORIES TO FIND AND ERECT\nFOR YOU THE FINEST GRECIAN\nNEWS\nCOLUMNS, ADORNING THEM WITH\nTHE MOST UP-TO-THE-MINUTE\nBAS-RELIEF.\nAND THEN I IMPART MY MOST\nTOPICAL\nTEACHINGS TO BE ABSORBED BY\nEAGER, SPONGE-LIKE\nMINDS IN THE\nAUGUST ATHENIAN ACADEMY THAT\nIS MY MONOLOGUE.\nBUT SOMETIMES, JUST\nSOMETIMES, FOLKS, I COME TO IN A\nDRIED-OUT BABY POOL\nON AN ABANDONED RANCH I WON\nIN A RUSSIAN ROULETTE GAME\nOFF THE COAST OF MOZAMBIQUE\nI GATHER TUMBLEWEEDS\nAND I LASH THEM TOGETHER WITH\nSOME TWINE I FOUND IN A DUMPSTER\nBY A BURNED-OUT REST STOP,\nTHEN I\nSHOTGUN A HOT MONSTER ENERGY\nDRINK AND CHEW ON MACA ROOT\nAS I\nHALLUCINATE THROUGH THE\nNIGHT IN THE VAGRANT'S HOT\n-BOX YURT OF\nNEWS THAT IS MY SEGMENT:\n\"MEANWILE!\""

},

"EnGHyZS4f-8": {

"begin": "1:33.0",

"end": "2:12.0",

"text": "YOU KNOW, FOLKS, I'VE SPENT\nDECADES CULTIVATING THE MOST\nRELEVANT RED OAK, FELLING THEM\nWITH TOPICAL HUSQVARNAS,\nMULTIGRADING THEM INTO THE MOST\nBUZZWORTHY THREE-QUARTER INCH\nFLOORSTRIPS, AND FINISHING THEM\nWITH UP-TO-THE-MINUTE HIGH-GLOSS\nPOLYURETHANE, TO LAY FOR YOU THE\nFLAWLESS PARQUET N.B.A.-QUALITY\nBASKETBALL COURT THAT IS MY\nNIGHTLY MONOLOGUE.\nBUT SOMETIMES, I SCARE THE GEESE\nOUT OF THE BOG BEHIND MY UNCLE'S\nSHED, FILL IT WITH SAND I STOLE\nFROM AN ABANDONED PLAYGROUND,\nTHEN BLANKET IT WITH WET TRASH\nAND DISCARDED AMAZON BOXES, TO\nCREATE FOR YOU THE\nMUSKRAT-RIDDLED BOUNCELESS\nBACKYARD WALLBALL PIT OF NEWS\nTHAT IS MY SEGMENT:\n\"MEANWHILE!\""

},

"G8ajua4Mb5I": {

"begin": "1:39.0",

"end": "2:24.0",

"text": "YOU KNOW, FOLKS,\nIF YOU WATCH THIS SHOW AND I\nHOPE YOU DO,\nI SPEND A LOT OF TIME RIGHT OVER\nTHERE, CAREFULLY ERECTING THE\nNEWSIEST, MOST TOPICAL\nCORINTHIAN COLUMNS, FOLLOWING\nTHE GOLDEN RATIO, POLISHING THE\nFINEST CARRARA MARBLE, AND\nENGRAVING ONTO IT MYTHIC TALES\nWITH FLOURISHES OF HUMOR AND\nPATHOS, TO CREATE THE\nGRECO-ROMAN ACROPOLIS THAT IS MY\nNIGHTLY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES, FOLKS,\nI GRAB A COUPLE OF OFF-BRAND\nLEGOS THAT HAVE BEEN JAMMED\nBETWEEN MY TOES SINCE I STEPPED\nON THEM IN 2003, FISH THE STICKS\nOUT OF SOME MELTED POPSICLES IN\nA BROKEN FREEZER, COLLECT THE\nPRE-SOAKED SAND FROM A\nPLAYGROUND I BROKE INTO IN THE\nDEAD OF NIGHT, AND THROW IT ALL\nTOGETHER TO CONSTRUCT FOR YOU\nTHE RAMSHACKLE PARTHENON OF NEWS\nTHAT IS MY SEGMENT:\n\"MEANWHILE!\""

},

"I4s-44cPYVE": {

"begin": "1:10.0",

"end": "2:59.0",

"text": "FOLKS, YOU KNOW, IF YOU WATCH THE SHOW, YOU KNOW, I SPEND\nMOST OF MY TIME\nRIGHT OVER THERE, SURVEYING THE\nNEWS MARKET FOR THE\nBIGGEST STORIES, THEN CAREFULLY\nSELECTING THE FINEST, MOST\nTOPICAL BUFFALO NEWS HIDE WHICH\nI THEN SOAK USING NATURAL SPRING\nAND LIMEWATER-- ONLY DURING\nCOLDER MONTHS-- AND SCRAPE IT\nUNTIL IT IS EVENLY TRANSLUCENT\nAND SUPPLE.\nAND THEN, USING THE TRADITIONAL\nPUSH-KNIFE METHOD, I DELICATELY\nMAKE MORE THAN 3,000 CUTS TO\nCREATE THE EMOTIVE AND POWERFUL\nFIGURINE WHICH I DECORATE WITH\nGRADATIONS AND CONTRAST,\nEMPLOYING THE SHAANXI REGION\nFIVE-COLOR SYSTEM.\nTHEN I CAREFULLY CONNECT THE\nFIGURINE'S JOINTS WITH COTTON\nTHREADS SO THEY CAN BE OPERATED\nFREELY, AND FIRE UP A PAPER\nLANTERN BEHIND A FINE HUANGZHOU\nSILK SCREEN, AND, BACKED BY A\nSUONA HORN, AND YUEQIN, AND\nBANHU FIDDLE, I OPERATE NO LESS\nTHAN FIVE OF THESE FIGURINES AT\nONCE, BECOMING THE LIVING\nEMBODIMENT OF THE 1,000-HAND\nGWAN-YIN, TO MOUNT FOR YOU THE\nEPIC AND MOVING TONG DYNASTY\nPI-YING SHADOW PLAY THAT IS MY\nNIGHTLY MONOLOGUE.\nBUT SOMETIMES, FOLKS,\nSOMETIMES,\nIT'S CRAFTSMANSHIP.\nIT GOES LIKE THAT, RIGHT\nTHERE.\nSOMETIMES, FOLKS,\nI AM PECKED\nAWAKE BY A DIRTY SEAGULL ON THE\nINTRACOASTAL WATERWAY, WHILE\nSTILL LYING ON THE BACK OF A\nMANATEE WHO RESCUED ME FROM SOME\nARMS DEALERS I DOUBLE-CROSSED\nOFF THE COAST OF CAPE FEAR, AND WHO THEN\nDUMPS ME ON AN ABANDONED WHARF\nWHERE I SLIP THEIR DIRTY SOCK OFF\nA SEVERED FOOT I FISHED OUT OF A\nSTORM DRAIN, AND SLAP GOOGLY\nEYES ON IT MADE FROM TWO MENTOS\nI PRIED OUT OF THE MOUTH OF A\nMANGY COYOTE.\nTHEN I FASHION A KAZOO OUT OF A\nPOCKET COMB I STOLE FROM A\nFISHERMAN AND THE WAX PAPER FROM\nHIS MEATBALL SUB, TO HONK OUT A\nDIRGE WHILE YAMMERING A TONE\nPOEM ABOUT THE DEMONS INFESTING\nMY BEARD IN THE UNBALANCED MANIC\nSOCK PUPPET SHOW OF NEWS THAT IS\nMY SEGMENT:\n\"MEANWHILE!\""

},

"JAfAApqOeFU": {

"begin": "1:49.5",

"end": "2:42.5",

"text": "FOLKS.\nI SPEND A LOT OF MY TIME GATHERING FOR YOU\nTHE FINEST, MOST TOPICAL STORIES\nABOUT NATIONAL STUDIES,\nSCIENTIFIC BREAKTHROUGHS, AND\nDRUNK MOOSE BREAKING INTO ICE\nCREAM SHOPS, ONLY TO HAVE A\nPANDEMIC HIT, DURING WHICH I\nTAKE THEM INTO A SAD, EMPTY\nLITTLE ROOM WHERE MY ONLY\nFRIENDS ARE ROBOT CAMERAS AND A\nPURELL BOTTLE, AND I LET MYSELF\nGO WHILE SLOWLY DESCENDING INTO\nMADNESS AS I SHOVE MY SWEET\nINNOCENT LITTLE JOKES INTO A\nSEGMENT THAT I AM FORCED TO\nRENAME \"QUARANTINE-WHILE.\"\nBUT SOMETIMES, I CRAWL OUT OF\nTHE BROOM CLOSET AFTER 15\nMONTHS, POUR MYSELF BACK INTO A\nSUIT, ASSEMBLE THE TOP TEAM IN\nTHE BUSINESS, THE SWINGINGEST\nBAND IN LATE NIGHT, AND THE\nBEST DAMN AUDIENCE IN THE WORLD.\nSO I CAN RETURN TO YOUR LOVING\nARMS IN THE KICK-ASS,\nPROPERLY-PRESENTED\nCELEBRATION OF MARGINAL NEWS\nTHAT IS MY SEGMENT:\n\"MEANWHILE!\""

},

"JfT59wBSQME": {

"begin": "0:52.0",

"end": "1:37.0",

"text": "YOU KNOW, FOLKS, I SPEND A\nLOT OF TIME HARVESTING THE DAY'S\nMOST TOPICAL MATCHA POWDER,\nCAREFULLY POLISHING THE NEWSIEST\nCHAWAN TEA BOWL WITH A HEMP\nCLOTH, AND ADDING THE PUREST\nBOILED WATER COLLECTED FROM THE\nRIVER OF STORIES, TO STAGE THE\nELEGANT JAPANESE TEA CEREMONY\nTHAT IS MY NIGHTLY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES, I CONVINCE A\nTRUCK DRIVER TO GIVE ME A RIDE\nIN EXCHANGE FOR AN UNREGISTERED\nHAND GUN AND A HALF-EATEN CAN OF\nBEANS, HITCHHIKE TO THE SONORA\nDESERT WITH NOTHING BUT AN OLD\nPOT THAT I FILL WITH THE\nNEWSPAPER I'VE BEEN USING FOR A\nBLANKET, AND THE SALVAGED\nTOBACCO FROM A SIDEWALK CIGARETTE\nBUTT, TO BREW FOR YOU, THE\nNIGHTMARE HALLUCINATION-INDUCING\nFERMENTED AYAHUASCA SLURRY OF\nNEWS THAT IS MY SEGMENT:\nMEANWHILE!"

},

"KT8pCZ5Xw9I": {

"begin": "0:37.6",

"end": "1:26.6",

"text": "FOLKS, YOU KNOW,\nIF YOU WATCH THE SHOW,\nTHAT I SPEND A LOT OF TIME RIGHT OVER\nTHERE, GATHERING THE FRESHEST,\nNEWSIEST HEADLINE FLOWERS,\nSCOURING THE FIELDS AND FORESTS\nFOR THE MOST TOPICAL AND\nFRAGRANT SYLVAN NEWS BOUGHS, THE\nJOKIEST FESTIVE GOURDS, AND THEN\nCAREFULLY ASSEMBLING AND\nARRANGING THEM ALL INTO THE\nGRAND YET TASTEFUL STATE\nDINNER-WORTHY CENTERPIECE THAT\nIS MY NIGHTLY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES, NOW, SOMETIMES,\nI RUB SOME LEAD PAINT CHIPS ONTO\nMY GUMS, STAGGER INTO THE WOODS\nWITH NOTHING BUT A STAPLE GUN\nAND SOME EMPTY CANS OF SPRAY\nPAINT, AND THEN, BY THE LIGHT OF THE\nTIRE-FIRE, USING SMASHED LARVAE\nAND MY OWN SALIVA AS GLUE, I\nCOBBLE TOGETHER A CRUDE PILE OF\nPUNKY WOOD AND ANIMAL SKULLS TO\nPRESENT TO YOU THE UNHINGED\nLONER'S CORNUCOPIA OF NEWS THAT\nIS MY SEGMENT:\n\"MEANWHILE!\""

},

"L-kR7UCzhTU": {

"begin": "1:36.0",

"end": "2:19.0",

"text": "YOU KNOW, I SPEND A LOT OF TIME\nRIGHT OVER THERE HAND-RAISING\nAND SELECTING THE NEWEST,\nMOST-TOPICAL SEVILLE ORANGES,\nCAREFULLY SIMMERING THEM WITH\nTURBINADO SUGAR AND PRISTINE\nFILTERED WATER TO CREATE FOR YOU\nA DOUBLE-GOLD-MEDAL-WINNING\nBITTERSWEET ENGLISH MARMALADE TO\nSPREAD ON THE PERFECTLY TOASTED\nARTISANAL BRIOCHE THAT IS\nMY NIGHTLY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES, FOLKS,\nI GRAB AN EXPIRED TUB OF\nWHIPPING CREAM, TOSS IT IN A\nBLENDER WITH THE HALF OF A\nGOGURT I FOUND IN A SCHOOLYARD,\nAND LET THAT FERMENT BEHIND THE\nFURNACE WHILE I FISH A DRIED\nPITA OUT OF THE GUTTER\nUNDERNEATH THE CORN-- CORNER KEBAB\nSTAND, THEN SLATHER IT WITH THE\nUNPRESSURIZED NIGHTMARE\nDAIRY OF NEWS THAT IS MY\nSEGMENT:\n\"MEANWHILE!\""

},

"Lf-LkJhKVhk": {

"begin": "1:14.0",

"end": "1:53.0",

"text": "YOU KNOW, I SPEND A LOT OF TIME\nOVER THERE CAREFULLY ASSEMBLING\nTHE MOST TOPICAL VIRTUOSO WIND\nSECTION, TUNING THE VIOLAS,\nCELLOS, AND CONTRABASS TO THE\nCOUNTRY'S ZEITGEIST, AND WAVING\nMY CONDUCTOR'S BATON TO THE\nTEMPLE OF HUMOR TO PRESENT FOR\nYOU THE SPECTACULAR BRAHMS\nCONCERTO IN SATIRE MAJOR THAT IS\nMY MONOLOGUE.\nBUT SOMETIMES, I WAKE UP IN A\nFUGUE STATE BEHIND THE ABANDONED\nVIDEO STORE, STEAL A GARBAGE CAN\nLID AND A BROKEN UMBRELLA\nHANDLE, AND THEN GRAB AN EMPTY CAN\nOF P.B.R. I'VE BEEN USING AS AN\nASHTRAY TO FASHION A RUSTED\nKAZOO, ALL TO CREATE THE\n2-IN-THE-MORNING ONE-MAN-STOMP\nSHOW OF NEWS THAT IS MY SEGMENT:\n\"MEANWHILE!\""

},

"P72uFdrkaVA": {

"begin": "0:49.3",

"end": "1:48.3",

"text": "FOLKS, IF YOU WATCH THE SHOW, YOU KNOW I SPEND A LOT OF\nTIME RIGHT OVER THERE, TENDERLY\nCLIPPING THE NEWSIEST, MOST\nFRAGRANT TEA-LEAF BUDS OF THE\nDAY, GINGERLY LAYING THEM TO DRY\nBY THE LIGHT OF THE\nROSY-FINGERED DAWN,\nPAINSTAKINGLY STEEPING THEM TO\nPERFECTION IN THE MOST TOPICAL\nOF FRESH WATER GATHERED FROM THE\nNATION'S NEWS RESERVOIR, BEFORE\nCEREMONIOUSLY SERVING TO YOU THE\nANTIOXIDANT-RICH ELIXIR OF\nBESPOKE TEA SHAN THAT IS MY\nNIGHTLY MONOLOGUE.\nBUT SOMETIMES, SOMETIMES FOLKS, AFTER\nA BENDER ON ABSINTHE AND\nMOUNTAIN DEW CODE RED, I SWEAT\nMYSELF AWAKE HOVERED OVER A VAT\nOF BLISTERING SEWER RUNOFF.\nI ADD TO THE GURGLING POT OF\nNIGHTMARES WHATEVER THE VOICES\nDEMAND: SCRAPS OF WET TRASHCAN\nLETTUCE AND BAND-AIDS FROM THE\nCOMMUNITY POOL.\nTHEN, USING AN OLD GYM SOCK I\nFOUND AT THE HOBOKEN Y.M.C.A., I\nSTRAIN IT ALL INTO A DISUSED\nGASOLINE CONTAINER TO OFFER YOU\nTHE SCALDING-HOT DEMENTED DEMON\nTONIC OF NEWS THAT IS MY\nSEGMENT: \"MEANWHILE!\""

},

"PT5_00Bld_8": {

"begin": "0:57.0",

"end": "2:02.0",

"text": "FOLKS, YOU KNOW, I SPEND A LOT OF MY TIME,\nRIGHT OVER THERE, CRUISING THE\nVAST TSUKIJI FISH MARKET OF THE\nDAY'S BIGGEST STORIES, CAREFULLY\nSURVEYING THE FRESHEST, MOST\nTOPICAL CATCH OF THE DAY,\nCHOOSING ONLY THE HIGHEST GRADE\nAHI NEWS TUNA, AWABI, AND UNAGI,\nTHEN DELICATELY PICKING THROUGH\nTHE RICE GRAINS OF THE\nHEADLINES, GENTLY WRAPPING THE\nINGREDIENTS IN THE FRESHEST\nHAND-PICKED NORI, AND CAREFULLY\nLAYING IT ALL OUT TO PRESENT TO\nYOU THE YAMANAKA LACQUERED\nTHREE-TIER JUBAKO BENTO BOX THAT\nIS MY NIGHTLY MONOLOGUE.\nBUT -- YOU KNOW WHAT I'M SAYING.\nYOU KNOW WHAT I'M SAYING.\nYOU KNOW WHAT'S COMING.\nBUT SOMETIMES, JUST SOMETIMES FOLKS, I AM SLAPPED\nAWAKE BY A POSSUM ON A BED OF\nWET TIRES NEAR A LONG-ABANDONED\nWHARF, SELL MY LAST REMAINING\nADULT TEETH TO A VAGRANT\nFISHMONGER FOR AN EXPIRED SALMON\nCARCASS, AND GRIND THAT INTO A\nSOFT PASTE, THEN ROLL IT IN ENOUGH\nSTALE RICE KRISPIES TO MAKE\nSNAP, CRACKLE, AND POP TAKE A\nHARD LOOK IN THE MIRROR, AND SERVE\nYOU THE NIGHT-SCREAM INDUCING\nHOBO HAND-ROLL OF NEWS THAT IS\nMY SEGMENT:\n\"MEANWHILE!\""

},

"QPDZbNEhsuw": {

"begin": "1:31.0",

"end": "2:15.0",

"text": "YOU KNOW, FOLKS, I SPEND MOST\nOF MY TIME RIGHT OVER THERE,\nDIGGING DOWN INTO THE NEWS\nPIT AND MINING FOR YOU THE\nDAY'S\nCLEAREST STORY DIAMONDS,\nCLEAVING THEM INTO THE MOST\nTOPICAL CUTS, FACETING THEM,\nPOLISHING THEM TO A HIGH\nFINISH,\nTHEN SETTING THEM ALL IN A\nDELICATE 24 KARAT GOLD CHAIN\nTO\nCREATE THE BESPOKE CARTIER\nNECKLACE THAT IS MY NIGHTLY MONOLOGUE,\nBUT SOMETIMES, SOMETIMES FOLKS,\nI JUST HUFF A LITTLE TOO MUCH\nEPOXY\nAND STUMBLE DOWN TO AN\nABANDONED PIER, WHERE I FIND\nA PIECE OF\nDISUSED FISHING LINE AND\nSTRING IT WITH OLD BOTTLE\nCAPS, RUSTY\nPADLOCKS, AND BABY TEETH,\nTHEN RIP THE SEAT BELT OUT\nOF A\nBURNED-OUT POLICE CAR TO\nMAKE A CLASP, AND PARADE\nNAKED THROUGH\nA CHI-CHI'S WEARING THE\nPSYCHO CHOKER OF NEWS THAT\nIS MY\nSEGMENT:\n\"MEANWHILE.\""

},

"QjQbQlN9Ev8": {

"begin": "3:53.0",

"end": "4:35.0",

"text": "YOU KNOW, FOLKS, I SPEND A LOT OF\nMY TIME RIGHT OVER THERE,\nHANGING FOR YOU THE DAY'S\nHOTTEST, MOST TOPICAL NEWS\nDECORATIONS, BOOKING THE SEXIEST\nBAND, CURATING THE MOST RELEVANT\nDRINKS MENU, DISTRIBUTING\nPLAYFUL, YET TASTEFUL PARTY\nFAVORS, AND GLITTER HATS THAT\nSAY \"2022,\" AND THEN SETTING THE\nMOST AU-COURANT LIGHTING TO\nTHROW FOR YOU THE UPSCALE,\n\"PITCH PERFECT\" NEW YEAR'S EVE\nPARTY THAT IS MY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES,\nFOLKS, I WAKE UP IN THE RAFTERS\nAFTER LOSING A BET TO A CROW.\nAND THEN I SPIKE THE PUNCH BOWL WITH\nCHLOROFORM AND MILITARY-GRADE\nHELICOPTER LUBRICANTS, MAKE A\nBUNCH OF RESOLUTIONS TO QUIT\nHUFFING WD-40, AND PUNCH A\nPOLICE HORSE DURING THE FUGITIVE\nNEW YEAR'S HO-DOWN OF NEWS THAT\nIS MY SEGMENT:\nMEANWHILE!"

},

"R6JV_I36It8": {

"begin": "0:38.0",

"end": "1:18.0",

"text": "YOU KNOW, FOLKS, I SPEND\nA LOT OF TIME\nSHUCKING FOR YOU THE DAY'S MOST\nTOPICAL CLAMS, BONING THE\nFINEST, MOST CURRENT NEWS\nCHICKENS, AND COLLECTING THE\nHIGHEST QUALITY STORY SHRIMP\nAND SAFFRON RICE, THEN GENTLY\nSIMMERING IT ALL IN A CAST-IRON\nCOMEDY PAI-YERA, TO CREATE\nTHE FRAGRANT SEAFOOD PAELLA THAT\nIS MY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES FOLKS, I\nSHAMBLE DOWN TO THE DOCKS WITH A\nRUSTY CROWBAR, MANEUVER THROUGH\nTHE POLLUTED CANAL USING A\nMCDONALD'S STRAW AS A SNORKEL,\nAND SCRAPE THE BARNACLES OFF A\nPASSING GARBAGE SCOW, TOSS THEM\nIN A POT WITH SOME HALF-USED\nRAMEN FLAVORED PACKETS AND\nMOUNTAIN DEW, TO BREW FOR YOU\nTHE CHUNKY STEW OF NEWS THAT IS\nMY SEGMENT:\n\"MEANWHILE!\""

},

"RFVggCw58lo": {

"begin": "1:00.0",

"end": "1:43.0",

"text": "YOU KNOW FOLKS, I SPEND A LOT OF TIME\nSTANDING RIGHT OVER THERE, PAINSTAKINGLY\nPENCILING THE DAY'S MOST TOPICAL\nAND HEROIC STORIES, STAGING THEM\nIN METICULOUSLY PLANNED PANELS,\nHAND-INKING THEM WITH THE\nPITHIEST DIALOGUE, THEN COLOR\nBALANCING THE FINISHED PAGES TO\nCREATE FOR YOU THE GENRE-BENDING\nONCE-IN-A-GENERATION GRAPHIC\nNOVEL THAT IS MY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES FOLKS, I JUST TEAR A PAGE OUT\nOF A WET NEWSPAPER I PEELED OFF\nA SUBWAY SEAT, GRAB A BROKEN\nCRAYON I FOUND IN MY COUCH,\nCRUDELY DRAW SOME FILTHY\nCARTOONS, SCRIBBLE IN AS\nMANY CURSE WORDS AS I CAN IN THE\nPORNOGRAPHIC DRIFTER ZINE OF\nNEWS THAT IS MY SEGMENT:\nMEANWHILE!"

},

"RHDQpOVLKeM": {

"begin": "0:39.0",

"end": "2:11.0",

"text": "YOU KNOW, FOLKS, I\nSPEND MOST OF MY TIME, RIGHT\nOVER THERE, PORING OVER THE\nDAY'S BIGGEST STORIES,\nCOLLECTING THE FINEST,\nMOST-TOPICAL NEWS CALFSKINS AND\nPAINSTAKINGLY WASHING THEM IN A\nCALCIUM HYDROXIDE SOLUTION, THEN\nSOAKING THEM IN LIME FOR DAYS TO\nREMOVE ALL NARRATIVE IMPURITIES\nAND CREATE A PALE VELLUM THAT I\nLATER PLACE ON MY SCRIPTORIUM IN\nA MONASTERY ON THE CLIFFS OF\nDOVER.\nTHERE, USING A PEN CUT FROM THE\nWING FEATHER OF A SWAN OF THE\nRIVER AVON, I DESIGN COPTIC AND\nSYRIAC ILLUSTRATIONS, ADORNED\nWITH WHIMSICAL CELTIC SPIRALS\nAND USE GERMANIC ZOOMORPHIC\nDESIGNS TO CREATE THE MARGINALIA\nSURROUNDING THE PAGES OF ELEGANT\nHALF-UNCIAL INSULAR SCRIPT THAT\nTELL THE HOLIEST OF STORIES,\nWHICH I THEN BIND WITH GOLDEN\nTHREAD UNDER A PROTECTIVE CASE\nOF CARVED OAK TO CREATE FOR YOU\nTHE GLORIOUS LATE\nANGLO-SAXON PERIOD ILLUMINATED\nMANUSCRIPT THAT IS MY MONOLOGUE.\nBUT SOMETIMES, FOLKS, SOMETIMES,\nTHESE PEOPLE KNOW, THEY KNOW, THEY KNOW\nBUT SOMETIMES, FOLKS,\nI COME TO UNDER A RAMP IN THE\nMIDDLE OF A DEMOLITION DERBY,\nHOTWIRE THE TRUCKASAURUS\nAND LEAD THE POLICE ON A CHASE\nBEFORE CRASHING INTO A SWAMP\nGATHERING JUST AS, WHAT I ASSUME\nIS A PRIEST SAYS, \"YOU MAY KISS\nTHE BRIDE,\" RIP A LEECH OFF MY\nASS, AND USE IT TO HASTILY\nDOODLE A SKETCH OF THE SCENE IN\nMY OWN BLOOD ON AN OLD DAVE AND\nBUSTERS RECEIPT, THEN STAGGER\nTOWARD THE HAPPY COUPLE\nCLUTCHING THE NIGHTMARE\nSTALKER'S WEDDING ALBUM OF NEWS\nTHAT IS MY SEGMENT:\nMEANWHILE!"

},

"TZSw9iRk03E": {

"begin": "0:22.5",

"end": "1:08.5",

"text": "FOLKS, YOU KNOW I\nSPEND A LOT\nOF RIGHT TIME OVER THERE, CAREFULLY\nSTUDYING THE LATEST, NEWSIEST\nCLINICAL STUDIES, PRACTICING AND\nTRAINING UNDER THE BEST, MOST\nTOPICAL DOCTORS, CAREFULLY\nSTERILIZING ALL MY EQUIPMENT,\nAND ASSEMBLING THE WORLD'S GREATEST\nSURGICAL TEAM TO PERFORM FOR YOU THE\nDAZZLINGLY COMPLEX AND\nGROUNDBREAKING THORACIC AORTIC\nDISSECTION REPAIR THAT IS MY\nMONOLOGUE.\nBUT SOMETIMES, SOMETIMES,\nI GET KICKED OUT OF MY HEALTH CARE\nPLAN FOR LISTING MY DOG BENNY AS\nA GASTROENTEROLOGIST, SO I\nSTIPPLE SOME INCISION MARKS ON\nMY ABDOMEN WITH A DRIED-OUT\nSHARPIE, SLAM A COUPLE OF RED BULLS\nIN FRONT OF A SHATTERED MIRROR,\nAND FISH A RUSTY BONING KNIFE\nOUT OF A STOLEN CRAB BOAT TO\nPERFORM THE EXPLORATORY HOBO\nAPPENDECTOMY OF NEWS THAT IS MY\nSEGMENT:\n\"MEANWHILE!\""

},

"VV3UJmb8kHw": {

"begin": "2:04.0",

"end": "2:54.0",

"text": "YOU KNOW, FOLKS,\nIF YOU WATCH THE SHOW, YOU KNOW\nI SPEND A LOT OF MY TIME RIGHT\nOVER THERE.\nCAREFULLY COMBING THROUGH THE\nBIGGEST STORIES OF THE DAY,\nSOURCING FOR YOU THE NEWSIEST\nMIKADO ORGANZA IN A HIGH SHEEN,\nADDING THE MOST TOPICAL IVORY\nFEATHER FRINGE AND A DIPPED\nBACK, THEN THROWING ON A DEMURE\nBUT KICKY FLORAL EMBROIDERED\nTULLE SHRUG WITH STATEMENT PEARL\nACCENTS TO PRESENT TO YOU THE\nGLORIOUS \"VOGUE\" COVER-READY\nWEDDING GOWN THAT IS MY\nMONOLOGUE.\nBUT SOMETIMES, WHILE ON A\nGLUE-HUFFING BINGE, I CRASH A\nSTOLEN HEARSE INTO AN ABANDONED\nCHILDREN'S HOSPITAL WHERE I USE\nMY TEETH TO TEAR UP SOME OLD\nCURTAINS AND STAINED CARPETING,\nAND STEAL A BUTTON OFF AN OLD\nSURGICAL APRON, AND STITCH IT\nALL TOGETHER WITH A NEEDLE MADE\nFROM A CHICKEN BONE TO THROW\nTOGETHER THE SHRIEKING CAT\nLADY'S SACK DRESS OF NEWS THAT\nIS MY SEGMENT: \"MEANWHILE.\""

},

"VYVbTzoggKc": {

"begin": "0:00.0",

"end": "0:49.0",

"text": "FOLKS, YOU KNOW, I\nSPEND A LOT OF\nMY TIME ON THE SHOW-- IF YOU\nWATCH THE SHOW YOU'D FIGURE\nTHIS OUT,\nRIGHT OVER THERE, STANDING RIGHT OVER THERE IN THE MONOLOGUE SPELUNKING\nTHROUGH THE DAY'S STORIES TO\nSELECT AND SOURCE THE\nNEWSIEST MARBLE, CHISELING\nIT INTO A\nPEDESTAL OF HUMOR AS WIDE AS\nTWO GREEK ISLES.\nTHEN I CAST THE MOST TOPICAL\nCURRENT-EVENTS-BRONZE INTO A\nFINELY CRAFTED MOULD TO\nERECT FOR YOU THE TOWERING\nGRECIAN\nCOLOSSUS THAT IS MY NIGHTLY\nMONOLOGUE.\nBUT SOMETIMES, JUST\nSOMETIMES, FOLKS, I JOLT\nAWAKE INSIDE\nWHAT'S LEFT OF A RUSTED\nMAZDA MIATA IN A WHITE CLAW\nAND\nOVEN-CLEANER-INDUCED FUGUE\nSTATE, SHAMBLE THROUGH THE\nJUNKYARD, RANSACKING THE\nDEBRIS FOR OLD FISHING RODS,\nMELTED\nBATTERIES AND THE SHOVEL OF\nA DERELICT BACKHOE, AND THEN\nBOOST AN\nACETYLENE TORCH TO HASTILY\nWELD TOGETHER THE BOOTLEG\nTRUCKASAURUS OF NEWS THAT\nIS MY SEGMENT:\nMEANWHILE!"

},

"WWWeV8xVNtI": {

"begin": "2:00.0",

"end": "2:35.0",

"text": "YOU KNOW, FOLKS, I SPENT A LOT\nOF TIME CAREFULLY RESEARCHING\nTHE DAY'S MOST CULTURALLY\nPRECIOUS STORIES,\nCROSS-REFERENCING HISTORICAL\nACCOUNTS WITH TOPOGRAPHICAL\nMAPS, AND ASSEMBLING THE FINEST\nTEAM OF ARCHAEOLOGISTS TO\nUNEARTH THE UNESCO WORLD\nHERITAGE EXCAVATION SITE OF\nHUMOR THAT IS MY MONOLOGUE.\nBUT SOMETIMES, I DISTRACT AN\nORPHAN WITH A PIECE OF\nLINT-COVERED CANDY AND STEAL\nTHEIR BUCKET AND PAIL, THEN\nSNEAK INTO THE POTTER'S FIELD IN\nTHE DEAD OF NIGHT WITH TWO\nDRIFTERS I PICKED UP ON THE\nCOMMUTER TRAIN, AND FORCE THEM\nTO DIG FOR THE ABANDONED\nPAUPER'S GRAVE\nOF NEWS THAT IS MY SEGMENT:\nMEANWHILE!"

},

"XzJAtzdrY_w": {

"begin": "2:57.0",

"end": "4:32.0",

"text": "FOLKS, IF YOU WATCH THIS SHOW, YOU KNOW I SPEND MOST\nOF MY TIME, RIGHT OVER THERE,\nCAREFULLY COMBING THE NEWS\nLANDSCAPE AND HARVESTING THE\nFINEST, MOST BEAUTIFUL STORY\nPIGMENTS LIKE MALACHITE,\nAZURITE, AND CINNABAR, WHICH I\nSLOWLY GRIND UNDER A GLASS\nOF MULLER WITH ONLY THE MOST\nTOPICAL LINSEED OIL, WORKING\nTHEM INTO SMOOTH, BUTTERY\nVERMILLIONS, VERDIGRIS, AND NEW\nGAMBOGES, WHICH I THEN APPLY TO\nA GRISAILLE PREPARED ON A CANVAS\nOF FLAX, TOW, AND JUTE, SLOWLY\nWORKING UP THE SHADOW\nSHAPES AND MAJOR MASSES, THEN\nDELICATELY RENDERING THE\nINTERPLAY OF LIGHT AND FORM,\nBEFORE APPLYING THE FINE DAMMAR\nAND MASTIC VARNISH TO UNVEIL FOR\nYOU THE GLORIOUS REMBRANDT\nPORTRAIT OF THE DAY'S EVENTS\nTHAT IS MY NIGHTLY MONOLOGUE.\nBUT SOMETIMES--\nSOMETIMES, FOLKS, SOMETIMES\nI'M SHAKEN AWAKE INSIDE THE\nDARKENED TRUNK OF A BULGARIAN\nMOBSTER'S VOLVO 940, I QUIETLY\nRELEASE THE SAFETY CATCH AND\nTUMBLE ONTO THE SIDE OF A DIRT\nROAD, BREAKING BOTH CLAVICLES,\nWHICH I DO NOT FEEL BECAUSE OF\nALL THE ANGEL DUST.\nI STAGGER INTO AN ABANDONED\nTANNERY WHERE I BEFRIEND AN OWL\nWHO TELLS ME TO I HAVE TO LET\nHIM SPEAK THROUGH ME OR HE'LL\nMURDER THE CLOUDS.\nAND IN HIS DIRECTION, I MIX THE\nFUN DIP I FOUND IN MY POCKET\nWITH THE FISTFULS OF HEXAVALENT\nCHROMIUM I SCOOP UP FROM THE\nDISUSED TANNING PITS, THEN HURL\nIT AT THE SIDE OF A NEARBY\nDEFUNCT DAIRY QUEEN IN A FUGUE\nSTATE OF LASHING OUT AT LIGHT\nAND COLOR, TO UNLEASH FOR YOU\nTHE ABSTRACT EXPRESSIONIST\nSPLATTER FRESCO OF NEWS THAT IS\nMY SEGMENT:\nMEANWHILE!"

},

"YyV6l8HPmdQ": {

"begin": "0:48.0",

"end": "1:32.0",

"text": "FOLKS, LADIES AND\nGENTLEMEN, YOU KNOW I SPEND A\nLOT\nOF TIME DELICATELY WHITTLING A\nMELANGE OF THE DAY'S MOST\nPRESSING STORY TIMBERS,\nPRECISELY MEASURING THE NECKS,\nRIBS, AND BACKS OF THE NEWS,\nEMPLOYING ONLY THE MOST\nSOPHISTICATED AND TOPICAL\nPURFLING, THEN LAYING 15\nEXQUISITE COATS OF INSIGHT ONTO\nTHE ORNATE YET ROBUST\nSTRADIVARIUS VIOLIN THAT IS MY\nNIGHTLY MONOLOGUE.\nBUT SOMETIMES, SOMETIMES, FOLKS,\nI GATHER UP FRAYED ELECTRICAL\nWIRE FROM A BURNT-OUT BOWLING\nALLEY, TAPE IT TO A\nTERMITE-INFESTED 2-by-4, THEN SHOVE\nONE END TO A DISCARDED CHUM\nBUCKET TO MAKE FOR YOU THE APPALACHIAN\nDRIFTER'S BANJO OF NEWS THAT IS\nMY SEGMENT:\n\"MEANWHILE!\""

},

"a8DD__mRtPk": {

"begin": "1:13.0",

"end": "1:58.0",

"text": "FOLKS, YOU KNOW, I SPEND MOST OF\nMY TIME GATHERING FOR YOU THE LATEST,\nMOST CUTTING-EDGE NEWS STORIES,\nCAREFULLY EXAMINING THE DAY'S\nCT SCAN, THEN ASSEMBLING\nAMERICA'S CRACK MEDICAL TEAM,\nAND MAKING PRECISE INCISIONS\nWITH THE AID OF A STRYKER 1588\nLAPAROSCOPE IN THE\nGROUNDBREAKING SURGICAL ARTISTRY\nTHAT IS MY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES,\nFOLKS, WHEN I NEED A LITTLE EXTRA CASH TO\nPAY OFF MY COCK-FIGHTING DEBTS,\nI SET UP A RUSTY COT UNDER A\nTARP IN WASHINGTON SQUARE PARK,\nWHERE I PLY CURIOUS PASSERSBY\nWITH BATHTUB COUGH SYRUP TO HELP\nDULL THE PAIN WHILE I USE GARDEN\nSHEARS TO CUT OUT ANYTHING THAT\nLOOKS SUPERFLUOUS IN THE AMATEUR\nAPPENDECTOMY TENT OF NEWS\nTHAT IS MY SEGMENT...\nMEANWHILE!"

},

"cHhomJMwY1I": {

"begin": "2:42.0",

"end": "3:24.0",

"text": "FOLKS, I SPEND A LOT OF TIME\nSTANDING RIGHT OVER THERE,\nCOMBING THROUGH HOURS UPON HOURS\nOF GAME TAPE ON THE MOST PROMISING\nHEADLINES, METICULOUSLY CRAFTING\nMY BIG BOARD TO RANK STORIES\nBASED ON THEIR RAW TALENT AND\nINTANGIBLES, AND CUT DEALS FOR\nTHE MOST TOPICAL TRADES TO DRAFT\nTHE ONCE-IN-A-GENERATION,\nHEISMAN-WINNING QUARTERBACK THAT\nIS MY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES I\nWAKE UP IN AN ICE BATH AFTER\nDOING RAILS OF GATORADE POWDER,\nREALIZE IT'S DRAFT DAY, AND I\nHAVE 15 SECONDS LEFT TO MAKE A\nCHOICE AND BLURT OUT THE FIRST\nNAME I SEE TO WASTE THE NUMBER\nONE OVERALL PICK ON THE SCRAWNY,\nUNPOLISHED THIRD-STRING PUNTER\nOF NEWS THAT IS MY SEGMENT,\n\"MEANWHILE!\""

},

"hhwTiwUAaf8": {

"begin": "1:06.5",

"end": "1:54.5",

"text": "YOU KNOW, FOLKS, I SPEND MOST\nOF MY TIME SOURCING FOR YOU THE DAY'S\nFINEST HANGZHOU SILK NEWS\nSTORIES, MOUNTING THEM ON THE\nMOST TOPICAL, PREMIUM,\nPOLYHEDRAL BAMBOO JOKE FRAME,\nDECORATING IT WITH ARTISANAL ASH\nINK, INSERTING A HAND-POURED\nBEESWAX CANDLE, FILLING IT WITH\nINTENTION AND THEN SENDING IT ALOFT\nON THE UPDRAFT OF AUDIENCE\nLAUGHTER IN THE SPECTACULAR\nCHINESE LANTERN FESTIVAL THAT IS\nMY NIGHTLY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES, FOLKS, I GO VISIT MY\nBUDDY, BARRACUDA AT THE\nABANDONED MALL, DROP A COUPLE OF\nHUNDOS ON SOME WET ROMAN\nCANDLES, BENT SPARKLERS SMUGGLED\nIN FROM THE PHILIPPINES, AND A\nFLARE GUN STOLEN FROM A CRASHED\nCOAST GUARD BOAT, SET IT\nALL OFF IN THE DERANGED,\nUNREGULATED FIREWORKS ACCIDENT\nOF NEWS THAT IS MY SEGMENT:\n\"MEANWHILE!\""

},

"iB6diOGE8y4": {

"begin": "0:52.8",

"end": "1:50.8",

"text": "YOU KNOW, IF YOU WATCH THE SHOW, YOU KNOW I SPEND MOST OF MY TIME\nRIGHT OVER THERE, COMBING\nTHROUGH THE DAY'S BIGGEST NEWS,\nAND SELECTING FOR YOU THE\nFINEST, MOST TOPICAL INDIAN\nROSEWOOD, SPRUCE, AND MAHOGANY\nSTORIES.\nI THEN HAND-SHAPE AND COMBINE\nTHEM WITH AN ABALONE\nMULTI-STRIPE BACK INLAY, AND\nFORWARD-SHIFTED SCALLOPED\nBRACES, ANTIQUE WHITE BINDING,\nAND A HIGH-PERFORMANCE NECK WITH\nA HEXAGON FINGERBOARD, AND\nFINALLY LAY IN A\nTORTOISE-PATTERN PEARL PICK\nGUARD, AND A COMPENSATED\nBONE SADDLE, TO CRAFT FOR YOU\nTHE EXQUISITE MARTIN D-45\nDREADNOUGHT ACOUSTIC GUITAR\nTHAT IS MY NIGHTLY MONOLOGUE.\nBUT SOMETIMES, JUST SOMETIMES, FOLKS, I SNAP\nAWAKE IN A RUSTY COFFIN FREEZER\nBEHIND AN ABANDONED DAIRY QUEEN\nOUTSIDE OF GALVESTON.\nTHEN I NAIL A 2-BY-4 TO A CEDAR URN\nI STOLE FROM A FUNERAL PARLOR, STRING\nON SOME BRAKE CABLES I RIPPED\nOUT OF A COP CAR, THEN CUT EYE\nHOLES IN A GOODWILL BAG FOR A MASK,\nHIT A WHIPPET, AND TERRORIZE\nTHE LOCALS ON THE TEXAS CHAINSAW\nBANJO OF NEWS THAT IS MY\nSEGMENT:\n\"MEANWHILE!\""

},

"iQFwGF0aW-o": {

"begin": "2:10.7",

"end": "3:33.7",

"text": "I SPEND A LOT OF MY TIME, RIGHT\nOVER THERE, CULTIVATING FOR YOU THE\nDAY'S BIGGEST STORIES,\nPLUCKING THE MOST BEAUTIFUL AND\nTOPICAL NEWS VIOLETS AND\nMARIGOLDS, STRIPPING THE FRENCH\nLAVENDER FROM THE STEM AND\nLOVINGLY PRESSING THEM ALL\nBETWEEN THE PAGES OF A GILDED\nFIRST EDITION OF \"PRIDE AND\nPREJUDICE.\"\nTHEN I FOLD THEM INTO A DOUGH I\nHAND ROLLED FROM PASINI BAKERY\nFLOUR, BORDIER BUTTER, AND\nCHILLED SOMERDALE DEVON DOUBLE\nCREAM, SPRINKLE THEM WITH A\nPINCH OF MUSCOVADO SUGAR, AND\nBAKE THEM IN A \"LA CORNUE GRAND\nPALAIS\" RANGE TO PERFECTLY PREP\nTHE GOURMET FLORAL SHORTBREAD\nCOOKIE THAT IS MY NIGHTLY MONOLOGUE.\nBUT SOMETIMES, FOLKS, SOMETIMES,\nSOMETIMES, I AM NIBBLED AWAKE BY AN AMOROUS\nRACCOON IN THE ABANDONED WALK-IN\nFREEZER OF A HAUNTED BAKERY IN\nWHICH I HAVE ESTABLISHED\nSQUATTER'S RIGHTS, I SLIP INTO\nTHE TWISTED KITCHENAID PADDLES I\nCALL SHOES, AND KNIFE FIGHT A\nPOSSUM FOR AN EXPIRED BAG OF\nCRUSHED BREAKFAST CEREAL DUST\nAND A BROKEN EGG, WHICH I MIX\nWITH THREE SMUSHED RESTAURANT\nBUTTER PACKETS I STOLE FROM A\nNAPPING RETIREE'S PURSE, POUR\nTHE REST OF A SHATTERED BOTTLE\nOF RUBBING ALCOHOL I FOUND IN\nTHE DUMPSTER OUT BACK INTO A\nRUSTY BARREL TO IGNITE THE HOBO\nFIRE OVER WHICH I BAKE MY\nSLUDGE, THEN DISPLAY IT IN A\nFILTHY CHEF'S HAT TO SERVE YOU\nTHE DERANGED RAT KING BISCUIT OF\nNEWS THAT IS MY SEGMENT:\n\"MEANWHILE\"!"

},

"jIL7kvG7d10": {

"begin": "1:55.3",

"end": "2:40.3",