Showing preview only (1,874K chars total). Download the full file or copy to clipboard to get everything.

Repository: s-zx/edgeFlow.js

Branch: main

Commit: ba87394114d1

Files: 179

Total size: 1.7 MB

Directory structure:

gitextract_2xh90dxw/

├── .github/

│ ├── ISSUE_TEMPLATE/

│ │ ├── bug_report.md

│ │ └── feature_request.md

│ ├── pull_request_template.md

│ └── workflows/

│ ├── ci.yml

│ └── publish.yml

├── .gitignore

├── CLAUDE.md

├── CONTRIBUTING.md

├── README.md

├── README_CN.md

├── benchmarks/

│ └── README.md

├── demo/

│ ├── demo.js

│ ├── index.html

│ ├── server.js

│ └── styles.css

├── dist/

│ ├── backends/

│ │ ├── index.d.ts

│ │ ├── index.js

│ │ ├── onnx.d.ts

│ │ ├── onnx.js

│ │ ├── transformers-adapter.d.ts

│ │ ├── transformers-adapter.js

│ │ ├── wasm.d.ts

│ │ ├── wasm.js

│ │ ├── webgpu.d.ts

│ │ ├── webgpu.js

│ │ ├── webnn.d.ts

│ │ └── webnn.js

│ ├── core/

│ │ ├── composer.d.ts

│ │ ├── composer.js

│ │ ├── device-profiler.d.ts

│ │ ├── device-profiler.js

│ │ ├── index.d.ts

│ │ ├── index.js

│ │ ├── memory.d.ts

│ │ ├── memory.js

│ │ ├── plugin.d.ts

│ │ ├── plugin.js

│ │ ├── runtime.d.ts

│ │ ├── runtime.js

│ │ ├── scheduler.d.ts

│ │ ├── scheduler.js

│ │ ├── tensor.d.ts

│ │ ├── tensor.js

│ │ ├── types.d.ts

│ │ ├── types.js

│ │ ├── worker.d.ts

│ │ └── worker.js

│ ├── edgeflow.browser.js

│ ├── index.d.ts

│ ├── index.js

│ ├── pipelines/

│ │ ├── automatic-speech-recognition.d.ts

│ │ ├── automatic-speech-recognition.js

│ │ ├── base.d.ts

│ │ ├── base.js

│ │ ├── feature-extraction.d.ts

│ │ ├── feature-extraction.js

│ │ ├── image-classification.d.ts

│ │ ├── image-classification.js

│ │ ├── image-segmentation.d.ts

│ │ ├── image-segmentation.js

│ │ ├── index.d.ts

│ │ ├── index.js

│ │ ├── object-detection.d.ts

│ │ ├── object-detection.js

│ │ ├── question-answering.d.ts

│ │ ├── question-answering.js

│ │ ├── text-classification.d.ts

│ │ ├── text-classification.js

│ │ ├── text-generation.d.ts

│ │ ├── text-generation.js

│ │ ├── zero-shot-classification.d.ts

│ │ └── zero-shot-classification.js

│ ├── tools/

│ │ ├── benchmark.d.ts

│ │ ├── benchmark.js

│ │ ├── debugger.d.ts

│ │ ├── debugger.js

│ │ ├── index.d.ts

│ │ ├── index.js

│ │ ├── monitor.d.ts

│ │ ├── monitor.js

│ │ ├── quantization.d.ts

│ │ └── quantization.js

│ └── utils/

│ ├── cache.d.ts

│ ├── cache.js

│ ├── hub.d.ts

│ ├── hub.js

│ ├── index.d.ts

│ ├── index.js

│ ├── model-loader.d.ts

│ ├── model-loader.js

│ ├── offline.d.ts

│ ├── offline.js

│ ├── preprocessor.d.ts

│ ├── preprocessor.js

│ ├── tokenizer.d.ts

│ └── tokenizer.js

├── docs/

│ ├── .vitepress/

│ │ └── config.ts

│ ├── api/

│ │ ├── model-loader.md

│ │ ├── pipeline.md

│ │ ├── tensor.md

│ │ └── tokenizer.md

│ ├── cookbook/

│ │ ├── composition.md

│ │ └── transformers-adapter.md

│ ├── guide/

│ │ ├── architecture.md

│ │ ├── concepts.md

│ │ ├── device-profiling.md

│ │ ├── installation.md

│ │ ├── plugins.md

│ │ └── quickstart.md

│ ├── index.md

│ └── tutorials/

│ └── text-classification.md

├── examples/

│ ├── basic-usage.ts

│ ├── multi-model-dashboard/

│ │ └── index.html

│ ├── offline-notepad/

│ │ └── index.html

│ └── orchestration.ts

├── package.json

├── playwright.config.ts

├── scripts/

│ └── build-browser.js

├── src/

│ ├── backends/

│ │ ├── index.ts

│ │ ├── onnx.ts

│ │ ├── transformers-adapter.ts

│ │ ├── wasm.ts

│ │ ├── webgpu.ts

│ │ └── webnn.ts

│ ├── core/

│ │ ├── composer.ts

│ │ ├── device-profiler.ts

│ │ ├── index.ts

│ │ ├── memory.ts

│ │ ├── plugin.ts

│ │ ├── runtime.ts

│ │ ├── scheduler.ts

│ │ ├── tensor.ts

│ │ ├── types.ts

│ │ └── worker.ts

│ ├── index.ts

│ ├── pipelines/

│ │ ├── automatic-speech-recognition.ts

│ │ ├── base.ts

│ │ ├── feature-extraction.ts

│ │ ├── image-classification.ts

│ │ ├── image-segmentation.ts

│ │ ├── index.ts

│ │ ├── object-detection.ts

│ │ ├── question-answering.ts

│ │ ├── text-classification.ts

│ │ ├── text-generation.ts

│ │ └── zero-shot-classification.ts

│ ├── tools/

│ │ ├── benchmark.ts

│ │ ├── debugger.ts

│ │ ├── index.ts

│ │ ├── monitor.ts

│ │ └── quantization.ts

│ └── utils/

│ ├── cache.ts

│ ├── hub.ts

│ ├── index.ts

│ ├── model-loader.ts

│ ├── offline.ts

│ ├── preprocessor.ts

│ └── tokenizer.ts

├── tests/

│ ├── e2e/

│ │ ├── browser.spec.ts

│ │ ├── browser.test.ts

│ │ ├── localai-10s-check.spec.ts

│ │ ├── localai-clear-cache-load.spec.ts

│ │ ├── localai-knowledge-base.spec.ts

│ │ ├── localai-load-models.spec.ts

│ │ ├── localai-loading-check.spec.ts

│ │ ├── localai-network-audit.spec.ts

│ │ ├── localai-network-failures.spec.ts

│ │ └── localai-network-full.spec.ts

│ ├── integration/

│ │ └── pipeline.test.ts

│ └── unit/

│ ├── memory.test.ts

│ ├── model-loader.test.ts

│ ├── runtime.test.ts

│ ├── scheduler.test.ts

│ ├── tensor.test.ts

│ ├── tokenizer.test.ts

│ └── worker.test.ts

├── tsconfig.json

├── vercel.json

└── vitest.config.ts

================================================

FILE CONTENTS

================================================

================================================

FILE: .github/ISSUE_TEMPLATE/bug_report.md

================================================

---

name: Bug report

about: Report a bug to help improve edgeFlow.js

title: '[Bug] '

labels: bug

assignees: ''

---

**Describe the bug**

A clear and concise description of what the bug is.

**To Reproduce**

Steps to reproduce the behavior:

1. Import '...'

2. Call '...'

3. See error

**Expected behavior**

A clear description of what you expected to happen.

**Code sample**

```typescript

// Minimal reproduction

```

**Environment**

- Browser: [e.g. Chrome 120, Firefox 118]

- OS: [e.g. macOS 14, Windows 11]

- edgeFlow.js version: [e.g. 0.1.0]

- Runtime: [e.g. WebGPU, WASM]

**Additional context**

Any other context about the problem.

================================================

FILE: .github/ISSUE_TEMPLATE/feature_request.md

================================================

---

name: Feature request

about: Suggest a feature for edgeFlow.js

title: '[Feature] '

labels: enhancement

assignees: ''

---

**Is your feature request related to a problem?**

A clear description of the problem. Ex. "I'm always frustrated when..."

**Describe the solution you'd like**

A clear description of what you want to happen.

**Describe alternatives you've considered**

Any alternative solutions or features you've considered.

**Additional context**

Any other context, code examples, or screenshots.

================================================

FILE: .github/pull_request_template.md

================================================

## Summary

Brief description of the changes.

## Motivation

Why is this change needed?

## Changes

- Change 1

- Change 2

## Testing

- [ ] Unit tests pass (`npm run test:unit`)

- [ ] TypeScript compiles (`npx tsc --noEmit`)

- [ ] Lint passes (`npm run lint`)

- [ ] Tested in browser (if applicable)

## Breaking Changes

List any breaking changes, or "None".

================================================

FILE: .github/workflows/ci.yml

================================================

name: CI

on:

push:

branches: [main]

pull_request:

branches: [main]

jobs:

lint-and-typecheck:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-node@v4

with:

node-version: 20

cache: npm

- run: npm ci

- run: npm run lint

- run: npx tsc --noEmit

test:

runs-on: ubuntu-latest

needs: lint-and-typecheck

steps:

- uses: actions/checkout@v4

- uses: actions/setup-node@v4

with:

node-version: 20

cache: npm

- run: npm ci

- run: npm run test:unit

- run: npm run test:coverage

- uses: actions/upload-artifact@v4

if: always()

with:

name: coverage-report

path: coverage/

build:

runs-on: ubuntu-latest

needs: test

steps:

- uses: actions/checkout@v4

- uses: actions/setup-node@v4

with:

node-version: 20

cache: npm

- run: npm ci

- run: npm run build

- uses: actions/upload-artifact@v4

with:

name: dist

path: dist/

================================================

FILE: .github/workflows/publish.yml

================================================

name: Publish to npm

on:

release:

types: [published]

jobs:

publish:

runs-on: ubuntu-latest

permissions:

contents: read

id-token: write

steps:

- uses: actions/checkout@v4

- uses: actions/setup-node@v4

with:

node-version: 20

cache: npm

registry-url: https://registry.npmjs.org

- run: npm ci

- run: npm run build

- run: npm run test:unit

- run: npm publish --provenance --access public

env:

NODE_AUTH_TOKEN: ${{ secrets.NPM_TOKEN }}

================================================

FILE: .gitignore

================================================

# Dependencies

node_modules/

# Build outputs (keep dist/ for npm publishing)

# dist/

# IDE

.idea/

.vscode/

*.swp

*.swo

.DS_Store

# Logs

*.log

npm-debug.log*

yarn-debug.log*

yarn-error.log*

# Test coverage

coverage/

# Playwright / E2E test output

test-results/

# Environment

.env

.env.local

.env.*.local

# TypeScript cache

*.tsbuildinfo

# Temporary files

tmp/

temp/

.tmp/

.temp/

.vercel

.env*.local

# Personal docs (not for public repo)

INTERVIEW_PREP.md

================================================

FILE: CLAUDE.md

================================================

# CLAUDE.md

This file provides guidance to Claude Code (claude.ai/code) when working with code in this repository.

## Commands

- **Build:** `npm run build` (runs `tsc` then `scripts/build-browser.js` which produces `dist/edgeflow.browser.js` via esbuild; `onnxruntime-web` is marked external).

- **Watch compile:** `npm run dev`

- **Lint:** `npm run lint` (ESLint on `src/**/*.ts`)

- **Unit/integration tests (vitest, happy-dom):** `npm test` / `npm run test:unit` / `npm run test:integration`

- Single test file: `npx vitest run tests/unit/tokenizer.test.ts`

- Single test by name: `npx vitest run -t "test name pattern"`

- **E2E (Playwright, Chromium):** `npm run test:e2e`

- Uses `playwright.config.ts` by default; alternate configs exist for `localai`, `network`, `privatedoc` scenarios (run with `npx playwright test -c playwright.localai.config.ts`).

- Playwright auto-starts `npm run demo:server` on `localhost:3000`.

- **Demo app:** `npm run demo` (builds then serves `demo/server.js` on port 3000). Load a Hugging Face ONNX URL in the browser UI to exercise pipelines.

- **Docs (VitePress):** `npm run docs:dev` / `npm run docs:build`

## Architecture

edgeFlow.js is a browser-first ML inference framework. The runtime graph is: **Pipeline → BasePipeline → RuntimeManager → Runtime backend (ONNX/WebGPU/WebNN/WASM) → Scheduler → MemoryManager**. All public exports flow through `src/index.ts`.

### Layered structure (`src/`)

- **`core/`** — framework internals. `types.ts` is the canonical type/error surface (`EdgeFlowError`, `ErrorCodes`, all `Tensor`/`Runtime`/`Pipeline` interfaces — most other files import from here). `runtime.ts` holds the `RuntimeManager` singleton, runtime factory registry, and priority-based automatic backend selection (webgpu > webnn > wasm). `scheduler.ts` implements the global priority queue / concurrency-limited `InferenceScheduler` that every runtime dispatches through. `memory.ts` provides `MemoryManager`, `MemoryScope`, and `ModelCache` with reference-counted cleanup. `composer.ts` enables `compose()`/`parallel()` multi-stage pipelines. `plugin.ts` is the extension point for third-party pipelines/backends/middleware. `device-profiler.ts` recommends quantization/model variant based on device tier. `worker.ts` runs inference in a Web Worker.

- **`backends/`** — concrete `Runtime` implementations: `onnx.ts` (onnxruntime-web, peer dep), `webgpu.ts`, `webnn.ts`, `wasm.ts`, plus `transformers-adapter.ts` for interop with transformers.js. `registerAllBackends()` wires factories into `RuntimeManager`.

- **`pipelines/`** — task-specific wrappers extending `base.ts`'s `BasePipeline`. The `pipeline(task, options?)` factory in `index.ts` looks up a registered pipeline factory (built-in or plugin) and returns a ready-to-run instance. Each pipeline owns its own tokenizer/preprocessor, model loading, and result formatting.

- **`utils/`** — `tokenizer.ts` (BPE, WordPiece, Unigram — loads `tokenizer.json` directly), `preprocessor.ts` (image/audio/text), `model-loader.ts` (preloading, sharding, resumable downloads), `cache.ts` (`InferenceCache`, `ModelDownloadCache` — IndexedDB-backed), `hub.ts` (HuggingFace Hub download helpers + `POPULAR_MODELS`), `offline.ts`.

- **`tools/`** — developer tooling surface: `quantization.ts` (int8/uint8/float16 quant + dequant), `debugger.ts` (tensor inspection, histograms, heatmaps, trace events), `monitor.ts` (`PerformanceMonitor` + dashboard generators), `benchmark.ts`.

### Cross-cutting conventions

- **ESM only** (`"type": "module"`, `sideEffects: false`). All intra-repo imports use `.js` extensions even from `.ts` source — required for Node ESM resolution after `tsc` emits.

- **`onnxruntime-web` is an optional peer dep** marked `external` in the browser bundle; consumer bundlers resolve it. Do not import it eagerly in code paths that should run without ONNX.

- **Errors always use `EdgeFlowError` + `ErrorCodes`** from `core/types.ts` — do not throw bare `Error` from library code.

- **Scheduling is mandatory:** runtime inference paths go through `getScheduler()`. New backends should dispatch via the scheduler rather than calling model.run directly, so priority/concurrency controls are honored.

- **Tests:** unit/integration run under happy-dom (no real WebGPU/WebNN); those backends are exercised via Playwright E2E against the demo server. Test timeout is 30s.

================================================

FILE: CONTRIBUTING.md

================================================

# Contributing to edgeFlow.js

Thank you for your interest in contributing to edgeFlow.js! This guide will help you get started.

## Development Setup

```bash

# Clone the repository

git clone https://github.com/s-zx/edgeflow.js.git

cd edgeflow.js

# Install dependencies

npm install

# Build the project

npm run build

# Run tests

npm run test:unit

# Start development mode (watch)

npm run dev

```

## Project Structure

```

src/

├── core/ # Runtime, scheduler, memory, tensor, types

├── backends/ # ONNX Runtime (production), WebGPU/WebNN (planned)

├── pipelines/ # Task pipelines (text-generation, image-segmentation, etc.)

├── utils/ # Tokenizer, preprocessor, cache, model-loader, hub

└── tools/ # Quantization, benchmark, debugger, monitor

```

## How to Contribute

### Reporting Bugs

Open an issue using the bug report template. Include:

- A minimal code reproduction

- Browser and OS information

- edgeFlow.js version

### Suggesting Features

Open an issue using the feature request template describing:

- The problem you're trying to solve

- Your proposed solution

- Alternatives you've considered

### Submitting Code

1. Fork the repository

2. Create a feature branch: `git checkout -b feature/my-feature`

3. Make your changes

4. Run checks: `npm run lint && npx tsc --noEmit && npm run test:unit`

5. Commit with a descriptive message

6. Push and open a pull request

### Good First Issues

Look for issues labeled `good first issue`. These are scoped tasks ideal for newcomers:

- Adding tests for uncovered modules

- Improving error messages

- Adding examples

- Documentation improvements

## Code Standards

- **TypeScript strict mode** — all strict options are enabled

- **No `any`** — use proper types; `unknown` if truly dynamic

- **ESM only** — use `.js` extensions in imports

- **No console.log in library code** — use the event system or `console.warn` for important warnings

- **Dispose pattern** — all resources must be disposable to prevent memory leaks

## Testing

```bash

npm run test:unit # Run unit tests

npm run test:integration # Run integration tests

npm run test:coverage # Generate coverage report

npm run test:watch # Watch mode

```

Tests use [Vitest](https://vitest.dev/). Place tests in:

- `tests/unit/` — for isolated unit tests

- `tests/integration/` — for pipeline/backend integration tests

- `tests/e2e/` — for browser-based tests

## Architecture Decisions

edgeFlow.js is designed as an **orchestration layer**, not an inference engine. Key principles:

1. **Backend agnostic** — work with any inference engine (ONNX Runtime, transformers.js, custom)

2. **Production-first** — scheduling, memory management, error recovery matter more than model count

3. **Honest API** — experimental features are clearly labeled, not presented as production-ready

4. **Plugin-friendly** — custom pipelines and backends can be registered at runtime

## License

By contributing, you agree that your contributions will be licensed under the MIT License.

================================================

FILE: README.md

================================================

# edgeFlow.js

<div align="center">

**Browser ML inference framework with task scheduling and smart caching.**

[](https://www.npmjs.com/package/edgeflowjs)

[](https://packagephobia.com/result?p=edgeflowjs)

[](LICENSE)

[Documentation](https://edgeflow.js.org) · [Examples](examples/) · [API Reference](https://edgeflow.js.org/api) · [English](README.md) | [中文](README_CN.md)

</div>

---

## ✨ Features

- 📋 **Task Scheduler** - Priority queue, concurrency control, task cancellation

- 🔄 **Batch Processing** - Efficient batch inference out of the box

- 💾 **Memory Management** - Automatic memory tracking and cleanup with scopes

- 📥 **Smart Model Loading** - Preloading, sharding, resume download support

- 💿 **Offline Caching** - IndexedDB-based model caching for offline use

- ⚡ **Multi-Backend** - ONNX Runtime with WebGPU/WASM execution providers, automatic fallback

- 🤗 **HuggingFace Hub** - Direct model download with one line

- 🔤 **Real Tokenizers** - BPE & WordPiece tokenizers, load tokenizer.json directly

- 👷 **Web Worker Support** - Run inference in background threads

- 📦 **Batteries Included** - ONNX Runtime bundled, zero configuration needed

- 🎯 **TypeScript First** - Full type support with intuitive APIs

## 📦 Installation

```bash

npm install edgeflowjs

```

```bash

yarn add edgeflowjs

```

```bash

pnpm add edgeflowjs

```

> **Note**: ONNX Runtime is included as a dependency. No additional setup required.

## 🚀 Quick Start

### Try the Demo

Run the interactive demo locally to test all features:

```bash

# Clone and install

git clone https://github.com/user/edgeflow.js.git

cd edgeflow.js

npm install

# Build and start demo server

npm run demo

```

Open **http://localhost:3000** in your browser:

1. **Load Model** - Enter a Hugging Face ONNX model URL and click "Load Model"

```

https://huggingface.co/Xenova/distilbert-base-uncased-finetuned-sst-2-english/resolve/main/onnx/model_quantized.onnx

```

2. **Test Features**:

- 🧮 **Tensor Operations** - Test tensor creation, math ops, softmax, relu

- 📝 **Text Classification** - Run sentiment analysis on text

- 🔍 **Feature Extraction** - Extract embeddings from text

- ⚡ **Task Scheduling** - Test priority-based scheduling

- 📋 **Task Scheduler** - Test priority-based task scheduling

- 💾 **Memory Management** - Test allocation and cleanup

### Basic Usage

```typescript

import { pipeline } from 'edgeflowjs';

// Create a sentiment analysis pipeline

const sentiment = await pipeline('sentiment-analysis');

// Run inference

const result = await sentiment.run('I love this product!');

console.log(result);

// { label: 'positive', score: 0.98, processingTime: 12.5 }

```

### Batch Processing

```typescript

// Native batch processing support

const results = await sentiment.run([

'This is amazing!',

'This is terrible.',

'It\'s okay I guess.'

]);

console.log(results);

// [

// { label: 'positive', score: 0.95 },

// { label: 'negative', score: 0.92 },

// { label: 'neutral', score: 0.68 }

// ]

```

### Multiple Pipelines

```typescript

import { pipeline } from 'edgeflowjs';

// Create multiple pipelines

const classifier = await pipeline('text-classification');

const extractor = await pipeline('feature-extraction');

// Run in parallel with Promise.all

const [classification, features] = await Promise.all([

classifier.run('Sample text'),

extractor.run('Sample text')

]);

```

### Image Classification

```typescript

import { pipeline } from 'edgeflowjs';

const classifier = await pipeline('image-classification');

// From URL

const result = await classifier.run('https://example.com/image.jpg');

// From HTMLImageElement

const img = document.getElementById('myImage');

const result = await classifier.run(img);

// Batch

const results = await classifier.run([img1, img2, img3]);

```

### Text Generation (Streaming)

```typescript

import { pipeline } from 'edgeflowjs';

const generator = await pipeline('text-generation');

// Simple generation

const result = await generator.run('Once upon a time', {

maxNewTokens: 50,

temperature: 0.8,

});

console.log(result.generatedText);

// Streaming output

for await (const event of generator.stream('Hello, ')) {

process.stdout.write(event.token);

if (event.done) break;

}

```

### Zero-shot Classification

```typescript

import { pipeline } from 'edgeflowjs';

const classifier = await pipeline('zero-shot-classification');

const result = await classifier.classify(

'I love playing soccer on weekends',

['sports', 'politics', 'technology', 'entertainment']

);

console.log(result.labels[0], result.scores[0]);

// 'sports', 0.92

```

### Question Answering

```typescript

import { pipeline } from 'edgeflowjs';

const qa = await pipeline('question-answering');

const result = await qa.run({

question: 'What is the capital of France?',

context: 'Paris is the capital and largest city of France.'

});

console.log(result.answer); // 'Paris'

```

### Load from HuggingFace Hub

```typescript

import { fromHub, fromTask } from 'edgeflowjs';

// Load by model ID (auto-downloads model, tokenizer, config)

const bundle = await fromHub('Xenova/distilbert-base-uncased-finetuned-sst-2-english');

console.log(bundle.tokenizer); // Tokenizer instance

console.log(bundle.config); // Model config

// Load by task name (uses recommended model)

const sentimentBundle = await fromTask('sentiment-analysis');

```

### Web Workers (Background Inference)

```typescript

import { runInWorker, WorkerPool, isWorkerSupported } from 'edgeflowjs';

// Simple: run inference in background thread

if (isWorkerSupported()) {

const outputs = await runInWorker(modelUrl, inputs);

}

// Advanced: use worker pool for parallel processing

const pool = new WorkerPool({ numWorkers: 4 });

await pool.init();

const modelId = await pool.loadModel(modelUrl);

const results = await pool.runBatch(modelId, batchInputs);

pool.terminate();

```

## 🎯 Supported Tasks

| Task | Pipeline | Status |

|------|----------|--------|

| Text Generation | `text-generation` | ✅ Production (TinyLlama, streaming, KV cache) |

| Image Segmentation | `image-segmentation` | ✅ Production (SlimSAM, interactive prompts) |

| Text Classification | `text-classification` | ⚠️ Experimental (heuristic, provide own model) |

| Sentiment Analysis | `sentiment-analysis` | ⚠️ Experimental (heuristic, provide own model) |

| Feature Extraction | `feature-extraction` | ⚠️ Experimental (mock embeddings, provide own model) |

| Image Classification | `image-classification` | ⚠️ Experimental (heuristic, provide own model) |

| Object Detection | `object-detection` | ⚠️ Experimental (real NMS/IoU, needs own model) |

| Speech Recognition | `automatic-speech-recognition` | ⚠️ Experimental (preprocessing only, needs model) |

| Zero-shot Classification | `zero-shot-classification` | ⚠️ Experimental (random scoring, needs NLI model) |

| Question Answering | `question-answering` | ⚠️ Experimental (word overlap heuristic, needs model) |

> **Note:** Experimental pipelines work for demos and testing the API surface. For production accuracy, provide a real ONNX model via `options.model` or use the **transformers.js adapter backend** to leverage HuggingFace's model ecosystem.

## ⚡ Key Differentiators

edgeFlow.js is not a replacement for transformers.js — it is a **production orchestration layer** that can wrap any inference engine (including transformers.js) and add the features real apps need.

### What edgeFlow.js adds on top of inference engines

| Feature | Inference engines alone | With edgeFlow.js |

|---------|------------------------|------------------|

| Task Scheduling | None — run and hope | Priority queue with concurrency limits |

| Task Cancellation | Not possible | Cancel pending/queued tasks |

| Batch Processing | Manual | Built-in batching with configurable size |

| Memory Management | Manual cleanup | Automatic scopes, leak detection, GC hints |

| Model Preloading | Manual | Background preloading with priority queue |

| Resume Download | Start over on failure | Chunked download with automatic resume |

| Model Caching | Basic or none | IndexedDB cache with stats and eviction |

| Pipeline Composition | Not available | Chain multiple models (ASR → translate → TTS) |

| Device Adaptation | Manual model selection | Auto-select model variant by device capability |

| Performance Monitoring | External tooling needed | Built-in dashboard and alerting |

## 🔌 transformers.js Adapter (Recommended)

Use edgeFlow.js as an orchestration layer on top of [transformers.js](https://huggingface.co/docs/transformers.js) to get access to 1000+ HuggingFace models with scheduling, caching, and memory management:

```typescript

import { pipeline as tfPipeline } from '@xenova/transformers';

import { useTransformersBackend, pipeline } from 'edgeflowjs';

// Register transformers.js as the inference backend

useTransformersBackend({

pipelineFactory: tfPipeline,

device: 'webgpu', // GPU acceleration

dtype: 'fp16', // Half precision

});

// Use edgeFlow.js API — scheduling, caching, memory management included

const classifier = await pipeline('text-classification', {

model: 'Xenova/distilbert-base-uncased-finetuned-sst-2-english',

});

const result = await classifier.run('I love this product!');

```

> **Why?** transformers.js is excellent at loading and running single models. edgeFlow.js adds the production features you need when running multiple models, managing memory on constrained devices, caching for offline use, and scheduling concurrent inference.

## 🔧 Configuration

### Runtime Selection

```typescript

import { pipeline } from 'edgeflowjs';

// Automatic (recommended)

const model = await pipeline('text-classification');

// Specify runtime

const model = await pipeline('text-classification', {

runtime: 'webgpu' // or 'webnn', 'wasm', 'auto'

});

```

### Memory Management

```typescript

import { pipeline, getMemoryStats, gc } from 'edgeflowjs';

const model = await pipeline('text-classification');

// Use the model

await model.run('text');

// Check memory usage

console.log(getMemoryStats());

// { allocated: 50MB, used: 45MB, peak: 52MB, tensorCount: 12 }

// Explicit cleanup

model.dispose();

// Force garbage collection

gc();

```

### Scheduler Configuration

```typescript

import { configureScheduler } from 'edgeflowjs';

configureScheduler({

maxConcurrentTasks: 4,

maxConcurrentPerModel: 1,

defaultTimeout: 30000,

enableBatching: true,

maxBatchSize: 32,

});

```

### Caching

```typescript

import { pipeline, Cache } from 'edgeflowjs';

// Create a cache

const cache = new Cache({

strategy: 'lru',

maxSize: 100 * 1024 * 1024, // 100MB

persistent: true, // Use IndexedDB

});

const model = await pipeline('text-classification', {

cache: true

});

```

## 🛠️ Advanced Usage

### Custom Model Loading

```typescript

import { loadModel, runInference } from 'edgeflowjs';

// Load from URL with caching, sharding, and resume support

const model = await loadModel('https://example.com/model.bin', {

runtime: 'webgpu',

quantization: 'int8',

cache: true, // Enable IndexedDB caching (default: true)

resumable: true, // Enable resume download (default: true)

chunkSize: 5 * 1024 * 1024, // 5MB chunks for large models

onProgress: (progress) => console.log(`Loading: ${progress * 100}%`)

});

// Run inference

const outputs = await runInference(model, inputs);

// Cleanup

model.dispose();

```

### Preloading Models

```typescript

import { preloadModel, preloadModels, getPreloadStatus } from 'edgeflowjs';

// Preload a single model in background (with priority)

preloadModel('https://example.com/model1.onnx', { priority: 10 });

// Preload multiple models

preloadModels([

{ url: 'https://example.com/model1.onnx', priority: 10 },

{ url: 'https://example.com/model2.onnx', priority: 5 },

]);

// Check preload status

const status = getPreloadStatus('https://example.com/model1.onnx');

// 'pending' | 'loading' | 'complete' | 'error' | 'not_found'

```

### Model Caching

```typescript

import {

isModelCached,

getCachedModel,

deleteCachedModel,

clearModelCache,

getModelCacheStats

} from 'edgeflowjs';

// Check if model is cached

if (await isModelCached('https://example.com/model.onnx')) {

console.log('Model is cached!');

}

// Get cached model data directly

const modelData = await getCachedModel('https://example.com/model.onnx');

// Delete a specific cached model

await deleteCachedModel('https://example.com/model.onnx');

// Clear all cached models

await clearModelCache();

// Get cache statistics

const stats = await getModelCacheStats();

console.log(`${stats.models} models cached, ${stats.totalSize} bytes total`);

```

### Resume Downloads

Large model downloads automatically support resuming from where they left off:

```typescript

import { loadModelData } from 'edgeflowjs';

// Download with progress and resume support

const modelData = await loadModelData('https://example.com/large-model.onnx', {

resumable: true,

chunkSize: 10 * 1024 * 1024, // 10MB chunks

parallelConnections: 4, // Download 4 chunks in parallel

onProgress: (progress) => {

console.log(`${progress.percent.toFixed(1)}% downloaded`);

console.log(`Speed: ${(progress.speed / 1024 / 1024).toFixed(2)} MB/s`);

console.log(`ETA: ${(progress.eta / 1000).toFixed(0)}s`);

console.log(`Chunk ${progress.currentChunk}/${progress.totalChunks}`);

}

});

```

### Model Quantization

```typescript

import { quantize } from 'edgeflowjs/tools';

const quantized = await quantize(model, {

method: 'int8',

calibrationData: samples,

});

console.log(`Compression: ${quantized.compressionRatio}x`);

// Compression: 3.8x

```

### Benchmarking

```typescript

import { benchmark } from 'edgeflowjs/tools';

const result = await benchmark(

() => model.run('sample text'),

{ warmupRuns: 5, runs: 100 }

);

console.log(result);

// {

// avgTime: 12.5,

// minTime: 10.2,

// maxTime: 18.3,

// throughput: 80 // inferences/sec

// }

```

### Memory Scope

```typescript

import { withMemoryScope, tensor } from 'edgeflowjs';

const result = await withMemoryScope(async (scope) => {

// Tensors tracked in scope

const a = scope.track(tensor([1, 2, 3]));

const b = scope.track(tensor([4, 5, 6]));

// Process...

const output = process(a, b);

// Keep result, dispose others

return scope.keep(output);

});

// a and b automatically disposed

```

## 🔌 Tensor Operations

```typescript

import { tensor, zeros, ones, matmul, softmax, relu } from 'edgeflowjs';

// Create tensors

const a = tensor([[1, 2], [3, 4]]);

const b = zeros([2, 2]);

const c = ones([2, 2]);

// Operations

const d = matmul(a, c);

const probs = softmax(d);

const activated = relu(d);

// Cleanup

a.dispose();

b.dispose();

c.dispose();

```

## 🌐 Browser Support

| Browser | WebGPU | WebNN | WASM |

|---------|--------|-------|------|

| Chrome 113+ | ✅ | ✅ | ✅ |

| Edge 113+ | ✅ | ✅ | ✅ |

| Firefox 118+ | ⚠️ Flag | ❌ | ✅ |

| Safari 17+ | ⚠️ Preview | ❌ | ✅ |

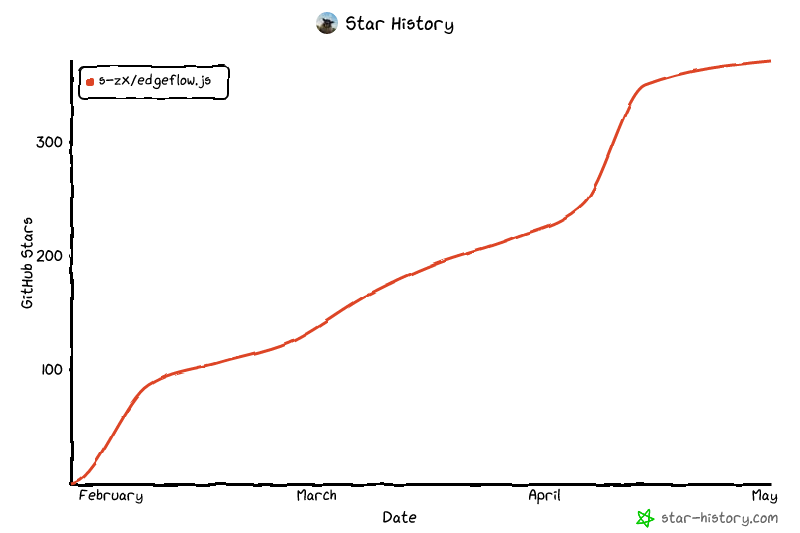

## Star History

[](https://www.star-history.com/?repos=s-zx%2FedgeFlow.js&type=date&legend=top-left)

## 📖 API Reference

### Core

- `pipeline(task, options?)` - Create a pipeline for a task

- `loadModel(url, options?)` - Load a model from URL

- `runInference(model, inputs)` - Run model inference

- `getScheduler()` - Get the global scheduler

- `getMemoryManager()` - Get the memory manager

- `runInWorker(url, inputs)` - Run inference in a Web Worker

- `WorkerPool` - Manage multiple workers for parallel inference

### Pipelines

- `TextClassificationPipeline` - Text/sentiment classification

- `SentimentAnalysisPipeline` - Sentiment analysis

- `FeatureExtractionPipeline` - Text embeddings

- `ImageClassificationPipeline` - Image classification

- `TextGenerationPipeline` - Text generation with streaming

- `ObjectDetectionPipeline` - Object detection with bounding boxes

- `AutomaticSpeechRecognitionPipeline` - Speech to text

- `ZeroShotClassificationPipeline` - Classify without training

- `QuestionAnsweringPipeline` - Extractive QA

### HuggingFace Hub

- `fromHub(modelId, options?)` - Load model bundle from HuggingFace

- `fromTask(task, options?)` - Load recommended model for task

- `downloadTokenizer(modelId)` - Download tokenizer only

- `downloadConfig(modelId)` - Download config only

- `POPULAR_MODELS` - Registry of popular models by task

### Utilities

- `Tokenizer` - BPE/WordPiece tokenization with HuggingFace support

- `ImagePreprocessor` - Image preprocessing with HuggingFace config support

- `AudioPreprocessor` - Audio preprocessing for Whisper/wav2vec

- `Cache` - LRU caching utilities

### Tools

- `quantize(model, options)` - Quantize a model

- `prune(model, options)` - Prune model weights

- `benchmark(fn, options)` - Benchmark inference

- `analyzeModel(model)` - Analyze model structure

## 🤝 Contributing

We welcome contributions! Please see our [Contributing Guide](CONTRIBUTING.md) for details.

1. Fork the repository

2. Create your feature branch (`git checkout -b feature/amazing-feature`)

3. Commit your changes (`git commit -m 'Add amazing feature'`)

4. Push to the branch (`git push origin feature/amazing-feature`)

5. Open a Pull Request

## 📄 License

MIT © edgeFlow.js Contributors

---

<div align="center">

**[Get Started](https://edgeflow.js.org/getting-started) · [API Docs](https://edgeflow.js.org/api) · [Examples](examples/)**

Made with ❤️ for the edge AI community

</div>

================================================

FILE: README_CN.md

================================================

# edgeFlow.js

<div align="center">

**浏览器端机器学习推理框架,内置任务调度和智能缓存**

[](https://www.npmjs.com/package/edgeflowjs)

[](https://packagephobia.com/result?p=edgeflowjs)

[](LICENSE)

[文档](https://edgeflow.js.org) · [示例](examples/) · [API 参考](https://edgeflow.js.org/api) · [English](README.md) | [中文](README_CN.md)

</div>

---

## ✨ 特性

- 📋 **任务调度器** - 优先级队列、并发控制、任务取消

- 🔄 **批量处理** - 开箱即用的高效批量推理

- 💾 **内存管理** - 自动内存追踪和作用域清理

- 📥 **智能模型加载** - 支持预加载、分片下载、断点续传

- 💿 **离线缓存** - 基于 IndexedDB 的模型缓存,支持离线使用

- ⚡ **多后端支持** - WebGPU、WebNN、WASM 自动降级

- 🤗 **HuggingFace Hub** - 一行代码从 HuggingFace 下载模型

- 🔤 **真实分词器** - BPE 和 WordPiece 分词器,直接加载 tokenizer.json

- 👷 **Web Worker 支持** - 在后台线程运行推理

- 📦 **开箱即用** - 内置 ONNX Runtime,零配置直接使用

- 🎯 **TypeScript 优先** - 完整的类型支持和直观的 API

## 📦 安装

```bash

npm install edgeflowjs

```

```bash

yarn add edgeflowjs

```

```bash

pnpm add edgeflowjs

```

> **注意**: ONNX Runtime 已作为依赖包含,无需额外配置。

## 🚀 快速开始

### 体验 Demo

在本地运行交互式 Demo 测试所有功能:

```bash

# 克隆并安装

git clone https://github.com/user/edgeflow.js.git

cd edgeflow.js

npm install

# 构建并启动 Demo 服务器

npm run demo

```

在浏览器中打开 **http://localhost:3000**:

1. **加载模型** - 输入 Hugging Face ONNX 模型 URL 并点击 "Load Model"

```

https://huggingface.co/Xenova/distilbert-base-uncased-finetuned-sst-2-english/resolve/main/onnx/model_quantized.onnx

```

2. **测试功能**:

- 🧮 **张量运算** - 测试张量创建、数学运算、softmax、relu

- 📝 **文本分类** - 对文本进行情感分析

- 🔍 **特征提取** - 从文本中提取嵌入向量

- ⚡ **任务调度** - 测试优先级调度

- 📋 **任务调度** - 测试基于优先级的任务调度

- 💾 **内存管理** - 测试内存分配和清理

### 基础用法

```typescript

import { pipeline } from 'edgeflowjs';

// 创建情感分析流水线

const sentiment = await pipeline('sentiment-analysis');

// 运行推理

const result = await sentiment.run('I love this product!');

console.log(result);

// { label: 'positive', score: 0.98, processingTime: 12.5 }

```

### 批量处理

```typescript

// 原生批处理支持

const results = await sentiment.run([

'This is amazing!',

'This is terrible.',

'It\'s okay I guess.'

]);

console.log(results);

// [

// { label: 'positive', score: 0.95 },

// { label: 'negative', score: 0.92 },

// { label: 'neutral', score: 0.68 }

// ]

```

### 多流水线

```typescript

import { pipeline } from 'edgeflowjs';

// 创建多个流水线

const classifier = await pipeline('text-classification');

const extractor = await pipeline('feature-extraction');

// 使用 Promise.all 并行运行

const [classification, features] = await Promise.all([

classifier.run('Sample text'),

extractor.run('Sample text')

]);

```

### 图像分类

```typescript

import { pipeline } from 'edgeflowjs';

const classifier = await pipeline('image-classification');

// 从 URL 加载

const result = await classifier.run('https://example.com/image.jpg');

// 从 HTMLImageElement 加载

const img = document.getElementById('myImage');

const result = await classifier.run(img);

// 批量处理

const results = await classifier.run([img1, img2, img3]);

```

### 文本生成(流式输出)

```typescript

import { pipeline } from 'edgeflowjs';

const generator = await pipeline('text-generation');

// 简单生成

const result = await generator.run('从前有座山', {

maxNewTokens: 50,

temperature: 0.8,

});

console.log(result.generatedText);

// 流式输出

for await (const event of generator.stream('你好,')) {

process.stdout.write(event.token);

if (event.done) break;

}

```

### 零样本分类

```typescript

import { pipeline } from 'edgeflowjs';

const classifier = await pipeline('zero-shot-classification');

const result = await classifier.classify(

'周末我喜欢踢足球',

['体育', '政治', '科技', '娱乐']

);

console.log(result.labels[0], result.scores[0]);

// '体育', 0.92

```

### 问答系统

```typescript

import { pipeline } from 'edgeflowjs';

const qa = await pipeline('question-answering');

const result = await qa.run({

question: '法国的首都是什么?',

context: '巴黎是法国的首都和最大城市。'

});

console.log(result.answer); // '巴黎'

```

### 从 HuggingFace Hub 加载

```typescript

import { fromHub, fromTask } from 'edgeflowjs';

// 通过模型 ID 加载(自动下载模型、分词器、配置)

const bundle = await fromHub('Xenova/distilbert-base-uncased-finetuned-sst-2-english');

console.log(bundle.tokenizer); // Tokenizer 实例

console.log(bundle.config); // 模型配置

// 通过任务名称加载(使用推荐模型)

const sentimentBundle = await fromTask('sentiment-analysis');

```

### Web Workers(后台推理)

```typescript

import { runInWorker, WorkerPool, isWorkerSupported } from 'edgeflowjs';

// 简单:在后台线程运行推理

if (isWorkerSupported()) {

const outputs = await runInWorker(modelUrl, inputs);

}

// 高级:使用 Worker 池进行并行处理

const pool = new WorkerPool({ numWorkers: 4 });

await pool.init();

const modelId = await pool.loadModel(modelUrl);

const results = await pool.runBatch(modelId, batchInputs);

pool.terminate();

```

## 🎯 支持的任务

| 任务 | 流水线 | 状态 |

|------|--------|------|

| 文本分类 | `text-classification` | ✅ |

| 情感分析 | `sentiment-analysis` | ✅ |

| 特征提取 | `feature-extraction` | ✅ |

| 图像分类 | `image-classification` | ✅ |

| 文本生成 | `text-generation` | ✅ |

| 目标检测 | `object-detection` | ✅ |

| 语音识别 | `automatic-speech-recognition` | ✅ |

| 零样本分类 | `zero-shot-classification` | ✅ |

| 问答系统 | `question-answering` | ✅ |

## ⚡ 核心差异

### 与 transformers.js 对比

| 特性 | transformers.js | edgeFlow.js |

|------|-----------------|-------------|

| 任务调度器 | ❌ 无 | ✅ 优先级队列 + 并发限制 |

| 任务取消 | ❌ 无 | ✅ 支持取消排队任务 |

| 批量处理 | ⚠️ 手动 | ✅ 内置批处理 |

| 内存作用域 | ❌ 无 | ✅ 作用域自动清理 |

| 模型预加载 | ❌ 无 | ✅ 后台加载 |

| 断点续传 | ❌ 无 | ✅ 分片 + 续传 |

| 模型缓存 | ⚠️ 基础 | ✅ IndexedDB + 统计 |

| TypeScript | ✅ 完整 | ✅ 完整 |

## 🔧 配置

### 运行时选择

```typescript

import { pipeline } from 'edgeflowjs';

// 自动选择(推荐)

const model = await pipeline('text-classification');

// 指定运行时

const model = await pipeline('text-classification', {

runtime: 'webgpu' // 或 'webnn', 'wasm', 'auto'

});

```

### 内存管理

```typescript

import { pipeline, getMemoryStats, gc } from 'edgeflowjs';

const model = await pipeline('text-classification');

// 使用模型

await model.run('text');

// 检查内存使用

console.log(getMemoryStats());

// { allocated: 50MB, used: 45MB, peak: 52MB, tensorCount: 12 }

// 显式清理

model.dispose();

// 强制垃圾回收

gc();

```

### 调度器配置

```typescript

import { configureScheduler } from 'edgeflowjs';

configureScheduler({

maxConcurrentTasks: 4,

maxConcurrentPerModel: 1,

defaultTimeout: 30000,

enableBatching: true,

maxBatchSize: 32,

});

```

### 缓存

```typescript

import { pipeline, Cache } from 'edgeflowjs';

// 创建缓存

const cache = new Cache({

strategy: 'lru',

maxSize: 100 * 1024 * 1024, // 100MB

persistent: true, // 使用 IndexedDB

});

const model = await pipeline('text-classification', {

cache: true

});

```

## 🛠️ 高级用法

### 自定义模型加载

```typescript

import { loadModel, runInference } from 'edgeflowjs';

// 从 URL 加载,支持缓存、分片和断点续传

const model = await loadModel('https://example.com/model.bin', {

runtime: 'webgpu',

quantization: 'int8',

cache: true, // 启用 IndexedDB 缓存(默认: true)

resumable: true, // 启用断点续传(默认: true)

chunkSize: 5 * 1024 * 1024, // 大模型使用 5MB 分片

onProgress: (progress) => console.log(`加载中: ${progress * 100}%`)

});

// 运行推理

const outputs = await runInference(model, inputs);

// 清理

model.dispose();

```

### 模型预加载

```typescript

import { preloadModel, preloadModels, getPreloadStatus } from 'edgeflowjs';

// 后台预加载单个模型(支持优先级)

preloadModel('https://example.com/model1.onnx', { priority: 10 });

// 预加载多个模型

preloadModels([

{ url: 'https://example.com/model1.onnx', priority: 10 },

{ url: 'https://example.com/model2.onnx', priority: 5 },

]);

// 检查预加载状态

const status = getPreloadStatus('https://example.com/model1.onnx');

// 'pending' | 'loading' | 'complete' | 'error' | 'not_found'

```

### 模型缓存

```typescript

import {

isModelCached,

getCachedModel,

deleteCachedModel,

clearModelCache,

getModelCacheStats

} from 'edgeflowjs';

// 检查模型是否已缓存

if (await isModelCached('https://example.com/model.onnx')) {

console.log('模型已缓存!');

}

// 直接获取缓存的模型数据

const modelData = await getCachedModel('https://example.com/model.onnx');

// 删除特定缓存的模型

await deleteCachedModel('https://example.com/model.onnx');

// 清空所有缓存的模型

await clearModelCache();

// 获取缓存统计

const stats = await getModelCacheStats();

console.log(`${stats.models} 个模型已缓存,共 ${stats.totalSize} 字节`);

```

### 断点续传下载

大模型下载自动支持从断点处继续:

```typescript

import { loadModelData } from 'edgeflowjs';

// 带进度和断点续传的下载

const modelData = await loadModelData('https://example.com/large-model.onnx', {

resumable: true,

chunkSize: 10 * 1024 * 1024, // 10MB 分片

parallelConnections: 4, // 并行下载 4 个分片

onProgress: (progress) => {

console.log(`${progress.percent.toFixed(1)}% 已下载`);

console.log(`速度: ${(progress.speed / 1024 / 1024).toFixed(2)} MB/s`);

console.log(`预计剩余: ${(progress.eta / 1000).toFixed(0)}秒`);

console.log(`分片 ${progress.currentChunk}/${progress.totalChunks}`);

}

});

```

### 模型量化

```typescript

import { quantize } from 'edgeflowjs/tools';

const quantized = await quantize(model, {

method: 'int8',

calibrationData: samples,

});

console.log(`压缩比: ${quantized.compressionRatio}x`);

// 压缩比: 3.8x

```

### 性能测试

```typescript

import { benchmark } from 'edgeflowjs/tools';

const result = await benchmark(

() => model.run('sample text'),

{ warmupRuns: 5, runs: 100 }

);

console.log(result);

// {

// avgTime: 12.5,

// minTime: 10.2,

// maxTime: 18.3,

// throughput: 80 // 推理次数/秒

// }

```

### 内存作用域

```typescript

import { withMemoryScope, tensor } from 'edgeflowjs';

const result = await withMemoryScope(async (scope) => {

// 在作用域中追踪张量

const a = scope.track(tensor([1, 2, 3]));

const b = scope.track(tensor([4, 5, 6]));

// 处理...

const output = process(a, b);

// 保留结果,释放其他

return scope.keep(output);

});

// a 和 b 自动释放

```

## 🔌 张量操作

```typescript

import { tensor, zeros, ones, matmul, softmax, relu } from 'edgeflowjs';

// 创建张量

const a = tensor([[1, 2], [3, 4]]);

const b = zeros([2, 2]);

const c = ones([2, 2]);

// 运算

const d = matmul(a, c);

const probs = softmax(d);

const activated = relu(d);

// 清理

a.dispose();

b.dispose();

c.dispose();

```

## 🌐 浏览器支持

| 浏览器 | WebGPU | WebNN | WASM |

|--------|--------|-------|------|

| Chrome 113+ | ✅ | ✅ | ✅ |

| Edge 113+ | ✅ | ✅ | ✅ |

| Firefox 118+ | ⚠️ 需开启 | ❌ | ✅ |

| Safari 17+ | ⚠️ 预览版 | ❌ | ✅ |

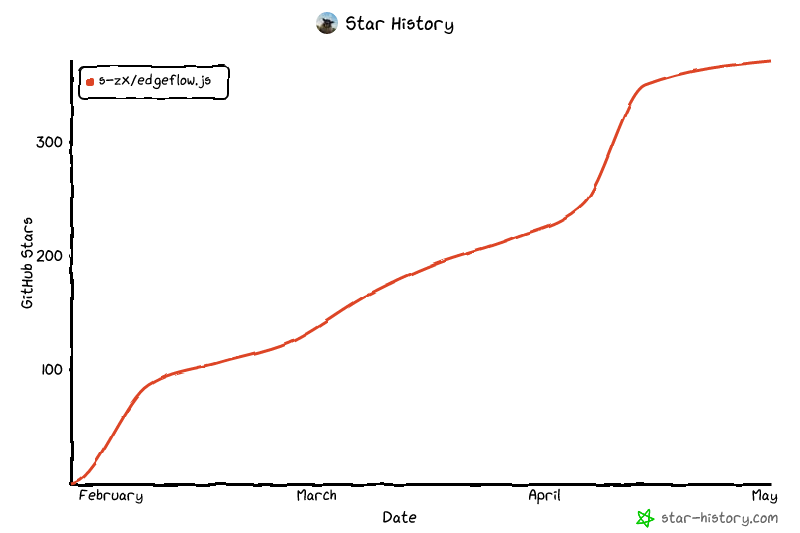

## Star History

[](https://www.star-history.com/?repos=s-zx%2FedgeFlow.js&type=date&legend=top-left)

## 📖 API 参考

### 核心

- `pipeline(task, options?)` - 为任务创建流水线

- `loadModel(url, options?)` - 从 URL 加载模型

- `runInference(model, inputs)` - 运行模型推理

- `getScheduler()` - 获取全局调度器

- `getMemoryManager()` - 获取内存管理器

- `runInWorker(url, inputs)` - 在 Web Worker 中运行推理

- `WorkerPool` - 管理多个 Worker 进行并行推理

### 流水线

- `TextClassificationPipeline` - 文本/情感分类

- `SentimentAnalysisPipeline` - 情感分析

- `FeatureExtractionPipeline` - 文本嵌入

- `ImageClassificationPipeline` - 图像分类

- `TextGenerationPipeline` - 文本生成(支持流式输出)

- `ObjectDetectionPipeline` - 目标检测(带边界框)

- `AutomaticSpeechRecognitionPipeline` - 语音转文字

- `ZeroShotClassificationPipeline` - 零样本分类

- `QuestionAnsweringPipeline` - 抽取式问答

### HuggingFace Hub

- `fromHub(modelId, options?)` - 从 HuggingFace 加载模型包

- `fromTask(task, options?)` - 按任务加载推荐模型

- `downloadTokenizer(modelId)` - 仅下载分词器

- `downloadConfig(modelId)` - 仅下载配置

- `POPULAR_MODELS` - 按任务分类的热门模型注册表

### 工具类

- `Tokenizer` - BPE/WordPiece 分词器,支持 HuggingFace 格式

- `ImagePreprocessor` - 图像预处理器,支持 HuggingFace 配置

- `AudioPreprocessor` - 音频预处理器,支持 Whisper/wav2vec

- `Cache` - LRU 缓存工具

### 工具

- `quantize(model, options)` - 模型量化

- `prune(model, options)` - 模型剪枝

- `benchmark(fn, options)` - 性能基准测试

- `analyzeModel(model)` - 分析模型结构

## 🤝 贡献

欢迎贡献!请查看我们的 [贡献指南](CONTRIBUTING.md) 了解详情。

1. Fork 本仓库

2. 创建特性分支 (`git checkout -b feature/amazing-feature`)

3. 提交更改 (`git commit -m 'Add amazing feature'`)

4. 推送到分支 (`git push origin feature/amazing-feature`)

5. 发起 Pull Request

## 📄 许可证

MIT © edgeFlow.js Contributors

---

<div align="center">

**[快速开始](https://edgeflow.js.org/getting-started) · [API 文档](https://edgeflow.js.org/api) · [示例](examples/)**

用 ❤️ 为边缘 AI 社区打造

</div>

================================================

FILE: benchmarks/README.md

================================================

# edgeFlow.js Benchmarks

This directory contains performance benchmarks for edgeFlow.js.

## Running Benchmarks

```bash

npm install

npm run build

npm run test -- --run tests/unit/

```

> **Note:** A dedicated `npm run benchmark` script with browser-based benchmarks is planned. The unit tests include basic tensor and scheduler performance validation.

## Benchmark Types

### 1. Tensor Operations

- Tensor creation and disposal

- Shape transformation (reshape, transpose)

- Math operations (add, matmul, softmax)

### 2. Scheduler Throughput

- Priority queue ordering under load

- Concurrent task execution

- Task cancellation overhead

### 3. Model Loading

- Cached vs uncached loads (IndexedDB)

- Chunked download with resume

- Preloading pipeline

### 4. Inference Latency

- Text generation (TinyLlama) end-to-end

- Image segmentation (SlimSAM) encode + decode

## How edgeFlow.js Adds Value

edgeFlow.js is not a replacement for inference engines like ONNX Runtime or transformers.js. It is an **orchestration layer** that adds production features on top of them:

| Scenario | Without edgeFlow.js | With edgeFlow.js |

|----------|---------------------|------------------|

| 5 concurrent model calls | Uncontrolled, may OOM | Scheduled with concurrency limits |

| Repeated inference on same input | Recomputed every time | Cached results (LRU/TTL) |

| Large model download interrupted | Start from scratch | Resume from last chunk |

| Memory leak from undisposed tensors | Silent leak | Detected and warned |

> All benchmark claims will be backed by reproducible scripts before the 1.0 release.

## Custom Benchmarks

```typescript

import { runBenchmark, benchmarkSuite } from 'edgeflowjs/tools';

const result = await runBenchmark(

async () => {

await model.run(input);

},

{

warmupRuns: 5,

runs: 20,

verbose: true,

}

);

console.log(`Average: ${result.avgTime.toFixed(2)}ms`);

console.log(`Throughput: ${result.throughput.toFixed(2)} ops/sec`);

const results = await benchmarkSuite({

'small-model': async () => smallModel.run(input),

'large-model': async () => largeModel.run(input),

});

```

================================================

FILE: demo/demo.js

================================================

/**

* edgeFlow.js Interactive Demo

*

* Organized into modules:

* 1. State & Config

* 2. Utilities

* 3. UI Helpers

* 4. Core Features

* 5. SAM Interactive Segmentation (Real Model)

* 6. AI Chat (Real Model)

* 7. Demo Class (Public API)

* 8. Initialization

*/

import * as edgeFlow from '/dist/edgeflow.browser.js';

// Expose edgeFlow globally for debugging

window.edgeFlow = edgeFlow;

/* ==========================================================================

1. State & Config

========================================================================== */

const state = {

model: null,

testTensors: [],

monitor: null,

// SAM state

samPipeline: null,

samModelLoaded: false,

samImage: null,

samPoints: [],

samCanvas: null,

samMaskCanvas: null,

samCtx: null,

samMaskCtx: null,

// Chat state

chatPipeline: null,

chatModelLoaded: false,

chatHistory: [],

chatGenerating: false,

};

const config = {

defaultSeqLen: 128,

monitorSampleInterval: 500,

monitorHistorySize: 30,

};

/* ==========================================================================

2. Utilities

========================================================================== */

const utils = {

/**

* Format bytes to human readable string

*/

formatBytes(bytes) {

if (!bytes) return '0 B';

const k = 1024;

const sizes = ['B', 'KB', 'MB', 'GB'];

const i = Math.floor(Math.log(bytes) / Math.log(k));

return parseFloat((bytes / Math.pow(k, i)).toFixed(1)) + ' ' + sizes[i];

},

/**

* Sleep for given milliseconds

*/

sleep(ms) {

return new Promise(resolve => setTimeout(resolve, ms));

},

/**

* Generate placeholder model inputs based on model metadata

*/

createModelInputs(model, seqLen = config.defaultSeqLen) {

return model.metadata.inputs.map(spec => {

const data = new Array(seqLen).fill(0);

if (spec.name.includes('input')) {

data[0] = 101; // [CLS]

data[1] = 2054; // sample token

data[2] = 102; // [SEP]

} else if (spec.name.includes('mask')) {

data[0] = 1;

data[1] = 1;

data[2] = 1;

}

return edgeFlow.tensor(data, [1, seqLen], 'int64');

});

},

/**

* Simple tokenization and inference

*/

async inferText(text) {

if (!state.model) throw new Error('Model not loaded');

const tokens = text.toLowerCase().split(/\s+/);

const maxLen = config.defaultSeqLen;

const numTokens = Math.min(tokens.length + 2, maxLen);

const inputs = state.model.metadata.inputs.map(spec => {

const data = new Array(maxLen).fill(0);

if (spec.name.includes('input')) {

data[0] = 101; // [CLS]

tokens.slice(0, maxLen - 2).forEach((t, i) => {

// Simple hash-based token ID (demo only)

data[i + 1] = Math.abs(t.split('').reduce((a, c) => a + c.charCodeAt(0), 0)) % 30000;

});

data[numTokens - 1] = 102; // [SEP]

} else if (spec.name.includes('mask')) {

for (let i = 0; i < numTokens; i++) data[i] = 1;

}

return edgeFlow.tensor(data, [1, maxLen], 'int64');

});

const outputs = await edgeFlow.runInference(state.model, inputs);

const outputData = outputs[0].toArray();

// Calculate sentiment score

const score = outputData.length >= 2

? Math.exp(outputData[1]) / (Math.exp(outputData[0]) + Math.exp(outputData[1]))

: outputData[0] > 0.5 ? outputData[0] : 1 - outputData[0];

// Cleanup

inputs.forEach(t => t.dispose());

outputs.forEach(t => t.dispose());

return {

label: score > 0.5 ? 'positive' : 'negative',

score,

};

},

};

/* ==========================================================================

3. UI Helpers

========================================================================== */

const ui = {

/**

* Get element by ID

*/

$(id) {

return document.getElementById(id);

},

/**

* Set output content

*/

setOutput(id, content, type = '') {

const el = this.$(id);

if (!el) return;

const className = type ? `class="${type}"` : '';

el.innerHTML = `<pre><span ${className}>${content}</span></pre>`;

},

/**

* Show loading state

*/

showLoading(id, message = 'Loading...') {

this.setOutput(id, `<span class="loader"></span>${message}`);

},

/**

* Show success message

*/

showSuccess(id, message) {

this.setOutput(id, `✓ ${message}`, 'success');

},

/**

* Show error message

*/

showError(id, error) {

const message = error instanceof Error ? error.message : String(error);

this.setOutput(id, `Error: ${message}`, 'error');

},

/**

* Render status list

*/

renderStatusList(id, items) {

const el = this.$(id);

if (!el) return;

el.innerHTML = items.map(({ label, value, status }) => `

<div class="status-item">

<span>${label}</span>

<span class="${status ? 'status-badge status-' + status : ''}">${value}</span>

</div>

`).join('');

},

/**

* Render metrics

*/

renderMetrics(id, metrics) {

const el = this.$(id);

if (!el) return;

el.innerHTML = metrics.map(({ value, label }) => `

<div class="metric">

<div class="metric-value">${value}</div>

<div class="metric-label">${label}</div>

</div>

`).join('');

el.classList.remove('hidden');

},

/**

* Update runtime status

*/

async updateRuntimeStatus() {

try {

const runtimes = await edgeFlow.getAvailableRuntimes();

this.renderStatusList('runtime-status', [

{ label: 'WebGPU', value: runtimes.get('webgpu') ? 'Ready' : 'N/A', status: runtimes.get('webgpu') ? 'success' : 'error' },

{ label: 'WebNN', value: runtimes.get('webnn') ? 'Ready' : 'N/A', status: runtimes.get('webnn') ? 'success' : 'error' },

{ label: 'WASM', value: runtimes.get('wasm') ? 'Ready' : 'N/A', status: runtimes.get('wasm') ? 'success' : 'error' },

]);

} catch {

this.renderStatusList('runtime-status', [

{ label: 'WebGPU', value: 'N/A', status: 'error' },

{ label: 'WebNN', value: 'N/A', status: 'error' },

{ label: 'WASM', value: 'N/A', status: 'error' },

]);

}

},

/**

* Update memory status

*/

updateMemoryStatus() {

try {

const stats = edgeFlow.getMemoryStats();

this.renderStatusList('memory-status', [

{ label: 'Allocated', value: utils.formatBytes(stats.allocated || 0) },

{ label: 'Peak', value: utils.formatBytes(stats.peak || 0) },

{ label: 'Tensors', value: String(stats.tensorCount || 0) },

]);

} catch {

this.renderStatusList('memory-status', [

{ label: 'Allocated', value: '0 B' },

{ label: 'Peak', value: '0 B' },

{ label: 'Tensors', value: '0' },

]);

}

},

/**

* Update monitor metrics

*/

updateMonitorMetrics(sample) {

this.renderMetrics('monitor-metrics', [

{ value: sample.inference.count, label: 'Inferences' },

{ value: sample.inference.avgTime.toFixed(1) + 'ms', label: 'Avg Time' },

{ value: sample.inference.throughput.toFixed(1), label: 'Ops/sec' },

{ value: utils.formatBytes(sample.memory.usedHeap), label: 'Memory' },

{ value: sample.system.fps || '-', label: 'FPS' },

]);

},

/**

* Initialize default outputs

*/

initOutputs() {

const defaults = {

'model-output': ['Click "Load Model" to download an ONNX model', 'info'],

'tensor-output': ['Click "Run Tests" to test tensor operations...', ''],

'text-output': ['Load model first, then classify text...', ''],

'feature-output': ['Enter text and extract features...', ''],

'quant-output': ['Test in-browser quantization...', ''],

'debugger-output': ['Inspect tensor values and statistics...', ''],

'benchmark-output': ['Benchmark tensor operations...', ''],

'scheduler-output': ['Test task scheduling with priorities...', ''],

'memory-output': ['Test memory allocation and cleanup...', ''],

'concurrency-output': ['Test concurrent inference...', ''],

};

for (const [id, [msg, type]] of Object.entries(defaults)) {

this.setOutput(id, msg, type);

}

// Initialize monitor metrics

this.renderMetrics('monitor-metrics', [

{ value: '0', label: 'Inferences' },

{ value: '0ms', label: 'Avg Time' },

{ value: '0', label: 'Ops/sec' },

{ value: '0 B', label: 'Memory' },

{ value: '-', label: 'FPS' },

]);

},

};

/* ==========================================================================

4. Core Features

========================================================================== */

const features = {

/**

* Load ONNX model

*/

async loadModel() {

const url = ui.$('model-url')?.value;

if (!url) {

ui.setOutput('model-output', 'Enter a model URL', 'warn');

return;

}

ui.showLoading('model-output', 'Loading model...');

try {

const start = performance.now();

state.model = await edgeFlow.loadModel(url, { runtime: 'wasm' });

const time = ((performance.now() - start) / 1000).toFixed(2);

const info = [

`<span class="success">✓ Model loaded in ${time}s</span>`,

`Name: ${state.model.metadata.name}`,

`Size: ${utils.formatBytes(state.model.metadata.sizeBytes)}`,

`Inputs: ${state.model.metadata.inputs.map(i => i.name).join(', ')}`,

].join('\n');

ui.$('model-output').innerHTML = `<pre>${info}</pre>`;

ui.updateMemoryStatus();

} catch (e) {

ui.showError('model-output', e);

}

},

/**

* Test model inference

*/

async testModel() {

if (!state.model) {

ui.setOutput('model-output', 'Load model first', 'warn');

return;

}

ui.showLoading('model-output', 'Running inference...');

try {

const inputs = utils.createModelInputs(state.model);

const start = performance.now();

const outputs = await edgeFlow.runInference(state.model, inputs);

const time = (performance.now() - start).toFixed(2);

const data = outputs[0].toArray();

const info = [

`<span class="success">✓ Inference: ${time}ms</span>`,

`Output: [${data.slice(0, 5).map(x => x.toFixed(4)).join(', ')}...]`,

].join('\n');

ui.$('model-output').innerHTML = `<pre>${info}</pre>`;

inputs.forEach(t => t.dispose());

outputs.forEach(t => t.dispose());

} catch (e) {

ui.showError('model-output', e);

}

},

/**

* Run tensor operation tests

*/

testTensors() {

try {

const a = edgeFlow.tensor([[1, 2], [3, 4]]);

const b = edgeFlow.tensor([[5, 6], [7, 8]]);

const sum = edgeFlow.add(a, b);

const rand = edgeFlow.random([10]);

const probs = edgeFlow.softmax(edgeFlow.tensor([1, 2, 3, 4]));

const info = [

`<span class="success">✓ All tensor tests passed</span>`,

`• Created 2x2 tensor`,

`• Addition: [${sum.toArray()}]`,

`• Random: [${rand.toArray().slice(0, 5).map(x => x.toFixed(2))}...]`,

`• Softmax: [${probs.toArray().map(x => x.toFixed(3))}]`,

].join('\n');

ui.$('tensor-output').innerHTML = `<pre>${info}</pre>`;

[a, b, sum, rand, probs].forEach(t => t.dispose());

ui.updateMemoryStatus();

} catch (e) {

ui.showError('tensor-output', e);

}

},

/**

* Classify single text

*/

async classifyText() {

if (!state.model) {

ui.setOutput('text-output', 'Load model first', 'warn');

return;

}

const text = ui.$('text-input')?.value;

if (!text) return;

ui.showLoading('text-output', 'Classifying...');

try {

const result = await utils.inferText(text);

const emoji = result.label === 'positive' ? '😊' : '😞';

const pct = (result.score * 100).toFixed(1);

ui.$('text-output').innerHTML = `<pre><span class="success">${emoji} ${result.label.toUpperCase()}</span> (${pct}%)</pre>`;

} catch (e) {

ui.showError('text-output', e);

}

},

/**

* Batch classification

*/

async classifyBatch() {

if (!state.model) {

ui.setOutput('text-output', 'Load model first', 'warn');

return;

}

const texts = ['I love this!', 'This is terrible.', 'Amazing!', 'Worst ever.', 'Pretty good.'];

ui.showLoading('text-output', 'Processing batch...');

try {

const start = performance.now();

const results = await Promise.all(texts.map(t => utils.inferText(t)));

const time = (performance.now() - start).toFixed(0);

const lines = results.map((r, i) => {

const emoji = r.label === 'positive' ? '😊' : '😞';

return `${emoji} "${texts[i]}" → ${r.label}`;

});

lines.push('', `<span class="success">Total: ${time}ms</span>`);

ui.$('text-output').innerHTML = `<pre>${lines.join('\n')}</pre>`;

} catch (e) {

ui.showError('text-output', e);

}

},

/**

* Extract features

*/

async extractFeatures() {

if (!state.model) {

ui.setOutput('feature-output', 'Load model first', 'warn');

return;

}

const text = ui.$('feature-input')?.value;

if (!text) return;

ui.showLoading('feature-output', 'Extracting...');

try {

const inputs = utils.createModelInputs(state.model);

const start = performance.now();

const outputs = await edgeFlow.runInference(state.model, inputs);

const time = (performance.now() - start).toFixed(2);

const embeddings = outputs[0].toArray();

const norm = Math.sqrt(embeddings.reduce((a, b) => a + b * b, 0));

const info = [

`<span class="success">✓ Features extracted in ${time}ms</span>`,

`Dimension: ${embeddings.length}`,

`L2 Norm: ${norm.toFixed(4)}`,

`Sample: [${embeddings.slice(0, 5).map(x => x.toFixed(4)).join(', ')}...]`,

].join('\n');

ui.$('feature-output').innerHTML = `<pre>${info}</pre>`;

inputs.forEach(t => t.dispose());

outputs.forEach(t => t.dispose());

} catch (e) {

ui.showError('feature-output', e);

}

},

/**

* Quantization demo

*/

quantize() {

try {

const weights = edgeFlow.tensor([0.5, -0.3, 0.8, -0.1, 0.9, -0.7, 0.2, -0.4], [2, 4], 'float32');

const { tensor: quantized, scale, zeroPoint } = edgeFlow.quantizeTensor(weights, 'int8');

const dequantized = edgeFlow.dequantizeTensor(quantized, scale, zeroPoint, 'int8');

const original = weights.toArray();

const recovered = dequantized.toArray();

const maxError = Math.max(...original.map((v, i) => Math.abs(v - recovered[i])));

const info = [

`<span class="success">✓ Int8 Quantization</span>`,

`Original: [${original.map(v => v.toFixed(3)).join(', ')}]`,

`Quantized: [${quantized.toArray().join(', ')}]`,

`Dequantized: [${recovered.map(v => v.toFixed(3)).join(', ')}]`,

`Scale: ${scale.toFixed(6)}, Max Error: ${maxError.toFixed(6)}`,

].join('\n');

ui.$('quant-output').innerHTML = `<pre>${info}</pre>`;

[weights, quantized, dequantized].forEach(t => t.dispose());

} catch (e) {

ui.showError('quant-output', e);

}

},

/**

* Pruning demo

*/

prune() {

try {

const weights = edgeFlow.tensor([0.5, -0.1, 0.8, -0.05, 0.9, -0.02, 0.2, -0.4], [2, 4], 'float32');

const { tensor: pruned, sparsity } = edgeFlow.pruneTensor(weights, { ratio: 0.5 });

const info = [

`<span class="success">✓ Magnitude Pruning (50%)</span>`,

`Original: [${weights.toArray().map(v => v.toFixed(2)).join(', ')}]`,

`Pruned: [${pruned.toArray().map(v => v.toFixed(2)).join(', ')}]`,

`Sparsity: ${(sparsity * 100).toFixed(1)}%`,

].join('\n');

ui.$('quant-output').innerHTML = `<pre>${info}</pre>`;

[weights, pruned].forEach(t => t.dispose());

} catch (e) {

ui.showError('quant-output', e);

}

},

/**

* Debugger demo

*/

debug() {

try {

const data = Array.from({ length: 100 }, () => Math.random() * 2 - 1);

const tensor = edgeFlow.tensor(data, [10, 10], 'float32');

const inspection = edgeFlow.inspectTensor(tensor, 'random_weights');

const histogram = edgeFlow.createAsciiHistogram(inspection.histogram, 25, 4);

const info = [

`<span class="success">Tensor: ${inspection.name}</span>`,

`Shape: [${inspection.shape}], Size: ${inspection.size}`,

`<span class="info">Statistics:</span>`,

` Min: ${inspection.stats.min.toFixed(4)}`,

` Max: ${inspection.stats.max.toFixed(4)}`,

` Mean: ${inspection.stats.mean.toFixed(4)}`,

` Std: ${inspection.stats.std.toFixed(4)}`,

'',

histogram,

].join('\n');

ui.$('debugger-output').innerHTML = `<pre>${info}</pre>`;

tensor.dispose();

} catch (e) {

ui.showError('debugger-output', e);

}

},

/**

* Benchmark demo

*/

async benchmark() {

ui.showLoading('benchmark-output', 'Running benchmark...');

try {

const result = await edgeFlow.runBenchmark(async () => {

const t = edgeFlow.tensor(Array.from({ length: 1000 }, () => Math.random()), [1000], 'float32');

const sum = t.toArray().reduce((a, b) => a + b, 0);

t.dispose();

return sum;

}, { warmupRuns: 2, runs: 5, name: 'Tensor Sum (1000)' });

const info = [

`<span class="success">Benchmark: ${result.name}</span>`,

`Avg: ${result.avgTime.toFixed(2)}ms`,

`Min: ${result.minTime.toFixed(2)}ms`,

`Max: ${result.maxTime.toFixed(2)}ms`,

`Throughput: ${result.throughput.toFixed(0)} ops/sec`,

].join('\n');

ui.$('benchmark-output').innerHTML = `<pre>${info}</pre>`;

} catch (e) {

ui.showError('benchmark-output', e);

}

},

/**

* Scheduler test

*/

async testScheduler() {

ui.showLoading('scheduler-output', 'Testing scheduler...');

try {

const scheduler = edgeFlow.getScheduler();

const task1 = scheduler.schedule('model-a', async () => { await utils.sleep(100); return 'Task 1'; }, 'high');

const task2 = scheduler.schedule('model-b', async () => { await utils.sleep(50); return 'Task 2'; }, 'normal');

const task3 = scheduler.schedule('model-a', async () => { await utils.sleep(75); return 'Task 3'; }, 'low');

const [r1, r2, r3] = await Promise.all([task1.wait(), task2.wait(), task3.wait()]);

const info = [

`<span class="success">✓ Scheduler Test Passed</span>`,

`• ${r1} (high priority)`,

`• ${r2} (normal priority)`,

`• ${r3} (low priority)`,

].join('\n');

ui.$('scheduler-output').innerHTML = `<pre>${info}</pre>`;

} catch (e) {

ui.showError('scheduler-output', e);

}

},

/**

* Memory allocation test

*/

allocateMemory() {

try {

const before = edgeFlow.getMemoryStats();

for (let i = 0; i < 10; i++) {

state.testTensors.push(edgeFlow.random([100, 100]));

}

const after = edgeFlow.getMemoryStats();

const info = [

`<span class="success">✓ Allocated 10 tensors (100x100)</span>`,

`Before: ${utils.formatBytes(before.allocated || 0)}, ${before.tensorCount || 0} tensors`,

`After: ${utils.formatBytes(after.allocated || 0)}, ${after.tensorCount || 0} tensors`,

].join('\n');

ui.$('memory-output').innerHTML = `<pre>${info}</pre>`;

ui.updateMemoryStatus();

} catch (e) {

ui.showError('memory-output', e);

}

},

/**

* Memory cleanup

*/

cleanupMemory() {

state.testTensors.forEach(t => {

if (!t.isDisposed) t.dispose();

});

state.testTensors = [];

edgeFlow.gc();

ui.showSuccess('memory-output', 'Memory cleaned up');

ui.updateMemoryStatus();

},

/**

* Concurrency test

*/

async testConcurrency() {

if (!state.model) {

ui.setOutput('concurrency-output', 'Load model first', 'warn');

ui.$('concurrency-metrics')?.classList.add('hidden');

return;

}

ui.showLoading('concurrency-output', 'Running concurrent tasks...');

try {

const texts = ['Great!', 'Terrible!', 'Amazing!', 'Awful!', 'Good!', 'Bad!', 'Nice!', 'Horrible!'];

const start = performance.now();

const results = await Promise.all(texts.map(t => utils.inferText(t)));

const total = performance.now() - start;

const lines = [

`<span class="success">✓ Concurrent execution complete</span>`,

...results.map((r, i) => `${r.label === 'positive' ? '😊' : '😞'} "${texts[i]}"`),

];

ui.$('concurrency-output').innerHTML = `<pre>${lines.join('\n')}</pre>`;

ui.renderMetrics('concurrency-metrics', [

{ value: total.toFixed(0) + 'ms', label: 'Total' },

{ value: String(texts.length), label: 'Tasks' },

{ value: (total / texts.length).toFixed(0) + 'ms', label: 'Avg' },

]);

} catch (e) {

ui.showError('concurrency-output', e);

}

},

/**

* Start performance monitor

*/

startMonitor() {

if (!state.monitor) {

state.monitor = new edgeFlow.PerformanceMonitor({

sampleInterval: config.monitorSampleInterval,

historySize: config.monitorHistorySize,

});

state.monitor.onSample(sample => ui.updateMonitorMetrics(sample));

}

state.monitor.start();

},

/**

* Stop monitor

*/

stopMonitor() {

if (state.monitor) {

state.monitor.stop();

}

},

/**

* Simulate inferences for monitor

*/

simulateInferences() {

if (!state.monitor) {

this.startMonitor();

}

for (let i = 0; i < 5; i++) {

setTimeout(() => {

state.monitor?.recordInference(30 + Math.random() * 70);

}, i * 100);

}

},

/**

* Open dashboard modal

*/

openDashboard() {

if (!state.monitor) {

this.startMonitor();

this.simulateInferences();

}