Repository: skrusche63/spark-elastic

Branch: master

Commit: 43163e14780e

Files: 39

Total size: 109.6 KB

Directory structure:

gitextract_mstyggjy/

├── .classpath

├── .project

├── README.md

├── pom.xml

└── src/

└── main/

├── resources/

│ ├── goals.xml

│ ├── pageview.xml

│ └── server.conf

└── scala/

└── de/

└── kp/

└── spark/

└── elastic/

├── Configuration.scala

├── EsClient.scala

├── EsContext.scala

├── EsEvents.scala

├── EsService.scala

├── KafkaReader.scala

├── KafkaService.scala

├── SparkBase.scala

├── actor/

│ ├── EsMaster.scala

│ └── KafkaMaster.scala

├── apps/

│ ├── GoalApp.scala

│ ├── InsightApp.scala

│ └── SegmentApp.scala

├── bayes/

│ └── ClickPredictor.scala

├── enron/

│ ├── EnronApp.scala

│ ├── EnronEngine.scala

│ └── EnronUtils.scala

├── ml/

│ ├── EsKMeans.scala

│ ├── EsNPref.scala

│ └── EsSimilarity.scala

├── samples/

│ ├── EsCountMinSktech.scala

│ ├── EsHyperLogLog.scala

│ ├── KafkaEngine.scala

│ ├── KafkaSerializer.scala

│ ├── MessageApp.scala

│ ├── MessageGenerator.scala

│ └── MessageUtils.scala

├── specs/

│ ├── FieldSpec.scala

│ ├── GoalSpec.scala

│ └── PageViewSpec.scala

└── stream/

├── EsHistogram.scala

└── EsStream.scala

================================================

FILE CONTENTS

================================================

================================================

FILE: .classpath

================================================

================================================

FILE: .project

================================================

spark-elastic

org.eclipse.m2e.core.maven2Builder

org.scala-ide.sdt.core.scalabuilder

org.scala-ide.sdt.core.scalanature

org.eclipse.jdt.core.javanature

org.eclipse.m2e.core.maven2Nature

================================================

FILE: README.md

================================================

## Integration of Elasticsearch with Spark

This project shows how to easily integrate [Apache Spark](http://spark.apache.org), a fast and general purpose engine for

large-scale data processing, with [Elasticsearch](http://elasticsearch.org), a real-time distributed search and analytics

engine.

Spark is an in-memory processing framework and outperforms Hadoop up to a factor of 100. Spark is accompanied by

* [MLlib](https://spark.apache.org/mllib/), a scalable machine learning library,

* [Spark SQL](https://spark.apache.org/sql/), a unified access platform for structured big data,

* [Spark Streaming](https://spark.apache.org/streaming/), a library to build scalable fault-tolerant streaming applications.

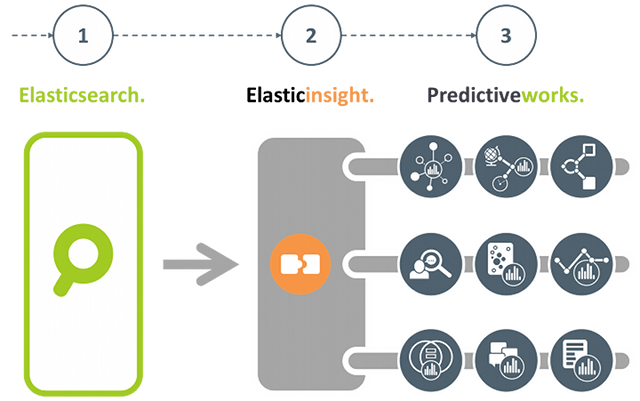

If you are more interested in an Elasticsearch plugin-in that brings the power of [Predictiveworks.](http://predictiveworks.eu) to Elasticsearch,

then please refer to [Elasticinsight.](http://elasticinsight.eu)

[Predictiveworks.](http://predictiveworks.eu) is an ensemble of dedicated predictive engines that covers a wide range of today's analytics requirements from Association Analysis,

to Context-Aware Recommendations up to Text Analysis. Elasticinsight. empowers Elasticsearch to seamlessly uses these multiple engines.

---

### Machine Learning with Elasticsearch

Besides linguistic and semantic enrichment, for data in a search index there is an increasing demand to apply knowledge discovery and

data mining techniques, and even predictive analytics to gain deeper insights into the data and further increase their business value.

One of the key prerequisites is to easily connect existing data sources to state-of-the art machine learning and predictive analytics

frameworks.

In this project, we give advice how to connect Elasticsearch, a powerful distributed search engine, to Apache Spark and profit from the increasing number of existing machine learning algorithms.

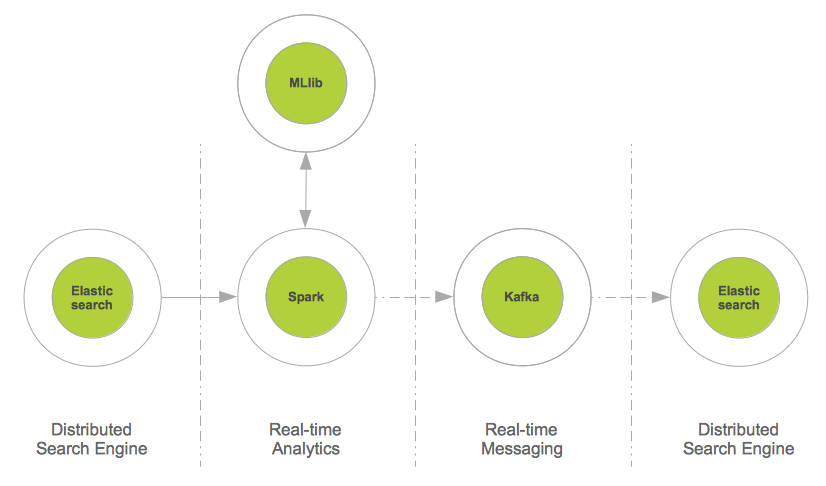

The figure shows the integration pattern for Elasticsearch and Spark from an architectural persepctive and also indicates how to proceed with the enriched content (i.e. the way back to the search index).

The source code below describes a few lines of Scala, that are sufficient to read from Elasticsearch and provide data for further mining

and prediction tasks:

```

val source = sc.newAPIHadoopRDD(conf, classOf[EsInputFormat[Text, MapWritable]], classOf[Text], classOf[MapWritable])

val docs = source.map(hit => {

new EsDocument(hit._1.toString,toMap(hit._2))

})

```

#### Document Segmentation with KMeans

From the data format extracted from Elasticsearch `RDD[EsDocument]` it is just a few lines of Scala to segment these documents with respect to their geo location (latitude,longitude).

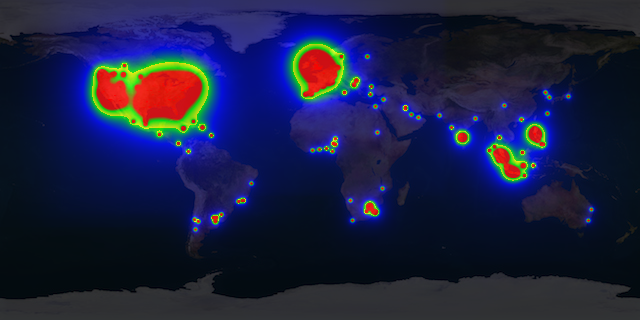

From these data a heatmap can be drawn to visualize from which region of world most of the documents come from. The image below shows a multi-colored heatmap, where the colors red, yellow, green and blue indicate different heat ranges.

Segmenting documents into specific target groups is not restricted their geo location. Time of the day, product or service categories, total revenue, and other parameters may be used.

For segmentation, the [K-Means clustering](http://http://en.wikipedia.org/wiki/K-means_clustering) implementation

of [MLlib](https://spark.apache.org/mllib/) is used:

```

def cluster(documents:RDD[EsDocument],esConf:Configuration):RDD[(Int,EsDocument)] = {

val fields = esConf.get("es.fields").split(",")

val vectors = documents.map(doc => toVector(doc.data,fields))

val clusters = esConf.get("es.clusters").toInt

val iterations = esConf.get("es.iterations").toInt

/* Train model */

val model = KMeans.train(vectors, clusters, iterations)

/* Apply model */

documents.map(doc => (model.predict(toVector(doc.data,fields)),doc))

}

```

Clustering Elasticsearch data with K-Means is a first and simple example of how to immediately benefit from the integration with Spark. Other business cases may cover recommendations:

Suppose Elasticsearch is used to index e-commerce transactions on a per user basis, then it is also straightforward to build a recommendation system in just two steps:

* **First**, implicit user-item ratings have to be derived from the e-commerce transactions, and

* **Second**, from this item similarities are calculated to provide a recommendation model.

For more information, please read [here](https://github.com/skrusche63/spark-elastic/wiki/Item-Similarity-with-Spark).

#### Insights from Elasticsearch with SQL

[Spark SQL](https://spark.apache.org/sql/) allows relational queries expressed in SQL to be executed using Spark. This enables to apply queries to Spark data structures and also to Spark data streams (see below).

As SQL queries generate Spark data structures, a mixture of SQL and native Spark operations is also possible, thus providing a sophisticated mechanism to compute valuable insight from data in real-time.

The code example below illustrates how to apply SQL queries on a Spark data structure (RDD) and provide further insight by mixing with native Spark operations.

```

/*

* Elasticsearch specific configuration

*/

val esConf = new Configuration()

esConf.set("es.nodes","localhost")

esConf.set("es.port","9200")

esConf.set("es.resource", "enron/mails")

esConf.set("es.query", "?q=*:*")

esConf.set("es.table", "docs")

esConf.set("es.sql", "select subject from docs")

...

/*

* Read from ES and provide some insight with Spark & SparkSQL,

* thereby mixing SQL and other Spark operations

*/

val documents = es.documentsAsJson(esConf)

val subjects = es.query(documents, esConf).filter(row => row.getString(0).contains("Re"))

...

def query(documents:RDD[String], esConfig:Configuration):SchemaRDD = {

val query = esConfig.get("es.sql")

val name = esConfig.get("es.table")

val table = sqlc.jsonRDD(documents)

table.registerAsTable(name)

sqlc.sql(query)

}

```

---

### Real-Time Stream Processing and Elasticsearch

Real-time analytics is a very popular topic with a wide range of application areas:

* High frequency trading (finance),

* Real-time bidding (adtech),

* Real-time social activity (social networks),

* Real-time sensoring (Internet of things),

* Real-time user behavior,

and more, gain tremendous business value from real-time analytics. There exist a lot of popular frameworks to aggregate data in real-time, such as Apache Storm,

Apache S4, Apache Samza, Akka Streams, SQLStream to name just a few.

Spark Streaming, which is capable to process about 400,000 records per node per second for simple aggregations on small records, significantly outperforms other popular

streaming systems. This is mainly because Spark Streaming groups messages in small batches which are then processed together.

Moreover in case of failure, Spark Streaming batches are only processed once which greatly simplifies the logic (e.g. to make sure some values are not counted multiple times).

Spark Streaming is a layer on top of Spark and transforms and batches data streams from various sources, such as Kafka, Twitter or ZeroMQ into a sequence of

Spark RDDs (Resilient Distributed DataSets) using a sliding window. These RDDs can then be manipulated using normal Spark operations.

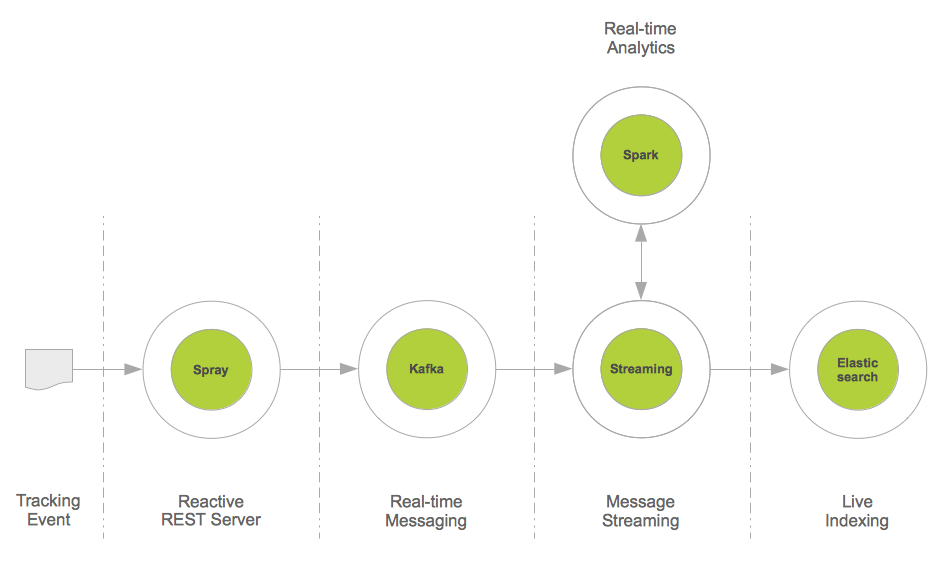

This project provides a real-time data integration pattern based on Apache Kafka, Spark Streaming and Elasticsearch:

[Apache Kafka](http://kafka.apache.org/) is a distributed publish-subscribe messaging system, that may also be seen as a real-time integration system. For example, Web tracking events are easily sent to Kafka,

and may then be consumed by a set of different consumers.

In this project, we use Spark Streaming as a consumer and aggregator of e.g. such tracking data streams, and perform a live indexing. As Spark Streaming is also able to directly

compute new insights from data streams, this data integration pattern may be used as a starting point for real-time data analytics and enrichment before search indexing.

The figure below illustrates the architecture of this pattern. For completeness reasons, [Spray](http://spray.io/) has been introduced. Spray is an open-source toolkit for

building REST/HTTP-based integration layers on top of Scala and Akka. As it is asynchronous, actor-based, fast, lightweight, and modular, it is an easy way to connect Scala

applications to the Web.

The code example below illustrates that such an integration pattern may be implemented with just a few lines of Scala code:

```

val stream = KafkaUtils.createStream[String,Message,StringDecoder,MessageDecoder](ssc, kafkaConfig, kafkaTopics, StorageLevel.MEMORY_AND_DISK).map(_._2)

stream.foreachRDD(messageRDD => {

/**

* Live indexing of Kafka messages; note, that this is also

* an appropriate place to integrate further message analysis

*/

val messages = messageRDD.map(prepare)

messages.saveAsNewAPIHadoopFile("-",classOf[NullWritable],classOf[MapWritable],classOf[EsOutputFormat],esConfig)

})

```

#### Most Frequent Items from Streams

Using the architecture as illustrated above not only enables to apply Spark to data streams. It also opens real-time streams to other data processing libraries such as [Algebird](https://github.com/twitter/algebird) from

Twitter.

Algebird brings, as the name indicates, algebraic algorithms to streaming data. An important representative is [Count-Min Sketch](http://en.wikipedia.org/wiki/Count%E2%80%93min_sketch) which enables to compute the most

frequent items from streams in a certain time window. The code example below describes how to apply the CountMinSketchMonoid (Algebird) to compute the most frequent messages from a Kafka Stream with respect to the messages' classification:

```

object EsCountMinSktech {

def findTopK(stream:DStream[Message]):Seq[(Long,Long)] = {

val DELTA = 1E-3

val EPS = 0.01

val SEED = 1

val PERC = 0.001

val k = 5

var globalCMS = new CountMinSketchMonoid(DELTA, EPS, SEED, PERC).zero

val clases = stream.map(message => message.clas)

val approxTopClases = clases.mapPartitions(clases => {

val localCMS = new CountMinSketchMonoid(DELTA, EPS, SEED, PERC)

clases.map(clas => localCMS.create(clas))

}).reduce(_ ++ _)

approxTopClases.foreach(rdd => {

if (rdd.count() != 0) globalCMS ++= rdd.first()

})

/**

* Retrieve approximate TopK classifiers from the provided messages

*/

val globalTopK = globalCMS.heavyHitters.map(clas => (clas, globalCMS.frequency(clas).estimate))

/*

* Retrieve the top k message classifiers: it may also be interesting to

* return the classifier frequency from this method, ignoring the line below

*/

.toSeq.sortBy(_._2).reverse.slice(0, k)

globalTopK

}

}

```

***

### Technology Stack

* [Scala](http://scala-lang.org)

* [Apache Kafka](http://kafka.apache.org/)

* [Apache Spark](http://spark.apache.org)

* [Spark SQL](https://spark.apache.org/sql/)

* [Spark Streaming](https://spark.apache.org/streaming/)

* [Twitter Algebird](https://github.com/twitter/algebird)

* [Elasticsearch](http://elasticsearch.org)

* [Elasticsearch Hadoop](http://elasticsearch.org/overview/hadoop/)

* [Spray](http://spray.io/)

================================================

FILE: pom.xml

================================================

4.0.0

spark-elastic

spark-elastic

0.0.1-SNAPSHOT

Spark-ELASTIC

This project combines Apache Spark and Elasticsearch to enable mining & prediction for Elasticsearch.

2010

GPL v3

http://....

repo

1.6

1.6

UTF-8

2.10

2.10.0

org.scala-lang

scala-library

${scala.version}

junit

junit

4.11

test

org.specs2

specs2_${scala.tools.version}

1.13

test

org.scalatest

scalatest_${scala.tools.version}

2.0.M6-SNAP8

test

org.apache.spark

spark-core_2.10

1.0.2

org.codehaus.jackson

jackson-mapper-asl

org.apache.spark

spark-mllib_2.10

1.0.2

org.apache.spark

spark-sql_2.10

1.0.2

org.apache.spark

spark-streaming_2.10

1.0.2

org.apache.spark

spark-streaming-twitter_2.10

1.0.2

org.apache.spark

spark-streaming-kafka_2.10

1.0.2

com.twitter

algebird-core_2.10

0.7.0

org.elasticsearch

elasticsearch-hadoop

2.0.0

org.elasticsearch

elasticsearch

1.3.0

org.json4s

json4s-native_2.10

3.2.10

io.spray

spray-client

1.2.0

io.spray

spray-httpx

1.2.0

com.typesafe.akka

akka-actor_2.10

2.2.3

com.typesafe.akka

akka-contrib_2.10

2.2.3

com.typesafe.akka

akka-remote_2.10

2.2.3

org.apache.kafka

kafka_2.10

0.8.1.1

com.sun.jmx

jmxri

com.sun.jdmk

jmxtools

javax.jms

jms

com.twitter

twitter-text

1.9.9

spray repo

Spray Repository

http://repo.spray.io/

conjars.org

http://conjars.org/repo

src/main/scala

src/test/scala

net.alchim31.maven

scala-maven-plugin

3.1.3

compile

testCompile

-make:transitive

-dependencyfile

${project.build.directory}/.scala_dependencies

org.apache.maven.plugins

maven-surefire-plugin

2.13

false

true

**/*Test.*

**/*Suite.*

Dr. Krusche & Partner PartG

http://dr-kruscheundpartner.de

================================================

FILE: src/main/resources/goals.xml

================================================

/shoppingCart,/checkOut,/signin,/signup,/billing,/confirmShipping,/placeOrder

================================================

FILE: src/main/resources/pageview.xml

================================================

sessionid

timestamp

userid

pageurl

visittime

referrer

================================================

FILE: src/main/resources/server.conf

================================================

akka {

actor {

provider = "akka.remote.RemoteActorRefProvider"

}

remote {

enabled-transports = ["akka.remote.netty.tcp"]

netty.tcp {

hostname = "127.0.0.1"

port = 2600

}

log-sent-messages = on

log-received-messages = on

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/Configuration.scala

================================================

package de.kp.spark.elastic

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import com.typesafe.config.ConfigFactory

import java.util.Properties

object Configuration {

/* Load configuration for router */

val path = "application.conf"

val config = ConfigFactory.load(path)

def elastic():(String,String,String,String) = {

val cfg = config.getConfig("elastic")

val host = cfg.getString("host")

val port = cfg.getString("port")

val index = cfg.getString("index")

val mapping = cfg.getString("mapping")

(host,port,index,mapping)

}

def kafka():Properties = {

val cfg = config.getConfig("kafka")

val host = cfg.getString("zk.connect.host")

val port = cfg.getString("zk.connect.port")

val gid = config.getString("consumer.groupid")

val ctimeout = cfg.getString("consumer.timeout.ms")

val stimeout = cfg.getString("consumer.socket.timeout.ms")

val ccommit = cfg.getString("consumer.commit.ms")

val aoffset = cfg.getString("auto.offset.reset")

val params = Map(

"zookeeper.connect" -> (host + ":" + port),

"group.id" -> gid,

"socket.timeout.ms" -> stimeout,

"consumer.timeout.ms" -> ctimeout,

"autocommit.interval.ms" -> ccommit,

"auto.offset.reset" -> aoffset

)

val props = new Properties()

params.map(kv => {

props.put(kv._1,kv._2)

})

props

}

def router():(Int,Int,Int) = {

val cfg = config.getConfig("router")

val time = cfg.getInt("time")

val retries = cfg.getInt("retries")

val workers = cfg.getInt("workers")

(time,retries,workers)

}

def topic() = config.getString("topic")

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/EsClient.scala

================================================

package de.kp.spark.elastic

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import akka.actor.ActorSystem

import spray.http.{HttpRequest,HttpResponse}

import spray.client.pipelining.{Get,Post,sendReceive }

import org.elasticsearch.client.transport.TransportClient

import org.elasticsearch.common.transport.InetSocketTransportAddress

import org.elasticsearch.transport.ConnectTransportException

import scala.concurrent.Future

import scala.util.{Success,Failure}

case class EsConfig(

hosts:Seq[String],ports:Seq[Int]

)

/**

* A Http client implementation based on Akka & Spray

*/

class EsHttpClient {

import concurrent.ExecutionContext.Implicits._

implicit val system = ActorSystem("EsClient")

val pipeline: HttpRequest => Future[HttpResponse] = sendReceive

def get(url:String):Future[HttpResponse] = pipeline(Get(url))

def post(url:String,payload:String):Future[HttpResponse] = pipeline(Post(url, payload))

def shutdown = system.shutdown

}

object EsTransportClient {

def apply(config:EsConfig):TransportClient = {

val client = try {

val transportClient = new TransportClient()

(config.hosts zip config.ports) foreach { hp =>

transportClient.addTransportAddress(

new InetSocketTransportAddress(hp._1, hp._2))

}

transportClient

} catch {

case e: ConnectTransportException =>

throw new Exception(e.getMessage)

}

client

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/EsContext.scala

================================================

package de.kp.spark.elastic

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.apache.spark.SparkContext

import org.apache.spark.SparkContext._

import org.apache.spark.rdd.RDD

import org.apache.spark.sql.{SchemaRDD,SQLContext}

import org.apache.spark.mllib.clustering.KMeans

import org.apache.spark.mllib.linalg.{Vector,Vectors}

import org.apache.hadoop.conf.{Configuration => HadoopConfig}

import org.apache.hadoop.io.{ArrayWritable,MapWritable,NullWritable,Text}

import org.elasticsearch.hadoop.mr.EsInputFormat

import org.json4s.native.Serialization.write

import org.json4s.DefaultFormats

import scala.collection.JavaConversions._

case class EsDocument(id:String,data:Map[String,String])

/**

* ElasticContext supports access to Elasticsearch from Apache Spark using the library

* from org.elasticsearch.hadoop. For read requests, the [Text] specifies the _id field

* from Elasticsearch, and [MapWritable] specifies a (field,value) map

*

*/

class EsContext(sparkConf:HadoopConfig) extends SparkBase {

private val sc = createSCLocal("ElasticContext",sparkConf)

private val sqlc = new SQLContext(sc)

/**

* EsDocument is the common format to be used if machine learning algorithms

* have to be applied to the extracted content of an Elasticseach index

*/

def documents(esConf:HadoopConfig):RDD[EsDocument] = {

val source = sc.newAPIHadoopRDD(esConf, classOf[EsInputFormat[Text, MapWritable]], classOf[Text], classOf[MapWritable])

source.map(hit => new EsDocument(hit._1.toString,toMap(hit._2)))

}

/**

* Json format is the common format to be used if SQL queries have to be applied

* to the extracted content of an Elasticsearch index

*/

def documentsAsJson(esConf:HadoopConfig):RDD[String] = {

implicit val formats = DefaultFormats

val source = sc.newAPIHadoopRDD(esConf, classOf[EsInputFormat[Text, MapWritable]], classOf[Text], classOf[MapWritable])

val docs = source.map(hit => {

val doc = Map("ident" -> hit._1.toString()) ++ toMap(hit._2)

write(doc)

})

docs

}

def documentsFromSpec(conf:HadoopConfig):RDD[EsDocument] = {

val fields = sc.broadcast(conf.get("es.fields").split(","))

val source = sc.newAPIHadoopRDD(conf, classOf[EsInputFormat[Text, MapWritable]], classOf[Text], classOf[MapWritable])

source.map(hit => new EsDocument(hit._1.toString,toMap(hit._2,fields.value)))

}

/**

* Cluster extracted content from an Elasticsearch index by applying KMeans

* clustering algorithm from MLLib

*/

def cluster(documents:RDD[EsDocument],conf:HadoopConfig):RDD[(Int,EsDocument)] = {

val fields = sc.broadcast(conf.get("es.fields").split(","))

val vectors = documents.map(doc => toVector(doc.data,fields.value))

val clusters = conf.get("es.clusters").toInt

val iterations = conf.get("es.iterations").toInt

/* Train model */

val model = KMeans.train(vectors, clusters, iterations)

/* Apply model */

documents.map(doc => (model.predict(toVector(doc.data,fields.value)),doc))

}

/**

* Apply SQL statement to extracted content from an Elasticsearch index

*/

def query(documents:RDD[String], esConfig:HadoopConfig):SchemaRDD = {

val query = esConfig.get("es.sql")

val name = esConfig.get("es.table")

val table = sqlc.jsonRDD(documents)

table.registerAsTable(name)

sqlc.sql(query)

}

/**

* Wrapper to stop SparkContext

*/

def shutdown = sc.stop

/**

* Wrapper to get SparkContext from ElasticContext

*/

def sparkContext = sc

/**

* A helper method to convert a MapWritable into a Map

*/

private def toMap(mw:MapWritable):Map[String,String] = {

val m = mw.map(e => {

val k = e._1.toString

val v = (if (e._2.isInstanceOf[Text]) e._2.toString()

else if (e._2.isInstanceOf[ArrayWritable]) {

val array = e._2.asInstanceOf[ArrayWritable].get()

array.map(item => {

(if (item.isInstanceOf[NullWritable]) "" else item.asInstanceOf[Text].toString)}).mkString(",")

}

else "")

k -> v

})

m.toMap

}

/**

* A helper method to convert a MapWritable into a Map

* thereby selecting predefined fields

*/

private def toMap(mw:MapWritable,fields:Array[String]):Map[String,String] = {

val m = mw.map(e => {

val k = e._1.toString

val v = (if (e._2.isInstanceOf[Text]) e._2.toString()

else if (e._2.isInstanceOf[ArrayWritable]) {

val array = e._2.asInstanceOf[ArrayWritable].get()

array.map(item => {

(if (item.isInstanceOf[NullWritable]) "" else item.asInstanceOf[Text].toString)}).mkString(",")

}

else "")

k -> v

})

m.filter(kv => fields.contains(kv._1)).toMap

}

private def toVector(data:Map[String,String], fields:Array[String]):Vector = {

val features = data.filter(kv => fields.contains(kv._1)).map(_._2.toDouble)

Vectors.dense(features.toArray)

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/EsEvents.scala

================================================

package de.kp.spark.elastic

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.elasticsearch.client.Client

import org.elasticsearch.action.index.IndexResponse

import org.elasticsearch.action.ActionListener

import scala.concurrent.{ExecutionContext,Future,Promise}

/**

* EsEvents indexes trackable events retrieved from Apache Kafka

*/

class EsEvents(client:Client,index:String,mapping:String) {

def insert(event:String)(implicit ec:ExecutionContext): Future[Either[String,String]] = {

val response = Promise[IndexResponse]

/* index/mapping = enron/mails */

client.prepareIndex(index,mapping).setSource(event)

.execute(new EsActionListener(response))

response.future

.map(r => Right(r.getId()))

.recover {

case e: Exception => Left(e.toString)

}

}

}

class EsActionListener[T](val p: Promise[T]) extends ActionListener[T]{

override def onResponse(r: T) = {

p.success(r)

}

override def onFailure(e: Throwable) = {

p.failure(e)

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/EsService.scala

================================================

package de.kp.spark.elastic

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import akka.actor.{ActorSystem,Props}

import com.typesafe.config.ConfigFactory

import de.kp.spark.elastic.actor.EsMaster

object EsService {

def main(args: Array[String]) {

val name:String = "elastic-server"

val conf:String = "server.conf"

val server = new EsService(conf, name)

while (true) {}

server.shutdown

}

}

class EsService(conf:String, name:String) {

val system = ActorSystem(name, ConfigFactory.load(conf))

sys.addShutdownHook(system.shutdown)

val master = system.actorOf(Props[EsMaster], name="elastic-master")

def shutdown = system.shutdown()

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/KafkaReader.scala

================================================

package de.kp.spark.elastic

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import akka.actor.ActorRef

import kafka.consumer.{Consumer,ConsumerConfig,Whitelist}

import kafka.serializer.DefaultDecoder

class KafkaReader(topic:String,actor:ActorRef) {

private val props = Configuration.kafka

private val connector = Consumer.create(new ConsumerConfig(props))

private val stream = connector.createMessageStreamsByFilter(new Whitelist(topic),1,new DefaultDecoder(),new DefaultDecoder())(0)

def shutdown {

connector.shutdown()

}

def read {

consume(execute)

}

private def consume(write:(Array[Byte]) => Unit) = {

for (compose <- stream) {

try {

write(compose.message)

} catch {

case e: Throwable =>

if (true) { //this is objective even how to conditionalize on it

//error("Error processing message, skipping this message: ", e)

} else {

throw e

}

}

}

}

private def execute(bytes:Array[Byte]) {

actor ! new String(bytes)

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/KafkaService.scala

================================================

package de.kp.spark.elastic

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import akka.actor.{ActorSystem,Props}

import de.kp.spark.elastic.actor.KafkaMaster

object KafkaService {

val topic = Configuration.topic

val system = ActorSystem("elastic-kafka")

sys.addShutdownHook(system.shutdown)

def main(args: Array[String]) {

val master = system.actorOf(Props(new KafkaMaster()))

val reader = new KafkaReader(topic, master)

while (true) reader.read

reader.shutdown

system.shutdown

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/SparkBase.scala

================================================

package de.kp.spark.elastic

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.apache.spark.{SparkConf,SparkContext}

import org.apache.spark.serializer.KryoSerializer

import org.apache.spark.streaming.{Seconds,StreamingContext}

import org.apache.hadoop.conf.{Configuration => HadoopConfig}

import scala.collection.JavaConversions._

trait SparkBase {

protected def createSSCLocal(name:String,config:HadoopConfig):StreamingContext = {

val sc = createSCLocal(name,config)

/*

* Batch duration is the time duration spark streaming uses to

* collect spark RDDs; with a duration of 5 seconds, for example

* spark streaming collects RDDs every 5 seconds, which then are

* gathered int RDDs

*/

val batch = config.get("spark.batch.duration").toInt

new StreamingContext(sc, Seconds(batch))

}

protected def createSCLocal(name:String,config:HadoopConfig):SparkContext = {

/* Extract Spark related properties from the Hadoop configuration */

val iterator = config.iterator()

for (prop <- iterator) {

val k = prop.getKey()

val v = prop.getValue()

if (k.startsWith("spark."))System.setProperty(k,v)

}

val runtime = Runtime.getRuntime()

runtime.gc()

val cores = runtime.availableProcessors()

val conf = new SparkConf()

conf.setMaster("local["+cores+"]")

conf.setAppName(name);

conf.set("spark.serializer", classOf[KryoSerializer].getName)

/* Set the Jetty port to 0 to find a random port */

conf.set("spark.ui.port", "0")

new SparkContext(conf)

}

protected def createSSCRemote(name:String,config:HadoopConfig):SparkContext = {

/* Not implemented yet */

null

}

protected def createSCRemote(name:String,config:HadoopConfig):SparkContext = {

/* Not implemented yet */

null

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/actor/EsMaster.scala

================================================

package de.kp.spark.elastic.actor

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import akka.actor.{Actor,ActorLogging,ActorRef,Props}

import akka.pattern.ask

import akka.util.Timeout

import akka.actor.{OneForOneStrategy, SupervisorStrategy}

import akka.routing.RoundRobinRouter

import com.typesafe.config.ConfigFactory

import scala.concurrent.duration.DurationInt

/**

* EsMaster handles remote search requests based on Akka Remoting

* feature; also see: EsService (remote service)

*/

class EsMaster extends Actor with ActorLogging {

def receive = {

case _ => log.info("Unknown request")

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/actor/KafkaMaster.scala

================================================

package de.kp.spark.elastic.actor

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import akka.actor.{Actor,ActorLogging,ActorRef,Props}

import akka.actor.{OneForOneStrategy, SupervisorStrategy}

import akka.routing.RoundRobinRouter

import de.kp.spark.elastic.{Configuration,EsConfig,EsEvents,EsTransportClient}

import scala.concurrent.duration._

import scala.concurrent.duration.Duration._

import scala.concurrent.duration.DurationInt

class KafkaMaster extends Actor with ActorLogging {

private val (esHost,esPort,esIndex,esType) = Configuration.elastic

private val esClient = EsTransportClient(EsConfig(Seq(esHost),Seq(esPort.toInt)))

private val esEvents = new EsEvents(esClient,esIndex,esType)

import concurrent.ExecutionContext.Implicits._

/* Load configuration for routers */

val (time,retries,workers) = Configuration.router

override val supervisorStrategy = OneForOneStrategy(maxNrOfRetries=retries,withinTimeRange = DurationInt(time).minutes) {

case _ : Exception => SupervisorStrategy.Restart

}

def receive = {

case req:String => {

val response = esEvents.insert(req)

}

case _ => {}

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/apps/GoalApp.scala

================================================

package de.kp.spark.elastic.apps

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.apache.spark.rdd.RDD

import org.apache.hadoop.conf.Configuration

import de.kp.spark.elastic.{EsContext,EsDocument}

import de.kp.spark.elastic.bayes.{ClickModel,ClickTrainer}

import de.kp.spark.elastic.specs.{GoalSpec,PageViewSpec}

object GoalApp {

def run(clicks:Int,goal:String) {

val start = System.currentTimeMillis()

/* Configure Apache Spark */

val sparkConf = new Configuration()

sparkConf.set("spark.executor.memory","1g")

sparkConf.set("spark.kryoserializer.buffer.mb","256")

val es = new EsContext(sparkConf)

/* Configure Elasticsearch */

val esConf = new Configuration()

esConf.set("es.nodes","localhost")

esConf.set("es.port","9200")

esConf.set("es.resource", "visits/pageview")

esConf.set("es.query", "?q=*:*")

val fields = PageViewSpec.get.map(_._2._1).mkString(",")

esConf.set("es.fields", fields)

/*

* Read from Elasticsearch and restrict to those document fields

* specified by PageViewSpec

*/

val documents = es.documentsFromSpec(esConf)

/*

* Extract dataset: (sessionid,timestamp,userid,pageurl,visittime,referrer)

*/

val extracted = extract(documents,PageViewSpec.get)

/*

* Evaluate extracted dataset and determine whether the conversion goal provided matches the

* page urls within a session

*

* Evaluated dataset: (sessid,userid,total,starttime,timespent,referrer,exitpage,flowstatus)

*/

val evaluated = evaluate(extracted,goal)

/*

* Train a Bayes model from the evaluated dataset

*/

val model = ClickTrainer.train(evaluated)

println("Conversion Probability: " + model.predict(clicks))

val end = System.currentTimeMillis()

println("Total time: " + (end-start) + " ms")

es.shutdown

}

def evaluate(source:RDD[(String,Long,String,String,String,String)],goal:String):RDD[(String,String,Int,Long,Long,String,String,Int)] = {

/* Group source by sessionid */

val dataset = source.groupBy(group => group._1)

dataset.map(valu => {

/* Sort single session data by timestamp */

val data = valu._2.toList.sortBy(_._2)

val pages = data.map(_._4)

/* Total number of page clicks */

val total = pages.size

val (sessid,starttime,userid,pageurl,visittime,referrer) = data.head

val endtime = data.last._2

/* Total time spent for session */

val timespent = (if (total > 1) (endtime - starttime) / 1000 else 0)

val exitpage = pages(total - 1)

/*

* This is a simple session evaluation to determine whether the sequence of

* pages per session matches with a predefined page flow

*/

val flowstatus = GoalSpec.checkFlow(goal,pages)

(sessid,userid,total,starttime,timespent,referrer,exitpage,flowstatus)

})

}

private def extract(documents:RDD[EsDocument],spec:Map[String,(String,String)]):RDD[(String,Long,String,String,String,String)] = {

val sc = documents.context

val bspec = sc.broadcast(spec)

documents.map(document => {

/* sessionid */

val sessionid = document.data(bspec.value("sessionid")._1)

/* timestamp */

val timestamp = document.data(bspec.value("timestamp")._1).toLong

/* userid */

val userid = document.data(bspec.value("userid")._1)

/* pageurl */

val pageurl = document.data(bspec.value("pageurl")._1)

/* visittime */

val visittime = document.data(bspec.value("visittime")._1)

/* referrer */

val referrer = document.data(bspec.value("referrer")._1)

/* Format: (sessionid,timestamp,userid,pageurl,visittime,referrer) */

(sessionid,timestamp,userid,pageurl,visittime,referrer)

})

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/apps/InsightApp.scala

================================================

package de.kp.spark.elastic.apps

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.apache.hadoop.conf.Configuration

import de.kp.spark.elastic.EsContext

/**

* An example of how to extract documents from Elasticsearch

* and apply a simple SQL statement to the documents

*/

object InsightApp {

def run() {

val start = System.currentTimeMillis()

/*

* Spark specific configuration

*/

val sparkConf = new Configuration()

sparkConf.set("spark.executor.memory","1g")

sparkConf.set("spark.kryoserializer.buffer.mb","256")

val es = new EsContext(sparkConf)

/*

* Elasticsearch specific configuration

*/

val esConf = new Configuration()

esConf.set("es.nodes","localhost")

esConf.set("es.port","9200")

esConf.set("es.resource", "enron/mails")

esConf.set("es.query", "?q=*:*")

esConf.set("es.table", "docs")

esConf.set("es.sql", "select subject from docs")

/*

* Read from ES and provide some insight with Spark & SparkSQL,

* thereby mixing SQL and other Spark operations

*/

val documents = es.documentsAsJson(esConf)

val subjects = es.query(documents, esConf).filter(row => row.getString(0).contains("Re"))

subjects.foreach(subject => println(subject))

val end = System.currentTimeMillis()

println("Total time: " + (end-start) + " ms")

es.shutdown

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/apps/SegmentApp.scala

================================================

package de.kp.spark.elastic.apps

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.apache.hadoop.conf.Configuration

import de.kp.spark.elastic.EsContext

/**

* An example of how to extract documents from Elasticsearch

* and apply KMeans clustering algorithm to group documents

* by similar features

*/

object SegmentApp {

def run() {

val start = System.currentTimeMillis()

/*

* Spark specific configuration

*/

val sparkConf = new Configuration()

sparkConf.set("spark.executor.memory","1g")

sparkConf.set("spark.kryoserializer.buffer.mb","256")

val es = new EsContext(sparkConf)

/*

* Elasticsearch specific configuration

*/

val esConf = new Configuration()

esConf.set("es.nodes","localhost")

esConf.set("es.port","9200")

esConf.set("es.resource", "visits/pageview")

esConf.set("es.query", "?q=*:*")

esConf.set("es.fields", "lat,lon")

esConf.set("es.clusters", "10")

esConf.set("es.iterations", "100")

/*

* Read from Elasticsearch and apply KMeans clustering

* to the extracted documents

*/

val documents = es.documents(esConf)

val clustered = es.cluster(documents, esConf)

val end = System.currentTimeMillis()

println("Total time: " + (end-start) + " ms")

es.shutdown

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/bayes/ClickPredictor.scala

================================================

package de.kp.spark.elastic.bayes

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.apache.spark.SparkContext._

import org.apache.spark.rdd.RDD

import de.kp.spark.elastic.specs.GoalSpec

class ClickModel(probabilities:Map[Int,Double]) {

def predict(clicks:Int):Double = {

val nearest = probabilities.map(valu => {

val k = valu._1

val d = Math.abs(k - clicks)

(k,d)

}).toList.sortBy(_._2).take(1)(0)._1

probabilities(nearest)

}

}

/**

* This Predictor is backed by the Bayesian Discriminant method

* to determine the conversion probability given a number of clicks

* within a certain web session; in this context, a web session is

* considered to be converted, if a certain sequence of page views

* appeared

*/

object ClickTrainer {

/**

* Input = (sessid,userid,total,starttime,timespent,referrer,exiturl,flowstatus)

*/

def train(dataset:RDD[(String,String,Int,Long,Long,String,String,Int)]):ClickModel = {

val histo = histogram(dataset)

/*

* p(c|v=1): probability of clicks per session, given the visitor converted in the session

*/

val prob1 = histo.filter(valu => {valu._1._2 == 1}).map(valu => {

val (clicks,converted) = valu._1

val support = valu._2

val prop = 1.toDouble / support

(clicks,prop)

}).collect().toMap

/*

* (p(c|v=0): probability of clicks per session, given the visitor did not convert in the session

*/

val prob2 = histo.filter(valu => {valu._1._2 == 0}).map(valu => {

val (clicks,converted) = valu._1

val support = valu._2

val prop = 1.toDouble / support

(clicks,prop)

}).collect().toMap

val counts = conversions(dataset)

/*

* p(v=1): unconditional probability of visitor converted in a session

*/

val prob3 = 1.toDouble / counts.filter(valu => valu._1 == 1).map(valu => valu._2).collect()(0)

/*

* p(v=0): unconditional probability of visitor did not convert in a session

*/

val prob4 = 1.toDouble / counts.filter(valu => valu._1 == 0).map(valu => valu._2).collect()(0)

/*

* p(v=1|c) = p(c|v=1) * p(v=1) / (p(c|v=0) * p(v=0) + p(c|v=1) * p(v=1))

*/

val clickProbs = prob1.map(valu => {

val (clicks,prop) = valu

val numerator = prop * prob3

val denominator = numerator + prob4 * prob2(clicks)

val res = (if (denominator > 0) numerator / denominator else 0)

(clicks,res)

})

new ClickModel(clickProbs)

}

/**

* Input = (sessid,userid,total,starttime,timespent,referrer,exiturl,flowstatus)

*

*/

private def conversions(dataset:RDD[(String,String,Int,Long,Long,String,String,Int)]):RDD[(Int,Int)] = {

val counts = dataset.map(valu => {

val userConvertedPerSession = if (valu._8 == GoalSpec.FLOW_COMPLETED) 1 else 0

val k = userConvertedPerSession

val v = 1

(k,v)

}).reduceByKey(_ + _)

/*

* The output shows the session counts

* for conversion and no conversion

*/

counts

}

/**

* Input = (sessid,userid,total,starttime,timespent,referrer,exiturl,flowstatus)

*

*/

private def histogram(dataset:RDD[(String,String,Int,Long,Long,String,String,Int)]):RDD[((Int,Int),Int)] = {

/*

* The input contains one row per session. Each row contains the number of clicks

* in the session, time spent in the session and a boolean indicating whether the

* user converted during the session.

*/

val histogram = dataset.map(valu => {

val clicksPerSession = valu._3

val userConvertedPerSession = if (valu._8 == GoalSpec.FLOW_COMPLETED) 1 else 0

val k = (clicksPerSession,userConvertedPerSession)

val v = 1

(k,v)

}).reduceByKey(_ + _)

/*

* Each row of the output contains the conversion flag, click count

* per session and the number of sessions with those click counts.

*/

histogram

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/enron/EnronApp.scala

================================================

package de.kp.spark.elastic.enron

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

/**

* EnronApp is a helper to prepare and index data in ES

*/

object EnronApp {

def main(args : Array[String]) {

val settings = Map(

"dir" -> "/Work/tmp/enron/20110402/mails/allen-p",

"index" -> "enron",

"mapping" -> "mails",

"server" -> "http://localhost:9200"

)

val action = "index" // or prepare

EnronEngine.execute(action, settings)

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/enron/EnronEngine.scala

================================================

package de.kp.spark.elastic.enron

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import java.io.File

import scala.io.Source

import scala.concurrent.Future

import spray.http.HttpResponse

import org.json4s._

import org.json4s.native.Serialization

import org.json4s.native.Serialization.{read,write}

import de.kp.spark.elastic.EsHttpClient

/**

* Please note, that part of the functionality below is taken from

* the code base assigned to this blog entry:

*

* http://sujitpal.blogspot.de/2012/11/indexing-into-elasticsearch-with-akka.html

*/

object EnronEngine {

import concurrent.ExecutionContext.Implicits._

private val client = new EsHttpClient()

private val shards:Int = 1

private val replicas:Int = 1

private val es_CreateIndex:String = """

{"settings": {"index": {"number_of_shards": %s, "number_of_replicas": %s}}}""".format(shards, replicas)

private val es_CreateSchema:String = """{ "%s" : { "properties" : %s } }"""

private val parser = new EnronParser()

private val schema = new EnronSchema()

def execute(action:String,settings:Map[String,String]) {

action match {

case "index" =>

index(settings)

client.shutdown

case "prepare" =>

prepare(settings)

client.shutdown

case _ => {}

}

}

private def prepare(settings:Map[String,String]) {

/**

* Create new index

*/

val server0 = List(settings("server"), settings("index")).foldRight("")(_ + "/" + _)

client.post(server0, es_CreateIndex)

/**

* Create new schema

*/

val server1 = List(settings("server"), settings("index"), settings("mapping")).foldRight("")(_ + "/" + _)

client.post(server1 + "_mapping", es_CreateSchema.format("enron", schema.mappings))

}

private def index(settings:Map[String,String]) {

val dir = settings.get("dir").get

val filefilter = new EnronFilter()

val files = walk(new File(dir)).filter(f => filefilter.accept(f))

val server1 = List(settings("server"), settings("index"), settings("mapping")).foldRight("")(_ + "/" + _)

for (file <- files) {

val path = file.getAbsolutePath()

val doc = parser.parse(Source.fromFile(path))

val response = addDocument(doc,server1)

response.map(result => println("RESPONSE: " + result.entity.asString))

}

}

private def getProps(path:String): Map[String,String] = {

val file:File = new File(path)

Map() ++ Source.fromFile(file).getLines().toList.

filter(line => (! (line.isEmpty || line.startsWith("#")))).

map(line => (line.split("=")(0) -> line.split("=")(1)))

}

private def walk(root: File): Stream[File] = {

if (root.isDirectory) {

root #:: root.listFiles.toStream.flatMap(walk(_))

} else root #:: Stream.empty

}

private def addDocument(doc:EnronDoc, server:String):Future[HttpResponse] = {

implicit val formats = Serialization.formats(NoTypeHints)

val json = write(doc)

client.post(server, json)

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/enron/EnronUtils.scala

================================================

package de.kp.spark.elastic.enron

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import scala.io.Source

import scala.collection.immutable.HashMap

import java.io.{File,FileFilter}

import java.util.Locale

import java.text.SimpleDateFormat

/**

* Please note, that part of the functionality below is taken from

* the code base assigned to this blog entry:

*

* http://sujitpal.blogspot.de/2012/11/indexing-into-elasticsearch-with-akka.html

*/

case class EnronDoc (

message_id: String,

from: String,

to: Seq[String],

x_cc: Seq[String],

x_bcc: Seq[String],

date: String,

subject: String,

body:String

)

class EnronSchema {

def mappings(): String = """{

"message_id": {"type": "string", "index": "not_analyzed", "store": "yes"},

"from": {"type": "string", "index": "not_analyzed", "store": "yes"},

"to": {"type": "string", "index": "not_analyzed", "store": "yes", "multi_field": "yes"},

"x_cc": {"type": "string", "index": "not_analyzed", "store": "yes", "multi_field": "yes"},

"x_bcc": {"type": "string", "index": "not_analyzed", "store": "yes", "multi_field": "yes"},

"date": {"type": "date", "index": "not_analyzed", "store": "yes"},

"subject": {"type": "string", "index": "analyzed", "store": "yes"},

"body": {"type": "string", "index": "analyzed", "store": "yes"}

}"""

}

class EnronParser {

def parse(source: Source):EnronDoc = {

val map = parse(source.getLines(), HashMap[String,String](), false)

/**

* Convert map into case class

*/

val message_id = map.get("message_id").get

val from = map.get("from").get

val to = map.get("to") match {

case None => Seq()

case Some(to) => to.split(",").toSeq

}

val x_cc = map.get("x_cc").get.split(",").toSeq

val x_bcc = map.get("x_bcc").get.split(",").toSeq

val date = map.get("date").get

val subject = map.get("subject").get

val body = map.get("body").get

new EnronDoc(message_id,from,to,x_cc,x_bcc,date,subject,body)

}

private def parse(lines: Iterator[String], map: Map[String,String], startBody: Boolean): Map[String,String] = {

if (lines.isEmpty) map

else {

val head = lines.next()

if (head.trim.length == 0) parse(lines, map, true)

else if (startBody) {

val body = map.getOrElse("body", "") + "\n" + head

parse(lines, map + ("body" -> body), startBody)

} else {

val split = head.indexOf(':')

if (split > 0) {

val kv = (head.substring(0, split), head.substring(split + 1))

val key = kv._1.map(c => if (c == '-') '_' else c).trim.toLowerCase

val value = kv._1 match {

case "Date" => formatDate(kv._2.trim)

case _ => kv._2.trim

}

parse(lines, map + (key -> value), startBody)

} else parse(lines, map, startBody)

}

}

}

private def formatDate(date: String): String = {

lazy val parser = new SimpleDateFormat("EEE, dd MMM yyyy HH:mm:ss", Locale.US)

lazy val formatter = new SimpleDateFormat("yyyy-MM-dd'T'HH:mm:ss")

formatter.format(parser.parse(date.substring(0, date.lastIndexOf('-') - 1)))

}

}

/**

* We restrict to the /sent/ folders of the Enron dataset

*/

class EnronFilter extends FileFilter {

override def accept(file: File): Boolean = {

file.getAbsolutePath().contains("/sent/")

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/ml/EsKMeans.scala

================================================

package de.kp.spark.elastic.ml

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.apache.spark.rdd.RDD

import org.apache.spark.mllib.clustering.KMeans

import org.apache.spark.mllib.linalg.{Vector,Vectors}

object EsKMeans {

/**

* This method segments an RDD of documents clustering the assigned (lat,lon) geo coordinates.

* The field parameter specifies the names of the lat & lon coordinate fields

*/

def segmentByLocation(docs:RDD[(String,Map[String,String])],fields:Array[String],clusters:Int,iterations:Int):RDD[(Int,String,Map[String,String])] = {

/**

* Train model

*/

val vectors = docs.map(doc => toVector(doc._2,fields))

val model = KMeans.train(vectors, clusters, iterations)

/**

* Apply model

*/

docs.map(doc => {

val vector = toVector(doc._2,fields)

(model.predict(vector),doc._1,doc._2)

})

}

private def toVector(data:Map[String,String], fields:Array[String]):Vector = {

val lat = data(fields(0)).toDouble

val lon = data(fields(1)).toDouble

Vectors.dense(Array(lat,lon))

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/ml/EsNPref.scala

================================================

package de.kp.spark.cf

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.apache.spark.rdd.RDD

import org.apache.spark.SparkContext._

object EsNPref {

def build(docs:RDD[(String,Map[String,String])],fields:Array[String]):RDD[(String,String,Int)] = {

val transactions = docs.map(doc => {

/**

* Each document (doc) represents an ecommerce transaction per user

*/

val user = doc._2(fields(0))

val line = doc._2(fields(1))

(user,line)

})

build(transactions)

}

def build(transactions:RDD[(String,String)]):RDD[(String,String,Int)] = {

/**

* STEP #1

*

* Compute the total number of transactions per user. The transactions are

* grouped by user (_._1) and then mapped onto number of transactions

* per user

*/

val total = transactions.groupBy(_._1).map(grouped => (grouped._1, grouped._2.size))

/**

* STEP #2

*

* Computer the item support per user. Each transaction (text line) is split

* into an Array[String] and all items are made unique. The result is mapped

* into (user,item,support) tuples

*/

val userItemSupport = transactions.flatMap(valu => List.fromArray(valu._2.split(" ")).distinct.map(item => (valu._1,item)))

.groupBy(valu => (valu._1,valu._2))

.map(grouped => (grouped._1,grouped._2.size)).map(valu => (valu._1._1,valu._1._2,valu._2))

/**

* STEP #3

*

* Compute item preference per user. Item support and total transactions per user

* are used to compute the respective item preference:

*

* pref = Math.log(1 + supp.toDouble / total.toDouble)

*/

val userItemPref = userItemSupport.keyBy(value => value._1).join(total)

.map(valu => {

val user = valu._1

val data = valu._2 // ((user,item,support),total)

val item = data._1._2

val supp = data._1._3

val total = data._2

/**

* Math.log means natural logarithm in Scala

*/

val pref = Math.log(1 + supp.toDouble / total.toDouble)

(user, item, pref)

})

/**

* The user-item preferences are solely based on the purchase data of a

* particluar user; the respective value, however, is far from representing

* a real-life value, as it only takes the purchase frequency into account.

*

* The frequency is quite different depending on the item price, item lifetime,

* and the like. For example, since expensive items or items with long lifespan,

* such as jewelry or electronic home appliances, are purchased infrequently.

*

* So the preferences of users form them cannot be higher that cheap items or

* those with a short lifespan such as hand creams or tissues. Also, when a

* user u purchase item i four times out of ten transactions, we may think that

* he does not prefer item i if other users purchased the same item eight times

* out of ten transactions.

*

* It is therefore necessary to define a relative preference so it is comparable

* among all users. We therefore proceed to compute the maximum item preference

* for all users and use this value to normalize the user-item preference derived

* above.

*/

/**

* STEP #4

*

* Compute the maximum preference per item (independent of the user)

*/

val itemMaxPref = userItemPref.map(valu => (valu._2,valu._3)).groupBy(valu => valu._1)

.map(grouped => {

def max(pref1:Double, pref2:Double):Double = if (pref1 > pref2) pref1 else pref2

val item = grouped._1

val mpref = grouped._2.map(valu => valu._2).reduceLeft(max)

(item,mpref)

})

/**

* STEP #5

*

* Finally compute the user-item rating with scores from 1..5

*/

val userItemRating = userItemPref.keyBy(valu => valu._2).join(itemMaxPref)

.map(valu => {

val item = valu._1

val data = valu._2

val uid = data._1._1

val pref = data._1._3

val mpref = data._2

val npref = Math.round( 5* (pref.toDouble / mpref.toDouble) ).toInt

(uid,item,npref)

})

userItemRating

}

}

================================================

FILE: src/main/scala/de/kp/spark/elastic/ml/EsSimilarity.scala

================================================

package de.kp.spark.elastic.ml

/* Copyright (c) 2014 Dr. Krusche & Partner PartG

*

* This file is part of the Spark-ELASTIC project

* (https://github.com/skrusche63/spark-elastic).

*

* Spark-ELASTIC is free software: you can redistribute it and/or modify it under the

* terms of the GNU General Public License as published by the Free Software

* Foundation, either version 3 of the License, or (at your option) any later

* version.

*

* Spark-ELASTIC is distributed in the hope that it will be useful, but WITHOUT ANY

* WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR

* A PARTICULAR PURPOSE. See the GNU General Public License for more details.

* You should have received a copy of the GNU General Public License along with

* Spark-ELASTIC.

*

* If not, see .

*/

import org.apache.spark.rdd.RDD

import org.apache.spark.SparkContext._

class EsSimilarity(ratings:RDD[(String,String,Int)]) {

/**

* Parameters to regularize correlation.

*/

val PRIOR_COUNT = 10

val PRIOR_CORRELATION = 0

val model = build()

def recommend(item:String, k:Int):Array[(String,Double,Double,Double,Double)] = {

/**

* Retrieve all similarities where the first item

* is equal to the provided one

*/

val similarities = model.filter(valu => {

val pair = valu._1

pair._1 == item

})

val result = similarities.map(valu => {

val (item1,item2) = valu._1

val (corr,rcorr,cos,jac) = valu._2

(item2, corr, rcorr, cos, jac)

}).collect().filter(valu => (valu._3 equals Double.NaN) == false)

.sortBy(valu => valu._4).take(k)

result

}

private def build():RDD[((String,String),(Double,Double,Double,Double))] = {

/**

* Compute the number of raters per item

*/

val itemSupport = ratings.groupBy(valu => valu._2)

.map(grouped => (grouped._1, grouped._2.size))

/**

* Join rating with item support: the result contains

* the following data (user,item,rating,support)

*/

val ratingsSupport = ratings.groupBy(valu => valu._2).join(itemSupport)

.flatMap(joined => joined._2._1.map(valu => (valu._1, valu._2, valu._3, joined._2._2)))

/**

* Clone data, join on user and filter pairs to make sure

* that we do not double count and exclude self pairs

*/

val ratingsSupportClone = ratingsSupport.keyBy(valu => valu._1)

val ratingsPairs = ratingsSupportClone.join(ratingsSupportClone).filter(valu => valu._2._1._2 < valu._2._2._2)

/**

* Compute raw inputs to similarity metrics

*/

val vectorCalcs = ratingsPairs.map(valu => {

val (user1,item1,rating1,support1) = valu._2._1

val (user2,item2,rating2,support2) = valu._2._2

val key = (item1, item2)

val stats = (

rating1 * rating2,

rating1,

rating2,

math.pow(rating1, 2),