Repository: tensorflow/fairness-indicators

Branch: master

Commit: 6c970e0ec6c5

Files: 64

Total size: 442.6 KB

Directory structure:

gitextract_8nbht4qq/

├── .github/

│ ├── ISSUE_TEMPLATE/

│ │ ├── 00-bug-issue.md

│ │ ├── 10-build-installation-issue.md

│ │ ├── 20-documentation-issue.md

│ │ ├── 30-feature-request.md

│ │ ├── 40-performance-issue.md

│ │ └── 50-other-issues.md

│ ├── actions/

│ │ └── setup-env/

│ │ └── action.yml

│ └── workflows/

│ ├── build.yml

│ ├── ci-lint.yml

│ ├── docs.yml

│ └── test.yml

├── .pre-commit-config.yaml

├── CONTRIBUTING.md

├── LICENSE

├── README.md

├── RELEASE.md

├── docs/

│ ├── __init__.py

│ ├── guide/

│ │ ├── _index.yaml

│ │ ├── _toc.yaml

│ │ └── guidance.md

│ ├── index.md

│ ├── javascripts/

│ │ └── mathjax.js

│ ├── stylesheets/

│ │ └── extra.css

│ └── tutorials/

│ ├── Facessd_Fairness_Indicators_Example_Colab.ipynb

│ ├── Fairness_Indicators_Example_Colab.ipynb

│ ├── Fairness_Indicators_Pandas_Case_Study.ipynb

│ ├── Fairness_Indicators_TFCO_CelebA_Case_Study.ipynb

│ ├── Fairness_Indicators_TFCO_Wiki_Case_Study.ipynb

│ ├── Fairness_Indicators_TensorBoard_Plugin_Example_Colab.ipynb

│ ├── Fairness_Indicators_on_TF_Hub_Text_Embeddings.ipynb

│ ├── README.md

│ ├── _Deprecated_Fairness_Indicators_Lineage_Case_Study.ipynb

│ └── _toc.yaml

├── fairness_indicators/

│ ├── __init__.py

│ ├── example_model.py

│ ├── example_model_test.py

│ ├── fairness_indicators_metrics.py

│ ├── remediation/

│ │ ├── __init__.py

│ │ ├── weight_utils.py

│ │ └── weight_utils_test.py

│ ├── test_cases/

│ │ └── dlvm/

│ │ ├── fairness_indicators_dlvm_test_case.ipynb

│ │ └── fi_test_installed.sh

│ ├── tutorial_utils/

│ │ ├── __init__.py

│ │ ├── util.py

│ │ └── util_test.py

│ └── version.py

├── mkdocs.yml

├── pyproject.toml

├── requirements-docs.txt

├── setup.py

└── tensorboard_plugin/

├── README.md

├── pytest.ini

├── setup.py

└── tensorboard_plugin_fairness_indicators/

├── RELEASE.md

├── __init__.py

├── demo.py

├── metadata.py

├── metadata_test.py

├── plugin.py

├── plugin_test.py

├── static/

│ └── index.js

├── summary_v2.py

├── summary_v2_test.py

└── version.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .github/ISSUE_TEMPLATE/00-bug-issue.md

================================================

---

name: Bug Issue

about: Use this template for reporting a bug

labels: 'type:bug'

---

**System information**

- Have I written custom code (as opposed to using stock example code provided):

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04):

- Fairness Indicators version:

- TensorFlow version:

- Python version:

**Describe the current behavior**

**Describe the expected behavior**

**Standalone code to reproduce the issue**

Provide a reproducible test case that is the bare minimum necessary to generate

the problem. If possible, please share a link to Colab/Jupyter/any notebook.

**Other info / logs** Include any logs or source code that would be helpful to

diagnose the problem. If including tracebacks, please include the full

traceback. Large logs and files should be attached.

================================================

FILE: .github/ISSUE_TEMPLATE/10-build-installation-issue.md

================================================

---

name: Build/Installation Issue

about: Use this template for build/installation issues

labels: 'type:build/install'

---

**System information**

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04):

- Fairness Indicators version:

- Python version:

- Pip version:

**Describe the problem**

**Provide the exact sequence of commands / steps that you executed before running into the problem**

**Any other info / logs**

Include any logs or source code that would be helpful to diagnose the problem. If including tracebacks, please include the full traceback. Large logs and files should be attached.

================================================

FILE: .github/ISSUE_TEMPLATE/20-documentation-issue.md

================================================

---

name: Documentation Issue

about: Use this template for documentation related issues

labels: 'type:docs'

---

The Fairness Indicators docs are open source! To get involved, read the

documentation contributor guide:

https://github.com/tensorflow/fairness-indicators/blob/master/CONTRIBUTING.md

## URL(s) with the issue:

Please provide a link to the documentation entry.

## Description of issue (what needs changing):

### Clear description

For example, why should someone use this method? How is it useful?

### Correct links

Is the link to the source code correct?

### Parameters defined

Are all parameters defined and formatted correctly?

### Returns defined

Are return values defined?

### Raises listed and defined

Are the errors defined?

### Usage example

Is there currently a usage example for this method?

### Request visuals, if applicable

Are there currently visuals? If not, will it clarify the content?

### Submit a pull request?

Are you planning to also submit a pull request to fix the issue?

================================================

FILE: .github/ISSUE_TEMPLATE/30-feature-request.md

================================================

---

name: Feature Request

about: Use this template for raising a feature request

labels: 'type:feature'

---

**Describe the feature and the current behavior/state.**

**Will this change the current api? How?**

**Who will benefit with this feature?**

**Are you willing to contribute it (Yes/No).**

**Any Other info.**

================================================

FILE: .github/ISSUE_TEMPLATE/40-performance-issue.md

================================================

---

name: Performance Issue

about: Use this template for reporting a performance issue

labels: 'type:performance'

---

**System information**

- Have I written custom code (as opposed to using stock example code provided):

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04):

- Fairness Indicators version:

- TensorFlow version:

- Python version:

**Describe the current behavior**

**Describe the expected behavior**

**Standalone code to reproduce the issue**

Provide a reproducible test case that is the bare minimum necessary to generate

the problem. If possible, please share a link to Colab/Jupyter/any notebook.

**Other info / logs** Include any logs or source code that would be helpful to

diagnose the problem. If including tracebacks, please include the full

traceback. Large logs and files should be attached.

================================================

FILE: .github/ISSUE_TEMPLATE/50-other-issues.md

================================================

---

name: Other Issues

about: Use this template for any other non-support related issues

labels: 'type:others'

---

This template is for miscellaneous issues not covered by the other categories.

================================================

FILE: .github/actions/setup-env/action.yml

================================================

name: Set up environment

description: Set up environment and install package

inputs:

python-version:

default: "3.10"

required: true

package-root-dir:

default: "./"

required: true

runs:

using: composite

steps:

- name: Set up Python ${{ matrix.python-version }}

uses: actions/setup-python@v5

with:

python-version: ${{ inputs.python-version }}

cache-dependency-path: |

${{ inputs.package-root-dir }}/setup.py

- name: Install dependencies

shell: bash

run: |

python -m pip install --upgrade pip

pip install ${{ inputs.package-root-dir }}[test]

================================================

FILE: .github/workflows/build.yml

================================================

name: Build

on:

push:

branches:

- master

pull_request:

branches:

- master

workflow_dispatch:

jobs:

build:

runs-on: ubuntu-latest

strategy:

matrix:

python-version: ["3.9", "3.10"]

steps:

- name: Checkout

uses: actions/checkout@v4

- name: Set up python

uses: actions/setup-python@v5

with:

python-version: ${{ matrix.python-version }}

- name: Install python build dependencies

run: |

python -m pip install --upgrade pip build

- name: Build wheels

run: |

python -m build --wheel --sdist

mkdir wheelhouse

mv dist/* wheelhouse/

- name: List and check wheels

run: |

pip install twine pkginfo>=1.11.0

${{ matrix.ls || 'ls -lh' }} wheelhouse/

twine check wheelhouse/*

- name: Upload wheels

uses: actions/upload-artifact@v4

with:

name: wheels-${{ matrix.python-version }}

path: ./wheelhouse/*

upload_to_pypi:

name: Upload to PyPI

runs-on: ubuntu-latest

if: (github.event_name == 'release' && startsWith(github.ref, 'refs/tags')) || (github.event_name == 'workflow_dispatch')

needs: [build]

environment:

name: pypi

url: https://pypi.org/p/fairness-indicators

permissions:

id-token: write

steps:

- name: Retrieve wheels

uses: actions/download-artifact@v4.1.8

with:

merge-multiple: true

path: wheels

- name: List the build artifacts

run: |

ls -lAs wheels/

- name: Upload to PyPI

uses: pypa/gh-action-pypi-publish@release/v1.12

with:

packages_dir: wheels/

repository_url: https://pypi.org/legacy/

verify_metadata: false

verbose: true

================================================

FILE: .github/workflows/ci-lint.yml

================================================

name: pre-commit

on:

pull_request:

push:

branches: [master]

jobs:

pre-commit:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4.1.7

with:

# Ensure the full history is fetched

# This is required to run pre-commit on a specific set of commits

# TODO: Remove this when all the pre-commit issues are fixed

fetch-depth: 0

- uses: actions/setup-python@v5.1.1

with:

python-version: 3.13

- uses: pre-commit/action@v3.0.1

================================================

FILE: .github/workflows/docs.yml

================================================

name: Deploy docs

on:

workflow_dispatch:

push:

branches:

- 'master'

pull_request:

permissions:

contents: write

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- name: Checkout repo

uses: actions/checkout@v4

- name: Configure Git Credentials

run: |

git config user.name github-actions[bot]

git config user.email 41898282+github-actions[bot]@users.noreply.github.com

if: (github.event_name != 'pull_request')

- name: Set up Python 3.9

uses: actions/setup-python@v5

with:

python-version: '3.9'

cache: 'pip'

cache-dependency-path: |

setup.py

requirements-docs.txt

- name: Save time for cache for mkdocs

run: echo "cache_id=$(date --utc '+%V')" >> $GITHUB_ENV

- name: Caching

uses: actions/cache@v4

with:

key: mkdocs-material-${{ env.cache_id }}

path: .cache

restore-keys: |

mkdocs-material-

- name: Install Dependencies

run: pip install -r requirements-docs.txt

- name: Deploy to GitHub Pages

run: mkdocs gh-deploy --force

if: (github.event_name != 'pull_request')

- name: Build docs to check for errors

run: mkdocs build

if: (github.event_name == 'pull_request')

================================================

FILE: .github/workflows/test.yml

================================================

name: Tests

on:

push:

paths-ignore:

- '**.md'

- 'docs/**'

pull_request:

branches: [ master ]

paths-ignore:

- '**.md'

- 'docs/**'

workflow_dispatch:

jobs:

tests:

if: github.actor != 'copybara-service[bot]'

runs-on: ubuntu-latest

strategy:

matrix:

python-version: ['3.9', '3.10']

package-root-dir: ['./', './tensorboard_plugin']

steps:

- name: Checkout repo

uses: actions/checkout@v4

- name: Set up environment

uses: ./.github/actions/setup-env

with:

python-version: ${{ matrix.python-version }}

package-root-dir: ${{ matrix.package-root-dir }}

- name: Run tests

shell: bash

run: |

cd ${{ matrix.package-root-dir }}

pytest

================================================

FILE: .pre-commit-config.yaml

================================================

# pre-commit is a tool to perform a predefined set of tasks manually and/or

# automatically before git commits are made.

#

# Config reference: https://pre-commit.com/#pre-commit-configyaml---top-level

#

# Common tasks

#

# - Register git hooks: pre-commit install --install-hooks

# - Run on all files: pre-commit run --all-files

#

# These pre-commit hooks are run as CI.

#

# NOTE: if it can be avoided, add configs/args in pyproject.toml or below instead of creating a new `.config.file`.

# https://pre-commit.ci/#configuration

ci:

autoupdate_schedule: monthly

autofix_commit_msg: |

[pre-commit.ci] Apply automatic pre-commit fixes

repos:

# general

- repo: https://github.com/pre-commit/pre-commit-hooks

rev: v4.6.0

hooks:

- id: end-of-file-fixer

exclude: '\.svg$'

- id: trailing-whitespace

exclude: '\.svg$'

- id: check-json

- id: check-yaml

args: [--allow-multiple-documents, --unsafe]

- id: check-toml

- repo: https://github.com/astral-sh/ruff-pre-commit

rev: v0.5.6

hooks:

- id: ruff

args: ["--fix"]

- id: ruff-format

================================================

FILE: CONTRIBUTING.md

================================================

# How to Contribute

We'd love to accept your patches and contributions to this project. There are

just a few small guidelines you need to follow.

## Contributor License Agreement

Contributions to this project must be accompanied by a Contributor License

Agreement. You (or your employer) retain the copyright to your contribution,

this simply gives us permission to use and redistribute your contributions as

part of the project. Head over to to see

your current agreements on file or to sign a new one.

You generally only need to submit a CLA once, so if you've already submitted one

(even if it was for a different project), you probably don't need to do it

again.

## Code reviews

All submissions, including submissions by project members, require review. We

use GitHub pull requests for this purpose. Consult

[GitHub Help](https://help.github.com/articles/about-pull-requests/) for more

information on using pull requests.

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright 2017, The TensorFlow Authors.

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

--------------------------------------------------------------------------------

MIT

The MIT License (MIT)

Copyright (c) 2014-2015, Jon Schlinkert.

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in

all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN

THE SOFTWARE.

--------------------------------------------------------------------------------

BSD-3-Clause

Copyright (c) 2016, Daniel Wirtz All rights reserved.

Redistribution and use in source and binary forms, with or without

modification, are permitted provided that the following conditions are

met:

* Redistributions of source code must retain the above copyright

notice, this list of conditions and the following disclaimer.

* Redistributions in binary form must reproduce the above copyright

notice, this list of conditions and the following disclaimer in the

documentation and/or other materials provided with the distribution.

* Neither the name of its author, nor the names of its contributors

may be used to endorse or promote products derived from this software

without specific prior written permission.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

"AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT

LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR

A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT

OWNER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL,

SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT

LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE,

DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY

THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT

(INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

================================================

FILE: README.md

================================================

# Fairness Indicators

Fairness Indicators is designed to support teams in evaluating, improving, and comparing models for fairness concerns in partnership with the broader Tensorflow toolkit.

The tool is currently actively used internally by many of our products. We would love to partner with you to understand where Fairness Indicators is most useful, and where added functionality would be valuable. Please reach out at tfx@tensorflow.org. You can provide feedback and feature requests [here](https://github.com/tensorflow/fairness-indicators/issues/new/choose).

## Key links

* [Introductory Video](https://www.youtube.com/watch?v=pHT-ImFXPQo)

* [Fairness Indicators Case Study](https://developers.google.com/machine-learning/practica/fairness-indicators?utm_source=github&utm_medium=github&utm_campaign=fi-practicum&utm_term=&utm_content=repo-body)

* [Fairness Indicators Example Colab](https://colab.research.google.com/github/tensorflow/fairness-indicators/blob/master/g3doc/tutorials/Fairness_Indicators_Example_Colab.ipynb)

* [Pandas DataFrame to Fairness Indicators Case Study](https://colab.research.google.com/github/tensorflow/fairness-indicators/blob/master/g3doc/tutorials/Fairness_Indicators_Pandas_Case_Study.ipynb)

* [Fairness Indicators: Thinking about Fairness Evaluation](https://github.com/tensorflow/fairness-indicators/blob/master/g3doc/guide/guidance.md)

## What is Fairness Indicators?

Fairness Indicators enables easy computation of commonly-identified fairness metrics for **binary** and **multiclass** classifiers.

Many existing tools for evaluating fairness concerns don’t work well on large-scale datasets and models. At Google, it is important for us to have tools that can work on billion-user systems. Fairness Indicators will allow you to evaluate fairenss metrics across any size of use case.

In particular, Fairness Indicators includes the ability to:

* Evaluate the distribution of datasets

* Evaluate model performance, sliced across defined groups of users

* Feel confident about your results with confidence intervals and evals at multiple thresholds

* Dive deep into individual slices to explore root causes and opportunities for improvement

This [case study](https://developers.google.com/machine-learning/practica/fairness-indicators?utm_source=github&utm_medium=github&utm_campaign=fi-practicum&utm_term=&utm_content=repo-body), complete with [videos](https://www.youtube.com/watch?v=pHT-ImFXPQo) and programming exercises, demonstrates how Fairness Indicators can be used on one of your own products to evaluate fairness concerns over time.

[](http://www.youtube.com/watch?v=pHT-ImFXPQo "")

## [Installation](https://pypi.org/project/fairness-indicators/)

`pip install fairness-indicators`

The pip package includes:

* [**Tensorflow Data Validation (TFDV)**](https://github.com/tensorflow/data-validation) - analyze the distribution of your dataset

* [**Tensorflow Model Analysis (TFMA)**](https://github.com/tensorflow/model-analysis) - analyze model performance

* **Fairness Indicators** - an addition to TFMA that adds fairness metrics and easy performance comparison across slices

* **The What-If Tool (WIT)**](https://github.com/PAIR-code/what-if-tool - an interactive visual interface designed to probe your models better

### Nightly Packages

Fairness Indicators also hosts nightly packages at

https://pypi-nightly.tensorflow.org on Google Cloud. To install the latest

nightly package, please use the following command:

```bash

pip install --extra-index-url https://pypi-nightly.tensorflow.org/simple fairness-indicators

```

This will install the nightly packages for the major dependencies of Fairness

Indicators such as TensorFlow Data Validation (TFDV), TensorFlow Model Analysis

(TFMA).

## How can I use Fairness Indicators?

Tensorflow Models

* Access Fairness Indicators as part of the Evaluator component in Tensorflow Extended \[[docs](https://www.tensorflow.org/tfx/guide/evaluator)]

* Access Fairness Indicators in Tensorboard when evaluating other real-time metrics \[[docs](https://github.com/tensorflow/tensorboard/blob/master/docs/fairness-indicators.md)]

Not using existing Tensorflow tools? No worries!

* Download the Fairness Indicators pip package, and use Tensorflow Model Analysis as a standalone tool \[[docs](https://www.tensorflow.org/tfx/guide/fairness_indicators)]

* Model Agnostic TFMA enables you to compute Fairness Indicators based on the output of any model \[[docs](https://www.tensorflow.org/tfx/guide/fairness_indicators)]

## [Examples](https://github.com/tensorflow/fairness-indicators/tree/master/g3doc/tutorials) directory contains several examples.

* [Fairness_Indicators_Example_Colab.ipynb](https://github.com/tensorflow/fairness-indicators/blob/master/g3doc/tutorials/Fairness_Indicators_Example_Colab.ipynb) gives an overview of Fairness Indicators in [TensorFlow Model Analysis](https://www.tensorflow.org/tfx/guide/tfma) and how to use it with a real dataset. This notebook also goes over [TensorFlow Data Validation](https://www.tensorflow.org/tfx/data_validation/get_started) and [What-If Tool](https://pair-code.github.io/what-if-tool/), two tools for analyzing TensorFlow models that are packaged with Fairness Indicators.

* [Fairness_Indicators_on_TF_Hub.ipynb](https://github.com/tensorflow/fairness-indicators/blob/master/g3doc/tutorials/Fairness_Indicators_on_TF_Hub_Text_Embeddings.ipynb) demonstrates how to use Fairness Indicators to compare models trained on different [text embeddings](https://en.wikipedia.org/wiki/Word_embedding). This notebook uses text embeddings from [TensorFlow Hub](https://www.tensorflow.org/hub), TensorFlow's library to publish, discover, and reuse model components.

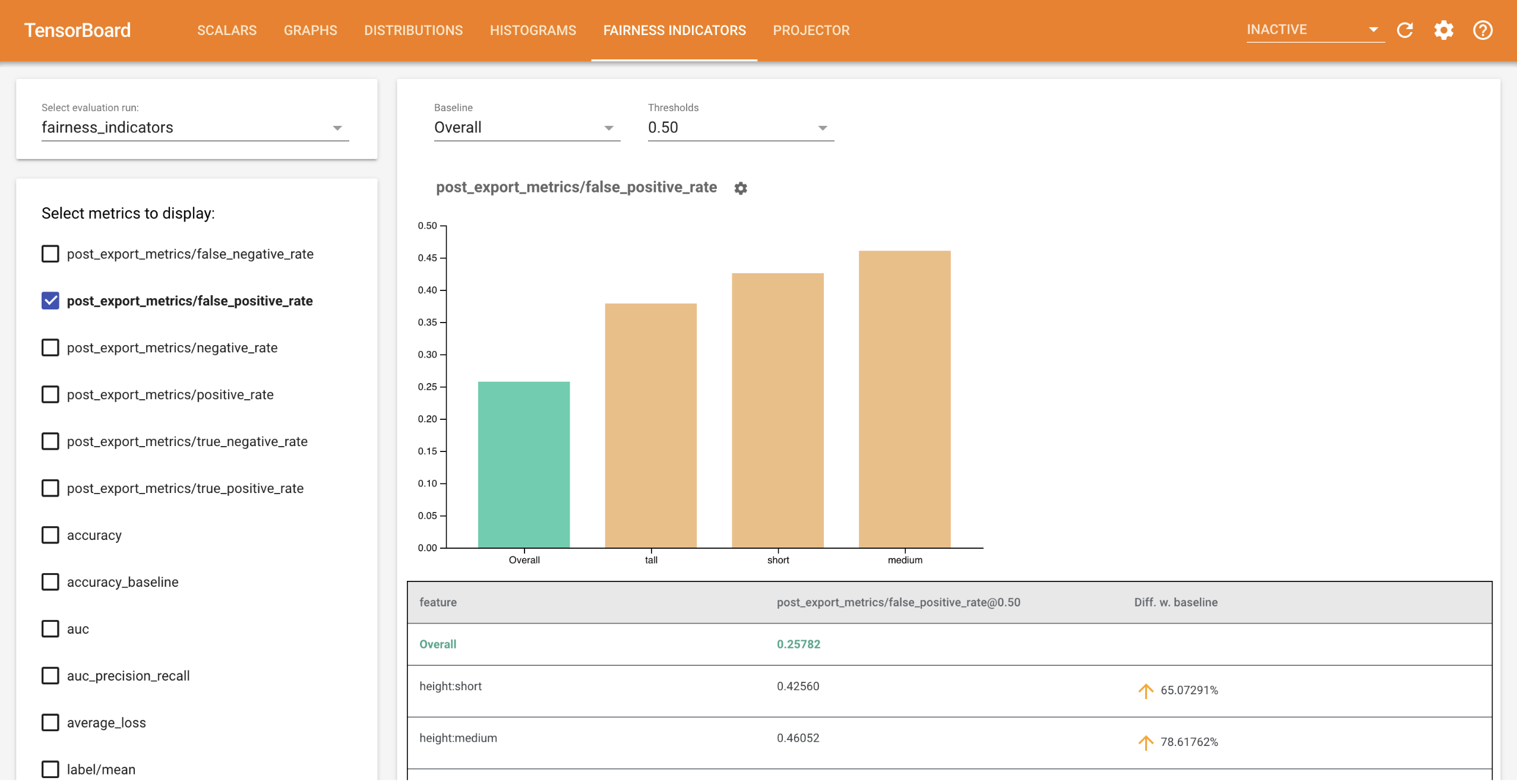

* [Fairness_Indicators_TensorBoard_Plugin_Example_Colab.ipynb](https://github.com/tensorflow/fairness-indicators/blob/master/g3doc/tutorials/Fairness_Indicators_TensorBoard_Plugin_Example_Colab.ipynb)

demonstrates how to visualize Fairness Indicators in TensorBoard.

## More questions?

For more information on how to think about fairness evaluation in the context of your use case, see [this link](https://github.com/tensorflow/fairness-indicators/blob/master/g3doc/guide/guidance.md).

If you have found a bug in Fairness Indicators, please file a [GitHub issue](https://github.com/tensorflow/fairness-indicators/issues/new/choose) with as much supporting information as you can provide.

## Compatible versions

The following table shows the package versions that are

compatible with each other. This is determined by our testing framework, but

other *untested* combinations may also work.

|fairness-indicators | tensorflow | tensorflow-data-validation | tensorflow-model-analysis |

|-------------------------------------------------------------------------------------------|--------------------|----------------------------|---------------------------|

|[GitHub master](https://github.com/tensorflow/fairness-indicators/blob/master/RELEASE.md) | nightly (1.x/2.x) | 1.17.0 | 0.48.0 |

|[v0.48.0](https://github.com/tensorflow/fairness-indicators/blob/v0.48.0/RELEASE.md) | 2.17 | 1.17.0 | 0.48.0 |

|[v0.47.0](https://github.com/tensorflow/fairness-indicators/blob/v0.47.0/RELEASE.md) | 2.16 | 1.16.1 | 0.47.1 |

|[v0.46.0](https://github.com/tensorflow/fairness-indicators/blob/v0.44.0/RELEASE.md) | 2.15 | 1.15.1 | 0.46.0 |

|[v0.44.0](https://github.com/tensorflow/fairness-indicators/blob/v0.44.0/RELEASE.md) | 2.12 | 1.13.0 | 0.44.0 |

|[v0.43.0](https://github.com/tensorflow/fairness-indicators/blob/v0.43.0/RELEASE.md) | 2.11 | 1.12.0 | 0.43.0 |

|[v0.42.0](https://github.com/tensorflow/fairness-indicators/blob/v0.42.0/RELEASE.md) | 1.15.5 / 2.10 | 1.11.0 | 0.42.0 |

|[v0.41.0](https://github.com/tensorflow/fairness-indicators/blob/v0.41.0/RELEASE.md) | 1.15.5 / 2.9 | 1.10.0 | 0.41.0 |

|[v0.40.0](https://github.com/tensorflow/fairness-indicators/blob/v0.40.0/RELEASE.md) | 1.15.5 / 2.9 | 1.9.0 | 0.40.0 |

|[v0.39.0](https://github.com/tensorflow/fairness-indicators/blob/v0.39.0/RELEASE.md) | 1.15.5 / 2.8 | 1.8.0 | 0.39.0 |

|[v0.38.0](https://github.com/tensorflow/fairness-indicators/blob/v0.38.0/RELEASE.md) | 1.15.5 / 2.8 | 1.7.0 | 0.38.0 |

|[v0.37.0](https://github.com/tensorflow/fairness-indicators/blob/v0.37.0/RELEASE.md) | 1.15.5 / 2.7 | 1.6.0 | 0.37.0 |

|[v0.36.0](https://github.com/tensorflow/fairness-indicators/blob/v0.36.0/RELEASE.md) | 1.15.2 / 2.7 | 1.5.0 | 0.36.0 |

|[v0.35.0](https://github.com/tensorflow/fairness-indicators/blob/v0.35.0/RELEASE.md) | 1.15.2 / 2.6 | 1.4.0 | 0.35.0 |

|[v0.34.0](https://github.com/tensorflow/fairness-indicators/blob/v0.34.0/RELEASE.md) | 1.15.2 / 2.6 | 1.3.0 | 0.34.0 |

|[v0.33.0](https://github.com/tensorflow/fairness-indicators/blob/v0.33.0/RELEASE.md) | 1.15.2 / 2.5 | 1.2.0 | 0.33.0 |

|[v0.30.0](https://github.com/tensorflow/fairness-indicators/blob/v0.30.0/RELEASE.md) | 1.15.2 / 2.4 | 0.30.0 | 0.30.0 |

|[v0.29.0](https://github.com/tensorflow/fairness-indicators/blob/v0.29.0/RELEASE.md) | 1.15.2 / 2.4 | 0.29.0 | 0.29.0 |

|[v0.28.0](https://github.com/tensorflow/fairness-indicators/blob/v0.28.0/RELEASE.md) | 1.15.2 / 2.4 | 0.28.0 | 0.28.0 |

|[v0.27.0](https://github.com/tensorflow/fairness-indicators/blob/v0.27.0/RELEASE.md) | 1.15.2 / 2.4 | 0.27.0 | 0.27.0 |

|[v0.26.0](https://github.com/tensorflow/fairness-indicators/blob/v0.26.0/RELEASE.md) | 1.15.2 / 2.3 | 0.26.0 | 0.26.0 |

|[v0.25.0](https://github.com/tensorflow/fairness-indicators/blob/v0.25.0/RELEASE.md) | 1.15.2 / 2.3 | 0.25.0 | 0.25.0 |

|[v0.24.0](https://github.com/tensorflow/fairness-indicators/blob/v0.24.0/RELEASE.md) | 1.15.2 / 2.3 | 0.24.0 | 0.24.0 |

|[v0.23.0](https://github.com/tensorflow/fairness-indicators/blob/v0.23.0/RELEASE.md) | 1.15.2 / 2.3 | 0.23.0 | 0.23.0 |

================================================

FILE: RELEASE.md

================================================

# Current Version (Still in Development)

## Major Features and Improvements

## Bug Fixes and Other Changes

## Breaking Changes

## Deprecations

# Version 0.48.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow>=2.17,<2.18`.

* Depends on `tensorflow-data-validation>=1.17.0,<1.18.0`.

* Depends on `tensorflow-model-analysis>=0.48,<0.49`.

* Depends on `protobuf>=4.21.6,<6.0.0`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.47.0

## Major Features and Improvements

* Add fairness indicator metrics in the third_party library.

## Bug Fixes and Other Changes

* Depends on `tensorflow>=2.16,<2.17`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.46.0

## Major Features and Improvements

* Update example model to use Keras models instead of estimators.

## Bug Fixes and Other Changes

* N/A

## Breaking Changes

* N/A

## Deprecations

* Deprecated python 3.8 support

# Version 0.44.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow>=2.12.0,<2.13`.

* Depends on `tensorflow-data-validation>=1.13.0,<1.14.0`.

* Depends on `tensorflow-model-analysis>=0.44,<0.45`.

* Depends on `protobuf>=3.20.3,<5`.

## Breaking Changes

* N/A

## Deprecations

* Deprecating python3.7 support.

# Version 0.43.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow>=2.11,<2.12`

* Depends on `tensorflow-data-validation>=1.11.0,<1.12.0`.

* Depends on `tensorflow-model-analysis>=0.42,<0.43`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.42.0

## Major Features and Improvements

* This is the last version that supports TensorFlow 1.15.x. TF 1.15.x support

will be removed in the next version. Please check the

[TF2 migration guide](https://www.tensorflow.org/guide/migrate) to migrate

to TF2.

## Bug Fixes and Other Changes

* N/A

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.41.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow-data-validation>=1.10.0,<1.11.0`.

* Depends on `tensorflow-model-analysis>=0.41,<0.42`.

* Depends on `tensorflow>=1.15.5,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,!=2.6.*,!=2.7.*,!=2.8.*,!=2.9.*,<3`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.40.0

## Major Features and Improvements

* Allow counterfactual metrics to be calculated from predictions instead of

only features.

* Add precision and recall to the set of fairness indicators metrics.

## Bug Fixes and Other Changes

* Depends on `tensorflow-data-validation>=1.9.0,<1.10.0`.

* Depends on `tensorflow-model-analysis>=0.40,<0.41`.

* Depends on `tensorflow>=1.15.5,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,!=2.6.*,!=2.7.*,!=2.8.*,<3`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.39.0

## Major Features and Improvements

* Allow counterfactual metrics to be calculated from predictions instead of

only features.

* Add precision and recall to the set of fairness indicators metrics.

## Bug Fixes and Other Changes

* Depends on `tensorflow-data-validation>=1.8.0,<1.9.0`.

* Depends on `tensorflow-model-analysis>=0.39,<0.40`.

* Depends on `tensorflow>=1.15.5,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,!=2.6.*,!=2.7.*,<3`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.38.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow-data-validation>=1.7.0,<1.8.0`.

* Depends on `tensorflow-model-analysis>=0.38,<0.39`.

* Depends on `tensorflow>=1.15.5,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,!=2.6.*,!=2.7.*,<3`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.37.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Fix Fairness Indicators UI bug with overlapping charts when comparing EvalResults

* Depends on `tensorflow-data-validation>=1.6.0,<1.7.0`.

* Depends on `tensorflow-model-analysis>=0.37,<0.38`.

* Depends on `tensorflow>=1.15.5,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,!=2.6.*,<3`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.36.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow-data-validation>=1.5.0,<1.6.0`.

* Depends on `tensorflow-model-analysis>=0.36,<0.37`.

* Depends on `tensorflow>=1.15.2,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,!=2.6.*,<3`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.35.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow-data-validation>=1.4.0,<1.5.0`.

* Depends on `tensorflow-model-analysis>=0.35,<0.36`.

## Breaking Changes

* N/A

## Deprecations

* Deprecating python 3.6 support.

# Version 0.34.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow>=1.15.2,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3`.

* Depends on `tensorflow-data-validation>=1.3.0,<1.4.0`.

* Depends on `tensorflow-model-analysis>=0.34,<0.35`.

## Breaking Changes

* Drop Py2 support.

## Deprecations

* N/A

# Version 0.33.0

## Major Features and Improvements

* Porting Counterfactual Fairness metrics into FI UI.

## Bug Fixes and Other Changes

* Improve rendering of HTML stubs for Fairness Indicators UI

* Depends on `tensorflow>=1.15.2,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,<3`.

* Depends on `protobuf>=3.13,<4`.

* Depends on `tensorflow-data-validation>=1.2.0,<1.3.0`.

* Depends on `tensorflow-model-analysis>=0.33,<0.34`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.30.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow>=1.15.2,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.5.*,<3`.

* Depends on `tensorflow-data-validation>=0.30,<0.31`.

* Depends on `tensorflow-model-analysis>=0.30,<0.31`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.29.0

## Major Features and Improvements

* N/A

## Bug Fixes and Other Changes

* Depends on `tensorflow-data-validation>=0.29,<0.30`.

* Depends on `tensorflow-model-analysis>=0.29,<0.30`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.28.0

## Major Features and Improvements

* In Fairness Indicators UI, sort metrics list to show common metrics first

* For lift, support negative values in bar chart.

* Adding two new metrics - Flip Count and Flip Rate to evaluate Counterfactual

Fairness.

* Add Lift metrics under addons/fairness.

* Porting Lift metrics into FI UI.

## Bug Fixes and Other Changes

* Depends on `tensorflow-data-validation>=0.28,<0.29`.

* Depends on `tensorflow-model-analysis>=0.28,<0.29`.

## Breaking Changes

* N/A

## Deprecations

* N/A

# Version 0.27.0

## Major Features and Improvements

* N/A

## Bug fixes and other changes

* Added test cases for DLVM testing.

* Move the util files to a seperate folder.

* Add `tensorflow-hub` as a dependency because it's used inside the

example_model.py.

* Depends on `tensorflow>=1.15.2,!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,<3`.

* Depends on `tensorflow-data-validation>=0.27,<0.28`.

* Depends on `tensorflow-model-analysis>=0.27,<0.28`.

## Breaking changes

* N/A

## Deprecations

* N/A

# Version 0.26.0

## Major Features and Improvements

* Sorting fairness metrics table rows to keep slices in order with slice drop

down in the UI.

## Bug fixes and other changes

* Update fairness_indicators.documentation.examples.util to TensorFlow 2.0.

* Table now displays 3 decimal places instead of 2.

* Fix the bug that metric list won't refresh if the input eval result changed.

* Remove d3-tip dependency.

* Depends on `tensorflow>=1.15.2,!=2.0.*,!=2.1.*,!=2.2.*,!=2.4.*,<3`.

* Depends on `tensorflow-data-validation>=0.26,<0.27`.

* Depends on `tensorflow-model-analysis>=0.26,<0.27`.

## Breaking changes

* N/A

## Deprecations

* N/A

# Version 0.25.0

## Major Features and Improvements

* Add workflow buttons to Fairness Indicators UI, providing tutorial on how to

configure metrics and parameters, and how to interpret the results.

* Add metric definitions as tooltips in the metric selector UI

* Removing prefix from metric names in graph titles in UI.

* From this release Fairness Indicators will also be hosting nightly packages

on https://pypi-nightly.tensorflow.org. To install the nightly package use

the following command:

```

pip install --extra-index-url https://pypi-nightly.tensorflow.org/simple fairness-indicators

```

Note: These nightly packages are unstable and breakages are likely to

happen. The fix could often take a week or more depending on the complexity

involved for the wheels to be available on the PyPI cloud service. You can

always use the stable version of Fairness Indicators available on PyPI by

running the command `pip install fairness-indicators` .

## Bug fixes and other changes

* Update table colors.

* Modify privacy note in Fairness Indicators UI.

* Depends on `tensorflow-data-validation>=0.25,<0.26`.

* Depends on `tensorflow-model-analysis>=0.25,<0.26`.

## Breaking changes

* N/A

## Deprecations

* N/A

# Version 0.24.0

## Major Features and Improvements

* Made the Fairness Indicators UI thresholds drop down list sorted.

## Bug fixes and other changes

* Fix in the issue where the Sort menu is not hidden when there is no model

comparison.

* Depends on `tensorflow-data-validation>=0.24,<0.25`.

* Depends on `tensorflow-model-analysis>=0.24,<0.25`.

## Breaking changes

* N/A

## Deprecations

* Deprecated Py3.5 support.

# Version 0.23.1

## Major Features and Improvements

* N/A

## Bug fixes and other changes

* Fix broken import path in Fairness_Indicators_Example_Colab and Fairness_Indicators_on_TF_Hub_Text_Embeddings.

## Breaking changes

* N/A

## Deprecations

* N/A

# Version 0.23.0

## Major Features and Improvements

* N/A

## Bug fixes and other changes

* Depends on `tensorflow>=1.15.2,!=2.0.*,!=2.1.*,!=2.2.*,<3`.

* Depends on `tensorflow-data-validation>=0.23,<0.24`.

* Depends on `tensorflow-model-analysis>=0.23,<0.24`.

## Breaking changes

* N/A

## Deprecations

* Deprecating Py2 support.

* Note: We plan to drop py3.5 support in the next release.

================================================

FILE: docs/__init__.py

================================================

# Copyright 2019 Google LLC. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

================================================

FILE: docs/guide/_index.yaml

================================================

book_path: /responsible_ai/_book.yaml

project_path: /responsible_ai/_project.yaml

title: Fairness Indicators

landing_page:

custom_css_path: /site-assets/css/style.css

nav: left

meta_tags:

- name: description

content: >

Fairness Indicators tool suite for TensorFlow.

rows:

- classname: devsite-landing-row-100

- heading: Fairness Indicators

options:

- description-50

items:

- description: >

Fairness Indicators is a library that enables easy computation of commonly-identified

fairness metrics for binary and multiclass classifiers. With the Fairness Indicators tool

suite, you can:

Compute commonly-identified fairness metrics for classification models

Compare model performance across subgroups to a baseline, or to other models

Use confidence intervals to surface statistically significant disparities

- classname: devsite-landing-row-cards

items:

- heading: "ML Practicum: Fairness in Perspective API using Fairness Indicators"

image_path: /responsible_ai/fairness_indicators/images/mlpracticum.png

path: "https://developers.google.com/machine-learning/practica/fairness-indicators?utm_source=github&utm_medium=github&utm_campaign=fi-practicum&utm_term=&utm_content=repo-body"

buttons:

- label: "Try the Case Study"

path: "https://developers.google.com/machine-learning/practica/fairness-indicators?utm_source=github&utm_medium=github&utm_campaign=fi-practicum&utm_term=&utm_content=repo-body"

- heading: "Fairness Indicators on the TensorFlow blog"

image_path: /resources/images/tf-logo-card-16x9.png

path: https://blog.tensorflow.org/2019/12/fairness-indicators-fair-ML-systems.html

buttons:

- label: "Read on the TensorFlow blog"

path: https://blog.tensorflow.org/2019/12/fairness-indicators-fair-ML-systems.html

- heading: "Fairness Indicators on GitHub"

image_path: /resources/images/github-card-16x9.png

path: https://github.com/tensorflow/fairness-indicators

buttons:

- label: "View on GitHub"

path: https://github.com/tensorflow/fairness-indicators

- classname: devsite-landing-row-cards

items:

- heading: "Fairness Indicators on the Google AI Blog"

image_path: /responsible_ai/fairness_indicators/images/googleai.png

path: https://ai.googleblog.com/2019/12/fairness-indicators-scalable.html

buttons:

- label: "Read on Google AI blog"

path: https://ai.googleblog.com/2019/12/fairness-indicators-scalable.html

- heading: "Fairness Indicators at Google I/O"

path: https://www.youtube.com/watch?v=6CwzDoE8J4M

youtube_id: 6CwzDoE8J4M?rel=0&show_info=0

buttons:

- label: "Watch the video"

path: https://www.youtube.com/watch?v=6CwzDoE8J4M

================================================

FILE: docs/guide/_toc.yaml

================================================

toc:

- title: Overview

path: /responsible_ai/fairness_indicators/guide/

- title: Thinking about fairness evaluation

path: /responsible_ai/fairness_indicators/guide/guidance

================================================

FILE: docs/guide/guidance.md

================================================

# Fairness Indicators: Thinking about Fairness Evaluation

Fairness Indicators is a useful tool for evaluating _binary_ and _multi-class_

classifiers for fairness. Eventually, we hope to expand this tool, in

partnership with all of you, to evaluate even more considerations.

Keep in mind that quantitative evaluation is only one part of evaluating a

broader user experience. Start by thinking about the different _contexts_

through which a user may experience your product. Who are the different types of

users your product is expected to serve? Who else may be affected by the

experience?

When considering AI's impact on people, it is important to always remember that

human societies are extremely complex! Understanding people, and their social

identities, social structures and cultural systems are each huge fields of open

research in their own right. Throw in the complexities of cross-cultural

differences around the globe, and getting even a foothold on understanding

societal impact can be challenging. Whenever possible, it is recommended you

consult with appropriate domain experts, which may include social scientists,

sociolinguists, and cultural anthropologists, as well as with members of the

populations on which technology will be deployed.

A single model, for example, the toxicity model that we leverage in the

[example colab](../../tutorials/Fairness_Indicators_Example_Colab),

can be used in many different contexts. A toxicity model deployed on a website

to filter offensive comments, for example, is a very different use case than the

model being deployed in an example web UI where users can type in a sentence and

see what score the model gives. Depending on the use case, and how users

experience the model prediction, your product will have different risks,

effects, and opportunities and you may want to evaluate for different fairness

concerns.

The questions above are the foundation of what ethical considerations, including

fairness, you may want to take into account when designing and developing your

ML-based product. These questions also motivate which metrics and which groups

of users you should use the tool to evaluate.

Before diving in further, here are three recommended resources for getting

started:

* **[The People + AI Guidebook](https://pair.withgoogle.com/) for

Human-centered AI design:** This guidebook is a great resource for the

questions and aspects to keep in mind when designing a machine-learning

based product. While we created this guidebook with designers in mind, many

of the principles will help answer questions like the one posed above.

* **[Our Fairness Lessons Learned](https://www.youtube.com/watch?v=6CwzDoE8J4M):**

This talk at Google I/O discusses lessons we have learned in our goal to

build and design inclusive products.

* **[ML Crash Course: Fairness](https://developers.google.com/machine-learning/crash-course/fairness/video-lecture):**

The ML Crash Course has a 70 minute section dedicated to identifying and

evaluating fairness concerns

So, why look at individual slices? Evaluation over individual slices is

important as strong overall metrics can obscure poor performance for certain

groups. Similarly, performing well for a certain metric (accuracy, AUC) doesn’t

always translate to acceptable performance for other metrics (false positive

rate, false negative rate) that are equally important in assessing opportunity

and harm for users.

The below sections will walk through some of the aspects to consider.

## Which groups should I slice by?

In general, a good practice is to slice by as many groups as may be affected by

your product, since you never know when performance might differ for one of the

other. However, if you aren’t sure, think about the different users who may be

engaging with your product, and how they might be affected. Consider,

especially, slices related to sensitive characteristics such as race, ethnicity,

gender, nationality, income, sexual orientation, and disability status.

**What if I don’t have data labeled for the slices I want to investigate?**

Good question. We know that many datasets don’t have ground-truth labels for

individual identity attributes.

If you find yourself in this position, we recommend a few approaches:

1. Identify if there _are_ attributes that you have that may give you some

insight into the performance across groups. For example, _geography_ while

not equivalent to ethnicity & race, may help you uncover any disparate

patterns in performance

1. Identify if there are representative public datasets that might map well to

your problem. You can find a range of diverse and inclusive datasets on the

[Google AI site](https://ai.google/responsibilities/responsible-ai-practices/?category=fairness),

which include

[Project Respect](https://www.blog.google/technology/ai/fairness-matters-promoting-pride-and-respect-ai/),

[Inclusive Images](https://www.kaggle.com/c/inclusive-images-challenge), and

[Open Images Extended](https://ai.google/tools/datasets/open-images-extended-crowdsourced/),

among others.

1. Leverage rules or classifiers, when relevant, to label your data with

objective surface-level attributes. For example, you can label text as to

whether or not there is an identity term _in_ the sentence. Keep in mind

that classifiers have their own challenges, and if you’re not careful, may

introduce another layer of bias as well. Be clear about what your classifier

is actually classifying. For

example, an age classifier on images is in fact classifying _perceived age_.

Additionally, when possible, leverage surface-level attributes that _can_ be

objectively identified in the data. For example, it is ill-advised to build

an image classifier for race or ethnicity, because these are not visual

traits that can be defined in an image. A classifier would likely pick up on

proxies or stereotypes. Instead, building a classifier for skin tone may be

a more appropriate way to label and evaluate an image. Lastly, ensure high

accuracy for classifiers labeling such attributes.

1. Find more representative data that is labeled

**Always make sure to evaluate on multiple, diverse datasets.**

If your evaluation data is not adequately representative of your user base, or

the types of data likely to be encountered, you may end up with deceptively good

fairness metrics. Similarly, high model performance on one dataset doesn’t

guarantee high performance on others.

**Keep in mind subgroups aren’t always the best way to classify individuals.**

People are multidimensional and belong to more than one group, even within a

single dimension -- consider someone who is multiracial, or belongs to multiple

racial groups. Also, while overall metrics for a given racial group may look

equitable, particular interactions, such as race and gender together may show

unintended bias. Moreover, many subgroups have fuzzy boundaries which are

constantly being redrawn.

**When have I tested enough slices, and how do I know which slices to test?**

We acknowledge that there are a vast number of groups or slices that may be

relevant to test, and when possible, we recommend slicing and evaluating a

diverse and wide range of slices and then deep-diving where you spot

opportunities for improvement. It is also important to acknowledge that even

though you may not see concerns on slices you have tested, that doesn’t imply

that your product works for _all_ users, and getting diverse user feedback and

testing is important to ensure that you are continually identifying new

opportunities.

To get started, we recommend thinking through your particular use case and the

different ways users may engage with your product. How might different users

have different experiences? What does that mean for slices you should evaluate?

Collecting feedback from diverse users may also highlight potential slices to

prioritize.

## Which metrics should I choose?

When selecting which metrics to evaluate for your system, consider who will be

experiencing your model, how it will be experienced, and the effects of that

experience.

For example, how does your model give people more dignity or autonomy, or

positively impact their emotional, physical or financial wellbeing? In contrast,

how could your model’s predictions reduce people's dignity or autonomy, or

negatively impact their emotional, physical or financial wellbeing?

**In general, we recommend slicing _all your existing performance metrics as

good practice. We also recommend evaluating your metrics across

multiple thresholds_** in order

to understand how the threshold can affect the performance for different groups.

In addition, if there is a predicted label which is uniformly "good" or “bad”,

then consider reporting (for each subgroup) the rate at which that label is

predicted. For example, a “good” label would be a label whose prediction grants

a person access to some resource, or enables them to perform some action.

## Critical fairness metrics for classification

When thinking about a classification model, think about the effects of _errors_

(the differences between the actual “ground truth” label, and the label from the

model). If some errors may pose more opportunity or harm to your users, make

sure you evaluate the rates of these errors across groups of users. These error

rates are defined below, in the metrics currently supported by the Fairness

Indicators beta.

**Over the course of the next year, we hope to release case studies of different

use cases and the metrics associated with these so that we can better highlight

when different metrics might be most appropriate.**

**Metrics available today in Fairness Indicators**

Note: There are many valuable fairness metrics that are not currently supported

in the Fairness Indicators beta. As we continue to add more metrics, we will

continue to add guidance for these metrics, here. Below, you can access

instructions to add your own metrics to Fairness Indicators. Additionally,

please reach out to [tfx@tensorflow.org](mailto:tfx@tensorflow.org) if there are

metrics that you would like to see. We hope to partner with you to build this

out further.

**Positive Rate / Negative Rate**

* _Definition:_ The percentage

of data points that are classified as positive or negative, independent of

ground truth

* _Relates to:_ Demographic

Parity and Equality of Outcomes, when equal across subgroups

* _When to use this metric:_

Fairness use cases where having equal final percentages of groups is

important

**True Positive Rate / False Negative Rate**

* _Definition:_ The percentage

of positive data points (as labeled in the ground truth) that are

_correctly_ classified as positive, or the percentage of positive data

points that are _incorrectly_ classified as negative

* _Relates to:_ Equality of

Opportunity (for the positive class), when equal across subgroups

* _When to use this metric:_

Fairness use cases where it is important that the same % of qualified

candidates are rated positive in each group. This is most commonly

recommended in cases of classifying positive outcomes, such as loan

applications, school admissions, or whether content is kid-friendly

**True Negative Rate / False Positive Rate**

* _Definition:_ The percentage

of negative data points (as labeled in the ground truth) that are correctly

classified as negative, or the percentage of negative data points that are

incorrectly classified as positive

* _Relates to:_ Equality of

Opportunity (for the negative class), when equal across subgroups

* _When to use this metric:_

Fairness use cases where error rates (or misclassifying something as

positive) are more concerning than classifying the positives. This is most

common in abuse cases, where _positives_ often lead to negative actions.

These are also important for Facial Analysis Technologies such as face

detection or face attributes

Note: When both “positive” and “negative” mistakes are equally important, the

metric is called “equality of

odds”. This can be measured by

evaluating and aiming for equality across both the TNR & FNR, or both the TPR &

FPR. For example, an app that counts how many cars go past a stop sign is

roughly equally bad whether or not it accidentally includes an extra car (a

false positive) or accidentally excludes a car (a false negative).

**Accuracy & AUC**

* _Relates to:_ Predictive

Parity, when equal across subgroups

* _When to use these metrics:_

Cases where precision of the task is most critical (not necessarily in a

given direction), such as face identification or face clustering

**False Discovery Rate**

* _Definition:_ The percentage

of negative data points (as labeled in the ground truth) that are

incorrectly classified as positive out of all data points classified as

positive. This is also the inverse of PPV

* _Relates to:_ Predictive

Parity (also known as Calibration), when equal across subgroups

* _When to use this metric:_

Cases where the fraction of correct positive predictions should be equal

across subgroups

**False Omission Rate**

* _Definition:_ The percentage

of positive data points (as labeled in the ground truth) that are

incorrectly classified as negative out of all data points classified as

negative. This is also the inverse of NPV

* _Relates to:_ Predictive

Parity (also known as Calibration), when equal across subgroups

* _When to use this metric:_

Cases where the fraction of correct negative predictions should be equal

across subgroups

Note: When used together, False Discovery Rate and False Omission Rate relate to

Conditional Use Accuracy Equality, when FDR and FOR are both equal across

subgroups. FDR and FOR are also similar to FPR and FNR, where FDR/FOR compare

FP/FN to predicted negative/positive data points, and FPR/FNR compare FP/FN to

ground truth negative/positive data points. FDR/FOR can be used instead of

FPR/FNR when predictive parity is more critical than equality of opportunity.

**Overall Flip Rate / Positive to Negative Prediction Flip Rate / Negative to

Positive Prediction Flip Rate**

* *Definition:* The

probability that the classifier gives a different prediction if the identity

attribute in a given feature were changed.

* *Relates to:* Counterfactual

fairness

* *When to use this metric:*

When determining whether the model’s prediction changes when the sensitive

attributes referenced in the example is removed or replaced. If it does,

consider using the Counterfactual Logit Pairing technique within the

Tensorflow Model Remediation library.

**Flip Count / Positive to Negative Prediction Flip Count / Negative to Positive

Prediction Flip Count** *

* *Definition:* The number of

times the classifier gives a different prediction if the identity term in a

given example were changed.

* *Relates to:* Counterfactual

fairness

* *When to use this metric:*

When determining whether the model’s prediction changes when the sensitive

attributes referenced in the example is removed or replaced. If it does,

consider using the Counterfactual Logit Pairing technique within the

Tensorflow Model Remediation library.

**Examples of which metrics to select**

* _Systematically failing to detect faces in a camera app can lead to a

negative user experience for certain user groups._ In this case, false

negatives in a face detection system may lead to product failure, while a

false positive (detecting a face when there isn’t one) may pose a slight

annoyance to the user. Thus, evaluating and minimizing the false negative

rate is important for this use case.

* _Unfairly marking text comments from certain people as “spam” or “high

toxicity” in a moderation system leads to certain voices being silenced._ On

one hand, a high false positive rate leads to unfair censorship. On the

other, a high false negative rate could lead to a proliferation of toxic

content from certain groups, which may both harm the user and constitute a

representational harm for those groups. Thus, both metrics are important to

consider, in addition to metrics which take into account all types of errors

such as accuracy or AUC.

**Don’t see the metrics you’re looking for?**

Follow the documentation

[here](https://tensorflow.github.io/model-analysis/post_export_metrics/)

to add you own custom metric.

## Final notes

**A gap in metric between two groups can be a sign that your model may have

unfair skews**. You should interpret your results according to your use case.

However, the first sign that you may be treating one set of users _unfairly_ is

when the metrics between that set of users and your overall are significantly

different. Make sure to account for confidence intervals when looking at these

differences. When you have too few samples in a particular slice, the difference

between metrics may not be accurate.

**Achieving equality across groups on Fairness Indicators doesn’t mean the model

is fair.** Systems are highly complex, and achieving equality on one (or even

all) of the provided metrics can’t guarantee Fairness.

**Fairness evaluations should be run throughout the development process and

post-launch (not the day before launch).** Just like improving your product is

an ongoing process and subject to adjustment based on user and market feedback,

making your product fair and equitable requires ongoing attention. As different

aspects of the model changes, such as training data, inputs from other models,

or the design itself, fairness metrics are likely to change. “Clearing the bar”

once isn’t enough to ensure that all of the interacting components have remained

intact over time.

**Adversarial testing should be performed for rare, malicious examples.**

Fairness evaluations aren’t meant to replace adversarial testing. Additional

defense against rare, targeted examples is crucial as these examples probably

will not manifest in training or evaluation data.

================================================

FILE: docs/index.md

================================================

# Fairness Indicators

/// html | div[style='float: left; width: 50%;']

Fairness Indicators is a library that enables easy computation of commonly-identified fairness metrics for binary and multiclass classifiers. With the Fairness Indicators tool suite, you can:

- Compute commonly-identified fairness metrics for classification models

- Compare model performance across subgroups to a baseline, or to other models

- Use confidence intervals to surface statistically significant disparities

- Perform evaluation over multiple thresholds

Use Fairness Indicators via the:

- [Evaluator component](https://tensorflow.github.io/tfx/guide/evaluator/) in a [TFX pipeline](https://tensorflow.github.io/tfx/)

- [TensorBoard plugin](https://github.com/tensorflow/tensorboard/blob/master/docs/fairness-indicators.md)

- [TensorFlow Model Analysis library](https://tensorflow.github.io/tfx/guide/fairness_indicators/)

- [Model Agnostic TFMA library](https://tensorflow.github.io/tfx/guide/fairness_indicators/#using-fairness-indicators-with-non-tensorflow-models)

///

/// html | div[style='float: right;width: 50%;']

```python

eval_config_pbtxt = """

model_specs {

label_key: "%s"

}

metrics_specs {

metrics {

class_name: "FairnessIndicators"

config: '{ "thresholds": [0.25, 0.5, 0.75] }'

}

metrics {

class_name: "ExampleCount"

}

}

slicing_specs {}

slicing_specs {

feature_keys: "%s"

}

options {

compute_confidence_intervals { value: False }

disabled_outputs{values: "analysis"}

}

""" % (LABEL_KEY, GROUP_KEY)

```

///

/// html | div[style='clear: both;']

///

-

### [ML Practicum: Fairness in Perspective API using Fairness Indicators](https://developers.google.com/machine-learning/practica/fairness-indicators?utm_source=github&utm_medium=github&utm_campaign=fi-practicum&utm_term=&utm_content=repo-body)

---

[Try the Case Study](https://developers.google.com/machine-learning/practica/fairness-indicators?utm_source=github&utm_medium=github&utm_campaign=fi-practicum&utm_term=&utm_content=repo-body)

-

### [Fairness Indicators on the TensorFlow blog](https://blog.tensorflow.org/2019/12/fairness-indicators-fair-ML-systems.html)

---