## Updates

* 11/26/2020 v1.0 release

* 02/25/2021 improve camera and lidar calibration parameter

* 03/04/2021 update ROS bag with new tf (v1.1 release)

* 06/14/2021 fix missing labels of point cloud and fix wrong poses

* 01/24/2022 add Velodyne point clouds in kitti format and labels transfered from Ouster

## Overview

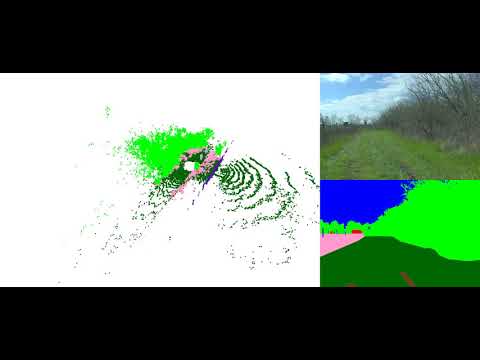

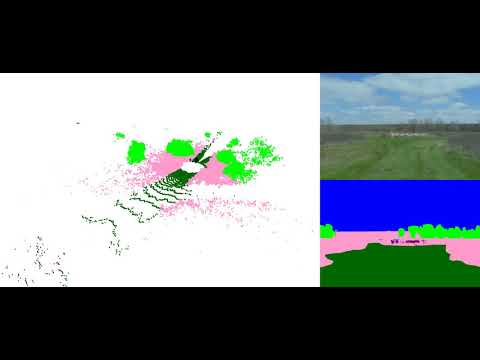

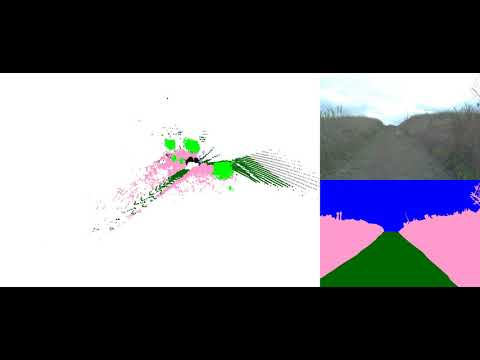

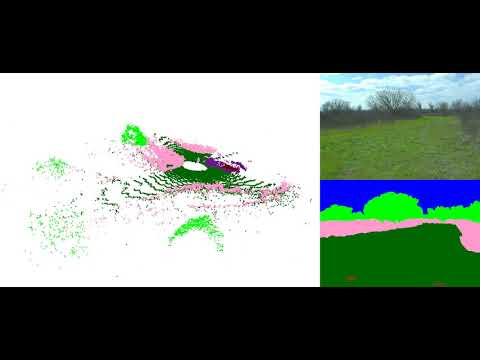

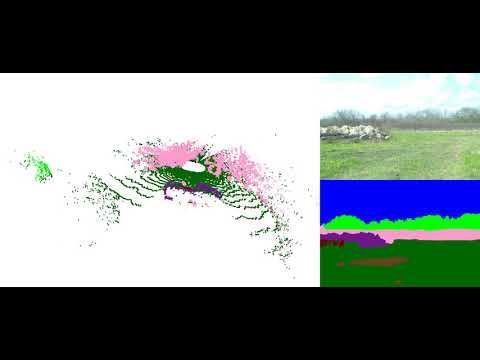

Semantic scene understanding is crucial for robust and safe autonomous navigation, particularly so in off-road environments. Recent deep learning advances for 3D semantic segmentation rely heavily on large sets of training data; however, existing autonomy datasets represent urban environments or lack multimodal off-road data. We fill this gap with RELLIS-3D, a multimodal dataset collected in an off-road environment containing annotations for **13,556 LiDAR scans** and **6,235 images**. The data was collected on the Rellis Campus of Texas A\&M University and presents challenges to existing algorithms related to class imbalance and environmental topography. Additionally, we evaluate the current state of the art deep learning semantic segmentation models on this dataset. Experimental results show that RELLIS-3D presents challenges for algorithms designed for segmentation in urban environments. Except for the annotated data, the dataset also provides full-stack sensor data in ROS bag format, including **RGB camera images**, **LiDAR point clouds**, **a pair of stereo images**, **high-precision GPS measurement**, and **IMU data**. This novel dataset provides the resources needed by researchers to develop more advanced algorithms and investigate new research directions to enhance autonomous navigation in off-road environments.

### Recording Platform

* [Clearpath Robobtics Warthog](https://clearpathrobotics.com/warthog-unmanned-ground-vehicle-robot/)

### Sensor Setup

* 64 channels Lidar: [Ouster OS1](https://ouster.com/products/os1-lidar-sensor)

* 32 Channels Lidar: [Velodyne Ultra Puck](https://velodynelidar.com/vlp-32c.html)

* 3D Stereo Camera: [Nerian Karmin2](https://nerian.com/products/karmin2-3d-stereo-camera/) + [Nerian SceneScan](https://nerian.com/products/scenescan-stereo-vision/) [(Sensor Configuration)](https://nerian.com/support/calculator/?1,10,0,6,2,1600,1200,1,1,1,800,592,1,6,2,0,61.4,0,0,1,1,0,5,0,0,0,0.66,0,1,25,1,0,1,256,0.25,256,4.0,5.0,0,1.5,1,#results)

* RGB Camera: [Basler acA1920-50gc](https://www.baslerweb.com/en/products/cameras/area-scan-cameras/ace/aca1920-50gc/) + [Edmund Optics 16mm/F1.8 86-571](https://www.edmundoptics.com/p/16mm-focal-length-hp-series-fixed-focal-length-lens/28990/)

* Inertial Navigation System (GPS/IMU): [Vectornav VN-300 Dual Antenna GNSS/INS](https://www.vectornav.com/products/vn-300)

## Folder structure

Rellis-3D

├── pt_test.lst

├── pt_val.lst

├── pt_train.lst

├── pt_test.lst

├── pt_train.lst

├── pt_val.lst

├── 00000

├── os1_cloud_node_kitti_bin/ -- directory containing ".bin" files with Ouster 64-Channels point clouds.

├── os1_cloud_node_semantickitti_label_id/ -- containing, ".label" files for Ouster Lidar point cloud with manually labelled semantics label

├── vel_cloud_node_kitti_bin/ -- directory containing ".bin" files with Velodyne 32-Channels point clouds.

├── vel_cloud_node_semantickitti_label_id/ -- containing, ".label" files for Velodyne Lidar point cloud transfered from Ouster point cloud.

├── pylon_camera_node/ -- directory containing ".png" files from the color camera.

├── pylon_camera_node_label_color -- color image lable

├── pylon_camera_node_label_id -- id image lable

├── calib.txt -- calibration of velodyne vs. camera. needed for projection of point cloud into camera.

└── poses.txt -- file containing the poses of every scan.

## Download Link on BaiDu Pan:

链接: https://pan.baidu.com/s/1akqSm7mpIMyUJhn_qwg3-w?pwd=4gk3 提取码: 4gk3 复制这段内容后打开百度网盘手机App,操作更方便哦

## Annotated Data:

### Ontology:

With the goal of providing multi-modal data to enhance autonomous off-road navigation, we defined an ontology of object and terrain classes, which largely derives from [the RUGD dataset](http://rugd.vision/) but also includes unique terrain and object classes not present in RUGD. Specifically, sequences from this dataset includes classes such as mud, man-made barriers, and rubble piles. Additionally, this dataset provides a finer-grained class structure for water sources, i.e., puddle and deep water, as these two classes present different traversability scenarios for most robotic platforms. Overall, 20 classes (including void class) are present in the data.

**Ontology Definition** ([Download 18KB](https://drive.google.com/file/d/1K8Zf0ju_xI5lnx3NTDLJpVTs59wmGPI6/view?usp=sharing))

### Images Statics:

Note: Due to the limitation of Google Drive, the downloads might be constrained. Please wait for 24h and try again. If you still can't access the file, please email maskjp@tamu.edu with the title "RELLIS-3D Access Request"..

### Image Download:

**Image with Annotation Examples** ([Download 3MB](https://drive.google.com/file/d/1wIig-LCie571DnK72p2zNAYYWeclEz1D/view?usp=sharing))

**Full Images** ([Download 11GB](https://drive.google.com/file/d/1F3Leu0H_m6aPVpZITragfreO_SGtL2yV/view?usp=sharing))

**Full Image Annotations Color Format** ([Download 119MB](https://drive.google.com/file/d/1HJl8Fi5nAjOr41DPUFmkeKWtDXhCZDke/view?usp=sharing))

**Full Image Annotations ID Format** ([Download 94MB](https://drive.google.com/file/d/16URBUQn_VOGvUqfms-0I8HHKMtjPHsu5/view?usp=sharing))

**Image Split File** ([44KB](https://drive.google.com/file/d/1zHmnVaItcYJAWat3Yti1W_5Nfux194WQ/view?usp=sharing))

### LiDAR Scans Statics:

### LiDAR Download:

**Ouster LiDAR with Annotation Examples** ([Download 24MB](https://drive.google.com/file/d/1QikPnpmxneyCuwefr6m50fBOSB2ny4LC/view?usp=sharing))

**Ouster LiDAR with Color Annotation PLY Format** ([Download 26GB](https://drive.google.com/file/d/1BZWrPOeLhbVItdN0xhzolfsABr6ymsRr/view?usp=sharing))

The header of the PLY file is described as followed:

```

element vertex

property float x

property float y

property float z

property float intensity

property uint t

property ushort reflectivity

property uchar ring

property ushort noise

property uint range

property uchar label

property uchar red

property uchar green

property uchar blue

```

To visualize the color of the ply file, please use [CloudCompare](https://www.danielgm.net/cc/) or [Open3D](http://www.open3d.org/). Meshlab has problem to visualize the color.

**Ouster LiDAR SemanticKITTI Format** ([Download 14GB](https://drive.google.com/file/d/1lDSVRf_kZrD0zHHMsKJ0V1GN9QATR4wH/view?usp=sharing))

To visualize the datasets using the SemanticKITTI tools, please use this fork: [https://github.com/unmannedlab/point_labeler](https://github.com/unmannedlab/point_labeler)

**Ouster LiDAR Annotation SemanticKITTI Format** ([Download 174MB](https://drive.google.com/file/d/12bsblHXtob60KrjV7lGXUQTdC5PhV8Er/view?usp=sharing))

**Ouster LiDAR Scan Poses files** ([Download 174MB](https://drive.google.com/file/d/1V3PT_NJhA41N7TBLp5AbW31d0ztQDQOX/view?usp=sharing))

**Ouster LiDAR Split File** ([75KB](https://drive.google.com/file/d/1raQJPySyqDaHpc53KPnJVl3Bln6HlcVS/view?usp=sharing))

**Velodyne LiDAR SemanticKITTI Format** ([Download 5.58GB](https://drive.google.com/file/d/1PiQgPQtJJZIpXumuHSig5Y6kxhAzz1cz/view?usp=sharing))

**Velodyne LiDAR Annotation SemanticKITTI Format** ([Download 143.6MB](https://drive.google.com/file/d/1n-9FkpiH4QUP7n0PnQBp-s7nzbSzmxp8/view?usp=sharing))

### Calibration Download:

**Camera Instrinsic** ([Download 2KB](https://drive.google.com/file/d/1NAigZTJYocRSOTfgFBddZYnDsI_CSpwK/view?usp=sharing))

**Basler Camera to Ouster LiDAR** ([Download 3KB](https://drive.google.com/file/d/19EOqWS9fDUFp4nsBrMCa69xs9LgIlS2e/view?usp=sharing))

**Velodyne LiDAR to Ouster LiDAR** ([Download 3KB](https://drive.google.com/file/d/1T6yPwcdzJoU-ifFRelLtDLPuPQswIQwf/view?usp=sharing))

**Stereo Calibration** ([Download 3KB](https://drive.google.com/file/d/1cP5-l_nYt3kZ4hZhEAHEdpt2fzToar0R/view?usp=sharing))

**Calibration Raw Data** ([Download 774MB](https://drive.google.com/drive/folders/1VAb-98lh6HWEe_EKLhUC1Xle0jkpp2Fl?usp=sharing

))

## Benchmarks

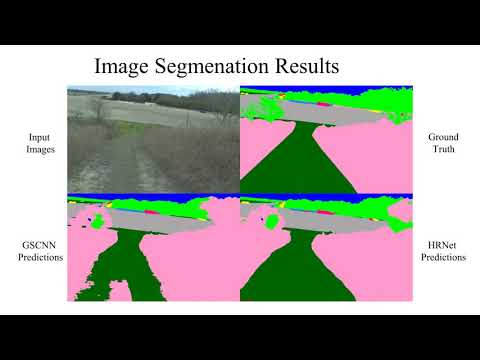

### Image Semantic Segmenation

models | sky | grass |tr ee | bush | concrete | mud | person | puddle | rubble | barrier | log | fence | vehicle | object | pole | water | asphalt | building | mean

-------| ----| ------|------|------|----------|-----| -------| -------|--------|---------|-----|-------| --------| -------|------|-------|---------|----------| ----

[HRNet+OCR](https://github.com/HRNet/HRNet-Semantic-Segmentation/tree/HRNet-OCR) | 96.94 | 90.20 | 80.53 | 76.76 | 84.22 | 43.29 | 89.48 | 73.94 | 62.03 | 54.86 | 0.00 | 39.52 | 41.54 | 46.44 | 9.51 | 0.72 | 33.25 | 4.60 | 48.83

[GSCNN](https://github.com/nv-tlabs/GSCNN) | 97.02 | 84.95 | 78.52 | 70.33 | 83.82 | 45.52 | 90.31 | 71.49 | 66.03 | 55.12 | 2.92 | 41.86 | 46.51 | 54.64 | 6.90 | 0.94 | 44.18 | 11.47 | 50.13

[](https://www.youtube.com/watch?v=vr3g6lCTKRM)

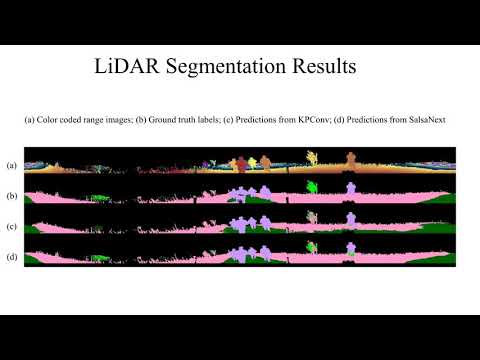

### LiDAR Semantic Segmenation

models | sky | grass |tr ee | bush | concrete | mud | person | puddle | rubble | barrier | log | fence | vehicle | object | pole | water | asphalt | building | mean

-------| ----| ------|------|------|----------|-----| -------| -------|--------|---------|-----|-------| --------| -------|------|-------|---------|----------| ----

[SalsaNext](https://github.com/Halmstad-University/SalsaNext) | - | 64.74 | 79.04 | 72.90 | 75.27 | 9.58 | 83.17 | 23.20 | 5.01 | 75.89 | 18.76 | 16.13| 23.12 | - | 56.26 | 0.00 | - | - | 40.20

[KPConv](https://github.com/HuguesTHOMAS/KPConv) | - | 56.41 | 49.25 | 58.45 | 33.91 | 0.00 | 81.20 | 0.00 | 0.00 | 0.00 | 0.00 | 0.40 | 0.00 | - | 0.00 | 0.00 | - | - | 18.64

[](https://www.youtube.com/watch?v=wkm8UiVNGao)

### Benchmark Reproduction

To reproduce the results, please refer to [here](./benchmarks/README.md)

## ROS Bag Raw Data

Data included in raw ROS bagfiles:

Topic Name | Message Tpye | Message Descriptison

------------ | ------------- | ---------------------------------

/img_node/intensity_image | sensor_msgs/Image | Intensity image generated by ouster Lidar

/img_node/noise_image | sensor_msgs/Image | Noise image generated by ouster Lidar

/img_node/range_image | sensor_msgs/Image | Range image generated by ouster Lidar

/imu/data | sensor_msgs/Imu | Filtered imu data from embeded imu of Warthog

/imu/data_raw | sensor_msgs/Imu | Raw imu data from embeded imu of Warthog

/imu/mag | sensor_msgs/MagneticField | Raw magnetic field data from embeded imu of Warthog

/left_drive/status/battery_current | std_msgs/Float64 |

/left_drive/status/battery_voltage | std_msgs/Float64 |

/mcu/status | warthog_msgs/Status |

/nerian/left/camera_info | sensor_msgs/CameraInfo |

/nerian/left/image_raw | sensor_msgs/Image | Left image from Nerian Karmin2

/nerian/right/camera_info | sensor_msgs/CameraInfo |

/nerian/right/image_raw | sensor_msgs/Image | Right image from Nerian Karmin2

/odometry/filtered | nav_msgs/Odometry | A filtered local-ization estimate based on wheel odometry (en-coders) and integrated IMU from Warthog

/os1_cloud_node/imu | sensor_msgs/Imu | Raw imu data from embeded imu of Ouster Lidar

/os1_cloud_node/points | sensor_msgs/PointCloud2 | Point cloud data from Ouster Lidar

/os1_node/imu_packets | ouster_ros/PacketMsg | Raw imu data from Ouster Lidar

/os1_node/lidar_packets | ouster_ros/PacketMsg | Raw lidar data from Ouster Lidar

/pylon_camera_node/camera_info | sensor_msgs/CameraInfo |

/pylon_camera_node/image_raw | sensor_msgs/Image |

/right_drive/status/battery_current | std_msgs/Float64 |

/right_drive/status/battery_voltage | std_msgs/Float64 |

/tf | tf2_msgs/TFMessage |

/tf_static | tf2_msgs/TFMessage

/vectornav/GPS | sensor_msgs/NavSatFix | INS data from VectorNav-VN300

/vectornav/IMU | sensor_msgs/Imu | Imu data from VectorNav-VN300

/vectornav/Mag | sensor_msgs/MagneticField | Raw magnetic field data from VectorNav-VN300

/vectornav/Odom | nav_msgs/Odometry | Odometry from VectorNav-VN300

/vectornav/Pres | sensor_msgs/FluidPressure |

/vectornav/Temp | sensor_msgs/Temperature |

/velodyne_points | sensor_msgs/PointCloud2 | PointCloud produced by the Velodyne Lidar

/warthog_velocity_controller/cmd_vel | geometry_msgs/Twist |

/warthog_velocity_controller/odom | nav_msgs/Odometry |

### ROS Bag Download

The following are the links for the ROS Bag files.

* Synced data (60 seconds example [2 GB](https://drive.google.com/file/d/13EHwiJtU0aAWBQn-ZJhTJwC1Yx2zDVUv/view?usp=sharing)): includes synced */os1_cloud_node/points*, */pylon_camera_node/camera_info* and */pylon_camera_node/image_raw*

* Full-stack Merged data:(60 seconds example [4.2 GB](https://drive.google.com/file/d/1qSeOoY6xbQGjcrZycgPM8Ty37eKDjpJL/view?usp=sharing)): includes all data in above table and extrinsic calibration info data embedded in the tf tree.

* Full-stack Split Raw data:(60 seconds example [4.3 GB](https://drive.google.com/file/d/1-TDpelP4wKTWUDTIn0dNuZIT3JkBoZ_R/view?usp=sharing)): is orignal data recorded by ```rosbag record``` command.

**Sequence 00000**: Synced data: ([12GB](https://drive.google.com/file/d/1bIb-6fWbaiI9Q8Pq9paANQwXWn7GJDtl/view?usp=sharing)) Full-stack Merged data: ([23GB](https://drive.google.com/file/d/1grcYRvtAijiA0Kzu-AV_9K4k2C1Kc3Tn/view?usp=sharing)) Full-stack Split Raw data: ([29GB](https://drive.google.com/drive/folders/1IZ-Tn_kzkp82mNbOL_4sNAniunD7tsYU?usp=sharing))

[](https://www.youtube.com/watch?v=Qc7IepWGKr8)

**Sequence 00001**: Synced data: ([8GB](https://drive.google.com/file/d/1xNjAFE3cv6X8n046irm8Bo5QMerNbwP1/view?usp=sharing)) Full-stack Merged data: ([16GB](https://drive.google.com/file/d/1geoU45pPavnabQ0arm4ILeHSsG3cU6ti/view?usp=sharing)) Full-stack Split Raw data: ([22GB](https://drive.google.com/drive/folders/1hf-vF5zyTKcCLqIiddIGdemzKT742T1t?usp=sharing))

[](https://www.youtube.com/watch?v=nO5JADjDWQ0)

**Sequence 00002**: Synced data: ([14GB](https://drive.google.com/file/d/1gy0ehP9Buj-VkpfvU9Qwyz1euqXXQ_mj/view?usp=sharing)) Full-stack Merged data: ([28GB](https://drive.google.com/file/d/1h0CVg62jTXiJ91LnR6md-WrUBDxT543n/view?usp=sharing)) Full-stack Split Raw data: ([37GB](https://drive.google.com/drive/folders/1R8jP5Qo7Z6uKPoG9XUvFCStwJu6rtliu?usp=sharing))

[](https://www.youtube.com/watch?v=aXaOmzjHmNE)

**Sequence 00003**:Synced data: ([8GB](https://drive.google.com/file/d/1vCeZusijzyn1ZrZbg4JaHKYSc2th7GEt/view?usp=sharing)) Full-stack Merged data: ([15GB](https://drive.google.com/file/d/1glJzgnTYLIB_ar3CgHpc_MBp5AafQpy9/view?usp=sharing)) Full-stack Split Raw data: ([19GB](https://drive.google.com/drive/folders/1iP0k6dbmPdAH9kkxs6ugi6-JbrkGhm5o?usp=sharing))

[](https://www.youtube.com/watch?v=Kjo3tGDSbtU)

**Sequence 00004**:Synced data: ([7GB](https://drive.google.com/file/d/1gxODhAd8CBM5AGvsoyuqN7yGpWazzmVy/view?usp=sharing)) Full-stack Merged data: ([14GB](https://drive.google.com/file/d/1AuEjX0do3jGZhGKPszSEUNoj85YswNya/view?usp=sharing)) Full-stack Split Raw data: ([17GB](https://drive.google.com/drive/folders/1WV9pecF2beESyM7N29W-nhi-JaoKvEqc?usp=sharing))

[](https://www.youtube.com/watch?v=lLLYTI4TCD4)

### ROS Environment Installment

The ROS workspace includes a plaftform description package which can provide rough tf tree for running the rosbag.

To run cartographer on RELLIS-3D please refer to [here](https://github.com/unmannedlab/cartographer)

## Full Data Download:

[Access Link](https://drive.google.com/drive/folders/1aZ1tJ3YYcWuL3oWKnrTIC5gq46zx1bMc?usp=sharing)

## Citation

```

@misc{jiang2020rellis3d,

title={RELLIS-3D Dataset: Data, Benchmarks and Analysis},

author={Peng Jiang and Philip Osteen and Maggie Wigness and Srikanth Saripalli},

year={2020},

eprint={2011.12954},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

```

## Collaborator

## License

All datasets and code on this page are copyright by us and published under the Creative Commons Attribution-NonCommercial-ShareAlike 3.0 License.

## Related Work

[SemanticUSL: A Dataset for Semantic Segmentation Domain Adatpation](https://unmannedlab.github.io/research/SemanticUSL)

[LiDARNet: A Boundary-Aware Domain Adaptation Model for Lidar Point Cloud Semantic Segmentation](https://unmannedlab.github.io/research/LiDARNet)

[A RUGD Dataset for Autonomous Navigation and Visual Perception inUnstructured Outdoor Environments](http://rugd.vision/)

================================================

FILE: benchmarks/GSCNN-master/.gitignore

================================================

logs/

network/pretrained_models/

__pycache__/

================================================

FILE: benchmarks/GSCNN-master/Dockerfile

================================================

FROM pytorch/pytorch:1.0-cuda10.0-cudnn7-devel

RUN apt-get -y update

RUN apt-get -y upgrade

RUN apt-get update \

&& apt-get install -y software-properties-common wget \

&& add-apt-repository -y ppa:ubuntu-toolchain-r/test \

&& apt-get update \

&& apt-get install -y make git curl vim vim-gnome

# Install apt-get

RUN apt-get install -y python3-pip python3-dev vim htop python3-tk pkg-config

RUN pip3 install --upgrade pip==9.0.1

# Install from pip

RUN pip3 install pyyaml \

scipy==1.1.0 \

numpy \

tensorflow \

scikit-learn \

scikit-image \

matplotlib \

opencv-python \

torch==1.0.0 \

torchvision==0.2.0 \

torch-encoding==1.0.1 \

tensorboardX \

tqdm

================================================

FILE: benchmarks/GSCNN-master/LICENSE

================================================

Copyright (C) 2019 NVIDIA Corporation. Towaki Takikawa, David Acuna, Varun Jampani, Sanja Fidler

All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

Permission to use, copy, modify, and distribute this software and its documentation

for any non-commercial purpose is hereby granted without fee, provided that the above

copyright notice appear in all copies and that both that copyright notice and this

permission notice appear in supporting documentation, and that the name of the author

not be used in advertising or publicity pertaining to distribution of the software

without specific, written prior permission.

THE AUTHOR DISCLAIMS ALL WARRANTIES WITH REGARD TO THIS SOFTWARE, INCLUDING ALL

IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR ANY PARTICULAR PURPOSE.

IN NO EVENT SHALL THE AUTHOR BE LIABLE FOR ANY SPECIAL, INDIRECT OR CONSEQUENTIAL

DAMAGES OR ANY DAMAGES WHATSOEVER RESULTING FROM LOSS OF USE, DATA OR PROFITS,

WHETHER IN AN ACTION OF CONTRACT, NEGLIGENCE OR OTHER TORTIOUS ACTION, ARISING

OUT OF OR IN CONNECTION WITH THE USE OR PERFORMANCE OF THIS SOFTWARE.

================================================

FILE: benchmarks/GSCNN-master/README.md

================================================

# GSCNN

This is the official code for:

#### Gated-SCNN: Gated Shape CNNs for Semantic Segmentation

[Towaki Takikawa](https://tovacinni.github.io), [David Acuna](http://www.cs.toronto.edu/~davidj/), [Varun Jampani](https://varunjampani.github.io), [Sanja Fidler](http://www.cs.toronto.edu/~fidler/)

ICCV 2019

**[[Paper](https://arxiv.org/abs/1907.05740)] [[Project Page](https://nv-tlabs.github.io/GSCNN/)]**

Based on based on https://github.com/NVIDIA/semantic-segmentation.

## License

```

Copyright (C) 2019 NVIDIA Corporation. Towaki Takikawa, David Acuna, Varun Jampani, Sanja Fidler

All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

Permission to use, copy, modify, and distribute this software and its documentation

for any non-commercial purpose is hereby granted without fee, provided that the above

copyright notice appear in all copies and that both that copyright notice and this

permission notice appear in supporting documentation, and that the name of the author

not be used in advertising or publicity pertaining to distribution of the software

without specific, written prior permission.

THE AUTHOR DISCLAIMS ALL WARRANTIES WITH REGARD TO THIS SOFTWARE, INCLUDING ALL

IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR ANY PARTICULAR PURPOSE.

IN NO EVENT SHALL THE AUTHOR BE LIABLE FOR ANY SPECIAL, INDIRECT OR CONSEQUENTIAL

DAMAGES OR ANY DAMAGES WHATSOEVER RESULTING FROM LOSS OF USE, DATA OR PROFITS,

WHETHER IN AN ACTION OF CONTRACT, NEGLIGENCE OR OTHER TORTIOUS ACTION, ARISING

OUT OF OR IN CONNECTION WITH THE USE OR PERFORMANCE OF THIS SOFTWARE.

~

```

## Usage

##### Clone this repo

```bash

git clone https://github.com/nv-tlabs/GSCNN

cd GSCNN

```

#### Python requirements

Currently, the code supports Python 3

* numpy

* PyTorch (>=1.1.0)

* torchvision

* scipy

* scikit-image

* tensorboardX

* tqdm

* torch-encoding

* opencv

* PyYAML

#### Download pretrained models

Download the pretrained model from the [Google Drive Folder](https://drive.google.com/file/d/1wlhAXg-PfoUM-rFy2cksk43Ng3PpsK2c/view), and save it in 'checkpoints/'

#### Download inferred images

Download (if needed) the inferred images from the [Google Drive Folder](https://drive.google.com/file/d/105WYnpSagdlf5-ZlSKWkRVeq-MyKLYOV/view)

#### Evaluation (Cityscapes)

```bash

python train.py --evaluate --snapshot checkpoints/best_cityscapes_checkpoint.pth

```

#### Training

A note on training- we train on 8 NVIDIA GPUs, and as such, training will be an issue with WiderResNet38 if you try to train on a single GPU.

If you use this code, please cite:

```

@article{takikawa2019gated,

title={Gated-SCNN: Gated Shape CNNs for Semantic Segmentation},

author={Takikawa, Towaki and Acuna, David and Jampani, Varun and Fidler, Sanja},

journal={ICCV},

year={2019}

}

```

================================================

FILE: benchmarks/GSCNN-master/config.py

================================================

"""

Copyright (C) 2019 NVIDIA Corporation. All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

# Code adapted from:

# https://github.com/facebookresearch/Detectron/blob/master/detectron/core/config.py

Source License

# Copyright (c) 2017-present, Facebook, Inc.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

##############################################################################

#

# Based on:

# --------------------------------------------------------

# Fast R-CNN

# Copyright (c) 2015 Microsoft

# Licensed under The MIT License [see LICENSE for details]

# Written by Ross Girshick

# --------------------------------------------------------

"""

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

from __future__ import unicode_literals

import copy

import six

import os.path as osp

from ast import literal_eval

import numpy as np

import yaml

import torch

import torch.nn as nn

from torch.nn import init

from utils.AttrDict import AttrDict

__C = AttrDict()

# Consumers can get config by:

# from fast_rcnn_config import cfg

cfg = __C

__C.EPOCH = 0

__C.CLASS_UNIFORM_PCT=0.0

__C.BATCH_WEIGHTING=False

__C.BORDER_WINDOW=1

__C.REDUCE_BORDER_EPOCH= -1

__C.STRICTBORDERCLASS= None

__C.DATASET =AttrDict()

__C.DATASET.CITYSCAPES_DIR='/home/usl/Datasets/cityscapes/'

__C.DATASET.RELLIS_DIR='/path/to/RELLIS-3D/'

__C.DATASET.CV_SPLITS=3

__C.MODEL = AttrDict()

__C.MODEL.BN = 'regularnorm'

__C.MODEL.BNFUNC = torch.nn.BatchNorm2d

__C.MODEL.BIGMEMORY = False

def assert_and_infer_cfg(args, make_immutable=True):

"""Call this function in your script after you have finished setting all cfg

values that are necessary (e.g., merging a config from a file, merging

command line config options, etc.). By default, this function will also

mark the global cfg as immutable to prevent changing the global cfg settings

during script execution (which can lead to hard to debug errors or code

that's harder to understand than is necessary).

"""

if args.batch_weighting:

__C.BATCH_WEIGHTING=True

if args.syncbn:

import encoding

__C.MODEL.BN = 'syncnorm'

__C.MODEL.BNFUNC = encoding.nn.BatchNorm2d

else:

__C.MODEL.BNFUNC = torch.nn.BatchNorm2d

print('Using regular batch norm')

if make_immutable:

cfg.immutable(True)

================================================

FILE: benchmarks/GSCNN-master/datasets/__init__.py

================================================

"""

Copyright (C) 2019 NVIDIA Corporation. All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

"""

from datasets import cityscapes, rellis

import torchvision.transforms as standard_transforms

import torchvision.utils as vutils

import transforms.joint_transforms as joint_transforms

import transforms.transforms as extended_transforms

from torch.utils.data import DataLoader

def setup_loaders(args):

'''

input: argument passed by the user

return: training data loader, validation data loader loader, train_set

'''

if args.dataset == 'cityscapes':

args.dataset_cls = cityscapes

args.train_batch_size = args.bs_mult * args.ngpu

if args.bs_mult_val > 0:

args.val_batch_size = args.bs_mult_val * args.ngpu

else:

args.val_batch_size = args.bs_mult * args.ngpu

mean_std = ([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

elif args.dataset == "rellis":

args.dataset_cls = rellis

args.train_batch_size = args.bs_mult * args.ngpu

if args.bs_mult_val > 0:

args.val_batch_size = args.bs_mult_val * args.ngpu

else:

args.val_batch_size = args.bs_mult * args.ngpu

mean_std = ([0.496588, 0.59493099, 0.53358843], [0.496588, 0.59493099, 0.53358843])

else:

raise

args.num_workers = 4 * args.ngpu

if args.test_mode:

args.num_workers = 0 #1

# Geometric image transformations

train_joint_transform_list = [

joint_transforms.RandomSizeAndCrop(args.crop_size,

False,

pre_size=args.pre_size,

scale_min=args.scale_min,

scale_max=args.scale_max,

ignore_index=args.dataset_cls.ignore_label),

joint_transforms.Resize(args.crop_size),

joint_transforms.RandomHorizontallyFlip()]

#if args.rotate:

# train_joint_transform_list += [joint_transforms.RandomRotate(args.rotate)]

train_joint_transform = joint_transforms.Compose(train_joint_transform_list)

# Image appearance transformations

train_input_transform = []

if args.color_aug:

train_input_transform += [extended_transforms.ColorJitter(

brightness=args.color_aug,

contrast=args.color_aug,

saturation=args.color_aug,

hue=args.color_aug)]

if args.bblur:

train_input_transform += [extended_transforms.RandomBilateralBlur()]

elif args.gblur:

train_input_transform += [extended_transforms.RandomGaussianBlur()]

else:

pass

train_input_transform += [standard_transforms.ToTensor(),

standard_transforms.Normalize(*mean_std)]

train_input_transform = standard_transforms.Compose(train_input_transform)

val_input_transform = standard_transforms.Compose([

standard_transforms.ToTensor(),

standard_transforms.Normalize(*mean_std)

])

target_transform = extended_transforms.MaskToTensor()

target_train_transform = extended_transforms.MaskToTensor()

if args.dataset == 'cityscapes':

city_mode = 'train' ## Can be trainval

city_quality = 'fine'

train_set = args.dataset_cls.CityScapes(

city_quality, city_mode, 0,

joint_transform=train_joint_transform,

transform=train_input_transform,

target_transform=target_train_transform,

dump_images=args.dump_augmentation_images,

cv_split=args.cv)

val_set = args.dataset_cls.CityScapes('fine', 'val', 0,

transform=val_input_transform,

target_transform=target_transform,

cv_split=args.cv)

elif args.dataset == 'rellis':

if args.mode != "test":

city_mode = 'train'

train_set = args.dataset_cls.Rellis(

city_mode,

joint_transform=train_joint_transform,

transform=train_input_transform,

target_transform=target_train_transform,

dump_images=args.dump_augmentation_images,

cv_split=args.cv)

val_set = args.dataset_cls.Rellis('val',

transform=val_input_transform,

target_transform=target_transform,

cv_split=args.cv)

else:

city_mode = 'test'

train_set = args.dataset_cls.Rellis('test',

transform=val_input_transform,

target_transform=target_transform,

cv_split=args.cv)

val_set = args.dataset_cls.Rellis('test',

transform=val_input_transform,

target_transform=target_transform,

cv_split=args.cv)

else:

raise

train_sampler = None

val_sampler = None

train_loader = DataLoader(train_set, batch_size=args.train_batch_size,

num_workers=args.num_workers, shuffle=(train_sampler is None), drop_last=True, sampler = train_sampler)

val_loader = DataLoader(val_set, batch_size=args.val_batch_size,

num_workers=args.num_workers // 2 , shuffle=False, drop_last=False, sampler = val_sampler)

return train_loader, val_loader, train_set

================================================

FILE: benchmarks/GSCNN-master/datasets/cityscapes.py

================================================

"""

Copyright (C) 2019 NVIDIA Corporation. All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

"""

import os

import numpy as np

import torch

from PIL import Image

from torch.utils import data

from collections import defaultdict

import math

import logging

import datasets.cityscapes_labels as cityscapes_labels

import json

from config import cfg

import torchvision.transforms as transforms

import datasets.edge_utils as edge_utils

trainid_to_name = cityscapes_labels.trainId2name

id_to_trainid = cityscapes_labels.label2trainid

num_classes = 19

ignore_label = 255

root = cfg.DATASET.CITYSCAPES_DIR

palette = [128, 64, 128, 244, 35, 232, 70, 70, 70, 102, 102, 156, 190, 153, 153,

153, 153, 153, 250, 170, 30,

220, 220, 0, 107, 142, 35, 152, 251, 152, 70, 130, 180, 220, 20, 60,

255, 0, 0, 0, 0, 142, 0, 0, 70,

0, 60, 100, 0, 80, 100, 0, 0, 230, 119, 11, 32]

zero_pad = 256 * 3 - len(palette)

for i in range(zero_pad):

palette.append(0)

def colorize_mask(mask):

# mask: numpy array of the mask

new_mask = Image.fromarray(mask.astype(np.uint8)).convert('P')

new_mask.putpalette(palette)

return new_mask

def add_items(items, aug_items, cities, img_path, mask_path, mask_postfix, mode, maxSkip):

for c in cities:

c_items = [name.split('_leftImg8bit.png')[0] for name in

os.listdir(os.path.join(img_path, c))]

for it in c_items:

item = (os.path.join(img_path, c, it + '_leftImg8bit.png'),

os.path.join(mask_path, c, it + mask_postfix))

items.append(item)

def make_cv_splits(img_dir_name):

'''

Create splits of train/val data.

A split is a lists of cities.

split0 is aligned with the default Cityscapes train/val.

'''

trn_path = os.path.join(root, img_dir_name, 'leftImg8bit', 'train')

val_path = os.path.join(root, img_dir_name, 'leftImg8bit', 'val')

trn_cities = ['train/' + c for c in os.listdir(trn_path)]

val_cities = ['val/' + c for c in os.listdir(val_path)]

# want reproducible randomly shuffled

trn_cities = sorted(trn_cities)

all_cities = val_cities + trn_cities

num_val_cities = len(val_cities)

num_cities = len(all_cities)

cv_splits = []

for split_idx in range(cfg.DATASET.CV_SPLITS):

split = {}

split['train'] = []

split['val'] = []

offset = split_idx * num_cities // cfg.DATASET.CV_SPLITS

for j in range(num_cities):

if j >= offset and j < (offset + num_val_cities):

split['val'].append(all_cities[j])

else:

split['train'].append(all_cities[j])

cv_splits.append(split)

return cv_splits

def make_split_coarse(img_path):

'''

Create a train/val split for coarse

return: city split in train

'''

all_cities = os.listdir(img_path)

all_cities = sorted(all_cities) # needs to always be the same

val_cities = [] # Can manually set cities to not be included into train split

split = {}

split['val'] = val_cities

split['train'] = [c for c in all_cities if c not in val_cities]

return split

def make_test_split(img_dir_name):

test_path = os.path.join(root, img_dir_name, 'leftImg8bit', 'test')

test_cities = ['test/' + c for c in os.listdir(test_path)]

return test_cities

def make_dataset(quality, mode, maxSkip=0, fine_coarse_mult=6, cv_split=0):

'''

Assemble list of images + mask files

fine - modes: train/val/test/trainval cv:0,1,2

coarse - modes: train/val cv:na

path examples:

leftImg8bit_trainextra/leftImg8bit/train_extra/augsburg

gtCoarse/gtCoarse/train_extra/augsburg

'''

items = []

aug_items = []

if quality == 'fine':

assert mode in ['train', 'val', 'test', 'trainval']

img_dir_name = 'leftImg8bit_trainvaltest'

img_path = os.path.join(root, img_dir_name, 'leftImg8bit')

mask_path = os.path.join(root, 'gtFine_trainvaltest', 'gtFine')

mask_postfix = '_gtFine_labelIds.png'

cv_splits = make_cv_splits(img_dir_name)

if mode == 'trainval':

modes = ['train', 'val']

else:

modes = [mode]

for mode in modes:

if mode == 'test':

cv_splits = make_test_split(img_dir_name)

add_items(items, cv_splits, img_path, mask_path,

mask_postfix)

else:

logging.info('{} fine cities: '.format(mode) + str(cv_splits[cv_split][mode]))

add_items(items, aug_items, cv_splits[cv_split][mode], img_path, mask_path,

mask_postfix, mode, maxSkip)

else:

raise 'unknown cityscapes quality {}'.format(quality)

logging.info('Cityscapes-{}: {} images'.format(mode, len(items)+len(aug_items)))

return items, aug_items

class CityScapes(data.Dataset):

def __init__(self, quality, mode, maxSkip=0, joint_transform=None, sliding_crop=None,

transform=None, target_transform=None, dump_images=False,

cv_split=None, eval_mode=False,

eval_scales=None, eval_flip=False):

self.quality = quality

self.mode = mode

self.maxSkip = maxSkip

self.joint_transform = joint_transform

self.sliding_crop = sliding_crop

self.transform = transform

self.target_transform = target_transform

self.dump_images = dump_images

self.eval_mode = eval_mode

self.eval_flip = eval_flip

self.eval_scales = None

if eval_scales != None:

self.eval_scales = [float(scale) for scale in eval_scales.split(",")]

if cv_split:

self.cv_split = cv_split

assert cv_split < cfg.DATASET.CV_SPLITS, \

'expected cv_split {} to be < CV_SPLITS {}'.format(

cv_split, cfg.DATASET.CV_SPLITS)

else:

self.cv_split = 0

self.imgs, _ = make_dataset(quality, mode, self.maxSkip, cv_split=self.cv_split)

if len(self.imgs) == 0:

raise RuntimeError('Found 0 images, please check the data set')

self.mean_std = ([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

def _eval_get_item(self, img, mask, scales, flip_bool):

return_imgs = []

for flip in range(int(flip_bool)+1):

imgs = []

if flip :

img = img.transpose(Image.FLIP_LEFT_RIGHT)

for scale in scales:

w,h = img.size

target_w, target_h = int(w * scale), int(h * scale)

resize_img =img.resize((target_w, target_h))

tensor_img = transforms.ToTensor()(resize_img)

final_tensor = transforms.Normalize(*self.mean_std)(tensor_img)

imgs.append(tensor_img)

return_imgs.append(imgs)

return return_imgs, mask

def __getitem__(self, index):

img_path, mask_path = self.imgs[index]

img, mask = Image.open(img_path).convert('RGB'), Image.open(mask_path)

img_name = os.path.splitext(os.path.basename(img_path))[0]

mask = np.array(mask)

mask_copy = mask.copy()

for k, v in id_to_trainid.items():

mask_copy[mask == k] = v

if self.eval_mode:

return self._eval_get_item(img, mask_copy, self.eval_scales, self.eval_flip), img_name

mask = Image.fromarray(mask_copy.astype(np.uint8))

# Image Transformations

if self.joint_transform is not None:

img, mask = self.joint_transform(img, mask)

if self.transform is not None:

img = self.transform(img)

if self.target_transform is not None:

mask = self.target_transform(mask)

_edgemap = mask.numpy()

_edgemap = edge_utils.mask_to_onehot(_edgemap, num_classes)

_edgemap = edge_utils.onehot_to_binary_edges(_edgemap, 2, num_classes)

edgemap = torch.from_numpy(_edgemap).float()

# Debug

if self.dump_images:

outdir = '../../dump_imgs_{}'.format(self.mode)

os.makedirs(outdir, exist_ok=True)

out_img_fn = os.path.join(outdir, img_name + '.png')

out_msk_fn = os.path.join(outdir, img_name + '_mask.png')

mask_img = colorize_mask(np.array(mask))

img.save(out_img_fn)

mask_img.save(out_msk_fn)

return img, mask, edgemap, img_name

def __len__(self):

return len(self.imgs)

def make_dataset_video():

img_dir_name = 'leftImg8bit_demoVideo'

img_path = os.path.join(root, img_dir_name, 'leftImg8bit/demoVideo')

items = []

categories = os.listdir(img_path)

for c in categories[1:]:

c_items = [name.split('_leftImg8bit.png')[0] for name in

os.listdir(os.path.join(img_path, c))]

for it in c_items:

item = os.path.join(img_path, c, it + '_leftImg8bit.png')

items.append(item)

return items

class CityScapesVideo(data.Dataset):

def __init__(self, transform=None):

self.imgs = make_dataset_video()

if len(self.imgs) == 0:

raise RuntimeError('Found 0 images, please check the data set')

self.transform = transform

def __getitem__(self, index):

img_path = self.imgs[index]

img = Image.open(img_path).convert('RGB')

img_name = os.path.splitext(os.path.basename(img_path))[0]

if self.transform is not None:

img = self.transform(img)

return img, img_name

def __len__(self):

return len(self.imgs)

================================================

FILE: benchmarks/GSCNN-master/datasets/cityscapes_labels.py

================================================

"""

Copyright (C) 2019 NVIDIA Corporation. All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

# File taken from https://github.com/mcordts/cityscapesScripts/

# License File Available at:

# https://github.com/mcordts/cityscapesScripts/blob/master/license.txt

# ----------------------

# The Cityscapes Dataset

# ----------------------

#

#

# License agreement

# -----------------

#

# This dataset is made freely available to academic and non-academic entities for non-commercial purposes such as academic research, teaching, scientific publications, or personal experimentation. Permission is granted to use the data given that you agree:

#

# 1. That the dataset comes "AS IS", without express or implied warranty. Although every effort has been made to ensure accuracy, we (Daimler AG, MPI Informatics, TU Darmstadt) do not accept any responsibility for errors or omissions.

# 2. That you include a reference to the Cityscapes Dataset in any work that makes use of the dataset. For research papers, cite our preferred publication as listed on our website; for other media cite our preferred publication as listed on our website or link to the Cityscapes website.

# 3. That you do not distribute this dataset or modified versions. It is permissible to distribute derivative works in as far as they are abstract representations of this dataset (such as models trained on it or additional annotations that do not directly include any of our data) and do not allow to recover the dataset or something similar in character.

# 4. That you may not use the dataset or any derivative work for commercial purposes as, for example, licensing or selling the data, or using the data with a purpose to procure a commercial gain.

# 5. That all rights not expressly granted to you are reserved by us (Daimler AG, MPI Informatics, TU Darmstadt).

#

#

# Contact

# -------

#

# Marius Cordts, Mohamed Omran

# www.cityscapes-dataset.net

"""

from collections import namedtuple

#--------------------------------------------------------------------------------

# Definitions

#--------------------------------------------------------------------------------

# a label and all meta information

Label = namedtuple( 'Label' , [

'name' , # The identifier of this label, e.g. 'car', 'person', ... .

# We use them to uniquely name a class

'id' , # An integer ID that is associated with this label.

# The IDs are used to represent the label in ground truth images

# An ID of -1 means that this label does not have an ID and thus

# is ignored when creating ground truth images (e.g. license plate).

# Do not modify these IDs, since exactly these IDs are expected by the

# evaluation server.

'trainId' , # Feel free to modify these IDs as suitable for your method. Then create

# ground truth images with train IDs, using the tools provided in the

# 'preparation' folder. However, make sure to validate or submit results

# to our evaluation server using the regular IDs above!

# For trainIds, multiple labels might have the same ID. Then, these labels

# are mapped to the same class in the ground truth images. For the inverse

# mapping, we use the label that is defined first in the list below.

# For example, mapping all void-type classes to the same ID in training,

# might make sense for some approaches.

# Max value is 255!

'category' , # The name of the category that this label belongs to

'categoryId' , # The ID of this category. Used to create ground truth images

# on category level.

'hasInstances', # Whether this label distinguishes between single instances or not

'ignoreInEval', # Whether pixels having this class as ground truth label are ignored

# during evaluations or not

'color' , # The color of this label

] )

#--------------------------------------------------------------------------------

# A list of all labels

#--------------------------------------------------------------------------------

# Please adapt the train IDs as appropriate for you approach.

# Note that you might want to ignore labels with ID 255 during training.

# Further note that the current train IDs are only a suggestion. You can use whatever you like.

# Make sure to provide your results using the original IDs and not the training IDs.

# Note that many IDs are ignored in evaluation and thus you never need to predict these!

labels = [

# name id trainId category catId hasInstances ignoreInEval color

Label( 'unlabeled' , 0 , 255 , 'void' , 0 , False , True , ( 0, 0, 0) ),

Label( 'ego vehicle' , 1 , 255 , 'void' , 0 , False , True , ( 0, 0, 0) ),

Label( 'rectification border' , 2 , 255 , 'void' , 0 , False , True , ( 0, 0, 0) ),

Label( 'out of roi' , 3 , 255 , 'void' , 0 , False , True , ( 0, 0, 0) ),

Label( 'static' , 4 , 255 , 'void' , 0 , False , True , ( 0, 0, 0) ),

Label( 'dynamic' , 5 , 255 , 'void' , 0 , False , True , (111, 74, 0) ),

Label( 'ground' , 6 , 255 , 'void' , 0 , False , True , ( 81, 0, 81) ),

Label( 'road' , 7 , 0 , 'flat' , 1 , False , False , (128, 64,128) ),

Label( 'sidewalk' , 8 , 1 , 'flat' , 1 , False , False , (244, 35,232) ),

Label( 'parking' , 9 , 255 , 'flat' , 1 , False , True , (250,170,160) ),

Label( 'rail track' , 10 , 255 , 'flat' , 1 , False , True , (230,150,140) ),

Label( 'building' , 11 , 2 , 'construction' , 2 , False , False , ( 70, 70, 70) ),

Label( 'wall' , 12 , 3 , 'construction' , 2 , False , False , (102,102,156) ),

Label( 'fence' , 13 , 4 , 'construction' , 2 , False , False , (190,153,153) ),

Label( 'guard rail' , 14 , 255 , 'construction' , 2 , False , True , (180,165,180) ),

Label( 'bridge' , 15 , 255 , 'construction' , 2 , False , True , (150,100,100) ),

Label( 'tunnel' , 16 , 255 , 'construction' , 2 , False , True , (150,120, 90) ),

Label( 'pole' , 17 , 5 , 'object' , 3 , False , False , (153,153,153) ),

Label( 'polegroup' , 18 , 255 , 'object' , 3 , False , True , (153,153,153) ),

Label( 'traffic light' , 19 , 6 , 'object' , 3 , False , False , (250,170, 30) ),

Label( 'traffic sign' , 20 , 7 , 'object' , 3 , False , False , (220,220, 0) ),

Label( 'vegetation' , 21 , 8 , 'nature' , 4 , False , False , (107,142, 35) ),

Label( 'terrain' , 22 , 9 , 'nature' , 4 , False , False , (152,251,152) ),

Label( 'sky' , 23 , 10 , 'sky' , 5 , False , False , ( 70,130,180) ),

Label( 'person' , 24 , 11 , 'human' , 6 , True , False , (220, 20, 60) ),

Label( 'rider' , 25 , 12 , 'human' , 6 , True , False , (255, 0, 0) ),

Label( 'car' , 26 , 13 , 'vehicle' , 7 , True , False , ( 0, 0,142) ),

Label( 'truck' , 27 , 14 , 'vehicle' , 7 , True , False , ( 0, 0, 70) ),

Label( 'bus' , 28 , 15 , 'vehicle' , 7 , True , False , ( 0, 60,100) ),

Label( 'caravan' , 29 , 255 , 'vehicle' , 7 , True , True , ( 0, 0, 90) ),

Label( 'trailer' , 30 , 255 , 'vehicle' , 7 , True , True , ( 0, 0,110) ),

Label( 'train' , 31 , 16 , 'vehicle' , 7 , True , False , ( 0, 80,100) ),

Label( 'motorcycle' , 32 , 17 , 'vehicle' , 7 , True , False , ( 0, 0,230) ),

Label( 'bicycle' , 33 , 18 , 'vehicle' , 7 , True , False , (119, 11, 32) ),

Label( 'license plate' , -1 , -1 , 'vehicle' , 7 , False , True , ( 0, 0,142) ),

Label( 'license plate' , 34 , 255 , 'vehicle' , 7 , False , True , ( 0, 0,142) ),

]

#--------------------------------------------------------------------------------

# Create dictionaries for a fast lookup

#--------------------------------------------------------------------------------

# Please refer to the main method below for example usages!

# name to label object

name2label = { label.name : label for label in labels }

# id to label object

id2label = { label.id : label for label in labels }

# trainId to label object

trainId2label = { label.trainId : label for label in reversed(labels) }

# label2trainid

label2trainid = { label.id : label.trainId for label in labels }

# trainId to label object

trainId2name = { label.trainId : label.name for label in labels }

trainId2color = { label.trainId : label.color for label in labels }

# category to list of label objects

category2labels = {}

for label in labels:

category = label.category

if category in category2labels:

category2labels[category].append(label)

else:

category2labels[category] = [label]

#--------------------------------------------------------------------------------

# Assure single instance name

#--------------------------------------------------------------------------------

# returns the label name that describes a single instance (if possible)

# e.g. input | output

# ----------------------

# car | car

# cargroup | car

# foo | None

# foogroup | None

# skygroup | None

def assureSingleInstanceName( name ):

# if the name is known, it is not a group

if name in name2label:

return name

# test if the name actually denotes a group

if not name.endswith("group"):

return None

# remove group

name = name[:-len("group")]

# test if the new name exists

if not name in name2label:

return None

# test if the new name denotes a label that actually has instances

if not name2label[name].hasInstances:

return None

# all good then

return name

#--------------------------------------------------------------------------------

# Main for testing

#--------------------------------------------------------------------------------

# just a dummy main

if __name__ == "__main__":

# Print all the labels

print("List of cityscapes labels:")

print("")

print((" {:>21} | {:>3} | {:>7} | {:>14} | {:>10} | {:>12} | {:>12}".format( 'name', 'id', 'trainId', 'category', 'categoryId', 'hasInstances', 'ignoreInEval' )))

print((" " + ('-' * 98)))

for label in labels:

print((" {:>21} | {:>3} | {:>7} | {:>14} | {:>10} | {:>12} | {:>12}".format( label.name, label.id, label.trainId, label.category, label.categoryId, label.hasInstances, label.ignoreInEval )))

print("")

print("Example usages:")

# Map from name to label

name = 'car'

id = name2label[name].id

print(("ID of label '{name}': {id}".format( name=name, id=id )))

# Map from ID to label

category = id2label[id].category

print(("Category of label with ID '{id}': {category}".format( id=id, category=category )))

# Map from trainID to label

trainId = 0

name = trainId2label[trainId].name

print(("Name of label with trainID '{id}': {name}".format( id=trainId, name=name )))

================================================

FILE: benchmarks/GSCNN-master/datasets/edge_utils.py

================================================

"""

Copyright (C) 2019 NVIDIA Corporation. All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

"""

import os

import numpy as np

from PIL import Image

from scipy.ndimage.morphology import distance_transform_edt

def mask_to_onehot(mask, num_classes):

"""

Converts a segmentation mask (H,W) to (K,H,W) where the last dim is a one

hot encoding vector

"""

_mask = [mask == (i + 1) for i in range(num_classes)]

return np.array(_mask).astype(np.uint8)

def onehot_to_mask(mask):

"""

Converts a mask (K,H,W) to (H,W)

"""

_mask = np.argmax(mask, axis=0)

_mask[_mask != 0] += 1

return _mask

def onehot_to_multiclass_edges(mask, radius, num_classes):

"""

Converts a segmentation mask (K,H,W) to an edgemap (K,H,W)

"""

if radius < 0:

return mask

# We need to pad the borders for boundary conditions

mask_pad = np.pad(mask, ((0, 0), (1, 1), (1, 1)), mode='constant', constant_values=0)

channels = []

for i in range(num_classes):

dist = distance_transform_edt(mask_pad[i, :])+distance_transform_edt(1.0-mask_pad[i, :])

dist = dist[1:-1, 1:-1]

dist[dist > radius] = 0

dist = (dist > 0).astype(np.uint8)

channels.append(dist)

return np.array(channels)

def onehot_to_binary_edges(mask, radius, num_classes):

"""

Converts a segmentation mask (K,H,W) to a binary edgemap (H,W)

"""

if radius < 0:

return mask

# We need to pad the borders for boundary conditions

mask_pad = np.pad(mask, ((0, 0), (1, 1), (1, 1)), mode='constant', constant_values=0)

edgemap = np.zeros(mask.shape[1:])

for i in range(num_classes):

dist = distance_transform_edt(mask_pad[i, :])+distance_transform_edt(1.0-mask_pad[i, :])

dist = dist[1:-1, 1:-1]

dist[dist > radius] = 0

edgemap += dist

edgemap = np.expand_dims(edgemap, axis=0)

edgemap = (edgemap > 0).astype(np.uint8)

return edgemap

================================================

FILE: benchmarks/GSCNN-master/datasets/rellis.py

================================================

"""

Copyright (C) 2019 NVIDIA Corporation. All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

"""

import os

import numpy as np

import torch

from PIL import Image

from torch.utils import data

from collections import defaultdict

import math

import logging

import datasets.cityscapes_labels as cityscapes_labels

import json

from config import cfg

import torchvision.transforms as transforms

import datasets.edge_utils as edge_utils

trainid_to_name = cityscapes_labels.trainId2name

id_to_trainid = cityscapes_labels.label2trainid

num_classes = 19

ignore_label = 0

root = cfg.DATASET.RELLIS_DIR

list_paths = {'train':'train.lst','val':"val.lst",'test':'test.lst'}

palette = [128, 64, 128, 244, 35, 232, 70, 70, 70, 102, 102, 156, 190, 153, 153,

153, 153, 153, 250, 170, 30,

220, 220, 0, 107, 142, 35, 152, 251, 152, 70, 130, 180, 220, 20, 60,

255, 0, 0, 0, 0, 142, 0, 0, 70,

0, 60, 100, 0, 80, 100, 0, 0, 230, 119, 11, 32]

zero_pad = 256 * 3 - len(palette)

for i in range(zero_pad):

palette.append(0)

def colorize_mask(mask):

# mask: numpy array of the mask

new_mask = Image.fromarray(mask.astype(np.uint8)).convert('P')

new_mask.putpalette(palette)

return new_mask

class Rellis(data.Dataset):

def __init__(self, mode, joint_transform=None, sliding_crop=None,

transform=None, target_transform=None, dump_images=False,

cv_split=None, eval_mode=False,

eval_scales=None, eval_flip=False):

self.mode = mode

self.joint_transform = joint_transform

self.sliding_crop = sliding_crop

self.transform = transform

self.target_transform = target_transform

self.dump_images = dump_images

self.eval_mode = eval_mode

self.eval_flip = eval_flip

self.eval_scales = None

self.root = root

if eval_scales != None:

self.eval_scales = [float(scale) for scale in eval_scales.split(",")]

self.list_path = list_paths[mode]

self.img_list = [line.strip().split() for line in open(root+self.list_path)]

self.files = self.read_files()

if len(self.files) == 0:

raise RuntimeError('Found 0 images, please check the data set')

self.mean_std = ([0.54218053, 0.64250553, 0.56620195], [0.54218052, 0.64250552, 0.56620194])

self.label_mapping = {0: 0,

1: 0,

3: 1,

4: 2,

5: 3,

6: 4,

7: 5,

8: 6,

9: 7,

10: 8,

12: 9,

15: 10,

17: 11,

18: 12,

19: 13,

23: 14,

27: 15,

29: 1,

30: 1,

31: 16,

32: 4,

33: 17,

34: 18}

def _eval_get_item(self, img, mask, scales, flip_bool):

return_imgs = []

for flip in range(int(flip_bool)+1):

imgs = []

if flip :

img = img.transpose(Image.FLIP_LEFT_RIGHT)

for scale in scales:

w,h = img.size

target_w, target_h = int(w * scale), int(h * scale)

resize_img =img.resize((target_w, target_h))

tensor_img = transforms.ToTensor()(resize_img)

final_tensor = transforms.Normalize(*self.mean_std)(tensor_img)

imgs.append(tensor_img)

return_imgs.append(imgs)

return return_imgs, mask

def read_files(self):

files = []

# if 'test' in self.mode:

# for item in self.img_list:

# image_path = item

# name = os.path.splitext(os.path.basename(image_path[0]))[0]

# files.append({

# "img": image_path[0],

# "name": name,

# })

# else:

for item in self.img_list:

image_path, label_path = item

name = os.path.splitext(os.path.basename(label_path))[0]

files.append({

"img": image_path,

"label": label_path,

"name": name,

"weight": 1

})

return files

def convert_label(self, label, inverse=False):

temp = label.copy()

if inverse:

for v, k in self.label_mapping.items():

label[temp == k] = v

else:

for k, v in self.label_mapping.items():

label[temp == k] = v

return label

def __getitem__(self, index):

item = self.files[index]

img_name = item["name"]

img_path = self.root + item['img']

label_path = self.root + item["label"]

img = Image.open(img_path).convert('RGB')

mask = np.array(Image.open(label_path))

mask = mask[:, :]

mask_copy = self.convert_label(mask)

if self.eval_mode:

return self._eval_get_item(img, mask_copy, self.eval_scales, self.eval_flip), img_name

mask = Image.fromarray(mask_copy.astype(np.uint8))

# Image Transformations

if self.joint_transform is not None:

img, mask = self.joint_transform(img, mask)

if self.transform is not None:

img = self.transform(img)

if self.target_transform is not None:

mask = self.target_transform(mask)

if self.mode == 'test':

return img, mask, img_name, item['img']

_edgemap = mask.numpy()

_edgemap = edge_utils.mask_to_onehot(_edgemap, num_classes)

_edgemap = edge_utils.onehot_to_binary_edges(_edgemap, 2, num_classes)

edgemap = torch.from_numpy(_edgemap).float()

# Debug

if self.dump_images:

outdir = '../../dump_imgs_{}'.format(self.mode)

os.makedirs(outdir, exist_ok=True)

out_img_fn = os.path.join(outdir, img_name + '.png')

out_msk_fn = os.path.join(outdir, img_name + '_mask.png')

mask_img = colorize_mask(np.array(mask))

img.save(out_img_fn)

mask_img.save(out_msk_fn)

return img, mask, edgemap, img_name

def __len__(self):

return len(self.files)

def make_dataset_video():

img_dir_name = 'leftImg8bit_demoVideo'

img_path = os.path.join(root, img_dir_name, 'leftImg8bit/demoVideo')

items = []

categories = os.listdir(img_path)

for c in categories[1:]:

c_items = [name.split('_leftImg8bit.png')[0] for name in

os.listdir(os.path.join(img_path, c))]

for it in c_items:

item = os.path.join(img_path, c, it + '_leftImg8bit.png')

items.append(item)

return items

class CityScapesVideo(data.Dataset):

def __init__(self, transform=None):

self.imgs = make_dataset_video()

if len(self.imgs) == 0:

raise RuntimeError('Found 0 images, please check the data set')

self.transform = transform

def __getitem__(self, index):

img_path = self.imgs[index]

img = Image.open(img_path).convert('RGB')

img_name = os.path.splitext(os.path.basename(img_path))[0]

if self.transform is not None:

img = self.transform(img)

return img, img_name

def __len__(self):

return len(self.imgs)

================================================

FILE: benchmarks/GSCNN-master/docs/index.html

================================================

Gated Shape CNN

Gated-SCNN Gated Shape CNNs for Semantic Segmentation

Current state-of-the-art methods for image segmentation form a dense image representation where the color, shape and texture information are all processed together inside a deep CNN. This however may not be ideal as they contain very different type of information relevant for recognition. We propose a new architecture that adds a shape stream to the classical CNN architecture. The two streams process the image in parallel, and their information gets fused in the very top layers. Key to this architecture is a new type of gates that connect the intermediate layers of the two streams. Specifically, we use the higher-level activations in the classical stream to gate the lower-level activations in the shape stream, effectively removing noise and helping the shape stream to only focus on processing the relevant boundary-related information. This enables us to use a very shallow architecture for the shape stream that operates on the image-level resolution. Our experiments show that this leads to a highly effective architecture that produces sharper predictions around object boundaries and significantly boosts performance on thinner and smaller objects. Our method achieves state-of-the-art performance on the Cityscapes benchmark, in terms of both mask (mIoU) and boundary (F-score) quality, improving by 2% and 4% over strong baselines.

Evaluation at different distances, measured by crop factor.

================================================

FILE: benchmarks/GSCNN-master/docs/resources/bibtex.txt

================================================

@inproceedings{Takikawa2019GatedSCNNGS,

title={Gated-SCNN: Gated Shape CNNs for Semantic Segmentation},

author={Towaki Takikawa and David Acuna and Varun Jampani and Sanja Fidler},

year={2019}

}

================================================

FILE: benchmarks/GSCNN-master/gscnn.txt

================================================

absl-py==0.10.0

aiohttp==3.6.2

astor==0.8.1

astunparse==1.6.3

async-timeout==3.0.1

attrs==20.2.0

cachetools==4.1.1

certifi==2020.6.20

chardet==3.0.4

cycler==0.10.0

decorator==4.4.2

future==0.18.2

gast==0.2.2

google-auth==1.22.0

google-auth-oauthlib==0.4.1

google-pasta==0.2.0

grpcio==1.32.0

h5py==2.10.0

idna==2.10

idna-ssl==1.1.0

imageio==2.9.0

imageio-ffmpeg==0.4.2

importlib-metadata==2.0.0

joblib==0.16.0

Keras-Applications==1.0.8

Keras-Preprocessing==1.1.2

kiwisolver==1.2.0

Markdown==3.2.2

matplotlib==3.3.2

multidict==4.7.6

networkx==2.5

ninja==1.10.0.post2

nose==1.3.7

numpy==1.18.5

oauthlib==3.1.0

opencv-python==4.4.0.44

opt-einsum==3.3.0

Pillow==7.2.0

portalocker==2.0.0

protobuf==3.13.0

pyasn1==0.4.8

pyasn1-modules==0.2.8

pyparsing==2.4.7

python-dateutil==2.8.1

PyWavelets==1.1.1

PyYAML==5.3.1

requests==2.24.0

requests-oauthlib==1.3.0

rsa==4.6

scikit-image==0.17.2

scikit-learn==0.23.2

scipy==1.5.2

six==1.15.0

tensorboard==1.15.0

tensorboard-plugin-wit==1.7.0

tensorboardX==2.1

tensorflow-estimator==1.15.1

tensorflow-gpu==1.15.0

termcolor==1.1.0

threadpoolctl==2.1.0

tifffile==2020.9.3

torch==1.4.0

torch-encoding @ git+https://github.com/zhanghang1989/PyTorch-Encoding/@ced288d6fa10d4780fa5205a2f239c84022e71a3

torchvision==0.5.0

tqdm==4.49.0

typing-extensions==3.7.4.3

urllib3==1.25.10

Werkzeug==1.0.1

wrapt==1.12.1

yacs==0.1.8

yarl==1.6.0

zipp==3.2.0

================================================

FILE: benchmarks/GSCNN-master/loss.py

================================================

"""

Copyright (C) 2019 NVIDIA Corporation. All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

"""

import torch

import torch.nn as nn

import torch.nn.functional as F

import logging

import numpy as np

from config import cfg

from my_functionals.DualTaskLoss import DualTaskLoss

def get_loss(args):

'''

Get the criterion based on the loss function

args:

return: criterion

'''

if args.img_wt_loss:

criterion = ImageBasedCrossEntropyLoss2d(

classes=args.dataset_cls.num_classes, size_average=True,

ignore_index=args.dataset_cls.ignore_label,

upper_bound=args.wt_bound).cuda()

elif args.joint_edgeseg_loss:

criterion = JointEdgeSegLoss(classes=args.dataset_cls.num_classes,

ignore_index=args.dataset_cls.ignore_label, upper_bound=args.wt_bound,

edge_weight=args.edge_weight, seg_weight=args.seg_weight, att_weight=args.att_weight, dual_weight=args.dual_weight).cuda()

else:

criterion = CrossEntropyLoss2d(size_average=True,

ignore_index=args.dataset_cls.ignore_label).cuda()

criterion_val = JointEdgeSegLoss(classes=args.dataset_cls.num_classes, mode='val',

ignore_index=args.dataset_cls.ignore_label, upper_bound=args.wt_bound,

edge_weight=args.edge_weight, seg_weight=args.seg_weight).cuda()

return criterion, criterion_val

class JointEdgeSegLoss(nn.Module):

def __init__(self, classes, weight=None, reduction='mean', ignore_index=255,

norm=False, upper_bound=1.0, mode='train',

edge_weight=1, seg_weight=1, att_weight=1, dual_weight=1, edge='none'):

super(JointEdgeSegLoss, self).__init__()

self.num_classes = classes

if mode == 'train':

self.seg_loss = ImageBasedCrossEntropyLoss2d(

classes=classes, ignore_index=ignore_index, upper_bound=upper_bound).cuda()

elif mode == 'val':

self.seg_loss = CrossEntropyLoss2d(size_average=True,

ignore_index=ignore_index).cuda()

self.ignore_index = ignore_index

self.edge_weight = edge_weight

self.seg_weight = seg_weight

self.att_weight = att_weight

self.dual_weight = dual_weight

self.dual_task = DualTaskLoss()

def bce2d(self, input, target):

n, c, h, w = input.size()

log_p = input.transpose(1, 2).transpose(2, 3).contiguous().view(1, -1)

target_t = target.transpose(1, 2).transpose(2, 3).contiguous().view(1, -1)

target_trans = target_t.clone()

pos_index = (target_t ==1)

neg_index = (target_t ==0)

ignore_index=(target_t >1)

target_trans[pos_index] = 1

target_trans[neg_index] = 0

pos_index = pos_index.data.cpu().numpy().astype(bool)

neg_index = neg_index.data.cpu().numpy().astype(bool)

ignore_index=ignore_index.data.cpu().numpy().astype(bool)

weight = torch.Tensor(log_p.size()).fill_(0)

weight = weight.numpy()

pos_num = pos_index.sum()

neg_num = neg_index.sum()

sum_num = pos_num + neg_num

weight[pos_index] = neg_num*1.0 / sum_num

weight[neg_index] = pos_num*1.0 / sum_num

weight[ignore_index] = 0

weight = torch.from_numpy(weight)

weight = weight.cuda()

loss = F.binary_cross_entropy_with_logits(log_p, target_t, weight, size_average=True)

return loss

def edge_attention(self, input, target, edge):

n, c, h, w = input.size()

filler = torch.ones_like(target) * self.ignore_index

return self.seg_loss(input,

torch.where(edge.max(1)[0] > 0.8, target, filler))

def forward(self, inputs, targets):

segin, edgein = inputs

segmask, edgemask = targets

losses = {}

losses['seg_loss'] = self.seg_weight * self.seg_loss(segin, segmask)

losses['edge_loss'] = self.edge_weight * 20 * self.bce2d(edgein, edgemask)

losses['att_loss'] = self.att_weight * self.edge_attention(segin, segmask, edgein)

losses['dual_loss'] = self.dual_weight * self.dual_task(segin, segmask,ignore_pixel=self.ignore_index)

return losses

#Img Weighted Loss

class ImageBasedCrossEntropyLoss2d(nn.Module):

def __init__(self, classes, weight=None, size_average=True, ignore_index=255,

norm=False, upper_bound=1.0):

super(ImageBasedCrossEntropyLoss2d, self).__init__()

logging.info("Using Per Image based weighted loss")

self.num_classes = classes

self.nll_loss = nn.NLLLoss2d(weight, size_average, ignore_index)

self.norm = norm

self.upper_bound = upper_bound

self.batch_weights = cfg.BATCH_WEIGHTING

def calculateWeights(self, target):

hist = np.histogram(target.flatten(), range(

self.num_classes + 1), normed=True)[0]

if self.norm:

hist = ((hist != 0) * self.upper_bound * (1 / hist)) + 1

else:

hist = ((hist != 0) * self.upper_bound * (1 - hist)) + 1

return hist

def forward(self, inputs, targets):

target_cpu = targets.data.cpu().numpy()

#print("loss",np.unique(target_cpu))

if self.batch_weights:

weights = self.calculateWeights(target_cpu)

self.nll_loss.weight = torch.Tensor(weights).cuda()

loss = 0.0

for i in range(0, inputs.shape[0]):

if not self.batch_weights:

weights = self.calculateWeights(target_cpu[i])

self.nll_loss.weight = torch.Tensor(weights).cuda()

loss += self.nll_loss(F.log_softmax(inputs[i].unsqueeze(0)),

targets[i].unsqueeze(0))

return loss

#Cross Entroply NLL Loss

class CrossEntropyLoss2d(nn.Module):

def __init__(self, weight=None, size_average=True, ignore_index=255):

super(CrossEntropyLoss2d, self).__init__()

logging.info("Using Cross Entropy Loss")

self.nll_loss = nn.NLLLoss2d(weight, size_average, ignore_index)

def forward(self, inputs, targets):

return self.nll_loss(F.log_softmax(inputs), targets)

================================================

FILE: benchmarks/GSCNN-master/my_functionals/DualTaskLoss.py

================================================

"""

Copyright (C) 2019 NVIDIA Corporation. All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

# Code adapted from:

# https://github.com/ericjang/gumbel-softmax/blob/3c8584924603869e90ca74ac20a6a03d99a91ef9/Categorical%20VAE.ipynb

#

# MIT License

#

# Copyright (c) 2016 Eric Jang

#

# Permission is hereby granted, free of charge, to any person obtaining a copy

# of this software and associated documentation files (the "Software"), to deal

# in the Software without restriction, including without limitation the rights

# to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

# copies of the Software, and to permit persons to whom the Software is

# furnished to do so, subject to the following conditions:

#

# The above copyright notice and this permission notice shall be included in all

# copies or substantial portions of the Software.

#

# THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

# IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

# FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

# AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

# LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

# OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

# SOFTWARE.

"""

import torch

import torch.nn as nn

import torch.nn.functional as F

import numpy as np

from my_functionals.custom_functional import compute_grad_mag

def perturbate_input_(input, n_elements=200):

N, C, H, W = input.shape

assert N == 1

c_ = np.random.random_integers(0, C - 1, n_elements)

h_ = np.random.random_integers(0, H - 1, n_elements)

w_ = np.random.random_integers(0, W - 1, n_elements)

for c_idx in c_:

for h_idx in h_:

for w_idx in w_:

input[0, c_idx, h_idx, w_idx] = 1

return input

def _sample_gumbel(shape, eps=1e-10):

"""

Sample from Gumbel(0, 1)

based on

https://github.com/ericjang/gumbel-softmax/blob/3c8584924603869e90ca74ac20a6a03d99a91ef9/Categorical%20VAE.ipynb ,

(MIT license)

"""

U = torch.rand(shape).cuda()

return - torch.log(eps - torch.log(U + eps))

def _gumbel_softmax_sample(logits, tau=1, eps=1e-10):

"""

Draw a sample from the Gumbel-Softmax distribution

based on

https://github.com/ericjang/gumbel-softmax/blob/3c8584924603869e90ca74ac20a6a03d99a91ef9/Categorical%20VAE.ipynb

(MIT license)

"""

assert logits.dim() == 3

gumbel_noise = _sample_gumbel(logits.size(), eps=eps)

y = logits + gumbel_noise

return F.softmax(y / tau, 1)

def _one_hot_embedding(labels, num_classes):

"""Embedding labels to one-hot form.

Args:

labels: (LongTensor) class labels, sized [N,].

num_classes: (int) number of classes.

Returns:

(tensor) encoded labels, sized [N, #classes].

"""

y = torch.eye(num_classes).cuda()

return y[labels].permute(0,3,1,2)

class DualTaskLoss(nn.Module):

def __init__(self, cuda=False):

super(DualTaskLoss, self).__init__()

self._cuda = cuda

return

def forward(self, input_logits, gts, ignore_pixel=255):

"""

:param input_logits: NxCxHxW

:param gt_semantic_masks: NxCxHxW

:return: final loss

"""

N, C, H, W = input_logits.shape

th = 1e-8 # 1e-10

eps = 1e-10

ignore_mask = (gts == ignore_pixel).detach()

input_logits = torch.where(ignore_mask.view(N, 1, H, W).expand(N, C, H, W),

torch.zeros(N,C,H,W).cuda(),

input_logits)

gt_semantic_masks = gts.detach()

gt_semantic_masks = torch.where(ignore_mask, torch.zeros(N,H,W).long().cuda(), gt_semantic_masks)

gt_semantic_masks = _one_hot_embedding(gt_semantic_masks, C).detach()

g = _gumbel_softmax_sample(input_logits.view(N, C, -1), tau=0.5)

g = g.reshape((N, C, H, W))

g = compute_grad_mag(g, cuda=self._cuda)

g_hat = compute_grad_mag(gt_semantic_masks, cuda=self._cuda)

g = g.view(N, -1)

#g_hat = g_hat.view(N, -1)

g_hat = g_hat.reshape(N, -1)

loss_ewise = F.l1_loss(g, g_hat, reduction='none', reduce=False)

p_plus_g_mask = (g >= th).detach().float()

loss_p_plus_g = torch.sum(loss_ewise * p_plus_g_mask) / (torch.sum(p_plus_g_mask) + eps)

p_plus_g_hat_mask = (g_hat >= th).detach().float()

loss_p_plus_g_hat = torch.sum(loss_ewise * p_plus_g_hat_mask) / (torch.sum(p_plus_g_hat_mask) + eps)

total_loss = 0.5 * loss_p_plus_g + 0.5 * loss_p_plus_g_hat

return total_loss

================================================

FILE: benchmarks/GSCNN-master/my_functionals/GatedSpatialConv.py

================================================

"""

Copyright (C) 2019 NVIDIA Corporation. All rights reserved.

Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

"""

import torch.nn as nn

import torch

import torch.nn.functional as F

from torch.nn.modules.conv import _ConvNd

from torch.nn.modules.utils import _pair

import numpy as np

import math

import network.mynn as mynn

import my_functionals.custom_functional as myF

class GatedSpatialConv2d(_ConvNd):

def __init__(self, in_channels, out_channels, kernel_size=1, stride=1,

padding=0, dilation=1, groups=1, bias=False):

"""

:param in_channels:

:param out_channels:

:param kernel_size:

:param stride:

:param padding:

:param dilation:

:param groups:

:param bias:

"""

kernel_size = _pair(kernel_size)

stride = _pair(stride)

padding = _pair(padding)

dilation = _pair(dilation)

super(GatedSpatialConv2d, self).__init__(

in_channels, out_channels, kernel_size, stride, padding, dilation,

False, _pair(0), groups, bias, 'zeros')

self._gate_conv = nn.Sequential(

mynn.Norm2d(in_channels+1),

nn.Conv2d(in_channels+1, in_channels+1, 1),

nn.ReLU(),

nn.Conv2d(in_channels+1, 1, 1),

mynn.Norm2d(1),

nn.Sigmoid()

)

def forward(self, input_features, gating_features):

"""

:param input_features: [NxCxHxW] featuers comming from the shape branch (canny branch).

:param gating_features: [Nx1xHxW] features comming from the texture branch (resnet). Only one channel feature map.

:return:

"""

alphas = self._gate_conv(torch.cat([input_features, gating_features], dim=1))

input_features = (input_features * (alphas + 1))