Repository: wyysf-98/GenMM

Branch: main

Commit: aee9bec5e1b5

Files: 32

Total size: 193.8 KB

Directory structure:

gitextract_jk8oqutc/

├── .gitignore

├── GenMM.py

├── LICENSE

├── README.md

├── __init__.py

├── configs/

│ ├── default.yaml

│ └── ganimator.yaml

├── dataset/

│ ├── blender_motion.py

│ ├── bvh/

│ │ ├── Quaternions.py

│ │ ├── bvh_io.py

│ │ ├── bvh_parser.py

│ │ └── bvh_writer.py

│ ├── bvh_motion.py

│ ├── motion.py

│ └── tracks_motion.py

├── demo.blend

├── docker/

│ ├── Dockerfile

│ ├── README.md

│ ├── apt-sources.list

│ ├── requirements.txt

│ └── requirements_blender.txt

├── fix_contact.py

├── nearest_neighbor/

│ ├── losses.py

│ └── utils.py

├── run_random_generation.py

├── run_web_server.py

└── utils/

├── base.py

├── contact.py

├── kinematics.py

├── rename_mixamo_rig.py

├── skeleton.py

└── transforms.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

*.json

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

*$py.class

out/

# C extensions

*.so

*.pkl

# Distribution / packaging

.Python

build/

develop-eggs/

distf/

downloads/

eggs/

.eggs/

lib/

lib64/

parts/

sdist/

var/

wheels/

wandb/

pip-wheel-metadata/

share/python-wheels/

*.egg-info/

.installed.cfg

*.egg

MANIFEST

.vscode/*

.vscode/settings.json

# PyInstaller

# Usually these files are written by a python script from a template

# before PyInstaller builds the exe, so as to inject date/other infos into it.

*.manifest

*.spec

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.nox/

.coverage

.coverage.*

.cache

nosetests.xml

coverage.xml

*.cover

*.py,cover

.hypothesis/

.pytest_cache/

# Translations

*.mo

*.pot

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

# Flask stuff:

instance/

.webassets-cache

# Scrapy stuff:

.scrapy

# Sphinx documentation

docs/_build/

# PyBuilder

# target/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

.python-version

# pipenv

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

# However, in case of collaboration, if having platform-specific dependencies or dependencies

# having no cross-platform support, pipenv may install dependencies that don't work, or not

# install all needed dependencies.

#Pipfile.lock

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

__pypackages__/

# Celery stuff

celerybeat-schedule

celerybeat.pid

# SageMath parsed files

*.sage.py

# Environments

.env

.venv

venv/

env.bak/

venv.bak/

# Rope project settings

.ropeproject

# mkdocs documentation

/site

# Pyre type checker

.pyre/

checkpoints/

data/*

output/

log/

runs/

*.png

*.jpg

*.mp4

*.gif

*.pkl

*.pt

================================================

FILE: GenMM.py

================================================

import os

import os.path as osp

import numpy as np

import torch

import torch.nn.functional as F

from utils.base import logger

class GenMM:

def __init__(self, mode = 'random_synthesis', noise_sigma = 1.0, coarse_ratio = 0.2, coarse_ratio_factor = 6, pyr_factor = 0.75, num_stages_limit = -1, device = 'cuda:0', silent = False):

'''

GenMM main constructor

Args:

device : str = 'cuda:0', default device.

silent : bool = False, whether to mute the output.

'''

self.device = torch.device(device)

self.silent = silent

def _get_pyramid_lengths(self, final_len, coarse_ratio, pyr_factor):

'''

Get a list of pyramid lengths using given target length and ratio

'''

lengths = [int(np.round(final_len * coarse_ratio))]

while lengths[-1] < final_len:

lengths.append(int(np.round(lengths[-1] / pyr_factor)))

if lengths[-1] == lengths[-2]:

lengths[-1] += 1

lengths[-1] = final_len

return lengths

def _get_target_pyramid(self, target, coarse_ratio, pyr_factor, num_stages_limit=-1):

'''

Reads a target motion(s) and create a pyraimd out of it. Ordered in increatorch.sing size

'''

self.num_target = len(target)

lengths = []

min_len = 10000

for i in range(len(target)):

new_length = self._get_pyramid_lengths(len(target[i].motion_data), coarse_ratio, pyr_factor)

min_len = min(min_len, len(new_length))

if num_stages_limit != -1:

new_length = new_length[:num_stages_limit]

lengths.append(new_length)

for i in range(len(target)):

lengths[i] = lengths[i][-min_len:]

self.pyraimd_lengths = lengths

target_pyramid = [[] for _ in range(len(lengths[0]))]

for step in range(len(lengths[0])):

for i in range(len(target)):

length = lengths[i][step]

target_pyramid[step].append(target[i].sample(size=length).to(self.device))

if not self.silent:

print('Levels:', lengths)

for i in range(len(target_pyramid)):

print(f'Number of clips in target pyramid {i} is {len(target_pyramid[i])}, ranging {[[tgt.min(), tgt.max()] for tgt in target_pyramid[i]]}')

return target_pyramid

def _get_initial_motion(self, init_length, noise_sigma):

'''

Prepare the initial motion for optimization

'''

initial_motion = F.interpolate(torch.cat([self.target_pyramid[0][i] for i in range(self.num_target)], dim=-1),

size=init_length, mode='linear', align_corners=True)

if noise_sigma > 0:

initial_motion_w_noise = initial_motion + torch.randn_like(initial_motion) * noise_sigma

initial_motion_w_noise = torch.fmod(initial_motion_w_noise, 1.0)

else:

initial_motion_w_noise = initial_motion

if not self.silent:

print('Initial motion:', initial_motion.min(), initial_motion.max())

print('Initial motion with noise:', initial_motion_w_noise.min(), initial_motion_w_noise.max())

return initial_motion_w_noise

def run(self, target, criteria, num_frames, num_steps, noise_sigma, patch_size, coarse_ratio, pyr_factor, ext=None, debug_dir=None):

'''

generation function

Args:

mode : - string = 'x?', generate x times longer frames results

: - int, specifying the number of times to generate

noise_sigma : float = 1.0, random noise.

coarse_ratio : float = 0.2, ratio at the coarse level.

pyr_factor : float = 0.75, pyramid factor.

num_stages_limit : int = -1, no limit.

'''

if debug_dir is not None:

from tensorboardX import SummaryWriter

writer = SummaryWriter(log_dir=debug_dir)

# build target pyramid

if 'patchsize' in coarse_ratio:

coarse_ratio = patch_size * float(coarse_ratio.split('x_')[0]) / max([len(t.motion_data) for t in target])

elif 'nframes' in coarse_ratio:

coarse_ratio = float(coarse_ratio.split('x_')[0])

else:

raise ValueError('Unsupported coarse ratio specified')

self.target_pyramid = self._get_target_pyramid(target, coarse_ratio, pyr_factor)

# get the initial motion data

if 'nframes' in num_frames:

syn_length = int(sum([i[-1] for i in self.pyraimd_lengths]) * float(num_frames.split('x_')[0]))

elif num_frames.isdigit():

syn_length = int(num_frames)

else:

raise ValueError(f'Unsupported mode {self.mode}')

self.synthesized_lengths = self._get_pyramid_lengths(syn_length, coarse_ratio, pyr_factor)

if not self.silent:

print('Synthesized lengths:', self.synthesized_lengths)

self.synthesized = self._get_initial_motion(self.synthesized_lengths[0], noise_sigma)

# perform the optimization

self.synthesized.requires_grad_(False)

self.pbar = logger(num_steps, len(self.target_pyramid))

for lvl, lvl_target in enumerate(self.target_pyramid):

self.pbar.new_lvl()

if lvl > 0:

with torch.no_grad():

self.synthesized = F.interpolate(self.synthesized.detach(), size=self.synthesized_lengths[lvl], mode='linear')

self.synthesized, losses = GenMM.match_and_blend(self.synthesized, lvl_target, criteria, num_steps, self.pbar, ext=ext)

criteria.clean_cache()

if debug_dir is not None:

for itr in range(len(losses)):

writer.add_scalar(f'optimize/losses_lvl{lvl}', losses[itr], itr)

self.pbar.pbar.close()

return self.synthesized.detach()

@staticmethod

@torch.no_grad()

def match_and_blend(synthesized, targets, criteria, n_steps, pbar, ext=None):

'''

Minimizes criteria between synthesized and target

Args:

synthesized : torch.Tensor, optimized motion data

targets : torch.Tensor, target motion data

criteria : optimize target function

n_steps : int, number of steps to optimize

pbar : logger

ext : extra configurations or constraints (optional)

'''

losses = []

keyframe_motion = targets[0] if isinstance(targets, list) else targets

syn_length = synthesized.shape[-1]

km_length = keyframe_motion.shape[-1]

print("Synthesized shape:", synthesized.shape)

print("Keyframe_motion shape:", keyframe_motion.shape)

# Use the class-level KEYFRAME_INDICES

keyframe_indices = GenMM.KEYFRAME_INDICES

for _i in range(n_steps):

synthesized, loss = criteria(synthesized, targets, ext=ext, return_blended_results=True)

# Manually set the keyframes in synthesized motion to be the ones from the input motion

if syn_length >= keyframe_indices.stop and km_length >= keyframe_indices.stop:

synthesized[..., keyframe_indices] = keyframe_motion[..., keyframe_indices]

# Update status

losses.append(loss.item())

pbar.step()

pbar.print()

return synthesized, losses

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright [yyyy] [name of copyright owner]

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: README.md

================================================

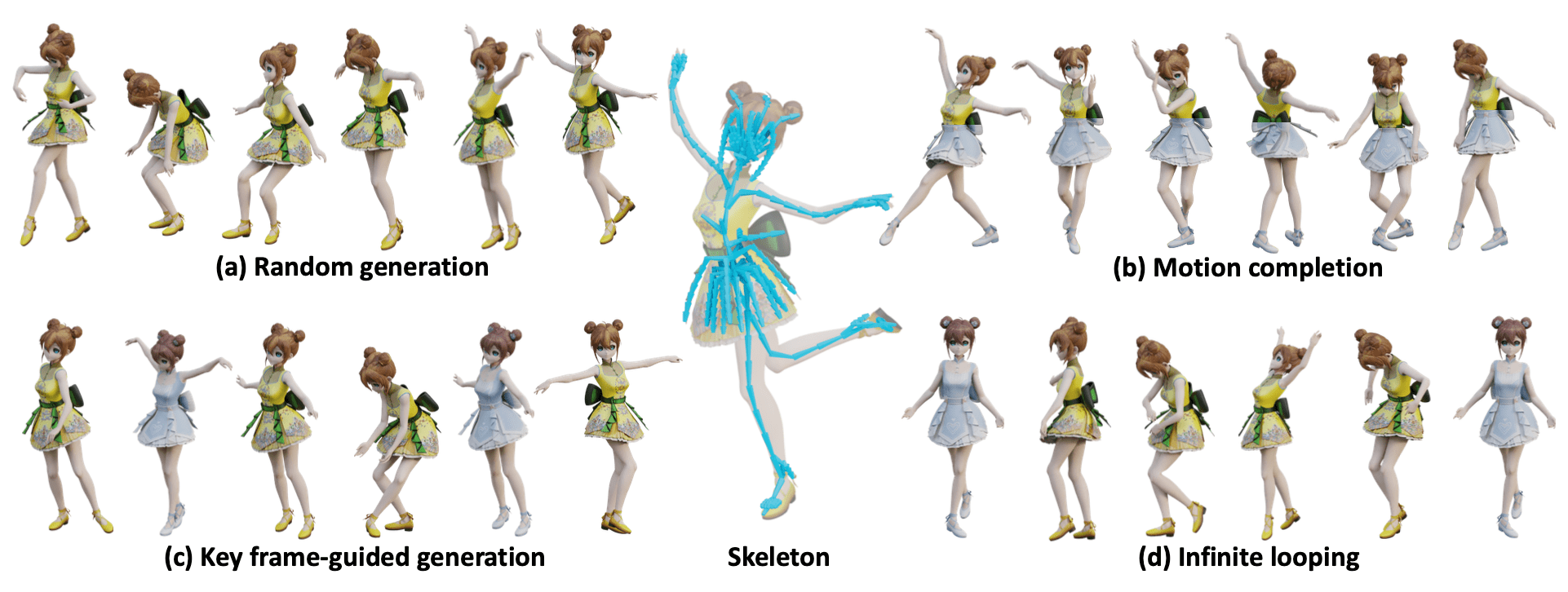

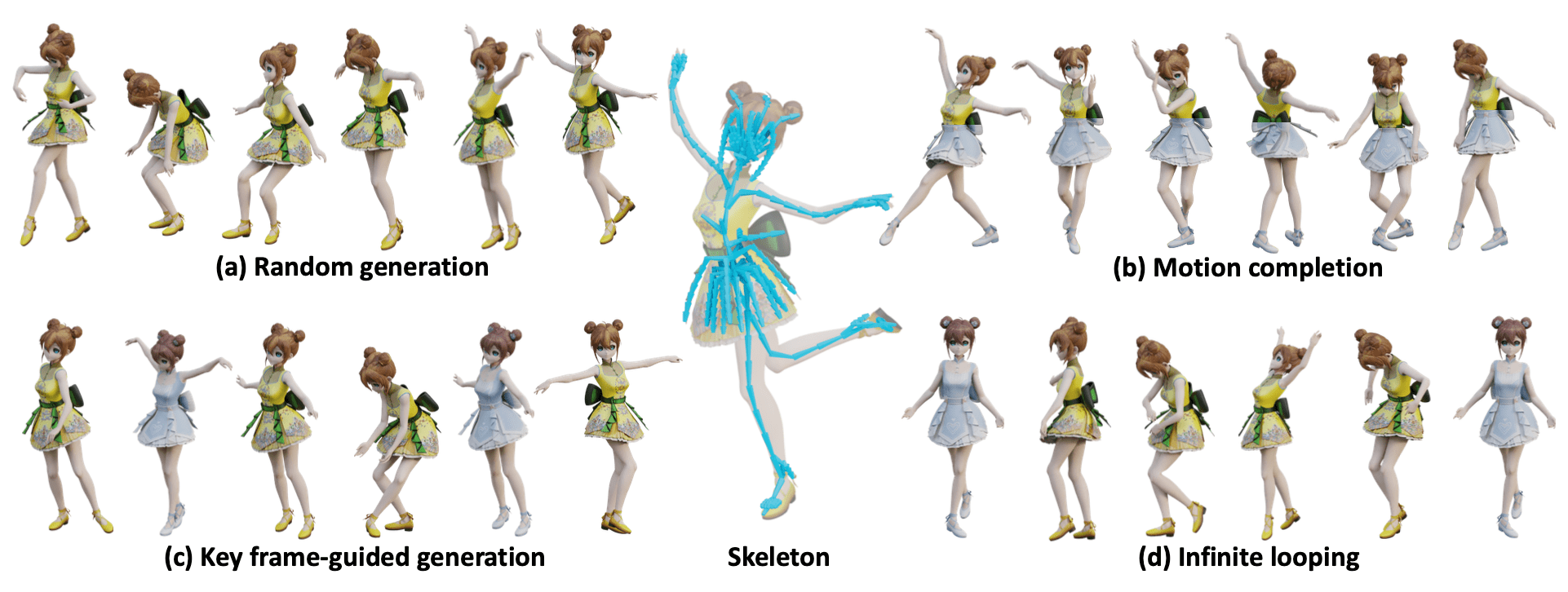

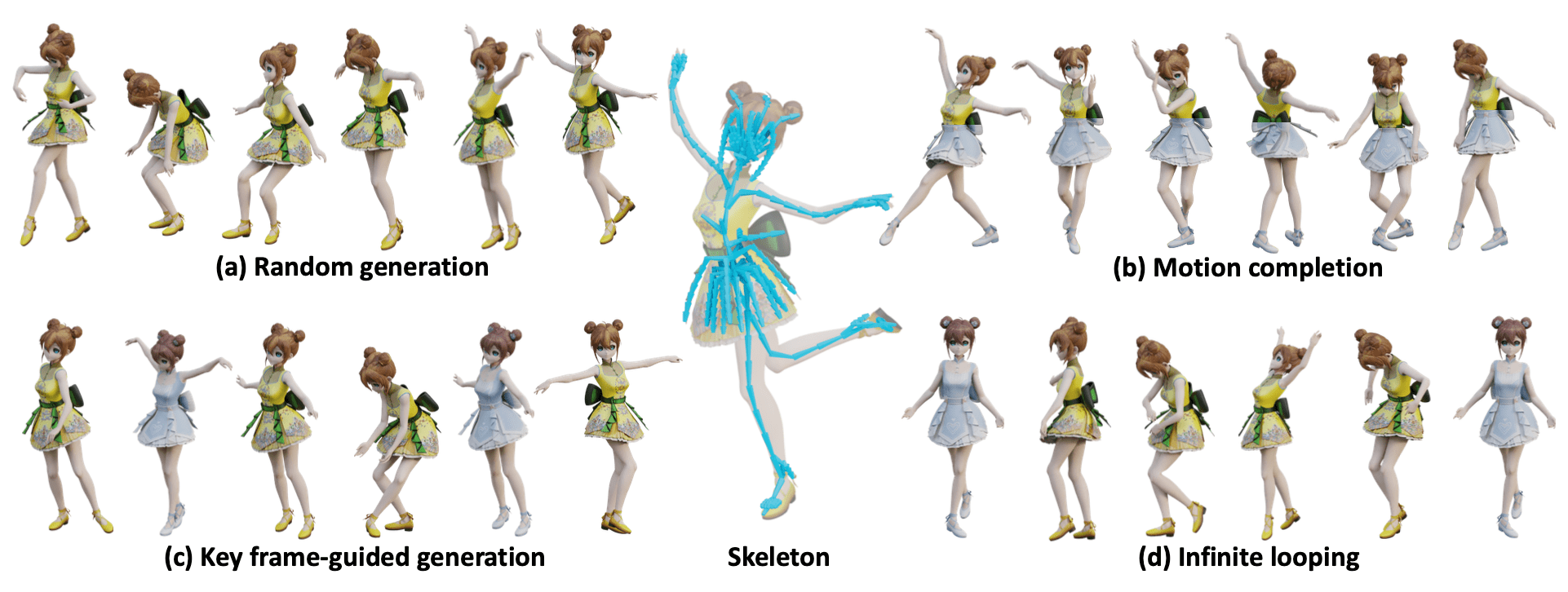

# Example-based Motion Synthesis via Generative Motion Matching, ACM Transactions on Graphics (Proceedings of SIGGRAPH 2023)

##### [Weiyu Li*](https://wyysf-98.github.io/), [Xuelin Chen*†](https://xuelin-chen.github.io/), [Peizhuo Li](https://peizhuoli.github.io/), [Olga Sorkine-Hornung](https://igl.ethz.ch/people/sorkine/), [Baoquan Chen](https://cfcs.pku.edu.cn/baoquan/)

#### [Project Page](https://wyysf-98.github.io/GenMM) | [ArXiv](https://arxiv.org/abs/2306.00378) | [Paper](https://wyysf-98.github.io/GenMM/paper/Paper_high_res.pdf) | [Video](https://youtu.be/lehnxcade4I)

All Code and demo will be released in this week(still ongoing...) 🏗️ 🚧 🔨

- [x] Release main code

- [x] Release blender addon

- [x] Detailed README and installation guide

- [ ] Release skeleton-aware component, WIP as we need to split the joints into groups manually.

- [ ] Release codes for evaluation

## Prerequisite

Setup environment

:smiley: We also provide a Dockerfile for easy installation, see [Setup using Docker](./docker/README.md).

- Python 3.8

- PyTorch 1.12.1

- [unfoldNd](https://github.com/f-dangel/unfoldNd)

Clone this repository.

```sh

git clone git@github.com:wyysf-98/GenMM.git

```

Install the required packages.

```sh

conda create -n GenMM python=3.8

conda activate GenMM

conda install -c pytorch pytorch=1.12.1 torchvision=0.13.1 cudatoolkit=11.3 && \

pip install -r docker/requirements.txt

pip install torch-scatter==2.1.1

```

## Quick inference demo

For local quick inference demo using .bvh file, you can use

```sh

python run_random_generation.py -i './data/Malcolm/Gangnam-Style.bvh'

```

More configuration can be found in the `run_random_generation.py`.

We use an Apple M1 and NVIDIA Tesla V100 with 32 GB RAM to generate each motion, which takes about ~0.2s and ~0.05s as mentioned in our paper.

## Blender add-on

You can install and use the blender add-on with easy installation as our method is efficient and you do not need to install CUDA Toolkit.

We test our code using blender 3.22.0, and will support 2.8.0 in the future.

Step 1: Find yout blender python path. Common paths are as follows

```sh

(Windows) 'C:\Program Files\Blender Foundation\Blender 3.2\3.2\python\bin'

(Linux) '/path/to/blender/blender-path/3.2/python/bin'

(Windows) '/Applications/Blender.app/Contents/Resources/3.2/python/bin'

```

Step 2: Install required packages. Open your shell(Linux) or powershell(Windows),

```sh

cd {your python path} && pip3 install -r docker/requirements.txt && pip3 install torch-scatter==2.1.0 -f https://data.pyg.org/whl/torch-1.12.0+${CUDA}.html

```

, where ${CUDA} should be replaced by either cpu, cu117, or cu118 depending on your PyTorch installation.

On my MacOS with M1 cpu,

```sh

cd /Applications/Blender.app/Contents/Resources/3.2/python/bin && pip3 install -r docker/requirements_blender.txt && pip3 install torch-scatter==2.1.0 -f https://data.pyg.org/whl/torch-1.12.0+cpu.html

```

Step 3: Install add-on in blender. [Blender Add-ons Official Tutorial](https://docs.blender.org/manual/en/latest/editors/preferences/addons.html). `edit -> Preferences -> Add-ons -> Install -> Select the downloaded .zip file`

Step 4: Have fun! Click the armature and you will find a `GenMM` tag.

(GPU support) If you have GPU and CUDA Toolskits installed, we automatically dectect the running device.

Feel free to submit an issue if you run into any issues during the installation :)

## Acknowledgement

We thank [@stefanonuvoli](https://github.com/stefanonuvoli/skinmixer) for the help for the discussion of implementation about `Motion Reassembly` part (we eventually manually merged the meshes of different characters). And [@Radamés Ajna](https://github.com/radames) for the help of a better huggingface demo.

## Citation

If you find our work useful for your research, please consider citing using the following BibTeX entry.

```BibTeX

@article{10.1145/weiyu23GenMM,

author = {Li, Weiyu and Chen, Xuelin and Li, Peizhuo and Sorkine-Hornung, Olga and Chen, Baoquan},

title = {Example-Based Motion Synthesis via Generative Motion Matching},

journal = {ACM Transactions on Graphics (TOG)},

volume = {42},

number = {4},

year = {2023},

articleno = {94},

doi = {10.1145/3592395},

publisher = {Association for Computing Machinery},

}

```

================================================

FILE: __init__.py

================================================

# This program is free software; you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation; either version 3 of the License, or

# (at your option) any later version.

#

# This program is distributed in the hope that it will be useful, but

# WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTIBILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU

# General Public License for more details.

#

# You should have received a copy of the GNU General Public License

# along with this program. If not, see .

import os

import sys

import bpy

import torch

import mathutils

import numpy as np

from math import degrees, radians, ceil

from mathutils import Vector, Matrix, Euler

from typing import List, Iterable, Tuple, Any, Dict

abs_path = os.path.abspath(__file__)

sys.path.append(os.path.dirname(abs_path))

from GenMM import GenMM

from nearest_neighbor.losses import PatchCoherentLoss

from dataset.blender_motion import BlenderMotion

bl_info = {

"name" : "GenMM",

"author" : "Weiyu Li",

"description" : "Blender addon for SIGGRAPH paper 'Example-Based Motion Synthesis via Generative Motion Matching'",

"blender" : (3, 2, 0),

"version" : (0, 0, 1),

"location": "3D View",

"description": "Synthesis novel motions form a few exemplars.",

"location" : "",

"support": "TESTING",

"warning" : "",

"category" : "Generic"

}

def capture_rest_pose(armature_obj):

"""Capture the rest pose bone data (head, tail, roll) from an armature."""

rest_pose_data = {}

bpy.ops.object.mode_set(mode='EDIT')

arm_data = armature_obj.data

for bone in arm_data.edit_bones:

rest_pose_data[bone.name] = {

'head': bone.head.copy(),

'tail': bone.tail.copy(),

'roll': bone.roll,

'matrix_local': bone.matrix.copy()

}

bpy.ops.object.mode_set(mode='OBJECT')

return rest_pose_data

# This function is modified from

# https://github.com/bwrsandman/blender-addons/blob/master/io_anim_bvh

def get_bvh_data(context,

frame_end,

frame_start,

global_scale=1.0,

rotate_mode='NATIVE',

root_transform_only=False,

):

def ensure_rot_order(rot_order_str):

if set(rot_order_str) != {'X', 'Y', 'Z'}:

rot_order_str = "XYZ"

return rot_order_str

file_str = []

obj = context.object

arm = obj.data

# Build a dictionary of children.

# None for parentless

children = {None: []}

# initialize with blank lists

for bone in arm.bones:

children[bone.name] = []

# keep bone order from armature, no sorting, not esspential but means

# we can maintain order from import -> export which secondlife incorrectly expects.

for bone in arm.bones:

children[getattr(bone.parent, "name", None)].append(bone.name)

# bone name list in the order that the bones are written

serialized_names = []

node_locations = {}

file_str.append("HIERARCHY\n")

def write_recursive_nodes(bone_name, indent):

my_children = children[bone_name]

indent_str = "\t" * indent

bone = arm.bones[bone_name]

pose_bone = obj.pose.bones[bone_name]

loc = bone.head_local

node_locations[bone_name] = loc

if rotate_mode == "NATIVE":

rot_order_str = ensure_rot_order(pose_bone.rotation_mode)

else:

rot_order_str = rotate_mode

# make relative if we can

if bone.parent:

loc = loc - node_locations[bone.parent.name]

if indent:

file_str.append("%sJOINT %s\n" % (indent_str, bone_name))

else:

file_str.append("%sROOT %s\n" % (indent_str, bone_name))

file_str.append("%s{\n" % indent_str)

file_str.append("%s\tOFFSET %.6f %.6f %.6f\n" % (indent_str, loc.x * global_scale, loc.y * global_scale, loc.z * global_scale))

if (bone.use_connect or root_transform_only) and bone.parent:

file_str.append("%s\tCHANNELS 3 %srotation %srotation %srotation\n" % (indent_str, rot_order_str[0], rot_order_str[1], rot_order_str[2]))

else:

file_str.append("%s\tCHANNELS 6 Xposition Yposition Zposition %srotation %srotation %srotation\n" % (indent_str, rot_order_str[0], rot_order_str[1], rot_order_str[2]))

if my_children:

# store the location for the children

# to get their relative offset

# Write children

for child_bone in my_children:

serialized_names.append(child_bone)

write_recursive_nodes(child_bone, indent + 1)

else:

# Write the bone end.

file_str.append("%s\tEnd Site\n" % indent_str)

file_str.append("%s\t{\n" % indent_str)

loc = bone.tail_local - node_locations[bone_name]

file_str.append("%s\t\tOFFSET %.6f %.6f %.6f\n" % (indent_str, loc.x * global_scale, loc.y * global_scale, loc.z * global_scale))

file_str.append("%s\t}\n" % indent_str)

file_str.append("%s}\n" % indent_str)

if len(children[None]) == 1:

key = children[None][0]

serialized_names.append(key)

indent = 0

write_recursive_nodes(key, indent)

else:

# Write a dummy parent node, with a dummy key name

# Just be sure it's not used by another bone!

i = 0

key = "__%d" % i

while key in children:

i += 1

key = "__%d" % i

file_str.append("ROOT %s\n" % key)

file_str.append("{\n")

file_str.append("\tOFFSET 0.0 0.0 0.0\n")

file_str.append("\tCHANNELS 0\n") # Xposition Yposition Zposition Xrotation Yrotation Zrotation

indent = 1

# Write children

for child_bone in children[None]:

serialized_names.append(child_bone)

write_recursive_nodes(child_bone, indent)

file_str.append("}\n")

file_str = ''.join(file_str)

# redefine bones as sorted by serialized_names

# so we can write motion

class DecoratedBone:

__slots__ = (

# Bone name, used as key in many places.

"name",

"parent", # decorated bone parent, set in a later loop

# Blender armature bone.

"rest_bone",

# Blender pose bone.

"pose_bone",

# Blender pose matrix.

"pose_mat",

# Blender rest matrix (armature space).

"rest_arm_mat",

# Blender rest matrix (local space).

"rest_local_mat",

# Pose_mat inverted.

"pose_imat",

# Rest_arm_mat inverted.

"rest_arm_imat",

# Rest_local_mat inverted.

"rest_local_imat",

# Last used euler to preserve euler compatibility in between keyframes.

"prev_euler",

# Is the bone disconnected to the parent bone?

"skip_position",

"rot_order",

"rot_order_str",

# Needed for the euler order when converting from a matrix.

"rot_order_str_reverse",

)

_eul_order_lookup = {

'XYZ': (0, 1, 2),

'XZY': (0, 2, 1),

'YXZ': (1, 0, 2),

'YZX': (1, 2, 0),

'ZXY': (2, 0, 1),

'ZYX': (2, 1, 0),

}

def __init__(self, bone_name):

self.name = bone_name

self.rest_bone = arm.bones[bone_name]

self.pose_bone = obj.pose.bones[bone_name]

if rotate_mode == "NATIVE":

self.rot_order_str = ensure_rot_order(self.pose_bone.rotation_mode)

else:

self.rot_order_str = rotate_mode

self.rot_order_str_reverse = self.rot_order_str[::-1]

self.rot_order = DecoratedBone._eul_order_lookup[self.rot_order_str]

self.pose_mat = self.pose_bone.matrix

# mat = self.rest_bone.matrix # UNUSED

self.rest_arm_mat = self.rest_bone.matrix_local

self.rest_local_mat = self.rest_bone.matrix

# inverted mats

self.pose_imat = self.pose_mat.inverted()

self.rest_arm_imat = self.rest_arm_mat.inverted()

self.rest_local_imat = self.rest_local_mat.inverted()

self.parent = None

self.prev_euler = Euler((0.0, 0.0, 0.0), self.rot_order_str_reverse)

self.skip_position = ((self.rest_bone.use_connect or root_transform_only) and self.rest_bone.parent)

def update_posedata(self):

self.pose_mat = self.pose_bone.matrix

self.pose_imat = self.pose_mat.inverted()

def __repr__(self):

if self.parent:

return "[\"%s\" child on \"%s\"]\n" % (self.name, self.parent.name)

else:

return "[\"%s\" root bone]\n" % (self.name)

bones_decorated = [DecoratedBone(bone_name) for bone_name in serialized_names]

# Assign parents

bones_decorated_dict = {dbone.name: dbone for dbone in bones_decorated}

for dbone in bones_decorated:

parent = dbone.rest_bone.parent

if parent:

dbone.parent = bones_decorated_dict[parent.name]

del bones_decorated_dict

# finish assigning parents

scene = context.scene

frame_current = scene.frame_current

file_str += "MOTION\n"

file_str += "Frames: %d\n" % (frame_end - frame_start + 1)

file_str += "Frame Time: %.6f\n" % (1.0 / (scene.render.fps / scene.render.fps_base))

for frame in range(frame_start, frame_end + 1):

scene.frame_set(frame)

for dbone in bones_decorated:

dbone.update_posedata()

for dbone in bones_decorated:

trans = Matrix.Translation(dbone.rest_bone.head_local)

itrans = Matrix.Translation(-dbone.rest_bone.head_local)

if dbone.parent:

mat_final = dbone.parent.rest_arm_mat @ dbone.parent.pose_imat @ dbone.pose_mat @ dbone.rest_arm_imat

mat_final = itrans @ mat_final @ trans

loc = mat_final.to_translation() + (dbone.rest_bone.head_local - dbone.parent.rest_bone.head_local)

else:

mat_final = dbone.pose_mat @ dbone.rest_arm_imat

mat_final = itrans @ mat_final @ trans

loc = mat_final.to_translation() + dbone.rest_bone.head

# keep eulers compatible, no jumping on interpolation.

rot = mat_final.to_euler(dbone.rot_order_str_reverse, dbone.prev_euler)

if not dbone.skip_position:

file_str += "%.6f %.6f %.6f " % (loc * global_scale)[:]

file_str += "%.6f %.6f %.6f " % (degrees(rot[dbone.rot_order[0]]), degrees(rot[dbone.rot_order[1]]), degrees(rot[dbone.rot_order[2]]))

dbone.prev_euler = rot

file_str += "\n"

scene.frame_set(frame_current)

return file_str

class BVH_Node:

__slots__ = (

# Bvh joint name.

'name',

# BVH_Node type or None for no parent.

'parent',

# A list of children of this type..

'children',

# Worldspace rest location for the head of this node.

'rest_head_world',

# Localspace rest location for the head of this node.

'rest_head_local',

# Worldspace rest location for the tail of this node.

'rest_tail_world',

# Worldspace rest location for the tail of this node.

'rest_tail_local',

# List of 6 ints, -1 for an unused channel,

# otherwise an index for the BVH motion data lines,

# loc triple then rot triple.

'channels',

# A triple of indices as to the order rotation is applied.

# [0,1,2] is x/y/z - [None, None, None] if no rotation..

'rot_order',

# Same as above but a string 'XYZ' format..

'rot_order_str',

# A list one tuple's one for each frame: (locx, locy, locz, rotx, roty, rotz),

# euler rotation ALWAYS stored xyz order, even when native used.

'anim_data',

# Convenience function, bool, same as: (channels[0] != -1 or channels[1] != -1 or channels[2] != -1).

'has_loc',

# Convenience function, bool, same as: (channels[3] != -1 or channels[4] != -1 or channels[5] != -1).

'has_rot',

# Index from the file, not strictly needed but nice to maintain order.

'index',

# Use this for whatever you want.

'temp',

)

_eul_order_lookup = {

(None, None, None): 'XYZ', # XXX Dummy one, no rotation anyway!

(0, 1, 2): 'XYZ',

(0, 2, 1): 'XZY',

(1, 0, 2): 'YXZ',

(1, 2, 0): 'YZX',

(2, 0, 1): 'ZXY',

(2, 1, 0): 'ZYX',

}

def __init__(self, name, rest_head_world, rest_head_local, parent, channels, rot_order, index):

self.name = name

self.rest_head_world = rest_head_world

self.rest_head_local = rest_head_local

self.rest_tail_world = None

self.rest_tail_local = None

self.parent = parent

self.channels = channels

self.rot_order = tuple(rot_order)

self.rot_order_str = BVH_Node._eul_order_lookup[self.rot_order]

self.index = index

# convenience functions

self.has_loc = channels[0] != -1 or channels[1] != -1 or channels[2] != -1

self.has_rot = channels[3] != -1 or channels[4] != -1 or channels[5] != -1

self.children = []

# List of 6 length tuples: (lx, ly, lz, rx, ry, rz)

# even if the channels aren't used they will just be zero.

self.anim_data = [(0, 0, 0, 0, 0, 0)]

def __repr__(self):

return (

"BVH name: '%s', rest_loc:(%.3f,%.3f,%.3f), rest_tail:(%.3f,%.3f,%.3f)" % (

self.name,

*self.rest_head_world,

*self.rest_head_world,

)

)

def sorted_nodes(bvh_nodes):

bvh_nodes_list = list(bvh_nodes.values())

bvh_nodes_list.sort(key=lambda bvh_node: bvh_node.index)

return bvh_nodes_list

def read_bvh(context, bvh_str, rotate_mode='XYZ', global_scale=1.0):

# Separate into a list of lists, each line a list of words.

file_lines = bvh_str

# Non standard carriage returns?

if len(file_lines) == 1:

file_lines = file_lines[0].split('\r')

# Split by whitespace.

file_lines = [ll for ll in [l.split() for l in file_lines] if ll]

# Create hierarchy as empties

if file_lines[0][0].lower() == 'hierarchy':

# print 'Importing the BVH Hierarchy for:', file_path

pass

else:

raise Exception("This is not a BVH file")

bvh_nodes = {None: None}

bvh_nodes_serial = [None]

bvh_frame_count = None

bvh_frame_time = None

channelIndex = -1

lineIdx = 0 # An index for the file.

while lineIdx < len(file_lines) - 1:

if file_lines[lineIdx][0].lower() in {'root', 'joint'}:

# Join spaces into 1 word with underscores joining it.

if len(file_lines[lineIdx]) > 2:

file_lines[lineIdx][1] = '_'.join(file_lines[lineIdx][1:])

file_lines[lineIdx] = file_lines[lineIdx][:2]

# MAY NEED TO SUPPORT MULTIPLE ROOTS HERE! Still unsure weather multiple roots are possible?

# Make sure the names are unique - Object names will match joint names exactly and both will be unique.

name = file_lines[lineIdx][1]

# print '%snode: %s, parent: %s' % (len(bvh_nodes_serial) * ' ', name, bvh_nodes_serial[-1])

lineIdx += 2 # Increment to the next line (Offset)

rest_head_local = global_scale * Vector((

float(file_lines[lineIdx][1]),

float(file_lines[lineIdx][2]),

float(file_lines[lineIdx][3]),

))

lineIdx += 1 # Increment to the next line (Channels)

# newChannel[Xposition, Yposition, Zposition, Xrotation, Yrotation, Zrotation]

# newChannel references indices to the motiondata,

# if not assigned then -1 refers to the last value that will be added on loading at a value of zero, this is appended

# We'll add a zero value onto the end of the MotionDATA so this always refers to a value.

my_channel = [-1, -1, -1, -1, -1, -1]

my_rot_order = [None, None, None]

rot_count = 0

for channel in file_lines[lineIdx][2:]:

channel = channel.lower()

channelIndex += 1 # So the index points to the right channel

if channel == 'xposition':

my_channel[0] = channelIndex

elif channel == 'yposition':

my_channel[1] = channelIndex

elif channel == 'zposition':

my_channel[2] = channelIndex

elif channel == 'xrotation':

my_channel[3] = channelIndex

my_rot_order[rot_count] = 0

rot_count += 1

elif channel == 'yrotation':

my_channel[4] = channelIndex

my_rot_order[rot_count] = 1

rot_count += 1

elif channel == 'zrotation':

my_channel[5] = channelIndex

my_rot_order[rot_count] = 2

rot_count += 1

channels = file_lines[lineIdx][2:]

my_parent = bvh_nodes_serial[-1] # account for none

# Apply the parents offset accumulatively

if my_parent is None:

rest_head_world = Vector(rest_head_local)

else:

rest_head_world = my_parent.rest_head_world + rest_head_local

bvh_node = bvh_nodes[name] = BVH_Node(

name,

rest_head_world,

rest_head_local,

my_parent,

my_channel,

my_rot_order,

len(bvh_nodes) - 1,

)

# If we have another child then we can call ourselves a parent, else

bvh_nodes_serial.append(bvh_node)

# Account for an end node.

# There is sometimes a name after 'End Site' but we will ignore it.

if file_lines[lineIdx][0].lower() == 'end' and file_lines[lineIdx][1].lower() == 'site':

# Increment to the next line (Offset)

lineIdx += 2

rest_tail = global_scale * Vector((

float(file_lines[lineIdx][1]),

float(file_lines[lineIdx][2]),

float(file_lines[lineIdx][3]),

))

bvh_nodes_serial[-1].rest_tail_world = bvh_nodes_serial[-1].rest_head_world + rest_tail

bvh_nodes_serial[-1].rest_tail_local = bvh_nodes_serial[-1].rest_head_local + rest_tail

# Just so we can remove the parents in a uniform way,

# the end has kids so this is a placeholder.

bvh_nodes_serial.append(None)

if len(file_lines[lineIdx]) == 1 and file_lines[lineIdx][0] == '}': # == ['}']

bvh_nodes_serial.pop() # Remove the last item

# End of the hierarchy. Begin the animation section of the file with

# the following header.

# MOTION

# Frames: n

# Frame Time: dt

if len(file_lines[lineIdx]) == 1 and file_lines[lineIdx][0].lower() == 'motion':

lineIdx += 1 # Read frame count.

if (

len(file_lines[lineIdx]) == 2 and

file_lines[lineIdx][0].lower() == 'frames:'

):

bvh_frame_count = int(file_lines[lineIdx][1])

lineIdx += 1 # Read frame rate.

if (

len(file_lines[lineIdx]) == 3 and

file_lines[lineIdx][0].lower() == 'frame' and

file_lines[lineIdx][1].lower() == 'time:'

):

bvh_frame_time = float(file_lines[lineIdx][2])

lineIdx += 1 # Set the cursor to the first frame

break

lineIdx += 1

# Remove the None value used for easy parent reference

del bvh_nodes[None]

# Don't use anymore

del bvh_nodes_serial

# importing world with any order but nicer to maintain order

# second life expects it, which isn't to spec.

bvh_nodes_list = sorted_nodes(bvh_nodes)

while lineIdx < len(file_lines):

line = file_lines[lineIdx]

for bvh_node in bvh_nodes_list:

# for bvh_node in bvh_nodes_serial:

lx = ly = lz = rx = ry = rz = 0.0

channels = bvh_node.channels

anim_data = bvh_node.anim_data

if channels[0] != -1:

lx = global_scale * float(line[channels[0]])

if channels[1] != -1:

ly = global_scale * float(line[channels[1]])

if channels[2] != -1:

lz = global_scale * float(line[channels[2]])

if channels[3] != -1 or channels[4] != -1 or channels[5] != -1:

rx = radians(float(line[channels[3]]))

ry = radians(float(line[channels[4]]))

rz = radians(float(line[channels[5]]))

# Done importing motion data #

anim_data.append((lx, ly, lz, rx, ry, rz))

lineIdx += 1

# Assign children

for bvh_node in bvh_nodes_list:

bvh_node_parent = bvh_node.parent

if bvh_node_parent:

bvh_node_parent.children.append(bvh_node)

# Now set the tip of each bvh_node

for bvh_node in bvh_nodes_list:

if not bvh_node.rest_tail_world:

if len(bvh_node.children) == 0:

# could just fail here, but rare BVH files have childless nodes

bvh_node.rest_tail_world = Vector(bvh_node.rest_head_world)

bvh_node.rest_tail_local = Vector(bvh_node.rest_head_local)

elif len(bvh_node.children) == 1:

bvh_node.rest_tail_world = Vector(bvh_node.children[0].rest_head_world)

bvh_node.rest_tail_local = bvh_node.rest_head_local + bvh_node.children[0].rest_head_local

else:

# allow this, see above

# if not bvh_node.children:

# raise Exception("bvh node has no end and no children. bad file")

# Removed temp for now

rest_tail_world = Vector((0.0, 0.0, 0.0))

rest_tail_local = Vector((0.0, 0.0, 0.0))

for bvh_node_child in bvh_node.children:

rest_tail_world += bvh_node_child.rest_head_world

rest_tail_local += bvh_node_child.rest_head_local

bvh_node.rest_tail_world = rest_tail_world * (1.0 / len(bvh_node.children))

bvh_node.rest_tail_local = rest_tail_local * (1.0 / len(bvh_node.children))

# Make sure tail isn't the same location as the head.

if (bvh_node.rest_tail_local - bvh_node.rest_head_local).length <= 0.001 * global_scale:

print("\tzero length node found:", bvh_node.name)

bvh_node.rest_tail_local.y = bvh_node.rest_tail_local.y + global_scale / 10

bvh_node.rest_tail_world.y = bvh_node.rest_tail_world.y + global_scale / 10

return bvh_nodes, bvh_frame_time, bvh_frame_count

def bvh_node_dict2objects(context, bvh_name, bvh_nodes, rotate_mode='NATIVE', frame_start=1, IMPORT_LOOP=False):

if frame_start < 1:

frame_start = 1

scene = context.scene

for obj in scene.objects:

obj.select_set(False)

objects = []

def add_ob(name):

obj = bpy.data.objects.new(name, None)

context.collection.objects.link(obj)

objects.append(obj)

obj.select_set(True)

# nicer drawing.

obj.empty_display_type = 'CUBE'

obj.empty_display_size = 0.1

return obj

# Add objects

for name, bvh_node in bvh_nodes.items():

bvh_node.temp = add_ob(name)

bvh_node.temp.rotation_mode = bvh_node.rot_order_str[::-1]

# Parent the objects

for bvh_node in bvh_nodes.values():

for bvh_node_child in bvh_node.children:

bvh_node_child.temp.parent = bvh_node.temp

# Offset

for bvh_node in bvh_nodes.values():

# Make relative to parents offset

bvh_node.temp.location = bvh_node.rest_head_local

# Add tail objects

for name, bvh_node in bvh_nodes.items():

if not bvh_node.children:

ob_end = add_ob(name + '_end')

ob_end.parent = bvh_node.temp

ob_end.location = bvh_node.rest_tail_world - bvh_node.rest_head_world

for name, bvh_node in bvh_nodes.items():

obj = bvh_node.temp

for frame_current in range(len(bvh_node.anim_data)):

lx, ly, lz, rx, ry, rz = bvh_node.anim_data[frame_current]

if bvh_node.has_loc:

obj.delta_location = Vector((lx, ly, lz)) - bvh_node.rest_head_world

obj.keyframe_insert("delta_location", index=-1, frame=frame_start + frame_current)

if bvh_node.has_rot:

obj.delta_rotation_euler = rx, ry, rz

obj.keyframe_insert("delta_rotation_euler", index=-1, frame=frame_start + frame_current)

return objects

def bvh_node_dict2armature(

context,

bvh_name,

bvh_nodes,

bvh_frame_time,

rotate_mode='XYZ',

frame_start=1,

IMPORT_LOOP=False,

global_matrix=None,

use_fps_scale=False,

original_rest_pose=None # New parameter for the original rest pose

):

if frame_start < 1:

frame_start = 1

scene = context.scene

for obj in scene.objects:

obj.select_set(False)

arm_data = bpy.data.armatures.new(bvh_name)

arm_ob = bpy.data.objects.new(bvh_name, arm_data)

context.collection.objects.link(arm_ob)

arm_ob.select_set(True)

context.view_layer.objects.active = arm_ob

bpy.ops.object.mode_set(mode='EDIT', toggle=False)

bvh_nodes_list = sorted_nodes(bvh_nodes)

# Get the average bone length for zero length bones

average_bone_length = 0.0

nonzero_count = 0

for bvh_node in bvh_nodes_list:

l = (bvh_node.rest_head_local - bvh_node.rest_tail_local).length

if l:

average_bone_length += l

nonzero_count += 1

if not average_bone_length:

average_bone_length = 0.1

else:

average_bone_length = average_bone_length / nonzero_count

while arm_data.edit_bones:

arm_ob.edit_bones.remove(arm_data.edit_bones[-1])

ZERO_AREA_BONES = []

# First pass: Create all bones and assign to temp

for bvh_node in bvh_nodes_list:

bone = arm_data.edit_bones.new(bvh_node.name)

# Use the original rest pose if provided, otherwise fall back to BVH data

if original_rest_pose and bvh_node.name in original_rest_pose:

bone.head = original_rest_pose[bvh_node.name]['head']

bone.tail = original_rest_pose[bvh_node.name]['tail']

bone.roll = original_rest_pose[bvh_node.name]['roll']

else:

bone.head = bvh_node.rest_head_world

bone.tail = bvh_node.rest_tail_world

# Handle zero-length bones

if (bone.head - bone.tail).length < 0.001:

print("\tzero length bone found:", bone.name)

if bvh_node.parent:

ofs = bvh_node.parent.rest_head_local - bvh_node.parent.rest_tail_local

if ofs.length:

bone.tail = bone.tail - ofs

else:

bone.tail.y = bone.tail.y + average_bone_length

else:

bone.tail.y = bone.tail.y + average_bone_length

ZERO_AREA_BONES.append(bvh_node.name)

# Assign the edit bone to the temp attribute

bvh_node.temp = bone

# Second pass: Set parenting and connection

for bvh_node in bvh_nodes_list:

if bvh_node.parent:

# Now bvh_node.temp and bvh_node.parent.temp should both be valid

bvh_node.temp.parent = bvh_node.parent.temp

if (

(not bvh_node.has_loc) and

(bvh_node.parent.temp.name not in ZERO_AREA_BONES) and

(bvh_node.parent.rest_tail_local == bvh_node.rest_head_local)

):

bvh_node.temp.use_connect = True

# Replace temp with bone name for later use

for bvh_node in bvh_nodes_list:

bvh_node.temp = bvh_node.temp.name

bpy.ops.object.mode_set(mode='OBJECT', toggle=False)

pose = arm_ob.pose

pose_bones = pose.bones

if rotate_mode == 'NATIVE':

for bvh_node in bvh_nodes_list:

bone_name = bvh_node.temp

pose_bone = pose_bones[bone_name]

pose_bone.rotation_mode = bvh_node.rot_order_str

elif rotate_mode != 'QUATERNION':

for pose_bone in pose_bones:

pose_bone.rotation_mode = rotate_mode

context.view_layer.update()

arm_ob.animation_data_create()

action = bpy.data.actions.new(name=bvh_name)

arm_ob.animation_data.action = action

num_frame = 0

for bvh_node in bvh_nodes_list:

bone_name = bvh_node.temp

pose_bone = pose_bones[bone_name]

rest_bone = arm_data.bones[bone_name]

bone_rest_matrix = rest_bone.matrix_local.to_3x3()

bone_rest_matrix_inv = Matrix(bone_rest_matrix)

bone_rest_matrix_inv.invert()

bone_rest_matrix_inv.resize_4x4()

bone_rest_matrix.resize_4x4()

bvh_node.temp = (pose_bone, rest_bone, bone_rest_matrix, bone_rest_matrix_inv)

if 0 == num_frame:

num_frame = len(bvh_node.anim_data)

skip_frame = 1

if num_frame > skip_frame:

num_frame = num_frame - skip_frame

time = [float(frame_start)] * num_frame

if use_fps_scale:

dt = scene.render.fps * bvh_frame_time

for frame_i in range(1, num_frame):

time[frame_i] += float(frame_i) * dt

else:

for frame_i in range(1, num_frame):

time[frame_i] += float(frame_i)

for i, bvh_node in enumerate(bvh_nodes_list):

pose_bone, bone, bone_rest_matrix, bone_rest_matrix_inv = bvh_node.temp

if bvh_node.has_loc:

data_path = f'pose.bones["{pose_bone.name}"].location'

location = [(0.0, 0.0, 0.0)] * num_frame

for frame_i in range(num_frame):

bvh_loc = bvh_node.anim_data[frame_i + skip_frame][:3]

bone_translate_matrix = Matrix.Translation(

Vector(bvh_loc) - bvh_node.rest_head_local)

location[frame_i] = (bone_rest_matrix_inv @

bone_translate_matrix).to_translation()

for axis_i in range(3):

curve = action.fcurves.new(data_path=data_path, index=axis_i, action_group=bvh_node.name)

keyframe_points = curve.keyframe_points

keyframe_points.add(num_frame)

for frame_i in range(num_frame):

keyframe_points[frame_i].co = (

time[frame_i],

location[frame_i][axis_i],

)

if bvh_node.has_rot:

data_path = None

rotate = None

if 'QUATERNION' == rotate_mode:

rotate = [(1.0, 0.0, 0.0, 0.0)] * num_frame

data_path = f'pose.bones["{pose_bone.name}"].rotation_quaternion'

else:

rotate = [(0.0, 0.0, 0.0)] * num_frame

data_path = f'pose.bones["{pose_bone.name}"].rotation_euler'

prev_euler = Euler((0.0, 0.0, 0.0))

for frame_i in range(num_frame):

bvh_rot = bvh_node.anim_data[frame_i + skip_frame][3:]

euler = Euler(bvh_rot, bvh_node.rot_order_str[::-1])

bone_rotation_matrix = euler.to_matrix().to_4x4()

bone_rotation_matrix = (

bone_rest_matrix_inv @

bone_rotation_matrix @

bone_rest_matrix

)

if len(rotate[frame_i]) == 4:

rotate[frame_i] = bone_rotation_matrix.to_quaternion()

else:

rotate[frame_i] = bone_rotation_matrix.to_euler(

pose_bone.rotation_mode, prev_euler)

prev_euler = rotate[frame_i]

for axis_i in range(len(rotate[0])):

curve = action.fcurves.new(data_path=data_path, index=axis_i, action_group=bvh_node.name)

keyframe_points = curve.keyframe_points

keyframe_points.add(num_frame)

for frame_i in range(num_frame):

keyframe_points[frame_i].co = (

time[frame_i],

rotate[frame_i][axis_i],

)

for cu in action.fcurves:

if IMPORT_LOOP:

pass

for bez in cu.keyframe_points:

bez.interpolation = 'LINEAR'

try:

arm_ob.matrix_world = global_matrix

except:

pass

bpy.ops.object.transform_apply(location=False, rotation=True, scale=False)

return arm_ob

def load(

context,

bvh_str,

*,

target='ARMATURE',

rotate_mode='NATIVE',

global_scale=1.0,

use_cyclic=False,

frame_start=1,

global_matrix=None,

use_fps_scale=False,

update_scene_fps=False,

update_scene_duration=False,

original_rest_pose=None,

bvh_name='synsized', # Added parameter

report=print,

):

import time

t1 = time.time()

bvh_nodes, bvh_frame_time, bvh_frame_count = read_bvh(

context, bvh_str,

rotate_mode=rotate_mode,

global_scale=global_scale,

)

print("%.4f" % (time.time() - t1))

scene = context.scene

frame_orig = scene.frame_current

if bvh_frame_time is None:

report(

{'WARNING'},

"The BVH file does not contain frame duration in its MOTION "

"section, assuming the BVH and Blender scene have the same "

"frame rate"

)

bvh_frame_time = scene.render.fps_base / scene.render.fps

use_fps_scale = False

if update_scene_fps:

_update_scene_fps(context, report, bvh_frame_time)

use_fps_scale = False

if update_scene_duration:

_update_scene_duration(context, report, bvh_frame_count, bvh_frame_time, frame_start, use_fps_scale)

t1 = time.time()

print("\timporting to blender...", end="")

if target == 'ARMATURE':

bvh_node_dict2armature(

context, bvh_name, bvh_nodes, bvh_frame_time,

rotate_mode=rotate_mode,

frame_start=frame_start,

IMPORT_LOOP=use_cyclic,

global_matrix=global_matrix,

use_fps_scale=use_fps_scale,

original_rest_pose=original_rest_pose

)

elif target == 'OBJECT':

bvh_node_dict2objects(

context, bvh_name, bvh_nodes,

rotate_mode=rotate_mode,

frame_start=frame_start,

IMPORT_LOOP=use_cyclic,

)

else:

report({'ERROR'}, tip_("Invalid target %r (must be 'ARMATURE' or 'OBJECT')") % target)

return {'CANCELLED'}

print('Done in %.4f\n' % (time.time() - t1))

context.scene.frame_set(frame_orig)

return {'FINISHED'}

def _update_scene_fps(context, report, bvh_frame_time):

"""Update the scene's FPS settings from the BVH, but only if the BVH contains enough info."""

# Broken BVH handling: prevent division by zero.

if bvh_frame_time == 0.0:

report(

{'WARNING'},

"Unable to update scene frame rate, as the BVH file "

"contains a zero frame duration in its MOTION section",

)

return

scene = context.scene

scene_fps = scene.render.fps / scene.render.fps_base

new_fps = 1.0 / bvh_frame_time

if scene.render.fps != new_fps or scene.render.fps_base != 1.0:

print("\tupdating scene FPS (was %f) to BVH FPS (%f)" % (scene_fps, new_fps))

scene.render.fps = int(round(new_fps))

scene.render.fps_base = scene.render.fps / new_fps

def _update_scene_duration(

context, report, bvh_frame_count, bvh_frame_time, frame_start,

use_fps_scale):

"""Extend the scene's duration so that the BVH file fits in its entirety."""

if bvh_frame_count is None:

report(

{'WARNING'},

"Unable to extend the scene duration, as the BVH file does not "

"contain the number of frames in its MOTION section",

)

return

# Not likely, but it can happen when a BVH is just used to store an armature.

if bvh_frame_count == 0:

return

if use_fps_scale:

scene_fps = context.scene.render.fps / context.scene.render.fps_base

scaled_frame_count = int(ceil(bvh_frame_count * bvh_frame_time * scene_fps))

bvh_last_frame = frame_start + scaled_frame_count

else:

bvh_last_frame = frame_start + bvh_frame_count

# Only extend the scene, never shorten it.

if context.scene.frame_end < bvh_last_frame:

context.scene.frame_end = bvh_last_frame

# This function is from

# https://github.com/yuki-koyama/blender-cli-rendering

def set_smooth_shading(mesh: bpy.types.Mesh) -> None:

for polygon in mesh.polygons:

polygon.use_smooth = True

# This function is from

# https://github.com/yuki-koyama/blender-cli-rendering

def create_mesh_from_pydata(scene: bpy.types.Scene,

vertices: Iterable[Iterable[float]],

faces: Iterable[Iterable[int]],

mesh_name: str,

object_name: str,

use_smooth: bool = True) -> bpy.types.Object:

# Add a new mesh and set vertices and faces

# Note: In this case, it does not require to set edges.

# Note: After manipulating mesh data, update() needs to be called.

new_mesh: bpy.types.Mesh = bpy.data.meshes.new(mesh_name)

new_mesh.from_pydata(vertices, [], faces)

new_mesh.update()

if use_smooth:

set_smooth_shading(new_mesh)

new_object: bpy.types.Object = bpy.data.objects.new(object_name, new_mesh)

scene.collection.objects.link(new_object)

return new_object

# This function is from

# https://github.com/yuki-koyama/blender-cli-rendering

def add_subdivision_surface_modifier(mesh_object: bpy.types.Object, level: int, is_simple: bool = False) -> None:

'''

https://docs.blender.org/api/current/bpy.types.SubsurfModifier.html

'''

modifier: bpy.types.SubsurfModifier = mesh_object.modifiers.new(name="Subsurf", type='SUBSURF')

modifier.levels = level

modifier.render_levels = level

modifier.subdivision_type = 'SIMPLE' if is_simple else 'CATMULL_CLARK'

# This function is from

# https://github.com/yuki-koyama/blender-cli-rendering

def create_armature_mesh(scene: bpy.types.Scene, armature_object: bpy.types.Object, mesh_name: str) -> bpy.types.Object:

assert armature_object.type == 'ARMATURE', 'Error'

assert len(armature_object.data.bones) != 0, 'Error'

def add_rigid_vertex_group(target_object: bpy.types.Object, name: str, vertex_indices: Iterable[int]) -> None:

new_vertex_group = target_object.vertex_groups.new(name=name)

for vertex_index in vertex_indices:

new_vertex_group.add([vertex_index], 1.0, 'REPLACE')

def generate_bone_mesh_pydata(radius: float, length: float) -> Tuple[List[mathutils.Vector], List[List[int]]]:

base_radius = radius

top_radius = 0.5 * radius

vertices = [

# Cross section of the base part

mathutils.Vector((-base_radius, 0.0, +base_radius)),

mathutils.Vector((+base_radius, 0.0, +base_radius)),

mathutils.Vector((+base_radius, 0.0, -base_radius)),

mathutils.Vector((-base_radius, 0.0, -base_radius)),

# Cross section of the top part

mathutils.Vector((-top_radius, length, +top_radius)),

mathutils.Vector((+top_radius, length, +top_radius)),

mathutils.Vector((+top_radius, length, -top_radius)),

mathutils.Vector((-top_radius, length, -top_radius)),

# End points

mathutils.Vector((0.0, -base_radius, 0.0)),

mathutils.Vector((0.0, length + top_radius, 0.0))

]

faces = [

# End point for the base part

[8, 1, 0],

[8, 2, 1],

[8, 3, 2],

[8, 0, 3],

# End point for the top part

[9, 4, 5],

[9, 5, 6],

[9, 6, 7],

[9, 7, 4],

# Side faces

[0, 1, 5, 4],

[1, 2, 6, 5],

[2, 3, 7, 6],

[3, 0, 4, 7],

]

return vertices, faces

armature_data: bpy.types.Armature = armature_object.data

vertices: List[mathutils.Vector] = []

faces: List[List[int]] = []

vertex_groups: List[Dict[str, Any]] = []

for bone in armature_data.bones:

radius = 0.10 * (0.10 + bone.length)

temp_vertices, temp_faces = generate_bone_mesh_pydata(radius, bone.length)

vertex_index_offset = len(vertices)

temp_vertex_group = {'name': bone.name, 'vertex_indices': []}

for local_index, vertex in enumerate(temp_vertices):

vertices.append(bone.matrix_local @ vertex)

temp_vertex_group['vertex_indices'].append(local_index + vertex_index_offset)

vertex_groups.append(temp_vertex_group)

for face in temp_faces:

if len(face) == 3:

faces.append([

face[0] + vertex_index_offset,

face[1] + vertex_index_offset,

face[2] + vertex_index_offset,

])

else:

faces.append([

face[0] + vertex_index_offset,

face[1] + vertex_index_offset,

face[2] + vertex_index_offset,

face[3] + vertex_index_offset,

])

new_object = create_mesh_from_pydata(scene, vertices, faces, mesh_name, mesh_name)

new_object.matrix_world = armature_object.matrix_world

for vertex_group in vertex_groups:

add_rigid_vertex_group(new_object, vertex_group['name'], vertex_group['vertex_indices'])

armature_modifier = new_object.modifiers.new('Armature', 'ARMATURE')

armature_modifier.object = armature_object

armature_modifier.use_vertex_groups = True

add_subdivision_surface_modifier(new_object, 1, is_simple=True)

add_subdivision_surface_modifier(new_object, 2, is_simple=False)

# Set the armature as the parent of the new object

bpy.ops.object.select_all(action='DESELECT')

new_object.select_set(True)

armature_object.select_set(True)

bpy.context.view_layer.objects.active = armature_object

bpy.ops.object.parent_set(type='OBJECT')

return new_object

class OP_AddMesh(bpy.types.Operator):

bl_idname = "genmm.add_mesh"

bl_label = "Add mesh"

bl_description = ""

bl_options = {"REGISTER", "UNDO"}

def __init__(self) -> None:

super().__init__()

def execute(self, context: bpy.types.Context):

name = bpy.context.object.name + "_proxy"

create_armature_mesh(bpy.context.scene, bpy.context.object, name)

return {'FINISHED'}

class OP_RunSynthesis(bpy.types.Operator):

bl_idname = "genmm.run_synthesis"

bl_label = "Run synthesis"

bl_description = ""

bl_options = {"REGISTER", "UNDO"}

def execute(self, context: bpy.types.Context):

setting = context.scene.setting

original_armature = context.object

rest_pose_data = capture_rest_pose(original_armature)

anim = original_armature.animation_data.action

start_frame, end_frame = map(int, anim.frame_range)

start_frame = start_frame if setting.start_frame == -1 else setting.start_frame

end_frame = end_frame if setting.end_frame == -1 else setting.end_frame

bvh_str = get_bvh_data(context,

frame_start=start_frame,

frame_end=end_frame)

frames_str, frame_time_str = bvh_str.split('MOTION\n')[1].split('\n')[:2]

motion_data_str = bvh_str.split('MOTION\n')[1].split('\n')[2:-1]

motion_data = np.array([item.strip().split(' ') for item in motion_data_str], dtype=np.float32)

model = GenMM(device='cuda' if torch.cuda.is_available() else 'cpu', silent=True)

criteria = PatchCoherentLoss(patch_size=setting.patch_size,

alpha=setting.alpha,

loop=setting.loop, cache=True)

for i in range(setting.num_output):

print(f"Generating motion {i+1} of {setting.num_output}")

# Create a new BlenderMotion instance for each iteration

motion = [BlenderMotion(motion_data.copy(), repr='repr6d', use_velo=True,

keep_up_pos=True, up_axis=setting.up_axis, padding_last=False)]

syn = model.run(motion, criteria,

num_frames=str(setting.num_syn_frames),

num_steps=setting.num_steps,

noise_sigma=setting.noise,

patch_size=setting.patch_size,

coarse_ratio=f'{setting.coarse_ratio}x_nframes',

pyr_factor=setting.pyr_factor)

motion_data_str = [' '.join(str(x) for x in item) for item in motion[0].parse(syn)]

bvh_name = f"synsized_{i+1}"

load(context,

bvh_str.split('MOTION\n')[0].split('\n') + ['MOTION'] + [frames_str] + [frame_time_str] + motion_data_str,

rotate_mode='QUATERNION',

global_matrix=original_armature.matrix_world,

original_rest_pose=rest_pose_data,

target='ARMATURE',

use_fps_scale=False,

bvh_name=bvh_name)

return {'FINISHED'}

class GENMM_PT_ControlPanel(bpy.types.Panel):

bl_label = "GenMM"

bl_space_type = 'VIEW_3D'

bl_region_type = 'UI'

bl_category = "GenMM"

@classmethod

def poll(cls, context: bpy.types.Context):

return True

def draw_header(self, context: bpy.types.Context):

layout = self.layout

layout.label(text="", icon='PLUGIN')

def draw(self, context: bpy.types.Context):

layout = self.layout

scene = bpy.context.scene

ops: List[bpy.types.Operator] = [

OP_AddMesh,

]

for op in ops:

layout.operator(op.bl_idname, text=op.bl_label)

box = layout.box()

box.label(text="Exemplar config:")

exemplar_row = box.row()

exemplar_row.prop(scene.setting, "start_frame")

exemplar_row.prop(scene.setting, "end_frame")

exemplar_row = box.row()

exemplar_row.prop(scene.setting, "up_axis")

box = layout.box()

box.label(text="Synthesis config:")

box.prop(scene.setting, "loop")

box.prop(scene.setting, "noise")

box.prop(scene.setting, "num_syn_frames")

box.prop(scene.setting, "patch_size")

box.prop(scene.setting, "coarse_ratio")

box.prop(scene.setting, "pyr_factor")

box.prop(scene.setting, "alpha")

box.prop(scene.setting, "num_steps")

box.prop(scene.setting, "num_output") # New parameter

ops: List[bpy.types.Operator] = [

OP_RunSynthesis,

]

for op in ops:

layout.operator(op.bl_idname, text=op.bl_label)

class PropertyGroup(bpy.types.PropertyGroup):

'''Property container for options and paths of GenMM'''

start_frame: bpy.props.IntProperty(

name="Start Frame",

description="Start Frame of the Exemplar Motion.",

default=1)

end_frame: bpy.props.IntProperty(

name="End Frame",

description="End Frame of the Exemplar Motion.",

default=-1)

up_axis: bpy.props.EnumProperty(

name="Up Axis",

default='Z_UP',

description="Up axis of the Exemplar Motion",

items=[('Z_UP', "Z-Up", 'Z Up'),

('Y_UP', "Y-Up", 'Y Up'),

('X_UP', "X-Up", 'X Up'),

]

)

noise: bpy.props.FloatProperty(

name="Noise Intensity",

description="Intensity of Noise Added to the Synthesized Motion.",

default=10)

num_syn_frames: bpy.props.IntProperty(

name="Num. of Frames",

description="Number of the Synthesized Motion.",

default=600)

patch_size: bpy.props.IntProperty(

name="Patch Size",

description="Size for Patch Extraction.",

min=7,

default=15)

coarse_ratio: bpy.props.FloatProperty(

name="Coarse Ratio",

description="Ratio of the Coarest Pyramid.",

min=0.0,

default=0.2)

pyr_factor: bpy.props.FloatProperty(

name="Pyramid Factor",

description="Pyramid Downsample Factor.",

min=0.1,

default=0.75)

alpha: bpy.props.FloatProperty(

name="Completeness Alpha",

description="Alpha Value for Completeness/Diversity Trade-off.",

default=0.05)

loop: bpy.props.BoolProperty(

name="Endless Loop",

description="Whether to Use Loop Constrain.",

default=False)

num_steps: bpy.props.IntProperty(

name="Num of Steps",

description="Number of Optimized Steps.",

default=5)

num_output: bpy.props.IntProperty(

name="Num. of Output",

description="Number of different motions to generate.",

min=1,

default=1)

classes = [

OP_AddMesh,

OP_RunSynthesis,

GENMM_PT_ControlPanel,

]

def register():

bpy.utils.register_class(PropertyGroup)

bpy.types.Scene.setting = bpy.props.PointerProperty(type=PropertyGroup)

for cls in classes:

bpy.utils.register_class(cls)

def unregister():

bpy.utils.unregister_class(PropertyGroup)

for cls in classes:

bpy.utils.unregister_class(cls)

if __name__ == "__main__":

register()

================================================

FILE: configs/default.yaml

================================================

# motion data config

repr: 'repr6d'

skeleton_name: null

use_velo: true

keep_up_pos: true

up_axis: 'Y_UP'

padding_last: false

requires_contact: false

joint_reduction: false

skeleton_aware: false

joints_group: null

# generate parameters

num_frames: '2x_nframes'

alpha: 0.01

num_steps: 3

noise_sigma: 10.0

coarse_ratio: '5x_patchsize'

# coarse_ratio: '0.2x_nframes'

pyr_factor: 0.75

num_stages_limit: -1

patch_size: 11

loop: false

================================================

FILE: configs/ganimator.yaml

================================================

################################################################

# This configuration uses the same input format of GANimmator for generation

################################################################

outout_dir: './output/ganimator_format'

# for GANimator BVH data

repr: 'repr6d'

skeleton_name: 'mixamo'

use_velo: true

keep_up_pos: true

up_axis: 'Y_UP'

padding_last: true

requires_contact: true

joint_reduction: true

skeleton_aware: false

joints_group: null

# generate parameters

num_frames: '2x_nframes'

alpha: 0.01

num_steps: 3

noise_sigma: 10.0

coarse_ratio: '3x_patchsize'

# coarse_ratio: '0.1x_nframes'

pyr_factor: 0.75

num_stages_limit: -1

patch_size: 11

loop: false

================================================

FILE: dataset/blender_motion.py

================================================

import os

import os.path as osp

import torch

import numpy as np

import torch.nn.functional as F

from .motion import MotionData

from utils.transforms import quat2repr6d, euler2mat, mat2quat, repr6d2quat, quat2euler

class BlenderMotion:

def __init__(self, motion_data, repr='quat', use_velo=True, keep_up_pos=True, up_axis=None, padding_last=False):

'''

BVHMotion constructor

Args:

motion_data : np.array, bvh format data to load from

repr : string, rotation representation, support ['quat', 'repr6d', 'euler']

use_velo : book, whether to transform the joints positions to velocities

keep_up_pos : bool, whether to keep y position when converting to velocity

up_axis : string, up axis of the motion data

padding_last : bool, whether to pad the last position

requires_contact : bool, whether to concatenate contact information

'''

self.motion_data = motion_data

def to_tensor(motion_data, repr='euler', rot_only=False):

if repr not in ['euler', 'quat', 'quaternion', 'repr6d']:

raise Exception('Unknown rotation representation')

if repr == 'quaternion' or repr == 'quat' or repr == 'repr6d': # default is euler for blender data

rotations = torch.tensor(motion_data[:, 3:], dtype=torch.float).view(motion_data.shape[0], -1, 3)

if repr == 'quat':

rotations = euler2mat(rotations)

rotations = mat2quat(rotations)

if repr == 'repr6d':

rotations = euler2mat(rotations)

rotations = mat2quat(rotations)

rotations = quat2repr6d(rotations)

positions = torch.tensor(motion_data[:, :3], dtype=torch.float32)

if rot_only:

return rotations.reshape(rotations.shape[0], -1)

rotations = rotations.reshape(rotations.shape[0], -1)

return torch.cat((rotations, positions), dim=-1)

self.motion_data = MotionData(to_tensor(motion_data, repr=repr).permute(1, 0).unsqueeze(0), repr=repr, use_velo=use_velo,

keep_up_pos=keep_up_pos, up_axis=up_axis, padding_last=padding_last, contact_id=None)

@property

def repr(self):

return self.motion_data.repr

@property

def use_velo(self):

return self.motion_data.use_velo

@property

def keep_up_pos(self):

return self.motion_data.keep_up_pos

@property

def padding_last(self):

return self.motion_data.padding_last

@property

def concat_id(self):

return self.motion_data.contact_id

@property

def n_pad(self):

return self.motion_data.n_pad

@property

def n_contact(self):

return self.motion_data.n_contact

@property

def n_rot(self):

return self.motion_data.n_rot

def sample(self, size=None, slerp=False):

'''

Sample motion data, support slerp

'''

return self.motion_data.sample(size, slerp)

def parse(self, motion, keep_velo=False,):

"""

No batch support here!!!

:returns tracks_json

"""

motion = motion.clone()

if self.use_velo and not keep_velo:

motion = self.motion_data.to_position(motion)

if self.n_pad:

motion = motion[:, :-self.n_pad]

motion = motion.squeeze().permute(1, 0)

pos = motion[..., -3:]

rot = motion[..., :-3].reshape(motion.shape[0], -1, self.n_rot)

if self.repr == 'quat':

rot = quat2euler(rot)

elif self.repr == 'repr6d':

rot = repr6d2quat(rot)

rot = quat2euler(rot)