Showing preview only (614K chars total). Download the full file or copy to clipboard to get everything.

Repository: yahoo/HaloDB

Branch: master

Commit: 767d2357a4c9

Files: 117

Total size: 575.4 KB

Directory structure:

gitextract_fajmuc1o/

├── .github/

│ └── workflows/

│ ├── maven-publish.yml

│ └── maven.yml

├── .gitignore

├── .travis.yml

├── CHANGELOG.md

├── CONTRIBUTING.md

├── CONTRIBUTORS.md

├── Code-of-Conduct.md

├── LICENSE

├── NOTICE

├── README.md

├── benchmarks/

│ ├── README.md

│ ├── pom.xml

│ └── src/

│ └── main/

│ └── java/

│ └── com/

│ └── oath/

│ └── halodb/

│ └── benchmarks/

│ ├── BenchmarkTool.java

│ ├── Benchmarks.java

│ ├── HaloDBStorageEngine.java

│ ├── KyotoStorageEngine.java

│ ├── RandomDataGenerator.java

│ ├── RocksDBStorageEngine.java

│ └── StorageEngine.java

├── docs/

│ ├── WhyHaloDB.md

│ └── benchmarks.md

├── pom.xml

└── src/

├── main/

│ └── java/

│ └── com/

│ └── oath/

│ └── halodb/

│ ├── CompactionManager.java

│ ├── Constants.java

│ ├── DBDirectory.java

│ ├── DBMetaData.java

│ ├── FileUtils.java

│ ├── HaloDB.java

│ ├── HaloDBException.java

│ ├── HaloDBFile.java

│ ├── HaloDBInternal.java

│ ├── HaloDBIterator.java

│ ├── HaloDBKeyIterator.java

│ ├── HaloDBOptions.java

│ ├── HaloDBStats.java

│ ├── HashAlgorithm.java

│ ├── HashTableUtil.java

│ ├── HashTableValueSerializer.java

│ ├── Hasher.java

│ ├── InMemoryIndex.java

│ ├── InMemoryIndexMetaData.java

│ ├── InMemoryIndexMetaDataSerializer.java

│ ├── IndexFile.java

│ ├── IndexFileEntry.java

│ ├── JNANativeAllocator.java

│ ├── KeyBuffer.java

│ ├── LongArrayList.java

│ ├── MemoryPoolAddress.java

│ ├── MemoryPoolChunk.java

│ ├── MemoryPoolHashEntries.java

│ ├── NativeMemoryAllocator.java

│ ├── NonMemoryPoolHashEntries.java

│ ├── OffHeapHashTable.java

│ ├── OffHeapHashTableBuilder.java

│ ├── OffHeapHashTableImpl.java

│ ├── OffHeapHashTableStats.java

│ ├── Record.java

│ ├── RecordKey.java

│ ├── Segment.java

│ ├── SegmentNonMemoryPool.java

│ ├── SegmentStats.java

│ ├── SegmentWithMemoryPool.java

│ ├── TombstoneEntry.java

│ ├── TombstoneFile.java

│ ├── Uns.java

│ ├── UnsExt.java

│ ├── UnsExt8.java

│ ├── UnsafeAllocator.java

│ ├── Utils.java

│ ├── Versions.java

│ └── histo/

│ └── EstimatedHistogram.java

└── test/

├── java/

│ └── com/

│ └── oath/

│ └── halodb/

│ ├── CheckOffHeapHashTable.java

│ ├── CheckSegment.java

│ ├── CompactionWithErrorsTest.java

│ ├── CrossCheckTest.java

│ ├── DBDirectoryTest.java

│ ├── DBMetaDataTest.java

│ ├── DBRepairTest.java

│ ├── DataConsistencyDB.java

│ ├── DataConsistencyTest.java

│ ├── DoubleCheckOffHeapHashTableImpl.java

│ ├── FileUtilsTest.java

│ ├── HaloDBCompactionTest.java

│ ├── HaloDBDeletionTest.java

│ ├── HaloDBFileCompactionTest.java

│ ├── HaloDBFileTest.java

│ ├── HaloDBIteratorTest.java

│ ├── HaloDBKeyIteratorTest.java

│ ├── HaloDBOptionsTest.java

│ ├── HaloDBStatsTest.java

│ ├── HaloDBTest.java

│ ├── HashTableTestUtils.java

│ ├── HashTableUtilTest.java

│ ├── HashTableValueSerializerTest.java

│ ├── HasherTest.java

│ ├── IndexFileEntryTest.java

│ ├── KeyBufferTest.java

│ ├── LinkedImplTest.java

│ ├── LongArrayListTest.java

│ ├── MemoryPoolChunkTest.java

│ ├── NonMemoryPoolHashEntriesTest.java

│ ├── OffHeapHashTableBuilderTest.java

│ ├── RandomDataGenerator.java

│ ├── RecordTest.java

│ ├── RehashTest.java

│ ├── SegmentWithMemoryPoolTest.java

│ ├── SequenceNumberTest.java

│ ├── SyncWriteTest.java

│ ├── TestBase.java

│ ├── TestListener.java

│ ├── TestUtils.java

│ ├── TombstoneFileCleanUpTest.java

│ ├── TombstoneFileTest.java

│ ├── UnsTest.java

│ └── histo/

│ └── EstimatedHistogramTest.java

└── resources/

└── log4j2-test.xml

================================================

FILE CONTENTS

================================================

================================================

FILE: .github/workflows/maven-publish.yml

================================================

# This workflow will build a package using Maven and then publish it to GitHub packages when a release is created

# For more information see: https://github.com/actions/setup-java/blob/main/docs/advanced-usage.md#apache-maven-with-a-settings-path

name: Maven Package

on:

release:

types: [created]

jobs:

build:

runs-on: ubuntu-latest

permissions:

contents: read

packages: write

steps:

- uses: actions/checkout@v2

- name: Set up JDK 8

uses: actions/setup-java@v2

with:

java-version: '8'

distribution: 'adopt'

server-id: github # Value of the distributionManagement/repository/id field of the pom.xml

settings-path: ${{ github.workspace }} # location for the settings.xml file

- name: Publish to GitHub Packages Apache Maven

run: mvn deploy -s $GITHUB_WORKSPACE/settings.xml

env:

GITHUB_TOKEN: ${{ github.token }}

================================================

FILE: .github/workflows/maven.yml

================================================

# This workflow will build a Java project with Maven

# For more information see: https://help.github.com/actions/language-and-framework-guides/building-and-testing-java-with-maven

name: Java CI with Maven

on:

push:

branches: [ master ]

pull_request:

branches: [ master ]

jobs:

build:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2

- name: Set up JDK 8

uses: actions/setup-java@v2

with:

java-version: '8'

distribution: 'adopt'

- name: Build with Maven

run: mvn -B package --file pom.xml

================================================

FILE: .gitignore

================================================

target

.idea

halodb.iml

tmp/

================================================

FILE: .travis.yml

================================================

language: java

dist: trusty

jdk:

- oraclejdk8

================================================

FILE: CHANGELOG.md

================================================

# HaloDB Change Log

## 0.4.3 (08/20/2018)

* Sequence number, instead of relying on system time, is now a number incremented for each write operation.

* Include compaction rate in stats.

## 0.4.2 (08/06/2018)

* Handle the case where db crashes while it is being repaired due to error from a previous crash.

* _put_ operation in _HaloDB_ now returns a boolean value indicating the status of the operation.

## 0.4.1 (7/16/2018)

* Include version, checksum and max file size in META file.

* _maxFileSize_ in _HaloDBOptions_ now accepts only int values.

## 0.4.0 (7/11/2018)

* Implemented memory pool for in-memory index.

================================================

FILE: CONTRIBUTING.md

================================================

# How to contribute

First, thanks for taking the time to contribute to our project! The following information provides a guide for making contributions.

## Code of Conduct

By participating in this project, you agree to abide by the [Oath Code of Conduct](Code-of-Conduct.md). Everyone is welcome to submit a pull request or open an issue to improve the documentation, add improvements, or report bugs.

## How to Ask a Question

If you simply have a question that needs an answer, [create an issue](https://help.github.com/articles/creating-an-issue/), and label it as a question.

## How To Contribute

### Report a Bug or Request a Feature

If you encounter any bugs while using this software, or want to request a new feature or enhancement, feel free to [create an issue](https://help.github.com/articles/creating-an-issue/) to report it, make sure you add a label to indicate what type of issue it is.

### Contribute Code

Pull requests are welcome for bug fixes. If you want to implement something new, please [request a feature first](#report-a-bug-or-request-a-feature) so we can discuss it.

#### Creating a Pull Request

Please follow [best practices](https://github.com/trein/dev-best-practices/wiki/Git-Commit-Best-Practices) for creating git commits.

When your code is ready to be submitted, you can [submit a pull request](https://help.github.com/articles/creating-a-pull-request/) to begin the code review process.

================================================

FILE: CONTRIBUTORS.md

================================================

HaloDB was designed and implemented by [Arjun Mannaly](https://github.com/amannaly)

================================================

FILE: Code-of-Conduct.md

================================================

# Oath Open Source Code of Conduct

## Summary

This Code of Conduct is our way to encourage good behavior and discourage bad behavior in our open source community. We invite participation from many people to bring different perspectives to support this project. We pledge to do our part to foster a welcoming and professional environment free of harassment. We expect participants to communicate professionally and thoughtfully during their involvement with this project.

Participants may lose their good standing by engaging in misconduct. For example: insulting, threatening, or conveying unwelcome sexual content. We ask participants who observe conduct issues to report the incident directly to the project's Response Team at opensource-conduct@oath.com. Oath will assign a respondent to address the issue. We may remove harassers from this project.

This code does not replace the terms of service or acceptable use policies of the websites used to support this project. We acknowledge that participants may be subject to additional conduct terms based on their employment which may govern their online expressions.

## Details

This Code of Conduct makes our expectations of participants in this community explicit.

* We forbid harassment and abusive speech within this community.

* We request participants to report misconduct to the project’s Response Team.

* We urge participants to refrain from using discussion forums to play out a fight.

### Expected Behaviors

We expect participants in this community to conduct themselves professionally. Since our primary mode of communication is text on an online forum (e.g. issues, pull requests, comments, emails, or chats) devoid of vocal tone, gestures, or other context that is often vital to understanding, it is important that participants are attentive to their interaction style.

* **Assume positive intent.** We ask community members to assume positive intent on the part of other people’s communications. We may disagree on details, but we expect all suggestions to be supportive of the community goals.

* **Respect participants.** We expect participants will occasionally disagree. Even if we reject an idea, we welcome everyone’s participation. Open Source projects are learning experiences. Ask, explore, challenge, and then respectfully assert if you agree or disagree. If your idea is rejected, be more persuasive not bitter.

* **Welcoming to new members.** New members bring new perspectives. Some may raise questions that have been addressed before. Kindly point them to existing discussions. Everyone is new to every project once.

* **Be kind to beginners.** Beginners use open source projects to get experience. They might not be talented coders yet, and projects should not accept poor quality code. But we were all beginners once, and we need to engage kindly.

* **Consider your impact on others.** Your work will be used by others, and you depend on the work of others. We expect community members to be considerate and establish a balance their self-interest with communal interest.

* **Use words carefully.** We may not understand intent when you say something ironic. Poe’s Law suggests that without an emoticon people will misinterpret sarcasm. We ask community members to communicate plainly.

* **Leave with class.** When you wish to resign from participating in this project for any reason, you are free to fork the code and create a competitive project. Open Source explicitly allows this. Your exit should not be dramatic or bitter.

### Unacceptable Behaviors

Participants remain in good standing when they do not engage in misconduct or harassment. To elaborate:

* **Don't be a bigot.** Calling out project members by their identity or background in a negative or insulting manner. This includes, but is not limited to, slurs or insinuations related to protected or suspect classes e.g. race, color, citizenship, national origin, political belief, religion, sexual orientation, gender identity and expression, age, size, culture, ethnicity, genetic features, language, profession, national minority statue, mental or physical ability.

* **Don't insult.** Insulting remarks about a person’s lifestyle practices.

* **Don't dox.** Revealing private information about other participants without explicit permission.

* **Don't intimidate.** Threats of violence or intimidation of any project member.

* **Don't creep.** Unwanted sexual attention or content unsuited for the subject of this project.

* **Don't disrupt.** Sustained disruptions in a discussion.

* **Let us help.** Refusal to assist the Response Team to resolve an issue in the community.

We do not list all forms of harassment, nor imply some forms of harassment are not worthy of action. Any participant who *feels* harassed or *observes* harassment, should report the incident. Victim of harassment should not address grievances in the public forum, as this often intensifies the problem. Report it, and let us address it off-line.

### Reporting Issues

If you experience or witness misconduct, or have any other concerns about the conduct of members of this project, please report it by contacting our Response Team at opensource-conduct@oath.com who will handle your report with discretion. Your report should include:

* Your preferred contact information. We cannot process anonymous reports.

* Names (real or usernames) of those involved in the incident.

* Your account of what occurred, and if the incident is ongoing. Please provide links to or transcripts of the publicly available records (e.g. a mailing list archive or a public IRC logger), so that we can review it.

* Any additional information that may be helpful to achieve resolution.

After filing a report, a representative will contact you directly to review the incident and ask additional questions. If a member of the Oath Response Team is named in an incident report, that member will be recused from handling your incident. If the complaint originates from a member of the Response Team, it will be addressed by a different member of the Response Team. We will consider reports to be confidential for the purpose of protecting victims of abuse.

### Scope

Oath will assign a Response Team member with admin rights on the project and legal rights on the project copyright. The Response Team is empowered to restrict some privileges to the project as needed. Since this project is governed by an open source license, any participant may fork the code under the terms of the project license. The Response Team’s goal is to preserve the project if possible, and will restrict or remove participation from those who disrupt the project.

This code does not replace the terms of service or acceptable use policies that are provided by the websites used to support this community. Nor does this code apply to communications or actions that take place outside of the context of this community. Many participants in this project are also subject to codes of conduct based on their employment. This code is a social-contract that informs participants of our social expectations. It is not a terms of service or legal contract.

## License and Acknowledgment.

This text is shared under the [CC-BY-4.0 license](https://creativecommons.org/licenses/by/4.0/). This code is based on a study conducted by the [TODO Group](https://todogroup.org/) of many codes used in the open source community. If you have feedback about this code, contact our Response Team at the address listed above.

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction, and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all other entities that control, are controlled by, or are under common control with that entity. For the purposes of this definition, "control" means (i) the power, direct or indirect, to cause the direction or management of such entity, whether by contract or otherwise, or (ii) ownership of fifty percent (50%) or more of the outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications, including but not limited to software source code, documentation source, and configuration files.

"Object" form shall mean any form resulting from mechanical transformation or translation of a Source form, including but not limited to compiled object code, generated documentation, and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or Object form, made available under the License, as indicated by a copyright notice that is included in or attached to the work (an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object form, that is based on (or derived from) the Work and for which the editorial revisions, annotations, elaborations, or other modifications represent, as a whole, an original work of authorship. For the purposes of this License, Derivative Works shall not include works that remain separable from, or merely link (or bind by name) to the interfaces of, the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including the original version of the Work and any modifications or additions to that Work or Derivative Works thereof, that is intentionally submitted to Licensor for inclusion in the Work by the copyright owner or by an individual or Legal Entity authorized to submit on behalf of the copyright owner. For the purposes of this definition, "submitted" means any form of electronic, verbal, or written communication sent to the Licensor or its representatives, including but not limited to communication on electronic mailing lists, source code control systems, and issue tracking systems that are managed by, or on behalf of, the Licensor for the purpose of discussing and improving the Work, but excluding communication that is conspicuously marked or otherwise designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity on behalf of whom a Contribution has been received by Licensor and subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of this License, each Contributor hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable copyright license to reproduce, prepare Derivative Works of, publicly display, publicly perform, sublicense, and distribute the Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of this License, each Contributor hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable (except as stated in this section) patent license to make, have made, use, offer to sell, sell, import, and otherwise transfer the Work, where such license applies only to those patent claims licensable by such Contributor that are necessarily infringed by their Contribution(s) alone or by combination of their Contribution(s) with the Work to which such Contribution(s) was submitted. If You institute patent litigation against any entity (including a cross-claim or counterclaim in a lawsuit) alleging that the Work or a Contribution incorporated within the Work constitutes direct or contributory patent infringement, then any patent licenses granted to You under this License for that Work shall terminate as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the Work or Derivative Works thereof in any medium, with or without modifications, and in Source or Object form, provided that You meet the following conditions:

You must give any other recipients of the Work or Derivative Works a copy of this License; and

You must cause any modified files to carry prominent notices stating that You changed the files; and

You must retain, in the Source form of any Derivative Works that You distribute, all copyright, patent, trademark, and attribution notices from the Source form of the Work, excluding those notices that do not pertain to any part of the Derivative Works; and

If the Work includes a "NOTICE" text file as part of its distribution, then any Derivative Works that You distribute must include a readable copy of the attribution notices contained within such NOTICE file, excluding those notices that do not pertain to any part of the Derivative Works, in at least one of the following places: within a NOTICE text file distributed as part of the Derivative Works; within the Source form or documentation, if provided along with the Derivative Works; or, within a display generated by the Derivative Works, if and wherever such third-party notices normally appear. The contents of the NOTICE file are for informational purposes only and do not modify the License. You may add Your own attribution notices within Derivative Works that You distribute, alongside or as an addendum to the NOTICE text from the Work, provided that such additional attribution notices cannot be construed as modifying the License.

You may add Your own copyright statement to Your modifications and may provide additional or different license terms and conditions for use, reproduction, or distribution of Your modifications, or for any such Derivative Works as a whole, provided Your use, reproduction, and distribution of the Work otherwise complies with the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise, any Contribution intentionally submitted for inclusion in the Work by You to the Licensor shall be under the terms and conditions of this License, without any additional terms or conditions. Notwithstanding the above, nothing herein shall supersede or modify the terms of any separate license agreement you may have executed with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade names, trademarks, service marks, or product names of the Licensor, except as required for reasonable and customary use in describing the origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or agreed to in writing, Licensor provides the Work (and each Contributor provides its Contributions) on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied, including, without limitation, any warranties or conditions of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A PARTICULAR PURPOSE. You are solely responsible for determining the appropriateness of using or redistributing the Work and assume any risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory, whether in tort (including negligence), contract, or otherwise, unless required by applicable law (such as deliberate and grossly negligent acts) or agreed to in writing, shall any Contributor be liable to You for damages, including any direct, indirect, special, incidental, or consequential damages of any character arising as a result of this License or out of the use or inability to use the Work (including but not limited to damages for loss of goodwill, work stoppage, computer failure or malfunction, or any and all other commercial damages or losses), even if such Contributor has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing the Work or Derivative Works thereof, You may choose to offer, and charge a fee for, acceptance of support, warranty, indemnity, or other liability obligations and/or rights consistent with this License. However, in accepting such obligations, You may act only on Your own behalf and on Your sole responsibility, not on behalf of any other Contributor, and only if You agree to indemnify, defend, and hold each Contributor harmless for any liability incurred by, or claims asserted against, such Contributor by reason of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

================================================

FILE: NOTICE

================================================

=========================================================================

NOTICE file for use with, and corresponding to Section 4 of,

the Apache License, Version 2.0 (http://www.apache.org/licenses/LICENSE-2.0)

in this case for the HaloDB project

=========================================================================

This project contains software developed by Robert Stupp.

OHC (https://github.com/snazy/ohc)

Java Off-Heap-Cache, licensed under APLv2

Copyright (C) 2014 Robert Stupp, Koeln, Germany, robert-stupp.de

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: README.md

================================================

# HaloDB

[](https://travis-ci.org/yahoo/HaloDB)

[ ](https://bintray.com/yahoo/maven/halodb/_latestVersion)

HaloDB is a fast and simple embedded key-value store written in Java. HaloDB is suitable for IO bound workloads, and is capable of handling high throughput reads and writes at submillisecond latencies.

HaloDB was written for a high-throughput, low latency distributed key-value database that powers multiple ad platforms at Yahoo, therefore all its design choices and optimizations were

primarily for this use case.

Basic design principles employed in HaloDB are not new. Refer to this [document](docs/WhyHaloDB.md) for more details about the motivation for HaloDB and its inspirations.

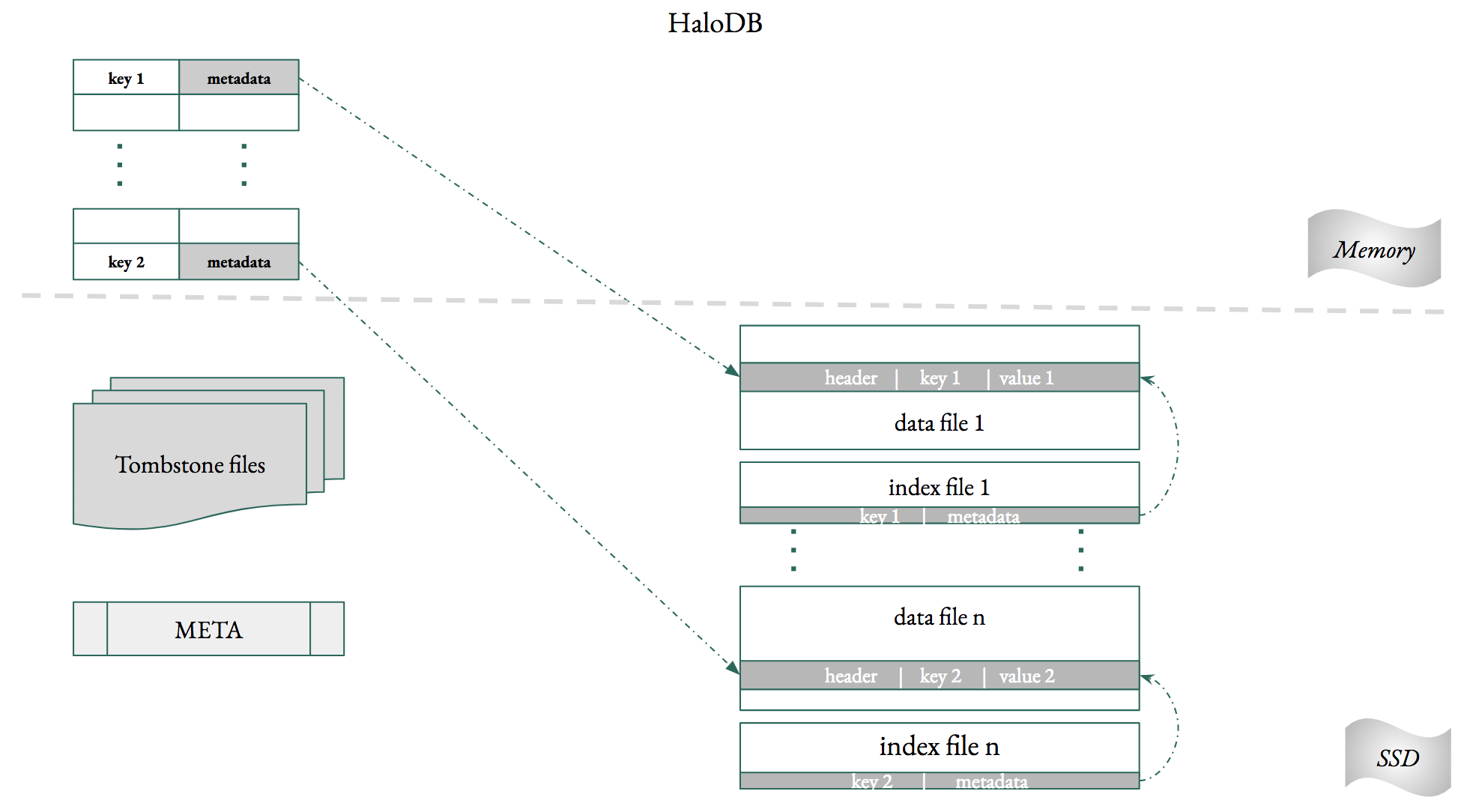

HaloDB comprises of two main components: an index in memory which stores all the keys, and append-only log files on

the persistent layer which stores all the data. To reduce Java garbage collection pressure the index

is allocated in native memory, outside the Java heap.

### Basic Operations.

```java

// Open a db with default options.

HaloDBOptions options = new HaloDBOptions();

// Size of each data file will be 1GB.

options.setMaxFileSize(1024 * 1024 * 1024);

// Size of each tombstone file will be 64MB

// Large file size mean less file count but will slow down db open time. But if set

// file size too small, it will result large amount of tombstone files under db folder

options.setMaxTombstoneFileSize(64 * 1024 * 1024);

// Set the number of threads used to scan index and tombstone files in parallel

// to build in-memory index during db open. It must be a positive number which is

// not greater than Runtime.getRuntime().availableProcessors().

// It is used to speed up db open time.

options.setBuildIndexThreads(8);

// The threshold at which page cache is synced to disk.

// data will be durable only if it is flushed to disk, therefore

// more data will be lost if this value is set too high. Setting

// this value too low might interfere with read and write performance.

options.setFlushDataSizeBytes(10 * 1024 * 1024);

// The percentage of stale data in a data file at which the file will be compacted.

// This value helps control write and space amplification. Increasing this value will

// reduce write amplification but will increase space amplification.

// This along with the compactionJobRate below is the most important setting

// for tuning HaloDB performance. If this is set to x then write amplification

// will be approximately 1/x.

options.setCompactionThresholdPerFile(0.7);

// Controls how fast the compaction job should run.

// This is the amount of data which will be copied by the compaction thread per second.

// Optimal value depends on the compactionThresholdPerFile option.

options.setCompactionJobRate(50 * 1024 * 1024);

// Setting this value is important as it helps to preallocate enough

// memory for the off-heap cache. If the value is too low the db might

// need to rehash the cache. For a db of size n set this value to 2*n.

options.setNumberOfRecords(100_000_000);

// Delete operation for a key will write a tombstone record to a tombstone file.

// the tombstone record can be removed only when all previous version of that key

// has been deleted by the compaction job.

// enabling this option will delete during startup all tombstone records whose previous

// versions were removed from the data file.

options.setCleanUpTombstonesDuringOpen(true);

// HaloDB does native memory allocation for the in-memory index.

// Enabling this option will release all allocated memory back to the kernel when the db is closed.

// This option is not necessary if the JVM is shutdown when the db is closed, as in that case

// allocated memory is released automatically by the kernel.

// If using in-memory index without memory pool this option,

// depending on the number of records in the database,

// could be a slow as we need to call _free_ for each record.

options.setCleanUpInMemoryIndexOnClose(false);

// ** settings for memory pool **

options.setUseMemoryPool(true);

// Hash table implementation in HaloDB is similar to that of ConcurrentHashMap in Java 7.

// Hash table is divided into segments and each segment manages its own native memory.

// The number of segments is twice the number of cores in the machine.

// A segment's memory is further divided into chunks whose size can be configured here.

options.setMemoryPoolChunkSize(2 * 1024 * 1024);

// using a memory pool requires us to declare the size of keys in advance.

// Any write request with key length greater than the declared value will fail, but it

// is still possible to store keys smaller than this declared size.

options.setFixedKeySize(8);

// Represents a database instance and provides all methods for operating on the database.

HaloDB db = null;

// The directory will be created if it doesn't exist and all database files will be stored in this directory

String directory = "directory";

// Open the database. Directory will be created if it doesn't exist.

// If we are opening an existing database HaloDB needs to scan all the

// index files to create the in-memory index, which, depending on the db size, might take a few minutes.

db = HaloDB.open(directory, options);

// key and values are byte arrays. Key size is restricted to 128 bytes.

byte[] key1 = Ints.toByteArray(200);

byte[] value1 = "Value for key 1".getBytes();

byte[] key2 = Ints.toByteArray(300);

byte[] value2 = "Value for key 2".getBytes();

// add the key-value pair to the database.

db.put(key1, value1);

db.put(key2, value2);

// read the value from the database.

value1 = db.get(key1);

value2 = db.get(key2);

// delete a key from the database.

db.delete(key1);

// Open an iterator and iterate through all the key-value records.

HaloDBIterator iterator = db.newIterator();

while (iterator.hasNext()) {

Record record = iterator.next();

System.out.println(Ints.fromByteArray(record.getKey()));

System.out.println(new String(record.getValue()));

}

// get stats and print it.

HaloDBStats stats = db.stats();

System.out.println(stats.toString());

// reset stats

db.resetStats();

// pause background compaction thread.

// if a file is being compacted the thread

// will block until the compaction is complete.

db.pauseCompaction();

// resume background compaction thread.

db.resumeCompaction();

// repeatedly calling pause/resume compaction methods will have no effect.

// Close the database.

db.close();

```

Binaries for HaloDB are hosted on [Bintray](https://bintray.com/yahoo).

``` xml

<dependency>

<groupId>com.oath.halodb</groupId>

<artifactId>halodb</artifactId>

<version>x.y.x</version>

</dependency>

<repository>

<id>yahoo-bintray</id>

<name>yahoo-bintray</name>

<url>https://yahoo.bintray.com/maven</url>

</repository>

```

### Read, Write and Space amplification.

Read amplification in HaloDB is always 1—for a read request it needs to do at most one disk lookup—hence it is well suited for

read latency critical workloads. HaloDB provides a configuration which can be tuned to control write amplification

and space amplification, both of which trade-off with each other; HaloDB has a background compaction thread which removes stale data

from the DB. The percentage of stale data at which a file is compacted can be controlled. Increasing this value will increase space amplification

but will reduce write amplification. For example if the value is set to 50% then write amplification will be approximately 2

### Durability and Crash recovery.

Write Ahead Logs (WAL) are usually used by databases for crash recovery. Since for HaloDB WAL _is the_ database crash recovery

is easier and faster.

HaloDB does not flush writes to disk immediately, but, for performance reasons, writes only to the OS page cache. The cache is synced to

disk once a configurable size is reached. In the event of a power loss, the data not flushed to disk will be lost. This compromise

between performance and durability is a necessary one.

In the event of a power loss and data corruption, HaloDB will scan and discard corrupted records. Since the write thread and compaction

thread could be writing to at most two files at a time only those files need to be repaired and hence recovery times are very short.

In the event of a power loss HaloDB offers the following consistency guarantees:

* Writes are atomic.

* Inserts and updates are committed to disk in the same order they are received.

* When inserts/updates and deletes are interleaved total ordering is not guaranteed, but partial ordering is guaranteed for inserts/updates and deletes.

### In-memory index.

HaloDB stores all keys and their associated metadata in an index in memory. The size of this index, depending on the

number and length of keys, can be quite big. Therefore, storing this in the Java Heap is a non-starter for a

performance critical storage engine. HaloDB solves this problem by storing the index in native memory,

outside the heap. There are two variants of the index; one with a memory pool and the other

without it. Using the memory pool helps to reduce the memory footprint of the index and reduce

fragmentation, but requires fixed size keys. A billion 8 byte keys

currently takes around 44GB of memory with memory pool and around 64GB without memory pool.

The size of the keys when using a memory pool should be declared in advance, and although this imposes an

upper limit on the size of the keys it is still possible to store keys smaller than this declared size.

Without the memory pool, HaloDB needs to allocate native memory for every write request. Therefore,

memory fragmentation could be an issue. Using [jemalloc](http://jemalloc.net/) is highly recommended as it

provides a significant reduction in the cache's memory footprint and fragmentation.

### Delete operations.

Delete operation for a key will add a tombstone record to a tombstone file, which is distinct from the data files.

This design has the advantage that the tombstone record once written need not be copied again during compaction, but

the drawback is that in case of a power loss HaloDB cannot guarantee total ordering when put and delete operations are

interleaved (although partial ordering for both is guaranteed).

### DB open time

Open db could take a few minutes, depends on number of records and tombstones. If the db open time is critical to your

use case, please keep tombstone file size relatively small and increase the number of threads used in building index.

See the option setting section in example code above. As best practice, set tombstone file size at 64MB and set build

index threads to number of available processors divided by number of dbs being opened simultaneously.

### System requirements.

* HaloDB requires Java 8 to run, but has not yet been tested with newer Java versions.

* HaloDB has been tested on Linux running on x86 and on MacOS. It may run on other platforms, but this hasn't been verified yet.

* For performance disable Transparent Huge Pages and swapping (vm.swappiness=0).

* If a thread is interrupted JVM will close those file channels the thread was operating on.

Therefore, don't interrupt threads while they are doing IO operations.

### Restrictions.

* Size of keys is restricted to 128 bytes.

* HaloDB don't support range scans or ordered access.

# Benchmarks.

[Benchmarks](docs/benchmarks.md).

# Contributing

Contributions are most welcome. Please refer to the [CONTRIBUTING](https://github.com/yahoo/HaloDB/blob/master/CONTRIBUTING.md) guide

# Credits

HaloDB was written by [Arjun Mannaly](https://github.com/amannaly).

# License

HaloDB is released under the Apache License, Version 2.0

================================================

FILE: benchmarks/README.md

================================================

# Storage Engine Benchmark Tool.

Build the package using **mvn clean package** This will create a far jar *target/storage-engine-benchmark-1.0.jar*

Different benchmarks can be run using:

`java -jar storage-engine-benchmark-1.0-SNAPSHOT.jar <db directory> <benchmary type>`

Different benchmark types are defined [here](https://github.com/yahoo/HaloDB/blob/master/benchmarks/src/main/java/com/oath/halodb/benchmarks/Benchmarks.java).

================================================

FILE: benchmarks/pom.xml

================================================

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>mannaly</groupId>

<artifactId>storage-engine-benchmark</artifactId>

<version>1.0</version>

<packaging>jar</packaging>

<name>storage-engine-benchmark</name>

<url>http://maven.apache.org</url>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>org.rocksdb</groupId>

<artifactId>rocksdbjni</artifactId>

<version>5.7.2</version>

</dependency>

<dependency>

<groupId>com.oath.halodb</groupId>

<artifactId>halodb</artifactId>

<version>0.4.2</version>

</dependency>

<dependency>

<groupId>com.fallabs</groupId>

<artifactId>kyotocabinet-java</artifactId>

<version>1.16</version>

</dependency>

<dependency>

<groupId>com.google.guava</groupId>

<artifactId>guava</artifactId>

<version>19.0</version>

</dependency>

<dependency>

<groupId>org.hdrhistogram</groupId>

<artifactId>HdrHistogram</artifactId>

<version>2.1.9</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-simple</artifactId>

<version>1.8.0-alpha2</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.5.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<!-- assembly plugin to create a fat jar. -->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.3</version>

<executions>

<!-- Run shade goal on package phase -->

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<transformers>

<!-- add Main-Class to manifest file -->

<transformer implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass>com.oath.halodb.benchmarks.BenchmarkTool</mainClass>

</transformer>

</transformers>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

<repositories>

<repository>

<id>halodb-bintray</id>

<name>halodb-bintray</name>

<url>https://yahoo.bintray.com/maven</url>

<releases>

<enabled>true</enabled>

</releases>

<snapshots>

<enabled>false</enabled>

</snapshots>

</repository>

</repositories>

</project>

================================================

FILE: benchmarks/src/main/java/com/oath/halodb/benchmarks/BenchmarkTool.java

================================================

/*

* Copyright 2018, Oath Inc

* Licensed under the terms of the Apache License 2.0. Please refer to accompanying LICENSE file for terms.

*/

package com.oath.halodb.benchmarks;

import com.google.common.primitives.Longs;

import com.google.common.util.concurrent.RateLimiter;

import org.HdrHistogram.Histogram;

import java.io.File;

import java.text.DateFormat;

import java.text.SimpleDateFormat;

import java.util.Arrays;

import java.util.Date;

import java.util.Random;

import java.util.concurrent.TimeUnit;

public class BenchmarkTool {

private static SimpleDateFormat sdf = new SimpleDateFormat("HH:mm:ss");

// adjust HaloDB number of records accordingly.

private final static int numberOfRecords = 500_000_000;

private static volatile boolean isReadComplete = false;

private static final int numberOfReads = 640_000_000;

private static final int numberOfReadThreads = 32;

private static final int noOfReadsPerThread = numberOfReads / numberOfReadThreads; // 400 million.

private static final int writeMBPerSecond = 20 * 1024 * 1024;

private static final RateLimiter writeRateLimiter = RateLimiter.create(writeMBPerSecond);

private static final int recordSize = 1024;

private static final int seed = 100;

private static final Random random = new Random(seed);

private static RandomDataGenerator randomDataGenerator = new RandomDataGenerator(seed);

public static void main(String[] args) throws Exception {

String directoryName = args[0];

String benchmarkType = args[1];

Benchmarks benchmark = null;

try {

benchmark = Benchmarks.valueOf(benchmarkType);

}

catch (IllegalArgumentException e) {

System.out.println("Benchmarks should be one of " + Arrays.toString(Benchmarks.values()));

System.exit(1);

}

System.out.println("Running benchmark " + benchmark);

File dir = new File(directoryName);

// select different storage engines here.

final StorageEngine db = new HaloDBStorageEngine(dir, numberOfRecords);

//final StorageEngine db = new RocksDBStorageEngine(dir, numberOfRecords);

//final StorageEngine db = new KyotoStorageEngine(dir, numberOfRecords);

db.open();

System.out.println("Opened the database.");

switch (benchmark) {

case FILL_SEQUENCE: createDB(db, true);break;

case FILL_RANDOM: createDB(db, false);break;

case READ_RANDOM: readRandom(db, numberOfReadThreads);break;

case RANDOM_UPDATE: update(db);break;

case READ_AND_UPDATE: updateWithReads(db);

}

db.close();

}

private static void createDB(StorageEngine db, boolean isSequential) {

long start = System.currentTimeMillis();

byte[] value;

long dataSize = 0;

for (int i = 0; i < numberOfRecords; i++) {

value = randomDataGenerator.getData(recordSize);

dataSize += (long)value.length;

byte[] key = isSequential ? longToBytes(i) : longToBytes(random.nextInt(numberOfRecords));

db.put(key, value);

if (i % 1_000_000 == 0) {

System.out.printf("%s: Wrote %d records\n", DateFormat.getTimeInstance().format(new Date()), i);

}

}

long end = System.currentTimeMillis();

long time = (end - start) / 1000;

System.out.println("Completed writing data in " + time);

System.out.printf("Write rate %d MB/sec\n", dataSize / time / 1024l / 1024l);

System.out.println("Size of database " + db.size());

}

private static void update(StorageEngine db) {

long start = System.currentTimeMillis();

byte[] value;

long dataSize = 0;

for (int i = 0; i < numberOfRecords; i++) {

value = randomDataGenerator.getData(recordSize);

writeRateLimiter.acquire(value.length);

dataSize += (long)value.length;

byte[] key = longToBytes(random.nextInt(numberOfRecords));

db.put(key, value);

if (i % 1_000_000 == 0) {

System.out.printf("%s: Wrote %d records\n", DateFormat.getTimeInstance().format(new Date()), i);

}

}

long end = System.currentTimeMillis();

long time = (end - start) / 1000;

System.out.println("Completed over writing data in " + time);

System.out.printf("Write rate %d MB/sec\n", dataSize / time / 1024l / 1024l);

System.out.println("Size of database " + db.size());

}

private static void readRandom(StorageEngine db, int threads) {

Read[] reads = new Read[numberOfReadThreads];

long start = System.currentTimeMillis();

for (int i = 0; i < reads.length; i++) {

reads[i] = new Read(db, i);

reads[i].start();

}

for (Read r : reads) {

try {

r.join();

} catch (InterruptedException e) {

e.printStackTrace();

}

}

long time = (System.currentTimeMillis() - start) / 1000;

System.out.printf("Completed %d reads with %d threads in %d seconds\n", numberOfReads, numberOfReadThreads, time);

System.out.println("Operations per second - " + numberOfReads/time);

Histogram latencyHistogram = new Histogram(TimeUnit.SECONDS.toNanos(10), 3);

for(Read r : reads) {

latencyHistogram.add(r.latencyHistogram);

}

System.out.printf("Max value - %d\n", latencyHistogram.getMaxValue());

System.out.printf("Average value - %f\n", latencyHistogram.getMean());

System.out.printf("95th percentile - %d\n", latencyHistogram.getValueAtPercentile(95.0));

System.out.printf("99th percentile - %d\n", latencyHistogram.getValueAtPercentile(99.0));

System.out.printf("99.9th percentile - %d\n", latencyHistogram.getValueAtPercentile(99.9));

System.out.printf("99.99th percentile - %d\n", latencyHistogram.getValueAtPercentile(99.99));

}

private static void updateWithReads(StorageEngine db) {

Read[] reads = new Read[numberOfReadThreads];

Thread update = new Thread(new Runnable() {

@Override

public void run() {

long start = System.currentTimeMillis();

byte[] value;

long dataSize = 0, count = 0;

while (!isReadComplete) {

value = randomDataGenerator.getData(recordSize);

writeRateLimiter.acquire(value.length);

dataSize += (long)value.length;

byte[] key = longToBytes(random.nextInt(numberOfRecords));

db.put(key, value);

if (count++ % 1_000_000 == 0) {

System.out.printf("%s: Wrote %d records\n", DateFormat.getTimeInstance().format(new Date()), count);

}

}

long end = System.currentTimeMillis();

long time = (end - start) / 1000;

System.out.println("Completed over writing data in " + time);

System.out.println("Write operations per second - " + count/time);

System.out.printf("Write rate %d MB/sec\n", dataSize / time / 1024l / 1024l);

System.out.println("Size of database " + db.size());

}

});

long start = System.currentTimeMillis();

for (int i = 0; i < reads.length; i++) {

reads[i] = new Read(db, i);

reads[i].start();

}

update.start();

for(Read r : reads) {

try {

r.join();

} catch (InterruptedException e) {

e.printStackTrace();

}

}

long time = (System.currentTimeMillis() - start) / 1000;

isReadComplete = true;

long maxTime = -1;

for (Read r : reads) {

maxTime = Math.max(maxTime, r.time);

}

maxTime = maxTime / 1000;

System.out.println("Maximum time taken by a read thread to complete - " + maxTime);

System.out.printf("Completed %d reads with %d threads in %d seconds\n", numberOfReads, numberOfReadThreads, time);

System.out.println("Read operations per second - " + numberOfReads/time);

Histogram latencyHistogram = new Histogram(TimeUnit.SECONDS.toNanos(10), 3);

for(Read r : reads) {

latencyHistogram.add(r.latencyHistogram);

}

System.out.printf("Max value - %d\n", latencyHistogram.getMaxValue());

System.out.printf("Average value - %f\n", latencyHistogram.getMean());

System.out.printf("95th percentile - %d\n", latencyHistogram.getValueAtPercentile(95.0));

System.out.printf("99th percentile - %d\n", latencyHistogram.getValueAtPercentile(99.0));

System.out.printf("99.9th percentile - %d\n", latencyHistogram.getValueAtPercentile(99.9));

System.out.printf("99.99th percentile - %d\n", latencyHistogram.getValueAtPercentile(99.99));

}

static class Read extends Thread {

final int id;

final Random rand;

final StorageEngine db;

long time;

Histogram latencyHistogram = new Histogram(TimeUnit.SECONDS.toNanos(10), 3);

Read(StorageEngine db, int id) {

this.db = db;

this.id = id;

rand = new Random(seed + id);

}

@Override

public void run() {

long sum = 0, count = 0;

long start = System.currentTimeMillis();

while (count < noOfReadsPerThread) {

long id = (long)rand.nextInt(numberOfRecords);

long s = System.nanoTime();

byte[] value = db.get(longToBytes(id));

latencyHistogram.recordValue(System.nanoTime()-s);

count++;

if (value == null) {

System.out.println("NO value for key " +id);

continue;

}

if (count % 1_000_000 == 0) {

System.out.printf(printDate() + "Read: %d Completed %d reads\n", this.id, count);

}

sum += value.length;

}

time = (System.currentTimeMillis() - start);

System.out.printf("Read: %d Completed in time %d\n", id, time);

}

}

public static byte[] longToBytes(long value) {

return Longs.toByteArray(value);

}

public static String printDate() {

return sdf.format(new Date()) + ": ";

}

}

================================================

FILE: benchmarks/src/main/java/com/oath/halodb/benchmarks/Benchmarks.java

================================================

/*

* Copyright 2018, Oath Inc

* Licensed under the terms of the Apache License 2.0. Please refer to accompanying LICENSE file for terms.

*/

package com.oath.halodb.benchmarks;

public enum Benchmarks {

FILL_SEQUENCE,

FILL_RANDOM,

READ_RANDOM,

RANDOM_UPDATE,

READ_AND_UPDATE;

}

================================================

FILE: benchmarks/src/main/java/com/oath/halodb/benchmarks/HaloDBStorageEngine.java

================================================

/*

* Copyright 2018, Oath Inc

* Licensed under the terms of the Apache License 2.0. Please refer to accompanying LICENSE file for terms.

*/

package com.oath.halodb.benchmarks;

import com.google.common.primitives.Ints;

import com.oath.halodb.HaloDB;

import com.oath.halodb.HaloDBException;

import com.oath.halodb.HaloDBOptions;

import java.io.File;

public class HaloDBStorageEngine implements StorageEngine {

private final File dbDirectory;

private HaloDB db;

private final long noOfRecords;

public HaloDBStorageEngine(File dbDirectory, long noOfRecords) {

this.dbDirectory = dbDirectory;

this.noOfRecords = noOfRecords;

}

@Override

public void put(byte[] key, byte[] value) {

try {

db.put(key, value);

} catch (HaloDBException e) {

e.printStackTrace();

}

}

@Override

public byte[] get(byte[] key) {

try {

return db.get(key);

} catch (HaloDBException e) {

e.printStackTrace();

}

return new byte[0];

}

@Override

public void delete(byte[] key) {

try {

db.delete(key);

} catch (HaloDBException e) {

e.printStackTrace();

}

}

@Override

public void open() {

HaloDBOptions opts = new HaloDBOptions();

opts.setMaxFileSize(1024*1024*1024);

opts.setCompactionThresholdPerFile(0.50);

opts.setFlushDataSizeBytes(10 * 1024 * 1024);

opts.setNumberOfRecords(Ints.checkedCast(2 * noOfRecords));

opts.setCompactionJobRate(135 * 1024 * 1024);

opts.setUseMemoryPool(true);

opts.setFixedKeySize(8);

try {

db = HaloDB.open(dbDirectory, opts);

} catch (HaloDBException e) {

e.printStackTrace();

}

}

@Override

public void close() {

if (db != null){

try {

db.close();

} catch (HaloDBException e) {

e.printStackTrace();

}

}

}

@Override

public long size() {

return db.size();

}

@Override

public void printStats() {

}

@Override

public String stats() {

return db.stats().toString();

}

}

================================================

FILE: benchmarks/src/main/java/com/oath/halodb/benchmarks/KyotoStorageEngine.java

================================================

/*

* Copyright 2018, Oath Inc

* Licensed under the terms of the Apache License 2.0. Please refer to accompanying LICENSE file for terms.

*/

package com.oath.halodb.benchmarks;

import java.io.File;

import kyotocabinet.DB;

public class KyotoStorageEngine implements StorageEngine {

private final File dbDirectory;

private final int noOfRecords;

private final DB db = new DB(2);

public KyotoStorageEngine(File dbDirectory, int noOfRecords) {

this.dbDirectory = dbDirectory;

this.noOfRecords = noOfRecords;

}

@Override

public void open() {

int mode = DB.OWRITER | DB.OCREATE | DB.ONOREPAIR;

StringBuilder fileNameBuilder = new StringBuilder();

fileNameBuilder.append(dbDirectory.getPath()).append("/kyoto.kch");

// specifies the power of the alignment of record size

fileNameBuilder.append("#apow=").append(8);

// specifies the number of buckets of the hash table

fileNameBuilder.append("#bnum=").append(noOfRecords * 4);

// specifies the mapped memory size

fileNameBuilder.append("#msiz=").append(2_500_000_000l);

// specifies the unit step number of auto defragmentation

fileNameBuilder.append("#dfunit=").append(8);

System.out.printf("Creating %s\n", fileNameBuilder.toString());

if (!db.open(fileNameBuilder.toString(), mode)) {

throw new IllegalArgumentException(String.format("KC db %s open error: " + db.error(),

fileNameBuilder.toString()));

}

}

@Override

public void put(byte[] key, byte[] value) {

db.set(key, value);

}

@Override

public byte[] get(byte[] key) {

return db.get(key);

}

@Override

public void close() {

db.close();

}

@Override

public long size() {

return db.size();

}

}

================================================

FILE: benchmarks/src/main/java/com/oath/halodb/benchmarks/RandomDataGenerator.java

================================================

/*

* Copyright 2018, Oath Inc

* Licensed under the terms of the Apache License 2.0. Please refer to accompanying LICENSE file for terms.

*/

package com.oath.halodb.benchmarks;

import java.util.Random;

public class RandomDataGenerator {

private final byte[] data;

private static final int size = 1003087;

private int position = 0;

public RandomDataGenerator(int seed) {

this.data = new byte[size];

Random random = new Random(seed);

random.nextBytes(data);

}

public byte[] getData(int length) {

byte[] b = new byte[length];

for (int i = 0; i < length; i++) {

if (position >= size) {

position = 0;

}

b[i] = data[position++];

}

return b;

}

}

================================================

FILE: benchmarks/src/main/java/com/oath/halodb/benchmarks/RocksDBStorageEngine.java

================================================

/*

* Copyright 2018, Oath Inc

* Licensed under the terms of the Apache License 2.0. Please refer to accompanying LICENSE file for terms.

*/

package com.oath.halodb.benchmarks;

import org.rocksdb.CompressionType;

import org.rocksdb.Env;

import org.rocksdb.Options;

import org.rocksdb.RocksDB;

import org.rocksdb.RocksDBException;

import org.rocksdb.Statistics;

import org.rocksdb.WriteOptions;

import java.io.File;

import java.util.Arrays;

import java.util.List;

public class RocksDBStorageEngine implements StorageEngine {

private RocksDB db;

private Options options;

private Statistics statistics;

private WriteOptions writeOptions;

private final File dbDirectory;

public RocksDBStorageEngine(File dbDirectory, int noOfRecords) {

this.dbDirectory = dbDirectory;

}

@Override

public void put(byte[] key, byte[] value) {

try {

db.put(writeOptions, key, value);

} catch (RocksDBException e) {

e.printStackTrace();

}

}

@Override

public byte[] get(byte[] key) {

byte[] value = null;

try {

value = db.get(key);

} catch (RocksDBException e) {

e.printStackTrace();

}

return value;

}

@Override

public void open() {

options = new Options().setCreateIfMissing(true);

options.setStatsDumpPeriodSec(1000000);

options.setWriteBufferSize(128l * 1024 * 1024);

options.setMaxWriteBufferNumber(3);

options.setMaxBackgroundCompactions(20);

Env env = Env.getDefault();

env.setBackgroundThreads(20, Env.COMPACTION_POOL);

options.setEnv(env);

// max size of L1 10 MB.

options.setMaxBytesForLevelBase(10485760);

options.setTargetFileSizeBase(67108864);

options.setLevel0FileNumCompactionTrigger(4);

options.setLevel0SlowdownWritesTrigger(6);

options.setLevel0StopWritesTrigger(12);

options.setNumLevels(6);

options.setDeleteObsoleteFilesPeriodMicros(300000000);

options.setAllowMmapReads(false);

options.setCompressionType(CompressionType.SNAPPY_COMPRESSION);

System.out.printf("maxBackgroundCompactions %d \n", options.maxBackgroundCompactions());

System.out.printf("minWriteBufferNumberToMerge %d \n", options.minWriteBufferNumberToMerge());

System.out.printf("maxWriteBufferNumberToMaintain %d \n", options.maxWriteBufferNumberToMaintain());

System.out.printf("level0FileNumCompactionTrigger %d \n", options.level0FileNumCompactionTrigger());

System.out.printf("maxBytesForLevelBase %d \n", options.maxBytesForLevelBase());

System.out.printf("maxBytesForLevelMultiplier %f \n", options.maxBytesForLevelMultiplier());

System.out.printf("targetFileSizeBase %d \n", options.targetFileSizeBase());

System.out.printf("targetFileSizeMultiplier %d \n", options.targetFileSizeMultiplier());

List<CompressionType> compressionLevels =

Arrays.asList(

CompressionType.NO_COMPRESSION,

CompressionType.NO_COMPRESSION,

CompressionType.SNAPPY_COMPRESSION,

CompressionType.SNAPPY_COMPRESSION,

CompressionType.SNAPPY_COMPRESSION,

CompressionType.SNAPPY_COMPRESSION

);

options.setCompressionPerLevel(compressionLevels);

System.out.printf("compressionPerLevel %s \n", options.compressionPerLevel());

System.out.printf("numLevels %s \n", options.numLevels());

writeOptions = new WriteOptions();

writeOptions.setDisableWAL(true);

System.out.printf("WAL is disabled - %s \n", writeOptions.disableWAL());

try {

db = RocksDB.open(options, dbDirectory.getPath());

} catch (RocksDBException e) {

e.printStackTrace();

}

}

@Override

public void close() {

//statistics.close();

options.close();

writeOptions.close();

db.close();

}

}

================================================

FILE: benchmarks/src/main/java/com/oath/halodb/benchmarks/StorageEngine.java

================================================

/*

* Copyright 2018, Oath Inc

* Licensed under the terms of the Apache License 2.0. Please refer to accompanying LICENSE file for terms.

*/

package com.oath.halodb.benchmarks;

public interface StorageEngine {

void put(byte[] key, byte[] value);

default String stats() { return "";}

byte[] get(byte[] key);

default void delete(byte[] key) {};

void open();

void close();

default long size() {return 0;}

default void printStats() {

}

}

================================================

FILE: docs/WhyHaloDB.md

================================================

# HaloDB at Yahoo.

At Yahoo, we built this high throughput, low latency distributed key-value database that runs in multiple data centers in different parts for the world.

The database stores billions of records and handles millions of read and write requests per second with an SLA of 1 millisecond at the 99th percentile.

The data we have in this database must be persistent, and the working set is larger than what we can fit in memory.

Therefore, a key component of the database’s performance is a fast storage engine, for which we have relied on Kyoto Cabinet. Although Kyoto Cabinet has served us well,

it was designed primarily for a read-heavy workload and its write throughput started to be a bottleneck as we took on more write traffic.

There were also other issues we faced with Kyoto Cabinet; it takes up to an hour to repair a corrupted db, and takes hours to iterate over and update/delete records (which we have to do every night).

It also doesn't expose enough operational metrics or logs which makes resolving issues challenging. However, our primary concern was Kyoto Cabinet’s write performance,

which based on our projections, would have been a major obstacle for scaling the database; therefore, it was a good time to look for alternatives.

**These are the salient features of the database’s workload for which the storage engine will be used:**

* Small keys (8 bytes) and large values (10KB average)

* Both read and write throughput are high.

* Submillisecond read latency at the 99th percentile.

* Single writer thread.

* No need for ordered access or range scans.

* Working set is much larger than available memory, hence workload is IO bound.

* Database is written in Java.

## Why a new storage engine?

Although there are umpteen number of storage engines publicly available almost all use a variation of the following data structures to organize data on disk for fast lookup:

* __Hash table__: Kyoto Cabinet.

* __Log-structured merge tree__: LevelDB, RocksDB.

* __B-Tree/B+ Tree__: Berkeley DB, InnoDB.

Since our workload requires very high write throughput, Hash table and B-Tree based storage engines were not suitable as they need to do random writes.

Although modern SSDs have narrowed the gap between sequential and random write performance, sequential writes still have higher throughput, primarily due

to the reduced internal garbage collection load within the SSD. LSM trees also turned out to be unsuitable; benchmarking RocksDB on our workload showed

a write amplification of 10-12, therefore writing 100MB/sec to RocksDB meant that it will write more than 1 GB/sec to the SSD, clearly too high.

High write amplification of RocksDB is a property of the LSM data structure itself, thereby ruling out storage engines based on LSM trees.

LSM tree and B-Tree also maintain an ordering of keys to support efficient range scans, but the cost they pay is a read amplification greater than 1,

and for LSM tree, very high write amplification. Since our workload only does point lookups, we don’t want to pay the cost associated with storing data

in a format suitable for range scans.

These problems ruled out most of the publicly available and well maintained storage engines. Looking at alternate storage engine data structures led us to

explore ideas used in Log-structured storage systems. Here was a potential good fit; log-structured system only does sequential writes, an efficient

garbage collection implementation can keep write amplification low, and having an index in memory for the keys can give us a read amplification of one,

and we get transactional updates, snapshots, and quick crash recovery almost for free. Also in this scheme, there is no ordering of data and hence its

associated costs are not paid. We found that similar ideas have been used in [BitCask](https://github.com/basho/bitcask/blob/develop/doc/bitcask-intro.pdf)

and [Haystack](https://code.facebook.com/posts/685565858139515/needle-in-a-haystack-efficient-storage-of-billions-of-photos/).

But BitCask was written in Erlang, and since our database runs on the JVM running Erlang VM on the same box and talking to it from the JVM is something

that we didn’t want to do. Haystack, on the other hand, is a full-fledged distributed database optimized for storing photos, and its storage engine hasn’t been open sourced.

Therefore it was decided to write a new storage engine from scratch; thus the HaloDB project was initiated.

## Performance test results on our production workload.

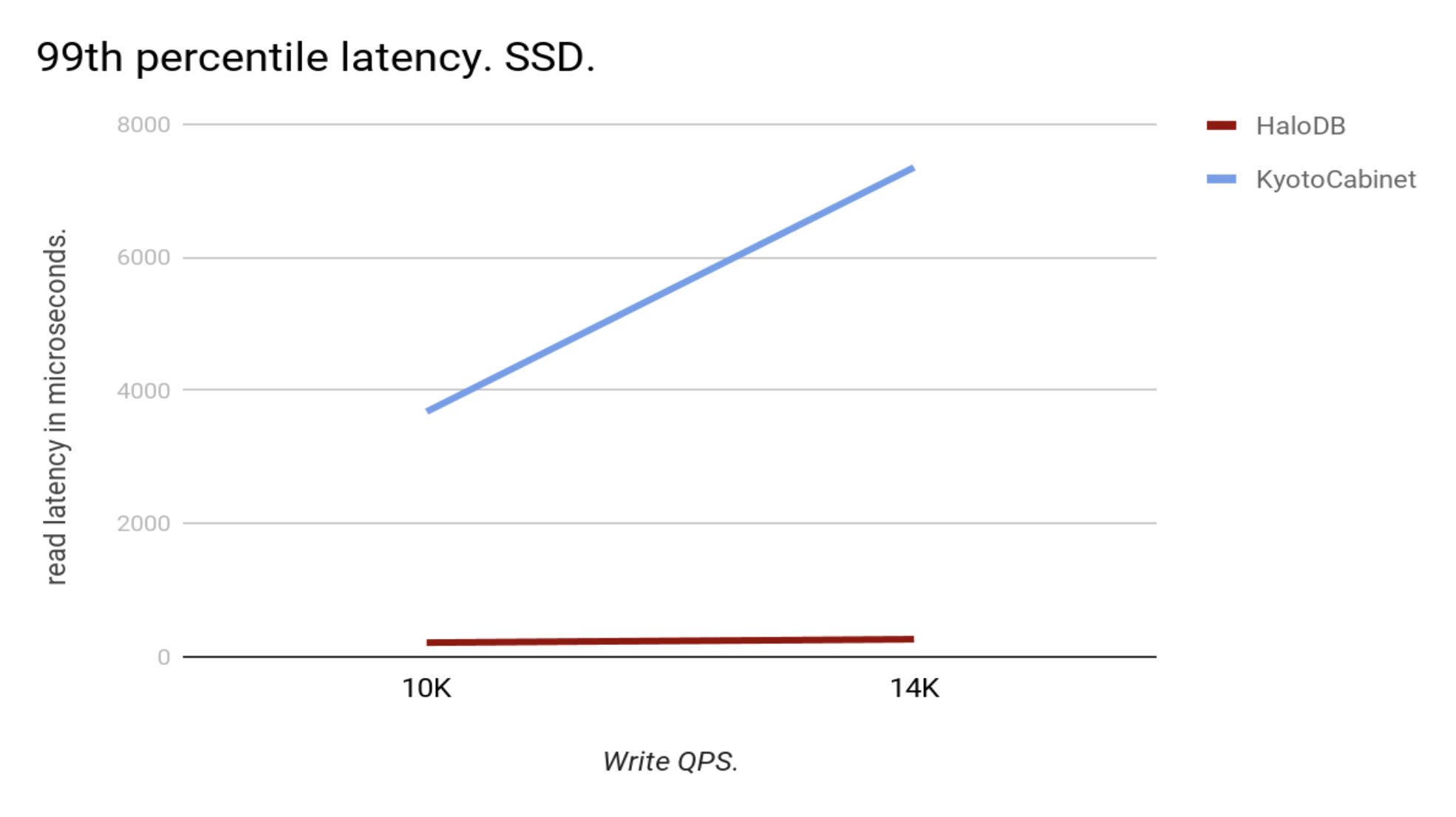

The following chart shows the results of performance tests that we ran with production data against a performance test box with the same hardware as production boxes. The read requests were kept at 50,000 QPS while the write QPS was increased.

As you can see at the 99th percentile HaloDB read latency is an order of magnitude better than that of Kyoto Cabinet.

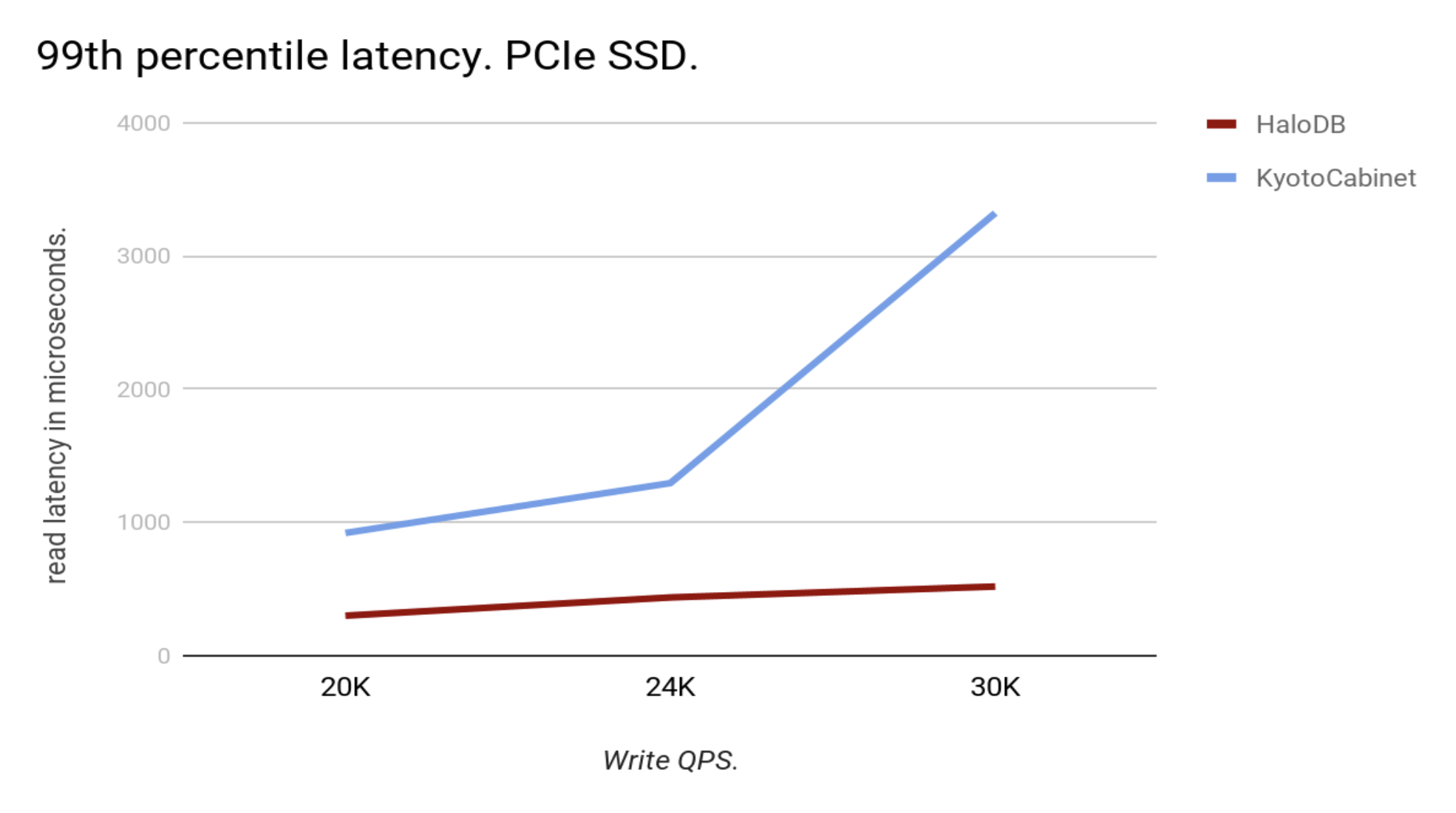

We recently upgraded our SSDs to PCIe NVMe SSDs. This has given us a significant performance boost and has narrowed the gap between HaloDB and Kyoto Cabinet,

but the difference is still significant:

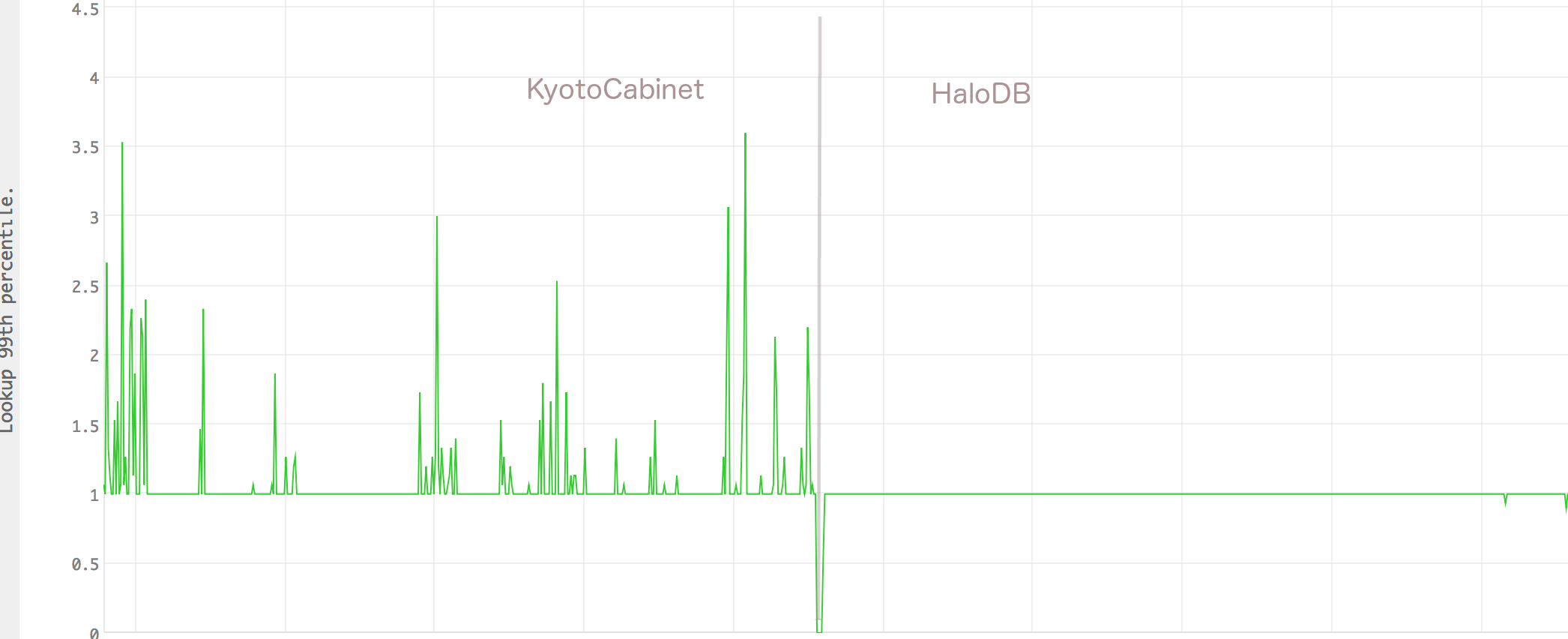

Of course, these are results from performance tests, but nothing beats real data from hosts running in production.

Following chart shows the 99th percentile latency from a production server before and after migration to HaloDB.

HaloDB has thus given our production boxes a 50% improvement in capacity while consistently maintaining a sub-millisecond latency at the 99th percentile.

HaloDB also has fixed few other problems that we had with KyotoCabinet. The daily cleanup job that used to take upto 5 hours in Kyoto Cabinet is now complete in 90 minutes

with HaloDB due to its improved write throughput. Also, HaloDB takes only a few seconds to recover from a crash due to the fact that all log files,

once they are rolled over, are immutable. Hence, in the event of a crash only the last file that was being written to need to be repaired.

Whereas, with Kyoto Cabinet crash recovery used to take more than an hour to complete. And the metrics that HaloDB exposes gives us good insight into its internal state,

which was missing with Kyoto Cabinet.

================================================

FILE: docs/benchmarks.md

================================================

# Benchmarks

Benchmarks were run to compare HaloDB against RocksDB and KyotoCabinet.

KyotoCabinet was chosen as we were using it in production. RockDB was chosen as it is a well known storage engine

with good documentation and a large community. HaloDB and KyotoCabinet supports only a subset of RocksDB's features, therefore the comparison is not exactly fair to RocksDB.

All benchmarks were run on bare-metal box with the following specifications:

* 2 x Xeon E5-2680 2.50GHz (HT enabled, 24 cores, 48 threads)

* 128 GB of RAM.

* 1 Samsung PM863 960 GB SSD with XFS file system.

* RHEL 6 with kernel 2.6.32.

Key size was 8 bytes and value size 1024 bytes. Tests created a db with 500 million records with total size of approximately

500GB. Since this is significantly bigger than the available memory it will ensure that the workload will be IO bound, which is what HaloDB was primarily designed for.

Benchmark tool can be found [here](../benchmarks)

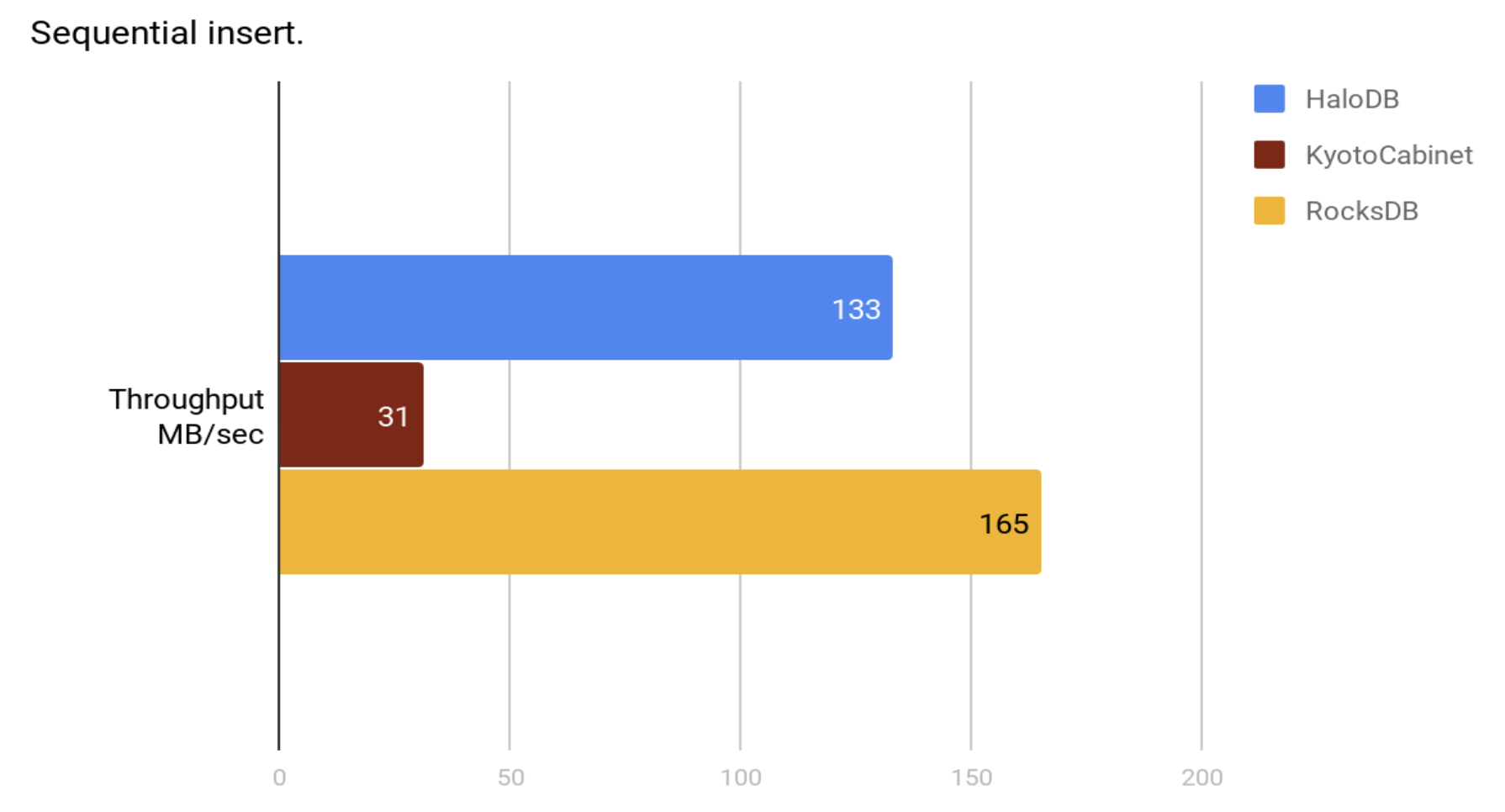

## Test 1: Fill Sequential.

Create a new db by inserting 500 million records in sorted key order.

DB size at the end of the test run.

| Storage Engine | GB |

| ------------- | --------- |

| HaloDB | 503 |

| KyotoCabinet | 609 |

| RocksDB | 487 |

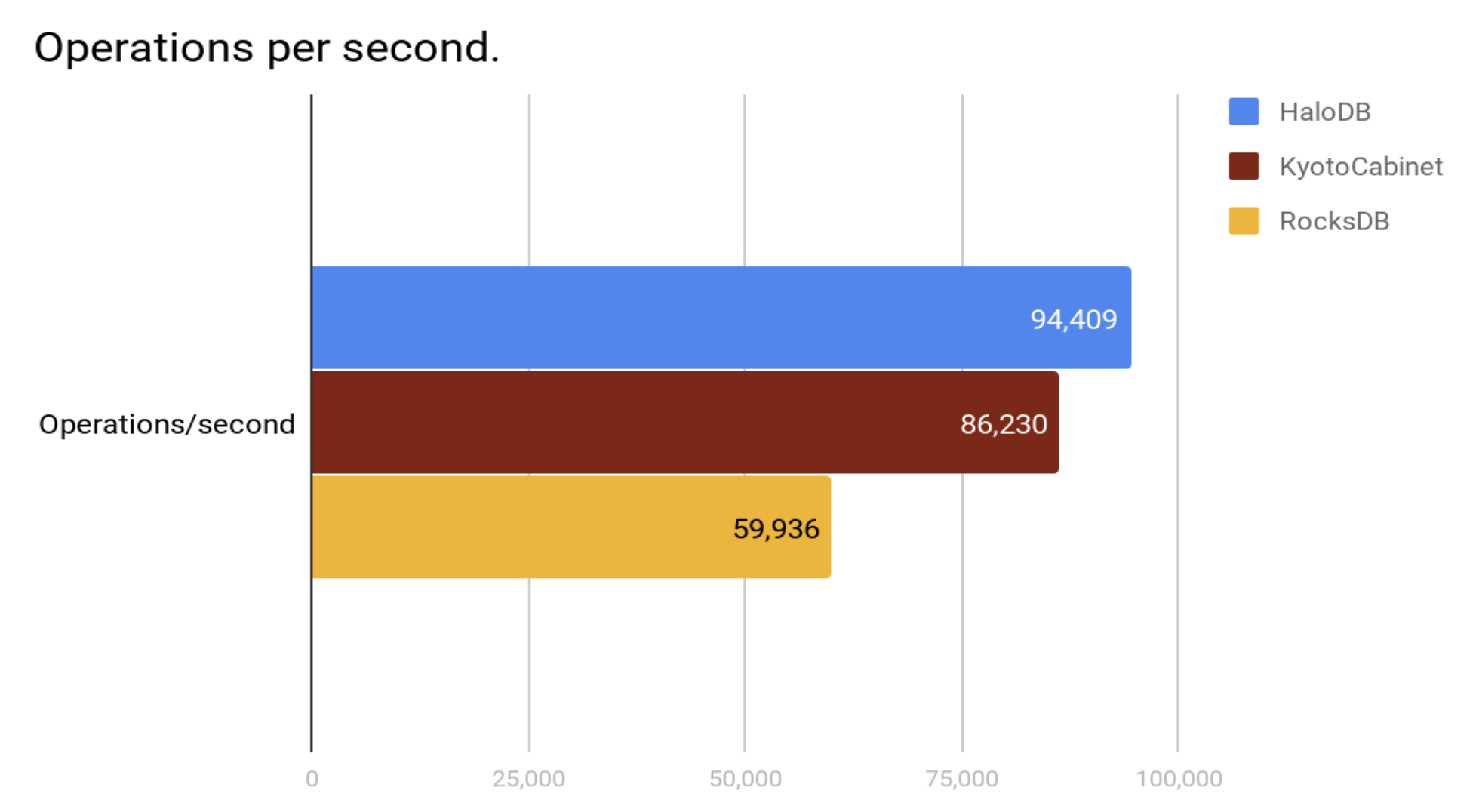

## Test 2: Random Read

Measure random read performance with 32 threads doing _640 million reads_ in total. Read ahead was disabled for this test.

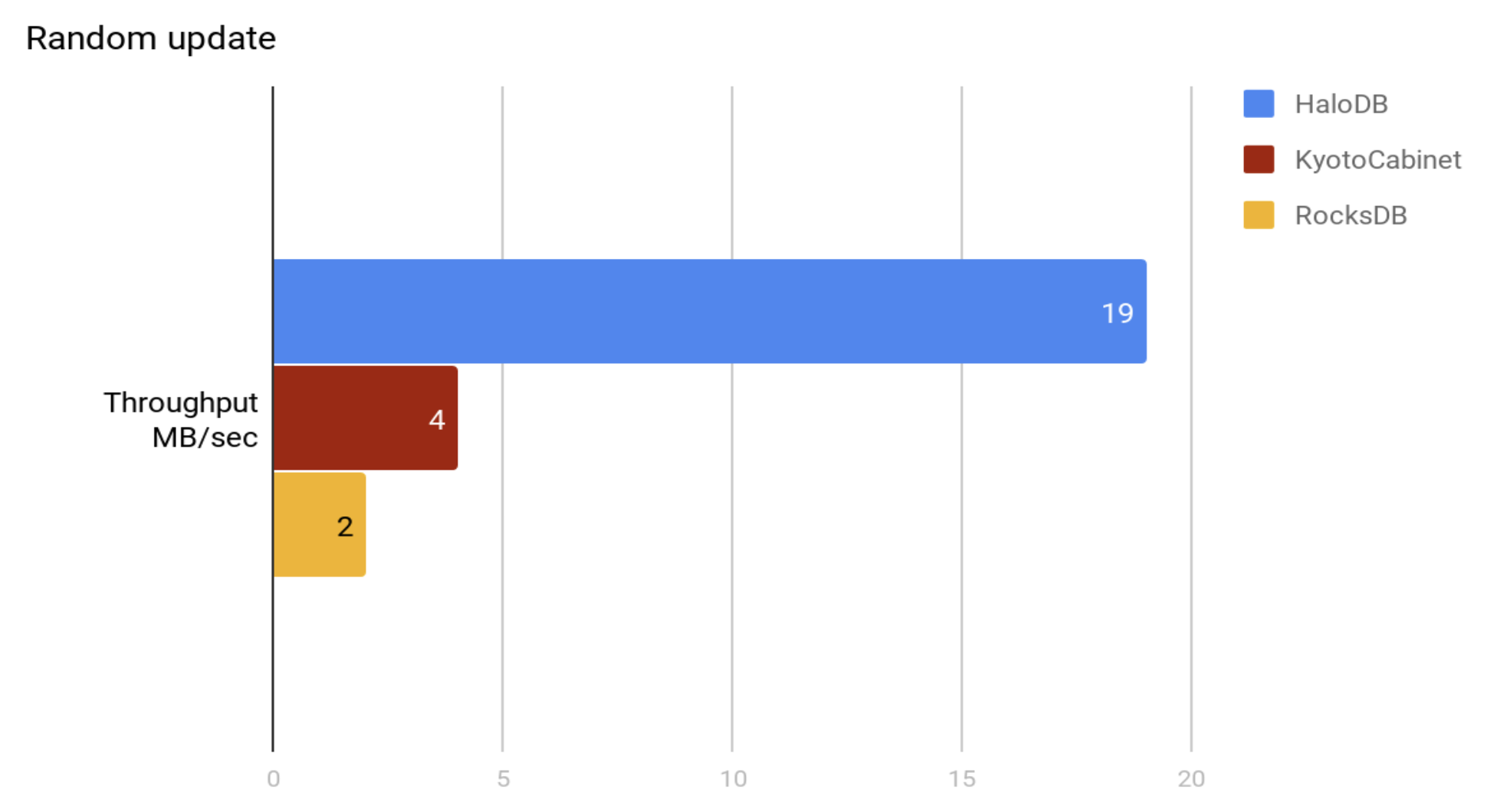

## Test 3: Random Update.

Perform 500 million updates to randomly selected records.

DB size at the end of the test run.

| Storage Engine | GB |

| ------------- | --------- |

| HaloDB | 556 |

| KyotoCabinet | 609 |

| RocksDB | 504 |

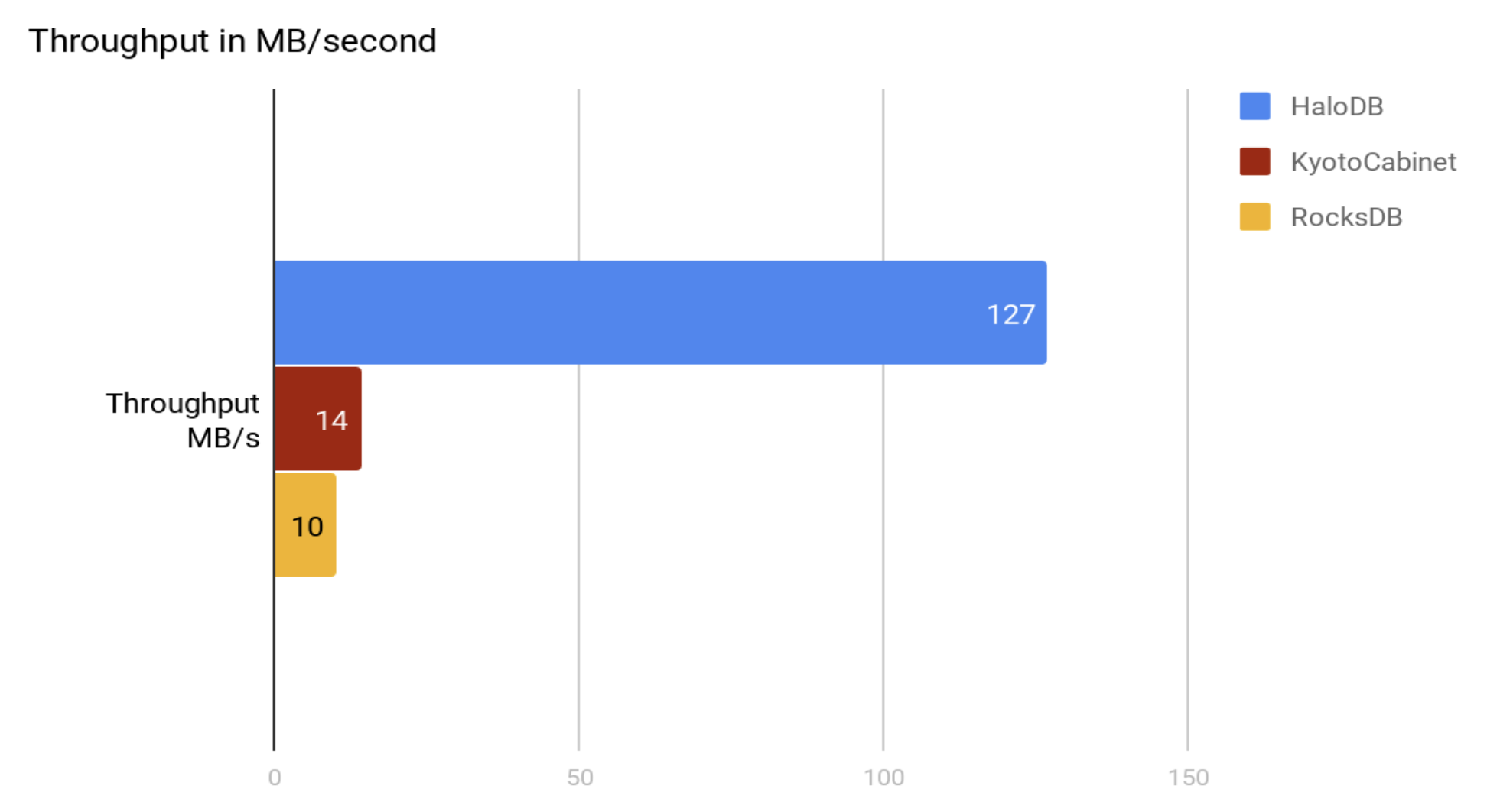

## Test 4: Fill Random.

Insert 500 million records into an empty db in random order.

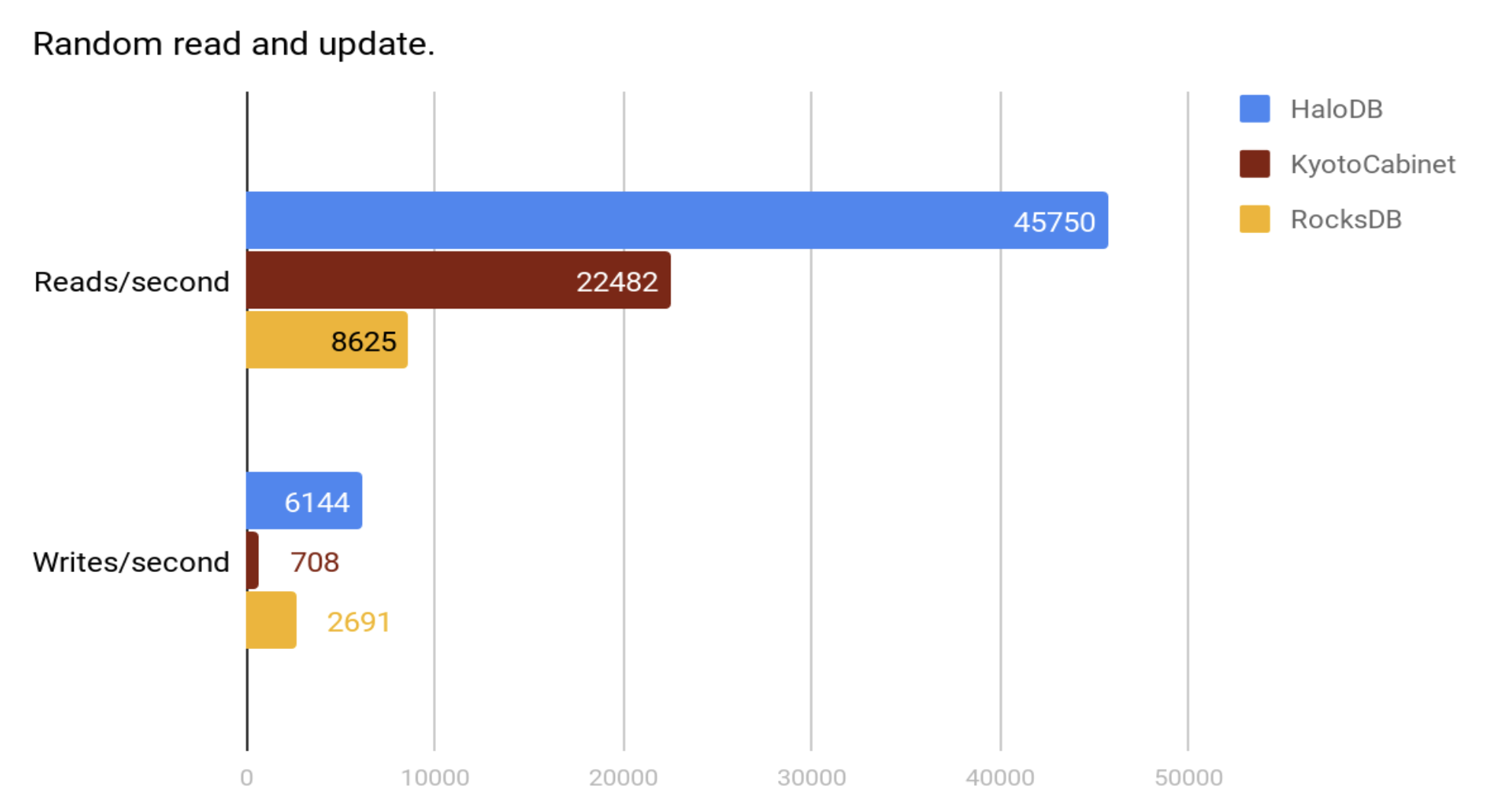

## Test 5: Read and update.

32 threads doing a total of 640 million random reads and one thread doing random updates as fast as possible.

## Why HaloDB is fast.

HaloDB doesn't claim to be always better than RocksDB or KyotoCabinet. HaloDB was written for a specific type of workload, and therefore had

the advantage of optimizing for that workload; the trade-offs that HaloDB makes might make it sub-optimal for other workloads (best to run benchmarks to verify).

HaloDB also offers only a small subset of features compared to other storage engines like RocksDB.

All writes to HaloDB are sequential writes to append-only log files. HaloDB uses a background compaction job to clean up stale data.

The threshold at which a file is compacted can be tuned and this determines HaloDB's write amplification and space amplification.

A compaction threshold of 50% gives a write amplification of only 2, this coupled with the fact that we do only sequential writes

are the primary reasons for HaloDB’s high write throughput. Additionally, the only meta-data that HaloDB need to modify during writes are

those of the index in memory. The trade-off here is that HaloDB will occupy more space on disk.

To lookup the value for a key its corresponding metadata is first read from the in-memory index and then the value is read from disk.

Therefore each lookup request requires at most a single read from disk, giving us a read amplification of 1, and is primarily responsible

for HaloDB’s low read latencies. The trade-off here is that we need to store all the keys and their associated metadata in memory. HaloDB

also need to scan all the keys during startup to build the in-memory index. This, depending on the number of keys, might take a few minutes.

HaloDB avoids doing in-place updates and doesn't need record level locks. A type of MVCC is inherent in the design of all log-structured storage systems. This also helps with performance even under high read and write throughput.

HaloDB also doesn't support range scans and therefore doesn't pay the cost associated with storing data in a format suitable for efficient range scans.

================================================

FILE: pom.xml

================================================

<!--

~ Copyright 2018, Oath Inc

~ Licensed under the terms of the Apache License 2.0. Please refer to accompanying LICENSE file for terms.

-->

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.oath.halodb</groupId>

<artifactId>halodb</artifactId>

<version>0.5.6</version>

<packaging>jar</packaging>

<name>HaloDB</name>

<description>A fast, embedded, persistent key-value storage engine.</description>

<url>http://maven.apache.org</url>

<developers>

<developer>

<name>Arjun Mannaly</name>

</developer>

</developers>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-api</artifactId>

<version>1.7.12</version>

</dependency>

<dependency>

<groupId>com.google.guava</groupId>

<artifactId>guava</artifactId>

<version>18.0</version>

</dependency>

<dependency>

<groupId>net.java.dev.jna</groupId>

<artifactId>jna</artifactId>

<version>4.1.0</version>

</dependency>

<dependency>

<groupId>net.jpountz.lz4</groupId>

<artifactId>lz4</artifactId>

<optional>true</optional>

<version>1.3</version>

</dependency>

<dependency>

<groupId>org.hamcrest</groupId>

<artifactId>hamcrest-all</artifactId>

<version>1.3</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

<version>2.3</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-slf4j-impl</artifactId>

<version>2.3</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.testng</groupId>

<artifactId>testng</artifactId>

<version>6.9.10</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.jmockit</groupId>

<artifactId>jmockit</artifactId>

<version>1.38</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.assertj</groupId>

<artifactId>assertj-core</artifactId>

<version>3.8.0</version>

<scope>test</scope>

</dependency>

</dependencies>

<scm>

<url>https://github.com/yahoo/HaloDB</url>

<developerConnection>scm:git:git@github.com:yahoo/HaloDB.git</developerConnection>

<tag>HEAD</tag>

</scm>

<build>