Showing preview only (428K chars total). Download the full file or copy to clipboard to get everything.

Repository: yeyupiaoling/LearnPaddle2

Branch: master

Commit: 7c53fa82cd64

Files: 215

Total size: 322.8 KB

Directory structure:

gitextract_qo9m5qrb/

├── .gitignore

├── LICENSE

├── README.md

├── note1/

│ ├── README.md

│ └── test_paddle.py

├── note10/

│ ├── README.md

│ ├── mobilenet_v2.py

│ ├── test_visualdl.py

│ └── train.py

├── note11/

│ ├── README.md

│ ├── create_data_list.py

│ ├── download_image.py

│ ├── infer.py

│ ├── mobilenet_v1.py

│ ├── reader.py

│ └── train.py

├── note12/

│ ├── README.md

│ ├── bilstm_net.py

│ ├── create_data.py

│ ├── download_text_data.py

│ ├── infer.py

│ ├── text_reader.py

│ └── train.py

├── note13/

│ ├── README.md

│ ├── image_reader.py

│ ├── infer.py

│ └── train.py

├── note14/

│ ├── README.md

│ ├── index.html

│ └── paddle_server.py

├── note15/

│ ├── .gitignore

│ ├── README.md

│ ├── app/

│ │ ├── .gitignore

│ │ ├── build.gradle

│ │ ├── proguard-rules.pro

│ │ └── src/

│ │ └── main/

│ │ ├── AndroidManifest.xml

│ │ ├── assets/

│ │ │ └── infer_model/

│ │ │ ├── __model__

│ │ │ ├── batch_norm_0.b_0

│ │ │ ├── batch_norm_0.w_0

│ │ │ ├── batch_norm_0.w_1

│ │ │ ├── batch_norm_0.w_2

│ │ │ ├── batch_norm_1.b_0

│ │ │ ├── batch_norm_1.w_0

│ │ │ ├── batch_norm_1.w_1

│ │ │ ├── batch_norm_1.w_2

│ │ │ ├── batch_norm_10.b_0

│ │ │ ├── batch_norm_10.w_0

│ │ │ ├── batch_norm_10.w_1

│ │ │ ├── batch_norm_10.w_2

│ │ │ ├── batch_norm_11.b_0

│ │ │ ├── batch_norm_11.w_0

│ │ │ ├── batch_norm_11.w_1

│ │ │ ├── batch_norm_11.w_2

│ │ │ ├── batch_norm_12.b_0

│ │ │ ├── batch_norm_12.w_0

│ │ │ ├── batch_norm_12.w_1

│ │ │ ├── batch_norm_12.w_2

│ │ │ ├── batch_norm_13.b_0

│ │ │ ├── batch_norm_13.w_0

│ │ │ ├── batch_norm_13.w_1

│ │ │ ├── batch_norm_13.w_2

│ │ │ ├── batch_norm_14.b_0

│ │ │ ├── batch_norm_14.w_0

│ │ │ ├── batch_norm_14.w_1

│ │ │ ├── batch_norm_14.w_2

│ │ │ ├── batch_norm_15.b_0

│ │ │ ├── batch_norm_15.w_0

│ │ │ ├── batch_norm_15.w_1

│ │ │ ├── batch_norm_15.w_2

│ │ │ ├── batch_norm_16.b_0

│ │ │ ├── batch_norm_16.w_0

│ │ │ ├── batch_norm_16.w_1

│ │ │ ├── batch_norm_16.w_2

│ │ │ ├── batch_norm_17.b_0

│ │ │ ├── batch_norm_17.w_0

│ │ │ ├── batch_norm_17.w_1

│ │ │ ├── batch_norm_17.w_2

│ │ │ ├── batch_norm_18.b_0

│ │ │ ├── batch_norm_18.w_0

│ │ │ ├── batch_norm_18.w_1

│ │ │ ├── batch_norm_18.w_2

│ │ │ ├── batch_norm_19.b_0

│ │ │ ├── batch_norm_19.w_0

│ │ │ ├── batch_norm_19.w_1

│ │ │ ├── batch_norm_19.w_2

│ │ │ ├── batch_norm_2.b_0

│ │ │ ├── batch_norm_2.w_0

│ │ │ ├── batch_norm_2.w_1

│ │ │ ├── batch_norm_2.w_2

│ │ │ ├── batch_norm_20.b_0

│ │ │ ├── batch_norm_20.w_0

│ │ │ ├── batch_norm_20.w_1

│ │ │ ├── batch_norm_20.w_2

│ │ │ ├── batch_norm_21.b_0

│ │ │ ├── batch_norm_21.w_0

│ │ │ ├── batch_norm_21.w_1

│ │ │ ├── batch_norm_21.w_2

│ │ │ ├── batch_norm_22.b_0

│ │ │ ├── batch_norm_22.w_0

│ │ │ ├── batch_norm_22.w_1

│ │ │ ├── batch_norm_22.w_2

│ │ │ ├── batch_norm_23.b_0

│ │ │ ├── batch_norm_23.w_0

│ │ │ ├── batch_norm_23.w_1

│ │ │ ├── batch_norm_23.w_2

│ │ │ ├── batch_norm_24.b_0

│ │ │ ├── batch_norm_24.w_0

│ │ │ ├── batch_norm_24.w_1

│ │ │ ├── batch_norm_24.w_2

│ │ │ ├── batch_norm_25.b_0

│ │ │ ├── batch_norm_25.w_0

│ │ │ ├── batch_norm_25.w_1

│ │ │ ├── batch_norm_25.w_2

│ │ │ ├── batch_norm_26.b_0

│ │ │ ├── batch_norm_26.w_0

│ │ │ ├── batch_norm_26.w_1

│ │ │ ├── batch_norm_26.w_2

│ │ │ ├── batch_norm_3.b_0

│ │ │ ├── batch_norm_3.w_0

│ │ │ ├── batch_norm_3.w_1

│ │ │ ├── batch_norm_3.w_2

│ │ │ ├── batch_norm_4.b_0

│ │ │ ├── batch_norm_4.w_0

│ │ │ ├── batch_norm_4.w_1

│ │ │ ├── batch_norm_4.w_2

│ │ │ ├── batch_norm_5.b_0

│ │ │ ├── batch_norm_5.w_0

│ │ │ ├── batch_norm_5.w_1

│ │ │ ├── batch_norm_5.w_2

│ │ │ ├── batch_norm_6.b_0

│ │ │ ├── batch_norm_6.w_0

│ │ │ ├── batch_norm_6.w_1

│ │ │ ├── batch_norm_6.w_2

│ │ │ ├── batch_norm_7.b_0

│ │ │ ├── batch_norm_7.w_0

│ │ │ ├── batch_norm_7.w_1

│ │ │ ├── batch_norm_7.w_2

│ │ │ ├── batch_norm_8.b_0

│ │ │ ├── batch_norm_8.w_0

│ │ │ ├── batch_norm_8.w_1

│ │ │ ├── batch_norm_8.w_2

│ │ │ ├── batch_norm_9.b_0

│ │ │ ├── batch_norm_9.w_0

│ │ │ ├── batch_norm_9.w_1

│ │ │ ├── batch_norm_9.w_2

│ │ │ ├── conv2d_0.w_0

│ │ │ ├── conv2d_1.w_0

│ │ │ ├── conv2d_10.w_0

│ │ │ ├── conv2d_11.w_0

│ │ │ ├── conv2d_12.w_0

│ │ │ ├── conv2d_13.w_0

│ │ │ ├── conv2d_2.w_0

│ │ │ ├── conv2d_3.w_0

│ │ │ ├── conv2d_4.w_0

│ │ │ ├── conv2d_5.w_0

│ │ │ ├── conv2d_6.w_0

│ │ │ ├── conv2d_7.w_0

│ │ │ ├── conv2d_8.w_0

│ │ │ ├── conv2d_9.w_0

│ │ │ ├── depthwise_conv2d_0.w_0

│ │ │ ├── depthwise_conv2d_1.w_0

│ │ │ ├── depthwise_conv2d_10.w_0

│ │ │ ├── depthwise_conv2d_11.w_0

│ │ │ ├── depthwise_conv2d_12.w_0

│ │ │ ├── depthwise_conv2d_2.w_0

│ │ │ ├── depthwise_conv2d_3.w_0

│ │ │ ├── depthwise_conv2d_4.w_0

│ │ │ ├── depthwise_conv2d_5.w_0

│ │ │ ├── depthwise_conv2d_6.w_0

│ │ │ ├── depthwise_conv2d_7.w_0

│ │ │ ├── depthwise_conv2d_8.w_0

│ │ │ ├── depthwise_conv2d_9.w_0

│ │ │ ├── fc_0.b_0

│ │ │ └── fc_0.w_0

│ │ ├── java/

│ │ │ └── com/

│ │ │ ├── baidu/

│ │ │ │ └── paddle/

│ │ │ │ └── PML.java

│ │ │ └── yeyupiaoling/

│ │ │ └── note15/

│ │ │ ├── MainActivity.java

│ │ │ └── Utils.java

│ │ └── res/

│ │ ├── drawable/

│ │ │ └── ic_launcher_background.xml

│ │ ├── drawable-v24/

│ │ │ └── ic_launcher_foreground.xml

│ │ ├── layout/

│ │ │ └── activity_main.xml

│ │ ├── mipmap-anydpi-v26/

│ │ │ ├── ic_launcher.xml

│ │ │ └── ic_launcher_round.xml

│ │ └── values/

│ │ ├── colors.xml

│ │ ├── strings.xml

│ │ └── styles.xml

│ ├── build.gradle

│ ├── gradle/

│ │ └── wrapper/

│ │ ├── gradle-wrapper.jar

│ │ └── gradle-wrapper.properties

│ ├── gradle.properties

│ ├── gradlew

│ ├── gradlew.bat

│ └── settings.gradle

├── note2/

│ ├── README.md

│ ├── constant_sum.py

│ └── variable_sum.py

├── note3/

│ ├── README.md

│ ├── linear_regression.py

│ └── uci_housing_linear.py

├── note4/

│ ├── README.md

│ └── mnist_classification.py

├── note5/

│ ├── README.md

│ └── text_classification.py

├── note6/

│ ├── GAN.py

│ └── README.md

├── note7/

│ ├── DQN.py

│ └── README.md

├── note8/

│ ├── README.md

│ ├── save_infer_model.py

│ ├── save_use_params_model.py

│ ├── save_use_persistables_model.py

│ └── use_infer_model.py

├── note9/

│ ├── README.md

│ ├── pretrain_model.py

│ └── train.py

└── requirements.txt

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

.idea/

note1/fit_a_line.inference.model

note3/image/

note8/models/

note9/models/

note10/log/

note11/images/

note11/infer_model/

note12/datasets/

note12/infer_model/

note13/train_image/

note13/infer_image/

note13/infer_model/

note13/datasets/

note14/infer_model/

note14/images/

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright [yyyy] [name of copyright owner]

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: README.md

================================================

# LearnPaddle2

PaddlePaddle新版本Fluid教程,使用的PaddlePaddle版本为1.2.0,Python版本为3.5。

# 文章博客地址

* [第一章 新版本PaddlePaddle的安装](https://blog.doiduoyi.com/articles/1584974303857.html)

* [第二章 计算1+1](https://blog.doiduoyi.com/articles/1584974387872.html)

* [第三章 线性回归](https://blog.doiduoyi.com/articles/1584974471592.html)

* [第四章 卷积神经网络](https://blog.doiduoyi.com/articles/1584974540988.html)

* [第五章 循环神经网络](https://blog.doiduoyi.com/articles/1584974601202.html)

* [第六章 生成对抗网络](https://blog.doiduoyi.com/articles/1584974661687.html)

* [第七章 强化学习](https://blog.doiduoyi.com/articles/1584974728689.html)

* [第八章 模型的保存与使用](https://blog.doiduoyi.com/articles/1584974792165.html)

* [第九章 迁移学习](https://blog.doiduoyi.com/articles/1584974849177.html)

* [第十章 VisualDL 训练可视化](https://blog.doiduoyi.com/articles/1584974915236.html)

* [第十一章 自定义图像数据集识别](https://blog.doiduoyi.com/articles/1584974968544.html)

* [第十二章 自定义文本数据集分类](https://blog.doiduoyi.com/articles/1584975038292.html)

* [第十三章 自定义图像数生成](https://blog.doiduoyi.com/articles/1584975142214.html)

* [第十四章 把预测模型部署在服务器](https://blog.doiduoyi.com/articles/1584975208040.html)

* [第十五章 把预测模型部署到Android手机上](https://blog.doiduoyi.com/articles/1584975263604.html)

# 补充

来都来了,觉得不错的话,给个star吧。

================================================

FILE: note1/README.md

================================================

@[TOC]

# 前言

这一章我们介绍如何安装新版本的PaddlePaddle,这里说的新版本主要是说Fluid版本。Fluid 是设计用来让用户像Pytorch和Tensorflow Eager Execution一样执行程序。在这些系统中,不再有模型这个概念,应用也不再包含一个用于描述Operator图或者一系列层的符号描述,而是像通用程序那样描述训练或者预测的过程。也就是说PaddlePaddle从Fluid版本开始使用动态图机制,所以我们这个系列也是使用Fluid版本编写的教程。

# 环境

- 系统:64位Windows 10专业版,64位Ubuntu 16.04

- Python环境:Python 3.5

- 内存:8G

# Windows下安装

PaddlePaddle在1.2版本之后开始支持Windows,也就是说使用Windows的用户不需要再安装Docker容器,或者使用Windows的Liunx子系统,直接可以在Windows系统本身安装PaddlePaddle。下面我们就介绍如何在Windows安装PaddlePaddle,分为两个部分介绍,首先安装Python 3.5环境,然后再使用命令安装PaddlePaddle。

## 安装Python

1、本系列使用的是Python 3.5,官方在Windows上支持Python2.7.15,Python3.5.x,Python3.6.x,Python3.7.x。读者根据自己的实际情况安装自己喜欢的版本。官网下载页面:https://www.python.org/downloads/windows/ ,官网下载地址:https://www.python.org/ftp/python/3.5.4/python-3.5.4-amd64.exe

2、双击运行Python 3.5安装包开始安装,记住要选上添加环境变量,这很重要,之后使用命令都要依赖这个环境变量,要不每次都要进入到`pip`的目录比较麻烦。然后点击`Install Now`开始安装。

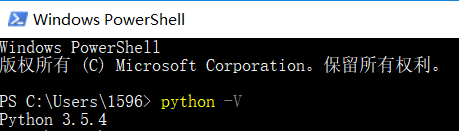

3、安装完成之后,测试安装是否成功,打开`Windows PowerShell`或者`cmd`,笔者的系统是Windows 10,可以使用`Windows PowerShell`,如果读者是其他系统,可以使用`cmd`。用命令`python -V`查看是否安装成功。正常安装之后可以显示安装Python的版本。

## 安装PaddlePaddle

PaddlePaddle支持Windows之后,安装起来非常简单,只需要一条命令就可以完成安装。

- 安装CPU版本,打开`Windows PowerShell`,输入以下命令。可以使用`==`指定安装PaddlePaddle的版本,如没有指定版本,默认安装是最新版本。`-i`后面是镜像源地址,使用国内镜像源可以大大提高下载速度:

```

pip3 install paddlepaddle==1.2.0 -i https://mirrors.aliyun.com/pypi/simple/

```

- 安装GPU版本,目前不支持Windows的GPU版本,支持后会更新。

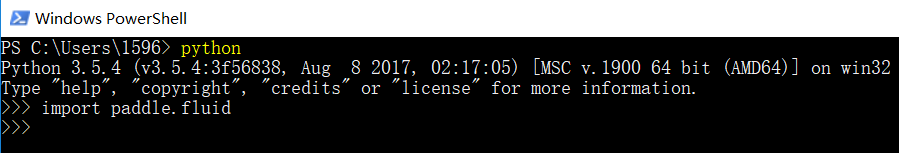

- 测试安装是否成功,在`Windows PowerShell`中输入命令`python`,进入到Python 编辑环境,并输入以下代码,导没有保存证明安装成功:

```

import paddle.fluid

```

# Ubuntu下安装

下面介绍在Ubuntu系统下安装PaddlePaddle,PaddlePaddle支持64位的Ubuntu 14.04 /16.04 /18.04系统,Python支持Python2.7.15,Python3.5.x,Python3.6.x,Python3.7.x。

- 安装Python 3.5(通常不需要执行)。通常情况下Ubuntu 16.04自带的就是Python 3.5,其他Ubuntu的版本自带的可能是其他版本,不过没有关系,PaddlePaddle基本都支持,所以不必专门安装Python3.5。

```

sudo apt install python3.5

sudo apt install python3.5-dev

```

- 安装CPU版本,打开Ubuntu的终端,快捷键是`Ctrl+Alt+T`,输入以下命令。可以使用`==`指定安装PaddlePaddle的版本,如没有指定版本,默认安装是最新版本。`-i`后面是镜像源地址,使用国内镜像源可以大大提高下载速度:

```

pip3 install paddlepaddle==1.2.0 -i https://mirrors.aliyun.com/pypi/simple/

```

- 安装GPU版本,安装GPU版本之前,要先安装CUDA,可以查看笔者之前的文章[《Ubuntu安装和卸载CUDA和CUDNN》](https://blog.csdn.net/qq_33200967/article/details/80689543),安装完成 CUDA 9 和 CUDNN 7 之后,再安装PaddlePaddle的GPU版本,安装命令如下。可以使用`==`指定安装PaddlePaddle的版本和CUDA、CUDNN的版本,这必须要跟读者系统本身安装的CUDA版本对应,比如以下命令就是安装支持CUDA 9.0和CUDNN 7的PaddlePaddle版本。`-i`后面是镜像源地址,使用国内镜像源可以大大提高下载速度:

```

pip3 install paddlepaddle-gpu==1.2.0.post97 -i https://mirrors.aliyun.com/pypi/simple/

```

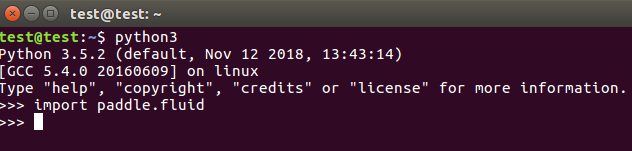

- 测试安装是否成功,在终端中输入命令`python3`,进入到Python 编辑环境,并输入以下代码,正确情况下如图所示:

```

import paddle.fluid

```

# 源码编译

这部分我们将介绍使用源码编译PaddlePaddle,可以通过这种方式安装符合读者需求的PaddlePaddle,比如笔者的电脑安装的是CUDA 10 和 CUDNN 7,而目前官方提供的没有支持CUDA 10 和 CUDNN 7的PaddlePaddle版本,所以笔者就可以通过源码编译的方式编译PaddlePaddle安装包,当然也要PaddlePaddle支持才行。

## Windows下源码编译

下面我们将介绍在Windows系统下进行源码编译PaddlePaddle。目前支持使用的系统是64位的Windows 10 家庭版/专业版/企业版。

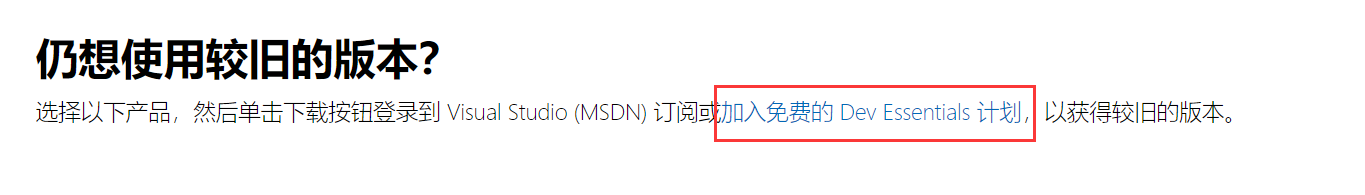

1. 安装`Visual Studio 2015 Update3`。下载地址:https://visualstudio.microsoft.com/zh-hans/vs/older-downloads/ ,因为是旧版本,还有`加入免费的 Dev Essentials 计划`才能正常下载。

2. 安装`cmake 3.13`,下载cmake的安装包,下载地址:https://cmake.org/download/ ,一路默认,只需要在添加环境变量的时候注意添加环境变量就可以了。如何存在环境变量问题,可以重启系统。

3. 安装Python的依赖库,只要执行以下命令。关于Windows安装Python,在“Windows下安装”部分已经介绍过,这里就不介绍了。

```

pip3 install numpy

pip3 install protobuf

pip3 install wheel

```

4. 安装 git 工具。git的下载地址:https://git-scm.com/downloads ,下载git的安装包,安装的时候一路默认就可以了。

5. 右键打开`Git Bash Here`,执行以下两条命令。将PaddlePaddle的源码clone在当下目录下的Paddle的文件夹中,并进入Padde目录下,操作如下图所示,之后的命令也是在这个终端操作:

```

git clone https://github.com/PaddlePaddle/Paddle.git

cd Paddle

```

6. 切换到较稳定release分支下进行编译,入笔者选择1.2版本的代码:

```

git checkout release/1.2

```

7. 创建名为build的目录并进入:

```

mkdir build

cd build

```

8. 执行编译

- 编译**CPU版本**命令如下:

```

cmake .. -G "Visual Studio 14 2015 Win64" -DPY_VERSION=3.5 -DPYTHON_INCLUDE_DIR=${PYTHON_INCLUDE_DIRS} -DPYTHON_LIBRARY=${PYTHON_LIBRARY} -DPYTHON_EXECUTABLE=${PYTHON_EXECUTABLE} -DWITH_FLUID_ONLY=ON -DWITH_GPU=OFF -DWITH_TESTING=OFF -DCMAKE_BUILD_TYPE=Release

```

- 编译**GPU版本**,目前Windows还不支持GPU,支持后会更新。

9. 下载第三方依赖包(openblas,snappystream),下载地址:https://github.com/wopeizl/Paddle_deps ,将整个`third_party`文件夹放到上面第7步创建的`build`目录下。

10. 使用`Blend for Visual Studio 2015` 打开`paddle.sln`文件,选择平台为`x64`,配置为`Release`,开始编译

11. 编译成功后进入`\paddle\build\python\dist`目录下找到生成的`.whl`包

12. 执行以下命令安装编译好的PaddlePaddle包:

```

pip3 install (whl包的名字)

```

## Ubuntu本地下源码编译

下面介绍的是使用Ubuntu编译PaddlePaddle源码,笔者的系统是64位的Ubuntu 16.04,Python环境是Python 3.5。

### 安装openCV

1. 更新apt的源,命令如下:

```

sudo apt update

```

2. 下载openCV源码,官方地址:https://opencv.org/releases.html , 笔者下载的是3.4.5版本,选择的是`Sources`点击下载。

3. 解压openCV源码,命令如下:

```

unzip opencv-3.4.5.zip

```

4. 安装可能需要的依赖库,命令如下:

```

sudo apt-get install cmake

sudo apt-get install build-essential libgtk2.0-dev libavcodec-dev libavformat-dev libjpeg.dev libtiff4.dev libswscale-dev libjasper-dev

```

5. 开始执行cmake。

```

cd opencv-3.4.5/

mkdir my_build_dir

cd my_build_dir

cmake -D CMAKE_BUILD_TYPE=Release -D CMAKE_INSTALL_PREFIX=/usr/local ..

```

6. 开始执行编译

```

make -j$(nproc)

```

7. 执行安装命令

```

sudo make install

```

### 安装依赖环境

编译PaddlePaddle源码之前,还需要安装以下的一些依赖环境。

```

sudo apt install python3.5-dev

sudo apt-get udpate

sudo apt-get install -y software-properties-common

sudo add-apt-repository ppa:deadsnakes/ppa

sudo apt install curl

sudo curl https://bootstrap.pypa.io/get-pip.py -o - | python3.5

sudo easy_install pip

sudo apt install swig

sudo apt install wget

sudo pip install numpy==1.14.0

sudo pip install protobuf==3.1.0

sudo pip install wheel

sudo apt install patchelf

```

### 编译PaddlePaddle

1. 将PaddlePaddle的源码clone在当下目录下的Paddle的文件夹中,并进入Padde目录下,命令如下:

```

git clone https://github.com/PaddlePaddle/Paddle.git

cd Paddle

```

2. 切换到较稳定release分支下进行编译,比如笔者使用的是1.2版本,读者可以根据自己的情况选择其他版本:

```

git checkout release/1.2

```

3. 创建并进入一个叫build的目录下:

```

mkdir build && cd build

```

4. 执行cmake,这里分为CPU版本和GPU版本。

- 编译**CPU版本**,命令如下。使用参数`-DPY_VERSION`指定编译的PaddlePaddle支持的Python版本,笔者这里选择的是Python 3.5。并且使用参数`-DWITH_FLUID_ONLY`指定不编译V2版本的PaddlePaddle代码。使用参数`-DWITH_GPU`指定不使用GPU,也就是只编译CPU版本:

```

cmake .. -DPY_VERSION=3.5 -DWITH_FLUID_ONLY=ON -DWITH_GPU=OFF -DWITH_TESTING=OFF -DCMAKE_BUILD_TYPE=Release

```

- 编译**GPU版本**,还要安装一下依赖环境,如下:

1. 安装 CUDA 和 CUDNN,可以查看笔者之前的文章[《Ubuntu安装和卸载CUDA和CUDNN》](https://blog.csdn.net/qq_33200967/article/details/80689543)

2. 安装nccl2,命令如下

```

wget http://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1604/x86_64/nvidia-machine-learning-repo-ubuntu1604_1.0.0-1_amd64.deb

dpkg -i nvidia-machine-learning-repo-ubuntu1604_1.0.0-1_amd64.deb

sudo apt-get install -y libnccl2=2.2.13-1+cuda9.0 libnccl-dev=2.2.13-1+cuda9.0

```

3. 执行cmake。使用参数`-DPY_VERSION`指定编译的PaddlePaddle支持的Python版本,笔者这里选择的是Python 3.5。并且使用参数`-DWITH_FLUID_ONLY`指定不编译V2版本的PaddlePaddle代码。使用参数`-DWITH_GPU`指定使用GPU,同时编译支持CPU和GPU版本的PaddlePaddle。

```

cmake .. -DPY_VERSION=3.5 -DWITH_FLUID_ONLY=ON -DWITH_GPU=ON -DWITH_TESTING=OFF -DCMAKE_BUILD_TYPE=Release

```

5. 使用以下命令正式编译,编译时间比较长:

```

make -j$(nproc)

```

6. 编译成功后进入`/paddle/build/python/dist`目录下找到生成的PaddlePaddle`.whl`包,可以使用这个命令进入到指定目录。

```

cd /paddle/build/python/dist

```

7. 在当前机器或目标机器安装编译好的`.whl`包:

```

pip3 install (whl包的名字)

```

## Ubuntu使用Docker源码编译

使用docker编译的安装包只能支持Ubuntu的PaddlePaddle,因为下载docker镜像也是Ubuntu系统的。通过使用docker编译PaddlePaddle得到的安装包,可以在docker本身使用,之后可以使用docker执行PaddlePaddle。也可以本地的Ubuntu上安装使用,不过要注意的是docker中的系统是Ubuntu 16.04。

### 安装Docker

1. 安装前准备

```python

# 卸载系统原有docker

sudo apt-get remove docker docker-engine docker.io

# 更新apt-get源

sudo apt-get update

# 安装docker的依赖

sudo apt-get install apt-transport-https ca-certificates curl software-properties-common

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo apt-key add -

sudo apt-key fingerprint 0EBFCD88

sudo add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/ubuntu && $(lsb_release -cs) && stable"

```

2. 安装Docker,编译**CPU版本**使用。

```python

# 再次更新apt-get源

sudo apt-get update

# 开始安装docker

sudo apt-get install docker-ce

# 加载docker

sudo apt-cache madison docker-ce

# 验证docker是否安装成功

sudo docker run hello-world

```

正常情况下输出如下图所示。

3. 安装nvidia-docker,编译**GPU版本**使用(根据情况安装)。安装之前要确认本地有独立显卡并安装的显卡驱动。

```

wget -P /tmp https://github.com/NVIDIA/nvidia-docker/releases/download/v1.0.1/nvidia-docker_1.0.1-1_amd64.deb

sudo dpkg -i /tmp/nvidia-docker*.deb && rm /tmp/nvidia-docker*.deb

```

### 编译PaddlePaddle

1. 克隆PaddlePaddle源码:

```

git clone https://github.com/PaddlePaddle/Paddle.git

```

2. 进入Paddle目录下:

```

cd Paddle

```

3. 启动docker镜像

- 编译**CPU版本**,使用命令

```

sudo docker run --name paddle-test -v $PWD:/paddle --network=host -it hub.baidubce.com/paddlepaddle/paddle:latest-dev /bin/bash

```

- 编译**GPU版本**,使用命令

```

sudo nvidia-docker run --name paddle-test -v $PWD:/paddle --network=host -it hub.baidubce.com/paddlepaddle/paddle:latest-dev /bin/bash

```

4. 进入Docker后进入paddle目录下:

```

cd paddle

```

5. 切换到较稳定release分支下进行编译,读者可以根据自己的情况选择其他版本:

```

git checkout release/1.2

```

6. 创建并进入`/paddle/build`路径下:

```

mkdir -p /paddle/build && cd /paddle/build

```

7. 使用以下命令安装相关依赖:

```

pip3 install protobuf==3.1.0

apt install patchelf

```

8. 执行cmake:

- 编译**CPU版本**PaddlePaddle的命令。使用参数`-DPY_VERSION`指定编译的PaddlePaddle支持的Python版本,笔者这里选择的是Python 3.5。并且使用参数`-DWITH_FLUID_ONLY`指定不编译V2版本的PaddlePaddle代码。使用参数`-DWITH_GPU`指定不使用GPU,只编译支持CPU的PaddlePaddle:

```

cmake .. -DPY_VERSION=3.5 -DWITH_FLUID_ONLY=ON -DWITH_GPU=OFF -DWITH_TESTING=OFF -DCMAKE_BUILD_TYPE=Release

```

- 编译**GPU版本**PaddlePaddle的命令。使用参数`-DPY_VERSION`指定编译的PaddlePaddle支持的Python版本,笔者这里选择的是Python 3.5。并且使用参数`-DWITH_FLUID_ONLY`指定不编译V2版本的PaddlePaddle代码。使用参数`-DWITH_GPU`指定使用GPU,同时编译支持CPU和GPU版本的PaddlePaddle。这里要注意一下,我们拉取的这个镜像是CUDA 8.0的,不一定跟读者本地的CUDA版本对应,这可能导致编译的安装包在本地不可用:

```

cmake .. -DPY_VERSION=3.5 -DWITH_FLUID_ONLY=ON -DWITH_GPU=ON -DWITH_TESTING=OFF -DCMAKE_BUILD_TYPE=Release

```

9. 执行编译:

```

make -j$(nproc)

```

10. 编译成功后,生成的安装包存放在`/paddle/build/python/dist`目录下,如果是想在docker中安装PaddlePaddle,可以直接在docker中打开这个目录。如果要在本地安装的话,还有先退出docker,并进入到这个目录:

```python

# 在docker镜像中安装

cd /paddle/build/python/dist

# 在Ubuntu本地安装】

exit

cd build/python/dist

```

11. 安装PaddlePaddle,执行以下命令:

```

pip3.5 install (whl包的名字)

```

# 测试环境

下面介绍在Windows测试PaddlePaddle的安装情况,Ubuntu环境类似。

1. 开发工具笔者喜欢使用PyCharm,下载地址:https://www.jetbrains.com/pycharm/download/#section=windows , 笔者使用的是社区版本的PyCharm,因为这个是免费的[坏笑]。

2. 创建一个新项目,并选择系统的Python环境,第一个是创建一个Python的虚拟环境,这里选择第二个外部的Python环境,点击`...`选择外部Python环境。

3. 这里选择系统的Python环境,选择的路径是之前安装Python的路径。

3. 创建一个Python程序文件,并命名为`test_paddle.py`,编写并执行以下测试代码,现在看不懂没有关系,跟着这个系列教程来学,我们会熟悉使用PaddlePaddle的:

```python

# Include libraries.

import paddle

import paddle.fluid as fluid

import numpy

import six

# Configure the neural network.

def net(x, y):

y_predict = fluid.layers.fc(input=x, size=1, act=None)

cost = fluid.layers.square_error_cost(input=y_predict, label=y)

avg_cost = fluid.layers.mean(cost)

return y_predict, avg_cost

# Define train function.

def train(save_dirname):

x = fluid.layers.data(name='x', shape=[13], dtype='float32')

y = fluid.layers.data(name='y', shape=[1], dtype='float32')

y_predict, avg_cost = net(x, y)

sgd_optimizer = fluid.optimizer.SGD(learning_rate=0.001)

sgd_optimizer.minimize(avg_cost)

train_reader = paddle.batch(

paddle.reader.shuffle(paddle.dataset.uci_housing.train(), buf_size=500),

batch_size=20)

place = fluid.CPUPlace()

exe = fluid.Executor(place)

def train_loop(main_program):

feeder = fluid.DataFeeder(place=place, feed_list=[x, y])

exe.run(fluid.default_startup_program())

PASS_NUM = 1000

for pass_id in range(PASS_NUM):

total_loss_pass = 0

for data in train_reader():

avg_loss_value, = exe.run(

main_program, feed=feeder.feed(data), fetch_list=[avg_cost])

total_loss_pass += avg_loss_value

if avg_loss_value < 5.0:

if save_dirname is not None:

fluid.io.save_inference_model(

save_dirname, ['x'], [y_predict], exe)

return

print("Pass %d, total avg cost = %f" % (pass_id, total_loss_pass))

train_loop(fluid.default_main_program())

# Infer by using provided test data.

def infer(save_dirname=None):

place = fluid.CPUPlace()

exe = fluid.Executor(place)

inference_scope = fluid.core.Scope()

with fluid.scope_guard(inference_scope):

[inference_program, feed_target_names, fetch_targets] = (

fluid.io.load_inference_model(save_dirname, exe))

test_reader = paddle.batch(paddle.dataset.uci_housing.test(), batch_size=20)

test_data = six.next(test_reader())

test_feat = numpy.array(list(map(lambda x: x[0], test_data))).astype("float32")

test_label = numpy.array(list(map(lambda x: x[1], test_data))).astype("float32")

results = exe.run(inference_program,

feed={feed_target_names[0]: numpy.array(test_feat)},

fetch_list=fetch_targets)

print("infer results: ", results[0])

print("ground truth: ", test_label)

# Run train and infer.

if __name__ == "__main__":

save_dirname = "fit_a_line.inference.model"

train(save_dirname)

infer(save_dirname)

```

正常情况下会输出:

```

Pass 0, total avg cost = 13527.760742

Pass 1, total avg cost = 12497.969727

Pass 2, total avg cost = 11737.727539

Pass 3, total avg cost = 11017.893555

Pass 4, total avg cost = 9801.554688

Pass 5, total avg cost = 9150.510742

Pass 6, total avg cost = 8611.593750

Pass 7, total avg cost = 7924.654297

......

```

PaddlePaddle的安装已经介绍完成,那我们开始进入深度学习的大门吧。本系列教程将会一步步介绍如何使用PaddlePaddle,并使用PaddlePaddle应用到实际项目中。

项目代码GitHub地址:https://github.com/yeyupiaoling/LearnPaddle2/tree/master/note1

**注意:** 最新代码以GitHub上的为准

# 参考资料

1. http://www.paddlepaddle.org/documentation/docs/zh/1.2/beginners_guide/install/install_Ubuntu.html

2. http://www.paddlepaddle.org/documentation/docs/zh/1.2/beginners_guide/install/install_Windows.html

3. https://blog.csdn.net/cocoaqin/article/details/78163171

================================================

FILE: note1/test_paddle.py

================================================

# Include libraries.

import paddle

import paddle.fluid as fluid

import numpy

import six

# Configure the neural network.

def net(x, y):

y_predict = fluid.layers.fc(input=x, size=1, act=None)

cost = fluid.layers.square_error_cost(input=y_predict, label=y)

avg_cost = fluid.layers.mean(cost)

return y_predict, avg_cost

# Define train function.

def train(save_dirname):

x = fluid.layers.data(name='x', shape=[13], dtype='float32')

y = fluid.layers.data(name='y', shape=[1], dtype='float32')

y_predict, avg_cost = net(x, y)

sgd_optimizer = fluid.optimizer.SGD(learning_rate=0.001)

sgd_optimizer.minimize(avg_cost)

train_reader = paddle.batch(

paddle.reader.shuffle(paddle.dataset.uci_housing.train(), buf_size=500),

batch_size=20)

place = fluid.CPUPlace()

exe = fluid.Executor(place)

def train_loop(main_program):

feeder = fluid.DataFeeder(place=place, feed_list=[x, y])

exe.run(fluid.default_startup_program())

PASS_NUM = 1000

for pass_id in range(PASS_NUM):

total_loss_pass = 0

for data in train_reader():

avg_loss_value, = exe.run(

main_program, feed=feeder.feed(data), fetch_list=[avg_cost])

total_loss_pass += avg_loss_value

if avg_loss_value < 5.0:

if save_dirname is not None:

fluid.io.save_inference_model(

save_dirname, ['x'], [y_predict], exe)

return

print("Pass %d, total avg cost = %f" % (pass_id, total_loss_pass))

train_loop(fluid.default_main_program())

# Infer by using provided test data.

def infer(save_dirname=None):

place = fluid.CPUPlace()

exe = fluid.Executor(place)

inference_scope = fluid.core.Scope()

with fluid.scope_guard(inference_scope):

[inference_program, feed_target_names, fetch_targets] = (

fluid.io.load_inference_model(save_dirname, exe))

test_reader = paddle.batch(paddle.dataset.uci_housing.test(), batch_size=20)

test_data = six.next(test_reader())

test_feat = numpy.array(list(map(lambda x: x[0], test_data))).astype("float32")

test_label = numpy.array(list(map(lambda x: x[1], test_data))).astype("float32")

results = exe.run(inference_program,

feed={feed_target_names[0]: numpy.array(test_feat)},

fetch_list=fetch_targets)

print("infer results: ", results[0])

print("ground truth: ", test_label)

# Run train and infer.

if __name__ == "__main__":

save_dirname = "fit_a_line.inference.model"

train(save_dirname)

infer(save_dirname)

================================================

FILE: note10/README.md

================================================

@[TOC]

# 前言

VisualDL是一个面向深度学习任务设计的可视化工具,包含了scalar、参数分布、模型结构、图像可视化等功能。可以这样说:“所见即所得”。我们可以借助VisualDL来观察我们训练的情况,方便我们对训练的模型进行分析,改善模型的收敛情况。

1. `scalar`,趋势图,可用于训练测试误差的展示

2. `image`, 图片的可视化,可用于卷积层或者其他参数的图形化展示

3. `histogram`, 用于参数分布及变化趋势的展示

4. `graph`,用于训练模型结构的可视化

以上的图像来自[VisualDL的Github](https://github.com/PaddlePaddle/VisualDL)

既然那么方便,那么我们就来尝试一下吧。VisualDL底层采用C++编写,但是它在提供C++ SDK的同时,也支持Python SDK,我们主要是使用Python的SDK。顺便说一下,VisualDL除了支持PaddlePaddle,之外,还支持pytorch, mxnet在内的大部分主流DNN平台。

# VisualDL的安装

本章只讲述在Ubuntu系统上的安装和使用,Mac的操作应该也差不多。

## 使用pip安装

使用pip安装非常简单,只要一条命令就够了,如下:

```shell

pip3 install --upgrade visualdl

```

测试一下是否安装成功了,运行一个例子下载日志文件:

```shell

# 在当前位置下载一个日志

vdl_create_scratch_log

```

然后再输入,启动VisualDL并加载这个日志信息:

```shell

visualdl --logdir=scratch_log/ --port=8080

```

这里说明一下,visualDL的参数:

- `host` 设定IP

- `port` 设定端口

- `model_pb` 指定 ONNX 格式的模型文件,这木方我们还没要用到

**注意:** 如果是报以下的错误,那是因为protobuf版本过低的原因。

```

root@test:/home/test/VisualDL# visualdl --logdir ./scratch_log --port 8080

Traceback (most recent call last):

File "/usr/local/bin/visualdl", line 29, in <module>

import visualdl.server.graph as vdl_graph

File "/usr/local/lib/python2.7/dist-packages/visualdl/server/graph.py", line 23, in <module>

from . import onnx

File "/usr/local/lib/python2.7/dist-packages/visualdl/server/onnx/__init__.py", line 8, in <module>

from .onnx_pb2 import ModelProto

File "/usr/local/lib/python2.7/dist-packages/visualdl/server/onnx/onnx_pb2.py", line 213, in <module>

options=None, file=DESCRIPTOR),

TypeError: __init__() got an unexpected keyword argument 'file'

```

protobuf的版本要不小于3.5.0,如何小于这个版本可以使用以下命令升级:

```

pip3 install protobuf -U

```

然后在浏览器上输入:

```

http://127.0.0.1:8080

```

即可看到一个可视化的界面,如下:

## 使用源码安装

如果读者出于各种情况,使用pip安装不能满足需求,那可以考虑使用源码安装VisualDL,操作如下:

首先要安装依赖库:

```shell

# 安装npm

apt install npm

# 安装node

apt install nodejs-legacy

# 安装cmake

apt install cmake

# 安装unzip

apt install unzip

```

然后在GitHub上clone最新的源码并打开:

```shell

git clone https://github.com/PaddlePaddle/VisualDL.git

cd VisualDL

```

之后是编译生成`whl`安装包:

```shell

python3 setup.py bdist_wheel

```

生成`whl`安装包之后,就可以使用pip命令安装这个安装包了,`*`号对应的是visualdl版本号,读者要根据实际情况来安装:

```shell

pip3 install --upgrade dist/visualdl-*.whl

```

安装完成之后,同样可以使用在上一部分的[使用pip安装](http://mp.csdn.net/mdeditor/79127175#%E4%BD%BF%E7%94%A8pip%E5%AE%89%E8%A3%85)的测试方法测试安装是否成功。

# 简单使用VisualDL

我们编写下面这一小段的代码来学习VisualDL的使用,`test_visualdl.py`的代码如下:

```python

# 导入VisualDL的包

from visualdl import LogWriter

# 创建一个LogWriter,第一个参数是指定存放数据的路径,

# 第二个参数是指定多少次写操作执行一次内存到磁盘的数据持久化

logw = LogWriter("./random_log", sync_cycle=10000)

# 创建训练和测试的scalar图,

# mode是标注线条的名称,

# scalar标注的是指定这个组件的tag

with logw.mode('train') as logger:

scalar0 = logger.scalar("scratch/scalar")

with logw.mode('test') as logger:

scalar1 = logger.scalar("scratch/scalar")

# 读取数据

for step in range(1000):

scalar0.add_record(step, step * 1. / 1000)

scalar1.add_record(step, 1. - step * 1. / 1000)

```

运行Python代码之后,在终端上输入,从上面的代码可以看到我们定义的路径是`./random_log`:

```shell

visualdl --logdir=random_log/ --port=8080

```

然后在浏览器上输入:

```

http://127.0.0.1:8080

```

然后就可以看到刚才编写Python代码生成的图像了:

经过这个例子,读者对VisualDL有了进一步的了解了,那么在接下来的我们就在实际的PaddlePaddle例子中使用我们的VisualDL。

# 在PaddlePaddle使用VisualDL

下面就介绍在PaddlePaddle训练中使用VisualDL,通过在训练的时候使用VisualDL不断收集训练的数据集,最终通过可视化展示出来。

## 定义MobileNet V2神经网络

创建一个`mobilenet_v2.py`来定义一个MobileNet V2神经网络。MobileNet V2是MobileNet V1的升级版,从名字可以看出这个网络是为例移动设备而诞生的,它最大的特点就是模型小,预测速度快,适合部署在移动设备上。MobileNet V2是将MobileNet V1和残差网络ResNet的残差单元结合起来,用Depthwise Convolutions代替残差单元的bottleneck,最重要的是与residuals block相反,通常的residuals block是先经过1×1的卷积,降低feature map通道数,然后再通过3×3卷积,最后重新经过1×1卷积将feature map通道数扩张回去;而且为了避免ReLU对特征的破坏,用线性层替换channel数较少层后的ReLU非线性激活。

```python

import paddle.fluid as fluid

def conv_bn_layer(input, filter_size, num_filters, stride, padding, num_groups=1, if_act=True, use_cudnn=True):

conv = fluid.layers.conv2d(input=input,

num_filters=num_filters,

filter_size=filter_size,

stride=stride,

padding=padding,

groups=num_groups,

use_cudnn=use_cudnn,

bias_attr=False)

bn = fluid.layers.batch_norm(input=conv)

if if_act:

return fluid.layers.relu6(bn)

else:

return bn

def shortcut(input, data_residual):

return fluid.layers.elementwise_add(input, data_residual)

def inverted_residual_unit(input,

num_in_filter,

num_filters,

ifshortcut,

stride,

filter_size,

padding,

expansion_factor):

num_expfilter = int(round(num_in_filter * expansion_factor))

channel_expand = conv_bn_layer(input=input,

num_filters=num_expfilter,

filter_size=1,

stride=1,

padding=0,

num_groups=1,

if_act=True)

bottleneck_conv = conv_bn_layer(input=channel_expand,

num_filters=num_expfilter,

filter_size=filter_size,

stride=stride,

padding=padding,

num_groups=num_expfilter,

if_act=True,

use_cudnn=False)

linear_out = conv_bn_layer(input=bottleneck_conv,

num_filters=num_filters,

filter_size=1,

stride=1,

padding=0,

num_groups=1,

if_act=False)

if ifshortcut:

out = shortcut(input=input, data_residual=linear_out)

return out

else:

return linear_out

def invresi_blocks(input, in_c, t, c, n, s, name=None):

first_block = inverted_residual_unit(input=input,

num_in_filter=in_c,

num_filters=c,

ifshortcut=False,

stride=s,

filter_size=3,

padding=1,

expansion_factor=t)

last_residual_block = first_block

last_c = c

for i in range(1, n):

last_residual_block = inverted_residual_unit(input=last_residual_block,

num_in_filter=last_c,

num_filters=c,

ifshortcut=True,

stride=1,

filter_size=3,

padding=1,

expansion_factor=t)

return last_residual_block

def net(input, class_dim, scale=1.0):

bottleneck_params_list = [

(1, 16, 1, 1),

(6, 24, 2, 2),

(6, 32, 3, 2),

(6, 64, 4, 2),

(6, 96, 3, 1),

(6, 160, 3, 2),

(6, 320, 1, 1),

]

# conv1

input = conv_bn_layer(input,

num_filters=int(32 * scale),

filter_size=3,

stride=2,

padding=1,

if_act=True)

# bottleneck sequences

i = 1

in_c = int(32 * scale)

for layer_setting in bottleneck_params_list:

t, c, n, s = layer_setting

i += 1

input = invresi_blocks(input=input,

in_c=in_c,

t=t,

c=int(c * scale),

n=n,

s=s,

name='conv' + str(i))

in_c = int(c * scale)

# last_conv

input = conv_bn_layer(input=input,

num_filters=int(1280 * scale) if scale > 1.0 else 1280,

filter_size=1,

stride=1,

padding=0,

if_act=True)

feature = fluid.layers.pool2d(input=input,

pool_size=7,

pool_stride=1,

pool_type='avg',

global_pooling=True)

net = fluid.layers.fc(input=feature,

size=class_dim,

act='softmax')

return net

```

创建一个`train.py`开始训练。首先导入相关的依赖包。

```python

import paddle as paddle

import paddle.dataset.cifar as cifar

import paddle.fluid as fluid

import mobilenet_v2

from visualdl import LogWriter

```

创建VisualDL的记录器,通过这个记录器可以记录每次训练的数据,并存储在`log/`目录下。

```python

# 创建记录器

log_writer = LogWriter(dir='log/', sync_cycle=10)

# 创建训练和测试记录数据工具

with log_writer.mode('train') as writer:

train_cost_writer = writer.scalar('cost')

train_acc_writer = writer.scalar('accuracy')

histogram = writer.histogram('histogram', num_buckets=50)

with log_writer.mode('test') as writer:

test_cost_writer = writer.scalar('cost')

test_acc_writer = writer.scalar('accuracy')

```

这里是定义一系列的操作,如定义输入层,获取MobileNet V2的分类器,克隆预测程序,定义优化方法。

```python

# 定义输入层

image = fluid.layers.data(name='image', shape=[3, 32, 32], dtype='float32')

label = fluid.layers.data(name='label', shape=[1], dtype='int64')

# 获取分类器

model = mobilenet_v2.net(image, 10)

# 获取损失函数和准确率函数

cost = fluid.layers.cross_entropy(input=model, label=label)

avg_cost = fluid.layers.mean(cost)

acc = fluid.layers.accuracy(input=model, label=label)

# 获取训练和测试程序

test_program = fluid.default_main_program().clone(for_test=True)

# 定义优化方法

optimizer = fluid.optimizer.AdamOptimizer(learning_rate=1e-3)

opts = optimizer.minimize(avg_cost)

```

获取CIFAR的训练数据和测试数据,并创建一个执行器,MobileNet V2这个模型虽然使用在手机上的,但是在训练起来却不是那么快,最好使用GPU进行训练,要不是相当的慢。

```python

# 获取CIFAR数据

train_reader = paddle.batch(cifar.train10(), batch_size=32)

test_reader = paddle.batch(cifar.test10(), batch_size=32)

# 定义一个使用CPU的执行器

place = fluid.CUDAPlace(0)

# place = fluid.CPUPlace()

exe = fluid.Executor(place)

# 进行参数初始化

exe.run(fluid.default_startup_program())

# 定义输入数据维度

feeder = fluid.DataFeeder(place=place, feed_list=[image, label])

```

这里从初始化程序中获取全部参数的名称,用于之后训练过程中输出参数的值,并记录到VisualDL中。

```python

# 定义日志的开始位置和获取参数名称

train_step = 0

test_step = 0

params_name = fluid.default_startup_program().global_block().all_parameters()[0].name

```

开始训练模型,在训练过程中,把训练时的损失值保存到`train_cost_writer`中,把训练时的准确率保存到`train_acc_writer`中,把训练过程中的参数变化保存到`histogram`中。把测试时的损失值保存到`test_cost_writer`中,把测试时的准确率保存到`test_acc_writer`中。

```python

# 训练10次

for pass_id in range(10):

# 进行训练

for batch_id, data in enumerate(train_reader()):

train_cost, train_acc, params = exe.run(program=fluid.default_main_program(),

feed=feeder.feed(data),

fetch_list=[avg_cost, acc, params_name])

# 保存训练的日志数据

train_step += 1

train_cost_writer.add_record(train_step, train_cost[0])

train_acc_writer.add_record(train_step, train_acc[0])

histogram.add_record(train_step, params.flatten())

# 每100个batch打印一次信息

if batch_id % 100 == 0:

print('Pass:%d, Batch:%d, Cost:%0.5f, Accuracy:%0.5f' %

(pass_id, batch_id, train_cost[0], train_acc[0]))

# 进行测试

test_accs = []

test_costs = []

for batch_id, data in enumerate(test_reader()):

test_cost, test_acc = exe.run(program=test_program,

feed=feeder.feed(data),

fetch_list=[avg_cost, acc])

# 保存测试的日志数据

test_step += 1

test_cost_writer.add_record(test_step, test_cost[0])

test_acc_writer.add_record(test_step, test_acc[0])

test_accs.append(test_acc[0])

test_costs.append(test_cost[0])

# 求测试结果的平均值

test_cost = (sum(test_costs) / len(test_costs))

test_acc = (sum(test_accs) / len(test_accs))

print('Test:%d, Cost:%0.5f, Accuracy:%0.5f' % (pass_id, test_cost, test_acc))

```

训练时输出的信息:

```

Pass:0, Batch:0, Cost:2.79566, Accuracy:0.03125

Pass:0, Batch:100, Cost:2.48199, Accuracy:0.15625

Pass:0, Batch:200, Cost:2.49757, Accuracy:0.18750

Pass:0, Batch:300, Cost:2.10605, Accuracy:0.28125

Pass:0, Batch:400, Cost:2.24151, Accuracy:0.15625

Pass:0, Batch:500, Cost:1.99807, Accuracy:0.21875

Pass:0, Batch:600, Cost:1.92178, Accuracy:0.34375

Pass:0, Batch:700, Cost:1.81583, Accuracy:0.28125

Pass:0, Batch:800, Cost:2.22559, Accuracy:0.25000

Pass:0, Batch:900, Cost:1.79611, Accuracy:0.34375

Pass:0, Batch:1000, Cost:2.00520, Accuracy:0.25000

```

训练结束之后,启动VisualDL工具,指定日志文件的目录和端口号。

```

visualdl --logdir=log/ --port=8080

```

访问网页地址:`http://localhost:8080/`,我们会得到以下的图片。

- 训练时的准确率和损失值的变化,从这些图片可以看到模型正在收敛,准确率在不断提升。

- 下图是使用测试集的准确率和损失值,从图中可以看出后期的测试情况准确率在下降,损失值在增大,也对比上图训练的准确率还在上升,证明模型出现过拟合的情况。

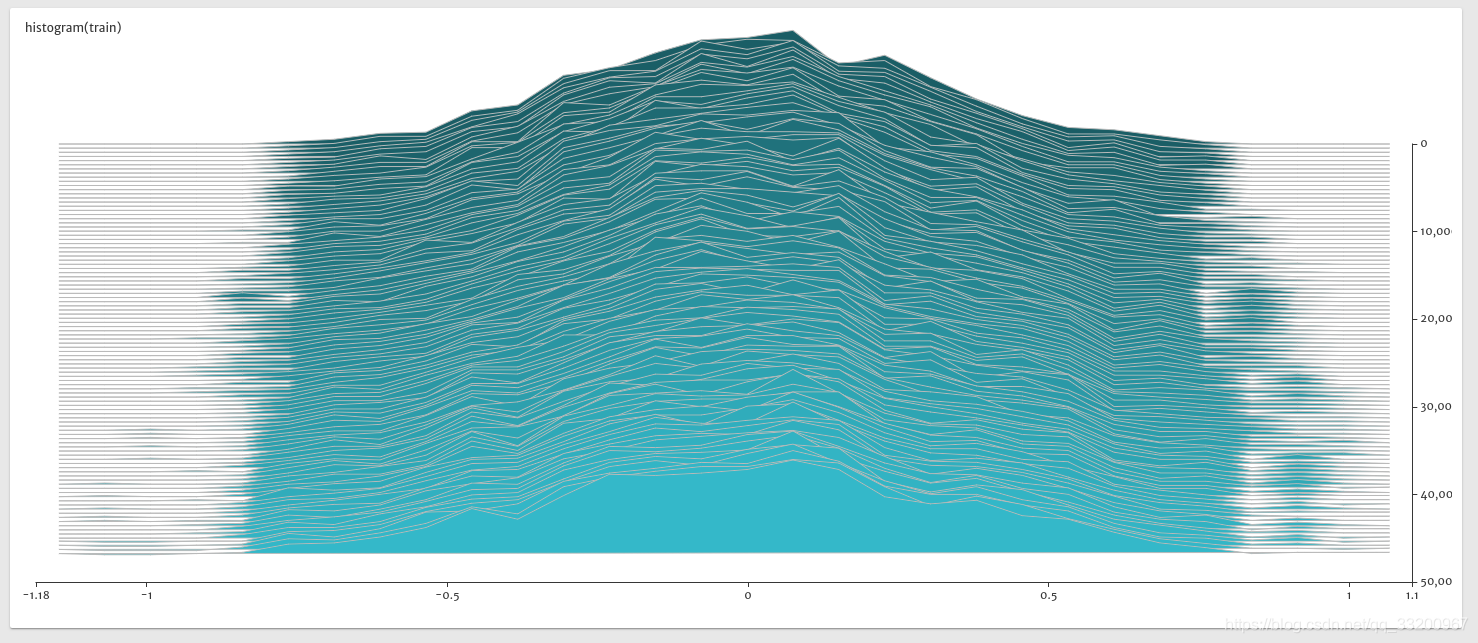

- 下图是训练是参数的histogram图,从图中可以看出参数正在趋于稳定,同时的没有出现异常值,如极大值或者极小值。

本章关于VisualDL的使用就介绍到这里,读者在实际开发中可以使用VisualDL,通过利用VisualDL给予的训练可视化,不断优化模型。

同步到百度AI Studio平台:http://aistudio.baidu.com/#/projectdetail/38856

同步到科赛网K-Lab平台:https://www.kesci.com/home/project/5c3f495589f4aa002b845d6b

项目代码GitHub地址:https://github.com/yeyupiaoling/LearnPaddle2/tree/master/note10

**注意:** 最新代码以GitHub上的为准

# 参考资料

1. https://blog.csdn.net/qq_33200967/article/details/79127175

2. https://github.com/PaddlePaddle/VisualDL

3. https://www.jianshu.com/p/4c9404d4998c

================================================

FILE: note10/mobilenet_v2.py

================================================

import paddle.fluid as fluid

def conv_bn_layer(input, filter_size, num_filters, stride, padding, num_groups=1, if_act=True, use_cudnn=True):

conv = fluid.layers.conv2d(input=input,

num_filters=num_filters,

filter_size=filter_size,

stride=stride,

padding=padding,

groups=num_groups,

use_cudnn=use_cudnn,

bias_attr=False)

bn = fluid.layers.batch_norm(input=conv)

if if_act:

return fluid.layers.relu6(bn)

else:

return bn

def shortcut(input, data_residual):

return fluid.layers.elementwise_add(input, data_residual)

def inverted_residual_unit(input,

num_in_filter,

num_filters,

ifshortcut,

stride,

filter_size,

padding,

expansion_factor):

num_expfilter = int(round(num_in_filter * expansion_factor))

channel_expand = conv_bn_layer(input=input,

num_filters=num_expfilter,

filter_size=1,

stride=1,

padding=0,

num_groups=1,

if_act=True)

bottleneck_conv = conv_bn_layer(input=channel_expand,

num_filters=num_expfilter,

filter_size=filter_size,

stride=stride,

padding=padding,

num_groups=num_expfilter,

if_act=True,

use_cudnn=False)

linear_out = conv_bn_layer(input=bottleneck_conv,

num_filters=num_filters,

filter_size=1,

stride=1,

padding=0,

num_groups=1,

if_act=False)

if ifshortcut:

out = shortcut(input=input, data_residual=linear_out)

return out

else:

return linear_out

def invresi_blocks(input, in_c, t, c, n, s, name=None):

first_block = inverted_residual_unit(input=input,

num_in_filter=in_c,

num_filters=c,

ifshortcut=False,

stride=s,

filter_size=3,

padding=1,

expansion_factor=t)

last_residual_block = first_block

last_c = c

for i in range(1, n):

last_residual_block = inverted_residual_unit(input=last_residual_block,

num_in_filter=last_c,

num_filters=c,

ifshortcut=True,

stride=1,

filter_size=3,

padding=1,

expansion_factor=t)

return last_residual_block

def net(input, class_dim, scale=1.0):

bottleneck_params_list = [

(1, 16, 1, 1),

(6, 24, 2, 2),

(6, 32, 3, 2),

(6, 64, 4, 2),

(6, 96, 3, 1),

(6, 160, 3, 2),

(6, 320, 1, 1),

]

# conv1

input = conv_bn_layer(input,

num_filters=int(32 * scale),

filter_size=3,

stride=2,

padding=1,

if_act=True)

# bottleneck sequences

i = 1

in_c = int(32 * scale)

for layer_setting in bottleneck_params_list:

t, c, n, s = layer_setting

i += 1

input = invresi_blocks(input=input,

in_c=in_c,

t=t,

c=int(c * scale),

n=n,

s=s,

name='conv' + str(i))

in_c = int(c * scale)

# last_conv

input = conv_bn_layer(input=input,

num_filters=int(1280 * scale) if scale > 1.0 else 1280,

filter_size=1,

stride=1,

padding=0,

if_act=True)

feature = fluid.layers.pool2d(input=input,

pool_size=7,

pool_stride=1,

pool_type='avg',

global_pooling=True)

net = fluid.layers.fc(input=feature,

size=class_dim,

act='softmax')

return net

================================================

FILE: note10/test_visualdl.py

================================================

# 导入VisualDL的包

from visualdl import LogWriter

# 创建一个LogWriter,第一个参数是指定存放数据的路径,

# 第二个参数是指定多少次写操作执行一次内存到磁盘的数据持久化

logw = LogWriter("./random_log", sync_cycle=10000)

# 创建训练和测试的scalar图,

# mode是标注线条的名称,

# scalar标注的是指定这个组件的tag

with logw.mode('train') as logger:

scalar0 = logger.scalar("scratch/scalar")

with logw.mode('test') as logger:

scalar1 = logger.scalar("scratch/scalar")

# 读取数据

for step in range(1000):

scalar0.add_record(step, step * 1. / 1000)

scalar1.add_record(step, 1. - step * 1. / 1000)

================================================

FILE: note10/train.py

================================================

import mobilenet_v2

import paddle as paddle

import paddle.dataset.cifar as cifar

import paddle.fluid as fluid

from visualdl import LogWriter

# 创建记录器

log_writer = LogWriter(dir='log/', sync_cycle=10)

# 创建训练和测试记录数据工具

with log_writer.mode('train') as writer:

train_cost_writer = writer.scalar('cost')

train_acc_writer = writer.scalar('accuracy')

histogram = writer.histogram('histogram', num_buckets=50)

with log_writer.mode('test') as writer:

test_cost_writer = writer.scalar('cost')

test_acc_writer = writer.scalar('accuracy')

# 定义输入层

image = fluid.layers.data(name='image', shape=[3, 32, 32], dtype='float32')

label = fluid.layers.data(name='label', shape=[1], dtype='int64')

# 获取分类器

model = mobilenet_v2.net(image, 10)

# 获取损失函数和准确率函数

cost = fluid.layers.cross_entropy(input=model, label=label)

avg_cost = fluid.layers.mean(cost)

acc = fluid.layers.accuracy(input=model, label=label)

# 获取训练和测试程序

test_program = fluid.default_main_program().clone(for_test=True)

# 定义优化方法

optimizer = fluid.optimizer.AdamOptimizer(learning_rate=1e-3)

opts = optimizer.minimize(avg_cost)

# 获取CIFAR数据

train_reader = paddle.batch(cifar.train10(), batch_size=32)

test_reader = paddle.batch(cifar.test10(), batch_size=32)

# 定义一个使用CPU的执行器

place = fluid.CUDAPlace(0)

# place = fluid.CPUPlace()

exe = fluid.Executor(place)

# 进行参数初始化

exe.run(fluid.default_startup_program())

# 定义输入数据维度

feeder = fluid.DataFeeder(place=place, feed_list=[image, label])

# 定义日志的开始位置和获取参数名称

train_step = 0

test_step = 0

params_name = fluid.default_startup_program().global_block().all_parameters()[0].name

# 训练10次

for pass_id in range(10):

# 进行训练

for batch_id, data in enumerate(train_reader()):

train_cost, train_acc, params = exe.run(program=fluid.default_main_program(),

feed=feeder.feed(data),

fetch_list=[avg_cost, acc, params_name])

# 保存训练的日志数据

train_step += 1

train_cost_writer.add_record(train_step, train_cost[0])

train_acc_writer.add_record(train_step, train_acc[0])

histogram.add_record(train_step, params.flatten())

# 每100个batch打印一次信息

if batch_id % 100 == 0:

print('Pass:%d, Batch:%d, Cost:%0.5f, Accuracy:%0.5f' %

(pass_id, batch_id, train_cost[0], train_acc[0]))

# 进行测试

test_accs = []

test_costs = []

for batch_id, data in enumerate(test_reader()):

test_cost, test_acc = exe.run(program=test_program,

feed=feeder.feed(data),

fetch_list=[avg_cost, acc])

# 保存测试的日志数据

test_step += 1

test_cost_writer.add_record(test_step, test_cost[0])

test_acc_writer.add_record(test_step, test_acc[0])

test_accs.append(test_acc[0])

test_costs.append(test_cost[0])

# 求测试结果的平均值

test_cost = (sum(test_costs) / len(test_costs))

test_acc = (sum(test_accs) / len(test_accs))

print('Test:%d, Cost:%0.5f, Accuracy:%0.5f' % (pass_id, test_cost, test_acc))

================================================

FILE: note11/README.md

================================================

@[TOC]

GitHub地址:https://github.com/yeyupiaoling/LearnPaddle2/tree/master/note11

# 前言

本章将介绍如何使用PaddlePaddle训练自己的图片数据集,在之前的图像数据集中,我们都是使用PaddlePaddle自带的数据集,本章我们就来学习如何让PaddlePaddle训练我们自己的图片数据集。

# 爬取图像

在本章中,我们使用的是自己的图片数据集,所以我们需要弄一堆图像来制作训练的数据集。下面我们就编写一个爬虫程序,让其帮我们从百度图片中爬取相应类别的图片。

创建一个`download_image.py`文件用于编写爬取图片程序。首先导入所需的依赖包。

```python

import re

import uuid

import requests

import os

import numpy

import imghdr

from PIL import Image

```

然后编写一个下载图片的函数,这个是程序核心代码。参数是下载图片的关键、保存的名字、下载图片的数量。关键字是百度搜索图片的关键。

```python

# 获取百度图片下载图片

def download_image(key_word, save_name, download_max):

download_sum = 0

str_gsm = '80'

# 把每个类别的图片存放在单独一个文件夹中

save_path = 'images' + '/' + save_name

if not os.path.exists(save_path):

os.makedirs(save_path)

while download_sum < download_max:

# 下载次数超过指定值就停止下载

if download_sum >= download_max:

break

str_pn = str(download_sum)

# 定义百度图片的路径

url = 'http://image.baidu.com/search/flip?tn=baiduimage&ie=utf-8&' \

'word=' + key_word + '&pn=' + str_pn + '&gsm=' + str_gsm + '&ct=&ic=0&lm=-1&width=0&height=0'

print('正在下载 %s 的第 %d 张图片.....' % (key_word, download_sum))

try:

# 获取当前页面的源码

result = requests.get(url, timeout=30).text

# 获取当前页面的图片URL

img_urls = re.findall('"objURL":"(.*?)",', result, re.S)

if len(img_urls) < 1:

break

# 把这些图片URL一个个下载

for img_url in img_urls:

# 获取图片内容

img = requests.get(img_url, timeout=30)

img_name = save_path + '/' + str(uuid.uuid1()) + '.jpg'

# 保存图片

with open(img_name, 'wb') as f:

f.write(img.content)

download_sum += 1

if download_sum >= download_max:

break

except Exception as e:

print('【错误】当前图片无法下载,%s' % e)

download_sum += 1

continue

print('下载完成')

```

图片下载完成之后,需要删除一家损坏的图片,因为在下载的过程中,由于图片本身的问题或者下载过程造成的图片损坏,需要把这些已经损坏的图片上传。下面的函数就是删除所有损坏的图片,根据图像数据集的目录读取获取所有图片文件的路径,然后使用`imghdr`工具获取图片的类型是否为`png`或者`jpg`来判断图片文件是否完整,最后再删除根据图片的通道数据来删除灰度图片。

```python

# 删除不是JPEG或者PNG格式的图片

def delete_error_image(father_path):

# 获取父级目录的所有文件以及文件夹

try:

image_dirs = os.listdir(father_path)

for image_dir in image_dirs:

image_dir = os.path.join(father_path, image_dir)

# 如果是文件夹就继续获取文件夹中的图片

if os.path.isdir(image_dir):

images = os.listdir(image_dir)

for image in images:

image = os.path.join(image_dir, image)

try:

# 获取图片的类型

image_type = imghdr.what(image)

# 如果图片格式不是JPEG同时也不是PNG就删除图片

if image_type is not 'jpeg' and image_type is not 'png':

os.remove(image)

print('已删除:%s' % image)

continue

# 删除灰度图

img = numpy.array(Image.open(image))

if len(img.shape) is 2:

os.remove(image)

print('已删除:%s' % image)

except:

os.remove(image)

print('已删除:%s' % image)

except:

pass

```

最后在main入口中通过调用两个函数来完成下载图像数据集,使用中文进行百度搜索图片,使用英文是为了出现中文路径导致图片读取错误。

```python

if __name__ == '__main__':

# 定义要下载的图片中文名称和英文名称,ps:英文名称主要是为了设置文件夹名

key_words = {'西瓜': 'watermelon', '哈密瓜': 'cantaloupe',

'樱桃': 'cherry', '苹果': 'apple', '黄瓜': 'cucumber', '胡萝卜': 'carrot'}

# 每个类别下载一千个

max_sum = 500

for key_word in key_words:

save_name = key_words[key_word]

download_image(key_word, save_name, max_sum)

# 删除错误图片

delete_error_image('images/')

```

输出信息:

```

正在下载 哈密瓜 的第 0 张图片.....

【错误】当前图片无法下载,HTTPConnectionPool(host='www.boyingsj.com', port=80): Read timed out.

正在下载 哈密瓜 的第 10 张图片.....

```

**注意:** 下载处理完成之后,还可能存在其他杂乱的图片,所以还需要我们手动删除这些不属于这个类别的图片,这才算完成图像数据集的制作。

# 创建图像列表

创建一个`create_data_list.py`文件,在这个程序中,我们只要把爬取保存图片的路径的文件夹路径传进去就可以了,生成固定格式的列表,格式为`图片的路径 <Tab> 图片类别的标签`:

```python

import json

import os

def create_data_list(data_root_path):

with open(data_root_path + "test.list", 'w') as f:

pass

with open(data_root_path + "train.list", 'w') as f:

pass

# 所有类别的信息

class_detail = []

# 获取所有类别

class_dirs = os.listdir(data_root_path)

# 类别标签

class_label = 0

# 获取总类别的名称

father_paths = data_root_path.split('/')

while True:

if father_paths[len(father_paths) - 1] == '':

del father_paths[len(father_paths) - 1]

else:

break

father_path = father_paths[len(father_paths) - 1]

all_class_images = 0

other_file = 0

# 读取每个类别

for class_dir in class_dirs:

if class_dir == 'test.list' or class_dir == "train.list" or class_dir == 'readme.json':

other_file += 1

continue

print('正在读取类别:%s' % class_dir)

# 每个类别的信息

class_detail_list = {}

test_sum = 0

trainer_sum = 0

# 统计每个类别有多少张图片

class_sum = 0

# 获取类别路径

path = data_root_path + "/" + class_dir

# 获取所有图片

img_paths = os.listdir(path)

for img_path in img_paths:

# 每张图片的路径

name_path = class_dir + '/' + img_path

# 如果不存在这个文件夹,就创建

if not os.path.exists(data_root_path):

os.makedirs(data_root_path)

# 每10张图片取一个做测试数据

if class_sum % 10 == 0:

test_sum += 1

with open(data_root_path + "test.list", 'a') as f:

f.write(name_path + "\t%d" % class_label + "\n")

else:

trainer_sum += 1

with open(data_root_path + "train.list", 'a') as f:

f.write(name_path + "\t%d" % class_label + "\n")

class_sum += 1

all_class_images += 1

# 说明的json文件的class_detail数据

class_detail_list['class_name'] = class_dir

class_detail_list['class_label'] = class_label

class_detail_list['class_test_images'] = test_sum

class_detail_list['class_trainer_images'] = trainer_sum

class_detail.append(class_detail_list)

class_label += 1

# 获取类别数量

all_class_sum = len(class_dirs) - other_file

# 说明的json文件信息

readjson = {}

readjson['all_class_name'] = father_path

readjson['all_class_sum'] = all_class_sum

readjson['all_class_images'] = all_class_images

readjson['class_detail'] = class_detail

jsons = json.dumps(readjson, sort_keys=True, indent=4, separators=(',', ': '))

with open(data_root_path + "readme.json", 'w') as f:

f.write(jsons)

print('图像列表已生成')

```

最后执行就可以生成图像的列表。

```python

if __name__ == '__main__':

# 把生产的数据列表都放在自己的总类别文件夹中

data_root_path = "images/"

create_data_list(data_root_path)

```

输出信息:

```

正在读取类别:apple

正在读取类别:cantaloupe

正在读取类别:carrot

正在读取类别:cherry

正在读取类别:cucumber

正在读取类别:watermelon

图像列表已生成

```

运行这个程序之后,会生成在data文件夹中生成一个单独的大类文件夹,比如我们这次是使用到蔬菜类,所以我生成一个`vegetables`文件夹,在这个文件夹下有3个文件:

|文件名|作用|

|:---:|:---:|

|trainer.list|用于训练的图像列表|

|test.list|用于测试的图像列表|

|readme.json|该数据集的json格式的说明,方便以后使用|

`readme.json`文件的格式如下,可以很清楚看到整个数据的图像数量,总类别名称和类别数量,还有每个类对应的标签,类别的名字,该类别的测试数据和训练数据的数量:

```json

{

"all_class_images": 2200,

"all_class_name": "images",

"all_class_sum": 2,

"class_detail": [

{

"class_label": 1,

"class_name": "watermelon",

"class_test_images": 110,

"class_trainer_images": 990

},

{

"class_label": 2,

"class_name": "cantaloupe",

"class_test_images": 110,

"class_trainer_images": 990

}

]

}

```

# 定义模型

创建一个`mobilenet_v1.py`文件,在本章我们使用的是MobileNet神经网络,MobileNet是Google针对手机等嵌入式设备提出的一种轻量级的深层神经网络,它的核心思想就是卷积核的巧妙分解,可以有效减少网络参数,从而达到减小训练时网络的模型。因为太大的模型模型文件是不利于移植到移动设备上的,比如我们把模型文件迁移到Android手机应用上,那么模型文件的大小就直接影响应用安装包的大小。以下就是使用PaddlePaddle定义的MobileNet神经网络:

```python

import paddle.fluid as fluid

def conv_bn_layer(input, filter_size, num_filters, stride,

padding, channels=None, num_groups=1, act='relu', use_cudnn=True):

conv = fluid.layers.conv2d(input=input,

num_filters=num_filters,

filter_size=filter_size,

stride=stride,

padding=padding,

groups=num_groups,

act=None,

use_cudnn=use_cudnn,

bias_attr=False)

return fluid.layers.batch_norm(input=conv, act=act)

```

```python

def depthwise_separable(input, num_filters1, num_filters2, num_groups, stride, scale):

depthwise_conv = conv_bn_layer(input=input,

filter_size=3,

num_filters=int(num_filters1 * scale),

stride=stride,

padding=1,

num_groups=int(num_groups * scale),

use_cudnn=False)

pointwise_conv = conv_bn_layer(input=depthwise_conv,

filter_size=1,

num_filters=int(num_filters2 * scale),

stride=1,

padding=0)

return pointwise_conv

```

```python

def net(input, class_dim, scale=1.0):

# conv1: 112x112

input = conv_bn_layer(input=input,

filter_size=3,

channels=3,

num_filters=int(32 * scale),

stride=2,

padding=1)

# 56x56

input = depthwise_separable(input=input,

num_filters1=32,

num_filters2=64,

num_groups=32,

stride=1,

scale=scale)

input = depthwise_separable(input=input,

num_filters1=64,

num_filters2=128,

num_groups=64,

stride=2,

scale=scale)

# 28x28

input = depthwise_separable(input=input,

num_filters1=128,

num_filters2=128,

num_groups=128,

stride=1,

scale=scale)

input = depthwise_separable(input=input,

num_filters1=128,

num_filters2=256,

num_groups=128,

stride=2,

scale=scale)

# 14x14

input = depthwise_separable(input=input,

num_filters1=256,

num_filters2=256,

num_groups=256,

stride=1,

scale=scale)

input = depthwise_separable(input=input,

num_filters1=256,

num_filters2=512,

num_groups=256,

stride=2,

scale=scale)

# 14x14

for i in range(5):

input = depthwise_separable(input=input,

num_filters1=512,

num_filters2=512,

num_groups=512,

stride=1,

scale=scale)

# 7x7

input = depthwise_separable(input=input,

num_filters1=512,

num_filters2=1024,

num_groups=512,

stride=2,

scale=scale)

input = depthwise_separable(input=input,

num_filters1=1024,

num_filters2=1024,

num_groups=1024,

stride=1,

scale=scale)

feature = fluid.layers.pool2d(input=input,

pool_size=0,

pool_stride=1,

pool_type='avg',

global_pooling=True)

net = fluid.layers.fc(input=feature,

size=class_dim,

act='softmax')

return net

```

# 定义数据读取

创建一个`reader.py`文件,这个程序就是用户训练和测试的使用读取数据的。训练的时候,通过这个程序从本地读取图片,然后通过一系列的预处理操作,最后转换成训练所需的Numpy数组。

首先导入所需的包,其中`cpu_count`是获取当前计算机有多少个CPU,然后使用多线程读取数据。

```python

import os

import random

from multiprocessing import cpu_count

import numpy as np

import paddle

from PIL import Image

```

首先定义一个`train_mapper()`函数,这个函数是根据传入进来的图片路径来对图片进行预处理,比如训练的时候需要统一图片的大小,同时也使用多种的数据增强的方式,如水平翻转、垂直翻转、角度翻转、随机裁剪,这些方式都可以让有限的图片数据集在训练的时候成倍的增加。最后因为PIL打开图片存储顺序为H(高度),W(宽度),C(通道),PaddlePaddle要求数据顺序为CHW,所以需要转换顺序。最后返回的是处理后的图片数据和其对应的标签。

```python

# 训练图片的预处理

def train_mapper(sample):

img_path, label, crop_size, resize_size = sample

try:

img = Image.open(img_path)

# 统一图片大小

img = img.resize((resize_size, resize_size), Image.ANTIALIAS)

# 随机水平翻转

r1 = random.random()

if r1 > 0.5:

img = img.transpose(Image.FLIP_LEFT_RIGHT)

# 随机垂直翻转

r2 = random.random()

if r2 > 0.5:

img = img.transpose(Image.FLIP_TOP_BOTTOM)

# 随机角度翻转

r3 = random.randint(-3, 3)

img = img.rotate(r3, expand=False)

# 随机裁剪

r4 = random.randint(0, int(resize_size - crop_size))

r5 = random.randint(0, int(resize_size - crop_size))

box = (r4, r5, r4 + crop_size, r5 + crop_size)

img = img.crop(box)

# 把图片转换成numpy值

img = np.array(img).astype(np.float32)

# 转换成CHW

img = img.transpose((2, 0, 1))

# 转换成BGR

img = img[(2, 1, 0), :, :] / 255.0

return img, int(label)

except:

print("%s 该图片错误,请删除该图片并重新创建图像数据列表" % img_path)

```

这个`train_reader()`函数是根据已经创建的图像列表解析得到每张图片的路径和其他对应的标签,然后使用`paddle.reader.xmap_readers()`把数据传递给上面定义的`train_mapper()`函数进行处理,最后得到一个训练所需的reader。

```python

# 获取训练的reader

def train_reader(train_list_path, crop_size, resize_size):

father_path = os.path.dirname(train_list_path)

def reader():

with open(train_list_path, 'r') as f:

lines = f.readlines()

# 打乱图像列表

np.random.shuffle(lines)

# 开始获取每张图像和标签

for line in lines:

img, label = line.split('\t')

img = os.path.join(father_path, img)

yield img, label, crop_size, resize_size

return paddle.reader.xmap_readers(train_mapper, reader, cpu_count(), 102400)

```

这是一个测试数据的预处理函数`test_mapper()`,这个没有做太多处理,因为测试的数据不需要数据增强操作,只需统一图片大小和设置好图片的通过顺序和数据类型即可。

```python

# 测试图片的预处理

def test_mapper(sample):

img, label, crop_size = sample

img = Image.open(img)

# 统一图像大小

img = img.resize((crop_size, crop_size), Image.ANTIALIAS)

# 转换成numpy值

img = np.array(img).astype(np.float32)

# 转换成CHW

img = img.transpose((2, 0, 1))

# 转换成BGR

img = img[(2, 1, 0), :, :] / 255.0

return img, int(label)

```

这个是测试的reader函数`test_reader()`,这个跟训练的reader函数定义一样。

```python

# 测试的图片reader

def test_reader(test_list_path, crop_size):

father_path = os.path.dirname(test_list_path)

def reader():

with open(test_list_path, 'r') as f:

lines = f.readlines()

for line in lines:

img, label = line.split('\t')

img = os.path.join(father_path, img)

yield img, label, crop_size

return paddle.reader.xmap_readers(test_mapper, reader, cpu_count(), 1024)

```

# 训练模型

万事俱备,只等训练了。关于PaddlePaddle训练流程,我们已经非常熟悉了,那么我们就简单地过一遍。

创建`train.py`文件,首先导入所需的包,其中包括我们定义的MobileNet模型和数据读取程序:

```python

import os

import shutil

import mobilenet_v1

import paddle as paddle

import reader

import paddle.fluid as fluid

```

然后定义数据输入层,这次我们使用的是图片大小是224,这比之前使用的CIFAR数据集的32大小要大很多,所以训练其他会慢不少。至于`resize_size`是用于统一缩放到这个大小,然后再随机裁剪成`crop_size`大小,`crop_size`才是最终训练图片的大小。

```python

crop_size = 224

resize_size = 250

# 定义输入层

image = fluid.layers.data(name='image', shape=[3, crop_size, crop_size], dtype='float32')

label = fluid.layers.data(name='label', shape=[1], dtype='int64')

```

接着获取MobileNet网络的分类器,传入的第一个参数就是上面定义的输入层,第二个是分类的类别大小,比如我们这次爬取的图像类别数量是6个。

```python

# 获取分类器,因为这次只爬取了6个类别的图片,所以分类器的类别大小为6

model = mobilenet_v1.net(image, 6)

```

再接着是获取损失函数和平均准确率函数,还有测试程序和优化方法,这个优化方法我加了正则,因为爬取的图片数量太少,在训练容易过拟合,所以加上正则一定程度上可以抑制过拟合。

```python

# 获取损失函数和准确率函数

cost = fluid.layers.cross_entropy(input=model, label=label)

avg_cost = fluid.layers.mean(cost)

acc = fluid.layers.accuracy(input=model, label=label)

# 获取训练和测试程序

test_program = fluid.default_main_program().clone(for_test=True)

# 定义优化方法

optimizer = fluid.optimizer.AdamOptimizer(learning_rate=1e-3,

regularization=fluid.regularizer.L2DecayRegularizer(1e-4))

opts = optimizer.minimize(avg_cost)

```

这里就是获取训练测试是所以想的数据读取reader,通过使用`paddle.batch()`函数可以把多条数据打包成一个批次,训练的时候是按照一个个批次训练的。

```python

# 获取自定义数据

train_reader = paddle.batch(reader=reader.train_reader('images/train.list', crop_size, resize_size), batch_size=32)

test_reader = paddle.batch(reader=reader.test_reader('images/test.list', crop_size), batch_size=32)

```

执行训练之前,还需要创建一个执行器,建议使用GPU进行训练,因为我们训练的图片比较大,所以使用CPU训练速度会相当的慢。

```python

# 定义一个使用GPU的执行器

place = fluid.CUDAPlace(0)

# place = fluid.CPUPlace()

exe = fluid.Executor(place)

# 进行参数初始化

exe.run(fluid.default_startup_program())

# 定义输入数据维度

feeder = fluid.DataFeeder(place=place, feed_list=[image, label])

```

最后终于可以执行训练了,这里跟在前些章节都几乎一样,就不重复介绍了。

```python

# 训练100次

for pass_id in range(100):

# 进行训练

for batch_id, data in enumerate(train_reader()):

train_cost, train_acc = exe.run(program=fluid.default_main_program(),

feed=feeder.feed(data),

fetch_list=[avg_cost, acc])

# 每100个batch打印一次信息

if batch_id % 100 == 0:

print('Pass:%d, Batch:%d, Cost:%0.5f, Accuracy:%0.5f' %

(pass_id, batch_id, train_cost[0], train_acc[0]))

# 进行测试

test_accs = []

test_costs = []

for batch_id, data in enumerate(test_reader()):

test_cost, test_acc = exe.run(program=test_program,

feed=feeder.feed(data),

fetch_list=[avg_cost, acc])

test_accs.append(test_acc[0])

test_costs.append(test_cost[0])

# 求测试结果的平均值

test_cost = (sum(test_costs) / len(test_costs))

test_acc = (sum(test_accs) / len(test_accs))

print('Test:%d, Cost:%0.5f, Accuracy:%0.5f' % (pass_id, test_cost, test_acc))

```

训练的过程中可以保存预测模型,用于之后的预测。笔者一般是每一个pass保存一次模型。

```python

# 保存预测模型

save_path = 'infer_model/'

# 删除旧的模型文件

shutil.rmtree(save_path, ignore_errors=True)

# 创建保持模型文件目录

os.makedirs(save_path)

# 保存预测模型

fluid.io.save_inference_model(save_path, feeded_var_names=[image.name], target_vars=[model], executor=exe)

```

训练输出的信息:

```

Pass:0, Batch:0, Cost:1.84754, Accuracy:0.15625

Test:0, Cost:4.66276, Accuracy:0.17857

Pass:1, Batch:0, Cost:1.04008, Accuracy:0.59375

Test:1, Cost:1.23828, Accuracy:0.54464

Pass:2, Batch:0, Cost:1.04778, Accuracy:0.65625

Test:2, Cost:0.99189, Accuracy:0.64286

Pass:3, Batch:0, Cost:1.21555, Accuracy:0.65625

Test:3, Cost:1.01552, Accuracy:0.57589

Pass:4, Batch:0, Cost:0.64620, Accuracy:0.81250

Test:4, Cost:1.19264, Accuracy:0.63393

```

# 预测图片

经过上面训练后,得到了一个预测模型,下面我们就使用一个预测模型来预测一些图片。

创建一个`infer.py`文件作为预测程序。首先导入所需的依赖包。

```python

import paddle.fluid as fluid

from PIL import Image

import numpy as np

```

创建一个执行器,这些不需要训练,所以可以使用CPU进行预测,速度不会太慢,当然,使用GPU的预测速度会更快一些。

```python

# 创建执行器

place = fluid.CPUPlace()

exe = fluid.Executor(place)

exe.run(fluid.default_startup_program())

```