Repository: yz93/LAVT-RIS

Branch: main

Commit: 1da0af9f21b6

Files: 59

Total size: 1.1 MB

Directory structure:

gitextract_240sk8kr/

├── LICENSE

├── README.md

├── args.py

├── bert/

│ ├── activations.py

│ ├── configuration_bert.py

│ ├── configuration_utils.py

│ ├── file_utils.py

│ ├── generation_utils.py

│ ├── modeling_bert.py

│ ├── modeling_utils.py

│ ├── tokenization_bert.py

│ ├── tokenization_utils.py

│ └── tokenization_utils_base.py

├── data/

│ └── dataset_refer_bert.py

├── demo_inference.py

├── lib/

│ ├── _utils.py

│ ├── backbone.py

│ ├── mask_predictor.py

│ ├── mmcv_custom/

│ │ ├── __init__.py

│ │ └── checkpoint.py

│ └── segmentation.py

├── refer/

│ ├── LICENSE

│ ├── Makefile

│ ├── README.md

│ ├── data/

│ │ └── README.md

│ ├── evaluation/

│ │ ├── __init__.py

│ │ ├── bleu/

│ │ │ ├── LICENSE

│ │ │ ├── __init__.py

│ │ │ ├── bleu.py

│ │ │ └── bleu_scorer.py

│ │ ├── cider/

│ │ │ ├── __init__.py

│ │ │ ├── cider.py

│ │ │ └── cider_scorer.py

│ │ ├── meteor/

│ │ │ ├── __init__.py

│ │ │ └── meteor.py

│ │ ├── readme.txt

│ │ ├── refEvaluation.py

│ │ ├── rouge/

│ │ │ ├── __init__.py

│ │ │ └── rouge.py

│ │ └── tokenizer/

│ │ ├── __init__.py

│ │ ├── ptbtokenizer.py

│ │ └── stanford-corenlp-3.4.1.jar

│ ├── external/

│ │ ├── README.md

│ │ ├── __init__.py

│ │ ├── _mask.pyx

│ │ ├── mask.py

│ │ ├── maskApi.c

│ │ └── maskApi.h

│ ├── pyEvalDemo.ipynb

│ ├── pyReferDemo.ipynb

│ ├── refer.py

│ ├── setup.py

│ └── test/

│ ├── sample_expressions_testA.json

│ └── sample_expressions_testB.json

├── requirements.txt

├── test.py

├── train.py

├── transforms.py

└── utils.py

================================================

FILE CONTENTS

================================================

================================================

FILE: LICENSE

================================================

GNU GENERAL PUBLIC LICENSE

Version 3, 29 June 2007

Copyright (C) 2007 Free Software Foundation, Inc.

Everyone is permitted to copy and distribute verbatim copies

of this license document, but changing it is not allowed.

Preamble

The GNU General Public License is a free, copyleft license for

software and other kinds of works.

The licenses for most software and other practical works are designed

to take away your freedom to share and change the works. By contrast,

the GNU General Public License is intended to guarantee your freedom to

share and change all versions of a program--to make sure it remains free

software for all its users. We, the Free Software Foundation, use the

GNU General Public License for most of our software; it applies also to

any other work released this way by its authors. You can apply it to

your programs, too.

When we speak of free software, we are referring to freedom, not

price. Our General Public Licenses are designed to make sure that you

have the freedom to distribute copies of free software (and charge for

them if you wish), that you receive source code or can get it if you

want it, that you can change the software or use pieces of it in new

free programs, and that you know you can do these things.

To protect your rights, we need to prevent others from denying you

these rights or asking you to surrender the rights. Therefore, you have

certain responsibilities if you distribute copies of the software, or if

you modify it: responsibilities to respect the freedom of others.

For example, if you distribute copies of such a program, whether

gratis or for a fee, you must pass on to the recipients the same

freedoms that you received. You must make sure that they, too, receive

or can get the source code. And you must show them these terms so they

know their rights.

Developers that use the GNU GPL protect your rights with two steps:

(1) assert copyright on the software, and (2) offer you this License

giving you legal permission to copy, distribute and/or modify it.

For the developers' and authors' protection, the GPL clearly explains

that there is no warranty for this free software. For both users' and

authors' sake, the GPL requires that modified versions be marked as

changed, so that their problems will not be attributed erroneously to

authors of previous versions.

Some devices are designed to deny users access to install or run

modified versions of the software inside them, although the manufacturer

can do so. This is fundamentally incompatible with the aim of

protecting users' freedom to change the software. The systematic

pattern of such abuse occurs in the area of products for individuals to

use, which is precisely where it is most unacceptable. Therefore, we

have designed this version of the GPL to prohibit the practice for those

products. If such problems arise substantially in other domains, we

stand ready to extend this provision to those domains in future versions

of the GPL, as needed to protect the freedom of users.

Finally, every program is threatened constantly by software patents.

States should not allow patents to restrict development and use of

software on general-purpose computers, but in those that do, we wish to

avoid the special danger that patents applied to a free program could

make it effectively proprietary. To prevent this, the GPL assures that

patents cannot be used to render the program non-free.

The precise terms and conditions for copying, distribution and

modification follow.

TERMS AND CONDITIONS

0. Definitions.

"This License" refers to version 3 of the GNU General Public License.

"Copyright" also means copyright-like laws that apply to other kinds of

works, such as semiconductor masks.

"The Program" refers to any copyrightable work licensed under this

License. Each licensee is addressed as "you". "Licensees" and

"recipients" may be individuals or organizations.

To "modify" a work means to copy from or adapt all or part of the work

in a fashion requiring copyright permission, other than the making of an

exact copy. The resulting work is called a "modified version" of the

earlier work or a work "based on" the earlier work.

A "covered work" means either the unmodified Program or a work based

on the Program.

To "propagate" a work means to do anything with it that, without

permission, would make you directly or secondarily liable for

infringement under applicable copyright law, except executing it on a

computer or modifying a private copy. Propagation includes copying,

distribution (with or without modification), making available to the

public, and in some countries other activities as well.

To "convey" a work means any kind of propagation that enables other

parties to make or receive copies. Mere interaction with a user through

a computer network, with no transfer of a copy, is not conveying.

An interactive user interface displays "Appropriate Legal Notices"

to the extent that it includes a convenient and prominently visible

feature that (1) displays an appropriate copyright notice, and (2)

tells the user that there is no warranty for the work (except to the

extent that warranties are provided), that licensees may convey the

work under this License, and how to view a copy of this License. If

the interface presents a list of user commands or options, such as a

menu, a prominent item in the list meets this criterion.

1. Source Code.

The "source code" for a work means the preferred form of the work

for making modifications to it. "Object code" means any non-source

form of a work.

A "Standard Interface" means an interface that either is an official

standard defined by a recognized standards body, or, in the case of

interfaces specified for a particular programming language, one that

is widely used among developers working in that language.

The "System Libraries" of an executable work include anything, other

than the work as a whole, that (a) is included in the normal form of

packaging a Major Component, but which is not part of that Major

Component, and (b) serves only to enable use of the work with that

Major Component, or to implement a Standard Interface for which an

implementation is available to the public in source code form. A

"Major Component", in this context, means a major essential component

(kernel, window system, and so on) of the specific operating system

(if any) on which the executable work runs, or a compiler used to

produce the work, or an object code interpreter used to run it.

The "Corresponding Source" for a work in object code form means all

the source code needed to generate, install, and (for an executable

work) run the object code and to modify the work, including scripts to

control those activities. However, it does not include the work's

System Libraries, or general-purpose tools or generally available free

programs which are used unmodified in performing those activities but

which are not part of the work. For example, Corresponding Source

includes interface definition files associated with source files for

the work, and the source code for shared libraries and dynamically

linked subprograms that the work is specifically designed to require,

such as by intimate data communication or control flow between those

subprograms and other parts of the work.

The Corresponding Source need not include anything that users

can regenerate automatically from other parts of the Corresponding

Source.

The Corresponding Source for a work in source code form is that

same work.

2. Basic Permissions.

All rights granted under this License are granted for the term of

copyright on the Program, and are irrevocable provided the stated

conditions are met. This License explicitly affirms your unlimited

permission to run the unmodified Program. The output from running a

covered work is covered by this License only if the output, given its

content, constitutes a covered work. This License acknowledges your

rights of fair use or other equivalent, as provided by copyright law.

You may make, run and propagate covered works that you do not

convey, without conditions so long as your license otherwise remains

in force. You may convey covered works to others for the sole purpose

of having them make modifications exclusively for you, or provide you

with facilities for running those works, provided that you comply with

the terms of this License in conveying all material for which you do

not control copyright. Those thus making or running the covered works

for you must do so exclusively on your behalf, under your direction

and control, on terms that prohibit them from making any copies of

your copyrighted material outside their relationship with you.

Conveying under any other circumstances is permitted solely under

the conditions stated below. Sublicensing is not allowed; section 10

makes it unnecessary.

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

No covered work shall be deemed part of an effective technological

measure under any applicable law fulfilling obligations under article

11 of the WIPO copyright treaty adopted on 20 December 1996, or

similar laws prohibiting or restricting circumvention of such

measures.

When you convey a covered work, you waive any legal power to forbid

circumvention of technological measures to the extent such circumvention

is effected by exercising rights under this License with respect to

the covered work, and you disclaim any intention to limit operation or

modification of the work as a means of enforcing, against the work's

users, your or third parties' legal rights to forbid circumvention of

technological measures.

4. Conveying Verbatim Copies.

You may convey verbatim copies of the Program's source code as you

receive it, in any medium, provided that you conspicuously and

appropriately publish on each copy an appropriate copyright notice;

keep intact all notices stating that this License and any

non-permissive terms added in accord with section 7 apply to the code;

keep intact all notices of the absence of any warranty; and give all

recipients a copy of this License along with the Program.

You may charge any price or no price for each copy that you convey,

and you may offer support or warranty protection for a fee.

5. Conveying Modified Source Versions.

You may convey a work based on the Program, or the modifications to

produce it from the Program, in the form of source code under the

terms of section 4, provided that you also meet all of these conditions:

a) The work must carry prominent notices stating that you modified

it, and giving a relevant date.

b) The work must carry prominent notices stating that it is

released under this License and any conditions added under section

7. This requirement modifies the requirement in section 4 to

"keep intact all notices".

c) You must license the entire work, as a whole, under this

License to anyone who comes into possession of a copy. This

License will therefore apply, along with any applicable section 7

additional terms, to the whole of the work, and all its parts,

regardless of how they are packaged. This License gives no

permission to license the work in any other way, but it does not

invalidate such permission if you have separately received it.

d) If the work has interactive user interfaces, each must display

Appropriate Legal Notices; however, if the Program has interactive

interfaces that do not display Appropriate Legal Notices, your

work need not make them do so.

A compilation of a covered work with other separate and independent

works, which are not by their nature extensions of the covered work,

and which are not combined with it such as to form a larger program,

in or on a volume of a storage or distribution medium, is called an

"aggregate" if the compilation and its resulting copyright are not

used to limit the access or legal rights of the compilation's users

beyond what the individual works permit. Inclusion of a covered work

in an aggregate does not cause this License to apply to the other

parts of the aggregate.

6. Conveying Non-Source Forms.

You may convey a covered work in object code form under the terms

of sections 4 and 5, provided that you also convey the

machine-readable Corresponding Source under the terms of this License,

in one of these ways:

a) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by the

Corresponding Source fixed on a durable physical medium

customarily used for software interchange.

b) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by a

written offer, valid for at least three years and valid for as

long as you offer spare parts or customer support for that product

model, to give anyone who possesses the object code either (1) a

copy of the Corresponding Source for all the software in the

product that is covered by this License, on a durable physical

medium customarily used for software interchange, for a price no

more than your reasonable cost of physically performing this

conveying of source, or (2) access to copy the

Corresponding Source from a network server at no charge.

c) Convey individual copies of the object code with a copy of the

written offer to provide the Corresponding Source. This

alternative is allowed only occasionally and noncommercially, and

only if you received the object code with such an offer, in accord

with subsection 6b.

d) Convey the object code by offering access from a designated

place (gratis or for a charge), and offer equivalent access to the

Corresponding Source in the same way through the same place at no

further charge. You need not require recipients to copy the

Corresponding Source along with the object code. If the place to

copy the object code is a network server, the Corresponding Source

may be on a different server (operated by you or a third party)

that supports equivalent copying facilities, provided you maintain

clear directions next to the object code saying where to find the

Corresponding Source. Regardless of what server hosts the

Corresponding Source, you remain obligated to ensure that it is

available for as long as needed to satisfy these requirements.

e) Convey the object code using peer-to-peer transmission, provided

you inform other peers where the object code and Corresponding

Source of the work are being offered to the general public at no

charge under subsection 6d.

A separable portion of the object code, whose source code is excluded

from the Corresponding Source as a System Library, need not be

included in conveying the object code work.

A "User Product" is either (1) a "consumer product", which means any

tangible personal property which is normally used for personal, family,

or household purposes, or (2) anything designed or sold for incorporation

into a dwelling. In determining whether a product is a consumer product,

doubtful cases shall be resolved in favor of coverage. For a particular

product received by a particular user, "normally used" refers to a

typical or common use of that class of product, regardless of the status

of the particular user or of the way in which the particular user

actually uses, or expects or is expected to use, the product. A product

is a consumer product regardless of whether the product has substantial

commercial, industrial or non-consumer uses, unless such uses represent

the only significant mode of use of the product.

"Installation Information" for a User Product means any methods,

procedures, authorization keys, or other information required to install

and execute modified versions of a covered work in that User Product from

a modified version of its Corresponding Source. The information must

suffice to ensure that the continued functioning of the modified object

code is in no case prevented or interfered with solely because

modification has been made.

If you convey an object code work under this section in, or with, or

specifically for use in, a User Product, and the conveying occurs as

part of a transaction in which the right of possession and use of the

User Product is transferred to the recipient in perpetuity or for a

fixed term (regardless of how the transaction is characterized), the

Corresponding Source conveyed under this section must be accompanied

by the Installation Information. But this requirement does not apply

if neither you nor any third party retains the ability to install

modified object code on the User Product (for example, the work has

been installed in ROM).

The requirement to provide Installation Information does not include a

requirement to continue to provide support service, warranty, or updates

for a work that has been modified or installed by the recipient, or for

the User Product in which it has been modified or installed. Access to a

network may be denied when the modification itself materially and

adversely affects the operation of the network or violates the rules and

protocols for communication across the network.

Corresponding Source conveyed, and Installation Information provided,

in accord with this section must be in a format that is publicly

documented (and with an implementation available to the public in

source code form), and must require no special password or key for

unpacking, reading or copying.

7. Additional Terms.

"Additional permissions" are terms that supplement the terms of this

License by making exceptions from one or more of its conditions.

Additional permissions that are applicable to the entire Program shall

be treated as though they were included in this License, to the extent

that they are valid under applicable law. If additional permissions

apply only to part of the Program, that part may be used separately

under those permissions, but the entire Program remains governed by

this License without regard to the additional permissions.

When you convey a copy of a covered work, you may at your option

remove any additional permissions from that copy, or from any part of

it. (Additional permissions may be written to require their own

removal in certain cases when you modify the work.) You may place

additional permissions on material, added by you to a covered work,

for which you have or can give appropriate copyright permission.

Notwithstanding any other provision of this License, for material you

add to a covered work, you may (if authorized by the copyright holders of

that material) supplement the terms of this License with terms:

a) Disclaiming warranty or limiting liability differently from the

terms of sections 15 and 16 of this License; or

b) Requiring preservation of specified reasonable legal notices or

author attributions in that material or in the Appropriate Legal

Notices displayed by works containing it; or

c) Prohibiting misrepresentation of the origin of that material, or

requiring that modified versions of such material be marked in

reasonable ways as different from the original version; or

d) Limiting the use for publicity purposes of names of licensors or

authors of the material; or

e) Declining to grant rights under trademark law for use of some

trade names, trademarks, or service marks; or

f) Requiring indemnification of licensors and authors of that

material by anyone who conveys the material (or modified versions of

it) with contractual assumptions of liability to the recipient, for

any liability that these contractual assumptions directly impose on

those licensors and authors.

All other non-permissive additional terms are considered "further

restrictions" within the meaning of section 10. If the Program as you

received it, or any part of it, contains a notice stating that it is

governed by this License along with a term that is a further

restriction, you may remove that term. If a license document contains

a further restriction but permits relicensing or conveying under this

License, you may add to a covered work material governed by the terms

of that license document, provided that the further restriction does

not survive such relicensing or conveying.

If you add terms to a covered work in accord with this section, you

must place, in the relevant source files, a statement of the

additional terms that apply to those files, or a notice indicating

where to find the applicable terms.

Additional terms, permissive or non-permissive, may be stated in the

form of a separately written license, or stated as exceptions;

the above requirements apply either way.

8. Termination.

You may not propagate or modify a covered work except as expressly

provided under this License. Any attempt otherwise to propagate or

modify it is void, and will automatically terminate your rights under

this License (including any patent licenses granted under the third

paragraph of section 11).

However, if you cease all violation of this License, then your

license from a particular copyright holder is reinstated (a)

provisionally, unless and until the copyright holder explicitly and

finally terminates your license, and (b) permanently, if the copyright

holder fails to notify you of the violation by some reasonable means

prior to 60 days after the cessation.

Moreover, your license from a particular copyright holder is

reinstated permanently if the copyright holder notifies you of the

violation by some reasonable means, this is the first time you have

received notice of violation of this License (for any work) from that

copyright holder, and you cure the violation prior to 30 days after

your receipt of the notice.

Termination of your rights under this section does not terminate the

licenses of parties who have received copies or rights from you under

this License. If your rights have been terminated and not permanently

reinstated, you do not qualify to receive new licenses for the same

material under section 10.

9. Acceptance Not Required for Having Copies.

You are not required to accept this License in order to receive or

run a copy of the Program. Ancillary propagation of a covered work

occurring solely as a consequence of using peer-to-peer transmission

to receive a copy likewise does not require acceptance. However,

nothing other than this License grants you permission to propagate or

modify any covered work. These actions infringe copyright if you do

not accept this License. Therefore, by modifying or propagating a

covered work, you indicate your acceptance of this License to do so.

10. Automatic Licensing of Downstream Recipients.

Each time you convey a covered work, the recipient automatically

receives a license from the original licensors, to run, modify and

propagate that work, subject to this License. You are not responsible

for enforcing compliance by third parties with this License.

An "entity transaction" is a transaction transferring control of an

organization, or substantially all assets of one, or subdividing an

organization, or merging organizations. If propagation of a covered

work results from an entity transaction, each party to that

transaction who receives a copy of the work also receives whatever

licenses to the work the party's predecessor in interest had or could

give under the previous paragraph, plus a right to possession of the

Corresponding Source of the work from the predecessor in interest, if

the predecessor has it or can get it with reasonable efforts.

You may not impose any further restrictions on the exercise of the

rights granted or affirmed under this License. For example, you may

not impose a license fee, royalty, or other charge for exercise of

rights granted under this License, and you may not initiate litigation

(including a cross-claim or counterclaim in a lawsuit) alleging that

any patent claim is infringed by making, using, selling, offering for

sale, or importing the Program or any portion of it.

11. Patents.

A "contributor" is a copyright holder who authorizes use under this

License of the Program or a work on which the Program is based. The

work thus licensed is called the contributor's "contributor version".

A contributor's "essential patent claims" are all patent claims

owned or controlled by the contributor, whether already acquired or

hereafter acquired, that would be infringed by some manner, permitted

by this License, of making, using, or selling its contributor version,

but do not include claims that would be infringed only as a

consequence of further modification of the contributor version. For

purposes of this definition, "control" includes the right to grant

patent sublicenses in a manner consistent with the requirements of

this License.

Each contributor grants you a non-exclusive, worldwide, royalty-free

patent license under the contributor's essential patent claims, to

make, use, sell, offer for sale, import and otherwise run, modify and

propagate the contents of its contributor version.

In the following three paragraphs, a "patent license" is any express

agreement or commitment, however denominated, not to enforce a patent

(such as an express permission to practice a patent or covenant not to

sue for patent infringement). To "grant" such a patent license to a

party means to make such an agreement or commitment not to enforce a

patent against the party.

If you convey a covered work, knowingly relying on a patent license,

and the Corresponding Source of the work is not available for anyone

to copy, free of charge and under the terms of this License, through a

publicly available network server or other readily accessible means,

then you must either (1) cause the Corresponding Source to be so

available, or (2) arrange to deprive yourself of the benefit of the

patent license for this particular work, or (3) arrange, in a manner

consistent with the requirements of this License, to extend the patent

license to downstream recipients. "Knowingly relying" means you have

actual knowledge that, but for the patent license, your conveying the

covered work in a country, or your recipient's use of the covered work

in a country, would infringe one or more identifiable patents in that

country that you have reason to believe are valid.

If, pursuant to or in connection with a single transaction or

arrangement, you convey, or propagate by procuring conveyance of, a

covered work, and grant a patent license to some of the parties

receiving the covered work authorizing them to use, propagate, modify

or convey a specific copy of the covered work, then the patent license

you grant is automatically extended to all recipients of the covered

work and works based on it.

A patent license is "discriminatory" if it does not include within

the scope of its coverage, prohibits the exercise of, or is

conditioned on the non-exercise of one or more of the rights that are

specifically granted under this License. You may not convey a covered

work if you are a party to an arrangement with a third party that is

in the business of distributing software, under which you make payment

to the third party based on the extent of your activity of conveying

the work, and under which the third party grants, to any of the

parties who would receive the covered work from you, a discriminatory

patent license (a) in connection with copies of the covered work

conveyed by you (or copies made from those copies), or (b) primarily

for and in connection with specific products or compilations that

contain the covered work, unless you entered into that arrangement,

or that patent license was granted, prior to 28 March 2007.

Nothing in this License shall be construed as excluding or limiting

any implied license or other defenses to infringement that may

otherwise be available to you under applicable patent law.

12. No Surrender of Others' Freedom.

If conditions are imposed on you (whether by court order, agreement or

otherwise) that contradict the conditions of this License, they do not

excuse you from the conditions of this License. If you cannot convey a

covered work so as to satisfy simultaneously your obligations under this

License and any other pertinent obligations, then as a consequence you may

not convey it at all. For example, if you agree to terms that obligate you

to collect a royalty for further conveying from those to whom you convey

the Program, the only way you could satisfy both those terms and this

License would be to refrain entirely from conveying the Program.

13. Use with the GNU Affero General Public License.

Notwithstanding any other provision of this License, you have

permission to link or combine any covered work with a work licensed

under version 3 of the GNU Affero General Public License into a single

combined work, and to convey the resulting work. The terms of this

License will continue to apply to the part which is the covered work,

but the special requirements of the GNU Affero General Public License,

section 13, concerning interaction through a network will apply to the

combination as such.

14. Revised Versions of this License.

The Free Software Foundation may publish revised and/or new versions of

the GNU General Public License from time to time. Such new versions will

be similar in spirit to the present version, but may differ in detail to

address new problems or concerns.

Each version is given a distinguishing version number. If the

Program specifies that a certain numbered version of the GNU General

Public License "or any later version" applies to it, you have the

option of following the terms and conditions either of that numbered

version or of any later version published by the Free Software

Foundation. If the Program does not specify a version number of the

GNU General Public License, you may choose any version ever published

by the Free Software Foundation.

If the Program specifies that a proxy can decide which future

versions of the GNU General Public License can be used, that proxy's

public statement of acceptance of a version permanently authorizes you

to choose that version for the Program.

Later license versions may give you additional or different

permissions. However, no additional obligations are imposed on any

author or copyright holder as a result of your choosing to follow a

later version.

15. Disclaimer of Warranty.

THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY

APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT

HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY

OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO,

THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR

PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM

IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF

ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

16. Limitation of Liability.

IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS

THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY

GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE

USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF

DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD

PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),

EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF

SUCH DAMAGES.

17. Interpretation of Sections 15 and 16.

If the disclaimer of warranty and limitation of liability provided

above cannot be given local legal effect according to their terms,

reviewing courts shall apply local law that most closely approximates

an absolute waiver of all civil liability in connection with the

Program, unless a warranty or assumption of liability accompanies a

copy of the Program in return for a fee.

END OF TERMS AND CONDITIONS

How to Apply These Terms to Your New Programs

If you develop a new program, and you want it to be of the greatest

possible use to the public, the best way to achieve this is to make it

free software which everyone can redistribute and change under these terms.

To do so, attach the following notices to the program. It is safest

to attach them to the start of each source file to most effectively

state the exclusion of warranty; and each file should have at least

the "copyright" line and a pointer to where the full notice is found.

Copyright (C)

This program is free software: you can redistribute it and/or modify

it under the terms of the GNU General Public License as published by

the Free Software Foundation, either version 3 of the License, or

(at your option) any later version.

This program is distributed in the hope that it will be useful,

but WITHOUT ANY WARRANTY; without even the implied warranty of

MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

GNU General Public License for more details.

You should have received a copy of the GNU General Public License

along with this program. If not, see .

Also add information on how to contact you by electronic and paper mail.

If the program does terminal interaction, make it output a short

notice like this when it starts in an interactive mode:

Copyright (C)

This program comes with ABSOLUTELY NO WARRANTY; for details type `show w'.

This is free software, and you are welcome to redistribute it

under certain conditions; type `show c' for details.

The hypothetical commands `show w' and `show c' should show the appropriate

parts of the General Public License. Of course, your program's commands

might be different; for a GUI interface, you would use an "about box".

You should also get your employer (if you work as a programmer) or school,

if any, to sign a "copyright disclaimer" for the program, if necessary.

For more information on this, and how to apply and follow the GNU GPL, see

.

The GNU General Public License does not permit incorporating your program

into proprietary programs. If your program is a subroutine library, you

may consider it more useful to permit linking proprietary applications with

the library. If this is what you want to do, use the GNU Lesser General

Public License instead of this License. But first, please read

.

================================================

FILE: README.md

================================================

# LAVT: Language-Aware Vision Transformer for Referring Image Segmentation

Welcome to the official repository for the method presented in

"LAVT: Language-Aware Vision Transformer for Referring Image Segmentation."

Code in this repository is written using [PyTorch](https://pytorch.org/) and is organized in the following way (assuming the working directory is the root directory of this repository):

* `./lib` contains files implementing the main network.

* Inside `./lib`, `_utils.py` defines the highest-level model, which incorporates the backbone network

defined in `backbone.py` and the simple mask decoder defined in `mask_predictor.py`.

`segmentation.py` provides the model interface and initialization functions.

* `./bert` contains files migrated from [Hugging Face Transformers v3.0.2](https://huggingface.co/transformers/v3.0.2/quicktour.html),

which implement the BERT language model.

We used Transformers v3.0.2 during development but it had a bug that would appear when using `DistributedDataParallel`.

Therefore we maintain a copy of the relevant source files in this repository.

This way, the bug is fixed and code in this repository is self-contained.

* `./train.py` is invoked to train the model.

* `./test.py` is invoked to run inference on the evaluation subsets after training.

* `./refer` contains data pre-processing code and is also where data should be placed, including the images and all annotations.

It is cloned from [refer](https://github.com/lichengunc/refer).

* `./data/dataset_refer_bert.py` is where the dataset class is defined.

* `./utils.py` defines functions that track training statistics and setup

functions for `DistributedDataParallel`.

## Updates

**April 13th, 2023**. Using the Dice loss instead of the cross-entropy loss can improve results. Will add code and release weights later when get a chance.

**June 21st, 2022**. Uploaded the training logs and trained

model weights of lavt_one.

**June 9th, 2022**.

Added a more efficient implementation of LAVT.

* To train this new model, specify `--model` as `lavt_one`

(and `lavt` is still valid for specifying the old model).

The rest of the configuration stays unchanged.

* The difference between this version and the previous one

is that the language model has been moved inside the overall model,

so that `DistributedDataParallel` needs to be applied only once.

Applying it twice (on the standalone language model and the main branch)

as done in the old implementation led to low GPU utility,

which slowed down training.

We recommend training this model on 8 GPUs

(and same as before with batch size 32).

## Setting Up

### Preliminaries

The code has been verified to work with PyTorch v1.7.1 and Python 3.7.

1. Clone this repository.

2. Change directory to root of this repository.

### Package Dependencies

1. Create a new Conda environment with Python 3.7 then activate it:

```shell

conda create -n lavt python==3.7

conda activate lavt

```

2. Install PyTorch v1.7.1 with a CUDA version that works on your cluster/machine (CUDA 10.2 is used in this example):

```shell

conda install pytorch==1.7.1 torchvision==0.8.2 torchaudio==0.7.2 cudatoolkit=10.2 -c pytorch

```

3. Install the packages in `requirements.txt` via `pip`:

```shell

pip install -r requirements.txt

```

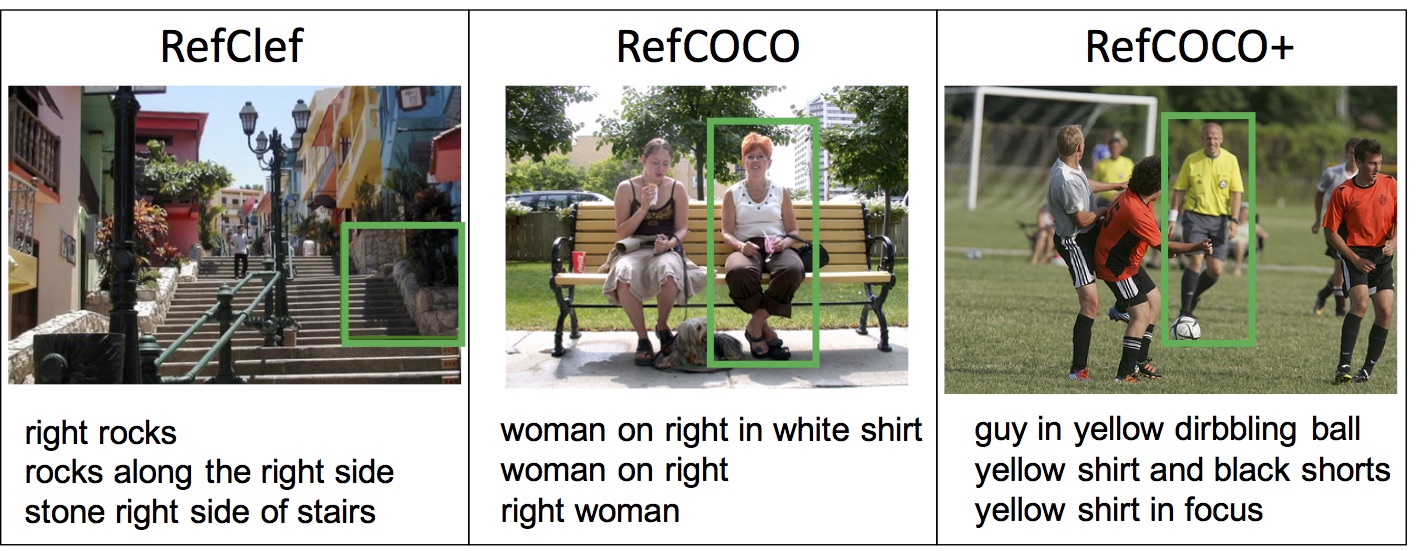

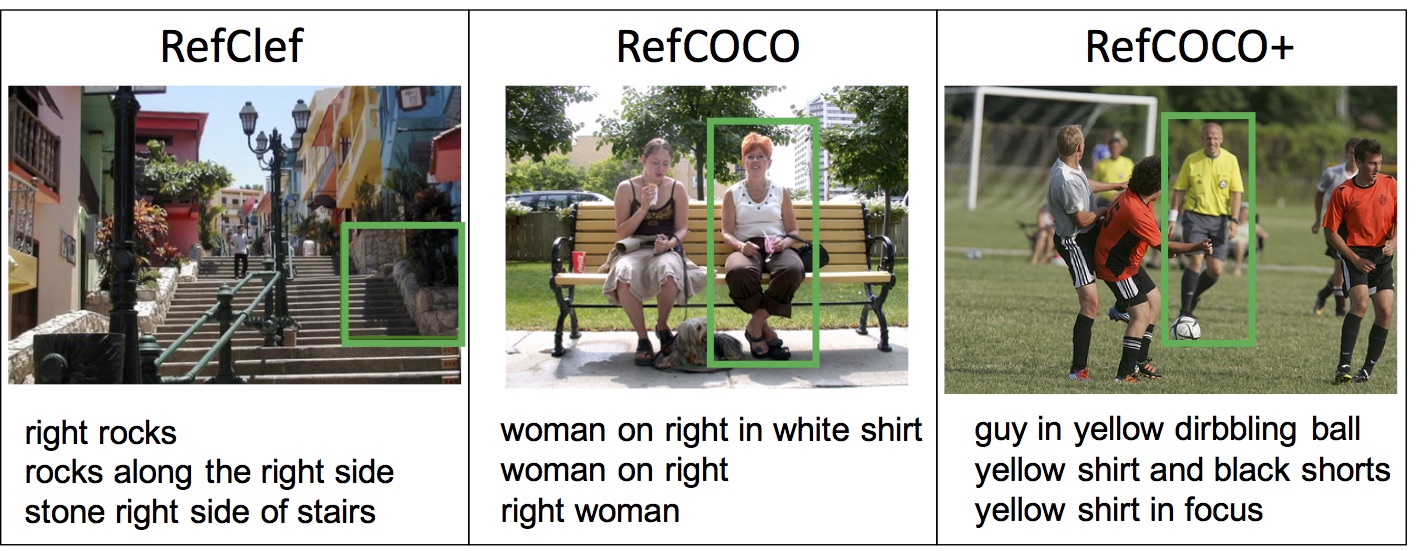

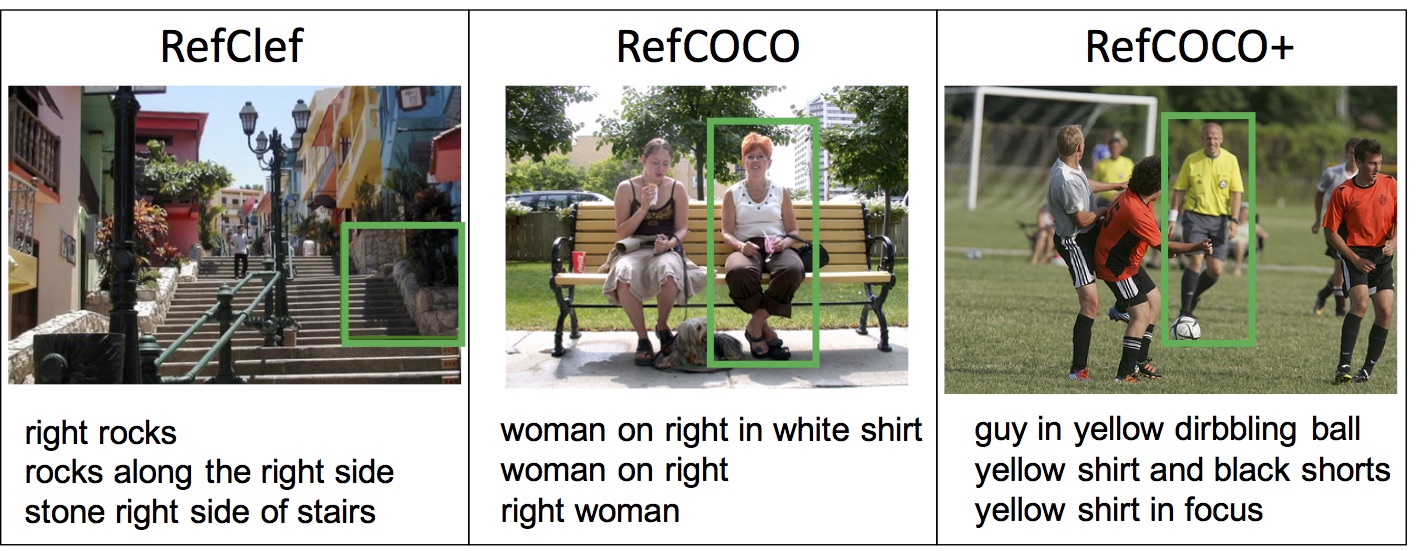

### Datasets

1. Follow instructions in the `./refer` directory to set up subdirectories

and download annotations.

This directory is a git clone (minus two data files that we do not need)

from the [refer](https://github.com/lichengunc/refer) public API.

2. Download images from [COCO](https://cocodataset.org/#download).

Please use the first downloading link *2014 Train images [83K/13GB]*, and extract

the downloaded `train_2014.zip` file to `./refer/data/images/mscoco/images`.

### The Initialization Weights for Training

1. Create the `./pretrained_weights` directory where we will be storing the weights.

```shell

mkdir ./pretrained_weights

```

2. Download [pre-trained classification weights of

the Swin Transformer](https://github.com/SwinTransformer/storage/releases/download/v1.0.0/swin_base_patch4_window12_384_22k.pth),

and put the `pth` file in `./pretrained_weights`.

These weights are needed for training to initialize the model.

### Trained Weights of LAVT for Testing

1. Create the `./checkpoints` directory where we will be storing the weights.

```shell

mkdir ./checkpoints

```

2. Download LAVT model weights (which are stored on Google Drive) using links below and put them in `./checkpoints`.

| [RefCOCO](https://drive.google.com/file/d/13D-OeEOijV8KTC3BkFP-gOJymc6DLwVT/view?usp=sharing) | [RefCOCO+](https://drive.google.com/file/d/1B8Q44ZWsc8Pva2xD_M-KFh7-LgzeH2-2/view?usp=sharing) | [G-Ref (UMD)](https://drive.google.com/file/d/1BjUnPVpALurkGl7RXXvQiAHhA-gQYKvK/view?usp=sharing) | [G-Ref (Google)](https://drive.google.com/file/d/1weiw5UjbPfo3tCBPfB8tu6xFXCUG16yS/view?usp=sharing) |

|---|---|---|---|

3. Model weights and training logs of the new lavt_one implementation are below.

| RefCOCO | RefCOCO+ | G-Ref (UMD) | G-Ref (Google) |

|:-----:|:-----:|:-----:|:-----:|

|[log](https://drive.google.com/file/d/1YIojIHqe3bxxsWOltifa2U9jH67hPHLM/view?usp=sharing) | [weights](https://drive.google.com/file/d/1xFMEXr6AGU97Ypj1yr8oo00uObbeIQvJ/view?usp=sharing)|[log](https://drive.google.com/file/d/1Z34T4gEnWlvcSUQya7txOuM0zdLK7MRT/view?usp=sharing) | [weights](https://drive.google.com/file/d/1HS8ZnGaiPJr-OmoUn4-4LVnVtD_zHY6w/view?usp=sharing)|[log](https://drive.google.com/file/d/14VAgahngOV8NA6noLZCqDoqaUrlW14v8/view?usp=sharing) | [weights](https://drive.google.com/file/d/14g8NzgZn6HzC6tP_bsQuWmh5LnOcovsE/view?usp=sharing)|[log](https://drive.google.com/file/d/1JBXfmlwemWSvs92Rky0TlHcVuuLpt4Da/view?usp=sharing) | [weights](https://drive.google.com/file/d/1IJeahFVLgKxu_BVmWacZs3oUzgTCeWcz/view?usp=sharing)|

* The Prec@K, overall IoU and mean IoU numbers in the training logs will differ

from the final results obtained by running `test.py`,

because only one out of multiple annotated expressions is

randomly selected and evaluated for each object during training.

But these numbers give a good idea about the test performance.

The two should be fairly close.

## Training

We use `DistributedDataParallel` from PyTorch.

The released `lavt` weights were trained using 4 x 32G V100 cards (max mem on each card was about 26G).

The released `lavt_one` weights were trained using 8 x 32G V100 cards (max mem on each card was about 13G).

Using more cards was to accelerate training.

To run on 4 GPUs (with IDs 0, 1, 2, and 3) on a single node:

```shell

mkdir ./models

mkdir ./models/refcoco

CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node 4 --master_port 12345 train.py --model lavt --dataset refcoco --model_id refcoco --batch-size 8 --lr 0.00005 --wd 1e-2 --swin_type base --pretrained_swin_weights ./pretrained_weights/swin_base_patch4_window12_384_22k.pth --epochs 40 --img_size 480 2>&1 | tee ./models/refcoco/output

mkdir ./models/refcoco+

CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node 4 --master_port 12345 train.py --model lavt --dataset refcoco+ --model_id refcoco+ --batch-size 8 --lr 0.00005 --wd 1e-2 --swin_type base --pretrained_swin_weights ./pretrained_weights/swin_base_patch4_window12_384_22k.pth --epochs 40 --img_size 480 2>&1 | tee ./models/refcoco+/output

mkdir ./models/gref_umd

CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node 4 --master_port 12345 train.py --model lavt --dataset refcocog --splitBy umd --model_id gref_umd --batch-size 8 --lr 0.00005 --wd 1e-2 --swin_type base --pretrained_swin_weights ./pretrained_weights/swin_base_patch4_window12_384_22k.pth --epochs 40 --img_size 480 2>&1 | tee ./models/gref_umd/output

mkdir ./models/gref_google

CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node 4 --master_port 12345 train.py --model lavt --dataset refcocog --splitBy google --model_id gref_google --batch-size 8 --lr 0.00005 --wd 1e-2 --swin_type base --pretrained_swin_weights ./pretrained_weights/swin_base_patch4_window12_384_22k.pth --epochs 40 --img_size 480 2>&1 | tee ./models/gref_google/output

```

* *--model* is a pre-defined model name. Options include `lavt` and `lavt_one`. See [Updates](#updates).

* *--dataset* is the dataset name. One can choose from `refcoco`, `refcoco+`, and `refcocog`.

* *--splitBy* needs to be specified if and only if the dataset is G-Ref (which is also called RefCOCOg).

`umd` identifies the UMD partition and `google` identifies the Google partition.

* *--model_id* is the model name one should define oneself (*e.g.*, customize it to contain training/model configurations, dataset information, experiment IDs, *etc*.).

It is used in two ways: Training log will be saved as `./models/[args.model_id]/output` and the best checkpoint will be saved as `./checkpoints/model_best_[args.model_id].pth`.

* *--swin_type* specifies the version of the Swin Transformer.

One can choose from `tiny`, `small`, `base`, and `large`. The default is `base`.

* *--pretrained_swin_weights* specifies the path to pre-trained Swin Transformer weights used for model initialization.

* Note that currently we need to manually create the `./models/[args.model_id]` directory via `mkdir` before running `train.py`.

This is because we use `tee` to redirect `stdout` and `stderr` to `./models/[args.model_id]/output` for logging.

This is a nuisance and should be resolved in the future, *i.e.*, using a proper logger or a bash script for initiating training.

## Testing

For RefCOCO/RefCOCO+, run one of

```shell

python test.py --model lavt --swin_type base --dataset refcoco --split val --resume ./checkpoints/refcoco.pth --workers 4 --ddp_trained_weights --window12 --img_size 480

python test.py --model lavt --swin_type base --dataset refcoco+ --split val --resume ./checkpoints/refcoco+.pth --workers 4 --ddp_trained_weights --window12 --img_size 480

```

* *--split* is the subset to evaluate, and one can choose from `val`, `testA`, and `testB`.

* *--resume* is the path to the weights of a trained model.

For G-Ref (UMD)/G-Ref (Google), run one of

```shell

python test.py --model lavt --swin_type base --dataset refcocog --splitBy umd --split val --resume ./checkpoints/gref_umd.pth --workers 4 --ddp_trained_weights --window12 --img_size 480

python test.py --model lavt --swin_type base --dataset refcocog --splitBy google --split val --resume ./checkpoints/gref_google.pth --workers 4 --ddp_trained_weights --window12 --img_size 480

```

* *--splitBy* specifies the partition to evaluate.

One can choose from `umd` or `google`.

* *--split* is the subset (according to the specified partition) to evaluate, and one can choose from `val` and `test` for the UMD partition, and only `val` for the Google partition..

* *--resume* is the path to the weights of a trained model.

## Results

1. The evaluation results (those reported in the paper) of LAVT trained with a cross-entropy loss and based on our original implementation are summarized as follows:

| Dataset | P@0.5 | P@0.6 | P@0.7 | P@0.8 | P@0.9 | Overall IoU | Mean IoU |

|:---------------:|:-----:|:-----:|:-----:|:-----:|:-----:|:-----------:|:--------:|

| RefCOCO val | 84.46 | 80.90 | 75.28 | 64.71 | 34.30 | 72.73 | 74.46 |

| RefCOCO test A | 88.07 | 85.17 | 79.90 | 68.52 | 35.69 | 75.82 | 76.89 |

| RefCOCO test B | 79.12 | 74.94 | 69.17 | 59.37 | 34.45 | 68.79 | 70.94 |

| RefCOCO+ val | 74.44 | 70.91 | 65.58 | 56.34 | 30.23 | 62.14 | 65.81 |

| RefCOCO+ test A | 80.68 | 77.96 | 72.90 | 62.21 | 32.36 | 68.38 | 70.97 |

| RefCOCO+ test B | 65.66 | 61.85 | 55.94 | 47.56 | 27.24 | 55.10 | 59.23 |

| G-Ref val (UMD) | 70.81 | 65.28 | 58.60 | 47.49 | 22.73 | 61.24 | 63.34 |

| G-Ref test (UMD)| 71.54 | 66.38 | 59.00 | 48.21 | 23.10 | 62.09 | 63.62 |

|G-Ref val (Goog.)| 71.16 | 67.21 | 61.76 | 51.98 | 27.30 | 60.50 | 63.66 |

- We have validated LAVT on RefCOCO with multiple runs. The overall IoU on the val set generally lies in the range of 72.73±0.5%.

2. In the following, we report the results of LAVT trained with a multi-class Dice loss and based on the new implementation (`lavt_one`).

| Dataset | P@0.5 | P@0.6 | P@0.7 | P@0.8 | P@0.9 | Overall IoU | Mean IoU |

|:---------------:|:-----:|:-----:|:-----:|:-----:|:-----:|:-----------:|:--------:|

| RefCOCO val | 85.87 | 82.13 | 76.64 | 65.45 | 35.30 | 73.50 | 75.41 |

| RefCOCO test A | 88.47 | 85.63 | 80.57 | 68.84 | 35.71 | 75.97 | 77.31 |

| RefCOCO test B | 80.20 | 76.49 | 70.34 | 60.12 | 34.94 | 69.33 | 71.86 |

| RefCOCO+ val | 76.19 | 72.27 | 66.82 | 56.87 | 30.15 | 63.79 | 67.65 |

| RefCOCO+ test A | 82.50 | 79.44 | 74.00 | 63.27 | 31.99 | 69.79 | 72.53 |

| RefCOCO+ test B | 68.03 | 63.35 | 57.29 | 47.92 | 26.98 | 56.49 | 61.22 |

| G-Ref val (UMD) | 75.82 | 71.06 | 63.99 | 52.98 | 27.31 | 64.02 | 67.41 |

| G-Ref test (UMD)| 76.12 | 71.13 | 64.58 | 53.62 | 28.03 | 64.49 | 67.45 |

|G-Ref val (Goog.)| 72.57 | 68.65 | 63.09 | 53.33 | 28.14 | 61.31 | 64.84 |

## Demo: Try LAVT on Your Own Image-Text Pairs

You can run inference on any image-text pair

and visualize the result by running the script `./demo_inference.py`.

Have fun!

## Citing LAVT

```

@inproceedings{yang2022lavt,

title={LAVT: Language-Aware Vision Transformer for Referring Image Segmentation},

author={Yang, Zhao and Wang, Jiaqi and Tang, Yansong and Chen, Kai and Zhao, Hengshuang and Torr, Philip HS},

booktitle={CVPR},

year={2022}

}

```

## Contributing

We appreciate all contributions.

It helps the project if you could

- report issues you are facing,

- give a :+1: on issues reported by others that are relevant to you,

- answer issues reported by others for which you have found solutions,

- and implement helpful new features or improve the code otherwise with pull requests.

## Acknowledgements

Code in this repository is built upon several public repositories.

Specifically,

* data pre-processing leverages the [refer](https://github.com/lichengunc/refer) repository,

* the backbone model is implemented based on code from [Swin Transformer for Semantic Segmentation](https://github.com/SwinTransformer/Swin-Transformer-Semantic-Segmentation),

* the training and testing pipelines are adapted from [RefVOS](https://github.com/miriambellver/refvos),

* and implementation of the BERT model (files in the bert directory) is from [Hugging Face Transformers v3.0.2](https://github.com/huggingface/transformers/tree/v3.0.2)

(we migrated over the relevant code to fix a bug and simplify the installation process).

Some of these repositories in turn adapt code from [OpenMMLab](https://github.com/open-mmlab) and [TorchVision](https://github.com/pytorch/vision).

We'd like to thank the authors/organizations of these repositories for open sourcing their projects.

## License

GNU GPLv3

================================================

FILE: args.py

================================================

import argparse

def get_parser():

parser = argparse.ArgumentParser(description='LAVT training and testing')

parser.add_argument('--amsgrad', action='store_true',

help='if true, set amsgrad to True in an Adam or AdamW optimizer.')

parser.add_argument('-b', '--batch-size', default=8, type=int)

parser.add_argument('--bert_tokenizer', default='bert-base-uncased', help='BERT tokenizer')

parser.add_argument('--ck_bert', default='bert-base-uncased', help='pre-trained BERT weights')

parser.add_argument('--dataset', default='refcoco', help='refcoco, refcoco+, or refcocog')

parser.add_argument('--ddp_trained_weights', action='store_true',

help='Only needs specified when testing,'

'whether the weights to be loaded are from a DDP-trained model')

parser.add_argument('--device', default='cuda:0', help='device') # only used when testing on a single machine

parser.add_argument('--epochs', default=40, type=int, metavar='N', help='number of total epochs to run')

parser.add_argument('--fusion_drop', default=0.0, type=float, help='dropout rate for PWAMs')

parser.add_argument('--img_size', default=480, type=int, help='input image size')

parser.add_argument("--local_rank", type=int, help='local rank for DistributedDataParallel')

parser.add_argument('--lr', default=0.00005, type=float, help='the initial learning rate')

parser.add_argument('--mha', default='', help='If specified, should be in the format of a-b-c-d, e.g., 4-4-4-4,'

'where a, b, c, and d refer to the numbers of heads in stage-1,'

'stage-2, stage-3, and stage-4 PWAMs')

parser.add_argument('--model', default='lavt', help='model: lavt, lavt_one')

parser.add_argument('--model_id', default='lavt', help='name to identify the model')

parser.add_argument('--output-dir', default='./checkpoints/', help='path where to save checkpoint weights')

parser.add_argument('--pin_mem', action='store_true',

help='If true, pin memory when using the data loader.')

parser.add_argument('--pretrained_swin_weights', default='',

help='path to pre-trained Swin backbone weights')

parser.add_argument('--print-freq', default=10, type=int, help='print frequency')

parser.add_argument('--refer_data_root', default='./refer/data/', help='REFER dataset root directory')

parser.add_argument('--resume', default='', help='resume from checkpoint')

parser.add_argument('--split', default='test', help='only used when testing')

parser.add_argument('--splitBy', default='unc', help='change to umd or google when the dataset is G-Ref (RefCOCOg)')

parser.add_argument('--swin_type', default='base',

help='tiny, small, base, or large variants of the Swin Transformer')

parser.add_argument('--wd', '--weight-decay', default=1e-2, type=float, metavar='W', help='weight decay',

dest='weight_decay')

parser.add_argument('--window12', action='store_true',

help='only needs specified when testing,'

'when training, window size is inferred from pre-trained weights file name'

'(containing \'window12\'). Initialize Swin with window size 12 instead of the default 7.')

parser.add_argument('-j', '--workers', default=8, type=int, metavar='N', help='number of data loading workers')

return parser

if __name__ == "__main__":

parser = get_parser()

args_dict = parser.parse_args()

================================================

FILE: bert/activations.py

================================================

import logging

import math

import torch

import torch.nn.functional as F

logger = logging.getLogger(__name__)

def swish(x):

return x * torch.sigmoid(x)

def _gelu_python(x):

""" Original Implementation of the gelu activation function in Google Bert repo when initially created.

For information: OpenAI GPT's gelu is slightly different (and gives slightly different results):

0.5 * x * (1 + torch.tanh(math.sqrt(2 / math.pi) * (x + 0.044715 * torch.pow(x, 3))))

This is now written in C in torch.nn.functional

Also see https://arxiv.org/abs/1606.08415

"""

return x * 0.5 * (1.0 + torch.erf(x / math.sqrt(2.0)))

def gelu_new(x):

""" Implementation of the gelu activation function currently in Google Bert repo (identical to OpenAI GPT).

Also see https://arxiv.org/abs/1606.08415

"""

return 0.5 * x * (1.0 + torch.tanh(math.sqrt(2.0 / math.pi) * (x + 0.044715 * torch.pow(x, 3.0))))

if torch.__version__ < "1.4.0":

gelu = _gelu_python

else:

gelu = F.gelu

def gelu_fast(x):

return 0.5 * x * (1.0 + torch.tanh(x * 0.7978845608 * (1.0 + 0.044715 * x * x)))

ACT2FN = {

"relu": F.relu,

"swish": swish,

"gelu": gelu,

"tanh": torch.tanh,

"gelu_new": gelu_new,

"gelu_fast": gelu_fast,

}

def get_activation(activation_string):

if activation_string in ACT2FN:

return ACT2FN[activation_string]

else:

raise KeyError("function {} not found in ACT2FN mapping {}".format(activation_string, list(ACT2FN.keys())))

================================================

FILE: bert/configuration_bert.py

================================================

# coding=utf-8

# Copyright 2018 The Google AI Language Team Authors and The HuggingFace Inc. team.

# Copyright (c) 2018, NVIDIA CORPORATION. All rights reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

""" BERT model configuration """

import logging

from .configuration_utils import PretrainedConfig

logger = logging.getLogger(__name__)

BERT_PRETRAINED_CONFIG_ARCHIVE_MAP = {

"bert-base-uncased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-uncased-config.json",

"bert-large-uncased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-uncased-config.json",

"bert-base-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-cased-config.json",

"bert-large-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-cased-config.json",

"bert-base-multilingual-uncased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-multilingual-uncased-config.json",

"bert-base-multilingual-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-multilingual-cased-config.json",

"bert-base-chinese": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-chinese-config.json",

"bert-base-german-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-german-cased-config.json",

"bert-large-uncased-whole-word-masking": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-uncased-whole-word-masking-config.json",

"bert-large-cased-whole-word-masking": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-cased-whole-word-masking-config.json",

"bert-large-uncased-whole-word-masking-finetuned-squad": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-uncased-whole-word-masking-finetuned-squad-config.json",

"bert-large-cased-whole-word-masking-finetuned-squad": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-large-cased-whole-word-masking-finetuned-squad-config.json",

"bert-base-cased-finetuned-mrpc": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-cased-finetuned-mrpc-config.json",

"bert-base-german-dbmdz-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-german-dbmdz-cased-config.json",

"bert-base-german-dbmdz-uncased": "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-german-dbmdz-uncased-config.json",

"cl-tohoku/bert-base-japanese": "https://s3.amazonaws.com/models.huggingface.co/bert/cl-tohoku/bert-base-japanese/config.json",

"cl-tohoku/bert-base-japanese-whole-word-masking": "https://s3.amazonaws.com/models.huggingface.co/bert/cl-tohoku/bert-base-japanese-whole-word-masking/config.json",

"cl-tohoku/bert-base-japanese-char": "https://s3.amazonaws.com/models.huggingface.co/bert/cl-tohoku/bert-base-japanese-char/config.json",

"cl-tohoku/bert-base-japanese-char-whole-word-masking": "https://s3.amazonaws.com/models.huggingface.co/bert/cl-tohoku/bert-base-japanese-char-whole-word-masking/config.json",

"TurkuNLP/bert-base-finnish-cased-v1": "https://s3.amazonaws.com/models.huggingface.co/bert/TurkuNLP/bert-base-finnish-cased-v1/config.json",

"TurkuNLP/bert-base-finnish-uncased-v1": "https://s3.amazonaws.com/models.huggingface.co/bert/TurkuNLP/bert-base-finnish-uncased-v1/config.json",

"wietsedv/bert-base-dutch-cased": "https://s3.amazonaws.com/models.huggingface.co/bert/wietsedv/bert-base-dutch-cased/config.json",

# See all BERT models at https://huggingface.co/models?filter=bert

}

class BertConfig(PretrainedConfig):

r"""

This is the configuration class to store the configuration of a :class:`~transformers.BertModel`.

It is used to instantiate an BERT model according to the specified arguments, defining the model

architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of

the BERT `bert-base-uncased `__ architecture.

Configuration objects inherit from :class:`~transformers.PretrainedConfig` and can be used

to control the model outputs. Read the documentation from :class:`~transformers.PretrainedConfig`

for more information.

Args:

vocab_size (:obj:`int`, optional, defaults to 30522):

Vocabulary size of the BERT model. Defines the different tokens that

can be represented by the `inputs_ids` passed to the forward method of :class:`~transformers.BertModel`.

hidden_size (:obj:`int`, optional, defaults to 768):

Dimensionality of the encoder layers and the pooler layer.

num_hidden_layers (:obj:`int`, optional, defaults to 12):

Number of hidden layers in the Transformer encoder.

num_attention_heads (:obj:`int`, optional, defaults to 12):

Number of attention heads for each attention layer in the Transformer encoder.

intermediate_size (:obj:`int`, optional, defaults to 3072):

Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Transformer encoder.

hidden_act (:obj:`str` or :obj:`function`, optional, defaults to "gelu"):

The non-linear activation function (function or string) in the encoder and pooler.

If string, "gelu", "relu", "swish" and "gelu_new" are supported.

hidden_dropout_prob (:obj:`float`, optional, defaults to 0.1):

The dropout probabilitiy for all fully connected layers in the embeddings, encoder, and pooler.

attention_probs_dropout_prob (:obj:`float`, optional, defaults to 0.1):

The dropout ratio for the attention probabilities.

max_position_embeddings (:obj:`int`, optional, defaults to 512):

The maximum sequence length that this model might ever be used with.

Typically set this to something large just in case (e.g., 512 or 1024 or 2048).

type_vocab_size (:obj:`int`, optional, defaults to 2):

The vocabulary size of the `token_type_ids` passed into :class:`~transformers.BertModel`.

initializer_range (:obj:`float`, optional, defaults to 0.02):

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

layer_norm_eps (:obj:`float`, optional, defaults to 1e-12):

The epsilon used by the layer normalization layers.

gradient_checkpointing (:obj:`bool`, optional, defaults to False):

If True, use gradient checkpointing to save memory at the expense of slower backward pass.

Example::

>>> from transformers import BertModel, BertConfig

>>> # Initializing a BERT bert-base-uncased style configuration

>>> configuration = BertConfig()

>>> # Initializing a model from the bert-base-uncased style configuration

>>> model = BertModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.config

"""

model_type = "bert"

def __init__(

self,

vocab_size=30522,

hidden_size=768,

num_hidden_layers=12,

num_attention_heads=12,

intermediate_size=3072,

hidden_act="gelu",

hidden_dropout_prob=0.1,

attention_probs_dropout_prob=0.1,

max_position_embeddings=512,

type_vocab_size=2,

initializer_range=0.02,

layer_norm_eps=1e-12,

pad_token_id=0,

gradient_checkpointing=False,

**kwargs

):

super().__init__(pad_token_id=pad_token_id, **kwargs)

self.vocab_size = vocab_size

self.hidden_size = hidden_size

self.num_hidden_layers = num_hidden_layers

self.num_attention_heads = num_attention_heads

self.hidden_act = hidden_act

self.intermediate_size = intermediate_size

self.hidden_dropout_prob = hidden_dropout_prob

self.attention_probs_dropout_prob = attention_probs_dropout_prob

self.max_position_embeddings = max_position_embeddings

self.type_vocab_size = type_vocab_size

self.initializer_range = initializer_range

self.layer_norm_eps = layer_norm_eps

self.gradient_checkpointing = gradient_checkpointing

================================================

FILE: bert/configuration_utils.py

================================================

# coding=utf-8

# Copyright 2018 The Google AI Language Team Authors and The HuggingFace Inc. team.

# Copyright (c) 2018, NVIDIA CORPORATION. All rights reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

""" Configuration base class and utilities."""

import copy

import json

import logging

import os

from typing import Dict, Tuple

from .file_utils import CONFIG_NAME, cached_path, hf_bucket_url, is_remote_url

logger = logging.getLogger(__name__)

class PretrainedConfig(object):

r""" Base class for all configuration classes.

Handles a few parameters common to all models' configurations as well as methods for loading/downloading/saving configurations.

Note:

A configuration file can be loaded and saved to disk. Loading the configuration file and using this file to initialize a model does **not** load the model weights.

It only affects the model's configuration.

Class attributes (overridden by derived classes):

- ``model_type``: a string that identifies the model type, that we serialize into the JSON file, and that we use to recreate the correct object in :class:`~transformers.AutoConfig`.

Args:

finetuning_task (:obj:`string` or :obj:`None`, `optional`, defaults to :obj:`None`):

Name of the task used to fine-tune the model. This can be used when converting from an original (TensorFlow or PyTorch) checkpoint.

num_labels (:obj:`int`, `optional`, defaults to `2`):

Number of classes to use when the model is a classification model (sequences/tokens)

output_hidden_states (:obj:`bool`, `optional`, defaults to :obj:`False`):

Should the model returns all hidden-states.

output_attentions (:obj:`bool`, `optional`, defaults to :obj:`False`):

Should the model returns all attentions.

torchscript (:obj:`bool`, `optional`, defaults to :obj:`False`):

Is the model used with Torchscript (for PyTorch models).

"""

model_type: str = ""

def __init__(self, **kwargs):

# Attributes with defaults

self.output_hidden_states = kwargs.pop("output_hidden_states", False)

self.output_attentions = kwargs.pop("output_attentions", False)

self.use_cache = kwargs.pop("use_cache", True) # Not used by all models

self.torchscript = kwargs.pop("torchscript", False) # Only used by PyTorch models

self.use_bfloat16 = kwargs.pop("use_bfloat16", False)

self.pruned_heads = kwargs.pop("pruned_heads", {})

# Is decoder is used in encoder-decoder models to differentiate encoder from decoder

self.is_encoder_decoder = kwargs.pop("is_encoder_decoder", False)

self.is_decoder = kwargs.pop("is_decoder", False)

# Parameters for sequence generation

self.max_length = kwargs.pop("max_length", 20)

self.min_length = kwargs.pop("min_length", 0)

self.do_sample = kwargs.pop("do_sample", False)

self.early_stopping = kwargs.pop("early_stopping", False)

self.num_beams = kwargs.pop("num_beams", 1)

self.temperature = kwargs.pop("temperature", 1.0)

self.top_k = kwargs.pop("top_k", 50)

self.top_p = kwargs.pop("top_p", 1.0)

self.repetition_penalty = kwargs.pop("repetition_penalty", 1.0)

self.length_penalty = kwargs.pop("length_penalty", 1.0)

self.no_repeat_ngram_size = kwargs.pop("no_repeat_ngram_size", 0)

self.bad_words_ids = kwargs.pop("bad_words_ids", None)

self.num_return_sequences = kwargs.pop("num_return_sequences", 1)

# Fine-tuning task arguments

self.architectures = kwargs.pop("architectures", None)

self.finetuning_task = kwargs.pop("finetuning_task", None)

self.id2label = kwargs.pop("id2label", None)

self.label2id = kwargs.pop("label2id", None)

if self.id2label is not None:

kwargs.pop("num_labels", None)

self.id2label = dict((int(key), value) for key, value in self.id2label.items())

# Keys are always strings in JSON so convert ids to int here.

else:

self.num_labels = kwargs.pop("num_labels", 2)

# Tokenizer arguments TODO: eventually tokenizer and models should share the same config

self.prefix = kwargs.pop("prefix", None)

self.bos_token_id = kwargs.pop("bos_token_id", None)

self.pad_token_id = kwargs.pop("pad_token_id", None)

self.eos_token_id = kwargs.pop("eos_token_id", None)

self.decoder_start_token_id = kwargs.pop("decoder_start_token_id", None)

# task specific arguments

self.task_specific_params = kwargs.pop("task_specific_params", None)

# TPU arguments

self.xla_device = kwargs.pop("xla_device", None)

# Additional attributes without default values

for key, value in kwargs.items():

try:

setattr(self, key, value)

except AttributeError as err:

logger.error("Can't set {} with value {} for {}".format(key, value, self))

raise err

@property

def num_labels(self):

return len(self.id2label)

@num_labels.setter

def num_labels(self, num_labels):

self.id2label = {i: "LABEL_{}".format(i) for i in range(num_labels)}

self.label2id = dict(zip(self.id2label.values(), self.id2label.keys()))

def save_pretrained(self, save_directory):

"""

Save a configuration object to the directory `save_directory`, so that it

can be re-loaded using the :func:`~transformers.PretrainedConfig.from_pretrained` class method.

Args:

save_directory (:obj:`string`):

Directory where the configuration JSON file will be saved.

"""

if os.path.isfile(save_directory):

raise AssertionError("Provided path ({}) should be a directory, not a file".format(save_directory))

os.makedirs(save_directory, exist_ok=True)

# If we save using the predefined names, we can load using `from_pretrained`

output_config_file = os.path.join(save_directory, CONFIG_NAME)

self.to_json_file(output_config_file, use_diff=True)

logger.info("Configuration saved in {}".format(output_config_file))

@classmethod

def from_pretrained(cls, pretrained_model_name_or_path, **kwargs) -> "PretrainedConfig":

r"""

Instantiate a :class:`~transformers.PretrainedConfig` (or a derived class) from a pre-trained model configuration.

Args:

pretrained_model_name_or_path (:obj:`string`):

either:

- a string with the `shortcut name` of a pre-trained model configuration to load from cache or

download, e.g.: ``bert-base-uncased``.

- a string with the `identifier name` of a pre-trained model configuration that was user-uploaded to

our S3, e.g.: ``dbmdz/bert-base-german-cased``.

- a path to a `directory` containing a configuration file saved using the

:func:`~transformers.PretrainedConfig.save_pretrained` method, e.g.: ``./my_model_directory/``.

- a path or url to a saved configuration JSON `file`, e.g.:

``./my_model_directory/configuration.json``.

cache_dir (:obj:`string`, `optional`):

Path to a directory in which a downloaded pre-trained model

configuration should be cached if the standard cache should not be used.

kwargs (:obj:`Dict[str, any]`, `optional`):

The values in kwargs of any keys which are configuration attributes will be used to override the loaded

values. Behavior concerning key/value pairs whose keys are *not* configuration attributes is

controlled by the `return_unused_kwargs` keyword parameter.

force_download (:obj:`bool`, `optional`, defaults to :obj:`False`):

Force to (re-)download the model weights and configuration files and override the cached versions if they exist.

resume_download (:obj:`bool`, `optional`, defaults to :obj:`False`):

Do not delete incompletely recieved file. Attempt to resume the download if such a file exists.

proxies (:obj:`Dict`, `optional`):

A dictionary of proxy servers to use by protocol or endpoint, e.g.:

:obj:`{'http': 'foo.bar:3128', 'http://hostname': 'foo.bar:4012'}.`

The proxies are used on each request.

return_unused_kwargs: (`optional`) bool:

If False, then this function returns just the final configuration object.

If True, then this functions returns a :obj:`Tuple(config, unused_kwargs)` where `unused_kwargs` is a

dictionary consisting of the key/value pairs whose keys are not configuration attributes: ie the part

of kwargs which has not been used to update `config` and is otherwise ignored.

Returns:

:class:`PretrainedConfig`: An instance of a configuration object

Examples::

# We can't instantiate directly the base class `PretrainedConfig` so let's show the examples on a

# derived class: BertConfig

config = BertConfig.from_pretrained('bert-base-uncased') # Download configuration from S3 and cache.

config = BertConfig.from_pretrained('./test/saved_model/') # E.g. config (or model) was saved using `save_pretrained('./test/saved_model/')`

config = BertConfig.from_pretrained('./test/saved_model/my_configuration.json')

config = BertConfig.from_pretrained('bert-base-uncased', output_attention=True, foo=False)

assert config.output_attention == True