")

img.show()

elif reply.type == ReplyType.IMAGE_URL: # 从网络下载图片

import io

import requests

from PIL import Image

img_url = reply.content

pic_res = requests.get(img_url, stream=True)

image_storage = io.BytesIO()

for block in pic_res.iter_content(1024):

image_storage.write(block)

image_storage.seek(0)

img = Image.open(image_storage)

print(img_url)

img.show()

else:

print(reply.content)

print("\nUser:", end="")

sys.stdout.flush()

return

def startup(self):

context = Context()

logger.setLevel("WARN")

print("\nPlease input your question:\nUser:", end="")

sys.stdout.flush()

msg_id = 0

while True:

try:

prompt = self.get_input()

except KeyboardInterrupt:

print("\nExiting...")

sys.exit()

msg_id += 1

trigger_prefixs = conf().get("single_chat_prefix", [""])

if check_prefix(prompt, trigger_prefixs) is None:

prompt = trigger_prefixs[0] + prompt # 给没触发的消息加上触发前缀

context = self._compose_context(ContextType.TEXT, prompt, msg=TerminalMessage(msg_id, prompt))

context["isgroup"] = False

if context:

self.produce(context)

else:

raise Exception("context is None")

def get_input(self):

"""

Multi-line input function

"""

sys.stdout.flush()

line = input()

return line

================================================

FILE: channel/web/README.md

================================================

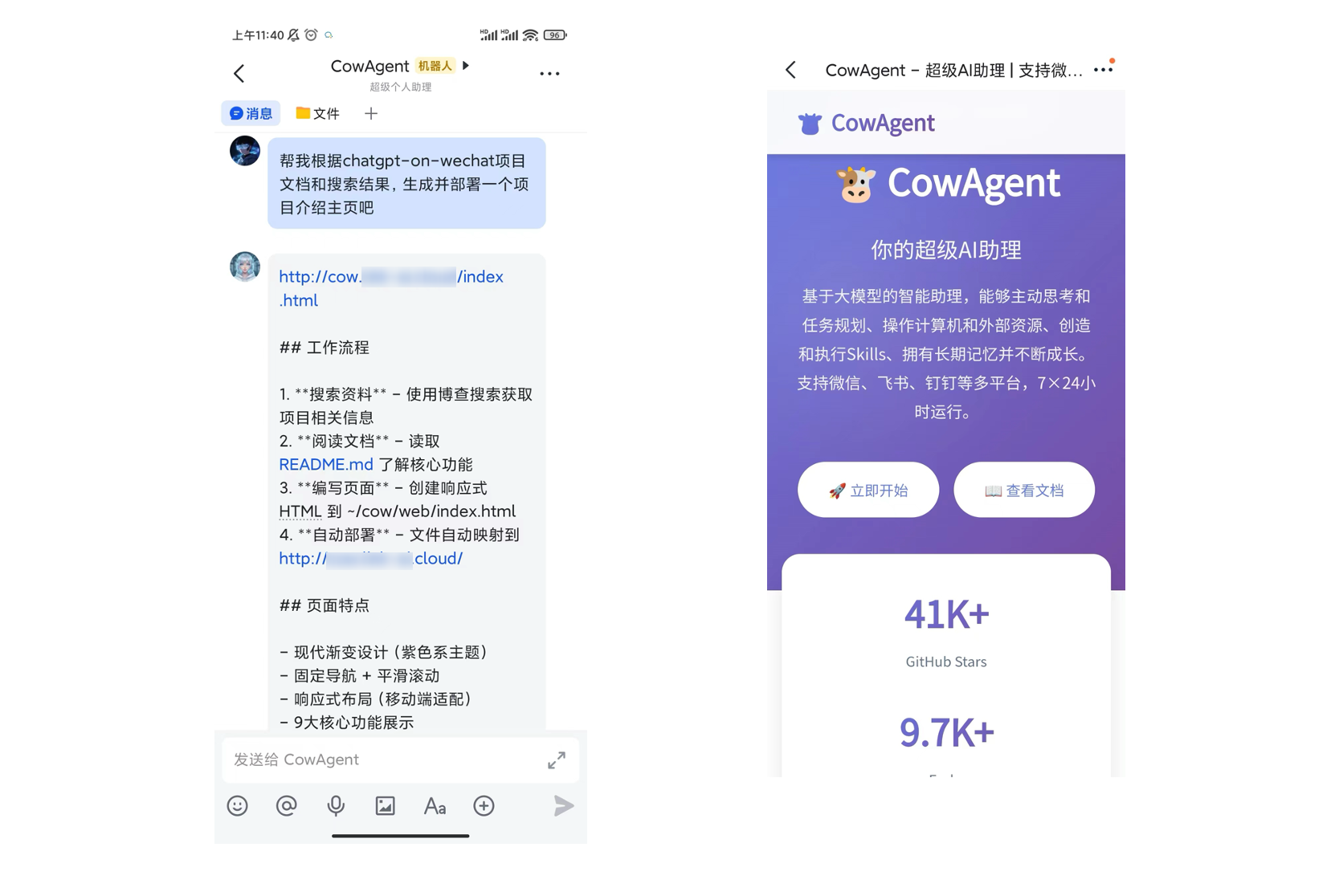

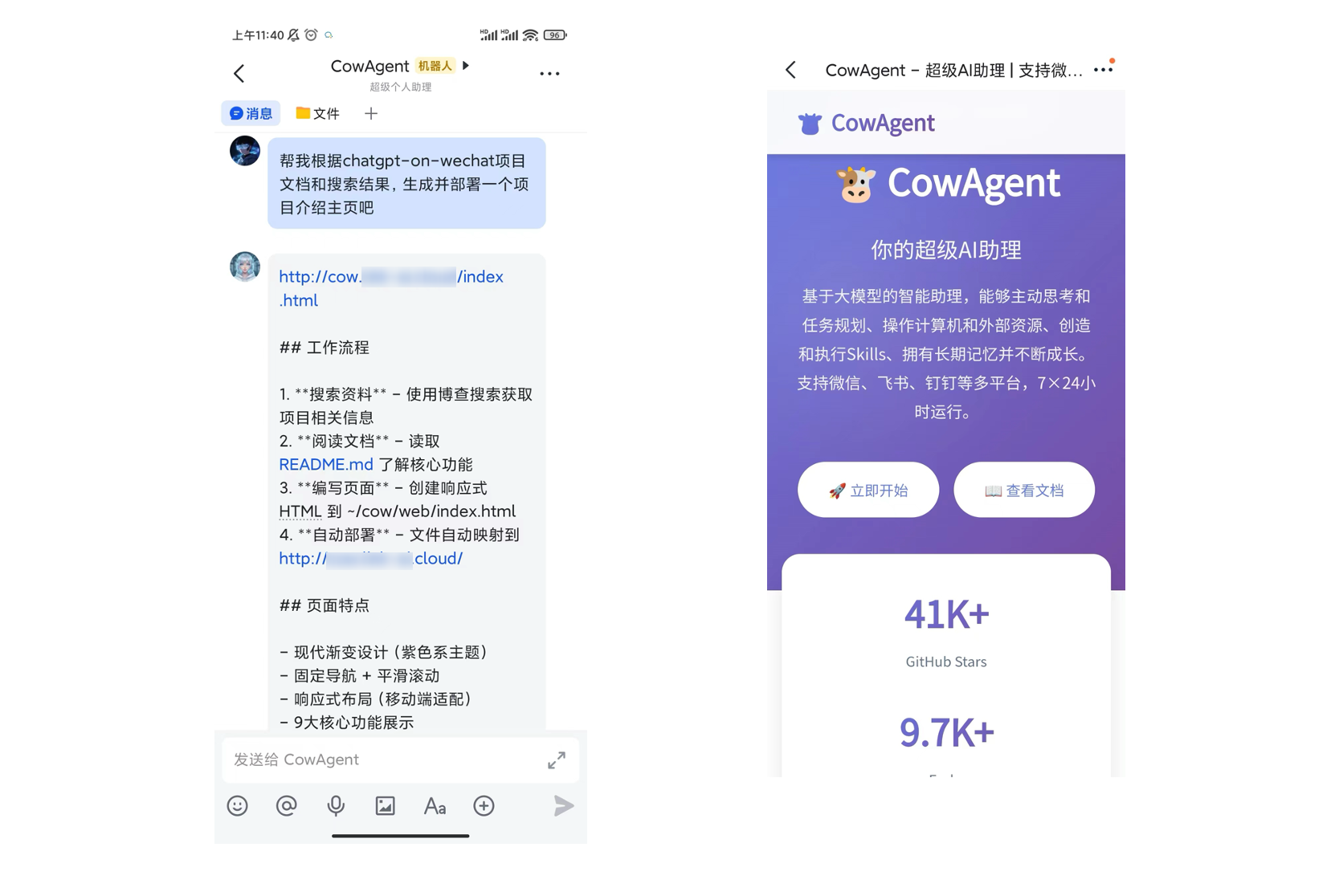

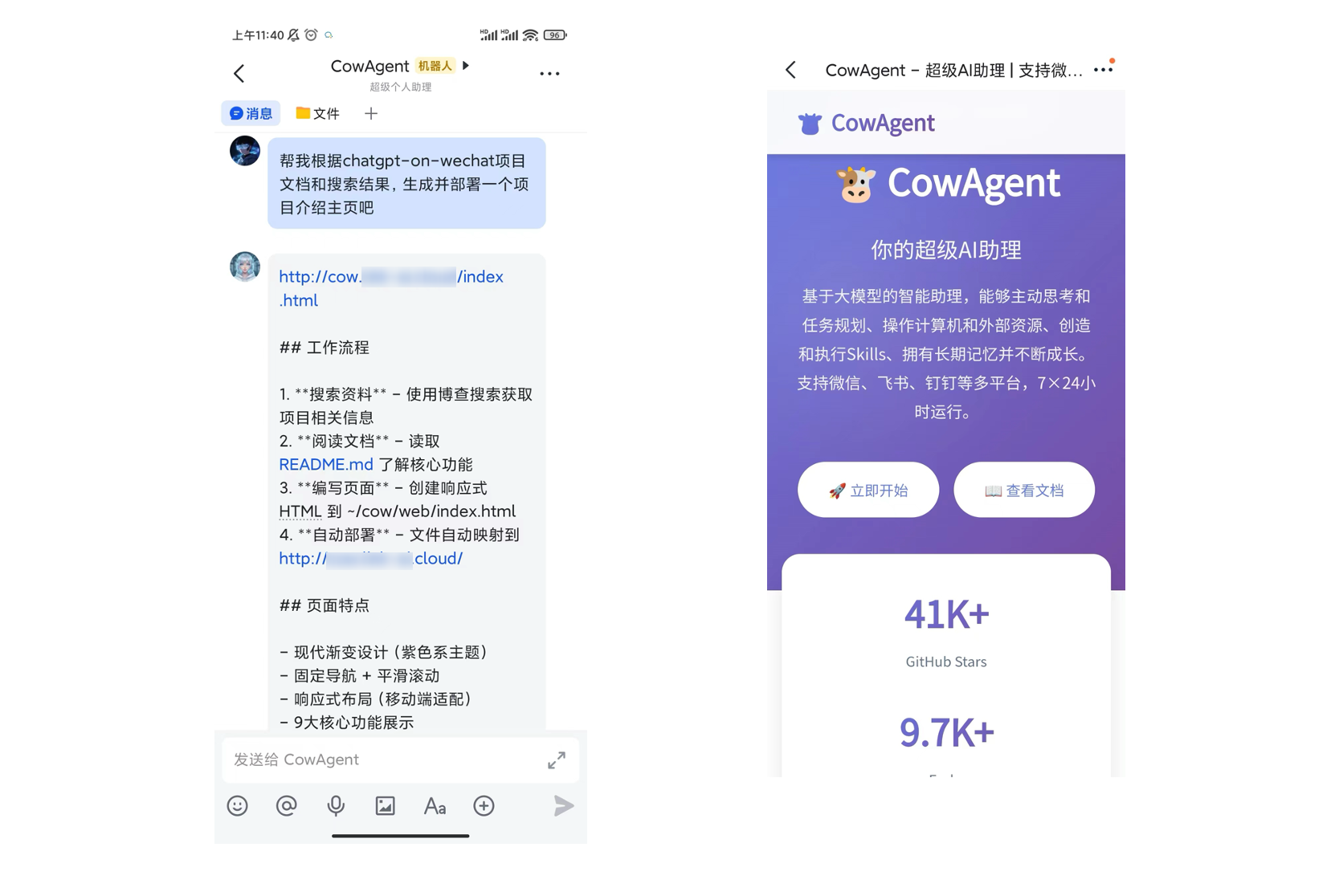

# Web Channel

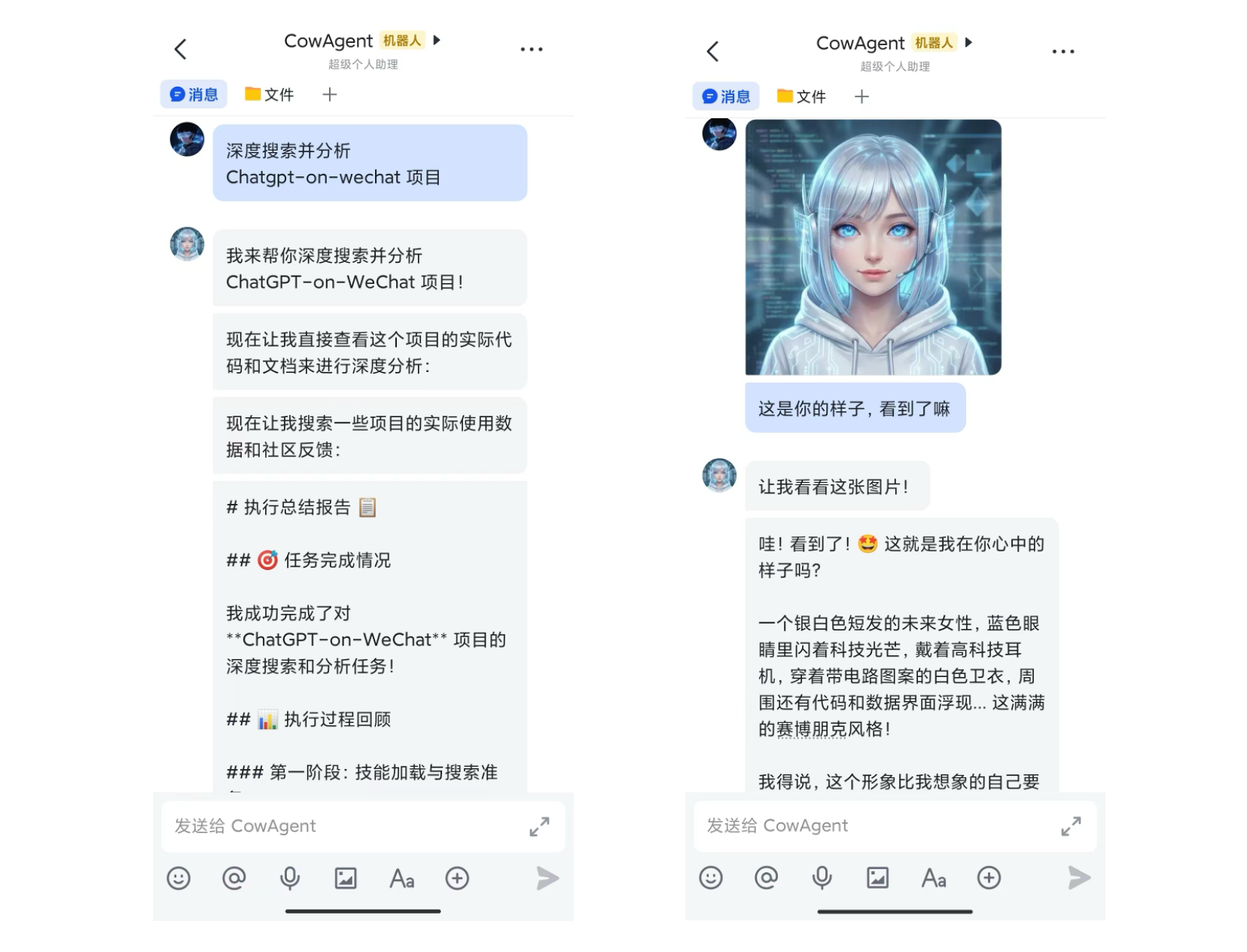

提供了一个默认的AI对话页面,可展示文本、图片等消息交互,支持markdown语法渲染,兼容插件执行。

# 使用说明

- 在 `config.json` 配置文件中的 `channel_type` 字段填入 `web`

- 程序运行后将监听9899端口,浏览器访问 http://localhost:9899/chat 即可使用

- 监听端口可以在配置文件 `web_port` 中自定义

- 对于Docker运行方式,如果需要外部访问,需要在 `docker-compose.yml` 中通过 ports配置将端口监听映射到宿主机

================================================

FILE: channel/web/chat.html

================================================

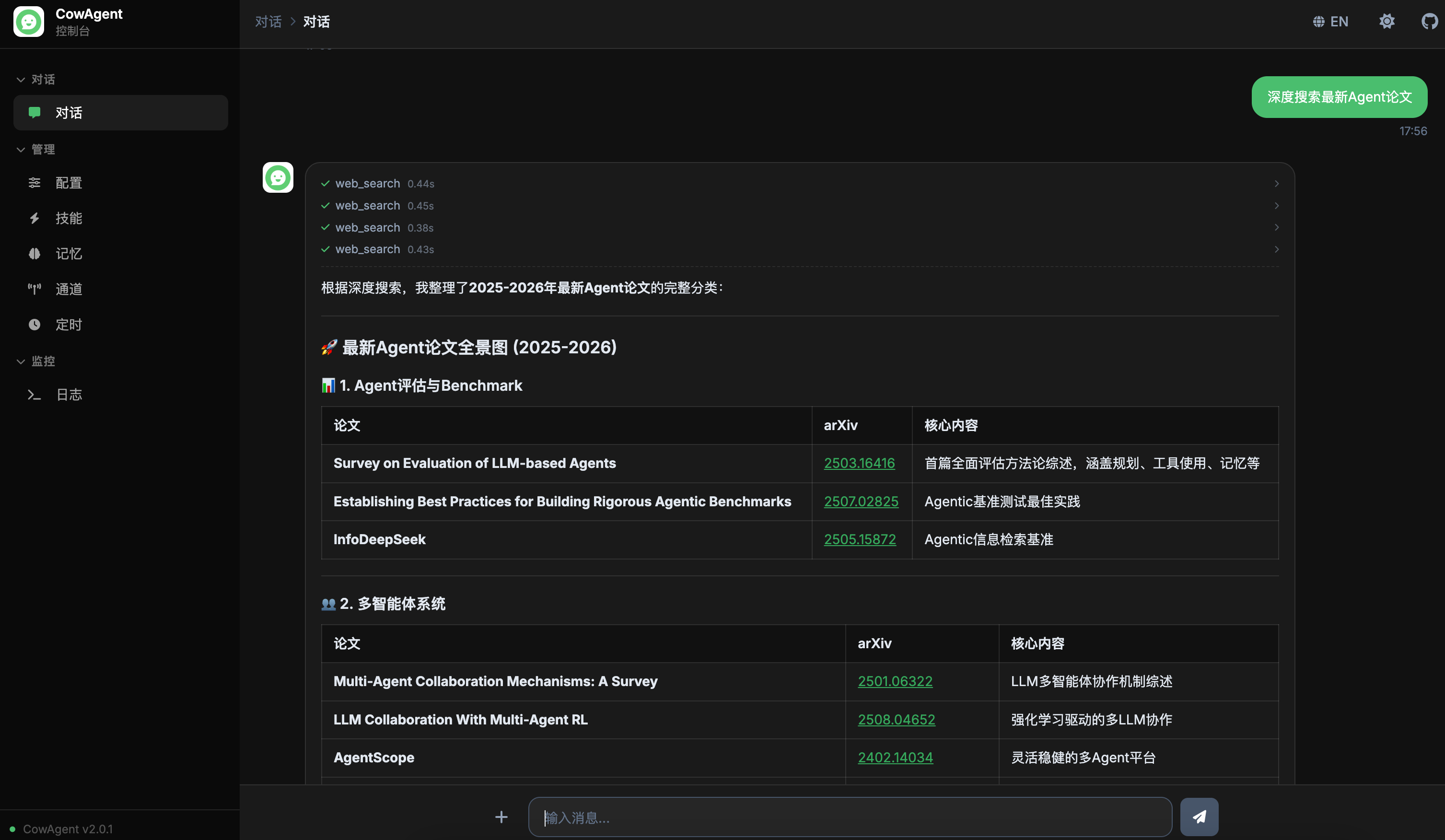

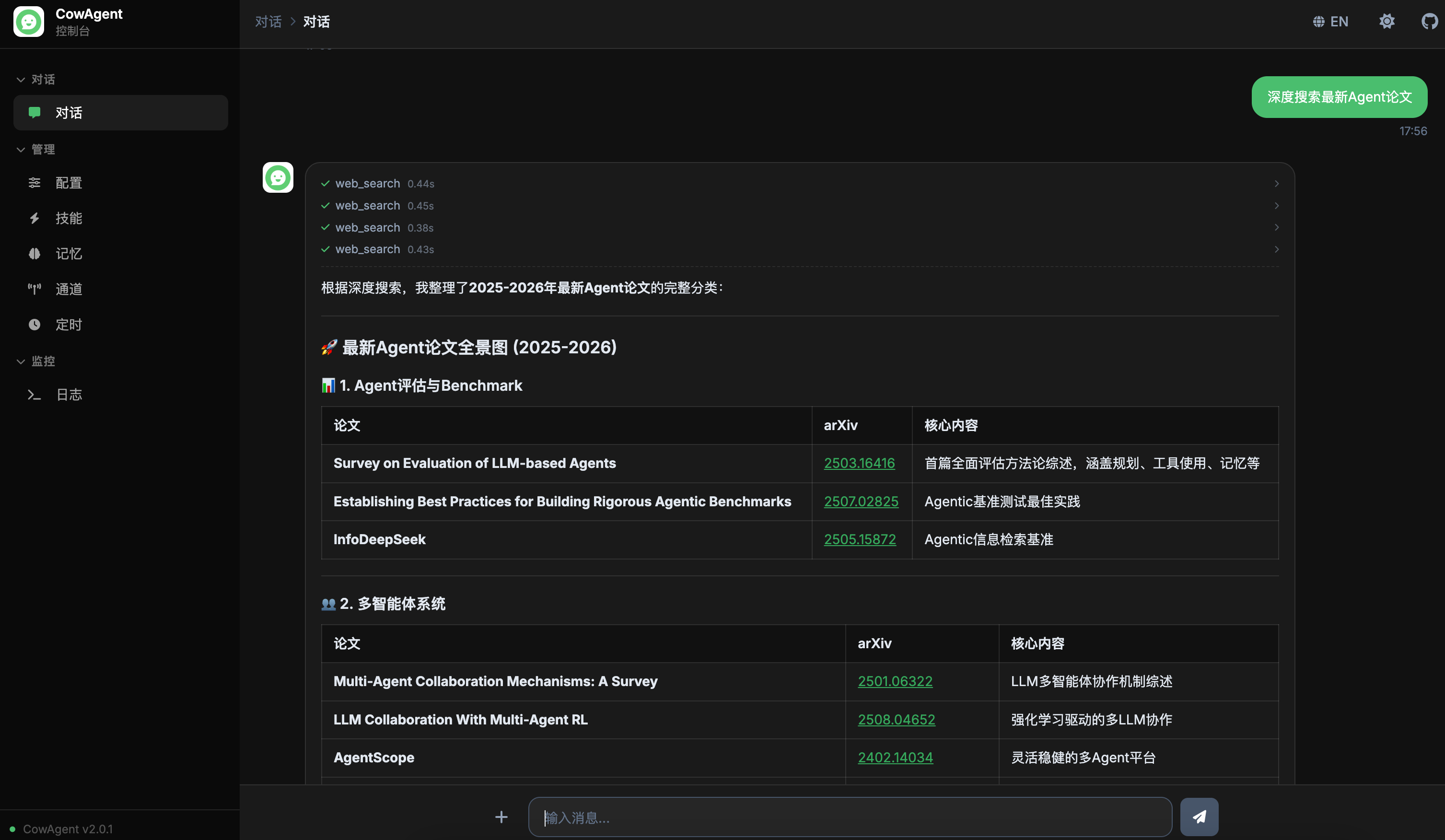

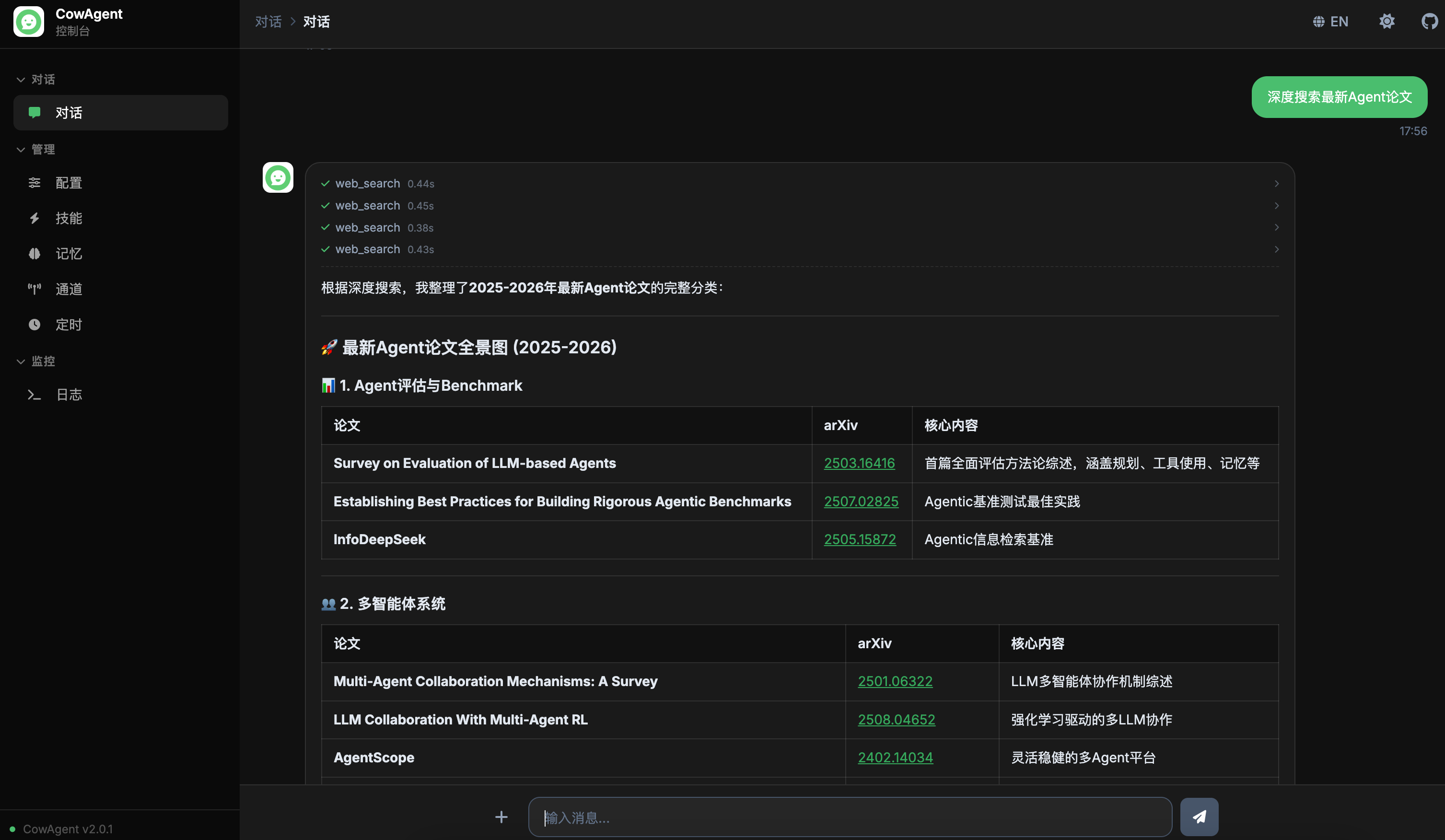

CowAgent Console

CowAgent

I can help you answer questions, manage your computer, create and execute skills,

and keep growing through long-term memory.

Show me the files in the workspace

Remind me to check the server in 5 minutes

Write a Python web scraper script

Configuration

Manage model and agent settings

Skills

View, enable, or disable agent skills

Built-in Tools

Loading tools...

Skills

Loading skills...

Skills will be displayed here after loading

Memory

View agent memory files and contents

Loading memory files...

Memory files will be displayed here

| Filename |

Type |

Size |

Updated |

Channels

View and manage messaging channels

Scheduled Tasks

View and manage scheduled tasks

Logs

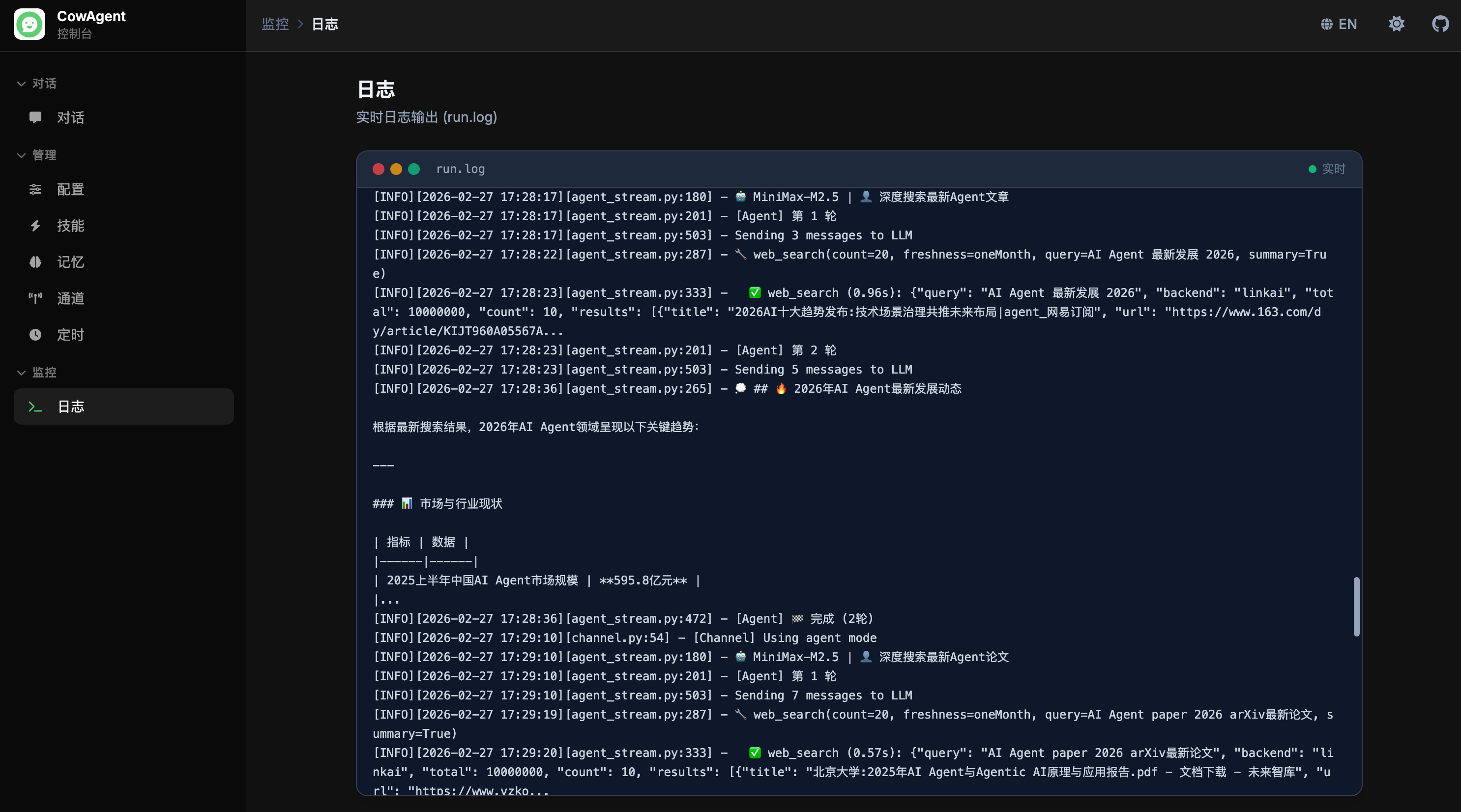

Real-time log output (run.log)

Log streaming will be available here. Connects to run.log for real-time output similar to tail -f.

================================================

FILE: channel/web/static/css/console.css

================================================

/* =====================================================================

CowAgent Console Styles

===================================================================== */

/* Animations */

@keyframes pulseDot {

0%, 80%, 100% { transform: scale(0.6); opacity: 0.4; }

40% { transform: scale(1); opacity: 1; }

}

/* Scrollbar */

* { scrollbar-width: thin; scrollbar-color: #94a3b8 transparent; }

::-webkit-scrollbar { width: 6px; height: 6px; }

::-webkit-scrollbar-track { background: transparent; }

::-webkit-scrollbar-thumb { background: #94a3b8; border-radius: 3px; }

::-webkit-scrollbar-thumb:hover { background: #64748b; }

.dark ::-webkit-scrollbar-thumb { background: #475569; }

.dark ::-webkit-scrollbar-thumb:hover { background: #64748b; }

/* Sidebar */

.sidebar-item.active {

background: rgba(255, 255, 255, 0.08);

color: #FFFFFF;

}

.sidebar-item.active .item-icon { color: #4ABE6E; }

/* Menu Groups */

.menu-group-items { max-height: 0; overflow: hidden; transition: max-height 0.25s ease-out; }

.menu-group.open .menu-group-items { max-height: 500px; transition: max-height 0.35s ease-in; }

.menu-group .chevron { transition: transform 0.25s ease; }

.menu-group.open .chevron { transform: rotate(90deg); }

/* View Switching */

.view { display: none; height: 100%; }

.view.active { display: flex; flex-direction: column; }

/* Markdown Content */

.msg-content p { margin: 0.5em 0; line-height: 1.7; }

.msg-content p:first-child { margin-top: 0; }

.msg-content p:last-child { margin-bottom: 0; }

.msg-content h1, .msg-content h2, .msg-content h3,

.msg-content h4, .msg-content h5, .msg-content h6 {

margin-top: 1.2em; margin-bottom: 0.6em; font-weight: 600; line-height: 1.3;

}

.msg-content h1 { font-size: 1.4em; }

.msg-content h2 { font-size: 1.25em; }

.msg-content h3 { font-size: 1.1em; }

.msg-content ul, .msg-content ol { margin: 0.5em 0; padding-left: 1.8em; }

.msg-content li { margin: 0.25em 0; }

.msg-content pre {

border-radius: 8px; overflow-x: auto; margin: 0.8em 0;

background: #f1f5f9; padding: 1em;

}

.dark .msg-content pre { background: #111111; }

.msg-content code {

font-family: 'JetBrains Mono', 'Fira Code', Consolas, monospace;

font-size: 0.875em;

}

.msg-content :not(pre) > code {

background: rgba(74, 190, 110, 0.1); color: #1C6B3B;

padding: 2px 6px; border-radius: 4px;

}

.dark .msg-content :not(pre) > code {

background: rgba(74, 190, 110, 0.15); color: #74E9A4;

}

.msg-content pre code { background: transparent; padding: 0; color: inherit; }

.msg-content blockquote {

border-left: 3px solid #4ABE6E; padding: 0.5em 1em;

margin: 0.8em 0; background: rgba(74, 190, 110, 0.05); border-radius: 0 6px 6px 0;

}

.dark .msg-content blockquote { background: rgba(74, 190, 110, 0.08); }

.msg-content table { border-collapse: collapse; width: 100%; margin: 0.8em 0; }

.msg-content th, .msg-content td {

border: 1px solid #e2e8f0; padding: 8px 12px; text-align: left;

}

.dark .msg-content th, .dark .msg-content td { border-color: rgba(255,255,255,0.1); }

.msg-content th { background: #f1f5f9; font-weight: 600; }

.dark .msg-content th { background: #111111; }

.msg-content img { max-width: 100%; height: auto; border-radius: 8px; margin: 0.5em 0; }

.msg-content a { color: #35A85B; text-decoration: underline; }

.msg-content a:hover { color: #228547; }

.msg-content hr { border: none; height: 1px; background: #e2e8f0; margin: 1.2em 0; }

.dark .msg-content hr { background: rgba(255,255,255,0.1); }

/* SSE Streaming cursor */

@keyframes blink { 0%, 100% { opacity: 1; } 50% { opacity: 0; } }

.sse-streaming::after {

content: '▋';

display: inline-block;

margin-left: 2px;

color: #4ABE6E;

animation: blink 0.9s step-end infinite;

font-size: 0.85em;

vertical-align: middle;

}

/* Agent steps (thinking summaries + tool indicators) */

.agent-steps:empty { display: none; }

.agent-steps:not(:empty) {

margin-bottom: 0.625rem;

padding-bottom: 0.5rem;

border-bottom: 1px dashed rgba(0, 0, 0, 0.08);

}

.dark .agent-steps:not(:empty) { border-bottom-color: rgba(255, 255, 255, 0.08); }

.agent-step {

font-size: 0.75rem;

line-height: 1.4;

color: #94a3b8;

margin-bottom: 0.25rem;

}

.agent-step:last-child { margin-bottom: 0; }

/* Thinking step - collapsible */

.agent-thinking-step .thinking-header {

display: flex;

align-items: center;

gap: 0.375rem;

cursor: pointer;

user-select: none;

}

.agent-thinking-step .thinking-header.no-toggle { cursor: default; }

.agent-thinking-step .thinking-header:not(.no-toggle):hover { color: #64748b; }

.dark .agent-thinking-step .thinking-header:not(.no-toggle):hover { color: #cbd5e1; }

.agent-thinking-step .thinking-header i:first-child { font-size: 0.625rem; margin-top: 1px; }

.agent-thinking-step .thinking-chevron {

font-size: 0.5rem;

margin-left: auto;

transition: transform 0.2s ease;

opacity: 0.5;

}

.agent-thinking-step.expanded .thinking-chevron { transform: rotate(90deg); }

.agent-thinking-step .thinking-full {

display: none;

margin-top: 0.375rem;

margin-left: 1rem;

padding: 0.5rem;

background: rgba(0, 0, 0, 0.02);

border-radius: 6px;

border: 1px solid rgba(0, 0, 0, 0.04);

font-size: 0.75rem;

line-height: 1.5;

color: #94a3b8;

max-height: 200px;

overflow-y: auto;

}

.dark .agent-thinking-step .thinking-full {

background: rgba(255, 255, 255, 0.02);

border-color: rgba(255, 255, 255, 0.04);

}

.agent-thinking-step.expanded .thinking-full { display: block; }

.agent-thinking-step .thinking-full p { margin: 0.25em 0; }

.agent-thinking-step .thinking-full p:first-child { margin-top: 0; }

.agent-thinking-step .thinking-full p:last-child { margin-bottom: 0; }

/* Tool step - collapsible */

.agent-tool-step .tool-header {

display: flex;

align-items: center;

gap: 0.375rem;

cursor: pointer;

user-select: none;

padding: 1px 0;

border-radius: 4px;

}

.agent-tool-step .tool-header:hover { color: #64748b; }

.dark .agent-tool-step .tool-header:hover { color: #cbd5e1; }

.agent-tool-step .tool-icon { font-size: 0.625rem; }

.agent-tool-step .tool-chevron {

font-size: 0.5rem;

margin-left: auto;

transition: transform 0.2s ease;

opacity: 0.5;

}

.agent-tool-step.expanded .tool-chevron { transform: rotate(90deg); }

.agent-tool-step .tool-time {

font-size: 0.65rem;

opacity: 0.6;

margin-left: 0.25rem;

}

/* Tool detail panel */

.agent-tool-step .tool-detail {

display: none;

margin-top: 0.375rem;

margin-left: 1rem;

padding: 0.5rem;

background: rgba(0, 0, 0, 0.02);

border-radius: 6px;

border: 1px solid rgba(0, 0, 0, 0.04);

}

.dark .agent-tool-step .tool-detail {

background: rgba(255, 255, 255, 0.02);

border-color: rgba(255, 255, 255, 0.04);

}

.agent-tool-step.expanded .tool-detail { display: block; }

.tool-detail-section { margin-bottom: 0.375rem; }

.tool-detail-section:last-child { margin-bottom: 0; }

.tool-detail-label {

font-size: 0.625rem;

font-weight: 600;

text-transform: uppercase;

letter-spacing: 0.05em;

opacity: 0.6;

margin-bottom: 0.125rem;

}

.tool-detail-content {

font-family: 'JetBrains Mono', 'Fira Code', Consolas, monospace;

font-size: 0.7rem;

line-height: 1.5;

white-space: pre-wrap;

word-break: break-all;

max-height: 200px;

overflow-y: auto;

margin: 0;

padding: 0.25rem 0;

background: transparent;

color: inherit;

}

.tool-error-text { color: #f87171; }

/* Tool failed state */

.agent-tool-step.tool-failed .tool-name { color: #f87171; }

/* Config form controls */

#view-config input[type="text"],

#view-config input[type="number"],

#view-config input[type="password"] {

height: 40px;

transition: border-color 0.2s ease, box-shadow 0.2s ease;

}

#view-config input:focus {

border-color: #4ABE6E;

box-shadow: 0 0 0 3px rgba(74, 190, 110, 0.12);

}

#view-config input[type="text"]:hover,

#view-config input[type="number"]:hover,

#view-config input[type="password"]:hover {

border-color: #94a3b8;

}

.dark #view-config input[type="text"]:hover,

.dark #view-config input[type="number"]:hover,

.dark #view-config input[type="password"]:hover {

border-color: #64748b;

}

/* Custom dropdown */

.cfg-dropdown {

position: relative;

outline: none;

}

.cfg-dropdown-selected {

display: flex;

align-items: center;

justify-content: space-between;

height: 40px;

padding: 0 0.75rem;

border-radius: 0.5rem;

border: 1px solid #e2e8f0;

background: #f8fafc;

font-size: 0.875rem;

color: #1e293b;

cursor: pointer;

transition: border-color 0.2s ease, box-shadow 0.2s ease;

user-select: none;

}

.dark .cfg-dropdown-selected {

border-color: #475569;

background: rgba(255, 255, 255, 0.05);

color: #f1f5f9;

}

.cfg-dropdown-selected:hover { border-color: #94a3b8; }

.dark .cfg-dropdown-selected:hover { border-color: #64748b; }

.cfg-dropdown.open .cfg-dropdown-selected,

.cfg-dropdown:focus .cfg-dropdown-selected {

border-color: #4ABE6E;

box-shadow: 0 0 0 3px rgba(74, 190, 110, 0.12);

}

.cfg-dropdown-arrow {

font-size: 0.625rem;

color: #94a3b8;

transition: transform 0.2s ease;

flex-shrink: 0;

margin-left: 0.5rem;

}

.cfg-dropdown.open .cfg-dropdown-arrow { transform: rotate(180deg); }

.cfg-dropdown-menu {

display: none;

position: absolute;

top: calc(100% + 4px);

left: 0;

right: 0;

z-index: 50;

max-height: 240px;

overflow-y: auto;

border-radius: 0.5rem;

border: 1px solid #e2e8f0;

background: #ffffff;

box-shadow: 0 10px 25px -5px rgba(0, 0, 0, 0.1), 0 4px 10px -5px rgba(0, 0, 0, 0.04);

padding: 4px;

}

.dark .cfg-dropdown-menu {

border-color: #334155;

background: #1e1e1e;

box-shadow: 0 10px 25px -5px rgba(0, 0, 0, 0.4);

}

.cfg-dropdown.open .cfg-dropdown-menu { display: block; }

.cfg-dropdown-item {

display: flex;

align-items: center;

padding: 8px 10px;

border-radius: 6px;

font-size: 0.875rem;

color: #334155;

cursor: pointer;

transition: background 0.15s ease;

white-space: nowrap;

overflow: hidden;

text-overflow: ellipsis;

}

.dark .cfg-dropdown-item { color: #cbd5e1; }

.cfg-dropdown-item:hover { background: #f1f5f9; }

.dark .cfg-dropdown-item:hover { background: rgba(255, 255, 255, 0.08); }

.cfg-dropdown-item.active {

background: rgba(74, 190, 110, 0.1);

color: #228547;

font-weight: 500;

}

.dark .cfg-dropdown-item.active {

background: rgba(74, 190, 110, 0.15);

color: #74E9A4;

}

/* API Key masking via CSS (avoids browser password prompts) */

.cfg-key-masked {

-webkit-text-security: disc;

text-security: disc;

}

/* Chat Input */

#chat-input {

resize: none; height: 42px; max-height: 180px;

overflow-y: hidden;

transition: border-color 0.2s ease;

}

/* Attachment Preview Bar */

.attachment-preview {

display: flex;

flex-wrap: wrap;

gap: 8px;

padding: 8px 0;

}

.attachment-preview.hidden { display: none; }

.att-thumb {

position: relative;

width: 64px; height: 64px;

border-radius: 8px;

overflow: hidden;

border: 1px solid #e2e8f0;

flex-shrink: 0;

}

.dark .att-thumb { border-color: rgba(255,255,255,0.1); }

.att-thumb img {

width: 100%; height: 100%;

object-fit: cover;

}

.att-chip {

position: relative;

display: flex;

align-items: center;

gap: 6px;

padding: 6px 28px 6px 10px;

border-radius: 8px;

background: #f1f5f9;

border: 1px solid #e2e8f0;

font-size: 12px;

color: #475569;

max-width: 180px;

}

.dark .att-chip { background: rgba(255,255,255,0.05); border-color: rgba(255,255,255,0.1); color: #94a3b8; }

.att-uploading { opacity: 0.6; pointer-events: none; }

.att-name {

overflow: hidden;

text-overflow: ellipsis;

white-space: nowrap;

}

.att-remove {

position: absolute;

top: -4px; right: -4px;

width: 18px; height: 18px;

border-radius: 50%;

background: #ef4444;

color: #fff;

border: none;

font-size: 12px;

line-height: 18px;

text-align: center;

cursor: pointer;

padding: 0;

opacity: 0;

transition: opacity 0.15s;

}

.att-thumb:hover .att-remove,

.att-chip:hover .att-remove { opacity: 1; }

/* Drag-over highlight */

.drag-over {

background: rgba(74, 190, 110, 0.08) !important;

border-color: #4ABE6E !important;

}

/* User message attachments */

.user-msg-attachments {

display: flex;

flex-wrap: wrap;

gap: 6px;

margin-bottom: 6px;

}

.user-msg-image {

max-width: 200px;

max-height: 160px;

border-radius: 8px;

object-fit: cover;

cursor: pointer;

}

.user-msg-image:hover { opacity: 0.9; }

.user-msg-file {

display: flex;

align-items: center;

gap: 6px;

padding: 4px 10px;

border-radius: 6px;

background: rgba(255,255,255,0.15);

font-size: 12px;

}

/* Placeholder Cards */

.placeholder-card {

transition: transform 0.2s ease, box-shadow 0.2s ease;

}

.placeholder-card:hover {

transform: translateY(-2px);

box-shadow: 0 8px 25px -5px rgba(0, 0, 0, 0.1);

}

================================================

FILE: channel/web/static/js/console.js

================================================

/* =====================================================================

CowAgent Console - Main Application Script

===================================================================== */

// =====================================================================

// Version — update this before each release

// =====================================================================

const APP_VERSION = 'v2.0.3';

// =====================================================================

// i18n

// =====================================================================

const I18N = {

zh: {

console: '控制台',

nav_chat: '对话', nav_manage: '管理', nav_monitor: '监控',

menu_chat: '对话', menu_config: '配置', menu_skills: '技能',

menu_memory: '记忆', menu_channels: '通道', menu_tasks: '定时',

menu_logs: '日志',

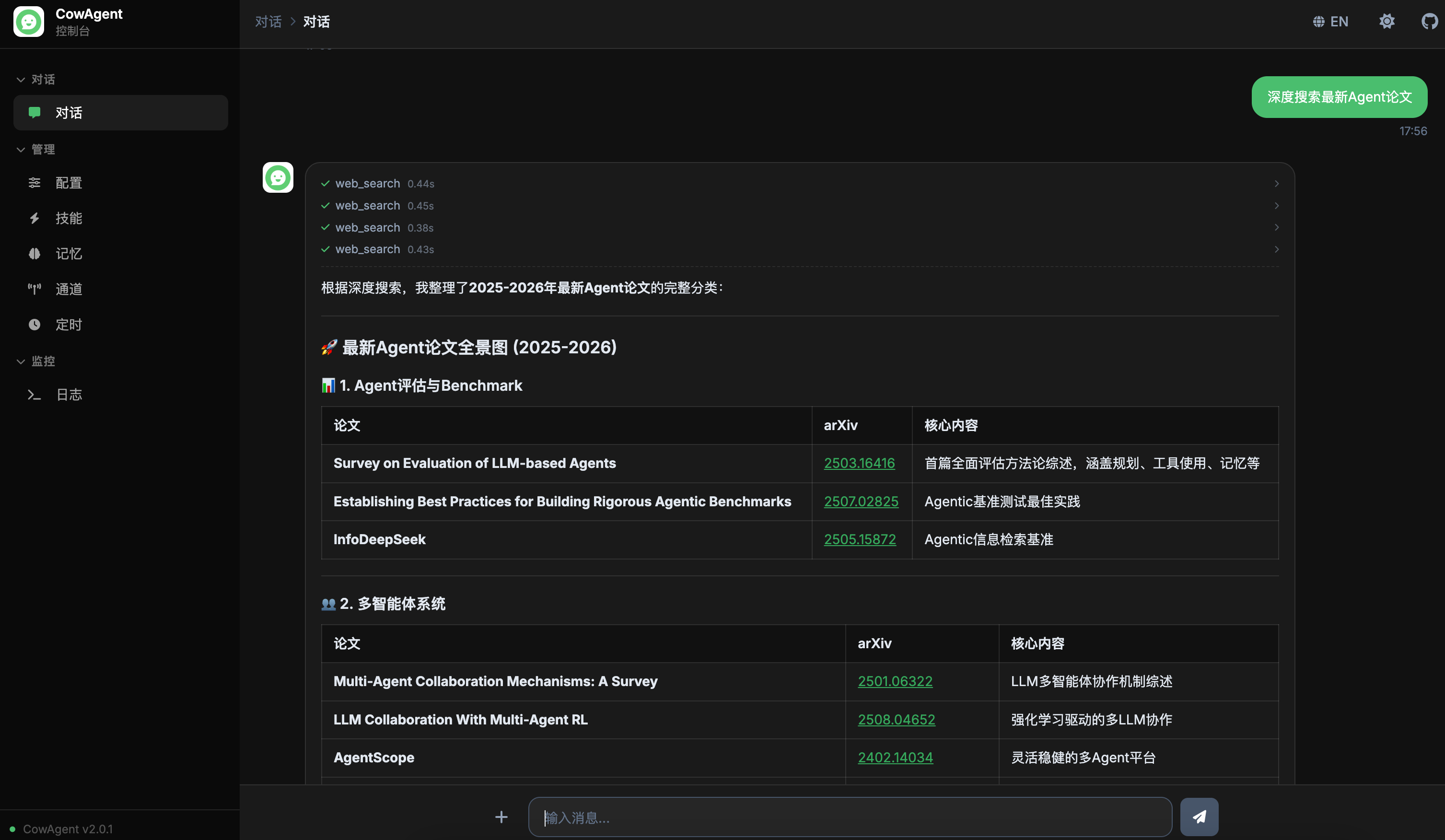

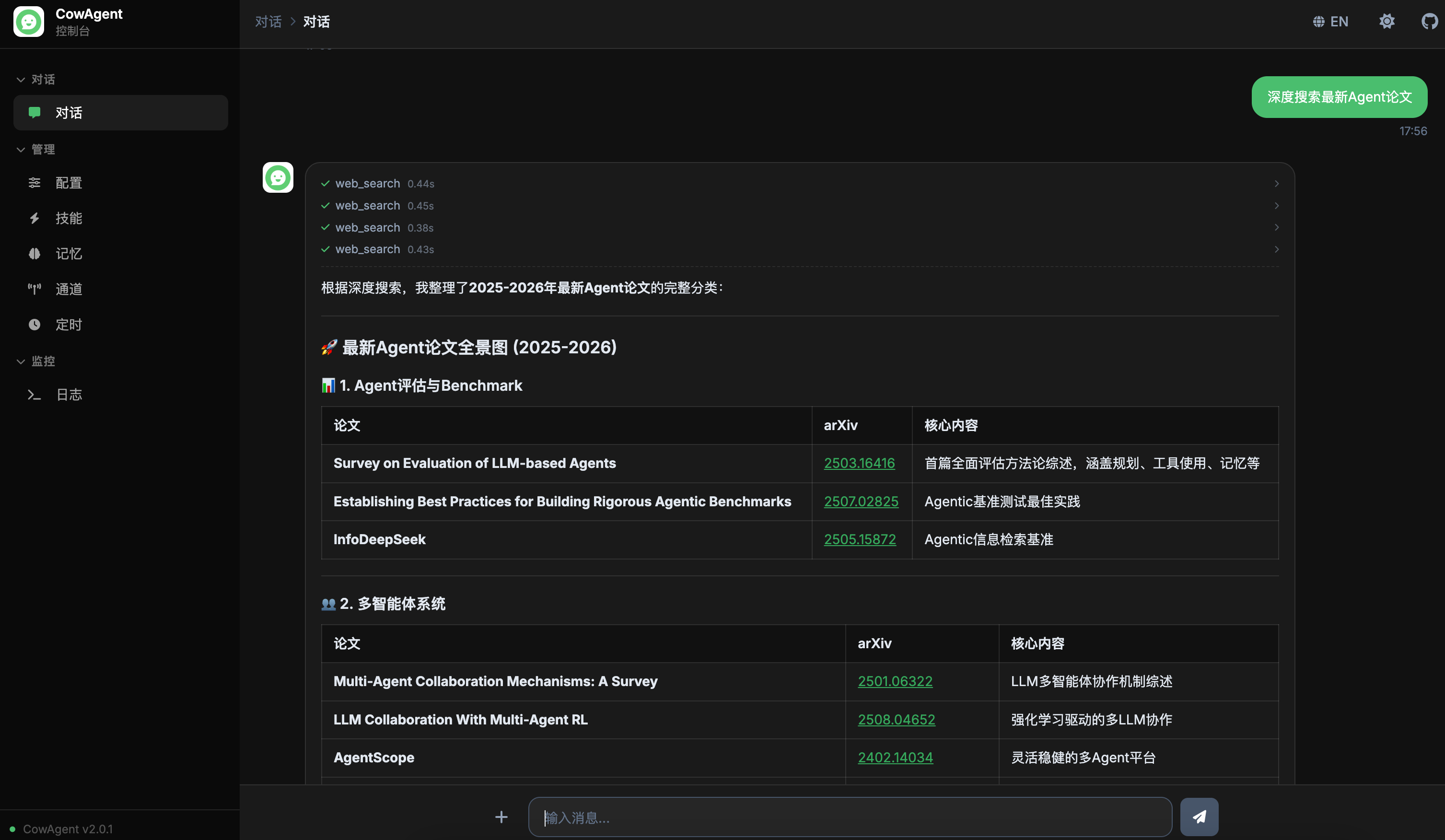

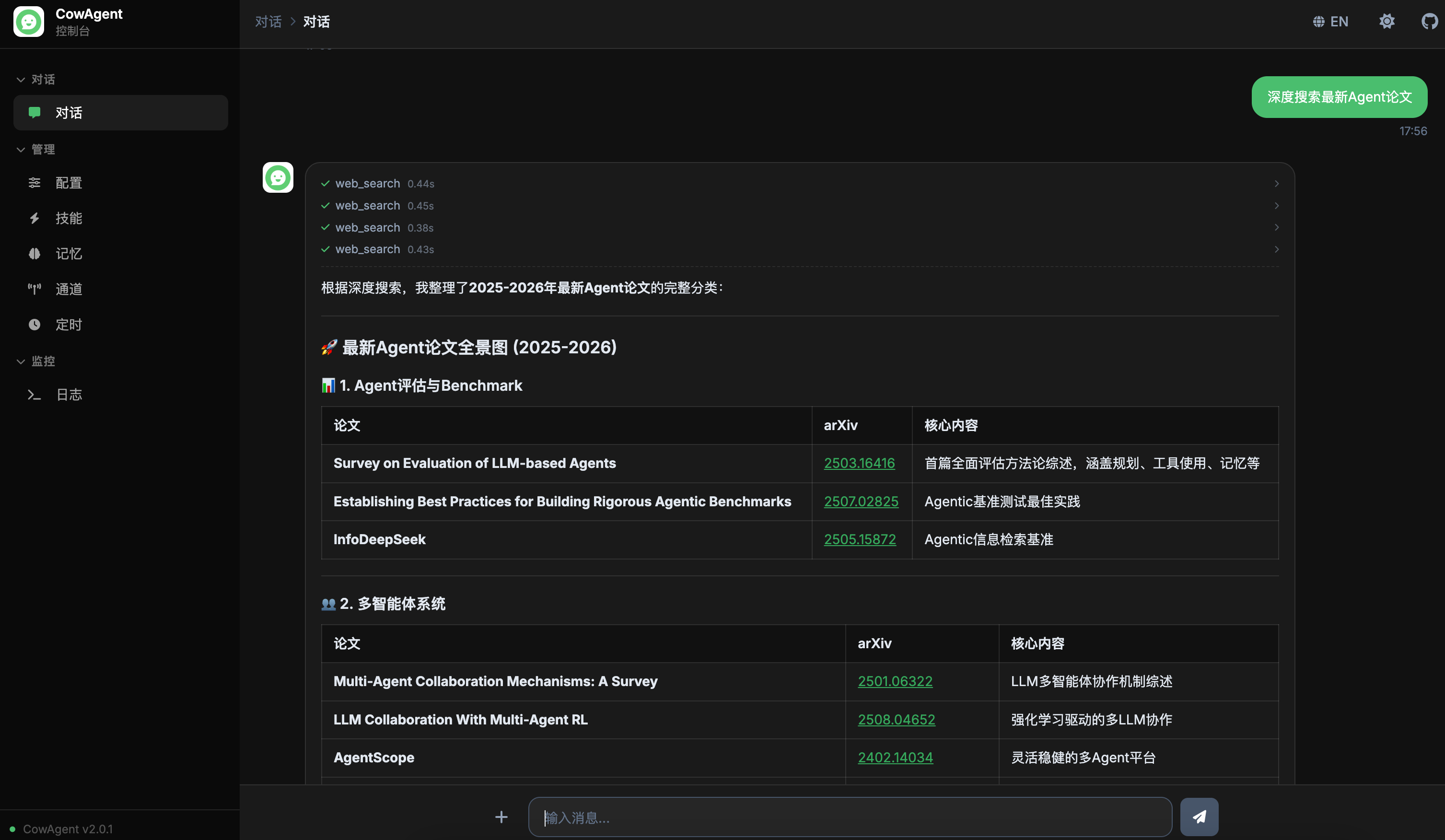

welcome_subtitle: '我可以帮你解答问题、管理计算机、创造和执行技能,并通过长期记忆

不断成长',

example_sys_title: '系统管理', example_sys_text: '帮我查看工作空间里有哪些文件',

example_task_title: '技能系统', example_task_text: '查看所有支持的工具和技能',

example_code_title: '编程助手', example_code_text: '帮我编写一个Python爬虫脚本',

input_placeholder: '输入消息...',

config_title: '配置管理', config_desc: '管理模型和 Agent 配置',

config_model: '模型配置', config_agent: 'Agent 配置',

config_channel: '通道配置',

config_agent_enabled: 'Agent 模式', config_max_tokens: '最大 Token',

config_max_turns: '最大轮次', config_max_steps: '最大步数',

config_channel_type: '通道类型',

config_provider: '模型厂商', config_model_name: '模型',

config_custom_model_hint: '输入自定义模型名称',

config_save: '保存', config_saved: '已保存',

config_save_error: '保存失败',

config_custom_option: '自定义...',

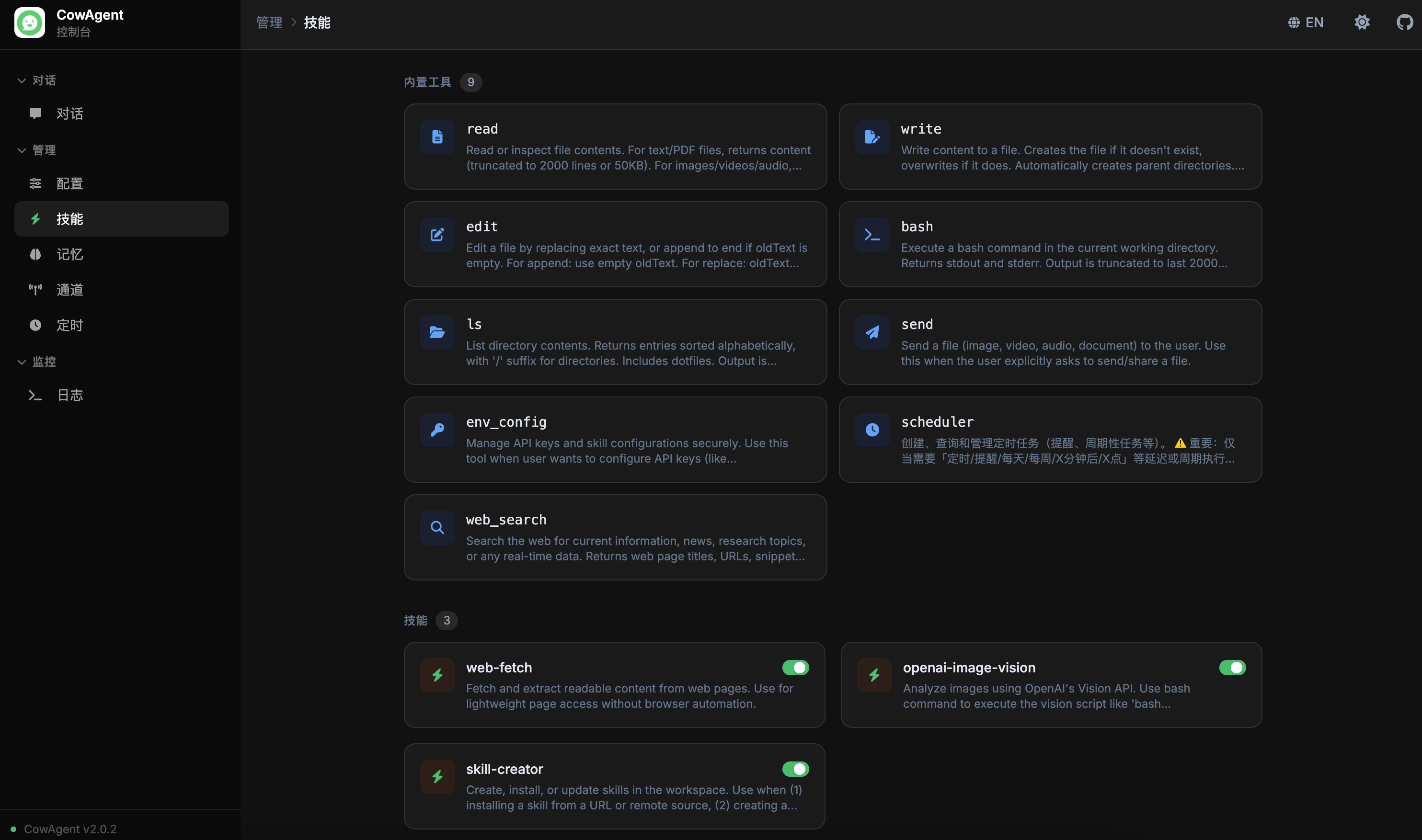

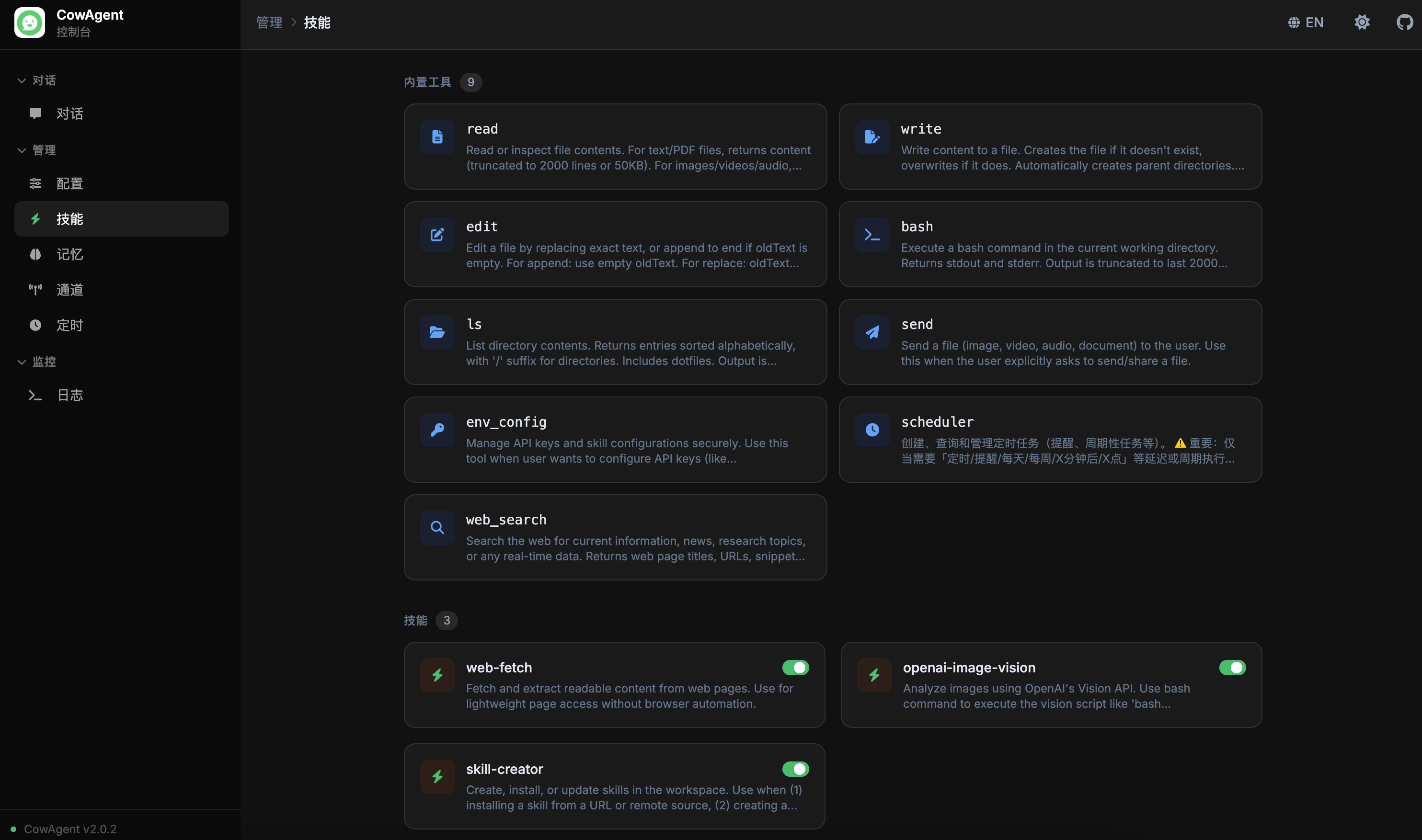

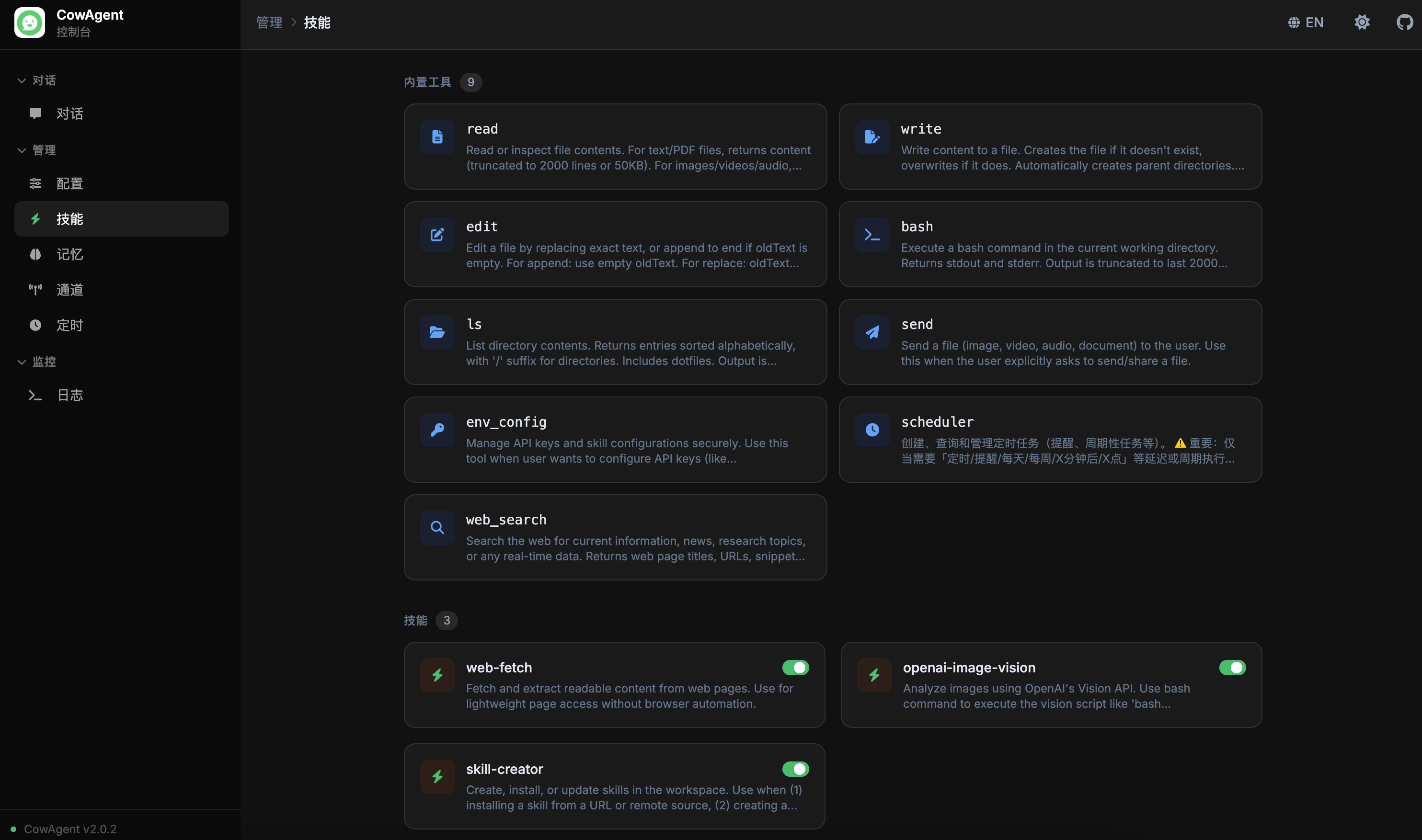

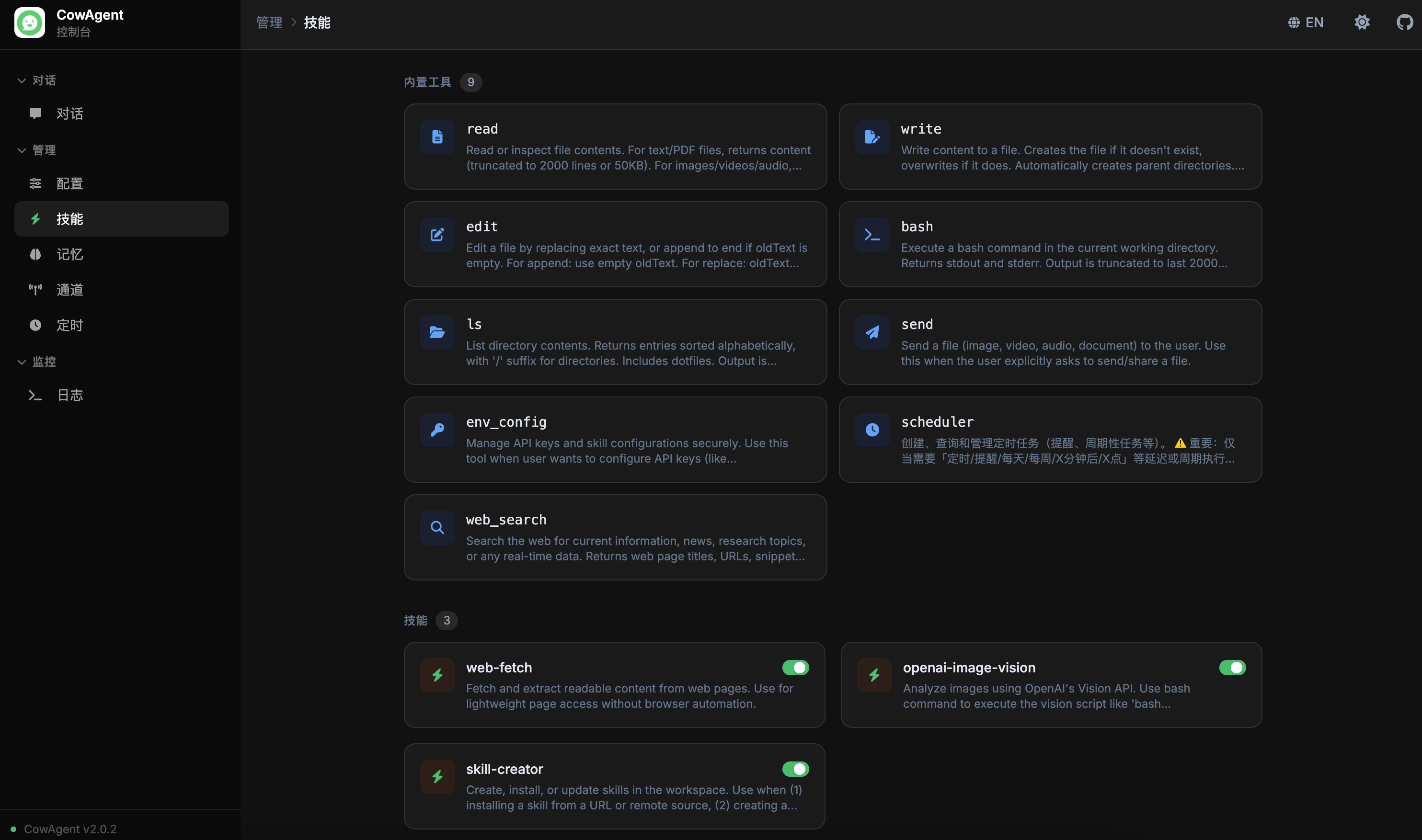

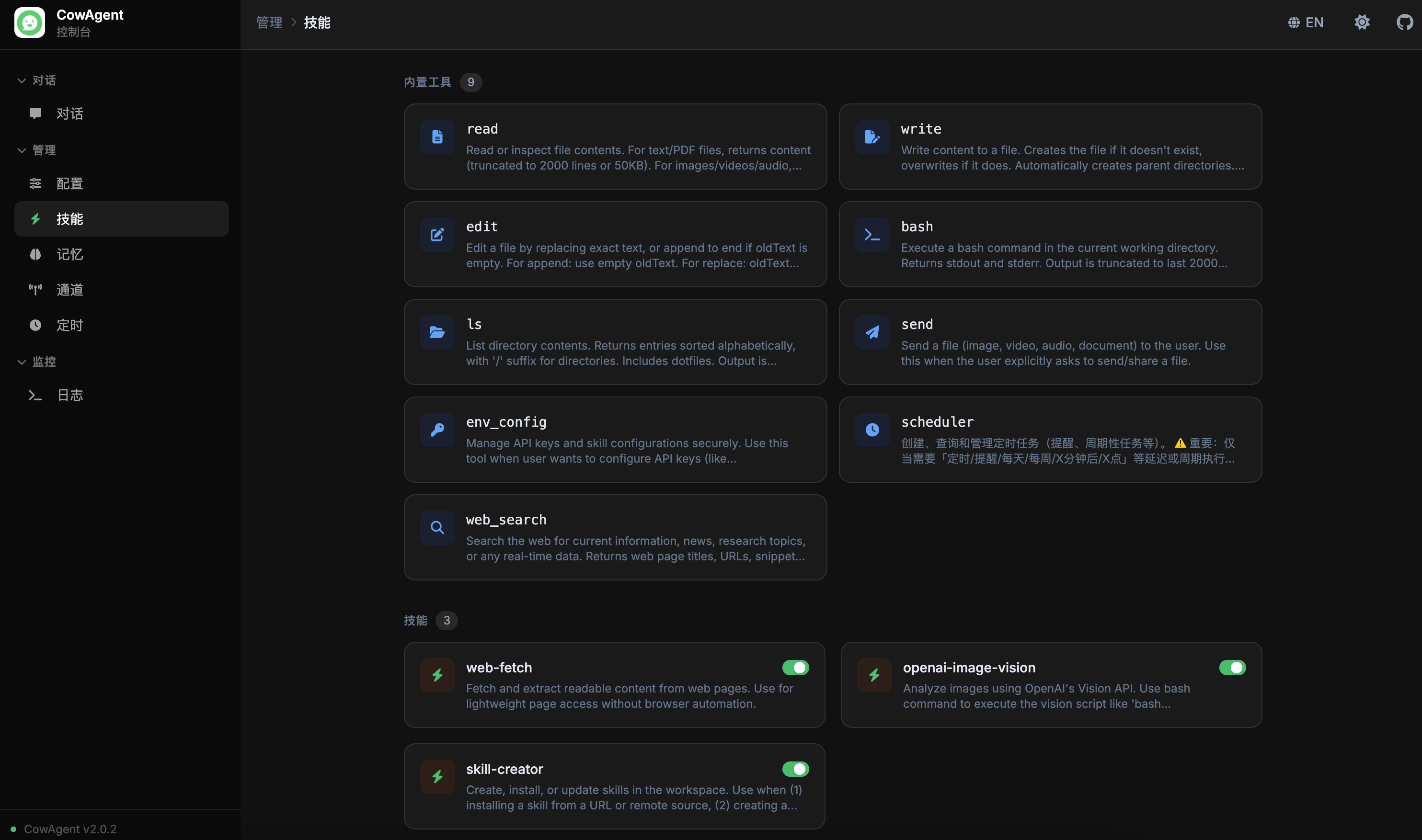

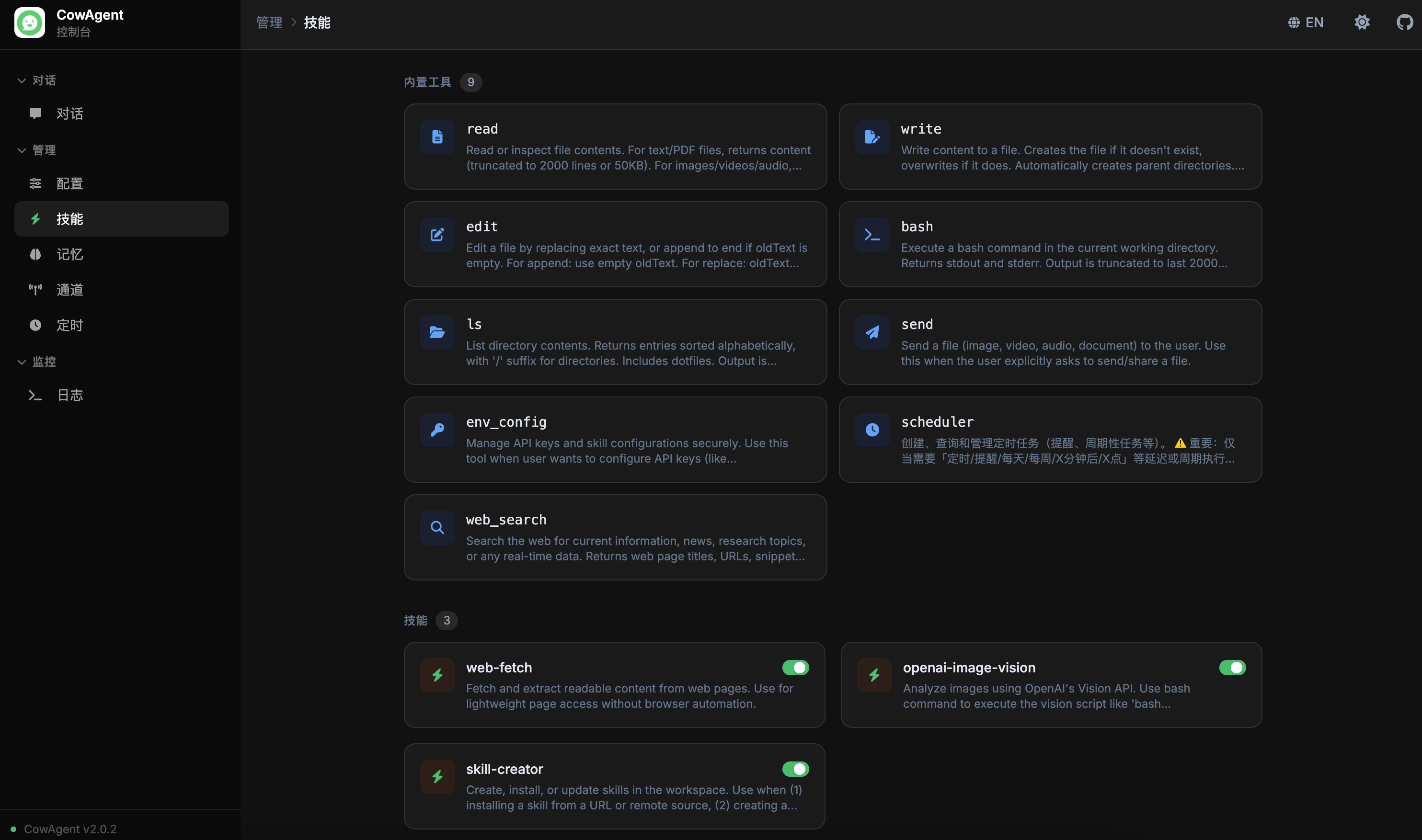

skills_title: '技能管理', skills_desc: '查看、启用或禁用 Agent 技能',

skills_loading: '加载技能中...', skills_loading_desc: '技能加载后将显示在此处',

tools_section_title: '内置工具', tools_loading: '加载工具中...',

skills_section_title: '技能', skill_enable: '启用', skill_disable: '禁用',

skill_toggle_error: '操作失败,请稍后再试',

memory_title: '记忆管理', memory_desc: '查看 Agent 记忆文件和内容',

memory_loading: '加载记忆文件中...', memory_loading_desc: '记忆文件将显示在此处',

memory_back: '返回列表',

memory_col_name: '文件名', memory_col_type: '类型', memory_col_size: '大小', memory_col_updated: '更新时间',

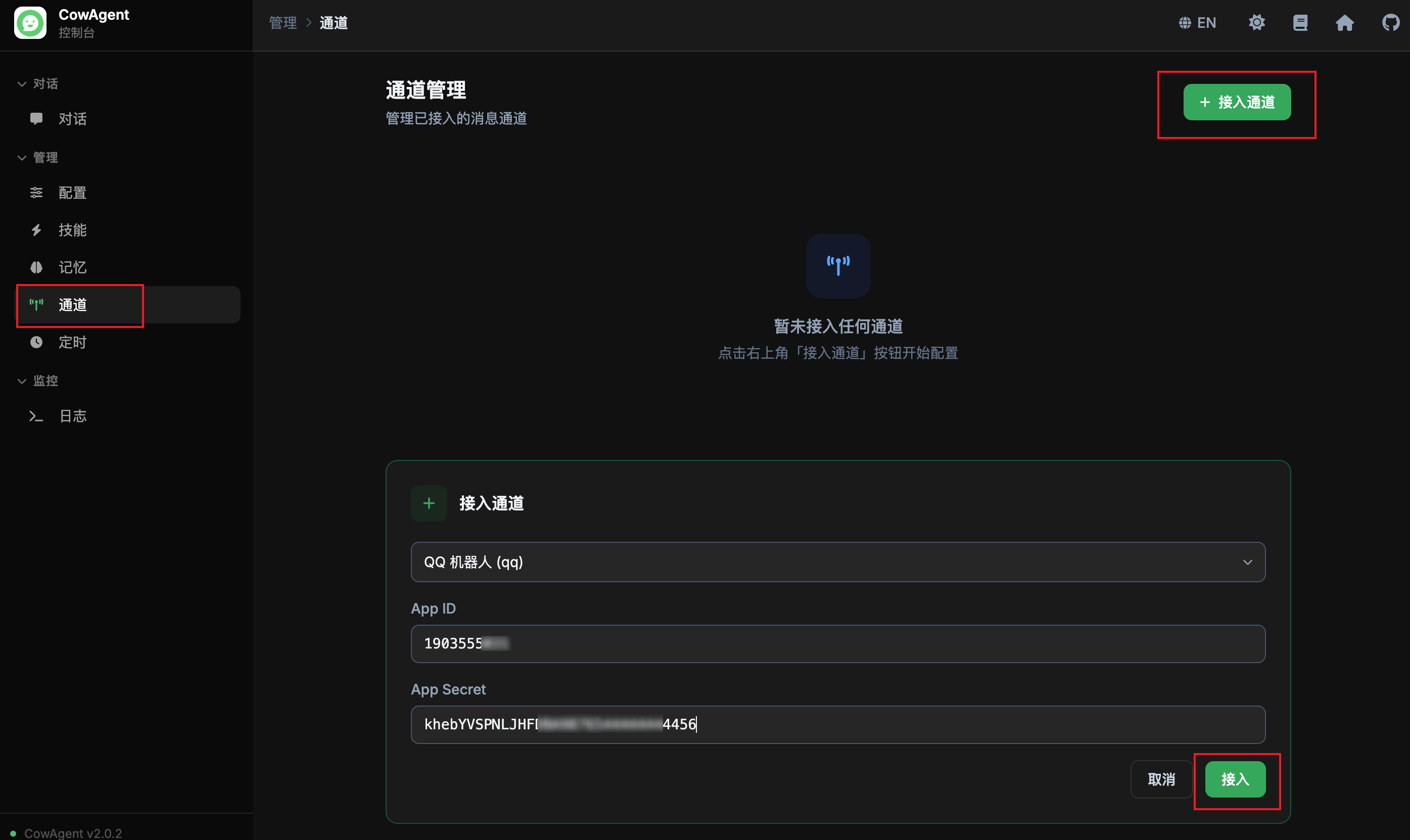

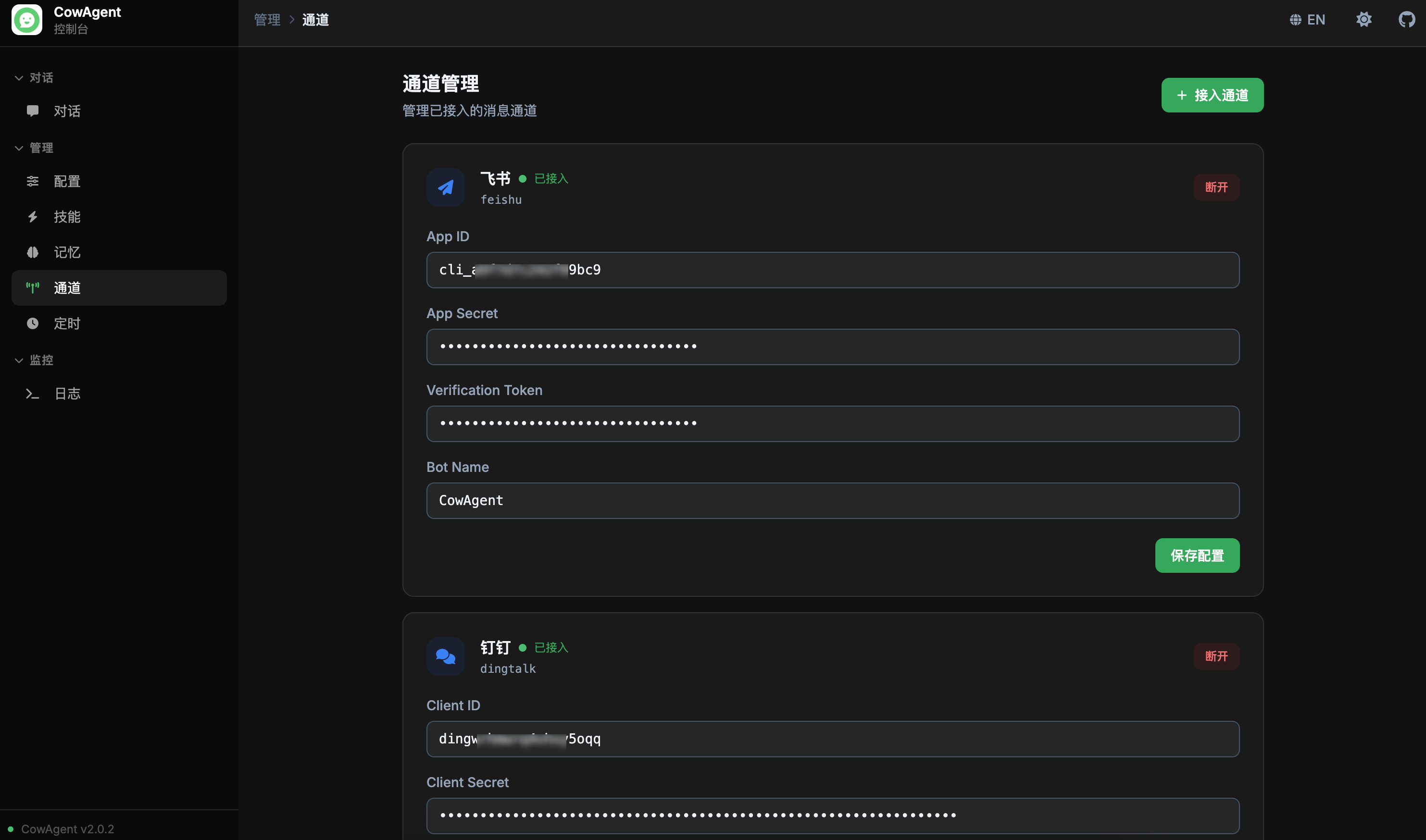

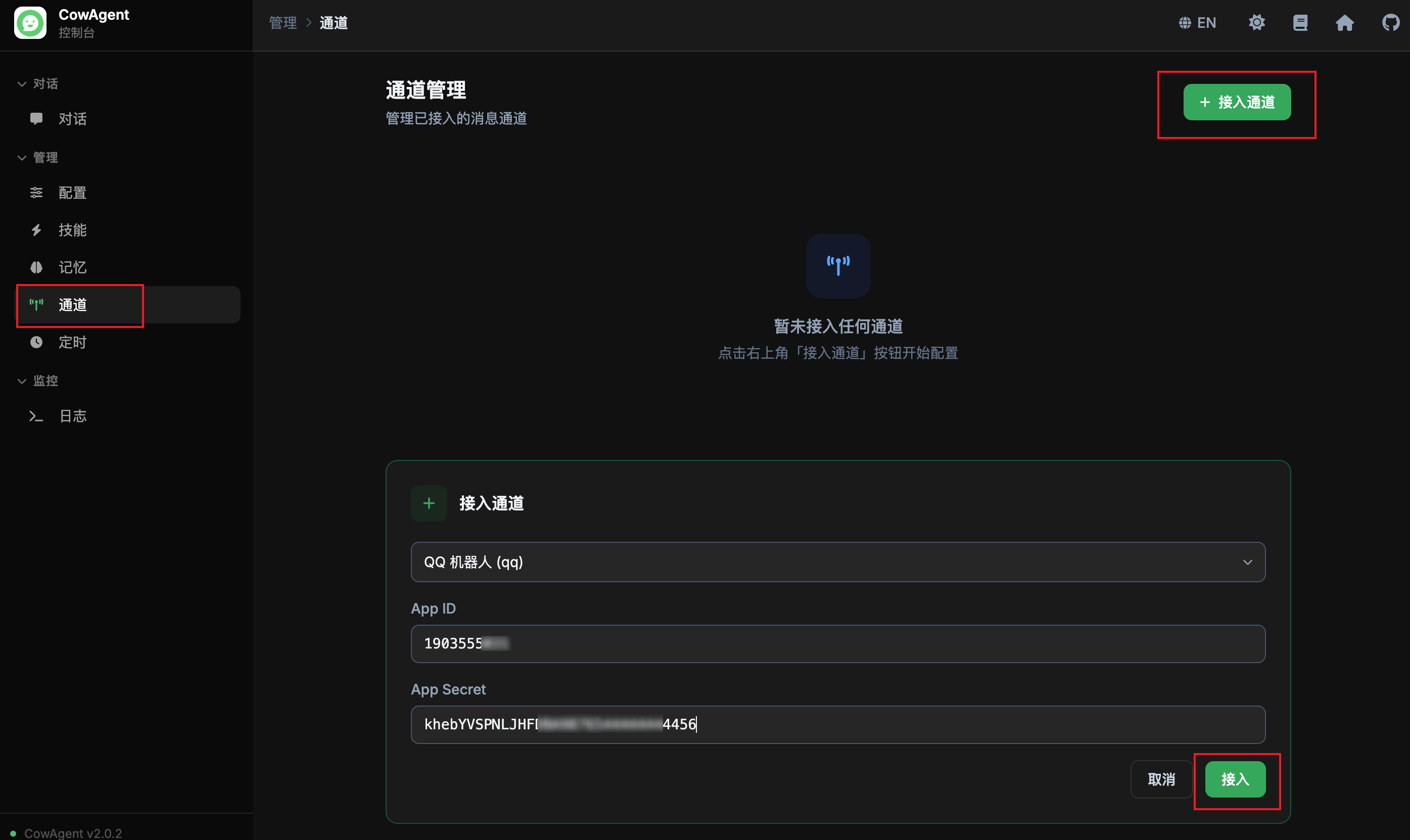

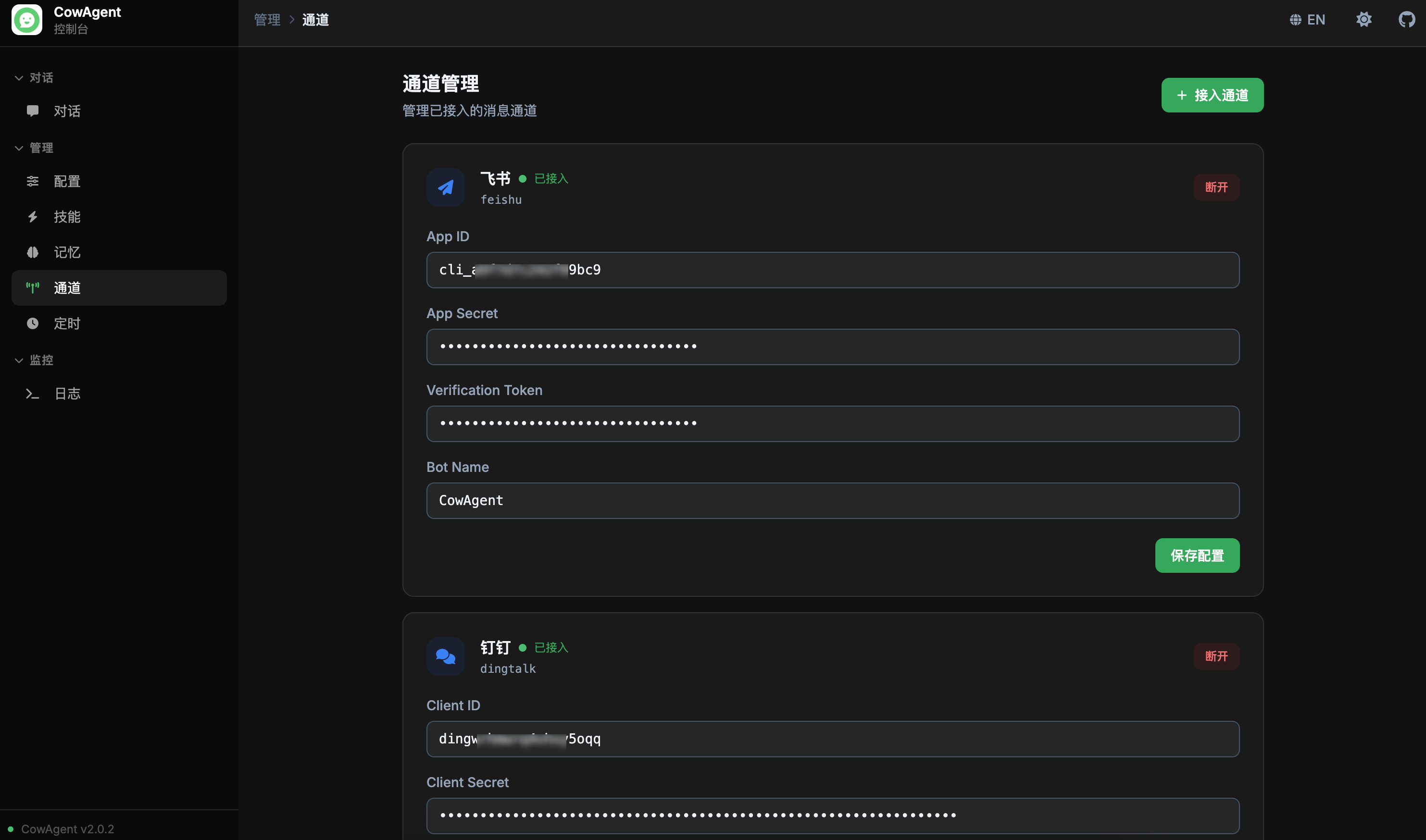

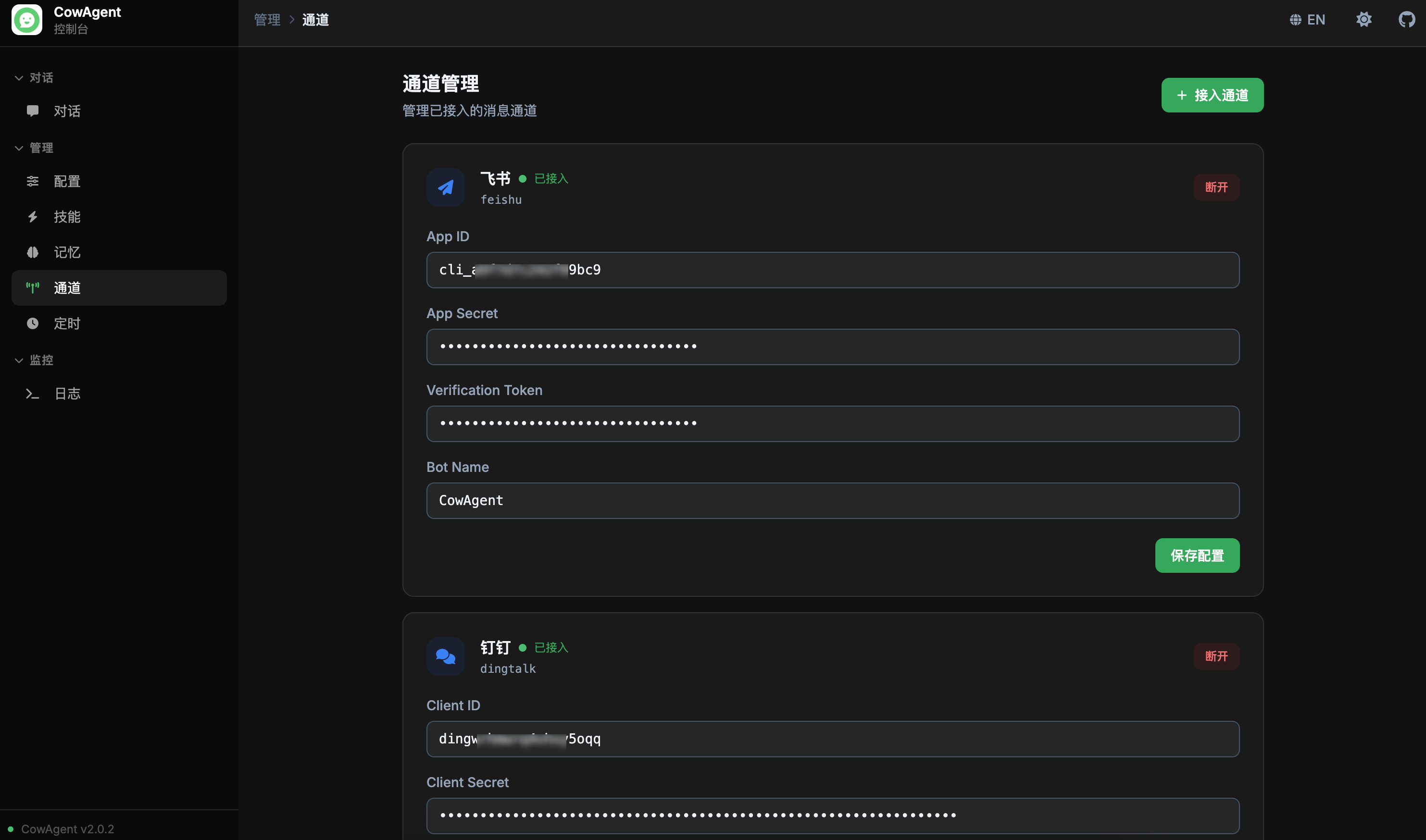

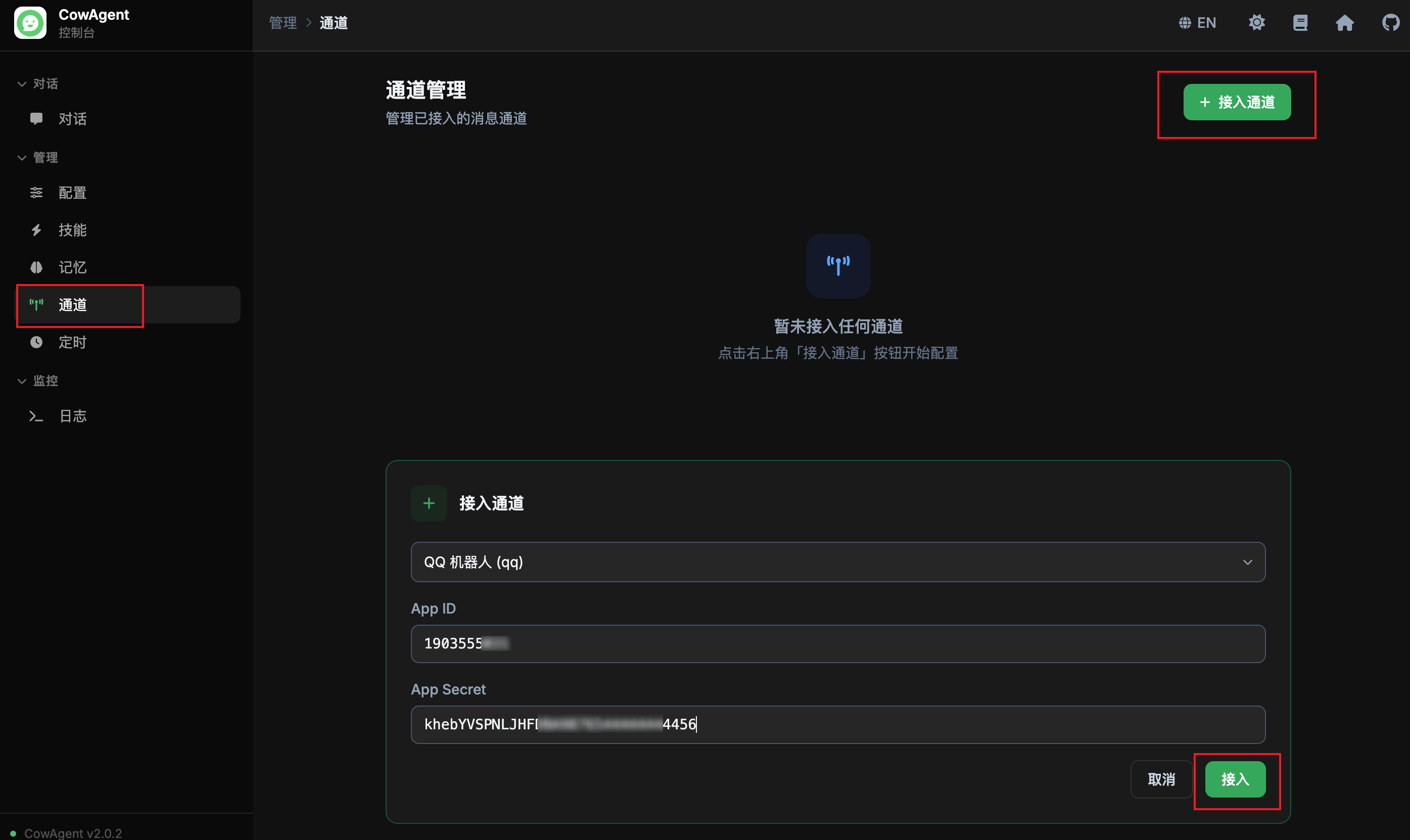

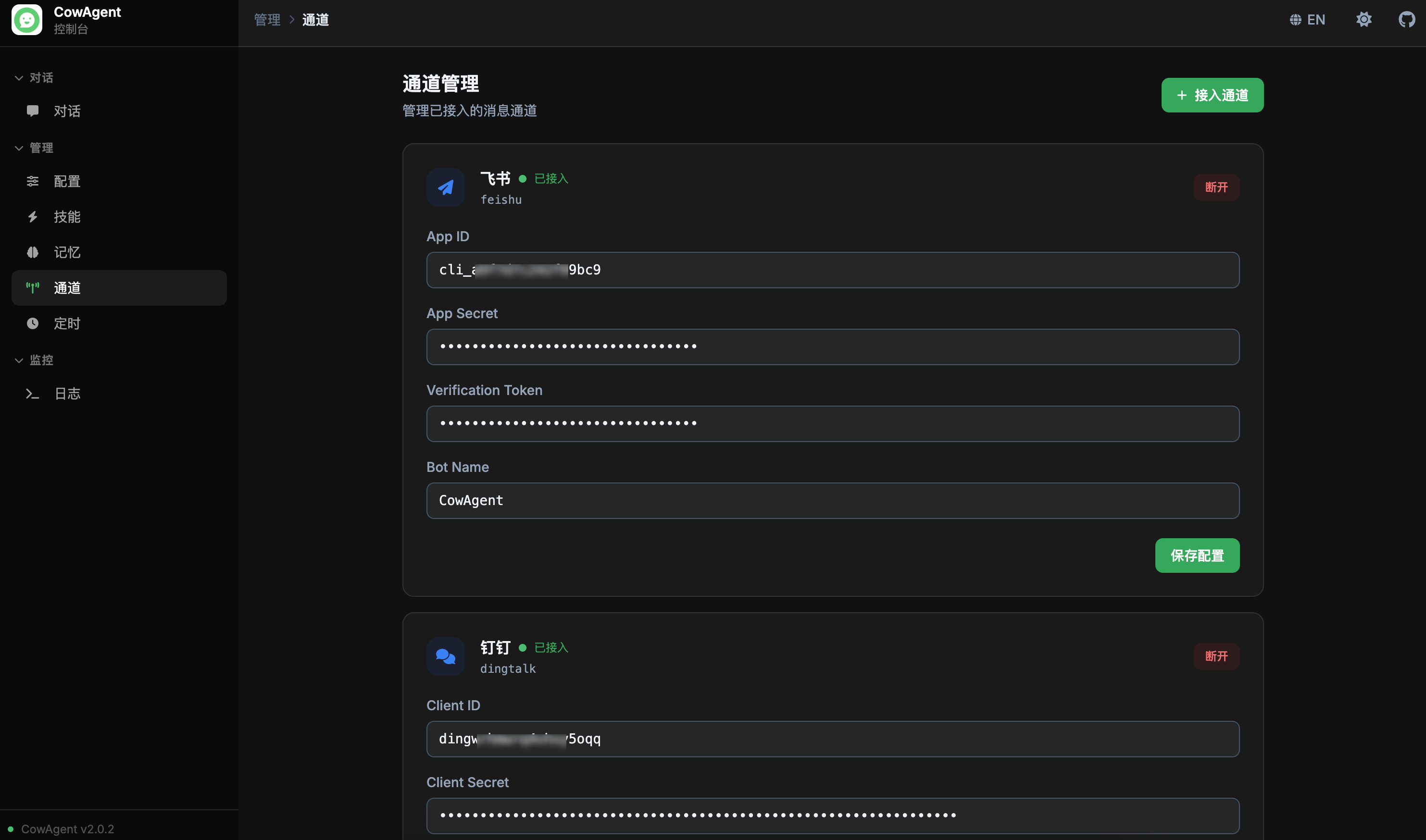

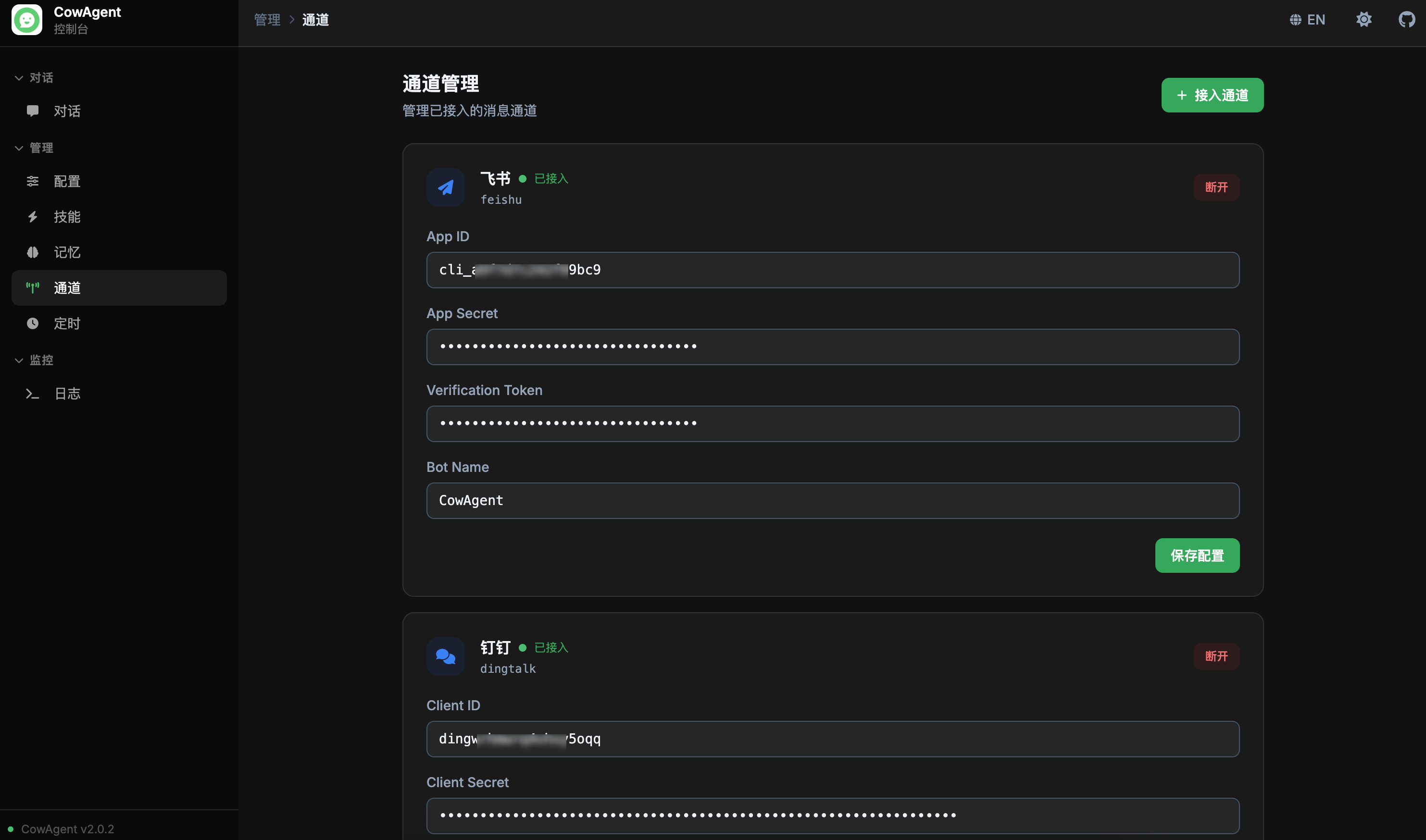

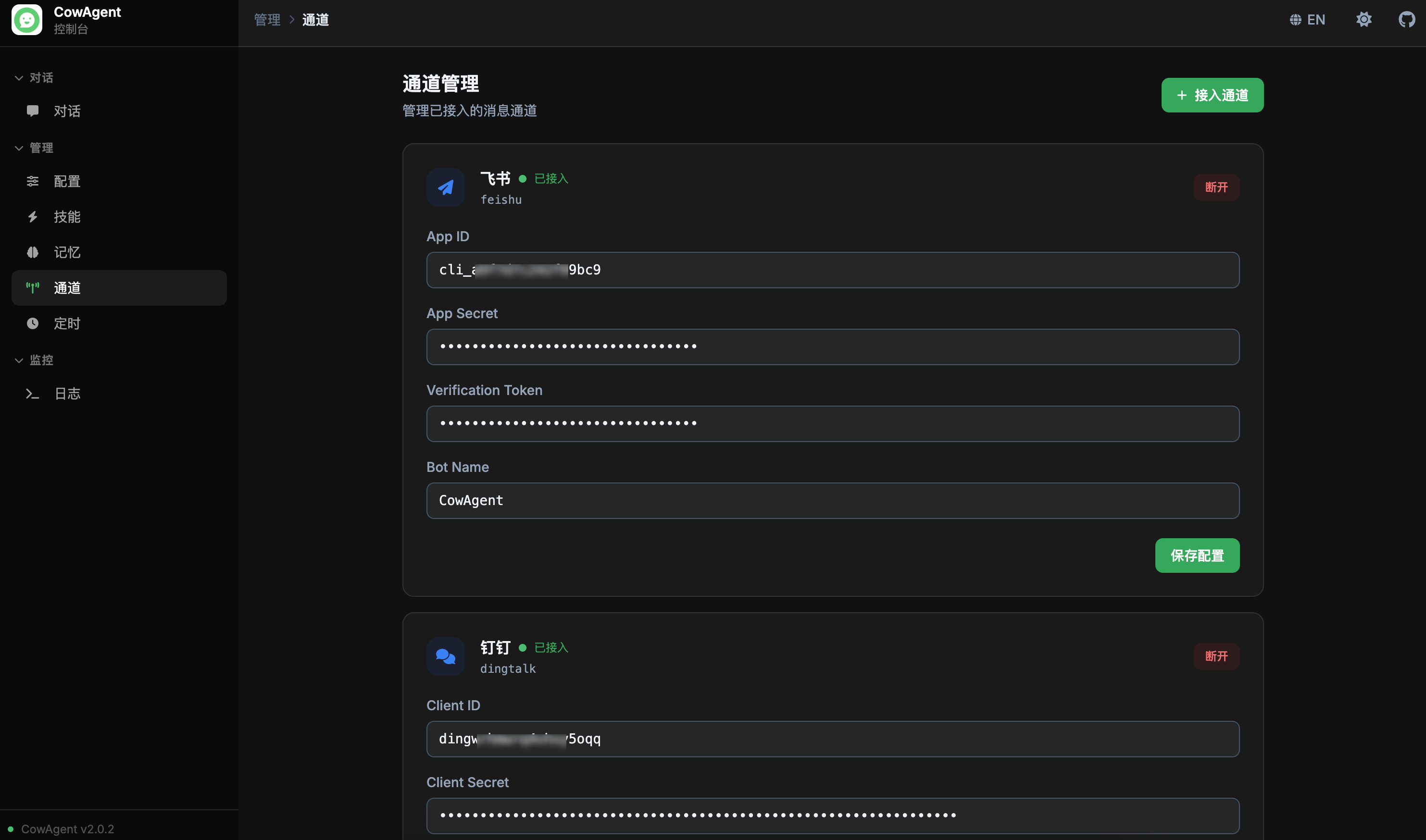

channels_title: '通道管理', channels_desc: '管理已接入的消息通道',

channels_add: '接入通道', channels_disconnect: '断开',

channels_save: '保存配置', channels_saved: '已保存', channels_save_error: '保存失败',

channels_restarted: '已保存并重启',

channels_connect_btn: '接入', channels_cancel: '取消',

channels_select_placeholder: '选择要接入的通道...',

channels_empty: '暂未接入任何通道', channels_empty_desc: '点击右上角「接入通道」按钮开始配置',

channels_disconnect_confirm: '确认断开该通道?配置将保留但通道会停止运行。',

channels_connected: '已接入', channels_connecting: '接入中...',

tasks_title: '定时任务', tasks_desc: '查看和管理定时任务',

tasks_coming: '即将推出', tasks_coming_desc: '定时任务管理功能即将在此提供',

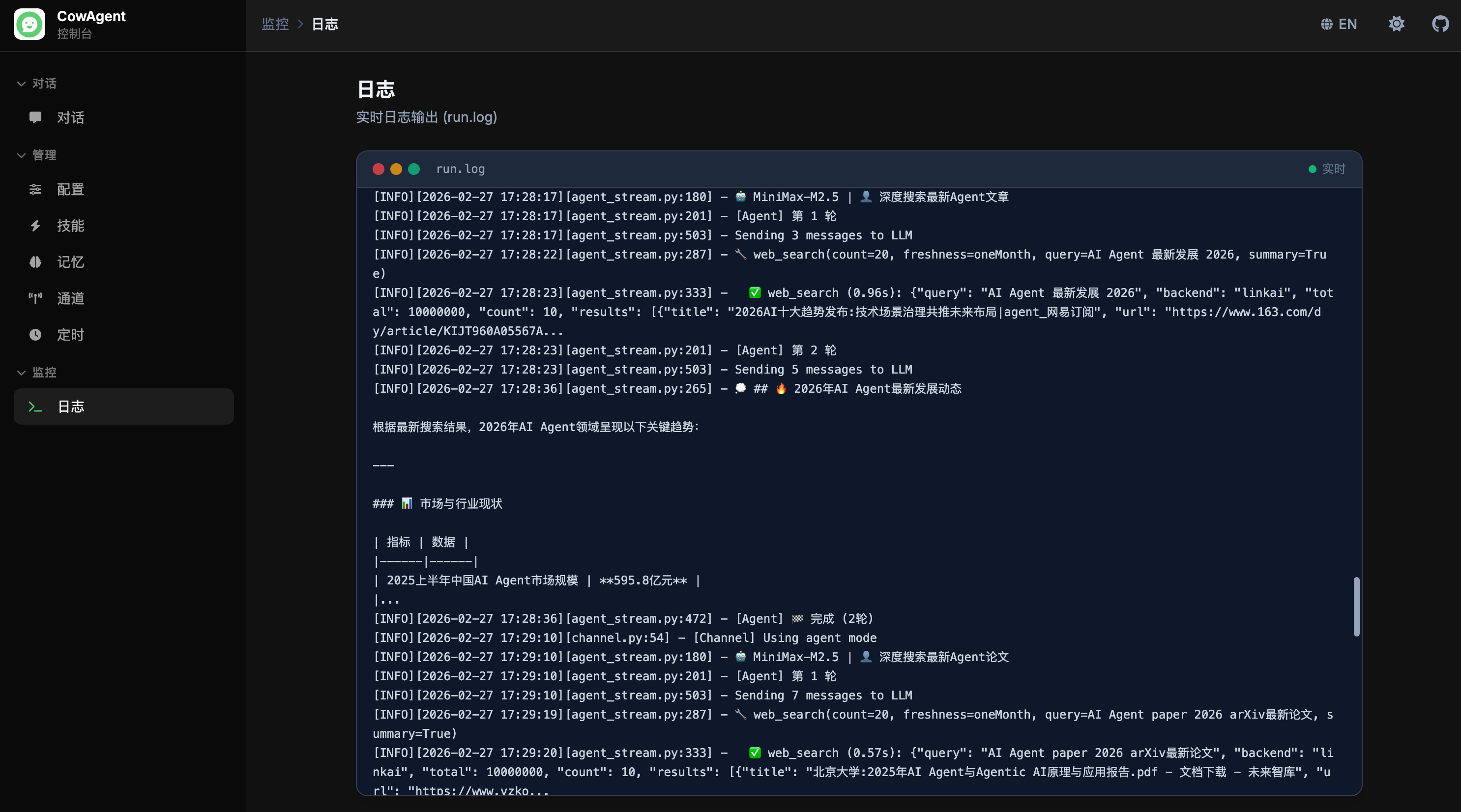

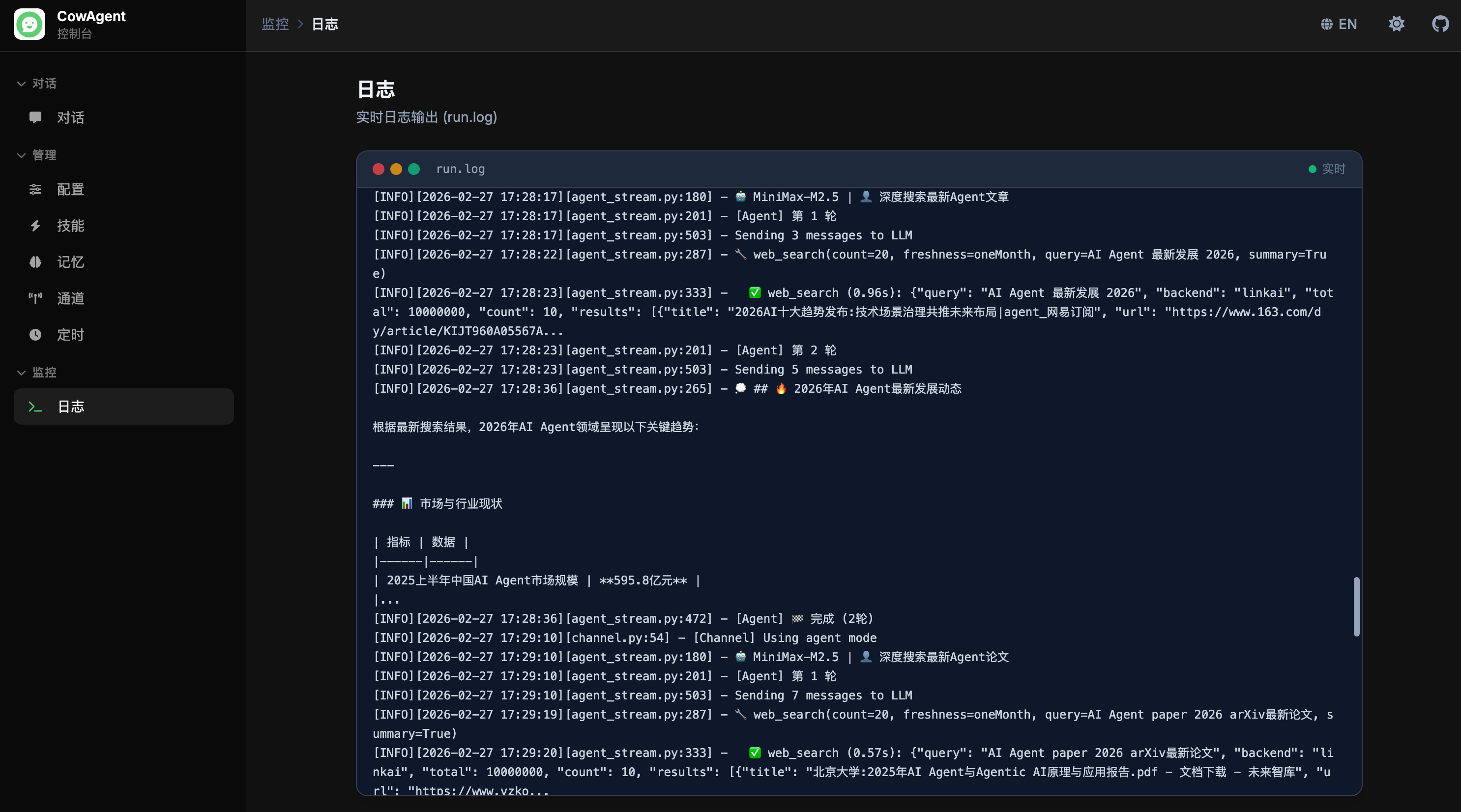

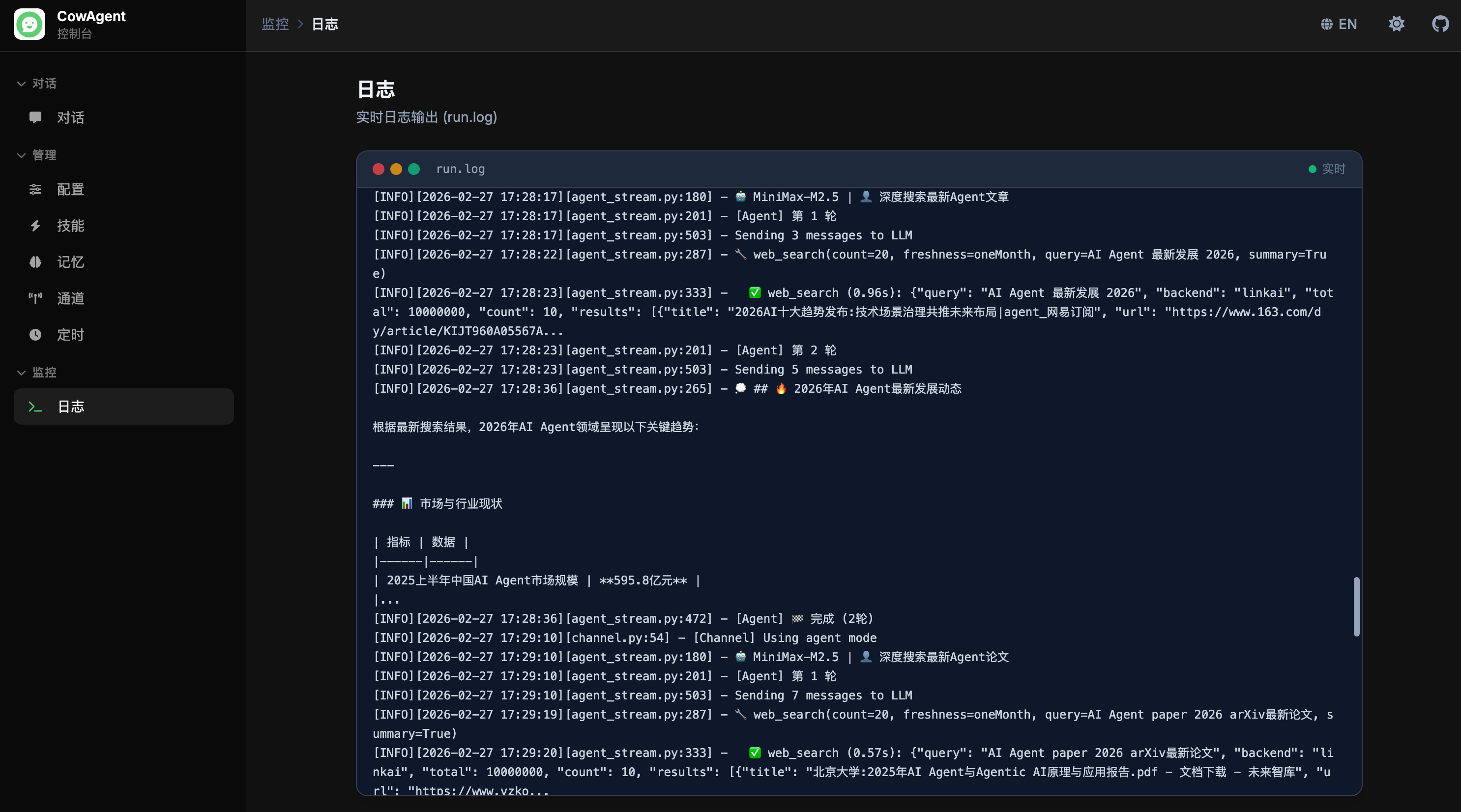

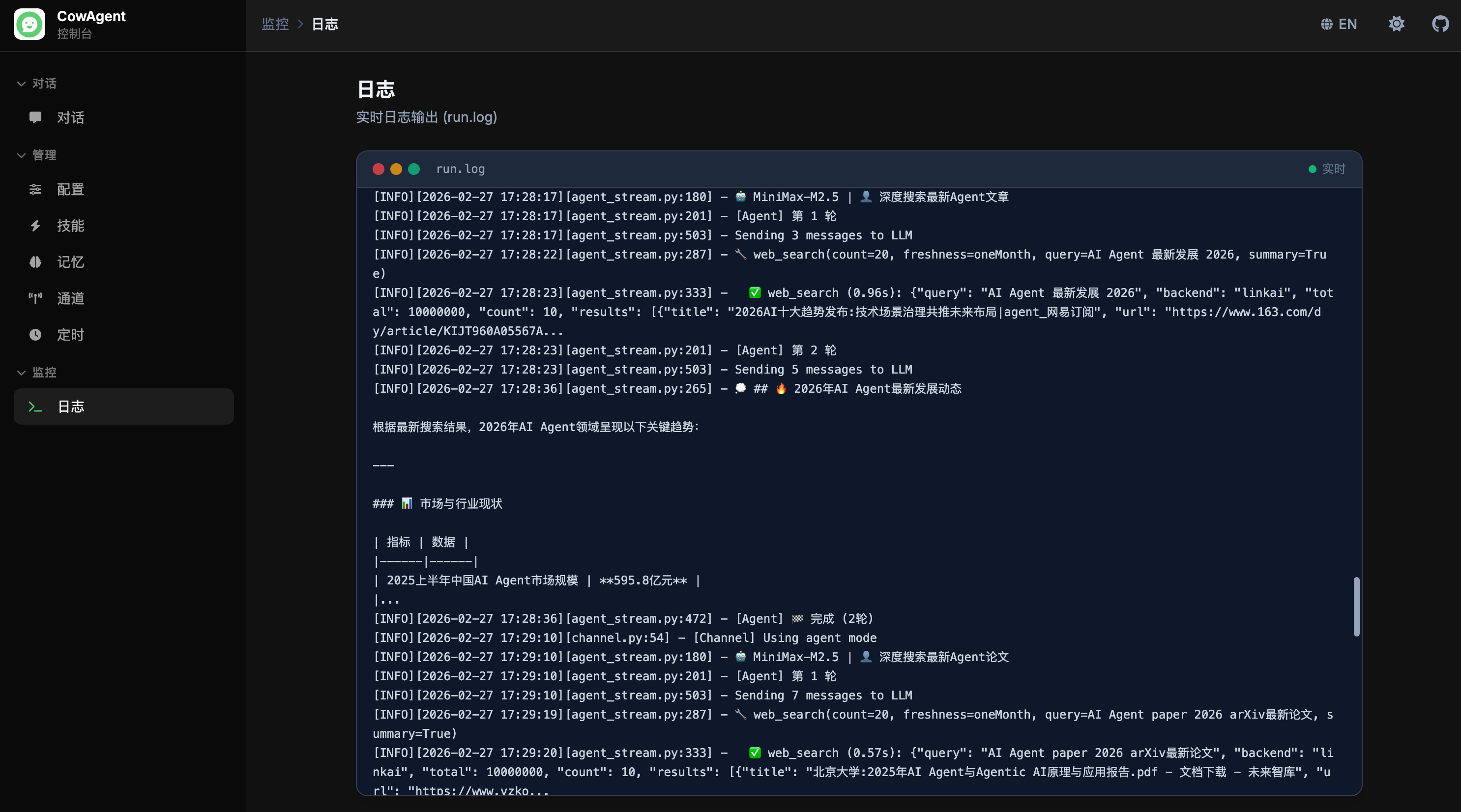

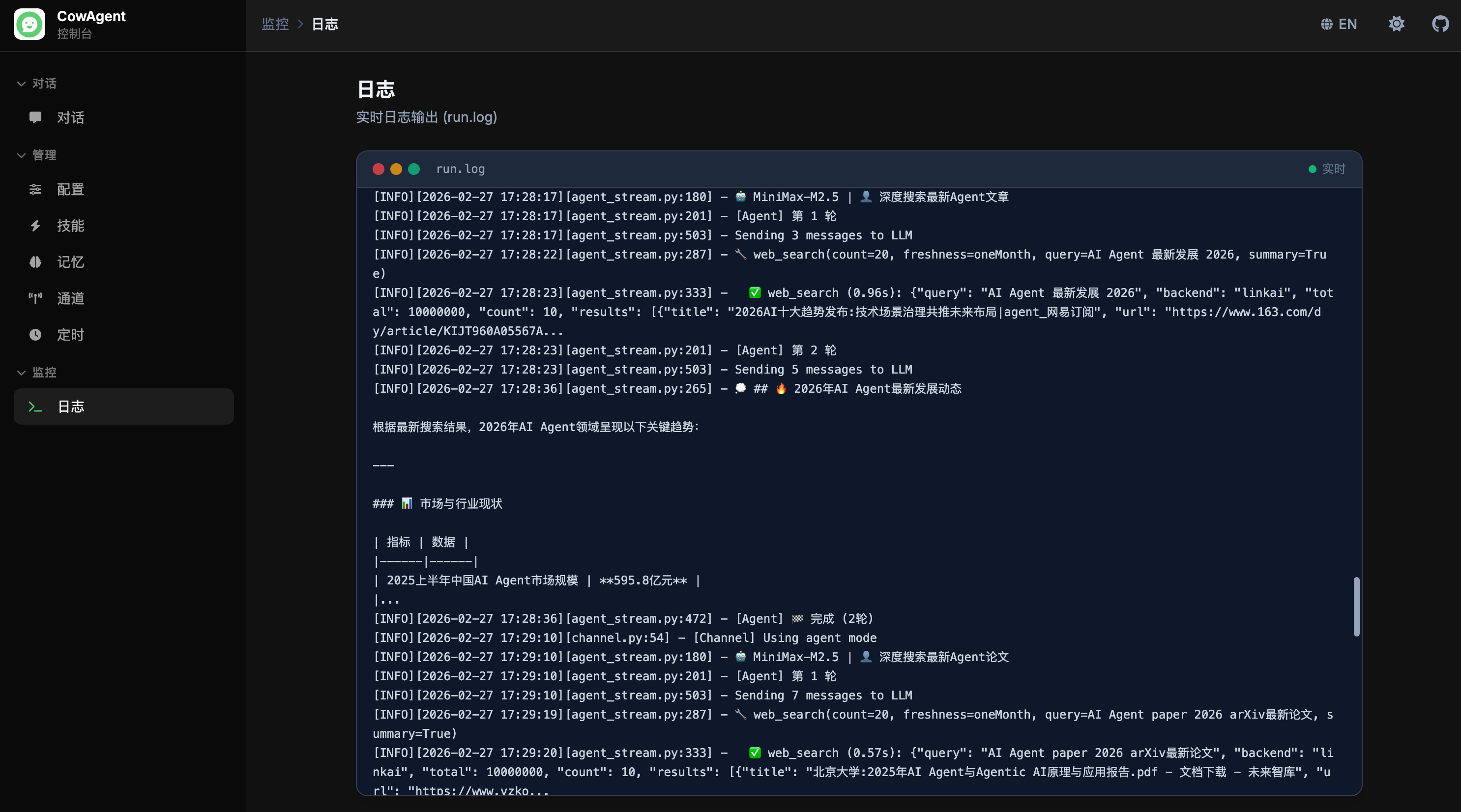

logs_title: '日志', logs_desc: '实时日志输出 (run.log)',

logs_live: '实时', logs_coming_msg: '日志流即将在此提供。将连接 run.log 实现类似 tail -f 的实时输出。',

error_send: '发送失败,请稍后再试。', error_timeout: '请求超时,请再试一次。',

},

en: {

console: 'Console',

nav_chat: 'Chat', nav_manage: 'Management', nav_monitor: 'Monitor',

menu_chat: 'Chat', menu_config: 'Config', menu_skills: 'Skills',

menu_memory: 'Memory', menu_channels: 'Channels', menu_tasks: 'Tasks',

menu_logs: 'Logs',

welcome_subtitle: 'I can help you answer questions, manage your computer, create and execute skills, and keep growing through

long-term memory.',

example_sys_title: 'System', example_sys_text: 'Show me the files in the workspace',

example_task_title: 'Skills', example_task_text: 'Show current tools and skills',

example_code_title: 'Coding', example_code_text: 'Write a Python web scraper script',

input_placeholder: 'Type a message...',

config_title: 'Configuration', config_desc: 'Manage model and agent settings',

config_model: 'Model Configuration', config_agent: 'Agent Configuration',

config_channel: 'Channel Configuration',

config_agent_enabled: 'Agent Mode', config_max_tokens: 'Max Tokens',

config_max_turns: 'Max Turns', config_max_steps: 'Max Steps',

config_channel_type: 'Channel Type',

config_provider: 'Provider', config_model_name: 'Model',

config_custom_model_hint: 'Enter custom model name',

config_save: 'Save', config_saved: 'Saved',

config_save_error: 'Save failed',

config_custom_option: 'Custom...',

skills_title: 'Skills', skills_desc: 'View, enable, or disable agent skills',

skills_loading: 'Loading skills...', skills_loading_desc: 'Skills will be displayed here after loading',

tools_section_title: 'Built-in Tools', tools_loading: 'Loading tools...',

skills_section_title: 'Skills', skill_enable: 'Enable', skill_disable: 'Disable',

skill_toggle_error: 'Operation failed, please try again',

memory_title: 'Memory', memory_desc: 'View agent memory files and contents',

memory_loading: 'Loading memory files...', memory_loading_desc: 'Memory files will be displayed here',

memory_back: 'Back to list',

memory_col_name: 'Filename', memory_col_type: 'Type', memory_col_size: 'Size', memory_col_updated: 'Updated',

channels_title: 'Channels', channels_desc: 'Manage connected messaging channels',

channels_add: 'Connect', channels_disconnect: 'Disconnect',

channels_save: 'Save', channels_saved: 'Saved', channels_save_error: 'Save failed',

channels_restarted: 'Saved & Restarted',

channels_connect_btn: 'Connect', channels_cancel: 'Cancel',

channels_select_placeholder: 'Select a channel to connect...',

channels_empty: 'No channels connected', channels_empty_desc: 'Click the "Connect" button above to get started',

channels_disconnect_confirm: 'Disconnect this channel? Config will be preserved but the channel will stop.',

channels_connected: 'Connected', channels_connecting: 'Connecting...',

tasks_title: 'Scheduled Tasks', tasks_desc: 'View and manage scheduled tasks',

tasks_coming: 'Coming Soon', tasks_coming_desc: 'Scheduled task management will be available here',

logs_title: 'Logs', logs_desc: 'Real-time log output (run.log)',

logs_live: 'Live', logs_coming_msg: 'Log streaming will be available here. Connects to run.log for real-time output similar to tail -f.',

error_send: 'Failed to send. Please try again.', error_timeout: 'Request timeout. Please try again.',

}

};

let currentLang = localStorage.getItem('cow_lang') || 'zh';

function t(key) {

return (I18N[currentLang] && I18N[currentLang][key]) || (I18N.en[key]) || key;

}

function applyI18n() {

document.querySelectorAll('[data-i18n]').forEach(el => {

el.textContent = t(el.dataset.i18n);

});

document.querySelectorAll('[data-i18n-html]').forEach(el => {

el.innerHTML = t(el.dataset.i18nHtml);

});

document.querySelectorAll('[data-i18n-placeholder]').forEach(el => {

el.placeholder = t(el.dataset['i18nPlaceholder']);

});

document.getElementById('lang-label').textContent = currentLang === 'zh' ? 'EN' : '中文';

}

function toggleLanguage() {

currentLang = currentLang === 'zh' ? 'en' : 'zh';

localStorage.setItem('cow_lang', currentLang);

applyI18n();

}

// =====================================================================

// Theme

// =====================================================================

let currentTheme = localStorage.getItem('cow_theme') || 'dark';

function applyTheme() {

const root = document.documentElement;

if (currentTheme === 'dark') {

root.classList.add('dark');

document.getElementById('theme-icon').className = 'fas fa-sun';

document.getElementById('hljs-light').disabled = true;

document.getElementById('hljs-dark').disabled = false;

} else {

root.classList.remove('dark');

document.getElementById('theme-icon').className = 'fas fa-moon';

document.getElementById('hljs-light').disabled = false;

document.getElementById('hljs-dark').disabled = true;

}

}

function toggleTheme() {

currentTheme = currentTheme === 'dark' ? 'light' : 'dark';

localStorage.setItem('cow_theme', currentTheme);

applyTheme();

}

// =====================================================================

// Sidebar & Navigation

// =====================================================================

const VIEW_META = {

chat: { group: 'nav_chat', page: 'menu_chat' },

config: { group: 'nav_manage', page: 'menu_config' },

skills: { group: 'nav_manage', page: 'menu_skills' },

memory: { group: 'nav_manage', page: 'menu_memory' },

channels: { group: 'nav_manage', page: 'menu_channels' },

tasks: { group: 'nav_manage', page: 'menu_tasks' },

logs: { group: 'nav_monitor', page: 'menu_logs' },

};

let currentView = 'chat';

function navigateTo(viewId) {

if (!VIEW_META[viewId]) return;

document.querySelectorAll('.view').forEach(v => v.classList.remove('active'));

const target = document.getElementById('view-' + viewId);

if (target) target.classList.add('active');

document.querySelectorAll('.sidebar-item').forEach(item => {

item.classList.toggle('active', item.dataset.view === viewId);

});

const meta = VIEW_META[viewId];

document.getElementById('breadcrumb-group').textContent = t(meta.group);

document.getElementById('breadcrumb-group').dataset.i18n = meta.group;

document.getElementById('breadcrumb-page').textContent = t(meta.page);

document.getElementById('breadcrumb-page').dataset.i18n = meta.page;

currentView = viewId;

if (window.innerWidth < 1024) closeSidebar();

}

function toggleSidebar() {

const sidebar = document.getElementById('sidebar');

const overlay = document.getElementById('sidebar-overlay');

const isOpen = !sidebar.classList.contains('-translate-x-full');

if (isOpen) {

closeSidebar();

} else {

sidebar.classList.remove('-translate-x-full');

overlay.classList.remove('hidden');

}

}

function closeSidebar() {

document.getElementById('sidebar').classList.add('-translate-x-full');

document.getElementById('sidebar-overlay').classList.add('hidden');

}

document.querySelectorAll('.menu-group > button').forEach(btn => {

btn.addEventListener('click', () => {

btn.parentElement.classList.toggle('open');

});

});

document.querySelectorAll('.sidebar-item').forEach(item => {

item.addEventListener('click', () => navigateTo(item.dataset.view));

});

window.addEventListener('resize', () => {

if (window.innerWidth >= 1024) {

document.getElementById('sidebar').classList.remove('-translate-x-full');

document.getElementById('sidebar-overlay').classList.add('hidden');

} else {

if (!document.getElementById('sidebar').classList.contains('-translate-x-full')) {

closeSidebar();

}

}

});

// =====================================================================

// Markdown Renderer

// =====================================================================

function createMd() {

const md = window.markdownit({

html: false, breaks: true, linkify: true, typographer: true,

highlight: function(str, lang) {

if (lang && hljs.getLanguage(lang)) {

try { return hljs.highlight(str, { language: lang }).value; } catch (_) {}

}

return hljs.highlightAuto(str).value;

}

});

const defaultLinkOpen = md.renderer.rules.link_open || function(tokens, idx, options, env, self) {

return self.renderToken(tokens, idx, options);

};

md.renderer.rules.link_open = function(tokens, idx, options, env, self) {

tokens[idx].attrPush(['target', '_blank']);

tokens[idx].attrPush(['rel', 'noopener noreferrer']);

return defaultLinkOpen(tokens, idx, options, env, self);

};

return md;

}

const md = createMd();

function renderMarkdown(text) {

try { return md.render(text); }

catch (e) { return text.replace(/\n/g, '

'); }

}

// =====================================================================

// Chat Module

// =====================================================================

let isPolling = false;

let loadingContainers = {};

let activeStreams = {}; // request_id -> EventSource

let isComposing = false;

let appConfig = { use_agent: false, title: 'CowAgent', subtitle: '', providers: {}, api_bases: {} };

const SESSION_ID_KEY = 'cow_session_id';

function generateSessionId() {

return 'session_' + ([1e7]+-1e3+-4e3+-8e3+-1e11).replace(/[018]/g, c =>

(c ^ crypto.getRandomValues(new Uint8Array(1))[0] & 15 >> c / 4).toString(16)

);

}

// Restore session_id from localStorage so conversation history survives page refresh.

// A new id is only generated when the user explicitly starts a new chat.

function loadOrCreateSessionId() {

const stored = localStorage.getItem(SESSION_ID_KEY);

if (stored) return stored;

const fresh = generateSessionId();

localStorage.setItem(SESSION_ID_KEY, fresh);

return fresh;

}

let sessionId = loadOrCreateSessionId();

// ---- Conversation history state ----

let historyPage = 0; // last page fetched (0 = nothing fetched yet)

let historyHasMore = false;

let historyLoading = false;

fetch('/config').then(r => r.json()).then(data => {

if (data.status === 'success') {

appConfig = data;

const title = data.title || 'CowAgent';

document.getElementById('welcome-title').textContent = title;

initConfigView(data);

}

loadHistory(1);

}).catch(() => { loadHistory(1); });

const chatInput = document.getElementById('chat-input');

const sendBtn = document.getElementById('send-btn');

const messagesDiv = document.getElementById('chat-messages');

const fileInput = document.getElementById('file-input');

const attachmentPreview = document.getElementById('attachment-preview');

// Pending attachments: [{file_path, file_name, file_type, preview_url}]

// Items with _uploading=true are still in flight.

let pendingAttachments = [];

let uploadingCount = 0;

function updateSendBtnState() {

sendBtn.disabled = uploadingCount > 0 || (!chatInput.value.trim() && pendingAttachments.length === 0);

}

function renderAttachmentPreview() {

if (pendingAttachments.length === 0) {

attachmentPreview.classList.add('hidden');

attachmentPreview.innerHTML = '';

updateSendBtnState();

return;

}

attachmentPreview.classList.remove('hidden');

attachmentPreview.innerHTML = pendingAttachments.map((att, idx) => {

if (att._uploading) {

return `

${escapeHtml(att.file_name)}

`;

}

if (att.file_type === 'image') {

return `

`;

}

const icon = att.file_type === 'video' ? 'fa-film' : 'fa-file-alt';

return `

${escapeHtml(att.file_name)}

`;

}).join('');

updateSendBtnState();

}

function removeAttachment(idx) {

if (pendingAttachments[idx]?._uploading) return;

pendingAttachments.splice(idx, 1);

renderAttachmentPreview();

}

async function handleFileSelect(files) {

if (!files || files.length === 0) return;

const tasks = [];

for (const file of files) {

const placeholder = { file_name: file.name, file_type: 'file', _uploading: true };

pendingAttachments.push(placeholder);

uploadingCount++;

renderAttachmentPreview();

tasks.push((async () => {

const formData = new FormData();

formData.append('file', file);

formData.append('session_id', sessionId);

try {

const resp = await fetch('/upload', { method: 'POST', body: formData });

const data = await resp.json();

if (data.status === 'success') {

placeholder.file_path = data.file_path;

placeholder.file_name = data.file_name;

placeholder.file_type = data.file_type;

placeholder.preview_url = data.preview_url;

delete placeholder._uploading;

} else {

const i = pendingAttachments.indexOf(placeholder);

if (i !== -1) pendingAttachments.splice(i, 1);

}

} catch (e) {

console.error('Upload failed:', e);

const i = pendingAttachments.indexOf(placeholder);

if (i !== -1) pendingAttachments.splice(i, 1);

}

uploadingCount--;

renderAttachmentPreview();

})());

}

await Promise.all(tasks);

}

fileInput.addEventListener('change', function() {

handleFileSelect(this.files);

this.value = '';

});

// Drag-and-drop support on chat input area

const chatInputArea = chatInput.closest('.flex-shrink-0');

chatInputArea.addEventListener('dragover', (e) => { e.preventDefault(); e.stopPropagation(); chatInputArea.classList.add('drag-over'); });

chatInputArea.addEventListener('dragleave', (e) => { e.preventDefault(); e.stopPropagation(); chatInputArea.classList.remove('drag-over'); });

chatInputArea.addEventListener('drop', (e) => {

e.preventDefault(); e.stopPropagation();

chatInputArea.classList.remove('drag-over');

if (e.dataTransfer.files.length) handleFileSelect(e.dataTransfer.files);

});

// Paste image support

chatInput.addEventListener('paste', (e) => {

const items = e.clipboardData?.items;

if (!items) return;

const files = [];

for (const item of items) {

if (item.kind === 'file') {

files.push(item.getAsFile());

}

}

if (files.length) {

e.preventDefault();

handleFileSelect(files);

}

});

chatInput.addEventListener('compositionstart', () => { isComposing = true; });

chatInput.addEventListener('compositionend', () => { setTimeout(() => { isComposing = false; }, 100); });

chatInput.addEventListener('input', function() {

this.style.height = '42px';

const scrollH = this.scrollHeight;

const newH = Math.min(scrollH, 180);

this.style.height = newH + 'px';

this.style.overflowY = scrollH > 180 ? 'auto' : 'hidden';

updateSendBtnState();

});

chatInput.addEventListener('keydown', function(e) {

// keyCode 229 indicates an IME is processing the keystroke (reliable across browsers)

if (e.keyCode === 229 || e.isComposing || isComposing) return;

if ((e.ctrlKey || e.shiftKey) && e.key === 'Enter') {

const start = this.selectionStart;

const end = this.selectionEnd;

this.value = this.value.substring(0, start) + '\n' + this.value.substring(end);

this.selectionStart = this.selectionEnd = start + 1;

this.dispatchEvent(new Event('input'));

e.preventDefault();

} else if (e.key === 'Enter' && !e.shiftKey && !e.ctrlKey) {

sendMessage();

e.preventDefault();

}

});

document.querySelectorAll('.example-card').forEach(card => {

card.addEventListener('click', () => {

const textEl = card.querySelector('[data-i18n*="text"]');

if (textEl) {

chatInput.value = textEl.textContent;

chatInput.dispatchEvent(new Event('input'));

chatInput.focus();

}

});

});

function sendMessage() {

const text = chatInput.value.trim();

if (!text && pendingAttachments.length === 0) return;

const ws = document.getElementById('welcome-screen');

if (ws) ws.remove();

const timestamp = new Date();

const attachments = [...pendingAttachments];

addUserMessage(text, timestamp, attachments);

const loadingEl = addLoadingIndicator();

chatInput.value = '';

chatInput.style.height = '42px';

chatInput.style.overflowY = 'hidden';

pendingAttachments = [];

renderAttachmentPreview();

sendBtn.disabled = true;

const body = { session_id: sessionId, message: text, stream: true, timestamp: timestamp.toISOString() };

if (attachments.length > 0) {

body.attachments = attachments.map(a => ({

file_path: a.file_path,

file_name: a.file_name,

file_type: a.file_type,

}));

}

fetch('/message', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify(body)

})

.then(r => r.json())

.then(data => {

if (data.status === 'success') {

if (data.stream) {

startSSE(data.request_id, loadingEl, timestamp);

} else {

loadingContainers[data.request_id] = loadingEl;

if (!isPolling) startPolling();

}

} else {

loadingEl.remove();

addBotMessage(t('error_send'), new Date());

}

})

.catch(err => {

loadingEl.remove();

addBotMessage(err.name === 'AbortError' ? t('error_timeout') : t('error_send'), new Date());

});

}

function startSSE(requestId, loadingEl, timestamp) {

const es = new EventSource(`/stream?request_id=${encodeURIComponent(requestId)}`);

activeStreams[requestId] = es;

let botEl = null;

let stepsEl = null; // .agent-steps (thinking summaries + tool indicators)

let contentEl = null; // .answer-content (final streaming answer)

let accumulatedText = '';

let currentToolEl = null;

function ensureBotEl() {

if (botEl) return;

if (loadingEl) { loadingEl.remove(); loadingEl = null; }

botEl = document.createElement('div');

botEl.className = 'flex gap-3 px-4 sm:px-6 py-3';

botEl.dataset.requestId = requestId;

botEl.innerHTML = `

`;

messagesDiv.appendChild(botEl);

stepsEl = botEl.querySelector('.agent-steps');

contentEl = botEl.querySelector('.answer-content');

}

es.onmessage = function(e) {

let item;

try { item = JSON.parse(e.data); } catch (_) { return; }

if (item.type === 'delta') {

ensureBotEl();

accumulatedText += item.content;

contentEl.innerHTML = renderMarkdown(accumulatedText);

scrollChatToBottom();

} else if (item.type === 'tool_start') {

ensureBotEl();

// Save current thinking as a collapsible step

if (accumulatedText.trim()) {

const fullText = accumulatedText.trim();

const oneLine = fullText.replace(/\n+/g, ' ');

const needsTruncate = oneLine.length > 80;

const stepEl = document.createElement('div');

stepEl.className = 'agent-step agent-thinking-step' + (needsTruncate ? '' : ' no-expand');

if (needsTruncate) {

const truncated = oneLine.substring(0, 80) + '…';

stepEl.innerHTML = `

`;

messagesDiv.appendChild(botEl);

stepsEl = botEl.querySelector('.agent-steps');

contentEl = botEl.querySelector('.answer-content');

}

es.onmessage = function(e) {

let item;

try { item = JSON.parse(e.data); } catch (_) { return; }

if (item.type === 'delta') {

ensureBotEl();

accumulatedText += item.content;

contentEl.innerHTML = renderMarkdown(accumulatedText);

scrollChatToBottom();

} else if (item.type === 'tool_start') {

ensureBotEl();

// Save current thinking as a collapsible step

if (accumulatedText.trim()) {

const fullText = accumulatedText.trim();

const oneLine = fullText.replace(/\n+/g, ' ');

const needsTruncate = oneLine.length > 80;

const stepEl = document.createElement('div');

stepEl.className = 'agent-step agent-thinking-step' + (needsTruncate ? '' : ' no-expand');

if (needsTruncate) {

const truncated = oneLine.substring(0, 80) + '…';

stepEl.innerHTML = `

${renderMarkdown(fullText)}

`;

} else {

stepEl.innerHTML = `

`;

}

stepsEl.appendChild(stepEl);

}

accumulatedText = '';

contentEl.innerHTML = '';

// Add tool execution indicator (collapsible)

currentToolEl = document.createElement('div');

currentToolEl.className = 'agent-step agent-tool-step';

const argsStr = formatToolArgs(item.arguments || {});

currentToolEl.innerHTML = `

`;

stepsEl.appendChild(currentToolEl);

scrollChatToBottom();

} else if (item.type === 'tool_end') {

if (currentToolEl) {

const isError = item.status !== 'success';

const icon = currentToolEl.querySelector('.tool-icon');

icon.className = isError

? 'fas fa-times text-red-400 flex-shrink-0 tool-icon'

: 'fas fa-check text-primary-400 flex-shrink-0 tool-icon';

// Show execution time

const nameEl = currentToolEl.querySelector('.tool-name');

if (item.execution_time !== undefined) {

nameEl.innerHTML += ` ${item.execution_time}s`;

}

// Fill output section

const outputSection = currentToolEl.querySelector('.tool-output-section');

if (outputSection && item.result) {

outputSection.innerHTML = `

${isError ? 'Error' : 'Output'}

${escapeHtml(String(item.result))}`;

}

if (isError) currentToolEl.classList.add('tool-failed');

currentToolEl = null;

}

} else if (item.type === 'done') {

es.close();

delete activeStreams[requestId];

const finalText = item.content || accumulatedText;

if (!botEl && finalText) {

if (loadingEl) { loadingEl.remove(); loadingEl = null; }

addBotMessage(finalText, new Date((item.timestamp || Date.now() / 1000) * 1000), requestId);

} else if (botEl) {

contentEl.classList.remove('sse-streaming');

if (finalText) contentEl.innerHTML = renderMarkdown(finalText);

applyHighlighting(botEl);

}

scrollChatToBottom();

} else if (item.type === 'error') {

es.close();

delete activeStreams[requestId];

if (loadingEl) { loadingEl.remove(); loadingEl = null; }

addBotMessage(t('error_send'), new Date());

}

};

es.onerror = function() {

es.close();

delete activeStreams[requestId];

if (loadingEl) { loadingEl.remove(); loadingEl = null; }

if (!botEl) {

addBotMessage(t('error_send'), new Date());

} else if (accumulatedText) {

contentEl.classList.remove('sse-streaming');

contentEl.innerHTML = renderMarkdown(accumulatedText);

applyHighlighting(botEl);

}

};

}

function startPolling() {

if (isPolling) return;

isPolling = true;

function poll() {

if (!isPolling) return;

if (document.hidden) { setTimeout(poll, 5000); return; }

fetch('/poll', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({ session_id: sessionId })

})

.then(r => r.json())

.then(data => {

if (data.status === 'success' && data.has_content) {

const rid = data.request_id;

if (loadingContainers[rid]) {

loadingContainers[rid].remove();

delete loadingContainers[rid];

}

addBotMessage(data.content, new Date(data.timestamp * 1000), rid);

scrollChatToBottom();

}

setTimeout(poll, 2000);

})

.catch(() => { setTimeout(poll, 3000); });

}

poll();

}

function createUserMessageEl(content, timestamp, attachments) {

const el = document.createElement('div');

el.className = 'flex justify-end px-4 sm:px-6 py-3';

let attachHtml = '';

if (attachments && attachments.length > 0) {

const items = attachments.map(a => {

if (a.file_type === 'image') {

return ` `;

}

const icon = a.file_type === 'video' ? 'fa-film' : 'fa-file-alt';

return `

`;

}

const icon = a.file_type === 'video' ? 'fa-film' : 'fa-file-alt';

return ` ${escapeHtml(a.file_name)}

`;

}).join('');

attachHtml = `${items}

`;

}

const textHtml = content ? renderMarkdown(content) : '';

el.innerHTML = `

${attachHtml}${textHtml}

${formatTime(timestamp)}

`;

return el;

}

function renderToolCallsHtml(toolCalls) {

if (!toolCalls || toolCalls.length === 0) return '';

return toolCalls.map(tc => {

const argsStr = formatToolArgs(tc.arguments || {});

const resultStr = tc.result ? escapeHtml(String(tc.result)) : '';

const hasResult = !!resultStr;

return `

`;

}).join('');

}

function createBotMessageEl(content, timestamp, requestId, toolCalls) {

const el = document.createElement('div');

el.className = 'flex gap-3 px-4 sm:px-6 py-3';

if (requestId) el.dataset.requestId = requestId;

const toolsHtml = renderToolCallsHtml(toolCalls);

el.innerHTML = `

${toolsHtml ? `

${toolsHtml}

` : ''}

${renderMarkdown(content)}

${formatTime(timestamp)}

`;

applyHighlighting(el);

return el;

}

function addUserMessage(content, timestamp, attachments) {

const el = createUserMessageEl(content, timestamp, attachments);

messagesDiv.appendChild(el);

scrollChatToBottom();

}

function addBotMessage(content, timestamp, requestId) {

const el = createBotMessageEl(content, timestamp, requestId);

messagesDiv.appendChild(el);

scrollChatToBottom();

}

// Load conversation history from the server (page 1 = most recent messages).

// Subsequent pages prepend older messages when the user scrolls to the top.

function loadHistory(page) {

if (historyLoading) return;

historyLoading = true;

fetch(`/api/history?session_id=${encodeURIComponent(sessionId)}&page=${page}&page_size=20`)

.then(r => r.json())

.then(data => {

if (data.status !== 'success' || data.messages.length === 0) return;

const prevScrollHeight = messagesDiv.scrollHeight;

const isFirstLoad = page === 1;

// On first load, remove the welcome screen if history exists

if (isFirstLoad) {

const ws = document.getElementById('welcome-screen');

if (ws) ws.remove();

}

// Build a fragment of history message elements in chronological order

const fragment = document.createDocumentFragment();

if (data.has_more && page > 1) {

// Keep the "load more" sentinel in place (inserted below)

}

data.messages.forEach(msg => {

const hasContent = msg.content && msg.content.trim();

const hasToolCalls = msg.role === 'assistant' && msg.tool_calls && msg.tool_calls.length > 0;

if (!hasContent && !hasToolCalls) return;

const ts = new Date(msg.created_at * 1000);

const el = msg.role === 'user'

? createUserMessageEl(msg.content, ts)

: createBotMessageEl(msg.content || '', ts, null, msg.tool_calls);

fragment.appendChild(el);

});

// Prepend history above any existing messages

const sentinel = document.getElementById('history-load-more');

const insertBefore = sentinel ? sentinel.nextSibling : messagesDiv.firstChild;

messagesDiv.insertBefore(fragment, insertBefore);

// Manage the "load more" sentinel at the very top

if (data.has_more) {

if (!document.getElementById('history-load-more')) {

const btn = document.createElement('div');

btn.id = 'history-load-more';

btn.className = 'flex justify-center py-3';

btn.innerHTML = ``;

messagesDiv.insertBefore(btn, messagesDiv.firstChild);

}

} else {

const sentinel = document.getElementById('history-load-more');

if (sentinel) sentinel.remove();

}

historyHasMore = data.has_more;

historyPage = page;

if (isFirstLoad) {

// Use requestAnimationFrame to ensure the DOM has fully rendered

// before scrolling, otherwise scrollHeight may not reflect new content.

requestAnimationFrame(() => scrollChatToBottom());

} else {

// Restore scroll position so loading older messages doesn't jump the view

messagesDiv.scrollTop = messagesDiv.scrollHeight - prevScrollHeight;

}

})

.catch(() => {})

.finally(() => { historyLoading = false; });

}

function addLoadingIndicator() {

const el = document.createElement('div');

el.className = 'flex gap-3 px-4 sm:px-6 py-3';

el.innerHTML = `

`;

messagesDiv.appendChild(el);

scrollChatToBottom();

return el;

}

function newChat() {

// Close all active SSE connections for the current session

Object.values(activeStreams).forEach(es => { try { es.close(); } catch (_) {} });

activeStreams = {};

// Generate a fresh session and persist it so the next page load also starts clean

sessionId = generateSessionId();

localStorage.setItem(SESSION_ID_KEY, sessionId);

isPolling = false;

loadingContainers = {};

messagesDiv.innerHTML = '';

const ws = document.createElement('div');

ws.id = 'welcome-screen';

ws.className = 'flex flex-col items-center justify-center h-full px-6 py-12';

ws.innerHTML = `

`;

messagesDiv.appendChild(el);

scrollChatToBottom();

return el;

}

function newChat() {

// Close all active SSE connections for the current session

Object.values(activeStreams).forEach(es => { try { es.close(); } catch (_) {} });

activeStreams = {};

// Generate a fresh session and persist it so the next page load also starts clean

sessionId = generateSessionId();

localStorage.setItem(SESSION_ID_KEY, sessionId);

isPolling = false;

loadingContainers = {};

messagesDiv.innerHTML = '';

const ws = document.createElement('div');

ws.id = 'welcome-screen';

ws.className = 'flex flex-col items-center justify-center h-full px-6 py-12';

ws.innerHTML = `

${appConfig.title || 'CowAgent'}

${t('welcome_subtitle')}

${t('example_sys_title')}

${t('example_sys_text')}

${t('example_task_title')}

${t('example_task_text')}

${t('example_code_title')}

${t('example_code_text')}

`;

messagesDiv.appendChild(ws);

ws.querySelectorAll('.example-card').forEach(card => {

card.addEventListener('click', () => {

const textEl = card.querySelector('[data-i18n*="text"]');

if (textEl) {

chatInput.value = textEl.textContent;

chatInput.dispatchEvent(new Event('input'));

chatInput.focus();

}

});

});

if (currentView !== 'chat') navigateTo('chat');

}

// =====================================================================

// Utilities

// =====================================================================

function formatTime(date) {

return date.toLocaleTimeString([], { hour: '2-digit', minute: '2-digit' });

}

function escapeHtml(str) {

const div = document.createElement('div');

div.appendChild(document.createTextNode(str));

return div.innerHTML;

}

function ChannelsHandler_maskSecret(val) {

if (!val || val.length <= 8) return val;

return val.slice(0, 4) + '*'.repeat(val.length - 8) + val.slice(-4);

}

function formatToolArgs(args) {

if (!args || Object.keys(args).length === 0) return '(none)';

try {

return escapeHtml(JSON.stringify(args, null, 2));

} catch (_) {

return escapeHtml(String(args));

}

}

function scrollChatToBottom() {

messagesDiv.scrollTop = messagesDiv.scrollHeight;

}

function applyHighlighting(container) {

const root = container || document;

setTimeout(() => {

root.querySelectorAll('pre code').forEach(block => {

if (!block.classList.contains('hljs')) {

hljs.highlightElement(block);

}

});

}, 0);

}

// =====================================================================

// Config View

// =====================================================================

let configProviders = {};

let configApiBases = {};

let configApiKeys = {};

let configCurrentModel = '';

let cfgProviderValue = '';

let cfgModelValue = '';

// --- Custom dropdown helper ---

function initDropdown(el, options, selectedValue, onChange) {

const textEl = el.querySelector('.cfg-dropdown-text');

const menuEl = el.querySelector('.cfg-dropdown-menu');

const selEl = el.querySelector('.cfg-dropdown-selected');

el._ddValue = selectedValue || '';

el._ddOnChange = onChange;

function render() {

menuEl.innerHTML = '';

options.forEach(opt => {

const item = document.createElement('div');

item.className = 'cfg-dropdown-item' + (opt.value === el._ddValue ? ' active' : '');

item.textContent = opt.label;

item.dataset.value = opt.value;

item.addEventListener('click', (e) => {

e.stopPropagation();

el._ddValue = opt.value;

textEl.textContent = opt.label;

menuEl.querySelectorAll('.cfg-dropdown-item').forEach(i => i.classList.remove('active'));

item.classList.add('active');

el.classList.remove('open');

if (el._ddOnChange) el._ddOnChange(opt.value);

});

menuEl.appendChild(item);

});

const sel = options.find(o => o.value === el._ddValue);

textEl.textContent = sel ? sel.label : (options[0] ? options[0].label : '--');

if (!sel && options[0]) el._ddValue = options[0].value;

}

render();

if (!el._ddBound) {

selEl.addEventListener('click', (e) => {

e.stopPropagation();

document.querySelectorAll('.cfg-dropdown.open').forEach(d => { if (d !== el) d.classList.remove('open'); });

el.classList.toggle('open');

});

el._ddBound = true;

}

}

document.addEventListener('click', () => {

document.querySelectorAll('.cfg-dropdown.open').forEach(d => d.classList.remove('open'));

});

function getDropdownValue(el) { return el._ddValue || ''; }

// --- Config init ---

function initConfigView(data) {

configProviders = data.providers || {};

configApiBases = data.api_bases || {};

configApiKeys = data.api_keys || {};

configCurrentModel = data.model || '';

const providerEl = document.getElementById('cfg-provider');

const providerOpts = Object.entries(configProviders).map(([pid, p]) => ({ value: pid, label: p.label }));

// if use_linkai is enabled, always select linkai as the provider

// Otherwise prefer bot_type from config, fall back to model-based detection

const detected = data.use_linkai ? 'linkai'

: (data.bot_type && configProviders[data.bot_type] ? data.bot_type : detectProvider(configCurrentModel));

cfgProviderValue = detected || (providerOpts[0] ? providerOpts[0].value : '');

initDropdown(providerEl, providerOpts, cfgProviderValue, onProviderChange);

onProviderChange(cfgProviderValue);

syncModelSelection(configCurrentModel);

document.getElementById('cfg-max-tokens').value = data.agent_max_context_tokens || 50000;

document.getElementById('cfg-max-turns').value = data.agent_max_context_turns || 30;

document.getElementById('cfg-max-steps').value = data.agent_max_steps || 15;

}

function detectProvider(model) {

if (!model) return Object.keys(configProviders)[0] || '';

for (const [pid, p] of Object.entries(configProviders)) {

if (pid === 'linkai') continue;

if (p.models && p.models.includes(model)) return pid;

}

return Object.keys(configProviders)[0] || '';

}

function onProviderChange(pid) {

cfgProviderValue = pid || getDropdownValue(document.getElementById('cfg-provider'));

const p = configProviders[cfgProviderValue];

if (!p) return;

const modelEl = document.getElementById('cfg-model-select');

const modelOpts = (p.models || []).map(m => ({ value: m, label: m }));

modelOpts.push({ value: '__custom__', label: t('config_custom_option') });

initDropdown(modelEl, modelOpts, modelOpts[0] ? modelOpts[0].value : '', onModelSelectChange);

// API Key

const keyField = p.api_key_field;

const keyWrap = document.getElementById('cfg-api-key-wrap');

const keyInput = document.getElementById('cfg-api-key');

if (keyField) {

keyWrap.classList.remove('hidden');

keyInput.classList.add('cfg-key-masked');

const maskedVal = configApiKeys[keyField] || '';

keyInput.value = maskedVal;

keyInput.dataset.field = keyField;

keyInput.dataset.masked = maskedVal ? '1' : '';

keyInput.dataset.maskedVal = maskedVal;

const toggleIcon = document.querySelector('#cfg-api-key-toggle i');

if (toggleIcon) toggleIcon.className = 'fas fa-eye text-xs';

if (!keyInput._cfgBound) {

keyInput.addEventListener('focus', function() {

if (this.dataset.masked === '1') {

this.value = '';

this.dataset.masked = '';

this.classList.remove('cfg-key-masked');

}

});

keyInput.addEventListener('blur', function() {

if (!this.value.trim() && this.dataset.maskedVal) {

this.value = this.dataset.maskedVal;

this.dataset.masked = '1';

this.classList.add('cfg-key-masked');

}

});

keyInput.addEventListener('input', function() {

this.dataset.masked = '';

});

keyInput._cfgBound = true;

}

} else {

keyWrap.classList.add('hidden');

keyInput.value = '';

keyInput.dataset.field = '';

}

// API Base

if (p.api_base_key) {

document.getElementById('cfg-api-base-wrap').classList.remove('hidden');

document.getElementById('cfg-api-base').value = configApiBases[p.api_base_key] || p.api_base_default || '';

} else {

document.getElementById('cfg-api-base-wrap').classList.add('hidden');

document.getElementById('cfg-api-base').value = '';

}

onModelSelectChange(modelOpts[0] ? modelOpts[0].value : '');

}

function onModelSelectChange(val) {

cfgModelValue = val || getDropdownValue(document.getElementById('cfg-model-select'));

const customWrap = document.getElementById('cfg-model-custom-wrap');

if (cfgModelValue === '__custom__') {

customWrap.classList.remove('hidden');

document.getElementById('cfg-model-custom').focus();

} else {

customWrap.classList.add('hidden');

document.getElementById('cfg-model-custom').value = '';

}

}

function syncModelSelection(model) {

const p = configProviders[cfgProviderValue];

if (!p) return;

const modelEl = document.getElementById('cfg-model-select');

if (p.models && p.models.includes(model)) {

const modelOpts = (p.models || []).map(m => ({ value: m, label: m }));

modelOpts.push({ value: '__custom__', label: t('config_custom_option') });

initDropdown(modelEl, modelOpts, model, onModelSelectChange);

cfgModelValue = model;

document.getElementById('cfg-model-custom-wrap').classList.add('hidden');

} else {

cfgModelValue = '__custom__';

const modelOpts = (p.models || []).map(m => ({ value: m, label: m }));

modelOpts.push({ value: '__custom__', label: t('config_custom_option') });

initDropdown(modelEl, modelOpts, '__custom__', onModelSelectChange);

document.getElementById('cfg-model-custom-wrap').classList.remove('hidden');

document.getElementById('cfg-model-custom').value = model;

}

}

function getSelectedModel() {

if (cfgModelValue === '__custom__') {

return document.getElementById('cfg-model-custom').value.trim();

}

return cfgModelValue;

}

function toggleApiKeyVisibility() {

const input = document.getElementById('cfg-api-key');

const icon = document.querySelector('#cfg-api-key-toggle i');

if (input.classList.contains('cfg-key-masked')) {

input.classList.remove('cfg-key-masked');

icon.className = 'fas fa-eye-slash text-xs';

} else {

input.classList.add('cfg-key-masked');

icon.className = 'fas fa-eye text-xs';

}

}

function showStatus(elId, msgKey, isError) {

const el = document.getElementById(elId);

el.textContent = t(msgKey);

el.classList.toggle('text-red-500', !!isError);

el.classList.toggle('text-primary-500', !isError);

el.classList.remove('opacity-0');

setTimeout(() => el.classList.add('opacity-0'), 2500);

}

function saveModelConfig() {

const model = getSelectedModel();

if (!model) return;

const updates = { model: model };

const p = configProviders[cfgProviderValue];

updates.use_linkai = (cfgProviderValue === 'linkai');

if (cfgProviderValue === 'linkai') {

updates.bot_type = '';

} else {

updates.bot_type = cfgProviderValue;

}

if (p && p.api_base_key) {

const base = document.getElementById('cfg-api-base').value.trim();

if (base) updates[p.api_base_key] = base;

}

if (p && p.api_key_field) {

const keyInput = document.getElementById('cfg-api-key');

const rawVal = keyInput.value.trim();

if (rawVal && keyInput.dataset.masked !== '1') {

updates[p.api_key_field] = rawVal;

}

}

const btn = document.getElementById('cfg-model-save');

btn.disabled = true;

fetch('/config', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({ updates })

})

.then(r => r.json())

.then(data => {

if (data.status === 'success') {

configCurrentModel = model;

if (data.applied) {

const keyInput = document.getElementById('cfg-api-key');

Object.entries(data.applied).forEach(([k, v]) => {

if (k === 'model') return;

if (k.includes('api_key')) {

const masked = v.length > 8

? v.substring(0, 4) + '*'.repeat(v.length - 8) + v.substring(v.length - 4)

: v;

configApiKeys[k] = masked;

if (keyInput.dataset.field === k) {

keyInput.value = masked;

keyInput.dataset.masked = '1';

keyInput.dataset.maskedVal = masked;

keyInput.classList.add('cfg-key-masked');

const toggleIcon = document.querySelector('#cfg-api-key-toggle i');

if (toggleIcon) toggleIcon.className = 'fas fa-eye text-xs';

}

} else {

configApiBases[k] = v;

}

});

}

showStatus('cfg-model-status', 'config_saved', false);

} else {

showStatus('cfg-model-status', 'config_save_error', true);

}

})

.catch(() => showStatus('cfg-model-status', 'config_save_error', true))

.finally(() => { btn.disabled = false; });

}

function saveAgentConfig() {

const updates = {

agent_max_context_tokens: parseInt(document.getElementById('cfg-max-tokens').value) || 50000,

agent_max_context_turns: parseInt(document.getElementById('cfg-max-turns').value) || 30,

agent_max_steps: parseInt(document.getElementById('cfg-max-steps').value) || 15,

};

const btn = document.getElementById('cfg-agent-save');

btn.disabled = true;

fetch('/config', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({ updates })

})

.then(r => r.json())

.then(data => {

if (data.status === 'success') {

showStatus('cfg-agent-status', 'config_saved', false);

} else {

showStatus('cfg-agent-status', 'config_save_error', true);

}

})

.catch(() => showStatus('cfg-agent-status', 'config_save_error', true))

.finally(() => { btn.disabled = false; });

}

function loadConfigView() {

fetch('/config').then(r => r.json()).then(data => {

if (data.status !== 'success') return;

appConfig = data;

initConfigView(data);

}).catch(() => {});

}

// =====================================================================

// Skills View

// =====================================================================

let toolsLoaded = false;

const TOOL_ICONS = {

bash: 'fa-terminal',

edit: 'fa-pen-to-square',

read: 'fa-file-lines',

write: 'fa-file-pen',

ls: 'fa-folder-open',

send: 'fa-paper-plane',

web_search: 'fa-magnifying-glass',

browser: 'fa-globe',

env_config: 'fa-key',

scheduler: 'fa-clock',

memory_get: 'fa-brain',

memory_search: 'fa-brain',

};

function getToolIcon(name) {

return TOOL_ICONS[name] || 'fa-wrench';

}

function loadSkillsView() {

loadToolsSection();

loadSkillsSection();

}

function loadToolsSection() {

if (toolsLoaded) return;

const emptyEl = document.getElementById('tools-empty');

const listEl = document.getElementById('tools-list');

const badge = document.getElementById('tools-count-badge');

fetch('/api/tools').then(r => r.json()).then(data => {

if (data.status !== 'success') return;

const tools = data.tools || [];

emptyEl.classList.add('hidden');

if (tools.length === 0) {

emptyEl.classList.remove('hidden');

emptyEl.innerHTML = `${currentLang === 'zh' ? '暂无内置工具' : 'No built-in tools'}`;

return;

}

badge.textContent = tools.length;

badge.classList.remove('hidden');

listEl.innerHTML = '';

tools.forEach(tool => {

const card = document.createElement('div');

card.className = 'bg-white dark:bg-[#1A1A1A] rounded-xl border border-slate-200 dark:border-white/10 p-4 flex items-start gap-3';

card.innerHTML = `

${escapeHtml(tool.name)}

${escapeHtml(tool.description || '--')}

`;

listEl.appendChild(card);

});

listEl.classList.remove('hidden');

toolsLoaded = true;

}).catch(() => {

emptyEl.classList.remove('hidden');

emptyEl.innerHTML = `${currentLang === 'zh' ? '加载失败' : 'Failed to load'}`;

});

}

function loadSkillsSection() {

const emptyEl = document.getElementById('skills-empty');

const listEl = document.getElementById('skills-list');

const badge = document.getElementById('skills-count-badge');

fetch('/api/skills').then(r => r.json()).then(data => {

if (data.status !== 'success') return;

const skills = data.skills || [];

if (skills.length === 0) {

const p = emptyEl.querySelector('p');

if (p) p.textContent = currentLang === 'zh' ? '暂无技能' : 'No skills found';

return;

}

badge.textContent = skills.length;

badge.classList.remove('hidden');

emptyEl.classList.add('hidden');

listEl.innerHTML = '';

skills.forEach(sk => {

const card = document.createElement('div');

card.className = 'bg-white dark:bg-[#1A1A1A] rounded-xl border border-slate-200 dark:border-white/10 p-4 flex items-start gap-3 transition-opacity';

card.dataset.skillName = sk.name;

card.dataset.skillDesc = sk.description || '';

card.dataset.enabled = sk.enabled ? '1' : '0';

renderSkillCard(card, sk);

listEl.appendChild(card);

});

}).catch(() => {});

}

function renderSkillCard(card, sk) {

const enabled = sk.enabled;

const iconColor = enabled ? 'text-primary-400' : 'text-slate-300 dark:text-slate-600';

const trackClass = enabled

? 'bg-primary-400'

: 'bg-slate-200 dark:bg-slate-700';

const thumbTranslate = enabled ? 'translate-x-3' : 'translate-x-0.5';

card.innerHTML = `

${escapeHtml(sk.name)}

${escapeHtml(sk.description || '--')}

`;

}

function toggleSkill(name, currentlyEnabled) {

const action = currentlyEnabled ? 'close' : 'open';

const card = document.querySelector(`[data-skill-name="${CSS.escape(name)}"]`);

if (card) card.style.opacity = '0.5';

fetch('/api/skills', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({ action, name })

})

.then(r => r.json())

.then(data => {

if (data.status === 'success') {

if (card) {

const desc = card.dataset.skillDesc || '';

card.dataset.enabled = currentlyEnabled ? '0' : '1';

card.style.opacity = '1';

renderSkillCard(card, { name, description: desc, enabled: !currentlyEnabled });

}

} else {

if (card) card.style.opacity = '1';

alert(currentLang === 'zh' ? '操作失败,请稍后再试' : 'Operation failed, please try again');

}

})

.catch(() => {

if (card) card.style.opacity = '1';

alert(currentLang === 'zh' ? '操作失败,请稍后再试' : 'Operation failed, please try again');

});

}

// =====================================================================

// Memory View

// =====================================================================

let memoryPage = 1;

const memoryPageSize = 10;

function loadMemoryView(page) {

page = page || 1;

memoryPage = page;

fetch(`/api/memory?page=${page}&page_size=${memoryPageSize}`).then(r => r.json()).then(data => {

if (data.status !== 'success') return;

const emptyEl = document.getElementById('memory-empty');

const listEl = document.getElementById('memory-list');

const files = data.list || [];

const total = data.total || 0;

if (total === 0) {

emptyEl.querySelector('p').textContent = currentLang === 'zh' ? '暂无记忆文件' : 'No memory files';

emptyEl.classList.remove('hidden');

listEl.classList.add('hidden');

return;

}

emptyEl.classList.add('hidden');

listEl.classList.remove('hidden');

const tbody = document.getElementById('memory-table-body');

tbody.innerHTML = '';

files.forEach(f => {

const tr = document.createElement('tr');

tr.className = 'border-b border-slate-100 dark:border-white/5 hover:bg-slate-50 dark:hover:bg-white/5 cursor-pointer transition-colors';

tr.onclick = () => openMemoryFile(f.filename);

const typeLabel = f.type === 'global'

? 'Global'

: 'Daily';

const sizeStr = f.size < 1024 ? f.size + ' B' : (f.size / 1024).toFixed(1) + ' KB';

tr.innerHTML = `

${escapeHtml(f.filename)} |

${typeLabel} |

${sizeStr} |

${escapeHtml(f.updated_at)} | `;

tbody.appendChild(tr);

});

// Pagination

const totalPages = Math.ceil(total / memoryPageSize);

const pagEl = document.getElementById('memory-pagination');

if (totalPages <= 1) { pagEl.innerHTML = ''; return; }

let pagHtml = `${page} / ${totalPages}`;

if (page > 1) pagHtml += ``;

if (page < totalPages) pagHtml += ``;

pagHtml += '

';

pagEl.innerHTML = pagHtml;

}).catch(() => {});

}

function openMemoryFile(filename) {

fetch(`/api/memory/content?filename=${encodeURIComponent(filename)}`).then(r => r.json()).then(data => {

if (data.status !== 'success') return;

document.getElementById('memory-panel-list').classList.add('hidden');

const panel = document.getElementById('memory-panel-viewer');

document.getElementById('memory-viewer-title').textContent = filename;

document.getElementById('memory-viewer-content').innerHTML = renderMarkdown(data.content || '');

panel.classList.remove('hidden');

applyHighlighting(panel);

}).catch(() => {});

}

function closeMemoryViewer() {

document.getElementById('memory-panel-viewer').classList.add('hidden');

document.getElementById('memory-panel-list').classList.remove('hidden');

}

// =====================================================================

// Custom Confirm Dialog

// =====================================================================

function showConfirmDialog({ title, message, okText, cancelText, onConfirm }) {

const overlay = document.getElementById('confirm-dialog-overlay');

document.getElementById('confirm-dialog-title').textContent = title || '';

document.getElementById('confirm-dialog-message').textContent = message || '';

document.getElementById('confirm-dialog-ok').textContent = okText || 'OK';

document.getElementById('confirm-dialog-cancel').textContent = cancelText || t('channels_cancel');

function cleanup() {

overlay.classList.add('hidden');

okBtn.removeEventListener('click', onOk);

cancelBtn.removeEventListener('click', onCancel);

overlay.removeEventListener('click', onOverlayClick);

}

function onOk() { cleanup(); if (onConfirm) onConfirm(); }

function onCancel() { cleanup(); }

function onOverlayClick(e) { if (e.target === overlay) cleanup(); }

const okBtn = document.getElementById('confirm-dialog-ok');

const cancelBtn = document.getElementById('confirm-dialog-cancel');

okBtn.addEventListener('click', onOk);

cancelBtn.addEventListener('click', onCancel);

overlay.addEventListener('click', onOverlayClick);

overlay.classList.remove('hidden');

}

// =====================================================================

// Channels View

// =====================================================================

let channelsData = [];

function loadChannelsView() {

const container = document.getElementById('channels-content');

container.innerHTML = `

Loading...

`;

fetch('/api/channels').then(r => r.json()).then(data => {

if (data.status !== 'success') return;

channelsData = data.channels || [];

renderActiveChannels();

}).catch(() => {

container.innerHTML = 'Failed to load channels

';

});

}

function renderActiveChannels() {

const container = document.getElementById('channels-content');

container.innerHTML = '';

closeAddChannelPanel();

const activeChannels = channelsData.filter(ch => ch.active);

if (activeChannels.length === 0) {

container.innerHTML = `

${t('channels_empty')}

${t('channels_empty_desc')}

`;

return;

}

activeChannels.forEach(ch => {

const label = (typeof ch.label === 'object') ? (ch.label[currentLang] || ch.label.en) : ch.label;

const card = document.createElement('div');

card.className = 'bg-white dark:bg-[#1A1A1A] rounded-xl border border-slate-200 dark:border-white/10 p-6';

card.id = `channel-card-${ch.name}`;

const fieldsHtml = buildChannelFieldsHtml(ch.name, ch.fields || []);

card.innerHTML = `

${escapeHtml(label)}

${t('channels_connected')}

${escapeHtml(ch.name)}

${fieldsHtml}

${inputHtml}

`;

});

return html;

}

function bindSecretFieldEvents(container) {

container.querySelectorAll('input[data-masked="1"]').forEach(inp => {

inp.addEventListener('focus', function() {

if (this.dataset.masked === '1') {

this.value = '';

this.dataset.masked = '';

this.classList.remove('cfg-key-masked');

}

});

});

}

function showChannelStatus(chName, msgKey, isError) {

const el = document.getElementById(`ch-status-${chName}`);

if (!el) return;

el.textContent = t(msgKey);

el.classList.toggle('text-red-500', !!isError);

el.classList.toggle('text-primary-500', !isError);

el.classList.remove('opacity-0');

setTimeout(() => el.classList.add('opacity-0'), 2500);

}

function saveChannelConfig(chName) {

const card = document.getElementById(`channel-card-${chName}`);

if (!card) return;

const updates = {};

card.querySelectorAll('input[data-ch="' + chName + '"]').forEach(inp => {

const key = inp.dataset.field;

if (inp.type === 'checkbox') {

updates[key] = inp.checked;

} else {

if (inp.dataset.masked === '1') return;

updates[key] = inp.value;

}

});

const btn = document.getElementById(`ch-save-${chName}`);

if (btn) btn.disabled = true;

fetch('/api/channels', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },