Showing preview only (4,907K chars total). Download the full file or copy to clipboard to get everything.

Repository: BrainCog-X/Brain-Cog

Branch: main

Commit: f9b879f75da2

Files: 685

Total size: 4.5 MB

Directory structure:

gitextract_qe2qoke6/

├── .gitignore

├── LICENSE

├── README.md

├── braincog/

│ ├── __init__.py

│ ├── base/

│ │ ├── __init__.py

│ │ ├── brainarea/

│ │ │ ├── BrainArea.py

│ │ │ ├── IPL.py

│ │ │ ├── Insula.py

│ │ │ ├── PFC.py

│ │ │ ├── __init__.py

│ │ │ ├── basalganglia.py

│ │ │ └── dACC.py

│ │ ├── connection/

│ │ │ ├── CustomLinear.py

│ │ │ ├── __init__.py

│ │ │ └── layer.py

│ │ ├── conversion/

│ │ │ ├── __init__.py

│ │ │ ├── convertor.py

│ │ │ ├── merge.py

│ │ │ └── spicalib.py

│ │ ├── encoder/

│ │ │ ├── __init__.py

│ │ │ ├── encoder.py

│ │ │ ├── population_coding.py

│ │ │ └── qs_coding.py

│ │ ├── learningrule/

│ │ │ ├── BCM.py

│ │ │ ├── Hebb.py

│ │ │ ├── RSTDP.py

│ │ │ ├── STDP.py

│ │ │ ├── STP.py

│ │ │ └── __init__.py

│ │ ├── node/

│ │ │ ├── __init__.py

│ │ │ └── node.py

│ │ ├── strategy/

│ │ │ ├── LateralInhibition.py

│ │ │ ├── __init__.py

│ │ │ └── surrogate.py

│ │ └── utils/

│ │ ├── __init__.py

│ │ ├── criterions.py

│ │ └── visualization.py

│ ├── datasets/

│ │ ├── CUB2002011.py

│ │ ├── ESimagenet/

│ │ │ ├── ES_imagenet.py

│ │ │ ├── __init__.py

│ │ │ └── reconstructed_ES_imagenet.py

│ │ ├── NOmniglot/

│ │ │ ├── NOmniglot.py

│ │ │ ├── __init__.py

│ │ │ ├── nomniglot_full.py

│ │ │ ├── nomniglot_nw_ks.py

│ │ │ ├── nomniglot_pair.py

│ │ │ └── utils.py

│ │ ├── StanfordDogs.py

│ │ ├── TinyImageNet.py

│ │ ├── __init__.py

│ │ ├── bullying10k/

│ │ │ ├── __init__.py

│ │ │ └── bullying10k.py

│ │ ├── cut_mix.py

│ │ ├── datasets.py

│ │ ├── gen_input_signal.py

│ │ ├── hmdb_dvs/

│ │ │ ├── __init__.py

│ │ │ └── hmdb_dvs.py

│ │ ├── ncaltech101/

│ │ │ ├── __init__.py

│ │ │ └── ncaltech101.py

│ │ ├── rand_aug.py

│ │ ├── scripts/

│ │ │ ├── testlist01.txt

│ │ │ └── ucf101_dvs_preprocessing.py

│ │ ├── ucf101_dvs/

│ │ │ ├── __init__.py

│ │ │ └── ucf101_dvs.py

│ │ └── utils.py

│ ├── model_zoo/

│ │ ├── NeuEvo/

│ │ │ ├── __init__.py

│ │ │ ├── architect.py

│ │ │ ├── genotypes.py

│ │ │ ├── model.py

│ │ │ ├── model_search.py

│ │ │ ├── operations.py

│ │ │ └── others.py

│ │ ├── __init__.py

│ │ ├── backeinet.py

│ │ ├── base_module.py

│ │ ├── bdmsnn.py

│ │ ├── convnet.py

│ │ ├── fc_snn.py

│ │ ├── glsnn.py

│ │ ├── linearNet.py

│ │ ├── nonlinearNet.py

│ │ ├── qsnn.py

│ │ ├── resnet.py

│ │ ├── resnet19_snn.py

│ │ ├── rsnn.py

│ │ ├── sew_resnet.py

│ │ └── vgg_snn.py

│ └── utils.py

├── docs/

│ ├── Makefile

│ ├── make.bat

│ └── source/

│ ├── conf.py

│ ├── examples/

│ │ ├── Brain_Cognitive_Function_Simulation/

│ │ │ ├── drosophila.md

│ │ │ └── index.rst

│ │ ├── Decision_Making/

│ │ │ ├── BDM_SNN.md

│ │ │ ├── RL.md

│ │ │ └── index.rst

│ │ ├── Knowledge_Representation_and_Reasoning/

│ │ │ ├── CKRGSNN.md

│ │ │ ├── CRSNN.md

│ │ │ ├── SPSNN.md

│ │ │ ├── index.rst

│ │ │ └── musicMemory.md

│ │ ├── Multi-scale_Brain_Structure_Simulation/

│ │ │ ├── Corticothalamic_minicolumn.md

│ │ │ ├── HumanBrain.md

│ │ │ ├── Human_PFC.md

│ │ │ ├── MacaqueBrain.md

│ │ │ ├── index.rst

│ │ │ └── mouse_brain.md

│ │ ├── Perception_and_Learning/

│ │ │ ├── Conversion.md

│ │ │ ├── MultisensoryIntegration.md

│ │ │ ├── QSNN.md

│ │ │ ├── UnsupervisedSTDP.md

│ │ │ ├── img_cls/

│ │ │ │ ├── bp.md

│ │ │ │ ├── glsnn.md

│ │ │ │ └── index.rst

│ │ │ └── index.rst

│ │ ├── Social_Cognition/

│ │ │ ├── Mirror_Test.md

│ │ │ ├── ToM.md

│ │ │ └── index.rst

│ │ └── index.rst

│ ├── index.rst

│ ├── modules.rst

│ └── setup.rst

├── docs.md

├── documents/

│ ├── Data_engine.md

│ ├── Lectures.md

│ ├── Pub_brain_inspired_AI.md

│ ├── Pub_brain_simulation.md

│ ├── Pub_sh_codesign.md

│ ├── Publication.md

│ └── Tutorial.md

├── examples/

│ ├── Brain_Cognitive_Function_Simulation/

│ │ └── drosophila/

│ │ ├── README.md

│ │ └── drosophila.py

│ ├── Embodied_Cognition/

│ │ └── RHI/

│ │ ├── RHI_Test.py

│ │ ├── RHI_Train.py

│ │ └── ReadMe.md

│ ├── Hardware_acceleration/

│ │ ├── README.md

│ │ ├── firefly_v1_schedule_on_pynq.py

│ │ ├── standalone_utils.py

│ │ ├── ultra96_test.py

│ │ └── zcu104_test.py

│ ├── Knowledge_Representation_and_Reasoning/

│ │ ├── CKRGSNN/

│ │ │ ├── README.md

│ │ │ ├── main.py

│ │ │ └── sub_Conceptnet.csv

│ │ ├── CRSNN/

│ │ │ ├── README.md

│ │ │ └── main.py

│ │ ├── SPSNN/

│ │ │ ├── README.md

│ │ │ └── main.py

│ │ └── musicMemory/

│ │ ├── Areas/

│ │ │ ├── apac.py

│ │ │ ├── cortex.py

│ │ │ ├── pac.py

│ │ │ └── pfc.py

│ │ ├── Modal/

│ │ │ ├── PAC.py

│ │ │ ├── cluster.py

│ │ │ ├── composercluster.py

│ │ │ ├── composerlayer.py

│ │ │ ├── composerlifneuron.py

│ │ │ ├── genrecluster.py

│ │ │ ├── genrelayer.py

│ │ │ ├── genrelifneuron.py

│ │ │ ├── izhikevichneuron.py

│ │ │ ├── layer.py

│ │ │ ├── lifneuron.py

│ │ │ ├── note.py

│ │ │ ├── notecluster.py

│ │ │ ├── notelifneuron.py

│ │ │ ├── notesequencelayer.py

│ │ │ ├── pitch.py

│ │ │ ├── sequencelayer.py

│ │ │ ├── sequencememory.py

│ │ │ ├── synapse.py

│ │ │ ├── tempocluster.py

│ │ │ ├── tempolifneuron.py

│ │ │ ├── temposequencelayer.py

│ │ │ ├── titlecluster.py

│ │ │ ├── titlelayer.py

│ │ │ └── titlelifneuron.py

│ │ ├── README.md

│ │ ├── api/

│ │ │ └── music_engine_api.py

│ │ ├── conf/

│ │ │ ├── GenreData.txt

│ │ │ ├── MIDIData.txt

│ │ │ └── conf.py

│ │ ├── inputs/

│ │ │ ├── 1.txt

│ │ │ ├── Data.txt

│ │ │ ├── GenreData.txt

│ │ │ ├── MIDIData.txt

│ │ │ ├── chords.csv

│ │ │ ├── chords.xlsx

│ │ │ ├── information.csv

│ │ │ ├── keyIndex.csv

│ │ │ ├── keys.csv

│ │ │ ├── keys.xlsx

│ │ │ ├── modeindex.csv

│ │ │ ├── modeindex.xlsx

│ │ │ ├── pitch2midi.csv

│ │ │ └── tones2.csv

│ │ ├── result_output/

│ │ │ └── tone learning/

│ │ │ ├── C major_20241121155522.mid

│ │ │ ├── C major_20241122093822.mid

│ │ │ ├── C major_20241122094000.mid

│ │ │ ├── C major_20241122094419.mid

│ │ │ └── C major_20241122094736.mid

│ │ ├── task/

│ │ │ ├── Bach_generated.mid

│ │ │ ├── Classical_generated.mid

│ │ │ ├── Sonate C Major.Mid_recall.mid

│ │ │ ├── melody_generated.mid

│ │ │ ├── mode-conditioned learning.py

│ │ │ ├── musicGeneration.py

│ │ │ └── musicMemory.py

│ │ ├── testData/

│ │ │ ├── Bach/

│ │ │ │ └── prelude C major.mid

│ │ │ ├── JayZhou/

│ │ │ │ └── rainbow.mid

│ │ │ └── Mozart/

│ │ │ └── Sonate C major.mid

│ │ └── tools/

│ │ ├── __init__.py

│ │ ├── generateData.py

│ │ ├── hamonydataset_test.py

│ │ ├── msg.py

│ │ ├── msgq.py

│ │ ├── oscillations.py

│ │ ├── position.txt

│ │ ├── readjson.py

│ │ ├── testSound.py

│ │ ├── testmusic21.py

│ │ ├── testopengl.py

│ │ ├── testwave.py

│ │ └── xmlParser.py

│ ├── MotorControl/

│ │ └── experimental/

│ │ ├── README.md

│ │ ├── brain_area.py

│ │ ├── main.py

│ │ └── model.py

│ ├── Multiscale_Brain_Structure_Simulation/

│ │ ├── CorticothalamicColumn/

│ │ │ ├── README.md

│ │ │ ├── data/

│ │ │ │ ├── __init__.py

│ │ │ │ └── globaldata.py

│ │ │ ├── main.py

│ │ │ ├── model/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── cortex.py

│ │ │ │ ├── cortex_thalamus.py

│ │ │ │ ├── dendrite.py

│ │ │ │ ├── fire.csv

│ │ │ │ ├── layer.py

│ │ │ │ ├── synapse.py

│ │ │ │ └── thalamus.py

│ │ │ └── tools/

│ │ │ ├── __init__.py

│ │ │ ├── cortical.csv

│ │ │ ├── exdata.py

│ │ │ ├── layer.csv

│ │ │ ├── neuron.csv

│ │ │ └── synapse.csv

│ │ ├── Corticothalamic_Brain_Model/

│ │ │ ├── Bioinformatics_propofol_circle.py

│ │ │ ├── Readme.md

│ │ │ └── spectrogram.py

│ │ ├── HumanBrain/

│ │ │ ├── README.md

│ │ │ ├── human_brain.py

│ │ │ └── human_multi.py

│ │ ├── Human_Brain_Model/

│ │ │ ├── NA.py

│ │ │ ├── Readme.md

│ │ │ ├── gc.py

│ │ │ ├── main_246.py

│ │ │ ├── main_84.py

│ │ │ ├── pci.py

│ │ │ ├── pci_246.py

│ │ │ └── spectrogram.py

│ │ ├── Human_PFC_Model/

│ │ │ ├── README.md

│ │ │ └── Six_Layer_PFC.py

│ │ ├── MacaqueBrain/

│ │ │ ├── README.md

│ │ │ └── macaque_brain.py

│ │ └── MouseBrain/

│ │ ├── README.md

│ │ └── mouse_brain.py

│ ├── Perception_and_Learning/

│ │ ├── Conversion/

│ │ │ ├── burst_conversion/

│ │ │ │ ├── CIFAR10_VGG16.py

│ │ │ │ ├── README.md

│ │ │ │ └── converted_CIFAR10.py

│ │ │ └── msat_conversion/

│ │ │ ├── CIFAR10_VGG16.py

│ │ │ ├── README.md

│ │ │ ├── converted_CIFAR10.py

│ │ │ └── convertor.py

│ │ ├── IllusionPerception/

│ │ │ └── AbuttingGratingIllusion/

│ │ │ ├── distortion/

│ │ │ │ ├── __init__.py

│ │ │ │ └── abutting_grating_illusion/

│ │ │ │ ├── __init__.py

│ │ │ │ └── abutting_grating_distortion.py

│ │ │ └── main.py

│ │ ├── MultisensoryIntegration/

│ │ │ ├── README.md

│ │ │ └── code/

│ │ │ ├── MultisensoryIntegrationDEMO_AM.py

│ │ │ ├── MultisensoryIntegrationDEMO_IM.py

│ │ │ └── measure_and_visualization.py

│ │ ├── NeuEvo/

│ │ │ ├── auto_augment.py

│ │ │ ├── main.py

│ │ │ ├── separate_loss.py

│ │ │ ├── train.py

│ │ │ ├── train_search.py

│ │ │ └── utils.py

│ │ ├── QSNN/

│ │ │ ├── README.md

│ │ │ └── main.py

│ │ ├── UnsupervisedSTDP/

│ │ │ ├── Readme.md

│ │ │ └── codef.py

│ │ └── img_cls/

│ │ ├── bp/

│ │ │ ├── README.md

│ │ │ ├── main.py

│ │ │ ├── main_backei.py

│ │ │ └── main_simplified.py

│ │ ├── glsnn/

│ │ │ ├── README.md

│ │ │ └── cls_glsnn.py

│ │ ├── spiking_capsnet/

│ │ │ ├── README.md

│ │ │ └── spikingcaps.py

│ │ └── transfer_for_dvs/

│ │ ├── GradCAM_visualization.py

│ │ ├── README.md

│ │ ├── datasets.py

│ │ ├── main.py

│ │ ├── main_transfer.py

│ │ └── main_visual_losslandscape.py

│ ├── Snn_safety/

│ │ ├── DPSNN/

│ │ │ ├── Readme.txt

│ │ │ ├── load_data.py

│ │ │ ├── main_dpsnn.py

│ │ │ └── model.py

│ │ └── RandHet-SNN/

│ │ ├── README.md

│ │ ├── evaluate.py

│ │ ├── my_node.py

│ │ ├── sew_resnet.py

│ │ ├── train.py

│ │ └── utils.py

│ ├── Social_Cognition/

│ │ ├── FOToM/

│ │ │ ├── algorithms/

│ │ │ │ ├── ToM_class.py

│ │ │ │ ├── __init__.py

│ │ │ │ ├── maddpg.py

│ │ │ │ └── tom11.py

│ │ │ ├── common/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── distributions.py

│ │ │ │ ├── tile_images.py

│ │ │ │ └── vec_env/

│ │ │ │ ├── __init__.py

│ │ │ │ └── vec_env.py

│ │ │ ├── evaluate.py

│ │ │ ├── main.py

│ │ │ ├── multiagent/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── core.py

│ │ │ │ ├── environment.py

│ │ │ │ ├── multi_discrete.py

│ │ │ │ ├── policy.py

│ │ │ │ ├── rendering.py

│ │ │ │ ├── scenario.py

│ │ │ │ └── scenarios/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── hetero_spread.py

│ │ │ │ ├── simple.py

│ │ │ │ ├── simple_adversary.py

│ │ │ │ ├── simple_crypto.py

│ │ │ │ ├── simple_push.py

│ │ │ │ ├── simple_reference.py

│ │ │ │ ├── simple_speaker_listener.py

│ │ │ │ ├── simple_spread.py

│ │ │ │ ├── simple_tag.py

│ │ │ │ └── simple_world_comm.py

│ │ │ ├── readme.md

│ │ │ └── utils/

│ │ │ ├── __init__.py

│ │ │ ├── agents.py

│ │ │ ├── buffer.py

│ │ │ ├── env_wrappers.py

│ │ │ ├── make_env.py

│ │ │ ├── misc.py

│ │ │ ├── multiprocessing.py

│ │ │ ├── networks.py

│ │ │ └── noise.py

│ │ ├── Intention_Prediction/

│ │ │ └── Intention_Prediction.py

│ │ ├── MAToM-SNN/

│ │ │ ├── LICENSE

│ │ │ ├── MPE/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── agents/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ └── agents.py

│ │ │ │ ├── common/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ ├── distributions.py

│ │ │ │ │ ├── tile_images.py

│ │ │ │ │ └── vec_env/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ └── vec_env.py

│ │ │ │ ├── main.py

│ │ │ │ ├── multiagent/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ └── scenarios/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ ├── simple.py

│ │ │ │ │ ├── simple_crypto.py

│ │ │ │ │ ├── simple_push.py

│ │ │ │ │ ├── simple_reference.py

│ │ │ │ │ ├── simple_speaker_listener.py

│ │ │ │ │ ├── simple_spread.py

│ │ │ │ │ └── simple_world_comm.py

│ │ │ │ ├── policy/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ └── maddpg.py

│ │ │ │ └── utils/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── buffer.py

│ │ │ │ ├── env_wrappers.py

│ │ │ │ ├── make_env.py

│ │ │ │ ├── misc.py

│ │ │ │ ├── multiprocessing.py

│ │ │ │ ├── networks.py

│ │ │ │ └── noise.py

│ │ │ ├── README.md

│ │ │ └── STAG/

│ │ │ ├── agents/

│ │ │ │ ├── __init__.py

│ │ │ │ └── sagent.py

│ │ │ ├── common_sr/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── arguments.py

│ │ │ │ ├── dummy_vec_env.py

│ │ │ │ ├── multiprocessing_env.py

│ │ │ │ ├── replay_buffer.py

│ │ │ │ └── srollout.py

│ │ │ ├── envs/

│ │ │ │ ├── Stag_Hunt_env.py

│ │ │ │ ├── __init__.py

│ │ │ │ ├── abstract.py

│ │ │ │ └── constants.py

│ │ │ ├── main_spiking.py

│ │ │ ├── network/

│ │ │ │ ├── __init__.py

│ │ │ │ └── spiking_net.py

│ │ │ ├── policy/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── dqn.py

│ │ │ │ ├── stomvdn.py

│ │ │ │ └── svdn.py

│ │ │ ├── preprocessoing/

│ │ │ │ ├── __init__.py

│ │ │ │ └── common.py

│ │ │ └── runner.py

│ │ ├── ReadMe.md

│ │ ├── SmashVat/

│ │ │ ├── dqn.py

│ │ │ ├── environment.py

│ │ │ ├── main.py

│ │ │ ├── manual_control.py

│ │ │ ├── qnets.py

│ │ │ ├── side_effect_eval.py

│ │ │ └── window.py

│ │ ├── ToCM/

│ │ │ ├── README.md

│ │ │ ├── agent/

│ │ │ │ ├── controllers/

│ │ │ │ │ └── ToCMController.py

│ │ │ │ ├── learners/

│ │ │ │ │ └── ToCMLearner.py

│ │ │ │ ├── memory/

│ │ │ │ │ └── ToCMMemory.py

│ │ │ │ ├── models/

│ │ │ │ │ └── ToCMModel.py

│ │ │ │ ├── optim/

│ │ │ │ │ ├── loss.py

│ │ │ │ │ └── utils.py

│ │ │ │ ├── runners/

│ │ │ │ │ └── ToCMRunner.py

│ │ │ │ ├── utils/

│ │ │ │ │ └── params.py

│ │ │ │ └── workers/

│ │ │ │ └── ToCMWorker.py

│ │ │ ├── configs/

│ │ │ │ ├── Config.py

│ │ │ │ ├── EnvConfigs.py

│ │ │ │ ├── Experiment.py

│ │ │ │ ├── ToCM/

│ │ │ │ │ ├── ToCMAgentConfig.py

│ │ │ │ │ ├── ToCMControllerConfig.py

│ │ │ │ │ ├── ToCMLearnerConfig.py

│ │ │ │ │ └── optimal/

│ │ │ │ │ └── starcraft/

│ │ │ │ │ ├── AgentConfig.py

│ │ │ │ │ └── LearnerConfig.py

│ │ │ │ └── __init__.py

│ │ │ ├── env/

│ │ │ │ ├── mpe/

│ │ │ │ │ └── MPE.py

│ │ │ │ └── starcraft/

│ │ │ │ └── StarCraft.py

│ │ │ ├── environments.py

│ │ │ ├── mpe/

│ │ │ │ ├── MPE_Env.py

│ │ │ │ ├── __init__.py

│ │ │ │ ├── core.py

│ │ │ │ ├── environment.py

│ │ │ │ ├── multi_discrete.py

│ │ │ │ ├── rendering.py

│ │ │ │ ├── scenario.py

│ │ │ │ └── scenarios/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── hetero_spread.py

│ │ │ │ ├── simple_adversary.py

│ │ │ │ ├── simple_crypto.py

│ │ │ │ ├── simple_crypto_display.py

│ │ │ │ ├── simple_push.py

│ │ │ │ ├── simple_reference.py

│ │ │ │ ├── simple_speaker_listener.py

│ │ │ │ ├── simple_spread.py

│ │ │ │ ├── simple_tag.py

│ │ │ │ └── simple_world_comm.py

│ │ │ ├── networks/

│ │ │ │ ├── ToCM/

│ │ │ │ │ ├── action.py

│ │ │ │ │ ├── critic.py

│ │ │ │ │ ├── dense.py

│ │ │ │ │ ├── rnns.py

│ │ │ │ │ ├── utils.py

│ │ │ │ │ └── vae.py

│ │ │ │ └── transformer/

│ │ │ │ └── layers.py

│ │ │ ├── requirements.txt

│ │ │ ├── run.sh

│ │ │ ├── smac/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── bin/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ └── map_list.py

│ │ │ │ ├── env/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ ├── multiagentenv.py

│ │ │ │ │ ├── pettingzoo/

│ │ │ │ │ │ ├── StarCraft2PZEnv.py

│ │ │ │ │ │ ├── __init__.py

│ │ │ │ │ │ └── test/

│ │ │ │ │ │ ├── __init__.py

│ │ │ │ │ │ ├── all_test.py

│ │ │ │ │ │ └── smac_pettingzoo_test.py

│ │ │ │ │ └── starcraft2/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ ├── maps/

│ │ │ │ │ │ ├── SMAC_Maps/

│ │ │ │ │ │ │ └── 2s_vs_1sc.SC2Map

│ │ │ │ │ │ ├── __init__.py

│ │ │ │ │ │ └── smac_maps.py

│ │ │ │ │ ├── render.py

│ │ │ │ │ └── starcraft2.py

│ │ │ │ └── examples/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── pettingzoo/

│ │ │ │ │ ├── README.rst

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ └── pettingzoo_demo.py

│ │ │ │ ├── random_agents.py

│ │ │ │ └── rllib/

│ │ │ │ ├── README.rst

│ │ │ │ ├── __init__.py

│ │ │ │ ├── env.py

│ │ │ │ ├── model.py

│ │ │ │ ├── run_ppo.py

│ │ │ │ └── run_qmix.py

│ │ │ ├── train.py

│ │ │ └── utils/

│ │ │ ├── __init__.py

│ │ │ ├── mlp_buffer.py

│ │ │ ├── mlp_nstep_buffer.py

│ │ │ ├── popart.py

│ │ │ ├── rec_buffer.py

│ │ │ ├── segment_tree.py

│ │ │ └── util.py

│ │ ├── ToM/

│ │ │ ├── BrainArea/

│ │ │ │ ├── PFC_ToM.py

│ │ │ │ ├── TPJ.py

│ │ │ │ ├── __init__.py

│ │ │ │ ├── dACC.py

│ │ │ │ ├── one_hot.py

│ │ │ │ └── test.py

│ │ │ ├── README.md

│ │ │ ├── __init__.py

│ │ │ ├── data/

│ │ │ │ ├── NPC_assessment.csv

│ │ │ │ ├── agent_assessment.csv

│ │ │ │ ├── injury_memory.txt

│ │ │ │ ├── injury_value.txt

│ │ │ │ └── one_hot.py

│ │ │ ├── env/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── env.py

│ │ │ │ ├── env3_train_env00.py

│ │ │ │ └── env3_train_env01.py

│ │ │ ├── main_ToM.py

│ │ │ ├── main_both.py

│ │ │ ├── rulebasedpolicy/

│ │ │ │ ├── Find_a_way.py

│ │ │ │ ├── __init__.py

│ │ │ │ ├── a_star.py

│ │ │ │ ├── load_statedata.py

│ │ │ │ ├── point.py

│ │ │ │ ├── random_map.py

│ │ │ │ ├── statedata_pre.py

│ │ │ │ ├── train.txt

│ │ │ │ └── world_model.py

│ │ │ └── utils/

│ │ │ ├── Encoder.py

│ │ │ └── one_hot.py

│ │ ├── affective_empathy/

│ │ │ ├── BAE-SNN/

│ │ │ │ ├── BAESNN.py

│ │ │ │ ├── README.md

│ │ │ │ ├── env_poly.py

│ │ │ │ └── env_two_poly.py

│ │ │ ├── BEEAD-SNN/

│ │ │ │ ├── BEEAD-SNN.py

│ │ │ │ ├── README.md

│ │ │ │ ├── RL_Brain.py

│ │ │ │ ├── env.py

│ │ │ │ ├── env_poly_SNN.py

│ │ │ │ ├── rsnn.py

│ │ │ │ ├── sd_env.py

│ │ │ │ └── snowdrift_main.py

│ │ │ └── BRP-SNN/

│ │ │ ├── BRP-SNN.py

│ │ │ ├── README.md

│ │ │ ├── env_poly_SNN.py

│ │ │ └── env_two_poly_SNN.py

│ │ └── mirror_test/

│ │ ├── README.md

│ │ └── mirror_test.py

│ ├── Spiking-Transformers/

│ │ ├── LIFNode.py

│ │ ├── README.md

│ │ ├── datasets.py

│ │ ├── main.py

│ │ └── models/

│ │ ├── spike_driven_transformer.py

│ │ ├── spike_driven_transformer_dvs.py

│ │ ├── spike_driven_transformer_v2.py

│ │ ├── spike_driven_transformer_v2_dvs.py

│ │ ├── spikformer.py

│ │ └── spikformer_dvs.py

│ ├── Structural_Development/

│ │ ├── DPAP/

│ │ │ ├── README.md

│ │ │ ├── mask_model.py

│ │ │ ├── prun_main.py

│ │ │ └── utils.py

│ │ ├── DSD-SNN/

│ │ │ ├── README.md

│ │ │ └── cifar100/

│ │ │ ├── available.py

│ │ │ ├── main_simplified.py

│ │ │ ├── manipulate.py

│ │ │ ├── maskcl2.py

│ │ │ └── vgg_snn.py

│ │ ├── ELSM/

│ │ │ ├── evolve.py

│ │ │ ├── lsm.py

│ │ │ ├── model.py

│ │ │ ├── nsganet.py

│ │ │ └── spikes.py

│ │ ├── SCA-SNN/

│ │ │ ├── README.md

│ │ │ ├── configs/

│ │ │ │ └── train.yaml

│ │ │ ├── inclearn/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── convnet/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ ├── classifier.py

│ │ │ │ │ ├── imbalance.py

│ │ │ │ │ ├── maskcl2.py

│ │ │ │ │ ├── network.py

│ │ │ │ │ ├── resnet.py

│ │ │ │ │ ├── sew_resnet.py

│ │ │ │ │ └── utils.py

│ │ │ │ ├── datasets/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ ├── data.py

│ │ │ │ │ └── dataset.py

│ │ │ │ ├── models/

│ │ │ │ │ ├── __init__.py

│ │ │ │ │ ├── base.py

│ │ │ │ │ └── incmodel.py

│ │ │ │ └── tools/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── autoaugment_extra.py

│ │ │ │ ├── cutout.py

│ │ │ │ ├── data_utils.py

│ │ │ │ ├── factory.py

│ │ │ │ ├── memory.py

│ │ │ │ ├── metrics.py

│ │ │ │ ├── results_utils.py

│ │ │ │ ├── scheduler.py

│ │ │ │ ├── similar.py

│ │ │ │ └── utils.py

│ │ │ └── main.py

│ │ └── SD-SNN/

│ │ ├── README.md

│ │ ├── main.py

│ │ ├── prun_and_generation.py

│ │ ├── snn_model.py

│ │ └── utils.py

│ ├── Structure_Evolution/

│ │ ├── Adaptive_lsm/

│ │ │ ├── BrainCog-Version/

│ │ │ │ ├── README.md

│ │ │ │ ├── brid.py

│ │ │ │ ├── lsmmodel.py

│ │ │ │ ├── maze.py

│ │ │ │ └── tools/

│ │ │ │ ├── EnuGlobalNetwork.py

│ │ │ │ ├── ExperimentEnvGlobalNetworkSurvival.py

│ │ │ │ ├── MazeTurnEnvVec.py

│ │ │ │ └── nsganet.py

│ │ │ └── raw/

│ │ │ ├── BCM.py

│ │ │ ├── README.md

│ │ │ ├── lstm.py

│ │ │ ├── main.py

│ │ │ ├── pltbcm.py

│ │ │ ├── pltrank.py

│ │ │ ├── q_l.py

│ │ │ └── tools/

│ │ │ ├── EnuGlobalNetwork.py

│ │ │ ├── ExperimentEnvGlobalNetworkSurvival.py

│ │ │ └── MazeTurnEnvVec.py

│ │ ├── EB-NAS/

│ │ │ ├── acc_predictor/

│ │ │ │ ├── adaptive_switching.py

│ │ │ │ ├── carts.py

│ │ │ │ ├── factory.py

│ │ │ │ ├── gp.py

│ │ │ │ ├── mlp.py

│ │ │ │ └── rbf.py

│ │ │ ├── cellmodel.py

│ │ │ ├── ebnas.py

│ │ │ ├── micro_encoding.py

│ │ │ ├── motifs.py

│ │ │ ├── nsganet.py

│ │ │ ├── operations.py

│ │ │ ├── readme.md

│ │ │ ├── single_genome.py

│ │ │ └── tm.py

│ │ ├── ELSM/

│ │ │ ├── README.md

│ │ │ ├── evolve.py

│ │ │ ├── lsm.py

│ │ │ ├── model.py

│ │ │ ├── nsganet.py

│ │ │ └── spikes.py

│ │ └── MSE-NAS/

│ │ ├── auto_augment.py

│ │ ├── cellmodel.py

│ │ ├── evolution.py

│ │ ├── loss_f.py

│ │ ├── micro_encoding.py

│ │ ├── motifs.py

│ │ ├── nsganet.py

│ │ ├── obj.py

│ │ ├── operations.py

│ │ ├── readme.md

│ │ ├── tm.py

│ │ └── utils.py

│ ├── TIM/

│ │ ├── README.md

│ │ ├── main.py

│ │ ├── models/

│ │ │ ├── TIM.py

│ │ │ ├── spikformer_braincog_DVS.py

│ │ │ └── spikformer_braincog_SHD.py

│ │ └── utils/

│ │ ├── MyGrad.py

│ │ ├── MyNode.py

│ │ └── datasets.py

│ └── decision_making/

│ ├── BDM-SNN/

│ │ ├── BDM-SNN-UAV.py

│ │ ├── BDM-SNN-hh.py

│ │ ├── BDM-SNN.py

│ │ ├── README.md

│ │ └── decisionmaking.py

│ ├── RL/

│ │ ├── README.md

│ │ ├── atari/

│ │ │ ├── __init__.py

│ │ │ └── atari_wrapper.py

│ │ ├── mcs-fqf/

│ │ │ ├── discrete.py

│ │ │ ├── main.py

│ │ │ ├── network.py

│ │ │ └── policy.py

│ │ ├── requirements.txt

│ │ ├── sdqn/

│ │ │ ├── main.py

│ │ │ └── network.py

│ │ └── utils/

│ │ ├── __init__.py

│ │ └── normalization.py

│ └── swarm/

│ ├── Collision-Avoidance.py

│ └── README.md

├── requirements.txt

└── setup.py

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

.idea

*.egg-info/

eggs/

.eggs/

*.exe

*.pyc

/.vscode/

*.code-workspace

__pycache__

# Sphinx documentation

docs/_build/

docs/build/

# Jupyter Notebook

.ipynb_checkpoints

# IPython

profile_default/

ipython_config.py

# pyenv

.python-version

# event data

*.bin

*.dat

*.pt

# Django stuff:

*.log

local_settings.py

db.sqlite3

db.sqlite3-journal

================================================

FILE: LICENSE

================================================

Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright [yyyy] [name of copyright owner]

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

================================================

FILE: README.md

================================================

# BrainCog

---

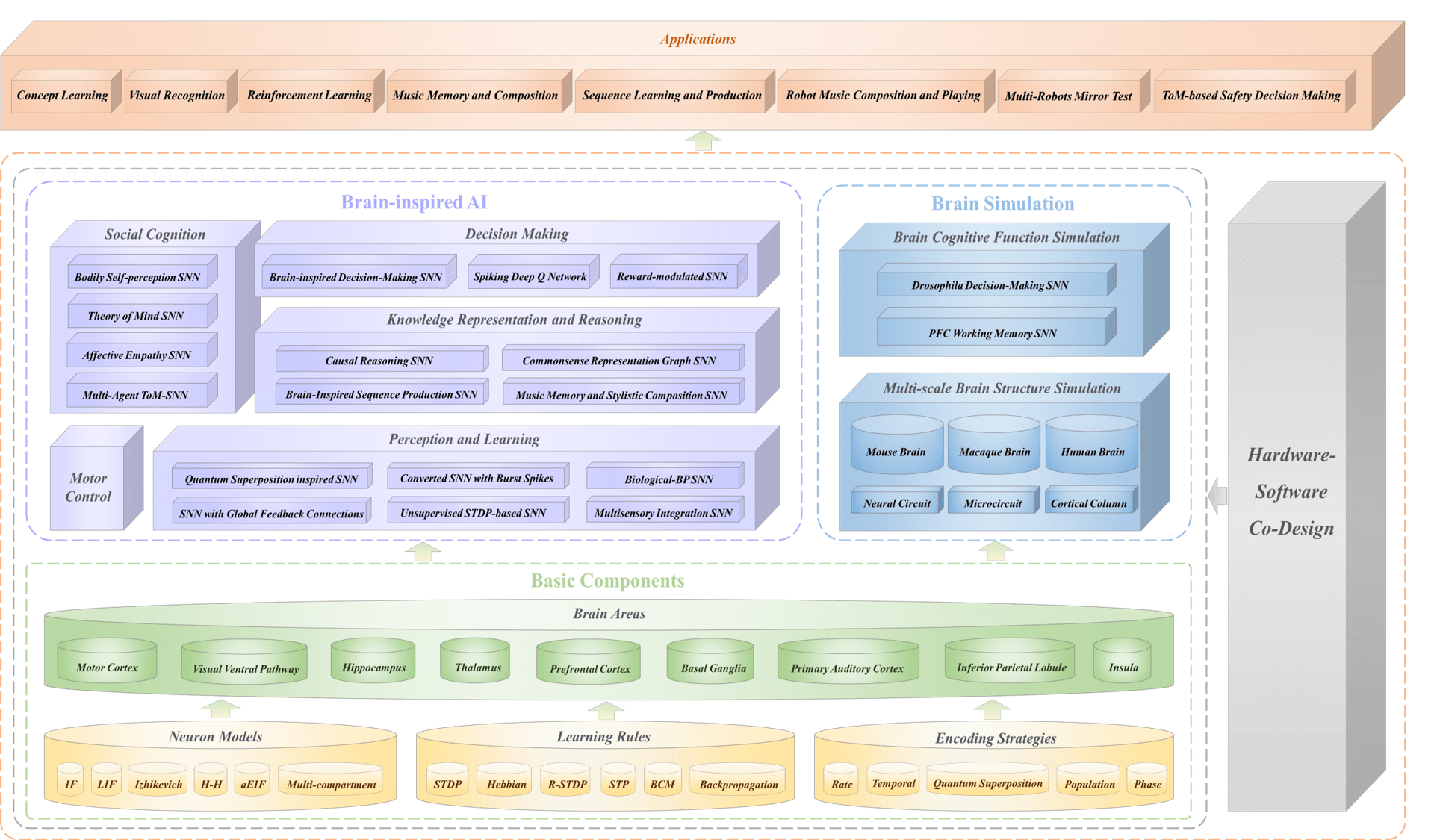

BrainCog is an open source spiking neural network based brain-inspired

cognitive intelligence engine for Brain-inspired Artificial Intelligence, Brain-inspired Embodied AI, and brain simulation. More information on BrainCog can be found on its homepage http://www.brain-cog.network/

The current version of BrainCog contains at least 50 functional spiking neural network algorithms (including but not limited to perception and learning, decision making, knowledge representation and reasoning, motor control, social cognition, etc.) built based on BrainCog infrastructures, and BrainCog also provide brain simulations to drosophila, rodent, monkey, and human brains at multiple scales based on spiking neural networks at multiple scales. More detail in http://www.brain-cog.network/docs/

BrainCog is a community based effort for spiking neural network based artificial intelligence, and we welcome any forms of contributions, from contributing to the development of core components, to contributing for applications.

<img src="http://braincog.ai/static_index/image/github_readme/logo.jpg" alt="./figures/logo.jpg" width="70%" />

BrainCog provides essential and fundamental components to model biological and artificial intelligence.

Our paper is published in [Patterns](https://www.cell.com/patterns/fulltext/S2666-3899(23)00144-7?_returnURL=https%3A%2F%2Flinkinghub.elsevier.com%2Fretrieve%2Fpii%2FS2666389923001447%3Fshowall%3Dtrue). If you use BrainCog in your research, the following paper can be cited as the source for BrainCog.

```bib

@article{Zeng2023,

doi = {10.1016/j.patter.2023.100789},

url = {https://doi.org/10.1016/j.patter.2023.100789},

year = {2023},

month = jul,

publisher = {Cell Press},

pages = {100789},

author = {Yi Zeng and Dongcheng Zhao and Feifei Zhao and Guobin Shen and Yiting Dong and Enmeng Lu and Qian Zhang and Yinqian Sun and Qian Liang and Yuxuan Zhao and Zhuoya Zhao and Hongjian Fang and Yuwei Wang and Yang Li and Xin Liu and Chengcheng Du and Qingqun Kong and Zizhe Ruan and Weida Bi},

title = {{BrainCog}: A spiking neural network based, brain-inspired cognitive intelligence engine for brain-inspired {AI} and brain simulation},

journal = {Patterns}

}

```

## Brain-Inspired AI

BrainCog currently provides cognitive functions components that can be classified

into five categories:

* Perception and Learning

* Knowledge Representation and Reasoning

* Decision Making

* Motor Control

* Social Cognition

* Development and Evolution

* Safety and Security

<img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/mirror-test.gif" alt="mt" width="55%" />

<img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/joy.gif" alt="mt" width="55%" />

## Brain Simulation

BrainCog currently include two parts for brain simulation:

* Brain Cognitive Function Simulation

* Multi-scale Brain Structure Simulation

<img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/braincog-mouse-brain-model-10s.gif" alt="bmbm10s" width="55%" />

<img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/braincog-macaque-10s.gif" alt="bm10s" width="55%" />

<img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/braincog-humanbrain-10s.gif" alt="bh10s" width="55%" />

The anatomical and imaging data is used to support our simulation from various aspects.

## Software-Hardware Codesign (BrainCog Firefly)

<img src="http://www.brain-cog.network/static/image/github_readme/firefly_logo.jpg" alt="bh10s" width="25%" />

BrainCog currently provides `hardware acceleration` for spiking neural network based brain-inspired AI.

<img src="http://braincog.ai/static_index/image/github_readme/firefly.jpg" alt="bh10s" width="55%" />

The following papers are most recent advancement of BrainCog Firefly series for Software-Hardware Codesign for Brain-inspired AI.

* Tenglong Li, Jindong Li, Guobin Shen, Dongcheng Zhao, Qian Zhang, Yi Zeng. FireFly-S: Exploiting Dual-Side Sparsity for Spiking Neural Networks Acceleration With Reconfigurable Spatial Architecture. IEEE Transactions on Circuits and Systems I (TCAS-I), 2024.(https://doi.org/10.1109/TCSI.2024.3496554)

* Jindong Li, Guobin Shen, Dongcheng Zhao, Qian Zhang, Yi Zeng. Firefly v2: Advancing hardware support for high-performance spiking neural network with a spatiotemporal fpga accelerator. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems, 2024. (https://ieeexplore.ieee.org/abstract/document/10478105/)

* Jindong Li, Guobin Shen, Dongcheng Zhao, Qian Zhang, Yi Zeng. FireFly: A High-Throughput Hardware Accelerator for Spiking Neural Networks With Efficient DSP and Memory Optimization. IEEE Transactions on Very Large Scale Integration (VLSI) Systems, 2023. (https://ieeexplore.ieee.org/document/10143752)

## Embodied AI and Robotics (BrainCog Embot)

<img src="http://www.brain-cog.network/static/image/github_readme/Embot_logo/%E5%B8%A6%E4%B8%8A%E6%A0%87%E9%A2%98-Embot%20logo%20%E9%80%8F%E6%98%8E%E8%83%8C%E6%99%AF.png" alt="bh10s" width="25%" />

<img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/PushT.gif" alt="bm10s" width="10%" /><img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/Can.gif" alt="bh10s" width="10%" /> <img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/左数第三个.gif" alt="bh10s" width="10%" /> <img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/Square.gif" alt="bm10s" width="10%" /><img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/ToolHang.gif" alt="bh10s" width="10%" /> <img src="https://raw.githubusercontent.com/Brain-Cog-Lab/Brain-Cog/main/figures/左数第六个.gif" alt="bh10s" width="10%" />

BrainCog Embot is an Embodied AI platform under the Brain-inspired Cognitive Intelligence Engine (BrainCog) framework, which is an open-source Brain-inspired AI platform based on Spiking Neural Network.

The following papers are most recent advancement of BrainCog Embot:

* Qianhao Wang, Yinqian Sun, Enmeng Lu, Qian Zhang, Yi Zeng. Brain-Inspired Action Generation with Spiking Transformer Diffusion Policy Model. Advances in Brain Inspired Cognitive Systems (BICS), 2024.(https://link.springer.com/chapter/10.1007/978-981-96-2882-7_23)

* Yinqian Sun, Feifei Zhao, Mingyang Lv, Yi Zeng. Implementing Spiking World Model with Multi-Compartment Neurons for Model-based Reinforcement Learning, 2025. (https://arxiv.org/abs/2503.00713)

* Qianhao Wang, Yinqian Sun, Enmeng Lu, Qian Zhang, Yi Zeng. MTDP: Modulated Transformer Diffusion Policy Model, 2025. (https://arxiv.org/abs/2502.09029)

## Resources

### [[Lectures]](https://github.com/BrainCog-X/Brain-Cog/blob/main/documents/Lectures.md) | [[Tutorial]](https://github.com/BrainCog-X/Brain-Cog/blob/main/documents/Tutorial.md)

## Publications using BrainCog

### [[Brain Inspired AI]](https://github.com/BrainCog-X/Brain-Cog/blob/main/documents/Publication.md) | [[Brain Simulation]](https://github.com/BrainCog-X/Brain-Cog/blob/main/documents/Pub_brain_simulation.md) | [[Software-Hardware Co-design]](https://github.com/BrainCog-X/Brain-Cog/blob/main/documents/Pub_sh_codesign.md)

## BrainCog Data Engine

### [BrainCog Data Engine](https://github.com/BrainCog-X/Brain-Cog/blob/main/documents/Data_engine.md)

## Requirements:

* numpy

* scipy

* h5py

* torch

* torchvision

* torchaudio

* timm == 0.6.13

* scikit-learn

* einops

* thop

* pyyaml

* matplotlib

* seaborn

* pygame

* dv

* tensorboard

* tonic

## Install

### Install Online

1. You can install braincog by running:

> `pip install braincog`

2. Also, install from github by running:

> `pip install git+https://github.com/braincog-X/Brain-Cog.git`

### Install locally

1. If you are a developer, it is recommanded to download or clone

braincog from github.

> `git clone https://github.com/braincog-X/Brain-Cog.git`

2. Enter the folder of braincog

> `cd Brain-Cog`

3. Install braincog locally

> `pip install -e .`

## Example

1. Examples for Image Classification

```shell

cd ./examples/Perception_and_Learning/img_cls/bp

python main.py --model cifar_convnet --dataset cifar10 --node-type LIFNode --step 8 --device 0

```

2. Examples for Event Classification

```shell

cd ./examples/Perception_and_Learning/img_cls/bp

python main.py --model dvs_convnet --node-type LIFNode --dataset dvsc10 --step 10 --batch-size 128 --act-fun QGateGrad --device 0

```

Other BrainCog features and tutorials can be found at http://www.brain-cog.network/docs/

## BrainCog Assistant

Please add our BrainCog Assitant via wechat and we will invite you to our wechat developer group.

## Maintenance

This project is led by

**1.Brain-inspired Cognitive Intelligence Lab, Institute of Automation, Chinese Academy of Sciences http://www.braincog.ai/**

**2.Center for Long-term Artificial Intelligence (CLAI) http://long-term-ai.center/**

================================================

FILE: braincog/__init__.py

================================================

# __all__ = ['base', 'datasets', 'model_zoo', 'utils']

#

# from . import (

# base,

# datasets,

# model_zoo,

# utils

# )

================================================

FILE: braincog/base/__init__.py

================================================

__all__ = ['node', 'connection', 'learningrule', 'brainarea', 'encoder', 'utils', 'conversion']

from . import (

node,

strategy,

connection,

conversion,

learningrule,

brainarea,

utils,

encoder

)

================================================

FILE: braincog/base/brainarea/BrainArea.py

================================================

import numpy as np

import torch, os, sys

from torch import nn

from torch.nn import Parameter

import abc

import math

from abc import ABC

import numpy as np

import torch

from torch import nn

from torch.nn import Parameter

import torch.nn.functional as F

from braincog.base.node.node import *

from braincog.base.learningrule.STDP import *

from braincog.base.connection.CustomLinear import *

class BrainArea(nn.Module, abc.ABC):

"""

脑区基类

"""

@abc.abstractmethod

def __init__(self):

"""

"""

super().__init__()

@abc.abstractmethod

def forward(self, x):

"""

计算前向传播过程

:return:x是脉冲

"""

return x

def reset(self):

"""

计算前向传播过程

:return:x是脉冲

"""

pass

class ThreePointForward(BrainArea):

"""

三点前馈脑区

"""

def __init__(self, w1, w2, w3):

"""

"""

super().__init__()

self.node = [IFNode(), IFNode(), IFNode()]

self.connection = [CustomLinear(w1), CustomLinear(w2), CustomLinear(w3)]

self.stdp = []

self.stdp.append(STDP(self.node[0], self.connection[0]))

self.stdp.append(STDP(self.node[1], self.connection[1]))

self.stdp.append(STDP(self.node[2], self.connection[2]))

def forward(self, x):

"""

计算前向传播过程

:return:x是脉冲

"""

x, dw1 = self.stdp[0](x)

x, dw2 = self.stdp[1](x)

x, dw3 = self.stdp[2](x)

return x, (*dw1, *dw2, *dw3)

class Feedback(BrainArea):

"""

反馈网络

"""

def __init__(self, w1, w2, w3):

"""

"""

super().__init__()

self.node = [IFNode(), IFNode()]

self.connection = [CustomLinear(w1), CustomLinear(w2), CustomLinear(w3)]

self.stdp = []

self.stdp.append(MutliInputSTDP(self.node[0], [self.connection[0], self.connection[2]]))

self.stdp.append(STDP(self.node[1], self.connection[1]))

self.x1 = torch.zeros(1, w3.shape[0])

def forward(self, x):

"""

计算前向传播过程

:return:x是脉冲

"""

x, dw1 = self.stdp[0](x, self.x1)

self.x1, dw2 = self.stdp[1](x)

return self.x1, (*dw1, *dw2)

def reset(self):

self.x1 *= 0

class TwoInOneOut(BrainArea):

"""

反馈网络

"""

def __init__(self, w1, w2):

"""

"""

super().__init__()

self.node = [IFNode()]

self.connection = [CustomLinear(w1), CustomLinear(w2)]

self.stdp = []

self.stdp.append(MutliInputSTDP(self.node[0], [self.connection[0], self.connection[1]]))

def forward(self, x1, x2):

"""

计算前向传播过程

:return:x是脉冲

"""

x, dw1 = self.stdp[0](x1, x2)

return x, dw1

class SelfConnectionArea(BrainArea):

"""

反馈网络

"""

def __init__(self, w1, w2 ):

"""

"""

super().__init__()

self.node = [IFNode() ]

self.connection = [CustomLinear(w1), CustomLinear(w2) ]

self.stdp = []

self.stdp.append(MutliInputSTDP(self.node[0], [self.connection[0], self.connection[1]]))

self.x1 = torch.zeros(1, w2.shape[0])

def forward(self, x):

"""

计算前向传播过程

:return:x是脉冲

"""

self.x1, dw1 = self.stdp[0](x, self.x1)

return self.x1, dw1

def reset(self):

self.x1 *= 0

if __name__ == "__main__":

T = 20

w1 = torch.tensor([[1., 1], [1, 1]])

w2 = torch.tensor([[1., 1], [1, 1]])

w3 = torch.tensor([[0.4, 0.4], [0.4, 0.4]])

ba = TwoInOneOut(w1, w2)

for i in range(T):

x = ba(torch.tensor([[0.1, 0.1]]), torch.tensor([[0.1, 0.1]]))

print(x[0])

================================================

FILE: braincog/base/brainarea/IPL.py

================================================

from braincog.base.learningrule.STDP import *

from braincog.base.node.node import *

from braincog.base.connection.CustomLinear import *

import random

import numpy as np

import torch

import os

import sys

from torch import nn

from torch.nn import Parameter

import abc

import math

from abc import ABC

import numpy as np

import torch

from torch import nn

from torch.nn import Parameter

import torch.nn.functional as F

import matplotlib.pyplot as plt

from braincog.base.strategy.surrogate import *

import os

os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"

class IPLNet(nn.Module):

"""

inferior parietal lobule (IPL)

"""

def __init__(self, connection):

"""

Setting the network structure of IPL

"""

super().__init__()

# IPLM, IPLV

self.num_subMB = 2

self.node = [IzhNodeMU(threshold=30., a=0.02, b=0.2, c=-65., d=6., mem=-70.) for i in range(self.num_subMB)]

self.connection = connection

self.learning_rule = []

self.learning_rule.append(STDP(self.node[0], self.connection[0])) # vPMC_input-IPLM

self.learning_rule.append(MutliInputSTDP(self.node[1], [self.connection[1], self.connection[2]])) # STS_input-IPLV, IPLM-IPLV

self.out_IPLM = torch.zeros((self.connection[0].weight.shape[1]), dtype=torch.float)

self.out_IPLV = torch.zeros((self.connection[1].weight.shape[1]), dtype=torch.float)

def forward(self, input1, input2): # input from vPMC and STS

"""

Calculate the output of IPLv and the weight update between IPLm and IPLv

:param input1: input from vPMC

:param input2: input from STS

:return: output of IPLv, weight update between IPLm and IPLv

"""

self.out_IPLM = self.node[0](self.connection[0](input1))

self.out_IPLV, dw_IPLv = self.learning_rule[1](input2, self.out_IPLM)

if sum(sum(self.out_IPLV)) == 1:

dw_IPLv = dw_IPLv[0][torch.nonzero(dw_IPLv[1])[0][1]][torch.nonzero(dw_IPLv[1])[0][1]] * dw_IPLv[1]

else:

dw_IPLv = dw_IPLv[0]

return self.out_IPLV, dw_IPLv

def UpdateWeight(self, i, dw):

"""

Update the weight

:param i: index of the connection to update

:param dw: weight update

:return: None

"""

self.connection[i].update(dw)

def reset(self):

"""

reset the network

:return: None

"""

for i in range(self.num_subMB):

self.node[i].n_reset()

for i in range(len(self.learning_rule)):

self.learning_rule[i].reset()

def getweight(self):

"""

Get the connection and weight in IPL

:return: connection

"""

return self.connection

================================================

FILE: braincog/base/brainarea/Insula.py

================================================

import numpy as np

import torch,os,sys

from torch import nn

from torch.nn import Parameter

import abc

import math

from abc import ABC

import numpy as np

import torch

from torch import nn

from torch.nn import Parameter

import torch.nn.functional as F

import matplotlib.pyplot as plt

from braincog.base.strategy.surrogate import *

import os

os.environ["KMP_DUPLICATE_LIB_OK"]="TRUE"

import random

from braincog.base.connection.CustomLinear import *

from braincog.base.node.node import *

from braincog.base.learningrule.STDP import *

class InsulaNet(nn.Module):

"""

Insula

"""

def __init__(self,connection):

"""

Setting the network structure of Insula

"""

super().__init__()

# Insula

self.num_subMB = 1

self.node = [IzhNodeMU(threshold=30., a=0.02, b=0.2, c=-65., d=6., mem=-70.) for i in range(self.num_subMB)]

self.connection = connection

self.learning_rule = []

self.learning_rule.append(MutliInputSTDP(self.node[0], [self.connection[0],self.connection[1]]))# IPLv-Insula, STS-Insula

self.Insula=torch.zeros((self.connection[1].weight.shape[1]), dtype=torch.float)

def forward(self, input1, input2): # input from IPLv and STS

"""

Calculate the output of Insula

:param input1: input from IPLv

:param input2: input from STS

:return: output of Insula, weight update (unused)

"""

self.out_Insula, dw_Insula = self.learning_rule[0](input1, input2)

return self.out_Insula

def UpdateWeight(self,i,dw):

"""

Update the weight

:param i: index of the connection to update

:param dw: weight update

:return: None

"""

self.connection[i].update(dw)

def reset(self):

"""

reset the network

:return: None

"""

for i in range(self.num_subMB):

self.node[i].n_reset()

for i in range(len(self.learning_rule)):

self.learning_rule[i].reset()

def getweight(self):

"""

Get the connection and weight in Insula

:return: connection

"""

return self.connection

================================================

FILE: braincog/base/brainarea/PFC.py

================================================

import torch

from torch import nn

from braincog.base.brainarea import BrainArea

from braincog.model_zoo.base_module import BaseLinearModule, BaseModule

class PFC:

"""

PFC

"""

def __init__(self):

"""

"""

super().__init__()

def forward(self, x):

"""

:return:x

"""

return x

def reset(self):

"""

:return:x

"""

pass

class dlPFC(BaseModule, PFC):

"""

SNNLinear

"""

def __init__(self,

step,

encode_type,

in_features:int,

out_features:int,

bias,

*args,

**kwargs):

super().__init__(step, encode_type, *args, **kwargs)

self.bias = bias

self.in_features = in_features

self.out_features = out_features

self.fc = self._create_fc()

self.c = self._rest_c()

def _rest_c(self):

c = torch.rand((self.out_features, self.in_features)) # eligibility trace

return c

def _create_fc(self):

"""

the connection of the SNN linear

@return: nn.Linear

"""

fc = nn.Linear(in_features=self.in_features,

out_features=self.out_features, bias=self.bias)

return fc

================================================

FILE: braincog/base/brainarea/__init__.py

================================================

from .basalganglia import basalganglia

from .BrainArea import BrainArea, ThreePointForward, Feedback, TwoInOneOut, SelfConnectionArea

from .Insula import InsulaNet

from .IPL import IPLNet

from .PFC import PFC, dlPFC

__all__ = [

'basalganglia',

'BrainArea', 'ThreePointForward', 'Feedback', 'TwoInOneOut', 'SelfConnectionArea',

'InsulaNet',

'IPLNet',

'PFC', 'dlPFC'

]

================================================

FILE: braincog/base/brainarea/basalganglia.py

================================================

import numpy as np

import torch

import os

import sys

from torch import nn

from torch.nn import Parameter

import abc

import math

from abc import ABC

import numpy as np

import torch

import torch.nn.functional as F

from braincog.base.strategy.surrogate import *

from braincog.base.node.node import IFNode, SimHHNode

from braincog.base.learningrule.STDP import STDP, MutliInputSTDP

from braincog.base.connection.CustomLinear import CustomLinear

class basalganglia(nn.Module):

"""

Basal Ganglia

"""

def __init__(self, ns, na, we, wi, node_type):

super().__init__()

"""

:param ns: 状态个数

:param na:动作个数

:param we:兴奋性连接权重

:param wi:抑制性连接权重

"""

num_state = ns

num_action = na

num_STN = 2

weight_exc = we

weight_inh = wi

# connetions: 0DLPFC-StrD1 1DLPFC-StrD2 2DLPFC-STN 3StrD1-GPi 4StrD2-GPe 5Gpe-Gpi 6STN-Gpi 7STN-Gpe 8Gpe-STN

bg_connection = []

bg_con_mask = []

# DLPFC-StrD1

con_matrix1 = torch.zeros((num_state, num_state * num_action), dtype=torch.float)

for i in range(num_state):

for j in range(num_action):

con_matrix1[i, i * num_action + j] = 1

bg_con_mask.append(con_matrix1)

bg_connection.append(CustomLinear(weight_exc * con_matrix1, con_matrix1))

# DLPFC-StrD2

bg_connection.append(CustomLinear(weight_exc * con_matrix1, con_matrix1))

bg_con_mask.append(con_matrix1)

# DLPFC-STN

con_matrix3 = torch.ones((num_state, num_STN), dtype=torch.float)

bg_con_mask.append(con_matrix3)

bg_connection.append(CustomLinear(weight_exc * con_matrix3, con_matrix3))

# StrD1-GPi

con_matrix4 = torch.zeros((num_state * num_action, num_action), dtype=torch.float)

for i in range(num_state):

for j in range(num_action):

con_matrix4[i * num_action + j, j] = 1

bg_con_mask.append(con_matrix4)

bg_connection.append(CustomLinear(weight_inh * con_matrix4, con_matrix4))

# StrD2-GPe

bg_con_mask.append(con_matrix4)

bg_connection.append(CustomLinear(weight_inh * con_matrix4, con_matrix4))

# Gpe-Gpi

con_matrix5 = torch.eye((num_action), dtype=torch.float)

bg_con_mask.append(con_matrix5)

bg_connection.append(CustomLinear(weight_inh * con_matrix5, con_matrix5))

# STN-Gpi

con_matrix6 = torch.ones((num_STN, num_action), dtype=torch.float)

bg_con_mask.append(con_matrix6)

bg_connection.append(CustomLinear(0.5 * weight_exc * con_matrix6, con_matrix6))

# STN-Gpe

bg_con_mask.append(con_matrix6)

bg_connection.append(CustomLinear(0.5 * weight_exc * con_matrix6, con_matrix6))

# Gpe-STN

con_matrix7 = torch.ones((num_action, num_STN), dtype=torch.float)

bg_con_mask.append(con_matrix7)

bg_connection.append(CustomLinear(0.5 * weight_inh * con_matrix7, con_matrix7))

self.num_subBG = 5

self.node_type = node_type

if self.node_type == "hh":

self.node = [SimHHNode() for i in range(self.num_subBG)]

if self.node_type == "lif":

self.node = [IFNode() for i in range(self.num_subBG)]

self.connection = bg_connection

self.mask = bg_con_mask

self.learning_rule = []

trace_stdp = 0.99

self.learning_rule.append(STDP(self.node[0], self.connection[0], trace_stdp)) # DLPFC-StrD1

self.learning_rule.append(STDP(self.node[1], self.connection[1], trace_stdp)) # DLPFC-StrD2

self.learning_rule.append(MutliInputSTDP(self.node[2], [self.connection[2], self.connection[8]])) # DLPFC-STN

self.learning_rule.append(MutliInputSTDP(self.node[3], [self.connection[4], self.connection[7]])) # StrD2-GPe STN-Gpe

self.learning_rule.append(MutliInputSTDP(self.node[4], [self.connection[3], self.connection[5], self.connection[6]])) # StrD1-GPi Gpe-Gpi STN-Gpi

self.out_StrD1 = torch.zeros((self.connection[0].weight.shape[1]), dtype=torch.float)

self.out_StrD2 = torch.zeros((self.connection[1].weight.shape[1]), dtype=torch.float)

self.out_STN = torch.zeros((self.connection[2].weight.shape[1]), dtype=torch.float)

self.out_Gpi = torch.zeros((self.connection[3].weight.shape[1]), dtype=torch.float)

self.out_Gpe = torch.zeros((self.connection[4].weight.shape[1]), dtype=torch.float)

def forward(self, input):

"""

计算由当前输入基底节网络的输出

:param input: 输入电流

:return: 输出脉冲

"""

self.out_StrD1, dw_strd1 = self.learning_rule[0](input)

self.out_StrD2, dw_strd2 = self.learning_rule[1](input)

self.out_STN, dw_stn = self.learning_rule[2](input, self.out_Gpe)

self.out_Gpe, dw_gpe = self.learning_rule[3](self.out_StrD2, self.out_STN)

self.out_Gpi, dw_gpi = self.learning_rule[4](self.out_StrD1, self.out_Gpe, self.out_STN)

return self.out_Gpi

def UpdateWeight(self, i, dw):

"""

更新基底节内第i组连接的权重 根据传入的dw值

:param i: 要更新的连接的索引

:param dw: 更新的量

:return: None

"""

self.connection[i].update(dw)

self.connection[i].weight.data = F.normalize(self.connection[i].weight.data.float(), p=1, dim=1)

def reset(self):

"""

reset神经元或学习法则的中间量

:return: None

"""

for i in range(self.num_subMB):

self.node[i].n_reset()

for i in range(len(self.learning_rule)):

self.learning_rule[i].reset()

def getweight(self):

"""

获取基底节网络的连接(包括权值等)

:return: 基底节网络的连接

"""

return self.connection

def getmask(self):

"""

获取基底节网络的连接(仅连接矩阵)

:return: 基底节网络的连接矩阵

"""

return self.mask

if __name__ == "__main__":

BG = basalganglia(4, 2, 0.2, -4)

con = BG.getweight()

print(con)

================================================

FILE: braincog/base/brainarea/dACC.py

================================================

import torch

import matplotlib.pyplot as plt

import numpy as np

np.set_printoptions(threshold=np.inf)

from utils.one_hot import *

import os

import time

import sys

from tqdm import tqdm

from braincog.base.encoder.population_coding import *

from braincog.model_zoo.base_module import BaseLinearModule, BaseModule

from braincog.base.learningrule.STDP import *

import sys

sys.path.append("..")

class dACC(BaseModule):

"""

SNNLinear

"""

def __init__(self,

step,

encode_type,

in_features:int,

out_features:int,

bias,

node,

*args,

**kwargs):

super().__init__(step, encode_type, *args, **kwargs)

self.bias = bias

self.in_features = in_features

self.out_features = out_features

self.node1 = node(threshold=0.5, tau=2.)

self.node_name1 = node

self.node2 = node(threshold=0.1, tau=2.)

self.node_name2 = node

self.fc = self._create_fc()

self.c = self._rest_c()

def _rest_c(self):

c = torch.rand((self.out_features, self.in_features)) # eligibility trace

return c

def _create_fc(self):

"""

the connection of the SNN linear

@return: nn.Linear

"""

fc = nn.Linear(in_features=self.in_features,

out_features=self.out_features, bias=self.bias)

return fc

def update_c(self, c, STDP, tau_c=0.2):

"""

update the trace of eligibility

@param c: a tensor to record eligibility

@param STDP: the results of STDP

@param tau_c: the parameter of trace decay

@return: a update tensor to record eligibility

Equation:

delta_c = (-(c / tau_c) + STDP) * dela_t

c = c + delta_c

reference:<Solving the Distal Reward Problem through ...>

"""

c = c + tau_c * STDP

return c

def forward(self, inputs, epoch):

"""

decision

@param inputs: state

@return: action

"""

output = []

stdp = STDP(self.node2, self.fc, decay=0.80)

self.c = self._rest_c()

# stdp.connection.weight.data = torch.rand((self.out_features, self.in_features))

for i in range(inputs.shape[0]):

for t in range(self.step):

l1_in = torch.tensor(inputs[i, :])

l1_out = self.node1(l1_in).unsqueeze(0) #pre : l1_out

l2_out, dw = stdp(l1_out) #dw -- STDP

self.c = self.update_c(self.c, dw[0])

output.append(torch.min(l2_out))

# output.append((l2_out.any() == 0).cpu().detach().numpy().tolist())

return output

# if __name__ == '__main__':

# np.random.seed(6)

# T = 5

# num_popneurons = 2

# safety = 2

# epoch = 50

# file_name = "/home/zhaozhuoya/braincog/examples/ToM/data/injury_value.txt"

# state = []

# with open(file_name) as f:

# data = []

# data_split = f.readlines() #

# for i in data_split:

# state.append(one_hot(int(i[0])))

#

# output = np.array(state)

# train_y = output

# test_y = output[79:82]#output[12].reshape(1,2)

#

# file_name = "/home/zhaozhuoya/braincog/examples/ToM/data/injury_memory.txt"

# state = []

# with open(file_name) as f:

# data_split = f.readlines()

# for i in data_split:

# data = []

# data.append(int(bool(abs(int(i[2]) - int(i[18]))))*10)

# data.append(int(bool(abs(int(i[5]) - int(i[21]))))*10)

# state.append(data)

# input = np.array(state)

# train_x = input

# test_x = input[79:82]

# dACC_net = dACC(step=T, encode_type='rate', bias=True,

# in_features=num_popneurons, out_features=safety,

# node=node.LIFNode)

# dACC_net.fc.weight.data = torch.rand((safety, num_popneurons))

# dACC_net.load_state_dict(torch.load('./checkpoint/dACC_net.pth')['dacc'])

# output = dACC_net(inputs=train_x, epoch=50)

# for i in range(len(output)):

# print(output[i], train_x[i])

# torch.save({'dacc': dACC_net.state_dict()}, os.path.join('./checkpoint', 'dACC_net.pth'))

# dACC_net.load_state_dict(torch.load('./checkpoint/dACC_net.pth')['dacc'])

# output = dACC_net(inputs=test_x, epoch=50)

# for i in range(len(test_x)):

#

# print(output[i],test_x[i])

================================================

FILE: braincog/base/connection/CustomLinear.py

================================================

import os

import sys

import numpy as np

import torch

from torch import nn

from torch import einsum

import torch.nn.functional as F

class CustomLinear(nn.Module):

"""

用户自定义连接 通常stdp的计算

"""

def __init__(self, weight, mask=None):

super().__init__()

self.weight = nn.Parameter(weight, requires_grad=True)

self.mask = mask

def forward(self, x: torch.Tensor):

"""

:param x:输入 x.shape = [N ]

"""

#

# ret.shape = [C]

return x.matmul(self.weight)

def update(self, dw):

"""

:param dw:权重更新量

"""

with torch.no_grad():

if self.mask is not None:

dw *= self.mask

self.weight.data += dw

================================================

FILE: braincog/base/connection/__init__.py

================================================

from .CustomLinear import CustomLinear

from .layer import VotingLayer, WTALayer, NDropout, ThresholdDependentBatchNorm2d, LayerNorm, SMaxPool, LIPool

__all__ = [

'CustomLinear',

'VotingLayer', 'WTALayer', 'NDropout', 'ThresholdDependentBatchNorm2d', 'LayerNorm', 'SMaxPool', 'LIPool'

]

================================================

FILE: braincog/base/connection/layer.py

================================================

import warnings

import math

import numpy as np

import torch

from torch import nn

from torch import einsum

from torch.nn.modules.batchnorm import _BatchNorm

import torch.nn.functional as F

from torch.nn import Parameter

from einops import rearrange

class VotingLayer(nn.Module):

"""

用于SNNs的输出层, 几个神经元投票选出最终的类

:param voter_num: 投票的神经元的数量, 例如 ``voter_num = 10``, 则表明会对这10个神经元取平均

"""

def __init__(self, voter_num: int):

super().__init__()

self.voting = nn.AvgPool1d(voter_num, voter_num)

def forward(self, x: torch.Tensor):

# x.shape = [N, voter_num * C]

# ret.shape = [N, C]

return self.voting(x.unsqueeze(1)).squeeze(1)

class WTALayer(nn.Module):

"""

winner take all用于SNNs的每层后,将随机选取一个或者多个输出

:param k: X选取的输出数目 k默认等于1

"""

def __init__(self, k=1):

super().__init__()

self.k = k

def forward(self, x: torch.Tensor):

# x.shape = [N, C,W,H]

# ret.shape = [N, C,W,H]

pos = x * torch.rand(x.shape, device=x.device)

if self.k > 1:

x = x * (pos >= pos.topk(self.k, dim=1)[0][:, -1:]).float()

else:

x = x * (pos >= pos.max(1, True)[0]).float()

return x

class NDropout(nn.Module):

"""

与Drop功能相同, 但是会保证同一个样本不同时刻的mask相同.

"""

def __init__(self, p):

super(NDropout, self).__init__()

self.p = p

self.mask = None

def n_reset(self):

"""

重置, 能够生成新的mask

:return:

"""

self.mask = None

def create_mask(self, x):

"""

生成新的mask

:param x: 输入Tensor, 生成与之形状相同的mask

:return:

"""

self.mask = F.dropout(torch.ones_like(x.data), self.p, training=True)

def forward(self, x):

if self.training:

if self.mask is None:

self.create_mask(x)

return self.mask * x

else:

return x

class WSConv2d(nn.Conv2d):

def __init__(self, in_channels, out_channels, kernel_size, stride=1,

padding=0, dilation=1, groups=1, bias=True, gain=True):

super(WSConv2d, self).__init__(in_channels, out_channels, kernel_size, stride,

padding, dilation, groups, bias)

if gain:

self.gain = nn.Parameter(torch.ones(self.out_channels, 1, 1, 1))

else:

self.gain = 1.

def forward(self, x):

weight = self.weight

weight_mean = weight.mean(dim=1, keepdim=True).mean(dim=2,

keepdim=True).mean(dim=3, keepdim=True)

weight = weight - weight_mean

std = weight.view(weight.size(0), -1).std(dim=1).view(-1, 1, 1, 1) + 1e-5

weight = self.gain * weight / std.expand_as(weight)

return F.conv2d(x, weight, self.bias, self.stride,

self.padding, self.dilation, self.groups)

class ThresholdDependentBatchNorm2d(_BatchNorm):

"""

tdBN

https://ojs.aaai.org/index.php/AAAI/article/view/17320

"""

def __init__(self, num_features, alpha: float, threshold: float = .5, layer_by_layer: bool = True, affine: bool = True,**kwargs):

self.alpha = alpha

self.threshold = threshold

super().__init__(num_features=num_features, affine=affine)

assert layer_by_layer, \

'tdBN may works in step-by-step mode, which will not take temporal dimension into batch norm'

assert self.affine, 'ThresholdDependentBatchNorm needs to set `affine = True`!'

torch.nn.init.constant_(self.weight, alpha * threshold)

def _check_input_dim(self, input):

if input.dim() != 4:

raise ValueError("expected 4D input (got {}D input)".format(input.dim()))

def forward(self, input):

# input = rearrange(input, '(t b) c w h -> b (t c) w h', t=self.step)

output = super().forward(input)

return output

# return rearrange(output, 'b (t c) w h -> (t b) c w h', t=self.step)

class TEBN(nn.Module):

def __init__(self, num_features,step, eps=1e-5, momentum=0.1,**kwargs):

super(TEBN, self).__init__()

self.bn = nn.BatchNorm3d(num_features)

self.p = nn.Parameter(torch.ones(4, 1, 1, 1, 1))

self.step=step

def forward(self, input):

#y = input.transpose(1, 2).contiguous() # N T C H W , N C T H W

y = rearrange(input,"(t b) c w h -> t c b w h",t=self.step)

y = self.bn(y)

# y = y.contiguous().transpose(1, 2)

# y = y.transpose(0, 1).contiguous() # NTCHW TNCHW

y = rearrange(y,"t c b w h -> t b c w h")

y = y * self.p

#y = y.contiguous().transpose(0, 1) # TNCHW NTCHW

y = rearrange(y, "t b c w h -> (t b) c w h")

return y

class LayerNorm(nn.Module):

""" LayerNorm that supports two data formats: channels_last (default) or channels_first.

The ordering of the dimensions in the inputs. channels_last corresponds to inputs with

shape (batch_size, height, width, channels) while channels_first corresponds to inputs

with shape (batch_size, channels, height, width).

"""

def __init__(self, normalized_shape, eps=1e-6, data_format="channels_last"):

super().__init__()

self.weight = nn.Parameter(torch.ones(normalized_shape))

self.bias = nn.Parameter(torch.zeros(normalized_shape))

self.eps = eps

self.data_format = data_format

if self.data_format not in ["channels_last", "channels_first"]:

raise NotImplementedError

self.normalized_shape = (normalized_shape,)

def forward(self, x):

if self.data_format == "channels_last":

return F.layer_norm(x, self.normalized_shape, self.weight, self.bias, self.eps)

elif self.data_format == "channels_first":

u = x.mean(1, keepdim=True)

s = (x - u).pow(2).mean(1, keepdim=True)

x = (x - u) / torch.sqrt(s + self.eps)

x = self.weight[:, None, None] * x + self.bias[:, None, None]

return x

class SMaxPool(nn.Module):

"""用于转换方法的最大池化层的常规替换

选用具有最大脉冲发放率的神经元的脉冲通过,能够满足一般性最大池化层的需要

Reference:

https://arxiv.org/abs/1612.04052

"""

def __init__(self, child):

super(SMaxPool, self).__init__()

self.opration = child

self.sumspike = 0

def forward(self, x):

self.sumspike += x

single = self.opration(self.sumspike * 1000)

sum_plus_spike = self.opration(x + self.sumspike * 1000)

return sum_plus_spike - single

def reset(self):

self.sumspike = 0

class LIPool(nn.Module):

r"""用于转换方法的最大池化层的精准替换

LIPooling通过引入侧向抑制机制保证在转换后的SNN中输出的最大值与期望值相同。

Reference:

https://arxiv.org/abs/2204.13271

"""

def __init__(self, child=None):

super(LIPool, self).__init__()

if child is None:

raise NotImplementedError("child should be Pooling operation with torch.")

self.opration = child

self.sumspike = 0

def forward(self, x):

self.sumspike += x

out = self.opration(self.sumspike)

self.sumspike -= F.interpolate(out, scale_factor=2, mode='nearest')

return out

def reset(self):

self.sumspike = 0

class CustomLinear(nn.Module):

def __init__(self, in_channels, out_channels, bias=True):

super(CustomLinear, self).__init__()

self.in_channels = in_channels

self.out_channels = out_channels

# self.weight = Parameter(torch.tensor([

# [1., .5, .25, .125],