Showing preview only (555K chars total). Download the full file or copy to clipboard to get everything.

Repository: bgruening/docker-galaxy-stable

Branch: main

Commit: 5488282c3e3e

Files: 188

Total size: 506.3 KB

Directory structure:

gitextract__x213t1e/

├── .dive-ci

├── .editorconfig

├── .github/

│ └── workflows/

│ ├── compose.yml

│ ├── cvmfs.yml

│ ├── lint.yml

│ ├── pull-request.yml

│ ├── release.yml

│ ├── single.sh

│ ├── single_container.yml

│ └── update-site.yml

├── .gitignore

├── .travis.yml

├── Changelog.md

├── LICENSE

├── README.md

├── compose/

│ ├── README.md

│ ├── base-images/

│ │ ├── galaxy-cluster-base/

│ │ │ ├── Dockerfile

│ │ │ └── files/

│ │ │ ├── common_cleanup.sh

│ │ │ └── cvmfs/

│ │ │ ├── default.local

│ │ │ ├── domain.d/

│ │ │ │ └── galaxyproject.org.conf

│ │ │ └── keys/

│ │ │ └── galaxyproject.org/

│ │ │ ├── data.galaxyproject.org.pub

│ │ │ └── singularity.galaxyproject.org.pub

│ │ └── galaxy-container-base/

│ │ ├── Dockerfile

│ │ └── files/

│ │ └── common_cleanup.sh

│ ├── base_config.yml

│ ├── docker-compose.htcondor.yml

│ ├── docker-compose.k8s.yml

│ ├── docker-compose.pulsar.mq.yml

│ ├── docker-compose.pulsar.yml

│ ├── docker-compose.singularity.yml

│ ├── docker-compose.slurm.yml

│ ├── docker-compose.yml

│ ├── galaxy-configurator/

│ │ ├── Dockerfile

│ │ ├── customize.py

│ │ ├── run.sh

│ │ └── templates/

│ │ ├── galaxy/

│ │ │ ├── GALAXY_PROXY_PREFIX.txt.j2

│ │ │ ├── container_resolvers_conf.yml.j2

│ │ │ ├── dependency_resolvers_conf.xml.j2

│ │ │ ├── galaxy.yml.j2

│ │ │ ├── job_conf.xml.j2

│ │ │ └── job_metrics.xml.j2

│ │ ├── htcondor/

│ │ │ ├── executor.conf.j2

│ │ │ ├── galaxy.conf.j2

│ │ │ └── master.conf.j2

│ │ ├── kind/

│ │ │ ├── k8s_config/

│ │ │ │ ├── persistent_volumes.yml.j2

│ │ │ │ └── pv_claims.yml.j2

│ │ │ └── kind_config.yml.j2

│ │ ├── nginx/

│ │ │ └── nginx.conf.j2

│ │ ├── pulsar/

│ │ │ ├── app.yml.j2

│ │ │ └── server.ini.j2

│ │ └── slurm/

│ │ └── slurm.conf.j2

│ ├── galaxy-htcondor/

│ │ ├── Dockerfile

│ │ ├── start.sh

│ │ └── supervisord.conf

│ ├── galaxy-kind/

│ │ ├── Dockerfile

│ │ └── docker-entrypoint.sh

│ ├── galaxy-nginx/

│ │ ├── Dockerfile

│ │ └── start.sh

│ ├── galaxy-server/

│ │ ├── Dockerfile

│ │ └── files/

│ │ ├── common_cleanup.sh

│ │ ├── create_galaxy_user.py

│ │ └── start.sh

│ ├── galaxy-slurm/

│ │ ├── Dockerfile

│ │ └── start.sh

│ ├── galaxy-slurm-node-discovery/

│ │ ├── Dockerfile

│ │ └── run.sh

│ ├── pulsar/

│ │ ├── Dockerfile

│ │ ├── docker-entrypoint.sh

│ │ └── files/

│ │ └── common_cleanup.sh

│ └── tests/

│ ├── docker-compose.test.bioblend.yml

│ ├── docker-compose.test.selenium.yml

│ ├── docker-compose.test.workflows.yml

│ ├── docker-compose.test.yml

│ ├── galaxy-bioblend-test/

│ │ ├── Dockerfile

│ │ └── run.sh

│ ├── galaxy-selenium-test/

│ │ ├── Dockerfile

│ │ └── run.sh

│ └── galaxy-workflow-test/

│ ├── Dockerfile

│ └── run.sh

├── cvmfs/

│ ├── Dockerfile

│ ├── README.md

│ ├── ansible/

│ │ ├── playbook.yml

│ │ └── requirements.yml

│ └── docker-entrypoint.sh

├── docs/

│ ├── README.md

│ ├── Running_jobs_outside_of_the_container.md

│ ├── css/

│ │ └── landing_page.css

│ ├── js/

│ │ └── landing_page.js

│ └── src/

│ ├── generate_docs.py

│ └── requirements.txt

├── galaxy/

│ ├── Dockerfile

│ ├── ansible/

│ │ ├── condor.yml

│ │ ├── cvmfs_client.yml

│ │ ├── docker.yml

│ │ ├── files/

│ │ │ ├── 413.html

│ │ │ ├── 500.html

│ │ │ ├── 502.html

│ │ │ ├── nginx_sample.crt

│ │ │ ├── nginx_sample.key

│ │ │ └── production_b2drop.yml

│ │ ├── flower.yml

│ │ ├── galaxy_file_source_templates.yml

│ │ ├── galaxy_job_conf.yml

│ │ ├── galaxy_job_metrics.yml

│ │ ├── galaxy_object_store_templates.yml

│ │ ├── galaxy_scripts.yml

│ │ ├── galaxy_vault_config.yml

│ │ ├── gravity.yml

│ │ ├── group_vars/

│ │ │ └── all.yml

│ │ ├── k8s.yml

│ │ ├── nginx.yml

│ │ ├── pbs.yml

│ │ ├── postgresql.yml

│ │ ├── proftpd.yml

│ │ ├── provision.yml

│ │ ├── rabbitmq.yml

│ │ ├── redis.yml

│ │ ├── requirements.yml

│ │ ├── slurm.yml

│ │ ├── supervisor.yml

│ │ ├── templates/

│ │ │ ├── add_tool_shed.py.j2

│ │ │ ├── cgroupfs_mount.sh.j2

│ │ │ ├── check_database.py.j2

│ │ │ ├── configure_rabbitmq_users.yml.j2

│ │ │ ├── configure_slurm.py.j2

│ │ │ ├── container_resolvers_conf.yml.j2

│ │ │ ├── create_galaxy_user.py.j2

│ │ │ ├── export_user_files.py.j2

│ │ │ ├── file_source_templates.yml.j2

│ │ │ ├── gravity.yml.j2

│ │ │ ├── job_conf.xml.j2

│ │ │ ├── job_metrics_conf.yml.j2

│ │ │ ├── macros.xml.j2

│ │ │ ├── nginx/

│ │ │ │ ├── delegated_uploads.conf.j2

│ │ │ │ ├── flower_auth.conf.j2

│ │ │ │ ├── galaxy_common.conf.j2

│ │ │ │ ├── galaxy_http.j2

│ │ │ │ ├── galaxy_https.j2

│ │ │ │ ├── galaxy_redirect_ssl.j2

│ │ │ │ ├── htpasswd.j2

│ │ │ │ ├── interactive_tools_common.conf.j2

│ │ │ │ ├── interactive_tools_http.j2

│ │ │ │ ├── interactive_tools_https.j2

│ │ │ │ └── interactive_tools_redirect_ssl.j2

│ │ │ ├── object_store_templates.yml.j2

│ │ │ ├── rabbitmq.sh.j2

│ │ │ ├── startup_lite.sh.j2

│ │ │ ├── supervisor.conf.j2

│ │ │ ├── update_yaml_value.py.j2

│ │ │ └── vault_conf.yml.j2

│ │ └── tusd.yml

│ ├── bashrc

│ ├── cgroupfs_mount.sh

│ ├── common_cleanup.sh

│ ├── docker-compose.yaml

│ ├── install_tools_wrapper.sh

│ ├── run.sh

│ ├── sample_tool_list.yaml

│ ├── setup_postgresql.py

│ ├── startup.sh

│ ├── startup2.sh

│ ├── tool_conf_interactive.xml.sample

│ ├── tool_sheds_conf.xml

│ └── welcome.html

├── skills/

│ └── galaxy-docker/

│ ├── SKILL.md

│ └── references/

│ └── upgrade-25.1.md

└── test/

├── bioblend/

│ ├── Dockerfile

│ └── test.sh

├── container_resolvers_conf.ci.yml

├── cvmfs/

│ └── test.sh

├── gridengine/

│ ├── Dockerfile

│ ├── act_qmaster

│ ├── job_conf.xml.sge

│ ├── master_script.sh

│ ├── outputhostname/

│ │ └── outputhostname.xml

│ ├── outputhostname.tool.xml

│ ├── setup_gridengine.sh

│ ├── setup_tool.sh

│ ├── test.sh

│ ├── test_outputhostname.py

│ └── tool_conf.xml

└── slurm/

├── Dockerfile

├── configure_slurm.py

├── job_conf.xml

├── munge.conf

├── startup.sh

├── supervisor_slurm.conf

└── test.sh

================================================

FILE CONTENTS

================================================

================================================

FILE: .dive-ci

================================================

rules:

# If the efficiency is measured below X%, mark as failed.

# Expressed as a ratio between 0-1.

lowestEfficiency: 0.95

# If the amount of wasted space is at least X or larger than X, mark as failed.

# Expressed in B, KB, MB, and GB.

# highestWastedBytes: 20MB

# If the amount of wasted space makes up for X% or more of the image, mark as failed.

# Note: the base image layer is NOT included in the total image size.

# Expressed as a ratio between 0-1; fails if the threshold is met or crossed.

highestUserWastedPercent: 0.10

================================================

FILE: .editorconfig

================================================

root = true

[*]

indent_style = space

indent_size = 2

charset = utf-8

trim_trailing_whitespace = true

insert_final_newline = true

[*.py]

indent_size = 4

================================================

FILE: .github/workflows/compose.yml

================================================

name: build-and-test

on: [push]

jobs:

build_container_base:

if: false # Temporarily disable workflow

runs-on: ubuntu-22.04

steps:

- name: Checkout

uses: actions/checkout@v6

- name: Set image tag

id: image_tag

run: |

if [ "${GITHUB_REF#refs/heads/}" = "master" ]; then

echo "image_tag=latest" >> $GITHUB_OUTPUT;

else

echo "image_tag=${GITHUB_REF#refs/heads/}" >> $GITHUB_OUTPUT;

fi

- name: Docker Login

run: echo "${{ secrets.docker_registry_password }}" | docker login -u ${{ secrets.docker_registry_username }} --password-stdin ${{ secrets.docker_registry }}

- name: Set up Docker Buildx

id: buildx

uses: docker/setup-buildx-action@v3

with:

version: v0.17.1

- name: Run Buildx

env:

image_name: galaxy-container-base

run: |

for i in {1..4}; do

set +e

docker buildx build \

--output "type=image,name=${{ secrets.docker_registry }}/${{ secrets.docker_registry_username }}/$image_name:${{ steps.image_tag.outputs.image_tag }},push=true" \

--cache-from type=gha \

--cache-to type=gha,mode=max \

--build-arg IMAGE_TAG=${{ steps.image_tag.outputs.image_tag }} \

--build-arg DOCKER_REGISTRY=${{ secrets.docker_registry }} \

--build-arg DOCKER_REGISTRY_USERNAME=${{ secrets.docker_registry_username }} \

$image_name && break || echo "Fail.. Retrying"

done;

shell: bash

working-directory: ./compose/base-images

build_cluster_base:

needs: build_container_base

runs-on: ubuntu-latest

steps:

- name: Checkout

uses: actions/checkout@v6

- name: Set image tag

id: image_tag

run: |

if [ "${GITHUB_REF#refs/heads/}" = "master" ]; then

echo "image_tag=latest" >> $GITHUB_OUTPUT;

else

echo "image_tag=${GITHUB_REF#refs/heads/}" >> $GITHUB_OUTPUT;

fi

- name: Docker Login

run: echo "${{ secrets.docker_registry_password }}" | docker login -u ${{ secrets.docker_registry_username }} --password-stdin ${{ secrets.docker_registry }}

- name: Set up Docker Buildx

id: buildx

uses: docker/setup-buildx-action@v3

with:

version: v0.17.1

- name: Run Buildx

env:

image_name: galaxy-cluster-base

run: |

for i in {1..4}; do

set +e

docker buildx build \

--output "type=image,name=${{ secrets.docker_registry }}/${{ secrets.docker_registry_username }}/$image_name:${{ steps.image_tag.outputs.image_tag }},push=true" \

--cache-from type=gha \

--cache-to type=gha,mode=max \

--build-arg IMAGE_TAG=${{ steps.image_tag.outputs.image_tag }} \

--build-arg DOCKER_REGISTRY=${{ secrets.docker_registry }} \

--build-arg DOCKER_REGISTRY_USERNAME=${{ secrets.docker_registry_username }} \

$image_name && break || echo "Fail.. Retrying"

done;

shell: bash

working-directory: ./compose/base-images

build:

needs: build_cluster_base

runs-on: ubuntu-latest

strategy:

matrix:

image:

- name: galaxy-server

- name: galaxy-nginx

- name: galaxy-htcondor

- name: galaxy-slurm

- name: galaxy-slurm-node-discovery

- name: galaxy-kind

- name: pulsar

- name: galaxy-configurator

- name: galaxy-bioblend-test

subdir: tests/

- name: galaxy-workflow-test

subdir: tests/

- name: galaxy-selenium-test

subdir: tests/

fail-fast: false

steps:

- name: Checkout

uses: actions/checkout@v6

- name: Set image tag

id: image_tag

run: |

if [ "${GITHUB_REF#refs/heads/}" = "master" ]; then

echo "image_tag=latest" >> $GITHUB_OUTPUT;

else

echo "image_tag=${GITHUB_REF#refs/heads/}" >> $GITHUB_OUTPUT;

fi

- name: Docker Login

run: echo "${{ secrets.docker_registry_password }}" | docker login -u ${{ secrets.docker_registry_username }} --password-stdin ${{ secrets.docker_registry }}

- name: Set up Docker Buildx

id: buildx

uses: docker/setup-buildx-action@v3

with:

version: v0.17.1

- name: Run Buildx

run: |

for i in {1..4}; do

set +e

docker buildx build \

--output "type=image,name=${{ secrets.docker_registry }}/${{ secrets.docker_registry_username }}/${{ matrix.image.name }}:${{ steps.image_tag.outputs.image_tag }},push=true" \

--cache-from type=gha \

--cache-to type=gha,mode=max \

--build-arg IMAGE_TAG=${{ steps.image_tag.outputs.image_tag }} \

--build-arg DOCKER_REGISTRY=${{ secrets.docker_registry }} \

--build-arg DOCKER_REGISTRY_USERNAME=${{ secrets.docker_registry_username }} \

--build-arg GALAXY_REPO=https://github.com/galaxyproject/galaxy \

${{ matrix.image.subdir }}${{ matrix.image.name }} && break || echo "Fail.. Retrying"

done;

shell: bash

working-directory: ./compose

test:

needs: [build]

runs-on: ubuntu-latest

strategy:

matrix:

infrastructure:

- name: galaxy-base

files: -f docker-compose.yml

- name: galaxy-proxy-prefix

files: -f docker-compose.yml

env: GALAXY_PROXY_PREFIX=/arbitrary_Galaxy-prefix GALAXY_CONFIG_GALAXY_INFRASTRUCTURE_URL=http://localhost/arbitrary_Galaxy-prefix EXTRA_SKIP_TESTS_BIOBLEND="not test_import_export_workflow_dict and not test_import_export_workflow_from_local_path"

exclude_test:

- selenium

- name: galaxy-htcondor

files: -f docker-compose.yml -f docker-compose.htcondor.yml

- name: galaxy-slurm

files: -f docker-compose.yml -f docker-compose.slurm.yml

env: SLURM_NODE_COUNT=3

options: --scale slurm_node=3

- name: galaxy-pulsar

files: -f docker-compose.yml -f docker-compose.pulsar.yml

exclude_test:

- workflow_quality_control

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_wait_for_job"

- name: galaxy-pulsar-mq

files: -f docker-compose.yml -f docker-compose.pulsar.yml -f docker-compose.pulsar.mq.yml

exclude_test:

- workflow_quality_control

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_wait_for_job"

- name: galaxy-k8s

files: -f docker-compose.yml -f docker-compose.k8s.yml

- name: galaxy-singularity

files: -f docker-compose.yml -f docker-compose.singularity.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_get_container_resolvers and not test_show_container_resolver"

- name: galaxy-pulsar-mq-singularity

files: -f docker-compose.yml -f docker-compose.pulsar.yml -f docker-compose.pulsar.mq.yml -f docker-compose.singularity.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_wait_for_job and not test_get_container_resolvers and not test_show_container_resolver"

exclude_test:

- workflow_quality_control

- name: galaxy-slurm-singularity

files: -f docker-compose.yml -f docker-compose.slurm.yml -f docker-compose.singularity.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_get_container_resolvers and not test_show_container_resolver"

- name: galaxy-htcondor-singularity

files: -f docker-compose.yml -f docker-compose.htcondor.yml -f docker-compose.singularity.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_get_container_resolvers and not test_show_container_resolver"

test:

- name: bioblend

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.bioblend.yml

exit-from: galaxy-bioblend-test

timeout: 60

second_run: "true"

- name: workflow_ard

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.workflows.yml

exit-from: galaxy-workflow-test

workflow: sklearn/ard/ard.ga

timeout: 60

second_run: "true"

- name: workflow_quality_control

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.workflows.yml

exit-from: galaxy-workflow-test

workflow: training/sequence-analysis/quality-control/quality_control.ga

timeout: 60

- name: workflow_example1

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.workflows.yml

exit-from: galaxy-workflow-test

workflow: example1/wf3-shed-tools.ga

timeout: 60

- name: selenium

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.selenium.yml

exit-from: galaxy-selenium-test

timeout: 60

fail-fast: false

steps:

# Self-made `exclude` as Github Actions currently does not support

# exclude/including of dicts in matrices

- name: Check if test should be run

id: run_check

if: contains(matrix.infrastructure.exclude_test, matrix.test.name) != true

run: echo "run=true" >> $GITHUB_OUTPUT

- name: Checkout

uses: actions/checkout@v6

- name: Set image tag in env

run: echo "IMAGE_TAG=${GITHUB_REF#refs/heads/}" >> $GITHUB_ENV

- name: Master branch - Set image to to 'latest'

if: github.ref == 'refs/heads/master'

run: echo "IMAGE_TAG=latest" >> $GITHUB_ENV

- name: Set WORKFLOWS env for worfklows-test

if: matrix.test.workflow

run: echo "WORKFLOWS=${{ matrix.test.workflow }}" >> $GITHUB_ENV

- name: Install Docker Compose

run: |

sudo apt-get update -qq && sudo apt-get install docker-compose -y

- name: Run tests for the first time

if: steps.run_check.outputs.run

run: |

export DOCKER_REGISTRY=${{ secrets.docker_registry }}

export DOCKER_REGISTRY_USERNAME=${{ secrets.docker_registry_username }}

export ${{ matrix.infrastructure.env }}

export TIMEOUT=${{ matrix.test.timeout }}

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} config

env

for i in {1..4}; do

echo "Running test - try \#$i"

echo "Removing export directory if existent";

sudo rm -rf export

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} pull

set +e

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} up ${{ matrix.infrastructure.options }} --exit-code-from ${{ matrix.test.exit-from }}

test_exit_code=$?

error_exit_codes_count=$(expr $(docker ps -a --filter exited=1 | wc -l) - 1)

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} down

if [ $error_exit_codes_count != 0 ] || [ $test_exit_code != 0 ] ; then

echo "Test failed..";

continue;

else

exit $test_exit_code;

fi

done;

exit 1

shell: bash

working-directory: ./compose

continue-on-error: false

- name: Fix file names before saving artifacts

if: failure()

run: |

sudo find ./compose/export/galaxy/database -depth -name '*:*' -execdir bash -c 'mv "$1" "${1//:/-}"' bash {} \;

- name: Allow upload-artifact read access

if: failure()

run: sudo chmod -R +r ./compose/export/galaxy/database

- name: Save artifacts for debugging a failed test

uses: actions/upload-artifact@v6

if: failure()

with:

name: ${{ matrix.infrastructure.name }}_${{ matrix.test.name }}_first-run

path: ./compose/export/galaxy/database

- name: Clean up after first run

if: matrix.test.second_run == 'true'

run: |

sudo rm -rf export/postgres

sudo rm -rf export/galaxy/database

working-directory: ./compose

- name: Run tests a second time

if: matrix.test.second_run == 'true' && steps.run_check.outputs.run

run: |

export DOCKER_REGISTRY=${{ secrets.docker_registry }}

export DOCKER_REGISTRY_USERNAME=${{ secrets.docker_registry_username }}

export ${{ matrix.infrastructure.env }}

export TIMEOUT=${{ matrix.test.timeout }}

for i in {1..4}; do

echo "Running test - try \#$i"

echo "Removing export directory if existent";

sudo rm -rf export

set +e

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} up ${{ matrix.infrastructure.options }} --exit-code-from ${{ matrix.test.exit-from }}

test_exit_code=$?

error_exit_codes_count=$(expr $(docker ps -a --filter exited=1 | wc -l) - 1)

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} down

if [ $error_exit_codes_count != 0 ] || [ $test_exit_code != 0 ] ; then

echo "Test failed..";

continue;

else

exit $test_exit_code;

fi

done;

exit 1

shell: bash

working-directory: ./compose

continue-on-error: false

- name: Fix file names before saving artifacts

if: failure() && matrix.test.second_run == 'true'

run: |

sudo find ./compose/export/galaxy/database -depth -name '*:*' -execdir bash -c 'mv "$1" "${1//:/-}"' bash {} \;

- name: Allow upload-artifact read access

if: failure() && matrix.test.second_run == 'true'

run: sudo chmod -R +r ./compose/export/galaxy/database

- name: Save artifacts for debugging a failed test

uses: actions/upload-artifact@v6

if: failure() && matrix.test.second_run == 'true'

with:

name: ${{ matrix.infrastructure.name }}_${{ matrix.test.name }}_second-run

path: ./compose/export/galaxy/database

================================================

FILE: .github/workflows/cvmfs.yml

================================================

name: cvmfs-sidecar

on:

push:

branches:

- '**'

tags:

- '*'

pull_request:

paths:

- 'cvmfs/**'

- 'test/cvmfs/**'

- '.github/workflows/cvmfs.yml'

jobs:

build_test_publish:

runs-on: ubuntu-latest

steps:

- name: Checkout

uses: actions/checkout@v6

- name: Detect CVMFS changes

id: changes

uses: dorny/paths-filter@v3

with:

filters: |

cvmfs:

- 'cvmfs/**'

- 'test/cvmfs/**'

- '.github/workflows/cvmfs.yml'

- name: Run CVMFS sidecar tests

if: github.event_name == 'pull_request' || steps.changes.outputs.cvmfs == 'true' || startsWith(github.ref, 'refs/tags/')

run: bash test/cvmfs/test.sh

- name: Set image version

id: version

if: github.event_name == 'push' && (steps.changes.outputs.cvmfs == 'true' || startsWith(github.ref, 'refs/tags/'))

run: |

set -euo pipefail

if [[ "${GITHUB_REF}" == refs/tags/* ]]; then

version="${GITHUB_REF_NAME}"

else

ref="${GITHUB_REF_NAME//\//-}"

version="${ref}-${GITHUB_SHA::7}"

fi

echo "version=$version" >> "$GITHUB_OUTPUT"

- name: Set up Docker Buildx

if: github.event_name == 'push' && (steps.changes.outputs.cvmfs == 'true' || startsWith(github.ref, 'refs/tags/'))

uses: docker/setup-buildx-action@v3

- name: Login to Quay IO

if: github.event_name == 'push' && (steps.changes.outputs.cvmfs == 'true' || startsWith(github.ref, 'refs/tags/'))

uses: docker/login-action@v3

with:

registry: quay.io

username: '$oauthtoken'

password: ${{ secrets.QUAY_OAUTH_TOKEN }}

- name: Build and push CVMFS image

if: github.event_name == 'push' && (steps.changes.outputs.cvmfs == 'true' || startsWith(github.ref, 'refs/tags/'))

uses: docker/build-push-action@v6

with:

context: "{{defaultContext}}:cvmfs"

push: true

tags: quay.io/bgruening/cvmfs:${{ steps.version.outputs.version }}

cache-from: type=gha

cache-to: type=gha,mode=max

================================================

FILE: .github/workflows/lint.yml

================================================

name: Lint

on: [push]

jobs:

lint:

runs-on: ubuntu-latest

steps:

- name: Checkout

uses: actions/checkout@v6

# - name: Cleanup to only use compose

# run: rm -R docs galaxy test

- name: Run shellcheck with reviewdog

uses: reviewdog/action-shellcheck@v1.27.0

with:

github_token: ${{ secrets.GITHUB_TOKEN }}

reporter: github-check

level: warning

pattern: "*.sh"

- name: Run hadolint with reviewdog

uses: reviewdog/action-hadolint@v1.46.0

with:

github_token: ${{ secrets.GITHUB_TOKEN }}

reporter: github-check

================================================

FILE: .github/workflows/pull-request.yml

================================================

name: pr-test

on: pull_request

jobs:

test:

if: false # Temporarily disable workflow

runs-on: ubuntu-22.04

strategy:

matrix:

infrastructure:

- name: galaxy-base

files: -f docker-compose.yml

- name: galaxy-proxy-prefix

files: -f docker-compose.yml

env: GALAXY_PROXY_PREFIX=/arbitrary_Galaxy-prefix GALAXY_CONFIG_GALAXY_INFRASTRUCTURE_URL=http://localhost/arbitrary_Galaxy-prefix EXTRA_SKIP_TESTS_BIOBLEND="not test_import_export_workflow_dict and not test_import_export_workflow_from_local_path"

exclude_test:

- selenium

- name: galaxy-htcondor

files: -f docker-compose.yml -f docker-compose.htcondor.yml

- name: galaxy-slurm

files: -f docker-compose.yml -f docker-compose.slurm.yml

env: SLURM_NODE_COUNT=3

options: --scale slurm_node=3

- name: galaxy-pulsar

files: -f docker-compose.yml -f docker-compose.pulsar.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_wait_for_job"

exclude_test:

- workflow_quality_control

- name: galaxy-pulsar-mq

files: -f docker-compose.yml -f docker-compose.pulsar.yml -f docker-compose.pulsar.mq.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_wait_for_job"

exclude_test:

- workflow_quality_control

- name: galaxy-k8s

files: -f docker-compose.yml -f docker-compose.k8s.yml

- name: galaxy-singularity

files: -f docker-compose.yml -f docker-compose.singularity.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_get_container_resolvers and not test_show_container_resolver"

- name: galaxy-pulsar-mq-singularity

files: -f docker-compose.yml -f docker-compose.pulsar.yml -f docker-compose.pulsar.mq.yml -f docker-compose.singularity.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_wait_for_job and not test_get_container_resolvers and not test_show_container_resolver"

exclude_test:

- workflow_quality_control

- name: galaxy-slurm-singularity

files: -f docker-compose.yml -f docker-compose.slurm.yml -f docker-compose.singularity.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_get_container_resolvers and not test_show_container_resolver"

- name: galaxy-htcondor-singularity

files: -f docker-compose.yml -f docker-compose.htcondor.yml -f docker-compose.singularity.yml

env: EXTRA_SKIP_TESTS_BIOBLEND="not test_get_container_resolvers and not test_show_container_resolver"

test:

- name: bioblend

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.bioblend.yml

exit-from: galaxy-bioblend-test

timeout: 60

second_run: "true"

- name: workflow_ard

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.workflows.yml

exit-from: galaxy-workflow-test

workflow: sklearn/ard/ard.ga

timeout: 60

second_run: "true"

- name: workflow_quality_control

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.workflows.yml

exit-from: galaxy-workflow-test

workflow: training/sequence-analysis/quality-control/quality_control.ga

timeout: 60

- name: workflow_example1

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.workflows.yml

exit-from: galaxy-workflow-test

workflow: example1/wf3-shed-tools.ga

timeout: 60

- name: selenium

files: -f tests/docker-compose.test.yml -f tests/docker-compose.test.selenium.yml

exit-from: galaxy-selenium-test

timeout: 60

fail-fast: false

steps:

# Self-made `exclude` as Github Actions currently does not support

# exclude/including of dicts in matrices

- name: Check if test should be run

id: run_check

if: contains(matrix.infrastructure.exclude_test, matrix.test.name) != true

run: echo "run=true" >> $GITHUB_OUTPUT

- name: Checkout

uses: actions/checkout@v6

- name: Set WORKFLOWS env for worfklows-test

if: matrix.test.workflow

run: echo "WORKFLOWS=${{ matrix.test.workflow }}" >> $GITHUB_ENV

- name: Build galaxy-container-base

env:

image_name: galaxy-container-base

run: |

docker buildx build \

--output "type=image,name=quay.io/bgruening/$image_name:ci-testing" \

--cache-from type=gha \

--cache-to type=gha,mode=max \

--build-arg IMAGE_TAG=ci-testing \

$image_name

working-directory: ./compose/base-images

- name: Build galaxy-cluster-base

env:

image_name: galaxy-cluster-base

run: |

docker buildx build \

--output "type=image,name=quay.io/bgruening/$image_name:ci-testing" \

--cache-from type=gha \

--cache-to type=gha,mode=max \

--build-arg IMAGE_TAG=ci-testing \

$image_name

working-directory: ./compose/base-images

- name: Install Docker Compose

run: |

sudo apt-get update -qq && sudo apt-get install docker-compose -y

- name: Run tests for the first time

if: steps.run_check.outputs.run

run: |

export IMAGE_TAG=ci-testing

export COMPOSE_DOCKER_CLI_BUILD=1

export DOCKER_BUILDKIT=1

export ${{ matrix.infrastructure.env }}

export TIMEOUT=${{ matrix.test.timeout }}

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} config

env

for i in {1..4}; do

echo "Running test - try \#$i"

echo "Removing export directory if existent";

sudo rm -rf export

set +e

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} build --build-arg IMAGE_TAG=ci-testing --build-arg GALAXY_REPO=https://github.com/galaxyproject/galaxy

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} up ${{ matrix.infrastructure.options }} --exit-code-from ${{ matrix.test.exit-from }}

test_exit_code=$?

error_exit_codes_count=$(expr $(docker ps -a --filter exited=1 | wc -l) - 1)

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} down

if [ $error_exit_codes_count != 0 ] || [ $test_exit_code != 0 ] ; then

echo "Test failed..";

continue;

else

exit $test_exit_code;

fi

done;

exit 1

shell: bash

working-directory: ./compose

continue-on-error: false

- name: Fix file names before saving artifacts

if: failure()

run: |

sudo find ./compose/export/galaxy/database -depth -name '*:*' -execdir bash -c 'mv "$1" "${1//:/-}"' bash {} \;

- name: Allow upload-artifact read access

if: failure()

run: sudo chmod -R +r ./compose/export/galaxy/database

- name: Save artifacts for debugging a failed test

uses: actions/upload-artifact@v6

if: failure()

with:

name: ${{ matrix.infrastructure.name }}_${{ matrix.test.name }}_first-run

path: ./compose/export/galaxy/database

- name: Clean up after first run

if: matrix.test.second_run == 'true'

run: |

sudo rm -rf export/postgres

sudo rm -rf export/galaxy/database

working-directory: ./compose

- name: Run tests a second time

if: matrix.test.second_run == 'true' && steps.run_check.outputs.run

run: |

export IMAGE_TAG=ci-testing

export COMPOSE_DOCKER_CLI_BUILD=1

export DOCKER_BUILDKIT=1

export ${{ matrix.infrastructure.env }}

export TIMEOUT=${{ matrix.test.timeout }}

for i in {1..4}; do

echo "Running test - try \#$i"

echo "Removing export directory if existent";

sudo rm -rf export

set +e

docker-compose ${{ matrix.infrastructure.files }} ${{ matrix.test.files }} up ${{ matrix.infrastructure.options }} --exit-code-from ${{ matrix.test.exit-from }}

test_exit_code=$?

error_exit_codes_count=$(expr $(docker ps -a --filter exited=1 | wc -l) - 1)

if [ $error_exit_codes_count != 0 ] || [ $test_exit_code != 0 ] ; then

echo "Test failed..";

continue;

else

exit $test_exit_code;

fi

done;

exit 1

shell: bash

working-directory: ./compose

continue-on-error: false

- name: Fix file names before saving artifacts

if: failure() && matrix.test.second_run == 'true'

run: |

sudo find ./compose/export/galaxy/database -depth -name '*:*' -execdir bash -c 'mv "$1" "${1//:/-}"' bash {} \;

- name: Allow upload-artifact read access

if: failure() && matrix.test.second_run == 'true'

run: sudo chmod -R +r ./compose/export/galaxy/database

- name: Save artifacts for debugging a failed test

uses: actions/upload-artifact@v6

if: failure() && matrix.test.second_run == 'true'

with:

name: ${{ matrix.infrastructure.name }}_${{ matrix.test.name }}_second-run

path: ./compose/export/galaxy/database

================================================

FILE: .github/workflows/release.yml

================================================

name: release-CI

on:

release:

types: [published]

# Allows you to run this workflow manually from the Actions tab

workflow_dispatch:

jobs:

build_and_publish:

runs-on: ubuntu-latest

steps:

# Checks-out your repository under $GITHUB_WORKSPACE, so your job can access it

- uses: actions/checkout@v6

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v3

- name: Login to Quay IO

uses: docker/login-action@v3

with:

registry: quay.io

username: '$oauthtoken'

password: ${{ secrets.QUAY_OAUTH_TOKEN }}

- name: Build docker image and push to quay.io

uses: docker/build-push-action@v6

with:

context: "{{defaultContext}}:galaxy"

push: true

tags: quay.io/bgruening/galaxy:${{ github.event.release.tag_name }}

cache-from: type=gha

cache-to: type=gha,mode=max

================================================

FILE: .github/workflows/single.sh

================================================

#!/bin/bash

set -ex

docker --version

docker info

export GALAXY_HOME=/home/galaxy

export GALAXY_USER=admin@example.org

export GALAXY_USER_EMAIL=admin@example.org

export GALAXY_USER_PASSWD=password

export BIOBLEND_GALAXY_API_KEY=fakekey

export BIOBLEND_GALAXY_URL=http://localhost:8080

export EPHEMERIS_IMAGE=${EPHEMERIS_IMAGE:-quay.io/biocontainers/ephemeris:0.10.11--pyhdfd78af_0}

export GALAXY_WAIT_TIMEOUT=${GALAXY_WAIT_TIMEOUT:-600}

SKIP_SFTP=false

SKIP_DIVE=false

if [[ "${CI:-}" == "true" ]]; then

sudo apt-get update -qq

#sudo apt-get install docker-ce --no-install-recommends -y -o Dpkg::Options::="--force-confmiss" -o Dpkg::Options::="--force-confdef" -o Dpkg::Options::="--force-confnew"

sudo apt-get install sshpass --no-install-recommends -y

else

if ! command -v sshpass >/dev/null 2>&1; then

echo "sshpass not found; skipping SFTP test."

SKIP_SFTP=true

fi

fi

if [[ "${CI:-}" == "true" ]]; then

DIVE_VERSION=$(curl -sL "https://api.github.com/repos/wagoodman/dive/releases/latest" | grep '"tag_name":' | sed -E 's/.*"v([^"]+)".*/\1/')

curl -OL https://github.com/wagoodman/dive/releases/download/v${DIVE_VERSION}/dive_${DIVE_VERSION}_linux_amd64.deb

sudo apt install ./dive_${DIVE_VERSION}_linux_amd64.deb

rm ./dive_${DIVE_VERSION}_linux_amd64.deb

else

if ! command -v dive >/dev/null 2>&1; then

echo "dive not found; skipping image analysis."

SKIP_DIVE=true

fi

fi

galaxy_wait() {

docker run --rm --link galaxy:galaxy \

"${EPHEMERIS_IMAGE}" galaxy-wait -g http://galaxy --timeout "${1:-$GALAXY_WAIT_TIMEOUT}"

}

# start building this repo

if [[ "${CI:-}" == "true" ]]; then

sudo chown 1450 /tmp && sudo chmod a=rwx /tmp

fi

## define a container size check function, first parameter is the container name, second the max allowed size in MB

container_size_check () {

# check that the image size is not growing too much between releases

# the 19.05 monolithic image was around 1.500 MB

size="${docker image inspect $1 --format='{{.Size}}'}"

size_in_mb=$(($size/(1024*1024)))

if [[ $size_in_mb -ge $2 ]]

then

echo "The new compiled image ($1) is larger than allowed. $size_in_mb vs. $2"

sleep 2

#exit

fi

}

export WORKING_DIR=${GITHUB_WORKSPACE:-$PWD}

export DOCKER_RUN_CONTAINER="quay.io/bgruening/galaxy"

SAMPLE_TOOLS=$GALAXY_HOME/ephemeris/sample_tool_list.yaml

GALAXY_EXTRA_MOUNTS=()

if [ -f "$WORKING_DIR/test/container_resolvers_conf.ci.yml" ]; then

GALAXY_EXTRA_MOUNTS+=(-v "$WORKING_DIR/test/container_resolvers_conf.ci.yml:/etc/galaxy/container_resolvers_conf.yml:ro")

fi

cd "$WORKING_DIR"

docker buildx build \

--load \

--cache-from type=gha \

--cache-to type=gha,mode=max \

-t quay.io/bgruening/galaxy \

galaxy/

#container_size_check quay.io/bgruening/galaxy 1500

docker rm -f galaxy httpstest || true

mkdir -p local_folder

docker run -d -p 8080:80 -p 8021:21 -p 8022:22 \

--name galaxy \

--privileged=true \

-v "$(pwd)/local_folder:/export/" \

"${GALAXY_EXTRA_MOUNTS[@]}" \

-e GALAXY_CONFIG_ALLOW_USER_DATASET_PURGE=True \

-e GALAXY_CONFIG_ALLOW_PATH_PASTE=True \

-e GALAXY_CONFIG_ALLOW_USER_DELETION=True \

-e GALAXY_CONFIG_ENABLE_BETA_WORKFLOW_MODULES=True \

-v /tmp/:/tmp/ \

quay.io/bgruening/galaxy

sleep 30

docker logs galaxy

# Define start functions

docker_exec() {

cd "$WORKING_DIR"

docker exec galaxy "$@"

}

docker_exec_run() {

cd "$WORKING_DIR"

docker run quay.io/bgruening/galaxy "$@"

}

docker_run() {

cd "$WORKING_DIR"

docker run "$@"

}

docker ps

# Test submitting jobs to an external slurm cluster

cd "${WORKING_DIR}/test/slurm/" && bash test.sh && cd "$WORKING_DIR"

# Test submitting jobs to an external gridengine cluster

cd $WORKING_DIR/test/gridengine/ && bash test.sh || exit 1 && cd $WORKING_DIR

echo "SLURM and SGE tests have finished."

docker ps

echo 'Waiting for Galaxy to come up.'

galaxy_wait_timeout=$GALAXY_WAIT_TIMEOUT

galaxy_wait_interval=30

galaxy_wait_end=$((SECONDS + galaxy_wait_timeout))

while [ $SECONDS -lt $galaxy_wait_end ]; do

if galaxy_wait 30; then

break

fi

echo "Galaxy still starting, tailing logs..."

docker logs --tail 200 galaxy || true

sleep $galaxy_wait_interval

done

if [ $SECONDS -ge $galaxy_wait_end ]; then

echo "Galaxy did not become ready within ${galaxy_wait_timeout}s."

docker logs --tail 400 galaxy || true

exit 1

fi

curl -v --fail $BIOBLEND_GALAXY_URL/api/version

# Test self-signed HTTPS

docker_run -d --name httpstest -p 443:443 -e "USE_HTTPS=True" $DOCKER_RUN_CONTAINER

sleep 30

docker logs httpstest

sleep 180s && curl -v -k --fail https://127.0.0.1:443/api/version

echo | openssl s_client -connect 127.0.0.1:443 2>/dev/null | openssl x509 -issuer -noout| grep localhost

docker rm -f httpstest || true

# Test FTP Server upload

date > time.txt

# FIXME passive mode does not work, it would require the container to run with --net=host

#curl -v --fail -T time.txt ftp://localhost:8021 --user $GALAXY_USER:$GALAXY_USER_PASSWD || true

# Test FTP Server get

#curl -v --fail ftp://localhost:8021 --user $GALAXY_USER:$GALAXY_USER_PASSWD

# Test SFTP Server

if [[ "$SKIP_SFTP" != "true" ]]; then

sshpass -p $GALAXY_USER_PASSWD sftp -v -P 8022 -o User=$GALAXY_USER -o "StrictHostKeyChecking no" localhost <<< $'put time.txt'

fi

# Test FTP Server from within the container (avoids host NAT/passive issues)

docker_exec python - <<'PY'

import ftplib

ftp = ftplib.FTP()

ftp.connect("localhost", 21, timeout=30)

ftp.login("admin@example.org", "password")

ftp.retrlines("LIST")

ftp.quit()

PY

# Test CVMFS

docker_exec bash -c "service autofs start"

docker_exec bash -c "cvmfs_config chksetup"

docker_exec bash -c "ls /cvmfs/data.galaxyproject.org/byhand"

# Run a ton of BioBlend test against our servers.

cd "$WORKING_DIR/test/bioblend/" && . ./test.sh && cd "$WORKING_DIR/"

# Test without install-repository wrapper

curl -v --fail POST -H "Content-Type: application/json" -H "x-api-key: fakekey" -d \

'{

"tool_shed_url": "https://toolshed.g2.bx.psu.edu",

"name": "cut_columns",

"owner": "devteam",

"changeset_revision": "cec635fab700",

"new_tool_panel_section_label": "BEDTools"

}' \

"http://localhost:8080/api/tool_shed_repositories"

# Test the 'new' tool installation script

docker_exec install-tools "$SAMPLE_TOOLS"

# Test the Conda installation

docker_exec_run bash -c 'export PATH=$GALAXY_CONFIG_TOOL_DEPENDENCY_DIR/_conda/bin/:$PATH && conda --version && conda install samtools -c bioconda --yes'

# Test if data persistence works

docker stop galaxy

docker rm -f galaxy

cd "$WORKING_DIR"

docker run -d -p 8080:80 \

--name galaxy \

--privileged=true \

-v "$(pwd)/local_folder:/export/" \

"${GALAXY_EXTRA_MOUNTS[@]}" \

-e GALAXY_CONFIG_ALLOW_USER_DATASET_PURGE=True \

-e GALAXY_CONFIG_ALLOW_PATH_PASTE=True \

-e GALAXY_CONFIG_ALLOW_USER_DELETION=True \

-e GALAXY_CONFIG_ENABLE_BETA_WORKFLOW_MODULES=True \

-v /tmp/:/tmp/ \

quay.io/bgruening/galaxy

echo 'Waiting for Galaxy to come up.'

galaxy_wait "$GALAXY_WAIT_TIMEOUT"

# Test if the tool installed previously is available

curl -v --fail 'http://localhost:8080/api/tools/toolshed.g2.bx.psu.edu/repos/devteam/cut_columns/Cut1/1.0.2'

# analyze image using dive tool

if [[ "$SKIP_DIVE" == "true" ]]; then

echo "Skipping dive image analysis (dive not installed)."

else

CI=true dive quay.io/bgruening/galaxy

fi

docker stop galaxy

docker rm -f galaxy

docker rmi -f $DOCKER_RUN_CONTAINER || true

================================================

FILE: .github/workflows/single_container.yml

================================================

name: Single Container Test

on: [push, pull_request]

jobs:

build_and_test:

runs-on: ubuntu-latest

strategy:

matrix:

python-version: ['3.10']

steps:

- name: Checkout

uses: actions/checkout@v6

- name: Configure Docker data-root

run: |

sudo mkdir -p /mnt/docker

if [ ! -f /etc/docker/daemon.json ]; then

echo '{}' | sudo tee /etc/docker/daemon.json

fi

sudo jq '."data-root"="/mnt/docker"' /etc/docker/daemon.json > /tmp/docker_daemon.json

sudo mv /tmp/docker_daemon.json /etc/docker/daemon.json

sudo systemctl daemon-reload

sudo systemctl restart docker

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v3

- uses: actions/setup-python@v6

with:

python-version: ${{ matrix.python-version }}

- name: Build and Test

run: bash .github/workflows/single.sh

================================================

FILE: .github/workflows/update-site.yml

================================================

name: Deploy Documentation

on:

push:

branches:

- main

paths:

- 'README.md'

jobs:

deploy_docs:

runs-on: ubuntu-latest

steps:

- name: Check out the repository

uses: actions/checkout@v6

with:

persist-credentials: false

- name: Set up Python

uses: actions/setup-python@v6

with:

python-version: "3.12"

cache: "pip"

- name: Install python dependencies

run: pip install -r docs/src/requirements.txt

- name: Generate documentation

run: python docs/src/generate_docs.py

- name: Deploy to GitHub Pages

uses: peaceiris/actions-gh-pages@v4

with:

github_token: ${{ secrets.GITHUB_TOKEN }}

publish_dir: ./docs

================================================

FILE: .gitignore

================================================

# Byte-compiled / optimized / DLL files

__pycache__/

*.py[cod]

# C extensions

*.so

# Distribution / packaging

.Python

env/

bin/

build/

develop-eggs/

dist/

eggs/

lib/

lib64/

parts/

sdist/

var/

*.egg-info/

.installed.cfg

*.egg

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

# Unit test / coverage reports

htmlcov/

.tox/

.coverage

.cache

nosetests.xml

coverage.xml

# Translations

*.mo

# Mr Developer

.mr.developer.cfg

.project

.pydevproject

# Rope

.ropeproject

# Django stuff:

*.log

*.pot

# Sphinx documentation

docs/_build/

# Export folder for docker-compose setup

compose/export

compose-v2/export

.DS_Store

================================================

FILE: .travis.yml

================================================

sudo: required

language: python

python: 3.10

services:

- docker

env:

matrix:

- TOX_ENV=py310

global:

- secure: "SEjcKJQ0NGXdpFxFhLVlyJmiBvgiLtR5Uufg90Vm3owKlMy0NSfIrOR+2dwNniqOp7QI3eVepnqjid/Ka0QStzVqMCe55OLkJ/TbTHnMLpbtY63mpGfogVRvxMMAVpzLpcQqtJFORZmO/MIWSLlBiXMMzOg3+tbXvQXmL17Rbmw="

matrix:

allow_failures:

- env: KUBE=True

git:

submodules: false

before_install:

- set -e

- export GALAXY_HOME=/home/galaxy

- export GALAXY_USER=admin@example.org

- export GALAXY_USER_EMAIL=admin@example.org

- export GALAXY_USER_PASSWD=password

- export BIOBLEND_GALAXY_API_KEY=fakekey

- export BIOBLEND_GALAXY_URL=http://localhost:8080

- sudo apt-get update -qq

- sudo apt-get install docker-ce --no-install-recommends -y -o Dpkg::Options::="--force-confmiss" -o Dpkg::Options::="--force-confdef" -o Dpkg::Options::="--force-confnew"

- sudo apt-get install sshpass --no-install-recommends -y

- pip install ephemeris

- docker --version

- docker info

# start building this repo

- sudo chown 1450 /tmp && sudo chmod a=rwx /tmp

- export WORKING_DIR="$TRAVIS_BUILD_DIR"

- export DOCKER_RUN_CONTAINER="quay.io/bgruening/galaxy"

- export INSTALL_REPO_ARG=""

- export SAMPLE_TOOLS=$GALAXY_HOME/ephemeris/sample_tool_list.yaml

- travis_wait 30 cd "$WORKING_DIR" && docker build -t quay.io/bgruening/galaxy galaxy/

- |

## define a container size check function, first parameter is the container name, second the max allowed size in MB

container_size_check () {

# check that the image size is not growing too much between releases

# the 19.05 monolithic image was around 1.500 MB

size=`docker image inspect $1 --format='{{.Size}}'`

size_in_mb=$(($size/(1024*1024)))

if [[ $size_in_mb -ge $2 ]]

then

echo "The new compiled image ($1) is larger than allowed. $size_in_mb vs. $2"

sleep 2

#exit

fi

}

container_size_check quay.io/bgruening/galaxy 1500

mkdir local_folder

docker run -d -p 8080:80 -p 8021:21 -p 8022:22 \

--name galaxy \

--privileged=true \

-v `pwd`/local_folder:/export/ \

-e GALAXY_CONFIG_ALLOW_USER_DATASET_PURGE=True \

-e GALAXY_CONFIG_ALLOW_PATH_PASTE=True \

-e GALAXY_CONFIG_ALLOW_USER_DELETION=True \

-e GALAXY_CONFIG_ENABLE_BETA_WORKFLOW_MODULES=True \

-v /tmp/:/tmp/ \

quay.io/bgruening/galaxy

sleep 30

docker logs galaxy

# Define start functions

docker_exec() {

cd $WORKING_DIR

docker exec -t -i galaxy "$@"

}

docker_exec_run() {

cd $WORKING_DIR

docker run quay.io/bgruening/galaxy "$@"

}

docker_run() {

cd $WORKING_DIR

docker run "$@"

}

- docker ps

script:

- set -e

# Test submitting jobs to an external slurm cluster

- cd $TRAVIS_BUILD_DIR/test/slurm/ && bash test.sh && cd $WORKING_DIR

# Test submitting jobs to an external gridengine cluster

# TODO 19.05, need to enable this again!

# - cd $TRAVIS_BUILD_DIR/test/gridengine/ && bash test.sh && cd $WORKING_DIR

- echo 'Waiting for Galaxy to come up.'

- galaxy-wait -g $BIOBLEND_GALAXY_URL --timeout 600

- curl -v --fail $BIOBLEND_GALAXY_URL/api/version

# Test self-signed HTTPS

- docker_run -d --name httpstest -p 443:443 -e "USE_HTTPS=True" $DOCKER_RUN_CONTAINER

- sleep 180s && curl -v -k --fail https://127.0.0.1:443/api/version

- echo | openssl s_client -connect 127.0.0.1:443 2>/dev/null | openssl x509 -issuer -noout| grep localhost

- docker logs httpstest && docker stop httpstest && docker rm httpstest

# Test FTP Server upload

- date > time.txt && curl -v --fail -T time.txt ftp://localhost:8021 --user $GALAXY_USER:$GALAXY_USER_PASSWD || true

# Test FTP Server get

- curl -v --fail ftp://localhost:8021 --user $GALAXY_USER:$GALAXY_USER_PASSWD

# Test CVMFS

- docker_exec bash -c "service autofs start"

- docker_exec bash -c "cvmfs_config chksetup"

- docker_exec bash -c "ls /cvmfs/data.galaxyproject.org/byhand"

# Test SFTP Server

- sshpass -p $GALAXY_USER_PASSWD sftp -v -P 8022 -o User=$GALAXY_USER -o "StrictHostKeyChecking no" localhost <<< $'put time.txt'

# Run a ton of BioBlend test against our servers.

- cd $TRAVIS_BUILD_DIR/test/bioblend/ && . ./test.sh && cd $WORKING_DIR/

# not working anymore in 18.01

# executing: /galaxy_venv/bin/uwsgi --yaml /etc/galaxy/galaxy.yml --master --daemonize2 galaxy.log --pidfile2 galaxy.pid --log-file=galaxy_install.log --pid-file=galaxy_install.pid

# [uWSGI] getting YAML configuration from /etc/galaxy/galaxy.yml

# /galaxy_venv/bin/python: unrecognized option '--log-file=galaxy_install.log'

# getopt_long() error

# cat: galaxy_install.pid: No such file or directory

# tail: cannot open ‘galaxy_install.log’ for reading: No such file or directory

#- |

# if [ "${COMPOSE_SLURM}" ] || [ "${KUBE}" ] || [ "${COMPOSE_CONDOR_DOCKER}" ] || [ "${COMPOSE_SLURM_SINGULARITY}" ]

# then

# # Test without install-repository wrapper

# sleep 10

# docker_exec_run bash -c 'cd $GALAXY_ROOT_DIR && python ./scripts/api/install_tool_shed_repositories.py --api admin -l http://localhost:80 --url https://toolshed.g2.bx.psu.edu -o devteam --name cut_columns --panel-section-name BEDTools'

# fi

# Test the 'new' tool installation script

- docker_exec install-tools "$SAMPLE_TOOLS"

# Test the Conda installation

- docker_exec_run bash -c 'export PATH=$GALAXY_CONFIG_TOOL_DEPENDENCY_DIR/_conda/bin/:$PATH && conda --version && conda install samtools -c bioconda --yes'

after_success:

- |

if [ "$TRAVIS_PULL_REQUEST" == "false" -a "$TRAVIS_BRANCH" == "master" ]

then

cd ${TRAVIS_BUILD_DIR}

echo "Generate and deploy html documentation"

./docs/bin/deploy_docs

fi

notifications:

webhooks:

urls:

- https://webhooks.gitter.im/e/559f5480ac7a4ef238af

on_success: change

on_failure: always

on_start: never

================================================

FILE: Changelog.md

================================================

# Changelog

## 0.1: Initial release!

- with Apache2, PostgreSQL and Tool Shed integration

## 0.2: complete new Galaxy stack.

- with nginx, uwsgi, proftpd, docker, supervisord and SLURM

## 0.3: Add Interactive Environments

- IPython in docker in Galaxy in docker

- advanged logging

## 0.4:

- base the image on toolshed/requirements with all required Galaxy dependencies

- use Ansible roles to build large parts of the image

- export the supervisord web interface on port 9002

- enable Galaxy reports webapp

## 15.07:

- `install-biojs` can install BioJS visualisations into Galaxy

- `add-tool-shed` can be used to activate third party Tool Sheds in child Dockerfiles

- many documentation improvements

- RStudio is now part of Galaxy and this Image

- configurable postgres UID/GID by @chambm

- smarter starting of postgres during Tool installations by @shiltemann

## 15.10:

- new Galaxy 15.10 release

- fix https://github.com/bgruening/docker-galaxy-stable/issues/94

## 16.01:

- enable Travis testing for all builds and PR

- offer new [yaml based tool installations](https://github.com/galaxyproject/ansible-galaxy-tools/blob/master/files/tool_list.yaml.sample)

- enable dynamic UWSGI processes and threads with `-e UWSGI_PROCESSES=2` and `-e UWSGI_THREADS=4`

- enable dynamic Galaxy handlers `-e GALAXY_HANDLER_NUMPROCS=2`

- Addition of a new `lite` mode contributed by @kellrott

- first release with Jupyter integration

## 16.04:

- include a Galaxy-bare mode, enable with `-e BARE=True`

- first release with [HTCondor](https://research.cs.wisc.edu/htcondor/) installed and pre-configured

## 16.07:

- documentation and tests updates for SLURM integration by @mvdbeek

- first version with initial Docker compose support (proftpd ✔️)

- SFTP support by @zfrenchee

## 16.10:

- [HTTPS support](https://github.com/bgruening/docker-galaxy-stable/pull/240 ) by @zfrenchee and @mvdbeek

## 17.01:

- enable Conda dependency resolution by default

- [new Galaxy version](https://docs.galaxyproject.org/en/master/releases/17.01_announce.html)

- more compose work (slurm, postgresql)

## 17.05:

- add PROXY_PREFIX variable to enable automatic configuration of Galaxy running under some prefix (@abretaud)

- enable quota by default (just the funtionality, not any specific value)

- HT-Condor is now supported in compose with semi-autoscaling and BioContainers

- Galaxy Docker Compose is completely under Travis testing and available with SLURM and HT-Condor

- using Docker `build-arg`s for GALAXY_RELEASE and GALAXY_REPO

## 17.09:

- much improved documentation about using Galaxy Docker and an external cluster (@rhpvorderman)

- CVMFS support - mounting in 4TB of pre-build reference data (@chambm)

- Singularity support and tests (compose only)

- more work on K8s support and testing (@jmchilton)

- using .env files to configure the compose setup for SLURM, Condor, K8s, SLURM-Singularity, Condor-Docker

## 18.01:

- tracking the Galaxy release_18.01 branch

- uwsgi work to adopt to changes for 18.01

- remove nodejs-legacy & npm from Dockerfile and install latest version from ansible-extras

- initial galaxy.ini → galaxy.yml integration

- grafana and influxdb container (compose)

- Galaxy telegraf integration to push to influxdb (compose)

- added some documentation (compose)

## 18.05:

- Nothing very special, but a awesome Galaxy release as usual

## 18.09:

- new and more powerful orchestration build script (build-orchestration-images.sh) by @pcm32

- a lot of bug-fixes to the compose setup by @abretaud

## 19.01:

- This is featuring the latest and greatest from the Galaxy community

- Please note that this release will be the last release which is based on `ubuntu:14.04` and PostgreSQL 9.3.

We will migrate to `ubuntu:18.04` and a newer PostgreSQL version in `19.05`. Furthermore, we will not

support old Galaxy tool dependencies.

## 19.05:

- The image is now based on `ubuntu:18.04` (instead of ubuntu:14.04 previously) and PostgreSQL 11.5 (9.3 previously).

See [migration documention](#Postgresql-migration) to migrate the postgresql database from 9.3 to 11.5.

- We not longer support old Galaxy tool dependencies.

## 20.05:

- Featuring Galaxy 20.05

- Completely reworked compose setup

- The default admin password and apikey (`GALAXY_DEFAULT_ADMIN_PASSWORD` and `GALAXY_DEFAULT_ADMIN_KEY`) have changed: the password is now `password` (instead of `admin`) and the apikey `fakekey` (instead of `admin`).

## 20.09:

- Featuring Galaxy 20.09

## 24.1:

- Deprecating the `compose` setup.

- Complete new setup, adjusting to the latest Galaxy stack.

- Base Ubuntu Image: Upgraded from version 18.04 to 22.04

- Galaxy: Upgraded from version 20.09 to 24.1

- PostgreSQL: Upgraded from version 11 to 15

- Python3: Upgraded from version 3.7 to 3.10 (Python 3.10 is set as the default interpreter)

- The dockerfile now uses a multi-stage build to reduce the final image size and include only necessary files.

- New Service Support:

- Gunicorn: Replaces uWSGI as the web server for Galaxy. Installed by default inside Galaxy's virtual environment. Configured Nginx to proxy Gunicorn enabled on port 4001.

- Celery: Installed by default inside Galaxy's virtual environment. Enabled Celery for distributed task queues and Celery Beat for periodic task running. RabbitMQ serves as the broker for Celery (if RabbitMQ is disabled, it defaults to PostgreSQL database connection).

- Redis is used as the backend for Celery (if Redis is disabled, it defaults to a SQLite database). Flower service is added for monitoring and debugging Celery.

- RabbitMQ Management: Enabled the RabbitMQ management plugin on port 15672 for managing and monitoring the RabbitMQ server. The dashboard is exposed via Nginx and is accessible at the /rabbitmq/ path. The default access credentials are admin:admin.

- Flower: Added Flower service on port 5555 for monitoring and debugging Celery. The dashboard is exposed via Nginx and is available at the /flower/ path. The default access credentials are admin:admin.

- TUSd: Added TUSd server on port 1080 to support fault-tolerant uploads; Nginx is configured to proxy TUSd.

- gx-it-proxy: Added gx-it-proxy service on port 4002 to support Interactive Tools.

- Ansible Playbooks:

- Migrated from galaxyextras git submodule to using mainatined ansible roles.

- Added configure_rabbitmq_users.yml Ansible playbook, which removes the default guest user and adds admin, galaxy, and flower users for RabbitMQ during container startup.

- Environment Variables:

- Added `GUNICORN_WORKERS` and `CELERY_WORKERS` magic environment variables to set the number of Gunicorn and Celery workers, respectively, during container startup.

- Configuration Changes:

- Replaced the Galaxy Reports sample configuration file.

- Removed galaxy_web, handlers, reports, and ie_proxy services from Supervisor.

- Added Gravity for managing Galaxy services such as Gunicorn, Celery, gx-it-proxy, TUSd, reports, and handlers. It uses Supervisor as the process manager, with the configuration file located at /etc/galaxy/gravity.yml.

- Added support for dynamic handlers (set as the default handler type).

- Redis and Flower services are now managed by Supervisor.

- Since Galaxy Interactive Environments are deprecated, they have been replaced by Interactive Tools (ITs). The sample configuration file tools_conf_interactive.xml.sample is placed inside GALAXY_CONFIG_DIR. Nginx is also configured to support both domain and path-based ITs.

- Switched to using the cvmfs-config.galaxyproject.org repository for automatic configuration and updates of Galaxy project CVMFS repositories. Updated tool data table config path to include CVMFS locations from data.galaxyproject.org in --privileged mode.

- Enabled IPv6 support in Nginx for ports 80 and 443.

- Added Subject Alternative Name (SAN) extension (DNS:localhost and IP:127.0.0.1) while generating a self-signed SSL certificate.

- Ensured the Nginx SSL certificate is trusted system-wide by adding it to the CA store.

- Updated Galaxy extra dependencies.

- Added docker_net, docker_auto_rm, and docker_set_user parameters for Docker-enabled job destinations.

- Added update_yaml_value.py script to update nested key values in a YAML file.

- Replaced ie_proxy with gx-it-proxy.

- Replaced nginx_upload_module with TUSd for delegated uploads.

- CI Tests

- Added dive tool for analyzing the docker image

- Added test for check data persistence

================================================

FILE: LICENSE

================================================

The MIT License (MIT)

Copyright (c) 2014 Björn Grüning

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

================================================

FILE: README.md

================================================

[](https://zenodo.org/badge/latestdoi/5466/bgruening/docker-galaxy-stable)

[](https://travis-ci.org/bgruening/docker-galaxy-stable)

[](https://quay.io/repository/bgruening/galaxy)

[](https://gitter.im/bgruening/docker-galaxy-stable?utm_source=badge&utm_medium=badge&utm_campaign=pr-badge)

[](https://microbadger.com/images/bgruening/galaxy-stable "Get your own image badge on microbadger.com")

Galaxy Docker Image

===================

The [Galaxy](http://www.galaxyproject.org) [Docker](http://www.docker.io) Image is an easy distributable full-fledged Galaxy installation, that can be used for testing, teaching and presenting new tools and features.

One of the main goals is to make access to entire tool suites as easy as possible. Usually,

this includes the setup of a public available web-service that needs to be maintained, or that the Tool-user needs to either setup a Galaxy Server by its own or to have Admin access to a local Galaxy server.

With docker, tool developers can create their own Image with all dependencies and the user only needs to run it within docker.

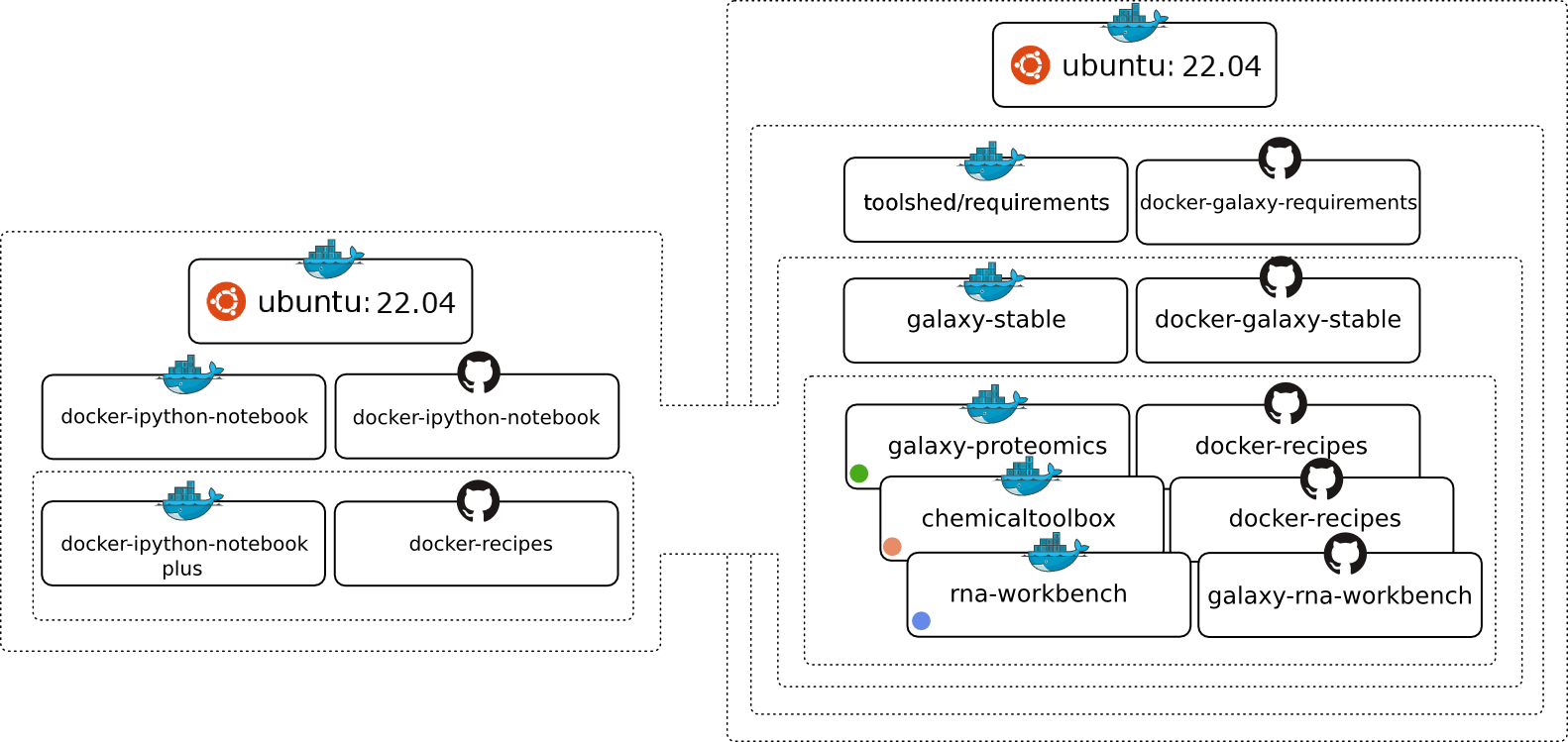

The Image is based on [Ubuntu 24.04 LTS](http://releases.ubuntu.com/24.04/) and all recommended Galaxy requirements are installed. The following chart should illustrate the [Docker](http://www.docker.io) image hierarchy we have build to make is as easy as possible to build on different layers of our stack and create many exciting Galaxy flavors.

Breaking changes

================

:information_source: After a long pause, due to interesting times at the beginning of the "golden 2020s", we are finally back with release `24.1`. Many things have changed in Galaxy.

It is deployed completely differently and gained many new features with many new dependencies. We recommend starting with a fresh `/export` folder and contacting us if you encounter any problems.

# Table of Contents <a name="toc" />

- [Usage](#Usage)

- [Upgrading images](#Upgrading-images)

- [PostgreSQL migration](#Postgresql-migration)

- [Enabling Interactive Tools in Galaxy](#Enabling-Interactive-Tools-in-Galaxy)

- [Using passive mode FTP or SFTP](#Using-passive-mode-FTP-or-SFTP)

- [Using Parent docker](#Using-Parent-docker)

- [RabbitMQ Management](#RabbitMQ-Management)

- [Flower Webapp](#Flower-Webapp)

- [Galaxy's config settings](#Galaxys-config-settings)

- [Configuring Galaxy's behind a proxy](#Galaxy-behind-proxy)

- [On-demand reference data with CVMFS](#cvmfs)

- [Personalize your Galaxy](#Personalize-your-Galaxy)

- [Deactivating services](#Deactivating-services)

- [Restarting Galaxy](#Restarting-Galaxy)

- [Advanced Logging](#Advanced-Logging)

- [Running on an external cluster (DRM)](#Running-on-an-external-cluster-(DRM))

- [Basic setup for the filesystem](#Basic-setup-for-the-filesystem)

- [Using an external Slurm cluster](#Using-an-external-Slurm-cluster)

- [Using an external Grid Engine cluster](#Using-an-external-Grid-Engine-cluster)

- [Tips for Running Jobs Outside the Container](#Tips-for-Running-Jobs-Outside-the-Container)

- [Enable Galaxy to use BioContainers (Docker)](#auto-exec-tools-in-docker)

- [Magic Environment variables](#Magic-Environment-variables)

- [HTTPS Support](#HTTPS-Support)

- [Lite Mode](#Lite-Mode)

- [Extending the Docker Image](#Extending-the-Docker-Image)

- [List of Galaxy flavours](#List-of-Galaxy-flavours)

- [Integrating non-Tool Shed tools into the container](#Integrating-non-Tool-Shed-tools-into-the-container)

- [Users & Passwords](#Users-Passwords)

- [Development](#Development)

- [Requirements](#Requirements)

- [Changelog](./Changelog.md)

- [Support & Bug Reports](#Support-Bug-Reports)

# Usage <a name="Usage" /> [[toc]](#toc)

This chapter explains how to launch the container manually.

At first you need to install docker. Please follow the [very good instructions](https://docs.docker.com/installation/) from the Docker project.

After the successful installation, all you need to do is:

```sh

docker run -d -p 8080:80 -p 8021:21 -p 8022:22 quay.io/bgruening/galaxy

```

I will shortly explain the meaning of all the parameters. For a more detailed description please consult the [docker manual](http://docs.docker.io/), it's really worth reading.

Let's start:

- `docker run` will run the Image/Container for you.

In case you do not have the Container stored locally, docker will download it for you.

- `-p 8080:80` will make the port 80 (inside of the container) available on port 8080 on your host. Same holds for port 8021 and 8022, that can be used to transfer data via the FTP or SFTP protocol, respectively.

Inside the container a nginx Webserver is running on port 80 and that port can be bound to a local port on your host computer. With this parameter you can access your Galaxy

instance via `http://localhost:8080` immediately after executing the command above. If you work with the [Docker Toolbox](https://www.docker.com/products/docker-toolbox) on Mac or Windows,

you need to connect to the machine generated by 'Docker Quickstart'. You get its IP address from `docker-machine ls` or from the first line in the terminal, e.g.: `docker is configured to use the default machine with IP 192.168.99.100`.

- `quay.io/bgruening/galaxy` is the Image/Container name, that directs docker to the correct path in the [docker index](https://quay.io/repository/bgruening/galaxy?tab=tags).

- `-d` will start the docker container in daemon mode.

For an interactive session, you can execute:

```sh

docker run -i -t -p 8080:80 \

quay.io/bgruening/galaxy \

/bin/bash

```

and run the `startup` script by yourself, to start PostgreSQL, nginx and Galaxy.

Docker images are "read-only", all your changes inside one session will be lost after restart. This mode is useful to present Galaxy to your colleagues or to run workshops with it. To install Tool Shed repositories or to save your data you need to export the calculated data to the host computer.

Fortunately, this is as easy as:

```sh

docker run -d -p 8080:80 \

-v /home/user/galaxy_storage/:/export/ \

quay.io/bgruening/galaxy

```

With the additional `-v /home/user/galaxy_storage/:/export/` parameter, Docker will mount the local folder `/home/user/galaxy_storage` into the Container under `/export/`. A `startup.sh` script, that is usually starting nginx, PostgreSQL and Galaxy, will recognize the export directory with one of the following outcomes:

- In case of an empty `/export/` directory, it will move the [PostgreSQL](http://www.postgresql.org/) database, the Galaxy database directory, Shed Tools and Tool Dependencies and various config scripts to /export/ and symlink back to the original location.

- In case of a non-empty `/export/`, for example if you continue a previous session within the same folder, nothing will be moved, but the symlinks will be created.

This enables you to have different export folders for different sessions - means real separation of your different projects.

To detect when the Galaxy distribution in the image changes, the container writes a marker at

`/export/.galaxy_export_marker`. You can override the marker value with `GALAXY_EXPORT_MARKER` if you

need deterministic export refresh behavior.

You can also collect and store `/export/` data of Galaxy instances in a dedicated docker [Data volume Container](https://docs.docker.com/engine/userguide/dockervolumes/) created by:

```sh

docker create -v /export \

--name galaxy-store \

quay.io/bgruening/galaxy \

/bin/true

```

To mount this data volume in a Galaxy container, use the `--volumes-from` parameter:

```sh

docker run -d -p 8080:80 \

--volumes-from galaxy-store \

quay.io/bgruening/galaxy

```

This also allows for data separation, but keeps everything encapsulated within the docker engine (e.g. on OS X within your `$HOME/.docker` folder - easy to backup, archive and restore. This approach, albeit at the expense of disk space, avoids the problems with permissions [reported](https://github.com/bgruening/docker-galaxy-stable/issues/68) for data export on non-Linux hosts.

## Upgrading images <a name="Upgrading-images" /> [[toc]](#toc)

We will release a new version of this image concurrent with every new Galaxy release. For upgrading an image to a new version we have assembled a few hints for you. Please, take in account that upgrading may vary depending on your Galaxy installation, and the changes in new versions. Use this example carefully!

* Create a test instance with only the database and configuration files. This will allow testing to ensure that things run but won't require copying all of the data.

* New unmodified configuration files are always stored in a hidden directory called `.distribution_config`. Use this folder to diff your configurations with the new configuration files shipped with Galaxy. This prevents needing to go through the change log files to find out which new files were added or which new features you can activate.

Here are 2 suggested upgrade methods, a quick one, and a safer one.

### The quick upgrade method

This method involves less data copying, which makes the process quicker, but makes it impossible to downgrade in case of problems.

If you are upgrading from <19.05 to >=19.05, you need to migrate the PostgreSQL database, have a look at [PostgreSQL migration](#Postgresql-migration).

1. Stop the old Galaxy container

```sh

docker stop <old_container_name>

docker pull quay.io/bgruening/galaxy

```

2. Run the container with the updated image

```sh

docker run -p 8080:80 -v /data/galaxy-data:/export --name <new_container_name> quay.io/bgruening/galaxy

```

3. Use diff to find changes in the config files (only if you changed any config file).

```sh

cd /data/galaxy-data/.distribution_config

for f in *; do echo $f; diff $f ../galaxy/config/$f; read; done

```

4. Upgrade the database schema

```sh

docker exec -it <new_container_name> bash

galaxyctl stop

sh manage_db.sh upgrade

exit

```

5. Restart Galaxy

```sh

docker exec -it <new_container_name> galaxyctl start

```

(Alternatively, restart the whole container)

### The safe upgrade method

With this method, you keep a backup in case you decide to downgrade, but requires some potentially long data copying.

* Note that copying database and datasets can be expensive if you have many GB of data.

* If you are upgrading from <19.05 to >=19.05, you need to migrate the PostgreSQL database, have a look at [PostgreSQL migration](#Postgresql-migration).

1. Download newer version of the Galaxy image

```

$ sudo docker pull quay.io/bgruening/galaxy

```

2. Stop and rename the current galaxy container

```

$ sudo docker stop galaxy-instance

$ sudo docker rename galaxy-instance galaxy-instance-old

```

3. Rename the data directory (the one that is mounted to /export in the docker)

```

$ sudo mv /data/galaxy-data /data/galaxy-data-old

```

4. Run a new Galaxy container using newer image and wait while Galaxy generates the default content for /export

```

$ sudo docker run -p 8080:80 -v /data/galaxy-data:/export --name galaxy-instance quay.io/bgruening/galaxy

```

5. Stop the Galaxy container

```

$ sudo docker stop galaxy-instance

```

6. Replace the content of the postgres database by the old db data

```

$ sudo rm -r /data/galaxy-data/postgresql/

$ sudo rsync -var /data/galaxy-data-old/postgresql/ /data/galaxy-data/postgresql/

```

7. Use diff to find changes in the config files (only if you changed any config file).

```

$ cd /data/galaxy-data/.distribution_config

$ for f in *; do echo $f; diff $f ../../galaxy-data-old/galaxy/config/$f; read; done

```

8. Copy all the users' datasets to the new instance

```

$ sudo rsync -var /data/galaxy-data-old/galaxy/database/files/* /data/galaxy-data/galaxy/database/files/

```

9. Copy all the installed tools

```

$ sudo rsync -var /data/galaxy-data-old/tool_deps/* /data/galaxy-data/tool_deps/

$ sudo rsync -var /data/galaxy-data-old/galaxy/database/shed_tools/* /data/galaxy-data/galaxy/database/shed_tools/

$ sudo rsync -var /data/galaxy-data-old/galaxy/database/config/* /data/galaxy-data/galaxy/database/config/

```

10. Copy the welcome page and all its files.

```

$ sudo rsync -var /data/galaxy-data-old/welcome* /data/galaxy-data/

```

11. Create an auxiliary docker in interactive mode and upgrade the database.

```

$ sudo docker run -it --rm -v /data/galaxy-data:/export quay.io/bgruening/galaxy /bin/bash

# Startup all processes

> startup &

#Upgrade the database to the most recent version

> sh manage_db.sh upgrade

#Logout

> exit

```

12. Start the docker and test

```

$ sudo docker start galaxy-instance

```

13. Clean the old container and image

### Postgresql migration <a name="Postgresql-migration" /> [[toc]](#toc)

In the 19.05 version, Postgresql was updated from version 9.3 to version 11.5. If you are upgrading from a version <19.05, you will need to migrate the database.

You can do it the following way (based on the "The quick upgrade method" above):

1. Stop Galaxy in the old container

```sh

docker exec -it <old_container_name> galaxyctl stop

```

2. Dump the old database

```sh

docker exec -it <old_container_name> bash

su postgres

pg_dumpall --clean > /export/postgresql/9.3dump.sql

exit

exit

```

3. Update the container (= step 1 of the "The quick upgrade method" above)

```sh

docker stop <old_container_name>

docker pull quay.io/bgruening/galaxy

```

4. Run the container with the updated image (= step 2 of the "The quick upgrade method" above)

```sh

docker run -p 8080:80 -v /data/galaxy-data:/export --name <new_container_name> quay.io/bgruening/galaxy

```

5. Restore the dump to the new postgres version

Wait for the startup process to finish (Galaxy should be accessible)

```sh

docker exec -it <new_container_name> bash

galaxyctl stop

su postgres

psql -f /export/postgresql/9.3dump.sql postgres

exit

exit

```

6. Use diff to find changes in the config files (only if you changed any config file). (= step 3 of the "The quick upgrade method" above)

```sh

cd /data/galaxy-data/.distribution_config

for f in *; do echo $f; diff $f ../galaxy/config/$f; read; done

```