Showing preview only (274K chars total). Download the full file or copy to clipboard to get everything.

Repository: concurrencylabs/aws-pricing-tools

Branch: master

Commit: f03a296e0b69

Files: 41

Total size: 260.4 KB

Directory structure:

gitextract_2lg3vbht/

├── .gitignore

├── LICENSE.md

├── MANIFEST.in

├── README.md

├── awspricecalculator/

│ ├── __init__.py

│ ├── awslambda/

│ │ ├── __init__.py

│ │ └── pricing.py

│ ├── common/

│ │ ├── __init__.py

│ │ ├── consts.py

│ │ ├── errors.py

│ │ ├── models.py

│ │ └── phelper.py

│ ├── datatransfer/

│ │ ├── __init__.py

│ │ └── pricing.py

│ ├── dynamodb/

│ │ ├── __init__.py

│ │ └── pricing.py

│ ├── ec2/

│ │ ├── __init__.py

│ │ └── pricing.py

│ ├── emr/

│ │ ├── __init__.py

│ │ └── pricing.py

│ ├── kinesis/

│ │ ├── __init__.py

│ │ └── pricing.py

│ ├── rds/

│ │ ├── __init__.py

│ │ └── pricing.py

│ └── redshift/

│ ├── __init__.py

│ └── pricing.py

├── cloudformation/

│ ├── function-plus-schedule.json

│ └── lambda-metric-filters.yml

├── functions/

│ └── calculate-near-realtime.py

├── install.sh

├── requirements-dev.txt

├── requirements.txt

├── scripts/

│ ├── README.md

│ ├── emr-pricing.py

│ ├── get-latest-index.py

│ ├── lambda-optimization.py

│ └── redshift-pricing.py

├── serverless.env.yml

├── serverless.yml

├── setup.py

└── test/

└── events/

└── constant-tag.json

================================================

FILE CONTENTS

================================================

================================================

FILE: .gitignore

================================================

# Don't include anything installed into the virtualenv by pip

.Python

bin

lib

include

pip-selfcheck.json

# We don't need compiled Python artifacts in the repo

__pycache__

*egg-info

*.pyc

*.pyo

.idea/*

.serverless/*

awspricecalculator/data/*

# ignore vendored files

vendored

#temporarily out

awspricecalculator/s3*

awspricecalculator/common/utils.py

scripts/ec2-pricing.py

scripts/rds-pricing.py

scripts/s3-pricing.py

scripts/lambda-pricing.py

scripts/dynamodb-pricing.py

scripts/kinesis-pricing.py

scripts/context.py

scripts/propagate-lambda-code.py

================================================

FILE: LICENSE.md

================================================

GNU GENERAL PUBLIC LICENSE

Version 3, 29 June 2007

Copyright (C) 2007 Free Software Foundation, Inc. <http://fsf.org/>

Everyone is permitted to copy and distribute verbatim copies of this license document, but changing it is not allowed.

Preamble

The GNU General Public License is a free, copyleft license for software and other kinds of works.

The licenses for most software and other practical works are designed to take away your freedom to share and change the works. By contrast, the GNU General Public License is intended to guarantee your freedom to share and change all versions of a program--to make sure it remains free software for all its users. We, the Free Software Foundation, use the GNU General Public License for most of our software; it applies also to any other work released this way by its authors. You can apply it to your programs, too.

When we speak of free software, we are referring to freedom, not price. Our General Public Licenses are designed to make sure that you have the freedom to distribute copies of free software (and charge for them if you wish), that you receive source code or can get it if you want it, that you can change the software or use pieces of it in new free programs, and that you know you can do these things.

To protect your rights, we need to prevent others from denying you these rights or asking you to surrender the rights. Therefore, you have certain responsibilities if you distribute copies of the software, or if you modify it: responsibilities to respect the freedom of others.

For example, if you distribute copies of such a program, whether gratis or for a fee, you must pass on to the recipients the same freedoms that you received. You must make sure that they, too, receive or can get the source code. And you must show them these terms so they know their rights.

Developers that use the GNU GPL protect your rights with two steps: (1) assert copyright on the software, and (2) offer you this License giving you legal permission to copy, distribute and/or modify it.

For the developers' and authors' protection, the GPL clearly explains that there is no warranty for this free software. For both users' and authors' sake, the GPL requires that modified versions be marked as changed, so that their problems will not be attributed erroneously to authors of previous versions.

Some devices are designed to deny users access to install or run modified versions of the software inside them, although the manufacturer can do so. This is fundamentally incompatible with the aim of protecting users' freedom to change the software. The systematic pattern of such abuse occurs in the area of products for individuals to use, which is precisely where it is most unacceptable. Therefore, we have designed this version of the GPL to prohibit the practice for those products. If such problems arise substantially in other domains, we stand ready to extend this provision to those domains in future versions of the GPL, as needed to protect the freedom of users.

Finally, every program is threatened constantly by software patents. States should not allow patents to restrict development and use of software on general-purpose computers, but in those that do, we wish to avoid the special danger that patents applied to a free program could make it effectively proprietary. To prevent this, the GPL assures that patents cannot be used to render the program non-free.

The precise terms and conditions for copying, distribution and modification follow.

TERMS AND CONDITIONS

0. Definitions.

"This License" refers to version 3 of the GNU General Public License.

"Copyright" also means copyright-like laws that apply to other kinds of works, such as semiconductor masks.

"The Program" refers to any copyrightable work licensed under this License. Each licensee is addressed as "you". "Licensees" and "recipients" may be individuals or organizations.

To "modify" a work means to copy from or adapt all or part of the work in a fashion requiring copyright permission, other than the making of an exact copy. The resulting work is called a "modified version" of the earlier work or a work "based on" the earlier work.

A "covered work" means either the unmodified Program or a work based on the Program.

To "propagate" a work means to do anything with it that, without permission, would make you directly or secondarily liable for infringement under applicable copyright law, except executing it on a computer or modifying a private copy. Propagation includes copying, distribution (with or without modification), making available to the public, and in some countries other activities as well.

To "convey" a work means any kind of propagation that enables other parties to make or receive copies. Mere interaction with a user through a computer network, with no transfer of a copy, is not conveying.

An interactive user interface displays "Appropriate Legal Notices" to the extent that it includes a convenient and prominently visible feature that (1) displays an appropriate copyright notice, and (2) tells the user that there is no warranty for the work (except to the extent that warranties are provided), that licensees may convey the work under this License, and how to view a copy of this License. If the interface presents a list of user commands or options, such as a menu, a prominent item in the list meets this criterion.

1. Source Code.

The "source code" for a work means the preferred form of the work for making modifications to it. "Object code" means any non-source form of a work.

A "Standard Interface" means an interface that either is an official standard defined by a recognized standards body, or, in the case of interfaces specified for a particular programming language, one that is widely used among developers working in that language.

The "System Libraries" of an executable work include anything, other than the work as a whole, that (a) is included in the normal form of packaging a Major Component, but which is not part of that Major Component, and (b) serves only to enable use of the work with that Major Component, or to implement a Standard Interface for which an implementation is available to the public in source code form. A "Major Component", in this context, means a major essential component (kernel, window system, and so on) of the specific operating system (if any) on which the executable work runs, or a compiler used to produce the work, or an object code interpreter used to run it.

The "Corresponding Source" for a work in object code form means all the source code needed to generate, install, and (for an executable work) run the object code and to modify the work, including scripts to control those activities. However, it does not include the work's System Libraries, or general-purpose tools or generally available free programs which are used unmodified in performing those activities but which are not part of the work. For example, Corresponding Source includes interface definition files associated with source files for the work, and the source code for shared libraries and dynamically linked subprograms that the work is specifically designed to require, such as by intimate data communication or control flow between those subprograms and other parts of the work.

The Corresponding Source need not include anything that users can regenerate automatically from other parts of the Corresponding Source.

The Corresponding Source for a work in source code form is that same work.

2. Basic Permissions.

All rights granted under this License are granted for the term of copyright on the Program, and are irrevocable provided the stated conditions are met. This License explicitly affirms your unlimited permission to run the unmodified Program. The output from running a covered work is covered by this License only if the output, given its content, constitutes a covered work. This License acknowledges your rights of fair use or other equivalent, as provided by copyright law.

You may make, run and propagate covered works that you do not convey, without conditions so long as your license otherwise remains in force. You may convey covered works to others for the sole purpose of having them make modifications exclusively for you, or provide you with facilities for running those works, provided that you comply with the terms of this License in conveying all material for which you do not control copyright. Those thus making or running the covered works for you must do so exclusively on your behalf, under your direction and control, on terms that prohibit them from making any copies of your copyrighted material outside their relationship with you.

Conveying under any other circumstances is permitted solely under the conditions stated below. Sublicensing is not allowed; section 10 makes it unnecessary.

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

No covered work shall be deemed part of an effective technological measure under any applicable law fulfilling obligations under article 11 of the WIPO copyright treaty adopted on 20 December 1996, or similar laws prohibiting or restricting circumvention of such measures.

When you convey a covered work, you waive any legal power to forbid circumvention of technological measures to the extent such circumvention is effected by exercising rights under this License with respect to the covered work, and you disclaim any intention to limit operation or modification of the work as a means of enforcing, against the work's users, your or third parties' legal rights to forbid circumvention of technological measures.

4. Conveying Verbatim Copies.

You may convey verbatim copies of the Program's source code as you receive it, in any medium, provided that you conspicuously and appropriately publish on each copy an appropriate copyright notice; keep intact all notices stating that this License and any non-permissive terms added in accord with section 7 apply to the code; keep intact all notices of the absence of any warranty; and give all recipients a copy of this License along with the Program.

You may charge any price or no price for each copy that you convey, and you may offer support or warranty protection for a fee.

5. Conveying Modified Source Versions.

You may convey a work based on the Program, or the modifications to produce it from the Program, in the form of source code under the terms of section 4, provided that you also meet all of these conditions:

a) The work must carry prominent notices stating that you modified it, and giving a relevant date.

b) The work must carry prominent notices stating that it is released under this License and any conditions added under section 7. This requirement modifies the requirement in section 4 to "keep intact all notices".

c) You must license the entire work, as a whole, under this License to anyone who comes into possession of a copy. This License will therefore apply, along with any applicable section 7 additional terms, to the whole of the work, and all its parts, regardless of how they are packaged. This License gives no permission to license the work in any other way, but it does not invalidate such permission if you have separately received it.

d) If the work has interactive user interfaces, each must display Appropriate Legal Notices; however, if the Program has interactive interfaces that do not display Appropriate Legal Notices, your work need not make them do so.

A compilation of a covered work with other separate and independent works, which are not by their nature extensions of the covered work, and which are not combined with it such as to form a larger program, in or on a volume of a storage or distribution medium, is called an "aggregate" if the compilation and its resulting copyright are not used to limit the access or legal rights of the compilation's users beyond what the individual works permit. Inclusion of a covered work in an aggregate does not cause this License to apply to the other parts of the aggregate.

6. Conveying Non-Source Forms.

You may convey a covered work in object code form under the terms of sections 4 and 5, provided that you also convey the machine-readable Corresponding Source under the terms of this License, in one of these ways:

a) Convey the object code in, or embodied in, a physical product (including a physical distribution medium), accompanied by the Corresponding Source fixed on a durable physical medium customarily used for software interchange.

b) Convey the object code in, or embodied in, a physical product (including a physical distribution medium), accompanied by a written offer, valid for at least three years and valid for as long as you offer spare parts or customer support for that product model, to give anyone who possesses the object code either (1) a copy of the Corresponding Source for all the software in the product that is covered by this License, on a durable physical medium customarily used for software interchange, for a price no more than your reasonable cost of physically performing this conveying of source, or (2) access to copy the Corresponding Source from a network server at no charge.

c) Convey individual copies of the object code with a copy of the written offer to provide the Corresponding Source. This alternative is allowed only occasionally and noncommercially, and only if you received the object code with such an offer, in accord with subsection 6b.

d) Convey the object code by offering access from a designated place (gratis or for a charge), and offer equivalent access to the Corresponding Source in the same way through the same place at no further charge. You need not require recipients to copy the Corresponding Source along with the object code. If the place to copy the object code is a network server, the Corresponding Source may be on a different server (operated by you or a third party) that supports equivalent copying facilities, provided you maintain clear directions next to the object code saying where to find the Corresponding Source. Regardless of what server hosts the Corresponding Source, you remain obligated to ensure that it is available for as long as needed to satisfy these requirements.

e) Convey the object code using peer-to-peer transmission, provided you inform other peers where the object code and Corresponding Source of the work are being offered to the general public at no charge under subsection 6d.

A separable portion of the object code, whose source code is excluded from the Corresponding Source as a System Library, need not be included in conveying the object code work.

A "User Product" is either (1) a "consumer product", which means any tangible personal property which is normally used for personal, family, or household purposes, or (2) anything designed or sold for incorporation into a dwelling. In determining whether a product is a consumer product, doubtful cases shall be resolved in favor of coverage. For a particular product received by a particular user, "normally used" refers to a typical or common use of that class of product, regardless of the status of the particular user or of the way in which the particular user actually uses, or expects or is expected to use, the product. A product is a consumer product regardless of whether the product has substantial commercial, industrial or non-consumer uses, unless such uses represent the only significant mode of use of the product.

"Installation Information" for a User Product means any methods, procedures, authorization keys, or other information required to install and execute modified versions of a covered work in that User Product from a modified version of its Corresponding Source. The information must suffice to ensure that the continued functioning of the modified object code is in no case prevented or interfered with solely because modification has been made.

If you convey an object code work under this section in, or with, or specifically for use in, a User Product, and the conveying occurs as part of a transaction in which the right of possession and use of the User Product is transferred to the recipient in perpetuity or for a fixed term (regardless of how the transaction is characterized), the Corresponding Source conveyed under this section must be accompanied by the Installation Information. But this requirement does not apply if neither you nor any third party retains the ability to install modified object code on the User Product (for example, the work has been installed in ROM).

The requirement to provide Installation Information does not include a requirement to continue to provide support service, warranty, or updates for a work that has been modified or installed by the recipient, or for the User Product in which it has been modified or installed. Access to a network may be denied when the modification itself materially and adversely affects the operation of the network or violates the rules and protocols for communication across the network.

Corresponding Source conveyed, and Installation Information provided, in accord with this section must be in a format that is publicly documented (and with an implementation available to the public in source code form), and must require no special password or key for unpacking, reading or copying.

7. Additional Terms.

"Additional permissions" are terms that supplement the terms of this License by making exceptions from one or more of its conditions. Additional permissions that are applicable to the entire Program shall be treated as though they were included in this License, to the extent that they are valid under applicable law. If additional permissions apply only to part of the Program, that part may be used separately under those permissions, but the entire Program remains governed by this License without regard to the additional permissions.

When you convey a copy of a covered work, you may at your option remove any additional permissions from that copy, or from any part of it. (Additional permissions may be written to require their own removal in certain cases when you modify the work.) You may place additional permissions on material, added by you to a covered work, for which you have or can give appropriate copyright permission.

Notwithstanding any other provision of this License, for material you add to a covered work, you may (if authorized by the copyright holders of that material) supplement the terms of this License with terms:

a) Disclaiming warranty or limiting liability differently from the terms of sections 15 and 16 of this License; or

b) Requiring preservation of specified reasonable legal notices or author attributions in that material or in the Appropriate Legal Notices displayed by works containing it; or

c) Prohibiting misrepresentation of the origin of that material, or requiring that modified versions of such material be marked in reasonable ways as different from the original version; or

d) Limiting the use for publicity purposes of names of licensors or authors of the material; or

e) Declining to grant rights under trademark law for use of some trade names, trademarks, or service marks; or

f) Requiring indemnification of licensors and authors of that material by anyone who conveys the material (or modified versions of it) with contractual assumptions of liability to the recipient, for any liability that these contractual assumptions directly impose on those licensors and authors.

All other non-permissive additional terms are considered "further restrictions" within the meaning of section 10. If the Program as you received it, or any part of it, contains a notice stating that it is governed by this License along with a term that is a further restriction, you may remove that term. If a license document contains a further restriction but permits relicensing or conveying under this License, you may add to a covered work material governed by the terms of that license document, provided that the further restriction does not survive such relicensing or conveying.

If you add terms to a covered work in accord with this section, you must place, in the relevant source files, a statement of the additional terms that apply to those files, or a notice indicating where to find the applicable terms.

Additional terms, permissive or non-permissive, may be stated in the form of a separately written license, or stated as exceptions; the above requirements apply either way.

8. Termination.

You may not propagate or modify a covered work except as expressly provided under this License. Any attempt otherwise to propagate or modify it is void, and will automatically terminate your rights under this License (including any patent licenses granted under the third paragraph of section 11).

However, if you cease all violation of this License, then your license from a particular copyright holder is reinstated (a) provisionally, unless and until the copyright holder explicitly and finally terminates your license, and (b) permanently, if the copyright holder fails to notify you of the violation by some reasonable means prior to 60 days after the cessation.

Moreover, your license from a particular copyright holder is reinstated permanently if the copyright holder notifies you of the violation by some reasonable means, this is the first time you have received notice of violation of this License (for any work) from that copyright holder, and you cure the violation prior to 30 days after your receipt of the notice.

Termination of your rights under this section does not terminate the licenses of parties who have received copies or rights from you under this License. If your rights have been terminated and not permanently reinstated, you do not qualify to receive new licenses for the same material under section 10.

9. Acceptance Not Required for Having Copies.

You are not required to accept this License in order to receive or run a copy of the Program. Ancillary propagation of a covered work occurring solely as a consequence of using peer-to-peer transmission to receive a copy likewise does not require acceptance. However, nothing other than this License grants you permission to propagate or modify any covered work. These actions infringe copyright if you do not accept this License. Therefore, by modifying or propagating a covered work, you indicate your acceptance of this License to do so.

10. Automatic Licensing of Downstream Recipients.

Each time you convey a covered work, the recipient automatically receives a license from the original licensors, to run, modify and propagate that work, subject to this License. You are not responsible for enforcing compliance by third parties with this License.

An "entity transaction" is a transaction transferring control of an organization, or substantially all assets of one, or subdividing an organization, or merging organizations. If propagation of a covered work results from an entity transaction, each party to that transaction who receives a copy of the work also receives whatever licenses to the work the party's predecessor in interest had or could give under the previous paragraph, plus a right to possession of the Corresponding Source of the work from the predecessor in interest, if the predecessor has it or can get it with reasonable efforts.

You may not impose any further restrictions on the exercise of the rights granted or affirmed under this License. For example, you may not impose a license fee, royalty, or other charge for exercise of rights granted under this License, and you may not initiate litigation (including a cross-claim or counterclaim in a lawsuit) alleging that any patent claim is infringed by making, using, selling, offering for sale, or importing the Program or any portion of it.

11. Patents.

A "contributor" is a copyright holder who authorizes use under this License of the Program or a work on which the Program is based. The work thus licensed is called the contributor's "contributor version".

A contributor's "essential patent claims" are all patent claims owned or controlled by the contributor, whether already acquired or hereafter acquired, that would be infringed by some manner, permitted by this License, of making, using, or selling its contributor version, but do not include claims that would be infringed only as a consequence of further modification of the contributor version. For purposes of this definition, "control" includes the right to grant patent sublicenses in a manner consistent with the requirements of this License.

Each contributor grants you a non-exclusive, worldwide, royalty-free patent license under the contributor's essential patent claims, to make, use, sell, offer for sale, import and otherwise run, modify and propagate the contents of its contributor version.

In the following three paragraphs, a "patent license" is any express agreement or commitment, however denominated, not to enforce a patent (such as an express permission to practice a patent or covenant not to sue for patent infringement). To "grant" such a patent license to a party means to make such an agreement or commitment not to enforce a patent against the party.

If you convey a covered work, knowingly relying on a patent license, and the Corresponding Source of the work is not available for anyone to copy, free of charge and under the terms of this License, through a publicly available network server or other readily accessible means, then you must either (1) cause the Corresponding Source to be so available, or (2) arrange to deprive yourself of the benefit of the patent license for this particular work, or (3) arrange, in a manner consistent with the requirements of this License, to extend the patent license to downstream recipients. "Knowingly relying" means you have actual knowledge that, but for the patent license, your conveying the covered work in a country, or your recipient's use of the covered work in a country, would infringe one or more identifiable patents in that country that you have reason to believe are valid.

If, pursuant to or in connection with a single transaction or arrangement, you convey, or propagate by procuring conveyance of, a covered work, and grant a patent license to some of the parties receiving the covered work authorizing them to use, propagate, modify or convey a specific copy of the covered work, then the patent license you grant is automatically extended to all recipients of the covered work and works based on it.

A patent license is "discriminatory" if it does not include within the scope of its coverage, prohibits the exercise of, or is conditioned on the non-exercise of one or more of the rights that are specifically granted under this License. You may not convey a covered work if you are a party to an arrangement with a third party that is in the business of distributing software, under which you make payment to the third party based on the extent of your activity of conveying the work, and under which the third party grants, to any of the parties who would receive the covered work from you, a discriminatory patent license (a) in connection with copies of the covered work conveyed by you (or copies made from those copies), or (b) primarily for and in connection with specific products or compilations that contain the covered work, unless you entered into that arrangement, or that patent license was granted, prior to 28 March 2007.

Nothing in this License shall be construed as excluding or limiting any implied license or other defenses to infringement that may otherwise be available to you under applicable patent law.

12. No Surrender of Others' Freedom.

If conditions are imposed on you (whether by court order, agreement or otherwise) that contradict the conditions of this License, they do not excuse you from the conditions of this License. If you cannot convey a covered work so as to satisfy simultaneously your obligations under this License and any other pertinent obligations, then as a consequence you may not convey it at all. For example, if you agree to terms that obligate you to collect a royalty for further conveying from those to whom you convey the Program, the only way you could satisfy both those terms and this License would be to refrain entirely from conveying the Program.

13. Use with the GNU Affero General Public License.

Notwithstanding any other provision of this License, you have permission to link or combine any covered work with a work licensed under version 3 of the GNU Affero General Public License into a single combined work, and to convey the resulting work. The terms of this License will continue to apply to the part which is the covered work, but the special requirements of the GNU Affero General Public License, section 13, concerning interaction through a network will apply to the combination as such.

14. Revised Versions of this License.

The Free Software Foundation may publish revised and/or new versions of the GNU General Public License from time to time. Such new versions will be similar in spirit to the present version, but may differ in detail to address new problems or concerns.

Each version is given a distinguishing version number. If the Program specifies that a certain numbered version of the GNU General Public License "or any later version" applies to it, you have the option of following the terms and conditions either of that numbered version or of any later version published by the Free Software Foundation. If the Program does not specify a version number of the GNU General Public License, you may choose any version ever published by the Free Software Foundation.

If the Program specifies that a proxy can decide which future versions of the GNU General Public License can be used, that proxy's public statement of acceptance of a version permanently authorizes you to choose that version for the Program.

Later license versions may give you additional or different permissions. However, no additional obligations are imposed on any author or copyright holder as a result of your choosing to follow a later version.

15. Disclaimer of Warranty.

THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

16. Limitation of Liability.

IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS), EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

17. Interpretation of Sections 15 and 16.

If the disclaimer of warranty and limitation of liability provided above cannot be given local legal effect according to their terms, reviewing courts shall apply local law that most closely approximates an absolute waiver of all civil liability in connection with the Program, unless a warranty or assumption of liability accompanies a copy of the Program in return for a fee.

END OF TERMS AND CONDITIONS

================================================

FILE: MANIFEST.in

================================================

recursive-include awspricecalculator/data *.csv *.json

================================================

FILE: README.md

================================================

## Concurrency Labs - AWS Price Calculator tool

This repository uses the AWS Price List API to implement price calculation utilities.

Supported services:

* EC2

* ELB

* EBS

* RDS

* Lambda

* Dynamo DB

* Kinesis

Visit the following URLs for more details:

https://www.concurrencylabs.com/blog/aws-pricing-lambda-realtime-calculation-function/

https://www.concurrencylabs.com/blog/aws-lambda-cost-optimization-tools/

https://www.concurrencylabs.com/blog/calculate-near-realtime-pricing-serverless-applications/

The code is structured in the following way:

**awspricecalculator**. The modules in this package search data within the AWS Price List API index files.

They take price dimension parameters as inputs and return results in JSON format. This package

is called by Lambda functions or other Python scripts.

**functions**. This is where our Lambda functions live. Functions are packaged using the Serverless framework.

**scripts**. Here are some Python scripts to help with management and price optimizations. See README.md in the scripts

folder for more details.

## Available Lambda functions:

### calculate-near-realtime

This function is called by a schedule configured using CloudWatch Events.

The function receives a JSON object configured in the schedule. The JSON object supports the following format:

Tag-based: ```{"tag":{"key":"mykey","value":"myvalue"}}```.

The function finds resources with the corresponding tag, gets current usage using CloudWatch metrics,

projects usage into a longer time period (a month), calls pricecalculator to calculate price

and puts results in CloudWatch metrics under the namespace ```ConcurrencyLabs/Pricing/NearRealTimeForecast```.

Supported services are EC2, EBS, ELB, RDS and Lambda. Not all price dimensions are supported for all services, though.

You can configure as many CloudWatch Events as you want, each one with a different tag.

**Rules:**

* The function only considers for price calculation those resources that are tagged. For example, if there is an untagged ELB

with tagged EC2 instances, the function will only consider the EC2 instances for the calculation.

If there is a tagged ELB with untagged EC2 instances, the function will only calculate price

for the ELB.

* The behavior described above is intended for simplicity, otherwise the function would have to

cover a number of combinations that might or might not be suitable to all users of the function.

* To keep it simple, if you want a resource to be included in the calculation, then tag it. Otherwise

leave it untagged.

**Limitations**

The function doesn't support cost estimations for the following:

* EC2 data transfer for instances not registered to an ELB

* EC2 Reserved Instances

* EBS Snapshots

* RDS data trasfer

* Lambda data transfer

* Kinesis PUT Payload Units are partially calculated based on CloudWatch metrics (there's no 100% accuracy for this price dimension)

* Dynamo DB storage

* Dynamo DB data transfer

## Install - using CloudFormation (recommended)

I created a CloudFormation template that deploys the Lambda function, as well as the CloudWatch Events

schedule. All you have to do is specify the tag key and value you want to calculate pricing for.

For example: TagKey:stack, TagValue:mywebapp

Click here to get started:

<a href="https://console.aws.amazon.com/cloudformation/home?region=us-east-1#/stacks/new?stackName=near-realtime-pricing-calculator&templateURL=http://s3.amazonaws.com/concurrencylabs-cfn-templates/lambda-near-realtime-pricing/function-plus-schedule.json" target="new"><img src="https://s3.amazonaws.com/cloudformation-examples/cloudformation-launch-stack.png" alt="Launch Stack"></a>

### Metrics

The function publishes a metric named `EstimatedCharges` to CloudWatch, under namespace `ConcurrencyLabs/Pricing/NearRealTimeForecast` and it uses

the following dimensions:

* Currency: USD

* ForecastPeriod: monthly

* ServiceName: ec2, rds, lambda, kinesis, dynamodb

* Tag: mykey=myvalue

### Updating to the latest version using CloudFormation

This function will be updated regularly in order to fix bugs, update AWS Price List data and also to add more functionality.

This means you will likely have to update the function at some point. I recommend installing

the function using the CloudFormation template, since it will simplify the update process.

To update the function, just go to the CloudFormation console, select the stack you've created

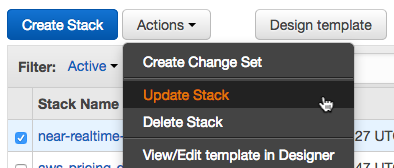

and click on Actions -> Update Stack:

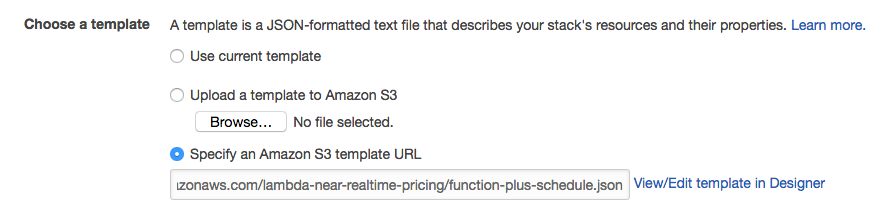

Then select "Specify an Amazon S3 template URL" and enter the following value:

```

http://concurrencylabs-cfn-templates.s3.amazonaws.com/lambda-near-realtime-pricing/function-plus-schedule.json

```

And that's it. CloudFormation will update the function with the latest code.

## Install Locally (if you want to modify it) - Manual steps

If you only want to install the Lambda function, you don't need to follow the steps below, just follow

the instructions in the "Install - Using CloudFormation" section above.

If you want to setup a dev environment, run a local copy, make some modifications and then install in your AWS account, then keep reading...

### Clone the repo

```

git clone https://github.com/concurrencylabs/aws-pricing-tools aws-pricing-tools

```

### (Optional, but recommended) Create an isolated Python environment using virtualenv

It's always a good practice to create an isolated environment so we have greater control over

the dependencies in our project, including the Python runtime.

If you don't have virtualenv installed, run:

```

pip install virtualenv

```

For more details on virtualenv, <a href="https://virtualenv.pypa.io/en/stable/installation/" target="new">click here.</a>

Now, create a Python 2.7 virtual environment in the location where you cloned the repo into. If you want to name your project

aws-pricing-tools, then run (one level up from the dir, use the same local name you used when you cloned

the repo):

```

virtualenv aws-pricing-tools -p python2.7

```

After your environment is created, it's time to activate it. Go to the recently created

folder of your project (i.e. aws-pricing-tools) and from there run:

```

source bin/activate

```

### Install Requirements

From your project root folder, run:

```

./install.sh

```

This will install the following dependencies to the ```vendored``` directory:

* **tinydb** - The code in this repo queries the Price List API csv records using the tinydb library.

* **numpy** - Used for statistics in the lambda optimization script

... and the following dependencies in your default site-packages location:

* **python-local-lambda** - lets me test my Lambda functions locally using test events in my workstation.

* **boto3** - AWS Python SDK to call AWS APIs.

### Install the Serverless Framework

Since the pricing tool runs on AWS Lambda, I decided to use the <a href="http://serverless.com/" target="new">Serverless Framework</a>.

This framework enormously simplifies the development, configuration and deployment of Function as a Service (a.k.a. FaaS, or "serverless")

code into AWS Lambda.

You should follow the instructions described <a href="https://github.com/serverless/serverless/blob/master/docs/guide/installation.md" target="new">here</a>,

which can be summarized in the following steps:

1. Make sure you have Node.js <a href="https://nodejs.org/en/download/" target="new">installed</a> in your workstation.

```

node --version

```

2. Install the Serverless Framework

```

npm install -g serverless

```

3. Confirm Serverless has been installed

```

serverless --version

```

The steps in this post were tested using version ```1.6.1```

4. Serverless needs access to your AWS account, so it can create and update AWS Lambda

functions, among other operations. Therefore, you have to make sure Serverless can access

a set of IAM User credentials. Follow <a href="https://github.com/serverless/serverless/blob/master/docs/guide/provider-account-setup.md" target="new">these instructions</a>.

In the long term, you should make sure these credentials are limited to only the API operations

Serverless requires - avoid Administrator access, which is a bad security and operational practice.

5. Checkout the code from this repo into your virtualenv folder.

### Set environment variables

```

export AWS_DEFAULT_PROFILE=<your-aws-cli-profile>

export AWS_DEFAULT_REGION=<us-east-1|us-west-2|etc.>

```

### How to test the function locally

**Download the latest AWS Price List API Index file**

The code needs a local copy of the the AWS Price List API index file.

The GitHub repo doesn't come with the index file, therefore you have to

download it the first time you run your code and every time AWS publishes a new

Price List API index.

Also, this index file is constantly updated by AWS. I recommend subscribing to the AWS Price List API

change notifications.

In order to download the latest index file, go to the ```scripts```` folder and run:

```

python get-latest-index.py --service=all

```

The script takes a few seconds to execute since some index files are a little heavy (like the EC2 one).

**Run a test**

Once you have the virtualenv activated, all dependencies installed, environment

variables set and the latest AWS Price List index file, it's time to run a test.

Update ```test/events/constant-tag.json``` with a tag key/value pair that exists in your AWS account.

Then run, from the **root** location in the local repo and replace <your-region> and <your-aws-account-id> with actual values:

```

python-lambda-local functions/calculate-near-realtime.py test/events/constant-tag.json -l lib/ -l . -f handler -t 30 -a arn:aws:lambda:<your-region>:<your-aws-account-id>:function:calculate-near-realtime

```

### Deploy the Serverless Project

From your project root folder, run:

```

serverless deploy

```

================================================

FILE: awspricecalculator/__init__.py

================================================

import os, sys

__location__ = os.path.dirname(os.path.realpath(__file__))

sys.path.append(os.path.join(__location__, "../"))

sys.path.append(os.path.join(__location__, "../vendored"))

================================================

FILE: awspricecalculator/awslambda/__init__.py

================================================

================================================

FILE: awspricecalculator/awslambda/pricing.py

================================================

import json

import logging

from ..common import consts, phelper

from ..common.models import PricingResult

import tinydb

log = logging.getLogger()

regiondbs = {}

indexMetadata = {}

def calculate(pdim):

log.info("Calculating Lambda pricing with the following inputs: {}".format(str(pdim.__dict__)))

global regiondbs

global indexMetadata

ts = phelper.Timestamp()

ts.start('totalCalculationAwsLambda')

#Load On-Demand DB

dbs = regiondbs.get(consts.SERVICE_LAMBDA+pdim.region+pdim.termType,{})

if not dbs:

dbs, indexMetadata = phelper.loadDBs(consts.SERVICE_LAMBDA, phelper.get_partition_keys(consts.SERVICE_LAMBDA, pdim.region, consts.SCRIPT_TERM_TYPE_ON_DEMAND))

regiondbs[consts.SERVICE_LAMBDA+pdim.region+pdim.termType]=dbs

cost = 0

pricing_records = []

awsPriceListApiVersion = indexMetadata['Version']

priceQuery = tinydb.Query()

#TODO: add support to include/ignore free-tier (include a flag)

serverlessDb = dbs[phelper.create_file_key([consts.REGION_MAP[pdim.region], consts.TERM_TYPE_MAP[pdim.termType], consts.PRODUCT_FAMILY_SERVERLESS])]

#Requests

if pdim.requestCount:

query = ((priceQuery['Group'] == 'AWS-Lambda-Requests'))

pricing_records, cost = phelper.calculate_price(consts.SERVICE_LAMBDA, serverlessDb, query, pdim.requestCount, pricing_records, cost)

#GB-s (aka compute time)

if pdim.avgDurationMs:

query = ((priceQuery['Group'] == 'AWS-Lambda-Duration'))

usageUnits = pdim.GBs

pricing_records, cost = phelper.calculate_price(consts.SERVICE_LAMBDA, serverlessDb, query, usageUnits, pricing_records, cost)

#Data Transfer

dataTransferDb = dbs[phelper.create_file_key([consts.REGION_MAP[pdim.region], consts.TERM_TYPE_MAP[pdim.termType], consts.PRODUCT_FAMILY_DATA_TRANSFER])]

#To internet

if pdim.dataTransferOutInternetGb:

query = ((priceQuery['To Location'] == 'External') & (priceQuery['Transfer Type'] == 'AWS Outbound'))

pricing_records, cost = phelper.calculate_price(consts.SERVICE_LAMBDA, dataTransferDb, query, pdim.dataTransferOutInternetGb, pricing_records, cost)

#Intra-regional data transfer - in/out/between EC2 AZs or using IPs or ELB

if pdim.dataTransferOutIntraRegionGb:

query = ((priceQuery['Transfer Type'] == 'IntraRegion'))

pricing_records, cost = phelper.calculate_price(consts.SERVICE_LAMBDA, dataTransferDb, query, pdim.dataTransferOutIntraRegionGb, pricing_records, cost)

#Inter-regional data transfer - out to other AWS regions

if pdim.dataTransferOutInterRegionGb:

query = ((priceQuery['Transfer Type'] == 'InterRegion Outbound') & (priceQuery['To Location'] == consts.REGION_MAP[pdim.toRegion]))

pricing_records, cost = phelper.calculate_price(consts.SERVICE_LAMBDA, dataTransferDb, query, pdim.dataTransferOutInterRegionGb, pricing_records, cost)

extraargs = {'priceDimensions':pdim}

pricing_result = PricingResult(awsPriceListApiVersion, pdim.region, cost, pricing_records, **extraargs)

log.debug(json.dumps(vars(pricing_result),sort_keys=False,indent=4))

log.debug("Total time to compute: [{}]".format(ts.finish('totalCalculationAwsLambda')))

return pricing_result.__dict__

================================================

FILE: awspricecalculator/common/__init__.py

================================================

================================================

FILE: awspricecalculator/common/consts.py

================================================

import os, logging

# COMMON

#_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/

AWS_PRICE_CALCULATOR_VERSION = "v2.0"

LOG_LEVEL = os.environ.get('LOG_LEVEL',logging.INFO)

DEFAULT_CURRENCY = "USD"

FORECAST_PERIOD_MONTHLY = "monthly"

FORECAST_PERIOD_YEARLY = "yearly"

HOURS_IN_MONTH = 720

SERVICE_CODE_AWS_DATA_TRANSFER = 'AWSDataTransfer'

REGION_MAP = {'us-east-1':'US East (N. Virginia)',

'us-east-2':'US East (Ohio)',

'us-west-1':'US West (N. California)',

'us-west-2':'US West (Oregon)',

'ca-central-1':'Canada (Central)',

'eu-west-1':'EU (Ireland)',

'eu-west-2':'EU (London)',

'eu-west-3':'EU (Paris)',

'eu-north-1':'EU (Stockholm)',

'eu-central-1':'EU (Frankfurt)',

'ap-northeast-1':'Asia Pacific (Tokyo)',

'ap-northeast-2':'Asia Pacific (Seoul)',

'ap-northeast-3':'Asia Pacific (Osaka-Local)',

'ap-southeast-1':'Asia Pacific (Singapore)',

'ap-southeast-2':'Asia Pacific (Sydney)',

'sa-east-1':'South America (Sao Paulo)',

'ap-south-1':'Asia Pacific (Mumbai)',

'cn-northwest-1':'China (Ningxia)',

'ap-east-1':'Asia Pacific (Hong Kong)'

}

#TODO: update for China region

REGION_PREFIX_MAP = {'us-east-1':'',

'us-east-2':'USE2-',

'us-west-1':'USW1-',

'us-west-2':'USW2-',

'ca-central-1':'CAN1-',

'eu-west-1':'EU-',

'eu-west-2':'EUW2-',

'eu-west-3':'EUW3-',

'eu-north-1':'EUN1-',

'eu-central-1':'EUC1-',

'ap-east-1':'APE1-' ,

'ap-northeast-1':'APN1-',

'ap-northeast-2':'APN2-',

'ap-northeast-3':'APN3-',

'ap-southeast-1':'APS1-',

'ap-southeast-2':'APS2-',

'sa-east-1':'SAE1-',

'ap-south-1':'APS3-',

'cn-northwest-1':'',

'US East (N. Virginia)':'',

'US East (Ohio)':'USE2-',

'US West (N. California)':'USW1-',

'US West (Oregon)':'USW2-',

'Canada (Central)':'CAN1-',

'EU (Ireland)':'EU-',

'EU (London)':'EUW2-',

'EU (Paris)':'EUW3-',

'EU (Stockholm)':'EUN1-',

'EU (Frankfurt)':'EUC1-',

'Asia Pacific (Tokyo)':'APN1-',

'Asia Pacific (Seoul)':'APN2-',

'Asia Pacific (Singapore)':'APS1-',

'Asia Pacific (Sydney)':'APS2-',

'South America (Sao Paulo)':'SAE1-',

'Asia Pacific (Mumbai)':'APS3-',

'AWS GovCloud (US)':'UGW1-',

'External':'',

'Any': ''

}

REGION_REPORT_MAP = {'us-east-1':'N. Virginia',

'us-east-2':'Ohio',

'us-west-1':'N. California',

'us-west-2':'Oregon',

'ca-central-1':'Canada',

'eu-west-1':'Ireland',

'eu-west-2':'London',

'eu-north-1':'Stockholm',

'eu-central-1':'Frankfurt',

'ap-east-1':'Hong Kong',

'ap-northeast-1':'Tokyo',

'ap-northeast-2':'Seoul',

'ap-northeast-3':'Osaka',

'ap-southeast-1':'Singapore',

'ap-southeast-2':'Sydney',

'sa-east-1':'Sao Paulo',

'ap-south-1':'Mumbai',

'cn-northwest-1':'Ningxia',

'eu-west-3':'Paris'

}

SERVICE_EC2 = 'ec2'

SERVICE_ELB = 'elb'

SERVICE_EBS = 'ebs'

SERVICE_S3 = 's3'

SERVICE_RDS = 'rds'

SERVICE_LAMBDA = 'lambda'

SERVICE_DYNAMODB= 'dynamodb'

SERVICE_KINESIS = 'kinesis'

SERVICE_DATA_TRANSFER = 'datatransfer'

SERVICE_EMR = 'emr'

SERVICE_REDSHIFT = 'redshift'

SERVICE_ALL = 'all'

NOT_APPLICABLE = 'NA'

SUPPORTED_SERVICES = (SERVICE_S3, SERVICE_EC2, SERVICE_RDS, SERVICE_LAMBDA, SERVICE_DYNAMODB, SERVICE_KINESIS,

SERVICE_EMR, SERVICE_REDSHIFT)

SUPPORTED_REGIONS = ('us-east-1','us-east-2', 'us-west-1', 'us-west-2','ca-central-1', 'eu-west-1','eu-west-2',

'eu-central-1', 'ap-east-1', 'ap-northeast-1', 'ap-northeast-2', 'ap-northeast-3', 'ap-southeast-1', 'ap-southeast-2',

'sa-east-1','ap-south-1', 'eu-west-3', 'eu-north-1'

)

SUPPORTED_EC2_INSTANCE_TYPES = ('a1.2xlarge','a1.4xlarge','a1.large','a1.medium','a1.xlarge','c1.medium','c1.xlarge','c3.2xlarge',

'c3.4xlarge','c3.8xlarge','c3.large','c3.xlarge','c4.2xlarge','c4.4xlarge','c4.8xlarge','c4.large',

'c4.xlarge','c5.18xlarge','c5.2xlarge','c5.4xlarge','c5.9xlarge','c5.large','c5.xlarge','c5d.18xlarge',

'c5d.2xlarge','c5d.4xlarge','c5d.9xlarge','c5d.large','c5d.xlarge','c5n.18xlarge','c5n.2xlarge',

'c5n.4xlarge','c5n.9xlarge','c5n.large','c5n.xlarge','cc2.8xlarge','cr1.8xlarge','d2.2xlarge',

'd2.4xlarge','d2.8xlarge','d2.xlarge','f1.16xlarge','f1.2xlarge','f1.4xlarge','g2.2xlarge',

'g2.8xlarge','g3.16xlarge','g3.4xlarge','g3.8xlarge','g3s.xlarge','h1.16xlarge','h1.2xlarge',

'h1.4xlarge','h1.8xlarge','hs1.8xlarge','i2.2xlarge','i2.4xlarge','i2.8xlarge','i2.xlarge',

'i3.16xlarge','i3.2xlarge','i3.4xlarge','i3.8xlarge','i3.large','i3.xlarge','m1.large',

'm1.medium','m1.small','m1.xlarge','m2.2xlarge','m2.4xlarge','m2.xlarge','m3.2xlarge',

'm3.large','m3.medium','m3.xlarge','m4.10xlarge','m4.16xlarge','m4.2xlarge','m4.4xlarge',

'm4.large','m4.xlarge','m5.12xlarge','m5.24xlarge','m5.2xlarge','m5.4xlarge','m5.large',

'm5.metal','m5.xlarge','m5a.12xlarge','m5a.24xlarge','m5a.2xlarge','m5a.4xlarge','m5a.large',

'm5a.xlarge','m5d.12xlarge','m5d.24xlarge','m5d.2xlarge','m5d.4xlarge','m5d.large','m5d.metal',

'm5d.xlarge','p2.16xlarge','p2.8xlarge','p2.xlarge','p3.16xlarge','p3.2xlarge','p3.8xlarge',

'p3dn.24xlarge','r3.2xlarge','r3.4xlarge','r3.8xlarge','r3.large','r3.xlarge','r4.16xlarge',

'r4.2xlarge','r4.4xlarge','r4.8xlarge','r4.large','r4.xlarge',

'r5.12xlarge','r5.24xlarge', 'r5.8xlarge',

'r5.2xlarge','r5.4xlarge','r5.large','r5.xlarge','r5a.12xlarge','r5a.24xlarge','r5a.2xlarge',

'r5a.4xlarge','r5a.large','r5a.xlarge','r5d.12xlarge','r5d.24xlarge','r5d.2xlarge','r5d.4xlarge',

'r5d.large','r5d.xlarge','t1.micro','t2.2xlarge','t2.large','t2.medium','t2.micro','t2.nano',

't2.small','t2.xlarge',

't3.2xlarge','t3.large','t3.medium','t3.micro','t3.nano','t3.small','t3.xlarge',

't3a.nano', 't3a.micro','t3a.small','t3a.medium','t3a.large','t3a.xlarge','t3a.2xlarge',

'x1.16xlarge','x1.32xlarge','x1e.16xlarge','x1e.2xlarge','x1e.32xlarge','x1e.4xlarge',

'x1e.8xlarge','x1e.xlarge','z1d.12xlarge','z1d.2xlarge','z1d.3xlarge','z1d.6xlarge','z1d.large','z1d.xlarge')

SUPPORTED_EMR_INSTANCE_TYPES = ('c1.medium','c1.xlarge','c3.2xlarge','c3.4xlarge','c3.8xlarge','c3.large','c3.xlarge','c4.2xlarge',

'c4.4xlarge','c4.8xlarge','c4.large','c4.xlarge','c5.18xlarge','c5.2xlarge','c5.4xlarge',

'c5.9xlarge','c5.xlarge','c5d.18xlarge','c5d.2xlarge','c5d.4xlarge','c5d.9xlarge','c5d.xlarge',

'c5n.18xlarge','c5n.2xlarge','c5n.4xlarge','c5n.9xlarge','c5n.xlarge',

'cc2.8xlarge',

'cr1.8xlarge','d2.2xlarge','d2.4xlarge','d2.8xlarge','d2.xlarge','g2.2xlarge','g3.16xlarge',

'g3.4xlarge','g3.8xlarge','g3s.xlarge','h1.16xlarge','h1.2xlarge','h1.4xlarge','h1.8xlarge',

'hs1.8xlarge','i2.2xlarge','i2.4xlarge','i2.8xlarge','i2.xlarge','i3.16xlarge',

'i3.2xlarge','i3.4xlarge','i3.8xlarge','i3.xlarge','m1.large','m1.medium','m1.small','m1.xlarge',

'm2.2xlarge','m2.4xlarge','m2.xlarge','m3.2xlarge','m3.large','m3.medium','m3.xlarge','m4.10xlarge',

'm4.16xlarge','m4.2xlarge','m4.4xlarge','m4.large','m4.xlarge','m5.12xlarge','m5.24xlarge',

'm5.2xlarge','m5.4xlarge','m5.xlarge','m5a.12xlarge','m5a.24xlarge','m5a.2xlarge','m5a.4xlarge',

'm5a.xlarge',

'm5d.12xlarge','m5d.24xlarge','m5d.2xlarge','m5d.4xlarge','m5d.xlarge','p2.16xlarge','p2.8xlarge',

'p2.xlarge','p3.16xlarge','p3.2xlarge','p3.8xlarge','r3.2xlarge','r3.4xlarge','r3.8xlarge',

'r3.xlarge','r4.16xlarge','r4.2xlarge','r4.4xlarge','r4.8xlarge','r4.large','r4.xlarge',

'r5.12xlarge','r5.24xlarge','r5.2xlarge','r5.4xlarge','r5.xlarge','r5a.12xlarge','r5a.24xlarge',

'r5a.2xlarge','r5a.4xlarge','r5a.xlarge',

'r5d.2xlarge','r5d.4xlarge','r5d.xlarge','z1d.12xlarge','z1d.2xlarge','z1d.3xlarge',

'z1d.6xlarge','z1d.xlarge')

SUPPORTED_REDSHIFT_INSTANCE_TYPES = ('ds1.xlarge','dc1.8xlarge','dc1.large','ds2.8xlarge',

'ds1.8xlarge','ds2.xlarge','dc2.8xlarge','dc2.large')

SUPPORTED_INSTANCE_TYPES_MAP = {SERVICE_EC2:SUPPORTED_EC2_INSTANCE_TYPES, SERVICE_EMR:SUPPORTED_EMR_INSTANCE_TYPES ,

SERVICE_REDSHIFT:SUPPORTED_REDSHIFT_INSTANCE_TYPES}

SERVICE_INDEX_MAP = {SERVICE_S3:'AmazonS3', SERVICE_EC2:'AmazonEC2', SERVICE_RDS:'AmazonRDS',

SERVICE_LAMBDA:'AWSLambda', SERVICE_DYNAMODB:'AmazonDynamoDB',

SERVICE_KINESIS:'AmazonKinesis', SERVICE_EMR:'ElasticMapReduce', SERVICE_REDSHIFT:'AmazonRedshift',

SERVICE_DATA_TRANSFER:'AWSDataTransfer'}

SCRIPT_TERM_TYPE_ON_DEMAND = 'on-demand'

SCRIPT_TERM_TYPE_RESERVED = 'reserved'

TERM_TYPE_RESERVED = 'Reserved'

TERM_TYPE_ON_DEMAND = 'OnDemand'

SUPPORTED_TERM_TYPES = (SCRIPT_TERM_TYPE_ON_DEMAND, SCRIPT_TERM_TYPE_RESERVED)

TERM_TYPE_MAP = {SCRIPT_TERM_TYPE_ON_DEMAND:'OnDemand', SCRIPT_TERM_TYPE_RESERVED:'Reserved'}

PRODUCT_FAMILY_COMPUTE_INSTANCE = 'Compute Instance'

PRODUCT_FAMILY_DATABASE_INSTANCE = 'Database Instance'

PRODUCT_FAMILY_DATA_TRANSFER = 'Data Transfer'

PRODUCT_FAMILY_FEE = 'Fee'

PRODUCT_FAMILY_API_REQUEST = 'API Request'

PRODUCT_FAMILY_STORAGE = 'Storage'

PRODUCT_FAMILY_SYSTEM_OPERATION = 'System Operation'

PRODUCT_FAMILY_LOAD_BALANCER = 'Load Balancer'

PRODUCT_FAMILY_APPLICATION_LOAD_BALANCER = 'Load Balancer-Application'

PRODUCT_FAMILY_NETWORK_LOAD_BALANCER = 'Load Balancer-Network'

PRODUCT_FAMILY_SNAPSHOT = "Storage Snapshot"

PRODUCT_FAMILY_SERVERLESS = "Serverless"

PRODUCT_FAMILY_DB_STORAGE = "Database Storage"

PRODUCT_FAMILY_DB_PIOPS = "Provisioned IOPS"

PRODUCT_FAMILY_KINESIS_STREAMS = "Kinesis Streams"

PRODUCT_FAMILY_EMR_INSTANCE = "Elastic Map Reduce Instance"

PRODUCT_FAMILIY_BUNDLE = 'Bundle'

PRODUCT_FAMILIY_REDSHIFT_CONCURRENCY_SCALING = 'Redshift Concurrency Scaling'

PRODUCT_FAMILIY_REDSHIFT_DATA_SCAN = 'Redshift Data Scan'

PRODUCT_FAMILIY_STORAGE_SNAPSHOT = 'Storage Snapshot'

SUPPORTED_PRODUCT_FAMILIES = (PRODUCT_FAMILY_COMPUTE_INSTANCE, PRODUCT_FAMILY_DATABASE_INSTANCE,

PRODUCT_FAMILY_DATA_TRANSFER,PRODUCT_FAMILY_FEE, PRODUCT_FAMILY_API_REQUEST,

PRODUCT_FAMILY_STORAGE, PRODUCT_FAMILY_SYSTEM_OPERATION, PRODUCT_FAMILY_LOAD_BALANCER,

PRODUCT_FAMILY_APPLICATION_LOAD_BALANCER, PRODUCT_FAMILY_NETWORK_LOAD_BALANCER,

PRODUCT_FAMILY_SNAPSHOT,PRODUCT_FAMILY_SERVERLESS,PRODUCT_FAMILY_DB_STORAGE,

PRODUCT_FAMILY_DB_PIOPS,PRODUCT_FAMILY_KINESIS_STREAMS, PRODUCT_FAMILY_EMR_INSTANCE,

PRODUCT_FAMILIY_BUNDLE, PRODUCT_FAMILIY_REDSHIFT_CONCURRENCY_SCALING, PRODUCT_FAMILIY_REDSHIFT_DATA_SCAN,

PRODUCT_FAMILIY_STORAGE_SNAPSHOT

)

SUPPORTED_RESERVED_PRODUCT_FAMILIES = (PRODUCT_FAMILY_COMPUTE_INSTANCE, PRODUCT_FAMILY_DATABASE_INSTANCE)

SUPPORTED_PRODUCT_FAMILIES_BY_SERVICE_DICT = {

SERVICE_EC2:[PRODUCT_FAMILY_COMPUTE_INSTANCE,PRODUCT_FAMILY_DATA_TRANSFER, PRODUCT_FAMILY_FEE,

PRODUCT_FAMILY_STORAGE,PRODUCT_FAMILY_SYSTEM_OPERATION,PRODUCT_FAMILY_LOAD_BALANCER,

PRODUCT_FAMILY_APPLICATION_LOAD_BALANCER,PRODUCT_FAMILY_NETWORK_LOAD_BALANCER,

PRODUCT_FAMILY_SNAPSHOT],

SERVICE_RDS:[PRODUCT_FAMILY_DATABASE_INSTANCE, PRODUCT_FAMILY_DATA_TRANSFER,PRODUCT_FAMILY_FEE,

PRODUCT_FAMILY_DB_STORAGE,PRODUCT_FAMILY_DB_PIOPS,PRODUCT_FAMILY_SNAPSHOT ],

SERVICE_S3:[PRODUCT_FAMILY_STORAGE, PRODUCT_FAMILY_FEE,PRODUCT_FAMILY_API_REQUEST,PRODUCT_FAMILY_SYSTEM_OPERATION, PRODUCT_FAMILY_DATA_TRANSFER ],

SERVICE_LAMBDA:[PRODUCT_FAMILY_SERVERLESS, PRODUCT_FAMILY_DATA_TRANSFER, PRODUCT_FAMILY_FEE,

PRODUCT_FAMILY_API_REQUEST],

SERVICE_KINESIS:[PRODUCT_FAMILY_KINESIS_STREAMS],

SERVICE_DYNAMODB:[PRODUCT_FAMILY_DB_STORAGE, PRODUCT_FAMILY_DB_PIOPS, PRODUCT_FAMILY_FEE ],

SERVICE_EMR:[PRODUCT_FAMILY_EMR_INSTANCE],

SERVICE_REDSHIFT:[PRODUCT_FAMILY_COMPUTE_INSTANCE, PRODUCT_FAMILIY_BUNDLE, PRODUCT_FAMILIY_REDSHIFT_CONCURRENCY_SCALING,

PRODUCT_FAMILIY_REDSHIFT_DATA_SCAN, PRODUCT_FAMILIY_STORAGE_SNAPSHOT],

SERVICE_DATA_TRANSFER:[PRODUCT_FAMILY_DATA_TRANSFER]

}

INFINITY = 'Inf'

SORT_CRITERIA_REGION = 'region'

SORT_CRITERIA_INSTANCE_TYPE = 'instance-type'

SORT_CRITERIA_OS = 'os'

SORT_CRITERIA_DB_INSTANCE_CLASS = 'db-instance-class'

SORT_CRITERIA_DB_ENGINE = 'engine'

SORT_CRITERIA_S3_STORAGE_CLASS = 'storage-class'

SORT_CRITERIA_S3_STORAGE_SIZE_GB = 'storage-size-gb'

SORT_CRITERIA_S3_DATA_RETRIEVAL_GB = 'data-retrieval-gb'

SORT_CRITERIA_S3_STORAGE_CLASS_DATA_RETRIEVAL_GB = 'storage-class-data-retrieval-gb'

SORT_CRITERIA_TO_REGION = 'to-region'

SORT_CRITERIA_LAMBDA_MEMORY = 'memory'

SORT_CRITERIA_TERM_TYPE = 'term-type'

SORT_CRITERIA_TERM_TYPE_REGION = 'term-type-region'

SORT_CRITERIA_VALUE_SEPARATOR = ','

#_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/

#EC2

EC2_OPERATING_SYSTEM_LINUX = 'Linux'

EC2_OPERATING_SYSTEM_BYOL = 'Windows BYOL'

EC2_OPERATING_SYSTEM_WINDOWS = 'Windows'

EC2_OPERATING_SYSTEM_SUSE = 'Suse'

#EC2_OPERATING_SYSTEM_SQL_WEB = 'SQL Web'

EC2_OPERATING_SYSTEM_RHEL = 'RHEL'

SCRIPT_EC2_TENANCY_SHARED = 'shared'

SCRIPT_EC2_TENANCY_DEDICATED = 'dedicated'

SCRIPT_EC2_TENANCY_HOST = 'host'

EC2_TENANCY_SHARED = 'Shared'

EC2_TENANCY_DEDICATED = 'Dedicated'

EC2_TENANCY_HOST = 'Host'

EC2_TENANCY_MAP = {SCRIPT_EC2_TENANCY_SHARED:EC2_TENANCY_SHARED,

SCRIPT_EC2_TENANCY_DEDICATED:EC2_TENANCY_DEDICATED,

SCRIPT_EC2_TENANCY_HOST:EC2_TENANCY_HOST}

SCRIPT_EC2_CAPACITY_RESERVATION_STATUS_USED = 'used'

SCRIPT_EC2_CAPACITY_RESERVATION_STATUS_UNUSED = 'unused'

SCRIPT_EC2_CAPACITY_RESERVATION_STATUS_ALLOCATED = 'allocated'

EC2_CAPACITY_RESERVATION_STATUS_USED = 'Used'

EC2_CAPACITY_RESERVATION_STATUS_UNUSED = 'UnusedCapacityReservation'

EC2_CAPACITY_RESERVATION_STATUS_ALLOCATED = 'AllocatedCapacityReservation'

EC2_CAPACITY_RESERVATION_STATUS_MAP = {SCRIPT_EC2_CAPACITY_RESERVATION_STATUS_USED: EC2_CAPACITY_RESERVATION_STATUS_USED,

SCRIPT_EC2_CAPACITY_RESERVATION_STATUS_UNUSED: EC2_CAPACITY_RESERVATION_STATUS_UNUSED,

SCRIPT_EC2_CAPACITY_RESERVATION_STATUS_ALLOCATED: EC2_CAPACITY_RESERVATION_STATUS_ALLOCATED}

STORAGE_MEDIA_SSD = "SSD-backed"

STORAGE_MEDIA_HDD = "HDD-backed"

STORAGE_MEDIA_S3 = "AmazonS3"

EBS_VOLUME_TYPE_MAGNETIC = "Magnetic"

EBS_VOLUME_TYPE_GENERAL_PURPOSE = "General Purpose"

EBS_VOLUME_TYPE_PIOPS = "Provisioned IOPS"

EBS_VOLUME_TYPE_THROUGHPUT_OPTIMIZED = "Throughput Optimized HDD"

EBS_VOLUME_TYPE_COLD_HDD = "Cold HDD"

#Values that are valid in the calling script (which could be a Lambda function or any Python module)

#OS

SCRIPT_OPERATING_SYSTEM_LINUX = 'linux'

SCRIPT_OPERATING_SYSTEM_WINDOWS_BYOL = 'windowsbyol'

SCRIPT_OPERATING_SYSTEM_WINDOWS = 'windows'

SCRIPT_OPERATING_SYSTEM_SUSE = 'suse'

#SCRIPT_OPERATING_SYSTEM_SQL_WEB = 'sqlweb'

SCRIPT_OPERATING_SYSTEM_RHEL = 'rhel'

#License Model

SCRIPT_EC2_LICENSE_MODEL_BYOL = 'byol'

SCRIPT_EC2_LICENSE_MODEL_INCLUDED = 'included'

SCRIPT_EC2_LICENSE_MODEL_NONE_REQUIRED = 'none-required'

#EBS

SCRIPT_EBS_VOLUME_TYPE_STANDARD = 'standard'

SCRIPT_EBS_VOLUME_TYPE_IO1 = 'io1'

SCRIPT_EBS_VOLUME_TYPE_GP2 = 'gp2'

SCRIPT_EBS_VOLUME_TYPE_SC1 = 'sc1'

SCRIPT_EBS_VOLUME_TYPE_ST1 = 'st1'

#Reserved Instances

SCRIPT_EC2_OFFERING_CLASS_STANDARD = 'standard'

SCRIPT_EC2_OFFERING_CLASS_CONVERTIBLE = 'convertible'

EC2_OFFERING_CLASS_STANDARD = 'standard'

EC2_OFFERING_CLASS_CONVERTIBLE = 'convertible'

SUPPORTED_EC2_OFFERING_CLASSES = [SCRIPT_EC2_OFFERING_CLASS_STANDARD, SCRIPT_EC2_OFFERING_CLASS_CONVERTIBLE]

SUPPORTED_RDS_OFFERING_CLASSES = [SCRIPT_EC2_OFFERING_CLASS_STANDARD]

SUPPORTED_EMR_OFFERING_CLASSES = [SCRIPT_EC2_OFFERING_CLASS_STANDARD, SCRIPT_EC2_OFFERING_CLASS_CONVERTIBLE]

SUPPORTED_REDSHIFT_OFFERING_CLASSES = [SCRIPT_EC2_OFFERING_CLASS_STANDARD]

SUPPORTED_OFFERING_CLASSES_MAP = {SERVICE_EC2:SUPPORTED_EC2_OFFERING_CLASSES, SERVICE_RDS: SUPPORTED_RDS_OFFERING_CLASSES,

SERVICE_EMR:SUPPORTED_EMR_OFFERING_CLASSES,

SERVICE_REDSHIFT: SUPPORTED_REDSHIFT_OFFERING_CLASSES }

EC2_OFFERING_CLASS_MAP = {SCRIPT_EC2_OFFERING_CLASS_STANDARD:EC2_OFFERING_CLASS_STANDARD,

SCRIPT_EC2_OFFERING_CLASS_CONVERTIBLE: EC2_OFFERING_CLASS_CONVERTIBLE}

SCRIPT_EC2_PURCHASE_OPTION_PARTIAL_UPFRONT = 'partial-upfront'

SCRIPT_EC2_PURCHASE_OPTION_ALL_UPFRONT = 'all-upfront'

SCRIPT_EC2_PURCHASE_OPTION_NO_UPFRONT = 'no-upfront'

EC2_PURCHASE_OPTION_PARTIAL_UPFRONT = 'Partial Upfront'

EC2_PURCHASE_OPTION_ALL_UPFRONT = 'All Upfront'

EC2_PURCHASE_OPTION_NO_UPFRONT = 'No Upfront'

SCRIPT_EC2_RESERVED_YEARS_1 = '1'

SCRIPT_EC2_RESERVED_YEARS_3 = '3'

EC2_SUPPORTED_RESERVED_YEARS = (SCRIPT_EC2_RESERVED_YEARS_1, SCRIPT_EC2_RESERVED_YEARS_3)

EC2_RESERVED_YEAR_MAP = {SCRIPT_EC2_RESERVED_YEARS_1:'1yr', SCRIPT_EC2_RESERVED_YEARS_3:'3yr'}

EC2_SUPPORTED_PURCHASE_OPTIONS = (SCRIPT_EC2_PURCHASE_OPTION_ALL_UPFRONT, SCRIPT_EC2_PURCHASE_OPTION_NO_UPFRONT, SCRIPT_EC2_PURCHASE_OPTION_PARTIAL_UPFRONT)

EC2_PURCHASE_OPTION_MAP = {SCRIPT_EC2_PURCHASE_OPTION_PARTIAL_UPFRONT:EC2_PURCHASE_OPTION_PARTIAL_UPFRONT,

SCRIPT_EC2_PURCHASE_OPTION_ALL_UPFRONT: EC2_PURCHASE_OPTION_ALL_UPFRONT, SCRIPT_EC2_PURCHASE_OPTION_NO_UPFRONT: EC2_PURCHASE_OPTION_NO_UPFRONT

}

SUPPORTED_EC2_OPERATING_SYSTEMS = (SCRIPT_OPERATING_SYSTEM_LINUX,

SCRIPT_OPERATING_SYSTEM_WINDOWS,

SCRIPT_OPERATING_SYSTEM_WINDOWS_BYOL,

SCRIPT_OPERATING_SYSTEM_SUSE,

#SCRIPT_OPERATING_SYSTEM_SQL_WEB,

SCRIPT_OPERATING_SYSTEM_RHEL)

SUPPORTED_EC2_LICENSE_MODELS = (SCRIPT_EC2_LICENSE_MODEL_BYOL, SCRIPT_EC2_LICENSE_MODEL_INCLUDED, SCRIPT_EC2_LICENSE_MODEL_NONE_REQUIRED)

EC2_LICENSE_MODEL_MAP = {SCRIPT_EC2_LICENSE_MODEL_BYOL: 'Bring your own license',

SCRIPT_EC2_LICENSE_MODEL_INCLUDED: 'License Included',

SCRIPT_EC2_LICENSE_MODEL_NONE_REQUIRED: 'No License required'

}

EC2_OPERATING_SYSTEMS_MAP = {SCRIPT_OPERATING_SYSTEM_LINUX:'Linux',

SCRIPT_OPERATING_SYSTEM_WINDOWS_BYOL:'Windows',

SCRIPT_OPERATING_SYSTEM_WINDOWS:'Windows',

SCRIPT_OPERATING_SYSTEM_SUSE:'SUSE',

#SCRIPT_OPERATING_SYSTEM_SQL_WEB:'SQL Web',

SCRIPT_OPERATING_SYSTEM_RHEL:'RHEL'}

SUPPORTED_EBS_VOLUME_TYPES = (SCRIPT_EBS_VOLUME_TYPE_STANDARD,

SCRIPT_EBS_VOLUME_TYPE_IO1,

SCRIPT_EBS_VOLUME_TYPE_GP2,

SCRIPT_EBS_VOLUME_TYPE_SC1,

SCRIPT_EBS_VOLUME_TYPE_ST1

)

EBS_VOLUME_TYPES_MAP = {

SCRIPT_EBS_VOLUME_TYPE_STANDARD : {'storageMedia':STORAGE_MEDIA_HDD , 'volumeType':EBS_VOLUME_TYPE_MAGNETIC},

SCRIPT_EBS_VOLUME_TYPE_IO1 : {'storageMedia':STORAGE_MEDIA_SSD , 'volumeType':EBS_VOLUME_TYPE_PIOPS},

SCRIPT_EBS_VOLUME_TYPE_GP2 : {'storageMedia':STORAGE_MEDIA_SSD , 'volumeType':EBS_VOLUME_TYPE_GENERAL_PURPOSE},

SCRIPT_EBS_VOLUME_TYPE_SC1 : {'storageMedia':STORAGE_MEDIA_HDD , 'volumeType':EBS_VOLUME_TYPE_COLD_HDD},

SCRIPT_EBS_VOLUME_TYPE_ST1 : {'storageMedia':STORAGE_MEDIA_HDD , 'volumeType':EBS_VOLUME_TYPE_THROUGHPUT_OPTIMIZED}

}

#_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/

#RDS

SUPPORTED_RDS_INSTANCE_CLASSES = ('db.t1.micro', 'db.m1.small', 'db.m1.medium', 'db.m1.large', 'db.m1.xlarge',

'db.m2.xlarge', 'db.m2.2xlarge', 'db.m2.4xlarge',

'db.m3.medium', 'db.m3.large', 'db.m3.xlarge', 'db.m3.2xlarge',

'db.m4.large', 'db.m4.xlarge', 'db.m4.2xlarge', 'db.m4.4xlarge', 'db.m4.10xlarge', 'db.m4.16xlarge',

'db.m5.large', 'db.m5.xlarge', 'db.m5.2xlarge', 'db.m5.4xlarge', 'db.m5.12xlarge', 'db.m5.24xlarge',

'db.r3.large', 'db.r3.xlarge', 'db.r3.2xlarge', 'db.r3.4xlarge', 'db.r3.8xlarge',

'db.r4.large', 'db.r4.xlarge', 'db.r4.2xlarge', 'db.r4.4xlarge', 'db.r4.8xlarge', 'db.r4.16xlarge',

'db.r5.large', 'db.r5.xlarge', 'db.r5.2xlarge', 'db.r5.4xlarge', 'db.r5.12xlarge', 'db.r5.24xlarge',

'db.t2.micro', 'db.t2.small', 'db.t2.2xlarge', 'db.t2.large', 'db.t2.xlarge', 'db.t2.medium',

'db.t3.micro', 'db.t3.small', 'db.t3.medium', 'db.t3.large', 'db.t3.xlarge', 'db.t3.2xlarge',

'db.x1.16xlarge', 'db.x1.32xlarge', 'db.x1e.16xlarge', 'db.x1e.2xlarge', 'db.x1e.32xlarge', 'db.x1e.4xlarge', 'db.x1e.8xlarge', 'db.x1e.xlarge'

)

SCRIPT_RDS_STORAGE_TYPE_STANDARD = 'standard'

SCRIPT_RDS_STORAGE_TYPE_AURORA = 'aurora' #Aurora has its own type of storage, which is billed by IO operations and size

SCRIPT_RDS_STORAGE_TYPE_GP2 = 'gp2'

SCRIPT_RDS_STORAGE_TYPE_IO1 = 'io1'

RDS_VOLUME_TYPE_MAGNETIC = 'Magnetic'

RDS_VOLUME_TYPE_AURORA = 'General Purpose-Aurora'

RDS_VOLUME_TYPE_GP2 = 'General Purpose'

RDS_VOLUME_TYPE_IO1 = 'Provisioned IOPS'

RDS_VOLUME_TYPES_MAP = {

SCRIPT_RDS_STORAGE_TYPE_STANDARD : RDS_VOLUME_TYPE_MAGNETIC,

SCRIPT_RDS_STORAGE_TYPE_AURORA : RDS_VOLUME_TYPE_AURORA,

SCRIPT_RDS_STORAGE_TYPE_GP2 : RDS_VOLUME_TYPE_GP2,

SCRIPT_RDS_STORAGE_TYPE_IO1 : RDS_VOLUME_TYPE_IO1

}

SUPPORTED_RDS_STORAGE_TYPES = (SCRIPT_RDS_STORAGE_TYPE_STANDARD, SCRIPT_RDS_STORAGE_TYPE_AURORA, SCRIPT_RDS_STORAGE_TYPE_GP2, SCRIPT_RDS_STORAGE_TYPE_IO1)

RDS_DEPLOYMENT_OPTION_SINGLE_AZ = 'Single-AZ'

RDS_DEPLOYMENT_OPTION_MULTI_AZ = 'Multi-AZ'

RDS_DEPLOYMENT_OPTION_MULTI_AZ_MIRROR = 'Multi-AZ (SQL Server Mirror)'

RDS_DB_ENGINE_MYSQL = 'MySQL'

RDS_DB_ENGINE_MARIADB = 'MariaDB'

RDS_DB_ENGINE_ORACLE = 'Oracle'

RDS_DB_ENGINE_SQL_SERVER = 'SQL Server'

RDS_DB_ENGINE_POSTGRESQL = 'PostgreSQL'

RDS_DB_ENGINE_AURORA_MYSQL = 'Aurora MySQL'

RDS_DB_ENGINE_AURORA_POSTGRESQL = 'Aurora PostgreSQL'

RDS_DB_EDITION_ENTERPRISE = 'Enterprise'

RDS_DB_EDITION_STANDARD = 'Standard'

RDS_DB_EDITION_STANDARD_ONE = 'Standard One'

RDS_DB_EDITION_STANDARD_TWO = 'Standard Two'

RDS_DB_EDITION_EXPRESS = 'Express'

RDS_DB_EDITION_WEB = 'Web'

SCRIPT_RDS_DATABASE_ENGINE_MYSQL = 'mysql'

SCRIPT_RDS_DATABASE_ENGINE_MARIADB = 'mariadb'

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_STANDARD = 'oracle-se'

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_STANDARD_ONE = 'oracle-se1'

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_STANDARD_TWO = 'oracle-se2'

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_ENTERPRISE = 'oracle-ee'

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_ENTERPRISE = 'sqlserver-ee'

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_STANDARD = 'sqlserver-se'

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_EXPRESS = 'sqlserver-ex'

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_WEB = 'sqlserver-web'

SCRIPT_RDS_DATABASE_ENGINE_POSTGRESQL = 'postgres' #to be consistent with RDS API - https://docs.aws.amazon.com/AmazonRDS/latest/APIReference/API_CreateDBInstance.html

SCRIPT_RDS_DATABASE_ENGINE_AURORA_MYSQL = 'aurora'

SCRIPT_RDS_DATABASE_ENGINE_AURORA_MYSQL_LONG = 'aurora-mysql' #some items in the RDS API now return aurora-mysql as a valid engine (instead of just aurora)

SCRIPT_RDS_DATABASE_ENGINE_AURORA_POSTGRESQL = 'aurora-postgresql'

RDS_SUPPORTED_DB_ENGINES = (SCRIPT_RDS_DATABASE_ENGINE_MYSQL,SCRIPT_RDS_DATABASE_ENGINE_MARIADB,

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_STANDARD, SCRIPT_RDS_DATABASE_ENGINE_ORACLE_STANDARD_ONE,

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_STANDARD_TWO,SCRIPT_RDS_DATABASE_ENGINE_ORACLE_ENTERPRISE,

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_ENTERPRISE, SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_STANDARD,

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_EXPRESS, SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_WEB,

SCRIPT_RDS_DATABASE_ENGINE_POSTGRESQL, SCRIPT_RDS_DATABASE_ENGINE_AURORA_POSTGRESQL,

SCRIPT_RDS_DATABASE_ENGINE_AURORA_MYSQL, SCRIPT_RDS_DATABASE_ENGINE_AURORA_MYSQL_LONG

)

SCRIPT_RDS_LICENSE_MODEL_INCLUDED = 'license-included'

SCRIPT_RDS_LICENSE_MODEL_BYOL = 'bring-your-own-license'

SCRIPT_RDS_LICENSE_MODEL_PUBLIC = 'general-public-license'

RDS_SUPPORTED_LICENSE_MODELS = (SCRIPT_RDS_LICENSE_MODEL_INCLUDED, SCRIPT_RDS_LICENSE_MODEL_BYOL, SCRIPT_RDS_LICENSE_MODEL_PUBLIC)

RDS_LICENSE_MODEL_MAP = {SCRIPT_RDS_LICENSE_MODEL_INCLUDED:'License included',

SCRIPT_RDS_LICENSE_MODEL_BYOL:'Bring your own license',

SCRIPT_RDS_LICENSE_MODEL_PUBLIC:'No license required'}

RDS_ENGINE_MAP = {SCRIPT_RDS_DATABASE_ENGINE_MYSQL:{'engine':RDS_DB_ENGINE_MYSQL,'edition':''},

SCRIPT_RDS_DATABASE_ENGINE_MARIADB:{'engine':RDS_DB_ENGINE_MARIADB ,'edition':''},

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_STANDARD:{'engine':RDS_DB_ENGINE_ORACLE ,'edition':RDS_DB_EDITION_STANDARD},

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_STANDARD_ONE:{'engine':RDS_DB_ENGINE_ORACLE ,'edition':RDS_DB_EDITION_STANDARD_ONE},

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_STANDARD_TWO:{'engine':RDS_DB_ENGINE_ORACLE ,'edition':RDS_DB_EDITION_STANDARD_TWO},

SCRIPT_RDS_DATABASE_ENGINE_ORACLE_ENTERPRISE:{'engine':RDS_DB_ENGINE_ORACLE ,'edition':RDS_DB_EDITION_ENTERPRISE},

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_ENTERPRISE:{'engine':RDS_DB_ENGINE_SQL_SERVER ,'edition':RDS_DB_EDITION_ENTERPRISE},

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_STANDARD:{'engine':RDS_DB_ENGINE_SQL_SERVER ,'edition':RDS_DB_EDITION_STANDARD},

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_EXPRESS:{'engine':RDS_DB_ENGINE_SQL_SERVER ,'edition':RDS_DB_EDITION_EXPRESS},

SCRIPT_RDS_DATABASE_ENGINE_SQL_SERVER_WEB:{'engine':RDS_DB_ENGINE_SQL_SERVER ,'edition':RDS_DB_EDITION_WEB},

SCRIPT_RDS_DATABASE_ENGINE_POSTGRESQL:{'engine':RDS_DB_ENGINE_POSTGRESQL ,'edition':''},

SCRIPT_RDS_DATABASE_ENGINE_AURORA_MYSQL:{'engine':RDS_DB_ENGINE_AURORA_MYSQL ,'edition':''},

SCRIPT_RDS_DATABASE_ENGINE_AURORA_MYSQL_LONG:{'engine':RDS_DB_ENGINE_AURORA_MYSQL ,'edition':''},

SCRIPT_RDS_DATABASE_ENGINE_AURORA_POSTGRESQL:{'engine':RDS_DB_ENGINE_AURORA_POSTGRESQL ,'edition':''}

}

#_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/

#S3

S3_USAGE_GROUP_REQUESTS_TIER_1 = 'S3-API-Tier1'

S3_USAGE_GROUP_REQUESTS_TIER_2 = 'S3-API-Tier2'

S3_USAGE_GROUP_REQUESTS_TIER_3 = 'S3-API-Tier3'

S3_USAGE_GROUP_REQUESTS_SIA_TIER1 = 'S3-API-SIA-Tier1'

S3_USAGE_GROUP_REQUESTS_SIA_TIER2 = 'S3-API-SIA-Tier2'

S3_USAGE_GROUP_REQUESTS_SIA_RETRIEVAL = 'S3-API-SIA-Retrieval'

S3_USAGE_GROUP_REQUESTS_ZIA_TIER1 = 'S3-API-ZIA-Tier1'

S3_USAGE_GROUP_REQUESTS_ZIA_TIER2 = 'S3-API-ZIA-Tier2'

S3_USAGE_GROUP_REQUESTS_ZIA_RETRIEVAL = 'S3-API-ZIA-Retrieval'

S3_STORAGE_CLASS_STANDARD = 'General Purpose'

S3_STORAGE_CLASS_SIA = 'Infrequent Access'

S3_STORAGE_CLASS_ZIA = 'Infrequent Access'

S3_STORAGE_CLASS_GLACIER = 'Archive'

S3_STORAGE_CLASS_REDUCED_REDUNDANCY = 'Non-Critical Data'

SUPPORTED_REQUEST_TYPES = ('PUT','COPY','POST','LIST','GET')

SCRIPT_STORAGE_CLASS_INFREQUENT_ACCESS = 'STANDARD_IA'

SCRIPT_STORAGE_CLASS_ONE_ZONE_INFREQUENT_ACCESS = 'ONEZONE_IA'

SCRIPT_STORAGE_CLASS_STANDARD = 'STANDARD'

SCRIPT_STORAGE_CLASS_GLACIER = 'GLACIER'

SCRIPT_STORAGE_CLASS_REDUCED_REDUNDANCY = 'REDUCED_REDUNDANCY'

SUPPORTED_S3_STORAGE_CLASSES = (SCRIPT_STORAGE_CLASS_STANDARD,

SCRIPT_STORAGE_CLASS_INFREQUENT_ACCESS,

SCRIPT_STORAGE_CLASS_ONE_ZONE_INFREQUENT_ACCESS,

SCRIPT_STORAGE_CLASS_GLACIER,

SCRIPT_STORAGE_CLASS_REDUCED_REDUNDANCY)

S3_STORAGE_CLASS_MAP = {SCRIPT_STORAGE_CLASS_INFREQUENT_ACCESS:S3_STORAGE_CLASS_SIA,

SCRIPT_STORAGE_CLASS_ONE_ZONE_INFREQUENT_ACCESS:S3_STORAGE_CLASS_ZIA,

SCRIPT_STORAGE_CLASS_STANDARD:S3_STORAGE_CLASS_STANDARD,

SCRIPT_STORAGE_CLASS_GLACIER:S3_STORAGE_CLASS_GLACIER,

SCRIPT_STORAGE_CLASS_REDUCED_REDUNDANCY:S3_STORAGE_CLASS_REDUCED_REDUNDANCY}

S3_USAGE_TYPE_DICT = {

SCRIPT_STORAGE_CLASS_STANDARD:'TimedStorage-ByteHrs',

SCRIPT_STORAGE_CLASS_INFREQUENT_ACCESS:'TimedStorage-SIA-ByteHrs',

SCRIPT_STORAGE_CLASS_ONE_ZONE_INFREQUENT_ACCESS:'TimedStorage-ZIA-ByteHrs',

SCRIPT_STORAGE_CLASS_GLACIER:'TimedStorage-GlacierByteHrs',

SCRIPT_STORAGE_CLASS_REDUCED_REDUNDANCY:'TimedStorage-RRS-ByteHrs'

}

S3_VOLUME_TYPE_DICT = {

SCRIPT_STORAGE_CLASS_STANDARD:'Standard',

SCRIPT_STORAGE_CLASS_INFREQUENT_ACCESS:'Standard - Infrequent Access',

SCRIPT_STORAGE_CLASS_ONE_ZONE_INFREQUENT_ACCESS:'One Zone - Infrequent Access',

SCRIPT_STORAGE_CLASS_GLACIER:'Amazon Glacier',

SCRIPT_STORAGE_CLASS_REDUCED_REDUNDANCY:'Reduced Redundancy'

}

#_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/_/

#LAMBDA

LAMBDA_MEM_SIZES = [64,128,192,256,320,384,448,512,576,640,704,768,832,896,960,1024,1088,1152,1216,1280,1344,1408,

1472,1536,1600,1664,1728,1792,1856,1920,1984,2048,2112,2176,2240,2304,2368,2432,2496,2560,2624,2688,

2752,2816,2880,2944,3008]

================================================

FILE: awspricecalculator/common/errors.py