Showing preview only (4,559K chars total). Download the full file or copy to clipboard to get everything.

Repository: reiniscimurs/DRL-robot-navigation-IR-SIM

Branch: master

Commit: 31e1a4d511bb

Files: 81

Total size: 44.5 MB

Directory structure:

gitextract_12_7tajc/

├── .devin/

│ └── wiki.json

├── .github/

│ └── workflows/

│ └── main.yml

├── .gitignore

├── README.md

├── docs/

│ ├── api/

│ │ ├── IR-SIM/

│ │ │ ├── ir-marl-sim.md

│ │ │ └── ir-sim.md

│ │ ├── Testing/

│ │ │ ├── test.md

│ │ │ └── testrnn.md

│ │ ├── Training/

│ │ │ ├── train.md

│ │ │ └── trainrnn.md

│ │ ├── Utils/

│ │ │ ├── replay_buffer.md

│ │ │ └── utils.md

│ │ ├── models/

│ │ │ ├── DDPG.md

│ │ │ ├── HCM.md

│ │ │ ├── MARL/

│ │ │ │ ├── Attention.md

│ │ │ │ └── TD3.md

│ │ │ ├── PPO.md

│ │ │ ├── RCPG.md

│ │ │ ├── SAC.md

│ │ │ ├── TD3.md

│ │ │ ├── __init__.md

│ │ │ └── cnntd3.md

│ │ └── robot_nav.md

│ └── index.md

├── mkdocs.yml

├── pyproject.toml

├── robot_nav/

│ ├── SIM_ENV/

│ │ ├── __init__.py

│ │ ├── marl_sim.py

│ │ ├── sim.py

│ │ └── sim_env.py

│ ├── __init__.py

│ ├── assets/

│ │ └── data.yml

│ ├── eval_points.yaml

│ ├── marl_test_random.py

│ ├── marl_test_single.py

│ ├── marl_train.py

│ ├── models/

│ │ ├── CNNTD3/

│ │ │ ├── CNNTD3.py

│ │ │ └── __init__.py

│ │ ├── DDPG/

│ │ │ ├── DDPG.py

│ │ │ └── __init__.py

│ │ ├── HCM/

│ │ │ ├── __init__.py

│ │ │ └── hardcoded_model.py

│ │ ├── MARL/

│ │ │ ├── Attention/

│ │ │ │ ├── __init__.py

│ │ │ │ ├── g2anet.py

│ │ │ │ └── iga.py

│ │ │ ├── __init__.py

│ │ │ └── marlTD3/

│ │ │ ├── __init__.py

│ │ │ └── marlTD3.py

│ │ ├── PPO/

│ │ │ ├── PPO.py

│ │ │ └── __init__.py

│ │ ├── RCPG/

│ │ │ ├── RCPG.py

│ │ │ └── __init__.py

│ │ ├── SAC/

│ │ │ ├── SAC.py

│ │ │ ├── SAC_actor.py

│ │ │ ├── SAC_critic.py

│ │ │ ├── SAC_utils.py

│ │ │ └── __init__.py

│ │ ├── TD3/

│ │ │ ├── TD3.py

│ │ │ └── __init__.py

│ │ └── __init__.py

│ ├── replay_buffer.py

│ ├── rl_test.py

│ ├── rl_test_random.py

│ ├── rl_train.py

│ ├── rnn_test.py

│ ├── rnn_train.py

│ ├── rvo_test_random.py

│ ├── rvo_test_single.py

│ ├── utils.py

│ └── worlds/

│ ├── circle_world.yaml

│ ├── cross_world.yaml

│ ├── eval_world.yaml

│ ├── multi_robot_world.yaml

│ └── robot_world.yaml

└── tests/

├── __init__.py

├── test_data.yml

├── test_marl_world.yaml

├── test_model.py

├── test_sim.py

├── test_utils.py

└── test_world.yaml

================================================

FILE CONTENTS

================================================

================================================

FILE: .devin/wiki.json

================================================

{

"repo_notes": [

{

"content": ""

}

],

"pages": [

{

"title": "Overview",

"purpose": "Introduce the DRL-robot-navigation-IR-SIM project, explaining its purpose as a Deep Reinforcement Learning system for robot navigation using the IR-SIM simulator, and providing a high-level architecture overview",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Getting Started",

"purpose": "Guide users through installation using Poetry, running the main training script, and monitoring with TensorBoard",

"parent": "Overview",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Core Training System",

"purpose": "Explain the main training orchestration, including the training loop structure (epochs, episodes, steps), state-action-reward cycle, and integration with models and environments",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Simulation Environments",

"purpose": "Document the SIM (single-agent) and MARL_SIM (multi-agent) environment wrappers around IR-SIM, including state observation processing, reward functions, and reset mechanics",

"parent": "Core Training System",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Data Management and Replay Buffers",

"purpose": "Describe the replay buffer implementations (ReplayBuffer, RolloutReplayBuffer, RolloutBuffer), the get_buffer factory function, and experience storage/sampling strategies for different algorithms",

"parent": "Core Training System",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Single-Agent Models",

"purpose": "Overview of the single-agent DRL model library, explaining the model taxonomy, evolutionary relationships (DDPG→TD3→CNNTD3→RCPG), and shared interfaces",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "TD3 and DDPG",

"purpose": "Document the Twin Delayed DDPG algorithm and base DDPG, including network architectures, training loop with target smoothing and delayed updates, and state preparation for 10-dimensional states",

"parent": "Single-Agent Models",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "CNNTD3",

"purpose": "Explain the CNN-enhanced TD3 model designed for 185-dimensional laser scan data, detailing the 1D convolutional layers for spatial feature extraction and its role as the primary model in rl_train.py",

"parent": "Single-Agent Models",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "RCPG",

"purpose": "Document the Recurrent Convolutional Policy Gradient model that adds LSTM/GRU/RNN layers to CNNTD3 for temporal modeling, including its specialized RolloutReplayBuffer for sequence sampling",

"parent": "Single-Agent Models",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "SAC",

"purpose": "Describe the Soft Actor-Critic algorithm with entropy regularization, including the DiagGaussianActor with SquashedNormal distribution and DoubleQCritic architecture",

"parent": "Single-Agent Models",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "PPO",

"purpose": "Explain the Proximal Policy Optimization on-policy algorithm, its clipped objective, RolloutBuffer for episodic collection, and action standard deviation decay mechanism",

"parent": "Single-Agent Models",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "HCM (Hardcoded Model)",

"purpose": "Document the hard-coded navigation model used for collecting collision data and pre-training datasets, including its action computation logic and sample saving functionality",

"parent": "Single-Agent Models",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Multi-Agent Reinforcement Learning",

"purpose": "Introduce the MARL capabilities of the system, explaining the multi-agent simulation environment, attention-based coordination mechanisms, and training differences from single-agent approaches",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "MARL Simulation Environment",

"purpose": "Detail the MARL_SIM class for multi-robot scenarios, including per-agent state processing, two-phase reward functions, connection matrices for communication topology, and conflict-free reset logic",

"parent": "Multi-Agent Reinforcement Learning",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "marlTD3 with Attention Mechanisms",

"purpose": "Explain the multi-agent TD3 implementation with hard-soft attention, the Actor and Critic architectures with attention encoders, In-Graph Attention and G2ANET, GoalAttentionLayer message passing, and auxiliary losses (BCE, entropy)",

"parent": "Multi-Agent Reinforcement Learning",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Environment Configuration",

"purpose": "Document the YAML configuration system for world setup, robot properties, sensor specifications (lidar2d), obstacle definitions, and evaluation point configurations",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Testing and Validation",

"purpose": "Overview of the comprehensive testing infrastructure using pytest, including unit tests for models, environments, and utilities, with CI/CD integration via GitHub Actions",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Model Testing",

"purpose": "Explain the test suite for validating model training capabilities, including parameterized tests for all algorithms, prefilled test data usage, and max bound variant testing",

"parent": "Testing and Validation",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Simulation and Environment Testing",

"purpose": "Document tests for SIM and MARL_SIM environments, including state retrieval, step execution, reset functionality, and the cossin utility function",

"parent": "Testing and Validation",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Buffer and Utility Testing",

"purpose": "Detail the testing framework for the get_buffer factory function, validating correct buffer type instantiation (ReplayBuffer, RolloutReplayBuffer, RolloutBuffer) based on model type",

"parent": "Testing and Validation",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Advanced Features",

"purpose": "Document advanced capabilities including max upper bound loss for Q-value regularization, pretraining from offline datasets, and model-specific architectural enhancements",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Max Upper Bound Loss",

"purpose": "Explain the optional Q-value bounding mechanism available for TD3, DDPG, and CNNTD3 to address overestimation bias, including the theoretical maximum calculation and loss formulation",

"parent": "Advanced Features",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Pretraining and Offline Learning",

"purpose": "Document the Pretraining class for loading offline experience data from YAML files, the get_max_bound utility, and integration with the get_buffer factory for model initialization",

"parent": "Advanced Features",

"page_notes": [

{

"content": ""

}

]

},

{

"title": "Development and Deployment",

"purpose": "Cover the development workflow including Poetry dependency management, project structure with pyproject.toml, CI/CD pipeline with GitHub Actions, and TensorBoard integration for training monitoring",

"page_notes": [

{

"content": ""

}

]

}

]

}

================================================

FILE: .github/workflows/main.yml

================================================

name: Run Tests

on:

push:

branches: [master]

pull_request:

branches: [master]

jobs:

test:

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Set up Python

uses: actions/setup-python@v4

with:

python-version: '3.10'

- name: Install Poetry

run: |

curl -sSL https://install.python-poetry.org | python3 -

echo "$HOME/.local/bin" >> $GITHUB_PATH

- name: Install dependencies

run: poetry install

- name: Run tests

run: poetry run pytest

================================================

FILE: .gitignore

================================================

/.venv/

/runs/

/site/

================================================

FILE: README.md

================================================

[](https://deepwiki.com/reiniscimurs/DRL-robot-navigation-IR-SIM)

**DRL Robot navigation in IR-SIM**

Deep Reinforcement Learning algorithm implementation for simulated robot navigation in IR-SIM. Using 2D laser sensor data

and information about the goal point a robot learns to navigate to a specified point in the environment.

**Installation**

* Package versioning is managed with poetry \

`pip install poetry`

* Clone the repository \

`git clone https://github.com/reiniscimurs/DRL-robot-navigation.git`

* Navigate to the cloned location and install using poetry \

`poetry install`

**Training the model**

* Run the training by executing the train.py file \

`poetry run python robot_nav/rl_train.py`

* To open tensorbord, in a new terminal execute \

`tensorboard --logdir runs`

**Sources**

| Package | Description | Source |

|:--------|:-------------------------------------------------------------:|------------------------------------:|

| IR-SIM | Light-weight robot simulator | https://github.com/hanruihua/ir-sim |

| PythonRobotics | Python code collection of robotics algorithms (Path planning) | https://github.com/AtsushiSakai/PythonRobotics |

**Models**

| Model | Description | Model Source |

|:-----------------|:-----------------------------------------------------------------------------------------------:|----------------------------------------------------------:|

| TD3 | Twin Delayed Deep Deterministic Policy Gradient model | https://github.com/reiniscimurs/DRL-Robot-Navigation-ROS2 |

| SAC | Soft Actor-Critic model | https://github.com/denisyarats/pytorch_sac |

| PPO | Proximal Policy Optimization model | https://github.com/nikhilbarhate99/PPO-PyTorch |

| DDPG | Deep Deterministic Policy Gradient model | Updated from TD3 |

| CNNTD3 | TD3 model with 1D CNN encoding of laser state | - |

| RCPG | Recurrent Convolution Policy Gradient - adding recurrence layers (lstm/gru/rnn) to CNNTD3 model | - |

| MARL: TD3-G2ANet | G2ANet attention encoder for TD3 model in MARL setting | G2ANet adapted from https://github.com/starry-sky6688/MARL-Algorithms |

| MARL: TD3-IGS | In-Graph Softmax attention model for TD3 model in MARL setting | - |

**Max Upper Bound Models**

Models that support the additional loss of Q values exceeding the maximal possible Q value in the episode. Q values that exceed this upper bound are used to calculate a loss for the model. This helps to control the overestimation of Q values in off-policy actor-critic networks.

To enable max upper bound loss set `use_max_bound = True` when initializing a model.

| Model |

|:-------|

| TD3 |

| DDPG |

| CNNTD3 |

**MARL Models**

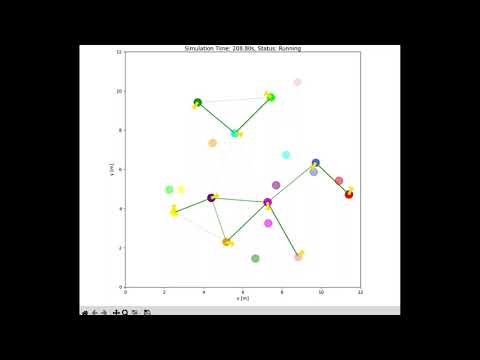

Multi agent RL setting with training multiple robots a the same time with a single policy. Implementation does not use sensor informaition and only exchanged graph messages are used for navigation.

Read more bout the In-Graph Softmax for MARL implementation [here](https://medium.com/@reinis_86651/learning-graphs-for-marl-on-the-fly-9fe44c356808).

Video of results:

[](https://www.youtube.com/watch?v=SGl7sil_dpo)

================================================

FILE: docs/api/IR-SIM/ir-marl-sim.md

================================================

# MARL-IR-SIM

::: robot_nav.SIM_ENV.marl_sim

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/IR-SIM/ir-sim.md

================================================

# IR-SIM

::: robot_nav.SIM_ENV.sim

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/Testing/test.md

================================================

# Testing

::: robot_nav.test

options:

show_root_heading: true

show_source: true

::: robot_nav.test_random

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/Testing/testrnn.md

================================================

# Testing RNN

::: robot_nav.test_rnn

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/Training/train.md

================================================

# Training

::: robot_nav.train

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/Training/trainrnn.md

================================================

# Training RNN

::: robot_nav.train_rnn

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/Utils/replay_buffer.md

================================================

# Replay/Rollout Buffer

::: robot_nav.replay_buffer

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/Utils/utils.md

================================================

# Utils

::: robot_nav.utils

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/models/DDPG.md

================================================

# DDPG

::: robot_nav.models.DDPG.DDPG

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/models/HCM.md

================================================

# Hardcoded Model

::: robot_nav.models.HCM.hardcoded_model

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/models/MARL/Attention.md

================================================

# Hard-Soft Attention

::: robot_nav.models.MARL.hardsoftAttention

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/models/MARL/TD3.md

================================================

# MARL TD3

::: robot_nav.models.MARL.marlTD3

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/models/PPO.md

================================================

# PPO

::: robot_nav.models.PPO.PPO

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/models/RCPG.md

================================================

# RCPG

::: robot_nav.models.RCPG.RCPG

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/models/SAC.md

================================================

# SAC

::: robot_nav.models.SAC.SAC

options:

show_root_heading: true

show_source: true

::: robot_nav.models.SAC.SAC_actor

options:

show_root_heading: true

show_source: true

::: robot_nav.models.SAC.SAC_critic

options:

show_root_heading: true

show_source: true

::: robot_nav.models.SAC.SAC_utils

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/models/TD3.md

================================================

# TD3

::: robot_nav.models.TD3.TD3

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/models/__init__.md

================================================

# __init__

## Documentation

```

No documentation available for __init__.

```

================================================

FILE: docs/api/models/cnntd3.md

================================================

# CNNTD3

::: robot_nav.models.CNNTD3.CNNTD3

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/api/robot_nav.md

================================================

# Robot Navigation

Will be updated

::: robot_nav

options:

show_root_heading: true

show_source: true

================================================

FILE: docs/index.md

================================================

# Welcome to DRL-robot-navigation-IR-SIM

**DRL Robot navigation in IR-SIM**

Deep Reinforcement Learning algorithm implementation for simulated robot navigation in IR-SIM. Using 2D laser sensor data

and information about the goal point a robot learns to navigate to a specified point in the environment.

**Installation**

* Package versioning is managed with poetry \

`pip install poetry`

* Clone the repository \

`git clone https://github.com/reiniscimurs/DRL-robot-navigation.git`

* Navigate to the cloned location and install using poetry \

`poetry install`

**Training the model**

* Run the training by executing the train.py file \

`poetry run python robot_nav/train.py`

* To open tensorbord, in a new terminal execute \

`tensorboard --logdir runs`

**Sources**

| Package | Description | Source |

|:--------|:-------------------------------------------------------------:|------------------------------------:|

| IR-SIM | Light-weight robot simulator | https://github.com/hanruihua/ir-sim |

| PythonRobotics | Python code collection of robotics algorithms (Path planning) | https://github.com/AtsushiSakai/PythonRobotics |

**Models**

| Model | Description | Model Source |

|:----------|:-----------------------------------------------------------------------------------------------:|----------------------------------------------------------:|

| TD3 | Twin Delayed Deep Deterministic Policy Gradient model | https://github.com/reiniscimurs/DRL-Robot-Navigation-ROS2 |

| SAC | Soft Actor-Critic model | https://github.com/denisyarats/pytorch_sac |

| PPO | Proximal Policy Optimization model | https://github.com/nikhilbarhate99/PPO-PyTorch |

| DDPG | Deep Deterministic Policy Gradient model | Updated from TD3 |

| CNNTD3 | TD3 model with 1D CNN encoding of laser state | - |

| RCPG | Recurrent Convolution Policy Gradient - adding recurrence layers (lstm/gru/rnn) to CNNTD3 model | - |

**Max Upper Bound Models**

Models that support the additional loss of Q values exceeding the maximal possible Q value in the episode. Q values that exceed this upper bound are used to calculate a loss for the model. This helps to control the overestimation of Q values in off-policy actor-critic networks.

To enable max upper bound loss set `use_max_bound = True` when initializing a model.

| Model |

|:-------|

| TD3 |

| DDPG |

| CNNTD3 |

================================================

FILE: mkdocs.yml

================================================

extra:

python_path:

- ./robot_nav

nav:

- Home: index.md

- API Reference:

IR-SIM:

- SIM: api/IR-SIM/ir-sim

- MARL SIM: api/IR-SIM/ir-marl-sim

Models:

- DDPG: api/models/DDPG.md

- TD3: api/models/TD3.md

- CNNTD3: api/models/cnntd3.md

- RCPG: api/models/RCPG.md

- HCM: api/models/HCM.md

- PPO: api/models/PPO.md

- SAC: api/models/SAC.md

- MARL:

- HardSoft Attention: api/models/MARL/Attention

- TD3: api/models/MARL/TD3

Training:

- Train: api/Training/train.md

- Train RNN: api/Training/trainrnn.md

Testing:

- Test: api/Testing/test.md

- Test RNN: api/Testing/testrnn.md

Utils:

- Replay Buffer: api/Utils/replay_buffer.md

- Utils: api/Utils/utils.md

plugins:

- search

- mkdocstrings

site_name: DRL-robot-navigation-IR-SIM

theme:

name: material

================================================

FILE: pyproject.toml

================================================

[project]

name = "robot-nav"

version = "0.1.0"

description = ""

authors = [

{name = "reinis",email = "ireinisi@gmail.com"}

]

readme = "README.md"

requires-python = ">=3.10"

dependencies = [

"ir-sim[all] (>=2.3.0,<3.0.0)",

"torch (>=2.5.1,<3.0.0)",

"tensorboard (>=2.18.0,<3.0.0)",

"tqdm (>=4.67.1,<5.0.0)",

"pytest (>=8.3.4,<9.0.0)",

"mkdocs (>=1.6.1,<2.0.0)",

"mkdocstrings[python] (>=0.29.1,<0.30.0)",

"mkdocs-material (>=9.6.11,<10.0.0)",

"scipy (>=1.15.2,<2.0.0)",

"torch-geometric (>=2.6.1,<3.0.0)",

]

[tool.pytest.ini_options]

testpaths = ["tests"]

[build-system]

requires = ["poetry-core>=2.0.0,<3.0.0"]

build-backend = "poetry.core.masonry.api"

================================================

FILE: robot_nav/SIM_ENV/__init__.py

================================================

================================================

FILE: robot_nav/SIM_ENV/marl_sim.py

================================================

import irsim

import numpy as np

import random

import torch

from robot_nav.SIM_ENV.sim_env import SIM_ENV

class MARL_SIM(SIM_ENV):

"""

Simulation environment for multi-agent robot navigation using IRSim.

This class extends the SIM_ENV and provides a wrapper for multi-robot

simulation and interaction, supporting reward computation and custom reset logic.

Attributes:

env (object): IRSim simulation environment instance.

robot_goal (np.ndarray): Current goal position(s) for the robots.

num_robots (int): Number of robots in the environment.

x_range (tuple): World x-range.

y_range (tuple): World y-range.

"""

def __init__(

self,

world_file="multi_robot_world.yaml",

disable_plotting=False,

reward_phase=1,

):

"""

Initialize the MARL_SIM environment.

Args:

world_file (str, optional):

Path to an IRSim YAML world configuration. Defaults to

"multi_robot_world.yaml".

disable_plotting (bool, optional):

If True, disables all IRSim plotting and display windows.

Useful for headless training. Defaults to False.

reward_phase (int, optional):

Selects the reward function variant used by `get_reward`.

Supported values: {1, 2}. Defaults to 1.

"""

display = False if disable_plotting else True

self.env = irsim.make(

world_file, disable_all_plot=disable_plotting, display=display

)

robot_info = self.env.get_robot_info(0)

self.robot_goal = robot_info.goal

self.num_robots = len(self.env.robot_list)

self.x_range = self.env._world.x_range

self.y_range = self.env._world.y_range

self.reward_phase = reward_phase

def step(self, action, connection, combined_weights=None):

"""

Perform a simulation step for all robots using the provided actions and connections.

Args:

action (list):

A list of length `num_robots`. Each element is

`[linear_velocity, angular_velocity]` to be applied to the

corresponding robot for this step. Units follow IRSim's

kinematics setup (typically m/s and rad/s).

connection (Tensor):

A torch.Tensor of shape `(num_robots, num_robots-1)` containing

*logits* that indicate pairwise connections per robot *without*

the self-connection column. This is not used for logic here,

but preserved for compatibility (see commented code); you may

use it upstream to form `combined_weights`.

combined_weights (Tensor or None, optional):

A torch.Tensor of shape `(num_robots, num_robots)` **or**

`(num_robots, num_robots-1)` that encodes visualization weights

for robot-to-robot edges. When provided, edges from robot *i* to

*j* are drawn with line width `weight * 2`. If you pass the

`(num_robots, num_robots-1)` form, ensure indexing aligns with

how you constructed it (self-column typically omitted).

Returns:

tuple:

(

poses (list[list[float]]):

`[x, y, theta]` for each robot after the step.

distances (list[float]):

Euclidean distance from each robot to its goal.

coss (list[float]):

Cosine of the angle between robot heading and goal vector.

sins (list[float]):

Sine of the angle between robot heading and goal vector.

collisions (list[bool]):

Collision flags for each robot, as reported by IRSim.

goals (list[bool]):

Arrival flags for each robot. If True this step, a new

random goal was scheduled for that robot.

action (list):

Echo of the input `action`.

rewards (list[float]):

Per-robot rewards computed by `get_reward(...)`.

positions (list[list[float]]):

`[x, y]` positions for each robot after the step.

goal_positions (list[list[float]]):

`[x, y]` goal coordinates for each robot after any

arrival updates this step.

)

"""

self.env.step(action_id=[i for i in range(self.num_robots)], action=action)

self.env.render()

poses = []

distances = []

coss = []

sins = []

collisions = []

goals = []

rewards = []

positions = []

goal_positions = []

robot_states = [

[self.env.robot_list[i].state[0], self.env.robot_list[i].state[1]]

for i in range(self.num_robots)

]

for i in range(self.num_robots):

robot_state = self.env.robot_list[i].state

closest_robots = [

np.linalg.norm(

[

robot_states[j][0] - robot_state[0],

robot_states[j][1] - robot_state[1],

]

)

for j in range(self.num_robots)

if j != i

]

robot_goal = self.env.robot_list[i].goal

goal_vector = [

robot_goal[0].item() - robot_state[0].item(),

robot_goal[1].item() - robot_state[1].item(),

]

distance = np.linalg.norm(goal_vector)

goal = self.env.robot_list[i].arrive

pose_vector = [np.cos(robot_state[2]).item(), np.sin(robot_state[2]).item()]

cos, sin = self.cossin(pose_vector, goal_vector)

collision = self.env.robot_list[i].collision

action_i = action[i]

reward = self.get_reward(

goal, collision, action_i, closest_robots, distance, self.reward_phase

)

position = [robot_state[0].item(), robot_state[1].item()]

goal_position = [robot_goal[0].item(), robot_goal[1].item()]

distances.append(distance)

coss.append(cos)

sins.append(sin)

collisions.append(collision)

goals.append(goal)

rewards.append(reward)

positions.append(position)

poses.append(

[robot_state[0].item(), robot_state[1].item(), robot_state[2].item()]

)

goal_positions.append(goal_position)

if combined_weights is not None:

i_weights = combined_weights[i].tolist()

for j in range(self.num_robots):

if combined_weights is not None:

weight = i_weights[j]

# else:

# weight = 1

other_robot_state = self.env.robot_list[j].state

other_pos = [

other_robot_state[0].item(),

other_robot_state[1].item(),

]

rx = [position[0], other_pos[0]]

ry = [position[1], other_pos[1]]

self.env.draw_trajectory(

np.array([rx, ry]), refresh=True, linewidth=weight * 2

)

if goal:

self.env.robot_list[i].set_random_goal(

obstacle_list=self.env.obstacle_list,

init=True,

range_limits=[

[self.x_range[0] + 1, self.y_range[0] + 1, -3.141592653589793],

[self.x_range[1] - 1, self.y_range[1] - 1, 3.141592653589793],

],

)

return (

poses,

distances,

coss,

sins,

collisions,

goals,

action,

rewards,

positions,

goal_positions,

)

def reset(

self,

robot_state=None,

robot_goal=None,

random_obstacles=False,

random_obstacle_ids=None,

):

"""

Reset the simulation environment and optionally set robot and obstacle positions.

Args:

robot_state (list or None, optional): Initial robot state(s) as [[x], [y], [theta]].

If None, random states are assigned ensuring minimum spacing between robots.

robot_goal (list or None, optional): Fixed goal position(s) for robots. If None, random

goals are generated within the environment boundaries.

random_obstacles (bool, optional): If True, reposition obstacles randomly within bounds.

random_obstacle_ids (list or None, optional): IDs of obstacles to randomize. Defaults to

seven obstacles starting from index equal to the number of robots.

Returns:

tuple: (

poses (list): List of [x, y, theta] for each robot,

distances (list): Distance to goal for each robot,

coss (list): Cosine of angle to goal for each robot,

sins (list): Sine of angle to goal for each robot,

collisions (list): All False after reset,

goals (list): All False after reset,

action (list): Initial action ([[0.0, 0.0], ...]),

rewards (list): Rewards for initial state,

positions (list): Initial [x, y] for each robot,

goal_positions (list): Initial goal [x, y] for each robot,

)

"""

if robot_state is None:

robot_state = [[random.uniform(3, 9)], [random.uniform(3, 9)], [0]]

init_states = []

for robot in self.env.robot_list:

conflict = True

while conflict:

conflict = False

robot_state = [

[random.uniform(3, 9)],

[random.uniform(3, 9)],

[random.uniform(-3.14, 3.14)],

]

pos = [robot_state[0][0], robot_state[1][0]]

for loc in init_states:

vector = [

pos[0] - loc[0],

pos[1] - loc[1],

]

if np.linalg.norm(vector) < 0.6:

conflict = True

init_states.append(pos)

robot.set_state(

state=np.array(robot_state),

init=True,

)

if random_obstacles:

if random_obstacle_ids is None:

random_obstacle_ids = [i + self.num_robots for i in range(7)]

self.env.random_obstacle_position(

range_low=[self.x_range[0], self.y_range[0], -3.14],

range_high=[self.x_range[1], self.y_range[1], 3.14],

ids=random_obstacle_ids,

non_overlapping=True,

)

for robot in self.env.robot_list:

if robot_goal is None:

robot.set_random_goal(

obstacle_list=self.env.obstacle_list,

init=True,

range_limits=[

[self.x_range[0] + 1, self.y_range[0] + 1, -3.141592653589793],

[self.x_range[1] - 1, self.y_range[1] - 1, 3.141592653589793],

],

)

else:

self.env.robot.set_goal(np.array(robot_goal), init=True)

self.env.reset()

self.robot_goal = self.env.robot.goal

action = [[0.0, 0.0] for _ in range(self.num_robots)]

con = torch.tensor(

[[0.0 for _ in range(self.num_robots - 1)] for _ in range(self.num_robots)]

)

poses, distance, cos, sin, _, _, action, reward, positions, goal_positions = (

self.step(action, con)

)

return (

poses,

distance,

cos,

sin,

[False] * self.num_robots,

[False] * self.num_robots,

action,

reward,

positions,

goal_positions,

)

@staticmethod

def get_reward(goal, collision, action, closest_robots, distance, phase=1):

"""

Compute the reward for a single robot based on goal progress, collisions,

control effort, and proximity to other robots.

Args:

goal (bool): Whether the robot reached its goal.

collision (bool): Whether the robot collided with an obstacle or another robot.

action (list): [linear_velocity, angular_velocity] applied by the robot.

closest_robots (list): Distances to other robots.

distance (float): Current distance to the goal.

phase (int, optional): Reward configuration (1 or 2). Default is 1.

Returns:

float: Computed scalar reward.

Raises:

ValueError: If an unknown reward phase is specified.

"""

match phase:

case 1:

if goal:

return 100.0

elif collision:

return -100.0 * 3 * action[0]

else:

r_dist = 1.5 / distance

cl_pen = 0

for rob in closest_robots:

add = (1.25 - rob) ** 2 if rob < 1.25 else 0

cl_pen += add

return action[0] - 0.5 * abs(action[1]) - cl_pen + r_dist

case 2:

if goal:

return 70.0

elif collision:

return -100.0 * 3 * action[0]

else:

cl_pen = 0

for rob in closest_robots:

add = (3 - rob) ** 2 if rob < 3 else 0

cl_pen += add

return -0.5 * abs(action[1]) - cl_pen

case _:

raise ValueError("Unknown reward phase")

================================================

FILE: robot_nav/SIM_ENV/sim.py

================================================

import irsim

import numpy as np

import random

from robot_nav.SIM_ENV.sim_env import SIM_ENV

class SIM(SIM_ENV):

"""

A simulation environment interface for robot navigation using IRSim.

This class wraps around the IRSim environment and provides methods for stepping,

resetting, and interacting with a mobile robot, including reward computation.

Attributes:

env (object): The simulation environment instance from IRSim.

robot_goal (np.ndarray): The goal position of the robot.

"""

def __init__(self, world_file="robot_world.yaml", disable_plotting=False):

"""

Initialize the simulation environment.

Args:

world_file (str): Path to the world configuration YAML file.

disable_plotting (bool): If True, disables rendering and plotting.

"""

display = False if disable_plotting else True

self.env = irsim.make(

world_file, disable_all_plot=disable_plotting, display=display

)

robot_info = self.env.get_robot_info(0)

self.robot_goal = robot_info.goal

def step(self, lin_velocity=0.0, ang_velocity=0.1):

"""

Perform one step in the simulation using the given control commands.

Args:

lin_velocity (float): Linear velocity to apply to the robot.

ang_velocity (float): Angular velocity to apply to the robot.

Returns:

(tuple): Contains the latest LIDAR scan, distance to goal, cosine and sine of angle to goal,

collision flag, goal reached flag, applied action, and computed reward.

"""

self.env.step(action_id=0, action=np.array([[lin_velocity], [ang_velocity]]))

self.env.render()

scan = self.env.get_lidar_scan()

latest_scan = scan["ranges"]

robot_state = self.env.get_robot_state()

goal_vector = [

self.robot_goal[0].item() - robot_state[0].item(),

self.robot_goal[1].item() - robot_state[1].item(),

]

distance = np.linalg.norm(goal_vector)

goal = self.env.robot.arrive

pose_vector = [np.cos(robot_state[2]).item(), np.sin(robot_state[2]).item()]

cos, sin = self.cossin(pose_vector, goal_vector)

collision = self.env.robot.collision

action = [lin_velocity, ang_velocity]

reward = self.get_reward(goal, collision, action, latest_scan)

return latest_scan, distance, cos, sin, collision, goal, action, reward

def reset(

self,

robot_state=None,

robot_goal=None,

random_obstacles=True,

random_obstacle_ids=None,

):

"""

Reset the simulation environment, optionally setting robot and obstacle states.

Args:

robot_state (list or None): Initial state of the robot as a list of [x, y, theta, speed].

robot_goal (list or None): Goal state for the robot.

random_obstacles (bool): Whether to randomly reposition obstacles.

random_obstacle_ids (list or None): Specific obstacle IDs to randomize.

Returns:

(tuple): Initial observation after reset, including LIDAR scan, distance, cos/sin,

and reward-related flags and values.

"""

if robot_state is None:

robot_state = [[random.uniform(1, 9)], [random.uniform(1, 9)], [0]]

self.env.robot.set_state(

state=np.array(robot_state),

init=True,

)

if random_obstacles:

if random_obstacle_ids is None:

random_obstacle_ids = [i + 1 for i in range(7)]

self.env.random_obstacle_position(

range_low=[0, 0, -3.14],

range_high=[10, 10, 3.14],

ids=random_obstacle_ids,

non_overlapping=True,

)

if robot_goal is None:

self.env.robot.set_random_goal(

obstacle_list=self.env.obstacle_list,

init=True,

range_limits=[[1, 1, -3.141592653589793], [9, 9, 3.141592653589793]],

)

else:

self.env.robot.set_goal(np.array(robot_goal), init=True)

self.env.reset()

self.robot_goal = self.env.robot.goal

action = [0.0, 0.0]

latest_scan, distance, cos, sin, _, _, action, reward = self.step(

lin_velocity=action[0], ang_velocity=action[1]

)

return latest_scan, distance, cos, sin, False, False, action, reward

@staticmethod

def get_reward(goal, collision, action, laser_scan):

"""

Calculate the reward for the current step.

Args:

goal (bool): Whether the goal has been reached.

collision (bool): Whether a collision occurred.

action (list): The action taken [linear velocity, angular velocity].

laser_scan (list): The LIDAR scan readings.

Returns:

(float): Computed reward for the current state.

"""

if goal:

return 100.0

elif collision:

return -100.0

else:

r3 = lambda x: 1.35 - x if x < 1.35 else 0.0

return action[0] - abs(action[1]) / 2 - r3(min(laser_scan)) / 2

================================================

FILE: robot_nav/SIM_ENV/sim_env.py

================================================

from abc import ABC, abstractmethod

import numpy as np

class SIM_ENV(ABC):

@abstractmethod

def step(self):

raise NotImplementedError("step method must be implemented by subclass.")

@abstractmethod

def reset(self):

raise NotImplementedError("reset method must be implemented by subclass.")

@staticmethod

def cossin(vec1, vec2):

"""

Compute the cosine and sine of the angle between two 2D vectors.

Args:

vec1 (list): First 2D vector.

vec2 (list): Second 2D vector.

Returns:

(tuple): (cosine, sine) of the angle between the vectors.

"""

vec1 = vec1 / np.linalg.norm(vec1)

vec2 = vec2 / np.linalg.norm(vec2)

cos = np.dot(vec1, vec2)

sin = vec1[0] * vec2[1] - vec1[1] * vec2[0]

return cos, sin

@staticmethod

@abstractmethod

def get_reward():

raise NotImplementedError("get_reward method must be implemented by subclass.")

================================================

FILE: robot_nav/__init__.py

================================================

================================================

FILE: robot_nav/assets/data.yml

================================================

[File too large to display: 40.2 MB]

================================================

FILE: robot_nav/eval_points.yaml

================================================

robot:

poses:

- [[3], [4], [0], [0]]

- [[8], [1], [1], [0]]

- [[2], [6], [1], [0]]

- [[7], [1], [0], [0]]

- [[7], [6.5], [2], [0]]

- [[9], [9], [3], [0]]

- [[2], [9], [1], [0]]

- [[3], [6], [3], [0]]

- [[1], [7], [0], [0]]

- [[5], [7], [3], [0]]

goals:

- [[8], [8], [0]]

- [[2], [9], [0]]

- [[7], [1], [0]]

- [[7.2], [9], [0]]

- [[1], [1], [0]]

- [[5], [1], [0]]

- [[7], [4], [0]]

- [[9], [4], [0]]

- [[1], [9], [0]]

- [[5], [1], [0]]

#robot_states = [np.array([[3],[4],[0],[0]]),

# np.array([[8],[1],[1],[0]]),

# np.array([[2],[6],[1],[0]]),

# np.array([[7],[1],[0],[0]]),

# np.array([[7],[6.5],[2],[0]]),

# np.array([[9],[9],[3],[0]]),

# np.array([[2], [9], [1], [0]]),

# np.array([[3], [6], [3], [0]]),

# np.array([[1], [7], [0], [0]]),

# np.array([[5], [7], [3], [0]]),

# ]

# robot_goals = [

# np.array([[8], [8], [0]]),

# np.array([[2], [9], [0]]),

# np.array([[7], [1], [0]]),

# np.array([[7.2], [9], [0]]),

# np.array([[1], [1], [0]]),

# np.array([[5], [1], [0]]),

# np.array([[7], [4], [0]]),

# np.array([[9], [4], [0]]),

# np.array([[1], [9], [0]]),

# np.array([[5], [1], [0]]),

# ]

================================================

FILE: robot_nav/marl_test_random.py

================================================

import statistics

from pathlib import Path

from tqdm import tqdm

import matplotlib.pyplot as plt

from robot_nav.models.MARL.marlTD3.marlTD3 import TD3

import torch

import numpy as np

from robot_nav.SIM_ENV.marl_sim import MARL_SIM

def outside_of_bounds(poses):

"""

Check if any robot is outside the defined world boundaries.

Args:

poses (list): List of [x, y, theta] poses for each robot.

Returns:

bool: True if any robot is outside the 21x21 area centered at (6, 6), else False.

"""

outside = False

for pose in poses:

norm_x = pose[0] - 6

norm_y = pose[1] - 6

if abs(norm_x) > 10.5 or abs(norm_y) > 10.5:

outside = True

break

return outside

def main(args=None):

"""Main training function"""

# ---- Hyperparameters and setup ----

action_dim = 2 # number of actions produced by the model

max_action = 1 # maximum absolute value of output actions

state_dim = 11 # number of input values in the neural network (vector length of state input)

device = torch.device(

"cuda" if torch.cuda.is_available() else "cpu"

) # using cuda if it is available, cpu otherwise

epoch = 1 # starting epoch number

episode = 0

max_steps = 600 # maximum number of steps in single episode

steps = 0 # starting step number

save_every = 5 # save the model every n training cycles

test_scenarios = 1000

# ---- Instantiate simulation environment and model ----

sim = MARL_SIM(

world_file="worlds/multi_robot_world.yaml",

disable_plotting=True,

reward_phase=2,

) # instantiate environment

model = TD3(

state_dim=state_dim,

action_dim=action_dim,

max_action=max_action,

num_robots=sim.num_robots,

device=device,

save_every=save_every,

load_model=True,

model_name="TDR-MARL-test",

load_model_name="TDR-MARL-train",

load_directory=Path("robot_nav/models/MARL/marlTD3/checkpoint"),

attention="igs",

) # instantiate a model

connections = torch.tensor(

[[0.0 for _ in range(sim.num_robots - 1)] for _ in range(sim.num_robots)]

)

# ---- Take initial step in environment ----

poses, distance, cos, sin, collision, goal, a, reward, positions, goal_positions = (

sim.step([[0, 0] for _ in range(sim.num_robots)], connections)

) # get the initial step state

running_goals = 0

running_reward = 0

running_collisions = 0

running_timesteps = 0

reward_per_ep = []

goals_per_ep = []

col_per_ep = []

lin_actions = []

ang_actions = []

entropy_list = []

epsilon = 1e-6

pbar = tqdm(total=test_scenarios)

while episode < test_scenarios:

state, terminal = model.prepare_state(

poses, distance, cos, sin, collision, a, goal_positions

)

action, connection, combined_weights = model.get_action(

np.array(state), False

) # get an action from the model

combined_weights_norm = combined_weights / (

combined_weights.sum(dim=-1, keepdim=True) + epsilon

)

# Entropy for analysis/logging

entropy = (

(

-(combined_weights_norm * (combined_weights_norm + epsilon).log())

.sum(dim=-1)

.mean()

)

.data.cpu()

.numpy()

)

entropy_list.append(entropy)

a_in = [

[(a[0] + 1) / 4, a[1]] for a in action

] # clip linear velocity to [0, 0.5] m/s range

(

poses,

distance,

cos,

sin,

collision,

goal,

a,

reward,

positions,

goal_positions,

) = sim.step(

a_in, connection, combined_weights

) # get data from the environment

running_goals += sum(goal)

running_collisions += sum(collision) / 2

running_reward += sum(reward)

running_timesteps += 1

for j in range(len(a_in)):

lin_actions.append(a_in[j][0].item())

ang_actions.append(a_in[j][1].item())

outside = outside_of_bounds(poses)

if (

sum(collision) > 0.5 or steps == max_steps or outside

): # reset environment of terminal state reached, or max_steps were taken

(

poses,

distance,

cos,

sin,

collision,

goal,

a,

reward,

positions,

goal_positions,

) = sim.reset()

reward_per_ep.append(running_reward)

running_reward = 0

goals_per_ep.append(running_goals)

running_goals = 0

col_per_ep.append(running_collisions)

running_collisions = 0

steps = 0

episode += 1

pbar.update(1)

else:

steps += 1

reward_per_ep = np.array(reward_per_ep, dtype=np.float32)

goals_per_ep = np.array(goals_per_ep, dtype=np.float32)

col_per_ep = np.array(col_per_ep, dtype=np.float32)

lin_actions = np.array(lin_actions, dtype=np.float32)

ang_actions = np.array(ang_actions, dtype=np.float32)

entropy = np.array(entropy_list, dtype=np.float32)

avg_ep_reward = statistics.mean(reward_per_ep)

avg_ep_reward_std = statistics.stdev(reward_per_ep)

avg_ep_col = statistics.mean(col_per_ep)

avg_ep_col_std = statistics.stdev(col_per_ep)

avg_ep_goals = statistics.mean(goals_per_ep)

avg_ep_goals_std = statistics.stdev(goals_per_ep)

mean_lin_action = statistics.mean(lin_actions)

lin_actions_std = statistics.stdev(lin_actions)

mean_ang_action = statistics.mean(ang_actions)

ang_actions_std = statistics.stdev(ang_actions)

mean_entropy = statistics.mean(entropy)

mean_entropy_std = statistics.stdev(entropy)

print(f"avg_ep_reward: {avg_ep_reward}")

print(f"avg_ep_reward_std: {avg_ep_reward_std}")

print(f"avg_ep_col: {avg_ep_col}")

print(f"avg_ep_col_std: {avg_ep_col_std}")

print(f"avg_ep_goals: {avg_ep_goals}")

print(f"avg_ep_goals_std: {avg_ep_goals_std}")

print(f"mean_lin_action: {mean_lin_action}")

print(f"lin_actions_std: {lin_actions_std}")

print(f"mean_ang_action: {mean_ang_action}")

print(f"ang_actions_std: {ang_actions_std}")

print(f"mean_entropy: {mean_entropy}")

print(f"mean_entropy_std: {mean_entropy_std}")

print("..............................................")

model.writer.add_scalar("test/avg_ep_reward", avg_ep_reward, epoch)

model.writer.add_scalar("test/avg_ep_reward_std", avg_ep_reward_std, epoch)

model.writer.add_scalar("test/avg_ep_col", avg_ep_col, epoch)

model.writer.add_scalar("test/avg_ep_col_std", avg_ep_col_std, epoch)

model.writer.add_scalar("test/avg_ep_goals", avg_ep_goals, epoch)

model.writer.add_scalar("test/avg_ep_goals_std", avg_ep_goals_std, epoch)

model.writer.add_scalar("test/mean_lin_action", mean_lin_action, epoch)

model.writer.add_scalar("test/lin_actions_std", lin_actions_std, epoch)

model.writer.add_scalar("test/mean_ang_action", mean_ang_action, epoch)

model.writer.add_scalar("test/ang_actions_std", ang_actions_std, epoch)

model.writer.add_scalar("test/mean_entropy", mean_entropy, epoch)

model.writer.add_scalar("test/mean_entropy_std", mean_entropy_std, epoch)

bins = 100

model.writer.add_histogram("test/lin_actions", lin_actions, epoch, max_bins=bins)

model.writer.add_histogram("test/ang_actions", ang_actions, epoch, max_bins=bins)

counts, bin_edges = np.histogram(lin_actions, bins=bins)

fig, ax = plt.subplots()

ax.bar(

bin_edges[:-1], counts, width=np.diff(bin_edges), align="edge", log=True

) # Log scale on y-axis

ax.set_xlabel("Value")

ax.set_ylabel("Frequency (Log Scale)")

ax.set_title("Histogram with Log Scale")

model.writer.add_figure("test/lin_actions_hist", fig)

counts, bin_edges = np.histogram(ang_actions, bins=bins)

fig, ax = plt.subplots()

ax.bar(

bin_edges[:-1], counts, width=np.diff(bin_edges), align="edge", log=True

) # Log scale on y-axis

ax.set_xlabel("Value")

ax.set_ylabel("Frequency (Log Scale)")

ax.set_title("Histogram with Log Scale")

model.writer.add_figure("test/ang_actions_hist", fig)

if __name__ == "__main__":

main()

================================================

FILE: robot_nav/marl_test_single.py

================================================

import statistics

from pathlib import Path

import irsim

from tqdm import tqdm

from robot_nav.SIM_ENV.sim_env import SIM_ENV

from robot_nav.models.MARL.marlTD3.marlTD3 import TD3

import torch

import numpy as np

class SINGLE_SIM(SIM_ENV):

"""

Simulation environment for multi-agent robot navigation using IRSim.

This class extends the SIM_ENV and provides a wrapper for multi-robot

simulation and interaction, supporting reward computation and custom reset logic.

Attributes:

env (object): IRSim simulation environment instance.

robot_goal (np.ndarray): Current goal position(s) for the robots.

num_robots (int): Number of robots in the environment.

x_range (tuple): World x-range.

y_range (tuple): World y-range.

"""

def __init__(

self,

world_file="worlds/circle_world.yaml",

disable_plotting=False,

reward_phase=2,

):

"""

Initialize the MARL_SIM environment.

Args:

world_file (str, optional): Path to the world configuration YAML file.

disable_plotting (bool, optional): If True, disables IRSim rendering and plotting.

"""

display = False if disable_plotting else True

self.env = irsim.make(

world_file, disable_all_plot=disable_plotting, display=display

)

self.num_robots = len(self.env.robot_list)

self.x_range = self.env._world.x_range

self.y_range = self.env._world.y_range

self.reward_phase = reward_phase

def step(self, action, connection, combined_weights=None):

"""

Perform a simulation step for all robots using the provided actions and connections.

Args:

action (list): List of actions for each robot [[lin_vel, ang_vel], ...].

connection (Tensor): Tensor of shape (num_robots, num_robots-1) containing logits indicating connections between robots.

combined_weights (Tensor or None, optional): Optional weights for each connection, shape (num_robots, num_robots-1).

Returns:

tuple: (

poses (list): List of [x, y, theta] for each robot,

distances (list): Distance to goal for each robot,

coss (list): Cosine of angle to goal for each robot,

sins (list): Sine of angle to goal for each robot,

collisions (list): Collision status for each robot,

goals (list): Goal reached status for each robot,

action (list): Actions applied,

rewards (list): Rewards computed,

positions (list): Current [x, y] for each robot,

goal_positions (list): Goal [x, y] for each robot,

)

"""

act = [

[0, 0] if self.env.robot_list[i].arrive else action[i]

for i in range(len(action))

]

self.env.step(action_id=[i for i in range(self.num_robots)], action=act)

self.env.render()

poses = []

distances = []

coss = []

sins = []

collisions = []

goals = []

rewards = []

positions = []

goal_positions = []

robot_states = [

[self.env.robot_list[i].state[0], self.env.robot_list[i].state[1]]

for i in range(self.num_robots)

]

for i in range(self.num_robots):

robot_state = self.env.robot_list[i].state

closest_robots = [

np.linalg.norm(

[

robot_states[j][0] - robot_state[0],

robot_states[j][1] - robot_state[1],

]

)

for j in range(self.num_robots)

if j != i

]

robot_goal = self.env.robot_list[i].goal

goal_vector = [

robot_goal[0].item() - robot_state[0].item(),

robot_goal[1].item() - robot_state[1].item(),

]

distance = np.linalg.norm(goal_vector)

goal = self.env.robot_list[i].arrive

pose_vector = [np.cos(robot_state[2]).item(), np.sin(robot_state[2]).item()]

cos, sin = self.cossin(pose_vector, goal_vector)

collision = self.env.robot_list[i].collision

action_i = action[i]

reward = self.get_reward(

goal, collision, action_i, closest_robots, distance, self.reward_phase

)

position = [robot_state[0].item(), robot_state[1].item()]

goal_position = [robot_goal[0].item(), robot_goal[1].item()]

distances.append(distance)

coss.append(cos)

sins.append(sin)

collisions.append(collision)

goals.append(goal)

rewards.append(reward)

positions.append(position)

poses.append(

[robot_state[0].item(), robot_state[1].item(), robot_state[2].item()]

)

goal_positions.append(goal_position)

i_probs = torch.sigmoid(

connection[i]

) # connection[i] is logits for "connect" per pair

i_connections = i_probs.tolist()

i_connections.insert(i, 0)

if combined_weights is not None:

i_weights = combined_weights[i].tolist()

i_weights.insert(i, 0)

for j in range(self.num_robots):

if i_connections[j] > 0.5:

if combined_weights is not None:

weight = i_weights[j]

else:

weight = 1

other_robot_state = self.env.robot_list[j].state

other_pos = [

other_robot_state[0].item(),

other_robot_state[1].item(),

]

rx = [position[0], other_pos[0]]

ry = [position[1], other_pos[1]]

self.env.draw_trajectory(

np.array([rx, ry]), refresh=True, linewidth=weight

)

return (

poses,

distances,

coss,

sins,

collisions,

goals,

action,

rewards,

positions,

goal_positions,

)

def reset(

self,

robot_state=None,

robot_goal=None,

random_obstacles=False,

random_obstacle_ids=None,

):

"""

Reset the simulation environment and optionally set robot and obstacle positions.

Args:

robot_state (list or None, optional): Initial state for robots as [x, y, theta, speed].

robot_goal (list or None, optional): Goal position(s) for the robots.

random_obstacles (bool, optional): If True, randomly position obstacles.

random_obstacle_ids (list or None, optional): IDs of obstacles to randomize.

Returns:

tuple: (

poses (list): List of [x, y, theta] for each robot,

distances (list): Distance to goal for each robot,

coss (list): Cosine of angle to goal for each robot,

sins (list): Sine of angle to goal for each robot,

collisions (list): All False after reset,

goals (list): All False after reset,

action (list): Initial action ([[0.0, 0.0], ...]),

rewards (list): Rewards for initial state,

positions (list): Initial [x, y] for each robot,

goal_positions (list): Initial goal [x, y] for each robot,

)

"""

self.env.reset()

self.robot_goal = self.env.robot.goal

action = [[0.0, 0.0] for _ in range(self.num_robots)]

con = torch.tensor(

[[0.0 for _ in range(self.num_robots - 1)] for _ in range(self.num_robots)]

)

poses, distance, cos, sin, _, _, action, reward, positions, goal_positions = (

self.step(action, con)

)

return (

poses,

distance,

cos,

sin,

[False] * self.num_robots,

[False] * self.num_robots,

action,

reward,

positions,

goal_positions,

)

@staticmethod

def get_reward(goal, collision, action, closest_robots, distance, phase=2):

"""

Calculate the reward for a robot given the current state and action.

Args:

goal (bool): Whether the robot reached its goal.

collision (bool): Whether a collision occurred.

action (list): [linear_velocity, angular_velocity] applied.

closest_robots (list): Distances to the closest other robots.

distance (float): Distance to the goal.

phase (int, optional): Reward phase/function selector (default: 1).

Returns:

float: Computed reward.

"""

match phase:

case 1:

if goal:

return 100.0

elif collision:

return -100.0 * 3 * action[0]

else:

r_dist = 1.5 / distance

cl_pen = 0

for rob in closest_robots:

add = 1.5 - rob if rob < 1.5 else 0

cl_pen += add

return action[0] - 0.5 * abs(action[1]) - cl_pen + r_dist

case 2:

if goal:

return 70.0

elif collision:

return -100.0 * 3 * action[0]

else:

cl_pen = 0

for rob in closest_robots:

add = (3 - rob) ** 2 if rob < 3 else 0

cl_pen += add

return -0.5 * abs(action[1]) - cl_pen

case _:

raise ValueError("Unknown reward phase")

def outside_of_bounds(poses):

"""

Check if any robot is outside the defined world boundaries.

Args:

poses (list): List of [x, y, theta] poses for each robot.

Returns:

bool: True if any robot is outside the 21x21 area centered at (6, 6), else False.

"""

outside = False

for pose in poses:

norm_x = pose[0] - 6

norm_y = pose[1] - 6

if abs(norm_x) > 10.5 or abs(norm_y) > 10.5:

outside = True

break

return outside

def main(args=None):

"""Main training function"""

# ---- Hyperparameters and setup ----

action_dim = 2 # number of actions produced by the model

max_action = 1 # maximum absolute value of output actions

state_dim = 11 # number of input values in the neural network (vector length of state input)

device = torch.device(

"cuda" if torch.cuda.is_available() else "cpu"

) # using cuda if it is available, cpu otherwise

epoch = 1 # starting epoch number

episode = 0

max_steps = 600 # maximum number of steps in single episode

steps = 0 # starting step number

save_every = 5 # save the model every n training cycles

test_scenarios = 100

# ---- Instantiate simulation environment and model ----

sim = SINGLE_SIM(

world_file="worlds/circle_world.yaml", disable_plotting=True, reward_phase=2

) # instantiate environment

model = TD3(

state_dim=state_dim,

action_dim=action_dim,

max_action=max_action,

num_robots=sim.num_robots,

device=device,

save_every=save_every,

load_model=True,

model_name="TDR-MARL-test",

load_model_name="TDR-MARL-train",

load_directory=Path("robot_nav/models/MARL/marlTD3/checkpoint"),

attention="igs",

) # instantiate a model

connections = torch.tensor(

[[0.0 for _ in range(sim.num_robots - 1)] for _ in range(sim.num_robots)]

)

# ---- Take initial step in environment ----

poses, distance, cos, sin, collision, goal, a, reward, positions, goal_positions = (

sim.step([[0, 0] for _ in range(sim.num_robots)], connections)

) # get the initial step state

running_goals = 0

running_reward = 0

running_collisions = 0

running_timesteps = 0

ran_out_of_time = 0

reward_per_ep = []

goals_per_ep = []

col_per_ep = []

lin_actions = []

ang_actions = []

entropy_list = []

timesteps_per_ep = []

epsilon = 1e-6

pbar = tqdm(total=test_scenarios)

while episode < test_scenarios:

state, terminal = model.prepare_state(

poses, distance, cos, sin, collision, a, goal_positions

)

action, connection, combined_weights = model.get_action(

np.array(state), False

) # get an action from the model

combined_weights_norm = combined_weights / (

combined_weights.sum(dim=-1, keepdim=True) + epsilon

)

entropy = (

(

-(combined_weights_norm * (combined_weights_norm + epsilon).log())

.sum(dim=-1)

.mean()

)

.data.cpu()

.numpy()

)

entropy_list.append(entropy)

a_in = [[(a[0] + 1) / 4, a[1]] for a in action]

(

poses,

distance,

cos,

sin,

collision,

goal,

a,

reward,

positions,

goal_positions,

) = sim.step(

a_in, connection, combined_weights

) # get data from the environment

running_goals += sum(goal)

running_collisions += sum(collision)

running_reward += sum(reward)

running_timesteps += 1

for j in range(len(a_in)):

lin_actions.append(a_in[j][0].item())

ang_actions.append(a_in[j][1].item())

outside = outside_of_bounds(poses)

if (

sum(collision) > 0.5 or steps == max_steps or int(sum(goal)) == len(goal)

): # reset environment of terminal state reached, or max_steps were taken

(

poses,

distance,

cos,

sin,

collision,

goal,

a,

reward,

positions,

goal_positions,

) = sim.reset()

reward_per_ep.append(running_reward)

running_reward = 0

goals_per_ep.append(running_goals)

running_goals = 0

col_per_ep.append(running_collisions)

running_collisions = 0

timesteps_per_ep.append(running_timesteps)

running_timesteps = 0

if steps == max_steps:

ran_out_of_time += 1

steps = 0

episode += 1

pbar.update(1)

else:

steps += 1

cols = sum(col_per_ep) / 2

goals_per_ep = np.array(goals_per_ep, dtype=np.float32)

col_per_ep = np.array(col_per_ep, dtype=np.float32)

avg_ep_col = statistics.mean(col_per_ep)

avg_ep_col_std = statistics.stdev(col_per_ep)

avg_ep_goals = statistics.mean(goals_per_ep)

avg_ep_goals_std = statistics.stdev(goals_per_ep)

t_per_ep = np.array(timesteps_per_ep, dtype=np.float32)

avg_ep_t = statistics.mean(t_per_ep)

avg_ep_t_std = statistics.stdev(t_per_ep)

print(f"avg_ep_col: {avg_ep_col}")

print(f"avg_ep_col_std: {avg_ep_col_std}")

print(f"success rate: {test_scenarios - cols}")

print(f"avg_ep_goals: {avg_ep_goals}")

print(f"avg_ep_goals_std: {avg_ep_goals_std}")

print(f"avg_ep_t: {avg_ep_t}")

print(f"avg_ep_t_std: {avg_ep_t_std}")

print(f"ran out of time: {ran_out_of_time}")

print("..............................................")

if __name__ == "__main__":

main()

================================================

FILE: robot_nav/marl_train.py

================================================

from pathlib import Path

from robot_nav.models.MARL.marlTD3.marlTD3 import TD3

import torch

import numpy as np

from robot_nav.SIM_ENV.marl_sim import MARL_SIM

from utils import get_buffer

def outside_of_bounds(poses):

"""

Check if any robot is outside the defined world boundaries.

Args:

poses (list): List of [x, y, theta] poses for each robot.

Returns:

bool: True if any robot is outside the 21x21 area centered at (6, 6), else False.

"""

outside = False

for pose in poses:

norm_x = pose[0] - 6

norm_y = pose[1] - 6

if abs(norm_x) > 10.5 or abs(norm_y) > 10.5:

outside = True

break

return outside

def main(args=None):

"""Main training function"""

# ---- Hyperparameters and setup ----

action_dim = 2 # number of actions produced by the model

max_action = 1 # maximum absolute value of output actions

state_dim = 11 # number of input values in the neural network (vector length of state input)

device = torch.device(

"cuda" if torch.cuda.is_available() else "cpu"

) # using cuda if it is available, cpu otherwise

max_epochs = 160 # max number of epochs

epoch = 1 # starting epoch number

episode = 0 # starting episode number

train_every_n = 10 # train and update network parameters every n episodes

training_iterations = 80 # how many batches to use for single training cycle

batch_size = 16 # batch size for each training iteration

max_steps = 300 # maximum number of steps in single episode

steps = 0 # starting step number

load_saved_buffer = False # whether to load experiences from assets/data.yml

pretrain = False # whether to use the loaded experiences to pre-train the model (load_saved_buffer must be True)

pretraining_iterations = (

10 # number of training iterations to run during pre-training

)

save_every = 5 # save the model every n training cycles

# ---- Instantiate simulation environment and model ----

sim = MARL_SIM(

world_file="worlds/multi_robot_world.yaml",

disable_plotting=True,

reward_phase=1,

) # instantiate environment

model = TD3(

state_dim=state_dim,

action_dim=action_dim,

max_action=max_action,

num_robots=sim.num_robots,

device=device,

save_every=save_every,

load_model=False,

model_name="TDR-MARL-train",

load_model_name="saved_model",

load_directory=Path("robot_nav/models/MARL/marlTD3/checkpoint"),

attention="iga",

) # instantiate a model

# ---- Setup replay buffer and initial connections ----

replay_buffer = get_buffer(

model,

sim,

load_saved_buffer,

pretrain,

pretraining_iterations,

training_iterations,

batch_size,

)

connections = torch.tensor(

[[0.0 for _ in range(sim.num_robots - 1)] for _ in range(sim.num_robots)]

)

# ---- Take initial step in environment ----

poses, distance, cos, sin, collision, goal, a, reward, positions, goal_positions = (

sim.step([[0, 0] for _ in range(sim.num_robots)], connections)

) # get the initial step state

running_goals = 0

running_collisions = 0

running_timesteps = 0

# ---- Main training loop ----

while epoch < max_epochs: # train until max_epochs is reached

state, terminal = model.prepare_state(

poses, distance, cos, sin, collision, a, goal_positions

) # get state a state representation from returned data from the environment

action, connection, combined_weights = model.get_action(

np.array(state), True

) # get an action from the model

a_in = [

[(a[0] + 1) / 4, a[1]] for a in action

] # clip linear velocity to [0, 0.5] m/s range

(

poses,

distance,

cos,

sin,

collision,

goal,

a,

reward,

positions,

goal_positions,

) = sim.step(

a_in, connection, combined_weights

) # get data from the environment

running_goals += sum(goal)

running_collisions += sum(collision)

running_timesteps += 1

next_state, terminal = model.prepare_state(

poses, distance, cos, sin, collision, a, goal_positions

) # get a next state representation

replay_buffer.add(

state, action, reward, terminal, next_state

) # add experience to the replay buffer

outside = outside_of_bounds(poses)

if (

any(terminal) or steps == max_steps or outside

): # reset environment of terminal state reached, or max_steps were taken

(

poses,

distance,

cos,

sin,

collision,

goal,

a,

reward,

positions,

goal_positions,

) = sim.reset()

episode += 1

if episode % train_every_n == 0:

model.writer.add_scalar(

"run/avg_goal", running_goals / running_timesteps, epoch

)

model.writer.add_scalar(

"run/avg_collision", running_collisions / running_timesteps, epoch

)

running_goals = 0

running_collisions = 0

running_timesteps = 0

epoch += 1

model.train(

replay_buffer=replay_buffer,

iterations=training_iterations,

batch_size=batch_size,

) # train the model and update its parameters

steps = 0

else:

steps += 1

if __name__ == "__main__":

main()

================================================

FILE: robot_nav/models/CNNTD3/CNNTD3.py

================================================

from pathlib import Path

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

from numpy import inf

from torch.utils.tensorboard import SummaryWriter

from robot_nav.utils import get_max_bound

class Actor(nn.Module):

"""

Actor network for the CNNTD3 agent.

This network takes as input a state composed of laser scan data, goal position encoding,

and previous action. It processes the scan through a 1D CNN stack and embeds the other

inputs before merging all features through fully connected layers to output a continuous

action vector.

Args:

action_dim (int): The dimension of the action space.

Architecture:

- 1D CNN layers process the laser scan data.

- Fully connected layers embed the goal vector (cos, sin, distance) and last action.

- Combined features are passed through two fully connected layers with LeakyReLU.

- Final action output is scaled with Tanh to bound the values.

"""

def __init__(self, action_dim):

super(Actor, self).__init__()

self.cnn1 = nn.Conv1d(1, 4, kernel_size=8, stride=4)

self.cnn2 = nn.Conv1d(4, 8, kernel_size=8, stride=4)

self.cnn3 = nn.Conv1d(8, 4, kernel_size=4, stride=2)

self.goal_embed = nn.Linear(3, 10)

self.action_embed = nn.Linear(2, 10)

self.layer_1 = nn.Linear(36, 400)

torch.nn.init.kaiming_uniform_(self.layer_1.weight, nonlinearity="leaky_relu")

self.layer_2 = nn.Linear(400, 300)

torch.nn.init.kaiming_uniform_(self.layer_2.weight, nonlinearity="leaky_relu")

self.layer_3 = nn.Linear(300, action_dim)

self.tanh = nn.Tanh()

def forward(self, s):

"""

Forward pass through the Actor network.

Args:

s (torch.Tensor): Input state tensor of shape (batch_size, state_dim).

The last 5 elements are [distance, cos, sin, lin_vel, ang_vel].

Returns:

(torch.Tensor): Action tensor of shape (batch_size, action_dim),

with values in range [-1, 1] due to tanh activation.

"""

if len(s.shape) == 1:

s = s.unsqueeze(0)

laser = s[:, :-5]

goal = s[:, -5:-2]

act = s[:, -2:]

laser = laser.unsqueeze(1)

l = F.leaky_relu(self.cnn1(laser))

l = F.leaky_relu(self.cnn2(l))

l = F.leaky_relu(self.cnn3(l))

l = l.flatten(start_dim=1)

g = F.leaky_relu(self.goal_embed(goal))

a = F.leaky_relu(self.action_embed(act))

s = torch.concat((l, g, a), dim=-1)

s = F.leaky_relu(self.layer_1(s))

s = F.leaky_relu(self.layer_2(s))

a = self.tanh(self.layer_3(s))

return a

class Critic(nn.Module):

"""

Critic network for the CNNTD3 agent.

The Critic estimates Q-values for state-action pairs using two separate sub-networks

(Q1 and Q2), as required by the TD3 algorithm. Each sub-network uses a combination of

CNN-extracted features, embedded goal and previous action features, and the current action.

Args:

action_dim (int): The dimension of the action space.

Architecture:

- Shared CNN layers process the laser scan input.

- Goal and previous action are embedded and concatenated.

- Each Q-network uses separate fully connected layers to produce scalar Q-values.

- Both Q-networks receive the full state and current action.

- Outputs two Q-value tensors (Q1, Q2) for TD3-style training and target smoothing.

"""

def __init__(self, action_dim):

super(Critic, self).__init__()

self.cnn1 = nn.Conv1d(1, 4, kernel_size=8, stride=4)

self.cnn2 = nn.Conv1d(4, 8, kernel_size=8, stride=4)

self.cnn3 = nn.Conv1d(8, 4, kernel_size=4, stride=2)

self.goal_embed = nn.Linear(3, 10)