Showing preview only (509K chars total). Download the full file or copy to clipboard to get everything.

Repository: datawhalechina/smoothly-vslam

Branch: main

Commit: b267b9fc6260

Files: 58

Total size: 344.1 KB

Directory structure:

gitextract_pi78t8bo/

├── LICENSE

├── README.md

├── TASK.md

├── code/

│ ├── ch10/

│ │ ├── CMakeLists.txt

│ │ ├── cmake_modules/

│ │ │ └── FindDBoW3.cmake

│ │ ├── feature_training.cpp

│ │ ├── gen_vocab_large.cpp

│ │ └── loop_closure.cpp

│ ├── ch11/

│ │ └── dense_mono/

│ │ ├── CMakeLists.txt

│ │ └── dense_mapping.cpp

│ └── ch12/

│ └── myslam/

│ ├── CMakeLists.txt

│ ├── app/

│ │ ├── CMakeLists.txt

│ │ └── run_kitti_stereo.cpp

│ ├── bin/

│ │ └── run_kitti_stereo

│ ├── cmake_modules/

│ │ ├── FindCSparse.cmake

│ │ ├── FindG2O.cmake

│ │ └── FindGlog.cmake

│ ├── config/

│ │ └── default.yaml

│ ├── include/

│ │ └── myslam/

│ │ ├── algorithm.h

│ │ ├── backend.h

│ │ ├── camera.h

│ │ ├── common_include.h

│ │ ├── config.h

│ │ ├── dataset.h

│ │ ├── feature.h

│ │ ├── frame.h

│ │ ├── frontend.h

│ │ ├── g2o_types.h

│ │ ├── map.h

│ │ ├── mappoint.h

│ │ ├── viewer.h

│ │ └── visual_odometry.h

│ └── src/

│ ├── CMakeLists.txt

│ ├── backend.cpp

│ ├── camera.cpp

│ ├── config.cpp

│ ├── dataset.cpp

│ ├── feature.cpp

│ ├── frame.cpp

│ ├── frontend.cpp

│ ├── map.cpp

│ ├── mappoint.cpp

│ ├── viewer.cpp

│ └── visual_odometry.cpp

└── docs/

├── _sidebar.md

├── chapter1/

│ └── chapter1.md

├── chapter10/

│ └── chapter10.md

├── chapter11/

│ └── chapter11.md

├── chapter12/

│ └── chapter12.md

├── chapter2/

│ └── chapter2.md

├── chapter3/

│ └── chapter3.md

├── chapter4/

│ └── chapter4.md

├── chapter5/

│ └── chapter5.md

├── chapter6/

│ └── chapter6.md

├── chapter7/

│ └── chapter7.md

├── chapter8/

│ └── chapter8.md

├── chapter9/

│ └── chapter9.md

└── reference_answers_for_each_chapter/

└── reference_answers_for_each_chapter.md

================================================

FILE CONTENTS

================================================

================================================

FILE: LICENSE

================================================

GNU GENERAL PUBLIC LICENSE

Version 2, June 1991

Copyright (C) 1989, 1991 Free Software Foundation, Inc.,

51 Franklin Street, Fifth Floor, Boston, MA 02110-1301 USA

Everyone is permitted to copy and distribute verbatim copies

of this license document, but changing it is not allowed.

Preamble

The licenses for most software are designed to take away your

freedom to share and change it. By contrast, the GNU General Public

License is intended to guarantee your freedom to share and change free

software--to make sure the software is free for all its users. This

General Public License applies to most of the Free Software

Foundation's software and to any other program whose authors commit to

using it. (Some other Free Software Foundation software is covered by

the GNU Lesser General Public License instead.) You can apply it to

your programs, too.

When we speak of free software, we are referring to freedom, not

price. Our General Public Licenses are designed to make sure that you

have the freedom to distribute copies of free software (and charge for

this service if you wish), that you receive source code or can get it

if you want it, that you can change the software or use pieces of it

in new free programs; and that you know you can do these things.

To protect your rights, we need to make restrictions that forbid

anyone to deny you these rights or to ask you to surrender the rights.

These restrictions translate to certain responsibilities for you if you

distribute copies of the software, or if you modify it.

For example, if you distribute copies of such a program, whether

gratis or for a fee, you must give the recipients all the rights that

you have. You must make sure that they, too, receive or can get the

source code. And you must show them these terms so they know their

rights.

We protect your rights with two steps: (1) copyright the software, and

(2) offer you this license which gives you legal permission to copy,

distribute and/or modify the software.

Also, for each author's protection and ours, we want to make certain

that everyone understands that there is no warranty for this free

software. If the software is modified by someone else and passed on, we

want its recipients to know that what they have is not the original, so

that any problems introduced by others will not reflect on the original

authors' reputations.

Finally, any free program is threatened constantly by software

patents. We wish to avoid the danger that redistributors of a free

program will individually obtain patent licenses, in effect making the

program proprietary. To prevent this, we have made it clear that any

patent must be licensed for everyone's free use or not licensed at all.

The precise terms and conditions for copying, distribution and

modification follow.

GNU GENERAL PUBLIC LICENSE

TERMS AND CONDITIONS FOR COPYING, DISTRIBUTION AND MODIFICATION

0. This License applies to any program or other work which contains

a notice placed by the copyright holder saying it may be distributed

under the terms of this General Public License. The "Program", below,

refers to any such program or work, and a "work based on the Program"

means either the Program or any derivative work under copyright law:

that is to say, a work containing the Program or a portion of it,

either verbatim or with modifications and/or translated into another

language. (Hereinafter, translation is included without limitation in

the term "modification".) Each licensee is addressed as "you".

Activities other than copying, distribution and modification are not

covered by this License; they are outside its scope. The act of

running the Program is not restricted, and the output from the Program

is covered only if its contents constitute a work based on the

Program (independent of having been made by running the Program).

Whether that is true depends on what the Program does.

1. You may copy and distribute verbatim copies of the Program's

source code as you receive it, in any medium, provided that you

conspicuously and appropriately publish on each copy an appropriate

copyright notice and disclaimer of warranty; keep intact all the

notices that refer to this License and to the absence of any warranty;

and give any other recipients of the Program a copy of this License

along with the Program.

You may charge a fee for the physical act of transferring a copy, and

you may at your option offer warranty protection in exchange for a fee.

2. You may modify your copy or copies of the Program or any portion

of it, thus forming a work based on the Program, and copy and

distribute such modifications or work under the terms of Section 1

above, provided that you also meet all of these conditions:

a) You must cause the modified files to carry prominent notices

stating that you changed the files and the date of any change.

b) You must cause any work that you distribute or publish, that in

whole or in part contains or is derived from the Program or any

part thereof, to be licensed as a whole at no charge to all third

parties under the terms of this License.

c) If the modified program normally reads commands interactively

when run, you must cause it, when started running for such

interactive use in the most ordinary way, to print or display an

announcement including an appropriate copyright notice and a

notice that there is no warranty (or else, saying that you provide

a warranty) and that users may redistribute the program under

these conditions, and telling the user how to view a copy of this

License. (Exception: if the Program itself is interactive but

does not normally print such an announcement, your work based on

the Program is not required to print an announcement.)

These requirements apply to the modified work as a whole. If

identifiable sections of that work are not derived from the Program,

and can be reasonably considered independent and separate works in

themselves, then this License, and its terms, do not apply to those

sections when you distribute them as separate works. But when you

distribute the same sections as part of a whole which is a work based

on the Program, the distribution of the whole must be on the terms of

this License, whose permissions for other licensees extend to the

entire whole, and thus to each and every part regardless of who wrote it.

Thus, it is not the intent of this section to claim rights or contest

your rights to work written entirely by you; rather, the intent is to

exercise the right to control the distribution of derivative or

collective works based on the Program.

In addition, mere aggregation of another work not based on the Program

with the Program (or with a work based on the Program) on a volume of

a storage or distribution medium does not bring the other work under

the scope of this License.

3. You may copy and distribute the Program (or a work based on it,

under Section 2) in object code or executable form under the terms of

Sections 1 and 2 above provided that you also do one of the following:

a) Accompany it with the complete corresponding machine-readable

source code, which must be distributed under the terms of Sections

1 and 2 above on a medium customarily used for software interchange; or,

b) Accompany it with a written offer, valid for at least three

years, to give any third party, for a charge no more than your

cost of physically performing source distribution, a complete

machine-readable copy of the corresponding source code, to be

distributed under the terms of Sections 1 and 2 above on a medium

customarily used for software interchange; or,

c) Accompany it with the information you received as to the offer

to distribute corresponding source code. (This alternative is

allowed only for noncommercial distribution and only if you

received the program in object code or executable form with such

an offer, in accord with Subsection b above.)

The source code for a work means the preferred form of the work for

making modifications to it. For an executable work, complete source

code means all the source code for all modules it contains, plus any

associated interface definition files, plus the scripts used to

control compilation and installation of the executable. However, as a

special exception, the source code distributed need not include

anything that is normally distributed (in either source or binary

form) with the major components (compiler, kernel, and so on) of the

operating system on which the executable runs, unless that component

itself accompanies the executable.

If distribution of executable or object code is made by offering

access to copy from a designated place, then offering equivalent

access to copy the source code from the same place counts as

distribution of the source code, even though third parties are not

compelled to copy the source along with the object code.

4. You may not copy, modify, sublicense, or distribute the Program

except as expressly provided under this License. Any attempt

otherwise to copy, modify, sublicense or distribute the Program is

void, and will automatically terminate your rights under this License.

However, parties who have received copies, or rights, from you under

this License will not have their licenses terminated so long as such

parties remain in full compliance.

5. You are not required to accept this License, since you have not

signed it. However, nothing else grants you permission to modify or

distribute the Program or its derivative works. These actions are

prohibited by law if you do not accept this License. Therefore, by

modifying or distributing the Program (or any work based on the

Program), you indicate your acceptance of this License to do so, and

all its terms and conditions for copying, distributing or modifying

the Program or works based on it.

6. Each time you redistribute the Program (or any work based on the

Program), the recipient automatically receives a license from the

original licensor to copy, distribute or modify the Program subject to

these terms and conditions. You may not impose any further

restrictions on the recipients' exercise of the rights granted herein.

You are not responsible for enforcing compliance by third parties to

this License.

7. If, as a consequence of a court judgment or allegation of patent

infringement or for any other reason (not limited to patent issues),

conditions are imposed on you (whether by court order, agreement or

otherwise) that contradict the conditions of this License, they do not

excuse you from the conditions of this License. If you cannot

distribute so as to satisfy simultaneously your obligations under this

License and any other pertinent obligations, then as a consequence you

may not distribute the Program at all. For example, if a patent

license would not permit royalty-free redistribution of the Program by

all those who receive copies directly or indirectly through you, then

the only way you could satisfy both it and this License would be to

refrain entirely from distribution of the Program.

If any portion of this section is held invalid or unenforceable under

any particular circumstance, the balance of the section is intended to

apply and the section as a whole is intended to apply in other

circumstances.

It is not the purpose of this section to induce you to infringe any

patents or other property right claims or to contest validity of any

such claims; this section has the sole purpose of protecting the

integrity of the free software distribution system, which is

implemented by public license practices. Many people have made

generous contributions to the wide range of software distributed

through that system in reliance on consistent application of that

system; it is up to the author/donor to decide if he or she is willing

to distribute software through any other system and a licensee cannot

impose that choice.

This section is intended to make thoroughly clear what is believed to

be a consequence of the rest of this License.

8. If the distribution and/or use of the Program is restricted in

certain countries either by patents or by copyrighted interfaces, the

original copyright holder who places the Program under this License

may add an explicit geographical distribution limitation excluding

those countries, so that distribution is permitted only in or among

countries not thus excluded. In such case, this License incorporates

the limitation as if written in the body of this License.

9. The Free Software Foundation may publish revised and/or new versions

of the General Public License from time to time. Such new versions will

be similar in spirit to the present version, but may differ in detail to

address new problems or concerns.

Each version is given a distinguishing version number. If the Program

specifies a version number of this License which applies to it and "any

later version", you have the option of following the terms and conditions

either of that version or of any later version published by the Free

Software Foundation. If the Program does not specify a version number of

this License, you may choose any version ever published by the Free Software

Foundation.

10. If you wish to incorporate parts of the Program into other free

programs whose distribution conditions are different, write to the author

to ask for permission. For software which is copyrighted by the Free

Software Foundation, write to the Free Software Foundation; we sometimes

make exceptions for this. Our decision will be guided by the two goals

of preserving the free status of all derivatives of our free software and

of promoting the sharing and reuse of software generally.

NO WARRANTY

11. BECAUSE THE PROGRAM IS LICENSED FREE OF CHARGE, THERE IS NO WARRANTY

FOR THE PROGRAM, TO THE EXTENT PERMITTED BY APPLICABLE LAW. EXCEPT WHEN

OTHERWISE STATED IN WRITING THE COPYRIGHT HOLDERS AND/OR OTHER PARTIES

PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY OF ANY KIND, EITHER EXPRESSED

OR IMPLIED, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF

MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE. THE ENTIRE RISK AS

TO THE QUALITY AND PERFORMANCE OF THE PROGRAM IS WITH YOU. SHOULD THE

PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF ALL NECESSARY SERVICING,

REPAIR OR CORRECTION.

12. IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MAY MODIFY AND/OR

REDISTRIBUTE THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES,

INCLUDING ANY GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING

OUT OF THE USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED

TO LOSS OF DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY

YOU OR THIRD PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER

PROGRAMS), EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE

POSSIBILITY OF SUCH DAMAGES.

END OF TERMS AND CONDITIONS

How to Apply These Terms to Your New Programs

If you develop a new program, and you want it to be of the greatest

possible use to the public, the best way to achieve this is to make it

free software which everyone can redistribute and change under these terms.

To do so, attach the following notices to the program. It is safest

to attach them to the start of each source file to most effectively

convey the exclusion of warranty; and each file should have at least

the "copyright" line and a pointer to where the full notice is found.

<one line to give the program's name and a brief idea of what it does.>

Copyright (C) <year> <name of author>

This program is free software; you can redistribute it and/or modify

it under the terms of the GNU General Public License as published by

the Free Software Foundation; either version 2 of the License, or

(at your option) any later version.

This program is distributed in the hope that it will be useful,

but WITHOUT ANY WARRANTY; without even the implied warranty of

MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

GNU General Public License for more details.

You should have received a copy of the GNU General Public License along

with this program; if not, write to the Free Software Foundation, Inc.,

51 Franklin Street, Fifth Floor, Boston, MA 02110-1301 USA.

Also add information on how to contact you by electronic and paper mail.

If the program is interactive, make it output a short notice like this

when it starts in an interactive mode:

Gnomovision version 69, Copyright (C) year name of author

Gnomovision comes with ABSOLUTELY NO WARRANTY; for details type `show w'.

This is free software, and you are welcome to redistribute it

under certain conditions; type `show c' for details.

The hypothetical commands `show w' and `show c' should show the appropriate

parts of the General Public License. Of course, the commands you use may

be called something other than `show w' and `show c'; they could even be

mouse-clicks or menu items--whatever suits your program.

You should also get your employer (if you work as a programmer) or your

school, if any, to sign a "copyright disclaimer" for the program, if

necessary. Here is a sample; alter the names:

Yoyodyne, Inc., hereby disclaims all copyright interest in the program

`Gnomovision' (which makes passes at compilers) written by James Hacker.

<signature of Ty Coon>, 1 April 1989

Ty Coon, President of Vice

This General Public License does not permit incorporating your program into

proprietary programs. If your program is a subroutine library, you may

consider it more useful to permit linking proprietary applications with the

library. If this is what you want to do, use the GNU Lesser General

Public License instead of this License.

================================================

FILE: README.md

================================================

# smoothly-vslam

- **项目简介**

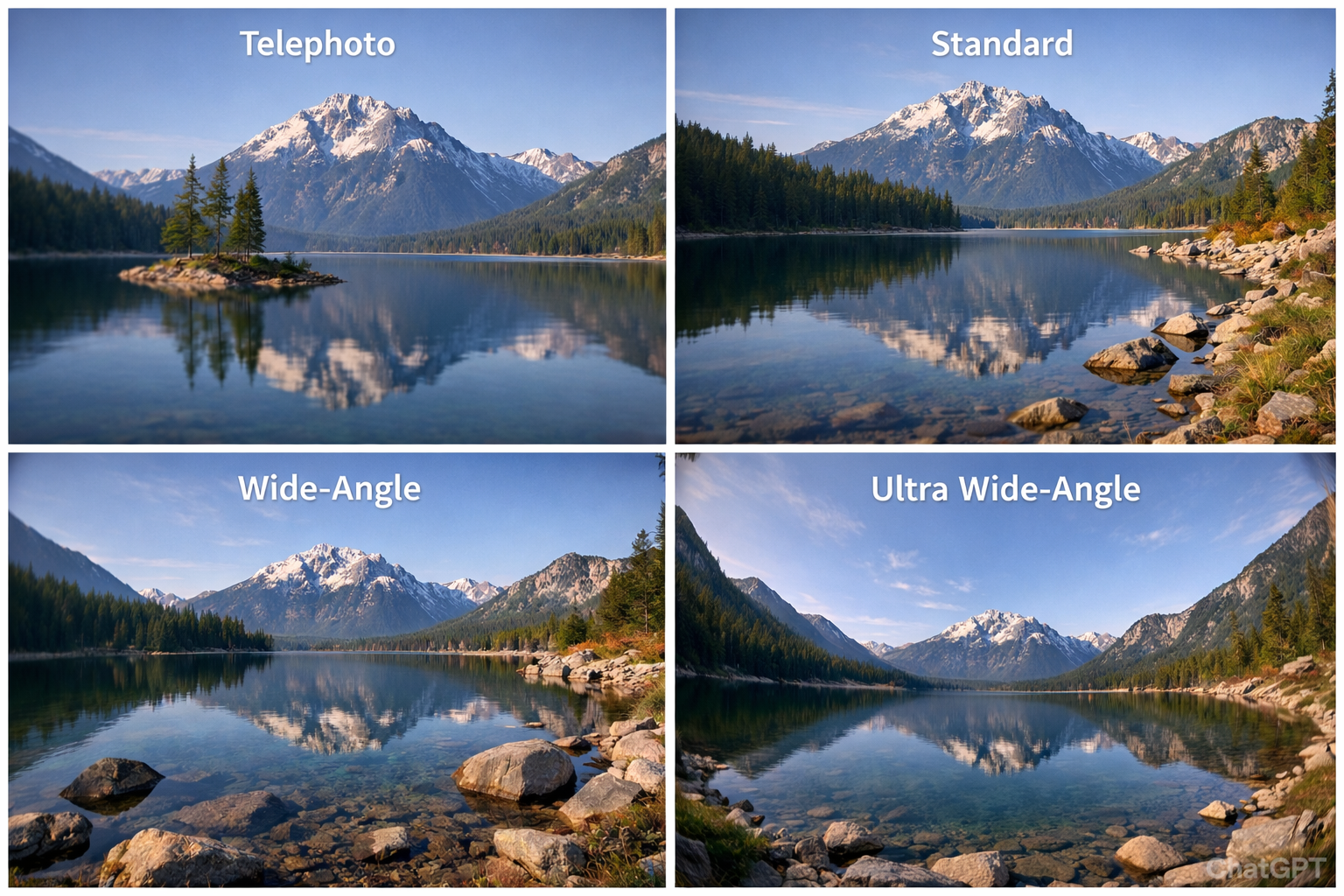

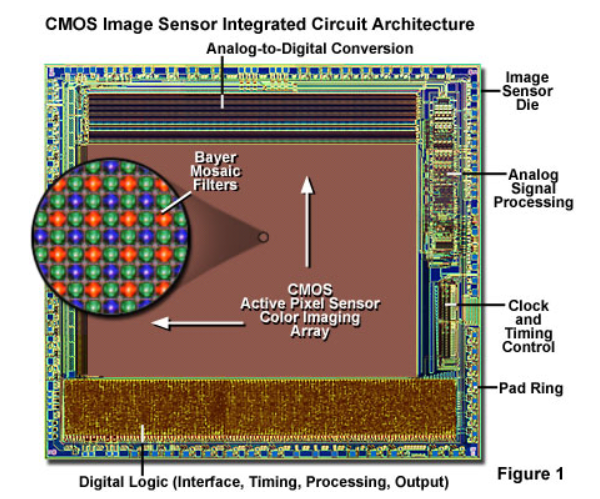

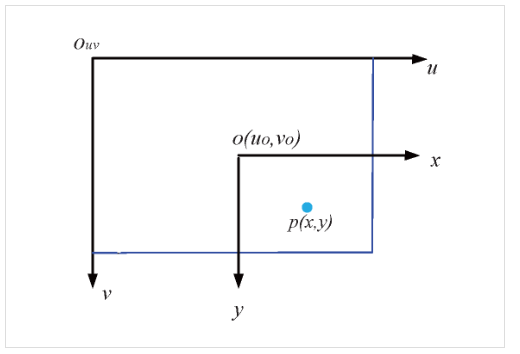

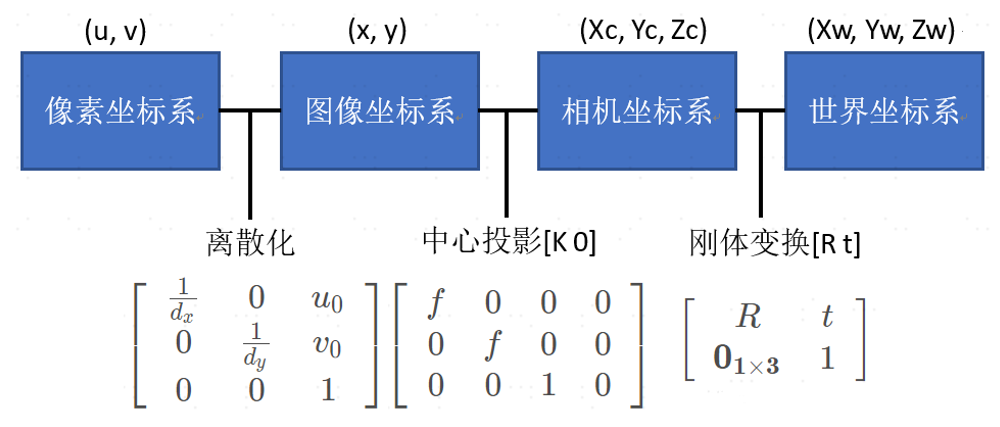

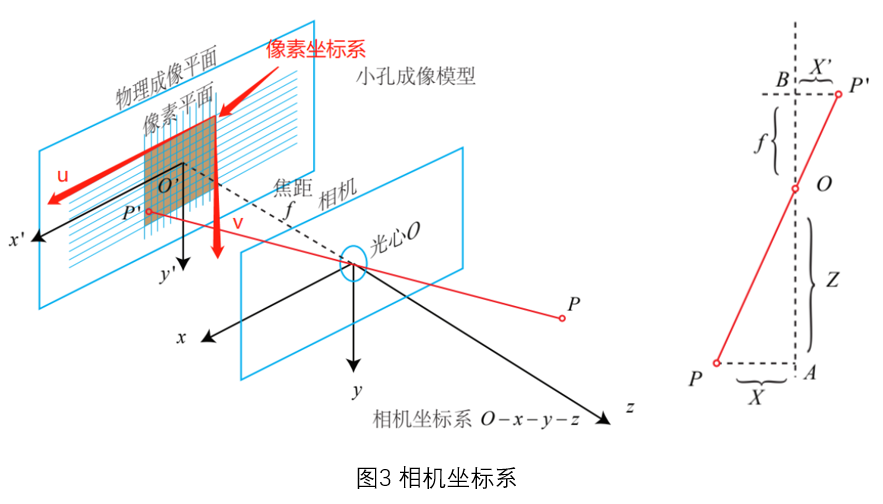

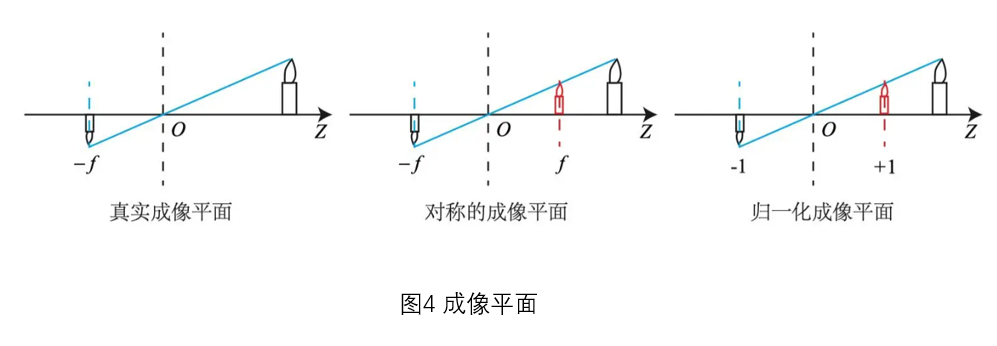

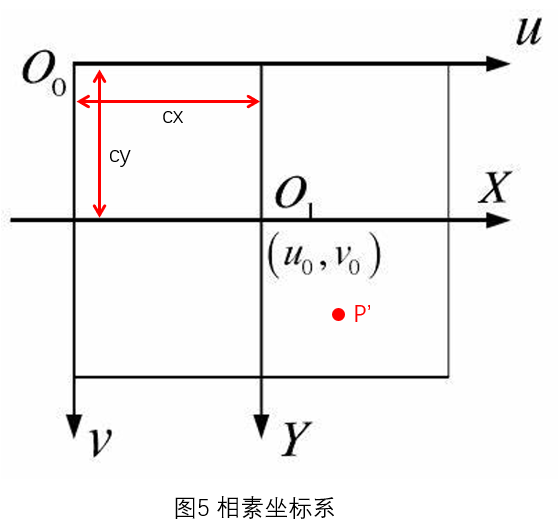

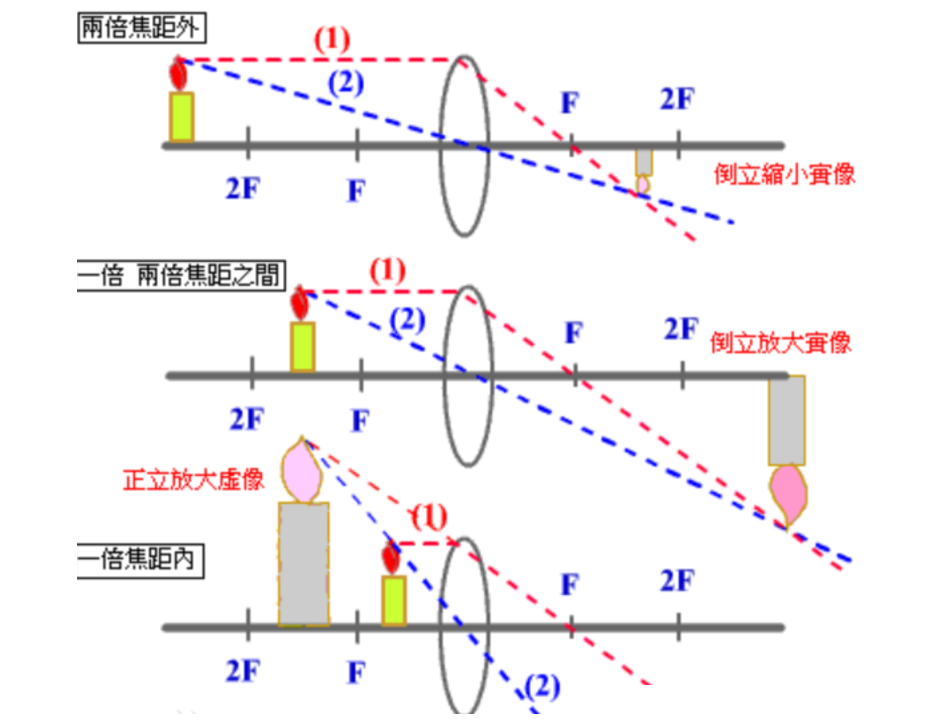

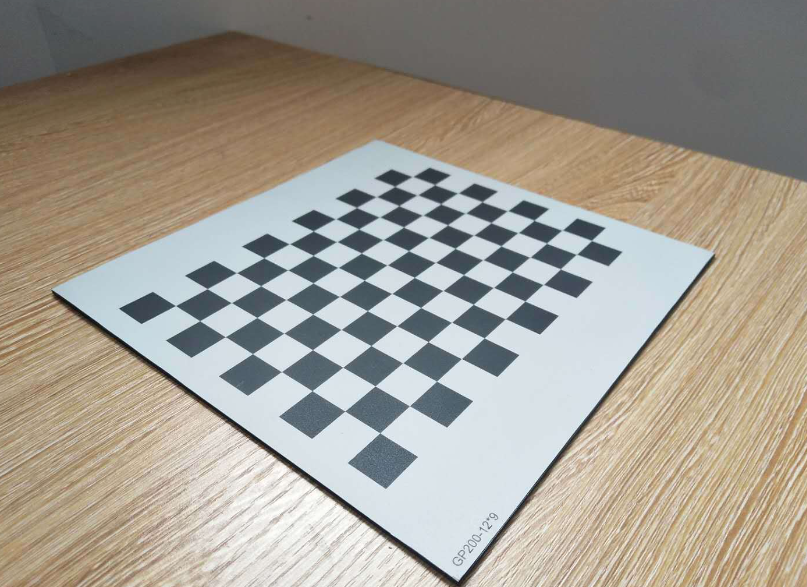

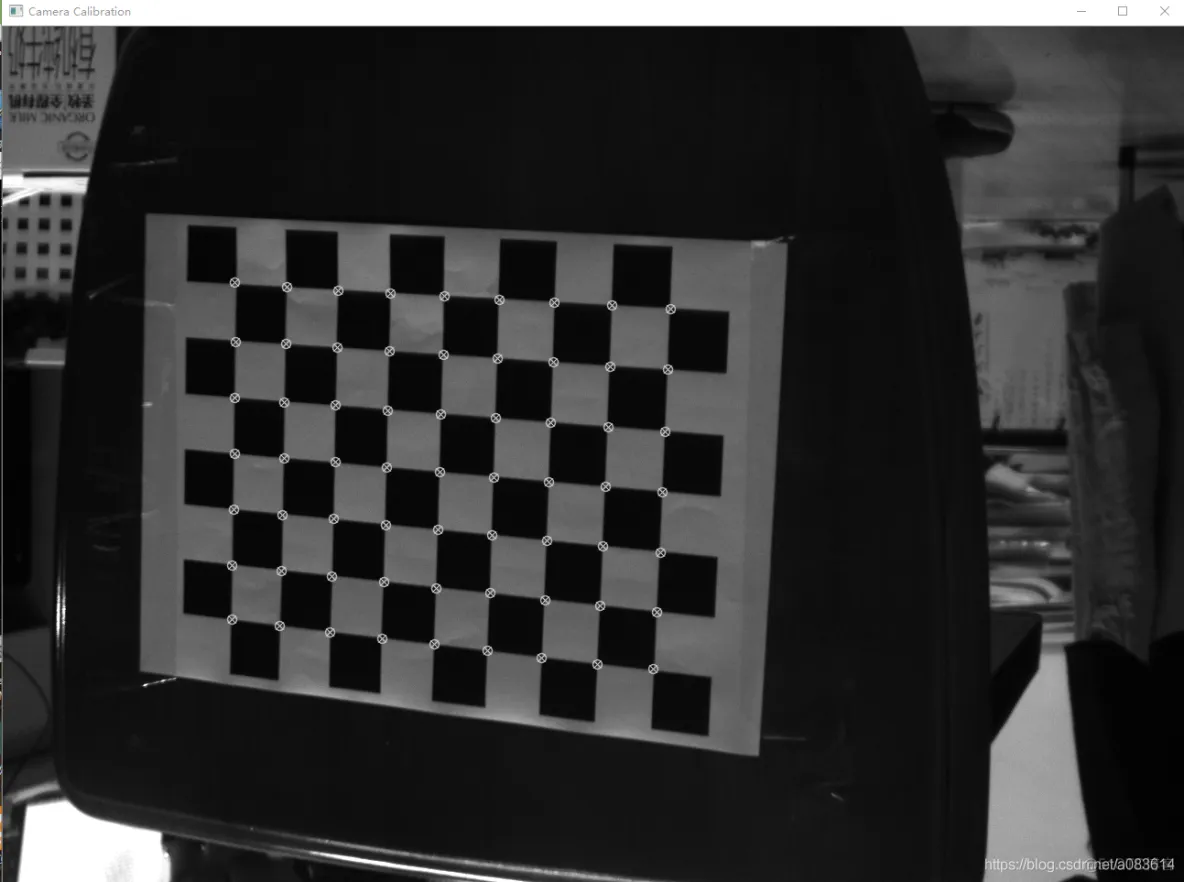

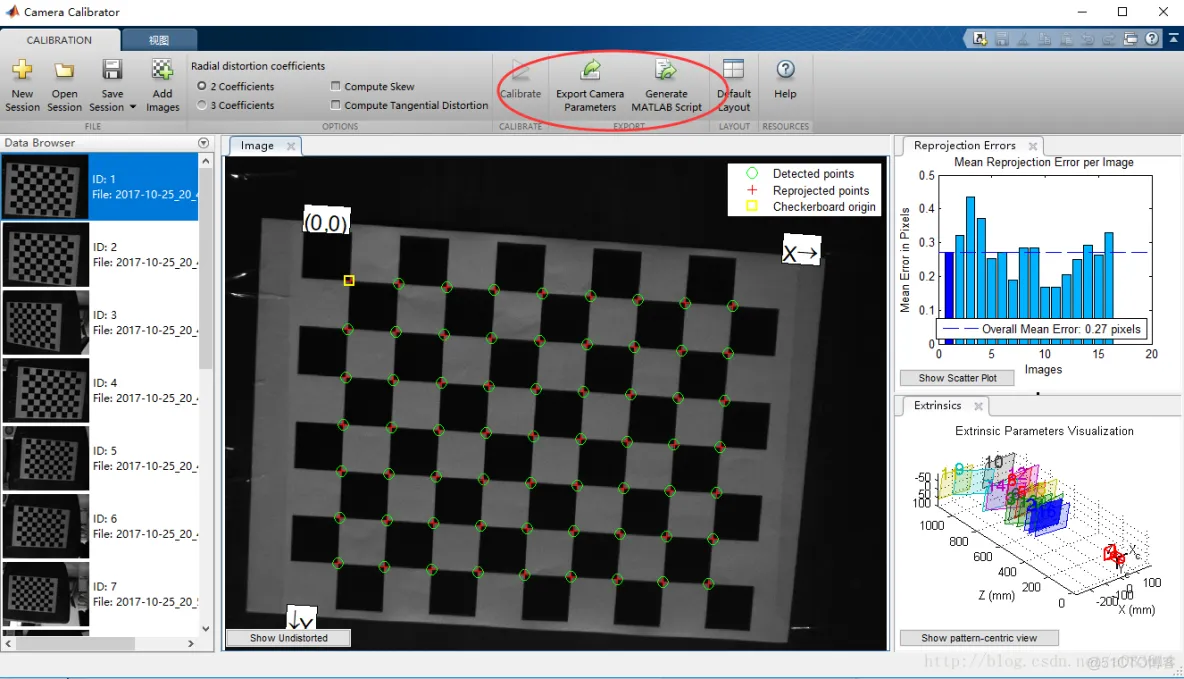

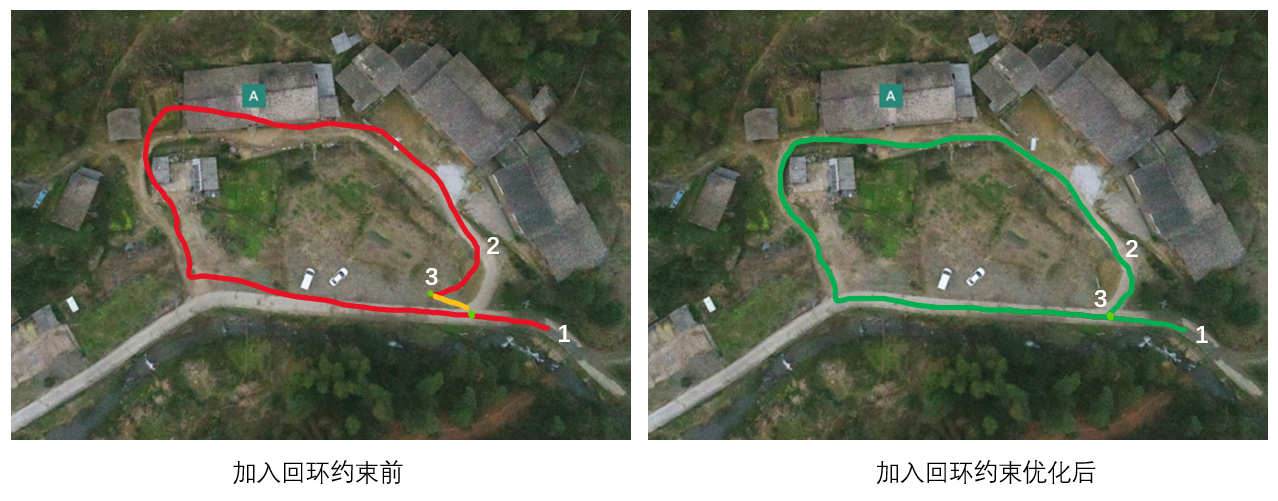

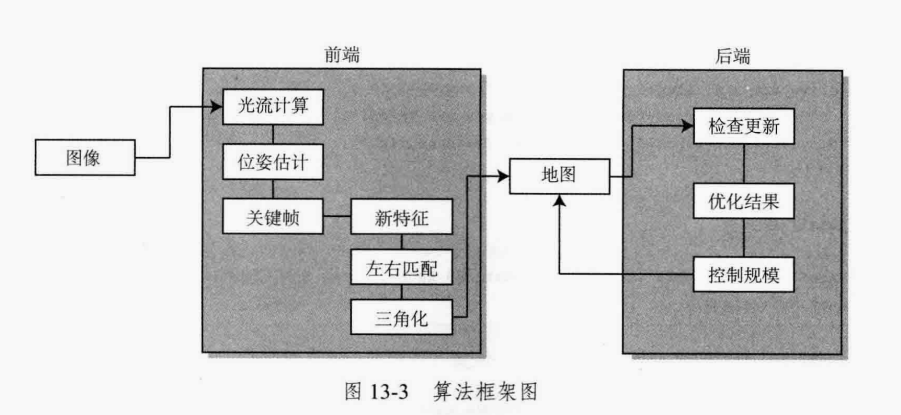

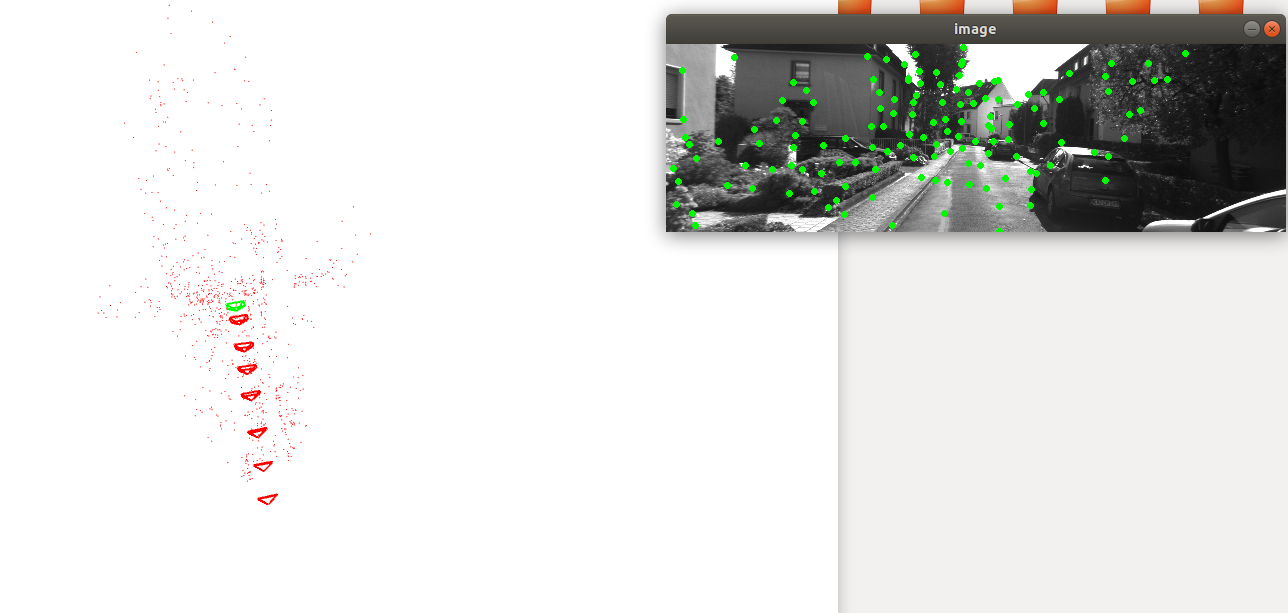

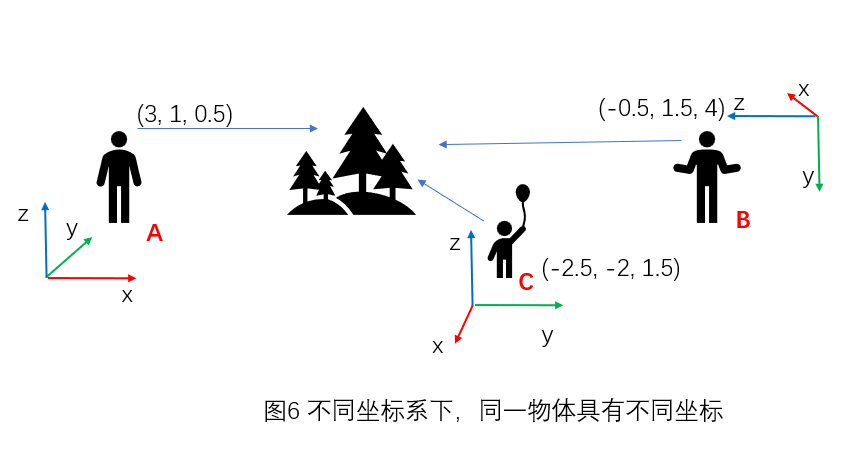

👋 **欢迎来到VSLAM的世界**<br />SLAM是一个与传感器紧密联系的系统,VSLAM使用的传感器就是摄像头。处理摄像头数据需要理解相机成像的过程,这是一个从现实世界到计算机能处理的数据的映射过程。通过这节,你会知道相机的成像模型及映射过程的几个坐标系。与之相关的是相机的畸变及内参标定。这对应于第一章。<br />观测数据所处坐标系为传感器坐标系,为局部观测,我们需要将局部观测转换到全局观测上,这就涉及坐标系间转换。将传感器坐标系观测转换到载体坐标系需要通过外参,计算载体坐标系在世界坐标系下坐标需要用到三维空间中刚体运动变换。这两部分分别对应第二章与第三章。<br />通过上面三部分,基本就可以知道VSLAM的前端工作的一个背景了。可以想象VSLAM系统是在一个载体上搭载着摄像头,在未知环境中不断移动,对环境及自身位姿进行同时估计。那么什么是VSLAM?这块会在第四章进行介绍。<br />通过第四章,我们知道目前成熟的VSLAM框架主要包含前端,后端,回环检测及建图四个模块,这会在后续章节依次介绍。<br />前端为视觉里程计,即VO。我们着重介绍目前使用的较多的特征点法VO,也就是间接法。传统特征点法依赖人工设计的视觉特征,这块会在第五章进行介绍。<br />基于特征点的提取与匹配,我们可以对相机的位姿及特征点的三维空间位姿进行估计,这部分主要分为两个过程,即初始化过程及帧间位移估计。初始化需要确定三维空间点坐标,世界坐标系及尺度,比较复杂,在初始化完成后,就可以通过特征匹配,比较轻松得获得相机帧间位移。这两个过程会在第六章及第七章进行介绍。<br />介绍完视觉前端,接下来是视觉后端,按照使用不同技术,分为基于滤波的方法与非线性优化的方法。这两部分对应于第八章及第九章。<br />然后是回环检测模块,这部分会在第十章进行讲解。<br />VSLAM最后一个重要模块建图对应于第十一章,这也是主教程最后一个章节。<br />如果学有余力,可以看第十二章实践章节,亲自设计一个VSLAM系统。如果完成这个章节,你会获得很大的成就感,对于后面工程应用会有很大帮助。<br />最后就是进度,每天看一点就行。学到很多东西的秘诀,就是每次不要看太多。\

教程博客在线阅读地址一:[smoothly-vslam](https://www.yuque.com/u1507140/vslam-hmh)

<a name="lKFny"></a>

### 推荐书籍

VSLAM属于一个交叉系统学科,包含很多方向的内容,如多视图几何,状态估计,优化等等,以下是部分推荐书籍:<br />1.多视图几何

- 《Multiple View Geometry in Computer Vision 》

- [《计算机视觉中的数学方法》](http://in.ruc.edu.cn/wp-content/uploads/2021/01/Maths-in-3D-computer-vision.pdf)

2.刚体运动

- 李群和李代数: [Quaternion kinematics for the error-state Kalman filter ](http://www.iri.upc.edu/people/jsola/JoanSola/objectes/notes/kinematics.pdf)

- 《机器人学中的状态估计》原作者蒂莫西.D.巴富特,译者高翔。

3.VSLAM介绍

- 《视觉SLAM十四讲:从理论到实践》

- [高翔博士的博客](https://www.cnblogs.com/gaoxiang12/p/3695962.html)

- - D. Scaramuzza, F. Fraundorfer, "Visual Odometry: Part I - The First 30 Years and Fundamentals IEEE Robotics and Automation Magazine", Volume 18, issue 4, 2011.

- - F. Fraundorfer and D. Scaramuzza, "Visual Odometry : Part II: Matching, Robustness, Optimization, and Applications," in IEEE Robotics & Automation Magazine, vol. 19, no. 2, pp. 78-90, June 2012.

4.概率论

- 《概率机器人》

5.优化理论

- [数值最优化(第二版)(美)乔治·劳斯特(Jorge Nocedal)](https://www.math.uci.edu/~qnie/Publications/NumericalOptimization.pdf)

- 凸优化:[Convex Optimization ](https://web.stanford.edu/~boyd/cvxbook/bv_cvxbook.pdf)

- 凸优化:[Introductory Lectures on Convex Programming](https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.693.855&rep=rep1&type=pdf)

- [g2o](http://ais.informatik.uni-freiburg.de/publications/papers/kuemmerle11icra.pdf)

- 《机器人感知:因子图在SLAM中的应用》(gtsam作者,佐治亚理工教授 Frank Dellaert写的)

优化理论进阶

- Nolinear Programming

6.一些工具

- ubuntu, cmake, [bash](https://www.zhihu.com/search?q=bash&search_source=Entity&hybrid_search_source=Entity&hybrid_search_extra=%7B%22sourceType%22%3A%22answer%22%2C%22sourceId%22%3A145219653%7D), vim, qt(optional).

- OpenCV install, read the opencv reference manual and tutorial.

- ros install: [ROS/Installation - ROS Wiki](https://link.zhihu.com/?target=http%3A//wiki.ros.org/ROS/Installation)

- ros [tutorial](https://www.zhihu.com/search?q=tutorial&search_source=Entity&hybrid_search_source=Entity&hybrid_search_extra=%7B%22sourceType%22%3A%22answer%22%2C%22sourceId%22%3A145219653%7D): [ROS/Tutorials - ROS Wiki](https://link.zhihu.com/?target=http%3A//wiki.ros.org/ROS/Tutorials) \

如果你想对本教程做贡献,请邮件联系:530019431@qq.com

Finally, hope you enjoy it!

- **项目内容目录** \

**0.前言** \

**1.一幅图像的诞生:相机投影及相机畸变** \

**2.差异与统一:坐标系变换与外参标定** \

**3.描述状态不简单:三维空间刚体运动** \

**4.也见森林:视觉SLAM简介** \

**5.以不变应万变:前端-视觉里程计之特征点** \

**6.换一个视角看世界:前端视觉里程计之对极几何** \

**7.积硅步以至千里:前端-视觉里程计之相对位姿估计** \

**8.在不确定中寻找确定:后端之卡尔曼滤波** \

**9.每次更好,就是最好:后端之非线性优化** \

**10.又回到曾经的地点:回环检测** \

**11.终识庐山真面目:建图** \

**12.实践是检验真理的唯一标准:设计VSLAM系统**

- **项目在线阅读地址**\

https://www.yuque.com/u1507140/vslam-hmh

## Roadmap

这里列一些还需要完善的部分

### 1.对各章节内容的进一步的完善

补充各章节的基础内容,从背景发展到具体的算法原理的进一步充实

比如,对第八章,卡尔曼滤波可以有如下的进一步完善

- 1.贝叶斯滤波的补充

- 2.其他滤波的介绍

### 2.内容的进阶,不仅限于VSLAM内容

- 1.SLAM更一般的理论基础,如可观性,退化场景分析

- 2.SLAM应用场景等等

**当前教程为VSLAM基础教程,涉及VSLAM背景知识及基础算法原理,对更深入的部分,计划后续开一个进阶教程进行讲解。**

## 参与贡献

- 如果你想参与到项目中来欢迎查看项目的 [Issue]() 查看没有被分配的任务。

- 如果你发现了一些问题,欢迎在 [Issue]() 中进行反馈🐛。

- 如果你对本项目感兴趣想要参与进来可以通过 [Discussion]() 进行交流💬。

如果你对 Datawhale 很感兴趣并想要发起一个新的项目,欢迎查看 [Datawhale 贡献指南](https://github.com/datawhalechina/DOPMC#%E4%B8%BA-datawhale-%E5%81%9A%E5%87%BA%E8%B4%A1%E7%8C%AE)。

## 贡献者名单

| 姓名 | 职责 | 简介 | 联系方式|

| :----| :---- | :---- |:---- |

| 胡明豪 | 项目负责人,教程初版贡献者 | DataWhale成员,VSLAM算法工程师 |530019431@qq.com|

| 王洲烽 | 第1,2章贡献者 | DataWhale成员,国防科技大学准研究生 | wangzhoufeng7346@gmail.com |

| 乔建森 | 第3,5,8,9章贡献者| 中国航天科工三院-算法工程师 | 2719008838@qq.com |

| 林俊强 | 第5章贡献者| 算法工程师 | hayasijq@gmail.com |

## 关注我们

<div align=center>

<p>扫描下方二维码关注公众号:Datawhale</p>

<img src="https://raw.githubusercontent.com/datawhalechina/pumpkin-book/master/res/qrcode.jpeg" width = "180" height = "180">

</div>

## LICENSE

<a rel="license" href="http://creativecommons.org/licenses/by-nc-sa/4.0/"><img alt="知识共享许可协议" style="border-width:0" src="https://img.shields.io/badge/license-CC%20BY--NC--SA%204.0-lightgrey" /></a><br />本作品采用<a rel="license" href="http://creativecommons.org/licenses/by-nc-sa/4.0/">知识共享署名-非商业性使用-相同方式共享 4.0 国际许可协议</a>进行许可。

================================================

FILE: TASK.md

================================================

# smoothly-vslam

基本信息 \

本项目涉及VSLAM基础概念及算法原理,通过本项目内容,降低学习者后续学习开源vslam算法的难度,平滑小白学习vslam的学习曲线,为后续学习者掌握复杂的vslam算法打下基础。

学习周期:20天,每天平均花费时间 2小时-4小时不等,根据个人学习接受能力强弱有所浮动

学习形式:教程学习+实践

人群定位:具备一定编程基础,有学习和梳理VSLAM算法的需求。

先修内容:无

难度系数:中

## 任务安排

### Task 1: 相机投影及内外参 (3天)

理论部分

1.1 介绍针孔相机模型

1.2相机成像的几个坐标系

1.3 内参矩阵及去畸变的方法

2.1介绍 坐标系变换

2.2介绍 相机外参标定

教程地址:

[1.一幅图像的诞生:相机投影及相机畸变](https://www.yuque.com/u1507140/vslam-hmh/ogc8v31hbzb6efy8) \

[2. 差异与统一:坐标系变换与外参标定](https://www.yuque.com/u1507140/vslam-hmh/csqub9k4nax99i19)

### Task 2: 三维空间刚体运动 (2天)

理论部分

介绍 世界坐标系与里程计坐标系

介绍 三维刚体运动

介绍 旋转的参数化

教程地址

[3.描述状态不简单:三维空间刚体运动](https://www.yuque.com/u1507140/vslam-hmh/pn5az0nwigy51f25)

### Task 3: 视觉SLAM简介 (1天)

理论部分

介绍 什么是视觉SLAM

介绍 视觉SLAM的主流框架及分类

教程地址

[4. 也见森林:视觉SLAM简介](https://www.yuque.com/u1507140/vslam-hmh/xkqtacgm3gg6crk5)

### Task 4: 前端-视觉里程计之特征点 (3天)

理论部分

介绍 图像特征简述

介绍 常用特征点

教程地址

[5.以不变应万变:前端-视觉里程计之特征点](https://www.yuque.com/u1507140/vslam-hmh/rcvyw38lhgchkb6g)

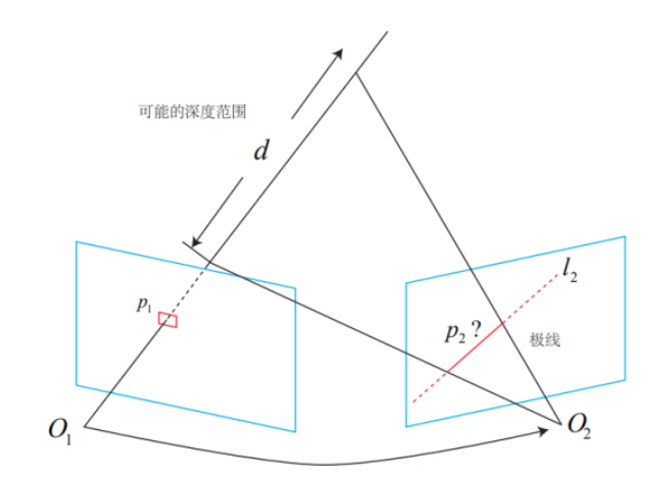

### Task 5: 前端-对极几何及相对位姿估计 (3天)

理论部分

1.1介绍 对极几何

1.2介绍 本质矩阵及其求解

1.3介绍 单应矩阵及其求解

1.4介绍 三角测量

2.1介绍 已知3D-2D匹配关系,估计相机相对位姿的常用算法

教程地址

[6.换一个视角看世界:前端-视觉里程计之对极几何](https://www.yuque.com/u1507140/vslam-hmh/yu9032oczfnbnr2y) \

[7.积硅步以至千里:前端-视觉里程计之相对位姿估计](https://www.yuque.com/u1507140/vslam-hmh/lusyrekv9r5g0q4n)

### Task 6: 后端之卡尔曼滤波与非线性优化 (4天)

理论部分

1.1 介绍 卡尔曼滤波原理

2.1介绍 最小二乘问题

2.2介绍 牛顿法

2.3介绍 高斯牛顿法

2.4介绍 LM法

教程地址

[8.在不确定中寻找确定:后端之卡尔曼滤波](https://www.yuque.com/u1507140/vslam-hmh/fsblfmf5te9egulq) \

[9.每次更好,就是最好:后端之非线性优化](https://www.yuque.com/u1507140/vslam-hmh/swh0g4a0t452pwux)

### Task 7: 回环检测 (2天)

理论部分

介绍 回环检测的作用

介绍 视觉常用回环检测方法

介绍 使用词袋模型进行回环检测

教程地址

[10.又回到曾经的地点:回环检测](https://www.yuque.com/u1507140/vslam-hmh/mrx3a5aotqr412qe)

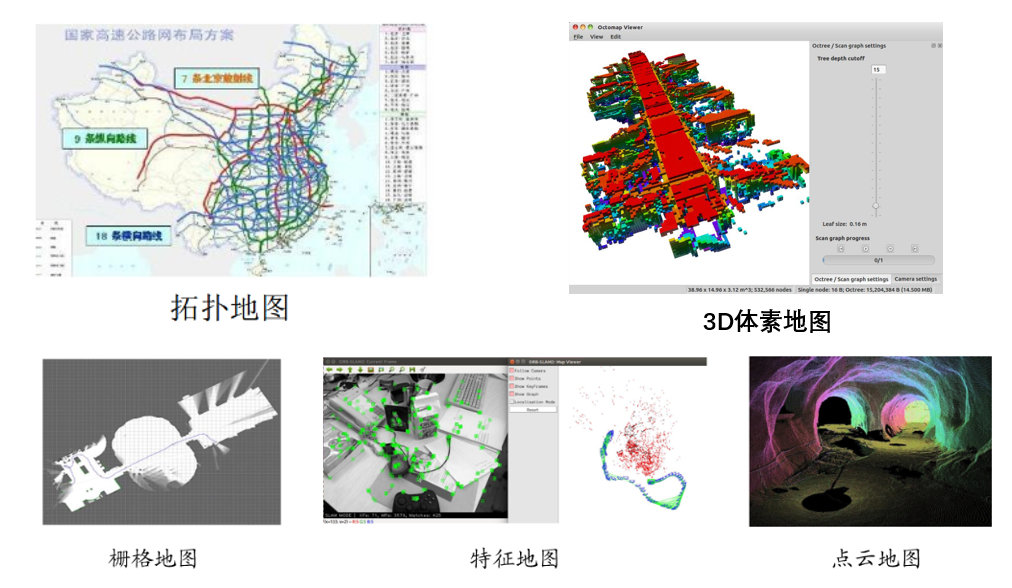

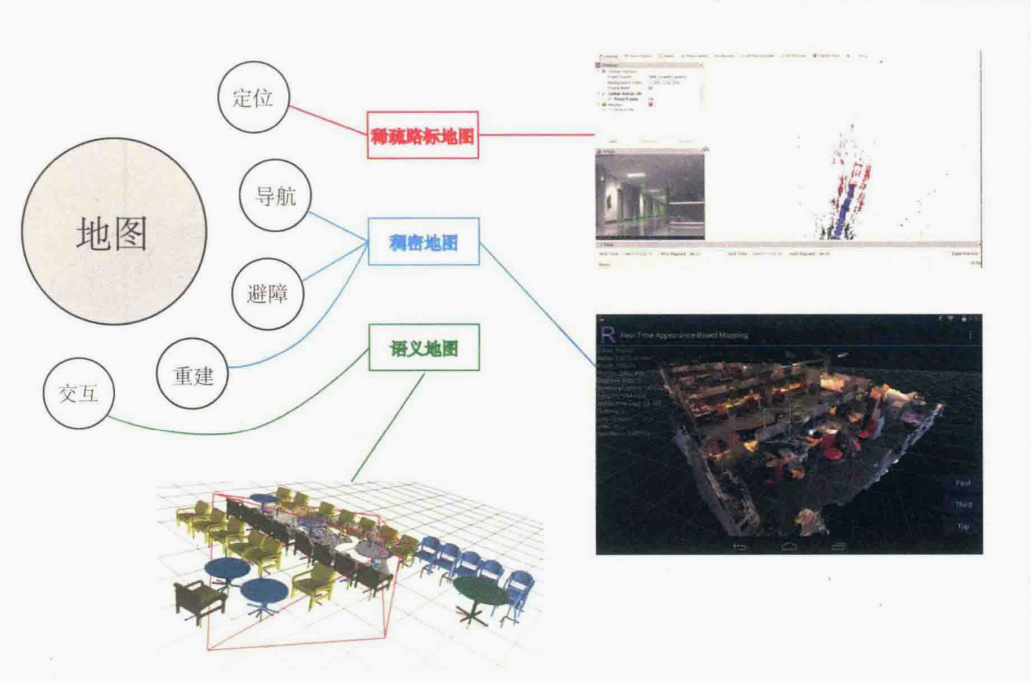

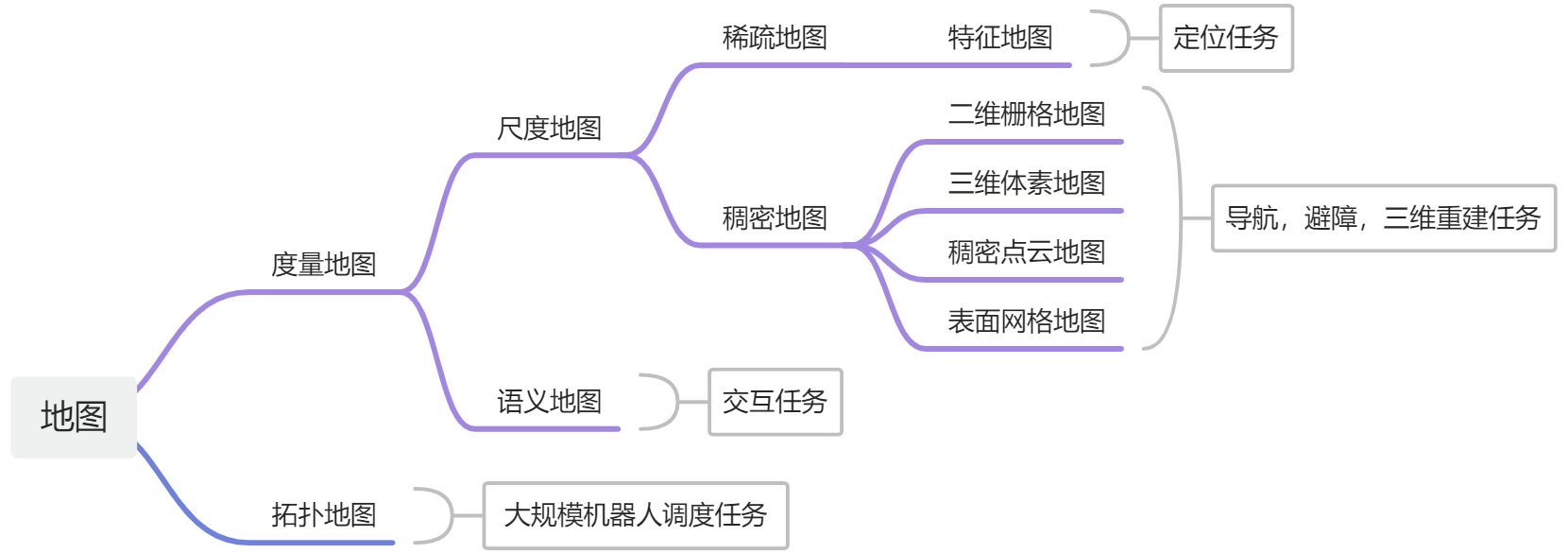

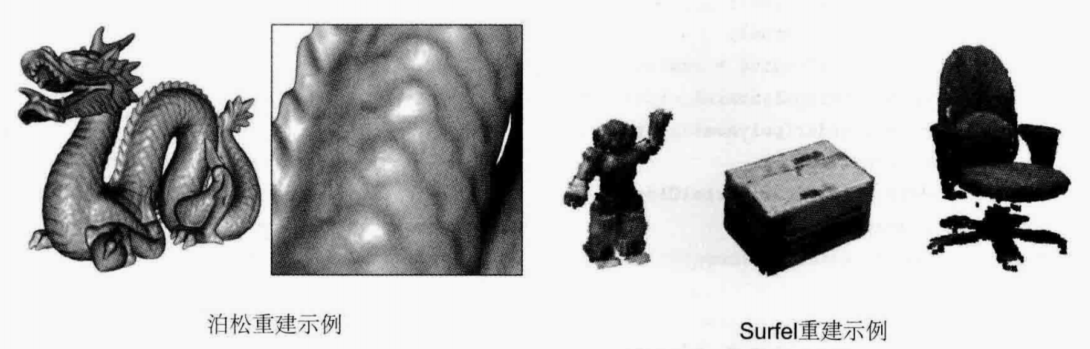

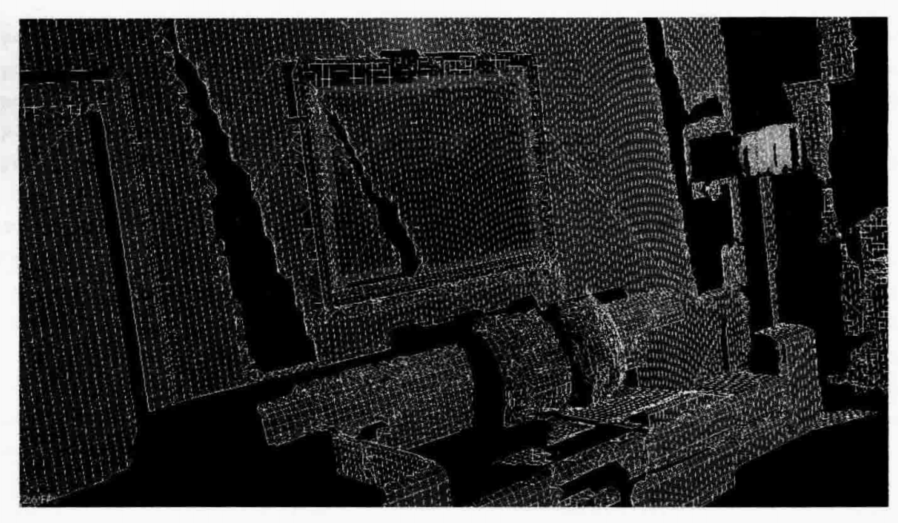

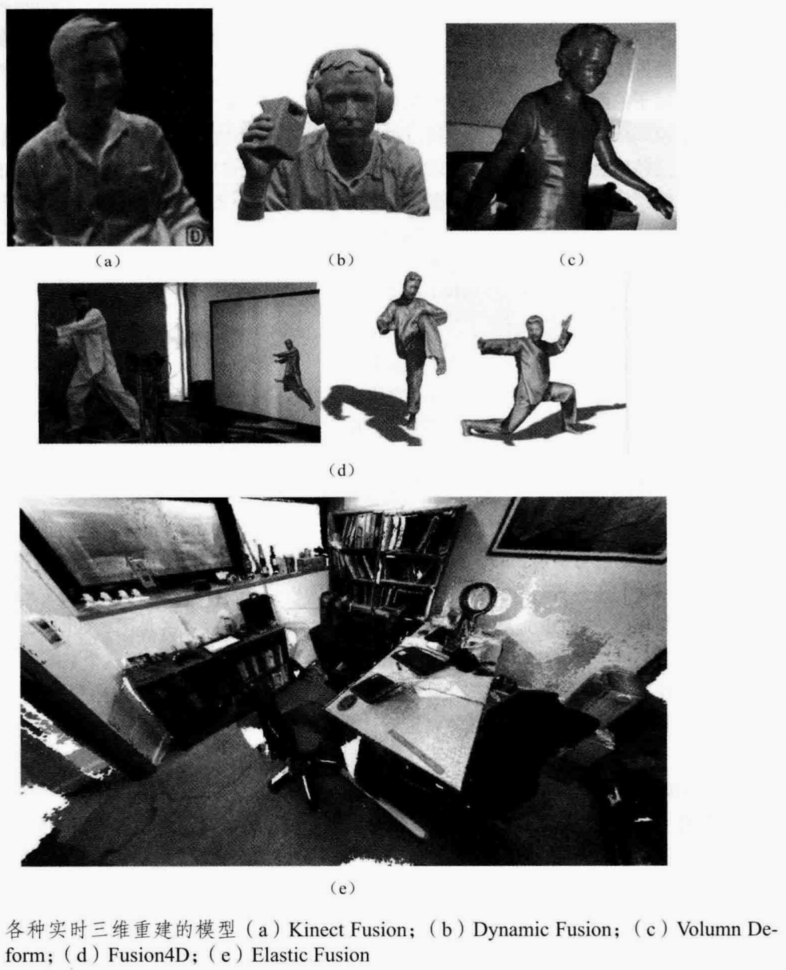

### Task 8: 建图 (2天)

理论部分

介绍 地图的类型

介绍 稠密重建

介绍 点云地图

介绍 TSDF地图

介绍 实时三维重建

教程地址

[11.终识庐山真面目:建图](https://www.yuque.com/u1507140/vslam-hmh/ow0woydmogl8wc6n)

### Task 9: 设计VSLAM系统 (2天)选做

理论部分

介绍 C++的工程结构

介绍 系统设计方法

练习 设计一个VSLAM系统

教程地址

[*12.实践是检验真理的唯一标准:设计VSLAM系统](https://www.yuque.com/u1507140/vslam-hmh/cgl78erifda00hge)

================================================

FILE: code/ch10/CMakeLists.txt

================================================

cmake_minimum_required( VERSION 2.8 )

project( loop_closure )

set( CMAKE_BUILD_TYPE "Release" )

set( CMAKE_CXX_FLAGS "-std=c++11 -O3" )

# opencv

find_package( OpenCV 3.1 REQUIRED )

include_directories( ${OpenCV_INCLUDE_DIRS} )

# dbow3

# dbow3 is a simple lib so I assume you installed it in default directory

set( DBoW3_INCLUDE_DIRS "/usr/local/include" )

#set( DBoW3_LIBS "/usr/local/lib/libDBoW3.a" )

set( DBoW3_LIBS "/usr/local/lib/libDBoW3.so" )

add_executable( feature_training feature_training.cpp )

target_link_libraries( feature_training ${OpenCV_LIBS} ${DBoW3_LIBS} )

add_executable( loop_closure loop_closure.cpp )

target_link_libraries( loop_closure ${OpenCV_LIBS} ${DBoW3_LIBS} )

add_executable( gen_vocab gen_vocab_large.cpp )

target_link_libraries( gen_vocab ${OpenCV_LIBS} ${DBoW3_LIBS} )

================================================

FILE: code/ch10/cmake_modules/FindDBoW3.cmake

================================================

================================================

FILE: code/ch10/feature_training.cpp

================================================

#include "DBoW3/DBoW3.h"

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <iostream>

#include <vector>

#include <string>

using namespace cv;

using namespace std;

/***************************************************

* 本节演示了如何根据data/目录下的十张图训练字典

* ************************************************/

int main( int argc, char** argv ) {

// read the image

cout<<"reading images... "<<endl;

vector<Mat> images;

for ( int i=0; i<10; i++ )

{

string path = "../data/"+to_string(i+1)+".png";

images.push_back( imread(path) );

}

// detect ORB features

cout<<"detecting ORB features ... "<<endl;

Ptr< Feature2D > detector = ORB::create();

vector<Mat> descriptors;

for ( Mat& image:images )

{

vector<KeyPoint> keypoints;

Mat descriptor;

detector->detectAndCompute( image, Mat(), keypoints, descriptor );

descriptors.push_back( descriptor );

}

// create vocabulary

cout<<"creating vocabulary ... "<<endl;

DBoW3::Vocabulary vocab;

vocab.create( descriptors );

cout<<"vocabulary info: "<<vocab<<endl;

vocab.save( "vocabulary.yml.gz" );

cout<<"done"<<endl;

return 0;

}

================================================

FILE: code/ch10/gen_vocab_large.cpp

================================================

#include "DBoW3/DBoW3.h"

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <iostream>

#include <vector>

#include <string>

using namespace cv;

using namespace std;

int main( int argc, char** argv )

{

string dataset_dir = argv[1];

ifstream fin ( dataset_dir+"/associate.txt" );

if ( !fin )

{

cout<<"please generate the associate file called associate.txt!"<<endl;

return 1;

}

vector<string> rgb_files, depth_files;

vector<double> rgb_times, depth_times;

while ( !fin.eof() )

{

string rgb_time, rgb_file, depth_time, depth_file;

fin>>rgb_time>>rgb_file>>depth_time>>depth_file;

rgb_times.push_back ( atof ( rgb_time.c_str() ) );

depth_times.push_back ( atof ( depth_time.c_str() ) );

rgb_files.push_back ( dataset_dir+"/"+rgb_file );

depth_files.push_back ( dataset_dir+"/"+depth_file );

if ( fin.good() == false )

break;

}

fin.close();

cout<<"generating features ... "<<endl;

vector<Mat> descriptors;

Ptr< Feature2D > detector = ORB::create();

int index = 1;

for ( string rgb_file:rgb_files )

{

Mat image = imread(rgb_file);

vector<KeyPoint> keypoints;

Mat descriptor;

detector->detectAndCompute( image, Mat(), keypoints, descriptor );

descriptors.push_back( descriptor );

cout<<"extracting features from image " << index++ <<endl;

}

cout<<"extract total "<<descriptors.size()*500<<" features."<<endl;

// create vocabulary

cout<<"creating vocabulary, please wait ... "<<endl;

DBoW3::Vocabulary vocab;

vocab.create( descriptors );

cout<<"vocabulary info: "<<vocab<<endl;

vocab.save( "vocab_larger.yml.gz" );

cout<<"done"<<endl;

return 0;

}

================================================

FILE: code/ch10/loop_closure.cpp

================================================

#include "DBoW3/DBoW3.h"

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <iostream>

#include <vector>

#include <string>

using namespace cv;

using namespace std;

/***************************************************

* 本节演示了如何根据前面训练的字典计算相似性评分

* ************************************************/

int main(int argc, char **argv) {

// read the images and database

cout << "reading database" << endl;

DBoW3::Vocabulary vocab("./vocabulary.yml.gz");

// DBoW3::Vocabulary vocab("./vocab_larger.yml.gz"); // use large vocab if you want:

if (vocab.empty()) {

cerr << "Vocabulary does not exist." << endl;

return 1;

}

cout << "reading images... " << endl;

vector<Mat> images;

for (int i = 0; i < 10; i++) {

string path = "../data/" + to_string(i + 1) + ".png";

images.push_back(imread(path));

}

// NOTE: in this case we are comparing images with a vocabulary generated by themselves, this may lead to overfit.

// detect ORB features

cout << "detecting ORB features ... " << endl;

Ptr<Feature2D> detector = ORB::create();

vector<Mat> descriptors;

for (Mat &image:images) {

vector<KeyPoint> keypoints;

Mat descriptor;

detector->detectAndCompute(image, Mat(), keypoints, descriptor);

descriptors.push_back(descriptor);

}

// we can compare the images directly or we can compare one image to a database

// images :

cout << "comparing images with images " << endl;

for (int i = 0; i < images.size(); i++) {

DBoW3::BowVector v1;

vocab.transform(descriptors[i], v1);

for (int j = i; j < images.size(); j++) {

DBoW3::BowVector v2;

vocab.transform(descriptors[j], v2);

double score = vocab.score(v1, v2);

cout << "image " << i << " vs image " << j << " : " << score << endl;

}

cout << endl;

}

// or with database

cout << "comparing images with database " << endl;

DBoW3::Database db(vocab, false, 0);

for (int i = 0; i < descriptors.size(); i++)

db.add(descriptors[i]);

cout << "database info: " << db << endl;

for (int i = 0; i < descriptors.size(); i++) {

DBoW3::QueryResults ret;

db.query(descriptors[i], ret, 4); // max result=4

cout << "searching for image " << i << " returns " << ret << endl << endl;

}

cout << "done." << endl;

}

================================================

FILE: code/ch11/dense_mono/CMakeLists.txt

================================================

cmake_minimum_required(VERSION 2.8)

project(dense_monocular)

set(CMAKE_BUILD_TYPE "Release")

set(CMAKE_CXX_FLAGS "-std=c++11 -march=native -O3")

############### dependencies ######################

# Eigen

find_package(Eigen3 REQUIRED)

include_directories(${EIGEN3_INCLUDE_DIR})

include_directories(${FMT_INCLUDE_DIRS})

find_package(FMT REQUIRED)

# OpenCV

find_package(OpenCV 3.1 REQUIRED)

include_directories(${OpenCV_INCLUDE_DIRS})

# Sophus

find_package(Sophus REQUIRED)

include_directories(${Sophus_INCLUDE_DIRS})

set(THIRD_PARTY_LIBS

${OpenCV_LIBS}

${Sophus_LIBRARIES})

add_executable(dense_mapping dense_mapping.cpp)

target_link_libraries(dense_mapping ${THIRD_PARTY_LIBS} fmt::fmt)

================================================

FILE: code/ch11/dense_mono/dense_mapping.cpp

================================================

#include <iostream>

#include <vector>

#include <fstream>

using namespace std;

#include <boost/timer.hpp>

// for sophus

#include <sophus/se3.hpp>

using Sophus::SE3d;

// for eigen

#include <Eigen/Core>

#include <Eigen/Geometry>

using namespace Eigen;

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

using namespace cv;

/**********************************************

* 本程序演示了单目相机在已知轨迹下的稠密深度估计

* 使用极线搜索 + NCC 匹配的方式,与书本的 12.2 节对应

* 请注意本程序并不完美,你完全可以改进它——我其实在故意暴露一些问题(这是借口)。

***********************************************/

// ------------------------------------------------------------------

// parameters

const int boarder = 20; // 边缘宽度

const int width = 640; // 图像宽度

const int height = 480; // 图像高度

const double fx = 481.2f; // 相机内参

const double fy = -480.0f;

const double cx = 319.5f;

const double cy = 239.5f;

const int ncc_window_size = 3; // NCC 取的窗口半宽度

const int ncc_area = (2 * ncc_window_size + 1) * (2 * ncc_window_size + 1); // NCC窗口面积

const double min_cov = 0.1; // 收敛判定:最小方差

const double max_cov = 10; // 发散判定:最大方差

// ------------------------------------------------------------------

// 重要的函数

/// 从 REMODE 数据集读取数据

bool readDatasetFiles(

const string &path,

vector<string> &color_image_files,

vector<SE3d> &poses,

cv::Mat &ref_depth

);

/**

* 根据新的图像更新深度估计

* @param ref 参考图像

* @param curr 当前图像

* @param T_C_R 参考图像到当前图像的位姿

* @param depth 深度

* @param depth_cov 深度方差

* @return 是否成功

*/

bool update(

const Mat &ref,

const Mat &curr,

const SE3d &T_C_R,

Mat &depth,

Mat &depth_cov2

);

/**

* 极线搜索

* @param ref 参考图像

* @param curr 当前图像

* @param T_C_R 位姿

* @param pt_ref 参考图像中点的位置

* @param depth_mu 深度均值

* @param depth_cov 深度方差

* @param pt_curr 当前点

* @param epipolar_direction 极线方向

* @return 是否成功

*/

bool epipolarSearch(

const Mat &ref,

const Mat &curr,

const SE3d &T_C_R,

const Vector2d &pt_ref,

const double &depth_mu,

const double &depth_cov,

Vector2d &pt_curr,

Vector2d &epipolar_direction

);

/**

* 更新深度滤波器

* @param pt_ref 参考图像点

* @param pt_curr 当前图像点

* @param T_C_R 位姿

* @param epipolar_direction 极线方向

* @param depth 深度均值

* @param depth_cov2 深度方向

* @return 是否成功

*/

bool updateDepthFilter(

const Vector2d &pt_ref,

const Vector2d &pt_curr,

const SE3d &T_C_R,

const Vector2d &epipolar_direction,

Mat &depth,

Mat &depth_cov2

);

/**

* 计算 NCC 评分

* @param ref 参考图像

* @param curr 当前图像

* @param pt_ref 参考点

* @param pt_curr 当前点

* @return NCC评分

*/

double NCC(const Mat &ref, const Mat &curr, const Vector2d &pt_ref, const Vector2d &pt_curr);

// 双线性灰度插值

inline double getBilinearInterpolatedValue(const Mat &img, const Vector2d &pt) {

uchar *d = &img.data[int(pt(1, 0)) * img.step + int(pt(0, 0))];

double xx = pt(0, 0) - floor(pt(0, 0));

double yy = pt(1, 0) - floor(pt(1, 0));

return ((1 - xx) * (1 - yy) * double(d[0]) +

xx * (1 - yy) * double(d[1]) +

(1 - xx) * yy * double(d[img.step]) +

xx * yy * double(d[img.step + 1])) / 255.0;

}

// ------------------------------------------------------------------

// 一些小工具

// 显示估计的深度图

void plotDepth(const Mat &depth_truth, const Mat &depth_estimate);

// 像素到相机坐标系

inline Vector3d px2cam(const Vector2d px) {

return Vector3d(

(px(0, 0) - cx) / fx,

(px(1, 0) - cy) / fy,

1

);

}

// 相机坐标系到像素

inline Vector2d cam2px(const Vector3d p_cam) {

return Vector2d(

p_cam(0, 0) * fx / p_cam(2, 0) + cx,

p_cam(1, 0) * fy / p_cam(2, 0) + cy

);

}

// 检测一个点是否在图像边框内

inline bool inside(const Vector2d &pt) {

return pt(0, 0) >= boarder && pt(1, 0) >= boarder

&& pt(0, 0) + boarder < width && pt(1, 0) + boarder <= height;

}

// 显示极线匹配

void showEpipolarMatch(const Mat &ref, const Mat &curr, const Vector2d &px_ref, const Vector2d &px_curr);

// 显示极线

void showEpipolarLine(const Mat &ref, const Mat &curr, const Vector2d &px_ref, const Vector2d &px_min_curr,

const Vector2d &px_max_curr);

/// 评测深度估计

void evaludateDepth(const Mat &depth_truth, const Mat &depth_estimate);

// ------------------------------------------------------------------

int main(int argc, char **argv) {

if (argc != 2) {

cout << "Usage: dense_mapping path_to_test_dataset" << endl;

return -1;

}

// 从数据集读取数据

vector<string> color_image_files;

vector<SE3d> poses_TWC;

Mat ref_depth;

bool ret = readDatasetFiles(argv[1], color_image_files, poses_TWC, ref_depth);

if (ret == false) {

cout << "Reading image files failed!" << endl;

return -1;

}

cout << "read total " << color_image_files.size() << " files." << endl;

// 第一张图

Mat ref = imread(color_image_files[0], 0); // gray-scale image

SE3d pose_ref_TWC = poses_TWC[0];

double init_depth = 3.0; // 深度初始值

double init_cov2 = 3.0; // 方差初始值

Mat depth(height, width, CV_64F, init_depth); // 深度图

Mat depth_cov2(height, width, CV_64F, init_cov2); // 深度图方差

for (int index = 1; index < color_image_files.size(); index++) {

cout << "*** loop " << index << " ***" << endl;

Mat curr = imread(color_image_files[index], 0);

if (curr.data == nullptr) continue;

SE3d pose_curr_TWC = poses_TWC[index];

SE3d pose_T_C_R = pose_curr_TWC.inverse() * pose_ref_TWC; // 坐标转换关系: T_C_W * T_W_R = T_C_R

update(ref, curr, pose_T_C_R, depth, depth_cov2);

evaludateDepth(ref_depth, depth);

plotDepth(ref_depth, depth);

// imshow("image", curr);

waitKey();

}

cout << "estimation returns, saving depth map ..." << endl;

imwrite("depth.png", depth);

cout << "done." << endl;

return 0;

}

bool readDatasetFiles(

const string &path,

vector<string> &color_image_files,

std::vector<SE3d> &poses,

cv::Mat &ref_depth) {

ifstream fin(path + "/first_200_frames_traj_over_table_input_sequence.txt");

if (!fin) return false;

while (!fin.eof()) {

// 数据格式:图像文件名 tx, ty, tz, qx, qy, qz, qw ,注意是 TWC 而非 TCW

string image;

fin >> image;

double data[7];

for (double &d:data) fin >> d;

color_image_files.push_back(path + string("/images/") + image);

poses.push_back(

SE3d(Quaterniond(data[6], data[3], data[4], data[5]),

Vector3d(data[0], data[1], data[2]))

);

if (!fin.good()) break;

}

fin.close();

// load reference depth

fin.open(path + "/depthmaps/scene_000.depth");

ref_depth = cv::Mat(height, width, CV_64F);

if (!fin) return false;

for (int y = 0; y < height; y++)

for (int x = 0; x < width; x++) {

double depth = 0;

fin >> depth;

ref_depth.ptr<double>(y)[x] = depth / 100.0;

}

return true;

}

// 对整个深度图进行更新

bool update(const Mat &ref, const Mat &curr, const SE3d &T_C_R, Mat &depth, Mat &depth_cov2) {

for (int x = boarder; x < width - boarder; x++)

for (int y = boarder; y < height - boarder; y++) {

// 遍历每个像素

if (depth_cov2.ptr<double>(y)[x] < min_cov || depth_cov2.ptr<double>(y)[x] > max_cov) // 深度已收敛或发散

continue;

// 在极线上搜索 (x,y) 的匹配

Vector2d pt_curr;

Vector2d epipolar_direction;

bool ret = epipolarSearch(

ref,

curr,

T_C_R,

Vector2d(x, y),

depth.ptr<double>(y)[x],

sqrt(depth_cov2.ptr<double>(y)[x]),

pt_curr,

epipolar_direction

);

if (ret == false) // 匹配失败

continue;

// 取消该注释以显示匹配

// showEpipolarMatch(ref, curr, Vector2d(x, y), pt_curr);

// 匹配成功,更新深度图

updateDepthFilter(Vector2d(x, y), pt_curr, T_C_R, epipolar_direction, depth, depth_cov2);

}

}

// 极线搜索

// 方法见书 12.2 12.3 两节

bool epipolarSearch(

const Mat &ref, const Mat &curr,

const SE3d &T_C_R, const Vector2d &pt_ref,

const double &depth_mu, const double &depth_cov,

Vector2d &pt_curr, Vector2d &epipolar_direction) {

Vector3d f_ref = px2cam(pt_ref);

f_ref.normalize();

Vector3d P_ref = f_ref * depth_mu; // 参考帧的 P 向量

Vector2d px_mean_curr = cam2px(T_C_R * P_ref); // 按深度均值投影的像素

double d_min = depth_mu - 3 * depth_cov, d_max = depth_mu + 3 * depth_cov;

if (d_min < 0.1) d_min = 0.1;

Vector2d px_min_curr = cam2px(T_C_R * (f_ref * d_min)); // 按最小深度投影的像素

Vector2d px_max_curr = cam2px(T_C_R * (f_ref * d_max)); // 按最大深度投影的像素

Vector2d epipolar_line = px_max_curr - px_min_curr; // 极线(线段形式)

epipolar_direction = epipolar_line; // 极线方向

epipolar_direction.normalize();

double half_length = 0.5 * epipolar_line.norm(); // 极线线段的半长度

if (half_length > 100) half_length = 100; // 我们不希望搜索太多东西

// 取消此句注释以显示极线(线段)

// showEpipolarLine( ref, curr, pt_ref, px_min_curr, px_max_curr );

// 在极线上搜索,以深度均值点为中心,左右各取半长度

double best_ncc = -1.0;

Vector2d best_px_curr;

for (double l = -half_length; l <= half_length; l += 0.7) { // l+=sqrt(2)

Vector2d px_curr = px_mean_curr + l * epipolar_direction; // 待匹配点

if (!inside(px_curr))

continue;

// 计算待匹配点与参考帧的 NCC

double ncc = NCC(ref, curr, pt_ref, px_curr);

if (ncc > best_ncc) {

best_ncc = ncc;

best_px_curr = px_curr;

}

}

if (best_ncc < 0.85f) // 只相信 NCC 很高的匹配

return false;

pt_curr = best_px_curr;

return true;

}

double NCC(

const Mat &ref, const Mat &curr,

const Vector2d &pt_ref, const Vector2d &pt_curr) {

// 零均值-归一化互相关

// 先算均值

double mean_ref = 0, mean_curr = 0;

vector<double> values_ref, values_curr; // 参考帧和当前帧的均值

for (int x = -ncc_window_size; x <= ncc_window_size; x++)

for (int y = -ncc_window_size; y <= ncc_window_size; y++) {

double value_ref = double(ref.ptr<uchar>(int(y + pt_ref(1, 0)))[int(x + pt_ref(0, 0))]) / 255.0;

mean_ref += value_ref;

double value_curr = getBilinearInterpolatedValue(curr, pt_curr + Vector2d(x, y));

mean_curr += value_curr;

values_ref.push_back(value_ref);

values_curr.push_back(value_curr);

}

mean_ref /= ncc_area;

mean_curr /= ncc_area;

// 计算 Zero mean NCC

double numerator = 0, demoniator1 = 0, demoniator2 = 0;

for (int i = 0; i < values_ref.size(); i++) {

double n = (values_ref[i] - mean_ref) * (values_curr[i] - mean_curr);

numerator += n;

demoniator1 += (values_ref[i] - mean_ref) * (values_ref[i] - mean_ref);

demoniator2 += (values_curr[i] - mean_curr) * (values_curr[i] - mean_curr);

}

return numerator / sqrt(demoniator1 * demoniator2 + 1e-10); // 防止分母出现零

}

bool updateDepthFilter(

const Vector2d &pt_ref,

const Vector2d &pt_curr,

const SE3d &T_C_R,

const Vector2d &epipolar_direction,

Mat &depth,

Mat &depth_cov2) {

// 不知道这段还有没有人看

// 用三角化计算深度

SE3d T_R_C = T_C_R.inverse();

Vector3d f_ref = px2cam(pt_ref);

f_ref.normalize();

Vector3d f_curr = px2cam(pt_curr);

f_curr.normalize();

// 方程

// d_ref * f_ref = d_cur * ( R_RC * f_cur ) + t_RC

// f2 = R_RC * f_cur

// 转化成下面这个矩阵方程组

// => [ f_ref^T f_ref, -f_ref^T f2 ] [d_ref] [f_ref^T t]

// [ f_2^T f_ref, -f2^T f2 ] [d_cur] = [f2^T t ]

Vector3d t = T_R_C.translation();

Vector3d f2 = T_R_C.so3() * f_curr;

Vector2d b = Vector2d(t.dot(f_ref), t.dot(f2));

Matrix2d A;

A(0, 0) = f_ref.dot(f_ref);

A(0, 1) = -f_ref.dot(f2);

A(1, 0) = -A(0, 1);

A(1, 1) = -f2.dot(f2);

Vector2d ans = A.inverse() * b;

Vector3d xm = ans[0] * f_ref; // ref 侧的结果

Vector3d xn = t + ans[1] * f2; // cur 结果

Vector3d p_esti = (xm + xn) / 2.0; // P的位置,取两者的平均

double depth_estimation = p_esti.norm(); // 深度值

// 计算不确定性(以一个像素为误差)

Vector3d p = f_ref * depth_estimation;

Vector3d a = p - t;

double t_norm = t.norm();

double a_norm = a.norm();

double alpha = acos(f_ref.dot(t) / t_norm);

double beta = acos(-a.dot(t) / (a_norm * t_norm));

Vector3d f_curr_prime = px2cam(pt_curr + epipolar_direction);

f_curr_prime.normalize();

double beta_prime = acos(f_curr_prime.dot(-t) / t_norm);

double gamma = M_PI - alpha - beta_prime;

double p_prime = t_norm * sin(beta_prime) / sin(gamma);

double d_cov = p_prime - depth_estimation;

double d_cov2 = d_cov * d_cov;

// 高斯融合

double mu = depth.ptr<double>(int(pt_ref(1, 0)))[int(pt_ref(0, 0))];

double sigma2 = depth_cov2.ptr<double>(int(pt_ref(1, 0)))[int(pt_ref(0, 0))];

double mu_fuse = (d_cov2 * mu + sigma2 * depth_estimation) / (sigma2 + d_cov2);

double sigma_fuse2 = (sigma2 * d_cov2) / (sigma2 + d_cov2);

depth.ptr<double>(int(pt_ref(1, 0)))[int(pt_ref(0, 0))] = mu_fuse;

depth_cov2.ptr<double>(int(pt_ref(1, 0)))[int(pt_ref(0, 0))] = sigma_fuse2;

return true;

}

// 后面这些太简单我就不注释了(其实是因为懒)

void plotDepth(const Mat &depth_truth, const Mat &depth_estimate) {

imshow("depth_truth", depth_truth * 0.4);

imshow("depth_estimate", depth_estimate * 0.4);

imshow("depth_error", depth_truth - depth_estimate);

// waitKey(1);

}

void evaludateDepth(const Mat &depth_truth, const Mat &depth_estimate) {

double ave_depth_error = 0; // 平均误差

double ave_depth_error_sq = 0; // 平方误差

int cnt_depth_data = 0;

for (int y = boarder; y < depth_truth.rows - boarder; y++)

for (int x = boarder; x < depth_truth.cols - boarder; x++) {

double error = depth_truth.ptr<double>(y)[x] - depth_estimate.ptr<double>(y)[x];

ave_depth_error += error;

ave_depth_error_sq += error * error;

cnt_depth_data++;

}

ave_depth_error /= cnt_depth_data;

ave_depth_error_sq /= cnt_depth_data;

cout << "Average squared error = " << ave_depth_error_sq << ", average error: " << ave_depth_error << endl;

}

void showEpipolarMatch(const Mat &ref, const Mat &curr, const Vector2d &px_ref, const Vector2d &px_curr) {

Mat ref_show, curr_show;

cv::cvtColor(ref, ref_show, CV_GRAY2BGR);

cv::cvtColor(curr, curr_show, CV_GRAY2BGR);

cv::circle(ref_show, cv::Point2f(px_ref(0, 0), px_ref(1, 0)), 5, cv::Scalar(0, 0, 250), 2);

cv::circle(curr_show, cv::Point2f(px_curr(0, 0), px_curr(1, 0)), 5, cv::Scalar(0, 0, 250), 2);

imshow("ref", ref_show);

imshow("curr", curr_show);

waitKey(1);

}

void showEpipolarLine(const Mat &ref, const Mat &curr, const Vector2d &px_ref, const Vector2d &px_min_curr,

const Vector2d &px_max_curr) {

Mat ref_show, curr_show;

cv::cvtColor(ref, ref_show, CV_GRAY2BGR);

cv::cvtColor(curr, curr_show, CV_GRAY2BGR);

cv::circle(ref_show, cv::Point2f(px_ref(0, 0), px_ref(1, 0)), 5, cv::Scalar(0, 255, 0), 2);

cv::circle(curr_show, cv::Point2f(px_min_curr(0, 0), px_min_curr(1, 0)), 5, cv::Scalar(0, 255, 0), 2);

cv::circle(curr_show, cv::Point2f(px_max_curr(0, 0), px_max_curr(1, 0)), 5, cv::Scalar(0, 255, 0), 2);

cv::line(curr_show, Point2f(px_min_curr(0, 0), px_min_curr(1, 0)), Point2f(px_max_curr(0, 0), px_max_curr(1, 0)),

Scalar(0, 255, 0), 1);

imshow("ref", ref_show);

imshow("curr", curr_show);

waitKey(1);

}

================================================

FILE: code/ch12/myslam/CMakeLists.txt

================================================

cmake_minimum_required(VERSION 2.8)

project(myslam)

set(CMAKE_BUILD_TYPE Release)

set(CMAKE_CXX_FLAGS "-std=c++11 -Wall")

set(CMAKE_CXX_FLAGS_RELEASE "-std=c++11 -O3 -fopenmp -pthread")

list(APPEND CMAKE_MODULE_PATH ${PROJECT_SOURCE_DIR}/cmake_modules)

set(EXECUTABLE_OUTPUT_PATH ${PROJECT_SOURCE_DIR}/bin)

set(LIBRARY_OUTPUT_PATH ${PROJECT_SOURCE_DIR}/lib)

############### dependencies ######################

# Eigen

include_directories("/usr/include/eigen3")

# OpenCV

find_package(OpenCV 3.1 REQUIRED)

include_directories(${OpenCV_INCLUDE_DIRS})

# pangolin

find_package(Pangolin REQUIRED)

include_directories(${Pangolin_INCLUDE_DIRS})

# Sophus

find_package(Sophus REQUIRED)

include_directories(${Sophus_INCLUDE_DIRS})

# G2O

find_package(G2O REQUIRED)

include_directories(${G2O_INCLUDE_DIRS})

# glog

find_package(Glog REQUIRED)

include_directories(${GLOG_INCLUDE_DIRS})

# gflags

find_package(GFlags REQUIRED)

include_directories(${GFLAGS_INCLUDE_DIRS})

# csparse

find_package(CSparse REQUIRED)

include_directories(${CSPARSE_INCLUDE_DIR})

set(THIRD_PARTY_LIBS

${OpenCV_LIBS}

${Sophus_LIBRARIES}

${Pangolin_LIBRARIES} GL GLU GLEW glut

g2o_core g2o_stuff g2o_types_sba g2o_solver_csparse g2o_csparse_extension

${GTEST_BOTH_LIBRARIES}

${GLOG_LIBRARIES}

${GFLAGS_LIBRARIES}

pthread

${CSPARSE_LIBRARY}

)

enable_testing()

############### source and test ######################

include_directories(${PROJECT_SOURCE_DIR}/include)

add_subdirectory(src)

add_subdirectory(app)

================================================

FILE: code/ch12/myslam/app/CMakeLists.txt

================================================

add_executable(run_kitti_stereo run_kitti_stereo.cpp)

target_link_libraries(run_kitti_stereo myslam ${THIRD_PARTY_LIBS})

================================================

FILE: code/ch12/myslam/app/run_kitti_stereo.cpp

================================================

//

// Created by gaoxiang on 19-5-4.

//

#include <gflags/gflags.h>

#include "myslam/visual_odometry.h"

DEFINE_string(config_file, "./config/default.yaml", "config file path");

int main(int argc, char **argv) {

google::ParseCommandLineFlags(&argc, &argv, true);

myslam::VisualOdometry::Ptr vo(

new myslam::VisualOdometry(FLAGS_config_file));

assert(vo->Init() == true);

vo->Run();

return 0;

}

================================================

FILE: code/ch12/myslam/cmake_modules/FindCSparse.cmake

================================================

# Look for csparse; note the difference in the directory specifications!

find_path(CSPARSE_INCLUDE_DIR NAMES cs.h

PATHS

/usr/include/suitesparse

/usr/include

/opt/local/include

/usr/local/include

/sw/include

/usr/include/ufsparse

/opt/local/include/ufsparse

/usr/local/include/ufsparse

/sw/include/ufsparse

PATH_SUFFIXES

suitesparse

)

find_library(CSPARSE_LIBRARY NAMES cxsparse libcxsparse

PATHS

/usr/lib

/usr/local/lib

/opt/local/lib

/sw/lib

)

include(FindPackageHandleStandardArgs)

find_package_handle_standard_args(CSPARSE DEFAULT_MSG

CSPARSE_INCLUDE_DIR CSPARSE_LIBRARY)

================================================

FILE: code/ch12/myslam/cmake_modules/FindG2O.cmake

================================================

# Find the header files

FIND_PATH(G2O_INCLUDE_DIR g2o/core/base_vertex.h

${G2O_ROOT}/include

$ENV{G2O_ROOT}/include

$ENV{G2O_ROOT}

/usr/local/include

/usr/include

/opt/local/include

/sw/local/include

/sw/include

NO_DEFAULT_PATH

)

# Macro to unify finding both the debug and release versions of the

# libraries; this is adapted from the OpenSceneGraph FIND_LIBRARY

# macro.

MACRO(FIND_G2O_LIBRARY MYLIBRARY MYLIBRARYNAME)

FIND_LIBRARY("${MYLIBRARY}_DEBUG"

NAMES "g2o_${MYLIBRARYNAME}_d"

PATHS

${G2O_ROOT}/lib/Debug

${G2O_ROOT}/lib

$ENV{G2O_ROOT}/lib/Debug

$ENV{G2O_ROOT}/lib

NO_DEFAULT_PATH

)

FIND_LIBRARY("${MYLIBRARY}_DEBUG"

NAMES "g2o_${MYLIBRARYNAME}_d"

PATHS

~/Library/Frameworks

/Library/Frameworks

/usr/local/lib

/usr/local/lib64

/usr/lib

/usr/lib64

/opt/local/lib

/sw/local/lib

/sw/lib

)

FIND_LIBRARY(${MYLIBRARY}

NAMES "g2o_${MYLIBRARYNAME}"

PATHS

${G2O_ROOT}/lib/Release

${G2O_ROOT}/lib

$ENV{G2O_ROOT}/lib/Release

$ENV{G2O_ROOT}/lib

NO_DEFAULT_PATH

)

FIND_LIBRARY(${MYLIBRARY}

NAMES "g2o_${MYLIBRARYNAME}"

PATHS

~/Library/Frameworks

/Library/Frameworks

/usr/local/lib

/usr/local/lib64

/usr/lib

/usr/lib64

/opt/local/lib

/sw/local/lib

/sw/lib

)

IF(NOT ${MYLIBRARY}_DEBUG)

IF(MYLIBRARY)

SET(${MYLIBRARY}_DEBUG ${MYLIBRARY})

ENDIF(MYLIBRARY)

ENDIF( NOT ${MYLIBRARY}_DEBUG)

ENDMACRO(FIND_G2O_LIBRARY LIBRARY LIBRARYNAME)

# Find the core elements

FIND_G2O_LIBRARY(G2O_STUFF_LIBRARY stuff)

FIND_G2O_LIBRARY(G2O_CORE_LIBRARY core)

# Find the CLI library

FIND_G2O_LIBRARY(G2O_CLI_LIBRARY cli)

# Find the pluggable solvers

FIND_G2O_LIBRARY(G2O_SOLVER_CHOLMOD solver_cholmod)

FIND_G2O_LIBRARY(G2O_SOLVER_CSPARSE solver_csparse)

FIND_G2O_LIBRARY(G2O_SOLVER_CSPARSE_EXTENSION csparse_extension)

FIND_G2O_LIBRARY(G2O_SOLVER_DENSE solver_dense)

FIND_G2O_LIBRARY(G2O_SOLVER_PCG solver_pcg)

FIND_G2O_LIBRARY(G2O_SOLVER_SLAM2D_LINEAR solver_slam2d_linear)

FIND_G2O_LIBRARY(G2O_SOLVER_STRUCTURE_ONLY solver_structure_only)

FIND_G2O_LIBRARY(G2O_SOLVER_EIGEN solver_eigen)

# Find the predefined types

FIND_G2O_LIBRARY(G2O_TYPES_DATA types_data)

FIND_G2O_LIBRARY(G2O_TYPES_ICP types_icp)

FIND_G2O_LIBRARY(G2O_TYPES_SBA types_sba)

FIND_G2O_LIBRARY(G2O_TYPES_SCLAM2D types_sclam2d)

FIND_G2O_LIBRARY(G2O_TYPES_SIM3 types_sim3)

FIND_G2O_LIBRARY(G2O_TYPES_SLAM2D types_slam2d)

FIND_G2O_LIBRARY(G2O_TYPES_SLAM3D types_slam3d)

# G2O solvers declared found if we found at least one solver

SET(G2O_SOLVERS_FOUND "NO")

IF(G2O_SOLVER_CHOLMOD OR G2O_SOLVER_CSPARSE OR G2O_SOLVER_DENSE OR G2O_SOLVER_PCG OR G2O_SOLVER_SLAM2D_LINEAR OR G2O_SOLVER_STRUCTURE_ONLY OR G2O_SOLVER_EIGEN)

SET(G2O_SOLVERS_FOUND "YES")

ENDIF(G2O_SOLVER_CHOLMOD OR G2O_SOLVER_CSPARSE OR G2O_SOLVER_DENSE OR G2O_SOLVER_PCG OR G2O_SOLVER_SLAM2D_LINEAR OR G2O_SOLVER_STRUCTURE_ONLY OR G2O_SOLVER_EIGEN)

# G2O itself declared found if we found the core libraries and at least one solver

SET(G2O_FOUND "NO")

IF(G2O_STUFF_LIBRARY AND G2O_CORE_LIBRARY AND G2O_INCLUDE_DIR AND G2O_SOLVERS_FOUND)

SET(G2O_FOUND "YES")

ENDIF(G2O_STUFF_LIBRARY AND G2O_CORE_LIBRARY AND G2O_INCLUDE_DIR AND G2O_SOLVERS_FOUND)

================================================

FILE: code/ch12/myslam/cmake_modules/FindGlog.cmake

================================================

# Ceres Solver - A fast non-linear least squares minimizer

# Copyright 2015 Google Inc. All rights reserved.

# http://ceres-solver.org/

#

# Redistribution and use in source and binary forms, with or without

# modification, are permitted provided that the following conditions are met:

#

# * Redistributions of source code must retain the above copyright notice,

# this list of conditions and the following disclaimer.

# * Redistributions in binary form must reproduce the above copyright notice,

# this list of conditions and the following disclaimer in the documentation

# and/or other materials provided with the distribution.

# * Neither the name of Google Inc. nor the names of its contributors may be

# used to endorse or promote products derived from this software without

# specific prior written permission.

#

# THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS"

# AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE

# IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE

# ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT OWNER OR CONTRIBUTORS BE

# LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR

# CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF

# SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS

# INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN

# CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE)

# ARISING IN ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE

# POSSIBILITY OF SUCH DAMAGE.

#

# Author: alexs.mac@gmail.com (Alex Stewart)

#

# FindGlog.cmake - Find Google glog logging library.

#

# This module defines the following variables:

#

# GLOG_FOUND: TRUE iff glog is found.

# GLOG_INCLUDE_DIRS: Include directories for glog.

# GLOG_LIBRARIES: Libraries required to link glog.

#

# The following variables control the behaviour of this module:

#

# GLOG_INCLUDE_DIR_HINTS: List of additional directories in which to

# search for glog includes, e.g: /timbuktu/include.

# GLOG_LIBRARY_DIR_HINTS: List of additional directories in which to

# search for glog libraries, e.g: /timbuktu/lib.

#

# The following variables are also defined by this module, but in line with

# CMake recommended FindPackage() module style should NOT be referenced directly

# by callers (use the plural variables detailed above instead). These variables

# do however affect the behaviour of the module via FIND_[PATH/LIBRARY]() which

# are NOT re-called (i.e. search for library is not repeated) if these variables

# are set with valid values _in the CMake cache_. This means that if these

# variables are set directly in the cache, either by the user in the CMake GUI,

# or by the user passing -DVAR=VALUE directives to CMake when called (which

# explicitly defines a cache variable), then they will be used verbatim,

# bypassing the HINTS variables and other hard-coded search locations.

#

# GLOG_INCLUDE_DIR: Include directory for glog, not including the

# include directory of any dependencies.

# GLOG_LIBRARY: glog library, not including the libraries of any

# dependencies.

# Reset CALLERS_CMAKE_FIND_LIBRARY_PREFIXES to its value when

# FindGlog was invoked.

macro(GLOG_RESET_FIND_LIBRARY_PREFIX)

if (MSVC)

set(CMAKE_FIND_LIBRARY_PREFIXES "${CALLERS_CMAKE_FIND_LIBRARY_PREFIXES}")

endif (MSVC)

endmacro(GLOG_RESET_FIND_LIBRARY_PREFIX)

# Called if we failed to find glog or any of it's required dependencies,

# unsets all public (designed to be used externally) variables and reports

# error message at priority depending upon [REQUIRED/QUIET/<NONE>] argument.

macro(GLOG_REPORT_NOT_FOUND REASON_MSG)

unset(GLOG_FOUND)

unset(GLOG_INCLUDE_DIRS)

unset(GLOG_LIBRARIES)

# Make results of search visible in the CMake GUI if glog has not

# been found so that user does not have to toggle to advanced view.

mark_as_advanced(CLEAR GLOG_INCLUDE_DIR

GLOG_LIBRARY)

glog_reset_find_library_prefix()

# Note <package>_FIND_[REQUIRED/QUIETLY] variables defined by FindPackage()

# use the camelcase library name, not uppercase.

if (Glog_FIND_QUIETLY)

message(STATUS "Failed to find glog - " ${REASON_MSG} ${ARGN})

elseif (Glog_FIND_REQUIRED)

message(FATAL_ERROR "Failed to find glog - " ${REASON_MSG} ${ARGN})

else ()

# Neither QUIETLY nor REQUIRED, use no priority which emits a message

# but continues configuration and allows generation.

message("-- Failed to find glog - " ${REASON_MSG} ${ARGN})

endif ()

endmacro(GLOG_REPORT_NOT_FOUND)

# Handle possible presence of lib prefix for libraries on MSVC, see

# also GLOG_RESET_FIND_LIBRARY_PREFIX().

if (MSVC)

# Preserve the caller's original values for CMAKE_FIND_LIBRARY_PREFIXES

# s/t we can set it back before returning.

set(CALLERS_CMAKE_FIND_LIBRARY_PREFIXES "${CMAKE_FIND_LIBRARY_PREFIXES}")

# The empty string in this list is important, it represents the case when

# the libraries have no prefix (shared libraries / DLLs).

set(CMAKE_FIND_LIBRARY_PREFIXES "lib" "" "${CMAKE_FIND_LIBRARY_PREFIXES}")

endif (MSVC)

# Search user-installed locations first, so that we prefer user installs

# to system installs where both exist.

list(APPEND GLOG_CHECK_INCLUDE_DIRS

/usr/local/include

/usr/local/homebrew/include # Mac OS X

/opt/local/var/macports/software # Mac OS X.

/opt/local/include

/usr/include)

# Windows (for C:/Program Files prefix).

list(APPEND GLOG_CHECK_PATH_SUFFIXES

glog/include

glog/Include

Glog/include

Glog/Include)

list(APPEND GLOG_CHECK_LIBRARY_DIRS

/usr/local/lib

/usr/local/homebrew/lib # Mac OS X.

/opt/local/lib

/usr/lib)

# Windows (for C:/Program Files prefix).

list(APPEND GLOG_CHECK_LIBRARY_SUFFIXES

glog/lib

glog/Lib

Glog/lib

Glog/Lib)

# Search supplied hint directories first if supplied.

find_path(GLOG_INCLUDE_DIR

NAMES glog/logging.h

PATHS ${GLOG_INCLUDE_DIR_HINTS}

${GLOG_CHECK_INCLUDE_DIRS}

PATH_SUFFIXES ${GLOG_CHECK_PATH_SUFFIXES})

if (NOT GLOG_INCLUDE_DIR OR

NOT EXISTS ${GLOG_INCLUDE_DIR})

glog_report_not_found(

"Could not find glog include directory, set GLOG_INCLUDE_DIR "

"to directory containing glog/logging.h")

endif (NOT GLOG_INCLUDE_DIR OR

NOT EXISTS ${GLOG_INCLUDE_DIR})

find_library(GLOG_LIBRARY NAMES glog

PATHS ${GLOG_LIBRARY_DIR_HINTS}

${GLOG_CHECK_LIBRARY_DIRS}

PATH_SUFFIXES ${GLOG_CHECK_LIBRARY_SUFFIXES})

if (NOT GLOG_LIBRARY OR

NOT EXISTS ${GLOG_LIBRARY})

glog_report_not_found(

"Could not find glog library, set GLOG_LIBRARY "

"to full path to libglog.")

endif (NOT GLOG_LIBRARY OR

NOT EXISTS ${GLOG_LIBRARY})

# Mark internally as found, then verify. GLOG_REPORT_NOT_FOUND() unsets

# if called.

set(GLOG_FOUND TRUE)

# Glog does not seem to provide any record of the version in its

# source tree, thus cannot extract version.

# Catch case when caller has set GLOG_INCLUDE_DIR in the cache / GUI and

# thus FIND_[PATH/LIBRARY] are not called, but specified locations are

# invalid, otherwise we would report the library as found.

if (GLOG_INCLUDE_DIR AND

NOT EXISTS ${GLOG_INCLUDE_DIR}/glog/logging.h)

glog_report_not_found(

"Caller defined GLOG_INCLUDE_DIR:"

" ${GLOG_INCLUDE_DIR} does not contain glog/logging.h header.")

endif (GLOG_INCLUDE_DIR AND

NOT EXISTS ${GLOG_INCLUDE_DIR}/glog/logging.h)

# TODO: This regex for glog library is pretty primitive, we use lowercase

# for comparison to handle Windows using CamelCase library names, could

# this check be better?

string(TOLOWER "${GLOG_LIBRARY}" LOWERCASE_GLOG_LIBRARY)

if (GLOG_LIBRARY AND

NOT "${LOWERCASE_GLOG_LIBRARY}" MATCHES ".*glog[^/]*")

glog_report_not_found(

"Caller defined GLOG_LIBRARY: "

"${GLOG_LIBRARY} does not match glog.")

endif (GLOG_LIBRARY AND

NOT "${LOWERCASE_GLOG_LIBRARY}" MATCHES ".*glog[^/]*")

# Set standard CMake FindPackage variables if found.

if (GLOG_FOUND)

set(GLOG_INCLUDE_DIRS ${GLOG_INCLUDE_DIR})

set(GLOG_LIBRARIES ${GLOG_LIBRARY})

endif (GLOG_FOUND)

glog_reset_find_library_prefix()

# Handle REQUIRED / QUIET optional arguments.

include(FindPackageHandleStandardArgs)

find_package_handle_standard_args(Glog DEFAULT_MSG

GLOG_INCLUDE_DIRS GLOG_LIBRARIES)

# Only mark internal variables as advanced if we found glog, otherwise

# leave them visible in the standard GUI for the user to set manually.

if (GLOG_FOUND)

mark_as_advanced(FORCE GLOG_INCLUDE_DIR

GLOG_LIBRARY)

endif (GLOG_FOUND)

================================================

FILE: code/ch12/myslam/config/default.yaml

================================================

%YAML:1.0

# data

# the tum dataset directory, change it to yours!

# dataset_dir: /media/xiang/Data/Dataset/Kitti/dataset/sequences/00

dataset_dir: /home/huminghao/hmh/KITTI/00

# camera intrinsics

camera.fx: 517.3

camera.fy: 516.5

camera.cx: 325.1

camera.cy: 249.7

num_features: 150

num_features_init: 50

num_features_tracking: 50

================================================

FILE: code/ch12/myslam/include/myslam/algorithm.h

================================================

//

// Created by gaoxiang on 19-5-4.

//

#ifndef MYSLAM_ALGORITHM_H

#define MYSLAM_ALGORITHM_H

// algorithms used in myslam

#include "myslam/common_include.h"

namespace myslam {

/**

* linear triangulation with SVD

* @param poses poses,

* @param points points in normalized plane

* @param pt_world triangulated point in the world

* @return true if success

*/

inline bool triangulation(const std::vector<SE3> &poses,

const std::vector<Vec3> points, Vec3 &pt_world) {

MatXX A(2 * poses.size(), 4);

VecX b(2 * poses.size());

b.setZero();

for (size_t i = 0; i < poses.size(); ++i) {

Mat34 m = poses[i].matrix3x4();

A.block<1, 4>(2 * i, 0) = points[i][0] * m.row(2) - m.row(0);

A.block<1, 4>(2 * i + 1, 0) = points[i][1] * m.row(2) - m.row(1);

}

auto svd = A.bdcSvd(Eigen::ComputeThinU | Eigen::ComputeThinV);

pt_world = (svd.matrixV().col(3) / svd.matrixV()(3, 3)).head<3>();

if (svd.singularValues()[3] / svd.singularValues()[2] < 1e-2) {

// 解质量不好,放弃

return true;

}

return false;

}

// converters

inline Vec2 toVec2(const cv::Point2f p) { return Vec2(p.x, p.y); }

} // namespace myslam

#endif // MYSLAM_ALGORITHM_H

================================================

FILE: code/ch12/myslam/include/myslam/backend.h

================================================

//

// Created by gaoxiang on 19-5-2.

//

#ifndef MYSLAM_BACKEND_H

#define MYSLAM_BACKEND_H

#include "myslam/common_include.h"

#include "myslam/frame.h"

#include "myslam/map.h"

namespace myslam {

class Map;

/**

* 后端

* 有单独优化线程,在Map更新时启动优化

* Map更新由前端触发

*/

class Backend {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

typedef std::shared_ptr<Backend> Ptr;

/// 构造函数中启动优化线程并挂起

Backend();

// 设置左右目的相机,用于获得内外参

void SetCameras(Camera::Ptr left, Camera::Ptr right) {

cam_left_ = left;

cam_right_ = right;

}

/// 设置地图

void SetMap(std::shared_ptr<Map> map) { map_ = map; }

/// 触发地图更新,启动优化

void UpdateMap();

/// 关闭后端线程

void Stop();

private:

/// 后端线程

void BackendLoop();

/// 对给定关键帧和路标点进行优化

void Optimize(Map::KeyframesType& keyframes, Map::LandmarksType& landmarks);

std::shared_ptr<Map> map_;

std::thread backend_thread_;

std::mutex data_mutex_;

std::condition_variable map_update_;

std::atomic<bool> backend_running_;

Camera::Ptr cam_left_ = nullptr, cam_right_ = nullptr;

};

} // namespace myslam

#endif // MYSLAM_BACKEND_H

================================================

FILE: code/ch12/myslam/include/myslam/camera.h

================================================

#pragma once

#ifndef MYSLAM_CAMERA_H

#define MYSLAM_CAMERA_H

#include "myslam/common_include.h"

namespace myslam {

// Pinhole stereo camera model

class Camera {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

typedef std::shared_ptr<Camera> Ptr;

double fx_ = 0, fy_ = 0, cx_ = 0, cy_ = 0,

baseline_ = 0; // Camera intrinsics

SE3 pose_; // extrinsic, from stereo camera to single camera

SE3 pose_inv_; // inverse of extrinsics

Camera();

Camera(double fx, double fy, double cx, double cy, double baseline,

const SE3 &pose)

: fx_(fx), fy_(fy), cx_(cx), cy_(cy), baseline_(baseline), pose_(pose) {

pose_inv_ = pose_.inverse();

}

SE3 pose() const { return pose_; }

// return intrinsic matrix

Mat33 K() const {

Mat33 k;

k << fx_, 0, cx_, 0, fy_, cy_, 0, 0, 1;

return k;

}

// coordinate transform: world, camera, pixel

Vec3 world2camera(const Vec3 &p_w, const SE3 &T_c_w);

Vec3 camera2world(const Vec3 &p_c, const SE3 &T_c_w);

Vec2 camera2pixel(const Vec3 &p_c);

Vec3 pixel2camera(const Vec2 &p_p, double depth = 1);

Vec3 pixel2world(const Vec2 &p_p, const SE3 &T_c_w, double depth = 1);

Vec2 world2pixel(const Vec3 &p_w, const SE3 &T_c_w);

};

} // namespace myslam

#endif // MYSLAM_CAMERA_H

================================================

FILE: code/ch12/myslam/include/myslam/common_include.h

================================================

#pragma once

#ifndef MYSLAM_COMMON_INCLUDE_H

#define MYSLAM_COMMON_INCLUDE_H

// std

#include <atomic>

#include <condition_variable>

#include <iostream>

#include <list>

#include <map>

#include <memory>

#include <mutex>

#include <set>

#include <string>

#include <thread>

#include <typeinfo>

#include <unordered_map>

#include <vector>

// define the commonly included file to avoid a long include list

#include <Eigen/Core>

#include <Eigen/Geometry>

// typedefs for eigen

// double matricies

typedef Eigen::Matrix<double, Eigen::Dynamic, Eigen::Dynamic> MatXX;

typedef Eigen::Matrix<double, 10, 10> Mat1010;

typedef Eigen::Matrix<double, 13, 13> Mat1313;

typedef Eigen::Matrix<double, 8, 10> Mat810;

typedef Eigen::Matrix<double, 8, 3> Mat83;

typedef Eigen::Matrix<double, 6, 6> Mat66;

typedef Eigen::Matrix<double, 5, 3> Mat53;

typedef Eigen::Matrix<double, 4, 3> Mat43;

typedef Eigen::Matrix<double, 4, 2> Mat42;

typedef Eigen::Matrix<double, 3, 3> Mat33;

typedef Eigen::Matrix<double, 2, 2> Mat22;

typedef Eigen::Matrix<double, 8, 8> Mat88;

typedef Eigen::Matrix<double, 7, 7> Mat77;

typedef Eigen::Matrix<double, 4, 9> Mat49;

typedef Eigen::Matrix<double, 8, 9> Mat89;

typedef Eigen::Matrix<double, 9, 4> Mat94;

typedef Eigen::Matrix<double, 9, 8> Mat98;

typedef Eigen::Matrix<double, 8, 1> Mat81;

typedef Eigen::Matrix<double, 1, 8> Mat18;

typedef Eigen::Matrix<double, 9, 1> Mat91;

typedef Eigen::Matrix<double, 1, 9> Mat19;

typedef Eigen::Matrix<double, 8, 4> Mat84;

typedef Eigen::Matrix<double, 4, 8> Mat48;

typedef Eigen::Matrix<double, 4, 4> Mat44;

typedef Eigen::Matrix<double, 3, 4> Mat34;

typedef Eigen::Matrix<double, 14, 14> Mat1414;

// float matricies

typedef Eigen::Matrix<float, 3, 3> Mat33f;

typedef Eigen::Matrix<float, 10, 3> Mat103f;